Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

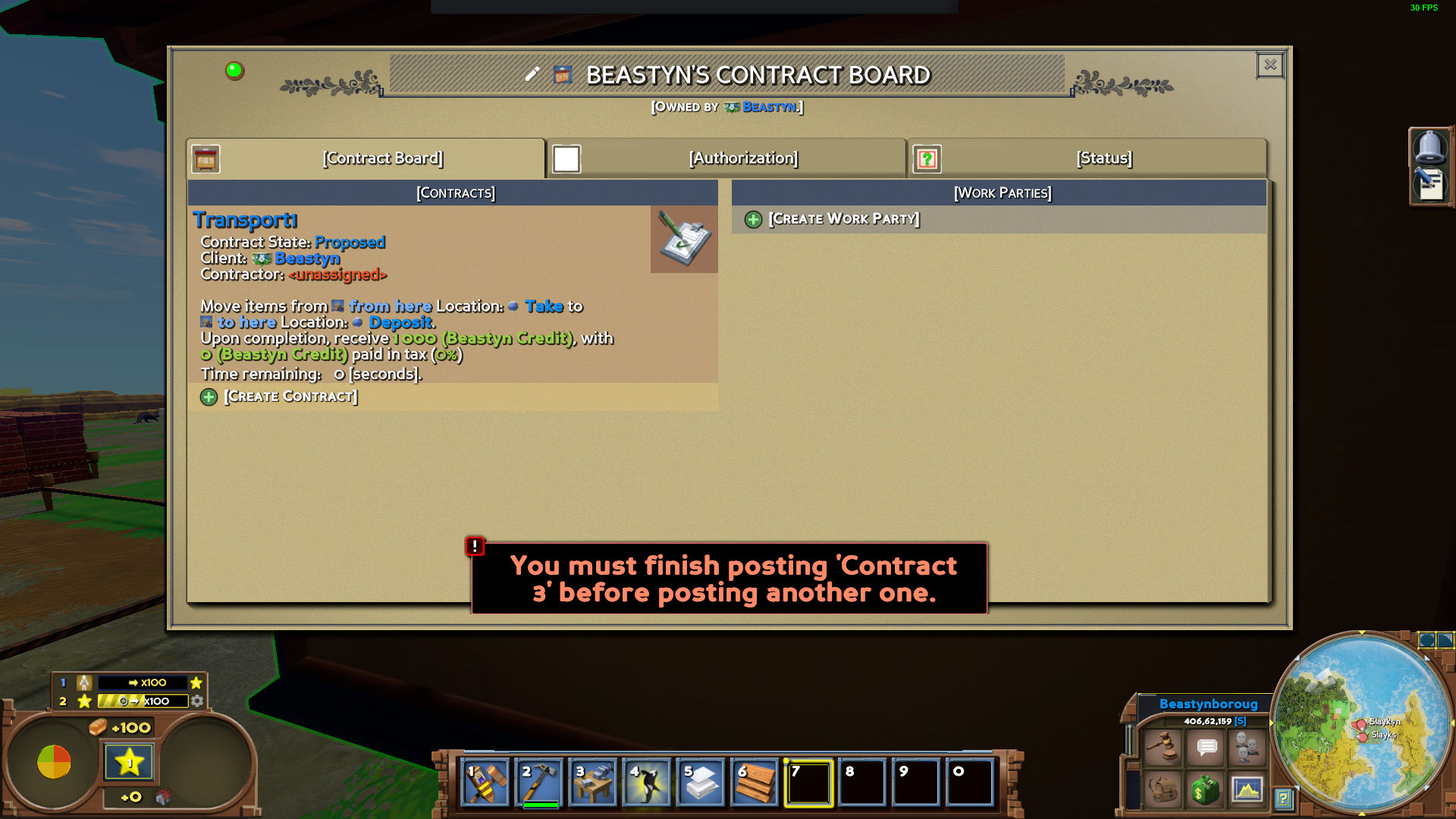

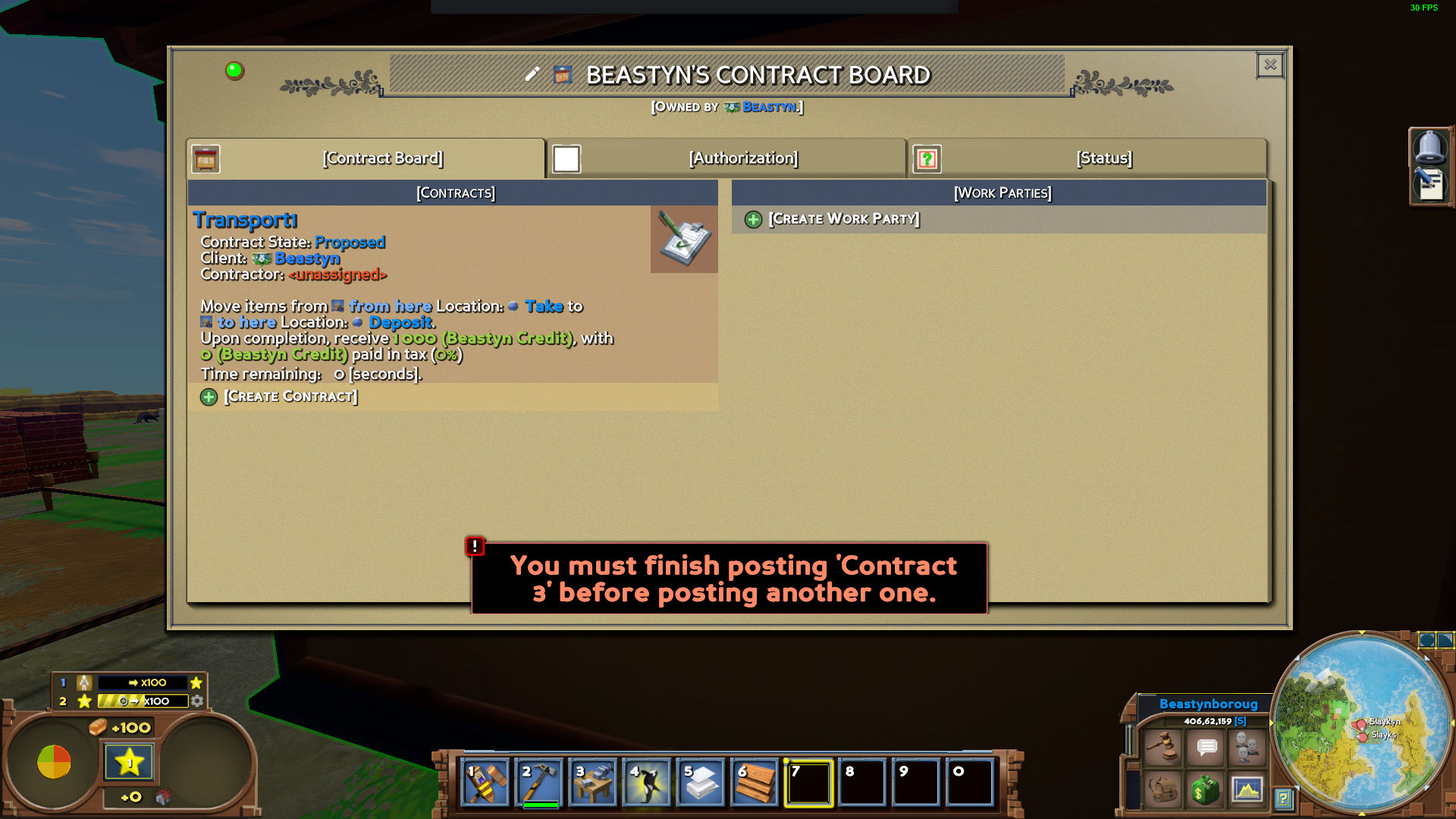

386,701 | 11,449,129,359 | IssuesEvent | 2020-02-06 06:09:51 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1384] Contracts: broken creation and acceptance | Priority: High Status: Fixed | 1. Try to create a contract

Don't finish it

2. Try to create the new one

3. And now try to open non-finished one from the Work tab

You can't

And from Economy viewer too

And you can't accept a contract too with the same reason (no contract UI)

| 1.0 | [0.9.0 staging-1384] Contracts: broken creation and acceptance - 1. Try to create a contract

Don't finish it

2. Try to create the new one

3. And now try to open non-finished one from the Work tab

You can't

And from Economy viewer too

And you can't accept a contract too with the same reason (no contract UI)

| priority | contracts broken creation and acceptance try to create a contract don t finish it try to create the new one and now try to open non finished one from the work tab you can t and from economy viewer too and you can t accept a contract too with the same reason no contract ui | 1 |

398,796 | 11,742,347,795 | IssuesEvent | 2020-03-12 00:27:27 | statechannels/monorepo | https://api.github.com/repos/statechannels/monorepo | closed | Create workflow that opens and directly funds a ledger channel | High Priority xstate-wallet | We need a workflow that opens and directly funds a ledger channel with the hub. | 1.0 | Create workflow that opens and directly funds a ledger channel - We need a workflow that opens and directly funds a ledger channel with the hub. | priority | create workflow that opens and directly funds a ledger channel we need a workflow that opens and directly funds a ledger channel with the hub | 1 |

480,800 | 13,867,143,216 | IssuesEvent | 2020-10-16 08:01:30 | wso2/product-is | https://api.github.com/repos/wso2/product-is | opened | Misleading description in Account disable setting | Priority/High identity-core improvement ux | **Is your suggestion related to an experience ? Please describe.**

Under Account Disable setting, there is a config to enable the feature and the description says `Allow an administrative user to disable user accounts`.

However, regardless of the feature is enabled or not, administrators are able to disable the account by management console or via API.

However, the configuration is taken into consideration for sending the account disable notifications to the user.

**Describe the improvement**

Some suggestions,

- Change the lable/desciption of the configuration to a more meaningful one

- Prevent allowing the administrators to disable accounts as the configuration explains

**Additional context**

- if the lables/description is changed it must be coming from the underneath governance connector implementationon

| 1.0 | Misleading description in Account disable setting - **Is your suggestion related to an experience ? Please describe.**

Under Account Disable setting, there is a config to enable the feature and the description says `Allow an administrative user to disable user accounts`.

However, regardless of the feature is enabled or not, administrators are able to disable the account by management console or via API.

However, the configuration is taken into consideration for sending the account disable notifications to the user.

**Describe the improvement**

Some suggestions,

- Change the lable/desciption of the configuration to a more meaningful one

- Prevent allowing the administrators to disable accounts as the configuration explains

**Additional context**

- if the lables/description is changed it must be coming from the underneath governance connector implementationon

| priority | misleading description in account disable setting is your suggestion related to an experience please describe under account disable setting there is a config to enable the feature and the description says allow an administrative user to disable user accounts however regardless of the feature is enabled or not administrators are able to disable the account by management console or via api however the configuration is taken into consideration for sending the account disable notifications to the user describe the improvement some suggestions change the lable desciption of the configuration to a more meaningful one prevent allowing the administrators to disable accounts as the configuration explains additional context if the lables description is changed it must be coming from the underneath governance connector implementationon | 1 |

580,458 | 17,258,680,611 | IssuesEvent | 2021-07-22 02:20:59 | TestCentric/testcentric-gui | https://api.github.com/repos/TestCentric/testcentric-gui | closed | Restore TestPropertiesDialog for use with the mini-GUI | Feature High Priority | The older `TestPropertiesDialog` was removed in favor of `TestPropertiesView`. However, this leaves the mini-GUI without any way to display test or result details.

We'll restore and modify the original dialog to work with the mini-GUI. Subsequently, we may be able to merge the two views into one, housing it either in a dialog or a tabbed window according to the layout selected. | 1.0 | Restore TestPropertiesDialog for use with the mini-GUI - The older `TestPropertiesDialog` was removed in favor of `TestPropertiesView`. However, this leaves the mini-GUI without any way to display test or result details.

We'll restore and modify the original dialog to work with the mini-GUI. Subsequently, we may be able to merge the two views into one, housing it either in a dialog or a tabbed window according to the layout selected. | priority | restore testpropertiesdialog for use with the mini gui the older testpropertiesdialog was removed in favor of testpropertiesview however this leaves the mini gui without any way to display test or result details we ll restore and modify the original dialog to work with the mini gui subsequently we may be able to merge the two views into one housing it either in a dialog or a tabbed window according to the layout selected | 1 |

434,639 | 12,520,997,495 | IssuesEvent | 2020-06-03 16:46:12 | scality/metalk8s | https://api.github.com/repos/scality/metalk8s | opened | Custom salt k8s module to List do not work with CustomObjects | complexity:easy kind:bug priority:high | <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately to moonshot-platform@scality.com

-->

**Component**:

'salt'

<!-- E.g. 'salt', 'containers', 'kubernetes', 'build', 'tests'... -->

**What happened**:

If you try to list custom object using salt the output is wrong

```

[root@bootstrap /]# salt-run salt.cmd metalk8s_kubernetes.list_objects kind="PrometheusRule" apiVersion="monitoring.coreos.com/v1" namespace="metalk8s-monitoring"

- items

- kind

- apiVersion

- metadata

```

**What was expected**:

Real object output

**Steps to reproduce**

:arrow_double_up:

**Resolution proposal** (optional):

```

diff --git a/salt/_utils/kubernetes_utils.py b/salt/_utils/kubernetes_utils.py

index f7849436..ed2926a4 100644

--- a/salt/_utils/kubernetes_utils.py

+++ b/salt/_utils/kubernetes_utils.py

@@ -433,13 +433,6 @@ class CustomApiClient(ApiClient):

result = base_method(*args, **kwargs)

- if verb == 'list':

- return CustomObject({

- 'kind': '{}List'.format(self.kind),

- 'apiVersion': '{s.group}/{s.version}'.format(s=self),

- 'items': [CustomObject(obj) for obj in result],

- })

-

# TODO: do we have a result for `delete` methods?

return CustomObject(result)

``` | 1.0 | Custom salt k8s module to List do not work with CustomObjects - <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately to moonshot-platform@scality.com

-->

**Component**:

'salt'

<!-- E.g. 'salt', 'containers', 'kubernetes', 'build', 'tests'... -->

**What happened**:

If you try to list custom object using salt the output is wrong

```

[root@bootstrap /]# salt-run salt.cmd metalk8s_kubernetes.list_objects kind="PrometheusRule" apiVersion="monitoring.coreos.com/v1" namespace="metalk8s-monitoring"

- items

- kind

- apiVersion

- metadata

```

**What was expected**:

Real object output

**Steps to reproduce**

:arrow_double_up:

**Resolution proposal** (optional):

```

diff --git a/salt/_utils/kubernetes_utils.py b/salt/_utils/kubernetes_utils.py

index f7849436..ed2926a4 100644

--- a/salt/_utils/kubernetes_utils.py

+++ b/salt/_utils/kubernetes_utils.py

@@ -433,13 +433,6 @@ class CustomApiClient(ApiClient):

result = base_method(*args, **kwargs)

- if verb == 'list':

- return CustomObject({

- 'kind': '{}List'.format(self.kind),

- 'apiVersion': '{s.group}/{s.version}'.format(s=self),

- 'items': [CustomObject(obj) for obj in result],

- })

-

# TODO: do we have a result for `delete` methods?

return CustomObject(result)

``` | priority | custom salt module to list do not work with customobjects please use this template while reporting a bug and provide as much info as possible not doing so may result in your bug not being addressed in a timely manner thanks if the matter is security related please disclose it privately to moonshot platform scality com component salt what happened if you try to list custom object using salt the output is wrong salt run salt cmd kubernetes list objects kind prometheusrule apiversion monitoring coreos com namespace monitoring items kind apiversion metadata what was expected real object output steps to reproduce arrow double up resolution proposal optional diff git a salt utils kubernetes utils py b salt utils kubernetes utils py index a salt utils kubernetes utils py b salt utils kubernetes utils py class customapiclient apiclient result base method args kwargs if verb list return customobject kind list format self kind apiversion s group s version format s self items todo do we have a result for delete methods return customobject result | 1 |

671,981 | 22,782,524,594 | IssuesEvent | 2022-07-08 21:52:19 | mskcc/pluto-cwl | https://api.github.com/repos/mskcc/pluto-cwl | closed | fix duplicate file output in portal workflow | bug high priority | [`portal_cna_data_file`](https://github.com/mskcc/pluto-cwl/blob/3bc4fab5503e58521ed0eb9c0d035ac18460dc13/cwl/portal-workflow.cwl#L499) and [`merged_cna_file`](https://github.com/mskcc/pluto-cwl/blob/3bc4fab5503e58521ed0eb9c0d035ac18460dc13/cwl/portal-workflow.cwl#L517) are being output with the same filename (`data_CNA.txt`) which is causing one file or the other to get automatically renamed by cwltool / Toil

this is breaking test cases that expect both files to be named `data_CNA.txt`

in most test cases these two files also end up with the same sha1 hash so they are identical files

Need to figure out what the handling method for this should be | 1.0 | fix duplicate file output in portal workflow - [`portal_cna_data_file`](https://github.com/mskcc/pluto-cwl/blob/3bc4fab5503e58521ed0eb9c0d035ac18460dc13/cwl/portal-workflow.cwl#L499) and [`merged_cna_file`](https://github.com/mskcc/pluto-cwl/blob/3bc4fab5503e58521ed0eb9c0d035ac18460dc13/cwl/portal-workflow.cwl#L517) are being output with the same filename (`data_CNA.txt`) which is causing one file or the other to get automatically renamed by cwltool / Toil

this is breaking test cases that expect both files to be named `data_CNA.txt`

in most test cases these two files also end up with the same sha1 hash so they are identical files

Need to figure out what the handling method for this should be | priority | fix duplicate file output in portal workflow and are being output with the same filename data cna txt which is causing one file or the other to get automatically renamed by cwltool toil this is breaking test cases that expect both files to be named data cna txt in most test cases these two files also end up with the same hash so they are identical files need to figure out what the handling method for this should be | 1 |

315,850 | 9,632,839,797 | IssuesEvent | 2019-05-15 17:09:23 | epam/cloud-pipeline | https://api.github.com/repos/epam/cloud-pipeline | closed | Expose Git SSH clone URL to the GUI | kind/enhancement priority/high state/verify sys/gui | Extends #245

**API**

1. All the API methods, that provide clone URL (e.g. `/pipeline/{id}/load`) via the `repository`attribute - shall expose additional field `repository_ssh`

2. `repository_ssh` shall be retrieved from the GitLab, same as https URL

**GUI**

1. Add `HTTP/SSH` selector to the `GIT REPOSITORY` popup (e.g. [ant dropdown](https://2x.ant.design/components/dropdown/))

2. Default selection - `HTTP`, which shall display the same URL as now (`repository`). Popup header shall contain: `Clone repository via HTTPS` (where HTTPS is a dropdown)

3. If `SSH` is used - a value of the new `repository_ssh` attribute shall be used. Popup header shall contain: `Clone repository via SSH` (where SSH is a dropdown) | 1.0 | Expose Git SSH clone URL to the GUI - Extends #245

**API**

1. All the API methods, that provide clone URL (e.g. `/pipeline/{id}/load`) via the `repository`attribute - shall expose additional field `repository_ssh`

2. `repository_ssh` shall be retrieved from the GitLab, same as https URL

**GUI**

1. Add `HTTP/SSH` selector to the `GIT REPOSITORY` popup (e.g. [ant dropdown](https://2x.ant.design/components/dropdown/))

2. Default selection - `HTTP`, which shall display the same URL as now (`repository`). Popup header shall contain: `Clone repository via HTTPS` (where HTTPS is a dropdown)

3. If `SSH` is used - a value of the new `repository_ssh` attribute shall be used. Popup header shall contain: `Clone repository via SSH` (where SSH is a dropdown) | priority | expose git ssh clone url to the gui extends api all the api methods that provide clone url e g pipeline id load via the repository attribute shall expose additional field repository ssh repository ssh shall be retrieved from the gitlab same as https url gui add http ssh selector to the git repository popup e g default selection http which shall display the same url as now repository popup header shall contain clone repository via https where https is a dropdown if ssh is used a value of the new repository ssh attribute shall be used popup header shall contain clone repository via ssh where ssh is a dropdown | 1 |

412,614 | 12,053,179,839 | IssuesEvent | 2020-04-15 08:57:23 | TheOnlineJudge/ojudge | https://api.github.com/repos/TheOnlineJudge/ojudge | opened | Implement migration mechanism from joomla users | enhancement priority: high | The current Online Judge uses joomla. We have to implement a migration mechanism for those users to the new systems. This should include:

- Implement a 'joomla' password type, so the current joomla hashes can be used, probably forcing the user to update the password on first login, so it can be migrated to 'bcrypt', or automatically changing the hash to 'bcrypt' on first succesfull login.

- Keep track of the old userid, to correctly migrate submissions, or, maintain the same user IDs (I guess that migration would be preferred to allow the new user management to keep it's own sequence for user IDs).

- Link all of the submissions to the correct new IDs.

- Same thing with contests, but this needs more study, as the contest system will be different. | 1.0 | Implement migration mechanism from joomla users - The current Online Judge uses joomla. We have to implement a migration mechanism for those users to the new systems. This should include:

- Implement a 'joomla' password type, so the current joomla hashes can be used, probably forcing the user to update the password on first login, so it can be migrated to 'bcrypt', or automatically changing the hash to 'bcrypt' on first succesfull login.

- Keep track of the old userid, to correctly migrate submissions, or, maintain the same user IDs (I guess that migration would be preferred to allow the new user management to keep it's own sequence for user IDs).

- Link all of the submissions to the correct new IDs.

- Same thing with contests, but this needs more study, as the contest system will be different. | priority | implement migration mechanism from joomla users the current online judge uses joomla we have to implement a migration mechanism for those users to the new systems this should include implement a joomla password type so the current joomla hashes can be used probably forcing the user to update the password on first login so it can be migrated to bcrypt or automatically changing the hash to bcrypt on first succesfull login keep track of the old userid to correctly migrate submissions or maintain the same user ids i guess that migration would be preferred to allow the new user management to keep it s own sequence for user ids link all of the submissions to the correct new ids same thing with contests but this needs more study as the contest system will be different | 1 |

667,493 | 22,475,431,436 | IssuesEvent | 2022-06-22 11:53:25 | ballerina-platform/ballerina-dev-website | https://api.github.com/repos/ballerina-platform/ballerina-dev-website | closed | Fix the Formatting of the Release Note Template | Priority/Highest Type/Task Points/0.5 Area/CommonPages | ## Description

Need to fix the formatting of the release note templates below as per the latest updates for both Swan Lake and 1.2.x:

https://github.com/ballerina-platform/ballerina-release/blob/master/release-notes/release-note-template.md

## Related website/documentation area

> Add/Uncomment the relevant area label out of the following.

<!--Area/BBEs-->

<!--Area/HomePageSamples-->

<!--Area/LearnPages-->

<!--Area/CommonPages-->

<!--Area/Backend-->

<!--Area/UIUX-->

<!--Area/Workflows-->

<!--Area/Blog-->

## Describe your task(s)

> A detailed description of the task.

## Related issue(s) (optional)

> Any related issues such as sub tasks and issues reported in other repositories (e.g., component repositories), similar problems, etc.

## Suggested label(s) (optional)

> Optional comma-separated list of suggested labels. Non committers can’t assign labels to issues, and thereby, this will help issue creators who are not a committer to suggest possible labels.

## Suggested assignee(s) (optional)

> Optional comma-separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, and thereby, this will help issue creators who are not a committer to suggest possible assignees.

| 1.0 | Fix the Formatting of the Release Note Template - ## Description

Need to fix the formatting of the release note templates below as per the latest updates for both Swan Lake and 1.2.x:

https://github.com/ballerina-platform/ballerina-release/blob/master/release-notes/release-note-template.md

## Related website/documentation area

> Add/Uncomment the relevant area label out of the following.

<!--Area/BBEs-->

<!--Area/HomePageSamples-->

<!--Area/LearnPages-->

<!--Area/CommonPages-->

<!--Area/Backend-->

<!--Area/UIUX-->

<!--Area/Workflows-->

<!--Area/Blog-->

## Describe your task(s)

> A detailed description of the task.

## Related issue(s) (optional)

> Any related issues such as sub tasks and issues reported in other repositories (e.g., component repositories), similar problems, etc.

## Suggested label(s) (optional)

> Optional comma-separated list of suggested labels. Non committers can’t assign labels to issues, and thereby, this will help issue creators who are not a committer to suggest possible labels.

## Suggested assignee(s) (optional)

> Optional comma-separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, and thereby, this will help issue creators who are not a committer to suggest possible assignees.

| priority | fix the formatting of the release note template description need to fix the formatting of the release note templates below as per the latest updates for both swan lake and x related website documentation area add uncomment the relevant area label out of the following describe your task s a detailed description of the task related issue s optional any related issues such as sub tasks and issues reported in other repositories e g component repositories similar problems etc suggested label s optional optional comma separated list of suggested labels non committers can’t assign labels to issues and thereby this will help issue creators who are not a committer to suggest possible labels suggested assignee s optional optional comma separated list of suggested team members who should attend the issue non committers can’t assign issues to assignees and thereby this will help issue creators who are not a committer to suggest possible assignees | 1 |

225,268 | 7,480,265,911 | IssuesEvent | 2018-04-04 16:51:05 | ECP-CANDLE/Supervisor | https://api.github.com/repos/ECP-CANDLE/Supervisor | closed | Add test modules to workflows from report | priority=high | Add a test module for each workflow. This feeds into #20 . | 1.0 | Add test modules to workflows from report - Add a test module for each workflow. This feeds into #20 . | priority | add test modules to workflows from report add a test module for each workflow this feeds into | 1 |

503,820 | 14,598,393,190 | IssuesEvent | 2020-12-21 00:37:00 | Algalish/SmartIssueTracker | https://api.github.com/repos/Algalish/SmartIssueTracker | closed | Can't Rotate Foundation Block Anymore [HOLD mode question] | high-priority question | Ever since the update, the blocks are now fixed in one orientation and cannot be rotated at all. This mod can't be used until that's fixed. | 1.0 | Can't Rotate Foundation Block Anymore [HOLD mode question] - Ever since the update, the blocks are now fixed in one orientation and cannot be rotated at all. This mod can't be used until that's fixed. | priority | can t rotate foundation block anymore ever since the update the blocks are now fixed in one orientation and cannot be rotated at all this mod can t be used until that s fixed | 1 |

822,242 | 30,859,499,054 | IssuesEvent | 2023-08-03 00:53:18 | DiscoTrayStudios/WaterQualityTester | https://api.github.com/repos/DiscoTrayStudios/WaterQualityTester | opened | Streamline image-taking process | type: incomplete category: I/O priority: high size: small category: ui | **Describe the incomplete feature**

When an image is taken which cannot be fully processed by the computer vision library, the user is taken to a different screen where they have the choice to proceed to the results page (and view invalid data) or go back to the camera page to take another picture. This can be slow and tedious.

**Describe the solution you'd like**

If the image cannot be completely processed, stay on the camera page and give the user a pop-up notification describing the error (currently, one of "cannot find color key" or "cannot find test strip"). If the image is completely processed, go straight to the results page.

| 1.0 | Streamline image-taking process - **Describe the incomplete feature**

When an image is taken which cannot be fully processed by the computer vision library, the user is taken to a different screen where they have the choice to proceed to the results page (and view invalid data) or go back to the camera page to take another picture. This can be slow and tedious.

**Describe the solution you'd like**

If the image cannot be completely processed, stay on the camera page and give the user a pop-up notification describing the error (currently, one of "cannot find color key" or "cannot find test strip"). If the image is completely processed, go straight to the results page.

| priority | streamline image taking process describe the incomplete feature when an image is taken which cannot be fully processed by the computer vision library the user is taken to a different screen where they have the choice to proceed to the results page and view invalid data or go back to the camera page to take another picture this can be slow and tedious describe the solution you d like if the image cannot be completely processed stay on the camera page and give the user a pop up notification describing the error currently one of cannot find color key or cannot find test strip if the image is completely processed go straight to the results page | 1 |

490,561 | 14,135,966,660 | IssuesEvent | 2020-11-10 03:03:17 | bitrise-io/bb | https://api.github.com/repos/bitrise-io/bb | closed | Task: Combine V1 and V2 Slack apps | Category: Development Priority: High Status: Complete | ### Description:

The Slack integration overhaul has been pushed to a future release. We are going to combine the V1 and V2 Slack apps and re-deploy it to the new Heroku environment.

#### Subtasks

- [x] Remove credentials from V1 application.

- [x] Upload V1 application to GitHub => #3.

- [x] Adopt command logic in favour of parameter logic => #4.

- [x] Write "coming soon" messages for unimplemented features => #5.

- [x] Deploy to Heroku.

- [x] Request Simon to delete the old deployment.

### Implemented in:

Rust in the Slack app.

| 1.0 | Task: Combine V1 and V2 Slack apps - ### Description:

The Slack integration overhaul has been pushed to a future release. We are going to combine the V1 and V2 Slack apps and re-deploy it to the new Heroku environment.

#### Subtasks

- [x] Remove credentials from V1 application.

- [x] Upload V1 application to GitHub => #3.

- [x] Adopt command logic in favour of parameter logic => #4.

- [x] Write "coming soon" messages for unimplemented features => #5.

- [x] Deploy to Heroku.

- [x] Request Simon to delete the old deployment.

### Implemented in:

Rust in the Slack app.

| priority | task combine and slack apps description the slack integration overhaul has been pushed to a future release we are going to combine the and slack apps and re deploy it to the new heroku environment subtasks remove credentials from application upload application to github adopt command logic in favour of parameter logic write coming soon messages for unimplemented features deploy to heroku request simon to delete the old deployment implemented in rust in the slack app | 1 |

339,225 | 10,244,663,154 | IssuesEvent | 2019-08-20 10:58:59 | codetapacademy/codetap.academy | https://api.github.com/repos/codetapacademy/codetap.academy | opened | feat: add publish date to lecture | Priority: High Status: Available Type: Enhancement |

this is part of feat: enhance lecture with publish date and member level #173 | 1.0 | feat: add publish date to lecture -

this is part of feat: enhance lecture with publish date and member level #173 | priority | feat add publish date to lecture this is part of feat enhance lecture with publish date and member level | 1 |

239,690 | 7,799,928,042 | IssuesEvent | 2018-06-09 02:14:13 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0006206:

relation type field can be empty | Bug Crm Mantis high priority | **Reported by pschuele on 4 Apr 2012 12:12**

**Version:** Maischa (2011-05-9)

relation type field can be empty

**Steps to reproduce:** - add contact to lead

- click into relation type col in contact grid

- remove value

- click 'Ok' without leaving the field

-> contact relation is added without relation type

**Additional information:** Relation type not supported.

.../Tinebase/Record/Abstract.php(937): Crm_Model_Lead->_setFromJson()

.../Tinebase/Record/Abstract.php(327): Tinebase_Record_Abstract->setFromJson()

.../Tinebase/Frontend/Json/Abstract.php(180): Tinebase_Record_Abstract->setFromJsonInUsersTimezone()

.../Crm/Frontend/Json.php(89): Tinebase_Frontend_Json_Abstract->_save()

[internal function]: Crm_Frontend_Json->saveLead()

.../library/Zend/Server/Abstract.php(232): call_user_func_array()

.../Zend/Json/Server.php(558): Zend_Server_Abstract->_dispatch()

.../Zend/Json/Server.php(197): Zend_Json_Server->_handle()

.../Tinebase/Server/Json.php(140): Zend_Json_Server->handle()

.../Tinebase/Server/Json.php(76): Tinebase_Server_Json->_handle()

.../Tinebase/Core.php(235): Tinebase_Server_Json->handle()

.../index.php(57): Tinebase_Core::dispatchRequest()

| 1.0 | 0006206:

relation type field can be empty - **Reported by pschuele on 4 Apr 2012 12:12**

**Version:** Maischa (2011-05-9)

relation type field can be empty

**Steps to reproduce:** - add contact to lead

- click into relation type col in contact grid

- remove value

- click 'Ok' without leaving the field

-> contact relation is added without relation type

**Additional information:** Relation type not supported.

.../Tinebase/Record/Abstract.php(937): Crm_Model_Lead->_setFromJson()

.../Tinebase/Record/Abstract.php(327): Tinebase_Record_Abstract->setFromJson()

.../Tinebase/Frontend/Json/Abstract.php(180): Tinebase_Record_Abstract->setFromJsonInUsersTimezone()

.../Crm/Frontend/Json.php(89): Tinebase_Frontend_Json_Abstract->_save()

[internal function]: Crm_Frontend_Json->saveLead()

.../library/Zend/Server/Abstract.php(232): call_user_func_array()

.../Zend/Json/Server.php(558): Zend_Server_Abstract->_dispatch()

.../Zend/Json/Server.php(197): Zend_Json_Server->_handle()

.../Tinebase/Server/Json.php(140): Zend_Json_Server->handle()

.../Tinebase/Server/Json.php(76): Tinebase_Server_Json->_handle()

.../Tinebase/Core.php(235): Tinebase_Server_Json->handle()

.../index.php(57): Tinebase_Core::dispatchRequest()

| priority | relation type field can be empty reported by pschuele on apr version maischa relation type field can be empty steps to reproduce add contact to lead click into relation type col in contact grid remove value click ok without leaving the field gt contact relation is added without relation type additional information relation type not supported tinebase record abstract php crm model lead gt setfromjson tinebase record abstract php tinebase record abstract gt setfromjson tinebase frontend json abstract php tinebase record abstract gt setfromjsoninuserstimezone crm frontend json php tinebase frontend json abstract gt save crm frontend json gt savelead library zend server abstract php call user func array zend json server php zend server abstract gt dispatch zend json server php zend json server gt handle tinebase server json php zend json server gt handle tinebase server json php tinebase server json gt handle tinebase core php tinebase server json gt handle index php tinebase core dispatchrequest | 1 |

424,629 | 12,321,164,333 | IssuesEvent | 2020-05-13 08:16:34 | incognitochain/incognito-chain | https://api.github.com/repos/incognitochain/incognito-chain | opened | [Portal][Local] List issues | Priority: High Type: Bug | - [ ] Liquidation by exchange rate wrong

- [ ] Unlock collateral incorrect when redeem success

| 1.0 | [Portal][Local] List issues - - [ ] Liquidation by exchange rate wrong

- [ ] Unlock collateral incorrect when redeem success

| priority | list issues liquidation by exchange rate wrong unlock collateral incorrect when redeem success | 1 |

735,094 | 25,379,046,409 | IssuesEvent | 2022-11-21 16:07:26 | zowe/zowe-cli | https://api.github.com/repos/zowe/zowe-cli | closed | Zowe CLI Exit Code 0 for Errors | bug good first issue priority-high | For example (on windows): `zowe jobs -g && echo %ERRORLEVEL%` results in an exit code `0` although the command itself reports `Command Error:`.

This exit code should probably be a non-zero value. | 1.0 | Zowe CLI Exit Code 0 for Errors - For example (on windows): `zowe jobs -g && echo %ERRORLEVEL%` results in an exit code `0` although the command itself reports `Command Error:`.

This exit code should probably be a non-zero value. | priority | zowe cli exit code for errors for example on windows zowe jobs g echo errorlevel results in an exit code although the command itself reports command error this exit code should probably be a non zero value | 1 |

636,438 | 20,600,433,577 | IssuesEvent | 2022-03-06 06:41:44 | ut-issl/tlm-cmd-db | https://api.github.com/repos/ut-issl/tlm-cmd-db | opened | C2A内のenumとtlm DB上のstatus変換の整合性をとるのがめんどくさすぎる | help wanted priority::high tools WINGS | ## 概要

C2A内のenumとtlm DB上のstatus変換の整合性をとるのがめんどくさすぎる

## 詳細

- https://github.com/ut-issl/tlm-cmd-db/blob/d7e6a2115ef33a595c749fbd87781328a40f4dda/TLM_DB/SAMPLE_TLM_DB_HK.csv#L25 のようなstatus変換が,ソースコードのenumと整合をいちいち取るのがだるすぎる.

- 普通に更新漏れも起きるし

## close条件

なんとかなったら

| 1.0 | C2A内のenumとtlm DB上のstatus変換の整合性をとるのがめんどくさすぎる - ## 概要

C2A内のenumとtlm DB上のstatus変換の整合性をとるのがめんどくさすぎる

## 詳細

- https://github.com/ut-issl/tlm-cmd-db/blob/d7e6a2115ef33a595c749fbd87781328a40f4dda/TLM_DB/SAMPLE_TLM_DB_HK.csv#L25 のようなstatus変換が,ソースコードのenumと整合をいちいち取るのがだるすぎる.

- 普通に更新漏れも起きるし

## close条件

なんとかなったら

| priority | db上のstatus変換の整合性をとるのがめんどくさすぎる 概要 db上のstatus変換の整合性をとるのがめんどくさすぎる 詳細 のようなstatus変換が,ソースコードのenumと整合をいちいち取るのがだるすぎる. 普通に更新漏れも起きるし close条件 なんとかなったら | 1 |

492,664 | 14,217,253,946 | IssuesEvent | 2020-11-17 10:06:05 | bounswe/bounswe2020group4 | https://api.github.com/repos/bounswe/bounswe2020group4 | closed | (BKND) Finalize the Project Plan | Backend Effort: Medium Priority: High Status: Completed Task: Assignment | Backend team will update the Project Plan to shape it into its final form.

Deadline: 19/11/20 | 1.0 | (BKND) Finalize the Project Plan - Backend team will update the Project Plan to shape it into its final form.

Deadline: 19/11/20 | priority | bknd finalize the project plan backend team will update the project plan to shape it into its final form deadline | 1 |

179,826 | 6,628,922,841 | IssuesEvent | 2017-09-24 01:08:20 | KeplerGO/PyKE | https://api.github.com/repos/KeplerGO/PyKE | closed | Release PyKE v3.0.0 | high priority | Thanks to @mirca's incredibly hard work, the master branch of PyKE is now compatible with Python 3 and no longer depends on PyRAF (cf #12), which were the key goals for "PyKe v3.0" aka "PyKE3". This means we can now start planning the first official release of PyKE3!

Let's start by releasing a "3.0.beta" and announce it to a small audience on social media, followed by an official "3.0.0" a few weeks later announced on the website.

Tasks to complete ahead of the release are likely to include the following:

- [x] Review the documentation and its hosting

- [x] Edit the keplerscience website to reflect PyKE 3.0

- [x] Ensure installation and citation instructions in the README are up to date

- [x] Add the `kepdraw` tool [#18]

- [x] Add the `kepsff` tool [#19]

- [x] Add a simple tutorial to the sphinx docs that explains how to get a lightcurve from K2 pixels using `kepmask` + `kepextract` + `kepflatten` + `kepsff` + `kepdraw` (cf. https://keplerscience.arc.nasa.gov/PyKEprimerWalkthroughE.shtml)

- [x] Decide on a name for PyKE to use in PyPI (`pyke`, `pykep`, `pykepler` are all taken by others). Let's use `pyketools`? | 1.0 | Release PyKE v3.0.0 - Thanks to @mirca's incredibly hard work, the master branch of PyKE is now compatible with Python 3 and no longer depends on PyRAF (cf #12), which were the key goals for "PyKe v3.0" aka "PyKE3". This means we can now start planning the first official release of PyKE3!

Let's start by releasing a "3.0.beta" and announce it to a small audience on social media, followed by an official "3.0.0" a few weeks later announced on the website.

Tasks to complete ahead of the release are likely to include the following:

- [x] Review the documentation and its hosting

- [x] Edit the keplerscience website to reflect PyKE 3.0

- [x] Ensure installation and citation instructions in the README are up to date

- [x] Add the `kepdraw` tool [#18]

- [x] Add the `kepsff` tool [#19]

- [x] Add a simple tutorial to the sphinx docs that explains how to get a lightcurve from K2 pixels using `kepmask` + `kepextract` + `kepflatten` + `kepsff` + `kepdraw` (cf. https://keplerscience.arc.nasa.gov/PyKEprimerWalkthroughE.shtml)

- [x] Decide on a name for PyKE to use in PyPI (`pyke`, `pykep`, `pykepler` are all taken by others). Let's use `pyketools`? | priority | release pyke thanks to mirca s incredibly hard work the master branch of pyke is now compatible with python and no longer depends on pyraf cf which were the key goals for pyke aka this means we can now start planning the first official release of let s start by releasing a beta and announce it to a small audience on social media followed by an official a few weeks later announced on the website tasks to complete ahead of the release are likely to include the following review the documentation and its hosting edit the keplerscience website to reflect pyke ensure installation and citation instructions in the readme are up to date add the kepdraw tool add the kepsff tool add a simple tutorial to the sphinx docs that explains how to get a lightcurve from pixels using kepmask kepextract kepflatten kepsff kepdraw cf decide on a name for pyke to use in pypi pyke pykep pykepler are all taken by others let s use pyketools | 1 |

178,576 | 6,612,160,922 | IssuesEvent | 2017-09-20 01:55:23 | portworx/torpedo | https://api.github.com/repos/portworx/torpedo | closed | Generate application specs from yaml files | framework priority/high | Currently the applications are programiatically defined in golang. This takes a learning curve for someone to add a new application.

End users are more familiar with yaml config files for describing k8s primitives. So we need a stub module that takes yaml files and auto-genrates golang objects which subsequently get used by the torpedo framework. | 1.0 | Generate application specs from yaml files - Currently the applications are programiatically defined in golang. This takes a learning curve for someone to add a new application.

End users are more familiar with yaml config files for describing k8s primitives. So we need a stub module that takes yaml files and auto-genrates golang objects which subsequently get used by the torpedo framework. | priority | generate application specs from yaml files currently the applications are programiatically defined in golang this takes a learning curve for someone to add a new application end users are more familiar with yaml config files for describing primitives so we need a stub module that takes yaml files and auto genrates golang objects which subsequently get used by the torpedo framework | 1 |

394,573 | 11,645,444,196 | IssuesEvent | 2020-03-01 01:28:44 | Thorium-Sim/thorium | https://api.github.com/repos/Thorium-Sim/thorium | closed | Messages freezing core | priority/high type/bug | ### Requested By: Jordan

### Priority: High

### Version: 2.6.0

If the messaging core is open and a new message is received (tab changes color and the notification sound plays) core will freeze. Clicking won't do anything, key commands won't do anything. Closing the tab and opening a new one will resume functionality.

### Steps to Reproduce

Have the messaging core open but no messages selected.

Have a message sent from somewhere else.

Click in futility | 1.0 | Messages freezing core - ### Requested By: Jordan

### Priority: High

### Version: 2.6.0

If the messaging core is open and a new message is received (tab changes color and the notification sound plays) core will freeze. Clicking won't do anything, key commands won't do anything. Closing the tab and opening a new one will resume functionality.

### Steps to Reproduce

Have the messaging core open but no messages selected.

Have a message sent from somewhere else.

Click in futility | priority | messages freezing core requested by jordan priority high version if the messaging core is open and a new message is received tab changes color and the notification sound plays core will freeze clicking won t do anything key commands won t do anything closing the tab and opening a new one will resume functionality steps to reproduce have the messaging core open but no messages selected have a message sent from somewhere else click in futility | 1 |

610,053 | 18,892,927,737 | IssuesEvent | 2021-11-15 15:02:06 | 50ra4/clock-in-app | https://api.github.com/repos/50ra4/clock-in-app | closed | serviceのリファクタリング | priority high | ref: #61

- [x] 共通で使える関数を切り出して参照する形にする(`queryToxxx`とか)#132

- [x] dairyTimeRecordの配列にもorderが入るようにする #132

- [x] firestore、firebaseの型や関数などをservice外で利用させないようにする #136

- [x] 更新前後のデータを比較し、更新回数を抑止する #137 | 1.0 | serviceのリファクタリング - ref: #61

- [x] 共通で使える関数を切り出して参照する形にする(`queryToxxx`とか)#132

- [x] dairyTimeRecordの配列にもorderが入るようにする #132

- [x] firestore、firebaseの型や関数などをservice外で利用させないようにする #136

- [x] 更新前後のデータを比較し、更新回数を抑止する #137 | priority | serviceのリファクタリング ref 共通で使える関数を切り出して参照する形にする( querytoxxx とか) dairytimerecordの配列にもorderが入るようにする firestore、firebaseの型や関数などをservice外で利用させないようにする 更新前後のデータを比較し、更新回数を抑止する | 1 |

679,653 | 23,241,025,352 | IssuesEvent | 2022-08-03 15:35:27 | bigbio/quantms | https://api.github.com/repos/bigbio/quantms | closed | DIANN changes in the current pipeline | enhancement high-priority dia analysis | ### Description of feature

Dear @daichengxin @vdemichev @jpfeuffer:

I have started testing the DIANN pipeline in quantms. The main problem I found is that still the pipeline is **really slow**. However, after talking to @vdemichev some other issues should be solved. First two ideas about what do we want to solve between DIANN and quantms:

quantms + diann should be able to:

- reanalyze DIA-based data in PRIDE Archive annotated in SDRFs and Uniprot FASTA files.

- export the results into standard file formats including mzTab + mzML (still pending issue here #119 that we will continue discussing)

- use the capabilities of quantms to run DIANN distributed and cloud as much as possible.

The current pipeline/parallelization does the following :

1. Generate the "generic config files" for the analysis including the PTMs, Enzyme rules, etc. For that, we use the following command in one node:

```

prepare_diann_parameters.py generate \

--enzyme "Trypsin" \

--fix_mod "" \

--var_mod "Oxidation (M),Carbamidomethyl (C)" \

--precursor_tolerence 10 \

--precursor_tolerence_unit ppm \

--fragment_tolerence 0.05 \

--fragment_tolerence_unit Da \

> GENERATE_DIANN_CFG.log

```

This actually generates a file (**diann_config.cfg**) like containing the following information:

```

--dir ./mzMLs --cut K*,R*,!*P --var-mod Oxidation,15.994915,M --var-mod Carbamidomethyl,57.021464,C --mass-acc 10 --mass-acc-ms1 20 --matrices --report-lib-info

```

2. Each raw file in the SDRF is converted to mzML; or the mzML that was converted outside the pipeline (ABSiex case) is used for the analysis.

3. For each mzML, we generate in parallel the corresponding **theoretical spectral library** using the following command:

```

diann `cat library_config.cfg` \

--fasta Homo-sapiens-uniprot-reviewed-isoforms-contaminants-decoy-202105.fasta \

--fasta-search \

--f 20181116_QEHFX3_BP_RSLCcap_hHeart_DIA_58.mzML \

--out-lib 20181116_QEHFX3_BP_RSLCcap_hHeart_DIA_58_lib.tsv \

--min-pr-mz 350 \

--max-pr-mz 1650 \

\

\

--missed-cleavages 2 \

--min-pep-len 6 \

--max-pep-len 40 \

--min-pr-charge 2 \

--max-pr-charge 4 \

--var-mods 3 \

--threads 25 \

--predictor \

--verbose 3 \

> diann.log

```

**Note**: This step is extremely slow. In addition, @vdemichev as commented me that generating a spectral library by raw file will give you wrong results in the next step (step 4) when searching all the data together and perform the quantification (**q-value** wrong).

4. The quantification step the pipeline uses all the mzMLs and libraries generated in the individual steps to perform the quantification and statistical assessment:

```

diann `cat diann_config.cfg` \

--lib N294-1_lib.tsv--lib N294-2_lib.tsv--lib N295-1_lib.tsv--lib N295-2_lib.tsv--lib N296-1_lib.tsv--lib N299-1_lib.tsv--lib N296-2_lib.tsv--lib N299-2_lib.tsv \

--relaxed-prot-inf \

--fasta Homo-sapiens-uniprot-reviewed-isoforms-contaminants-decoy-202105.fasta \

\

\

\

\

--threads 25 \

--missed-cleavages 2 \

--min-pep-len 6 \

--max-pep-len 40 \

--min-pr-charge 2 \

--max-pr-charge 4 \

--var-mods 3 \

--matrix-spec-q 0.01 \

\

--reannotate \

\

--out diann_report.tsv \

--verbose 3 \

> diann.log

```

Discussing with @vdemichev about performance, he mentioned that this approach will give you wrong statistical results.

@vdemichev

- can you suggest which changes can be done to parallelize DIANN?

- can you suggest which parameters can improve the performance of all the steps?

Lets discuss the ideas in this thread in details.

| 1.0 | DIANN changes in the current pipeline - ### Description of feature

Dear @daichengxin @vdemichev @jpfeuffer:

I have started testing the DIANN pipeline in quantms. The main problem I found is that still the pipeline is **really slow**. However, after talking to @vdemichev some other issues should be solved. First two ideas about what do we want to solve between DIANN and quantms:

quantms + diann should be able to:

- reanalyze DIA-based data in PRIDE Archive annotated in SDRFs and Uniprot FASTA files.

- export the results into standard file formats including mzTab + mzML (still pending issue here #119 that we will continue discussing)

- use the capabilities of quantms to run DIANN distributed and cloud as much as possible.

The current pipeline/parallelization does the following :

1. Generate the "generic config files" for the analysis including the PTMs, Enzyme rules, etc. For that, we use the following command in one node:

```

prepare_diann_parameters.py generate \

--enzyme "Trypsin" \

--fix_mod "" \

--var_mod "Oxidation (M),Carbamidomethyl (C)" \

--precursor_tolerence 10 \

--precursor_tolerence_unit ppm \

--fragment_tolerence 0.05 \

--fragment_tolerence_unit Da \

> GENERATE_DIANN_CFG.log

```

This actually generates a file (**diann_config.cfg**) like containing the following information:

```

--dir ./mzMLs --cut K*,R*,!*P --var-mod Oxidation,15.994915,M --var-mod Carbamidomethyl,57.021464,C --mass-acc 10 --mass-acc-ms1 20 --matrices --report-lib-info

```

2. Each raw file in the SDRF is converted to mzML; or the mzML that was converted outside the pipeline (ABSiex case) is used for the analysis.

3. For each mzML, we generate in parallel the corresponding **theoretical spectral library** using the following command:

```

diann `cat library_config.cfg` \

--fasta Homo-sapiens-uniprot-reviewed-isoforms-contaminants-decoy-202105.fasta \

--fasta-search \

--f 20181116_QEHFX3_BP_RSLCcap_hHeart_DIA_58.mzML \

--out-lib 20181116_QEHFX3_BP_RSLCcap_hHeart_DIA_58_lib.tsv \

--min-pr-mz 350 \

--max-pr-mz 1650 \

\

\

--missed-cleavages 2 \

--min-pep-len 6 \

--max-pep-len 40 \

--min-pr-charge 2 \

--max-pr-charge 4 \

--var-mods 3 \

--threads 25 \

--predictor \

--verbose 3 \

> diann.log

```

**Note**: This step is extremely slow. In addition, @vdemichev as commented me that generating a spectral library by raw file will give you wrong results in the next step (step 4) when searching all the data together and perform the quantification (**q-value** wrong).

4. The quantification step the pipeline uses all the mzMLs and libraries generated in the individual steps to perform the quantification and statistical assessment:

```

diann `cat diann_config.cfg` \

--lib N294-1_lib.tsv--lib N294-2_lib.tsv--lib N295-1_lib.tsv--lib N295-2_lib.tsv--lib N296-1_lib.tsv--lib N299-1_lib.tsv--lib N296-2_lib.tsv--lib N299-2_lib.tsv \

--relaxed-prot-inf \

--fasta Homo-sapiens-uniprot-reviewed-isoforms-contaminants-decoy-202105.fasta \

\

\

\

\

--threads 25 \

--missed-cleavages 2 \

--min-pep-len 6 \

--max-pep-len 40 \

--min-pr-charge 2 \

--max-pr-charge 4 \

--var-mods 3 \

--matrix-spec-q 0.01 \

\

--reannotate \

\

--out diann_report.tsv \

--verbose 3 \

> diann.log

```

Discussing with @vdemichev about performance, he mentioned that this approach will give you wrong statistical results.

@vdemichev

- can you suggest which changes can be done to parallelize DIANN?

- can you suggest which parameters can improve the performance of all the steps?

Lets discuss the ideas in this thread in details.

| priority | diann changes in the current pipeline description of feature dear daichengxin vdemichev jpfeuffer i have started testing the diann pipeline in quantms the main problem i found is that still the pipeline is really slow however after talking to vdemichev some other issues should be solved first two ideas about what do we want to solve between diann and quantms quantms diann should be able to reanalyze dia based data in pride archive annotated in sdrfs and uniprot fasta files export the results into standard file formats including mztab mzml still pending issue here that we will continue discussing use the capabilities of quantms to run diann distributed and cloud as much as possible the current pipeline parallelization does the following generate the generic config files for the analysis including the ptms enzyme rules etc for that we use the following command in one node prepare diann parameters py generate enzyme trypsin fix mod var mod oxidation m carbamidomethyl c precursor tolerence precursor tolerence unit ppm fragment tolerence fragment tolerence unit da generate diann cfg log this actually generates a file diann config cfg like containing the following information dir mzmls cut k r p var mod oxidation m var mod carbamidomethyl c mass acc mass acc matrices report lib info each raw file in the sdrf is converted to mzml or the mzml that was converted outside the pipeline absiex case is used for the analysis for each mzml we generate in parallel the corresponding theoretical spectral library using the following command diann cat library config cfg fasta homo sapiens uniprot reviewed isoforms contaminants decoy fasta fasta search f bp rslccap hheart dia mzml out lib bp rslccap hheart dia lib tsv min pr mz max pr mz missed cleavages min pep len max pep len min pr charge max pr charge var mods threads predictor verbose diann log note this step is extremely slow in addition vdemichev as commented me that generating a spectral library by raw file will give you wrong results in the next step step when searching all the data together and perform the quantification q value wrong the quantification step the pipeline uses all the mzmls and libraries generated in the individual steps to perform the quantification and statistical assessment diann cat diann config cfg lib lib tsv lib lib tsv lib lib tsv lib lib tsv lib lib tsv lib lib tsv lib lib tsv lib lib tsv relaxed prot inf fasta homo sapiens uniprot reviewed isoforms contaminants decoy fasta threads missed cleavages min pep len max pep len min pr charge max pr charge var mods matrix spec q reannotate out diann report tsv verbose diann log discussing with vdemichev about performance he mentioned that this approach will give you wrong statistical results vdemichev can you suggest which changes can be done to parallelize diann can you suggest which parameters can improve the performance of all the steps lets discuss the ideas in this thread in details | 1 |

679,195 | 23,223,952,427 | IssuesEvent | 2022-08-02 21:13:38 | netxs-group/vtm | https://api.github.com/repos/netxs-group/vtm | closed | Run applications as standalone processes | enhancement Terminal high-priority | The goal is to free the memory allocated by the application along with its termination.

Desktopio process can signal with OSC to use IPC instead of TTY (something like extended alternate screen). | 1.0 | Run applications as standalone processes - The goal is to free the memory allocated by the application along with its termination.

Desktopio process can signal with OSC to use IPC instead of TTY (something like extended alternate screen). | priority | run applications as standalone processes the goal is to free the memory allocated by the application along with its termination desktopio process can signal with osc to use ipc instead of tty something like extended alternate screen | 1 |

605,715 | 18,739,415,233 | IssuesEvent | 2021-11-04 11:50:46 | lima-vm/lima | https://api.github.com/repos/lima-vm/lima | closed | `lima busybox nslookup storage.googleapis.com 192.168.5.3` fails with `Can't find storage.googleapis.com: Parse error` | bug priority/high | `lima busybox nslookup storage.googleapis.com 192.168.5.3` fails with `Can't find storage.googleapis.com: Parse error`

```console

$ lima busybox nslookup storage.googleapis.com 192.168.5.3

Server: 192.168.5.3

Address: 192.168.5.3:53

Non-authoritative answer:

*** Can't find storage.googleapis.com: Parse error

Non-authoritative answer:

```

But it works with the real `nslookup`

```console

$ lima nslookup storage.googleapis.com 192.168.5.3

Server: 192.168.5.3

Address: 192.168.5.3#53

Non-authoritative answer:

Name: storage.googleapis.com

Address: 172.217.175.240

Name: storage.googleapis.com

Address: 142.250.196.112

Name: storage.googleapis.com

Address: 216.58.220.144

Name: storage.googleapis.com

Address: 172.217.175.16

Name: storage.googleapis.com

Address: 172.217.175.48

Name: storage.googleapis.com

Address: 172.217.175.80

Name: storage.googleapis.com

Address: 172.217.175.112

Name: storage.googleapis.com

Address: 216.58.197.208

Name: storage.googleapis.com

Address: 216.58.197.240

Name: storage.googleapis.com

Address: 142.250.199.112

Name: storage.googleapis.com

Address: 142.250.207.16

Name: storage.googleapis.com

Address: 142.250.207.48

Name: storage.googleapis.com

Address: 172.217.31.176

Name: storage.googleapis.com

Address: 172.217.161.48

Name: storage.googleapis.com

Address: 172.217.174.112

Name: storage.googleapis.com

Address: 216.58.220.112

```

Also, `busybox nerdctl` with 8.8.8.8 works, too

```console

$ lima busybox nslookup storage.googleapis.com 8.8.8.8

Server: 8.8.8.8

Address: 8.8.8.8:53

Non-authoritative answer:

Name: storage.googleapis.com

Address: 142.250.196.144

Name: storage.googleapis.com

Address: 142.251.42.144

Name: storage.googleapis.com

Address: 142.251.42.176

Name: storage.googleapis.com

Address: 172.217.31.144

Name: storage.googleapis.com

Address: 172.217.161.80

Name: storage.googleapis.com

Address: 216.58.220.144

Name: storage.googleapis.com

Address: 172.217.175.16

Name: storage.googleapis.com

Address: 172.217.175.48

Name: storage.googleapis.com

Address: 172.217.175.80

Name: storage.googleapis.com

Address: 172.217.175.112

Name: storage.googleapis.com

Address: 216.58.197.208

Name: storage.googleapis.com

Address: 216.58.197.240

Name: storage.googleapis.com

Address: 172.217.25.80

Name: storage.googleapis.com

Address: 172.217.25.112

Name: storage.googleapis.com

Address: 142.250.199.112

Name: storage.googleapis.com

Address: 142.250.207.16

Non-authoritative answer:

Name: storage.googleapis.com

Address: 2404:6800:4004:822::2010

Name: storage.googleapis.com

Address: 2404:6800:4004:825::2010

Name: storage.googleapis.com

Address: 2404:6800:4004:826::2010

Name: storage.googleapis.com

Address: 2404:6800:4004:808::2010

```

---

Lima 0f9483b3ba568c86823cada0d6a9681d3e0d1f66 with the default Ubuntu 21.10 template

# Workaround

Set `useHostResolver: false` in the YAML

| 1.0 | `lima busybox nslookup storage.googleapis.com 192.168.5.3` fails with `Can't find storage.googleapis.com: Parse error` - `lima busybox nslookup storage.googleapis.com 192.168.5.3` fails with `Can't find storage.googleapis.com: Parse error`

```console

$ lima busybox nslookup storage.googleapis.com 192.168.5.3

Server: 192.168.5.3

Address: 192.168.5.3:53

Non-authoritative answer:

*** Can't find storage.googleapis.com: Parse error

Non-authoritative answer:

```

But it works with the real `nslookup`

```console

$ lima nslookup storage.googleapis.com 192.168.5.3

Server: 192.168.5.3

Address: 192.168.5.3#53

Non-authoritative answer:

Name: storage.googleapis.com

Address: 172.217.175.240

Name: storage.googleapis.com

Address: 142.250.196.112

Name: storage.googleapis.com

Address: 216.58.220.144

Name: storage.googleapis.com

Address: 172.217.175.16

Name: storage.googleapis.com

Address: 172.217.175.48

Name: storage.googleapis.com

Address: 172.217.175.80

Name: storage.googleapis.com

Address: 172.217.175.112

Name: storage.googleapis.com

Address: 216.58.197.208

Name: storage.googleapis.com

Address: 216.58.197.240

Name: storage.googleapis.com

Address: 142.250.199.112

Name: storage.googleapis.com

Address: 142.250.207.16

Name: storage.googleapis.com

Address: 142.250.207.48

Name: storage.googleapis.com

Address: 172.217.31.176

Name: storage.googleapis.com

Address: 172.217.161.48

Name: storage.googleapis.com

Address: 172.217.174.112

Name: storage.googleapis.com

Address: 216.58.220.112

```

Also, `busybox nerdctl` with 8.8.8.8 works, too

```console

$ lima busybox nslookup storage.googleapis.com 8.8.8.8

Server: 8.8.8.8

Address: 8.8.8.8:53

Non-authoritative answer:

Name: storage.googleapis.com

Address: 142.250.196.144

Name: storage.googleapis.com

Address: 142.251.42.144

Name: storage.googleapis.com

Address: 142.251.42.176

Name: storage.googleapis.com

Address: 172.217.31.144

Name: storage.googleapis.com

Address: 172.217.161.80

Name: storage.googleapis.com

Address: 216.58.220.144

Name: storage.googleapis.com

Address: 172.217.175.16

Name: storage.googleapis.com

Address: 172.217.175.48

Name: storage.googleapis.com

Address: 172.217.175.80

Name: storage.googleapis.com

Address: 172.217.175.112

Name: storage.googleapis.com

Address: 216.58.197.208

Name: storage.googleapis.com

Address: 216.58.197.240

Name: storage.googleapis.com

Address: 172.217.25.80

Name: storage.googleapis.com

Address: 172.217.25.112

Name: storage.googleapis.com

Address: 142.250.199.112

Name: storage.googleapis.com

Address: 142.250.207.16

Non-authoritative answer:

Name: storage.googleapis.com

Address: 2404:6800:4004:822::2010

Name: storage.googleapis.com

Address: 2404:6800:4004:825::2010

Name: storage.googleapis.com

Address: 2404:6800:4004:826::2010

Name: storage.googleapis.com

Address: 2404:6800:4004:808::2010

```

---

Lima 0f9483b3ba568c86823cada0d6a9681d3e0d1f66 with the default Ubuntu 21.10 template

# Workaround

Set `useHostResolver: false` in the YAML

| priority | lima busybox nslookup storage googleapis com fails with can t find storage googleapis com parse error lima busybox nslookup storage googleapis com fails with can t find storage googleapis com parse error console lima busybox nslookup storage googleapis com server address non authoritative answer can t find storage googleapis com parse error non authoritative answer but it works with the real nslookup console lima nslookup storage googleapis com server address non authoritative answer name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address also busybox nerdctl with works too console lima busybox nslookup storage googleapis com server address non authoritative answer name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address non authoritative answer name storage googleapis com address name storage googleapis com address name storage googleapis com address name storage googleapis com address lima with the default ubuntu template workaround set usehostresolver false in the yaml | 1 |

140,037 | 5,396,234,381 | IssuesEvent | 2017-02-27 11:03:05 | Cxbx-Reloaded/Cxbx-Reloaded | https://api.github.com/repos/Cxbx-Reloaded/Cxbx-Reloaded | opened | Try to reserve memory ranges via PE sections | enhancement help wanted high-priority kernel needs-developer-discussion | Research if there's a method by which memory ranges can be reserved, by linking in sections at absolute addresses. This would allow us to reserve an address range for the kernel, contiguous memory, etc.

Surely, VirtualAllocEx can be used, but that's unreliable. I wonder if we could (ab)use the PE loader for this.

(unreliable in a sense that the preferred address might not be claimable)

One lead is here :

http://stackoverflow.com/questions/33400783/how-can-i-declare-a-variable-at-an-absolute-address-with-gcc | 1.0 | Try to reserve memory ranges via PE sections - Research if there's a method by which memory ranges can be reserved, by linking in sections at absolute addresses. This would allow us to reserve an address range for the kernel, contiguous memory, etc.

Surely, VirtualAllocEx can be used, but that's unreliable. I wonder if we could (ab)use the PE loader for this.

(unreliable in a sense that the preferred address might not be claimable)

One lead is here :

http://stackoverflow.com/questions/33400783/how-can-i-declare-a-variable-at-an-absolute-address-with-gcc | priority | try to reserve memory ranges via pe sections research if there s a method by which memory ranges can be reserved by linking in sections at absolute addresses this would allow us to reserve an address range for the kernel contiguous memory etc surely virtualallocex can be used but that s unreliable i wonder if we could ab use the pe loader for this unreliable in a sense that the preferred address might not be claimable one lead is here | 1 |

226,467 | 7,519,581,639 | IssuesEvent | 2018-04-12 12:06:41 | boissierflorian/projet_webservices | https://api.github.com/repos/boissierflorian/projet_webservices | closed | Accès à la base de données | app/config core/model discuss high priority in progress todo | - Chargement de la connexion à la base données.

- Création d'un fichier de configuration ? | 1.0 | Accès à la base de données - - Chargement de la connexion à la base données.

- Création d'un fichier de configuration ? | priority | accès à la base de données chargement de la connexion à la base données création d un fichier de configuration | 1 |

121,540 | 4,817,811,586 | IssuesEvent | 2016-11-04 14:46:05 | aaronang/cong-the-ripper | https://api.github.com/repos/aaronang/cong-the-ripper | opened | Job.runningTasks is not updated | Priority: High | This part, and probably others too, do not call `decreaseRunningTasks`, actually, this function is not used anywhere in our code...

```Go

case addr := <-m.heartbeatMissChan:

// moved the scheduled tasks back to new tasks to be re-scheduled

for i := range m.instances[addr].tasks {

task := m.instances[addr].tasks[i]

m.scheduledTasks = removeTaskFrom(m.scheduledTasks, task.JobID, task.ID)

m.newTasks = append([]*lib.Task{task}, m.newTasks...)

}

delete(m.instances, addr)

``` | 1.0 | Job.runningTasks is not updated - This part, and probably others too, do not call `decreaseRunningTasks`, actually, this function is not used anywhere in our code...

```Go

case addr := <-m.heartbeatMissChan:

// moved the scheduled tasks back to new tasks to be re-scheduled

for i := range m.instances[addr].tasks {

task := m.instances[addr].tasks[i]

m.scheduledTasks = removeTaskFrom(m.scheduledTasks, task.JobID, task.ID)

m.newTasks = append([]*lib.Task{task}, m.newTasks...)

}

delete(m.instances, addr)

``` | priority | job runningtasks is not updated this part and probably others too do not call decreaserunningtasks actually this function is not used anywhere in our code go case addr m heartbeatmisschan moved the scheduled tasks back to new tasks to be re scheduled for i range m instances tasks task m instances tasks m scheduledtasks removetaskfrom m scheduledtasks task jobid task id m newtasks append lib task task m newtasks delete m instances addr | 1 |

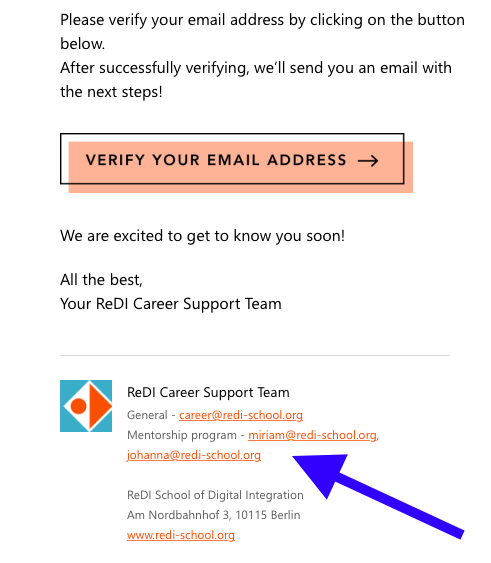

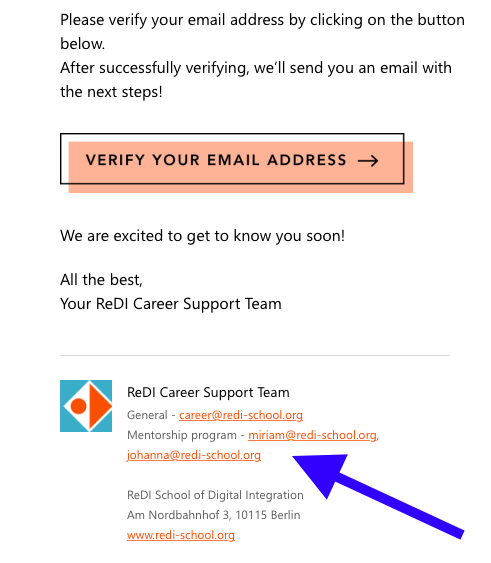

731,502 | 25,219,396,223 | IssuesEvent | 2022-11-14 11:36:19 | talent-connect/connect | https://api.github.com/repos/talent-connect/connect | closed | [CON:] Update contact information on page and emails | Area/frontend [react] Area/email [mjml] Task Ready Priority: High | ## Context/background

When a new mentor or mentee signs up for ReDI Connect, they receive information about contact people (both on the website and emails). With recent changes in the team, it is necessary to update such info so the mentors and mentees are referred to the correct team members.

## What needs to be done?

1) PAGE

Change contact information on the page (confirmation upon signing up): replace current info (screenshot) with the following:

ReDI Career Support Team

General - [career@redi-school.org](mailto:career@redi-school.org)

Mentorship program - [hadeer@redi-school.org](mailto:hadeer@redi-school.org)

2) EMAILS

Please to the same for the emails. There are several confirmation and follow up emails sent to users - "verify your email address", "checking in: we would love to hear about your mentorship", "XX has accepted your application". All of them need to be updated - the signature must contain Hadeer's contact information.

ReDI Career Support Team

General - [career@redi-school.org](mailto:career@redi-school.org)

Mentorship program - [hadeer@redi-school.org](mailto:hadeer@redi-school.org)

<img width="644" alt="Screenshot 2022-11-08 at 16 48 48" src="https://user-images.githubusercontent.com/114068119/200615790-cf968850-2a21-49ed-97fc-00776430cb6a.png">

<img width="644" alt="Screenshot 2022-11-08 at 16 48 58" src="https://user-images.githubusercontent.com/114068119/200615834-3d0f056a-3688-4202-851b-6c71784f5af8.png">

| 1.0 | [CON:] Update contact information on page and emails - ## Context/background

When a new mentor or mentee signs up for ReDI Connect, they receive information about contact people (both on the website and emails). With recent changes in the team, it is necessary to update such info so the mentors and mentees are referred to the correct team members.

## What needs to be done?

1) PAGE

Change contact information on the page (confirmation upon signing up): replace current info (screenshot) with the following:

ReDI Career Support Team

General - [career@redi-school.org](mailto:career@redi-school.org)

Mentorship program - [hadeer@redi-school.org](mailto:hadeer@redi-school.org)

2) EMAILS

Please to the same for the emails. There are several confirmation and follow up emails sent to users - "verify your email address", "checking in: we would love to hear about your mentorship", "XX has accepted your application". All of them need to be updated - the signature must contain Hadeer's contact information.

ReDI Career Support Team

General - [career@redi-school.org](mailto:career@redi-school.org)

Mentorship program - [hadeer@redi-school.org](mailto:hadeer@redi-school.org)

<img width="644" alt="Screenshot 2022-11-08 at 16 48 48" src="https://user-images.githubusercontent.com/114068119/200615790-cf968850-2a21-49ed-97fc-00776430cb6a.png">

<img width="644" alt="Screenshot 2022-11-08 at 16 48 58" src="https://user-images.githubusercontent.com/114068119/200615834-3d0f056a-3688-4202-851b-6c71784f5af8.png">

| priority | update contact information on page and emails context background when a new mentor or mentee signs up for redi connect they receive information about contact people both on the website and emails with recent changes in the team it is necessary to update such info so the mentors and mentees are referred to the correct team members what needs to be done page change contact information on the page confirmation upon signing up replace current info screenshot with the following redi career support team general mailto career redi school org mentorship program mailto hadeer redi school org emails please to the same for the emails there are several confirmation and follow up emails sent to users verify your email address checking in we would love to hear about your mentorship xx has accepted your application all of them need to be updated the signature must contain hadeer s contact information redi career support team general mailto career redi school org mentorship program mailto hadeer redi school org img width alt screenshot at src img width alt screenshot at src | 1 |

701,145 | 24,088,141,365 | IssuesEvent | 2022-09-19 12:46:53 | GlodoUK/helm-charts | https://api.github.com/repos/GlodoUK/helm-charts | closed | Change pullPolicy default | good first issue priority: high breaking change | `pullPolicy` has historically been to `Always`.

This is somewhat wasteful given how we tag images internally (usually `$ODOO_VERSION-$timestamp`), when we forget to change the policy to `IfNotPresent`.

If third parties have any concerns, please raise this with us over the next 6 weeks.

| 1.0 | Change pullPolicy default - `pullPolicy` has historically been to `Always`.

This is somewhat wasteful given how we tag images internally (usually `$ODOO_VERSION-$timestamp`), when we forget to change the policy to `IfNotPresent`.

If third parties have any concerns, please raise this with us over the next 6 weeks.

| priority | change pullpolicy default pullpolicy has historically been to always this is somewhat wasteful given how we tag images internally usually odoo version timestamp when we forget to change the policy to ifnotpresent if third parties have any concerns please raise this with us over the next weeks | 1 |

64,423 | 3,211,551,812 | IssuesEvent | 2015-10-06 11:27:08 | CoderDojo/community-platform | https://api.github.com/repos/CoderDojo/community-platform | closed | Docklands Dojo not searchable on map | bug high priority | I searched for "Docklands" and "CHQ" but I can't find it.

When I zoom in to the location also I cannot find it - I just get a cluster of "2" and when I click on "2" nothing happens:

Dojo listing is here: http://zen.coderdojo.com/dashboard/dojo/ie/the-chq-building-ifsc-dublin-docklands-dublin-1-ireland/dublin-docklands-chq

Are we indexing the "name" field for the map search? If not I think we need to be. | 1.0 | Docklands Dojo not searchable on map - I searched for "Docklands" and "CHQ" but I can't find it.

When I zoom in to the location also I cannot find it - I just get a cluster of "2" and when I click on "2" nothing happens:

Dojo listing is here: http://zen.coderdojo.com/dashboard/dojo/ie/the-chq-building-ifsc-dublin-docklands-dublin-1-ireland/dublin-docklands-chq

Are we indexing the "name" field for the map search? If not I think we need to be. | priority | docklands dojo not searchable on map i searched for docklands and chq but i can t find it when i zoom in to the location also i cannot find it i just get a cluster of and when i click on nothing happens dojo listing is here are we indexing the name field for the map search if not i think we need to be | 1 |

215,850 | 7,298,714,812 | IssuesEvent | 2018-02-26 17:47:37 | zom/Zom-iOS | https://api.github.com/repos/zom/Zom-iOS | closed | Add setup options in 'me' tab if no account is setup | FOR REVIEW high-priority | Add the options to 'Create an account' or 'Sign into an existing account' in the me tab if there is no account. | 1.0 | Add setup options in 'me' tab if no account is setup - Add the options to 'Create an account' or 'Sign into an existing account' in the me tab if there is no account. | priority | add setup options in me tab if no account is setup add the options to create an account or sign into an existing account in the me tab if there is no account | 1 |

246,598 | 7,895,420,599 | IssuesEvent | 2018-06-29 03:10:02 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | Average value query doesn't take into account the actual data | Likelihood: 3 - Occasional OS: All Priority: High Severity: 4 - Crash / Wrong Results Support Group: Any bug version: 2.10.0 | Carly Whitmore is working with an SPH dataset and when she did an average value query that doesn't appear to be taking into account what is actually being displayed. In her case she has a portion of the mesh that isn't moving and a portion of the mesh that is moving at a constant speed. When she selects just the portion that is moving she expects to get the speed of just the portion that is moving, instead she is getting a value that is lower and is probably averaging all the values.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. The following information

could not be accurately captured in the new ticket:

Original author: Eric Brugger

Original creation: 09/15/2016 03:03 pm

Original update: 08/02/2017 04:11 pm

Ticket number: 2680 | 1.0 | Average value query doesn't take into account the actual data - Carly Whitmore is working with an SPH dataset and when she did an average value query that doesn't appear to be taking into account what is actually being displayed. In her case she has a portion of the mesh that isn't moving and a portion of the mesh that is moving at a constant speed. When she selects just the portion that is moving she expects to get the speed of just the portion that is moving, instead she is getting a value that is lower and is probably averaging all the values.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. The following information

could not be accurately captured in the new ticket:

Original author: Eric Brugger

Original creation: 09/15/2016 03:03 pm

Original update: 08/02/2017 04:11 pm

Ticket number: 2680 | priority | average value query doesn t take into account the actual data carly whitmore is working with an sph dataset and when she did an average value query that doesn t appear to be taking into account what is actually being displayed in her case she has a portion of the mesh that isn t moving and a portion of the mesh that is moving at a constant speed when she selects just the portion that is moving she expects to get the speed of just the portion that is moving instead she is getting a value that is lower and is probably averaging all the values redmine migration this ticket was migrated from redmine the following information could not be accurately captured in the new ticket original author eric brugger original creation pm original update pm ticket number | 1 |

675,228 | 23,085,263,563 | IssuesEvent | 2022-07-26 10:48:18 | fyusuf-a/ft_transcendence | https://api.github.com/repos/fyusuf-a/ft_transcendence | closed | Frontend won't build in development (and in production) | bug frontend ci-cd HIGH PRIORITY | # To reproduce

### Env

```

# Build values

NODE_IMAGE=lts-alpine

NGINX_IMAGE=stable-alpine

BACKEND_DOCKERFILE=Dockerfile

FRONTEND_DOCKERFILE=Dockerfile

```

### Command

`docker-compose --profile debug --profile frontend up`

### Error

```

Build finish