Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

439,922 | 12,690,512,198 | IssuesEvent | 2020-06-21 12:32:54 | uksf/website-issues | https://api.github.com/repos/uksf/website-issues | closed | Add RAMC to specialization preferences during applications | area/both priority/high type/enhancement | Add an option to select RAMC or Medic to the select a specialization preference part of the application. | 1.0 | Add RAMC to specialization preferences during applications - Add an option to select RAMC or Medic to the select a specialization preference part of the application. | priority | add ramc to specialization preferences during applications add an option to select ramc or medic to the select a specialization preference part of the application | 1 |

244,561 | 7,876,604,764 | IssuesEvent | 2018-06-26 02:06:01 | bahmutov/rolling-task | https://api.github.com/repos/bahmutov/rolling-task | closed | Clean app iframe before adding rolled bundle | bug high priority | Currently before each test, we bundle and include the script. This leads to multiple script tags (and style elements)

<img width="841" alt="screen shot 2018-06-20 at 10 08 56 am" src="https://user-images.githubusercontent.com/2212006/41663760-2fb77b4a-7472-11e8-8f76-1e6d3472ca7a.png">

```js

beforeEach(() => {

// filename from the root of the repo

cy.task('roll', 'app.js').then(({ code }) => {

const doc = cy.state('document')

const script_tag = doc.createElement('script')

script_tag.type = 'text/javascript'

script_tag.text = code

doc.body.appendChild(script_tag)

})

})

```

How does Cypress clear the iframe between the tests? Probably during `cy.visit` | 1.0 | Clean app iframe before adding rolled bundle - Currently before each test, we bundle and include the script. This leads to multiple script tags (and style elements)

<img width="841" alt="screen shot 2018-06-20 at 10 08 56 am" src="https://user-images.githubusercontent.com/2212006/41663760-2fb77b4a-7472-11e8-8f76-1e6d3472ca7a.png">

```js

beforeEach(() => {

// filename from the root of the repo

cy.task('roll', 'app.js').then(({ code }) => {

const doc = cy.state('document')

const script_tag = doc.createElement('script')

script_tag.type = 'text/javascript'

script_tag.text = code

doc.body.appendChild(script_tag)

})

})

```

How does Cypress clear the iframe between the tests? Probably during `cy.visit` | priority | clean app iframe before adding rolled bundle currently before each test we bundle and include the script this leads to multiple script tags and style elements img width alt screen shot at am src js beforeeach filename from the root of the repo cy task roll app js then code const doc cy state document const script tag doc createelement script script tag type text javascript script tag text code doc body appendchild script tag how does cypress clear the iframe between the tests probably during cy visit | 1 |

580,001 | 17,202,820,177 | IssuesEvent | 2021-07-17 16:04:59 | ooni/probe | https://api.github.com/repos/ooni/probe | opened | Mobile release 3.1.0 | effort/M ooni/probe-mobile priority/high | Release notes:

- Added a reminder encouraging users to enable automated testing

- Added a badge to indicate that a proxy is being used

- Added support for minimizing a running test

- Added a progress bar in the dashboard when a test is running

* fix the play store warning

| 1.0 | Mobile release 3.1.0 - Release notes:

- Added a reminder encouraging users to enable automated testing

- Added a badge to indicate that a proxy is being used

- Added support for minimizing a running test

- Added a progress bar in the dashboard when a test is running

* fix the play store warning

| priority | mobile release release notes added a reminder encouraging users to enable automated testing added a badge to indicate that a proxy is being used added support for minimizing a running test added a progress bar in the dashboard when a test is running fix the play store warning | 1 |

140,147 | 5,397,833,887 | IssuesEvent | 2017-02-27 15:36:01 | swkoubou/molt | https://api.github.com/repos/swkoubou/molt | closed | Docker操作のためのイメージ作成 | enhancement high priority | molt では Docker の操作を行う必要があるのでそれ用のイメージを作成する必要がある。

現状 [dind](https://hub.docker.com/_/docker/) が存在するけど、これをベースにすると Python のバージョン固定ができないのでどうしようかなと | 1.0 | Docker操作のためのイメージ作成 - molt では Docker の操作を行う必要があるのでそれ用のイメージを作成する必要がある。

現状 [dind](https://hub.docker.com/_/docker/) が存在するけど、これをベースにすると Python のバージョン固定ができないのでどうしようかなと | priority | docker操作のためのイメージ作成 molt では docker の操作を行う必要があるのでそれ用のイメージを作成する必要がある。 現状 が存在するけど、これをベースにすると python のバージョン固定ができないのでどうしようかなと | 1 |

527,184 | 15,325,151,638 | IssuesEvent | 2021-02-26 00:39:16 | jcsnorlax97/rentr | https://api.github.com/repos/jcsnorlax97/rentr | closed | [TASK] Cleaning up naming conventions & Update Listing entity table | High Priority backend database dev-task | ### Associated User Story:

N/A

### Task Description:

- [X] Rename `getUser()` to be `getUserViaId()` in UserController & UserDao & all associated test files.

- [X] Rename `getListing()` to be `getListingViaId()`

- [X] Rename `unAuthenticated()` to be `unauthenticated()`

- [X] Replace `res.status(401).json(...)` in `authenticateUser()` in `UserController` with `ApiError.unauthenticated(...)`

- [X] Ensure the following attributes are in the "Listing" entity in `init.sql`:

- [X] listing id (int) — `id`

- [X] Title (String; < 100 chars) — `title`

- [X] Price (String) — `price`

- [X] Number of bedrooms (String) — `num_bedroom`

- [X] Number of washrooms (String) — `num_bathroom`

- [X] Laundry Room? (Boolean) — `is_laundry_available`

- [X] Pet Allowed? (Boolean) — `is_pet_allowed`

- [X] Parking Available? (Boolean) — `is_parking_available`

- [X] Image (Url/Base64) (Array of String) — `images`

- [X] Description (String; < 5000 chars) — `description`

- [X] Update all places that uses "Listing" entity and is affected:

- [X] Update `services/listing.js`

- [X] Update `services/listing.test.js`

- [X] Update `dto/listing.js`

- [X] Update `dao/listing.js`

- [X] Ensure all API endpoints are working via Postman (See Screenshots Below):

- [X] GET `/api/v1/ping`

- [X] GET `/api/v1/user/:id`

- [X] POST `/api/v1/user/registration`

- [X] POST `/api/v1/user/login`

- [X] GET `/api/v1/listing`

- [X] GET `/api/v1/listing/:id`

- [X] POST `/api/v1/listing`

- [X] Environment variable, JWT_KEY, for authentication

- [X] Decide shared secret key & initial user password

- [X] Re-generate the hashed password used in `init.sql` & Update them

- [X] Update `.env.template` as documentations

### Dependencies:

All Sprint 2 Dev Tasks

### Acceptance Criteria:

N/A | 1.0 | [TASK] Cleaning up naming conventions & Update Listing entity table - ### Associated User Story:

N/A

### Task Description:

- [X] Rename `getUser()` to be `getUserViaId()` in UserController & UserDao & all associated test files.

- [X] Rename `getListing()` to be `getListingViaId()`

- [X] Rename `unAuthenticated()` to be `unauthenticated()`

- [X] Replace `res.status(401).json(...)` in `authenticateUser()` in `UserController` with `ApiError.unauthenticated(...)`

- [X] Ensure the following attributes are in the "Listing" entity in `init.sql`:

- [X] listing id (int) — `id`

- [X] Title (String; < 100 chars) — `title`

- [X] Price (String) — `price`

- [X] Number of bedrooms (String) — `num_bedroom`

- [X] Number of washrooms (String) — `num_bathroom`

- [X] Laundry Room? (Boolean) — `is_laundry_available`

- [X] Pet Allowed? (Boolean) — `is_pet_allowed`

- [X] Parking Available? (Boolean) — `is_parking_available`

- [X] Image (Url/Base64) (Array of String) — `images`

- [X] Description (String; < 5000 chars) — `description`

- [X] Update all places that uses "Listing" entity and is affected:

- [X] Update `services/listing.js`

- [X] Update `services/listing.test.js`

- [X] Update `dto/listing.js`

- [X] Update `dao/listing.js`

- [X] Ensure all API endpoints are working via Postman (See Screenshots Below):

- [X] GET `/api/v1/ping`

- [X] GET `/api/v1/user/:id`

- [X] POST `/api/v1/user/registration`

- [X] POST `/api/v1/user/login`

- [X] GET `/api/v1/listing`

- [X] GET `/api/v1/listing/:id`

- [X] POST `/api/v1/listing`

- [X] Environment variable, JWT_KEY, for authentication

- [X] Decide shared secret key & initial user password

- [X] Re-generate the hashed password used in `init.sql` & Update them

- [X] Update `.env.template` as documentations

### Dependencies:

All Sprint 2 Dev Tasks

### Acceptance Criteria:

N/A | priority | cleaning up naming conventions update listing entity table associated user story n a task description rename getuser to be getuserviaid in usercontroller userdao all associated test files rename getlisting to be getlistingviaid rename unauthenticated to be unauthenticated replace res status json in authenticateuser in usercontroller with apierror unauthenticated ensure the following attributes are in the listing entity in init sql listing id int — id title string chars — title price string — price number of bedrooms string — num bedroom number of washrooms string — num bathroom laundry room boolean — is laundry available pet allowed boolean — is pet allowed parking available boolean — is parking available image url array of string — images description string chars — description update all places that uses listing entity and is affected update services listing js update services listing test js update dto listing js update dao listing js ensure all api endpoints are working via postman see screenshots below get api ping get api user id post api user registration post api user login get api listing get api listing id post api listing environment variable jwt key for authentication decide shared secret key initial user password re generate the hashed password used in init sql update them update env template as documentations dependencies all sprint dev tasks acceptance criteria n a | 1 |

420,372 | 12,237,182,559 | IssuesEvent | 2020-05-04 17:35:19 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [docdb] Make ConflictResolver code path async | area/docdb priority/high | UPDATE:

Seems like we can get all the RPC threads stuck during conflict resolution. The main thread does a `latch.Wait`, but it is expecting a new resolution RPC thread to actually wake them up. If *all* threads wait at the same time though, there's no thread to do the work that would wake them up.

Relevant stack:

```

#0 pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185

#1 0x00007f97c8511d1e in yb::ConditionVariable::Wait (this=<optimized out>) at ../../src/yb/util/condition_variable.cc:84

#2 0x00007f97c8511f48 in yb::CountDownLatch::Wait (this=this@entry=0x7f97a08ca020) at ../../src/yb/util/countdown_latch.cc:54

#3 0x00007f97cf96e88e in yb::docdb::(anonymous namespace)::ConflictResolver::FetchTransactionStatuses (this=0x7f97a08ca200) at ../../src/yb/docdb/conflict_resolution.cc:330

#4 yb::docdb::(anonymous namespace)::ConflictResolver::DoResolveConflicts (this=this@entry=0x7f97a08ca200) at ../../src/yb/docdb/conflict_resolution.cc:232

#5 0x00007f97cf97365f in yb::docdb::(anonymous namespace)::ConflictResolver::ResolveConflicts (this=0x7f97a08ca200) at ../../src/yb/docdb/conflict_resolution.cc:222

#6 yb::docdb::(anonymous namespace)::ConflictResolver::Resolve (this=0x7f97a08ca200) at ../../src/yb/docdb/conflict_resolution.cc:132

#7 yb::docdb::ResolveTransactionConflicts (doc_ops=..., write_batch=..., hybrid_time=..., read_time=..., doc_db=..., partial_range_key_intents=..., status_manager=0x34cbe190, conflicts_metric=0x33a4e800) at ../../src/yb/docdb/conflict_resolution.cc:750

#8 0x00007f97d04e0833 in yb::tablet::Tablet::StartDocWriteOperation (this=this@entry=0x28e94410, operation=0xec355560) at ../../src/yb/tablet/tablet.cc:2388

#9 0x00007f97d04e2ca1 in yb::tablet::Tablet::KeyValueBatchFromQLWriteBatch (this=this@entry=0x28e94410, operation=...) at ../../src/yb/tablet/tablet.cc:1267

#10 0x00007f97d04e3d1a in yb::tablet::Tablet::AcquireLocksAndPerformDocOperations (this=0x28e94410, operation=...) at ../../src/yb/tablet/tablet.cc:1581

#11 0x00007f97d05099f1 in yb::tablet::TabletPeer::WriteAsync (this=this@entry=0x255db80, state=..., term=term@entry=19, deadline=...) at ../../src/yb/tablet/tablet_peer.cc:590

#12 0x00007f97d0dc7f09 in yb::tserver::TabletServiceImpl::Write (this=this@entry=0x1055040, req=req@entry=0xb33db970, resp=resp@entry=0xd18b1820, context=...) at ../../src/yb/tserver/tablet_service.cc:1276

#13 0x00007f97ce069aba in yb::tserver::TabletServerServiceIf::Handle (this=0x1055040, call=...) at src/yb/tserver/tserver_service.service.cc:148

#14 0x00007f97c9ddecf9 in yb::rpc::ServicePoolImpl::Handle (this=0x176cb40, incoming=...) at ../../src/yb/rpc/service_pool.cc:262

#15 0x00007f97c9d82e04 in yb::rpc::InboundCall::InboundCallTask::Run (this=<optimized out>) at ../../src/yb/rpc/inbound_call.cc:212

#16 0x00007f97c9dea948 in yb::rpc::(anonymous namespace)::Worker::Execute (this=<optimized out>) at ../../src/yb/rpc/thread_pool.cc:99

#17 0x00007f97c86234af in std::function<void ()>::operator()() const (this=0x24e3258) at /usr/scratch/yugabyte/yugabyte-2.1.3.0-linux/linuxbrew-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx/Cellar/gcc/5.5.0_4/include/c++/5.5.0/functional:2267

#18 yb::Thread::SuperviseThread (arg=0x24e3200) at ../../src/yb/util/thread.cc:744

#19 0x00007f97c2e5d694 in start_thread (arg=0x7f97a08cc700) at pthread_create.c:333

#20 0x00007f97c259a41d in clone () at ../sysdeps/unix/sysv/linux/x86_64/clone.S:109

```

PREVIOUS ASSUMPTIONS:

In /threadz, seeing 146 stacks of:

```

@ 0x7fccde2e711e (unknown)

@ 0x7fccde396066 syscall

@ 0x7fccdf446746 std::__atomic_futex_unsigned_base::_M_futex_wait_until()

@ 0x7fcceda5f38e yb::ql::ExecContext::PrepareChildTransaction()

@ 0x7fcceda68755 yb::ql::Executor::UpdateIndexes()

@ 0x7fcceda667e7 yb::ql::Executor::AddOperation()

@ 0x7fcceda66b3a yb::ql::Executor::ExecPTNode()

@ 0x7fcceda6d53f yb::ql::Executor::ExecTreeNode()

@ 0x7fcceda6d6e9 yb::ql::Executor::Execute()

@ 0x7fcceda6db8d yb::ql::Executor::ExecuteAsync()

@ 0x7fccedec1918 yb::ql::QLProcessor::ExecuteAsync()

@ 0x7fcceebfc44f yb::cqlserver::CQLProcessor::ProcessRequest()

@ 0x7fcceebfc983 yb::cqlserver::CQLProcessor::ProcessRequest()

@ 0x7fcceebfcd44 yb::cqlserver::CQLProcessor::ProcessCall()

@ 0x7fcceec1590c yb::cqlserver::CQLServiceImpl::Handle()

@ 0x7fcce5bdf5e7 yb::rpc::ServicePoolImpl::Handle()

```

Background was 9 node, 3 region, RF3. Stopped a region, brought it back after 15m (so followers would be marked failed). On recovery, multiple RBSs would start and at some point, the nodes in this region get stuck, many on these stacks.

This could be caused by #4035 or a completely different problem in the same space!

cc @kmuthukk @ameyb | 1.0 | [docdb] Make ConflictResolver code path async - UPDATE:

Seems like we can get all the RPC threads stuck during conflict resolution. The main thread does a `latch.Wait`, but it is expecting a new resolution RPC thread to actually wake them up. If *all* threads wait at the same time though, there's no thread to do the work that would wake them up.

Relevant stack:

```

#0 pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185

#1 0x00007f97c8511d1e in yb::ConditionVariable::Wait (this=<optimized out>) at ../../src/yb/util/condition_variable.cc:84

#2 0x00007f97c8511f48 in yb::CountDownLatch::Wait (this=this@entry=0x7f97a08ca020) at ../../src/yb/util/countdown_latch.cc:54

#3 0x00007f97cf96e88e in yb::docdb::(anonymous namespace)::ConflictResolver::FetchTransactionStatuses (this=0x7f97a08ca200) at ../../src/yb/docdb/conflict_resolution.cc:330

#4 yb::docdb::(anonymous namespace)::ConflictResolver::DoResolveConflicts (this=this@entry=0x7f97a08ca200) at ../../src/yb/docdb/conflict_resolution.cc:232

#5 0x00007f97cf97365f in yb::docdb::(anonymous namespace)::ConflictResolver::ResolveConflicts (this=0x7f97a08ca200) at ../../src/yb/docdb/conflict_resolution.cc:222

#6 yb::docdb::(anonymous namespace)::ConflictResolver::Resolve (this=0x7f97a08ca200) at ../../src/yb/docdb/conflict_resolution.cc:132

#7 yb::docdb::ResolveTransactionConflicts (doc_ops=..., write_batch=..., hybrid_time=..., read_time=..., doc_db=..., partial_range_key_intents=..., status_manager=0x34cbe190, conflicts_metric=0x33a4e800) at ../../src/yb/docdb/conflict_resolution.cc:750

#8 0x00007f97d04e0833 in yb::tablet::Tablet::StartDocWriteOperation (this=this@entry=0x28e94410, operation=0xec355560) at ../../src/yb/tablet/tablet.cc:2388

#9 0x00007f97d04e2ca1 in yb::tablet::Tablet::KeyValueBatchFromQLWriteBatch (this=this@entry=0x28e94410, operation=...) at ../../src/yb/tablet/tablet.cc:1267

#10 0x00007f97d04e3d1a in yb::tablet::Tablet::AcquireLocksAndPerformDocOperations (this=0x28e94410, operation=...) at ../../src/yb/tablet/tablet.cc:1581

#11 0x00007f97d05099f1 in yb::tablet::TabletPeer::WriteAsync (this=this@entry=0x255db80, state=..., term=term@entry=19, deadline=...) at ../../src/yb/tablet/tablet_peer.cc:590

#12 0x00007f97d0dc7f09 in yb::tserver::TabletServiceImpl::Write (this=this@entry=0x1055040, req=req@entry=0xb33db970, resp=resp@entry=0xd18b1820, context=...) at ../../src/yb/tserver/tablet_service.cc:1276

#13 0x00007f97ce069aba in yb::tserver::TabletServerServiceIf::Handle (this=0x1055040, call=...) at src/yb/tserver/tserver_service.service.cc:148

#14 0x00007f97c9ddecf9 in yb::rpc::ServicePoolImpl::Handle (this=0x176cb40, incoming=...) at ../../src/yb/rpc/service_pool.cc:262

#15 0x00007f97c9d82e04 in yb::rpc::InboundCall::InboundCallTask::Run (this=<optimized out>) at ../../src/yb/rpc/inbound_call.cc:212

#16 0x00007f97c9dea948 in yb::rpc::(anonymous namespace)::Worker::Execute (this=<optimized out>) at ../../src/yb/rpc/thread_pool.cc:99

#17 0x00007f97c86234af in std::function<void ()>::operator()() const (this=0x24e3258) at /usr/scratch/yugabyte/yugabyte-2.1.3.0-linux/linuxbrew-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx/Cellar/gcc/5.5.0_4/include/c++/5.5.0/functional:2267

#18 yb::Thread::SuperviseThread (arg=0x24e3200) at ../../src/yb/util/thread.cc:744

#19 0x00007f97c2e5d694 in start_thread (arg=0x7f97a08cc700) at pthread_create.c:333

#20 0x00007f97c259a41d in clone () at ../sysdeps/unix/sysv/linux/x86_64/clone.S:109

```

PREVIOUS ASSUMPTIONS:

In /threadz, seeing 146 stacks of:

```

@ 0x7fccde2e711e (unknown)

@ 0x7fccde396066 syscall

@ 0x7fccdf446746 std::__atomic_futex_unsigned_base::_M_futex_wait_until()

@ 0x7fcceda5f38e yb::ql::ExecContext::PrepareChildTransaction()

@ 0x7fcceda68755 yb::ql::Executor::UpdateIndexes()

@ 0x7fcceda667e7 yb::ql::Executor::AddOperation()

@ 0x7fcceda66b3a yb::ql::Executor::ExecPTNode()

@ 0x7fcceda6d53f yb::ql::Executor::ExecTreeNode()

@ 0x7fcceda6d6e9 yb::ql::Executor::Execute()

@ 0x7fcceda6db8d yb::ql::Executor::ExecuteAsync()

@ 0x7fccedec1918 yb::ql::QLProcessor::ExecuteAsync()

@ 0x7fcceebfc44f yb::cqlserver::CQLProcessor::ProcessRequest()

@ 0x7fcceebfc983 yb::cqlserver::CQLProcessor::ProcessRequest()

@ 0x7fcceebfcd44 yb::cqlserver::CQLProcessor::ProcessCall()

@ 0x7fcceec1590c yb::cqlserver::CQLServiceImpl::Handle()

@ 0x7fcce5bdf5e7 yb::rpc::ServicePoolImpl::Handle()

```

Background was 9 node, 3 region, RF3. Stopped a region, brought it back after 15m (so followers would be marked failed). On recovery, multiple RBSs would start and at some point, the nodes in this region get stuck, many on these stacks.

This could be caused by #4035 or a completely different problem in the same space!

cc @kmuthukk @ameyb | priority | make conflictresolver code path async update seems like we can get all the rpc threads stuck during conflict resolution the main thread does a latch wait but it is expecting a new resolution rpc thread to actually wake them up if all threads wait at the same time though there s no thread to do the work that would wake them up relevant stack pthread cond wait glibc at sysdeps unix sysv linux pthread cond wait s in yb conditionvariable wait this at src yb util condition variable cc in yb countdownlatch wait this this entry at src yb util countdown latch cc in yb docdb anonymous namespace conflictresolver fetchtransactionstatuses this at src yb docdb conflict resolution cc yb docdb anonymous namespace conflictresolver doresolveconflicts this this entry at src yb docdb conflict resolution cc in yb docdb anonymous namespace conflictresolver resolveconflicts this at src yb docdb conflict resolution cc yb docdb anonymous namespace conflictresolver resolve this at src yb docdb conflict resolution cc yb docdb resolvetransactionconflicts doc ops write batch hybrid time read time doc db partial range key intents status manager conflicts metric at src yb docdb conflict resolution cc in yb tablet tablet startdocwriteoperation this this entry operation at src yb tablet tablet cc in yb tablet tablet keyvaluebatchfromqlwritebatch this this entry operation at src yb tablet tablet cc in yb tablet tablet acquirelocksandperformdocoperations this operation at src yb tablet tablet cc in yb tablet tabletpeer writeasync this this entry state term term entry deadline at src yb tablet tablet peer cc in yb tserver tabletserviceimpl write this this entry req req entry resp resp entry context at src yb tserver tablet service cc in yb tserver tabletserverserviceif handle this call at src yb tserver tserver service service cc in yb rpc servicepoolimpl handle this incoming at src yb rpc service pool cc in yb rpc inboundcall inboundcalltask run this at src yb rpc inbound call cc in yb rpc anonymous namespace worker execute this at src yb rpc thread pool cc in std function operator const this at usr scratch yugabyte yugabyte linux linuxbrew xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx cellar gcc include c functional yb thread supervisethread arg at src yb util thread cc in start thread arg at pthread create c in clone at sysdeps unix sysv linux clone s previous assumptions in threadz seeing stacks of unknown syscall std atomic futex unsigned base m futex wait until yb ql execcontext preparechildtransaction yb ql executor updateindexes yb ql executor addoperation yb ql executor execptnode yb ql executor exectreenode yb ql executor execute yb ql executor executeasync yb ql qlprocessor executeasync yb cqlserver cqlprocessor processrequest yb cqlserver cqlprocessor processrequest yb cqlserver cqlprocessor processcall yb cqlserver cqlserviceimpl handle yb rpc servicepoolimpl handle background was node region stopped a region brought it back after so followers would be marked failed on recovery multiple rbss would start and at some point the nodes in this region get stuck many on these stacks this could be caused by or a completely different problem in the same space cc kmuthukk ameyb | 1 |

285,640 | 8,767,413,047 | IssuesEvent | 2018-12-17 19:40:54 | torchgan/torchgan | https://api.github.com/repos/torchgan/torchgan | closed | Improve Documentation | enhancement high priority v0.0.2 work in progress | Since we are pushing for the release and putting more focus on feature completeness, I am listing all the current modules. All of these need to be documented before the next release can be tagged.

- [x] Loss Functions: New functions lack the equations. We also don't have a dedicated documentation for the functional forms of the losses. However, the `train_ops` need to have proper documentation as it is necessary for customizability.

- [x] Models: Models are fairly well documented. They just need some minor clean ups.

- [x] Metrics: The metrics API is a bit difficult to use. If we can't find a cleaner way to deal with metrics the only way is to have well-documented examples.

- [x] Logger: Lacks Documentation

- [x] Layers: Lacks Documentation

- [x] Trainer: Documentation is fine. However, needs a few demonstrative examples.

| 1.0 | Improve Documentation - Since we are pushing for the release and putting more focus on feature completeness, I am listing all the current modules. All of these need to be documented before the next release can be tagged.

- [x] Loss Functions: New functions lack the equations. We also don't have a dedicated documentation for the functional forms of the losses. However, the `train_ops` need to have proper documentation as it is necessary for customizability.

- [x] Models: Models are fairly well documented. They just need some minor clean ups.

- [x] Metrics: The metrics API is a bit difficult to use. If we can't find a cleaner way to deal with metrics the only way is to have well-documented examples.

- [x] Logger: Lacks Documentation

- [x] Layers: Lacks Documentation

- [x] Trainer: Documentation is fine. However, needs a few demonstrative examples.

| priority | improve documentation since we are pushing for the release and putting more focus on feature completeness i am listing all the current modules all of these need to be documented before the next release can be tagged loss functions new functions lack the equations we also don t have a dedicated documentation for the functional forms of the losses however the train ops need to have proper documentation as it is necessary for customizability models models are fairly well documented they just need some minor clean ups metrics the metrics api is a bit difficult to use if we can t find a cleaner way to deal with metrics the only way is to have well documented examples logger lacks documentation layers lacks documentation trainer documentation is fine however needs a few demonstrative examples | 1 |

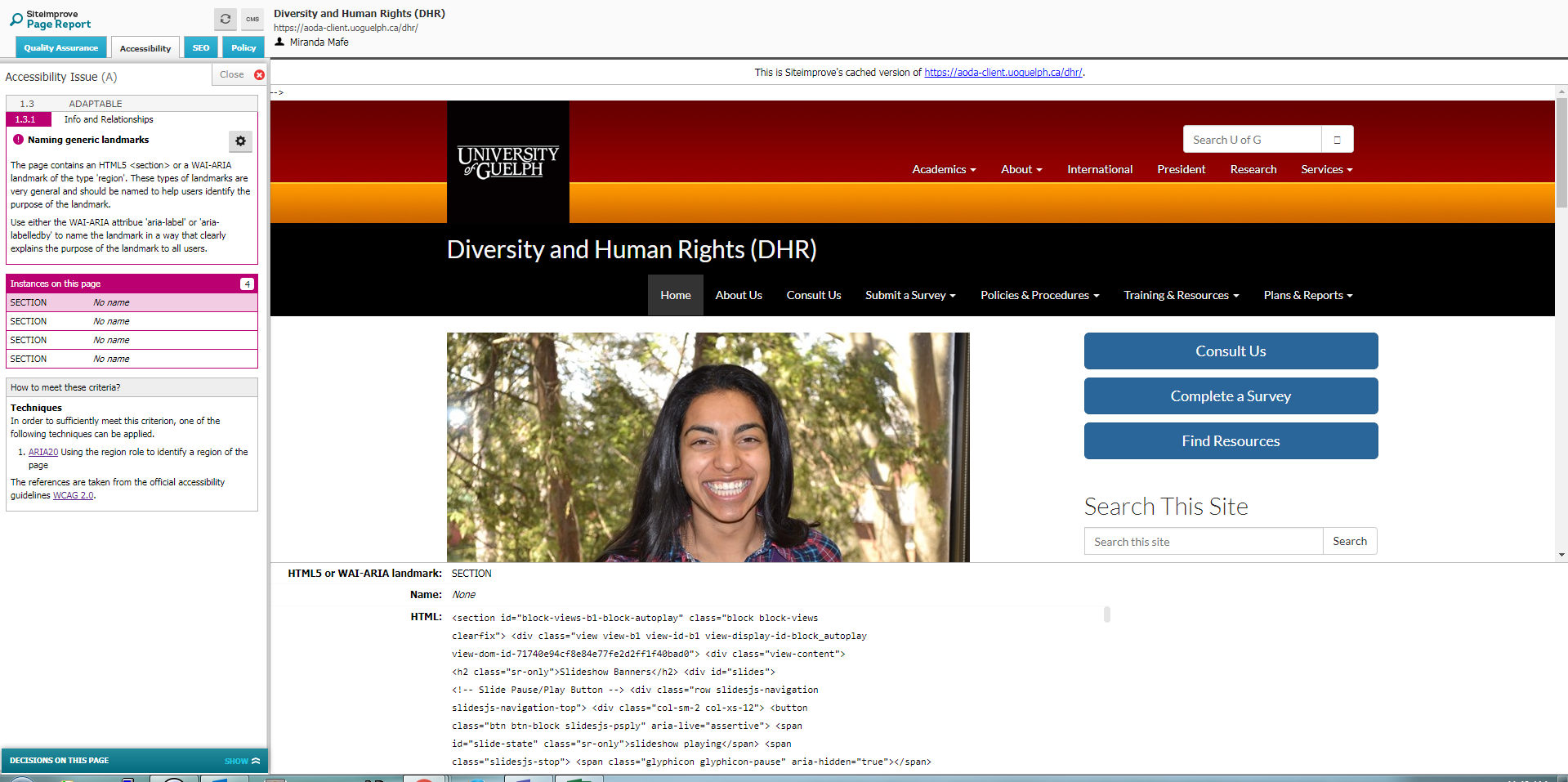

204,951 | 7,092,986,088 | IssuesEvent | 2018-01-12 18:38:14 | ArctosDB/arctos | https://api.github.com/repos/ArctosDB/arctos | closed | funky sorting on loan list | Display/Interface Function-Transactions Priority-High | If you review loan items in Arctos, the list is different if you have it on 100 or 250 per page. I was double checking the list of specimens loaned, and looking at it with 100 per page, it looked like lots of specimens were missing. I had forgotten there were greater than 100 specimens. I sorted the list more than once. Somehow it does not sort the entire list by cat number when on 100 per page, just those 100 that happen to make it onto the page under some other criteria. See attached images. One is for when set for 100 per page and sorted by MVZ number, the other for the 250 per page, again sorted by MVZ number. Can this be fixed?

<img width="328" alt="screen shot 2017-02-02 at 10 18 58 am 1" src="https://user-images.githubusercontent.com/5749672/33914502-51d466f2-df53-11e7-8238-c8b78d85117c.png">

<img width="328" alt="screen shot 2017-02-02 at 10 18 58 am" src="https://user-images.githubusercontent.com/5749672/33914504-51e84186-df53-11e7-9620-8ae2aced3d9c.png">

| 1.0 | funky sorting on loan list - If you review loan items in Arctos, the list is different if you have it on 100 or 250 per page. I was double checking the list of specimens loaned, and looking at it with 100 per page, it looked like lots of specimens were missing. I had forgotten there were greater than 100 specimens. I sorted the list more than once. Somehow it does not sort the entire list by cat number when on 100 per page, just those 100 that happen to make it onto the page under some other criteria. See attached images. One is for when set for 100 per page and sorted by MVZ number, the other for the 250 per page, again sorted by MVZ number. Can this be fixed?

<img width="328" alt="screen shot 2017-02-02 at 10 18 58 am 1" src="https://user-images.githubusercontent.com/5749672/33914502-51d466f2-df53-11e7-8238-c8b78d85117c.png">

<img width="328" alt="screen shot 2017-02-02 at 10 18 58 am" src="https://user-images.githubusercontent.com/5749672/33914504-51e84186-df53-11e7-9620-8ae2aced3d9c.png">

| priority | funky sorting on loan list if you review loan items in arctos the list is different if you have it on or per page i was double checking the list of specimens loaned and looking at it with per page it looked like lots of specimens were missing i had forgotten there were greater than specimens i sorted the list more than once somehow it does not sort the entire list by cat number when on per page just those that happen to make it onto the page under some other criteria see attached images one is for when set for per page and sorted by mvz number the other for the per page again sorted by mvz number can this be fixed img width alt screen shot at am src img width alt screen shot at am src | 1 |

133,457 | 5,203,788,465 | IssuesEvent | 2017-01-24 13:56:18 | CCAFS/MARLO | https://api.github.com/repos/CCAFS/MARLO | closed | Replace Management Liaison typo for A4NH | Priority - High Type -Task | These fields are necessary, instead we change the labels for A4NH. Replace Management Liaison = Flagship and Management Liaison contact person = Flagship Leader | 1.0 | Replace Management Liaison typo for A4NH - These fields are necessary, instead we change the labels for A4NH. Replace Management Liaison = Flagship and Management Liaison contact person = Flagship Leader | priority | replace management liaison typo for these fields are necessary instead we change the labels for replace management liaison flagship and management liaison contact person flagship leader | 1 |

793,568 | 28,001,798,423 | IssuesEvent | 2023-03-27 13:04:08 | AY2223S2-CS2113-W15-1/tp | https://api.github.com/repos/AY2223S2-CS2113-W15-1/tp | closed | Track Calories Intake | priority.High type.Story | As a busy Office Worker, I want to track my calories intake over the course of a working day so that I do not have to remember everything that I have eaten. | 1.0 | Track Calories Intake - As a busy Office Worker, I want to track my calories intake over the course of a working day so that I do not have to remember everything that I have eaten. | priority | track calories intake as a busy office worker i want to track my calories intake over the course of a working day so that i do not have to remember everything that i have eaten | 1 |

557,821 | 16,520,217,302 | IssuesEvent | 2021-05-26 13:52:00 | django-cms/django-cms | https://api.github.com/repos/django-cms/django-cms | opened | [BUG] add DJANGO_3_2 to certain conditions | priority: high | ## Description

https://github.com/django-cms/django-cms/blob/develop/cms/management/commands/subcommands/base.py#L40

https://github.com/django-cms/django-cms/blob/34a26bd1b543ee14eebc0b95ce550bf91eb7d236/cms/views.py#L57

There are also some places used inside the tests.

``DJANGO_3_2`` should be added to those conditions

This only affects django CMS 3.9.x

| 1.0 | [BUG] add DJANGO_3_2 to certain conditions - ## Description

https://github.com/django-cms/django-cms/blob/develop/cms/management/commands/subcommands/base.py#L40

https://github.com/django-cms/django-cms/blob/34a26bd1b543ee14eebc0b95ce550bf91eb7d236/cms/views.py#L57

There are also some places used inside the tests.

``DJANGO_3_2`` should be added to those conditions

This only affects django CMS 3.9.x

| priority | add django to certain conditions description there are also some places used inside the tests django should be added to those conditions this only affects django cms x | 1 |

780,789 | 27,408,316,445 | IssuesEvent | 2023-03-01 08:47:51 | giantswarm/roadmap | https://api.github.com/repos/giantswarm/roadmap | closed | [Spike] Improving Crossplane severe performance issues | priority/high team/honeybadger impact/high | We are experiencing a lot of client side throttling on clusters with Crossplane installed, which seemingly became worse with the switch to official providers (they have a magnitude more CRDs, getting over 900 / 1000 on some clusters).

Also experiencing more frequent CRD install job OOM kills for some apps (flux, external-secrets) on clusters with Crossplane.

All these combined causes Flux helm releases to fail often / get stuck in upgrade e.g. helm-controller sees slowness, or crossplane takes too long to come up / get healthy and the CRD jobs getting OOM killed fails the pre install hooks and we end up with a timed out / failed release.

Having these on live clusters (e.g. banana did trigger alert on Friday) will end up with more alerts for team on-call. Current clusters where we have these are mostly test clusters which are ignored and helm releases are normally reconciled when chart version changes or the flux helm releases is suspended and then resumed. These are the reasons why we don't have the alerts triggering at the moment.

```[tasklist]

### Tasks

- [ ] https://github.com/giantswarm/giantswarm/issues/25892

```

| 1.0 | [Spike] Improving Crossplane severe performance issues - We are experiencing a lot of client side throttling on clusters with Crossplane installed, which seemingly became worse with the switch to official providers (they have a magnitude more CRDs, getting over 900 / 1000 on some clusters).

Also experiencing more frequent CRD install job OOM kills for some apps (flux, external-secrets) on clusters with Crossplane.

All these combined causes Flux helm releases to fail often / get stuck in upgrade e.g. helm-controller sees slowness, or crossplane takes too long to come up / get healthy and the CRD jobs getting OOM killed fails the pre install hooks and we end up with a timed out / failed release.

Having these on live clusters (e.g. banana did trigger alert on Friday) will end up with more alerts for team on-call. Current clusters where we have these are mostly test clusters which are ignored and helm releases are normally reconciled when chart version changes or the flux helm releases is suspended and then resumed. These are the reasons why we don't have the alerts triggering at the moment.

```[tasklist]

### Tasks

- [ ] https://github.com/giantswarm/giantswarm/issues/25892

```

| priority | improving crossplane severe performance issues we are experiencing a lot of client side throttling on clusters with crossplane installed which seemingly became worse with the switch to official providers they have a magnitude more crds getting over on some clusters also experiencing more frequent crd install job oom kills for some apps flux external secrets on clusters with crossplane all these combined causes flux helm releases to fail often get stuck in upgrade e g helm controller sees slowness or crossplane takes too long to come up get healthy and the crd jobs getting oom killed fails the pre install hooks and we end up with a timed out failed release having these on live clusters e g banana did trigger alert on friday will end up with more alerts for team on call current clusters where we have these are mostly test clusters which are ignored and helm releases are normally reconciled when chart version changes or the flux helm releases is suspended and then resumed these are the reasons why we don t have the alerts triggering at the moment tasks | 1 |

563,103 | 16,676,094,980 | IssuesEvent | 2021-06-07 16:21:43 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | binary_cross_entropy misses derivative for target even if the variable is unused for backward | high priority module: autograd triaged | I don't understand why it breaks in the forward pass? The backprop through the loss variable may not even be required (e.g. when it's used as matching affinity for non-differentiated algorithm).

I would porpose:

- it should have a derivative formula for target (it can be useful for bringing two probability distributions together in contrastive formulations)

- if it doesn't have it, it should fail at backprop time when the variable is known to be backpropped through in the graph

```python

import torch

import torch.nn.functional as F

a = torch.ones(1, 2) / 2

a.requires_grad_()

b = torch.ones(1, 2) / 2

matching_matrix_unused_for_backprop = F.binary_cross_entropy(a, b.requires_grad_(), reduce = 'none')

# File ".../vadim/prefix/miniconda/lib/python3.8/site-packages/torch/nn/functional.py", line 2525, in binary_cross_entropy

# return torch._C._nn.binary_cross_entropy(

# RuntimeError: the derivative for 'target' is not implemented

```

cc @ezyang @gchanan @zou3519 @bdhirsh @jbschlosser @anjali411 @albanD @gqchen @pearu @nikitaved @soulitzer | 1.0 | binary_cross_entropy misses derivative for target even if the variable is unused for backward - I don't understand why it breaks in the forward pass? The backprop through the loss variable may not even be required (e.g. when it's used as matching affinity for non-differentiated algorithm).

I would porpose:

- it should have a derivative formula for target (it can be useful for bringing two probability distributions together in contrastive formulations)

- if it doesn't have it, it should fail at backprop time when the variable is known to be backpropped through in the graph

```python

import torch

import torch.nn.functional as F

a = torch.ones(1, 2) / 2

a.requires_grad_()

b = torch.ones(1, 2) / 2

matching_matrix_unused_for_backprop = F.binary_cross_entropy(a, b.requires_grad_(), reduce = 'none')

# File ".../vadim/prefix/miniconda/lib/python3.8/site-packages/torch/nn/functional.py", line 2525, in binary_cross_entropy

# return torch._C._nn.binary_cross_entropy(

# RuntimeError: the derivative for 'target' is not implemented

```

cc @ezyang @gchanan @zou3519 @bdhirsh @jbschlosser @anjali411 @albanD @gqchen @pearu @nikitaved @soulitzer | priority | binary cross entropy misses derivative for target even if the variable is unused for backward i don t understand why it breaks in the forward pass the backprop through the loss variable may not even be required e g when it s used as matching affinity for non differentiated algorithm i would porpose it should have a derivative formula for target it can be useful for bringing two probability distributions together in contrastive formulations if it doesn t have it it should fail at backprop time when the variable is known to be backpropped through in the graph python import torch import torch nn functional as f a torch ones a requires grad b torch ones matching matrix unused for backprop f binary cross entropy a b requires grad reduce none file vadim prefix miniconda lib site packages torch nn functional py line in binary cross entropy return torch c nn binary cross entropy runtimeerror the derivative for target is not implemented cc ezyang gchanan bdhirsh jbschlosser alband gqchen pearu nikitaved soulitzer | 1 |

239,134 | 7,787,024,625 | IssuesEvent | 2018-06-06 20:54:58 | EQuimper/react-native-google-autocomplete | https://api.github.com/repos/EQuimper/react-native-google-autocomplete | closed | Clear the locationResults when all text is erased | Improvement Priority:High | Great library ! Love the simplicity.

Any way to have the `locationResults` array cleared up when all text is erased ? For example using the `clear` method on the `Textinput` | 1.0 | Clear the locationResults when all text is erased - Great library ! Love the simplicity.

Any way to have the `locationResults` array cleared up when all text is erased ? For example using the `clear` method on the `Textinput` | priority | clear the locationresults when all text is erased great library love the simplicity any way to have the locationresults array cleared up when all text is erased for example using the clear method on the textinput | 1 |

430,814 | 12,466,381,452 | IssuesEvent | 2020-05-28 15:23:46 | windchime-yk/novel-support.js | https://api.github.com/repos/windchime-yk/novel-support.js | opened | v2.0.0として更新 | Priority: High Type: Release | Vueでも使えるように、テキストのみを変換する機能を追加する。

それに伴って名前付きエクスポートに戻し、関数名を変更する。

そのため、SemVerの理念に則って、メジャーバージョンを更新する。 | 1.0 | v2.0.0として更新 - Vueでも使えるように、テキストのみを変換する機能を追加する。

それに伴って名前付きエクスポートに戻し、関数名を変更する。

そのため、SemVerの理念に則って、メジャーバージョンを更新する。 | priority | vueでも使えるように、テキストのみを変換する機能を追加する。 それに伴って名前付きエクスポートに戻し、関数名を変更する。 そのため、semverの理念に則って、メジャーバージョンを更新する。 | 1 |

634,495 | 20,363,167,846 | IssuesEvent | 2022-02-21 00:01:17 | flix/flix | https://api.github.com/repos/flix/flix | closed | Add `fix` modifier | high-priority | - [x] Add `fix` keyword to frontend.

- [x] Add check for `fix` and `not`.

- [x] Add `fix` keyword support to Stratifier/Ullman.

- [x] Add `fix` keyword support to Datalog runtime.

- [x] Add check that rejects illegal use of lattice variables. (Suggest to use `fix`).

- [ ] Add positive test cases that use fix explicitly.

- [ ] Add negative test cases that use fix explicitly and wrongly.

- [x] Add test cases that uses lattices.

- [ ] Cleanup `Stratifier.run` to avoid too many intermediate collections.

- [x] Refactor query to use `fix` and get rid of `SelectFragment`.

- [x] Refactor documentation and error messages

- [x] https://github.com/flix/flix/issues/3179

- [x] VSCode keyword

| 1.0 | Add `fix` modifier - - [x] Add `fix` keyword to frontend.

- [x] Add check for `fix` and `not`.

- [x] Add `fix` keyword support to Stratifier/Ullman.

- [x] Add `fix` keyword support to Datalog runtime.

- [x] Add check that rejects illegal use of lattice variables. (Suggest to use `fix`).

- [ ] Add positive test cases that use fix explicitly.

- [ ] Add negative test cases that use fix explicitly and wrongly.

- [x] Add test cases that uses lattices.

- [ ] Cleanup `Stratifier.run` to avoid too many intermediate collections.

- [x] Refactor query to use `fix` and get rid of `SelectFragment`.

- [x] Refactor documentation and error messages

- [x] https://github.com/flix/flix/issues/3179

- [x] VSCode keyword

| priority | add fix modifier add fix keyword to frontend add check for fix and not add fix keyword support to stratifier ullman add fix keyword support to datalog runtime add check that rejects illegal use of lattice variables suggest to use fix add positive test cases that use fix explicitly add negative test cases that use fix explicitly and wrongly add test cases that uses lattices cleanup stratifier run to avoid too many intermediate collections refactor query to use fix and get rid of selectfragment refactor documentation and error messages vscode keyword | 1 |

94,732 | 3,931,602,942 | IssuesEvent | 2016-04-25 13:09:01 | 0mp/io-touchpad | https://api.github.com/repos/0mp/io-touchpad | opened | The final check list for the second iteration | priority: high status: in progress type: task | This is what we need to to before submitting the second iteration:

- [ ] Close related issues and pull requests.

- [ ] Help @RjiukYagami with the test suite. The check list for this issues is here: #22

- [ ] Sum up the second iteration in this document. Every one should add some information about how they contributed to the project during this iteration.

- [ ] @RjiukYagami

- [ ] @piotrekp1

- [ ] @michal7352

- [ ] me

- [ ] Clean up your code (@piotrekp1 especially :wink: ) :

- [ ] @RjiukYagami

- [ ] @piotrekp1

- [ ] @michal7352

- [ ] me

- [ ] Document your code

- [ ] @RjiukYagami

- [ ] @piotrekp1

- [ ] @michal7352

- [ ] me

Comment below that you've read this issue please. | 1.0 | The final check list for the second iteration - This is what we need to to before submitting the second iteration:

- [ ] Close related issues and pull requests.

- [ ] Help @RjiukYagami with the test suite. The check list for this issues is here: #22

- [ ] Sum up the second iteration in this document. Every one should add some information about how they contributed to the project during this iteration.

- [ ] @RjiukYagami

- [ ] @piotrekp1

- [ ] @michal7352

- [ ] me

- [ ] Clean up your code (@piotrekp1 especially :wink: ) :

- [ ] @RjiukYagami

- [ ] @piotrekp1

- [ ] @michal7352

- [ ] me

- [ ] Document your code

- [ ] @RjiukYagami

- [ ] @piotrekp1

- [ ] @michal7352

- [ ] me

Comment below that you've read this issue please. | priority | the final check list for the second iteration this is what we need to to before submitting the second iteration close related issues and pull requests help rjiukyagami with the test suite the check list for this issues is here sum up the second iteration in this document every one should add some information about how they contributed to the project during this iteration rjiukyagami me clean up your code especially wink rjiukyagami me document your code rjiukyagami me comment below that you ve read this issue please | 1 |

487,120 | 14,019,597,899 | IssuesEvent | 2020-10-29 18:24:43 | woocommerce/woocommerce-gateway-stripe | https://api.github.com/repos/woocommerce/woocommerce-gateway-stripe | opened | Update for PHP8 | Priority: High | PHP8 will be released on Thursday, November 26, 2020 (Thanksgiving in the US, and the start of the holiday shopping season). Ensuring this extension works well with PHP8 is a high priority.

Task list in progress:

- [ ] Test with PHP8 to identify any problems

- [ ] Fix PHP8 related problems

- [ ] Update Travis to include PHP 8 in the versions of PHP to be tested with | 1.0 | Update for PHP8 - PHP8 will be released on Thursday, November 26, 2020 (Thanksgiving in the US, and the start of the holiday shopping season). Ensuring this extension works well with PHP8 is a high priority.

Task list in progress:

- [ ] Test with PHP8 to identify any problems

- [ ] Fix PHP8 related problems

- [ ] Update Travis to include PHP 8 in the versions of PHP to be tested with | priority | update for will be released on thursday november thanksgiving in the us and the start of the holiday shopping season ensuring this extension works well with is a high priority task list in progress test with to identify any problems fix related problems update travis to include php in the versions of php to be tested with | 1 |

535,061 | 15,681,428,589 | IssuesEvent | 2021-03-25 05:20:11 | DeepakVelmurugan/virtualdata | https://api.github.com/repos/DeepakVelmurugan/virtualdata | opened | Add the score card | enhancement high-priority | Score card for displaying scores of each model and if score is bad it's background color should change try it with javascript | 1.0 | Add the score card - Score card for displaying scores of each model and if score is bad it's background color should change try it with javascript | priority | add the score card score card for displaying scores of each model and if score is bad it s background color should change try it with javascript | 1 |

296,845 | 9,126,570,461 | IssuesEvent | 2019-02-24 22:33:52 | Game-technology-group-2/project-teleport | https://api.github.com/repos/Game-technology-group-2/project-teleport | closed | Implement the Assimp library | high priority | The project should use the Assimp library to import external 3D assets. | 1.0 | Implement the Assimp library - The project should use the Assimp library to import external 3D assets. | priority | implement the assimp library the project should use the assimp library to import external assets | 1 |

140,562 | 5,412,267,763 | IssuesEvent | 2017-03-01 14:08:52 | k0shk0sh/FastHub | https://api.github.com/repos/k0shk0sh/FastHub | closed | bug links in readme | bug Future release High Priority | clicking on a link [non GitHub] in a readme file fails and tries to open it as a repo instead of opening it inside a webview | 1.0 | bug links in readme - clicking on a link [non GitHub] in a readme file fails and tries to open it as a repo instead of opening it inside a webview | priority | bug links in readme clicking on a link in a readme file fails and tries to open it as a repo instead of opening it inside a webview | 1 |

399,283 | 11,746,001,930 | IssuesEvent | 2020-03-12 10:51:05 | JuliaDynamics/DrWatson.jl | https://api.github.com/repos/JuliaDynamics/DrWatson.jl | closed | Add new travis key | high priority | Hi @sebastianpech , @tamasgal , @asinghvi17 @JonasIsensee or whoever else uses Linux.

Can someone please add a new documenter key to our travis builds, as is instructed by here: https://juliadocs.github.io/Documenter.jl/stable/man/hosting/#travis-ssh-1 ?

our old key got erased somehow... I can't do it because this key generator doesn't work on windows.

thanks! | 1.0 | Add new travis key - Hi @sebastianpech , @tamasgal , @asinghvi17 @JonasIsensee or whoever else uses Linux.

Can someone please add a new documenter key to our travis builds, as is instructed by here: https://juliadocs.github.io/Documenter.jl/stable/man/hosting/#travis-ssh-1 ?

our old key got erased somehow... I can't do it because this key generator doesn't work on windows.

thanks! | priority | add new travis key hi sebastianpech tamasgal jonasisensee or whoever else uses linux can someone please add a new documenter key to our travis builds as is instructed by here our old key got erased somehow i can t do it because this key generator doesn t work on windows thanks | 1 |

208,693 | 7,157,455,341 | IssuesEvent | 2018-01-26 19:55:28 | broadinstitute/gatk | https://api.github.com/repos/broadinstitute/gatk | closed | GenomicsDBImport: add support for samples with spaces in the name | GenomicsDB PRIORITY_HIGH bug | We are unable to run joint genotyping on the cloud when it includes a sample with a space in the name; it fails when calling GATK's GenomicsDBImport functionality (see error log below).

This practice (samples with spaces in the name) is unfortunately not uncommon, so we've had to modify our various workflows one by one to add support for this case. Please update GenomicsDBImport to handle samples with a space in the name.

Example error log:

gsutil cat gs://broad-jg-dev-cromwell-execution/JointGenotyping/6918095f-ca06-4883-bcb5-f5c2e343bb6d/call-ImportGVCFs/shard-0/ImportGVCFs-0-stderr.log

Using GATK jar /usr/gitc/gatk-package-4.beta.6-local.jar

Running:

java -Dsamjdk.use_async_io_read_samtools=false -Dsamjdk.use_async_io_write_samtools=true -Dsamjdk.use_async_io_write_tribble=false -Dsamjdk.compression_level=1 -Xmx4g -Xms4g -jar /usr/gitc/gatk-package-4.beta.6-local.jar GenomicsDBImport --genomicsDBWorkspace genomicsdb --batchSize 50 -L chr1:1-391754 --sampleNameMap /cromwell_root/broad-jg-dev-storage/freimer_dutch_fin_wgs_v1/v1/sample_map --readerThreads 5 -ip 500

Picked up _JAVA_OPTIONS: -Djava.io.tmpdir=/cromwell_root/tmp.H9t5pC

[December 14, 2017 7:41:30 PM UTC] GenomicsDBImport --genomicsDBWorkspace genomicsdb --batchSize 50 --sampleNameMap /cromwell_root/broad-jg-dev-storage/freimer_dutch_fin_wgs_v1/v1/sample_map --readerThreads 5 --intervals chr1:1-391754 --interval_padding 500 --genomicsDBSegmentSize 1048576 --genomicsDBVCFBufferSize 16384 --overwriteExistingGenomicsDBWorkspace false --consolidate false --validateSampleNameMap false --interval_set_rule UNION --interval_exclusion_padding 0 --interval_merging_rule ALL --readValidationStringency SILENT --secondsBetweenProgressUpdates 10.0 --disableSequenceDictionaryValidation false --createOutputBamIndex true --createOutputBamMD5 false --createOutputVariantIndex true --createOutputVariantMD5 false --lenient false --addOutputSAMProgramRecord true --addOutputVCFCommandLine true --cloudPrefetchBuffer 0 --cloudIndexPrefetchBuffer 0 --disableBamIndexCaching false --help false --version false --showHidden false --verbosity INFO --QUIET false --use_jdk_deflater false --use_jdk_inflater false --gcs_max_retries 20 --disableToolDefaultReadFilters false

[December 14, 2017 7:41:30 PM UTC] Executing as root@7ca892f01ff3 on Linux 4.9.0-0.bpo.3-amd64 amd64; OpenJDK 64-Bit Server VM 1.8.0_111-8u111-b14-2~bpo8+1-b14; Version: 4.beta.6

[December 14, 2017 7:41:30 PM UTC] org.broadinstitute.hellbender.tools.genomicsdb.GenomicsDBImport done. Elapsed time: 0.01 minutes.

Runtime.totalMemory()=4116185088

***********************************************************************

A USER ERROR has occurred: Bad input: Expected a file of format

Sample File

but found line: I-PAL_FR02_000639 001 gs://broad-gotc-prod-storage/pipeline/G87944/gvcfs/I-PAL_FR02_000639_001.023ca2f7-4fba-4617-9f65-cb989818c858.g.vcf.gz

***********************************************************************

Set the system property GATK_STACKTRACE_ON_USER_EXCEPTION (--javaOptions '-DGATK_STACKTRACE_ON_USER_EXCEPTION=true') to print the stack trace. | 1.0 | GenomicsDBImport: add support for samples with spaces in the name - We are unable to run joint genotyping on the cloud when it includes a sample with a space in the name; it fails when calling GATK's GenomicsDBImport functionality (see error log below).

This practice (samples with spaces in the name) is unfortunately not uncommon, so we've had to modify our various workflows one by one to add support for this case. Please update GenomicsDBImport to handle samples with a space in the name.

Example error log:

gsutil cat gs://broad-jg-dev-cromwell-execution/JointGenotyping/6918095f-ca06-4883-bcb5-f5c2e343bb6d/call-ImportGVCFs/shard-0/ImportGVCFs-0-stderr.log

Using GATK jar /usr/gitc/gatk-package-4.beta.6-local.jar

Running:

java -Dsamjdk.use_async_io_read_samtools=false -Dsamjdk.use_async_io_write_samtools=true -Dsamjdk.use_async_io_write_tribble=false -Dsamjdk.compression_level=1 -Xmx4g -Xms4g -jar /usr/gitc/gatk-package-4.beta.6-local.jar GenomicsDBImport --genomicsDBWorkspace genomicsdb --batchSize 50 -L chr1:1-391754 --sampleNameMap /cromwell_root/broad-jg-dev-storage/freimer_dutch_fin_wgs_v1/v1/sample_map --readerThreads 5 -ip 500

Picked up _JAVA_OPTIONS: -Djava.io.tmpdir=/cromwell_root/tmp.H9t5pC

[December 14, 2017 7:41:30 PM UTC] GenomicsDBImport --genomicsDBWorkspace genomicsdb --batchSize 50 --sampleNameMap /cromwell_root/broad-jg-dev-storage/freimer_dutch_fin_wgs_v1/v1/sample_map --readerThreads 5 --intervals chr1:1-391754 --interval_padding 500 --genomicsDBSegmentSize 1048576 --genomicsDBVCFBufferSize 16384 --overwriteExistingGenomicsDBWorkspace false --consolidate false --validateSampleNameMap false --interval_set_rule UNION --interval_exclusion_padding 0 --interval_merging_rule ALL --readValidationStringency SILENT --secondsBetweenProgressUpdates 10.0 --disableSequenceDictionaryValidation false --createOutputBamIndex true --createOutputBamMD5 false --createOutputVariantIndex true --createOutputVariantMD5 false --lenient false --addOutputSAMProgramRecord true --addOutputVCFCommandLine true --cloudPrefetchBuffer 0 --cloudIndexPrefetchBuffer 0 --disableBamIndexCaching false --help false --version false --showHidden false --verbosity INFO --QUIET false --use_jdk_deflater false --use_jdk_inflater false --gcs_max_retries 20 --disableToolDefaultReadFilters false

[December 14, 2017 7:41:30 PM UTC] Executing as root@7ca892f01ff3 on Linux 4.9.0-0.bpo.3-amd64 amd64; OpenJDK 64-Bit Server VM 1.8.0_111-8u111-b14-2~bpo8+1-b14; Version: 4.beta.6

[December 14, 2017 7:41:30 PM UTC] org.broadinstitute.hellbender.tools.genomicsdb.GenomicsDBImport done. Elapsed time: 0.01 minutes.

Runtime.totalMemory()=4116185088

***********************************************************************

A USER ERROR has occurred: Bad input: Expected a file of format

Sample File

but found line: I-PAL_FR02_000639 001 gs://broad-gotc-prod-storage/pipeline/G87944/gvcfs/I-PAL_FR02_000639_001.023ca2f7-4fba-4617-9f65-cb989818c858.g.vcf.gz

***********************************************************************

Set the system property GATK_STACKTRACE_ON_USER_EXCEPTION (--javaOptions '-DGATK_STACKTRACE_ON_USER_EXCEPTION=true') to print the stack trace. | priority | genomicsdbimport add support for samples with spaces in the name we are unable to run joint genotyping on the cloud when it includes a sample with a space in the name it fails when calling gatk s genomicsdbimport functionality see error log below this practice samples with spaces in the name is unfortunately not uncommon so we ve had to modify our various workflows one by one to add support for this case please update genomicsdbimport to handle samples with a space in the name example error log gsutil cat gs broad jg dev cromwell execution jointgenotyping call importgvcfs shard importgvcfs stderr log using gatk jar usr gitc gatk package beta local jar running java dsamjdk use async io read samtools false dsamjdk use async io write samtools true dsamjdk use async io write tribble false dsamjdk compression level jar usr gitc gatk package beta local jar genomicsdbimport genomicsdbworkspace genomicsdb batchsize l samplenamemap cromwell root broad jg dev storage freimer dutch fin wgs sample map readerthreads ip picked up java options djava io tmpdir cromwell root tmp genomicsdbimport genomicsdbworkspace genomicsdb batchsize samplenamemap cromwell root broad jg dev storage freimer dutch fin wgs sample map readerthreads intervals interval padding genomicsdbsegmentsize genomicsdbvcfbuffersize overwriteexistinggenomicsdbworkspace false consolidate false validatesamplenamemap false interval set rule union interval exclusion padding interval merging rule all readvalidationstringency silent secondsbetweenprogressupdates disablesequencedictionaryvalidation false createoutputbamindex true false createoutputvariantindex true false lenient false addoutputsamprogramrecord true addoutputvcfcommandline true cloudprefetchbuffer cloudindexprefetchbuffer disablebamindexcaching false help false version false showhidden false verbosity info quiet false use jdk deflater false use jdk inflater false gcs max retries disabletooldefaultreadfilters false executing as root on linux bpo openjdk bit server vm version beta org broadinstitute hellbender tools genomicsdb genomicsdbimport done elapsed time minutes runtime totalmemory a user error has occurred bad input expected a file of format sample file but found line i pal gs broad gotc prod storage pipeline gvcfs i pal g vcf gz set the system property gatk stacktrace on user exception javaoptions dgatk stacktrace on user exception true to print the stack trace | 1 |

508,015 | 14,688,346,792 | IssuesEvent | 2021-01-02 02:06:18 | blchelle/collabogreat | https://api.github.com/repos/blchelle/collabogreat | closed | Design/Implement UI For the "Task Card" Component | Priority: High Status: In Progress Type: Enhancement | ## Description

The BoardCard component is used in ProjectBoard Page of the app. The BoardCard component should give the following information

* The name of the associated test

* The description of the associated task

* The name and image of the user who is assigned to the task

The board card app should also allow the user to

* Change the status of the associated task (already implemented on the front-end, will be completed in #57)

* Delete the task

* Edit the task | 1.0 | Design/Implement UI For the "Task Card" Component - ## Description

The BoardCard component is used in ProjectBoard Page of the app. The BoardCard component should give the following information

* The name of the associated test

* The description of the associated task

* The name and image of the user who is assigned to the task

The board card app should also allow the user to

* Change the status of the associated task (already implemented on the front-end, will be completed in #57)

* Delete the task

* Edit the task | priority | design implement ui for the task card component description the boardcard component is used in projectboard page of the app the boardcard component should give the following information the name of the associated test the description of the associated task the name and image of the user who is assigned to the task the board card app should also allow the user to change the status of the associated task already implemented on the front end will be completed in delete the task edit the task | 1 |

811,857 | 30,302,999,789 | IssuesEvent | 2023-07-10 07:34:31 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | opened | yb-tserver crash: Segmentation fault during sidecars flush operation | area/docdb priority/high status/awaiting-triage | ### Description

Test1:

http://stress.dev.yugabyte.com/stress_test/da7af025-6c6c-4319-9b21-64af6a27bb7d

Test2:

http://stress.dev.yugabyte.com/stress_test/b5c4b879-cdeb-4578-91a9-3a957b515760

```

* thread #1, name = 'yb-tserver', stop reason = signal SIGSEGV

* frame #0: 0x000055e27683b395 yb-tserver`yb::rpc::Sidecars::Flush(boost::container::small_vector_base<yb::RefCntSlice, void, void>*) [inlined] yb::WriteBuffer::Block::Shrink(this=<unavailable>, size=<unavailable>) at write_buffer.h:131:27

frame #1: 0x000055e27683b395 yb-tserver`yb::rpc::Sidecars::Flush(boost::container::small_vector_base<yb::RefCntSlice, void, void>*) [inlined] yb::WriteBuffer::ShrinkLastBlock(this=0x000026887d9d7c28) at write_buffer.cc:138:20

frame #2: 0x000055e27683b364 yb-tserver`yb::rpc::Sidecars::Flush(boost::container::small_vector_base<yb::RefCntSlice, void, void>*) [inlined] yb::WriteBuffer::Flush(this=0x000026887d9d7c28, output=<unavailable>) at write_buffer.cc:184:3

frame #3: 0x000055e27683b364 yb-tserver`yb::rpc::Sidecars::Flush(this=0x000026887d9d7c20, output=0x000026887cb9b160) at sidecars.cc:110:11

frame #4: 0x000055e276789e26 yb-tserver`yb::rpc::OutboundCall::Serialize(this=0x00002688791ab420, output=0x000026887cb9b160) at outbound_call.cc:212:14

frame #5: 0x000055e27683d62a yb-tserver`yb::rpc::TcpStream::Send(std::__1::shared_ptr<yb::rpc::OutboundData>) [inlined] yb::rpc::TcpStreamSendingData::TcpStreamSendingData(this=0x000026887cb9b150, data_=<unavailable>, mem_tracker=std::__1::shared_ptr<yb::MemTracker>::element_type @ 0x000026887f9206e0) at tcp_stream.cc:530:9

frame #6: 0x000055e27683d5ee yb-tserver`yb::rpc::TcpStream::Send(std::__1::shared_ptr<yb::rpc::OutboundData>) [inlined] yb::rpc::TcpStreamSendingData* std::__1::construct_at[abi:v15007]<yb::rpc::TcpStreamSendingData, std::__1::shared_ptr<yb::rpc::OutboundData>, std::__1::shared_ptr<yb::MemTracker>&, yb::rpc::TcpStreamSendingData*>(__location=0x000026887cb9b150, __args=<unavailable>, __args=std::__1::shared_ptr<yb::MemTracker>::element_type @ 0x000026887f9206e0) at construct_at.h:35:48

frame #7: 0x000055e27683d5dd yb-tserver`yb::rpc::TcpStream::Send(std::__1::shared_ptr<yb::rpc::OutboundData>) [inlined] void std::__1::allocator_traits<std::__1::allocator<yb::rpc::TcpStreamSendingData>>::construct[abi:v15007]<yb::rpc::TcpStreamSendingData, std::__1::shared_ptr<yb::rpc::OutboundData>, std::__1::shared_ptr<yb::MemTracker>&, void, void>((null)=0x000026887f8e9c88, __p=0x000026887cb9b150, __args=<unavailable>, __args=std::__1::shared_ptr<yb::MemTracker>::element_type @ 0x000026887f9206e0) at allocator_traits.h:298:9

frame #8: 0x000055e27683d5dd yb-tserver`yb::rpc::TcpStream::Send(std::__1::shared_ptr<yb::rpc::OutboundData>) [inlined] yb::rpc::TcpStreamSendingData& std::__1::deque<yb::rpc::TcpStreamSendingData, std::__1::allocator<yb::rpc::TcpStreamSendingData>>::emplace_back<std::__1::shared_ptr<yb::rpc::OutboundData>, std::__1::shared_ptr<yb::MemTracker>&>(this=0x000026887f8e9c60, __args=<unavailable>, __args=std::__1::shared_ptr<yb::MemTracker>::element_type @ 0x000026887f9206e0) at deque:1984:5

frame #9: 0x000055e27683cbe9 yb-tserver`yb::rpc::TcpStream::Send(this=0x000026887f8e9b80, data=<unavailable>) at tcp_stream.cc:468:12

frame #10: 0x000055e2767a2c0c yb-tserver`yb::rpc::RefinedStream::Send(this=<unavailable>, data=<unavailable>) at refined_stream.cc:89:27

frame #11: 0x000055e2767a4b37 yb-tserver`yb::rpc::RefinedStream::Established(this=0x00002688775b8000, state=<unavailable>) at refined_stream.cc:215:5

frame #12: 0x000055e276763fbf yb-tserver`yb::rpc::(anonymous namespace)::CompressedRefiner::Handshake(this=0x0000268878bd01c0) at compressed_stream.cc:0

frame #13: 0x000055e2767a3993 yb-tserver`yb::rpc::RefinedStream::Connected(this=0x00002688775b8000) at refined_stream.cc:172:24

frame #14: 0x000055e276841513 yb-tserver`yb::rpc::TcpStream::Handler(this=0x000026887f8e9b80, watcher=<unavailable>, revents=<unavailable>) at tcp_stream.cc:286:17

frame #15: 0x000055e275b4f81b yb-tserver`ev_invoke_pending + 91

frame #16: 0x000055e275b52b99 yb-tserver`ev_run + 3577

frame #17: 0x000055e27679e147 yb-tserver`yb::rpc::Reactor::RunThread() [inlined] ev::loop_ref::run(this=0x000026887f9be638, flags=0) at ev++.h:211:7

frame #18: 0x000055e27679e13e yb-tserver`yb::rpc::Reactor::RunThread(this=0x000026887f9be600) at reactor.cc:630:9

frame #19: 0x000055e276f40f22 yb-tserver`yb::Thread::SuperviseThread(void*) [inlined] std::__1::__function::__value_func<void ()>::operator(this=0x000026887f939800)[abi:v15007]() const at function.h:512:16

frame #20: 0x000055e276f40f0c yb-tserver`yb::Thread::SuperviseThread(void*) [inlined] std::__1::function<void ()>::operator(this=0x000026887f939800)() const at function.h:1197:12

frame #21: 0x000055e276f40f0c yb-tserver`yb::Thread::SuperviseThread(arg=0x000026887f9397a0) at thread.cc:842:3

frame #22: 0x00007f058c994694 libpthread.so.0`start_thread(arg=0x00007f058555f700) at pthread_create.c:333

frame #23: 0x00007f058cc9141d libc.so.6`__clone at clone.S:109

```

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information. | 1.0 | yb-tserver crash: Segmentation fault during sidecars flush operation - ### Description

Test1:

http://stress.dev.yugabyte.com/stress_test/da7af025-6c6c-4319-9b21-64af6a27bb7d

Test2:

http://stress.dev.yugabyte.com/stress_test/b5c4b879-cdeb-4578-91a9-3a957b515760

```

* thread #1, name = 'yb-tserver', stop reason = signal SIGSEGV

* frame #0: 0x000055e27683b395 yb-tserver`yb::rpc::Sidecars::Flush(boost::container::small_vector_base<yb::RefCntSlice, void, void>*) [inlined] yb::WriteBuffer::Block::Shrink(this=<unavailable>, size=<unavailable>) at write_buffer.h:131:27

frame #1: 0x000055e27683b395 yb-tserver`yb::rpc::Sidecars::Flush(boost::container::small_vector_base<yb::RefCntSlice, void, void>*) [inlined] yb::WriteBuffer::ShrinkLastBlock(this=0x000026887d9d7c28) at write_buffer.cc:138:20

frame #2: 0x000055e27683b364 yb-tserver`yb::rpc::Sidecars::Flush(boost::container::small_vector_base<yb::RefCntSlice, void, void>*) [inlined] yb::WriteBuffer::Flush(this=0x000026887d9d7c28, output=<unavailable>) at write_buffer.cc:184:3

frame #3: 0x000055e27683b364 yb-tserver`yb::rpc::Sidecars::Flush(this=0x000026887d9d7c20, output=0x000026887cb9b160) at sidecars.cc:110:11

frame #4: 0x000055e276789e26 yb-tserver`yb::rpc::OutboundCall::Serialize(this=0x00002688791ab420, output=0x000026887cb9b160) at outbound_call.cc:212:14

frame #5: 0x000055e27683d62a yb-tserver`yb::rpc::TcpStream::Send(std::__1::shared_ptr<yb::rpc::OutboundData>) [inlined] yb::rpc::TcpStreamSendingData::TcpStreamSendingData(this=0x000026887cb9b150, data_=<unavailable>, mem_tracker=std::__1::shared_ptr<yb::MemTracker>::element_type @ 0x000026887f9206e0) at tcp_stream.cc:530:9

frame #6: 0x000055e27683d5ee yb-tserver`yb::rpc::TcpStream::Send(std::__1::shared_ptr<yb::rpc::OutboundData>) [inlined] yb::rpc::TcpStreamSendingData* std::__1::construct_at[abi:v15007]<yb::rpc::TcpStreamSendingData, std::__1::shared_ptr<yb::rpc::OutboundData>, std::__1::shared_ptr<yb::MemTracker>&, yb::rpc::TcpStreamSendingData*>(__location=0x000026887cb9b150, __args=<unavailable>, __args=std::__1::shared_ptr<yb::MemTracker>::element_type @ 0x000026887f9206e0) at construct_at.h:35:48

frame #7: 0x000055e27683d5dd yb-tserver`yb::rpc::TcpStream::Send(std::__1::shared_ptr<yb::rpc::OutboundData>) [inlined] void std::__1::allocator_traits<std::__1::allocator<yb::rpc::TcpStreamSendingData>>::construct[abi:v15007]<yb::rpc::TcpStreamSendingData, std::__1::shared_ptr<yb::rpc::OutboundData>, std::__1::shared_ptr<yb::MemTracker>&, void, void>((null)=0x000026887f8e9c88, __p=0x000026887cb9b150, __args=<unavailable>, __args=std::__1::shared_ptr<yb::MemTracker>::element_type @ 0x000026887f9206e0) at allocator_traits.h:298:9

frame #8: 0x000055e27683d5dd yb-tserver`yb::rpc::TcpStream::Send(std::__1::shared_ptr<yb::rpc::OutboundData>) [inlined] yb::rpc::TcpStreamSendingData& std::__1::deque<yb::rpc::TcpStreamSendingData, std::__1::allocator<yb::rpc::TcpStreamSendingData>>::emplace_back<std::__1::shared_ptr<yb::rpc::OutboundData>, std::__1::shared_ptr<yb::MemTracker>&>(this=0x000026887f8e9c60, __args=<unavailable>, __args=std::__1::shared_ptr<yb::MemTracker>::element_type @ 0x000026887f9206e0) at deque:1984:5

frame #9: 0x000055e27683cbe9 yb-tserver`yb::rpc::TcpStream::Send(this=0x000026887f8e9b80, data=<unavailable>) at tcp_stream.cc:468:12

frame #10: 0x000055e2767a2c0c yb-tserver`yb::rpc::RefinedStream::Send(this=<unavailable>, data=<unavailable>) at refined_stream.cc:89:27

frame #11: 0x000055e2767a4b37 yb-tserver`yb::rpc::RefinedStream::Established(this=0x00002688775b8000, state=<unavailable>) at refined_stream.cc:215:5

frame #12: 0x000055e276763fbf yb-tserver`yb::rpc::(anonymous namespace)::CompressedRefiner::Handshake(this=0x0000268878bd01c0) at compressed_stream.cc:0

frame #13: 0x000055e2767a3993 yb-tserver`yb::rpc::RefinedStream::Connected(this=0x00002688775b8000) at refined_stream.cc:172:24

frame #14: 0x000055e276841513 yb-tserver`yb::rpc::TcpStream::Handler(this=0x000026887f8e9b80, watcher=<unavailable>, revents=<unavailable>) at tcp_stream.cc:286:17

frame #15: 0x000055e275b4f81b yb-tserver`ev_invoke_pending + 91

frame #16: 0x000055e275b52b99 yb-tserver`ev_run + 3577

frame #17: 0x000055e27679e147 yb-tserver`yb::rpc::Reactor::RunThread() [inlined] ev::loop_ref::run(this=0x000026887f9be638, flags=0) at ev++.h:211:7

frame #18: 0x000055e27679e13e yb-tserver`yb::rpc::Reactor::RunThread(this=0x000026887f9be600) at reactor.cc:630:9

frame #19: 0x000055e276f40f22 yb-tserver`yb::Thread::SuperviseThread(void*) [inlined] std::__1::__function::__value_func<void ()>::operator(this=0x000026887f939800)[abi:v15007]() const at function.h:512:16

frame #20: 0x000055e276f40f0c yb-tserver`yb::Thread::SuperviseThread(void*) [inlined] std::__1::function<void ()>::operator(this=0x000026887f939800)() const at function.h:1197:12

frame #21: 0x000055e276f40f0c yb-tserver`yb::Thread::SuperviseThread(arg=0x000026887f9397a0) at thread.cc:842:3

frame #22: 0x00007f058c994694 libpthread.so.0`start_thread(arg=0x00007f058555f700) at pthread_create.c:333

frame #23: 0x00007f058cc9141d libc.so.6`__clone at clone.S:109

```

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information. | priority | yb tserver crash segmentation fault during sidecars flush operation description thread name yb tserver stop reason signal sigsegv frame yb tserver yb rpc sidecars flush boost container small vector base yb writebuffer block shrink this size at write buffer h frame yb tserver yb rpc sidecars flush boost container small vector base yb writebuffer shrinklastblock this at write buffer cc frame yb tserver yb rpc sidecars flush boost container small vector base yb writebuffer flush this output at write buffer cc frame yb tserver yb rpc sidecars flush this output at sidecars cc frame yb tserver yb rpc outboundcall serialize this output at outbound call cc frame yb tserver yb rpc tcpstream send std shared ptr yb rpc tcpstreamsendingdata tcpstreamsendingdata this data mem tracker std shared ptr element type at tcp stream cc frame yb tserver yb rpc tcpstream send std shared ptr yb rpc tcpstreamsendingdata std construct at std shared ptr yb rpc tcpstreamsendingdata location args args std shared ptr element type at construct at h frame yb tserver yb rpc tcpstream send std shared ptr void std allocator traits construct std shared ptr void void null p args args std shared ptr element type at allocator traits h frame yb tserver yb rpc tcpstream send std shared ptr yb rpc tcpstreamsendingdata std deque emplace back std shared ptr this args args std shared ptr element type at deque frame yb tserver yb rpc tcpstream send this data at tcp stream cc frame yb tserver yb rpc refinedstream send this data at refined stream cc frame yb tserver yb rpc refinedstream established this state at refined stream cc frame yb tserver yb rpc anonymous namespace compressedrefiner handshake this at compressed stream cc frame yb tserver yb rpc refinedstream connected this at refined stream cc frame yb tserver yb rpc tcpstream handler this watcher revents at tcp stream cc frame yb tserver ev invoke pending frame yb tserver ev run frame yb tserver yb rpc reactor runthread ev loop ref run this flags at ev h frame yb tserver yb rpc reactor runthread this at reactor cc frame yb tserver yb thread supervisethread void std function value func operator this const at function h frame yb tserver yb thread supervisethread void std function operator this const at function h frame yb tserver yb thread supervisethread arg at thread cc frame libpthread so start thread arg at pthread create c frame libc so clone at clone s warning please confirm that this issue does not contain any sensitive information i confirm this issue does not contain any sensitive information | 1 |

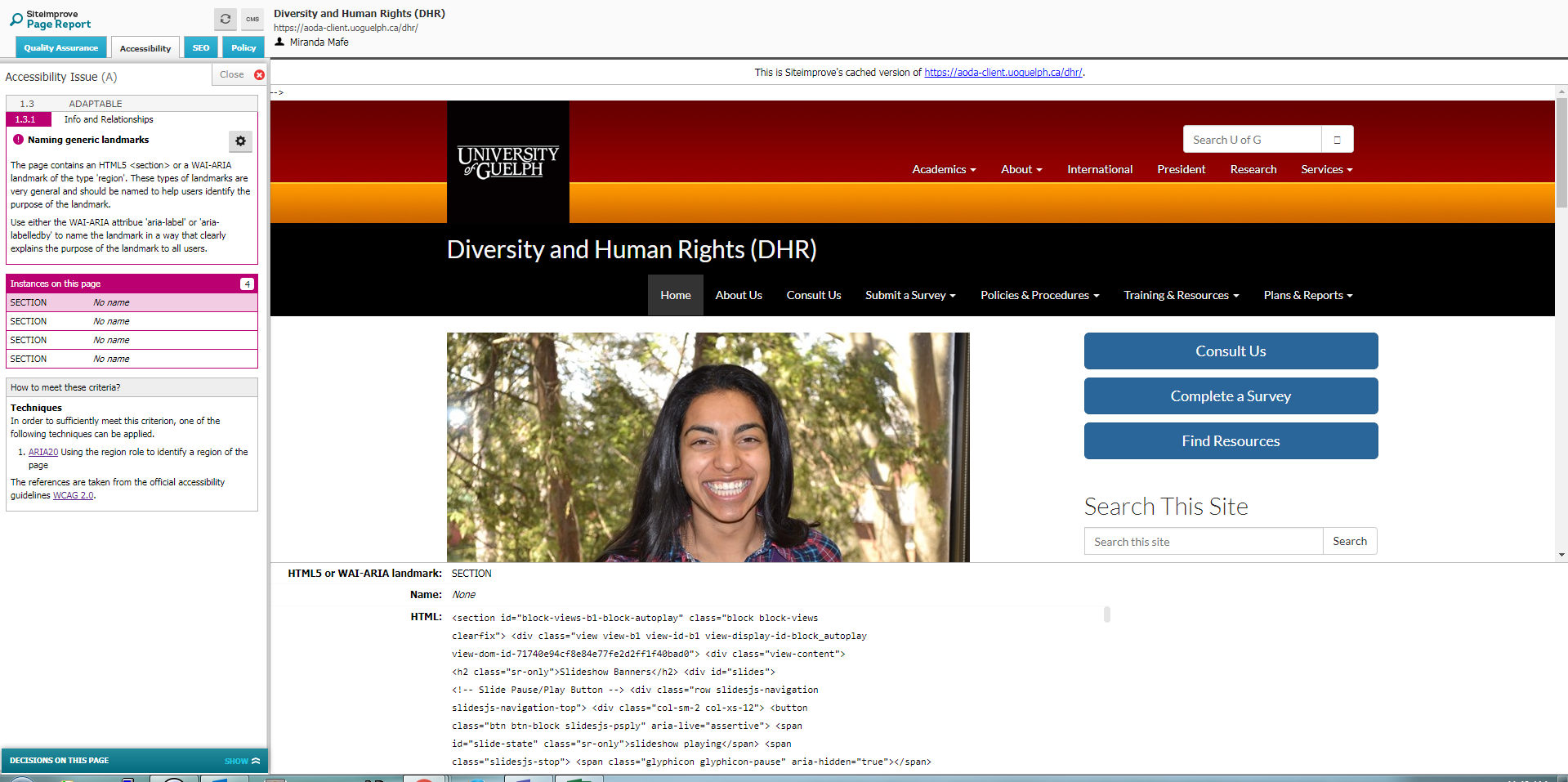

680,158 | 23,260,969,731 | IssuesEvent | 2022-08-04 13:28:51 | DDMAL/cantus | https://api.github.com/repos/DDMAL/cantus | closed | Improve volpiano spacing | High Priority | Volpiano excerpts sometimes have overlapping text.

<img width="440" alt="image" src="https://user-images.githubusercontent.com/11023634/180817413-0de38f70-2848-43fe-b1e7-74c4ba070192.png">

| 1.0 | Improve volpiano spacing - Volpiano excerpts sometimes have overlapping text.

<img width="440" alt="image" src="https://user-images.githubusercontent.com/11023634/180817413-0de38f70-2848-43fe-b1e7-74c4ba070192.png">

| priority | improve volpiano spacing volpiano excerpts sometimes have overlapping text img width alt image src | 1 |

457,827 | 13,162,965,683 | IssuesEvent | 2020-08-10 22:56:40 | zulip/zulip-mobile | https://api.github.com/repos/zulip/zulip-mobile | closed | When message fetch fails, show error instead of loading-animation | P1 high-priority a-data-sync a-message list | When you go to read a conversation, we load the messages that are in it if we don't already have them. While we're doing that fetch, we show a "loading" animation of placeholder messages. But then if that fetch fails with an exception, we carry on showing that loading-animation forever.

That's bad because after the fetch has failed, we are not in fact still working on loading the messages, so the animation is effectively telling the user something that isn't true. It's also misleading when trying to debug, as it obscures the fact there was an error and not just something taking a long time.

Cases where we've seen this come up recently:

* #4156 [turned out to be](https://chat.zulip.org/#narrow/stream/243-mobile-team/topic/.23M4156.20Message.20List.20placeholders) a server bug, where the server returned garbled data on fetching any messages that had emoji reactions.

* We'd actually seen this bug [before](https://chat.zulip.org/#narrow/stream/243-mobile-team/topic/Streams.20not.20loading.20in.20android/near/883392), when it was live on chat.zulip.org and caused the same symptom. It was fixed within a couple of days, but perhaps unsurprisingly there was a deployment that happened to have upgraded to master within that window of a couple of days, and stayed there.

* #4033 may in part reflect another case of this -- at least when the loading is "endless" and not merely long. (Though because that one goes away on quitting and relaunching the app, it's definitely not the same server bug and probably is a purely client-side bug.)

Instead, when the fetch fails we should show a widget that's not animated and says there was an error. Preferably also with a button (low-emphasis, like a [text button](https://material.io/components/buttons#text-button)) to retry.

Some chat discussion of how to implement this starts [here](https://chat.zulip.org/#narrow/stream/243-mobile-team/topic/.23M4156.20Message.20List.20placeholders/near/928698), and particularly [here](https://chat.zulip.org/#narrow/stream/243-mobile-team/topic/.23M4156.20Message.20List.20placeholders/near/928758) and after.

| 1.0 | When message fetch fails, show error instead of loading-animation - When you go to read a conversation, we load the messages that are in it if we don't already have them. While we're doing that fetch, we show a "loading" animation of placeholder messages. But then if that fetch fails with an exception, we carry on showing that loading-animation forever.

That's bad because after the fetch has failed, we are not in fact still working on loading the messages, so the animation is effectively telling the user something that isn't true. It's also misleading when trying to debug, as it obscures the fact there was an error and not just something taking a long time.

Cases where we've seen this come up recently:

* #4156 [turned out to be](https://chat.zulip.org/#narrow/stream/243-mobile-team/topic/.23M4156.20Message.20List.20placeholders) a server bug, where the server returned garbled data on fetching any messages that had emoji reactions.