Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

89,704 | 3,798,826,724 | IssuesEvent | 2016-03-23 14:04:19 | cs2103jan2016-t11-3j/main | https://api.github.com/repos/cs2103jan2016-t11-3j/main | closed | Parse command - For viewing all dates in a recurring task | priority.high type.enhancement type.task | Needs a command for user to see when are all the recurring dates for one task, if possible | 1.0 | Parse command - For viewing all dates in a recurring task - Needs a command for user to see when are all the recurring dates for one task, if possible | non_infrastructure | parse command for viewing all dates in a recurring task needs a command for user to see when are all the recurring dates for one task if possible | 0 |

10,464 | 3,391,674,943 | IssuesEvent | 2015-11-30 16:25:19 | ujh/iomrascalai | https://api.github.com/repos/ujh/iomrascalai | closed | Documentation for Config | documentation | When you run `cargo doc` there is currently no documentation at all. As a start it would be nice to document all the variables you can set in `Config`. | 1.0 | Documentation for Config - When you run `cargo doc` there is currently no documentation at all. As a start it would be nice to document all the variables you can set in `Config`. | non_infrastructure | documentation for config when you run cargo doc there is currently no documentation at all as a start it would be nice to document all the variables you can set in config | 0 |

1,665 | 3,320,792,137 | IssuesEvent | 2015-11-09 02:37:34 | AtlasOfLivingAustralia/data-management | https://api.github.com/repos/AtlasOfLivingAustralia/data-management | opened | Species Interactions | Infrastructure | build capability to store, search and navigate interactions

Sample data available - see email re fusarium species interaction data | 1.0 | Species Interactions - build capability to store, search and navigate interactions

Sample data available - see email re fusarium species interaction data | infrastructure | species interactions build capability to store search and navigate interactions sample data available see email re fusarium species interaction data | 1 |

11,438 | 9,368,307,781 | IssuesEvent | 2019-04-03 08:24:14 | mozilla/fxa | https://api.github.com/repos/mozilla/fxa | closed | CODE_OF_CONDUCT.md file missing | fxa-email-service train-135 |

As of January 1 2019, Mozilla requires that all GitHub projects include this [CODE_OF_CONDUCT.md](https://github.com/mozilla/repo-templates/blob/master/templates/CODE_OF_CONDUCT.md) file in the project root. The file has two parts:

1. Required Text - All text under the headings *Community Participation Guidelines and How to Report*, are required, and should not be altered.

2. Optional Text - The Project Specific Etiquette heading provides a space to speak more specifically about ways people can work effectively and inclusively together. Some examples of those can be found on the [Firefox Debugger](https://github.com/devtools-html/debugger.html/blob/master/CODE_OF_CONDUCT.md) project, and [Common Voice](https://github.com/mozilla/voice-web/blob/master/CODE_OF_CONDUCT.md). (The optional part is commented out in the [raw template file](https://raw.githubusercontent.com/mozilla/repo-templates/blob/master/templates/CODE_OF_CONDUCT.md), and will not be visible until you modify and uncomment that part.)

If you have any questions about this file, or Code of Conduct policies and procedures, please see [Mozilla-GitHub-Standards](https://wiki.mozilla.org/GitHub/Repository_Requirements) or email Mozilla-GitHub-Standards+CoC@mozilla.com.

_(Message COC001)_ | 1.0 | CODE_OF_CONDUCT.md file missing -

As of January 1 2019, Mozilla requires that all GitHub projects include this [CODE_OF_CONDUCT.md](https://github.com/mozilla/repo-templates/blob/master/templates/CODE_OF_CONDUCT.md) file in the project root. The file has two parts:

1. Required Text - All text under the headings *Community Participation Guidelines and How to Report*, are required, and should not be altered.

2. Optional Text - The Project Specific Etiquette heading provides a space to speak more specifically about ways people can work effectively and inclusively together. Some examples of those can be found on the [Firefox Debugger](https://github.com/devtools-html/debugger.html/blob/master/CODE_OF_CONDUCT.md) project, and [Common Voice](https://github.com/mozilla/voice-web/blob/master/CODE_OF_CONDUCT.md). (The optional part is commented out in the [raw template file](https://raw.githubusercontent.com/mozilla/repo-templates/blob/master/templates/CODE_OF_CONDUCT.md), and will not be visible until you modify and uncomment that part.)

If you have any questions about this file, or Code of Conduct policies and procedures, please see [Mozilla-GitHub-Standards](https://wiki.mozilla.org/GitHub/Repository_Requirements) or email Mozilla-GitHub-Standards+CoC@mozilla.com.

_(Message COC001)_ | non_infrastructure | code of conduct md file missing as of january mozilla requires that all github projects include this file in the project root the file has two parts required text all text under the headings community participation guidelines and how to report are required and should not be altered optional text the project specific etiquette heading provides a space to speak more specifically about ways people can work effectively and inclusively together some examples of those can be found on the project and the optional part is commented out in the and will not be visible until you modify and uncomment that part if you have any questions about this file or code of conduct policies and procedures please see or email mozilla github standards coc mozilla com message | 0 |

2,531 | 3,738,834,287 | IssuesEvent | 2016-03-09 00:45:46 | RIOT-OS/RIOT | https://api.github.com/repos/RIOT-OS/RIOT | closed | strider: restarting builds | CI-Infrastructure question | IIRC @phiros told that restarting strider builds is currently disabled due to a bug in strider causing docker instances not being properly destroyed. Is this correct and is there any estimation until when this issue will remain? | 1.0 | strider: restarting builds - IIRC @phiros told that restarting strider builds is currently disabled due to a bug in strider causing docker instances not being properly destroyed. Is this correct and is there any estimation until when this issue will remain? | infrastructure | strider restarting builds iirc phiros told that restarting strider builds is currently disabled due to a bug in strider causing docker instances not being properly destroyed is this correct and is there any estimation until when this issue will remain | 1 |

455,115 | 13,111,942,320 | IssuesEvent | 2020-08-05 00:35:59 | nicknadeau/Spin | https://api.github.com/repos/nicknadeau/Spin | closed | Spin should be able to scale memory requirements dynamically | LOW priority feature improvement | Two things here.

1. The real feature: we can specify a number, 1MB for instance, and ensure that the system itself does not use more memory than this (note I mean our system not the memory usage of the tests, which cannot be known or controlled; in other words, running the system with N tests but all tests are empty methods .. we should stay under 1MB in such a scenario).

2. The dynamic part: we should be able to change this number on the fly (realistically between running suites). This may call for further investigation into plausibility and trade-offs incurred. | 1.0 | Spin should be able to scale memory requirements dynamically - Two things here.

1. The real feature: we can specify a number, 1MB for instance, and ensure that the system itself does not use more memory than this (note I mean our system not the memory usage of the tests, which cannot be known or controlled; in other words, running the system with N tests but all tests are empty methods .. we should stay under 1MB in such a scenario).

2. The dynamic part: we should be able to change this number on the fly (realistically between running suites). This may call for further investigation into plausibility and trade-offs incurred. | non_infrastructure | spin should be able to scale memory requirements dynamically two things here the real feature we can specify a number for instance and ensure that the system itself does not use more memory than this note i mean our system not the memory usage of the tests which cannot be known or controlled in other words running the system with n tests but all tests are empty methods we should stay under in such a scenario the dynamic part we should be able to change this number on the fly realistically between running suites this may call for further investigation into plausibility and trade offs incurred | 0 |

802,830 | 29,047,139,012 | IssuesEvent | 2023-05-13 18:05:13 | bounswe/bounswe2023group2 | https://api.github.com/repos/bounswe/bounswe2023group2 | closed | Milestone 2 Tools Discussion | type: good first issue priority: high required: feedback effort: level 3 group work | ### Issue Description

I created some discussions [for APIs](https://github.com/bounswe/bounswe2023group2/discussions/157), [web service](https://github.com/bounswe/bounswe2023group2/discussions/158) and [git actions](https://github.com/bounswe/bounswe2023group2/discussions/159). You can share your ideas below these topics.

Additionally, you should revise the [meeting 9 notes](https://github.com/bounswe/bounswe2023group2/wiki/Meeting-%239) and learn the tools that we will use in this milestone. We will meet on Tuesday evening to discuss and pick the tools that we will use.

### Deadline of the Issue

18.04.2023

### Reviewer

Merve Gürbüz | 1.0 | Milestone 2 Tools Discussion - ### Issue Description

I created some discussions [for APIs](https://github.com/bounswe/bounswe2023group2/discussions/157), [web service](https://github.com/bounswe/bounswe2023group2/discussions/158) and [git actions](https://github.com/bounswe/bounswe2023group2/discussions/159). You can share your ideas below these topics.

Additionally, you should revise the [meeting 9 notes](https://github.com/bounswe/bounswe2023group2/wiki/Meeting-%239) and learn the tools that we will use in this milestone. We will meet on Tuesday evening to discuss and pick the tools that we will use.

### Deadline of the Issue

18.04.2023

### Reviewer

Merve Gürbüz | non_infrastructure | milestone tools discussion issue description i created some discussions and you can share your ideas below these topics additionally you should revise the and learn the tools that we will use in this milestone we will meet on tuesday evening to discuss and pick the tools that we will use deadline of the issue reviewer merve gürbüz | 0 |

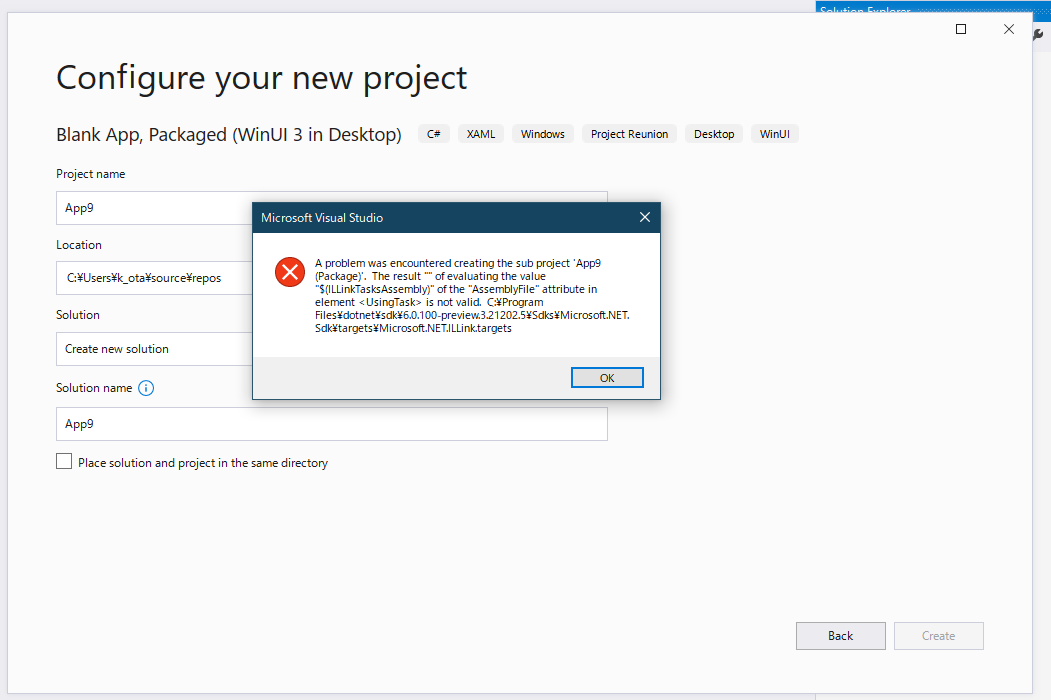

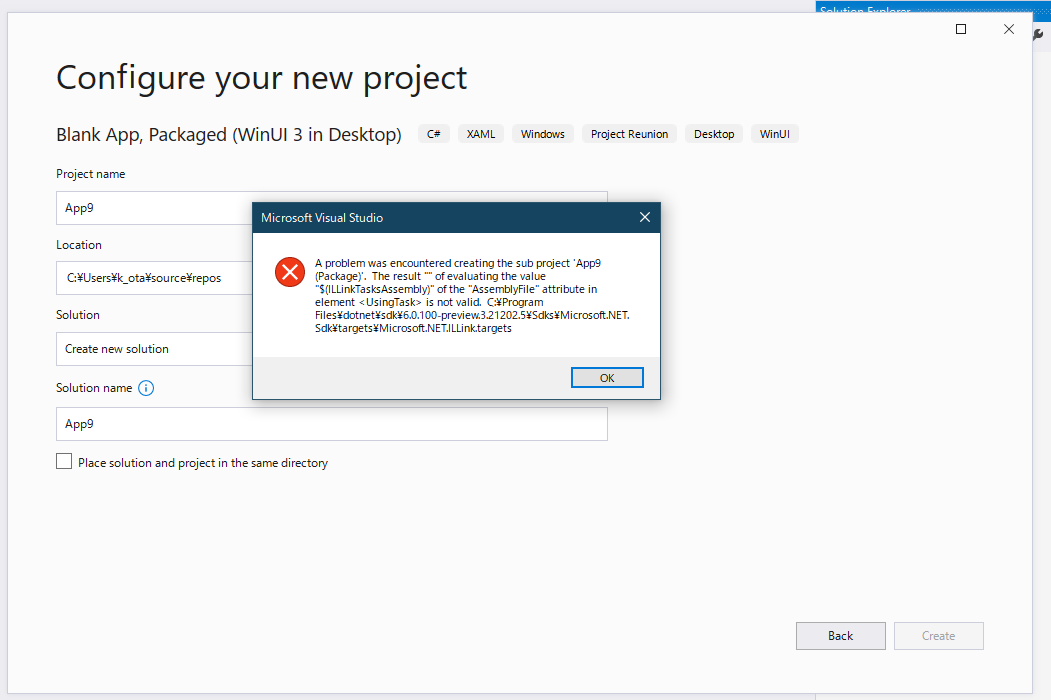

23,357 | 16,090,471,405 | IssuesEvent | 2021-04-26 16:04:42 | microsoft/ProjectReunion | https://api.github.com/repos/microsoft/ProjectReunion | closed | Project Reunion 0.5.5 Extension doesn't work on Visual Studio 2019 16.10 preview 2.0 | area-Infrastructure bug | **Describe the bug**

<!-- Please enter a short, clear description of the bug -->

<!-- For bugs related to WinUI, please open a bug on the WinUI3 repository: https://github.com/microsoft/microsoft-ui-xaml -->

I'm using Project Reunion 0.5.5 extension on Visual Studio 2019 Version 16.10.0 Preview 2.0. When I was trying to create a Blank App, Packaged (WinUI 3 in Desktop) project. Then the following error was occurred:

```

---------------------------

Microsoft Visual Studio

---------------------------

A problem was encountered creating the sub project 'App7 (Package)'. The result "" of evaluating the value "$(ILLinkTasksAssembly)" of the "AssemblyFile" attribute in element <UsingTask> is not valid. C:\Program Files\dotnet\sdk\6.0.100-preview.3.21202.5\Sdks\Microsoft.NET.Sdk\targets\Microsoft.NET.ILLink.targets

---------------------------

OK

---------------------------

```

**Steps to reproduce the bug**

<!-- Please provide any required setup and steps to reproduce the behavior -->

Steps to reproduce the behavior:

1. Setup dev env: https://docs.microsoft.com/en-us/windows/apps/project-reunion/get-started-with-project-reunion

- Visual Studio 2019 Version 16.10.0 Preview 2.0

- Project Reunion 0.5.5

2. Create a project using Blank App, Packaged (WinUI 3 in Desktop) item template.

1. Input a project name

2. Select OK button

3. Select target platforms, and then select OK button.

**Expected behavior**

A .NET 5 project and a packaged project are created.

**Screenshots**

**Version Info**

| Windows 10 version | Saw the problem? |

| :--------------------------------- | :-------------------- |

| Insider Build (xxxxx) | <!-- Yes/No? --> |

| May 2020 Update (19041) | Yes |

| November 2019 Update (18363) | <!-- Yes/No? --> |

| May 2019 Update (18362) | <!-- Yes/No? --> |

| October 2018 Update (17763) | <!-- Yes/No? --> |

| 1.0 | Project Reunion 0.5.5 Extension doesn't work on Visual Studio 2019 16.10 preview 2.0 - **Describe the bug**

<!-- Please enter a short, clear description of the bug -->

<!-- For bugs related to WinUI, please open a bug on the WinUI3 repository: https://github.com/microsoft/microsoft-ui-xaml -->

I'm using Project Reunion 0.5.5 extension on Visual Studio 2019 Version 16.10.0 Preview 2.0. When I was trying to create a Blank App, Packaged (WinUI 3 in Desktop) project. Then the following error was occurred:

```

---------------------------

Microsoft Visual Studio

---------------------------

A problem was encountered creating the sub project 'App7 (Package)'. The result "" of evaluating the value "$(ILLinkTasksAssembly)" of the "AssemblyFile" attribute in element <UsingTask> is not valid. C:\Program Files\dotnet\sdk\6.0.100-preview.3.21202.5\Sdks\Microsoft.NET.Sdk\targets\Microsoft.NET.ILLink.targets

---------------------------

OK

---------------------------

```

**Steps to reproduce the bug**

<!-- Please provide any required setup and steps to reproduce the behavior -->

Steps to reproduce the behavior:

1. Setup dev env: https://docs.microsoft.com/en-us/windows/apps/project-reunion/get-started-with-project-reunion

- Visual Studio 2019 Version 16.10.0 Preview 2.0

- Project Reunion 0.5.5

2. Create a project using Blank App, Packaged (WinUI 3 in Desktop) item template.

1. Input a project name

2. Select OK button

3. Select target platforms, and then select OK button.

**Expected behavior**

A .NET 5 project and a packaged project are created.

**Screenshots**

**Version Info**

| Windows 10 version | Saw the problem? |

| :--------------------------------- | :-------------------- |

| Insider Build (xxxxx) | <!-- Yes/No? --> |

| May 2020 Update (19041) | Yes |

| November 2019 Update (18363) | <!-- Yes/No? --> |

| May 2019 Update (18362) | <!-- Yes/No? --> |

| October 2018 Update (17763) | <!-- Yes/No? --> |

| infrastructure | project reunion extension doesn t work on visual studio preview describe the bug i m using project reunion extension on visual studio version preview when i was trying to create a blank app packaged winui in desktop project then the following error was occurred microsoft visual studio a problem was encountered creating the sub project package the result of evaluating the value illinktasksassembly of the assemblyfile attribute in element is not valid c program files dotnet sdk preview sdks microsoft net sdk targets microsoft net illink targets ok steps to reproduce the bug steps to reproduce the behavior setup dev env visual studio version preview project reunion create a project using blank app packaged winui in desktop item template input a project name select ok button select target platforms and then select ok button expected behavior a net project and a packaged project are created screenshots version info windows version saw the problem insider build xxxxx may update yes november update may update october update | 1 |

238 | 2,589,455,311 | IssuesEvent | 2015-02-18 12:51:48 | rust-lang/rust | https://api.github.com/repos/rust-lang/rust | closed | Publish guidelines somewhere official | A-docs A-infrastructure P-high | The coding guidelines are currently hosted at @aturon's github page: http://aturon.github.io/. These should be hosted somewhere official. Requires some network setup and possibly automation since there's a build step. | 1.0 | Publish guidelines somewhere official - The coding guidelines are currently hosted at @aturon's github page: http://aturon.github.io/. These should be hosted somewhere official. Requires some network setup and possibly automation since there's a build step. | infrastructure | publish guidelines somewhere official the coding guidelines are currently hosted at aturon s github page these should be hosted somewhere official requires some network setup and possibly automation since there s a build step | 1 |

315,663 | 23,590,886,821 | IssuesEvent | 2022-08-23 15:05:59 | arturo-lang/arturo | https://api.github.com/repos/arturo-lang/arturo | closed | [Reflection\complex?] add documentation example | documentation library todo easy | [Reflection\complex?] add documentation example

https://github.com/arturo-lang/arturo/blob/3a89302d1b1f33da097ed6e7bd6dfb05fc338482/src/library/Reflection.nim#L292

```text

builtin "color?",

alias = unaliased,

rule = PrefixPrecedence,

description = "checks if given value is of type :color",

args = {

"value" : {Any}

},

attrs = NoAttrs,

returns = {Logical},

# TODO(Reflection\color?) add documentation example

# labels: library, documentation, easy

example = """

""":

##########################################################

push(newLogical(x.kind==Color))

builtin "complex?",

alias = unaliased,

rule = PrefixPrecedence,

description = "checks if given value is of type :complex",

args = {

"value" : {Any}

},

attrs = NoAttrs,

returns = {Logical},

# TODO(Reflection\complex?) add documentation example

# labels: library, documentation, easy

example = """

""":

##########################################################

push(newLogical(x.kind==Complex))

builtin "database?",

alias = unaliased,

rule = PrefixPrecedence,

```

618f5b7315934d100b1650e62313cf8d69700ce6 | 1.0 | [Reflection\complex?] add documentation example - [Reflection\complex?] add documentation example

https://github.com/arturo-lang/arturo/blob/3a89302d1b1f33da097ed6e7bd6dfb05fc338482/src/library/Reflection.nim#L292

```text

builtin "color?",

alias = unaliased,

rule = PrefixPrecedence,

description = "checks if given value is of type :color",

args = {

"value" : {Any}

},

attrs = NoAttrs,

returns = {Logical},

# TODO(Reflection\color?) add documentation example

# labels: library, documentation, easy

example = """

""":

##########################################################

push(newLogical(x.kind==Color))

builtin "complex?",

alias = unaliased,

rule = PrefixPrecedence,

description = "checks if given value is of type :complex",

args = {

"value" : {Any}

},

attrs = NoAttrs,

returns = {Logical},

# TODO(Reflection\complex?) add documentation example

# labels: library, documentation, easy

example = """

""":

##########################################################

push(newLogical(x.kind==Complex))

builtin "database?",

alias = unaliased,

rule = PrefixPrecedence,

```

618f5b7315934d100b1650e62313cf8d69700ce6 | non_infrastructure | add documentation example add documentation example text builtin color alias unaliased rule prefixprecedence description checks if given value is of type color args value any attrs noattrs returns logical todo reflection color add documentation example labels library documentation easy example push newlogical x kind color builtin complex alias unaliased rule prefixprecedence description checks if given value is of type complex args value any attrs noattrs returns logical todo reflection complex add documentation example labels library documentation easy example push newlogical x kind complex builtin database alias unaliased rule prefixprecedence | 0 |

117,498 | 15,108,785,518 | IssuesEvent | 2021-02-08 17:02:20 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | [Design] FL Mobile search experience | Q121-priority design vsa vsa-facilities | ## Issue Description

As a Veteran using a mobile device to search on Facility Locator, I want the experience to be consistent with industry standards.

## Background

- In a mobile month study, veterans were not interested in using the map view of facility locator #14484

- The Facility Locator search experience has not been approached from a mobile-first perspective

- Planned changes include the implementation of two new usability features ("use my location" and "clear field") and the resolution of accessibility defects

- A separate mobile research session will be conducted with a specific assistive tech focus #18685

## Hypotheses

- The FL search experience could be better for mobile users. It is not aligned with Veteran expectations or other private industry map-based mobile search experiences.

- Veterans searching on FL using a mobile device want to view useful facility information displayed independently from the list view.

- Veterans need to be able to clear field inputs

- Veterans want to be able to opt-in to geolocation at search level, rather than via browser.

- ---

## Tasks

- [x] Adapt [Mobile exploration designs from hackathon](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/teams/vsa/teams/facility-locator/images/Facility%20Locator%20Mobile%20Chris.pdf) to create a testable prototype to test hypotheses.

- [x] Include planned ["clear field"](https://github.com/department-of-veterans-affairs/va.gov-team/issues/16513) and ["use my location"](https://github.com/department-of-veterans-affairs/va.gov-team/issues/17319) designs in prototype

## Acceptance Criteria

- [x] Testable mobile first prototype

---

## How to configure this issue

- [ ] **Attached to a Milestone** (when will this be completed?)

- [ ] **Attached to an Epic** (what body of work is this a part of?)

- [ ] **Labeled with Team** (`product support`, `analytics-insights`, `operations`, `service-design`, `tools-be`, `tools-fe`)

- [ ] **Labeled with Practice Area** (`backend`, `frontend`, `devops`, `design`, `research`, `product`, `ia`, `qa`, `analytics`, `contact center`, `research`, `accessibility`, `content`)

- [ ] **Labeled with Type** (`bug`, `request`, `discovery`, `documentation`, etc.)

| 1.0 | [Design] FL Mobile search experience - ## Issue Description

As a Veteran using a mobile device to search on Facility Locator, I want the experience to be consistent with industry standards.

## Background

- In a mobile month study, veterans were not interested in using the map view of facility locator #14484

- The Facility Locator search experience has not been approached from a mobile-first perspective

- Planned changes include the implementation of two new usability features ("use my location" and "clear field") and the resolution of accessibility defects

- A separate mobile research session will be conducted with a specific assistive tech focus #18685

## Hypotheses

- The FL search experience could be better for mobile users. It is not aligned with Veteran expectations or other private industry map-based mobile search experiences.

- Veterans searching on FL using a mobile device want to view useful facility information displayed independently from the list view.

- Veterans need to be able to clear field inputs

- Veterans want to be able to opt-in to geolocation at search level, rather than via browser.

- ---

## Tasks

- [x] Adapt [Mobile exploration designs from hackathon](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/teams/vsa/teams/facility-locator/images/Facility%20Locator%20Mobile%20Chris.pdf) to create a testable prototype to test hypotheses.

- [x] Include planned ["clear field"](https://github.com/department-of-veterans-affairs/va.gov-team/issues/16513) and ["use my location"](https://github.com/department-of-veterans-affairs/va.gov-team/issues/17319) designs in prototype

## Acceptance Criteria

- [x] Testable mobile first prototype

---

## How to configure this issue

- [ ] **Attached to a Milestone** (when will this be completed?)

- [ ] **Attached to an Epic** (what body of work is this a part of?)

- [ ] **Labeled with Team** (`product support`, `analytics-insights`, `operations`, `service-design`, `tools-be`, `tools-fe`)

- [ ] **Labeled with Practice Area** (`backend`, `frontend`, `devops`, `design`, `research`, `product`, `ia`, `qa`, `analytics`, `contact center`, `research`, `accessibility`, `content`)

- [ ] **Labeled with Type** (`bug`, `request`, `discovery`, `documentation`, etc.)

| non_infrastructure | fl mobile search experience issue description as a veteran using a mobile device to search on facility locator i want the experience to be consistent with industry standards background in a mobile month study veterans were not interested in using the map view of facility locator the facility locator search experience has not been approached from a mobile first perspective planned changes include the implementation of two new usability features use my location and clear field and the resolution of accessibility defects a separate mobile research session will be conducted with a specific assistive tech focus hypotheses the fl search experience could be better for mobile users it is not aligned with veteran expectations or other private industry map based mobile search experiences veterans searching on fl using a mobile device want to view useful facility information displayed independently from the list view veterans need to be able to clear field inputs veterans want to be able to opt in to geolocation at search level rather than via browser tasks adapt to create a testable prototype to test hypotheses include planned and designs in prototype acceptance criteria testable mobile first prototype how to configure this issue attached to a milestone when will this be completed attached to an epic what body of work is this a part of labeled with team product support analytics insights operations service design tools be tools fe labeled with practice area backend frontend devops design research product ia qa analytics contact center research accessibility content labeled with type bug request discovery documentation etc | 0 |

3,579 | 4,417,648,070 | IssuesEvent | 2016-08-15 07:00:15 | camptocamp/c2cgeoportal | https://api.github.com/repos/camptocamp/c2cgeoportal | closed | Error while make (new version 2.0.0) | Infrastructure Ready | ```

...

info closure-util Reading build config

info closure-util Getting Closure dependencies

ERR! closure-util Could not find base.js

CONST_Makefile:801: recipe for target '.build/mobile.js' failed

make: *** [.build/mobile.js] Error 1

```

Seen on the instance of @BenjaminZaugg and @marionb, OK on the instance of @eleu .

Might it be a permission problem?

Workaround: disable mobile in <user>.mk:

```

MOBILE ?= FALSE

``` | 1.0 | Error while make (new version 2.0.0) - ```

...

info closure-util Reading build config

info closure-util Getting Closure dependencies

ERR! closure-util Could not find base.js

CONST_Makefile:801: recipe for target '.build/mobile.js' failed

make: *** [.build/mobile.js] Error 1

```

Seen on the instance of @BenjaminZaugg and @marionb, OK on the instance of @eleu .

Might it be a permission problem?

Workaround: disable mobile in <user>.mk:

```

MOBILE ?= FALSE

``` | infrastructure | error while make new version info closure util reading build config info closure util getting closure dependencies err closure util could not find base js const makefile recipe for target build mobile js failed make error seen on the instance of benjaminzaugg and marionb ok on the instance of eleu might it be a permission problem workaround disable mobile in mk mobile false | 1 |

22,547 | 15,265,996,459 | IssuesEvent | 2021-02-22 08:12:22 | ansible-collections/community.general | https://api.github.com/repos/ansible-collections/community.general | closed | deploy_helper: missing release parameter for state=clean causes an error | affects_2.10 bug module needs_triage plugins python3 traceback web_infrastructure | **Summary**

The description of `release` parameter says that it is optional for `state=present` and required for `state=finalize`, but says nothing about `state=clean`. Executing a task with `state=clean` but without the `release` parameter causes an error

**Issue Type**

Bug Report

**Component Name**

deploy_helper

**Ansible Version**

```

ansible 2.10.5

config file = /Users/maxim/Projects/XXX/ansible.cfg

configured module search path = ['/Users/maxim/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /Users/maxim/Library/Python/3.7/lib/python/site-packages/ansible

executable location = /Users/maxim/Library/Python/3.7/bin/ansible

python version = 3.7.3 (default, Apr 24 2020, 18:51:23) [Clang 11.0.3 (clang-1103.0.32.62)]

```

**Configuration**

_No response_

**OS / Environment**

MacOS Catalina (10.15) on control node, and Ubuntu 20.04 on managed node

**Steps To Reproduce**

```yaml

- community.general.deploy_helper:

path: '{{ deploy_helper.project_path }}'

state: clean

```

**Expected Results**

I don't know exactly how this should work, but I think we should add that the `release` parameter is required for `state=clean`, and handle this in code. Also the examples of use look wrong

**Actual Results**

```

The full traceback is:

Traceback (most recent call last):

File "/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py", line 102, in <module>

_ansiballz_main()

File "/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py", line 94, in _ansiballz_main

invoke_module(zipped_mod, temp_path, ANSIBALLZ_PARAMS)

File "/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py", line 40, in invoke_module

runpy.run_module(mod_name='ansible_collections.community.general.plugins.modules.deploy_helper', init_globals=None, run_name='__main__', alter_sys=True)

File "/usr/lib/python3.8/runpy.py", line 207, in run_module

return _run_module_code(code, init_globals, run_name, mod_spec)

File "/usr/lib/python3.8/runpy.py", line 97, in _run_module_code

_run_code(code, mod_globals, init_globals,

File "/usr/lib/python3.8/runpy.py", line 87, in _run_code

exec(code, run_globals)

File "/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py", line 524, in <module>

File "/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py", line 506, in main

File "/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py", line 411, in remove_unfinished_link

TypeError: unsupported operand type(s) for +: 'NoneType' and 'str'

fatal: [XXX]: FAILED! => {

"changed": false,

"module_stderr": "Shared connection to XXX closed.\r\n",

"module_stdout": "Traceback (most recent call last):\r\n File \"/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py\", line 102, in <module>\r\n _ansiballz_main()\r\n File \"/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py\", line 94, in _ansiballz_main\r\n invoke_module(zipped_mod, temp_path, ANSIBALLZ_PARAMS)\r\n File \"/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py\", line 40, in invoke_module\r\n runpy.run_module(mod_name='ansible_collections.community.general.plugins.modules.deploy_helper', init_globals=None, run_name='__main__', alter_sys=True)\r\n File \"/usr/lib/python3.8/runpy.py\", line 207, in run_module\r\n return _run_module_code(code, init_globals, run_name, mod_spec)\r\n File \"/usr/lib/python3.8/runpy.py\", line 97, in _run_module_code\r\n _run_code(code, mod_globals, init_globals,\r\n File \"/usr/lib/python3.8/runpy.py\", line 87, in _run_code\r\n exec(code, run_globals)\r\n File \"/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py\", line 524, in <module>\r\n File \"/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py\", line 506, in main\r\n File \"/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py\", line 411, in remove_unfinished_link\r\nTypeError: unsupported operand type(s) for +: 'NoneType' and 'str'\r\n",

"msg": "MODULE FAILURE\nSee stdout/stderr for the exact error",

"rc": 1

}

```

| 1.0 | deploy_helper: missing release parameter for state=clean causes an error - **Summary**

The description of `release` parameter says that it is optional for `state=present` and required for `state=finalize`, but says nothing about `state=clean`. Executing a task with `state=clean` but without the `release` parameter causes an error

**Issue Type**

Bug Report

**Component Name**

deploy_helper

**Ansible Version**

```

ansible 2.10.5

config file = /Users/maxim/Projects/XXX/ansible.cfg

configured module search path = ['/Users/maxim/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /Users/maxim/Library/Python/3.7/lib/python/site-packages/ansible

executable location = /Users/maxim/Library/Python/3.7/bin/ansible

python version = 3.7.3 (default, Apr 24 2020, 18:51:23) [Clang 11.0.3 (clang-1103.0.32.62)]

```

**Configuration**

_No response_

**OS / Environment**

MacOS Catalina (10.15) on control node, and Ubuntu 20.04 on managed node

**Steps To Reproduce**

```yaml

- community.general.deploy_helper:

path: '{{ deploy_helper.project_path }}'

state: clean

```

**Expected Results**

I don't know exactly how this should work, but I think we should add that the `release` parameter is required for `state=clean`, and handle this in code. Also the examples of use look wrong

**Actual Results**

```

The full traceback is:

Traceback (most recent call last):

File "/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py", line 102, in <module>

_ansiballz_main()

File "/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py", line 94, in _ansiballz_main

invoke_module(zipped_mod, temp_path, ANSIBALLZ_PARAMS)

File "/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py", line 40, in invoke_module

runpy.run_module(mod_name='ansible_collections.community.general.plugins.modules.deploy_helper', init_globals=None, run_name='__main__', alter_sys=True)

File "/usr/lib/python3.8/runpy.py", line 207, in run_module

return _run_module_code(code, init_globals, run_name, mod_spec)

File "/usr/lib/python3.8/runpy.py", line 97, in _run_module_code

_run_code(code, mod_globals, init_globals,

File "/usr/lib/python3.8/runpy.py", line 87, in _run_code

exec(code, run_globals)

File "/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py", line 524, in <module>

File "/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py", line 506, in main

File "/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py", line 411, in remove_unfinished_link

TypeError: unsupported operand type(s) for +: 'NoneType' and 'str'

fatal: [XXX]: FAILED! => {

"changed": false,

"module_stderr": "Shared connection to XXX closed.\r\n",

"module_stdout": "Traceback (most recent call last):\r\n File \"/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py\", line 102, in <module>\r\n _ansiballz_main()\r\n File \"/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py\", line 94, in _ansiballz_main\r\n invoke_module(zipped_mod, temp_path, ANSIBALLZ_PARAMS)\r\n File \"/home/XXX/.ansible/tmp/ansible-tmp-1613593157.5376189-33857-233175515274164/AnsiballZ_deploy_helper.py\", line 40, in invoke_module\r\n runpy.run_module(mod_name='ansible_collections.community.general.plugins.modules.deploy_helper', init_globals=None, run_name='__main__', alter_sys=True)\r\n File \"/usr/lib/python3.8/runpy.py\", line 207, in run_module\r\n return _run_module_code(code, init_globals, run_name, mod_spec)\r\n File \"/usr/lib/python3.8/runpy.py\", line 97, in _run_module_code\r\n _run_code(code, mod_globals, init_globals,\r\n File \"/usr/lib/python3.8/runpy.py\", line 87, in _run_code\r\n exec(code, run_globals)\r\n File \"/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py\", line 524, in <module>\r\n File \"/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py\", line 506, in main\r\n File \"/tmp/ansible_community.general.deploy_helper_payload_zq3sjtgk/ansible_community.general.deploy_helper_payload.zip/ansible_collections/community/general/plugins/modules/deploy_helper.py\", line 411, in remove_unfinished_link\r\nTypeError: unsupported operand type(s) for +: 'NoneType' and 'str'\r\n",

"msg": "MODULE FAILURE\nSee stdout/stderr for the exact error",

"rc": 1

}

```

| infrastructure | deploy helper missing release parameter for state clean causes an error summary the description of release parameter says that it is optional for state present and required for state finalize but says nothing about state clean executing a task with state clean but without the release parameter causes an error issue type bug report component name deploy helper ansible version ansible config file users maxim projects xxx ansible cfg configured module search path ansible python module location users maxim library python lib python site packages ansible executable location users maxim library python bin ansible python version default apr configuration no response os environment macos catalina on control node and ubuntu on managed node steps to reproduce yaml community general deploy helper path deploy helper project path state clean expected results i don t know exactly how this should work but i think we should add that the release parameter is required for state clean and handle this in code also the examples of use look wrong actual results the full traceback is traceback most recent call last file home xxx ansible tmp ansible tmp ansiballz deploy helper py line in ansiballz main file home xxx ansible tmp ansible tmp ansiballz deploy helper py line in ansiballz main invoke module zipped mod temp path ansiballz params file home xxx ansible tmp ansible tmp ansiballz deploy helper py line in invoke module runpy run module mod name ansible collections community general plugins modules deploy helper init globals none run name main alter sys true file usr lib runpy py line in run module return run module code code init globals run name mod spec file usr lib runpy py line in run module code run code code mod globals init globals file usr lib runpy py line in run code exec code run globals file tmp ansible community general deploy helper payload ansible community general deploy helper payload zip ansible collections community general plugins modules deploy helper py line in file tmp ansible community general deploy helper payload ansible community general deploy helper payload zip ansible collections community general plugins modules deploy helper py line in main file tmp ansible community general deploy helper payload ansible community general deploy helper payload zip ansible collections community general plugins modules deploy helper py line in remove unfinished link typeerror unsupported operand type s for nonetype and str fatal failed changed false module stderr shared connection to xxx closed r n module stdout traceback most recent call last r n file home xxx ansible tmp ansible tmp ansiballz deploy helper py line in r n ansiballz main r n file home xxx ansible tmp ansible tmp ansiballz deploy helper py line in ansiballz main r n invoke module zipped mod temp path ansiballz params r n file home xxx ansible tmp ansible tmp ansiballz deploy helper py line in invoke module r n runpy run module mod name ansible collections community general plugins modules deploy helper init globals none run name main alter sys true r n file usr lib runpy py line in run module r n return run module code code init globals run name mod spec r n file usr lib runpy py line in run module code r n run code code mod globals init globals r n file usr lib runpy py line in run code r n exec code run globals r n file tmp ansible community general deploy helper payload ansible community general deploy helper payload zip ansible collections community general plugins modules deploy helper py line in r n file tmp ansible community general deploy helper payload ansible community general deploy helper payload zip ansible collections community general plugins modules deploy helper py line in main r n file tmp ansible community general deploy helper payload ansible community general deploy helper payload zip ansible collections community general plugins modules deploy helper py line in remove unfinished link r ntypeerror unsupported operand type s for nonetype and str r n msg module failure nsee stdout stderr for the exact error rc | 1 |

25,010 | 11,137,384,138 | IssuesEvent | 2019-12-20 19:11:25 | tomkerkhove/promitor | https://api.github.com/repos/tomkerkhove/promitor | closed | Provide support for Azure Managed Identities | feature-request security | From a security perspective, it is more secure to authenticate to the Azure subscription via Managed Identity rather than passing client ID and client secret via parameter. There is an alternative way of passing secure credentials via Docker secrets, but our enterprise requires that credentials are managed via Active Directory or KeyVault.

Is there a roadmap to implement Managed Identities as a feature in the near future?

| True | Provide support for Azure Managed Identities - From a security perspective, it is more secure to authenticate to the Azure subscription via Managed Identity rather than passing client ID and client secret via parameter. There is an alternative way of passing secure credentials via Docker secrets, but our enterprise requires that credentials are managed via Active Directory or KeyVault.

Is there a roadmap to implement Managed Identities as a feature in the near future?

| non_infrastructure | provide support for azure managed identities from a security perspective it is more secure to authenticate to the azure subscription via managed identity rather than passing client id and client secret via parameter there is an alternative way of passing secure credentials via docker secrets but our enterprise requires that credentials are managed via active directory or keyvault is there a roadmap to implement managed identities as a feature in the near future | 0 |

23,154 | 15,866,601,711 | IssuesEvent | 2021-04-08 15:55:35 | google/iree | https://api.github.com/repos/google/iree | closed | Submodule check failes at ToT (https://github.com/google/iree/pull/5343) | bug 🐞 infrastructure 🛠️ | If you are an IREE team member, please consider doing further triage: remove the "help wanted" label, add other relevant labels, and redirect to a more appropriate assignee.

**Describe the bug**

Submodule check fails with the following error:

```

third_party/mlir-emitc : actual=3c265bf59bf2515a63ec35571c66954349749a62 written=dde739ffd00a6fa99175cf3c0f28e4b763dc6f5f

```

It seems https://github.com/google/iree/pull/5326 updated the submodule but left `SUBMODULE_VERSIONS.txt` unchanged.

**To Reproduce**

All the opened PRs since 4/7/21 show this error.

Example:

https://github.com/google/iree/pull/5339/checks?check_run_id=2292642678

| 1.0 | Submodule check failes at ToT (https://github.com/google/iree/pull/5343) - If you are an IREE team member, please consider doing further triage: remove the "help wanted" label, add other relevant labels, and redirect to a more appropriate assignee.

**Describe the bug**

Submodule check fails with the following error:

```

third_party/mlir-emitc : actual=3c265bf59bf2515a63ec35571c66954349749a62 written=dde739ffd00a6fa99175cf3c0f28e4b763dc6f5f

```

It seems https://github.com/google/iree/pull/5326 updated the submodule but left `SUBMODULE_VERSIONS.txt` unchanged.

**To Reproduce**

All the opened PRs since 4/7/21 show this error.

Example:

https://github.com/google/iree/pull/5339/checks?check_run_id=2292642678

| infrastructure | submodule check failes at tot if you are an iree team member please consider doing further triage remove the help wanted label add other relevant labels and redirect to a more appropriate assignee describe the bug submodule check fails with the following error third party mlir emitc actual written it seems updated the submodule but left submodule versions txt unchanged to reproduce all the opened prs since show this error example | 1 |

30,946 | 25,190,777,541 | IssuesEvent | 2022-11-12 00:27:26 | flutter/website | https://api.github.com/repos/flutter/website | closed | Document how to run Travis scripts locally for testing/fixing build issues of Website | infrastructure passed first triage from:flutter-sdk | Recently there have been some website builds break as a result of changes in Flutter (eg. deprecating constants). Since the website doesn't rebuild on flutter changes but does pull flutter, this means the next push to the website will fail (and may not be related to the change made).

To debug these (for ex. flutter/flutter#16153) it'd be useful to be able to run the Travis script locally (since the build is slow, shotgun fixing by pushing to Travis is tedious) however when I tried to run it manually it seemed to fail:

```text

Running "flutter packages get" in animate1... 0.5s

Run flutter analyze on _includes/code/animation/animate1

ProcessException: No such file or directory

Command: /usr/bin/xcodebuild -project /Users/dantup/Dev/Google/flutter-website/ios/Runner.xcodeproj -target Runner -showBuildSettings

```

I had a scan through the scripts, but I couldn't figure out where that ios folder was supposed to come from. | 1.0 | Document how to run Travis scripts locally for testing/fixing build issues of Website - Recently there have been some website builds break as a result of changes in Flutter (eg. deprecating constants). Since the website doesn't rebuild on flutter changes but does pull flutter, this means the next push to the website will fail (and may not be related to the change made).

To debug these (for ex. flutter/flutter#16153) it'd be useful to be able to run the Travis script locally (since the build is slow, shotgun fixing by pushing to Travis is tedious) however when I tried to run it manually it seemed to fail:

```text

Running "flutter packages get" in animate1... 0.5s

Run flutter analyze on _includes/code/animation/animate1

ProcessException: No such file or directory

Command: /usr/bin/xcodebuild -project /Users/dantup/Dev/Google/flutter-website/ios/Runner.xcodeproj -target Runner -showBuildSettings

```

I had a scan through the scripts, but I couldn't figure out where that ios folder was supposed to come from. | infrastructure | document how to run travis scripts locally for testing fixing build issues of website recently there have been some website builds break as a result of changes in flutter eg deprecating constants since the website doesn t rebuild on flutter changes but does pull flutter this means the next push to the website will fail and may not be related to the change made to debug these for ex flutter flutter it d be useful to be able to run the travis script locally since the build is slow shotgun fixing by pushing to travis is tedious however when i tried to run it manually it seemed to fail text running flutter packages get in run flutter analyze on includes code animation processexception no such file or directory command usr bin xcodebuild project users dantup dev google flutter website ios runner xcodeproj target runner showbuildsettings i had a scan through the scripts but i couldn t figure out where that ios folder was supposed to come from | 1 |

94,330 | 10,819,409,521 | IssuesEvent | 2019-11-08 14:20:28 | fgypas/panoptes | https://api.github.com/repos/fgypas/panoptes | closed | Overview of project | documentation | Hi all

Here is an overview of our project (second slide) in Google slides:

https://docs.google.com/presentation/d/1UmLvAA4Z1ZQw16iythcOJQqe6h0GQuOeZCxu9xkuDTM/edit?usp=sharing

It's just what I have in my mind at the moment. Please let me know if I am missing something, or you think that there is a better way to implement some part. Everyone with the link has "write" permissions. Feel free to make changes or add comments.

Note that I marked with red, what I think it's not urgent to implement at the moment.

Best

Foivos

| 1.0 | Overview of project - Hi all

Here is an overview of our project (second slide) in Google slides:

https://docs.google.com/presentation/d/1UmLvAA4Z1ZQw16iythcOJQqe6h0GQuOeZCxu9xkuDTM/edit?usp=sharing

It's just what I have in my mind at the moment. Please let me know if I am missing something, or you think that there is a better way to implement some part. Everyone with the link has "write" permissions. Feel free to make changes or add comments.

Note that I marked with red, what I think it's not urgent to implement at the moment.

Best

Foivos

| non_infrastructure | overview of project hi all here is an overview of our project second slide in google slides it s just what i have in my mind at the moment please let me know if i am missing something or you think that there is a better way to implement some part everyone with the link has write permissions feel free to make changes or add comments note that i marked with red what i think it s not urgent to implement at the moment best foivos | 0 |

33,053 | 27,174,214,112 | IssuesEvent | 2023-02-17 22:47:36 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | [Automated] PRs inserted in VS build main-33417.173 | Area-Infrastructure untriaged vs-insertion | [View Complete Diff of Changes](https://github.com/dotnet/roslyn/compare/79a819ed4b0d89cea36749cd864e348c34f6456b...475293444be097e8a7cab8658b99d3c706b6e8e6?w=1)

- [[PERF] Remove all partial analysis state tracking in CompilationWithAnalyzers (66750)](https://github.com/dotnet/roslyn/pull/66750)

- [Fix parsing of 'file[]' and 'required[]' in old language versions (66769)](https://github.com/dotnet/roslyn/pull/66769)

- [Move the 'make method async' fixer down to shared layer (66863)](https://github.com/dotnet/roslyn/pull/66863)

- [Update 'make local function static' to support capturing 'this' as a parameter to that local funtion. (66875)](https://github.com/dotnet/roslyn/pull/66875)

- [Move the 'make method synchronous' fixer down to shared layer (66862)](https://github.com/dotnet/roslyn/pull/66862)

- [Make F1 work for DirectCast and TryCast (66882)](https://github.com/dotnet/roslyn/pull/66882)

- [Remove UI dependencies from VisualBasicPackage constructor (66797)](https://github.com/dotnet/roslyn/pull/66797)

- [Move 'doc comment' related code fixers down to shared layer (66860)](https://github.com/dotnet/roslyn/pull/66860)

- [Fix the span of 'introduce parameter' (66887)](https://github.com/dotnet/roslyn/pull/66887)

- [Move 'convert to record' fixer down to shared layer (66859)](https://github.com/dotnet/roslyn/pull/66859)

- [Move the 'remove new modifier' fixer down to shared layer (66857)](https://github.com/dotnet/roslyn/pull/66857)

- [Fix enum preselection in switch expressions (66883)](https://github.com/dotnet/roslyn/pull/66883)

- [Move the 'remove in keyword' fixer down to shared layer (66856)](https://github.com/dotnet/roslyn/pull/66856)

- [Remove some outdated configurations in tools (52800)](https://github.com/dotnet/roslyn/pull/52800)

- [Move 'conflict resolution' fixer down to shared layer (66855)](https://github.com/dotnet/roslyn/pull/66855)

- [move the 'make struct a ref struct' fixer down to shared layer (66853)](https://github.com/dotnet/roslyn/pull/66853)

- [Don't include star when committing promoted item (66858)](https://github.com/dotnet/roslyn/pull/66858)

- [move the 'remove async modifier' fixer down to shared layer (66852)](https://github.com/dotnet/roslyn/pull/66852)

- [move 'remove unused local function' down to shared layer (66851)](https://github.com/dotnet/roslyn/pull/66851)

| 1.0 | [Automated] PRs inserted in VS build main-33417.173 - [View Complete Diff of Changes](https://github.com/dotnet/roslyn/compare/79a819ed4b0d89cea36749cd864e348c34f6456b...475293444be097e8a7cab8658b99d3c706b6e8e6?w=1)

- [[PERF] Remove all partial analysis state tracking in CompilationWithAnalyzers (66750)](https://github.com/dotnet/roslyn/pull/66750)

- [Fix parsing of 'file[]' and 'required[]' in old language versions (66769)](https://github.com/dotnet/roslyn/pull/66769)

- [Move the 'make method async' fixer down to shared layer (66863)](https://github.com/dotnet/roslyn/pull/66863)

- [Update 'make local function static' to support capturing 'this' as a parameter to that local funtion. (66875)](https://github.com/dotnet/roslyn/pull/66875)

- [Move the 'make method synchronous' fixer down to shared layer (66862)](https://github.com/dotnet/roslyn/pull/66862)

- [Make F1 work for DirectCast and TryCast (66882)](https://github.com/dotnet/roslyn/pull/66882)

- [Remove UI dependencies from VisualBasicPackage constructor (66797)](https://github.com/dotnet/roslyn/pull/66797)

- [Move 'doc comment' related code fixers down to shared layer (66860)](https://github.com/dotnet/roslyn/pull/66860)

- [Fix the span of 'introduce parameter' (66887)](https://github.com/dotnet/roslyn/pull/66887)

- [Move 'convert to record' fixer down to shared layer (66859)](https://github.com/dotnet/roslyn/pull/66859)

- [Move the 'remove new modifier' fixer down to shared layer (66857)](https://github.com/dotnet/roslyn/pull/66857)

- [Fix enum preselection in switch expressions (66883)](https://github.com/dotnet/roslyn/pull/66883)

- [Move the 'remove in keyword' fixer down to shared layer (66856)](https://github.com/dotnet/roslyn/pull/66856)

- [Remove some outdated configurations in tools (52800)](https://github.com/dotnet/roslyn/pull/52800)

- [Move 'conflict resolution' fixer down to shared layer (66855)](https://github.com/dotnet/roslyn/pull/66855)

- [move the 'make struct a ref struct' fixer down to shared layer (66853)](https://github.com/dotnet/roslyn/pull/66853)

- [Don't include star when committing promoted item (66858)](https://github.com/dotnet/roslyn/pull/66858)

- [move the 'remove async modifier' fixer down to shared layer (66852)](https://github.com/dotnet/roslyn/pull/66852)

- [move 'remove unused local function' down to shared layer (66851)](https://github.com/dotnet/roslyn/pull/66851)

| infrastructure | prs inserted in vs build main remove all partial analysis state tracking in compilationwithanalyzers and required in old language versions | 1 |

8,233 | 7,299,889,267 | IssuesEvent | 2018-02-26 21:38:00 | dotnet/wcf | https://api.github.com/repos/dotnet/wcf | closed | Update RIDs for test execution. | Infrastructure | _Removals:_

Fedora 25 - EOL 12/20/17

OpenSUSE 42.2 - EOL 01/26/18

RedHat 7.2 - EOL 11/30/17

_Additions:_

OpenSUSE 42.3

Fedora 27

VSTS

- [x] Master Pipeline

- [x] 2.1 Pipeline

- [x] UWP6.0 Pipeline

- [x] 2.0 Pipeline

- [x] 1.1 Pipeline

- [x] 1.0 Pipeline | 1.0 | Update RIDs for test execution. - _Removals:_

Fedora 25 - EOL 12/20/17

OpenSUSE 42.2 - EOL 01/26/18

RedHat 7.2 - EOL 11/30/17

_Additions:_

OpenSUSE 42.3

Fedora 27

VSTS

- [x] Master Pipeline

- [x] 2.1 Pipeline

- [x] UWP6.0 Pipeline

- [x] 2.0 Pipeline

- [x] 1.1 Pipeline

- [x] 1.0 Pipeline | infrastructure | update rids for test execution removals fedora eol opensuse eol redhat eol additions opensuse fedora vsts master pipeline pipeline pipeline pipeline pipeline pipeline | 1 |

34,479 | 30,018,140,143 | IssuesEvent | 2023-06-26 20:27:59 | woocommerce/woocommerce | https://api.github.com/repos/woocommerce/woocommerce | closed | Investigate Unit Test Execution Performance | type: task tool: monorepo infrastructure | ### Summary

Currently it can take anywhere between 15 and 30 minutes for our unit tests to run on Pull Requests. This is a significant blocker to getting pull requests merged, and as such, we need to dive into why this is the case. The goal of this issue is to profile our unit tests, figure out which ones are slow, why, and what we can do to address it.

### Acceptance Criteria

We should deliver a list of the types of tests that are slow, why they are slow, what we can do to prevent this in the future, and what we can do to speed the tests up now. Ideally we can create some GitHub Issues to either do this work ourselves or outsource it to other teams if that would be more appropriate. | 1.0 | Investigate Unit Test Execution Performance - ### Summary

Currently it can take anywhere between 15 and 30 minutes for our unit tests to run on Pull Requests. This is a significant blocker to getting pull requests merged, and as such, we need to dive into why this is the case. The goal of this issue is to profile our unit tests, figure out which ones are slow, why, and what we can do to address it.

### Acceptance Criteria

We should deliver a list of the types of tests that are slow, why they are slow, what we can do to prevent this in the future, and what we can do to speed the tests up now. Ideally we can create some GitHub Issues to either do this work ourselves or outsource it to other teams if that would be more appropriate. | infrastructure | investigate unit test execution performance summary currently it can take anywhere between and minutes for our unit tests to run on pull requests this is a significant blocker to getting pull requests merged and as such we need to dive into why this is the case the goal of this issue is to profile our unit tests figure out which ones are slow why and what we can do to address it acceptance criteria we should deliver a list of the types of tests that are slow why they are slow what we can do to prevent this in the future and what we can do to speed the tests up now ideally we can create some github issues to either do this work ourselves or outsource it to other teams if that would be more appropriate | 1 |

24,112 | 16,856,321,492 | IssuesEvent | 2021-06-21 07:15:03 | pytest-dev/pytest | https://api.github.com/repos/pytest-dev/pytest | reopened | integrate twine check in the linting process after the warehouse validation got moved | type: infrastructure | @di metioned on irc that there is an effort to move parts of the warehouse validation into the`packaging` library in order to use it in `twine check`

https://github.com/pypa/warehouse/blob/8ac3770668c50f69f4e211d937a701d37e20155a/warehouse/forklift/legacy.py#L341-L343 | 1.0 | integrate twine check in the linting process after the warehouse validation got moved - @di metioned on irc that there is an effort to move parts of the warehouse validation into the`packaging` library in order to use it in `twine check`

https://github.com/pypa/warehouse/blob/8ac3770668c50f69f4e211d937a701d37e20155a/warehouse/forklift/legacy.py#L341-L343 | infrastructure | integrate twine check in the linting process after the warehouse validation got moved di metioned on irc that there is an effort to move parts of the warehouse validation into the packaging library in order to use it in twine check | 1 |

708,785 | 24,354,685,943 | IssuesEvent | 2022-10-03 06:04:57 | longhorn/longhorn | https://api.github.com/repos/longhorn/longhorn | closed | [BACKPORT][v1.3.2][BUG] Can not pull a backup created by another Longhorn system from the remote backup target | kind/bug area/manager priority/0 kind/regression feature/backup-restore kind/backport | backport https://github.com/longhorn/longhorn/issues/4637 | 1.0 | [BACKPORT][v1.3.2][BUG] Can not pull a backup created by another Longhorn system from the remote backup target - backport https://github.com/longhorn/longhorn/issues/4637 | non_infrastructure | can not pull a backup created by another longhorn system from the remote backup target backport | 0 |

21,568 | 14,640,140,185 | IssuesEvent | 2020-12-25 00:08:16 | nanoMFG/community | https://api.github.com/repos/nanoMFG/community | closed | Create a template for nanoMFG leaning lab courses | infrastructure | ## Description

Create basic template to use when spinning up a new course

## Details

- [x] Include basic elements needed

- `config.yml`

- Include commented out steps, say 1-10

- `course-details.md`

- `Readme.md`

- `responses/` directory with one or more canned responses.

- `LICENSE` - Use MIT with "University of Illinois" copyright

- [x] Update repo setting to make it a template

- [x] Test and validate `config.yml`. | 1.0 | Create a template for nanoMFG leaning lab courses - ## Description

Create basic template to use when spinning up a new course

## Details

- [x] Include basic elements needed

- `config.yml`

- Include commented out steps, say 1-10

- `course-details.md`

- `Readme.md`

- `responses/` directory with one or more canned responses.

- `LICENSE` - Use MIT with "University of Illinois" copyright

- [x] Update repo setting to make it a template

- [x] Test and validate `config.yml`. | infrastructure | create a template for nanomfg leaning lab courses description create basic template to use when spinning up a new course details include basic elements needed config yml include commented out steps say course details md readme md responses directory with one or more canned responses license use mit with university of illinois copyright update repo setting to make it a template test and validate config yml | 1 |

368,426 | 25,793,539,821 | IssuesEvent | 2022-12-10 09:54:08 | billionsjoel/sh-app | https://api.github.com/repos/billionsjoel/sh-app | closed | Change statement on the homepage | documentation | Change the statement **_Scribe House is recommended by hundreds upon hundreds of authors_** to **Scribe House is recommended by many authors.** Be mindful of correct spellings,

please.

** | 1.0 | Change statement on the homepage - Change the statement **_Scribe House is recommended by hundreds upon hundreds of authors_** to **Scribe House is recommended by many authors.** Be mindful of correct spellings,

please.

** | non_infrastructure | change statement on the homepage change the statement scribe house is recommended by hundreds upon hundreds of authors to scribe house is recommended by many authors be mindful of correct spellings please | 0 |

776,108 | 27,247,248,352 | IssuesEvent | 2023-02-22 03:47:10 | ansible-collections/azure | https://api.github.com/repos/ansible-collections/azure | closed | azure_rm_privatednszonelink - vNet subscription_id being overridden by value from profile | medium_priority new_featrue work in | <!--- Verify first that your issue is not already reported on GitHub -->

<!--- Also test if the latest release and devel branch are affected too -->

<!--- Complete *all* sections as described, this form is processed automatically -->

##### SUMMARY

<!--- Explain the problem briefly below -->

When executing module, supplying entire resource ID to the "virtual_network" parameter, seeing unexpected behavior. Ansible module seems to be reading the information provided to it correctly, however reviewing Azure logs would indicate that the wrong subscription ID is being provided to the call to ARM. The correct name and resource groups are being parsed and used.

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

<!--- Write the short name of the module, plugin, task or feature below, use your best guess if unsure -->

azure_rm_privatednszonelink

##### ANSIBLE VERSION

<!--- Paste verbatim output from "ansible --version" between quotes -->

```paste below

ansible [core 2.13.6]

config file = None

configured module search path = ['/home/username/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/local/lib/python3.9/dist-packages/ansible

ansible collection location = /home/username/.ansible/collections:/usr/share/ansible/collections

executable location = /usr/local/bin/ansible

python version = 3.9.2 (default, Feb 28 2021, 17:03:44) [GCC 10.2.1 20210110]

jinja version = 3.1.2

libyaml = True

```

##### COLLECTION VERSION

<!--- Paste verbatim output from "ansible-galaxy collection list <namespace>.<collection>" between the quotes

for example: ansible-galaxy collection list community.general

-->

```paste below

# /home/username/.ansible/collections/ansible_collections

Collection Version

------------------ -------

azure.azcollection 1.14.0

# /usr/local/lib/python3.9/dist-packages/ansible_collections

Collection Version

------------------ -------

azure.azcollection 1.13.0

```

##### CONFIGURATION

<!--- Paste verbatim output from "ansible-config dump --only-changed" between quotes -->

```paste below

<empty>

```

##### OS / ENVIRONMENT

<!--- Provide all relevant information below, e.g. target OS versions, network device firmware, etc. -->

username@hostname:~$ cat /etc/debian_version

11.5

##### STEPS TO REPRODUCE

<!--- Describe exactly how to reproduce the problem, using a minimal test-case -->

<!--- Paste example playbooks or commands between quotes below -->

```yaml

---

- hosts: all

connection: local

vars:

core_subscription_id: "55555555-4444-3333-2222-111111111111"

vnets:

- name: vnet-1

region: eastus

subscription: "11111111-2222-3333-4444-555555555555"

resource_group: rg-networking

dnsvnetlinks:

- zone_name: zone.example.com

registration_enabled: yes

resource_group: rg-networking

- name: Add Private DNS Zone vnet links

azure.azcollection.azure_rm_privatednszonelink:

name: "dnsvnetlink-{{ item.0['name']|regex_replace('^vnet-', '') }}"

profile: core

registration_enabled: "{{ item.1['registration_enabled'] }}"

resource_group: "{{ item.1['resource_group'] }}"

subscription_id: "{{ core_subscription_id }}"

virtual_network: "/subscriptions/{{ item.0['subscription'] }}/resourceGroups/{{ item.0['resource_group'] }}/providers/Microsoft.Network/virtualNetworks/{{ item.0['name'] }}"

zone_name: "{{ item.1['zone_name'] }}"

loop: "{{ vnets|subelements('dnsvnetlinks') }}"

```

<!--- HINT: You can paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- Describe what you expected to happen when running the steps above -->

Expect the vnet link to be created, or if it already exists for it to indicate so and not make any changes.

##### ACTUAL RESULTS

<!--- Describe what actually happened. If possible run with extra verbosity (-vvvv) -->

In the command output I get an error as seen below. Also worth noting that in the verbose output (invocation section) I see the vNet with the correct ID.

In the Azure portal activity logs I get ```"message": "Virtual network resource not found for '/subscriptions/55555555-4444-3333-2222-111111111111/resourceGroups/rg-networking/providers/Microsoft.Network/virtualNetworks/vnet-1'"```. The subscription_id for the vNet is the one related to the profile being used in the task, *NOT* the one provided in the virtual_network parameter nor the one showing in the verbose ```ansible-playbook``` output.

The text error in the output from ```ansible-playbook``` would indicate an operational conflict, however that error message is less deterministic than the indication from Azure Resource Manager that an incorrect resource ID is being used.

<!--- Paste verbatim command output between quotes -->

```paste below

<azure-prod> ESTABLISH LOCAL CONNECTION FOR USER: username

<azure-prod> EXEC /bin/sh -c 'echo ~username && sleep 0'

<azure-prod> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo /home/username/.ansible/tmp `"&& mkdir "` echo /home/username/.ansible/tmp/ansible-tmp-1668013990.5085132-7259-92530896260601 `" && echo ansible-tmp-1668013990.5085132-7259-92530896260601="` echo /home/username/.ansible/tmp/ansible-tmp-1668013990.5085132-7259-92530896260601 `" ) && sleep 0'

Using module file /home/username/.ansible/collections/ansible_collections/azure/azcollection/plugins/modules/azure_rm_privatednszonelink.py

<azure-prod> PUT /home/username/.ansible/tmp/ansible-local-7040joyl_665/tmp9usjihry TO /home/username/.ansible/tmp/ansible-tmp-1668013990.5085132-7259-92530896260601/AnsiballZ_azure_rm_privatednszonelink.py

<azure-prod> EXEC /bin/sh -c 'chmod u+x /home/username/.ansible/tmp/ansible-tmp-1668013990.5085132-7259-92530896260601/ /home/username/.ansible/tmp/ansible-tmp-1668013990.5085132-7259-92530896260601/AnsiballZ_azure_rm_privatednszonelink.py && sleep 0'

<azure-prod> EXEC /bin/sh -c '/usr/bin/python3 /home/username/.ansible/tmp/ansible-tmp-1668013990.5085132-7259-92530896260601/AnsiballZ_azure_rm_privatednszonelink.py && sleep 0'

<azure-prod> EXEC /bin/sh -c 'rm -f -r /home/username/.ansible/tmp/ansible-tmp-1668013990.5085132-7259-92530896260601/ > /dev/null 2>&1 && sleep 0'

The full traceback is:

File "/tmp/ansible_azure.azcollection.azure_rm_privatednszonelink_payload_n7i7562_/ansible_azure.azcollection.azure_rm_privatednszonelink_payload.zip/ansible_collections/azure/azcollection/plugins/modules/azure_rm_privatednszonelink.py", line 291, in create_or_update_network_link

File "/usr/local/lib/python3.9/dist-packages/azure/mgmt/privatedns/operations/_virtual_network_links_operations.py", line 163, in begin_create_or_update

raw_result = self._create_or_update_initial(

File "/usr/local/lib/python3.9/dist-packages/azure/mgmt/privatedns/operations/_virtual_network_links_operations.py", line 101, in _create_or_update_initial

map_error(status_code=response.status_code, response=response, error_map=error_map)

File "/usr/local/lib/python3.9/dist-packages/azure/core/exceptions.py", line 107, in map_error

raise error

[WARNING]: Azure API profile latest does not define an entry for PrivateDnsManagementClient

failed: [azure-prod] (item=[{'name': 'vnet-1', 'region': 'eastus', 'subscription': '11111111-2222-3333-4444-555555555555', 'resource_group': 'rg-networking', 'dnsvnetlinks': [{'zone_name': 'zone.example.com', 'registration_enabled': True, 'resource_group': 'rg-networking'}]}, {'zone_name': 'zone.example.com', 'registration_enabled': True}]) => {

"ansible_loop_var": "item",

"changed": false,

"invocation": {

"module_args": {

"ad_user": null,

"adfs_authority_url": null,

"api_profile": "latest",

"append_tags": true,

"auth_source": "auto",

"cert_validation_mode": null,

"client_id": null,

"cloud_environment": "AzureCloud",

"log_mode": null,

"log_path": null,

"name": "dnsvnetlink-1",

"password": null,

"profile": "core",

"registration_enabled": true,

"resource_group": "rg-networking",

"secret": null,

"state": "present",

"subscription_id": "55555555-4444-3333-2222-111111111111",

"tags": null,

"tenant": null,

"thumbprint": null,

"virtual_network": "/subscriptions/11111111-2222-3333-4444-555555555555/resourceGroups/rg-networking/providers/Microsoft.Network/virtualNetworks/vnet-1",

"x509_certificate_path": null,

"zone_name": "zone.example.com"

}

},

"item": [

{

"dnsvnetlinks": [

{

"registration_enabled": true,

"zone_name": "zone.example.com",

"resource_group": "rg-networking"

}

],

"name": "vnet-1",

"resource_group": "rg-networking",

"subscription": "11111111-2222-3333-4444-555555555555"

},

{

"registration_enabled": true,

"zone_name": "zone.example.com",

"resource_group": "rg-networking"

}

],

"msg": "Error creating or updating virtual network link dnsvnetlink-1 - (Conflict) Operation group '/operations/groups/id/|virtualNetworkLinks|55555555-4444-3333-2222-111111111111|rg-networking|zone.example.com|dnsvnetlink-1' already has 1 operations like '/operations/type/UpsertVirtualNetworkLink/id/5c97da98-4765-4cf8-86f1-088ce764027b_650f74e6-1373-4e94-8eac-a63e81064d9b' queued.\nCode: Conflict\nMessage: Operation group '/operations/groups/id/|virtualNetworkLinks|55555555-4444-3333-2222-111111111111|rg-networking|zone.example.com|dnsvnetlink-1' already has 1 operations like '/operations/type/UpsertVirtualNetworkLink/id/5c97da98-4765-4cf8-86f1-088ce764027b_650f74e6-1373-4e94-8eac-a63e81064d9b' queued."

```

| 1.0 | azure_rm_privatednszonelink - vNet subscription_id being overridden by value from profile - <!--- Verify first that your issue is not already reported on GitHub -->

<!--- Also test if the latest release and devel branch are affected too -->

<!--- Complete *all* sections as described, this form is processed automatically -->

##### SUMMARY

<!--- Explain the problem briefly below -->

When executing module, supplying entire resource ID to the "virtual_network" parameter, seeing unexpected behavior. Ansible module seems to be reading the information provided to it correctly, however reviewing Azure logs would indicate that the wrong subscription ID is being provided to the call to ARM. The correct name and resource groups are being parsed and used.

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

<!--- Write the short name of the module, plugin, task or feature below, use your best guess if unsure -->

azure_rm_privatednszonelink

##### ANSIBLE VERSION

<!--- Paste verbatim output from "ansible --version" between quotes -->

```paste below

ansible [core 2.13.6]

config file = None

configured module search path = ['/home/username/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/local/lib/python3.9/dist-packages/ansible

ansible collection location = /home/username/.ansible/collections:/usr/share/ansible/collections

executable location = /usr/local/bin/ansible

python version = 3.9.2 (default, Feb 28 2021, 17:03:44) [GCC 10.2.1 20210110]

jinja version = 3.1.2

libyaml = True

```

##### COLLECTION VERSION

<!--- Paste verbatim output from "ansible-galaxy collection list <namespace>.<collection>" between the quotes

for example: ansible-galaxy collection list community.general

-->

```paste below

# /home/username/.ansible/collections/ansible_collections

Collection Version

------------------ -------

azure.azcollection 1.14.0

# /usr/local/lib/python3.9/dist-packages/ansible_collections

Collection Version

------------------ -------

azure.azcollection 1.13.0

```

##### CONFIGURATION

<!--- Paste verbatim output from "ansible-config dump --only-changed" between quotes -->

```paste below

<empty>

```

##### OS / ENVIRONMENT