Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

310,192 | 23,325,204,052 | IssuesEvent | 2022-08-08 20:26:09 | wazuh/wazuh-documentation | https://api.github.com/repos/wazuh/wazuh-documentation | closed | Script for jira integration blog post doesn't work anymore | operations integrator blog documentation | Hello team,

I had to configure this integration many times lately and found that the script we provide in this blog post: https://wazuh.com/blog/how-to-integrate-external-software-using-integrator/

... doesn't work anymore.

I kept getting next error:

```

{

"errorMessages": [],

"errors": {

"project": "Especifica un ID o una clave de proyecto válida"

}

}

```

This is because Jira now requires an `issuetype ID` for issues to be created via API. But even inserting the issuetype ID into the script, still didn't work. This time it was because they also changed the format for the `description` field. It now requires additional parameters.

I managed to adjust the script to this:

```python

#!/usr/bin/env python

import sys

import json

import requests

from requests.auth import HTTPBasicAuth

# Read configuration parameters

alert_file = open(sys.argv[1])

user = sys.argv[2].split(':')[0]

api_key = sys.argv[2].split(':')[1]

hook_url = sys.argv[3]

# Read the alert file

alert_json = json.loads(alert_file.read())

alert_file.close()

# Extract issue fields

alert_level = alert_json['rule']['level']

ruleid = alert_json['rule']['id']

description = alert_json['rule']['description']

agentid = alert_json['agent']['id']

agentname = alert_json['agent']['name']

path = alert_json['syscheck']['path']

# Set the project attributes ===> This sections needs to be manually configured before running!

project_key = 'WT' # You can get this from the beggining of an issue key. E.g.: For WS-5018 its "WS"

issuetypeid = '10002' # Check https://confluence.atlassian.com/jirakb/finding-the-id-for-issue-types-646186508.html. There's also an API endpoint to get it.

# Generate request

headers = {'content-type': 'application/json'}

issue_data = {

"update": {},

"fields": {

"summary": 'FIM alert on [' + path + ']',

"issuetype": {

"id": issuetypeid

},

"project": {

"key": project_key

},

"description": {

'version': 1,

'type': 'doc',

'content': [

{

"type": "paragraph",

"content": [

{

"text": '- State: ' + description + '\n- Rule ID: ' + str(ruleid) + '\n- Alert level: ' + str(alert_level) + '\n- Agent: ' + str(agentid) + ' ' + agentname,

"type": "text"

}

]

}

],

},

}

}

# Send the request

response = requests.post(hook_url, data=json.dumps(issue_data), headers=headers, auth=(user, api_key))

#print(json.dumps(json.loads(response.text), sort_keys=True, indent=4, separators=(",", ": ")))

sys.exit(0)

```

Also here's a script for general use (nor tied to FIM):

```python

#!/usr/bin/env python

import sys

import json

import requests

from requests.auth import HTTPBasicAuth

# Read configuration parameters

alert_file = open(sys.argv[1])

user = sys.argv[2].split(':')[0]

api_key = sys.argv[2].split(':')[1]

hook_url = sys.argv[3]

# Read the alert file

alert_json = json.loads(alert_file.read())

alert_file.close()

# Extract issue fields

alert_level = alert_json['rule']['level']

ruleid = alert_json['rule']['id']

description = alert_json['rule']['description']

agentid = alert_json['agent']['id']

agentname = alert_json['agent']['name']

#path = alert_json['syscheck']['path']

# Set the project attributes ===> This sections needs to be manually configured before running!

project_key = 'WT' # You can get this from the beggining of an issue key. E.g.: For WS-5018 its "WS"

issuetypeid = '10002' # Check https://confluence.atlassian.com/jirakb/finding-the-id-for-issue-types-646186508.html. There's also an API endpoint to get it.

# Generate request

headers = {'content-type': 'application/json'}

issue_data = {

"update": {},

"fields": {

"summary": 'Wazuh Alert: ' + description,

"issuetype": {

"id": issuetypeid

},

"project": {

"key": project_key

},

"description": {

'version': 1,

'type': 'doc',

'content': [

{

"type": "paragraph",

"content": [

{

"text": '- Rule ID: ' + str(ruleid) + '\n- Alert level: ' + str(alert_level) + '\n- Agent: ' + str(agentid) + ' ' + agentname,

"type": "text"

}

]

}

],

},

}

}

# Send the request

response = requests.post(hook_url, data=json.dumps(issue_data), headers=headers, auth=(user, api_key))

#print(json.dumps(json.loads(response.text), sort_keys=True, indent=4, separators=(",", ": ")))

sys.exit(0)

```

It would be great if we could have both scripts in the blog. Users (and the team) will appreciate it :)

It is worth mentioning that the user will need to adjust both `project_key` and `issuetypeid` variables with theirs. I also commented out a "print" command at the end. In case of troubleshooting, it will be very useful to uncomment it.

It is also useful to know that the issuetype ID can be queried to Jira API as well by running next command:

```bash

curl --request GET \

--url 'https://your-domain.atlassian.net/rest/api/3/issuetype' \

--user 'email@example.com:<api_token>' \

--header 'Accept: application/json'

```

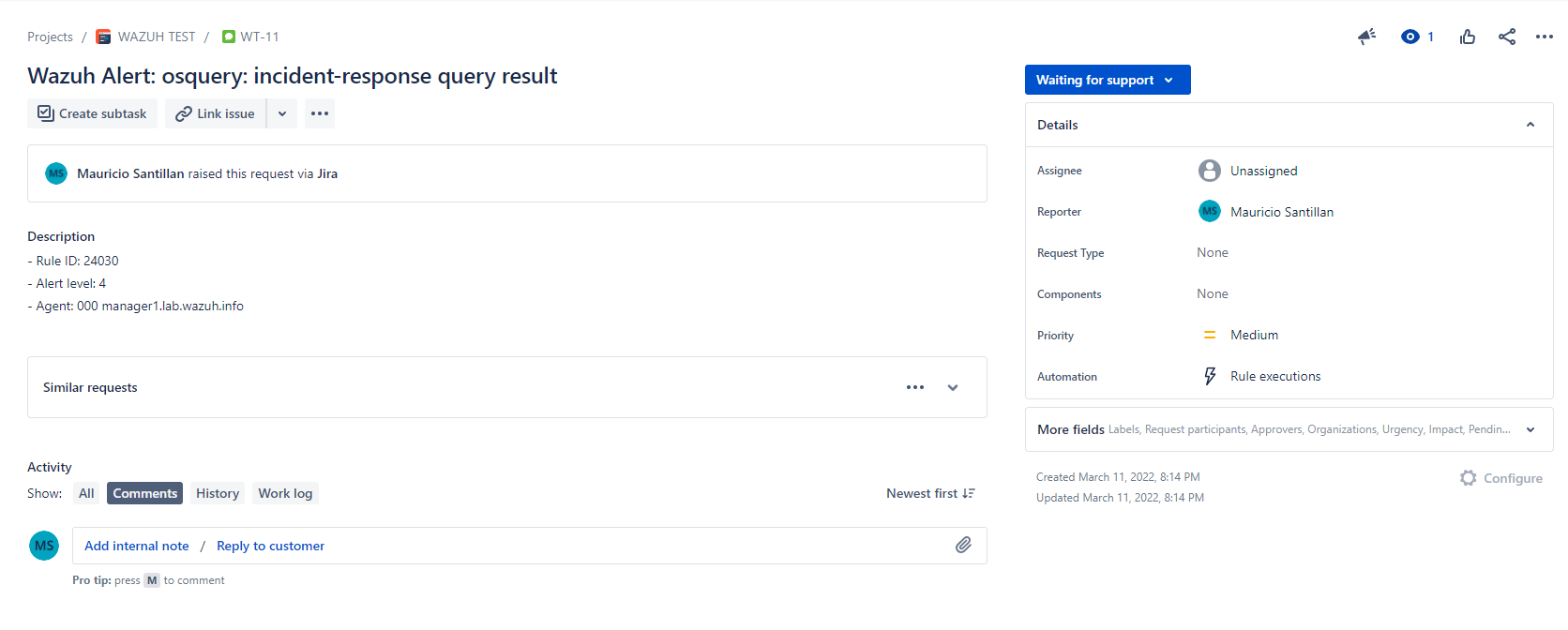

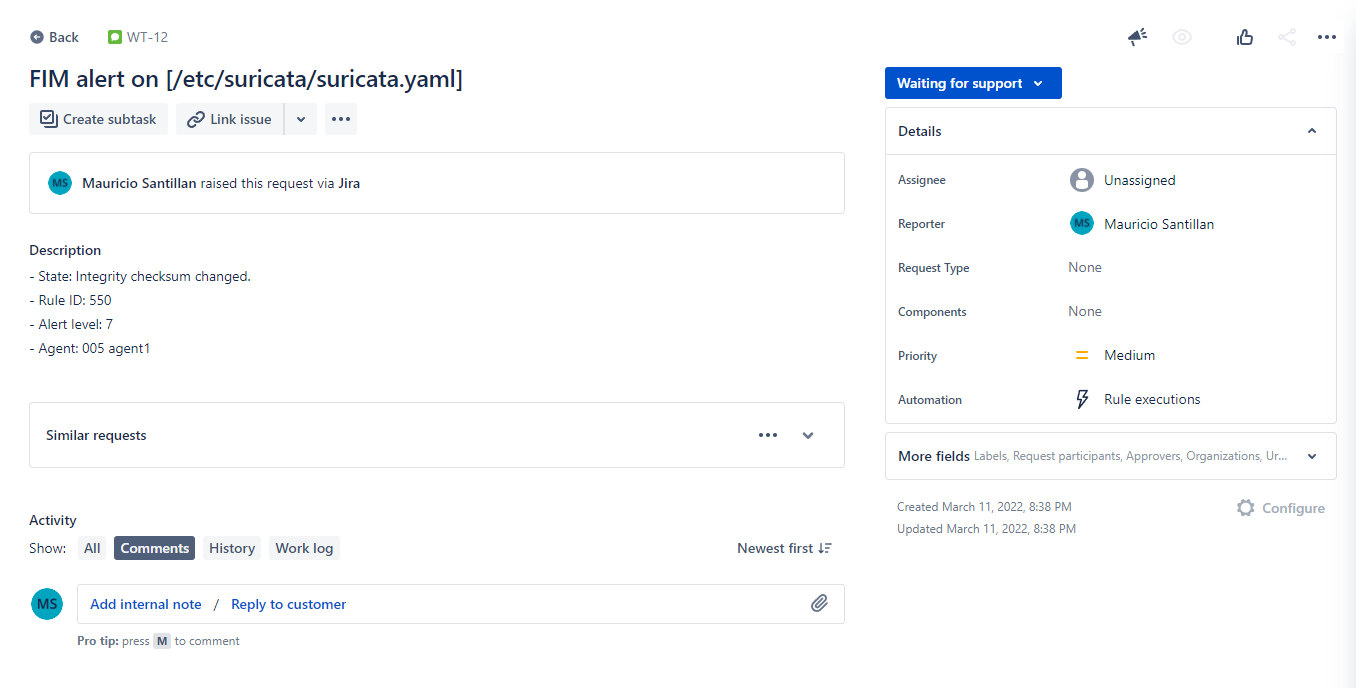

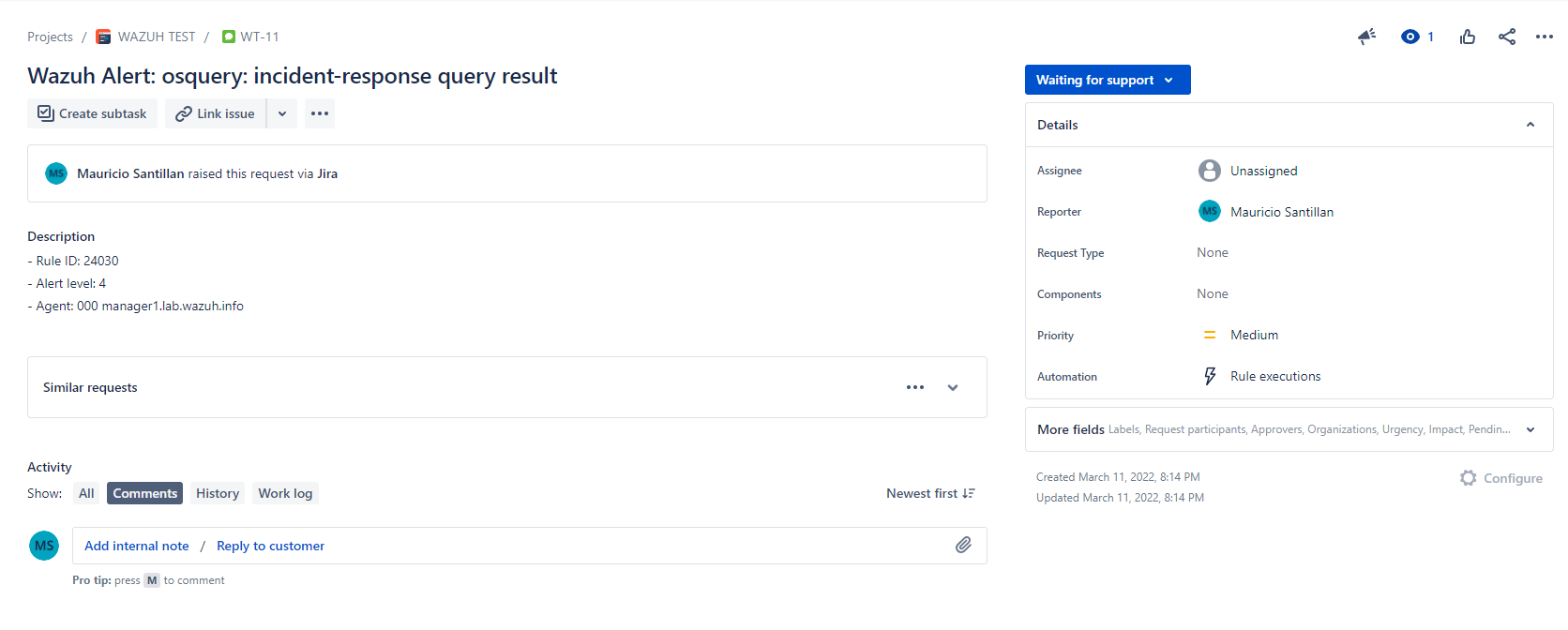

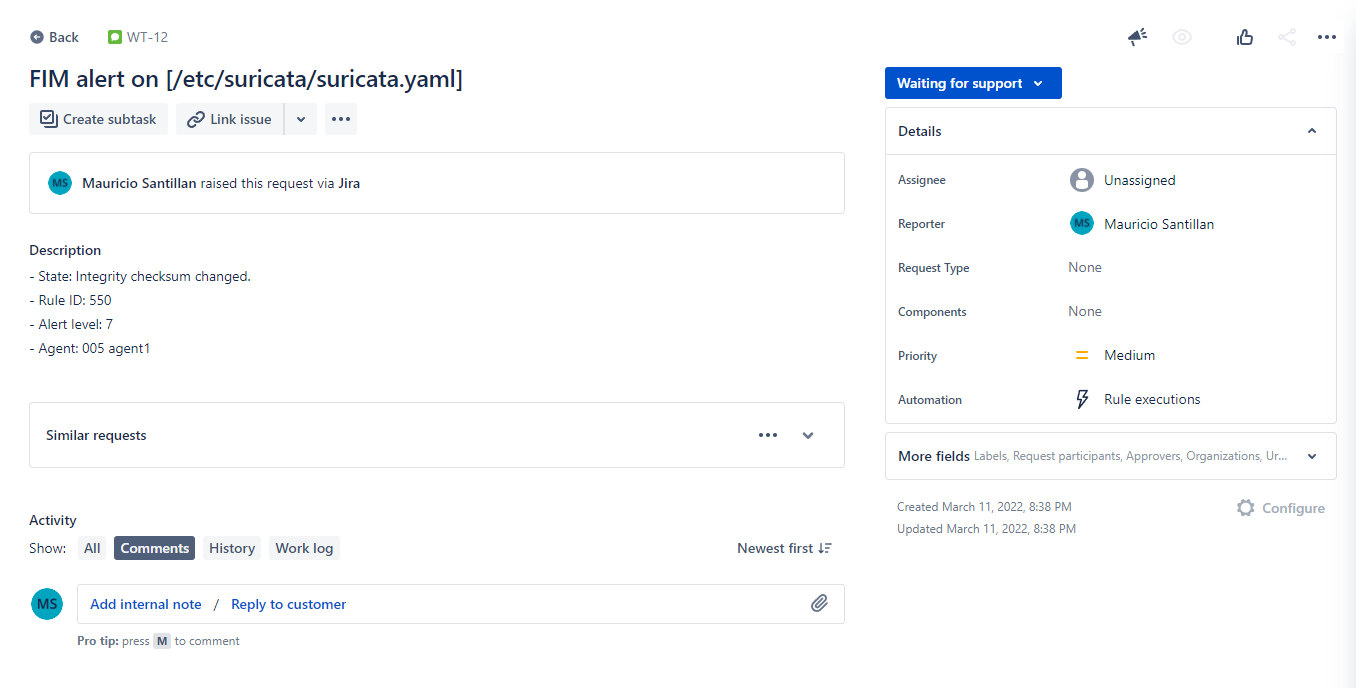

With these scripts I got next results in Jira:

| 1.0 | Script for jira integration blog post doesn't work anymore - Hello team,

I had to configure this integration many times lately and found that the script we provide in this blog post: https://wazuh.com/blog/how-to-integrate-external-software-using-integrator/

... doesn't work anymore.

I kept getting next error:

```

{

"errorMessages": [],

"errors": {

"project": "Especifica un ID o una clave de proyecto válida"

}

}

```

This is because Jira now requires an `issuetype ID` for issues to be created via API. But even inserting the issuetype ID into the script, still didn't work. This time it was because they also changed the format for the `description` field. It now requires additional parameters.

I managed to adjust the script to this:

```python

#!/usr/bin/env python

import sys

import json

import requests

from requests.auth import HTTPBasicAuth

# Read configuration parameters

alert_file = open(sys.argv[1])

user = sys.argv[2].split(':')[0]

api_key = sys.argv[2].split(':')[1]

hook_url = sys.argv[3]

# Read the alert file

alert_json = json.loads(alert_file.read())

alert_file.close()

# Extract issue fields

alert_level = alert_json['rule']['level']

ruleid = alert_json['rule']['id']

description = alert_json['rule']['description']

agentid = alert_json['agent']['id']

agentname = alert_json['agent']['name']

path = alert_json['syscheck']['path']

# Set the project attributes ===> This sections needs to be manually configured before running!

project_key = 'WT' # You can get this from the beggining of an issue key. E.g.: For WS-5018 its "WS"

issuetypeid = '10002' # Check https://confluence.atlassian.com/jirakb/finding-the-id-for-issue-types-646186508.html. There's also an API endpoint to get it.

# Generate request

headers = {'content-type': 'application/json'}

issue_data = {

"update": {},

"fields": {

"summary": 'FIM alert on [' + path + ']',

"issuetype": {

"id": issuetypeid

},

"project": {

"key": project_key

},

"description": {

'version': 1,

'type': 'doc',

'content': [

{

"type": "paragraph",

"content": [

{

"text": '- State: ' + description + '\n- Rule ID: ' + str(ruleid) + '\n- Alert level: ' + str(alert_level) + '\n- Agent: ' + str(agentid) + ' ' + agentname,

"type": "text"

}

]

}

],

},

}

}

# Send the request

response = requests.post(hook_url, data=json.dumps(issue_data), headers=headers, auth=(user, api_key))

#print(json.dumps(json.loads(response.text), sort_keys=True, indent=4, separators=(",", ": ")))

sys.exit(0)

```

Also here's a script for general use (nor tied to FIM):

```python

#!/usr/bin/env python

import sys

import json

import requests

from requests.auth import HTTPBasicAuth

# Read configuration parameters

alert_file = open(sys.argv[1])

user = sys.argv[2].split(':')[0]

api_key = sys.argv[2].split(':')[1]

hook_url = sys.argv[3]

# Read the alert file

alert_json = json.loads(alert_file.read())

alert_file.close()

# Extract issue fields

alert_level = alert_json['rule']['level']

ruleid = alert_json['rule']['id']

description = alert_json['rule']['description']

agentid = alert_json['agent']['id']

agentname = alert_json['agent']['name']

#path = alert_json['syscheck']['path']

# Set the project attributes ===> This sections needs to be manually configured before running!

project_key = 'WT' # You can get this from the beggining of an issue key. E.g.: For WS-5018 its "WS"

issuetypeid = '10002' # Check https://confluence.atlassian.com/jirakb/finding-the-id-for-issue-types-646186508.html. There's also an API endpoint to get it.

# Generate request

headers = {'content-type': 'application/json'}

issue_data = {

"update": {},

"fields": {

"summary": 'Wazuh Alert: ' + description,

"issuetype": {

"id": issuetypeid

},

"project": {

"key": project_key

},

"description": {

'version': 1,

'type': 'doc',

'content': [

{

"type": "paragraph",

"content": [

{

"text": '- Rule ID: ' + str(ruleid) + '\n- Alert level: ' + str(alert_level) + '\n- Agent: ' + str(agentid) + ' ' + agentname,

"type": "text"

}

]

}

],

},

}

}

# Send the request

response = requests.post(hook_url, data=json.dumps(issue_data), headers=headers, auth=(user, api_key))

#print(json.dumps(json.loads(response.text), sort_keys=True, indent=4, separators=(",", ": ")))

sys.exit(0)

```

It would be great if we could have both scripts in the blog. Users (and the team) will appreciate it :)

It is worth mentioning that the user will need to adjust both `project_key` and `issuetypeid` variables with theirs. I also commented out a "print" command at the end. In case of troubleshooting, it will be very useful to uncomment it.

It is also useful to know that the issuetype ID can be queried to Jira API as well by running next command:

```bash

curl --request GET \

--url 'https://your-domain.atlassian.net/rest/api/3/issuetype' \

--user 'email@example.com:<api_token>' \

--header 'Accept: application/json'

```

With these scripts I got next results in Jira:

| non_priority | script for jira integration blog post doesn t work anymore hello team i had to configure this integration many times lately and found that the script we provide in this blog post doesn t work anymore i kept getting next error errormessages errors project especifica un id o una clave de proyecto válida this is because jira now requires an issuetype id for issues to be created via api but even inserting the issuetype id into the script still didn t work this time it was because they also changed the format for the description field it now requires additional parameters i managed to adjust the script to this python usr bin env python import sys import json import requests from requests auth import httpbasicauth read configuration parameters alert file open sys argv user sys argv split api key sys argv split hook url sys argv read the alert file alert json json loads alert file read alert file close extract issue fields alert level alert json ruleid alert json description alert json agentid alert json agentname alert json path alert json set the project attributes this sections needs to be manually configured before running project key wt you can get this from the beggining of an issue key e g for ws its ws issuetypeid check there s also an api endpoint to get it generate request headers content type application json issue data update fields summary fim alert on issuetype id issuetypeid project key project key description version type doc content type paragraph content text state description n rule id str ruleid n alert level str alert level n agent str agentid agentname type text send the request response requests post hook url data json dumps issue data headers headers auth user api key print json dumps json loads response text sort keys true indent separators sys exit also here s a script for general use nor tied to fim python usr bin env python import sys import json import requests from requests auth import httpbasicauth read configuration parameters alert file open sys argv user sys argv split api key sys argv split hook url sys argv read the alert file alert json json loads alert file read alert file close extract issue fields alert level alert json ruleid alert json description alert json agentid alert json agentname alert json path alert json set the project attributes this sections needs to be manually configured before running project key wt you can get this from the beggining of an issue key e g for ws its ws issuetypeid check there s also an api endpoint to get it generate request headers content type application json issue data update fields summary wazuh alert description issuetype id issuetypeid project key project key description version type doc content type paragraph content text rule id str ruleid n alert level str alert level n agent str agentid agentname type text send the request response requests post hook url data json dumps issue data headers headers auth user api key print json dumps json loads response text sort keys true indent separators sys exit it would be great if we could have both scripts in the blog users and the team will appreciate it it is worth mentioning that the user will need to adjust both project key and issuetypeid variables with theirs i also commented out a print command at the end in case of troubleshooting it will be very useful to uncomment it it is also useful to know that the issuetype id can be queried to jira api as well by running next command bash curl request get url user email example com header accept application json with these scripts i got next results in jira | 0 |

194,010 | 14,667,214,784 | IssuesEvent | 2020-12-29 18:04:28 | github-vet/rangeloop-pointer-findings | https://api.github.com/repos/github-vet/rangeloop-pointer-findings | closed | itsivareddy/terrafrom-Oci: oci/autoscaling_auto_scaling_configuration_test.go; 14 LoC | fresh small test |

Found a possible issue in [itsivareddy/terrafrom-Oci](https://www.github.com/itsivareddy/terrafrom-Oci) at [oci/autoscaling_auto_scaling_configuration_test.go](https://github.com/itsivareddy/terrafrom-Oci/blob/075608a9e201ee0e32484da68d5ba5370dfde1be/oci/autoscaling_auto_scaling_configuration_test.go#L501-L514)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first issue it finds, so please do not limit your consideration to the contents of the below message.

> reference to autoScalingConfigurationId is reassigned at line 505

[Click here to see the code in its original context.](https://github.com/itsivareddy/terrafrom-Oci/blob/075608a9e201ee0e32484da68d5ba5370dfde1be/oci/autoscaling_auto_scaling_configuration_test.go#L501-L514)

<details>

<summary>Click here to show the 14 line(s) of Go which triggered the analyzer.</summary>

```go

for _, autoScalingConfigurationId := range autoScalingConfigurationIds {

if ok := SweeperDefaultResourceId[autoScalingConfigurationId]; !ok {

deleteAutoScalingConfigurationRequest := oci_auto_scaling.DeleteAutoScalingConfigurationRequest{}

deleteAutoScalingConfigurationRequest.AutoScalingConfigurationId = &autoScalingConfigurationId

deleteAutoScalingConfigurationRequest.RequestMetadata.RetryPolicy = getRetryPolicy(true, "auto_scaling")

_, error := autoScalingClient.DeleteAutoScalingConfiguration(context.Background(), deleteAutoScalingConfigurationRequest)

if error != nil {

fmt.Printf("Error deleting AutoScalingConfiguration %s %s, It is possible that the resource is already deleted. Please verify manually \n", autoScalingConfigurationId, error)

continue

}

}

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: 075608a9e201ee0e32484da68d5ba5370dfde1be

| 1.0 | itsivareddy/terrafrom-Oci: oci/autoscaling_auto_scaling_configuration_test.go; 14 LoC -

Found a possible issue in [itsivareddy/terrafrom-Oci](https://www.github.com/itsivareddy/terrafrom-Oci) at [oci/autoscaling_auto_scaling_configuration_test.go](https://github.com/itsivareddy/terrafrom-Oci/blob/075608a9e201ee0e32484da68d5ba5370dfde1be/oci/autoscaling_auto_scaling_configuration_test.go#L501-L514)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first issue it finds, so please do not limit your consideration to the contents of the below message.

> reference to autoScalingConfigurationId is reassigned at line 505

[Click here to see the code in its original context.](https://github.com/itsivareddy/terrafrom-Oci/blob/075608a9e201ee0e32484da68d5ba5370dfde1be/oci/autoscaling_auto_scaling_configuration_test.go#L501-L514)

<details>

<summary>Click here to show the 14 line(s) of Go which triggered the analyzer.</summary>

```go

for _, autoScalingConfigurationId := range autoScalingConfigurationIds {

if ok := SweeperDefaultResourceId[autoScalingConfigurationId]; !ok {

deleteAutoScalingConfigurationRequest := oci_auto_scaling.DeleteAutoScalingConfigurationRequest{}

deleteAutoScalingConfigurationRequest.AutoScalingConfigurationId = &autoScalingConfigurationId

deleteAutoScalingConfigurationRequest.RequestMetadata.RetryPolicy = getRetryPolicy(true, "auto_scaling")

_, error := autoScalingClient.DeleteAutoScalingConfiguration(context.Background(), deleteAutoScalingConfigurationRequest)

if error != nil {

fmt.Printf("Error deleting AutoScalingConfiguration %s %s, It is possible that the resource is already deleted. Please verify manually \n", autoScalingConfigurationId, error)

continue

}

}

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: 075608a9e201ee0e32484da68d5ba5370dfde1be

| non_priority | itsivareddy terrafrom oci oci autoscaling auto scaling configuration test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message reference to autoscalingconfigurationid is reassigned at line click here to show the line s of go which triggered the analyzer go for autoscalingconfigurationid range autoscalingconfigurationids if ok sweeperdefaultresourceid ok deleteautoscalingconfigurationrequest oci auto scaling deleteautoscalingconfigurationrequest deleteautoscalingconfigurationrequest autoscalingconfigurationid autoscalingconfigurationid deleteautoscalingconfigurationrequest requestmetadata retrypolicy getretrypolicy true auto scaling error autoscalingclient deleteautoscalingconfiguration context background deleteautoscalingconfigurationrequest if error nil fmt printf error deleting autoscalingconfiguration s s it is possible that the resource is already deleted please verify manually n autoscalingconfigurationid error continue leave a reaction on this issue to contribute to the project by classifying this instance as a bug mitigated or desirable behavior rocket see the descriptions of the classifications for more information commit id | 0 |

1,280 | 2,603,746,679 | IssuesEvent | 2015-02-24 17:42:47 | chrsmith/bwapi | https://api.github.com/repos/chrsmith/bwapi | closed | Training queue in nexus is never more than 1 unit | auto-migrated Type-Defect | ```

What steps will reproduce the problem?

1. Create a nexus

2. Train probes non-stop and make them gather minerals

3. The training queue is never more than 1 unit - they dont queue up?

What version of the product are you using? On what operating system?

Rev 1610 - Windows XP

Please provide any additional information below.

I will love the nexus to use the queue to make sure that the nexus is never

idle no matter the lag.

```

-----

Original issue reported on code.google.com by `wizuffeg...@gmail.com` on 29 Nov 2009 at 4:36 | 1.0 | Training queue in nexus is never more than 1 unit - ```

What steps will reproduce the problem?

1. Create a nexus

2. Train probes non-stop and make them gather minerals

3. The training queue is never more than 1 unit - they dont queue up?

What version of the product are you using? On what operating system?

Rev 1610 - Windows XP

Please provide any additional information below.

I will love the nexus to use the queue to make sure that the nexus is never

idle no matter the lag.

```

-----

Original issue reported on code.google.com by `wizuffeg...@gmail.com` on 29 Nov 2009 at 4:36 | non_priority | training queue in nexus is never more than unit what steps will reproduce the problem create a nexus train probes non stop and make them gather minerals the training queue is never more than unit they dont queue up what version of the product are you using on what operating system rev windows xp please provide any additional information below i will love the nexus to use the queue to make sure that the nexus is never idle no matter the lag original issue reported on code google com by wizuffeg gmail com on nov at | 0 |

227,688 | 17,396,303,994 | IssuesEvent | 2021-08-02 13:52:26 | TheSingleOneYT/FNLevel-DiscordBot | https://api.github.com/repos/TheSingleOneYT/FNLevel-DiscordBot | opened | Documentation | documentation | I have made a wiki for this project explaining how some of it works.

https://github.com/TheSingleOneYT/FNLevel-DiscordBot/wiki

Enjoy reading! | 1.0 | Documentation - I have made a wiki for this project explaining how some of it works.

https://github.com/TheSingleOneYT/FNLevel-DiscordBot/wiki

Enjoy reading! | non_priority | documentation i have made a wiki for this project explaining how some of it works enjoy reading | 0 |

85,842 | 15,755,290,436 | IssuesEvent | 2021-03-31 01:31:01 | ChenLuigi/GitHubScannerBower4 | https://api.github.com/repos/ChenLuigi/GitHubScannerBower4 | opened | CVE-2020-24025 (Medium) detected in node-sass-1.2.3.tgz | security vulnerability | ## CVE-2020-24025 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sass-1.2.3.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-1.2.3.tgz">https://registry.npmjs.org/node-sass/-/node-sass-1.2.3.tgz</a></p>

<p>Path to dependency file: GitHubScannerBower4/GoldenPanel_Lighter/GoldenPanel/c3-0.4.10/package/package.json</p>

<p>Path to vulnerable library: GitHubScannerBower4/GoldenPanel_Lighter/GoldenPanel/c3-0.4.10/package/node_modules/node-sass/package.json</p>

<p>

Dependency Hierarchy:

- grunt-sass-0.17.0.tgz (Root Library)

- :x: **node-sass-1.2.3.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Certificate validation in node-sass 2.0.0 to 4.14.1 is disabled when requesting binaries even if the user is not specifying an alternative download path.

<p>Publish Date: 2021-01-11

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-24025>CVE-2020-24025</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-24025 (Medium) detected in node-sass-1.2.3.tgz - ## CVE-2020-24025 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sass-1.2.3.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-1.2.3.tgz">https://registry.npmjs.org/node-sass/-/node-sass-1.2.3.tgz</a></p>

<p>Path to dependency file: GitHubScannerBower4/GoldenPanel_Lighter/GoldenPanel/c3-0.4.10/package/package.json</p>

<p>Path to vulnerable library: GitHubScannerBower4/GoldenPanel_Lighter/GoldenPanel/c3-0.4.10/package/node_modules/node-sass/package.json</p>

<p>

Dependency Hierarchy:

- grunt-sass-0.17.0.tgz (Root Library)

- :x: **node-sass-1.2.3.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Certificate validation in node-sass 2.0.0 to 4.14.1 is disabled when requesting binaries even if the user is not specifying an alternative download path.

<p>Publish Date: 2021-01-11

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-24025>CVE-2020-24025</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_priority | cve medium detected in node sass tgz cve medium severity vulnerability vulnerable library node sass tgz wrapper around libsass library home page a href path to dependency file goldenpanel lighter goldenpanel package package json path to vulnerable library goldenpanel lighter goldenpanel package node modules node sass package json dependency hierarchy grunt sass tgz root library x node sass tgz vulnerable library vulnerability details certificate validation in node sass to is disabled when requesting binaries even if the user is not specifying an alternative download path publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact low availability impact none for more information on scores click a href step up your open source security game with whitesource | 0 |

27,317 | 12,540,308,580 | IssuesEvent | 2020-06-05 10:07:54 | terraform-providers/terraform-provider-azurerm | https://api.github.com/repos/terraform-providers/terraform-provider-azurerm | closed | x509_certificate_properties should be required to create certificate | question service/keyvault | <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or "me too" comments, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform (and AzureRM Provider) Version

<!--- Please run `terraform -v` to show the Terraform core version and provider version(s). If you are not running the latest version of Terraform or the provider, please upgrade because your issue may have already been fixed. [Terraform documentation on provider versioning](https://www.terraform.io/docs/configuration/providers.html#provider-versions). --->

### Affected Resource(s)

<!--- Please list the affected resources and data sources. --->

* `azurerm v2.4.0 `

### Terraform Configuration Files

<!--- Information about code formatting: https://help.github.com/articles/basic-writing-and-formatting-syntax/#quoting-code --->

```hcl

resource "azurerm_key_vault_certificate" "sshkey" {

name = "nancyc-kv-cert-01"

key_vault_id = azurerm_key_vault.kv.id

certificate_policy {

issuer_parameters {

name = "Self"

}

key_properties {

exportable = true

key_size = 2048

key_type = "RSA"

reuse_key = true

}

secret_properties {

content_type = "application/x-pem-file"

}

}

}

```

### Debug Output

<!---

Please provide a link to a GitHub Gist containing the complete debug output. Please do NOT paste the debug output in the issue; just paste a link to the Gist.

To obtain the debug output, see the [Terraform documentation on debugging](https://www.terraform.io/docs/internals/debugging.html).

--->

### Panic Output

<!--- If Terraform produced a panic, please provide a link to a GitHub Gist containing the output of the `crash.log`. --->

### Expected Behavior

https://www.terraform.io/docs/providers/azurerm/r/key_vault_certificate.html

> certificate_policy supports the following:

>

> issuer_parameters - (Required) A issuer_parameters block as defined below.

> key_properties - (Required) A key_properties block as defined below.

> lifetime_action - (Optional) A lifetime_action block as defined below.

> secret_properties - (Required) A secret_properties block as defined below.

> **x509_certificate_properties - (Optional) A x509_certificate_properties block as defined below.**

However this should be **Required**.

### Actual Behavior

```

Error: keyvault.BaseClient#CreateCertificate: Failure responding to request: StatusCode=400 -- Original Error: autorest/azure: Service returned an error. Status=400 Code="BadParameter" Message="Property policy has invalid value\r\n"

on main.tf line 89, in resource "azurerm_key_vault_certificate" "sshkey":

89: resource "azurerm_key_vault_certificate" "sshkey" {

```

### Steps to Reproduce

<!--- Please list the steps required to reproduce the issue. --->

1. `terraform apply`

### Important Factoids

<!--- Are there anything atypical about your accounts that we should know? For example: Running in a Azure China/Germany/Government? --->

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Such as vendor documentation?

--->

* #0000

| 1.0 | x509_certificate_properties should be required to create certificate - <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or "me too" comments, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform (and AzureRM Provider) Version

<!--- Please run `terraform -v` to show the Terraform core version and provider version(s). If you are not running the latest version of Terraform or the provider, please upgrade because your issue may have already been fixed. [Terraform documentation on provider versioning](https://www.terraform.io/docs/configuration/providers.html#provider-versions). --->

### Affected Resource(s)

<!--- Please list the affected resources and data sources. --->

* `azurerm v2.4.0 `

### Terraform Configuration Files

<!--- Information about code formatting: https://help.github.com/articles/basic-writing-and-formatting-syntax/#quoting-code --->

```hcl

resource "azurerm_key_vault_certificate" "sshkey" {

name = "nancyc-kv-cert-01"

key_vault_id = azurerm_key_vault.kv.id

certificate_policy {

issuer_parameters {

name = "Self"

}

key_properties {

exportable = true

key_size = 2048

key_type = "RSA"

reuse_key = true

}

secret_properties {

content_type = "application/x-pem-file"

}

}

}

```

### Debug Output

<!---

Please provide a link to a GitHub Gist containing the complete debug output. Please do NOT paste the debug output in the issue; just paste a link to the Gist.

To obtain the debug output, see the [Terraform documentation on debugging](https://www.terraform.io/docs/internals/debugging.html).

--->

### Panic Output

<!--- If Terraform produced a panic, please provide a link to a GitHub Gist containing the output of the `crash.log`. --->

### Expected Behavior

https://www.terraform.io/docs/providers/azurerm/r/key_vault_certificate.html

> certificate_policy supports the following:

>

> issuer_parameters - (Required) A issuer_parameters block as defined below.

> key_properties - (Required) A key_properties block as defined below.

> lifetime_action - (Optional) A lifetime_action block as defined below.

> secret_properties - (Required) A secret_properties block as defined below.

> **x509_certificate_properties - (Optional) A x509_certificate_properties block as defined below.**

However this should be **Required**.

### Actual Behavior

```

Error: keyvault.BaseClient#CreateCertificate: Failure responding to request: StatusCode=400 -- Original Error: autorest/azure: Service returned an error. Status=400 Code="BadParameter" Message="Property policy has invalid value\r\n"

on main.tf line 89, in resource "azurerm_key_vault_certificate" "sshkey":

89: resource "azurerm_key_vault_certificate" "sshkey" {

```

### Steps to Reproduce

<!--- Please list the steps required to reproduce the issue. --->

1. `terraform apply`

### Important Factoids

<!--- Are there anything atypical about your accounts that we should know? For example: Running in a Azure China/Germany/Government? --->

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Such as vendor documentation?

--->

* #0000

| non_priority | certificate properties should be required to create certificate please note the following potential times when an issue might be in terraform core or resource ordering issues and issues issues issues spans resources across multiple providers if you are running into one of these scenarios we recommend opening an issue in the instead community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra noise for issue followers and do not help prioritize the request if you are interested in working on this issue or have submitted a pull request please leave a comment terraform and azurerm provider version affected resource s azurerm terraform configuration files hcl resource azurerm key vault certificate sshkey name nancyc kv cert key vault id azurerm key vault kv id certificate policy issuer parameters name self key properties exportable true key size key type rsa reuse key true secret properties content type application x pem file debug output please provide a link to a github gist containing the complete debug output please do not paste the debug output in the issue just paste a link to the gist to obtain the debug output see the panic output expected behavior certificate policy supports the following issuer parameters required a issuer parameters block as defined below key properties required a key properties block as defined below lifetime action optional a lifetime action block as defined below secret properties required a secret properties block as defined below certificate properties optional a certificate properties block as defined below however this should be required actual behavior error keyvault baseclient createcertificate failure responding to request statuscode original error autorest azure service returned an error status code badparameter message property policy has invalid value r n on main tf line in resource azurerm key vault certificate sshkey resource azurerm key vault certificate sshkey steps to reproduce terraform apply important factoids references information about referencing github issues are there any other github issues open or closed or pull requests that should be linked here such as vendor documentation | 0 |

210,306 | 23,750,846,983 | IssuesEvent | 2022-08-31 20:27:17 | ManageIQ/manageiq | https://api.github.com/repos/ManageIQ/manageiq | opened | Move all server certificates to /etc/pki/tls | enhancement core/security | Followup from: https://github.com/ManageIQ/manageiq/issues/21722

- [ ] https://github.com/ManageIQ/manageiq-pods/pull/770 update [orchestrator](https://github.com/ManageIQ/manageiq-pods/blob/bc27d1d70f451d7f52abe62db94bba540df34960/manageiq-operator/pkg/helpers/miq-components/orchestrator.go#L176-L179) to not copy to `/root/.postgres` | True | Move all server certificates to /etc/pki/tls - Followup from: https://github.com/ManageIQ/manageiq/issues/21722

- [ ] https://github.com/ManageIQ/manageiq-pods/pull/770 update [orchestrator](https://github.com/ManageIQ/manageiq-pods/blob/bc27d1d70f451d7f52abe62db94bba540df34960/manageiq-operator/pkg/helpers/miq-components/orchestrator.go#L176-L179) to not copy to `/root/.postgres` | non_priority | move all server certificates to etc pki tls followup from update to not copy to root postgres | 0 |

217,759 | 16,887,561,310 | IssuesEvent | 2021-06-23 03:48:35 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | [Test API] A newly added test will be triggered again in auto-run mode | testing under-discussion | Version: 1.58.0-insider (user setup)

Commit: a81fff00c9dab105800118fcf8b044cd84620419

Date: 2021-06-17T05:17:34.858Z

Electron: 12.0.11

Chrome: 89.0.4389.128

Node.js: 14.16.0

V8: 8.9.255.25-electron.0

OS: Windows_NT x64 10.0.19043

Steps to Reproduce:

1. In `test-provider-sample` project, in `run()` method of the `TestCase` class, change the else block to:

```ts

if (actual === this.expected) {

...

} else {

const child1 = vscode.test.createTestItem<TestCase>({

id: `fakeTest/${this.item.uri!.toString()}/${this.item.label}#1`,

label: this.item.label,

uri: this.item.uri!,

});

child1.range = this.item.range;

child1.runnable = false;

child1.debuggable = false;

child1.status = vscode.TestItemStatus.Resolved;

this.item.addChild(child1);

const message = vscode.TestMessage.diff(`Expected ${this.item.label}`, String(this.expected), String(actual));

message.location = new vscode.Location(this.item.uri!, this.item.range!);

options.appendMessage(child1, message);

options.setState(child1, vscode.TestResultState.Failed, duration);

}

```

_This is to imitate the case that when running parameterized tests, a test method will be triggered multiple times with different parameters during execution. So each invocation will be a added as a child of the test method during the execution._

2. Turn on the autorun mode

3. `runTests()` will be triggered twice, for the second time, only the newly added test item `child1` is contained in the tests array.

There are two reasons why I think the second `runTests()` should not be triggered:

1. The test state of `child1` has just been set for the last run

2. The test item `child1` is not runnable neither debuggable

// cc @connor4312

| 1.0 | [Test API] A newly added test will be triggered again in auto-run mode - Version: 1.58.0-insider (user setup)

Commit: a81fff00c9dab105800118fcf8b044cd84620419

Date: 2021-06-17T05:17:34.858Z

Electron: 12.0.11

Chrome: 89.0.4389.128

Node.js: 14.16.0

V8: 8.9.255.25-electron.0

OS: Windows_NT x64 10.0.19043

Steps to Reproduce:

1. In `test-provider-sample` project, in `run()` method of the `TestCase` class, change the else block to:

```ts

if (actual === this.expected) {

...

} else {

const child1 = vscode.test.createTestItem<TestCase>({

id: `fakeTest/${this.item.uri!.toString()}/${this.item.label}#1`,

label: this.item.label,

uri: this.item.uri!,

});

child1.range = this.item.range;

child1.runnable = false;

child1.debuggable = false;

child1.status = vscode.TestItemStatus.Resolved;

this.item.addChild(child1);

const message = vscode.TestMessage.diff(`Expected ${this.item.label}`, String(this.expected), String(actual));

message.location = new vscode.Location(this.item.uri!, this.item.range!);

options.appendMessage(child1, message);

options.setState(child1, vscode.TestResultState.Failed, duration);

}

```

_This is to imitate the case that when running parameterized tests, a test method will be triggered multiple times with different parameters during execution. So each invocation will be a added as a child of the test method during the execution._

2. Turn on the autorun mode

3. `runTests()` will be triggered twice, for the second time, only the newly added test item `child1` is contained in the tests array.

There are two reasons why I think the second `runTests()` should not be triggered:

1. The test state of `child1` has just been set for the last run

2. The test item `child1` is not runnable neither debuggable

// cc @connor4312

| non_priority | a newly added test will be triggered again in auto run mode version insider user setup commit date electron chrome node js electron os windows nt steps to reproduce in test provider sample project in run method of the testcase class change the else block to ts if actual this expected else const vscode test createtestitem id faketest this item uri tostring this item label label this item label uri this item uri range this item range runnable false debuggable false status vscode testitemstatus resolved this item addchild const message vscode testmessage diff expected this item label string this expected string actual message location new vscode location this item uri this item range options appendmessage message options setstate vscode testresultstate failed duration this is to imitate the case that when running parameterized tests a test method will be triggered multiple times with different parameters during execution so each invocation will be a added as a child of the test method during the execution turn on the autorun mode runtests will be triggered twice for the second time only the newly added test item is contained in the tests array there are two reasons why i think the second runtests should not be triggered the test state of has just been set for the last run the test item is not runnable neither debuggable cc | 0 |

3,279 | 3,873,268,040 | IssuesEvent | 2016-04-11 16:22:20 | Fermat-ORG/beta-testing-program | https://api.github.com/repos/Fermat-ORG/beta-testing-program | closed | Bit Coin Wallet _Cripto Wallet User | Performance | ### Template issue error report

#### Nombre pantalla

Cripto Wallet users

### Bitcoin Wallet

-----

##### Home

- [x] Pantalla de Transacciones recibidas (Es la de la derecha)

- [x] Pantalla de Transacciones enviadas (Es la de la izquierda)

- [x] Balance (es la circunferencia con la cantidad de bitcoin)

- [x] Pop up de bienvenida

- [x] Pop up de ayuda

##### Reporte de error:

*Al ingresar a la pantalla en ocasiones salen usuarios y en otras no sale nada como si estuviera vacio. Me ha pasado varias,veces el dia,de hoy, volvi a instalar el app pero el problema sigue.

#### Dispositivo desde donde se realizo la prueba

* Marca:zte

* Modelo:apex2

* Version de Android:4.4.2

| True | Bit Coin Wallet _Cripto Wallet User - ### Template issue error report

#### Nombre pantalla

Cripto Wallet users

### Bitcoin Wallet

-----

##### Home

- [x] Pantalla de Transacciones recibidas (Es la de la derecha)

- [x] Pantalla de Transacciones enviadas (Es la de la izquierda)

- [x] Balance (es la circunferencia con la cantidad de bitcoin)

- [x] Pop up de bienvenida

- [x] Pop up de ayuda

##### Reporte de error:

*Al ingresar a la pantalla en ocasiones salen usuarios y en otras no sale nada como si estuviera vacio. Me ha pasado varias,veces el dia,de hoy, volvi a instalar el app pero el problema sigue.

#### Dispositivo desde donde se realizo la prueba

* Marca:zte

* Modelo:apex2

* Version de Android:4.4.2

| non_priority | bit coin wallet cripto wallet user template issue error report nombre pantalla cripto wallet users bitcoin wallet home pantalla de transacciones recibidas es la de la derecha pantalla de transacciones enviadas es la de la izquierda balance es la circunferencia con la cantidad de bitcoin pop up de bienvenida pop up de ayuda reporte de error al ingresar a la pantalla en ocasiones salen usuarios y en otras no sale nada como si estuviera vacio me ha pasado varias veces el dia de hoy volvi a instalar el app pero el problema sigue dispositivo desde donde se realizo la prueba marca zte modelo version de android | 0 |

1,368 | 3,925,265,252 | IssuesEvent | 2016-04-22 18:17:48 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | closed | Дніпропетровська область - Видача довідки про неотримання аліментів-розмір аліментів | In process of testing | [Послуга 3 Отримання довідки про аліменти.docx](https://github.com/e-government-ua/iBP/files/198059/3.docx)

[ЗАЯВКА ПРО ВИДАЧУ ДОВІДКИ ПРО РОЗМІР АЛІМЕНТІВ.docx](https://github.com/e-government-ua/iBP/files/198062/default.docx)

[ЗАЯВКА ПО ДОВІДКЕ ПО АЛІМЕНТАМ.docx](https://github.com/e-government-ua/iBP/files/198063/default.docx)

| 1.0 | Дніпропетровська область - Видача довідки про неотримання аліментів-розмір аліментів - [Послуга 3 Отримання довідки про аліменти.docx](https://github.com/e-government-ua/iBP/files/198059/3.docx)

[ЗАЯВКА ПРО ВИДАЧУ ДОВІДКИ ПРО РОЗМІР АЛІМЕНТІВ.docx](https://github.com/e-government-ua/iBP/files/198062/default.docx)

[ЗАЯВКА ПО ДОВІДКЕ ПО АЛІМЕНТАМ.docx](https://github.com/e-government-ua/iBP/files/198063/default.docx)

| non_priority | дніпропетровська область видача довідки про неотримання аліментів розмір аліментів | 0 |

334,049 | 24,401,793,234 | IssuesEvent | 2022-10-05 02:38:50 | dankrzeminski32/BirthdayDiscordBot | https://api.github.com/repos/dankrzeminski32/BirthdayDiscordBot | closed | Adding @dataclasses | documentation enhancement | Current:

We have a bunch of classes that use __init__ and __repr__ which create instance variables.

Expect:

Import and use Dataclasses which in turn makes it so you dont need the __init__ and __repr__. It does it for you. you just have to name the instance variables below the class

EX:

@dataclass

class Test:

name: str

id: int

Reason:

Cuts the amount of lines in each file down a tad. | 1.0 | Adding @dataclasses - Current:

We have a bunch of classes that use __init__ and __repr__ which create instance variables.

Expect:

Import and use Dataclasses which in turn makes it so you dont need the __init__ and __repr__. It does it for you. you just have to name the instance variables below the class

EX:

@dataclass

class Test:

name: str

id: int

Reason:

Cuts the amount of lines in each file down a tad. | non_priority | adding dataclasses current we have a bunch of classes that use init and repr which create instance variables expect import and use dataclasses which in turn makes it so you dont need the init and repr it does it for you you just have to name the instance variables below the class ex dataclass class test name str id int reason cuts the amount of lines in each file down a tad | 0 |

118,050 | 25,240,154,036 | IssuesEvent | 2022-11-15 06:32:31 | hemx0147/TDVFuzz | https://api.github.com/repos/hemx0147/TDVFuzz | closed | read into CodeQL | code locations | read into the [CodeQL analysis engine](https://github.com/github/codeql) and figure out whether it could be used for **RQ2**: _find code locations that consume untrusted VMM input_

this is important to estimate the effort and time required for **RQ2** | 1.0 | read into CodeQL - read into the [CodeQL analysis engine](https://github.com/github/codeql) and figure out whether it could be used for **RQ2**: _find code locations that consume untrusted VMM input_

this is important to estimate the effort and time required for **RQ2** | non_priority | read into codeql read into the and figure out whether it could be used for find code locations that consume untrusted vmm input this is important to estimate the effort and time required for | 0 |

83,980 | 24,187,924,007 | IssuesEvent | 2022-09-23 14:48:06 | xamarin/xamarin-macios | https://api.github.com/repos/xamarin/xamarin-macios | closed | FileCopier.cs does not correctly Log\Error when used in msbuild | enhancement macOS iOS msbuild | https://github.com/xamarin/xamarin-macios/pull/5167#discussion_r238684107

This PR uses CWL\throwing an exception to report when run as part of an msbuild task.

Fixing this is tricky, as the LogError\LogMessage APIs are instance variables only accessible from the task.

We could stash the task somewhere in a static for reporting or bubble up some event to the task or something else, but in any case we need to fix this. | 1.0 | FileCopier.cs does not correctly Log\Error when used in msbuild - https://github.com/xamarin/xamarin-macios/pull/5167#discussion_r238684107

This PR uses CWL\throwing an exception to report when run as part of an msbuild task.

Fixing this is tricky, as the LogError\LogMessage APIs are instance variables only accessible from the task.

We could stash the task somewhere in a static for reporting or bubble up some event to the task or something else, but in any case we need to fix this. | non_priority | filecopier cs does not correctly log error when used in msbuild this pr uses cwl throwing an exception to report when run as part of an msbuild task fixing this is tricky as the logerror logmessage apis are instance variables only accessible from the task we could stash the task somewhere in a static for reporting or bubble up some event to the task or something else but in any case we need to fix this | 0 |

19,499 | 10,361,331,065 | IssuesEvent | 2019-09-06 09:46:03 | hisptz/nacp-dashboard-v2 | https://api.github.com/repos/hisptz/nacp-dashboard-v2 | opened | CVE-2019-6283 (Medium) detected in opennms-opennms-source-23.0.0-1 | security vulnerability | ## CVE-2019-6283 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opennmsopennms-source-23.0.0-1</b></p></summary>

<p>

<p>A Java based fault and performance management system</p>

<p>Library home page: <a href=https://sourceforge.net/projects/opennms/>https://sourceforge.net/projects/opennms/</a></p>

<p>Found in HEAD commit: <a href="https://github.com/hisptz/nacp-dashboard-v2/commit/769865a5eb38141c1edeb82f84f2ccacac36048a">769865a5eb38141c1edeb82f84f2ccacac36048a</a></p>

</p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Library Source Files (62)</summary>

<p></p>

<p> * The source files were matched to this source library based on a best effort match. Source libraries are selected from a list of probable public libraries.</p>

<p>

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/expand.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/expand.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/factory.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/boolean.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/util.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/value.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/emitter.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/callback_bridge.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/file.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/sass.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/operation.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/operators.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/constants.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/error_handling.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/custom_importer_bridge.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/parser.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/constants.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/list.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/cssize.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/functions.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/util.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/custom_function_bridge.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/custom_importer_bridge.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/bind.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/eval.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/backtrace.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/extend.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_context_wrapper.h

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/sass_value_wrapper.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/error_handling.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/debugger.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/emitter.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/number.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/color.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/sass_values.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/ast.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/output.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/check_nesting.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/null.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/ast_def_macros.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/functions.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/cssize.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/prelexer.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/ast.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/to_c.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/to_value.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/ast_fwd_decl.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/inspect.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/color.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/values.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_context_wrapper.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/list.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/check_nesting.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/map.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/to_value.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/context.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/string.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/sass_context.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/prelexer.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/context.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/boolean.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/eval.cpp

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In LibSass 3.5.5, a heap-based buffer over-read exists in Sass::Prelexer::parenthese_scope in prelexer.hpp.

<p>Publish Date: 2019-01-14

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-6283>CVE-2019-6283</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-6284">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-6284</a></p>

<p>Release Date: 2019-08-06</p>

<p>Fix Resolution: 3.6.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2019-6283 (Medium) detected in opennms-opennms-source-23.0.0-1 - ## CVE-2019-6283 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opennmsopennms-source-23.0.0-1</b></p></summary>

<p>

<p>A Java based fault and performance management system</p>

<p>Library home page: <a href=https://sourceforge.net/projects/opennms/>https://sourceforge.net/projects/opennms/</a></p>

<p>Found in HEAD commit: <a href="https://github.com/hisptz/nacp-dashboard-v2/commit/769865a5eb38141c1edeb82f84f2ccacac36048a">769865a5eb38141c1edeb82f84f2ccacac36048a</a></p>

</p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Library Source Files (62)</summary>

<p></p>

<p> * The source files were matched to this source library based on a best effort match. Source libraries are selected from a list of probable public libraries.</p>

<p>

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/expand.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/expand.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/factory.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/boolean.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/util.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/value.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/emitter.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/callback_bridge.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/file.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/sass.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/operation.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/operators.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/constants.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/error_handling.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/custom_importer_bridge.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/parser.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/constants.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/list.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/cssize.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/functions.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/util.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/custom_function_bridge.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/custom_importer_bridge.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/bind.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/eval.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/backtrace.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/extend.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_context_wrapper.h

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/sass_value_wrapper.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/error_handling.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/debugger.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/emitter.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/number.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/color.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/sass_values.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/ast.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/output.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/check_nesting.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/null.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/ast_def_macros.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/functions.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/cssize.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/prelexer.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/ast.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/to_c.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/to_value.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/ast_fwd_decl.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/inspect.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/color.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/values.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_context_wrapper.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/list.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/check_nesting.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/map.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/to_value.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/context.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/string.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/sass_context.cpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/prelexer.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/context.hpp

- /nacp-dashboard-v2/node_modules/node-sass/src/sass_types/boolean.h

- /nacp-dashboard-v2/node_modules/node-sass/src/libsass/src/eval.cpp

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In LibSass 3.5.5, a heap-based buffer over-read exists in Sass::Prelexer::parenthese_scope in prelexer.hpp.

<p>Publish Date: 2019-01-14

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-6283>CVE-2019-6283</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-6284">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-6284</a></p>

<p>Release Date: 2019-08-06</p>

<p>Fix Resolution: 3.6.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_priority | cve medium detected in opennms opennms source cve medium severity vulnerability vulnerable library opennmsopennms source a java based fault and performance management system library home page a href found in head commit a href library source files the source files were matched to this source library based on a best effort match source libraries are selected from a list of probable public libraries nacp dashboard node modules node sass src libsass src expand hpp nacp dashboard node modules node sass src libsass src expand cpp nacp dashboard node modules node sass src sass types factory cpp nacp dashboard node modules node sass src sass types boolean cpp nacp dashboard node modules node sass src libsass src util hpp nacp dashboard node modules node sass src sass types value h nacp dashboard node modules node sass src libsass src emitter hpp nacp dashboard node modules node sass src callback bridge h nacp dashboard node modules node sass src libsass src file cpp nacp dashboard node modules node sass src libsass src sass cpp nacp dashboard node modules node sass src libsass src operation hpp nacp dashboard node modules node sass src libsass src operators hpp nacp dashboard node modules node sass src libsass src constants hpp nacp dashboard node modules node sass src libsass src error handling hpp nacp dashboard node modules node sass src custom importer bridge cpp nacp dashboard node modules node sass src libsass src parser hpp nacp dashboard node modules node sass src libsass src constants cpp nacp dashboard node modules node sass src sass types list cpp nacp dashboard node modules node sass src libsass src cssize cpp nacp dashboard node modules node sass src libsass src functions hpp nacp dashboard node modules node sass src libsass src util cpp nacp dashboard node modules node sass src custom function bridge cpp nacp dashboard node modules node sass src custom importer bridge h nacp dashboard node modules node sass src libsass src bind cpp nacp dashboard node modules node sass src libsass src eval hpp nacp dashboard node modules node sass src libsass src backtrace cpp nacp dashboard node modules node sass src libsass src extend cpp nacp dashboard node modules node sass src sass context wrapper h nacp dashboard node modules node sass src sass types sass value wrapper h nacp dashboard node modules node sass src libsass src error handling cpp nacp dashboard node modules node sass src libsass src debugger hpp nacp dashboard node modules node sass src libsass src emitter cpp nacp dashboard node modules node sass src sass types number cpp nacp dashboard node modules node sass src sass types color h nacp dashboard node modules node sass src libsass src sass values cpp nacp dashboard node modules node sass src libsass src ast hpp nacp dashboard node modules node sass src libsass src output cpp nacp dashboard node modules node sass src libsass src check nesting cpp nacp dashboard node modules node sass src sass types null cpp nacp dashboard node modules node sass src libsass src ast def macros hpp nacp dashboard node modules node sass src libsass src functions cpp nacp dashboard node modules node sass src libsass src cssize hpp nacp dashboard node modules node sass src libsass src prelexer cpp nacp dashboard node modules node sass src libsass src ast cpp nacp dashboard node modules node sass src libsass src to c cpp nacp dashboard node modules node sass src libsass src to value hpp nacp dashboard node modules node sass src libsass src ast fwd decl hpp nacp dashboard node modules node sass src libsass src inspect hpp nacp dashboard node modules node sass src sass types color cpp nacp dashboard node modules node sass src libsass src values cpp nacp dashboard node modules node sass src sass context wrapper cpp nacp dashboard node modules node sass src sass types list h nacp dashboard node modules node sass src libsass src check nesting hpp nacp dashboard node modules node sass src sass types map cpp nacp dashboard node modules node sass src libsass src to value cpp nacp dashboard node modules node sass src libsass src context cpp nacp dashboard node modules node sass src sass types string cpp nacp dashboard node modules node sass src libsass src sass context cpp nacp dashboard node modules node sass src libsass src prelexer hpp nacp dashboard node modules node sass src libsass src context hpp nacp dashboard node modules node sass src sass types boolean h nacp dashboard node modules node sass src libsass src eval cpp vulnerability details in libsass a heap based buffer over read exists in sass prelexer parenthese scope in prelexer hpp publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource | 0 |

76,664 | 9,478,855,345 | IssuesEvent | 2019-04-20 02:11:24 | AgileVentures/sfn-client | https://api.github.com/repos/AgileVentures/sfn-client | closed | Make Trending Artists more responsive | css design help wanted review styling | Make Trending Artists more responsive

## Expected Behavior

Trending Artists should be more responsive

## Current Behavior

<img width="351" alt="Screenshot 2019-04-16 13 36 21" src="https://user-images.githubusercontent.com/11988089/56235338-c3dda100-6076-11e9-82e4-a4d6644db1ad.png">

## Your Environment

* Version used:

* Operating System and version (desktop or mobile): iPhoneX

| 1.0 | Make Trending Artists more responsive - Make Trending Artists more responsive

## Expected Behavior

Trending Artists should be more responsive

## Current Behavior

<img width="351" alt="Screenshot 2019-04-16 13 36 21" src="https://user-images.githubusercontent.com/11988089/56235338-c3dda100-6076-11e9-82e4-a4d6644db1ad.png">

## Your Environment

* Version used:

* Operating System and version (desktop or mobile): iPhoneX

| non_priority | make trending artists more responsive make trending artists more responsive expected behavior trending artists should be more responsive current behavior img width alt screenshot src your environment version used operating system and version desktop or mobile iphonex | 0 |

239,304 | 26,223,042,695 | IssuesEvent | 2023-01-04 16:15:53 | RG4421/Prebid.js | https://api.github.com/repos/RG4421/Prebid.js | reopened | CVE-2011-4969 (Low) detected in jquery-1.4.2.min.js | security vulnerability | ## CVE-2011-4969 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.4.2.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.4.2/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.4.2/jquery.min.js</a></p>

<p>Path to dependency file: /node_modules/faker/examples/browser/index.html</p>

<p>Path to vulnerable library: /node_modules/faker/examples/browser/js/jquery.js</p>

<p>

Dependency Hierarchy: