Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

126,469 | 17,892,210,396 | IssuesEvent | 2021-09-08 02:12:42 | matteobaccan/Web3jClient | https://api.github.com/repos/matteobaccan/Web3jClient | opened | CVE-2020-24750 (High) detected in jackson-databind-2.8.1.jar | security vulnerability | ## CVE-2020-24750 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.1.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-24750 (High) detected in jackson-databind-2.8.1.jar - ## CVE-2020-24750 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.1.jar</b></p></summary>

<p>General... | non_priority | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file pom xml path to vulnerable ... | 0 |

217 | 5,415,605,487 | IssuesEvent | 2017-03-01 22:00:48 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Sockets test failures SendToAsyncV4IPEndPointToV6Host_NotReceived & BeginSendToV4IPEndPointToV6Host_NotReceived | area-System.Net.Sockets os-mac-os-x tenet-reliability | ```

Test Result (2 failures / +2)

```

System.Net.Sockets.Tests.DualMode.SendToAsyncV4IPEndPointToV6Host_NotReceived

System.Net.Sockets.Tests.DualMode.BeginSendToV4IPEndPointToV6Host_NotReceived

http://dotnet-ci.cloudapp.net/job/dotnet_corefx/job/release_1.0.0/job/osx_debug_prtest/58/testReport/

Regression

System.Net... | True | Sockets test failures SendToAsyncV4IPEndPointToV6Host_NotReceived & BeginSendToV4IPEndPointToV6Host_NotReceived - ```

Test Result (2 failures / +2)

```

System.Net.Sockets.Tests.DualMode.SendToAsyncV4IPEndPointToV6Host_NotReceived

System.Net.Sockets.Tests.DualMode.BeginSendToV4IPEndPointToV6Host_NotReceived

http://dotn... | non_priority | sockets test failures notreceived notreceived test result failures system net sockets tests dualmode notreceived system net sockets tests dualmode notreceived regression system net sockets tests dualmode notreceived from empty failing for the past build since failed took ... | 0 |

242,583 | 26,277,738,316 | IssuesEvent | 2023-01-07 01:04:20 | mgh3326/nuber-eats-frontend | https://api.github.com/repos/mgh3326/nuber-eats-frontend | opened | CVE-2021-23382 (High) detected in postcss-8.2.5.tgz | security vulnerability | ## CVE-2021-23382 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>postcss-8.2.5.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href=... | True | CVE-2021-23382 (High) detected in postcss-8.2.5.tgz - ## CVE-2021-23382 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>postcss-8.2.5.tgz</b></p></summary>

<p>Tool for transforming sty... | non_priority | cve high detected in postcss tgz cve high severity vulnerability vulnerable library postcss tgz tool for transforming styles with js plugins library home page a href path to dependency file package json path to vulnerable library node modules postcss package json ... | 0 |

109,260 | 13,756,693,426 | IssuesEvent | 2020-10-06 20:21:12 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | [Design] Conduct My Documents usability testing sessions | design eFolders research vsa vsa-benefits-2 | ## Issue Description

_In order to validate that the My Documents design is intuitive to our user base, we need to a conduct task-based usability study to verify the UI and uncover opportunities for improvement._

---

## Tasks

- [ ] _Conduct usability test sessions_

- [ ] _Upload transcripts (scrubbed of PII) to GH_

- [... | 1.0 | [Design] Conduct My Documents usability testing sessions - ## Issue Description

_In order to validate that the My Documents design is intuitive to our user base, we need to a conduct task-based usability study to verify the UI and uncover opportunities for improvement._

---

## Tasks

- [ ] _Conduct usability test sessi... | non_priority | conduct my documents usability testing sessions issue description in order to validate that the my documents design is intuitive to our user base we need to a conduct task based usability study to verify the ui and uncover opportunities for improvement tasks conduct usability test sessions ... | 0 |

140,755 | 18,920,688,661 | IssuesEvent | 2021-11-17 01:03:12 | billmcchesney1/concord | https://api.github.com/repos/billmcchesney1/concord | opened | CVE-2021-23436 (High) detected in immer-1.10.0.tgz | security vulnerability | ## CVE-2021-23436 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>immer-1.10.0.tgz</b></p></summary>

<p>Create your next immutable state by mutating the current one</p>

<p>Library home... | True | CVE-2021-23436 (High) detected in immer-1.10.0.tgz - ## CVE-2021-23436 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>immer-1.10.0.tgz</b></p></summary>

<p>Create your next immutable ... | non_priority | cve high detected in immer tgz cve high severity vulnerability vulnerable library immer tgz create your next immutable state by mutating the current one library home page a href path to dependency file concord package json path to vulnerable library concord node ... | 0 |

215,120 | 24,126,435,045 | IssuesEvent | 2022-09-21 01:10:08 | Killy85/game_ai_trainer | https://api.github.com/repos/Killy85/game_ai_trainer | opened | CVE-2022-35998 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2022-35998 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learni... | True | CVE-2022-35998 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2022-35998 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27... | non_priority | cve medium detected in tensorflow whl cve medium severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href dependency hierarchy x tensorflow ... | 0 |

147,190 | 13,202,668,755 | IssuesEvent | 2020-08-14 12:47:27 | proyecto7000/gimnasio | https://api.github.com/repos/proyecto7000/gimnasio | opened | Colocar colores formales a la pagina | documentation | La página se ve muy apagada. Hay que colocar más colores.

Ejemplo Formato MarkDown

**Negrita**

_Cursiva_

* item

* item2

* item3

`public void main()` | 1.0 | Colocar colores formales a la pagina - La página se ve muy apagada. Hay que colocar más colores.

Ejemplo Formato MarkDown

**Negrita**

_Cursiva_

* item

* item2

* item3

`public void main()` | non_priority | colocar colores formales a la pagina la página se ve muy apagada hay que colocar más colores ejemplo formato markdown negrita cursiva item public void main | 0 |

82,550 | 10,257,904,143 | IssuesEvent | 2019-08-21 21:16:49 | standardhealth/shr_design | https://api.github.com/repos/standardhealth/shr_design | closed | SHR as Language: Signs, Meaning, Code, and a Way Forward | design rant | ### Background

Language is traditionally seen as consisting of three parts: signs, meanings, and a code connecting signs with their meanings. **Signs** are composed of symbols which are encoded and transmitted by the sender through a channel to the receiver, where signs are decoded. [[1](https://en.wikipedia.org/wiki/... | 1.0 | SHR as Language: Signs, Meaning, Code, and a Way Forward - ### Background

Language is traditionally seen as consisting of three parts: signs, meanings, and a code connecting signs with their meanings. **Signs** are composed of symbols which are encoded and transmitted by the sender through a channel to the receiver, w... | non_priority | shr as language signs meaning code and a way forward background language is traditionally seen as consisting of three parts signs meanings and a code connecting signs with their meanings signs are composed of symbols which are encoded and transmitted by the sender through a channel to the receiver w... | 0 |

49,297 | 7,493,977,773 | IssuesEvent | 2018-04-07 03:07:37 | coala/coala | https://api.github.com/repos/coala/coala | closed | Modify installing from git instructions in development_setup documentation | area/documentation difficulty/newcomer | https://github.com/coala/coala/blob/master/docs/Developers/Development_Setup.rst#installing-from-git

Change ```cd -``` to ```cd ..```

This makes it compatible with windows cmd as well

(and also will run in case the OLDPWD variable is not set by chance in any linux environment)

| 1.0 | Modify installing from git instructions in development_setup documentation - https://github.com/coala/coala/blob/master/docs/Developers/Development_Setup.rst#installing-from-git

Change ```cd -``` to ```cd ..```

This makes it compatible with windows cmd as well

(and also will run in case the OLDPWD variable is not ... | non_priority | modify installing from git instructions in development setup documentation change cd to cd this makes it compatible with windows cmd as well and also will run in case the oldpwd variable is not set by chance in any linux environment | 0 |

76,179 | 26,276,535,522 | IssuesEvent | 2023-01-06 22:48:59 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | closed | Linux Kernel 6.2 removed bio_set_op_attrs | Type: Defect | ### System information

Type | Version/Name

--- | ---

Distribution Name | Gentoo

Distribution Version | -

Kernel Version | `next-20221220`

Architecture | LoongArch

OpenZFS Version | 2.1.99-1641_gc935fe2e9

### Describe the problem you're observing

```

In file included from /var/tmp/portage/sys-fs/zfs-loo... | 1.0 | Linux Kernel 6.2 removed bio_set_op_attrs - ### System information

Type | Version/Name

--- | ---

Distribution Name | Gentoo

Distribution Version | -

Kernel Version | `next-20221220`

Architecture | LoongArch

OpenZFS Version | 2.1.99-1641_gc935fe2e9

### Describe the problem you're observing

```

In file i... | non_priority | linux kernel removed bio set op attrs system information type version name distribution name gentoo distribution version kernel version next architecture loongarch openzfs version describe the problem you re observing in file included from var tm... | 0 |

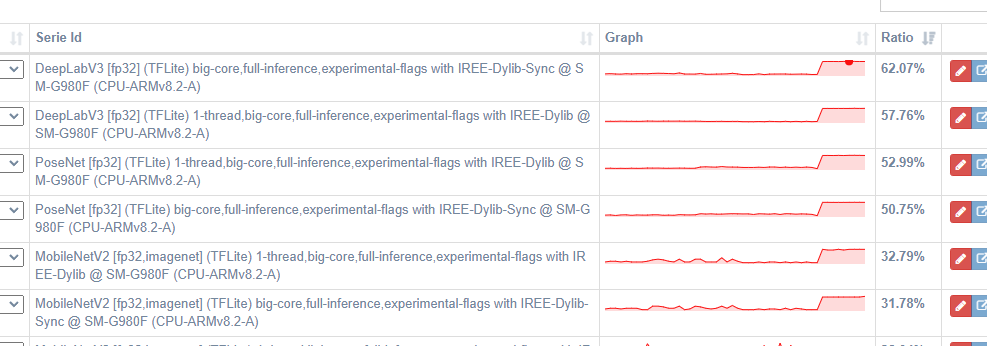

38,640 | 19,471,285,442 | IssuesEvent | 2021-12-24 01:52:58 | google/iree | https://api.github.com/repos/google/iree | opened | Large performance regression in tosa conv ops after upstream merge. | performance ⚡ | This merge commit caused a significant regression in tosa models with conv ops:

https://github.com/google/iree/commit/63a4724e168243a4697d654e90eb521a5e293bcf

From looking at the IR before/after the merge... | True | Large performance regression in tosa conv ops after upstream merge. - This merge commit caused a significant regression in tosa models with conv ops:

https://github.com/google/iree/commit/63a4724e168243a4697d654e90eb521a5e293bcf

` should be cancelable. With current impl, cancelling Reader context will not work if stream.Recv blocks infinitely. In addition, both Reader and Writer should use same context instance.

| 1.0 | Implement graceful shutdown for Kadcast peer - **Describe the bug**

`stream.Recv()` should be cancelable. With current impl, cancelling Reader context will not work if stream.Recv blocks infinitely. In addition, both Reader and Writer should use same context instance.

| non_priority | implement graceful shutdown for kadcast peer describe the bug stream recv should be cancelable with current impl cancelling reader context will not work if stream recv blocks infinitely in addition both reader and writer should use same context instance | 0 |

39,298 | 5,072,091,870 | IssuesEvent | 2016-12-26 19:10:46 | USGS-CIDA/metab_tests | https://api.github.com/repos/USGS-CIDA/metab_tests | closed | Experiment with model approaches | design factor | Leading models:

- the non-state-space, "shortcut" model by Charles

- hierarchical state-space model run with Stan

Others:

- MLE-PRK (observation error only)

- nighttime regression plus MLE-PR, probably linked by a K~Q regression

- MLE-PRK with process error only

- Bayesian with observation error only

| 1.0 | Experiment with model approaches - Leading models:

- the non-state-space, "shortcut" model by Charles

- hierarchical state-space model run with Stan

Others:

- MLE-PRK (observation error only)

- nighttime regression plus MLE-PR, probably linked by a K~Q regression

- MLE-PRK with process error only

- Bayesian with obser... | non_priority | experiment with model approaches leading models the non state space shortcut model by charles hierarchical state space model run with stan others mle prk observation error only nighttime regression plus mle pr probably linked by a k q regression mle prk with process error only bayesian with obser... | 0 |

114,495 | 24,609,685,591 | IssuesEvent | 2022-10-14 19:56:58 | lucasferreiram3/PyGoat | https://api.github.com/repos/lucasferreiram3/PyGoat | opened | Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS) [VID:80:pygoat/introduction/apis.py:83] | VeracodeFlaw: Medium Veracode Pipeline Scan | **Filename:** pygoat/introduction/apis.py

**Line:** 83

**CWE:** 80 (Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS))

<span>This call to django.http.JsonResponse() contains a cross-site scripting (XSS) flaw. The application populates the HTTP response with user-supplied input, allowing ... | 2.0 | Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS) [VID:80:pygoat/introduction/apis.py:83] - **Filename:** pygoat/introduction/apis.py

**Line:** 83

**CWE:** 80 (Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS))

<span>This call to django.http.JsonResponse() cont... | non_priority | improper neutralization of script related html tags in a web page basic xss filename pygoat introduction apis py line cwe improper neutralization of script related html tags in a web page basic xss this call to django http jsonresponse contains a cross site scripting xss flaw the ... | 0 |

111,188 | 11,726,360,025 | IssuesEvent | 2020-03-10 14:24:29 | dillon435/Activities-Project | https://api.github.com/repos/dillon435/Activities-Project | closed | boards | documentation | Your boards look like you have completed the project. This may be true. If you have other tasks to do, be sure to add them to the boards. | 1.0 | boards - Your boards look like you have completed the project. This may be true. If you have other tasks to do, be sure to add them to the boards. | non_priority | boards your boards look like you have completed the project this may be true if you have other tasks to do be sure to add them to the boards | 0 |

300,291 | 25,956,621,321 | IssuesEvent | 2022-12-18 10:14:37 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | kv/kvserver: TestReplicaClosedTimestamp failed | C-test-failure O-robot branch-master | kv/kvserver.TestReplicaClosedTimestamp [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8008755?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8008755?buildTab=artifacts#/) on master @ [93ed65565357538c9048ff... | 1.0 | kv/kvserver: TestReplicaClosedTimestamp failed - kv/kvserver.TestReplicaClosedTimestamp [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8008755?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8008755?buildTab... | non_priority | kv kvserver testreplicaclosedtimestamp failed kv kvserver testreplicaclosedtimestamp with on master run testreplicaclosedtimestamp test log scope go test logs captured to artifacts tmp tmp test log scope go use show logs to present logs inline cont testreplicaclos... | 0 |

17,180 | 10,617,723,764 | IssuesEvent | 2019-10-12 21:19:19 | cityofaustin/atd-vz-data | https://api.github.com/repos/cityofaustin/atd-vz-data | opened | VZE: Person/Primary Person death_cnt | Need: 2-Should Have Project: Vision Zero Crash Data System Service: Dev Workgroup: VZ | It appears the death count in the location page aggregates data from two tables: primary person and person.

These table have their own death_cnt column, separate from the crash's table.

Two things can be done to fix this:

1. Create two columns that provide revisions for APD for (ie. apd_confirmed_death_count), just... | 1.0 | VZE: Person/Primary Person death_cnt - It appears the death count in the location page aggregates data from two tables: primary person and person.

These table have their own death_cnt column, separate from the crash's table.

Two things can be done to fix this:

1. Create two columns that provide revisions for APD fo... | non_priority | vze person primary person death cnt it appears the death count in the location page aggregates data from two tables primary person and person these table have their own death cnt column separate from the crash s table two things can be done to fix this create two columns that provide revisions for apd fo... | 0 |

17,963 | 23,973,941,737 | IssuesEvent | 2022-09-13 09:58:56 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Update the Note in Runbook types section | automation/svc triaged cxp doc-enhancement process-automation/subsvc Pri2 | Note in [Runbook types](https://docs.microsoft.com/en-us/azure/automation/automation-child-runbooks#runbook-types) section needs update. It also needs to provide a reference to [Start a child runbook by using a cmdlet](https://docs.microsoft.com/en-us/azure/automation/automation-child-runbooks#start-a-child-runbook-by-... | 1.0 | Update the Note in Runbook types section - Note in [Runbook types](https://docs.microsoft.com/en-us/azure/automation/automation-child-runbooks#runbook-types) section needs update. It also needs to provide a reference to [Start a child runbook by using a cmdlet](https://docs.microsoft.com/en-us/azure/automation/automati... | non_priority | update the note in runbook types section note in section needs update it also needs to provide a reference to section and explain issues associated with starting a child runbook using the cmdlet reference document details ⚠ do not edit this section it is required for docs microsoft com ... | 0 |

17,031 | 10,593,568,601 | IssuesEvent | 2019-10-09 15:05:45 | prometheus/prometheus | https://api.github.com/repos/prometheus/prometheus | closed | Service discovery errors during startup in v2.13.0 | component/service discovery | ## Bug Report

**What did you do?**

We've upgraded Prometheus from 2.6.1 to 2.13.0.

When Prometheus is starting up there are service discovery errors logged, partly because of context cancellation. Service discovery otherwise works.

Also it seems the config is read twice.

**What did you expect to see?**

... | 1.0 | Service discovery errors during startup in v2.13.0 - ## Bug Report

**What did you do?**

We've upgraded Prometheus from 2.6.1 to 2.13.0.

When Prometheus is starting up there are service discovery errors logged, partly because of context cancellation. Service discovery otherwise works.

Also it seems the confi... | non_priority | service discovery errors during startup in bug report what did you do we ve upgraded prometheus from to when prometheus is starting up there are service discovery errors logged partly because of context cancellation service discovery otherwise works also it seems the config i... | 0 |

170,107 | 14,240,793,148 | IssuesEvent | 2020-11-18 22:15:40 | matplotlib/matplotlib | https://api.github.com/repos/matplotlib/matplotlib | closed | Mention rasterized option in more methods | Documentation Good first issue | We should mention rasterized in a few places in the docstrings of relavent methods. Ie pcolormesh, contour etc. This option is probably the number one reason I started to use matplotlib, but it seems a lot of people don’t realize it exists. | 1.0 | Mention rasterized option in more methods - We should mention rasterized in a few places in the docstrings of relavent methods. Ie pcolormesh, contour etc. This option is probably the number one reason I started to use matplotlib, but it seems a lot of people don’t realize it exists. | non_priority | mention rasterized option in more methods we should mention rasterized in a few places in the docstrings of relavent methods ie pcolormesh contour etc this option is probably the number one reason i started to use matplotlib but it seems a lot of people don’t realize it exists | 0 |

387,052 | 26,711,326,939 | IssuesEvent | 2023-01-28 00:35:58 | gbowne1/reactsocialnetwork | https://api.github.com/repos/gbowne1/reactsocialnetwork | opened | Fix these minor CSS issues | bug documentation enhancement help wanted good first issue question | Describe the bug

[App]

Fix these minor CSS issues.

CSS Issue(s):

- Footer.css: `<Footer class=Footer-body>`

Error is in browser console. `Error in parsing value for ‘float’. Declaration dropped.`

- App.css <.Register-button>

Error is in browser console. `Error in parsing value for ‘align-items’. ... | 1.0 | Fix these minor CSS issues - Describe the bug

[App]

Fix these minor CSS issues.

CSS Issue(s):

- Footer.css: `<Footer class=Footer-body>`

Error is in browser console. `Error in parsing value for ‘float’. Declaration dropped.`

- App.css <.Register-button>

Error is in browser console. `Error in pars... | non_priority | fix these minor css issues describe the bug fix these minor css issues css issue s footer css error is in browser console error in parsing value for ‘float’ declaration dropped app css error is in browser console error in parsing value for ‘align items’ declaration drop... | 0 |

283,158 | 30,889,610,083 | IssuesEvent | 2023-08-04 02:59:12 | maddyCode23/linux-4.1.15 | https://api.github.com/repos/maddyCode23/linux-4.1.15 | reopened | CVE-2022-4129 (Medium) detected in linux-stable-rtv4.1.33, linux-stable-rtv4.1.33 | Mend: dependency security vulnerability | ## CVE-2022-4129 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linux-stable-rtv4.1.33</b>, <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summ... | True | CVE-2022-4129 (Medium) detected in linux-stable-rtv4.1.33, linux-stable-rtv4.1.33 - ## CVE-2022-4129 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linux-stable-rtv4.1.33</b>, <b>l... | non_priority | cve medium detected in linux stable linux stable cve medium severity vulnerability vulnerable libraries linux stable linux stable vulnerability details a flaw was found in the linux kernel s layer tunneling protocol a missing lock when... | 0 |

28,271 | 4,087,278,908 | IssuesEvent | 2016-06-01 09:28:39 | fossasia/open-event-webapp | https://api.github.com/repos/fossasia/open-event-webapp | closed | Best way to integrate the webapp with gentelella | design | Hi ,

As [gentelella](https://github.com/puikinsh/gentelella) was accepted as a theme by everyone , What should be the best way for the integration ?

As gentelella is jquery based , it does not follow MVC architecture of Angular JS . Hence a best modular approach is needed .

The snapshot is the MVC architecture w... | 1.0 | Best way to integrate the webapp with gentelella - Hi ,

As [gentelella](https://github.com/puikinsh/gentelella) was accepted as a theme by everyone , What should be the best way for the integration ?

As gentelella is jquery based , it does not follow MVC architecture of Angular JS . Hence a best modular approach ... | non_priority | best way to integrate the webapp with gentelella hi as was accepted as a theme by everyone what should be the best way for the integration as gentelella is jquery based it does not follow mvc architecture of angular js hence a best modular approach is needed the snapshot is the mvc architecture... | 0 |

7,625 | 10,742,076,520 | IssuesEvent | 2019-10-29 21:38:37 | ennukee/aniupdater | https://api.github.com/repos/ennukee/aniupdater | reopened | Enhance landing screen | UX big deal enhancement release requirement | Provide more on the initial landing screen (where you input an anilist token) so that it's more user friendly to first-time visitors.

(Also allow Enter to be used to submit tokens)

Criteria

- [ ] Update "submit" button to be less... odd?

- [ ] Add logo from #19 to be bigger on this screen only

- [ ] Informati... | 1.0 | Enhance landing screen - Provide more on the initial landing screen (where you input an anilist token) so that it's more user friendly to first-time visitors.

(Also allow Enter to be used to submit tokens)

Criteria

- [ ] Update "submit" button to be less... odd?

- [ ] Add logo from #19 to be bigger on this scr... | non_priority | enhance landing screen provide more on the initial landing screen where you input an anilist token so that it s more user friendly to first time visitors also allow enter to be used to submit tokens criteria update submit button to be less odd add logo from to be bigger on this screen o... | 0 |

172,034 | 14,349,553,833 | IssuesEvent | 2020-11-29 17:04:38 | SAP/fundamental-ngx | https://api.github.com/repos/SAP/fundamental-ngx | opened | documentation versions are missing | documentation | on https://sap.github.io/fundamental-ngx/ and https://fundamental-ngx.netlify.app/ the last documentation is for 0.21.0. We are on 0.25 rc right now. We need to find a way to automate that.

Meanwhile we need to add the missing versions. | 1.0 | documentation versions are missing - on https://sap.github.io/fundamental-ngx/ and https://fundamental-ngx.netlify.app/ the last documentation is for 0.21.0. We are on 0.25 rc right now. We need to find a way to automate that.

Meanwhile we need to add the missing versions. | non_priority | documentation versions are missing on and the last documentation is for we are on rc right now we need to find a way to automate that meanwhile we need to add the missing versions | 0 |

350,229 | 24,974,119,725 | IssuesEvent | 2022-11-02 05:45:50 | cse110-fa22-group28/cse110-fa22-group28 | https://api.github.com/repos/cse110-fa22-group28/cse110-fa22-group28 | closed | User Stories Document | documentation | # Administrative or Organizational Tasks

What is the purpose of this task?

Create a document with user stories - how a user would interact with the app while using it based on their persona. Here is a link with more [information](https://en.wikipedia.org/wiki/User_story) :)

Steps to complete the task:

- [x] D... | 1.0 | User Stories Document - # Administrative or Organizational Tasks

What is the purpose of this task?

Create a document with user stories - how a user would interact with the app while using it based on their persona. Here is a link with more [information](https://en.wikipedia.org/wiki/User_story) :)

Steps to co... | non_priority | user stories document administrative or organizational tasks what is the purpose of this task create a document with user stories how a user would interact with the app while using it based on their persona here is a link with more steps to complete the task do some research on user stori... | 0 |

120,978 | 10,144,841,604 | IssuesEvent | 2019-08-05 00:50:31 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | Enable node e2e tests on Windows | area/test kind/feature lifecycle/rotten sig/windows | <!-- Please only use this template for submitting enhancement requests -->

**What would you like to be added**:

Currently there is no node e2e tests for Windows. Since the Windows features in kubernetes are going to GA, it is important to set up the job on PRs, to prevent build failure and reggressions for Window... | 1.0 | Enable node e2e tests on Windows - <!-- Please only use this template for submitting enhancement requests -->

**What would you like to be added**:

Currently there is no node e2e tests for Windows. Since the Windows features in kubernetes are going to GA, it is important to set up the job on PRs, to prevent build ... | non_priority | enable node tests on windows what would you like to be added currently there is no node tests for windows since the windows features in kubernetes are going to ga it is important to set up the job on prs to prevent build failure and reggressions for windows why is this needed node te... | 0 |

65,191 | 12,539,245,069 | IssuesEvent | 2020-06-05 08:16:05 | galasa-dev/projectmanagement | https://api.github.com/repos/galasa-dev/projectmanagement | closed | The vscode workspace OBR is not taking the custom maven repository into consideration | bug vscode | The workspace obr build is failing because it is not taking any notice of the Java Maven repository or the Galasa local repository settings, I had mine set to /Users/mikebyls/git/galasa/m2/repository, I would have expected to see a --settings /Users/mikebyls/git/galasa/m2/settings.xml from the Java Maven extension:-

`... | 1.0 | The vscode workspace OBR is not taking the custom maven repository into consideration - The workspace obr build is failing because it is not taking any notice of the Java Maven repository or the Galasa local repository settings, I had mine set to /Users/mikebyls/git/galasa/m2/repository, I would have expected to see a... | non_priority | the vscode workspace obr is not taking the custom maven repository into consideration the workspace obr build is failing because it is not taking any notice of the java maven repository or the galasa local repository settings i had mine set to users mikebyls git galasa repository i would have expected to see a ... | 0 |

90,161 | 18,068,307,588 | IssuesEvent | 2021-09-20 22:00:11 | PyTorchLightning/pytorch-lightning | https://api.github.com/repos/PyTorchLightning/pytorch-lightning | closed | Deprecate `LightningLoggerBase.close` | enhancement good first issue let's do it! refactors / code health logger deprecation | ## Proposed refactoring or deprecation

<!-- A clear and concise description of the code improvement -->

### Motivation

This is a follow up to https://github.com/PyTorchLightning/pytorch-lightning/discussions/9004#discussioncomment-1212966

and

https://github.com/PyTorchLightning/pytorch-lightning/issues/9037

... | 1.0 | Deprecate `LightningLoggerBase.close` - ## Proposed refactoring or deprecation

<!-- A clear and concise description of the code improvement -->

### Motivation

This is a follow up to https://github.com/PyTorchLightning/pytorch-lightning/discussions/9004#discussioncomment-1212966

and

https://github.com/PyTorch... | non_priority | deprecate lightningloggerbase close proposed refactoring or deprecation motivation this is a follow up to and the base logger api has close defined this is only implemented on given the test tube logger has since been deprecated we can also deprecate this method off the base... | 0 |

165,460 | 20,591,868,084 | IssuesEvent | 2022-03-05 00:25:16 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Question: are APIs in System.Security.Cryptography.Cng and System.Security.Cryptography.Pkcs available in Mono? | question area-System.Security | Hi, we've called APIs in those two packages in net5.0 code path and they worked correctly.

I'm wondering if the APIs work if calling them in Mono from non-Windows platforms?

I think that is,

1. Does the full framework code path of those APIs in the two packages depend on any windows platform specific APIs?

2. If a... | True | Question: are APIs in System.Security.Cryptography.Cng and System.Security.Cryptography.Pkcs available in Mono? - Hi, we've called APIs in those two packages in net5.0 code path and they worked correctly.

I'm wondering if the APIs work if calling them in Mono from non-Windows platforms?

I think that is,

1. Does the... | non_priority | question are apis in system security cryptography cng and system security cryptography pkcs available in mono hi we ve called apis in those two packages in code path and they worked correctly i m wondering if the apis work if calling them in mono from non windows platforms i think that is does the fu... | 0 |

176,368 | 14,579,717,194 | IssuesEvent | 2020-12-18 07:53:22 | qudgns5129/pill_classification | https://api.github.com/repos/qudgns5129/pill_classification | closed | 문제 정의 | documentation | 문제 배경 : 대형 병원에서 환자의 호전 상태에 따라 처방이 바뀌거나 하는 일이 발생하면, 조제되었으나 투약되지 않은 약은 회수하게 된다. 약사는 이렇게 회수된 수백 종류의 알약을 재분류하는 작업을 하고 있다.

문제 정의 : 이미지의 색상 정보를 가지고 약품명 예측하기, 글자 인식 X -> 인간의 직관과 비슷한 모델을 만들기 위함(보다 직관적인)

< 모델 구조 >

detection + classification

전처리 단계 또는 모델 내 레이어 : 이미지에서 알약 detection 하기

INPUT : 이미지 RGB 3채널의 픽셀값

OUTPUT... | 1.0 | 문제 정의 - 문제 배경 : 대형 병원에서 환자의 호전 상태에 따라 처방이 바뀌거나 하는 일이 발생하면, 조제되었으나 투약되지 않은 약은 회수하게 된다. 약사는 이렇게 회수된 수백 종류의 알약을 재분류하는 작업을 하고 있다.

문제 정의 : 이미지의 색상 정보를 가지고 약품명 예측하기, 글자 인식 X -> 인간의 직관과 비슷한 모델을 만들기 위함(보다 직관적인)

< 모델 구조 >

detection + classification

전처리 단계 또는 모델 내 레이어 : 이미지에서 알약 detection 하기

INPUT : 이미지 RGB 3채널의 픽셀값... | non_priority | 문제 정의 문제 배경 대형 병원에서 환자의 호전 상태에 따라 처방이 바뀌거나 하는 일이 발생하면 조제되었으나 투약되지 않은 약은 회수하게 된다 약사는 이렇게 회수된 수백 종류의 알약을 재분류하는 작업을 하고 있다 문제 정의 이미지의 색상 정보를 가지고 약품명 예측하기 글자 인식 x 인간의 직관과 비슷한 모델을 만들기 위함 보다 직관적인 detection classification 전처리 단계 또는 모델 내 레이어 이미지에서 알약 detection 하기 input 이미지 rgb 픽셀값 output ... | 0 |

221,307 | 17,011,976,289 | IssuesEvent | 2021-07-02 06:36:19 | a8119037/isfw2 | https://api.github.com/repos/a8119037/isfw2 | closed | グループ分けアプリの機能議論 | documentation | # アイデア出し

これを元にモデルを組んでも良いかも

## 条件を付けてグループ分けができる

- 男女のバランスを設定して分ける

- グループの人数を設定してランダムに分ける

- 特定の人(例えば4章担当の人)を各グループに分けて、他の人をランダムで割りふる

## 余談

この議論はgithub discussionに移行した方がいいのかも | 1.0 | グループ分けアプリの機能議論 - # アイデア出し

これを元にモデルを組んでも良いかも

## 条件を付けてグループ分けができる

- 男女のバランスを設定して分ける

- グループの人数を設定してランダムに分ける

- 特定の人(例えば4章担当の人)を各グループに分けて、他の人をランダムで割りふる

## 余談

この議論はgithub discussionに移行した方がいいのかも | non_priority | グループ分けアプリの機能議論 アイデア出し これを元にモデルを組んでも良いかも 条件を付けてグループ分けができる 男女のバランスを設定して分ける グループの人数を設定してランダムに分ける 特定の人( )を各グループに分けて、他の人をランダムで割りふる 余談 この議論はgithub discussionに移行した方がいいのかも | 0 |

32,298 | 4,761,189,095 | IssuesEvent | 2016-10-25 07:17:09 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | MapQueryEngineImpl_queryLocalPartition_resultSizeLimitTest.checkResultSize_limitExceeded & checkResultSize_limitNotExceeded | Team: Core Type: Test-Failure | ```

java.lang.AssertionError: Expected exception: com.hazelcast.map.QueryResultSizeExceededException

at org.junit.internal.runners.statements.ExpectException.evaluate(ExpectException.java:32)

at com.hazelcast.test.FailOnTimeoutStatement$CallableStatement.call(FailOnTimeoutStatement.java:88)

at com.hazelcast.test... | 1.0 | MapQueryEngineImpl_queryLocalPartition_resultSizeLimitTest.checkResultSize_limitExceeded & checkResultSize_limitNotExceeded - ```

java.lang.AssertionError: Expected exception: com.hazelcast.map.QueryResultSizeExceededException

at org.junit.internal.runners.statements.ExpectException.evaluate(ExpectException.java:32... | non_priority | mapqueryengineimpl querylocalpartition resultsizelimittest checkresultsize limitexceeded checkresultsize limitnotexceeded java lang assertionerror expected exception com hazelcast map queryresultsizeexceededexception at org junit internal runners statements expectexception evaluate expectexception java ... | 0 |

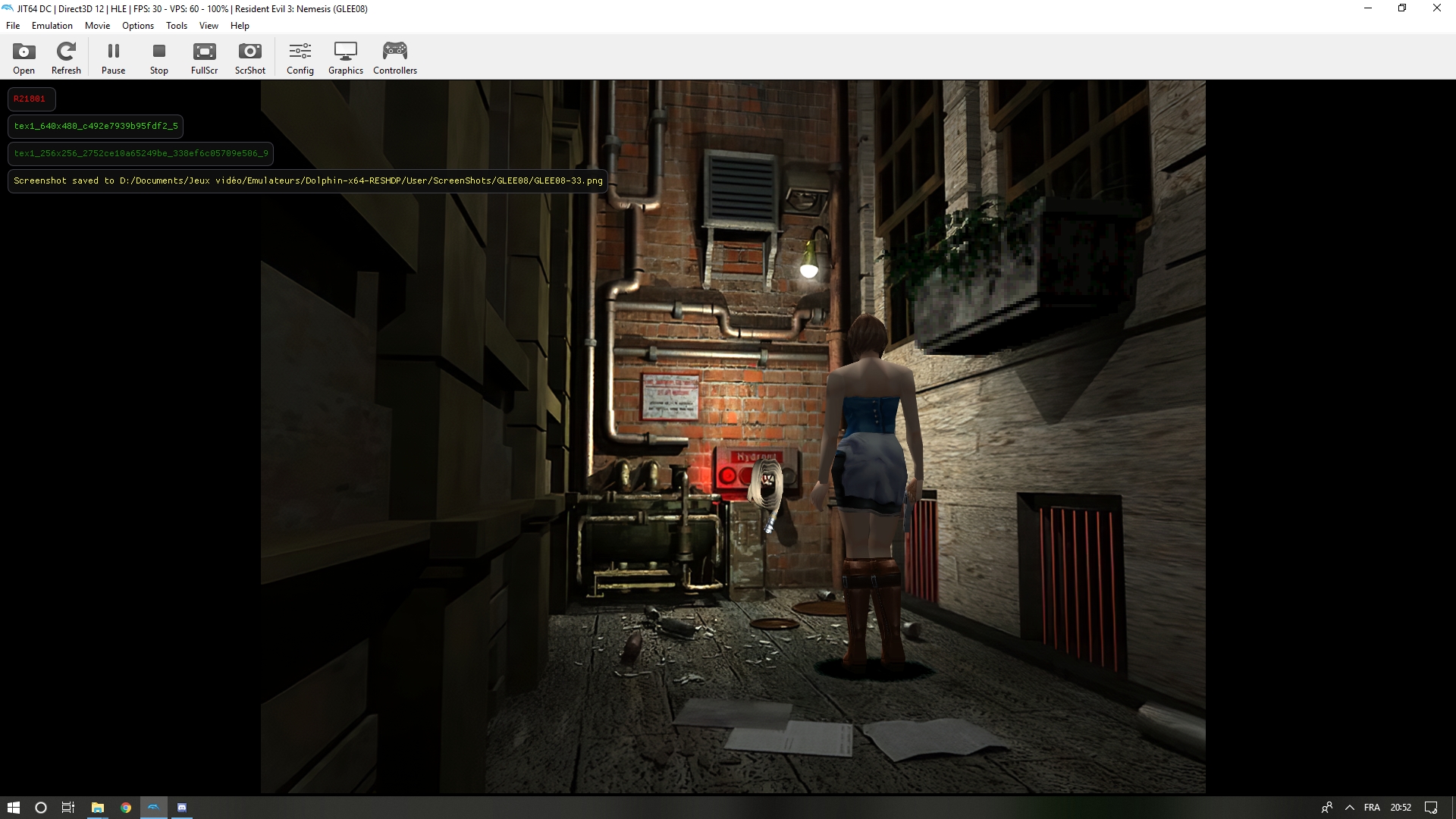

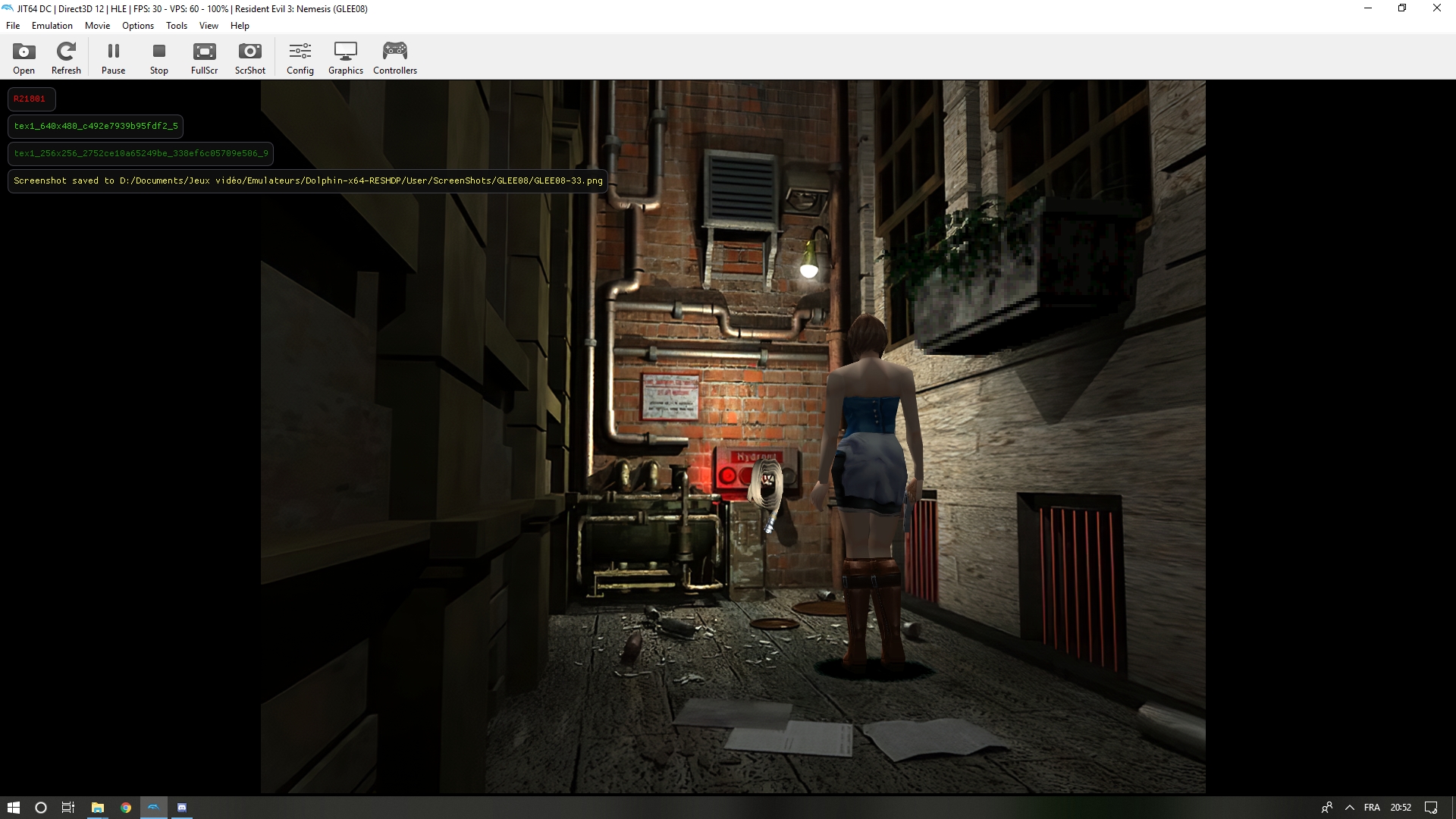

117,938 | 9,965,874,866 | IssuesEvent | 2019-07-08 09:46:31 | MoArtis/ResidentEvilSeamlessHdProject | https://api.github.com/repos/MoArtis/ResidentEvilSeamlessHdProject | closed | R21801 mask problem | 🎮 to be tested 💥 broken texture 🙎♀️🏙 Resident Evil 3 | It's one of the rooms where we cant' wildcard the mask.

The mask can have 2 different tlut : 338ef6c05709e506 or 91fbb229c7fa0f59. That is also the case for R21807. Of course the masks are unique for e... | 1.0 | R21801 mask problem - It's one of the rooms where we cant' wildcard the mask.

The mask can have 2 different tlut : 338ef6c05709e506 or 91fbb229c7fa0f59. That is also the case for R21807. Of course the ... | non_priority | mask problem it s one of the rooms where we cant wildcard the mask the mask can have different tlut or that is also the case for of course the masks are unique for each room i guess you have updated dolphin on the gdrive so i should have the latest version | 0 |

238,327 | 18,238,908,263 | IssuesEvent | 2021-10-01 10:24:41 | appsmithorg/appsmith-docs | https://api.github.com/repos/appsmithorg/appsmith-docs | opened | How to group multiple widgets on Appsmith | documentation good first issue hacktoberfest widget | Please make sure that your documentation has the following -

1. An Example app that shows the required widget.

2. Add screenshots for a better understanding of the topic.

3. Add code snippets wherever necessary.

For documentation guidelines, click [here.](https://github.com/appsmithorg/appsmith/blob/release/contr... | 1.0 | How to group multiple widgets on Appsmith - Please make sure that your documentation has the following -

1. An Example app that shows the required widget.

2. Add screenshots for a better understanding of the topic.

3. Add code snippets wherever necessary.

For documentation guidelines, click [here.](https://github... | non_priority | how to group multiple widgets on appsmith please make sure that your documentation has the following an example app that shows the required widget add screenshots for a better understanding of the topic add code snippets wherever necessary for documentation guidelines click | 0 |

467 | 2,534,107,957 | IssuesEvent | 2015-01-24 16:14:34 | numixproject/numix-icon-theme-circle | https://api.github.com/repos/numixproject/numix-icon-theme-circle | closed | Icon for UberWriter | hardcoded | Name=UberWriter

Icon=/opt/extras.ubuntu.com/uberwriter/share/uberwriter/media/uberwriter.svg

| 1.0 | Icon for UberWriter - Name=UberWriter

Icon=/opt/extras.ubuntu.com/uberwriter/share/uberwriter/media/uberwriter.svg

| non_priority | icon for uberwriter name uberwriter icon opt extras ubuntu com uberwriter share uberwriter media uberwriter svg | 0 |

110,767 | 16,988,568,352 | IssuesEvent | 2021-06-30 17:13:14 | MicrosoftDocs/windows-itpro-docs | https://api.github.com/repos/MicrosoftDocs/windows-itpro-docs | closed | [Feedback & Questions] BitLocker: How to enable Network Unlock (https://docs.microsoft.com/en-us/windows/security/information-protection/bitlocker/bitlocker-how-to-enable-network-unlock) | bitlocker security | Hello Bitlocker Experts,

I'm new to Bitlocker and I wanna have a deeper understanding on how the "Network Unlock" work.

Made reference to the:

1) BitLocker: How to enable Network Unlock

2) [MS-NKPU]: Network Key Protector Unlock Protocol

and draw a very simple diagram to try to get a more clear und... | True | [Feedback & Questions] BitLocker: How to enable Network Unlock (https://docs.microsoft.com/en-us/windows/security/information-protection/bitlocker/bitlocker-how-to-enable-network-unlock) - Hello Bitlocker Experts,

I'm new to Bitlocker and I wanna have a deeper understanding on how the "Network Unlock" work.

Made ... | non_priority | bitlocker how to enable network unlock hello bitlocker experts i m new to bitlocker and i wanna have a deeper understanding on how the network unlock work made reference to the bitlocker how to enable network unlock network key protector unlock protocol and draw a very simple... | 0 |

44,515 | 5,842,080,295 | IssuesEvent | 2017-05-10 04:07:58 | openMF/community-app | https://api.github.com/repos/openMF/community-app | closed | Side nav is not scrolling with the page in firefox browser | design gsoc p2 reskin | As you scroll down the page in community-app, the side-nav remains on top which should not happen.

This could be observed in the following link:

(https://demo.openmf.org/res... | 1.0 | Side nav is not scrolling with the page in firefox browser - As you scroll down the page in community-app, the side-nav remains on top which should not happen.

This could be... | non_priority | side nav is not scrolling with the page in firefox browser as you scroll down the page in community app the side nav remains on top which should not happen this could be observed in the following link | 0 |

267,914 | 20,250,949,355 | IssuesEvent | 2022-02-14 17:49:20 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | NOD: Update tech documentation | documentation vsa vsa-claims-appeals NOD | ## Description

- Document what a new team member would want to know about this app

- What were some choices that were made that might require explanation?

- What are some things we'd make better if we had more time?

- If something went wrong how might we investigate?

- Is there anything we should update in our p... | 1.0 | NOD: Update tech documentation - ## Description

- Document what a new team member would want to know about this app

- What were some choices that were made that might require explanation?

- What are some things we'd make better if we had more time?

- If something went wrong how might we investigate?

- Is there a... | non_priority | nod update tech documentation description document what a new team member would want to know about this app what were some choices that were made that might require explanation what are some things we d make better if we had more time if something went wrong how might we investigate is there a... | 0 |

278,807 | 30,702,405,196 | IssuesEvent | 2023-07-27 01:27:21 | Trinadh465/linux-4.1.15_CVE-2022-45934 | https://api.github.com/repos/Trinadh465/linux-4.1.15_CVE-2022-45934 | closed | CVE-2016-9685 (Medium) detected in linuxlinux-4.6 - autoclosed | Mend: dependency security vulnerability | ## CVE-2016-9685 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kerne... | True | CVE-2016-9685 (Medium) detected in linuxlinux-4.6 - autoclosed - ## CVE-2016-9685 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Ke... | non_priority | cve medium detected in linuxlinux autoclosed cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files fs ... | 0 |

66,815 | 8,971,422,043 | IssuesEvent | 2019-01-29 15:53:12 | Samsung/Universum | https://api.github.com/repos/Samsung/Universum | opened | Add user guide | documentation | Originally created on Wed, 26 Dec 2018 13:58:39 +0200

Add simple step-by-step guide as an example of usage to documentation. | 1.0 | Add user guide - Originally created on Wed, 26 Dec 2018 13:58:39 +0200

Add simple step-by-step guide as an example of usage to documentation. | non_priority | add user guide originally created on wed dec add simple step by step guide as an example of usage to documentation | 0 |

32,097 | 8,794,163,982 | IssuesEvent | 2018-12-21 23:39:13 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | reopened | Build from source issue - Bezel test fails | type:build/install | ### System information

- **OS Platform and Distribution: Linux Ubuntu 18.04:

- **TensorFlow version (git cloned from https://github.com/tensorflow/tensorflow (master):

- **Python version 3.6+ (virtual environment created with anaconda 5.3.1:

- **Bazel version 0.20.0:

- **GCC/Compiler version gcc (Ubuntu 7.3.0-27ub... | 1.0 | Build from source issue - Bezel test fails - ### System information

- **OS Platform and Distribution: Linux Ubuntu 18.04:

- **TensorFlow version (git cloned from https://github.com/tensorflow/tensorflow (master):

- **Python version 3.6+ (virtual environment created with anaconda 5.3.1:

- **Bazel version 0.20.0:

- ... | non_priority | build from source issue bezel test fails system information os platform and distribution linux ubuntu tensorflow version git cloned from master python version virtual environment created with anaconda bazel version gcc compiler version gcc ubuntu ... | 0 |

80,761 | 10,056,128,615 | IssuesEvent | 2019-07-22 08:25:59 | spring-projects/spring-boot | https://api.github.com/repos/spring-projects/spring-boot | closed | JavaVersion does not cover all available versions of Java | status: pending-design-work type: bug | We're missing Java 11 in 2.1.x. We also need to decide what to do about non-LTS versions (12 and 13) in 2.2. | 1.0 | JavaVersion does not cover all available versions of Java - We're missing Java 11 in 2.1.x. We also need to decide what to do about non-LTS versions (12 and 13) in 2.2. | non_priority | javaversion does not cover all available versions of java we re missing java in x we also need to decide what to do about non lts versions and in | 0 |

63,823 | 6,885,309,118 | IssuesEvent | 2017-11-21 15:45:04 | appium/appium | https://api.github.com/repos/appium/appium | closed | [XCUITest] Failed to create WDA session. Retrying... | NeedsInfo NotABug XCUITest |

Hi All,

I am getting similar error when trying to launch the app, even though I have not specified the bundle ID.I am running this on bitrise mac. box

Also , I have attached the ios logs

https://gist.github.com/zusmani-mbo-com/3dd2f3069ecfa0137bdbbc02f7fbedae

I have also looked at this issue, can not get it w... | 1.0 | [XCUITest] Failed to create WDA session. Retrying... -

Hi All,

I am getting similar error when trying to launch the app, even though I have not specified the bundle ID.I am running this on bitrise mac. box

Also , I have attached the ios logs

https://gist.github.com/zusmani-mbo-com/3dd2f3069ecfa0137bdbbc02f7fbe... | non_priority | failed to create wda session retrying hi all i am getting similar error when trying to launch the app even though i have not specified the bundle id i am running this on bitrise mac box also i have attached the ios logs i have also looked at this issue can not get it working still app... | 0 |

276,040 | 20,966,158,238 | IssuesEvent | 2022-03-28 06:57:45 | deepnight/ldtk | https://api.github.com/repos/deepnight/ldtk | closed | 0.10.0: EntityReferenceInfos doesn't appear to exist after quicktype | bug documentation Json | In the latest 0.10.0 commit, the Json Schema file doesn't appear to output this type of definition. `EntityReferenceInfos`

There appears to only be one match found, which is in the description of the value field: (Image)

detected in guava-23.6.1-jre.jar, guava-23.6-jre.jar | security vulnerability | ## CVE-2018-10237 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>guava-23.6.1-jre.jar</b>, <b>guava-23.6-jre.jar</b></p></summary>

<p>

<details><summary><b>guava-23.6.1-jre.jar</b... | True | CVE-2018-10237 (Medium) detected in guava-23.6.1-jre.jar, guava-23.6-jre.jar - ## CVE-2018-10237 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>guava-23.6.1-jre.jar</b>, <b>guava-2... | non_priority | cve medium detected in guava jre jar guava jre jar cve medium severity vulnerability vulnerable libraries guava jre jar guava jre jar guava jre jar guava is a suite of core and expanded libraries that include utility classes google s collections ... | 0 |

58,611 | 11,899,794,065 | IssuesEvent | 2020-03-30 09:35:06 | linewalks/MDwalks-UI | https://api.github.com/repos/linewalks/MDwalks-UI | closed | BarChart isScroll 인 경우 리펙토링 | Code clean | ## 기능에 관한 설명 또는 링크

scroll 기능을 넣으면서 코드 중복이 발생했다

## 원하는 솔루션에 대한 설명

코드 중복 제거와 test code 추가

## 요구 조건

- [ ] 코드 중복 제거

- [ ] test code

## 테스트 항목

- [ ]

## 완료 후 진행

PR 을 신청합니다

| 1.0 | BarChart isScroll 인 경우 리펙토링 - ## 기능에 관한 설명 또는 링크

scroll 기능을 넣으면서 코드 중복이 발생했다

## 원하는 솔루션에 대한 설명

코드 중복 제거와 test code 추가

## 요구 조건

- [ ] 코드 중복 제거

- [ ] test code

## 테스트 항목

- [ ]

## 완료 후 진행

PR 을 신청합니다

| non_priority | barchart isscroll 인 경우 리펙토링 기능에 관한 설명 또는 링크 scroll 기능을 넣으면서 코드 중복이 발생했다 원하는 솔루션에 대한 설명 코드 중복 제거와 test code 추가 요구 조건 코드 중복 제거 test code 테스트 항목 완료 후 진행 pr 을 신청합니다 | 0 |

25,286 | 6,648,523,989 | IssuesEvent | 2017-09-28 09:38:21 | Porucznik/Nexia-Home | https://api.github.com/repos/Porucznik/Nexia-Home | closed | Group and tag packages in archiso | code refactor | We need to clean our project a bit. Move nondefult packages to packages.x86_64, group them thematically, and tag gruops. | 1.0 | Group and tag packages in archiso - We need to clean our project a bit. Move nondefult packages to packages.x86_64, group them thematically, and tag gruops. | non_priority | group and tag packages in archiso we need to clean our project a bit move nondefult packages to packages group them thematically and tag gruops | 0 |

224,091 | 24,769,674,560 | IssuesEvent | 2022-10-23 01:06:17 | ChoeMinji/react-17.0.2 | https://api.github.com/repos/ChoeMinji/react-17.0.2 | opened | CVE-2022-37598 (High) detected in uglify-js-3.7.3.tgz, uglify-js-3.4.9.tgz | security vulnerability | ## CVE-2022-37598 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>uglify-js-3.7.3.tgz</b>, <b>uglify-js-3.4.9.tgz</b></p></summary>

<p>

<details><summary><b>uglify-js-3.7.3.tgz</b></... | True | CVE-2022-37598 (High) detected in uglify-js-3.7.3.tgz, uglify-js-3.4.9.tgz - ## CVE-2022-37598 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>uglify-js-3.7.3.tgz</b>, <b>uglify-js-3.... | non_priority | cve high detected in uglify js tgz uglify js tgz cve high severity vulnerability vulnerable libraries uglify js tgz uglify js tgz uglify js tgz javascript parser mangler compressor and beautifier toolkit library home page a href path to de... | 0 |

84,832 | 24,440,262,300 | IssuesEvent | 2022-10-06 14:10:21 | epicmaxco/vuestic-ui | https://api.github.com/repos/epicmaxco/vuestic-ui | closed | sandbox's tsconfig extends tsconfig from src/.nuxt folder which is empty after clean install. | BUG build sandbox | Need a postinstall script with mock.

see https://github.com/epicmaxco/vuestic-ui/issues/2407 | 1.0 | sandbox's tsconfig extends tsconfig from src/.nuxt folder which is empty after clean install. - Need a postinstall script with mock.

see https://github.com/epicmaxco/vuestic-ui/issues/2407 | non_priority | sandbox s tsconfig extends tsconfig from src nuxt folder which is empty after clean install need a postinstall script with mock see | 0 |

53,045 | 10,980,221,175 | IssuesEvent | 2019-11-30 12:39:03 | tobiasanker/SakuraTree | https://api.github.com/repos/tobiasanker/SakuraTree | closed | rework blossom-output | code cleanup / QA documentation feature / enhancement usability | ## Feature-request

### Description

The current implementation of writing blossom-output into a variable work, but doesn't scales very good. With many variables, its hard to read. So there should be another solution.

### Related Issue

#29

### Kitsunemimi-Repos, which have to be updated

### Possible Im... | 1.0 | rework blossom-output - ## Feature-request

### Description

The current implementation of writing blossom-output into a variable work, but doesn't scales very good. With many variables, its hard to read. So there should be another solution.

### Related Issue

#29

### Kitsunemimi-Repos, which have to be up... | non_priority | rework blossom output feature request description the current implementation of writing blossom output into a variable work but doesn t scales very good with many variables its hard to read so there should be another solution related issue kitsunemimi repos which have to be upd... | 0 |

93,619 | 8,439,225,747 | IssuesEvent | 2018-10-18 00:37:01 | QubesOS/qubes-issues | https://api.github.com/repos/QubesOS/qubes-issues | reopened | Idea: qvm-sync-appmenus also parsing /usr/local/share/applications | C: desktop-linux P: minor enhancement help wanted r4.0-buster-cur-test r4.0-centos7-cur-test r4.0-fc26-cur-test r4.0-fc27-cur-test r4.0-fc28-cur-test r4.0-jessie-cur-test r4.0-stretch-cur-test | ### Qubes OS version:

4.0

### Affected component(s):

Application menu syncing

### Steps to reproduce the behavior:

1. Install any locally installed app that a user may want in a particular VM, but not other VMs and they do not want to make it installed in all VMs and make a bunch of clones of VMs (as that cre... | 7.0 | Idea: qvm-sync-appmenus also parsing /usr/local/share/applications - ### Qubes OS version:

4.0

### Affected component(s):

Application menu syncing

### Steps to reproduce the behavior:

1. Install any locally installed app that a user may want in a particular VM, but not other VMs and they do not want to make i... | non_priority | idea qvm sync appmenus also parsing usr local share applications qubes os version affected component s application menu syncing steps to reproduce the behavior install any locally installed app that a user may want in a particular vm but not other vms and they do not want to make i... | 0 |

70,896 | 8,596,520,140 | IssuesEvent | 2018-11-15 16:10:42 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Add permalinks to the document sidebar | Needs Design Feedback Needs Dev | The current problem is permalinks aren't that discoverable. This has been reported in feedback. Right now, the title on select show the permalink. In phase 2 this likely won't be a case moving into everything being a block, so changing now is important.

@melchoyce worked on a suggested design for this which adds to ... | 1.0 | Add permalinks to the document sidebar - The current problem is permalinks aren't that discoverable. This has been reported in feedback. Right now, the title on select show the permalink. In phase 2 this likely won't be a case moving into everything being a block, so changing now is important.

@melchoyce worked on a... | non_priority | add permalinks to the document sidebar the current problem is permalinks aren t that discoverable this has been reported in feedback right now the title on select show the permalink in phase this likely won t be a case moving into everything being a block so changing now is important melchoyce worked on a... | 0 |

11,088 | 13,930,014,131 | IssuesEvent | 2020-10-22 01:15:25 | fluent/fluent-bit | https://api.github.com/repos/fluent/fluent-bit | closed | WinLog INPUT: include the StringInserts key-value pairs into the log record | work-in-process | **Is your feature request related to a problem? Please describe.**

In [this pull request](https://github.com/fluent/fluent-bit/pull/2322), the `StringInserts` [were removed from the resulting log record](https://github.com/fluent/fluent-bit/pull/2322/files#diff-0890d3b5666d8c56708c223ac7bc54a3L267-L269). A formatted ... | 1.0 | WinLog INPUT: include the StringInserts key-value pairs into the log record - **Is your feature request related to a problem? Please describe.**

In [this pull request](https://github.com/fluent/fluent-bit/pull/2322), the `StringInserts` [were removed from the resulting log record](https://github.com/fluent/fluent-bit... | non_priority | winlog input include the stringinserts key value pairs into the log record is your feature request related to a problem please describe in the stringinserts a formatted message containing the human readable message this solution is really useful to visualize the resulting message but it w... | 0 |

99,177 | 12,403,809,020 | IssuesEvent | 2020-05-21 14:31:29 | gitcoinco/web | https://api.github.com/repos/gitcoinco/web | opened | Workshops Tab | Gitcoin Hackathon design | <!--

Hello Gitcoiner!

Please use the template below for feature requests for Gitcoin.

If it is general support you need, reach out to us at

gitcoin.co/slack

-->

### User Story

As Gitcoin we'd like hackers to know all the workshops happening and allow devs to participate.

### Why Is this Needed

No c... | 1.0 | Workshops Tab - <!--

Hello Gitcoiner!

Please use the template below for feature requests for Gitcoin.

If it is general support you need, reach out to us at

gitcoin.co/slack

-->

### User Story

As Gitcoin we'd like hackers to know all the workshops happening and allow devs to participate.

### Why Is th... | non_priority | workshops tab hello gitcoiner please use the template below for feature requests for gitcoin if it is general support you need reach out to us at gitcoin co slack user story as gitcoin we d like hackers to know all the workshops happening and allow devs to participate why is th... | 0 |

220,258 | 24,564,790,771 | IssuesEvent | 2022-10-13 01:13:01 | turkdevops/desktop | https://api.github.com/repos/turkdevops/desktop | closed | CVE-2022-0355 (High) detected in simple-get-2.8.1.tgz, simple-get-3.1.0.tgz - autoclosed | security vulnerability | ## CVE-2022-0355 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>simple-get-2.8.1.tgz</b>, <b>simple-get-3.1.0.tgz</b></p></summary>

<p>

<details><summary><b>simple-get-2.8.1.tgz</b>... | True | CVE-2022-0355 (High) detected in simple-get-2.8.1.tgz, simple-get-3.1.0.tgz - autoclosed - ## CVE-2022-0355 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>simple-get-2.8.1.tgz</b>, <... | non_priority | cve high detected in simple get tgz simple get tgz autoclosed cve high severity vulnerability vulnerable libraries simple get tgz simple get tgz simple get tgz simplest way to make http get requests supports https redirects gzip deflate strea... | 0 |

17,128 | 3,594,474,897 | IssuesEvent | 2016-02-01 23:52:35 | googlefonts/fontbakery | https://api.github.com/repos/googlefonts/fontbakery | closed | Extend checker to allow automated fixes | testing | Following #221

Tests can have associated automatic fixes, so if a test fails, it can be automatically fixed.

For example, there should be a test for a nbsp glyph present, a space glyph present, correct unicode points assigned to each, and both with the same width

If this test fails, the fix is to make a nbsp... | 1.0 | Extend checker to allow automated fixes - Following #221

Tests can have associated automatic fixes, so if a test fails, it can be automatically fixed.

For example, there should be a test for a nbsp glyph present, a space glyph present, correct unicode points assigned to each, and both with the same width

If ... | non_priority | extend checker to allow automated fixes following tests can have associated automatic fixes so if a test fails it can be automatically fixed for example there should be a test for a nbsp glyph present a space glyph present correct unicode points assigned to each and both with the same width if th... | 0 |

153,785 | 19,708,600,905 | IssuesEvent | 2022-01-13 01:44:17 | artsking/linux-4.19.72_CVE-2020-14386 | https://api.github.com/repos/artsking/linux-4.19.72_CVE-2020-14386 | opened | CVE-2019-19067 (Medium) detected in linux-yoctov5.4.51 | security vulnerability | ## CVE-2019-19067 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://gi... | True | CVE-2019-19067 (Medium) detected in linux-yoctov5.4.51 - ## CVE-2019-19067 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Emb... | non_priority | cve medium detected in linux cve medium severity vulnerability vulnerable library linux yocto linux embedded kernel library home page a href found in base branch master vulnerable source files drivers gpu drm amd amdgpu amdgpu acp c ... | 0 |

19,641 | 10,382,671,245 | IssuesEvent | 2019-09-10 08:00:55 | AOSC-Dev/aosc-os-abbs | https://api.github.com/repos/AOSC-Dev/aosc-os-abbs | closed | graphviz: CVE-2019-11023 | needs-triage security to-stable | <!-- Please remove items do not apply. -->

**CVE IDs:** CVE-2019-11023

**Other security advisory IDs:** openSUSE-SU-2019:1434-1

**Descriptions:**

- CVE-2019-11023: Fixed a denial of service vulnerability, which was

caused by a NULL pointer dereference in agroot() (bsc#1132091).

**Patches:** from o... | True | graphviz: CVE-2019-11023 - <!-- Please remove items do not apply. -->

**CVE IDs:** CVE-2019-11023

**Other security advisory IDs:** openSUSE-SU-2019:1434-1

**Descriptions:**

- CVE-2019-11023: Fixed a denial of service vulnerability, which was

caused by a NULL pointer dereference in agroot() (bsc#11320... | non_priority | graphviz cve cve ids cve other security advisory ids opensuse su descriptions cve fixed a denial of service vulnerability which was caused by a null pointer dereference in agroot bsc patches from opensuse poc s architectural pro... | 0 |

151,590 | 12,044,125,301 | IssuesEvent | 2020-04-14 13:34:11 | Oldes/Rebol-issues | https://api.github.com/repos/Oldes/Rebol-issues | closed | UNIQUE/DIFFERENCE/INTERSECT/UNION do not accept blocks containing values of type NONE! or UNSET! | Test.written Type.bug | _Submitted by:_ **Ch.Ensel**

Unsure whether this is a bug or a feature.

``` rebol

>> unique [#[none]]

** Script error: none! type is not allowed here

** Where: unique

** Near: unique [none]

>> union [#[none]] []

** Script error: none! type is not allowed here

** Where: union

** Near: union [none] []

>> difference [... | 1.0 | UNIQUE/DIFFERENCE/INTERSECT/UNION do not accept blocks containing values of type NONE! or UNSET! - _Submitted by:_ **Ch.Ensel**

Unsure whether this is a bug or a feature.

``` rebol

>> unique [#[none]]

** Script error: none! type is not allowed here

** Where: unique

** Near: unique [none]

>> union [#[none]] []

** Scr... | non_priority | unique difference intersect union do not accept blocks containing values of type none or unset submitted by ch ensel unsure whether this is a bug or a feature rebol unique script error none type is not allowed here where unique near unique union script error none typ... | 0 |

82,053 | 15,646,496,183 | IssuesEvent | 2021-03-23 01:03:32 | LevyForchh/calm-dsl | https://api.github.com/repos/LevyForchh/calm-dsl | opened | CVE-2021-25290 (Medium) detected in Pillow-6.2.2-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2021-25290 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-6.2.2-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>Python Imaging Library (Fork)</p>

<p>Library hom... | True | CVE-2021-25290 (Medium) detected in Pillow-6.2.2-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2021-25290 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-6.2.2-cp27-cp27mu-manylin... | non_priority | cve medium detected in pillow whl cve medium severity vulnerability vulnerable library pillow whl python imaging library fork library home page a href path to dependency file calm dsl requirements txt path to vulnerable library calm dsl requirem... | 0 |

109,450 | 9,381,632,878 | IssuesEvent | 2019-04-04 20:08:28 | open-apparel-registry/open-apparel-registry | https://api.github.com/repos/open-apparel-registry/open-apparel-registry | closed | Manually rejecting all potential matches when the FacilityListItem has no geocoded address raises an IntegrityError | + bug rollbar tested/verified | ## Overview

Manually rejecting all potential matches when the FacilityListItem has no geocoded address raises an IntegrityError

### Expected Behavior

Manually rejecting all potential matches when the FacilityListItem has no geocoded address sets the status to "ERROR_MATCHING"

### Actual Behavior

An unhan... | 1.0 | Manually rejecting all potential matches when the FacilityListItem has no geocoded address raises an IntegrityError - ## Overview

Manually rejecting all potential matches when the FacilityListItem has no geocoded address raises an IntegrityError

### Expected Behavior

Manually rejecting all potential matches wh... | non_priority | manually rejecting all potential matches when the facilitylistitem has no geocoded address raises an integrityerror overview manually rejecting all potential matches when the facilitylistitem has no geocoded address raises an integrityerror expected behavior manually rejecting all potential matches wh... | 0 |

121,952 | 10,208,115,476 | IssuesEvent | 2019-08-14 09:21:22 | input-output-hk/chain-libs | https://api.github.com/repos/input-output-hk/chain-libs | opened | Update Proposal is not removed after expiry grace period (proposal_expiration) | bug test | UpdateState::process_proposals has bug in my opinion, which leads to problem in which proposal which was not accepted before proposal_expiration period won't be removed from proposal collection at all.

there are two conditions in mentioned method:

```

if prev_date.epoch < new_date.epoch

```

and

```

else i... | 1.0 | Update Proposal is not removed after expiry grace period (proposal_expiration) - UpdateState::process_proposals has bug in my opinion, which leads to problem in which proposal which was not accepted before proposal_expiration period won't be removed from proposal collection at all.

there are two conditions in menti... | non_priority | update proposal is not removed after expiry grace period proposal expiration updatestate process proposals has bug in my opinion which leads to problem in which proposal which was not accepted before proposal expiration period won t be removed from proposal collection at all there are two conditions in menti... | 0 |

261,174 | 19,701,303,819 | IssuesEvent | 2022-01-12 16:53:15 | web-illinois/illinois_framework_theme | https://api.github.com/repos/web-illinois/illinois_framework_theme | closed | Document how to remove the /news archive listing page | documentation News | By default we list all of the news items in a view at /news, but not all sites will want to do this. We need to document that proper way to change this and still list news is to disable the view-page and embed the (to be created) view-block into a Content Page. | 1.0 | Document how to remove the /news archive listing page - By default we list all of the news items in a view at /news, but not all sites will want to do this. We need to document that proper way to change this and still list news is to disable the view-page and embed the (to be created) view-block into a Content Page. | non_priority | document how to remove the news archive listing page by default we list all of the news items in a view at news but not all sites will want to do this we need to document that proper way to change this and still list news is to disable the view page and embed the to be created view block into a content page | 0 |

9,769 | 25,166,879,073 | IssuesEvent | 2022-11-10 21:45:19 | MicrosoftDocs/architecture-center | https://api.github.com/repos/MicrosoftDocs/architecture-center | closed | Multiple Virtual WAN Hubs per region | doc-enhancement assigned-to-author triaged architecture-center/svc example-scenario/subsvc Pri2 | Hey Team,

I sent some feedback for the Azure VWAN FAQ page yesterday regarding the same thing. It looks like some of this documentation needs to be updated now that it's possible to provision multiple VWAN hubs per region.

In the section **"Virtual WAN Hub"** it states _"There can only be one hub per Azure region... | 1.0 | Multiple Virtual WAN Hubs per region - Hey Team,

I sent some feedback for the Azure VWAN FAQ page yesterday regarding the same thing. It looks like some of this documentation needs to be updated now that it's possible to provision multiple VWAN hubs per region.

In the section **"Virtual WAN Hub"** it states _"The... | non_priority | multiple virtual wan hubs per region hey team i sent some feedback for the azure vwan faq page yesterday regarding the same thing it looks like some of this documentation needs to be updated now that it s possible to provision multiple vwan hubs per region in the section virtual wan hub it states the... | 0 |

21,841 | 4,754,692,663 | IssuesEvent | 2016-10-24 08:16:14 | cra-ros-pkg/robot_localization | https://api.github.com/repos/cra-ros-pkg/robot_localization | closed | Example launch file for using multiple robot_localization ekf nodes | documentation | I have seen a lot of people writing about using multiple ekf nodes (and a navsat node) for odom & map localization. Would it be possible to put together an example launch file for configuring both of these nodes together? | 1.0 | Example launch file for using multiple robot_localization ekf nodes - I have seen a lot of people writing about using multiple ekf nodes (and a navsat node) for odom & map localization. Would it be possible to put together an example launch file for configuring both of these nodes together? | non_priority | example launch file for using multiple robot localization ekf nodes i have seen a lot of people writing about using multiple ekf nodes and a navsat node for odom map localization would it be possible to put together an example launch file for configuring both of these nodes together | 0 |

19,574 | 4,424,361,388 | IssuesEvent | 2016-08-16 12:16:02 | spring-projects/spring-boot | https://api.github.com/repos/spring-projects/spring-boot | closed | Issue with SpringApplicationBuilder example in docs | documentation | Bug report

If you add SpringApplicationBuilder as per docs

```

new SpringApplicationBuilder()

.bannerMode(Banner.Mode.OFF)

.sources(Parent.class)

.child(Application.class)

.run(args);

```

This wont trigger banner off as it is trying to switch banner off on parent. It should be on child and... | 1.0 | Issue with SpringApplicationBuilder example in docs - Bug report

If you add SpringApplicationBuilder as per docs

```

new SpringApplicationBuilder()

.bannerMode(Banner.Mode.OFF)

.sources(Parent.class)

.child(Application.class)

.run(args);

```

This wont trigger banner off as it is trying to ... | non_priority | issue with springapplicationbuilder example in docs bug report if you add springapplicationbuilder as per docs new springapplicationbuilder bannermode banner mode off sources parent class child application class run args this wont trigger banner off as it is trying to ... | 0 |

83,312 | 10,340,520,085 | IssuesEvent | 2019-09-03 22:14:32 | MozillaReality/FirefoxReality | https://api.github.com/repos/MozillaReality/FirefoxReality | opened | Allow per-site use of location | Final Design PM/UX review enhancement | For sites that request location data, users should be able to grant permission once or always for that site. Users should also be able to revoke permission from sites they have allowed in the past.

Current behavior allows a site to ask for permissions multiple times in a single session, with no way for a user to say... | 1.0 | Allow per-site use of location - For sites that request location data, users should be able to grant permission once or always for that site. Users should also be able to revoke permission from sites they have allowed in the past.

Current behavior allows a site to ask for permissions multiple times in a single sess... | non_priority | allow per site use of location for sites that request location data users should be able to grant permission once or always for that site users should also be able to revoke permission from sites they have allowed in the past current behavior allows a site to ask for permissions multiple times in a single sess... | 0 |

127,537 | 10,474,462,913 | IssuesEvent | 2019-09-23 14:33:20 | jpmorganchase/tessera | https://api.github.com/repos/jpmorganchase/tessera | closed | Azure Key Vault - SSLPeerUnverifiedException when running disabled acceptance tests with jdk11 | 0.11 bug testing | AKV tests are currently disabled for jdk11 as Tessera throws a `javax.net.ssl.SSLPeerUnverifiedException: Hostname localhost not verified (no certificates)` when trying to communicate with the mock AKV (WireMock) server used in the tests.

Travis's `oraclejdk11` is currently `jdk11.0.2`. The test is reproducible loc... | 1.0 | Azure Key Vault - SSLPeerUnverifiedException when running disabled acceptance tests with jdk11 - AKV tests are currently disabled for jdk11 as Tessera throws a `javax.net.ssl.SSLPeerUnverifiedException: Hostname localhost not verified (no certificates)` when trying to communicate with the mock AKV (WireMock) server use... | non_priority | azure key vault sslpeerunverifiedexception when running disabled acceptance tests with akv tests are currently disabled for as tessera throws a javax net ssl sslpeerunverifiedexception hostname localhost not verified no certificates when trying to communicate with the mock akv wiremock server used in the... | 0 |

114,591 | 14,601,003,567 | IssuesEvent | 2020-12-21 07:56:27 | AtB-AS/mittatb-app | https://api.github.com/repos/AtB-AS/mittatb-app | reopened | [Designsync] Update journey details | Designsync | ## Origin

_Links to received feedback, user research or other findings._

## Motivation

_A short description of what user needs or business goals this feature will solve._

## Hypotheses and assumptions

_A list of hypotheses and assumptions we have made about the user or the proposed solution._

##... | 1.0 | [Designsync] Update journey details - ## Origin

_Links to received feedback, user research or other findings._

## Motivation

_A short description of what user needs or business goals this feature will solve._

## Hypotheses and assumptions

_A list of hypotheses and assumptions we have made about the use... | non_priority | update journey details origin links to received feedback user research or other findings motivation a short description of what user needs or business goals this feature will solve hypotheses and assumptions a list of hypotheses and assumptions we have made about the user or the pr... | 0 |