Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

125,326 | 4,955,587,017 | IssuesEvent | 2016-12-01 20:51:27 | caitlynmayers/dukes | https://api.github.com/repos/caitlynmayers/dukes | opened | Shopping Cart: Create Account Alignment | Low Priority Style | Upon checking out, "create an account" is on a separate line than the check box

<img width="189" alt="screen shot 2016-12-01 at 3 47 42 pm" src="https://cloud.githubusercontent.com/assets/24302252/20812010/fac027ea-b7dd-11e6-9e92-bb4a97466f82.png">

| 1.0 | Shopping Cart: Create Account Alignment - Upon checking out, "create an account" is on a separate line than the check box

<img width="189" alt="screen shot 2016-12-01 at 3 47 42 pm" src="https://cloud.githubusercontent.com/assets/24302252/20812010/fac027ea-b7dd-11e6-9e92-bb4a97466f82.png">

| priority | shopping cart create account alignment upon checking out create an account is on a separate line than the check box img width alt screen shot at pm src | 1 |

17,492 | 2,615,145,540 | IssuesEvent | 2015-03-01 06:21:26 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Need hook script for site update | auto-migrated Maintenance Milestone-2 Priority-Low Type-Enhancement | ```

- one for updating/building the slides cache manifest

- one for .zip'ing the studio samples

First is done, but should be put into a .sh script on in place of running

appcfg.py update.

```

Original issue reported on code.google.com by `ericbide...@html5rocks.com` on 2 Aug 2010 at 4:52 | 1.0 | Need hook script for site update - ```

- one for updating/building the slides cache manifest

- one for .zip'ing the studio samples

First is done, but should be put into a .sh script on in place of running

appcfg.py update.

```

Original issue reported on code.google.com by `ericbide...@html5rocks.com` on 2 Aug 2010 a... | priority | need hook script for site update one for updating building the slides cache manifest one for zip ing the studio samples first is done but should be put into a sh script on in place of running appcfg py update original issue reported on code google com by ericbide com on aug at | 1 |

683,499 | 23,384,600,029 | IssuesEvent | 2022-08-11 12:45:19 | TheYellowArchitect/doubledamnation | https://api.github.com/repos/TheYellowArchitect/doubledamnation | opened | Remake Enemy Behaviour | enemy ai low priority | It is no secret that the code for the enemies is **not** well-designed. After all, it was made back in 2018, I didn't even know a design pattern back then.

I didn't read on AI, it was quite literally handmade without any guidance, I still remember the "breakthroughs" and how making the AI felt like exploring, happy ti... | 1.0 | Remake Enemy Behaviour - It is no secret that the code for the enemies is **not** well-designed. After all, it was made back in 2018, I didn't even know a design pattern back then.

I didn't read on AI, it was quite literally handmade without any guidance, I still remember the "breakthroughs" and how making the AI felt... | priority | remake enemy behaviour it is no secret that the code for the enemies is not well designed after all it was made back in i didn t even know a design pattern back then i didn t read on ai it was quite literally handmade without any guidance i still remember the breakthroughs and how making the ai felt li... | 1 |

780,854 | 27,411,010,206 | IssuesEvent | 2023-03-01 10:31:25 | horizon-efrei/HorizonBot | https://api.github.com/repos/horizon-efrei/HorizonBot | closed | Feature pour délégués: TODO-list de devoirs | type: feature difficulty: complex status: awaiting approval scope: class groups priority: lowest | Ensemble de commandes pour gérer de façon semi-automatique une liste de devoirs à faire pour les délégués dans les classes

Commandes à prévoir: !todo create <liste des matières>, !todo add <matière> <intitulé> <date due>, !todo remove <matière/intitulé>, !todo edit <intitulé>, !todo archive

Exemple de layout (de... | 1.0 | Feature pour délégués: TODO-list de devoirs - Ensemble de commandes pour gérer de façon semi-automatique une liste de devoirs à faire pour les délégués dans les classes

Commandes à prévoir: !todo create <liste des matières>, !todo add <matière> <intitulé> <date due>, !todo remove <matière/intitulé>, !todo edit <inti... | priority | feature pour délégués todo list de devoirs ensemble de commandes pour gérer de façon semi automatique une liste de devoirs à faire pour les délégués dans les classes commandes à prévoir todo create todo add todo remove todo edit todo archive exemple de layout devoirs à faire de... | 1 |

250,614 | 7,979,146,271 | IssuesEvent | 2018-07-17 20:41:58 | conveyal/analysis-ui | https://api.github.com/repos/conveyal/analysis-ui | closed | Support email should not be hard-coded | low priority small task | We may want to specify the support email in the same place we specify API keys, instead of https://github.com/conveyal/analysis-ui/blob/c9a53e9f74386de40450d5d9e4d3b0f852b14b0a/lib/components/application.js#L245

This would make sure that our support email address only shows up for our supported deployments. | 1.0 | Support email should not be hard-coded - We may want to specify the support email in the same place we specify API keys, instead of https://github.com/conveyal/analysis-ui/blob/c9a53e9f74386de40450d5d9e4d3b0f852b14b0a/lib/components/application.js#L245

This would make sure that our support email address only shows u... | priority | support email should not be hard coded we may want to specify the support email in the same place we specify api keys instead of this would make sure that our support email address only shows up for our supported deployments | 1 |

470,732 | 13,543,433,083 | IssuesEvent | 2020-09-16 18:57:16 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID :212426] Unrecoverable parse warning in drivers/wifi/eswifi/eswifi_socket_offload.c | Coverity bug priority: low |

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/66bd06a7d1f9e4682faafbc551046af695fa1060/drivers/wifi/eswifi/eswifi_socket_offload.c#L502

Category: Parse warnings

Function: ``

Component: Drivers

CID: [212426](https://scan9.coverity.com/reports.htm#v29726/p12996/mergedDefectId... | 1.0 | [Coverity CID :212426] Unrecoverable parse warning in drivers/wifi/eswifi/eswifi_socket_offload.c -

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/66bd06a7d1f9e4682faafbc551046af695fa1060/drivers/wifi/eswifi/eswifi_socket_offload.c#L502

Category: Parse warnings

Function: ``

... | priority | unrecoverable parse warning in drivers wifi eswifi eswifi socket offload c static code scan issues found in file category parse warnings function component drivers cid details const struct fd op vtable eswifi socket fd op vtable ... | 1 |

806,219 | 29,806,536,787 | IssuesEvent | 2023-06-16 12:08:16 | penrose/penrose | https://api.github.com/repos/penrose/penrose | opened | feat: Make it possible to "bake" diagrams | kind:enhancement system:optimization system:style kind:usability priority:feature request layout priority:low | ### Issue

Penrose uses random sampling to initialize variables (e.g., those marked `?` in a Style program, or shape properties that are not explicitly specified). If a user is happy with a particular instance of a diagram, they would like to be able to reproduce this diagram later down the road. For this reason, w... | 2.0 | feat: Make it possible to "bake" diagrams - ### Issue

Penrose uses random sampling to initialize variables (e.g., those marked `?` in a Style program, or shape properties that are not explicitly specified). If a user is happy with a particular instance of a diagram, they would like to be able to reproduce this diag... | priority | feat make it possible to bake diagrams issue penrose uses random sampling to initialize variables e g those marked in a style program or shape properties that are not explicitly specified if a user is happy with a particular instance of a diagram they would like to be able to reproduce this diag... | 1 |

78,014 | 3,508,687,744 | IssuesEvent | 2016-01-08 19:02:54 | LunaNode/lobster | https://api.github.com/repos/LunaNode/lobster | closed | SolusVM with OpenVZ: burst memory on creation | low-priority | Context: for SolusVM we only require setup of a single Lobster plan. Then we use customdisk, customcpu, etc. to configure the plan so that it matches the one in the Lobster database.

For OpenVZ, the vserver-create returns an invalid custom burst memory error if we set custommemory. Currently we are using a work-arou... | 1.0 | SolusVM with OpenVZ: burst memory on creation - Context: for SolusVM we only require setup of a single Lobster plan. Then we use customdisk, customcpu, etc. to configure the plan so that it matches the one in the Lobster database.

For OpenVZ, the vserver-create returns an invalid custom burst memory error if we set ... | priority | solusvm with openvz burst memory on creation context for solusvm we only require setup of a single lobster plan then we use customdisk customcpu etc to configure the plan so that it matches the one in the lobster database for openvz the vserver create returns an invalid custom burst memory error if we set ... | 1 |

159,825 | 6,062,201,755 | IssuesEvent | 2017-06-14 08:50:56 | python/mypy | https://api.github.com/repos/python/mypy | opened | Use plugin to support SQLAlchemy table definitions | feature priority-2-low topic-plugins | We'll also need stubs for SQLAlchemy as they were removed from typeshed some time ago. Current work on stubs is happening at https://github.com/JelleZijlstra/sqlalchemy-stubs. | 1.0 | Use plugin to support SQLAlchemy table definitions - We'll also need stubs for SQLAlchemy as they were removed from typeshed some time ago. Current work on stubs is happening at https://github.com/JelleZijlstra/sqlalchemy-stubs. | priority | use plugin to support sqlalchemy table definitions we ll also need stubs for sqlalchemy as they were removed from typeshed some time ago current work on stubs is happening at | 1 |

777,495 | 27,281,970,566 | IssuesEvent | 2023-02-23 10:43:19 | uhh-cms/columnflow | https://api.github.com/repos/uhh-cms/columnflow | opened | Add tasks and helpers to write pyhf workspaces | enhancement low-priority | We define our statistical models in an experiment agnostic way, allowing for various formats for exporting actual fit models (stat. model + data). So far, we have a combine datacard writer and an accompanying task that creates cards.

We should provide the same mechanism for Pyhf workspaces, which should be fairly ea... | 1.0 | Add tasks and helpers to write pyhf workspaces - We define our statistical models in an experiment agnostic way, allowing for various formats for exporting actual fit models (stat. model + data). So far, we have a combine datacard writer and an accompanying task that creates cards.

We should provide the same mechani... | priority | add tasks and helpers to write pyhf workspaces we define our statistical models in an experiment agnostic way allowing for various formats for exporting actual fit models stat model data so far we have a combine datacard writer and an accompanying task that creates cards we should provide the same mechani... | 1 |

445,948 | 12,838,084,003 | IssuesEvent | 2020-07-07 16:49:55 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | closed | Duplicate ID caused by Optimize CSS Delivery option | community effort: [S] module: file optimization needs: acceptance criteria priority: low type: bug | **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version ✓

- Used the search feature to ensure that the bug hasn’t been reported before ✓

**Describe the bug**

Have developed a theme for a client and checked my theme with w3 validator. There ar... | 1.0 | Duplicate ID caused by Optimize CSS Delivery option - **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version ✓

- Used the search feature to ensure that the bug hasn’t been reported before ✓

**Describe the bug**

Have developed a theme for a c... | priority | duplicate id caused by optimize css delivery option before submitting an issue please check that you’ve completed the following steps made sure you’re on the latest version ✓ used the search feature to ensure that the bug hasn’t been reported before ✓ describe the bug have developed a theme for a c... | 1 |

824,789 | 31,199,304,361 | IssuesEvent | 2023-08-18 00:36:00 | awslabs/aws-ec2rescue-linux | https://api.github.com/repos/awslabs/aws-ec2rescue-linux | closed | Parse metrics from metrics collect modules | enhancement low priority request for comment | Similar reasoning behind this as #72

We could potentially use the postdiag modules here for this - basically, the ask would be to take the relevant metrics from collect modules and parse them into json for use with a monitoring system.

Since the output from tools is nonstandard we'd need to handle parsing on a p... | 1.0 | Parse metrics from metrics collect modules - Similar reasoning behind this as #72

We could potentially use the postdiag modules here for this - basically, the ask would be to take the relevant metrics from collect modules and parse them into json for use with a monitoring system.

Since the output from tools is n... | priority | parse metrics from metrics collect modules similar reasoning behind this as we could potentially use the postdiag modules here for this basically the ask would be to take the relevant metrics from collect modules and parse them into json for use with a monitoring system since the output from tools is no... | 1 |

604,790 | 18,718,973,372 | IssuesEvent | 2021-11-03 09:34:37 | aau-giraf/weekplanner | https://api.github.com/repos/aau-giraf/weekplanner | closed | As a user I would like messages that are displayed to me to be consistent and grammatically correct | Type: chore Priority: low Good First Issue Trustee | **Is your feature request related to a problem? Please describe.**

It seems a bit lazy to not use the correct plural or singular form when managing items in the application, such as deleting and copying. There are also many instances where a question mark is missing.

**Describe the solution you'd like**

A ternary... | 1.0 | As a user I would like messages that are displayed to me to be consistent and grammatically correct - **Is your feature request related to a problem? Please describe.**

It seems a bit lazy to not use the correct plural or singular form when managing items in the application, such as deleting and copying. There are als... | priority | as a user i would like messages that are displayed to me to be consistent and grammatically correct is your feature request related to a problem please describe it seems a bit lazy to not use the correct plural or singular form when managing items in the application such as deleting and copying there are als... | 1 |

306,274 | 9,383,218,664 | IssuesEvent | 2019-04-05 02:15:21 | squizlabs/PHP_CodeSniffer | https://api.github.com/repos/squizlabs/PHP_CodeSniffer | closed | --config-set installed_paths overwrites itself | Enhancement Low Priority | phpcs 2.8.1

attempted

```php

phpcs --config-set installed_paths /path/to/one

phpcs --config-set installed_paths /path/to/two

```

### result

only `/path/to/two` was added to the installed_paths

### expected

both `/path/to/one` and `/path/to/two` should be added to the installed_paths.

### note

`phpcs --... | 1.0 | --config-set installed_paths overwrites itself - phpcs 2.8.1

attempted

```php

phpcs --config-set installed_paths /path/to/one

phpcs --config-set installed_paths /path/to/two

```

### result

only `/path/to/two` was added to the installed_paths

### expected

both `/path/to/one` and `/path/to/two` should be adde... | priority | config set installed paths overwrites itself phpcs attempted php phpcs config set installed paths path to one phpcs config set installed paths path to two result only path to two was added to the installed paths expected both path to one and path to two should be adde... | 1 |

416,129 | 12,140,046,616 | IssuesEvent | 2020-04-23 19:52:20 | containrrr/watchtower | https://api.github.com/repos/containrrr/watchtower | closed | Watchtower HTTP API proposal | Do not close Priority: Low Status: Available Type: Enhancement | Since Watchtower basically watches for docker registries and actively pulls images in order to check for outdated containers, it keeps continuously incrementing the registries' pull counter, making them useless. The counts can no longer be considered as user downloads/pulls.

This issue has been impacting some of my ... | 1.0 | Watchtower HTTP API proposal - Since Watchtower basically watches for docker registries and actively pulls images in order to check for outdated containers, it keeps continuously incrementing the registries' pull counter, making them useless. The counts can no longer be considered as user downloads/pulls.

This issue... | priority | watchtower http api proposal since watchtower basically watches for docker registries and actively pulls images in order to check for outdated containers it keeps continuously incrementing the registries pull counter making them useless the counts can no longer be considered as user downloads pulls this issue... | 1 |

831,251 | 32,042,808,542 | IssuesEvent | 2023-09-22 21:01:45 | cucapra/filament | https://api.github.com/repos/cucapra/filament | opened | Testing floating point/iterative divider implementations for accuracy instead of conformity | low priority C: tools | Currently, the `floating-point` directory of implementations tests for conformity among a couple other implementations, most of which are directly derived from an implementation in verilog. The adder was taken externally from [here](https://github.com/suhasr1991/5-Stage-Pipelined-IEEE-Single-Precision-Floating-Point-Ad... | 1.0 | Testing floating point/iterative divider implementations for accuracy instead of conformity - Currently, the `floating-point` directory of implementations tests for conformity among a couple other implementations, most of which are directly derived from an implementation in verilog. The adder was taken externally from ... | priority | testing floating point iterative divider implementations for accuracy instead of conformity currently the floating point directory of implementations tests for conformity among a couple other implementations most of which are directly derived from an implementation in verilog the adder was taken externally from ... | 1 |

405,392 | 11,872,516,730 | IssuesEvent | 2020-03-26 15:55:48 | ComPWA/pycompwa | https://api.github.com/repos/ComPWA/pycompwa | closed | Improve urls of subpages of the pycompwa website | Priority: Low Status: In Progress Type: Enhancement Type: Maintenance | Currently, some urls of our website, contain an underscore, like so:

* https://compwa.github.io/_api/pycompwa.html

* https://compwa.github.io/_examples/Quickstart.html

This is only case for pages that have been included through a [`toctree`](https://www.sphinx-doc.org/en/master/usage/restructuredtext/directives.... | 1.0 | Improve urls of subpages of the pycompwa website - Currently, some urls of our website, contain an underscore, like so:

* https://compwa.github.io/_api/pycompwa.html

* https://compwa.github.io/_examples/Quickstart.html

This is only case for pages that have been included through a [`toctree`](https://www.sphinx-d... | priority | improve urls of subpages of the pycompwa website currently some urls of our website contain an underscore like so this is only case for pages that have been included through a the underscores were added to render the pages correctly through the it could be however that this is no longe... | 1 |

544,578 | 15,894,731,289 | IssuesEvent | 2021-04-11 11:21:47 | marcusolsson/grafana-hourly-heatmap-panel | https://api.github.com/repos/marcusolsson/grafana-hourly-heatmap-panel | closed | Color option for null values | priority/low type/enhancement | If there is a null value in the data, the heatmap prints black color. It would be nice to have a color palette to select a color for null values. | 1.0 | Color option for null values - If there is a null value in the data, the heatmap prints black color. It would be nice to have a color palette to select a color for null values. | priority | color option for null values if there is a null value in the data the heatmap prints black color it would be nice to have a color palette to select a color for null values | 1 |

92,501 | 3,871,643,981 | IssuesEvent | 2016-04-11 10:40:52 | osm2vectortiles/osm2vectortiles | https://api.github.com/repos/osm2vectortiles/osm2vectortiles | opened | Implement network and shield field | low-priority | - [ ] Implement field shield of layer road_label

- [ ] Implement field network of layer rail_station_label

- The OSM key [network](http://wiki.openstreetmap.org/wiki/Key:network) can be used to determine in which country/region (network) the road or railway is. | 1.0 | Implement network and shield field - - [ ] Implement field shield of layer road_label

- [ ] Implement field network of layer rail_station_label

- The OSM key [network](http://wiki.openstreetmap.org/wiki/Key:network) can be used to determine in which country/region (network) the road or railway is. | priority | implement network and shield field implement field shield of layer road label implement field network of layer rail station label the osm key can be used to determine in which country region network the road or railway is | 1 |

438,923 | 12,663,474,754 | IssuesEvent | 2020-06-18 01:29:11 | vmware/clarity | https://api.github.com/repos/vmware/clarity | closed | Datagrid memory leak and DOM elements leak. | component: datagrid flag: has workaround priority: 1 low status: needs investigation type: bug | ```

[x] bug

[ ] feature request

[ ] enhancement

```

### Expected behavior

Datagrid not to leak memory and DOM elements.

### Actual behavior

After constantly updating the table using a socket, memory leaks and element leaks are created.

### Reproduction of behavior

- https://stackblitz.com/edit/angular-4... | 1.0 | Datagrid memory leak and DOM elements leak. - ```

[x] bug

[ ] feature request

[ ] enhancement

```

### Expected behavior

Datagrid not to leak memory and DOM elements.

### Actual behavior

After constantly updating the table using a socket, memory leaks and element leaks are created.

### Reproduction of beh... | priority | datagrid memory leak and dom elements leak bug feature request enhancement expected behavior datagrid not to leak memory and dom elements actual behavior after constantly updating the table using a socket memory leaks and element leaks are created reproduction of behavior ... | 1 |

438,120 | 12,619,567,073 | IssuesEvent | 2020-06-13 01:16:00 | skylight-hq/skylight.digital | https://api.github.com/repos/skylight-hq/skylight.digital | closed | Auto generate pages for blog post authors, blog post tags, and project team members | priority:low | Right now each page has to be set up manually. | 1.0 | Auto generate pages for blog post authors, blog post tags, and project team members - Right now each page has to be set up manually. | priority | auto generate pages for blog post authors blog post tags and project team members right now each page has to be set up manually | 1 |

256,216 | 8,127,038,622 | IssuesEvent | 2018-08-17 06:17:52 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | Append version number onto build_visit and visit-install that we put on the Web. | Expected Use: 3 - Occasional Feature Impact: 2 - Low Priority: Normal Support Group: DOE/ASC | cq-id: VisIt00008818

cq-submitter: Brad Whitlock

cq-submit-date: 12/01/08

A couple of external users have become confused with build_visit and visit-install and have ended up using them with wrong versions of the binary distributions and source code. The users both suggested that we append the version number to th... | 1.0 | Append version number onto build_visit and visit-install that we put on the Web. - cq-id: VisIt00008818

cq-submitter: Brad Whitlock

cq-submit-date: 12/01/08

A couple of external users have become confused with build_visit and visit-install and have ended up using them with wrong versions of the binary distribution... | priority | append version number onto build visit and visit install that we put on the web cq id cq submitter brad whitlock cq submit date a couple of external users have become confused with build visit and visit install and have ended up using them with wrong versions of the binary distributions and source co... | 1 |

671,447 | 22,761,544,955 | IssuesEvent | 2022-07-07 21:47:20 | markmac99/UKmon-shared | https://api.github.com/repos/markmac99/UKmon-shared | closed | Add container support for cartopy | enhancement Low priority | This would allow OS maps to be used as the backdrop for the ground-track maps.

However cartopy is poorly supported and has to be built from source, along with GEOS, PROJ4 and SQLITE3, which makes it quite a task. | 1.0 | Add container support for cartopy - This would allow OS maps to be used as the backdrop for the ground-track maps.

However cartopy is poorly supported and has to be built from source, along with GEOS, PROJ4 and SQLITE3, which makes it quite a task. | priority | add container support for cartopy this would allow os maps to be used as the backdrop for the ground track maps however cartopy is poorly supported and has to be built from source along with geos and which makes it quite a task | 1 |

29,254 | 2,714,206,549 | IssuesEvent | 2015-04-10 00:43:08 | hamiltont/clasp | https://api.github.com/repos/hamiltont/clasp | opened | Option for custom Android system, boot, and kernel images. | Low priority | _From @bamos on September 24, 2014 15:33_

Specifically to run nbd -- http://bamos.github.io/2014/09/08/nbd-android/

_Copied from original issue: hamiltont/attack#53_ | 1.0 | Option for custom Android system, boot, and kernel images. - _From @bamos on September 24, 2014 15:33_

Specifically to run nbd -- http://bamos.github.io/2014/09/08/nbd-android/

_Copied from original issue: hamiltont/attack#53_ | priority | option for custom android system boot and kernel images from bamos on september specifically to run nbd copied from original issue hamiltont attack | 1 |

228,531 | 7,552,579,968 | IssuesEvent | 2018-04-19 01:09:32 | OperationCode/operationcode_backend | https://api.github.com/repos/OperationCode/operationcode_backend | closed | Rake task to tag community leaders | Priority: Low Status: In Progress Type: Feature | <!-- Please fill out one of the sections below based on the type of issue you're creating -->

# Feature

## Why is this feature being added?

<!-- What problem is it solving? What value does it add? -->

Now that https://github.com/OperationCode/operationcode_backend/pull/292 is merged, we need a rake task to initiall... | 1.0 | Rake task to tag community leaders - <!-- Please fill out one of the sections below based on the type of issue you're creating -->

# Feature

## Why is this feature being added?

<!-- What problem is it solving? What value does it add? -->

Now that https://github.com/OperationCode/operationcode_backend/pull/292 is me... | priority | rake task to tag community leaders feature why is this feature being added now that is merged we need a rake task to initially tag the appropriate users in prod with the community leader tag what should your feature do collaborate with hollomancer or whomever he deems appropriate to... | 1 |

35,022 | 2,789,753,415 | IssuesEvent | 2015-05-08 21:16:41 | google/google-visualization-api-issues | https://api.github.com/repos/google/google-visualization-api-issues | opened | Visualization to understand XML | Priority-Low Type-Enhancement | Original [issue 55](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=55) created by google-admin on 2009-09-16T14:22:48.000Z:

<b>What would you like to see us add to this API?</b>

It would have been nice if all visualization also understands XML.

In that case we have to only fetch the XML ... | 1.0 | Visualization to understand XML - Original [issue 55](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=55) created by google-admin on 2009-09-16T14:22:48.000Z:

<b>What would you like to see us add to this API?</b>

It would have been nice if all visualization also understands XML.

In that c... | priority | visualization to understand xml original created by google admin on what would you like to see us add to this api it would have been nice if all visualization also understands xml in that case we have to only fetch the xml from the server and pass it to visualization this will remove the co... | 1 |

614,576 | 19,185,841,565 | IssuesEvent | 2021-12-05 06:59:01 | eshoku/frontend | https://api.github.com/repos/eshoku/frontend | opened | 主催者でないのにurl経由で編集ページにアクセスできてしまう | bug Priority: low | ## 概要

主催者でないのにurl経由で編集ページにアクセスできてしまうバグがある。

バックエンド側で権限を確認しているので実際にルーム情報を編集できるわけではないが、利用者が混乱するため、修正するべき。 | 1.0 | 主催者でないのにurl経由で編集ページにアクセスできてしまう - ## 概要

主催者でないのにurl経由で編集ページにアクセスできてしまうバグがある。

バックエンド側で権限を確認しているので実際にルーム情報を編集できるわけではないが、利用者が混乱するため、修正するべき。 | priority | 主催者でないのにurl経由で編集ページにアクセスできてしまう 概要 主催者でないのにurl経由で編集ページにアクセスできてしまうバグがある。 バックエンド側で権限を確認しているので実際にルーム情報を編集できるわけではないが、利用者が混乱するため、修正するべき。 | 1 |

121,182 | 4,805,925,332 | IssuesEvent | 2016-11-02 17:13:31 | FrozenSand/UrbanTerror4 | https://api.github.com/repos/FrozenSand/UrbanTerror4 | closed | Auto-completion is not working for several commands | confirmed low priority | Auto completion in 4.3 isnt't working anymore for all auth commands, and potentially others.

| 1.0 | Auto-completion is not working for several commands - Auto completion in 4.3 isnt't working anymore for all auth commands, and potentially others.

| priority | auto completion is not working for several commands auto completion in isnt t working anymore for all auth commands and potentially others | 1 |

545,698 | 15,955,375,664 | IssuesEvent | 2021-04-15 14:32:38 | Gird-the-Grid/Grid-the-Grid | https://api.github.com/repos/Gird-the-Grid/Grid-the-Grid | opened | Frontend: Register | enhancement low priority | * Show loading button while waiting for register response

* Display error message if `Success: false` (Email user / other error) | 1.0 | Frontend: Register - * Show loading button while waiting for register response

* Display error message if `Success: false` (Email user / other error) | priority | frontend register show loading button while waiting for register response display error message if success false email user other error | 1 |

35,101 | 2,789,798,526 | IssuesEvent | 2015-05-08 21:33:42 | google/google-visualization-api-issues | https://api.github.com/repos/google/google-visualization-api-issues | opened | Comparing of different time-periods | Priority-Low Type-Enhancement | Original [issue 140](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=140) created by orwant on 2009-11-30T21:45:51.000Z:

<b>What would you like to see us add to this API?</b>

A feature where you can compare values to different time-periods would be

very helpful.

E.G. How developed my sa... | 1.0 | Comparing of different time-periods - Original [issue 140](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=140) created by orwant on 2009-11-30T21:45:51.000Z:

<b>What would you like to see us add to this API?</b>

A feature where you can compare values to different time-periods would be

v... | priority | comparing of different time periods original created by orwant on what would you like to see us add to this api a feature where you can compare values to different time periods would be very helpful e g how developed my sales in the last month to the same month in the previous year or ... | 1 |

353,970 | 10,561,380,709 | IssuesEvent | 2019-10-04 15:45:01 | fgpv-vpgf/fgpv-vpgf | https://api.github.com/repos/fgpv-vpgf/fgpv-vpgf | closed | API projectFromPoint function is wrong | bug-type: unexpected behavior priority: low problem: bug type: corrective | API `XY.projectFromPoint()` has a few things off with it.

It's marking the result with the incoming projection instead of the outcome projection.

Also allows you to default to the XY object's co-ords, which makes no sense as they are already in latlong.

The function itself really doesn't need to be on the XY... | 1.0 | API projectFromPoint function is wrong - API `XY.projectFromPoint()` has a few things off with it.

It's marking the result with the incoming projection instead of the outcome projection.

Also allows you to default to the XY object's co-ords, which makes no sense as they are already in latlong.

The function i... | priority | api projectfrompoint function is wrong api xy projectfrompoint has a few things off with it it s marking the result with the incoming projection instead of the outcome projection also allows you to default to the xy object s co ords which makes no sense as they are already in latlong the function i... | 1 |

414,706 | 12,110,534,493 | IssuesEvent | 2020-04-21 10:35:06 | Icyr/DnDApp | https://api.github.com/repos/Icyr/DnDApp | closed | Add loading indicators. | change request priority: low | There is no delay on add (thanks to Firestore).

EDIT: Room has a delay on create, we need to add loading for it too.

But there is a delay on initial load.

We need to display loading indicator when list is updated. | 1.0 | Add loading indicators. - There is no delay on add (thanks to Firestore).

EDIT: Room has a delay on create, we need to add loading for it too.

But there is a delay on initial load.

We need to display loading indicator when list is updated. | priority | add loading indicators there is no delay on add thanks to firestore edit room has a delay on create we need to add loading for it too but there is a delay on initial load we need to display loading indicator when list is updated | 1 |

467,203 | 13,443,404,207 | IssuesEvent | 2020-09-08 08:18:47 | input-output-hk/cardano-wallet | https://api.github.com/repos/input-output-hk/cardano-wallet | closed | Listing transaction when node is still in the Byron era may fail with 500 | BUG PRIORITY:LOW SEVERITY:HIGH | # Context

<!-- WHEN CREATED

Any information that is useful to understand the bug and the subsystem

it evolves in. References to documentation and or other tickets are

welcome.

-->

| Information | - |

| --- | --- ... | 1.0 | Listing transaction when node is still in the Byron era may fail with 500 - # Context

<!-- WHEN CREATED

Any information that is useful to understand the bug and the subsystem

it evolves in. References to documentation and or other tickets are

welcome.

-->

| Information | - ... | priority | listing transaction when node is still in the byron era may fail with context when created any information that is useful to understand the bug and the subsystem it evolves in references to documentation and or other tickets are welcome information ... | 1 |

646,526 | 21,051,694,848 | IssuesEvent | 2022-03-31 21:08:36 | rathena/rathena | https://api.github.com/repos/rathena/rathena | closed | Vending Table store wrong coordinate if merchant warped during vending state. | status:confirmed component:core priority:low mode:renewal mode:prerenewal type:bug | <!-- NOTE: Anything within these brackets will be hidden on the preview of the Issue. -->

* **rAthena Hash**:

https://github.com/rathena/rathena/commit/73a8d1365e84252e37185f8c579a8825abd925c4

<!-- Please specify the rAthena [GitHub hash](https://help.github.com/articles/autolinked-references-and-urls/#commit-sha... | 1.0 | Vending Table store wrong coordinate if merchant warped during vending state. - <!-- NOTE: Anything within these brackets will be hidden on the preview of the Issue. -->

* **rAthena Hash**:

https://github.com/rathena/rathena/commit/73a8d1365e84252e37185f8c579a8825abd925c4

<!-- Please specify the rAthena [GitHub h... | priority | vending table store wrong coordinate if merchant warped during vending state rathena hash please specify the rathena on which you encountered this issue how to get your github hash cd your rathena directory git rev parse short head copy the resulting hash cli... | 1 |

674,664 | 23,061,359,382 | IssuesEvent | 2022-07-25 10:13:29 | SeldonIO/alibi-detect | https://api.github.com/repos/SeldonIO/alibi-detect | opened | Review content of metadata in detector config files | Priority: Low Type: Engineering | Our detector `self.meta` attribute currently contains the following information:

```python

DEFAULT_META = {

"name": None,

"online": None, # true or false

"data_type": None, # tabular, image or time-series

"version": None,

"detector_type": None # drift, outlier or adversarial

} # type: ... | 1.0 | Review content of metadata in detector config files - Our detector `self.meta` attribute currently contains the following information:

```python

DEFAULT_META = {

"name": None,

"online": None, # true or false

"data_type": None, # tabular, image or time-series

"version": None,

"detector_typ... | priority | review content of metadata in detector config files our detector self meta attribute currently contains the following information python default meta name none online none true or false data type none tabular image or time series version none detector typ... | 1 |

226,261 | 7,516,866,513 | IssuesEvent | 2018-04-12 00:12:56 | atlassian/react-beautiful-dnd | https://api.github.com/repos/atlassian/react-beautiful-dnd | closed | Add a real browser test | engineering health good first issue idea priority: low | I am not sure if we need this - but I thought I would raise it anyway

Generally I am quite against browser tests. However, I think it could be worthwhile having one smoke test that runs in a real browser. I am fairly keen to use [puppeteer](https://github.com/GoogleChrome/puppeteer) for this one. It would not need t... | 1.0 | Add a real browser test - I am not sure if we need this - but I thought I would raise it anyway

Generally I am quite against browser tests. However, I think it could be worthwhile having one smoke test that runs in a real browser. I am fairly keen to use [puppeteer](https://github.com/GoogleChrome/puppeteer) for thi... | priority | add a real browser test i am not sure if we need this but i thought i would raise it anyway generally i am quite against browser tests however i think it could be worthwhile having one smoke test that runs in a real browser i am fairly keen to use for this one it would not need to run in all browsers th... | 1 |

416,897 | 12,152,421,797 | IssuesEvent | 2020-04-24 22:13:32 | TykTechnologies/tyk | https://api.github.com/repos/TykTechnologies/tyk | closed | Support authorisation time based ( business-hours / business-days / etc.) | Priority: Low customer request enhancement wontfix | **Do you want to request a *feature* or report a *bug*?**

Feature

**What is the current behavior?**

No option to have time based access control to apis.

Use case - backend services are responding only during biz hours and are shutdown or going through maintenance outside this slot.

**What is the expected beha... | 1.0 | Support authorisation time based ( business-hours / business-days / etc.) - **Do you want to request a *feature* or report a *bug*?**

Feature

**What is the current behavior?**

No option to have time based access control to apis.

Use case - backend services are responding only during biz hours and are shutdown or ... | priority | support authorisation time based business hours business days etc do you want to request a feature or report a bug feature what is the current behavior no option to have time based access control to apis use case backend services are responding only during biz hours and are shutdown or ... | 1 |

450,597 | 13,016,984,889 | IssuesEvent | 2020-07-26 09:53:17 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | duplicated workspace role alert msg should be displayed before saving role | area/console kind/bug kind/need-to-verify priority/low | ## English only!

**注意!GitHub Issue 仅支持英文,中文 Issue 请在 [论坛](https://kubesphere.com.cn/forum/) 提交。**

**General remarks**

> Please delete this section including header before submitting

>

> This form is to report bugs. For general usage questions refer to our Slack channel

> [KubeSphere-users](https://jo... | 1.0 | duplicated workspace role alert msg should be displayed before saving role - ## English only!

**注意!GitHub Issue 仅支持英文,中文 Issue 请在 [论坛](https://kubesphere.com.cn/forum/) 提交。**

**General remarks**

> Please delete this section including header before submitting

>

> This form is to report bugs. For general usage... | priority | duplicated workspace role alert msg should be displayed before saving role english only 注意!github issue 仅支持英文,中文 issue 请在 提交。 general remarks please delete this section including header before submitting this form is to report bugs for general usage questions refer to our slack chann... | 1 |

31,452 | 2,732,910,563 | IssuesEvent | 2015-04-17 10:10:13 | tiku01/oryx-editor | https://api.github.com/repos/tiku01/oryx-editor | closed | Layout Management | auto-migrated Priority-Low Schedule-LongTerm Type-Enhancement | ```

The current layouting possibilities are very rudimentary. The goal is to

offer process model layout manager. Because the layouts of different

stencil sets differ, layouts must be stencil set specific.

```

Original issue reported on code.google.com by `NicoPete...@gmail.com` on 17 Apr 2008 at 1:44 | 1.0 | Layout Management - ```

The current layouting possibilities are very rudimentary. The goal is to

offer process model layout manager. Because the layouts of different

stencil sets differ, layouts must be stencil set specific.

```

Original issue reported on code.google.com by `NicoPete...@gmail.com` on 17 Apr 2008 at... | priority | layout management the current layouting possibilities are very rudimentary the goal is to offer process model layout manager because the layouts of different stencil sets differ layouts must be stencil set specific original issue reported on code google com by nicopete gmail com on apr at | 1 |

491,192 | 14,146,644,219 | IssuesEvent | 2020-11-10 19:32:17 | powercomm/PCM-Dashboard | https://api.github.com/repos/powercomm/PCM-Dashboard | opened | When a new PCM is added, history chart is blank, user experience needs improvement | Deficiency Priority: Low | Type 30 Edit form needs to fill history chart when no history points are returned from PCM_AC, a straight line will make it easier to see the threshold alignments. | 1.0 | When a new PCM is added, history chart is blank, user experience needs improvement - Type 30 Edit form needs to fill history chart when no history points are returned from PCM_AC, a straight line will make it easier to see the threshold alignments. | priority | when a new pcm is added history chart is blank user experience needs improvement type edit form needs to fill history chart when no history points are returned from pcm ac a straight line will make it easier to see the threshold alignments | 1 |

493,989 | 14,243,036,089 | IssuesEvent | 2020-11-19 03:20:16 | containrrr/watchtower | https://api.github.com/repos/containrrr/watchtower | closed | Update images that have never been launched as a container | Priority: Low Status: Available Status: Stale Type: Enhancement | I have a use case where I want images updated on a machine but I haven't necessarily ran the image yet as a container. In this case, `--include-stopped` takes no effect (a container never ran), and there are no options to look at images only.

Expanded problem:

I have a CI system. The worker instances, when created,... | 1.0 | Update images that have never been launched as a container - I have a use case where I want images updated on a machine but I haven't necessarily ran the image yet as a container. In this case, `--include-stopped` takes no effect (a container never ran), and there are no options to look at images only.

Expanded prob... | priority | update images that have never been launched as a container i have a use case where i want images updated on a machine but i haven t necessarily ran the image yet as a container in this case include stopped takes no effect a container never ran and there are no options to look at images only expanded prob... | 1 |

664,399 | 22,268,774,705 | IssuesEvent | 2022-06-10 10:05:27 | mozilla/perfcompare | https://api.github.com/repos/mozilla/perfcompare | closed | export store creating function for tests | priority: low | from Julien:

> I believe the next step would be to export a store creating function instead of the store object itself, and create an empty store for each test, so that tests are always fully isolated. Happy to discuss about it more later! I was thinking that as a first step, if you don't want to change existing tests... | 1.0 | export store creating function for tests - from Julien:

> I believe the next step would be to export a store creating function instead of the store object itself, and create an empty store for each test, so that tests are always fully isolated. Happy to discuss about it more later! I was thinking that as a first step,... | priority | export store creating function for tests from julien i believe the next step would be to export a store creating function instead of the store object itself and create an empty store for each test so that tests are always fully isolated happy to discuss about it more later i was thinking that as a first step ... | 1 |

41,341 | 2,868,999,142 | IssuesEvent | 2015-06-05 22:28:46 | dart-lang/args | https://api.github.com/repos/dart-lang/args | opened | zsh/bash autocompletion generator from ArgParser | enhancement Priority-Low | <a href="https://github.com/amouravski"><img src="https://avatars.githubusercontent.com/u/264967?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [amouravski](https://github.com/amouravski)**

_Originally opened as dart-lang/sdk#8389_

----

zsh and bash both have a shell autocomplete capability... | 1.0 | zsh/bash autocompletion generator from ArgParser - <a href="https://github.com/amouravski"><img src="https://avatars.githubusercontent.com/u/264967?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [amouravski](https://github.com/amouravski)**

_Originally opened as dart-lang/sdk#8389_

----

zsh... | priority | zsh bash autocompletion generator from argparser issue by originally opened as dart lang sdk zsh and bash both have a shell autocomplete capability so that you can quickly complete flags arguments etc it d be amazing if we could ingest an argparser and spit out zsh bash completion files so t... | 1 |

306,404 | 9,392,654,150 | IssuesEvent | 2019-04-07 03:18:03 | generative-music/generative.fm | https://api.github.com/repos/generative-music/generative.fm | closed | Update indicator animation | enhancement high priority low effort | Animate the update indicator above the "About" tab label so users click on it. | 1.0 | Update indicator animation - Animate the update indicator above the "About" tab label so users click on it. | priority | update indicator animation animate the update indicator above the about tab label so users click on it | 1 |

827,297 | 31,765,057,160 | IssuesEvent | 2023-09-12 08:16:30 | filamentphp/filament | https://api.github.com/repos/filamentphp/filament | opened | Test | bug unconfirmed low priority | ### Package

filament/filament

### Package Version

vNothing

### Laravel Version

vNothing

### Livewire Version

vNothing

### PHP Version

vNothing

### Problem description

N/A

### Expected behavior

N/A

### Steps to reproduce

N/A

### Reproduction repository

N/A

### Relevant log output

```shell

N/A

```

| 1.0 | Test - ### Package

filament/filament

### Package Version

vNothing

### Laravel Version

vNothing

### Livewire Version

vNothing

### PHP Version

vNothing

### Problem description

N/A

### Expected behavior

N/A

### Steps to reproduce

N/A

### Reproduction repository

N/A

### Relevant log output

```shell

N/A

`... | priority | test package filament filament package version vnothing laravel version vnothing livewire version vnothing php version vnothing problem description n a expected behavior n a steps to reproduce n a reproduction repository n a relevant log output shell n a ... | 1 |

35,609 | 2,791,519,588 | IssuesEvent | 2015-05-10 06:42:47 | minj/foxtrick | https://api.github.com/repos/minj/foxtrick | closed | Consider adding 'force English flags' in CountryList | feature Misc needs-feedback Priority-Low | Original [issue 1217](https://code.google.com/p/foxtrick/issues/detail?id=1217) created by [minj](mailto:4mr.minj@gmail.com) on 2014-07-14T08:32:13.000Z:

**From:** knullig

**PostID:** [(16412248.177)](https://www.hattrick.org/goto.ashx?path=/Forum/Read.aspx%3Ft%3D16412248%26n%3D177%26v%3D4)

**Reply:** [(16412248.1)](h... | 1.0 | Consider adding 'force English flags' in CountryList - Original [issue 1217](https://code.google.com/p/foxtrick/issues/detail?id=1217) created by [minj](mailto:4mr.minj@gmail.com) on 2014-07-14T08:32:13.000Z:

**From:** knullig

**PostID:** [(16412248.177)](https://www.hattrick.org/goto.ashx?path=/Forum/Read.aspx%3Ft%3D... | priority | consider adding force english flags in countrylist original created by mailto minj gmail com on from knullig postid reply to everyone datetime message i like to request options country names everywhere in same language when i check... | 1 |

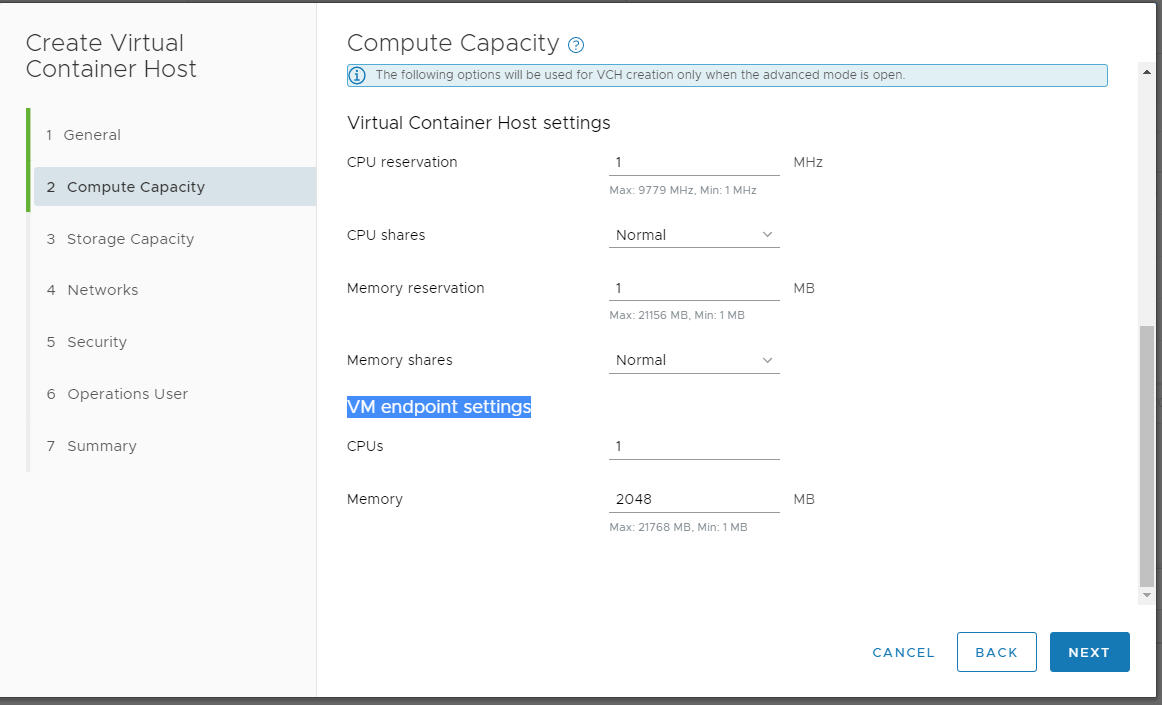

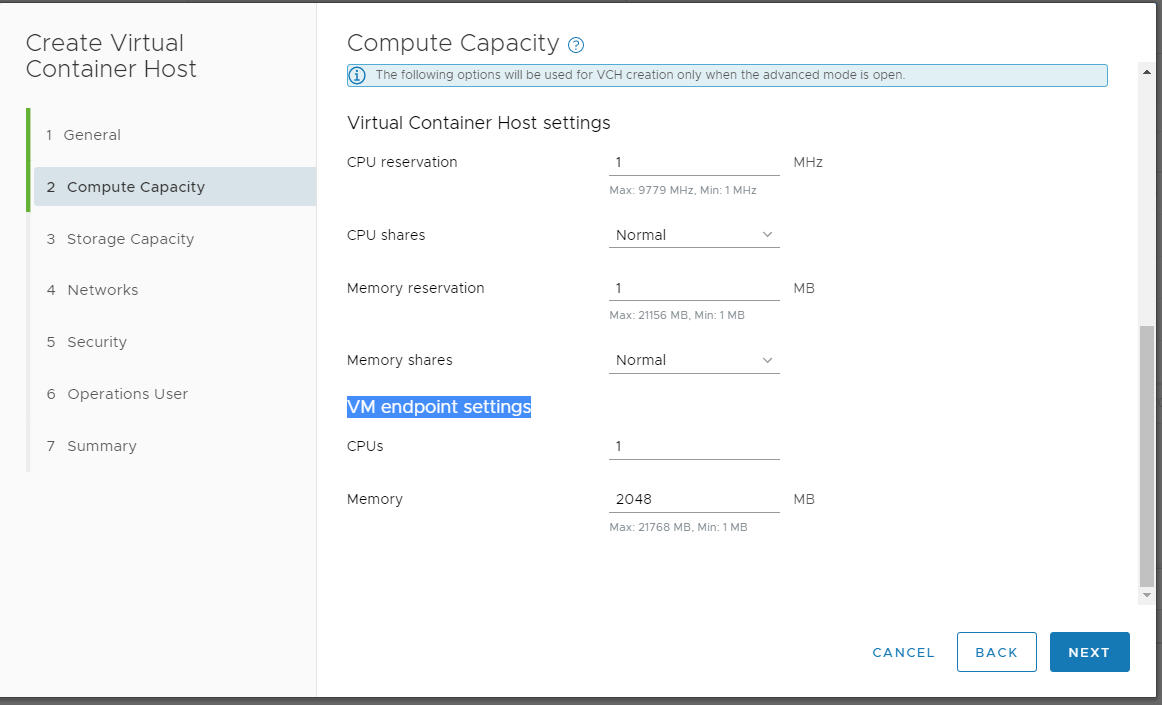

196,323 | 6,926,823,400 | IssuesEvent | 2017-11-30 20:32:44 | vmware/vic-ui | https://api.github.com/repos/vmware/vic-ui | closed | Create VCH wizard says "VM endpoint" instead of "Endpoint VM" | area/ui priority/low team/lifecycle | In the Compute page of the VCH deployment wizard, the text "VM endpoint settings" should be "VCH endpoint VM settings:

| 1.0 | Create VCH wizard says "VM endpoint" instead of "Endpoint VM" - In the Compute page of the VCH deployment wizard, the text "VM endpoint settings" should be "VCH endpoint VM settings:

| priority | create vch wizard says vm endpoint instead of endpoint vm in the compute page of the vch deployment wizard the text vm endpoint settings should be vch endpoint vm settings | 1 |

396,031 | 11,700,391,601 | IssuesEvent | 2020-03-06 17:23:50 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | closed | Investigate cache job runtime increase, part 2! | Priority: Low Team: Echo 🐬 Type: Investigation Type: Tech-Improvement | Runtimes for [the `UpdateCachedAppealsAttributesJob`](https://github.com/department-of-veterans-affairs/caseflow/blob/master/app/jobs/update_cached_appeals_attributes_job.rb) increased from significantly after [recent changes](https://github.com/department-of-veterans-affairs/caseflow/pull/13285). Short runtimes allow ... | 1.0 | Investigate cache job runtime increase, part 2! - Runtimes for [the `UpdateCachedAppealsAttributesJob`](https://github.com/department-of-veterans-affairs/caseflow/blob/master/app/jobs/update_cached_appeals_attributes_job.rb) increased from significantly after [recent changes](https://github.com/department-of-veterans-a... | priority | investigate cache job runtime increase part runtimes for increased from significantly after short runtimes allow us to run the job more frequently and keep the cache more up to date this ticket exists to determine why runtimes increased and address it if possible acceptance criter... | 1 |

298,069 | 9,195,552,186 | IssuesEvent | 2019-03-07 02:54:58 | gw2efficiency/issues | https://api.github.com/repos/gw2efficiency/issues | closed | Miscalculating Deaths/hour and/or Playtime | 1-Type: Bug 2-Priority: C 3-Complexity: Low 4-Impact: Low 5-Area: Account 9-Status: For next release 9-Status: Ready for Release | I have 4,142h 33m Playtime and 7,666 deaths as per the https://gw2efficiency.com/account/statistics page.

In the https://gw2efficiency.com/account/statistics/statistics.deathCountPerHour Page i have 1.85 deaths/hour which seems correct.

In the https://gw2efficiency.com/account/overview Bottom it shows:

You playe... | 1.0 | Miscalculating Deaths/hour and/or Playtime - I have 4,142h 33m Playtime and 7,666 deaths as per the https://gw2efficiency.com/account/statistics page.

In the https://gw2efficiency.com/account/statistics/statistics.deathCountPerHour Page i have 1.85 deaths/hour which seems correct.

In the https://gw2efficiency.com... | priority | miscalculating deaths hour and or playtime i have playtime and deaths as per the page in the page i have deaths hour which seems correct in the bottom it shows you played a total of hours across all characters during that time you died a total of times that s deaths per hou... | 1 |

712,032 | 24,482,597,367 | IssuesEvent | 2022-10-09 02:30:26 | chaotic-aur/packages | https://api.github.com/repos/chaotic-aur/packages | closed | [Request] openssl3-git | request:new-pkg priority:low | ### Link to the package(s) in the AUR

https://aur.archlinux.org/packages/openssl3-git

### Utility this package has for you

provides library file for ruffle, a flash emulator

### Do you consider the package(s) to be useful for every Chaotic-AUR user?

No, but for a few.

### Do you consider the package to be useful ... | 1.0 | [Request] openssl3-git - ### Link to the package(s) in the AUR

https://aur.archlinux.org/packages/openssl3-git

### Utility this package has for you

provides library file for ruffle, a flash emulator

### Do you consider the package(s) to be useful for every Chaotic-AUR user?

No, but for a few.

### Do you consider ... | priority | git link to the package s in the aur utility this package has for you provides library file for ruffle a flash emulator do you consider the package s to be useful for every chaotic aur user no but for a few do you consider the package to be useful for feature testing preview ye... | 1 |

185,817 | 6,730,853,842 | IssuesEvent | 2017-10-18 03:48:51 | wireservice/csvkit | https://api.github.com/repos/wireservice/csvkit | closed | Install with Homebrew | feature Low Priority | [Homebrew](http://brew.sh)

Tried with `brew diy` but

```

Error: Couldn't determine build system

```

| 1.0 | Install with Homebrew - [Homebrew](http://brew.sh)

Tried with `brew diy` but

```

Error: Couldn't determine build system

```

| priority | install with homebrew tried with brew diy but error couldn t determine build system | 1 |

485,031 | 13,960,082,618 | IssuesEvent | 2020-10-24 19:20:47 | learnweb/moodle-mod_ratingallocate | https://api.github.com/repos/learnweb/moodle-mod_ratingallocate | closed | Adapt the plugin's code style to Moodle's code style | Effort: Very High Priority: Low enhancement | This is definitely not the most important issue, but should be tackled sometime:

If the code style would conform to Moodle's code style, the plugin would (hopefully) be

- easier to understand (especially for those who are new to the plugin but have experience with Moodle)

- easier to maintain (as a result from the fir... | 1.0 | Adapt the plugin's code style to Moodle's code style - This is definitely not the most important issue, but should be tackled sometime:

If the code style would conform to Moodle's code style, the plugin would (hopefully) be

- easier to understand (especially for those who are new to the plugin but have experience with... | priority | adapt the plugin s code style to moodle s code style this is definitely not the most important issue but should be tackled sometime if the code style would conform to moodle s code style the plugin would hopefully be easier to understand especially for those who are new to the plugin but have experience with... | 1 |

539,924 | 15,797,128,845 | IssuesEvent | 2021-04-02 16:07:58 | neurostuff/NiMARE | https://api.github.com/repos/neurostuff/NiMARE | closed | Generic data type check and initialization function | effort: low impact: low priority: low refactoring | ## Summary

<!--What would you like changed/added and why?-->

There are a number of methods where we expect an object of a specific class (e.g., a KernelTransformer), either initialized or not. The check we have (e.g., see below) is pretty straightforward, and could easily be abstracted out to its own function, which ... | 1.0 | Generic data type check and initialization function - ## Summary

<!--What would you like changed/added and why?-->

There are a number of methods where we expect an object of a specific class (e.g., a KernelTransformer), either initialized or not. The check we have (e.g., see below) is pretty straightforward, and coul... | priority | generic data type check and initialization function summary there are a number of methods where we expect an object of a specific class e g a kerneltransformer either initialized or not the check we have e g see below is pretty straightforward and could easily be abstracted out to its own function ... | 1 |

355,314 | 10,579,271,950 | IssuesEvent | 2019-10-08 02:00:25 | momentum-mod/game | https://api.github.com/repos/momentum-mod/game | closed | OSX: map selector color inconsistencies | Priority: Low Size: Small Type: Bug | (not sure if it only happens on osx)

1. right click map haven't downloaded > add to library (map red) > download map (map green) > restart game > map turns red again (not intentional) > map will appear as not downloaded > right click to "download" map again > map turns white but doesn't download because it's already... | 1.0 | OSX: map selector color inconsistencies - (not sure if it only happens on osx)

1. right click map haven't downloaded > add to library (map red) > download map (map green) > restart game > map turns red again (not intentional) > map will appear as not downloaded > right click to "download" map again > map turns white... | priority | osx map selector color inconsistencies not sure if it only happens on osx right click map haven t downloaded add to library map red download map map green restart game map turns red again not intentional map will appear as not downloaded right click to download map again map turns white... | 1 |

552,959 | 16,331,928,287 | IssuesEvent | 2021-05-12 10:16:10 | stackabletech/t2 | https://api.github.com/repos/stackabletech/t2 | closed | configure firewalld properly | priority/low size/M type/enhancement | On the host t2.stackable.tech, we disabled firewalld (host firewall) because it stood in our way and we didn't take the time to configure it properly. As we have an enabled firewall in the network, this isn't risky as of now. Nevertheless, we should take the time to configure it properly "some time". | 1.0 | configure firewalld properly - On the host t2.stackable.tech, we disabled firewalld (host firewall) because it stood in our way and we didn't take the time to configure it properly. As we have an enabled firewall in the network, this isn't risky as of now. Nevertheless, we should take the time to configure it properly ... | priority | configure firewalld properly on the host stackable tech we disabled firewalld host firewall because it stood in our way and we didn t take the time to configure it properly as we have an enabled firewall in the network this isn t risky as of now nevertheless we should take the time to configure it properly ... | 1 |

208,438 | 7,154,496,884 | IssuesEvent | 2018-01-26 08:47:53 | qutebrowser/qutebrowser | https://api.github.com/repos/qutebrowser/qutebrowser | closed | view-source: scheme doesn't load qutebrowser's internal JavaScript | component: QtWebEngine priority: 2 - low qt | As stated in the title, keybindings for scrolling - gg, G, ctrl+d and ctrl+u - don't work in view-source mode, though 'j' and 'k' work normally. I understand that most users don't scroll that much through source, but it would still be a nice thing to have, and doesn't seem much different from ordinary page scrolling.

... | 1.0 | view-source: scheme doesn't load qutebrowser's internal JavaScript - As stated in the title, keybindings for scrolling - gg, G, ctrl+d and ctrl+u - don't work in view-source mode, though 'j' and 'k' work normally. I understand that most users don't scroll that much through source, but it would still be a nice thing to ... | priority | view source scheme doesn t load qutebrowser s internal javascript as stated in the title keybindings for scrolling gg g ctrl d and ctrl u don t work in view source mode though j and k work normally i understand that most users don t scroll that much through source but it would still be a nice thing to ... | 1 |

531,371 | 15,496,195,473 | IssuesEvent | 2021-03-11 02:14:07 | ankidroid/Anki-Android | https://api.github.com/repos/ankidroid/Anki-Android | closed | Note Editor: Add Icons to Multimedia Menu | Enhancement Good First Issue! Help Wanted Multimedia Note Editor Priority-Low Stale UI | Good first issue - need to replace icons with XML drawables (Via Add - Vector Icon), then set a style/tint on the XML icons.

https://github.com/ankidroid/Anki-Android/blob/71fdd0445f020ec7f798e29bc08dc13d658d9234/AnkiDroid/src/main/java/com/ichi2/anki/NoteEditor.java#L1405-L1406

Menu currently looks like:

![im... | 1.0 | Note Editor: Add Icons to Multimedia Menu - Good first issue - need to replace icons with XML drawables (Via Add - Vector Icon), then set a style/tint on the XML icons.

https://github.com/ankidroid/Anki-Android/blob/71fdd0445f020ec7f798e29bc08dc13d658d9234/AnkiDroid/src/main/java/com/ichi2/anki/NoteEditor.java#L1405... | priority | note editor add icons to multimedia menu good first issue need to replace icons with xml drawables via add vector icon then set a style tint on the xml icons menu currently looks like | 1 |

81,406 | 3,590,557,716 | IssuesEvent | 2016-02-01 06:52:18 | jamesmontemagno/Xamarin.Plugins | https://api.github.com/repos/jamesmontemagno/Xamarin.Plugins | closed | [Feature Request] (Media/Android) Add ability to push MediaIntent so that returning doesn't force application resume with Xamarin.Forms | enhancement Media priority-low | Currently on Android, media is taken using an intent. The intent is always launched with the "NewTask" flag, which means that when the user is done getting/taking media, a Xamarin.Forms application will go through the OnResume method. Is it possible to keep the intent in the current application's Task to prevent the On... | 1.0 | [Feature Request] (Media/Android) Add ability to push MediaIntent so that returning doesn't force application resume with Xamarin.Forms - Currently on Android, media is taken using an intent. The intent is always launched with the "NewTask" flag, which means that when the user is done getting/taking media, a Xamarin.Fo... | priority | media android add ability to push mediaintent so that returning doesn t force application resume with xamarin forms currently on android media is taken using an intent the intent is always launched with the newtask flag which means that when the user is done getting taking media a xamarin forms application ... | 1 |

216,394 | 7,307,618,145 | IssuesEvent | 2018-02-28 03:47:22 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | Update CMSSW in the json templates | Low Priority | The following workflows are giving failures when ran on singularity nodes:

TaskChain_LumiMask_multiRun

ReDigi_AllFlag

ReDigi_cmsRun2

StepChain_ReDigi3

StepChain_LumiMask

ReDigi_cmsRun3

ReDigi_LumiMask

at least...

Just in case, the error is:

```

An exception of category 'PluginLibraryLoadError' occurred w... | 1.0 | Update CMSSW in the json templates - The following workflows are giving failures when ran on singularity nodes:

TaskChain_LumiMask_multiRun

ReDigi_AllFlag

ReDigi_cmsRun2

StepChain_ReDigi3

StepChain_LumiMask

ReDigi_cmsRun3

ReDigi_LumiMask

at least...

Just in case, the error is:

```

An exception of categor... | priority | update cmssw in the json templates the following workflows are giving failures when ran on singularity nodes taskchain lumimask multirun redigi allflag redigi stepchain stepchain lumimask redigi redigi lumimask at least just in case the error is an exception of category pluginlibrarylo... | 1 |

798,548 | 28,289,559,052 | IssuesEvent | 2023-04-09 02:44:23 | AY2223S2-CS2103T-W09-4/tp | https://api.github.com/repos/AY2223S2-CS2103T-W09-4/tp | closed | [PE-D][Tester D] [Minor] days instead of day | priority.Low type.FeatureFlaw |

<!--session: 1680242808805-81f07706-8824-452e-a98e-ad93d1f47077--><!--Version: Web v3.4.7-->

-------------

Labels: `severity.VeryLow` `type.FunctionalityBug`

original: rockman007372/ped#9 | 1.0 | [PE-D][Tester D] [Minor] days instead of day -

<!--session: 1680242808805-81f07706-8824-452e-a98e-ad93d1f47077--><!--Version: Web v3.4.7-->

-------------

Labels: `severity.VeryLow` `type.Functionalit... | priority | days instead of day labels severity verylow type functionalitybug original ped | 1 |

644,285 | 20,972,829,348 | IssuesEvent | 2022-03-28 12:58:05 | mito-ds/monorepo | https://api.github.com/repos/mito-ds/monorepo | closed | Pivot table aggregation methods like `median` should be disabled for non-number columns | type: mitosheet effort: 2 priority: low | **Describe the bug**

Add a row to a pivot table, and then a value that is a string column. Set the aggregation method to `mean`, and get an error.

**Expected behavior**

The invalid options for the value aggregation should be disabled (with an error message) on non-number columns (e.g. everything but count, and... | 1.0 | Pivot table aggregation methods like `median` should be disabled for non-number columns - **Describe the bug**

Add a row to a pivot table, and then a value that is a string column. Set the aggregation method to `mean`, and get an error.

**Expected behavior**

The invalid options for the value aggregation should... | priority | pivot table aggregation methods like median should be disabled for non number columns describe the bug add a row to a pivot table and then a value that is a string column set the aggregation method to mean and get an error expected behavior the invalid options for the value aggregation should... | 1 |

56,099 | 3,078,216,071 | IssuesEvent | 2015-08-21 08:43:38 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Папки с медиафайлами в шаре имеют "качество звука" | enhancement imported Priority-Low | _From [reaor...@gmail.com](https://code.google.com/u/102418317896447533964/) on March 11, 2011 19:29:43_

Скрин ниже

**Attachment:** [Безымянный.JPG](http://code.google.com/p/flylinkdc/issues/detail?id=392)

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=392_ | 1.0 | Папки с медиафайлами в шаре имеют "качество звука" - _From [reaor...@gmail.com](https://code.google.com/u/102418317896447533964/) on March 11, 2011 19:29:43_

Скрин ниже

**Attachment:** [Безымянный.JPG](http://code.google.com/p/flylinkdc/issues/detail?id=392)

_Original issue: http://code.google.com/p/flylinkdc/issues... | priority | папки с медиафайлами в шаре имеют качество звука from on march скрин ниже attachment original issue | 1 |

491,427 | 14,163,820,106 | IssuesEvent | 2020-11-12 03:20:55 | momentum-mod/game | https://api.github.com/repos/momentum-mod/game | closed | info_particle_systems with "start_active 1" don't appear on map load | Priority: Low Size: Medium Type: Bug | **Describe the bug**

Any info_particle_system with the "start_active" keyvalue doesn't appear unless restarted via entity inputs.

**To Reproduce**

Steps to reproduce the behavior:

- Load a [map](https://github.com/momentum-mod/game/files/4366426/firetest.zip) with the aforementioned info_particle_system.

- Do `e... | 1.0 | info_particle_systems with "start_active 1" don't appear on map load - **Describe the bug**

Any info_particle_system with the "start_active" keyvalue doesn't appear unless restarted via entity inputs.

**To Reproduce**

Steps to reproduce the behavior:

- Load a [map](https://github.com/momentum-mod/game/files/43664... | priority | info particle systems with start active don t appear on map load describe the bug any info particle system with the start active keyvalue doesn t appear unless restarted via entity inputs to reproduce steps to reproduce the behavior load a with the aforementioned info particle system d... | 1 |

477,684 | 13,766,373,003 | IssuesEvent | 2020-10-07 14:29:47 | ansible/awx | https://api.github.com/repos/ansible/awx | opened | Host details variables showing JSON format without indentation on load | component:ui_next priority:low state:needs_devel type:bug | <!-- Issues are for **concrete, actionable bugs and feature requests** only - if you're just asking for debugging help or technical support, please use:

- http://webchat.freenode.net/?channels=ansible-awx

- https://groups.google.com/forum/#!forum/awx-project

We have to limit this because of limited volunteer tim... | 1.0 | Host details variables showing JSON format without indentation on load - <!-- Issues are for **concrete, actionable bugs and feature requests** only - if you're just asking for debugging help or technical support, please use:

- http://webchat.freenode.net/?channels=ansible-awx

- https://groups.google.com/forum/#!fo... | priority | host details variables showing json format without indentation on load issues are for concrete actionable bugs and feature requests only if you re just asking for debugging help or technical support please use we have to limit this because of limited volunteer time to respond to issues ... | 1 |

709,032 | 24,365,653,485 | IssuesEvent | 2022-10-03 14:58:49 | NCAR/wrfcloud | https://api.github.com/repos/NCAR/wrfcloud | opened | Add workflow manager to runtime components | priority: low alert: NEED MORE DEFINITION component: NWP components | ## Describe the New Feature ##

Consider adding a workflow manager, e.g. ecflow or rocoto, to the runtime components to help manage the monitoring and job dependencies.

### Acceptance Testing ###

*List input data types and sources.*

*Describe tests required for new functionality.*

### Time Estimate ###

*Estim... | 1.0 | Add workflow manager to runtime components - ## Describe the New Feature ##

Consider adding a workflow manager, e.g. ecflow or rocoto, to the runtime components to help manage the monitoring and job dependencies.

### Acceptance Testing ###

*List input data types and sources.*

*Describe tests required for new fun... | priority | add workflow manager to runtime components describe the new feature consider adding a workflow manager e g ecflow or rocoto to the runtime components to help manage the monitoring and job dependencies acceptance testing list input data types and sources describe tests required for new fun... | 1 |

157,577 | 6,008,757,547 | IssuesEvent | 2017-06-06 08:46:16 | lxde/lxqt | https://api.github.com/repos/lxde/lxqt | closed | Some widgets can not be themed | low-priority lxqt-panel qss/themes | Maybe Im missing something, but this are some little issues I have found while theming:

**LXQT Runner**

The searh results (QListView or "commandList") use the system theme, but still refuses to be styled with the lxqt-runner.qss. The last update did not change this, or I did not notice how to do it.

**Panel Plugins*... | 1.0 | Some widgets can not be themed - Maybe Im missing something, but this are some little issues I have found while theming:

**LXQT Runner**

The searh results (QListView or "commandList") use the system theme, but still refuses to be styled with the lxqt-runner.qss. The last update did not change this, or I did not notic... | priority | some widgets can not be themed maybe im missing something but this are some little issues i have found while theming lxqt runner the searh results qlistview or commandlist use the system theme but still refuses to be styled with the lxqt runner qss the last update did not change this or i did not notic... | 1 |

194,819 | 6,899,517,119 | IssuesEvent | 2017-11-24 14:08:07 | highcharts/highcharts | https://api.github.com/repos/highcharts/highcharts | closed | One data label not showing. | Bug Priority:Low | When I create a stack bar chart, and choose the stacked bars to be horizontally, along with the labels showing on the stacked bars, one data label is not being displayed.

As shown in the snapshot,

The data la... | 1.0 | One data label not showing. - When I create a stack bar chart, and choose the stacked bars to be horizontally, along with the labels showing on the stacked bars, one data label is not being displayed.

As shown in the snapshot,

` which returns domain size of initial/final state sets. Those might contain “deleted” states (final/initial at some point but not now, yet still allocated with `false` value in `NumberPredicate`), so when... | 1.0 | Complementation over non-existent states - In classical complement algorithm implemented in `Mata::Nfa::complement_classical`, we call `Mata::Nfa::Nfa::size()` which returns domain size of initial/final state sets. Those might contain “deleted” states (final/initial at some point but not now, yet still allocated with `... | priority | complementation over non existent states in classical complement algorithm implemented in mata nfa complement classical we call mata nfa nfa size which returns domain size of initial final state sets those might contain “deleted” states final initial at some point but not now yet still allocated with ... | 1 |

585,245 | 17,483,511,838 | IssuesEvent | 2021-08-09 07:53:18 | chaosblade-io/chaosblade | https://api.github.com/repos/chaosblade-io/chaosblade | closed | Does the dd command in the mac system not support the inflag attribute? There will be problems when simulating the disk fill scene | priority/low type/feature chaosblade-exec-os | 我在mac上执行下面命令会报错:

blade create disk fill -d --mount-point /home --size 1024

错误为:

{"code":604,"success":false,"error":"dd: unknown operand iflag\n exit status 1 exit status 1"}

看了一下上面的讨论,我直接执行下面的命令也会报错

命令为:

dd if=/dev/zero of=/home/chaos_filldisk.log.dat bs=1b count=1 iflag=fullblock

错误为:

dd: unknown operand if... | 1.0 | Does the dd command in the mac system not support the inflag attribute? There will be problems when simulating the disk fill scene - 我在mac上执行下面命令会报错:

blade create disk fill -d --mount-point /home --size 1024

错误为:

{"code":604,"success":false,"error":"dd: unknown operand iflag\n exit status 1 exit status 1"}

看了一下上面... | priority | does the dd command in the mac system not support the inflag attribute there will be problems when simulating the disk fill scene 我在mac上执行下面命令会报错: blade create disk fill d mount point home size 错误为: code success false error dd unknown operand iflag n exit status exit status 看了一下上面的讨论,我... | 1 |

59,326 | 3,105,473,842 | IssuesEvent | 2015-08-31 21:06:11 | UniVR/GolfVR | https://api.github.com/repos/UniVR/GolfVR | opened | Explain rules introduction | priority:low type:idea | Explain the rules before the game begin

_Watch the ball to shoot (the time the ball is watched will define the power)

_... (to be defined) | 1.0 | Explain rules introduction - Explain the rules before the game begin

_Watch the ball to shoot (the time the ball is watched will define the power)

_... (to be defined) | priority | explain rules introduction explain the rules before the game begin watch the ball to shoot the time the ball is watched will define the power to be defined | 1 |

233,092 | 7,693,577,662 | IssuesEvent | 2018-05-18 04:38:28 | ElektraInitiative/libelektra | https://api.github.com/repos/ElektraInitiative/libelektra | opened | doc: changes to icheck script | low priority | Forgot to add section to doc/news/_preparation_next_release.md mentioning that the icheck script now no longer leaves the base directory. | 1.0 | doc: changes to icheck script - Forgot to add section to doc/news/_preparation_next_release.md mentioning that the icheck script now no longer leaves the base directory. | priority | doc changes to icheck script forgot to add section to doc news preparation next release md mentioning that the icheck script now no longer leaves the base directory | 1 |

744,867 | 25,958,769,527 | IssuesEvent | 2022-12-18 15:51:19 | CosmosOS/Cosmos | https://api.github.com/repos/CosmosOS/Cosmos | closed | Deleting file and directory not working | Bug Up for Grabs Complexity: Medium Priority: Low Area: File System | #### Area of Cosmos - What area of Cosmos are we dealing with?

File system

#### Expected Behaviour - What do you think that should happen?

I should be able to delete files and directories when I want.