Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

1,824 | 6,577,335,030 | IssuesEvent | 2017-09-12 00:11:21 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | postgresql_db owner for tables and views | affects_2.0 bug_report feature_idea waiting_on_maintainer | ##### ISSUE TYPE

- Bug

- Feature Idea

##### COMPONENT NAME

postgresql_db

##### ANSIBLE VERSION

```

ansible 2.0.2.0

config file = /home/toga/PycharmProjects/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

ansible.cfg

```

[defaults]

inventory = hosts

ask_vault_pass=True

[privilege_escalation]

become=True

become_ask_pass=True

```

##### OS / ENVIRONMENT

```

Ubuntu 14.04.4 LTS

virtualenv with ansible

PostgreSQL 9.3

```

##### SUMMARY

The module `postgresql_db` should recursively set the database owner for all included tables, views and sequences.

`ALTER DATABASE %s OWNER TO %s` only affects the database but not the tables in the database.

After restoring a dump with the postgres user, the owner of all tables where set to postgres and won't be reassigned to the user named in the task.

##### STEPS TO REPRODUCE

Connect to psql

```

CREATE USER test;

CREATE DATABASE test OWNER postgres;

\c test

CREATE TABLE films (

code char(5) CONSTRAINT firstkey PRIMARY KEY,

title varchar(40) NOT NULL,

did integer NOT NULL,

date_prod date,

kind varchar(10),

len interval hour to minute

);

```

User ansible

```

ansible localhost --connection=local -b --become-user postgres -m postgresql_db -a "name=test owner=test"

```

##### EXPECTED RESULTS

```

test=# \l

Name | Owner | Encoding | Collate | Ctype | Access privileges

-----------+----------+----------+-------------+-------------+-----------------------

test | test | UTF8 | de_DE.UTF-8 | de_DE.UTF-8 | =Tc/test2 +

| | | | | test2=CTc/test2

test=# \d

List of relations

Schema | Name | Type | Owner

--------+-------+-------+----------

public | films | table | test

(1 row)

```

##### ACTUAL RESULTS

```

test=# \l

Name | Owner | Encoding | Collate | Ctype | Access privileges

-----------+----------+----------+-------------+-------------+-----------------------

test | test | UTF8 | de_DE.UTF-8 | de_DE.UTF-8 | =Tc/test2 +

| | | | | test2=CTc/test2

test=# \d

List of relations

Schema | Name | Type | Owner

--------+-------+-------+----------

public | films | table | postgres

(1 row)

```

| True | postgresql_db owner for tables and views - ##### ISSUE TYPE

- Bug

- Feature Idea

##### COMPONENT NAME

postgresql_db

##### ANSIBLE VERSION

```

ansible 2.0.2.0

config file = /home/toga/PycharmProjects/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

ansible.cfg

```

[defaults]

inventory = hosts

ask_vault_pass=True

[privilege_escalation]

become=True

become_ask_pass=True

```

##### OS / ENVIRONMENT

```

Ubuntu 14.04.4 LTS

virtualenv with ansible

PostgreSQL 9.3

```

##### SUMMARY

The module `postgresql_db` should recursively set the database owner for all included tables, views and sequences.

`ALTER DATABASE %s OWNER TO %s` only affects the database but not the tables in the database.

After restoring a dump with the postgres user, the owner of all tables where set to postgres and won't be reassigned to the user named in the task.

##### STEPS TO REPRODUCE

Connect to psql

```

CREATE USER test;

CREATE DATABASE test OWNER postgres;

\c test

CREATE TABLE films (

code char(5) CONSTRAINT firstkey PRIMARY KEY,

title varchar(40) NOT NULL,

did integer NOT NULL,

date_prod date,

kind varchar(10),

len interval hour to minute

);

```

User ansible

```

ansible localhost --connection=local -b --become-user postgres -m postgresql_db -a "name=test owner=test"

```

##### EXPECTED RESULTS

```

test=# \l

Name | Owner | Encoding | Collate | Ctype | Access privileges

-----------+----------+----------+-------------+-------------+-----------------------

test | test | UTF8 | de_DE.UTF-8 | de_DE.UTF-8 | =Tc/test2 +

| | | | | test2=CTc/test2

test=# \d

List of relations

Schema | Name | Type | Owner

--------+-------+-------+----------

public | films | table | test

(1 row)

```

##### ACTUAL RESULTS

```

test=# \l

Name | Owner | Encoding | Collate | Ctype | Access privileges

-----------+----------+----------+-------------+-------------+-----------------------

test | test | UTF8 | de_DE.UTF-8 | de_DE.UTF-8 | =Tc/test2 +

| | | | | test2=CTc/test2

test=# \d

List of relations

Schema | Name | Type | Owner

--------+-------+-------+----------

public | films | table | postgres

(1 row)

```

| main | postgresql db owner for tables and views issue type bug feature idea component name postgresql db ansible version ansible config file home toga pycharmprojects ansible ansible cfg configured module search path default w o overrides configuration ansible cfg inventory hosts ask vault pass true become true become ask pass true os environment ubuntu lts virtualenv with ansible postgresql summary the module postgresql db should recursively set the database owner for all included tables views and sequences alter database s owner to s only affects the database but not the tables in the database after restoring a dump with the postgres user the owner of all tables where set to postgres and won t be reassigned to the user named in the task steps to reproduce connect to psql create user test create database test owner postgres c test create table films code char constraint firstkey primary key title varchar not null did integer not null date prod date kind varchar len interval hour to minute user ansible ansible localhost connection local b become user postgres m postgresql db a name test owner test expected results test l name owner encoding collate ctype access privileges test test de de utf de de utf tc ctc test d list of relations schema name type owner public films table test row actual results test l name owner encoding collate ctype access privileges test test de de utf de de utf tc ctc test d list of relations schema name type owner public films table postgres row | 1 |

19,853 | 10,428,319,387 | IssuesEvent | 2019-09-16 22:11:53 | gate5/test2 | https://api.github.com/repos/gate5/test2 | opened | CVE-2018-10054 (High) detected in h2-1.4.187.jar | security vulnerability | ## CVE-2018-10054 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>h2-1.4.187.jar</b></p></summary>

<p>null</p>

<p>Path to dependency file: /tmp/ws-scm/test2/pom.xml</p>

<p>Path to vulnerable library: epository/com/h2database/h2/1.4.187/h2-1.4.187.jar</p>

<p>

Dependency Hierarchy:

- :x: **h2-1.4.187.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/gate5/test2/commit/9caf3d214cc15f500423a2e431ea111cf9526739">9caf3d214cc15f500423a2e431ea111cf9526739</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

H2 1.4.197, as used in Datomic before 0.9.5697 and other products, allows remote code execution because CREATE ALIAS can execute arbitrary Java code.

<p>Publish Date: 2018-04-11

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-10054>CVE-2018-10054</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-10054">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-10054</a></p>

<p>Release Date: 2018-04-11</p>

<p>Fix Resolution: 1.4.198</p>

</p>

</details>

<p></p>

| True | CVE-2018-10054 (High) detected in h2-1.4.187.jar - ## CVE-2018-10054 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>h2-1.4.187.jar</b></p></summary>

<p>null</p>

<p>Path to dependency file: /tmp/ws-scm/test2/pom.xml</p>

<p>Path to vulnerable library: epository/com/h2database/h2/1.4.187/h2-1.4.187.jar</p>

<p>

Dependency Hierarchy:

- :x: **h2-1.4.187.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/gate5/test2/commit/9caf3d214cc15f500423a2e431ea111cf9526739">9caf3d214cc15f500423a2e431ea111cf9526739</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

H2 1.4.197, as used in Datomic before 0.9.5697 and other products, allows remote code execution because CREATE ALIAS can execute arbitrary Java code.

<p>Publish Date: 2018-04-11

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-10054>CVE-2018-10054</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-10054">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-10054</a></p>

<p>Release Date: 2018-04-11</p>

<p>Fix Resolution: 1.4.198</p>

</p>

</details>

<p></p>

| non_main | cve high detected in jar cve high severity vulnerability vulnerable library jar null path to dependency file tmp ws scm pom xml path to vulnerable library epository com jar dependency hierarchy x jar vulnerable library found in head commit a href vulnerability details as used in datomic before and other products allows remote code execution because create alias can execute arbitrary java code publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution | 0 |

342,333 | 24,738,950,544 | IssuesEvent | 2022-10-21 02:10:16 | AY2223S1-CS2103T-W16-2/tp | https://api.github.com/repos/AY2223S1-CS2103T-W16-2/tp | closed | Remove hard line breaks from markdown files | priority.High documentation.UserGuide documentation.DeveloperGuide documentation bug | * They make it harder to change text

* They don't follow our module's markdown coding style

* They cause rendering issues as different parsers interpret hard breaks differently

We need to wrap this up quickly as we have a draft UG/DG submission due. | 3.0 | Remove hard line breaks from markdown files - * They make it harder to change text

* They don't follow our module's markdown coding style

* They cause rendering issues as different parsers interpret hard breaks differently

We need to wrap this up quickly as we have a draft UG/DG submission due. | non_main | remove hard line breaks from markdown files they make it harder to change text they don t follow our module s markdown coding style they cause rendering issues as different parsers interpret hard breaks differently we need to wrap this up quickly as we have a draft ug dg submission due | 0 |

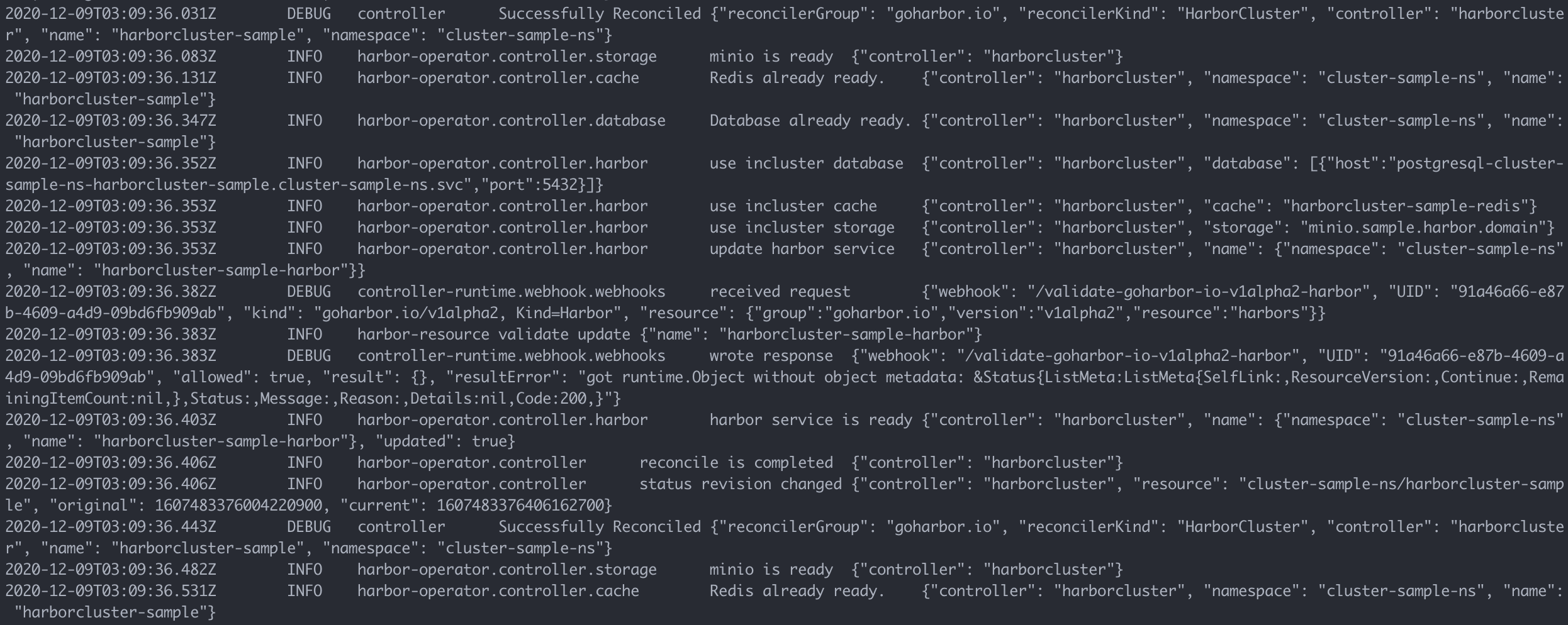

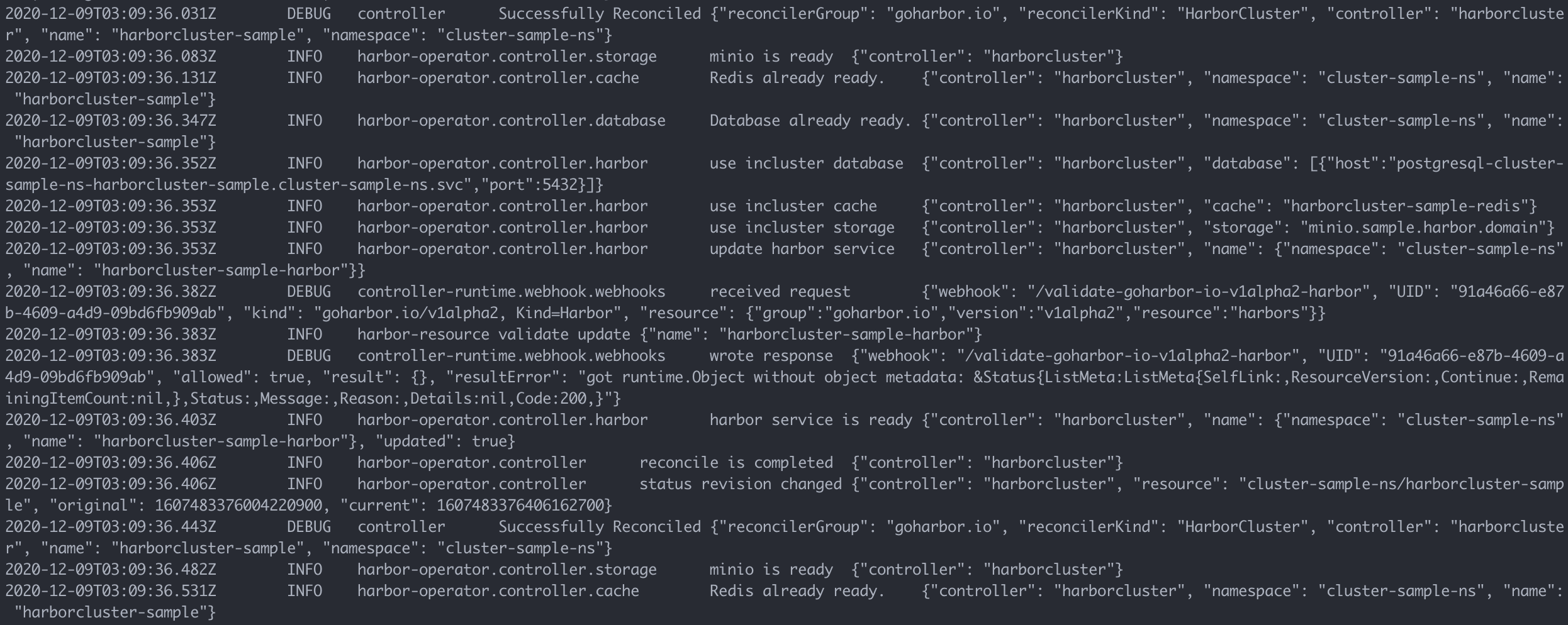

501,797 | 14,533,719,975 | IssuesEvent | 2020-12-15 01:14:30 | goharbor/harbor-operator | https://api.github.com/repos/goharbor/harbor-operator | closed | harbor-operator harbor-cluster controller reconcile dead loop. | area/reconciler kind/bug priority/high reconciler/harbor-cluster release/1.0 | What can we help you?

All service is ready, but harbor-cluster is always reconciled into infinite cycles. | 1.0 | harbor-operator harbor-cluster controller reconcile dead loop. - What can we help you?

All service is ready, but harbor-cluster is always reconciled into infinite cycles. | non_main | harbor operator harbor cluster controller reconcile dead loop what can we help you all service is ready but harbor cluster is always reconciled into infinite cycles | 0 |

5,280 | 26,682,333,414 | IssuesEvent | 2023-01-26 18:45:34 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | opened | Persist visibility of table inspector and its sections within localStorage | type: enhancement work: frontend status: ready restricted: maintainers | ## Current behavior

- The table inspector is enabled when loading a page. Users can hide it, but it always re-appears when reloading.

- The sections within the table inspector work in a similar manner, though some are set to hidden by default.

## Desired behavior

- The visibility of the table inspector and each of its sections is synchronized with localStorage.

- We'll need to figure out a proper schema for storing this data in localStorage, taking into account the likelihood of eventually putting more data in localStorage and possibly needing to migrate existing localStorage data.

This is marked as restricted because we're not yet persisting anything to localStorage and I want to make sure this we set this up in a manner that provides a clean integration with our Svelte stores.

| True | Persist visibility of table inspector and its sections within localStorage - ## Current behavior

- The table inspector is enabled when loading a page. Users can hide it, but it always re-appears when reloading.

- The sections within the table inspector work in a similar manner, though some are set to hidden by default.

## Desired behavior

- The visibility of the table inspector and each of its sections is synchronized with localStorage.

- We'll need to figure out a proper schema for storing this data in localStorage, taking into account the likelihood of eventually putting more data in localStorage and possibly needing to migrate existing localStorage data.

This is marked as restricted because we're not yet persisting anything to localStorage and I want to make sure this we set this up in a manner that provides a clean integration with our Svelte stores.

| main | persist visibility of table inspector and its sections within localstorage current behavior the table inspector is enabled when loading a page users can hide it but it always re appears when reloading the sections within the table inspector work in a similar manner though some are set to hidden by default desired behavior the visibility of the table inspector and each of its sections is synchronized with localstorage we ll need to figure out a proper schema for storing this data in localstorage taking into account the likelihood of eventually putting more data in localstorage and possibly needing to migrate existing localstorage data this is marked as restricted because we re not yet persisting anything to localstorage and i want to make sure this we set this up in a manner that provides a clean integration with our svelte stores | 1 |

322,071 | 27,579,121,405 | IssuesEvent | 2023-03-08 15:03:50 | onc-healthit/onc-certification-g10-test-kit | https://api.github.com/repos/onc-healthit/onc-certification-g10-test-kit | closed | Check bulk export status of cancelled job in bulk v2 | g10-test-kit add constraint v3.5.0 | > Following the delete request, when subsequent requests are made to the polling location, the server SHALL return a 404 Not Found error and an associated FHIR OperationOutcome in JSON format.

http://hl7.org/fhir/uv/bulkdata/STU2/export.html#bulk-data-delete-request

A test needs to be added for these requirements. | 1.0 | Check bulk export status of cancelled job in bulk v2 - > Following the delete request, when subsequent requests are made to the polling location, the server SHALL return a 404 Not Found error and an associated FHIR OperationOutcome in JSON format.

http://hl7.org/fhir/uv/bulkdata/STU2/export.html#bulk-data-delete-request

A test needs to be added for these requirements. | non_main | check bulk export status of cancelled job in bulk following the delete request when subsequent requests are made to the polling location the server shall return a not found error and an associated fhir operationoutcome in json format a test needs to be added for these requirements | 0 |

5,863 | 31,768,815,833 | IssuesEvent | 2023-09-12 10:25:17 | markus-wa/demoinfocs-golang | https://api.github.com/repos/markus-wa/demoinfocs-golang | closed | CS2 corrupt input | needs: investigation (maintainer) | **Describe the bug**

This demo causes a parser error:

```

panic: snappy: corrupt input [recovered]

panic: snappy: corrupt input

goroutine 1 [running]:

github.com/markus-wa/demoinfocs-golang/v4/pkg/demoinfocs.recoverFromUnexpectedEOF({0x81a3e0, 0xc0000482e0})

C:/Users/runie/go/pkg/mod/github.com/markus-wa/demoinfocs-golang/v4@v4.0.0-beta.0/pkg/demoinfocs/parsing.go:171 +0x274

github.com/markus-wa/demoinfocs-golang/v4/pkg/demoinfocs.(*parser).ParseToEnd.func1()

C:/Users/runie/go/pkg/mod/github.com/markus-wa/demoinfocs-golang/v4@v4.0.0-beta.0/pkg/demoinfocs/parsing.go:124 +0x9f

panic({0x81a3e0?, 0xc0000482e0?})

C:/Program Files/Go/src/runtime/panic.go:920 +0x290

github.com/markus-wa/demoinfocs-golang/v4/pkg/demoinfocs.(*parser).parseFrameS2(0xc000069a00)

C:/Users/runie/go/pkg/mod/github.com/markus-wa/demoinfocs-golang/v4@v4.0.0-beta.0/pkg/demoinfocs/parsing.go:349 +0x905

github.com/markus-wa/demoinfocs-golang/v4/pkg/demoinfocs.(*parser).ParseToEnd(0xc000069a00)

C:/Users/runie/go/pkg/mod/github.com/markus-wa/demoinfocs-golang/v4@v4.0.0-beta.0/pkg/demoinfocs/parsing.go:147 +0x210

```

Not familiar enough with the library to tell if this is a bugged demo or not.

**To Reproduce**

Replay: http://replay183.valve.net/730/003636925493187444888_0599554593.dem.bz2

**Library version**

v4.0.0-beta.0

| True | CS2 corrupt input - **Describe the bug**

This demo causes a parser error:

```

panic: snappy: corrupt input [recovered]

panic: snappy: corrupt input

goroutine 1 [running]:

github.com/markus-wa/demoinfocs-golang/v4/pkg/demoinfocs.recoverFromUnexpectedEOF({0x81a3e0, 0xc0000482e0})

C:/Users/runie/go/pkg/mod/github.com/markus-wa/demoinfocs-golang/v4@v4.0.0-beta.0/pkg/demoinfocs/parsing.go:171 +0x274

github.com/markus-wa/demoinfocs-golang/v4/pkg/demoinfocs.(*parser).ParseToEnd.func1()

C:/Users/runie/go/pkg/mod/github.com/markus-wa/demoinfocs-golang/v4@v4.0.0-beta.0/pkg/demoinfocs/parsing.go:124 +0x9f

panic({0x81a3e0?, 0xc0000482e0?})

C:/Program Files/Go/src/runtime/panic.go:920 +0x290

github.com/markus-wa/demoinfocs-golang/v4/pkg/demoinfocs.(*parser).parseFrameS2(0xc000069a00)

C:/Users/runie/go/pkg/mod/github.com/markus-wa/demoinfocs-golang/v4@v4.0.0-beta.0/pkg/demoinfocs/parsing.go:349 +0x905

github.com/markus-wa/demoinfocs-golang/v4/pkg/demoinfocs.(*parser).ParseToEnd(0xc000069a00)

C:/Users/runie/go/pkg/mod/github.com/markus-wa/demoinfocs-golang/v4@v4.0.0-beta.0/pkg/demoinfocs/parsing.go:147 +0x210

```

Not familiar enough with the library to tell if this is a bugged demo or not.

**To Reproduce**

Replay: http://replay183.valve.net/730/003636925493187444888_0599554593.dem.bz2

**Library version**

v4.0.0-beta.0

| main | corrupt input describe the bug this demo causes a parser error panic snappy corrupt input panic snappy corrupt input goroutine github com markus wa demoinfocs golang pkg demoinfocs recoverfromunexpectedeof c users runie go pkg mod github com markus wa demoinfocs golang beta pkg demoinfocs parsing go github com markus wa demoinfocs golang pkg demoinfocs parser parsetoend c users runie go pkg mod github com markus wa demoinfocs golang beta pkg demoinfocs parsing go panic c program files go src runtime panic go github com markus wa demoinfocs golang pkg demoinfocs parser c users runie go pkg mod github com markus wa demoinfocs golang beta pkg demoinfocs parsing go github com markus wa demoinfocs golang pkg demoinfocs parser parsetoend c users runie go pkg mod github com markus wa demoinfocs golang beta pkg demoinfocs parsing go not familiar enough with the library to tell if this is a bugged demo or not to reproduce replay library version beta | 1 |

104,324 | 16,613,611,299 | IssuesEvent | 2021-06-02 14:18:10 | Thanraj/linux-4.1.15 | https://api.github.com/repos/Thanraj/linux-4.1.15 | opened | CVE-2020-8992 (Medium) detected in linux-stable-rtv4.1.33 | security vulnerability | ## CVE-2020-8992 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://api.github.com/repos/Thanraj/linux-4.1.15/commits/5e3fb3e332499e1ad10a0969e55582af1027b085">5e3fb3e332499e1ad10a0969e55582af1027b085</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux-4.1.15/fs/ext4/block_validity.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux-4.1.15/fs/ext4/block_validity.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ext4_protect_reserved_inode in fs/ext4/block_validity.c in the Linux kernel through 5.5.3 allows attackers to cause a denial of service (soft lockup) via a crafted journal size.

<p>Publish Date: 2020-02-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8992>CVE-2020-8992</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2020-8992">https://www.linuxkernelcves.com/cves/CVE-2020-8992</a></p>

<p>Release Date: 2020-07-22</p>

<p>Fix Resolution: v5.6-rc2,v5.4.21,v5.5.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-8992 (Medium) detected in linux-stable-rtv4.1.33 - ## CVE-2020-8992 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://api.github.com/repos/Thanraj/linux-4.1.15/commits/5e3fb3e332499e1ad10a0969e55582af1027b085">5e3fb3e332499e1ad10a0969e55582af1027b085</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux-4.1.15/fs/ext4/block_validity.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux-4.1.15/fs/ext4/block_validity.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ext4_protect_reserved_inode in fs/ext4/block_validity.c in the Linux kernel through 5.5.3 allows attackers to cause a denial of service (soft lockup) via a crafted journal size.

<p>Publish Date: 2020-02-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8992>CVE-2020-8992</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2020-8992">https://www.linuxkernelcves.com/cves/CVE-2020-8992</a></p>

<p>Release Date: 2020-07-22</p>

<p>Fix Resolution: v5.6-rc2,v5.4.21,v5.5.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | cve medium detected in linux stable cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source files linux fs block validity c linux fs block validity c vulnerability details protect reserved inode in fs block validity c in the linux kernel through allows attackers to cause a denial of service soft lockup via a crafted journal size publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource | 0 |

5,653 | 28,961,043,329 | IssuesEvent | 2023-05-10 02:31:19 | MozillaFoundation/foundation.mozilla.org | https://api.github.com/repos/MozillaFoundation/foundation.mozilla.org | opened | Investigate `test_library_page.py` | engineering backend maintain nice to have needs grooming | While working on #10488, the test `test_filter_multiple_author_profiles` was giving me some trouble whenever I tried to update its implementation from using a .get() request to instead using the `_get_research_detail_pages()` method directly, as all the other tests do.

What I found was that using `_get_research_detail_pages()` directly would return an empty queryset of no pages, even though using the test client and a .get() request would still technically send the same author ID's to the method in the end.

I also found that both using the method directly and using the test client would return the same Author Profiles using the same translation keys when using print statements [here](https://github.com/MozillaFoundation/foundation.mozilla.org/blob/96654723c6a3e82e209f14bfe84db651ec4d6d03/network-api/networkapi/wagtailpages/pagemodels/research_hub/library_page.py#L126-L135).

However, it seems that this may part of a bigger issue as if you rename this function, other tests begin to fail as well.

I think we should investigate this further in its own ticket to see what is going on here.

| True | Investigate `test_library_page.py` - While working on #10488, the test `test_filter_multiple_author_profiles` was giving me some trouble whenever I tried to update its implementation from using a .get() request to instead using the `_get_research_detail_pages()` method directly, as all the other tests do.

What I found was that using `_get_research_detail_pages()` directly would return an empty queryset of no pages, even though using the test client and a .get() request would still technically send the same author ID's to the method in the end.

I also found that both using the method directly and using the test client would return the same Author Profiles using the same translation keys when using print statements [here](https://github.com/MozillaFoundation/foundation.mozilla.org/blob/96654723c6a3e82e209f14bfe84db651ec4d6d03/network-api/networkapi/wagtailpages/pagemodels/research_hub/library_page.py#L126-L135).

However, it seems that this may part of a bigger issue as if you rename this function, other tests begin to fail as well.

I think we should investigate this further in its own ticket to see what is going on here.

| main | investigate test library page py while working on the test test filter multiple author profiles was giving me some trouble whenever i tried to update its implementation from using a get request to instead using the get research detail pages method directly as all the other tests do what i found was that using get research detail pages directly would return an empty queryset of no pages even though using the test client and a get request would still technically send the same author id s to the method in the end i also found that both using the method directly and using the test client would return the same author profiles using the same translation keys when using print statements however it seems that this may part of a bigger issue as if you rename this function other tests begin to fail as well i think we should investigate this further in its own ticket to see what is going on here | 1 |

2,132 | 7,302,364,406 | IssuesEvent | 2018-02-27 09:28:36 | RalfKoban/MiKo-Analyzers | https://api.github.com/repos/RalfKoban/MiKo-Analyzers | reopened | Test classes should contain tests | Area: analyzer Area: maintainability feature | Classes that are marked as tests should include tests. Otherwise it does not make sense to mark them as test classes. | True | Test classes should contain tests - Classes that are marked as tests should include tests. Otherwise it does not make sense to mark them as test classes. | main | test classes should contain tests classes that are marked as tests should include tests otherwise it does not make sense to mark them as test classes | 1 |

4,613 | 23,879,134,688 | IssuesEvent | 2022-09-07 22:28:52 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | "sam local start-api" should use HTTP/1.1 | contributors/good-first-issue type/feature area/local/start-api maintainer/need-followup | ### Describe your idea/feature/enhancement

It would be nice if `sam local start-api` responses used HTTP/1.1. I ran into this idea while trying to test my API locally with Postman. Requests keep failing there with the console message `Error: Parse Error: Expected HTTP/`, even though the local server output shows a successful response and `curl` requests also work correctly. After some googling, it appears that Postman cannot handle HTTP/1.0 responses. Frankly, I'm annoyed with Postman for not being able to support different HTTP versions (and [others](https://github.com/postmanlabs/postman-app-support/issues/6242) [have](https://stackoverflow.com/questions/20359149/how-can-you-set-the-http-protocol-version-in-postman) already opened tickets with Postman to resolve that), but I see no reason why SAM CLI should use such an ancient version of the protocol either. HTTP/1.1 or /2 would be preferrable (though I think /2 would also break Postman 😬). I believe this is happening because `sam local start-api` uses Flask internally (v1.1.2, at time of this writing), which apparently defaults to using HTTP/1.0

### Proposal

The `sam local start-api` command handler should configure its internal Flask instance to use HTTP/1.1. Based on [this SO post](https://stackoverflow.com/questions/59027260/make-flask-return-response-header-http1-1-instead-http1-0) and a quick search through SAM CLI's source, I think you would just need to add the following line to the [`LocalApigwService.create`](https://github.com/aws/aws-sam-cli/blob/2b2a921172a22f43ef8580eb8ad0be1558e9cb7d/samcli/local/apigw/local_apigw_service.py#L154) method:

```python

WSGIRequestHandler.protocol_version = "HTTP/1.1"

```

I would have opened a PR for this, but I'm not very familiar with Python.

This would not require any updates to the SAM Spec, though it wouldn't hurt to add a warning to the docs about what HTTP version `sam local start-api` uses. | True | "sam local start-api" should use HTTP/1.1 - ### Describe your idea/feature/enhancement

It would be nice if `sam local start-api` responses used HTTP/1.1. I ran into this idea while trying to test my API locally with Postman. Requests keep failing there with the console message `Error: Parse Error: Expected HTTP/`, even though the local server output shows a successful response and `curl` requests also work correctly. After some googling, it appears that Postman cannot handle HTTP/1.0 responses. Frankly, I'm annoyed with Postman for not being able to support different HTTP versions (and [others](https://github.com/postmanlabs/postman-app-support/issues/6242) [have](https://stackoverflow.com/questions/20359149/how-can-you-set-the-http-protocol-version-in-postman) already opened tickets with Postman to resolve that), but I see no reason why SAM CLI should use such an ancient version of the protocol either. HTTP/1.1 or /2 would be preferrable (though I think /2 would also break Postman 😬). I believe this is happening because `sam local start-api` uses Flask internally (v1.1.2, at time of this writing), which apparently defaults to using HTTP/1.0

### Proposal

The `sam local start-api` command handler should configure its internal Flask instance to use HTTP/1.1. Based on [this SO post](https://stackoverflow.com/questions/59027260/make-flask-return-response-header-http1-1-instead-http1-0) and a quick search through SAM CLI's source, I think you would just need to add the following line to the [`LocalApigwService.create`](https://github.com/aws/aws-sam-cli/blob/2b2a921172a22f43ef8580eb8ad0be1558e9cb7d/samcli/local/apigw/local_apigw_service.py#L154) method:

```python

WSGIRequestHandler.protocol_version = "HTTP/1.1"

```

I would have opened a PR for this, but I'm not very familiar with Python.

This would not require any updates to the SAM Spec, though it wouldn't hurt to add a warning to the docs about what HTTP version `sam local start-api` uses. | main | sam local start api should use http describe your idea feature enhancement it would be nice if sam local start api responses used http i ran into this idea while trying to test my api locally with postman requests keep failing there with the console message error parse error expected http even though the local server output shows a successful response and curl requests also work correctly after some googling it appears that postman cannot handle http responses frankly i m annoyed with postman for not being able to support different http versions and already opened tickets with postman to resolve that but i see no reason why sam cli should use such an ancient version of the protocol either http or would be preferrable though i think would also break postman 😬 i believe this is happening because sam local start api uses flask internally at time of this writing which apparently defaults to using http proposal the sam local start api command handler should configure its internal flask instance to use http based on and a quick search through sam cli s source i think you would just need to add the following line to the method python wsgirequesthandler protocol version http i would have opened a pr for this but i m not very familiar with python this would not require any updates to the sam spec though it wouldn t hurt to add a warning to the docs about what http version sam local start api uses | 1 |

877 | 4,541,075,367 | IssuesEvent | 2016-09-09 16:34:13 | coniks-sys/coniks-go | https://api.github.com/repos/coniks-sys/coniks-go | closed | Use script to gather all package test coverage profiles | maintainability | Instead of having to add each new package and subpackage to our .travis.yml file manually to collect the test coverage profiles, we should use a script like https://github.com/dedis/cosi/blob/master/coveralls.sh to handle this automatically. | True | Use script to gather all package test coverage profiles - Instead of having to add each new package and subpackage to our .travis.yml file manually to collect the test coverage profiles, we should use a script like https://github.com/dedis/cosi/blob/master/coveralls.sh to handle this automatically. | main | use script to gather all package test coverage profiles instead of having to add each new package and subpackage to our travis yml file manually to collect the test coverage profiles we should use a script like to handle this automatically | 1 |

276,908 | 8,614,579,025 | IssuesEvent | 2018-11-19 17:53:36 | Qiskit/qiskit-terra | https://api.github.com/repos/Qiskit/qiskit-terra | closed | No method to override use of interactive plot visualizations | priority: medium | <!-- ⚠️ If you do not respect this template, your issue will be closed -->

<!-- ⚠️ Make sure to browse the opened and closed issues to confirm this idea does not exist. -->

### What is the expected enhancement?

When running visualizations that have an interactive mode if you're system meets the requirements we unconditionally use the interactive visualizations. We need to provide an option to let users override this and use the non-interactive visualizations.

| 1.0 | No method to override use of interactive plot visualizations - <!-- ⚠️ If you do not respect this template, your issue will be closed -->

<!-- ⚠️ Make sure to browse the opened and closed issues to confirm this idea does not exist. -->

### What is the expected enhancement?

When running visualizations that have an interactive mode if you're system meets the requirements we unconditionally use the interactive visualizations. We need to provide an option to let users override this and use the non-interactive visualizations.

| non_main | no method to override use of interactive plot visualizations what is the expected enhancement when running visualizations that have an interactive mode if you re system meets the requirements we unconditionally use the interactive visualizations we need to provide an option to let users override this and use the non interactive visualizations | 0 |

640,442 | 20,789,895,821 | IssuesEvent | 2022-03-17 00:04:37 | JeffreyCHChan/SOEN390 | https://api.github.com/repos/JeffreyCHChan/SOEN390 | closed | As a patient, I want to be able to get an 8-digit ID so that I can better share information with my doctor | Priority 4 Back End USP 1 | - [ ] Store 8-digit ID in patients database. | 1.0 | As a patient, I want to be able to get an 8-digit ID so that I can better share information with my doctor - - [ ] Store 8-digit ID in patients database. | non_main | as a patient i want to be able to get an digit id so that i can better share information with my doctor store digit id in patients database | 0 |

117,055 | 11,945,666,626 | IssuesEvent | 2020-04-03 06:25:53 | grrrrnt/ped | https://api.github.com/repos/grrrrnt/ped | opened | 3.1. preface missing | severity.Low type.DocumentationBug | Will be better to have a preface for 3.1. Viewing help

e.g. This command will allow you to view a list of help options.

| 1.0 | 3.1. preface missing - Will be better to have a preface for 3.1. Viewing help

e.g. This command will allow you to view a list of help options.

| non_main | preface missing will be better to have a preface for viewing help e g this command will allow you to view a list of help options | 0 |

3,153 | 12,179,471,794 | IssuesEvent | 2020-04-28 10:44:29 | ipfs/js-ipfs | https://api.github.com/repos/ipfs/js-ipfs | opened | Run E2E tests against version at API port's /webui | need/analysis need/maintainers-input need/triage | > Needs #2984 to land first

Thanks to https://github.com/ipfs/js-ipfs/pull/2706 we have E2E tests that check if js-ipfs is compatible with ipfs-webui's `master` branch, but we don't run those tests against the webui version aliased at `/webui` on API port.

Over time, support for older version at `/webui` may break, while the `master` of webui continues to work with js-ipfs `master`, which means we miss some regressions.

A fix here would be to run ipfs-webui E2E test suite against version at `/webui` at well:

- extract CID from `packages/ipfs/src/http/api/routes/webui.js`

- prefetch it and cache so CI is not slowed down

- make it a separate task in CI with distinct name (eg. ipfs-webui(/webui))

- no need to run it twice

| True | Run E2E tests against version at API port's /webui - > Needs #2984 to land first

Thanks to https://github.com/ipfs/js-ipfs/pull/2706 we have E2E tests that check if js-ipfs is compatible with ipfs-webui's `master` branch, but we don't run those tests against the webui version aliased at `/webui` on API port.

Over time, support for older version at `/webui` may break, while the `master` of webui continues to work with js-ipfs `master`, which means we miss some regressions.

A fix here would be to run ipfs-webui E2E test suite against version at `/webui` at well:

- extract CID from `packages/ipfs/src/http/api/routes/webui.js`

- prefetch it and cache so CI is not slowed down

- make it a separate task in CI with distinct name (eg. ipfs-webui(/webui))

- no need to run it twice

| main | run tests against version at api port s webui needs to land first thanks to we have tests that check if js ipfs is compatible with ipfs webui s master branch but we don t run those tests against the webui version aliased at webui on api port over time support for older version at webui may break while the master of webui continues to work with js ipfs master which means we miss some regressions a fix here would be to run ipfs webui test suite against version at webui at well extract cid from packages ipfs src http api routes webui js prefetch it and cache so ci is not slowed down make it a separate task in ci with distinct name eg ipfs webui webui no need to run it twice | 1 |

41 | 2,588,002,698 | IssuesEvent | 2015-02-17 22:00:24 | jenkinsci/slack-plugin | https://api.github.com/repos/jenkinsci/slack-plugin | closed | Having trouble performing plugin release | maintainer communication | I'm currently having issues releasing the plugin. Here's an excerpt from slack...

> 21:01 < sag47> kohsuke: I'm having trouble releasing a plugin. My GitHub username is samrocketman and my jenkins-ci.org ID is sag47. I have added my credentials to ~/.m2/settings.xml from this article

https://wiki.jenkins-ci.org/display/JENKINS/Hosting+Plugins

21:02 < sag47> kohsuke: Do I have the capability to release if I'm able to be a contributor for a plugin? If not, what do I need to do in order to be able to release?

21:02 < sag47> kohsuke: I have taken over maintaining this as it was previously abandoned.

21:03 < sag47> kohsuke: Plugin in question: https://github.com/jenkinsci/slack-plugin

I'll be working through this and keep any followers for the next release updated via this issue. | True | Having trouble performing plugin release - I'm currently having issues releasing the plugin. Here's an excerpt from slack...

> 21:01 < sag47> kohsuke: I'm having trouble releasing a plugin. My GitHub username is samrocketman and my jenkins-ci.org ID is sag47. I have added my credentials to ~/.m2/settings.xml from this article

https://wiki.jenkins-ci.org/display/JENKINS/Hosting+Plugins

21:02 < sag47> kohsuke: Do I have the capability to release if I'm able to be a contributor for a plugin? If not, what do I need to do in order to be able to release?

21:02 < sag47> kohsuke: I have taken over maintaining this as it was previously abandoned.

21:03 < sag47> kohsuke: Plugin in question: https://github.com/jenkinsci/slack-plugin

I'll be working through this and keep any followers for the next release updated via this issue. | main | having trouble performing plugin release i m currently having issues releasing the plugin here s an excerpt from slack kohsuke i m having trouble releasing a plugin my github username is samrocketman and my jenkins ci org id is i have added my credentials to settings xml from this article kohsuke do i have the capability to release if i m able to be a contributor for a plugin if not what do i need to do in order to be able to release kohsuke i have taken over maintaining this as it was previously abandoned kohsuke plugin in question i ll be working through this and keep any followers for the next release updated via this issue | 1 |

550,507 | 16,114,391,082 | IssuesEvent | 2021-04-28 04:38:26 | calyco-yale/calyco | https://api.github.com/repos/calyco-yale/calyco | closed | Add Push Notifications | priority: high | Notify users whenever an invite or friend request is sent to them, and (by default) 10 minutes prior to the event. | 1.0 | Add Push Notifications - Notify users whenever an invite or friend request is sent to them, and (by default) 10 minutes prior to the event. | non_main | add push notifications notify users whenever an invite or friend request is sent to them and by default minutes prior to the event | 0 |

329,517 | 28,264,540,636 | IssuesEvent | 2023-04-07 05:01:15 | EunChanNam/We-Share-Wish-Hair | https://api.github.com/repos/EunChanNam/We-Share-Wish-Hair | closed | MyPage 서비스 테스트 및 Point 서비스 추가테스트 | test | ## 🤷 구현할 기능

* 리뷰 테스트를 마친 후 MyPageService 테스트

* PointSearchService - 정렬 테스팅 추가

## 📄 참고 사항

| 1.0 | MyPage 서비스 테스트 및 Point 서비스 추가테스트 - ## 🤷 구현할 기능

* 리뷰 테스트를 마친 후 MyPageService 테스트

* PointSearchService - 정렬 테스팅 추가

## 📄 참고 사항

| non_main | mypage 서비스 테스트 및 point 서비스 추가테스트 🤷 구현할 기능 리뷰 테스트를 마친 후 mypageservice 테스트 pointsearchservice 정렬 테스팅 추가 📄 참고 사항 | 0 |

343,031 | 10,324,603,565 | IssuesEvent | 2019-09-01 10:40:43 | weaveworks/ignite | https://api.github.com/repos/weaveworks/ignite | closed | Implement "docker attach" using custom Go code instead of execing | help wanted kind/enhancement priority/backlog | This is a follow-up from https://github.com/weaveworks/ignite/issues/67, that was left as a non-goal for v0.5.0. This should be completely doable, and we have to do the same for containerd anyways (#209). So we'll target this at the same time as containerd support, v0.6.0

This would be a really nice community contribution. | 1.0 | Implement "docker attach" using custom Go code instead of execing - This is a follow-up from https://github.com/weaveworks/ignite/issues/67, that was left as a non-goal for v0.5.0. This should be completely doable, and we have to do the same for containerd anyways (#209). So we'll target this at the same time as containerd support, v0.6.0

This would be a really nice community contribution. | non_main | implement docker attach using custom go code instead of execing this is a follow up from that was left as a non goal for this should be completely doable and we have to do the same for containerd anyways so we ll target this at the same time as containerd support this would be a really nice community contribution | 0 |

4,259 | 21,259,081,395 | IssuesEvent | 2022-04-13 00:43:57 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | Lambda SAM build (ARM64 archi) - Python libs fails | blocked/more-info-needed stage/bug-repro maintainer/need-followup platform/linux/arm | I am trying to build a **Lambda** function using **sam** (1.36.0) within **CodeBuild**, the Lambda has **arm64** architecture as well as the CodeBuild configuration (**aws/codebuild/amazonlinux2-aarch64-standard:2.0**).

Using Python 3.8.10

I have in my _requirements.txt_ the dependencies below:

```

PyYAML

pandas

s3fs

xlsxwriter

```

CodeBuild throws error:

```

Build Failed

Error: PythonPipBuilder:ResolveDependencies - {wrapt==1.13.3(sdist)}

```

My build details:

```

version: 0.2

phases:

install:

runtime-versions:

python: 3.8

commands:

- which python

- python --version

- pip install aws-sam-cli --upgrade

- sam build --template $(pwd)/template.yaml

- aws cloudformation package --template $(pwd)/.aws-sam/build/template.yaml --s3-bucket XXXXX --s3-prefix PREFIX_PATH_DUMMY --output-template-file product.template-eu-west-1.yaml

- sam deploy --template-file ./product.template-eu-west-1.yaml --stack-name DUMMY --capabilities CAPABILITY_IAM

```

I also manually tested this on **t4g Graviton instance**, doing pip install requirements.txt, there it works. But when using **CodeBuild it fails**. | True | Lambda SAM build (ARM64 archi) - Python libs fails - I am trying to build a **Lambda** function using **sam** (1.36.0) within **CodeBuild**, the Lambda has **arm64** architecture as well as the CodeBuild configuration (**aws/codebuild/amazonlinux2-aarch64-standard:2.0**).

Using Python 3.8.10

I have in my _requirements.txt_ the dependencies below:

```

PyYAML

pandas

s3fs

xlsxwriter

```

CodeBuild throws error:

```

Build Failed

Error: PythonPipBuilder:ResolveDependencies - {wrapt==1.13.3(sdist)}

```

My build details:

```

version: 0.2

phases:

install:

runtime-versions:

python: 3.8

commands:

- which python

- python --version

- pip install aws-sam-cli --upgrade

- sam build --template $(pwd)/template.yaml

- aws cloudformation package --template $(pwd)/.aws-sam/build/template.yaml --s3-bucket XXXXX --s3-prefix PREFIX_PATH_DUMMY --output-template-file product.template-eu-west-1.yaml

- sam deploy --template-file ./product.template-eu-west-1.yaml --stack-name DUMMY --capabilities CAPABILITY_IAM

```

I also manually tested this on **t4g Graviton instance**, doing pip install requirements.txt, there it works. But when using **CodeBuild it fails**. | main | lambda sam build archi python libs fails i am trying to build a lambda function using sam within codebuild the lambda has architecture as well as the codebuild configuration aws codebuild standard using python i have in my requirements txt the dependencies below pyyaml pandas xlsxwriter codebuild throws error build failed error pythonpipbuilder resolvedependencies wrapt sdist my build details version phases install runtime versions python commands which python python version pip install aws sam cli upgrade sam build template pwd template yaml aws cloudformation package template pwd aws sam build template yaml bucket xxxxx prefix prefix path dummy output template file product template eu west yaml sam deploy template file product template eu west yaml stack name dummy capabilities capability iam i also manually tested this on graviton instance doing pip install requirements txt there it works but when using codebuild it fails | 1 |

1,559 | 6,572,254,405 | IssuesEvent | 2017-09-11 00:39:41 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | unable to set mysql root password the first time | affects_1.9 bug_report waiting_on_maintainer | Issue Type:

Bug Report

Component Name:

mysql_user

Ansible Version:

```

ansible 1.9.4 (stable-1.9 2d914d4b1e) last updated 2015/11/16 21:35:26 (GMT +100)

lib/ansible/modules/core: (stable-1.9 4b65a4a8b5) last updated 2015/11/16 21:35:36 (GMT +100)

lib/ansible/modules/extras: (stable-1.9 29c3e31a92) last updated 2015/11/16 21:35:50 (GMT +100)

configured module search path = None

```

Ansible Configuration:

```

[defaults]

inventory = ./hosts

host_key_checking=False

forks = 40

display_skipped_hosts=False

retry_files_save_path = ~/.ansible-retry

log_path=/var/log/ansible.log

gathering = smart

# pipelining=True

```

Environment:

```

lsb_release -a

No LSB modules are available.

Distributor ID: Ubuntu

Description: Ubuntu 14.04.3 LTS

Release: 14.04

Codename: trusty

```

Summary:

Im unable to set the root password for mysql when deploying it with ansible.

Steps To Reproduce:

1. run [this](https://gist.github.com/serialdoom/427b246ab52b229da52b#file-mysq-yml) playbook once and get [this](https://gist.github.com/serialdoom/427b246ab52b229da52b#file-first_run-txt) result.

2. ssh to the server and try mysql without any luck. ([log](https://gist.github.com/serialdoom/427b246ab52b229da52b#file-first_sql_attempt-txt))

3. re-run playbook [log](https://gist.github.com/serialdoom/427b246ab52b229da52b#file-second_run-txt)

4. Run sql with success ([log](https://gist.github.com/serialdoom/427b246ab52b229da52b#file-second_sql-attempt-txt))

Expected Results:

Be able to start a mysql root shell at step 2 (and not at step 4).

Actual Results:

Mysql password was not set until i applied the same playbook twice.

| True | unable to set mysql root password the first time - Issue Type:

Bug Report

Component Name:

mysql_user

Ansible Version:

```

ansible 1.9.4 (stable-1.9 2d914d4b1e) last updated 2015/11/16 21:35:26 (GMT +100)

lib/ansible/modules/core: (stable-1.9 4b65a4a8b5) last updated 2015/11/16 21:35:36 (GMT +100)

lib/ansible/modules/extras: (stable-1.9 29c3e31a92) last updated 2015/11/16 21:35:50 (GMT +100)

configured module search path = None

```

Ansible Configuration:

```

[defaults]

inventory = ./hosts

host_key_checking=False

forks = 40

display_skipped_hosts=False

retry_files_save_path = ~/.ansible-retry

log_path=/var/log/ansible.log

gathering = smart

# pipelining=True

```

Environment:

```

lsb_release -a

No LSB modules are available.

Distributor ID: Ubuntu

Description: Ubuntu 14.04.3 LTS

Release: 14.04

Codename: trusty

```

Summary:

Im unable to set the root password for mysql when deploying it with ansible.

Steps To Reproduce:

1. run [this](https://gist.github.com/serialdoom/427b246ab52b229da52b#file-mysq-yml) playbook once and get [this](https://gist.github.com/serialdoom/427b246ab52b229da52b#file-first_run-txt) result.

2. ssh to the server and try mysql without any luck. ([log](https://gist.github.com/serialdoom/427b246ab52b229da52b#file-first_sql_attempt-txt))

3. re-run playbook [log](https://gist.github.com/serialdoom/427b246ab52b229da52b#file-second_run-txt)

4. Run sql with success ([log](https://gist.github.com/serialdoom/427b246ab52b229da52b#file-second_sql-attempt-txt))

Expected Results:

Be able to start a mysql root shell at step 2 (and not at step 4).

Actual Results:

Mysql password was not set until i applied the same playbook twice.

| main | unable to set mysql root password the first time issue type bug report component name mysql user ansible version ansible stable last updated gmt lib ansible modules core stable last updated gmt lib ansible modules extras stable last updated gmt configured module search path none ansible configuration inventory hosts host key checking false forks display skipped hosts false retry files save path ansible retry log path var log ansible log gathering smart pipelining true environment lsb release a no lsb modules are available distributor id ubuntu description ubuntu lts release codename trusty summary im unable to set the root password for mysql when deploying it with ansible steps to reproduce run playbook once and get result ssh to the server and try mysql without any luck re run playbook run sql with success expected results be able to start a mysql root shell at step and not at step actual results mysql password was not set until i applied the same playbook twice | 1 |

366 | 3,355,023,564 | IssuesEvent | 2015-11-18 14:57:20 | Homebrew/homebrew | https://api.github.com/repos/Homebrew/homebrew | closed | brew install --HEAD ghostscript fails | maintainer feedback | command fails with an error:

```

make: *** No rule to make target `src/png.h', needed by `obj/xpspng.o'. Stop.

make: *** Waiting for unfinished jobs....

```

I suppose this is a mixup in using system vs local libpng (autogen.sh finds system wide libpng):

```

checking for local zlib source... yes

checking for local png library source... no

checking for png_create_write_struct in -lpng... yes

checking png.h usability... yes

checking png.h presence... yes

```

Logs:

/.../Library/Logs/Homebrew/ghostscript/01.autogen.sh

/.../Library/Logs/Homebrew/ghostscript/01.autogen.sh.cc

/.../Library/Logs/Homebrew/ghostscript/02.make

/.../Library/Logs/Homebrew/ghostscript/02.make.cc

/.../Library/Logs/Homebrew/ghostscript/config.log

uploaded to: https://gist.github.com/a0cfb6f3ab1bd4e3429b

| True | brew install --HEAD ghostscript fails - command fails with an error:

```

make: *** No rule to make target `src/png.h', needed by `obj/xpspng.o'. Stop.

make: *** Waiting for unfinished jobs....

```

I suppose this is a mixup in using system vs local libpng (autogen.sh finds system wide libpng):

```

checking for local zlib source... yes

checking for local png library source... no

checking for png_create_write_struct in -lpng... yes

checking png.h usability... yes

checking png.h presence... yes

```

Logs:

/.../Library/Logs/Homebrew/ghostscript/01.autogen.sh

/.../Library/Logs/Homebrew/ghostscript/01.autogen.sh.cc

/.../Library/Logs/Homebrew/ghostscript/02.make

/.../Library/Logs/Homebrew/ghostscript/02.make.cc

/.../Library/Logs/Homebrew/ghostscript/config.log

uploaded to: https://gist.github.com/a0cfb6f3ab1bd4e3429b

| main | brew install head ghostscript fails command fails with an error make no rule to make target src png h needed by obj xpspng o stop make waiting for unfinished jobs i suppose this is a mixup in using system vs local libpng autogen sh finds system wide libpng checking for local zlib source yes checking for local png library source no checking for png create write struct in lpng yes checking png h usability yes checking png h presence yes logs library logs homebrew ghostscript autogen sh library logs homebrew ghostscript autogen sh cc library logs homebrew ghostscript make library logs homebrew ghostscript make cc library logs homebrew ghostscript config log uploaded to | 1 |

259,446 | 22,475,117,487 | IssuesEvent | 2022-06-22 11:36:22 | FOLIO-FSE/folio_migration_tools | https://api.github.com/repos/FOLIO-FSE/folio_migration_tools | closed | Add test files for all migration tasks | improve_test_coverage | In an effort to up the coverage, I am working on creating tests and setup methods that allows everyone to write tests for exactly what they need to test. | 1.0 | Add test files for all migration tasks - In an effort to up the coverage, I am working on creating tests and setup methods that allows everyone to write tests for exactly what they need to test. | non_main | add test files for all migration tasks in an effort to up the coverage i am working on creating tests and setup methods that allows everyone to write tests for exactly what they need to test | 0 |

196,696 | 22,498,602,407 | IssuesEvent | 2022-06-23 09:44:43 | jonazbot/IPington | https://api.github.com/repos/jonazbot/IPington | closed | Allow bot to share source-code on demand | enhancement security | Open source-code should be easily and readily available to end-users who want it.

### Options:

- **Post link to repo**

- Post source to channel

- Upload and post source-files | True | Allow bot to share source-code on demand - Open source-code should be easily and readily available to end-users who want it.

### Options:

- **Post link to repo**

- Post source to channel

- Upload and post source-files | non_main | allow bot to share source code on demand open source code should be easily and readily available to end users who want it options post link to repo post source to channel upload and post source files | 0 |

5,512 | 27,558,554,087 | IssuesEvent | 2023-03-07 19:57:59 | cosmos/ibc-rs | https://api.github.com/repos/cosmos/ibc-rs | closed | Investigate moving `verify_delay_passed` to a more appropriate section | A: breaking I: logic O: maintainability | <!-- < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < ☺

v ✰ Thanks for opening an issue! ✰

v Before smashing the submit button please review the template.

v Please also ensure that this is not a duplicate issue :)

☺ > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > -->

## Summary

Context: https://github.com/cosmos/ibc-rs/pull/393#discussion_r1097850720

The implemented `verify_delay_passed` method inside the `07_tendermint` doesn't seem a client-specific process and it should be placed somewhere in the "connection" part of the stack. The issue arose because we wanted to stop passing the `ctx` field into some of the verification methods like `verify_packet_acknowledgement`

| True | Investigate moving `verify_delay_passed` to a more appropriate section - <!-- < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < ☺

v ✰ Thanks for opening an issue! ✰

v Before smashing the submit button please review the template.

v Please also ensure that this is not a duplicate issue :)

☺ > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > -->

## Summary

Context: https://github.com/cosmos/ibc-rs/pull/393#discussion_r1097850720

The implemented `verify_delay_passed` method inside the `07_tendermint` doesn't seem a client-specific process and it should be placed somewhere in the "connection" part of the stack. The issue arose because we wanted to stop passing the `ctx` field into some of the verification methods like `verify_packet_acknowledgement`

| main | investigate moving verify delay passed to a more appropriate section ☺ v ✰ thanks for opening an issue ✰ v before smashing the submit button please review the template v please also ensure that this is not a duplicate issue ☺ summary context the implemented verify delay passed method inside the tendermint doesn t seem a client specific process and it should be placed somewhere in the connection part of the stack the issue arose because we wanted to stop passing the ctx field into some of the verification methods like verify packet acknowledgement | 1 |

95,971 | 16,112,999,992 | IssuesEvent | 2021-04-28 01:20:29 | RG4421/developers | https://api.github.com/repos/RG4421/developers | opened | CVE-2021-23382 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2021-23382 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-5.2.18.tgz</b>, <b>postcss-7.0.35.tgz</b>, <b>postcss-6.0.23.tgz</b></p></summary>

<p>

<details><summary><b>postcss-5.2.18.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-5.2.18.tgz">https://registry.npmjs.org/postcss/-/postcss-5.2.18.tgz</a></p>

<p>Path to dependency file: developers/package.json</p>

<p>Path to vulnerable library: developers/node_modules/perfectionist/node_modules/postcss/package.json,developers/node_modules/postcss-scss/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- tailwindcss-0.7.4.tgz (Root Library)

- perfectionist-2.4.0.tgz

- postcss-scss-0.3.1.tgz

- :x: **postcss-5.2.18.tgz** (Vulnerable Library)

</details>

<details><summary><b>postcss-7.0.35.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-7.0.35.tgz">https://registry.npmjs.org/postcss/-/postcss-7.0.35.tgz</a></p>

<p>Path to dependency file: developers/package.json</p>

<p>Path to vulnerable library: developers/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- tailwindcss-0.7.4.tgz (Root Library)

- :x: **postcss-7.0.35.tgz** (Vulnerable Library)

</details>

<details><summary><b>postcss-6.0.23.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-6.0.23.tgz">https://registry.npmjs.org/postcss/-/postcss-6.0.23.tgz</a></p>

<p>Path to dependency file: developers/package.json</p>

<p>Path to vulnerable library: developers/node_modules/postcss-modules-values/node_modules/postcss/package.json,developers/node_modules/css-loader/node_modules/postcss/package.json,developers/node_modules/postcss-functions/node_modules/postcss/package.json,developers/node_modules/postcss-easy-import/node_modules/postcss/package.json,developers/node_modules/postcss-modules-local-by-default/node_modules/postcss/package.json,developers/node_modules/postcss-modules-extract-imports/node_modules/postcss/package.json,developers/node_modules/postcss-modules-scope/node_modules/postcss/package.json,developers/node_modules/icss-utils/node_modules/postcss/package.json,developers/node_modules/postcss-import/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- postcss-easy-import-3.0.0.tgz (Root Library)

- postcss-import-10.0.0.tgz

- :x: **postcss-6.0.23.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>development</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package postcss before 8.2.13 are vulnerable to Regular Expression Denial of Service (ReDoS) via getAnnotationURL() and loadAnnotation() in lib/previous-map.js. The vulnerable regexes are caused mainly by the sub-pattern \/\*\s* sourceMappingURL=(.*).

<p>Publish Date: 2021-04-26

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23382>CVE-2021-23382</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23382">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23382</a></p>

<p>Release Date: 2021-04-26</p>

<p>Fix Resolution: postcss - 8.2.13</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"postcss","packageVersion":"5.2.18","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"tailwindcss:0.7.4;perfectionist:2.4.0;postcss-scss:0.3.1;postcss:5.2.18","isMinimumFixVersionAvailable":true,"minimumFixVersion":"postcss - 8.2.13"},{"packageType":"javascript/Node.js","packageName":"postcss","packageVersion":"7.0.35","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"tailwindcss:0.7.4;postcss:7.0.35","isMinimumFixVersionAvailable":true,"minimumFixVersion":"postcss - 8.2.13"},{"packageType":"javascript/Node.js","packageName":"postcss","packageVersion":"6.0.23","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"postcss-easy-import:3.0.0;postcss-import:10.0.0;postcss:6.0.23","isMinimumFixVersionAvailable":true,"minimumFixVersion":"postcss - 8.2.13"}],"baseBranches":["development"],"vulnerabilityIdentifier":"CVE-2021-23382","vulnerabilityDetails":"The package postcss before 8.2.13 are vulnerable to Regular Expression Denial of Service (ReDoS) via getAnnotationURL() and loadAnnotation() in lib/previous-map.js. The vulnerable regexes are caused mainly by the sub-pattern \\/\\*\\s* sourceMappingURL\u003d(.*).","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23382","cvss3Severity":"medium","cvss3Score":"5.3","cvss3Metrics":{"A":"Low","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | True | CVE-2021-23382 (Medium) detected in multiple libraries - ## CVE-2021-23382 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-5.2.18.tgz</b>, <b>postcss-7.0.35.tgz</b>, <b>postcss-6.0.23.tgz</b></p></summary>

<p>

<details><summary><b>postcss-5.2.18.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-5.2.18.tgz">https://registry.npmjs.org/postcss/-/postcss-5.2.18.tgz</a></p>

<p>Path to dependency file: developers/package.json</p>

<p>Path to vulnerable library: developers/node_modules/perfectionist/node_modules/postcss/package.json,developers/node_modules/postcss-scss/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- tailwindcss-0.7.4.tgz (Root Library)

- perfectionist-2.4.0.tgz

- postcss-scss-0.3.1.tgz

- :x: **postcss-5.2.18.tgz** (Vulnerable Library)

</details>

<details><summary><b>postcss-7.0.35.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-7.0.35.tgz">https://registry.npmjs.org/postcss/-/postcss-7.0.35.tgz</a></p>

<p>Path to dependency file: developers/package.json</p>

<p>Path to vulnerable library: developers/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- tailwindcss-0.7.4.tgz (Root Library)

- :x: **postcss-7.0.35.tgz** (Vulnerable Library)

</details>

<details><summary><b>postcss-6.0.23.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-6.0.23.tgz">https://registry.npmjs.org/postcss/-/postcss-6.0.23.tgz</a></p>

<p>Path to dependency file: developers/package.json</p>

<p>Path to vulnerable library: developers/node_modules/postcss-modules-values/node_modules/postcss/package.json,developers/node_modules/css-loader/node_modules/postcss/package.json,developers/node_modules/postcss-functions/node_modules/postcss/package.json,developers/node_modules/postcss-easy-import/node_modules/postcss/package.json,developers/node_modules/postcss-modules-local-by-default/node_modules/postcss/package.json,developers/node_modules/postcss-modules-extract-imports/node_modules/postcss/package.json,developers/node_modules/postcss-modules-scope/node_modules/postcss/package.json,developers/node_modules/icss-utils/node_modules/postcss/package.json,developers/node_modules/postcss-import/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- postcss-easy-import-3.0.0.tgz (Root Library)

- postcss-import-10.0.0.tgz

- :x: **postcss-6.0.23.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>development</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package postcss before 8.2.13 are vulnerable to Regular Expression Denial of Service (ReDoS) via getAnnotationURL() and loadAnnotation() in lib/previous-map.js. The vulnerable regexes are caused mainly by the sub-pattern \/\*\s* sourceMappingURL=(.*).

<p>Publish Date: 2021-04-26

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23382>CVE-2021-23382</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23382">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23382</a></p>

<p>Release Date: 2021-04-26</p>

<p>Fix Resolution: postcss - 8.2.13</p>

</p>

</details>

<p></p>