Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

3,104 | 11,852,478,317 | IssuesEvent | 2020-03-24 20:01:04 | tgstation/tgstation-server | https://api.github.com/repos/tgstation/tgstation-server | opened | Refactor the Chat Manager | Area: Chat Component Issue Maintainability Issue | Just the component. Everything around it is sane, it's just full of shit code that's a mess to understand. | True | Refactor the Chat Manager - Just the component. Everything around it is sane, it's just full of shit code that's a mess to understand. | main | refactor the chat manager just the component everything around it is sane it s just full of shit code that s a mess to understand | 1 |

3,073 | 11,641,039,394 | IssuesEvent | 2020-02-29 01:08:48 | plotly/dash-table | https://api.github.com/repos/plotly/dash-table | closed | Clean up server-side tests | dash-attribute-maintainability dash-stage-estimate_needed size: 3 | Server-side tests are built using n-apps for different flavours of the table (e.g. https://github.com/plotly/dash-table/blob/dev/package.json#L25) -- instead, use a single app with routing to simplify writing & running server-side tests for the table.

Alternatively, investigate rewriting the tests with Selenium. We've been having significant issues with Cypress for a while now and those issues don't look like they are going away. Work done in the last year in DCC also greatly improved our Selenium testing environment to the point that maintaining two different sets of testing environments might be moot. | True | Clean up server-side tests - Server-side tests are built using n-apps for different flavours of the table (e.g. https://github.com/plotly/dash-table/blob/dev/package.json#L25) -- instead, use a single app with routing to simplify writing & running server-side tests for the table.

Alternatively, investigate rewriting the tests with Selenium. We've been having significant issues with Cypress for a while now and those issues don't look like they are going away. Work done in the last year in DCC also greatly improved our Selenium testing environment to the point that maintaining two different sets of testing environments might be moot. | main | clean up server side tests server side tests are built using n apps for different flavours of the table e g instead use a single app with routing to simplify writing running server side tests for the table alternatively investigate rewriting the tests with selenium we ve been having significant issues with cypress for a while now and those issues don t look like they are going away work done in the last year in dcc also greatly improved our selenium testing environment to the point that maintaining two different sets of testing environments might be moot | 1 |

15,443 | 27,200,383,640 | IssuesEvent | 2023-02-20 09:18:15 | EEHPCWG/PowerMeasurementMethodology | https://api.github.com/repos/EEHPCWG/PowerMeasurementMethodology | opened | Accuracy requirements | enhancement affects requirements | # Problem statement

it is perceived to be hard to figure our the right level for a measurement with respect to accuracy.

# Solution

- Add the IEC 62053-21 accuracy classes

- Reconsider level accuracy thresholds based on information on accuracy of submitted Green500 runs

| 1.0 | Accuracy requirements - # Problem statement

it is perceived to be hard to figure our the right level for a measurement with respect to accuracy.

# Solution

- Add the IEC 62053-21 accuracy classes

- Reconsider level accuracy thresholds based on information on accuracy of submitted Green500 runs

| non_main | accuracy requirements problem statement it is perceived to be hard to figure our the right level for a measurement with respect to accuracy solution add the iec accuracy classes reconsider level accuracy thresholds based on information on accuracy of submitted runs | 0 |

393,876 | 11,625,915,409 | IssuesEvent | 2020-02-27 13:35:02 | googleapis/elixir-google-api | https://api.github.com/repos/googleapis/elixir-google-api | opened | Synthesis failed for OAuth2 | autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate OAuth2. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth-oauth2'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', '--metadata', 'clients/o_auth2/synth.metadata', 'synth.py', '--']

synthtool > Executing /tmpfs/src/git/autosynth/working_repo/synth.py.

synthtool > Cloning https://github.com/googleapis/elixir-google-api.git.

synthtool > Running: docker run --rm -v/home/kbuilder/.cache/synthtool/elixir-google-api:/workspace -v/var/run/docker.sock:/var/run/docker.sock -e USER_GROUP=1000:1000 -w /workspace gcr.io/cloud-devrel-public-resources/elixir19 scripts/generate_client.sh OAuth2

synthtool > Wrote metadata to clients/o_auth2/synth.metadata.

Changed files:

M clients/o_auth2/README.md

D clients/o_auth2/lib/google_api/o_auth2/v2/model/jwk.ex

D clients/o_auth2/lib/google_api/o_auth2/v2/model/jwk_keys.ex

M clients/o_auth2/mix.exs

M clients/o_auth2/synth.metadata

[autosynth-oauth2 68dbbdf5e] Regenerate OAuth2 client

5 files changed, 5 insertions(+), 111 deletions(-)

delete mode 100644 clients/o_auth2/lib/google_api/o_auth2/v2/model/jwk.ex

delete mode 100644 clients/o_auth2/lib/google_api/o_auth2/v2/model/jwk_keys.ex

To https://github.com/googleapis/elixir-google-api.git

+ 04b3645ca...68dbbdf5e autosynth-oauth2 -> autosynth-oauth2 (forced update)

Traceback (most recent call last):

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/tmpfs/src/git/autosynth/autosynth/synth.py", line 321, in <module>

main()

File "/tmpfs/src/git/autosynth/autosynth/synth.py", line 309, in main

args.repository, branch=branch, title=pr_title, body=pr_body

File "/tmpfs/src/git/autosynth/autosynth/github.py", line 65, in create_pull_request

response.raise_for_status()

File "/tmpfs/src/git/autosynth/env/lib/python3.6/site-packages/requests/models.py", line 941, in raise_for_status

raise HTTPError(http_error_msg, response=self)

requests.exceptions.HTTPError: 502 Server Error: Bad Gateway for url: https://api.github.com/repos/googleapis/elixir-google-api/pulls

```

Google internal developers can see the full log [here](https://sponge/b6c13870-fef3-4917-bf8f-a5d752845525).

| 1.0 | Synthesis failed for OAuth2 - Hello! Autosynth couldn't regenerate OAuth2. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth-oauth2'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', '--metadata', 'clients/o_auth2/synth.metadata', 'synth.py', '--']

synthtool > Executing /tmpfs/src/git/autosynth/working_repo/synth.py.

synthtool > Cloning https://github.com/googleapis/elixir-google-api.git.

synthtool > Running: docker run --rm -v/home/kbuilder/.cache/synthtool/elixir-google-api:/workspace -v/var/run/docker.sock:/var/run/docker.sock -e USER_GROUP=1000:1000 -w /workspace gcr.io/cloud-devrel-public-resources/elixir19 scripts/generate_client.sh OAuth2

synthtool > Wrote metadata to clients/o_auth2/synth.metadata.

Changed files:

M clients/o_auth2/README.md

D clients/o_auth2/lib/google_api/o_auth2/v2/model/jwk.ex

D clients/o_auth2/lib/google_api/o_auth2/v2/model/jwk_keys.ex

M clients/o_auth2/mix.exs

M clients/o_auth2/synth.metadata

[autosynth-oauth2 68dbbdf5e] Regenerate OAuth2 client

5 files changed, 5 insertions(+), 111 deletions(-)

delete mode 100644 clients/o_auth2/lib/google_api/o_auth2/v2/model/jwk.ex

delete mode 100644 clients/o_auth2/lib/google_api/o_auth2/v2/model/jwk_keys.ex

To https://github.com/googleapis/elixir-google-api.git

+ 04b3645ca...68dbbdf5e autosynth-oauth2 -> autosynth-oauth2 (forced update)

Traceback (most recent call last):

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/tmpfs/src/git/autosynth/autosynth/synth.py", line 321, in <module>

main()

File "/tmpfs/src/git/autosynth/autosynth/synth.py", line 309, in main

args.repository, branch=branch, title=pr_title, body=pr_body

File "/tmpfs/src/git/autosynth/autosynth/github.py", line 65, in create_pull_request

response.raise_for_status()

File "/tmpfs/src/git/autosynth/env/lib/python3.6/site-packages/requests/models.py", line 941, in raise_for_status

raise HTTPError(http_error_msg, response=self)

requests.exceptions.HTTPError: 502 Server Error: Bad Gateway for url: https://api.github.com/repos/googleapis/elixir-google-api/pulls

```

Google internal developers can see the full log [here](https://sponge/b6c13870-fef3-4917-bf8f-a5d752845525).

| non_main | synthesis failed for hello autosynth couldn t regenerate broken heart here s the output from running synth py cloning into working repo switched to branch autosynth running synthtool synthtool executing tmpfs src git autosynth working repo synth py synthtool cloning synthtool running docker run rm v home kbuilder cache synthtool elixir google api workspace v var run docker sock var run docker sock e user group w workspace gcr io cloud devrel public resources scripts generate client sh synthtool wrote metadata to clients o synth metadata changed files m clients o readme md d clients o lib google api o model jwk ex d clients o lib google api o model jwk keys ex m clients o mix exs m clients o synth metadata regenerate client files changed insertions deletions delete mode clients o lib google api o model jwk ex delete mode clients o lib google api o model jwk keys ex to autosynth autosynth forced update traceback most recent call last file home kbuilder pyenv versions lib runpy py line in run module as main main mod spec file home kbuilder pyenv versions lib runpy py line in run code exec code run globals file tmpfs src git autosynth autosynth synth py line in main file tmpfs src git autosynth autosynth synth py line in main args repository branch branch title pr title body pr body file tmpfs src git autosynth autosynth github py line in create pull request response raise for status file tmpfs src git autosynth env lib site packages requests models py line in raise for status raise httperror http error msg response self requests exceptions httperror server error bad gateway for url google internal developers can see the full log | 0 |

3,115 | 11,904,959,842 | IssuesEvent | 2020-03-30 17:44:10 | diofant/diofant | https://api.github.com/repos/diofant/diofant | opened | Port rootisolation module to use sparse polys | maintainability polys | This include implementing PolyElement.sturm() method to address [this TODO](https://github.com/diofant/diofant/blob/3d08f9ab8cd77359f97411382ad754b5dc09b96e/diofant/polys/rings.py#L2058-L2059).

| True | Port rootisolation module to use sparse polys - This include implementing PolyElement.sturm() method to address [this TODO](https://github.com/diofant/diofant/blob/3d08f9ab8cd77359f97411382ad754b5dc09b96e/diofant/polys/rings.py#L2058-L2059).

| main | port rootisolation module to use sparse polys this include implementing polyelement sturm method to address | 1 |

325,753 | 27,961,266,142 | IssuesEvent | 2023-03-24 15:49:43 | wazuh/wazuh-qa | https://api.github.com/repos/wazuh/wazuh-qa | closed | Red Hat Enterprise Linux 9 SCA policy rework - checks 6 to 6.2.16 | team/qa feature/sca dev-testing subteam/qa-main level/task type/test | | Target version | Related issue | Related PR |

|--------------------|--------------------|-----------------|

| 4.4.x | #3391 | https://github.com/wazuh/wazuh/pull/16016 |

|Check Id and Name| Status| Ready for QA|

|---|---|---|

||||

|6 System Maintenance|||

|6.1 System File Permissions|||

|6.1.1 Ensure permissions on /etc/passwd are configured (Automated)|🟢|🟢|

|6.1.2 Ensure permissions on /etc/passwd- are configured (Automated)|🟢|🟢|

|6.1.3 Ensure permissions on /etc/group are configured (Automated)|🟢|🟢|

|6.1.4 Ensure permissions on /etc/group- are configured (Automated)|🟢|🟢|

|6.1.5 Ensure permissions on /etc/shadow are configured (Automated)|🟢|🟢|

|6.1.6 Ensure permissions on /etc/shadow- are configured (Automated)|🟢|🟢|

|6.1.7 Ensure permissions on /etc/gshadow are configured (Automated)|🟢|🟢|

|6.1.8 Ensure permissions on /etc/gshadow- are configured (Automated)|🟢|🟢|

|6.1.9 Ensure no world writable files exist (Automated)|⚫||

|6.1.10 Ensure no unowned files or directories exist (Automated)|⚫||

|6.1.11 Ensure no ungrouped files or directories exist (Automated)|⚫||

|6.1.12 Ensure sticky bit is set on all world-writable directories (Automated)|⚫||

|6.1.13 Audit SUID executables (Manual)|⚫||

|6.1.14 Audit SGID executables (Manual)|⚫||

|6.1.15 Audit system file permissions (Manual)|⚫||

||||

|6.2 Local User and Group Settings|||

|6.2.1 Ensure accounts in /etc/passwd use shadowed passwords (Automated)|⚫||

|6.2.2 Ensure /etc/shadow password fields are not empty (Automated)|🟢|🟢|

|6.2.3 Ensure all groups in /etc/passwd exist in /etc/group (Automated)|⚫||

|6.2.4 Ensure no duplicate UIDs exist (Automated)|⚫||

|6.2.5 Ensure no duplicate GIDs exist (Automated)|⚫||

|6.2.6 Ensure no duplicate user names exist (Automated)|⚫||

|6.2.7 Ensure no duplicate group names exist (Automated)|⚫||

|6.2.8 Ensure root PATH Integrity (Automated)|⚫||

|6.2.9 Ensure root is the only UID 0 account (Automated)|🟢|🟢|

|6.2.10 Ensure local interactive user home directories exist (Automated)|⚫||

|6.2.11 Ensure local interactive users own their home directories (Automated)|⚫||

|6.2.12 Ensure local interactive user home directories are mode 750 or more restrictive (Automated)|⚫||

|6.2.13 Ensure no local interactive user has .netrc files (Automated)|⚫||

|6.2.14 Ensure no local interactive user has .forward files (Automated)|⚫||

|6.2.15 Ensure no local interactive user has .rhosts files (Automated)|⚫||

|6.2.16 Ensure local interactive user dot files are not group or world writable (Automated)|⚫||

|||| | 2.0 | Red Hat Enterprise Linux 9 SCA policy rework - checks 6 to 6.2.16 - | Target version | Related issue | Related PR |

|--------------------|--------------------|-----------------|

| 4.4.x | #3391 | https://github.com/wazuh/wazuh/pull/16016 |

|Check Id and Name| Status| Ready for QA|

|---|---|---|

||||

|6 System Maintenance|||

|6.1 System File Permissions|||

|6.1.1 Ensure permissions on /etc/passwd are configured (Automated)|🟢|🟢|

|6.1.2 Ensure permissions on /etc/passwd- are configured (Automated)|🟢|🟢|

|6.1.3 Ensure permissions on /etc/group are configured (Automated)|🟢|🟢|

|6.1.4 Ensure permissions on /etc/group- are configured (Automated)|🟢|🟢|

|6.1.5 Ensure permissions on /etc/shadow are configured (Automated)|🟢|🟢|

|6.1.6 Ensure permissions on /etc/shadow- are configured (Automated)|🟢|🟢|

|6.1.7 Ensure permissions on /etc/gshadow are configured (Automated)|🟢|🟢|

|6.1.8 Ensure permissions on /etc/gshadow- are configured (Automated)|🟢|🟢|

|6.1.9 Ensure no world writable files exist (Automated)|⚫||

|6.1.10 Ensure no unowned files or directories exist (Automated)|⚫||

|6.1.11 Ensure no ungrouped files or directories exist (Automated)|⚫||

|6.1.12 Ensure sticky bit is set on all world-writable directories (Automated)|⚫||

|6.1.13 Audit SUID executables (Manual)|⚫||

|6.1.14 Audit SGID executables (Manual)|⚫||

|6.1.15 Audit system file permissions (Manual)|⚫||

||||

|6.2 Local User and Group Settings|||

|6.2.1 Ensure accounts in /etc/passwd use shadowed passwords (Automated)|⚫||

|6.2.2 Ensure /etc/shadow password fields are not empty (Automated)|🟢|🟢|

|6.2.3 Ensure all groups in /etc/passwd exist in /etc/group (Automated)|⚫||

|6.2.4 Ensure no duplicate UIDs exist (Automated)|⚫||

|6.2.5 Ensure no duplicate GIDs exist (Automated)|⚫||

|6.2.6 Ensure no duplicate user names exist (Automated)|⚫||

|6.2.7 Ensure no duplicate group names exist (Automated)|⚫||

|6.2.8 Ensure root PATH Integrity (Automated)|⚫||

|6.2.9 Ensure root is the only UID 0 account (Automated)|🟢|🟢|

|6.2.10 Ensure local interactive user home directories exist (Automated)|⚫||

|6.2.11 Ensure local interactive users own their home directories (Automated)|⚫||

|6.2.12 Ensure local interactive user home directories are mode 750 or more restrictive (Automated)|⚫||

|6.2.13 Ensure no local interactive user has .netrc files (Automated)|⚫||

|6.2.14 Ensure no local interactive user has .forward files (Automated)|⚫||

|6.2.15 Ensure no local interactive user has .rhosts files (Automated)|⚫||

|6.2.16 Ensure local interactive user dot files are not group or world writable (Automated)|⚫||

|||| | non_main | red hat enterprise linux sca policy rework checks to target version related issue related pr x check id and name status ready for qa system maintenance system file permissions ensure permissions on etc passwd are configured automated 🟢 🟢 ensure permissions on etc passwd are configured automated 🟢 🟢 ensure permissions on etc group are configured automated 🟢 🟢 ensure permissions on etc group are configured automated 🟢 🟢 ensure permissions on etc shadow are configured automated 🟢 🟢 ensure permissions on etc shadow are configured automated 🟢 🟢 ensure permissions on etc gshadow are configured automated 🟢 🟢 ensure permissions on etc gshadow are configured automated 🟢 🟢 ensure no world writable files exist automated ⚫ ensure no unowned files or directories exist automated ⚫ ensure no ungrouped files or directories exist automated ⚫ ensure sticky bit is set on all world writable directories automated ⚫ audit suid executables manual ⚫ audit sgid executables manual ⚫ audit system file permissions manual ⚫ local user and group settings ensure accounts in etc passwd use shadowed passwords automated ⚫ ensure etc shadow password fields are not empty automated 🟢 🟢 ensure all groups in etc passwd exist in etc group automated ⚫ ensure no duplicate uids exist automated ⚫ ensure no duplicate gids exist automated ⚫ ensure no duplicate user names exist automated ⚫ ensure no duplicate group names exist automated ⚫ ensure root path integrity automated ⚫ ensure root is the only uid account automated 🟢 🟢 ensure local interactive user home directories exist automated ⚫ ensure local interactive users own their home directories automated ⚫ ensure local interactive user home directories are mode or more restrictive automated ⚫ ensure no local interactive user has netrc files automated ⚫ ensure no local interactive user has forward files automated ⚫ ensure no local interactive user has rhosts files automated ⚫ ensure local interactive user dot files are not group or world writable automated ⚫ | 0 |

17,066 | 23,542,658,287 | IssuesEvent | 2022-08-20 16:43:13 | sekiguchi-nagisa/ydsh | https://api.github.com/repos/sekiguchi-nagisa/ydsh | closed | control space insertion behavior in linenoise | incompatible change Interactive API | currently, if size of completion candidates is 1, always insert space after inserting a candidate.

in some situation, space insertion is not needed (ex. variable, field, method name completion).

need to change `DSState_complete` api | True | control space insertion behavior in linenoise - currently, if size of completion candidates is 1, always insert space after inserting a candidate.

in some situation, space insertion is not needed (ex. variable, field, method name completion).

need to change `DSState_complete` api | non_main | control space insertion behavior in linenoise currently if size of completion candidates is always insert space after inserting a candidate in some situation space insertion is not needed ex variable field method name completion need to change dsstate complete api | 0 |

344,597 | 30,751,816,649 | IssuesEvent | 2023-07-28 20:03:09 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | [Backport v2.7.6] Node gets kicked out of Cluster after snapshots are restored. | kind/bug internal [zube]: To Test QA/S area/provisioning-v2 team/area2 regression JIRA | This is a backport issue for https://github.com/rancher/rancher/issues/42201, automatically created via rancherbot by @Sahota1225

Original issue description:

<!--------- For bugs and general issues --------->

**Setup**

Rancher version: 2.7.5

Downstream cluster: Custom cluster

Nodes: 3

CNI: Cilium

Kubernetes version: v1.25.7+rke2r1

**Describe the bug**

When restoring a snapshot on a custom cluster a node gets deleted from the cluster.

**To Reproduce**

1. Deploy a fresh RKE2 custom cluster

2. Take a snapshot

3. Restore snapshot

4. repeat steps 2-3 until the bug is hit. (usually 2 tries)

**Result**

A worker node gets deleted from the cluster.

**Expected Result**

All nodes remain in the cluster and the restore occurs properly.

**Screenshots**

<img width="1100" alt="image" src="https://github.com/rancher/dashboard/assets/136753565/3f0a0484-0733-4582-b47f-ede6da8053a7">

**Additional context**

Tried to reproduce this on 2.7.4 but was unable to do so after 5-10 restores

SURE-6669 | 1.0 | [Backport v2.7.6] Node gets kicked out of Cluster after snapshots are restored. - This is a backport issue for https://github.com/rancher/rancher/issues/42201, automatically created via rancherbot by @Sahota1225

Original issue description:

<!--------- For bugs and general issues --------->

**Setup**

Rancher version: 2.7.5

Downstream cluster: Custom cluster

Nodes: 3

CNI: Cilium

Kubernetes version: v1.25.7+rke2r1

**Describe the bug**

When restoring a snapshot on a custom cluster a node gets deleted from the cluster.

**To Reproduce**

1. Deploy a fresh RKE2 custom cluster

2. Take a snapshot

3. Restore snapshot

4. repeat steps 2-3 until the bug is hit. (usually 2 tries)

**Result**

A worker node gets deleted from the cluster.

**Expected Result**

All nodes remain in the cluster and the restore occurs properly.

**Screenshots**

<img width="1100" alt="image" src="https://github.com/rancher/dashboard/assets/136753565/3f0a0484-0733-4582-b47f-ede6da8053a7">

**Additional context**

Tried to reproduce this on 2.7.4 but was unable to do so after 5-10 restores

SURE-6669 | non_main | node gets kicked out of cluster after snapshots are restored this is a backport issue for automatically created via rancherbot by original issue description setup rancher version downstream cluster custom cluster nodes cni cilium kubernetes version describe the bug when restoring a snapshot on a custom cluster a node gets deleted from the cluster to reproduce deploy a fresh custom cluster take a snapshot restore snapshot repeat steps until the bug is hit usually tries result a worker node gets deleted from the cluster expected result all nodes remain in the cluster and the restore occurs properly screenshots img width alt image src additional context tried to reproduce this on but was unable to do so after restores sure | 0 |

234,953 | 7,733,088,437 | IssuesEvent | 2018-05-26 06:58:06 | dbpiper/PathOfTrading | https://api.github.com/repos/dbpiper/PathOfTrading | opened | Issues with decreasing the height of page (2) | Medium Priority bug | When the height is severely decreased the following issues are observed:

- The body can go below the fixed header

- The menu icon can be hidden by the tabs

These issues should be investigated and fixed. | 1.0 | Issues with decreasing the height of page (2) - When the height is severely decreased the following issues are observed:

- The body can go below the fixed header

- The menu icon can be hidden by the tabs

These issues should be investigated and fixed. | non_main | issues with decreasing the height of page when the height is severely decreased the following issues are observed the body can go below the fixed header the menu icon can be hidden by the tabs these issues should be investigated and fixed | 0 |

2,338 | 8,365,342,640 | IssuesEvent | 2018-10-04 04:30:30 | chocolatey/chocolatey-package-requests | https://api.github.com/repos/chocolatey/chocolatey-package-requests | closed | RFP - openjdk11 | Status: Available For Maintainer(s) | https://jdk.java.net/11/

There is [concern about Oracle JDK](https://www.reddit.com/r/programming/comments/9j25je/do_not_fall_into_oracles_java_11_trap/), so I think OpenJDK would be useful in addition to future `javaruntime` 11. | True | RFP - openjdk11 - https://jdk.java.net/11/

There is [concern about Oracle JDK](https://www.reddit.com/r/programming/comments/9j25je/do_not_fall_into_oracles_java_11_trap/), so I think OpenJDK would be useful in addition to future `javaruntime` 11. | main | rfp there is so i think openjdk would be useful in addition to future javaruntime | 1 |

197,605 | 22,596,929,113 | IssuesEvent | 2022-06-29 04:46:06 | dmyers87/headerstrip | https://api.github.com/repos/dmyers87/headerstrip | opened | CVE-2021-23440 (High) detected in set-value-2.0.0.tgz, set-value-0.4.3.tgz | security vulnerability | ## CVE-2021-23440 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>set-value-2.0.0.tgz</b>, <b>set-value-0.4.3.tgz</b></p></summary>

<p>

<details><summary><b>set-value-2.0.0.tgz</b></p></summary>

<p>Create nested values and any intermediaries using dot notation (`'a.b.c'`) paths.</p>

<p>Library home page: <a href="https://registry.npmjs.org/set-value/-/set-value-2.0.0.tgz">https://registry.npmjs.org/set-value/-/set-value-2.0.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/set-value</p>

<p>

Dependency Hierarchy:

- lint-staged-7.2.0.tgz (Root Library)

- micromatch-3.1.10.tgz

- snapdragon-0.8.2.tgz

- base-0.11.2.tgz

- cache-base-1.0.1.tgz

- :x: **set-value-2.0.0.tgz** (Vulnerable Library)

</details>

<details><summary><b>set-value-0.4.3.tgz</b></p></summary>

<p>Create nested values and any intermediaries using dot notation (`'a.b.c'`) paths.</p>

<p>Library home page: <a href="https://registry.npmjs.org/set-value/-/set-value-0.4.3.tgz">https://registry.npmjs.org/set-value/-/set-value-0.4.3.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/set-value</p>

<p>

Dependency Hierarchy:

- lint-staged-7.2.0.tgz (Root Library)

- micromatch-3.1.10.tgz

- snapdragon-0.8.2.tgz

- base-0.11.2.tgz

- cache-base-1.0.1.tgz

- union-value-1.0.0.tgz

- :x: **set-value-0.4.3.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/dmyers87/headerstrip/commit/8442716a2567dd4d8cfb426df6ecf4129181aacc">8442716a2567dd4d8cfb426df6ecf4129181aacc</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects the package set-value before <2.0.1, >=3.0.0 <4.0.1. A type confusion vulnerability can lead to a bypass of CVE-2019-10747 when the user-provided keys used in the path parameter are arrays.

<p>Publish Date: 2021-09-12

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23440>CVE-2021-23440</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23440">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23440</a></p>

<p>Release Date: 2021-09-12</p>

<p>Fix Resolution (set-value): 2.0.1</p>

<p>Direct dependency fix Resolution (lint-staged): 7.2.1</p><p>Fix Resolution (set-value): 2.0.1</p>

<p>Direct dependency fix Resolution (lint-staged): 7.2.1</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

| True | CVE-2021-23440 (High) detected in set-value-2.0.0.tgz, set-value-0.4.3.tgz - ## CVE-2021-23440 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>set-value-2.0.0.tgz</b>, <b>set-value-0.4.3.tgz</b></p></summary>

<p>

<details><summary><b>set-value-2.0.0.tgz</b></p></summary>

<p>Create nested values and any intermediaries using dot notation (`'a.b.c'`) paths.</p>

<p>Library home page: <a href="https://registry.npmjs.org/set-value/-/set-value-2.0.0.tgz">https://registry.npmjs.org/set-value/-/set-value-2.0.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/set-value</p>

<p>

Dependency Hierarchy:

- lint-staged-7.2.0.tgz (Root Library)

- micromatch-3.1.10.tgz

- snapdragon-0.8.2.tgz

- base-0.11.2.tgz

- cache-base-1.0.1.tgz

- :x: **set-value-2.0.0.tgz** (Vulnerable Library)

</details>

<details><summary><b>set-value-0.4.3.tgz</b></p></summary>

<p>Create nested values and any intermediaries using dot notation (`'a.b.c'`) paths.</p>

<p>Library home page: <a href="https://registry.npmjs.org/set-value/-/set-value-0.4.3.tgz">https://registry.npmjs.org/set-value/-/set-value-0.4.3.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/set-value</p>

<p>

Dependency Hierarchy:

- lint-staged-7.2.0.tgz (Root Library)

- micromatch-3.1.10.tgz

- snapdragon-0.8.2.tgz

- base-0.11.2.tgz

- cache-base-1.0.1.tgz

- union-value-1.0.0.tgz

- :x: **set-value-0.4.3.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/dmyers87/headerstrip/commit/8442716a2567dd4d8cfb426df6ecf4129181aacc">8442716a2567dd4d8cfb426df6ecf4129181aacc</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects the package set-value before <2.0.1, >=3.0.0 <4.0.1. A type confusion vulnerability can lead to a bypass of CVE-2019-10747 when the user-provided keys used in the path parameter are arrays.

<p>Publish Date: 2021-09-12

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23440>CVE-2021-23440</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23440">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23440</a></p>

<p>Release Date: 2021-09-12</p>

<p>Fix Resolution (set-value): 2.0.1</p>

<p>Direct dependency fix Resolution (lint-staged): 7.2.1</p><p>Fix Resolution (set-value): 2.0.1</p>

<p>Direct dependency fix Resolution (lint-staged): 7.2.1</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

| non_main | cve high detected in set value tgz set value tgz cve high severity vulnerability vulnerable libraries set value tgz set value tgz set value tgz create nested values and any intermediaries using dot notation a b c paths library home page a href path to dependency file package json path to vulnerable library node modules set value dependency hierarchy lint staged tgz root library micromatch tgz snapdragon tgz base tgz cache base tgz x set value tgz vulnerable library set value tgz create nested values and any intermediaries using dot notation a b c paths library home page a href path to dependency file package json path to vulnerable library node modules set value dependency hierarchy lint staged tgz root library micromatch tgz snapdragon tgz base tgz cache base tgz union value tgz x set value tgz vulnerable library found in head commit a href found in base branch master vulnerability details this affects the package set value before a type confusion vulnerability can lead to a bypass of cve when the user provided keys used in the path parameter are arrays publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution set value direct dependency fix resolution lint staged fix resolution set value direct dependency fix resolution lint staged check this box to open an automated fix pr | 0 |

2,646 | 9,040,018,074 | IssuesEvent | 2019-02-10 12:48:58 | beefproject/beef | https://api.github.com/repos/beefproject/beef | closed | GeoLite Legacy databases are now discontinued | Core Maintainability | [GeoLite Legacy databases are discontinued](https://support.maxmind.com/geolite-legacy-discontinuation-notice/) as of January 2, 2019.

The GeoIP gem and associated GeoIP functionality will need to be replaced with https://github.com/maxmind/MaxMind-DB-Reader-ruby

```

# wget 'https://geolite.maxmind.com/download/geoip/database/GeoLite2-City.tar.gz'

--2019-02-10 02:32:42-- https://geolite.maxmind.com/download/geoip/database/GeoLite2-City.tar.gz

Resolving geolite.maxmind.com (geolite.maxmind.com)... 104.16.38.47, 104.16.37.47, 2606:4700::6810:252f, ...

Connecting to geolite.maxmind.com (geolite.maxmind.com)|104.16.38.47|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 27849181 (27M) [application/gzip]

Saving to: ‘GeoLite2-City.tar.gz’

GeoLite2-City.tar.gz 100%[===========================================>] 26.56M 10.3MB/s in 2.6s

2019-02-10 02:32:45 (10.3 MB/s) - ‘GeoLite2-City.tar.gz’ saved [27849181/27849181]

# gunzip GeoLite2-City.tar.gz

# tar xvf GeoLite2-City.tar

GeoLite2-City_20190205/

GeoLite2-City_20190205/COPYRIGHT.txt

GeoLite2-City_20190205/README.txt

GeoLite2-City_20190205/LICENSE.txt

GeoLite2-City_20190205/GeoLite2-City.mmdb

root@kali-2018:~/Desktop/beef# ./asdf.rb

{"continent"=>{"code"=>"NA", "geoname_id"=>6255149, "names"=>{"de"=>"Nordamerika", "en"=>"North America", "es"=>"Norteamérica", "fr"=>"Amérique du Nord", "ja"=>"北アメリカ", "pt-BR"=>"América do Norte", "ru"=>"Северная Америка", "zh-CN"=>"北美洲"}}, "country"=>{"geoname_id"=>6252001, "iso_code"=>"US", "names"=>{"de"=>"USA", "en"=>"United States", "es"=>"Estados Unidos", "fr"=>"États-Unis", "ja"=>"アメリカ合衆国", "pt-BR"=>"Estados Unidos", "ru"=>"США", "zh-CN"=>"美国"}}, "location"=>{"accuracy_radius"=>1000, "latitude"=>37.751, "longitude"=>-97.822}, "registered_country"=>{"geoname_id"=>6252001, "iso_code"=>"US", "names"=>{"de"=>"USA", "en"=>"United States", "es"=>"Estados Unidos", "fr"=>"États-Unis", "ja"=>"アメリカ合衆国", "pt-BR"=>"Estados Unidos", "ru"=>"США", "zh-CN"=>"美国"}}}

# cat asdf.rb

#!/usr/bin/env ruby

#

require 'maxmind/db'

reader = MaxMind::DB.new('GeoLite2-City_20190205/GeoLite2-City.mmdb', mode: MaxMind::DB::MODE_MEMORY)

record = reader.get('8.8.8.8')

if record.nil?

puts '8.8.8.8 not found'

else

puts record.inspect

end

```

| True | GeoLite Legacy databases are now discontinued - [GeoLite Legacy databases are discontinued](https://support.maxmind.com/geolite-legacy-discontinuation-notice/) as of January 2, 2019.

The GeoIP gem and associated GeoIP functionality will need to be replaced with https://github.com/maxmind/MaxMind-DB-Reader-ruby

```

# wget 'https://geolite.maxmind.com/download/geoip/database/GeoLite2-City.tar.gz'

--2019-02-10 02:32:42-- https://geolite.maxmind.com/download/geoip/database/GeoLite2-City.tar.gz

Resolving geolite.maxmind.com (geolite.maxmind.com)... 104.16.38.47, 104.16.37.47, 2606:4700::6810:252f, ...

Connecting to geolite.maxmind.com (geolite.maxmind.com)|104.16.38.47|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 27849181 (27M) [application/gzip]

Saving to: ‘GeoLite2-City.tar.gz’

GeoLite2-City.tar.gz 100%[===========================================>] 26.56M 10.3MB/s in 2.6s

2019-02-10 02:32:45 (10.3 MB/s) - ‘GeoLite2-City.tar.gz’ saved [27849181/27849181]

# gunzip GeoLite2-City.tar.gz

# tar xvf GeoLite2-City.tar

GeoLite2-City_20190205/

GeoLite2-City_20190205/COPYRIGHT.txt

GeoLite2-City_20190205/README.txt

GeoLite2-City_20190205/LICENSE.txt

GeoLite2-City_20190205/GeoLite2-City.mmdb

root@kali-2018:~/Desktop/beef# ./asdf.rb

{"continent"=>{"code"=>"NA", "geoname_id"=>6255149, "names"=>{"de"=>"Nordamerika", "en"=>"North America", "es"=>"Norteamérica", "fr"=>"Amérique du Nord", "ja"=>"北アメリカ", "pt-BR"=>"América do Norte", "ru"=>"Северная Америка", "zh-CN"=>"北美洲"}}, "country"=>{"geoname_id"=>6252001, "iso_code"=>"US", "names"=>{"de"=>"USA", "en"=>"United States", "es"=>"Estados Unidos", "fr"=>"États-Unis", "ja"=>"アメリカ合衆国", "pt-BR"=>"Estados Unidos", "ru"=>"США", "zh-CN"=>"美国"}}, "location"=>{"accuracy_radius"=>1000, "latitude"=>37.751, "longitude"=>-97.822}, "registered_country"=>{"geoname_id"=>6252001, "iso_code"=>"US", "names"=>{"de"=>"USA", "en"=>"United States", "es"=>"Estados Unidos", "fr"=>"États-Unis", "ja"=>"アメリカ合衆国", "pt-BR"=>"Estados Unidos", "ru"=>"США", "zh-CN"=>"美国"}}}

# cat asdf.rb

#!/usr/bin/env ruby

#

require 'maxmind/db'

reader = MaxMind::DB.new('GeoLite2-City_20190205/GeoLite2-City.mmdb', mode: MaxMind::DB::MODE_MEMORY)

record = reader.get('8.8.8.8')

if record.nil?

puts '8.8.8.8 not found'

else

puts record.inspect

end

```

| main | geolite legacy databases are now discontinued as of january the geoip gem and associated geoip functionality will need to be replaced with wget resolving geolite maxmind com geolite maxmind com connecting to geolite maxmind com geolite maxmind com connected http request sent awaiting response ok length saving to ‘ city tar gz’ city tar gz s in mb s ‘ city tar gz’ saved gunzip city tar gz tar xvf city tar city city copyright txt city readme txt city license txt city city mmdb root kali desktop beef asdf rb continent code na geoname id names de nordamerika en north america es norteamérica fr amérique du nord ja 北アメリカ pt br américa do norte ru северная америка zh cn 北美洲 country geoname id iso code us names de usa en united states es estados unidos fr états unis ja アメリカ合衆国 pt br estados unidos ru сша zh cn 美国 location accuracy radius latitude longitude registered country geoname id iso code us names de usa en united states es estados unidos fr états unis ja アメリカ合衆国 pt br estados unidos ru сша zh cn 美国 cat asdf rb usr bin env ruby require maxmind db reader maxmind db new city city mmdb mode maxmind db mode memory record reader get if record nil puts not found else puts record inspect end | 1 |

44,642 | 5,637,696,353 | IssuesEvent | 2017-04-06 09:50:15 | Promact/trappist | https://api.github.com/repos/Promact/trappist | opened | Test Question Selection page | Test Creation and Management | we are supposing that The Test name , Edit button and preview button will be bound throughout the test page? is our assumption right? | 1.0 | Test Question Selection page - we are supposing that The Test name , Edit button and preview button will be bound throughout the test page? is our assumption right? | non_main | test question selection page we are supposing that the test name edit button and preview button will be bound throughout the test page is our assumption right | 0 |

4,258 | 21,186,110,221 | IssuesEvent | 2022-04-08 12:55:26 | jesus2099/konami-command | https://api.github.com/repos/jesus2099/konami-command | opened | Duplicate JASRAC work domain selection feature | ninja jasrac-mb-minc_WORK-IMPORT-CROSS-LINKING jasrac_DIRECT-LINK maintainability | [selectDomain()](https://github.com/jesus2099/konami-command/blob/f8c0f3af88395c7ba05cd6ab1e6dd4644f25d9e2/jasrac_DIRECT-LINK.user.js#L69) and [Select music release rights](https://github.com/jesus2099/konami-command/blob/40c4ef3565548d829d1db1ea77e398a95ddefb7a/jasrac-mb-minc_WORK-IMPORT-CROSS-LINKING.user.js#L417) want to do the same thing, concurrently. | True | Duplicate JASRAC work domain selection feature - [selectDomain()](https://github.com/jesus2099/konami-command/blob/f8c0f3af88395c7ba05cd6ab1e6dd4644f25d9e2/jasrac_DIRECT-LINK.user.js#L69) and [Select music release rights](https://github.com/jesus2099/konami-command/blob/40c4ef3565548d829d1db1ea77e398a95ddefb7a/jasrac-mb-minc_WORK-IMPORT-CROSS-LINKING.user.js#L417) want to do the same thing, concurrently. | main | duplicate jasrac work domain selection feature and want to do the same thing concurrently | 1 |

3,255 | 12,402,316,546 | IssuesEvent | 2020-05-21 11:43:31 | ocaml/opam-repository | https://api.github.com/repos/ocaml/opam-repository | closed | lambda-term.1.12.0 doesn't compile on 4.02.3 | Stale needs maintainer action | ```#=== ERROR while installing lambda-term.1.12.0 ================================#

# opam-version 1.2.2

# os linux

# command jbuilder build -p lambda-term -j 4

# path /home/jonathandav/.opam/4.02.3/build/lambda-term.1.12.0

# compiler 4.02.3

# exit-code 1

# env-file /home/jonathandav/.opam/4.02.3/build/lambda-term.1.12.0/lambda-term-23227-c266f8.env

# stdout-file /home/jonathandav/.opam/4.02.3/build/lambda-term.1.12.0/lambda-term-23227-c266f8.out

# stderr-file /home/jonathandav/.opam/4.02.3/build/lambda-term.1.12.0/lambda-term-23227-c266f8.err

### stderr ###

# Warning 3: deprecated: Lwt_unix.execute_job

# [...]

# ocamlopt src/lTerm_resource_lexer.{cmx,o}

# ocamlopt src/lTerm_mouse.{cmx,o}

# ocamlc src/lTerm.{cmi,cmti}

# ocamlc src/lTerm_draw.{cmo,cmt}

# ocamlc src/lTerm_unix.{cmo,cmt} (exit 2)

# (cd _build/default && /home/jonathandav/.opam/4.02.3/bin/ocamlc.opt -w -40 -safe-string -g -bin-annot -I /home/jonathandav/.opam/4.02.3/lib/bytes -I /home/jonathandav/.opam/4.02.3/lib/camomile -I /home/jonathandav/.opam/4.02.3/lib/lwt -I /home/jonathandav/.opam/4.02.3/lib/lwt_react -I /home/jonathandav/.opam/4.02.3/lib/ocaml -I /home/jonathandav/.opam/4.02.3/lib/react -I /home/jonathandav/.opam/4.02.3/lib/result -I /home/jonathandav/.opam/4.02.3/lib/zed -no-alias-deps -I src -o src/lTerm_unix.cmo -c -impl src/lTerm_unix.ml)

# File "src/lTerm_unix.ml", line 342, characters 32-51:

# Error: This expression has type bytes but an expression was expected of type

# string

``` | True | lambda-term.1.12.0 doesn't compile on 4.02.3 - ```#=== ERROR while installing lambda-term.1.12.0 ================================#

# opam-version 1.2.2

# os linux

# command jbuilder build -p lambda-term -j 4

# path /home/jonathandav/.opam/4.02.3/build/lambda-term.1.12.0

# compiler 4.02.3

# exit-code 1

# env-file /home/jonathandav/.opam/4.02.3/build/lambda-term.1.12.0/lambda-term-23227-c266f8.env

# stdout-file /home/jonathandav/.opam/4.02.3/build/lambda-term.1.12.0/lambda-term-23227-c266f8.out

# stderr-file /home/jonathandav/.opam/4.02.3/build/lambda-term.1.12.0/lambda-term-23227-c266f8.err

### stderr ###

# Warning 3: deprecated: Lwt_unix.execute_job

# [...]

# ocamlopt src/lTerm_resource_lexer.{cmx,o}

# ocamlopt src/lTerm_mouse.{cmx,o}

# ocamlc src/lTerm.{cmi,cmti}

# ocamlc src/lTerm_draw.{cmo,cmt}

# ocamlc src/lTerm_unix.{cmo,cmt} (exit 2)

# (cd _build/default && /home/jonathandav/.opam/4.02.3/bin/ocamlc.opt -w -40 -safe-string -g -bin-annot -I /home/jonathandav/.opam/4.02.3/lib/bytes -I /home/jonathandav/.opam/4.02.3/lib/camomile -I /home/jonathandav/.opam/4.02.3/lib/lwt -I /home/jonathandav/.opam/4.02.3/lib/lwt_react -I /home/jonathandav/.opam/4.02.3/lib/ocaml -I /home/jonathandav/.opam/4.02.3/lib/react -I /home/jonathandav/.opam/4.02.3/lib/result -I /home/jonathandav/.opam/4.02.3/lib/zed -no-alias-deps -I src -o src/lTerm_unix.cmo -c -impl src/lTerm_unix.ml)

# File "src/lTerm_unix.ml", line 342, characters 32-51:

# Error: This expression has type bytes but an expression was expected of type

# string

``` | main | lambda term doesn t compile on error while installing lambda term opam version os linux command jbuilder build p lambda term j path home jonathandav opam build lambda term compiler exit code env file home jonathandav opam build lambda term lambda term env stdout file home jonathandav opam build lambda term lambda term out stderr file home jonathandav opam build lambda term lambda term err stderr warning deprecated lwt unix execute job ocamlopt src lterm resource lexer cmx o ocamlopt src lterm mouse cmx o ocamlc src lterm cmi cmti ocamlc src lterm draw cmo cmt ocamlc src lterm unix cmo cmt exit cd build default home jonathandav opam bin ocamlc opt w safe string g bin annot i home jonathandav opam lib bytes i home jonathandav opam lib camomile i home jonathandav opam lib lwt i home jonathandav opam lib lwt react i home jonathandav opam lib ocaml i home jonathandav opam lib react i home jonathandav opam lib result i home jonathandav opam lib zed no alias deps i src o src lterm unix cmo c impl src lterm unix ml file src lterm unix ml line characters error this expression has type bytes but an expression was expected of type string | 1 |

909 | 4,579,007,012 | IssuesEvent | 2016-09-18 01:40:42 | daemonraco/toobasic | https://api.github.com/repos/daemonraco/toobasic | opened | JSON Validator for Database Specs | Core Logic Database Database Structure Maintainer JSONValidator | ## What to do

Implement validations for database specifications using _JSON Validator_, both version 1 and 2. | True | JSON Validator for Database Specs - ## What to do

Implement validations for database specifications using _JSON Validator_, both version 1 and 2. | main | json validator for database specs what to do implement validations for database specifications using json validator both version and | 1 |

6,564 | 9,550,086,414 | IssuesEvent | 2019-05-02 11:03:45 | adaptlearning/adapt_authoring | https://api.github.com/repos/adaptlearning/adapt_authoring | opened | Tenant management alternatives | T: requirements | The purpose to this issue is to explore alternatives to tenant management. Please list any requirements you may have of tenant management. | 1.0 | Tenant management alternatives - The purpose to this issue is to explore alternatives to tenant management. Please list any requirements you may have of tenant management. | non_main | tenant management alternatives the purpose to this issue is to explore alternatives to tenant management please list any requirements you may have of tenant management | 0 |

2,770 | 27,590,655,135 | IssuesEvent | 2023-03-08 23:59:00 | Azure/azure-functions-host | https://api.github.com/repos/Azure/azure-functions-host | opened | Add heartbeat process to detect thread pool exhaustion | reliability | #### What problem would the feature you're requesting solve? Please describe.

Currently, there is no automated diagnostic in place that would detect deadlock/thread pool exhaustion in the Host. The only mode we have right now to detect thread pool exhaustion is by manually capturing and examining a memory dump.

#### Describe the solution you'd like

Add a heartbeat monitor process that pings the host on a regular schedule, and detects thread pool exhaustion scenarios.

#### Additional context

Once the heartbeat is in place, we will add one of two options for mitigation of the condition detected:

1. Auto-restart the host

or

2. Report this information through the status API's to the Antares platform for auto-mitigation/ memory dump collection. | True | Add heartbeat process to detect thread pool exhaustion - #### What problem would the feature you're requesting solve? Please describe.

Currently, there is no automated diagnostic in place that would detect deadlock/thread pool exhaustion in the Host. The only mode we have right now to detect thread pool exhaustion is by manually capturing and examining a memory dump.

#### Describe the solution you'd like

Add a heartbeat monitor process that pings the host on a regular schedule, and detects thread pool exhaustion scenarios.

#### Additional context

Once the heartbeat is in place, we will add one of two options for mitigation of the condition detected:

1. Auto-restart the host

or

2. Report this information through the status API's to the Antares platform for auto-mitigation/ memory dump collection. | non_main | add heartbeat process to detect thread pool exhaustion what problem would the feature you re requesting solve please describe currently there is no automated diagnostic in place that would detect deadlock thread pool exhaustion in the host the only mode we have right now to detect thread pool exhaustion is by manually capturing and examining a memory dump describe the solution you d like add a heartbeat monitor process that pings the host on a regular schedule and detects thread pool exhaustion scenarios additional context once the heartbeat is in place we will add one of two options for mitigation of the condition detected auto restart the host or report this information through the status api s to the antares platform for auto mitigation memory dump collection | 0 |

105,666 | 13,205,237,148 | IssuesEvent | 2020-08-14 17:31:18 | toggl/mobileapp | https://api.github.com/repos/toggl/mobileapp | opened | Visual changes in CalendarSettingsViewController | estimate:M ios needs-design | ## Description

Implement the visual changes in CalendarSettingsViewController

## Dependencies

Depends on #7713

## Definition of done

- [ ] The visual changes are implemented | 1.0 | Visual changes in CalendarSettingsViewController - ## Description

Implement the visual changes in CalendarSettingsViewController

## Dependencies

Depends on #7713

## Definition of done

- [ ] The visual changes are implemented | non_main | visual changes in calendarsettingsviewcontroller description implement the visual changes in calendarsettingsviewcontroller dependencies depends on definition of done the visual changes are implemented | 0 |

96,929 | 3,975,632,230 | IssuesEvent | 2016-05-05 06:54:51 | Sententiaregum/Sententiaregum | https://api.github.com/repos/Sententiaregum/Sententiaregum | opened | improve configuration handler of capistrano | Deployment Improvement Low priority | ### Description

the capistrano deployment handler is responsible for setting up the whole configuration.

Instead multiple files should be merged, so the heavy config loader can be simplified. | 1.0 | improve configuration handler of capistrano - ### Description

the capistrano deployment handler is responsible for setting up the whole configuration.

Instead multiple files should be merged, so the heavy config loader can be simplified. | non_main | improve configuration handler of capistrano description the capistrano deployment handler is responsible for setting up the whole configuration instead multiple files should be merged so the heavy config loader can be simplified | 0 |

14,577 | 2,829,609,333 | IssuesEvent | 2015-05-23 02:05:30 | awesomebing1/fuzzdb | https://api.github.com/repos/awesomebing1/fuzzdb | closed | http://www.rf-dimension.com/forum/entry.php?70656-Football%28Chicago-Bears-vs-Green-Bay-Packers%29-Live-Streaming | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1.

2.

3.

http://www.rf-dimension.com/forum/entry.php?70656-Football%28Chicago-Bears-vs-Gr

een-Bay-Packers%29-Live-Streaming

http://www.rf-dimension.com/forum/entry.php?70656-Football%28Chicago-Bears-vs-Gr

een-Bay-Packers%29-Live-Streaming

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

Please provide any additional information below.

```

Original issue reported on code.google.com by `sabujhos...@gmail.com` on 9 Nov 2014 at 2:47 | 1.0 | http://www.rf-dimension.com/forum/entry.php?70656-Football%28Chicago-Bears-vs-Green-Bay-Packers%29-Live-Streaming - ```

What steps will reproduce the problem?

1.

2.

3.

http://www.rf-dimension.com/forum/entry.php?70656-Football%28Chicago-Bears-vs-Gr

een-Bay-Packers%29-Live-Streaming

http://www.rf-dimension.com/forum/entry.php?70656-Football%28Chicago-Bears-vs-Gr

een-Bay-Packers%29-Live-Streaming

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

Please provide any additional information below.

```

Original issue reported on code.google.com by `sabujhos...@gmail.com` on 9 Nov 2014 at 2:47 | non_main | what steps will reproduce the problem een bay packers live streaming een bay packers live streaming what is the expected output what do you see instead what version of the product are you using on what operating system please provide any additional information below original issue reported on code google com by sabujhos gmail com on nov at | 0 |

321,843 | 27,560,601,127 | IssuesEvent | 2023-03-07 21:39:27 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | DISABLED test_dynamic_shapes_right_side_dynamic_shapes (torch._dynamo.testing.DynamicShapesReproTests) | triaged module: flaky-tests skipped module: dynamo | Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_dynamic_shapes_right_side_dynamic_shapes&suite=torch._dynamo.testing.DynamicShapesReproTests) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/11828458327).

Over the past 3 hours, it has been determined flaky in 2 workflow(s) with 2 failures and 2 successes.

**Debugging instructions (after clicking on the recent samples link):**

DO NOT ASSUME THINGS ARE OKAY IF THE CI IS GREEN. We now shield flaky tests from developers so CI will thus be green but it will be harder to parse the logs.

To find relevant log snippets:

1. Click on the workflow logs linked above

2. Click on the Test step of the job so that it is expanded. Otherwise, the grepping will not work.

3. Grep for `test_dynamic_shapes_right_side_dynamic_shapes`

4. There should be several instances run (as flaky tests are rerun in CI) from which you can study the logs.

Test file path: `/opt/conda/envs/py_3.10/lib/python3.10/site-packages/torch/_dynamo/testing.py` or `/opt/conda/envs/py_3.10/lib/python3.10/site-packages/torch/_dynamo/testing.py` | 1.0 | DISABLED test_dynamic_shapes_right_side_dynamic_shapes (torch._dynamo.testing.DynamicShapesReproTests) - Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_dynamic_shapes_right_side_dynamic_shapes&suite=torch._dynamo.testing.DynamicShapesReproTests) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/11828458327).

Over the past 3 hours, it has been determined flaky in 2 workflow(s) with 2 failures and 2 successes.

**Debugging instructions (after clicking on the recent samples link):**

DO NOT ASSUME THINGS ARE OKAY IF THE CI IS GREEN. We now shield flaky tests from developers so CI will thus be green but it will be harder to parse the logs.

To find relevant log snippets:

1. Click on the workflow logs linked above

2. Click on the Test step of the job so that it is expanded. Otherwise, the grepping will not work.

3. Grep for `test_dynamic_shapes_right_side_dynamic_shapes`

4. There should be several instances run (as flaky tests are rerun in CI) from which you can study the logs.

Test file path: `/opt/conda/envs/py_3.10/lib/python3.10/site-packages/torch/_dynamo/testing.py` or `/opt/conda/envs/py_3.10/lib/python3.10/site-packages/torch/_dynamo/testing.py` | non_main | disabled test dynamic shapes right side dynamic shapes torch dynamo testing dynamicshapesreprotests platforms linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with failures and successes debugging instructions after clicking on the recent samples link do not assume things are okay if the ci is green we now shield flaky tests from developers so ci will thus be green but it will be harder to parse the logs to find relevant log snippets click on the workflow logs linked above click on the test step of the job so that it is expanded otherwise the grepping will not work grep for test dynamic shapes right side dynamic shapes there should be several instances run as flaky tests are rerun in ci from which you can study the logs test file path opt conda envs py lib site packages torch dynamo testing py or opt conda envs py lib site packages torch dynamo testing py | 0 |

5,235 | 26,551,134,376 | IssuesEvent | 2023-01-20 07:51:49 | AhmadWaleed/laravel-blanket | https://api.github.com/repos/AhmadWaleed/laravel-blanket | opened | Looking for maintainer. | help wanted Looking For Maintainer | It's been a while since i worked in php/laravel, I don't have to time to maintain this package anymore. If anybody interested volunteering himself for becoming active maintainer for this project. Feel free to reach out to me.

If i could not find any maintainer in few weeks, unfortunately i will archive this repository. | True | Looking for maintainer. - It's been a while since i worked in php/laravel, I don't have to time to maintain this package anymore. If anybody interested volunteering himself for becoming active maintainer for this project. Feel free to reach out to me.

If i could not find any maintainer in few weeks, unfortunately i will archive this repository. | main | looking for maintainer it s been a while since i worked in php laravel i don t have to time to maintain this package anymore if anybody interested volunteering himself for becoming active maintainer for this project feel free to reach out to me if i could not find any maintainer in few weeks unfortunately i will archive this repository | 1 |

349,254 | 24,939,968,298 | IssuesEvent | 2022-10-31 18:04:32 | music-encoding/music-encoding.github.io | https://api.github.com/repos/music-encoding/music-encoding.github.io | closed | Link Checker Report | documentation bug | ## Summary

| Status | Count |

|---------------|-------|

| 🔍 Total | 432 |

| ✅ Successful | 199 |

| ⏳ Timeouts | 0 |

| 🔀 Redirected | 0 |

| 👻 Excluded | 232 |

| ❓ Unknown | 0 |

| 🚫 Errors | 1 |

## Errors per input

### Errors in ./resources/tools.md

* [http://custom.music-encoding.org/](http://custom.music-encoding.org/): Failed: Network error (status code: 404)

[Full Github Actions output](https://github.com/music-encoding/music-encoding.github.io/actions/runs/3355658640?check_suite_focus=true)

| 1.0 | Link Checker Report - ## Summary

| Status | Count |

|---------------|-------|

| 🔍 Total | 432 |

| ✅ Successful | 199 |

| ⏳ Timeouts | 0 |

| 🔀 Redirected | 0 |

| 👻 Excluded | 232 |

| ❓ Unknown | 0 |

| 🚫 Errors | 1 |

## Errors per input

### Errors in ./resources/tools.md

* [http://custom.music-encoding.org/](http://custom.music-encoding.org/): Failed: Network error (status code: 404)

[Full Github Actions output](https://github.com/music-encoding/music-encoding.github.io/actions/runs/3355658640?check_suite_focus=true)

| non_main | link checker report summary status count 🔍 total ✅ successful ⏳ timeouts 🔀 redirected 👻 excluded ❓ unknown 🚫 errors errors per input errors in resources tools md failed network error status code | 0 |

627,699 | 19,912,356,818 | IssuesEvent | 2022-01-25 18:29:25 | exalearn/EXARL | https://api.github.com/repos/exalearn/EXARL | closed | Do we need to save current_state and next_state? | Low Priority | learner_base.py: 158

train_writer.writerow([current_state,action,reward,next_state,total_reward,done])

Basically, each state is saved twice... | 1.0 | Do we need to save current_state and next_state? - learner_base.py: 158

train_writer.writerow([current_state,action,reward,next_state,total_reward,done])

Basically, each state is saved twice... | non_main | do we need to save current state and next state learner base py train writer writerow basically each state is saved twice | 0 |

315,739 | 9,631,612,191 | IssuesEvent | 2019-05-15 14:33:35 | canonical-web-and-design/snapcraft.io | https://api.github.com/repos/canonical-web-and-design/snapcraft.io | closed | Automate notifications in snapcraft.io | Priority: Medium | Recently we have been including banners to promote live stream notifications on youtube but would be good to find a way to automate this piece of work, so we dont need to (a) add and remove code manually, (b) external people like advocacy can schedule without our intervention and (c) #winning.

| 1.0 | Automate notifications in snapcraft.io - Recently we have been including banners to promote live stream notifications on youtube but would be good to find a way to automate this piece of work, so we dont need to (a) add and remove code manually, (b) external people like advocacy can schedule without our intervention and (c) #winning.

| non_main | automate notifications in snapcraft io recently we have been including banners to promote live stream notifications on youtube but would be good to find a way to automate this piece of work so we dont need to a add and remove code manually b external people like advocacy can schedule without our intervention and c winning | 0 |

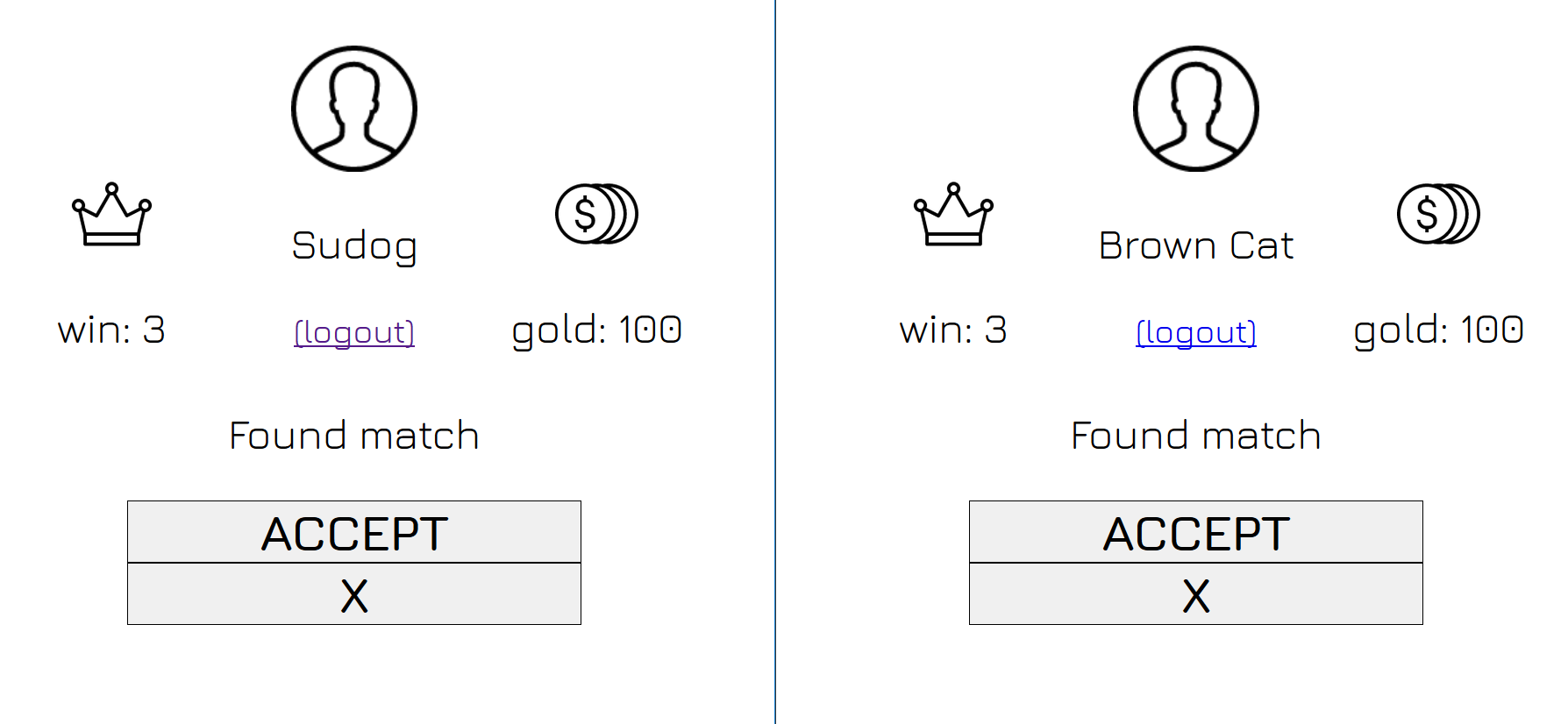

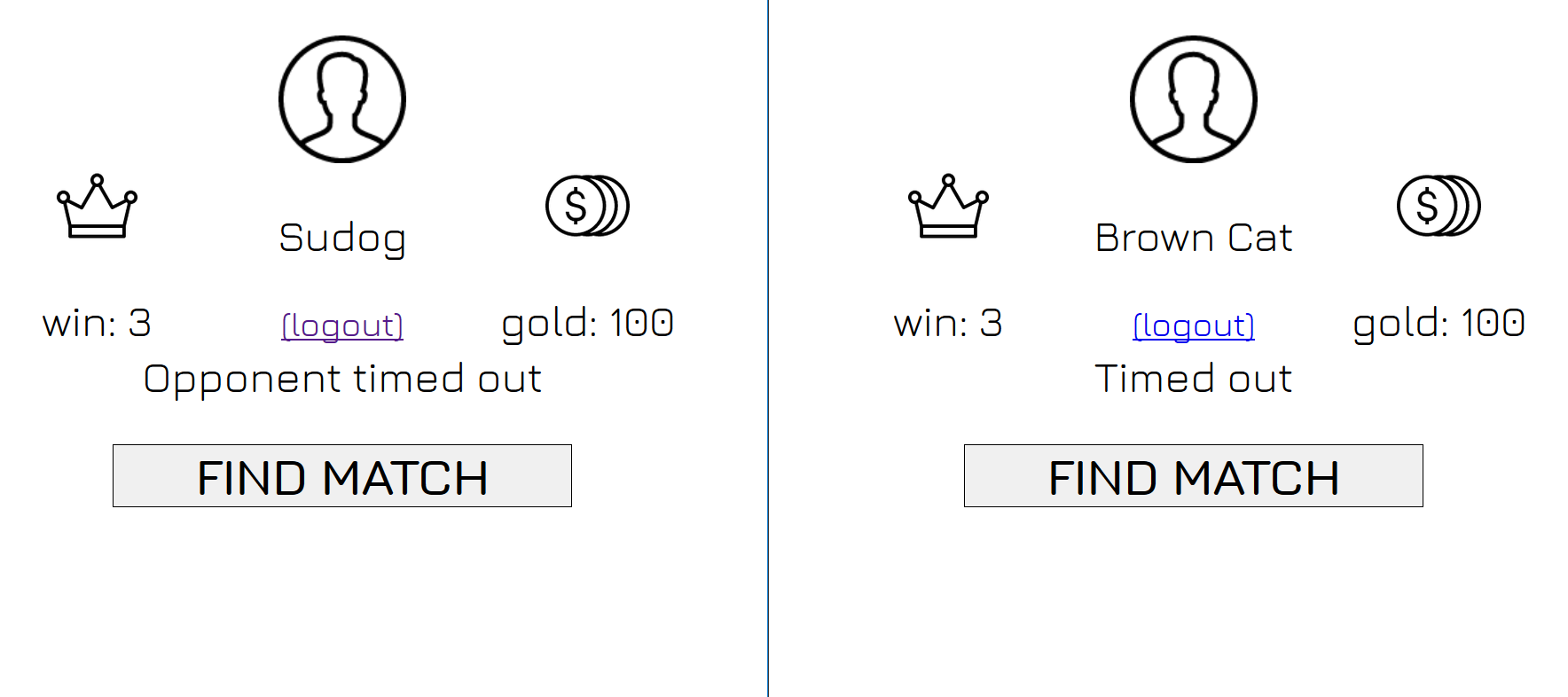

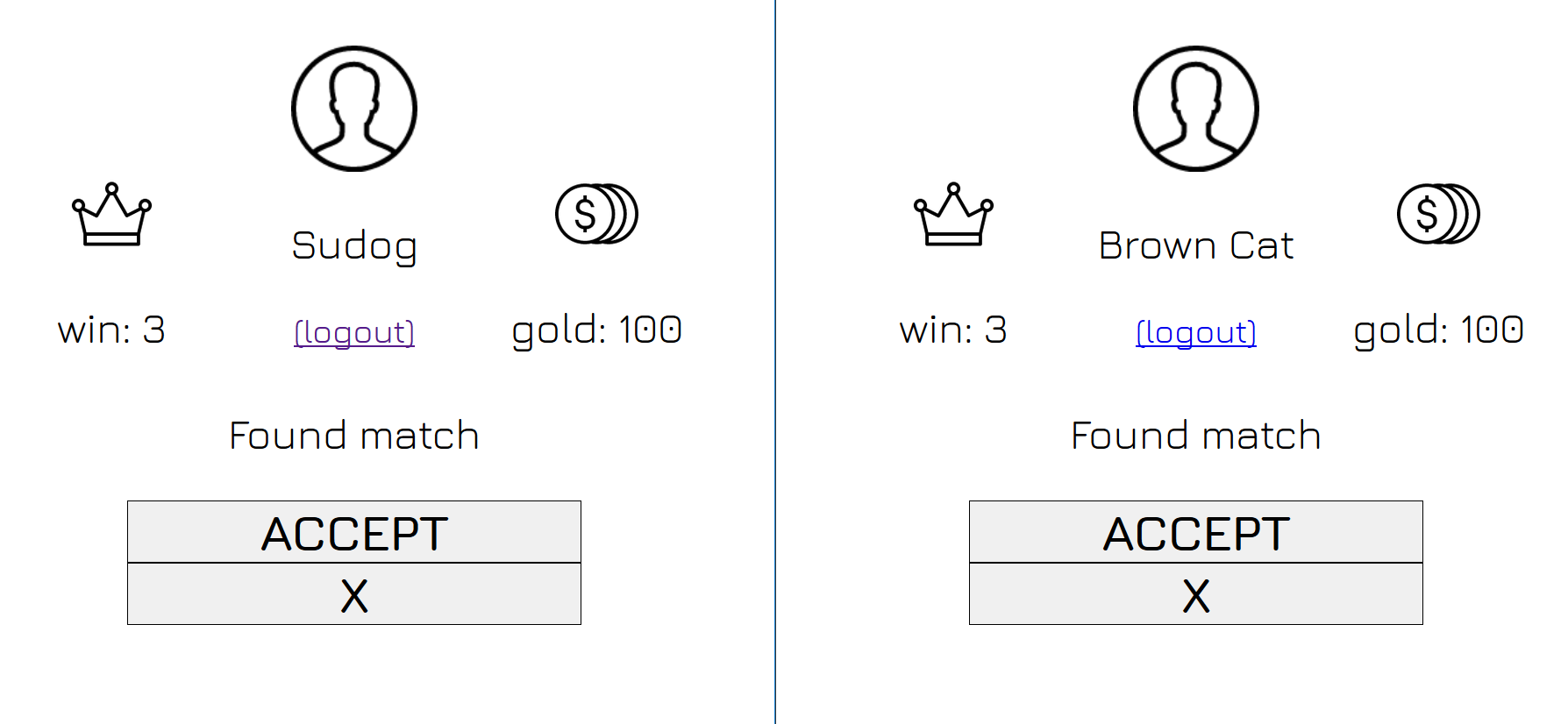

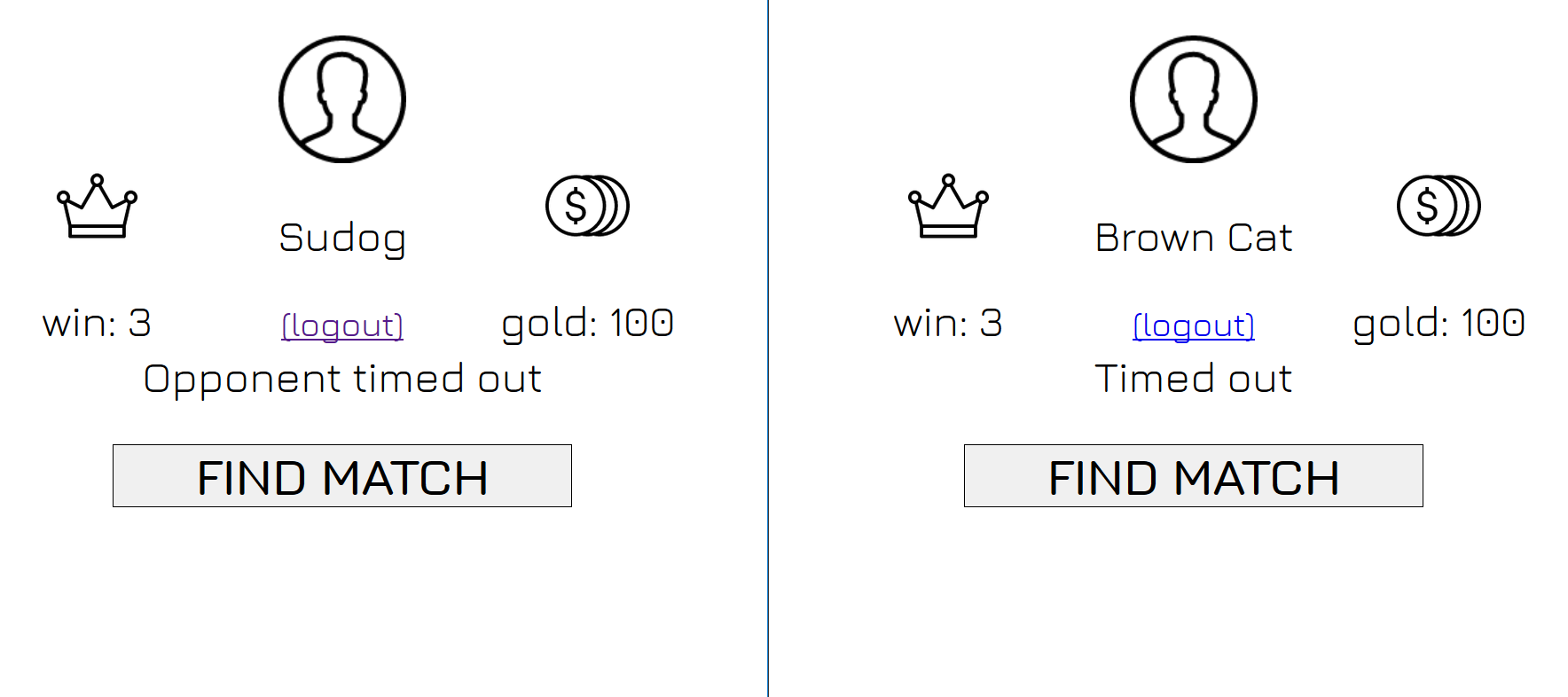

199,996 | 6,996,998,843 | IssuesEvent | 2017-12-16 08:51:00 | giangm9/enduel | https://api.github.com/repos/giangm9/enduel | closed | Sửa lại thời gian và hiển thị khi accept trận | High priority | - [x] Thời gian đợi ấn Accept quá ngắn, bị bug

- [x] Found match cần hiện thị tgian đếm ngược trước khi time out. VD: Found match 10->9->8.....

| 1.0 | Sửa lại thời gian và hiển thị khi accept trận - - [x] Thời gian đợi ấn Accept quá ngắn, bị bug

- [x] Found match cần hiện thị tgian đếm ngược trước khi time out. VD: Found match 10->9->8.....

| non_main | sửa lại thời gian và hiển thị khi accept trận thời gian đợi ấn accept quá ngắn bị bug found match cần hiện thị tgian đếm ngược trước khi time out vd found match | 0 |

220,928 | 7,372,235,202 | IssuesEvent | 2018-03-13 14:15:18 | mlibrary/heliotrope | https://api.github.com/repos/mlibrary/heliotrope | opened | Stop Unity's pop-up alert for every JS error | bug gabii high priority | - [ ] Add a NOP error handler (see link) to the 2 places in Heliotrope where the Unity engine is instantiated

- [ ] verify this stops unrelated JS errors from giving a pop-up alert

https://forum.unity.com/threads/make-javascript-errors-not-alert-in-5-6.466772/

| 1.0 | Stop Unity's pop-up alert for every JS error - - [ ] Add a NOP error handler (see link) to the 2 places in Heliotrope where the Unity engine is instantiated

- [ ] verify this stops unrelated JS errors from giving a pop-up alert

https://forum.unity.com/threads/make-javascript-errors-not-alert-in-5-6.466772/

| non_main | stop unity s pop up alert for every js error add a nop error handler see link to the places in heliotrope where the unity engine is instantiated verify this stops unrelated js errors from giving a pop up alert | 0 |

148,342 | 11,848,162,831 | IssuesEvent | 2020-03-24 13:21:02 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | [CI][master] DocsClientYamlTestSuiteIT put-auto-follow-pattern | :Distributed/CCR >test-failure | Log : https://elasticsearch-ci.elastic.co/job/elastic+elasticsearch+master+multijob-unix-compatibility/os=centos-8&&immutable/627/console

Build Scans: https://gradle-enterprise.elastic.co/s/t7pibrqbzjvsm

REPRODUCE WITH: ./gradlew ':docs:integTestRunner' --tests "org.elasticsearch.smoketest.DocsClientYamlTestSuiteIT.test {yaml=reference/ccr/apis/auto-follow/put-auto-follow-pattern/line_15}" \

-Dtests.seed=1FB43FBC4EBFC245 \

-Dtests.security.manager=true \

-Dtests.locale=da \

-Dtests.timezone=America/Cuiaba \

-Dcompiler.java=13

Doesn't reproduce for me

```