Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

770,464 | 27,040,772,629 | IssuesEvent | 2023-02-13 05:03:00 | GSM-MSG/GCMS-BackEnd | https://api.github.com/repos/GSM-MSG/GCMS-BackEnd | closed | 동아리 생성할때 같은 이름과 같은 타입의 동아리가 있는지 검사 | 1️⃣ Priority: High ⚡️ Simple | ### Describe

동아리 생성할때 같은 이름이면서 같은 타입인 동아리가 있는지 검사해야되는데 안했음;;

### Additional

_No response_ | 1.0 | 동아리 생성할때 같은 이름과 같은 타입의 동아리가 있는지 검사 - ### Describe

동아리 생성할때 같은 이름이면서 같은 타입인 동아리가 있는지 검사해야되는데 안했음;;

### Additional

_No response_ | non_main | 동아리 생성할때 같은 이름과 같은 타입의 동아리가 있는지 검사 describe 동아리 생성할때 같은 이름이면서 같은 타입인 동아리가 있는지 검사해야되는데 안했음 additional no response | 0 |

368,641 | 25,801,279,445 | IssuesEvent | 2022-12-11 02:03:27 | external-secrets/external-secrets | https://api.github.com/repos/external-secrets/external-secrets | closed | Documentation does not state that CreationPolicy=Owner setting ownerReference field | good first issue area/documentation Stale | **Describe the solution you'd like**

Update the creationPolicy=Merge (or create a new policy) that also sets the `ownerReference` on the managed secret.

**What is the added value?**

This would be useful for bootstrapping the `Secret` used by a `SecretStore`, and having external-secrets automatically syncronise changes to the secret after the initial secret creation.

This is possible with the Merge strategy already, however my GitOps tooling (argocd) is attempting to self-heal (converge on declared state) and clean up the secret as without the `ownerReference` metadata it does not understand the `Secret`s relationship to the `ExternalSecret` resource.

**Give us examples of the outcome**

I think this could be achieved by extending the check in the `mutationFunc` in `pkg/controllers/externalsecret/externalsecret_controller.go` to also set apply owner fields for the merge creationPolicy.

**Observations (Constraints, Context, etc):**

The initial secret is being created directly with `kubectl create secret generic ...`. | 1.0 | Documentation does not state that CreationPolicy=Owner setting ownerReference field - **Describe the solution you'd like**

Update the creationPolicy=Merge (or create a new policy) that also sets the `ownerReference` on the managed secret.

**What is the added value?**

This would be useful for bootstrapping the `Secret` used by a `SecretStore`, and having external-secrets automatically syncronise changes to the secret after the initial secret creation.

This is possible with the Merge strategy already, however my GitOps tooling (argocd) is attempting to self-heal (converge on declared state) and clean up the secret as without the `ownerReference` metadata it does not understand the `Secret`s relationship to the `ExternalSecret` resource.

**Give us examples of the outcome**

I think this could be achieved by extending the check in the `mutationFunc` in `pkg/controllers/externalsecret/externalsecret_controller.go` to also set apply owner fields for the merge creationPolicy.

**Observations (Constraints, Context, etc):**

The initial secret is being created directly with `kubectl create secret generic ...`. | non_main | documentation does not state that creationpolicy owner setting ownerreference field describe the solution you d like update the creationpolicy merge or create a new policy that also sets the ownerreference on the managed secret what is the added value this would be useful for bootstrapping the secret used by a secretstore and having external secrets automatically syncronise changes to the secret after the initial secret creation this is possible with the merge strategy already however my gitops tooling argocd is attempting to self heal converge on declared state and clean up the secret as without the ownerreference metadata it does not understand the secret s relationship to the externalsecret resource give us examples of the outcome i think this could be achieved by extending the check in the mutationfunc in pkg controllers externalsecret externalsecret controller go to also set apply owner fields for the merge creationpolicy observations constraints context etc the initial secret is being created directly with kubectl create secret generic | 0 |

1,247 | 5,308,979,794 | IssuesEvent | 2017-02-12 04:04:32 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | vmware_guest scsi controller type ignored | affects_2.2 bug_report cloud vmware waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

vmware_guest

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0.0

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

Default

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

N/A

##### SUMMARY

<!--- Explain the problem briefly -->

When cloning a new vm from template the given value for parameter scsi is ignored and controller in vm is always paravirtual even if controller in template is LSI_parallel

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

Create vm from template using vmware_guest and set scsi parameter to other than paravirtual.

<!--- Paste example playbooks or commands between quotes below -->

```

- name: Create the VM

vmware_guest:

validate_certs: False

hostname: "{{ vcenter_hostname }}"

username: "{{ vcenter_user }}"

password: "{{ vcenter_pass }}"

name: "{{ inventory_hostname }}"

state: poweredon

disk:

- size_gb: "{{ vm_hdd }}"

type: thick

datastore: "{{ esx_datastore }}"

nic:

- type: e1000e

network: "{{ vm_network }}"

network_type: standard

hardware:

memory_mb: "{{ vm_mem }}"

num_cpus: "{{ vm_cpu }}"

osid: centos64guest

scsi: lsi

datacenter: "{{ vcenter_dc }}"

esxi_hostname: "{{ esx_host }}"

template: "{{ vm_template }}"

wait_for_ip_address: yes

register: deploy

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

SCSI Controller is LSI Parallel

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with high verbosity (-vvvv) -->

SCSI Controller is paravirtual

<!--- Paste verbatim command output between quotes below -->

```

``` | True | vmware_guest scsi controller type ignored - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

vmware_guest

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0.0

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

Default

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

N/A

##### SUMMARY

<!--- Explain the problem briefly -->

When cloning a new vm from template the given value for parameter scsi is ignored and controller in vm is always paravirtual even if controller in template is LSI_parallel

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

Create vm from template using vmware_guest and set scsi parameter to other than paravirtual.

<!--- Paste example playbooks or commands between quotes below -->

```

- name: Create the VM

vmware_guest:

validate_certs: False

hostname: "{{ vcenter_hostname }}"

username: "{{ vcenter_user }}"

password: "{{ vcenter_pass }}"

name: "{{ inventory_hostname }}"

state: poweredon

disk:

- size_gb: "{{ vm_hdd }}"

type: thick

datastore: "{{ esx_datastore }}"

nic:

- type: e1000e

network: "{{ vm_network }}"

network_type: standard

hardware:

memory_mb: "{{ vm_mem }}"

num_cpus: "{{ vm_cpu }}"

osid: centos64guest

scsi: lsi

datacenter: "{{ vcenter_dc }}"

esxi_hostname: "{{ esx_host }}"

template: "{{ vm_template }}"

wait_for_ip_address: yes

register: deploy

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

SCSI Controller is LSI Parallel

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with high verbosity (-vvvv) -->

SCSI Controller is paravirtual

<!--- Paste verbatim command output between quotes below -->

```

``` | main | vmware guest scsi controller type ignored issue type bug report component name vmware guest ansible version ansible configuration mention any settings you have changed added removed in ansible cfg or using the ansible environment variables default os environment mention the os you are running ansible from and the os you are managing or say “n a” for anything that is not platform specific n a summary when cloning a new vm from template the given value for parameter scsi is ignored and controller in vm is always paravirtual even if controller in template is lsi parallel steps to reproduce for bugs show exactly how to reproduce the problem for new features show how the feature would be used create vm from template using vmware guest and set scsi parameter to other than paravirtual name create the vm vmware guest validate certs false hostname vcenter hostname username vcenter user password vcenter pass name inventory hostname state poweredon disk size gb vm hdd type thick datastore esx datastore nic type network vm network network type standard hardware memory mb vm mem num cpus vm cpu osid scsi lsi datacenter vcenter dc esxi hostname esx host template vm template wait for ip address yes register deploy expected results scsi controller is lsi parallel actual results scsi controller is paravirtual | 1 |

4,459 | 23,219,113,613 | IssuesEvent | 2022-08-02 16:26:37 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | opened | Troubleshoot slow vertical scrolling of table rows | type: bug work: frontend status: ready restricted: maintainers | As reported [on Matrix](https://matrix.to/#/!UnujZDUxGuMrYdvgTU:matrix.mathesar.org/$vG7lSFfzzDySrzpjHQRc3j8pYCnHhgx5P0xWA_J2oRo?via=matrix.mathesar.org&via=matrix.org), some users experience significant lags when scrolling the table vertically. ([example video](https://www.loom.com/share/f9284adfe82440a3b360223028219de4))

We should troubleshoot this to better understand the cause and reproduction scenarios.

| True | Troubleshoot slow vertical scrolling of table rows - As reported [on Matrix](https://matrix.to/#/!UnujZDUxGuMrYdvgTU:matrix.mathesar.org/$vG7lSFfzzDySrzpjHQRc3j8pYCnHhgx5P0xWA_J2oRo?via=matrix.mathesar.org&via=matrix.org), some users experience significant lags when scrolling the table vertically. ([example video](https://www.loom.com/share/f9284adfe82440a3b360223028219de4))

We should troubleshoot this to better understand the cause and reproduction scenarios.

| main | troubleshoot slow vertical scrolling of table rows as reported some users experience significant lags when scrolling the table vertically we should troubleshoot this to better understand the cause and reproduction scenarios | 1 |

5,328 | 26,903,222,365 | IssuesEvent | 2023-02-06 17:03:12 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | closed | Remove "Short Name" field on user form | type: enhancement work: frontend status: ready restricted: maintainers | I'd like to remove the "Short Name" field on the user form. In a [Matrix discussion](https://matrix.to/#/!xInuTkBwjXZXYatIlm:matrix.mathesar.org/$2e6ULgkergV1BZwlHxpNBNUKcG4W-bnetG9bApfg23M?via=matrix.mathesar.org&via=matrix.org) @kgodey said this was fine. It's an optional field so we can keep it in the API but just remove the form UI until we have a use-case for short names.

| True | Remove "Short Name" field on user form - I'd like to remove the "Short Name" field on the user form. In a [Matrix discussion](https://matrix.to/#/!xInuTkBwjXZXYatIlm:matrix.mathesar.org/$2e6ULgkergV1BZwlHxpNBNUKcG4W-bnetG9bApfg23M?via=matrix.mathesar.org&via=matrix.org) @kgodey said this was fine. It's an optional field so we can keep it in the API but just remove the form UI until we have a use-case for short names.

| main | remove short name field on user form i d like to remove the short name field on the user form in a kgodey said this was fine it s an optional field so we can keep it in the api but just remove the form ui until we have a use case for short names | 1 |

148,116 | 13,226,769,197 | IssuesEvent | 2020-08-18 00:59:37 | material-components/material-components-web-components | https://api.github.com/repos/material-components/material-components-web-components | closed | Update folder structure for Sass theme files | Focus Area: Components Severity: Medium Type: Feature Why: Enable new use cases Why: Improve documentation Why: improve ergonomics | ## Description

Folder structure for packages should be updated to compensate for Sass theming files. e.g. [linear-progress](https://github.com/material-components/material-components-web-components/tree/master/packages/linear-progress/src)

## Acceptance criteria

Users should be able to use theme mixins from an `_index.scss` partial at the root of the package.

```scss

@use '@material/linear-progress';

html {

@include linear-progress.theme((bar: secondary));

}

```

| 1.0 | Update folder structure for Sass theme files - ## Description

Folder structure for packages should be updated to compensate for Sass theming files. e.g. [linear-progress](https://github.com/material-components/material-components-web-components/tree/master/packages/linear-progress/src)

## Acceptance criteria

Users should be able to use theme mixins from an `_index.scss` partial at the root of the package.

```scss

@use '@material/linear-progress';

html {

@include linear-progress.theme((bar: secondary));

}

```

| non_main | update folder structure for sass theme files description folder structure for packages should be updated to compensate for sass theming files e g acceptance criteria users should be able to use theme mixins from an index scss partial at the root of the package scss use material linear progress html include linear progress theme bar secondary | 0 |

791 | 4,389,802,065 | IssuesEvent | 2016-08-08 23:41:08 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | unarchive on OSX does not extract the archive and fails with an error | bug_report P2 waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

Bug

##### COMPONENT NAME

unarchive module

##### ANSIBLE VERSION

Ansible on OSX 10.10, installed through Homebrew.

```

$ ansible --version

ansible 2.1.0.0

config file =

configured module search path = Default w/o overrides

```

##### CONFIGURATION

Not settings changed (to my knowledge ;-)).

##### OS / ENVIRONMENT

OSX 10.10, Homebrew

##### SUMMARY

When executing the play pasted below Ansible fails when excuting the unarchive command.

EDIT:

This is a regression because it happens with my existing plays which worked perfectly not long ago. I am pretty sure that this problem did not exist in the Ansible 2.0.x release on OSX using Homebrew.

##### STEPS TO REPRODUCE

1. Copy the play below into a file

2. Download the archive file (http://share.astina.io/openssl-certs.tar.gz) and put it besides the file. Alternatively you can just create your own archive, I guess the contents are irrelevant (maybe it is relevant it being a `tar.gz` archive)

3. Run `ansible-playbook -vvvv main.yml`

<!--- Paste example playbooks or commands between quotes below -->

```

---

- name: Test unarchive

hosts: 127.0.0.1

connection: local

tasks:

- name: Create destination folder

file: path=test-certificates state=directory

- name: Install public certificates for OpenSSL

unarchive: src=openssl-certs.tar.gz dest=test-certificates creates=test-certificates/VeriSign_Universal_Root_Certification_Authority.pem

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

The play runs through and in the play's directory there is a directory called `test-certificates` with the contents of the archive.

##### ACTUAL RESULTS

Ansible fails with the following error:

```

fatal: [127.0.0.1]: FAILED! => {"changed": false, "failed": true, "invocation": {"module_args": {"backup":

null, "content": null, "copy": true, "creates":

"test-certificates/VeriSign_Universal_Root_Certification_Authority.pem", "delimiter": null, "dest":

"test-certificates", "directory_mode": null, "exclude": [], "extra_opts": [], "follow": false, "force": null,

"group": null, "keep_newer": false, "list_files": false, "mode": null, "original_basename":

"openssl-certs.tar.gz", "owner": null, "regexp": null, "remote_src": null, "selevel": null, "serole": null,

"setype": null, "seuser": null, "src":

"/Users/raffaele/.ansible/tmp/ansible-tmp-1465980002.6-168001143856730/source"}}, "msg":

"Unexpected error when accessing exploded file: [Errno 2] No such file or directory:

'test-certificates/ACCVRAIZ1.pem'", "stat": {"exists": false}}

```

| True | unarchive on OSX does not extract the archive and fails with an error - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

Bug

##### COMPONENT NAME

unarchive module

##### ANSIBLE VERSION

Ansible on OSX 10.10, installed through Homebrew.

```

$ ansible --version

ansible 2.1.0.0

config file =

configured module search path = Default w/o overrides

```

##### CONFIGURATION

Not settings changed (to my knowledge ;-)).

##### OS / ENVIRONMENT

OSX 10.10, Homebrew

##### SUMMARY

When executing the play pasted below Ansible fails when excuting the unarchive command.

EDIT:

This is a regression because it happens with my existing plays which worked perfectly not long ago. I am pretty sure that this problem did not exist in the Ansible 2.0.x release on OSX using Homebrew.

##### STEPS TO REPRODUCE

1. Copy the play below into a file

2. Download the archive file (http://share.astina.io/openssl-certs.tar.gz) and put it besides the file. Alternatively you can just create your own archive, I guess the contents are irrelevant (maybe it is relevant it being a `tar.gz` archive)

3. Run `ansible-playbook -vvvv main.yml`

<!--- Paste example playbooks or commands between quotes below -->

```

---

- name: Test unarchive

hosts: 127.0.0.1

connection: local

tasks:

- name: Create destination folder

file: path=test-certificates state=directory

- name: Install public certificates for OpenSSL

unarchive: src=openssl-certs.tar.gz dest=test-certificates creates=test-certificates/VeriSign_Universal_Root_Certification_Authority.pem

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

The play runs through and in the play's directory there is a directory called `test-certificates` with the contents of the archive.

##### ACTUAL RESULTS

Ansible fails with the following error:

```

fatal: [127.0.0.1]: FAILED! => {"changed": false, "failed": true, "invocation": {"module_args": {"backup":

null, "content": null, "copy": true, "creates":

"test-certificates/VeriSign_Universal_Root_Certification_Authority.pem", "delimiter": null, "dest":

"test-certificates", "directory_mode": null, "exclude": [], "extra_opts": [], "follow": false, "force": null,

"group": null, "keep_newer": false, "list_files": false, "mode": null, "original_basename":

"openssl-certs.tar.gz", "owner": null, "regexp": null, "remote_src": null, "selevel": null, "serole": null,

"setype": null, "seuser": null, "src":

"/Users/raffaele/.ansible/tmp/ansible-tmp-1465980002.6-168001143856730/source"}}, "msg":

"Unexpected error when accessing exploded file: [Errno 2] No such file or directory:

'test-certificates/ACCVRAIZ1.pem'", "stat": {"exists": false}}

```

| main | unarchive on osx does not extract the archive and fails with an error issue type bug component name unarchive module ansible version ansible on osx installed through homebrew ansible version ansible config file configured module search path default w o overrides configuration not settings changed to my knowledge os environment osx homebrew summary when executing the play pasted below ansible fails when excuting the unarchive command edit this is a regression because it happens with my existing plays which worked perfectly not long ago i am pretty sure that this problem did not exist in the ansible x release on osx using homebrew steps to reproduce copy the play below into a file download the archive file and put it besides the file alternatively you can just create your own archive i guess the contents are irrelevant maybe it is relevant it being a tar gz archive run ansible playbook vvvv main yml name test unarchive hosts connection local tasks name create destination folder file path test certificates state directory name install public certificates for openssl unarchive src openssl certs tar gz dest test certificates creates test certificates verisign universal root certification authority pem expected results the play runs through and in the play s directory there is a directory called test certificates with the contents of the archive actual results ansible fails with the following error fatal failed changed false failed true invocation module args backup null content null copy true creates test certificates verisign universal root certification authority pem delimiter null dest test certificates directory mode null exclude extra opts follow false force null group null keep newer false list files false mode null original basename openssl certs tar gz owner null regexp null remote src null selevel null serole null setype null seuser null src users raffaele ansible tmp ansible tmp source msg unexpected error when accessing exploded file no such file or directory test certificates pem stat exists false | 1 |

750,814 | 26,219,054,912 | IssuesEvent | 2023-01-04 13:28:13 | AxonFramework/AxonFramework | https://api.github.com/repos/AxonFramework/AxonFramework | closed | Configurable Locking Scheme in SagaStore | Priority 4: Would Type: Feature Status: Under Discussion | It would be beneficial if the `SagaStore` could adjust it's locking scheme in regards to retrieving sagas from the database.

Ideally the `Builder` pattern would provide a toggle to change the query performed to retrieve a Saga instance. Doing so, a Saga instance could be enforce to not be acted upon concurrently by two distinct threads, for example when a Saga it's `SagaManager` is backed by a `SubscribingEventProcessor`.

Kick-off for this idea comes from Steven Grimm in [this](https://groups.google.com/forum/#!msg/axonframework/HAKofptqz0Q/B0KxDc9SCQAJ) user group issue. | 1.0 | Configurable Locking Scheme in SagaStore - It would be beneficial if the `SagaStore` could adjust it's locking scheme in regards to retrieving sagas from the database.

Ideally the `Builder` pattern would provide a toggle to change the query performed to retrieve a Saga instance. Doing so, a Saga instance could be enforce to not be acted upon concurrently by two distinct threads, for example when a Saga it's `SagaManager` is backed by a `SubscribingEventProcessor`.

Kick-off for this idea comes from Steven Grimm in [this](https://groups.google.com/forum/#!msg/axonframework/HAKofptqz0Q/B0KxDc9SCQAJ) user group issue. | non_main | configurable locking scheme in sagastore it would be beneficial if the sagastore could adjust it s locking scheme in regards to retrieving sagas from the database ideally the builder pattern would provide a toggle to change the query performed to retrieve a saga instance doing so a saga instance could be enforce to not be acted upon concurrently by two distinct threads for example when a saga it s sagamanager is backed by a subscribingeventprocessor kick off for this idea comes from steven grimm in user group issue | 0 |

2,821 | 10,119,928,966 | IssuesEvent | 2019-07-31 12:41:27 | precice/precice | https://api.github.com/repos/precice/precice | opened | Investigate pybind11 to replace Python actions and Python bindings | maintainability | The project pybind11 promises:

> Seamless operability between C++11 and Python

https://github.com/pybind/pybind11

This could be a single solution to generate both

1. the interoperability between preCICE and the user-defined python actions as well as

2. the generation of the python bindings.

I think we should investigate this and evaluate if we can use it to reduce the pythonic pain of maintenance. | True | Investigate pybind11 to replace Python actions and Python bindings - The project pybind11 promises:

> Seamless operability between C++11 and Python

https://github.com/pybind/pybind11

This could be a single solution to generate both

1. the interoperability between preCICE and the user-defined python actions as well as

2. the generation of the python bindings.

I think we should investigate this and evaluate if we can use it to reduce the pythonic pain of maintenance. | main | investigate to replace python actions and python bindings the project promises seamless operability between c and python this could be a single solution to generate both the interoperability between precice and the user defined python actions as well as the generation of the python bindings i think we should investigate this and evaluate if we can use it to reduce the pythonic pain of maintenance | 1 |

4,590 | 23,821,280,282 | IssuesEvent | 2022-09-05 11:29:05 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | opened | New Explorations should not auto-save. Editing an existing exploration should auto-save. | type: bug work: backend work: frontend status: ready restricted: maintainers | New Explorations are currently persistent, any change made immediately saves the exploration. This behaviour is not preferred since we'd like the user to be able to run and discard queries.

[Mail thread containing related discussion](https://groups.google.com/a/mathesar.org/g/mathesar-developers/c/RQJSiDQu1Tg/m/uLHj30yFAgAJ).

New behaviour proposed:

* New Exploration: Auto-save is not preferred

- User opens the Data Explorer

- User joins tables, does any number of operations

- This should not get saved automatically

- It should get saved when user manually clicks Save button

* Existing Exploration: Auto-save is preferred

- Users edits an existing exploration in the Data Explorer

- User makes changes to it

- The changes are auto-saved

- We have undo-redo to improve the user's editing experience

@kgodey @mathemancer @ghislaine @seancolsen does this sound good? | True | New Explorations should not auto-save. Editing an existing exploration should auto-save. - New Explorations are currently persistent, any change made immediately saves the exploration. This behaviour is not preferred since we'd like the user to be able to run and discard queries.

[Mail thread containing related discussion](https://groups.google.com/a/mathesar.org/g/mathesar-developers/c/RQJSiDQu1Tg/m/uLHj30yFAgAJ).

New behaviour proposed:

* New Exploration: Auto-save is not preferred

- User opens the Data Explorer

- User joins tables, does any number of operations

- This should not get saved automatically

- It should get saved when user manually clicks Save button

* Existing Exploration: Auto-save is preferred

- Users edits an existing exploration in the Data Explorer

- User makes changes to it

- The changes are auto-saved

- We have undo-redo to improve the user's editing experience

@kgodey @mathemancer @ghislaine @seancolsen does this sound good? | main | new explorations should not auto save editing an existing exploration should auto save new explorations are currently persistent any change made immediately saves the exploration this behaviour is not preferred since we d like the user to be able to run and discard queries new behaviour proposed new exploration auto save is not preferred user opens the data explorer user joins tables does any number of operations this should not get saved automatically it should get saved when user manually clicks save button existing exploration auto save is preferred users edits an existing exploration in the data explorer user makes changes to it the changes are auto saved we have undo redo to improve the user s editing experience kgodey mathemancer ghislaine seancolsen does this sound good | 1 |

126,990 | 17,148,533,527 | IssuesEvent | 2021-07-13 17:19:49 | eoscostarica/eosio-dashboard | https://api.github.com/repos/eoscostarica/eosio-dashboard | reopened | Add descriptive paragraphs | Design / UX content | Users can use a bit of text to help guide them on the dashboard features.

## Accounts Page

Enter a valid EOSIO account name to see information on the account and also interact with any smart contract deployed on that account.

## Node Performance

## Rewards Distributon | 1.0 | Add descriptive paragraphs - Users can use a bit of text to help guide them on the dashboard features.

## Accounts Page

Enter a valid EOSIO account name to see information on the account and also interact with any smart contract deployed on that account.

## Node Performance

## Rewards Distributon | non_main | add descriptive paragraphs users can use a bit of text to help guide them on the dashboard features accounts page enter a valid eosio account name to see information on the account and also interact with any smart contract deployed on that account node performance rewards distributon | 0 |

5,508 | 27,497,976,519 | IssuesEvent | 2023-03-05 11:17:39 | nordtheme/nord | https://api.github.com/repos/nordtheme/nord | opened | Migrate Nord repositories from `arcticicestudio` to `nordtheme` | context-docs context-port type-epic scope-maintainability scope-ux | # Migrate Nord repositories from `arcticicestudio` to `nordtheme`

The latest [“Northern Post — The state and roadmap of Nord“][1] announcement described the plans for the migration to [Nord‘s new home home on GitHub][2] and this _epic_ (GitHub [_tasklist_][3]) is used to track to overall process.

For a better visualization, with a time-based structure and general overview see [the “Roadmap ⧚“ view of Nord‘s “Planning & Roadmaps“ project board][4] as well as the [“Active ↻“ view][5] for the current iteration.

The main goal is to migrate the repositories itself to this new `nordtheme` organization, including…

- …adjustments of any hyperlinks to previous `arcticicestudio` references as well as other, already migrated, repositories.

- …adjustments of any copyright information, removing [_Arctic Ice Studio_ as Nord brand due to its retirement][6].

- …adaption to updated documentation and style conventions which reduces the need to solve this through individual issues again afterwards.

- …adaption to [Nord‘s new “Planning & Roadmaps“ project board][7] by adding existing, already triaged or active, issues and preparing “fresh“ issues for the follow-up triaging later on.

```[tasklist]

### Tasks

```

[1]: https://github.com/orgs/nordtheme/discussions/183

[2]: https://github.com/orgs/nordtheme/discussions/183#user-content-nords-new-home-on-github

[3]: https://docs.github.com/en/issues/tracking-your-work-with-issues/about-tasklists

[4]: https://github.com/orgs/nordtheme/projects/1/views/11

[5]: https://github.com/orgs/nordtheme/projects/1/views/7

[6]: https://github.com/orgs/nordtheme/discussions/183#user-content-retire-arctic-ice-studio-as-nord-brand

[7]: https://github.com/orgs/nordtheme/projects/1 | True | Migrate Nord repositories from `arcticicestudio` to `nordtheme` - # Migrate Nord repositories from `arcticicestudio` to `nordtheme`

The latest [“Northern Post — The state and roadmap of Nord“][1] announcement described the plans for the migration to [Nord‘s new home home on GitHub][2] and this _epic_ (GitHub [_tasklist_][3]) is used to track to overall process.

For a better visualization, with a time-based structure and general overview see [the “Roadmap ⧚“ view of Nord‘s “Planning & Roadmaps“ project board][4] as well as the [“Active ↻“ view][5] for the current iteration.

The main goal is to migrate the repositories itself to this new `nordtheme` organization, including…

- …adjustments of any hyperlinks to previous `arcticicestudio` references as well as other, already migrated, repositories.

- …adjustments of any copyright information, removing [_Arctic Ice Studio_ as Nord brand due to its retirement][6].

- …adaption to updated documentation and style conventions which reduces the need to solve this through individual issues again afterwards.

- …adaption to [Nord‘s new “Planning & Roadmaps“ project board][7] by adding existing, already triaged or active, issues and preparing “fresh“ issues for the follow-up triaging later on.

```[tasklist]

### Tasks

```

[1]: https://github.com/orgs/nordtheme/discussions/183

[2]: https://github.com/orgs/nordtheme/discussions/183#user-content-nords-new-home-on-github

[3]: https://docs.github.com/en/issues/tracking-your-work-with-issues/about-tasklists

[4]: https://github.com/orgs/nordtheme/projects/1/views/11

[5]: https://github.com/orgs/nordtheme/projects/1/views/7

[6]: https://github.com/orgs/nordtheme/discussions/183#user-content-retire-arctic-ice-studio-as-nord-brand

[7]: https://github.com/orgs/nordtheme/projects/1 | main | migrate nord repositories from arcticicestudio to nordtheme migrate nord repositories from arcticicestudio to nordtheme the latest announcement described the plans for the migration to and this epic github is used to track to overall process for a better visualization with a time based structure and general overview see as well as the for the current iteration the main goal is to migrate the repositories itself to this new nordtheme organization including… …adjustments of any hyperlinks to previous arcticicestudio references as well as other already migrated repositories …adjustments of any copyright information removing …adaption to updated documentation and style conventions which reduces the need to solve this through individual issues again afterwards …adaption to by adding existing already triaged or active issues and preparing “fresh“ issues for the follow up triaging later on tasks | 1 |

5,852 | 31,278,944,117 | IssuesEvent | 2023-08-22 08:21:22 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | Do not shutdown `LogManager` during on application shutdown; let Spring's shutdown hook do that. | area/maintainability kind/task | **Description**

> **Note**

> See linked epic issue for motivation

Acceptance criteria:

- We don't shutdown the `LogManager` in the `onApplicationEvent(ContextClosedEvent event)` of the `StandaloneBroker` and `StandaloneGateway`

- Instead, we enable the `logging.register-shutdown-hook` property in our default `application.properties`

One thing to keep in mind, when we'll launch the test applications, will we registering tons of hooks over time? It might be that we need to also disable it (via an overridden property) then. | True | Do not shutdown `LogManager` during on application shutdown; let Spring's shutdown hook do that. - **Description**

> **Note**

> See linked epic issue for motivation

Acceptance criteria:

- We don't shutdown the `LogManager` in the `onApplicationEvent(ContextClosedEvent event)` of the `StandaloneBroker` and `StandaloneGateway`

- Instead, we enable the `logging.register-shutdown-hook` property in our default `application.properties`

One thing to keep in mind, when we'll launch the test applications, will we registering tons of hooks over time? It might be that we need to also disable it (via an overridden property) then. | main | do not shutdown logmanager during on application shutdown let spring s shutdown hook do that description note see linked epic issue for motivation acceptance criteria we don t shutdown the logmanager in the onapplicationevent contextclosedevent event of the standalonebroker and standalonegateway instead we enable the logging register shutdown hook property in our default application properties one thing to keep in mind when we ll launch the test applications will we registering tons of hooks over time it might be that we need to also disable it via an overridden property then | 1 |

147,988 | 19,526,253,634 | IssuesEvent | 2021-12-30 08:24:25 | panasalap/linux-4.1.15 | https://api.github.com/repos/panasalap/linux-4.1.15 | opened | CVE-2020-27820 (Medium) detected in linux-stable-rtv4.1.33 | security vulnerability | ## CVE-2020-27820 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/panasalap/linux-4.1.15/commit/9c15ec31637ff4ee4a4c14fb9b3264a31f75aa69">9c15ec31637ff4ee4a4c14fb9b3264a31f75aa69</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/gpu/drm/nouveau/nouveau_drm.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A vulnerability was found in Linux kernel, where a use-after-frees in nouveau's postclose() handler could happen if removing device (that is not common to remove video card physically without power-off, but same happens if "unbind" the driver).

<p>Publish Date: 2021-11-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-27820>CVE-2020-27820</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.7</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-27820 (Medium) detected in linux-stable-rtv4.1.33 - ## CVE-2020-27820 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/panasalap/linux-4.1.15/commit/9c15ec31637ff4ee4a4c14fb9b3264a31f75aa69">9c15ec31637ff4ee4a4c14fb9b3264a31f75aa69</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/gpu/drm/nouveau/nouveau_drm.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A vulnerability was found in Linux kernel, where a use-after-frees in nouveau's postclose() handler could happen if removing device (that is not common to remove video card physically without power-off, but same happens if "unbind" the driver).

<p>Publish Date: 2021-11-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-27820>CVE-2020-27820</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.7</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | cve medium detected in linux stable cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source files drivers gpu drm nouveau nouveau drm c vulnerability details a vulnerability was found in linux kernel where a use after frees in nouveau s postclose handler could happen if removing device that is not common to remove video card physically without power off but same happens if unbind the driver publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity high privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href step up your open source security game with whitesource | 0 |

1,533 | 6,572,225,408 | IssuesEvent | 2017-09-11 00:17:05 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | ec2_vpc_route_table error not clear when Name tag already in use | affects_2.1 aws bug_report cloud waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

ec2_vpc_route_table

##### ANSIBLE VERSION

```

<!--- Paste verbatim output from “ansible --version” between quotes -->

ansible --version

ansible 2.1.0 (devel 22467a0de8) last updated 2016/04/13 09:48:50 (GMT -400)

lib/ansible/modules/core: (detached HEAD 99cd31140d) last updated 2016/04/13 09:49:08 (GMT -400)

lib/ansible/modules/extras: (detached HEAD ab2f4c4002) last updated 2016/04/13 09:49:08 (GMT -400)

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

Ansible Host: Fedora 23

Ansible Target: N/A AWS API

##### SUMMARY

<!--- Explain the problem briefly -->

Attempting to create a VPC route table for NAT purposes, I accidentally re-used the `Name` resource tag from an previously created route table. I wasn't trying to update the existing route table with the matching `Name`, it was a copy/paste error. The resulting error was not at all useful trying to troubleshoot the error.

The error appears to be thrown in this block:

```

for route_spec in route_specs:

i = index_of_matching_route(route_spec, routes_to_match)

if i is None:

route_specs_to_create.append(route_spec)

else:

del routes_to_match[i]

routes_to_delete = [r for r in routes_to_match

if r.gateway_id != 'local'

and r.gateway_id not in propagating_vgw_ids]

```

specifically iterating `routes_to_match`. I'm not familiar enough with the code to figure out what's going wrong with that list. It appears there's some matching going on against the resource Name that can empty the `routes_to_match` list.

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

<!--- Paste example playbooks or commands between quotes -->

```

$ ansible-playbook -i hosts site.yml -vvv

```

Failing role task:

```

- name: Create VPC route table - Public

ec2_vpc_route_table:

state: present

region: us-west-2

vpc_id: "{{ vpc.vpc_id }}"

resource_tags:

Environment: Testing

Name: Public Routes

subnets:

- "{{public_subnet.subnet.id}}"

routes:

- dest: 0.0.0.0/0

gateway_id: "{{ vpc.igw_id }}"

- name: Create VPC route table - Internal NAT

ec2_vpc_route_table:

state: present

region: us-west-2

vpc_id: "{{ vpc.vpc_id }}"

resource_tags:

Environment: Testing

Name: Public Routes

subnets:

- "{{ priv1_subnet.subnet.id }}"

- "{{ priv2_subnet.subnet.id }}"

routes:

- dest: 0.0.0.0/0

instance_id: "{{ item }}"

with_items:

- "{{ nat_host.instance_ids }}"

```

Note the reuse of

```

resource_tags:

Environment: Testing

Name: Public Routes

```

in each task

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

Not entirely sure, but if the intent is to allow for updates of a route table based on the `Name` resource tag, then something that has the syntax for that update. Otherwise an error that specifies that the match list was somehow empty.

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with high verbosity (-vvvv) -->

```

TASK [vpc : Create VPC route table - Internal NAT] *****************************

task path: /home/matt.micene/Projects/github/aws_ansible/roles/vpc/tasks/main.yml:82

<127.0.0.1> ESTABLISH LOCAL CONNECTION FOR USER: matt.micene

<127.0.0.1> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo $HOME/.ansible/tmp/ansible-tmp-1460558374.83-191553459311880 `" && echo "` echo $HOME/.ansible/tmp/ansible-tmp-1460558374.83-191553459311880 `" )'

<127.0.0.1> PUT /tmp/tmpLMeNP3 TO /home/matt.micene/.ansible/tmp/ansible-tmp-1460558374.83-191553459311880/ec2_vpc_route_table

<127.0.0.1> EXEC /bin/sh -c 'LANG=C LC_ALL=C LC_MESSAGES=C /usr/bin/python /home/matt.micene/.ansible/tmp/ansible-tmp-1460558374.83-191553459311880/ec2_vpc_route_table; rm -rf "/home/matt.micene/.ansible/tmp/ansible-tmp-1460558374.83-191553459311880/" > /dev/null 2>&1'

An exception occurred during task execution. The full traceback is:

Traceback (most recent call last):

File "/usr/lib64/python2.7/runpy.py", line 162, in _run_module_as_main

"__main__", fname, loader, pkg_name)

File "/usr/lib64/python2.7/runpy.py", line 72, in _run_code

exec code in run_globals

File "/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py", line 611, in <module>

File "/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py", line 599, in main

File "/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py", line 522, in ensure_route_table_present

File "/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py", line 322, in ensure_routes

TypeError: argument of type 'NoneType' is not iterable

failed: [localhost] (item=i-8358025b) => {"failed": true, "invocation": {"module_name": "ec2_vpc_route_table"}, "item": "i-8358025b", "module_stderr": "Traceback (most recent call last):\n File \"/usr/lib64/python2.7/runpy.py\", line 162, in _run_module_as_main\n \"__main__\", fname, loader, pkg_name)\n File \"/usr/lib64/python2.7/runpy.py\", line 72, in _run_code\n exec code in run_globals\n File \"/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py\", line 611, in <module>\n File \"/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py\", line 599, in main\n File \"/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py\", line 522, in ensure_route_table_present\n File \"/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py\", line 322, in ensure_routes\nTypeError: argument of type 'NoneType' is not iterable\n", "module_stdout": "", "msg": "MODULE FAILURE", "parsed": false}

```

| True | ec2_vpc_route_table error not clear when Name tag already in use - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

ec2_vpc_route_table

##### ANSIBLE VERSION

```

<!--- Paste verbatim output from “ansible --version” between quotes -->

ansible --version

ansible 2.1.0 (devel 22467a0de8) last updated 2016/04/13 09:48:50 (GMT -400)

lib/ansible/modules/core: (detached HEAD 99cd31140d) last updated 2016/04/13 09:49:08 (GMT -400)

lib/ansible/modules/extras: (detached HEAD ab2f4c4002) last updated 2016/04/13 09:49:08 (GMT -400)

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

Ansible Host: Fedora 23

Ansible Target: N/A AWS API

##### SUMMARY

<!--- Explain the problem briefly -->

Attempting to create a VPC route table for NAT purposes, I accidentally re-used the `Name` resource tag from an previously created route table. I wasn't trying to update the existing route table with the matching `Name`, it was a copy/paste error. The resulting error was not at all useful trying to troubleshoot the error.

The error appears to be thrown in this block:

```

for route_spec in route_specs:

i = index_of_matching_route(route_spec, routes_to_match)

if i is None:

route_specs_to_create.append(route_spec)

else:

del routes_to_match[i]

routes_to_delete = [r for r in routes_to_match

if r.gateway_id != 'local'

and r.gateway_id not in propagating_vgw_ids]

```

specifically iterating `routes_to_match`. I'm not familiar enough with the code to figure out what's going wrong with that list. It appears there's some matching going on against the resource Name that can empty the `routes_to_match` list.

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

<!--- Paste example playbooks or commands between quotes -->

```

$ ansible-playbook -i hosts site.yml -vvv

```

Failing role task:

```

- name: Create VPC route table - Public

ec2_vpc_route_table:

state: present

region: us-west-2

vpc_id: "{{ vpc.vpc_id }}"

resource_tags:

Environment: Testing

Name: Public Routes

subnets:

- "{{public_subnet.subnet.id}}"

routes:

- dest: 0.0.0.0/0

gateway_id: "{{ vpc.igw_id }}"

- name: Create VPC route table - Internal NAT

ec2_vpc_route_table:

state: present

region: us-west-2

vpc_id: "{{ vpc.vpc_id }}"

resource_tags:

Environment: Testing

Name: Public Routes

subnets:

- "{{ priv1_subnet.subnet.id }}"

- "{{ priv2_subnet.subnet.id }}"

routes:

- dest: 0.0.0.0/0

instance_id: "{{ item }}"

with_items:

- "{{ nat_host.instance_ids }}"

```

Note the reuse of

```

resource_tags:

Environment: Testing

Name: Public Routes

```

in each task

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

Not entirely sure, but if the intent is to allow for updates of a route table based on the `Name` resource tag, then something that has the syntax for that update. Otherwise an error that specifies that the match list was somehow empty.

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with high verbosity (-vvvv) -->

```

TASK [vpc : Create VPC route table - Internal NAT] *****************************

task path: /home/matt.micene/Projects/github/aws_ansible/roles/vpc/tasks/main.yml:82

<127.0.0.1> ESTABLISH LOCAL CONNECTION FOR USER: matt.micene

<127.0.0.1> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo $HOME/.ansible/tmp/ansible-tmp-1460558374.83-191553459311880 `" && echo "` echo $HOME/.ansible/tmp/ansible-tmp-1460558374.83-191553459311880 `" )'

<127.0.0.1> PUT /tmp/tmpLMeNP3 TO /home/matt.micene/.ansible/tmp/ansible-tmp-1460558374.83-191553459311880/ec2_vpc_route_table

<127.0.0.1> EXEC /bin/sh -c 'LANG=C LC_ALL=C LC_MESSAGES=C /usr/bin/python /home/matt.micene/.ansible/tmp/ansible-tmp-1460558374.83-191553459311880/ec2_vpc_route_table; rm -rf "/home/matt.micene/.ansible/tmp/ansible-tmp-1460558374.83-191553459311880/" > /dev/null 2>&1'

An exception occurred during task execution. The full traceback is:

Traceback (most recent call last):

File "/usr/lib64/python2.7/runpy.py", line 162, in _run_module_as_main

"__main__", fname, loader, pkg_name)

File "/usr/lib64/python2.7/runpy.py", line 72, in _run_code

exec code in run_globals

File "/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py", line 611, in <module>

File "/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py", line 599, in main

File "/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py", line 522, in ensure_route_table_present

File "/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py", line 322, in ensure_routes

TypeError: argument of type 'NoneType' is not iterable

failed: [localhost] (item=i-8358025b) => {"failed": true, "invocation": {"module_name": "ec2_vpc_route_table"}, "item": "i-8358025b", "module_stderr": "Traceback (most recent call last):\n File \"/usr/lib64/python2.7/runpy.py\", line 162, in _run_module_as_main\n \"__main__\", fname, loader, pkg_name)\n File \"/usr/lib64/python2.7/runpy.py\", line 72, in _run_code\n exec code in run_globals\n File \"/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py\", line 611, in <module>\n File \"/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py\", line 599, in main\n File \"/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py\", line 522, in ensure_route_table_present\n File \"/tmp/ansible_dQA9Zm/ansible/module_exec/ec2_vpc_route_table/__main__.py\", line 322, in ensure_routes\nTypeError: argument of type 'NoneType' is not iterable\n", "module_stdout": "", "msg": "MODULE FAILURE", "parsed": false}

```

| main | vpc route table error not clear when name tag already in use issue type bug report component name vpc route table ansible version ansible version ansible devel last updated gmt lib ansible modules core detached head last updated gmt lib ansible modules extras detached head last updated gmt config file etc ansible ansible cfg configured module search path default w o overrides configuration mention any settings you have changed added removed in ansible cfg or using the ansible environment variables os environment mention the os you are running ansible from and the os you are managing or say “n a” for anything that is not platform specific ansible host fedora ansible target n a aws api summary attempting to create a vpc route table for nat purposes i accidentally re used the name resource tag from an previously created route table i wasn t trying to update the existing route table with the matching name it was a copy paste error the resulting error was not at all useful trying to troubleshoot the error the error appears to be thrown in this block for route spec in route specs i index of matching route route spec routes to match if i is none route specs to create append route spec else del routes to match routes to delete r for r in routes to match if r gateway id local and r gateway id not in propagating vgw ids specifically iterating routes to match i m not familiar enough with the code to figure out what s going wrong with that list it appears there s some matching going on against the resource name that can empty the routes to match list steps to reproduce for bugs show exactly how to reproduce the problem for new features show how the feature would be used ansible playbook i hosts site yml vvv failing role task name create vpc route table public vpc route table state present region us west vpc id vpc vpc id resource tags environment testing name public routes subnets public subnet subnet id routes dest gateway id vpc igw id name create vpc route table internal nat vpc route table state present region us west vpc id vpc vpc id resource tags environment testing name public routes subnets subnet subnet id subnet subnet id routes dest instance id item with items nat host instance ids note the reuse of resource tags environment testing name public routes in each task expected results not entirely sure but if the intent is to allow for updates of a route table based on the name resource tag then something that has the syntax for that update otherwise an error that specifies that the match list was somehow empty actual results task task path home matt micene projects github aws ansible roles vpc tasks main yml establish local connection for user matt micene exec bin sh c umask mkdir p echo home ansible tmp ansible tmp echo echo home ansible tmp ansible tmp put tmp to home matt micene ansible tmp ansible tmp vpc route table exec bin sh c lang c lc all c lc messages c usr bin python home matt micene ansible tmp ansible tmp vpc route table rm rf home matt micene ansible tmp ansible tmp dev null an exception occurred during task execution the full traceback is traceback most recent call last file usr runpy py line in run module as main main fname loader pkg name file usr runpy py line in run code exec code in run globals file tmp ansible ansible module exec vpc route table main py line in file tmp ansible ansible module exec vpc route table main py line in main file tmp ansible ansible module exec vpc route table main py line in ensure route table present file tmp ansible ansible module exec vpc route table main py line in ensure routes typeerror argument of type nonetype is not iterable failed item i failed true invocation module name vpc route table item i module stderr traceback most recent call last n file usr runpy py line in run module as main n main fname loader pkg name n file usr runpy py line in run code n exec code in run globals n file tmp ansible ansible module exec vpc route table main py line in n file tmp ansible ansible module exec vpc route table main py line in main n file tmp ansible ansible module exec vpc route table main py line in ensure route table present n file tmp ansible ansible module exec vpc route table main py line in ensure routes ntypeerror argument of type nonetype is not iterable n module stdout msg module failure parsed false | 1 |

5,249 | 26,567,532,633 | IssuesEvent | 2023-01-20 21:57:34 | mozilla/foundation.mozilla.org | https://api.github.com/repos/mozilla/foundation.mozilla.org | closed | Fix CI deprecation warnings | engineering devops maintain | We currently get a bunch of deprecation warnings for every GitHub action run. I think we need to fix this to make sure CI is not going to break.

| True | Fix CI deprecation warnings - We currently get a bunch of deprecation warnings for every GitHub action run. I think we need to fix this to make sure CI is not going to break.

| main | fix ci deprecation warnings we currently get a bunch of deprecation warnings for every github action run i think we need to fix this to make sure ci is not going to break | 1 |

40,852 | 5,318,879,037 | IssuesEvent | 2017-02-14 04:01:11 | NorthBridge/nexus-community | https://api.github.com/repos/NorthBridge/nexus-community | closed | enrollment packet did not come through with Cats network | ready to test | New enrollment emails did not come through | 1.0 | enrollment packet did not come through with Cats network - New enrollment emails did not come through | non_main | enrollment packet did not come through with cats network new enrollment emails did not come through | 0 |

64,511 | 18,722,101,109 | IssuesEvent | 2021-11-03 12:58:05 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | [Components and pattern standards] Design components or patterns don't align with Design System guidelines. (04.07.1) | content design 508/Accessibility ia education 508-defect-3 collab-cycle-feedback afs-education Staging CCIssue04.07 CC-Dashboard | ### General Information

#### VFS team name

Education Application (BAH)

#### VFS product name

Comparison Tool Redesign

#### Point of Contact/Reviewers

Trevor Pierce (Accessibility)

Allison Christman (Design)

---

### Platform Issue

Design components or patterns don't align with Design System guidelines.

### Issue Details

Search bar on Comparison Tool view doesn't match other search bars like /find-forms

### Link, screenshot or steps to recreate

https://docs.google.com/spreadsheets/d/1KnkaMDBeOZUR9n1sG_w3JB1cpAWvg-rWOLuJPjbUwsI/edit#gid=0

### VA.gov Experience Standard

[Cateogy Number 04, Issue Number 07](https://depo-platform-documentation.scrollhelp.site/collaboration-cycle/VA.gov-experience-standards.1683980311.html)

### Other References

WCAG SC 3.2.4 AA

---

### Platform Recommendation

Update visual style to match existing search bars.

Style the search button to be attached and same color/size as the rest of the search on VA.gov. Put the icon in front of the text. See excel file under links for example

### VFS Team Tasks to Complete

- [ ] Comment on the ticket if there are questions or concerns

- [ ] VFS team closes the ticket when the issue has been resolved | 1.0 | [Components and pattern standards] Design components or patterns don't align with Design System guidelines. (04.07.1) - ### General Information

#### VFS team name

Education Application (BAH)

#### VFS product name

Comparison Tool Redesign

#### Point of Contact/Reviewers

Trevor Pierce (Accessibility)

Allison Christman (Design)

---

### Platform Issue

Design components or patterns don't align with Design System guidelines.

### Issue Details

Search bar on Comparison Tool view doesn't match other search bars like /find-forms

### Link, screenshot or steps to recreate

https://docs.google.com/spreadsheets/d/1KnkaMDBeOZUR9n1sG_w3JB1cpAWvg-rWOLuJPjbUwsI/edit#gid=0

### VA.gov Experience Standard

[Cateogy Number 04, Issue Number 07](https://depo-platform-documentation.scrollhelp.site/collaboration-cycle/VA.gov-experience-standards.1683980311.html)

### Other References

WCAG SC 3.2.4 AA

---

### Platform Recommendation

Update visual style to match existing search bars.

Style the search button to be attached and same color/size as the rest of the search on VA.gov. Put the icon in front of the text. See excel file under links for example

### VFS Team Tasks to Complete

- [ ] Comment on the ticket if there are questions or concerns

- [ ] VFS team closes the ticket when the issue has been resolved | non_main | design components or patterns don t align with design system guidelines general information vfs team name education application bah vfs product name comparison tool redesign point of contact reviewers trevor pierce accessibility allison christman design platform issue design components or patterns don t align with design system guidelines issue details search bar on comparison tool view doesn t match other search bars like find forms link screenshot or steps to recreate va gov experience standard other references wcag sc aa platform recommendation update visual style to match existing search bars style the search button to be attached and same color size as the rest of the search on va gov put the icon in front of the text see excel file under links for example vfs team tasks to complete comment on the ticket if there are questions or concerns vfs team closes the ticket when the issue has been resolved | 0 |

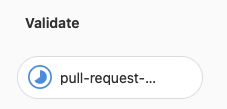

240,392 | 20,026,270,279 | IssuesEvent | 2022-02-01 21:42:33 | phetsims/sun | https://api.github.com/repos/phetsims/sun | closed | API change for `slider.enabledProperty` | dev:phet-io type:automated-testing status:blocks-publication | Several sims have been failing CT due to PhET-iO API changes to Slider `enabledProperty`. For example:

```

molecule-polarity : phet-io-api-compatibility : unbuilt

https://bayes.colorado.edu/continuous-testing/ct-snapshots/1643631899761/molecule-polarity/molecule-polarity_en.html?continuousTest=%7B%22test%22%3A%5B%22molecule-polarity%22%2C%22phet-io-api-compatibility%22%2C%22unbuilt%22%5D%2C%22snapshotName%22%3A%22snapshot-1643631899761%22%2C%22timestamp%22%3A1643637936709%7D&ea&brand=phet-io&phetioStandalone&phetioCompareAPI&randomSeed=332211

Query: ea&brand=phet-io&phetioStandalone&phetioCompareAPI&randomSeed=332211

Uncaught Error: Assertion failed: Designed API changes detected, please roll them back or revise the reference API:

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomAElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomBElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomCElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.twoAtomsScreen.view.electronegativityPanels.atomAElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.twoAtomsScreen.view.electronegativityPanels.atomBElectronegativityPanel.slider.enabledProperty

Error: Assertion failed: Designed API changes detected, please roll them back or revise the reference API:

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomAElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomBElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomCElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.twoAtomsScreen.view.electronegativityPanels.atomAElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.twoAtomsScreen.view.electronegativityPanels.atomBElectronegativityPanel.slider.enabledProperty

at window.assertions.assertFunction (https://bayes.colorado.edu/continuous-testing/ct-snapshots/1643631899761/assert/js/assert.js:25:13)

at XMLHttpRequest.<anonymous> (https://bayes.colorado.edu/continuous-testing/ct-snapshots/1643631899761/chipper/dist/js/phet-io/js/phetioEngine.js:345:23)

id: Bayes Chrome

Snapshot from 1/31/2022, 5:24:59 AM

```

There have been no sim-specific changes to the sims that I’m responsible for.

I see changes to AccessibleSlider and AccessibleValueHandler by @mjkauzmann that seem to coincide with the appearance of this problem. For example https://github.com/phetsims/sun/commit/1ad9e76414658b3797009662e651d3d41b48d34a and https://github.com/phetsims/sun/commit/877f09991f4ae29029c2a7c8215d814318b6a91d.

If this was an intentional change, please summarize "why", and update PhET-iO APIs. If it was unintentional, please correct the regression.

| 1.0 | API change for `slider.enabledProperty` - Several sims have been failing CT due to PhET-iO API changes to Slider `enabledProperty`. For example:

```

molecule-polarity : phet-io-api-compatibility : unbuilt

https://bayes.colorado.edu/continuous-testing/ct-snapshots/1643631899761/molecule-polarity/molecule-polarity_en.html?continuousTest=%7B%22test%22%3A%5B%22molecule-polarity%22%2C%22phet-io-api-compatibility%22%2C%22unbuilt%22%5D%2C%22snapshotName%22%3A%22snapshot-1643631899761%22%2C%22timestamp%22%3A1643637936709%7D&ea&brand=phet-io&phetioStandalone&phetioCompareAPI&randomSeed=332211

Query: ea&brand=phet-io&phetioStandalone&phetioCompareAPI&randomSeed=332211

Uncaught Error: Assertion failed: Designed API changes detected, please roll them back or revise the reference API:

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomAElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomBElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomCElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.twoAtomsScreen.view.electronegativityPanels.atomAElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.twoAtomsScreen.view.electronegativityPanels.atomBElectronegativityPanel.slider.enabledProperty

Error: Assertion failed: Designed API changes detected, please roll them back or revise the reference API:

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomAElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomBElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.threeAtomsScreen.view.electronegativityPanels.atomCElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.twoAtomsScreen.view.electronegativityPanels.atomAElectronegativityPanel.slider.enabledProperty

PhET-iO Element missing: moleculePolarity.twoAtomsScreen.view.electronegativityPanels.atomBElectronegativityPanel.slider.enabledProperty

at window.assertions.assertFunction (https://bayes.colorado.edu/continuous-testing/ct-snapshots/1643631899761/assert/js/assert.js:25:13)

at XMLHttpRequest.<anonymous> (https://bayes.colorado.edu/continuous-testing/ct-snapshots/1643631899761/chipper/dist/js/phet-io/js/phetioEngine.js:345:23)

id: Bayes Chrome

Snapshot from 1/31/2022, 5:24:59 AM

```

There have been no sim-specific changes to the sims that I’m responsible for.

I see changes to AccessibleSlider and AccessibleValueHandler by @mjkauzmann that seem to coincide with the appearance of this problem. For example https://github.com/phetsims/sun/commit/1ad9e76414658b3797009662e651d3d41b48d34a and https://github.com/phetsims/sun/commit/877f09991f4ae29029c2a7c8215d814318b6a91d.

If this was an intentional change, please summarize "why", and update PhET-iO APIs. If it was unintentional, please correct the regression.

| non_main | api change for slider enabledproperty several sims have been failing ct due to phet io api changes to slider enabledproperty for example molecule polarity phet io api compatibility unbuilt query ea brand phet io phetiostandalone phetiocompareapi randomseed uncaught error assertion failed designed api changes detected please roll them back or revise the reference api phet io element missing moleculepolarity threeatomsscreen view electronegativitypanels atomaelectronegativitypanel slider enabledproperty phet io element missing moleculepolarity threeatomsscreen view electronegativitypanels atombelectronegativitypanel slider enabledproperty phet io element missing moleculepolarity threeatomsscreen view electronegativitypanels atomcelectronegativitypanel slider enabledproperty phet io element missing moleculepolarity twoatomsscreen view electronegativitypanels atomaelectronegativitypanel slider enabledproperty phet io element missing moleculepolarity twoatomsscreen view electronegativitypanels atombelectronegativitypanel slider enabledproperty error assertion failed designed api changes detected please roll them back or revise the reference api phet io element missing moleculepolarity threeatomsscreen view electronegativitypanels atomaelectronegativitypanel slider enabledproperty phet io element missing moleculepolarity threeatomsscreen view electronegativitypanels atombelectronegativitypanel slider enabledproperty phet io element missing moleculepolarity threeatomsscreen view electronegativitypanels atomcelectronegativitypanel slider enabledproperty phet io element missing moleculepolarity twoatomsscreen view electronegativitypanels atomaelectronegativitypanel slider enabledproperty phet io element missing moleculepolarity twoatomsscreen view electronegativitypanels atombelectronegativitypanel slider enabledproperty at window assertions assertfunction at xmlhttprequest id bayes chrome snapshot from am there have been no sim specific changes to the sims that i’m responsible for i see changes to accessibleslider and accessiblevaluehandler by mjkauzmann that seem to coincide with the appearance of this problem for example and if this was an intentional change please summarize why and update phet io apis if it was unintentional please correct the regression | 0 |

119,923 | 25,707,249,578 | IssuesEvent | 2022-12-07 02:10:20 | Rich2/openstrat | https://api.github.com/repos/Rich2/openstrat | opened | Removal of Grid Managers | code elimination | Consider removing grid managers. I'm not sure the encapsulation they enable justifies the increase in obfuscation. | 1.0 | Removal of Grid Managers - Consider removing grid managers. I'm not sure the encapsulation they enable justifies the increase in obfuscation. | non_main | removal of grid managers consider removing grid managers i m not sure the encapsulation they enable justifies the increase in obfuscation | 0 |