Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

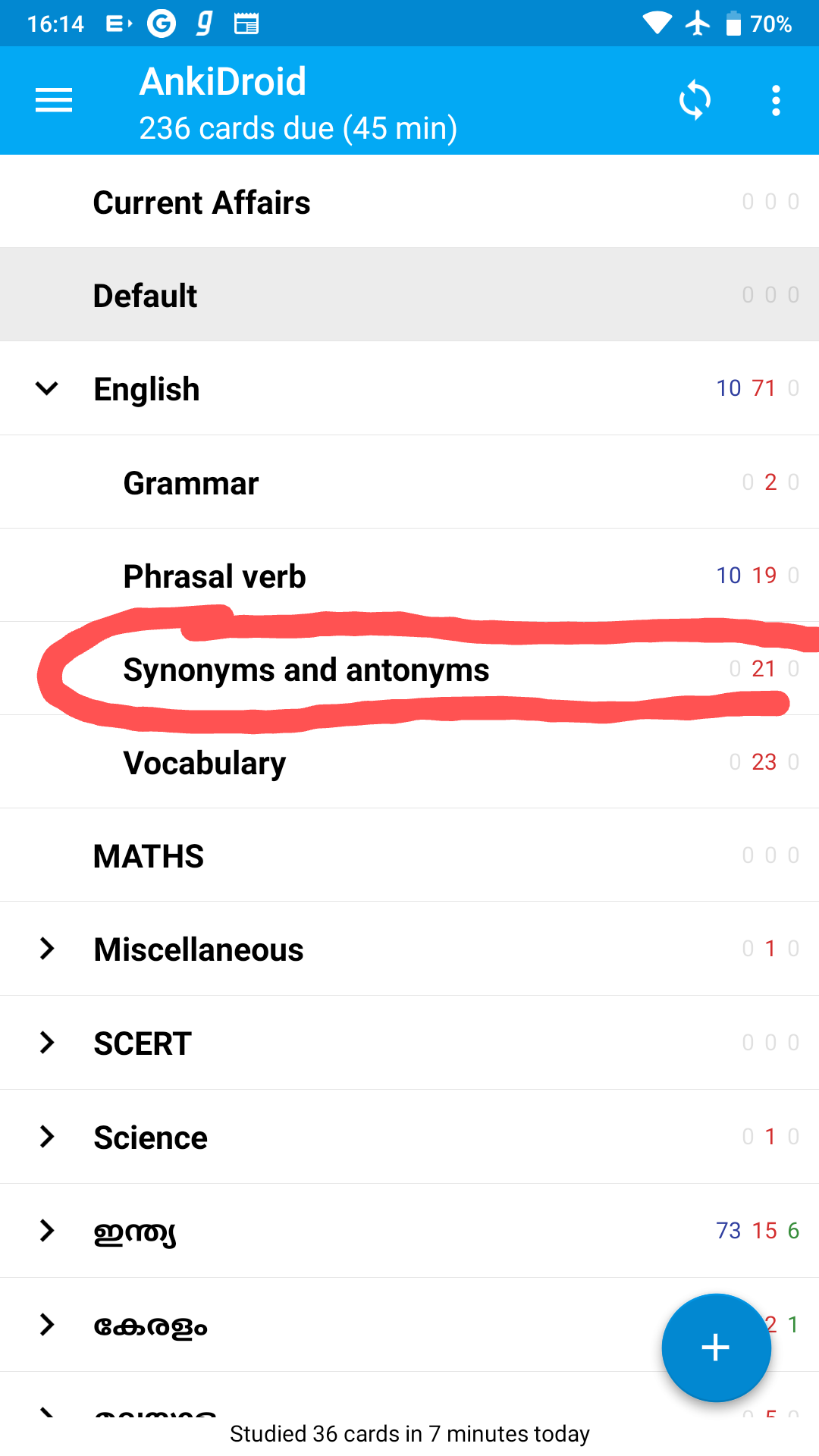

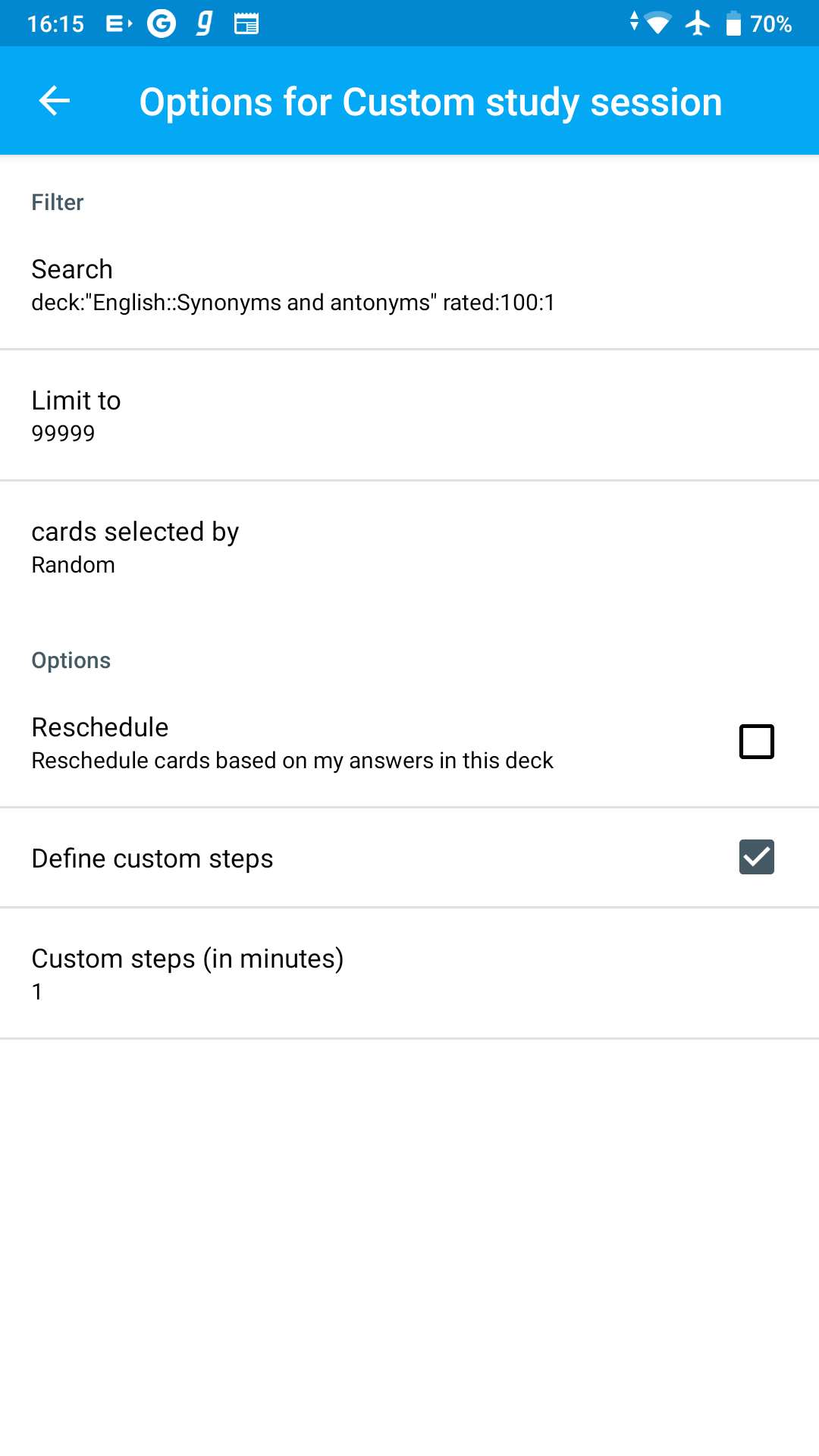

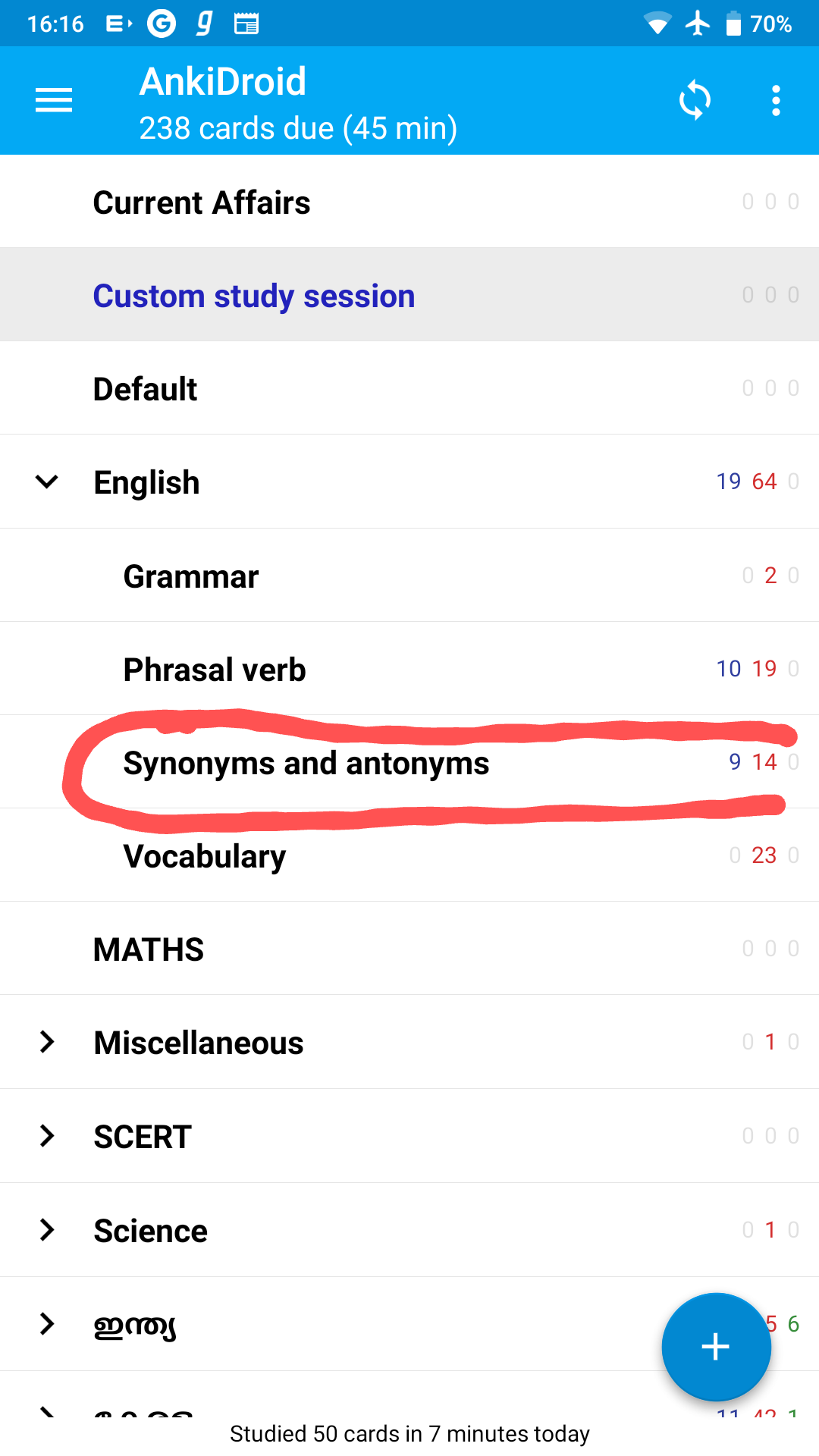

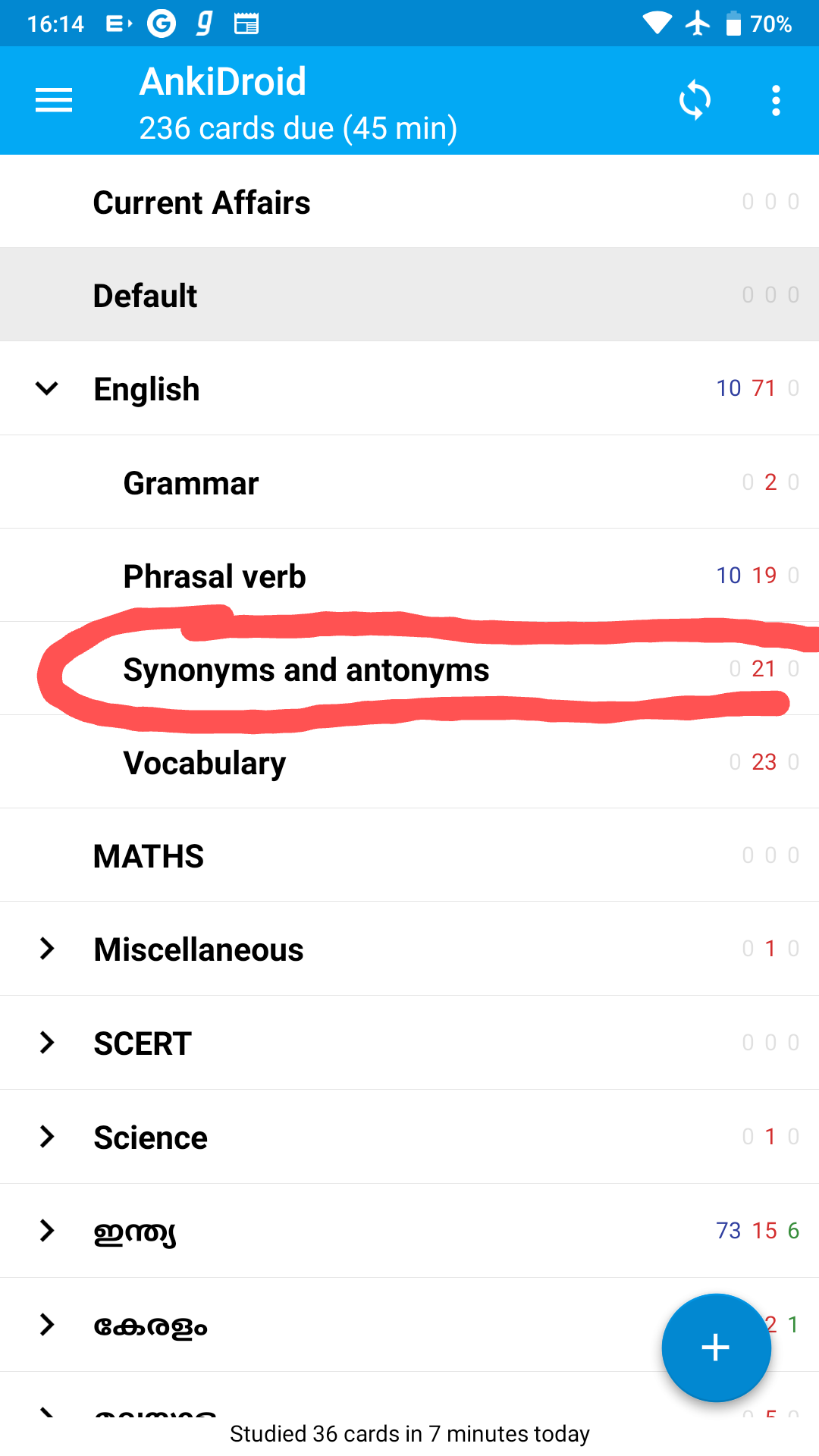

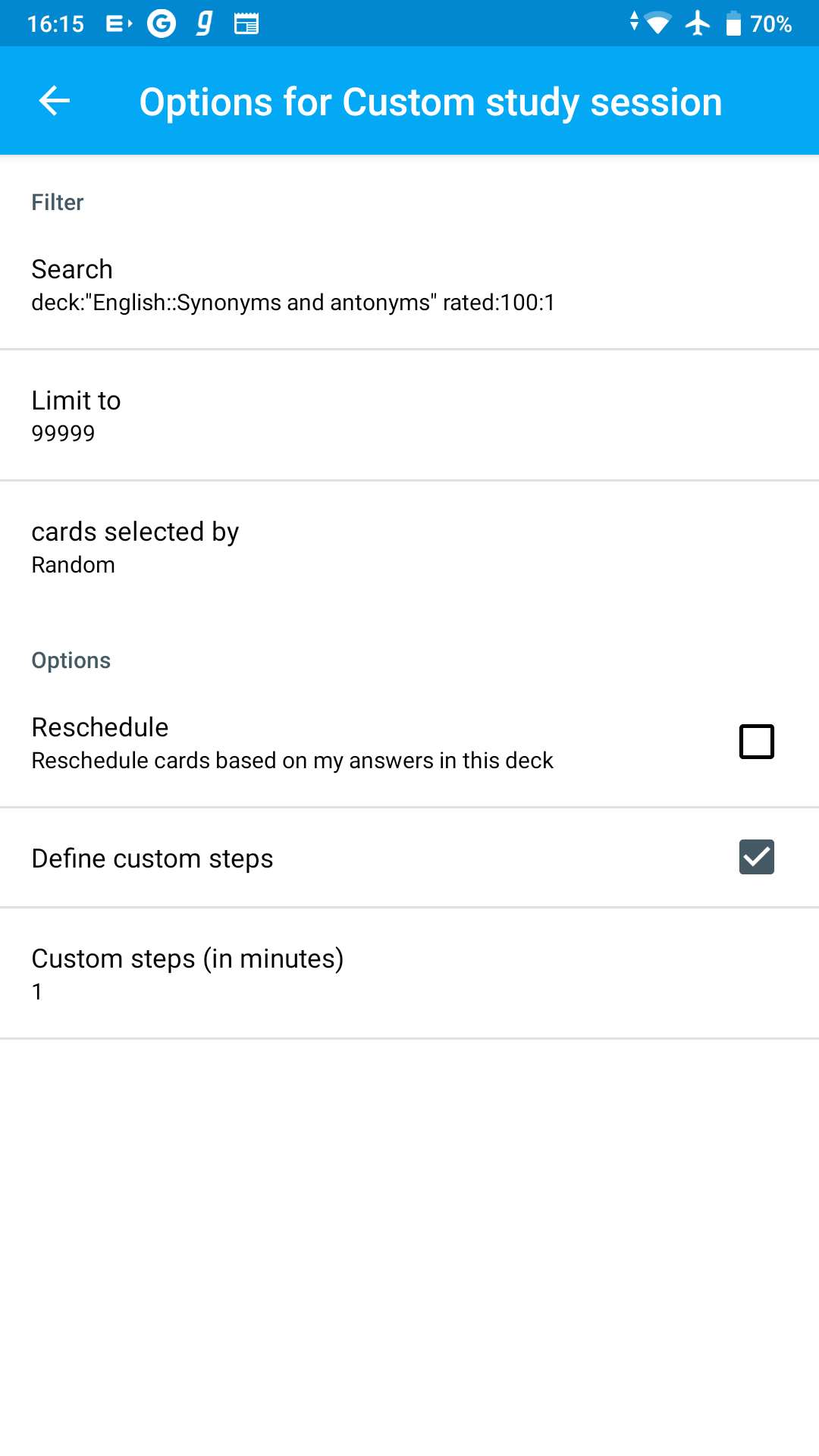

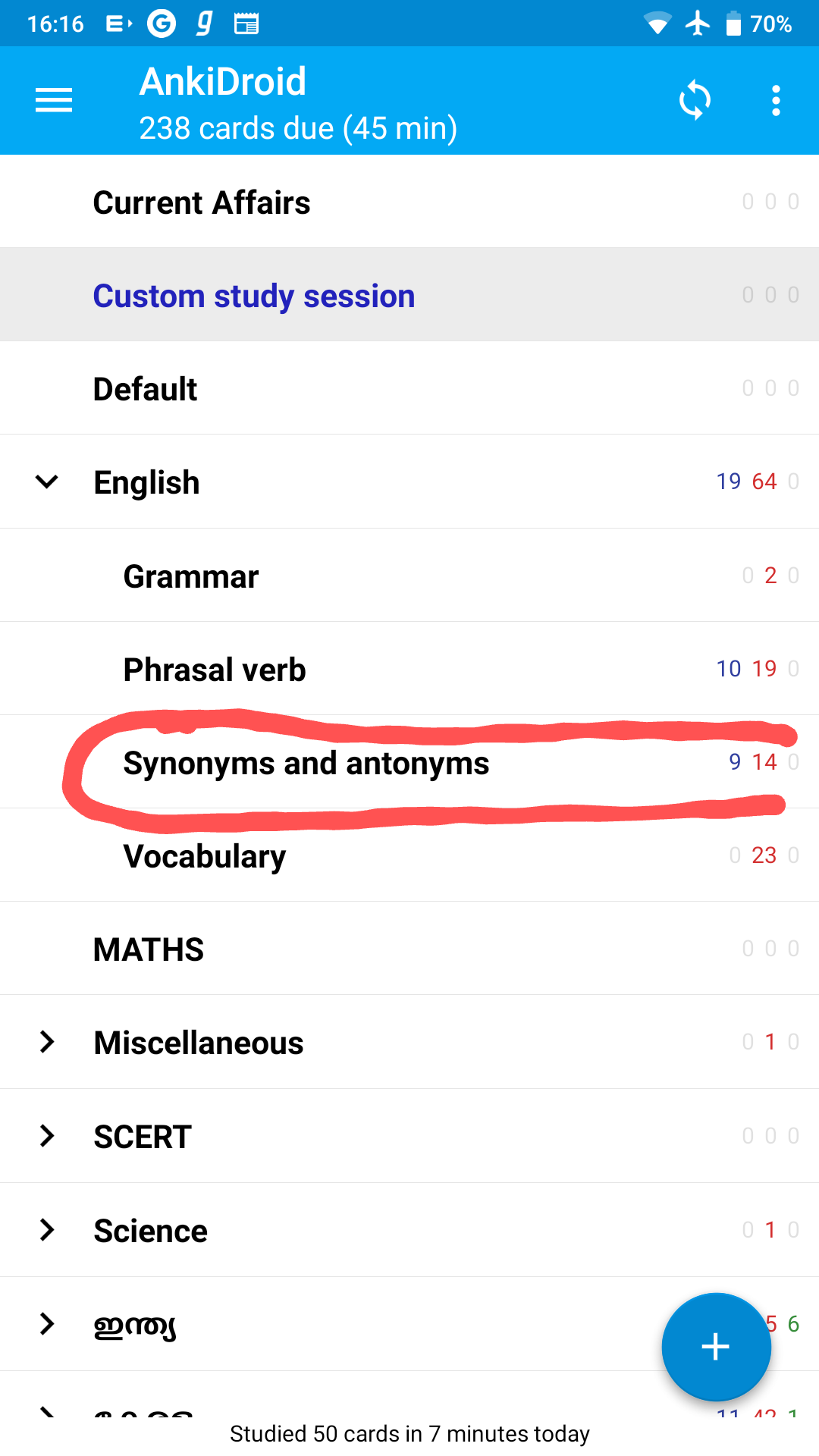

427,105 | 12,392,975,797 | IssuesEvent | 2020-05-20 14:46:03 | ankidroid/Anki-Android | https://api.github.com/repos/ankidroid/Anki-Android | closed | Anki custom study session changes original card state | Bug Priority-Medium Reproduced | ###### Reproduction Steps

1. I started custom study session on Synonyms and antonyms

2. Reschedule turned off

3. [Apkg file of the session](https://drive.google.com/file/d/1e4s_lQewsdVVjzqYu1jzUZxKI5hBafY6/view?usp=drivesdk)

###### Expected Result

###### Actual Result

The original card session state changed

###### Debug info

AnkiDroid Version = 2.10.2

Android Version = 9

ACRA UUID = 6e2a8e53-f25c-4cc6-a183-7dab54dad6a0

###### Research

*Enter an [x] character to confirm the points below:*

- [x] I have read the [support page](https://ankidroid.org/docs/help.html) and am reporting a bug or enhancement request specific to AnkiDroid

- [x] I have checked the [manual](https://ankidroid.org/docs/manual.html) and the [FAQ](https://github.com/ankidroid/Anki-Android/wiki/FAQ) and could not find a solution to my issue

- [x] I have searched for similar existing issues here and on the user forum

- [x] (Optional) I have confirmed the issue is not resolved in the latest alpha release ([instructions](https://docs.ankidroid.org/manual.html#betaTesting))

| 1.0 | Anki custom study session changes original card state - ###### Reproduction Steps

1. I started custom study session on Synonyms and antonyms

2. Reschedule turned off

3. [Apkg file of the session](https://drive.google.com/file/d/1e4s_lQewsdVVjzqYu1jzUZxKI5hBafY6/view?usp=drivesdk)

###### Expected Result

###### Actual Result

The original card session state changed

###### Debug info

AnkiDroid Version = 2.10.2

Android Version = 9

ACRA UUID = 6e2a8e53-f25c-4cc6-a183-7dab54dad6a0

###### Research

*Enter an [x] character to confirm the points below:*

- [x] I have read the [support page](https://ankidroid.org/docs/help.html) and am reporting a bug or enhancement request specific to AnkiDroid

- [x] I have checked the [manual](https://ankidroid.org/docs/manual.html) and the [FAQ](https://github.com/ankidroid/Anki-Android/wiki/FAQ) and could not find a solution to my issue

- [x] I have searched for similar existing issues here and on the user forum

- [x] (Optional) I have confirmed the issue is not resolved in the latest alpha release ([instructions](https://docs.ankidroid.org/manual.html#betaTesting))

| priority | anki custom study session changes original card state reproduction steps i started custom study session on synonyms and antonyms reschedule turned off expected result actual result the original card session state changed debug info ankidroid version android version acra uuid research enter an character to confirm the points below i have read the and am reporting a bug or enhancement request specific to ankidroid i have checked the and the and could not find a solution to my issue i have searched for similar existing issues here and on the user forum optional i have confirmed the issue is not resolved in the latest alpha release | 1 |

280,616 | 8,684,068,899 | IssuesEvent | 2018-12-02 23:32:22 | MarcusWolschon/osmeditor4android | https://api.github.com/repos/MarcusWolschon/osmeditor4android | closed | suggest recently/frequently used keys/values | Enhancement Medium Priority Work in progress | During my editing I use small set of tags. JOSM behaviour where recently used tags are suggested is very useful in such situation.

Currently Vespucci is showing possible keys/values in alphabetical order. Maybe at least part of that list may be reserved for popular/recently used keys/values? | 1.0 | suggest recently/frequently used keys/values - During my editing I use small set of tags. JOSM behaviour where recently used tags are suggested is very useful in such situation.

Currently Vespucci is showing possible keys/values in alphabetical order. Maybe at least part of that list may be reserved for popular/recently used keys/values? | priority | suggest recently frequently used keys values during my editing i use small set of tags josm behaviour where recently used tags are suggested is very useful in such situation currently vespucci is showing possible keys values in alphabetical order maybe at least part of that list may be reserved for popular recently used keys values | 1 |

684,489 | 23,419,980,840 | IssuesEvent | 2022-08-13 14:45:02 | ankidroid/Anki-Android | https://api.github.com/repos/ankidroid/Anki-Android | closed | [Bug] Kotlin: can no longer commit hunks | Priority-Medium Keep Open Dev | It seems that due to the pre-commit hook, the full file is committed

https://github.com/ankidroid/Anki-Android/blob/master/pre-commit | 1.0 | [Bug] Kotlin: can no longer commit hunks - It seems that due to the pre-commit hook, the full file is committed

https://github.com/ankidroid/Anki-Android/blob/master/pre-commit | priority | kotlin can no longer commit hunks it seems that due to the pre commit hook the full file is committed | 1 |

183,491 | 6,689,098,860 | IssuesEvent | 2017-10-08 22:02:50 | compodoc/compodoc | https://api.github.com/repos/compodoc/compodoc | closed | [BUG] Error when generating docs (Parse error on line 33, Expecting 'CLOSE', got 'ID') | Priority: Medium Time: ~1 hour Type: Bug | ##### **Overview of the issue**

When trying to generate the documentation, the following error occurs:

``` bash

[11:17:26] Process page : typealiases

[11:17:26] Process page : enumerations

[11:17:26] Process page : coverage

[11:17:26] Error: Parse error on line 33:

... {{> menu data menu='normal' }}

-----------------------^

Expecting 'CLOSE', got 'ID'

```

##### **Operating System, Node.js, npm, compodoc version(s)**

- Issue occuring with `compodoc 1.0.0-beta.14` and `compodoc 1.0.0-beta.15`

(`compodoc 1.0.0-beta.13` worked fine although the generation took forever)

- Windows 7

- NodeJS 8.2.1

- Angular 4.3.2

##### **Angular configuration, a `package.json` file in the root folder**

The command we use:

``` bash

compodoc src/lib --tsconfig src/tsconfig.docs.json

```

The referenced tsconfig file:

``` json

{

"compilerOptions": {

"baseUrl": "",

"declaration": false,

"emitDecoratorMetadata": true,

"experimentalDecorators": true,

"lib": [

"es2016",

"dom"

],

"module": "es2015",

"moduleResolution": "node",

"outDir": "../out-tsc/app",

"sourceMap": true,

"target": "es6",

"types": []

},

"exclude": [

"test.ts",

"polyfills.ts",

"main.ts",

"index.ts",

"**/*.spec.ts",

"app/**/*"

]

}

```

##### **Compodoc installed globally or locally ?**

locally

##### **Motivation for or Use Case**

The documentation won't build at all. | 1.0 | [BUG] Error when generating docs (Parse error on line 33, Expecting 'CLOSE', got 'ID') - ##### **Overview of the issue**

When trying to generate the documentation, the following error occurs:

``` bash

[11:17:26] Process page : typealiases

[11:17:26] Process page : enumerations

[11:17:26] Process page : coverage

[11:17:26] Error: Parse error on line 33:

... {{> menu data menu='normal' }}

-----------------------^

Expecting 'CLOSE', got 'ID'

```

##### **Operating System, Node.js, npm, compodoc version(s)**

- Issue occuring with `compodoc 1.0.0-beta.14` and `compodoc 1.0.0-beta.15`

(`compodoc 1.0.0-beta.13` worked fine although the generation took forever)

- Windows 7

- NodeJS 8.2.1

- Angular 4.3.2

##### **Angular configuration, a `package.json` file in the root folder**

The command we use:

``` bash

compodoc src/lib --tsconfig src/tsconfig.docs.json

```

The referenced tsconfig file:

``` json

{

"compilerOptions": {

"baseUrl": "",

"declaration": false,

"emitDecoratorMetadata": true,

"experimentalDecorators": true,

"lib": [

"es2016",

"dom"

],

"module": "es2015",

"moduleResolution": "node",

"outDir": "../out-tsc/app",

"sourceMap": true,

"target": "es6",

"types": []

},

"exclude": [

"test.ts",

"polyfills.ts",

"main.ts",

"index.ts",

"**/*.spec.ts",

"app/**/*"

]

}

```

##### **Compodoc installed globally or locally ?**

locally

##### **Motivation for or Use Case**

The documentation won't build at all. | priority | error when generating docs parse error on line expecting close got id overview of the issue when trying to generate the documentation the following error occurs bash process page typealiases process page enumerations process page coverage error parse error on line menu data menu normal expecting close got id operating system node js npm compodoc version s issue occuring with compodoc beta and compodoc beta compodoc beta worked fine although the generation took forever windows nodejs angular angular configuration a package json file in the root folder the command we use bash compodoc src lib tsconfig src tsconfig docs json the referenced tsconfig file json compileroptions baseurl declaration false emitdecoratormetadata true experimentaldecorators true lib dom module moduleresolution node outdir out tsc app sourcemap true target types exclude test ts polyfills ts main ts index ts spec ts app compodoc installed globally or locally locally motivation for or use case the documentation won t build at all | 1 |

411,655 | 12,027,202,138 | IssuesEvent | 2020-04-12 17:21:27 | AY1920S2-CS2103-W15-2/main | https://api.github.com/repos/AY1920S2-CS2103-W15-2/main | closed | Generate Report of Interviews in the form of PDF | priority.Medium severity.Medium type.Enhancement | The interview report might be needed in the form of a PDF, this feature is very beneficial for the interviewee. | 1.0 | Generate Report of Interviews in the form of PDF - The interview report might be needed in the form of a PDF, this feature is very beneficial for the interviewee. | priority | generate report of interviews in the form of pdf the interview report might be needed in the form of a pdf this feature is very beneficial for the interviewee | 1 |

432,932 | 12,500,228,908 | IssuesEvent | 2020-06-01 21:47:00 | OrangeJuice7/SDL-OpenGL-Game-Framework | https://api.github.com/repos/OrangeJuice7/SDL-OpenGL-Game-Framework | opened | Better animated sprites | assets.graphics priority.medium work.medium | Also create sprites that face different directions, and track that direction in the Entity. | 1.0 | Better animated sprites - Also create sprites that face different directions, and track that direction in the Entity. | priority | better animated sprites also create sprites that face different directions and track that direction in the entity | 1 |

348,469 | 10,442,792,736 | IssuesEvent | 2019-09-18 13:44:56 | CLOSER-Cohorts/archivist | https://api.github.com/repos/CLOSER-Cohorts/archivist | closed | Unable to change topic linked to variable | Database Mappings Medium priority | In the dataset view changing the topic linked to a variable to blank, none or selecting a new topic, it processes for a while and then eventually times out. | 1.0 | Unable to change topic linked to variable - In the dataset view changing the topic linked to a variable to blank, none or selecting a new topic, it processes for a while and then eventually times out. | priority | unable to change topic linked to variable in the dataset view changing the topic linked to a variable to blank none or selecting a new topic it processes for a while and then eventually times out | 1 |

517,855 | 15,020,469,114 | IssuesEvent | 2021-02-01 14:45:03 | ansible/galaxy_ng | https://api.github.com/repos/ansible/galaxy_ng | closed | UI: Disable groups and repo management in navigation for users with no permissions | area/frontend priority/medium status/in-progress type/enhancement | Disable groups and and repo management in the navigation menu for users that don't have permissions to view these pages.

Additionally, controls for creating, editing and deleting groups as well as the button for syncing and editing remotes should be disabled based on the users permissions.

| 1.0 | UI: Disable groups and repo management in navigation for users with no permissions - Disable groups and and repo management in the navigation menu for users that don't have permissions to view these pages.

Additionally, controls for creating, editing and deleting groups as well as the button for syncing and editing remotes should be disabled based on the users permissions.

| priority | ui disable groups and repo management in navigation for users with no permissions disable groups and and repo management in the navigation menu for users that don t have permissions to view these pages additionally controls for creating editing and deleting groups as well as the button for syncing and editing remotes should be disabled based on the users permissions | 1 |

121,461 | 4,816,836,900 | IssuesEvent | 2016-11-04 11:31:17 | SuperTux/supertux | https://api.github.com/repos/SuperTux/supertux | closed | Resizable Sprites | involves:functionality priority:medium status:needs-work type:idea | Sprites and Surfaces should be resizable. This is needed for the storage of powerups and items, because the user should be allowed to add his own collectible items and it's easier for the user to be able to use a Sprite in any resolution.

It might be a good idea to resize the images, and store them in a specail directory, because else the images would have to be resized too often, and it will ensure that the game is fast. For avoiding storing too many images they should be kept and maybe deleted if they weren't used in a long time.

TODO

- [ ] Implement a scaling algorithm for PNGs

- [ ] Implement the resized image container

- [ ] Extend this to Sprites

| 1.0 | Resizable Sprites - Sprites and Surfaces should be resizable. This is needed for the storage of powerups and items, because the user should be allowed to add his own collectible items and it's easier for the user to be able to use a Sprite in any resolution.

It might be a good idea to resize the images, and store them in a specail directory, because else the images would have to be resized too often, and it will ensure that the game is fast. For avoiding storing too many images they should be kept and maybe deleted if they weren't used in a long time.

TODO

- [ ] Implement a scaling algorithm for PNGs

- [ ] Implement the resized image container

- [ ] Extend this to Sprites

| priority | resizable sprites sprites and surfaces should be resizable this is needed for the storage of powerups and items because the user should be allowed to add his own collectible items and it s easier for the user to be able to use a sprite in any resolution it might be a good idea to resize the images and store them in a specail directory because else the images would have to be resized too often and it will ensure that the game is fast for avoiding storing too many images they should be kept and maybe deleted if they weren t used in a long time todo implement a scaling algorithm for pngs implement the resized image container extend this to sprites | 1 |

104,949 | 4,227,015,495 | IssuesEvent | 2016-07-02 21:44:32 | octobercms/october | https://api.github.com/repos/octobercms/october | closed | Crop and insert can produce "Invalid selection data" when a fixed ratio is used | Priority: Medium Status: Completed Type: Bug | Trying to crop an image using a fixed ratio can produce a _Invalid selection data_ message. Since [this commit](https://github.com/tapmodo/Jcrop/commit/3a51e67f8dbced3b4f763cb832f75c0a0750ec72#diff-f93c6ae36034c63a1c3e1d298a9af0d0L656) selection data can contain decimal places, which [prevents](https://github.com/octobercms/october/blob/ab4abb9bc5c42f09028e25206cd6d575fa3b1f31/modules/cms/widgets/MediaManager.php#L1124) images from being created.

##### Expected behavior

A cropped image should be created.

##### Actual behavior

An error for _Invalid selection data_ is shown.

##### Reproduce steps

1. Select crop and insert

2. Set selection mode to fixed ratio

3. Set width and height to 4/3

4. Make a selection with width and/or height that contain decimal places

5. Clip Crop and insert

##### October build

318 | 1.0 | Crop and insert can produce "Invalid selection data" when a fixed ratio is used - Trying to crop an image using a fixed ratio can produce a _Invalid selection data_ message. Since [this commit](https://github.com/tapmodo/Jcrop/commit/3a51e67f8dbced3b4f763cb832f75c0a0750ec72#diff-f93c6ae36034c63a1c3e1d298a9af0d0L656) selection data can contain decimal places, which [prevents](https://github.com/octobercms/october/blob/ab4abb9bc5c42f09028e25206cd6d575fa3b1f31/modules/cms/widgets/MediaManager.php#L1124) images from being created.

##### Expected behavior

A cropped image should be created.

##### Actual behavior

An error for _Invalid selection data_ is shown.

##### Reproduce steps

1. Select crop and insert

2. Set selection mode to fixed ratio

3. Set width and height to 4/3

4. Make a selection with width and/or height that contain decimal places

5. Clip Crop and insert

##### October build

318 | priority | crop and insert can produce invalid selection data when a fixed ratio is used trying to crop an image using a fixed ratio can produce a invalid selection data message since selection data can contain decimal places which images from being created expected behavior a cropped image should be created actual behavior an error for invalid selection data is shown reproduce steps select crop and insert set selection mode to fixed ratio set width and height to make a selection with width and or height that contain decimal places clip crop and insert october build | 1 |

412,254 | 12,037,249,657 | IssuesEvent | 2020-04-13 21:25:37 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Eco Tree dev features | Category: Web Priority: Medium Status: Fixed |

I'd like to expand the role of the dev icons here and make it part of our daily updates. The idea is that we make this a center for public development and personal connection between the team and community.

- [x] Jeff: add HTML code and styles for all the improvements of Tooltip, so Denys would just filled them with logic afterwards. Like `status`, `title`, `bio` and `email` links.

- [x] Denys: Add the ability to set a 'status' for each developer of what they're working on, with the date that the status was applied. Display this status in the tooltip.

- [x] Denys: Display a title for each dev (mentioned this before but would like to do it). Programmer, designer, artist, QA.

- [x] Jeff: Have two links in the tooltip that can be clicked, bio and email (not all devs have slg email, some signed up to wordpress with their private email. we dont want to publish private emails, so create new user field for email. should be optional).

- [x] Denys: Have an easy way for the team to set their status. They will be doing this everyday, so make sure it's easy. Ideally they can just open a page, click the node they're working on, and type a short status.

Bonus:

- [ ] Have 'recent updates' for each user, that links to the last few posts they made in the eco tree somewhere. | 1.0 | Eco Tree dev features -

I'd like to expand the role of the dev icons here and make it part of our daily updates. The idea is that we make this a center for public development and personal connection between the team and community.

- [x] Jeff: add HTML code and styles for all the improvements of Tooltip, so Denys would just filled them with logic afterwards. Like `status`, `title`, `bio` and `email` links.

- [x] Denys: Add the ability to set a 'status' for each developer of what they're working on, with the date that the status was applied. Display this status in the tooltip.

- [x] Denys: Display a title for each dev (mentioned this before but would like to do it). Programmer, designer, artist, QA.

- [x] Jeff: Have two links in the tooltip that can be clicked, bio and email (not all devs have slg email, some signed up to wordpress with their private email. we dont want to publish private emails, so create new user field for email. should be optional).

- [x] Denys: Have an easy way for the team to set their status. They will be doing this everyday, so make sure it's easy. Ideally they can just open a page, click the node they're working on, and type a short status.

Bonus:

- [ ] Have 'recent updates' for each user, that links to the last few posts they made in the eco tree somewhere. | priority | eco tree dev features i d like to expand the role of the dev icons here and make it part of our daily updates the idea is that we make this a center for public development and personal connection between the team and community jeff add html code and styles for all the improvements of tooltip so denys would just filled them with logic afterwards like status title bio and email links denys add the ability to set a status for each developer of what they re working on with the date that the status was applied display this status in the tooltip denys display a title for each dev mentioned this before but would like to do it programmer designer artist qa jeff have two links in the tooltip that can be clicked bio and email not all devs have slg email some signed up to wordpress with their private email we dont want to publish private emails so create new user field for email should be optional denys have an easy way for the team to set their status they will be doing this everyday so make sure it s easy ideally they can just open a page click the node they re working on and type a short status bonus have recent updates for each user that links to the last few posts they made in the eco tree somewhere | 1 |

392,208 | 11,584,825,490 | IssuesEvent | 2020-02-22 19:38:41 | Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth | https://api.github.com/repos/Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth | closed | Has a class in Artifact Conditions | :beetle: bug - localisation :scroll: :grey_exclamation: priority medium | <!--

**DO NOT REMOVE PRE-EXISTING LINES**

------------------------------------------------------------------------------------------------------------

-->

**Mod Version**

0317ee1deb744cfa454aa5f410a1fb475d84b255

**Are you using any submods/mods? If so, which?**

No

**Please explain your issue in as much detail as possible:**

Localization is wrong. I have a class, but it returns false.

**Upload screenshots of the problem localization:**

<details>

<summary>Click to expand</summary>

</details>

| 1.0 | Has a class in Artifact Conditions - <!--

**DO NOT REMOVE PRE-EXISTING LINES**

------------------------------------------------------------------------------------------------------------

-->

**Mod Version**

0317ee1deb744cfa454aa5f410a1fb475d84b255

**Are you using any submods/mods? If so, which?**

No

**Please explain your issue in as much detail as possible:**

Localization is wrong. I have a class, but it returns false.

**Upload screenshots of the problem localization:**

<details>

<summary>Click to expand</summary>

</details>

| priority | has a class in artifact conditions do not remove pre existing lines mod version are you using any submods mods if so which no please explain your issue in as much detail as possible localization is wrong i have a class but it returns false upload screenshots of the problem localization click to expand | 1 |

68,387 | 3,286,940,858 | IssuesEvent | 2015-10-29 07:23:03 | cs2103aug2015-t16-4j/main | https://api.github.com/repos/cs2103aug2015-t16-4j/main | closed | As a user, Jim wants to be able to have the program warn him if he places 2 events at the same time | priority.medium type.story | In case he mistakenly plans his timetable with clashes | 1.0 | As a user, Jim wants to be able to have the program warn him if he places 2 events at the same time - In case he mistakenly plans his timetable with clashes | priority | as a user jim wants to be able to have the program warn him if he places events at the same time in case he mistakenly plans his timetable with clashes | 1 |

2,281 | 2,525,001,611 | IssuesEvent | 2015-01-20 21:32:07 | graybeal/ont | https://api.github.com/repos/graybeal/ont | closed | Create device ontology instantiation | 1 star enhancement imported OntDev Priority-Medium | _From [caru...@gmail.com](https://code.google.com/u/113886747689301365533/) on November 23, 2009 12:47:36_

Description of the action item Create device ontology instantiation and present lessons learned to the team

(thanks Bob!) Please provide any relevant information/links below http://marinemetadata.org/community/teams/ontdevices/agendas/am20091027 http://marinemetadata.org/pipermail/ontdev/2009/000344.html http://marinemetadata.org/pipermail/ontdev/2009/000356.html

_Original issue: http://code.google.com/p/mmisw/issues/detail?id=224_ | 1.0 | Create device ontology instantiation - _From [caru...@gmail.com](https://code.google.com/u/113886747689301365533/) on November 23, 2009 12:47:36_

Description of the action item Create device ontology instantiation and present lessons learned to the team

(thanks Bob!) Please provide any relevant information/links below http://marinemetadata.org/community/teams/ontdevices/agendas/am20091027 http://marinemetadata.org/pipermail/ontdev/2009/000344.html http://marinemetadata.org/pipermail/ontdev/2009/000356.html

_Original issue: http://code.google.com/p/mmisw/issues/detail?id=224_ | priority | create device ontology instantiation from on november description of the action item create device ontology instantiation and present lessons learned to the team thanks bob please provide any relevant information links below original issue | 1 |

217,828 | 7,328,432,224 | IssuesEvent | 2018-03-04 20:40:19 | PaulL48/SOEN341-SC4 | https://api.github.com/repos/PaulL48/SOEN341-SC4 | opened | Improvement: Login after registering | enhancement priority: low risk: medium | It would make sense if once the user registers, they would be logged in. | 1.0 | Improvement: Login after registering - It would make sense if once the user registers, they would be logged in. | priority | improvement login after registering it would make sense if once the user registers they would be logged in | 1 |

641,984 | 20,864,112,261 | IssuesEvent | 2022-03-22 04:11:32 | AY2122S2-CS2103T-T12-4/tp | https://api.github.com/repos/AY2122S2-CS2103T-T12-4/tp | closed | Allow users to add links to tasks | priority.Medium | As a user, I can add links to a task so that I can access the link. | 1.0 | Allow users to add links to tasks - As a user, I can add links to a task so that I can access the link. | priority | allow users to add links to tasks as a user i can add links to a task so that i can access the link | 1 |

413,359 | 12,065,992,831 | IssuesEvent | 2020-04-16 10:57:14 | TykTechnologies/tyk | https://api.github.com/repos/TykTechnologies/tyk | closed | Dashboard should be able to provide a list of all my current keys | Priority: Medium customer request enhancement | **Do you want to request a *feature* or report a *bug*?**

Feature

**What is the current behavior?**

- ~~Key listing on the gateway is possible only if the keys are not hashed.~~ Possible directly with the gateway by setting `"enable_hashed_keys_listing": true,` in tyk.conf.

- Even if you enabled key listing on both dashboard and gateway we list keys under apis, users need to fetch all the apis and then call /keys per api.

- We provide keys listing in the analytics. As such, admins need to wait until the keys are being used before they can see the keys on the system.

**What is the expected behavior?**

We should add the ability to see the keys as soon as they get created since we have this info already. It will be very powerful from the dashboard users perspective.

Since we have this info why not create an appropriate endpoint?

**If the current behavior is a bug, please provide the steps to reproduce and if possible a minimal demo of the problem**

**Which versions of Tyk affected by this issue? Did this work in previous versions of Tyk?**

2.8 | 1.0 | Dashboard should be able to provide a list of all my current keys - **Do you want to request a *feature* or report a *bug*?**

Feature

**What is the current behavior?**

- ~~Key listing on the gateway is possible only if the keys are not hashed.~~ Possible directly with the gateway by setting `"enable_hashed_keys_listing": true,` in tyk.conf.

- Even if you enabled key listing on both dashboard and gateway we list keys under apis, users need to fetch all the apis and then call /keys per api.

- We provide keys listing in the analytics. As such, admins need to wait until the keys are being used before they can see the keys on the system.

**What is the expected behavior?**

We should add the ability to see the keys as soon as they get created since we have this info already. It will be very powerful from the dashboard users perspective.

Since we have this info why not create an appropriate endpoint?

**If the current behavior is a bug, please provide the steps to reproduce and if possible a minimal demo of the problem**

**Which versions of Tyk affected by this issue? Did this work in previous versions of Tyk?**

2.8 | priority | dashboard should be able to provide a list of all my current keys do you want to request a feature or report a bug feature what is the current behavior key listing on the gateway is possible only if the keys are not hashed possible directly with the gateway by setting enable hashed keys listing true in tyk conf even if you enabled key listing on both dashboard and gateway we list keys under apis users need to fetch all the apis and then call keys per api we provide keys listing in the analytics as such admins need to wait until the keys are being used before they can see the keys on the system what is the expected behavior we should add the ability to see the keys as soon as they get created since we have this info already it will be very powerful from the dashboard users perspective since we have this info why not create an appropriate endpoint if the current behavior is a bug please provide the steps to reproduce and if possible a minimal demo of the problem which versions of tyk affected by this issue did this work in previous versions of tyk | 1 |

726,442 | 24,999,504,975 | IssuesEvent | 2022-11-03 06:07:38 | factly/validly | https://api.github.com/repos/factly/validly | opened | Add new validations to check the PSU name upto proper standardisation | enhancement good first issue priority:medium | Description

Similar to States we must add PSU names from the data dictionary as a part of validly-server.

Task

Column mapping should include a new column that will incorporate PSU name columns

Then the validation rule will be added similar to how state validation is present | 1.0 | Add new validations to check the PSU name upto proper standardisation - Description

Similar to States we must add PSU names from the data dictionary as a part of validly-server.

Task

Column mapping should include a new column that will incorporate PSU name columns

Then the validation rule will be added similar to how state validation is present | priority | add new validations to check the psu name upto proper standardisation description similar to states we must add psu names from the data dictionary as a part of validly server task column mapping should include a new column that will incorporate psu name columns then the validation rule will be added similar to how state validation is present | 1 |

552,647 | 16,246,279,808 | IssuesEvent | 2021-05-07 14:57:14 | curiouslearning/followthelearners | https://api.github.com/repos/curiouslearning/followthelearners | closed | Shell scripts for Firebase emulators on dev.followthelearners.org | medium priority | - Initalization script for Firebase emulator.

- Shutdown script for Firebase emulator.

- Export script to export the data from the emulator to a file before shutdown. | 1.0 | Shell scripts for Firebase emulators on dev.followthelearners.org - - Initalization script for Firebase emulator.

- Shutdown script for Firebase emulator.

- Export script to export the data from the emulator to a file before shutdown. | priority | shell scripts for firebase emulators on dev followthelearners org initalization script for firebase emulator shutdown script for firebase emulator export script to export the data from the emulator to a file before shutdown | 1 |

257,745 | 8,141,070,923 | IssuesEvent | 2018-08-20 23:58:48 | minio/minio | https://api.github.com/repos/minio/minio | closed | Crash: Unsupported bitrot algorithm | community duplicate priority: medium | unable to heal minio

## Expected Behavior

I should be able to heal minio bucket

## Current Behavior

executing "mc admin heal --recursive --json --debug live-minio-4" ends with minio crashing.

## Logs

<details>

<summary>Logs</summary>

<pre><code>

Aug 16 22:45:51 live-minio-4 minio[11564]: API: SYSTEM()

Aug 16 22:45:51 live-minio-4 minio[11564]: Time: 22:45:51 EEST 08/16/2018

Aug 16 22:45:51 live-minio-4 minio[11564]: Error: Unsupported bitrot algorithm

Aug 16 22:45:51 live-minio-4 minio[11564]: 1: cmd/logger/logger.go:326:logger.CriticalIf()

Aug 16 22:45:51 live-minio-4 minio[11564]: 2: cmd/xl-v1-metadata.go:77:cmd.BitrotAlgorithm.New()

Aug 16 22:45:51 live-minio-4 minio[11564]: 3: cmd/erasure.go:102:cmd.NewBitrotVerifier()

Aug 16 22:45:51 live-minio-4 minio[11564]: 4: cmd/xl-v1-healing-common.go:182:cmd.disksWithAllParts()

Aug 16 22:45:51 live-minio-4 minio[11564]: 5: cmd/xl-v1-healing.go:308:cmd.healObject()

Aug 16 22:45:51 live-minio-4 minio[11564]: 6: cmd/xl-v1-healing.go:627:cmd.xlObjects.HealObject()

Aug 16 22:45:51 live-minio-4 minio[11564]: 7: cmd/xl-sets.go:1230:cmd.(*xlSets).HealObject()

Aug 16 22:45:51 live-minio-4 minio[11564]: 8: cmd/admin-heal-ops.go:658:cmd.(*healSequence).healObject()

Aug 16 22:45:51 live-minio-4 minio[11564]: 9: cmd/admin-heal-ops.go:635:cmd.(*healSequence).healBucket()

Aug 16 22:45:51 live-minio-4 minio[11564]: 10: cmd/admin-heal-ops.go:577:cmd.(*healSequence).healBuckets()

Aug 16 22:45:51 live-minio-4 minio[11564]: 11: cmd/admin-heal-ops.go:523:cmd.healBuckets)-fm()

Aug 16 22:45:51 live-minio-4 minio[11564]: 12: cmd/admin-heal-ops.go:516:cmd.(*healSequence).traverseAndHeal.func1()

Aug 16 22:45:51 live-minio-4 minio[11564]: 13: cmd/admin-heal-ops.go:523:cmd.(*healSequence).traverseAndHeal()

Aug 16 22:45:51 live-minio-4 minio[11564]: panic: (struct {}) (0x1158e60,0x1eac830)

Aug 16 22:45:51 live-minio-4 minio[11564]: goroutine 2130102 [running]:

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd/logger.CriticalIf(0x146cee0, 0xc42003c960, 0x145c4c0, 0xc4233c3860)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/logger/logger.go:327 +0x74

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.BitrotAlgorithm.New(0x0, 0x2, 0x2)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/xl-v1-metadata.go:77 +0xac

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.NewBitrotVerifier(0x0, 0x0, 0x0, 0x0, 0xa00000)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/erasure.go:102 +0x2f

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.disksWithAllParts(0x146cf60, 0xc424872360, 0xc422774900, 0x10, 0x10, 0xc420e18000, 0x10, 0x10, 0xc422774300, 0x10, ...)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/xl-v1-healing-common.go:182 +0x306

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.healObject(0x146cf60, 0xc424872360, 0xc422774200, 0x10, 0x10, 0xc42010a3eb, 0x7, 0xc42984b800, 0x34, 0xb, ...)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/xl-v1-healing.go:308 +0x28e

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.xlObjects.HealObject(0xc42045f340, 0xc42045f380, 0xc42045f360, 0x0, 0x0, 0x0, 0x0, 0x146cf60, 0xc424872360, 0xc42010a3eb, ...)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/xl-v1-healing.go:627 +0x38d

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*xlSets).HealObject(0xc420432b40, 0x146cf60, 0xc424872360, 0xc42010a3eb, 0x7, 0xc42984b800, 0x34, 0x0, 0x0, 0x0, ...)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/xl-sets.go:1230 +0x128

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).healObject(0xc420738300, 0xc42010a3eb, 0x7, 0xc42984b800, 0x34, 0x0, 0x0)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:658 +0xf4

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).healBucket(0xc420738300, 0xc42010a3eb, 0x7, 0xc422bb05a0, 0x2)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:635 +0x2ec

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).healBuckets(0xc420738300, 0xc4200a41e0, 0xc42523df00)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:577 +0x157

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).(github.com/minio/minio/cmd.healBuckets)-fm(0xc420738300, 0x0)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:523 +0x2a

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).traverseAndHeal.func1(0xc420566bd0)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:516 +0x8f

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).traverseAndHeal(0xc420738300)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:523 +0xd9

Aug 16 22:45:51 live-minio-4 minio[11564]: created by github.com/minio/minio/cmd.(*healSequence).healSequenceStart

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:466 +0xf0

Aug 16 22:45:51 live-minio-4 systemd[1]: minio.service: Main process exited, code=exited, status=2/INVALIDARGUMENT

Aug 16 22:45:51 live-minio-4 systemd[1]: minio.service: Unit entered failed state.

Aug 16 22:45:51 live-minio-4 systemd[1]: minio.service: Failed with result 'exit-code'.

</code></pre>

</details>

## Your Environment

* Version used (`minio version`): 2018-08-02T23:11:36Z

* Operating System and version (`uname -a`): 4.9.0-6-amd64 #1 SMP Debian 4.9.88-1+deb9u1 (2018-05-07) x86_64 GNU/Linux

| 1.0 | Crash: Unsupported bitrot algorithm - unable to heal minio

## Expected Behavior

I should be able to heal minio bucket

## Current Behavior

executing "mc admin heal --recursive --json --debug live-minio-4" ends with minio crashing.

## Logs

<details>

<summary>Logs</summary>

<pre><code>

Aug 16 22:45:51 live-minio-4 minio[11564]: API: SYSTEM()

Aug 16 22:45:51 live-minio-4 minio[11564]: Time: 22:45:51 EEST 08/16/2018

Aug 16 22:45:51 live-minio-4 minio[11564]: Error: Unsupported bitrot algorithm

Aug 16 22:45:51 live-minio-4 minio[11564]: 1: cmd/logger/logger.go:326:logger.CriticalIf()

Aug 16 22:45:51 live-minio-4 minio[11564]: 2: cmd/xl-v1-metadata.go:77:cmd.BitrotAlgorithm.New()

Aug 16 22:45:51 live-minio-4 minio[11564]: 3: cmd/erasure.go:102:cmd.NewBitrotVerifier()

Aug 16 22:45:51 live-minio-4 minio[11564]: 4: cmd/xl-v1-healing-common.go:182:cmd.disksWithAllParts()

Aug 16 22:45:51 live-minio-4 minio[11564]: 5: cmd/xl-v1-healing.go:308:cmd.healObject()

Aug 16 22:45:51 live-minio-4 minio[11564]: 6: cmd/xl-v1-healing.go:627:cmd.xlObjects.HealObject()

Aug 16 22:45:51 live-minio-4 minio[11564]: 7: cmd/xl-sets.go:1230:cmd.(*xlSets).HealObject()

Aug 16 22:45:51 live-minio-4 minio[11564]: 8: cmd/admin-heal-ops.go:658:cmd.(*healSequence).healObject()

Aug 16 22:45:51 live-minio-4 minio[11564]: 9: cmd/admin-heal-ops.go:635:cmd.(*healSequence).healBucket()

Aug 16 22:45:51 live-minio-4 minio[11564]: 10: cmd/admin-heal-ops.go:577:cmd.(*healSequence).healBuckets()

Aug 16 22:45:51 live-minio-4 minio[11564]: 11: cmd/admin-heal-ops.go:523:cmd.healBuckets)-fm()

Aug 16 22:45:51 live-minio-4 minio[11564]: 12: cmd/admin-heal-ops.go:516:cmd.(*healSequence).traverseAndHeal.func1()

Aug 16 22:45:51 live-minio-4 minio[11564]: 13: cmd/admin-heal-ops.go:523:cmd.(*healSequence).traverseAndHeal()

Aug 16 22:45:51 live-minio-4 minio[11564]: panic: (struct {}) (0x1158e60,0x1eac830)

Aug 16 22:45:51 live-minio-4 minio[11564]: goroutine 2130102 [running]:

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd/logger.CriticalIf(0x146cee0, 0xc42003c960, 0x145c4c0, 0xc4233c3860)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/logger/logger.go:327 +0x74

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.BitrotAlgorithm.New(0x0, 0x2, 0x2)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/xl-v1-metadata.go:77 +0xac

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.NewBitrotVerifier(0x0, 0x0, 0x0, 0x0, 0xa00000)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/erasure.go:102 +0x2f

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.disksWithAllParts(0x146cf60, 0xc424872360, 0xc422774900, 0x10, 0x10, 0xc420e18000, 0x10, 0x10, 0xc422774300, 0x10, ...)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/xl-v1-healing-common.go:182 +0x306

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.healObject(0x146cf60, 0xc424872360, 0xc422774200, 0x10, 0x10, 0xc42010a3eb, 0x7, 0xc42984b800, 0x34, 0xb, ...)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/xl-v1-healing.go:308 +0x28e

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.xlObjects.HealObject(0xc42045f340, 0xc42045f380, 0xc42045f360, 0x0, 0x0, 0x0, 0x0, 0x146cf60, 0xc424872360, 0xc42010a3eb, ...)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/xl-v1-healing.go:627 +0x38d

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*xlSets).HealObject(0xc420432b40, 0x146cf60, 0xc424872360, 0xc42010a3eb, 0x7, 0xc42984b800, 0x34, 0x0, 0x0, 0x0, ...)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/xl-sets.go:1230 +0x128

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).healObject(0xc420738300, 0xc42010a3eb, 0x7, 0xc42984b800, 0x34, 0x0, 0x0)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:658 +0xf4

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).healBucket(0xc420738300, 0xc42010a3eb, 0x7, 0xc422bb05a0, 0x2)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:635 +0x2ec

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).healBuckets(0xc420738300, 0xc4200a41e0, 0xc42523df00)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:577 +0x157

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).(github.com/minio/minio/cmd.healBuckets)-fm(0xc420738300, 0x0)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:523 +0x2a

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).traverseAndHeal.func1(0xc420566bd0)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:516 +0x8f

Aug 16 22:45:51 live-minio-4 minio[11564]: github.com/minio/minio/cmd.(*healSequence).traverseAndHeal(0xc420738300)

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:523 +0xd9

Aug 16 22:45:51 live-minio-4 minio[11564]: created by github.com/minio/minio/cmd.(*healSequence).healSequenceStart

Aug 16 22:45:51 live-minio-4 minio[11564]: #011/q/.q/sources/gopath/src/github.com/minio/minio/cmd/admin-heal-ops.go:466 +0xf0

Aug 16 22:45:51 live-minio-4 systemd[1]: minio.service: Main process exited, code=exited, status=2/INVALIDARGUMENT

Aug 16 22:45:51 live-minio-4 systemd[1]: minio.service: Unit entered failed state.

Aug 16 22:45:51 live-minio-4 systemd[1]: minio.service: Failed with result 'exit-code'.

</code></pre>

</details>

## Your Environment

* Version used (`minio version`): 2018-08-02T23:11:36Z

* Operating System and version (`uname -a`): 4.9.0-6-amd64 #1 SMP Debian 4.9.88-1+deb9u1 (2018-05-07) x86_64 GNU/Linux

| priority | crash unsupported bitrot algorithm unable to heal minio expected behavior i should be able to heal minio bucket current behavior executing mc admin heal recursive json debug live minio ends with minio crashing logs logs aug live minio minio api system aug live minio minio time eest aug live minio minio error unsupported bitrot algorithm aug live minio minio cmd logger logger go logger criticalif aug live minio minio cmd xl metadata go cmd bitrotalgorithm new aug live minio minio cmd erasure go cmd newbitrotverifier aug live minio minio cmd xl healing common go cmd diskswithallparts aug live minio minio cmd xl healing go cmd healobject aug live minio minio cmd xl healing go cmd xlobjects healobject aug live minio minio cmd xl sets go cmd xlsets healobject aug live minio minio cmd admin heal ops go cmd healsequence healobject aug live minio minio cmd admin heal ops go cmd healsequence healbucket aug live minio minio cmd admin heal ops go cmd healsequence healbuckets aug live minio minio cmd admin heal ops go cmd healbuckets fm aug live minio minio cmd admin heal ops go cmd healsequence traverseandheal aug live minio minio cmd admin heal ops go cmd healsequence traverseandheal aug live minio minio panic struct aug live minio minio goroutine aug live minio minio github com minio minio cmd logger criticalif aug live minio minio q q sources gopath src github com minio minio cmd logger logger go aug live minio minio github com minio minio cmd bitrotalgorithm new aug live minio minio q q sources gopath src github com minio minio cmd xl metadata go aug live minio minio github com minio minio cmd newbitrotverifier aug live minio minio q q sources gopath src github com minio minio cmd erasure go aug live minio minio github com minio minio cmd diskswithallparts aug live minio minio q q sources gopath src github com minio minio cmd xl healing common go aug live minio minio github com minio minio cmd healobject aug live minio minio q q sources gopath src github com minio minio cmd xl healing go aug live minio minio github com minio minio cmd xlobjects healobject aug live minio minio q q sources gopath src github com minio minio cmd xl healing go aug live minio minio github com minio minio cmd xlsets healobject aug live minio minio q q sources gopath src github com minio minio cmd xl sets go aug live minio minio github com minio minio cmd healsequence healobject aug live minio minio q q sources gopath src github com minio minio cmd admin heal ops go aug live minio minio github com minio minio cmd healsequence healbucket aug live minio minio q q sources gopath src github com minio minio cmd admin heal ops go aug live minio minio github com minio minio cmd healsequence healbuckets aug live minio minio q q sources gopath src github com minio minio cmd admin heal ops go aug live minio minio github com minio minio cmd healsequence github com minio minio cmd healbuckets fm aug live minio minio q q sources gopath src github com minio minio cmd admin heal ops go aug live minio minio github com minio minio cmd healsequence traverseandheal aug live minio minio q q sources gopath src github com minio minio cmd admin heal ops go aug live minio minio github com minio minio cmd healsequence traverseandheal aug live minio minio q q sources gopath src github com minio minio cmd admin heal ops go aug live minio minio created by github com minio minio cmd healsequence healsequencestart aug live minio minio q q sources gopath src github com minio minio cmd admin heal ops go aug live minio systemd minio service main process exited code exited status invalidargument aug live minio systemd minio service unit entered failed state aug live minio systemd minio service failed with result exit code your environment version used minio version operating system and version uname a smp debian gnu linux | 1 |

816,504 | 30,601,258,518 | IssuesEvent | 2023-07-22 12:15:47 | anuthapaliy/Coursework-Planner | https://api.github.com/repos/anuthapaliy/Coursework-Planner | opened | [TECH ED] 🏝️ Stretch challenges | Week 1 🏝️ Priority Stretch 🐂 Size Medium 📅 Node | From Module-Node created by [Dedekind561](https://github.com/Dedekind561): CodeYourFuture/Module-Node#11

### Link to the coursework

https://github.com/CodeYourFuture/Module-Node/edit/main/quote-server/README.md

### Why are we doing this?

These tasks will get you to further develop your skills by implementing more functionality for your server projects.

You can find the stretch section in the README under the 🏝 **Stretch challenge** heading.

### Maximum time in hours

2

### How to get help

Share your blockers in your class channel

https://syllabus.codeyourfuture.io/guides/asking-questions

### How to submit

Follow the instructions on the linked repo | 1.0 | [TECH ED] 🏝️ Stretch challenges - From Module-Node created by [Dedekind561](https://github.com/Dedekind561): CodeYourFuture/Module-Node#11

### Link to the coursework

https://github.com/CodeYourFuture/Module-Node/edit/main/quote-server/README.md

### Why are we doing this?

These tasks will get you to further develop your skills by implementing more functionality for your server projects.

You can find the stretch section in the README under the 🏝 **Stretch challenge** heading.

### Maximum time in hours

2

### How to get help

Share your blockers in your class channel

https://syllabus.codeyourfuture.io/guides/asking-questions

### How to submit

Follow the instructions on the linked repo | priority | 🏝️ stretch challenges from module node created by codeyourfuture module node link to the coursework why are we doing this these tasks will get you to further develop your skills by implementing more functionality for your server projects you can find the stretch section in the readme under the 🏝 stretch challenge heading maximum time in hours how to get help share your blockers in your class channel how to submit follow the instructions on the linked repo | 1 |

120,536 | 4,791,031,282 | IssuesEvent | 2016-10-31 10:59:37 | JKGDevs/JediKnightGalaxies | https://api.github.com/repos/JKGDevs/JediKnightGalaxies | closed | Switching teams makes the client think they have a second starting weapon...that they don't have. | bug priority:medium | Self-explanatory. Switch teams when you have only the starter weapon, and there will be two in your inventory. The second one cannot be equipped.

| 1.0 | Switching teams makes the client think they have a second starting weapon...that they don't have. - Self-explanatory. Switch teams when you have only the starter weapon, and there will be two in your inventory. The second one cannot be equipped.

| priority | switching teams makes the client think they have a second starting weapon that they don t have self explanatory switch teams when you have only the starter weapon and there will be two in your inventory the second one cannot be equipped | 1 |

208,348 | 7,153,261,278 | IssuesEvent | 2018-01-26 00:41:57 | vmware/vic-product | https://api.github.com/repos/vmware/vic-product | closed | Support bundle of integrated OVA should collect Admiral logs | component/ova kind/enhancement priority/medium team/lifecycle triage/proposed-1.4 | Support bundle should collect Admiral container(vic-admiral) logs.

Also it might be useful if logs of the docker which is running in the integrated OVA, to be collected too for more debugging information. | 1.0 | Support bundle of integrated OVA should collect Admiral logs - Support bundle should collect Admiral container(vic-admiral) logs.

Also it might be useful if logs of the docker which is running in the integrated OVA, to be collected too for more debugging information. | priority | support bundle of integrated ova should collect admiral logs support bundle should collect admiral container vic admiral logs also it might be useful if logs of the docker which is running in the integrated ova to be collected too for more debugging information | 1 |

333,710 | 10,130,497,028 | IssuesEvent | 2019-08-01 17:06:04 | SELinuxProject/selinux-kernel | https://api.github.com/repos/SELinuxProject/selinux-kernel | closed | BUG: selinux-testsuite failes on binder tests in v5.1-rc1 | bug priority/medium | When running the selinux-testsuite, the binder tests cause a kernel panic/BUG which causes the test to block.

The test output:

```

Running as user root with context unconfined_u:unconfined_r:unconfined_t

domain_trans/test ........... ok

...

netlink_socket/test ......... ok

prlimit/test ................ ok

binder/test ................. 1/6

<test hang>

```

The relevant console output:

```

[ 823.210062] binder: release 3645:3645 transaction 2 out, still active

[ 823.214047] binder: 3644:3644 transaction failed 29189/0, size 24-8 line 2926

[ 823.218009] binder: send failed reply for transaction 2, target dead

[ 823.221329] binder: 3646:3646 transaction failed 29201/-1, size 24-8 line 3002

[ 823.232432] ------------[ cut here ]------------

[ 823.234746] kernel BUG at drivers/android/binder_alloc.c:1141!

[ 823.237447] invalid opcode: 0000 [#1] SMP PTI

[ 823.239421] CPU: 1 PID: 3644 Comm: test_binder Not tainted 5.1.0-0.rc1.git0.1.2.secnext.fc31.x86_64 #1

[ 823.243538] Hardware name: Red Hat KVM, BIOS 0.5.1 01/01/2011

[ 823.246079] RIP: 0010:binder_alloc_do_buffer_copy+0x34/0x210

[ 823.248613] Code: 0a 41 55 49 89 fb 41 54 41 89 f4 48 8d 77 38 48 8b 42 58 55 53 48 39 f1 0f 84 17 01 00 00 48 8b 49 58 48 29 c1 49 39 c9 76 02 <0f> 0b 4c 29 c9 49 39 ca 77 f6 41 f6 c2 03 75 f0 0f b6 4a 28 f6 c1

[ 823.256404] RSP: 0018:ffffb04e41093b68 EFLAGS: 00010202

[ 823.258513] RAX: 00007fb600c52000 RBX: a0d48e24a0213e28 RCX: 0000000000000020

[ 823.261375] RDX: ffff9c09b058a9c0 RSI: ffff9c09189165b0 RDI: ffff9c0918916578

[ 823.264225] RBP: ffff9c09b058a9c0 R08: ffffb04e41093c80 R09: 0000000000000028

[ 823.267044] R10: a0d48e24a0213e28 R11: ffff9c0918916578 R12: 0000000000000000

[ 823.269758] R13: ffff9c09b67c9660 R14: ffff9c09b116fb40 R15: ffffffff8acd4d08

[ 823.272482] FS: 00007fbeb3438800(0000) GS:ffff9c09b7a80000(0000) knlGS:0000000000000000

[ 823.275595] CS: 0010 DS: 0000 ES: 0000 CR0: 0000000080050033

[ 823.277676] CR2: 000055b102d31cc9 CR3: 0000000234648000 CR4: 00000000001406e0

[ 823.280347] Call Trace:

[ 823.281287] binder_get_object+0x60/0xf0

[ 823.282728] binder_transaction+0xc2e/0x2370

[ 823.284268] ? __check_object_size+0x41/0x15d

[ 823.285849] ? binder_thread_read+0x9e2/0x1460

[ 823.287342] ? binder_update_ref_for_handle+0x83/0x1a0

[ 823.289066] binder_thread_write+0x2ae/0xfc0

[ 823.290513] ? finish_wait+0x80/0x80

[ 823.291729] binder_ioctl+0x659/0x836

[ 823.292980] do_vfs_ioctl+0x40a/0x670

[ 823.294234] ksys_ioctl+0x5e/0x90

[ 823.295364] __x64_sys_ioctl+0x16/0x20

[ 823.296609] do_syscall_64+0x5b/0x150

[ 823.297796] entry_SYSCALL_64_after_hwframe+0x44/0xa9

[ 823.299423] RIP: 0033:0x7fbeb35e782b

[ 823.300580] Code: 0f 1e fa 48 8b 05 5d 96 0c 00 64 c7 00 26 00 00 00 48 c7 c0 ff ff ff ff c3 66 0f 1f 44 00 00 f3 0f 1e fa b8 10 00 00 00 0f 05 <48> 3d 01 f0 ff ff 73 01 c3 48 8b 0d 2d 96 0c 00 f7 d8 64 89 01 48

[ 823.306473] RSP: 002b:00007ffdfae2f198 EFLAGS: 00000287 ORIG_RAX: 0000000000000010

[ 823.308868] RAX: ffffffffffffffda RBX: 0000000000000000 RCX: 00007fbeb35e782b

[ 823.311029] RDX: 00007ffdfae2f1b0 RSI: 00000000c0306201 RDI: 0000000000000003

[ 823.313206] RBP: 00007ffdfae30210 R08: 00000000010fa330 R09: 0000000000000000

[ 823.315379] R10: 0000000000400644 R11: 0000000000000287 R12: 0000000000401190

[ 823.317459] R13: 00007ffdfae304c0 R14: 0000000000000000 R15: 0000000000000000

[ 823.319510] Modules linked in: crypto_user nfnetlink xt_multiport bluetooth ecdh_generic rfkill sctp overlay ip6table_security xt_CONNSECMARK xt_SECMARK xt_state xt_conntrack nf_conntrack nf_defrag_ipv6 nf_defrag_ipv4 libcrc32c iptable_security ah6 xfrm6_mode_transport ah4 xfrm4_mode_transport ip6table_mangle ip6table_filter ip6_tables iptable_mangle xt_mark xt_AUDIT ib_isert iscsi_target_mod ib_srpt target_core_mod ib_srp scsi_transport_srp rpcrdma rdma_ucm ib_iser ib_umad ib_ipoib rdma_cm iw_cm libiscsi scsi_transport_iscsi ib_cm mlx5_ib ib_uverbs ib_core sunrpc crct10dif_pclmul crc32_pclmul ghash_clmulni_intel joydev virtio_balloon i2c_piix4 drm_kms_helper virtio_net net_failover failover ttm drm mlx5_core crc32c_intel virtio_blk ata_generic virtio_console mlxfw serio_raw pata_acpi qemu_fw_cfg [last unloaded: arp_tables]

[ 823.339786] ---[ end trace 6f761f654b297775 ]---

```

The related code in Linus' tree (it's the BUG_ON(...) at the top):

```

static void binder_alloc_do_buffer_copy(struct binder_alloc *alloc,

bool to_buffer,

struct binder_buffer *buffer,

binder_size_t buffer_offset,

void *ptr,

size_t bytes)

{

/* All copies must be 32-bit aligned and 32-bit size */

BUG_ON(!check_buffer(alloc, buffer, buffer_offset, bytes));

while (bytes) {

unsigned long size;

struct page *page;

pgoff_t pgoff;

void *tmpptr;

void *base_ptr;

page = binder_alloc_get_page(alloc, buffer,

buffer_offset, &pgoff);

size = min_t(size_t, bytes, PAGE_SIZE - pgoff);

base_ptr = kmap_atomic(page);

tmpptr = base_ptr + pgoff;

if (to_buffer)

memcpy(tmpptr, ptr, size);

else

memcpy(ptr, tmpptr, size);

/*

* kunmap_atomic() takes care of flushing the cache

* if this device has VIVT cache arch

*/

kunmap_atomic(base_ptr);

bytes -= size;

pgoff = 0;

ptr = ptr + size;

buffer_offset += size;

}

}

```

| 1.0 | BUG: selinux-testsuite failes on binder tests in v5.1-rc1 - When running the selinux-testsuite, the binder tests cause a kernel panic/BUG which causes the test to block.

The test output:

```

Running as user root with context unconfined_u:unconfined_r:unconfined_t

domain_trans/test ........... ok

...

netlink_socket/test ......... ok

prlimit/test ................ ok

binder/test ................. 1/6

<test hang>

```

The relevant console output:

```

[ 823.210062] binder: release 3645:3645 transaction 2 out, still active

[ 823.214047] binder: 3644:3644 transaction failed 29189/0, size 24-8 line 2926

[ 823.218009] binder: send failed reply for transaction 2, target dead

[ 823.221329] binder: 3646:3646 transaction failed 29201/-1, size 24-8 line 3002

[ 823.232432] ------------[ cut here ]------------

[ 823.234746] kernel BUG at drivers/android/binder_alloc.c:1141!

[ 823.237447] invalid opcode: 0000 [#1] SMP PTI

[ 823.239421] CPU: 1 PID: 3644 Comm: test_binder Not tainted 5.1.0-0.rc1.git0.1.2.secnext.fc31.x86_64 #1

[ 823.243538] Hardware name: Red Hat KVM, BIOS 0.5.1 01/01/2011

[ 823.246079] RIP: 0010:binder_alloc_do_buffer_copy+0x34/0x210

[ 823.248613] Code: 0a 41 55 49 89 fb 41 54 41 89 f4 48 8d 77 38 48 8b 42 58 55 53 48 39 f1 0f 84 17 01 00 00 48 8b 49 58 48 29 c1 49 39 c9 76 02 <0f> 0b 4c 29 c9 49 39 ca 77 f6 41 f6 c2 03 75 f0 0f b6 4a 28 f6 c1

[ 823.256404] RSP: 0018:ffffb04e41093b68 EFLAGS: 00010202

[ 823.258513] RAX: 00007fb600c52000 RBX: a0d48e24a0213e28 RCX: 0000000000000020

[ 823.261375] RDX: ffff9c09b058a9c0 RSI: ffff9c09189165b0 RDI: ffff9c0918916578

[ 823.264225] RBP: ffff9c09b058a9c0 R08: ffffb04e41093c80 R09: 0000000000000028

[ 823.267044] R10: a0d48e24a0213e28 R11: ffff9c0918916578 R12: 0000000000000000

[ 823.269758] R13: ffff9c09b67c9660 R14: ffff9c09b116fb40 R15: ffffffff8acd4d08

[ 823.272482] FS: 00007fbeb3438800(0000) GS:ffff9c09b7a80000(0000) knlGS:0000000000000000

[ 823.275595] CS: 0010 DS: 0000 ES: 0000 CR0: 0000000080050033

[ 823.277676] CR2: 000055b102d31cc9 CR3: 0000000234648000 CR4: 00000000001406e0

[ 823.280347] Call Trace:

[ 823.281287] binder_get_object+0x60/0xf0

[ 823.282728] binder_transaction+0xc2e/0x2370

[ 823.284268] ? __check_object_size+0x41/0x15d

[ 823.285849] ? binder_thread_read+0x9e2/0x1460

[ 823.287342] ? binder_update_ref_for_handle+0x83/0x1a0

[ 823.289066] binder_thread_write+0x2ae/0xfc0

[ 823.290513] ? finish_wait+0x80/0x80

[ 823.291729] binder_ioctl+0x659/0x836

[ 823.292980] do_vfs_ioctl+0x40a/0x670

[ 823.294234] ksys_ioctl+0x5e/0x90

[ 823.295364] __x64_sys_ioctl+0x16/0x20

[ 823.296609] do_syscall_64+0x5b/0x150

[ 823.297796] entry_SYSCALL_64_after_hwframe+0x44/0xa9

[ 823.299423] RIP: 0033:0x7fbeb35e782b

[ 823.300580] Code: 0f 1e fa 48 8b 05 5d 96 0c 00 64 c7 00 26 00 00 00 48 c7 c0 ff ff ff ff c3 66 0f 1f 44 00 00 f3 0f 1e fa b8 10 00 00 00 0f 05 <48> 3d 01 f0 ff ff 73 01 c3 48 8b 0d 2d 96 0c 00 f7 d8 64 89 01 48

[ 823.306473] RSP: 002b:00007ffdfae2f198 EFLAGS: 00000287 ORIG_RAX: 0000000000000010

[ 823.308868] RAX: ffffffffffffffda RBX: 0000000000000000 RCX: 00007fbeb35e782b

[ 823.311029] RDX: 00007ffdfae2f1b0 RSI: 00000000c0306201 RDI: 0000000000000003

[ 823.313206] RBP: 00007ffdfae30210 R08: 00000000010fa330 R09: 0000000000000000

[ 823.315379] R10: 0000000000400644 R11: 0000000000000287 R12: 0000000000401190

[ 823.317459] R13: 00007ffdfae304c0 R14: 0000000000000000 R15: 0000000000000000

[ 823.319510] Modules linked in: crypto_user nfnetlink xt_multiport bluetooth ecdh_generic rfkill sctp overlay ip6table_security xt_CONNSECMARK xt_SECMARK xt_state xt_conntrack nf_conntrack nf_defrag_ipv6 nf_defrag_ipv4 libcrc32c iptable_security ah6 xfrm6_mode_transport ah4 xfrm4_mode_transport ip6table_mangle ip6table_filter ip6_tables iptable_mangle xt_mark xt_AUDIT ib_isert iscsi_target_mod ib_srpt target_core_mod ib_srp scsi_transport_srp rpcrdma rdma_ucm ib_iser ib_umad ib_ipoib rdma_cm iw_cm libiscsi scsi_transport_iscsi ib_cm mlx5_ib ib_uverbs ib_core sunrpc crct10dif_pclmul crc32_pclmul ghash_clmulni_intel joydev virtio_balloon i2c_piix4 drm_kms_helper virtio_net net_failover failover ttm drm mlx5_core crc32c_intel virtio_blk ata_generic virtio_console mlxfw serio_raw pata_acpi qemu_fw_cfg [last unloaded: arp_tables]

[ 823.339786] ---[ end trace 6f761f654b297775 ]---

```

The related code in Linus' tree (it's the BUG_ON(...) at the top):

```

static void binder_alloc_do_buffer_copy(struct binder_alloc *alloc,

bool to_buffer,

struct binder_buffer *buffer,

binder_size_t buffer_offset,

void *ptr,

size_t bytes)

{

/* All copies must be 32-bit aligned and 32-bit size */

BUG_ON(!check_buffer(alloc, buffer, buffer_offset, bytes));

while (bytes) {

unsigned long size;

struct page *page;

pgoff_t pgoff;

void *tmpptr;

void *base_ptr;

page = binder_alloc_get_page(alloc, buffer,

buffer_offset, &pgoff);

size = min_t(size_t, bytes, PAGE_SIZE - pgoff);

base_ptr = kmap_atomic(page);

tmpptr = base_ptr + pgoff;

if (to_buffer)

memcpy(tmpptr, ptr, size);

else

memcpy(ptr, tmpptr, size);

/*

* kunmap_atomic() takes care of flushing the cache

* if this device has VIVT cache arch

*/

kunmap_atomic(base_ptr);

bytes -= size;

pgoff = 0;

ptr = ptr + size;

buffer_offset += size;

}

}

```

| priority | bug selinux testsuite failes on binder tests in when running the selinux testsuite the binder tests cause a kernel panic bug which causes the test to block the test output running as user root with context unconfined u unconfined r unconfined t domain trans test ok netlink socket test ok prlimit test ok binder test the relevant console output binder release transaction out still active binder transaction failed size line binder send failed reply for transaction target dead binder transaction failed size line kernel bug at drivers android binder alloc c invalid opcode smp pti cpu pid comm test binder not tainted secnext hardware name red hat kvm bios rip binder alloc do buffer copy code fb ca rsp eflags rax rbx rcx rdx rsi rdi rbp fs gs knlgs cs ds es call trace binder get object binder transaction check object size binder thread read binder update ref for handle binder thread write finish wait binder ioctl do vfs ioctl ksys ioctl sys ioctl do syscall entry syscall after hwframe rip code fa ff ff ff ff fa ff ff rsp eflags orig rax rax ffffffffffffffda rbx rcx rdx rsi rdi rbp modules linked in crypto user nfnetlink xt multiport bluetooth ecdh generic rfkill sctp overlay security xt connsecmark xt secmark xt state xt conntrack nf conntrack nf defrag nf defrag iptable security mode transport mode transport mangle filter tables iptable mangle xt mark xt audit ib isert iscsi target mod ib srpt target core mod ib srp scsi transport srp rpcrdma rdma ucm ib iser ib umad ib ipoib rdma cm iw cm libiscsi scsi transport iscsi ib cm ib ib uverbs ib core sunrpc pclmul pclmul ghash clmulni intel joydev virtio balloon drm kms helper virtio net net failover failover ttm drm core intel virtio blk ata generic virtio console mlxfw serio raw pata acpi qemu fw cfg the related code in linus tree it s the bug on at the top static void binder alloc do buffer copy struct binder alloc alloc bool to buffer struct binder buffer buffer binder size t buffer offset void ptr size t bytes all copies must be bit aligned and bit size bug on check buffer alloc buffer buffer offset bytes while bytes unsigned long size struct page page pgoff t pgoff void tmpptr void base ptr page binder alloc get page alloc buffer buffer offset pgoff size min t size t bytes page size pgoff base ptr kmap atomic page tmpptr base ptr pgoff if to buffer memcpy tmpptr ptr size else memcpy ptr tmpptr size kunmap atomic takes care of flushing the cache if this device has vivt cache arch kunmap atomic base ptr bytes size pgoff ptr ptr size buffer offset size | 1 |

271,937 | 8,494,026,977 | IssuesEvent | 2018-10-28 17:44:14 | nimona/go-nimona | https://api.github.com/repos/nimona/go-nimona | closed | Allow specifying own hostname | Good first issue Priority: Medium Status: In Review Type: Enhancement | Allow for either a `--announce-address` arg or a `NIMONA_ANNOUNCE_ADDRESS` env var to force the local address to be set to its value. This should allow bootstrap nodes to always announce their addresses as their hostnames. | 1.0 | Allow specifying own hostname - Allow for either a `--announce-address` arg or a `NIMONA_ANNOUNCE_ADDRESS` env var to force the local address to be set to its value. This should allow bootstrap nodes to always announce their addresses as their hostnames. | priority | allow specifying own hostname allow for either a announce address arg or a nimona announce address env var to force the local address to be set to its value this should allow bootstrap nodes to always announce their addresses as their hostnames | 1 |

100,425 | 4,087,516,626 | IssuesEvent | 2016-06-01 10:21:19 | pombase/canto | https://api.github.com/repos/pombase/canto | closed | Allow distinct genotypes that differ only by mating type | enhancement genotype_enhancements medium priority user interface | At present, Canto ignores the text in the background box for the purpose of determining whether one genotype differs from another in a session. That's usually OK, because if an allele is important we'll put in the genotype proper. But for mating type, we don't have a convenient single gene to use to add an allele as usual.

What we'd like is for Canto to let us use the most common mating type designations -- i.e. h-, h+, and h90 -- as alleles somehow.

Antonia suggested tickboxes; I wouldn't mind entering them in an interface more like the usual allele-addition setup, maybe with a "mating type" button analogous to the gene buttons. Whatever's easy to code ... my goal is simply to be able to represent mating type in genotypes so we can capture the phenotypes where it makes a difference.

Let me know if I should also open a Chado ticket for anything connected with this.

| 1.0 | Allow distinct genotypes that differ only by mating type - At present, Canto ignores the text in the background box for the purpose of determining whether one genotype differs from another in a session. That's usually OK, because if an allele is important we'll put in the genotype proper. But for mating type, we don't have a convenient single gene to use to add an allele as usual.

What we'd like is for Canto to let us use the most common mating type designations -- i.e. h-, h+, and h90 -- as alleles somehow.

Antonia suggested tickboxes; I wouldn't mind entering them in an interface more like the usual allele-addition setup, maybe with a "mating type" button analogous to the gene buttons. Whatever's easy to code ... my goal is simply to be able to represent mating type in genotypes so we can capture the phenotypes where it makes a difference.

Let me know if I should also open a Chado ticket for anything connected with this.

| priority | allow distinct genotypes that differ only by mating type at present canto ignores the text in the background box for the purpose of determining whether one genotype differs from another in a session that s usually ok because if an allele is important we ll put in the genotype proper but for mating type we don t have a convenient single gene to use to add an allele as usual what we d like is for canto to let us use the most common mating type designations i e h h and as alleles somehow antonia suggested tickboxes i wouldn t mind entering them in an interface more like the usual allele addition setup maybe with a mating type button analogous to the gene buttons whatever s easy to code my goal is simply to be able to represent mating type in genotypes so we can capture the phenotypes where it makes a difference let me know if i should also open a chado ticket for anything connected with this | 1 |

40,423 | 2,868,918,771 | IssuesEvent | 2015-06-05 21:57:35 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Pub should respect a DART_HOME environment variable | enhancement Priority-Medium wontfix | <a href="https://github.com/sethladd"><img src="https://avatars.githubusercontent.com/u/5479?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sethladd](https://github.com/sethladd)**

_Originally opened as dart-lang/sdk#3713_

----

Setting a DART_HOME to the binary SDK would allow us to avoid passing in --sdkdir to the pub command | 1.0 | Pub should respect a DART_HOME environment variable - <a href="https://github.com/sethladd"><img src="https://avatars.githubusercontent.com/u/5479?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sethladd](https://github.com/sethladd)**

_Originally opened as dart-lang/sdk#3713_

----

Setting a DART_HOME to the binary SDK would allow us to avoid passing in --sdkdir to the pub command | priority | pub should respect a dart home environment variable issue by originally opened as dart lang sdk setting a dart home to the binary sdk would allow us to avoid passing in sdkdir to the pub command | 1 |

800,047 | 28,323,833,389 | IssuesEvent | 2023-04-11 05:05:14 | WordPress/openverse | https://api.github.com/repos/WordPress/openverse | opened | Flaky playwright test `visual-regression/pages/pages-single-result.spec.ts:30:13` | 🟨 priority: medium 🛠 goal: fix 🤖 aspect: dx 🧱 stack: frontend | ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

`visual-regression/pages/pages-single-result.spec.ts:30:13 › image ltr single-result page snapshots › screen at breakpoint lg with width 1024 › from search results` is flaky: https://github.com/WordPress/openverse/actions/runs/4663921765/jobs/8255702950?pr=907

## Reproduction

<!-- Provide detailed steps to reproduce the bug. -->

See the linked failure. | 1.0 | Flaky playwright test `visual-regression/pages/pages-single-result.spec.ts:30:13` - ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

`visual-regression/pages/pages-single-result.spec.ts:30:13 › image ltr single-result page snapshots › screen at breakpoint lg with width 1024 › from search results` is flaky: https://github.com/WordPress/openverse/actions/runs/4663921765/jobs/8255702950?pr=907

## Reproduction

<!-- Provide detailed steps to reproduce the bug. -->

See the linked failure. | priority | flaky playwright test visual regression pages pages single result spec ts description visual regression pages pages single result spec ts › image ltr single result page snapshots › screen at breakpoint lg with width › from search results is flaky reproduction see the linked failure | 1 |

584,827 | 17,465,276,028 | IssuesEvent | 2021-08-06 15:54:52 | CookieJarApps/SmartCookieWeb | https://api.github.com/repos/CookieJarApps/SmartCookieWeb | closed | ad blocker issue | enhancement stale P2: Medium priority | some website use Ad blocker detection script (notification visible ) , please add specific website ad block enable/disable option

example

https://disableadblock.com/developers/ | 1.0 | ad blocker issue - some website use Ad blocker detection script (notification visible ) , please add specific website ad block enable/disable option

example

https://disableadblock.com/developers/ | priority | ad blocker issue some website use ad blocker detection script notification visible please add specific website ad block enable disable option example | 1 |

607,068 | 18,772,751,721 | IssuesEvent | 2021-11-07 05:21:10 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | closed | Windows can exist without low walls beneath them | Type: Bug Priority: 3-Not Required Difficulty: 2-Medium | <!-- To automatically tag this issue, add the uppercase label(s) surrounded by brackets below, for example: [LABEL] -->

## Description

windows shouldnt be able to float like that, eris' solution is to just have the window fall and break after a delay if the low wall is destroyed first

related to #3730

**Screenshots**

**Additional context**

<!-- Add any other context about the problem here. -->

| 1.0 | Windows can exist without low walls beneath them - <!-- To automatically tag this issue, add the uppercase label(s) surrounded by brackets below, for example: [LABEL] -->

## Description

windows shouldnt be able to float like that, eris' solution is to just have the window fall and break after a delay if the low wall is destroyed first

related to #3730

**Screenshots**

**Additional context**

<!-- Add any other context about the problem here. -->

| priority | windows can exist without low walls beneath them description windows shouldnt be able to float like that eris solution is to just have the window fall and break after a delay if the low wall is destroyed first related to screenshots additional context | 1 |

631,986 | 20,167,277,157 | IssuesEvent | 2022-02-10 06:37:15 | way-of-elendil/3.3.5 | https://api.github.com/repos/way-of-elendil/3.3.5 | closed | Changeliche selfheal dk | bug type-class priority-medium | **Description**