Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

54,741 | 3,071,164,643 | IssuesEvent | 2015-08-19 10:13:37 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Проблемы с распознаванием URL'ом | bug Component-Logic Component-UI imported Priority-Medium Usability | _From [sa.stol...@gmail.com](https://code.google.com/u/103855734279594150839/) on April 19, 2010 10:40:16_

При распозновании URL'ов используется конечный символ - пробел. Необходимо

также считать конечным символом - ковычку ("), скобки ((,),[]) - может

что-то еще?

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=80_ | 1.0 | Проблемы с распознаванием URL'ом - _From [sa.stol...@gmail.com](https://code.google.com/u/103855734279594150839/) on April 19, 2010 10:40:16_

При распозновании URL'ов используется конечный символ - пробел. Необходимо

также считать конечным символом - ковычку ("), скобки ((,),[]) - может

что-то еще?

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=80_ | priority | проблемы с распознаванием url ом from on april при распозновании url ов используется конечный символ пробел необходимо также считать конечным символом ковычку скобки может что то еще original issue | 1 |

364,292 | 10,761,791,035 | IssuesEvent | 2019-10-31 21:37:37 | ix/notewell | https://api.github.com/repos/ix/notewell | opened | Markdown image support | medium priority | Notewell should _probably_ support images. It's not pivotal in my opinion and it'd be quite a lot of hassle to support, but it's not exactly complete unless it does. | 1.0 | Markdown image support - Notewell should _probably_ support images. It's not pivotal in my opinion and it'd be quite a lot of hassle to support, but it's not exactly complete unless it does. | priority | markdown image support notewell should probably support images it s not pivotal in my opinion and it d be quite a lot of hassle to support but it s not exactly complete unless it does | 1 |

315,476 | 9,621,072,863 | IssuesEvent | 2019-05-14 09:46:30 | cms-gem-daq-project/cmsgemos | https://api.github.com/repos/cms-gem-daq-project/cmsgemos | opened | [feature] Send calibration parameters to manager applications | Priority: Medium Type: Feature Request | ## Brief summary of issue

In order to have the hardware receive correct scan configuration, the parameters need to be sent from the `CalibrationSuite` to the appropriate `<HW>Manager` applications.

### Types of issue

- [x] Feature request (request for change which adds functionality)

## Expected Behavior

This can happen by doing the following:

* Define a `CalibrationParameters` object in `CalibrationSuite`

* Should contain `xdata::<Type>` objects (see, e.g., [`OptoHybridManager.h`](https://github.com/cms-gem-daq-project/cmsgemos/blob/feature/gemcalib/gemhardware/include/gem/hw/optohybrid/OptoHybridManager.h#L64-L93)

* Define a SOAP callback in the manager applications `xoap::bind()`, (see, e.g., [`GEMSupervisor`](https://github.com/cms-gem-daq-project/cmsgemos/blob/1fdff44b5089203c526de06419de766c46cddd1b/gemsupervisor/src/common/GEMSupervisor.cc#L65) [and](https://github.com/cms-gem-daq-project/cmsgemos/blob/1fdff44b5089203c526de06419de766c46cddd1b/gemsupervisor/src/common/GEMSupervisor.cc#L1291-L1370))

* This callback should parse the calibration parameters object and set the necessary ones in the manager

* For a first test, you can print out the parameters received in the manager log message

* From the `CalibrationSuite` send the SOAP message to the appropriate application(s) using: [`gem::utils::soap::GEMSOAPToolBox::sendCommandWithParameterBag`](https://github.com/jsturdy/cmsgemos/blob/subfeature/hw-db-interface/gemutils/src/common/soap/GEMSOAPToolBox.cc#L344-L434)

* You will have to translate the `CalibrationParameters` into the appropriate type for serialization (it *may* be possible to do this as an `xdata::Bag` object) | 1.0 | [feature] Send calibration parameters to manager applications - ## Brief summary of issue

In order to have the hardware receive correct scan configuration, the parameters need to be sent from the `CalibrationSuite` to the appropriate `<HW>Manager` applications.

### Types of issue

- [x] Feature request (request for change which adds functionality)

## Expected Behavior

This can happen by doing the following:

* Define a `CalibrationParameters` object in `CalibrationSuite`

* Should contain `xdata::<Type>` objects (see, e.g., [`OptoHybridManager.h`](https://github.com/cms-gem-daq-project/cmsgemos/blob/feature/gemcalib/gemhardware/include/gem/hw/optohybrid/OptoHybridManager.h#L64-L93)

* Define a SOAP callback in the manager applications `xoap::bind()`, (see, e.g., [`GEMSupervisor`](https://github.com/cms-gem-daq-project/cmsgemos/blob/1fdff44b5089203c526de06419de766c46cddd1b/gemsupervisor/src/common/GEMSupervisor.cc#L65) [and](https://github.com/cms-gem-daq-project/cmsgemos/blob/1fdff44b5089203c526de06419de766c46cddd1b/gemsupervisor/src/common/GEMSupervisor.cc#L1291-L1370))

* This callback should parse the calibration parameters object and set the necessary ones in the manager

* For a first test, you can print out the parameters received in the manager log message

* From the `CalibrationSuite` send the SOAP message to the appropriate application(s) using: [`gem::utils::soap::GEMSOAPToolBox::sendCommandWithParameterBag`](https://github.com/jsturdy/cmsgemos/blob/subfeature/hw-db-interface/gemutils/src/common/soap/GEMSOAPToolBox.cc#L344-L434)

* You will have to translate the `CalibrationParameters` into the appropriate type for serialization (it *may* be possible to do this as an `xdata::Bag` object) | priority | send calibration parameters to manager applications brief summary of issue in order to have the hardware receive correct scan configuration the parameters need to be sent from the calibrationsuite to the appropriate manager applications types of issue feature request request for change which adds functionality expected behavior this can happen by doing the following define a calibrationparameters object in calibrationsuite should contain xdata objects see e g define a soap callback in the manager applications xoap bind see e g this callback should parse the calibration parameters object and set the necessary ones in the manager for a first test you can print out the parameters received in the manager log message from the calibrationsuite send the soap message to the appropriate application s using you will have to translate the calibrationparameters into the appropriate type for serialization it may be possible to do this as an xdata bag object | 1 |

537,284 | 15,726,489,421 | IssuesEvent | 2021-03-29 11:23:15 | AY2021S2-CS2103T-T11-4/tp | https://api.github.com/repos/AY2021S2-CS2103T-T11-4/tp | closed | FindCommand - Modify to cater to more functionalities | priority.Medium type.Enhancement | Aligning to the purpose of CakeCollate to track orders, enable the FindCommand to be flexible and have more fields to find keywords from so that user can properly track specific orders.

Flexibility - to allow for finding of substrings instead of having to key in a full keyword. Case-insensitive maybe.

More field - to allow for finding of keywords in fields other than n/NAME. For instance, user may key in "find a/Orchard" to find orders with address that contains "Orchard". | 1.0 | FindCommand - Modify to cater to more functionalities - Aligning to the purpose of CakeCollate to track orders, enable the FindCommand to be flexible and have more fields to find keywords from so that user can properly track specific orders.

Flexibility - to allow for finding of substrings instead of having to key in a full keyword. Case-insensitive maybe.

More field - to allow for finding of keywords in fields other than n/NAME. For instance, user may key in "find a/Orchard" to find orders with address that contains "Orchard". | priority | findcommand modify to cater to more functionalities aligning to the purpose of cakecollate to track orders enable the findcommand to be flexible and have more fields to find keywords from so that user can properly track specific orders flexibility to allow for finding of substrings instead of having to key in a full keyword case insensitive maybe more field to allow for finding of keywords in fields other than n name for instance user may key in find a orchard to find orders with address that contains orchard | 1 |

176,125 | 6,556,785,188 | IssuesEvent | 2017-09-06 15:11:13 | buttercup/buttercup-mobile | https://api.github.com/repos/buttercup/buttercup-mobile | opened | Remote explorer item icons | Effort: Low Priority: Medium Status: Available Type: Enhancement | The remote explorer, when selecting or creating an archive, needs **local** icons. | 1.0 | Remote explorer item icons - The remote explorer, when selecting or creating an archive, needs **local** icons. | priority | remote explorer item icons the remote explorer when selecting or creating an archive needs local icons | 1 |

108,188 | 4,328,764,814 | IssuesEvent | 2016-07-26 14:56:48 | DigitalCampus/django-oppia | https://api.github.com/repos/DigitalCampus/django-oppia | closed | Summary cron - oppia.models.DoesNotExist: Tracker matching query does not exist | bug medium priority | Get the following error when running the summary cron task against an empty tracker table...

Starting Oppia Summary cron...

Traceback (most recent call last):

File "/home/oppiamobile/django-oppia/oppia/summary/cron.py", line 104, in <module>

run()

File "/home/oppiamobile/django-oppia/oppia/summary/cron.py", line 24, in run

newest_tracker_pk = Tracker.objects.latest('id').id

File "/home/oppiamobile/env/local/lib/python2.7/site-packages/django/db/models/manager.py", line 92, in manager_method

return getattr(self.get_queryset(), name)(*args, **kwargs)

File "/home/oppiamobile/env/local/lib/python2.7/site-packages/django/db/models/query.py", line 502, in latest

return self._earliest_or_latest(field_name=field_name, direction="-")

File "/home/oppiamobile/env/local/lib/python2.7/site-packages/django/db/models/query.py", line 496, in _earliest_or_latest

return obj.get()

File "/home/oppiamobile/env/local/lib/python2.7/site-packages/django/db/models/query.py", line 357, in get

self.model._meta.object_name)

oppia.models.DoesNotExist: Tracker matching query does not exist.

| 1.0 | Summary cron - oppia.models.DoesNotExist: Tracker matching query does not exist - Get the following error when running the summary cron task against an empty tracker table...

Starting Oppia Summary cron...

Traceback (most recent call last):

File "/home/oppiamobile/django-oppia/oppia/summary/cron.py", line 104, in <module>

run()

File "/home/oppiamobile/django-oppia/oppia/summary/cron.py", line 24, in run

newest_tracker_pk = Tracker.objects.latest('id').id

File "/home/oppiamobile/env/local/lib/python2.7/site-packages/django/db/models/manager.py", line 92, in manager_method

return getattr(self.get_queryset(), name)(*args, **kwargs)

File "/home/oppiamobile/env/local/lib/python2.7/site-packages/django/db/models/query.py", line 502, in latest

return self._earliest_or_latest(field_name=field_name, direction="-")

File "/home/oppiamobile/env/local/lib/python2.7/site-packages/django/db/models/query.py", line 496, in _earliest_or_latest

return obj.get()

File "/home/oppiamobile/env/local/lib/python2.7/site-packages/django/db/models/query.py", line 357, in get

self.model._meta.object_name)

oppia.models.DoesNotExist: Tracker matching query does not exist.

| priority | summary cron oppia models doesnotexist tracker matching query does not exist get the following error when running the summary cron task against an empty tracker table starting oppia summary cron traceback most recent call last file home oppiamobile django oppia oppia summary cron py line in run file home oppiamobile django oppia oppia summary cron py line in run newest tracker pk tracker objects latest id id file home oppiamobile env local lib site packages django db models manager py line in manager method return getattr self get queryset name args kwargs file home oppiamobile env local lib site packages django db models query py line in latest return self earliest or latest field name field name direction file home oppiamobile env local lib site packages django db models query py line in earliest or latest return obj get file home oppiamobile env local lib site packages django db models query py line in get self model meta object name oppia models doesnotexist tracker matching query does not exist | 1 |

80,043 | 3,549,870,549 | IssuesEvent | 2016-01-20 19:42:38 | bbengfort/commis | https://api.github.com/repos/bbengfort/commis | opened | Label Command | priority: medium type: feature | A management command which takes one or more arbitrary arguments (labels) on the command line, and does something with each of them.

Rather than implementing `handle()`, subclasses must implement `handle_label()`, which will be called once for each label. | 1.0 | Label Command - A management command which takes one or more arbitrary arguments (labels) on the command line, and does something with each of them.

Rather than implementing `handle()`, subclasses must implement `handle_label()`, which will be called once for each label. | priority | label command a management command which takes one or more arbitrary arguments labels on the command line and does something with each of them rather than implementing handle subclasses must implement handle label which will be called once for each label | 1 |

420,924 | 12,246,010,307 | IssuesEvent | 2020-05-05 13:51:06 | ansible/galaxy_ng | https://api.github.com/repos/ansible/galaxy_ng | closed | TASK [pulp-database : Run database auth migrations] in playbook install.yml fails. | area/installer priority/medium status/new type/bug | TASK [pulp-database : Run database auth migrations] fails when running playbook install.yml

TASK [pulp-database : Run database auth migrations] ******************************************************************************************************************************

fatal: [35.178.172.220]: FAILED! => {"changed": true, "cmd": ["/usr/local/lib/pulp/bin/django-admin", "migrate", "auth", "--no-input"], "delta": "0:00:00.658146", "end": "2020-04-19 11:08:35.107148", "msg": "non-zero return code", "rc": 1, "start": "2020-04-19 11:08:34.449002", "stderr": "Traceback (most recent call last):\n File \"/usr/local/lib/pulp/bin/django-admin\", line 8, in <module>\n sys.exit(execute_from_command_line())\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/core/management/__init__.py\", line 381, in execute_from_command_line\n utility.execute()\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/core/management/__init__.py\", line 325, in execute\n settings.INSTALLED_APPS\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 79, in __getattr__\n self._setup(name)\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 66, in _setup\n self._wrapped = Settings(settings_module)\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 157, in __init__\n mod = importlib.import_module(self.SETTINGS_MODULE)\n File \"/usr/lib/python3.6/importlib/__init__.py\", line 126, in import_module\n return _bootstrap._gcd_import(name[level:], package, level)\n File \"<frozen importlib._bootstrap>\", line 994, in _gcd_import\n File \"<frozen importlib._bootstrap>\", line 971, in _find_and_load\n File \"<frozen importlib._bootstrap>\", line 955, in _find_and_load_unlocked\n File \"<frozen importlib._bootstrap>\", line 665, in _load_unlocked\n File \"<frozen importlib._bootstrap_external>\", line 678, in exec_module\n File \"<frozen importlib._bootstrap>\", line 219, in _call_with_frames_removed\n File \"/usr/local/lib/pulp/src/pulpcore/pulpcore/app/settings.py\", line 73, in <module>\n plugin_app_config = entry_point.load()\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 2449, in load\n self.require(*args, **kwargs)\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 2472, in require\n items = working_set.resolve(reqs, env, installer, extras=self.extras)\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 792, in resolve\n raise VersionConflict(dist, req).with_context(dependent_req)\npkg_resources.VersionConflict: (pulpcore 3.4.0.dev0 (/usr/local/lib/pulp/src/pulpcore), Requirement.parse('pulpcore<3.3,>=3.0'))", "stderr_lines": ["Traceback (most recent call last):", " File \"/usr/local/lib/pulp/bin/django-admin\", line 8, in <module>", " sys.exit(execute_from_command_line())", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/core/management/__init__.py\", line 381, in execute_from_command_line", " utility.execute()", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/core/management/__init__.py\", line 325, in execute", " settings.INSTALLED_APPS", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 79, in __getattr__", " self._setup(name)", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 66, in _setup", " self._wrapped = Settings(settings_module)", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 157, in __init__", " mod = importlib.import_module(self.SETTINGS_MODULE)", " File \"/usr/lib/python3.6/importlib/__init__.py\", line 126, in import_module", " return _bootstrap._gcd_import(name[level:], package, level)", " File \"<frozen importlib._bootstrap>\", line 994, in _gcd_import", " File \"<frozen importlib._bootstrap>\", line 971, in _find_and_load", " File \"<frozen importlib._bootstrap>\", line 955, in _find_and_load_unlocked", " File \"<frozen importlib._bootstrap>\", line 665, in _load_unlocked", " File \"<frozen importlib._bootstrap_external>\", line 678, in exec_module", " File \"<frozen importlib._bootstrap>\", line 219, in _call_with_frames_removed", " File \"/usr/local/lib/pulp/src/pulpcore/pulpcore/app/settings.py\", line 73, in <module>", " plugin_app_config = entry_point.load()", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 2449, in load", " self.require(*args, **kwargs)", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 2472, in require", " items = working_set.resolve(reqs, env, installer, extras=self.extras)", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 792, in resolve", " raise VersionConflict(dist, req).with_context(dependent_req)", "pkg_resources.VersionConflict: (pulpcore 3.4.0.dev0 (/usr/local/lib/pulp/src/pulpcore), Requirement.parse('pulpcore<3.3,>=3.0'))"], "stdout": "", "stdout_lines": []}

| 1.0 | TASK [pulp-database : Run database auth migrations] in playbook install.yml fails. - TASK [pulp-database : Run database auth migrations] fails when running playbook install.yml

TASK [pulp-database : Run database auth migrations] ******************************************************************************************************************************

fatal: [35.178.172.220]: FAILED! => {"changed": true, "cmd": ["/usr/local/lib/pulp/bin/django-admin", "migrate", "auth", "--no-input"], "delta": "0:00:00.658146", "end": "2020-04-19 11:08:35.107148", "msg": "non-zero return code", "rc": 1, "start": "2020-04-19 11:08:34.449002", "stderr": "Traceback (most recent call last):\n File \"/usr/local/lib/pulp/bin/django-admin\", line 8, in <module>\n sys.exit(execute_from_command_line())\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/core/management/__init__.py\", line 381, in execute_from_command_line\n utility.execute()\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/core/management/__init__.py\", line 325, in execute\n settings.INSTALLED_APPS\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 79, in __getattr__\n self._setup(name)\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 66, in _setup\n self._wrapped = Settings(settings_module)\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 157, in __init__\n mod = importlib.import_module(self.SETTINGS_MODULE)\n File \"/usr/lib/python3.6/importlib/__init__.py\", line 126, in import_module\n return _bootstrap._gcd_import(name[level:], package, level)\n File \"<frozen importlib._bootstrap>\", line 994, in _gcd_import\n File \"<frozen importlib._bootstrap>\", line 971, in _find_and_load\n File \"<frozen importlib._bootstrap>\", line 955, in _find_and_load_unlocked\n File \"<frozen importlib._bootstrap>\", line 665, in _load_unlocked\n File \"<frozen importlib._bootstrap_external>\", line 678, in exec_module\n File \"<frozen importlib._bootstrap>\", line 219, in _call_with_frames_removed\n File \"/usr/local/lib/pulp/src/pulpcore/pulpcore/app/settings.py\", line 73, in <module>\n plugin_app_config = entry_point.load()\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 2449, in load\n self.require(*args, **kwargs)\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 2472, in require\n items = working_set.resolve(reqs, env, installer, extras=self.extras)\n File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 792, in resolve\n raise VersionConflict(dist, req).with_context(dependent_req)\npkg_resources.VersionConflict: (pulpcore 3.4.0.dev0 (/usr/local/lib/pulp/src/pulpcore), Requirement.parse('pulpcore<3.3,>=3.0'))", "stderr_lines": ["Traceback (most recent call last):", " File \"/usr/local/lib/pulp/bin/django-admin\", line 8, in <module>", " sys.exit(execute_from_command_line())", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/core/management/__init__.py\", line 381, in execute_from_command_line", " utility.execute()", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/core/management/__init__.py\", line 325, in execute", " settings.INSTALLED_APPS", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 79, in __getattr__", " self._setup(name)", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 66, in _setup", " self._wrapped = Settings(settings_module)", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/django/conf/__init__.py\", line 157, in __init__", " mod = importlib.import_module(self.SETTINGS_MODULE)", " File \"/usr/lib/python3.6/importlib/__init__.py\", line 126, in import_module", " return _bootstrap._gcd_import(name[level:], package, level)", " File \"<frozen importlib._bootstrap>\", line 994, in _gcd_import", " File \"<frozen importlib._bootstrap>\", line 971, in _find_and_load", " File \"<frozen importlib._bootstrap>\", line 955, in _find_and_load_unlocked", " File \"<frozen importlib._bootstrap>\", line 665, in _load_unlocked", " File \"<frozen importlib._bootstrap_external>\", line 678, in exec_module", " File \"<frozen importlib._bootstrap>\", line 219, in _call_with_frames_removed", " File \"/usr/local/lib/pulp/src/pulpcore/pulpcore/app/settings.py\", line 73, in <module>", " plugin_app_config = entry_point.load()", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 2449, in load", " self.require(*args, **kwargs)", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 2472, in require", " items = working_set.resolve(reqs, env, installer, extras=self.extras)", " File \"/usr/local/lib/pulp/lib/python3.6/site-packages/pkg_resources/__init__.py\", line 792, in resolve", " raise VersionConflict(dist, req).with_context(dependent_req)", "pkg_resources.VersionConflict: (pulpcore 3.4.0.dev0 (/usr/local/lib/pulp/src/pulpcore), Requirement.parse('pulpcore<3.3,>=3.0'))"], "stdout": "", "stdout_lines": []}

| priority | task in playbook install yml fails task fails when running playbook install yml task fatal failed changed true cmd delta end msg non zero return code rc start stderr traceback most recent call last n file usr local lib pulp bin django admin line in n sys exit execute from command line n file usr local lib pulp lib site packages django core management init py line in execute from command line n utility execute n file usr local lib pulp lib site packages django core management init py line in execute n settings installed apps n file usr local lib pulp lib site packages django conf init py line in getattr n self setup name n file usr local lib pulp lib site packages django conf init py line in setup n self wrapped settings settings module n file usr local lib pulp lib site packages django conf init py line in init n mod importlib import module self settings module n file usr lib importlib init py line in import module n return bootstrap gcd import name package level n file line in gcd import n file line in find and load n file line in find and load unlocked n file line in load unlocked n file line in exec module n file line in call with frames removed n file usr local lib pulp src pulpcore pulpcore app settings py line in n plugin app config entry point load n file usr local lib pulp lib site packages pkg resources init py line in load n self require args kwargs n file usr local lib pulp lib site packages pkg resources init py line in require n items working set resolve reqs env installer extras self extras n file usr local lib pulp lib site packages pkg resources init py line in resolve n raise versionconflict dist req with context dependent req npkg resources versionconflict pulpcore usr local lib pulp src pulpcore requirement parse pulpcore stderr lines package level file line in gcd import file line in find and load file line in find and load unlocked file line in load unlocked file line in exec module file line in call with frames removed file usr local lib pulp src pulpcore pulpcore app settings py line in plugin app config entry point load file usr local lib pulp lib site packages pkg resources init py line in load self require args kwargs file usr local lib pulp lib site packages pkg resources init py line in require items working set resolve reqs env installer extras self extras file usr local lib pulp lib site packages pkg resources init py line in resolve raise versionconflict dist req with context dependent req pkg resources versionconflict pulpcore usr local lib pulp src pulpcore requirement parse pulpcore stdout stdout lines | 1 |

435,999 | 12,543,918,058 | IssuesEvent | 2020-06-05 16:21:07 | graknlabs/grakn | https://api.github.com/repos/graknlabs/grakn | opened | Incorrect Graql behaviour in some scenarios (major issues) | priority: medium type: bug | ## Description

A number of minor issues have been found while crafting BDD scenarios, where the actual behaviour of Graql does not match the expected behaviour.

## Environment

1. OS (where Grakn server runs): Mac OS 10

2. Grakn version: Grakn Core 1.7.2

3. Grakn client: client-java

## Scenarios

### Scenario: define relation subtype inherits 'relates' from supertypes without role subtyping

#### expected behaviour

given

```

define

employment sub relation, relates employee;

part-time-employment sub employment;

```

then `part-time-employment` should `relates employee`.

#### actual behaviour

The roleplayer `employee` is not inherited.

### Scenario: define additional 'key' on a type throws if it is not added to existing instances prior to commit

#### expected behaviour

given

```

define

name sub attribute, value string;

barcode sub attribute, value string;

product sub entity, has name;

insert

$x isa product, has name "Cheese";

$y isa product, has name "Ham";

```

then `define product key barcode;` should throw on commit if values for `barcode` have not been added to the existing products.

#### actual behaviour

It doesn't throw, and we now have instances that don't have the correct keys. | 1.0 | Incorrect Graql behaviour in some scenarios (major issues) - ## Description

A number of minor issues have been found while crafting BDD scenarios, where the actual behaviour of Graql does not match the expected behaviour.

## Environment

1. OS (where Grakn server runs): Mac OS 10

2. Grakn version: Grakn Core 1.7.2

3. Grakn client: client-java

## Scenarios

### Scenario: define relation subtype inherits 'relates' from supertypes without role subtyping

#### expected behaviour

given

```

define

employment sub relation, relates employee;

part-time-employment sub employment;

```

then `part-time-employment` should `relates employee`.

#### actual behaviour

The roleplayer `employee` is not inherited.

### Scenario: define additional 'key' on a type throws if it is not added to existing instances prior to commit

#### expected behaviour

given

```

define

name sub attribute, value string;

barcode sub attribute, value string;

product sub entity, has name;

insert

$x isa product, has name "Cheese";

$y isa product, has name "Ham";

```

then `define product key barcode;` should throw on commit if values for `barcode` have not been added to the existing products.

#### actual behaviour

It doesn't throw, and we now have instances that don't have the correct keys. | priority | incorrect graql behaviour in some scenarios major issues description a number of minor issues have been found while crafting bdd scenarios where the actual behaviour of graql does not match the expected behaviour environment os where grakn server runs mac os grakn version grakn core grakn client client java scenarios scenario define relation subtype inherits relates from supertypes without role subtyping expected behaviour given define employment sub relation relates employee part time employment sub employment then part time employment should relates employee actual behaviour the roleplayer employee is not inherited scenario define additional key on a type throws if it is not added to existing instances prior to commit expected behaviour given define name sub attribute value string barcode sub attribute value string product sub entity has name insert x isa product has name cheese y isa product has name ham then define product key barcode should throw on commit if values for barcode have not been added to the existing products actual behaviour it doesn t throw and we now have instances that don t have the correct keys | 1 |

89,080 | 3,789,517,527 | IssuesEvent | 2016-03-21 18:12:10 | PolarisSS13/Polaris | https://api.github.com/repos/PolarisSS13/Polaris | closed | Changeling armblade and flavortext fuckery | Bug Priority: Medium | Getting stunned or otherwise disarmed as a ling will instantly remove the armblade you just shelled 20 chems for, leaving lings to constantly reform it in combat.

The armblade does not seem deflect any projectile weapons. Not sure if this is an issue or if its just intended to work with lasers.

Changing identities while having snowflake text will retain the previous snowflake text, making lings a dead giveaway to the powergaymen in red suits. | 1.0 | Changeling armblade and flavortext fuckery - Getting stunned or otherwise disarmed as a ling will instantly remove the armblade you just shelled 20 chems for, leaving lings to constantly reform it in combat.

The armblade does not seem deflect any projectile weapons. Not sure if this is an issue or if its just intended to work with lasers.

Changing identities while having snowflake text will retain the previous snowflake text, making lings a dead giveaway to the powergaymen in red suits. | priority | changeling armblade and flavortext fuckery getting stunned or otherwise disarmed as a ling will instantly remove the armblade you just shelled chems for leaving lings to constantly reform it in combat the armblade does not seem deflect any projectile weapons not sure if this is an issue or if its just intended to work with lasers changing identities while having snowflake text will retain the previous snowflake text making lings a dead giveaway to the powergaymen in red suits | 1 |

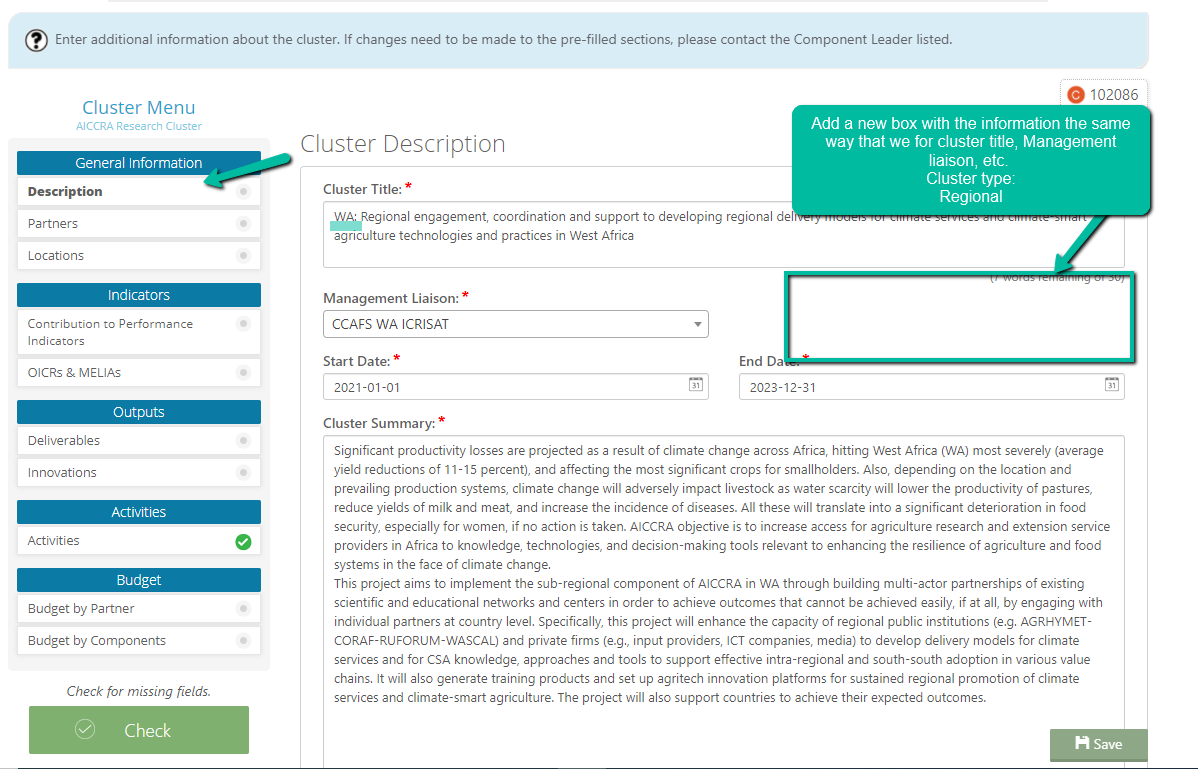

576,415 | 17,086,634,503 | IssuesEvent | 2021-07-08 12:41:53 | CCAFS/MARLO | https://api.github.com/repos/CCAFS/MARLO | closed | [KT] (AICCRA) Cluster tags (Management, Flagship, Regional and Country) | AICCRA Priority - Medium Type - Enhancement | In AICCRA, we have 4 types of projects which would be really great we can tag somehow.

1. Flagship led, 2. Regional led, 3. Country led, 4. PMU led (Cluster Type)

- [x] Database

- [x] Manager

- [x] Migration

- [x] Front end componets

| 1.0 | [KT] (AICCRA) Cluster tags (Management, Flagship, Regional and Country) - In AICCRA, we have 4 types of projects which would be really great we can tag somehow.

1. Flagship led, 2. Regional led, 3. Country led, 4. PMU led (Cluster Type)

- [x] Database

- [x] Manager

- [x] Migration

- [x] Front end componets

| priority | aiccra cluster tags management flagship regional and country in aiccra we have types of projects which would be really great we can tag somehow flagship led regional led country led pmu led cluster type database manager migration front end componets | 1 |

387,662 | 11,464,132,137 | IssuesEvent | 2020-02-07 17:23:06 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | opened | MultiSelect on adding a new item the dataSource requestEnd event args return "read" type of request | Bug C: MultiSelect FP: Planned Kendo2 Priority 5 SEV: Medium | ### Bug report

When a new item is added and the dataSource's sync method is called, the requestEnd event handler data (**arg.type**) returns the type of request as "read", instead of "create".

As a result the [Add new item](https://demos.telerik.com/kendo-ui/multiselect/addnewitem) demo, does not work as expected, because it has a check for the type of the request in the requestEnd handler, and expects the request to be "create". Since the request type comes out as "read" the logic for selecting the newly added item is not executed.

In previous versions the request has been correctly identified as "create". The issue is exhibited only in the MultiSelect. The ComboBox and the DropDownList return the request as "create".

This behavior has been introduced in R3 2017. Reproducible in Chrome, Firefox and Chromium Edge. Not reproducible in IE11 and Spartan Edge.

As a **workaround** the addNew function can be modified as shown below:

```

function addNew(widgetId, value) {

var widget = $("#" + widgetId).getKendoMultiSelect();

var dataSource = widget.dataSource;

if (confirm("Are you sure?")) {

dataSource.add({

ProductID: 0,

ProductName: value

});

dataSource.one("sync", function() {

var index = dataSource.view().length - 1;

var newValue = dataSource.at(index).ProductID;

widget.value(widget.value().concat([newValue]));

});

dataSource.sync();

}

}

```

### Reproduction of the problem

[Dojo ](https://dojo.telerik.com/eSohATUz) example.

1. Open the browser's console.

2. Focus the input and type in some random text.

3. Click the button in the popup to add a new item.

4. The type of the request returned by the requestEnd event data is logged in the console.

### Current behavior

The event data returns "read" as the type of the request.

### Expected/desired behavior

The event data returns "create" as the type of the request.

### Environment

* **Kendo UI version:** 2020.1.114

* **jQuery version:** x.y

* **Browser:** [Chrome XX | Firefox XX | Chromium Edge ]

| 1.0 | MultiSelect on adding a new item the dataSource requestEnd event args return "read" type of request - ### Bug report

When a new item is added and the dataSource's sync method is called, the requestEnd event handler data (**arg.type**) returns the type of request as "read", instead of "create".

As a result the [Add new item](https://demos.telerik.com/kendo-ui/multiselect/addnewitem) demo, does not work as expected, because it has a check for the type of the request in the requestEnd handler, and expects the request to be "create". Since the request type comes out as "read" the logic for selecting the newly added item is not executed.

In previous versions the request has been correctly identified as "create". The issue is exhibited only in the MultiSelect. The ComboBox and the DropDownList return the request as "create".

This behavior has been introduced in R3 2017. Reproducible in Chrome, Firefox and Chromium Edge. Not reproducible in IE11 and Spartan Edge.

As a **workaround** the addNew function can be modified as shown below:

```

function addNew(widgetId, value) {

var widget = $("#" + widgetId).getKendoMultiSelect();

var dataSource = widget.dataSource;

if (confirm("Are you sure?")) {

dataSource.add({

ProductID: 0,

ProductName: value

});

dataSource.one("sync", function() {

var index = dataSource.view().length - 1;

var newValue = dataSource.at(index).ProductID;

widget.value(widget.value().concat([newValue]));

});

dataSource.sync();

}

}

```

### Reproduction of the problem

[Dojo ](https://dojo.telerik.com/eSohATUz) example.

1. Open the browser's console.

2. Focus the input and type in some random text.

3. Click the button in the popup to add a new item.

4. The type of the request returned by the requestEnd event data is logged in the console.

### Current behavior

The event data returns "read" as the type of the request.

### Expected/desired behavior

The event data returns "create" as the type of the request.

### Environment

* **Kendo UI version:** 2020.1.114

* **jQuery version:** x.y

* **Browser:** [Chrome XX | Firefox XX | Chromium Edge ]

| priority | multiselect on adding a new item the datasource requestend event args return read type of request bug report when a new item is added and the datasource s sync method is called the requestend event handler data arg type returns the type of request as read instead of create as a result the demo does not work as expected because it has a check for the type of the request in the requestend handler and expects the request to be create since the request type comes out as read the logic for selecting the newly added item is not executed in previous versions the request has been correctly identified as create the issue is exhibited only in the multiselect the combobox and the dropdownlist return the request as create this behavior has been introduced in reproducible in chrome firefox and chromium edge not reproducible in and spartan edge as a workaround the addnew function can be modified as shown below function addnew widgetid value var widget widgetid getkendomultiselect var datasource widget datasource if confirm are you sure datasource add productid productname value datasource one sync function var index datasource view length var newvalue datasource at index productid widget value widget value concat datasource sync reproduction of the problem example open the browser s console focus the input and type in some random text click the button in the popup to add a new item the type of the request returned by the requestend event data is logged in the console current behavior the event data returns read as the type of the request expected desired behavior the event data returns create as the type of the request environment kendo ui version jquery version x y browser | 1 |

56,366 | 3,079,497,047 | IssuesEvent | 2015-08-21 16:34:53 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Свойства избранного хаба -> Прямое соединение -> IP (разрешить DNS name) | Component-Logic enhancement imported Priority-Medium Usability | _From [vts...@gmail.com](https://code.google.com/u/103957436465684378630/) on November 06, 2011 13:41:11_

Добавить возможность вводить DNS в поле адреса для прямого соединения.

Подробно:

Есть серая сеть провайдера для которой работают основные настройки

и есть VPN подключение с реальным IP. Реальный IP каждый раз меняется, но настроен DynDns который может по DNS name вернуть текущий IP.

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=587_ | 1.0 | Свойства избранного хаба -> Прямое соединение -> IP (разрешить DNS name) - _From [vts...@gmail.com](https://code.google.com/u/103957436465684378630/) on November 06, 2011 13:41:11_

Добавить возможность вводить DNS в поле адреса для прямого соединения.

Подробно:

Есть серая сеть провайдера для которой работают основные настройки

и есть VPN подключение с реальным IP. Реальный IP каждый раз меняется, но настроен DynDns который может по DNS name вернуть текущий IP.

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=587_ | priority | свойства избранного хаба прямое соединение ip разрешить dns name from on november добавить возможность вводить dns в поле адреса для прямого соединения подробно есть серая сеть провайдера для которой работают основные настройки и есть vpn подключение с реальным ip реальный ip каждый раз меняется но настроен dyndns который может по dns name вернуть текущий ip original issue | 1 |

749,646 | 26,172,936,477 | IssuesEvent | 2023-01-02 04:34:44 | battlecode/galaxy | https://api.github.com/repos/battlecode/galaxy | closed | error_handling.js can obscure error messages; dump in console | type: bug type: feature module: frontend priority: p2 medium | error_handling.js is good at parsing many error messages, and turning them into human-readable form.

However, sometimes the error message we get is uncommon, or the result of a deeper error. In this case, our parser will parse it...but because it's not getting expected input, it gives bad output. This can confuse devs, make for extra digging, etc.

We _could_ have a more robust handler. But this is really hard -- it's hard to balance human-readability with covering all cases. There's a reason we have a custom parser, after all.

The better idea is to simply **dump the full error message/array in the console.** (Not insecure -- since the network response in console can give it to us, and motivated ppl would just check that anyways) And it's much more convenient.

Side-note: from dev experience, you should prob learn that error_handling misparses.

**Should we include a note in readme? "If response looks weird, open your console and retry and see what's printed".** | 1.0 | error_handling.js can obscure error messages; dump in console - error_handling.js is good at parsing many error messages, and turning them into human-readable form.

However, sometimes the error message we get is uncommon, or the result of a deeper error. In this case, our parser will parse it...but because it's not getting expected input, it gives bad output. This can confuse devs, make for extra digging, etc.

We _could_ have a more robust handler. But this is really hard -- it's hard to balance human-readability with covering all cases. There's a reason we have a custom parser, after all.

The better idea is to simply **dump the full error message/array in the console.** (Not insecure -- since the network response in console can give it to us, and motivated ppl would just check that anyways) And it's much more convenient.

Side-note: from dev experience, you should prob learn that error_handling misparses.

**Should we include a note in readme? "If response looks weird, open your console and retry and see what's printed".** | priority | error handling js can obscure error messages dump in console error handling js is good at parsing many error messages and turning them into human readable form however sometimes the error message we get is uncommon or the result of a deeper error in this case our parser will parse it but because it s not getting expected input it gives bad output this can confuse devs make for extra digging etc we could have a more robust handler but this is really hard it s hard to balance human readability with covering all cases there s a reason we have a custom parser after all the better idea is to simply dump the full error message array in the console not insecure since the network response in console can give it to us and motivated ppl would just check that anyways and it s much more convenient side note from dev experience you should prob learn that error handling misparses should we include a note in readme if response looks weird open your console and retry and see what s printed | 1 |

663,298 | 22,172,297,832 | IssuesEvent | 2022-06-06 03:11:29 | authzed/spicedb | https://api.github.com/repos/authzed/spicedb | closed | Migration functions should have a context.Context parameter | priority/2 medium area/datastore | Also, we should probably be able to execute the migration and the part that writes the version in a single transaction. | 1.0 | Migration functions should have a context.Context parameter - Also, we should probably be able to execute the migration and the part that writes the version in a single transaction. | priority | migration functions should have a context context parameter also we should probably be able to execute the migration and the part that writes the version in a single transaction | 1 |

354,050 | 10,562,347,131 | IssuesEvent | 2019-10-04 18:06:00 | Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth | https://api.github.com/repos/Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth | closed | Occultism events are not adapted | :beetle: bug :beetle: :grey_exclamation: priority medium | **Mod Version**

46bfcd20

**What expansions do you have installed?**

All

**Please explain your issue in as much detail as possible:**

Occultism events (69040-69047) can fire for any religion

**Steps to reproduce the issue:**

`---`

**Upload an attachment below: .zip of your save, or screenshots:**

<details>

<summary>Click to expand</summary>

</details> | 1.0 | Occultism events are not adapted - **Mod Version**

46bfcd20

**What expansions do you have installed?**

All

**Please explain your issue in as much detail as possible:**

Occultism events (69040-69047) can fire for any religion

**Steps to reproduce the issue:**

`---`

**Upload an attachment below: .zip of your save, or screenshots:**

<details>

<summary>Click to expand</summary>

</details> | priority | occultism events are not adapted mod version what expansions do you have installed all please explain your issue in as much detail as possible occultism events can fire for any religion steps to reproduce the issue upload an attachment below zip of your save or screenshots click to expand | 1 |

621,295 | 19,582,557,928 | IssuesEvent | 2022-01-04 23:56:00 | bats-core/bats-core | https://api.github.com/repos/bats-core/bats-core | closed | bats waits for bg processes in `setup_file` even when FD3 is closed | Type: Bug Component: Docs Priority: Medium | I'm following the indications in [https://bats-core.readthedocs.io/en/stable/writing-tests.html\#file-descriptor-3-read-this-if-bats-hangs](https://bats-core.readthedocs.io/en/stable/writing-tests.html#file-descriptor-3-read-this-if-bats-hangs) and that seems to work when bg processes like `sleep 5s 3>- &` are launched from the test body or the (per-test) `setup` function. But when launched from the (per-file) `setup_file` function, `sleep 5s 3>- &` is still blocking the bats execution after all tests are done.

To reproduce:

```

@test "bats does not hang on bg process in setup_file" {

cd "$BATS_TEST_TMPDIR"

cat <<EOF >foo.bats

setup_file (){

sleep 5s 3>- &

}

$(echo @test) "foo" {

true

}

EOF

SECONDS=0

run bats foo.bats

test $SECONDS -lt 2

}

```

Is this a known issue? I have seen very recent work around this topic in [https://github.com/bats-core/bats-core/pull/525](https://github.com/bats-core/bats-core/pull/525) but not sure if that will apply to this particular issue.

**Environment:**

- Bats 1.5.0

- OS: Fedora 33

- Bash version: GNU bash, version 5.0.17(1)-release (x86\_64-redhat-linux-gnu)

| 1.0 | bats waits for bg processes in `setup_file` even when FD3 is closed - I'm following the indications in [https://bats-core.readthedocs.io/en/stable/writing-tests.html\#file-descriptor-3-read-this-if-bats-hangs](https://bats-core.readthedocs.io/en/stable/writing-tests.html#file-descriptor-3-read-this-if-bats-hangs) and that seems to work when bg processes like `sleep 5s 3>- &` are launched from the test body or the (per-test) `setup` function. But when launched from the (per-file) `setup_file` function, `sleep 5s 3>- &` is still blocking the bats execution after all tests are done.

To reproduce:

```

@test "bats does not hang on bg process in setup_file" {

cd "$BATS_TEST_TMPDIR"

cat <<EOF >foo.bats

setup_file (){

sleep 5s 3>- &

}

$(echo @test) "foo" {

true

}

EOF

SECONDS=0

run bats foo.bats

test $SECONDS -lt 2

}

```

Is this a known issue? I have seen very recent work around this topic in [https://github.com/bats-core/bats-core/pull/525](https://github.com/bats-core/bats-core/pull/525) but not sure if that will apply to this particular issue.

**Environment:**

- Bats 1.5.0

- OS: Fedora 33

- Bash version: GNU bash, version 5.0.17(1)-release (x86\_64-redhat-linux-gnu)

| priority | bats waits for bg processes in setup file even when is closed i m following the indications in and that seems to work when bg processes like sleep are launched from the test body or the per test setup function but when launched from the per file setup file function sleep is still blocking the bats execution after all tests are done to reproduce test bats does not hang on bg process in setup file cd bats test tmpdir cat foo bats setup file sleep echo test foo true eof seconds run bats foo bats test seconds lt is this a known issue i have seen very recent work around this topic in but not sure if that will apply to this particular issue environment bats os fedora bash version gnu bash version release redhat linux gnu | 1 |

424,604 | 12,313,533,367 | IssuesEvent | 2020-05-12 15:26:25 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | Unable to reconfigure existing filter | Priority: Medium Type: Bug | **Describe the bug**

I'm trying to reconfigure an existing filter and change its logic but moving objects around is glitchy

**To Reproduce**

1. Showed in live to @satkunas

2. Use the following config:

```

[02_no_employees_on_student_ssid]

status=enabled

condition=node_info.category != "Eleves" && ssid == "pi" || ssid == "niz"

role=REJECT

scopes=RegisteredRole

```

| 1.0 | Unable to reconfigure existing filter - **Describe the bug**

I'm trying to reconfigure an existing filter and change its logic but moving objects around is glitchy

**To Reproduce**

1. Showed in live to @satkunas

2. Use the following config:

```

[02_no_employees_on_student_ssid]

status=enabled

condition=node_info.category != "Eleves" && ssid == "pi" || ssid == "niz"

role=REJECT

scopes=RegisteredRole

```

| priority | unable to reconfigure existing filter describe the bug i m trying to reconfigure an existing filter and change its logic but moving objects around is glitchy to reproduce showed in live to satkunas use the following config status enabled condition node info category eleves ssid pi ssid niz role reject scopes registeredrole | 1 |

721,194 | 24,820,835,674 | IssuesEvent | 2022-10-25 16:18:00 | AY2223S1-CS2103-F14-3/tp | https://api.github.com/repos/AY2223S1-CS2103-F14-3/tp | closed | As a user, I can easily view upcoming interviews happening within 1 week from now | type.Story priority.Medium | ... so that I can prepare for them accordingly. | 1.0 | As a user, I can easily view upcoming interviews happening within 1 week from now - ... so that I can prepare for them accordingly. | priority | as a user i can easily view upcoming interviews happening within week from now so that i can prepare for them accordingly | 1 |

809,861 | 30,215,177,959 | IssuesEvent | 2023-07-05 15:07:43 | fmv1001/F1RacePredictor | https://api.github.com/repos/fmv1001/F1RacePredictor | closed | Desarrollar la lógica de la aplicación para la interacción con el modelo | medium priority | En esta tarea se crearán los medios necesarios para que la aplicación interactúe con el modelo y devuelva los resultados a la interfaz. | 1.0 | Desarrollar la lógica de la aplicación para la interacción con el modelo - En esta tarea se crearán los medios necesarios para que la aplicación interactúe con el modelo y devuelva los resultados a la interfaz. | priority | desarrollar la lógica de la aplicación para la interacción con el modelo en esta tarea se crearán los medios necesarios para que la aplicación interactúe con el modelo y devuelva los resultados a la interfaz | 1 |

204,049 | 7,079,882,942 | IssuesEvent | 2018-01-10 11:19:17 | gluster/glusterd2 | https://api.github.com/repos/gluster/glusterd2 | closed | New format for Volume create request | FW: ReST priority: medium | Current Volume Create request format is very limited since it converts

the list of bricks into sub volumes based on other parameters and

order of bricks. With new format, each sub volume can have its own type

and list of bricks.

For example, to create distribute replicate volume,

Existing,

{

"name": "gv1",

"replica": 2,

"bricks": [

"b4e2a4a5-103f-4ae7-8545-706b7c5039e9:/bricks/b1",

"72b66b1e-e29c-450a-a4af-bfc8499aae54:/bricks/b2",

"b0e0b26c-fecc-4e24-8edc-f2f328258301:/bricks/b3",

"9fd10082-84b7-431d-a58c-9a6d060fd7cd:/bricks/b4"

],

"transport": "tcp"

}

Proposed,

{

"name": "gv1",

"subvols": [

{

"name": "s1",

"type": "replicate",

"bricks": [

{"nodeid": "b4e2a4a5-103f-4ae7-8545-706b7c5039e9", "path": "/bricks/b1"},

{"nodeid": "72b66b1e-e29c-450a-a4af-bfc8499aae54", "path": "/bricks/b2"}

]

},

{

"name": "s2",

"type": "replicate",

"bricks": [

{"nodeid": "b0e0b26c-fecc-4e24-8edc-f2f328258301", "path": "/bricks/b3"},

{"nodeid": "9fd10082-84b7-431d-a58c-9a6d060fd7cd", "path": "/bricks/b4"}

]

}

],

"transport": "tcp"

}

In case of Arbiter volume,(Note, bricks order is not strict)

{

"name": "gv1",

"subvols": [

{

"name": "s1",

"type": "arbiter",

"bricks": [

{"nodeid": "b4e2a4a5-103f-4ae7-8545-706b7c5039e9", "path": "/bricks/b1"},

{"nodeid": "72b66b1e-e29c-450a-a4af-bfc8499aae54", "path": "/bricks/b2"},

{"nodeid": "59a6033e-07c3-49bb-92a0-f38c8dfbdb0a", "path": "/bricks/b3", "arbiter": true}

]

},

{

"name": "s2",

"type": "arbiter",

"bricks": [

{"nodeid": "b0e0b26c-fecc-4e24-8edc-f2f328258301", "path": "/bricks/b4"},

{"nodeid": "9fd10082-84b7-431d-a58c-9a6d060fd7cd", "path": "/bricks/b5", "arbiter": true},

{"nodeid": "1b9f7313-a85b-46ba-a2ff-e9620f0f06bb", "path": "/bricks/b6"}

]

}

],

"transport": "tcp"

}

Let me know if any changes required to the format or any new fields

required.

## Expected changes

- REST Client package, CLI to REST Client communication remains same

- CLI changes while displaying volinfo

- Volinfo struct changes

- Volume commands changes while accessing information

- REST API documentation changes

| 1.0 | New format for Volume create request - Current Volume Create request format is very limited since it converts

the list of bricks into sub volumes based on other parameters and

order of bricks. With new format, each sub volume can have its own type

and list of bricks.

For example, to create distribute replicate volume,

Existing,

{

"name": "gv1",

"replica": 2,

"bricks": [

"b4e2a4a5-103f-4ae7-8545-706b7c5039e9:/bricks/b1",

"72b66b1e-e29c-450a-a4af-bfc8499aae54:/bricks/b2",

"b0e0b26c-fecc-4e24-8edc-f2f328258301:/bricks/b3",

"9fd10082-84b7-431d-a58c-9a6d060fd7cd:/bricks/b4"

],

"transport": "tcp"

}

Proposed,

{

"name": "gv1",

"subvols": [

{

"name": "s1",

"type": "replicate",

"bricks": [

{"nodeid": "b4e2a4a5-103f-4ae7-8545-706b7c5039e9", "path": "/bricks/b1"},

{"nodeid": "72b66b1e-e29c-450a-a4af-bfc8499aae54", "path": "/bricks/b2"}

]

},

{

"name": "s2",

"type": "replicate",

"bricks": [

{"nodeid": "b0e0b26c-fecc-4e24-8edc-f2f328258301", "path": "/bricks/b3"},

{"nodeid": "9fd10082-84b7-431d-a58c-9a6d060fd7cd", "path": "/bricks/b4"}

]

}

],

"transport": "tcp"

}

In case of Arbiter volume,(Note, bricks order is not strict)

{

"name": "gv1",

"subvols": [

{

"name": "s1",

"type": "arbiter",

"bricks": [

{"nodeid": "b4e2a4a5-103f-4ae7-8545-706b7c5039e9", "path": "/bricks/b1"},

{"nodeid": "72b66b1e-e29c-450a-a4af-bfc8499aae54", "path": "/bricks/b2"},

{"nodeid": "59a6033e-07c3-49bb-92a0-f38c8dfbdb0a", "path": "/bricks/b3", "arbiter": true}

]

},

{

"name": "s2",

"type": "arbiter",

"bricks": [

{"nodeid": "b0e0b26c-fecc-4e24-8edc-f2f328258301", "path": "/bricks/b4"},

{"nodeid": "9fd10082-84b7-431d-a58c-9a6d060fd7cd", "path": "/bricks/b5", "arbiter": true},

{"nodeid": "1b9f7313-a85b-46ba-a2ff-e9620f0f06bb", "path": "/bricks/b6"}

]

}

],

"transport": "tcp"

}

Let me know if any changes required to the format or any new fields

required.

## Expected changes

- REST Client package, CLI to REST Client communication remains same

- CLI changes while displaying volinfo

- Volinfo struct changes

- Volume commands changes while accessing information

- REST API documentation changes

| priority | new format for volume create request current volume create request format is very limited since it converts the list of bricks into sub volumes based on other parameters and order of bricks with new format each sub volume can have its own type and list of bricks for example to create distribute replicate volume existing name replica bricks bricks bricks fecc bricks bricks transport tcp proposed name subvols name type replicate bricks nodeid path bricks nodeid path bricks name type replicate bricks nodeid fecc path bricks nodeid path bricks transport tcp in case of arbiter volume note bricks order is not strict name subvols name type arbiter bricks nodeid path bricks nodeid path bricks nodeid path bricks arbiter true name type arbiter bricks nodeid fecc path bricks nodeid path bricks arbiter true nodeid path bricks transport tcp let me know if any changes required to the format or any new fields required expected changes rest client package cli to rest client communication remains same cli changes while displaying volinfo volinfo struct changes volume commands changes while accessing information rest api documentation changes | 1 |

272,343 | 8,507,509,779 | IssuesEvent | 2018-10-30 19:16:27 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | opened | bazel: Use `try-import %workspace%/user.bazelrc` | configuration: bazel priority: medium team: kitware | Follow-up from #9864; super useful to not have to mutate `$HOME` dotfiles.

Can use once we have Bazel 0.18.

\cc @jamiesnape @sammy-tri | 1.0 | bazel: Use `try-import %workspace%/user.bazelrc` - Follow-up from #9864; super useful to not have to mutate `$HOME` dotfiles.

Can use once we have Bazel 0.18.

\cc @jamiesnape @sammy-tri | priority | bazel use try import workspace user bazelrc follow up from super useful to not have to mutate home dotfiles can use once we have bazel cc jamiesnape sammy tri | 1 |

274,762 | 8,565,045,582 | IssuesEvent | 2018-11-09 18:36:40 | sdss/marvin | https://api.github.com/repos/sdss/marvin | closed | VACs break pickling | marvin-tools priority-medium | <!-- **NEVER INCLUDE PLAINTEXT PASSWORDS OR PRIVATE INFORMATION IN THE BUG REPORT** -->

**Describe the bug**

Pickling seems broken everywhere with the introduction of the VACs.

**Additional context**

One example:

```python

test_spaxel.py:383:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

../../tools/spaxel.py:249: in save

return marvin.core.marvin_pickle.save(self, path=path, overwrite=overwrite)

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

obj = <Marvin Spaxel (plateifu=8485-1901, x=25, y=15; x_cen=8, y_cen=-2, loaded=maps/modelcube)>

path = '/private/var/folders/gp/vsl4fq4d47s9mpywmcbrsj7c0000gn/T/pytest-of-albireo/pytest-3/scratch7/test_spaxel.mpf'

overwrite = True

def save(obj, path=None, overwrite=False):

"""Pickles the object.

If ``path=None``, uses the default location of the file in the tree

but changes the extension of the file to ``.mpf`` (MaNGA Pickle File).

Returns the path of the saved pickle file.

Parameters:

obj:

Marvin object to pickle.

path (str):

Path of saved file. Default is ``None``.

overwrite (bool):

If ``True``, overwrite existing file. Default is ``False``.

Returns:

str:

Path of saved file.

"""

from ..tools.core import MarvinToolsClass

if path is None:

assert isinstance(obj, MarvinToolsClass), 'path=None is only allowed for core objects.'

path = obj._getFullPath()

assert isinstance(path, string_types), 'path must be a string.'

if path is None:

raise MarvinError('cannot determine the default path in the '

'tree for this file. You can overcome this '

'by calling save with a path keyword with '

'the path to which the file should be saved.')

# Replaces the extension (normally fits) with mpf

if '.fits.gz' in path:

path = path.strip('.fits.gz')

else:

path = os.path.splitext(path)[0]

path += '.mpf'

path = os.path.realpath(os.path.expanduser(path))

if os.path.isdir(path):

raise MarvinError('path must be a full route, including the filename.')

if os.path.exists(path) and not overwrite:

warnings.warn('file already exists. Not overwriting.', MarvinUserWarning)

return

dirname = os.path.dirname(path)

if not os.path.exists(dirname):

os.makedirs(dirname)

try:

with open(path, 'wb') as fout:

pickle.dump(obj, fout, protocol=-1)

except Exception as ee:

if os.path.exists(path):

os.remove(path)

> raise MarvinError('error found while pickling: {0}'.format(str(ee)))

E marvin.core.exceptions.MarvinError: error found while pickling: Can't pickle local object 'VACMixIn.get_vacs.<locals>.VACContainer'.

```

| 1.0 | VACs break pickling - <!-- **NEVER INCLUDE PLAINTEXT PASSWORDS OR PRIVATE INFORMATION IN THE BUG REPORT** -->

**Describe the bug**

Pickling seems broken everywhere with the introduction of the VACs.

**Additional context**

One example:

```python

test_spaxel.py:383:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

../../tools/spaxel.py:249: in save

return marvin.core.marvin_pickle.save(self, path=path, overwrite=overwrite)

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

obj = <Marvin Spaxel (plateifu=8485-1901, x=25, y=15; x_cen=8, y_cen=-2, loaded=maps/modelcube)>

path = '/private/var/folders/gp/vsl4fq4d47s9mpywmcbrsj7c0000gn/T/pytest-of-albireo/pytest-3/scratch7/test_spaxel.mpf'

overwrite = True

def save(obj, path=None, overwrite=False):

"""Pickles the object.

If ``path=None``, uses the default location of the file in the tree

but changes the extension of the file to ``.mpf`` (MaNGA Pickle File).

Returns the path of the saved pickle file.

Parameters:

obj:

Marvin object to pickle.

path (str):

Path of saved file. Default is ``None``.

overwrite (bool):

If ``True``, overwrite existing file. Default is ``False``.

Returns:

str:

Path of saved file.

"""

from ..tools.core import MarvinToolsClass

if path is None:

assert isinstance(obj, MarvinToolsClass), 'path=None is only allowed for core objects.'

path = obj._getFullPath()

assert isinstance(path, string_types), 'path must be a string.'

if path is None:

raise MarvinError('cannot determine the default path in the '

'tree for this file. You can overcome this '

'by calling save with a path keyword with '

'the path to which the file should be saved.')

# Replaces the extension (normally fits) with mpf

if '.fits.gz' in path:

path = path.strip('.fits.gz')

else:

path = os.path.splitext(path)[0]

path += '.mpf'

path = os.path.realpath(os.path.expanduser(path))

if os.path.isdir(path):

raise MarvinError('path must be a full route, including the filename.')

if os.path.exists(path) and not overwrite:

warnings.warn('file already exists. Not overwriting.', MarvinUserWarning)

return

dirname = os.path.dirname(path)

if not os.path.exists(dirname):

os.makedirs(dirname)

try:

with open(path, 'wb') as fout:

pickle.dump(obj, fout, protocol=-1)

except Exception as ee:

if os.path.exists(path):

os.remove(path)

> raise MarvinError('error found while pickling: {0}'.format(str(ee)))

E marvin.core.exceptions.MarvinError: error found while pickling: Can't pickle local object 'VACMixIn.get_vacs.<locals>.VACContainer'.

```

| priority | vacs break pickling describe the bug pickling seems broken everywhere with the introduction of the vacs additional context one example python test spaxel py tools spaxel py in save return marvin core marvin pickle save self path path overwrite overwrite obj path private var folders gp t pytest of albireo pytest test spaxel mpf overwrite true def save obj path none overwrite false pickles the object if path none uses the default location of the file in the tree but changes the extension of the file to mpf manga pickle file returns the path of the saved pickle file parameters obj marvin object to pickle path str path of saved file default is none overwrite bool if true overwrite existing file default is false returns str path of saved file from tools core import marvintoolsclass if path is none assert isinstance obj marvintoolsclass path none is only allowed for core objects path obj getfullpath assert isinstance path string types path must be a string if path is none raise marvinerror cannot determine the default path in the tree for this file you can overcome this by calling save with a path keyword with the path to which the file should be saved replaces the extension normally fits with mpf if fits gz in path path path strip fits gz else path os path splitext path path mpf path os path realpath os path expanduser path if os path isdir path raise marvinerror path must be a full route including the filename if os path exists path and not overwrite warnings warn file already exists not overwriting marvinuserwarning return dirname os path dirname path if not os path exists dirname os makedirs dirname try with open path wb as fout pickle dump obj fout protocol except exception as ee if os path exists path os remove path raise marvinerror error found while pickling format str ee e marvin core exceptions marvinerror error found while pickling can t pickle local object vacmixin get vacs vaccontainer | 1 |

338,445 | 10,229,415,212 | IssuesEvent | 2019-08-17 12:30:45 | ifedchankau/trading-risk-manager | https://api.github.com/repos/ifedchankau/trading-risk-manager | opened | Position size calculation | Priority: Medium Type: feature | Realize position size calculation based on:

- stop-loss level (calculated in `find_order_levels` function) and position open price

- exchange or broker comissions (fetching by API or stored in file: consider both realizations)

- slippage of market orders and stop-losses (stored in file for every market) | 1.0 | Position size calculation - Realize position size calculation based on:

- stop-loss level (calculated in `find_order_levels` function) and position open price

- exchange or broker comissions (fetching by API or stored in file: consider both realizations)

- slippage of market orders and stop-losses (stored in file for every market) | priority | position size calculation realize position size calculation based on stop loss level calculated in find order levels function and position open price exchange or broker comissions fetching by api or stored in file consider both realizations slippage of market orders and stop losses stored in file for every market | 1 |

362,305 | 10,725,978,661 | IssuesEvent | 2019-10-28 08:17:30 | she-code-africa/SCA-Website | https://api.github.com/repos/she-code-africa/SCA-Website | opened | Convert blog post editor to markdown editor | back-end priority: medium | This is to convert the current WYSIWYG editor used when creating or editing a blog post to a markdown equivalent which is also provided by Keystone - https://v4.keystonejs.com/api/field/markdown

The markdown section of this article may also be helpful with implementation - https://modernweb.com/building-blog-keystone-cms-node-js/ | 1.0 | Convert blog post editor to markdown editor - This is to convert the current WYSIWYG editor used when creating or editing a blog post to a markdown equivalent which is also provided by Keystone - https://v4.keystonejs.com/api/field/markdown

The markdown section of this article may also be helpful with implementation - https://modernweb.com/building-blog-keystone-cms-node-js/ | priority | convert blog post editor to markdown editor this is to convert the current wysiwyg editor used when creating or editing a blog post to a markdown equivalent which is also provided by keystone the markdown section of this article may also be helpful with implementation | 1 |

700,558 | 24,064,417,839 | IssuesEvent | 2022-09-17 09:10:23 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | Group preset for `@tanstack/react-query` and `@tanstack/react-query-devtools` | type:feature priority-3-medium status:in-progress core:config | ### What would you like Renovate to be able to do?

I think you're supposed to update the `@tanstack/react-query` and `@tanstack/react-query-devtools` packages in one go together.

### If you have any ideas on how this should be implemented, please tell us here.

Create a new `group:tanstack` preset, like this:

```json

{

"packageRules": [

{

"matchDatasources": [

"npm"

],

"matchPackageNames": [

"@tanstack/react-query",

"@tanstack/react-query-devtools"

],

"groupName": "tanstack react-query packages"

}

]

}

```

### Is this a feature you are interested in implementing yourself?

Maybe | 1.0 | Group preset for `@tanstack/react-query` and `@tanstack/react-query-devtools` - ### What would you like Renovate to be able to do?

I think you're supposed to update the `@tanstack/react-query` and `@tanstack/react-query-devtools` packages in one go together.

### If you have any ideas on how this should be implemented, please tell us here.

Create a new `group:tanstack` preset, like this:

```json

{

"packageRules": [

{

"matchDatasources": [

"npm"

],

"matchPackageNames": [

"@tanstack/react-query",

"@tanstack/react-query-devtools"

],

"groupName": "tanstack react-query packages"

}

]

}

```

### Is this a feature you are interested in implementing yourself?

Maybe | priority | group preset for tanstack react query and tanstack react query devtools what would you like renovate to be able to do i think you re supposed to update the tanstack react query and tanstack react query devtools packages in one go together if you have any ideas on how this should be implemented please tell us here create a new group tanstack preset like this json packagerules matchdatasources npm matchpackagenames tanstack react query tanstack react query devtools groupname tanstack react query packages is this a feature you are interested in implementing yourself maybe | 1 |

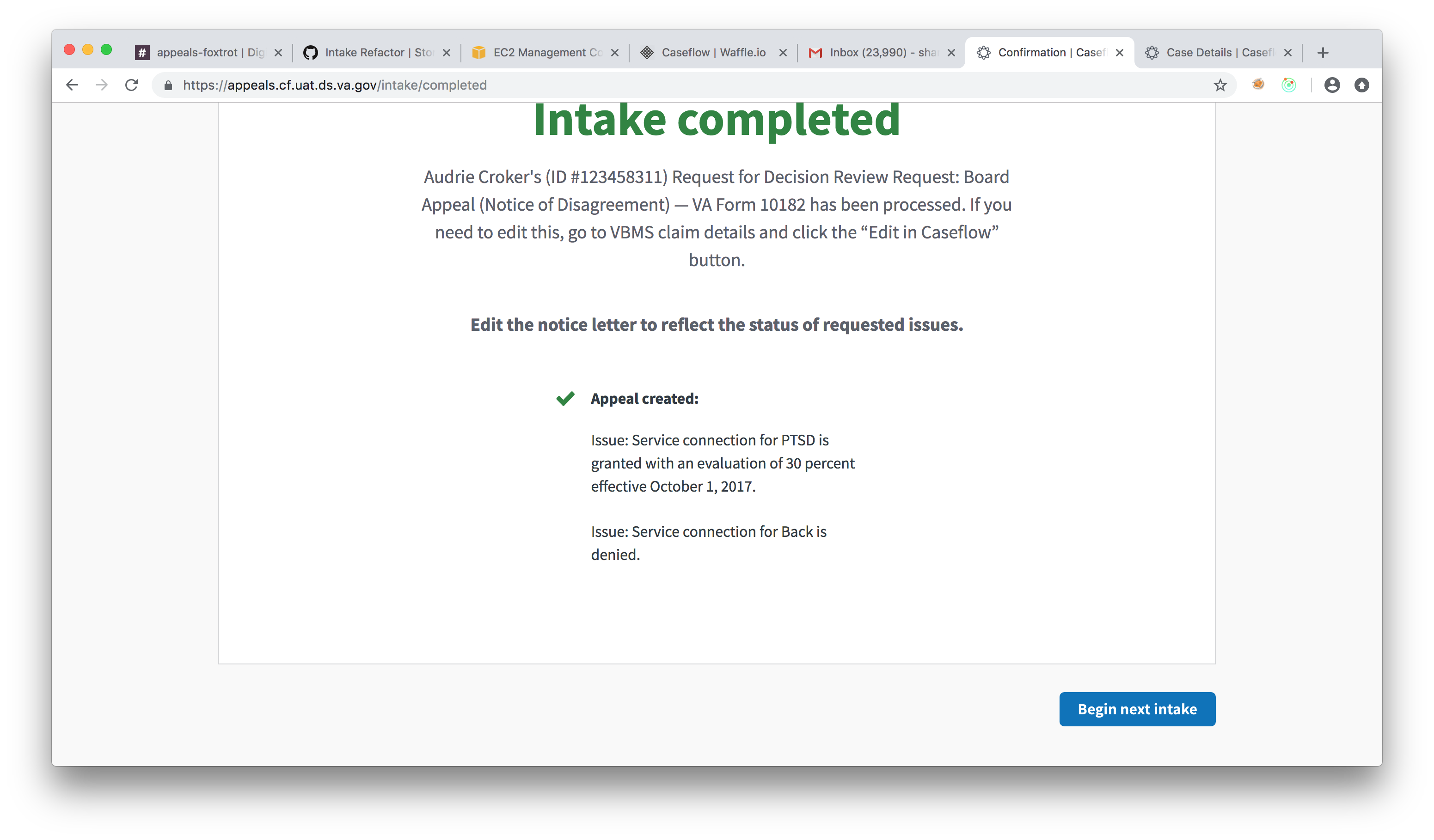

276,952 | 8,614,824,748 | IssuesEvent | 2018-11-19 18:39:42 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | closed | AMA Intake | Don't show ineligible issues as having been added to an appeal | Ready-for-Dev bug-medium-priority caseflow-intake sierra | Currently, ineligible issues are shown as having been added to an appeal. Even though we save them to the database, they should not considered to be issues on the appeal (similar to HLRs and SCs, where we do not create a contention)

## Acceptance Criteria

- Ineligible issues should not show up on the confirmation page of an appeal intake

## Screenshot

In this screen shot, one of these two issues was ineligible

| 1.0 | AMA Intake | Don't show ineligible issues as having been added to an appeal - Currently, ineligible issues are shown as having been added to an appeal. Even though we save them to the database, they should not considered to be issues on the appeal (similar to HLRs and SCs, where we do not create a contention)

## Acceptance Criteria

- Ineligible issues should not show up on the confirmation page of an appeal intake

## Screenshot

In this screen shot, one of these two issues was ineligible

| priority | ama intake don t show ineligible issues as having been added to an appeal currently ineligible issues are shown as having been added to an appeal even though we save them to the database they should not considered to be issues on the appeal similar to hlrs and scs where we do not create a contention acceptance criteria ineligible issues should not show up on the confirmation page of an appeal intake screenshot in this screen shot one of these two issues was ineligible | 1 |

154,232 | 5,916,172,221 | IssuesEvent | 2017-05-22 09:49:02 | harryshipton/secsplit | https://api.github.com/repos/harryshipton/secsplit | closed | Add a migration command | feature request medium priority | You should be able to migrate to the latest shard version by a single command, rather than having to merge and then resplit.

Extension to #8 (which is a prerequisite). | 1.0 | Add a migration command - You should be able to migrate to the latest shard version by a single command, rather than having to merge and then resplit.

Extension to #8 (which is a prerequisite). | priority | add a migration command you should be able to migrate to the latest shard version by a single command rather than having to merge and then resplit extension to which is a prerequisite | 1 |

520,369 | 15,085,248,554 | IssuesEvent | 2021-02-05 18:22:29 | dtcenter/MET | https://api.github.com/repos/dtcenter/MET | closed | Update the plotting R-scripts to handle output from different versions of MET. | alert: NEED ACCOUNT KEY component: user support component: utility scripts priority: medium requestor: Community type: enhancement | ## Describe the Enhancement ##

This issue is based on a request that came in via met-help:

https://rt.rap.ucar.edu/rt/Ticket/Display.html?id=98517

I actually already posted updates for plot_cnt.R and plot_mpr.R prior to realizing that these scripts actually do live in the MET repository. So this task is to make the same updates in the develop branch. Also, check if the same changes are needed in any of the other R-scripts. The change is this...

- add a -met_base command line argument

- if not specified there, check for a MET_BASE environment variable

- if neither are set, error out

- read the data and extract the MET version number from the first column of the first line

- read the corresponding header for that line type/version number from MET_BASE/table_files/met_header_columns_vX.Y.txt

- remove the hard-coded headers from the top of these scripts

### Time Estimate ###

4 hours.

### Sub-Issues ###

Consider breaking the enhancement down into sub-issues.

No sub-issues required.

### Relevant Deadlines ###

None.

### Funding Source ###

?

## Define the Metadata ##

### Assignee ###

- [x] Select **engineer(s)** or **no engineer** required: John HG

- [x] Select **scientist(s)** or **no scientist** required: no scientist required

### Labels ###

- [x] Select **component(s)**

- [x] Select **priority**

- [x] Select **requestor(s)**

### Projects and Milestone ###

- [x] Review **projects** and select relevant **Repository** and **Organization** ones or add "alert:NEED PROJECT ASSIGNMENT" label

- [x] Select **milestone** to next major version milestone or "Future Versions"

## Define Related Issue(s) ##

Consider the impact to the other METplus components.