Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

99,828 | 4,068,919,919 | IssuesEvent | 2016-05-27 00:17:18 | flashxyz/BookMe | https://api.github.com/repos/flashxyz/BookMe | opened | BUG: reading of room's time restrictions not working well | AdminSettingsTeam bug Development priority : medium | reading of fromTime and toTime in room options showing blank fields. | 1.0 | BUG: reading of room's time restrictions not working well - reading of fromTime and toTime in room options showing blank fields. | priority | bug reading of room s time restrictions not working well reading of fromtime and totime in room options showing blank fields | 1 |

387,412 | 11,460,986,929 | IssuesEvent | 2020-02-07 10:53:15 | project-koku/koku | https://api.github.com/repos/project-koku/koku | closed | Missing cluster name | bug priority - medium | ## Background

We recently removed an implicit group by cluster from the API for project/node which means that we don't have a single cluster to return here.

## Proposed Solution

- We can convert to return an array of clusters. This way if the project exists in multiple clusters we can still deliver this information in a single row in the details page / API resultset.

- See https://docs.djangoproject.com/en/3.0/ref/contrib/postgres/aggregates/#arrayagg for Django use of Postgres specific array_agg method. We could use ArrayAgg to package up cluster ids and cluster aliases in our annotations and aggregates.

**Describe the bug**

As a user, I want to see the cluster associated with each Ocp and Ocp on AWS project. The UI had provided this information in the details page, via table expandable rows.

Unfortunately, the cost reports no longer appear to provide the cluster. As a result, the cluster is undefined and the UI no longer shows cluster names for each project.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to 'OpenShift on cloud details' or 'OpenShift details'

2. Click on 'Group cost by' project

3. Expand any table row

4. See missing cluster name

**Expected behavior**

We need the cluster name associated with each project

**Screenshots**

This is the API used in the OpenShift details:

/v1/reports/openshift/costs/?delta=cost&filter[limit]=10&filter[offset]=0&filter[resolution]=monthly&filter[time_scope_units]=month&filter[time_scope_value]=-1&group_by[project]=*&order_by[cost]=desc

This is the API used in the OpenShift on cloud details:

/v1/reports/openshift/infrastructures/aws/costs/?delta=cost&filter[limit]=10&filter[offset]=0&filter[resolution]=monthly&filter[time_scope_units]=month&filter[time_scope_value]=-1&group_by[project]=*&order_by[cost]=desc

| 1.0 | Missing cluster name - ## Background

We recently removed an implicit group by cluster from the API for project/node which means that we don't have a single cluster to return here.

## Proposed Solution

- We can convert to return an array of clusters. This way if the project exists in multiple clusters we can still deliver this information in a single row in the details page / API resultset.

- See https://docs.djangoproject.com/en/3.0/ref/contrib/postgres/aggregates/#arrayagg for Django use of Postgres specific array_agg method. We could use ArrayAgg to package up cluster ids and cluster aliases in our annotations and aggregates.

**Describe the bug**

As a user, I want to see the cluster associated with each Ocp and Ocp on AWS project. The UI had provided this information in the details page, via table expandable rows.

Unfortunately, the cost reports no longer appear to provide the cluster. As a result, the cluster is undefined and the UI no longer shows cluster names for each project.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to 'OpenShift on cloud details' or 'OpenShift details'

2. Click on 'Group cost by' project

3. Expand any table row

4. See missing cluster name

**Expected behavior**

We need the cluster name associated with each project

**Screenshots**

This is the API used in the OpenShift details:

/v1/reports/openshift/costs/?delta=cost&filter[limit]=10&filter[offset]=0&filter[resolution]=monthly&filter[time_scope_units]=month&filter[time_scope_value]=-1&group_by[project]=*&order_by[cost]=desc

This is the API used in the OpenShift on cloud details:

/v1/reports/openshift/infrastructures/aws/costs/?delta=cost&filter[limit]=10&filter[offset]=0&filter[resolution]=monthly&filter[time_scope_units]=month&filter[time_scope_value]=-1&group_by[project]=*&order_by[cost]=desc

| priority | missing cluster name background we recently removed an implicit group by cluster from the api for project node which means that we don t have a single cluster to return here proposed solution we can convert to return an array of clusters this way if the project exists in multiple clusters we can still deliver this information in a single row in the details page api resultset see for django use of postgres specific array agg method we could use arrayagg to package up cluster ids and cluster aliases in our annotations and aggregates describe the bug as a user i want to see the cluster associated with each ocp and ocp on aws project the ui had provided this information in the details page via table expandable rows unfortunately the cost reports no longer appear to provide the cluster as a result the cluster is undefined and the ui no longer shows cluster names for each project to reproduce steps to reproduce the behavior go to openshift on cloud details or openshift details click on group cost by project expand any table row see missing cluster name expected behavior we need the cluster name associated with each project screenshots this is the api used in the openshift details reports openshift costs delta cost filter filter filter monthly filter month filter group by order by desc this is the api used in the openshift on cloud details reports openshift infrastructures aws costs delta cost filter filter filter monthly filter month filter group by order by desc | 1 |

57,784 | 3,083,786,163 | IssuesEvent | 2015-08-24 11:18:46 | zaproxy/zaproxy | https://api.github.com/repos/zaproxy/zaproxy | closed | DOM XSS detection | Priority-Medium Type-Enhancement | ```

The current XSS detection only really works for server side issues.

For DOM XSS we really need to launch a browser, attack that.

```

Original issue reported on code.google.com by `psiinon` on 2014-10-17 09:38:50 | 1.0 | DOM XSS detection - ```

The current XSS detection only really works for server side issues.

For DOM XSS we really need to launch a browser, attack that.

```

Original issue reported on code.google.com by `psiinon` on 2014-10-17 09:38:50 | priority | dom xss detection the current xss detection only really works for server side issues for dom xss we really need to launch a browser attack that original issue reported on code google com by psiinon on | 1 |

108,886 | 4,357,337,586 | IssuesEvent | 2016-08-02 01:14:31 | pombase/canto | https://api.github.com/repos/pombase/canto | closed | Add option to export all publication data with canto_export.pl | medium priority | For pombase/pombase-chado#67 we need to get the publication triage data into Chado.

Add it to the JSON output as an option. | 1.0 | Add option to export all publication data with canto_export.pl - For pombase/pombase-chado#67 we need to get the publication triage data into Chado.

Add it to the JSON output as an option. | priority | add option to export all publication data with canto export pl for pombase pombase chado we need to get the publication triage data into chado add it to the json output as an option | 1 |

494,697 | 14,263,526,980 | IssuesEvent | 2020-11-20 14:33:36 | ooni/backend | https://api.github.com/repos/ooni/backend | opened | Review runbooks for on-call readiness | ooni/backend priority/medium | This is about going through some scenarios of what might break while on-call and ensuring I have all the knowledge (and tools) to be able to handle incidents while on-call. | 1.0 | Review runbooks for on-call readiness - This is about going through some scenarios of what might break while on-call and ensuring I have all the knowledge (and tools) to be able to handle incidents while on-call. | priority | review runbooks for on call readiness this is about going through some scenarios of what might break while on call and ensuring i have all the knowledge and tools to be able to handle incidents while on call | 1 |

584,075 | 17,405,423,141 | IssuesEvent | 2021-08-03 04:47:19 | Vurv78/ExpressionScript | https://api.github.com/repos/Vurv78/ExpressionScript | opened | Fix indexing operator | bug parser priority.medium | For some reason even though we accept a comma token it won't move past there. It will instead stay at the comma and not be able to accept a type token.

Repro

```golo

E[1,number]

``` | 1.0 | Fix indexing operator - For some reason even though we accept a comma token it won't move past there. It will instead stay at the comma and not be able to accept a type token.

Repro

```golo

E[1,number]

``` | priority | fix indexing operator for some reason even though we accept a comma token it won t move past there it will instead stay at the comma and not be able to accept a type token repro golo e | 1 |

606,678 | 18,767,459,476 | IssuesEvent | 2021-11-06 06:56:27 | argosp/trialdash | https://api.github.com/repos/argosp/trialdash | closed | Cloning trial keeps previous cloned trial name | enhancement Priority Medium done | When cloning a trial, the name of the new trial is similar to the name of the last cloned one.

Please change to [trial name] clone.

| 1.0 | Cloning trial keeps previous cloned trial name - When cloning a trial, the name of the new trial is similar to the name of the last cloned one.

Please change to [trial name] clone.

| priority | cloning trial keeps previous cloned trial name when cloning a trial the name of the new trial is similar to the name of the last cloned one please change to clone | 1 |

746,836 | 26,048,162,110 | IssuesEvent | 2022-12-22 16:05:37 | mi6/ic-ui-kit | https://api.github.com/repos/mi6/ic-ui-kit | closed | [ic-ui-kit dx] UI Kit storybook will not run on node version >= 17 | type: bug 🐛 dependencies priority: medium type: needs investigation development dx | ## Summary of the bug

The command `npm run storybook` fails when run on any node version greater than 16.18.1 (also working on v16.13.0 LTS). Worth noting that `npm run build` actually succeeds, it's just Storybook that's having the issue.

The error returned is:

```

@ukic/react: Error: error:0308010C:digital envelope routines::unsupported

@ukic/react: at new Hash (node:internal/crypto/hash:67:19)

@ukic/react: at Object.createHash (node:crypto:135:10)

@ukic/react: at module.exports (C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\webpack\lib\util\createHash.js:135:53)

@ukic/react: at NormalModule._initBuildHash (C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\webpack\lib\NormalModule.js:417:16)

@ukic/react: at handleParseError (C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\webpack\lib\NormalModule.js:471:10)

@ukic/react: at C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\webpack\lib\NormalModule.js:503:5

@ukic/react: at C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\webpack\lib\NormalModule.js:358:12

@ukic/react: at C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\loader-runner\lib\LoaderRunner.js:373:3

@ukic/react: at iterateNormalLoaders (C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\loader-runner\lib\LoaderRunner.js:214:10)

@ukic/react: at C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\loader-runner\lib\LoaderRunner.js:205:4 {

@ukic/react: opensslErrorStack: [ 'error:03000086:digital envelope routines::initialization error' ],

@ukic/react: library: 'digital envelope routines',

@ukic/react: reason: 'unsupported',

@ukic/react: code: 'ERR_OSSL_EVP_UNSUPPORTED'

@ukic/react: }

```

## How to reproduce

Tell us the steps to reproduce the problem:

1. Use one of the failing node versions (below), say v17.9.1.

### Failing node versions

These versions are tested to fail:

- v17.9.1

- v18.12.1

- v19.2.0

### OK node versions

Version 16.13.0 and 16.18.1 are tested to work.

## 🧐 Expected behaviour

Storybook should launch without the `` error, as it does on node v16.18.1

## 🖥 Desktop

Tested on Windows Server 2022 and macOS Monterey 12.6.

## Possible fix

This is reported in Storybook: https://github.com/storybookjs/storybook/issues/19692, looks like changing to Webpack 5 might fix this.

| 1.0 | [ic-ui-kit dx] UI Kit storybook will not run on node version >= 17 - ## Summary of the bug

The command `npm run storybook` fails when run on any node version greater than 16.18.1 (also working on v16.13.0 LTS). Worth noting that `npm run build` actually succeeds, it's just Storybook that's having the issue.

The error returned is:

```

@ukic/react: Error: error:0308010C:digital envelope routines::unsupported

@ukic/react: at new Hash (node:internal/crypto/hash:67:19)

@ukic/react: at Object.createHash (node:crypto:135:10)

@ukic/react: at module.exports (C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\webpack\lib\util\createHash.js:135:53)

@ukic/react: at NormalModule._initBuildHash (C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\webpack\lib\NormalModule.js:417:16)

@ukic/react: at handleParseError (C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\webpack\lib\NormalModule.js:471:10)

@ukic/react: at C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\webpack\lib\NormalModule.js:503:5

@ukic/react: at C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\webpack\lib\NormalModule.js:358:12

@ukic/react: at C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\loader-runner\lib\LoaderRunner.js:373:3

@ukic/react: at iterateNormalLoaders (C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\loader-runner\lib\LoaderRunner.js:214:10)

@ukic/react: at C:\Users\Administrator\dev\ic-ui-kit\packages\react\node_modules\loader-runner\lib\LoaderRunner.js:205:4 {

@ukic/react: opensslErrorStack: [ 'error:03000086:digital envelope routines::initialization error' ],

@ukic/react: library: 'digital envelope routines',

@ukic/react: reason: 'unsupported',

@ukic/react: code: 'ERR_OSSL_EVP_UNSUPPORTED'

@ukic/react: }

```

## How to reproduce

Tell us the steps to reproduce the problem:

1. Use one of the failing node versions (below), say v17.9.1.

### Failing node versions

These versions are tested to fail:

- v17.9.1

- v18.12.1

- v19.2.0

### OK node versions

Version 16.13.0 and 16.18.1 are tested to work.

## 🧐 Expected behaviour

Storybook should launch without the `` error, as it does on node v16.18.1

## 🖥 Desktop

Tested on Windows Server 2022 and macOS Monterey 12.6.

## Possible fix

This is reported in Storybook: https://github.com/storybookjs/storybook/issues/19692, looks like changing to Webpack 5 might fix this.

| priority | ui kit storybook will not run on node version summary of the bug the command npm run storybook fails when run on any node version greater than also working on lts worth noting that npm run build actually succeeds it s just storybook that s having the issue the error returned is ukic react error error digital envelope routines unsupported ukic react at new hash node internal crypto hash ukic react at object createhash node crypto ukic react at module exports c users administrator dev ic ui kit packages react node modules webpack lib util createhash js ukic react at normalmodule initbuildhash c users administrator dev ic ui kit packages react node modules webpack lib normalmodule js ukic react at handleparseerror c users administrator dev ic ui kit packages react node modules webpack lib normalmodule js ukic react at c users administrator dev ic ui kit packages react node modules webpack lib normalmodule js ukic react at c users administrator dev ic ui kit packages react node modules webpack lib normalmodule js ukic react at c users administrator dev ic ui kit packages react node modules loader runner lib loaderrunner js ukic react at iteratenormalloaders c users administrator dev ic ui kit packages react node modules loader runner lib loaderrunner js ukic react at c users administrator dev ic ui kit packages react node modules loader runner lib loaderrunner js ukic react opensslerrorstack ukic react library digital envelope routines ukic react reason unsupported ukic react code err ossl evp unsupported ukic react how to reproduce tell us the steps to reproduce the problem use one of the failing node versions below say failing node versions these versions are tested to fail ok node versions version and are tested to work 🧐 expected behaviour storybook should launch without the error as it does on node 🖥 desktop tested on windows server and macos monterey possible fix this is reported in storybook looks like changing to webpack might fix this | 1 |

89,937 | 3,807,039,631 | IssuesEvent | 2016-03-25 04:27:24 | TheValarProject/TheValarProjectWebsite | https://api.github.com/repos/TheValarProject/TheValarProjectWebsite | opened | Make themed video player | enhancement priority-medium | Make video player with custom themed controls. This will be used later to show videos that showcase the server/mod. This should use the HTML5 video tags. | 1.0 | Make themed video player - Make video player with custom themed controls. This will be used later to show videos that showcase the server/mod. This should use the HTML5 video tags. | priority | make themed video player make video player with custom themed controls this will be used later to show videos that showcase the server mod this should use the video tags | 1 |

289,651 | 8,873,572,942 | IssuesEvent | 2019-01-11 18:34:16 | Coders-After-Dark/Reskillable | https://api.github.com/repos/Coders-After-Dark/Reskillable | closed | Is the "none" config option working? | Medium Priority bug | I have tried:

tp=reskillable:mining|3

tp:cooked_apple:*="none"

and I"m left with a cooked apple requiring mining 3. What am I doing wrong? Thanks. | 1.0 | Is the "none" config option working? - I have tried:

tp=reskillable:mining|3

tp:cooked_apple:*="none"

and I"m left with a cooked apple requiring mining 3. What am I doing wrong? Thanks. | priority | is the none config option working i have tried tp reskillable mining tp cooked apple none and i m left with a cooked apple requiring mining what am i doing wrong thanks | 1 |

490,047 | 14,114,980,522 | IssuesEvent | 2020-11-07 18:32:50 | apowers313/nhai | https://api.github.com/repos/apowers313/nhai | opened | Shell: run / step | priority:medium 🛠 type:tools | Create shell commands to `run` until the next break point or `step` until the next event | 1.0 | Shell: run / step - Create shell commands to `run` until the next break point or `step` until the next event | priority | shell run step create shell commands to run until the next break point or step until the next event | 1 |

326,310 | 9,954,961,279 | IssuesEvent | 2019-07-05 09:43:59 | Baystation12/Baystation12 | https://api.github.com/repos/Baystation12/Baystation12 | closed | [Master] APLU Classic modkit has no sprite | Priority: Medium Sprites | When you apply the Classic modkit to a Ripley, then enter and eject from it, it then has no sprite. If you reenter it, it once again has a sprite. Basically, the sprite for when no one is in it is missing.

| 1.0 | [Master] APLU Classic modkit has no sprite - When you apply the Classic modkit to a Ripley, then enter and eject from it, it then has no sprite. If you reenter it, it once again has a sprite. Basically, the sprite for when no one is in it is missing.

| priority | aplu classic modkit has no sprite when you apply the classic modkit to a ripley then enter and eject from it it then has no sprite if you reenter it it once again has a sprite basically the sprite for when no one is in it is missing | 1 |

666,960 | 22,393,412,241 | IssuesEvent | 2022-06-17 09:56:45 | opencrvs/opencrvs-core | https://api.github.com/repos/opencrvs/opencrvs-core | closed | After clicking any office it should show user lists but it takes time and shows no user lists | Priority: medium | steps:

1. log in as Sys admin

2. make your network slow a little bit

3. Search Ibombo office

https://www.loom.com/share/25882c44fb4c4b67816039b3567872b3 | 1.0 | After clicking any office it should show user lists but it takes time and shows no user lists - steps:

1. log in as Sys admin

2. make your network slow a little bit

3. Search Ibombo office

https://www.loom.com/share/25882c44fb4c4b67816039b3567872b3 | priority | after clicking any office it should show user lists but it takes time and shows no user lists steps log in as sys admin make your network slow a little bit search ibombo office | 1 |

409,932 | 11,980,607,984 | IssuesEvent | 2020-04-07 09:37:45 | numbersprotocol/lifebox | https://api.github.com/repos/numbersprotocol/lifebox | opened | [Feature] 一次性輸入的資料,應額外拉出紀錄 | medium priority | Android: 8.0.0

Mobile: Exodus 1s

App version: v0.1.11

一次性輸入的資料,如:身高、居家位置、性別、生日 (為了推算年齡)....等,應額外獨立頁面,於使用者第一次使用時輸入 | 1.0 | [Feature] 一次性輸入的資料,應額外拉出紀錄 - Android: 8.0.0

Mobile: Exodus 1s

App version: v0.1.11

一次性輸入的資料,如:身高、居家位置、性別、生日 (為了推算年齡)....等,應額外獨立頁面,於使用者第一次使用時輸入 | priority | 一次性輸入的資料,應額外拉出紀錄 android mobile exodus app version 一次性輸入的資料,如:身高、居家位置、性別、生日 為了推算年齡 等,應額外獨立頁面,於使用者第一次使用時輸入 | 1 |

309,041 | 9,460,641,893 | IssuesEvent | 2019-04-17 11:32:45 | Fabian-Sommer/HeroesLounge | https://api.github.com/repos/Fabian-Sommer/HeroesLounge | closed | Rework time formats for NA users | high priority medium | Times displayed (upcoming matches, schedule matches) should be in AM/PM for sloths with NA as their region. Scheduling should also be in this format for them. | 1.0 | Rework time formats for NA users - Times displayed (upcoming matches, schedule matches) should be in AM/PM for sloths with NA as their region. Scheduling should also be in this format for them. | priority | rework time formats for na users times displayed upcoming matches schedule matches should be in am pm for sloths with na as their region scheduling should also be in this format for them | 1 |

777,101 | 27,268,369,855 | IssuesEvent | 2023-02-22 20:00:52 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] Strict inequality filter combined with IN condition gives extra rows | kind/bug area/ysql priority/medium status/awaiting-triage | Strict inequality filter combined with IN condition gives extra rows. With hybrid scans we push both the inequality filter and IN query as a part of scan options. However, when there is a strict inequality and an IN condition on the query, the strict inequality ends up not being adhered to completely.

Jira Link: [DB-5465](https://yugabyte.atlassian.net/browse/DB-5465)

### Description

```

./bin/ysqlsh

ysqlsh (11.2-YB-2.17.2.0-b0)

Type "help" for help.

create table test(r1 int, r2 int, primary key(r1 asc, r2 asc));

insert into test select i/5, i%5 from generate_series(1,20) i;

select * from test;

r1 | r2

----+----

0 | 1

0 | 2

0 | 3

0 | 4

1 | 0

1 | 1

1 | 2

1 | 3

1 | 4

2 | 0

2 | 1

2 | 2

2 | 3

2 | 4

3 | 0

3 | 1

3 | 2

3 | 3

3 | 4

4 | 0

(20 rows)

// Case where extra row is present -- INCORRECT

select * from test where r1 in (1, 3) and r2 > 2;

r1 | r2

----+----

1 | 3

1 | 4

3 | 2 <-- extra row

3 | 3

3 | 4

(5 rows)

// Case where extra row is present -- INCORRECT

select * from test where r1 in (0, 1, 3) and r2 > 2;

r1 | r2

----+----

0 | 3

0 | 4

1 | 3

1 | 4

3 | 2 <-- extra row

3 | 3

3 | 4

(7 rows)

```

An extra row is given for queries with both IN condition and a range filter. For the examples shown above, the extra row `3, 2` is being printed. This issue does not persist when `yb_bypass_cond_recheck` to set to false.

[DB-5465]: https://yugabyte.atlassian.net/browse/DB-5465?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | 1.0 | [YSQL] Strict inequality filter combined with IN condition gives extra rows - Strict inequality filter combined with IN condition gives extra rows. With hybrid scans we push both the inequality filter and IN query as a part of scan options. However, when there is a strict inequality and an IN condition on the query, the strict inequality ends up not being adhered to completely.

Jira Link: [DB-5465](https://yugabyte.atlassian.net/browse/DB-5465)

### Description

```

./bin/ysqlsh

ysqlsh (11.2-YB-2.17.2.0-b0)

Type "help" for help.

create table test(r1 int, r2 int, primary key(r1 asc, r2 asc));

insert into test select i/5, i%5 from generate_series(1,20) i;

select * from test;

r1 | r2

----+----

0 | 1

0 | 2

0 | 3

0 | 4

1 | 0

1 | 1

1 | 2

1 | 3

1 | 4

2 | 0

2 | 1

2 | 2

2 | 3

2 | 4

3 | 0

3 | 1

3 | 2

3 | 3

3 | 4

4 | 0

(20 rows)

// Case where extra row is present -- INCORRECT

select * from test where r1 in (1, 3) and r2 > 2;

r1 | r2

----+----

1 | 3

1 | 4

3 | 2 <-- extra row

3 | 3

3 | 4

(5 rows)

// Case where extra row is present -- INCORRECT

select * from test where r1 in (0, 1, 3) and r2 > 2;

r1 | r2

----+----

0 | 3

0 | 4

1 | 3

1 | 4

3 | 2 <-- extra row

3 | 3

3 | 4

(7 rows)

```

An extra row is given for queries with both IN condition and a range filter. For the examples shown above, the extra row `3, 2` is being printed. This issue does not persist when `yb_bypass_cond_recheck` to set to false.

[DB-5465]: https://yugabyte.atlassian.net/browse/DB-5465?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | priority | strict inequality filter combined with in condition gives extra rows strict inequality filter combined with in condition gives extra rows with hybrid scans we push both the inequality filter and in query as a part of scan options however when there is a strict inequality and an in condition on the query the strict inequality ends up not being adhered to completely jira link description bin ysqlsh ysqlsh yb type help for help create table test int int primary key asc asc insert into test select i i from generate series i select from test rows case where extra row is present incorrect select from test where in and extra row rows case where extra row is present incorrect select from test where in and extra row rows an extra row is given for queries with both in condition and a range filter for the examples shown above the extra row is being printed this issue does not persist when yb bypass cond recheck to set to false | 1 |

552,506 | 16,241,826,142 | IssuesEvent | 2021-05-07 10:26:54 | edwisely-ai/Marketing | https://api.github.com/repos/edwisely-ai/Marketing | closed | Social Media - Rabindranath Tagore Post on May 7th | Criticality Medium Priority Low | "The highest education is that which does not merely give us information but makes our life in harmony with all existence."

Post Content :

In 1913, Rabindranath Tagore was the first non-European to win a Nobel Prize in Literature for his poetry collection titled, 'Gitanjali' which is originally written in Bengali and later translated into English. He is also referred to as "the Bard of Bengal".

Happy Rabindranath Tagore Jayanti !!

#literature #poetry #education #highereducation #Tagore #polymath

#highered #college #teaching #students #university #nobelprize

#pandemic #staysafe

| 1.0 | Social Media - Rabindranath Tagore Post on May 7th - "The highest education is that which does not merely give us information but makes our life in harmony with all existence."

Post Content :

In 1913, Rabindranath Tagore was the first non-European to win a Nobel Prize in Literature for his poetry collection titled, 'Gitanjali' which is originally written in Bengali and later translated into English. He is also referred to as "the Bard of Bengal".

Happy Rabindranath Tagore Jayanti !!

#literature #poetry #education #highereducation #Tagore #polymath

#highered #college #teaching #students #university #nobelprize

#pandemic #staysafe

| priority | social media rabindranath tagore post on may the highest education is that which does not merely give us information but makes our life in harmony with all existence post content in rabindranath tagore was the first non european to win a nobel prize in literature for his poetry collection titled gitanjali which is originally written in bengali and later translated into english he is also referred to as the bard of bengal happy rabindranath tagore jayanti literature poetry education highereducation tagore polymath highered college teaching students university nobelprize pandemic staysafe | 1 |

222,179 | 7,430,483,234 | IssuesEvent | 2018-03-25 02:20:09 | cuappdev/podcast-ios | https://api.github.com/repos/cuappdev/podcast-ios | closed | Fix facebook friends endpoint to reflect new backend | Priority: Medium Type: Maintenance | This will fix facebook suggestions on Feed to show up to 20 friends you are not following

https://github.com/cuappdev/podcast-backend/pull/212 | 1.0 | Fix facebook friends endpoint to reflect new backend - This will fix facebook suggestions on Feed to show up to 20 friends you are not following

https://github.com/cuappdev/podcast-backend/pull/212 | priority | fix facebook friends endpoint to reflect new backend this will fix facebook suggestions on feed to show up to friends you are not following | 1 |

636,205 | 20,594,960,225 | IssuesEvent | 2022-03-05 10:43:21 | AY2122S2-CS2103-W17-3/tp | https://api.github.com/repos/AY2122S2-CS2103-W17-3/tp | closed | Add skeleton to UG | type.Story priority.Medium | User story: As a user I can have an updated and useful user guide to teach me how to use the application.

Outline the rough skeleton for other teammates to refine later on. | 1.0 | Add skeleton to UG - User story: As a user I can have an updated and useful user guide to teach me how to use the application.

Outline the rough skeleton for other teammates to refine later on. | priority | add skeleton to ug user story as a user i can have an updated and useful user guide to teach me how to use the application outline the rough skeleton for other teammates to refine later on | 1 |

149,899 | 5,730,852,303 | IssuesEvent | 2017-04-21 10:33:39 | status-im/status-react | https://api.github.com/repos/status-im/status-react | opened | Tap near options button should work as tap on the button | bug intermediate medium-priority | ### Description

[comment]: # (Feature or Bug? i.e Type: Bug)

*Type*: Bug

| 1.0 | Tap near options button should work as tap on the button - ### Description

[comment]: # (Feature or Bug? i.e Type: Bug)

*Type*: Bug

| priority | tap near options button should work as tap on the button description feature or bug i e type bug type bug | 1 |

388,462 | 11,488,066,085 | IssuesEvent | 2020-02-11 13:14:46 | DigitalCampus/django-oppia | https://api.github.com/repos/DigitalCampus/django-oppia | closed | Media API, also return the individual elements/params | enhancement medium priority | Required for generating Oppia export package in ORB - since this won't be processed via Moodle | 1.0 | Media API, also return the individual elements/params - Required for generating Oppia export package in ORB - since this won't be processed via Moodle | priority | media api also return the individual elements params required for generating oppia export package in orb since this won t be processed via moodle | 1 |

57,187 | 3,081,247,157 | IssuesEvent | 2015-08-22 14:38:02 | bitfighter/bitfighter | https://api.github.com/repos/bitfighter/bitfighter | closed | checkArgList failure prints empty string | 020 bug imported Priority-Medium | _From [buckyballreaction](https://code.google.com/u/buckyballreaction/) on March 11, 2014 22:56:36_

What steps will reproduce the problem? 1. call an API method that internally uses checkArgList()

2. use an invalid argument

3. see the stacktrace? it doesn't print the error

For example, do this in a levelgen, in main():

bf:subscribe(Event.ShipEnteredTheTwilightZone)

That will fail the args check and trigger a stack trace... which prints empty strings for the stack.

_Original issue: http://code.google.com/p/bitfighter/issues/detail?id=411_ | 1.0 | checkArgList failure prints empty string - _From [buckyballreaction](https://code.google.com/u/buckyballreaction/) on March 11, 2014 22:56:36_

What steps will reproduce the problem? 1. call an API method that internally uses checkArgList()

2. use an invalid argument

3. see the stacktrace? it doesn't print the error

For example, do this in a levelgen, in main():

bf:subscribe(Event.ShipEnteredTheTwilightZone)

That will fail the args check and trigger a stack trace... which prints empty strings for the stack.

_Original issue: http://code.google.com/p/bitfighter/issues/detail?id=411_ | priority | checkarglist failure prints empty string from on march what steps will reproduce the problem call an api method that internally uses checkarglist use an invalid argument see the stacktrace it doesn t print the error for example do this in a levelgen in main bf subscribe event shipenteredthetwilightzone that will fail the args check and trigger a stack trace which prints empty strings for the stack original issue | 1 |

165,817 | 6,286,842,042 | IssuesEvent | 2017-07-19 13:51:12 | FezVrasta/popper.js | https://api.github.com/repos/FezVrasta/popper.js | closed | include typescript definitions in npm package | # ENHANCEMENT DIFFICULTY: medium PRIORITY: low TARGETS: core | @FezVrasta would you consider publishing a `.d.ts` file in the NPM package for typescript consumers? [`@types/popper.js`](https://www.npmjs.com/package/@types/popper.js) is already a thing, but including types in the source package itself provides a more consistent experience. it also allows libraries to depend on your interfaces without introducing an `@types` dependency that is unnecessary for JS consumers.

i've been improving the `@types` definitions and could be ready with a PR today or tomorrow? | 1.0 | include typescript definitions in npm package - @FezVrasta would you consider publishing a `.d.ts` file in the NPM package for typescript consumers? [`@types/popper.js`](https://www.npmjs.com/package/@types/popper.js) is already a thing, but including types in the source package itself provides a more consistent experience. it also allows libraries to depend on your interfaces without introducing an `@types` dependency that is unnecessary for JS consumers.

i've been improving the `@types` definitions and could be ready with a PR today or tomorrow? | priority | include typescript definitions in npm package fezvrasta would you consider publishing a d ts file in the npm package for typescript consumers is already a thing but including types in the source package itself provides a more consistent experience it also allows libraries to depend on your interfaces without introducing an types dependency that is unnecessary for js consumers i ve been improving the types definitions and could be ready with a pr today or tomorrow | 1 |

703,371 | 24,155,869,594 | IssuesEvent | 2022-09-22 07:38:18 | enviroCar/enviroCar-app | https://api.github.com/repos/enviroCar/enviroCar-app | closed | App crash when disabling auto connect | bug 3 - Done Priority - 2 - Medium | **Description**

The app crashes when you try to deactivate the "Auto Connect" setting.

**Branches**

master, develop

**How to reproduce**

Go to the settings screen, enable "Auto Connect" and try to disable it again.

**How to fix**

TBD | 1.0 | App crash when disabling auto connect - **Description**

The app crashes when you try to deactivate the "Auto Connect" setting.

**Branches**

master, develop

**How to reproduce**

Go to the settings screen, enable "Auto Connect" and try to disable it again.

**How to fix**

TBD | priority | app crash when disabling auto connect description the app crashes when you try to deactivate the auto connect setting branches master develop how to reproduce go to the settings screen enable auto connect and try to disable it again how to fix tbd | 1 |

40,791 | 2,868,942,355 | IssuesEvent | 2015-06-05 22:06:00 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | update the uploader on bignum | bug Done Priority-Medium | <a href="https://github.com/financeCoding"><img src="https://avatars.githubusercontent.com/u/654526?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [financeCoding](https://github.com/financeCoding)**

_Originally opened as dart-lang/sdk#8533_

----

The uploader email address is no longer valid, can someone update it to financeCoding@gmail.com

http://pub.dartlang.org/packages/bignum

Thanks! | 1.0 | update the uploader on bignum - <a href="https://github.com/financeCoding"><img src="https://avatars.githubusercontent.com/u/654526?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [financeCoding](https://github.com/financeCoding)**

_Originally opened as dart-lang/sdk#8533_

----

The uploader email address is no longer valid, can someone update it to financeCoding@gmail.com

http://pub.dartlang.org/packages/bignum

Thanks! | priority | update the uploader on bignum issue by originally opened as dart lang sdk the uploader email address is no longer valid can someone update it to financecoding gmail com thanks | 1 |

781,053 | 27,420,457,848 | IssuesEvent | 2023-03-01 16:22:38 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] tserver failed starting PG during server restart | kind/bug area/ysql priority/medium | Jira Link: [DB-2626](https://yugabyte.atlassian.net/browse/DB-2626)

### Description

The tserver failed to start PG with logs:

```

...

Failed when waiting for PostgreSQL server to exit: Illegal state (yb/util/subprocess.cc:444): DoWait called on a process that is not running, waiting a bit

<repeat>

...

```

~~This issue might be related to https://github.com/yugabyte/yugabyte-db/issues/12784.~~ The log in this issue is from server. We should locate the actual in PG to tell us more details.

Ref:

discussion thread: https://yugabyte.slack.com/archives/CAR5BCH29/p1655144419422059 | 1.0 | [YSQL] tserver failed starting PG during server restart - Jira Link: [DB-2626](https://yugabyte.atlassian.net/browse/DB-2626)

### Description

The tserver failed to start PG with logs:

```

...

Failed when waiting for PostgreSQL server to exit: Illegal state (yb/util/subprocess.cc:444): DoWait called on a process that is not running, waiting a bit

<repeat>

...

```

~~This issue might be related to https://github.com/yugabyte/yugabyte-db/issues/12784.~~ The log in this issue is from server. We should locate the actual in PG to tell us more details.

Ref:

discussion thread: https://yugabyte.slack.com/archives/CAR5BCH29/p1655144419422059 | priority | tserver failed starting pg during server restart jira link description the tserver failed to start pg with logs failed when waiting for postgresql server to exit illegal state yb util subprocess cc dowait called on a process that is not running waiting a bit this issue might be related to the log in this issue is from server we should locate the actual in pg to tell us more details ref discussion thread | 1 |

71,738 | 3,367,617,952 | IssuesEvent | 2015-11-22 10:19:05 | music-encoding/music-encoding | https://api.github.com/repos/music-encoding/music-encoding | closed | Allow <head> in more places with meiHead and text-containing elements | Component: Core Schema Priority: Medium Status: Needs Patch Type: Enhancement | _From [pd...@virginia.edu](https://code.google.com/u/103686026181985548448/) on January 28, 2015 11:29:01_

Allowing \<head> in more elements that occur in the header (not just \<projectDesc> as covered by issue `#187` ) will permit the header to better capture existing (printed) thematic catalogs. In addition, wherever head is allowed, it should be allowed to occur multiple times in order to capture subheadings.

_Original issue: http://code.google.com/p/music-encoding/issues/detail?id=221_ | 1.0 | Allow <head> in more places with meiHead and text-containing elements - _From [pd...@virginia.edu](https://code.google.com/u/103686026181985548448/) on January 28, 2015 11:29:01_

Allowing \<head> in more elements that occur in the header (not just \<projectDesc> as covered by issue `#187` ) will permit the header to better capture existing (printed) thematic catalogs. In addition, wherever head is allowed, it should be allowed to occur multiple times in order to capture subheadings.

_Original issue: http://code.google.com/p/music-encoding/issues/detail?id=221_ | priority | allow in more places with meihead and text containing elements from on january allowing in more elements that occur in the header not just as covered by issue will permit the header to better capture existing printed thematic catalogs in addition wherever head is allowed it should be allowed to occur multiple times in order to capture subheadings original issue | 1 |

162,191 | 6,148,687,004 | IssuesEvent | 2017-06-27 18:23:19 | cloudius-systems/osv | https://api.github.com/repos/cloudius-systems/osv | closed | TCP cork implementation | enhancement medium priority | Postpone tcp writes in order to batch data in existing packets and reduce exists

| 1.0 | TCP cork implementation - Postpone tcp writes in order to batch data in existing packets and reduce exists

| priority | tcp cork implementation postpone tcp writes in order to batch data in existing packets and reduce exists | 1 |

509,443 | 14,731,099,039 | IssuesEvent | 2021-01-06 14:13:47 | TeamChocoQuest/ChocolateQuestRepoured | https://api.github.com/repos/TeamChocoQuest/ChocolateQuestRepoured | closed | Possible memory leaks | Priority: Medium Status: Reproduced Type: Bug Waiting for feedback | **Common sense Info**

- I play...

- [X] With a large modpack

- [ ] Only with CQR and it's dependencies

- The issue occurs in...

- [X] Singleplayer

- [X] Multiplayer

- [X] I have searched for this or a similar issue before reporting and it was either (1) not previously reported, or (2) previously fixed and I'm having the same problem.

- [X] I am using the latest version of the mod (all versions can be found on github under releases)

- [X] I read through the FAQ and i could not find something helpful ([FAQ](https://wiki.cq-repoured.net/index.php?title=FAQ))

- [ ] I reproduced the bug without any other mod's except forge, cqr and it's dependencies

- [X] The game crashes because of this bug

**Versions**

Chocolate Quest Repoured: Latest

Forge: 2854

Minecraft: 1.12.2

**Describe the bug**

Generate world without protections. CQR generates protection_region files anyways in the thousands which are continuously loaded and written to on every world save causing a massive memory leak. A world without these files or CQR data on a modpack will boot using 1GB of ram, with these files over 6-8GB on boot up. And the ram keeps increasing until it runs out of memory and deadlocks/crashes.

When updating the mod, it spams that all of the files are from an older version, even when the conversion from old to new versions is enabled in the config. Combined that with the above problem it is impossible to use a world generated or created on a newer version of CQR.

I have seen CQR itself use up to 28GB of ram on my server, no matter how much memory you give it, it will use it all up.

Every world save takes 30-60+ seconds, and in that time nothing ticks, everything's deadlocked.

This has been confirmed to be a reproducible issue by multiple server owners/people i know.

**To Reproduce**

Steps to reproduce the behavior:

This error is produced on the Tekxit 3.14 (PI) modpack by Slayer5934

1) Create world and pregenerate to spawn many dungeons.

2) Watch as the world size generated increases, the ram required to run the server sky rockets.

3) Update CQR

4) Your console now has no purpose, it only passes CQR errors. And maybe butter.

5) Enable conversion of old to new files in config, the version errors dont vanish until the structures.dat file is deleted, the entire CQR folder in the world folder needs to be purged to fix the errors.

6) Every world save will take 60 seconds or longer, basically deadlocking the server AND client (world was moved to single player, same issues occured).

**Expected behavior**

Not to generate protection_region files if protection is disabled in config.

Not to continuously load files into memory when the world is saved.

Not to cause an infinite memory leak.

Not to cause world saves to take longer then 1 second. (On modpacks with over 300 mods and a 25000x25000 world a world save takes less than a second).

| 1.0 | Possible memory leaks - **Common sense Info**

- I play...

- [X] With a large modpack

- [ ] Only with CQR and it's dependencies

- The issue occurs in...

- [X] Singleplayer

- [X] Multiplayer

- [X] I have searched for this or a similar issue before reporting and it was either (1) not previously reported, or (2) previously fixed and I'm having the same problem.

- [X] I am using the latest version of the mod (all versions can be found on github under releases)

- [X] I read through the FAQ and i could not find something helpful ([FAQ](https://wiki.cq-repoured.net/index.php?title=FAQ))

- [ ] I reproduced the bug without any other mod's except forge, cqr and it's dependencies

- [X] The game crashes because of this bug

**Versions**

Chocolate Quest Repoured: Latest

Forge: 2854

Minecraft: 1.12.2

**Describe the bug**

Generate world without protections. CQR generates protection_region files anyways in the thousands which are continuously loaded and written to on every world save causing a massive memory leak. A world without these files or CQR data on a modpack will boot using 1GB of ram, with these files over 6-8GB on boot up. And the ram keeps increasing until it runs out of memory and deadlocks/crashes.

When updating the mod, it spams that all of the files are from an older version, even when the conversion from old to new versions is enabled in the config. Combined that with the above problem it is impossible to use a world generated or created on a newer version of CQR.

I have seen CQR itself use up to 28GB of ram on my server, no matter how much memory you give it, it will use it all up.

Every world save takes 30-60+ seconds, and in that time nothing ticks, everything's deadlocked.

This has been confirmed to be a reproducible issue by multiple server owners/people i know.

**To Reproduce**

Steps to reproduce the behavior:

This error is produced on the Tekxit 3.14 (PI) modpack by Slayer5934

1) Create world and pregenerate to spawn many dungeons.

2) Watch as the world size generated increases, the ram required to run the server sky rockets.

3) Update CQR

4) Your console now has no purpose, it only passes CQR errors. And maybe butter.

5) Enable conversion of old to new files in config, the version errors dont vanish until the structures.dat file is deleted, the entire CQR folder in the world folder needs to be purged to fix the errors.

6) Every world save will take 60 seconds or longer, basically deadlocking the server AND client (world was moved to single player, same issues occured).

**Expected behavior**

Not to generate protection_region files if protection is disabled in config.

Not to continuously load files into memory when the world is saved.

Not to cause an infinite memory leak.

Not to cause world saves to take longer then 1 second. (On modpacks with over 300 mods and a 25000x25000 world a world save takes less than a second).

| priority | possible memory leaks common sense info i play with a large modpack only with cqr and it s dependencies the issue occurs in singleplayer multiplayer i have searched for this or a similar issue before reporting and it was either not previously reported or previously fixed and i m having the same problem i am using the latest version of the mod all versions can be found on github under releases i read through the faq and i could not find something helpful i reproduced the bug without any other mod s except forge cqr and it s dependencies the game crashes because of this bug versions chocolate quest repoured latest forge minecraft describe the bug generate world without protections cqr generates protection region files anyways in the thousands which are continuously loaded and written to on every world save causing a massive memory leak a world without these files or cqr data on a modpack will boot using of ram with these files over on boot up and the ram keeps increasing until it runs out of memory and deadlocks crashes when updating the mod it spams that all of the files are from an older version even when the conversion from old to new versions is enabled in the config combined that with the above problem it is impossible to use a world generated or created on a newer version of cqr i have seen cqr itself use up to of ram on my server no matter how much memory you give it it will use it all up every world save takes seconds and in that time nothing ticks everything s deadlocked this has been confirmed to be a reproducible issue by multiple server owners people i know to reproduce steps to reproduce the behavior this error is produced on the tekxit pi modpack by create world and pregenerate to spawn many dungeons watch as the world size generated increases the ram required to run the server sky rockets update cqr your console now has no purpose it only passes cqr errors and maybe butter enable conversion of old to new files in config the version errors dont vanish until the structures dat file is deleted the entire cqr folder in the world folder needs to be purged to fix the errors every world save will take seconds or longer basically deadlocking the server and client world was moved to single player same issues occured expected behavior not to generate protection region files if protection is disabled in config not to continuously load files into memory when the world is saved not to cause an infinite memory leak not to cause world saves to take longer then second on modpacks with over mods and a world a world save takes less than a second | 1 |

251,628 | 8,019,665,305 | IssuesEvent | 2018-07-26 00:02:11 | MARKETProtocol/dApp | https://api.github.com/repos/MARKETProtocol/dApp | closed | [Deploy Contract] Retry in deploy contract throws error | Priority: Medium Status: Review Needed Type: Bug | ### Description

*Type*: Bug

### Current Behavior

Clicking retry button after cancelling transaction in Metamask throws below error --

```

Unhandled Rejection (TypeError): Cannot read property 'priceFloor' of undefined

```

### Expected Behavior

Clicking retry button after cancelling transaction in Metamask should start contract deployment flow with the provided user input | 1.0 | [Deploy Contract] Retry in deploy contract throws error - ### Description

*Type*: Bug

### Current Behavior

Clicking retry button after cancelling transaction in Metamask throws below error --

```

Unhandled Rejection (TypeError): Cannot read property 'priceFloor' of undefined

```

### Expected Behavior

Clicking retry button after cancelling transaction in Metamask should start contract deployment flow with the provided user input | priority | retry in deploy contract throws error description type bug current behavior clicking retry button after cancelling transaction in metamask throws below error unhandled rejection typeerror cannot read property pricefloor of undefined expected behavior clicking retry button after cancelling transaction in metamask should start contract deployment flow with the provided user input | 1 |

26,239 | 2,684,260,116 | IssuesEvent | 2015-03-28 20:18:12 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | post-update command не выполняется | 1 star bug imported Priority-Medium | _From [sim....@gmail.com](https://code.google.com/u/105258257765487351754/) on January 09, 2013 10:56:59_

Required information! OS version: Win7 SP1 x86 ConEmu version: 130108

Far version (if you are using Far Manager): 3.0.3067

post-update command не выполняется после автоматического обновления *Steps to reproduction* 1. я сделал .bat файл, который удаляет лишние файлы из плагинов ConMan и копирует мой файл Background.xml

2. получил сообщение о новой версии ConEmu запустил обновление

3. после обновления мой .bat файл не запустился.

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=877_ | 1.0 | post-update command не выполняется - _From [sim....@gmail.com](https://code.google.com/u/105258257765487351754/) on January 09, 2013 10:56:59_

Required information! OS version: Win7 SP1 x86 ConEmu version: 130108

Far version (if you are using Far Manager): 3.0.3067

post-update command не выполняется после автоматического обновления *Steps to reproduction* 1. я сделал .bat файл, который удаляет лишние файлы из плагинов ConMan и копирует мой файл Background.xml

2. получил сообщение о новой версии ConEmu запустил обновление

3. после обновления мой .bat файл не запустился.

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=877_ | priority | post update command не выполняется from on january required information os version conemu version far version if you are using far manager post update command не выполняется после автоматического обновления steps to reproduction я сделал bat файл который удаляет лишние файлы из плагинов conman и копирует мой файл background xml получил сообщение о новой версии conemu запустил обновление после обновления мой bat файл не запустился original issue | 1 |

22,789 | 2,650,925,099 | IssuesEvent | 2015-03-16 06:49:23 | grepper/tovid | https://api.github.com/repos/grepper/tovid | closed | mplayer renders OSD | bug imported Priority-Medium wontfix | _From [zaar...@gmail.com](https://code.google.com/u/116363330358804917839/) on January 14, 2008 16:46:05_

if /etc/mplayer/mplayer.conf is configured to always show the OSD (osdlevel

option) it will be rendered into converted videos by tovid.

either try to check for that and warn the user,

or override it with the command line parameter.

_Original issue: http://code.google.com/p/tovid/issues/detail?id=27_ | 1.0 | mplayer renders OSD - _From [zaar...@gmail.com](https://code.google.com/u/116363330358804917839/) on January 14, 2008 16:46:05_

if /etc/mplayer/mplayer.conf is configured to always show the OSD (osdlevel

option) it will be rendered into converted videos by tovid.

either try to check for that and warn the user,

or override it with the command line parameter.

_Original issue: http://code.google.com/p/tovid/issues/detail?id=27_ | priority | mplayer renders osd from on january if etc mplayer mplayer conf is configured to always show the osd osdlevel option it will be rendered into converted videos by tovid either try to check for that and warn the user or override it with the command line parameter original issue | 1 |

609,285 | 18,870,252,631 | IssuesEvent | 2021-11-13 03:21:02 | scilus/fibernavigator | https://api.github.com/repos/scilus/fibernavigator | closed | Equalize button disabled for specific T1 file | bug imported Priority-Medium OpSys-All Component-UI | _Original author: Jean.Chr...@gmail.com (October 18, 2011 19:58:22)_

<b>What steps will reproduce the problem?</b>

1. Load a specific T1 file.

The equalize button is disabled, when it should be enabled.

The cause is that, for an unknown reason, the nifti file is encoded as a pure 3D data file. The number of bands data is not set, and therefore is set to 0 by default by the nifti library. When updating the property sizer, the check fails.

Suggested fix: when the m_bands member is set to 0, fix it to 1 (anyway, this is logical). This is related to Issue #40.

_Original issue: http://code.google.com/p/fibernavigator/issues/detail?id=41_

| 1.0 | Equalize button disabled for specific T1 file - _Original author: Jean.Chr...@gmail.com (October 18, 2011 19:58:22)_

<b>What steps will reproduce the problem?</b>

1. Load a specific T1 file.

The equalize button is disabled, when it should be enabled.

The cause is that, for an unknown reason, the nifti file is encoded as a pure 3D data file. The number of bands data is not set, and therefore is set to 0 by default by the nifti library. When updating the property sizer, the check fails.

Suggested fix: when the m_bands member is set to 0, fix it to 1 (anyway, this is logical). This is related to Issue #40.

_Original issue: http://code.google.com/p/fibernavigator/issues/detail?id=41_

| priority | equalize button disabled for specific file original author jean chr gmail com october what steps will reproduce the problem load a specific file the equalize button is disabled when it should be enabled the cause is that for an unknown reason the nifti file is encoded as a pure data file the number of bands data is not set and therefore is set to by default by the nifti library when updating the property sizer the check fails suggested fix when the m bands member is set to fix it to anyway this is logical this is related to issue original issue | 1 |

615,021 | 19,211,357,751 | IssuesEvent | 2021-12-07 02:33:38 | MTWGA/thoughtworks-code-review-tools | https://api.github.com/repos/MTWGA/thoughtworks-code-review-tools | closed | 人员选择列表根据字母排序 | Medium Priority | **Is your feature request related to a problem? Please describe.**

选择姓名过于复杂,采用字母表顺序排序的话 可以大幅度缩减查找代码的范围

**Describe the solution you'd like**

使用字母表排序人员选择列表

| 1.0 | 人员选择列表根据字母排序 - **Is your feature request related to a problem? Please describe.**

选择姓名过于复杂,采用字母表顺序排序的话 可以大幅度缩减查找代码的范围

**Describe the solution you'd like**

使用字母表排序人员选择列表

| priority | 人员选择列表根据字母排序 is your feature request related to a problem please describe 选择姓名过于复杂,采用字母表顺序排序的话 可以大幅度缩减查找代码的范围 describe the solution you d like 使用字母表排序人员选择列表 | 1 |

585,006 | 17,468,620,464 | IssuesEvent | 2021-08-06 21:08:50 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | closed | Status app is not responding when right click -> Quit the app after sleep | bug macos crash general priority 2: medium | **Steps:**

1. install latest master and create account

2. click yellow button Hide to collapse the app to dock

3. run any other app on foreground

4. lock the laptop and move it to sleep (i just close laptop)

5. wait for a while , usually several minutes

6. open the laptop and unlock

7. right click the status app in dock -> quit

**As a result**, the app can't be closed and becomes not responsive

https://user-images.githubusercontent.com/82375995/120779456-c1c54d80-c52f-11eb-9820-6cbc94f8851e.mov

| 1.0 | Status app is not responding when right click -> Quit the app after sleep - **Steps:**

1. install latest master and create account

2. click yellow button Hide to collapse the app to dock

3. run any other app on foreground

4. lock the laptop and move it to sleep (i just close laptop)

5. wait for a while , usually several minutes

6. open the laptop and unlock

7. right click the status app in dock -> quit

**As a result**, the app can't be closed and becomes not responsive

https://user-images.githubusercontent.com/82375995/120779456-c1c54d80-c52f-11eb-9820-6cbc94f8851e.mov

| priority | status app is not responding when right click quit the app after sleep steps install latest master and create account click yellow button hide to collapse the app to dock run any other app on foreground lock the laptop and move it to sleep i just close laptop wait for a while usually several minutes open the laptop and unlock right click the status app in dock quit as a result the app can t be closed and becomes not responsive | 1 |

764,313 | 26,794,482,078 | IssuesEvent | 2023-02-01 10:51:37 | ooni/probe | https://api.github.com/repos/ooni/probe | closed | Investigate feasibility of using Dart for the CLI | priority/medium research prototype ooni/probe-cli ooni/probe-engine | This issue is about exploring a potential future development direction where we try to integrate tightly Android, iOS, Desktop, and CLI by using Dart and Flutter and by using FFI to access the OONI engine's functionality.

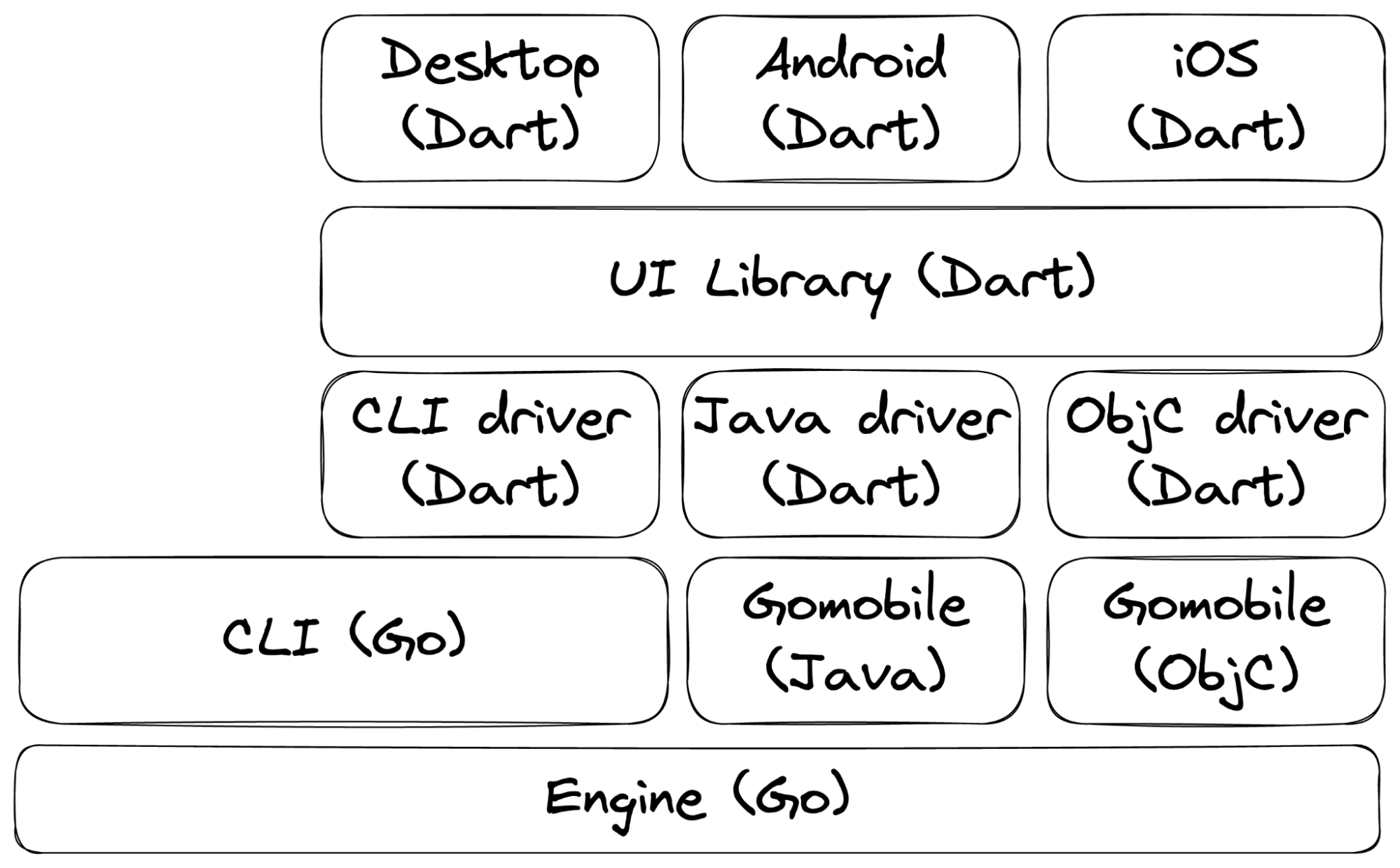

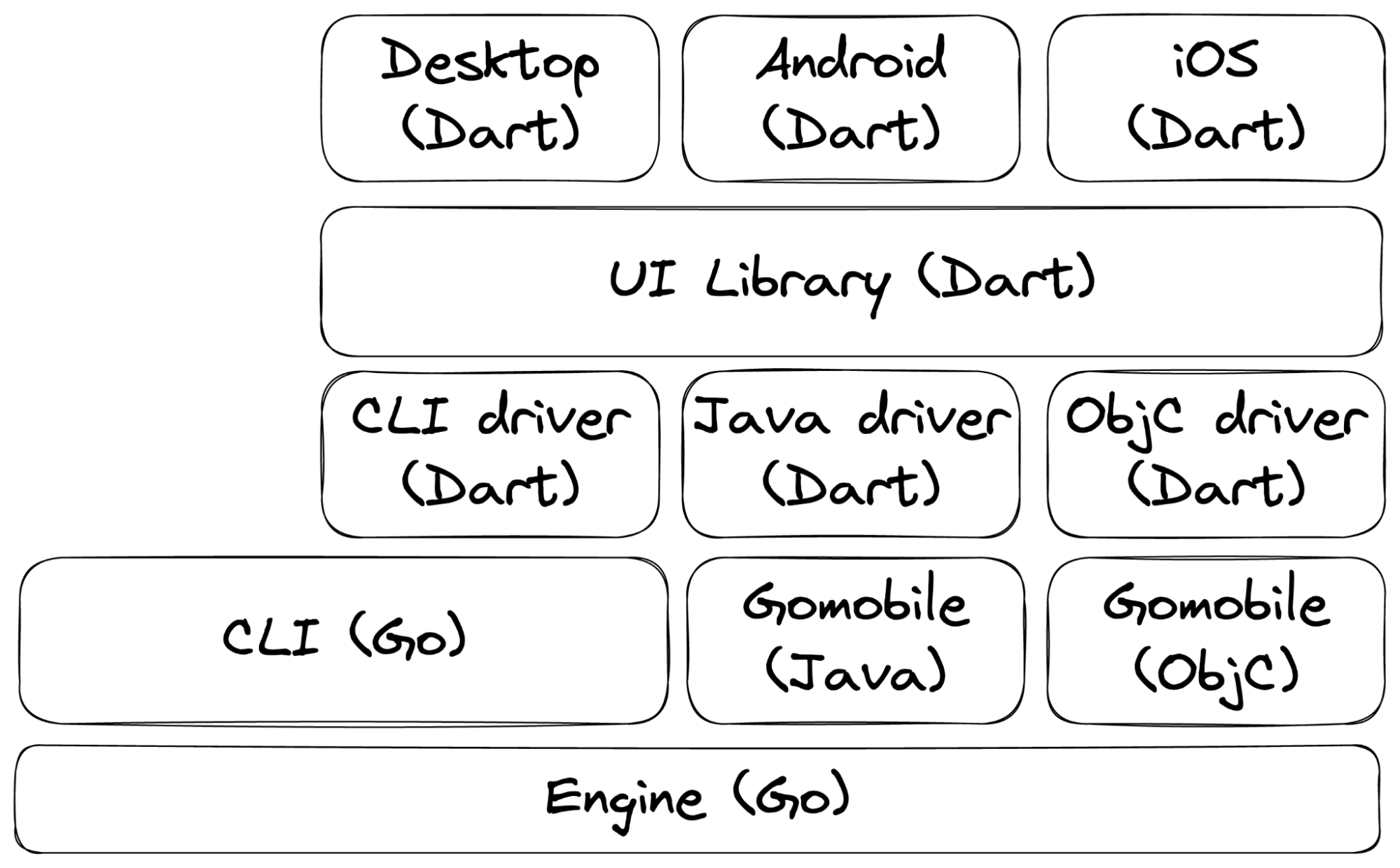

We are [already evaluating](https://github.com/aanorbel/probe-shared) whether to use Flutter for all the user-facing applications to increase code reuse. If we determine that this is doable and desirable, then we'll end up in a situation like the following one:

With this proposal, we hope to consolidate lots of algorithms inside a common library (called “UI Library”). We do not mean to modify the way in which we generate mobile bindings (that is, [go mobile](https://github.com/golang/mobile)). This means that the UI Library will need to implement three distinct drivers for interacting with the OONI Engine:

1. We need a CLI driver for the desktop app that executes [ooniprobe](https://github.com/ooni/probe-cli/) and parses its output.

2. We also need an Android driver for interfacing with Java code generated using go mobile.

3. We also need an iOS driver for interfacing with Objective-C code generated using go mobile.

While this restructuring would certainly reduce the overall complexity, observing this consolidation also begs the question of whether we could further reduce complexity. Let’s explore this possibility.

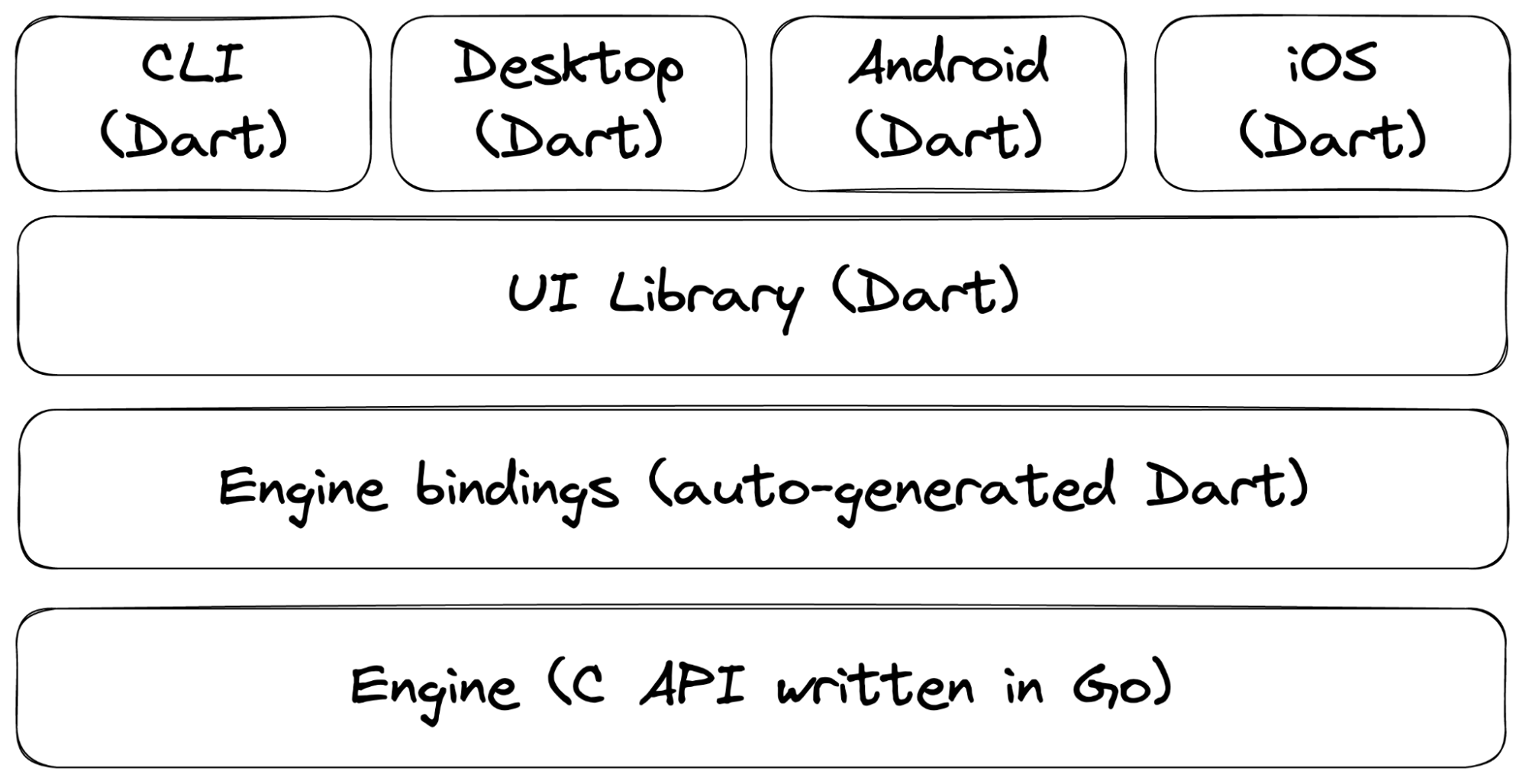

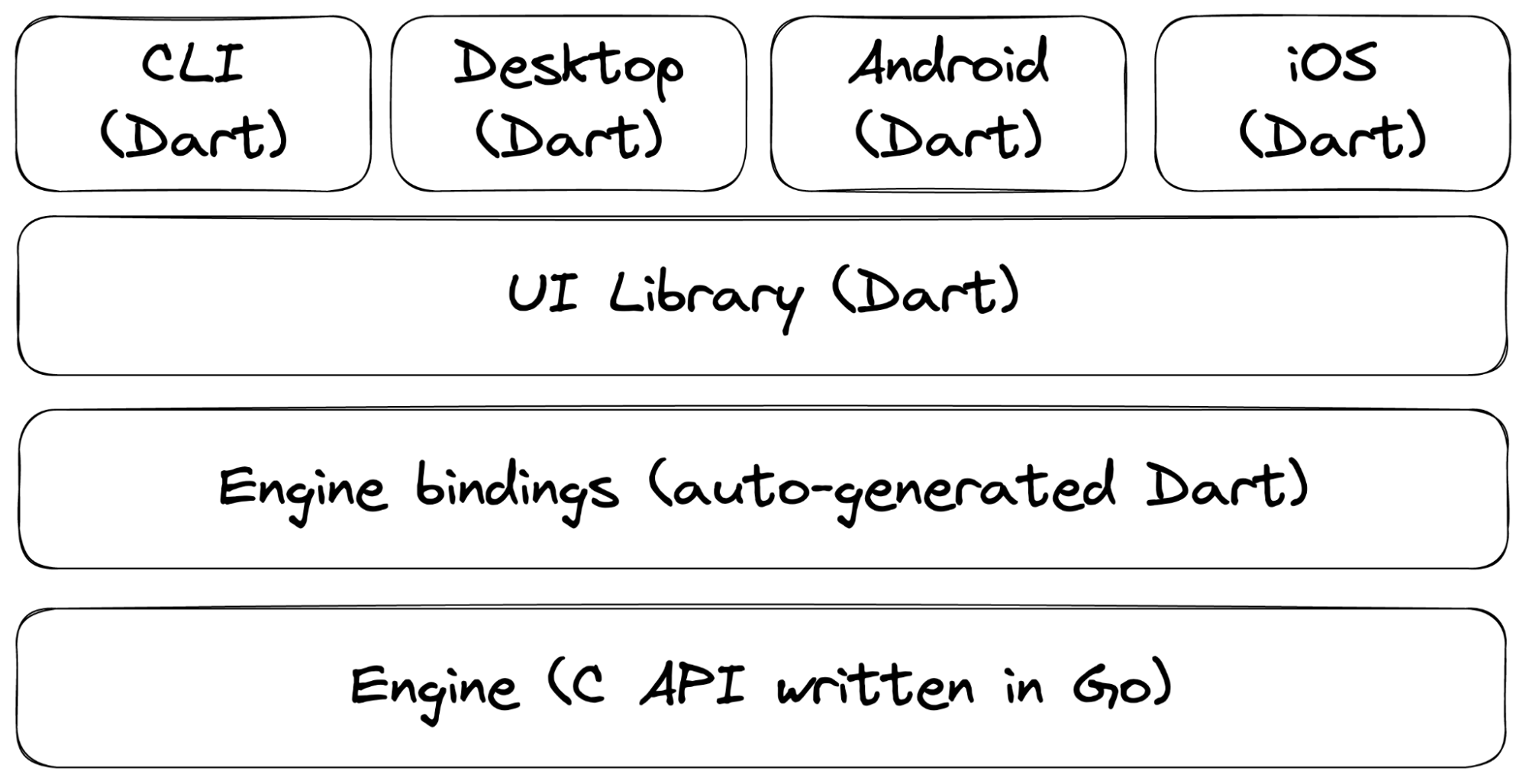

Maybe a possibility would be to stop using go mobile and to get rid of the Go implementation of `ooniprobe`. We could instead use FFI and a C API to make the same API and data ABI available to all Flutter/Dart clients.

Here's how it would look like:

The objective of the work described in this issue is thus to evaluate this alternative design. We need to understand what API we can expose from Go and which strategy to use to auto-generate messages parsers and serializers.

We have already experimented with this concept in https://github.com/ooni/probe-cli/pull/849, https://github.com/ooni/probe-cli/pull/850, https://github.com/ooni/probe-cli/pull/851. So, far, it seems a good idea to use protobuf. We should continue discussing these prototypes and move a bit forward by trying this code out inside https://github.com/aanorbel/probe-shared. | 1.0 | Investigate feasibility of using Dart for the CLI - This issue is about exploring a potential future development direction where we try to integrate tightly Android, iOS, Desktop, and CLI by using Dart and Flutter and by using FFI to access the OONI engine's functionality.

We are [already evaluating](https://github.com/aanorbel/probe-shared) whether to use Flutter for all the user-facing applications to increase code reuse. If we determine that this is doable and desirable, then we'll end up in a situation like the following one:

With this proposal, we hope to consolidate lots of algorithms inside a common library (called “UI Library”). We do not mean to modify the way in which we generate mobile bindings (that is, [go mobile](https://github.com/golang/mobile)). This means that the UI Library will need to implement three distinct drivers for interacting with the OONI Engine:

1. We need a CLI driver for the desktop app that executes [ooniprobe](https://github.com/ooni/probe-cli/) and parses its output.

2. We also need an Android driver for interfacing with Java code generated using go mobile.

3. We also need an iOS driver for interfacing with Objective-C code generated using go mobile.

While this restructuring would certainly reduce the overall complexity, observing this consolidation also begs the question of whether we could further reduce complexity. Let’s explore this possibility.

Maybe a possibility would be to stop using go mobile and to get rid of the Go implementation of `ooniprobe`. We could instead use FFI and a C API to make the same API and data ABI available to all Flutter/Dart clients.

Here's how it would look like:

The objective of the work described in this issue is thus to evaluate this alternative design. We need to understand what API we can expose from Go and which strategy to use to auto-generate messages parsers and serializers.

We have already experimented with this concept in https://github.com/ooni/probe-cli/pull/849, https://github.com/ooni/probe-cli/pull/850, https://github.com/ooni/probe-cli/pull/851. So, far, it seems a good idea to use protobuf. We should continue discussing these prototypes and move a bit forward by trying this code out inside https://github.com/aanorbel/probe-shared. | priority | investigate feasibility of using dart for the cli this issue is about exploring a potential future development direction where we try to integrate tightly android ios desktop and cli by using dart and flutter and by using ffi to access the ooni engine s functionality we are whether to use flutter for all the user facing applications to increase code reuse if we determine that this is doable and desirable then we ll end up in a situation like the following one with this proposal we hope to consolidate lots of algorithms inside a common library called “ui library” we do not mean to modify the way in which we generate mobile bindings that is this means that the ui library will need to implement three distinct drivers for interacting with the ooni engine we need a cli driver for the desktop app that executes and parses its output we also need an android driver for interfacing with java code generated using go mobile we also need an ios driver for interfacing with objective c code generated using go mobile while this restructuring would certainly reduce the overall complexity observing this consolidation also begs the question of whether we could further reduce complexity let’s explore this possibility maybe a possibility would be to stop using go mobile and to get rid of the go implementation of ooniprobe we could instead use ffi and a c api to make the same api and data abi available to all flutter dart clients here s how it would look like the objective of the work described in this issue is thus to evaluate this alternative design we need to understand what api we can expose from go and which strategy to use to auto generate messages parsers and serializers we have already experimented with this concept in so far it seems a good idea to use protobuf we should continue discussing these prototypes and move a bit forward by trying this code out inside | 1 |

429,355 | 12,423,169,537 | IssuesEvent | 2020-05-24 03:34:48 | scprogramming/Python-Security-Analysis-Toolkit | https://api.github.com/repos/scprogramming/Python-Security-Analysis-Toolkit | opened | Consider GUI/User Interface in General | Analysis Medium Priority | Django is not my forte, so I should consider how I want user interaction with the application to look going forward | 1.0 | Consider GUI/User Interface in General - Django is not my forte, so I should consider how I want user interaction with the application to look going forward | priority | consider gui user interface in general django is not my forte so i should consider how i want user interaction with the application to look going forward | 1 |

27,702 | 2,695,255,959 | IssuesEvent | 2015-04-02 03:05:30 | LK/nullpomino | https://api.github.com/repos/LK/nullpomino | closed | Netadmin login should be disabled on default | auto-migrated Priority-Medium Type-Enhancement | ```

Not like netadmin can do anything really dangerous, but it is not good practice

to have remote admin login with default password in default config.

```

Original issue reported on code.google.com by `w.kowa...@gmail.com` on 21 Jan 2012 at 11:38 | 1.0 | Netadmin login should be disabled on default - ```

Not like netadmin can do anything really dangerous, but it is not good practice

to have remote admin login with default password in default config.

```

Original issue reported on code.google.com by `w.kowa...@gmail.com` on 21 Jan 2012 at 11:38 | priority | netadmin login should be disabled on default not like netadmin can do anything really dangerous but it is not good practice to have remote admin login with default password in default config original issue reported on code google com by w kowa gmail com on jan at | 1 |

37,041 | 2,814,466,234 | IssuesEvent | 2015-05-18 20:12:31 | geoffhumphrey/brewcompetitiononlineentry | https://api.github.com/repos/geoffhumphrey/brewcompetitiononlineentry | closed | Contact form on website broken? | auto-migrated Priority-Medium Type-Other | ```

What steps will reproduce the problem?

1. Send a message using the contact form at http://brewcompetition.com/contact

2. Never receive reply.

Just curious if you're getting those messages or not... I've sent several over

the last few months and have not received any response.

```

Original issue reported on code.google.com by `br...@brewdrinkrepeat.com` on 14 Apr 2015 at 6:34 | 1.0 | Contact form on website broken? - ```

What steps will reproduce the problem?

1. Send a message using the contact form at http://brewcompetition.com/contact

2. Never receive reply.

Just curious if you're getting those messages or not... I've sent several over

the last few months and have not received any response.

```

Original issue reported on code.google.com by `br...@brewdrinkrepeat.com` on 14 Apr 2015 at 6:34 | priority | contact form on website broken what steps will reproduce the problem send a message using the contact form at never receive reply just curious if you re getting those messages or not i ve sent several over the last few months and have not received any response original issue reported on code google com by br brewdrinkrepeat com on apr at | 1 |

627,367 | 19,902,886,167 | IssuesEvent | 2022-01-25 09:48:44 | canonical-web-and-design/ubuntu.com | https://api.github.com/repos/canonical-web-and-design/ubuntu.com | closed | ubuntu.com is vulnerable to click jacking | Priority: Medium | Hello,

We got an RT (135497) telling us that ubuntu.com was vulnerable to "click jacking", basically because we don't send an X-Frame-Options header (https://cheatsheetseries.owasp.org/cheatsheets/Clickjacking_Defense_Cheat_Sheet.html)

Could you please take a look and fix, if appropriate ?

Thanks ! | 1.0 | ubuntu.com is vulnerable to click jacking - Hello,

We got an RT (135497) telling us that ubuntu.com was vulnerable to "click jacking", basically because we don't send an X-Frame-Options header (https://cheatsheetseries.owasp.org/cheatsheets/Clickjacking_Defense_Cheat_Sheet.html)

Could you please take a look and fix, if appropriate ?

Thanks ! | priority | ubuntu com is vulnerable to click jacking hello we got an rt telling us that ubuntu com was vulnerable to click jacking basically because we don t send an x frame options header could you please take a look and fix if appropriate thanks | 1 |

220,740 | 7,370,347,098 | IssuesEvent | 2018-03-13 08:05:23 | teamforus/research-and-development | https://api.github.com/repos/teamforus/research-and-development | closed | POC: state/payment channels | fill-template priority-medium proposal | ## poc-state-and-payment-channels

### Background / Context

**Goal/user story:**

**More:**

- Perform safe transactions off-chain, backed by a transaction on-chain.

### Hypothesis:

### Method

*documentation/code*

### Result

*present findings*

### Recommendation

*write recomendation*

| 1.0 | POC: state/payment channels - ## poc-state-and-payment-channels

### Background / Context

**Goal/user story:**

**More:**

- Perform safe transactions off-chain, backed by a transaction on-chain.

### Hypothesis:

### Method

*documentation/code*

### Result

*present findings*

### Recommendation

*write recomendation*

| priority | poc state payment channels poc state and payment channels background context goal user story more perform safe transactions off chain backed by a transaction on chain hypothesis method documentation code result present findings recommendation write recomendation | 1 |

25,933 | 2,684,049,386 | IssuesEvent | 2015-03-28 16:13:53 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | Отладочные версии ругаются насчет "Version not available" | 1 star bug imported Priority-Medium | _From [thecybershadow](https://code.google.com/u/thecybershadow/) on March 02, 2012 10:52:32_

" ConEmu latest version location info" указывает на:

file://T:\VCProject\FarPlugin\ ConEmu \Maximus5\version.ini

Предполагаю что это так только в отладочных версиях. (В альфа-релизах теперь только отладочные сборки?) Oтладочная сборка - первая во списке доступным по ссылке "Latest version".

Не совсем очевидно откуда это сообщение об ошибке.

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=498_ | 1.0 | Отладочные версии ругаются насчет "Version not available" - _From [thecybershadow](https://code.google.com/u/thecybershadow/) on March 02, 2012 10:52:32_

" ConEmu latest version location info" указывает на:

file://T:\VCProject\FarPlugin\ ConEmu \Maximus5\version.ini

Предполагаю что это так только в отладочных версиях. (В альфа-релизах теперь только отладочные сборки?) Oтладочная сборка - первая во списке доступным по ссылке "Latest version".

Не совсем очевидно откуда это сообщение об ошибке.

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=498_ | priority | отладочные версии ругаются насчет version not available from on march conemu latest version location info указывает на file t vcproject farplugin conemu version ini предполагаю что это так только в отладочных версиях в альфа релизах теперь только отладочные сборки oтладочная сборка первая во списке доступным по ссылке latest version не совсем очевидно откуда это сообщение об ошибке original issue | 1 |

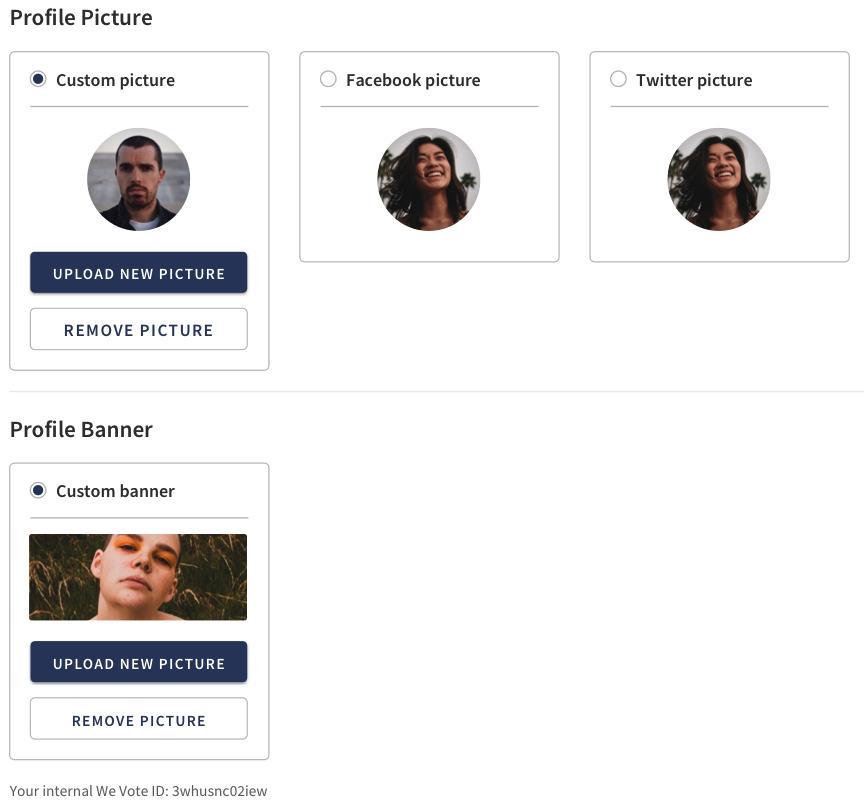

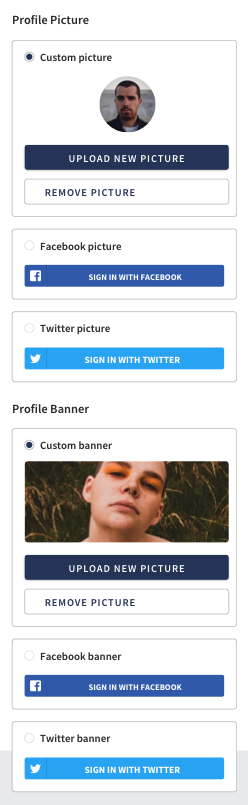

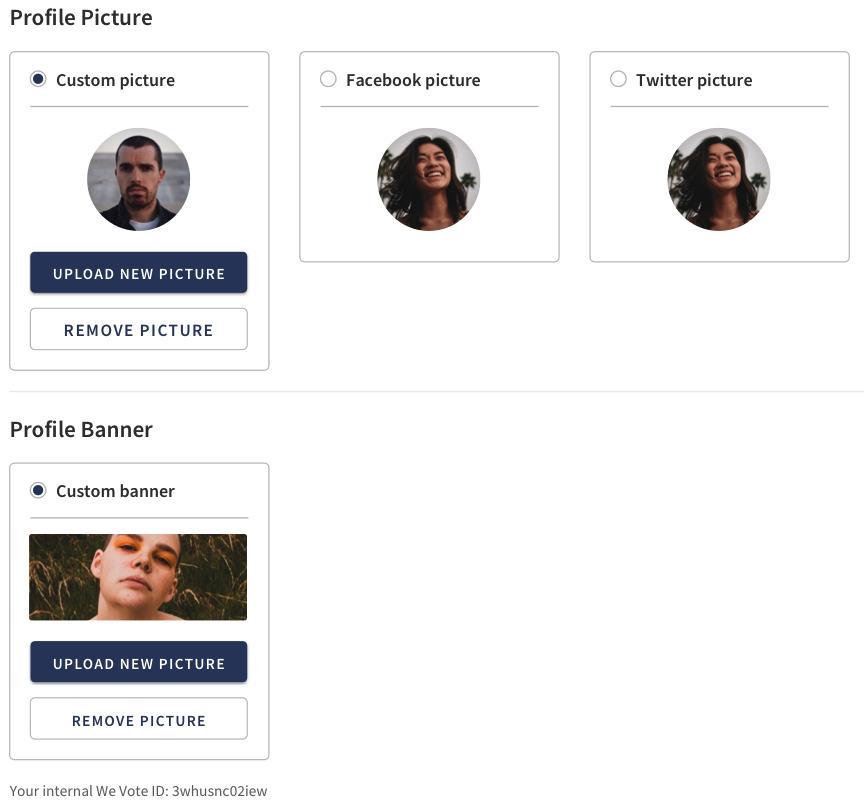

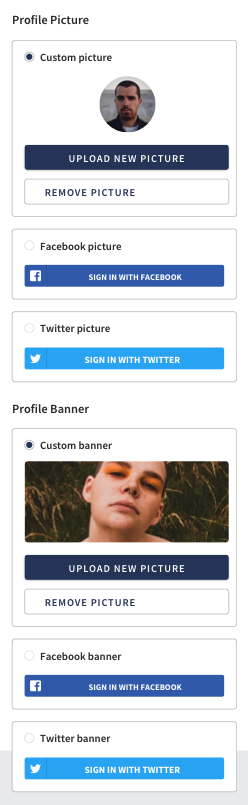

523,316 | 15,178,153,350 | IssuesEvent | 2021-02-14 14:19:24 | wevote/WebApp | https://api.github.com/repos/wevote/WebApp | closed | Add interface for uploading your own Profile Photo and Profile Banner | Difficulty: Medium Priority: 1 | Please implement the React interface for these on the "Settings" > "General Settings" page:

1. uploading your own profile photo

2. your own profile banner

3. Choosing which profile photo you would like to be displayed

4. Choose with profile banner you would like to have displayed

(There is no need to implement the API calls.)

Please add to: http://localhost:3000/settings/profile

src/js/components/Settings/SettingsProfile.jsx

I would recommend implementing the Profile picture interface and the Profile Banner interface in their own components.

PLEASE NOTE: In these mockups, the photos are round, but in We Vote all voter photos are square (we reserve round photos for candidates)

NOTE 2: Given time (not part of this issue), I would like us to find a package that allows drag-and-drop upload of a photo on Desktop

NOTE 3: Given time (not part of this issue), I would like to find a react tool that lets us crop and resize photos before we submit them to the API server.

DESKTOP

MOBILE

We have code that lets you upload a photo on the "Logo & Sharing" page -- there is good example code there:

http://localhost:3000/settings/sharing

See: src/js/components/Settings/SettingsSharing.jsx

| 1.0 | Add interface for uploading your own Profile Photo and Profile Banner - Please implement the React interface for these on the "Settings" > "General Settings" page:

1. uploading your own profile photo

2. your own profile banner

3. Choosing which profile photo you would like to be displayed

4. Choose with profile banner you would like to have displayed

(There is no need to implement the API calls.)

Please add to: http://localhost:3000/settings/profile

src/js/components/Settings/SettingsProfile.jsx

I would recommend implementing the Profile picture interface and the Profile Banner interface in their own components.

PLEASE NOTE: In these mockups, the photos are round, but in We Vote all voter photos are square (we reserve round photos for candidates)