Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

741,306 | 25,787,984,397 | IssuesEvent | 2022-12-09 22:54:36 | Automattic/abacus | https://api.github.com/repos/Automattic/abacus | closed | Impact on email experiments are incorrect | [!priority] medium [type] bug [section] experiment results [!team] explat [!milestone] current | <!-- Brief description/ context of issue. Provide links to p2 posts and relevant GitHub issues and PRs. -->

I was looking at the impact of the recent black friday email experiment and noticed that the impact is incorrect as we are extrapolating the experiment over time when the experiment is a one-time thing.

We ... | 1.0 | Impact on email experiments are incorrect - <!-- Brief description/ context of issue. Provide links to p2 posts and relevant GitHub issues and PRs. -->

I was looking at the impact of the recent black friday email experiment and noticed that the impact is incorrect as we are extrapolating the experiment over time whe... | priority | impact on email experiments are incorrect i was looking at the impact of the recent black friday email experiment and noticed that the impact is incorrect as we are extrapolating the experiment over time when the experiment is a one time thing we should disable impact for email experiments | 1 |

174,695 | 6,542,465,365 | IssuesEvent | 2017-09-02 07:11:07 | uclouvain/openjpeg | https://api.github.com/repos/uclouvain/openjpeg | closed | set reduce_factor_may_fail | bug Priority-Medium | Originally reported on Google Code with ID 474

```

This is the simplest solution (patch attached):

bin/opj_decompress -i Bretagne2.j2k -o Bretagne2.j2k.png -r 8

[INFO] Start to read j2k main header (0).

[ERROR] Error decoding component 0.

The number of resolutions 8 to remove is >= the maximum of resolutions 6 of t... | 1.0 | set reduce_factor_may_fail - Originally reported on Google Code with ID 474

```

This is the simplest solution (patch attached):

bin/opj_decompress -i Bretagne2.j2k -o Bretagne2.j2k.png -r 8

[INFO] Start to read j2k main header (0).

[ERROR] Error decoding component 0.

The number of resolutions 8 to remove is >= the ... | priority | set reduce factor may fail originally reported on google code with id this is the simplest solution patch attached bin opj decompress i o png r start to read main header error decoding component the number of resolutions to remove is the maximum of resolutions of this co... | 1 |

53,923 | 3,052,400,515 | IssuesEvent | 2015-08-12 14:32:54 | jkall/qgis-midvatten-plugin | https://api.github.com/repos/jkall/qgis-midvatten-plugin | closed | allow null values in w_levels_logger "level_masl" | enhancement Priority-Medium | I can not see no reason to set default value -999 | 1.0 | allow null values in w_levels_logger "level_masl" - I can not see no reason to set default value -999 | priority | allow null values in w levels logger level masl i can not see no reason to set default value | 1 |

766,854 | 26,901,845,758 | IssuesEvent | 2023-02-06 16:09:35 | BIDMCDigitalPsychiatry/LAMP-platform | https://api.github.com/repos/BIDMCDigitalPsychiatry/LAMP-platform | opened | Step Count Contains Duplicates and Inaccuracies | bug native core priority MEDIUM | Recently we have noticed that for multiple participants, some of their daily step count values calculated using `cortex.secondary.step_count.step_count` have been incredibly high (>200,000 on some days). We investigated this issue by looking at data from `cortex.raw.steps.steps`.

iPhone example:

Output from this ... | 1.0 | Step Count Contains Duplicates and Inaccuracies - Recently we have noticed that for multiple participants, some of their daily step count values calculated using `cortex.secondary.step_count.step_count` have been incredibly high (>200,000 on some days). We investigated this issue by looking at data from `cortex.raw.st... | priority | step count contains duplicates and inaccuracies recently we have noticed that for multiple participants some of their daily step count values calculated using cortex secondary step count step count have been incredibly high on some days we investigated this issue by looking at data from cortex raw steps ... | 1 |

653,429 | 21,582,037,190 | IssuesEvent | 2022-05-02 19:50:42 | vdjagilev/nmap-formatter | https://api.github.com/repos/vdjagilev/nmap-formatter | closed | Custom variables for custom templates | priority/medium tech/go type/feature tech/html | Add a possibility to pass custom variables for custom templates.

Example:

`--x-opt "foo=${bar}"`

Then this value can be used in a custom template like this:

```html

Some custom variables:

<ul>

<li><b>Foo value:</b> {{.custom.foo}}</li>

</ul>

```

This could be used in automated environments (pipe... | 1.0 | Custom variables for custom templates - Add a possibility to pass custom variables for custom templates.

Example:

`--x-opt "foo=${bar}"`

Then this value can be used in a custom template like this:

```html

Some custom variables:

<ul>

<li><b>Foo value:</b> {{.custom.foo}}</li>

</ul>

```

This could... | priority | custom variables for custom templates add a possibility to pass custom variables for custom templates example x opt foo bar then this value can be used in a custom template like this html some custom variables foo value custom foo this could be used in automat... | 1 |

388,505 | 11,488,471,173 | IssuesEvent | 2020-02-11 13:58:08 | DigitalCampus/oppia-mobile-android | https://api.github.com/repos/DigitalCampus/oppia-mobile-android | closed | Re-organise settings screen | Medium priority enhancement est-4-hours good-first-issue | It's grown and expanded quite a bit, so the ordering is a bit scattered now.

Suggested re-arrangement (keep on same page for now, unless very easy to divide into separate pages:

Visualization

- Preferred language

- Text size

- Display suggested activities

- Num suggested activities to display

- Highlight compl... | 1.0 | Re-organise settings screen - It's grown and expanded quite a bit, so the ordering is a bit scattered now.

Suggested re-arrangement (keep on same page for now, unless very easy to divide into separate pages:

Visualization

- Preferred language

- Text size

- Display suggested activities

- Num suggested activities... | priority | re organise settings screen it s grown and expanded quite a bit so the ordering is a bit scattered now suggested re arrangement keep on same page for now unless very easy to divide into separate pages visualization preferred language text size display suggested activities num suggested activities... | 1 |

526,639 | 15,297,377,891 | IssuesEvent | 2021-02-24 08:20:25 | erlang/otp | https://api.github.com/repos/erlang/otp | closed | ERL-1365: macOS build fails: call_error_handler_size < sizeof(BeamInstr) | bug priority:medium team:VM |

Original reporter: `dmorneau`

Affected version: `OTP-24.0`

Component: `erts`

Migrated from: https://bugs.erlang.org/browse/ERL-1365

---

```

I get this compilation error building the master branch on macOS 11.0 beta:

{code:java}

beam/jit/beam_asm.cpp:726:beamasm_emit_call_error_handler() Assertion failed: buff_len - ... | 1.0 | ERL-1365: macOS build fails: call_error_handler_size < sizeof(BeamInstr) -

Original reporter: `dmorneau`

Affected version: `OTP-24.0`

Component: `erts`

Migrated from: https://bugs.erlang.org/browse/ERL-1365

---

```

I get this compilation error building the master branch on macOS 11.0 beta:

{code:java}

beam/jit/beam_... | priority | erl macos build fails call error handler size sizeof beaminstr original reporter dmorneau affected version otp component erts migrated from i get this compilation error building the master branch on macos beta code java beam jit beam asm cpp beamasm emit call error handler ... | 1 |

625,258 | 19,723,450,410 | IssuesEvent | 2022-01-13 17:29:20 | hashicorp/terraform-cdk | https://api.github.com/repos/hashicorp/terraform-cdk | closed | Use `local` backend instead of `-state` CLI option for local state | enhancement cdktf priority/important-longterm size/medium | <!--- Please keep this note for the community --->

### Community Note

- Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

- Please do not l... | 1.0 | Use `local` backend instead of `-state` CLI option for local state - <!--- Please keep this note for the community --->

### Community Note

- Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the... | priority | use local backend instead of state cli option for local state community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or que... | 1 |

48,069 | 2,990,142,532 | IssuesEvent | 2015-07-21 07:16:00 | jayway/rest-assured | https://api.github.com/repos/jayway/rest-assured | closed | Add JsonPath support for Spring Hateoas (EnableHateoas) | Duplicate enhancement imported Priority-Medium | _From [johan.ha...@gmail.com](https://code.google.com/u/105676376875942041029/) on December 05, 2013 12:39:09_

https://github.com/spring-projects/spring-hateoas#enablehypermediasupport

_Original issue: http://code.google.com/p/rest-assured/issues/detail?id=287_ | 1.0 | Add JsonPath support for Spring Hateoas (EnableHateoas) - _From [johan.ha...@gmail.com](https://code.google.com/u/105676376875942041029/) on December 05, 2013 12:39:09_

https://github.com/spring-projects/spring-hateoas#enablehypermediasupport

_Original issue: http://code.google.com/p/rest-assured/issues/detail?id=287... | priority | add jsonpath support for spring hateoas enablehateoas from on december original issue | 1 |

665,222 | 22,304,025,588 | IssuesEvent | 2022-06-13 11:23:07 | virtual-tech-school/youtube-clone | https://api.github.com/repos/virtual-tech-school/youtube-clone | opened | Channel Page | :pushpin: task :orange_square: priority: medium :orange_circle: level: medium | Create a channel page, similar to how it is on YouTube. Reference - https://www.youtube.com/c/ApoorvGoyalMain

The page would container -

- [ ] Banner (with links on top)

- [ ] Profile Image, Channel Name, Subscribe Button, etc.

- [ ] Tabs (Home, Videos, Playlists, etc.)

Branch Name - issue-9 | 1.0 | Channel Page - Create a channel page, similar to how it is on YouTube. Reference - https://www.youtube.com/c/ApoorvGoyalMain

The page would container -

- [ ] Banner (with links on top)

- [ ] Profile Image, Channel Name, Subscribe Button, etc.

- [ ] Tabs (Home, Videos, Playlists, etc.)

Branch Name - issue-9 | priority | channel page create a channel page similar to how it is on youtube reference the page would container banner with links on top profile image channel name subscribe button etc tabs home videos playlists etc branch name issue | 1 |

617,273 | 19,346,655,463 | IssuesEvent | 2021-12-15 11:32:16 | numba/numba | https://api.github.com/repos/numba/numba | closed | Unify more of the CPU and GPU implementations | mediumpriority feature_request | Is there redundant code between the different targets? (Probably yes.)

| 1.0 | Unify more of the CPU and GPU implementations - Is there redundant code between the different targets? (Probably yes.)

| priority | unify more of the cpu and gpu implementations is there redundant code between the different targets probably yes | 1 |

174,287 | 6,538,859,443 | IssuesEvent | 2017-09-01 08:35:40 | apache/incubator-openwhisk-wskdeploy | https://api.github.com/repos/apache/incubator-openwhisk-wskdeploy | closed | Add more CI test cases. | priority: medium | Now as we started the job of support CI with Openwhisk deployments, we still lack util codes to support CI test, we need to add this infra/shim. | 1.0 | Add more CI test cases. - Now as we started the job of support CI with Openwhisk deployments, we still lack util codes to support CI test, we need to add this infra/shim. | priority | add more ci test cases now as we started the job of support ci with openwhisk deployments we still lack util codes to support ci test we need to add this infra shim | 1 |

363,489 | 10,741,716,869 | IssuesEvent | 2019-10-29 20:50:16 | PlasmaPy/PlasmaPy | https://api.github.com/repos/PlasmaPy/PlasmaPy | opened | Where should we put example interfaces to simulation packages? | Needs decision Priority: medium simulations | While discussing PlasmaPy top-level package structure during our community meeting today and at the APS DPP meeting last week (https://github.com/PlasmaPy/PlasmaPy-PLEPs/pull/26), the issue came up of where to put example interfaces to existing simulation packages.

For some background, our current plan is to creat... | 1.0 | Where should we put example interfaces to simulation packages? - While discussing PlasmaPy top-level package structure during our community meeting today and at the APS DPP meeting last week (https://github.com/PlasmaPy/PlasmaPy-PLEPs/pull/26), the issue came up of where to put example interfaces to existing simulation... | priority | where should we put example interfaces to simulation packages while discussing plasmapy top level package structure during our community meeting today and at the aps dpp meeting last week the issue came up of where to put example interfaces to existing simulation packages for some background our current pl... | 1 |

584,386 | 17,422,783,979 | IssuesEvent | 2021-08-04 05:00:50 | staynomad/Nomad-Back | https://api.github.com/repos/staynomad/Nomad-Back | closed | sendVerificationEmail() has incorrect parameters | dev:bug difficulty:easy priority:medium | # Background

<!--- Put any relevant background information here. --->

`sendVerificationEmail` should have name, email, and userId as parameters. All instances of `sendVerificationEmail` only have two parameters (email and userId).

# Task

<!--- Put the task here (ideally bullet points). --->

- Update all instanc... | 1.0 | sendVerificationEmail() has incorrect parameters - # Background

<!--- Put any relevant background information here. --->

`sendVerificationEmail` should have name, email, and userId as parameters. All instances of `sendVerificationEmail` only have two parameters (email and userId).

# Task

<!--- Put the task here (... | priority | sendverificationemail has incorrect parameters background sendverificationemail should have name email and userid as parameters all instances of sendverificationemail only have two parameters email and userid task update all instances of sendverificationemail to pass in parameters ... | 1 |

721,566 | 24,831,656,173 | IssuesEvent | 2022-10-26 04:26:47 | AY2223S1-CS2103T-T12-4/tp | https://api.github.com/repos/AY2223S1-CS2103T-T12-4/tp | closed | Add information for patient showing medication allergies | type.Story priority.Medium type.NoteBased | As a private nurse I want to know what type of medication my patient is allergic to so that I can avoid any potential mistake

| 1.0 | Add information for patient showing medication allergies - As a private nurse I want to know what type of medication my patient is allergic to so that I can avoid any potential mistake

| priority | add information for patient showing medication allergies as a private nurse i want to know what type of medication my patient is allergic to so that i can avoid any potential mistake | 1 |

492,384 | 14,201,511,201 | IssuesEvent | 2020-11-16 07:49:41 | onaio/reveal-frontend | https://api.github.com/repos/onaio/reveal-frontend | opened | Add ability to Edit the Activities on Draft Case Triggered Plans | Priority: Medium | Presently, a user cannot edit (remove) the activities on draft a case triggered plan. The client would want this feature developed. | 1.0 | Add ability to Edit the Activities on Draft Case Triggered Plans - Presently, a user cannot edit (remove) the activities on draft a case triggered plan. The client would want this feature developed. | priority | add ability to edit the activities on draft case triggered plans presently a user cannot edit remove the activities on draft a case triggered plan the client would want this feature developed | 1 |

289,220 | 8,861,854,675 | IssuesEvent | 2019-01-10 02:43:42 | sussol/mobile | https://api.github.com/repos/sussol/mobile | opened | "Monthly usage" in current stock page row expansion not translated | Effort small Priority: Medium | Build Number: 2.2.0-rc0

Description:

https://github.com/sussol/mobile/blob/529c4459f61c7f934c9af3d8272a14c75242929b/src/pages/StockPage.js#L94

This line doesn't use localisation, and is a typo "Montly Usage"

Should have typo corrected for English and should use localisation.

Reproducible: Yes

Reproduction... | 1.0 | "Monthly usage" in current stock page row expansion not translated - Build Number: 2.2.0-rc0

Description:

https://github.com/sussol/mobile/blob/529c4459f61c7f934c9af3d8272a14c75242929b/src/pages/StockPage.js#L94

This line doesn't use localisation, and is a typo "Montly Usage"

Should have typo corrected for Engl... | priority | monthly usage in current stock page row expansion not translated build number description this line doesn t use localisation and is a typo montly usage should have typo corrected for english and should use localisation reproducible yes reproduction steps go there and look comments... | 1 |

205,670 | 7,104,589,060 | IssuesEvent | 2018-01-16 10:29:33 | AnSyn/ansyn | https://api.github.com/repos/AnSyn/ansyn | closed | Bug- shadow mouse- cursor on inactive screen | Bug Priority: High Severity: Medium |

**Current behavior**

When hovering an inactive screen with the cursor- the user can see both the shadow mouse cross and the cursor in the same screen.

**Expected behavior**

the shadow mouse cross should not appear, so the user wont be confused.

**Minimal reproduction of the problem with instructions**

... | 1.0 | Bug- shadow mouse- cursor on inactive screen -

**Current behavior**

When hovering an inactive screen with the cursor- the user can see both the shadow mouse cross and the cursor in the same screen.

**Expected behavior**

the shadow mouse cross should not appear, so the user wont be confused.

**Minimal rep... | priority | bug shadow mouse cursor on inactive screen current behavior when hovering an inactive screen with the cursor the user can see both the shadow mouse cross and the cursor in the same screen expected behavior the shadow mouse cross should not appear so the user wont be confused minimal rep... | 1 |

448,252 | 12,946,283,832 | IssuesEvent | 2020-07-18 18:26:12 | status-im/nim-beacon-chain | https://api.github.com/repos/status-im/nim-beacon-chain | opened | [SEC] Brittle AES-GCM Tag/IV Construction in Nimcrypto | difficulty:medium low priority nbc-audit-2020-0 :passport_control: security status:reported | ## Description

The [`bcmode.nim`](https://github.com/cheatfate/nimcrypto/blob/f767595f4ddec2b5570b5194feb96954c00a6499/nimcrypto/bcmode.nim) source file provides support for large range of block ciphers including AES-ECB and AES-GCM.

For the AES-GCM cipher mode, the code provides a `getTag()` function that gives... | 1.0 | [SEC] Brittle AES-GCM Tag/IV Construction in Nimcrypto - ## Description

The [`bcmode.nim`](https://github.com/cheatfate/nimcrypto/blob/f767595f4ddec2b5570b5194feb96954c00a6499/nimcrypto/bcmode.nim) source file provides support for large range of block ciphers including AES-ECB and AES-GCM.

For the AES-GCM cipher... | priority | brittle aes gcm tag iv construction in nimcrypto description the source file provides support for large range of block ciphers including aes ecb and aes gcm for the aes gcm cipher mode the code provides a gettag function that gives the caller access to the authentication tag for both encryption ... | 1 |

522,633 | 15,164,147,480 | IssuesEvent | 2021-02-12 13:19:28 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.2.0 beta develop-111]Map Filter UX improvement: Adding folders/sorting for different layers by category | Category: UI Priority: Medium Squad: Wild Turkey Status: Fixed Type: Quality of Life | Would like to see a massive quality of life improvement when using the map filters and looking at different layers.

Right now for example when looking into Plants we have a list of 120+ items which is very annoying when interacting with the map

this could get some very good improvements by categorizing further into su... | 1.0 | [0.9.2.0 beta develop-111]Map Filter UX improvement: Adding folders/sorting for different layers by category - Would like to see a massive quality of life improvement when using the map filters and looking at different layers.

Right now for example when looking into Plants we have a list of 120+ items which is very an... | priority | map filter ux improvement adding folders sorting for different layers by category would like to see a massive quality of life improvement when using the map filters and looking at different layers right now for example when looking into plants we have a list of items which is very annoying when interacting wit... | 1 |

177,811 | 6,587,465,975 | IssuesEvent | 2017-09-13 21:11:17 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [craftercms] Add craftercms-test-suite to the craftercms bundle | Priority: Medium task | Please add https://github.com/craftercms/craftercms-test-suite to the core bundle and allow testing.

`gradle init` should pull this repo along with others.

`gradle test` should check if started, if not, error out and ask the user to `start` first (I'm open to suggestions here) -- this should also tell the user wher... | 1.0 | [craftercms] Add craftercms-test-suite to the craftercms bundle - Please add https://github.com/craftercms/craftercms-test-suite to the core bundle and allow testing.

`gradle init` should pull this repo along with others.

`gradle test` should check if started, if not, error out and ask the user to `start` first (I'... | priority | add craftercms test suite to the craftercms bundle please add to the core bundle and allow testing gradle init should pull this repo along with others gradle test should check if started if not error out and ask the user to start first i m open to suggestions here this should also tell the user... | 1 |

91,907 | 3,863,517,333 | IssuesEvent | 2016-04-08 09:45:49 | iamxavier/elmah | https://api.github.com/repos/iamxavier/elmah | closed | Add a timestamp column to the database schema | auto-migrated Priority-Medium Type-Enhancement | ```

What new or enhanced feature are you proposing?

Add a timestamp column to the database schema.

What goal would this enhancement help you achieve?

It would support Linq-To-Sql better, by allowing it to intrinsically

resolve optimistic concurrency when using a disconnected Linq-To-Sql model.

Furthermore, this wo... | 1.0 | Add a timestamp column to the database schema - ```

What new or enhanced feature are you proposing?

Add a timestamp column to the database schema.

What goal would this enhancement help you achieve?

It would support Linq-To-Sql better, by allowing it to intrinsically

resolve optimistic concurrency when using a disco... | priority | add a timestamp column to the database schema what new or enhanced feature are you proposing add a timestamp column to the database schema what goal would this enhancement help you achieve it would support linq to sql better by allowing it to intrinsically resolve optimistic concurrency when using a disco... | 1 |

87,299 | 3,744,701,288 | IssuesEvent | 2016-03-10 03:30:15 | rettigs/cs-senior-capstone | https://api.github.com/repos/rettigs/cs-senior-capstone | closed | Make manual overrides apply until next rule change rather than set time period | enhancement priority:medium | Currently, manual overrides last for a configurable amount of time (2 hours by default). They should last until a rule would have otherwise changed the state. For example, if your lights are set to turn off every morning at 6am, but you manually turn them on at 7am, they will stay on all day until the next morning at... | 1.0 | Make manual overrides apply until next rule change rather than set time period - Currently, manual overrides last for a configurable amount of time (2 hours by default). They should last until a rule would have otherwise changed the state. For example, if your lights are set to turn off every morning at 6am, but you ... | priority | make manual overrides apply until next rule change rather than set time period currently manual overrides last for a configurable amount of time hours by default they should last until a rule would have otherwise changed the state for example if your lights are set to turn off every morning at but you ma... | 1 |

451,624 | 13,039,427,997 | IssuesEvent | 2020-07-28 16:44:04 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | opened | AOD Cases should sort to the top | Priority: Medium Product: caseflow-queue Stakeholder: BVA Team: Echo 🐬 Type: Bug | ## Description

While investigating a seperate issue in production, I observed that AOD cases are not bubbling up to the top of a users queue, like we want. Specifically, non-original cases were appearing first in a Judges Assign queue.

## Acceptance criteria

- [ ] AOD cases should always sort to the top of a users... | 1.0 | AOD Cases should sort to the top - ## Description

While investigating a seperate issue in production, I observed that AOD cases are not bubbling up to the top of a users queue, like we want. Specifically, non-original cases were appearing first in a Judges Assign queue.

## Acceptance criteria

- [ ] AOD cases shoul... | priority | aod cases should sort to the top description while investigating a seperate issue in production i observed that aod cases are not bubbling up to the top of a users queue like we want specifically non original cases were appearing first in a judges assign queue acceptance criteria aod cases should ... | 1 |

181,312 | 6,658,276,638 | IssuesEvent | 2017-09-30 17:15:11 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | Outgoing webhooks shouldn't throw an exception on network errors | area: api area: bots bug in progress priority: medium | I think we try to suppress these in `do_rest_call`, but the exception list is clearly not complete.

```

Traceback (most recent call last):

File "/home/zulip/deployments/2017-08-14-16-02-48/zerver/lib/outgoing_webhook.py", line 192, in do_rest_call

response = requests.request(http_method, final_url, data=req... | 1.0 | Outgoing webhooks shouldn't throw an exception on network errors - I think we try to suppress these in `do_rest_call`, but the exception list is clearly not complete.

```

Traceback (most recent call last):

File "/home/zulip/deployments/2017-08-14-16-02-48/zerver/lib/outgoing_webhook.py", line 192, in do_rest_cal... | priority | outgoing webhooks shouldn t throw an exception on network errors i think we try to suppress these in do rest call but the exception list is clearly not complete traceback most recent call last file home zulip deployments zerver lib outgoing webhook py line in do rest call res... | 1 |

787,635 | 27,725,294,521 | IssuesEvent | 2023-03-15 01:21:52 | vrchatapi/vrchatapi.github.io | https://api.github.com/repos/vrchatapi/vrchatapi.github.io | closed | Missing Websocket events | Type: Undocumented Endpoint Priority: Medium | The defined ones on web are:

notification, notification-v2, notification-v2-update, notification-v2-delete, see-notification, hide-notification, clear-notification, friend-add, friend-delete, friend-online, friend-active, friend-offline, friend-update, friend-location, user-update, user-location

The new ones are:

... | 1.0 | Missing Websocket events - The defined ones on web are:

notification, notification-v2, notification-v2-update, notification-v2-delete, see-notification, hide-notification, clear-notification, friend-add, friend-delete, friend-online, friend-active, friend-offline, friend-update, friend-location, user-update, user-loca... | priority | missing websocket events the defined ones on web are notification notification notification update notification delete see notification hide notification clear notification friend add friend delete friend online friend active friend offline friend update friend location user update user locatio... | 1 |

690,808 | 23,673,098,027 | IssuesEvent | 2022-08-27 17:16:00 | crcn/tandem | https://api.github.com/repos/crcn/tandem | opened | support different rendering engines | priority: medium effort: medium | Good exercise for figuring out a generic library.

Examples:

- ThreeJS

Testing how to:

- Add custom components

- Generic sidebar controls

- generic canvas controls

| 1.0 | support different rendering engines - Good exercise for figuring out a generic library.

Examples:

- ThreeJS

Testing how to:

- Add custom components

- Generic sidebar controls

- generic canvas controls

| priority | support different rendering engines good exercise for figuring out a generic library examples threejs testing how to add custom components generic sidebar controls generic canvas controls | 1 |

419,624 | 12,226,075,035 | IssuesEvent | 2020-05-03 09:06:51 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Provide topicref to topics handled in processTopic mode called from generatePageSequenceFromTopicref | enhancement plugin/pdf priority/medium stale | org.dita.pdf2 plugin

## Expected Behavior

Templates handling topics have easy access to the topicref that referred to the topic.

## Actual Behavior

Topicref is not available.

## Possible Solution

Pass the topicref as a tunnel parameter to templates in mode processTopic in root-processing.xsl:

```

... | 1.0 | Provide topicref to topics handled in processTopic mode called from generatePageSequenceFromTopicref - org.dita.pdf2 plugin

## Expected Behavior

Templates handling topics have easy access to the topicref that referred to the topic.

## Actual Behavior

Topicref is not available.

## Possible Solution

Pas... | priority | provide topicref to topics handled in processtopic mode called from generatepagesequencefromtopicref org dita plugin expected behavior templates handling topics have easy access to the topicref that referred to the topic actual behavior topicref is not available possible solution pass t... | 1 |

413,788 | 12,092,155,253 | IssuesEvent | 2020-04-19 14:35:40 | lorenzwalthert/precommit | https://api.github.com/repos/lorenzwalthert/precommit | closed | Allow to choose installation environment | Complexity: Medium Priority: High Status: WIP Type: Enhancement | As mentioned in [#113 ](https://github.com/lorenzwalthert/precommit/issues/113#issuecomment-603808455). Recently I've tried to run `keras::install_keras()` inside the Docker image with already installed precommit. Looks like both keras and tensorflow are now [installed by default to `r-reticulate`](https://github.com/r... | 1.0 | Allow to choose installation environment - As mentioned in [#113 ](https://github.com/lorenzwalthert/precommit/issues/113#issuecomment-603808455). Recently I've tried to run `keras::install_keras()` inside the Docker image with already installed precommit. Looks like both keras and tensorflow are now [installed by defa... | priority | allow to choose installation environment as mentioned in recently i ve tried to run keras install keras inside the docker image with already installed precommit looks like both keras and tensorflow are now this results in the following error collecting package metadata current repodata json ... | 1 |

224,254 | 7,468,230,018 | IssuesEvent | 2018-04-02 18:14:25 | IfyAniefuna/experiment_metadata | https://api.github.com/repos/IfyAniefuna/experiment_metadata | closed | Implement drag and drop functionality for Yara and Richard's master spreadsheet | medium priority | Include the feature to specify which rows (I believe it was the serial number that was used as a label) in the imported CSV to output, avoiding generating CSVs for a massive spreadsheet. | 1.0 | Implement drag and drop functionality for Yara and Richard's master spreadsheet - Include the feature to specify which rows (I believe it was the serial number that was used as a label) in the imported CSV to output, avoiding generating CSVs for a massive spreadsheet. | priority | implement drag and drop functionality for yara and richard s master spreadsheet include the feature to specify which rows i believe it was the serial number that was used as a label in the imported csv to output avoiding generating csvs for a massive spreadsheet | 1 |

77,076 | 3,506,258,706 | IssuesEvent | 2016-01-08 05:02:24 | OregonCore/OregonCore | https://api.github.com/repos/OregonCore/OregonCore | closed | .server restart (BB #137) | migrated Priority: Medium Type: Bug | This issue was migrated from bitbucket.

**Original Reporter:** digerago

**Original Date:** 02.05.2010 17:18:46 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** resolved

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/137

<hr>

I have long observed this bug.

when I use the ... | 1.0 | .server restart (BB #137) - This issue was migrated from bitbucket.

**Original Reporter:** digerago

**Original Date:** 02.05.2010 17:18:46 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** resolved

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/137

<hr>

I have long observ... | priority | server restart bb this issue was migrated from bitbucket original reporter digerago original date gmt original priority major original type bug original state resolved direct link i have long observed this bug when i use the command server restart time se... | 1 |

36,158 | 2,796,141,580 | IssuesEvent | 2015-05-12 04:21:59 | twogee/ant-http | https://api.github.com/repos/twogee/ant-http | opened | Create test cases for ant task | auto-migrated Milestone-1.2 Priority-Medium Project-ant-http Type-Task | <a href="https://github.com/GoogleCodeExporter"><img src="https://avatars.githubusercontent.com/u/9614759?v=3" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [GoogleCodeExporter](https://github.com/GoogleCodeExporter)**

_Monday May 11, 2015 at 22:05 GMT_

_Originally opened as https://github.com/t... | 1.0 | Create test cases for ant task - <a href="https://github.com/GoogleCodeExporter"><img src="https://avatars.githubusercontent.com/u/9614759?v=3" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [GoogleCodeExporter](https://github.com/GoogleCodeExporter)**

_Monday May 11, 2015 at 22:05 GMT_

_Original... | priority | create test cases for ant task issue by monday may at gmt originally opened as create test cases for ant task original issue reported on code google com by alex she gmail com on mar at | 1 |

48,053 | 2,990,137,908 | IssuesEvent | 2015-07-21 07:13:31 | jayway/rest-assured | https://api.github.com/repos/jayway/rest-assured | closed | Add peek and prettyPeek to XmlPath | enhancement imported Priority-Medium | _From [johan.ha...@gmail.com](https://code.google.com/u/105676376875942041029/) on November 21, 2013 16:41:00_

Should print the value to the console but return an instance of XmlPath

_Original issue: http://code.google.com/p/rest-assured/issues/detail?id=271_ | 1.0 | Add peek and prettyPeek to XmlPath - _From [johan.ha...@gmail.com](https://code.google.com/u/105676376875942041029/) on November 21, 2013 16:41:00_

Should print the value to the console but return an instance of XmlPath

_Original issue: http://code.google.com/p/rest-assured/issues/detail?id=271_ | priority | add peek and prettypeek to xmlpath from on november should print the value to the console but return an instance of xmlpath original issue | 1 |

600,064 | 18,288,680,677 | IssuesEvent | 2021-10-05 13:09:42 | qlicker/qlicker | https://api.github.com/repos/qlicker/qlicker | closed | Bug in group managements | bug Medium priority | Suppose you go to Manage Groups, and there are 10 groups numbered 1 to 10. Suppose you delete group #5.

Now go to Group 1, and click the Next Group -> button. You will go Group 1, Group 2, Group 3, Group 4, Group 6, Group 8, Group 10.

The <- Previous Group button only works when you are on Groups 1-4, but doesn... | 1.0 | Bug in group managements - Suppose you go to Manage Groups, and there are 10 groups numbered 1 to 10. Suppose you delete group #5.

Now go to Group 1, and click the Next Group -> button. You will go Group 1, Group 2, Group 3, Group 4, Group 6, Group 8, Group 10.

The <- Previous Group button only works when you a... | priority | bug in group managements suppose you go to manage groups and there are groups numbered to suppose you delete group now go to group and click the next group button you will go group group group group group group group the previous group button only works when you are ... | 1 |

283,012 | 8,712,895,467 | IssuesEvent | 2018-12-06 23:59:53 | aowen87/TicketTester | https://api.github.com/repos/aowen87/TicketTester | closed | VisIt hangs during re-execution prompted by pick. | bug crash likelihood medium priority reviewed severity high wrong results | This is a bug Bruce Hammel claims to have been experiencing with VisIt for years, so I've set the priority as high, now that I have reproducible steps.

This seems only to occur with 2 nodes. Multiple processors on single node does not replicate.

Also seems only to occur in conjunction with CoordSwap operator, and w... | 1.0 | VisIt hangs during re-execution prompted by pick. - This is a bug Bruce Hammel claims to have been experiencing with VisIt for years, so I've set the priority as high, now that I have reproducible steps.

This seems only to occur with 2 nodes. Multiple processors on single node does not replicate.

Also seems only to... | priority | visit hangs during re execution prompted by pick this is a bug bruce hammel claims to have been experiencing with visit for years so i ve set the priority as high now that i have reproducible steps this seems only to occur with nodes multiple processors on single node does not replicate also seems only to... | 1 |

174,071 | 6,536,220,774 | IssuesEvent | 2017-08-31 17:17:28 | OperationCode/operationcode_frontend | https://api.github.com/repos/OperationCode/operationcode_frontend | closed | Update Raven Config | beginner friendly Priority: Medium Type: Feature | <!-- Please fill out one of the sections below based on the type of issue you're creating -->

# Feature

## Why is this feature being added?

<!-- What problem is it solving? What value does it add? -->

Raven is reporting into the backend project in Sentry and there's no good way to separate out issues.

## What sh... | 1.0 | Update Raven Config - <!-- Please fill out one of the sections below based on the type of issue you're creating -->

# Feature

## Why is this feature being added?

<!-- What problem is it solving? What value does it add? -->

Raven is reporting into the backend project in Sentry and there's no good way to separate out... | priority | update raven config feature why is this feature being added raven is reporting into the backend project in sentry and there s no good way to separate out issues what should your feature do replace the existing raven config with this line raven config | 1 |

85,498 | 3,691,042,387 | IssuesEvent | 2016-02-25 22:18:03 | BCGamer/website | https://api.github.com/repos/BCGamer/website | closed | Replace built-in mezzanine account/email | enhancement medium priority | -Signup Verify - This email is sent when a user signs up, this is OOB and looks pretty terrible. Not a good start for the LANtasy user experience.

-Account Approved - This is the email that is sent when a user has been verified.

-Password Reset - This email is sent when a user need to reset their password.

Will ne... | 1.0 | Replace built-in mezzanine account/email - -Signup Verify - This email is sent when a user signs up, this is OOB and looks pretty terrible. Not a good start for the LANtasy user experience.

-Account Approved - This is the email that is sent when a user has been verified.

-Password Reset - This email is sent when a us... | priority | replace built in mezzanine account email signup verify this email is sent when a user signs up this is oob and looks pretty terrible not a good start for the lantasy user experience account approved this is the email that is sent when a user has been verified password reset this email is sent when a us... | 1 |

450,792 | 13,019,374,203 | IssuesEvent | 2020-07-26 22:13:21 | dreadnoughtsix/dbmakerpy | https://api.github.com/repos/dreadnoughtsix/dbmakerpy | opened | Handle databases in the current working directory | priority: medium status: in progress type: feature | As the client uses the script in a specific directory, the DBMakerPy must be able to handle `.db` files.

- [ ] Check the working directory for `.db` files

- [ ] Ask the client if they want to access one of the specific databases | 1.0 | Handle databases in the current working directory - As the client uses the script in a specific directory, the DBMakerPy must be able to handle `.db` files.

- [ ] Check the working directory for `.db` files

- [ ] Ask the client if they want to access one of the specific databases | priority | handle databases in the current working directory as the client uses the script in a specific directory the dbmakerpy must be able to handle db files check the working directory for db files ask the client if they want to access one of the specific databases | 1 |

753,617 | 26,355,879,999 | IssuesEvent | 2023-01-11 09:40:27 | pystardust/ani-cli | https://api.github.com/repos/pystardust/ani-cli | opened | ani-cli -U not working No search results found ani-cli | type: bug priority 2: medium | **Metadata (please complete the following information)**

Version: [e.g. 2.0.2]

OS: [e.g. Windows 10 / Linux Mint 20.3]

Shell: [e.g. zsh, run `readlink /bin/sh` to get your shell]

Anime: [e.g. flcl] (if applicable)

**Describe the bug**

Downloading is broken.

It says something about an unsupported protocol, see ... | 1.0 | ani-cli -U not working No search results found ani-cli - **Metadata (please complete the following information)**

Version: [e.g. 2.0.2]

OS: [e.g. Windows 10 / Linux Mint 20.3]

Shell: [e.g. zsh, run `readlink /bin/sh` to get your shell]

Anime: [e.g. flcl] (if applicable)

**Describe the bug**

Downloading is broke... | priority | ani cli u not working no search results found ani cli metadata please complete the following information version os shell anime if applicable describe the bug downloading is broken it says something about an unsupported protocol see screenshot steps to reproduce run ... | 1 |

20,590 | 2,622,854,065 | IssuesEvent | 2015-03-04 08:06:48 | max99x/pagemon-chrome-ext | https://api.github.com/repos/max99x/pagemon-chrome-ext | closed | email notifications | auto-migrated Priority-Medium | ```

This seems like the perfect app for what I need except that I need to get

notifications by text or email so that I can be aware of changes immediately

even when I am not near my computer. Is that possible with this app?

```

Original issue reported on code.google.com by `flordech...@gmail.com` on 6 Feb 2014 at ... | 1.0 | email notifications - ```

This seems like the perfect app for what I need except that I need to get

notifications by text or email so that I can be aware of changes immediately

even when I am not near my computer. Is that possible with this app?

```

Original issue reported on code.google.com by `flordech...@gmail.... | priority | email notifications this seems like the perfect app for what i need except that i need to get notifications by text or email so that i can be aware of changes immediately even when i am not near my computer is that possible with this app original issue reported on code google com by flordech gmail ... | 1 |

3,229 | 2,537,516,740 | IssuesEvent | 2015-01-26 21:08:07 | web2py/web2py | https://api.github.com/repos/web2py/web2py | opened | T.is_writable cannot be disabled | 1 star bug imported Priority-Medium | _From [mca..._at_gmail.com](https://code.google.com/u/109910092030153265550/) on May 20, 2014 21:48:48_

* What steps will reproduce the problem?

-Test this in a controller:

T.is_writable = False

def index():

db = DAL('sqlite:memory:')

db.define_table('event',

Field('date_time', 'datetime')

... | 1.0 | T.is_writable cannot be disabled - _From [mca..._at_gmail.com](https://code.google.com/u/109910092030153265550/) on May 20, 2014 21:48:48_

* What steps will reproduce the problem?

-Test this in a controller:

T.is_writable = False

def index():

db = DAL('sqlite:memory:')

db.define_table('event',

... | priority | t is writable cannot be disabled from on may what steps will reproduce the problem test this in a controller t is writable false def index db dal sqlite memory db define table event field date time datetime return dict html sqlform ... | 1 |

133,184 | 5,198,494,482 | IssuesEvent | 2017-01-23 18:16:34 | Innovate-Inc/EnviroAtlas | https://api.github.com/repos/Innovate-Inc/EnviroAtlas | closed | Enable both National and Community geography toggle switches at start | Medium Priority MVP tableOfContents | This is a very tiny request, but creating an issue so we don't forget and can check it off the list! | 1.0 | Enable both National and Community geography toggle switches at start - This is a very tiny request, but creating an issue so we don't forget and can check it off the list! | priority | enable both national and community geography toggle switches at start this is a very tiny request but creating an issue so we don t forget and can check it off the list | 1 |

49,348 | 3,002,141,395 | IssuesEvent | 2015-07-24 15:32:33 | jayway/powermock | https://api.github.com/repos/jayway/powermock | opened | PowerMockRule interacts poorly with mocked member variables | bug imported Priority-Medium | _From [nachtr...@gmail.com](https://code.google.com/u/112111327311455846174/) on January 18, 2012 23:15:32_

What steps will reproduce the problem? Following the methodology here: https://code.google.com/p/powermock/wiki/PowerMockRule Set up a test that mocks some sort of static class. In this case it's a custom SQLUt... | 1.0 | PowerMockRule interacts poorly with mocked member variables - _From [nachtr...@gmail.com](https://code.google.com/u/112111327311455846174/) on January 18, 2012 23:15:32_

What steps will reproduce the problem? Following the methodology here: https://code.google.com/p/powermock/wiki/PowerMockRule Set up a test that mock... | priority | powermockrule interacts poorly with mocked member variables from on january what steps will reproduce the problem following the methodology here set up a test that mocks some sort of static class in this case it s a custom sqlutils object that takes a java sql connection and a string for our... | 1 |

140,577 | 5,412,556,667 | IssuesEvent | 2017-03-01 14:50:20 | stats4sd/wordcloud-app | https://api.github.com/repos/stats4sd/wordcloud-app | opened | Add ability to edit own comments | 1 - Ready Impact-Medium Priority-Medium Size-Medium Type-feature | Would be nice for users to be able to review and edit the comments they've submitted.

- edit comment text

- add / edit tags attached to the comment

**How:**

- Generate comment IDs locally to store them in local cache

- update newComment <firebase-document> to push key instead of letting firebase generate th... | 1.0 | Add ability to edit own comments - Would be nice for users to be able to review and edit the comments they've submitted.

- edit comment text

- add / edit tags attached to the comment

**How:**

- Generate comment IDs locally to store them in local cache

- update newComment <firebase-document> to push key inst... | priority | add ability to edit own comments would be nice for users to be able to review and edit the comments they ve submitted edit comment text add edit tags attached to the comment how generate comment ids locally to store them in local cache update newcomment to push key instead of letting fir... | 1 |

614,822 | 19,190,335,074 | IssuesEvent | 2021-12-05 22:01:05 | RE-SS3D/SS3D | https://api.github.com/repos/RE-SS3D/SS3D | opened | Implement Wire Adjacency Connections | Type: Feature (Addition) Asset: Script Coding: C# Priority: 2 - High Difficulty: 2 - Medium System: Tilemaps | <!-- The notes within these arrows are for you but can be deleted. -->

## Summary

Implement a new tilemap adjacency connection script (similar to the others) for "wire connections". This should follow the design located in the link below.

## Goal

This will allow for wires to be added to the map via the edit... | 1.0 | Implement Wire Adjacency Connections - <!-- The notes within these arrows are for you but can be deleted. -->

## Summary

Implement a new tilemap adjacency connection script (similar to the others) for "wire connections". This should follow the design located in the link below.

## Goal

This will allow for wi... | priority | implement wire adjacency connections summary implement a new tilemap adjacency connection script similar to the others for wire connections this should follow the design located in the link below goal this will allow for wires to be added to the map via the editor and perform intended connec... | 1 |

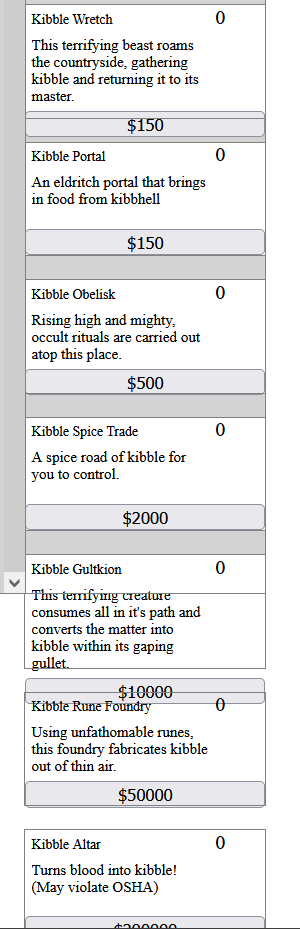

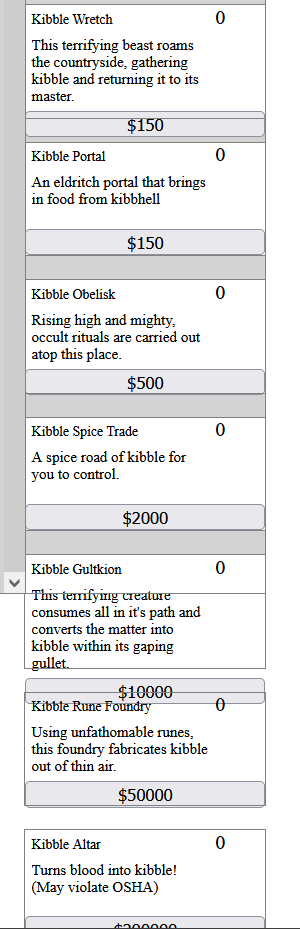

769,569 | 27,012,078,603 | IssuesEvent | 2023-02-10 16:09:28 | TimidBagel/Group-Clicker-Game | https://api.github.com/repos/TimidBagel/Group-Clicker-Game | closed | Buildings go outside of their container | bug CSS/Styling Medium Priority HTML |

The buildings go outside of the container instead of hiding them and making a scroll bar. | 1.0 | Buildings go outside of their container -

The buildings go outside of the container instead of hiding them and making a scroll bar. | priority | buildings go outside of their container the buildings go outside of the container instead of hiding them and making a scroll bar | 1 |

642,261 | 20,871,942,716 | IssuesEvent | 2022-03-22 12:47:48 | owncloud/web | https://api.github.com/repos/owncloud/web | closed | Renaming object via hamburger icon does't work | Type:Bug Priority:p3-medium | web: branch v5.2.0-rc.3:

ocis: docker v.1.17.0

Steps:

- admin creates folder

- admin tries to rename folder via hamburger icon

Expected: succufuly

Actual: method `https://localhost:9200/remote.php/dav/Shares` is used instead of `https://localhost:9200/remote.php/dav/files/marie/Shares`

![Screenshot 2022... | 1.0 | Renaming object via hamburger icon does't work - web: branch v5.2.0-rc.3:

ocis: docker v.1.17.0

Steps:

- admin creates folder

- admin tries to rename folder via hamburger icon

Expected: succufuly

Actual: method `https://localhost:9200/remote.php/dav/Shares` is used instead of `https://localhost:9200/remote... | priority | renaming object via hamburger icon does t work web branch rc ocis docker v steps admin creates folder admin tries to rename folder via hamburger icon expected succufuly actual method is used instead of | 1 |

826,147 | 31,558,721,638 | IssuesEvent | 2023-09-03 01:25:11 | wanderer-moe/site | https://api.github.com/repos/wanderer-moe/site | closed | search by [multiple] tags | api priority: medium db | allow for searching by multiple tags in addition to the existing new search feature that allows for searching multiple games / categories at once | 1.0 | search by [multiple] tags - allow for searching by multiple tags in addition to the existing new search feature that allows for searching multiple games / categories at once | priority | search by tags allow for searching by multiple tags in addition to the existing new search feature that allows for searching multiple games categories at once | 1 |

825,778 | 31,471,370,388 | IssuesEvent | 2023-08-30 07:47:12 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | opened | Review/revise logger warn and error messages | priority-3-medium type:refactor status:ready | ### Describe the proposed change(s).

We should clarify the correct situations for warns and errors. Importantly:

- Warn messages will result in _repository users_ being alerted, e.g. in their Dependency Dashboard

- Error messages will result in the _bot admin_ being alerted, because the bot will exit with a non-zero... | 1.0 | Review/revise logger warn and error messages - ### Describe the proposed change(s).

We should clarify the correct situations for warns and errors. Importantly:

- Warn messages will result in _repository users_ being alerted, e.g. in their Dependency Dashboard

- Error messages will result in the _bot admin_ being ale... | priority | review revise logger warn and error messages describe the proposed change s we should clarify the correct situations for warns and errors importantly warn messages will result in repository users being alerted e g in their dependency dashboard error messages will result in the bot admin being ale... | 1 |

739,447 | 25,597,449,542 | IssuesEvent | 2022-12-01 17:13:49 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] TServer crash when a table is accessed again in a transaction after another session drops it | kind/bug area/ysql priority/medium | Jira Link: [DB-4368](https://yugabyte.atlassian.net/browse/DB-4368)

### Description

Steps to reproduce:

(1) create a table

```

ysqlsh (11.2-YB-2.17.1.0-b0)

Type "help" for help.

yugabyte=# create table t(id int);

CREATE TABLE

yugabyte=# \q

```

(2) In a new session, start a transaction with

```

ysqlsh... | 1.0 | [YSQL] TServer crash when a table is accessed again in a transaction after another session drops it - Jira Link: [DB-4368](https://yugabyte.atlassian.net/browse/DB-4368)

### Description

Steps to reproduce:

(1) create a table

```

ysqlsh (11.2-YB-2.17.1.0-b0)

Type "help" for help.

yugabyte=# create table t(id ... | priority | tserver crash when a table is accessed again in a transaction after another session drops it jira link description steps to reproduce create a table ysqlsh yb type help for help yugabyte create table t id int create table yugabyte q in a new sessi... | 1 |

614,397 | 19,181,887,324 | IssuesEvent | 2021-12-04 14:50:25 | BlueBubblesApp/bluebubbles-app | https://api.github.com/repos/BlueBubblesApp/bluebubbles-app | opened | Fix issue where image disappears after sending. Comes back after leave and re enter | Bug priority: high Alpha Difficulty: Medium | Not sure if this will work for you, but here is what I did:

1. Send an image with text

2. Before the image fully sends, leave the app

3. Wait a sec

4. Re enter the app

5. Image seems to disappear or flicker

6. Send a message and it comes back | 1.0 | Fix issue where image disappears after sending. Comes back after leave and re enter - Not sure if this will work for you, but here is what I did:

1. Send an image with text

2. Before the image fully sends, leave the app

3. Wait a sec

4. Re enter the app

5. Image seems to disappear or flicker

6. Send a message a... | priority | fix issue where image disappears after sending comes back after leave and re enter not sure if this will work for you but here is what i did send an image with text before the image fully sends leave the app wait a sec re enter the app image seems to disappear or flicker send a message a... | 1 |

311,740 | 9,538,586,448 | IssuesEvent | 2019-04-30 15:01:18 | Terrastories/terrastories | https://api.github.com/repos/Terrastories/terrastories | opened | [CMS] Ensure that duplicate items cannot be added to the database (importer) | difficulty: easy priority: medium status: help wanted type: cms | Currently when the stories csv file is imported, if it's run again it will add duplicate records.

Add validations to the stories model to ensure that duplicates cannot be created. Consider adding a database migration to add unique indexes on these fields as well.

Please add tests to verify this behavior as well.

... | 1.0 | [CMS] Ensure that duplicate items cannot be added to the database (importer) - Currently when the stories csv file is imported, if it's run again it will add duplicate records.

Add validations to the stories model to ensure that duplicates cannot be created. Consider adding a database migration to add unique indexes... | priority | ensure that duplicate items cannot be added to the database importer currently when the stories csv file is imported if it s run again it will add duplicate records add validations to the stories model to ensure that duplicates cannot be created consider adding a database migration to add unique indexes on ... | 1 |

40,793 | 2,868,942,498 | IssuesEvent | 2015-06-05 22:06:03 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | pub: cannot use git dependency except for leaf packages. | bug duplicate Priority-Medium | <a href="https://github.com/jmesserly"><img src="https://avatars.githubusercontent.com/u/1081711?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [jmesserly](https://github.com/jmesserly)**

_Originally opened as dart-lang/sdk#8553_

----

Use case: you want to use a "git" dependency f... | 1.0 | pub: cannot use git dependency except for leaf packages. - <a href="https://github.com/jmesserly"><img src="https://avatars.githubusercontent.com/u/1081711?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [jmesserly](https://github.com/jmesserly)**

_Originally opened as dart-lang/sdk#8553_

---... | priority | pub cannot use git dependency except for leaf packages issue by originally opened as dart lang sdk use case you want to use a quot git quot dependency for web ui you re also using quot foo quot that depends on web ui via hosted dep it won t work what is best course of action here it ... | 1 |

610,789 | 18,924,461,652 | IssuesEvent | 2021-11-17 07:56:45 | MadsBalslev/P3 | https://api.github.com/repos/MadsBalslev/P3 | closed | Refactor ```frontend.Shared.Manager``` and assoiciated files | Type: Maintenance Priority: Medium Domain: Client | Limit this do a one person, one day assignment. | 1.0 | Refactor ```frontend.Shared.Manager``` and assoiciated files - Limit this do a one person, one day assignment. | priority | refactor frontend shared manager and assoiciated files limit this do a one person one day assignment | 1 |

725,358 | 24,959,796,839 | IssuesEvent | 2022-11-01 14:41:43 | AY2223S1-CS2103T-T15-3/tp | https://api.github.com/repos/AY2223S1-CS2103T-T15-3/tp | closed | [PE-D][Tester A] Edit after filter | wontfix priority.Medium severity.Medium | I think it is quite inconvenient that after every edit, the list would reset back to all tutors. I don't think this is optimised for convenience as I would have to look for the edited person again. The user can decide to list all the tutors again if he chooses instead of being forced to filter/sort the whole list again... | 1.0 | [PE-D][Tester A] Edit after filter - I think it is quite inconvenient that after every edit, the list would reset back to all tutors. I don't think this is optimised for convenience as I would have to look for the edited person again. The user can decide to list all the tutors again if he chooses instead of being force... | priority | edit after filter i think it is quite inconvenient that after every edit the list would reset back to all tutors i don t think this is optimised for convenience as i would have to look for the edited person again the user can decide to list all the tutors again if he chooses instead of being forced to filter so... | 1 |

31,381 | 2,732,898,844 | IssuesEvent | 2015-04-17 10:04:54 | tiku01/oryx-editor | https://api.github.com/repos/tiku01/oryx-editor | closed | Edit common properties of multiple selected shapes | auto-migrated Component-Editor OpSys-All Priority-Medium Type-Enhancement | ```

If multiple shapes are selected, it should be possible to edit the

properties they have in common. That would save a great deal of work,

e.g. when setting colors.

```

Original issue reported on code.google.com by `falko.me...@gmail.com` on 22 Oct 2008 at 3:11 | 1.0 | Edit common properties of multiple selected shapes - ```

If multiple shapes are selected, it should be possible to edit the

properties they have in common. That would save a great deal of work,

e.g. when setting colors.

```

Original issue reported on code.google.com by `falko.me...@gmail.com` on 22 Oct 2008 at 3:11 | priority | edit common properties of multiple selected shapes if multiple shapes are selected it should be possible to edit the properties they have in common that would save a great deal of work e g when setting colors original issue reported on code google com by falko me gmail com on oct at | 1 |

593,349 | 17,971,035,608 | IssuesEvent | 2021-09-14 02:03:22 | hackforla/design-systems | https://api.github.com/repos/hackforla/design-systems | closed | Week of September 13 2021 | priority: medium Role: Whole DS team Feature - Agenda | ### Overview

This issue tracks the agenda for the design systems meetings and weekly roll call for projects.

Please check in on the [Roll Call Spreadsheet](https://docs.google.com/spreadsheets/d/1JtJGxSpVQR3t3wN8P5iHtLZ-QEk-NtewGurNTikw1t0/edit#gid=0)

### Action Items

- [x] House keeping

- [x] New team membe... | 1.0 | Week of September 13 2021 - ### Overview

This issue tracks the agenda for the design systems meetings and weekly roll call for projects.

Please check in on the [Roll Call Spreadsheet](https://docs.google.com/spreadsheets/d/1JtJGxSpVQR3t3wN8P5iHtLZ-QEk-NtewGurNTikw1t0/edit#gid=0)

### Action Items

- [x] House k... | priority | week of september overview this issue tracks the agenda for the design systems meetings and weekly roll call for projects please check in on the action items house keeping new team member introduction change sizing on issues update from research update from ux ui tea... | 1 |

97,605 | 3,996,592,606 | IssuesEvent | 2016-05-10 19:18:13 | datreant/datreant.core | https://api.github.com/repos/datreant/datreant.core | closed | provide conda packages | packaging/distribution priority medium | Since MDAnalysis has joined the conda hotness I thought datreant could do the some. I got packages for all dependencies already except for scandir.

https://anaconda.org/kain88-de/packages | 1.0 | provide conda packages - Since MDAnalysis has joined the conda hotness I thought datreant could do the some. I got packages for all dependencies already except for scandir.

https://anaconda.org/kain88-de/packages | priority | provide conda packages since mdanalysis has joined the conda hotness i thought datreant could do the some i got packages for all dependencies already except for scandir | 1 |

653,673 | 21,610,170,395 | IssuesEvent | 2022-05-04 09:16:58 | MadsBalslev/SW4-2022 | https://api.github.com/repos/MadsBalslev/SW4-2022 | closed | 4.2.x.x Step declaration transition system | Priority: Medium | - [ ] Simon

- [ ] Nicolai

- [ ] Mads

- [ ] Lukas

- [ ] Patrick

- [x] Casper

- [ ] Sean | 1.0 | 4.2.x.x Step declaration transition system - - [ ] Simon

- [ ] Nicolai

- [ ] Mads

- [ ] Lukas

- [ ] Patrick

- [x] Casper

- [ ] Sean | priority | x x step declaration transition system simon nicolai mads lukas patrick casper sean | 1 |

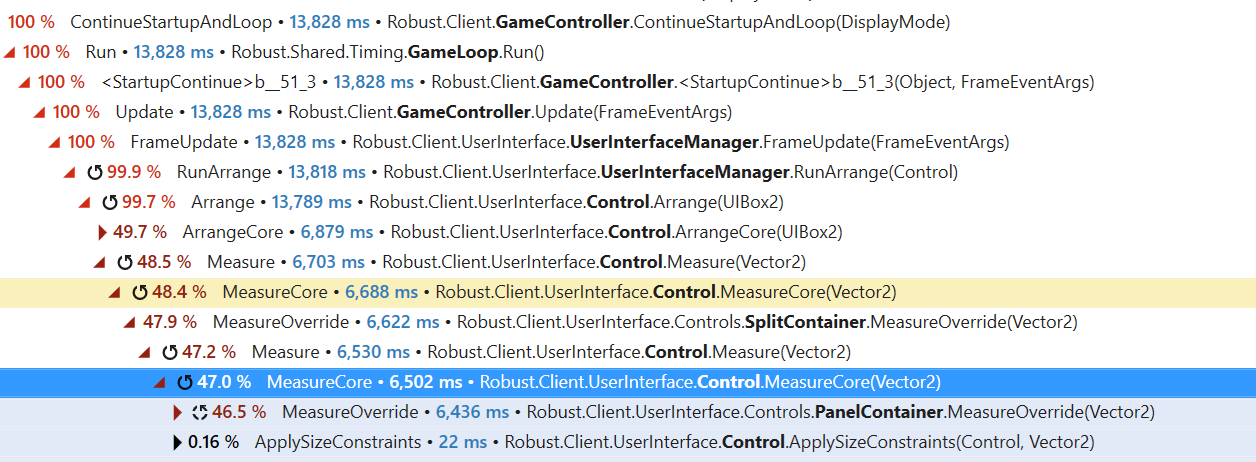

797,736 | 28,153,920,379 | IssuesEvent | 2023-04-03 05:26:16 | space-wizards/RobustToolbox | https://api.github.com/repos/space-wizards/RobustToolbox | opened | Making your game too narrow crashes it | Issue: Bug Priority: 2-Important Difficulty: 2-Medium | Repro:

Turn on separated chat

Make game narrow

Game hard locks

Seems to be some kind of infinite loop in measure.

| 1.0 | Making your game too narrow crashes it - Repro:

Turn on separated chat

Make game narrow

Game hard locks

Seems to be some kind of infinite loop in measure.

| priority | making your game too narrow crashes it repro turn on separated chat make game narrow game hard locks seems to be some kind of infinite loop in measure | 1 |

710,034 | 24,401,607,318 | IssuesEvent | 2022-10-05 02:20:10 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio] Editorial BP search script with multiple categories not work | bug priority: medium triage validate | ### Duplicates

- [X] I have searched the existing issues

### Latest version

- [X] The issue is in the latest released 4.0.x

- [ ] The issue is in the latest released 3.1.x

### Describe the issue

Search script `scripts/rest/search.get.groovy` with multiple categories value does not work.

### Steps to ... | 1.0 | [studio] Editorial BP search script with multiple categories not work - ### Duplicates

- [X] I have searched the existing issues

### Latest version

- [X] The issue is in the latest released 4.0.x

- [ ] The issue is in the latest released 3.1.x

### Describe the issue

Search script `scripts/rest/search.ge... | priority | editorial bp search script with multiple categories not work duplicates i have searched the existing issues latest version the issue is in the latest released x the issue is in the latest released x describe the issue search script scripts rest search get groovy wit... | 1 |

301,245 | 9,217,992,852 | IssuesEvent | 2019-03-11 12:16:15 | Bill-Ferny/CS1830-LightsOut | https://api.github.com/repos/Bill-Ferny/CS1830-LightsOut | closed | 007: handling scores in game_over | Medium priority task | # Handling scores in game_over

* Connect the scores, add_score method to game_over | 1.0 | 007: handling scores in game_over - # Handling scores in game_over

* Connect the scores, add_score method to game_over | priority | handling scores in game over handling scores in game over connect the scores add score method to game over | 1 |

2,686 | 2,532,468,343 | IssuesEvent | 2015-01-23 16:18:00 | IQSS/dataverse | https://api.github.com/repos/IQSS/dataverse | closed | Download button - one file selected | Priority: Medium Status: Dev Type: Feature | per Gustavo, opening a new ticket in relation to https://github.com/IQSS/dataverse/issues/1310 last question.

Download button action:

when one file is selected > actions grayed out > it should enable 'download selected' | 1.0 | Download button - one file selected - per Gustavo, opening a new ticket in relation to https://github.com/IQSS/dataverse/issues/1310 last question.

Download button action:

when one file is selected > actions grayed out > it should enable 'download selected' | priority | download button one file selected per gustavo opening a new ticket in relation to last question download button action when one file is selected actions grayed out it should enable download selected | 1 |

91,908 | 3,863,517,336 | IssuesEvent | 2016-04-08 09:45:49 | iamxavier/elmah | https://api.github.com/repos/iamxavier/elmah | closed | Create a FAQ Page | auto-migrated Priority-Medium Type-Enhancement | ```

What new or enhanced feature are you proposing?

A FAQ page in the wiki

What goal would this enhancement help you achieve?

Reduce the amount of head-scratching required to use ELMAH

```

Original issue reported on code.google.com by `Zian.C...@gmail.com` on 3 Sep 2009 at 3:20 | 1.0 | Create a FAQ Page - ```

What new or enhanced feature are you proposing?

A FAQ page in the wiki

What goal would this enhancement help you achieve?

Reduce the amount of head-scratching required to use ELMAH

```

Original issue reported on code.google.com by `Zian.C...@gmail.com` on 3 Sep 2009 at 3:20 | priority | create a faq page what new or enhanced feature are you proposing a faq page in the wiki what goal would this enhancement help you achieve reduce the amount of head scratching required to use elmah original issue reported on code google com by zian c gmail com on sep at | 1 |

285,647 | 8,767,494,867 | IssuesEvent | 2018-12-17 19:55:57 | webhintio/hint | https://api.github.com/repos/webhintio/hint | closed | [create hint] EventEmitter warning + script never ends on macOS and node 10+ | priority:medium type:bug | Node: v10.10.0

npm: 6.4.1

hint: v3.4.10

```sh

$ npm create hint

npx: installed 1 in 1.18s

? Is this a package with multiple hints? (yes) No

? What's the name of this new hint? tmp

? What's the description of this new hint 'tmp'? ...

? Please select the category of this new hint: development

? Please sel... | 1.0 | [create hint] EventEmitter warning + script never ends on macOS and node 10+ - Node: v10.10.0

npm: 6.4.1

hint: v3.4.10

```sh

$ npm create hint

npx: installed 1 in 1.18s

? Is this a package with multiple hints? (yes) No

? What's the name of this new hint? tmp

? What's the description of this new hint 'tmp'... | priority | eventemitter warning script never ends on macos and node node npm hint sh npm create hint npx installed in is this a package with multiple hints yes no what s the name of this new hint tmp what s the description of this new hint tmp please sele... | 1 |

499,795 | 14,479,380,752 | IssuesEvent | 2020-12-10 09:44:03 | amanianai/covid-conscious | https://api.github.com/repos/amanianai/covid-conscious | closed | Add categories /home to the database | Development Medium Priority ToDo | **Category/Keyword tags on /austin (city homepage) view**

Feel free to implement this the easiest way possible :)

**Tasks**

- [ ] add categories to the database via seed files (wellness, food, etc.)

- [ ] clicking on one of the thumbnails should run a query based on that category or keyword tag

**Categories th... | 1.0 | Add categories /home to the database - **Category/Keyword tags on /austin (city homepage) view**

Feel free to implement this the easiest way possible :)

**Tasks**

- [ ] add categories to the database via seed files (wellness, food, etc.)

- [ ] clicking on one of the thumbnails should run a query based on that cat... | priority | add categories home to the database category keyword tags on austin city homepage view feel free to implement this the easiest way possible tasks add categories to the database via seed files wellness food etc clicking on one of the thumbnails should run a query based on that categor... | 1 |

588,231 | 17,650,345,215 | IssuesEvent | 2021-08-20 12:22:31 | francheska-vicente/cssweng | https://api.github.com/repos/francheska-vicente/cssweng | opened | Dates that are not today are highlighted the same as today's date | bug priority: medium issue: front-end severity: low | ### Summary:

The dates that are not today but have the same day as today gets highlighted the same color as today's date.

E.g. if today is August 20, then September 20 also gets the same highlight

### Steps to Reproduce:

1. Login

2. Go to calendar

3. Go through various months

### Visual Proof:

Clean-up of #7454. You can visit that ticket to see the full history of discussion on this issue.

`memberCount` in group records is a stored computed value that we currently attempt to keep up to date by incrementing and decre... | 1.0 | Member Counts Inaccurate in Parties and Guilds - ### Description

[//]: # (Describe bug in detail here. Include screenshots if helpful.)

Clean-up of #7454. You can visit that ticket to see the full history of discussion on this issue.

`memberCount` in group records is a stored computed value that we currently att... | priority | member counts inaccurate in parties and guilds description describe bug in detail here include screenshots if helpful clean up of you can visit that ticket to see the full history of discussion on this issue membercount in group records is a stored computed value that we currently attempt t... | 1 |

345,943 | 10,375,170,754 | IssuesEvent | 2019-09-09 11:22:37 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | closed | TreeView template leaks memory on changes in the observable | Bug C: TreeView F: MVVM FP: In Development Kendo2 Next LIB Priority 1 SEV: Medium Triaged | ### Bug report

[HtmlPage1.zip](https://github.com/telerik/kendo-ui-core/files/3519331/HtmlPage1.zip)

### Reproduction of the problem

1. Run the attached page in the browser.

2. Take a 20 sec long heap snapshot. During these 20 sec click the "change" button to make changes to a specific node's data in the observ... | 1.0 | TreeView template leaks memory on changes in the observable - ### Bug report

[HtmlPage1.zip](https://github.com/telerik/kendo-ui-core/files/3519331/HtmlPage1.zip)

### Reproduction of the problem

1. Run the attached page in the browser.

2. Take a 20 sec long heap snapshot. During these 20 sec click the "change" ... | priority | treeview template leaks memory on changes in the observable bug report reproduction of the problem run the attached page in the browser take a sec long heap snapshot during these sec click the change button to make changes to a specific node s data in the observable current b... | 1 |

81,778 | 3,594,411,991 | IssuesEvent | 2016-02-01 23:34:11 | jkotlinski/durexforth | https://api.github.com/repos/jkotlinski/durexforth | closed | Cartridge version? | auto-migrated enhancement Priority-Medium | ```

Would free up some RAM

```

Original issue reported on code.google.com by `kotlin...@gmail.com` on 27 Oct 2012 at 11:59 | 1.0 | Cartridge version? - ```

Would free up some RAM

```

Original issue reported on code.google.com by `kotlin...@gmail.com` on 27 Oct 2012 at 11:59 | priority | cartridge version would free up some ram original issue reported on code google com by kotlin gmail com on oct at | 1 |

65,067 | 3,226,198,523 | IssuesEvent | 2015-10-10 03:22:19 | ankidroid/Anki-Android | https://api.github.com/repos/ankidroid/Anki-Android | closed | Make undo on 'Congratulations! ...' screen show the reviewer again | accepted enhancement Priority-Medium | When a study session is done, the 'Congratulations! You have finished for now.' screen is shown. In this screen, the 'undo' button to undo the last operation is shown (but why would it be there?) and clicking it changes the state of the deck (I guess it really undoes the operations) - this can be seen as the screen cha... | 1.0 | Make undo on 'Congratulations! ...' screen show the reviewer again - When a study session is done, the 'Congratulations! You have finished for now.' screen is shown. In this screen, the 'undo' button to undo the last operation is shown (but why would it be there?) and clicking it changes the state of the deck (I guess... | priority | make undo on congratulations screen show the reviewer again when a study session is done the congratulations you have finished for now screen is shown in this screen the undo button to undo the last operation is shown but why would it be there and clicking it changes the state of the deck i guess... | 1 |

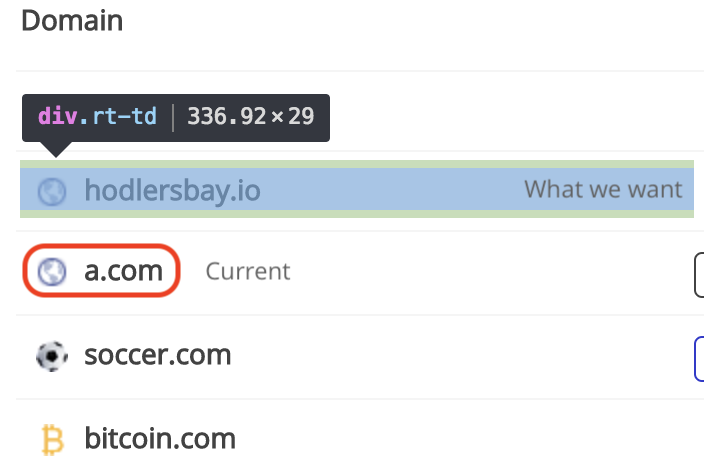

226,647 | 7,522,011,738 | IssuesEvent | 2018-04-12 19:00:25 | AdChain/AdChainRegistryDapp | https://api.github.com/repos/AdChain/AdChainRegistryDapp | closed | Extend Clickable Domains in Domain Table | Priority: Medium Type: UX Enhancement | Within the domains table, extend the ability to click into the single-domain view from `current` to `what we want`.

| 1.0 | Extend Clickable Domains in Domain Table - Within the domains table, extend the ability to click into the single-domain view from `current` to `what we want`.

| priority | extend clickable domains in domain table within the domains table extend the ability to click into the single domain view from current to what we want | 1 |

76,344 | 3,487,304,118 | IssuesEvent | 2016-01-01 19:14:23 | nanovad/ifios | https://api.github.com/repos/nanovad/ifios | opened | Finish ACPI table support | medium priority | Support needs to be finished for the RSDT and FADT for the century register ( #3 ). | 1.0 | Finish ACPI table support - Support needs to be finished for the RSDT and FADT for the century register ( #3 ). | priority | finish acpi table support support needs to be finished for the rsdt and fadt for the century register | 1 |