Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

384,735 | 11,402,487,999 | IssuesEvent | 2020-01-31 03:25:02 | PlasmaPy/PlasmaPy | https://api.github.com/repos/PlasmaPy/PlasmaPy | opened | Create plasmapy.analysis [PLEP 7] | Changes existing API Feature request Needs change log entry Priority: medium Refactoring | As part of the changes associated with the forthcoming PLEP 7 (PlasmaPy/PlasmaPy-PLEPs#26), we decided create a ``plasmapy.analysis`` sub-package.

This sub-package is intended to be your analysis toolbox, whereas, the ``plasmapy.diagnostics`` sub-package is your tool organizer. Consider a swept langmuir probe diagn... | 1.0 | Create plasmapy.analysis [PLEP 7] - As part of the changes associated with the forthcoming PLEP 7 (PlasmaPy/PlasmaPy-PLEPs#26), we decided create a ``plasmapy.analysis`` sub-package.

This sub-package is intended to be your analysis toolbox, whereas, the ``plasmapy.diagnostics`` sub-package is your tool organizer. C... | priority | create plasmapy analysis as part of the changes associated with the forthcoming plep plasmapy plasmapy pleps we decided create a plasmapy analysis sub package this sub package is intended to be your analysis toolbox whereas the plasmapy diagnostics sub package is your tool organizer consider ... | 1 |

88,098 | 3,771,482,610 | IssuesEvent | 2016-03-16 17:43:49 | ngageoint/hootenanny-ui | https://api.github.com/repos/ngageoint/hootenanny-ui | reopened | Basemap upload ignores defined Basemap Name | Category: UI Priority: High Priority: Medium Type: Bug | If you specify a Basemap Name for custom basemap it does not use that name but rather saves it using the file name. | 2.0 | Basemap upload ignores defined Basemap Name - If you specify a Basemap Name for custom basemap it does not use that name but rather saves it using the file name. | priority | basemap upload ignores defined basemap name if you specify a basemap name for custom basemap it does not use that name but rather saves it using the file name | 1 |

149,733 | 5,724,794,055 | IssuesEvent | 2017-04-20 15:14:06 | Osslack/HANA_SSBM | https://api.github.com/repos/Osslack/HANA_SSBM | closed | Unterschied in Laufzeit bei mehreren Befehlen gleichzeitig oder nacheinander. | Priority_medium | Macht es einen unterschied, ob man q1 oder q1.1,q1.2,q1.3 ausführt? | 1.0 | Unterschied in Laufzeit bei mehreren Befehlen gleichzeitig oder nacheinander. - Macht es einen unterschied, ob man q1 oder q1.1,q1.2,q1.3 ausführt? | priority | unterschied in laufzeit bei mehreren befehlen gleichzeitig oder nacheinander macht es einen unterschied ob man oder ausführt | 1 |

593,698 | 18,014,503,610 | IssuesEvent | 2021-09-16 12:32:38 | azerothcore/azerothcore-wotlk | https://api.github.com/repos/azerothcore/azerothcore-wotlk | closed | (Creature): Bosses do not reset correctly [phase+respawn] | Priority-Medium Confirmed | ### Current Behaviour

All bosses run back to their spawn point once a reset is called

### Expected Blizzlike Behaviour

There are some bosses that do a simple reset and other "more complex" bosses do a "phased reset" and then respawn.

**Seems** there is a pattern for which bosses do a simple or phased reset as... | 1.0 | (Creature): Bosses do not reset correctly [phase+respawn] - ### Current Behaviour

All bosses run back to their spawn point once a reset is called

### Expected Blizzlike Behaviour

There are some bosses that do a simple reset and other "more complex" bosses do a "phased reset" and then respawn.

**Seems** there ... | priority | creature bosses do not reset correctly current behaviour all bosses run back to their spawn point once a reset is called expected blizzlike behaviour there are some bosses that do a simple reset and other more complex bosses do a phased reset and then respawn seems there is a pattern f... | 1 |

284,580 | 8,744,038,513 | IssuesEvent | 2018-12-12 20:59:33 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | Popup info appears too high to see on starter camp | Medium Priority |

Lets make this really easy to spot since the player isnt familiar with the game at this point.

... | 1.0 | Popup info appears too high to see on starter camp -

Lets make this really easy to spot since th... | priority | popup info appears too high to see on starter camp lets make this really easy to spot since the player isnt familiar with the game at this point | 1 |

270,173 | 8,453,023,113 | IssuesEvent | 2018-10-20 11:20:03 | jredfox/evilnotchlib | https://api.github.com/repos/jredfox/evilnotchlib | closed | Pick Block Event TE issues | bug fixed next release good first issue priority=medium | Version: snapshot 76

Issue: the pickblock event data gets it on client side only rather then from the server this is invalid behavior that should have been removed in the integrated mc server update and especially 1.8

Steps to reproduce:

place spawener with multiple indexes go out of chunks far away

go back middl... | 1.0 | Pick Block Event TE issues - Version: snapshot 76

Issue: the pickblock event data gets it on client side only rather then from the server this is invalid behavior that should have been removed in the integrated mc server update and especially 1.8

Steps to reproduce:

place spawener with multiple indexes go out of c... | priority | pick block event te issues version snapshot issue the pickblock event data gets it on client side only rather then from the server this is invalid behavior that should have been removed in the integrated mc server update and especially steps to reproduce place spawener with multiple indexes go out of ch... | 1 |

127,217 | 5,026,192,842 | IssuesEvent | 2016-12-15 11:42:46 | GafferHQ/gaffer | https://api.github.com/repos/GafferHQ/gaffer | closed | Ensure all reader nodes have a 'Reload' button | component-image component-renderman component-scene component-ui priority-medium type-enhancement | - [ ] ImageReader

- [ ] SceneReader

- [ ] ObjectReader

- [ ] RenderManShader

- [ ] ?

| 1.0 | Ensure all reader nodes have a 'Reload' button - - [ ] ImageReader

- [ ] SceneReader

- [ ] ObjectReader

- [ ] RenderManShader

- [ ] ?

| priority | ensure all reader nodes have a reload button imagereader scenereader objectreader rendermanshader | 1 |

663,660 | 22,201,098,558 | IssuesEvent | 2022-06-07 11:16:34 | COS301-SE-2022/Vote-Vault | https://api.github.com/repos/COS301-SE-2022/Vote-Vault | closed | 💄 (Website) Edit the sizing properties of logo in header | enhancement scope:ui priority:medium | Fixed the width and height of the image logo in the heade navbar | 1.0 | 💄 (Website) Edit the sizing properties of logo in header - Fixed the width and height of the image logo in the heade navbar | priority | 💄 website edit the sizing properties of logo in header fixed the width and height of the image logo in the heade navbar | 1 |

815,679 | 30,567,231,956 | IssuesEvent | 2023-07-20 18:46:21 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] YugabyteDB locks nonexistent rows where PostgreSQL doesn't | kind/bug area/ysql priority/medium | Jira Link: [DB-7309](https://yugabyte.atlassian.net/browse/DB-7309)

### Description

Setup:

```

CREATE TABLE t (k int PRIMARY KEY);

```

Session 1:

```

BEGIN ISOLATION LEVEL SERIALIZABLE;

SELECT * FROM t WHERE k=1 FOR UPDATE;

```

Session 2:

```

BEGIN ISOLATION LEVEL SERIALIZABLE;

INSERT INTO t VALUES (1);

... | 1.0 | [YSQL] YugabyteDB locks nonexistent rows where PostgreSQL doesn't - Jira Link: [DB-7309](https://yugabyte.atlassian.net/browse/DB-7309)

### Description

Setup:

```

CREATE TABLE t (k int PRIMARY KEY);

```

Session 1:

```

BEGIN ISOLATION LEVEL SERIALIZABLE;

SELECT * FROM t WHERE k=1 FOR UPDATE;

```

Session 2:

`... | priority | yugabytedb locks nonexistent rows where postgresql doesn t jira link description setup create table t k int primary key session begin isolation level serializable select from t where k for update session begin isolation level serializable insert into t val... | 1 |

782,963 | 27,512,552,099 | IssuesEvent | 2023-03-06 09:49:31 | scaleway/terraform-provider-scaleway | https://api.github.com/repos/scaleway/terraform-provider-scaleway | closed | add data source for scaleway_lb_ips | enhancement load-balancer priority:medium | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | add data source for scaleway_lb_ips - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prio... | priority | add data source for scaleway lb ips community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or questions they generate extra noi... | 1 |

752,380 | 26,283,625,785 | IssuesEvent | 2023-01-07 15:36:30 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | Overriding `commitMessageTopic` fails for github-action | priority-3-medium type:docs status:in-progress | ### How are you running Renovate?

Mend Renovate hosted app on github.com

### If you're self-hosting Renovate, tell us what version of Renovate you run.

_No response_

### If you're self-hosting Renovate, select which platform you are using.

_No response_

### If you're self-hosting Renovate, tell us wha... | 1.0 | Overriding `commitMessageTopic` fails for github-action - ### How are you running Renovate?

Mend Renovate hosted app on github.com

### If you're self-hosting Renovate, tell us what version of Renovate you run.

_No response_

### If you're self-hosting Renovate, select which platform you are using.

_No res... | priority | overriding commitmessagetopic fails for github action how are you running renovate mend renovate hosted app on github com if you re self hosting renovate tell us what version of renovate you run no response if you re self hosting renovate select which platform you are using no res... | 1 |

281,000 | 8,689,281,623 | IssuesEvent | 2018-12-03 18:15:56 | minio/minio | https://api.github.com/repos/minio/minio | closed | Minio in docker does not generate a config.json file in the desired location | community priority: medium working as intended | I am having a problem making minio generates a config.json file outside the docker container.

it does generate the JSON file when using the local server, however, when using b2/aws gateway it fails to generate the JSON file.

also, I can not find it in docker itself under '/root/.minio' directory.

## Expecte... | 1.0 | Minio in docker does not generate a config.json file in the desired location - I am having a problem making minio generates a config.json file outside the docker container.

it does generate the JSON file when using the local server, however, when using b2/aws gateway it fails to generate the JSON file.

also, I ca... | priority | minio in docker does not generate a config json file in the desired location i am having a problem making minio generates a config json file outside the docker container it does generate the json file when using the local server however when using aws gateway it fails to generate the json file also i can... | 1 |

22,917 | 2,651,354,105 | IssuesEvent | 2015-03-16 10:50:28 | nfprojects/nfengine | https://api.github.com/repos/nfprojects/nfengine | closed | Prepare nfCommon for Linux port | low priority medium new feature | This will be the first step in porting the whole engine.

* Write CMake files.

* Run compilation.

* Make sure tests pass

* If minor fixes are needed in multi-platform parts of the code, do them

* Extract parts of platform-dependent code (for example WinAPI vs. X usage for managing windows) to separate cpp file an... | 1.0 | Prepare nfCommon for Linux port - This will be the first step in porting the whole engine.

* Write CMake files.

* Run compilation.

* Make sure tests pass

* If minor fixes are needed in multi-platform parts of the code, do them

* Extract parts of platform-dependent code (for example WinAPI vs. X usage for managin... | priority | prepare nfcommon for linux port this will be the first step in porting the whole engine write cmake files run compilation make sure tests pass if minor fixes are needed in multi platform parts of the code do them extract parts of platform dependent code for example winapi vs x usage for managin... | 1 |

99,140 | 4,048,280,179 | IssuesEvent | 2016-05-23 09:41:59 | OCHA-DAP/liverpool16 | https://api.github.com/repos/OCHA-DAP/liverpool16 | closed | Using the config selector on Android devices opens the keyboard which messes with the page | bug Medium Priority Mobile | Check screenshot @danmihaila

| 1.0 | Using the config selector on Android devices opens the keyboard which messes with the page - Check screenshot @danmihaila

| priority | using the config selector on android devices opens the keyboard which messes with the page check screenshot danmihaila | 1 |

773,657 | 27,165,297,711 | IssuesEvent | 2023-02-17 14:58:43 | episphere/connectApp | https://api.github.com/repos/episphere/connectApp | closed | Edit language on last PWA electronic consent screen | Medium Priority MVP E consent | Update the language on the final screen of the electronic consent modules for review. Edits tracked here:

https://nih.box.com/s/awqoyltghbolwm5ky5rerdqneu8yzo2y

The edits are restricted to the electronic signature portion of the final screen under the heading "Informed Consent." Please loop in @cunnaneaq and @depie... | 1.0 | Edit language on last PWA electronic consent screen - Update the language on the final screen of the electronic consent modules for review. Edits tracked here:

https://nih.box.com/s/awqoyltghbolwm5ky5rerdqneu8yzo2y

The edits are restricted to the electronic signature portion of the final screen under the heading "I... | priority | edit language on last pwa electronic consent screen update the language on the final screen of the electronic consent modules for review edits tracked here the edits are restricted to the electronic signature portion of the final screen under the heading informed consent please loop in cunnaneaq and depi... | 1 |

576,427 | 17,086,884,650 | IssuesEvent | 2021-07-08 12:58:59 | netdata/netdata | https://api.github.com/repos/netdata/netdata | closed | dismiss alarms from the dashboard | area/health area/web feature request priority/medium | I was setting up a new dockerized project on my server yesterday. The `docker-compose.yml` file contained a definition of an internal network that was shared by a few local services. Because I was running `docker-compose up` and `docker-compose down` until I felt satisfied with the result, this network was killed and r... | 1.0 | dismiss alarms from the dashboard - I was setting up a new dockerized project on my server yesterday. The `docker-compose.yml` file contained a definition of an internal network that was shared by a few local services. Because I was running `docker-compose up` and `docker-compose down` until I felt satisfied with the r... | priority | dismiss alarms from the dashboard i was setting up a new dockerized project on my server yesterday the docker compose yml file contained a definition of an internal network that was shared by a few local services because i was running docker compose up and docker compose down until i felt satisfied with the r... | 1 |

753,469 | 26,347,752,991 | IssuesEvent | 2023-01-11 00:18:19 | belav/csharpier | https://api.github.com/repos/belav/csharpier | closed | Consider always putting generic type constraints onto a new line | area:formatting priority:medium | According to [this stylecop rule](https://github.com/DotNetAnalyzers/StyleCopAnalyzers/blob/master/documentation/SA1127.md) generic type constraints should always be on a new line.

I think I am on board with modifying this

```c#

public static T CreatePipelineResult<T>(

T result,

ResultCode re... | 1.0 | Consider always putting generic type constraints onto a new line - According to [this stylecop rule](https://github.com/DotNetAnalyzers/StyleCopAnalyzers/blob/master/documentation/SA1127.md) generic type constraints should always be on a new line.

I think I am on board with modifying this

```c#

public static T... | priority | consider always putting generic type constraints onto a new line according to generic type constraints should always be on a new line i think i am on board with modifying this c public static t createpipelineresult t result resultcode resultcode subcode subcode ... | 1 |

67,608 | 3,275,472,653 | IssuesEvent | 2015-10-26 15:42:25 | nikcross/open-forum | https://api.github.com/repos/nikcross/open-forum | closed | Refactor Wiki code into plugable modules | auto-migrated Priority-Medium Type-Enhancement | ```

Break out:

* Search

* Authentication

* File System

into separate modules defined by interface

Have them under Jar Manager control

```

Original issue reported on code.google.com by `nicholas...@gmail.com` on 14 May 2008 at 10:32 | 1.0 | Refactor Wiki code into plugable modules - ```

Break out:

* Search

* Authentication

* File System

into separate modules defined by interface

Have them under Jar Manager control

```

Original issue reported on code.google.com by `nicholas...@gmail.com` on 14 May 2008 at 10:32 | priority | refactor wiki code into plugable modules break out search authentication file system into separate modules defined by interface have them under jar manager control original issue reported on code google com by nicholas gmail com on may at | 1 |

485,871 | 14,000,705,944 | IssuesEvent | 2020-10-28 12:42:52 | AY2021S1-CS2103T-T12-2/tp | https://api.github.com/repos/AY2021S1-CS2103T-T12-2/tp | closed | As a store manager, I want to know who are working on the next day | priority.Medium type.Story | ... so that I can make plan for next day beforehand. | 1.0 | As a store manager, I want to know who are working on the next day - ... so that I can make plan for next day beforehand. | priority | as a store manager i want to know who are working on the next day so that i can make plan for next day beforehand | 1 |

701,447 | 24,098,434,988 | IssuesEvent | 2022-09-19 21:07:45 | radical-cybertools/radical.pilot | https://api.github.com/repos/radical-cybertools/radical.pilot | closed | Feature Request: run in an interactive job | type:feature topic:api topic:resource priority:medium comp:agent:bootstrapper comp:agent topic:configuration | The agent will always assume it's running with the local resource manager - but we should be able to configure other resource managers. However, those will not see the batch system's environment - that would need to be communicated from client to agent.

See radical-collaboration/hpc-workflows/issues/154 | 1.0 | Feature Request: run in an interactive job - The agent will always assume it's running with the local resource manager - but we should be able to configure other resource managers. However, those will not see the batch system's environment - that would need to be communicated from client to agent.

See radical-colla... | priority | feature request run in an interactive job the agent will always assume it s running with the local resource manager but we should be able to configure other resource managers however those will not see the batch system s environment that would need to be communicated from client to agent see radical colla... | 1 |

200,231 | 7,001,664,798 | IssuesEvent | 2017-12-18 11:04:52 | sunpy/sunpy | https://api.github.com/repos/sunpy/sunpy | closed | Normalize SunPy sample data | Effort Medium Feature Request Hacktoberfest Package Novice Priority Medium Refactoring | The current sample data for SunPy (e.g. sunpy.AIA_171_IMAGE, sunpy.RHESSI_IMAGE, etc) were chosen somewhat arbitrarily and have do not share anything in common.

I think it would be useful to take a more systematic approach to selecting sample data to include with SunPy.

Things to consider:

1. Choose data for same tim... | 1.0 | Normalize SunPy sample data - The current sample data for SunPy (e.g. sunpy.AIA_171_IMAGE, sunpy.RHESSI_IMAGE, etc) were chosen somewhat arbitrarily and have do not share anything in common.

I think it would be useful to take a more systematic approach to selecting sample data to include with SunPy.

Things to conside... | priority | normalize sunpy sample data the current sample data for sunpy e g sunpy aia image sunpy rhessi image etc were chosen somewhat arbitrarily and have do not share anything in common i think it would be useful to take a more systematic approach to selecting sample data to include with sunpy things to consider ... | 1 |

502,082 | 14,539,819,107 | IssuesEvent | 2020-12-15 12:26:48 | robotframework/robotframework | https://api.github.com/repos/robotframework/robotframework | closed | Line starting with single space followed by `#` is not considered comment | beta 2 bug priority: medium | Hello ,

We're in process of migrating from Robot3.0.4 to 3.2.1, and found the following issues, I did search through the release notes of 3.1 and 3.2.1 I couldn't find any related tickets. So thought report them here and get some insights.

resources files with keywords in it had comments starting '#' character which ... | 1.0 | Line starting with single space followed by `#` is not considered comment - Hello ,

We're in process of migrating from Robot3.0.4 to 3.2.1, and found the following issues, I did search through the release notes of 3.1 and 3.2.1 I couldn't find any related tickets. So thought report them here and get some insights.

re... | priority | line starting with single space followed by is not considered comment hello we re in process of migrating from to and found the following issues i did search through the release notes of and i couldn t find any related tickets so thought report them here and get some insights resourc... | 1 |

430,163 | 12,440,839,895 | IssuesEvent | 2020-05-26 12:43:22 | BgeeDB/bgee_apps | https://api.github.com/repos/BgeeDB/bgee_apps | opened | Show information about confidence in ranks | priority: medium | In GitLab by @fbastian on Jul 1, 2016, 14:45

For conditions with only ESTs and/or in situ data, a high rank is not likely to mean that the gene is lowly expressed, but most likely that we had no granularity enough in the data to appropriately rank the gene.

We should find a way to convey this information to the us... | 1.0 | Show information about confidence in ranks - In GitLab by @fbastian on Jul 1, 2016, 14:45

For conditions with only ESTs and/or in situ data, a high rank is not likely to mean that the gene is lowly expressed, but most likely that we had no granularity enough in the data to appropriately rank the gene.

We should fi... | priority | show information about confidence in ranks in gitlab by fbastian on jul for conditions with only ests and or in situ data a high rank is not likely to mean that the gene is lowly expressed but most likely that we had no granularity enough in the data to appropriately rank the gene we should find a ... | 1 |

499,196 | 14,442,833,672 | IssuesEvent | 2020-12-07 18:44:04 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | closed | UnconnectedWaySnapper sometimes chooses a poor way node insert index | Category: Algorithms Priority: Medium Status: Ready For Review Type: Bug | This causes malformed ways when road snapping is used with Diff Conflate.

See output of `ServiceDiffNetworkRoadSnapTest`. Snaps to fix:

* way -206 snapped to way 31 via node -301 - snap insert index probably should be 2 instead of 3

* way -72 snapped to way -289 via node -225

* way -67 snapped to way -169 via n... | 1.0 | UnconnectedWaySnapper sometimes chooses a poor way node insert index - This causes malformed ways when road snapping is used with Diff Conflate.

See output of `ServiceDiffNetworkRoadSnapTest`. Snaps to fix:

* way -206 snapped to way 31 via node -301 - snap insert index probably should be 2 instead of 3

* way -72... | priority | unconnectedwaysnapper sometimes chooses a poor way node insert index this causes malformed ways when road snapping is used with diff conflate see output of servicediffnetworkroadsnaptest snaps to fix way snapped to way via node snap insert index probably should be instead of way snapp... | 1 |

430,558 | 12,462,949,726 | IssuesEvent | 2020-05-28 09:45:37 | RevivalPMMP/PureEntitiesX | https://api.github.com/repos/RevivalPMMP/PureEntitiesX | closed | Monsters crossing walls, sheeps flying around | Category: Bug Priority: Medium Status: Confirmed | <!-- REQUIRED INFORMATION - Labels should be self explainatory, failure to fill out this section will get your issued closed with Resolution status of "Invalid". -->

## Required Information

__PocketMine-MP Version:__ 1.7dev-318

__Plugin Version:__ 0.2.8-3.alpha9

Where you got the plugin: Cloned from github

... | 1.0 | Monsters crossing walls, sheeps flying around - <!-- REQUIRED INFORMATION - Labels should be self explainatory, failure to fill out this section will get your issued closed with Resolution status of "Invalid". -->

## Required Information

__PocketMine-MP Version:__ 1.7dev-318

__Plugin Version:__ 0.2.8-3.alpha9

... | priority | monsters crossing walls sheeps flying around required information pocketmine mp version plugin version where you got the plugin cloned from github optional information php version php cli other installed plugins none os version li... | 1 |

253,465 | 8,056,504,523 | IssuesEvent | 2018-08-02 12:56:11 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | Site:WaterMainsTemperature Default | IDDChange NewFeature Priority2 S2 - Medium | ## Issue overview

Based on the Engineering Reference, 'If there is no Site:WaterMainsTemperature object in the input file, a default constant value of 10 C is assumed.' I have found that the actual calculated profile for the water mains temperature is significantly higher for the majority of climates, resulting in an... | 1.0 | Site:WaterMainsTemperature Default - ## Issue overview

Based on the Engineering Reference, 'If there is no Site:WaterMainsTemperature object in the input file, a default constant value of 10 C is assumed.' I have found that the actual calculated profile for the water mains temperature is significantly higher for the ... | priority | site watermainstemperature default issue overview based on the engineering reference if there is no site watermainstemperature object in the input file a default constant value of c is assumed i have found that the actual calculated profile for the water mains temperature is significantly higher for the m... | 1 |

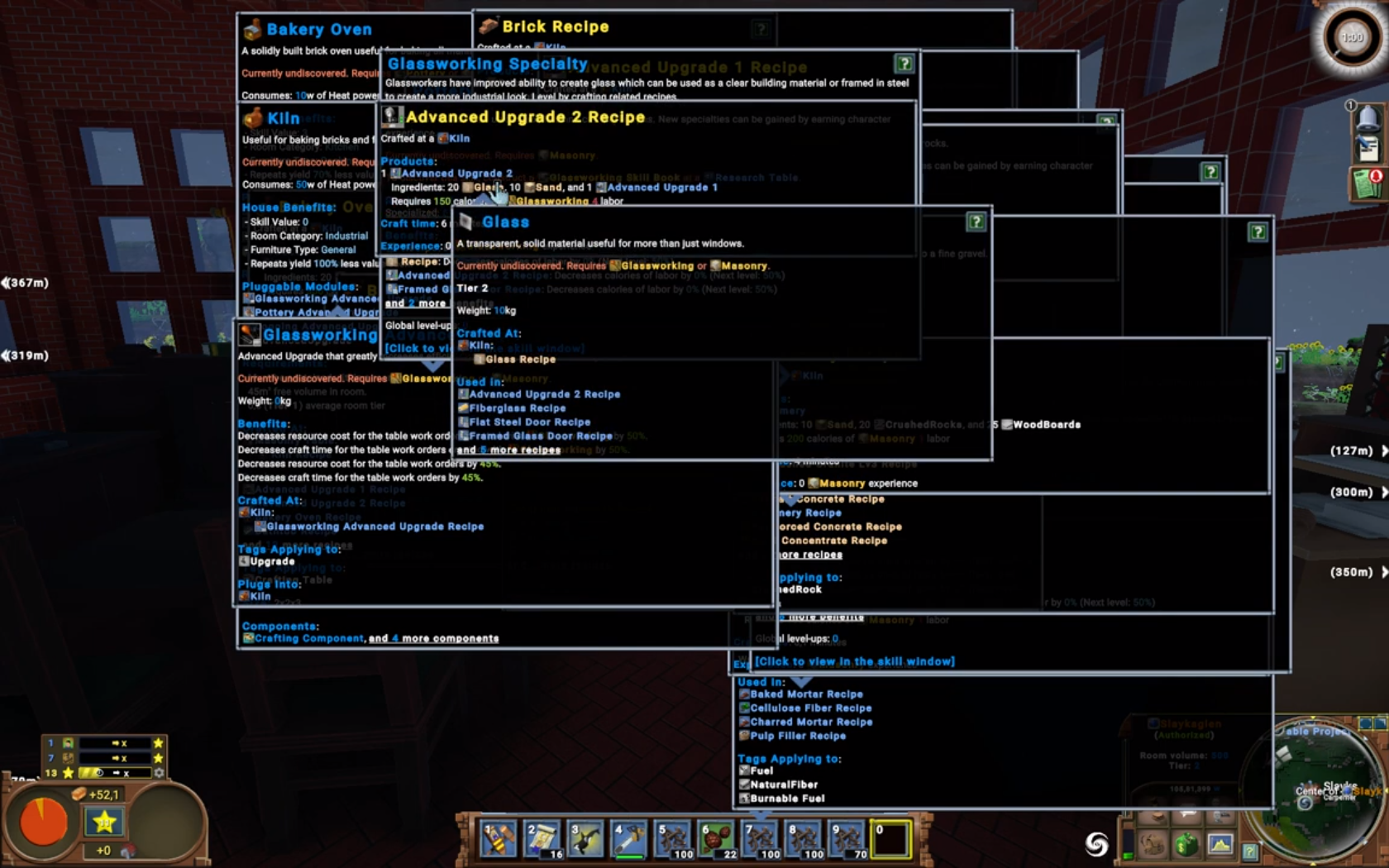

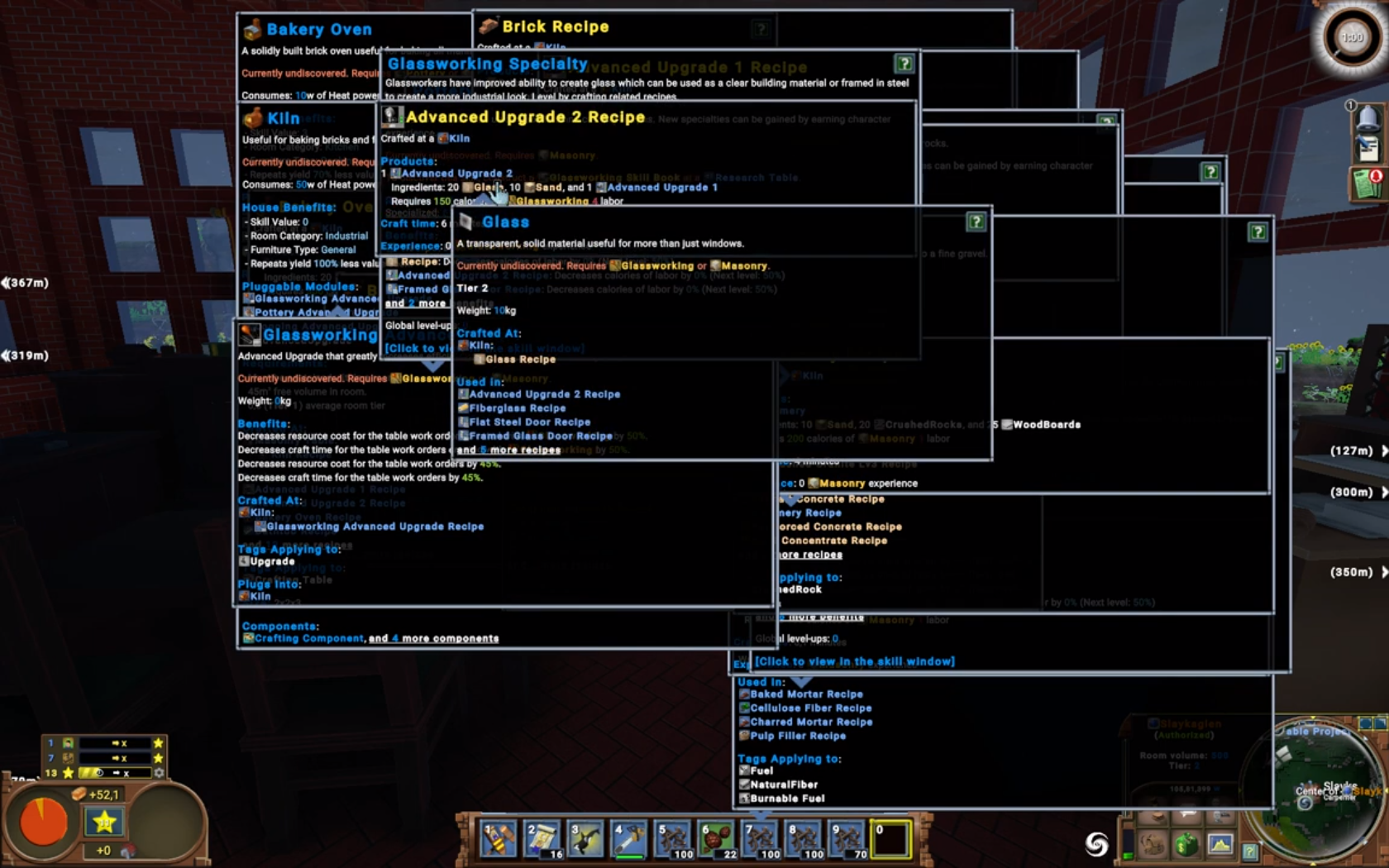

497,407 | 14,369,641,490 | IssuesEvent | 2020-12-01 10:01:49 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | [0.9.2 staging-1860] Tooltips are closed one by one and take a long time | Category: UI Priority: Medium Type: Regression | Step to reproduce:

- open a lot of tooltips:

- mouseover a free space:

https://drive.google.com/file/d/1kNEwNnayn1vSCC8Ix3geydpsdOg7t7DB/view?usp=sharing | 1.0 | [0.9.2 staging-1860] Tooltips are closed one by one and take a long time - Step to reproduce:

- open a lot of tooltips:

- mouseover a free space:

https://drive.google.com/file/d/1kNEwNnayn1vSCC8Ix3geydpsd... | priority | tooltips are closed one by one and take a long time step to reproduce open a lot of tooltips mouseover a free space | 1 |

718,473 | 24,718,355,836 | IssuesEvent | 2022-10-20 08:47:53 | SkriptLang/Skript | https://api.github.com/repos/SkriptLang/Skript | closed | Fix default variables for 2.7 | bug priority: medium variables core task | ### Description

https://github.com/SkriptLang/Skript/pull/4566/

This needs to be ported over to 2.7 after merge

| 1.0 | Fix default variables for 2.7 - ### Description

https://github.com/SkriptLang/Skript/pull/4566/

This needs to be ported over to 2.7 after merge

| priority | fix default variables for description this needs to be ported over to after merge | 1 |

566,836 | 16,831,848,880 | IssuesEvent | 2021-06-18 06:35:34 | jqwidgets/jQWidgets | https://api.github.com/repos/jqwidgets/jQWidgets | closed | jqxGrid - using of the "cellsrenderer" callback broke the formatting | medium priority | Also, if return the _"**defaulthtml**" argument_ it does not look aligned fine.

**Example:**

http://jsfiddle.net/tw503hbd/

**Topic:**

https://www.jqwidgets.com/community/topic/jqxgrid-with-property-autorowheight-and-cellsrenderer/ | 1.0 | jqxGrid - using of the "cellsrenderer" callback broke the formatting - Also, if return the _"**defaulthtml**" argument_ it does not look aligned fine.

**Example:**

http://jsfiddle.net/tw503hbd/

**Topic:**

https://www.jqwidgets.com/community/topic/jqxgrid-with-property-autorowheight-and-cellsrenderer/ | priority | jqxgrid using of the cellsrenderer callback broke the formatting also if return the defaulthtml argument it does not look aligned fine example topic | 1 |

209,969 | 7,181,853,920 | IssuesEvent | 2018-02-01 07:28:57 | dkpro/dkpro-tc | https://api.github.com/repos/dkpro/dkpro-tc | closed | Adapter and report for statistics evaluation package | Priority-Medium bug | Originally reported on Google Code with ID 224

```

This issue was created by revision r1296.

Created reports, adapter and a demo (20newsgroup)

```

Reported by `daxenberger.j` on 2014-12-10 21:56:03

| 1.0 | Adapter and report for statistics evaluation package - Originally reported on Google Code with ID 224

```

This issue was created by revision r1296.

Created reports, adapter and a demo (20newsgroup)

```

Reported by `daxenberger.j` on 2014-12-10 21:56:03

| priority | adapter and report for statistics evaluation package originally reported on google code with id this issue was created by revision created reports adapter and a demo reported by daxenberger j on | 1 |

754,005 | 26,370,331,138 | IssuesEvent | 2023-01-11 20:05:15 | Slicer/Slicer | https://api.github.com/repos/Slicer/Slicer | closed | Independent top level view windows so they can be on separate monitors | type:enhancement priority:medium | _This issue was created automatically from an original [Mantis Issue](https://mantisarchive.slicer.org/view.php?id=2104). Further discussion may take place here._ | 1.0 | Independent top level view windows so they can be on separate monitors - _This issue was created automatically from an original [Mantis Issue](https://mantisarchive.slicer.org/view.php?id=2104). Further discussion may take place here._ | priority | independent top level view windows so they can be on separate monitors this issue was created automatically from an original further discussion may take place here | 1 |

80,676 | 3,573,006,362 | IssuesEvent | 2016-01-27 02:51:50 | DistrictDataLabs/trinket | https://api.github.com/repos/DistrictDataLabs/trinket | closed | SSL 404 Redirect | priority: medium type: bug | Accessing Trinket on Heroku via http (instead of https) leads to a 404 redirect error when signing in with Google. | 1.0 | SSL 404 Redirect - Accessing Trinket on Heroku via http (instead of https) leads to a 404 redirect error when signing in with Google. | priority | ssl redirect accessing trinket on heroku via http instead of https leads to a redirect error when signing in with google | 1 |

709,487 | 24,379,786,168 | IssuesEvent | 2022-10-04 06:40:51 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | Integrate Zod validator with http utils | priority-3-medium type:refactor status:ready | ### Describe the proposed change(s).

- [ ] Introduce optional parameter for response data validation

- [ ] Create validation-specific error type that fits our exception handling flow

| 1.0 | Integrate Zod validator with http utils - ### Describe the proposed change(s).

- [ ] Introduce optional parameter for response data validation

- [ ] Create validation-specific error type that fits our exception handling flow

| priority | integrate zod validator with http utils describe the proposed change s introduce optional parameter for response data validation create validation specific error type that fits our exception handling flow | 1 |

199,005 | 6,979,944,649 | IssuesEvent | 2017-12-12 23:01:17 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [Search] RestClient is missing file size when indexing binary documents. | bug priority: medium | When using this method to index binary documents:

```java

public String updateFile(String site, String id, File file, Map<String, List<String>> additionalFields) throws SearchException

```

https://github.com/craftercms/search/blob/2.5.x/crafter-search-client/src/main/java/org/craftercms/search/service/impl/RestCl... | 1.0 | [Search] RestClient is missing file size when indexing binary documents. - When using this method to index binary documents:

```java

public String updateFile(String site, String id, File file, Map<String, List<String>> additionalFields) throws SearchException

```

https://github.com/craftercms/search/blob/2.5.x/cr... | priority | restclient is missing file size when indexing binary documents when using this method to index binary documents java public string updatefile string site string id file file map additionalfields throws searchexception the indexed data is missing the stream size field meta ... | 1 |

113,173 | 4,544,110,845 | IssuesEvent | 2016-09-10 14:09:22 | 4-20ma/ModbusMaster | https://api.github.com/repos/4-20ma/ModbusMaster | opened | Add continuous integration testing with travis | Priority: Medium Status: In Progress Type: Feature Request | <!----------------------------------------------------------------------------

Title - ensure the issue title is clear & concise

- QUESTIONS - describe the specific question

- BUG REPORTS - describe an activity

- FEATURE REQUESTS - describe an activity

-->

<!-----------------------------------------------------... | 1.0 | Add continuous integration testing with travis - <!----------------------------------------------------------------------------

Title - ensure the issue title is clear & concise

- QUESTIONS - describe the specific question

- BUG REPORTS - describe an activity

- FEATURE REQUESTS - describe an activity

-->

<!----... | priority | add continuous integration testing with travis title ensure the issue title is clear concise questions describe the specific question bug reports describe an activity feature requests describe an activity ... | 1 |

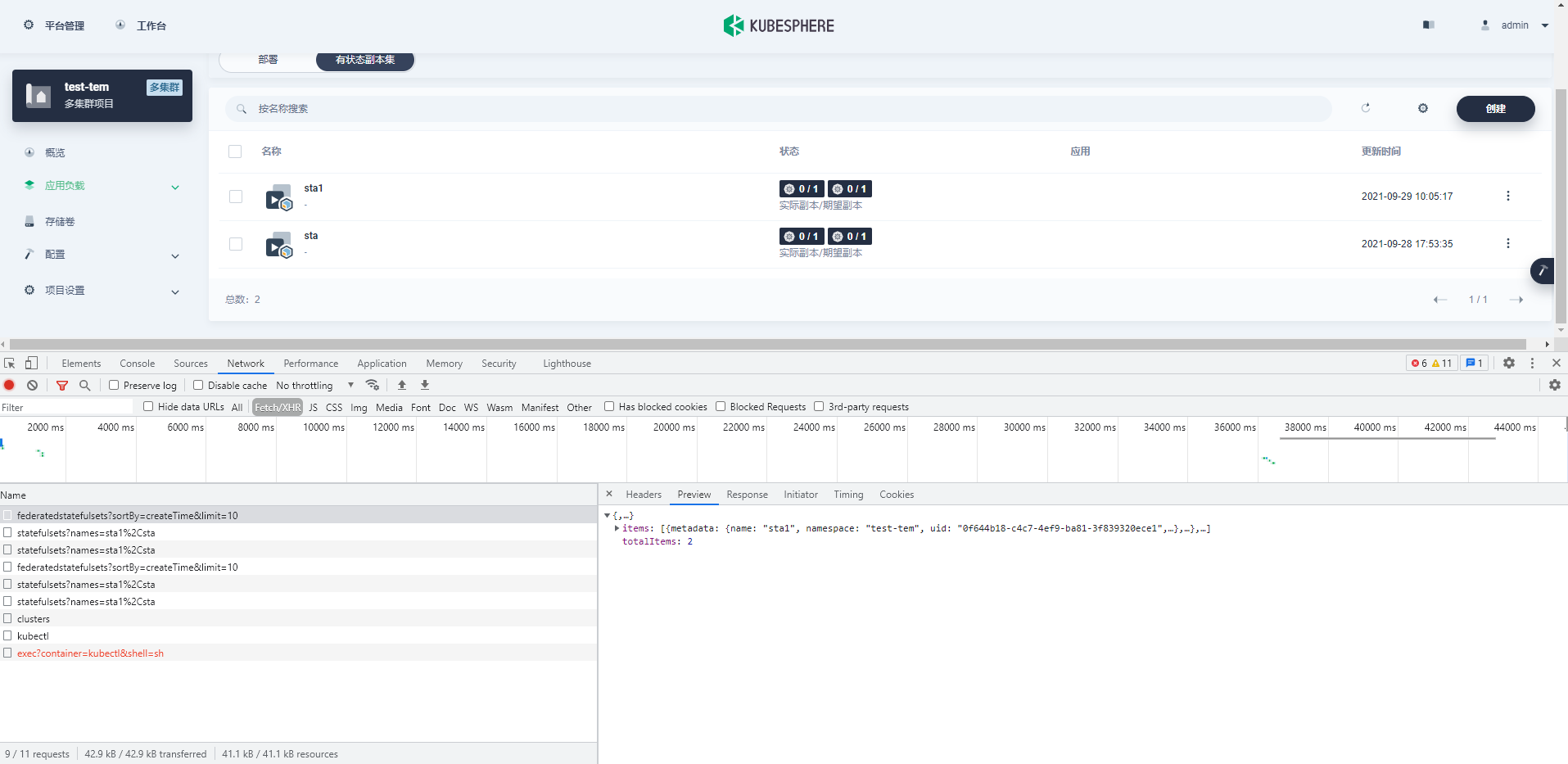

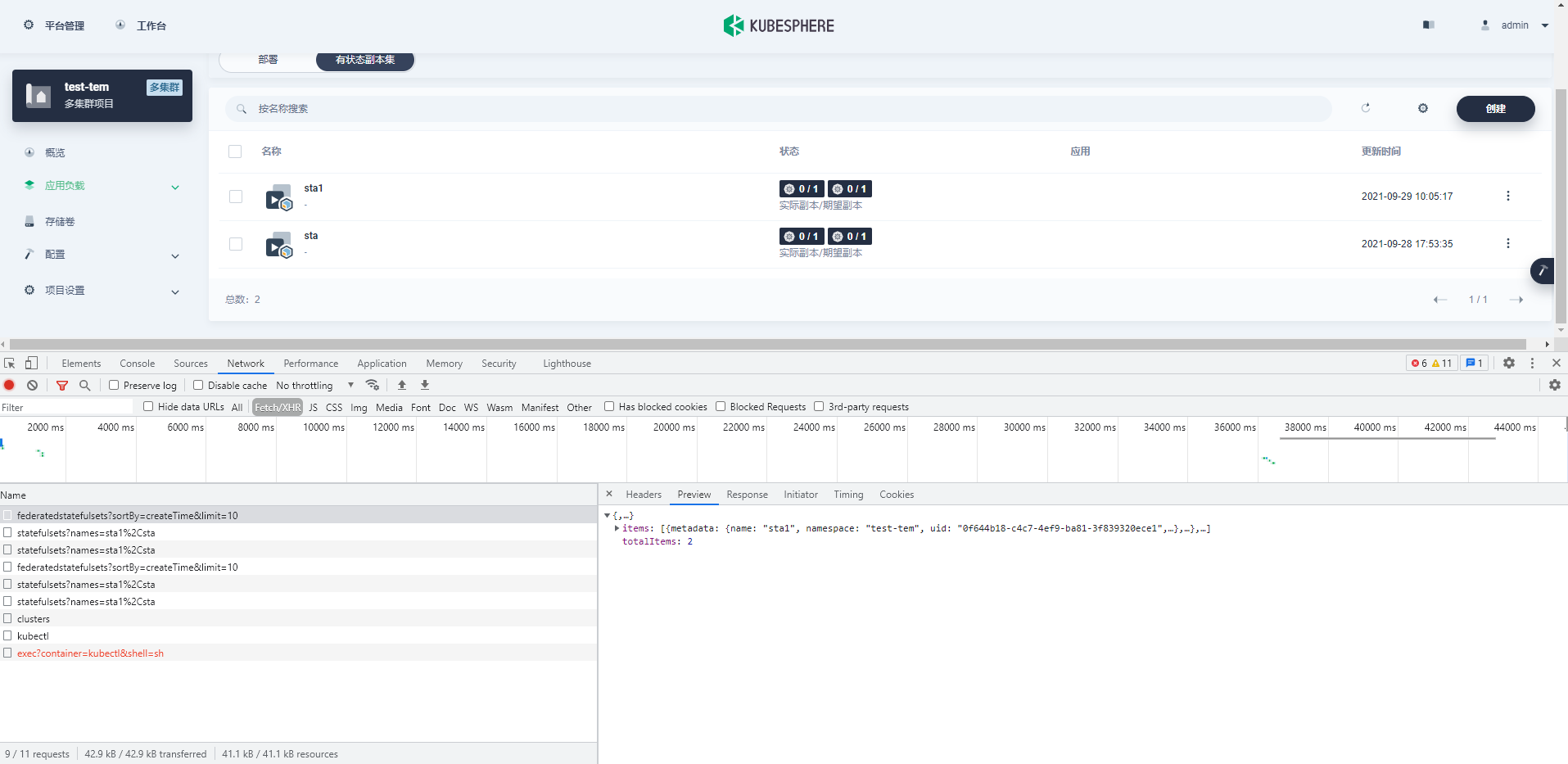

673,761 | 23,029,996,998 | IssuesEvent | 2022-07-22 13:04:53 | frappe/erpnext | https://api.github.com/repos/frappe/erpnext | opened | Capacity for service unit missing in Quick Entry for Healthcare Service Unit | bug healthcare Enhancement Medium Priority V14 Pre Merge | ### Information about bug

The capacity for service unit is missing in Quick Entry for Healthcare Service Unit. So after filling in all details when a user tries submitting through quick entry it throws appropriate validation error and redirects to full form view

Expected :

**Versions used(KubeSphere/Kubernetes)**

KubeSphere: nightly-20... | 1.0 | The StatefulSets list shows an error - **Describe the bug**

**Versions used(KubeSpher... | priority | the statefulsets list shows an error describe the bug versions used kubesphere kubernetes kubesphere nightly kind bug kubesphere sig console priority medium | 1 |

421,153 | 12,254,457,008 | IssuesEvent | 2020-05-06 08:31:20 | BingLingGroup/autosub | https://api.github.com/repos/BingLingGroup/autosub | closed | Remove required dependency langcodes | Priority: Medium Status: Accepted Type: Enhancement | **Describe the solution you'd like**

Remove required dependency langcodes to avoid using marisa-trie because it needs C++ environment.

| 1.0 | Remove required dependency langcodes - **Describe the solution you'd like**

Remove required dependency langcodes to avoid using marisa-trie because it needs C++ environment.

| priority | remove required dependency langcodes describe the solution you d like remove required dependency langcodes to avoid using marisa trie because it needs c environment | 1 |

164,409 | 6,225,739,895 | IssuesEvent | 2017-07-10 16:51:34 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] certain dialog boxes break preview tools labels | bug Priority: Medium | <img width="1398" alt="screen shot 2017-06-23 at 10 42 40 am" src="https://user-images.githubusercontent.com/169432/27487290-4fe4e652-5801-11e7-8c8f-3e7741552a42.png">

# steps to reproduce

1. open a page in preview

2. open review tools, note the labels look fine

3. refresh, note the labels look fine

4. click on ... | 1.0 | [studio-ui] certain dialog boxes break preview tools labels - <img width="1398" alt="screen shot 2017-06-23 at 10 42 40 am" src="https://user-images.githubusercontent.com/169432/27487290-4fe4e652-5801-11e7-8c8f-3e7741552a42.png">

# steps to reproduce

1. open a page in preview

2. open review tools, note the labels ... | priority | certain dialog boxes break preview tools labels img width alt screen shot at am src steps to reproduce open a page in preview open review tools note the labels look fine refresh note the labels look fine click on any context nav operations for the page approve and publi... | 1 |

49,641 | 3,003,799,036 | IssuesEvent | 2015-07-25 08:25:43 | jayway/powermock | https://api.github.com/repos/jayway/powermock | opened | powermock not working in IntelliJ but working in eclipse | bug imported Priority-Medium | _From [goldbric...@gmail.com](https://code.google.com/u/108697047924162601890/) on November 28, 2014 08:28:24_

What steps will reproduce the problem? 1.create a static method

2.write to unit test by using powerMockito

3.not working in IntelliJ but working in eclipse What is the expected output? What do you see inst... | 1.0 | powermock not working in IntelliJ but working in eclipse - _From [goldbric...@gmail.com](https://code.google.com/u/108697047924162601890/) on November 28, 2014 08:28:24_

What steps will reproduce the problem? 1.create a static method

2.write to unit test by using powerMockito

3.not working in IntelliJ but working i... | priority | powermock not working in intellij but working in eclipse from on november what steps will reproduce the problem create a static method write to unit test by using powermockito not working in intellij but working in eclipse what is the expected output what do you see instead expected outp... | 1 |

576,006 | 17,068,786,627 | IssuesEvent | 2021-07-07 10:39:34 | gnosis/ido-ux | https://api.github.com/repos/gnosis/ido-ux | closed | Minimum Funding/Estimated Tokens Sold are not calculated when Minimum Funding value =<1 | QA QA passed bug medium priority | 1. Create an auction with Minimum Funding vale <1

2. Create an order for this auction with bidding amount < Minimum Funding

3. Check Minimum Funding/Estimated Tokens Sold fields

**AR**: Minimum Funding/Estimated Tokens Sold = 0

- [x] 제목, 작품명, 글 내용, 첨부파일 등록(유형,제목,내용,작품명에 required 속성 부여)

- [ ] 첨부파일(이미지)를 미리보기 출력

- [x] 작성하기 클릭 시, 정보 저장 요청

- [x] 게시글 수정하기의 경우 저장된 내용 모두 조회

| 1.0 | FE_[Feat]: 게시글 작성 페이지 - ## 📃To do List

- [x] 뒤로가기 아이콘 클릭 시, 이전 페이지로 이동

## 📃글 작성

- [x] 드롭다운 박스를 통해 게시글 유형 선택(리뷰, 잡담)

- [x] 제목, 작품명, 글 내용, 첨부파일 등록(유형,제목,내용,작품명에 required 속성 부여)

- [ ] 첨부파일(이미지)를 미리보기 출력

- [x] 작성하기 클릭 시, 정보 저장 요청

- [x] 게시글 수정하기의 경우 저장된 내용 모두 조회

| priority | fe 게시글 작성 페이지 📃to do list 뒤로가기 아이콘 클릭 시 이전 페이지로 이동 📃글 작성 드롭다운 박스를 통해 게시글 유형 선택 리뷰 잡담 제목 작품명 글 내용 첨부파일 등록 유형 제목 내용 작품명에 required 속성 부여 첨부파일 이미지 를 미리보기 출력 작성하기 클릭 시 정보 저장 요청 게시글 수정하기의 경우 저장된 내용 모두 조회 | 1 |

584,577 | 17,458,747,758 | IssuesEvent | 2021-08-06 07:23:39 | ansible-collections/azure | https://api.github.com/repos/ansible-collections/azure | closed | Add support for spot instances | has_pr medium_priority new_featrue | <!--- Verify first that your feature was not already discussed on GitHub -->

<!--- Complete *all* sections as described, this form is processed automatically -->

##### SUMMARY

<!--- Describe the new feature/improvement briefly below -->

Add support for creating spot instances in AWS and would like to be able to d... | 1.0 | Add support for spot instances - <!--- Verify first that your feature was not already discussed on GitHub -->

<!--- Complete *all* sections as described, this form is processed automatically -->

##### SUMMARY

<!--- Describe the new feature/improvement briefly below -->

Add support for creating spot instances in A... | priority | add support for spot instances summary add support for creating spot instances in aws and would like to be able to do the same in azure rather than having to spin up only full price instances in the cli this is done by adding a priority spot to the vm instance creation command see ... | 1 |

508,046 | 14,688,990,924 | IssuesEvent | 2021-01-02 06:42:35 | emre1702/TDS-V-Public | https://api.github.com/repos/emre1702/TDS-V-Public | closed | [BUG] Winner in round ranking has wrong rotation | bug medium priority | He is looking to the wrong direction (back), why?

Tested with 2 players. | 1.0 | [BUG] Winner in round ranking has wrong rotation - He is looking to the wrong direction (back), why?

Tested with 2 players. | priority | winner in round ranking has wrong rotation he is looking to the wrong direction back why tested with players | 1 |

39,653 | 2,858,049,555 | IssuesEvent | 2015-06-02 23:03:38 | pmem/issues | https://api.github.com/repos/pmem/issues | closed | pmemobj: user is able to open pool with NULL layout | Exposure: Medium Priority: 3 medium Type: Bug | It is possible to open pool with passed NULL as layout.

Steps to reproduce:

1. pop = pmemobj_create(/mnt/psmem_0/myfile, "pmemobj_layout", PMEMOBJ_MIN_POOL, 0666)

2. pmemobj_close(pop)

3. pop = pmemobj_open(/mnt/psmem_0/myfile, NULL)

Expected result:

Pool should not be opened, NULL pointer returned

Current... | 1.0 | pmemobj: user is able to open pool with NULL layout - It is possible to open pool with passed NULL as layout.

Steps to reproduce:

1. pop = pmemobj_create(/mnt/psmem_0/myfile, "pmemobj_layout", PMEMOBJ_MIN_POOL, 0666)

2. pmemobj_close(pop)

3. pop = pmemobj_open(/mnt/psmem_0/myfile, NULL)

Expected result:

Pool ... | priority | pmemobj user is able to open pool with null layout it is possible to open pool with passed null as layout steps to reproduce pop pmemobj create mnt psmem myfile pmemobj layout pmemobj min pool pmemobj close pop pop pmemobj open mnt psmem myfile null expected result pool sho... | 1 |

319,501 | 9,745,178,895 | IssuesEvent | 2019-06-03 09:00:36 | poanetwork/blockscout | https://api.github.com/repos/poanetwork/blockscout | opened | Failed to decode Ethereum JSONRPC response in debug_traceTransaction | bug :bug: chain: Go :chains: priority: medium | ```

2019-06-03T08:53:25.460 fetcher=internal_transaction count=10 [error] Task #PID<0.20387.48> started from Indexer.Fetcher.InternalTransaction terminating

** (EthereumJSONRPC.DecodeError) Failed to decode Ethereum JSONRPC response:

request:

url: http://XXX

body: [{"id":0,"jsonrpc":"2.0","method":... | 1.0 | Failed to decode Ethereum JSONRPC response in debug_traceTransaction - ```

2019-06-03T08:53:25.460 fetcher=internal_transaction count=10 [error] Task #PID<0.20387.48> started from Indexer.Fetcher.InternalTransaction terminating

** (EthereumJSONRPC.DecodeError) Failed to decode Ethereum JSONRPC response:

request:... | priority | failed to decode ethereum jsonrpc response in debug tracetransaction fetcher internal transaction count task pid started from indexer fetcher internaltransaction terminating ethereumjsonrpc decodeerror failed to decode ethereum jsonrpc response request url body ... | 1 |

592,835 | 17,931,995,242 | IssuesEvent | 2021-09-10 10:29:47 | fangohr/nmag | https://api.github.com/repos/fangohr/nmag | closed | Put static html from Wiki into new github pages location | medium priority | The scraped and convert html is here: https://github.com/mhanberry1/nmag-www-archive/tree/master/nmag.soton.ac.uk

- This needs to be moved to http://nmag-project.github.io to be browsable

- Need to update link to Wiki in tabs on the left to point to the new location

@venkat004 - can you help? | 1.0 | Put static html from Wiki into new github pages location - The scraped and convert html is here: https://github.com/mhanberry1/nmag-www-archive/tree/master/nmag.soton.ac.uk

- This needs to be moved to http://nmag-project.github.io to be browsable

- Need to update link to Wiki in tabs on the left to point to the new... | priority | put static html from wiki into new github pages location the scraped and convert html is here this needs to be moved to to be browsable need to update link to wiki in tabs on the left to point to the new location can you help | 1 |

734,741 | 25,360,872,081 | IssuesEvent | 2022-11-20 21:44:11 | bounswe/bounswe2022group6 | https://api.github.com/repos/bounswe/bounswe2022group6 | closed | Implementing locmgr for location services | Priority: Medium State: In Progress Type: Development Backend | An API for retrieving location information should be implemented for requirements [1.1.1.2.5](https://github.com/bounswe/bounswe2022group6/wiki/Requirements#1112-adding-information-to-an-account), [1.1.1.3.7](https://github.com/bounswe/bounswe2022group6/wiki/Requirements#1113-editing-the-information-in-an-account), and... | 1.0 | Implementing locmgr for location services - An API for retrieving location information should be implemented for requirements [1.1.1.2.5](https://github.com/bounswe/bounswe2022group6/wiki/Requirements#1112-adding-information-to-an-account), [1.1.1.3.7](https://github.com/bounswe/bounswe2022group6/wiki/Requirements#1113... | priority | implementing locmgr for location services an api for retrieving location information should be implemented for requirements and location information precision should be in this manner country state city city district | 1 |

56,217 | 3,078,574,086 | IssuesEvent | 2015-08-21 11:12:54 | MinetestForFun/minetest-minetestforfun-server | https://api.github.com/repos/MinetestForFun/minetest-minetestforfun-server | closed | Nouvelle idée de mod pour la peche | Modding Priority: Medium | Pour avoir plus de chance en pêche il nous faudrait un stuff de pêcheur

genre casque du pêcheur + bottes du pêcheur = rapidité de pêche augmentée de 50% par exemple | 1.0 | Nouvelle idée de mod pour la peche - Pour avoir plus de chance en pêche il nous faudrait un stuff de pêcheur

genre casque du pêcheur + bottes du pêcheur = rapidité de pêche augmentée de 50% par exemple | priority | nouvelle idée de mod pour la peche pour avoir plus de chance en pêche il nous faudrait un stuff de pêcheur genre casque du pêcheur bottes du pêcheur rapidité de pêche augmentée de par exemple | 1 |

674,243 | 23,044,084,117 | IssuesEvent | 2022-07-23 16:11:28 | capawesome-team/capacitor-firebase | https://api.github.com/repos/capawesome-team/capacitor-firebase | closed | feat(authentication): expose `AdditionalUserInfo` | feature priority: medium package: authentication | **Is your feature request related to an issue? Please describe:**

<!-- A clear and concise explanation of what the problem is. Former. [...] -->

Yeah. In social media entries (Facebook, Google, GooglePlay, Twitter, Apple etc.), the user's id value connected to the provider does not appear. (GoogleId value does not ... | 1.0 | feat(authentication): expose `AdditionalUserInfo` - **Is your feature request related to an issue? Please describe:**

<!-- A clear and concise explanation of what the problem is. Former. [...] -->

Yeah. In social media entries (Facebook, Google, GooglePlay, Twitter, Apple etc.), the user's id value connected to the... | priority | feat authentication expose additionaluserinfo is your feature request related to an issue please describe yeah in social media entries facebook google googleplay twitter apple etc the user s id value connected to the provider does not appear googleid value does not come for login with googl... | 1 |

530,438 | 15,428,719,204 | IssuesEvent | 2021-03-06 00:36:40 | marklogic/marklogic-data-hub | https://api.github.com/repos/marklogic/marklogic-data-hub | closed | On redeploy, only remove hub modules | Enhancement priority:medium | When Quick Start redeploys, it wipes the modules database. Need to only remove the modules that DHF puts in the modules database and replace those. Anything put into the modules database by a different process or application (for instance, a slush-generator search app) should be left alone. | 1.0 | On redeploy, only remove hub modules - When Quick Start redeploys, it wipes the modules database. Need to only remove the modules that DHF puts in the modules database and replace those. Anything put into the modules database by a different process or application (for instance, a slush-generator search app) should be l... | priority | on redeploy only remove hub modules when quick start redeploys it wipes the modules database need to only remove the modules that dhf puts in the modules database and replace those anything put into the modules database by a different process or application for instance a slush generator search app should be l... | 1 |

89,580 | 3,797,121,207 | IssuesEvent | 2016-03-23 05:31:42 | RickyGAkl/yahoo-finance-managed | https://api.github.com/repos/RickyGAkl/yahoo-finance-managed | closed | cannot downlload options data for OEX Index | auto-migrated Priority-Medium Type-Enhancement | ```

What steps will reproduce the problem?

1.trying to get options data

2.

3.

What is the expected output? What do you see instead?

Strikeprice, type, symbol etc..

Do you have other informations?

method getlastdetofstock works for cash equities

Suggestions to fix the defect?

```

Original issue reported on code.g... | 1.0 | cannot downlload options data for OEX Index - ```

What steps will reproduce the problem?

1.trying to get options data

2.

3.

What is the expected output? What do you see instead?

Strikeprice, type, symbol etc..

Do you have other informations?

method getlastdetofstock works for cash equities

Suggestions to fix the d... | priority | cannot downlload options data for oex index what steps will reproduce the problem trying to get options data what is the expected output what do you see instead strikeprice type symbol etc do you have other informations method getlastdetofstock works for cash equities suggestions to fix the d... | 1 |

103,912 | 4,187,296,994 | IssuesEvent | 2016-06-23 17:01:29 | isawnyu/isaw.web | https://api.github.com/repos/isawnyu/isaw.web | closed | cropping option missing from multiple content types (events, pages, possibly more) | deploy medium priority | When editing an Event Item with a lead image, I don't have the Cropping option to adjust cropping settings for that lead image (the way that it's an option for news items). | 1.0 | cropping option missing from multiple content types (events, pages, possibly more) - When editing an Event Item with a lead image, I don't have the Cropping option to adjust cropping settings for that lead image (the way that it's an option for news items). | priority | cropping option missing from multiple content types events pages possibly more when editing an event item with a lead image i don t have the cropping option to adjust cropping settings for that lead image the way that it s an option for news items | 1 |

786,505 | 27,657,441,702 | IssuesEvent | 2023-03-12 05:17:26 | prgrms-web-devcourse/Team-Kkini-Mukvengers-FE | https://api.github.com/repos/prgrms-web-devcourse/Team-Kkini-Mukvengers-FE | closed | 밥모임 상세 페이지에서 뒤로가기 버튼 만들기 | Priority: Medium Feature | ## 📕 작업 설명

> 밥모임 상세 페이지에서 뒤로가기 버튼 만들기

## 📖 To-Do list

- [ ] 밥모임 상세 페이지에서 뒤로가기 버튼 만들기 | 1.0 | 밥모임 상세 페이지에서 뒤로가기 버튼 만들기 - ## 📕 작업 설명

> 밥모임 상세 페이지에서 뒤로가기 버튼 만들기

## 📖 To-Do list

- [ ] 밥모임 상세 페이지에서 뒤로가기 버튼 만들기 | priority | 밥모임 상세 페이지에서 뒤로가기 버튼 만들기 📕 작업 설명 밥모임 상세 페이지에서 뒤로가기 버튼 만들기 📖 to do list 밥모임 상세 페이지에서 뒤로가기 버튼 만들기 | 1 |

226,816 | 7,523,222,793 | IssuesEvent | 2018-04-12 23:42:06 | Fireboyd78/mm2hook | https://api.github.com/repos/Fireboyd78/mm2hook | opened | Impose upper limit for speedometer/tachometer values | RV6 Roadmap enhancement help wanted medium priority | May require to be hardcoded, as new data cannot be added to existing vehicle information. | 1.0 | Impose upper limit for speedometer/tachometer values - May require to be hardcoded, as new data cannot be added to existing vehicle information. | priority | impose upper limit for speedometer tachometer values may require to be hardcoded as new data cannot be added to existing vehicle information | 1 |

630,379 | 20,107,305,429 | IssuesEvent | 2022-02-07 11:49:03 | eclipse/dirigible | https://api.github.com/repos/eclipse/dirigible | closed | [IDE] CSVIM Editor not opening csv files in the csv editor | bug web-ide usability priority-medium efforts-low | **Describe the bug**

The CSVIM editor does not specify an editor id, resulting in the csv file being opened by the default editor which is Monaco.

**To Reproduce**

Steps to reproduce the behavior:

1. Open a csvim file

2. Click on 'Open'

3. See issue

**Expected behavior**

The Monaco editor should be used onl... | 1.0 | [IDE] CSVIM Editor not opening csv files in the csv editor - **Describe the bug**

The CSVIM editor does not specify an editor id, resulting in the csv file being opened by the default editor which is Monaco.

**To Reproduce**

Steps to reproduce the behavior:

1. Open a csvim file

2. Click on 'Open'

3. See issue

... | priority | csvim editor not opening csv files in the csv editor describe the bug the csvim editor does not specify an editor id resulting in the csv file being opened by the default editor which is monaco to reproduce steps to reproduce the behavior open a csvim file click on open see issue ... | 1 |

535,615 | 15,691,608,564 | IssuesEvent | 2021-03-25 18:04:41 | CS506-Oversight/autorack-front | https://api.github.com/repos/CS506-Oversight/autorack-front | opened | Dark theme | Priority-Medium Type-Enhancements | Check if material-ui provides one. If not, customize one.

Ideal settings:

- Background color: `#323232` ~ `#505050`

- Font color: `#FFFFFF` | 1.0 | Dark theme - Check if material-ui provides one. If not, customize one.

Ideal settings:

- Background color: `#323232` ~ `#505050`

- Font color: `#FFFFFF` | priority | dark theme check if material ui provides one if not customize one ideal settings background color font color ffffff | 1 |

514,792 | 14,944,092,009 | IssuesEvent | 2021-01-26 00:35:27 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | mimxrt1050_evk: testcase tests/kernel/fatal/exception/ failed to be ran | bug priority: medium | To Reproduce

Steps to reproduce the behavior:

sanitycheck -p mimxrt1050_evk --device-testing --device-serial /dev/ttyACM0 -T tests/kernel/fatal/exception/

see error

*** Booting Zephyr OS build zephyr-v2.4.0-2092-g748e7b6d7515 ***

E: ***** MPU FAULT *****

E: Stacking error (context area might be not valid)

... | 1.0 | mimxrt1050_evk: testcase tests/kernel/fatal/exception/ failed to be ran - To Reproduce

Steps to reproduce the behavior:

sanitycheck -p mimxrt1050_evk --device-testing --device-serial /dev/ttyACM0 -T tests/kernel/fatal/exception/

see error

*** Booting Zephyr OS build zephyr-v2.4.0-2092-g748e7b6d7515 ***

E: ****... | priority | evk testcase tests kernel fatal exception failed to be ran to reproduce steps to reproduce the behavior sanitycheck p evk device testing device serial dev t tests kernel fatal exception see error booting zephyr os build zephyr e mpu fault e stacking error ... | 1 |

565,730 | 16,768,285,211 | IssuesEvent | 2021-06-14 11:48:29 | canonical-web-and-design/vanilla-framework | https://api.github.com/repos/canonical-web-and-design/vanilla-framework | closed | Typo in navigation examples | Priority: Medium | In the navigation examples the last item is using the wrong class name. | 1.0 | Typo in navigation examples - In the navigation examples the last item is using the wrong class name. | priority | typo in navigation examples in the navigation examples the last item is using the wrong class name | 1 |

612,563 | 19,025,799,147 | IssuesEvent | 2021-11-24 03:13:31 | crombird/meta | https://api.github.com/repos/crombird/meta | opened | Include prompts in slash command outputs | type/feature-request priority/3-medium integration/discord | Users can't see the slash command parameters until they click on the command name in the interaction. And this is not always intuitive. In the message format, the prompt immediately preceded the response, so you could tell what the response was for, i.e.

> **user**

> ?? search query

>

> **CROM**

> (response)

... | 1.0 | Include prompts in slash command outputs - Users can't see the slash command parameters until they click on the command name in the interaction. And this is not always intuitive. In the message format, the prompt immediately preceded the response, so you could tell what the response was for, i.e.

> **user**

> ?? se... | priority | include prompts in slash command outputs users can t see the slash command parameters until they click on the command name in the interaction and this is not always intuitive in the message format the prompt immediately preceded the response so you could tell what the response was for i e user se... | 1 |

114,690 | 4,642,737,507 | IssuesEvent | 2016-09-30 10:46:59 | softdevteam/krun | https://api.github.com/repos/softdevteam/krun | closed | Rework run_shell_cmd() | enhancement medium priority (a clear improvement but not a blocker for publication) | As discussed with @snim2:

* Move `util.run_shell_cmd()` into the platform instance.

* It should accept an optional argument `user`, which if not `None` uses `sudo` or `doas` to invoke the command as another user

* It should accept a list of arguments, and never a string.

* We should replace any manual `os.s... | 1.0 | Rework run_shell_cmd() - As discussed with @snim2:

* Move `util.run_shell_cmd()` into the platform instance.

* It should accept an optional argument `user`, which if not `None` uses `sudo` or `doas` to invoke the command as another user

* It should accept a list of arguments, and never a string.

* We should... | priority | rework run shell cmd as discussed with move util run shell cmd into the platform instance it should accept an optional argument user which if not none uses sudo or doas to invoke the command as another user it should accept a list of arguments and never a string we should rep... | 1 |

84,188 | 3,655,018,015 | IssuesEvent | 2016-02-17 14:57:03 | miracle091/transmission-remote-dotnet | https://api.github.com/repos/miracle091/transmission-remote-dotnet | closed | folder structure when adding torrent | Priority-Medium Type-Enhancement | ```

When adding a torrent, you see the files contained as a flat list.

If you download a 0-day warez pack wich sometimes conatinas thousands of files,

it can be rather messy selecting just some of the items in there.

In for example utorrent, the files are in a tree-view. I usually deselect

everything except for the... | 1.0 | folder structure when adding torrent - ```

When adding a torrent, you see the files contained as a flat list.

If you download a 0-day warez pack wich sometimes conatinas thousands of files,

it can be rather messy selecting just some of the items in there.

In for example utorrent, the files are in a tree-view. I usua... | priority | folder structure when adding torrent when adding a torrent you see the files contained as a flat list if you download a day warez pack wich sometimes conatinas thousands of files it can be rather messy selecting just some of the items in there in for example utorrent the files are in a tree view i usua... | 1 |

179,017 | 6,620,901,525 | IssuesEvent | 2017-09-21 17:08:13 | crcn/tandem | https://api.github.com/repos/crcn/tandem | closed | History | Feature Medium priority | Not so important with Tandem since history exists with the users text editor. This feature will be required if the user decides to use the built-in text editor option (when it's implemented). Or if the editor is used online. | 1.0 | History - Not so important with Tandem since history exists with the users text editor. This feature will be required if the user decides to use the built-in text editor option (when it's implemented). Or if the editor is used online. | priority | history not so important with tandem since history exists with the users text editor this feature will be required if the user decides to use the built in text editor option when it s implemented or if the editor is used online | 1 |

794,862 | 28,052,549,823 | IssuesEvent | 2023-03-29 07:09:03 | AY2223S2-CS2103-F11-3/tp | https://api.github.com/repos/AY2223S2-CS2103-F11-3/tp | closed | `APPEND`, `REMOVE`, `REPLACE` for list attributes | priority.Medium type.Enhancement | Currently list attributes only support replace.

`APPEND` and `REMOVE` would be nice so that the user will not have to retype the entire attribute. | 1.0 | `APPEND`, `REMOVE`, `REPLACE` for list attributes - Currently list attributes only support replace.

`APPEND` and `REMOVE` would be nice so that the user will not have to retype the entire attribute. | priority | append remove replace for list attributes currently list attributes only support replace append and remove would be nice so that the user will not have to retype the entire attribute | 1 |

347,036 | 10,423,587,641 | IssuesEvent | 2019-09-16 11:49:12 | Th3-Fr3d/pmdbs | https://api.github.com/repos/Th3-Fr3d/pmdbs | closed | Redesign MainForm Header | medium priority tweak | Change the MainForm headers / titles to match the CertificateForm and BreachForm design | 1.0 | Redesign MainForm Header - Change the MainForm headers / titles to match the CertificateForm and BreachForm design | priority | redesign mainform header change the mainform headers titles to match the certificateform and breachform design | 1 |

566,238 | 16,816,236,747 | IssuesEvent | 2021-06-17 07:44:16 | ansible-collections/azure | https://api.github.com/repos/ansible-collections/azure | closed | Include data_disk name in return values for "azure_rm_virtualmachine_info" | has_pr medium_priority | ##### SUMMARY

Include data_disk name in return values for "azure_rm_virtualmachine_info"

##### ISSUE TYPE

- Current return values for the module doesn't capture the data disk names

##### COMPONENT NAME

azure_rm_virtualmachine_info

##### ADDITIONAL INFORMATION

- Have been working on an use case Azure VM dis... | 1.0 | Include data_disk name in return values for "azure_rm_virtualmachine_info" - ##### SUMMARY

Include data_disk name in return values for "azure_rm_virtualmachine_info"

##### ISSUE TYPE

- Current return values for the module doesn't capture the data disk names

##### COMPONENT NAME

azure_rm_virtualmachine_info

... | priority | include data disk name in return values for azure rm virtualmachine info summary include data disk name in return values for azure rm virtualmachine info issue type current return values for the module doesn t capture the data disk names component name azure rm virtualmachine info ... | 1 |

249,862 | 7,964,843,790 | IssuesEvent | 2018-07-13 23:58:24 | SETI/pds-opus | https://api.github.com/repos/SETI/pds-opus | closed | Inconsistent tooltip interface | A-Bug Effort 2 Medium Priority 3 | In some cases (like the search categories) you hover over the (i) to get the tooltip, but in other cases (widget titles) you click on the (i) to get the tooltip. | 1.0 | Inconsistent tooltip interface - In some cases (like the search categories) you hover over the (i) to get the tooltip, but in other cases (widget titles) you click on the (i) to get the tooltip. | priority | inconsistent tooltip interface in some cases like the search categories you hover over the i to get the tooltip but in other cases widget titles you click on the i to get the tooltip | 1 |

247,158 | 7,904,319,464 | IssuesEvent | 2018-07-02 03:38:09 | medic/medic-webapp | https://api.github.com/repos/medic/medic-webapp | closed | Determine how we migrate large instances from CouchDB 1.x to CouchDB 2.0 | Priority: 2 - Medium Status: 1 - Triaged Type: Technical issue Upgrading | We have some large instances, and it's going to be a pain to migrate to CouchDB 2.0, primary because it will force all long term sessions to be logged out (ie all CHWs will have to log back in).

We should look into how we can get around this, and if we definitely can't, strategies for migrating people over slowly (i... | 1.0 | Determine how we migrate large instances from CouchDB 1.x to CouchDB 2.0 - We have some large instances, and it's going to be a pain to migrate to CouchDB 2.0, primary because it will force all long term sessions to be logged out (ie all CHWs will have to log back in).

We should look into how we can get around this,... | priority | determine how we migrate large instances from couchdb x to couchdb we have some large instances and it s going to be a pain to migrate to couchdb primary because it will force all long term sessions to be logged out ie all chws will have to log back in we should look into how we can get around this ... | 1 |

434,240 | 12,515,922,225 | IssuesEvent | 2020-06-03 08:32:21 | canonical-web-and-design/build.snapcraft.io | https://api.github.com/repos/canonical-web-and-design/build.snapcraft.io | closed | Can't change registered name when repo not configured | Priority: Medium | If the repo isn't configured with a snapcraft.yaml, you don't seem to be able to change the registered name. | 1.0 | Can't change registered name when repo not configured - If the repo isn't configured with a snapcraft.yaml, you don't seem to be able to change the registered name. | priority | can t change registered name when repo not configured if the repo isn t configured with a snapcraft yaml you don t seem to be able to change the registered name | 1 |

370,175 | 10,926,207,339 | IssuesEvent | 2019-11-22 14:15:42 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | Need for a composite MBP + SG | priority: medium team: dynamics | We had several talks about this. #9665 is the most recent discussion that references this design.

The ability to easily merge MBP with SG is blocking in that many of our new IK and planning tools really need both in sync, with the ability to make changes and with simple APIs to perform both geometric queries and multi... | 1.0 | Need for a composite MBP + SG - We had several talks about this. #9665 is the most recent discussion that references this design.

The ability to easily merge MBP with SG is blocking in that many of our new IK and planning tools really need both in sync, with the ability to make changes and with simple APIs to perform ... | priority | need for a composite mbp sg we had several talks about this is the most recent discussion that references this design the ability to easily merge mbp with sg is blocking in that many of our new ik and planning tools really need both in sync with the ability to make changes and with simple apis to perform bot... | 1 |

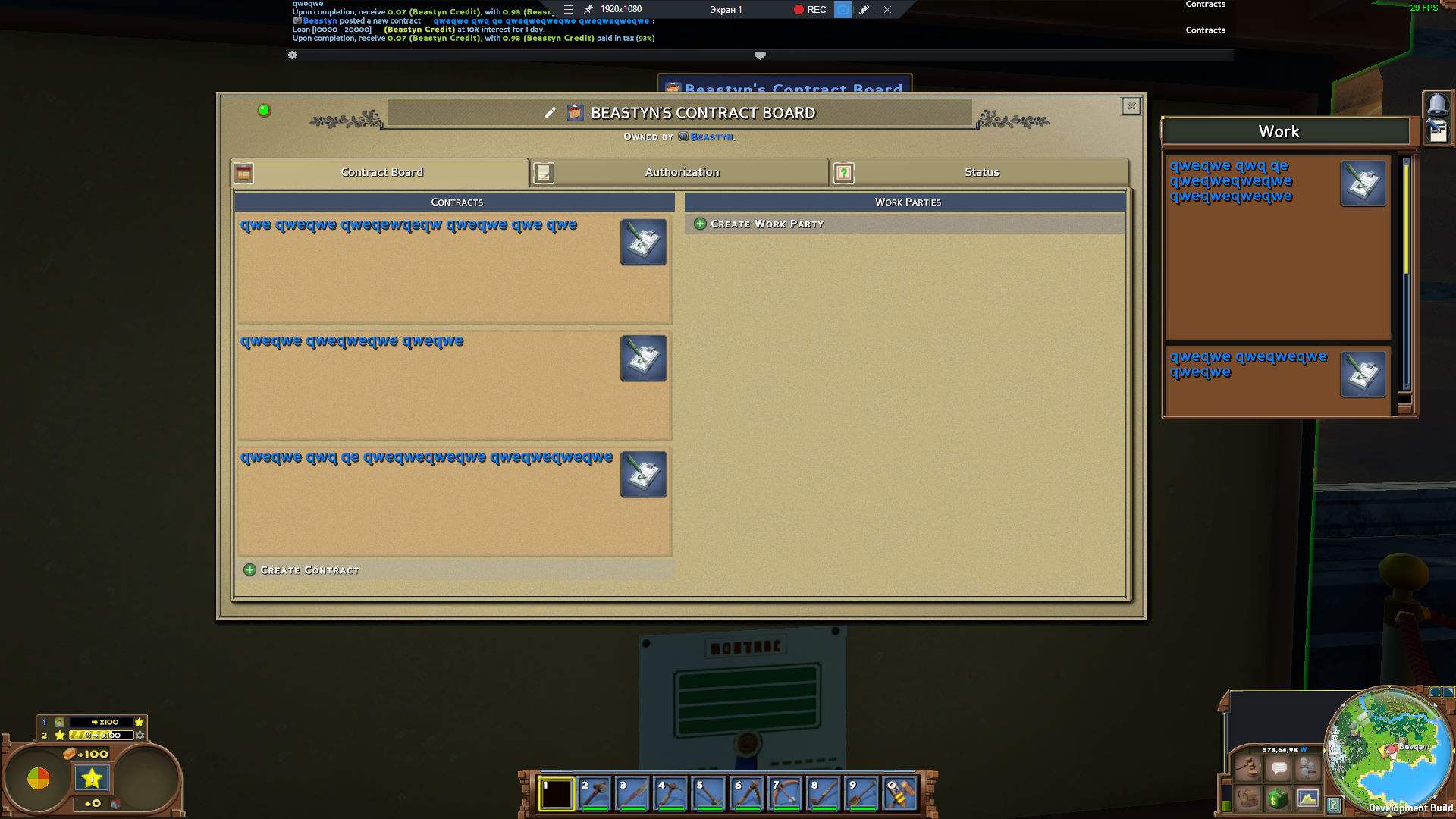

438,720 | 12,643,593,389 | IssuesEvent | 2020-06-16 10:03:51 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | [0.9.0 staging-1607] Empty information in some places | Priority: Medium | It just happens

When you have created a contract

- Empty info about contract on contract board

- Empty information in the right panel side

Can be connected to #16681

| 1.0 | [0.9.0 staging-1607] Empty information in some places - It just happens

When you have created a contract

- Empty info about contract on contract board

- Empty information in the right panel side

on September 06, 2011 01:34:39_

What steps will reproduce the problem? 1. Create a list with no string "needle".

2. call solo.waitForText("needle", 1, 2000, true)

What is the expected output?

needle wouldn't be found in searchFor call, but... | 1.0 | waitForText timeout is ignored if text not found - _From [gaz...@gmail.com](https://code.google.com/u/113313170396315103068/) on September 06, 2011 01:34:39_

What steps will reproduce the problem? 1. Create a list with no string "needle".

2. call solo.waitForText("needle", 1, 2000, true)

What is the expected outpu... | priority | waitfortext timeout is ignored if text not found from on september what steps will reproduce the problem create a list with no string needle call solo waitfortext needle true what is the expected output needle wouldn t be found in searchfor call but after some seconds yo... | 1 |

203,644 | 7,068,145,180 | IssuesEvent | 2018-01-08 06:25:57 | gluster/glusterd2 | https://api.github.com/repos/gluster/glusterd2 | closed | Quorum support in GD2 | feature priority: medium | To have parity with GD1, GD2 needs to have server & client side quorum support. | 1.0 | Quorum support in GD2 - To have parity with GD1, GD2 needs to have server & client side quorum support. | priority | quorum support in to have parity with needs to have server client side quorum support | 1 |

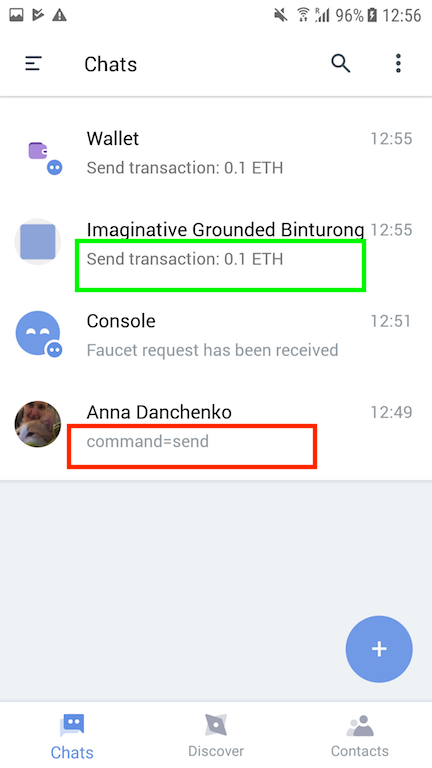

426,740 | 12,378,825,604 | IssuesEvent | 2020-05-19 11:24:03 | threefoldtech/3bot_wallet | https://api.github.com/repos/threefoldtech/3bot_wallet | closed | Stellar Staging - Currency is shown in send even when the user doesn't have that currency, results in no From accounts to be selectable. | priority_medium type_bug | **Repro steps**

1) Have an account with no FreeTFT

2) Attempt to send FreeTFT

**Expected Result**

Option should not exist in the currency dropdown, only display currencies the user actually owns in the send transaction.

**Actual Result**

Have an account with no FreeTFT

2) Attempt to send FreeTFT

**Expected Result**

Option should not exist in the currency dropdown, only display currencies the... | priority | stellar staging currency is shown in send even when the user doesn t have that currency results in no from accounts to be selectable repro steps have an account with no freetft attempt to send freetft expected result option should not exist in the currency dropdown only display currencies the... | 1 |

2,788 | 2,533,459,081 | IssuesEvent | 2015-01-23 23:36:45 | srabbelier-google/issue-export-test-3 | https://api.github.com/repos/srabbelier-google/issue-export-test-3 | closed | Migrate python SRC bindings into merged tree | Lang-Python Priority-Medium Type-Task | Original [issue 7](https://code.google.com/p/selenium/issues/detail?id=7) created by srabbelier-google on 2009-11-28T13:59:29.000Z:

The code and tests from:

selenium-rc/trunk/clients/python

should be integrated into the merged tree at:

branches/merge/selenium/{src|test}/py

The target to run them from the b... | 1.0 | Migrate python SRC bindings into merged tree - Original [issue 7](https://code.google.com/p/selenium/issues/detail?id=7) created by srabbelier-google on 2009-11-28T13:59:29.000Z:

The code and tests from:

selenium-rc/trunk/clients/python

should be integrated into the merged tree at:

branches/merge/selenium/{sr... | priority | migrate python src bindings into merged tree original created by srabbelier google on the code and tests from selenium rc trunk clients python should be integrated into the merged tree at branches merge selenium src test py the target to run them from the build should be rake tes... | 1 |

547,349 | 16,041,565,545 | IssuesEvent | 2021-04-22 08:32:39 | SAP/xsk | https://api.github.com/repos/SAP/xsk | closed | [Engines] Explore the capabilities of testsconteiners library | core effort-medium priority-medium | Testcontainers is a Java library that supports JUnit tests, providing lightweight, throwaway instances of common databases, Selenium web browsers, or anything else that can run in a Docker container.

It could be quite useful for testing our DB access layer. | 1.0 | [Engines] Explore the capabilities of testsconteiners library - Testcontainers is a Java library that supports JUnit tests, providing lightweight, throwaway instances of common databases, Selenium web browsers, or anything else that can run in a Docker container.

It could be quite useful for testing our DB access la... | priority | explore the capabilities of testsconteiners library testcontainers is a java library that supports junit tests providing lightweight throwaway instances of common databases selenium web browsers or anything else that can run in a docker container it could be quite useful for testing our db access layer | 1 |

55,141 | 3,072,162,398 | IssuesEvent | 2015-08-19 15:38:47 | RobotiumTech/robotium | https://api.github.com/repos/RobotiumTech/robotium | closed | How to create test cases for Android PhoneGap Application? | bug imported invalid Priority-Medium | _From [vidhya.p...@hcl.com](https://code.google.com/u/118222096880548246241/) on September 20, 2011 21:15:35_

What steps will reproduce the problem? 1.solo.EditText(0,"name")

2.In test result it showing there is no EditText 3. What is the expected output? What do you see instead? What version of the product are you u... | 1.0 | How to create test cases for Android PhoneGap Application? - _From [vidhya.p...@hcl.com](https://code.google.com/u/118222096880548246241/) on September 20, 2011 21:15:35_

What steps will reproduce the problem? 1.solo.EditText(0,"name")

2.In test result it showing there is no EditText 3. What is the expected output? W... | priority | how to create test cases for android phonegap application from on september what steps will reproduce the problem solo edittext name in test result it showing there is no edittext what is the expected output what do you see instead what version of the product are you using on what ... | 1 |

680,965 | 23,291,969,532 | IssuesEvent | 2022-08-06 01:42:57 | twidi/quantifier | https://api.github.com/repos/twidi/quantifier | closed | Try to keep the current date as when navigating | Type: Bug Workflow: 8 - Done Priority: 3 - Medium Scope: Core Scope: Interface Status: Confirmed Complexity: 2 - Medium | If we hare the 2022-08-05 and are in monthly mode, all links will have the date set to 2022-08-01, so the context is lost for example if we want to go in daily mode | 1.0 | Try to keep the current date as when navigating - If we hare the 2022-08-05 and are in monthly mode, all links will have the date set to 2022-08-01, so the context is lost for example if we want to go in daily mode | priority | try to keep the current date as when navigating if we hare the and are in monthly mode all links will have the date set to so the context is lost for example if we want to go in daily mode | 1 |

419,256 | 12,219,628,233 | IssuesEvent | 2020-05-01 22:16:46 | codidact/qpixel | https://api.github.com/repos/codidact/qpixel | closed | Per-category access restriction | area: backend priority: medium type: change request | A user on Writing Meta suggested a category where people could post their work, either for critique or just to share. A concern is that publishing something, even informally, can impede or even prevent selling that work to a publisher later -- some publishers won't buy what was previously publicly available for free, ... | 1.0 | Per-category access restriction - A user on Writing Meta suggested a category where people could post their work, either for critique or just to share. A concern is that publishing something, even informally, can impede or even prevent selling that work to a publisher later -- some publishers won't buy what was previo... | priority | per category access restriction a user on writing meta suggested a category where people could post their work either for critique or just to share a concern is that publishing something even informally can impede or even prevent selling that work to a publisher later some publishers won t buy what was previo... | 1 |

553,781 | 16,381,908,528 | IssuesEvent | 2021-05-17 05:03:35 | clabe45/vidar | https://api.github.com/repos/clabe45/vidar | opened | Audio fade in effect | priority:medium type:enhancement | Add an audio effect that makes the target fade in from silence. It should have a duration property that controls how many seconds the effect should last after the start of the layer.