Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

691,815 | 23,711,820,585 | IssuesEvent | 2022-08-30 08:30:18 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Bluetooth: Scan responses with info about periodic adv. sometimes stops being reported | bug priority: medium area: Bluetooth area: Bluetooth Controller | **Describe the bug**

Sometimes after for example a sync termination then no new advertisments with periodic adv. are reported in the `bt_le_scan_cb recv` callback. Restarting scanning will result again with scan responses containing info about periodic adv.

Please also mention any information which could help other... | 1.0 | Bluetooth: Scan responses with info about periodic adv. sometimes stops being reported - **Describe the bug**

Sometimes after for example a sync termination then no new advertisments with periodic adv. are reported in the `bt_le_scan_cb recv` callback. Restarting scanning will result again with scan responses containi... | priority | bluetooth scan responses with info about periodic adv sometimes stops being reported describe the bug sometimes after for example a sync termination then no new advertisments with periodic adv are reported in the bt le scan cb recv callback restarting scanning will result again with scan responses containi... | 1 |

53,757 | 3,047,320,801 | IssuesEvent | 2015-08-11 03:25:22 | piccolo2d/piccolo2d.java | https://api.github.com/repos/piccolo2d/piccolo2d.java | closed | PArea, a wrapper for java.awt.geom.Area to allow Constructive Area Geometry (CAG) operations | Component-Core Effort-Medium Milestone-2.0 OpSys-All Priority-Medium Status-Verified Toolkit-Piccolo2D.Java Type-Enhancement | Originally reported on Google Code with ID 153

```

It would be desirable to have a node wrapper for java.awt.geom.Area to

allow Constructive Area Geometry (CAG) operations, such as area addition,

subtraction, intersection, and exclusive or.

```

Reported by `heuermh` on 2009-12-15 20:36:09

| 1.0 | PArea, a wrapper for java.awt.geom.Area to allow Constructive Area Geometry (CAG) operations - Originally reported on Google Code with ID 153

```

It would be desirable to have a node wrapper for java.awt.geom.Area to

allow Constructive Area Geometry (CAG) operations, such as area addition,

subtraction, intersection, ... | priority | parea a wrapper for java awt geom area to allow constructive area geometry cag operations originally reported on google code with id it would be desirable to have a node wrapper for java awt geom area to allow constructive area geometry cag operations such as area addition subtraction intersection an... | 1 |

188,202 | 6,773,966,455 | IssuesEvent | 2017-10-27 08:35:58 | status-im/status-react | https://api.github.com/repos/status-im/status-react | closed | "Insufficient funds" error is not visible if try to send ETH from Wallet via Enter address/Scan QR | android bug medium-priority wontfix | ### Description

[comment]: # (Feature or Bug? i.e Type: Bug)

*Type*: Bug

[comment]: # (Describe the feature you would like, or briefly summarise the bug and what you did, what you expected to happen, and what actually happens. Sections below)

*Summary*: If try to send more ETH than available to someone who is n... | 1.0 | "Insufficient funds" error is not visible if try to send ETH from Wallet via Enter address/Scan QR - ### Description

[comment]: # (Feature or Bug? i.e Type: Bug)

*Type*: Bug

[comment]: # (Describe the feature you would like, or briefly summarise the bug and what you did, what you expected to happen, and what ac... | priority | insufficient funds error is not visible if try to send eth from wallet via enter address scan qr description feature or bug i e type bug type bug describe the feature you would like or briefly summarise the bug and what you did what you expected to happen and what actually happens ... | 1 |

711,733 | 24,473,502,133 | IssuesEvent | 2022-10-07 23:38:16 | turbot/steampipe-plugin-oci | https://api.github.com/repos/turbot/steampipe-plugin-oci | closed | Deprecate columns ipv6_cidr_block and ipv6_public_cidr_block in oci_core_vcn table | enhancement priority:medium stale | **Describe the bug**

The VCN List/Get API does not return values for `ipv6_cidr_block` and `ipv6_public_cidr_block`, so it will always show as null.

**Steampipe version (`steampipe -v`)**

Example: v0.3.0

**Plugin version (`steampipe plugin list`)**

Example: v0.5.0

**To reproduce**

Steps to reproduce the be... | 1.0 | Deprecate columns ipv6_cidr_block and ipv6_public_cidr_block in oci_core_vcn table - **Describe the bug**

The VCN List/Get API does not return values for `ipv6_cidr_block` and `ipv6_public_cidr_block`, so it will always show as null.

**Steampipe version (`steampipe -v`)**

Example: v0.3.0

**Plugin version (`stea... | priority | deprecate columns cidr block and public cidr block in oci core vcn table describe the bug the vcn list get api does not return values for cidr block and public cidr block so it will always show as null steampipe version steampipe v example plugin version steampipe plugin ... | 1 |

54,682 | 3,070,961,113 | IssuesEvent | 2015-08-19 09:00:00 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | opened | Отключить кеш windows для раздаваемых (да и наверно для всех) файлов | enhancement imported Performance Priority-Medium | _From [a.rain...@gmail.com](https://code.google.com/u/117892482479228821242/) on July 21, 2009 09:14:33_

Когда расшаришь популярные файлы большого объёма, и ни них налетит

толпа леммингов для скачки (большой аплоад), кеш виндовс забивается --

он уходит на обслуживание флайлинка -- дабы ему комфортно отдавались

р... | 1.0 | Отключить кеш windows для раздаваемых (да и наверно для всех) файлов - _From [a.rain...@gmail.com](https://code.google.com/u/117892482479228821242/) on July 21, 2009 09:14:33_

Когда расшаришь популярные файлы большого объёма, и ни них налетит

толпа леммингов для скачки (большой аплоад), кеш виндовс забивается --

о... | priority | отключить кеш windows для раздаваемых да и наверно для всех файлов from on july когда расшаришь популярные файлы большого объёма и ни них налетит толпа леммингов для скачки большой аплоад кеш виндовс забивается он уходит на обслуживание флайлинка дабы ему комфортно отдавались ранее... | 1 |

576,422 | 17,086,781,144 | IssuesEvent | 2021-07-08 12:51:58 | netdata/netdata | https://api.github.com/repos/netdata/netdata | closed | Intel Performance Counters | area/collectors data-collection-mteam feature request new collector priority/medium | Found this: https://www.quora.com/How-can-I-monitor-PCI-Express-bus-usage-on-Linux

with a lead to this: https://software.intel.com/en-us/articles/intel-performance-counter-monitor

It would be nice if we could monitor such system internals...

| 1.0 | Intel Performance Counters - Found this: https://www.quora.com/How-can-I-monitor-PCI-Express-bus-usage-on-Linux

with a lead to this: https://software.intel.com/en-us/articles/intel-performance-counter-monitor

It would be nice if we could monitor such system internals...

| priority | intel performance counters found this with a lead to this it would be nice if we could monitor such system internals | 1 |

709,131 | 24,368,124,589 | IssuesEvent | 2022-10-03 16:47:23 | diba-io/bitmask-core | https://api.github.com/repos/diba-io/bitmask-core | opened | Wallet Contract Storage | priority-medium | This allows us to store contracts on the user's behalf. Earlier, assets would have to be tracked out of band.

GET and POST for both `/store_global` and `/store_wallet` on local `bitmaskd` and lambdas

File Schema:

- global

- rgbc

- rgb1... (rgbid asset genesis)

- wallet

- (pkh)

- assets (file)

... | 1.0 | Wallet Contract Storage - This allows us to store contracts on the user's behalf. Earlier, assets would have to be tracked out of band.

GET and POST for both `/store_global` and `/store_wallet` on local `bitmaskd` and lambdas

File Schema:

- global

- rgbc

- rgb1... (rgbid asset genesis)

- wallet

- (... | priority | wallet contract storage this allows us to store contracts on the user s behalf earlier assets would have to be tracked out of band get and post for both store global and store wallet on local bitmaskd and lambdas file schema global rgbc rgbid asset genesis wallet pkh... | 1 |

372,070 | 11,008,735,167 | IssuesEvent | 2019-12-04 11:07:00 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Laws: Location conditions available for actions without location | Fixed Medium Priority Reopen | I.e. for PolluteAir action (which doesn't implement `IPositionGameAction` it still allows to add `Location` related conditions).

| 1.0 | Laws: Location conditions available for actions without location - I.e. for PolluteAir action (which doesn't implement `IPositionGameAction` it still allows to add `Location` related conditions).

| priority | laws location conditions available for actions without location i e for polluteair action which doesn t implement ipositiongameaction it still allows to add location related conditions | 1 |

677,899 | 23,179,434,674 | IssuesEvent | 2022-07-31 22:33:20 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Exhaustion Limit extra bits | Priority: Medium Type: Feature Category: Gameplay Squad: Redwood | - [ ] Make flags in Balance Manager that define what general activities are affected by exhaustion. Make them all on by default. Should be: farming, transport (vehicles), labor on tables, starting work order

- [ ] Make picking vegetables and starting work orders check exhaustion limit if flags set.

- [ ] Add a warni... | 1.0 | Exhaustion Limit extra bits - - [ ] Make flags in Balance Manager that define what general activities are affected by exhaustion. Make them all on by default. Should be: farming, transport (vehicles), labor on tables, starting work order

- [ ] Make picking vegetables and starting work orders check exhaustion limit if ... | priority | exhaustion limit extra bits make flags in balance manager that define what general activities are affected by exhaustion make them all on by default should be farming transport vehicles labor on tables starting work order make picking vegetables and starting work orders check exhaustion limit if flag... | 1 |

111,967 | 4,499,890,163 | IssuesEvent | 2016-09-01 01:09:40 | benvenutti/hasm | https://api.github.com/repos/benvenutti/hasm | closed | Load command accepts negative numbers | priority: medium status: completed type: bug | Loading signed integers with the load command is an invalid operation. The assembler should output an error message and halt the assembling process. | 1.0 | Load command accepts negative numbers - Loading signed integers with the load command is an invalid operation. The assembler should output an error message and halt the assembling process. | priority | load command accepts negative numbers loading signed integers with the load command is an invalid operation the assembler should output an error message and halt the assembling process | 1 |

791,233 | 27,856,657,648 | IssuesEvent | 2023-03-20 23:53:45 | conan-io/conan | https://api.github.com/repos/conan-io/conan | closed | [workspaces] Consumer (conanfile.txt) as root of workspace | type: feature stage: queue priority: medium complex: medium | Current Workspace feature does not support conan "final consumer project" (configured by conanfile.txt) to be root of the workspace - it requires root to be full-featured package reference. As temp hack, we converted our conanfile.txt into conanfile.py with dummy names and versions, but we are not sure how safe such a ... | 1.0 | [workspaces] Consumer (conanfile.txt) as root of workspace - Current Workspace feature does not support conan "final consumer project" (configured by conanfile.txt) to be root of the workspace - it requires root to be full-featured package reference. As temp hack, we converted our conanfile.txt into conanfile.py with d... | priority | consumer conanfile txt as root of workspace current workspace feature does not support conan final consumer project configured by conanfile txt to be root of the workspace it requires root to be full featured package reference as temp hack we converted our conanfile txt into conanfile py with dummy names ... | 1 |

638,837 | 20,739,897,336 | IssuesEvent | 2022-03-14 16:43:26 | bounswe/bounswe2022group5 | https://api.github.com/repos/bounswe/bounswe2022group5 | closed | Research about Telegram’s Chatbot API | Type: Research Medium Priority Status: In Progress | To Do:

- Research will be made about Telegram's Chatbot API to get the idea of how to use the bot in our Medical Experience Sharing Platform Project, and the advantages of the Chatbot API.

- Information about the research will be documented in our [Research Items for Project](https://github.com/bounswe/bounswe2022g... | 1.0 | Research about Telegram’s Chatbot API - To Do:

- Research will be made about Telegram's Chatbot API to get the idea of how to use the bot in our Medical Experience Sharing Platform Project, and the advantages of the Chatbot API.

- Information about the research will be documented in our [Research Items for Project]... | priority | research about telegram’s chatbot api to do research will be made about telegram s chatbot api to get the idea of how to use the bot in our medical experience sharing platform project and the advantages of the chatbot api information about the research will be documented in our page reviewers ... | 1 |

303,337 | 9,305,666,228 | IssuesEvent | 2019-03-25 07:24:05 | TNG/ngqp | https://api.github.com/repos/TNG/ngqp | closed | Array values with multi: false need to be boxed | Comp: Core Priority: Medium Type: Bug | I think that right now, if `multi: false` is used with an array-types value, we end up spreading the value across multiple URL query parameters anway. We probably need to box the URL parameter for non-multi parameters to avoid this. | 1.0 | Array values with multi: false need to be boxed - I think that right now, if `multi: false` is used with an array-types value, we end up spreading the value across multiple URL query parameters anway. We probably need to box the URL parameter for non-multi parameters to avoid this. | priority | array values with multi false need to be boxed i think that right now if multi false is used with an array types value we end up spreading the value across multiple url query parameters anway we probably need to box the url parameter for non multi parameters to avoid this | 1 |

401,145 | 11,786,333,892 | IssuesEvent | 2020-03-17 12:04:17 | ooni/probe | https://api.github.com/repos/ooni/probe | closed | Fix OONI Explorer links linked in the mobile & desktop apps | effort/XS ooni/probe-desktop ooni/probe-mobile priority/medium | The OONI Probe mobile & desktop apps link to an old version of OONI Explorer (https://explorer.ooni.io/world/) which no longer works.

We need to replace these links with the new URL of the revamped OONI Explorer: https://explorer.ooni.org/ | 1.0 | Fix OONI Explorer links linked in the mobile & desktop apps - The OONI Probe mobile & desktop apps link to an old version of OONI Explorer (https://explorer.ooni.io/world/) which no longer works.

We need to replace these links with the new URL of the revamped OONI Explorer: https://explorer.ooni.org/ | priority | fix ooni explorer links linked in the mobile desktop apps the ooni probe mobile desktop apps link to an old version of ooni explorer which no longer works we need to replace these links with the new url of the revamped ooni explorer | 1 |

83,944 | 3,645,311,306 | IssuesEvent | 2016-02-15 14:07:57 | MBB-team/VBA-toolbox | https://api.github.com/repos/MBB-team/VBA-toolbox | closed | wiki is incomplete! | auto-migrated Priority-Medium Type-Enhancement | _From @GoogleCodeExporter on October 7, 2015 9:29_

```

What steps will reproduce the problem?

1. SVN checkout

2. list directories

3.

What is the expected output? What do you see instead?

Well, it should work.

What version of the product are you using? On what operating system?

I'm using version 0.0

Please provide... | 1.0 | wiki is incomplete! - _From @GoogleCodeExporter on October 7, 2015 9:29_

```

What steps will reproduce the problem?

1. SVN checkout

2. list directories

3.

What is the expected output? What do you see instead?

Well, it should work.

What version of the product are you using? On what operating system?

I'm using versio... | priority | wiki is incomplete from googlecodeexporter on october what steps will reproduce the problem svn checkout list directories what is the expected output what do you see instead well it should work what version of the product are you using on what operating system i m using version ... | 1 |

461,529 | 13,231,787,226 | IssuesEvent | 2020-08-18 12:18:35 | input-output-hk/ouroboros-network | https://api.github.com/repos/input-output-hk/ouroboros-network | closed | Introduce header revalidation | consensus optimisation priority medium | As mentioned in https://github.com/input-output-hk/cardano-ledger-specs/pull/1785#issuecomment-675035681, the majority of block replay time is spent in C functions doing crypto checks, see the two top of the profiling report:

```

COST CENTRE SRC %time %alloc

verify ... | 1.0 | Introduce header revalidation - As mentioned in https://github.com/input-output-hk/cardano-ledger-specs/pull/1785#issuecomment-675035681, the majority of block replay time is spent in C functions doing crypto checks, see the two top of the profiling report:

```

COST CENTRE SRC ... | priority | introduce header revalidation as mentioned in the majority of block replay time is spent in c functions doing crypto checks see the two top of the profiling report cost centre src time alloc verify src cardano crypto vrf praos hs ... | 1 |

479,705 | 13,804,751,355 | IssuesEvent | 2020-10-11 10:31:33 | amplication/amplication | https://api.github.com/repos/amplication/amplication | closed | Run E2E tests in CI | priority: medium | **Is your feature request related to a problem? Please describe.**

We currently have no indication in the CI E2E tests are passing

**Describe the solution you'd like**

Run the E2E tests in a dedicated GitHub action

**Describe alternatives you've considered**

RUn the E2E tests in Google Cloud Build

**Additio... | 1.0 | Run E2E tests in CI - **Is your feature request related to a problem? Please describe.**

We currently have no indication in the CI E2E tests are passing

**Describe the solution you'd like**

Run the E2E tests in a dedicated GitHub action

**Describe alternatives you've considered**

RUn the E2E tests in Google Cl... | priority | run tests in ci is your feature request related to a problem please describe we currently have no indication in the ci tests are passing describe the solution you d like run the tests in a dedicated github action describe alternatives you ve considered run the tests in google cloud buil... | 1 |

198,932 | 6,979,238,243 | IssuesEvent | 2017-12-12 20:17:51 | sagesharp/outreachy-django-wagtail | https://api.github.com/repos/sagesharp/outreachy-django-wagtail | opened | Set up test server | medium priority | Need to have a test server managed by dokku at test.outreachy.org. Currently we're just crossing our fingers and pushing to production after local testing. 😱

Ideally in the future we would set up a test server for each developer to push to. We need to have a way to copy the Django database, scrub any personal data... | 1.0 | Set up test server - Need to have a test server managed by dokku at test.outreachy.org. Currently we're just crossing our fingers and pushing to production after local testing. 😱

Ideally in the future we would set up a test server for each developer to push to. We need to have a way to copy the Django database, sc... | priority | set up test server need to have a test server managed by dokku at test outreachy org currently we re just crossing our fingers and pushing to production after local testing 😱 ideally in the future we would set up a test server for each developer to push to we need to have a way to copy the django database sc... | 1 |

523,756 | 15,189,184,663 | IssuesEvent | 2021-02-15 16:04:12 | staxrip/staxrip | https://api.github.com/repos/staxrip/staxrip | closed | StaxRip MP4Box Crash on AAC 7.1 MKV File Open | added/fixed/done bug priority medium tool issue | I get StaxRip crash on opening MKV files with AAC 7.1 audio. ALWAYS occurs on ALL MKV files with aac 7.1 audio. MKV files with aac 5.1 audio open without issue. If I leave video and audio untouched and convert the MKV to an MP4 container the file opens fine. The culprit appears to be MP4Box. It reads the 7.1 aac in MKV... | 1.0 | StaxRip MP4Box Crash on AAC 7.1 MKV File Open - I get StaxRip crash on opening MKV files with AAC 7.1 audio. ALWAYS occurs on ALL MKV files with aac 7.1 audio. MKV files with aac 5.1 audio open without issue. If I leave video and audio untouched and convert the MKV to an MP4 container the file opens fine. The culprit a... | priority | staxrip crash on aac mkv file open i get staxrip crash on opening mkv files with aac audio always occurs on all mkv files with aac audio mkv files with aac audio open without issue if i leave video and audio untouched and convert the mkv to an container the file opens fine the culprit appears ... | 1 |

40,679 | 2,868,935,667 | IssuesEvent | 2015-06-05 22:03:34 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | oauth authorized redirect should use given server | bug Priority-Medium wontfix | <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#7035_

----

If you pub lish to a custom server, it goes through oat... | 1.0 | oauth authorized redirect should use given server - <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#7035_

----

If ... | priority | oauth authorized redirect should use given server issue by originally opened as dart lang sdk if you pub lish to a custom server it goes through oath and then redirects you to pub dartlang org authorized it should redirect to lt custom server gt authorized | 1 |

26,116 | 2,684,179,527 | IssuesEvent | 2015-03-28 18:42:42 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | powershell scripts will not execute under ConEmu, but execute ok with native PowerShell directly | 1 star bug imported Priority-Medium | _From [ois...@gmail.com](https://code.google.com/u/105345745417239788864/) on September 17, 2012 14:19:44_

Required information! OS version: Win8 x64 ConEmu version: started with 120909 (I think?) -> 120916 (current)

PowerShell scripts will not execute with execution policy = remotesigned when run under ConEmu . Th... | 1.0 | powershell scripts will not execute under ConEmu, but execute ok with native PowerShell directly - _From [ois...@gmail.com](https://code.google.com/u/105345745417239788864/) on September 17, 2012 14:19:44_

Required information! OS version: Win8 x64 ConEmu version: started with 120909 (I think?) -> 120916 (current)

... | priority | powershell scripts will not execute under conemu but execute ok with native powershell directly from on september required information os version conemu version started with i think current powershell scripts will not execute with execution policy remotesigned when run under... | 1 |

447,278 | 12,887,471,406 | IssuesEvent | 2020-07-13 11:18:23 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Oil Refinery has significant issues | Category: Balance Priority: Medium | During the most recent playtest, we have tracked the major source of over-pollution to the Oil Refinery. There are a few reasons for this:

- Multiple refineries are running 24/7. With barrels being refunded 100%, we can setup a loop where the oil refineries run indefinitely as long as the labor as been added.

- It i... | 1.0 | Oil Refinery has significant issues - During the most recent playtest, we have tracked the major source of over-pollution to the Oil Refinery. There are a few reasons for this:

- Multiple refineries are running 24/7. With barrels being refunded 100%, we can setup a loop where the oil refineries run indefinitely as lon... | priority | oil refinery has significant issues during the most recent playtest we have tracked the major source of over pollution to the oil refinery there are a few reasons for this multiple refineries are running with barrels being refunded we can setup a loop where the oil refineries run indefinitely as long a... | 1 |

772,853 | 27,139,195,589 | IssuesEvent | 2023-02-16 15:14:32 | boostercloud/booster | https://api.github.com/repos/boostercloud/booster | closed | Add support for notification events | size: XL dev-experience difficulty: high priority: medium | ## Feature Request

## Description

Following up with the conversation in #894, we've found many scenarios whose implementation could be significantly simplified if we had support for notification events, that is, events that are not tied to any specific entity. The only thing these events can do is trigger an even... | 1.0 | Add support for notification events - ## Feature Request

## Description

Following up with the conversation in #894, we've found many scenarios whose implementation could be significantly simplified if we had support for notification events, that is, events that are not tied to any specific entity. The only thing ... | priority | add support for notification events feature request description following up with the conversation in we ve found many scenarios whose implementation could be significantly simplified if we had support for notification events that is events that are not tied to any specific entity the only thing th... | 1 |

536,269 | 15,707,018,403 | IssuesEvent | 2021-03-26 18:15:04 | sopra-fs21-group-03/Client | https://api.github.com/repos/sopra-fs21-group-03/Client | opened | Every user can see what the users before have done so far | medium priority task | Time estimate: 0.7h

"This task is part of user story #13" | 1.0 | Every user can see what the users before have done so far - Time estimate: 0.7h

"This task is part of user story #13" | priority | every user can see what the users before have done so far time estimate this task is part of user story | 1 |

655,695 | 21,705,322,563 | IssuesEvent | 2022-05-10 09:05:11 | epiphany-platform/epiphany | https://api.github.com/repos/epiphany-platform/epiphany | closed | [FEATURE REQUEST] Create more accurate exception output for dnf_repoquery.py command | area/development priority/medium | **Is your feature request related to a problem? Please describe.**

After changes in dnf_repoquery command we run list of all packages in one repoquery call. That means when some of paczage is not found we get excpetion with list of all packages and it difficult to find package is the reason of issue.

```code

... | 1.0 | [FEATURE REQUEST] Create more accurate exception output for dnf_repoquery.py command - **Is your feature request related to a problem? Please describe.**

After changes in dnf_repoquery command we run list of all packages in one repoquery call. That means when some of paczage is not found we get excpetion with list of ... | priority | create more accurate exception output for dnf repoquery py command is your feature request related to a problem please describe after changes in dnf repoquery command we run list of all packages in one repoquery call that means when some of paczage is not found we get excpetion with list of all packages and... | 1 |

763,505 | 26,760,696,902 | IssuesEvent | 2023-01-31 06:30:32 | JeYeongR/repo-setup-sample | https://api.github.com/repos/JeYeongR/repo-setup-sample | closed | Sample Backlog 1 | For: CI/CD Priority: Medium Type: Idea Status: Available | ## Description

프로젝트 작업 전 수행되어야 할 issue(backlog)를 template을 활용하여 만들었습니다.

## Tasks(Process)

- [ ] 저녁 메뉴 정하기

- [ ] 저녁 먹기

- [ ] 집에 가기

## References

- [google](https://www.google.com/)

| 1.0 | Sample Backlog 1 - ## Description

프로젝트 작업 전 수행되어야 할 issue(backlog)를 template을 활용하여 만들었습니다.

## Tasks(Process)

- [ ] 저녁 메뉴 정하기

- [ ] 저녁 먹기

- [ ] 집에 가기

## References

- [google](https://www.google.com/)

| priority | sample backlog description 프로젝트 작업 전 수행되어야 할 issue backlog 를 template을 활용하여 만들었습니다 tasks process 저녁 메뉴 정하기 저녁 먹기 집에 가기 references | 1 |

77,693 | 3,507,216,950 | IssuesEvent | 2016-01-08 11:58:05 | OregonCore/OregonCore | https://api.github.com/repos/OregonCore/OregonCore | closed | Bugged Global Cooldown (BB #673) | migrated Priority: Medium Type: Bug | This issue was migrated from bitbucket.

**Original Reporter:** Alex_Step

**Original Date:** 27.08.2014 09:29:57 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** invalid

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/673

<hr>

If you are in combat change equip (weapon, shi... | 1.0 | Bugged Global Cooldown (BB #673) - This issue was migrated from bitbucket.

**Original Reporter:** Alex_Step

**Original Date:** 27.08.2014 09:29:57 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** invalid

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/673

<hr>

If you are ... | priority | bugged global cooldown bb this issue was migrated from bitbucket original reporter alex step original date gmt original priority major original type bug original state invalid direct link if you are in combat change equip weapon shield relic appears a global... | 1 |

216,677 | 7,310,826,930 | IssuesEvent | 2018-02-28 16:01:53 | canonical-websites/maas.io | https://api.github.com/repos/canonical-websites/maas.io | closed | Need event on the contact us button on the contact us page | Priority: Medium Type: Enhancement | ## Summary

Ideally need an event when button is clicked.

## Process

<button type="submit" class="mktoButton p-button--positive" onclick="dataLayer.push({'event' : 'GAEvent', 'eventCategory' : 'Form', 'eventAction' : 'MaaS.io contact-us', 'eventLabel' : maas.io-cloud', 'eventValue' : undefined });">Submit</button... | 1.0 | Need event on the contact us button on the contact us page - ## Summary

Ideally need an event when button is clicked.

## Process

<button type="submit" class="mktoButton p-button--positive" onclick="dataLayer.push({'event' : 'GAEvent', 'eventCategory' : 'Form', 'eventAction' : 'MaaS.io contact-us', 'eventLabel' :... | priority | need event on the contact us button on the contact us page summary ideally need an event when button is clicked process submit current and expected result screenshot | 1 |

289,165 | 8,855,483,643 | IssuesEvent | 2019-01-09 06:42:06 | visit-dav/issues-test | https://api.github.com/repos/visit-dav/issues-test | closed | Use Coord System Fix for MFEM Mesh Constructor | bug likelihood medium priority reviewed severity low | From Tzanio Kolev & Dan White We have a bug in the MFEM/VisIt integration that prevents us from visualizing high-order Nedelec elements on tet meshes. Cyrus: the problem is in line 462 of VisIts src/databases/MFEM/avtMFEMFileFormat.C. Can you switch mesh = new Mesh(imesh, 1, 1);to mesh = new Mesh(imesh, 1, 1, false); T... | 1.0 | Use Coord System Fix for MFEM Mesh Constructor - From Tzanio Kolev & Dan White We have a bug in the MFEM/VisIt integration that prevents us from visualizing high-order Nedelec elements on tet meshes. Cyrus: the problem is in line 462 of VisIts src/databases/MFEM/avtMFEMFileFormat.C. Can you switch mesh = new Mesh(imesh... | priority | use coord system fix for mfem mesh constructor from tzanio kolev dan white we have a bug in the mfem visit integration that prevents us from visualizing high order nedelec elements on tet meshes cyrus the problem is in line of visits src databases mfem avtmfemfileformat c can you switch mesh new mesh imesh ... | 1 |

220,486 | 7,360,330,094 | IssuesEvent | 2018-03-10 17:28:52 | bounswe/bounswe2018group5 | https://api.github.com/repos/bounswe/bounswe2018group5 | opened | Revise User Stories page | Effort: Medium Priority: High Status: In Progress Type: Wiki | Per Cihat's comment:

> Mockups

> * Do not forget to provide real data within the all of the pages :)

> * You can progress the scenario between each page with a couple of sentences.

> * That would be good to add 1-2 more pages to the 3rd scenario. Is this information (most bided project etc.) are available at the ... | 1.0 | Revise User Stories page - Per Cihat's comment:

> Mockups

> * Do not forget to provide real data within the all of the pages :)

> * You can progress the scenario between each page with a couple of sentences.

> * That would be good to add 1-2 more pages to the 3rd scenario. Is this information (most bided project ... | priority | revise user stories page per cihat s comment mockups do not forget to provide real data within the all of the pages you can progress the scenario between each page with a couple of sentences that would be good to add more pages to the scenario is this information most bided project et... | 1 |

583,090 | 17,376,940,149 | IssuesEvent | 2021-07-30 23:46:14 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Trying to start the election for a constitution on tiger leads to darkened screen with no hint whats going on | Category: UI Priority: Medium Squad: Mountain Goat Type: Bug |

| 1.0 | Trying to start the election for a constitution on tiger leads to darkened screen with no hint whats going on -

| priority | trying to start the election for a constitution on tiger leads to darkened screen with no hint whats going on | 1 |

829,310 | 31,863,630,272 | IssuesEvent | 2023-09-15 12:45:06 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | opened | Grid's pager breaks if you use the setOptions method on the Pager. | Bug SEV: Medium C: Grid jQuery Priority 5 | ### Bug report

The Pager breaks if you use the setOptions method to alter its options.

**Regression introduced with 2023.2.829**

### Reproduction of the problem

1. Open the Pager Grid demo - https://demos.telerik.com/kendo-ui/grid/pager-functionality

2. Check either of the checkboxes to change the Pager op... | 1.0 | Grid's pager breaks if you use the setOptions method on the Pager. - ### Bug report

The Pager breaks if you use the setOptions method to alter its options.

**Regression introduced with 2023.2.829**

### Reproduction of the problem

1. Open the Pager Grid demo - https://demos.telerik.com/kendo-ui/grid/pager-fu... | priority | grid s pager breaks if you use the setoptions method on the pager bug report the pager breaks if you use the setoptions method to alter its options regression introduced with reproduction of the problem open the pager grid demo check either of the checkboxes to change the pa... | 1 |

783,295 | 27,525,594,361 | IssuesEvent | 2023-03-06 17:49:03 | telabotanica/pollinisateurs | https://api.github.com/repos/telabotanica/pollinisateurs | closed | Profile formulaire style | priority::medium | en plus des corrections de fond qui sont en cours (ajout d'un champ et modif 1 champ & txts et skills) il faut modifier du style, un peu comme pour

#72

lien https://staging.nospollinisateurs.fr/user/profile/edit

font, hauteur de ligne, parcourir en rose clair sans survol

:

Woodruff

| 1.0 | American Journal of Roentgenology - ### Example:star::star: :

https://www.ajronline.org/

### Priority:

Medium

### Subscriber (Library):

Woodruff

| priority | american journal of roentgenology example star star priority medium subscriber library woodruff | 1 |

16,707 | 2,615,122,167 | IssuesEvent | 2015-03-01 05:49:20 | chrsmith/google-api-java-client | https://api.github.com/repos/chrsmith/google-api-java-client | opened | Google Custom Search Api | auto-migrated Priority-Medium Type-Sample | ```

Which Google API and version (e.g. Google Calendar Data API version 2)?

Google Custom Search Api

What format (e.g. JSON, Atom)?

JSON

What Authentation (e.g. OAuth, OAuth 2, Android, ClientLogin)?

ClientLogin

Java environment (e.g. Java 6, Android 2.3, App Engine 1.4.2)?

Java 6

External references, such as API r... | 1.0 | Google Custom Search Api - ```

Which Google API and version (e.g. Google Calendar Data API version 2)?

Google Custom Search Api

What format (e.g. JSON, Atom)?

JSON

What Authentation (e.g. OAuth, OAuth 2, Android, ClientLogin)?

ClientLogin

Java environment (e.g. Java 6, Android 2.3, App Engine 1.4.2)?

Java 6

Externa... | priority | google custom search api which google api and version e g google calendar data api version google custom search api what format e g json atom json what authentation e g oauth oauth android clientlogin clientlogin java environment e g java android app engine java externa... | 1 |

101,606 | 4,120,652,224 | IssuesEvent | 2016-06-08 18:36:18 | ocadotechnology/rapid-router | https://api.github.com/repos/ocadotechnology/rapid-router | closed | Night / underground mode | estimate: 8 priority: medium | A mode were you can't see the road and try to solve the route using a generic solution. | 1.0 | Night / underground mode - A mode were you can't see the road and try to solve the route using a generic solution. | priority | night underground mode a mode were you can t see the road and try to solve the route using a generic solution | 1 |

310,180 | 9,486,747,955 | IssuesEvent | 2019-04-22 14:54:06 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] Duplicate dialog is not clickable after publishing an item | bug priority: medium | ## Describe the bug

Duplicate dialog is not clickable after publish an item

## To Reproduce

Steps to reproduce the behavior:

1. Create a new site using a BP

2. Go to Preview

3. Go to sidebar, right click and select duplicate opt, see it is working

4. Go to sidebar, right click and select publish opt, and then ... | 1.0 | [studio-ui] Duplicate dialog is not clickable after publishing an item - ## Describe the bug

Duplicate dialog is not clickable after publish an item

## To Reproduce

Steps to reproduce the behavior:

1. Create a new site using a BP

2. Go to Preview

3. Go to sidebar, right click and select duplicate opt, see it is... | priority | duplicate dialog is not clickable after publishing an item describe the bug duplicate dialog is not clickable after publish an item to reproduce steps to reproduce the behavior create a new site using a bp go to preview go to sidebar right click and select duplicate opt see it is working ... | 1 |

559,710 | 16,574,249,357 | IssuesEvent | 2021-05-31 00:01:46 | factly/vidcheck | https://api.github.com/repos/factly/vidcheck | closed | Add Featured Image to Rating entity | priority:medium | Add `featured_image` as another field to Rating entity to keep it consistent with Dega. | 1.0 | Add Featured Image to Rating entity - Add `featured_image` as another field to Rating entity to keep it consistent with Dega. | priority | add featured image to rating entity add featured image as another field to rating entity to keep it consistent with dega | 1 |

418,719 | 12,202,703,184 | IssuesEvent | 2020-04-30 09:22:40 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1308] Default world generation | Priority: Medium Status: Fixed Type: Regression | We need to have it properly generated with builds and be actual.

It seems to be old and have some issues for now

- no trees with start from client

- wrong yield potential

the old one #11062 | 1.0 | [0.9.0 staging-1308] Default world generation - We need to have it properly generated with builds and be actual.

It seems to be old and have some issues for now

- no trees with start from client

- wrong yield potential

the old one #11062 | priority | default world generation we need to have it properly generated with builds and be actual it seems to be old and have some issues for now no trees with start from client wrong yield potential the old one | 1 |

237,721 | 7,763,559,240 | IssuesEvent | 2018-06-01 16:59:47 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | opened | Fix tests randomly failing on the build | Priority: Medium bug | ### Description

Some tests sometimes fail becouse of slowdown or some wrong race condition:

Mainly in 2 or 3 different failure sequence:

- [Sample 1](https://travis-ci.org/geosolutions-it/MapStore2/builds/380775634)

- [Sample 2](https://travis-ci.org/geosolutions-it/MapStore2/builds/380747290)

- [Sample 3](htt... | 1.0 | Fix tests randomly failing on the build - ### Description

Some tests sometimes fail becouse of slowdown or some wrong race condition:

Mainly in 2 or 3 different failure sequence:

- [Sample 1](https://travis-ci.org/geosolutions-it/MapStore2/builds/380775634)

- [Sample 2](https://travis-ci.org/geosolutions-it/MapS... | priority | fix tests randomly failing on the build description some tests sometimes fail becouse of slowdown or some wrong race condition mainly in or different failure sequence sample and are similar so they may have the same reason in case of bug otherwise remove this parag... | 1 |

163,438 | 6,198,303,274 | IssuesEvent | 2017-07-05 18:50:57 | Polymer/polymer-bundler | https://api.github.com/repos/Polymer/polymer-bundler | closed | Shell's import gets included in all the bundles | Priority: Medium Type: Question | Suppose we have an application having **4 entrypoints** (named Entrypoint 1, 2, 3, 4).

There dependencies are as flow

### Dependency tree

Entrypoint | Dependencies

------------ | -------------

Entrypoint 1 | Dep1.html, common.html

Entrypoint 2 | Dep2.html, common.html

Entrypoint 3 | common.html

Entrypoint ... | 1.0 | Shell's import gets included in all the bundles - Suppose we have an application having **4 entrypoints** (named Entrypoint 1, 2, 3, 4).

There dependencies are as flow

### Dependency tree

Entrypoint | Dependencies

------------ | -------------

Entrypoint 1 | Dep1.html, common.html

Entrypoint 2 | Dep2.html, co... | priority | shell s import gets included in all the bundles suppose we have an application having entrypoints named entrypoint there dependencies are as flow dependency tree entrypoint dependencies entrypoint html common html entrypoint html common h... | 1 |

421,853 | 12,262,195,882 | IssuesEvent | 2020-05-06 21:34:11 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui][studio] Create site dialog updates | enhancement priority: medium | Studio UI:

Update the Create Site dialog, marketplace tab to:

- Invoke the updated API with include incompatible plugins

- Have a checkbox that's checked on by default and controls that parameter

- Remove the Crafter CMS version from the detailed plugin/blueprint view

- Remove the "Use" button from the listing i... | 1.0 | [studio-ui][studio] Create site dialog updates - Studio UI:

Update the Create Site dialog, marketplace tab to:

- Invoke the updated API with include incompatible plugins

- Have a checkbox that's checked on by default and controls that parameter

- Remove the Crafter CMS version from the detailed plugin/blueprint v... | priority | create site dialog updates studio ui update the create site dialog marketplace tab to invoke the updated api with include incompatible plugins have a checkbox that s checked on by default and controls that parameter remove the crafter cms version from the detailed plugin blueprint view remove the... | 1 |

58,865 | 3,092,304,015 | IssuesEvent | 2015-08-26 17:08:00 | TheLens/demolitions | https://api.github.com/repos/TheLens/demolitions | closed | change "support this project" text to "about this project" | Medium priority | i think it's incongruous. we'll leave donation stuff at the bottom of the about page. | 1.0 | change "support this project" text to "about this project" - i think it's incongruous. we'll leave donation stuff at the bottom of the about page. | priority | change support this project text to about this project i think it s incongruous we ll leave donation stuff at the bottom of the about page | 1 |

97,052 | 3,984,636,467 | IssuesEvent | 2016-05-07 09:43:12 | BugBusterSWE/documentation | https://api.github.com/repos/BugBusterSWE/documentation | opened | Attività di aggiunta alle norme | activity Manager priority:medium | *Documento in cui si trova il problema*:

Norme di progetto

*Descrizione del problema*:

- [ ] Aggiungere processo di validazione

- [ ] Aggiungere decisioni riunione

Link task: [https://bugbusters.teamwork.com/tasks/6601072](https://bugbusters.teamwork.com/tasks/6601072) | 1.0 | Attività di aggiunta alle norme - *Documento in cui si trova il problema*:

Norme di progetto

*Descrizione del problema*:

- [ ] Aggiungere processo di validazione

- [ ] Aggiungere decisioni riunione

Link task: [https://bugbusters.teamwork.com/tasks/6601072](https://bugbusters.teamwork.com/tasks/6601072) | priority | attività di aggiunta alle norme documento in cui si trova il problema norme di progetto descrizione del problema aggiungere processo di validazione aggiungere decisioni riunione link task | 1 |

721,615 | 24,832,668,922 | IssuesEvent | 2022-10-26 05:54:24 | AY2223S1-CS2103T-T09-1/tp | https://api.github.com/repos/AY2223S1-CS2103T-T09-1/tp | closed | Change date format in transaction | priority.Medium type.Task | Currently shows `2000-11-11`, but it should be changed to `11/11/2000` | 1.0 | Change date format in transaction - Currently shows `2000-11-11`, but it should be changed to `11/11/2000` | priority | change date format in transaction currently shows but it should be changed to | 1 |

131,338 | 5,146,242,320 | IssuesEvent | 2017-01-13 00:19:34 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | Create a cmake only way of packaging Drake. | priority: medium team: kitware type: installation and distribution | Currently, this shell script is used:

https://github.com/RobotLocomotion/drake-admin/tree/master/packaging

Would be better if this was a cmake only script so it can be run cross platform. Maybe CPack, or other.

| 1.0 | Create a cmake only way of packaging Drake. - Currently, this shell script is used:

https://github.com/RobotLocomotion/drake-admin/tree/master/packaging

Would be better if this was a cmake only script so it can be run cross platform. Maybe CPack, or other.

| priority | create a cmake only way of packaging drake currently this shell script is used would be better if this was a cmake only script so it can be run cross platform maybe cpack or other | 1 |

22,701 | 2,649,880,980 | IssuesEvent | 2015-03-15 11:41:06 | prikhi/pencil | https://api.github.com/repos/prikhi/pencil | closed | Feature request: Object cloning | 2–5 stars duplicate enhancement imported Priority-Medium | _From [leschilo...@ok.by](https://code.google.com/u/100258333374114659683/) on December 27, 2013 07:08:54_

Hi,

Please add a feature "object cloning" (hotkey "ctrl+D" or "+" on numpad)

Regards,

Alex

_Original issue: http://code.google.com/p/evoluspencil/issues/detail?id=611_ | 1.0 | Feature request: Object cloning - _From [leschilo...@ok.by](https://code.google.com/u/100258333374114659683/) on December 27, 2013 07:08:54_

Hi,

Please add a feature "object cloning" (hotkey "ctrl+D" or "+" on numpad)

Regards,

Alex

_Original issue: http://code.google.com/p/evoluspencil/issues/detail?id=611_ | priority | feature request object cloning from on december hi please add a feature object cloning hotkey ctrl d or on numpad regards alex original issue | 1 |

19,576 | 2,622,154,031 | IssuesEvent | 2015-03-04 00:07:20 | byzhang/terrastore | https://api.github.com/repos/byzhang/terrastore | opened | Add streaming support to Java API | auto-migrated Priority-Medium Project-Terrastore-JavaClient Type-Feature | ```

It would be nice if the Java Client API supported streaming via the

getValue and putValue operations. This would be nice for the following

situations:

- storing large documents (ie. 100MB)

- can pass the stream directly through to the client connected to the

webapp, thus consuming no disk or memory on the web serv... | 1.0 | Add streaming support to Java API - ```

It would be nice if the Java Client API supported streaming via the

getValue and putValue operations. This would be nice for the following

situations:

- storing large documents (ie. 100MB)

- can pass the stream directly through to the client connected to the

webapp, thus consumi... | priority | add streaming support to java api it would be nice if the java client api supported streaming via the getvalue and putvalue operations this would be nice for the following situations storing large documents ie can pass the stream directly through to the client connected to the webapp thus consuming n... | 1 |

153,192 | 5,886,993,855 | IssuesEvent | 2017-05-17 05:41:42 | ThoughtWorksInc/treadmill | https://api.github.com/repos/ThoughtWorksInc/treadmill | closed | Set up FreeIPA server for LDAP-based directory of host and user accounts | Feature-Security inDev Priority-Critical Role-Administrator Size-Medium (M) | So that :

I have a centralized way of managing host and user accounts across Treadmill

Tasks:

"Assumption:

Can initialize a Treadmill cell on the cloud. (i.e. Stories '44844e' and '0dae3b' have been played.)

FreeIPA could be a probable candidate for centrally managing host and user accounts."

Tasks:

Create a... | 1.0 | Set up FreeIPA server for LDAP-based directory of host and user accounts - So that :

I have a centralized way of managing host and user accounts across Treadmill

Tasks:

"Assumption:

Can initialize a Treadmill cell on the cloud. (i.e. Stories '44844e' and '0dae3b' have been played.)

FreeIPA could be a probable ca... | priority | set up freeipa server for ldap based directory of host and user accounts so that i have a centralized way of managing host and user accounts across treadmill tasks assumption can initialize a treadmill cell on the cloud i e stories and have been played freeipa could be a probable candidate fo... | 1 |

4,743 | 2,563,500,067 | IssuesEvent | 2015-02-06 13:35:27 | olga-jane/prizm | https://api.github.com/repos/olga-jane/prizm | closed | Open only "Pipe" tab from menu "Settings" | bug bug - functional Coding MEDIUM priority Settings to_share_students | Scenario:

1. Open Prizm application

2. Go to "Settings" -> ANY menu item

Result:

Open only Pipe tab

Expected:

Open tab corresponding to menu item | 1.0 | Open only "Pipe" tab from menu "Settings" - Scenario:

1. Open Prizm application

2. Go to "Settings" -> ANY menu item

Result:

Open only Pipe tab

Expected:

Open tab corresponding to menu item | priority | open only pipe tab from menu settings scenario open prizm application go to settings any menu item result open only pipe tab expected open tab corresponding to menu item | 1 |

802,443 | 28,962,630,688 | IssuesEvent | 2023-05-10 04:50:59 | Park-Station/Parkstation | https://api.github.com/repos/Park-Station/Parkstation | closed | Shuttle changes | Priority: 3-Medium Type: Feature Request Status: Derelict | - [x] Longer shuttle transit

- [x] Auto call shuttle

- [x] Restart vote calls shuttle

- [x] Make evac a "lose condition" if the shift wasn't ended via Autocall

- [ ] Make the condition message appear at the bottom of the round end panel

- [x] Shuttle collision damage

---

- [x] Cargo should cost something to fly

... | 1.0 | Shuttle changes - - [x] Longer shuttle transit

- [x] Auto call shuttle

- [x] Restart vote calls shuttle

- [x] Make evac a "lose condition" if the shift wasn't ended via Autocall

- [ ] Make the condition message appear at the bottom of the round end panel

- [x] Shuttle collision damage

---

- [x] Cargo should cost... | priority | shuttle changes longer shuttle transit auto call shuttle restart vote calls shuttle make evac a lose condition if the shift wasn t ended via autocall make the condition message appear at the bottom of the round end panel shuttle collision damage cargo should cost something to ... | 1 |

265,085 | 8,337,063,174 | IssuesEvent | 2018-09-28 09:49:47 | edenlabllc/ehealth.api | https://api.github.com/repos/edenlabllc/ehealth.api | opened | The role MIS USER is not able to receive its scopes, PreProd, #J355 | kind/support priority/medium | Steps to reproduce:

GET https://api-preprod.ehealth-ukraine.org/admin/roles

Для ролі MIS USER у скоупах містяться скоупи: user:request_factor user:approve_factor

Із цими скоупами неможливо виконати процедуру логіну від користувача МІС.

Expected results:

Скоупи відповідають документації та досзволяють пройти Oauth ... | 1.0 | The role MIS USER is not able to receive its scopes, PreProd, #J355 - Steps to reproduce:

GET https://api-preprod.ehealth-ukraine.org/admin/roles

Для ролі MIS USER у скоупах містяться скоупи: user:request_factor user:approve_factor

Із цими скоупами неможливо виконати процедуру логіну від користувача МІС.

Expected r... | priority | the role mis user is not able to receive its scopes preprod steps to reproduce get для ролі mis user у скоупах містяться скоупи user request factor user approve factor із цими скоупами неможливо виконати процедуру логіну від користувача міс expected results скоупи відповідають документації та досзволя... | 1 |

512,483 | 14,897,845,748 | IssuesEvent | 2021-01-21 12:20:16 | igroglaz/srvmgr | https://api.github.com/repos/igroglaz/srvmgr | closed | unhardcode starting exp | Medium priority enhancement | allow to create character with 0 exp (atm character created with 20 main skill and 10 secondary.. we need to create characters with 0 skill) | 1.0 | unhardcode starting exp - allow to create character with 0 exp (atm character created with 20 main skill and 10 secondary.. we need to create characters with 0 skill) | priority | unhardcode starting exp allow to create character with exp atm character created with main skill and secondary we need to create characters with skill | 1 |

23,800 | 2,663,652,511 | IssuesEvent | 2015-03-20 08:23:25 | less/less.js | https://api.github.com/repos/less/less.js | closed | Add color scaling functions | Feature Request Medium Priority ReadyForImplementation | As evidenced by #282, many people feel the color functions should work relatively instead of absolutely. For example, calling ```darken(hsla(0,0,10%,1), 10%)``` should result in ```hsla(0,0,9%,1)``` instead of ```hsla(0,0,0,1)```.

Due to obvious backwards compatibility issues, we shouldn't change the behavior of the... | 1.0 | Add color scaling functions - As evidenced by #282, many people feel the color functions should work relatively instead of absolutely. For example, calling ```darken(hsla(0,0,10%,1), 10%)``` should result in ```hsla(0,0,9%,1)``` instead of ```hsla(0,0,0,1)```.

Due to obvious backwards compatibility issues, we should... | priority | add color scaling functions as evidenced by many people feel the color functions should work relatively instead of absolutely for example calling darken hsla should result in hsla instead of hsla due to obvious backwards compatibility issues we shouldn t ... | 1 |

425,485 | 12,341,059,445 | IssuesEvent | 2020-05-14 21:07:32 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] Nested drop-zone created component but it's not binding it to the drop-zone | bug priority: medium | ## Describe the bug

I used the empty blueprint to test the nested dropzones. I added a freemarker block which accept a Component content type called `section` and when i drag `section` to that dropzone it works fine. Now that section component also accept a dropzone of any Component content type exists in the site. Wh... | 1.0 | [studio-ui] Nested drop-zone created component but it's not binding it to the drop-zone - ## Describe the bug

I used the empty blueprint to test the nested dropzones. I added a freemarker block which accept a Component content type called `section` and when i drag `section` to that dropzone it works fine. Now that sec... | priority | nested drop zone created component but it s not binding it to the drop zone describe the bug i used the empty blueprint to test the nested dropzones i added a freemarker block which accept a component content type called section and when i drag section to that dropzone it works fine now that section compo... | 1 |

792,099 | 27,946,316,686 | IssuesEvent | 2023-03-24 03:37:58 | ncaq/google-search-title-qualified | https://api.github.com/repos/ncaq/google-search-title-qualified | closed | タイトル幅を広めるCSSを追加 | Type: Feature Priority: Medium | 現在私は自作の、

<https://userstyles.org/styles/95431/google-expand>

を使っているのですが、

タイトル文字列が多くなる場合ほとんどの環境で改行が発生しやすくなるので、

拡張機能にそういうCSSを同梱したほうが良いかもしれません。 | 1.0 | タイトル幅を広めるCSSを追加 - 現在私は自作の、

<https://userstyles.org/styles/95431/google-expand>

を使っているのですが、

タイトル文字列が多くなる場合ほとんどの環境で改行が発生しやすくなるので、

拡張機能にそういうCSSを同梱したほうが良いかもしれません。 | priority | タイトル幅を広めるcssを追加 現在私は自作の、 を使っているのですが、 タイトル文字列が多くなる場合ほとんどの環境で改行が発生しやすくなるので、 拡張機能にそういうcssを同梱したほうが良いかもしれません。 | 1 |

145,923 | 5,584,008,570 | IssuesEvent | 2017-03-29 02:55:16 | awslabs/s2n | https://api.github.com/repos/awslabs/s2n | closed | Add Support for ChaCha20-Poly1305 | priority/high size/medium status/work_in_progress type/enhancement type/new_crypto | Recent vulnerabilities in 3DES-CBC3[1] pushed us to remove it from our default preference list. That has left us with only suites that use AES-{CBC,GCM}. To diversify our offerings, we consider adding the stream cipher ChaCha20-Poly1305 . ChaCha20-Poly1305 was added in Openssl 1.1.0 [2]. Supporting 1.1.0's libcrypto is... | 1.0 | Add Support for ChaCha20-Poly1305 - Recent vulnerabilities in 3DES-CBC3[1] pushed us to remove it from our default preference list. That has left us with only suites that use AES-{CBC,GCM}. To diversify our offerings, we consider adding the stream cipher ChaCha20-Poly1305 . ChaCha20-Poly1305 was added in Openssl 1.1.0 ... | priority | add support for recent vulnerabilities in pushed us to remove it from our default preference list that has left us with only suites that use aes cbc gcm to diversify our offerings we consider adding the stream cipher was added in openssl supporting s libcrypto is a prerequisite ... | 1 |

421,674 | 12,260,114,444 | IssuesEvent | 2020-05-06 17:44:19 | onicagroup/runway | https://api.github.com/repos/onicagroup/runway | opened | [REQUEST] escape runway.yml lookup syntax | feature priority:medium | In some cases, there is a need to pass a `parameters` or `options` in a format that looks like our lookup syntax (e.g. `${<something>}`). There needs to be a way to _escape_ the lookup, causing it to pass the literal value instead of resolving it.

### Possible Syntax

- `{{<something>}}` in the runway or CFNgin co... | 1.0 | [REQUEST] escape runway.yml lookup syntax - In some cases, there is a need to pass a `parameters` or `options` in a format that looks like our lookup syntax (e.g. `${<something>}`). There needs to be a way to _escape_ the lookup, causing it to pass the literal value instead of resolving it.

### Possible Syntax

- ... | priority | escape runway yml lookup syntax in some cases there is a need to pass a parameters or options in a format that looks like our lookup syntax e g there needs to be a way to escape the lookup causing it to pass the literal value instead of resolving it possible syntax in the run... | 1 |

396,348 | 11,708,283,488 | IssuesEvent | 2020-03-08 12:23:23 | naipaka/PinMusubi-iOS | https://api.github.com/repos/naipaka/PinMusubi-iOS | opened | add favorite spot feature | Enhancement Medium Priority | # Overview

- Make enable to add only spot to favorite list

- add favorite button on spot cell on spot list view

# Purpose

- To make easier to reach spot information which user wants to look | 1.0 | add favorite spot feature - # Overview

- Make enable to add only spot to favorite list

- add favorite button on spot cell on spot list view

# Purpose

- To make easier to reach spot information which user wants to look | priority | add favorite spot feature overview make enable to add only spot to favorite list add favorite button on spot cell on spot list view purpose to make easier to reach spot information which user wants to look | 1 |

317,613 | 9,666,973,066 | IssuesEvent | 2019-05-21 12:12:32 | conan-io/conan | https://api.github.com/repos/conan-io/conan | opened | SCM auto mode improvments | complex: medium priority: high stage: queue type: feature | This is a new issue to manage together #5126 and #4732 (probably some other).

**Current pains**

- `scm_folder.txt` is tricky and ugly (internal concern mostly).

- Since the usage of the local sources should be avoided when running a `conan install` command (see next section), could be still a good mechanism to t... | 1.0 | SCM auto mode improvments - This is a new issue to manage together #5126 and #4732 (probably some other).

**Current pains**

- `scm_folder.txt` is tricky and ugly (internal concern mostly).

- Since the usage of the local sources should be avoided when running a `conan install` command (see next section), could be... | priority | scm auto mode improvments this is a new issue to manage together and probably some other current pains scm folder txt is tricky and ugly internal concern mostly since the usage of the local sources should be avoided when running a conan install command see next section could be still... | 1 |

764,046 | 26,782,889,636 | IssuesEvent | 2023-01-31 22:59:47 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] Batch nested loops closing connection when querying PG tables | kind/bug area/ysql priority/medium status/awaiting-triage | Jira Link: [DB-5294](https://yugabyte.atlassian.net/browse/DB-5294)

### Description

When using batched nested loops, certain queries to PG tables cause the connection to close due to the following error seen on the postgres logs:

```

TRAP: FailedAssertion("!(bms_overlap(rinfo->clause_relids, batchedrelids))", Fil... | 1.0 | [YSQL] Batch nested loops closing connection when querying PG tables - Jira Link: [DB-5294](https://yugabyte.atlassian.net/browse/DB-5294)

### Description

When using batched nested loops, certain queries to PG tables cause the connection to close due to the following error seen on the postgres logs:

```

TRAP: Fai... | priority | batch nested loops closing connection when querying pg tables jira link description when using batched nested loops certain queries to pg tables cause the connection to close due to the following error seen on the postgres logs trap failedassertion bms overlap rinfo clause relids batchedr... | 1 |

121,204 | 4,806,371,886 | IssuesEvent | 2016-11-02 18:22:06 | worona/worona-dashboard-layout | https://api.github.com/repos/worona/worona-dashboard-layout | closed | We've to decide the errors to show when check site fail | Priority: Medium | I'd say something like this...

**If "Site online and available" fails:**

> ### Oops!

>

> Looks like your site is not online or available. This may occur if:

> - You have entered an incorrect URL. Please make sure your site is correct: _http://www.site.com_. Want to fix it? Edit your URL [here](url).

> - Your WordPre... | 1.0 | We've to decide the errors to show when check site fail - I'd say something like this...

**If "Site online and available" fails:**

> ### Oops!

>

> Looks like your site is not online or available. This may occur if:

> - You have entered an incorrect URL. Please make sure your site is correct: _http://www.site.com_. W... | priority | we ve to decide the errors to show when check site fail i d say something like this if site online and available fails oops looks like your site is not online or available this may occur if you have entered an incorrect url please make sure your site is correct want to fix it edit ... | 1 |

55,868 | 3,075,081,191 | IssuesEvent | 2015-08-20 11:31:34 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Очередь скачивания не очистилась после завершения загрузки | bug imported Priority-Medium | _From [franc...@mail.ru](https://code.google.com/u/108356571090263611971/) on February 08, 2011 23:07:34_

114 issue, как я понял, говорит о том, что в очереди скачивания отображаются не все файлы, у меня же получилась обратная ситуация (но тоже с Интернами, и это может быть на что-то наведёт):

Поставил на скачивание ... | 1.0 | Очередь скачивания не очистилась после завершения загрузки - _From [franc...@mail.ru](https://code.google.com/u/108356571090263611971/) on February 08, 2011 23:07:34_

114 issue, как я понял, говорит о том, что в очереди скачивания отображаются не все файлы, у меня же получилась обратная ситуация (но тоже с Интернами, ... | priority | очередь скачивания не очистилась после завершения загрузки from on february issue как я понял говорит о том что в очереди скачивания отображаются не все файлы у меня же получилась обратная ситуация но тоже с интернами и это может быть на что то наведёт поставил на скачивание файла один... | 1 |

105,278 | 4,233,497,925 | IssuesEvent | 2016-07-05 08:10:21 | BugBusterSWE/documentation | https://api.github.com/repos/BugBusterSWE/documentation | opened | Slide riguardanti il bilancio | priority:medium | *Documento in cui si trova il problema*:

Manuale Admin

Activity #580

*Descrizione del problema*:

Creare slide riguardanti il bilancio.

Link task: [https://bugbusters.teamwork.com/tasks/7482386](https://bugbusters.teamwork.com/tasks/7482386) | 1.0 | Slide riguardanti il bilancio - *Documento in cui si trova il problema*:

Manuale Admin

Activity #580

*Descrizione del problema*:

Creare slide riguardanti il bilancio.

Link task: [https://bugbusters.teamwork.com/tasks/7482386](https://bugbusters.teamwork.com/tasks/7482386) | priority | slide riguardanti il bilancio documento in cui si trova il problema manuale admin activity descrizione del problema creare slide riguardanti il bilancio link task | 1 |

749,031 | 26,148,233,298 | IssuesEvent | 2022-12-30 09:22:24 | NomicFoundation/hardhat | https://api.github.com/repos/NomicFoundation/hardhat | closed | Can not change gas price of local mainet fork | type:feature priority:medium | Here is my hardhat setting:

```js

module.exports = {

solidity: "0.7.3",

networks: {

hardhat: {

forking: {

url: "http://127.0.0.1:18545"

},

gasPrice: 150000000000

}

}

};

```

But the gas price is still 8000000000:

```js

const gasPrice = await web3.eth.getGas... | 1.0 | Can not change gas price of local mainet fork - Here is my hardhat setting:

```js

module.exports = {

solidity: "0.7.3",

networks: {

hardhat: {

forking: {

url: "http://127.0.0.1:18545"

},

gasPrice: 150000000000

}

}

};

```

But the gas price is still 8000000000:

... | priority | can not change gas price of local mainet fork here is my hardhat setting js module exports solidity networks hardhat forking url gasprice but the gas price is still js const gasprice await eth get... | 1 |

581,270 | 17,290,107,525 | IssuesEvent | 2021-07-24 15:04:38 | leokraft/QuickKey | https://api.github.com/repos/leokraft/QuickKey | opened | Autogenerate version numbers. | Priority: Medium Type: Feature | This would be usefull to keep track of build number and update wix info. | 1.0 | Autogenerate version numbers. - This would be usefull to keep track of build number and update wix info. | priority | autogenerate version numbers this would be usefull to keep track of build number and update wix info | 1 |

393,486 | 11,616,560,629 | IssuesEvent | 2020-02-26 15:54:02 | arkhn/pyrog | https://api.github.com/repos/arkhn/pyrog | closed | Clicking issues | Client Enhancement Medium Priority | When we change resources, attributes are no longer clickable (page needs to be refreshed) | 1.0 | Clicking issues - When we change resources, attributes are no longer clickable (page needs to be refreshed) | priority | clicking issues when we change resources attributes are no longer clickable page needs to be refreshed | 1 |

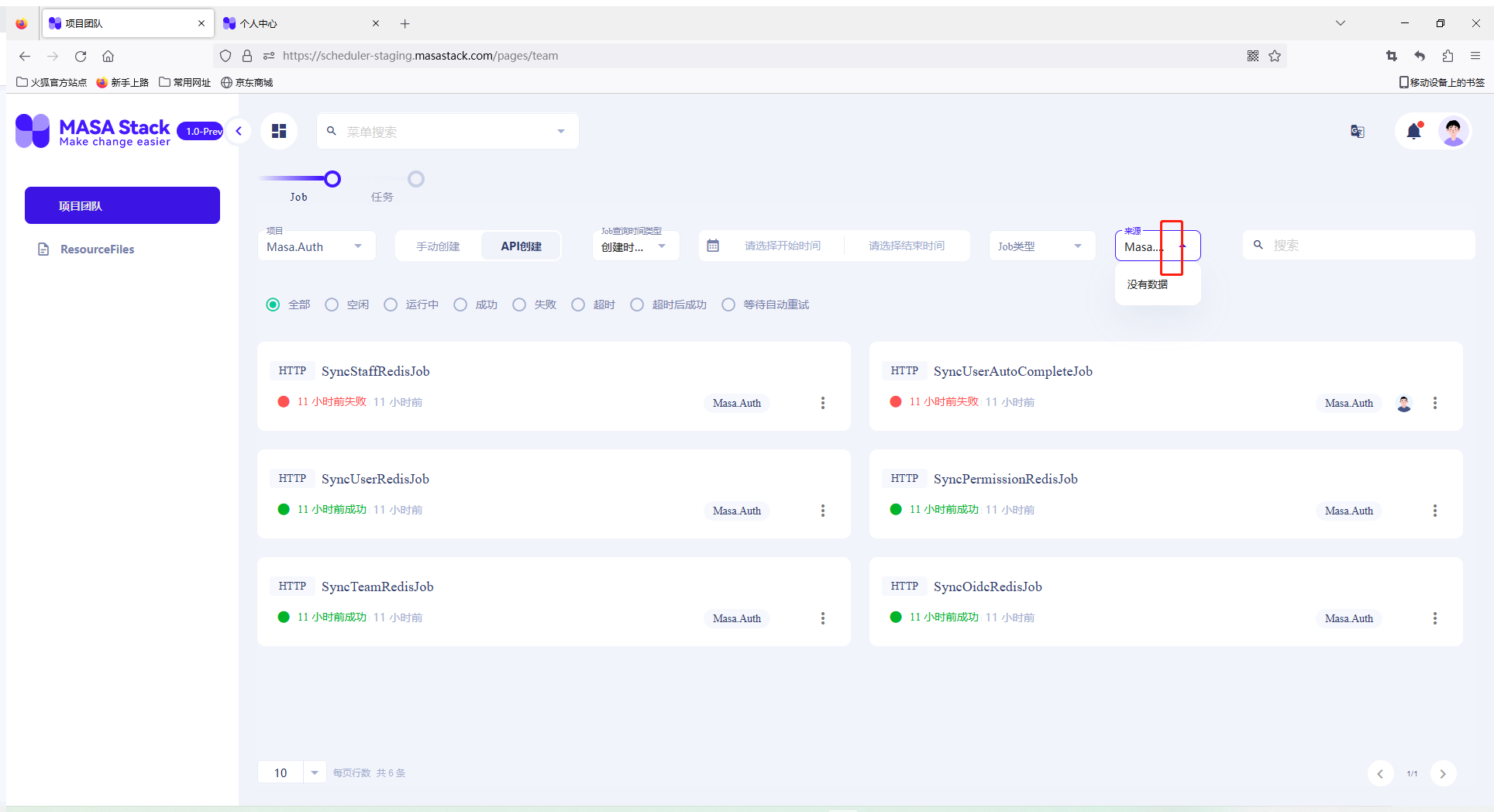

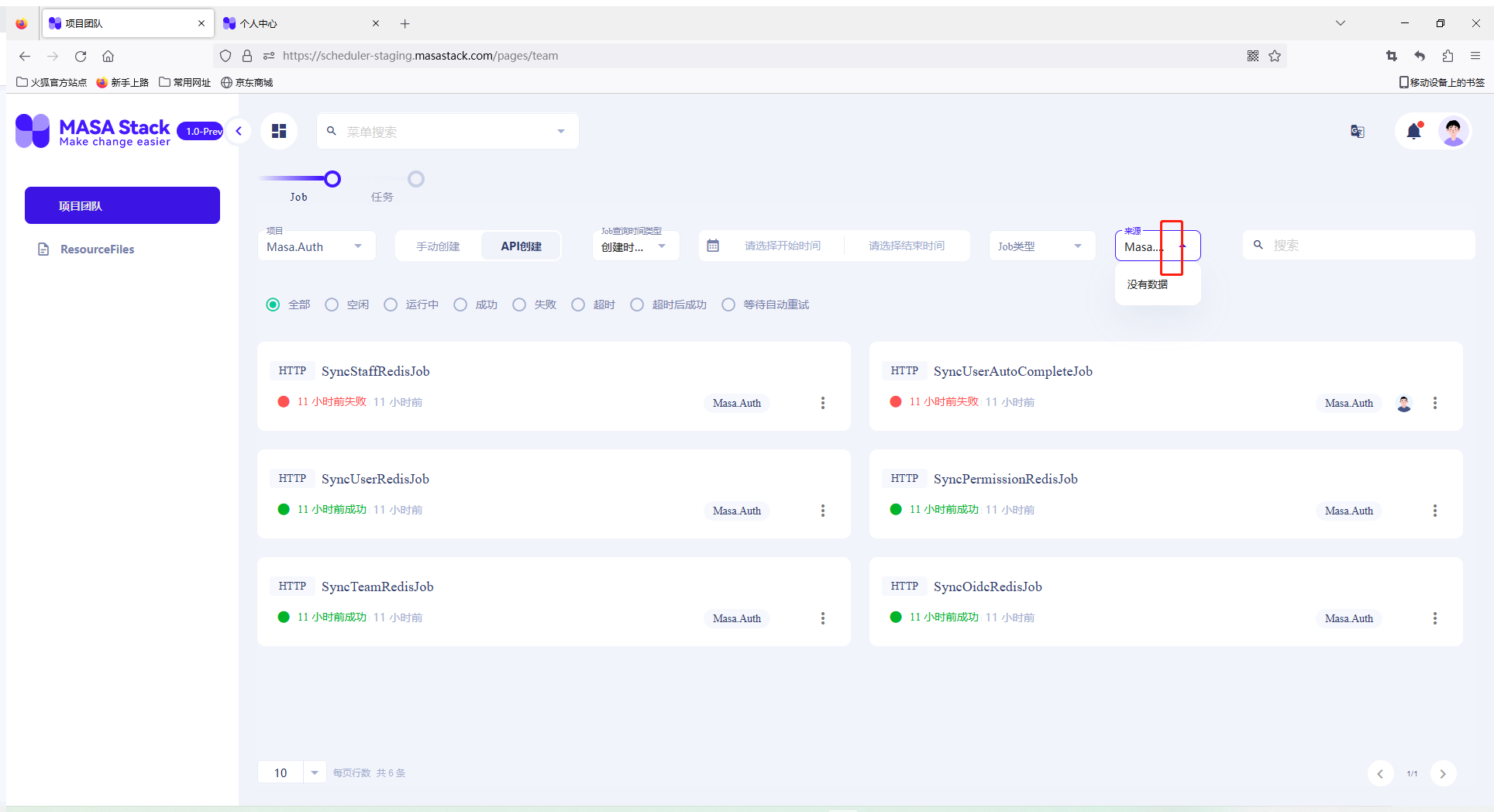

801,062 | 28,452,506,225 | IssuesEvent | 2023-04-17 02:55:40 | masastack/MASA.Scheduler | https://api.github.com/repos/masastack/MASA.Scheduler | closed | The source drop-down box is missing a clear button. Click to clear the options in the drop-down box | type/bug status/resolved severity/medium site/staging priority/p3 | 来源下拉框缺少清除按钮,点击清除下拉框内已选项

| 1.0 | The source drop-down box is missing a clear button. Click to clear the options in the drop-down box - 来源下拉框缺少清除按钮,点击清除下拉框内已选项

| priority | the source drop down box is missing a clear button click to clear the options in the drop down box 来源下拉框缺少清除按钮,点击清除下拉框内已选项 | 1 |

173,768 | 6,530,669,791 | IssuesEvent | 2017-08-30 15:50:23 | assemblee-virtuelle/mmmfest | https://api.github.com/repos/assemblee-virtuelle/mmmfest | closed | Harmoniser les polices d'affichage sur la bannière | medium priority | Sur la bannière à minima pour Code social, Programme etc...

Utiliser la police des grands voisins ?

Enlever introduction, et peut être augmenter un poil l'espace voir la police pour Domaine de Millemont / 4 au 10 octobre... | 1.0 | Harmoniser les polices d'affichage sur la bannière - Sur la bannière à minima pour Code social, Programme etc...

Utiliser la police des grands voisins ?

Enlever introduction, et peut être augmenter un poil l'espace voir la police pour Domaine de Millemont / 4 au 10 octobre... | priority | harmoniser les polices d affichage sur la bannière sur la bannière à minima pour code social programme etc utiliser la police des grands voisins enlever introduction et peut être augmenter un poil l espace voir la police pour domaine de millemont au octobre | 1 |

603,294 | 18,537,066,747 | IssuesEvent | 2021-10-21 12:40:52 | HabitRPG/habitica-ios | https://api.github.com/repos/HabitRPG/habitica-ios | closed | New task drag and drop logic for when filters are applied | Type: Enhancement Priority: medium | this is to help fix issues with task shuffling their order when users try to reorganize them. Filtering to 'is due' is extremely common, and the filtered tasks you can't see behind the scenes can cause a very strange experience when dragging and dropping tasks around.

When a user tries to drag and drop a task when f... | 1.0 | New task drag and drop logic for when filters are applied - this is to help fix issues with task shuffling their order when users try to reorganize them. Filtering to 'is due' is extremely common, and the filtered tasks you can't see behind the scenes can cause a very strange experience when dragging and dropping tasks... | priority | new task drag and drop logic for when filters are applied this is to help fix issues with task shuffling their order when users try to reorganize them filtering to is due is extremely common and the filtered tasks you can t see behind the scenes can cause a very strange experience when dragging and dropping tasks... | 1 |

22,611 | 2,649,565,063 | IssuesEvent | 2015-03-15 01:43:03 | prikhi/pencil | https://api.github.com/repos/prikhi/pencil | closed | Pencil not compatible with Firefox 4.0 | 6–10 stars bug imported Priority-Medium | _From [seaplane...@gmail.com](https://code.google.com/u/109609509405567341253/) on March 22, 2011 17:13:36_

What steps will reproduce the problem? 1. Install Pencil

2. Install Firefox 4.0

3. Revert installation when prompted with add-on warning What is the expected output? What do you see instead? Hopefully an updat... | 1.0 | Pencil not compatible with Firefox 4.0 - _From [seaplane...@gmail.com](https://code.google.com/u/109609509405567341253/) on March 22, 2011 17:13:36_

What steps will reproduce the problem? 1. Install Pencil

2. Install Firefox 4.0

3. Revert installation when prompted with add-on warning What is the expected output? Wh... | priority | pencil not compatible with firefox from on march what steps will reproduce the problem install pencil install firefox revert installation when prompted with add on warning what is the expected output what do you see instead hopefully an update to pencil to make it compatible wi... | 1 |

770,912 | 27,060,750,450 | IssuesEvent | 2023-02-13 19:33:35 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] LIKE ALL is not supported | kind/enhancement area/ysql priority/medium pgcm | Jira Link: [DB-974](https://yugabyte.atlassian.net/browse/DB-974)

### Description

```

CREATE TABLE p1_c1 (LIKE p1 INCLUDING ALL, CHECK (id <= 100)) INHERITS(p1);

ERROR: LIKE ALL not supported yet

LINE 1: CREATE TABLE p1_c1 (LIKE p1 INCLUDING ALL, CHECK (id <= 100)...

... | 1.0 | [YSQL] LIKE ALL is not supported - Jira Link: [DB-974](https://yugabyte.atlassian.net/browse/DB-974)

### Description

```

CREATE TABLE p1_c1 (LIKE p1 INCLUDING ALL, CHECK (id <= 100)) INHERITS(p1);

ERROR: LIKE ALL not supported yet

LINE 1: CREATE TABLE p1_c1 (LIKE p1 INCLUDING ALL, CHECK (id <= 100)...

... | priority | like all is not supported jira link description create table like including all check id inherits error like all not supported yet line create table like including all check id | 1 |

658,574 | 21,897,163,114 | IssuesEvent | 2022-05-20 09:44:29 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | closed | Practice App: Frontend for Creating Event | priority-medium status-inprogress feature practice-app practice-app:front-end | ### Issue Description

Since the backend development of event creation and getting attended events is done and can be viewed in detail from the below issues, now I will be implementing the frontend (related web pages) of the named functionalities of our project:

* #193

* #195

* #211

### Step Details

Steps tha... | 1.0 | Practice App: Frontend for Creating Event - ### Issue Description

Since the backend development of event creation and getting attended events is done and can be viewed in detail from the below issues, now I will be implementing the frontend (related web pages) of the named functionalities of our project:

* #193

* #... | priority | practice app frontend for creating event issue description since the backend development of event creation and getting attended events is done and can be viewed in detail from the below issues now i will be implementing the frontend related web pages of the named functionalities of our project ... | 1 |

248,848 | 7,936,865,096 | IssuesEvent | 2018-07-09 10:51:42 | nlbdev/pipeline | https://api.github.com/repos/nlbdev/pipeline | closed | Class to force volume break | CSS Priority:2 - Medium enhancement | As a convenience instead of using style attributes or attaching CSS stylesheets, we should have a class that can be manually inserted if there are some locations that we need to force a volume break.

Maybe `braille-volume-break-before`. | 1.0 | Class to force volume break - As a convenience instead of using style attributes or attaching CSS stylesheets, we should have a class that can be manually inserted if there are some locations that we need to force a volume break.

Maybe `braille-volume-break-before`. | priority | class to force volume break as a convenience instead of using style attributes or attaching css stylesheets we should have a class that can be manually inserted if there are some locations that we need to force a volume break maybe braille volume break before | 1 |

311,848 | 9,539,639,528 | IssuesEvent | 2019-04-30 17:28:14 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | opened | Do not treat "unidentified" request issues as non-comp BusinessLine | bug-medium-priority caseflow-intake sierra | See https://dsva.slack.com/archives/C2ZAMLK88/p1556644556118700