Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

438,071 | 12,610,637,765 | IssuesEvent | 2020-06-12 05:37:33 | confidantstation/Confidant-Station | https://api.github.com/repos/confidantstation/Confidant-Station | closed | Optimize confidant friends, file transfer data storage structure | Priority: Medium Status: In Progress Type: Enhancement | Currently, a user reserves n friends. The number of file transfers is changed to a global pool | 1.0 | Optimize confidant friends, file transfer data storage structure - Currently, a user reserves n friends. The number of file transfers is changed to a global pool | priority | optimize confidant friends file transfer data storage structure currently a user reserves n friends the number of file transfers is changed to a global pool | 1 |

554,487 | 16,430,524,636 | IssuesEvent | 2021-05-20 00:28:23 | MeAmAnUsername/pie | https://api.github.com/repos/MeAmAnUsername/pie | opened | Remove warning on single element in multi-import if element is renamed | Component: editor Priority: medium Status: specified Type: bug | Remove warning on single element in multi-import if element is renamed.

Could also add a note suggesting a rewrite if the renaming happens for a single import.

Example of how it should work

```

import a:b:{c}:d:{someFunc, someOtherFunc} // warning: single element multi-import (quick fix: remove braces)

import a:... | 1.0 | Remove warning on single element in multi-import if element is renamed - Remove warning on single element in multi-import if element is renamed.

Could also add a note suggesting a rewrite if the renaming happens for a single import.

Example of how it should work

```

import a:b:{c}:d:{someFunc, someOtherFunc} // w... | priority | remove warning on single element in multi import if element is renamed remove warning on single element in multi import if element is renamed could also add a note suggesting a rewrite if the renaming happens for a single import example of how it should work import a b c d somefunc someotherfunc w... | 1 |

427,511 | 12,396,183,081 | IssuesEvent | 2020-05-20 20:03:02 | react-figma/react-figma | https://api.github.com/repos/react-figma/react-figma | closed | Create polyfills for functions as ’fetch’ in main thread | complexity: hard priority: medium type: feature or enhancement | Currently things such as `fetch` are undefined in the main execution thread where all components are rendered. We need to polyfill these functions using a bridge between UI and main threads | 1.0 | Create polyfills for functions as ’fetch’ in main thread - Currently things such as `fetch` are undefined in the main execution thread where all components are rendered. We need to polyfill these functions using a bridge between UI and main threads | priority | create polyfills for functions as ’fetch’ in main thread currently things such as fetch are undefined in the main execution thread where all components are rendered we need to polyfill these functions using a bridge between ui and main threads | 1 |

791,994 | 27,884,351,923 | IssuesEvent | 2023-03-21 22:13:25 | agrc/electrofishing | https://api.github.com/repos/agrc/electrofishing | closed | Gut Check Metrics Before Submission | waiting medium priority | >When submitting a report create a summary that allows for easy QA/QC using metrics like Condition Factor, Average length, Maximum/Minimum length by species that could serve as red flags. Prior to submitting report the verification of the data includes some measure of condition based on length and weight relationship f... | 1.0 | Gut Check Metrics Before Submission - >When submitting a report create a summary that allows for easy QA/QC using metrics like Condition Factor, Average length, Maximum/Minimum length by species that could serve as red flags. Prior to submitting report the verification of the data includes some measure of condition bas... | priority | gut check metrics before submission when submitting a report create a summary that allows for easy qa qc using metrics like condition factor average length maximum minimum length by species that could serve as red flags prior to submitting report the verification of the data includes some measure of condition bas... | 1 |

57,809 | 3,083,990,705 | IssuesEvent | 2015-08-24 12:45:07 | StefanIsidorovic/salira | https://api.github.com/repos/StefanIsidorovic/salira | closed | Unary minus | auto-migrated Priority-Medium Type-Other | ```

Look at PPJ->ispit->aritmeticki izrazi

UNARY MINUS

(-1) have to be interpreted like negative number and (n-1) like

functor("minus", ...)

```

Original issue reported on code.google.com by `missuchi...@gmail.com` on 7 Jun 2015 at 2:13 | 1.0 | Unary minus - ```

Look at PPJ->ispit->aritmeticki izrazi

UNARY MINUS

(-1) have to be interpreted like negative number and (n-1) like

functor("minus", ...)

```

Original issue reported on code.google.com by `missuchi...@gmail.com` on 7 Jun 2015 at 2:13 | priority | unary minus look at ppj ispit aritmeticki izrazi unary minus have to be interpreted like negative number and n like functor minus original issue reported on code google com by missuchi gmail com on jun at | 1 |

339,862 | 10,263,332,877 | IssuesEvent | 2019-08-22 14:10:23 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | Separate MSTransferor and MSMonitor in different process | Enhancement Medium Priority New Feature ReqMgr2MS | **Impact of the new feature**

ReqMgr2MS

**Is your feature request related to a problem? Please describe.**

Once ReqMgr2MS hit production, it will be under heavy load and we better allocate a different CPU for each of those threads.

With the thread/service separation, we also need to take into consideration the lo... | 1.0 | Separate MSTransferor and MSMonitor in different process - **Impact of the new feature**

ReqMgr2MS

**Is your feature request related to a problem? Please describe.**

Once ReqMgr2MS hit production, it will be under heavy load and we better allocate a different CPU for each of those threads.

With the thread/service... | priority | separate mstransferor and msmonitor in different process impact of the new feature is your feature request related to a problem please describe once hit production it will be under heavy load and we better allocate a different cpu for each of those threads with the thread service separation we ... | 1 |

517,384 | 15,008,360,404 | IssuesEvent | 2021-01-31 09:44:22 | bounswe/bounswe2020group9 | https://api.github.com/repos/bounswe/bounswe2020group9 | closed | iOS - Customer/ Add Review | Estimation - Medium Mobile Priority - High Status - Completed | Implement "adding reviews" feature.

As discussed in the meeting, customer shall be able to add review only for the products they have already purchased. Therefore, it should be carried out on the Orders Page.

**Deadline: 25.01.2021** | 1.0 | iOS - Customer/ Add Review - Implement "adding reviews" feature.

As discussed in the meeting, customer shall be able to add review only for the products they have already purchased. Therefore, it should be carried out on the Orders Page.

**Deadline: 25.01.2021** | priority | ios customer add review implement adding reviews feature as discussed in the meeting customer shall be able to add review only for the products they have already purchased therefore it should be carried out on the orders page deadline | 1 |

642,057 | 20,866,178,239 | IssuesEvent | 2022-03-22 07:26:31 | AY2122S2-CS2103T-T17-4/tp | https://api.github.com/repos/AY2122S2-CS2103T-T17-4/tp | closed | Add status for each person | type.Story priority.Medium | As an advanced user, I can check the status of a person so that I can focus on contacting people that have not yet been contacted. | 1.0 | Add status for each person - As an advanced user, I can check the status of a person so that I can focus on contacting people that have not yet been contacted. | priority | add status for each person as an advanced user i can check the status of a person so that i can focus on contacting people that have not yet been contacted | 1 |

246,493 | 7,895,376,819 | IssuesEvent | 2018-06-29 02:52:56 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | Make IceT the default when available. | Expected Use: 3 - Occasional Feature Impact: 3 - Medium OS: All Priority: Normal Support Group: Any | Several developers (Mark Miller, Cyrus Harrison, Brad Whitlock, Tom Fogal and Eric Brugger) have been discussing making IceT the default when available. The consensus was that we should. Mark and Tom suggested it would still be nice to have a way to disable it for debugging and other purposes. Mark proposed adding a fl... | 1.0 | Make IceT the default when available. - Several developers (Mark Miller, Cyrus Harrison, Brad Whitlock, Tom Fogal and Eric Brugger) have been discussing making IceT the default when available. The consensus was that we should. Mark and Tom suggested it would still be nice to have a way to disable it for debugging and o... | priority | make icet the default when available several developers mark miller cyrus harrison brad whitlock tom fogal and eric brugger have been discussing making icet the default when available the consensus was that we should mark and tom suggested it would still be nice to have a way to disable it for debugging and o... | 1 |

48,013 | 2,990,117,627 | IssuesEvent | 2015-07-21 07:02:47 | jayway/rest-assured | https://api.github.com/repos/jayway/rest-assured | closed | Lazily merge path arguments | bug imported invalid Priority-Medium | _From [johan.ha...@gmail.com](https://code.google.com/u/105676376875942041029/) on May 02, 2013 08:45:15_

Make this work:

... rootPath("x.y.%s.z").body("w", withArguments("u"), .. ).

_Original issue: http://code.google.com/p/rest-assured/issues/detail?id=232_ | 1.0 | Lazily merge path arguments - _From [johan.ha...@gmail.com](https://code.google.com/u/105676376875942041029/) on May 02, 2013 08:45:15_

Make this work:

... rootPath("x.y.%s.z").body("w", withArguments("u"), .. ).

_Original issue: http://code.google.com/p/rest-assured/issues/detail?id=232_ | priority | lazily merge path arguments from on may make this work rootpath x y s z body w witharguments u original issue | 1 |

525,033 | 15,228,015,416 | IssuesEvent | 2021-02-18 10:56:03 | ivpn/ios-app | https://api.github.com/repos/ivpn/ios-app | closed | UI issue regarding the circles animation when changing networks | Network Protection priority: medium type: bug | **Description:**

In the App Store build 2.0.4, as well as in the latest beta 2.1.0 (23), there is an UI issue with the connecting circle animation while changing networks and having the following network trust settings (Mobile data: Untrusted, WIFI: Trusted or vide versa).

When changing networks, it is observed two c... | 1.0 | UI issue regarding the circles animation when changing networks - **Description:**

In the App Store build 2.0.4, as well as in the latest beta 2.1.0 (23), there is an UI issue with the connecting circle animation while changing networks and having the following network trust settings (Mobile data: Untrusted, WIFI: Tru... | priority | ui issue regarding the circles animation when changing networks description in the app store build as well as in the latest beta there is an ui issue with the connecting circle animation while changing networks and having the following network trust settings mobile data untrusted wifi trus... | 1 |

819,070 | 30,718,765,989 | IssuesEvent | 2023-07-27 14:36:13 | containrrr/watchtower | https://api.github.com/repos/containrrr/watchtower | opened | ghcr Private Repo Issue | Type: Bug Priority: Medium Status: Available | ### Describe the bug

I become a 404 Error because the URL what Watchtower tries to reach is unavailable.

### Steps to reproduce

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

### Expected behavior

That WatchTower checks the repo and loads updates.

### Screenshots

_No response_

###... | 1.0 | ghcr Private Repo Issue - ### Describe the bug

I become a 404 Error because the URL what Watchtower tries to reach is unavailable.

### Steps to reproduce

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

### Expected behavior

That WatchTower checks the repo and loads updates.

### Scree... | priority | ghcr private repo issue describe the bug i become a error because the url what watchtower tries to reach is unavailable steps to reproduce go to click on scroll down to see error expected behavior that watchtower checks the repo and loads updates screens... | 1 |

69,965 | 3,316,353,453 | IssuesEvent | 2015-11-06 16:33:13 | TeselaGen/Peony-Issue-Tracking | https://api.github.com/repos/TeselaGen/Peony-Issue-Tracking | opened | Rationalize right-click menu for all library views | Customer: DAS Phase I Priority: Medium Status: In Progress Type: Enhancement | _From @mfero on September 24, 2015 20:37_

We should rationalize the right-click menu across all library views. Menu items specific to a particular library can sit below a separator.

My Protocols: Rename, Edit, Delete, Create Copy, Export

My Strains: Rename, Edit, Delete, Create Copy, Export

My Sequence: Rename, Edit... | 1.0 | Rationalize right-click menu for all library views - _From @mfero on September 24, 2015 20:37_

We should rationalize the right-click menu across all library views. Menu items specific to a particular library can sit below a separator.

My Protocols: Rename, Edit, Delete, Create Copy, Export

My Strains: Rename, Edit, ... | priority | rationalize right click menu for all library views from mfero on september we should rationalize the right click menu across all library views menu items specific to a particular library can sit below a separator my protocols rename edit delete create copy export my strains rename edit delete... | 1 |

685,290 | 23,451,453,556 | IssuesEvent | 2022-08-16 03:31:39 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] Inequality is shown to be getting pushed down to docDB when yb_enable_optimizer_statistics is set to true | kind/bug area/ysql priority/medium | Jira Link: [DB-2897](https://yugabyte.atlassian.net/browse/DB-2897)

### Description

When `yb_enable_optimizer_statistics` is set to true, an inequality filter on the hash indexed primary key seems to be pushed down to docDB. The query plan shows an Index Scan. A range index scan cannot be performed on the hash inde... | 1.0 | [YSQL] Inequality is shown to be getting pushed down to docDB when yb_enable_optimizer_statistics is set to true - Jira Link: [DB-2897](https://yugabyte.atlassian.net/browse/DB-2897)

### Description

When `yb_enable_optimizer_statistics` is set to true, an inequality filter on the hash indexed primary key seems to b... | priority | inequality is shown to be getting pushed down to docdb when yb enable optimizer statistics is set to true jira link description when yb enable optimizer statistics is set to true an inequality filter on the hash indexed primary key seems to be pushed down to docdb the query plan shows an index scan... | 1 |

213,178 | 7,246,466,448 | IssuesEvent | 2018-02-14 21:45:59 | Motoxpro/WorldCupStatsSite | https://api.github.com/repos/Motoxpro/WorldCupStatsSite | closed | Need to get track length for world championships | Medium Priority Data Issue | need to download and parse the worlds pdfs for track length | 1.0 | Need to get track length for world championships - need to download and parse the worlds pdfs for track length | priority | need to get track length for world championships need to download and parse the worlds pdfs for track length | 1 |

160,765 | 6,102,038,199 | IssuesEvent | 2017-06-20 15:41:47 | OperationCode/operationcode_frontend | https://api.github.com/repos/OperationCode/operationcode_frontend | closed | Add sentry | beginner friendly Priority: Medium Status: Available Type: Feature | <!-- Please fill out one of the sections below based on the type of issue you're creating -->

# Feature

## Why is this feature being added?

<!-- What problem is it solving? What value does it add? -->

Sentry helps track errors.

## What should your feature do?

Follow https://sentry.io/for/react/

Our public DN i... | 1.0 | Add sentry - <!-- Please fill out one of the sections below based on the type of issue you're creating -->

# Feature

## Why is this feature being added?

<!-- What problem is it solving? What value does it add? -->

Sentry helps track errors.

## What should your feature do?

Follow https://sentry.io/for/react/

Ou... | priority | add sentry feature why is this feature being added sentry helps track errors what should your feature do follow our public dn is | 1 |

659,086 | 21,916,230,207 | IssuesEvent | 2022-05-21 21:42:06 | SkriptLang/Skript | https://api.github.com/repos/SkriptLang/Skript | closed | Cant remove saturation effect | bug priority: medium completed | ### Skript/Server Version

```

[23:45:09 INFO]: [Skript] Skript's aliases can be found here: https://github.com/SkriptLang/skript-aliases

[23:45:09 INFO]: [Skript] Skript's documentation can be found here: https://skriptlang.github.io/Skript

[23:45:09 INFO]: [Skript] Server Version: git-Purpur-"4ff0630" (MC: 1.17.1)... | 1.0 | Cant remove saturation effect - ### Skript/Server Version

```

[23:45:09 INFO]: [Skript] Skript's aliases can be found here: https://github.com/SkriptLang/skript-aliases

[23:45:09 INFO]: [Skript] Skript's documentation can be found here: https://skriptlang.github.io/Skript

[23:45:09 INFO]: [Skript] Server Version: g... | priority | cant remove saturation effect skript server version skript s aliases can be found here skript s documentation can be found here server version git purpur mc skript version installed skript addons skript yaml skript reflect ... | 1 |

749,535 | 26,166,800,480 | IssuesEvent | 2023-01-01 11:45:47 | docker-mailserver/docker-mailserver | https://api.github.com/repos/docker-mailserver/docker-mailserver | opened | [BUG] postfix: reject_unknown_client_hostname prevents legitimate mail from being received | kind/bug meta/needs triage priority/medium | ### Miscellaneous first checks

- [X] I checked that all ports are open and not blocked by my ISP / hosting provider.

- [X] I know that SSL errors are likely the result of a wrong setup on the user side and not caused by DMS itself. I'm confident my setup is correct.

### Affected Component(s)

postfix

### What happen... | 1.0 | [BUG] postfix: reject_unknown_client_hostname prevents legitimate mail from being received - ### Miscellaneous first checks

- [X] I checked that all ports are open and not blocked by my ISP / hosting provider.

- [X] I know that SSL errors are likely the result of a wrong setup on the user side and not caused by DMS it... | priority | postfix reject unknown client hostname prevents legitimate mail from being received miscellaneous first checks i checked that all ports are open and not blocked by my isp hosting provider i know that ssl errors are likely the result of a wrong setup on the user side and not caused by dms itself i ... | 1 |

617,243 | 19,345,995,265 | IssuesEvent | 2021-12-15 10:51:46 | google/android-fhir | https://api.github.com/repos/google/android-fhir | closed | Support evaluation of FHIRPath expressions and calculation within Questionnaire | enhancement medium priority Q4 2021 | **Is your feature request related to a problem? Please describe.**

There is a need to be able to evaluate expressions within a Questionnaire. This is currently not supported by the data-capture library

**Describe the solution you'd like**

Support for FHIRPath expressions

**Describe alternatives you've considere... | 1.0 | Support evaluation of FHIRPath expressions and calculation within Questionnaire - **Is your feature request related to a problem? Please describe.**

There is a need to be able to evaluate expressions within a Questionnaire. This is currently not supported by the data-capture library

**Describe the solution you'd li... | priority | support evaluation of fhirpath expressions and calculation within questionnaire is your feature request related to a problem please describe there is a need to be able to evaluate expressions within a questionnaire this is currently not supported by the data capture library describe the solution you d li... | 1 |

684,158 | 23,409,453,335 | IssuesEvent | 2022-08-12 15:53:17 | Kong/kubernetes-ingress-controller | https://api.github.com/repos/Kong/kubernetes-ingress-controller | closed | Make Gateway API enabled by default | priority/medium area/gateway-api | ### Problem Statement

Now that [Gateway API](https://github.com/kubernetes-sigs/gateway-api) has APIs in `v1beta1` with the release of `v0.5.0` we are ready to call our Gateway API implementation beta as well. The purpose of this task is to mark the beta APIs as beta and enable them by default for future KIC release... | 1.0 | Make Gateway API enabled by default - ### Problem Statement

Now that [Gateway API](https://github.com/kubernetes-sigs/gateway-api) has APIs in `v1beta1` with the release of `v0.5.0` we are ready to call our Gateway API implementation beta as well. The purpose of this task is to mark the beta APIs as beta and enable ... | priority | make gateway api enabled by default problem statement now that has apis in with the release of we are ready to call our gateway api implementation beta as well the purpose of this task is to mark the beta apis as beta and enable them by default for future kic releases alpha apis should remain... | 1 |

671,620 | 22,769,284,204 | IssuesEvent | 2022-07-08 08:27:39 | canonical-web-and-design/ubuntu.com | https://api.github.com/repos/canonical-web-and-design/ubuntu.com | closed | Blog cards are missing the coloured strip at the top | Priority: Medium | There is a fallback class for cards that don't have a specific colour assigned that is over riding everything https://github.com/canonical-web-and-design/ubuntu.com/blob/main/static/sass/_pattern_blog-card.scss#L119

- the runtime should then use the correct keystore based on the `keyTypeID` that's passed into ext_c... | 1.0 | update runtime keystore to use GlobalKeystore, use key type IDs in ext_crypto funcs - ## Task summary

<!-- A clear and concise description of what the task is. -->

- currently the runtime uses only the `Acco` keystore

- it should be using the `GlobalKeystore` instead (see dot/node.go `createRuntime`)

- the runtime ... | priority | update runtime keystore to use globalkeystore use key type ids in ext crypto funcs task summary currently the runtime uses only the acco keystore it should be using the globalkeystore instead see dot node go createruntime the runtime should then use the correct keystore based on the keytypeid... | 1 |

707,528 | 24,309,123,912 | IssuesEvent | 2022-09-29 20:19:46 | georchestra/georchestra | https://api.github.com/repos/georchestra/georchestra | closed | mapfishapp: produce SLD v1.1.0 compliant documents | feature 0 - Backlog priority-medium | ... targeted to WMS >= 1.3.0 servers.

Keep current SLD 1.0 service for WMS version <= 1.1.1 servers.

| 1.0 | mapfishapp: produce SLD v1.1.0 compliant documents - ... targeted to WMS >= 1.3.0 servers.

Keep current SLD 1.0 service for WMS version <= 1.1.1 servers.

| priority | mapfishapp produce sld compliant documents targeted to wms servers keep current sld service for wms version servers | 1 |

509,570 | 14,739,817,832 | IssuesEvent | 2021-01-07 07:59:23 | konveyor/forklift-ui | https://api.github.com/repos/konveyor/forklift-ui | closed | Non-ready providers should be excluded from any provider selections in forms | medium-priority | If a provider does not have the Ready condition, inventory API requests for resources in that provider will fail. We should either filter out or disable those options in Select fields for providers (and if filtering out, show a message when no providers remain instead of showing an empty dropdown). This might also be a... | 1.0 | Non-ready providers should be excluded from any provider selections in forms - If a provider does not have the Ready condition, inventory API requests for resources in that provider will fail. We should either filter out or disable those options in Select fields for providers (and if filtering out, show a message when ... | priority | non ready providers should be excluded from any provider selections in forms if a provider does not have the ready condition inventory api requests for resources in that provider will fail we should either filter out or disable those options in select fields for providers and if filtering out show a message when ... | 1 |

642,165 | 20,868,857,197 | IssuesEvent | 2022-03-22 10:01:23 | LiskHQ/lisk-desktop | https://api.github.com/repos/LiskHQ/lisk-desktop | closed | Distorted UI on wallet balance card when in discrete mode | type: bug unplanned priority: medium | ### Expected behavior

There should be no distorted view on the balance card when discrete mode is toggled on.

### Actual behavior

Switching to discrete mode cause a misplacement in the content of the balance card as seen in the screenshot.

nearly 100% of users would choose the separate described version.

- It is extremely... | 1.0 | Eliminate options related to description - User testing with screen reader users has led to some insights related to description:

- If description is available as _both_ a separate described version of the video and text-based description (i.e., a WebVTT description track) nearly 100% of users would choose the sep... | priority | eliminate options related to description user testing with screen reader users has led to some insights related to description if description is available as both a separate described version of the video and text based description i e a webvtt description track nearly of users would choose the separ... | 1 |

548,352 | 16,062,580,992 | IssuesEvent | 2021-04-23 14:27:49 | enso-org/ide | https://api.github.com/repos/enso-org/ide | opened | Strange things happens when putting colon as a separator in list. | Category: Controllers Priority: Medium Type: Bug | <!--

Please ensure that you are using the latest version of Enso IDE before reporting

the bug! It may have been fixed since.

-->

### What did you do?

I put `:` as a separator list by mistake, trying to make node `["x": [4.0]]`

### What did you expect to see?

The node with that exact expression, probably with syntax... | 1.0 | Strange things happens when putting colon as a separator in list. - <!--

Please ensure that you are using the latest version of Enso IDE before reporting

the bug! It may have been fixed since.

-->

### What did you do?

I put `:` as a separator list by mistake, trying to make node `["x": [4.0]]`

### What did you expe... | priority | strange things happens when putting colon as a separator in list please ensure that you are using the latest version of enso ide before reporting the bug it may have been fixed since what did you do i put as a separator list by mistake trying to make node what did you expect to see ... | 1 |

738,649 | 25,570,643,406 | IssuesEvent | 2022-11-30 17:23:43 | uhh-cms/columnflow | https://api.github.com/repos/uhh-cms/columnflow | opened | Allow selection masks being passed to muon / electron weight producers | enhancement medium-priority | As it is right now, the muon and electron weight producers in https://github.com/uhh-cms/columnflow/blob/master/columnflow/production/muon.py and https://github.com/uhh-cms/columnflow/blob/master/columnflow/production/electron.py are very generic, but still they automatically read all leptons available.

In some use ... | 1.0 | Allow selection masks being passed to muon / electron weight producers - As it is right now, the muon and electron weight producers in https://github.com/uhh-cms/columnflow/blob/master/columnflow/production/muon.py and https://github.com/uhh-cms/columnflow/blob/master/columnflow/production/electron.py are very generic,... | priority | allow selection masks being passed to muon electron weight producers as it is right now the muon and electron weight producers in and are very generic but still they automatically read all leptons available in some use cases where the unwanted leptons were already removed in an upstream reduction step t... | 1 |

713,679 | 24,535,334,769 | IssuesEvent | 2022-10-11 20:11:32 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | Install Python auto-complete helper files (`*.pyi`) | component: distribution priority: medium | See https://github.com/pybind/pybind11/issues/2350#issuecomment-668879301.

As I understand it, some IDEs will not help the user auto-complete their function-call arguments unless we also install `*.pyi` files for our native modules.

As part of our build process, we should import `mypy` (maybe `python3-mypy` if it... | 1.0 | Install Python auto-complete helper files (`*.pyi`) - See https://github.com/pybind/pybind11/issues/2350#issuecomment-668879301.

As I understand it, some IDEs will not help the user auto-complete their function-call arguments unless we also install `*.pyi` files for our native modules.

As part of our build proces... | priority | install python auto complete helper files pyi see as i understand it some ides will not help the user auto complete their function call arguments unless we also install pyi files for our native modules as part of our build process we should import mypy maybe mypy if it s new enough otherw... | 1 |

1,566 | 2,515,613,855 | IssuesEvent | 2015-01-15 19:50:18 | adobe/brackets | https://api.github.com/repos/adobe/brackets | reopened | LiveDevelopmentMultiBrowser unit test issues | F Live Preview MultiBrowser medium priority | **1)** <del>The Jasmine test-runner window always logs an exception when it is first loaded, before any tests are run:</del> [console messages hidden]

<!--

```

TypeError: undefined is not a function

at _showStatusChangeReason (file:///C:/code/Brackets/brackets-app/brackets/src/LiveDevelopment/main.js:145:21)

... | 1.0 | LiveDevelopmentMultiBrowser unit test issues - **1)** <del>The Jasmine test-runner window always logs an exception when it is first loaded, before any tests are run:</del> [console messages hidden]

<!--

```

TypeError: undefined is not a function

at _showStatusChangeReason (file:///C:/code/Brackets/brackets-ap... | priority | livedevelopmentmultibrowser unit test issues the jasmine test runner window always logs an exception when it is first loaded before any tests are run typeerror undefined is not a function at showstatuschangereason file c code brackets brackets app brackets src livedevelopment ma... | 1 |

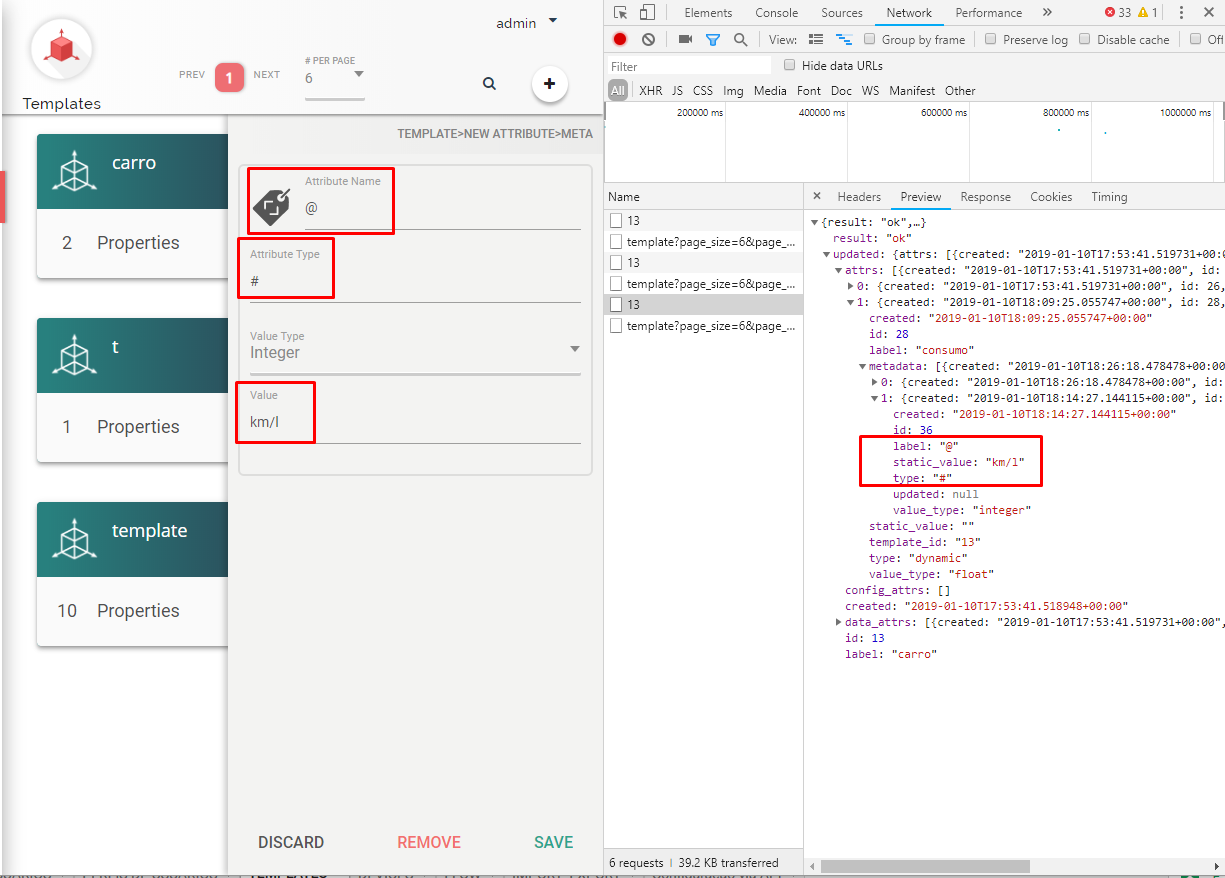

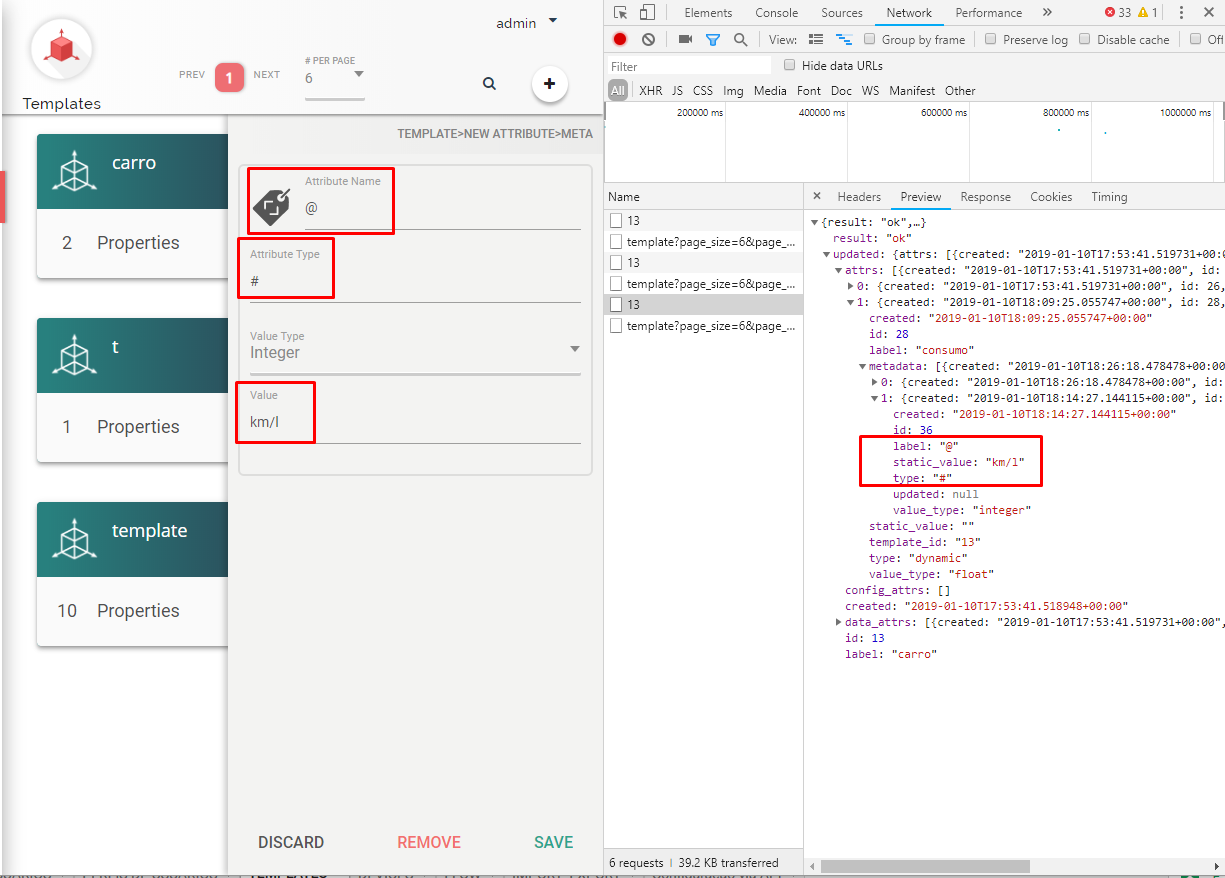

321,053 | 9,792,850,663 | IssuesEvent | 2019-06-10 18:27:02 | dojot/dojot | https://api.github.com/repos/dojot/dojot | closed | [GUI] Template: Change metadata - does not validate fields populated with invalid values | Priority:Medium Status:Treated Team:Frontend Type:Bug |

**Note:** validation is done by adding the metadata

**Affected Version:** 0.3.0-nightly_20181227 | 1.0 | [GUI] Template: Change metadata - does not validate fields populated with invalid values -

**Note:** validation is done by adding the metadata

**Affected Version:** 0.3.0-nightly_20181227 | priority | template change metadata does not validate fields populated with invalid values note validation is done by adding the metadata affected version nightly | 1 |

75,196 | 3,456,583,548 | IssuesEvent | 2015-12-18 02:28:41 | dkpro/dkpro-core | https://api.github.com/repos/dkpro/dkpro-core | closed | Use current directory as default target location for writers | enhancement Module-api.io Priority-Medium wontfix | ```

For writers that write to directories, e.g. Conll2006Writer, we could maybe use the

current directory as default output location.

```

Original issue reported on code.google.com by `richard.eckart` on 2014-06-24 17:20:17 | 1.0 | Use current directory as default target location for writers - ```

For writers that write to directories, e.g. Conll2006Writer, we could maybe use the

current directory as default output location.

```

Original issue reported on code.google.com by `richard.eckart` on 2014-06-24 17:20:17 | priority | use current directory as default target location for writers for writers that write to directories e g we could maybe use the current directory as default output location original issue reported on code google com by richard eckart on | 1 |

1,024 | 2,506,912,178 | IssuesEvent | 2015-01-12 14:54:17 | ukwa/w3act | https://api.github.com/repos/ukwa/w3act | closed | CSV download from reports pages not working | bug Medium Priority | Hi @kinmanli : an attempt to download CSV from this just hangs up the browser:

http://www.webarchive.org.uk/actdev/reportscreation/targets/?s=createdAt&o=desc&crawlFrequency=DAILY&tld=either

| 1.0 | CSV download from reports pages not working - Hi @kinmanli : an attempt to download CSV from this just hangs up the browser:

http://www.webarchive.org.uk/actdev/reportscreation/targets/?s=createdAt&o=desc&crawlFrequency=DAILY&tld=either

| priority | csv download from reports pages not working hi kinmanli an attempt to download csv from this just hangs up the browser | 1 |

369,603 | 10,915,304,423 | IssuesEvent | 2019-11-21 10:53:28 | react-figma/react-figma | https://api.github.com/repos/react-figma/react-figma | closed | Different color formats support | complexity: medium priority: medium topic: components topic: primitives support type: feature or enhancement | ERROR: type should be string, got "https://facebook.github.io/react-native/docs/colors\r\n\r\n* 'rgb(255, 0, 255)'\r\n* 'rgba(255, 255, 255, 1.0)'\r\n* '#ff00ff00' \r\n* 'hsl(360, 100%, 100%)'\r\n* 'transparent'\r\n* Named colors: aliceblue, antiquewhite, etc." | 1.0 | Different color formats support - https://facebook.github.io/react-native/docs/colors

* 'rgb(255, 0, 255)'

* 'rgba(255, 255, 255, 1.0)'

* '#ff00ff00'

* 'hsl(360, 100%, 100%)'

* 'transparent'

* Named colors: aliceblue, antiquewhite, etc. | priority | different color formats support rgb rgba hsl transparent named colors aliceblue antiquewhite etc | 1 |

4,374 | 2,550,873,794 | IssuesEvent | 2015-02-02 00:37:23 | SO-Close-Vote-Reviewers/SOCVR-Chatbot | https://api.github.com/repos/SO-Close-Vote-Reviewers/SOCVR-Chatbot | closed | Add commands to get tags | enhancement help wanted medium-priority | The following commands need to get added:

- current tag

- next x tags

- start event

Details on those commands are int the setup.md file.

Most of the work is in the sede branch. | 1.0 | Add commands to get tags - The following commands need to get added:

- current tag

- next x tags

- start event

Details on those commands are int the setup.md file.

Most of the work is in the sede branch. | priority | add commands to get tags the following commands need to get added current tag next x tags start event details on those commands are int the setup md file most of the work is in the sede branch | 1 |

826,164 | 31,559,398,126 | IssuesEvent | 2023-09-03 03:53:48 | ubiquity/ubiquibot | https://api.github.com/repos/ubiquity/ubiquibot | opened | Linked Pull Request Not Closed On Expired Task | Priority: 2 (Medium) Time: <4 Hours | This should be diagnosed and fixed.

> @wannacfuture - Releasing the bounty back to dev pool because the allocated duration already ended!

Last activity time: Fri Aug 18 2023 23:07:15 GMT+0000 (Coordinated Universal Time)

_Originally posted by @ubiquibot in https://github.com/ubiquity/ubiquibot/issues/431#issuecomme... | 1.0 | Linked Pull Request Not Closed On Expired Task - This should be diagnosed and fixed.

> @wannacfuture - Releasing the bounty back to dev pool because the allocated duration already ended!

Last activity time: Fri Aug 18 2023 23:07:15 GMT+0000 (Coordinated Universal Time)

_Originally posted by @ubiquibot in https://gi... | priority | linked pull request not closed on expired task this should be diagnosed and fixed wannacfuture releasing the bounty back to dev pool because the allocated duration already ended last activity time fri aug gmt coordinated universal time originally posted by ubiquibot in | 1 |

733,473 | 25,307,451,687 | IssuesEvent | 2022-11-17 15:07:10 | Fiserv/Support | https://api.github.com/repos/Fiserv/Support | closed | .docignore Articles Showing in Search | bug CommerceHub Priority - Medium Severity - Medium | # Reporting new issue for Commerce Hub

**Region** (if applicable)

Dev

**Page**

https://dev-developer.fiserv.com/search?q=test%20response&p=%5B%22CommerceHub%22%5D

**Describe the bug**

Search results contain articles that are in the .docignore

**Expected behavior**

.docignore articles should not show in ... | 1.0 | .docignore Articles Showing in Search - # Reporting new issue for Commerce Hub

**Region** (if applicable)

Dev

**Page**

https://dev-developer.fiserv.com/search?q=test%20response&p=%5B%22CommerceHub%22%5D

**Describe the bug**

Search results contain articles that are in the .docignore

**Expected behavior**

... | priority | docignore articles showing in search reporting new issue for commerce hub region if applicable dev page describe the bug search results contain articles that are in the docignore expected behavior docignore articles should not show in search results they result in a scr... | 1 |

507,013 | 14,678,259,527 | IssuesEvent | 2020-12-31 02:36:33 | rubyforgood/casa | https://api.github.com/repos/rubyforgood/casa | closed | Automate github issue weekly "is this still in progress?" checkins | :sparkles: :computer: Contributor Friendly / Devel Help Wanted Priority: Medium | Automate github issue weekly "is this still in progress?" checkins on "in progress" github issues without recent comments / commits. After two weeks (14 days) of checking-in and no comments or PRs, unassign the assignee and move the issue back to "to do" (automate as many parts of this as possible! Anything is better t... | 1.0 | Automate github issue weekly "is this still in progress?" checkins - Automate github issue weekly "is this still in progress?" checkins on "in progress" github issues without recent comments / commits. After two weeks (14 days) of checking-in and no comments or PRs, unassign the assignee and move the issue back to "to... | priority | automate github issue weekly is this still in progress checkins automate github issue weekly is this still in progress checkins on in progress github issues without recent comments commits after two weeks days of checking in and no comments or prs unassign the assignee and move the issue back to to ... | 1 |

55,593 | 3,073,806,877 | IssuesEvent | 2015-08-20 00:42:14 | RobotiumTech/robotium | https://api.github.com/repos/RobotiumTech/robotium | closed | setDatePicker set wrong moth. | bug imported invalid Priority-Medium | _From [zoumy...@gmail.com](https://code.google.com/u/115552050981841755101/) on August 07, 2013 01:12:46_

What steps will reproduce the problem? 1.open DatePicker.

2.set Date 1986,7,21 but the date has been set Aug 21 1986 3. What is the expected output? What do you see instead? Jul 21 1986 What version of the produc... | 1.0 | setDatePicker set wrong moth. - _From [zoumy...@gmail.com](https://code.google.com/u/115552050981841755101/) on August 07, 2013 01:12:46_

What steps will reproduce the problem? 1.open DatePicker.

2.set Date 1986,7,21 but the date has been set Aug 21 1986 3. What is the expected output? What do you see instead? Jul 2... | priority | setdatepicker set wrong moth from on august what steps will reproduce the problem open datepicker set date but the date has been set aug what is the expected output what do you see instead jul what version of the product are you using on what operating system version ... | 1 |

349,853 | 10,474,561,773 | IssuesEvent | 2019-09-23 14:43:32 | LifeMC/LifeSkript | https://api.github.com/repos/LifeMC/LifeSkript | opened | Method 'loadScript' is too complex to analyze by data flow algorithm | priority: longtime goal priority: medium state: help wanted type: enhancement | **Describe the bug**

IntelliJ gives warning "Method 'loadScript' is too complex to analyze by data flow algorithm" in the ScriptLoader#loadScript method.

**To Reproduce**

Open ScriptLoader and navigate to loadScript method in IntelliJ (tested on IntelliJ IDEA Ultimate Edition 2019.2)

**Expected behavior**

Expe... | 2.0 | Method 'loadScript' is too complex to analyze by data flow algorithm - **Describe the bug**

IntelliJ gives warning "Method 'loadScript' is too complex to analyze by data flow algorithm" in the ScriptLoader#loadScript method.

**To Reproduce**

Open ScriptLoader and navigate to loadScript method in IntelliJ (tested o... | priority | method loadscript is too complex to analyze by data flow algorithm describe the bug intellij gives warning method loadscript is too complex to analyze by data flow algorithm in the scriptloader loadscript method to reproduce open scriptloader and navigate to loadscript method in intellij tested o... | 1 |

789,765 | 27,804,979,962 | IssuesEvent | 2023-03-17 18:59:40 | knative/docs | https://api.github.com/repos/knative/docs | closed | Fix tab formatting in code-samples folder | kind/bug kind/good-first-issue priority/medium kind/cleanup hacktoberfest good first issue help wanted | ## Background

The files in `code-samples` folder are no longer viewable on the website, only in GitHub. However, this has caused broken formatting because tabbed elements are not available in GitHub markdown.

## Task

Remove the tabbed formatting, for example `=== "yaml"`, in the files in the `code-samples` folder... | 1.0 | Fix tab formatting in code-samples folder - ## Background

The files in `code-samples` folder are no longer viewable on the website, only in GitHub. However, this has caused broken formatting because tabbed elements are not available in GitHub markdown.

## Task

Remove the tabbed formatting, for example `=== "yaml"... | priority | fix tab formatting in code samples folder background the files in code samples folder are no longer viewable on the website only in github however this has caused broken formatting because tabbed elements are not available in github markdown task remove the tabbed formatting for example yaml ... | 1 |

163,186 | 6,192,717,545 | IssuesEvent | 2017-07-05 03:28:44 | start-jsk/jsk_apc | https://api.github.com/repos/start-jsk/jsk_apc | opened | Make additional fingers, finger bases and gears | enhancement issue/priority/medium | - [ ] Print additional fingers, finger bases and gears (fix magnet fitting)

- [ ] Mold rubber to fingers

- [ ] Print additional other parts | 1.0 | Make additional fingers, finger bases and gears - - [ ] Print additional fingers, finger bases and gears (fix magnet fitting)

- [ ] Mold rubber to fingers

- [ ] Print additional other parts | priority | make additional fingers finger bases and gears print additional fingers finger bases and gears fix magnet fitting mold rubber to fingers print additional other parts | 1 |

350,723 | 10,500,868,163 | IssuesEvent | 2019-09-26 11:31:23 | code4romania/monitorizare-vot-android | https://api.github.com/repos/code4romania/monitorizare-vot-android | closed | [Research] Investigate replacing realm with room | android enhancement help wanted medium priority research | We are planning a complete redo of the app, using kotlin.

Please research the possibility of replacing Realm db with [Room](https://developer.android.com/topic/libraries/architecture/room). | 1.0 | [Research] Investigate replacing realm with room - We are planning a complete redo of the app, using kotlin.

Please research the possibility of replacing Realm db with [Room](https://developer.android.com/topic/libraries/architecture/room). | priority | investigate replacing realm with room we are planning a complete redo of the app using kotlin please research the possibility of replacing realm db with | 1 |

67,714 | 3,277,566,036 | IssuesEvent | 2015-10-27 01:40:51 | saxifrage/caac-map | https://api.github.com/repos/saxifrage/caac-map | closed | Hover-over highlighting does not work for pathway colors | Medium Priority | The darker colors of the pathway blocks don't allow hover-overs to do anything. Seems like if we shift the color scheme at large to echo Chelsea's design, then the really dark blue could be used for all hover-overs.

The ultra-light blue is the non-highlighted color, and the middle blue is the default color.

on May 20, 2011 18:51:43_

Create a method on JsWindow class to get the parent window

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=249_ | 1.0 | Create a method on JsWindow class to get the parent window - _From [ge...@cruxframework.org](https://code.google.com/u/108728025643241132101/) on May 20, 2011 18:51:43_

Create a method on JsWindow class to get the parent window

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=249_ | priority | create a method on jswindow class to get the parent window from on may create a method on jswindow class to get the parent window original issue | 1 |

160,956 | 6,106,076,560 | IssuesEvent | 2017-06-21 02:28:04 | minio/minio-go | https://api.github.com/repos/minio/minio-go | closed | TestPutObjectStream is not passing with latest and master minio | priority: medium | --- FAIL: TestPutObjectStreaming (0.00s)

api_functional_v4_test.go:406: Test 1 Error: The request signature we calculated does not match the signature you provided. Check your key and signing method. minio-go-

testh1owqaacnr26h95qc test-object

| 1.0 | TestPutObjectStream is not passing with latest and master minio - --- FAIL: TestPutObjectStreaming (0.00s)

api_functional_v4_test.go:406: Test 1 Error: The request signature we calculated does not match the signature you provided. Check your key and signing method. minio-go-

testh1owqaacnr26h95qc test-object ... | priority | testputobjectstream is not passing with latest and master minio fail testputobjectstreaming api functional test go test error the request signature we calculated does not match the signature you provided check your key and signing method minio go test object | 1 |

522,088 | 15,148,714,826 | IssuesEvent | 2021-02-11 11:00:55 | FraunhoferISST/IDS-Connector-Framework | https://api.github.com/repos/FraunhoferISST/IDS-Connector-Framework | opened | Forward received RejectionMessage to Connector | Priority: Medium Type: Enhancement | Currently a received RejectionMessage is not forwarded to the connector-developer upon receiving it at the endpoints of the IDS-Framework due to failed DAT-validation of the received RejectionMessage within the IDS-Framework.

The received RejectionMessage should be forwarded to the connector developer so that the de... | 1.0 | Forward received RejectionMessage to Connector - Currently a received RejectionMessage is not forwarded to the connector-developer upon receiving it at the endpoints of the IDS-Framework due to failed DAT-validation of the received RejectionMessage within the IDS-Framework.

The received RejectionMessage should be fo... | priority | forward received rejectionmessage to connector currently a received rejectionmessage is not forwarded to the connector developer upon receiving it at the endpoints of the ids framework due to failed dat validation of the received rejectionmessage within the ids framework the received rejectionmessage should be fo... | 1 |

710,668 | 24,427,238,851 | IssuesEvent | 2022-10-06 04:37:49 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | Renovate is stuck in an infinite loop overwriting a ci bot's commits | type:bug priority-3-medium status:in-progress regression | ### How are you running Renovate?

Mend Renovate hosted app on github.com

### If you're self-hosting Renovate, tell us what version of Renovate you run.

_No response_

### If you're self-hosting Renovate, select which platform you are using.

_No response_

### If you're self-hosting Renovate, tell us what version of... | 1.0 | Renovate is stuck in an infinite loop overwriting a ci bot's commits - ### How are you running Renovate?

Mend Renovate hosted app on github.com

### If you're self-hosting Renovate, tell us what version of Renovate you run.

_No response_

### If you're self-hosting Renovate, select which platform you are using.

_No ... | priority | renovate is stuck in an infinite loop overwriting a ci bot s commits how are you running renovate mend renovate hosted app on github com if you re self hosting renovate tell us what version of renovate you run no response if you re self hosting renovate select which platform you are using no ... | 1 |

180,393 | 6,649,277,897 | IssuesEvent | 2017-09-28 12:41:23 | herbiehp/unicenta | https://api.github.com/repos/herbiehp/unicenta | closed | Modify Customer Form | 3-Medium Priority enhancement help wanted | Modify customer form to add additional attributes that are check boxes (like visible) and add columns to the customers table to store them.

To make it generic and usable by any business the names displayed in the GUI (and field names) should be something like CustAttr1, CustAttr2, etc with the ability to change the na... | 1.0 | Modify Customer Form - Modify customer form to add additional attributes that are check boxes (like visible) and add columns to the customers table to store them.

To make it generic and usable by any business the names displayed in the GUI (and field names) should be something like CustAttr1, CustAttr2, etc with the a... | priority | modify customer form modify customer form to add additional attributes that are check boxes like visible and add columns to the customers table to store them to make it generic and usable by any business the names displayed in the gui and field names should be something like etc with the ability to change... | 1 |

559,360 | 16,557,057,288 | IssuesEvent | 2021-05-28 15:02:32 | guardicore/monkey | https://api.github.com/repos/guardicore/monkey | closed | Configure MongoDB on Monkey Island initialization | Complexity: Medium Enhancement Priority: High python | Monkey Island needs to write runtime artifacts to a writable location and assume that the source code directory is read-only. Currently, MongoDB is started by the `linux/run.sh`, `windows\run_mongodb.bat`, and `appimage/run_appimage.sh`. These scripts do not have access to the `data_dir` property in `server_config.json... | 1.0 | Configure MongoDB on Monkey Island initialization - Monkey Island needs to write runtime artifacts to a writable location and assume that the source code directory is read-only. Currently, MongoDB is started by the `linux/run.sh`, `windows\run_mongodb.bat`, and `appimage/run_appimage.sh`. These scripts do not have acce... | priority | configure mongodb on monkey island initialization monkey island needs to write runtime artifacts to a writable location and assume that the source code directory is read only currently mongodb is started by the linux run sh windows run mongodb bat and appimage run appimage sh these scripts do not have acce... | 1 |

247,239 | 7,915,576,371 | IssuesEvent | 2018-07-04 00:08:31 | facelessuser/pymdown-extensions | https://api.github.com/repos/facelessuser/pymdown-extensions | closed | SuperFences: Preserve Tabs and \r | Bug Priority - Medium Severity - Major | The preserve tabs feature is useful for preserving the tab character in code blocks, but it makes fences get processed before whitespace normalization. This means before `\r\n` is transformed to `\n`. On a Windows system, this can cause an issue as the content is scanned assuming normalization. We should strip traili... | 1.0 | SuperFences: Preserve Tabs and \r - The preserve tabs feature is useful for preserving the tab character in code blocks, but it makes fences get processed before whitespace normalization. This means before `\r\n` is transformed to `\n`. On a Windows system, this can cause an issue as the content is scanned assuming n... | priority | superfences preserve tabs and r the preserve tabs feature is useful for preserving the tab character in code blocks but it makes fences get processed before whitespace normalization this means before r n is transformed to n on a windows system this can cause an issue as the content is scanned assuming n... | 1 |

476,723 | 13,749,106,737 | IssuesEvent | 2020-10-06 10:00:03 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | closed | User group invitation tab invited message not showing | bug hacktoberfest priority: medium | **Describe the bug**

When you go into the user profile groups tab and under that Invitation you will not show the invitation message. E.g https://learndash/members/mateo/groups/invites

**To Reproduce**

Steps to reproduce the behavior:

1. Invite member into your group with some invitation text.

2. Now login in... | 1.0 | User group invitation tab invited message not showing - **Describe the bug**

When you go into the user profile groups tab and under that Invitation you will not show the invitation message. E.g https://learndash/members/mateo/groups/invites

**To Reproduce**

Steps to reproduce the behavior:

1. Invite member int... | priority | user group invitation tab invited message not showing describe the bug when you go into the user profile groups tab and under that invitation you will not show the invitation message e g to reproduce steps to reproduce the behavior invite member into your group with some invitation text n... | 1 |

289,431 | 8,870,684,399 | IssuesEvent | 2019-01-11 10:13:44 | georchestra/georchestra | https://api.github.com/repos/georchestra/georchestra | closed | CAS - Relicates of config.jar in the JSP files | 2018 bug priority-medium | **Bug description**

We can find some traces of the shared config here:

https://github.com/georchestra/georchestra/blob/master/cas-server-webapp/src/main/webapp/WEB-INF/view/jsp/default/ui/includes/top.jsp#L38-L42

**geOrchestra version or branch**

since 18.06, also present on master currently

**Expected b... | 1.0 | CAS - Relicates of config.jar in the JSP files - **Bug description**

We can find some traces of the shared config here:

https://github.com/georchestra/georchestra/blob/master/cas-server-webapp/src/main/webapp/WEB-INF/view/jsp/default/ui/includes/top.jsp#L38-L42

**geOrchestra version or branch**

since 18.06... | priority | cas relicates of config jar in the jsp files bug description we can find some traces of the shared config here georchestra version or branch since also present on master currently expected behavior we should get rid of these shared variables previously defined in the old con... | 1 |

100,528 | 4,097,848,842 | IssuesEvent | 2016-06-03 04:41:54 | Putaitu/mondai | https://api.github.com/repos/Putaitu/mondai | closed | Reloading resources doesn't work immediately | estimate:1h priority:medium type:bug version:0.1.3 | This appears to be a GitHub issue, that there is an extended output cache on the API. Is there anything that can be done to circumvent it? | 1.0 | Reloading resources doesn't work immediately - This appears to be a GitHub issue, that there is an extended output cache on the API. Is there anything that can be done to circumvent it? | priority | reloading resources doesn t work immediately this appears to be a github issue that there is an extended output cache on the api is there anything that can be done to circumvent it | 1 |

438,820 | 12,651,991,474 | IssuesEvent | 2020-06-17 02:09:13 | minio/minio | https://api.github.com/repos/minio/minio | closed | "Invalid argument" errors due to setrlimit calls on macOS | community priority: medium | Hello!

When using MinIO on macOS, we frequently see log lines that look like the following:

```

API: SYSTEM()

Time: <time>

Error: invalid argument

1: /Users/user/go/pkg/mod/github.com/minio/minio@v0.0.0-20180508161510-54cd29b51c38/cmd/gateway-main.go:166:cmd.StartGateway()

```

These are ignorable and ever... | 1.0 | "Invalid argument" errors due to setrlimit calls on macOS - Hello!

When using MinIO on macOS, we frequently see log lines that look like the following:

```

API: SYSTEM()

Time: <time>

Error: invalid argument

1: /Users/user/go/pkg/mod/github.com/minio/minio@v0.0.0-20180508161510-54cd29b51c38/cmd/gateway-main.go... | priority | invalid argument errors due to setrlimit calls on macos hello when using minio on macos we frequently see log lines that look like the following api system time error invalid argument users user go pkg mod github com minio minio cmd gateway main go cmd startgateway ... | 1 |

533,130 | 15,577,446,931 | IssuesEvent | 2021-03-17 13:33:01 | Proof-Of-Humanity/proof-of-humanity-web | https://api.github.com/repos/Proof-Of-Humanity/proof-of-humanity-web | opened | Submit Profile Page - Reword Primary Document Button Text | priority: medium status: available type: enhancement :sparkles: | Instead of "primary document", we should say registry rules or something like that. | 1.0 | Submit Profile Page - Reword Primary Document Button Text - Instead of "primary document", we should say registry rules or something like that. | priority | submit profile page reword primary document button text instead of primary document we should say registry rules or something like that | 1 |

368,912 | 10,886,082,145 | IssuesEvent | 2019-11-18 11:46:39 | canonical-web-and-design/vanilla-framework | https://api.github.com/repos/canonical-web-and-design/vanilla-framework | closed | Contextual menu (left/center) doesn't grow to fit item's size | Priority: Medium |

Contextual menu pattern is defined to have a with between 10rem - 21rem and should adapt to contents (menu items) width.

This only works for default (right aligned) menu:

<img width="631" alt="screen shot 2018-11-19 at 17 10 16" src="https://user-images.githubusercontent.com/83575/48719886-cb5f6200-ec1e-11e8-8c66... | 1.0 | Contextual menu (left/center) doesn't grow to fit item's size -

Contextual menu pattern is defined to have a with between 10rem - 21rem and should adapt to contents (menu items) width.

This only works for default (right aligned) menu:

<img width="631" alt="screen shot 2018-11-19 at 17 10 16" src="https://user-ima... | priority | contextual menu left center doesn t grow to fit item s size contextual menu pattern is defined to have a with between and should adapt to contents menu items width this only works for default right aligned menu img width alt screen shot at src left aligned or centred menus... | 1 |

804,612 | 29,495,147,921 | IssuesEvent | 2023-06-02 16:17:54 | SolarWindss/Hearthstone.js | https://api.github.com/repos/SolarWindss/Hearthstone.js | closed | Move `validateCard` from interact to functions | priority: medium time: short improvement | This doesn't belong in interact

- [x] Move

- [x] Update src & tests | 1.0 | Move `validateCard` from interact to functions - This doesn't belong in interact

- [x] Move

- [x] Update src & tests | priority | move validatecard from interact to functions this doesn t belong in interact move update src tests | 1 |

99,940 | 4,074,675,549 | IssuesEvent | 2016-05-28 16:29:15 | BugBusterSWE/documentation | https://api.github.com/repos/BugBusterSWE/documentation | reopened | Aggiungere automazione APIDoc | priority:medium | *Documento in cui si trova il problema*:

Norme di Progetto

Activity #494

*Descrizione del problema*:

Aggiungere automazione APIDoc

Link task: [https://bugbusters.teamwork.com/tasks/6938623](https://bugbusters.teamwork.com/tasks/6938623) | 1.0 | Aggiungere automazione APIDoc - *Documento in cui si trova il problema*:

Norme di Progetto

Activity #494

*Descrizione del problema*:

Aggiungere automazione APIDoc

Link task: [https://bugbusters.teamwork.com/tasks/6938623](https://bugbusters.teamwork.com/tasks/6938623) | priority | aggiungere automazione apidoc documento in cui si trova il problema norme di progetto activity descrizione del problema aggiungere automazione apidoc link task | 1 |

481,788 | 13,891,889,024 | IssuesEvent | 2020-10-19 11:22:33 | sunpy/sunpy | https://api.github.com/repos/sunpy/sunpy | closed | Maps using the CD matrix are not correctly modified by resample | Bug(?) Close? Effort High Package Intermediate Priority Medium map | resample only changes the CDELT flags not CD if present.

| 1.0 | Maps using the CD matrix are not correctly modified by resample - resample only changes the CDELT flags not CD if present.

| priority | maps using the cd matrix are not correctly modified by resample resample only changes the cdelt flags not cd if present | 1 |

747,678 | 26,095,245,726 | IssuesEvent | 2022-12-26 18:18:08 | canaltin-byte/SWE573-SDP-Can | https://api.github.com/repos/canaltin-byte/SWE573-SDP-Can | closed | Home Page Name and surname | enhancement priority : Low Front-end Effort: Medium Home Page | User id should not be accessible. User Name and Surname should be there | 1.0 | Home Page Name and surname - User id should not be accessible. User Name and Surname should be there | priority | home page name and surname user id should not be accessible user name and surname should be there | 1 |

696,650 | 23,909,701,924 | IssuesEvent | 2022-09-09 06:54:44 | dmwm/CRABServer | https://api.github.com/repos/dmwm/CRABServer | opened | If extraJDL contains DESIRED_Sites, then submission fails after 25 tries | Priority: Medium | I submitted the task `220907_161045:dmapelli_crab_20220907_181041` that contained an `extraJDL` similar to

```python

config.Debug.extraJDL = [ '+DESIRED_Sites = "T1_DE_KIT,T2_US_Purdue,T3_UK_London_QMUL"' ]

```

and the submission failed with the error

```plaintext

Failure message from server: The CRAB se... | 1.0 | If extraJDL contains DESIRED_Sites, then submission fails after 25 tries - I submitted the task `220907_161045:dmapelli_crab_20220907_181041` that contained an `extraJDL` similar to

```python

config.Debug.extraJDL = [ '+DESIRED_Sites = "T1_DE_KIT,T2_US_Purdue,T3_UK_London_QMUL"' ]

```

and the submission failed ... | priority | if extrajdl contains desired sites then submission fails after tries i submitted the task dmapelli crab that contained an extrajdl similar to python config debug extrajdl and the submission failed with the error plaintext failure message from server the crab server backend... | 1 |

556,151 | 16,476,186,850 | IssuesEvent | 2021-05-24 05:47:30 | Repair-DeskPOS/RepairDesk-BUGS-IMPROVEMENTS | https://api.github.com/repos/Repair-DeskPOS/RepairDesk-BUGS-IMPROVEMENTS | closed | iPAD - Deposit Feature | Cellular Surgeons Medium Priority enhancement iPAD POS Register | **Suggestion # 1**

I've created a product under iPhone 7 >> deposit / bench fee which is not refundable. Lets say customer comes to our store and using the iPAD I check in this customer >> tap on checkout and collect the deposit amount. This works fine

However when I open the device to find device issue and edit th... | 1.0 | iPAD - Deposit Feature - **Suggestion # 1**

I've created a product under iPhone 7 >> deposit / bench fee which is not refundable. Lets say customer comes to our store and using the iPAD I check in this customer >> tap on checkout and collect the deposit amount. This works fine

However when I open the device to fin... | priority | ipad deposit feature suggestion i ve created a product under iphone deposit bench fee which is not refundable lets say customer comes to our store and using the ipad i check in this customer tap on checkout and collect the deposit amount this works fine however when i open the device to fin... | 1 |

252,702 | 8,039,294,016 | IssuesEvent | 2018-07-30 17:55:36 | systers/communities | https://api.github.com/repos/systers/communities | closed | Set up the repository with basic angular files | Category: Coding Difficulty: MEDIUM Priority: HIGH Program: GSoC Type: Enhancement | ## Description

As a user,

I need set up the repository,

so that I can restart the project with angular framework

## Acceptance Criteria

- Basic working Angular App

- Basic files and modules established

### Update [Required]

- README

- Create multiple new files

## Definition of Done

- [ ] All of the re... | 1.0 | Set up the repository with basic angular files - ## Description

As a user,

I need set up the repository,

so that I can restart the project with angular framework

## Acceptance Criteria

- Basic working Angular App

- Basic files and modules established

### Update [Required]

- README

- Create multiple new f... | priority | set up the repository with basic angular files description as a user i need set up the repository so that i can restart the project with angular framework acceptance criteria basic working angular app basic files and modules established update readme create multiple new files ... | 1 |

68,233 | 3,285,102,990 | IssuesEvent | 2015-10-28 19:07:44 | pantheon-systems/WordPress | https://api.github.com/repos/pantheon-systems/WordPress | closed | Allow for cache TTL of zero in cache plugin | priority:medium | In some instances such as development users dont want to have the minimum TTL of 600s. | 1.0 | Allow for cache TTL of zero in cache plugin - In some instances such as development users dont want to have the minimum TTL of 600s. | priority | allow for cache ttl of zero in cache plugin in some instances such as development users dont want to have the minimum ttl of | 1 |

725,678 | 24,971,367,564 | IssuesEvent | 2022-11-02 01:42:23 | aws-samples/aws-last-mile-delivery-hyperlocal | https://api.github.com/repos/aws-samples/aws-last-mile-delivery-hyperlocal | closed | Setup yarn dependency checks for all packages | enhancement dependencies priority:medium component:all effort:low | * first candidate would be [depcheck](https://github.com/depcheck/depcheck).

* to make sure that there are no missing dependencies in any packages | 1.0 | Setup yarn dependency checks for all packages - * first candidate would be [depcheck](https://github.com/depcheck/depcheck).

* to make sure that there are no missing dependencies in any packages | priority | setup yarn dependency checks for all packages first candidate would be to make sure that there are no missing dependencies in any packages | 1 |

94,280 | 3,923,997,027 | IssuesEvent | 2016-04-22 13:46:00 | EnvironmentAgency/pafs-user | https://api.github.com/repos/EnvironmentAgency/pafs-user | opened | CR:: User Requirements::Funding Sources:: Change the word 'expected' | Change Priority - 3 Medium Sprint 3 | **Issue:**

The word 'expected' caused participants to assume the funding types listed on this page, represent funding that is yet to be secured.

For example, if you have already secured some pri... | 1.0 | CR:: User Requirements::Funding Sources:: Change the word 'expected' - **Issue:**

The word 'expected' caused participants to assume the funding types listed on this page, represent funding that is yet to be secured.

| Difficulty: Easy Priority: Medium Status: Available Type: Enhancement good first issue | We'd like to measure the quality of apps written in Swift.

There are "language detectors" to determine what language is on which path.

It's necessary to implement **SwiftLanguageDetector** the same way as is [JavaScriptLanguageDetector](https://github.com/DXHeroes/dx-scanner/blob/master/src/detectors/JavaScript/J... | 1.0 | Create a language detector for Swift (~100 new lines of code) - We'd like to measure the quality of apps written in Swift.

There are "language detectors" to determine what language is on which path.

It's necessary to implement **SwiftLanguageDetector** the same way as is [JavaScriptLanguageDetector](https://githu... | priority | create a language detector for swift new lines of code we d like to measure the quality of apps written in swift there are language detectors to determine what language is on which path it s necessary to implement swiftlanguagedetector the same way as is implemented it s around new lines of ... | 1 |

650,780 | 21,416,982,859 | IssuesEvent | 2022-04-22 11:54:59 | sahar-avsh/SWE-599 | https://api.github.com/repos/sahar-avsh/SWE-599 | closed | Q&A - Creating a question UI | enhancement Show stopper Hard medium priority Q&A | For creating question:

- [x] A **form** shall open

- [x] User shall enter **title**

- [x] User shall be able to enter **description**

- [x] User shall be able to **attach** her/his **decks**

- [x] User shall be able to **attach** her/his **resources** | 1.0 | Q&A - Creating a question UI - For creating question:

- [x] A **form** shall open

- [x] User shall enter **title**

- [x] User shall be able to enter **description**

- [x] User shall be able to **attach** her/his **decks**

- [x] User shall be able to **attach** her/his **resources** | priority | q a creating a question ui for creating question a form shall open user shall enter title user shall be able to enter description user shall be able to attach her his decks user shall be able to attach her his resources | 1 |

224,225 | 7,467,858,395 | IssuesEvent | 2018-04-02 16:52:53 | enforcer574/smashclub | https://api.github.com/repos/enforcer574/smashclub | opened | Photo Slideshow on Home Page | Complexity: Medium Priority: 3 - Medium Type: User Request | Replace the static image on the "about" portlet of the home page with a timed slideshow. Admins can upload photos to a directory in the assets folder and they will be displayed in the rotation. A setting in the site_settings table controls the slideshow speed. | 1.0 | Photo Slideshow on Home Page - Replace the static image on the "about" portlet of the home page with a timed slideshow. Admins can upload photos to a directory in the assets folder and they will be displayed in the rotation. A setting in the site_settings table controls the slideshow speed. | priority | photo slideshow on home page replace the static image on the about portlet of the home page with a timed slideshow admins can upload photos to a directory in the assets folder and they will be displayed in the rotation a setting in the site settings table controls the slideshow speed | 1 |

406,501 | 11,894,049,716 | IssuesEvent | 2020-03-29 14:14:33 | robotframework/robotframework | https://api.github.com/repos/robotframework/robotframework | closed | Dynamic API: Add new `get_keyword_source` method | enhancement priority: medium rc 1 | This is needed to make it possible to add source information to Libdoc spec files (#3507) as well as to Libdoc's model objects (#3448). External tools like editors can then use this information to implement "go to definition" functionality.

This method needs to be able to return both the path to the source file and ... | 1.0 | Dynamic API: Add new `get_keyword_source` method - This is needed to make it possible to add source information to Libdoc spec files (#3507) as well as to Libdoc's model objects (#3448). External tools like editors can then use this information to implement "go to definition" functionality.

This method needs to be a... | priority | dynamic api add new get keyword source method this is needed to make it possible to add source information to libdoc spec files as well as to libdoc s model objects external tools like editors can then use this information to implement go to definition functionality this method needs to be able to... | 1 |

707,107 | 24,295,583,272 | IssuesEvent | 2022-09-29 09:46:52 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | Upgrade versions in .tool-versions | type:feature help wanted priority-3-medium new package manager status:in-progress | **What would you like Renovate to be able to do?**

When upgrading node, ruby or the like, it should also change the version in `.tool-versions`, used by [asdf](https://github.com/asdf-vm/asdf).

**Describe the solution you'd like**

See above.

**Describe alternatives you've considered**

Doing it manually.

**A... | 1.0 | Upgrade versions in .tool-versions - **What would you like Renovate to be able to do?**

When upgrading node, ruby or the like, it should also change the version in `.tool-versions`, used by [asdf](https://github.com/asdf-vm/asdf).

**Describe the solution you'd like**

See above.

**Describe alternatives you've co... | priority | upgrade versions in tool versions what would you like renovate to be able to do when upgrading node ruby or the like it should also change the version in tool versions used by describe the solution you d like see above describe alternatives you ve considered doing it manually a... | 1 |

457,584 | 13,158,552,952 | IssuesEvent | 2020-08-10 14:30:06 | canonical-web-and-design/jaas-dashboard | https://api.github.com/repos/canonical-web-and-design/jaas-dashboard | opened | Leader information not shown in unit list | Model Details Priority: Medium | When a unit is in HA and a leader has been elected we should indicate as such.

I'd expect to see the leader status in the unit list but also in the unit details. @ziheliu214 can you add this to the unit list/details designs please.

related: https://discourse.juju.is/t/leadership-in-juju-operations-perspective/34... | 1.0 | Leader information not shown in unit list - When a unit is in HA and a leader has been elected we should indicate as such.

I'd expect to see the leader status in the unit list but also in the unit details. @ziheliu214 can you add this to the unit list/details designs please.

related: https://discourse.juju.is/t/... | priority | leader information not shown in unit list when a unit is in ha and a leader has been elected we should indicate as such i d expect to see the leader status in the unit list but also in the unit details can you add this to the unit list details designs please related | 1 |

375,306 | 11,102,411,831 | IssuesEvent | 2019-12-16 23:58:12 | AugurProject/augur | https://api.github.com/repos/AugurProject/augur | closed | ZeroXTrade does not enforce ERC1155_PROXY_ID in AssetData | Priority: Medium V2 Audit | While the off-chain calls to ZeroXTrade (createZeroXOrderFor) will encode the ERC1155_PROXY_ID (`0xa7cb5fb7`) into the first four bytes of the order that is submitted to 0x.fillOrder, there is no check inside the actual trade function to ensure that is what is being passed along to the 0x Exchange. Alternate PROXY_IDs ... | 1.0 | ZeroXTrade does not enforce ERC1155_PROXY_ID in AssetData - While the off-chain calls to ZeroXTrade (createZeroXOrderFor) will encode the ERC1155_PROXY_ID (`0xa7cb5fb7`) into the first four bytes of the order that is submitted to 0x.fillOrder, there is no check inside the actual trade function to ensure that is what is... | priority | zeroxtrade does not enforce proxy id in assetdata while the off chain calls to zeroxtrade createzeroxorderfor will encode the proxy id into the first four bytes of the order that is submitted to fillorder there is no check inside the actual trade function to ensure that is what is being passed along to... | 1 |

204,028 | 7,079,438,127 | IssuesEvent | 2018-01-10 09:34:02 | Automattic/liveblog | https://api.github.com/repos/Automattic/liveblog | closed | Remove reliance on GET params for single entry ajax requests | Priority::Medium enhancement | Scenario:

* A user loads a liveblog

* There are several key events shown in the key events widget

* Some of the key events have not loaded in the initial set of entries that are loaded by default

* The user clicks on one of the entries that isn't visible

* The plugin fires off an ajax request [appending an `inde... | 1.0 | Remove reliance on GET params for single entry ajax requests - Scenario:

* A user loads a liveblog

* There are several key events shown in the key events widget

* Some of the key events have not loaded in the initial set of entries that are loaded by default

* The user clicks on one of the entries that isn't visi... | priority | remove reliance on get params for single entry ajax requests scenario a user loads a liveblog there are several key events shown in the key events widget some of the key events have not loaded in the initial set of entries that are loaded by default the user clicks on one of the entries that isn t visi... | 1 |

828,734 | 31,840,844,496 | IssuesEvent | 2023-09-14 16:12:04 | bcgov/foi-flow | https://api.github.com/repos/bcgov/foi-flow | closed | Single Source of Truth - Divisions | Task dev medium priority | Title of ticket:

#### Description

This task has been created to Remove existing `Program Area Divisions ` Tables and related dependencies like `PROGRAM AREAS ?? ` inside DOC Reviewer DB , in order to keep single source of truth of Divisions across FOI FLOW app. At present DOC REVIEWER Web App (only?) using this tabl... | 1.0 | Single Source of Truth - Divisions - Title of ticket:

#### Description