Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

94,445 | 3,926,005,312 | IssuesEvent | 2016-04-22 21:17:41 | CCAFS/ccafs-ap | https://api.github.com/repos/CCAFS/ccafs-ap | closed | Create the CRP indicators section | auto-migrated enhancement Priority-Medium Section-General | ```

This section will be used by the coordinating unit users

```

Original issue reported on code.google.com by `carvajal.hernandavid@gmail.com` on 15 Apr 2014 at 7:39 | 1.0 | Create the CRP indicators section - ```

This section will be used by the coordinating unit users

```

Original issue reported on code.google.com by `carvajal.hernandavid@gmail.com` on 15 Apr 2014 at 7:39 | priority | create the crp indicators section this section will be used by the coordinating unit users original issue reported on code google com by carvajal hernandavid gmail com on apr at | 1 |

746,452 | 26,030,707,075 | IssuesEvent | 2022-12-21 20:55:18 | bounswe/bounswe2022group8 | https://api.github.com/repos/bounswe/bounswe2022group8 | opened | BE-39: Backend Unit Tests | Effort: Medium Priority: Medium Status: In Progress Coding Team: Backend | ### What's up?

We have already implemented some unit tests, which can be found [here](https://github.com/bounswe/bounswe2022group8/tree/master/App/backend/api/tests), to test our helper functions and models. Within the scope of this task, my aim is to enrich the content of our unit tests to cover all the API endpoints... | 1.0 | BE-39: Backend Unit Tests - ### What's up?

We have already implemented some unit tests, which can be found [here](https://github.com/bounswe/bounswe2022group8/tree/master/App/backend/api/tests), to test our helper functions and models. Within the scope of this task, my aim is to enrich the content of our unit tests t... | priority | be backend unit tests what s up we have already implemented some unit tests which can be found to test our helper functions and models within the scope of this task my aim is to enrich the content of our unit tests to cover all the api endpoints i ve implemented so far to do implement uni... | 1 |

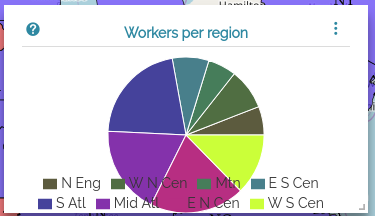

487,656 | 14,049,933,750 | IssuesEvent | 2020-11-02 10:59:05 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Widgets: Improve legend presentation for pie chart | Good first issue Priority: Medium Widgets enhancement investigation | # Description

The legend provided by recharts for pie charts is ugly.

It should be aligned on a side.

# Acceptance criteria AC

- The legend should be aligned on one side for pie charts

# Implementa... | 1.0 | Widgets: Improve legend presentation for pie chart - # Description

The legend provided by recharts for pie charts is ugly.

It should be aligned on a side.

# Acceptance criteria AC

- The legend should... | priority | widgets improve legend presentation for pie chart description the legend provided by recharts for pie charts is ugly it should be aligned on a side acceptance criteria ac the legend should be aligned on one side for pie charts implementation notes this is a sample with legend aligned to... | 1 |

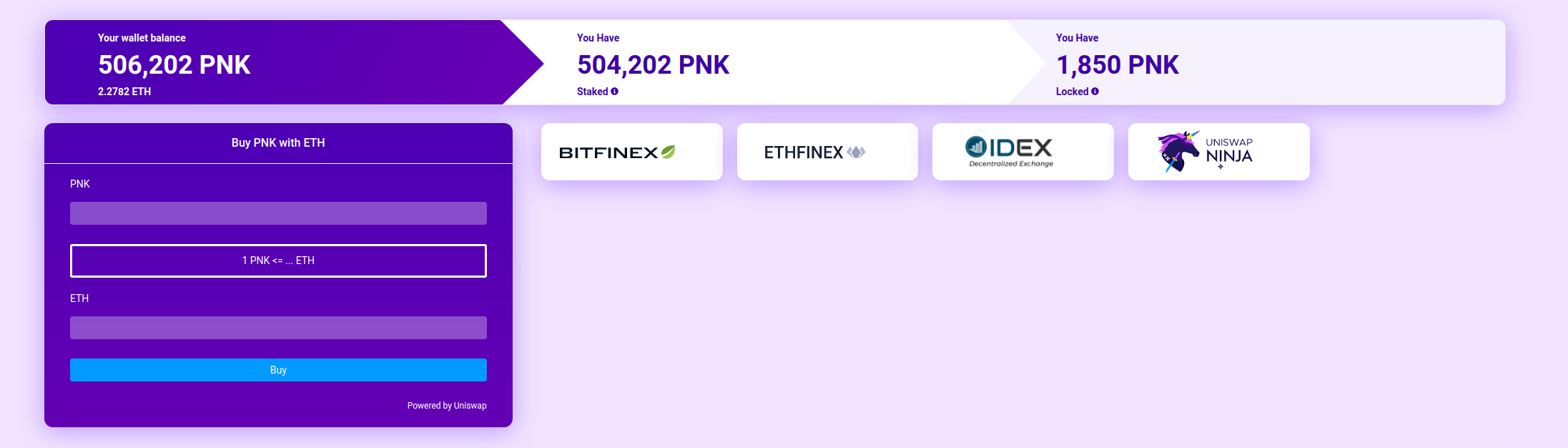

576,314 | 17,084,063,402 | IssuesEvent | 2021-07-08 09:29:57 | kleros/court | https://api.github.com/repos/kleros/court | closed | Maintenance on 'Where to Buy' | Priority: Medium Status: In Progress Type: Enhancement :sparkles: |

Ninja is retired, Ethfinex no longer exists, IDEX is dried up on liquidity. Also, cards have excess space on the right side (a.k.a. not centered). | 1.0 | Maintenance on 'Where to Buy' -

Ninja is retired, Ethfinex no longer exists, IDEX is dried up on liquidity. Also, cards have excess space on the right side (a.k.a. not centered... | priority | maintenance on where to buy ninja is retired ethfinex no longer exists idex is dried up on liquidity also cards have excess space on the right side a k a not centered | 1 |

499,970 | 14,483,486,328 | IssuesEvent | 2020-12-10 15:13:17 | xournalpp/xournalpp | https://api.github.com/repos/xournalpp/xournalpp | closed | Command line option --page not working in 1.1.0+dev | bug priority::medium regression | **Affects versions :**

- OS: Linux, Windows

- (Linux only) Desktop environment: X11

- Which version of libgtk do you use: 3.24.23

- Version of Xournal++: 1.1.0+dev (at least from git commit 8afb3841 to the latest version)

- Installation method: All of PPA, flatpak and building from source

**Describe the bug**... | 1.0 | Command line option --page not working in 1.1.0+dev - **Affects versions :**

- OS: Linux, Windows

- (Linux only) Desktop environment: X11

- Which version of libgtk do you use: 3.24.23

- Version of Xournal++: 1.1.0+dev (at least from git commit 8afb3841 to the latest version)

- Installation method: All of PPA, ... | priority | command line option page not working in dev affects versions os linux windows linux only desktop environment which version of libgtk do you use version of xournal dev at least from git commit to the latest version installation method all of ppa flatpak and... | 1 |

412,816 | 12,056,562,399 | IssuesEvent | 2020-04-15 14:37:54 | AY1920S2-CS2103-W14-1/main | https://api.github.com/repos/AY1920S2-CS2103-W14-1/main | closed | As a user, I want to make analysis based on student sign-up/ drop out rate, so that I can know the current running business makes money | feature.Student priority.Medium type.Story | We do not delete students from the database!? | 1.0 | As a user, I want to make analysis based on student sign-up/ drop out rate, so that I can know the current running business makes money - We do not delete students from the database!? | priority | as a user i want to make analysis based on student sign up drop out rate so that i can know the current running business makes money we do not delete students from the database | 1 |

754,646 | 26,397,192,566 | IssuesEvent | 2023-01-12 20:36:14 | inlang/inlang | https://api.github.com/repos/inlang/inlang | closed | auto-rebase when changes from main to inlang branch | type: improvement scope: editor priority: medium | ## Problem

#219 introduced an automatic switch to a branch called "inlang". Changes from other branches are not auto-merged into this branch. This is fine as long as a user is aware of that fact. The current flow does not make the user aware of the fact that an inlang branch is created that could be outdated.

... | 1.0 | auto-rebase when changes from main to inlang branch - ## Problem

#219 introduced an automatic switch to a branch called "inlang". Changes from other branches are not auto-merged into this branch. This is fine as long as a user is aware of that fact. The current flow does not make the user aware of the fact that an ... | priority | auto rebase when changes from main to inlang branch problem introduced an automatic switch to a branch called inlang changes from other branches are not auto merged into this branch this is fine as long as a user is aware of that fact the current flow does not make the user aware of the fact that an in... | 1 |

650,699 | 21,413,872,781 | IssuesEvent | 2022-04-22 08:59:10 | ros-controls/ros2_control | https://api.github.com/repos/ros-controls/ros2_control | closed | As a ros2_control user I would like to define transmissions for my robots | medium-priority | # Design

Port over [transmission_interface](https://github.com/ros-controls/ros_control/tree/melodic-devel/transmission_interface) | 1.0 | As a ros2_control user I would like to define transmissions for my robots - # Design

Port over [transmission_interface](https://github.com/ros-controls/ros_control/tree/melodic-devel/transmission_interface) | priority | as a control user i would like to define transmissions for my robots design port over | 1 |

138,378 | 5,332,746,618 | IssuesEvent | 2017-02-15 22:56:06 | cuappdev/tcat-ios | https://api.github.com/repos/cuappdev/tcat-ios | closed | Design Data Model | Priority: Medium | Regardless of what the design ends up looking like, we will need models of stops, routes, etc. We should plan out what models we want, what fields/functions they have, and how they're related (at least roughly).

| 1.0 | Design Data Model - Regardless of what the design ends up looking like, we will need models of stops, routes, etc. We should plan out what models we want, what fields/functions they have, and how they're related (at least roughly).

| priority | design data model regardless of what the design ends up looking like we will need models of stops routes etc we should plan out what models we want what fields functions they have and how they re related at least roughly | 1 |

718,411 | 24,716,334,697 | IssuesEvent | 2022-10-20 07:16:54 | AY2223S1-CS2103T-T14-3/tp | https://api.github.com/repos/AY2223S1-CS2103T-T14-3/tp | closed | Add birthdate field for patients | enhancement priority.Medium | - Implement `Birthdate` class to encapsulate birthdate of a patient, and modify relevant classes and tests to reflect latest change. | 1.0 | Add birthdate field for patients - - Implement `Birthdate` class to encapsulate birthdate of a patient, and modify relevant classes and tests to reflect latest change. | priority | add birthdate field for patients implement birthdate class to encapsulate birthdate of a patient and modify relevant classes and tests to reflect latest change | 1 |

523,402 | 15,181,072,903 | IssuesEvent | 2021-02-15 02:17:49 | silentium-labs/merlin-gql | https://api.github.com/repos/silentium-labs/merlin-gql | closed | new command should also allow to setup ngrok for `basic` template | Priority: Medium Status: Pending Type: Enhancement | otherwise we would be forcing everyone to start with the `example` template to get all the features and the intention is the opposite. We want people to use the `examplte` template to learn the framework and the `basic` template to start an actual project from scratch

| 1.0 | new command should also allow to setup ngrok for `basic` template - otherwise we would be forcing everyone to start with the `example` template to get all the features and the intention is the opposite. We want people to use the `examplte` template to learn the framework and the `basic` template to start an actual proj... | priority | new command should also allow to setup ngrok for basic template otherwise we would be forcing everyone to start with the example template to get all the features and the intention is the opposite we want people to use the examplte template to learn the framework and the basic template to start an actual proj... | 1 |

57,199 | 3,081,247,695 | IssuesEvent | 2015-08-22 14:38:35 | bitfighter/bitfighter | https://api.github.com/repos/bitfighter/bitfighter | opened | Playback games enhancements | enhancement imported Priority-Medium | _From [buckyballreaction](https://code.google.com/u/buckyballreaction/) on April 04, 2014 23:54:58_

When playing back games we should add (at least) the following features:

1. Some way to tell the name of the current player you're following

2. Have a quick key to travel to a 'person of interest'. Example CTRL+SPA... | 1.0 | Playback games enhancements - _From [buckyballreaction](https://code.google.com/u/buckyballreaction/) on April 04, 2014 23:54:58_

When playing back games we should add (at least) the following features:

1. Some way to tell the name of the current player you're following

2. Have a quick key to travel to a 'person o... | priority | playback games enhancements from on april when playing back games we should add at least the following features some way to tell the name of the current player you re following have a quick key to travel to a person of interest example ctrl space in a ctf game might go to whoever ha... | 1 |

458,634 | 13,179,023,757 | IssuesEvent | 2020-08-12 10:09:20 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Cron: scanDownloadServer get versions from both buckets | Category: Accounts Priority: Medium Status: Fixed | Get versions from both buckets with paging (re-calling url with marker of a last version) | 1.0 | Cron: scanDownloadServer get versions from both buckets - Get versions from both buckets with paging (re-calling url with marker of a last version) | priority | cron scandownloadserver get versions from both buckets get versions from both buckets with paging re calling url with marker of a last version | 1 |

430,490 | 12,453,876,746 | IssuesEvent | 2020-05-27 14:28:00 | bounswe/bounswe2020group7 | https://api.github.com/repos/bounswe/bounswe2020group7 | closed | Practice App - Search | Priority: Medium Status: Done Status: Under review Type: New Feature | Implementation of the search functionality of **practice-app**.

- [x] Implementation of the Code

- [x] Documentation

- [x] Endpoint Documentation

- [x] Unit Tests | 1.0 | Practice App - Search - Implementation of the search functionality of **practice-app**.

- [x] Implementation of the Code

- [x] Documentation

- [x] Endpoint Documentation

- [x] Unit Tests | priority | practice app search implementation of the search functionality of practice app implementation of the code documentation endpoint documentation unit tests | 1 |

54,856 | 3,071,449,147 | IssuesEvent | 2015-08-19 12:12:08 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Вывод поисковых запросов в быстром поиске | Component-UI enhancement imported Priority-Medium Usability | _From [Tirael...@gmail.com](https://code.google.com/u/108935377450235604965/) on September 26, 2010 15:38:53_

1.Кнопка быстрого поиска в окошке флая хоть и разворачивается но там всегда пусто, сделайте так чтобы там выводились те же запросы что и сохранённые в обычном поиске.

2. Сделайте нормально пункт сброс в сохра... | 1.0 | Вывод поисковых запросов в быстром поиске - _From [Tirael...@gmail.com](https://code.google.com/u/108935377450235604965/) on September 26, 2010 15:38:53_

1.Кнопка быстрого поиска в окошке флая хоть и разворачивается но там всегда пусто, сделайте так чтобы там выводились те же запросы что и сохранённые в обычном поиске... | priority | вывод поисковых запросов в быстром поиске from on september кнопка быстрого поиска в окошке флая хоть и разворачивается но там всегда пусто сделайте так чтобы там выводились те же запросы что и сохранённые в обычном поиске сделайте нормально пункт сброс в сохранённых запросах а то список оч... | 1 |

109,970 | 4,417,088,805 | IssuesEvent | 2016-08-15 02:04:21 | slackhq/node-slack-sdk | https://api.github.com/repos/slackhq/node-slack-sdk | closed | Support for incoming webhooks? | Feature Request Priority—Medium | Are y'all interested in exposing an object for posting [incoming webhooks](https://api.slack.com/incoming-webhooks)?

I was thinking an interface like this:

```js

var IncomingWebhook = require('@slack/client').IncomingWebhook;

// slackUrl is the webhook url provided when creating a new incoming webhook

// opt... | 1.0 | Support for incoming webhooks? - Are y'all interested in exposing an object for posting [incoming webhooks](https://api.slack.com/incoming-webhooks)?

I was thinking an interface like this:

```js

var IncomingWebhook = require('@slack/client').IncomingWebhook;

// slackUrl is the webhook url provided when creati... | priority | support for incoming webhooks are y all interested in exposing an object for posting i was thinking an interface like this js var incomingwebhook require slack client incomingwebhook slackurl is the webhook url provided when creating a new incoming webhook options would mirror the pay... | 1 |

9,052 | 2,607,904,819 | IssuesEvent | 2015-02-26 00:15:10 | chrsmithdemos/zen-coding | https://api.github.com/repos/chrsmithdemos/zen-coding | opened | Textpad Support | auto-migrated Priority-Medium Type-EditorSupport | ```

Any chance for Textpad support?

```

-----

Original issue reported on code.google.com by `Alarzel...@gmail.com` on 19 May 2010 at 6:31 | 1.0 | Textpad Support - ```

Any chance for Textpad support?

```

-----

Original issue reported on code.google.com by `Alarzel...@gmail.com` on 19 May 2010 at 6:31 | priority | textpad support any chance for textpad support original issue reported on code google com by alarzel gmail com on may at | 1 |

25,631 | 2,683,870,288 | IssuesEvent | 2015-03-28 12:09:16 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | ConEmu.exe 2010.2.17 падение Conemu/Conhost при turn bufferheight ON | 2–5 stars bug imported Priority-Medium | _From [Zero...@gmail.com](https://code.google.com/u/103642962356045697092/) on February 18, 2010 00:30:19_

Версия ОС:Win7 x86 Ultimate/W2003r2sp2

Версия FAR: 1.75 build 2621 x86/v2.0 (build 1406) x86 Описание бага... 1) Падение Conemu при работе с FAR2. Из плагинов только conemu.dll (Без

плагина conemu.dll - нет и ... | 1.0 | ConEmu.exe 2010.2.17 падение Conemu/Conhost при turn bufferheight ON - _From [Zero...@gmail.com](https://code.google.com/u/103642962356045697092/) on February 18, 2010 00:30:19_

Версия ОС:Win7 x86 Ultimate/W2003r2sp2

Версия FAR: 1.75 build 2621 x86/v2.0 (build 1406) x86 Описание бага... 1) Падение Conemu при работе с... | priority | conemu exe падение conemu conhost при turn bufferheight on from on february версия ос ultimate версия far build build описание бага падение conemu при работе с из плагинов только conemu dll без плагина conemu dll нет и падения через кнопку делаем tu... | 1 |

3,887 | 2,541,648,936 | IssuesEvent | 2015-01-28 10:34:37 | jackjonesfashion/tasks | https://api.github.com/repos/jackjonesfashion/tasks | closed | Content - Update brand_footer with job links | In progress Priority: Medium Task | The current footer job links need to be split into stores and office.

Original ticket: jackjonesfashion/wiz/issues/77

Danish copy:

Job & Karriere butik

Job & Karriere kontor

English copy:

Jobs & Careers Stores

Jobs & Careers Office

Links:

JACK & JONES store: http://www.aboutbestseller.com/en/JobsConten... | 1.0 | Content - Update brand_footer with job links - The current footer job links need to be split into stores and office.

Original ticket: jackjonesfashion/wiz/issues/77

Danish copy:

Job & Karriere butik

Job & Karriere kontor

English copy:

Jobs & Careers Stores

Jobs & Careers Office

Links:

JACK & JONES stor... | priority | content update brand footer with job links the current footer job links need to be split into stores and office original ticket jackjonesfashion wiz issues danish copy job karriere butik job karriere kontor english copy jobs careers stores jobs careers office links jack jones store... | 1 |

57,296 | 3,081,254,854 | IssuesEvent | 2015-08-22 14:46:44 | bitfighter/bitfighter | https://api.github.com/repos/bitfighter/bitfighter | closed | Levels From Pleadies | enhancement imported invalid Priority-Medium | _From [Xelor41...@gmail.com](https://code.google.com/u/116935753572643655650/) on May 16, 2015 19:39:36_

Implement a System Where players can play Levels by getting them from pleadies from Pleiades Have a Search and and filter systems as well.

This would alow for Greater Level Variety as well as reduce Bitfighters ... | 1.0 | Levels From Pleadies - _From [Xelor41...@gmail.com](https://code.google.com/u/116935753572643655650/) on May 16, 2015 19:39:36_

Implement a System Where players can play Levels by getting them from pleadies from Pleiades Have a Search and and filter systems as well.

This would alow for Greater Level Variety as well... | priority | levels from pleadies from on may implement a system where players can play levels by getting them from pleadies from pleiades have a search and and filter systems as well this would alow for greater level variety as well as reduce bitfighters memory size original issue | 1 |

160,468 | 6,098,109,474 | IssuesEvent | 2017-06-20 06:27:48 | intel-analytics/BigDL | https://api.github.com/repos/intel-analytics/BigDL | opened | SpatialConvolutionMap's function for creating connection table is unimplemented in python. | medium priority python | SpatialConvolutionMap.full(nin: Int, nout: In)

SpatialConvolutionMap.oneToOne(nfeat: Int)

SpatialConvolutionMap.random(nin: Int, nout: Int, nto: Int) | 1.0 | SpatialConvolutionMap's function for creating connection table is unimplemented in python. - SpatialConvolutionMap.full(nin: Int, nout: In)

SpatialConvolutionMap.oneToOne(nfeat: Int)

SpatialConvolutionMap.random(nin: Int, nout: Int, nto: Int) | priority | spatialconvolutionmap s function for creating connection table is unimplemented in python spatialconvolutionmap full nin int nout in spatialconvolutionmap onetoone nfeat int spatialconvolutionmap random nin int nout int nto int | 1 |

30,657 | 2,724,508,145 | IssuesEvent | 2015-04-14 18:13:50 | CruxFramework/crux-widgets | https://api.github.com/repos/CruxFramework/crux-widgets | closed | Rest proxies does not recognizes methods on parent interfaces | bug imported Milestone-M14-C2 Priority-Medium | _From [thi...@cruxframework.org](https://code.google.com/u/114650528804514463329/) on April 08, 2014 17:01:44_

What steps will reproduce the problem? 1. Create a rest service that implements an interface

2. On that interface, declare a method with crux rest annotations What is the expected output? What do you see ins... | 1.0 | Rest proxies does not recognizes methods on parent interfaces - _From [thi...@cruxframework.org](https://code.google.com/u/114650528804514463329/) on April 08, 2014 17:01:44_

What steps will reproduce the problem? 1. Create a rest service that implements an interface

2. On that interface, declare a method with crux r... | priority | rest proxies does not recognizes methods on parent interfaces from on april what steps will reproduce the problem create a rest service that implements an interface on that interface declare a method with crux rest annotations what is the expected output what do you see instead crux does ... | 1 |

31,743 | 2,736,708,171 | IssuesEvent | 2015-04-19 18:04:44 | devsnd/cherrymusic | https://api.github.com/repos/devsnd/cherrymusic | closed | Cannot load playlists with single quote in the name | bug priority medium | Cannot load playlists with single quote in the name. :crying_cat_face: | 1.0 | Cannot load playlists with single quote in the name - Cannot load playlists with single quote in the name. :crying_cat_face: | priority | cannot load playlists with single quote in the name cannot load playlists with single quote in the name crying cat face | 1 |

196,036 | 6,923,547,512 | IssuesEvent | 2017-11-30 09:27:35 | datavisyn/tdp_core | https://api.github.com/repos/datavisyn/tdp_core | opened | implement option for custom security check for views | priority: medium type: feature | e.g. whether the user has a certain role

```python

.security(lambda user: user.has_role('role_name'))

``` | 1.0 | implement option for custom security check for views - e.g. whether the user has a certain role

```python

.security(lambda user: user.has_role('role_name'))

``` | priority | implement option for custom security check for views e g whether the user has a certain role python security lambda user user has role role name | 1 |

637,158 | 20,622,341,846 | IssuesEvent | 2022-03-07 18:41:12 | hapi-server/data-specification | https://api.github.com/repos/hapi-server/data-specification | closed | Need to revise old docs about Unicode | priority-medium | State that Unicode is not allowed in JSON and Unicode parameters are not allowed. This will be a minor revision. | 1.0 | Need to revise old docs about Unicode - State that Unicode is not allowed in JSON and Unicode parameters are not allowed. This will be a minor revision. | priority | need to revise old docs about unicode state that unicode is not allowed in json and unicode parameters are not allowed this will be a minor revision | 1 |

98,787 | 4,031,271,469 | IssuesEvent | 2016-05-18 16:37:17 | navacohen90/Click-a-Table | https://api.github.com/repos/navacohen90/Click-a-Table | opened | Course details page | 0 - Backlog points: 10 priority2 - MEDIUM | client side - andular + routing

<!---

@huboard:{"milestone_order":0.000244140625,"order":0.000244140625}

-->

| 1.0 | Course details page - client side - andular + routing

<!---

@huboard:{"milestone_order":0.000244140625,"order":0.000244140625}

-->

| priority | course details page client side andular routing huboard milestone order order | 1 |

807,363 | 29,997,890,886 | IssuesEvent | 2023-06-26 07:15:21 | gamefreedomgit/Maelstrom | https://api.github.com/repos/gamefreedomgit/Maelstrom | closed | [World Event] [Midsummer] [Quest] More Torch Tossing | Priority: Medium Quest Achievement | [//]: # (REMBEMBER! Add links to things related to the bug using for example:)

[//]: # (http://wowhead.com/)

[//]: # (cata-twinhead.twinstar.cz)

**Description:**

Until today I did not have any issues with the quest but today I have failed the quest 6 times, when you pick up the quest 2 braziers are disabled and... | 1.0 | [World Event] [Midsummer] [Quest] More Torch Tossing - [//]: # (REMBEMBER! Add links to things related to the bug using for example:)

[//]: # (http://wowhead.com/)

[//]: # (cata-twinhead.twinstar.cz)

**Description:**

Until today I did not have any issues with the quest but today I have failed the quest 6 times,... | priority | more torch tossing rembember add links to things related to the bug using for example cata twinhead twinstar cz description until today i did not have any issues with the quest but today i have failed the quest times when you pick up the quest braziers are disabled and ... | 1 |

600,234 | 18,291,968,197 | IssuesEvent | 2021-10-05 16:09:06 | parzh/iso4217 | https://api.github.com/repos/parzh/iso4217 | opened | Automatically export all definitions from `*.type.ts` files | Change: patch Domain: meta Pending: blocked Priority: medium Type: improvement | Currently, when another entity definition is added to a `*.type.ts` file, to re-export it through entry-point `index.ts` file it is necessary to reference this definition again via <code>export { <I>Entity</i> } from "<I>entity</I>.type.ts";</code> statement:

```diff

export type Type1 = any;

+ export type Type2 = ... | 1.0 | Automatically export all definitions from `*.type.ts` files - Currently, when another entity definition is added to a `*.type.ts` file, to re-export it through entry-point `index.ts` file it is necessary to reference this definition again via <code>export { <I>Entity</i> } from "<I>entity</I>.type.ts";</code> statement... | priority | automatically export all definitions from type ts files currently when another entity definition is added to a type ts file to re export it through entry point index ts file it is necessary to reference this definition again via export entity from entity type ts statement diff export ... | 1 |

458,715 | 13,180,247,570 | IssuesEvent | 2020-08-12 12:28:36 | mjuenema/python-terrascript | https://api.github.com/repos/mjuenema/python-terrascript | opened | Looking for new maintainer. | Priority: Medium help wanted | As many of you will have already noticed, unfortunately I don't have sufficient time to properly maintain python-terrascript anymore.

What was intended to be a just small experiment turned into my most popular Github project with more than 250 stars. In turn this means that at least 250 other people have a certain l... | 1.0 | Looking for new maintainer. - As many of you will have already noticed, unfortunately I don't have sufficient time to properly maintain python-terrascript anymore.

What was intended to be a just small experiment turned into my most popular Github project with more than 250 stars. In turn this means that at least 250... | priority | looking for new maintainer as many of you will have already noticed unfortunately i don t have sufficient time to properly maintain python terrascript anymore what was intended to be a just small experiment turned into my most popular github project with more than stars in turn this means that at least oth... | 1 |

583,585 | 17,393,141,183 | IssuesEvent | 2021-08-02 10:00:57 | stackabletech/t2 | https://api.github.com/repos/stackabletech/t2 | closed | Remove cluster-signing params in K3s installation | priority/medium status/blocked | Due to a bug in K3s, we had to add heaps of params to the installation: https://github.com/stackabletech/t2/commit/cd3047437e25fbb2fb6de0be4987c568216f76f1

The newest release (https://github.com/k3s-io/k3s/releases/tag/v1.21.1%2Bk3s1) has this problem fixed and we should be able to go without these params

- [x] r... | 1.0 | Remove cluster-signing params in K3s installation - Due to a bug in K3s, we had to add heaps of params to the installation: https://github.com/stackabletech/t2/commit/cd3047437e25fbb2fb6de0be4987c568216f76f1

The newest release (https://github.com/k3s-io/k3s/releases/tag/v1.21.1%2Bk3s1) has this problem fixed and we ... | priority | remove cluster signing params in installation due to a bug in we had to add heaps of params to the installation the newest release has this problem fixed and we should be able to go without these params remove params test | 1 |

242,268 | 7,840,282,019 | IssuesEvent | 2018-06-18 15:54:31 | DistrictDataLabs/yellowbrick | https://api.github.com/repos/DistrictDataLabs/yellowbrick | closed | Add a new parameter to the PCADecomposition visualizer in order to plot the feature columns in the projected space (biplot) | level: novice priority: medium type: feature | ### Proposal

Add a new parameter to the PCADecomposition class in order to have the option to plot the input columns.

I would like to have something like a biplot.

I can start working on the code but... | 1.0 | Add a new parameter to the PCADecomposition visualizer in order to plot the feature columns in the projected space (biplot) - ### Proposal

Add a new parameter to the PCADecomposition class in order to have the option to plot the input columns.

I would like to have something like a biplot.

are added at the very end of the exported file.

This is related to... | 1.0 | [Bug] Bookmark Issues - When setting bookmarks as start page, you will find that:

New bookmarks are not beeing sorted alphabetically as before,

although they are still sorted in the bookmark menu.

When exporting bookmarks, you will find that:

New bookmarks (see above) are added at the very end of the exported fil... | priority | bookmark issues when setting bookmarks as start page you will find that new bookmarks are not beeing sorted alphabetically as before although they are still sorted in the bookmark menu when exporting bookmarks you will find that new bookmarks see above are added at the very end of the exported file ... | 1 |

538,072 | 15,761,951,595 | IssuesEvent | 2021-03-31 10:31:59 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Review of layer Identify behavior from Search tool | Accepted Internal Priority: Medium enhancement | ## Description

<!-- A few sentences describing new feature -->

<!-- screenshot, video, or link to mockup/prototype are welcome -->

Currently if you perform an Indentify request from the Search tool configuring it as reported below

is same as loaded schematisation (3Di API Client) | ⏰ Priority: 3. Medium | If this isn't the case, warn the user about this

Perhaps we can include this in the overview page of the upload wizard? | 1.0 | When new upload starts, check if loaded model (3Di Toolbox) is same as loaded schematisation (3Di API Client) - If this isn't the case, warn the user about this

Perhaps we can include this in the overview page of the upload wizard? | priority | when new upload starts check if loaded model toolbox is same as loaded schematisation api client if this isn t the case warn the user about this perhaps we can include this in the overview page of the upload wizard | 1 |

20,631 | 2,622,855,194 | IssuesEvent | 2015-03-04 08:07:22 | max99x/pagemon-chrome-ext | https://api.github.com/repos/max99x/pagemon-chrome-ext | opened | Highlighting almost never works. | auto-migrated Priority-Medium | ```

What steps will reproduce the problem? Please include a URL.

Trying to use Page Monitor on URL:

http://www.amazon.com/gp/movers-and-shakers/toys-and-games/ref=zg_bsms_toys-and-

games_home_all?pf_rd_p=1712313502&pf_rd_s=center-1&pf_rd_t=2301&pf_rd_i=home&pf_

rd_m=ATVPDKIKX0DER&pf_rd_r=1Y81NK8KQZRX8VS8Y3JW It show... | 1.0 | Highlighting almost never works. - ```

What steps will reproduce the problem? Please include a URL.

Trying to use Page Monitor on URL:

http://www.amazon.com/gp/movers-and-shakers/toys-and-games/ref=zg_bsms_toys-and-

games_home_all?pf_rd_p=1712313502&pf_rd_s=center-1&pf_rd_t=2301&pf_rd_i=home&pf_

rd_m=ATVPDKIKX0DER&pf... | priority | highlighting almost never works what steps will reproduce the problem please include a url trying to use page monitor on url games home all pf rd p pf rd s center pf rd t pf rd i home pf rd m pf rd r it shows alerts often but i almost never see any highlighting which basically makes it useles... | 1 |

239,201 | 7,787,271,034 | IssuesEvent | 2018-06-06 21:47:54 | robotframework/robotframework | https://api.github.com/repos/robotframework/robotframework | closed | Change source distribution format from `tar.gz` to `zip` | alpha 1 enhancement priority: medium | Our current source distribution format is `tar.gz` but we should change it to `zip` for these reasons:

- On Windows you can open `zip` files using the file explorer but `tar.gz` requires installing a separate too.

- There have been problems using `pip` on Windows both with Jython and IronPython, requiring users to ... | 1.0 | Change source distribution format from `tar.gz` to `zip` - Our current source distribution format is `tar.gz` but we should change it to `zip` for these reasons:

- On Windows you can open `zip` files using the file explorer but `tar.gz` requires installing a separate too.

- There have been problems using `pip` on W... | priority | change source distribution format from tar gz to zip our current source distribution format is tar gz but we should change it to zip for these reasons on windows you can open zip files using the file explorer but tar gz requires installing a separate too there have been problems using pip on w... | 1 |

66,246 | 3,251,417,086 | IssuesEvent | 2015-10-19 09:41:21 | cs2103aug2015-w15-3j/main | https://api.github.com/repos/cs2103aug2015-w15-3j/main | closed | Different modes of working | priority.medium type.epic | ## Insert mode

Put the focus on input bar and start adding task without typing "add"

## Search mode

Put the foucs on search bar | 1.0 | Different modes of working - ## Insert mode

Put the focus on input bar and start adding task without typing "add"

## Search mode

Put the foucs on search bar | priority | different modes of working insert mode put the focus on input bar and start adding task without typing add search mode put the foucs on search bar | 1 |

66,645 | 3,256,835,452 | IssuesEvent | 2015-10-20 15:22:30 | remkos/rads | https://api.github.com/repos/remkos/rads | opened | Replace GIA model | enhancement Priority-Medium | Peltier produced a new GIA model, called ICE6G. Apparently this appears to be a significant improvement for present-day GIA ...

http://www.atmosp.physics.utoronto.ca/~peltier/data.php | 1.0 | Replace GIA model - Peltier produced a new GIA model, called ICE6G. Apparently this appears to be a significant improvement for present-day GIA ...

http://www.atmosp.physics.utoronto.ca/~peltier/data.php | priority | replace gia model peltier produced a new gia model called apparently this appears to be a significant improvement for present day gia | 1 |

154,295 | 5,917,211,826 | IssuesEvent | 2017-05-22 12:43:03 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | opened | Finish ref ID preservation mod | Category: Algorithms Priority: Medium Status: Defined Type: Feature |

* BuildingMerger

* DualWaySplitter

* DuplicateWayRemover - Do this in #1518 instead

* ~~HighwaySnapMerger~~

* ~~IntersectionSplitter~~

* MergeNearbyNodes

* PartialNetworkMerger

* PoiPolygonMerger

* RemoveDuplicateAreaVisitor

* ~~SmallWayMerger~~

* WaySplitter | 1.0 | Finish ref ID preservation mod -

* BuildingMerger

* DualWaySplitter

* DuplicateWayRemover - Do this in #1518 instead

* ~~HighwaySnapMerger~~

* ~~IntersectionSplitter~~

* MergeNearbyNodes

* PartialNetworkMerger

* PoiPolygonMerger

* RemoveDuplicateAreaVisitor

* ~~SmallWayMerger~~

* WaySplitter | priority | finish ref id preservation mod buildingmerger dualwaysplitter duplicatewayremover do this in instead highwaysnapmerger intersectionsplitter mergenearbynodes partialnetworkmerger poipolygonmerger removeduplicateareavisitor smallwaymerger waysplitter | 1 |

207,839 | 7,134,200,441 | IssuesEvent | 2018-01-22 20:01:06 | salesagility/SuiteCRM | https://api.github.com/repos/salesagility/SuiteCRM | closed | Unable to Edit/Delete Group User after Creation | Fix Proposed Medium Priority Resolved: Next Release bug |

<!--- Provide a general summary of the issue in the **Title** above -->

<!--- Before you open an issue, please check if a similar issue already exists or has been closed before. --->

<!--- If you have discovered a security risk please report it by emailing security@suitecrm.com. This will be delivered to the prod... | 1.0 | Unable to Edit/Delete Group User after Creation -

<!--- Provide a general summary of the issue in the **Title** above -->

<!--- Before you open an issue, please check if a similar issue already exists or has been closed before. --->

<!--- If you have discovered a security risk please report it by emailing securit... | priority | unable to edit delete group user after creation issue after creating a new group user none of the action menu items work i cannot edit the group user or delete it expected behavior i should be able to edit or delete a group user actual behavior clicking on the ... | 1 |

23,748 | 2,663,014,708 | IssuesEvent | 2015-03-20 00:03:57 | FuturePilot/Pi2buntu | https://api.github.com/repos/FuturePilot/Pi2buntu | closed | Timezone is not configured | bug Fix Released Image Medium Priority | The timezone is not configured. Either set the timezone to something (which one?) or better, offer to configure it on first login. | 1.0 | Timezone is not configured - The timezone is not configured. Either set the timezone to something (which one?) or better, offer to configure it on first login. | priority | timezone is not configured the timezone is not configured either set the timezone to something which one or better offer to configure it on first login | 1 |

61,409 | 3,145,556,348 | IssuesEvent | 2015-09-14 18:34:54 | fusioneng/reactor | https://api.github.com/repos/fusioneng/reactor | opened | Automatically launch `vagrant rsync-auto` on `vagrant up` | priority:medium | Because we're dependent on `vagrant rsync-auto` to get our editable files in the machine, it would be nice if `vagrant up` spawned this process automatically.

I did some googlin' and couldn't find an out of the box solution for this.

Here's a related request on the Vagrant repo: https://github.com/mitchellh/vagr... | 1.0 | Automatically launch `vagrant rsync-auto` on `vagrant up` - Because we're dependent on `vagrant rsync-auto` to get our editable files in the machine, it would be nice if `vagrant up` spawned this process automatically.

I did some googlin' and couldn't find an out of the box solution for this.

Here's a related re... | priority | automatically launch vagrant rsync auto on vagrant up because we re dependent on vagrant rsync auto to get our editable files in the machine it would be nice if vagrant up spawned this process automatically i did some googlin and couldn t find an out of the box solution for this here s a related re... | 1 |

30,663 | 2,724,514,750 | IssuesEvent | 2015-04-14 18:15:58 | CruxFramework/crux-widgets | https://api.github.com/repos/CruxFramework/crux-widgets | closed | DeviceAdaptiveGrid Smallcolumn layout bug | bug imported Priority-Medium | _From [wes...@triggolabs.com](https://code.google.com/u/114691046055037037756/) on April 22, 2014 15:57:16_

The ActionColumn of DeviceAdaptiveGrid had its CSS changed and the size of detail button was improved to guarantee a better usability.

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=3... | 1.0 | DeviceAdaptiveGrid Smallcolumn layout bug - _From [wes...@triggolabs.com](https://code.google.com/u/114691046055037037756/) on April 22, 2014 15:57:16_

The ActionColumn of DeviceAdaptiveGrid had its CSS changed and the size of detail button was improved to guarantee a better usability.

_Original issue: http://code.go... | priority | deviceadaptivegrid smallcolumn layout bug from on april the actioncolumn of deviceadaptivegrid had its css changed and the size of detail button was improved to guarantee a better usability original issue | 1 |

101,987 | 4,149,291,307 | IssuesEvent | 2016-06-15 14:03:53 | dhis2/dhis2-gis | https://api.github.com/repos/dhis2/dhis2-gis | closed | Earth Engine: Support storing color scale and elevation value and | enhancement medium priority Needs server work | User should be able to select colour scale and specify an elevation value for improved visualisation. This should be stored with the favorite. | 1.0 | Earth Engine: Support storing color scale and elevation value and - User should be able to select colour scale and specify an elevation value for improved visualisation. This should be stored with the favorite. | priority | earth engine support storing color scale and elevation value and user should be able to select colour scale and specify an elevation value for improved visualisation this should be stored with the favorite | 1 |

76,688 | 3,491,051,722 | IssuesEvent | 2016-01-04 13:53:51 | ngageoint/hootenanny-ui | https://api.github.com/repos/ngageoint/hootenanny-ui | opened | Click on review table zooms in too much | Category: UI Priority: Medium Status: New/Undefined Type: Bug | Create zoom threshold (level 18) for clicking on review table (red/blue). | 1.0 | Click on review table zooms in too much - Create zoom threshold (level 18) for clicking on review table (red/blue). | priority | click on review table zooms in too much create zoom threshold level for clicking on review table red blue | 1 |

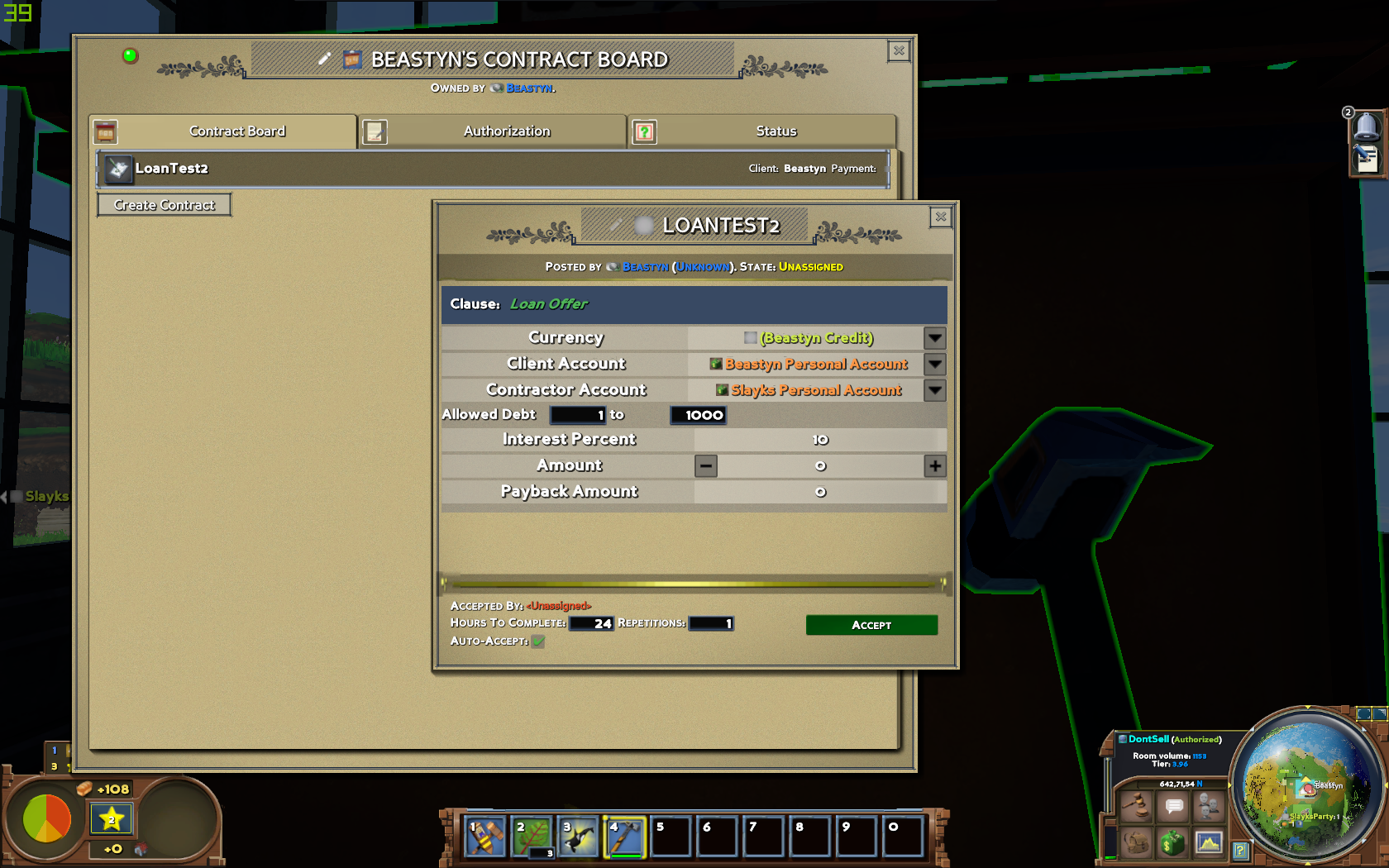

378,848 | 11,209,794,694 | IssuesEvent | 2020-01-06 11:25:34 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1308] Contract notification when start to join | Fixed Medium Priority | Step to reproduce:

- spawn contract board.

- another player add contract like:

- take it.

- pay off all debt:

- take it.

- pay off all debt:

.

Currently, we're waiting for discord.js to release v13 whi... | 1.0 | Add support for Slash Commands. - #### :zap: Describe the New Feature

Add support for slash commands. This will be necessary for when Discord starts [requiring verified bots a especial application to access message content](https://support-dev.discord.com/hc/en-us/articles/4404772028055).

Currently, we're waiting... | priority | add support for slash commands zap describe the new feature add support for slash commands this will be necessary for when discord starts currently we re waiting for discord js to release which will have support for slash commands | 1 |

704,389 | 24,195,163,315 | IssuesEvent | 2022-09-23 22:27:11 | MetaMask/metamask-mobile | https://api.github.com/repos/MetaMask/metamask-mobile | closed | Error message while connecting Ledger, even though it did connect successfully | type-bug needs-qa Priority - Medium team-key-management Ledger | **Describe the bug**

_A clear and concise description of what the bug is_

When connecting my Ledger to my Android device an error screen appeared when it shouldn't have

**Screenshots**

_If applicable, add screenshots or links to help explain your problem_

on March 25, 2014 00:09:07_

<b>What steps will reproduce the problem?</b>

1. msm + JavaSerilization + spring mvc

2. <Manager className="de.javakaffee.web.msm.MemcachedBackupSessionManager"

sticky="false"

... | 1.0 | Multiple session id's getting created in msm + memcached - _From [yoga.und...@gmail.com](https://code.google.com/u/111652832360445102669/) on March 25, 2014 00:09:07_

<b>What steps will reproduce the problem?</b>

1. msm + JavaSerilization + spring mvc

2. <Manager className="de.javakaffee.web.msm.Memcac... | priority | multiple session id s getting created in msm memcached from on march what steps will reproduce the problem msm javaserilization spring mvc lt manager classname de javakaffee web msm memcachedbackupsessionmanager sticky false memcachednod... | 1 |

577,187 | 17,104,963,964 | IssuesEvent | 2021-07-09 16:16:02 | umple/umple | https://api.github.com/repos/umple/umple | closed | Crash in SQL generation of Association Specializations first manual example | Component-SemanticsAndGen Diffic-Med Priority-Medium associations bug sql | There is a compiler error on example of Association Specialization when execute SQL generate; I tested on-line and command line; below, first lines of log errors:

Couldn't read results from the Umple compiler!

java.lang.StackOverflowError

at cruise.umple.compiler.Multiplicity.parseInt(Umple_CodeAssociation.ump:65... | 1.0 | Crash in SQL generation of Association Specializations first manual example - There is a compiler error on example of Association Specialization when execute SQL generate; I tested on-line and command line; below, first lines of log errors:

Couldn't read results from the Umple compiler!

java.lang.StackOverflowError... | priority | crash in sql generation of association specializations first manual example there is a compiler error on example of association specialization when execute sql generate i tested on line and command line below first lines of log errors couldn t read results from the umple compiler java lang stackoverflowerror... | 1 |

540,409 | 15,811,267,185 | IssuesEvent | 2021-04-05 01:54:41 | Kedyn/fusliez-notes | https://api.github.com/repos/Kedyn/fusliez-notes | closed | The crew mate icon is now very small. | Priority: Medium Status: In Progress Type: Maintenance | First of all, thank you for continuing to support a very useful tool.

With this update, the crew mate icon is now very small.

I want the size to be selectable.

Also, the previous drag-drop placement was convenient.

I want it to be selectable.

| 1.0 | The crew mate icon is now very small. - First of all, thank you for continuing to support a very useful tool.

With this update, the crew mate icon is now very small.

I want the size to be selectable.

Also, the previous drag-drop placement was convenient.

I want it to be selectable.

| priority | the crew mate icon is now very small first of all thank you for continuing to support a very useful tool with this update the crew mate icon is now very small i want the size to be selectable also the previous drag drop placement was convenient i want it to be selectable | 1 |

781,762 | 27,448,116,493 | IssuesEvent | 2023-03-02 15:33:23 | zowe/vscode-extension-for-zowe | https://api.github.com/repos/zowe/vscode-extension-for-zowe | closed | Regression in job-search through favorites | bug priority-medium severity-medium | There might be a slight issue with the updates made to the labels.

Based on @adam-wolfe's comments during yesterday's call, we looked into things a bit closer regarding favorites and found that the updated labels break the searching capabilities from a favorited job-search.

### Description

x86_64 macOS release:

https://gist.githubusercontent.com/mbautin/190048c188690a44da31fa43ab8cf0c2/raw

pgregress part only: https://gist.githubusercontent.com/mbautin/172bf6d956705c47c9a75b8676a082dc/raw

Important part: `Query error... | 1.0 | [DocDB] [YSQL] org.yb.pgsql.TestPgRegressTablegroup fails in multiple build types, cannot decode value type kGroupEnd - Jira Link: [DB-4155](https://yugabyte.atlassian.net/browse/DB-4155)

### Description

x86_64 macOS release:

https://gist.githubusercontent.com/mbautin/190048c188690a44da31fa43ab8cf0c2/raw

pgregress ... | priority | org yb pgsql testpgregresstablegroup fails in multiple build types cannot decode value type kgroupend jira link description macos release pgregress part only important part query error cannot decode value type kgroupend from the key encoding format release lto w... | 1 |

379,911 | 11,244,049,937 | IssuesEvent | 2020-01-10 05:45:07 | buttercup/buttercup-browser-extension | https://api.github.com/repos/buttercup/buttercup-browser-extension | closed | Focus on search field | Priority: Medium Status: Pending Type: Enhancement | I would like to request a little but handy feature - focus on search field in Buttercup dialog when a Buttercup button in an input field is pressed as not allways the relevant entry is displayed. Well, the search field can be focused by pressing `Tab` key, but those are four strokes.

For instance, the search field has... | 1.0 | Focus on search field - I would like to request a little but handy feature - focus on search field in Buttercup dialog when a Buttercup button in an input field is pressed as not allways the relevant entry is displayed. Well, the search field can be focused by pressing `Tab` key, but those are four strokes.

For instan... | priority | focus on search field i would like to request a little but handy feature focus on search field in buttercup dialog when a buttercup button in an input field is pressed as not allways the relevant entry is displayed well the search field can be focused by pressing tab key but those are four strokes for instan... | 1 |

748,616 | 26,129,250,950 | IssuesEvent | 2022-12-29 00:43:11 | michelegargiulo/MineCrashRespawn | https://api.github.com/repos/michelegargiulo/MineCrashRespawn | opened | Add Final Tier or Ore Processing, using Impetus | Enhancement Priority Medium Multiblocked | And possibly also other capabilities (Thaumcraft aspects, EMC, GP, Starlight, etc) | 1.0 | Add Final Tier or Ore Processing, using Impetus - And possibly also other capabilities (Thaumcraft aspects, EMC, GP, Starlight, etc) | priority | add final tier or ore processing using impetus and possibly also other capabilities thaumcraft aspects emc gp starlight etc | 1 |

577,093 | 17,103,336,923 | IssuesEvent | 2021-07-09 14:17:39 | jpmorganchase/modular | https://api.github.com/repos/jpmorganchase/modular | closed | Global coverage vs local package coverage. | discussion medium priority | Since adding my partial test change back to Credit, times have improved a lot, (a recent build took 12mins as oppose to 1h 30mins) but now instead of reporting an average coverage in the changed package, we now report the average coverage of the changed file(s).

First complaint from someone with a failing build, "b... | 1.0 | Global coverage vs local package coverage. - Since adding my partial test change back to Credit, times have improved a lot, (a recent build took 12mins as oppose to 1h 30mins) but now instead of reporting an average coverage in the changed package, we now report the average coverage of the changed file(s).

First com... | priority | global coverage vs local package coverage since adding my partial test change back to credit times have improved a lot a recent build took as oppose to but now instead of reporting an average coverage in the changed package we now report the average coverage of the changed file s first complaint from... | 1 |

673,794 | 23,031,500,644 | IssuesEvent | 2022-07-22 14:19:54 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [backup] Restore can't create tables dropped after backup creation | kind/bug area/docdb priority/medium status/awaiting-triage | Jira Link: [DB-3031](https://yugabyte.atlassian.net/browse/DB-3031)

### Description

Case:

1. Create cluster RF=3, 5 nodes, c5.2xlarge

2. Create 10 tables and load 100 MB data each (does not matter how many). YSQL, all columns is `varchar`

3. Wait 1 min and create a backup with platform UI, on AWS S3

4. Drop tab... | 1.0 | [backup] Restore can't create tables dropped after backup creation - Jira Link: [DB-3031](https://yugabyte.atlassian.net/browse/DB-3031)

### Description

Case:

1. Create cluster RF=3, 5 nodes, c5.2xlarge

2. Create 10 tables and load 100 MB data each (does not matter how many). YSQL, all columns is `varchar`

3. Wa... | priority | restore can t create tables dropped after backup creation jira link description case create cluster rf nodes create tables and load mb data each does not matter how many ysql all columns is varchar wait min and create a backup with platform ui on aws drop table... | 1 |

496,351 | 14,345,580,869 | IssuesEvent | 2020-11-28 19:44:54 | NikitaKit1998/datakyt | https://api.github.com/repos/NikitaKit1998/datakyt | closed | Write employee's name and equimpment id on QR code | priority: medium type: feature | **Is your feature request related to a problem? Please describe.**

It will be convenient to have employee's first/last name and equipment id written n QR code image (sticker). This will help to quickly identify who owns some equipment without looking into db.

**Describe the solution you'd like**

Method that writes... | 1.0 | Write employee's name and equimpment id on QR code - **Is your feature request related to a problem? Please describe.**

It will be convenient to have employee's first/last name and equipment id written n QR code image (sticker). This will help to quickly identify who owns some equipment without looking into db.

**D... | priority | write employee s name and equimpment id on qr code is your feature request related to a problem please describe it will be convenient to have employee s first last name and equipment id written n qr code image sticker this will help to quickly identify who owns some equipment without looking into db d... | 1 |

577,095 | 17,103,383,873 | IssuesEvent | 2021-07-09 14:20:42 | f-lab-edu/conference-reservation | https://api.github.com/repos/f-lab-edu/conference-reservation | opened | UserLoginDto의 Id 의 코드컨벤션 수정 | Priority: Medium Type: Feature/Function | - 추가 / 개선 요소

* UserLoginDto 의 private field 가 `Id` 입니다. `id`로 변경하는 것을 제안합니다.

| 1.0 | UserLoginDto의 Id 의 코드컨벤션 수정 - - 추가 / 개선 요소

* UserLoginDto 의 private field 가 `Id` 입니다. `id`로 변경하는 것을 제안합니다.

| priority | userlogindto의 id 의 코드컨벤션 수정 추가 개선 요소 userlogindto 의 private field 가 id 입니다 id 로 변경하는 것을 제안합니다 | 1 |

671,230 | 22,749,664,145 | IssuesEvent | 2022-07-07 12:09:42 | argosp/trialdash | https://api.github.com/repos/argosp/trialdash | closed | Dump and load an experiment | enhancement Wait for QA Priority Medium done | Add a feature to dump the entire experiment to a JSON file. and the possibility to upload it.

I think the images should be saved as binary-ascii data in the file.

If there are problems, lets talk about it. | 1.0 | Dump and load an experiment - Add a feature to dump the entire experiment to a JSON file. and the possibility to upload it.

I think the images should be saved as binary-ascii data in the file.

If there are problems, lets talk about it. | priority | dump and load an experiment add a feature to dump the entire experiment to a json file and the possibility to upload it i think the images should be saved as binary ascii data in the file if there are problems lets talk about it | 1 |

487,045 | 14,018,365,301 | IssuesEvent | 2020-10-29 16:44:40 | AY2021S1-CS2103-F09-2/tp | https://api.github.com/repos/AY2021S1-CS2103-F09-2/tp | closed | Update Suspects of Investigation Case | priority.Medium type.Story | As an investigator, I can update the list of suspects that is linked to an investigation case. | 1.0 | Update Suspects of Investigation Case - As an investigator, I can update the list of suspects that is linked to an investigation case. | priority | update suspects of investigation case as an investigator i can update the list of suspects that is linked to an investigation case | 1 |

423,323 | 12,294,396,544 | IssuesEvent | 2020-05-10 23:42:40 | minio/minio | https://api.github.com/repos/minio/minio | closed | Minio Internal Blobstore for PCF - Static IP Address for Load Balancer | community priority: medium | Feature Request:

Currently the Minio Internal Blobstore for PCF tile does not have a way to assign a static IP address to the Minio load balancer. Currently you can add IPs for the Minio nodes but not the load balancer. This makes it a problem when you redeploy and the load balancer IP address may change. It would be ... | 1.0 | Minio Internal Blobstore for PCF - Static IP Address for Load Balancer - Feature Request:

Currently the Minio Internal Blobstore for PCF tile does not have a way to assign a static IP address to the Minio load balancer. Currently you can add IPs for the Minio nodes but not the load balancer. This makes it a problem wh... | priority | minio internal blobstore for pcf static ip address for load balancer feature request currently the minio internal blobstore for pcf tile does not have a way to assign a static ip address to the minio load balancer currently you can add ips for the minio nodes but not the load balancer this makes it a problem wh... | 1 |

232,848 | 7,680,841,557 | IssuesEvent | 2018-05-16 04:10:05 | socialappslab/appcivist-pb-client | https://api.github.com/repos/socialappslab/appcivist-pb-client | closed | Fix translation of type of contribution in the stats of campaign page | Priority: Medium |

### What's the problem?

The text that describes the status of proposals in the little stats of the campaign is translated in the wrong gender (should be "Publicadas" not "Publicado" because it refers to Propostas.

See example at => `https://testpb.appcivist.org/#/v2/p/assembly/cc699ccf-ffb1-47e9-8b96-2a7e7012324d/ca... | 1.0 | Fix translation of type of contribution in the stats of campaign page -

### What's the problem?

The text that describes the status of proposals in the little stats of the campaign is translated in the wrong gender (should be "Publicadas" not "Publicado" because it refers to Propostas.

See example at => `https://test... | priority | fix translation of type of contribution in the stats of campaign page what s the problem the text that describes the status of proposals in the little stats of the campaign is translated in the wrong gender should be publicadas not publicado because it refers to propostas see example at ... | 1 |

142,015 | 5,448,151,960 | IssuesEvent | 2017-03-07 15:16:25 | stats4sd/wordcloud-app | https://api.github.com/repos/stats4sd/wordcloud-app | closed | Add front page with big, easy buttons | 1 - Ready Impact-High Priority-Medium Size-Small Type-feature | 2 buttons - data session and "end of day review" (or something like that!) | 1.0 | Add front page with big, easy buttons - 2 buttons - data session and "end of day review" (or something like that!) | priority | add front page with big easy buttons buttons data session and end of day review or something like that | 1 |

763,324 | 26,752,446,964 | IssuesEvent | 2023-01-30 20:47:37 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] Deleting snapshot never finishes | kind/bug area/docdb priority/medium | Jira Link: [[DB-417]](https://yugabyte.atlassian.net/browse/DB-417)

### Description

On our cluster a backup was attempted on the 25th April 2022, this created a database snapshot, around 15 minutes after creating the snapshot the snapshot was deleted (or it's deletion was started), since then it has been unsuccessful... | 1.0 | [DocDB] Deleting snapshot never finishes - Jira Link: [[DB-417]](https://yugabyte.atlassian.net/browse/DB-417)

### Description

On our cluster a backup was attempted on the 25th April 2022, this created a database snapshot, around 15 minutes after creating the snapshot the snapshot was deleted (or it's deletion was st... | priority | deleting snapshot never finishes jira link description on our cluster a backup was attempted on the april this created a database snapshot around minutes after creating the snapshot the snapshot was deleted or it s deletion was started since then it has been unsuccessfully trying to find the ... | 1 |

41,498 | 2,869,010,362 | IssuesEvent | 2015-06-05 22:33:38 | dart-lang/intl | https://api.github.com/repos/dart-lang/intl | closed | Escaping for plural and gender expressions using the JSON format seems to be broken | AssumedStale bug Priority-Medium | <a href="https://github.com/alan-knight"><img src="https://avatars.githubusercontent.com/u/3476088?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [alan-knight](https://github.com/alan-knight)**

_Originally opened as dart-lang/sdk#19403_

----

From the dart mailing list:

...

I translated it,... | 1.0 | Escaping for plural and gender expressions using the JSON format seems to be broken - <a href="https://github.com/alan-knight"><img src="https://avatars.githubusercontent.com/u/3476088?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [alan-knight](https://github.com/alan-knight)**

_Originally o... | priority | escaping for plural and gender expressions using the json format seems to be broken issue by originally opened as dart lang sdk from the dart mailing list i translated it and produced the following file for generate from json dart quot locale quot quot en quot quot playertexts... | 1 |

51,817 | 3,014,304,322 | IssuesEvent | 2015-07-29 14:15:33 | scalan/scalan | https://api.github.com/repos/scalan/scalan | opened | Implement Shapeless-based tuples/case classes access | enhancement medium priority | This should help with #1 and make for simpler graphs and more efficient output. | 1.0 | Implement Shapeless-based tuples/case classes access - This should help with #1 and make for simpler graphs and more efficient output. | priority | implement shapeless based tuples case classes access this should help with and make for simpler graphs and more efficient output | 1 |

15,944 | 2,611,533,928 | IssuesEvent | 2015-02-27 06:04:36 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | keyboard problems in engine chat | auto-migrated Component-Engine Milestone-NextRelease Priority-Medium Type-Regression | ```

1. chat history navigation is not working

2. nick completion is not working

3. commands like '/fullscreen' do not work in multiplayer mode

Tested under OS X

```

Original issue reported on code.google.com by `vittorio...@gmail.com` on 23 Oct 2013 at 2:00 | 1.0 | keyboard problems in engine chat - ```

1. chat history navigation is not working

2. nick completion is not working

3. commands like '/fullscreen' do not work in multiplayer mode

Tested under OS X

```

Original issue reported on code.google.com by `vittorio...@gmail.com` on 23 Oct 2013 at 2:00 | priority | keyboard problems in engine chat chat history navigation is not working nick completion is not working commands like fullscreen do not work in multiplayer mode tested under os x original issue reported on code google com by vittorio gmail com on oct at | 1 |

376,949 | 11,160,574,978 | IssuesEvent | 2019-12-26 10:12:38 | qlcchain/go-qlc | https://api.github.com/repos/qlcchain/go-qlc | closed | sign the released binaries with gpg | Priority: Medium Type: Maintenance | ### Description of the issue

import gpg private key to CI

### Issue-Type

- [ ] bug report

- [x] feature request

- [ ] Documentation improvement | 1.0 | sign the released binaries with gpg - ### Description of the issue

import gpg private key to CI

### Issue-Type

- [ ] bug report

- [x] feature request

- [ ] Documentation improvement | priority | sign the released binaries with gpg description of the issue import gpg private key to ci issue type bug report feature request documentation improvement | 1 |

734,081 | 25,337,710,092 | IssuesEvent | 2022-11-18 18:20:51 | zowe/zowe-explorer-intellij | https://api.github.com/repos/zowe/zowe-explorer-intellij | closed | 'Zowe Explorer 0.2.1' installation does remove 'For Mainframe 0.6.1' from IntellJ 2021.3.2 and vice versa | bug priority-medium severity-medium | Hi,

I have upgraded 'For Mainframe' plugin to v 0.6.1. Then I installed 'Zowe Explorer' v0.2.1.

I expected to see both plugins available in my IntellJ 2021.3.2 Community Edition.

But it seems 'Zowe Explorer' replaced 'For Mainframe'.

I tried to install 'For Mainframe' plugin once again.

This time 'Zowe Explorer' d... | 1.0 | 'Zowe Explorer 0.2.1' installation does remove 'For Mainframe 0.6.1' from IntellJ 2021.3.2 and vice versa - Hi,

I have upgraded 'For Mainframe' plugin to v 0.6.1. Then I installed 'Zowe Explorer' v0.2.1.

I expected to see both plugins available in my IntellJ 2021.3.2 Community Edition.

But it seems 'Zowe Explorer' r... | priority | zowe explorer installation does remove for mainframe from intellj and vice versa hi i have upgraded for mainframe plugin to v then i installed zowe explorer i expected to see both plugins available in my intellj community edition but it seems zowe explorer replaced... | 1 |

71,739 | 3,367,617,954 | IssuesEvent | 2015-11-22 10:19:05 | music-encoding/music-encoding | https://api.github.com/repos/music-encoding/music-encoding | closed | <instrumentation> and subelements should allow att.edit | Priority: Medium | _From [klaus.re...@gmail.com](https://code.google.com/u/105834722588578911678/) on January 29, 2015 08:11:10_

At some point it may not be possible to identify the original instrumation of a work (completely). So it should be possible to mark the coding with @-cert.

_Original issue: http://code.google.com/p/music-enco... | 1.0 | <instrumentation> and subelements should allow att.edit - _From [klaus.re...@gmail.com](https://code.google.com/u/105834722588578911678/) on January 29, 2015 08:11:10_

At some point it may not be possible to identify the original instrumation of a work (completely). So it should be possible to mark the coding with @-c... | priority | and subelements should allow att edit from on january at some point it may not be possible to identify the original instrumation of a work completely so it should be possible to mark the coding with cert original issue | 1 |

174,119 | 6,536,793,083 | IssuesEvent | 2017-08-31 19:35:21 | k0shk0sh/FastHub | https://api.github.com/repos/k0shk0sh/FastHub | closed | Enabling the Android Move To SD Card Feature? | Priority: Medium Status: Completed Type: Feature Request | I didn't find an issue about this topic so I wanted to ask if it is possible to add the optional "Move to SD Card" feature.

I myself haven't yet tried to add it or even developed Android Apps yet but after looking at the following two websites it doesn't seem very difficult (from my point of view - I am just interes... | 1.0 | Enabling the Android Move To SD Card Feature? - I didn't find an issue about this topic so I wanted to ask if it is possible to add the optional "Move to SD Card" feature.

I myself haven't yet tried to add it or even developed Android Apps yet but after looking at the following two websites it doesn't seem very diff... | priority | enabling the android move to sd card feature i didn t find an issue about this topic so i wanted to ask if it is possible to add the optional move to sd card feature i myself haven t yet tried to add it or even developed android apps yet but after looking at the following two websites it doesn t seem very diff... | 1 |

727,784 | 25,046,314,732 | IssuesEvent | 2022-11-05 09:43:33 | Chatterino/chatterino2 | https://api.github.com/repos/Chatterino/chatterino2 | closed | Migrate /commercial command to Helix API | Platform: Twitch Priority: Medium Deprecation: Twitch IRC Commands hacktoberfest | As part of Twitch's announced deprecation of IRC-based commands ([see here for more info](https://discuss.dev.twitch.tv/t/deprecation-of-chat-commands-through-irc/40486), the `/commercial` command needs to be migrated to use the relevant Helix API endpoint.

Helix API reference: https://dev.twitch.tv/docs/api/referen... | 1.0 | Migrate /commercial command to Helix API - As part of Twitch's announced deprecation of IRC-based commands ([see here for more info](https://discuss.dev.twitch.tv/t/deprecation-of-chat-commands-through-irc/40486), the `/commercial` command needs to be migrated to use the relevant Helix API endpoint.

Helix API refere... | priority | migrate commercial command to helix api as part of twitch s announced deprecation of irc based commands the commercial command needs to be migrated to use the relevant helix api endpoint helix api reference split from | 1 |

527,397 | 15,342,070,838 | IssuesEvent | 2021-02-27 14:46:05 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | opened | Show the Photo Albums in the main photo directory | feature: enhancement priority: medium | **Is your feature request related to a problem? Please describe.**

There is a link to create an album on the main photos directory but there is no way to see them on the main photos directory. Currently, you will need to go to each of the profile pages to see the available photo albums.

**Support ticket links**

ht... | 1.0 | Show the Photo Albums in the main photo directory - **Is your feature request related to a problem? Please describe.**

There is a link to create an album on the main photos directory but there is no way to see them on the main photos directory. Currently, you will need to go to each of the profile pages to see the ava... | priority | show the photo albums in the main photo directory is your feature request related to a problem please describe there is a link to create an album on the main photos directory but there is no way to see them on the main photos directory currently you will need to go to each of the profile pages to see the ava... | 1 |

789,358 | 27,787,790,320 | IssuesEvent | 2023-03-17 05:51:08 | ainc/ainc-gatsby-sanity | https://api.github.com/repos/ainc/ainc-gatsby-sanity | opened | Create /team-alpha page | Priority: High Difficulty: Medium | https://www.awesomeinc.org/team-alpha

- Sanity Doc

- Name

- Picture

- Team/Role

- Favorite Rule

- Favorite Song

- Favorite Person

- Random Fact | 1.0 | Create /team-alpha page - https://www.awesomeinc.org/team-alpha

- Sanity Doc

- Name

- Picture

- Team/Role

- Favorite Rule

- Favorite Song

- Favorite Person

- Random Fact | priority | create team alpha page sanity doc name picture team role favorite rule favorite song favorite person random fact | 1 |

242,832 | 7,849,149,416 | IssuesEvent | 2018-06-20 01:45:58 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | USER ISSUE: eEvveerryytthhiinngg iiss ddoouubblleedd tteexxtt.. | Medium Priority | **Version:** 0.7.3.2 beta

**Steps to Reproduce:**

lLaauunncchh tthhee llaatteesstt eeccoo oonn lliinnuuxx..

**Expected behavior:**

wweellccoommee ssccrreeeenn wwiitthh uusseerrnnaammee//ppaasssswwoorrdd

**Actual behavior:**

aAss yyoouu ccaann sseeee eevveerryytthhiinngg iinn ggaammee iiss d... | 1.0 | USER ISSUE: eEvveerryytthhiinngg iiss ddoouubblleedd tteexxtt.. - **Version:** 0.7.3.2 beta

**Steps to Reproduce:**

lLaauunncchh tthhee llaatteesstt eeccoo oonn lliinnuuxx..

**Expected behavior:**

wweellccoommee ssccrreeeenn wwiitthh uusseerrnnaammee//ppaasssswwoorrdd

**Actual behavior:**

aAss ... | priority | user issue eevveerryytthhiinngg iiss ddoouubblleedd tteexxtt version beta steps to reproduce llaauunncchh tthhee llaatteesstt eeccoo oonn lliinnuuxx expected behavior wweellccoommee ssccrreeeenn wwiitthh uusseerrnnaammee ppaasssswwoorrdd actual behavior aass ... | 1 |

583,364 | 17,383,366,056 | IssuesEvent | 2021-08-01 06:13:44 | eatmyvenom/hyarcade | https://api.github.com/repos/eatmyvenom/hyarcade | closed | [Refractor] improve leaderboard generation speed. | Medium priority disc:overall refractor t:discord | utilizing some advanced JS features this can be sped up a bit. This should be done to improve quality of life overall.

| 1.0 | [Refractor] improve leaderboard generation speed. - utilizing some advanced JS features this can be sped up a bit. This should be done to improve quality of life overall.

| priority | improve leaderboard generation speed utilizing some advanced js features this can be sped up a bit this should be done to improve quality of life overall | 1 |

804,449 | 29,488,573,818 | IssuesEvent | 2023-06-02 11:45:55 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID: 316635] Macro compares unsigned to 0 in subsys/net/l2/ethernet/gptp/gptp_md.c | bug priority: medium area: Networking Coverity |

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/dae79cefaabf63086946a48ccca4094f26f146c8/subsys/net/l2/ethernet/gptp/gptp_md.c#L478

Category: Integer handling issues

Function: `gptp_md_follow_up_receipt_timeout`

Component: Networking

CID: [316635](https://scan9.scan.coverity.... | 1.0 | [Coverity CID: 316635] Macro compares unsigned to 0 in subsys/net/l2/ethernet/gptp/gptp_md.c -

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/dae79cefaabf63086946a48ccca4094f26f146c8/subsys/net/l2/ethernet/gptp/gptp_md.c#L478

Category: Integer handling issues

Function: `gptp... | priority | macro compares unsigned to in subsys net ethernet gptp gptp md c static code scan issues found in file category integer handling issues function gptp md follow up receipt timeout component networking cid details struct gptp sync rcv state state int port ... | 1 |

286,243 | 8,785,665,487 | IssuesEvent | 2018-12-20 13:41:56 | pravega/pravega-operator | https://api.github.com/repos/pravega/pravega-operator | opened | Investigate Bookkeeper disruption cases | kind/enhancement priority/medium status/needs-investigation | We need to investigate and implement an action plan to cover all BookKeeper disruption cases. Some disruption cases that come to my mind are:

- Graceful termination. Pod will receive an TERM signal to gracefully shutdown. Some situations that cause this are:

- `kubectl drain` to remove a node from the K8 cluster.... | 1.0 | Investigate Bookkeeper disruption cases - We need to investigate and implement an action plan to cover all BookKeeper disruption cases. Some disruption cases that come to my mind are:

- Graceful termination. Pod will receive an TERM signal to gracefully shutdown. Some situations that cause this are:

- `kubectl dr... | priority | investigate bookkeeper disruption cases we need to investigate and implement an action plan to cover all bookkeeper disruption cases some disruption cases that come to my mind are graceful termination pod will receive an term signal to gracefully shutdown some situations that cause this are kubectl dr... | 1 |

46,677 | 2,964,265,421 | IssuesEvent | 2015-07-10 15:41:17 | Sonarr/Sonarr | https://api.github.com/repos/Sonarr/Sonarr | opened | Remove Failing Pending Items | priority:medium proposal suboptimal | If a pending download fails a number of times we should remove it from the pending queue instead of trying over and over. Recently I've seen cases where the torrent has ben removed or there is no torrent client to be sent to. | 1.0 | Remove Failing Pending Items - If a pending download fails a number of times we should remove it from the pending queue instead of trying over and over. Recently I've seen cases where the torrent has ben removed or there is no torrent client to be sent to. | priority | remove failing pending items if a pending download fails a number of times we should remove it from the pending queue instead of trying over and over recently i ve seen cases where the torrent has ben removed or there is no torrent client to be sent to | 1 |

438,325 | 12,626,399,331 | IssuesEvent | 2020-06-14 16:24:13 | rikatz/kubepug | https://api.github.com/repos/rikatz/kubepug | closed | Feature: Use YAML instead of Kubernetes as validation source | Priority/Medium enhancement | The tool currently checks objects in Kubernetes, which is great. I would also like to add it to our CI/CD to check manifests before they make it to Kubernetes. In that regards, I'd like to either pipe YAML/JSON into the tool and it provides the same output including error codes for the pipeline to fail.

Let me know ... | 1.0 | Feature: Use YAML instead of Kubernetes as validation source - The tool currently checks objects in Kubernetes, which is great. I would also like to add it to our CI/CD to check manifests before they make it to Kubernetes. In that regards, I'd like to either pipe YAML/JSON into the tool and it provides the same output ... | priority | feature use yaml instead of kubernetes as validation source the tool currently checks objects in kubernetes which is great i would also like to add it to our ci cd to check manifests before they make it to kubernetes in that regards i d like to either pipe yaml json into the tool and it provides the same output ... | 1 |