Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

730,077 | 25,158,354,039 | IssuesEvent | 2022-11-10 15:05:06 | ooni/backend | https://api.github.com/repos/ooni/backend | closed | auth: remove the nickname field | ooni/api priority/medium | We decided that the nickname field has limited usefulness.

The reasons are the following:

- It's confusing to users what this is

- Every time somebody logs in they have to respecify it since it's not stored

- It looks like an identity (it's called nickname), but it's not unique and for the same email can change

... | 1.0 | auth: remove the nickname field - We decided that the nickname field has limited usefulness.

The reasons are the following:

- It's confusing to users what this is

- Every time somebody logs in they have to respecify it since it's not stored

- It looks like an identity (it's called nickname), but it's not unique a... | priority | auth remove the nickname field we decided that the nickname field has limited usefulness the reasons are the following it s confusing to users what this is every time somebody logs in they have to respecify it since it s not stored it looks like an identity it s called nickname but it s not unique a... | 1 |

181,912 | 6,665,196,397 | IssuesEvent | 2017-10-02 23:33:23 | classifiedz/classifiedz.github.io | https://api.github.com/repos/classifiedz/classifiedz.github.io | opened | On homepage, show ads that have been created most recently first + limit to 25 ads (implement pagination in another sprint). | Low Priority Low Risk Medium Priority | This can be fixed in HomeController as seen here

Resource

https://laravel.com/docs/5.5/eloquent | 2.0 | On homepage, show ads that have been created most recently first + limit to 25 ads (implement pagination in another sprint). - This can be fixed in HomeController as seen here

Resource

https://laravel.com/docs/5.5/eloquent | priority | on homepage show ads that have been created most recently first limit to ads implement pagination in another sprint this can be fixed in homecontroller as seen here resource | 1 |

499,082 | 14,439,772,805 | IssuesEvent | 2020-12-07 14:47:15 | projectdissolve/dissolve | https://api.github.com/repos/projectdissolve/dissolve | opened | Epic / Documentation 0.7 | Priority: Medium | ### Focus

Provide basic information on all aspects in the code to accompany 0.7 release.

### Tasks

- [ ] #205

- [ ] #209

- [ ] #204

- [ ] #197

- [ ] #172

- [ ] #176

- [ ] #180

- [ ] #182

- [ ] #184

| 1.0 | Epic / Documentation 0.7 - ### Focus

Provide basic information on all aspects in the code to accompany 0.7 release.

### Tasks

- [ ] #205

- [ ] #209

- [ ] #204

- [ ] #197

- [ ] #172

- [ ] #176

- [ ] #180

- [ ] #182

- [ ] #184

| priority | epic documentation focus provide basic information on all aspects in the code to accompany release tasks | 1 |

141,502 | 5,437,040,122 | IssuesEvent | 2017-03-06 04:44:54 | CS2103JAN2017-W10-B4/main | https://api.github.com/repos/CS2103JAN2017-W10-B4/main | opened | As a user I can search for event/deadline/task by attribute | priority.medium type.story | ... so that I can find out details on specific event/deadline/task | 1.0 | As a user I can search for event/deadline/task by attribute - ... so that I can find out details on specific event/deadline/task | priority | as a user i can search for event deadline task by attribute so that i can find out details on specific event deadline task | 1 |

98,904 | 4,039,403,395 | IssuesEvent | 2016-05-20 04:36:01 | shelljs/shelljs | https://api.github.com/repos/shelljs/shelljs | reopened | Plugin System. | feat medium priority question refactor | **[NOTE: This thread was originally about the `open` command. I'm hijacking it. -@ariporad]**

It would be nice to have a plugin system for shelljs which allows custom commands. | 1.0 | Plugin System. - **[NOTE: This thread was originally about the `open` command. I'm hijacking it. -@ariporad]**

It would be nice to have a plugin system for shelljs which allows custom commands. | priority | plugin system it would be nice to have a plugin system for shelljs which allows custom commands | 1 |

55,445 | 3,073,445,120 | IssuesEvent | 2015-08-19 22:00:20 | RobotiumTech/robotium | https://api.github.com/repos/RobotiumTech/robotium | closed | Add more getXXX(int resID) & clickOnXXX(int resID) methods | bug imported Priority-Medium wontfix | _From [courag...@gmail.com](https://code.google.com/u/111939886635846010704/) on December 05, 2012 16:11:40_

It would be nice if we have more of those getXXX() & clickOnXXX() methods by Resource ID as parameter so that it's easier for us to pin-point exactly which visual controls we are referencing to. Especially whe... | 1.0 | Add more getXXX(int resID) & clickOnXXX(int resID) methods - _From [courag...@gmail.com](https://code.google.com/u/111939886635846010704/) on December 05, 2012 16:11:40_

It would be nice if we have more of those getXXX() & clickOnXXX() methods by Resource ID as parameter so that it's easier for us to pin-point exactly... | priority | add more getxxx int resid clickonxxx int resid methods from on december it would be nice if we have more of those getxxx clickonxxx methods by resource id as parameter so that it s easier for us to pin point exactly which visual controls we are referencing to especially when now consider... | 1 |

103,988 | 4,188,185,482 | IssuesEvent | 2016-06-23 19:54:01 | duckduckgo/zeroclickinfo-spice | https://api.github.com/repos/duckduckgo/zeroclickinfo-spice | closed | Make sure we're using Sort blocks where applicable | Improvement Low-Hanging Fruit Priority: Medium | Many Spice IA's sort their results but aren't using a sorting block, which they really should.

A quick check shows several Spice's that aren't correctly sorting their results:

- [x] Airlines

- [ ] Book

- [ ] Detect Lang

- [x] People In Space

- [ ] DNS

- [ ] Bootic

- [x] GitHub

- [x] Congress

- [x] Recipes... | 1.0 | Make sure we're using Sort blocks where applicable - Many Spice IA's sort their results but aren't using a sorting block, which they really should.

A quick check shows several Spice's that aren't correctly sorting their results:

- [x] Airlines

- [ ] Book

- [ ] Detect Lang

- [x] People In Space

- [ ] DNS

- [ ... | priority | make sure we re using sort blocks where applicable many spice ia s sort their results but aren t using a sorting block which they really should a quick check shows several spice s that aren t correctly sorting their results airlines book detect lang people in space dns bootic ... | 1 |

467,269 | 13,444,815,970 | IssuesEvent | 2020-09-08 10:22:39 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [9.0 staging-1756] Faulty tooltips in Host Private World menu | Category: UI Priority: Medium | - All tooltips in the custom settings to host a private world have the same title: "Specialty Cost Multiplier"

- The tooltip descriptions for Craft Resource Multiplier and Craft Time Multiplier are reversed.

To avoid vendor lock-in, such an API should not be called Seamless. Future libraries should be free to re-implement the API, and as long as they stick to SHA3-256 (and Seamless's scheme of semantic checksumming), their transformations ... | 1.0 | Imperative transformations inside transformer code - Allow transformations to be launched within transformer code (distinct from #130)

To avoid vendor lock-in, such an API should not be called Seamless. Future libraries should be free to re-implement the API, and as long as they stick to SHA3-256 (and Seamless's sch... | priority | imperative transformations inside transformer code allow transformations to be launched within transformer code distinct from to avoid vendor lock in such an api should not be called seamless future libraries should be free to re implement the api and as long as they stick to and seamless s scheme of ... | 1 |

543,028 | 15,876,520,819 | IssuesEvent | 2021-04-09 08:28:38 | eclipse/dirigible | https://api.github.com/repos/eclipse/dirigible | opened | [IDE] Monaco - Add support for SignatureHelpProvider | component-ide efforts-medium enhancement priority-medium usability web-ide | Add support for [SignatureHelpProvider ](https://microsoft.github.io/monaco-editor/api/interfaces/monaco.languages.signaturehelpprovider.html#providesignaturehelp)

Sample: https://jsfiddle.net/hec12da1/

Related Monaco Issues:

- https://github.com/microsoft/monaco-editor/issues/243

- https://github.com/microsoft... | 1.0 | [IDE] Monaco - Add support for SignatureHelpProvider - Add support for [SignatureHelpProvider ](https://microsoft.github.io/monaco-editor/api/interfaces/monaco.languages.signaturehelpprovider.html#providesignaturehelp)

Sample: https://jsfiddle.net/hec12da1/

Related Monaco Issues:

- https://github.com/microsoft/... | priority | monaco add support for signaturehelpprovider add support for sample related monaco issues | 1 |

109,029 | 4,366,561,996 | IssuesEvent | 2016-08-03 14:41:29 | LearningLocker/learninglocker | https://api.github.com/repos/LearningLocker/learninglocker | closed | Is there a way to do a webhook per LRS? | priority:medium status:confirmed type:question | Like this: https://github.com/LearningLocker/learninglocker/settings/hooks/new

our client have an internal progression tracking system, so just wonder if LRS can do a webhook to other services?

E.g.: listen on statement verb

```

On 'passed' verb

Make a request with custom payload to designated service

On 'c... | 1.0 | Is there a way to do a webhook per LRS? - Like this: https://github.com/LearningLocker/learninglocker/settings/hooks/new

our client have an internal progression tracking system, so just wonder if LRS can do a webhook to other services?

E.g.: listen on statement verb

```

On 'passed' verb

Make a request with cus... | priority | is there a way to do a webhook per lrs like this our client have an internal progression tracking system so just wonder if lrs can do a webhook to other services e g listen on statement verb on passed verb make a request with custom payload to designated service on completed verb make a r... | 1 |

686,650 | 23,500,072,531 | IssuesEvent | 2022-08-18 07:36:24 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YCQL] Support additional bind formats for multi-column IN clause | kind/enhancement priority/medium area/ycql | Jira Link: [DB-2990](https://yugabyte.atlassian.net/browse/DB-2990)

### Description

With https://github.com/yugabyte/yugabyte-db/issues/12938, we added support for the multi-column IN clause. It added support for the below bind format:

```

SELECT ... WHERE (r1, r2) IN ((?, ?), (?, ?));

```

The task is to sup... | 1.0 | [YCQL] Support additional bind formats for multi-column IN clause - Jira Link: [DB-2990](https://yugabyte.atlassian.net/browse/DB-2990)

### Description

With https://github.com/yugabyte/yugabyte-db/issues/12938, we added support for the multi-column IN clause. It added support for the below bind format:

```

SELEC... | priority | support additional bind formats for multi column in clause jira link description with we added support for the multi column in clause it added support for the below bind format select where in the task is to support the below formats as well ... | 1 |

249,154 | 7,953,925,342 | IssuesEvent | 2018-07-12 04:49:56 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | USER ISSUE: Stuck inside a players building | Medium Priority | **Version:** 0.7.3.3 beta

**Steps to Reproduce:**

Log off close to a players building

Log back on.

**Expected behavior:**

I expected to log back into the game outside a players building where I was standing at the time

**Actual behavior:**

I spawned inside a players building instead | 1.0 | USER ISSUE: Stuck inside a players building - **Version:** 0.7.3.3 beta

**Steps to Reproduce:**

Log off close to a players building

Log back on.

**Expected behavior:**

I expected to log back into the game outside a players building where I was standing at the time

**Actual behavior:**

I spawned inside a pl... | priority | user issue stuck inside a players building version beta steps to reproduce log off close to a players building log back on expected behavior i expected to log back into the game outside a players building where i was standing at the time actual behavior i spawned inside a pl... | 1 |

704,939 | 24,215,330,650 | IssuesEvent | 2022-09-26 06:05:01 | OpenMined/PySyft | https://api.github.com/repos/OpenMined/PySyft | closed | Hagrid launch show docker compose version instead of docker version | Type: Bug :bug: Priority: 3 - Medium :unamused: PwP | ## Description

A clear and concise description of the bug.

## How to Reproduce

1. hagrid launch domain_name to docker:8081 --tail=false --tag=latest --silent

2. See the Docker version printed. It prints the docker compose version instead of docker version

## Expected Behavior

A clear and concise description o... | 1.0 | Hagrid launch show docker compose version instead of docker version - ## Description

A clear and concise description of the bug.

## How to Reproduce

1. hagrid launch domain_name to docker:8081 --tail=false --tag=latest --silent

2. See the Docker version printed. It prints the docker compose version instead of doc... | priority | hagrid launch show docker compose version instead of docker version description a clear and concise description of the bug how to reproduce hagrid launch domain name to docker tail false tag latest silent see the docker version printed it prints the docker compose version instead of docker... | 1 |

357,501 | 10,607,546,473 | IssuesEvent | 2019-10-11 04:17:08 | canonical-web-and-design/maas-ui | https://api.github.com/repos/canonical-web-and-design/maas-ui | closed | Provide UI feedback if websocket disconnects & reconnects | Enhancement ✨ Priority: Medium | Having implemented `reconnecting-websocket` in https://github.com/canonical-web-and-design/maas-ui/pull/192 it would be nice if we also provided some UI feedback when the connection drops and reconnects. | 1.0 | Provide UI feedback if websocket disconnects & reconnects - Having implemented `reconnecting-websocket` in https://github.com/canonical-web-and-design/maas-ui/pull/192 it would be nice if we also provided some UI feedback when the connection drops and reconnects. | priority | provide ui feedback if websocket disconnects reconnects having implemented reconnecting websocket in it would be nice if we also provided some ui feedback when the connection drops and reconnects | 1 |

623,064 | 19,660,313,028 | IssuesEvent | 2022-01-10 16:22:51 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | closed | Add a profile type dropdown in the Profile Search form filed option | priority-medium feature Stale | **Describe the bug**

Add a profile type dropdown in the Profile Search form filed option. So users can search members more effectively and narrow down the search result.

**Screenshots**

https://prnt.sc/

**Jira issue** : [PROD-926]

[PROD-926]: https://buddyboss.atlassian.net/browse/PROD-926?atlOrigin=eyJpIjoiNW... | 1.0 | Add a profile type dropdown in the Profile Search form filed option - **Describe the bug**

Add a profile type dropdown in the Profile Search form filed option. So users can search members more effectively and narrow down the search result.

**Screenshots**

https://prnt.sc/

**Jira issue** : [PROD-926]

[PROD-926]... | priority | add a profile type dropdown in the profile search form filed option describe the bug add a profile type dropdown in the profile search form filed option so users can search members more effectively and narrow down the search result screenshots jira issue | 1 |

690,789 | 23,672,385,580 | IssuesEvent | 2022-08-27 15:06:26 | ArjunSharda/TimeConv | https://api.github.com/repos/ArjunSharda/TimeConv | closed | Credits page on mobile has text below footer | bug help wanted Priority: Medium | Credits page on mobile has some text below the footer. I have tried fixing it, but need help with solving it. Any help would be greatly appreciated. | 1.0 | Credits page on mobile has text below footer - Credits page on mobile has some text below the footer. I have tried fixing it, but need help with solving it. Any help would be greatly appreciated. | priority | credits page on mobile has text below footer credits page on mobile has some text below the footer i have tried fixing it but need help with solving it any help would be greatly appreciated | 1 |

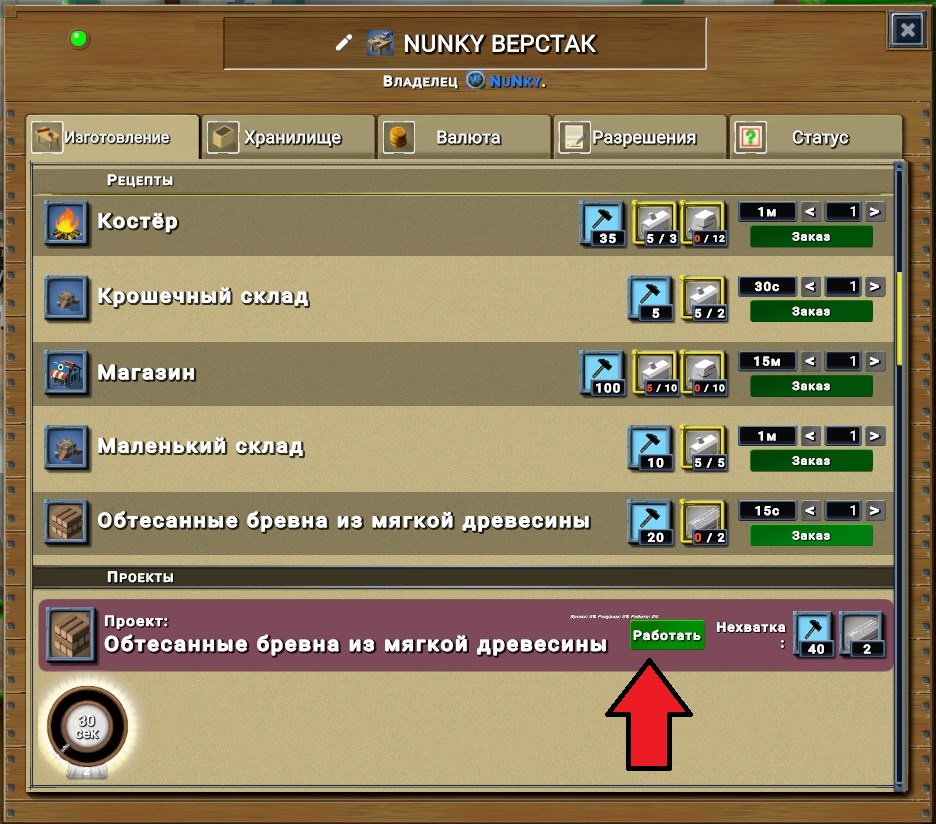

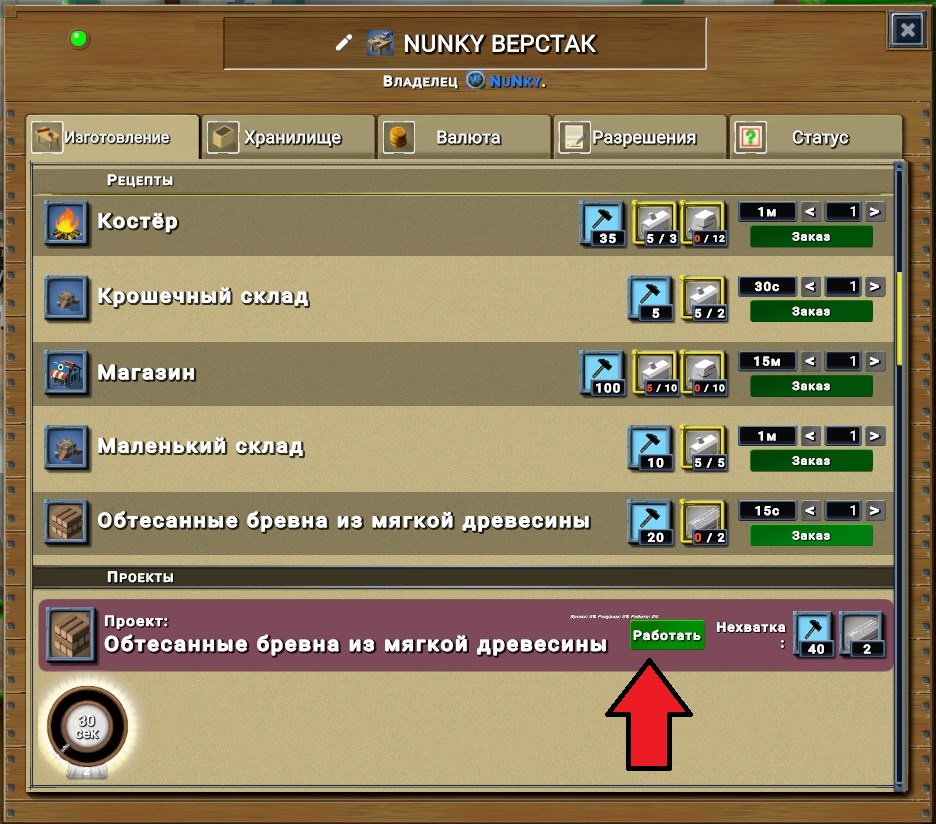

471,375 | 13,565,764,303 | IssuesEvent | 2020-09-18 12:15:56 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1722] Incorrect progress display of Work Orders | Category: Localization Category: UI Priority: Medium Status: Fixed Status: Reopen | When using a translation (currently Russian) with a long text, the progress bar and buttons are displayed incorrectly

Here's how it looks in the original

with a long text, the progress bar and buttons are displayed incorrectly

Here's how it looks i... | priority | incorrect progress display of work orders when using a translation currently russian with a long text the progress bar and buttons are displayed incorrectly here s how it looks in the original | 1 |

655,376 | 21,687,641,930 | IssuesEvent | 2022-05-09 12:50:13 | SimplyVC/panic | https://api.github.com/repos/SimplyVC/panic | opened | Installation wizard - Node setup test buttons - Substrate base-chain | UI iteration 2 Priority: Medium | ### User Story

As a node operator, I want to be able to verify that the Node exporter URL is correctly configured before my settings are finalised.

### Description

The scope of this task is **limited exclusively to the frontend aspect** of the test button associated with the nodes setup step - see #48.

### ... | 1.0 | Installation wizard - Node setup test buttons - Substrate base-chain - ### User Story

As a node operator, I want to be able to verify that the Node exporter URL is correctly configured before my settings are finalised.

### Description

The scope of this task is **limited exclusively to the frontend aspect** of ... | priority | installation wizard node setup test buttons substrate base chain user story as a node operator i want to be able to verify that the node exporter url is correctly configured before my settings are finalised description the scope of this task is limited exclusively to the frontend aspect of ... | 1 |

407,949 | 11,939,895,224 | IssuesEvent | 2020-04-02 15:51:36 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | launching jupyter notebook on tutorials errors out | configuration: linux priority: medium team: kitware type: bug | Testing #12646 locally on my ubuntu machine demonstrated a failure to launch jupyter:

```

russt@Puget-179850-01:~/drake/tutorials$ bazel run rendering_multibody_plant

Starting local Bazel server and connecting to it...

INFO: Invocation ID: 4d6b6aea-e675-4fa1-b8e4-4cde0f9435dd

INFO: Analyzed target //tutorials:rend... | 1.0 | launching jupyter notebook on tutorials errors out - Testing #12646 locally on my ubuntu machine demonstrated a failure to launch jupyter:

```

russt@Puget-179850-01:~/drake/tutorials$ bazel run rendering_multibody_plant

Starting local Bazel server and connecting to it...

INFO: Invocation ID: 4d6b6aea-e675-4fa1-b8e4... | priority | launching jupyter notebook on tutorials errors out testing locally on my ubuntu machine demonstrated a failure to launch jupyter russt puget drake tutorials bazel run rendering multibody plant starting local bazel server and connecting to it info invocation id info analyzed target ... | 1 |

207,848 | 7,134,205,348 | IssuesEvent | 2018-01-22 20:02:06 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Economy viewer contracts tooltips not displaying | Medium Priority | In economy viewer the contracts tooltips don't display at all. Sometimes they will work if the contract window is also opened, but it's very inconsistent. | 1.0 | Economy viewer contracts tooltips not displaying - In economy viewer the contracts tooltips don't display at all. Sometimes they will work if the contract window is also opened, but it's very inconsistent. | priority | economy viewer contracts tooltips not displaying in economy viewer the contracts tooltips don t display at all sometimes they will work if the contract window is also opened but it s very inconsistent | 1 |

461,891 | 13,237,893,040 | IssuesEvent | 2020-08-18 22:45:49 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | opened | Activity page Document file name overlapping on Firefox web browser | bug priority: medium | **Describe the bug**

Overlapping text when the document file name is too long on firefox web browse only.

I have provided a CSS code to client to fix, but this is an issue in our platform so I considered posting this issue here.

CSS code that I provided to the client to fix the issue:

.bb-activity-media-wrap .bb-... | 1.0 | Activity page Document file name overlapping on Firefox web browser - **Describe the bug**

Overlapping text when the document file name is too long on firefox web browse only.

I have provided a CSS code to client to fix, but this is an issue in our platform so I considered posting this issue here.

CSS code that I ... | priority | activity page document file name overlapping on firefox web browser describe the bug overlapping text when the document file name is too long on firefox web browse only i have provided a css code to client to fix but this is an issue in our platform so i considered posting this issue here css code that i ... | 1 |

24,223 | 2,667,010,872 | IssuesEvent | 2015-03-22 04:45:54 | NewCreature/EOF | https://api.github.com/repos/NewCreature/EOF | closed | I deleted all lyrics, and EOF replaced them with strange text such as "4u^^g^" repeating in the lyric preview in the 3d panel. | bug imported Priority-Medium | _From [xander4j...@yahoo.com](https://code.google.com/u/111302640723734240985/) on May 10, 2010 01:19:40_

I deleted all lyrics, and EOF replaced them with strange text such as

"4u^^g^" repeating in the lyric preview in the 3d panel.

_Original issue: http://code.google.com/p/editor-on-fire/issues/detail?id=4_ | 1.0 | I deleted all lyrics, and EOF replaced them with strange text such as "4u^^g^" repeating in the lyric preview in the 3d panel. - _From [xander4j...@yahoo.com](https://code.google.com/u/111302640723734240985/) on May 10, 2010 01:19:40_

I deleted all lyrics, and EOF replaced them with strange text such as

"4u^^g^" rep... | priority | i deleted all lyrics and eof replaced them with strange text such as g repeating in the lyric preview in the panel from on may i deleted all lyrics and eof replaced them with strange text such as g repeating in the lyric preview in the panel original issue | 1 |

796,370 | 28,108,488,622 | IssuesEvent | 2023-03-31 04:17:36 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | Renovate not detecting docker image in Kubernetes file if there's a comment behind the image name | type:bug priority-3-medium manager:kubernetes status:ready reproduction:provided | ### How are you running Renovate?

Self-hosted Renovate

### If you're self-hosting Renovate, tell us what version of Renovate you run.

35.24.6

### If you're self-hosting Renovate, select which platform you are using.

Gitlab

### Was this something which used to work for you, and then stopped?

I don't... | 1.0 | Renovate not detecting docker image in Kubernetes file if there's a comment behind the image name - ### How are you running Renovate?

Self-hosted Renovate

### If you're self-hosting Renovate, tell us what version of Renovate you run.

35.24.6

### If you're self-hosting Renovate, select which platform you are... | priority | renovate not detecting docker image in kubernetes file if there s a comment behind the image name how are you running renovate self hosted renovate if you re self hosting renovate tell us what version of renovate you run if you re self hosting renovate select which platform you are u... | 1 |

650,776 | 21,416,940,008 | IssuesEvent | 2022-04-22 11:52:06 | sahar-avsh/SWE-599 | https://api.github.com/repos/sahar-avsh/SWE-599 | closed | Mindspace - Asking question functionality | enhancement Hard medium priority mindspace UI | There shall be a place to ask question about any **note** and **resource** or even a **mindspace** | 1.0 | Mindspace - Asking question functionality - There shall be a place to ask question about any **note** and **resource** or even a **mindspace** | priority | mindspace asking question functionality there shall be a place to ask question about any note and resource or even a mindspace | 1 |

239,039 | 7,785,999,021 | IssuesEvent | 2018-06-06 17:32:40 | DistrictDataLabs/yellowbrick | https://api.github.com/repos/DistrictDataLabs/yellowbrick | closed | CVScores | priority: medium review type: feature | Implement a visualizer that shows cross-validation scores as a bar chart along with the final score as an annotated horizontal line.

We want to start moving toward better cross-validation and model selection. Create `yb.model_selection.CVScores` visualizer that extends `ModelVisualizer` and wraps an estimator. Acce... | 1.0 | CVScores - Implement a visualizer that shows cross-validation scores as a bar chart along with the final score as an annotated horizontal line.

We want to start moving toward better cross-validation and model selection. Create `yb.model_selection.CVScores` visualizer that extends `ModelVisualizer` and wraps an esti... | priority | cvscores implement a visualizer that shows cross validation scores as a bar chart along with the final score as an annotated horizontal line we want to start moving toward better cross validation and model selection create yb model selection cvscores visualizer that extends modelvisualizer and wraps an esti... | 1 |

819,038 | 30,717,524,727 | IssuesEvent | 2023-07-27 13:56:06 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] Add Node task fails after Backup/Restores with EAR rotations | kind/bug area/docdb priority/medium 2.18 Backport Required | Jira Link: [DB-7062](https://yugabyte.atlassian.net/browse/DB-7062)

### Description

The Update Universe Task fails after the following operations on a EAR Enabled universe:

Create a 3 RF and 4 node universe

Run Sample Apps

Take Backup

Rotate KMS key

Restore in the same universe by renaming the keyspa... | 1.0 | [DocDB] Add Node task fails after Backup/Restores with EAR rotations - Jira Link: [DB-7062](https://yugabyte.atlassian.net/browse/DB-7062)

### Description

The Update Universe Task fails after the following operations on a EAR Enabled universe:

Create a 3 RF and 4 node universe

Run Sample Apps

Take Backup

... | priority | add node task fails after backup restores with ear rotations jira link description the update universe task fails after the following operations on a ear enabled universe create a rf and node universe run sample apps take backup rotate kms key restore in the same universe by renamin... | 1 |

599,824 | 18,283,945,848 | IssuesEvent | 2021-10-05 08:12:22 | lea927/drop-that-beat | https://api.github.com/repos/lea927/drop-that-beat | closed | As a Player, I want to know if I got the correct answer. | Priority: Medium Type: Feature State: Ongoing Review | ## User Story

As a Player, I want to know if I got the correct answer.

## Acceptance criteria

- [x] Given that I'm playing the game, when I click a choice, the system generates a message that the answer is correct.

- [x] Given that I'm playing the game, when I click the correct choice, the button changes color fr... | 1.0 | As a Player, I want to know if I got the correct answer. - ## User Story

As a Player, I want to know if I got the correct answer.

## Acceptance criteria

- [x] Given that I'm playing the game, when I click a choice, the system generates a message that the answer is correct.

- [x] Given that I'm playing the game, w... | priority | as a player i want to know if i got the correct answer user story as a player i want to know if i got the correct answer acceptance criteria given that i m playing the game when i click a choice the system generates a message that the answer is correct given that i m playing the game when ... | 1 |

212,867 | 7,243,488,546 | IssuesEvent | 2018-02-14 11:55:26 | bounswe/bounswe2018group2 | https://api.github.com/repos/bounswe/bounswe2018group2 | closed | Create a wiki page that describes yourself and update your links on both README.md and sidebar | good first issue medium priority | After you have created a new page and filled that page with your personal information, you can update your links on README.md by clicking the file and clicking edit (something like this: ✏️ 😄 ) and you can update the sidebar links on the wiki by clicking the same icon on the sidebar. | 1.0 | Create a wiki page that describes yourself and update your links on both README.md and sidebar - After you have created a new page and filled that page with your personal information, you can update your links on README.md by clicking the file and clicking edit (something like this: ✏️ 😄 ) and you can update the sideb... | priority | create a wiki page that describes yourself and update your links on both readme md and sidebar after you have created a new page and filled that page with your personal information you can update your links on readme md by clicking the file and clicking edit something like this ✏️ 😄 and you can update the sideb... | 1 |

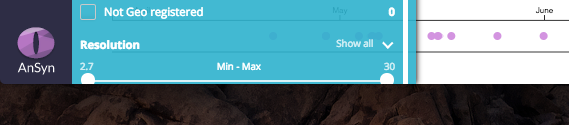

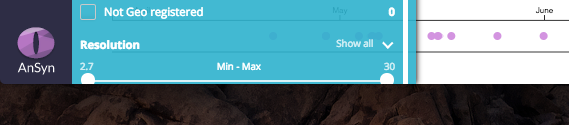

222,904 | 7,440,707,123 | IssuesEvent | 2018-03-27 11:00:35 | AnSyn/ansyn | https://api.github.com/repos/AnSyn/ansyn | opened | Filters menu - resolution slider | Bug Priority: Medium | 1. when menu has scroll the slider is partially visible

| 1.0 | Filters menu - resolution slider - 1. when menu has scroll the slider is partially visible

| priority | filters menu resolution slider when menu has scroll the slider is partially visible | 1 |

462,580 | 13,249,519,033 | IssuesEvent | 2020-08-19 20:57:31 | phetsims/scenery-phet | https://api.github.com/repos/phetsims/scenery-phet | closed | Change StepButton constructor? | priority:3-medium | Related to https://github.com/phetsims/wave-interference/issues/342.

Compare `PlayButton` and `StepButton` constructor APIs:

```js

function PlayPauseButton( isPlayingProperty, options )

function StepButton( options ) {

options = _.extend( {

...

// {Property.<boolean>|null} is the sim play... | 1.0 | Change StepButton constructor? - Related to https://github.com/phetsims/wave-interference/issues/342.

Compare `PlayButton` and `StepButton` constructor APIs:

```js

function PlayPauseButton( isPlayingProperty, options )

function StepButton( options ) {

options = _.extend( {

...

// {Propert... | priority | change stepbutton constructor related to compare playbutton and stepbutton constructor apis js function playpausebutton isplayingproperty options function stepbutton options options extend property null is the sim playing this is a convenience opti... | 1 |

799,563 | 28,309,562,201 | IssuesEvent | 2023-04-10 14:15:28 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | closed | Editing any item in the TreeList InCell will add the k-dirty indicator to its top-left data cell | Bug SEV: Medium C: Gantt C: TreeList jQuery Priority 5 | ### Bug report

Editing any item in the TreeList InCell will add the k-dirty indicator to its top-left data cell

**Regression introduced with R1 2023**

### Reproduction of the problem

1. Open the TreeList InCell editing demo - https://demos.telerik.com/kendo-ui/treelist/editing-incell

2. Edit any cell in th... | 1.0 | Editing any item in the TreeList InCell will add the k-dirty indicator to its top-left data cell - ### Bug report

Editing any item in the TreeList InCell will add the k-dirty indicator to its top-left data cell

**Regression introduced with R1 2023**

### Reproduction of the problem

1. Open the TreeList InCel... | priority | editing any item in the treelist incell will add the k dirty indicator to its top left data cell bug report editing any item in the treelist incell will add the k dirty indicator to its top left data cell regression introduced with reproduction of the problem open the treelist incell ed... | 1 |

734,483 | 25,351,053,721 | IssuesEvent | 2022-11-19 19:31:42 | bounswe/bounswe2022group4 | https://api.github.com/repos/bounswe/bounswe2022group4 | opened | Backend: Modification of the authentication mechanism. | Category - To Do Priority - Medium Language - Python Team - Backend | **Description**:

The backend team decided to migrate from JWT Authentication to the token authentication mechanism provided by the Django REST framework.

**Tasks:**

- Implement the token authentication.

**Deadline: 22/11/2022, 22.00 (GMT+3)** | 1.0 | Backend: Modification of the authentication mechanism. - **Description**:

The backend team decided to migrate from JWT Authentication to the token authentication mechanism provided by the Django REST framework.

**Tasks:**

- Implement the token authentication.

**Deadline: 22/11/2022, 22.00 (GMT+3)** | priority | backend modification of the authentication mechanism description the backend team decided to migrate from jwt authentication to the token authentication mechanism provided by the django rest framework tasks implement the token authentication deadline gmt | 1 |

830,056 | 31,986,917,372 | IssuesEvent | 2023-09-21 00:37:33 | LBL-EESA/TECA | https://api.github.com/repos/LBL-EESA/TECA | opened | add temporal index select stage to the cf_restripe | feature 2_medium_priority | add the teca_temporal_index_select stage, which takes a list of indices that will be presented to the system to be processed.

currently the index_select gets the list indices externally. there';s some ad hoc code in the temporal_reduction app to load the list of indices, the table reader could potentially be used (the... | 1.0 | add temporal index select stage to the cf_restripe - add the teca_temporal_index_select stage, which takes a list of indices that will be presented to the system to be processed.

currently the index_select gets the list indices externally. there';s some ad hoc code in the temporal_reduction app to load the list of in... | priority | add temporal index select stage to the cf restripe add the teca temporal index select stage which takes a list of indices that will be presented to the system to be processed currently the index select gets the list indices externally there s some ad hoc code in the temporal reduction app to load the list of in... | 1 |

538,637 | 15,774,378,669 | IssuesEvent | 2021-04-01 00:57:34 | sonia-auv/octopus-telemetry | https://api.github.com/repos/sonia-auv/octopus-telemetry | closed | Fix Dockerfile | Priority: Medium Type: Bug | ## Expected Behavior

We expect to be able to build the project.

## Current Behavior

The `docker build...` step fails because of a missing `package-lock.json`.

## Possible Solution

We can remove the `package-lock.json` - not necessary for the Docker instance.

## Comments

We could test on as most Doc... | 1.0 | Fix Dockerfile - ## Expected Behavior

We expect to be able to build the project.

## Current Behavior

The `docker build...` step fails because of a missing `package-lock.json`.

## Possible Solution

We can remove the `package-lock.json` - not necessary for the Docker instance.

## Comments

We could te... | priority | fix dockerfile expected behavior we expect to be able to build the project current behavior the docker build step fails because of a missing package lock json possible solution we can remove the package lock json not necessary for the docker instance comments we could te... | 1 |

78,191 | 3,509,507,843 | IssuesEvent | 2016-01-08 23:08:29 | OregonCore/OregonCore | https://api.github.com/repos/OregonCore/OregonCore | closed | Quest: [Portals of the Legion] (BB #960) | Category: Quests migrated Priority: Medium Type: Bug | This issue was migrated from bitbucket.

**Original Reporter:** GazelleMag

**Original Date:** 03.06.2015 13:06:07 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** resolved

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/960

<hr>

When you banish the portals they aren't bein... | 1.0 | Quest: [Portals of the Legion] (BB #960) - This issue was migrated from bitbucket.

**Original Reporter:** GazelleMag

**Original Date:** 03.06.2015 13:06:07 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** resolved

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/960

<hr>

W... | priority | quest bb this issue was migrated from bitbucket original reporter gazellemag original date gmt original priority major original type bug original state resolved direct link when you banish the portals they aren t being counted as banished the quest objective... | 1 |

454,170 | 13,095,914,813 | IssuesEvent | 2020-08-03 14:51:56 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | ycb objects missing textures in drake visualizer | priority: medium team: robot locomotion group type: bug | Running ` bazel run //examples/manipulation_station:end_effector_teleop_sliders -- --setup=clutter_clearing` currently results in textureless YCB objects.

They used to have t... | 1.0 | ycb objects missing textures in drake visualizer - Running ` bazel run //examples/manipulation_station:end_effector_teleop_sliders -- --setup=clutter_clearing` currently results in textureless YCB objects.

devrait être du helvetica et pas du century gothic

| 1.0 | Changer font page discussion d'un groupe - La font ici https://staging.nospollinisateurs.fr/groups/communaute/discussions qui est en grey light "actif il y a x", "2 messages, "véro schäfer y'a 2 jours" (mis dans #68 pour parler de sa couleur)

devrait être du helvetica et pas du century gothic

| priority | changer font page discussion d un groupe la font ici qui est en grey light actif il y a x messages véro schäfer y a jours mis dans pour parler de sa couleur devrait être du helvetica et pas du century gothic | 1 |

57,772 | 3,083,773,966 | IssuesEvent | 2015-08-24 11:13:10 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Звук скачивания файлов не должен звучать при скачивании списка файлов и запросе IP. | bug Component-UI imported Priority-Medium | _From [tret2...@gmail.com](https://code.google.com/u/116508191076211387118/) on July 17, 2013 06:43:44_

Звук скачивания файлов не должен звучать при скачивании списка файлов, что при запросе-IP всех пользователей [на всех хабах] - хорошо наблюдается...

_Original issue: http://code.google.com/p/flylinkdc/issues/detail... | 1.0 | Звук скачивания файлов не должен звучать при скачивании списка файлов и запросе IP. - _From [tret2...@gmail.com](https://code.google.com/u/116508191076211387118/) on July 17, 2013 06:43:44_

Звук скачивания файлов не должен звучать при скачивании списка файлов, что при запросе-IP всех пользователей [на всех хабах] - хо... | priority | звук скачивания файлов не должен звучать при скачивании списка файлов и запросе ip from on july звук скачивания файлов не должен звучать при скачивании списка файлов что при запросе ip всех пользователей хорошо наблюдается original issue | 1 |

427,449 | 12,395,429,582 | IssuesEvent | 2020-05-20 18:38:52 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | opened | Add ability for VLJs to edit attorney comments/feedback | Priority: Medium Product: caseflow-queue Team: Echo 🐬 Type: New Development | <!-- The goal of this template is to be a tool to communicate the requirements for a story related task. It is not intended as a mandate, adapt as needed. -->

## User or job story

User story: As a VLJ, I need the ability to view and edit attorney feedback/comments after judge checkout and/or dispatch, so that report... | 1.0 | Add ability for VLJs to edit attorney comments/feedback - <!-- The goal of this template is to be a tool to communicate the requirements for a story related task. It is not intended as a mandate, adapt as needed. -->

## User or job story

User story: As a VLJ, I need the ability to view and edit attorney feedback/com... | priority | add ability for vljs to edit attorney comments feedback user or job story user story as a vlj i need the ability to view and edit attorney feedback comments after judge checkout and or dispatch so that reporting can be as accurate as possible acceptance criteria vljs are able to view the attorne... | 1 |

637,340 | 20,625,762,167 | IssuesEvent | 2022-03-07 22:20:28 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | lvgl: upgrade LVGL to 8.1 build error | bug priority: medium | **Describe the bug**

`[60/413] Building C object modules/lvgl/CMakeFiles/..__modules__lib__gui__lvgl__zephyr.dir/D_/personal/pinetime/modules/lib/gui/lvgl/src/extra/widgets/win/lv_win.c.obj

FAILED: modules/lvgl/CMakeFiles/..__modules__lib__gui__lvgl__zephyr.dir/D_/personal/pinetime/modules/lib/gui/lvgl/src/extra/wi... | 1.0 | lvgl: upgrade LVGL to 8.1 build error - **Describe the bug**

`[60/413] Building C object modules/lvgl/CMakeFiles/..__modules__lib__gui__lvgl__zephyr.dir/D_/personal/pinetime/modules/lib/gui/lvgl/src/extra/widgets/win/lv_win.c.obj

FAILED: modules/lvgl/CMakeFiles/..__modules__lib__gui__lvgl__zephyr.dir/D_/personal/pi... | priority | lvgl upgrade lvgl to build error describe the bug building c object modules lvgl cmakefiles modules lib gui lvgl zephyr dir d personal pinetime modules lib gui lvgl src extra widgets win lv win c obj failed modules lvgl cmakefiles modules lib gui lvgl zephyr dir d personal pinetime ... | 1 |

640,355 | 20,781,351,360 | IssuesEvent | 2022-03-16 15:01:58 | wasmerio/wasmer | https://api.github.com/repos/wasmerio/wasmer | closed | macOS function calls can be optimized | 🎉 enhancement priority-medium | Right now we are using `sigsetjmp` when calling functions from host to wasm, which adds significant overhead.

We can either use `setjmp` or macho exception handling directly.

Performance from `sigsetjmp` to `setjmp` reported here: https://github.com/wasmerio/wasmer/pull/2102 (from 140.14ns to 29.684ns in some examp... | 1.0 | macOS function calls can be optimized - Right now we are using `sigsetjmp` when calling functions from host to wasm, which adds significant overhead.

We can either use `setjmp` or macho exception handling directly.

Performance from `sigsetjmp` to `setjmp` reported here: https://github.com/wasmerio/wasmer/pull/2102 ... | priority | macos function calls can be optimized right now we are using sigsetjmp when calling functions from host to wasm which adds significant overhead we can either use setjmp or macho exception handling directly performance from sigsetjmp to setjmp reported here from to in some examples | 1 |

657,992 | 21,874,456,157 | IssuesEvent | 2022-05-19 08:54:00 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | closed | Frontend for the Categories | priority-medium status-new practice-app practice-app:front-end | ### Issue Description

Now that we initialized categories, we can begin implementing our pages.

This issue is for implementation of a page regarding listing all of existing categories.

### Step Details

Steps that will be performed:

- [x] Determine what will be shown in the page

- [x] Add the page to the r... | 1.0 | Frontend for the Categories - ### Issue Description

Now that we initialized categories, we can begin implementing our pages.

This issue is for implementation of a page regarding listing all of existing categories.

### Step Details

Steps that will be performed:

- [x] Determine what will be shown in the pag... | priority | frontend for the categories issue description now that we initialized categories we can begin implementing our pages this issue is for implementation of a page regarding listing all of existing categories step details steps that will be performed determine what will be shown in the page ... | 1 |

509,459 | 14,737,037,968 | IssuesEvent | 2021-01-07 00:41:34 | codidact/qpixel | https://api.github.com/repos/codidact/qpixel | closed | Need a way to specify rep gains for new post types | area: html/css/js area: ruby complexity: unassessed priority: medium type: change request | With post unification, we now have the ability to create new post types, like wiki (already created). Site settings includes a reputation section, which lets an admin specify the rep gained for questions, answers, or articles and the rep lost for downvotes (anywhere). Wikis don't have voting and thus don't affect rep... | 1.0 | Need a way to specify rep gains for new post types - With post unification, we now have the ability to create new post types, like wiki (already created). Site settings includes a reputation section, which lets an admin specify the rep gained for questions, answers, or articles and the rep lost for downvotes (anywhere... | priority | need a way to specify rep gains for new post types with post unification we now have the ability to create new post types like wiki already created site settings includes a reputation section which lets an admin specify the rep gained for questions answers or articles and the rep lost for downvotes anywhere... | 1 |

365,096 | 10,775,555,847 | IssuesEvent | 2019-11-03 15:06:47 | AY1920S1-CS2113T-F09-4/main | https://api.github.com/repos/AY1920S1-CS2113T-F09-4/main | closed | Exception message was shown | priority.High severity.Medium status.Ongoing |

The exception error IndexOutOfBounds Exception message should not be shown.

<hr><sub>[original: JasonLeeWeiHern/ped#8]<br/>

</sub> | 1.0 | Exception message was shown -

The exception error IndexOutOfBounds Exception message should not be shown.

<hr><sub>[original: JasonLeeWeiHern/ped#8]<br/>

</sub> | priority | exception message was shown the exception error indexoutofbounds exception message should not be shown | 1 |

668,129 | 22,553,720,128 | IssuesEvent | 2022-06-27 08:24:00 | medialab/portic-storymaps-2022 | https://api.github.com/repos/medialab/portic-storymaps-2022 | closed | Linechart improvements | enhancement priority:medium | - [x] handle negative values

- [x] handle vertical layout

- [ ] API : add an option to format axis ticks with pretty numbers (use `misc/formatNumber`) | 1.0 | Linechart improvements - - [x] handle negative values

- [x] handle vertical layout

- [ ] API : add an option to format axis ticks with pretty numbers (use `misc/formatNumber`) | priority | linechart improvements handle negative values handle vertical layout api add an option to format axis ticks with pretty numbers use misc formatnumber | 1 |

71,200 | 3,353,752,632 | IssuesEvent | 2015-11-18 08:30:00 | Apollo-Community/ApolloStation | https://api.github.com/repos/Apollo-Community/ApolloStation | closed | Reagents taking forever to metabolize | bug priority: medium | It seems every time we lower the tickrate, metabolism rates decrease exponentially. I saw someone who was injected with sleep toxin and 10 minutes later they had metabolized less than 0.01 units. | 1.0 | Reagents taking forever to metabolize - It seems every time we lower the tickrate, metabolism rates decrease exponentially. I saw someone who was injected with sleep toxin and 10 minutes later they had metabolized less than 0.01 units. | priority | reagents taking forever to metabolize it seems every time we lower the tickrate metabolism rates decrease exponentially i saw someone who was injected with sleep toxin and minutes later they had metabolized less than units | 1 |

720,190 | 24,782,811,891 | IssuesEvent | 2022-10-24 07:16:55 | trustwallet/wallet-core | https://api.github.com/repos/trustwallet/wallet-core | closed | [NewChain]: add Aptos support | chain-integration priority:medium size:large | **Motivation**

[Aptos](https://aptoslabs.com/) is a proposed Layer 1 blockchain that uses the [Move programming language](https://101blockchains.com/move-programming-language-tutorial/)

The goal is to support the blockchain prior to the main net launch.

**Checklist**

<!--- Group checklist per issue needed, ... | 1.0 | [NewChain]: add Aptos support - **Motivation**

[Aptos](https://aptoslabs.com/) is a proposed Layer 1 blockchain that uses the [Move programming language](https://101blockchains.com/move-programming-language-tutorial/)

The goal is to support the blockchain prior to the main net launch.

**Checklist**

<!--- Gr... | priority | add aptos support motivation is a proposed layer blockchain that uses the the goal is to support the blockchain prior to the main net launch checklist skeleton registry json update implemented in address support implemented in transaction support ... | 1 |

391,547 | 11,575,668,886 | IssuesEvent | 2020-02-21 10:14:37 | luna/enso | https://api.github.com/repos/luna/enso | closed | File Manager Binary File Support | Category: GUI Change: Non-Breaking Difficulty: Core Contributor Priority: Medium Type: Enhancement | ### Summary

With the protocol specified in #395 we have a good idea of how we want to handle binary file transfers.

### Value

We give the IDE the tools to provide users with a way to transfer binary files to our backend through the IDE.

### Specification

- [ ] Implement the transport specified as part of #39... | 1.0 | File Manager Binary File Support - ### Summary

With the protocol specified in #395 we have a good idea of how we want to handle binary file transfers.

### Value

We give the IDE the tools to provide users with a way to transfer binary files to our backend through the IDE.

### Specification

- [ ] Implement the... | priority | file manager binary file support summary with the protocol specified in we have a good idea of how we want to handle binary file transfers value we give the ide the tools to provide users with a way to transfer binary files to our backend through the ide specification implement the tra... | 1 |

170,709 | 6,469,764,340 | IssuesEvent | 2017-08-17 07:10:07 | vmware/admiral | https://api.github.com/repos/vmware/admiral | closed | Problem accessing Admiral service on VIC OVA deployment | kind/bug priority/medium | I have deployed the VIC OVA (build 1dc0021a) using DHCP.

After I enter the vCenter credentials, I cannot access the management portal:

- Service is running on port 8282. I get certificate warning (self-signed) but then I get the error:

using DHCP.

After I enter the vCenter credentials, I cannot access the management portal:

- Service is running on port 8282. I get certificate warning (self-signed) but then I get the error:

` is called:

- for `Addresses#get`, `Servers#get`, and others, if the resource isn't found, it returns `nil`, (this seems to be the preferred behavior,) whereas

- for `UrlMaps#get`, `TargetHttpProxies#... | 1.0 | get and other business logic should be DRY-ed up in the models - This issue is coming from the pain point that different resources behave differently when `#get('nonexistent-identity')` is called:

- for `Addresses#get`, `Servers#get`, and others, if the resource isn't found, it returns `nil`, (this seems to be the pref... | priority | get and other business logic should be dry ed up in the models this issue is coming from the pain point that different resources behave differently when get nonexistent identity is called for addresses get servers get and others if the resource isn t found it returns nil this seems to be the pref... | 1 |

619,071 | 19,515,244,631 | IssuesEvent | 2021-12-29 09:06:39 | edwisely-ai/Marketing | https://api.github.com/repos/edwisely-ai/Marketing | closed | Blog - Create Help Documents for Product | Criticality Low Priority Medium | 1. Make also blogs like Why did we releasing a Web App or something like that. | 1.0 | Blog - Create Help Documents for Product - 1. Make also blogs like Why did we releasing a Web App or something like that. | priority | blog create help documents for product make also blogs like why did we releasing a web app or something like that | 1 |

687,645 | 23,533,933,088 | IssuesEvent | 2022-08-19 18:18:26 | cthit/Gamma | https://api.github.com/repos/cthit/Gamma | closed | Add monitoring for backend | Type: Enhancement good first issue Where: Backend Priority: Medium | Should be pretty easy. You can add a dependency to Spring, with actuators. Then you only need to protect the endpoint | 1.0 | Add monitoring for backend - Should be pretty easy. You can add a dependency to Spring, with actuators. Then you only need to protect the endpoint | priority | add monitoring for backend should be pretty easy you can add a dependency to spring with actuators then you only need to protect the endpoint | 1 |

756,353 | 26,467,865,370 | IssuesEvent | 2023-01-17 02:52:27 | OffprintStudios/Sailfish | https://api.github.com/repos/OffprintStudios/Sailfish | closed | Fix scroll on mobile | bug medium priority | currently, the mobile view auto-scrolls to the top of the content on navigation and hides the nav bar. this needs to be fixed | 1.0 | Fix scroll on mobile - currently, the mobile view auto-scrolls to the top of the content on navigation and hides the nav bar. this needs to be fixed | priority | fix scroll on mobile currently the mobile view auto scrolls to the top of the content on navigation and hides the nav bar this needs to be fixed | 1 |

78,148 | 3,509,486,432 | IssuesEvent | 2016-01-08 23:00:56 | OregonCore/OregonCore | https://api.github.com/repos/OregonCore/OregonCore | closed | Windfury Totem (BB #917) | Category: Spells migrated Priority: Medium Type: Bug | This issue was migrated from bitbucket.

**Original Reporter:** cent0s

**Original Date:** 26.05.2015 23:20:09 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** invalid

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/917

<hr>

[http://wowwiki.wikia.com/Windfury_Totem](http://... | 1.0 | Windfury Totem (BB #917) - This issue was migrated from bitbucket.

**Original Reporter:** cent0s

**Original Date:** 26.05.2015 23:20:09 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** invalid

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/917

<hr>

[http://wowwiki.wikia.... | priority | windfury totem bb this issue was migrated from bitbucket original reporter original date gmt original priority major original type bug original state invalid direct link no chance of proc proceed almost constantly as the machine | 1 |

578,966 | 17,169,343,453 | IssuesEvent | 2021-07-15 00:22:09 | AtlasOfLivingAustralia/biocache-service | https://api.github.com/repos/AtlasOfLivingAustralia/biocache-service | closed | Improve downloads multimedia implementation | Downloads enhancement images priority-medium | Download API provides an option to specify `extra=multimedia,image_url` to be able to get image info in download file. There are two problems with this:

- the `image_url` field is just an `image_uuid` string and thus relies on the client to encode a URL themselves, which is not documented anywhere that I could find. Be... | 1.0 | Improve downloads multimedia implementation - Download API provides an option to specify `extra=multimedia,image_url` to be able to get image info in download file. There are two problems with this:

- the `image_url` field is just an `image_uuid` string and thus relies on the client to encode a URL themselves, which is... | priority | improve downloads multimedia implementation download api provides an option to specify extra multimedia image url to be able to get image info in download file there are two problems with this the image url field is just an image uuid string and thus relies on the client to encode a url themselves which is... | 1 |

203,042 | 7,057,410,193 | IssuesEvent | 2018-01-04 16:21:32 | ImageEngine/cortex | https://api.github.com/repos/ImageEngine/cortex | closed | Support GeometricData::Interpretation in IECoreRI | priority-medium renderer-RenderMan type-enhancement | This needs to be used in ParameterList and PrimitiveVariableList to decide the renderman type of the data. Once this is done we should entirely abandon the typeHints system, which should simplify both those classes and also the Renderer.

| 1.0 | Support GeometricData::Interpretation in IECoreRI - This needs to be used in ParameterList and PrimitiveVariableList to decide the renderman type of the data. Once this is done we should entirely abandon the typeHints system, which should simplify both those classes and also the Renderer.

| priority | support geometricdata interpretation in iecoreri this needs to be used in parameterlist and primitivevariablelist to decide the renderman type of the data once this is done we should entirely abandon the typehints system which should simplify both those classes and also the renderer | 1 |

280,104 | 8,678,061,135 | IssuesEvent | 2018-11-30 18:42:41 | linterhub/usage-parser | https://api.github.com/repos/linterhub/usage-parser | closed | Refactoring of junk code | Priority: Medium Status: In Progress Type: Maintenance | Next solutions isn't good:

* function handle of handle.js file

```

const context = require('./template/context.js');

context.options = [];

```

* function templatizer of templatizer.js file

```

const argumentsTemplate = JSON.parse(fs.readFileSync('./src/template/args.json'));

argumentsTemplate.definitions.arg... | 1.0 | Refactoring of junk code - Next solutions isn't good:

* function handle of handle.js file

```

const context = require('./template/context.js');

context.options = [];

```

* function templatizer of templatizer.js file

```

const argumentsTemplate = JSON.parse(fs.readFileSync('./src/template/args.json'));

argume... | priority | refactoring of junk code next solutions isn t good function handle of handle js file const context require template context js context options function templatizer of templatizer js file const argumentstemplate json parse fs readfilesync src template args json argumen... | 1 |

361,386 | 10,708,002,726 | IssuesEvent | 2019-10-24 18:42:34 | Gelbpunkt/IdleRPG | https://api.github.com/repos/Gelbpunkt/IdleRPG | closed | Profile idea | Priority: Medium enhancement | **Is your feature request related to a problem? Please describe.**

This isn't to a problem but a suggestion. Showing race and god on the profile.

**Describe the solution you'd like**

Not sure where it should go but it would be nice to see my race and god on my profile. Maybe next to where class is on the profile.

... | 1.0 | Profile idea - **Is your feature request related to a problem? Please describe.**

This isn't to a problem but a suggestion. Showing race and god on the profile.

**Describe the solution you'd like**

Not sure where it should go but it would be nice to see my race and god on my profile. Maybe next to where class is o... | priority | profile idea is your feature request related to a problem please describe this isn t to a problem but a suggestion showing race and god on the profile describe the solution you d like not sure where it should go but it would be nice to see my race and god on my profile maybe next to where class is o... | 1 |

57,545 | 3,082,706,470 | IssuesEvent | 2015-08-24 00:22:26 | magro/memcached-session-manager | https://api.github.com/repos/magro/memcached-session-manager | closed | what kind of service should I use for memcached-session manager | bug imported invalid Priority-Medium | _From [xiaolian...@gmail.com](https://code.google.com/u/111950605265423305308/) on June 27, 2012 05:00:57_

membase or couchbase?

and another quesition:

when I use 2 or more service to support sticky session ,the services should be a cluster? Or just individual service?

the same question to non-sticky session ... | 1.0 | what kind of service should I use for memcached-session manager - _From [xiaolian...@gmail.com](https://code.google.com/u/111950605265423305308/) on June 27, 2012 05:00:57_

membase or couchbase?

and another quesition:

when I use 2 or more service to support sticky session ,the services should be a cluster? Or ju... | priority | what kind of service should i use for memcached session manager from on june membase or couchbase and another quesition when i use or more service to support sticky session the services should be a cluster or just individual service the same question to non sticky session configurati... | 1 |

177,976 | 6,589,171,841 | IssuesEvent | 2017-09-14 07:51:50 | edenlabllc/ehealth.api | https://api.github.com/repos/edenlabllc/ehealth.api | opened | Migration for reimbursement | kind/task priority/medium project/reimbursement |

I want to have full DB's in all environments (dev, demo, etc.)

So that it is necessary to prepare migration:

- add **PHARMACY** to il.dictionaries “Legal_entity_type”

```

update dictionaries set values=('{"MIS": "Medical Information system", "MSP": "заклад з надання медичних послуг", "PHARMACY": "Аптека"}') wh... | 1.0 | Migration for reimbursement -

I want to have full DB's in all environments (dev, demo, etc.)

So that it is necessary to prepare migration:

- add **PHARMACY** to il.dictionaries “Legal_entity_type”

```

update dictionaries set values=('{"MIS": "Medical Information system", "MSP": "заклад з надання медичних послу... | priority | migration for reimbursement i want to have full db s in all environments dev demo etc so that it is necessary to prepare migration add pharmacy to il dictionaries “legal entity type” update dictionaries set values mis medical information system msp заклад з надання медичних послу... | 1 |

261,880 | 8,247,256,960 | IssuesEvent | 2018-09-11 15:04:46 | trimstray/htrace.sh | https://api.github.com/repos/trimstray/htrace.sh | closed | Added ssl owner 'Organization' and 'OrganizationalUnit'. | Priority: Medium Status: Completed Type: Feature | - `_ssl_domain_subject_o`

- `_ssl_domain_subject_ou` | 1.0 | Added ssl owner 'Organization' and 'OrganizationalUnit'. - - `_ssl_domain_subject_o`

- `_ssl_domain_subject_ou` | priority | added ssl owner organization and organizationalunit ssl domain subject o ssl domain subject ou | 1 |

35,832 | 2,793,218,975 | IssuesEvent | 2015-05-11 09:28:08 | umutafacan/bounswe2015group3 | https://api.github.com/repos/umutafacan/bounswe2015group3 | closed | Crowdsourcing software development and creative work | auto-migrated duplicate Priority-Medium Type-Task | ```

I am gonna do my research on Crowdsourcing, especially on the topics of

"Crowdsourcing creative work" and "Crowdsourcing software development"

Estimated time: 3 hours of research and 1.5 hours of documenting. 4.5 hours in

total

```

Original issue reported on code.google.com by `ozn....@gmail.com` on 22 Feb 201... | 1.0 | Crowdsourcing software development and creative work - ```

I am gonna do my research on Crowdsourcing, especially on the topics of

"Crowdsourcing creative work" and "Crowdsourcing software development"

Estimated time: 3 hours of research and 1.5 hours of documenting. 4.5 hours in

total

```

Original issue reported ... | priority | crowdsourcing software development and creative work i am gonna do my research on crowdsourcing especially on the topics of crowdsourcing creative work and crowdsourcing software development estimated time hours of research and hours of documenting hours in total original issue reported ... | 1 |

32,590 | 2,756,221,566 | IssuesEvent | 2015-04-27 06:19:15 | bwapi/bwapi | https://api.github.com/repos/bwapi/bwapi | closed | Unloading a unit causes them to be in an attack frame | bug Priority-Medium | Unloading an SCV from a Dropship causes `isAttackFrame()` to return true.

| 1.0 | Unloading a unit causes them to be in an attack frame - Unloading an SCV from a Dropship causes `isAttackFrame()` to return true.

| priority | unloading a unit causes them to be in an attack frame unloading an scv from a dropship causes isattackframe to return true | 1 |

169,319 | 6,399,430,330 | IssuesEvent | 2017-08-05 00:18:05 | phetsims/kite | https://api.github.com/repos/phetsims/kite | closed | SVG-style ellipticalArcTo not implemented | dev:enhancement priority:3-medium | This is needed for full SVG path handling generated by our parser.

| 1.0 | SVG-style ellipticalArcTo not implemented - This is needed for full SVG path handling generated by our parser.

| priority | svg style ellipticalarcto not implemented this is needed for full svg path handling generated by our parser | 1 |

525,154 | 15,239,121,981 | IssuesEvent | 2021-02-19 03:38:31 | actually-colab/editor | https://api.github.com/repos/actually-colab/editor | opened | Endpoint Input Schema Validation | REST difficulty: medium priority: low server | - [ ] Create a validation middleware

- [ ] Implement on POST /notebook

- [ ] Implement on POST/notebook/:id/share

- [ ] Implement on GET /notebooks | 1.0 | Endpoint Input Schema Validation - - [ ] Create a validation middleware

- [ ] Implement on POST /notebook

- [ ] Implement on POST/notebook/:id/share

- [ ] Implement on GET /notebooks | priority | endpoint input schema validation create a validation middleware implement on post notebook implement on post notebook id share implement on get notebooks | 1 |

192,312 | 6,848,513,448 | IssuesEvent | 2017-11-13 18:47:07 | osuosl/streamwebs | https://api.github.com/repos/osuosl/streamwebs | opened | Unit Typo: In view soil survey distance is incorrectly labeled | bug medium priority | In the View Soil Survey view the "Distance from stream" is labeled as having units of feet when the code has converted feet to meters. The unit label just needs to be changed from _ft_ to _m_. | 1.0 | Unit Typo: In view soil survey distance is incorrectly labeled - In the View Soil Survey view the "Distance from stream" is labeled as having units of feet when the code has converted feet to meters. The unit label just needs to be changed from _ft_ to _m_. | priority | unit typo in view soil survey distance is incorrectly labeled in the view soil survey view the distance from stream is labeled as having units of feet when the code has converted feet to meters the unit label just needs to be changed from ft to m | 1 |

666,669 | 22,363,065,484 | IssuesEvent | 2022-06-15 23:05:08 | diffgram/diffgram | https://api.github.com/repos/diffgram/diffgram | reopened | Fix hard crash if blob can't load | medium-priority | In some cases lifecylcle rules can break a task from rendering properly:

https://diffgram.com/task/99297

Double check if all urls are regenerating correctly and that the error handling does not block the task from being rendered. | 1.0 | Fix hard crash if blob can't load - In some cases lifecylcle rules can break a task from rendering properly:

https://diffgram.com/task/99297

Double check if all urls are regenerating correctly and that the error handling does not block the task from being rendered. | priority | fix hard crash if blob can t load in some cases lifecylcle rules can break a task from rendering properly double check if all urls are regenerating correctly and that the error handling does not block the task from being rendered | 1 |

56,561 | 3,080,249,941 | IssuesEvent | 2015-08-21 20:57:43 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | opened | Некорректно отображается прогресс обновления в статусной строке Win7. | bug Component-UI imported Priority-Medium | _From [Tirael...@gmail.com](https://code.google.com/u/108935377450235604965/) on May 23, 2012 19:30:06_

При автообновлении флай постоянно показывает заполненную стоку прогресса. Win7 x64 flylink x64 самый последний билд с сервера Night Orion. Проявилось уже достаточно давно, некогда было написать.

**Attachment:** [Сн... | 1.0 | Некорректно отображается прогресс обновления в статусной строке Win7. - _From [Tirael...@gmail.com](https://code.google.com/u/108935377450235604965/) on May 23, 2012 19:30:06_

При автообновлении флай постоянно показывает заполненную стоку прогресса. Win7 x64 flylink x64 самый последний билд с сервера Night Orion. Проя... | priority | некорректно отображается прогресс обновления в статусной строке from on may при автообновлении флай постоянно показывает заполненную стоку прогресса flylink самый последний билд с сервера night orion проявилось уже достаточно давно некогда было написать attachment original i... | 1 |

610,706 | 18,922,072,950 | IssuesEvent | 2021-11-17 03:44:58 | Sage-Bionetworks/rocc-app | https://api.github.com/repos/Sage-Bionetworks/rocc-app | closed | Add metrics to the home page | Priority: Medium | Per user feedback (see below), we will investigate adding metrics to the Home Page.

We can start with our current set of past challenges.

Organizer Feedback

"So the first thing that I would, I would want to see as I'm seeing that page right now is somewhere indicating the community engagement of this challenge.... | 1.0 | Add metrics to the home page - Per user feedback (see below), we will investigate adding metrics to the Home Page.

We can start with our current set of past challenges.

Organizer Feedback

"So the first thing that I would, I would want to see as I'm seeing that page right now is somewhere indicating the communit... | priority | add metrics to the home page per user feedback see below we will investigate adding metrics to the home page we can start with our current set of past challenges organizer feedback so the first thing that i would i would want to see as i m seeing that page right now is somewhere indicating the communit... | 1 |

68,040 | 3,283,957,033 | IssuesEvent | 2015-10-28 14:56:09 | marvinlabs/customer-area | https://api.github.com/repos/marvinlabs/customer-area | closed | "spectator" role for projects | enhancement Premium add-ons Priority - medium | From: http://wp-customerarea.com/support/topic/third-role-for-projects/

Would be nice to have a 3rd category of users on a project (managers, contributors, spectators/guests) | 1.0 | "spectator" role for projects - From: http://wp-customerarea.com/support/topic/third-role-for-projects/

Would be nice to have a 3rd category of users on a project (managers, contributors, spectators/guests) | priority | spectator role for projects from would be nice to have a category of users on a project managers contributors spectators guests | 1 |

107,904 | 4,321,744,452 | IssuesEvent | 2016-07-25 11:30:48 | richelbilderbeek/Cer2016 | https://api.github.com/repos/richelbilderbeek/Cer2016 | closed | Use nLTT::nltt_stat instead of approximation | medium priority | Sure, it was useful to add `get_nltt_values` to the `nLTT` package. But now, I could just calculate the exact nLTT statistic instead. | 1.0 | Use nLTT::nltt_stat instead of approximation - Sure, it was useful to add `get_nltt_values` to the `nLTT` package. But now, I could just calculate the exact nLTT statistic instead. | priority | use nltt nltt stat instead of approximation sure it was useful to add get nltt values to the nltt package but now i could just calculate the exact nltt statistic instead | 1 |

72,606 | 3,388,399,029 | IssuesEvent | 2015-11-29 08:19:44 | crutchcorn/stagger | https://api.github.com/repos/crutchcorn/stagger | closed | stagger --print fails with AttributeError | bug Priority Medium | ```

What steps will reproduce the problem?

1. download attached id3 file

2. run stagger --print dump2.id3

3. File "/home/michael/omg/trunk/stagger/tags.py", line 324, in getter

(track, sep, total) = frame.text[0].partition("/")

AttributeError: 'list' object has no attribute 'text'

For some reason, the 'TRCK' attri... | 1.0 | stagger --print fails with AttributeError - ```

What steps will reproduce the problem?

1. download attached id3 file

2. run stagger --print dump2.id3

3. File "/home/michael/omg/trunk/stagger/tags.py", line 324, in getter

(track, sep, total) = frame.text[0].partition("/")

AttributeError: 'list' object has no attribu... | priority | stagger print fails with attributeerror what steps will reproduce the problem download attached file run stagger print file home michael omg trunk stagger tags py line in getter track sep total frame text partition attributeerror list object has no attribute text f... | 1 |

735,589 | 25,405,067,921 | IssuesEvent | 2022-11-22 14:46:10 | PHI-base/PHI5_web_display | https://api.github.com/repos/PHI-base/PHI5_web_display | closed | Display wild type host genotypes as 'wild type' in metagenotypes | medium priority | (Extracted from issue https://github.com/PHI-base/PHI5_web_display/issues/52)

In the display names for metagenotypes, hosts with no genes are displayed with the string "(wild_type)", which is an allele type instead of a genotype name. For example:

TRI5+ (wild_type) [Wild type product level] F. graminearum (PH-1) ... | 1.0 | Display wild type host genotypes as 'wild type' in metagenotypes - (Extracted from issue https://github.com/PHI-base/PHI5_web_display/issues/52)

In the display names for metagenotypes, hosts with no genes are displayed with the string "(wild_type)", which is an allele type instead of a genotype name. For example:

... | priority | display wild type host genotypes as wild type in metagenotypes extracted from issue in the display names for metagenotypes hosts with no genes are displayed with the string wild type which is an allele type instead of a genotype name for example wild type f graminearum ph wild type... | 1 |

698,793 | 23,991,791,930 | IssuesEvent | 2022-09-14 02:19:27 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] Unique index involving custom FUNCTION results in ERRORDATA_STACK_SIZE | kind/bug area/ysql priority/medium | Jira Link: [[DB-296]](https://yugabyte.atlassian.net/browse/DB-296)

### Description

When porting commit 34ff15660b4f752e3941d661c3896fd96b1571f9 from upstream, we would see:

```

+CREATE MATERIALIZED VIEW sro_index_mv AS SELECT 1 AS c;

+CREATE UNIQUE INDEX ON sro_index_mv (c) WHERE unwanted_grant_nofail(1) > 0;

+W... | 1.0 | [YSQL] Unique index involving custom FUNCTION results in ERRORDATA_STACK_SIZE - Jira Link: [[DB-296]](https://yugabyte.atlassian.net/browse/DB-296)

### Description

When porting commit 34ff15660b4f752e3941d661c3896fd96b1571f9 from upstream, we would see:

```

+CREATE MATERIALIZED VIEW sro_index_mv AS SELECT 1 AS c;

... | priority | unique index involving custom function results in errordata stack size jira link description when porting commit from upstream we would see create materialized view sro index mv as select as c create unique index on sro index mv c where unwanted grant nofail warning abortsu... | 1 |

246,535 | 7,895,389,951 | IssuesEvent | 2018-06-29 02:58:04 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | Add the ability to control all the viewing settings in the x ray image query. | Expected Use: 3 - Occasional Feature Impact: 3 - Medium OS: All Priority: Normal Support Group: Any | John Fields, who is working with Steve Langer, would like more control over the image view. In particular he is interested in setting a perspective view. We should just make all the controls available.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. The followin... | 1.0 | Add the ability to control all the viewing settings in the x ray image query. - John Fields, who is working with Steve Langer, would like more control over the image view. In particular he is interested in setting a perspective view. We should just make all the controls available.

-----------------------REDMINE MIGR... | priority | add the ability to control all the viewing settings in the x ray image query john fields who is working with steve langer would like more control over the image view in particular he is interested in setting a perspective view we should just make all the controls available redmine migr... | 1 |

100,243 | 4,081,742,863 | IssuesEvent | 2016-05-31 10:03:52 | nim-lang/Nim | https://api.github.com/repos/nim-lang/Nim | closed | Missing docs/*.txt causes doc2 to fail | Medium Priority | While playing with gradha's github pages documentation generator thingie, doc2 failed, with macros.nim complaining that it couldn't find ../doc/astspec.txt

````

$ ~/.babel/bin/gh_nimrod_doc_pages -c .

Generating docs for target 'master'