Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 970 | labels stringlengths 4 625 | body stringlengths 3 247k | index stringclasses 9

values | text_combine stringlengths 96 247k | label stringclasses 2

values | text stringlengths 96 218k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

490,443 | 14,121,486,316 | IssuesEvent | 2020-11-09 02:10:52 | AY2021S1-CS2113T-W12-2/tp | https://api.github.com/repos/AY2021S1-CS2113T-W12-2/tp | closed | [PE-D] [W12-2] Duration for flexible tasks too long | priority.High type.Bug |

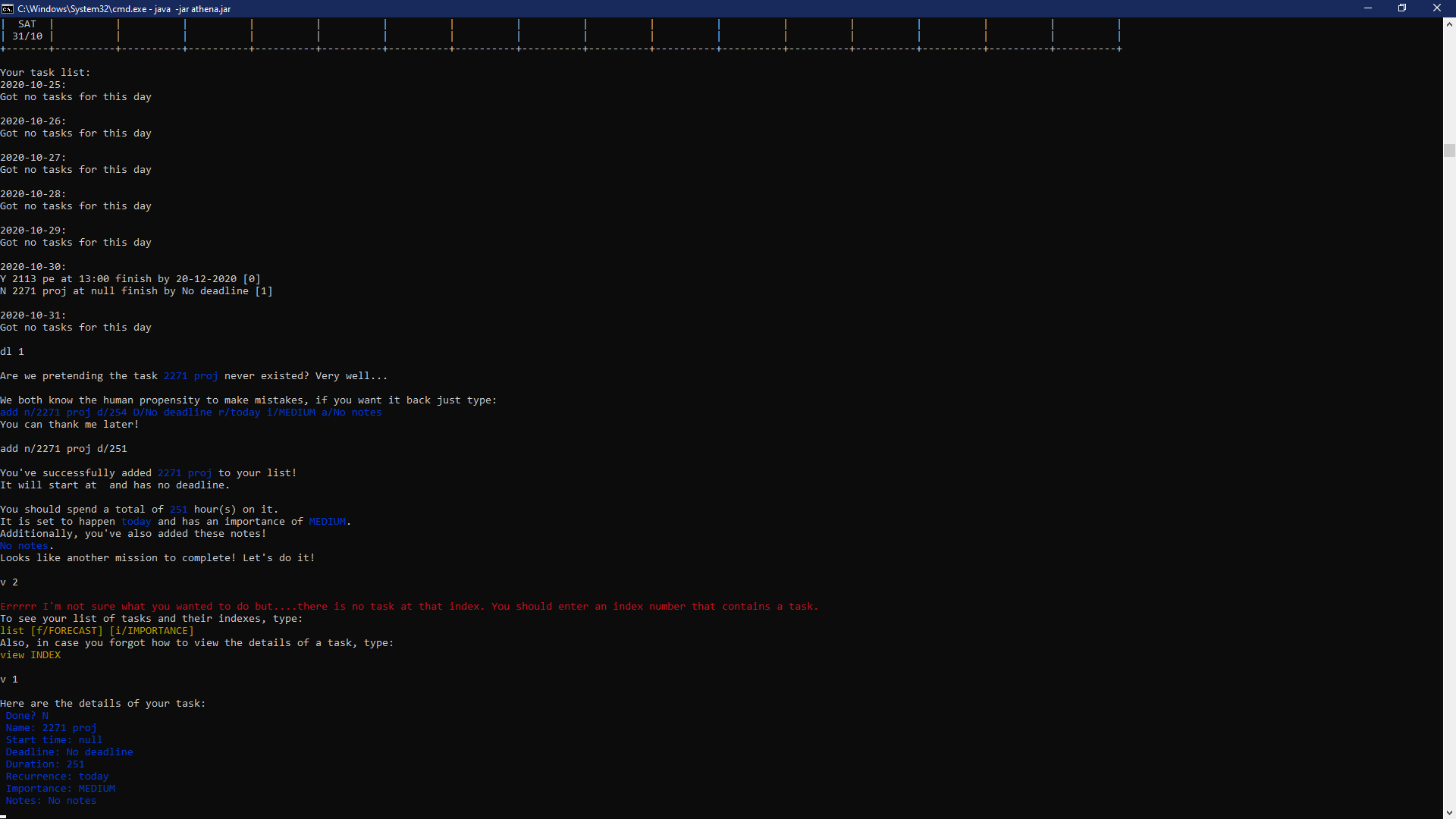

When I add a task that lasts 254 hours long, start time isn't set as default, and it doesn't show up when I use the list command.

<!--session: 1604047703205-acf0f73b-f2fb-4dd8-aff5-c649ca198eba-->

-------------

Labels: `severity.Medium` `type.FunctionalityBug`

original: thngyuxuan/ped#3 | 1.0 | [PE-D] [W12-2] Duration for flexible tasks too long -

When I add a task that lasts 254 hours long, start time isn't set as default, and it doesn't show up when I use the list command.

<!--session: 1604047703205-acf0f73b-f2fb-4dd8-aff5-c649ca198eba-->

-------------

Labels: `severity.Medium` `type.FunctionalityBug`

original: thngyuxuan/ped#3 | non_perf | duration for flexible tasks too long when i add a task that lasts hours long start time isn t set as default and it doesn t show up when i use the list command labels severity medium type functionalitybug original thngyuxuan ped | 0 |

35,814 | 17,269,192,734 | IssuesEvent | 2021-07-22 17:23:03 | erayerdin/levelheadbrowser | https://api.github.com/repos/erayerdin/levelheadbrowser | closed | Do not request for null icons | bug performance | Icons on profiles, levels and tower trials page are requested even if the related field is `null`. A real request is made just to get 404 in this case, which is not performant. | True | Do not request for null icons - Icons on profiles, levels and tower trials page are requested even if the related field is `null`. A real request is made just to get 404 in this case, which is not performant. | perf | do not request for null icons icons on profiles levels and tower trials page are requested even if the related field is null a real request is made just to get in this case which is not performant | 1 |

293,321 | 22,052,447,822 | IssuesEvent | 2022-05-30 09:50:38 | felangel/bloc | https://api.github.com/repos/felangel/bloc | opened | question: Cancel event in separate event handlers | documentation | How to cancel event processing?

For example i have `start` and `stop` events in separated event handlers.

I know i can make a flag like `isWorking`, but is there any way to cancel event?

| 1.0 | question: Cancel event in separate event handlers - How to cancel event processing?

For example i have `start` and `stop` events in separated event handlers.

I know i can make a flag like `isWorking`, but is there any way to cancel event?

| non_perf | question cancel event in separate event handlers how to cancel event processing for example i have start and stop events in separated event handlers i know i can make a flag like isworking but is there any way to cancel event | 0 |

47,785 | 25,187,884,023 | IssuesEvent | 2022-11-11 20:06:15 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Godot 4.0 is very slow to start on macOS compared to 3.x | bug platform:macos topic:core topic:rendering topic:porting confirmed regression performance | ### Godot version

4.0.alpha10.official

### System information

macOS 12.4 (Monterey), Vulkan, Intel Iris Pro 5200

### Issue description

Latest release of Godot 4.0 (alpha 10) seems to be super slow on macOS 12.4 (Monterey), at least when tested on my MacBook Pro setup (meanwhile, Godot 3.4.4 works without any noticeable performance issues). Here are some benchmarks:

- time to start Godot (to open project list): **33 seconds**

- time to open new project: 35 seconds

- time to start an empty 2D scene app: 33 seconds

On the other hand, editor itself is generally responsive when interacting with its UI (creating new nodes etc.).

Among important details, here are some errors that are raised during the 2D application start (empty scene):

```

E 0:00:00:0276 _debug_messenger_callback: - Message Id Number: 0 | Message Id Name:

VK_ERROR_INITIALIZATION_FAILED: Render pipeline compile failed (Error code 2):

Compiler encountered an internal error.

Objects - 1

Object[0] - VK_OBJECT_TYPE_PIPELINE, Handle 140389825172992

<C++ Source> drivers/vulkan/vulkan_context.cpp:159 @ _debug_messenger_callback()

E 0:00:00:0276 render_pipeline_create: vkCreateGraphicsPipelines failed with error -3 for shader 'ClusterRenderShaderRD:0'.

<C++ Error> Condition "err" is true. Returning: RID()

<C++ Source> drivers/vulkan/rendering_device_vulkan.cpp:6614 @ render_pipeline_create()

E 0:00:30:0395 _debug_messenger_callback: - Message Id Number: 0 | Message Id Name:

VK_ERROR_INITIALIZATION_FAILED: Render pipeline compile failed (Error code 2):

Compiler encountered an internal error.

Objects - 1

Object[0] - VK_OBJECT_TYPE_PIPELINE, Handle 140389832876544

<C++ Source> drivers/vulkan/vulkan_context.cpp:159 @ _debug_messenger_callback()

E 0:00:30:0395 render_pipeline_create: vkCreateGraphicsPipelines failed with error -3 for shader 'ClusterRenderShaderRD:0'.

<C++ Error> Condition "err" is true. Returning: RID()

<C++ Source> drivers/vulkan/rendering_device_vulkan.cpp:6614 @ render_pipeline_create()

```

Also, here's the editor output after Godot start, for the record:

```

--- Debugging process started ---

Godot Engine v4.0.alpha10.official.4bbe7f0b9 - https://godotengine.org

Vulkan API 1.1.198 - Using Vulkan Device #0: Intel - Intel Iris Pro Graphics

Registered camera FaceTime HD Camera with id 1 position 0 at index 0

--- Debugging process stopped ---

```

It seems that Vulkan support is not an issue on my MacBook Pro. I've just installed Vulkan SDK 1.3.216.0 for macOS from [LunarG website](https://vulkan.lunarg.com/sdk/home#mac) and their `vkcube.app` demo works without any issues (thanks to [MoltenVK](https://github.com/KhronosGroup/MoltenVK) underneath, of course).

I can provide any additional information or testing if that would help.

### System details

- laptop: MacBook Pro (Retina, 15-inch, Mid 2015)

- CPU: Intel Core i7-4870HQ 2.5 GHz (Haswell)

- OS: macOS Monterey 12.4 (build [21F79](https://en.wikipedia.org/wiki/MacOS_Monterey#Release_history))

### GPU details (from `system_profiler`)

```

Chipset Model: Intel Iris Pro

Type: GPU

Bus: Built-In

VRAM (Dynamic, Max): 1536 MB

Vendor: Intel

Device ID: 0x0d26

Revision ID: 0x0008

Metal Family: Supported, Metal GPUFamily macOS 1

```

And by the way, thank you all for Godot! 🎉 It's absolutely amazing that a game engine like this is available out there.

### Steps to reproduce

No extra steps are needed to reproduce the issue. It seems that any MacBook with a similar spec would be prone to this problem.

### Minimal reproduction project

_No response_ | True | Godot 4.0 is very slow to start on macOS compared to 3.x - ### Godot version

4.0.alpha10.official

### System information

macOS 12.4 (Monterey), Vulkan, Intel Iris Pro 5200

### Issue description

Latest release of Godot 4.0 (alpha 10) seems to be super slow on macOS 12.4 (Monterey), at least when tested on my MacBook Pro setup (meanwhile, Godot 3.4.4 works without any noticeable performance issues). Here are some benchmarks:

- time to start Godot (to open project list): **33 seconds**

- time to open new project: 35 seconds

- time to start an empty 2D scene app: 33 seconds

On the other hand, editor itself is generally responsive when interacting with its UI (creating new nodes etc.).

Among important details, here are some errors that are raised during the 2D application start (empty scene):

```

E 0:00:00:0276 _debug_messenger_callback: - Message Id Number: 0 | Message Id Name:

VK_ERROR_INITIALIZATION_FAILED: Render pipeline compile failed (Error code 2):

Compiler encountered an internal error.

Objects - 1

Object[0] - VK_OBJECT_TYPE_PIPELINE, Handle 140389825172992

<C++ Source> drivers/vulkan/vulkan_context.cpp:159 @ _debug_messenger_callback()

E 0:00:00:0276 render_pipeline_create: vkCreateGraphicsPipelines failed with error -3 for shader 'ClusterRenderShaderRD:0'.

<C++ Error> Condition "err" is true. Returning: RID()

<C++ Source> drivers/vulkan/rendering_device_vulkan.cpp:6614 @ render_pipeline_create()

E 0:00:30:0395 _debug_messenger_callback: - Message Id Number: 0 | Message Id Name:

VK_ERROR_INITIALIZATION_FAILED: Render pipeline compile failed (Error code 2):

Compiler encountered an internal error.

Objects - 1

Object[0] - VK_OBJECT_TYPE_PIPELINE, Handle 140389832876544

<C++ Source> drivers/vulkan/vulkan_context.cpp:159 @ _debug_messenger_callback()

E 0:00:30:0395 render_pipeline_create: vkCreateGraphicsPipelines failed with error -3 for shader 'ClusterRenderShaderRD:0'.

<C++ Error> Condition "err" is true. Returning: RID()

<C++ Source> drivers/vulkan/rendering_device_vulkan.cpp:6614 @ render_pipeline_create()

```

Also, here's the editor output after Godot start, for the record:

```

--- Debugging process started ---

Godot Engine v4.0.alpha10.official.4bbe7f0b9 - https://godotengine.org

Vulkan API 1.1.198 - Using Vulkan Device #0: Intel - Intel Iris Pro Graphics

Registered camera FaceTime HD Camera with id 1 position 0 at index 0

--- Debugging process stopped ---

```

It seems that Vulkan support is not an issue on my MacBook Pro. I've just installed Vulkan SDK 1.3.216.0 for macOS from [LunarG website](https://vulkan.lunarg.com/sdk/home#mac) and their `vkcube.app` demo works without any issues (thanks to [MoltenVK](https://github.com/KhronosGroup/MoltenVK) underneath, of course).

I can provide any additional information or testing if that would help.

### System details

- laptop: MacBook Pro (Retina, 15-inch, Mid 2015)

- CPU: Intel Core i7-4870HQ 2.5 GHz (Haswell)

- OS: macOS Monterey 12.4 (build [21F79](https://en.wikipedia.org/wiki/MacOS_Monterey#Release_history))

### GPU details (from `system_profiler`)

```

Chipset Model: Intel Iris Pro

Type: GPU

Bus: Built-In

VRAM (Dynamic, Max): 1536 MB

Vendor: Intel

Device ID: 0x0d26

Revision ID: 0x0008

Metal Family: Supported, Metal GPUFamily macOS 1

```

And by the way, thank you all for Godot! 🎉 It's absolutely amazing that a game engine like this is available out there.

### Steps to reproduce

No extra steps are needed to reproduce the issue. It seems that any MacBook with a similar spec would be prone to this problem.

### Minimal reproduction project

_No response_ | perf | godot is very slow to start on macos compared to x godot version official system information macos monterey vulkan intel iris pro issue description latest release of godot alpha seems to be super slow on macos monterey at least when tested on my macbook pro setup meanwhile godot works without any noticeable performance issues here are some benchmarks time to start godot to open project list seconds time to open new project seconds time to start an empty scene app seconds on the other hand editor itself is generally responsive when interacting with its ui creating new nodes etc among important details here are some errors that are raised during the application start empty scene e debug messenger callback message id number message id name vk error initialization failed render pipeline compile failed error code compiler encountered an internal error objects object vk object type pipeline handle drivers vulkan vulkan context cpp debug messenger callback e render pipeline create vkcreategraphicspipelines failed with error for shader clusterrendershaderrd condition err is true returning rid drivers vulkan rendering device vulkan cpp render pipeline create e debug messenger callback message id number message id name vk error initialization failed render pipeline compile failed error code compiler encountered an internal error objects object vk object type pipeline handle drivers vulkan vulkan context cpp debug messenger callback e render pipeline create vkcreategraphicspipelines failed with error for shader clusterrendershaderrd condition err is true returning rid drivers vulkan rendering device vulkan cpp render pipeline create also here s the editor output after godot start for the record debugging process started godot engine official vulkan api using vulkan device intel intel iris pro graphics registered camera facetime hd camera with id position at index debugging process stopped it seems that vulkan support is not an issue on my macbook pro i ve just installed vulkan sdk for macos from and their vkcube app demo works without any issues thanks to underneath of course i can provide any additional information or testing if that would help system details laptop macbook pro retina inch mid cpu intel core ghz haswell os macos monterey build gpu details from system profiler chipset model intel iris pro type gpu bus built in vram dynamic max mb vendor intel device id revision id metal family supported metal gpufamily macos and by the way thank you all for godot 🎉 it s absolutely amazing that a game engine like this is available out there steps to reproduce no extra steps are needed to reproduce the issue it seems that any macbook with a similar spec would be prone to this problem minimal reproduction project no response | 1 |

52,031 | 27,340,801,136 | IssuesEvent | 2023-02-26 19:22:02 | starlite-api/starlite | https://api.github.com/repos/starlite-api/starlite | closed | Refactor: Reduce reliance on `pydantic.BaseModel` | enhancement help wanted refactor performance | Internally we use pydantic models for the following types:

- `config.allowed_hosts.AllowedHostsConfig`

- `config.app.AppConfig`

- `config.cache.CacheConfig`

- `config.compression.CompressionConfig`

- `config.cors.CORSConfig`

- `config.csrf.CSRFConfig`

- `config.openapi.OpenAPIConfig`

- `config.static_files.StaticFilesConfig`

- `contrib.jwt.jwt_auth.OAuth2Login`

- `contrib.jwt.jwt_auth.Token`

- `contrib.opentelemetry.config.OpenTelemetryConfig`

- `middleware.logging.LoggingMiddlewareConfig`

- `middleware.rate_limit.RateLimitConfig`

- `middleware.session.base.BaseBackendConfig`

- `openapi.datastructures.ResponseSpec`

- `plugins.sql_alchemy.config.SQLAlchemySessionConfig`

- `plugins.sql_alchemy.config.SQLAlchemyEngineConfig`

- `plugins.sql_alchemy.config.SQLAlchemyConfig`

- `utils.exception.ExceptionResponseContent`

Any of these that do not explicitly rely on pydantic functionality for some reason should probably be migrated to dataclasses. | True | Refactor: Reduce reliance on `pydantic.BaseModel` - Internally we use pydantic models for the following types:

- `config.allowed_hosts.AllowedHostsConfig`

- `config.app.AppConfig`

- `config.cache.CacheConfig`

- `config.compression.CompressionConfig`

- `config.cors.CORSConfig`

- `config.csrf.CSRFConfig`

- `config.openapi.OpenAPIConfig`

- `config.static_files.StaticFilesConfig`

- `contrib.jwt.jwt_auth.OAuth2Login`

- `contrib.jwt.jwt_auth.Token`

- `contrib.opentelemetry.config.OpenTelemetryConfig`

- `middleware.logging.LoggingMiddlewareConfig`

- `middleware.rate_limit.RateLimitConfig`

- `middleware.session.base.BaseBackendConfig`

- `openapi.datastructures.ResponseSpec`

- `plugins.sql_alchemy.config.SQLAlchemySessionConfig`

- `plugins.sql_alchemy.config.SQLAlchemyEngineConfig`

- `plugins.sql_alchemy.config.SQLAlchemyConfig`

- `utils.exception.ExceptionResponseContent`

Any of these that do not explicitly rely on pydantic functionality for some reason should probably be migrated to dataclasses. | perf | refactor reduce reliance on pydantic basemodel internally we use pydantic models for the following types config allowed hosts allowedhostsconfig config app appconfig config cache cacheconfig config compression compressionconfig config cors corsconfig config csrf csrfconfig config openapi openapiconfig config static files staticfilesconfig contrib jwt jwt auth contrib jwt jwt auth token contrib opentelemetry config opentelemetryconfig middleware logging loggingmiddlewareconfig middleware rate limit ratelimitconfig middleware session base basebackendconfig openapi datastructures responsespec plugins sql alchemy config sqlalchemysessionconfig plugins sql alchemy config sqlalchemyengineconfig plugins sql alchemy config sqlalchemyconfig utils exception exceptionresponsecontent any of these that do not explicitly rely on pydantic functionality for some reason should probably be migrated to dataclasses | 1 |

24,447 | 12,299,584,010 | IssuesEvent | 2020-05-11 12:38:54 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Mention changed file with `--verbose_explanations` | P3 team-Performance type: feature request | Please provide the following information. The more we know about your system and use case, the more easily and likely we can help.

### Description of the problem / feature request / question:

I have a mid-sized C++ codebase. I'd like to understand which header files are commonly being changed that require rebuilds of the ~entire codebase. I added `build --explain=bazel.log --verbose_explanations` to my `.bazelrc`, in the hopes of consulting `bazel.log` after rebuilds to determine which header change(s) necessitated the full rebuild.

However, the log file is just full of information like

```

Executing action 'Compiling common/counters.cc': One of the files has changed.

```

which doesn't tell me the information I actually want -- *which* dependency file changed?

Would it be possible (probably only under `--verbose_explanations`) for bazel to log *which* file has changed, or maybe a subset of them, if an exact list is too verbose or expensive somehow?

### If possible, provide a minimal example to reproduce the problem:

### Environment info

* Operating System:

Linux Ubuntu 16.04

* Bazel version (output of `bazel info release`):

```

release 0.8.0

```

* If `bazel info release` returns "development version" or "(@non-git)", please tell us what source tree you compiled Bazel from; git commit hash is appreciated (`git rev-parse HEAD`):

### Have you found anything relevant by searching the web?

(e.g. [StackOverflow answers](http://stackoverflow.com/questions/tagged/bazel),

[GitHub issues](https://github.com/bazelbuild/bazel/issues),

email threads on the [`bazel-discuss`](https://groups.google.com/forum/#!forum/bazel-discuss) Google group)

### Anything else, information or logs or outputs that would be helpful?

(If they are large, please upload as attachment or provide link).

| True | Mention changed file with `--verbose_explanations` - Please provide the following information. The more we know about your system and use case, the more easily and likely we can help.

### Description of the problem / feature request / question:

I have a mid-sized C++ codebase. I'd like to understand which header files are commonly being changed that require rebuilds of the ~entire codebase. I added `build --explain=bazel.log --verbose_explanations` to my `.bazelrc`, in the hopes of consulting `bazel.log` after rebuilds to determine which header change(s) necessitated the full rebuild.

However, the log file is just full of information like

```

Executing action 'Compiling common/counters.cc': One of the files has changed.

```

which doesn't tell me the information I actually want -- *which* dependency file changed?

Would it be possible (probably only under `--verbose_explanations`) for bazel to log *which* file has changed, or maybe a subset of them, if an exact list is too verbose or expensive somehow?

### If possible, provide a minimal example to reproduce the problem:

### Environment info

* Operating System:

Linux Ubuntu 16.04

* Bazel version (output of `bazel info release`):

```

release 0.8.0

```

* If `bazel info release` returns "development version" or "(@non-git)", please tell us what source tree you compiled Bazel from; git commit hash is appreciated (`git rev-parse HEAD`):

### Have you found anything relevant by searching the web?

(e.g. [StackOverflow answers](http://stackoverflow.com/questions/tagged/bazel),

[GitHub issues](https://github.com/bazelbuild/bazel/issues),

email threads on the [`bazel-discuss`](https://groups.google.com/forum/#!forum/bazel-discuss) Google group)

### Anything else, information or logs or outputs that would be helpful?

(If they are large, please upload as attachment or provide link).

| perf | mention changed file with verbose explanations please provide the following information the more we know about your system and use case the more easily and likely we can help description of the problem feature request question i have a mid sized c codebase i d like to understand which header files are commonly being changed that require rebuilds of the entire codebase i added build explain bazel log verbose explanations to my bazelrc in the hopes of consulting bazel log after rebuilds to determine which header change s necessitated the full rebuild however the log file is just full of information like executing action compiling common counters cc one of the files has changed which doesn t tell me the information i actually want which dependency file changed would it be possible probably only under verbose explanations for bazel to log which file has changed or maybe a subset of them if an exact list is too verbose or expensive somehow if possible provide a minimal example to reproduce the problem environment info operating system linux ubuntu bazel version output of bazel info release release if bazel info release returns development version or non git please tell us what source tree you compiled bazel from git commit hash is appreciated git rev parse head have you found anything relevant by searching the web e g email threads on the google group anything else information or logs or outputs that would be helpful if they are large please upload as attachment or provide link | 1 |

8,665 | 6,618,295,026 | IssuesEvent | 2017-09-21 07:31:47 | TorXakis/TorXakis | https://api.github.com/repos/TorXakis/TorXakis | opened | Optimal translation of STAUTDEF? | performace-improvement | When experimenting with MovingArms I made the following observation:

The following manual generated LPE

```

PROCDEF singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] ( pc :: Int ) ::=

[[ pc == 0 ]] =>> UpX >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 1 )

## [[ pc == 0 ]] =>> DownX >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 2 )

## [[ pc == 0 ]] =>> UpY >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 3 )

## [[ pc == 0 ]] =>> DownY >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 4 )

## [[ pc == 0 ]] =>> UpZ >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 5 )

## [[ pc == 0 ]] =>> DownZ >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 6 )

## [[ (pc == 1) \/ (pc == 2) ]] =>> StopX >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 1) ]] =>> MaxX >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 2) ]] =>> MinX >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 3) \/ (pc == 4) ]] =>> StopY >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 3) ]] =>> MaxY >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 4) ]] =>> MinY >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 5) \/ (pc == 6) ]] =>> StopZ >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 5) ]] =>> MaxZ >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 6) ]] =>> MinZ >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

ENDDEF

MODELDEF TestPurposeLPE ::=

CHAN IN UpX, DownX, StopX,

UpY, DownY, StopY,

UpZ, DownZ, StopZ

CHAN OUT MinX, MaxX,

MinY, MaxY,

MinZ, MaxZ

BEHAVIOUR

allowedBehaviour [ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] ( )

|[ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ]|

singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

ENDDEF

```

executes 100 steps in 17.7086647s

Yet, the comparable manual made STAUTDEF

```

STAUTDEF singleAxisMovementSTAUTDEF[ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] () ::=

STATE idle, moveX, moveY, moveZ

VAR up :: Bool

INIT idle

TRANS idle -> UpX { up := True } -> moveX

idle -> DownX { up := False } -> moveX

idle -> UpY { up := True } -> moveY

idle -> DownY { up := False } -> moveY

idle -> UpZ { up := True } -> moveZ

idle -> DownZ { up := False } -> moveZ

moveX -> StopX -> idle

moveX -> MaxX [[ up ]] -> idle

moveX -> MinX [[ not( up ) ]] -> idle

moveY -> StopY -> idle

moveY -> MaxY [[ up ]] -> idle

moveY -> MinY [[ not( up ) ]] -> idle

moveZ -> StopZ -> idle

moveZ -> MaxZ [[ up ]] -> idle

moveZ -> MinZ [[ not( up ) ]] -> idle

ENDDEF

MODELDEF TestPurposeSTAUTDEF ::=

CHAN IN UpX, DownX, StopX,

UpY, DownY, StopY,

UpZ, DownZ, StopZ

CHAN OUT MinX, MaxX,

MinY, MaxY,

MinZ, MaxZ

BEHAVIOUR

allowedBehaviour [ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] ( )

|[ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ]|

singleAxisMovementSTAUTDEF [ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] ( )

ENDDEF

```

takes longer 21.4246334s (with the same seed)

This observation raises the question: is the translation of STAUTDEF optimal?

For convenience the MovingArms.txs file and the commando files are attached below

[MovingArms.zip](https://github.com/TorXakis/TorXakis/files/1320302/MovingArms.zip)

| True | Optimal translation of STAUTDEF? - When experimenting with MovingArms I made the following observation:

The following manual generated LPE

```

PROCDEF singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] ( pc :: Int ) ::=

[[ pc == 0 ]] =>> UpX >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 1 )

## [[ pc == 0 ]] =>> DownX >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 2 )

## [[ pc == 0 ]] =>> UpY >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 3 )

## [[ pc == 0 ]] =>> DownY >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 4 )

## [[ pc == 0 ]] =>> UpZ >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 5 )

## [[ pc == 0 ]] =>> DownZ >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 6 )

## [[ (pc == 1) \/ (pc == 2) ]] =>> StopX >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 1) ]] =>> MaxX >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 2) ]] =>> MinX >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 3) \/ (pc == 4) ]] =>> StopY >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 3) ]] =>> MaxY >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 4) ]] =>> MinY >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 5) \/ (pc == 6) ]] =>> StopZ >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 5) ]] =>> MaxZ >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

## [[ (pc == 6) ]] =>> MinZ >-> singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX, UpY, DownY, StopY, MinY, MaxY, UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

ENDDEF

MODELDEF TestPurposeLPE ::=

CHAN IN UpX, DownX, StopX,

UpY, DownY, StopY,

UpZ, DownZ, StopZ

CHAN OUT MinX, MaxX,

MinY, MaxY,

MinZ, MaxZ

BEHAVIOUR

allowedBehaviour [ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] ( )

|[ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ]|

singleAxisMovementLPE [ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] ( 0 )

ENDDEF

```

executes 100 steps in 17.7086647s

Yet, the comparable manual made STAUTDEF

```

STAUTDEF singleAxisMovementSTAUTDEF[ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] () ::=

STATE idle, moveX, moveY, moveZ

VAR up :: Bool

INIT idle

TRANS idle -> UpX { up := True } -> moveX

idle -> DownX { up := False } -> moveX

idle -> UpY { up := True } -> moveY

idle -> DownY { up := False } -> moveY

idle -> UpZ { up := True } -> moveZ

idle -> DownZ { up := False } -> moveZ

moveX -> StopX -> idle

moveX -> MaxX [[ up ]] -> idle

moveX -> MinX [[ not( up ) ]] -> idle

moveY -> StopY -> idle

moveY -> MaxY [[ up ]] -> idle

moveY -> MinY [[ not( up ) ]] -> idle

moveZ -> StopZ -> idle

moveZ -> MaxZ [[ up ]] -> idle

moveZ -> MinZ [[ not( up ) ]] -> idle

ENDDEF

MODELDEF TestPurposeSTAUTDEF ::=

CHAN IN UpX, DownX, StopX,

UpY, DownY, StopY,

UpZ, DownZ, StopZ

CHAN OUT MinX, MaxX,

MinY, MaxY,

MinZ, MaxZ

BEHAVIOUR

allowedBehaviour [ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] ( )

|[ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ]|

singleAxisMovementSTAUTDEF [ UpX, DownX, StopX, MinX, MaxX,

UpY, DownY, StopY, MinY, MaxY,

UpZ, DownZ, StopZ, MinZ, MaxZ] ( )

ENDDEF

```

takes longer 21.4246334s (with the same seed)

This observation raises the question: is the translation of STAUTDEF optimal?

For convenience the MovingArms.txs file and the commando files are attached below

[MovingArms.zip](https://github.com/TorXakis/TorXakis/files/1320302/MovingArms.zip)

| perf | optimal translation of stautdef when experimenting with movingarms i made the following observation the following manual generated lpe procdef singleaxismovementlpe upx downx stopx minx maxx upy downy stopy miny maxy upz downz stopz minz maxz pc int upx singleaxismovementlpe downx singleaxismovementlpe upy singleaxismovementlpe downy singleaxismovementlpe upz singleaxismovementlpe downz singleaxismovementlpe stopx singleaxismovementlpe maxx singleaxismovementlpe minx singleaxismovementlpe stopy singleaxismovementlpe maxy singleaxismovementlpe miny singleaxismovementlpe stopz singleaxismovementlpe maxz singleaxismovementlpe minz singleaxismovementlpe enddef modeldef testpurposelpe chan in upx downx stopx upy downy stopy upz downz stopz chan out minx maxx miny maxy minz maxz behaviour allowedbehaviour upx downx stopx minx maxx upy downy stopy miny maxy upz downz stopz minz maxz upx downx stopx minx maxx upy downy stopy miny maxy upz downz stopz minz maxz singleaxismovementlpe upx downx stopx minx maxx upy downy stopy miny maxy upz downz stopz minz maxz enddef executes steps in yet the comparable manual made stautdef stautdef singleaxismovementstautdef upx downx stopx minx maxx upy downy stopy miny maxy upz downz stopz minz maxz state idle movex movey movez var up bool init idle trans idle upx up true movex idle downx up false movex idle upy up true movey idle downy up false movey idle upz up true movez idle downz up false movez movex stopx idle movex maxx idle movex minx idle movey stopy idle movey maxy idle movey miny idle movez stopz idle movez maxz idle movez minz idle enddef modeldef testpurposestautdef chan in upx downx stopx upy downy stopy upz downz stopz chan out minx maxx miny maxy minz maxz behaviour allowedbehaviour upx downx stopx minx maxx upy downy stopy miny maxy upz downz stopz minz maxz upx downx stopx minx maxx upy downy stopy miny maxy upz downz stopz minz maxz singleaxismovementstautdef upx downx stopx minx maxx upy downy stopy miny maxy upz downz stopz minz maxz enddef takes longer with the same seed this observation raises the question is the translation of stautdef optimal for convenience the movingarms txs file and the commando files are attached below | 1 |

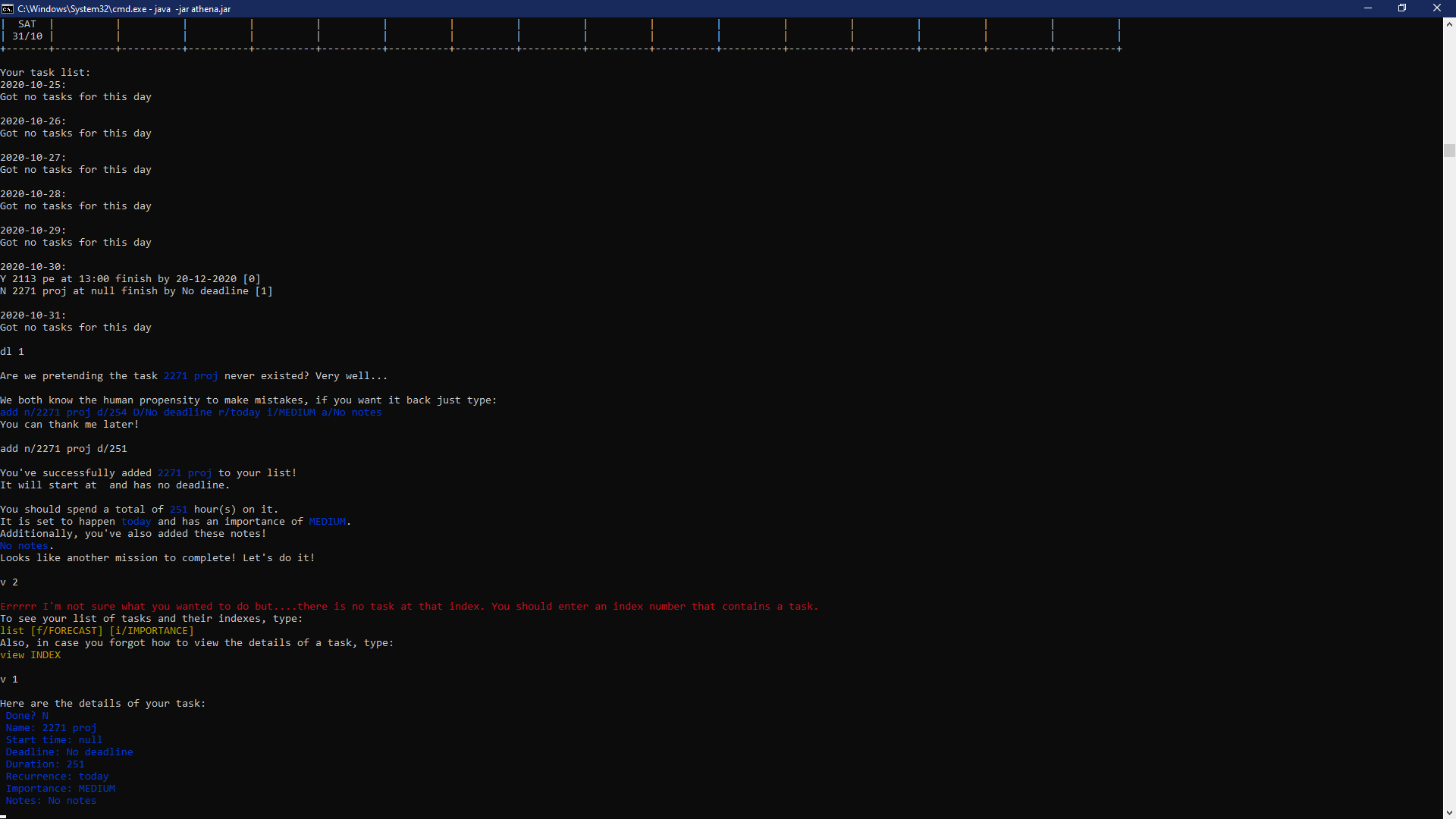

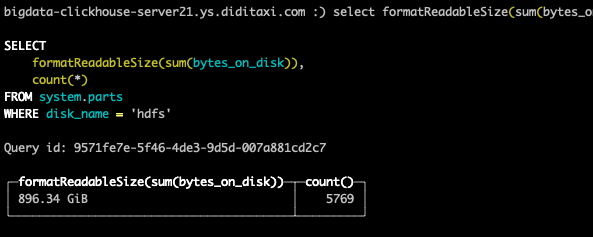

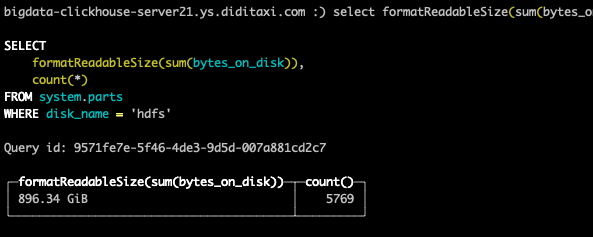

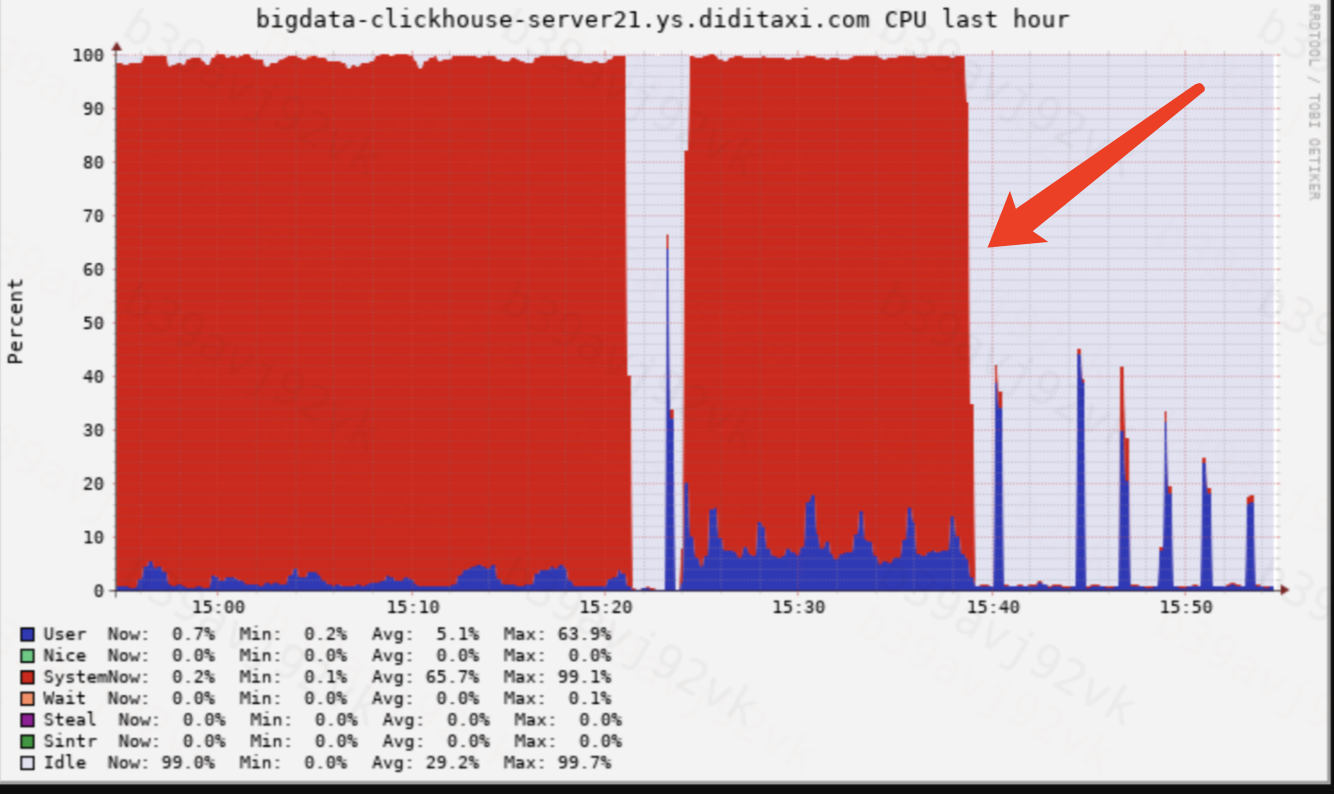

53,625 | 28,316,304,433 | IssuesEvent | 2023-04-10 19:56:30 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | closed | Service startup is slow to load Hdfs files concurrently | performance | (you don't have to strictly follow this form)

**Describe the situation**

Using Hdfs Disk, when there are many tables and parts, the service startup will lead to high system cpu, and the startup time is very long.

**How to reproduce**

1. Import a certain number of parts and write to hdfs.

2. Change the merge thread pool to 0 to prevent the part from being merged after restarting.

3. Set the max_part_loading_threads parameter to 300.

4. Restarting the System cpu will be full.

* Which ClickHouse server version to use

v21.8.15.1-lts

| True | Service startup is slow to load Hdfs files concurrently - (you don't have to strictly follow this form)

**Describe the situation**

Using Hdfs Disk, when there are many tables and parts, the service startup will lead to high system cpu, and the startup time is very long.

**How to reproduce**

1. Import a certain number of parts and write to hdfs.

2. Change the merge thread pool to 0 to prevent the part from being merged after restarting.

3. Set the max_part_loading_threads parameter to 300.

4. Restarting the System cpu will be full.

* Which ClickHouse server version to use

v21.8.15.1-lts

| perf | service startup is slow to load hdfs files concurrently you don t have to strictly follow this form describe the situation using hdfs disk when there are many tables and parts the service startup will lead to high system cpu and the startup time is very long how to reproduce import a certain number of parts and write to hdfs change the merge thread pool to to prevent the part from being merged after restarting set the max part loading threads parameter to restarting the system cpu will be full which clickhouse server version to use lts | 1 |

28,071 | 13,523,403,737 | IssuesEvent | 2020-09-15 09:54:47 | prisma/docs | https://api.github.com/repos/prisma/docs | closed | Clicking on some links is sometimes really slow | topic: performance website/improvement | It happens to me sometimes that I click on in the sidebar menu on a link and it takes “a while” to update the page, see example recording:

- I click on some links it works fast enough for me until…

- I click on “REST API”, only once (timestamp 8sec)

- And wait for rendering… 7 seconds later :slow-parrot:

This happens kind of randomly but only once per link, looking at the Dev Tools I see it loads:

`/docs/page-data/app-data.json`

`/docs/page-data/guides/upgrade-guides/upgrade-from-prisma-1/upgrading-a-rest-api/page-data.json`

I forgot to open Dev Tools to record what was happening, it could be Netlify slow response or client side rendering taking a long time somehow?

Tested on Firefox Developer Edition 79.0b3 (64-bit) on macOS Catalina | True | Clicking on some links is sometimes really slow - It happens to me sometimes that I click on in the sidebar menu on a link and it takes “a while” to update the page, see example recording:

- I click on some links it works fast enough for me until…

- I click on “REST API”, only once (timestamp 8sec)

- And wait for rendering… 7 seconds later :slow-parrot:

This happens kind of randomly but only once per link, looking at the Dev Tools I see it loads:

`/docs/page-data/app-data.json`

`/docs/page-data/guides/upgrade-guides/upgrade-from-prisma-1/upgrading-a-rest-api/page-data.json`

I forgot to open Dev Tools to record what was happening, it could be Netlify slow response or client side rendering taking a long time somehow?

Tested on Firefox Developer Edition 79.0b3 (64-bit) on macOS Catalina | perf | clicking on some links is sometimes really slow it happens to me sometimes that i click on in the sidebar menu on a link and it takes “a while” to update the page see example recording i click on some links it works fast enough for me until… i click on “rest api” only once timestamp and wait for rendering… seconds later slow parrot this happens kind of randomly but only once per link looking at the dev tools i see it loads docs page data app data json docs page data guides upgrade guides upgrade from prisma upgrading a rest api page data json i forgot to open dev tools to record what was happening it could be netlify slow response or client side rendering taking a long time somehow tested on firefox developer edition bit on macos catalina | 1 |

27,350 | 13,229,568,630 | IssuesEvent | 2020-08-18 08:23:31 | PLhery/unfollowNinja | https://api.github.com/repos/PLhery/unfollowNinja | closed | Store followers usernames in postresql instead of redis | performance | All twittos usernames are currently stored in redis, to retrieve them if some of them leave Twitter.

I estimated that these were using 25% of redis space.

There is not that much read/write operations so there is no reason to store these in an in-memory db.

(We should copy/keep/update our users's usernames in redis though, as these are currently accessed ~10 000 times/minute) | True | Store followers usernames in postresql instead of redis - All twittos usernames are currently stored in redis, to retrieve them if some of them leave Twitter.

I estimated that these were using 25% of redis space.

There is not that much read/write operations so there is no reason to store these in an in-memory db.

(We should copy/keep/update our users's usernames in redis though, as these are currently accessed ~10 000 times/minute) | perf | store followers usernames in postresql instead of redis all twittos usernames are currently stored in redis to retrieve them if some of them leave twitter i estimated that these were using of redis space there is not that much read write operations so there is no reason to store these in an in memory db we should copy keep update our users s usernames in redis though as these are currently accessed times minute | 1 |

33,945 | 16,325,812,941 | IssuesEvent | 2021-05-12 00:55:27 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | torch.one_hot causes multiple DeviceToHost transfers when input tensor is a cuda tensor | module: cuda module: performance triaged | ## 🐛 Bug

<!-- A clear and concise description of what the bug is. -->

## To Reproduce

Steps to reproduce the behavior:

1.

1.

1.

<!-- If you have a code sample, error messages, stack traces, please provide it here as well -->

```

x = torch.tensor([1, 2, 3]).long().cuda()

F.one_hot(x, 4)

```

One example:

https://github.com/pytorch/pytorch/blob/release/1.8/aten/src/ATen/native/Onehot.cpp#L21

## Expected behavior

<!-- A clear and concise description of what you expected to happen. -->

No DeviceToHost transfers. There are multiple .item() calls in the ATen one_hot implementation. These are mostly unnecessary except for when `num_classes=-1`

.

## Environment

Please copy and paste the output from our

[environment collection script](https://raw.githubusercontent.com/pytorch/pytorch/master/torch/utils/collect_env.py)

(or fill out the checklist below manually).

You can get the script and run it with:

```

wget https://raw.githubusercontent.com/pytorch/pytorch/master/torch/utils/collect_env.py

# For security purposes, please check the contents of collect_env.py before running it.

python collect_env.py

```

- PyTorch Version (e.g., 1.0):

- OS (e.g., Linux):

- How you installed PyTorch (`conda`, `pip`, source):

- Build command you used (if compiling from source):

- Python version:

- CUDA/cuDNN version:

- GPU models and configuration:

- Any other relevant information:

## Additional context

<!-- Add any other context about the problem here. -->

cc @ngimel @VitalyFedyunin | True | torch.one_hot causes multiple DeviceToHost transfers when input tensor is a cuda tensor - ## 🐛 Bug

<!-- A clear and concise description of what the bug is. -->

## To Reproduce

Steps to reproduce the behavior:

1.

1.

1.

<!-- If you have a code sample, error messages, stack traces, please provide it here as well -->

```

x = torch.tensor([1, 2, 3]).long().cuda()

F.one_hot(x, 4)

```

One example:

https://github.com/pytorch/pytorch/blob/release/1.8/aten/src/ATen/native/Onehot.cpp#L21

## Expected behavior

<!-- A clear and concise description of what you expected to happen. -->

No DeviceToHost transfers. There are multiple .item() calls in the ATen one_hot implementation. These are mostly unnecessary except for when `num_classes=-1`

.

## Environment

Please copy and paste the output from our

[environment collection script](https://raw.githubusercontent.com/pytorch/pytorch/master/torch/utils/collect_env.py)

(or fill out the checklist below manually).

You can get the script and run it with:

```

wget https://raw.githubusercontent.com/pytorch/pytorch/master/torch/utils/collect_env.py

# For security purposes, please check the contents of collect_env.py before running it.

python collect_env.py

```

- PyTorch Version (e.g., 1.0):

- OS (e.g., Linux):

- How you installed PyTorch (`conda`, `pip`, source):

- Build command you used (if compiling from source):

- Python version:

- CUDA/cuDNN version:

- GPU models and configuration:

- Any other relevant information:

## Additional context

<!-- Add any other context about the problem here. -->

cc @ngimel @VitalyFedyunin | perf | torch one hot causes multiple devicetohost transfers when input tensor is a cuda tensor 🐛 bug to reproduce steps to reproduce the behavior x torch tensor long cuda f one hot x one example expected behavior no devicetohost transfers there are multiple item calls in the aten one hot implementation these are mostly unnecessary except for when num classes environment please copy and paste the output from our or fill out the checklist below manually you can get the script and run it with wget for security purposes please check the contents of collect env py before running it python collect env py pytorch version e g os e g linux how you installed pytorch conda pip source build command you used if compiling from source python version cuda cudnn version gpu models and configuration any other relevant information additional context cc ngimel vitalyfedyunin | 1 |

517,791 | 15,020,012,515 | IssuesEvent | 2021-02-01 14:14:10 | mozilla-lockwise/lockwise-ios | https://api.github.com/repos/mozilla-lockwise/lockwise-ios | reopened | Include explicit message when forcing users to re-authenticate due to FxA error | archived feature needs-content priority-P2 | The UX for this ticket will be displaying a dialog on the welcome screen when forcing users to sign in despite a saved account instance.

This could look like "Your local account has been corrupted, please login again" or something. We've previously had behavior like this when we forced all users to migrate to OAuth. | 1.0 | Include explicit message when forcing users to re-authenticate due to FxA error - The UX for this ticket will be displaying a dialog on the welcome screen when forcing users to sign in despite a saved account instance.

This could look like "Your local account has been corrupted, please login again" or something. We've previously had behavior like this when we forced all users to migrate to OAuth. | non_perf | include explicit message when forcing users to re authenticate due to fxa error the ux for this ticket will be displaying a dialog on the welcome screen when forcing users to sign in despite a saved account instance this could look like your local account has been corrupted please login again or something we ve previously had behavior like this when we forced all users to migrate to oauth | 0 |

6,619 | 5,544,358,870 | IssuesEvent | 2017-03-22 18:56:55 | broadinstitute/gatk | https://api.github.com/repos/broadinstitute/gatk | closed | implement parallel copy from NFS (or IFS) to HDFS | performance Spark | A lot of data we have lives on NFS (or underlying IFS - Isilon FS). Copying files in and out is a bottleneck and a pain. This ticket for an implementation of a parallel copy of a BAM/CRAM file to HDFS (sharded or unsharded)

| True | implement parallel copy from NFS (or IFS) to HDFS - A lot of data we have lives on NFS (or underlying IFS - Isilon FS). Copying files in and out is a bottleneck and a pain. This ticket for an implementation of a parallel copy of a BAM/CRAM file to HDFS (sharded or unsharded)

| perf | implement parallel copy from nfs or ifs to hdfs a lot of data we have lives on nfs or underlying ifs isilon fs copying files in and out is a bottleneck and a pain this ticket for an implementation of a parallel copy of a bam cram file to hdfs sharded or unsharded | 1 |

131,021 | 27,812,299,414 | IssuesEvent | 2023-03-18 09:06:11 | trunisam/codeboard | https://api.github.com/repos/trunisam/codeboard | opened | Reiter für Helpersysteme | Codeboard | Der Zugriff auf die Helpersysteme (Tipps und Compiler-Meldungen) soll über entsprechende Reiter in der rechten Navigationsleiste des Codeboards gewährleistet sein. | 1.0 | Reiter für Helpersysteme - Der Zugriff auf die Helpersysteme (Tipps und Compiler-Meldungen) soll über entsprechende Reiter in der rechten Navigationsleiste des Codeboards gewährleistet sein. | non_perf | reiter für helpersysteme der zugriff auf die helpersysteme tipps und compiler meldungen soll über entsprechende reiter in der rechten navigationsleiste des codeboards gewährleistet sein | 0 |

36,702 | 17,869,033,804 | IssuesEvent | 2021-09-06 13:11:05 | kframework/kore | https://api.github.com/repos/kframework/kore | closed | Investigate long running z3 commands. | type: investigation type: performance | While working on https://github.com/kframework/evm-semantics/pull/1102, I noticed that the proof takes roughly an hour to complete. About 40 minutes are spent waiting for z3.

So far, I've recorded an smt-transcript, which shows some suspicious behavior. There are multiple very long sequences of `declare-fun`-commands followed by one `assert`-command with one giant conjunction over all the declared symbols:

~~~lisp

(declare-fun <0> () Bool )

; success

(declare-fun <1> () Bool )

; success

; ...

(declare-fun <9183> () Bool )

; success

(assert (and <2> (and <3> (and <4> .... (and <9182> <9183>) ... ))))

~~~

I am not sure if this is where z3 spends most of its 40 minutes, but it seems suspicious.

[z3.1.log](https://github.com/kframework/kore/files/6929746/z3.1.log)

| True | Investigate long running z3 commands. - While working on https://github.com/kframework/evm-semantics/pull/1102, I noticed that the proof takes roughly an hour to complete. About 40 minutes are spent waiting for z3.

So far, I've recorded an smt-transcript, which shows some suspicious behavior. There are multiple very long sequences of `declare-fun`-commands followed by one `assert`-command with one giant conjunction over all the declared symbols:

~~~lisp

(declare-fun <0> () Bool )

; success

(declare-fun <1> () Bool )

; success

; ...

(declare-fun <9183> () Bool )

; success

(assert (and <2> (and <3> (and <4> .... (and <9182> <9183>) ... ))))

~~~

I am not sure if this is where z3 spends most of its 40 minutes, but it seems suspicious.

[z3.1.log](https://github.com/kframework/kore/files/6929746/z3.1.log)

| perf | investigate long running commands while working on i noticed that the proof takes roughly an hour to complete about minutes are spent waiting for so far i ve recorded an smt transcript which shows some suspicious behavior there are multiple very long sequences of declare fun commands followed by one assert command with one giant conjunction over all the declared symbols lisp declare fun bool success declare fun bool success declare fun bool success assert and and and and i am not sure if this is where spends most of its minutes but it seems suspicious | 1 |

53,444 | 28,131,451,712 | IssuesEvent | 2023-04-01 00:00:03 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | [Performance] XmlSerializationWriter.WriteTypedPrimitive is converting primitive types to string before writing them to the XmlWriter | area-Serialization tenet-performance |

### Description

Possible optimization found.

Current implementation of writing primitive types fallbacks to string using XmlConvert.ToString(..).

https://github.com/dotnet/runtime/blob/5c8aade5384cbad2d086e7fae482ba0b692d3601/src/libraries/System.Private.Xml/src/System/Xml/Serialization/XmlSerializationWriter.cs#L247

### Regression?

No

### Analysis

How to optimize:

Write to char[] and then use XmlWriter.WriteChars(...). This should eliminate all temporary string for int/DateTime/guid.... types, which should be majority when serializing custom types. This change will help us later to use XmlSerializationWriter.WriteTypedPrimitive method in XmlSerializationWriterILGen instead of again creating strings and using XmlConvert.ToString(...)

| True | [Performance] XmlSerializationWriter.WriteTypedPrimitive is converting primitive types to string before writing them to the XmlWriter -

### Description

Possible optimization found.

Current implementation of writing primitive types fallbacks to string using XmlConvert.ToString(..).

https://github.com/dotnet/runtime/blob/5c8aade5384cbad2d086e7fae482ba0b692d3601/src/libraries/System.Private.Xml/src/System/Xml/Serialization/XmlSerializationWriter.cs#L247

### Regression?

No

### Analysis

How to optimize:

Write to char[] and then use XmlWriter.WriteChars(...). This should eliminate all temporary string for int/DateTime/guid.... types, which should be majority when serializing custom types. This change will help us later to use XmlSerializationWriter.WriteTypedPrimitive method in XmlSerializationWriterILGen instead of again creating strings and using XmlConvert.ToString(...)

| perf | xmlserializationwriter writetypedprimitive is converting primitive types to string before writing them to the xmlwriter description possible optimization found current implementation of writing primitive types fallbacks to string using xmlconvert tostring regression no analysis how to optimize write to char and then use xmlwriter writechars this should eliminate all temporary string for int datetime guid types which should be majority when serializing custom types this change will help us later to use xmlserializationwriter writetypedprimitive method in xmlserializationwriterilgen instead of again creating strings and using xmlconvert tostring | 1 |

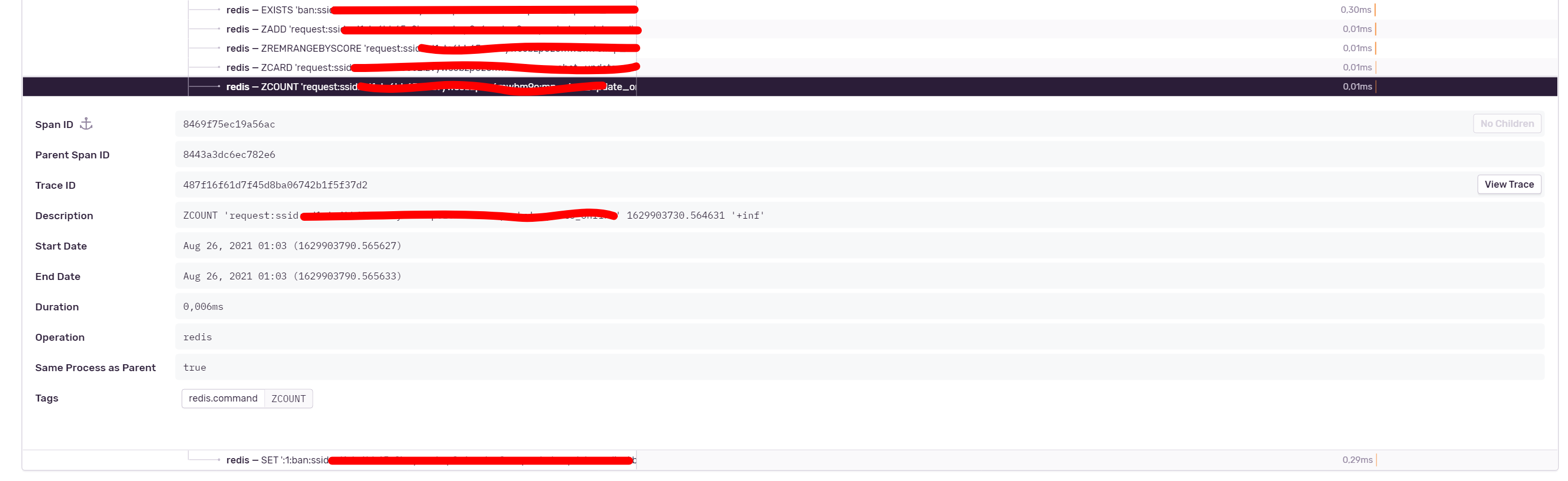

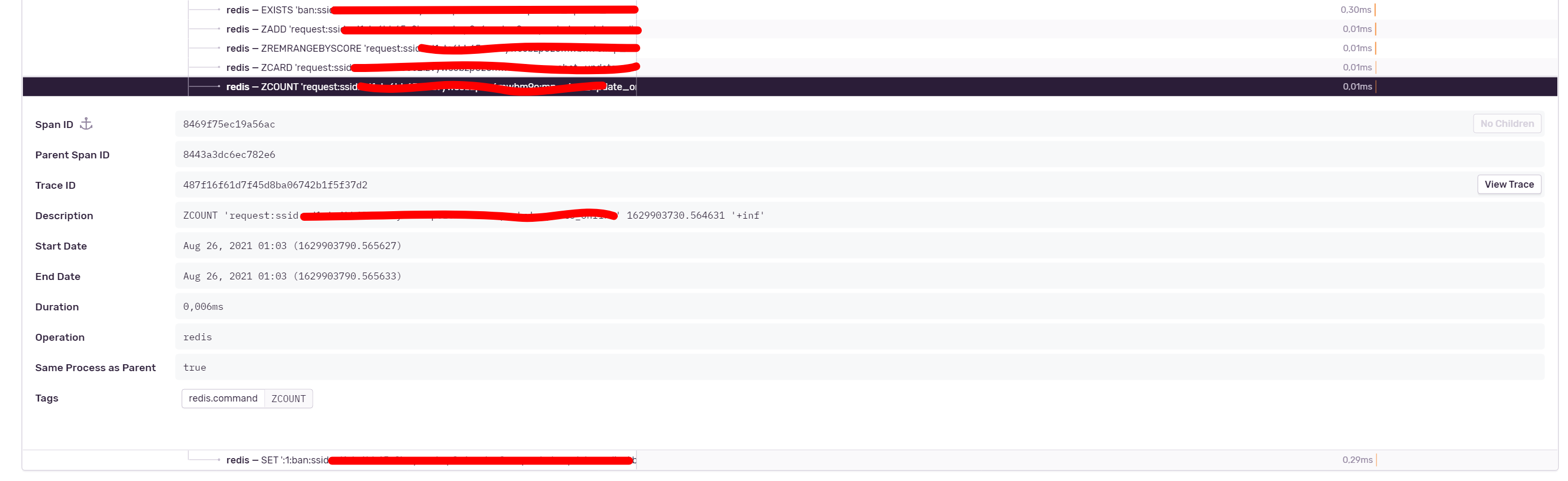

56,614 | 32,077,940,429 | IssuesEvent | 2023-09-25 12:16:37 | getsentry/sentry-python | https://api.github.com/repos/getsentry/sentry-python | closed | Add Redis Pipeline to RedisIntegration | enhancement Help wanted Feature: Performance Integration: Redis | Hi! Pipeline in redis-py has also have method [execute_command](https://github.com/andymccurdy/redis-py/blob/e19a76c58f2a998d86e51c5a2a0f1db37563efce/redis/client.py#L1531). Can you add redis.client.Pipeline to patching in RedisIntegration? It work: all Z* command on screenshot from one pipeline.

And maybe PubSub. | True | Add Redis Pipeline to RedisIntegration - Hi! Pipeline in redis-py has also have method [execute_command](https://github.com/andymccurdy/redis-py/blob/e19a76c58f2a998d86e51c5a2a0f1db37563efce/redis/client.py#L1531). Can you add redis.client.Pipeline to patching in RedisIntegration? It work: all Z* command on screenshot from one pipeline.

And maybe PubSub. | perf | add redis pipeline to redisintegration hi pipeline in redis py has also have method can you add redis client pipeline to patching in redisintegration it work all z command on screenshot from one pipeline and maybe pubsub | 1 |

11,717 | 7,680,172,490 | IssuesEvent | 2018-05-16 00:00:09 | Microsoft/DirectXShaderCompiler | https://api.github.com/repos/Microsoft/DirectXShaderCompiler | closed | Slow PCF shader performance with dxc vs. fxc | performance | Hello,

Now that I have the shader from issue #604 compiling, I've noticed that the performance of the DXIL version is much worse than when the same shader is compiled to DXBC. When testing with the experimental branch of [this project](https://github.com/TheRealMJP/DeferredTexturing), I'm getting about 20.40 milliseconds to complete the forward path, vs about 3.20ms when running the DXBC version. This was measured on an Nvidia GTX 1070 running driver 384.94.

You can compile and run the project yourself if you'd like, or if you'd like to look at the compiler output I've attached the pre-processed code, compiled DXBC, and compiled DXIL for the main pixel shader:

[Mesh_PP.txt](https://github.com/Microsoft/DirectXShaderCompiler/files/1262512/Mesh_PP.txt)

[Mesh_DXBC.txt](https://github.com/Microsoft/DirectXShaderCompiler/files/1262513/Mesh_DXBC.txt)

[Mesh_DXIL.txt](https://github.com/Microsoft/DirectXShaderCompiler/files/1262514/Mesh_DXIL.txt)

I had a quick look myself through the DXIL, and I didn't see anything immediately bad. I was initially concerned that perhaps the looks in the PCF kernel hadn't been unrolled, but it looks like that was handled properly.

Thanks in advance, and please let me know if I can provide any additional information (such as PIX captures, or pre-compiled binaries).

| True | Slow PCF shader performance with dxc vs. fxc - Hello,

Now that I have the shader from issue #604 compiling, I've noticed that the performance of the DXIL version is much worse than when the same shader is compiled to DXBC. When testing with the experimental branch of [this project](https://github.com/TheRealMJP/DeferredTexturing), I'm getting about 20.40 milliseconds to complete the forward path, vs about 3.20ms when running the DXBC version. This was measured on an Nvidia GTX 1070 running driver 384.94.

You can compile and run the project yourself if you'd like, or if you'd like to look at the compiler output I've attached the pre-processed code, compiled DXBC, and compiled DXIL for the main pixel shader:

[Mesh_PP.txt](https://github.com/Microsoft/DirectXShaderCompiler/files/1262512/Mesh_PP.txt)

[Mesh_DXBC.txt](https://github.com/Microsoft/DirectXShaderCompiler/files/1262513/Mesh_DXBC.txt)

[Mesh_DXIL.txt](https://github.com/Microsoft/DirectXShaderCompiler/files/1262514/Mesh_DXIL.txt)

I had a quick look myself through the DXIL, and I didn't see anything immediately bad. I was initially concerned that perhaps the looks in the PCF kernel hadn't been unrolled, but it looks like that was handled properly.

Thanks in advance, and please let me know if I can provide any additional information (such as PIX captures, or pre-compiled binaries).

| perf | slow pcf shader performance with dxc vs fxc hello now that i have the shader from issue compiling i ve noticed that the performance of the dxil version is much worse than when the same shader is compiled to dxbc when testing with the experimental branch of i m getting about milliseconds to complete the forward path vs about when running the dxbc version this was measured on an nvidia gtx running driver you can compile and run the project yourself if you d like or if you d like to look at the compiler output i ve attached the pre processed code compiled dxbc and compiled dxil for the main pixel shader i had a quick look myself through the dxil and i didn t see anything immediately bad i was initially concerned that perhaps the looks in the pcf kernel hadn t been unrolled but it looks like that was handled properly thanks in advance and please let me know if i can provide any additional information such as pix captures or pre compiled binaries | 1 |

50,901 | 26,834,963,306 | IssuesEvent | 2023-02-02 18:44:27 | runtimeverification/haskell-backend | https://api.github.com/repos/runtimeverification/haskell-backend | closed | Compute function call graph and memoize non-recursive functions | investigation performance | Following @virgil-serbanuta's issue https://github.com/kframework/kore/issues/1520, I did some experiment:

To run 10 steps,

without `memo` attribute added to `#computeValidJumpDest`, the runtime is 2m9s.

After adding the `memo` attribute, the runtime goes down to 59s.

There seems a regression on enabling caching for all functions of constructor-like arguments. | True | Compute function call graph and memoize non-recursive functions - Following @virgil-serbanuta's issue https://github.com/kframework/kore/issues/1520, I did some experiment:

To run 10 steps,

without `memo` attribute added to `#computeValidJumpDest`, the runtime is 2m9s.

After adding the `memo` attribute, the runtime goes down to 59s.

There seems a regression on enabling caching for all functions of constructor-like arguments. | perf | compute function call graph and memoize non recursive functions following virgil serbanuta s issue i did some experiment to run steps without memo attribute added to computevalidjumpdest the runtime is after adding the memo attribute the runtime goes down to there seems a regression on enabling caching for all functions of constructor like arguments | 1 |

19,653 | 10,478,416,534 | IssuesEvent | 2019-09-23 23:57:35 | 0xProject/starkcrypto | https://api.github.com/repos/0xProject/starkcrypto | opened | We can use macro generated specializations till then. | performance tracker | *On 2019-08-26 @Recmo wrote in [`996bf59`](https://github.com/0xProject/starkcrypto/commit/996bf5959c56749af107d7cfd2b8b6a06dc6a84a) “Add comments”:*

We can use macro generated specializations till then.

```rust

debug_assert!(divisor.len() >= 2);

debug_assert!(numerator.len() > divisor.len());

debug_assert!(*divisor.last().unwrap() > 0);

debug_assert!(*numerator.last().unwrap() == 0);

// OPT: Once const generics are in, unroll for lengths.

// OPT: We can use macro generated specializations till then.

let n = divisor.len();

let m = numerator.len() - n - 1;

// D1. Normalize.

let shift = divisor[n - 1].leading_zeros();

```

*From [`algebra/u256/src/division.rs:102`](https://github.com/0xProject/starkcrypto/blob/96ec7dde35194aefec933e560fc28a69090e8e9b/algebra/u256/src/division.rs#L102)*

<!--{"commit-hash": "996bf5959c56749af107d7cfd2b8b6a06dc6a84a", "author": "Remco Bloemen", "author-mail": "<remco@0x.org>", "author-time": 1566843820, "author-tz": "-0700", "committer": "Remco Bloemen", "committer-mail": "<remco@0x.org>", "committer-time": 1566843820, "committer-tz": "-0700", "summary": "Add comments", "previous": "5573d5a660682ab30bcc879a6ab601a90f82eca6 u256/src/division.rs", "filename": "algebra/u256/src/division.rs", "line": 101, "line_end": 102, "kind": "OPT", "issue": "We can use macro generated specializations till then.", "head": "We can use macro generated specializations till then.", "context": " debug_assert!(divisor.len() >= 2);\n debug_assert!(numerator.len() > divisor.len());\n debug_assert!(*divisor.last().unwrap() > 0);\n debug_assert!(*numerator.last().unwrap() == 0);\n // OPT: Once const generics are in, unroll for lengths.\n // OPT: We can use macro generated specializations till then.\n let n = divisor.len();\n let m = numerator.len() - n - 1;\n\n // D1. Normalize.\n let shift = divisor[n - 1].leading_zeros();\n", "repo": "0xProject/starkcrypto", "branch-hash": "96ec7dde35194aefec933e560fc28a69090e8e9b"}--> | True | We can use macro generated specializations till then. - *On 2019-08-26 @Recmo wrote in [`996bf59`](https://github.com/0xProject/starkcrypto/commit/996bf5959c56749af107d7cfd2b8b6a06dc6a84a) “Add comments”:*

We can use macro generated specializations till then.

```rust

debug_assert!(divisor.len() >= 2);

debug_assert!(numerator.len() > divisor.len());

debug_assert!(*divisor.last().unwrap() > 0);

debug_assert!(*numerator.last().unwrap() == 0);

// OPT: Once const generics are in, unroll for lengths.

// OPT: We can use macro generated specializations till then.

let n = divisor.len();

let m = numerator.len() - n - 1;

// D1. Normalize.

let shift = divisor[n - 1].leading_zeros();

```

*From [`algebra/u256/src/division.rs:102`](https://github.com/0xProject/starkcrypto/blob/96ec7dde35194aefec933e560fc28a69090e8e9b/algebra/u256/src/division.rs#L102)*

<!--{"commit-hash": "996bf5959c56749af107d7cfd2b8b6a06dc6a84a", "author": "Remco Bloemen", "author-mail": "<remco@0x.org>", "author-time": 1566843820, "author-tz": "-0700", "committer": "Remco Bloemen", "committer-mail": "<remco@0x.org>", "committer-time": 1566843820, "committer-tz": "-0700", "summary": "Add comments", "previous": "5573d5a660682ab30bcc879a6ab601a90f82eca6 u256/src/division.rs", "filename": "algebra/u256/src/division.rs", "line": 101, "line_end": 102, "kind": "OPT", "issue": "We can use macro generated specializations till then.", "head": "We can use macro generated specializations till then.", "context": " debug_assert!(divisor.len() >= 2);\n debug_assert!(numerator.len() > divisor.len());\n debug_assert!(*divisor.last().unwrap() > 0);\n debug_assert!(*numerator.last().unwrap() == 0);\n // OPT: Once const generics are in, unroll for lengths.\n // OPT: We can use macro generated specializations till then.\n let n = divisor.len();\n let m = numerator.len() - n - 1;\n\n // D1. Normalize.\n let shift = divisor[n - 1].leading_zeros();\n", "repo": "0xProject/starkcrypto", "branch-hash": "96ec7dde35194aefec933e560fc28a69090e8e9b"}--> | perf | we can use macro generated specializations till then on recmo wrote in “add comments” we can use macro generated specializations till then rust debug assert divisor len debug assert numerator len divisor len debug assert divisor last unwrap debug assert numerator last unwrap opt once const generics are in unroll for lengths opt we can use macro generated specializations till then let n divisor len let m numerator len n normalize let shift divisor leading zeros from author time author tz committer remco bloemen committer mail committer time committer tz summary add comments previous src division rs filename algebra src division rs line line end kind opt issue we can use macro generated specializations till then head we can use macro generated specializations till then context debug assert divisor len n debug assert numerator len divisor len n debug assert divisor last unwrap n debug assert numerator last unwrap n opt once const generics are in unroll for lengths n opt we can use macro generated specializations till then n let n divisor len n let m numerator len n n n normalize n let shift divisor leading zeros n repo starkcrypto branch hash | 1 |

68,338 | 17,257,904,397 | IssuesEvent | 2021-07-22 00:18:23 | o3de/o3de | https://api.github.com/repos/o3de/o3de | closed | PhysX Gem can't be used as build dependency in engine SDK Part 2 | kind/bug needs-triage sig/build | **Describe the bug**

This is a continuation of this bug: https://github.com/o3de/o3de/issues/1971

Adding the PhysX Gem as a build dependency to an empty projects leads to the following build errors:

```

-- Build files have been written to: D:/dev/open3d/projects/PhysXTestProject/build/windows_vs2019

Microsoft (R) Build Engine version 16.10.2+857e5a733 for .NET Framework

Copyright (C) Microsoft Corporation. All rights reserved.

Checking Build System

PhysXTestProjectSystemComponent.cpp

PhysXTestProject.Static.vcxproj -> D:\dev\open3d\projects\PhysXTestProject\build\windows_vs2019\lib\profile\PhysXTestProject.Static.lib

PhysXTestProjectModule.cpp

LINK : fatal error LNK1104: cannot open file '$<TARGET_PROPERTY:LmbrCentral,INTERFACE_LINK_LIBRARIES>.obj' [D:\dev\open3d\projects\PhysXTestProject\build\windows_vs2019\o3de\PhysXTestProject-84ec4707\Code\PhysXTestProject.vcxproj]

```

**To Reproduce**

The same as last time:

1. Build the engine as an SDK

2. Create a new project from the Project Manager

3. In the projects CMakeLists.txt, add `Gem::PhysX` to BUILD_DEPENDENCIES:

```

ly_add_target(

NAME PhysXTest.Static STATIC

NAMESPACE Gem

FILES_CMAKE

physxtest_files.cmake

${pal_dir}/physxtest_${PAL_PLATFORM_NAME_LOWERCASE}_files.cmake

INCLUDE_DIRECTORIES

PUBLIC

Include

BUILD_DEPENDENCIES

PRIVATE

AZ::AzGameFramework

Gem::Atom_AtomBridge.Static

Gem::PhysX

)

```

4. Build the project in the Project Manager or Visual Studio.

**Expected behavior**

No build errors.

**Additional context**

Commenting out all lines containing `$<TARGET_PROPERTY:LmbrCentral,INTERFACE_LINK_LIBRARIES>` in `o3de-install\Gems\PhysX\Code\CMakeLists.txt` fixes the problem (4 lines in my case). The Gem works after this change. | 1.0 | PhysX Gem can't be used as build dependency in engine SDK Part 2 - **Describe the bug**

This is a continuation of this bug: https://github.com/o3de/o3de/issues/1971

Adding the PhysX Gem as a build dependency to an empty projects leads to the following build errors:

```

-- Build files have been written to: D:/dev/open3d/projects/PhysXTestProject/build/windows_vs2019

Microsoft (R) Build Engine version 16.10.2+857e5a733 for .NET Framework

Copyright (C) Microsoft Corporation. All rights reserved.

Checking Build System

PhysXTestProjectSystemComponent.cpp

PhysXTestProject.Static.vcxproj -> D:\dev\open3d\projects\PhysXTestProject\build\windows_vs2019\lib\profile\PhysXTestProject.Static.lib

PhysXTestProjectModule.cpp

LINK : fatal error LNK1104: cannot open file '$<TARGET_PROPERTY:LmbrCentral,INTERFACE_LINK_LIBRARIES>.obj' [D:\dev\open3d\projects\PhysXTestProject\build\windows_vs2019\o3de\PhysXTestProject-84ec4707\Code\PhysXTestProject.vcxproj]

```

**To Reproduce**

The same as last time:

1. Build the engine as an SDK

2. Create a new project from the Project Manager

3. In the projects CMakeLists.txt, add `Gem::PhysX` to BUILD_DEPENDENCIES:

```

ly_add_target(

NAME PhysXTest.Static STATIC

NAMESPACE Gem

FILES_CMAKE

physxtest_files.cmake

${pal_dir}/physxtest_${PAL_PLATFORM_NAME_LOWERCASE}_files.cmake

INCLUDE_DIRECTORIES

PUBLIC

Include

BUILD_DEPENDENCIES

PRIVATE

AZ::AzGameFramework

Gem::Atom_AtomBridge.Static

Gem::PhysX

)

```

4. Build the project in the Project Manager or Visual Studio.

**Expected behavior**

No build errors.

**Additional context**

Commenting out all lines containing `$<TARGET_PROPERTY:LmbrCentral,INTERFACE_LINK_LIBRARIES>` in `o3de-install\Gems\PhysX\Code\CMakeLists.txt` fixes the problem (4 lines in my case). The Gem works after this change. | non_perf | physx gem can t be used as build dependency in engine sdk part describe the bug this is a continuation of this bug adding the physx gem as a build dependency to an empty projects leads to the following build errors build files have been written to d dev projects physxtestproject build windows microsoft r build engine version for net framework copyright c microsoft corporation all rights reserved checking build system physxtestprojectsystemcomponent cpp physxtestproject static vcxproj d dev projects physxtestproject build windows lib profile physxtestproject static lib physxtestprojectmodule cpp link fatal error cannot open file obj to reproduce the same as last time build the engine as an sdk create a new project from the project manager in the projects cmakelists txt add gem physx to build dependencies ly add target name physxtest static static namespace gem files cmake physxtest files cmake pal dir physxtest pal platform name lowercase files cmake include directories public include build dependencies private az azgameframework gem atom atombridge static gem physx build the project in the project manager or visual studio expected behavior no build errors additional context commenting out all lines containing in install gems physx code cmakelists txt fixes the problem lines in my case the gem works after this change | 0 |

8,116 | 6,414,770,988 | IssuesEvent | 2017-08-08 11:03:08 | fabd/kanji-koohii | https://api.github.com/repos/fabd/kanji-koohii | opened | Refactor the Study search dropdown to VueJS | help-wanted performance refactor vuejs | The old "autocomplete" Javascript needs to be removed and replaced with a (likely) smaller implementation in VueJS.

## Goals

1. pre-requisite to consider improvements to the search box functionality #9

2. may improve response on mobile (we have to include Vue on all pages anyway, so in theory we end up with less Javascript). The dropdown functionality can then be included as part of the Study page Javascript bundle.

**Refactoring** search box to Vue is a client-side / front-end task, which is suitable for contribution.

## Notes

Until this is solved, #9 is on hold. | True | Refactor the Study search dropdown to VueJS - The old "autocomplete" Javascript needs to be removed and replaced with a (likely) smaller implementation in VueJS.

## Goals

1. pre-requisite to consider improvements to the search box functionality #9

2. may improve response on mobile (we have to include Vue on all pages anyway, so in theory we end up with less Javascript). The dropdown functionality can then be included as part of the Study page Javascript bundle.

**Refactoring** search box to Vue is a client-side / front-end task, which is suitable for contribution.

## Notes

Until this is solved, #9 is on hold. | perf | refactor the study search dropdown to vuejs the old autocomplete javascript needs to be removed and replaced with a likely smaller implementation in vuejs goals pre requisite to consider improvements to the search box functionality may improve response on mobile we have to include vue on all pages anyway so in theory we end up with less javascript the dropdown functionality can then be included as part of the study page javascript bundle refactoring search box to vue is a client side front end task which is suitable for contribution notes until this is solved is on hold | 1 |

30,590 | 14,613,470,895 | IssuesEvent | 2020-12-22 08:17:51 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | opened | GetHostAddressesAsync on Arm64 | tenet-performance | ### Description

```

[InProcess]

public class DnsBenchmark

{

[Benchmark]

public Task<IPAddress[]> GetHostAddressesAsync() => Dns.GetHostAddressesAsync("microsoft.com");

}

public class Program

{

public static async Task Main(string[] args)

{

var summary = BenchmarkRunner.Run<DnsBenchmark>();

}

}

```

### Data

#### Arm64

``` ini

BenchmarkDotNet=v0.12.1, OS=debian 10 (container)

Unknown processor

.NET Core SDK=5.0.101

[Host] : .NET Core 5.0.1 (CoreCLR 5.0.120.57516, CoreFX 5.0.120.57516), Arm64 RyuJIT

Job=InProcess Toolchain=InProcessEmitToolchain

```

| Method | Mean | Error | StdDev |

|---------------------- |---------:|--------:|--------:|

| GetHostAddressesAsync | 6.671 ms | 0.5831 ms | 1.692 ms |

#### X64

``` ini

BenchmarkDotNet=v0.12.1, OS=debian 10 (container)

Intel Xeon CPU E5-2620 0 2.00GHz, 2 CPU, 24 logical and 12 physical cores

.NET Core SDK=5.0.101

[Host] : .NET Core 5.0.1 (CoreCLR 5.0.120.57516, CoreFX 5.0.120.57516), X64 RyuJIT

Job=InProcess Toolchain=InProcessEmitToolchain

```

| Method | Mean | Error | StdDev |

|---------------------- |---------:|----------:|----------:|

| GetHostAddressesAsync | 1.817 ms | 0.0234 ms | 0.0207 ms |

| True | GetHostAddressesAsync on Arm64 - ### Description

```

[InProcess]

public class DnsBenchmark

{

[Benchmark]

public Task<IPAddress[]> GetHostAddressesAsync() => Dns.GetHostAddressesAsync("microsoft.com");

}

public class Program

{

public static async Task Main(string[] args)

{

var summary = BenchmarkRunner.Run<DnsBenchmark>();

}

}

```

### Data

#### Arm64

``` ini

BenchmarkDotNet=v0.12.1, OS=debian 10 (container)

Unknown processor

.NET Core SDK=5.0.101

[Host] : .NET Core 5.0.1 (CoreCLR 5.0.120.57516, CoreFX 5.0.120.57516), Arm64 RyuJIT

Job=InProcess Toolchain=InProcessEmitToolchain

```

| Method | Mean | Error | StdDev |

|---------------------- |---------:|--------:|--------:|

| GetHostAddressesAsync | 6.671 ms | 0.5831 ms | 1.692 ms |

#### X64

``` ini

BenchmarkDotNet=v0.12.1, OS=debian 10 (container)

Intel Xeon CPU E5-2620 0 2.00GHz, 2 CPU, 24 logical and 12 physical cores

.NET Core SDK=5.0.101

[Host] : .NET Core 5.0.1 (CoreCLR 5.0.120.57516, CoreFX 5.0.120.57516), X64 RyuJIT

Job=InProcess Toolchain=InProcessEmitToolchain

```

| Method | Mean | Error | StdDev |

|---------------------- |---------:|----------:|----------:|

| GetHostAddressesAsync | 1.817 ms | 0.0234 ms | 0.0207 ms |

| perf | gethostaddressesasync on description public class dnsbenchmark public task gethostaddressesasync dns gethostaddressesasync microsoft com public class program public static async task main string args var summary benchmarkrunner run data ini benchmarkdotnet os debian container unknown processor net core sdk net core coreclr corefx ryujit job inprocess toolchain inprocessemittoolchain method mean error stddev gethostaddressesasync ms ms ms ini benchmarkdotnet os debian container intel xeon cpu cpu logical and physical cores net core sdk net core coreclr corefx ryujit job inprocess toolchain inprocessemittoolchain method mean error stddev gethostaddressesasync ms ms ms | 1 |

328,033 | 9,985,128,837 | IssuesEvent | 2019-07-10 15:51:45 | geosolutions-it/MapStore2-C027 | https://api.github.com/repos/geosolutions-it/MapStore2-C027 | closed | MapStore Update v2019.01.xx | Priority: High Project: C027 backlog | - Aggiornamento all'ultima revision di MS2 e relativi tests

- Abilitazione dashboard

- Abilitazione widgets

- Abilitazione timeline

- Test e documentazione | 1.0 | MapStore Update v2019.01.xx - - Aggiornamento all'ultima revision di MS2 e relativi tests

- Abilitazione dashboard

- Abilitazione widgets

- Abilitazione timeline

- Test e documentazione | non_perf | mapstore update xx aggiornamento all ultima revision di e relativi tests abilitazione dashboard abilitazione widgets abilitazione timeline test e documentazione | 0 |