Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

16,018 | 20,188,227,598 | IssuesEvent | 2022-02-11 01:19:45 | savitamittalmsft/WAS-SEC-TEST | https://api.github.com/repos/savitamittalmsft/WAS-SEC-TEST | opened | Leverage a cloud application security broker (CASB) | WARP-Import WAF FEB 2021 Security Performance and Scalability Capacity Management Processes Networking & Connectivity Data flow | <a href="https://docs.microsoft.com/cloud-app-security/what-is-cloud-app-security">Leverage a cloud application security broker (CASB)</a>

<p><b>Why Consider This?</b></p>

CASBs can provide rich visibility, control over data travel, and sophisticated analytics to identify and combat cyberthreats across Microsof... | 1.0 | Leverage a cloud application security broker (CASB) - <a href="https://docs.microsoft.com/cloud-app-security/what-is-cloud-app-security">Leverage a cloud application security broker (CASB)</a>

<p><b>Why Consider This?</b></p>

CASBs can provide rich visibility, control over data travel, and sophisticated analyti... | process | leverage a cloud application security broker casb why consider this casbs can provide rich visibility control over data travel and sophisticated analytics to identify and combat cyberthreats across microsoft and third party cloud services context moving to the cloud increases flexibility... | 1 |

75,068 | 15,391,330,370 | IssuesEvent | 2021-03-03 14:27:01 | Madhusuthanan-B/FOO | https://api.github.com/repos/Madhusuthanan-B/FOO | opened | CVE-2020-11022 (Medium) detected in jquery-1.7.1.min.js | security vulnerability | ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="ht... | True | CVE-2020-11022 (Medium) detected in jquery-1.7.1.min.js - ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript librar... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file foo node modules sockjs examples hapi html index html path to vulnerable libr... | 0 |

16,480 | 21,427,989,317 | IssuesEvent | 2022-04-23 00:47:57 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | opened | Cleanup job fails with multi-arch manifests | bug process | ### Description

https://github.com/hashgraph/hedera-mirror-node/runs/6136689862

### Steps to reproduce

Run cleanup workflow

### Additional context

_No response_

### Hedera network

other

### Version

main

### Operating system

_No response_ | 1.0 | Cleanup job fails with multi-arch manifests - ### Description

https://github.com/hashgraph/hedera-mirror-node/runs/6136689862

### Steps to reproduce

Run cleanup workflow

### Additional context

_No response_

### Hedera network

other

### Version

main

### Operating system

_No response_ | process | cleanup job fails with multi arch manifests description steps to reproduce run cleanup workflow additional context no response hedera network other version main operating system no response | 1 |

5,518 | 8,380,770,929 | IssuesEvent | 2018-10-07 18:01:32 | bitshares/bitshares-community-ui | https://api.github.com/repos/bitshares/bitshares-community-ui | closed | Basic Login to Bitshares | Login feature process | Basic Login to Bitshares

+ prevent unauthorized access

+ validate account name

+ validate fields: both required & show errors

| 1.0 | Basic Login to Bitshares - Basic Login to Bitshares

+ prevent unauthorized access

+ validate account name

+ validate fields: both required & show errors

| process | basic login to bitshares basic login to bitshares prevent unauthorized access validate account name validate fields both required show errors | 1 |

547,656 | 16,044,492,591 | IssuesEvent | 2021-04-22 12:07:43 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | Armhf Runtime problem | kind/bug lang/C# priority/P2 | <!--

PLEASE DO NOT POST A QUESTION HERE.

This form is for bug reports and feature requests ONLY!

For general questions and troubleshooting, please ask/look for answers at StackOverflow, with "grpc" tag: https://stackoverflow.com/questions/tagged/grpc

For questions that specifically need to be answered by gRPC t... | 1.0 | Armhf Runtime problem - <!--

PLEASE DO NOT POST A QUESTION HERE.

This form is for bug reports and feature requests ONLY!

For general questions and troubleshooting, please ask/look for answers at StackOverflow, with "grpc" tag: https://stackoverflow.com/questions/tagged/grpc

For questions that specifically need ... | non_process | armhf runtime problem please do not post a question here this form is for bug reports and feature requests only for general questions and troubleshooting please ask look for answers at stackoverflow with grpc tag for questions that specifically need to be answered by grpc team members please as... | 0 |

340,806 | 10,278,787,926 | IssuesEvent | 2019-08-25 17:16:07 | krzychu124/Cities-Skylines-Traffic-Manager-President-Edition | https://api.github.com/repos/krzychu124/Cities-Skylines-Traffic-Manager-President-Edition | opened | [EPIC] WIP: Collating issues relating to pathfinding tweaks | EPIC JUNCTION RESTRICTIONS LANE ROUTING PARKING PATHFINDER PRIORITY SIGNS investigating | Will tidy this up later but just jotting down a few initial links (there are probably more that need adding, will search later)

https://github.com/krzychu124/Cities-Skylines-Traffic-Manager-President-Edition/issues/19

https://github.com/krzychu124/Cities-Skylines-Traffic-Manager-President-Edition/issues/189

ht... | 1.0 | [EPIC] WIP: Collating issues relating to pathfinding tweaks - Will tidy this up later but just jotting down a few initial links (there are probably more that need adding, will search later)

https://github.com/krzychu124/Cities-Skylines-Traffic-Manager-President-Edition/issues/19

https://github.com/krzychu124/Citi... | non_process | wip collating issues relating to pathfinding tweaks will tidy this up later but just jotting down a few initial links there are probably more that need adding will search later | 0 |

20,787 | 27,525,489,883 | IssuesEvent | 2023-03-06 17:44:20 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [Epic] Time zone metadata improvements for 46 | Querying/Processor .Backend .Epic | A handful of improvements to the way we we deal with time zones in the Query Processor.

### Shovel-Ready

- [ ] #14056

- [ ] #27177

- [x] ~#6439~

### Details Being Hashed Out

- [ ] #4284

- [ ] Support per-Query overrides

### Related

- [ ] #5927

### Internal Documents

[Brain dump](https://www.no... | 1.0 | [Epic] Time zone metadata improvements for 46 - A handful of improvements to the way we we deal with time zones in the Query Processor.

### Shovel-Ready

- [ ] #14056

- [ ] #27177

- [x] ~#6439~

### Details Being Hashed Out

- [ ] #4284

- [ ] Support per-Query overrides

### Related

- [ ] #5927

###... | process | time zone metadata improvements for a handful of improvements to the way we we deal with time zones in the query processor shovel ready details being hashed out support per query overrides related internal documents ... | 1 |

451,574 | 13,038,743,162 | IssuesEvent | 2020-07-28 15:39:12 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Dashboards with questions, that user doesn't haven't permissions to, breaks completely | Administration/Permissions Priority:P1 Reporting/Dashboards Type:Bug | **Describe the bug**

If a dashboard contains a single question, which a user does not have permissions to, then the entire dashboard breaks for that user.

**To Reproduce**

1. Create a collection "C1" under root

2. Create dashboard "D1" in "C1"

3. Simple question > Sample Dataset > Orders - save as "Q1" in "C1" a... | 1.0 | Dashboards with questions, that user doesn't haven't permissions to, breaks completely - **Describe the bug**

If a dashboard contains a single question, which a user does not have permissions to, then the entire dashboard breaks for that user.

**To Reproduce**

1. Create a collection "C1" under root

2. Create dash... | non_process | dashboards with questions that user doesn t haven t permissions to breaks completely describe the bug if a dashboard contains a single question which a user does not have permissions to then the entire dashboard breaks for that user to reproduce create a collection under root create dashb... | 0 |

765,969 | 26,867,032,122 | IssuesEvent | 2023-02-04 01:59:45 | ArctosDB/arctos | https://api.github.com/repos/ArctosDB/arctos | closed | Entity Components Search Not Working | Priority-High (Needed for work) Bug | **Describe the bug**

In an Entity record, the See Components in Results is returning inappropriate records.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to for example https://arctos.database.museum/guid/Arctos:Entity:235

2. Note that this is an Elephant at the Albuquerque Biopark, with multiple bloo... | 1.0 | Entity Components Search Not Working - **Describe the bug**

In an Entity record, the See Components in Results is returning inappropriate records.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to for example https://arctos.database.museum/guid/Arctos:Entity:235

2. Note that this is an Elephant at the ... | non_process | entity components search not working describe the bug in an entity record the see components in results is returning inappropriate records to reproduce steps to reproduce the behavior go to for example note that this is an elephant at the albuquerque biopark with multiple blood and serum s... | 0 |

75,613 | 9,878,826,021 | IssuesEvent | 2019-06-24 08:35:58 | ktbs/ktbs | https://api.github.com/repos/ktbs/ktbs | closed | ktbs:hasSubject is required | documentation | In the [kTBS documentation](http://kernel-for-trace-based-systems.readthedocs.org/en/v0.4/tutorials/rest-turtle.html#add-obsels-to-trace) the first POST trace example (on a kTBS 0.4 installed via PIP) lead to:

```

403 Forbidden

403 Forbidden - Invalid data

check_new_graph: ko

* Property <http://liris.cnrs.fr/silex/20... | 1.0 | ktbs:hasSubject is required - In the [kTBS documentation](http://kernel-for-trace-based-systems.readthedocs.org/en/v0.4/tutorials/rest-turtle.html#add-obsels-to-trace) the first POST trace example (on a kTBS 0.4 installed via PIP) lead to:

```

403 Forbidden

403 Forbidden - Invalid data

check_new_graph: ko

* Property ... | non_process | ktbs hassubject is required in the the first post trace example on a ktbs installed via pip lead to forbidden forbidden invalid data check new graph ko property of should have at least objects it only has it s like hasdefaultsubject me doesn t behave as we would expe... | 0 |

3,598 | 2,683,745,976 | IssuesEvent | 2015-03-28 08:34:03 | Jasig/cas | https://api.github.com/repos/Jasig/cas | closed | [CAS-721] Functional Testing | Compatibility Testing Future Major Task | Create functional tests to ensure CAS features. These tests can be Selenium tests, etc.

Reported by: Scott Battaglia, id: battags

Created: Fri, 17 Oct 2008 11:56:54 -0700

Updated: Fri, 17 Oct 2008 11:56:54 -0700

JIRA: https://issues.jasig.org/browse/CAS-721 | 1.0 | [CAS-721] Functional Testing - Create functional tests to ensure CAS features. These tests can be Selenium tests, etc.

Reported by: Scott Battaglia, id: battags

Created: Fri, 17 Oct 2008 11:56:54 -0700

Updated: Fri, 17 Oct 2008 11:56:54 -0700

JIRA: https://issues.jasig.org/browse/CAS-721 | non_process | functional testing create functional tests to ensure cas features these tests can be selenium tests etc reported by scott battaglia id battags created fri oct updated fri oct jira | 0 |

212,677 | 16,492,866,491 | IssuesEvent | 2021-05-25 07:04:42 | bounswe/2021SpringGroup5 | https://api.github.com/repos/bounswe/2021SpringGroup5 | closed | Arranging RAM section for Milestone 1 | documentation | I will prepare a draft for RAM (Responsibility Assignment Matrix) and list the responsibilities and group them. Then, the group members will fill their columns with the corresponding letters. If there exists a responsibility I missed, group members may add it. When finished, I will organize it and prepare for the Miles... | 1.0 | Arranging RAM section for Milestone 1 - I will prepare a draft for RAM (Responsibility Assignment Matrix) and list the responsibilities and group them. Then, the group members will fill their columns with the corresponding letters. If there exists a responsibility I missed, group members may add it. When finished, I wi... | non_process | arranging ram section for milestone i will prepare a draft for ram responsibility assignment matrix and list the responsibilities and group them then the group members will fill their columns with the corresponding letters if there exists a responsibility i missed group members may add it when finished i wi... | 0 |

325,601 | 24,055,017,965 | IssuesEvent | 2022-09-16 15:59:40 | lightdash/lightdash | https://api.github.com/repos/lightdash/lightdash | closed | Upgrade dbt to 1.2.0 | 📖 documentation ⚙️ backend | Unable to run lightdash on top of jaffle_shop_metrics:

https://github.com/dbt-labs/jaffle_shop_metrics/

I tried to downgrade the project's version but some of the features used there are unsupported by previous DBT versions.

I think lightdash on top of dbt-metrics can be a huge win for lightdash. | 1.0 | Upgrade dbt to 1.2.0 - Unable to run lightdash on top of jaffle_shop_metrics:

https://github.com/dbt-labs/jaffle_shop_metrics/

I tried to downgrade the project's version but some of the features used there are unsupported by previous DBT versions.

I think lightdash on top of dbt-metrics can be a huge win for lig... | non_process | upgrade dbt to unable to run lightdash on top of jaffle shop metrics i tried to downgrade the project s version but some of the features used there are unsupported by previous dbt versions i think lightdash on top of dbt metrics can be a huge win for lightdash | 0 |

333,103 | 29,508,132,841 | IssuesEvent | 2023-06-03 15:19:17 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Fix jax_numpy_math.test_jax_numpy_around | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5163696541/jobs/9302175979" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5163696541/jobs/9302175979" rel="noopener ... | 1.0 | Fix jax_numpy_math.test_jax_numpy_around - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5163696541/jobs/9302175979" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs... | non_process | fix jax numpy math test jax numpy around tensorflow img src torch img src numpy img src jax img src paddle img src | 0 |

22,360 | 31,075,054,684 | IssuesEvent | 2023-08-12 11:31:58 | h4sh5/npm-auto-scanner | https://api.github.com/repos/h4sh5/npm-auto-scanner | opened | meadow-connection-mssql 1.0.7 has 60 guarddog issues | npm-install-script shady-links npm-silent-process-execution | ```{"npm-install-script":[{"code":" \"prepare\": \"npm run compile\"","location":"package/retold-harness/node_modules/@isaacs/cliui/package.json:29","message":"The package.json has a script automatically running when the package is installed"},{"code":" \"prepare\": \"node ./scripts/transpile-to-esm.js\",","locat... | 1.0 | meadow-connection-mssql 1.0.7 has 60 guarddog issues - ```{"npm-install-script":[{"code":" \"prepare\": \"npm run compile\"","location":"package/retold-harness/node_modules/@isaacs/cliui/package.json:29","message":"The package.json has a script automatically running when the package is installed"},{"code":" \"pre... | process | meadow connection mssql has guarddog issues npm install script license mit author jake luer alogicalparadox com npm silent process execution n t t tdetached true n t t tstdio ignore n t t unref location package retold harness node modules update notifier up... | 1 |

10,673 | 13,460,674,867 | IssuesEvent | 2020-09-09 13:54:38 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Add physical machines to environment? | Pri1 devops-cicd-process/tech devops/prod doc-enhancement | Is it possible to define environment as set of physical machines

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 77d95db6-9983-7346-d0eb-4b7443e4e252

* Version Independent ID: 0a22cccc-318d-592f-d1ab-09ec01d88087

* Content: [Environment - Az... | 1.0 | Add physical machines to environment? - Is it possible to define environment as set of physical machines

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 77d95db6-9983-7346-d0eb-4b7443e4e252

* Version Independent ID: 0a22cccc-318d-592f-d1ab-0... | process | add physical machines to environment is it possible to define environment as set of physical machines document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source pro... | 1 |

2,220 | 5,070,761,910 | IssuesEvent | 2016-12-26 08:20:48 | kerubistan/kerub | https://api.github.com/repos/kerubistan/kerub | opened | mirrored and striped configurations for gvinum | component:data processing enhancement priority: high | work left from #167

* mirrored gvinum configuration

* striped gvinum configuration | 1.0 | mirrored and striped configurations for gvinum - work left from #167

* mirrored gvinum configuration

* striped gvinum configuration | process | mirrored and striped configurations for gvinum work left from mirrored gvinum configuration striped gvinum configuration | 1 |

1,807 | 4,541,933,728 | IssuesEvent | 2016-09-09 19:28:18 | neuropoly/spinalcordtoolbox | https://api.github.com/repos/neuropoly/spinalcordtoolbox | closed | ValueError: min() arg is an empty sequence | bug priority: high sct_process_segmentation | batch_processing:

~~~

sct_process_segmentation -i t2_seg.nii.gz -p csa -vert 3:4

--

Spinal Cord Toolbox (version dev-26e7a1e783bed24b6cd5cee29244a28bd60e7508)

/Users/julien/code/spinalcordtoolbox/scripts/sct_process_segmentation.py -i t2_seg.nii.gz -p csa -vert 3:4

Check parameters:

.. segmentation file: ... | 1.0 | ValueError: min() arg is an empty sequence - batch_processing:

~~~

sct_process_segmentation -i t2_seg.nii.gz -p csa -vert 3:4

--

Spinal Cord Toolbox (version dev-26e7a1e783bed24b6cd5cee29244a28bd60e7508)

/Users/julien/code/spinalcordtoolbox/scripts/sct_process_segmentation.py -i t2_seg.nii.gz -p csa -vert 3:4

C... | process | valueerror min arg is an empty sequence batch processing sct process segmentation i seg nii gz p csa vert spinal cord toolbox version dev users julien code spinalcordtoolbox scripts sct process segmentation py i seg nii gz p csa vert check parameters segmentation file ... | 1 |

81,370 | 23,449,111,774 | IssuesEvent | 2022-08-15 23:26:30 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | [wasm] Perftracing build broken due to trimming errors | arch-wasm blocking-release blocking-clean-ci area-Build-mono in-pr | [Build](https://dev.azure.com/dnceng/public/_build/results?buildId=1943226&view=logs&jobId=7f41eb05-1c5f-5922-38da-d308f495fb31&j=92c81310-cb26-5923-f96e-b7c73963fa3d&t=11318e79-3fe2-58cb-1121-19fe8295c832):

```

/__w/1/s/artifacts/bin/microsoft.netcore.app.runtime.browser-wasm/Release/runtimes/browser-wasm/native/S... | 1.0 | [wasm] Perftracing build broken due to trimming errors - [Build](https://dev.azure.com/dnceng/public/_build/results?buildId=1943226&view=logs&jobId=7f41eb05-1c5f-5922-38da-d308f495fb31&j=92c81310-cb26-5923-f96e-b7c73963fa3d&t=11318e79-3fe2-58cb-1121-19fe8295c832):

```

/__w/1/s/artifacts/bin/microsoft.netcore.app.ru... | non_process | perftracing build broken due to trimming errors w s artifacts bin microsoft netcore app runtime browser wasm release runtimes browser wasm native system private corelib dll error assembly system private corelib produced trim warnings for more information see artifacts bin microsoft ... | 0 |

92,576 | 3,872,560,185 | IssuesEvent | 2016-04-11 14:17:26 | cs2103jan2016-w14-3j/main | https://api.github.com/repos/cs2103jan2016-w14-3j/main | closed | A user can set reminder intervals | not done priority.medium type.story | so that he will not forget about the task if he choose to ignore it the first time. | 1.0 | A user can set reminder intervals - so that he will not forget about the task if he choose to ignore it the first time. | non_process | a user can set reminder intervals so that he will not forget about the task if he choose to ignore it the first time | 0 |

184,187 | 21,784,826,000 | IssuesEvent | 2022-05-14 01:27:47 | bsbtd/Teste | https://api.github.com/repos/bsbtd/Teste | opened | CVE-2022-1650 (High) detected in eventsource-1.0.7.tgz | security vulnerability | ## CVE-2022-1650 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>eventsource-1.0.7.tgz</b></p></summary>

<p>W3C compliant EventSource client for Node.js and browser (polyfill)</p>

<p>L... | True | CVE-2022-1650 (High) detected in eventsource-1.0.7.tgz - ## CVE-2022-1650 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>eventsource-1.0.7.tgz</b></p></summary>

<p>W3C compliant Event... | non_process | cve high detected in eventsource tgz cve high severity vulnerability vulnerable library eventsource tgz compliant eventsource client for node js and browser polyfill library home page a href path to dependency file aws mobile appsync chat starter angular package js... | 0 |

104,084 | 8,960,943,135 | IssuesEvent | 2019-01-28 08:08:04 | fedora-infra/bodhi | https://api.github.com/repos/fedora-infra/bodhi | closed | bodhi-ci clean should clean up the integration test images | RFE Tests | The ```bodhi-ci``` clean should clean up the integration test images as well. | 1.0 | bodhi-ci clean should clean up the integration test images - The ```bodhi-ci``` clean should clean up the integration test images as well. | non_process | bodhi ci clean should clean up the integration test images the bodhi ci clean should clean up the integration test images as well | 0 |

855 | 3,316,511,329 | IssuesEvent | 2015-11-06 17:12:30 | technofreaky/woocomerce-quick-donation | https://api.github.com/repos/technofreaky/woocomerce-quick-donation | closed | Automatic confirmation email not sent when donation made through payment gateway | Feature Request Issue Processing | When I make a new donation using <b>cheque/DD method</b>, the status of the donation is <b>"on-hold"</b>, I am getting the automatic confirmation email in this scenario.

But, when I make a donation using <b>payment gateway</b>, the status of the donation is <b>"processing"</b>. I am not getting the automatic confirm... | 1.0 | Automatic confirmation email not sent when donation made through payment gateway - When I make a new donation using <b>cheque/DD method</b>, the status of the donation is <b>"on-hold"</b>, I am getting the automatic confirmation email in this scenario.

But, when I make a donation using <b>payment gateway</b>, the st... | process | automatic confirmation email not sent when donation made through payment gateway when i make a new donation using cheque dd method the status of the donation is on hold i am getting the automatic confirmation email in this scenario but when i make a donation using payment gateway the status of the don... | 1 |

7,440 | 10,554,571,886 | IssuesEvent | 2019-10-03 19:47:00 | pelias/pelias | https://api.github.com/repos/pelias/pelias | closed | pelias testing module | processed question | it might be nice to have all our repositories linking to a testing module we control, so `npm test` would run the same thing in every module.

having an npm module called something like `pelias-testing` would allow us to add new functions to that repo and update our testing tools in a single place, we could even use th... | 1.0 | pelias testing module - it might be nice to have all our repositories linking to a testing module we control, so `npm test` would run the same thing in every module.

having an npm module called something like `pelias-testing` would allow us to add new functions to that repo and update our testing tools in a single pla... | process | pelias testing module it might be nice to have all our repositories linking to a testing module we control so npm test would run the same thing in every module having an npm module called something like pelias testing would allow us to add new functions to that repo and update our testing tools in a single pla... | 1 |

10,926 | 13,726,724,979 | IssuesEvent | 2020-10-04 01:44:45 | fluent/fluent-bit | https://api.github.com/repos/fluent/fluent-bit | closed | Fluent Bit v1.5.6 stops outputting logs to stackdriver | work-in-process | ## Bug Report

**Describe the bug**

Fluent Bit v1.5.6 stops outputting logs to stackdriver. The `kubectl top pods` command shows CPU stuck at 1m. The fluentbit_output_proc_records_total metric would show 0 log sent to stackdriver. But the pod would show that it is running. Once this happens, the fluent-bit pod s... | 1.0 | Fluent Bit v1.5.6 stops outputting logs to stackdriver - ## Bug Report

**Describe the bug**

Fluent Bit v1.5.6 stops outputting logs to stackdriver. The `kubectl top pods` command shows CPU stuck at 1m. The fluentbit_output_proc_records_total metric would show 0 log sent to stackdriver. But the pod would show tha... | process | fluent bit stops outputting logs to stackdriver bug report describe the bug fluent bit stops outputting logs to stackdriver the kubectl top pods command shows cpu stuck at the fluentbit output proc records total metric would show log sent to stackdriver but the pod would show that i... | 1 |

2,499 | 5,272,152,537 | IssuesEvent | 2017-02-06 11:58:00 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | I spawn through the creation of sub-thread How non-stdio: 'inherit' set tty to true | child_process question tty | [I spawn through the creation of sub-thread How non-stdio: 'inherit' set tty to true](https://github.com/nodejs/node/issues/10573)

, it should be reflected in the mozilla-recommendations.json file when signed, under “states” (values: “sponsored” for Verified tier 1, “verified” for Verified tier 2, “line” for Line).

Probable Autograph side changes needed too. | 1.0 | Add details of promoted status to xpi when signing - > When an add-on is added to either of the Verified or Line lists (not Spotlight), it should be reflected in the mozilla-recommendations.json file when signed, under “states” (values: “sponsored” for Verified tier 1, “verified” for Verified tier 2, “line” for Line).

... | non_process | add details of promoted status to xpi when signing when an add on is added to either of the verified or line lists not spotlight it should be reflected in the mozilla recommendations json file when signed under “states” values “sponsored” for verified tier “verified” for verified tier “line” for line ... | 0 |

17,092 | 22,600,776,272 | IssuesEvent | 2022-06-29 08:56:16 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | opened | [processor/resourcedetection] 'docker' detector does not work in official contrib images | bug comp: resourcedetectionprocessor | **Describe the bug**

The [docker detector](https://github.com/open-telemetry/opentelemetry-collector-contrib/tree/main/processor/resourcedetectionprocessor#docker-metadata) from the resource detection processor does not work on official opentelemetry-collector-contrib images, or any other image that runs the Collect... | 1.0 | [processor/resourcedetection] 'docker' detector does not work in official contrib images - **Describe the bug**

The [docker detector](https://github.com/open-telemetry/opentelemetry-collector-contrib/tree/main/processor/resourcedetectionprocessor#docker-metadata) from the resource detection processor does not work o... | process | docker detector does not work in official contrib images describe the bug the from the resource detection processor does not work on official opentelemetry collector contrib images or any other image that runs the collector under a user other than root steps to reproduce run the resource d... | 1 |

785,660 | 27,621,710,714 | IssuesEvent | 2023-03-10 01:05:54 | responsible-ai-collaborative/aiid | https://api.github.com/repos/responsible-ai-collaborative/aiid | closed | Production Minified React Error | Type:Bug Priority:High | Production has a series of what are likely hydration errors. The app behaves fine, but this has been popping up periodically for a while and we need to take care of it.

`react-dom.production.min.js:131 Uncaught Error: Minified React error #418; visit https://reactjs.org/docs/error-decoder.html?invariant=418 for the ... | 1.0 | Production Minified React Error - Production has a series of what are likely hydration errors. The app behaves fine, but this has been popping up periodically for a while and we need to take care of it.

`react-dom.production.min.js:131 Uncaught Error: Minified React error #418; visit https://reactjs.org/docs/error-d... | non_process | production minified react error production has a series of what are likely hydration errors the app behaves fine but this has been popping up periodically for a while and we need to take care of it react dom production min js uncaught error minified react error visit for the full message or use the no... | 0 |

18,039 | 24,049,836,250 | IssuesEvent | 2022-09-16 11:46:55 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | Rename OneHotEncoder option sparse to sparse_output | module:preprocessing | ### Task

Introduce new parameter `sparse_output` in `OneHotEncoder` and deprecate the then old `sparse` parameter.

### Background

Several estimators have an option to return sparse output.

- `RandomTreesEmbedding(sparse_output=True)`

- `LabelBinarizer(sparse_output=True)`

- `MultiLabelBinarizer(sparse_output=Tr... | 1.0 | Rename OneHotEncoder option sparse to sparse_output - ### Task

Introduce new parameter `sparse_output` in `OneHotEncoder` and deprecate the then old `sparse` parameter.

### Background

Several estimators have an option to return sparse output.

- `RandomTreesEmbedding(sparse_output=True)`

- `LabelBinarizer(sparse_... | process | rename onehotencoder option sparse to sparse output task introduce new parameter sparse output in onehotencoder and deprecate the then old sparse parameter background several estimators have an option to return sparse output randomtreesembedding sparse output true labelbinarizer sparse ... | 1 |

811,729 | 30,297,901,835 | IssuesEvent | 2023-07-10 01:44:54 | ppy/osu | https://api.github.com/repos/ppy/osu | closed | Add length limit to chat text box | type:behavioural area:overlay-chat priority:2 | Currently there is no length limit applied client-side, and any message that exceed the server-side limit will get thrown away. Ideally such errors would be elegantly handled and the message would be returned back to the user, but I've attempted at least adding a client-side length limit of 100 characters (which is the... | 1.0 | Add length limit to chat text box - Currently there is no length limit applied client-side, and any message that exceed the server-side limit will get thrown away. Ideally such errors would be elegantly handled and the message would be returned back to the user, but I've attempted at least adding a client-side length l... | non_process | add length limit to chat text box currently there is no length limit applied client side and any message that exceed the server side limit will get thrown away ideally such errors would be elegantly handled and the message would be returned back to the user but i ve attempted at least adding a client side length l... | 0 |

13,388 | 2,755,261,347 | IssuesEvent | 2015-04-26 13:51:39 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Linq API help error: XML comment contains invalid XML | defect in progress | For example, by mouse over the `Intersect()` method below you get the following help message "XML comment contains invalid XML: Enat tag 'param' does not match the start tag 'T'"

```

using System;

using System.Collections.Generic;

using System.Linq;

using Bridge;

namespace ClientTestLibrary.Linq

{

class T... | 1.0 | Linq API help error: XML comment contains invalid XML - For example, by mouse over the `Intersect()` method below you get the following help message "XML comment contains invalid XML: Enat tag 'param' does not match the start tag 'T'"

```

using System;

using System.Collections.Generic;

using System.Linq;

using Bri... | non_process | linq api help error xml comment contains invalid xml for example by mouse over the intersect method below you get the following help message xml comment contains invalid xml enat tag param does not match the start tag t using system using system collections generic using system linq using bri... | 0 |

652,587 | 21,556,491,307 | IssuesEvent | 2022-04-30 14:05:58 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | closed | Practice App: Initialization Steps of the Project | priority-high status-needreview practice-app | ### Issue Description

We will implement a practice app which will be a practice for the main project we will develop. This is the first issue of our practice app project and we will define the initialization steps that should be performed under this issue before starting to code implementation of the project. Steps ... | 1.0 | Practice App: Initialization Steps of the Project - ### Issue Description

We will implement a practice app which will be a practice for the main project we will develop. This is the first issue of our practice app project and we will define the initialization steps that should be performed under this issue before st... | non_process | practice app initialization steps of the project issue description we will implement a practice app which will be a practice for the main project we will develop this is the first issue of our practice app project and we will define the initialization steps that should be performed under this issue before st... | 0 |

150,747 | 5,786,665,992 | IssuesEvent | 2017-05-01 12:15:54 | esikachev/abc-server | https://api.github.com/repos/esikachev/abc-server | opened | Encrypt of password for github account | feature request priority/P2 | Now we have a password in a config file in a not encrypted state. This is not secure. We need to implement the method for safer storing of passwords. | 1.0 | Encrypt of password for github account - Now we have a password in a config file in a not encrypted state. This is not secure. We need to implement the method for safer storing of passwords. | non_process | encrypt of password for github account now we have a password in a config file in a not encrypted state this is not secure we need to implement the method for safer storing of passwords | 0 |

569,607 | 17,015,495,532 | IssuesEvent | 2021-07-02 11:24:28 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | opened | Show users of a tag | Component: taginfo Priority: major Type: enhancement | **[Submitted to the original trac issue database at 3.58pm, Tuesday, 19th April 2011]**

It would be helpful to show users of a given key or key/value combination, sorted by percentage. This will help to indicate if a certain tag is only being used by a few individuals, or by a large group of people. I'm assuming that ... | 1.0 | Show users of a tag - **[Submitted to the original trac issue database at 3.58pm, Tuesday, 19th April 2011]**

It would be helpful to show users of a given key or key/value combination, sorted by percentage. This will help to indicate if a certain tag is only being used by a few individuals, or by a large group of peop... | non_process | show users of a tag it would be helpful to show users of a given key or key value combination sorted by percentage this will help to indicate if a certain tag is only being used by a few individuals or by a large group of people i m assuming that this would use the last user to edit an object rather than ... | 0 |

145,089 | 11,648,327,666 | IssuesEvent | 2020-03-01 20:05:54 | Sleep-tracker-1/Back_End | https://api.github.com/repos/Sleep-tracker-1/Back_End | closed | Staging and CI | deployment testing | Setup a staging server runs tests and merges with master if all tests pass.

- [x] Staging branch

- [x] Staging DB

- [x] Staging environment config

- [ ] Auto merge into master on test pass | 1.0 | Staging and CI - Setup a staging server runs tests and merges with master if all tests pass.

- [x] Staging branch

- [x] Staging DB

- [x] Staging environment config

- [ ] Auto merge into master on test pass | non_process | staging and ci setup a staging server runs tests and merges with master if all tests pass staging branch staging db staging environment config auto merge into master on test pass | 0 |

155,889 | 12,281,106,513 | IssuesEvent | 2020-05-08 15:15:54 | d-r-q/qbit | https://api.github.com/repos/d-r-q/qbit | opened | Add child to parent tree test | api choose enhancement refactoring research tests | Requires API change to allow user to persist several entities in single call

Add test for factoring of tree of

```kotlin

data class ChildToParent(val id: Long?, parent: ChildToParent?)

``` | 1.0 | Add child to parent tree test - Requires API change to allow user to persist several entities in single call

Add test for factoring of tree of

```kotlin

data class ChildToParent(val id: Long?, parent: ChildToParent?)

``` | non_process | add child to parent tree test requires api change to allow user to persist several entities in single call add test for factoring of tree of kotlin data class childtoparent val id long parent childtoparent | 0 |

229,299 | 25,319,001,549 | IssuesEvent | 2022-11-18 01:03:47 | tlkh/transformers-benchmarking | https://api.github.com/repos/tlkh/transformers-benchmarking | opened | CVE-2022-45198 (High) detected in Pillow-6.2.2-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2022-45198 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-6.2.2-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>Python Imaging Library (Fork)</p>

<p>Library home ... | True | CVE-2022-45198 (High) detected in Pillow-6.2.2-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2022-45198 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-6.2.2-cp27-cp27mu-manylinux1_... | non_process | cve high detected in pillow whl cve high severity vulnerability vulnerable library pillow whl python imaging library fork library home page a href path to dependency file requirements txt path to vulnerable library requirements txt depende... | 0 |

13,591 | 16,163,269,078 | IssuesEvent | 2021-05-01 02:57:51 | tdwg/chrono | https://api.github.com/repos/tdwg/chrono | closed | New Term - materialDatedRelationship | Process - prepare for Executive review Term - add | ## New term

* Submitter: Laura Brenskelle

* Justification (why is this term necessary?): See https://github.com/tdwg/chrono/issues/20.

* Proponents (at least two independent parties who need this term): Public review.

Proposed attributes of the new term:

* Organized in Class: ChronometricAge

* Term name (in... | 1.0 | New Term - materialDatedRelationship - ## New term

* Submitter: Laura Brenskelle

* Justification (why is this term necessary?): See https://github.com/tdwg/chrono/issues/20.

* Proponents (at least two independent parties who need this term): Public review.

Proposed attributes of the new term:

* Organized in ... | process | new term materialdatedrelationship new term submitter laura brenskelle justification why is this term necessary see proponents at least two independent parties who need this term public review proposed attributes of the new term organized in class chronometricage term name in ... | 1 |

13,097 | 15,495,138,350 | IssuesEvent | 2021-03-11 00:20:29 | metabase/metabase | https://api.github.com/repos/metabase/metabase | opened | Should be able to run a card with another card as its source query with just perms for the former | Administration/Permissions Priority:P1 Querying/Processor Type:Bug | Suppose we have two Cards, Card 1 and Card 2. Card 2 has a query like `{:query {:source-table "card__1}}` (i.e., Card 2 uses Card 1 as a source query). Now suppose the current User has permissions to see Card 2, but no perms for Card 1. They should still be able to run Card 2 -- we don't need to check perms for Card 1 ... | 1.0 | Should be able to run a card with another card as its source query with just perms for the former - Suppose we have two Cards, Card 1 and Card 2. Card 2 has a query like `{:query {:source-table "card__1}}` (i.e., Card 2 uses Card 1 as a source query). Now suppose the current User has permissions to see Card 2, but no p... | process | should be able to run a card with another card as its source query with just perms for the former suppose we have two cards card and card card has a query like query source table card i e card uses card as a source query now suppose the current user has permissions to see card but no p... | 1 |

2,630 | 5,410,114,991 | IssuesEvent | 2017-03-01 07:29:57 | FujiXeroxNZ-Wellington/Indigo | https://api.github.com/repos/FujiXeroxNZ-Wellington/Indigo | closed | form data is displaying feedback even after saving the data in the contract processing modal | 0-4-Contract Processing 0-Contract Management Client Side KB Article v1.0 | The labels and input elements are all green and are not resetting their colors to defaults. | 1.0 | form data is displaying feedback even after saving the data in the contract processing modal - The labels and input elements are all green and are not resetting their colors to defaults. | process | form data is displaying feedback even after saving the data in the contract processing modal the labels and input elements are all green and are not resetting their colors to defaults | 1 |

509,875 | 14,750,711,514 | IssuesEvent | 2021-01-08 02:56:45 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | How to compile multiple languages in jenkins by switching node | area/devops kind/feature priority/medium | ## Devops in microservices

1. Most companies use kubernetes because of microservices.Kubernetes is a natural fit for microservices

2. Jenkins supports devops on kubernetes, but it's very troublesome to configure. You need to write a yaml file or configure your docker image in casc.

3. There may be dependencies... | 1.0 | How to compile multiple languages in jenkins by switching node - ## Devops in microservices

1. Most companies use kubernetes because of microservices.Kubernetes is a natural fit for microservices

2. Jenkins supports devops on kubernetes, but it's very troublesome to configure. You need to write a yaml file or con... | non_process | how to compile multiple languages in jenkins by switching node devops in microservices most companies use kubernetes because of microservices kubernetes is a natural fit for microservices jenkins supports devops on kubernetes but it s very troublesome to configure you need to write a yaml file or con... | 0 |

2,029 | 4,847,083,247 | IssuesEvent | 2016-11-10 13:59:24 | woesterduolf/Mission-reisbureau | https://api.github.com/repos/woesterduolf/Mission-reisbureau | closed | Reisstad selecteren | Boekingsprocess priority: highest Type:Feature | **Mockup design (page 2)**

When the consumer gets to this page, he is greeted by the text in the top asking him where he wants to travel. Below that are all the possible cities he can choose from, represented by an image of a well-known building in the city. Since the customer needs to be able to see more than just ... | 1.0 | Reisstad selecteren - **Mockup design (page 2)**

When the consumer gets to this page, he is greeted by the text in the top asking him where he wants to travel. Below that are all the possible cities he can choose from, represented by an image of a well-known building in the city. Since the customer needs to be able ... | process | reisstad selecteren mockup design page when the consumer gets to this page he is greeted by the text in the top asking him where he wants to travel below that are all the possible cities he can choose from represented by an image of a well known building in the city since the customer needs to be able ... | 1 |

175,709 | 27,963,323,892 | IssuesEvent | 2023-03-24 17:17:16 | MNK-photoday/photoday | https://api.github.com/repos/MNK-photoday/photoday | closed | [Design] User Page 구현 | 아현 Design | Description

> User Page 구현

Progress

- [x] 프로필 부분 구현

- [x] 프로필 소개 부분 구현

- [x] 수정 클릭 시, 보이는 input 박스 구현

- [x] 비밀 번호 변경 클릭 시, 보이는 input 박스 구현

- [x] 팔로우 클릭 시 나오는 모달 구현

| 1.0 | [Design] User Page 구현 - Description

> User Page 구현

Progress

- [x] 프로필 부분 구현

- [x] 프로필 소개 부분 구현

- [x] 수정 클릭 시, 보이는 input 박스 구현

- [x] 비밀 번호 변경 클릭 시, 보이는 input 박스 구현

- [x] 팔로우 클릭 시 나오는 모달 구현

| non_process | user page 구현 description user page 구현 progress 프로필 부분 구현 프로필 소개 부분 구현 수정 클릭 시 보이는 input 박스 구현 비밀 번호 변경 클릭 시 보이는 input 박스 구현 팔로우 클릭 시 나오는 모달 구현 | 0 |

249,515 | 7,962,811,113 | IssuesEvent | 2018-07-13 15:24:05 | containous/traefik | https://api.github.com/repos/containous/traefik | closed | v1.7 RC Basic Auth KV and Web UI | area/provider/kv area/webui kind/bug/confirmed priority/P1 | ### Do you want to request a *feature* or report a *bug*?

Bug

### What did you do?

The old format for KV Basic Auth was

`traefik/frontends/frontend1/basicauth/0 'user:password'`

The new value is

`traefik/frontends/frontend1/auth/basic/users/0 'user:password'`

When I remove the old format and replac... | 1.0 | v1.7 RC Basic Auth KV and Web UI - ### Do you want to request a *feature* or report a *bug*?

Bug

### What did you do?

The old format for KV Basic Auth was

`traefik/frontends/frontend1/basicauth/0 'user:password'`

The new value is

`traefik/frontends/frontend1/auth/basic/users/0 'user:password'`

When... | non_process | rc basic auth kv and web ui do you want to request a feature or report a bug bug what did you do the old format for kv basic auth was traefik frontends basicauth user password the new value is traefik frontends auth basic users user password when i remove the old... | 0 |

83,403 | 7,870,086,743 | IssuesEvent | 2018-06-24 21:29:12 | SunwellTracker/issues | https://api.github.com/repos/SunwellTracker/issues | closed | [Quest - Nekrum's Medallion] | Works locally | Requires testing question | Decription: The quest [Nekrum's Medallion] is a chain from the hinterlands

How it works: Nekrum is suppose to drop the quest item

How it should work: Nekrum did not drop the quest item

Source (you should point out proofs of your report, please give us some source): His body disappeared before I could tak... | 1.0 | [Quest - Nekrum's Medallion] - Decription: The quest [Nekrum's Medallion] is a chain from the hinterlands

How it works: Nekrum is suppose to drop the quest item

How it should work: Nekrum did not drop the quest item

Source (you should point out proofs of your report, please give us some source): His bod... | non_process | decription the quest is a chain from the hinterlands how it works nekrum is suppose to drop the quest item how it should work nekrum did not drop the quest item source you should point out proofs of your report please give us some source his body disappeared before i could take a screenshot... | 0 |

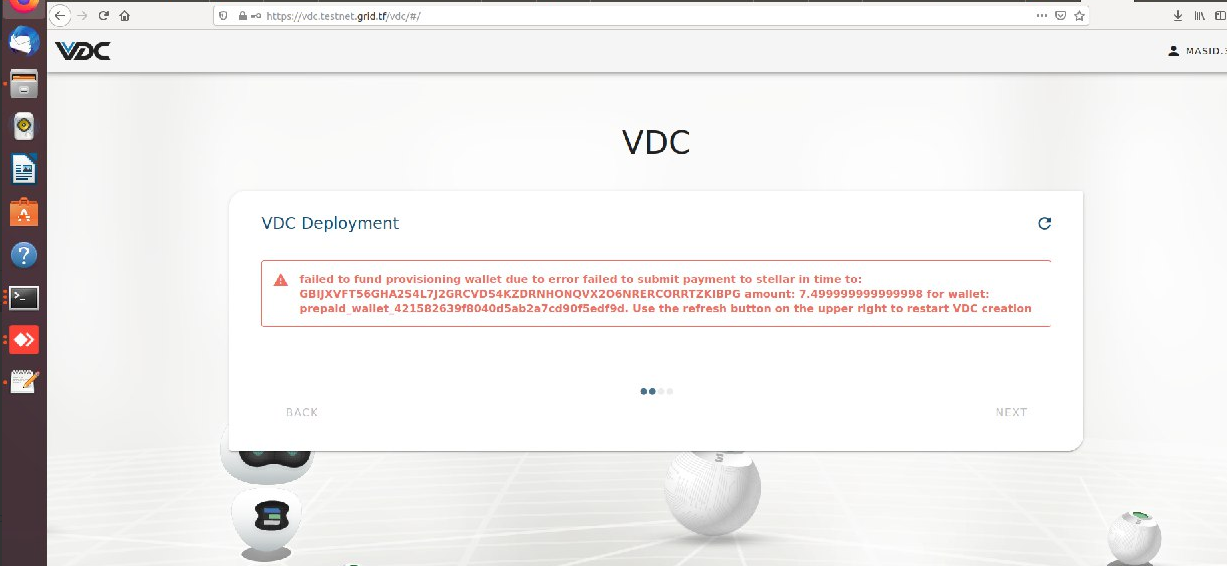

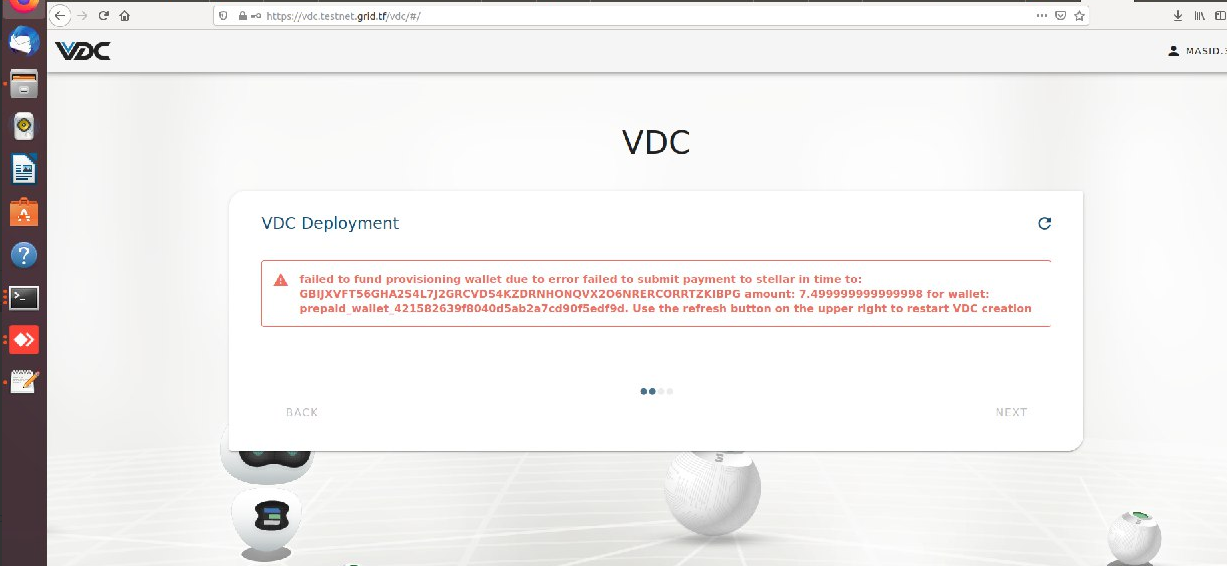

12,636 | 15,016,577,936 | IssuesEvent | 2021-02-01 09:46:05 | threefoldtech/js-sdk | https://api.github.com/repos/threefoldtech/js-sdk | closed | Deploying new VDC failing - failed to fund provisioning wallet error | process_wontfix type_bug | Was trying to deploy a new VDC , after the payment , it took a lot of time and in the end , got the following error :

3bot : masid.3bot | 1.0 | Deploying new VDC failing - failed to fund provisioning wallet error - Was trying to deploy a new VDC , after the payment , it took a lot of time and in the end , got the following error :

3bot :... | process | deploying new vdc failing failed to fund provisioning wallet error was trying to deploy a new vdc after the payment it took a lot of time and in the end got the following error masid | 1 |

59,337 | 24,733,723,938 | IssuesEvent | 2022-10-20 19:56:21 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | closed | define a cosmos DB connection string into an Azure function app_settings | enhancement good first issue service/cosmosdb | I feel the need to document the use case where you need to link an azure function to the cosmos DB database using the connection string.

Assume you have declared a cosmosdb resource in your TF script like this

```

resource "azurerm_cosmosdb_account" "db" {

..

}

```

and now you want to define the connection... | 1.0 | define a cosmos DB connection string into an Azure function app_settings - I feel the need to document the use case where you need to link an azure function to the cosmos DB database using the connection string.

Assume you have declared a cosmosdb resource in your TF script like this

```

resource "azurerm_cosmo... | non_process | define a cosmos db connection string into an azure function app settings i feel the need to document the use case where you need to link an azure function to the cosmos db database using the connection string assume you have declared a cosmosdb resource in your tf script like this resource azurerm cosmo... | 0 |

802,095 | 28,633,767,796 | IssuesEvent | 2023-04-25 00:02:36 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | Make Stream settings and Create stream UIs more consistent | help wanted good first issue area: stream settings priority: high | Following up on #19519 / #23013, we should make the following changes to make the UIs of the Stream settings > General panel and the Create stream panel more consistent:

- [ ] Move the "Announce stream" option just below "Stream description" (with no changes to the logic for whether it's shown).

- [ ] While we're h... | 1.0 | Make Stream settings and Create stream UIs more consistent - Following up on #19519 / #23013, we should make the following changes to make the UIs of the Stream settings > General panel and the Create stream panel more consistent:

- [ ] Move the "Announce stream" option just below "Stream description" (with no chang... | non_process | make stream settings and create stream uis more consistent following up on we should make the following changes to make the uis of the stream settings general panel and the create stream panel more consistent move the announce stream option just below stream description with no changes to the ... | 0 |

30,222 | 4,568,399,811 | IssuesEvent | 2016-09-15 14:22:06 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | [k8s.io] Pods should not start app containers if init containers fail on a RestartAlways pod | area/platform/gke area/test kind/upgrade-test-failure priority/P0 team/gke | [k8s.io] Pods should not start app containers if init containers fail on a RestartAlways pod

https://k8s-testgrid.appspot.com/release-1.4-blocking#gke-1.3-1.4-upgrade-cluster

https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/kubernetes-e2e-gke-1.3-1.4-upgrade-cluster/559 | 2.0 | [k8s.io] Pods should not start app containers if init containers fail on a RestartAlways pod - [k8s.io] Pods should not start app containers if init containers fail on a RestartAlways pod

https://k8s-testgrid.appspot.com/release-1.4-blocking#gke-1.3-1.4-upgrade-cluster

https://k8s-gubernator.appspot.com/build/kub... | non_process | pods should not start app containers if init containers fail on a restartalways pod pods should not start app containers if init containers fail on a restartalways pod | 0 |

3,240 | 6,302,337,392 | IssuesEvent | 2017-07-21 10:34:18 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Can not getting complete stdout | child_process windows | Version: v4.4.7

Platform: windows 10 (64 bit)

``` javascript

const spawn = require('child_process').spawn;

const ls = spawn("D:\\Documents\\Nauman Umer\\New folder\\electron-quick-start\\PC-BASIC\\a.bat", []);

ls.stdout.on('data', (data) => {

console.log(`stdout: ${data}`);

});

ls.stderr.on('data', (data... | 1.0 | Can not getting complete stdout - Version: v4.4.7

Platform: windows 10 (64 bit)

``` javascript

const spawn = require('child_process').spawn;

const ls = spawn("D:\\Documents\\Nauman Umer\\New folder\\electron-quick-start\\PC-BASIC\\a.bat", []);

ls.stdout.on('data', (data) => {

console.log(`stdout: ${data}`);

... | process | can not getting complete stdout version platform windows bit javascript const spawn require child process spawn const ls spawn d documents nauman umer new folder electron quick start pc basic a bat ls stdout on data data console log stdout data ... | 1 |

10,760 | 13,549,206,279 | IssuesEvent | 2020-09-17 07:51:30 | timberio/vector | https://api.github.com/repos/timberio/vector | closed | New `sha1` remap function | domain: mapping domain: processing type: feature | As requested in #3691, the `sha1` remap function hashes the provided argument with the SHA1 algorithm.

## Examples

For all examples assume the following event:

```js

{

"message": "Hello world",

"remote_addr": "54.23.22.123"

}

```

### Path

```

.fingerprint = sha1(.message)

```

### String lit... | 1.0 | New `sha1` remap function - As requested in #3691, the `sha1` remap function hashes the provided argument with the SHA1 algorithm.

## Examples

For all examples assume the following event:

```js

{

"message": "Hello world",

"remote_addr": "54.23.22.123"

}

```

### Path

```

.fingerprint = sha1(.mes... | process | new remap function as requested in the remap function hashes the provided argument with the algorithm examples for all examples assume the following event js message hello world remote addr path fingerprint message st... | 1 |

10,717 | 13,520,231,870 | IssuesEvent | 2020-09-15 04:11:20 | knative/serving | https://api.github.com/repos/knative/serving | closed | Updating placeholder k8s services fails with Service is invalid: spec.clusterIP: Invalid value: "" | area/API area/networking kind/bug kind/process | <!-- If you need to report a security issue with Knative, send an email to knative-security@googlegroups.com. -->

/area API

/kind process

## What version of Knative?

<!-- Delete all but your choice -->

0.13.x

## Expected Behavior

<!-- Briefly describe what you expect to happen -->

Route reconciliation... | 1.0 | Updating placeholder k8s services fails with Service is invalid: spec.clusterIP: Invalid value: "" - <!-- If you need to report a security issue with Knative, send an email to knative-security@googlegroups.com. -->

/area API

/kind process

## What version of Knative?

<!-- Delete all but your choice -->

0.13.x... | process | updating placeholder services fails with service is invalid spec clusterip invalid value area api kind process what version of knative x expected behavior route reconciliation to successfully complete when updating placeholder services actual behavior route... | 1 |

10,380 | 13,193,976,496 | IssuesEvent | 2020-08-13 16:02:49 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | "Only a few tasks are supported in a server job at present." which ones? | Pri2 devops-cicd-process/tech devops/prod doc-enhancement |

> Only a few tasks are supported in a server job at present.

Please list which are supported.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 67504b34-d64b-02a4-2e10-ab99f3b8cfe4

* Version Independent ID: 2cf63b2e-184b-7726-3b... | 1.0 | "Only a few tasks are supported in a server job at present." which ones? -

> Only a few tasks are supported in a server job at present.

Please list which are supported.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 67504b34-d... | process | only a few tasks are supported in a server job at present which ones only a few tasks are supported in a server job at present please list which are supported document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id ... | 1 |

682,652 | 23,351,709,680 | IssuesEvent | 2022-08-10 01:11:05 | Jonius7/SteamUI-OldGlory | https://api.github.com/repos/Jonius7/SteamUI-OldGlory | closed | [28 July] JS TWEAKS NOT WORKING | JS Priority | With the Steam Update on 28 July, using any of the **JS Tweaks** in OldGlory will cause the library to blackscreen.

I have not found a fix yet. In the meantime you can use the Reset button and install CSS tweaks only (don't touch the JS ones) | 1.0 | [28 July] JS TWEAKS NOT WORKING - With the Steam Update on 28 July, using any of the **JS Tweaks** in OldGlory will cause the library to blackscreen.

I have not found a fix yet. In the meantime you can use the Reset button and install CSS tweaks only (don't touch the JS ones) | non_process | js tweaks not working with the steam update on july using any of the js tweaks in oldglory will cause the library to blackscreen i have not found a fix yet in the meantime you can use the reset button and install css tweaks only don t touch the js ones | 0 |

11,078 | 13,920,285,765 | IssuesEvent | 2020-10-21 10:13:05 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Cleaning up the 'GO:0007534 gene conversion at mating-type locus' | cell cycle and DNA processes obsoletion ready term merge | From #18777 - other changes needed in the area of homologous recombination, specifically under 'GO:0007534 gene conversion at mating-type locus'

- [x] 'GO:0034624 DNA recombinase assembly involved in gene conversion at mating-type locus' -> 0 annotations -> obsolete, GO-CAM model

- [x] 'GO:0000728 gene conversio... | 1.0 | Cleaning up the 'GO:0007534 gene conversion at mating-type locus' - From #18777 - other changes needed in the area of homologous recombination, specifically under 'GO:0007534 gene conversion at mating-type locus'

- [x] 'GO:0034624 DNA recombinase assembly involved in gene conversion at mating-type locus' -> 0 anno... | process | cleaning up the go gene conversion at mating type locus from other changes needed in the area of homologous recombination specifically under go gene conversion at mating type locus go dna recombinase assembly involved in gene conversion at mating type locus annotations obsolete go ... | 1 |

23,427 | 3,851,750,482 | IssuesEvent | 2016-04-06 04:28:46 | ysdn-2016/ysdn-2016.github.io | https://api.github.com/repos/ysdn-2016/ysdn-2016.github.io | closed | Mobile ribbon "Attend the Show" -> "Attend the Grad Show" | design |

The mobile nav still has the older copy. We should change it to "Attend the Grad Show" | 1.0 | Mobile ribbon "Attend the Show" -> "Attend the Grad Show" -

The mobile nav still has the older copy. We should change it to "Attend the Grad Show" | non_process | mobile ribbon attend the show attend the grad show the mobile nav still has the older copy we should change it to attend the grad show | 0 |

8,371 | 11,520,305,916 | IssuesEvent | 2020-02-14 14:34:27 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Obsoletion notice: phagocytosis modulation by symbiont terms | multi-species process obsoletion ready term merge |

Very strange.

The corresponding positive and negative regulation terms are in different parts of the ontology.

<img width="779" alt="phagocytosis" src="https://user-images.githubusercontent.com/7359272/72616541-1c580c80-392f-11ea-8553-d2650b04a4ce.png">

| 1.0 | Obsoletion notice: phagocytosis modulation by symbiont terms -

Very strange.

The corresponding positive and negative regulation terms are in different parts of the ontology.

<img width="779" alt="phagocytosis" src="https://user-images.githubusercontent.com/7359272/72616541-1c580c80-392f-11ea-8553-d2650b04a4ce.p... | process | obsoletion notice phagocytosis modulation by symbiont terms very strange the corresponding positive and negative regulation terms are in different parts of the ontology img width alt phagocytosis src | 1 |

248,818 | 21,075,170,356 | IssuesEvent | 2022-04-02 03:16:20 | Uuvana-Studios/longvinter-windows-client | https://api.github.com/repos/Uuvana-Studios/longvinter-windows-client | closed | NON-PVP server very very serious bug | Bug Not Tested | **Describe the bug**

I have found some serious bug in NON-PK server setting.

i have set my server as PVP=false

so my server should not able to kill each other and should indestroiable other player's stucture

and I have found the way to destroy other player's structure in PVP=false server setting.

1. unequ... | 1.0 | NON-PVP server very very serious bug - **Describe the bug**

I have found some serious bug in NON-PK server setting.

i have set my server as PVP=false

so my server should not able to kill each other and should indestroiable other player's stucture

and I have found the way to destroy other player's structure in P... | non_process | non pvp server very very serious bug describe the bug i have found some serious bug in non pk server setting i have set my server as pvp false so my server should not able to kill each other and should indestroiable other player s stucture and i have found the way to destroy other player s structure in p... | 0 |

130 | 2,570,625,905 | IssuesEvent | 2015-02-10 10:56:37 | FG-Team/HCJ-Website-Builder | https://api.github.com/repos/FG-Team/HCJ-Website-Builder | closed | Team organization Team 2 | No Processing | betrifft allgemeine Aufgaben (Merging ...)

-> evtl. im Moment nicht notwendig | 1.0 | Team organization Team 2 - betrifft allgemeine Aufgaben (Merging ...)

-> evtl. im Moment nicht notwendig | process | team organization team betrifft allgemeine aufgaben merging evtl im moment nicht notwendig | 1 |

108,698 | 23,649,227,760 | IssuesEvent | 2022-08-26 03:54:04 | MarlinFirmware/Marlin | https://api.github.com/repos/MarlinFirmware/Marlin | closed | [BUG] Some arguments in G-code are parsed as Hexadecimal values | Bug: Confirmed ! C: G-code Parser | ### Did you test the latest `bugfix-2.1.x` code?

Yes, and the problem still exists.

### Bug Description

Sending the G-code `G0Y0X10' results as Y target position parsed as 16 and X as 10.

G0Y0X20 is parsed as Y=32 and X=20.

### Bug Timeline

_No response_

### Expected behavior

If a hexadecimal value is intended ... | 1.0 | [BUG] Some arguments in G-code are parsed as Hexadecimal values - ### Did you test the latest `bugfix-2.1.x` code?

Yes, and the problem still exists.

### Bug Description

Sending the G-code `G0Y0X10' results as Y target position parsed as 16 and X as 10.

G0Y0X20 is parsed as Y=32 and X=20.

### Bug Timeline

_No res... | non_process | some arguments in g code are parsed as hexadecimal values did you test the latest bugfix x code yes and the problem still exists bug description sending the g code results as y target position parsed as and x as is parsed as y and x bug timeline no response expected... | 0 |

285,090 | 24,642,299,826 | IssuesEvent | 2022-10-17 12:35:47 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | com.hazelcast.client.ClientReconnectTest.testCallbackAfterServerShutdown | Team: Client Type: Test-Failure Source: Internal | _5.2.z_ (commit ecf1b1a7c4572a759b5b4d0f277b646e2a9ccfab)

Failed on CorrettoJDK8: https://jenkins.hazelcast.com/view/Official%20Builds/job/Hazelcast-5.maintenance-CorrettoJDK8/55/testReport/junit/com.hazelcast.client/ClientReconnectTest/testCallbackAfterServerShutdown/

<details><summary>Stacktrace:</summary>

```

... | 1.0 | com.hazelcast.client.ClientReconnectTest.testCallbackAfterServerShutdown - _5.2.z_ (commit ecf1b1a7c4572a759b5b4d0f277b646e2a9ccfab)

Failed on CorrettoJDK8: https://jenkins.hazelcast.com/view/Official%20Builds/job/Hazelcast-5.maintenance-CorrettoJDK8/55/testReport/junit/com.hazelcast.client/ClientReconnectTest/testCal... | non_process | com hazelcast client clientreconnecttest testcallbackafterservershutdown z commit failed on stacktrace java lang nullpointerexception at com hazelcast client test clienttestsupport lambda makesuredisconnectedfromserver clienttestsupport java at com hazelcast test hazelcasttestsup... | 0 |

13,378 | 15,839,096,952 | IssuesEvent | 2021-04-07 00:01:08 | googleapis/gapic-generator-go | https://api.github.com/repos/googleapis/gapic-generator-go | closed | bazel: generate go_gapic_repositories based on google-cloud-go go.mod | type: process | Currently, [`go_gapic_repositories.bzl`](https://github.com/googleapis/gapic-generator-go/blob/master/rules_go_gapic/go_gapic_repositories.bzl) imports the extra dependencies for the `go_gapic_library` targets. This is updated manually and ad hoc. Instead, it should be updated based on the google-cloud-go `go.mod` and ... | 1.0 | bazel: generate go_gapic_repositories based on google-cloud-go go.mod - Currently, [`go_gapic_repositories.bzl`](https://github.com/googleapis/gapic-generator-go/blob/master/rules_go_gapic/go_gapic_repositories.bzl) imports the extra dependencies for the `go_gapic_library` targets. This is updated manually and ad hoc. ... | process | bazel generate go gapic repositories based on google cloud go go mod currently imports the extra dependencies for the go gapic library targets this is updated manually and ad hoc instead it should be updated based on the google cloud go go mod and via gazelle just like this project s repositories bzl... | 1 |

118,409 | 11,968,287,089 | IssuesEvent | 2020-04-06 08:23:19 | cjolowicz/cookiecutter-hypermodern-python | https://api.github.com/repos/cjolowicz/cookiecutter-hypermodern-python | closed | Override theme on Read the Docs | documentation | The Cookiecutter documentation on RTD gets their theme instead of Alabaster with the configured logo. | 1.0 | Override theme on Read the Docs - The Cookiecutter documentation on RTD gets their theme instead of Alabaster with the configured logo. | non_process | override theme on read the docs the cookiecutter documentation on rtd gets their theme instead of alabaster with the configured logo | 0 |

270,002 | 8,445,436,039 | IssuesEvent | 2018-10-18 21:30:06 | jjplay175/Project-N2DE | https://api.github.com/repos/jjplay175/Project-N2DE | closed | Project - Losing integrity after cloning | Medium Priority Review bug | It appears that if you download the project fresh, the files are not recognised as part of the project | 1.0 | Project - Losing integrity after cloning - It appears that if you download the project fresh, the files are not recognised as part of the project | non_process | project losing integrity after cloning it appears that if you download the project fresh the files are not recognised as part of the project | 0 |

42,037 | 12,867,876,131 | IssuesEvent | 2020-07-10 07:48:51 | benchmarkdebricked/sentry | https://api.github.com/repos/benchmarkdebricked/sentry | opened | CVE-2020-10994 (Medium) detected in Pillow-4.2.1-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2020-10994 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-4.2.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>Python Imaging Library (Fork)</p>

<p>Library hom... | True | CVE-2020-10994 (Medium) detected in Pillow-4.2.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2020-10994 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-4.2.1-cp27-cp27mu-manylin... | non_process | cve medium detected in pillow whl cve medium severity vulnerability vulnerable library pillow whl python imaging library fork library home page a href path to dependency file tmp ws scm sentry path to vulnerable library sentry dependency h... | 0 |

138,154 | 20,362,090,437 | IssuesEvent | 2022-02-20 20:40:33 | executablebooks/sphinx-book-theme | https://api.github.com/repos/executablebooks/sphinx-book-theme | closed | Improvements to PDF printing CSS | enhancement :label: design | ### Description / Summary

Currently the CSS that is applied when we print a page to PDF is a little bit hacky. It doesn't quite match the design principles you'd want with a printed PDF, and there is likely some low-hanging fruit that we can tackle to improve this.

### Value / benefit

The most common way for a p... | 1.0 | Improvements to PDF printing CSS - ### Description / Summary

Currently the CSS that is applied when we print a page to PDF is a little bit hacky. It doesn't quite match the design principles you'd want with a printed PDF, and there is likely some low-hanging fruit that we can tackle to improve this.

### Value / b... | non_process | improvements to pdf printing css description summary currently the css that is applied when we print a page to pdf is a little bit hacky it doesn t quite match the design principles you d want with a printed pdf and there is likely some low hanging fruit that we can tackle to improve this value b... | 0 |

4,864 | 7,748,484,092 | IssuesEvent | 2018-05-30 08:28:56 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | dvrResourcePrefix/dvrResourceSuffix not used [DOT 2.x develop branch] | DITA 1.3 feature preprocess preprocess/branch-filtering | If my DITA Map has a construct like this:

```

<topicref href="UserManual.ditamap" format="ditamap" keyscope="A1">

<ditavalref href="author.ditaval">

<ditavalmeta>

<dvrResourcePrefix>mr</dvrResourcePrefix>

<dvrKeyscopePrefix>ks</dvrKeyscopePrefix>

</ditavalmeta>

</ditavalref>

</topicref>

```... | 2.0 | dvrResourcePrefix/dvrResourceSuffix not used [DOT 2.x develop branch] - If my DITA Map has a construct like this:

```

<topicref href="UserManual.ditamap" format="ditamap" keyscope="A1">

<ditavalref href="author.ditaval">

<ditavalmeta>

<dvrResourcePrefix>mr</dvrResourcePrefix>

<dvrKeyscopePrefix>ks</... | process | dvrresourceprefix dvrresourcesuffix not used if my dita map has a construct like this mr ks the topics on disk either have the original name or they have the suffix but the dvrresourceprefix dvrresourcesuffix do not seem to be used | 1 |

755,340 | 26,425,763,935 | IssuesEvent | 2023-01-14 06:00:21 | GLEF1X/glQiwiApi | https://api.github.com/repos/GLEF1X/glQiwiApi | closed | Compatibility with python 3.11 | bug help wanted good first issue priority level: medium | **[Reported in the official telegram group ](https://t.me/glQiwiAPIOfficial/947)**

Detected issues(fixed / not fixed):

- [X] QIWI API webhook config that is using `dataclasses` have to have only immutable defaults due to changes in python3.11 | 1.0 | Compatibility with python 3.11 - **[Reported in the official telegram group ](https://t.me/glQiwiAPIOfficial/947)**

Detected issues(fixed / not fixed):

- [X] QIWI API webhook config that is using `dataclasses` have to have only immutable defaults due to changes in python3.11 | non_process | compatibility with python detected issues fixed not fixed qiwi api webhook config that is using dataclasses have to have only immutable defaults due to changes in | 0 |

184,907 | 14,290,116,021 | IssuesEvent | 2020-11-23 20:21:48 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | closed | hongdoctor/magiccube-wsserv: wsserv/go-v1.4.linux-amd64/src/sync/atomic/atomic_test.go; 21 LoC | fresh small test |

Found a possible issue in [hongdoctor/magiccube-wsserv](https://www.github.com/hongdoctor/magiccube-wsserv) at [wsserv/go-v1.4.linux-amd64/src/sync/atomic/atomic_test.go](https://github.com/hongdoctor/magiccube-wsserv/blob/93f30dec73ea9df939c4a208f56445deacdf8382/wsserv/go-v1.4.linux-amd64/src/sync/atomic/atomic_test.... | 1.0 | hongdoctor/magiccube-wsserv: wsserv/go-v1.4.linux-amd64/src/sync/atomic/atomic_test.go; 21 LoC -

Found a possible issue in [hongdoctor/magiccube-wsserv](https://www.github.com/hongdoctor/magiccube-wsserv) at [wsserv/go-v1.4.linux-amd64/src/sync/atomic/atomic_test.go](https://github.com/hongdoctor/magiccube-wsserv/blob... | non_process | hongdoctor magiccube wsserv wsserv go linux src sync atomic atomic test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the line s of go whi... | 0 |

12,806 | 15,182,090,255 | IssuesEvent | 2021-02-15 05:28:20 | yuta252/startlens_learning | https://api.github.com/repos/yuta252/startlens_learning | closed | pytestによるテストの実施とバグ修正 | dev process | ## 概要

テストとしてpytestを導入しUnittestを実施する。併せてコードのリファクタリングとバグ修正を行う。

ただし、TripletNetworkやKNNモデルによる訓練・推論過程は除く。

## 変更点

- testsディレクトリでunitテストを実施した。

- resource.pyによるS3のファイルハンドリングはmotoモジュールを利用しmockする

```

pip install moto

```

- model/knn.pyにおけるファイルの書き込み読み込み処理をfixtureを利用しながらテストを実施する。併せてテストがしやすいようなモジュールに分けて関数をリファクタ... | 1.0 | pytestによるテストの実施とバグ修正 - ## 概要

テストとしてpytestを導入しUnittestを実施する。併せてコードのリファクタリングとバグ修正を行う。

ただし、TripletNetworkやKNNモデルによる訓練・推論過程は除く。

## 変更点

- testsディレクトリでunitテストを実施した。

- resource.pyによるS3のファイルハンドリングはmotoモジュールを利用しmockする

```

pip install moto

```

- model/knn.pyにおけるファイルの書き込み読み込み処理をfixtureを利用しながらテストを実施する。併せてテストがし... | process | pytestによるテストの実施とバグ修正 概要 テストとしてpytestを導入しunittestを実施する。併せてコードのリファクタリングとバグ修正を行う。 ただし、tripletnetworkやknnモデルによる訓練・推論過程は除く。 変更点 testsディレクトリでunitテストを実施した。 resource pip install moto model knn pyにおけるファイルの書き込み読み込み処理をfixtureを利用しながらテストを実施する。併せてテストがしやすいようなモジュールに分けて関数をリファクタリングする。 ... | 1 |

4,537 | 7,373,525,183 | IssuesEvent | 2018-03-13 17:30:39 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Problem with included file | app-service-web assigned-to-author doc-bug in-process triaged | I wanted to fix a couple lines in the include:

[!INCLUDE [Create resource group](../../../includes/app-service-web-create-resource-group.md)]

but could not figure out how to navigate to the embedded page. The two problems I see are that for the command:

az appservice list-locations

you must specify the -... | 1.0 | Problem with included file - I wanted to fix a couple lines in the include:

[!INCLUDE [Create resource group](../../../includes/app-service-web-create-resource-group.md)]

but could not figure out how to navigate to the embedded page. The two problems I see are that for the command:

az appservice list-locatio... | process | problem with included file i wanted to fix a couple lines in the include includes app service web create resource group md but could not figure out how to navigate to the embedded page the two problems i see are that for the command az appservice list locations you must specify the sd... | 1 |