Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

113,748 | 11,813,322,274 | IssuesEvent | 2020-03-19 22:06:13 | carla-simulator/carla | https://api.github.com/repos/carla-simulator/carla | closed | Remove Autopilot dependencies on CarlaMapGenerator | backlog documentation | Right now our autopilot needs a CarlaMapGenerator Built in the world for it to work correctly, even in maps using the new roadrunner pipeline. Remove those to clean the map generation pipeline. | 1.0 | Remove Autopilot dependencies on CarlaMapGenerator - Right now our autopilot needs a CarlaMapGenerator Built in the world for it to work correctly, even in maps using the new roadrunner pipeline. Remove those to clean the map generation pipeline. | non_process | remove autopilot dependencies on carlamapgenerator right now our autopilot needs a carlamapgenerator built in the world for it to work correctly even in maps using the new roadrunner pipeline remove those to clean the map generation pipeline | 0 |

244,902 | 20,729,111,087 | IssuesEvent | 2022-03-14 07:31:16 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | rpc: TestHeartbeatHealthTransport failed | C-test-failure O-robot branch-master | rpc.TestHeartbeatHealthTransport [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4566826&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4566826&tab=artifacts#/) on master @ [231fd420c61e39b6ad08c982c496d82cf1910bc5](https://github.com/cockroachdb/cockroach/commits/23... | 1.0 | rpc: TestHeartbeatHealthTransport failed - rpc.TestHeartbeatHealthTransport [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4566826&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4566826&tab=artifacts#/) on master @ [231fd420c61e39b6ad08c982c496d82cf1910bc5](https://... | non_process | rpc testheartbeathealthtransport failed rpc testheartbeathealthtransport with on master run testheartbeathealthtransport context test go rpc error code unknown desc client cluster id doesn t match server cluster id fail testheartbeathealthtransport ... | 0 |

33,002 | 4,454,519,174 | IssuesEvent | 2016-08-23 01:16:38 | geetsisbac/WCVVENIXYFVIRBXH3BYTI6TE | https://api.github.com/repos/geetsisbac/WCVVENIXYFVIRBXH3BYTI6TE | reopened | TRCzT13XAfird313adxPKWEkiZ6/yzHdBaXmIZu7TqEOs2TFFdJUegDeYNAbMT48In0nMC2KqZrpbOren73zewmspGGp9Bdw0/5rf7vjuICQpmucJGuwpUgBIZ9EKgiWNiS4Kmz6io6bmfVqvh+VhlmjQfJFCX7Oi2+0/SolQ90= | design | HyGVnNiduvLezui3rYzmQR8X9fVvL0/wjW5BIXRxK3XCEW5pyxTQY0g11YLEqXc4/0eahojwjYr2/Thqp1ZwsCwIi3XEES0Mc3tEYRj0N7xkgj/7ZAwKXGMrvEsLZv4m+nLpempNtcqOH7x9/vGyvjiP0YP+iDqwu2+4OpaPGqd0N/BEcQHcj68i5eqeJkEOib0NSlj7nCYZ/4YaF0rE2znbC5sClxfKoOciCi/lStr87ZkoKBXgbwtdf3gct9ipp9R+aw4Iqdo0+m7ykLKU7qwUXQ9e5guFUHwGkQupZAH4NiE62QQKiIQ2BCp8PU1z... | 1.0 | TRCzT13XAfird313adxPKWEkiZ6/yzHdBaXmIZu7TqEOs2TFFdJUegDeYNAbMT48In0nMC2KqZrpbOren73zewmspGGp9Bdw0/5rf7vjuICQpmucJGuwpUgBIZ9EKgiWNiS4Kmz6io6bmfVqvh+VhlmjQfJFCX7Oi2+0/SolQ90= - HyGVnNiduvLezui3rYzmQR8X9fVvL0/wjW5BIXRxK3XCEW5pyxTQY0g11YLEqXc4/0eahojwjYr2/Thqp1ZwsCwIi3XEES0Mc3tEYRj0N7xkgj/7ZAwKXGMrvEsLZv4m+nLpempNtcqOH7x9/... | non_process | v upjjmllguhpg bqnl jocm aefj lbekshin naee du hysmd y lmteshy osgz wnt smz s... | 0 |

6,583 | 9,661,775,338 | IssuesEvent | 2019-05-20 18:57:53 | googleapis/nodejs-pubsub | https://api.github.com/repos/googleapis/nodejs-pubsub | closed | Publish 0.29.1 to npm | type: process | It seems as though the `0.29.1` release has been made in the repo but not published to `npm`. Could this be addressed? Thanks. | 1.0 | Publish 0.29.1 to npm - It seems as though the `0.29.1` release has been made in the repo but not published to `npm`. Could this be addressed? Thanks. | process | publish to npm it seems as though the release has been made in the repo but not published to npm could this be addressed thanks | 1 |

231,552 | 18,778,081,367 | IssuesEvent | 2021-11-08 00:17:30 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | Frequent test failures of `TestDownloadOnly/v1.22.3-rc.0/preload-exists` | priority/backlog kind/failing-test | This test has high flake rates for the following environments:

|Environment|Flake Rate (%)|

|---|---|

|[Docker_Linux_crio_arm64](https://storage.googleapis.com/minikube-flake-rate/flake_chart.html?env=Docker_Linux_crio_arm64&test=TestDownloadOnly/v1.22.3-rc.0/preload-exists)|23.08|

|[Docker_macOS](https://storage.goog... | 1.0 | Frequent test failures of `TestDownloadOnly/v1.22.3-rc.0/preload-exists` - This test has high flake rates for the following environments:

|Environment|Flake Rate (%)|

|---|---|

|[Docker_Linux_crio_arm64](https://storage.googleapis.com/minikube-flake-rate/flake_chart.html?env=Docker_Linux_crio_arm64&test=TestDownloadOn... | non_process | frequent test failures of testdownloadonly rc preload exists this test has high flake rates for the following environments environment flake rate | 0 |

70,712 | 15,099,758,134 | IssuesEvent | 2021-02-08 03:33:57 | fedora-infra/noggin | https://api.github.com/repos/fedora-infra/noggin | closed | [secaudit-blocking] Missing justification for `nosec` lines | security | Part of secaudit #316, blocking.

As per the [Fedora Infrastructure Application Security Policy](https://docs.pagure.org/infra-docs/dev-guide/security_policy.html#static-security-checking), any `# nosec` lines must be properly justified.

These lines have no documentation why they are ignored from bandit checks:

- h... | True | [secaudit-blocking] Missing justification for `nosec` lines - Part of secaudit #316, blocking.

As per the [Fedora Infrastructure Application Security Policy](https://docs.pagure.org/infra-docs/dev-guide/security_policy.html#static-security-checking), any `# nosec` lines must be properly justified.

These lines have ... | non_process | missing justification for nosec lines part of secaudit blocking as per the any nosec lines must be properly justified these lines have no documentation why they are ignored from bandit checks | 0 |

48,303 | 7,404,153,732 | IssuesEvent | 2018-03-20 02:56:42 | ufal/neuralmonkey | https://api.github.com/repos/ufal/neuralmonkey | closed | tutorial/docs for continuing a model | documentation | The tutorial or another page in the documentation needs a clear example on how to 'continue a training run', i.e. how to start the training based on some existing variables file. | 1.0 | tutorial/docs for continuing a model - The tutorial or another page in the documentation needs a clear example on how to 'continue a training run', i.e. how to start the training based on some existing variables file. | non_process | tutorial docs for continuing a model the tutorial or another page in the documentation needs a clear example on how to continue a training run i e how to start the training based on some existing variables file | 0 |

262,506 | 19,806,754,263 | IssuesEvent | 2022-01-19 07:50:51 | TheThingsIndustries/lorawan-stack-docs | https://api.github.com/repos/TheThingsIndustries/lorawan-stack-docs | opened | Update the command-line interface documentation | documentation |

#### Summary

The Enterprise CLI (`tti-lw-cli`) has additional commands for tenant management and OpenID Connect, which are not available with `ttn-lw-cli`. Currently, in the [command-line interface documentation](https://www.thethingsindustries.com/docs/reference/cli/), the enterprise CLI (`tti-lw-cli`) commands are... | 1.0 | Update the command-line interface documentation -

#### Summary

The Enterprise CLI (`tti-lw-cli`) has additional commands for tenant management and OpenID Connect, which are not available with `ttn-lw-cli`. Currently, in the [command-line interface documentation](https://www.thethingsindustries.com/docs/reference/cli... | non_process | update the command line interface documentation summary the enterprise cli tti lw cli has additional commands for tenant management and openid connect which are not available with ttn lw cli currently in the the enterprise cli tti lw cli commands are also documented to use with the open sourc... | 0 |

6,619 | 9,702,774,519 | IssuesEvent | 2019-05-27 09:35:28 | plazi/arcadia-project | https://api.github.com/repos/plazi/arcadia-project | opened | website development | Article processing BLR Outreach website | @mguidoti @teodorgeorgiev @millerjemey

can we figure out a time Wednesday of Thursday to discuss the usecases we would like to be covered for the BLR website?

For me, any time from 1pm to 6pm would be ok each day, with a preference on Wednesday.

* The reason we should do this now is that Marcus and Puneet meet... | 1.0 | website development - @mguidoti @teodorgeorgiev @millerjemey

can we figure out a time Wednesday of Thursday to discuss the usecases we would like to be covered for the BLR website?

For me, any time from 1pm to 6pm would be ok each day, with a preference on Wednesday.

* The reason we should do this now is that ... | process | website development mguidoti teodorgeorgiev millerjemey can we figure out a time wednesday of thursday to discuss the usecases we would like to be covered for the blr website for me any time from to would be ok each day with a preference on wednesday the reason we should do this now is that marc... | 1 |

61,706 | 14,634,015,924 | IssuesEvent | 2020-12-24 03:56:27 | LuanP/list-of-common-names | https://api.github.com/repos/LuanP/list-of-common-names | opened | CVE-2020-7693 (Medium) detected in sockjs-0.3.19.tgz | security vulnerability | ## CVE-2020-7693 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sockjs-0.3.19.tgz</b></p></summary>

<p>SockJS-node is a server counterpart of SockJS-client a JavaScript library that... | True | CVE-2020-7693 (Medium) detected in sockjs-0.3.19.tgz - ## CVE-2020-7693 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sockjs-0.3.19.tgz</b></p></summary>

<p>SockJS-node is a server... | non_process | cve medium detected in sockjs tgz cve medium severity vulnerability vulnerable library sockjs tgz sockjs node is a server counterpart of sockjs client a javascript library that provides a websocket like object in the browser sockjs gives you a coherent cross browser javascrip... | 0 |

15,370 | 19,548,039,451 | IssuesEvent | 2022-01-02 08:13:54 | ethereum/EIPs | https://api.github.com/repos/ethereum/EIPs | closed | Extend EIP1 with an option for "EIP editor" | type: Meta type: EIP1 (Process) stale | There are a lot of specifications which could be published as an EIP/ERC, which have been around and (un)maintained in random places. Some of these include: ABI specification, NatSpec, secure private key storage in clients, RPC methods, etc.

Since these have been specification efforts by many parties, it may look we... | 1.0 | Extend EIP1 with an option for "EIP editor" - There are a lot of specifications which could be published as an EIP/ERC, which have been around and (un)maintained in random places. Some of these include: ABI specification, NatSpec, secure private key storage in clients, RPC methods, etc.

Since these have been specifi... | process | extend with an option for eip editor there are a lot of specifications which could be published as an eip erc which have been around and un maintained in random places some of these include abi specification natspec secure private key storage in clients rpc methods etc since these have been specificat... | 1 |

13,983 | 16,759,521,348 | IssuesEvent | 2021-06-13 13:53:16 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | "Cube lut out of range" on export leads to random failed exporting and freeze up | no-issue-activity scope: image processing understood: unclear |

The issue is described at end of the thread...copied here

https://discuss.pixls.us/t/cube-lut-lines-number-not-correct-error/17413/14

On exporting images using .cube lut made with 3d lut creator (50 in this bunch), i get an error. Then only a few of the images actually export, and the border of the DT window st... | 1.0 | "Cube lut out of range" on export leads to random failed exporting and freeze up -

The issue is described at end of the thread...copied here

https://discuss.pixls.us/t/cube-lut-lines-number-not-correct-error/17413/14

On exporting images using .cube lut made with 3d lut creator (50 in this bunch), i get an error... | process | cube lut out of range on export leads to random failed exporting and freeze up the issue is described at end of the thread copied here on exporting images using cube lut made with lut creator in this bunch i get an error then only a few of the images actually export and the border of the dt wi... | 1 |

8,036 | 11,210,864,206 | IssuesEvent | 2020-01-06 14:14:06 | 10up/wp-component-library | https://api.github.com/repos/10up/wp-component-library | closed | Add Functional Testing to UI Components | enhancement in process | This should be added after the ES6 conversion is complete. | 1.0 | Add Functional Testing to UI Components - This should be added after the ES6 conversion is complete. | process | add functional testing to ui components this should be added after the conversion is complete | 1 |

103,316 | 4,166,943,779 | IssuesEvent | 2016-06-20 07:21:31 | TheNOOFClan/S.C.S.I. | https://api.github.com/repos/TheNOOFClan/S.C.S.I. | closed | Check time without needing a message to be sent | help wanted High Priority Independent TODO | Find a way to Check time without needing a message.

Possible Solution:

- Store time stamped commands/actions in a JSON file

- Create a new thread or process to check each command/action (maybe not every tick)

- Run command/action when time stamp is reached

- Remove command/action from JSON file | 1.0 | Check time without needing a message to be sent - Find a way to Check time without needing a message.

Possible Solution:

- Store time stamped commands/actions in a JSON file

- Create a new thread or process to check each command/action (maybe not every tick)

- Run command/action when time stamp is reached

- Remove... | non_process | check time without needing a message to be sent find a way to check time without needing a message possible solution store time stamped commands actions in a json file create a new thread or process to check each command action maybe not every tick run command action when time stamp is reached remove... | 0 |

94,466 | 3,926,236,265 | IssuesEvent | 2016-04-22 22:25:53 | kdahlquist/GRNmap | https://api.github.com/repos/kdahlquist/GRNmap | closed | Download transcription factor data from SGD and YEASTRACT for constructing networks | data analysis priority 1 | Will download transcription factor data from SGD and YEASTRACT so that we have a standard set of data to use when building new networks. We don't want to be confused by data version issues if those databases get updated while we are developing our process for creating networks. | 1.0 | Download transcription factor data from SGD and YEASTRACT for constructing networks - Will download transcription factor data from SGD and YEASTRACT so that we have a standard set of data to use when building new networks. We don't want to be confused by data version issues if those databases get updated while we are ... | non_process | download transcription factor data from sgd and yeastract for constructing networks will download transcription factor data from sgd and yeastract so that we have a standard set of data to use when building new networks we don t want to be confused by data version issues if those databases get updated while we are ... | 0 |

6,454 | 9,546,536,567 | IssuesEvent | 2019-05-01 20:14:21 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Department of State: Add content to Next Steps Page | Apply Process Approved Requirements Ready | Who: Student

What: Add content to let the student know we are pulling their information from their USAJOBS profile

Why: As a student I want to know where the information that is populated in my application came from.

A/C

- Change the content under the "Change your profile" to the following:

To save time, we'... | 1.0 | Department of State: Add content to Next Steps Page - Who: Student

What: Add content to let the student know we are pulling their information from their USAJOBS profile

Why: As a student I want to know where the information that is populated in my application came from.

A/C

- Change the content under the "Change... | process | department of state add content to next steps page who student what add content to let the student know we are pulling their information from their usajobs profile why as a student i want to know where the information that is populated in my application came from a c change the content under the change... | 1 |

640,525 | 20,791,793,011 | IssuesEvent | 2022-03-17 03:23:30 | AY2122S2-CS2103T-W13-4/tp | https://api.github.com/repos/AY2122S2-CS2103T-W13-4/tp | closed | As a first time user I can update an existing contact | priority.High type.Story | so that I can accommodate changes in contact details or fix any mistakes that I made when entering the contact details | 1.0 | As a first time user I can update an existing contact - so that I can accommodate changes in contact details or fix any mistakes that I made when entering the contact details | non_process | as a first time user i can update an existing contact so that i can accommodate changes in contact details or fix any mistakes that i made when entering the contact details | 0 |

66,911 | 14,813,513,107 | IssuesEvent | 2021-01-14 02:15:59 | MValle21/lamby_site | https://api.github.com/repos/MValle21/lamby_site | opened | CVE-2020-8162 (High) detected in activestorage-5.2.3.gem | security vulnerability | ## CVE-2020-8162 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>activestorage-5.2.3.gem</b></p></summary>

<p>Attach cloud and local files in Rails applications.</p>

<p>Library home pa... | True | CVE-2020-8162 (High) detected in activestorage-5.2.3.gem - ## CVE-2020-8162 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>activestorage-5.2.3.gem</b></p></summary>

<p>Attach cloud an... | non_process | cve high detected in activestorage gem cve high severity vulnerability vulnerable library activestorage gem attach cloud and local files in rails applications library home page a href dependency hierarchy rails gem root library x activestorage ... | 0 |

18,257 | 24,339,110,043 | IssuesEvent | 2022-10-01 13:16:13 | fertadeo/ISPC-2do-Cuat-Proyecto | https://api.github.com/repos/fertadeo/ISPC-2do-Cuat-Proyecto | reopened | #TK 3.0 Creación de un formulario de géneros literarios | in process | Generar opciones desplegables en un "SELECT" de HTML para los géneros literarios (drama, ficción, romance, etc.) | 1.0 | #TK 3.0 Creación de un formulario de géneros literarios - Generar opciones desplegables en un "SELECT" de HTML para los géneros literarios (drama, ficción, romance, etc.) | process | tk creación de un formulario de géneros literarios generar opciones desplegables en un select de html para los géneros literarios drama ficción romance etc | 1 |

449,872 | 12,976,255,253 | IssuesEvent | 2020-07-21 18:26:45 | GoogleContainerTools/skaffold | https://api.github.com/repos/GoogleContainerTools/skaffold | opened | Add ability to suppress status check output when configured | area/logging area/ux july-chill kind/feature-request priority/p0 | depends on #4512

wire up flag/config option to suppress status check output in favor of status. | 1.0 | Add ability to suppress status check output when configured - depends on #4512

wire up flag/config option to suppress status check output in favor of status. | non_process | add ability to suppress status check output when configured depends on wire up flag config option to suppress status check output in favor of status | 0 |

18,720 | 24,610,987,525 | IssuesEvent | 2022-10-14 21:26:07 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Release checklist 0.65 | enhancement process | ### Problem

We need a checklist to verify the release is rolled out successfully.

### Solution

- [x] Milestone field populated on relevant [issues](https://github.com/hashgraph/hedera-mirror-node/issues?q=is%3Aclosed+no%3Amilestone+sort%3Aupdated-desc)

- [x] Nothing open for [milestone](https://github.com/hashgraph... | 1.0 | Release checklist 0.65 - ### Problem

We need a checklist to verify the release is rolled out successfully.

### Solution

- [x] Milestone field populated on relevant [issues](https://github.com/hashgraph/hedera-mirror-node/issues?q=is%3Aclosed+no%3Amilestone+sort%3Aupdated-desc)

- [x] Nothing open for [milestone](htt... | process | release checklist problem we need a checklist to verify the release is rolled out successfully solution milestone field populated on relevant nothing open for github checks for branch are passing automated kubernetes deployment successful tag release upload release... | 1 |

17,960 | 23,966,996,090 | IssuesEvent | 2022-09-13 02:39:46 | openxla/stablehlo | https://api.github.com/repos/openxla/stablehlo | closed | Add python bindings test to GitHub Actions | Process | ### Request description

Per feedback in #49 - Add tests for python API to CI workflows.

https://github.com/openxla/stablehlo/tree/main/build_tools#python-api

### Additional context

_No response_ | 1.0 | Add python bindings test to GitHub Actions - ### Request description

Per feedback in #49 - Add tests for python API to CI workflows.

https://github.com/openxla/stablehlo/tree/main/build_tools#python-api

### Additional context

_No response_ | process | add python bindings test to github actions request description per feedback in add tests for python api to ci workflows additional context no response | 1 |

16,294 | 20,923,992,407 | IssuesEvent | 2022-03-24 20:24:24 | biocodellc/localcontexts_db | https://api.github.com/repos/biocodellc/localcontexts_db | opened | Add preparation step screen to the registration process when it is approved | medium priority registration process Figma | This will be a screen that will prepare the user to go through community or institution account creation process.

It is created in Figma, just needs to be approved by Jane or Maui before it is added to the hub. | 1.0 | Add preparation step screen to the registration process when it is approved - This will be a screen that will prepare the user to go through community or institution account creation process.

It is created in Figma, just needs to be approved by Jane or Maui before it is added to the hub. | process | add preparation step screen to the registration process when it is approved this will be a screen that will prepare the user to go through community or institution account creation process it is created in figma just needs to be approved by jane or maui before it is added to the hub | 1 |

20,288 | 26,921,767,489 | IssuesEvent | 2023-02-07 10:58:00 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | closed | [C++] Nightly Integration Testing Report | type: process nightly-testing | Note: This report excludes firestore. Please also check **[the report for firestore](https://github.com/firebase/firebase-cpp-sdk/issues/1178)**

***

<hidden value="integration-test-status-comment"></hidden>

### ❌ [build against repo] Integration test FAILED

Requested by @DellaBitta on commit 5c0eebe6cdffa6007b... | 1.0 | [C++] Nightly Integration Testing Report - Note: This report excludes firestore. Please also check **[the report for firestore](https://github.com/firebase/firebase-cpp-sdk/issues/1178)**

***

<hidden value="integration-test-status-comment"></hidden>

### ❌ [build against repo] Integration test FAILED

Requested ... | process | nightly integration testing report note this report excludes firestore please also check ❌ nbsp integration test failed requested by dellabitta on commit last updated mon feb pst failures configs missing log storage ... | 1 |

272,061 | 23,651,233,371 | IssuesEvent | 2022-08-26 06:52:25 | kubernetes-sigs/cluster-api-provider-aws | https://api.github.com/repos/kubernetes-sigs/cluster-api-provider-aws | closed | Divide presubmit e2e tests to shorten test duration | priority/backlog lifecycle/rotten area/testing triage/accepted | Our e2e test suite is growing, and as a consequence takes more time ( ~1.5 hours) to finish.

Not all the tests are giving valuable signal to run as a presubmit job, running them in periodic jobs are enough.

We could divide existing tests as pr-e2e-full and pr-e2e-essential (or better naming) to run on PRs.

For ins... | 1.0 | Divide presubmit e2e tests to shorten test duration - Our e2e test suite is growing, and as a consequence takes more time ( ~1.5 hours) to finish.

Not all the tests are giving valuable signal to run as a presubmit job, running them in periodic jobs are enough.

We could divide existing tests as pr-e2e-full and pr-e2... | non_process | divide presubmit tests to shorten test duration our test suite is growing and as a consequence takes more time hours to finish not all the tests are giving valuable signal to run as a presubmit job running them in periodic jobs are enough we could divide existing tests as pr full and pr essent... | 0 |

14,334 | 17,364,973,654 | IssuesEvent | 2021-07-30 05:33:36 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | closed | Failure in nightly rubocop test | type: process | https://github.com/googleapis/google-cloud-ruby/runs/3006986561

One fairly straightforward fix in the security_center samples acceptance test. | 1.0 | Failure in nightly rubocop test - https://github.com/googleapis/google-cloud-ruby/runs/3006986561

One fairly straightforward fix in the security_center samples acceptance test. | process | failure in nightly rubocop test one fairly straightforward fix in the security center samples acceptance test | 1 |

6,163 | 9,048,488,751 | IssuesEvent | 2019-02-12 00:17:16 | googleapis/nodejs-error-reporting | https://api.github.com/repos/googleapis/nodejs-error-reporting | closed | Determine if escape-regexp-component@1.0.2 can be used | type: process | The `escape-regexp-component@1.0.2` package does not have a license specified in `package.json` or a LICENSE file. However, its `Readme.md` file contains a License section that just says `MIT`. Determine if this is enough to know that the library is under the MIT license. | 1.0 | Determine if escape-regexp-component@1.0.2 can be used - The `escape-regexp-component@1.0.2` package does not have a license specified in `package.json` or a LICENSE file. However, its `Readme.md` file contains a License section that just says `MIT`. Determine if this is enough to know that the library is under the M... | process | determine if escape regexp component can be used the escape regexp component package does not have a license specified in package json or a license file however its readme md file contains a license section that just says mit determine if this is enough to know that the library is under the m... | 1 |

19,410 | 25,556,308,142 | IssuesEvent | 2022-11-30 07:02:35 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Wed, 30 Nov 22 | event camera white balance isp compression image signal processing image signal process raw raw image | ## Keyword: event camera

There is no result

## Keyword: white balance

There is no result

## Keyword: isp

### FETI-DP preconditioners for 2D Biot model with discontinuous Galerkin discretization

- **Authors:** Pilhwa Lee

- **Subjects:** Numerical Analysis (math.NA); Analysis of PDEs (math.AP)

- **Arxiv link:** htt... | 2.0 | New submissions for Wed, 30 Nov 22 - ## Keyword: event camera

There is no result

## Keyword: white balance

There is no result

## Keyword: isp

### FETI-DP preconditioners for 2D Biot model with discontinuous Galerkin discretization

- **Authors:** Pilhwa Lee

- **Subjects:** Numerical Analysis (math.NA); Analysis of ... | process | new submissions for wed nov keyword event camera there is no result keyword white balance there is no result keyword isp feti dp preconditioners for biot model with discontinuous galerkin discretization authors pilhwa lee subjects numerical analysis math na analysis of pde... | 1 |

231,714 | 18,790,245,604 | IssuesEvent | 2021-11-08 16:05:35 | Azure/azure-sdk-for-js | https://api.github.com/repos/Azure/azure-sdk-for-js | closed | Azure Form Recognizer Samples Issue | bug Client Docs Cognitive - Form Recognizer test-manual-pass | 1.

Section [link1](https://github.com/Azure/azure-sdk-for-js/blob/main/sdk/formrecognizer/ai-form-recognizer/samples-dev/buildModel.ts),[link2](https://github.com/Azure/azure-sdk-for-js/blob/main/sdk/formrecognizer/ai-form-recognizer/samples-dev/copyModel.ts),[link3](https://github.com/Azure/azure-sdk-for-js/blob/main... | 1.0 | Azure Form Recognizer Samples Issue - 1.

Section [link1](https://github.com/Azure/azure-sdk-for-js/blob/main/sdk/formrecognizer/ai-form-recognizer/samples-dev/buildModel.ts),[link2](https://github.com/Azure/azure-sdk-for-js/blob/main/sdk/formrecognizer/ai-form-recognizer/samples-dev/copyModel.ts),[link3](https://githu... | non_process | azure form recognizer samples issue section suggestion if in ts file add the code as follow import as dotenv from dotenv dotenv config if in js file add the code as follow const dotenv require dotenv dotenv config ... | 0 |

79,795 | 10,142,059,663 | IssuesEvent | 2019-08-03 20:02:28 | brotkrueml/schema | https://api.github.com/repos/brotkrueml/schema | closed | Don't embed schema markup when no_index=1 | documentation enhancement | When the page should not be indexed by search engines (the page field "no_index" is activated) no schema markup should be rendered. This saves some bytes.

**Acceptance criteria:**

- [x] If the "no_index" checkbox (from seo extension) is activated in the page properties, the field for selecting the web page type is ... | 1.0 | Don't embed schema markup when no_index=1 - When the page should not be indexed by search engines (the page field "no_index" is activated) no schema markup should be rendered. This saves some bytes.

**Acceptance criteria:**

- [x] If the "no_index" checkbox (from seo extension) is activated in the page properties, t... | non_process | don t embed schema markup when no index when the page should not be indexed by search engines the page field no index is activated no schema markup should be rendered this saves some bytes acceptance criteria if the no index checkbox from seo extension is activated in the page properties the... | 0 |

19,653 | 26,010,586,445 | IssuesEvent | 2022-12-21 01:02:33 | ossf/package-analysis | https://api.github.com/repos/ossf/package-analysis | closed | Disable "Dismiss stale pull request approvals when new commits are pushed" | process | Rationale from offline chat:

- when enabled, it limits the ability to do "LGTM with nits" and ends up needing more back and forth for small issues to be resolved

- also applies to rebases and things like fixing commit signoffs where approval status is essentially unchanged | 1.0 | Disable "Dismiss stale pull request approvals when new commits are pushed" - Rationale from offline chat:

- when enabled, it limits the ability to do "LGTM with nits" and ends up needing more back and forth for small issues to be resolved

- also applies to rebases and things like fixing commit signoffs where approv... | process | disable dismiss stale pull request approvals when new commits are pushed rationale from offline chat when enabled it limits the ability to do lgtm with nits and ends up needing more back and forth for small issues to be resolved also applies to rebases and things like fixing commit signoffs where approv... | 1 |

85 | 2,533,378,389 | IssuesEvent | 2015-01-23 22:52:04 | MozillaFoundation/plan | https://api.github.com/repos/MozillaFoundation/plan | opened | Create resources for quicker design implementation | process | We have a need to ship design more quickly, in particular to ship design and dev within a single heartbeat.

Other than ensuring heartbeats are scoped appropriately, what are some other tools or methods that would make design move faster without compromising quality or burning out the team?

Some ideas:

* update t... | 1.0 | Create resources for quicker design implementation - We have a need to ship design more quickly, in particular to ship design and dev within a single heartbeat.

Other than ensuring heartbeats are scoped appropriately, what are some other tools or methods that would make design move faster without compromising qualit... | process | create resources for quicker design implementation we have a need to ship design more quickly in particular to ship design and dev within a single heartbeat other than ensuring heartbeats are scoped appropriately what are some other tools or methods that would make design move faster without compromising qualit... | 1 |

282,133 | 24,452,003,518 | IssuesEvent | 2022-10-07 00:35:13 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | opened | [DocDB] flaky test: YBBackupTest.TestYSQLTabletSplitRangeUniqueIndexOnHiddenColumn | kind/failing-test area/docdb priority/high status/awaiting-triage | ### Description

https://detective-gcp.dev.yugabyte.com/stability/test?branch=master&buckets=20&build_type=all&class=YBBackupTest&fail_tag=all&name=TestYSQLTabletSplitRangeUniqueIndexOnHiddenColumn&platform=linux

Traces back to https://github.com/yugabyte/yugabyte-db/commit/7583bcc0ea272ef8742adff796b36e354f9b6767 | 1.0 | [DocDB] flaky test: YBBackupTest.TestYSQLTabletSplitRangeUniqueIndexOnHiddenColumn - ### Description

https://detective-gcp.dev.yugabyte.com/stability/test?branch=master&buckets=20&build_type=all&class=YBBackupTest&fail_tag=all&name=TestYSQLTabletSplitRangeUniqueIndexOnHiddenColumn&platform=linux

Traces back to http... | non_process | flaky test ybbackuptest testysqltabletsplitrangeuniqueindexonhiddencolumn description traces back to | 0 |

2,278 | 5,105,231,767 | IssuesEvent | 2017-01-05 06:10:38 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | opened | Process new data contributions | 0. Ready for Analysis Collectors Processors | @georgiana-b @nightsh this is quite urgent, especially the Ebola-related contributions. | 1.0 | Process new data contributions - @georgiana-b @nightsh this is quite urgent, especially the Ebola-related contributions. | process | process new data contributions georgiana b nightsh this is quite urgent especially the ebola related contributions | 1 |

529,608 | 15,392,304,414 | IssuesEvent | 2021-03-03 15:29:17 | Prodigy-Hacking/ProdigyMathGameHacking | https://api.github.com/repos/Prodigy-Hacking/ProdigyMathGameHacking | closed | [BUG] Error's on startup, tested multiple times, repeatable. | Bug Confirmed Priority: High | ***Description***:

"Uncaught (in promise) TypeError: Failed to fetch"

(async () => {

const debug = false;

const redirectorDomain = debug ? "http://localhost:1337" : "https://prodigyhacking.ml"

if (!window.abortion) {

// only run inject script once on the page, even if game.min is requested multiple t... | 1.0 | [BUG] Error's on startup, tested multiple times, repeatable. - ***Description***:

"Uncaught (in promise) TypeError: Failed to fetch"

(async () => {

const debug = false;

const redirectorDomain = debug ? "http://localhost:1337" : "https://prodigyhacking.ml"

if (!window.abortion) {

// only run inject sc... | non_process | error s on startup tested multiple times repeatable description uncaught in promise typeerror failed to fetch async const debug false const redirectordomain debug if window abortion only run inject script once on the page even if game min is request... | 0 |

136,482 | 19,824,485,521 | IssuesEvent | 2022-01-20 03:47:04 | microsoft/pyright | https://api.github.com/repos/microsoft/pyright | closed | nonlocal type assignment error not caught | as designed | **Describe the bug**

```py

from typing import Callable

def patch_method() -> Callable[[], None]:

captured = 5

def inner() -> None:

nonlocal captured

reveal_type(captured) # T: int

captured = 'a' # should error

reveal_type(captured) # T: Literal['a']

retur... | 1.0 | nonlocal type assignment error not caught - **Describe the bug**

```py

from typing import Callable

def patch_method() -> Callable[[], None]:

captured = 5

def inner() -> None:

nonlocal captured

reveal_type(captured) # T: int

captured = 'a' # should error

reveal_ty... | non_process | nonlocal type assignment error not caught describe the bug py from typing import callable def patch method callable none captured def inner none nonlocal captured reveal type captured t int captured a should error reveal type... | 0 |

335,412 | 30,028,854,221 | IssuesEvent | 2023-06-27 08:15:53 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Fix jax_devicearray.test_jax_special_add | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5386564860/jobs/9776762610"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5386564860/jobs/9776762610"><img src=https://img.shields.io/badge/-success-success></a>

|t... | 1.0 | Fix jax_devicearray.test_jax_special_add - | | |

|---|---|

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5386564860/jobs/9776762610"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5386564860/jobs/9776762610"><img src=https://img... | non_process | fix jax devicearray test jax special add jax a href src numpy a href src tensorflow a href src torch a href src paddle img src | 0 |

443,563 | 12,796,086,271 | IssuesEvent | 2020-07-02 09:49:51 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | closed | hard delete addons from *all* users deleted over 7 years old | priority: p4 | #14494 adds a cron job that deletes the addons of user accounts that were deleted over 7 years ago, but only for user accounts that are being cleared of fxa_id, email, or last_login_ip. This is fine for new accounts, but we've only started retaining fxa_id and email a few weeks ago, and last_login_ip was previously cl... | 1.0 | hard delete addons from *all* users deleted over 7 years old - #14494 adds a cron job that deletes the addons of user accounts that were deleted over 7 years ago, but only for user accounts that are being cleared of fxa_id, email, or last_login_ip. This is fine for new accounts, but we've only started retaining fxa_id... | non_process | hard delete addons from all users deleted over years old adds a cron job that deletes the addons of user accounts that were deleted over years ago but only for user accounts that are being cleared of fxa id email or last login ip this is fine for new accounts but we ve only started retaining fxa id and... | 0 |

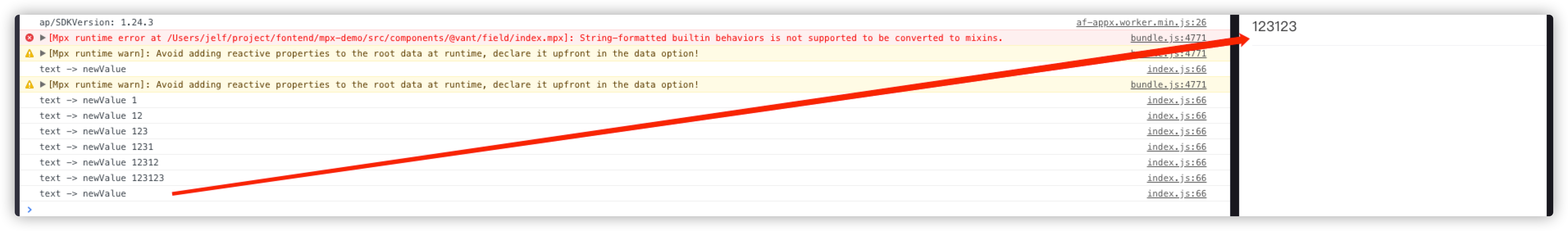

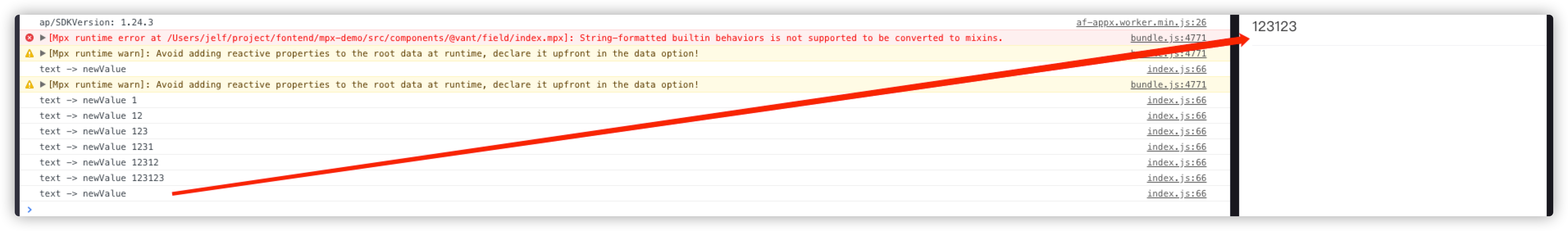

10,272 | 13,125,343,965 | IssuesEvent | 2020-08-06 06:27:51 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | [Bug report] 支付宝下 vant field 组件clearable无法清除输入框中内容 | processing | **问题描述**

支付宝下vant field 组件clearable无法清除输入框中内容

使用wx:model双向绑定后绑定值情况了但是输入框中的内容还存在

**环境信息描述**

至少包含以下部分:

1. Mac

2. mpx版本

"@mpxjs/core": "^2.5.33"

"@mpxjs/webpack-plugin": "^2.5.33"

3. 支付宝IDE 1... | 1.0 | [Bug report] 支付宝下 vant field 组件clearable无法清除输入框中内容 - **问题描述**

支付宝下vant field 组件clearable无法清除输入框中内容

使用wx:model双向绑定后绑定值情况了但是输入框中的内容还存在

**环境信息描述**

至少包含以下部分:

1. Mac

2. mpx版本

"@mpxjs/core": "^2.5... | process | 支付宝下 vant field 组件clearable无法清除输入框中内容 问题描述 支付宝下vant field 组件clearable无法清除输入框中内容 使用wx model双向绑定后绑定值情况了但是输入框中的内容还存在 环境信息描述 至少包含以下部分: mac mpx版本 mpxjs core mpxjs webpack plugin 支付宝ide 最简复现demo | 1 |

131,052 | 10,679,237,937 | IssuesEvent | 2019-10-21 18:52:08 | HERA-Team/hera-validation | https://api.github.com/repos/HERA-Team/hera-validation | opened | Step 0.5: Sharp-Feature P(k) | formal-test | <!-- Give a brief description here, if necessary, of what this proposed test should do -->

This is a test of `pspec`s ability to recover a Gaussian random sky with known P(k), where that P(k) has a reasonably "sharp" feature within the range of interest.

<!-- Fill out the following meta-data for the issue (it will ... | 1.0 | Step 0.5: Sharp-Feature P(k) - <!-- Give a brief description here, if necessary, of what this proposed test should do -->

This is a test of `pspec`s ability to recover a Gaussian random sky with known P(k), where that P(k) has a reasonably "sharp" feature within the range of interest.

<!-- Fill out the following me... | non_process | step sharp feature p k this is a test of pspec s ability to recover a gaussian random sky with known p k where that p k has a reasonably sharp feature within the range of interest simulation component eor with sharp feature simulators rimez pipeline components pspec depen... | 0 |

12,712 | 15,084,826,066 | IssuesEvent | 2021-02-05 17:43:41 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | arm v7 b.w instructions now decoding as vst4.32 (with ghidra 9.2) | Feature: Processor/ARM | **Describe the bug**

byte sequence c2 f4 b4 bf used to decode (with ghidra 9.1.1) as:

b.w [address]

now decodes (with ghidra 9.2) as:

vst4.32 {d27[],d29[],d31[],d1[]},[r2@64],r4

**To Reproduce**

Upgrade to ghidra 9.2 and re-disassemble.

**Environment (please complete the following information):**... | 1.0 | arm v7 b.w instructions now decoding as vst4.32 (with ghidra 9.2) - **Describe the bug**

byte sequence c2 f4 b4 bf used to decode (with ghidra 9.1.1) as:

b.w [address]

now decodes (with ghidra 9.2) as:

vst4.32 {d27[],d29[],d31[],d1[]},[r2@64],r4

**To Reproduce**

Upgrade to ghidra 9.2 and re-disassembl... | process | arm b w instructions now decoding as with ghidra describe the bug byte sequence bf used to decode with ghidra as b w now decodes with ghidra as to reproduce upgrade to ghidra and re disassemble environment please complete... | 1 |

21,206 | 28,242,944,744 | IssuesEvent | 2023-04-06 08:37:27 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | GO:0044658 pore formation in membrane of host by symbiont missing parent | multi-species process | GO:0044658 pore formation in membrane of host by symbiont

- move to disruption by symbiont of host cellular component

- change label to "pore formation in host plasma membrane" | 1.0 | GO:0044658 pore formation in membrane of host by symbiont missing parent - GO:0044658 pore formation in membrane of host by symbiont

- move to disruption by symbiont of host cellular component

- change label to "pore formation in host plasma membrane" | process | go pore formation in membrane of host by symbiont missing parent go pore formation in membrane of host by symbiont move to disruption by symbiont of host cellular component change label to pore formation in host plasma membrane | 1 |

17,608 | 23,428,322,413 | IssuesEvent | 2022-08-14 18:33:28 | ankidroid/Anki-Android | https://api.github.com/repos/ankidroid/Anki-Android | closed | 'DeckPickerTest > version16CollectionOpens' is flaky on windows | Priority-Medium Needs Triage Stale Dev Test process | 'DeckPickerTest > version16CollectionOpens' is flaky on windows

Seen in another instance or two as well? Will re-run failed unit test once it's possible (other parts of OS matrix have to finish first)

```

com.ichi2.anki.DeckPickerTest > checkDisplayOfStudyOptionsOnTablet[0] SKIPPED

thread '<unnamed>' panicked... | 1.0 | 'DeckPickerTest > version16CollectionOpens' is flaky on windows - 'DeckPickerTest > version16CollectionOpens' is flaky on windows

Seen in another instance or two as well? Will re-run failed unit test once it's possible (other parts of OS matrix have to finish first)

```

com.ichi2.anki.DeckPickerTest > checkDis... | process | deckpickertest is flaky on windows deckpickertest is flaky on windows seen in another instance or two as well will re run failed unit test once it s possible other parts of os matrix have to finish first com anki deckpickertest checkdisplayofstudyoptionsontablet skipped thread pa... | 1 |

284 | 2,722,778,372 | IssuesEvent | 2015-04-14 07:47:51 | mkdocs/mkdocs | https://api.github.com/repos/mkdocs/mkdocs | closed | Release 0.12 | Process | - [x] Update website DNS. #405

- [x] Fix blocker #441

- [x] Verify potential blocker #439

- [x] Finalise and merge release notes #437

- [x] Bump version

- [x] Publish to PyPI | 1.0 | Release 0.12 - - [x] Update website DNS. #405

- [x] Fix blocker #441

- [x] Verify potential blocker #439

- [x] Finalise and merge release notes #437

- [x] Bump version

- [x] Publish to PyPI | process | release update website dns fix blocker verify potential blocker finalise and merge release notes bump version publish to pypi | 1 |

539,089 | 15,783,144,001 | IssuesEvent | 2021-04-01 13:39:32 | enso-org/enso | https://api.github.com/repos/enso-org/enso | closed | Database Grouped Columns Report Wrong Counts | Category: Libraries Change: Non-Breaking Difficulty: Intermediate Priority: High Type: Bug | <!--

Please ensure that you are running the latest version of Enso before reporting

the bug! It may have been fixed since.

-->

### General Summary

<!--

- Please include a high-level description of your bug here.

-->

The generated SQL code results in wrong counting when grouping is involved.

For example... | 1.0 | Database Grouped Columns Report Wrong Counts - <!--

Please ensure that you are running the latest version of Enso before reporting

the bug! It may have been fixed since.

-->

### General Summary

<!--

- Please include a high-level description of your bug here.

-->

The generated SQL code results in wrong cou... | non_process | database grouped columns report wrong counts please ensure that you are running the latest version of enso before reporting the bug it may have been fixed since general summary please include a high level description of your bug here the generated sql code results in wrong cou... | 0 |

16,390 | 21,158,103,422 | IssuesEvent | 2022-04-07 06:43:32 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | DISABLED test_exception_single (__main__.ForkTest) | module: multiprocessing module: flaky-tests skipped | Platforms: asan, linux

This test was disabled because it is failing in CI. See [recent examples](http://torch-ci.com/failure/test_exception_single%2C%20ForkTest) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/5863105042).

Over the past 3 hours, it has been determined flaky in 1 work... | 1.0 | DISABLED test_exception_single (__main__.ForkTest) - Platforms: asan, linux

This test was disabled because it is failing in CI. See [recent examples](http://torch-ci.com/failure/test_exception_single%2C%20ForkTest) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/5863105042).

Over the... | process | disabled test exception single main forktest platforms asan linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with red and green | 1 |

29,001 | 8,248,310,343 | IssuesEvent | 2018-09-11 18:03:32 | idris-lang/Idris-dev | https://api.github.com/repos/idris-lang/Idris-dev | closed | pow broken with C backend? | C-Low Hanging Fruit G-C Backend S-Normal U-Build System W-Debugging | When I compile the following code

``` idris

module Main

main : IO ()

main = do

print $ (pow 2 200)

```

with `idris test.idr -o test` then all I get is

``` shell

# ./test

0

#

```

| 1.0 | pow broken with C backend? - When I compile the following code

``` idris

module Main

main : IO ()

main = do

print $ (pow 2 200)

```

with `idris test.idr -o test` then all I get is

``` shell

# ./test

0

#

```

| non_process | pow broken with c backend when i compile the following code idris module main main io main do print pow with idris test idr o test then all i get is shell test | 0 |

766,254 | 26,875,249,972 | IssuesEvent | 2023-02-05 00:05:15 | apache/hudi | https://api.github.com/repos/apache/hudi | closed | [SUPPORT][CDC]UnresolvedUnionException: Not in union ["null","double"]: 20230202105806923_0_1 | schema-and-data-types priority:blocker spark change-data-capture | **_Tips before filing an issue_**

- Have you gone through our [FAQs](https://hudi.apache.org/learn/faq/)?

- Join the mailing list to engage in conversations and get faster support at dev-subscribe@hudi.apache.org.

- If you have triaged this as a bug, then file an [issue](https://issues.apache.org/jira/projects... | 1.0 | [SUPPORT][CDC]UnresolvedUnionException: Not in union ["null","double"]: 20230202105806923_0_1 - **_Tips before filing an issue_**

- Have you gone through our [FAQs](https://hudi.apache.org/learn/faq/)?

- Join the mailing list to engage in conversations and get faster support at dev-subscribe@hudi.apache.org.

-... | non_process | unresolvedunionexception not in union tips before filing an issue have you gone through our join the mailing list to engage in conversations and get faster support at dev subscribe hudi apache org if you have triaged this as a bug then file an directly describe the pro... | 0 |

166,499 | 6,305,815,806 | IssuesEvent | 2017-07-21 19:19:12 | DashboardHub/PipelineDashboard | https://api.github.com/repos/DashboardHub/PipelineDashboard | closed | Create Generic Widgets to accept data from MicroServices | priority: high | - [ ] Large number blocks (collection)

- [ ] Simple line graph

- [ ] Tabular data

| 1.0 | Create Generic Widgets to accept data from MicroServices - - [ ] Large number blocks (collection)

- [ ] Simple line graph

- [ ] Tabular data

| non_process | create generic widgets to accept data from microservices large number blocks collection simple line graph tabular data | 0 |

12,470 | 14,940,217,386 | IssuesEvent | 2021-01-25 17:58:11 | threefoldtech/js-sdk | https://api.github.com/repos/threefoldtech/js-sdk | closed | 3bots activate the testnet wallet using friendbot, not the testnet activation service | process_wontfix type_bug | ### Description

Although this works now and prevents our testnet activation service from being drained, this does not test the actual activation service/flow itself.

It also does not test real life scenario's where the activation service is in need of new funding and operations needs to step in

| 1.0 | 3bots activate the testnet wallet using friendbot, not the testnet activation service - ### Description

Although this works now and prevents our testnet activation service from being drained, this does not test the actual activation service/flow itself.

It also does not test real life scenario's where the activatio... | process | activate the testnet wallet using friendbot not the testnet activation service description although this works now and prevents our testnet activation service from being drained this does not test the actual activation service flow itself it also does not test real life scenario s where the activation se... | 1 |

17,044 | 22,421,400,668 | IssuesEvent | 2022-06-20 03:56:11 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | Reject ProcessInstanceCreation command targeting element inside multi-instance | team/process-automation | A target element of the ProcessInstanceCreation command with start instructions, should not be part of a multi-instance embedded sub-process. It would be unclear which specific iteration the instance should be part of.

The `ProcessInstanceCreation` command should be rejected:

- when one of the target element ids re... | 1.0 | Reject ProcessInstanceCreation command targeting element inside multi-instance - A target element of the ProcessInstanceCreation command with start instructions, should not be part of a multi-instance embedded sub-process. It would be unclear which specific iteration the instance should be part of.

The `ProcessInsta... | process | reject processinstancecreation command targeting element inside multi instance a target element of the processinstancecreation command with start instructions should not be part of a multi instance embedded sub process it would be unclear which specific iteration the instance should be part of the processinsta... | 1 |

107,681 | 23,465,791,058 | IssuesEvent | 2022-08-16 16:38:05 | pulumi/pulumi-yaml | https://api.github.com/repos/pulumi/pulumi-yaml | closed | [Go] Convert for Kubernetes provider generates invalid code | resolution/fixed kind/bug area/codegen | ### What happened?

`pulumi convert` generates invalid Go code for Kubernetes providers.

### Steps to reproduce

```yaml

name: pulumi-yaml-patch

runtime: yaml

description: A minimal Kubernetes Pulumi YAML program

resources:

provider:

type: pulumi:providers:kubernetes

properties:

enableServerSid... | 1.0 | [Go] Convert for Kubernetes provider generates invalid code - ### What happened?

`pulumi convert` generates invalid Go code for Kubernetes providers.

### Steps to reproduce

```yaml

name: pulumi-yaml-patch

runtime: yaml

description: A minimal Kubernetes Pulumi YAML program

resources:

provider:

type: pulum... | non_process | convert for kubernetes provider generates invalid code what happened pulumi convert generates invalid go code for kubernetes providers steps to reproduce yaml name pulumi yaml patch runtime yaml description a minimal kubernetes pulumi yaml program resources provider type pulumi p... | 0 |

4,513 | 7,359,401,952 | IssuesEvent | 2018-03-10 06:02:25 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | Builder vmware-iso type:esx5 post-processor:vagrant format:ovf can't find vm-name-flat.vmdk | post-processor/vagrant |

FOR BUGS:

The machine gets provisioned correctly on remote on Esxi 6.0 box. However when doing the post processing it is unable to get the files to run through the ovftool. Then resources are deleted and unless I use debug mode I can not grab the disk image to convert manually.

I made sure that VNC ports were... | 1.0 | Builder vmware-iso type:esx5 post-processor:vagrant format:ovf can't find vm-name-flat.vmdk -

FOR BUGS:

The machine gets provisioned correctly on remote on Esxi 6.0 box. However when doing the post processing it is unable to get the files to run through the ovftool. Then resources are deleted and unless I use de... | process | builder vmware iso type post processor vagrant format ovf can t find vm name flat vmdk for bugs the machine gets provisioned correctly on remote on esxi box however when doing the post processing it is unable to get the files to run through the ovftool then resources are deleted and unless i use debug... | 1 |

41,542 | 10,512,188,585 | IssuesEvent | 2019-09-27 17:15:40 | google/caliper | https://api.github.com/repos/google/caliper | closed | Method not found: org.apache.commons.math.stat.descriptive.rank.Percentile.setData | type=defect | ```

What steps will reproduce the problem?

1. This very simple benchmark:

public class ArrayDigestBench {

double[] data = new double[10000];

@Param({"16", "32"})

int pageSize;

@BeforeExperiment

protected void setUp() throws Exception {

Random random = new Random();

for (int i = 0;... | 1.0 | Method not found: org.apache.commons.math.stat.descriptive.rank.Percentile.setData - ```

What steps will reproduce the problem?

1. This very simple benchmark:

public class ArrayDigestBench {

double[] data = new double[10000];

@Param({"16", "32"})

int pageSize;

@BeforeExperiment

protected void set... | non_process | method not found org apache commons math stat descriptive rank percentile setdata what steps will reproduce the problem this very simple benchmark public class arraydigestbench double data new double param int pagesize beforeexperiment protected void setup thro... | 0 |

315,754 | 23,596,095,244 | IssuesEvent | 2022-08-23 19:24:01 | comp426-2022-fall/a00 | https://api.github.com/repos/comp426-2022-fall/a00 | closed | Changing a UNIX username | documentation |

#### URL of file with confusing thing

https://www.cyberciti.biz/faq/howto-change-rename-user-name-id/

#### Line number of confusing thing

Linux Change or Rename User Command Syntax

#### What is confusing?

When I first downloaded Ubuntu, I accidentally closed the window where you create your UNIX login in... | 1.0 | Changing a UNIX username -

#### URL of file with confusing thing

https://www.cyberciti.biz/faq/howto-change-rename-user-name-id/

#### Line number of confusing thing

Linux Change or Rename User Command Syntax

#### What is confusing?

When I first downloaded Ubuntu, I accidentally closed the window where yo... | non_process | changing a unix username url of file with confusing thing line number of confusing thing linux change or rename user command syntax what is confusing when i first downloaded ubuntu i accidentally closed the window where you create your unix login info i was able to figure out how to... | 0 |

503,400 | 14,591,042,980 | IssuesEvent | 2020-12-19 10:59:58 | Energy-Innovation/eps-us | https://api.github.com/repos/Energy-Innovation/eps-us | closed | Calculate the domestic content share of consumption for ISIC 05T06 in Vensim to account for differences in coal vs. crude oil | 3.1.2 high priority | India has significant coal production, but little crude oil production. But coal and crude production are both lumped under ISIC 05T06. In a scenario with lower transportation fuel use, for example, you see a substantial negative change in ISIC 05T06 output, which should be pretty much entirely due to changes in crude ... | 1.0 | Calculate the domestic content share of consumption for ISIC 05T06 in Vensim to account for differences in coal vs. crude oil - India has significant coal production, but little crude oil production. But coal and crude production are both lumped under ISIC 05T06. In a scenario with lower transportation fuel use, for ex... | non_process | calculate the domestic content share of consumption for isic in vensim to account for differences in coal vs crude oil india has significant coal production but little crude oil production but coal and crude production are both lumped under isic in a scenario with lower transportation fuel use for example y... | 0 |

2,366 | 5,166,999,579 | IssuesEvent | 2017-01-17 17:35:45 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | closed | Avoid a single error stopping our processors/collectors | 3. In Development Collectors Processors | We should keep trying with the other records when it makes sense. For example, check https://github.com/opentrials/processors/pull/52.

| 1.0 | Avoid a single error stopping our processors/collectors - We should keep trying with the other records when it makes sense. For example, check https://github.com/opentrials/processors/pull/52.

| process | avoid a single error stopping our processors collectors we should keep trying with the other records when it makes sense for example check | 1 |

7,586 | 10,697,510,412 | IssuesEvent | 2019-10-23 16:40:27 | IIIF/api | https://api.github.com/repos/IIIF/api | opened | Image and Presentation 3.0 Feature Implementations | editorial process | The [Evaluation and Testing](https://iiif.io/community/policy/editorial/#evaluation-and-testing) criteria in the IIIF Editorial Process are:

> In order to be considered ready for final review, new features must have two open-source server-side implementations, at least one of which should be in production. New featu... | 1.0 | Image and Presentation 3.0 Feature Implementations - The [Evaluation and Testing](https://iiif.io/community/policy/editorial/#evaluation-and-testing) criteria in the IIIF Editorial Process are:

> In order to be considered ready for final review, new features must have two open-source server-side implementations, at ... | process | image and presentation feature implementations the criteria in the iiif editorial process are in order to be considered ready for final review new features must have two open source server side implementations at least one of which should be in production new features must also have at least one open... | 1 |

474 | 2,911,381,539 | IssuesEvent | 2015-06-22 09:11:57 | haskell-distributed/distributed-process-azure | https://api.github.com/repos/haskell-distributed/distributed-process-azure | opened | Make all distributed-process-demos work on Azure | distributed-process-azure Feature Request | _From @edsko on October 16, 2012 9:44_

_Copied from original issue: haskell-distributed/distributed-process#45_ | 1.0 | Make all distributed-process-demos work on Azure - _From @edsko on October 16, 2012 9:44_

_Copied from original issue: haskell-distributed/distributed-process#45_ | process | make all distributed process demos work on azure from edsko on october copied from original issue haskell distributed distributed process | 1 |

20,796 | 14,167,960,484 | IssuesEvent | 2020-11-12 11:01:26 | pymor/pymor | https://api.github.com/repos/pymor/pymor | opened | Remove need for ignoring coverage errors | bug infrastructure | Currently generating the coverage xml report needs `--ignore-errors`. Otherwise we fail with a `source for pymor/(builtin) missing` error from coverage. This is triggered in the mpirun_demos for the [ipython test](https://github.com/pymor/pymor/blob/master/src/pymortests/demos.py#L260). Even an empty function body will... | 1.0 | Remove need for ignoring coverage errors - Currently generating the coverage xml report needs `--ignore-errors`. Otherwise we fail with a `source for pymor/(builtin) missing` error from coverage. This is triggered in the mpirun_demos for the [ipython test](https://github.com/pymor/pymor/blob/master/src/pymortests/demos... | non_process | remove need for ignoring coverage errors currently generating the coverage xml report needs ignore errors otherwise we fail with a source for pymor builtin missing error from coverage this is triggered in the mpirun demos for the even an empty function body will trigger the issue excluding the function... | 0 |

352,387 | 25,064,958,148 | IssuesEvent | 2022-11-07 07:22:44 | crispindeity/issue-tracker | https://api.github.com/repos/crispindeity/issue-tracker | opened | Add Issue API Documentation | 📃 Documentation 📬 API BE | # Description

- Spring Rest Doc 을 사용하여 Issue API 를 문서화를 진행한다.

# Progress

- [ ] Issue API Documentation

| 1.0 | Add Issue API Documentation - # Description

- Spring Rest Doc 을 사용하여 Issue API 를 문서화를 진행한다.

# Progress

- [ ] Issue API Documentation

| non_process | add issue api documentation description spring rest doc 을 사용하여 issue api 를 문서화를 진행한다 progress issue api documentation | 0 |

390 | 2,838,856,862 | IssuesEvent | 2015-05-27 10:15:20 | Graylog2/graylog2-server | https://api.github.com/repos/Graylog2/graylog2-server | closed | Alert on field content? | processing | I am sure that this has been mentioned somewhere previously but it would be awesome to be able to alert based on field content (string and regex matching) - currently field condition alerts only support metrics & statistics.

Inverting matches should be supported, too.

| 1.0 | Alert on field content? - I am sure that this has been mentioned somewhere previously but it would be awesome to be able to alert based on field content (string and regex matching) - currently field condition alerts only support metrics & statistics.

Inverting matches should be supported, too.

| process | alert on field content i am sure that this has been mentioned somewhere previously but it would be awesome to be able to alert based on field content string and regex matching currently field condition alerts only support metrics statistics inverting matches should be supported too | 1 |

17,201 | 22,778,056,433 | IssuesEvent | 2022-07-08 16:21:39 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | Can't use traceID in transform processor because it returns struct | bug priority:p2 processor/transform | trace_id: Currently accessed as a TraceID struct so does not work in conditions.

The transform processor's Getters normally returns a field as the type it is represented in pdata. This can cause problems when the field is used in set expression. I cannot use traceId as it is returned as struct. | 1.0 | Can't use traceID in transform processor because it returns struct - trace_id: Currently accessed as a TraceID struct so does not work in conditions.

The transform processor's Getters normally returns a field as the type it is represented in pdata. This can cause problems when the field is used in set expression. I ca... | process | can t use traceid in transform processor because it returns struct trace id currently accessed as a traceid struct so does not work in conditions the transform processor s getters normally returns a field as the type it is represented in pdata this can cause problems when the field is used in set expression i ca... | 1 |

55,915 | 13,697,032,373 | IssuesEvent | 2020-10-01 01:49:11 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | IL Link warning failures in System.Private.DataContractSerialization breaking the build | area-Serialization blocking-clean-ci blocking-official-build blocking-outerloop linkable-framework untriaged | I'm seeing a variety of trim analysis warnings causing build failures in master and locally due to #42824

Here's some of the warnings I've seen:

> D:\source\runtime\src\libraries\System.Private.DataContractSerialization\src\System\Runtime\Serialization\ClassDataContract.cs(390,17): Trim analysis error IL2070: Sy... | 1.0 | IL Link warning failures in System.Private.DataContractSerialization breaking the build - I'm seeing a variety of trim analysis warnings causing build failures in master and locally due to #42824

Here's some of the warnings I've seen:

> D:\source\runtime\src\libraries\System.Private.DataContractSerialization\src... | non_process | il link warning failures in system private datacontractserialization breaking the build i m seeing a variety of trim analysis warnings causing build failures in master and locally due to here s some of the warnings i ve seen d source runtime src libraries system private datacontractserialization src sys... | 0 |

617,849 | 19,406,365,681 | IssuesEvent | 2021-12-20 01:40:11 | eatmyvenom/HyArcade | https://api.github.com/repos/eatmyvenom/HyArcade | closed | [FEATURE] add a configurable json replacer to database queries | enhancement question Medium priority t:database | When getting the `/db` endpoint the pure time of stringifying and sending the data slows down everything due to the blocking nature of JSON handling. Not only can the json stringing process be changed slightly to allow more ticks to pass for other queries to be handled. But the data can be dramatically reduced with the... | 1.0 | [FEATURE] add a configurable json replacer to database queries - When getting the `/db` endpoint the pure time of stringifying and sending the data slows down everything due to the blocking nature of JSON handling. Not only can the json stringing process be changed slightly to allow more ticks to pass for other queries... | non_process | add a configurable json replacer to database queries when getting the db endpoint the pure time of stringifying and sending the data slows down everything due to the blocking nature of json handling not only can the json stringing process be changed slightly to allow more ticks to pass for other queries to be h... | 0 |

3,721 | 6,732,894,517 | IssuesEvent | 2017-10-18 13:13:04 | lockedata/rcms | https://api.github.com/repos/lockedata/rcms | opened | Register | attendee osem processes | ## Detailed task

Buy a ticket for the conference

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to this task if you were able to perform the tas... | 1.0 | Register - ## Detailed task

Buy a ticket for the conference

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to this task if you were able to perf... | process | register detailed task buy a ticket for the conference assessing the task try to perform the task use google and the system documentation to help part of what we re trying to assess how easy it is for people to work out how to do tasks use a 👍 reaction to this task if you were able to perf... | 1 |

12,123 | 14,740,777,733 | IssuesEvent | 2021-01-07 09:36:51 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Laser - New account error | anc-process anp-important ant-bug ant-parent/primary | In GitLab by @kdjstudios on Dec 3, 2018, 10:03

**Submitted by:** "'Jessica Hinkle'" <jhinkle@laseranswering.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-12-03-19324

**Server:** External

**Client/Site:** Laser

**Account:** ALL

**Issue:**

I am trying to enter a new customer in SA B... | 1.0 | Laser - New account error - In GitLab by @kdjstudios on Dec 3, 2018, 10:03

**Submitted by:** "'Jessica Hinkle'" <jhinkle@laseranswering.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-12-03-19324

**Server:** External

**Client/Site:** Laser

**Account:** ALL

**Issue:**

I am trying to ... | process | laser new account error in gitlab by kdjstudios on dec submitted by jessica hinkle helpdesk server external client site laser account all issue i am trying to enter a new customer in sa billing and it wont allow me to save or enter the recurring fees etc t... | 1 |

6,160 | 2,584,002,073 | IssuesEvent | 2015-02-16 12:07:14 | TrinityCore/TrinityCore | https://api.github.com/repos/TrinityCore/TrinityCore | closed | [Bug]Tome of Mel'Thandris event missing | Comp-Database Feedback-FixOutdatedMissingWip Priority-Low Sub-Quests | How it should work:

1. Accept "The Howling Vale".

2. Use "Tome of Mel'Thandris".

3. "Velinde Starsong" will appear and say:

"The numbers of my companions dwindles, goddess, and my own power shall soon be insufficient to hold back the demons of Felwood."

"Goddess, grant me the power to overcome my enemies! Hear me,... | 1.0 | [Bug]Tome of Mel'Thandris event missing - How it should work:

1. Accept "The Howling Vale".

2. Use "Tome of Mel'Thandris".

3. "Velinde Starsong" will appear and say:

"The numbers of my companions dwindles, goddess, and my own power shall soon be insufficient to hold back the demons of Felwood."

"Goddess, grant me ... | non_process | tome of mel thandris event missing how it should work accept the howling vale use tome of mel thandris velinde starsong will appear and say the numbers of my companions dwindles goddess and my own power shall soon be insufficient to hold back the demons of felwood goddess grant me the ... | 0 |

809,858 | 30,214,999,681 | IssuesEvent | 2023-07-05 15:01:36 | sdatkinson/NeuralAmpModelerPlugin | https://api.github.com/repos/sdatkinson/NeuralAmpModelerPlugin | closed | Please make "Select model" and "Select IR" text labels clickable, would improve GUI accessibility | enhancement priority:high | Hi,

I'm a totally blind guitar player who uses open source screen reader software and DAW parameters to control plug-ins. Just been checking out NAM, and it's good that you've already got all adjustable controls exposed to DAW automation, but at the moment I'm not able to load models or IRs because the iPlug2 GUI is... | 1.0 | Please make "Select model" and "Select IR" text labels clickable, would improve GUI accessibility - Hi,

I'm a totally blind guitar player who uses open source screen reader software and DAW parameters to control plug-ins. Just been checking out NAM, and it's good that you've already got all adjustable controls expos... | non_process | please make select model and select ir text labels clickable would improve gui accessibility hi i m a totally blind guitar player who uses open source screen reader software and daw parameters to control plug ins just been checking out nam and it s good that you ve already got all adjustable controls expos... | 0 |

9,492 | 12,484,677,222 | IssuesEvent | 2020-05-30 15:54:26 | Arch666Angel/mods | https://api.github.com/repos/Arch666Angel/mods | closed | [BUG] Unknown key: "recipe-name.puffer-puffing-12" to "...-15" | Angels Bio Processing Impact: Bug | **Describe the bug**

The new puffer puffing recipes are missing the locale key:

* recipe-name.puffer-puffing-12

* recipe-name.puffer-puffing-13

* recipe-name.puffer-puffing-14

* recipe-name.puffer-puffing-15

**To Reproduce**

Factorio 0.18.28 with Angel's Bio Processing 0.7.9.

Hover over the recipe in either... | 1.0 | [BUG] Unknown key: "recipe-name.puffer-puffing-12" to "...-15" - **Describe the bug**

The new puffer puffing recipes are missing the locale key:

* recipe-name.puffer-puffing-12

* recipe-name.puffer-puffing-13

* recipe-name.puffer-puffing-14

* recipe-name.puffer-puffing-15

**To Reproduce**

Factorio 0.18.28 with... | process | unknown key recipe name puffer puffing to describe the bug the new puffer puffing recipes are missing the locale key recipe name puffer puffing recipe name puffer puffing recipe name puffer puffing recipe name puffer puffing to reproduce factorio with angel s bio... | 1 |

6,854 | 9,992,266,751 | IssuesEvent | 2019-07-11 13:06:55 | bisq-network/bisq | https://api.github.com/repos/bisq-network/bisq | closed | Timeout error when taking an offer | in:network in:trade-process was:dropped | I am trying to take a HalCash sell offer but I am getting a timeout error. I am using Windows 7 Professional 64 bits and Bisq version is 0.9.7. Logs attached:

[Bisq_log_20190403.txt](https://github.com/bisq-network/bisq/files/3038137/Bisq_log_20190403.txt)

| 1.0 | Timeout error when taking an offer - I am trying to take a HalCash sell offer but I am getting a timeout error. I am using Windows 7 Professional 64 bits and Bisq version is 0.9.7. Logs attached:

[Bisq_log_20190403.txt](https://github.com/bisq-network/bisq/files/3038137/Bisq_log_20190403.txt)

| process | timeout error when taking an offer i am trying to take a halcash sell offer but i am getting a timeout error i am using windows professional bits and bisq version is logs attached | 1 |

8,921 | 12,032,269,998 | IssuesEvent | 2020-04-13 11:43:15 | nanoframework/Home | https://api.github.com/repos/nanoframework/Home | closed | MDP error with some device libs | Area: Metadata Processor Status: FIXED Type: Bug | #Details about Problem

When building some device libraries with the new MDP, it fails. due to a missing reference table for System.DateTime

Found so far:

Windows.Devices.SerialCommunication

Windows.Devices.GPIO

nanoFramework.Devices.Can

**VS version**

2019

**VS extension version**

2019.1.8.10

## Det... | 1.0 | MDP error with some device libs - #Details about Problem

When building some device libraries with the new MDP, it fails. due to a missing reference table for System.DateTime

Found so far:

Windows.Devices.SerialCommunication

Windows.Devices.GPIO

nanoFramework.Devices.Can

**VS version**

2019

**VS extension ... | process | mdp error with some device libs details about problem when building some device libraries with the new mdp it fails due to a missing reference table for system datetime found so far windows devices serialcommunication windows devices gpio nanoframework devices can vs version vs extension ver... | 1 |