Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

15,777 | 19,916,724,768 | IssuesEvent | 2022-01-25 23:56:34 | alphagov/govuk-design-system | https://api.github.com/repos/alphagov/govuk-design-system | closed | Revise support model | epic refining team processes | ## What

Revise the support model for a bigger team and decide how Prototype team will fit into the model

## Why

- Team has grown since the support model was created.

- Prototype team is operational

- Users of prototype and design system team may need different types of support

- Support takes up ~17% of th... | 1.0 | Revise support model - ## What

Revise the support model for a bigger team and decide how Prototype team will fit into the model

## Why

- Team has grown since the support model was created.

- Prototype team is operational

- Users of prototype and design system team may need different types of support

- Supp... | process | revise support model what revise the support model for a bigger team and decide how prototype team will fit into the model why team has grown since the support model was created prototype team is operational users of prototype and design system team may need different types of support supp... | 1 |

17,005 | 22,386,195,677 | IssuesEvent | 2022-06-17 00:49:27 | figlesias221/ProyectoDevOps_Grupo3_IglesiasPerezMolinoloJuan | https://api.github.com/repos/figlesias221/ProyectoDevOps_Grupo3_IglesiasPerezMolinoloJuan | closed | Retrospectiva | process | Integrantes: Todos

Esfuerzo en HS-P:

- Estimado: 0 (no hay estimaciones la primera iteración)

- Real: 1 (por persona) | 1.0 | Retrospectiva - Integrantes: Todos

Esfuerzo en HS-P:

- Estimado: 0 (no hay estimaciones la primera iteración)

- Real: 1 (por persona) | process | retrospectiva integrantes todos esfuerzo en hs p estimado no hay estimaciones la primera iteración real por persona | 1 |

463,650 | 13,285,534,118 | IssuesEvent | 2020-08-24 08:16:53 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Bluetooth: host: GATT service request is not able to trigger the authentication procedure while in SC only mode | Bluetooth Qualification area: Bluetooth bug priority: high | **Describe the bug**

While the Zephyr host is in SC only mode, it is not able to trigger the authentication procedure when accessing an GATT characteristic requiring READ_AUTHEN permission. In the case of insufficient authentication, the security level will be escalated gradually (att.c#L1976). As stated in the SPEC, ... | 1.0 | Bluetooth: host: GATT service request is not able to trigger the authentication procedure while in SC only mode - **Describe the bug**

While the Zephyr host is in SC only mode, it is not able to trigger the authentication procedure when accessing an GATT characteristic requiring READ_AUTHEN permission. In the case of ... | non_process | bluetooth host gatt service request is not able to trigger the authentication procedure while in sc only mode describe the bug while the zephyr host is in sc only mode it is not able to trigger the authentication procedure when accessing an gatt characteristic requiring read authen permission in the case of ... | 0 |

62,250 | 8,583,480,980 | IssuesEvent | 2018-11-13 19:51:18 | pjmc-oliveira/HubListener | https://api.github.com/repos/pjmc-oliveira/HubListener | closed | Requirements Specifications Document : Project Drivers & Project Constraints # 6 | Documentation | Complete the following sections of the Requirements Specifications Template :

\section{Project Drivers}

\subsection{The Purpose of the Project}

\subsection{The Client the Customer, and Other Stakeholders}

\subsection{Users of the Product}

\section{Project Constraints}

\subsection{Relevant Facts and Assumptio... | 1.0 | Requirements Specifications Document : Project Drivers & Project Constraints # 6 - Complete the following sections of the Requirements Specifications Template :

\section{Project Drivers}

\subsection{The Purpose of the Project}

\subsection{The Client the Customer, and Other Stakeholders}

\subsection{Users of the... | non_process | requirements specifications document project drivers project constraints complete the following sections of the requirements specifications template section project drivers subsection the purpose of the project subsection the client the customer and other stakeholders subsection users of the... | 0 |

65,822 | 27,244,149,703 | IssuesEvent | 2023-02-21 23:40:09 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Page on azure kubernetes storage has no mention of Azure Shared Disks | container-service/svc triaged assigned-to-author doc-bug Pri1 |

Announcement blogpost: https://azure.microsoft.com/en-us/blog/announcing-the-general-availability-of-azure-shared-disks-and-new-azure-disk-storage-enhancements/

Dynamic provisioning example: https://github.com/kubernetes-sigs/azuredisk-csi-driver/tree/v1.6.0/deploy/example/sharedisk

---

#### Document Details

... | 1.0 | Page on azure kubernetes storage has no mention of Azure Shared Disks -

Announcement blogpost: https://azure.microsoft.com/en-us/blog/announcing-the-general-availability-of-azure-shared-disks-and-new-azure-disk-storage-enhancements/

Dynamic provisioning example: https://github.com/kubernetes-sigs/azuredisk-csi-drive... | non_process | page on azure kubernetes storage has no mention of azure shared disks announcement blogpost dynamic provisioning example document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id co... | 0 |

721,737 | 24,836,181,718 | IssuesEvent | 2022-10-26 09:01:43 | wso2/api-manager | https://api.github.com/repos/wso2/api-manager | closed | Continuous WARN logs in the EI carbon logs. | Type/Bug Priority/Normal Missing/Component | ### Description

The following WARN log can be seen continuously in the carbon logs of the EI nodes.

`TID: [-1234] [] [2022-10-07 04:30:16,926] WARN {org.apache.synapse.aspects.flow.statistics.collectors.RuntimeStatisticCollector} - Events occur after event collection is finished, event - urn_uuid_B45589D5E71C4A548... | 1.0 | Continuous WARN logs in the EI carbon logs. - ### Description

The following WARN log can be seen continuously in the carbon logs of the EI nodes.

`TID: [-1234] [] [2022-10-07 04:30:16,926] WARN {org.apache.synapse.aspects.flow.statistics.collectors.RuntimeStatisticCollector} - Events occur after event collection i... | non_process | continuous warn logs in the ei carbon logs description the following warn log can be seen continuously in the carbon logs of the ei nodes tid warn org apache synapse aspects flow statistics collectors runtimestatisticcollector events occur after event collection is finished event urn uuid ... | 0 |

12,597 | 14,995,115,015 | IssuesEvent | 2021-01-29 13:53:45 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Panic when querying an M:N model after inserting/updating records | bug/2-confirmed kind/bug process/candidate team/client | ## Bug description

If you have a simple m:n schema, and try to add to an existing relationship, a findMany() query will throw a panic.

## Schema

```

model Post {

author String @Id

lastUpdated DateTime @default(now())

categories Category[]

}

model Category {

id Int @id

posts Po... | 1.0 | Panic when querying an M:N model after inserting/updating records - ## Bug description

If you have a simple m:n schema, and try to add to an existing relationship, a findMany() query will throw a panic.

## Schema

```

model Post {

author String @Id

lastUpdated DateTime @default(now())

categories C... | process | panic when querying an m n model after inserting updating records bug description if you have a simple m n schema and try to add to an existing relationship a findmany query will throw a panic schema model post author string id lastupdated datetime default now categories c... | 1 |

72,248 | 24,018,144,578 | IssuesEvent | 2022-09-15 04:14:18 | vector-im/element-android | https://api.github.com/repos/vector-im/element-android | opened | Unnecessary indicator in spaces selection | T-Defect | ### Steps to reproduce

1. Enable the new layout in Labs

2. Tap on the

icon

### Outcome

#### What did you expect?

No unnecessary indicator for selected space

#### What ha... | 1.0 | Unnecessary indicator in spaces selection - ### Steps to reproduce

1. Enable the new layout in Labs

2. Tap on the

icon

### Outcome

#### What did you expect?

No unnecessary ... | non_process | unnecessary indicator in spaces selection steps to reproduce enable the new layout in labs tap on the icon outcome what did you expect no unnecessary indicator for selected space what happened instead your phone model sharp aquos operating system version ... | 0 |

24,009 | 23,208,020,012 | IssuesEvent | 2022-08-02 07:40:03 | Elgg/Elgg | https://api.github.com/repos/Elgg/Elgg | closed | Provide a standard way to track forms with analytics | usability forms | For example, I imagine all sites want to know how many visitors try to use the sign up form but fail.

Designing the system such that all forms are automatically tracked in similar fashion would be sweet.

This would be library agnostic ideally so you wouldn't be locked to Google analytics.

| True | Provide a standard way to track forms with analytics - For example, I imagine all sites want to know how many visitors try to use the sign up form but fail.

Designing the system such that all forms are automatically tracked in similar fashion would be sweet.

This would be library agnostic ideally so you wouldn't be l... | non_process | provide a standard way to track forms with analytics for example i imagine all sites want to know how many visitors try to use the sign up form but fail designing the system such that all forms are automatically tracked in similar fashion would be sweet this would be library agnostic ideally so you wouldn t be l... | 0 |

10,835 | 13,616,805,392 | IssuesEvent | 2020-09-23 16:06:07 | CDLUC3/Make-Data-Count | https://api.github.com/repos/CDLUC3/Make-Data-Count | closed | Public documentation for log processor | Log Processing S07: Document Log Processor review | Write documentation sufficient for non-affiliated repositories to be able to implement the log processing. | 2.0 | Public documentation for log processor - Write documentation sufficient for non-affiliated repositories to be able to implement the log processing. | process | public documentation for log processor write documentation sufficient for non affiliated repositories to be able to implement the log processing | 1 |

329,943 | 10,027,064,778 | IssuesEvent | 2019-07-17 08:22:44 | wso2/product-ei | https://api.github.com/repos/wso2/product-ei | closed | ESB Tooling installation from p2/hosted p2 shows 2 certificates | Integration Studio Priority/Low | **Description:**

When installing ESB tooling pack to Eclipse it shows 2 different certificates. In further investigation, found that this other certificate comes from 3 dependency jars(org.milyn.smooks.osgi_1.5.0.SNAPSHOT.jar, org.smooks.edi.editor_1.0.1.201105031731.jar, org.smooks.edi.editor.model_1.0.1.201105031731... | 1.0 | ESB Tooling installation from p2/hosted p2 shows 2 certificates - **Description:**

When installing ESB tooling pack to Eclipse it shows 2 different certificates. In further investigation, found that this other certificate comes from 3 dependency jars(org.milyn.smooks.osgi_1.5.0.SNAPSHOT.jar, org.smooks.edi.editor_1.0.... | non_process | esb tooling installation from hosted shows certificates description when installing esb tooling pack to eclipse it shows different certificates in further investigation found that this other certificate comes from dependency jars org milyn smooks osgi snapshot jar org smooks edi editor ... | 0 |

5,827 | 21,331,820,069 | IssuesEvent | 2022-04-18 09:24:17 | mozilla-mobile/firefox-ios | https://api.github.com/repos/mozilla-mobile/firefox-ios | opened | Improve automatic string import to not take changes in unrelated files | eng:automation | In this PR the automation is changing the package.resolved file by removing one line: https://github.com/mozilla-mobile/firefox-ios/pull/10505/files#diff-6edf4db475d69aa9d1d8c8cc7cba4419a30e16fddfb130b90bf06e2a5b809cb4L142

In this case that's not critical but it could be in case there is a package change. We need to... | 1.0 | Improve automatic string import to not take changes in unrelated files - In this PR the automation is changing the package.resolved file by removing one line: https://github.com/mozilla-mobile/firefox-ios/pull/10505/files#diff-6edf4db475d69aa9d1d8c8cc7cba4419a30e16fddfb130b90bf06e2a5b809cb4L142

In this case that's n... | non_process | improve automatic string import to not take changes in unrelated files in this pr the automation is changing the package resolved file by removing one line in this case that s not critical but it could be in case there is a package change we need to be sure that only locale lproj files are changed | 0 |

11,656 | 14,519,042,826 | IssuesEvent | 2020-12-14 01:42:52 | Arch666Angel/mods | https://api.github.com/repos/Arch666Angel/mods | closed | [BUG] All 3 of the Agriculture Module Techs are using the same tier 1 tech icon | Angels Bio Processing Impact: Bug | All three Agriculture Module techs are using icon:

"__angelsbioprocessing__/graphics/technology/module-bio-productivity-1-tech.png"

Tier 2 and 3 should be using their respective tech icons. | 1.0 | [BUG] All 3 of the Agriculture Module Techs are using the same tier 1 tech icon - All three Agriculture Module techs are using icon:

"__angelsbioprocessing__/graphics/technology/module-bio-productivity-1-tech.png"

Tier 2 and 3 should be using their respective tech icons. | process | all of the agriculture module techs are using the same tier tech icon all three agriculture module techs are using icon angelsbioprocessing graphics technology module bio productivity tech png tier and should be using their respective tech icons | 1 |

10,294 | 13,147,956,100 | IssuesEvent | 2020-08-08 18:33:01 | jyn514/saltwater | https://api.github.com/repos/jyn514/saltwater | opened | [ICE] Macro expansion assertion failed | ICE fuzz preprocessor | ### Code

<!-- The code that caused the panic goes here.

This should also include the error message you got. -->

```c

#define T()

T( ` )

```

Message:

```

The application panicked (crashed).

Message: assertion failed: !matches!(args . last(), Some(Token :: Whitespace(_)))

Location: src/lex/replace.rs... | 1.0 | [ICE] Macro expansion assertion failed - ### Code

<!-- The code that caused the panic goes here.

This should also include the error message you got. -->

```c

#define T()

T( ` )

```

Message:

```

The application panicked (crashed).

Message: assertion failed: !matches!(args . last(), Some(Token :: Whit... | process | macro expansion assertion failed code the code that caused the panic goes here this should also include the error message you got c define t t message the application panicked crashed message assertion failed matches args last some token whitespa... | 1 |

1,459 | 4,039,343,275 | IssuesEvent | 2016-05-20 04:03:47 | inasafe/inasafe | https://api.github.com/repos/inasafe/inasafe | closed | Impact on Buildings: we need a new data type | Aggregation Bug Postprocessing | # problem

All impact functions on buildings assume that the data are classified and have a 'type; field. This is fine if buildings data have been downloaded through OSM and data type field is added regardless of whether the data have any attributes or system of classification. This means that users can not use their o... | 1.0 | Impact on Buildings: we need a new data type - # problem

All impact functions on buildings assume that the data are classified and have a 'type; field. This is fine if buildings data have been downloaded through OSM and data type field is added regardless of whether the data have any attributes or system of classifica... | process | impact on buildings we need a new data type problem all impact functions on buildings assume that the data are classified and have a type field this is fine if buildings data have been downloaded through osm and data type field is added regardless of whether the data have any attributes or system of classifica... | 1 |

77,732 | 15,569,831,872 | IssuesEvent | 2021-03-17 01:05:39 | benlazarine/atmosphere | https://api.github.com/repos/benlazarine/atmosphere | opened | CVE-2020-14422 (Medium) detected in ipaddress-1.0.18-py2-none-any.whl | security vulnerability | ## CVE-2020-14422 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ipaddress-1.0.18-py2-none-any.whl</b></p></summary>

<p>IPv4/IPv6 manipulation library</p>

<p>Library home page: <a h... | True | CVE-2020-14422 (Medium) detected in ipaddress-1.0.18-py2-none-any.whl - ## CVE-2020-14422 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ipaddress-1.0.18-py2-none-any.whl</b></p></su... | non_process | cve medium detected in ipaddress none any whl cve medium severity vulnerability vulnerable library ipaddress none any whl manipulation library library home page a href path to dependency file atmosphere dev requirements txt path to vulnerable library atmosphe... | 0 |

1,489 | 4,059,145,977 | IssuesEvent | 2016-05-25 08:30:11 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | closed | Дніпропетровська область - Надання довідки з Державної статистичної звітності про наявність земель та розподіл їх за власниками земель, землекористувачами, угіддями (за даними форми 6-ЗЕМ) | In process of testing in work | Розкрити/створити послугу на наступні міста Дніпропетровської області:

- [ ] Жовті Води

- [x] Марганець

- [ ] Новомосковськ

- [ ] Орджонікідзе

- [ ] Павлоград

- [ ] Першотравенськ

- [ ] Синельникове

- [ ] Тернівка

- [ ] Васильківський р-н

- [ ] Верхньодніпровський р-н

- [ ] Криворізький р-н

- [ ]... | 1.0 | Дніпропетровська область - Надання довідки з Державної статистичної звітності про наявність земель та розподіл їх за власниками земель, землекористувачами, угіддями (за даними форми 6-ЗЕМ) - Розкрити/створити послугу на наступні міста Дніпропетровської області:

- [ ] Жовті Води

- [x] Марганець

- [ ] Новомосковськ ... | process | дніпропетровська область надання довідки з державної статистичної звітності про наявність земель та розподіл їх за власниками земель землекористувачами угіддями за даними форми зем розкрити створити послугу на наступні міста дніпропетровської області жовті води марганець новомосковськ ... | 1 |

14,313 | 17,330,898,355 | IssuesEvent | 2021-07-28 02:04:28 | monetr/rest-api | https://api.github.com/repos/monetr/rest-api | closed | jobs: failed to retrieve bank accounts from plaid: failed to retrieve plaid accounts | Job Processing Links Plaid bug | Sentry Issue: [REST-API-19](https://sentry.io/organizations/monetr/issues/2505852012/?referrer=github_integration)

```

plaid.Error: Plaid Error - request ID: s0Y03f9LxHJbKBL, http status: 400, type: ITEM_ERROR, code: ITEM_LOGIN_REQUIRED, message: the login details of this item have changed (credentials, MFA, or requir... | 1.0 | jobs: failed to retrieve bank accounts from plaid: failed to retrieve plaid accounts - Sentry Issue: [REST-API-19](https://sentry.io/organizations/monetr/issues/2505852012/?referrer=github_integration)

```

plaid.Error: Plaid Error - request ID: s0Y03f9LxHJbKBL, http status: 400, type: ITEM_ERROR, code: ITEM_LOGIN_REQU... | process | jobs failed to retrieve bank accounts from plaid failed to retrieve plaid accounts sentry issue plaid error plaid error request id http status type item error code item login required message the login details of this item have changed credentials mfa or required user action and a user... | 1 |

67,425 | 12,957,737,080 | IssuesEvent | 2020-07-20 10:11:15 | oSoc20/ArTIFFact-Control | https://api.github.com/repos/oSoc20/ArTIFFact-Control | opened | Create the configuration creation flow | code frontend | Create the flow that enables users to create a new configuration. This configuration needs to be stored on disk as well. (see #60)

Styling the flow is not inside scope of this issue. | 1.0 | Create the configuration creation flow - Create the flow that enables users to create a new configuration. This configuration needs to be stored on disk as well. (see #60)

Styling the flow is not inside scope of this issue. | non_process | create the configuration creation flow create the flow that enables users to create a new configuration this configuration needs to be stored on disk as well see styling the flow is not inside scope of this issue | 0 |

15,088 | 18,798,433,136 | IssuesEvent | 2021-11-09 02:39:56 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Output.vrt adds itself to the buildvrtInputFiles.txt even when "overwrite output" is set. | Feedback stale Processing Bug | ### What is the bug or the crash?

Undesireable 'feature'.

If any the folders containing the rasters to be put into your VRT contains the VRT file you are recreating, the VRT file is added to the end of the buildvrtInputFiles.txt file.

Whilst this is obvious (logical) if the VRT file happens to already be in your ... | 1.0 | Output.vrt adds itself to the buildvrtInputFiles.txt even when "overwrite output" is set. - ### What is the bug or the crash?

Undesireable 'feature'.

If any the folders containing the rasters to be put into your VRT contains the VRT file you are recreating, the VRT file is added to the end of the buildvrtInputFiles.... | process | output vrt adds itself to the buildvrtinputfiles txt even when overwrite output is set what is the bug or the crash undesireable feature if any the folders containing the rasters to be put into your vrt contains the vrt file you are recreating the vrt file is added to the end of the buildvrtinputfiles ... | 1 |

16,985 | 2,964,848,594 | IssuesEvent | 2015-07-10 19:02:22 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | opened | [TypeScript] Keyword constructor can be used in C# | defect | ```C#

using Bridge.Html5;

namespace basicTypes

{

public class Keywords

{

public string constructor = "constructor";

[Ready]

public static void Main()

{

var k = new Keywords();

Console.Log(k.constructor);

}

}

}

```

Generated... | 1.0 | [TypeScript] Keyword constructor can be used in C# - ```C#

using Bridge.Html5;

namespace basicTypes

{

public class Keywords

{

public string constructor = "constructor";

[Ready]

public static void Main()

{

var k = new Keywords();

Console.Log(k... | non_process | keyword constructor can be used in c c using bridge namespace basictypes public class keywords public string constructor constructor public static void main var k new keywords console log k constructor ... | 0 |

749,009 | 26,147,464,371 | IssuesEvent | 2022-12-30 08:05:45 | hbrs-cse/Modellbildung-und-Simulation | https://api.github.com/repos/hbrs-cse/Modellbildung-und-Simulation | closed | Modellbildung-und-Simulation/intro | priority-low 💬 comment | # Modellbildung und Simulation — Modellbildung und Simulation

[https://joergbrech.github.io/Modellbildung-und-Simulation/intro.html](https://joergbrech.github.io/Modellbildung-und-Simulation/intro.html) | 1.0 | Modellbildung-und-Simulation/intro - # Modellbildung und Simulation — Modellbildung und Simulation

[https://joergbrech.github.io/Modellbildung-und-Simulation/intro.html](https://joergbrech.github.io/Modellbildung-und-Simulation/intro.html) | non_process | modellbildung und simulation intro modellbildung und simulation — modellbildung und simulation | 0 |

4,644 | 3,875,579,902 | IssuesEvent | 2016-04-12 02:01:33 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 22018195: Cntrl-drag to create outlet connection adds the declaration for the outlet to the wrong class | classification:ui/usability reproducible:always status:open | #### Description

Summary:

In a workspace with multiple projects in it, if any of the projects contain a class with the same name as a class in another project (ie. ViewController), cntrl-dragging to create an outlet connection to that class will create the declaration in the other project's class.

Steps to Reprodu... | True | 22018195: Cntrl-drag to create outlet connection adds the declaration for the outlet to the wrong class - #### Description

Summary:

In a workspace with multiple projects in it, if any of the projects contain a class with the same name as a class in another project (ie. ViewController), cntrl-dragging to create an out... | non_process | cntrl drag to create outlet connection adds the declaration for the outlet to the wrong class description summary in a workspace with multiple projects in it if any of the projects contain a class with the same name as a class in another project ie viewcontroller cntrl dragging to create an outlet con... | 0 |

20,855 | 27,635,294,742 | IssuesEvent | 2023-03-10 14:01:58 | Open-EO/openeo-processes | https://api.github.com/repos/Open-EO/openeo-processes | closed | Process to load a vector cube | new process minor vector | While there are various discussions about how to conceptually define and handle vector cubes,

I don't think we have already a standardized solution to *load* the vector data in the first place (except for inline GeoJSON).

I'll first try to list a couple of vector loading scenario's (with varying degrees of practic... | 1.0 | Process to load a vector cube - While there are various discussions about how to conceptually define and handle vector cubes,

I don't think we have already a standardized solution to *load* the vector data in the first place (except for inline GeoJSON).

I'll first try to list a couple of vector loading scenario's ... | process | process to load a vector cube while there are various discussions about how to conceptually define and handle vector cubes i don t think we have already a standardized solution to load the vector data in the first place except for inline geojson i ll first try to list a couple of vector loading scenario s ... | 1 |

21,269 | 28,441,846,687 | IssuesEvent | 2023-04-16 01:35:43 | home-climate-control/dz | https://api.github.com/repos/home-climate-control/dz | closed | Reduce PidEconomizer jitter on reaching the target temperature | reactive process control economizer | Relevant as of rev. 224da0bafb7f68c8c427f85f899ed9b6026ae3ac

### Expected Behavior

When the indoor temperature reaches the target temperature, control system issues a signal consistent with the usual PID controller system behavior.

### Actual Behavior

Due to math used, properties of this PID controller must... | 1.0 | Reduce PidEconomizer jitter on reaching the target temperature - Relevant as of rev. 224da0bafb7f68c8c427f85f899ed9b6026ae3ac

### Expected Behavior

When the indoor temperature reaches the target temperature, control system issues a signal consistent with the usual PID controller system behavior.

### Actual Beh... | process | reduce pideconomizer jitter on reaching the target temperature relevant as of rev expected behavior when the indoor temperature reaches the target temperature control system issues a signal consistent with the usual pid controller system behavior actual behavior due to math used properties o... | 1 |

351,912 | 32,035,484,785 | IssuesEvent | 2023-09-22 15:03:30 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Manual test run on macOS (Intel) for 1.58.x - Release #5 | tests OS/macOS QA/Yes release-notes/exclude OS/Desktop | ### Installer

- [x] Check signature:

- [x] If macOS, using x64 binary run `spctl --assess --verbose` for the installed version and make sure it returns `accepted`

- [x] If macOS, using universal binary run `spctl --assess --verbose` for the installed version and make sure it returns `accepted`

### Widev... | 1.0 | Manual test run on macOS (Intel) for 1.58.x - Release #5 - ### Installer

- [x] Check signature:

- [x] If macOS, using x64 binary run `spctl --assess --verbose` for the installed version and make sure it returns `accepted`

- [x] If macOS, using universal binary run `spctl --assess --verbose` for the installe... | non_process | manual test run on macos intel for x release installer check signature if macos using binary run spctl assess verbose for the installed version and make sure it returns accepted if macos using universal binary run spctl assess verbose for the installed version... | 0 |

13,152 | 15,573,052,313 | IssuesEvent | 2021-03-17 08:02:46 | bitpal/bitpal_umbrella | https://api.github.com/repos/bitpal/bitpal_umbrella | opened | Support other cryptocurrencies | Payment processor enhancement | Should focus on cryptos people wants to use as payments. It might be beneficial to focus on those similar to each other (like the Bitcoin forks). | 1.0 | Support other cryptocurrencies - Should focus on cryptos people wants to use as payments. It might be beneficial to focus on those similar to each other (like the Bitcoin forks). | process | support other cryptocurrencies should focus on cryptos people wants to use as payments it might be beneficial to focus on those similar to each other like the bitcoin forks | 1 |

69,320 | 14,988,344,094 | IssuesEvent | 2021-01-29 01:01:31 | orenavitov/promoted-builds-plugin | https://api.github.com/repos/orenavitov/promoted-builds-plugin | opened | CVE-2021-21610 (Medium) detected in jenkins-core-2.121.1.jar | security vulnerability | ## CVE-2021-21610 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jenkins-core-2.121.1.jar</b></p></summary>

<p>Jenkins core code and view files to render HTML.</p>

<p>Path to depend... | True | CVE-2021-21610 (Medium) detected in jenkins-core-2.121.1.jar - ## CVE-2021-21610 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jenkins-core-2.121.1.jar</b></p></summary>

<p>Jenkins... | non_process | cve medium detected in jenkins core jar cve medium severity vulnerability vulnerable library jenkins core jar jenkins core code and view files to render html path to dependency file promoted builds plugin pom xml path to vulnerable library epository org jenkins ci main je... | 0 |

6,890 | 10,029,118,248 | IssuesEvent | 2019-07-17 13:17:50 | habitat-sh/habitat | https://api.github.com/repos/habitat-sh/habitat | closed | Restarting a service when any hook changes is too extreme and leads to unnecessary restarts | A-process-management A-supervisor C-bug E-easy V-sup X-change | Currently, whenever _any_ lifecycle hook file is updated in response to census changes, we trigger a restart of the service.

Based on how the hooks in question are actually run, this is actually far too aggressive, and could lead to services restarting more than is strictly necessary.

For example, the `post-stop`... | 1.0 | Restarting a service when any hook changes is too extreme and leads to unnecessary restarts - Currently, whenever _any_ lifecycle hook file is updated in response to census changes, we trigger a restart of the service.

Based on how the hooks in question are actually run, this is actually far too aggressive, and coul... | process | restarting a service when any hook changes is too extreme and leads to unnecessary restarts currently whenever any lifecycle hook file is updated in response to census changes we trigger a restart of the service based on how the hooks in question are actually run this is actually far too aggressive and coul... | 1 |

15,472 | 19,683,505,935 | IssuesEvent | 2022-01-11 19:16:34 | bridgetownrb/bridgetown | https://api.github.com/repos/bridgetownrb/bridgetown | closed | Deprecate generators in plugins prior to 1.0 | process | The "generate" step in Bridgetown is superfluous. Adding either a post_read or pre_render hook accomplishes exactly the same thing. All a generator "does" is get called by the site build in between post_read and pre_render.

Either we should sunset this API in favor of Builders + Hooks, or we should expand the generato... | 1.0 | Deprecate generators in plugins prior to 1.0 - The "generate" step in Bridgetown is superfluous. Adding either a post_read or pre_render hook accomplishes exactly the same thing. All a generator "does" is get called by the site build in between post_read and pre_render.

Either we should sunset this API in favor of Bui... | process | deprecate generators in plugins prior to the generate step in bridgetown is superfluous adding either a post read or pre render hook accomplishes exactly the same thing all a generator does is get called by the site build in between post read and pre render either we should sunset this api in favor of bui... | 1 |

41,348 | 12,831,922,413 | IssuesEvent | 2020-07-07 06:37:47 | rvvergara/fazebuk-api | https://api.github.com/repos/rvvergara/fazebuk-api | closed | CVE-2020-7595 (High) detected in nokogiri-1.10.5.gem | security vulnerability | ## CVE-2020-7595 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nokogiri-1.10.5.gem</b></p></summary>

<p>Nokogiri (���) is an HTML, XML, SAX, and Reader parser. Among

Nokogiri's many... | True | CVE-2020-7595 (High) detected in nokogiri-1.10.5.gem - ## CVE-2020-7595 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nokogiri-1.10.5.gem</b></p></summary>

<p>Nokogiri (���) is an HT... | non_process | cve high detected in nokogiri gem cve high severity vulnerability vulnerable library nokogiri gem nokogiri ��� is an html xml sax and reader parser among nokogiri s many features is the ability to search documents via xpath or selectors library home page a href ... | 0 |

1,561 | 4,160,901,245 | IssuesEvent | 2016-06-17 14:50:50 | BEP-store/final_report | https://api.github.com/repos/BEP-store/final_report | closed | Methodology | process | Is the software development methodology chosen by the team clear and well justified? | 1.0 | Methodology - Is the software development methodology chosen by the team clear and well justified? | process | methodology is the software development methodology chosen by the team clear and well justified | 1 |

47,654 | 19,686,974,117 | IssuesEvent | 2022-01-11 23:42:48 | microsoft/BotFramework-Composer | https://api.github.com/repos/microsoft/BotFramework-Composer | closed | CICD Azure Devops or Visual Studio | Type: Bug customer-reported Bot Services Needs-triage | <!-- Please search for your feature request before creating a new one. >

<!-- Complete the necessary portions of this template and delete the rest. -->

## Describe the bug

<!-- Give a clear and concise description of what the bug is. -->

I have done compilations from Azure Devops Pipeline and also test compil... | 1.0 | CICD Azure Devops or Visual Studio - <!-- Please search for your feature request before creating a new one. >

<!-- Complete the necessary portions of this template and delete the rest. -->

## Describe the bug

<!-- Give a clear and concise description of what the bug is. -->

I have done compilations from Azure... | non_process | cicd azure devops or visual studio describe the bug i have done compilations from azure devops pipeline and also test compilation from visual studio but i see that the output contains fewer files than the one published directly from bf composer version os ... | 0 |

11,332 | 14,144,989,957 | IssuesEvent | 2020-11-10 17:08:05 | googleapis/nodejs-asset | https://api.github.com/repos/googleapis/nodejs-asset | opened | cleanup old nodejs-asset resources | type: process | I'm wondering whether the reason this repository is slowing down is that we're collecting old vms and buckets in our account (_leading to gradual slowdown over time).

We should add logic that cleans up the old VMs and storage buckets created for the asset client. | 1.0 | cleanup old nodejs-asset resources - I'm wondering whether the reason this repository is slowing down is that we're collecting old vms and buckets in our account (_leading to gradual slowdown over time).

We should add logic that cleans up the old VMs and storage buckets created for the asset client. | process | cleanup old nodejs asset resources i m wondering whether the reason this repository is slowing down is that we re collecting old vms and buckets in our account leading to gradual slowdown over time we should add logic that cleans up the old vms and storage buckets created for the asset client | 1 |

4,057 | 6,988,877,644 | IssuesEvent | 2017-12-14 14:30:13 | w3c/transitions | https://api.github.com/repos/w3c/transitions | closed | need adequate implementation when entering Edited CR | Process Issue | s/will be demonstrated/is demonstrated/ in

https://www.w3.org/Guide/transitions?profile=CR&cr=rec-update | 1.0 | need adequate implementation when entering Edited CR - s/will be demonstrated/is demonstrated/ in

https://www.w3.org/Guide/transitions?profile=CR&cr=rec-update | process | need adequate implementation when entering edited cr s will be demonstrated is demonstrated in | 1 |

127,567 | 17,296,470,506 | IssuesEvent | 2021-07-25 20:45:15 | UEH-Squad/VMS | https://api.github.com/repos/UEH-Squad/VMS | opened | Body Part - Unit Organization Logo Banner | UI/UX Design requirements | As a user,

I want to know which unit organizations are involved in this website

So that I can know the scope of this website.

**Acceptance Criteria:**

1. A big banner with freestyle design is compulsory to be blue, green and white.

2. @Meenomenal will provide to you all of the logo (including the unit organiza... | 1.0 | Body Part - Unit Organization Logo Banner - As a user,

I want to know which unit organizations are involved in this website

So that I can know the scope of this website.

**Acceptance Criteria:**

1. A big banner with freestyle design is compulsory to be blue, green and white.

2. @Meenomenal will provide to you ... | non_process | body part unit organization logo banner as a user i want to know which unit organizations are involved in this website so that i can know the scope of this website acceptance criteria a big banner with freestyle design is compulsory to be blue green and white meenomenal will provide to you ... | 0 |

76,576 | 26,493,990,247 | IssuesEvent | 2023-01-18 02:43:19 | zed-industries/feedback | https://api.github.com/repos/zed-industries/feedback | closed | vim mode changes to insert after code navigation | defect vim | ### Check for existing issues

- [X] Completed

### Describe the bug

When I navigate to a new position using <kbd>Command + Shift + O</kbd> or <kbd>Command + P</kbd> with vim mode set to Normal mode, vim keeps switching to Insert mode which is unexpected because my next action will usually be moving my cursor un... | 1.0 | vim mode changes to insert after code navigation - ### Check for existing issues

- [X] Completed

### Describe the bug

When I navigate to a new position using <kbd>Command + Shift + O</kbd> or <kbd>Command + P</kbd> with vim mode set to Normal mode, vim keeps switching to Insert mode which is unexpected because... | non_process | vim mode changes to insert after code navigation check for existing issues completed describe the bug when i navigate to a new position using command shift o or command p with vim mode set to normal mode vim keeps switching to insert mode which is unexpected because my next action will... | 0 |

411,694 | 27,828,178,551 | IssuesEvent | 2023-03-20 00:26:18 | BizTheHabesha/bug-free-code-quiz | https://api.github.com/repos/BizTheHabesha/bug-free-code-quiz | closed | Improve readme | documentation enhancement | ### readme needs:

- Screenshot

- Description

- Deployed application link

- Notes for devs | 1.0 | Improve readme - ### readme needs:

- Screenshot

- Description

- Deployed application link

- Notes for devs | non_process | improve readme readme needs screenshot description deployed application link notes for devs | 0 |

13,170 | 2,735,063,879 | IssuesEvent | 2015-04-18 01:54:56 | STEllAR-GROUP/hpx | https://api.github.com/repos/STEllAR-GROUP/hpx | reopened | HPX Compilation Fails | compiler: intel type: defect | Revision: 682730ca36ff2eca4462ab497071b3c036ec4f84

Compiler: Intel 14.0.2 on SuperMIC

Log:

```

$ make

[ 0%] Building CXX object src/CMakeFiles/hpx.dir/runtime_impl.cpp.o

/usr/include/c++/4.4.7/bits/stl_pair.h(73): error: function "std::unique_ptr<_Tp, _Tp_Deleter>::unique_ptr(const std::unique_ptr<_Tp, _Tp_Dele... | 1.0 | HPX Compilation Fails - Revision: 682730ca36ff2eca4462ab497071b3c036ec4f84

Compiler: Intel 14.0.2 on SuperMIC

Log:

```

$ make

[ 0%] Building CXX object src/CMakeFiles/hpx.dir/runtime_impl.cpp.o

/usr/include/c++/4.4.7/bits/stl_pair.h(73): error: function "std::unique_ptr<_Tp, _Tp_Deleter>::unique_ptr(const std::... | non_process | hpx compilation fails revision compiler intel on supermic log make building cxx object src cmakefiles hpx dir runtime impl cpp o usr include c bits stl pair h error function std unique ptr unique ptr const std unique ptr declared at line of usr include c ... | 0 |

8,699 | 11,841,355,192 | IssuesEvent | 2020-03-23 20:37:57 | john-kurkowski/tldextract | https://api.github.com/repos/john-kurkowski/tldextract | closed | Support for IPv6 | icebox: needs clarification low priority: caller can pre/post-process | Hi!

It looks like TLDExtract does not support IPv6 (IPv4 works fine):

```

In [5]: tldextract.extract('http://[2001:0db8:85a3:08d3::0370:7344]:8080/')

Out[5]: ExtractResult(subdomain='', domain='[2001', suffix='')

```

Do you think it's possible to add it?

Thanks a lot.

Regards | 1.0 | Support for IPv6 - Hi!

It looks like TLDExtract does not support IPv6 (IPv4 works fine):

```

In [5]: tldextract.extract('http://[2001:0db8:85a3:08d3::0370:7344]:8080/')

Out[5]: ExtractResult(subdomain='', domain='[2001', suffix='')

```

Do you think it's possible to add it?

Thanks a lot.

Regards | process | support for hi it looks like tldextract does not support works fine in tldextract extract http out extractresult subdomain domain suffix do you think it s possible to add it thanks a lot regards | 1 |

12,486 | 14,952,620,015 | IssuesEvent | 2021-01-26 15:44:44 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | closed | Process Heating Opening View Factor Warnings | Process Heating | If the user entered value gets too far away from the calculated value (5%), then give a warning

Like FLA in pumps/fans | 1.0 | Process Heating Opening View Factor Warnings - If the user entered value gets too far away from the calculated value (5%), then give a warning

Like FLA in pumps/fans | process | process heating opening view factor warnings if the user entered value gets too far away from the calculated value then give a warning like fla in pumps fans | 1 |

268,791 | 8,414,543,546 | IssuesEvent | 2018-10-13 03:47:18 | insidegui/Sharecuts | https://api.github.com/repos/insidegui/Sharecuts | opened | Implement Analytics | priority / low type / idea | I believe we should implement **privacy-friendly** analytics to help us determine areas where we can improve and/or focus on. These would be strictly for improving the app for users and nothing else.

### Immediate

1. Visits

2. Page Views

-- Detailed shortcut views would be included.

3. Session Time

4. Bounce ... | 1.0 | Implement Analytics - I believe we should implement **privacy-friendly** analytics to help us determine areas where we can improve and/or focus on. These would be strictly for improving the app for users and nothing else.

### Immediate

1. Visits

2. Page Views

-- Detailed shortcut views would be included.

3. Se... | non_process | implement analytics i believe we should implement privacy friendly analytics to help us determine areas where we can improve and or focus on these would be strictly for improving the app for users and nothing else immediate visits page views detailed shortcut views would be included se... | 0 |

10,079 | 13,044,161,973 | IssuesEvent | 2020-07-29 03:47:28 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `UnixTimestampDec` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `UnixTimestampDec` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/r... | 2.0 | UCP: Migrate scalar function `UnixTimestampDec` from TiDB -

## Description

Port the scalar function `UnixTimestampDec` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://... | process | ucp migrate scalar function unixtimestampdec from tidb description port the scalar function unixtimestampdec from tidb to coprocessor score mentor s sticnarf recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

816,649 | 30,605,783,171 | IssuesEvent | 2023-07-23 01:19:27 | JeffreyGaydos/te-custom-mods | https://api.github.com/repos/JeffreyGaydos/te-custom-mods | closed | Merge Existing Video Styling with Recent Video Styling | enhancement Priority | Since adding the recent video automatic embed, the articles with actual videos have different styles. Bring them closer to being the same by:

- Ensuring the X is visible on mobile devices

- Adding a maximize feature for both desktop and mobile devices

Originally brought up here: https://discord.com/channels/278658... | 1.0 | Merge Existing Video Styling with Recent Video Styling - Since adding the recent video automatic embed, the articles with actual videos have different styles. Bring them closer to being the same by:

- Ensuring the X is visible on mobile devices

- Adding a maximize feature for both desktop and mobile devices

Origin... | non_process | merge existing video styling with recent video styling since adding the recent video automatic embed the articles with actual videos have different styles bring them closer to being the same by ensuring the x is visible on mobile devices adding a maximize feature for both desktop and mobile devices origin... | 0 |

6,224 | 9,161,748,478 | IssuesEvent | 2019-03-01 11:20:29 | JudicialAppointmentsCommission/documentation | https://api.github.com/repos/JudicialAppointmentsCommission/documentation | closed | Write up findings from the two postmortems | process | ## Background

We ran two postmortems on 2018-10-30. Details can be found here:

https://drive.google.com/drive/u/0/folders/0AAn1hbRIW4_hUk9PVA

All of the details are current in photos of post-its. These need to be written up as tickets/recommendation or passed over to policy for inclusion in their templates.

As... | 1.0 | Write up findings from the two postmortems - ## Background

We ran two postmortems on 2018-10-30. Details can be found here:

https://drive.google.com/drive/u/0/folders/0AAn1hbRIW4_hUk9PVA

All of the details are current in photos of post-its. These need to be written up as tickets/recommendation or passed over to ... | process | write up findings from the two postmortems background we ran two postmortems on details can be found here all of the details are current in photos of post its these need to be written up as tickets recommendation or passed over to policy for inclusion in their templates assigned this to the ja... | 1 |

83,457 | 7,872,154,538 | IssuesEvent | 2018-06-25 10:13:49 | ethersphere/go-ethereum | https://api.github.com/repos/ethersphere/go-ethereum | opened | Run longrunning and benchmark tests somehow | test | We need to run long running and benchmark tests somehow, e.g. once a day | 1.0 | Run longrunning and benchmark tests somehow - We need to run long running and benchmark tests somehow, e.g. once a day | non_process | run longrunning and benchmark tests somehow we need to run long running and benchmark tests somehow e g once a day | 0 |

17,117 | 22,635,800,987 | IssuesEvent | 2022-06-30 18:51:03 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | Allow input parameter values for qgis_process to be specified as a JSON object passed via stdin (Request in QGIS) | Processing 3.24 | ### Request for documentation

From pull request QGIS/qgis#46497

Author: @nyalldawson

QGIS version: 3.24

**Allow input parameter values for qgis_process to be specified as a JSON object passed via stdin**

### PR Description:

This provides a mechanism to support complex input parameters for algorithms, and a way for qg... | 1.0 | Allow input parameter values for qgis_process to be specified as a JSON object passed via stdin (Request in QGIS) - ### Request for documentation

From pull request QGIS/qgis#46497

Author: @nyalldawson

QGIS version: 3.24

**Allow input parameter values for qgis_process to be specified as a JSON object passed via stdin**... | process | allow input parameter values for qgis process to be specified as a json object passed via stdin request in qgis request for documentation from pull request qgis qgis author nyalldawson qgis version allow input parameter values for qgis process to be specified as a json object passed via stdin ... | 1 |

35,392 | 4,652,438,602 | IssuesEvent | 2016-10-03 14:01:42 | prdxn-org/tagster | https://api.github.com/repos/prdxn-org/tagster | closed | Device (iPhone/Nexus) - Instagram Users section - Long names are misaligned | bug Design development | **Issue Images:**

**What should happen:**

Long names is misaligned. The two worlds should be one below the other and aligned. | 1.0 | Device (iPhone/Nexus) - Instagram Users section - Long names are misaligned - **Issue Images:**

**What should happen:**

Long names is misaligned. The two worlds should be one below t... | non_process | device iphone nexus instagram users section long names are misaligned issue images what should happen long names is misaligned the two worlds should be one below the other and aligned | 0 |

271,131 | 8,476,487,279 | IssuesEvent | 2018-10-24 22:08:22 | wevote/WebApp | https://api.github.com/repos/wevote/WebApp | opened | Update styles of "Suggest Organization" buttons | HTML / CSS Priority: 1 | When the “Suggest” buttons below are clicked, we open a new browser tab to the link: https://api.wevoteusa.org/vg/create/

In our recent upgrade to Bootstrap 4, these buttons lost their basic Bootstrap formatting.

- [ ] “Suggest Organization” button on the “Listen to Organizations” page (at the bottom of the “Who... | 1.0 | Update styles of "Suggest Organization" buttons - When the “Suggest” buttons below are clicked, we open a new browser tab to the link: https://api.wevoteusa.org/vg/create/

In our recent upgrade to Bootstrap 4, these buttons lost their basic Bootstrap formatting.

- [ ] “Suggest Organization” button on the “Listen... | non_process | update styles of suggest organization buttons when the “suggest” buttons below are clicked we open a new browser tab to the link in our recent upgrade to bootstrap these buttons lost their basic bootstrap formatting “suggest organization” button on the “listen to organizations” page at the botto... | 0 |

1,365 | 3,923,626,005 | IssuesEvent | 2016-04-22 12:14:55 | SpongePowered/Mixin | https://api.github.com/repos/SpongePowered/Mixin | closed | Annotation Processor Chokes on Inner Classes | annotation processor bug | Throughout various injections in Sponge's implementation, there are a few that inject into methods where either the target or the targeted method itself uses a nested class type as an argument and Mixin AP will chuck a warning at compile time, even though the injection is perfectly valid at runtime.

@Mumfrey was sug... | 1.0 | Annotation Processor Chokes on Inner Classes - Throughout various injections in Sponge's implementation, there are a few that inject into methods where either the target or the targeted method itself uses a nested class type as an argument and Mixin AP will chuck a warning at compile time, even though the injection is ... | process | annotation processor chokes on inner classes throughout various injections in sponge s implementation there are a few that inject into methods where either the target or the targeted method itself uses a nested class type as an argument and mixin ap will chuck a warning at compile time even though the injection is ... | 1 |

400,286 | 11,771,859,598 | IssuesEvent | 2020-03-16 01:46:45 | AY1920S2-CS2103-W14-3/main | https://api.github.com/repos/AY1920S2-CS2103-W14-3/main | closed | As a busy university student I want to be reminded of my friend's birthdays as and when they are approaching | priority.High type.Story | so that I do not need to memorise all my friend's birthdays but will still be able to celebrate it for them. | 1.0 | As a busy university student I want to be reminded of my friend's birthdays as and when they are approaching - so that I do not need to memorise all my friend's birthdays but will still be able to celebrate it for them. | non_process | as a busy university student i want to be reminded of my friend s birthdays as and when they are approaching so that i do not need to memorise all my friend s birthdays but will still be able to celebrate it for them | 0 |

15,295 | 19,304,118,977 | IssuesEvent | 2021-12-13 09:41:50 | codeanit/til | https://api.github.com/repos/codeanit/til | opened | Shu-Ha-Ri - A way of thinking about how you learn a technique | wip leader process | Shu-Ha-Ri - A way of thinking about how you learn a technique.

Aikido – first learn, then detach, finally transcend

# Resource

- [ ] https://www.martinfowler.com/bliki/ShuHaRi.html

- [ ] https://en.wikipedia.org/wiki/Shuhari

- [ ] https://www.accenture.com/us-en/blogs/software-engineering-blog/shuhari-agile-ad... | 1.0 | Shu-Ha-Ri - A way of thinking about how you learn a technique - Shu-Ha-Ri - A way of thinking about how you learn a technique.

Aikido – first learn, then detach, finally transcend

# Resource

- [ ] https://www.martinfowler.com/bliki/ShuHaRi.html

- [ ] https://en.wikipedia.org/wiki/Shuhari

- [ ] https://www.acce... | process | shu ha ri a way of thinking about how you learn a technique shu ha ri a way of thinking about how you learn a technique aikido – first learn then detach finally transcend resource | 1 |

221,403 | 17,348,771,631 | IssuesEvent | 2021-07-29 05:29:33 | WPChill/strong-testimonials | https://api.github.com/repos/WPChill/strong-testimonials | closed | Read more link is sometimes under / stuck to the bottom of the wrapper | bug need testing tested | **Describe the bug**

Used Slideshow with small widget style

**Screenshots**

If applicable, add screenshots to help explain your problem.

| 2.0 | Read more link is sometimes under / stuck to the bottom of the wrapper - **Describe the bug**

Used Slideshow with small widget style

**Screenshots**

If applicable, add screenshots to help explain your problem.

| 1.0 | Service Jobs invoiced to Manufacturer appear on Customers > Make Payment tab - If a service job is invoiced to Manufacturer, it should NOT show as on the customer's account.

| non_process | service jobs invoiced to manufacturer appear on customers make payment tab if a service job is invoiced to manufacturer it should not show as on the customer s account | 0 |

356,242 | 10,590,558,669 | IssuesEvent | 2019-10-09 09:01:12 | yosefalnajjarofficial/handyman | https://api.github.com/repos/yosefalnajjarofficial/handyman | closed | BACK BUTTON | High Priority | - [x] Back button work to go back to the previous path using history

- [ ] Back button in the home page must be removed | 1.0 | BACK BUTTON - - [x] Back button work to go back to the previous path using history

- [ ] Back button in the home page must be removed | non_process | back button back button work to go back to the previous path using history back button in the home page must be removed | 0 |

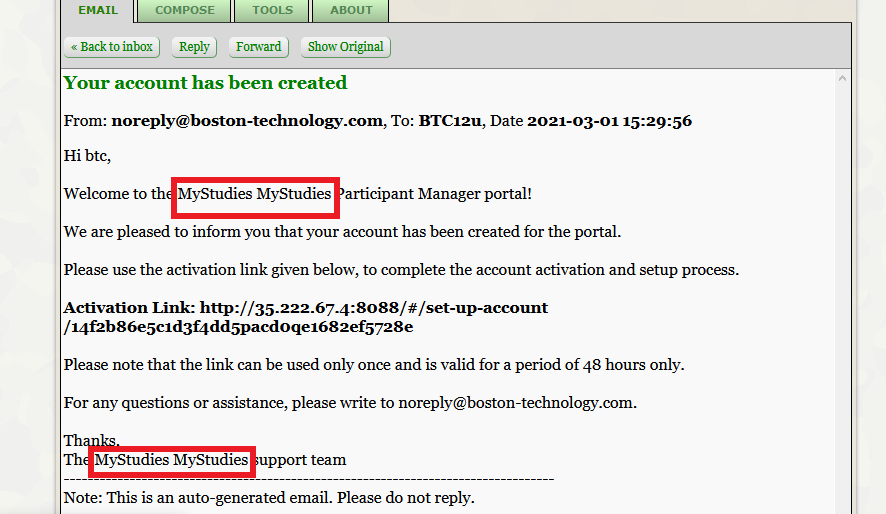

13,440 | 15,882,145,474 | IssuesEvent | 2021-04-09 15:39:11 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | Email templates > Org name issue | Auth server Bug P2 Participant datastore Process: Fixed Process: Tested QA Process: Tested dev Study datastore | AR : Org name is displayed as 'MyStudies MyStudies'

ER : Org name should be displayed as 'Organization' for all the email templates

| 3.0 | Email templates > Org name issue - AR : Org name is displayed as 'MyStudies MyStudies'

ER : Org name should be displayed as 'Organization' for all the email templates

| process | email templates org name issue ar org name is displayed as mystudies mystudies er org name should be displayed as organization for all the email templates | 1 |

335,252 | 10,151,024,127 | IssuesEvent | 2019-08-05 19:13:24 | ilakeful/LakeBot | https://api.github.com/repos/ilakeful/LakeBot | closed | Possible removal of animal commands | changes: patch possible priority: medium | The feature implies possible removing of deprecated animal commands. | 1.0 | Possible removal of animal commands - The feature implies possible removing of deprecated animal commands. | non_process | possible removal of animal commands the feature implies possible removing of deprecated animal commands | 0 |

15,452 | 19,667,417,203 | IssuesEvent | 2022-01-11 00:54:37 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | `Process.Kill(entireProcessTree: true)` does not fire `Exited` event on Windows | area-System.Diagnostics.Process untriaged | ### Description

The `Kill(bool entireProcessTree)` overload on `System.Diagnostics.Process` does not cause the `Exited` event to be fired on Windows when `entireProcessTree` is `true`, even when `EnableRaisingEvents` is `true` and `WaitForExit()` is called.

### Reproduction Steps

```csharp

using System.Diagno... | 1.0 | `Process.Kill(entireProcessTree: true)` does not fire `Exited` event on Windows - ### Description

The `Kill(bool entireProcessTree)` overload on `System.Diagnostics.Process` does not cause the `Exited` event to be fired on Windows when `entireProcessTree` is `true`, even when `EnableRaisingEvents` is `true` and `Wai... | process | process kill entireprocesstree true does not fire exited event on windows description the kill bool entireprocesstree overload on system diagnostics process does not cause the exited event to be fired on windows when entireprocesstree is true even when enableraisingevents is true and wai... | 1 |

4,041 | 6,973,206,380 | IssuesEvent | 2017-12-11 19:40:40 | orbisgis/orbisgis | https://api.github.com/repos/orbisgis/orbisgis | closed | Error with the projections | Bug Processing and analysis Rendering & cartography Severity Critical | Issue get by @gpetit with the the following SQL script (simplified). OrbisGIS has a strange behavior :

``` sql

DROP TABLE IF EXISTS FRANCE_L93, FRANCE_L2E;

--Save the union of all the geometries of the table COMMUNE in FRANCE_L93

CREATE TABLE FRANCE_L93 AS SELECT ST_UNION(ST_ACCUM(THE_GEOM)) as THE_GEOM FROM COMMUNE;... | 1.0 | Error with the projections - Issue get by @gpetit with the the following SQL script (simplified). OrbisGIS has a strange behavior :

``` sql

DROP TABLE IF EXISTS FRANCE_L93, FRANCE_L2E;

--Save the union of all the geometries of the table COMMUNE in FRANCE_L93

CREATE TABLE FRANCE_L93 AS SELECT ST_UNION(ST_ACCUM(THE_GEO... | process | error with the projections issue get by gpetit with the the following sql script simplified orbisgis has a strange behavior sql drop table if exists france france save the union of all the geometries of the table commune in france create table france as select st union st accum the geom as t... | 1 |

629,902 | 20,070,490,515 | IssuesEvent | 2022-02-04 05:49:23 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | prim_count may never trigger an error | Priority:P1 Type:Task Component:RTL Component:IP:prim |

In otp, this prim_count is parameterized to a up increasing CrossCnt, but `set_i` is tie to 0 as it uses the default max value (all 1s).

https://github.com/lowRISC/opentitan/blob/68e86515989c047e28915f6d5d534c578c9bdb3d/hw/ip/otp_ctrl/rtl/otp_ctrl_part_buf.sv#L585-L599

However, since `set_i` is always 0. `cmp_val... | 1.0 | prim_count may never trigger an error -

In otp, this prim_count is parameterized to a up increasing CrossCnt, but `set_i` is tie to 0 as it uses the default max value (all 1s).

https://github.com/lowRISC/opentitan/blob/68e86515989c047e28915f6d5d534c578c9bdb3d/hw/ip/otp_ctrl/rtl/otp_ctrl_part_buf.sv#L585-L599

Howe... | non_process | prim count may never trigger an error in otp this prim count is parameterized to a up increasing crosscnt but set i is tie to as it uses the default max value all however since set i is always cmp valid is always invalid which causes the error is always regardless the counter value ... | 0 |

6,558 | 9,648,700,419 | IssuesEvent | 2019-05-17 16:59:38 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | Release the world to support 'client_info' for manual clients. | api: bigquery api: bigtable api: clouderrorreporting api: cloudresourcemanager api: cloudtrace api: datastore api: dns api: firestore api: logging api: pubsub api: runtimeconfig api: spanner api: translation api:bigquerystorage packaging type: process | /cc @crwilcox, @busunkim96, @tswast

Follow-on to #7825. Because we are releasing new features in `google-api-core` and (more importantly) `google-cloud-core`, we need to handle this release phase delicately. Current clients which depend on `google-cloud-core` use a too-narrow pin:

```bash

$ grep google-cloud-... | 1.0 | Release the world to support 'client_info' for manual clients. - /cc @crwilcox, @busunkim96, @tswast

Follow-on to #7825. Because we are releasing new features in `google-api-core` and (more importantly) `google-cloud-core`, we need to handle this release phase delicately. Current clients which depend on `google-c... | process | release the world to support client info for manual clients cc crwilcox tswast follow on to because we are releasing new features in google api core and more importantly google cloud core we need to handle this release phase delicately current clients which depend on google cloud core u... | 1 |

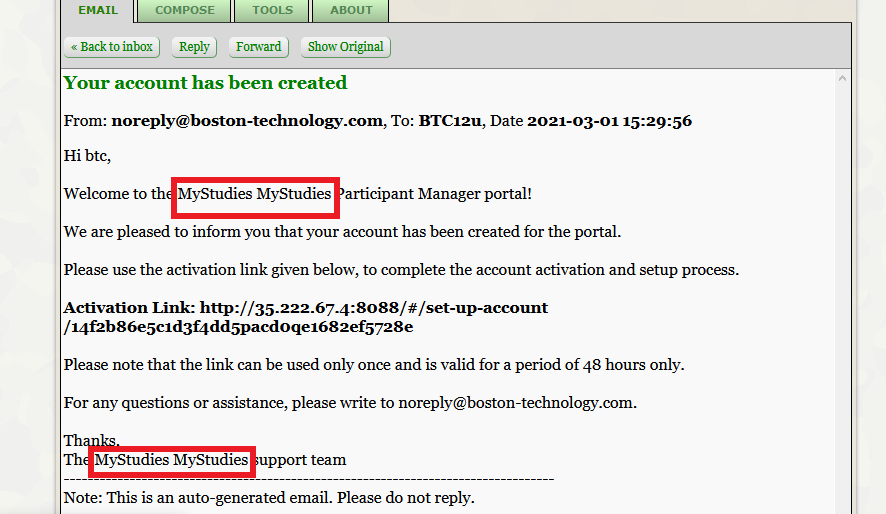

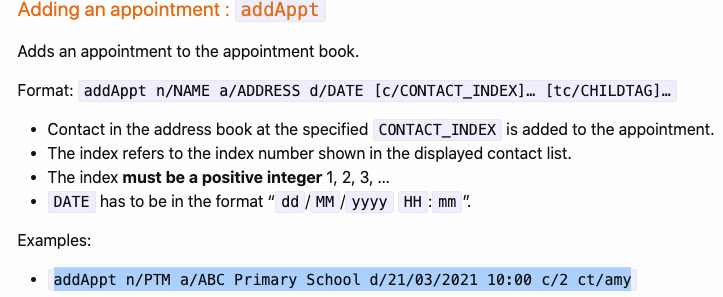

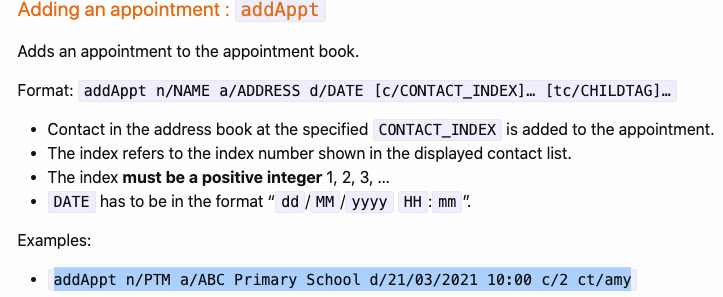

200,488 | 15,801,721,306 | IssuesEvent | 2021-04-03 06:17:08 | habi39/ped | https://api.github.com/repos/habi39/ped | opened | Typo error in UG | severity.Medium type.DocumentationBug |

Used the example provided above and realised that the ct is a typo error. Should be tc instead.

... | 1.0 | Typo error in UG -

Used the example provided above and realised that the ct is a typo error. Should be tc instead.

" is called; if returning a Promise, ensure it resolves. | process: flaky test topic: flake ❄️ stage: fire watch "topic: done()" E2E stale | ### Link to dashboard or CircleCI failure

https://app.circleci.com/pipelines/github/cypress-io/cypress/42199/workflows/8284d2ab-4a4d-4254-b1a8-37702a8eaea4/jobs/1751398

### Link to failing test in GitHub

https://github.com/cypress-io/cypress/blob/develop/packages/server/test/unit/fixture_spec.js#L156

### An... | 1.0 | Flaky test: Error: Timeout of 10000ms exceeded. For async tests and hooks, ensure "done()" is called; if returning a Promise, ensure it resolves. - ### Link to dashboard or CircleCI failure

https://app.circleci.com/pipelines/github/cypress-io/cypress/42199/workflows/8284d2ab-4a4d-4254-b1a8-37702a8eaea4/jobs/175139... | process | flaky test error timeout of exceeded for async tests and hooks ensure done is called if returning a promise ensure it resolves link to dashboard or circleci failure link to failing test in github analysis img width alt screen shot at am src ... | 1 |

4,221 | 7,179,784,193 | IssuesEvent | 2018-01-31 20:52:59 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Improve definition of neurological system and nervous system | organism-level process | This was brought up in #13824

Uberon has 'neurological system' as an exact synonym for 'nervous system'.

http://www.ontobee.org/ontology/UBERON?iri=http://purl.obolibrary.org/obo/UBERON_0001016

@dosumis writes (by email)

> I just got the impression from the asserted child classes that something broader than w... | 1.0 | Improve definition of neurological system and nervous system - This was brought up in #13824

Uberon has 'neurological system' as an exact synonym for 'nervous system'.

http://www.ontobee.org/ontology/UBERON?iri=http://purl.obolibrary.org/obo/UBERON_0001016

@dosumis writes (by email)

> I just got the impressio... | process | improve definition of neurological system and nervous system this was brought up in uberon has neurological system as an exact synonym for nervous system dosumis writes by email i just got the impression from the asserted child classes that something broader than what is usually understood by... | 1 |

59,449 | 6,651,900,083 | IssuesEvent | 2017-09-28 21:56:53 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Investigate flaky parallel/test-net-better-error-messages-port-hostname | CI / flaky test net test | * **Version**: v8.0.0-pre

* **Platform**: centos5-32

* **Subsystem**: test, net

<!-- Enter your issue details below this comment. -->

https://ci.nodejs.org/job/node-test-commit-linux/8716/nodes=centos5-32/console

```console

not ok 740 parallel/test-net-better-error-messages-port-hostname

---

duration... | 2.0 | Investigate flaky parallel/test-net-better-error-messages-port-hostname - * **Version**: v8.0.0-pre

* **Platform**: centos5-32

* **Subsystem**: test, net

<!-- Enter your issue details below this comment. -->

https://ci.nodejs.org/job/node-test-commit-linux/8716/nodes=centos5-32/console

```console

not ok 74... | non_process | investigate flaky parallel test net better error messages port hostname version pre platform subsystem test net console not ok parallel test net better error messages port hostname duration ms severity fail stack assert js ... | 0 |

11,086 | 13,929,153,260 | IssuesEvent | 2020-10-21 22:57:39 | Team-MoXie/InventoryManager | https://api.github.com/repos/Team-MoXie/InventoryManager | closed | Very slow order processing | OrderProcessor enhancement | https://github.com/Team-MoXie/InventoryManager/blob/a76cd88d6339656488d9d2e4e7c57c5c16f1e682/OrderProcessor/src/main/java/team/moxie/OrderProcessor.java#L24

This is pretty slow, it is roughly about 13.5 orders per second, this is entirely due to going out to the table 2 times for each one. I will have to research ho... | 1.0 | Very slow order processing - https://github.com/Team-MoXie/InventoryManager/blob/a76cd88d6339656488d9d2e4e7c57c5c16f1e682/OrderProcessor/src/main/java/team/moxie/OrderProcessor.java#L24

This is pretty slow, it is roughly about 13.5 orders per second, this is entirely due to going out to the table 2 times for each on... | process | very slow order processing this is pretty slow it is roughly about orders per second this is entirely due to going out to the table times for each one i will have to research how to do this faster as far as i can tell there is a faster way to do this by creating a local table and then using join but i ... | 1 |

21,109 | 28,069,348,742 | IssuesEvent | 2023-03-29 17:50:33 | AvaloniaUI/Avalonia | https://api.github.com/repos/AvaloniaUI/Avalonia | closed | Emoji panel input is not working for TextBox on Win11 | bug area-textprocessing | **Describe the bug**

Emoji panel input is not working for TextBox on Win11

**To Reproduce**

Steps to reproduce the behavior:

1. Open control catalog

2. open text box tab

3. select any text box

4. open emoji panel (Win+.)

5. click on any emoji

6. Nothing happens

**Expected behavior**

Emoji is added to t... | 1.0 | Emoji panel input is not working for TextBox on Win11 - **Describe the bug**

Emoji panel input is not working for TextBox on Win11

**To Reproduce**

Steps to reproduce the behavior:

1. Open control catalog

2. open text box tab

3. select any text box

4. open emoji panel (Win+.)

5. click on any emoji

6. Nothi... | process | emoji panel input is not working for textbox on describe the bug emoji panel input is not working for textbox on to reproduce steps to reproduce the behavior open control catalog open text box tab select any text box open emoji panel win click on any emoji nothing happe... | 1 |

7,221 | 10,349,562,713 | IssuesEvent | 2019-09-04 22:59:45 | edgi-govdata-archiving/web-monitoring | https://api.github.com/repos/edgi-govdata-archiving/web-monitoring | closed | ☂ Pull Versions from IA for diffing | deployment priority processing | Useful links:

* https://archive.org/help/wayback_api.php

* https://blog.archive.org/2013/07/04/metadata-api/#read

* https://archive.org/help/abouts3.txt

* http://ws-dl.blogspot.fr/2013/07/2013-07-15-wayback-machine-upgrades.html (timemap)

<!---

@huboard:{"order":23.0,"milestone_order":23,"custom_state":""}

--... | 1.0 | ☂ Pull Versions from IA for diffing - Useful links:

* https://archive.org/help/wayback_api.php

* https://blog.archive.org/2013/07/04/metadata-api/#read

* https://archive.org/help/abouts3.txt

* http://ws-dl.blogspot.fr/2013/07/2013-07-15-wayback-machine-upgrades.html (timemap)

<!---

@huboard:{"order":23.0,"mil... | process | ☂ pull versions from ia for diffing useful links timemap huboard order milestone order custom state | 1 |

6,667 | 9,782,643,162 | IssuesEvent | 2019-06-08 01:14:56 | hppod/movie-api | https://api.github.com/repos/hppod/movie-api | closed | User controller | Controllers Implement In process | - [x] GET BY ID

- [x] PUT

- [x] DELETE

---

- [x] MY REVIEWS

---

- [x] CREATE USER

- [x] LOGIN | 1.0 | User controller - - [x] GET BY ID

- [x] PUT

- [x] DELETE

---

- [x] MY REVIEWS

---

- [x] CREATE USER

- [x] LOGIN | process | user controller get by id put delete my reviews create user login | 1 |

285,746 | 24,693,417,054 | IssuesEvent | 2022-10-19 10:12:49 | harvester/harvester | https://api.github.com/repos/harvester/harvester | closed | [BUG] enable storage-network, Networks statistics are incorrect | kind/bug area/ui priority/2 severity/2 reproduce/always not-require/test-plan | **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

![image.pn... | 1.0 | [BUG] enable storage-network, Networks statistics are incorrect - **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

detected in golang.org/x/tools-v0.1.12 - autoclosed | Mend: dependency security vulnerability | ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>golang.org/x/tools-v0.1.12</b></p></summary>

<p></p>

<p>Library home page: <a href="https://proxy.golang.org/golang.o... | True | CVE-2015-9251 (Medium) detected in golang.org/x/tools-v0.1.12 - autoclosed - ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>golang.org/x/tools-v0.1.12</b></p></summa... | non_process | cve medium detected in golang org x tools autoclosed cve medium severity vulnerability vulnerable library golang org x tools library home page a href dependency hierarchy x golang org x tools vulnerable library found in head commit a href f... | 0 |

9,754 | 12,737,221,848 | IssuesEvent | 2020-06-25 18:21:25 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Request - Add process architecture to Process object | api-suggestion area-System.Diagnostics.Process untriaged | It would be nice if there was property for the Process object that indicated whether a process was x64 / x86 (WOW64 for x64 systems).

A simple call to [IsWow64Process](https://docs.microsoft.com/en-us/windows/desktop/api/wow64apiset/nf-wow64apiset-iswow64process) would do the trick for Windows (not sure about the eq... | 1.0 | Request - Add process architecture to Process object - It would be nice if there was property for the Process object that indicated whether a process was x64 / x86 (WOW64 for x64 systems).

A simple call to [IsWow64Process](https://docs.microsoft.com/en-us/windows/desktop/api/wow64apiset/nf-wow64apiset-iswow64process... | process | request add process architecture to process object it would be nice if there was property for the process object that indicated whether a process was for systems a simple call to would do the trick for windows not sure about the equivalent functions for linux osx and would save everyone from... | 1 |

7,966 | 11,147,737,293 | IssuesEvent | 2019-12-23 13:33:18 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | closed | Invalid state in `prisma2 init` | bug/2-confirmed kind/bug process/candidate topic: cli-init | Was playing around with `preview-10` and these steps:

```

Blank project

SQLite

Photon + Lift

Javascript

Demo Script

```

Somehow I ended up here:

The "Back" has no functionali... | 1.0 | Invalid state in `prisma2 init` - Was playing around with `preview-10` and these steps:

```

Blank project

SQLite

Photon + Lift

Javascript

Demo Script

```

Somehow I ended up here:

detected in postcss-8.2.4.tgz, postcss-7.0.35.tgz | security vulnerability | ## CVE-2021-23382 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-8.2.4.tgz</b>, <b>postcss-7.0.35.tgz</b></p></summary>

<p>

<details><summary><b>postcss-8.2.4.tgz</b></p><... | True | CVE-2021-23382 (Medium) detected in postcss-8.2.4.tgz, postcss-7.0.35.tgz - ## CVE-2021-23382 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-8.2.4.tgz</b>, <b>postcss-7.0.3... | non_process | cve medium detected in postcss tgz postcss tgz cve medium severity vulnerability vulnerable libraries postcss tgz postcss tgz postcss tgz tool for transforming styles with js plugins library home page a href path to dependency file js snac... | 0 |

1,680 | 4,320,705,115 | IssuesEvent | 2016-07-25 06:59:11 | Jumpscale/jscockpit | https://api.github.com/repos/Jumpscale/jscockpit | closed | Various macro errors on Cockpit | priority_critical process_duplicate type_bug | See:

- https://moehaha.barcelona.aydo.com/cockpit/AYSRepos

- https://moehaha.barcelona.aydo.com/cockpit/AYSInstances

- https://moehaha.barcelona.aydo.com/cockpit/AYSTemplates

- https://moehaha.barcelona.aydo.com/cockpit/AYSRuns

- https://moehaha.barcelona.aydo.com/cockpit/AYSBlueprints

- https://moehaha.barcelona... | 1.0 | Various macro errors on Cockpit - See:

- https://moehaha.barcelona.aydo.com/cockpit/AYSRepos

- https://moehaha.barcelona.aydo.com/cockpit/AYSInstances

- https://moehaha.barcelona.aydo.com/cockpit/AYSTemplates

- https://moehaha.barcelona.aydo.com/cockpit/AYSRuns

- https://moehaha.barcelona.aydo.com/cockpit/AYSBluep... | process | various macro errors on cockpit see also error in raml console | 1 |