Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

16,534 | 21,563,296,774 | IssuesEvent | 2022-05-01 13:44:14 | MartinBruun/P6 | https://api.github.com/repos/MartinBruun/P6 | opened | (process) Update the labels-on-pr.yml workflow to update PRs when they fix an Issue | Need grooming 4: Could have Process | **What and why is it needed?**

When linking an issue from a PR (writing Fixes #some-number), it should automatically update the label from "Increment" to "Fix" to signal the change, so it is obvious which pull request actually fixes issues, and which just increment the general quality of the program.

The logic to i... | 1.0 | (process) Update the labels-on-pr.yml workflow to update PRs when they fix an Issue - **What and why is it needed?**

When linking an issue from a PR (writing Fixes #some-number), it should automatically update the label from "Increment" to "Fix" to signal the change, so it is obvious which pull request actually fixes ... | process | process update the labels on pr yml workflow to update prs when they fix an issue what and why is it needed when linking an issue from a pr writing fixes some number it should automatically update the label from increment to fix to signal the change so it is obvious which pull request actually fixes ... | 1 |

20,321 | 26,961,255,960 | IssuesEvent | 2023-02-08 18:21:51 | syncfusion/ej2-react-ui-components | https://api.github.com/repos/syncfusion/ej2-react-ui-components | closed | Export in DocumentEditorContainerComponent | word-processor | Exporting function in DocumentEditorComponent is working.

But exporting in DocumentEditorContainerComponent is not working.

Is it issue, or expected? | 1.0 | Export in DocumentEditorContainerComponent - Exporting function in DocumentEditorComponent is working.

But exporting in DocumentEditorContainerComponent is not working.

Is it issue, or expected? | process | export in documenteditorcontainercomponent exporting function in documenteditorcomponent is working but exporting in documenteditorcontainercomponent is not working is it issue or expected | 1 |

11,151 | 13,957,693,246 | IssuesEvent | 2020-10-24 08:10:55 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | BE: Bounding box GetCapabilities | BE - Belgium Geoportal Harvesting process | Dear Angelo,

I hope you are all fine. I have a question about WMS GetCapibilites. It concerns tag wms:BoundingBox. I see here (http://inspire-geoportal.ec.europa.eu/resources/INSPIRE-b285fced-4eb6-11e8-a459-52540023a883_20190118-042017/services/1/PullResults/1-110/services/107/resourceLocator1/view/services/1/layers/... | 1.0 | BE: Bounding box GetCapabilities - Dear Angelo,

I hope you are all fine. I have a question about WMS GetCapibilites. It concerns tag wms:BoundingBox. I see here (http://inspire-geoportal.ec.europa.eu/resources/INSPIRE-b285fced-4eb6-11e8-a459-52540023a883_20190118-042017/services/1/PullResults/1-110/services/107/resou... | process | be bounding box getcapabilities dear angelo i hope you are all fine i have a question about wms getcapibilites it concerns tag wms boundingbox i see here it is written that the metadata element quot bounding box for each coordinate reference system in which the layer is available quot is missing empty or... | 1 |

5,445 | 7,158,511,947 | IssuesEvent | 2018-01-27 01:22:05 | aspnet/Identity | https://api.github.com/repos/aspnet/Identity | closed | Improve the extensibility of the default identity UI | 2 - Working identity-service | Support changing the user type from IdentityUser to TUser while using the default UI. | 1.0 | Improve the extensibility of the default identity UI - Support changing the user type from IdentityUser to TUser while using the default UI. | non_process | improve the extensibility of the default identity ui support changing the user type from identityuser to tuser while using the default ui | 0 |

4,489 | 7,345,950,655 | IssuesEvent | 2018-03-07 19:04:46 | UKHomeOffice/dq-aws-transition | https://api.github.com/repos/UKHomeOffice/dq-aws-transition | closed | Add data-transfer job for OAG data to S3 archive | DQ Data Pipeline DQ Tranche 1 Production SSM processing | Add Data Transfer job for OAG data to S3 archive.

Pre requisites:

- [x] `sftp_oag_client_maytech.py` downloads files successfully.

## Acceptance Criteria

- [x] PM2 logs show no errors for OAG to S3 Archive configuration

- [x] OAG data is moved to S3 Archive bucket

- [x] Data Transfer configuration repeats | 1.0 | Add data-transfer job for OAG data to S3 archive - Add Data Transfer job for OAG data to S3 archive.

Pre requisites:

- [x] `sftp_oag_client_maytech.py` downloads files successfully.

## Acceptance Criteria

- [x] PM2 logs show no errors for OAG to S3 Archive configuration

- [x] OAG data is moved to S3 Archive b... | process | add data transfer job for oag data to archive add data transfer job for oag data to archive pre requisites sftp oag client maytech py downloads files successfully acceptance criteria logs show no errors for oag to archive configuration oag data is moved to archive bucket d... | 1 |

5,251 | 8,039,527,291 | IssuesEvent | 2018-07-30 18:38:00 | GoogleCloudPlatform/google-cloud-python | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-python | opened | BigQuery: 'test_undelete_table' systest | api: bigquery flaky testing type: process | See: https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/7481

```python

_____________________________ test_undelete_table ______________________________

client = <google.cloud.bigquery.client.Client object at 0x7f7bd1a56e50>

to_delete = [Dataset(DatasetReference(u'precise-truck-742', u'undelete_tabl... | 1.0 | BigQuery: 'test_undelete_table' systest - See: https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/7481

```python

_____________________________ test_undelete_table ______________________________

client = <google.cloud.bigquery.client.Client object at 0x7f7bd1a56e50>

to_delete = [Dataset(DatasetRefer... | process | bigquery test undelete table systest see python test undelete table client to delete def test undelete table client to delete dataset id undelete table dataset format millis table id undelet... | 1 |

16,540 | 9,439,793,759 | IssuesEvent | 2019-04-14 13:20:20 | friendica/friendica | https://api.github.com/repos/friendica/friendica | closed | Base URL and Base Path shouldn't be guessed on every single request | Enhancement Performance | On the heel of #6679, I'd like to move the App auto-detection of the base path and base URL to the install phase. These values then could be written in the config file skeleton and not be detected/re-written on each call.

Not only it is useless for correctly configured nodes, but it can produce spectacular failures ... | True | Base URL and Base Path shouldn't be guessed on every single request - On the heel of #6679, I'd like to move the App auto-detection of the base path and base URL to the install phase. These values then could be written in the config file skeleton and not be detected/re-written on each call.

Not only it is useless fo... | non_process | base url and base path shouldn t be guessed on every single request on the heel of i d like to move the app auto detection of the base path and base url to the install phase these values then could be written in the config file skeleton and not be detected re written on each call not only it is useless for c... | 0 |

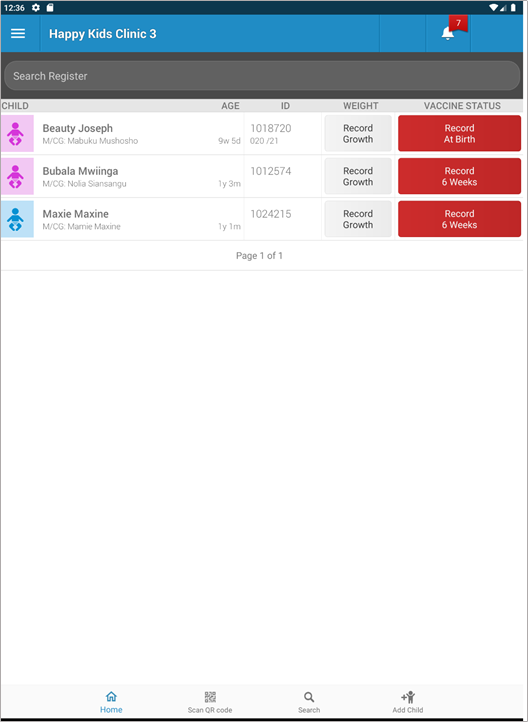

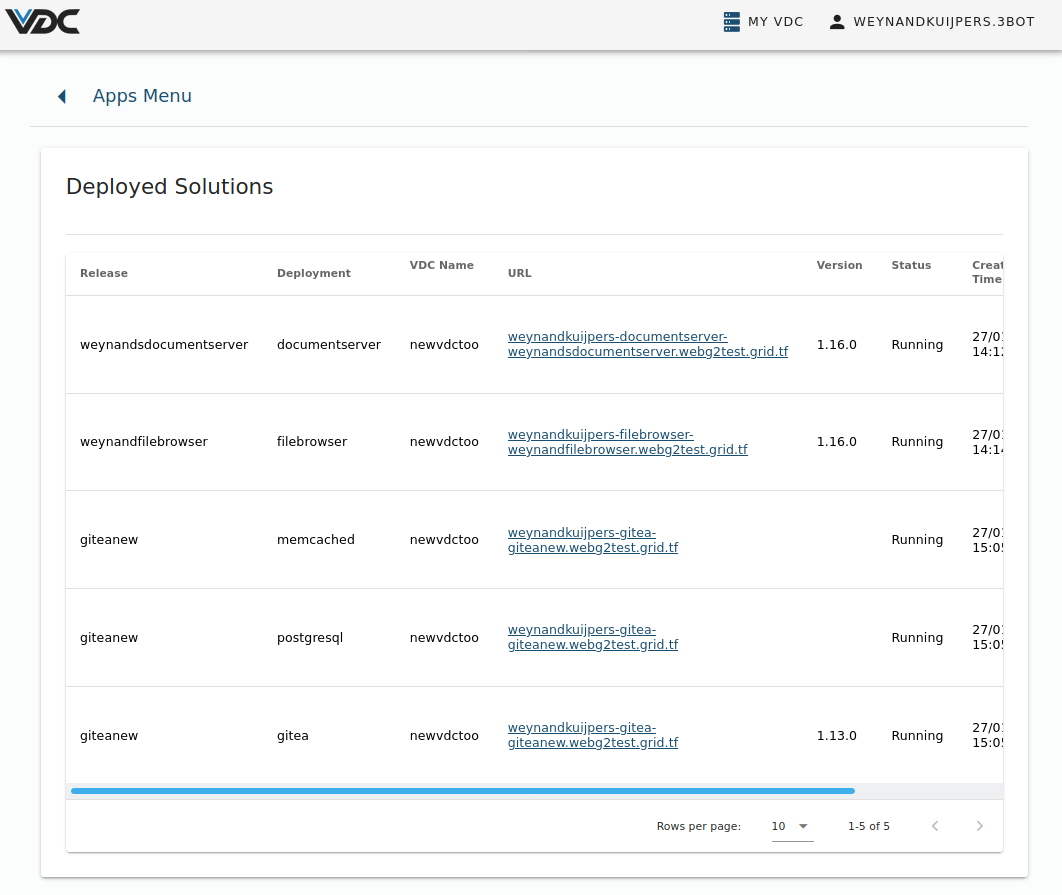

542,711 | 15,865,323,981 | IssuesEvent | 2021-04-08 14:38:02 | OpenSRP/opensrp-client-path-zeir | https://api.github.com/repos/OpenSRP/opensrp-client-path-zeir | closed | The filter flag showing incorrect figures | Show stopper Top priority Under discussion | v.0.0.8- preview

The filter button is showing incorrect figures - this client has only 3 clients but the filter shows 7 children (user: Demo3) I also checked with Demo1 and the same issue is happening.

| 1.0 | The filter flag showing incorrect figures - v.0.0.8- preview

The filter button is showing incorrect figures - this client has only 3 clients but the filter shows 7 children (user: Demo3) I also checked with Demo1 and the same issue is happening.

Author Name: **opi**

---

Actually, on a fresh 2.6.3 testing install, my cron file are located in `/etc/cron.daily` :

```

$ ls -1B /etc/cron.*/yunohost*

/etc/cron.daily/yunohost-certificate-renew

/etc/cron.daily/yunohost-fetch-appslists

``... | 1.0 | Backup cron hook only look at /etc/cron.d/ folder - ###### Original Redmine Issue: [933](https://dev.yunohost.org/issues/933)

Author Name: **opi**

---

Actually, on a fresh 2.6.3 testing install, my cron file are located in `/etc/cron.daily` :

```

$ ls -1B /etc/cron.*/yunohost*

/etc/cron.daily/yunohost-certificate... | non_process | backup cron hook only look at etc cron d folder original redmine issue author name opi actually on a fresh testing install my cron file are located in etc cron daily ls etc cron yunohost etc cron daily yunohost certificate renew etc cron daily yunohost fetch a... | 0 |

507,264 | 14,679,956,604 | IssuesEvent | 2020-12-31 08:36:28 | k8smeetup/website-tasks | https://api.github.com/repos/k8smeetup/website-tasks | opened | /docs/reference/glossary/aggregation-layer.md | lang/zh priority/P0 sync/update version/master welcome | Source File: [/docs/reference/glossary/aggregation-layer.md](https://github.com/kubernetes/website/blob/master/content/en/docs/reference/glossary/aggregation-layer.md)

Diff 命令参考:

```bash

# 查看原始文档与翻译文档更新差异

git diff --no-index -- content/en/docs/reference/glossary/aggregation-layer.md content/zh/docs/reference/glossar... | 1.0 | /docs/reference/glossary/aggregation-layer.md - Source File: [/docs/reference/glossary/aggregation-layer.md](https://github.com/kubernetes/website/blob/master/content/en/docs/reference/glossary/aggregation-layer.md)

Diff 命令参考:

```bash

# 查看原始文档与翻译文档更新差异

git diff --no-index -- content/en/docs/reference/glossary/aggreg... | non_process | docs reference glossary aggregation layer md source file diff 命令参考 bash 查看原始文档与翻译文档更新差异 git diff no index content en docs reference glossary aggregation layer md content zh docs reference glossary aggregation layer md 跨分支持查看原始文档更新差异 git diff release master content en docs reference gloss... | 0 |

16,773 | 21,951,173,074 | IssuesEvent | 2022-05-24 08:04:20 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | Add launch_tika() method to simplify usage of TikaConverter | type:feature good first issue Contributions wanted! topic:preprocessing | `TikaConverter` requires tika to be running, which can be achieved by executing `docker run -p 9998:9998 apache/tika:1.24.1`. However, to simplify the usage of `TikaConverter` for users, it would be nice to have a method to launch the docker container from within Haystack. As an example, for our document stores, we hav... | 1.0 | Add launch_tika() method to simplify usage of TikaConverter - `TikaConverter` requires tika to be running, which can be achieved by executing `docker run -p 9998:9998 apache/tika:1.24.1`. However, to simplify the usage of `TikaConverter` for users, it would be nice to have a method to launch the docker container from w... | process | add launch tika method to simplify usage of tikaconverter tikaconverter requires tika to be running which can be achieved by executing docker run p apache tika however to simplify the usage of tikaconverter for users it would be nice to have a method to launch the docker container from within h... | 1 |

2,898 | 5,887,002,032 | IssuesEvent | 2017-05-17 05:44:09 | Jumpscale/jumpscale_core8 | https://api.github.com/repos/Jumpscale/jumpscale_core8 | closed | IPFS support for AYS build process | process_wontfix type_feature |

- make sure js82 supports everything required to build towards IPFS so it can be used for core0 in 0-complexity/g8os | 1.0 | IPFS support for AYS build process -

- make sure js82 supports everything required to build towards IPFS so it can be used for core0 in 0-complexity/g8os | process | ipfs support for ays build process make sure supports everything required to build towards ipfs so it can be used for in complexity | 1 |

5,640 | 8,499,451,150 | IssuesEvent | 2018-10-29 17:11:42 | easy-software-ufal/annotations_repos | https://api.github.com/repos/easy-software-ufal/annotations_repos | opened | aspnet/Routing The RegEx inline constraint doesnt take care of Escape characters | C# RPV test wrong processing | Issue: `https://github.com/aspnet/Routing/issues/136`

PR: `https://github.com/aspnet/Routing/commit/4e5fc2e2dd4f7dd7a8c2fd8a936f6b2a002a1078` | 1.0 | aspnet/Routing The RegEx inline constraint doesnt take care of Escape characters - Issue: `https://github.com/aspnet/Routing/issues/136`

PR: `https://github.com/aspnet/Routing/commit/4e5fc2e2dd4f7dd7a8c2fd8a936f6b2a002a1078` | process | aspnet routing the regex inline constraint doesnt take care of escape characters issue pr | 1 |

3,370 | 6,497,143,975 | IssuesEvent | 2017-08-22 13:02:28 | zero-os/0-orchestrator | https://api.github.com/repos/zero-os/0-orchestrator | closed | OVS container not started after a reboot of a node | process_duplicate type_bug | ```

[Wed16 13:11] - Run.py :108 :j.atyourservice.server - ERROR - error during execution of step 1 in run fbd466193ee8fcf66a5363bb223f78ef

Error of job: container!geertsgw (install):

*TRACEBACK*********************************************************************************

Traceback (most r... | 1.0 | OVS container not started after a reboot of a node - ```

[Wed16 13:11] - Run.py :108 :j.atyourservice.server - ERROR - error during execution of step 1 in run fbd466193ee8fcf66a5363bb223f78ef

Error of job: container!geertsgw (install):

*TRACEBACK***********************************************... | process | ovs container not started after a reboot of a node run py j atyourservice server error error during execution of step in run error of job container geertsgw install traceback traceback... | 1 |

95,718 | 27,591,566,360 | IssuesEvent | 2023-03-09 01:02:46 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | OSX infra issue - prereq check for 'pkg-config' missing | os-mac-os-x blocking-clean-ci blocking-official-build area-Infrastructure untriaged | Library + OSX tests failing with this:

```

__DistroRid: osx-x64

Setting up directories for build

Checking prerequisites...

Please install pkg-config before running this script, see https://github.com/dotnet/runtime/blob/main/docs/workflow/requirements/macos-requirements.md

```

See errors on https://git... | 1.0 | OSX infra issue - prereq check for 'pkg-config' missing - Library + OSX tests failing with this:

```

__DistroRid: osx-x64

Setting up directories for build

Checking prerequisites...

Please install pkg-config before running this script, see https://github.com/dotnet/runtime/blob/main/docs/workflow/requiremen... | non_process | osx infra issue prereq check for pkg config missing library osx tests failing with this distrorid osx setting up directories for build checking prerequisites please install pkg config before running this script see see errors on known issue error message fill th... | 0 |

246,357 | 7,895,166,959 | IssuesEvent | 2018-06-29 01:30:05 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | User settable axis label scaling is backwards (inverted?) | Likelihood: 3 - Occasional OS: All Priority: Normal Severity: 4 - Crash / Wrong Results Support Group: Any bug version: 2.8.2 | If I choose to change the axis label scaling, and change it to '2' (for 10^2), I get the results for 10^-2 instead (and vice-versa).

eg, if my label is 5, and I change label scaling to '2', I get 0.05 instead of 500.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Red... | 1.0 | User settable axis label scaling is backwards (inverted?) - If I choose to change the axis label scaling, and change it to '2' (for 10^2), I get the results for 10^-2 instead (and vice-versa).

eg, if my label is 5, and I change label scaling to '2', I get 0.05 instead of 500.

-----------------------REDMINE MIGRAT... | non_process | user settable axis label scaling is backwards inverted if i choose to change the axis label scaling and change it to for i get the results for instead and vice versa eg if my label is and i change label scaling to i get instead of redmine migration ... | 0 |

262,345 | 19,783,749,698 | IssuesEvent | 2022-01-18 02:24:51 | Rabbittee/JavaScript30 | https://api.github.com/repos/Rabbittee/JavaScript30 | closed | Skip no.6 on day 04 | documentation day04 | > 6. create a list of Boulevards in Paris that contain 'de' anywhere in the name

> https://en.wikipedia.org/wiki/Category:Boulevards_in_Paris

Skip it.

@Rabbittee/dunnojs | 1.0 | Skip no.6 on day 04 - > 6. create a list of Boulevards in Paris that contain 'de' anywhere in the name

> https://en.wikipedia.org/wiki/Category:Boulevards_in_Paris

Skip it.

@Rabbittee/dunnojs | non_process | skip no on day create a list of boulevards in paris that contain de anywhere in the name skip it rabbittee dunnojs | 0 |

6,703 | 9,814,881,706 | IssuesEvent | 2019-06-13 11:16:40 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | [Processing] In batch mode, the "Remove row" button does not remove the selected row but the one at the bottom of the table | Feature Request Processing | Author Name: **Harrissou Santanna** (@DelazJ)

Original Redmine Issue: [20167](https://issues.qgis.org/issues/20167)

Redmine category:processing/gui

---

Open an algorithm dialog

switch to batch mode

add a bunch of files from system

Try to delete one file in the middle: I'm not really sure how selection proceeds bu... | 1.0 | [Processing] In batch mode, the "Remove row" button does not remove the selected row but the one at the bottom of the table - Author Name: **Harrissou Santanna** (@DelazJ)

Original Redmine Issue: [20167](https://issues.qgis.org/issues/20167)

Redmine category:processing/gui

---

Open an algorithm dialog

switch to bat... | process | in batch mode the remove row button does not remove the selected row but the one at the bottom of the table author name harrissou santanna delazj original redmine issue redmine category processing gui open an algorithm dialog switch to batch mode add a bunch of files from system try to del... | 1 |

615,676 | 19,272,618,066 | IssuesEvent | 2021-12-10 08:04:13 | Vyxal/Vyxal | https://api.github.com/repos/Vyxal/Vyxal | closed | Escape sequences don't actually escape stuff | bug difficulty: average priority:high | and y'all nerds said "use the `!r` flag when using f strings" smh

(created by lyxal [here](https://chat.stackexchange.com/transcript/message/59839487)) | 1.0 | Escape sequences don't actually escape stuff - and y'all nerds said "use the `!r` flag when using f strings" smh

(created by lyxal [here](https://chat.stackexchange.com/transcript/message/59839487)) | non_process | escape sequences don t actually escape stuff and y all nerds said use the r flag when using f strings smh created by lyxal | 0 |

207,496 | 7,130,396,438 | IssuesEvent | 2018-01-22 06:19:23 | taniman/profit-trailer | https://api.github.com/repos/taniman/profit-trailer | closed | Monitor Enhancement: Daily log summary | enhancement low priority | I guess I am OK with the sell log being 24 hours rolling... but it would be sweet if daily we could write a summary log of the days activity... based on the sell log.

Maybe AVG Profit, AVG Trigger, Sum Profit, number of trades

If not, that's fine - I'll work up something to scrape the data into a google sheet ;) | 1.0 | Monitor Enhancement: Daily log summary - I guess I am OK with the sell log being 24 hours rolling... but it would be sweet if daily we could write a summary log of the days activity... based on the sell log.

Maybe AVG Profit, AVG Trigger, Sum Profit, number of trades

If not, that's fine - I'll work up something t... | non_process | monitor enhancement daily log summary i guess i am ok with the sell log being hours rolling but it would be sweet if daily we could write a summary log of the days activity based on the sell log maybe avg profit avg trigger sum profit number of trades if not that s fine i ll work up something to... | 0 |

8,064 | 11,233,126,478 | IssuesEvent | 2020-01-09 00:04:17 | googleapis/nodejs-spanner | https://api.github.com/repos/googleapis/nodejs-spanner | closed | Spanner: Adjust timeouts for CreateDatabase and retries for other methods | type: process | As part of a recent change to GAPIC Configuration, timeouts and retries need to be updated.

Ensure that the timeouts and retries specified in https://github.com/googleapis/googleapis/commit/cc233544aa39b8947c9b929819aeb08e2cb71feb#diff-4501db3e3507bcce8496c9aea2017d96 are reflected in this client library.

| 1.0 | Spanner: Adjust timeouts for CreateDatabase and retries for other methods - As part of a recent change to GAPIC Configuration, timeouts and retries need to be updated.

Ensure that the timeouts and retries specified in https://github.com/googleapis/googleapis/commit/cc233544aa39b8947c9b929819aeb08e2cb71feb#diff-4501... | process | spanner adjust timeouts for createdatabase and retries for other methods as part of a recent change to gapic configuration timeouts and retries need to be updated ensure that the timeouts and retries specified in are reflected in this client library | 1 |

70,353 | 9,411,821,220 | IssuesEvent | 2019-04-10 01:14:08 | ove/ove-docs | https://api.github.com/repos/ove/ove-docs | closed | Documentation on Spaces.json | documentation enhancement | There needs to be a document that explains what the Spaces.json file is and how to make changes to it and replace the default. The instructions that we have right now are very high level and scattered across many parts of the documentation. | 1.0 | Documentation on Spaces.json - There needs to be a document that explains what the Spaces.json file is and how to make changes to it and replace the default. The instructions that we have right now are very high level and scattered across many parts of the documentation. | non_process | documentation on spaces json there needs to be a document that explains what the spaces json file is and how to make changes to it and replace the default the instructions that we have right now are very high level and scattered across many parts of the documentation | 0 |

16,335 | 20,990,770,947 | IssuesEvent | 2022-03-29 09:05:48 | equinor/MAD-VSM-WEB | https://api.github.com/repos/equinor/MAD-VSM-WEB | closed | Improve API release pipeline | back-end process improvement | We need to look into improving the release pipeline so that a release can be done in an expected timeframe.

The main issue is situations where APIM update fails to find the swagger.json file as a basis for its update.

In order to fix this, we are experimenting with adding a Webserver warmup step to our deploy proce... | 1.0 | Improve API release pipeline - We need to look into improving the release pipeline so that a release can be done in an expected timeframe.

The main issue is situations where APIM update fails to find the swagger.json file as a basis for its update.

In order to fix this, we are experimenting with adding a Webserver ... | process | improve api release pipeline we need to look into improving the release pipeline so that a release can be done in an expected timeframe the main issue is situations where apim update fails to find the swagger json file as a basis for its update in order to fix this we are experimenting with adding a webserver ... | 1 |

44,912 | 9,659,203,136 | IssuesEvent | 2019-05-20 12:56:43 | Regalis11/Barotrauma | https://api.github.com/repos/Regalis11/Barotrauma | closed | Add a cooldown to GUI Use | Code Feature request | Come to think about it - there is no cooldown for the GUI Use functionality on the HUD, you could have say, a bandage which can only be applied on another X times per second (Such as once or maybe twice) - however self-using does not have any such restriction and can simply be spammed very fast.

Could we either get ... | 1.0 | Add a cooldown to GUI Use - Come to think about it - there is no cooldown for the GUI Use functionality on the HUD, you could have say, a bandage which can only be applied on another X times per second (Such as once or maybe twice) - however self-using does not have any such restriction and can simply be spammed very f... | non_process | add a cooldown to gui use come to think about it there is no cooldown for the gui use functionality on the hud you could have say a bandage which can only be applied on another x times per second such as once or maybe twice however self using does not have any such restriction and can simply be spammed very f... | 0 |

12,644 | 15,018,422,197 | IssuesEvent | 2021-02-01 12:12:09 | ethereumclassic/ECIPs | https://api.github.com/repos/ethereumclassic/ECIPs | closed | ECIP 1000: Nominate new ECIP Editors | meta:1 governance meta:3 process | To prevent the Ethereum Classic governance from halting, please use this ticket to nominate candidates for the ECIP Editor position.

Ideally, we need at least two more ECIP editors. An ideal candidate should be well-recognized in the Ethereum Classic community and widely acting independently.

Everyone can nominat... | 1.0 | ECIP 1000: Nominate new ECIP Editors - To prevent the Ethereum Classic governance from halting, please use this ticket to nominate candidates for the ECIP Editor position.

Ideally, we need at least two more ECIP editors. An ideal candidate should be well-recognized in the Ethereum Classic community and widely acting... | process | ecip nominate new ecip editors to prevent the ethereum classic governance from halting please use this ticket to nominate candidates for the ecip editor position ideally we need at least two more ecip editors an ideal candidate should be well recognized in the ethereum classic community and widely acting in... | 1 |

62,036 | 8,570,156,951 | IssuesEvent | 2018-11-11 17:35:59 | mor1001/GESPRO_GESTIONTAREAS | https://api.github.com/repos/mor1001/GESPRO_GESTIONTAREAS | opened | Documentar Aspectos relevantes | Documentation | **Inicio del proyecto:**

Introducción

**Metodologías**

Ensayo / error

TDD

**Formación:**

OpenCV

Android

**Desarrollo del algoritmo:**

Búsqueda bibliográfica

Plataforma de desarrollo

**Desarrollo de la app:**

Prototipos

Bugs

MVP

Material design

Patrones

Servicio de monitorización

Open... | 1.0 | Documentar Aspectos relevantes - **Inicio del proyecto:**

Introducción

**Metodologías**

Ensayo / error

TDD

**Formación:**

OpenCV

Android

**Desarrollo del algoritmo:**

Búsqueda bibliográfica

Plataforma de desarrollo

**Desarrollo de la app:**

Prototipos

Bugs

MVP

Material design

Patrones

... | non_process | documentar aspectos relevantes inicio del proyecto introducción metodologías ensayo error tdd formación opencv android desarrollo del algoritmo búsqueda bibliográfica plataforma de desarrollo desarrollo de la app prototipos bugs mvp material design patrones ... | 0 |

85,545 | 10,618,369,745 | IssuesEvent | 2019-10-13 03:48:44 | carbon-design-system/ibm-dotcom-library | https://api.github.com/repos/carbon-design-system/ibm-dotcom-library | closed | Finalize site map for DDS Cupcake site | Sprint Must Have design migrate sprint demo website: cupcake | _chsanche created the following on Aug 26:_

### User Story

As a member of the DDS team, I need a site map to guide my Cupcake website development work so that I can assure that any updates or new pages to the DDS site are within the site map plan.

### Deliverables

- [x] DDS Cupcake website v1.0 sitemap

### ... | 1.0 | Finalize site map for DDS Cupcake site - _chsanche created the following on Aug 26:_

### User Story

As a member of the DDS team, I need a site map to guide my Cupcake website development work so that I can assure that any updates or new pages to the DDS site are within the site map plan.

### Deliverables

- [x]... | non_process | finalize site map for dds cupcake site chsanche created the following on aug user story as a member of the dds team i need a site map to guide my cupcake website development work so that i can assure that any updates or new pages to the dds site are within the site map plan deliverables dd... | 0 |

97,200 | 8,651,570,800 | IssuesEvent | 2018-11-27 03:49:47 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: Browser Unit Tests.geohash_layer - geohash_layer GeohashGridLayer Scaled Circle Markers | failed-test | A test failed on a tracked branch

```

[object Object]

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+gis-plugin+multijob-intake/40/)

<!-- kibanaCiData = {"failed-test":{"test.class":"Browser Unit Tests.geohash_layer","test.name":"geohash_layer GeohashGridLayer Scaled Circle Markers"... | 1.0 | Failing test: Browser Unit Tests.geohash_layer - geohash_layer GeohashGridLayer Scaled Circle Markers - A test failed on a tracked branch

```

[object Object]

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+gis-plugin+multijob-intake/40/)

<!-- kibanaCiData = {"failed-test":{"test.clas... | non_process | failing test browser unit tests geohash layer geohash layer geohashgridlayer scaled circle markers a test failed on a tracked branch first failure | 0 |

395,547 | 11,688,385,920 | IssuesEvent | 2020-03-05 14:27:48 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | playtech.ro - Missing "Like" button when returning from Facebook page | browser-fenix engine-gecko priority-normal severity-critical | <!-- @browser: Firefox Mobile 75.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:75.0) Gecko/75.0 Firefox/75.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/49600 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://playtech.ro/echipa-playtech/

**Browser ... | 1.0 | playtech.ro - Missing "Like" button when returning from Facebook page - <!-- @browser: Firefox Mobile 75.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:75.0) Gecko/75.0 Firefox/75.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/49600 -->

<!-- @extra_labels: brow... | non_process | playtech ro missing like button when returning from facebook page url browser version firefox mobile operating system android tested another browser yes problem type design is broken description firefox blocks social media like button scripts steps to reproduc... | 0 |

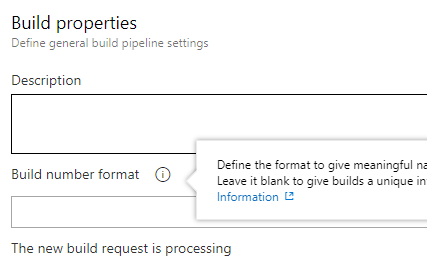

19,675 | 26,031,615,170 | IssuesEvent | 2022-12-21 21:59:13 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | The default build number is now a blank field, not `$(Date:yyyyMMdd).$(Rev:r)` | devops/prod doc-bug Pri2 devops-cicd-process/tech | From a new empty pipeline...

Personally I think the docs page is right and this is a bug in the program, but how am I supposed to know which it is?

---

#### Document Details

⚠ *Do not edit this s... | 1.0 | The default build number is now a blank field, not `$(Date:yyyyMMdd).$(Rev:r)` - From a new empty pipeline...

Personally I think the docs page is right and this is a bug in the program, but how am I suppo... | process | the default build number is now a blank field not date yyyymmdd rev r from a new empty pipeline personally i think the docs page is right and this is a bug in the program but how am i supposed to know which it is document details ⚠ do not edit this section it is required for... | 1 |

114,639 | 24,633,744,176 | IssuesEvent | 2022-10-17 05:58:36 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | Start and Finished featured article settings cleared when saving article at frontend | No Code Attached Yet Information Required | In Joomla 4 you can set at "Publishing" the settings for Start Featured and Finish Featured in the backend.

After saving it works well on the website.

But in article management on the frontend, these settings are not available.

When someone edits and saves an article on the frontend, the Start Featured and Finish Fe... | 1.0 | Start and Finished featured article settings cleared when saving article at frontend - In Joomla 4 you can set at "Publishing" the settings for Start Featured and Finish Featured in the backend.

After saving it works well on the website.

But in article management on the frontend, these settings are not available.

Wh... | non_process | start and finished featured article settings cleared when saving article at frontend in joomla you can set at publishing the settings for start featured and finish featured in the backend after saving it works well on the website but in article management on the frontend these settings are not available wh... | 0 |

564 | 3,024,046,230 | IssuesEvent | 2015-08-02 06:05:12 | HazyResearch/dd-genomics | https://api.github.com/repos/HazyResearch/dd-genomics | opened | REDO SCHEMA: separate doc_id, section_id, sent_id | Preprocessing | This should be a mostly superficial change but I think it is worth doing now to avoid future confusions / hack-ey workarounds. Basically `doc_id` will be renamed `section_id` and remain the primary key that e.g. the tables are partitioned on in greenplum, queries are joined on etc. `sent_id` counts will restart for e... | 1.0 | REDO SCHEMA: separate doc_id, section_id, sent_id - This should be a mostly superficial change but I think it is worth doing now to avoid future confusions / hack-ey workarounds. Basically `doc_id` will be renamed `section_id` and remain the primary key that e.g. the tables are partitioned on in greenplum, queries are... | process | redo schema separate doc id section id sent id this should be a mostly superficial change but i think it is worth doing now to avoid future confusions hack ey workarounds basically doc id will be renamed section id and remain the primary key that e g the tables are partitioned on in greenplum queries are... | 1 |

15,759 | 19,912,465,815 | IssuesEvent | 2022-01-25 18:36:29 | MunchBit/MunchLove | https://api.github.com/repos/MunchBit/MunchLove | opened | Connect to Munch Love Payment account | feature Payment Process | **Title**

Connect to Munch Love Payment account

**Description**

Connect to Munch Love Payment account

| 1.0 | Connect to Munch Love Payment account - **Title**

Connect to Munch Love Payment account

**Description**

Connect to Munch Love Payment account

| process | connect to munch love payment account title connect to munch love payment account description connect to munch love payment account | 1 |

45,315 | 7,177,781,697 | IssuesEvent | 2018-01-31 14:43:07 | npm/npm | https://api.github.com/repos/npm/npm | closed | Clarify documentation for package-lock.json behaviour | documentation | #### I'm opening this issue because:

- [ ] npm is crashing.

- [ ] npm is producing an incorrect install.

- [ ] npm is doing something I don't understand.

- [x] Other (_see below for feature requests_):

#### What's going wrong?

[doc/files/npm-package-locks.md](https://github.com/npm/npm/blob/latest/d... | 1.0 | Clarify documentation for package-lock.json behaviour - #### I'm opening this issue because:

- [ ] npm is crashing.

- [ ] npm is producing an incorrect install.

- [ ] npm is doing something I don't understand.

- [x] Other (_see below for feature requests_):

#### What's going wrong?

[doc/files/npm-pa... | non_process | clarify documentation for package lock json behaviour i m opening this issue because npm is crashing npm is producing an incorrect install npm is doing something i don t understand other see below for feature requests what s going wrong should be updated acc... | 0 |

3,328 | 6,447,256,361 | IssuesEvent | 2017-08-14 06:00:35 | gaocegege/Processing.R | https://api.github.com/repos/gaocegege/Processing.R | closed | docs--broken reference link | community/processing difficulty/low priority/p0 size/small type/bug | 1. go to: https://processing-r.github.io/reference/index.html

2. click on Reference

3. 404

It looks like the link probably points to `reference/`, not `/reference/` -- so it works from the top level, but not anywhere else.

| 1.0 | docs--broken reference link - 1. go to: https://processing-r.github.io/reference/index.html

2. click on Reference

3. 404

It looks like the link probably points to `reference/`, not `/reference/` -- so it works from the top level, but not anywhere else.

| process | docs broken reference link go to click on reference it looks like the link probably points to reference not reference so it works from the top level but not anywhere else | 1 |

37,859 | 10,092,173,485 | IssuesEvent | 2019-07-26 15:58:02 | apollographql/apollo-ios | https://api.github.com/repos/apollographql/apollo-ios | closed | Node LTS Version Out of Date | build-issue | Currently, the CLI script installs the exact Node version specified using nvm (#434) and switches to it, then installs Apollo locally (which is not ideal in my opinion #401). That Node version should be LTS but appears to be out of date (currently 8.15.0, [LTS](https://nodejs.org/) is at 10.16.0). I'm not sure if simpl... | 1.0 | Node LTS Version Out of Date - Currently, the CLI script installs the exact Node version specified using nvm (#434) and switches to it, then installs Apollo locally (which is not ideal in my opinion #401). That Node version should be LTS but appears to be out of date (currently 8.15.0, [LTS](https://nodejs.org/) is at ... | non_process | node lts version out of date currently the cli script installs the exact node version specified using nvm and switches to it then installs apollo locally which is not ideal in my opinion that node version should be lts but appears to be out of date currently is at i m not sure if simp... | 0 |

267,012 | 8,378,234,889 | IssuesEvent | 2018-10-06 12:01:14 | matthiaskoenig/pkdb | https://api.github.com/repos/matthiaskoenig/pkdb | closed | Add authors to reference endpoint & study_pk & study_name | backend priority | Authors should be directly part of the reference endpoint. Makes it much easier to consume the reference | 1.0 | Add authors to reference endpoint & study_pk & study_name - Authors should be directly part of the reference endpoint. Makes it much easier to consume the reference | non_process | add authors to reference endpoint study pk study name authors should be directly part of the reference endpoint makes it much easier to consume the reference | 0 |

8,103 | 4,160,734,247 | IssuesEvent | 2016-06-17 14:19:19 | NuGet/Home | https://api.github.com/repos/NuGet/Home | opened | [1] Enable Solution level restore in msbuild w/o 2 passes | Area:PJ2MsBuild CLI 1.1 Type:Feature | It is critical that Restore operates on a solution, not a project for:

- correctness (only by understand all the project to project references in a solution, and the set of nuget packages that the projects all use, are we able to get the right answers for version conflict resolution)

- performance (restore used t... | 1.0 | [1] Enable Solution level restore in msbuild w/o 2 passes - It is critical that Restore operates on a solution, not a project for:

- correctness (only by understand all the project to project references in a solution, and the set of nuget packages that the projects all use, are we able to get the right answers for v... | non_process | enable solution level restore in msbuild w o passes it is critical that restore operates on a solution not a project for correctness only by understand all the project to project references in a solution and the set of nuget packages that the projects all use are we able to get the right answers for ver... | 0 |

11,446 | 14,264,499,757 | IssuesEvent | 2020-11-20 15:50:17 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | StartInfo_NotepadWithContent* tests are failing in CI | area-System.Diagnostics.Process os-windows test-run-core | We have 348 failures in last 30 days. This is predominately on `server20h1` queue:

```

Assert.StartsWith() Failure:\r\nExpected: StartInfo_NotepadWithContent_withArgumentList_1156_a34ea3f3\r\nActual:

at System.Diagnostics.Tests.ProcessStartInfoTests.StartInfo_NotepadWithContent_withArgumentList(Boolean useS... | 1.0 | StartInfo_NotepadWithContent* tests are failing in CI - We have 348 failures in last 30 days. This is predominately on `server20h1` queue:

```

Assert.StartsWith() Failure:\r\nExpected: StartInfo_NotepadWithContent_withArgumentList_1156_a34ea3f3\r\nActual:

at System.Diagnostics.Tests.ProcessStartInfoTests.St... | process | startinfo notepadwithcontent tests are failing in ci we have failures in last days this is predominately on queue assert startswith failure r nexpected startinfo notepadwithcontent withargumentlist r nactual at system diagnostics tests processstartinfotests startinfo notepadwithcon... | 1 |

13,755 | 16,504,800,295 | IssuesEvent | 2021-05-25 17:55:16 | threefoldtech/0-stor_v2 | https://api.github.com/repos/threefoldtech/0-stor_v2 | closed | ETCD reachability is only checked if there is a single endpoint | process_wontfix type_bug | If more than one endpoint is set, there is no check to see if the cluster is reachable. Since we rely on library behavior for this, it might be best to somehow add a check of our own for this | 1.0 | ETCD reachability is only checked if there is a single endpoint - If more than one endpoint is set, there is no check to see if the cluster is reachable. Since we rely on library behavior for this, it might be best to somehow add a check of our own for this | process | etcd reachability is only checked if there is a single endpoint if more than one endpoint is set there is no check to see if the cluster is reachable since we rely on library behavior for this it might be best to somehow add a check of our own for this | 1 |

55,260 | 3,072,590,423 | IssuesEvent | 2015-08-19 17:41:54 | RobotiumTech/robotium | https://api.github.com/repos/RobotiumTech/robotium | closed | Provider -src.jar for download | bug imported Priority-Medium wontfix | _From [zorze...@google.com](https://code.google.com/u/115535067556298780337/) on March 27, 2012 15:02:43_

Along with the .jar download, it would be useful to have a -src.jar to be able to browse the sources from my project.

_Original issue: http://code.google.com/p/robotium/issues/detail?id=240_ | 1.0 | Provider -src.jar for download - _From [zorze...@google.com](https://code.google.com/u/115535067556298780337/) on March 27, 2012 15:02:43_

Along with the .jar download, it would be useful to have a -src.jar to be able to browse the sources from my project.

_Original issue: http://code.google.com/p/robotium/issues/det... | non_process | provider src jar for download from on march along with the jar download it would be useful to have a src jar to be able to browse the sources from my project original issue | 0 |

5,753 | 8,597,862,128 | IssuesEvent | 2018-11-15 19:58:21 | knative/serving | https://api.github.com/repos/knative/serving | closed | Clean up some of the Knative Github Team Details | kind/process | <!--

/kind process

/assign @mchmarny @dewitt

-->

Few suggestions:

- rename 'Knative Users' to 'Knative Members' so it matches our [ROLES.md](https://github.com/knative/docs/blob/master/community/ROLES.md)

- 'Serving Maintainers' description mentions 'elafros'

- 'Pkg admins' -> 'Pkg Admins' for consistency (wh... | 1.0 | Clean up some of the Knative Github Team Details - <!--

/kind process

/assign @mchmarny @dewitt

-->

Few suggestions:

- rename 'Knative Users' to 'Knative Members' so it matches our [ROLES.md](https://github.com/knative/docs/blob/master/community/ROLES.md)

- 'Serving Maintainers' description mentions 'elafros'

... | process | clean up some of the knative github team details kind process assign mchmarny dewitt few suggestions rename knative users to knative members so it matches our serving maintainers description mentions elafros pkg admins pkg admins for consistency why not | 1 |

173,627 | 13,433,938,490 | IssuesEvent | 2020-09-07 10:34:15 | nathanbaleeta/ureport-mobile | https://api.github.com/repos/nathanbaleeta/ureport-mobile | closed | Write widget test for top navigation using tabs | good first issue test | To ensure theU-Report app continues to work as developers add more features or change existing functionality, writing tests for every custom built widget should be an integral part of the development process.

Unit tests are handy for verifying the behavior of a single function, method, or class. The test package pro... | 1.0 | Write widget test for top navigation using tabs - To ensure theU-Report app continues to work as developers add more features or change existing functionality, writing tests for every custom built widget should be an integral part of the development process.

Unit tests are handy for verifying the behavior of a singl... | non_process | write widget test for top navigation using tabs to ensure theu report app continues to work as developers add more features or change existing functionality writing tests for every custom built widget should be an integral part of the development process unit tests are handy for verifying the behavior of a singl... | 0 |

15,732 | 10,265,614,380 | IssuesEvent | 2019-08-22 19:17:56 | ualbertalib/avalon | https://api.github.com/repos/ualbertalib/avalon | closed | Resource Description Page - agreement in wrong stage of workflow and must be accepted each time metadata is edited | Post-launch usability | ### Descriptive summary

The user agreement is the last metadata field on the resource description page and users must click "I agree" before saving changes. However, each time an edit is made on the resource description page, users must click "I agree". Also, the user agreement is placed in the wrong stage of the dep... | True | Resource Description Page - agreement in wrong stage of workflow and must be accepted each time metadata is edited - ### Descriptive summary

The user agreement is the last metadata field on the resource description page and users must click "I agree" before saving changes. However, each time an edit is made on the res... | non_process | resource description page agreement in wrong stage of workflow and must be accepted each time metadata is edited descriptive summary the user agreement is the last metadata field on the resource description page and users must click i agree before saving changes however each time an edit is made on the res... | 0 |

9,422 | 3,906,343,438 | IssuesEvent | 2016-04-19 08:27:43 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | Error when trying to access system information | No Code Attached Yet | #### Steps to reproduce the issue

After updating from 3.4.8 to 3.5.1 and fix the database

When trying to access Information pages (Menu System/System Information) I'm having the following error:

> An error has occured

1682 Native table 'performance_schema'.'session_variables' has the wrong structure SQL=SHOW VARIAB... | 1.0 | Error when trying to access system information - #### Steps to reproduce the issue

After updating from 3.4.8 to 3.5.1 and fix the database

When trying to access Information pages (Menu System/System Information) I'm having the following error:

> An error has occured

1682 Native table 'performance_schema'.'session_v... | non_process | error when trying to access system information steps to reproduce the issue after updating from to and fix the database when trying to access information pages menu system system information i m having the following error an error has occured native table performance schema session vari... | 0 |

144,350 | 22,334,047,483 | IssuesEvent | 2022-06-14 16:49:09 | lexml/lexml-eta | https://api.github.com/repos/lexml/lexml-eta | closed | Aplicar classe CSS de existente ou inexistente na norma alterada | enhancement design | Para dispositivos adicionados, caso o atributo existeNaNormaAlterada tenha sido informado, deverá ser aplicada uma classe CSS correspondente para permitir alguma diferenciação visual. | 1.0 | Aplicar classe CSS de existente ou inexistente na norma alterada - Para dispositivos adicionados, caso o atributo existeNaNormaAlterada tenha sido informado, deverá ser aplicada uma classe CSS correspondente para permitir alguma diferenciação visual. | non_process | aplicar classe css de existente ou inexistente na norma alterada para dispositivos adicionados caso o atributo existenanormaalterada tenha sido informado deverá ser aplicada uma classe css correspondente para permitir alguma diferenciação visual | 0 |

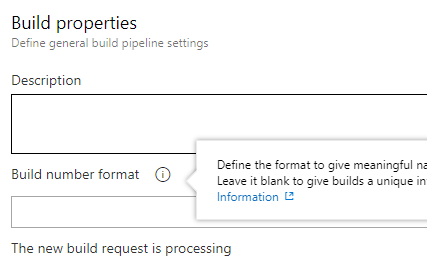

12,543 | 14,975,345,402 | IssuesEvent | 2021-01-28 05:55:33 | threefoldtech/js-sdk | https://api.github.com/repos/threefoldtech/js-sdk | closed | deploy Gitea and 3 entries appear in the "deployed solutions overview | process_wontfix | Deploying Gitea leads to a list of 3 things that appear in the "deployed solutions page". 1 deletes all three.

While in the Gitea specific deployed solutions overview it is only one:

While in the Gitea ... | process | deploy gitea and entries appear in the deployed solutions overview deploying gitea leads to a list of things that appear in the deployed solutions page deletes all three while in the gitea specific deployed solutions overview it is only one | 1 |

12,189 | 14,742,264,864 | IssuesEvent | 2021-01-07 11:59:42 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Laser - Error msg when processing billing | anc-external anc-process anp-0.5 ant-enhancement ant-support has attachment | In GitLab by @kdjstudios on Apr 2, 2019, 13:24

**Submitted by:** Sharon Carver <scarver@laseranswering.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-02-56436

**Server:** External

**Client/Site:** Laser

**Account:** NA

**Issue:**

At the end of processing our monthly billing yest... | 1.0 | Laser - Error msg when processing billing - In GitLab by @kdjstudios on Apr 2, 2019, 13:24

**Submitted by:** Sharon Carver <scarver@laseranswering.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-02-56436

**Server:** External

**Client/Site:** Laser

**Account:** NA

**Issue:**

At th... | process | laser error msg when processing billing in gitlab by kdjstudios on apr submitted by sharon carver helpdesk server external client site laser account na issue at the end of processing our monthly billing yesterday sa billing had an error message on the screen tha... | 1 |

5,079 | 7,873,889,132 | IssuesEvent | 2018-06-25 15:26:06 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | opened | [Processing] QGIS Network Analysis algorithms are not documented | Processing help | The tools under Network Analysis group in the Processing Toolbox are not documented (and I can't find reference to any issue report). The initial commit seems to be https://github.com/qgis/QGIS/pull/4869. | 1.0 | [Processing] QGIS Network Analysis algorithms are not documented - The tools under Network Analysis group in the Processing Toolbox are not documented (and I can't find reference to any issue report). The initial commit seems to be https://github.com/qgis/QGIS/pull/4869. | process | qgis network analysis algorithms are not documented the tools under network analysis group in the processing toolbox are not documented and i can t find reference to any issue report the initial commit seems to be | 1 |

255,348 | 21,919,332,047 | IssuesEvent | 2022-05-22 10:25:58 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: ruby-pg failed | C-test-failure O-robot O-roachtest release-blocker branch-release-21.2 | roachtest.ruby-pg [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=5230923&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=5230923&tab=artifacts#/ruby-pg) on release-21.2 @ [ef393bba2596c5b680a8fd07ba6fa34cca47ce4e](https://github.com/cockroachdb/cockroach/commits/ef39... | 2.0 | roachtest: ruby-pg failed - roachtest.ruby-pg [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=5230923&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=5230923&tab=artifacts#/ruby-pg) on release-21.2 @ [ef393bba2596c5b680a8fd07ba6fa34cca47ce4e](https://github.com/cockro... | non_process | roachtest ruby pg failed roachtest ruby pg with on release the test failed on branch release cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts ruby pg run orm helpers go orm helpers go ruby pg go ruby pg go test runner g... | 0 |

520,810 | 15,094,190,752 | IssuesEvent | 2021-02-07 04:56:52 | softmatterlab/Braph-2.0-Matlab | https://api.github.com/repos/softmatterlab/Braph-2.0-Matlab | closed | BrainSurface value checks | BRAPH2Genesis atlas low priority | Add checks values (¡check_value!) to the props

- [x] VERTEX_NUMBER

- [x] COORDINATES

- [x] TRIANGLES_NUMBER

- [x] TRIANGLES | 1.0 | BrainSurface value checks - Add checks values (¡check_value!) to the props

- [x] VERTEX_NUMBER

- [x] COORDINATES

- [x] TRIANGLES_NUMBER

- [x] TRIANGLES | non_process | brainsurface value checks add checks values ¡check value to the props vertex number coordinates triangles number triangles | 0 |

16,643 | 21,707,726,178 | IssuesEvent | 2022-05-10 11:11:39 | ModdingCommonwealth/Keepers-of-the-Stones | https://api.github.com/repos/ModdingCommonwealth/Keepers-of-the-Stones | closed | Adding structures with artifacts | new feature In process | Structures where you can find these artifacts:

- [ ] Fire Temple

- [ ] Aerial Temple

- [ ] Water Temple

- [ ] Earth Temple

- [ ] Cosmic Temple

- [ ] Light Temple

- [ ] Shadow Temple

- [ ] Natural Temple | 1.0 | Adding structures with artifacts - Structures where you can find these artifacts:

- [ ] Fire Temple

- [ ] Aerial Temple

- [ ] Water Temple

- [ ] Earth Temple

- [ ] Cosmic Temple

- [ ] Light Temple

- [ ] Shadow Temple

- [ ] Natural Temple | process | adding structures with artifacts structures where you can find these artifacts fire temple aerial temple water temple earth temple cosmic temple light temple shadow temple natural temple | 1 |

239,655 | 26,232,021,213 | IssuesEvent | 2023-01-05 01:39:46 | tharun453/samples | https://api.github.com/repos/tharun453/samples | opened | CVE-2021-44906 (High) detected in minimist-1.2.5.tgz | security vulnerability | ## CVE-2021-44906 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimist-1.2.5.tgz</b></p></summary>

<p>parse argument options</p>

<p>Library home page: <a href="https://registry.npm... | True | CVE-2021-44906 (High) detected in minimist-1.2.5.tgz - ## CVE-2021-44906 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimist-1.2.5.tgz</b></p></summary>

<p>parse argument options<... | non_process | cve high detected in minimist tgz cve high severity vulnerability vulnerable library minimist tgz parse argument options library home page a href path to dependency file core tutorials buggyamb buggyamb wwwroot scripts jquery ui package json path to vulnerable ... | 0 |

1,331 | 3,881,425,623 | IssuesEvent | 2016-04-13 04:20:24 | dataproofer/Dataproofer | https://api.github.com/repos/dataproofer/Dataproofer | closed | Add tree-checkbox view for tests | engine: processing engine: rendering | Want to refactor Step 2's test selection and Step 3's test results into one view.

The first step of this is introducing a new tree-checkbox view instead of our current toggle spaghetti.

Suites (what we are now calling Sets) are able to be turned on and off, and by default turn all the tests in the suit on or off... | 1.0 | Add tree-checkbox view for tests - Want to refactor Step 2's test selection and Step 3's test results into one view.

The first step of this is introducing a new tree-checkbox view instead of our current toggle spaghetti.

Suites (what we are now calling Sets) are able to be turned on and off, and by default turn ... | process | add tree checkbox view for tests want to refactor step s test selection and step s test results into one view the first step of this is introducing a new tree checkbox view instead of our current toggle spaghetti suites what we are now calling sets are able to be turned on and off and by default turn ... | 1 |

283,715 | 30,913,529,797 | IssuesEvent | 2023-08-05 02:08:54 | hshivhare67/kernel_v4.19.72 | https://api.github.com/repos/hshivhare67/kernel_v4.19.72 | reopened | WS-2022-0015 (Medium) detected in linuxlinux-4.19.282 | Mend: dependency security vulnerability | ## WS-2022-0015 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.282</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.k... | True | WS-2022-0015 (Medium) detected in linuxlinux-4.19.282 - ## WS-2022-0015 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.282</b></p></summary>

<p>

<p>The Linux Kernel<... | non_process | ws medium detected in linuxlinux ws medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files vu... | 0 |

19,533 | 25,842,314,556 | IssuesEvent | 2022-12-13 02:00:07 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Tue, 13 Dec 22 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

There is no result

## Keyword: event camera

### Recurrent Vision Transformers for Object Detection with Event Cameras

- **Authors:** Mathias Gehrig, Davide Scaramuzza

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2212.05598

- **Pdf link:*... | 2.0 | New submissions for Tue, 13 Dec 22 - ## Keyword: events

There is no result

## Keyword: event camera

### Recurrent Vision Transformers for Object Detection with Event Cameras

- **Authors:** Mathias Gehrig, Davide Scaramuzza

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arx... | process | new submissions for tue dec keyword events there is no result keyword event camera recurrent vision transformers for object detection with event cameras authors mathias gehrig davide scaramuzza subjects computer vision and pattern recognition cs cv arxiv link pdf li... | 1 |

6,973 | 10,121,569,538 | IssuesEvent | 2019-07-31 15:53:30 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | opened | Make Bazel itself depend on `@rules_python` | P3 team-Rules-Python type: process | As part of the work for #9006, we're migrating Bazel's own use of native Python rules to load these rules from a bzl file. Ideally, the load statements should reference the macros in `@rules_python//python:defs.bzl` just like user code should. However, this isn't possible for the `@bazel_tools` repository, which is not... | 1.0 | Make Bazel itself depend on `@rules_python` - As part of the work for #9006, we're migrating Bazel's own use of native Python rules to load these rules from a bzl file. Ideally, the load statements should reference the macros in `@rules_python//python:defs.bzl` just like user code should. However, this isn't possible f... | process | make bazel itself depend on rules python as part of the work for we re migrating bazel s own use of native python rules to load these rules from a bzl file ideally the load statements should reference the macros in rules python python defs bzl just like user code should however this isn t possible for ... | 1 |

240,161 | 20,014,425,876 | IssuesEvent | 2022-02-01 10:33:22 | TeamGalacticraft/Galacticraft-Legacy | https://api.github.com/repos/TeamGalacticraft/Galacticraft-Legacy | closed | Various generators crash the game when placed next to energy condensers | Bug [Priority] [Status] Requires Testing [Status] Triage | ### Forge Version

14.23.5.2859

### Galacticraft Version

4.0.2.280

### Log or Crash Report

https://gist.github.com/Sethy152/13f8d21edc150e28b431de291363d809

I placed a simple coal generator next to the energy storage unit from Galacticraft. Instant crash. When I try to load back in it crashes in the same way.

... | 1.0 | Various generators crash the game when placed next to energy condensers - ### Forge Version

14.23.5.2859

### Galacticraft Version

4.0.2.280

### Log or Crash Report

https://gist.github.com/Sethy152/13f8d21edc150e28b431de291363d809

I placed a simple coal generator next to the energy storage unit from Galacticraf... | non_process | various generators crash the game when placed next to energy condensers forge version galacticraft version log or crash report i placed a simple coal generator next to the energy storage unit from galacticraft instant crash when i try to load back in it crashes in the same way... | 0 |

735,708 | 25,411,400,101 | IssuesEvent | 2022-11-22 19:19:11 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | closed | Own replies not visible | bug priority 1: high | # Bug Report

## Steps to reproduce

1. go to a community channel

2. reply to a message

result: can't see own reply | 1.0 | Own replies not visible - # Bug Report

## Steps to reproduce

1. go to a community channel

2. reply to a message

result: can't see own reply | non_process | own replies not visible bug report steps to reproduce go to a community channel reply to a message result can t see own reply | 0 |

137,139 | 18,752,642,688 | IssuesEvent | 2021-11-05 05:43:23 | madhans23/linux-4.15 | https://api.github.com/repos/madhans23/linux-4.15 | opened | CVE-2018-12233 (High) detected in linux-yoctov4.17 | security vulnerability | ## CVE-2018-12233 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov4.17</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://git.yo... | True | CVE-2018-12233 (High) detected in linux-yoctov4.17 - ## CVE-2018-12233 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov4.17</b></p></summary>

<p>

<p>Yocto Linux Embedded ke... | non_process | cve high detected in linux cve high severity vulnerability vulnerable library linux yocto linux embedded kernel library home page a href found in head commit a href found in base branch master vulnerable source files vulnera... | 0 |

54,087 | 7,873,157,102 | IssuesEvent | 2018-06-25 13:33:10 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Dead / Broken links in Documentation | Documentation Good First Issue | Learning to support Gutenberg is hard enough already.

Broken links in the handbook don't need to complicate things even more.

Let's hunt them down and report them here! | 1.0 | Dead / Broken links in Documentation - Learning to support Gutenberg is hard enough already.

Broken links in the handbook don't need to complicate things even more.

Let's hunt them down and report them here! | non_process | dead broken links in documentation learning to support gutenberg is hard enough already broken links in the handbook don t need to complicate things even more let s hunt them down and report them here | 0 |

4,084 | 6,905,654,506 | IssuesEvent | 2017-11-27 08:15:15 | AdguardTeam/AdguardForAndroid | https://api.github.com/repos/AdguardTeam/AdguardForAndroid | opened | com.androbin.newsrucoil does not work with AdGuard enabled | compatibility | Link to the app:

https://play.google.com/store/apps/details?id=com.androbin.newsrucoil

AG does not let it load news. | True | com.androbin.newsrucoil does not work with AdGuard enabled - Link to the app:

https://play.google.com/store/apps/details?id=com.androbin.newsrucoil

AG does not let it load news. | non_process | com androbin newsrucoil does not work with adguard enabled link to the app ag does not let it load news | 0 |

14,965 | 11,273,267,079 | IssuesEvent | 2020-01-14 16:15:56 | ForNeVeR/AvaloniaRider | https://api.github.com/repos/ForNeVeR/AvaloniaRider | opened | Use Gradle JVM Wrapper | infrastructure | As many people who wants to build the plugin don't usually do JVM development, we could benefit from automated JVM installation using the [gradle-jvm-wrapper](https://github.com/mfilippov/gradle-jvm-wrapper).

I still investigate if it will work well for all of our users, and want to make its usage optional (but enab... | 1.0 | Use Gradle JVM Wrapper - As many people who wants to build the plugin don't usually do JVM development, we could benefit from automated JVM installation using the [gradle-jvm-wrapper](https://github.com/mfilippov/gradle-jvm-wrapper).

I still investigate if it will work well for all of our users, and want to make its... | non_process | use gradle jvm wrapper as many people who wants to build the plugin don t usually do jvm development we could benefit from automated jvm installation using the i still investigate if it will work well for all of our users and want to make its usage optional but enabled by default we ll see about that | 0 |

1,217 | 3,749,530,616 | IssuesEvent | 2016-03-11 00:24:29 | metabase/metabase | https://api.github.com/repos/metabase/metabase | opened | QP doesn't return full name of columns that include slashes | Limitation Query Processor | This came up while working on calculated columns:

The SQL generated looks like this;

```sql

SELECT ("PUBLIC"."ORDERS"."TOTAL" - "PUBLIC"."ORDERS"."TAX") AS "TOTAL - TAX",

("PUBLIC"."ORDERS"."TAX" / "PUBLIC"."ORDERS"."TOTAL") AS "TAX / TOTAL"

FROM "PUBLIC"."ORDERS"

LIMIT 2000

```

What ends up happenin... | 1.0 | QP doesn't return full name of columns that include slashes - This came up while working on calculated columns:

The SQL generated looks like this;

```sql

SELECT ("PUBLIC"."ORDERS"."TOTAL" - "PUBLIC"."ORDERS"."TAX") AS "TOTAL - TAX",

("PUBLIC"."ORDERS"."TAX" / "PUBLIC"."ORDERS"."TOTAL") AS "TAX / TOTAL"

FR... | process | qp doesn t return full name of columns that include slashes this came up while working on calculated columns the sql generated looks like this sql select public orders total public orders tax as total tax public orders tax public orders total as tax total fr... | 1 |

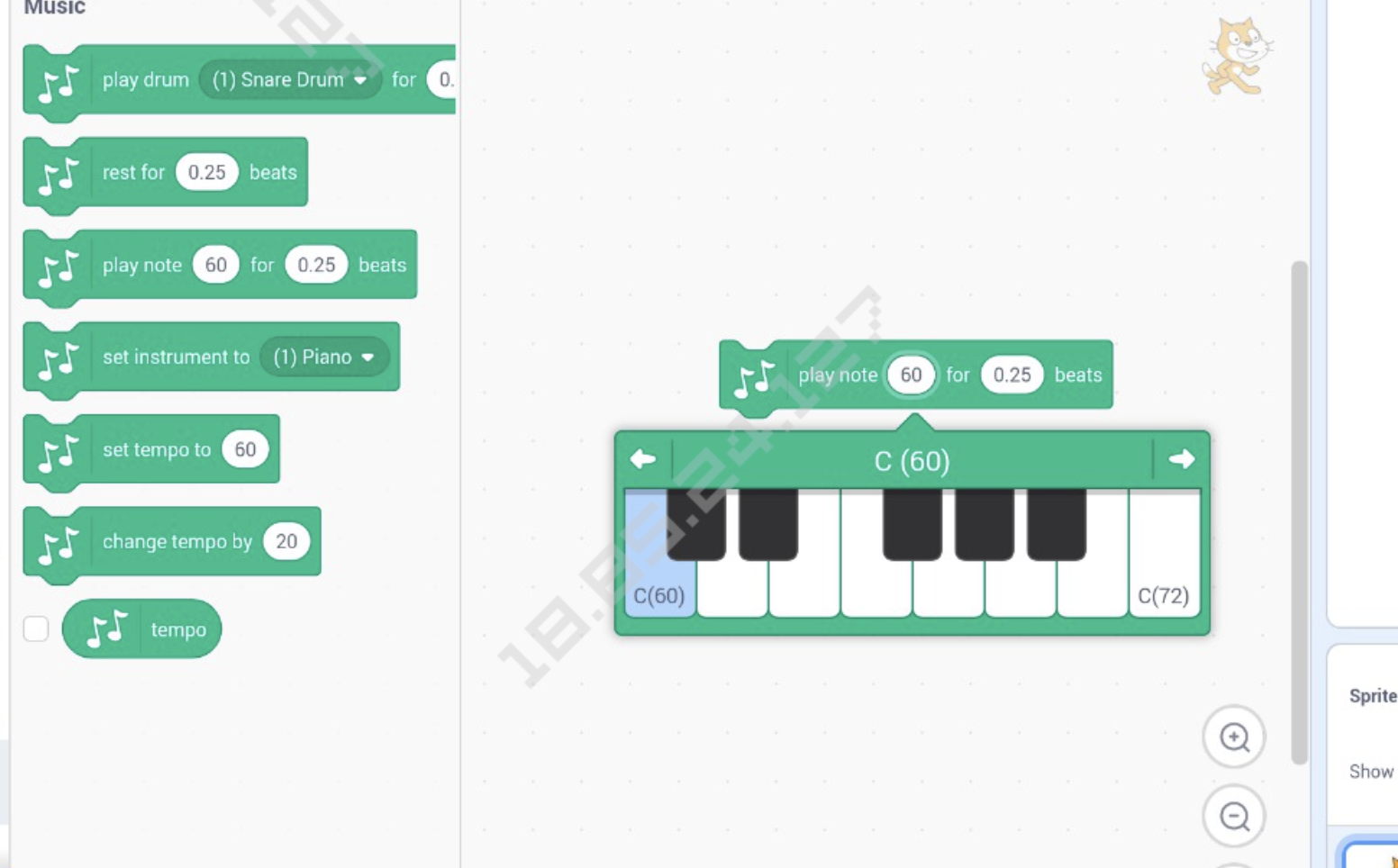

95,977 | 8,582,201,962 | IssuesEvent | 2018-11-13 16:25:32 | LLK/scratch-gui | https://api.github.com/repos/LLK/scratch-gui | opened | Issues found in bughunt and exquisite playtest on 11/9/18 | smoke-testing | * [ ] On Android Tablet Chrome: Piano notepicker appeared fine at first, but then later it appeared at half width @benjiwheeler

. However, it should not be saved. | 1.0 | Twitter messages should be collected. - Twitter messages should be collected via Twitter API (tweepy). However, it should not be saved. | non_process | twitter messages should be collected twitter messages should be collected via twitter api tweepy however it should not be saved | 0 |

193,392 | 6,884,554,463 | IssuesEvent | 2017-11-21 13:28:01 | zero-os/0-stor | https://api.github.com/repos/zero-os/0-stor | closed | rename db/badger.BadgerDB to db/badger.DB | mentor priority_minor state_inprogress | We should rename` BadgerDB` to `DB` in `server/db/badger/badger.go`. The reason being is that when you import it, currently you would stutter, should you reference the DB type:

```go

import "github.com/zero-os/0-stor/server/db/badger"

var db *badger.BadgerDB

```

If we rename it to `DB` we no longer stutter and... | 1.0 | rename db/badger.BadgerDB to db/badger.DB - We should rename` BadgerDB` to `DB` in `server/db/badger/badger.go`. The reason being is that when you import it, currently you would stutter, should you reference the DB type:

```go

import "github.com/zero-os/0-stor/server/db/badger"

var db *badger.BadgerDB

```

If w... | non_process | rename db badger badgerdb to db badger db we should rename badgerdb to db in server db badger badger go the reason being is that when you import it currently you would stutter should you reference the db type go import github com zero os stor server db badger var db badger badgerdb if w... | 0 |

57,616 | 14,166,818,805 | IssuesEvent | 2020-11-12 09:26:59 | resindrake/frontiersmen | https://api.github.com/repos/resindrake/frontiersmen | closed | Premise of Game | question worldbuilding | If we do eventually want to make this game into a survival game, what should the premise and lore be?

### I propose the following:

* Game is set in a frontier, such as Alaska or Yukon

* Player and NPCs move to the frontier for gold or oil operations

* Connections to the rest of North America exist, but is very re... | 1.0 | Premise of Game - If we do eventually want to make this game into a survival game, what should the premise and lore be?

### I propose the following:

* Game is set in a frontier, such as Alaska or Yukon

* Player and NPCs move to the frontier for gold or oil operations

* Connections to the rest of North America exi... | non_process | premise of game if we do eventually want to make this game into a survival game what should the premise and lore be i propose the following game is set in a frontier such as alaska or yukon player and npcs move to the frontier for gold or oil operations connections to the rest of north america exi... | 0 |

1,781 | 4,511,916,388 | IssuesEvent | 2016-09-03 09:42:02 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | closed | Implement stats interface for Query Processor | ADMIN development MYSQL QUERY PROCESSOR STATISTICS | We need to export internal statistics about Query Processor | 1.0 | Implement stats interface for Query Processor - We need to export internal statistics about Query Processor | process | implement stats interface for query processor we need to export internal statistics about query processor | 1 |

19,958 | 26,433,277,194 | IssuesEvent | 2023-01-15 03:33:23 | liuzihaohao/myoj | https://api.github.com/repos/liuzihaohao/myoj | closed | [新功能]增加用户主页 | Feature Request / 功能请求 Need Processing / 需要处理 Work in Progress / 施工中 | 在提交报告之前,请先回答几个问题:

- [x] 我已仔细搜索所有issues,确定没有已经提交。

- [x] 我已经有详细的构思

- [ ] 我已经编写好了详细的代码

请详细说明,并配上详细的介绍等信息

增加用户主页功能

| 1.0 | [新功能]增加用户主页 - 在提交报告之前,请先回答几个问题:

- [x] 我已仔细搜索所有issues,确定没有已经提交。

- [x] 我已经有详细的构思

- [ ] 我已经编写好了详细的代码

请详细说明,并配上详细的介绍等信息

增加用户主页功能

| process | 增加用户主页 在提交报告之前 请先回答几个问题 我已仔细搜索所有issues,确定没有已经提交。 我已经有详细的构思 我已经编写好了详细的代码 请详细说明,并配上详细的介绍等信息 增加用户主页功能 | 1 |

10,859 | 13,631,444,006 | IssuesEvent | 2020-09-24 18:01:09 | eddieantonio/predictive-text-studio | https://api.github.com/repos/eddieantonio/predictive-text-studio | closed | Create a bare-minimum kmp.json file | data-processing worker 🔥 High priority | This is the metadata file that is included in the generated `.kmp` zip archive.

A bare-minimum KMP must have the following:

```json

{

"license": "mit",

"languages": ["crl", "crj"]

}

```

The license is ALWAYS `"mit"` 😂

And the languages is a list of supported languages (BCP-47), but for now, we sh... | 1.0 | Create a bare-minimum kmp.json file - This is the metadata file that is included in the generated `.kmp` zip archive.

A bare-minimum KMP must have the following:

```json

{

"license": "mit",

"languages": ["crl", "crj"]

}

```

The license is ALWAYS `"mit"` 😂

And the languages is a list of supported ... | process | create a bare minimum kmp json file this is the metadata file that is included in the generated kmp zip archive a bare minimum kmp must have the following json license mit languages the license is always mit 😂 and the languages is a list of supported languages bc... | 1 |

983 | 3,439,051,414 | IssuesEvent | 2015-12-14 06:53:02 | snorberhuis/MasterThesis | https://api.github.com/repos/snorberhuis/MasterThesis | closed | Upload final version to TUD Repo | Process | At least five working days before the defense the student uploads a pdf of the final version of the thesis report in the electronic TU Delft repository. (www.library.tudelft.nl/collecties/tu-delft-repository/) | 1.0 | Upload final version to TUD Repo - At least five working days before the defense the student uploads a pdf of the final version of the thesis report in the electronic TU Delft repository. (www.library.tudelft.nl/collecties/tu-delft-repository/) | process | upload final version to tud repo at least five working days before the defense the student uploads a pdf of the final version of the thesis report in the electronic tu delft repository | 1 |

39,080 | 5,217,083,792 | IssuesEvent | 2017-01-26 12:42:26 | IgniteUI/igniteui-js-blocks | https://api.github.com/repos/IgniteUI/igniteui-js-blocks | closed | Improve the navigation drawer tests | medium priority nav-drawer testing | Some of the tests or part of them are commented because of the last RC4. They need to be improved so they can work with RC4.

| 1.0 | Improve the navigation drawer tests - Some of the tests or part of them are commented because of the last RC4. They need to be improved so they can work with RC4.

| non_process | improve the navigation drawer tests some of the tests or part of them are commented because of the last they need to be improved so they can work with | 0 |

20,739 | 27,439,715,015 | IssuesEvent | 2023-03-02 10:08:35 | xataio/xata-py | https://api.github.com/repos/xataio/xata-py | opened | Slow bulk processing down on rate limited requests | enhancement bulk-processor | If a `429` status code is returned, increase the processing timeout to avoid future rate limit hits. | 1.0 | Slow bulk processing down on rate limited requests - If a `429` status code is returned, increase the processing timeout to avoid future rate limit hits. | process | slow bulk processing down on rate limited requests if a status code is returned increase the processing timeout to avoid future rate limit hits | 1 |

18,220 | 24,280,625,523 | IssuesEvent | 2022-09-28 17:03:55 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | NTR: [ mitotic nuclear envelope segregation] | New term request cell cycle and DNA processes | Please provide as much information as you can:

* **Suggested term label:**

mitotic nuclear envelope segregation

* **Definition (free text)**

The mitotic cell cycle process in which the. nuclear envelope, including nuclear pores, is equally distributed to the two daughter cells during the mitotic cell cycle.

... | 1.0 | NTR: [ mitotic nuclear envelope segregation] - Please provide as much information as you can:

* **Suggested term label:**

mitotic nuclear envelope segregation

* **Definition (free text)**

The mitotic cell cycle process in which the. nuclear envelope, including nuclear pores, is equally distributed to the two ... | process | ntr please provide as much information as you can suggested term label mitotic nuclear envelope segregation definition free text the mitotic cell cycle process in which the nuclear envelope including nuclear pores is equally distributed to the two daughter cells during the mitotic cell... | 1 |

4,067 | 6,997,821,508 | IssuesEvent | 2017-12-16 19:14:55 | eclipse/microprofile-open-api | https://api.github.com/repos/eclipse/microprofile-open-api | closed | Publish jars to appropriate repository | process | We need to plug into MicroProfile's repository so that the jars we have in this spec (annotations, models, programming interfaces, etc) are available in the proper place. | 1.0 | Publish jars to appropriate repository - We need to plug into MicroProfile's repository so that the jars we have in this spec (annotations, models, programming interfaces, etc) are available in the proper place. | process | publish jars to appropriate repository we need to plug into microprofile s repository so that the jars we have in this spec annotations models programming interfaces etc are available in the proper place | 1 |