Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

171,439 | 27,119,847,218 | IssuesEvent | 2023-02-15 21:48:18 | pandas-dev/pandas | https://api.github.com/repos/pandas-dev/pandas | closed | API deprecate date_parser, add date_format | Timeseries API Design IO CSV | **TLDR** the conversation here goes on for a bit, but to summarise, the suggestion is:

- deprecate `date_parser`, because it always hurts performance (counter examples welcome!)

- add `date_format`, because that can boost performance

- for anything else, amend docs to make clear that users should first in the data... | 1.0 | API deprecate date_parser, add date_format - **TLDR** the conversation here goes on for a bit, but to summarise, the suggestion is:

- deprecate `date_parser`, because it always hurts performance (counter examples welcome!)

- add `date_format`, because that can boost performance

- for anything else, amend docs to m... | non_process | api deprecate date parser add date format tldr the conversation here goes on for a bit but to summarise the suggestion is deprecate date parser because it always hurts performance counter examples welcome add date format because that can boost performance for anything else amend docs to m... | 0 |

270,882 | 23,545,252,395 | IssuesEvent | 2022-08-21 02:16:48 | puppetlabs/pdk | https://api.github.com/repos/puppetlabs/pdk | closed | Long time from starting pdk test unit until unit tests start | needs information pdk-test no-issue-activity | **Describe the bug**

When running `pdk test unit` it takes a very long time where PDK is doing something prior the unit tests start

**To Reproduce**

run `time pdk test unit`

**Expected behavior**

Unit tests start immediately after running `pdk test unit`

**Additional context**

Installed via DEB Package v1.... | 1.0 | Long time from starting pdk test unit until unit tests start - **Describe the bug**

When running `pdk test unit` it takes a very long time where PDK is doing something prior the unit tests start

**To Reproduce**

run `time pdk test unit`

**Expected behavior**

Unit tests start immediately after running `pdk test... | non_process | long time from starting pdk test unit until unit tests start describe the bug when running pdk test unit it takes a very long time where pdk is doing something prior the unit tests start to reproduce run time pdk test unit expected behavior unit tests start immediately after running pdk test... | 0 |

166,965 | 6,329,306,251 | IssuesEvent | 2017-07-26 02:19:58 | tgstation/tgstation | https://api.github.com/repos/tgstation/tgstation | closed | Timers are crashing (or something else is that is causing temp_visuals to not delete) consistently nearing 2 hours into the round | Bug Priority: High | For the past few days every time it's nearing 14:00 shift time shit just starts going wack.

What happened to the robust MC that can handle everything? | 1.0 | Timers are crashing (or something else is that is causing temp_visuals to not delete) consistently nearing 2 hours into the round - For the past few days every time it's nearing 14:00 shift time shit just starts going wack.

What happened to the robust MC that can handle everything? | non_process | timers are crashing or something else is that is causing temp visuals to not delete consistently nearing hours into the round for the past few days every time it s nearing shift time shit just starts going wack what happened to the robust mc that can handle everything | 0 |

5,988 | 2,996,822,144 | IssuesEvent | 2015-07-23 00:47:03 | swagger-api/swagger-js | https://api.github.com/repos/swagger-api/swagger-js | closed | adding clientAuthorization broken | Documentation | The example in readme.md for adding a new authorization token is busted.

Using the boilerplate code plus

`client.clientAuthorizations.add("apiKey", new client.ApiKeyAuthorization("api_key","special-key","query"));`

results in a TypeError:

`client.clientAuthorizations.add("api_key", ...`

` ... | 1.0 | adding clientAuthorization broken - The example in readme.md for adding a new authorization token is busted.

Using the boilerplate code plus

`client.clientAuthorizations.add("apiKey", new client.ApiKeyAuthorization("api_key","special-key","query"));`

results in a TypeError:

`client.clientAuthorizations.add("api... | non_process | adding clientauthorization broken the example in readme md for adding a new authorization token is busted using the boilerplate code plus client clientauthorizations add apikey new client apikeyauthorization api key special key query results in a typeerror client clientauthorizations add api... | 0 |

8,772 | 23,421,737,082 | IssuesEvent | 2022-08-13 20:06:55 | nix-community/dream2nix | https://api.github.com/repos/nix-community/dream2nix | closed | per-subsystem subsystemInfo definition/parser? | enhancement architecture | It feels weird to let subsystemInfo be handled by translator only, especially since there can be many translators, passing settings to the builder is arbitrary, and I guess the discoverer can't see them?

How about a per-subsystem settings module that defines the flags and optionally transforms them?

Then they cou... | 1.0 | per-subsystem subsystemInfo definition/parser? - It feels weird to let subsystemInfo be handled by translator only, especially since there can be many translators, passing settings to the builder is arbitrary, and I guess the discoverer can't see them?

How about a per-subsystem settings module that defines the flags... | non_process | per subsystem subsysteminfo definition parser it feels weird to let subsysteminfo be handled by translator only especially since there can be many translators passing settings to the builder is arbitrary and i guess the discoverer can t see them how about a per subsystem settings module that defines the flags... | 0 |

12,825 | 15,210,184,604 | IssuesEvent | 2021-02-17 06:58:38 | gfx-rs/naga | https://api.github.com/repos/gfx-rs/naga | opened | Global usage flags are not correctly derived | area: processing kind: bug | Related to #486

I don't think we correctly identify things like `buffer.array[a+4] = b`. Here, the LHS expression is `Access`, not the global itself. We need to make it so `buffer` is identified as being written to, and `a` with `b` are read. | 1.0 | Global usage flags are not correctly derived - Related to #486

I don't think we correctly identify things like `buffer.array[a+4] = b`. Here, the LHS expression is `Access`, not the global itself. We need to make it so `buffer` is identified as being written to, and `a` with `b` are read. | process | global usage flags are not correctly derived related to i don t think we correctly identify things like buffer array b here the lhs expression is access not the global itself we need to make it so buffer is identified as being written to and a with b are read | 1 |

12,542 | 14,974,454,200 | IssuesEvent | 2021-01-28 03:35:48 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] Resources > PDFs uploaded in resources are broken on accessing for the first time | Bug P1 Process: Dev Process: Fixed iOS | **Describe the bug**

PDFs uploaded in resources are broken on accessing it for the first time

**To Reproduce**

Steps to reproduce the behavior:

1. Join the study successfully

2. Go to 'Resources'

3. Click on any resources having PDF

4. Observe the format

5. Navigate back and again click the same PDF

6. Obse... | 2.0 | [iOS] Resources > PDFs uploaded in resources are broken on accessing for the first time - **Describe the bug**

PDFs uploaded in resources are broken on accessing it for the first time

**To Reproduce**

Steps to reproduce the behavior:

1. Join the study successfully

2. Go to 'Resources'

3. Click on any resource... | process | resources pdfs uploaded in resources are broken on accessing for the first time describe the bug pdfs uploaded in resources are broken on accessing it for the first time to reproduce steps to reproduce the behavior join the study successfully go to resources click on any resources ha... | 1 |

59,700 | 8,376,996,575 | IssuesEvent | 2018-10-05 22:02:25 | opal/opal | https://api.github.com/repos/opal/opal | closed | Browser support | discuss documentation | > On an unrelated subject (well, maybe not so unrelated), I believe the claim that Opal runs on IE 6 is no longer true. I have seen things like Object.keys used in corelib (I think in the Array or Hash implementation), and that only became available in IE 9 I think. Not sure what if anything needs to be done about that... | 1.0 | Browser support - > On an unrelated subject (well, maybe not so unrelated), I believe the claim that Opal runs on IE 6 is no longer true. I have seen things like Object.keys used in corelib (I think in the Array or Hash implementation), and that only became available in IE 9 I think. Not sure what if anything needs to ... | non_process | browser support on an unrelated subject well maybe not so unrelated i believe the claim that opal runs on ie is no longer true i have seen things like object keys used in corelib i think in the array or hash implementation and that only became available in ie i think not sure what if anything needs to ... | 0 |

1,087 | 3,550,200,172 | IssuesEvent | 2016-01-20 21:02:22 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | hrtime regression | process | I have the feeling https://github.com/nodejs/node/commit/89f056bdf323a77a48997f14196b41ddf76f90b1 introduced a regression. The nanoseconds might return negative values if you compare the actual value with the one before. This does not occur in 5.3 or before.

```

> process.hrtime(t)

[ 45, 373756947 ]

> process.hrtim... | 1.0 | hrtime regression - I have the feeling https://github.com/nodejs/node/commit/89f056bdf323a77a48997f14196b41ddf76f90b1 introduced a regression. The nanoseconds might return negative values if you compare the actual value with the one before. This does not occur in 5.3 or before.

```

> process.hrtime(t)

[ 45, 37375694... | process | hrtime regression i have the feeling introduced a regression the nanoseconds might return negative values if you compare the actual value with the one before this does not occur in or before process hrtime t process hrtime t easy to reproduce with js var t process hrtime ... | 1 |

6,582 | 9,661,750,759 | IssuesEvent | 2019-05-20 18:53:45 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | opened | Transcript: Default radio button if only one | Apply Process State Dept. | Who: Internship applicants

What: Default transcript selection if only one uploaded

Why: To avoid confusion

Acceptance Criteria:

- When an internship applicant selects to upload a transcript and then selects "Refresh", default the radio button to select the transcript IF there was only one uploaded. | 1.0 | Transcript: Default radio button if only one - Who: Internship applicants

What: Default transcript selection if only one uploaded

Why: To avoid confusion

Acceptance Criteria:

- When an internship applicant selects to upload a transcript and then selects "Refresh", default the radio button to select the transcript... | process | transcript default radio button if only one who internship applicants what default transcript selection if only one uploaded why to avoid confusion acceptance criteria when an internship applicant selects to upload a transcript and then selects refresh default the radio button to select the transcript... | 1 |

14,667 | 17,786,803,265 | IssuesEvent | 2021-08-31 12:05:55 | googleapis/google-api-python-client | https://api.github.com/repos/googleapis/google-api-python-client | reopened | Dependency Dashboard | type: process | This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Other Branches

These updates are pending. To force PRs open, click the checkbox below.

- [ ] <!-- other-branch=renovate/actions-github-script-4.x -->chore(deps): update acti... | 1.0 | Dependency Dashboard - This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Other Branches

These updates are pending. To force PRs open, click the checkbox below.

- [ ] <!-- other-branch=renovate/actions-github-script-4.x -->c... | process | dependency dashboard this issue provides visibility into renovate updates and their statuses other branches these updates are pending to force prs open click the checkbox below chore deps update actions github script action to open these updates have all been created already click a ... | 1 |

51,929 | 12,828,632,103 | IssuesEvent | 2020-07-06 20:56:53 | google/blockly | https://api.github.com/repos/google/blockly | opened | Updata metadata size as part of release script | component: build process type: feature request | **Is your feature request related to a problem? Please describe.**

We have to update the metadata size for every release.

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

**Describe the solution you'd like**

Change npm run recompile -> npm run recompile:bump & ... | 1.0 | Updata metadata size as part of release script - **Is your feature request related to a problem? Please describe.**

We have to update the metadata size for every release.

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

**Describe the solution you'd like**

Chan... | non_process | updata metadata size as part of release script is your feature request related to a problem please describe we have to update the metadata size for every release describe the solution you d like change npm run recompile npm run recompile bump npm run recompile all npm run recompile all will b... | 0 |

280,702 | 24,324,753,152 | IssuesEvent | 2022-09-30 13:53:54 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: unoptimized-query-oracle/disable-rules=half failed | C-test-failure O-robot O-roachtest T-sql-queries branch-release-22.2 | roachtest.unoptimized-query-oracle/disable-rules=half [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6704805?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6704805?buildTab=artifac... | 2.0 | roachtest: unoptimized-query-oracle/disable-rules=half failed - roachtest.unoptimized-query-oracle/disable-rules=half [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6704805?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockr... | non_process | roachtest unoptimized query oracle disable rules half failed roachtest unoptimized query oracle disable rules half with on release null strings join e à... | 0 |

19,397 | 25,539,287,997 | IssuesEvent | 2022-11-29 14:16:30 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Preprocessor function multi_model_statistics does not work if no time coordinate or only single time point in data | bug preprocessor | Because the code assumes a time coordinate is present and does `cube.coord('time').guess_bounds()` | 1.0 | Preprocessor function multi_model_statistics does not work if no time coordinate or only single time point in data - Because the code assumes a time coordinate is present and does `cube.coord('time').guess_bounds()` | process | preprocessor function multi model statistics does not work if no time coordinate or only single time point in data because the code assumes a time coordinate is present and does cube coord time guess bounds | 1 |

4,963 | 7,806,051,135 | IssuesEvent | 2018-06-11 12:59:15 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | closed | Per connection rate limit | ADMIN CONNECTION POOL QUERY PROCESSOR | We need to define a way to throttle bandwidth usage per connection/query, client side. | 1.0 | Per connection rate limit - We need to define a way to throttle bandwidth usage per connection/query, client side. | process | per connection rate limit we need to define a way to throttle bandwidth usage per connection query client side | 1 |

414 | 7,703,837,749 | IssuesEvent | 2018-05-21 09:53:35 | DrewAPicture/ensemble | https://api.github.com/repos/DrewAPicture/ensemble | opened | Term query support for Unit Directors | ::People ::Taxonomy Enhancement | Like contests and venues, Unit Directors (users) are connected with various taxonomies, but most notably the Units taxonomy. It would be nice if there were a way to bridge between directors and the terms assigned to them, even if it means going outside of the core API pathways to accomplish it. | 1.0 | Term query support for Unit Directors - Like contests and venues, Unit Directors (users) are connected with various taxonomies, but most notably the Units taxonomy. It would be nice if there were a way to bridge between directors and the terms assigned to them, even if it means going outside of the core API pathways to... | non_process | term query support for unit directors like contests and venues unit directors users are connected with various taxonomies but most notably the units taxonomy it would be nice if there were a way to bridge between directors and the terms assigned to them even if it means going outside of the core api pathways to... | 0 |

4,769 | 3,882,931,791 | IssuesEvent | 2016-04-13 12:02:37 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 20627241: Swift.LazySequence should have a .first property | classification:ui/usability reproducible:always status:open | #### Description

Summary:

LazySequence should have a `first` property of type `S.Generator.Element?`.

Steps to Reproduce:

1. Try to find the first element matching a given criterion:

return lazy(start…end).filter { $0 % 2 == 0 }.first

Expected Results:

This code, or something very similar, works fine.

Actual R... | True | 20627241: Swift.LazySequence should have a .first property - #### Description

Summary:

LazySequence should have a `first` property of type `S.Generator.Element?`.

Steps to Reproduce:

1. Try to find the first element matching a given criterion:

return lazy(start…end).filter { $0 % 2 == 0 }.first

Expected Results... | non_process | swift lazysequence should have a first property description summary lazysequence should have a first property of type s generator element steps to reproduce try to find the first element matching a given criterion return lazy start…end filter first expected results this ... | 0 |

6,978 | 10,129,759,576 | IssuesEvent | 2019-08-01 15:25:34 | ptddatateam/PublicTransitDataReportingPortal | https://api.github.com/repos/ptddatateam/PublicTransitDataReportingPortal | opened | Prep for meeting to discuss Summary of Public Transportation Vision | process | Prep for meeting to discuss Summary of Public Transportation Vision | 1.0 | Prep for meeting to discuss Summary of Public Transportation Vision - Prep for meeting to discuss Summary of Public Transportation Vision | process | prep for meeting to discuss summary of public transportation vision prep for meeting to discuss summary of public transportation vision | 1 |

816,133 | 30,590,165,097 | IssuesEvent | 2023-07-21 16:19:34 | Memmy-App/memmy | https://api.github.com/repos/Memmy-App/memmy | closed | Minor Traverse Tweaks: Favorite and Subscriptions Counts and Padding. | enhancement medium priority visual | **Describe the solution you'd like**

Beside the Favorites and Subscriptions titles I would like a count of number of favorites and subscriptions.

I would also like the padding between Subscriptions and the post above to match the padding between the search box and favorite title. Looks cramped.

**Additional context**... | 1.0 | Minor Traverse Tweaks: Favorite and Subscriptions Counts and Padding. - **Describe the solution you'd like**

Beside the Favorites and Subscriptions titles I would like a count of number of favorites and subscriptions.

I would also like the padding between Subscriptions and the post above to match the padding between t... | non_process | minor traverse tweaks favorite and subscriptions counts and padding describe the solution you d like beside the favorites and subscriptions titles i would like a count of number of favorites and subscriptions i would also like the padding between subscriptions and the post above to match the padding between t... | 0 |

343,230 | 30,653,536,342 | IssuesEvent | 2023-07-25 10:29:20 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Fix paddle_math.test_paddle_atanh | Sub Task Failing Test Paddle Frontend | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5522136127"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5522136127"><img src=https://img.shields.io/badge/-success-success></a>

|paddle|<a href="https://gith... | 1.0 | Fix paddle_math.test_paddle_atanh - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5522136127"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5522136127"><img src=https://img.shields.io/badge/-success-success... | non_process | fix paddle math test paddle atanh tensorflow a href src jax a href src paddle a href src torch a href src numpy a href src | 0 |

2,628 | 5,400,490,947 | IssuesEvent | 2017-02-27 22:10:22 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Non admin users can't see Questions that are using GA as data source | Bug Database/Google Analytics Query Processor | ### Bug

Regular User can't access Questions and Cards using data from GoogleAnalytics database but they can ask a question and get data as long as they are not saving it.

- Browser and the version: Chrome 55.0.2883.95

- Operating system: macOS Sierra

- Databases: MongoDB, GoogleAnalytics

- Metabase version: 0.2... | 1.0 | Non admin users can't see Questions that are using GA as data source - ### Bug

Regular User can't access Questions and Cards using data from GoogleAnalytics database but they can ask a question and get data as long as they are not saving it.

- Browser and the version: Chrome 55.0.2883.95

- Operating system: macOS ... | process | non admin users can t see questions that are using ga as data source bug regular user can t access questions and cards using data from googleanalytics database but they can ask a question and get data as long as they are not saving it browser and the version chrome operating system macos sierr... | 1 |

20,196 | 26,772,809,498 | IssuesEvent | 2023-01-31 15:11:56 | Open-EO/openeo-processes | https://api.github.com/repos/Open-EO/openeo-processes | closed | Cropping cumbersome | new process | Feedback from METER group is that cropping to a GeoJSON is cumbersome as you need to run two processes:

- [filter_spatial](https://processes.openeo.org/#filter_spatial) and

- [mask_polygon](https://processes.openeo.org/#mask_polygon)

Might be a client improvement, but maybe we can also enforce implicit filtering i... | 1.0 | Cropping cumbersome - Feedback from METER group is that cropping to a GeoJSON is cumbersome as you need to run two processes:

- [filter_spatial](https://processes.openeo.org/#filter_spatial) and

- [mask_polygon](https://processes.openeo.org/#mask_polygon)

Might be a client improvement, but maybe we can also enforc... | process | cropping cumbersome feedback from meter group is that cropping to a geojson is cumbersome as you need to run two processes and might be a client improvement but maybe we can also enforce implicit filtering in mask polygon or implicit masking in filter spatial not sure yet | 1 |

18,072 | 24,089,078,497 | IssuesEvent | 2022-09-19 13:26:59 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | NTR pore forming activity | multi-species process | For the work on multiorganisms, to (re)annotate cytolysis terms, I will create a new MF, "pore forming activity", definition:

An activity in which a protein is inserted into the membrane of another cell where it forms transmembrane pores.

Added: Pores disrupts the integrity of the cell membrane, resulting in der... | 1.0 | NTR pore forming activity - For the work on multiorganisms, to (re)annotate cytolysis terms, I will create a new MF, "pore forming activity", definition:

An activity in which a protein is inserted into the membrane of another cell where it forms transmembrane pores.

Added: Pores disrupts the integrity of the ce... | process | ntr pore forming activity for the work on multiorganisms to re annotate cytolysis terms i will create a new mf pore forming activity definition an activity in which a protein is inserted into the membrane of another cell where it forms transmembrane pores added pores disrupts the integrity of the ce... | 1 |

696,004 | 23,879,200,542 | IssuesEvent | 2022-09-07 22:34:26 | oncokb/oncokb | https://api.github.com/repos/oncokb/oncokb | closed | Support cancer type exclusion | high priority Epic next-minor-release | In the recent BRAF/V600E/All Solid Tumors FDA approval, we need to exclude colorectal cancer per approval. | 1.0 | Support cancer type exclusion - In the recent BRAF/V600E/All Solid Tumors FDA approval, we need to exclude colorectal cancer per approval. | non_process | support cancer type exclusion in the recent braf all solid tumors fda approval we need to exclude colorectal cancer per approval | 0 |

21,969 | 30,464,224,117 | IssuesEvent | 2023-07-17 09:13:27 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Invalid environment specification in pipeline YAML targeting resource | doc-bug Pri1 azure-devops-pipelines/svc azure-devops-pipelines-process/subsvc | I believe the YAML in the "Target an environment from a deployment job" section is invalid. When I copy the code block from this section into the YAML pipeline editor, it reports a bunch of errors starting with the `resourceName` line. If I simple move `smarthotel-dev` onto it's own line with the `name` tag, then the e... | 1.0 | Invalid environment specification in pipeline YAML targeting resource - I believe the YAML in the "Target an environment from a deployment job" section is invalid. When I copy the code block from this section into the YAML pipeline editor, it reports a bunch of errors starting with the `resourceName` line. If I simple ... | process | invalid environment specification in pipeline yaml targeting resource i believe the yaml in the target an environment from a deployment job section is invalid when i copy the code block from this section into the yaml pipeline editor it reports a bunch of errors starting with the resourcename line if i simple ... | 1 |

271,363 | 8,483,195,071 | IssuesEvent | 2018-10-25 20:48:46 | pulumi/pulumi | https://api.github.com/repos/pulumi/pulumi | closed | Concurrent `update` and `preview` can lead to confusing errors | priority/P1 | During a deployment to `pulumi.io` we saw a failure which appears to be related to a concurrent preview and update occuring: https://github.com/pulumi/docs/pull/583#pullrequestreview-154478411

```

error:the current deployment has 6 resource(s) with pending operations:

* urn:pulumi:pulumi.io-staging::pulumi.io::a... | 1.0 | Concurrent `update` and `preview` can lead to confusing errors - During a deployment to `pulumi.io` we saw a failure which appears to be related to a concurrent preview and update occuring: https://github.com/pulumi/docs/pull/583#pullrequestreview-154478411

```

error:the current deployment has 6 resource(s) with pe... | non_process | concurrent update and preview can lead to confusing errors during a deployment to pulumi io we saw a failure which appears to be related to a concurrent preview and update occuring error the current deployment has resource s with pending operations urn pulumi pulumi io staging pulumi io aw... | 0 |

185,375 | 14,351,563,318 | IssuesEvent | 2020-11-30 01:39:52 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | opened | zuoralabs/zsec-go-examples: goroutine_test.go; 9 LoC | fresh test tiny |

Found a possible issue in [zuoralabs/zsec-go-examples](https://www.github.com/zuoralabs/zsec-go-examples) at [goroutine_test.go](https://github.com/zuoralabs/zsec-go-examples/blob/605d1788c03ea7e9d2c7594b16f46137ad6e1025/goroutine_test.go#L243-L251)

The below snippet of Go code triggered static analysis which searche... | 1.0 | zuoralabs/zsec-go-examples: goroutine_test.go; 9 LoC -

Found a possible issue in [zuoralabs/zsec-go-examples](https://www.github.com/zuoralabs/zsec-go-examples) at [goroutine_test.go](https://github.com/zuoralabs/zsec-go-examples/blob/605d1788c03ea7e9d2c7594b16f46137ad6e1025/goroutine_test.go#L243-L251)

The below sni... | non_process | zuoralabs zsec go examples goroutine test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the line s of go which triggered the analyzer go... | 0 |

15,963 | 20,177,192,988 | IssuesEvent | 2022-02-10 15:24:19 | ossf/tac | https://api.github.com/repos/ossf/tac | closed | TAC Election: Officials | ElectionProcess | Who should be the Election Officials?

Suggestion:

- OpenSSF General Manager

- OpenSSF Program Director

- OpenSSF TAC Chair

- OpenSSF TAC rep from an org that is not the same org as the TAC Chair | 1.0 | TAC Election: Officials - Who should be the Election Officials?

Suggestion:

- OpenSSF General Manager

- OpenSSF Program Director

- OpenSSF TAC Chair

- OpenSSF TAC rep from an org that is not the same org as the TAC Chair | process | tac election officials who should be the election officials suggestion openssf general manager openssf program director openssf tac chair openssf tac rep from an org that is not the same org as the tac chair | 1 |

14,097 | 16,920,313,411 | IssuesEvent | 2021-06-25 03:58:34 | pingcap/tidb | https://api.github.com/repos/pingcap/tidb | closed | UPDATE result is not compatible with MySQL | compatibility-breaker severity/moderate sig/sql-infra status/future type/bug type/wontfix | ## Bug Report

Please answer these questions before submitting your issue. Thanks!

### 1. Minimal reproduce step (Required)

```

create table companies(id bigint primary key, ida bigint);

insert into companies values(14, 14);

UPDATE companies SET id = id + 1, ida = id * 2;

```

<!-- a step by step guide fo... | True | UPDATE result is not compatible with MySQL - ## Bug Report

Please answer these questions before submitting your issue. Thanks!

### 1. Minimal reproduce step (Required)

```

create table companies(id bigint primary key, ida bigint);

insert into companies values(14, 14);

UPDATE companies SET id = id + 1, ida ... | non_process | update result is not compatible with mysql bug report please answer these questions before submitting your issue thanks minimal reproduce step required create table companies id bigint primary key ida bigint insert into companies values update companies set id id ida ... | 0 |

470,748 | 13,543,702,414 | IssuesEvent | 2020-09-16 19:23:51 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Errors in the HL7800.c file | bug priority: low | **Describe the bug**

I have a Pinnacle 100 dvk board that I integrated to Platformio to be used with Zephyr 2.3. I need to use the HL7800 semiconductor that is not available in the current version of Zephyr but may be for the 2.4 version. So I decided to add what is missing manually, so far so good. I end up with thes... | 1.0 | Errors in the HL7800.c file - **Describe the bug**

I have a Pinnacle 100 dvk board that I integrated to Platformio to be used with Zephyr 2.3. I need to use the HL7800 semiconductor that is not available in the current version of Zephyr but may be for the 2.4 version. So I decided to add what is missing manually, so f... | non_process | errors in the c file describe the bug i have a pinnacle dvk board that i integrated to platformio to be used with zephyr i need to use the semiconductor that is not available in the current version of zephyr but may be for the version so i decided to add what is missing manually so far so good ... | 0 |

195,995 | 15,570,791,696 | IssuesEvent | 2021-03-17 03:24:53 | gravitational/teleport | https://api.github.com/repos/gravitational/teleport | opened | Fixes for next docs linter | documentation release-engineering | - Backport `test-docs` to at least `branch/v6` so that docs PRs to that branch are linted.

- Maybe `branch/5.0` as well if we're planning to make any changes to those docs as a matter of course

- Maybe it even needs to be backported to all older branches which run Drone as they're all using the new docs engine no... | 1.0 | Fixes for next docs linter - - Backport `test-docs` to at least `branch/v6` so that docs PRs to that branch are linted.

- Maybe `branch/5.0` as well if we're planning to make any changes to those docs as a matter of course

- Maybe it even needs to be backported to all older branches which run Drone as they're all... | non_process | fixes for next docs linter backport test docs to at least branch so that docs prs to that branch are linted maybe branch as well if we re planning to make any changes to those docs as a matter of course maybe it even needs to be backported to all older branches which run drone as they re all ... | 0 |

17,722 | 23,625,566,596 | IssuesEvent | 2022-08-25 03:17:09 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add The Attack of the Amazing Electrified Man from Popcorn (1991) | suggested title in process | Title: The Attack of the Amazing Electrified Man

Type (film/tv show): Film

Film or show in which it appears: Popcorn (1991)

Is the parent film/show streaming anywhere? Yes (Shudder and AMC+); https://www.justwatch.com/us/movie/popcorn-1991

About when in the parent film/show does it appear? 0:39:00

Actual... | 1.0 | Add The Attack of the Amazing Electrified Man from Popcorn (1991) - Title: The Attack of the Amazing Electrified Man

Type (film/tv show): Film

Film or show in which it appears: Popcorn (1991)

Is the parent film/show streaming anywhere? Yes (Shudder and AMC+); https://www.justwatch.com/us/movie/popcorn-1991

... | process | add the attack of the amazing electrified man from popcorn title the attack of the amazing electrified man type film tv show film film or show in which it appears popcorn is the parent film show streaming anywhere yes shudder and amc about when in the parent film show does it appear ... | 1 |

4,440 | 3,024,885,908 | IssuesEvent | 2015-08-03 01:55:11 | catapult-project/catapult | https://api.github.com/repos/catapult-project/catapult | opened | Clarify role of IR type, IR title using an override of userFriendlyName | Code Health | <a href="https://github.com/natduca"><img src="https://avatars.githubusercontent.com/u/412396?v=3" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [natduca](https://github.com/natduca)**

_Thursday Jul 16, 2015 at 22:52 GMT_

_Originally opened as https://github.com/google/trace-viewer/issues/1099_

... | 1.0 | Clarify role of IR type, IR title using an override of userFriendlyName - <a href="https://github.com/natduca"><img src="https://avatars.githubusercontent.com/u/412396?v=3" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [natduca](https://github.com/natduca)**

_Thursday Jul 16, 2015 at 22:52 GMT_

... | non_process | clarify role of ir type ir title using an override of userfriendlyname issue by thursday jul at gmt originally opened as ir displayname returns type title title is what the user is doing e g click drag keytyping ir type is r a i l types upper | 0 |

42,276 | 2,869,997,020 | IssuesEvent | 2015-06-06 18:37:38 | thexerteproject/xerteonlinetoolkits | https://api.github.com/repos/thexerteproject/xerteonlinetoolkits | opened | Turning on DB authentication causes error loading /index.php | bug high priority | You get this error

Strict Standards: Declaration of Xerte_Authentication_Db::addUser() should be compatible with Xerte_Authentication_Abstract::addUser($username, $passwd, $firstname, $lastname) in /home/domain/library/Xerte/Authentication/Db.php on line 35 | 1.0 | Turning on DB authentication causes error loading /index.php - You get this error

Strict Standards: Declaration of Xerte_Authentication_Db::addUser() should be compatible with Xerte_Authentication_Abstract::addUser($username, $passwd, $firstname, $lastname) in /home/domain/library/Xerte/Authentication/Db.php on line... | non_process | turning on db authentication causes error loading index php you get this error strict standards declaration of xerte authentication db adduser should be compatible with xerte authentication abstract adduser username passwd firstname lastname in home domain library xerte authentication db php on line... | 0 |

8,381 | 11,543,404,307 | IssuesEvent | 2020-02-18 09:32:39 | google/go-jsonnet | https://api.github.com/repos/google/go-jsonnet | closed | Add tests for 32-bit to Travis | process | As far as I can tell, there is no i686 arch in Travis available, but we can just compile stuff in 32-bit mode.

It should let us catch the problems like #376 early. | 1.0 | Add tests for 32-bit to Travis - As far as I can tell, there is no i686 arch in Travis available, but we can just compile stuff in 32-bit mode.

It should let us catch the problems like #376 early. | process | add tests for bit to travis as far as i can tell there is no arch in travis available but we can just compile stuff in bit mode it should let us catch the problems like early | 1 |

726,490 | 25,000,784,634 | IssuesEvent | 2022-11-03 07:40:39 | submariner-io/enhancements | https://api.github.com/repos/submariner-io/enhancements | closed | Epic: Support OCP with submariner on VMWare | enhancement priority:high | **What would you like to be added**:

Proposal is to fully support connecting multiple OCP clusters on VMWare using Submariner.

**Why is this needed**:

Aim of this proposal is to list and track steps needed to fill any gaps and allow full support for such deployments.

**UPDATE**

Investigations have confirmed th... | 1.0 | Epic: Support OCP with submariner on VMWare - **What would you like to be added**:

Proposal is to fully support connecting multiple OCP clusters on VMWare using Submariner.

**Why is this needed**:

Aim of this proposal is to list and track steps needed to fill any gaps and allow full support for such deployments.

... | non_process | epic support ocp with submariner on vmware what would you like to be added proposal is to fully support connecting multiple ocp clusters on vmware using submariner why is this needed aim of this proposal is to list and track steps needed to fill any gaps and allow full support for such deployments ... | 0 |

99,955 | 8,719,190,487 | IssuesEvent | 2018-12-07 23:21:08 | OpenLiberty/open-liberty | https://api.github.com/repos/OpenLiberty/open-liberty | closed | Update com.ibm.ws.microprofile.metrics_fat_tck dependencies | team:Lumberjack test bug | com.ibm.ws.microprofile.metrics_fat_tck was still using an internal dependency on Arquillian and external contributors couldn't run it.

| 1.0 | Update com.ibm.ws.microprofile.metrics_fat_tck dependencies - com.ibm.ws.microprofile.metrics_fat_tck was still using an internal dependency on Arquillian and external contributors couldn't run it.

| non_process | update com ibm ws microprofile metrics fat tck dependencies com ibm ws microprofile metrics fat tck was still using an internal dependency on arquillian and external contributors couldn t run it | 0 |

271 | 5,315,392,461 | IssuesEvent | 2017-02-13 17:12:18 | PopulateTools/gobierto | https://api.github.com/repos/PopulateTools/gobierto | closed | Mark days with events in the calendar | enhancement gobierto-people | The days that have events should be higlighted and differentiated from the days without events. | 1.0 | Mark days with events in the calendar - The days that have events should be higlighted and differentiated from the days without events. | non_process | mark days with events in the calendar the days that have events should be higlighted and differentiated from the days without events | 0 |

367 | 6,225,170,496 | IssuesEvent | 2017-07-10 15:38:58 | offa/danek | https://api.github.com/repos/offa/danek | closed | Refactor BufferedFileReader class | enhancement portability | Refactor `BufferedFileReader` class.

- [x] Use RAII

- [x] Disable copying

- [x] Clean up the special member functions

- [x] Proper portability

- [x] Use better error handling

- [x] Remove preprocessor usage

- [x] Avoid C API's as much as possible

- [x] Use better types whenever possible

- [x] Move to a dedic... | True | Refactor BufferedFileReader class - Refactor `BufferedFileReader` class.

- [x] Use RAII

- [x] Disable copying

- [x] Clean up the special member functions

- [x] Proper portability

- [x] Use better error handling

- [x] Remove preprocessor usage

- [x] Avoid C API's as much as possible

- [x] Use better types when... | non_process | refactor bufferedfilereader class refactor bufferedfilereader class use raii disable copying clean up the special member functions proper portability use better error handling remove preprocessor usage avoid c api s as much as possible use better types whenever possible ... | 0 |

315,569 | 27,085,615,951 | IssuesEvent | 2023-02-14 16:47:05 | arfc/openmcyclus | https://api.github.com/repos/arfc/openmcyclus | closed | Need CI in repo | Comp:Core Difficulty:1-Beginner Priority:2-Normal Status:1-New Type:Test | This repository needs CI implemented to run tests.

This issue can be closed when:

- [ ] CI is set up

- [ ] CI is called when a new PR is created.

I recommend using GitHub actions to keep things contained in this repository instead of calling a third-party. | 1.0 | Need CI in repo - This repository needs CI implemented to run tests.

This issue can be closed when:

- [ ] CI is set up

- [ ] CI is called when a new PR is created.

I recommend using GitHub actions to keep things contained in this repository instead of calling a third-party. | non_process | need ci in repo this repository needs ci implemented to run tests this issue can be closed when ci is set up ci is called when a new pr is created i recommend using github actions to keep things contained in this repository instead of calling a third party | 0 |

295,845 | 9,101,457,720 | IssuesEvent | 2019-02-20 11:08:52 | jetstack/cert-manager | https://api.github.com/repos/jetstack/cert-manager | closed | Validation stops certificate creation | area/api kind/bug priority/important-soon | While trying to use cert-manager 0.6.1 with the no-webhook configuration, on a GKE cluster running 1.11.6, I encountered this error in the cert-manager logs `status.certificate in body must be of type string: "null"`. Context with minor redaction...:

```

cert-manager-7476cc944f-7m9cg cert-manager I0216 04:11:06.01... | 1.0 | Validation stops certificate creation - While trying to use cert-manager 0.6.1 with the no-webhook configuration, on a GKE cluster running 1.11.6, I encountered this error in the cert-manager logs `status.certificate in body must be of type string: "null"`. Context with minor redaction...:

```

cert-manager-7476cc9... | non_process | validation stops certificate creation while trying to use cert manager with the no webhook configuration on a gke cluster running i encountered this error in the cert manager logs status certificate in body must be of type string null context with minor redaction cert manager cert... | 0 |

8,389 | 11,562,704,650 | IssuesEvent | 2020-02-20 03:25:53 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Obsolete negative regulation by symbiont of entry into host and children | multi-species process | Following up on #18778. The symbiont doesn't negatively regulates its own entry into the host.

Will obsolete

* GO:0052374 negative regulation by symbiont of entry into host

* GO:0075056 negative regulation of symbiont penetration peg formation for entry into host

* GO:0075200 negative regulation of symbiont... | 1.0 | Obsolete negative regulation by symbiont of entry into host and children - Following up on #18778. The symbiont doesn't negatively regulates its own entry into the host.

Will obsolete

* GO:0052374 negative regulation by symbiont of entry into host

* GO:0075056 negative regulation of symbiont penetration peg f... | process | obsolete negative regulation by symbiont of entry into host and children following up on the symbiont doesn t negatively regulates its own entry into the host will obsolete go negative regulation by symbiont of entry into host go negative regulation of symbiont penetration peg formation for ent... | 1 |

269,940 | 8,444,657,077 | IssuesEvent | 2018-10-18 19:05:06 | DoSomething/blink | https://api.github.com/repos/DoSomething/blink | closed | RabbitMQ uses about 70% of the memory available. | Maintenance Priority (High) | The memory utilization reported by the node in the RabbitMQ interface averages about 70%. We should research what is making it consume so much memory and if it's a signal that we should scale and/or optimize anything. | 1.0 | RabbitMQ uses about 70% of the memory available. - The memory utilization reported by the node in the RabbitMQ interface averages about 70%. We should research what is making it consume so much memory and if it's a signal that we should scale and/or optimize anything. | non_process | rabbitmq uses about of the memory available the memory utilization reported by the node in the rabbitmq interface averages about we should research what is making it consume so much memory and if it s a signal that we should scale and or optimize anything | 0 |

818,771 | 30,703,317,107 | IssuesEvent | 2023-07-27 02:41:47 | Memmy-App/memmy | https://api.github.com/repos/Memmy-App/memmy | closed | Apply „Display total score“ option everywhere | enhancement medium priority visual help wanted | **Is your feature request related to a problem? Please describe.**

Currently, the option to display the total score instead of up/downvotes only applies to the posts on standard feed view.

**Describe the solution you'd like**

Have the option also apply to other instances where we see up and downvotes, like posts i... | 1.0 | Apply „Display total score“ option everywhere - **Is your feature request related to a problem? Please describe.**

Currently, the option to display the total score instead of up/downvotes only applies to the posts on standard feed view.

**Describe the solution you'd like**

Have the option also apply to other insta... | non_process | apply „display total score“ option everywhere is your feature request related to a problem please describe currently the option to display the total score instead of up downvotes only applies to the posts on standard feed view describe the solution you d like have the option also apply to other insta... | 0 |

13,779 | 16,537,543,973 | IssuesEvent | 2021-05-27 13:27:20 | encode/uvicorn | https://api.github.com/repos/encode/uvicorn | closed | Hot-reloading gets stuck after interacting with multiprocessing / Twisted signals | multiprocessing need confirmation workers | ### Checklist

<!-- Please make sure you check all these items before submitting your bug report. -->

- [x] The bug is reproducible against the latest release and/or `master`.

- [x] There are no similar issues or pull requests to fix it yet.

### Describe the bug

Launched with `--reload` option gets stuck af... | 1.0 | Hot-reloading gets stuck after interacting with multiprocessing / Twisted signals - ### Checklist

<!-- Please make sure you check all these items before submitting your bug report. -->

- [x] The bug is reproducible against the latest release and/or `master`.

- [x] There are no similar issues or pull requests to ... | process | hot reloading gets stuck after interacting with multiprocessing twisted signals checklist the bug is reproducible against the latest release and or master there are no similar issues or pull requests to fix it yet describe the bug launched with reload option gets stuck after e... | 1 |

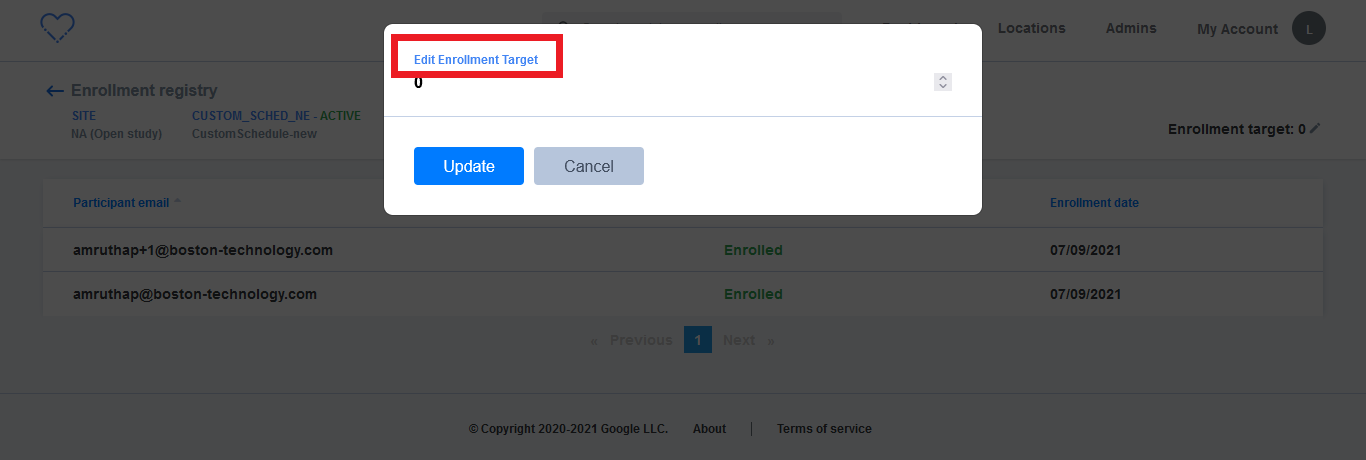

14,280 | 17,260,316,565 | IssuesEvent | 2021-07-22 06:29:33 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Open study > Enrollment registry > Enrollment target > Text case issue | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | Open study > Enrollment registry > Click on enrollment target > 'Edit Enrollment Target' should be changed to 'Edit enrollment target'

| 3.0 | [PM] Open study > Enrollment registry > Enrollment target > Text case issue - Open study > Enrollment registry > Click on enrollment target > 'Edit Enrollment Target' should be changed to 'Edit enrollment target'

can be found here

- https://github.com/keras-team/keras-cv/blob/master/keras_cv/layer... | 1.0 | Add augment_bounding_boxes support to AugMix layer - The augment_bounding_boxes should be implemented for AugMix Layer in keras_cv. The PR should contain implementation, test scripts and a demo script to verify implementation.

Example code for implementing augment_bounding_boxes() can be found here

- https://gith... | process | add augment bounding boxes support to augmix layer the augment bounding boxes should be implemented for augmix layer in keras cv the pr should contain implementation test scripts and a demo script to verify implementation example code for implementing augment bounding boxes can be found here ... | 1 |

51,013 | 13,612,770,954 | IssuesEvent | 2020-09-23 10:49:12 | jgeraigery/angular-breadcrumb | https://api.github.com/repos/jgeraigery/angular-breadcrumb | opened | CVE-2020-7608 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>yargs-parser-9.0.2.tgz</b>, <b>yargs-parser-5.0.0.tgz</b>, <b>yargs-parser-10.1.0.tgz</b>, <b>yargs-parser-7.0.0.tgz... | True | CVE-2020-7608 (Medium) detected in multiple libraries - ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>yargs-parser-9.0.2.tgz</b>, <b>yargs-parser-5.0.0.tgz</b>, <... | non_process | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries yargs parser tgz yargs parser tgz yargs parser tgz yargs parser tgz yargs parser tgz the mighty option parser used by yargs library home page ... | 0 |

49,582 | 6,033,529,701 | IssuesEvent | 2017-06-09 08:35:14 | xcat2/xcat-core | https://api.github.com/repos/xcat2/xcat-core | closed | [FVT]update script pre_deploy_sn to support PostgreSQL database in automation test bucket | component:test priority:low sprint2 status:pending test:testcase_added | We need to support both Mysql and PostgreSQL in automaton bucket. Need to update pre_deploy_sn to run PostgreSQL setup script.

```

$cmd = "XCATMYSQLADMIN_PW=12345 XCATMYSQLROOT_PW=12345 /opt/xcat/bin/mysqlsetup -i -V";

runcmd("$cmd");

$cmd = "echo \"GRANT ALL on xcatdb.* TO xcatadmin@\'%\' IDENTIFIED BY \'1234... | 3.0 | [FVT]update script pre_deploy_sn to support PostgreSQL database in automation test bucket - We need to support both Mysql and PostgreSQL in automaton bucket. Need to update pre_deploy_sn to run PostgreSQL setup script.

```

$cmd = "XCATMYSQLADMIN_PW=12345 XCATMYSQLROOT_PW=12345 /opt/xcat/bin/mysqlsetup -i -V";

run... | non_process | update script pre deploy sn to support postgresql database in automation test bucket we need to support both mysql and postgresql in automaton bucket need to update pre deploy sn to run postgresql setup script cmd xcatmysqladmin pw xcatmysqlroot pw opt xcat bin mysqlsetup i v runcmd cmd ... | 0 |

723,223 | 24,889,920,229 | IssuesEvent | 2022-10-28 11:02:50 | robotframework/robotframework | https://api.github.com/repos/robotframework/robotframework | closed | Libdoc's `DocumentationBuilder` doesn't anymore work with resource files with `.robot` extension | bug priority: medium | When support for generating documentation for suite files was added (#4493), the `DocumentationBuilder` factory method was changed so that it returns a `SuiteDocBuilder` with all files having a `.robot` extension. This builder only works with suite files and not with resource files. The `LibraryDocumentation` factory m... | 1.0 | Libdoc's `DocumentationBuilder` doesn't anymore work with resource files with `.robot` extension - When support for generating documentation for suite files was added (#4493), the `DocumentationBuilder` factory method was changed so that it returns a `SuiteDocBuilder` with all files having a `.robot` extension. This bu... | non_process | libdoc s documentationbuilder doesn t anymore work with resource files with robot extension when support for generating documentation for suite files was added the documentationbuilder factory method was changed so that it returns a suitedocbuilder with all files having a robot extension this build... | 0 |

18,562 | 24,555,700,393 | IssuesEvent | 2022-10-12 15:42:16 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] Enrollment flow > Data sharing permission > Participant is able to select both data sharing options present on the screen | Bug P0 iOS Process: Fixed Process: Tested dev | AR: Enrollment flow > Data sharing permission > Participant is able to select both data sharing options present on the screen

ER: Participants should be able to select only one option at a time on the data sharing permission screen

.

In the Windows world, this can be done by assembling the list of descendant processes to terminate using [WMI's Win32_Process management object](https://stackoverflow.com/a/7... | 1.0 | Expand Process.Kill to Optionally Kill a Process Tree - ## Issue

.Net Standard does not provide a means to kill a process tree (that is, a given process and all of its child/descendant processes).

In the Windows world, this can be done by assembling the list of descendant processes to terminate using [WMI's Win32_... | process | expand process kill to optionally kill a process tree issue net standard does not provide a means to kill a process tree that is a given process and all of its child descendant processes in the windows world this can be done by assembling the list of descendant processes to terminate using or ... | 1 |

776,884 | 27,264,742,308 | IssuesEvent | 2023-02-22 17:10:27 | ascheid/itsg33-pbmm-issue-gen | https://api.github.com/repos/ascheid/itsg33-pbmm-issue-gen | opened | SA-17(4): Developer Security Architecture And Design | Informal Correspondence | Priority: P3 ITSG-33 Suggested Assignment: IT Projects Class: Management Control: SA-17 | # Control Definition

DEVELOPER SECURITY ARCHITECTURE AND DESIGN | INFORMAL CORRESPONDENCE

The organization requires the developer of the information system, system component, or information system service to:

(a) Produce, as an integral part of the development process, an informal descriptive top-level specification t... | 1.0 | SA-17(4): Developer Security Architecture And Design | Informal Correspondence - # Control Definition

DEVELOPER SECURITY ARCHITECTURE AND DESIGN | INFORMAL CORRESPONDENCE

The organization requires the developer of the information system, system component, or information system service to:

(a) Produce, as an integral p... | non_process | sa developer security architecture and design informal correspondence control definition developer security architecture and design informal correspondence the organization requires the developer of the information system system component or information system service to a produce as an integral pa... | 0 |

6,923 | 10,082,811,085 | IssuesEvent | 2019-07-25 12:14:54 | linnovate/root | https://api.github.com/repos/linnovate/root | closed | Multipale selecte does not work on the tasks I following to | Fixed Process bug bug critical | - I have marked one task, in fact all have been marked | 1.0 | Multipale selecte does not work on the tasks I following to - - I have marked one task, in fact all have been marked | process | multipale selecte does not work on the tasks i following to i have marked one task in fact all have been marked | 1 |

41,296 | 8,957,841,021 | IssuesEvent | 2019-01-27 08:41:34 | opencodeiiita/Competitive_Coding | https://api.github.com/repos/opencodeiiita/Competitive_Coding | closed | Logical error | Everyone OpenCode19 Skilled: 20 points bug open | Task 3, Issue 3: Find and fix the logical error by modifying not more than one line. | 1.0 | Logical error - Task 3, Issue 3: Find and fix the logical error by modifying not more than one line. | non_process | logical error task issue find and fix the logical error by modifying not more than one line | 0 |

261,488 | 27,809,782,609 | IssuesEvent | 2023-03-18 01:43:07 | madhans23/linux-4.1.15 | https://api.github.com/repos/madhans23/linux-4.1.15 | closed | CVE-2022-21504 (Medium) detected in linux-stable-rtv4.1.33, linux-yocto-4.1v4.1.17 - autoclosed | Mend: dependency security vulnerability | ## CVE-2022-21504 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linux-stable-rtv4.1.33</b>, <b>linux-yocto-4.1v4.1.17</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><sum... | True | CVE-2022-21504 (Medium) detected in linux-stable-rtv4.1.33, linux-yocto-4.1v4.1.17 - autoclosed - ## CVE-2022-21504 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linux-stable-rtv4... | non_process | cve medium detected in linux stable linux yocto autoclosed cve medium severity vulnerability vulnerable libraries linux stable linux yocto vulnerability details the code in was missing an appropiate file descriptor count to be missi... | 0 |

2,895 | 2,607,964,946 | IssuesEvent | 2015-02-26 00:41:55 | chrsmithdemos/leveldb | https://api.github.com/repos/chrsmithdemos/leveldb | closed | Provide "close" interface to leveldb | auto-migrated Priority-Medium Type-Defect | ```

According to the API document, for Closing A Database, just delete the database

object. Example:

... open the db as described above ...

... do something with db ...

delete db;

It might leads to some encapsulation error. For example, if there were a

storage encapsuator with "clear" interface as well as it... | 1.0 | Provide "close" interface to leveldb - ```

According to the API document, for Closing A Database, just delete the database

object. Example:

... open the db as described above ...

... do something with db ...

delete db;

It might leads to some encapsulation error. For example, if there were a

storage encapsuat... | non_process | provide close interface to leveldb according to the api document for closing a database just delete the database object example open the db as described above do something with db delete db it might leads to some encapsulation error for example if there were a storage encapsuat... | 0 |

257,472 | 8,137,809,664 | IssuesEvent | 2018-08-20 13:03:26 | themuseblockchain/Muse-Source | https://api.github.com/repos/themuseblockchain/Muse-Source | closed | Provide a way to pay to content | enhancement hardfork high priority | Currently the smart contract made up by a piece of content is only used for paying out content rewards.

It would be nice if there was a way to pay an amount of money to a piece of content that is automatically split among the content's payees. | 1.0 | Provide a way to pay to content - Currently the smart contract made up by a piece of content is only used for paying out content rewards.

It would be nice if there was a way to pay an amount of money to a piece of content that is automatically split among the content's payees. | non_process | provide a way to pay to content currently the smart contract made up by a piece of content is only used for paying out content rewards it would be nice if there was a way to pay an amount of money to a piece of content that is automatically split among the content s payees | 0 |

456,494 | 13,150,825,940 | IssuesEvent | 2020-08-09 13:43:20 | chrisjsewell/docutils | https://api.github.com/repos/chrisjsewell/docutils | closed | UTF8 in stylesheets [SF:bugs:79] | bugs closed-fixed priority-5 |

author: sonderblade

created: 2007-04-02 10:44:35

assigned: None

SF_url: https://sourceforge.net/p/docutils/bugs/79

rst2html.py does not like this ":Author: Björn Lindqvist" in stylesheets:

Traceback \(most recent call last\):

File "C:\Python25\rst2html.py", line 25, in <module>

publish\_cmdline\(writer\_name='... | 1.0 | UTF8 in stylesheets [SF:bugs:79] -

author: sonderblade

created: 2007-04-02 10:44:35

assigned: None

SF_url: https://sourceforge.net/p/docutils/bugs/79

rst2html.py does not like this ":Author: Björn Lindqvist" in stylesheets:

Traceback \(most recent call last\):

File "C:\Python25\rst2html.py", line 25, in <modul... | non_process | in stylesheets author sonderblade created assigned none sf url py does not like this author björn lindqvist in stylesheets traceback most recent call last file c py line in publish cmdline writer name html description description file c lib site pack... | 0 |

123,466 | 26,262,570,445 | IssuesEvent | 2023-01-06 09:23:32 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | [Mono] Remainder example fails for LLVMFullAot | area-Codegen-AOT-mono | ```csharp

// Licensed to the .NET Foundation under one or more agreements.

// The .NET Foundation licenses this file to you under the MIT license.

public class Program

{

public static ulong[,] s_1;

public static int Main()

{

// This should not assert.

try

{

u... | 1.0 | [Mono] Remainder example fails for LLVMFullAot - ```csharp

// Licensed to the .NET Foundation under one or more agreements.

// The .NET Foundation licenses this file to you under the MIT license.

public class Program

{

public static ulong[,] s_1;

public static int Main()

{

// This should n... | non_process | remainder example fails for llvmfullaot csharp licensed to the net foundation under one or more agreements the net foundation licenses this file to you under the mit license public class program public static ulong s public static int main this should not asse... | 0 |

20,165 | 26,719,010,759 | IssuesEvent | 2023-01-28 22:32:18 | bisq-network/proposals | https://api.github.com/repos/bisq-network/proposals | closed | Set the minimum payout at mediation to be 5% of trade amount | was:approved a:proposal re:processes | ## Proposal

Currently the minimum payout at mediation is [2.5% of trade amount](https://github.com/bisq-network/bisq/pull/6362). This proposal suggests to increase this amount to be 5% of trade amount.

### Reasoning

With the introduction [do not pay out the security deposit of the trade peer to the arbitration... | 1.0 | Set the minimum payout at mediation to be 5% of trade amount - ## Proposal

Currently the minimum payout at mediation is [2.5% of trade amount](https://github.com/bisq-network/bisq/pull/6362). This proposal suggests to increase this amount to be 5% of trade amount.

### Reasoning

With the introduction [do not p... | process | set the minimum payout at mediation to be of trade amount proposal currently the minimum payout at mediation is this proposal suggests to increase this amount to be of trade amount reasoning with the introduction there needs to be a bigger incentive for the penalized party to accept th... | 1 |

265,642 | 20,105,886,153 | IssuesEvent | 2022-02-07 10:26:22 | Katolus/functions | https://api.github.com/repos/Katolus/functions | closed | Add support environments document | documentation | It should include information about support environments and runtimes. | 1.0 | Add support environments document - It should include information about support environments and runtimes. | non_process | add support environments document it should include information about support environments and runtimes | 0 |

8,931 | 12,041,150,699 | IssuesEvent | 2020-04-14 08:20:32 | Inria-Visages/BIDS-prov | https://api.github.com/repos/Inria-Visages/BIDS-prov | closed | List all features that are in the examples but not yet in the specification [0.5D]. | Spec update process | Delivrable: Itemized list in a markdown document in [current repository](https://github.com/Inria-Visages/BIDS-prov/). | 1.0 | List all features that are in the examples but not yet in the specification [0.5D]. - Delivrable: Itemized list in a markdown document in [current repository](https://github.com/Inria-Visages/BIDS-prov/). | process | list all features that are in the examples but not yet in the specification delivrable itemized list in a markdown document in | 1 |

5,389 | 8,213,270,862 | IssuesEvent | 2018-09-04 18:59:52 | PennyDreadfulMTG/perf-reports | https://api.github.com/repos/PennyDreadfulMTG/perf-reports | closed | 500 error at /api/gitpull | CalledProcessError decksite wontfix | Command '['pip', 'install', '-U', '--user', '-r', 'requirements.txt', '--no-cache']' returned non-zero exit status 1.

Reported on decksite by logged_out

--------------------------------------------------------------------------------

Request Method: POST

Path: /api/gitpull?

Cookies: {}

Endpoint: process_github_webhoo... | 1.0 | 500 error at /api/gitpull - Command '['pip', 'install', '-U', '--user', '-r', 'requirements.txt', '--no-cache']' returned non-zero exit status 1.

Reported on decksite by logged_out

--------------------------------------------------------------------------------

Request Method: POST

Path: /api/gitpull?

Cookies: {}

End... | process | error at api gitpull command returned non zero exit status reported on decksite by logged out request method post path api gitpull cookies endpoint process github webhook view args person logged out referrer no... | 1 |

333,047 | 10,114,645,889 | IssuesEvent | 2019-07-30 19:42:53 | rstudio/gt | https://api.github.com/repos/rstudio/gt | closed | cols_label overrides tab_styles settings | Difficulty: ② Intermediate Effort: ② Medium Priority: ♨︎ Critical Type: ☹︎ Bug | If you use the cols_label function the formatting of the column header cells seems to be overridden by that function such that you can no longer control the formatting with tab_style.

For example, this

```

gt::gtcars %>%

dplyr::select(mfr, model, year, hp) %>%

dplyr::top_n(n = 10) %>%

gt::gt() %>%

gt::... | 1.0 | cols_label overrides tab_styles settings - If you use the cols_label function the formatting of the column header cells seems to be overridden by that function such that you can no longer control the formatting with tab_style.

For example, this

```

gt::gtcars %>%

dplyr::select(mfr, model, year, hp) %>%

dplyr... | non_process | cols label overrides tab styles settings if you use the cols label function the formatting of the column header cells seems to be overridden by that function such that you can no longer control the formatting with tab style for example this gt gtcars dplyr select mfr model year hp dplyr... | 0 |

196,542 | 14,878,206,924 | IssuesEvent | 2021-01-20 05:10:26 | felipexpert1996/biblioteca-django-angular | https://api.github.com/repos/felipexpert1996/biblioteca-django-angular | closed | Criar testes unitários para api de crud de categoria de livros | back-end test | - [ ] Criar teste unitário para api de cadastro de categoria de livros;

- [ ] Criar teste unitário para api de atualização de categoria de livros;

- [ ] Criar teste unitário para api de deleção de categoria de livros;

- [ ] Criar teste unitário para api de listagem de categoria de livros; | 1.0 | Criar testes unitários para api de crud de categoria de livros - - [ ] Criar teste unitário para api de cadastro de categoria de livros;

- [ ] Criar teste unitário para api de atualização de categoria de livros;

- [ ] Criar teste unitário para api de deleção de categoria de livros;

- [ ] Criar teste unitário para ap... | non_process | criar testes unitários para api de crud de categoria de livros criar teste unitário para api de cadastro de categoria de livros criar teste unitário para api de atualização de categoria de livros criar teste unitário para api de deleção de categoria de livros criar teste unitário para api de lis... | 0 |

17,494 | 23,305,507,970 | IssuesEvent | 2022-08-07 23:50:04 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add [Reality Bites] | suggested title in process | Please add as much of the following info as you can:

Title: Reality Bites

Type (film/tv show): show

Film or show in which it appears: Reality Bites

Is the parent film/show streaming anywhere?

About when in the parent film/show does it appear? Toward the end when Ben Stillers character shows Winona Ryder... | 1.0 | Add [Reality Bites] - Please add as much of the following info as you can:

Title: Reality Bites

Type (film/tv show): show

Film or show in which it appears: Reality Bites

Is the parent film/show streaming anywhere?

About when in the parent film/show does it appear? Toward the end when Ben Stillers charac... | process | add please add as much of the following info as you can title reality bites type film tv show show film or show in which it appears reality bites is the parent film show streaming anywhere about when in the parent film show does it appear toward the end when ben stillers character shows wino... | 1 |

6,255 | 9,215,603,022 | IssuesEvent | 2019-03-11 04:05:33 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | UseShellExecute on macOS passes Arguments to `open` instead of specified `filename` | area-System.Diagnostics.Process bug os-mac-os-x | Using PSCore6:

```powershell

$si = [System.Diagnostics.ProcessStartInfo]::new()

$si.UseShellExecute = $true

$si.FileName = (Get-Command pwsh).Source

$si.Arguments = "-c 1+1;read-host"

[System.Diagnostics.Process]::Start($si)

```

Expected:

Start new instance of PowerShell outputting answer of `2` and wait... | 1.0 | UseShellExecute on macOS passes Arguments to `open` instead of specified `filename` - Using PSCore6:

```powershell

$si = [System.Diagnostics.ProcessStartInfo]::new()

$si.UseShellExecute = $true

$si.FileName = (Get-Command pwsh).Source

$si.Arguments = "-c 1+1;read-host"

[System.Diagnostics.Process]::Start($si)

... | process | useshellexecute on macos passes arguments to open instead of specified filename using powershell si new si useshellexecute true si filename get command pwsh source si arguments c read host start si expected start new instance of powershell outputting answe... | 1 |

6,817 | 9,959,692,195 | IssuesEvent | 2019-07-06 09:24:59 | artisan-roaster-scope/artisan | https://api.github.com/repos/artisan-roaster-scope/artisan | closed | Overwrite batch counter from settings file; No.... Does not overwrite batch prefix. | in process | ## Expected Behavior

Batch prefix may continue to be loaded from settings file, even if we choose not to overwrite batch counter from the settings file.

## Actual Behavior... | 1.0 | Overwrite batch counter from settings file; No.... Does not overwrite batch prefix. - ## Expected Behavior

Batch prefix may continue to be loaded from settings file, even if we choose not to overwrite batch counter from the settings file.

```

0.7:

```

julia> @time @code_warntype 1+1

5.536148 seconds (2.12 M allocations: 121.450 MiB, 1.51% gc time)

```

Profiling shows a bunch of type inference stuff. | True | code_warntype is significantly slower than on 0.6 - 0.6:

```

julia> @time @code_warntype 1+1;

1.695684 seconds (522.70 k allocations: 27.840 MiB, 8.88% gc time)

```

0.7:

```

julia> @time @code_warntype 1+1

5.536148 seconds (2.12 M allocations: 121.450 MiB, 1.51% gc time)

```

Profiling shows a bunc... | non_process | code warntype is significantly slower than on julia time code warntype seconds k allocations mib gc time julia time code warntype seconds m allocations mib gc time profiling shows a bunch of type inference stu... | 0 |

16,754 | 21,922,071,612 | IssuesEvent | 2022-05-22 18:21:09 | googleapis/gapic-generator-python | https://api.github.com/repos/googleapis/gapic-generator-python | closed | Snippetgen: PRs are not prefixed with type docs | type: process | When a snippetgen updates are rolled out, since the types are prefixed with `chore` it does not triggers a release, even though a release is normally required for a docs update.

For example: this PR https://github.com/googleapis/python-scheduler/pull/233 updated problematic snippets, however a docs refresh was not m... | 1.0 | Snippetgen: PRs are not prefixed with type docs - When a snippetgen updates are rolled out, since the types are prefixed with `chore` it does not triggers a release, even though a release is normally required for a docs update.

For example: this PR https://github.com/googleapis/python-scheduler/pull/233 updated prob... | process | snippetgen prs are not prefixed with type docs when a snippetgen updates are rolled out since the types are prefixed with chore it does not triggers a release even though a release is normally required for a docs update for example this pr updated problematic snippets however a docs refresh was not made ... | 1 |

5,148 | 7,928,260,214 | IssuesEvent | 2018-07-06 10:57:38 | Open-EO/openeo-api | https://api.github.com/repos/Open-EO/openeo-api | opened | Change the definition of a process_graph | process graphs vote | Issues such as #89 or #106 require a change in the process graph definition as objects are required to/should be used.

Values of a process argument are defined as follows:

`<Value> := <string|number|array|boolean|null|Process>`

Source: https://open-eo.github.io/openeo-api/v/0.3.0/processgraphs/index.html

For th... | 1.0 | Change the definition of a process_graph - Issues such as #89 or #106 require a change in the process graph definition as objects are required to/should be used.

Values of a process argument are defined as follows:

`<Value> := <string|number|array|boolean|null|Process>`

Source: https://open-eo.github.io/openeo-api... | process | change the definition of a process graph issues such as or require a change in the process graph definition as objects are required to should be used values of a process argument are defined as follows source for the mentioned issues and probably others we probably need to change the def... | 1 |

254,707 | 27,413,650,073 | IssuesEvent | 2023-03-01 12:19:34 | scm-automation-project/npm-7-with-workspaces-without-lock-file-project | https://api.github.com/repos/scm-automation-project/npm-7-with-workspaces-without-lock-file-project | closed | package-b-1.0.0.tgz: 1 vulnerabilities (highest severity is: 5.0) - autoclosed | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>package-b-1.0.0.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/express/node_modules/mime/pac... | True | package-b-1.0.0.tgz: 1 vulnerabilities (highest severity is: 5.0) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>package-b-1.0.0.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.js... | non_process | package b tgz vulnerabilities highest severity is autoclosed vulnerable library package b tgz path to dependency file package json path to vulnerable library node modules express node modules mime package json node modules serve static node modules mime package json ... | 0 |