Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

10,536 | 13,311,772,054 | IssuesEvent | 2020-08-26 08:46:37 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Not prettified error `This line is invalid. It does not start with any known Prisma schema keyword.` in introspection for invalid schema. | kind/improvement process/candidate topic: errors topic: introspection | ## Bug description

I get this strange, not prettified error in introspection (with or without reintrospect flag):

```

j42@Pluto ~/D/p/s/p/introspection> env DEBUG="*" ts-node src/bin.ts introspect --experimental-reintrospection

Introspecting based on datasource defined in schema.prisma …

IntrospectionEngin... | 1.0 | Not prettified error `This line is invalid. It does not start with any known Prisma schema keyword.` in introspection for invalid schema. - ## Bug description

I get this strange, not prettified error in introspection (with or without reintrospect flag):

```

j42@Pluto ~/D/p/s/p/introspection> env DEBUG="*" ts-nod... | process | not prettified error this line is invalid it does not start with any known prisma schema keyword in introspection for invalid schema bug description i get this strange not prettified error in introspection with or without reintrospect flag pluto d p s p introspection env debug ts node ... | 1 |

255,653 | 27,488,305,168 | IssuesEvent | 2023-03-04 10:03:36 | ckt1031/cktidy-manager | https://api.github.com/repos/ckt1031/cktidy-manager | opened | mobile-1.0.7.tgz: 1 vulnerabilities (highest severity is: 9.8) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mobile-1.0.7.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.json</p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/ckt1031/cktidy-man... | True | mobile-1.0.7.tgz: 1 vulnerabilities (highest severity is: 9.8) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mobile-1.0.7.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.json</p>

<p>

<p>Fou... | non_process | mobile tgz vulnerabilities highest severity is vulnerable library mobile tgz path to dependency file package json found in head commit a href vulnerabilities cve severity cvss dependency type fixed in mobile version remediation available ... | 0 |

6,493 | 9,559,778,800 | IssuesEvent | 2019-05-03 17:41:25 | aiidateam/aiida_core | https://api.github.com/repos/aiidateam/aiida_core | closed | Use function name/docstring for label/description of CalcFunctioNode | priority/nice-to-have topic/processes type/accepted feature | When running a calcfunction, the name of the function (here: `testing`) is shown as the process label in the `verdi process list` command. This is obviously very useful information.

```

$ verdi process list -a

...

104 7s ago ⏹ Finished [0] testing

...

```

However, when looking at the generated `Cal... | 1.0 | Use function name/docstring for label/description of CalcFunctioNode - When running a calcfunction, the name of the function (here: `testing`) is shown as the process label in the `verdi process list` command. This is obviously very useful information.

```

$ verdi process list -a

...

104 7s ago ⏹ Finished... | process | use function name docstring for label description of calcfunctionode when running a calcfunction the name of the function here testing is shown as the process label in the verdi process list command this is obviously very useful information verdi process list a ago ⏹ finished ... | 1 |

19,614 | 25,969,368,544 | IssuesEvent | 2022-12-19 09:58:50 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | [Bug report] 当使用 tailwindcss 插件开发页面时,文件热重载没有触发 tailwindcss,导致 app.wxss 没有重新生成从而 tailwindcss 失效 | processing | **问题描述**

请用简洁的语言描述你遇到的bug,至少包括以下部分,如提供截图请尽量完整:

1. 使用 postcss tailwindcss 插件开发页面时,保存 `pages/*.mpx` ,`components/*.mpx` 这类的文件,HMR 并没有触发 tailwindcss 插件,导致 app.wxss 没有重新生成。

2. 保存 `app.mpx`/ `tailwind.config.js` / `package.json` 会走全量,重新生成 `app.wxss`

**环境信息描述**

至少包含以下部分:

1. Windows11

3. Mpx 2.8 (LTS) 刚从官网教程里新建... | 1.0 | [Bug report] 当使用 tailwindcss 插件开发页面时,文件热重载没有触发 tailwindcss,导致 app.wxss 没有重新生成从而 tailwindcss 失效 - **问题描述**

请用简洁的语言描述你遇到的bug,至少包括以下部分,如提供截图请尽量完整:

1. 使用 postcss tailwindcss 插件开发页面时,保存 `pages/*.mpx` ,`components/*.mpx` 这类的文件,HMR 并没有触发 tailwindcss 插件,导致 app.wxss 没有重新生成。

2. 保存 `app.mpx`/ `tailwind.config.js` / `package.... | process | 当使用 tailwindcss 插件开发页面时,文件热重载没有触发 tailwindcss,导致 app wxss 没有重新生成从而 tailwindcss 失效 问题描述 请用简洁的语言描述你遇到的bug,至少包括以下部分,如提供截图请尽量完整: 使用 postcss tailwindcss 插件开发页面时,保存 pages mpx components mpx 这类的文件,hmr 并没有触发 tailwindcss 插件,导致 app wxss 没有重新生成。 保存 app mpx tailwind config js package json 会走全量,... | 1 |

24,273 | 11,026,892,349 | IssuesEvent | 2019-12-06 08:06:13 | rammatzkvosky/saleor | https://api.github.com/repos/rammatzkvosky/saleor | opened | CVE-2019-11358 (Medium) detected in jquery-2.1.4.min.js | security vulnerability | ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-2.1.4.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="ht... | True | CVE-2019-11358 (Medium) detected in jquery-2.1.4.min.js - ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-2.1.4.min.js</b></p></summary>

<p>JavaScript librar... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file tmp ws scm saleor node modules js test moment index html path to vulnerabl... | 0 |

5,722 | 8,567,918,379 | IssuesEvent | 2018-11-10 16:34:32 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | Error string in revert | libs-etherlib status-inprocess type-enhancement | Error string are encoded in the same way as a function call would be using this function:

```

Error(string msg)

```

| 1.0 | Error string in revert - Error string are encoded in the same way as a function call would be using this function:

```

Error(string msg)

```

| process | error string in revert error string are encoded in the same way as a function call would be using this function error string msg | 1 |

2,036 | 4,847,429,429 | IssuesEvent | 2016-11-10 14:56:59 | Alfresco/alfresco-ng2-components | https://api.github.com/repos/Alfresco/alfresco-ng2-components | opened | Typeahead does not display suggestions | browser: all bug comp: activiti-processList | If typeahead widget is part of form attached to start event no suggestions are displayed when starting a process

**Component**

<img width="1362" alt="screen shot 2016-11-10 at 14 54 22" src="https://cloud.githubusercontent.com/assets/13200338/20181336/b08df488-a755-11e6-8331-debfc29c284f.png">

**Activiti**

!... | 1.0 | Typeahead does not display suggestions - If typeahead widget is part of form attached to start event no suggestions are displayed when starting a process

**Component**

<img width="1362" alt="screen shot 2016-11-10 at 14 54 22" src="https://cloud.githubusercontent.com/assets/13200338/20181336/b08df488-a755-11e6-83... | process | typeahead does not display suggestions if typeahead widget is part of form attached to start event no suggestions are displayed when starting a process component img width alt screen shot at src activiti | 1 |

49,137 | 26,004,440,760 | IssuesEvent | 2022-12-20 17:58:18 | RafaelGB/obsidian-db-folder | https://api.github.com/repos/RafaelGB/obsidian-db-folder | closed | [FR]: Refresh | Performance epic enhancement | ### Contact Details

_No response_

### Present your request

When I complete a task in the task property it doesn't disappear from view until the database is refreshed. I thought that was a trigger for a refresh.

When a page is added to my vault and should appear in a view it doesn't until I manually refresh the da... | True | [FR]: Refresh - ### Contact Details

_No response_

### Present your request

When I complete a task in the task property it doesn't disappear from view until the database is refreshed. I thought that was a trigger for a refresh.

When a page is added to my vault and should appear in a view it doesn't until I manuall... | non_process | refresh contact details no response present your request when i complete a task in the task property it doesn t disappear from view until the database is refreshed i thought that was a trigger for a refresh when a page is added to my vault and should appear in a view it doesn t until i manually r... | 0 |

19,175 | 25,284,196,711 | IssuesEvent | 2022-11-16 17:54:24 | googleapis/nodejs-compute | https://api.github.com/repos/googleapis/nodejs-compute | closed | Missing typescript declaration file | type: process type: feature request api: compute | #### Environment details

- OS: Linux

- npm version: latest

- `@google-cloud/compute` version: 2.1.0

#### Steps to reproduce

1. Install it with npm/yarn

2. Try to use it from typescript with `import Compute from '@google-cloud/compute`

3. See errors re: missing typescript declaration

It seems... | 1.0 | Missing typescript declaration file - #### Environment details

- OS: Linux

- npm version: latest

- `@google-cloud/compute` version: 2.1.0

#### Steps to reproduce

1. Install it with npm/yarn

2. Try to use it from typescript with `import Compute from '@google-cloud/compute`

3. See errors re: miss... | process | missing typescript declaration file environment details os linux npm version latest google cloud compute version steps to reproduce install it with npm yarn try to use it from typescript with import compute from google cloud compute see errors re miss... | 1 |

11,712 | 14,546,521,170 | IssuesEvent | 2020-12-15 21:22:17 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Pipeline Trigger on Stage Completion needs more info | Pri2 devops-cicd-process/tech devops/prod product-feedback | I am trying to trigger a pipeline on the completion of a stage:

```

resources:

pipelines:

- pipeline: MyPipelineResource

source: 'My Pipeline'

trigger:

stages:

- Release_To_Production

```

I am expecting this to trigger this pipeline anytime the Release_To_Production stage completes... | 1.0 | Pipeline Trigger on Stage Completion needs more info - I am trying to trigger a pipeline on the completion of a stage:

```

resources:

pipelines:

- pipeline: MyPipelineResource

source: 'My Pipeline'

trigger:

stages:

- Release_To_Production

```

I am expecting this to trigger this pip... | process | pipeline trigger on stage completion needs more info i am trying to trigger a pipeline on the completion of a stage resources pipelines pipeline mypipelineresource source my pipeline trigger stages release to production i am expecting this to trigger this pip... | 1 |

384,947 | 26,609,245,374 | IssuesEvent | 2023-01-23 22:10:56 | NetAppDocs/ontap | https://api.github.com/repos/NetAppDocs/ontap | closed | Root SVM volume with a space guarantee set to "volume" can be added to FabricPool. | documentation good first issue | Page: [Considerations and requirements for using FabricPool](https://docs.netapp.com/us-en/ontap/fabricpool/requirements-concept.html)

In the Functionality or features not supported by FabricPool section of this article there is a line indicating that a volume with a space guarantee set to anything other than "none"... | 1.0 | Root SVM volume with a space guarantee set to "volume" can be added to FabricPool. - Page: [Considerations and requirements for using FabricPool](https://docs.netapp.com/us-en/ontap/fabricpool/requirements-concept.html)

In the Functionality or features not supported by FabricPool section of this article there is a l... | non_process | root svm volume with a space guarantee set to volume can be added to fabricpool page in the functionality or features not supported by fabricpool section of this article there is a line indicating that a volume with a space guarantee set to anything other than none will not allow the owning aggregate to ... | 0 |

19,826 | 26,217,009,184 | IssuesEvent | 2023-01-04 11:51:27 | OpenEnergyPlatform/open-MaStR | https://api.github.com/repos/OpenEnergyPlatform/open-MaStR | closed | Adapt MaStRDownload.download_power_plants() to be working with postprocess() | :scissors: post processing |

**Tasks**

- [ ] Replace column renaming in `MaStRDownload.download_power_plants()` by renaming pattern used in `MaStRMirror.to_csv`

- [ ] Adapt documentation `postprocessing.rst` and remove warning about data from `MaStRDownload.download_power_plants()` | 1.0 | Adapt MaStRDownload.download_power_plants() to be working with postprocess() -

**Tasks**

- [ ] Replace column renaming in `MaStRDownload.download_power_plants()` by renaming pattern used in `MaStRMirror.to_csv`

- [ ] Adapt documentation `postprocessing.rst` and remove warning about data from `MaStRDownload.downlo... | process | adapt mastrdownload download power plants to be working with postprocess tasks replace column renaming in mastrdownload download power plants by renaming pattern used in mastrmirror to csv adapt documentation postprocessing rst and remove warning about data from mastrdownload download p... | 1 |

430,782 | 12,465,814,584 | IssuesEvent | 2020-05-28 14:35:05 | luna/ide | https://api.github.com/repos/luna/ide | opened | Disable Zoom/Pan When Visualization is in Fullscreen Mode | Category: IDE Change: Non-Breaking Difficulty: Core Contributor Priority: Medium Type: Enhancement | ### Summary

The zooming/panning functionality needs to be disabled if the general scene is not visible.

### Value

Avoids confusion when unexpected interactions happen that are not visible to the user.

### Specification

When the visualization fullscreen mode is enabled, at the same time the zoom/pan should be ... | 1.0 | Disable Zoom/Pan When Visualization is in Fullscreen Mode - ### Summary

The zooming/panning functionality needs to be disabled if the general scene is not visible.

### Value

Avoids confusion when unexpected interactions happen that are not visible to the user.

### Specification

When the visualization fullscre... | non_process | disable zoom pan when visualization is in fullscreen mode summary the zooming panning functionality needs to be disabled if the general scene is not visible value avoids confusion when unexpected interactions happen that are not visible to the user specification when the visualization fullscre... | 0 |

17,106 | 22,627,975,458 | IssuesEvent | 2022-06-30 12:26:19 | camunda/feel-scala | https://api.github.com/repos/camunda/feel-scala | opened | Functions `put all` and `context` do not propagate errors to the result | type: bug team/process-automation | **Describe the bug**

Currently the built-in functions `put all` and `context` do not propagate errors that are passed in as argument. Instead, they return `null` in these cases and the error gets lost.

This is the root cause for: https://github.com/camunda/zeebe/issues/9543

**To Reproduce**

see ticket linked ab... | 1.0 | Functions `put all` and `context` do not propagate errors to the result - **Describe the bug**

Currently the built-in functions `put all` and `context` do not propagate errors that are passed in as argument. Instead, they return `null` in these cases and the error gets lost.

This is the root cause for: https://gith... | process | functions put all and context do not propagate errors to the result describe the bug currently the built in functions put all and context do not propagate errors that are passed in as argument instead they return null in these cases and the error gets lost this is the root cause for to re... | 1 |

10,577 | 13,388,377,615 | IssuesEvent | 2020-09-02 17:16:59 | neuropoly/spinalcordtoolbox | https://api.github.com/repos/neuropoly/spinalcordtoolbox | closed | Adding CSA results in QC report | feature sct_process_segmentation sct_qc | It would be welcome to introduce a QC report for CSA results.

This report would also facilitate the optimization of default parameter for `get_centerline` (used for angle correction in CSA computation), see https://github.com/neuropoly/spinalcordtoolbox/pull/2299

| 1.0 | Adding CSA results in QC report - It would be welcome to introduce a QC report for CSA results.

This report would also facilitate the optimization of default parameter for `get_centerline` (used for angle correction in CSA computation), see https://github.com/neuropoly/spinalcordtoolbox/pull/2299

| process | adding csa results in qc report it would be welcome to introduce a qc report for csa results this report would also facilitate the optimization of default parameter for get centerline used for angle correction in csa computation see | 1 |

306,542 | 9,396,301,931 | IssuesEvent | 2019-04-08 06:45:46 | aeternity/aepp-base | https://api.github.com/repos/aeternity/aepp-base | closed | iPhone SE in Simulator: Strange behavior of password input fields | bug priority review | Can be reproduced via iPhone Simulator > iPhone SE device.

### Steps to Reproduce

- Go to lists experiment

- Press Add new Experiment

- Fill the name and description

- Save

### Acceptance Criteria

-... | 1.0 | [UI] Modal not removed after created Experiment - ### Parent Issue

https://github.com/dotCMS/core/issues/23095

### Problem Statement

After update PrimeNg when we destroy the sidebar not remove the background modal (Blur)

### Steps to Reproduce

- Go to lists experiment

- Press Add new Experiment

- Fill the name a... | non_process | modal not removed after created experiment parent issue problem statement after update primeng when we destroy the sidebar not remove the background modal blur steps to reproduce go to lists experiment press add new experiment fill the name and description save acceptance criter... | 0 |

18,898 | 24,837,415,188 | IssuesEvent | 2022-10-26 09:56:00 | altillimity/SatDump | https://api.github.com/repos/altillimity/SatDump | closed | GOES HRIT leaves corrupted GIFs | bug Processing | Whenever images are downloaded from GOES-18 the NWS folder is filled with unopenable or obviously corrupted images. If needed I can upload some for testing or you can view them in my github repository. | 1.0 | GOES HRIT leaves corrupted GIFs - Whenever images are downloaded from GOES-18 the NWS folder is filled with unopenable or obviously corrupted images. If needed I can upload some for testing or you can view them in my github repository. | process | goes hrit leaves corrupted gifs whenever images are downloaded from goes the nws folder is filled with unopenable or obviously corrupted images if needed i can upload some for testing or you can view them in my github repository | 1 |

261,671 | 8,244,934,957 | IssuesEvent | 2018-09-11 08:10:01 | nlbdev/nordic-epub3-dtbook-migrator | https://api.github.com/repos/nlbdev/nordic-epub3-dtbook-migrator | closed | Move semantic classes and ZedAI types to EDUPUB namespace | 0 - Low priority guidelines revision | All ZedAI types and semantic classes we use should be moved to the EDUPUB vocabulary if possible. We should compile a list of suggested additions and submit it to the EDUPUB WG.

<!---

@huboard:{"milestone_order":55.5}

-->

| 1.0 | Move semantic classes and ZedAI types to EDUPUB namespace - All ZedAI types and semantic classes we use should be moved to the EDUPUB vocabulary if possible. We should compile a list of suggested additions and submit it to the EDUPUB WG.

<!---

@huboard:{"milestone_order":55.5}

-->

| non_process | move semantic classes and zedai types to edupub namespace all zedai types and semantic classes we use should be moved to the edupub vocabulary if possible we should compile a list of suggested additions and submit it to the edupub wg huboard milestone order | 0 |

279,142 | 24,202,785,500 | IssuesEvent | 2022-09-24 20:12:13 | red/red | https://api.github.com/repos/red/red | closed | [Core] Object path access failure within loops scope | status.built status.tested type.bug test.written | **Describe the bug**

Could be related to #4854

**To reproduce**

Run this:

```

Red []

obj1: object []

obj2: object [owner: 'obj1]

list: function [obj [object!]] [

print ">>>"

?? obj/owner ;) works outside of loop!

; test: copy/deep [ ;) no problem if copied!

test: [

?? obj/ow... | 2.0 | [Core] Object path access failure within loops scope - **Describe the bug**

Could be related to #4854

**To reproduce**

Run this:

```

Red []

obj1: object []

obj2: object [owner: 'obj1]

list: function [obj [object!]] [

print ">>>"

?? obj/owner ;) works outside of loop!

; test: copy/deep [ ... | non_process | object path access failure within loops scope describe the bug could be related to to reproduce run this red object object list function print obj owner works outside of loop test copy deep no problem if copied test ... | 0 |

12,194 | 14,742,346,955 | IssuesEvent | 2021-01-07 12:08:15 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Exceptions report | anc-external anc-process anc-report anp-1 ant-support has attachment | In GitLab by @kdjstudios on Apr 10, 2019, 11:02

**Submitted by:** Gaylan Garrett <Gaylan.Garrett@Nexa.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-10-13579

**Server:** External

**Client/Site:** Keener

**Account:** NA

**Issue:**

I just ran the exceptions report for the Keener 4... | 1.0 | Exceptions report - In GitLab by @kdjstudios on Apr 10, 2019, 11:02

**Submitted by:** Gaylan Garrett <Gaylan.Garrett@Nexa.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-10-13579

**Server:** External

**Client/Site:** Keener

**Account:** NA

**Issue:**

I just ran the exceptions rep... | process | exceptions report in gitlab by kdjstudios on apr submitted by gaylan garrett helpdesk server external client site keener account na issue i just ran the exceptions report for the keener billing i am still in draft mode as doing some research of usage before... | 1 |

4,822 | 7,717,886,378 | IssuesEvent | 2018-05-23 14:50:22 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | closed | Arakoon deployment configurable via GUI/framework | process_wontfix state_question type_feature | The use of external Arakoons has cost OPS and framework team a lot of work and has lots of disadvantages

The user (customer / OPS / ...) should be able to determine where each and every Arakoon cluster should be deployed by using the GUI and/or the API calls delivered by the framework team | 1.0 | Arakoon deployment configurable via GUI/framework - The use of external Arakoons has cost OPS and framework team a lot of work and has lots of disadvantages

The user (customer / OPS / ...) should be able to determine where each and every Arakoon cluster should be deployed by using the GUI and/or the API calls delivere... | process | arakoon deployment configurable via gui framework the use of external arakoons has cost ops and framework team a lot of work and has lots of disadvantages the user customer ops should be able to determine where each and every arakoon cluster should be deployed by using the gui and or the api calls delivere... | 1 |

10,453 | 13,233,452,615 | IssuesEvent | 2020-08-18 14:49:19 | prisma/prisma-client-js | https://api.github.com/repos/prisma/prisma-client-js | opened | node script hanging after disconnect | bug/1-repro-available kind/bug process/candidate | <!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in Prisma Client.

Learn more about writing proper bug reports here: ht... | 1.0 | node script hanging after disconnect - <!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in Prisma Client.

Learn more ab... | process | node script hanging after disconnect thanks for helping us improve prisma 🙏 please follow the sections in the template and provide as much information as possible about your problem e g by setting the debug environment variable and enabling additional logging output in prisma client learn more ab... | 1 |

337,840 | 24,559,337,679 | IssuesEvent | 2022-10-12 18:44:59 | osPrims/chatApp | https://api.github.com/repos/osPrims/chatApp | closed | fix inconsistent badges in README | documentation good first issue reserved-for-uni | https://github.com/osPrims/chatApp/blob/fcf7cd9731fc05c4e014a789434151c0be03a022/README.md?plain=1#L6-L9

The 'Issues' and 'Pull Requests' badges -

* should have consistent style with the 'forks' and 'stars' issue (flat-square)

The 'Forks' and 'Star' badges -

* should not have a logo in them

Required fix woul... | 1.0 | fix inconsistent badges in README - https://github.com/osPrims/chatApp/blob/fcf7cd9731fc05c4e014a789434151c0be03a022/README.md?plain=1#L6-L9

The 'Issues' and 'Pull Requests' badges -

* should have consistent style with the 'forks' and 'stars' issue (flat-square)

The 'Forks' and 'Star' badges -

* should not have... | non_process | fix inconsistent badges in readme the issues and pull requests badges should have consistent style with the forks and stars issue flat square the forks and star badges should not have a logo in them required fix would be to correct the query parameters used for the badges | 0 |

736,226 | 25,463,609,699 | IssuesEvent | 2022-11-24 23:52:19 | diffgram/diffgram | https://api.github.com/repos/diffgram/diffgram | opened | Standard process for surfacing sql timeout errors | lowpriority performance sqlalchemy optimization | `"error":"(psycopg2.errors.QueryCanceled) canceling statement due to statement timeout`

Can sometimes cause cascading errors if exception is not caught.

We may want to think about how we expect key operations to work and surface an error to the user when a timeout occurs. | 1.0 | Standard process for surfacing sql timeout errors - `"error":"(psycopg2.errors.QueryCanceled) canceling statement due to statement timeout`

Can sometimes cause cascading errors if exception is not caught.

We may want to think about how we expect key operations to work and surface an error to the user when a timeout... | non_process | standard process for surfacing sql timeout errors error errors querycanceled canceling statement due to statement timeout can sometimes cause cascading errors if exception is not caught we may want to think about how we expect key operations to work and surface an error to the user when a timeout occurs... | 0 |

13,905 | 16,664,707,468 | IssuesEvent | 2021-06-07 00:07:58 | turnkeylinux/tracker | https://api.github.com/repos/turnkeylinux/tracker | opened | Processmaker - include pre-configured cron job | bug processmaker | It appears that we aren't shipping a pre-configured cron job in the Processmaker appliance. We should!

Here's a workaround to create a cron job (as suggested for [Processmaker 3.2 -> 3.6](https://wiki.processmaker.com/3.2/Executing_cron.php#Configuring_crontab_in_Linux.2FUNIX) - adjusted for TurnKey):

```

cat > ... | 1.0 | Processmaker - include pre-configured cron job - It appears that we aren't shipping a pre-configured cron job in the Processmaker appliance. We should!

Here's a workaround to create a cron job (as suggested for [Processmaker 3.2 -> 3.6](https://wiki.processmaker.com/3.2/Executing_cron.php#Configuring_crontab_in_Linu... | process | processmaker include pre configured cron job it appears that we aren t shipping a pre configured cron job in the processmaker appliance we should here s a workaround to create a cron job as suggested for adjusted for turnkey cat etc cron d processmaker eof www data usr bin ph... | 1 |

16,576 | 21,606,800,009 | IssuesEvent | 2022-05-04 04:55:34 | jamandujanoa/WASA | https://api.github.com/repos/jamandujanoa/WASA | opened | Implement a solution to configure unique local admin credentials | WARP-Import WAF-Assessment Security Operational Procedures Patch & Update Process (PNU) | <a href="https://techcommunity.microsoft.com/t5/itops-talk-blog/step-by-step-guide-how-to-configure-microsoft-local/ba-p/2806185">Implement a solution to configure unique local admin credentials</a>

<p><b>Why Consider This?</b></p>

Use of consistent local administrator passwords leaves the organization susceptibl... | 1.0 | Implement a solution to configure unique local admin credentials - <a href="https://techcommunity.microsoft.com/t5/itops-talk-blog/step-by-step-guide-how-to-configure-microsoft-local/ba-p/2806185">Implement a solution to configure unique local admin credentials</a>

<p><b>Why Consider This?</b></p>

Use of consiste... | process | implement a solution to configure unique local admin credentials why consider this use of consistent local administrator passwords leaves the organization susceptible to rapid lateral account movement as a compromised credential can be used on multiple hosts in attempt to escalate privilege context... | 1 |

18,623 | 24,579,634,482 | IssuesEvent | 2022-10-13 14:44:10 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [FHIR] Questionnaire responses > Response records are not getting created in the FHIR store | Bug Blocker P0 Response datastore Process: Fixed Process: Tested dev | Steps:

1. Sign up or sign in to the mobile app

2. Enroll to the study or click on enrolled study

3. Submit the responses for the activities

4. Go to the eFHIR store

5. Go to the Questionnaire responses and observe

AR: Questionnaire responses > Responses records are not getting created in the FHIR store

ER: Re... | 2.0 | [FHIR] Questionnaire responses > Response records are not getting created in the FHIR store - Steps:

1. Sign up or sign in to the mobile app

2. Enroll to the study or click on enrolled study

3. Submit the responses for the activities

4. Go to the eFHIR store

5. Go to the Questionnaire responses and observe

AR:... | process | questionnaire responses response records are not getting created in the fhir store steps sign up or sign in to the mobile app enroll to the study or click on enrolled study submit the responses for the activities go to the efhir store go to the questionnaire responses and observe ar ques... | 1 |

290,299 | 8,886,665,359 | IssuesEvent | 2019-01-15 01:39:35 | bcgov/ols-router | https://api.github.com/repos/bcgov/ols-router | closed | Add cardinal directions to Route Directions | api enhancement functional route planner low priority usability | @mraross commented on [Sat Apr 07 2018](https://github.com/bcgov/api-specs/issues/325)

Add cardinal directions to start of route and start of any reversals (going out the way you came). For example: Try this route in demo app:

16357 Hwy 2, Tupper, BC

16972 201 Rd, Tupper, BC

562 188 Rd, Tupper, BC

The first in... | 1.0 | Add cardinal directions to Route Directions - @mraross commented on [Sat Apr 07 2018](https://github.com/bcgov/api-specs/issues/325)

Add cardinal directions to start of route and start of any reversals (going out the way you came). For example: Try this route in demo app:

16357 Hwy 2, Tupper, BC

16972 201 Rd, Tupp... | non_process | add cardinal directions to route directions mraross commented on add cardinal directions to start of route and start of any reversals going out the way you came for example try this route in demo app hwy tupper bc rd tupper bc rd tupper bc the first instruction is continue o... | 0 |

234,117 | 25,800,871,007 | IssuesEvent | 2022-12-11 01:07:32 | praneethpanasala/linux | https://api.github.com/repos/praneethpanasala/linux | reopened | CVE-2019-19448 (High) detected in linuxv4.19 | security vulnerability | ## CVE-2019-19448 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv4.19</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torv... | True | CVE-2019-19448 (High) detected in linuxv4.19 - ## CVE-2019-19448 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv4.19</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Lib... | non_process | cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href found in head commit a href found in base branch master vulnerable source files fs btrfs free space cache c ... | 0 |

14,377 | 17,399,997,365 | IssuesEvent | 2021-08-02 18:12:15 | googleapis/doc-templates | https://api.github.com/repos/googleapis/doc-templates | closed | docfx: update Go golden | type: process | Go doesn't include READMEs any more. There have been other changes, too. | 1.0 | docfx: update Go golden - Go doesn't include READMEs any more. There have been other changes, too. | process | docfx update go golden go doesn t include readmes any more there have been other changes too | 1 |

957 | 3,419,124,280 | IssuesEvent | 2015-12-08 07:55:02 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | closed | Днепр обл. Видача довідки про: наявність та розмір земельної частки (паю), наявність у Державному земельному кадастрі відомостей про одержання у власність земельної ділянки у межах норм безоплатної приватизації за певним видом її цільового призначення (використання) | In process of testing in work | переработка процесса issue 78 с дополнениями.

Инфокарта http://e-services.dp.gov.ua/_layouts/WordViewer.aspx?id=/Lists/PermitStages/Attachments/8129/%D0%86%D0%9A_006.doc

Заява

https://drive.google.com/file/d/0B68lQ-z45GpYMGUyZnhxdzJDcHM/view?usp=sharing | 1.0 | Днепр обл. Видача довідки про: наявність та розмір земельної частки (паю), наявність у Державному земельному кадастрі відомостей про одержання у власність земельної ділянки у межах норм безоплатної приватизації за певним видом її цільового призначення (використання) - переработка процесса issue 78 с дополнениями.

Инф... | process | днепр обл видача довідки про наявність та розмір земельної частки паю наявність у державному земельному кадастрі відомостей про одержання у власність земельної ділянки у межах норм безоплатної приватизації за певним видом її цільового призначення використання переработка процесса issue с дополнениями инфо... | 1 |

407,321 | 11,912,014,561 | IssuesEvent | 2020-03-31 09:34:09 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | SQL thread pools | Estimation: M Module: SQL Priority: High Source: Internal Team: Core Type: Enhancement | We need to introduce thread pools for query processing. This includes:

1. Processing of query operations

1. Execution of query fragments | 1.0 | SQL thread pools - We need to introduce thread pools for query processing. This includes:

1. Processing of query operations

1. Execution of query fragments | non_process | sql thread pools we need to introduce thread pools for query processing this includes processing of query operations execution of query fragments | 0 |

11,909 | 14,699,401,870 | IssuesEvent | 2021-01-04 08:29:41 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Console Aplication (C#) - Memory Leak | area-System.Diagnostics.Process question | I have a program "Console Aplication" install in Windows that execute every time. Initial consume 32 MB RAM, but grow infinitely. My program execute connection with DB oracle through provider "Oracle.ManagedDataAccess". To Diagnostics what was happening, i executed o PerfView, the result show high consume/execute in na... | 1.0 | Console Aplication (C#) - Memory Leak - I have a program "Console Aplication" install in Windows that execute every time. Initial consume 32 MB RAM, but grow infinitely. My program execute connection with DB oracle through provider "Oracle.ManagedDataAccess". To Diagnostics what was happening, i executed o PerfView, th... | process | console aplication c memory leak i have a program console aplication install in windows that execute every time initial consume mb ram but grow infinitely my program execute connection with db oracle through provider oracle manageddataaccess to diagnostics what was happening i executed o perfview the... | 1 |

10,789 | 13,608,996,931 | IssuesEvent | 2020-09-23 03:58:27 | googleapis/java-redis | https://api.github.com/repos/googleapis/java-redis | closed | Dependency Dashboard | api: redis type: process | This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/org.apache.maven.plugins-maven-project-info-reports-plugin-3.x -->build(deps): update dependency org.apache... | 1.0 | Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/org.apache.maven.plugins-maven-project-info-reports-plugin-3.x -->build(deps): updat... | process | dependency dashboard this issue contains a list of renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any build deps update dependency org apache maven plugins maven project info reports plugin to deps up... | 1 |

12,953 | 15,327,261,502 | IssuesEvent | 2021-02-26 05:43:17 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PD] Emails sent to study participants must use study-specific support email address | P1 Participant datastore Process: Enhancement Process: Fixed Process: Tested QA Process: Tested dev | Emails sent to participants of a specific study (for study invitations) must contain the support contact email address specific to the study, and as configured in the Study Builder | 4.0 | [PD] Emails sent to study participants must use study-specific support email address - Emails sent to participants of a specific study (for study invitations) must contain the support contact email address specific to the study, and as configured in the Study Builder | process | emails sent to study participants must use study specific support email address emails sent to participants of a specific study for study invitations must contain the support contact email address specific to the study and as configured in the study builder | 1 |

5,333 | 8,150,064,095 | IssuesEvent | 2018-08-22 11:47:04 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | I find the value of "unique visitors" is not right! | log-processing question | env:

GoAccess - 1.2

ubuntu 14.04

my goaccess command:

```

goaccess -f /srv/logs/access.log -o report.html

```

The result of "unique visitors" is : '10704"

But if I use shell command:

```

cat /srv/logs/access.log |awk '{print $1}'|sort|uniq -c|wc -l

````

The result is : "5025"

| 1.0 | I find the value of "unique visitors" is not right! - env:

GoAccess - 1.2

ubuntu 14.04

my goaccess command:

```

goaccess -f /srv/logs/access.log -o report.html

```

The result of "unique visitors" is : '10704"

But if I use shell command:

```

cat /srv/logs/access.log |awk '{print $1}'|sort|uniq -c|wc -... | process | i find the value of unique visitors is not right env: goaccess ubuntu my goaccess command goaccess f srv logs access log o report html the result of unique visitors is but if i use shell command cat srv logs access log awk print sort uniq c wc l ... | 1 |

10,041 | 13,044,161,625 | IssuesEvent | 2020-07-29 03:47:24 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `SubDateDurationInt` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `SubDateDurationInt` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rp... | 2.0 | UCP: Migrate scalar function `SubDateDurationInt` from TiDB -

## Description

Port the scalar function `SubDateDurationInt` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https:/... | process | ucp migrate scalar function subdatedurationint from tidb description port the scalar function subdatedurationint from tidb to coprocessor score mentor s lonng recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

769,682 | 27,016,358,526 | IssuesEvent | 2023-02-10 19:47:43 | layer5io/layer5 | https://api.github.com/repos/layer5io/layer5 | closed | [Site Performance] Incorporate SVGO | help wanted kind/chore hacktoberfest area/ci priority/high kind/child issue/remind kind/performance | **Current Behavior**

The layer5.io website has poor performance (see #3366). Portions of its performance can be enhanced by reducing the file size of images, concluding SVG images.

**Desired Situation**

Incorporate https://github.com/svg/svgo into the site's build process or commit hooks or make targets to ensure ... | 1.0 | [Site Performance] Incorporate SVGO - **Current Behavior**

The layer5.io website has poor performance (see #3366). Portions of its performance can be enhanced by reducing the file size of images, concluding SVG images.

**Desired Situation**

Incorporate https://github.com/svg/svgo into the site's build process or ... | non_process | incorporate svgo current behavior the io website has poor performance see portions of its performance can be enhanced by reducing the file size of images concluding svg images desired situation incorporate into the site s build process or commit hooks or make targets to ensure that svg ima... | 0 |

34,277 | 29,188,338,711 | IssuesEvent | 2023-05-19 17:25:59 | casangi/astrohack | https://api.github.com/repos/casangi/astrohack | closed | Warnings about the deprecation of the numpy matrix class | Infrastructure | The matrix class is now deprecated in numpy and should not be used anymore.

PendingDeprecationWarning: the matrix subclass is not the recommended way to represent matrices or deal with linear algebra (see https://docs.scipy.org/doc/numpy/user/numpy-for-matlab-users.html). Please adjust your code to use regular ndarr... | 1.0 | Warnings about the deprecation of the numpy matrix class - The matrix class is now deprecated in numpy and should not be used anymore.

PendingDeprecationWarning: the matrix subclass is not the recommended way to represent matrices or deal with linear algebra (see https://docs.scipy.org/doc/numpy/user/numpy-for-matla... | non_process | warnings about the deprecation of the numpy matrix class the matrix class is now deprecated in numpy and should not be used anymore pendingdeprecationwarning the matrix subclass is not the recommended way to represent matrices or deal with linear algebra see please adjust your code to use regular ndarray ... | 0 |

6,992 | 4,717,123,221 | IssuesEvent | 2016-10-16 13:02:12 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 27516243: iCloud Keychain should not be required to use Home app | classification:ui/usability reproducible:always status:open | #### Description

Summary:

Currently if you try to use the Home app, you must first enable iCloud Keychain. I think user's should be able to use the Home app without this, and live with the limitations this creates.

Steps to Reproduce:

1) Make sure iCloud Keychain is disabled in Settings -> iCloud -> Keychain

2) O... | True | 27516243: iCloud Keychain should not be required to use Home app - #### Description

Summary:

Currently if you try to use the Home app, you must first enable iCloud Keychain. I think user's should be able to use the Home app without this, and live with the limitations this creates.

Steps to Reproduce:

1) Make sure... | non_process | icloud keychain should not be required to use home app description summary currently if you try to use the home app you must first enable icloud keychain i think user s should be able to use the home app without this and live with the limitations this creates steps to reproduce make sure icloud... | 0 |

1,311 | 14,916,335,241 | IssuesEvent | 2021-01-22 18:02:48 | hashicorp/consul | https://api.github.com/repos/hashicorp/consul | opened | Add emergency server write rate limit to allow controlled recovery from replication failure | theme/reliability | ## Background

This is a follow up to several incidents where the failure described in #9609 was the root cause.

## Proposal

In the outage situation described in the linked ticket above, there is currently no easy way in Consul to reduce the write load on the servers without external coordination like shutting ... | True | Add emergency server write rate limit to allow controlled recovery from replication failure - ## Background

This is a follow up to several incidents where the failure described in #9609 was the root cause.

## Proposal

In the outage situation described in the linked ticket above, there is currently no easy way ... | non_process | add emergency server write rate limit to allow controlled recovery from replication failure background this is a follow up to several incidents where the failure described in was the root cause proposal in the outage situation described in the linked ticket above there is currently no easy way in ... | 0 |

178,458 | 13,780,627,021 | IssuesEvent | 2020-10-08 15:08:58 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | Failing test: X-Pack Saved Object API Integration Tests -- security_and_spaces.x-pack/test/saved_object_api_integration/security_and_spaces/apis/create·ts - saved objects security and spaces enabled _create dual-privileges user within the space_1 space with overwrite enabled should return 200 success [globaltype/global... | failed-test | A test failed on a tracked branch

```

Error: expected 200 "OK", got 503 "Service Unavailable"

at Test._assertStatus (/dev/shm/workspace/kibana/node_modules/supertest/lib/test.js:268:12)

at Test._assertFunction (/dev/shm/workspace/kibana/node_modules/supertest/lib/test.js:283:11)

at Test.assert (/dev/shm/wo... | 1.0 | Failing test: X-Pack Saved Object API Integration Tests -- security_and_spaces.x-pack/test/saved_object_api_integration/security_and_spaces/apis/create·ts - saved objects security and spaces enabled _create dual-privileges user within the space_1 space with overwrite enabled should return 200 success [globaltype/global... | non_process | failing test x pack saved object api integration tests security and spaces x pack test saved object api integration security and spaces apis create·ts saved objects security and spaces enabled create dual privileges user within the space space with overwrite enabled should return success a test failed on... | 0 |

155,805 | 13,633,444,519 | IssuesEvent | 2020-09-24 21:26:42 | Witekio/pluma-automation | https://api.github.com/repos/Witekio/pluma-automation | opened | CopyToDeviceAction is not documented | bug documentation good first issue | Requires documentation in README.md, we missed that part. @mjftw , just FYI | 1.0 | CopyToDeviceAction is not documented - Requires documentation in README.md, we missed that part. @mjftw , just FYI | non_process | copytodeviceaction is not documented requires documentation in readme md we missed that part mjftw just fyi | 0 |

5,560 | 8,403,419,839 | IssuesEvent | 2018-10-11 09:43:16 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | <Select />: longer help text is getting hidden underneath the input | bug processing | ## Current Behavior

Help text which is long enough so that it must break onto a new line gets partially covered by the input.

In the screenshot below, the text is:

`Must be appropriately covered to avoid dents and scratches.`

but only the word is visible.

detected in postcss-7.0.30.tgz, postcss-7.0.21.tgz | security vulnerability | ## CVE-2021-23368 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-7.0.30.tgz</b>, <b>postcss-7.0.21.tgz</b></p></summary>

<p>

<details><summary><b>postcss-7.0.30.tgz</b></p... | True | CVE-2021-23368 (Medium) detected in postcss-7.0.30.tgz, postcss-7.0.21.tgz - ## CVE-2021-23368 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-7.0.30.tgz</b>, <b>postcss-7.0... | non_process | cve medium detected in postcss tgz postcss tgz cve medium severity vulnerability vulnerable libraries postcss tgz postcss tgz postcss tgz tool for transforming styles with js plugins library home page a href path to dependency file swot we... | 0 |

166,992 | 6,329,899,642 | IssuesEvent | 2017-07-26 05:18:24 | HouraiTeahouse/FantasyCrescendo | https://api.github.com/repos/HouraiTeahouse/FantasyCrescendo | closed | Changing stages without stopping game causes softlock | Category:Game Engine Priority:0 Severity:0 Status:Assigned Type:Bug | ### When reporting a bug/issue:

- Fantasy Crescendo Version: Build #276

- Operating System: Windows 7

- Expected Behavior: Proper character clearing and restarting.

- Actual Behavior: Softlock - "P1" indicator shown, but character is not loaded.

- Steps to reproduce the behavior:

1. Enter any stage and load a c... | 1.0 | Changing stages without stopping game causes softlock - ### When reporting a bug/issue:

- Fantasy Crescendo Version: Build #276

- Operating System: Windows 7

- Expected Behavior: Proper character clearing and restarting.

- Actual Behavior: Softlock - "P1" indicator shown, but character is not loaded.

- Steps to r... | non_process | changing stages without stopping game causes softlock when reporting a bug issue fantasy crescendo version build operating system windows expected behavior proper character clearing and restarting actual behavior softlock indicator shown but character is not loaded steps to repr... | 0 |

259,534 | 27,639,781,938 | IssuesEvent | 2023-03-10 17:05:08 | MatBenfield/news | https://api.github.com/repos/MatBenfield/news | opened | [SecurityWeek] Cyber Madness Bracket Challenge – Register to Play | SecurityWeek |

SecurityWeek’s Cyber Madness Bracket Challenge is a contest designed to bring the community together in a fun, competitive way through one of America’s top sporting events.

The post [Cyber Madness Bracket Challenge – Register to Play](https://www.securityweek.com/cyber-madness-bracket-challenge-register-to-play/) ap... | True | [SecurityWeek] Cyber Madness Bracket Challenge – Register to Play -

SecurityWeek’s Cyber Madness Bracket Challenge is a contest designed to bring the community together in a fun, competitive way through one of America’s top sporting events.

The post [Cyber Madness Bracket Challenge – Register to Play](https://www.se... | non_process | cyber madness bracket challenge – register to play securityweek’s cyber madness bracket challenge is a contest designed to bring the community together in a fun competitive way through one of america’s top sporting events the post appeared first on | 0 |

2,677 | 5,502,844,774 | IssuesEvent | 2017-03-16 01:17:36 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Return relevant FK dest fields automatically in "rows" queries | Duplicate Proposal Query Processor | User Story: as a user looking at row level data I would like to see human readable identifiers in my data rather than cryptic id values (numbers, hashes) so that I can better understand what I'm looking at.

Example if pulling up the list of Invoices from a db I would want to see the name of the User or Customer the in... | 1.0 | Return relevant FK dest fields automatically in "rows" queries - User Story: as a user looking at row level data I would like to see human readable identifiers in my data rather than cryptic id values (numbers, hashes) so that I can better understand what I'm looking at.

Example if pulling up the list of Invoices from... | process | return relevant fk dest fields automatically in rows queries user story as a user looking at row level data i would like to see human readable identifiers in my data rather than cryptic id values numbers hashes so that i can better understand what i m looking at example if pulling up the list of invoices from... | 1 |

21,371 | 29,202,227,386 | IssuesEvent | 2023-05-21 00:36:39 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Remoto] Data Analyst na Coodesh | SALVADOR HOME OFFICE PJ BANCO DE DADOS DATA SCIENCE PYTHON SQL GIT STARTUP NOSQL SOLID REQUISITOS REMOTO PROCESSOS GITHUB INGLÊS CI UMA ESPANHOL BI BIGQUERY NEGÓCIOS Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/data-analyst-185949334?utm_source=git... | 1.0 | [Remoto] Data Analyst na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/da... | process | data analyst na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candidatura 👋 a fh... | 1 |

151,317 | 12,031,698,299 | IssuesEvent | 2020-04-13 10:17:38 | mozilla-mobile/fenix | https://api.github.com/repos/mozilla-mobile/fenix | closed | [Bug] Fix intermittent UI test tabMediaControlButtonTest | Feature:Media intermittent-test 🐞 bug | https://console.firebase.google.com/u/0/project/moz-fenix/testlab/histories/bh.66b7091e15d53d45/matrices/8046457654635721978/executions/bs.4d9073ffb2260f3d/testcases/2

```Log

androidx.test.espresso.base.DefaultFailureHandler$AssertionFailedWithCauseError: 'with content description text: is "Play"' doesn't match the... | 1.0 | [Bug] Fix intermittent UI test tabMediaControlButtonTest - https://console.firebase.google.com/u/0/project/moz-fenix/testlab/histories/bh.66b7091e15d53d45/matrices/8046457654635721978/executions/bs.4d9073ffb2260f3d/testcases/2

```Log

androidx.test.espresso.base.DefaultFailureHandler$AssertionFailedWithCauseError: ... | non_process | fix intermittent ui test tabmediacontrolbuttontest log androidx test espresso base defaultfailurehandler assertionfailedwithcauseerror with content description text is play doesn t match the selected view expected with content description text is play got appcompatimagebutton id res name... | 0 |

14,086 | 16,977,309,872 | IssuesEvent | 2021-06-30 02:14:23 | q191201771/lal | https://api.github.com/repos/q191201771/lal | closed | arm32交叉编译失败 | #Bug *In process |

1.尝试交叉编译arm包出现以下错误,arm64没问题。

2.测试环境

go version go1.16.5 linux/amd64

ubuntu18.04

lal 最新代码

3.测试过程

编译命令

`CGO_ENABLED=0 GOOS=linux GOARCH=arm go build app/lalserver/main.go`

错误日志

` github.com/q191201771/naza/pkg/nazaatomic

/root/go/pkg/mod/github.com/q191201771/naza@v0.19.1/pkg/nazaatomic/atomic_64bit.g... | 1.0 | arm32交叉编译失败 -

1.尝试交叉编译arm包出现以下错误,arm64没问题。

2.测试环境

go version go1.16.5 linux/amd64

ubuntu18.04

lal 最新代码

3.测试过程

编译命令

`CGO_ENABLED=0 GOOS=linux GOARCH=arm go build app/lalserver/main.go`

错误日志

` github.com/q191201771/naza/pkg/nazaatomic

/root/go/pkg/mod/github.com/q191201771/naza@v0.19.1/pkg/nazaatomic/... | process | 尝试交叉编译arm包出现以下错误, 。 测试环境 go version linux lal 最新代码 测试过程 编译命令 cgo enabled goos linux goarch arm go build app lalserver main go 错误日志 github com naza pkg nazaatomic root go pkg mod github com naza pkg nazaatomic atomic go redeclared in this block ... | 1 |

2,812 | 5,738,574,655 | IssuesEvent | 2017-04-23 05:50:54 | SIMEXP/niak | https://api.github.com/repos/SIMEXP/niak | closed | A progress verbose while doing QC | enhancement preprocessing quality control | Note from @amanbadhwar : it would be a good add to have a verbose like progression bar or percentage when doing QC

| 1.0 | A progress verbose while doing QC - Note from @amanbadhwar : it would be a good add to have a verbose like progression bar or percentage when doing QC

| process | a progress verbose while doing qc note from amanbadhwar it would be a good add to have a verbose like progression bar or percentage when doing qc | 1 |

265,826 | 28,298,760,040 | IssuesEvent | 2023-04-10 02:38:11 | nidhi7598/linux-4.19.72 | https://api.github.com/repos/nidhi7598/linux-4.19.72 | closed | CVE-2021-26931 (Medium) detected in linuxlinux-4.19.254, linuxlinux-4.19.254 - autoclosed | Mend: dependency security vulnerability | ## CVE-2021-26931 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linuxlinux-4.19.254</b>, <b>linuxlinux-4.19.254</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><... | True | CVE-2021-26931 (Medium) detected in linuxlinux-4.19.254, linuxlinux-4.19.254 - autoclosed - ## CVE-2021-26931 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linuxlinux-4.19.254</b>... | non_process | cve medium detected in linuxlinux linuxlinux autoclosed cve medium severity vulnerability vulnerable libraries linuxlinux linuxlinux vulnerability details an issue was discovered in the linux kernel through as used in xen bloc... | 0 |

10,987 | 13,783,874,237 | IssuesEvent | 2020-10-08 19:56:22 | googleapis/gax-php | https://api.github.com/repos/googleapis/gax-php | closed | The latest release does not comply with psr-4 autoloading standard | type: process | Please release the current dev-master as our project strictly requires compatibility with Composer v2.0 (the release should contain #278 and #279).

Due to this I can't use your library (google/cloud-pubsub) in our project to support RTDN from Google Play.

Thank you. | 1.0 | The latest release does not comply with psr-4 autoloading standard - Please release the current dev-master as our project strictly requires compatibility with Composer v2.0 (the release should contain #278 and #279).

Due to this I can't use your library (google/cloud-pubsub) in our project to support RTDN from Googl... | process | the latest release does not comply with psr autoloading standard please release the current dev master as our project strictly requires compatibility with composer the release should contain and due to this i can t use your library google cloud pubsub in our project to support rtdn from google pla... | 1 |

6,626 | 9,725,756,550 | IssuesEvent | 2019-05-30 09:34:55 | linnovate/root | https://api.github.com/repos/linnovate/root | closed | Delete multiple choice - button not clickable | 2.0.7 Process bug | go to Settings -> Folders

create new item

go to Documents -> Manage Documents

create few items

choose some items and delete them

the items are deleted only after refreshing the page or going to another tab and back to the deleted item's list

- Also happens in: Folders from Office, Tasks from Discussion, Tasks f... | 1.0 | Delete multiple choice - button not clickable - go to Settings -> Folders

create new item

go to Documents -> Manage Documents

create few items

choose some items and delete them

the items are deleted only after refreshing the page or going to another tab and back to the deleted item's list

- Also happens in: Fol... | process | delete multiple choice button not clickable go to settings folders create new item go to documents manage documents create few items choose some items and delete them the items are deleted only after refreshing the page or going to another tab and back to the deleted item s list also happens in fol... | 1 |

19,007 | 25,006,597,169 | IssuesEvent | 2022-11-03 12:21:58 | Tencent/tdesign-miniprogram | https://api.github.com/repos/Tencent/tdesign-miniprogram | closed | [tabs] 希望在选项卡名称旁边增加标签 | in process | ### 这个功能解决了什么问题

选项卡现在遇到情况:

1.分选项卡的消息,显示不同页卡的数量,并且有样式(现在仅仅能显示数量)

2.该选项卡,用于商品,新品,热卖等有样式标签,无法设置

### 你建议的方案是什么

单独设置一个badge,可以支持slot,标签可以放在名称的前面或后面 | 1.0 | [tabs] 希望在选项卡名称旁边增加标签 - ### 这个功能解决了什么问题

选项卡现在遇到情况:

1.分选项卡的消息,显示不同页卡的数量,并且有样式(现在仅仅能显示数量)

2.该选项卡,用于商品,新品,热卖等有样式标签,无法设置

### 你建议的方案是什么

单独设置一个badge,可以支持slot,标签可以放在名称的前面或后面 | process | 希望在选项卡名称旁边增加标签 这个功能解决了什么问题 选项卡现在遇到情况: 分选项卡的消息,显示不同页卡的数量,并且有样式(现在仅仅能显示数量) 该选项卡,用于商品,新品,热卖等有样式标签,无法设置 你建议的方案是什么 单独设置一个badge,可以支持slot,标签可以放在名称的前面或后面 | 1 |

198,342 | 14,974,029,018 | IssuesEvent | 2021-01-28 02:31:53 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | closed | The folder name displays as strange strings when using non-ENU characters to create one ADLS Gen2 folder | :beetle: regression :gear: adls gen2 :heavy_check_mark: merged 🧪 testing | **Storage Explorer Version:** 1.17.0

**Build Number:** 20210127.3

**Branch:** main

**Platform/OS:** Windows 10/ Linux Ubuntu 18.04/ MacOS Catalina

**Architecture:** ia32/ x64

**Language**: Czech/ Hungarian/ Japanese/ Korean/ Russian/ Swedish/ ZH-CN/ ZH-TW

**Regression From:** Previous release (1.17.0)

**Steps... | 1.0 | The folder name displays as strange strings when using non-ENU characters to create one ADLS Gen2 folder - **Storage Explorer Version:** 1.17.0

**Build Number:** 20210127.3

**Branch:** main

**Platform/OS:** Windows 10/ Linux Ubuntu 18.04/ MacOS Catalina

**Architecture:** ia32/ x64

**Language**: Czech/ Hungarian/... | non_process | the folder name displays as strange strings when using non enu characters to create one adls folder storage explorer version build number branch main platform os windows linux ubuntu macos catalina architecture language czech hungarian japanese korean ... | 0 |

71,349 | 18,714,538,776 | IssuesEvent | 2021-11-03 01:27:05 | NVIDIA/spark-rapids | https://api.github.com/repos/NVIDIA/spark-rapids | opened | [FEA] build and test pipelines for databricks 9.1 | feature request build P1 | https://github.com/NVIDIA/spark-rapids/pull/3767 has add shim layer for databricks 9.1 runtime,

we will need to update our nightly build and test pipeline to support build new shims.

also our pre-merge pipeline should also cover databricks 9.1 and keep compatibility w/ databricks 8.2 for plugin version lower than 21.... | 1.0 | [FEA] build and test pipelines for databricks 9.1 - https://github.com/NVIDIA/spark-rapids/pull/3767 has add shim layer for databricks 9.1 runtime,

we will need to update our nightly build and test pipeline to support build new shims.

also our pre-merge pipeline should also cover databricks 9.1 and keep compatibility... | non_process | build and test pipelines for databricks has add shim layer for databricks runtime we will need to update our nightly build and test pipeline to support build new shims also our pre merge pipeline should also cover databricks and keep compatibility w databricks for plugin version lower than ... | 0 |

16,192 | 20,674,120,624 | IssuesEvent | 2022-03-10 07:21:16 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | CockroachDB: Remove the Json native type | process/candidate topic: schema engines/data model parser team/migrations topic: cockroachdb team/psl-wg | On cockroachdb, [JSON is an alias for JSONB](https://www.cockroachlabs.com/docs/v21.2/jsonb#alias). We should reflect that in our native type definitions and remove `Json`. | 1.0 | CockroachDB: Remove the Json native type - On cockroachdb, [JSON is an alias for JSONB](https://www.cockroachlabs.com/docs/v21.2/jsonb#alias). We should reflect that in our native type definitions and remove `Json`. | process | cockroachdb remove the json native type on cockroachdb we should reflect that in our native type definitions and remove json | 1 |

17,717 | 23,619,046,225 | IssuesEvent | 2022-08-24 18:37:58 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | opened | [processors/transform] Add `drop` action support | enhancement processor/transform | `drop` action is pretty important functionality to replace filter processors. It's already being mentioned in the docs but not implemented yet.

Subtasks per data type:

- [ ] Add `drop` action support for metrics

- [ ] Add `drop` action support for traces

- [ ] Add `drop` action support for logs | 1.0 | [processors/transform] Add `drop` action support - `drop` action is pretty important functionality to replace filter processors. It's already being mentioned in the docs but not implemented yet.

Subtasks per data type:

- [ ] Add `drop` action support for metrics

- [ ] Add `drop` action support for traces

- [ ] ... | process | add drop action support drop action is pretty important functionality to replace filter processors it s already being mentioned in the docs but not implemented yet subtasks per data type add drop action support for metrics add drop action support for traces add drop action support f... | 1 |

203,259 | 15,875,896,886 | IssuesEvent | 2021-04-09 07:39:40 | zkat/big-brain | https://api.github.com/repos/zkat/big-brain | opened | Write guide | documentation help wanted | There should be a step-by-step guide on how to get started with big-brain and do incrementally more complex things. | 1.0 | Write guide - There should be a step-by-step guide on how to get started with big-brain and do incrementally more complex things. | non_process | write guide there should be a step by step guide on how to get started with big brain and do incrementally more complex things | 0 |

18,494 | 24,550,979,314 | IssuesEvent | 2022-10-12 12:35:42 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] [Offline indicator] Share button should be disabled in the below mentioned screens when participant is offline | Bug P1 iOS Process: Fixed Process: Tested QA Process: Tested dev | Share button should be disabled in the below mentioned screens when the participant is offline

1. App glossary

2. Dashboard

3. Consent pdf ( both resources screen and study overview screen)

| 3.0 | [iOS] [Offline indicator] Share button should be disabled in the below mentioned screens when participant is offline - Share button should be disabled in the below mentioned screens when the participant is offline

1. App glossary

2. Dashboard

3. Consent pdf ( both resources screen and study overview screen)

)

There is no warning in the browser console when I use the SimpleForm tag with redirect={false}

```

<SimpleForm... | 1.0 | SimpleForm redirect attribute entails Warning: Received `false` for a non-boolean attribute `redirect`. - **What you were expecting:**

(According to the [documentation](https://github.com/marmelab/react-admin/blob/0ead4754e847a25aea57db81ce8468cf054e7534/packages/ra-ui-materialui/src/form/Toolbar.tsx#L42))

There is... | non_process | simpleform redirect attribute entails warning received false for a non boolean attribute redirect what you were expecting according to the there is no warning in the browser console when i use the simpleform tag with redirect false what happened instead there is a warn... | 0 |

425,093 | 29,191,820,276 | IssuesEvent | 2023-05-19 20:51:26 | caproto/caproto | https://api.github.com/repos/caproto/caproto | closed | Document meaning of "High load. Batched 2 commands" and warn only if above a threshold | help wanted documentation server | I am getting this message but I do not how to make sense out of it. See below. Is it something to do with sending too much data or sending to many updates to PVs?

```

[I 11:50:39.604 common: 390] High load. Batched 2 commands (168B) with 0.0003s latency.

[I 11:50:49.370 common: 390] High load. Batch... | 1.0 | Document meaning of "High load. Batched 2 commands" and warn only if above a threshold - I am getting this message but I do not how to make sense out of it. See below. Is it something to do with sending too much data or sending to many updates to PVs?

```

[I 11:50:39.604 common: 390] High load. Batched 2 co... | non_process | document meaning of high load batched commands and warn only if above a threshold i am getting this message but i do not how to make sense out of it see below is it something to do with sending too much data or sending to many updates to pvs high load batched commands with latency hi... | 0 |

123,058 | 4,851,953,873 | IssuesEvent | 2016-11-11 08:23:03 | MatchboxDorry/dorry-web | https://api.github.com/repos/MatchboxDorry/dorry-web | closed | start 多个aiphine 3:2后,无法找到service | effort: 1 (easy) feature: controller flag: fixed priority: 1 (urgent) type: bug | **Dorry UI Build Versin:**

Version: 0.1.2-alpha

**Operation System:**

Name: Ubuntu

Version: 16.04-LTS(64bit)

**Browser:**

Browser name: Chrome

Browser version: 54.0.2840.59

**What I want to do**

想启动多个alphine服务

**Where I am**

app页面

**What I have done**

点击start,大概6次

**What I expect:**

增加6个running service

**What r... | 1.0 | start 多个aiphine 3:2后,无法找到service - **Dorry UI Build Versin:**

Version: 0.1.2-alpha

**Operation System:**

Name: Ubuntu

Version: 16.04-LTS(64bit)

**Browser:**

Browser name: Chrome

Browser version: 54.0.2840.59

**What I want to do**

想启动多个alphine服务

**Where I am**

app页面

**What I have done**

点击start,大概6次

**What I expe... | non_process | start 多个aiphine ,无法找到service dorry ui build versin version alpha operation system name ubuntu version lts browser browser name chrome browser version what i want to do 想启动多个alphine服务 where i am app页面 what i have done 点击start, what i expect service... | 0 |

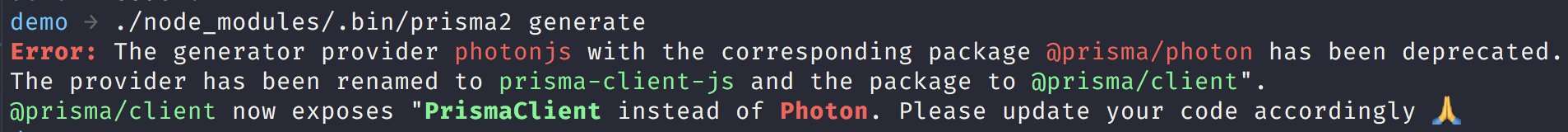

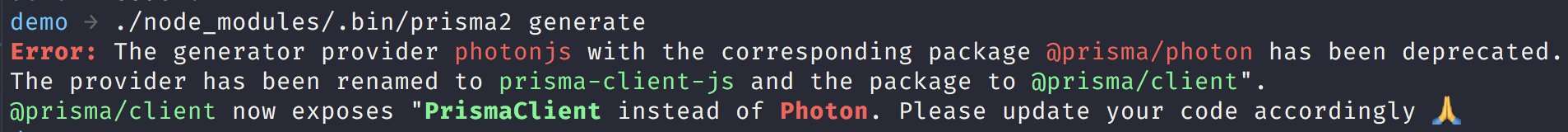

8,204 | 11,396,669,078 | IssuesEvent | 2020-01-30 13:59:30 | prisma/prisma-client-js | https://api.github.com/repos/prisma/prisma-client-js | closed | Improve photon to prisma client error | kind/improvement process/candidate | Right now we show this:

It's great that we detect this, but it's not very actionable. I propose we reword this to the following:

---

Oops! Photon has been renamed to Prisma Client. Please make the fo... | 1.0 | Improve photon to prisma client error - Right now we show this:

It's great that we detect this, but it's not very actionable. I propose we reword this to the following:

---

Oops! Photon has been rena... | process | improve photon to prisma client error right now we show this it s great that we detect this but it s not very actionable i propose we reword this to the following oops photon has been renamed to prisma client please make the following adjustments rename provider photonjs to p... | 1 |

21,551 | 29,865,435,491 | IssuesEvent | 2023-06-20 03:06:26 | cncf/tag-security | https://api.github.com/repos/cncf/tag-security | closed | [Sec Assess WG] Time and Effort of Security Assessments | help wanted assessment-process suggestion inactive | This issue was created from results of the Security Assessment Improvement Working Group (https://github.com/cncf/sig-security/issues/167#issuecomment-714514142).

# Time and Effort of Security Assessments

## Premise

- The result time span of assessments tend to stretch

- There is little awareness or lack of ... | 1.0 | [Sec Assess WG] Time and Effort of Security Assessments - This issue was created from results of the Security Assessment Improvement Working Group (https://github.com/cncf/sig-security/issues/167#issuecomment-714514142).

# Time and Effort of Security Assessments

## Premise

- The result time span of assessment... | process | time and effort of security assessments this issue was created from results of the security assessment improvement working group time and effort of security assessments premise the result time span of assessments tend to stretch there is little awareness or lack of clarity of the current sche... | 1 |

405,285 | 11,870,909,487 | IssuesEvent | 2020-03-26 13:35:56 | WebAhead/wajjbat-social | https://api.github.com/repos/WebAhead/wajjbat-social | opened | Add share button functionality | T4h priority-2 | Make the share button send all the collected data to a specific route | 1.0 | Add share button functionality - Make the share button send all the collected data to a specific route | non_process | add share button functionality make the share button send all the collected data to a specific route | 0 |

9,432 | 12,422,312,275 | IssuesEvent | 2020-05-23 21:24:56 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Runbooks missing | Pri1 automation/svc cxp process-automation/subsvc product-question triaged | None of the runbooks listed in the table were auto-deployed by the solution to my Automation account.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 225c9d05-83dd-b006-0025-3753f5ab25bf

* Version Independent ID: 9eecef0c-b1cb-1136-faf7-542... | 1.0 | Runbooks missing - None of the runbooks listed in the table were auto-deployed by the solution to my Automation account.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 225c9d05-83dd-b006-0025-3753f5ab25bf

* Version Independent ID: 9eecef0c... | process | runbooks missing none of the runbooks listed in the table were auto deployed by the solution to my automation account document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content ... | 1 |

547,516 | 16,043,447,355 | IssuesEvent | 2021-04-22 10:46:20 | googleapis/java-dialogflow | https://api.github.com/repos/googleapis/java-dialogflow | reopened | com.example.dialogflow.DetectIntentWithAudioTest: testDetectIntentAudio failed | api: dialogflow flakybot: flaky flakybot: issue priority: p1 type: bug | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 122d52c2f89d0e831926eabe6ff43a614523041a... | 1.0 | com.example.dialogflow.DetectIntentWithAudioTest: testDetectIntentAudio failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label a... | non_process | com example dialogflow detectintentwithaudiotest testdetectintentaudio failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output com google api ga... | 0 |