Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

13,922 | 16,679,257,670 | IssuesEvent | 2021-06-07 20:35:16 | Leviatan-Analytics/LA-data-processing | https://api.github.com/repos/Leviatan-Analytics/LA-data-processing | closed | Test open rofl file with League of Legends [2] | Data Processing Sprint 2 Week 2 | Research alternatives for opening league of legends replay files.

Output: Document including the research done on how to open this type of file. | 1.0 | Test open rofl file with League of Legends [2] - Research alternatives for opening league of legends replay files.

Output: Document including the research done on how to open this type of file. | process | test open rofl file with league of legends research alternatives for opening league of legends replay files output document including the research done on how to open this type of file | 1 |

36,144 | 2,795,869,777 | IssuesEvent | 2015-05-12 01:24:58 | TypeStrong/atom-typescript | https://api.github.com/repos/TypeStrong/atom-typescript | reopened | Renaming with "compile on save" affects focus | priority:high up-for-grabs | GIVEN the editor has unsaved changes,

AND the "compile on save" option is on,

AND the rename dialog appears

THEN the focus is moved to the dialog briefly until it moves back to the editor (which is in the background).

Atom version: 0.190

atom-typescript version: 2.15... | 1.0 | Renaming with "compile on save" affects focus - GIVEN the editor has unsaved changes,

AND the "compile on save" option is on,

AND the rename dialog appears

THEN the focus is moved to the dialog briefly until it moves back to the editor (which is in the background).

At... | non_process | renaming with compile on save affects focus given the editor has unsaved changes and the compile on save option is on and the rename dialog appears then the focus is moved to the dialog briefly until it moves back to the editor which is in the background atom version atom typescript versi... | 0 |

10,273 | 13,127,903,186 | IssuesEvent | 2020-08-06 11:16:30 | keep-network/keep-core | https://api.github.com/repos/keep-network/keep-core | closed | Remove redundant Address property in [ethereum.account] config section | process & client team 📟 client | Beacon client expects `Address` property to be present in `[ethereum.account]` config section. This information is redundant because we can read address from the key file as we do in `keep-ecdsa`. We should remove `Address` property altogether and instead of having:

```

[ethereum.account]

Address = "0x65ea55c1f104... | 1.0 | Remove redundant Address property in [ethereum.account] config section - Beacon client expects `Address` property to be present in `[ethereum.account]` config section. This information is redundant because we can read address from the key file as we do in `keep-ecdsa`. We should remove `Address` property altogether and... | process | remove redundant address property in config section beacon client expects address property to be present in config section this information is redundant because we can read address from the key file as we do in keep ecdsa we should remove address property altogether and instead of having add... | 1 |

21,057 | 28,005,674,679 | IssuesEvent | 2023-03-27 15:07:16 | oxidecomputer/hubris | https://api.github.com/repos/oxidecomputer/hubris | closed | update over management network should interlock against image for incorrect board | service processor robustness gimlet | We're expecting a mixed population of system board revisions in test environments shortly, and there is currently (to my knowledge) no protection against using the update server over the management network to install software for board revision A into a board of revision B. The results of such an operation are presuma... | 1.0 | update over management network should interlock against image for incorrect board - We're expecting a mixed population of system board revisions in test environments shortly, and there is currently (to my knowledge) no protection against using the update server over the management network to install software for board ... | process | update over management network should interlock against image for incorrect board we re expecting a mixed population of system board revisions in test environments shortly and there is currently to my knowledge no protection against using the update server over the management network to install software for board ... | 1 |

67,517 | 3,274,746,219 | IssuesEvent | 2015-10-26 12:39:51 | YetiForceCompany/YetiForceCRM | https://api.github.com/repos/YetiForceCompany/YetiForceCRM | closed | [Question] Looking for possibility to link doc with service contract | Label::MoreInfoRequired Priority::#2 Normal Type::VerificationRequired | Shortly speaking I would like to have possibility to link document upload to the system with record of service contract. Mean that when create service contract and sign physical doc with my customer I would like to have link between this record and scanned and upload signed contract...

Is it possible?

Thanks in advan... | 1.0 | [Question] Looking for possibility to link doc with service contract - Shortly speaking I would like to have possibility to link document upload to the system with record of service contract. Mean that when create service contract and sign physical doc with my customer I would like to have link between this record and ... | non_process | looking for possibility to link doc with service contract shortly speaking i would like to have possibility to link document upload to the system with record of service contract mean that when create service contract and sign physical doc with my customer i would like to have link between this record and scanned a... | 0 |

20,421 | 27,081,807,252 | IssuesEvent | 2023-02-14 14:30:30 | barrycumbie/studious-engine-feb2023-githubPd | https://api.github.com/repos/barrycumbie/studious-engine-feb2023-githubPd | closed | start here on day two | process | let's do

- a recap... with a gist! (I ❤️ gist)

- better yet, gist to our REPO (wiki) | 1.0 | start here on day two - let's do

- a recap... with a gist! (I ❤️ gist)

- better yet, gist to our REPO (wiki) | process | start here on day two let s do a recap with a gist i ❤️ gist better yet gist to our repo wiki | 1 |

99,713 | 30,539,596,208 | IssuesEvent | 2023-07-19 20:11:30 | DeiseCAPBarbosa/gha-build-homolog | https://api.github.com/repos/DeiseCAPBarbosa/gha-build-homolog | closed | Build Homolog feat help + about | build-homolog | ## Description

Realiza deploy automatizado da aplicação.

## Environments

environment_1

## Branches

feat/help

feat/about | 1.0 | Build Homolog feat help + about - ## Description

Realiza deploy automatizado da aplicação.

## Environments

environment_1

## Branches

feat/help

feat/about | non_process | build homolog feat help about description realiza deploy automatizado da aplicação environments environment branches feat help feat about | 0 |

4,813 | 7,700,773,055 | IssuesEvent | 2018-05-20 06:48:00 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | parallel/test-child-process-exec-timeout not cleaning up child process on win7 | child_process test windows | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

P... | 1.0 | parallel/test-child-process-exec-timeout not cleaning up child process on win7 - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in... | process | parallel test child process exec timeout not cleaning up child process on thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below a... | 1 |

7,352 | 10,483,219,182 | IssuesEvent | 2019-09-24 13:37:49 | Hurence/logisland | https://api.github.com/repos/Hurence/logisland | opened | add OpenFAAS support | feature processor | - controllerService: faas_service

component: com.hurence.logisland.service.faas

configuration:

faas.server: http://myfaas.org

# add field if defect found

- processor: defect_tagger

component: com.hurence.logisland.processor.FAASExecute

configuration:

... | 1.0 | add OpenFAAS support - - controllerService: faas_service

component: com.hurence.logisland.service.faas

configuration:

faas.server: http://myfaas.org

# add field if defect found

- processor: defect_tagger

component: com.hurence.logisland.processor.FAASExecute

... | process | add openfaas support controllerservice faas service component com hurence logisland service faas configuration faas server add field if defect found processor defect tagger component com hurence logisland processor faasexecute configur... | 1 |

16,904 | 22,216,225,972 | IssuesEvent | 2022-06-08 02:08:38 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Vector grid tool - result with wrong extent | Raster Processing Bug Vectors | ### What is the bug or the crash?

Using Vector>Research tools>Create grid results in a grid with an incorrect extent when this extent is given manually.

See steps below.

### Steps to reproduce the issue

Step 1 -Create a random raster with the following extent

372783.6000,372830.4000,7026751.5000,7029915.6000 [EP... | 1.0 | Vector grid tool - result with wrong extent - ### What is the bug or the crash?

Using Vector>Research tools>Create grid results in a grid with an incorrect extent when this extent is given manually.

See steps below.

### Steps to reproduce the issue

Step 1 -Create a random raster with the following extent

372783.... | process | vector grid tool result with wrong extent what is the bug or the crash using vector research tools create grid results in a grid with an incorrect extent when this extent is given manually see steps below steps to reproduce the issue step create a random raster with the following extent ... | 1 |

262,658 | 8,272,300,517 | IssuesEvent | 2018-09-16 18:41:22 | javaee/glassfish | https://api.github.com/repos/javaee/glassfish | closed | InjectionManager.inject doesn't handle PostConstruct methods properly | Component: naming ERR: Assignee Priority: Minor Type: Bug | The InjectionManagerImpl.inject method takes a boolean that controls whether postConstruct

methods are called. Fortunately, most of the time it's called with "false". I think the

only time it's called with "true" is when injecting an app client main class.

If called with true, the PostConstruct invocation logic ignore... | 1.0 | InjectionManager.inject doesn't handle PostConstruct methods properly - The InjectionManagerImpl.inject method takes a boolean that controls whether postConstruct

methods are called. Fortunately, most of the time it's called with "false". I think the

only time it's called with "true" is when injecting an app client mai... | non_process | injectionmanager inject doesn t handle postconstruct methods properly the injectionmanagerimpl inject method takes a boolean that controls whether postconstruct methods are called fortunately most of the time it s called with false i think the only time it s called with true is when injecting an app client mai... | 0 |

13,203 | 15,648,952,796 | IssuesEvent | 2021-03-23 06:45:08 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Password should be expire for every 90 days and enforce the user to reset the Password | P1 Participant manager datastore Process: Enhancement Process: Tested dev Unknown backend | Password should be expired for every 90 days and enforce the user to reset the Password, in case the password is not changed within that period by the user.

| 2.0 | [PM] Password should be expire for every 90 days and enforce the user to reset the Password - Password should be expired for every 90 days and enforce the user to reset the Password, in case the password is not changed within that period by the user.

| process | password should be expire for every days and enforce the user to reset the password password should be expired for every days and enforce the user to reset the password in case the password is not changed within that period by the user | 1 |

8,638 | 11,787,295,341 | IssuesEvent | 2020-03-17 13:49:06 | MicrosoftDocs/vsts-docs | https://api.github.com/repos/MicrosoftDocs/vsts-docs | closed | Pipeline resource variables available at compile time? | Pri1 devops-cicd-process/tech devops/prod doc-bug | Can you clarify if the pipeline resource variables (e.g. `variables.resources.pipeline.Alias.sourceBranch`) are available at compile time or only at run time? These variables act like they are only available at run time but I'd like to know if I'm missing something.

Background: I've been trying to use expressions to... | 1.0 | Pipeline resource variables available at compile time? - Can you clarify if the pipeline resource variables (e.g. `variables.resources.pipeline.Alias.sourceBranch`) are available at compile time or only at run time? These variables act like they are only available at run time but I'd like to know if I'm missing somethi... | process | pipeline resource variables available at compile time can you clarify if the pipeline resource variables e g variables resources pipeline alias sourcebranch are available at compile time or only at run time these variables act like they are only available at run time but i d like to know if i m missing somethi... | 1 |

9,893 | 12,891,011,577 | IssuesEvent | 2020-07-13 16:58:12 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Many versions wait for approval | Pri1 devops-cicd-process/tech devops/prod product-question | Let's assume YAML multi-release pipeline with stages.

Run many releases of pipeline and stage-X with approval is reached by many versions.

In old Release pipeline, new version cancels waiting for approval by old version.

In YAML pipeline, both of them are active for approval.

Is it expected behavior?

Is it pos... | 1.0 | Many versions wait for approval - Let's assume YAML multi-release pipeline with stages.

Run many releases of pipeline and stage-X with approval is reached by many versions.

In old Release pipeline, new version cancels waiting for approval by old version.

In YAML pipeline, both of them are active for approval.

I... | process | many versions wait for approval let s assume yaml multi release pipeline with stages run many releases of pipeline and stage x with approval is reached by many versions in old release pipeline new version cancels waiting for approval by old version in yaml pipeline both of them are active for approval i... | 1 |

68,608 | 8,310,096,441 | IssuesEvent | 2018-09-24 09:28:52 | python-trio/trio | https://api.github.com/repos/python-trio/trio | opened | make trio.BlockingTrioPortal and trio.Lock work together | design discussion user happiness | In [this SO question](https://stackoverflow.com/questions/52468911/python-ways-to-synchronize-trio-tasks-and-regular-threads), @Nikratio asks about how to share a lock between Trio and a thread.

There are some advantages to using `trio.Lock`, and having the thread access it through a `BlockingTrioPortal` – in partic... | 1.0 | make trio.BlockingTrioPortal and trio.Lock work together - In [this SO question](https://stackoverflow.com/questions/52468911/python-ways-to-synchronize-trio-tasks-and-regular-threads), @Nikratio asks about how to share a lock between Trio and a thread.

There are some advantages to using `trio.Lock`, and having the ... | non_process | make trio blockingtrioportal and trio lock work together in nikratio asks about how to share a lock between trio and a thread there are some advantages to using trio lock and having the thread access it through a blockingtrioportal – in particular it means that the trio side code can use cancellation a... | 0 |

21,602 | 30,004,925,160 | IssuesEvent | 2023-06-26 11:50:32 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | reopened | [C++] Nightly Integration Testing Report for Firestore | type: process nightly-testing |

<hidden value="integration-test-status-comment"></hidden>

### ✅ [build against repo] Integration test succeeded!

Requested by @sunmou99 on commit 9723100f301952fdfdeb31a412dec9b27873683a

Last updated: Sun Jun 25 04:35 PDT 2023

**[View integration test log & download artifacts](https://github.com/firebase/fire... | 1.0 | [C++] Nightly Integration Testing Report for Firestore -

<hidden value="integration-test-status-comment"></hidden>

### ✅ [build against repo] Integration test succeeded!

Requested by @sunmou99 on commit 9723100f301952fdfdeb31a412dec9b27873683a

Last updated: Sun Jun 25 04:35 PDT 2023

**[View integration test l... | process | nightly integration testing report for firestore ✅ nbsp integration test succeeded requested by on commit last updated sun jun pdt ✅ nbsp integration test succeeded requested by firebase workflow trigger on commit last updated sun jun pdt ... | 1 |

10,282 | 13,132,926,585 | IssuesEvent | 2020-08-06 19:52:52 | RockefellerArchiveCenter/request_broker | https://api.github.com/repos/RockefellerArchiveCenter/request_broker | closed | Check each requested item for available delivery formats | process request | ## Describe the solution you'd like

Each item in a request must be deliverable in the reading room or for duplication.

Requests must meet one of the following two conditions:

```

One or more instances are associated with the records

One or more digital object links are associated with the records

```

| 1.0 | Check each requested item for available delivery formats - ## Describe the solution you'd like

Each item in a request must be deliverable in the reading room or for duplication.

Requests must meet one of the following two conditions:

```

One or more instances are associated with the records

One or more digita... | process | check each requested item for available delivery formats describe the solution you d like each item in a request must be deliverable in the reading room or for duplication requests must meet one of the following two conditions one or more instances are associated with the records one or more digita... | 1 |

772,300 | 27,115,376,592 | IssuesEvent | 2023-02-15 18:08:52 | dtcenter/METplus | https://api.github.com/repos/dtcenter/METplus | closed | Bugfix: StatAnalysis cannot be configured to run once for each valid time | type: bug component: use case wrapper priority: blocker requestor: NOAA/EMC required: FOR DEVELOPMENT RELEASE METplus: Configuration MET: Gridded Analysis Tools | *Replace italics below with details for this issue.*

## Describe the Problem ##

*Provide a clear and concise description of the bug here.*

### Expected Behavior ###

*Provide a clear and concise description of what you expected to happen here.*

### Environment ###

Describe your runtime environment:

*1. Mach... | 1.0 | Bugfix: StatAnalysis cannot be configured to run once for each valid time - *Replace italics below with details for this issue.*

## Describe the Problem ##

*Provide a clear and concise description of the bug here.*

### Expected Behavior ###

*Provide a clear and concise description of what you expected to happen... | non_process | bugfix statanalysis cannot be configured to run once for each valid time replace italics below with details for this issue describe the problem provide a clear and concise description of the bug here expected behavior provide a clear and concise description of what you expected to happen... | 0 |

20,608 | 27,273,818,793 | IssuesEvent | 2023-02-23 02:00:07 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Thu, 23 Feb 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### Connecting Vision and Language with Video Localized Narratives

- **Authors:** Paul Voigtlaender, Soravit Changpinyo, Jordi Pont-Tuset, Radu Soricut, Vittorio Ferrari

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2302.11217

- **Pdf link... | 2.0 | New submissions for Thu, 23 Feb 23 - ## Keyword: events

### Connecting Vision and Language with Video Localized Narratives

- **Authors:** Paul Voigtlaender, Soravit Changpinyo, Jordi Pont-Tuset, Radu Soricut, Vittorio Ferrari

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://a... | process | new submissions for thu feb keyword events connecting vision and language with video localized narratives authors paul voigtlaender soravit changpinyo jordi pont tuset radu soricut vittorio ferrari subjects computer vision and pattern recognition cs cv arxiv link pdf ... | 1 |

184,527 | 31,997,598,145 | IssuesEvent | 2023-09-21 10:09:49 | NIAEFEUP/nitsig | https://api.github.com/repos/NIAEFEUP/nitsig | opened | Multiline info cards | bug ui-design | The current info cards implementation does not take multiple lines into account, this should be fixed

| 1.0 | Multiline info cards - The current info cards implementation does not take multiple lines into account, this should be fixed

| non_process | multiline info cards the current info cards implementation does not take multiple lines into account this should be fixed | 0 |

728 | 3,214,216,124 | IssuesEvent | 2015-10-06 23:58:04 | GsDevKit/GsDevKit | https://api.github.com/repos/GsDevKit/GsDevKit | closed | Upgrade Grease reference to 1.1.10 | in process | I noticed GLASS1 is still referencing Grease 1.0.7.x

Seems to me that an upgrade to 1.1.10 would be good to do. We need (and load) it in Seaside. | 1.0 | Upgrade Grease reference to 1.1.10 - I noticed GLASS1 is still referencing Grease 1.0.7.x

Seems to me that an upgrade to 1.1.10 would be good to do. We need (and load) it in Seaside. | process | upgrade grease reference to i noticed is still referencing grease x seems to me that an upgrade to would be good to do we need and load it in seaside | 1 |

5,951 | 8,775,454,331 | IssuesEvent | 2018-12-18 23:11:47 | kerubistan/kerub | https://api.github.com/repos/kerubistan/kerub | opened | monitor physical disks | component:data processing enhancement priority: normal | check the drives periodically for predicted errors, create a problem when a drive fails | 1.0 | monitor physical disks - check the drives periodically for predicted errors, create a problem when a drive fails | process | monitor physical disks check the drives periodically for predicted errors create a problem when a drive fails | 1 |

102,358 | 31,927,006,480 | IssuesEvent | 2023-09-19 03:08:39 | apache/camel-quarkus | https://api.github.com/repos/apache/camel-quarkus | closed | [CI] - Camel Main Branch Build Failure | build/camel-main | This is a placeholder issue used by the [nightly sync workflow](https://github.com/apache/camel-quarkus/actions/workflows/camel-master-cron.yaml) for the [`camel-main`](https://github.com/apache/camel-quarkus/tree/camel-main) branch. | 1.0 | [CI] - Camel Main Branch Build Failure - This is a placeholder issue used by the [nightly sync workflow](https://github.com/apache/camel-quarkus/actions/workflows/camel-master-cron.yaml) for the [`camel-main`](https://github.com/apache/camel-quarkus/tree/camel-main) branch. | non_process | camel main branch build failure this is a placeholder issue used by the for the branch | 0 |

351,248 | 10,514,571,364 | IssuesEvent | 2019-09-28 01:43:42 | AY1920S1-CS2113T-W17-4/main | https://api.github.com/repos/AY1920S1-CS2113T-W17-4/main | opened | As a Computing student, I can have a do-after task | priority.Medium type.Story | so that I know what tasks need to be done after completing a specific task. | 1.0 | As a Computing student, I can have a do-after task - so that I know what tasks need to be done after completing a specific task. | non_process | as a computing student i can have a do after task so that i know what tasks need to be done after completing a specific task | 0 |

12,446 | 14,934,581,563 | IssuesEvent | 2021-01-25 10:43:32 | pystatgen/sgkit | https://api.github.com/repos/pystatgen/sgkit | closed | Run build on a schedule | process + tools | We should run the build daily to pick up changes to dependencies that cause our tests to fail (like #421). | 1.0 | Run build on a schedule - We should run the build daily to pick up changes to dependencies that cause our tests to fail (like #421). | process | run build on a schedule we should run the build daily to pick up changes to dependencies that cause our tests to fail like | 1 |

160,895 | 12,520,995,353 | IssuesEvent | 2020-06-03 16:45:59 | aliasrobotics/RVD | https://api.github.com/repos/aliasrobotics/RVD | opened | Use of possibly insecure function - consider using safer ast, ./src/ros_comm/rosparam/src/rosparam/__init__.py:113 | bandit bug components software robot component: ROS static analysis testing triage version: melodic | ```yaml

{

"id": 1,

"title": "Use of possibly insecure function - consider using safer ast, ./src/ros_comm/rosparam/src/rosparam/__init__.py:113",

"type": "bug",

"description": "HIGH confidence of MEDIUM severity bug. Use of possibly insecure function - consider using safer ast.literal_eval. ./src/ros_co... | 1.0 | Use of possibly insecure function - consider using safer ast, ./src/ros_comm/rosparam/src/rosparam/__init__.py:113 - ```yaml

{

"id": 1,

"title": "Use of possibly insecure function - consider using safer ast, ./src/ros_comm/rosparam/src/rosparam/__init__.py:113",

"type": "bug",

"description": "HIGH confi... | non_process | use of possibly insecure function consider using safer ast src ros comm rosparam src rosparam init py yaml id title use of possibly insecure function consider using safer ast src ros comm rosparam src rosparam init py type bug description high confidenc... | 0 |

10,166 | 13,044,162,691 | IssuesEvent | 2020-07-29 03:47:35 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `AesEncrypt` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `AesEncrypt` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr) ... | 2.0 | UCP: Migrate scalar function `AesEncrypt` from TiDB -

## Description

Port the scalar function `AesEncrypt` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv... | process | ucp migrate scalar function aesencrypt from tidb description port the scalar function aesencrypt from tidb to coprocessor score mentor s lonng recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

3,211 | 6,266,406,261 | IssuesEvent | 2017-07-17 01:45:32 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | spawnSync can be used to perform OOB memory write | child_process security | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

P... | 1.0 | spawnSync can be used to perform OOB memory write - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template belo... | process | spawnsync can be used to perform oob memory write thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able version ... | 1 |

438,785 | 12,651,412,594 | IssuesEvent | 2020-06-17 00:14:11 | linkerd/linkerd2 | https://api.github.com/repos/linkerd/linkerd2 | closed | multicluster: support per-mirror credentials | area/cli priority/P0 | #4457 raises an issue I didn't notice -- `setup-remote` currently creates an SA/RBAC to be used by all remote gateways. This makes it impossible to revoke access to any one cluster. It's all-or-nothing. It also creates some unnecessary install-time-concerns.

Can we change the way that Service Accounts are allocated ... | 1.0 | multicluster: support per-mirror credentials - #4457 raises an issue I didn't notice -- `setup-remote` currently creates an SA/RBAC to be used by all remote gateways. This makes it impossible to revoke access to any one cluster. It's all-or-nothing. It also creates some unnecessary install-time-concerns.

Can we chan... | non_process | multicluster support per mirror credentials raises an issue i didn t notice setup remote currently creates an sa rbac to be used by all remote gateways this makes it impossible to revoke access to any one cluster it s all or nothing it also creates some unnecessary install time concerns can we change ... | 0 |

149,019 | 19,562,654,829 | IssuesEvent | 2022-01-03 18:28:29 | ibm-cio-vulnerability-scanning/insomnia | https://api.github.com/repos/ibm-cio-vulnerability-scanning/insomnia | opened | CVE-2021-37712 (High) detected in tar-4.4.13.tgz, tar-6.1.0.tgz | security vulnerability | ## CVE-2021-37712 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tar-4.4.13.tgz</b>, <b>tar-6.1.0.tgz</b></p></summary>

<p>

<details><summary><b>tar-4.4.13.tgz</b></p></summary>

<p... | True | CVE-2021-37712 (High) detected in tar-4.4.13.tgz, tar-6.1.0.tgz - ## CVE-2021-37712 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tar-4.4.13.tgz</b>, <b>tar-6.1.0.tgz</b></p></summa... | non_process | cve high detected in tar tgz tar tgz cve high severity vulnerability vulnerable libraries tar tgz tar tgz tar tgz tar for node library home page a href dependency hierarchy webpack tgz root library watchpack tgz ... | 0 |

7,840 | 11,013,477,332 | IssuesEvent | 2019-12-04 20:33:18 | thewca/wca-regulations | https://api.github.com/repos/thewca/wca-regulations | closed | WRC Projects (Mid-2019) | 2020 process | Here's a rough list of what I want to tackle with new WRC members in 2019:

# [Simplifications](https://github.com/orgs/thewca/projects/4#column-6349209)

Changes that reduce what a competitor/judge/Delegate needs to know without significantly impacting the nature of the sport.

- "Solved or Not"

- No +2 for mis... | 1.0 | WRC Projects (Mid-2019) - Here's a rough list of what I want to tackle with new WRC members in 2019:

# [Simplifications](https://github.com/orgs/thewca/projects/4#column-6349209)

Changes that reduce what a competitor/judge/Delegate needs to know without significantly impacting the nature of the sport.

- "Solved ... | process | wrc projects mid here s a rough list of what i want to tackle with new wrc members in changes that reduce what a competitor judge delegate needs to know without significantly impacting the nature of the sport solved or not no for misalignment disallow missing misplaced pieces in ... | 1 |

306,416 | 23,159,597,567 | IssuesEvent | 2022-07-29 16:12:53 | alibaba/ilogtail | https://api.github.com/repos/alibaba/ilogtail | opened | [DOC]: Add manual page for metric_http | documentation | <!--

Please describe any errors, ambiguities, or other improvement opportunities that you can find in the documentation.

-->

| 1.0 | [DOC]: Add manual page for metric_http - <!--

Please describe any errors, ambiguities, or other improvement opportunities that you can find in the documentation.

-->

| non_process | add manual page for metric http please describe any errors ambiguities or other improvement opportunities that you can find in the documentation | 0 |

2,504 | 5,277,587,390 | IssuesEvent | 2017-02-07 03:56:00 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | an underscore is not always a continuation line | antlr bug parse-tree-preprocessing | ````vb

Sub testRD2()

Dim i As Long

10 For i = 1 To 10

20 Debug.Print i ' comment_

30 Next i

' putting an underscore on the end of comment gets:

' Consider renaming line label '30' VBAProject.Module1

End Sub

````

same happens if a variable name ends in an underscore and is last on the line and the ... | 1.0 | an underscore is not always a continuation line - ````vb

Sub testRD2()

Dim i As Long

10 For i = 1 To 10

20 Debug.Print i ' comment_

30 Next i

' putting an underscore on the end of comment gets:

' Consider renaming line label '30' VBAProject.Module1

End Sub

````

same happens if a variable name ends... | process | an underscore is not always a continuation line vb sub dim i as long for i to debug print i comment next i putting an underscore on the end of comment gets consider renaming line label vbaproject end sub same happens if a variable name ends in an underscore... | 1 |

21,218 | 28,299,926,119 | IssuesEvent | 2023-04-10 04:34:24 | CATcher-org/WATcher | https://api.github.com/repos/CATcher-org/WATcher | closed | Issues/PRs not reloaded before data gets fetched when changing repository from header | bug aspect-Process aspect-UIX difficulty.Moderate category.Bug | **Describe the bug**

When changing repository from the header, the original repository is as below:

<img width="1311" alt="Screenshot 2023-03-20 at 12 45 04 PM" src="https://user-images.githubusercontent.com/25452497/226249290-77f22e25-1b43-4c05-9b1f-1e759b9c93c3.png">

But after changing to another repository ... | 1.0 | Issues/PRs not reloaded before data gets fetched when changing repository from header - **Describe the bug**

When changing repository from the header, the original repository is as below:

<img width="1311" alt="Screenshot 2023-03-20 at 12 45 04 PM" src="https://user-images.githubusercontent.com/25452497/226249290... | process | issues prs not reloaded before data gets fetched when changing repository from header describe the bug when changing repository from the header the original repository is as below img width alt screenshot at pm src but after changing to another repository from the header img... | 1 |

16,533 | 21,560,232,834 | IssuesEvent | 2022-05-01 03:31:58 | ctmccull/CPP-528 | https://api.github.com/repos/ctmccull/CPP-528 | closed | Utilization of Kanban boards | Team process | [ ] Steps have task lists

[ ] Each card assigned to a team member

[ ] Update card status weekly | 1.0 | Utilization of Kanban boards - [ ] Steps have task lists

[ ] Each card assigned to a team member

[ ] Update card status weekly | process | utilization of kanban boards steps have task lists each card assigned to a team member update card status weekly | 1 |

335,213 | 30,018,244,840 | IssuesEvent | 2023-06-26 20:33:25 | microsoft/vscode-python-debugger | https://api.github.com/repos/microsoft/vscode-python-debugger | opened | TPI: Debugging Python, Test automatic configuration | testplan-item | Refs: https://github.com/microsoft/vscode-python/issues/19503

- [ ] macOS

- [ ] linux

- [ ] windows

Complexity: 3

Author: @paulacamargo25

---

Prerequisites:

- Install python, version >= 3.7

- Install the [`debugpy`](https://marketplace.visualstudio.com/items?itemName=ms-python.debugpy&ssr=false) exten... | 1.0 | TPI: Debugging Python, Test automatic configuration - Refs: https://github.com/microsoft/vscode-python/issues/19503

- [ ] macOS

- [ ] linux

- [ ] windows

Complexity: 3

Author: @paulacamargo25

---

Prerequisites:

- Install python, version >= 3.7

- Install the [`debugpy`](https://marketplace.visualstudio... | non_process | tpi debugging python test automatic configuration refs macos linux windows complexity author prerequisites install python version install the extension automatically detect a flask application and run it with the correct debug configuration step... | 0 |

6,313 | 9,312,961,574 | IssuesEvent | 2019-03-26 03:36:52 | theamrzaki/text_summurization_abstractive_methods | https://api.github.com/repos/theamrzaki/text_summurization_abstractive_methods | closed | asking for instructions !!! | Data Processing | Hi Amrzaki!

I read your blogs on Medium, they are very good. I am new to text summarization and was wondering how to run the pointer-generator model with coverage on new data, I mean how to use it to summarize new articles?

your help is appreciated. | 1.0 | asking for instructions !!! - Hi Amrzaki!

I read your blogs on Medium, they are very good. I am new to text summarization and was wondering how to run the pointer-generator model with coverage on new data, I mean how to use it to summarize new articles?

your help is appreciated. | process | asking for instructions hi amrzaki i read your blogs on medium they are very good i am new to text summarization and was wondering how to run the pointer generator model with coverage on new data i mean how to use it to summarize new articles your help is appreciated | 1 |

627,914 | 19,956,681,402 | IssuesEvent | 2022-01-28 00:40:03 | microsoft/terminal | https://api.github.com/repos/microsoft/terminal | closed | Settings UI: bad spacing between pivot header and its content | Help Wanted Issue-Bug Product-Terminal In-PR Priority-3 Area-Settings UI | <!--

🚨🚨🚨🚨🚨🚨🚨🚨🚨🚨

I ACKNOWLEDGE THE FOLLOWING BEFORE PROCEEDING:

1. If I delete this entire template and go my own path, the core team may close my issue without further explanation or engagement.

2. If I list multiple bugs/concerns in this one issue, the core team may close my issue without further expl... | 1.0 | Settings UI: bad spacing between pivot header and its content - <!--

🚨🚨🚨🚨🚨🚨🚨🚨🚨🚨

I ACKNOWLEDGE THE FOLLOWING BEFORE PROCEEDING:

1. If I delete this entire template and go my own path, the core team may close my issue without further explanation or engagement.

2. If I list multiple bugs/concerns in this ... | non_process | settings ui bad spacing between pivot header and its content 🚨🚨🚨🚨🚨🚨🚨🚨🚨🚨 i acknowledge the following before proceeding if i delete this entire template and go my own path the core team may close my issue without further explanation or engagement if i list multiple bugs concerns in this ... | 0 |

8,020 | 11,206,983,520 | IssuesEvent | 2020-01-06 01:19:57 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | r.series broken in Processing due to wrong "range=0,0" pre-definition | Bug High Priority Processing Regression | Author Name: **Markus Neteler** (Markus Neteler)

Original Redmine Issue: [21452](https://issues.qgis.org/issues/21452)

Affected QGIS version: 3.7(master)

Redmine category:processing/grass

---

In QGIS 3.4, 3.6 r.series is broken in Processing due to wrong "range=0,0" pre-definition. It should be empty but is pre-popul... | 1.0 | r.series broken in Processing due to wrong "range=0,0" pre-definition - Author Name: **Markus Neteler** (Markus Neteler)

Original Redmine Issue: [21452](https://issues.qgis.org/issues/21452)

Affected QGIS version: 3.7(master)

Redmine category:processing/grass

---

In QGIS 3.4, 3.6 r.series is broken in Processing due ... | process | r series broken in processing due to wrong range pre definition author name markus neteler markus neteler original redmine issue affected qgis version master redmine category processing grass in qgis r series is broken in processing due to wrong range pre definition it sh... | 1 |

10,202 | 13,066,528,356 | IssuesEvent | 2020-07-30 21:54:07 | googleapis/python-bigquery | https://api.github.com/repos/googleapis/python-bigquery | closed | Testing: reduce / remove warning spew | api: bigquery testing type: process | ```python

$ nox -re unit-3.6

nox > Running session unit-3.6

nox > Re-using existing virtual environment at .nox/unit-3-6.

nox > pip install mock pytest google-cloud-testutils pytest-cov freezegun

nox > pip install grpcio

nox > pip install -e .[all,fastparquet]

nox > pip install ipython

nox > py.test --quiet --c... | 1.0 | Testing: reduce / remove warning spew - ```python

$ nox -re unit-3.6

nox > Running session unit-3.6

nox > Re-using existing virtual environment at .nox/unit-3-6.

nox > pip install mock pytest google-cloud-testutils pytest-cov freezegun

nox > pip install grpcio

nox > pip install -e .[all,fastparquet]

nox > pip in... | process | testing reduce remove warning spew python nox re unit nox running session unit nox re using existing virtual environment at nox unit nox pip install mock pytest google cloud testutils pytest cov freezegun nox pip install grpcio nox pip install e nox pip install ipython n... | 1 |

53,519 | 3,040,717,694 | IssuesEvent | 2015-08-07 16:56:40 | scamille/simc_issue_test3 | https://api.github.com/repos/scamille/simc_issue_test3 | closed | Some minor things in sc_warlock.cpp | bug imported Priority-Medium | _From [niarbeht@gmx.de](https://code.google.com/u/niarbeht@gmx.de/) on March 09, 2009 19:38:02_

In warlock_spell_t::target_debuff:

target_multiplier *= 1.01 + p -> talents.master_conjuror * 0.01;

Master Conjuror is a 150/300% increase in 3.1. The multiplier is 1.02 with

1/2 (rounded down) and 1.04 with 2/2. A... | 1.0 | Some minor things in sc_warlock.cpp - _From [niarbeht@gmx.de](https://code.google.com/u/niarbeht@gmx.de/) on March 09, 2009 19:38:02_

In warlock_spell_t::target_debuff:

target_multiplier *= 1.01 + p -> talents.master_conjuror * 0.01;

Master Conjuror is a 150/300% increase in 3.1. The multiplier is 1.02 with

1... | non_process | some minor things in sc warlock cpp from on march in warlock spell t target debuff target multiplier p talents master conjuror master conjuror is a increase in the multiplier is with rounded down and with and i wonder why you aren t doing the... | 0 |

105,595 | 11,455,022,490 | IssuesEvent | 2020-02-06 18:14:29 | CarlaValverde/Tarjeta- | https://api.github.com/repos/CarlaValverde/Tarjeta- | closed | Implementación de una clase | documentation enhancement implementation | Implementación de la clase Tarjeta, esta clase contará con los atributos privados id, dniTitular, pin y saldo. Además, contará con un constructor y con los métodos de obtención y establecimiento necesarios (get y set). | 1.0 | Implementación de una clase - Implementación de la clase Tarjeta, esta clase contará con los atributos privados id, dniTitular, pin y saldo. Además, contará con un constructor y con los métodos de obtención y establecimiento necesarios (get y set). | non_process | implementación de una clase implementación de la clase tarjeta esta clase contará con los atributos privados id dnititular pin y saldo además contará con un constructor y con los métodos de obtención y establecimiento necesarios get y set | 0 |

270,550 | 20,603,070,610 | IssuesEvent | 2022-03-06 15:16:55 | SciML/NeuralOperators.jl | https://api.github.com/repos/SciML/NeuralOperators.jl | closed | Notebooks are broken | documentation question | I tried running the super resolution example notebook and it errors with:

```julia

SystemError: opening file "/Desktop/dev/NeuralOperators.jl/example/SuperResolution/src/../model/model.jld2": No such file or directory

```

It appears that the ```model.jld2``` file is actually missing from the repo? This look... | 1.0 | Notebooks are broken - I tried running the super resolution example notebook and it errors with:

```julia

SystemError: opening file "/Desktop/dev/NeuralOperators.jl/example/SuperResolution/src/../model/model.jld2": No such file or directory

```

It appears that the ```model.jld2``` file is actually missing f... | non_process | notebooks are broken i tried running the super resolution example notebook and it errors with julia systemerror opening file desktop dev neuraloperators jl example superresolution src model model no such file or directory it appears that the model file is actually missing from th... | 0 |

84,802 | 10,564,377,051 | IssuesEvent | 2019-10-05 01:19:49 | ScoreWin/letsgoraiding | https://api.github.com/repos/ScoreWin/letsgoraiding | closed | "Cooldown" Fatigue | designer app feature | Abilities should be able to permanently increase their own cooldown. This will specifically be useful for balancing certain ultimate abilities which we want to be available early-on in the game. | 1.0 | "Cooldown" Fatigue - Abilities should be able to permanently increase their own cooldown. This will specifically be useful for balancing certain ultimate abilities which we want to be available early-on in the game. | non_process | cooldown fatigue abilities should be able to permanently increase their own cooldown this will specifically be useful for balancing certain ultimate abilities which we want to be available early on in the game | 0 |

7,946 | 11,137,527,183 | IssuesEvent | 2019-12-20 19:36:16 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Update What's next page to include content on updating application | Apply Process Approved Requirements Ready State Dept. | Who: Applicants

What: View information on what happens next

Why: In order to provide information about how to update and submit prior to closing

Acceptance Criteria:

Update the What's next page to include additional information related to updating the application, clicking submit, and all before 11:59 p.m. EST

... | 1.0 | Update What's next page to include content on updating application - Who: Applicants

What: View information on what happens next

Why: In order to provide information about how to update and submit prior to closing

Acceptance Criteria:

Update the What's next page to include additional information related to updati... | process | update what s next page to include content on updating application who applicants what view information on what happens next why in order to provide information about how to update and submit prior to closing acceptance criteria update the what s next page to include additional information related to updati... | 1 |

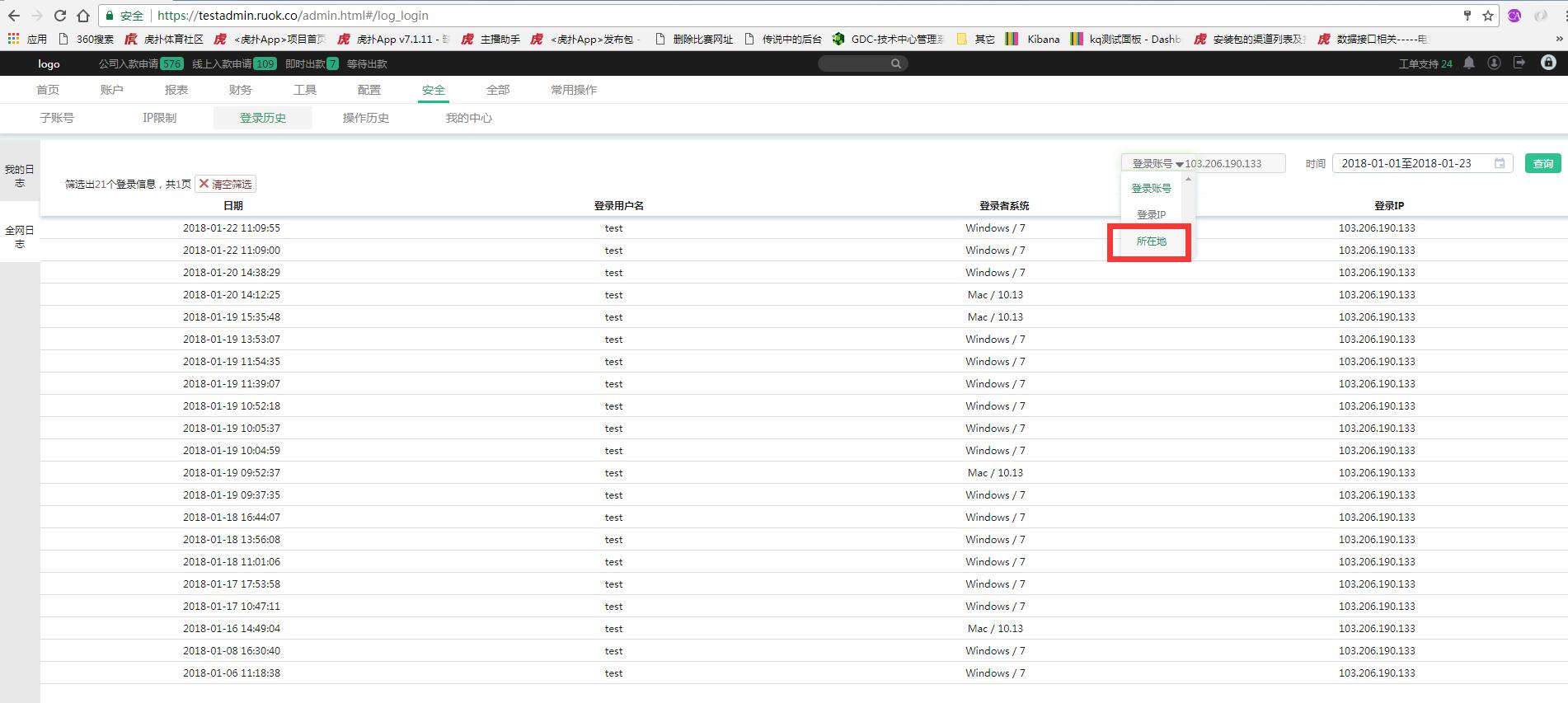

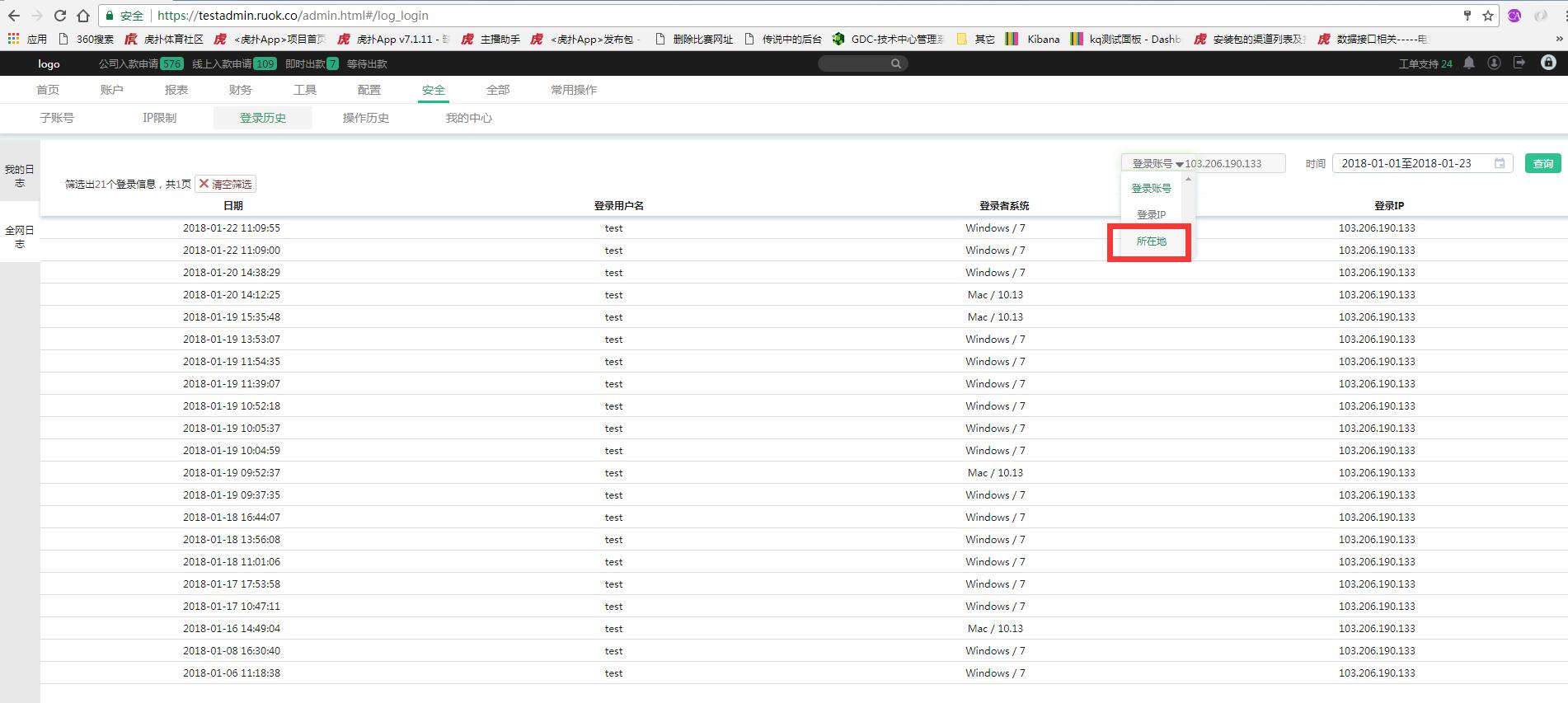

58,396 | 7,136,223,990 | IssuesEvent | 2018-01-23 05:52:38 | ruoklive/lottery_bug | https://api.github.com/repos/ruoklive/lottery_bug | closed | 【后台】安全版块下的登录历史,条件搜索 所在地,需求是否存在? | UI-Design fixed | 1、安全版块下的登录历史,条件搜索 中包括 所在地 输入搜索,但列表中并没有栏位显示 所在地,需确认搜索条件下拉框里 的 所在地是否有必要存在?

| 1.0 | 【后台】安全版块下的登录历史,条件搜索 所在地,需求是否存在? - 1、安全版块下的登录历史,条件搜索 中包括 所在地 输入搜索,但列表中并没有栏位显示 所在地,需确认搜索条件下拉框里 的 所在地是否有必要存在?

| non_process | 【后台】安全版块下的登录历史,条件搜索 所在地,需求是否存在? 、安全版块下的登录历史,条件搜索 中包括 所在地 输入搜索,但列表中并没有栏位显示 所在地,需确认搜索条件下拉框里 的 所在地是否有必要存在? | 0 |

2,686 | 5,536,938,050 | IssuesEvent | 2017-03-21 20:51:33 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Attach card ids to dataset query executions | Query Processor | For things like #609, and #2743 it would be very useful to be able to link a card to its executions.

| 1.0 | Attach card ids to dataset query executions - For things like #609, and #2743 it would be very useful to be able to link a card to its executions.

| process | attach card ids to dataset query executions for things like and it would be very useful to be able to link a card to its executions | 1 |

136,816 | 5,289,019,774 | IssuesEvent | 2017-02-08 16:27:15 | jeveloper/jayrock | https://api.github.com/repos/jeveloper/jayrock | closed | Have JsonRpcClient use .NET 4.0 ExpandoObject to dynamically generate API | auto-migrated Priority-Medium Type-Enhancement | ```

Most JSON-RPC client implementations dynamically generate their API according

to what is returned by system.listMethods. Therefore instead of this:

Console.WriteLine(client.Invoke("sum", new JsonObject { { "a", 123 }, { "b",

456 } }));

... one simply does this:

Console.WriteLine(client.sum(123, 456));

.NET no... | 1.0 | Have JsonRpcClient use .NET 4.0 ExpandoObject to dynamically generate API - ```

Most JSON-RPC client implementations dynamically generate their API according

to what is returned by system.listMethods. Therefore instead of this:

Console.WriteLine(client.Invoke("sum", new JsonObject { { "a", 123 }, { "b",

456 } }));

... | non_process | have jsonrpcclient use net expandoobject to dynamically generate api most json rpc client implementations dynamically generate their api according to what is returned by system listmethods therefore instead of this console writeline client invoke sum new jsonobject a b ... | 0 |

178,440 | 21,509,400,489 | IssuesEvent | 2022-04-28 01:37:05 | bsbtd/Teste | https://api.github.com/repos/bsbtd/Teste | closed | WS-2017-3740 (Medium) detected in elasticsearchv8.0.0-alpha1 - autoclosed | security vulnerability | ## WS-2017-3740 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elasticsearchv8.0.0-alpha1</b></p></summary>

<p>

<p>Free and Open, Distributed, RESTful Search Engine</p>

<p>Library h... | True | WS-2017-3740 (Medium) detected in elasticsearchv8.0.0-alpha1 - autoclosed - ## WS-2017-3740 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elasticsearchv8.0.0-alpha1</b></p></summary... | non_process | ws medium detected in autoclosed ws medium severity vulnerability vulnerable library free and open distributed restful search engine library home page a href found in head commit a href vulnerable source files elasticsearch x pac... | 0 |

7,728 | 10,852,841,069 | IssuesEvent | 2019-11-13 13:37:44 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | obsolete precomposed defense response | multi-species process obsoletion | GO:1901244

positive regulation of transcription from RNA polymerase II promoter involved in defense response to fungus | 1.0 | obsolete precomposed defense response - GO:1901244

positive regulation of transcription from RNA polymerase II promoter involved in defense response to fungus | process | obsolete precomposed defense response go positive regulation of transcription from rna polymerase ii promoter involved in defense response to fungus | 1 |

21,395 | 29,202,232,602 | IssuesEvent | 2023-05-21 00:37:48 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Hibrido / Belo Horizonte, Minas Gerais, Brazil] Backend Java Developer (Pleno - Híbrido em Belo Horizonte - MG) na Coodesh | SALVADOR BACK-END PJ JAVA MYSQL JAVASCRIPT PLENO PRIMEFACES JSF SPRING SQL GIT HIBERNATE MAVEN REST SOAP JSON ANGULAR REQUISITOS NGINX PROCESSOS INOVAÇÃO BACKEND GITHUB APACHE UMA C DOCUMENTAÇÃO WILDFLY HTTP MANUTENÇÃO HIBRIDO ALOCADO Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/backend-java-developer-pleno-hibrido-... | 1.0 | [Hibrido / Belo Horizonte, Minas Gerais, Brazil] Backend Java Developer (Pleno - Híbrido em Belo Horizonte - MG) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redire... | process | backend java developer pleno híbrido em belo horizonte mg na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url co... | 1 |

310,074 | 23,320,130,038 | IssuesEvent | 2022-08-08 15:38:21 | atsign-foundation/at_libraries | https://api.github.com/repos/atsign-foundation/at_libraries | closed | Unlist at_server_status on Pub.dev | documentation | Unlist the following packages ~ @nickelskevin to provide further details

- [ ] at_server_status | 1.0 | Unlist at_server_status on Pub.dev - Unlist the following packages ~ @nickelskevin to provide further details

- [ ] at_server_status | non_process | unlist at server status on pub dev unlist the following packages nickelskevin to provide further details at server status | 0 |

1,256 | 3,789,946,068 | IssuesEvent | 2016-03-21 19:42:57 | opattison/olivermakes | https://api.github.com/repos/opattison/olivermakes | opened | Patterns: add configuration details | content maintenance process | - [ ] options

- [ ] Liquid-specific implementation

Link to code where appropriate. | 1.0 | Patterns: add configuration details - - [ ] options

- [ ] Liquid-specific implementation

Link to code where appropriate. | process | patterns add configuration details options liquid specific implementation link to code where appropriate | 1 |

215,247 | 24,154,771,121 | IssuesEvent | 2022-09-22 06:36:02 | opfab/operatorfabric-core | https://api.github.com/repos/opfab/operatorfabric-core | closed | CVE-2022-25857 (High) detected in snakeyaml-1.30.jar, snakeyaml-1.29.jar - autoclosed | security vulnerability | ## CVE-2022-25857 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>snakeyaml-1.30.jar</b>, <b>snakeyaml-1.29.jar</b></p></summary>

<p>

<details><summary><b>snakeyaml-1.30.jar</b></p><... | True | CVE-2022-25857 (High) detected in snakeyaml-1.30.jar, snakeyaml-1.29.jar - autoclosed - ## CVE-2022-25857 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>snakeyaml-1.30.jar</b>, <b>sn... | non_process | cve high detected in snakeyaml jar snakeyaml jar autoclosed cve high severity vulnerability vulnerable libraries snakeyaml jar snakeyaml jar snakeyaml jar yaml parser and emitter for java library home page a href path to dependency file tools... | 0 |

11,447 | 14,265,099,695 | IssuesEvent | 2020-11-20 16:38:29 | ORNL-AMO/AMO-Tools-Suite | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Suite | closed | ESC Further Backend | Calculator Process Heating | Will need to add some things to

GasFlueGasMaterials.h

GasFlueGasMaterials.cpp

SolidLiquidFlueGasMaterials.h

SolidLiquidFlueGasMaterials.cpp | 1.0 | ESC Further Backend - Will need to add some things to

GasFlueGasMaterials.h

GasFlueGasMaterials.cpp

SolidLiquidFlueGasMaterials.h

SolidLiquidFlueGasMaterials.cpp | process | esc further backend will need to add some things to gasfluegasmaterials h gasfluegasmaterials cpp solidliquidfluegasmaterials h solidliquidfluegasmaterials cpp | 1 |

55,342 | 14,394,281,313 | IssuesEvent | 2020-12-03 00:55:02 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | Key appears twice in `test_optimize.test_show_options` | defect scipy.optimize | @AtsushiSakai, the maximize key appears twice in `st_optimize.test_show_options`, on line 2205. I think you just added this. Can you rejig the test as appropriate so that any key only appears once in the definition of a dict? | 1.0 | Key appears twice in `test_optimize.test_show_options` - @AtsushiSakai, the maximize key appears twice in `st_optimize.test_show_options`, on line 2205. I think you just added this. Can you rejig the test as appropriate so that any key only appears once in the definition of a dict? | non_process | key appears twice in test optimize test show options atsushisakai the maximize key appears twice in st optimize test show options on line i think you just added this can you rejig the test as appropriate so that any key only appears once in the definition of a dict | 0 |

53,152 | 6,690,492,881 | IssuesEvent | 2017-10-09 09:20:10 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | opened | Improve flow for inserting between blocks | Chrome Design Needs Design Feedback | There's an increasing need to improve the flow for inserting content between existing blocks. See #2752, #833, #2890, #2755, #2043.

It becomes notably important when we start to look at nested blocks, or inserting images inline into paragraph blocks. A sticky inserter (as previously proposed) probably isn't accessib... | 2.0 | Improve flow for inserting between blocks - There's an increasing need to improve the flow for inserting content between existing blocks. See #2752, #833, #2890, #2755, #2043.

It becomes notably important when we start to look at nested blocks, or inserting images inline into paragraph blocks. A sticky inserter (as ... | non_process | improve flow for inserting between blocks there s an increasing need to improve the flow for inserting content between existing blocks see it becomes notably important when we start to look at nested blocks or inserting images inline into paragraph blocks a sticky inserter as previously pro... | 0 |

2,541 | 5,300,476,420 | IssuesEvent | 2017-02-10 05:08:36 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | "uri and file may not be null" when having a reference to a Markdown file in DITA Map + preprocessing plugin installed | bug P1 preprocess | Having the "com.elovirta.dita.markdown_1.1.0" plugin installed in DITA OT 2.4, with a small DITA Map like this:

<!DOCTYPE map PUBLIC "-//OASIS//DTD DITA Map//EN" "map.dtd">

<map>

<topicref format="markdown" href="markdowntest.md"/>

</map>

and a markdown file "m... | 1.0 | "uri and file may not be null" when having a reference to a Markdown file in DITA Map + preprocessing plugin installed - Having the "com.elovirta.dita.markdown_1.1.0" plugin installed in DITA OT 2.4, with a small DITA Map like this:

<!DOCTYPE map PUBLIC "-//OASIS//DTD DITA Map//EN" "map.dtd">

... | process | uri and file may not be null when having a reference to a markdown file in dita map preprocessing plugin installed having the com elovirta dita markdown plugin installed in dita ot with a small dita map like this and a markdown file ... | 1 |

93,468 | 11,782,471,328 | IssuesEvent | 2020-03-17 02:03:34 | greatnewcls/KNLWKKGOTE62XGFSVAZIMVUH | https://api.github.com/repos/greatnewcls/KNLWKKGOTE62XGFSVAZIMVUH | reopened | kdy1odzo9IbSN7E381YyfxPUVBnCa6jEjLbHOnsDql2EgBOV+5AOj/ssG4vXzI844dDC2UdZ4Wq8jc8tOHwC8YFQ3XH42uiIKAhbLQ/qGFlLbBAeL9ZOjpWY3v66kwnyYAk6/TrjMSEVQB5xywqbJUD4xKiaQE424+mdzkxRvaM= | design | PVxJWv0+wuWJMM2i7gwPZnKMAGJVavU2SowxS5l3nMi845JrgLpyALhSwUPoUWbPMnJQxg/adiBCaKOglWFd0zPEI7nlI3MFVEDzxxexK4uWtIG27qhXete36XxGkiobM1xtkimC4b/w/dgjYQc//V3m+/P7yEA7kdgX07i3cs/Ay/CZTYmo1KBM38KlZWUlH+X3FRY1ZYtZ8nT526YwV9Sql0y5Tm/NhVJg0aEBhhy85dMQTAK/SlzKlR87yyIgwMvwmU2JqNSgTN/CpWVlJYXsAgjGSLTtgo6NRHK+qrU7h1ZM812lY0utBz57Lklg... | 1.0 | kdy1odzo9IbSN7E381YyfxPUVBnCa6jEjLbHOnsDql2EgBOV+5AOj/ssG4vXzI844dDC2UdZ4Wq8jc8tOHwC8YFQ3XH42uiIKAhbLQ/qGFlLbBAeL9ZOjpWY3v66kwnyYAk6/TrjMSEVQB5xywqbJUD4xKiaQE424+mdzkxRvaM= - PVxJWv0+wuWJMM2i7gwPZnKMAGJVavU2SowxS5l3nMi845JrgLpyALhSwUPoUWbPMnJQxg/adiBCaKOglWFd0zPEI7nlI3MFVEDzxxexK4uWtIG27qhXete36XxGkiobM1xtkimC4b/w/dgjY... | non_process | mdzkxrvam w dgjyqc ay uxmnray d ypty jltkjvmsigv ay dctn q cpwvljdpxm | 0 |

7,784 | 10,924,743,613 | IssuesEvent | 2019-11-22 10:48:38 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | Array of tags for `docker-tag` post processor. | enhancement good first issue post-processor/docker-tag | Hello,

I create a new issue, copy of ##2097 to be able to tag a Docker image with several tags.

#### Feature Description

Be able to tag a Docker image with several tags using docker-tag post-processor. Currently, it's possible to use only one tag.

#### Use Case(s)

I want to tag a Docker image with severa... | 1.0 | Array of tags for `docker-tag` post processor. - Hello,

I create a new issue, copy of ##2097 to be able to tag a Docker image with several tags.

#### Feature Description

Be able to tag a Docker image with several tags using docker-tag post-processor. Currently, it's possible to use only one tag.

#### Use C... | process | array of tags for docker tag post processor hello i create a new issue copy of to be able to tag a docker image with several tags feature description be able to tag a docker image with several tags using docker tag post processor currently it s possible to use only one tag use case... | 1 |

13,923 | 16,679,957,599 | IssuesEvent | 2021-06-07 21:40:57 | google/android-fhir | https://api.github.com/repos/google/android-fhir | closed | Regarding android-fhir client | process | **Describe the Issue**

[https://github.com/google/android-fhir/wiki/GSoC-Project-Ideas#android-fhir-client](url)

**There are few doubts regarding the project.**

1. I think the project will act as a combination of reference app and dc gallery app? am I correct here. How should we get started with it?

2. Should w... | 1.0 | Regarding android-fhir client - **Describe the Issue**

[https://github.com/google/android-fhir/wiki/GSoC-Project-Ideas#android-fhir-client](url)

**There are few doubts regarding the project.**

1. I think the project will act as a combination of reference app and dc gallery app? am I correct here. How should we g... | process | regarding android fhir client describe the issue url there are few doubts regarding the project i think the project will act as a combination of reference app and dc gallery app am i correct here how should we get started with it should we make a sample design for the app | 1 |

2,280 | 5,106,939,704 | IssuesEvent | 2017-01-05 13:22:47 | Hurence/logisland | https://api.github.com/repos/Hurence/logisland | opened | design a facial recognition processor with Convolutional Neural Network | feature help wanted processor | need a lot of design

https://opendatascience.com/blog/an-intuitive-explanation-of-convolutional-neural-networks/ | 1.0 | design a facial recognition processor with Convolutional Neural Network - need a lot of design

https://opendatascience.com/blog/an-intuitive-explanation-of-convolutional-neural-networks/ | process | design a facial recognition processor with convolutional neural network need a lot of design | 1 |

312,917 | 23,447,840,556 | IssuesEvent | 2022-08-15 21:40:50 | Interacao-Humano-Computador/2022.1-Faculdade-de-Arquitetura-e-Urbanismo | https://api.github.com/repos/Interacao-Humano-Computador/2022.1-Faculdade-de-Arquitetura-e-Urbanismo | closed | Criar documento do planejamento da avaliação do protótipo de papel | documentation entrega5 | Realizar criação do documento do planejamento da avaliação do protótipo de papel | 1.0 | Criar documento do planejamento da avaliação do protótipo de papel - Realizar criação do documento do planejamento da avaliação do protótipo de papel | non_process | criar documento do planejamento da avaliação do protótipo de papel realizar criação do documento do planejamento da avaliação do protótipo de papel | 0 |

162,613 | 13,892,943,180 | IssuesEvent | 2020-10-19 12:54:29 | GroupOneIncorporated/acme-infrastructure | https://api.github.com/repos/GroupOneIncorporated/acme-infrastructure | closed | Add README files. | documentation | - Add/fix README with instructions for terraglue script

- Add README with instructions for Ansible

- Add README with instructions for ssh config

- Add README with instructions for rke

- Add README with instructions for k8s | 1.0 | Add README files. - - Add/fix README with instructions for terraglue script

- Add README with instructions for Ansible

- Add README with instructions for ssh config

- Add README with instructions for rke

- Add README with instructions for k8s | non_process | add readme files add fix readme with instructions for terraglue script add readme with instructions for ansible add readme with instructions for ssh config add readme with instructions for rke add readme with instructions for | 0 |

594,609 | 18,049,237,075 | IssuesEvent | 2021-09-19 12:56:58 | AY2122S1-CS2103T-W17-1/tp | https://api.github.com/repos/AY2122S1-CS2103T-W17-1/tp | opened | Complete an application | type.Story :book: priority.Medium :2nd_place_medal: | As a user, I want to mark a specified entry in my application list as completed, so that I can be clear about the status of each application. | 1.0 | Complete an application - As a user, I want to mark a specified entry in my application list as completed, so that I can be clear about the status of each application. | non_process | complete an application as a user i want to mark a specified entry in my application list as completed so that i can be clear about the status of each application | 0 |

552,714 | 16,253,765,573 | IssuesEvent | 2021-05-08 00:14:59 | apcountryman/picolibrary-microchip-megaavr0 | https://api.github.com/repos/apcountryman/picolibrary-microchip-megaavr0 | closed | Add PORT peripheral based GPIO push-pull I/O pin | priority-normal status-awaiting_development type-feature | Add PORT peripheral based GPIO push-pull I/O pin (`::picolibrary::Microchip::megaAVR0::GPIO::Push_Pull_IO_Pin<::picolibrary::Microchip::megaAVR0::Peripheral::PORT>`).

- [ ] The PORT peripheral based GPIO push-pull I/O pin should be defined in the `include/picolibrary/microchip/megaavr0/gpio.h`/`source/picolibrary/micr... | 1.0 | Add PORT peripheral based GPIO push-pull I/O pin - Add PORT peripheral based GPIO push-pull I/O pin (`::picolibrary::Microchip::megaAVR0::GPIO::Push_Pull_IO_Pin<::picolibrary::Microchip::megaAVR0::Peripheral::PORT>`).

- [ ] The PORT peripheral based GPIO push-pull I/O pin should be defined in the `include/picolibrary/... | non_process | add port peripheral based gpio push pull i o pin add port peripheral based gpio push pull i o pin picolibrary microchip gpio push pull io pin the port peripheral based gpio push pull i o pin should be defined in the include picolibrary microchip gpio h source picolibrary microchip gpio cc h... | 0 |

254,562 | 21,793,877,447 | IssuesEvent | 2022-05-15 10:29:05 | suterma/replayer-pwa | https://api.github.com/repos/suterma/replayer-pwa | closed | Opening the linked compilations in the About page does not work | bug Regression Test | No compilation is actually loaded, although it should. | 1.0 | Opening the linked compilations in the About page does not work - No compilation is actually loaded, although it should. | non_process | opening the linked compilations in the about page does not work no compilation is actually loaded although it should | 0 |

38,305 | 6,667,002,815 | IssuesEvent | 2017-10-03 10:40:09 | facebook/react | https://api.github.com/repos/facebook/react | closed | [website] Buttons in Live Code sections have bad styling | Component: Documentation & Website Difficulty: beginner | On the new site, the button in the "todo" example looks strange (non-standard):

Generally this happens when border styling is overridden and no nice styling is applied to the button. This appears to be the case here. Removing `border-color: inherit` fixes the sty... | 1.0 | [website] Buttons in Live Code sections have bad styling - On the new site, the button in the "todo" example looks strange (non-standard):

Generally this happens when border styling is overridden and no nice styling is applied to the button. This appears to be th... | non_process | buttons in live code sections have bad styling on the new site the button in the todo example looks strange non standard generally this happens when border styling is overridden and no nice styling is applied to the button this appears to be the case here removing border color inherit fixes the ... | 0 |

91,729 | 3,862,260,022 | IssuesEvent | 2016-04-08 01:30:57 | Captianrock/android_PV | https://api.github.com/repos/Captianrock/android_PV | closed | Restructure Database | High Priority | Database should have multiple tables to account for multiple users and multiple applications for multiple traces done on the web app | 1.0 | Restructure Database - Database should have multiple tables to account for multiple users and multiple applications for multiple traces done on the web app | non_process | restructure database database should have multiple tables to account for multiple users and multiple applications for multiple traces done on the web app | 0 |

21,541 | 29,864,298,223 | IssuesEvent | 2023-06-20 01:25:15 | cncf/tag-security | https://api.github.com/repos/cncf/tag-security | closed | Review existing frameworks wrt. cloud-native | assessment-process inactive | This has come up in the calls that referencing a standardized model and/or vocabulary might be useful. Here's a list of frameworks that we might consider in no particular order...none of these seems immediately applicable to CN projects, but can be mapped to CN or a derivative vocabulary/model can learn from these:

... | 1.0 | Review existing frameworks wrt. cloud-native - This has come up in the calls that referencing a standardized model and/or vocabulary might be useful. Here's a list of frameworks that we might consider in no particular order...none of these seems immediately applicable to CN projects, but can be mapped to CN or a deriv... | process | review existing frameworks wrt cloud native this has come up in the calls that referencing a standardized model and or vocabulary might be useful here s a list of frameworks that we might consider in no particular order none of these seems immediately applicable to cn projects but can be mapped to cn or a deriv... | 1 |

18,953 | 24,913,677,760 | IssuesEvent | 2022-10-30 06:04:29 | osamhack2022-v2/APP_FreshPlus_TakeCareMyRefrigerator | https://api.github.com/repos/osamhack2022-v2/APP_FreshPlus_TakeCareMyRefrigerator | closed | AI camera security issues | question imgae process | 군대내에서 카메라를 상시 설치해 놓는 것에 관하여 보안이슈가 제기될 수 있을 것으로 생각합니다.

개인적으로는

1. 냉장고 내부를 주로 볼 수 있도록 카메라가 설치되는 점

2. 카메라에서 찍히는 사진은 로컬외에 저장 및 처리되는 곳이 없다는 점

3. AI로 처리된 물품 위치만이 서버에 전송된다는 점

4.

정도로 대응을 하면 좋을 것 같습니다.

다른 좋은 아이디어 있으시면 제시해주시면 좋겠습니다! | 1.0 | AI camera security issues - 군대내에서 카메라를 상시 설치해 놓는 것에 관하여 보안이슈가 제기될 수 있을 것으로 생각합니다.

개인적으로는

1. 냉장고 내부를 주로 볼 수 있도록 카메라가 설치되는 점

2. 카메라에서 찍히는 사진은 로컬외에 저장 및 처리되는 곳이 없다는 점

3. AI로 처리된 물품 위치만이 서버에 전송된다는 점

4.

정도로 대응을 하면 좋을 것 같습니다.

다른 좋은 아이디어 있으시면 제시해주시면 좋겠습니다! | process | ai camera security issues 군대내에서 카메라를 상시 설치해 놓는 것에 관하여 보안이슈가 제기될 수 있을 것으로 생각합니다 개인적으로는 냉장고 내부를 주로 볼 수 있도록 카메라가 설치되는 점 카메라에서 찍히는 사진은 로컬외에 저장 및 처리되는 곳이 없다는 점 ai로 처리된 물품 위치만이 서버에 전송된다는 점 정도로 대응을 하면 좋을 것 같습니다 다른 좋은 아이디어 있으시면 제시해주시면 좋겠습니다 | 1 |

20,111 | 26,650,714,509 | IssuesEvent | 2023-01-25 13:29:35 | MPMG-DCC-UFMG/C01 | https://api.github.com/repos/MPMG-DCC-UFMG/C01 | closed | Correção da exceção: '_UnixSelectorEventLoop' object has no attribute '_closed' em coletas dinâmicas | [1] Bug [2] Alta Prioridade [0] Desenvolvimento [3] Processamento Dinâmico | ## Comportamento Esperado

O sistema deve executar sem nenhum tipo de exceção, porém, como apontado na descrição da issue #782, em algumas coletas essa exceção é gerada. Embora seja ignorada pelo sistema.

## Comportamento atual

A exceção citada ocorre em algumas coletas, conforme apontado na issue #782.

## P... | 1.0 | Correção da exceção: '_UnixSelectorEventLoop' object has no attribute '_closed' em coletas dinâmicas - ## Comportamento Esperado

O sistema deve executar sem nenhum tipo de exceção, porém, como apontado na descrição da issue #782, em algumas coletas essa exceção é gerada. Embora seja ignorada pelo sistema.

## Comp... | process | correção da exceção unixselectoreventloop object has no attribute closed em coletas dinâmicas comportamento esperado o sistema deve executar sem nenhum tipo de exceção porém como apontado na descrição da issue em algumas coletas essa exceção é gerada embora seja ignorada pelo sistema compor... | 1 |

17,976 | 24,822,868,676 | IssuesEvent | 2022-10-25 17:57:32 | elementor/elementor | https://api.github.com/repos/elementor/elementor | closed | [⌛ Awaiting Feedback] 🧐 Possible Bug: Icon Box not able to display its own uploaded SVG | compatibility/3rd_party status/needs_feedback compatibility/assets component/icon-box | ### Prerequisites

- [X] I have searched for similar issues in both open and closed tickets and cannot find a duplicate.

- [X] The issue still exists against the latest stable version of Elementor.

### Description

I am working on a few pages that use a grid of Icon Box widgets. Since Elementor has not updated to Font... | True | [⌛ Awaiting Feedback] 🧐 Possible Bug: Icon Box not able to display its own uploaded SVG - ### Prerequisites

- [X] I have searched for similar issues in both open and closed tickets and cannot find a duplicate.

- [X] The issue still exists against the latest stable version of Elementor.

### Description

I am working ... | non_process | 🧐 possible bug icon box not able to display its own uploaded svg prerequisites i have searched for similar issues in both open and closed tickets and cannot find a duplicate the issue still exists against the latest stable version of elementor description i am working on a few pages that use ... | 0 |

15,436 | 19,648,405,837 | IssuesEvent | 2022-01-10 01:38:33 | plazi/community | https://api.github.com/repos/plazi/community | closed | to be processed: 10.1080/00305316.2021.2023056 | process request | * please process, including holotype and GBIF

First record of the ant genus Agraulomyrmex Prins, 1983 (Formicidae: Formicinae) from India, with description of a new species

[oritentalInsects.56.2021.2023056.pdf](https://github.com/plazi/community/files/7835576/oritentalInsects.56.2021.2023056.pdf)

| 1.0 | to be processed: 10.1080/00305316.2021.2023056 - * please process, including holotype and GBIF

First record of the ant genus Agraulomyrmex Prins, 1983 (Formicidae: Formicinae) from India, with description of a new species

[oritentalInsects.56.2021.2023056.pdf](https://github.com/plazi/community/files/7835576/oriten... | process | to be processed please process including holotype and gbif first record of the ant genus agraulomyrmex prins formicidae formicinae from india with description of a new species | 1 |

19,204 | 25,338,614,036 | IssuesEvent | 2022-11-18 19:11:37 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | How to get valgrind xml report | under investigation type: support / not a bug (process) team-OSS | Bazel version is 5.3.1. Here is my test job

`bazel test --test_verbose_timeout_warnings --run_under='valgrind --tool=memcheck --leak-check=full --xml=yes --xml-file=valgrind.xml' --test_output=streamed //...`

The tests running is ok, but where is the valgrind.xml that should be produced?

Anyone can help? Thanks. | 1.0 | How to get valgrind xml report - Bazel version is 5.3.1. Here is my test job

`bazel test --test_verbose_timeout_warnings --run_under='valgrind --tool=memcheck --leak-check=full --xml=yes --xml-file=valgrind.xml' --test_output=streamed //...`

The tests running is ok, but where is the valgrind.xml that should be produ... | process | how to get valgrind xml report bazel version is here is my test job bazel test test verbose timeout warnings run under valgrind tool memcheck leak check full xml yes xml file valgrind xml test output streamed the tests running is ok but where is the valgrind xml that should be produ... | 1 |

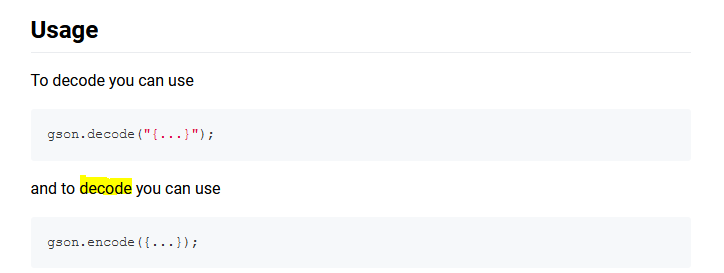

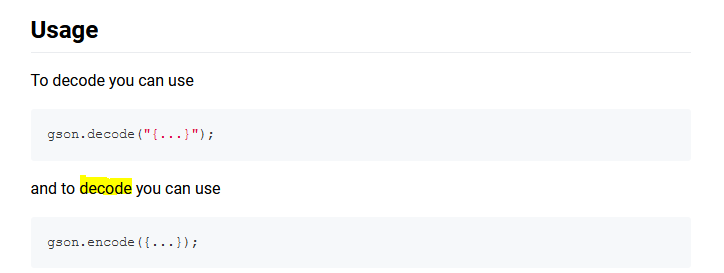

108,666 | 11,597,868,741 | IssuesEvent | 2020-02-24 21:47:13 | MinimineLP/dart-gson | https://api.github.com/repos/MinimineLP/dart-gson | closed | Documentation Error | documentation | In your documentation you put decode where I think you meant encode.

| 1.0 | Documentation Error - In your documentation you put decode where I think you meant encode.

| non_process | documentation error in your documentation you put decode where i think you meant encode | 0 |