Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

10,603 | 13,429,439,759 | IssuesEvent | 2020-09-07 01:50:31 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | Support ENUM / SET / BIT in Coprocessor | PCP-S1 difficulty/hard sig/coprocessor status/help-wanted | ## Description

The Coprocessor does not support the ENUM / SET / BIT type in the current implementation, which cause some expressions cannot be pushed down if the expression contains the ENUM / SET / BIT type. We can improve this situation by implementing ENUM / SET / BIT operations.

## Difficulty

- Hard

#... | 1.0 | Support ENUM / SET / BIT in Coprocessor - ## Description

The Coprocessor does not support the ENUM / SET / BIT type in the current implementation, which cause some expressions cannot be pushed down if the expression contains the ENUM / SET / BIT type. We can improve this situation by implementing ENUM / SET / BIT o... | process | support enum set bit in coprocessor description the coprocessor does not support the enum set bit type in the current implementation which cause some expressions cannot be pushed down if the expression contains the enum set bit type we can improve this situation by implementing enum set bit o... | 1 |

15,703 | 19,848,413,026 | IssuesEvent | 2022-01-21 09:32:04 | ooi-data/CE07SHSM-SBD12-05-WAVSSA000-recovered_host-wavss_a_dcl_motion_recovered | https://api.github.com/repos/ooi-data/CE07SHSM-SBD12-05-WAVSSA000-recovered_host-wavss_a_dcl_motion_recovered | opened | 🛑 Processing failed: ValueError | process | ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T09:32:03.501616.

## Details

Flow name: `CE07SHSM-SBD12-05-WAVSSA000-recovered_host-wavss_a_dcl_motion_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)... | 1.0 | 🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T09:32:03.501616.

## Details

Flow name: `CE07SHSM-SBD12-05-WAVSSA000-recovered_host-wavss_a_dcl_motion_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough v... | process | 🛑 processing failed valueerror overview valueerror found in processing task task during run ended on details flow name recovered host wavss a dcl motion recovered task name processing task error type valueerror error message not enough values to unpack expected got ... | 1 |

197,602 | 14,935,429,046 | IssuesEvent | 2021-01-25 11:56:50 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | test: kernel: Test kernel.common.stack_protection_arm_fpu_sharing.fatal fails on nrf52 platforms | area: Kernel area: Tests bug platform: nRF priority: medium | **Describe the bug**

The test kernel.common.kernel.common.stack_protection_arm_fpu_sharing.fatal fails on nrf52 platforms.

**To Reproduce**

Steps to reproduce the behavior:

1. go to zephyr dir

2. have nrf52 platform connected (e.g. nrf52dk_nrf52832)

3. run `./scripts/sanitycheck --device-testing -T tests/kerne... | 1.0 | test: kernel: Test kernel.common.stack_protection_arm_fpu_sharing.fatal fails on nrf52 platforms - **Describe the bug**

The test kernel.common.kernel.common.stack_protection_arm_fpu_sharing.fatal fails on nrf52 platforms.

**To Reproduce**

Steps to reproduce the behavior:

1. go to zephyr dir

2. have nrf52 platfo... | non_process | test kernel test kernel common stack protection arm fpu sharing fatal fails on platforms describe the bug the test kernel common kernel common stack protection arm fpu sharing fatal fails on platforms to reproduce steps to reproduce the behavior go to zephyr dir have platform connected... | 0 |

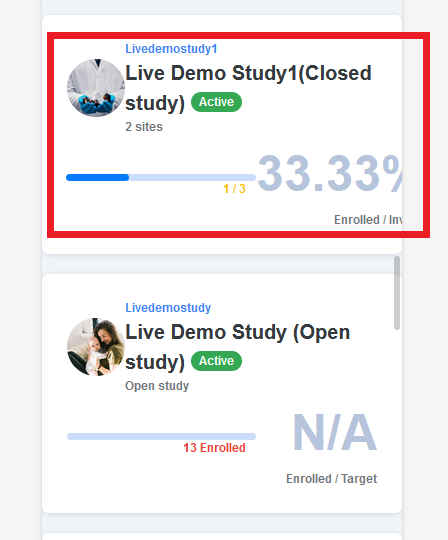

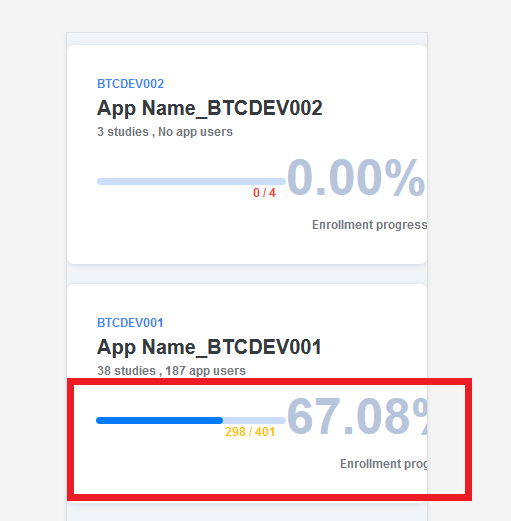

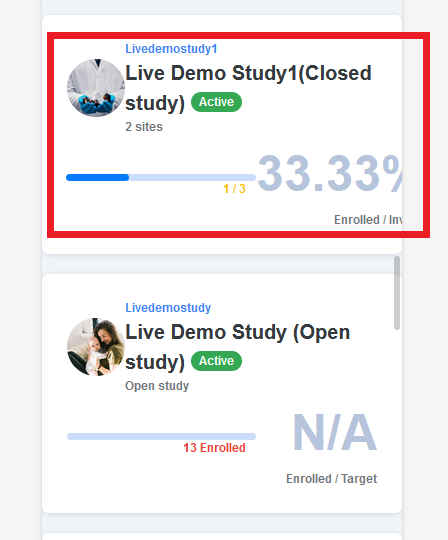

13,883 | 16,654,740,624 | IssuesEvent | 2021-06-05 10:07:48 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Responsive issues in Studies and Apps tab > UI issues | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | Responsive issues in Studies tab > UI issues

1. Studies tab

2. Apps app

| 3.0 | [PM] Responsive issues in Studies and Apps tab > UI issues - Responsive issues in Studies tab > UI issues

1. Studies tab

2. Apps app

- [x] ... | 2.0 | Check components that aren't yet wired up - As seen in #5725, it is possible that components that have been completed already aren't wired up in the list of components. Currently, this seems to be the list of exporters and receivers that haven't been wired up yet as of 29-Nov-2022:

- [x] logicmonitorexporter (under ... | process | check components that aren t yet wired up as seen in it is possible that components that have been completed already aren t wired up in the list of components currently this seems to be the list of exporters and receivers that haven t been wired up yet as of nov logicmonitorexporter under developme... | 1 |

332,403 | 24,342,161,836 | IssuesEvent | 2022-10-01 21:06:23 | Blockception/Minecraft-bedrock-json-schemas | https://api.github.com/repos/Blockception/Minecraft-bedrock-json-schemas | closed | Check entity ai behaviour: minecraft:behavior.circle_around_anchor | documentation help wanted Hacktoberfest | Check if the ai behaviour: minecraft:behavior.circle_around_anchor is update to date | 1.0 | Check entity ai behaviour: minecraft:behavior.circle_around_anchor - Check if the ai behaviour: minecraft:behavior.circle_around_anchor is update to date | non_process | check entity ai behaviour minecraft behavior circle around anchor check if the ai behaviour minecraft behavior circle around anchor is update to date | 0 |

17,571 | 23,384,503,206 | IssuesEvent | 2022-08-11 12:40:30 | MicrosoftDocs/windows-uwp | https://api.github.com/repos/MicrosoftDocs/windows-uwp | closed | URI for a settings page is missing | uwp/prod processes-and-threading/tech Pri1 | In the list of settings pages and URIs there is no listing for the page "Bluetooth & devices".

Both "ms-settings:bluetooth" and "ms-settings:connecteddevices" will open "Bluetooth & devices > Devices"

I dont know if there is no URI for this page or it is missing from the list.

But a URI for this page would be ver... | 1.0 | URI for a settings page is missing - In the list of settings pages and URIs there is no listing for the page "Bluetooth & devices".

Both "ms-settings:bluetooth" and "ms-settings:connecteddevices" will open "Bluetooth & devices > Devices"

I dont know if there is no URI for this page or it is missing from the list.

... | process | uri for a settings page is missing in the list of settings pages and uris there is no listing for the page bluetooth devices both ms settings bluetooth and ms settings connecteddevices will open bluetooth devices devices i dont know if there is no uri for this page or it is missing from the list ... | 1 |

32,750 | 7,588,647,687 | IssuesEvent | 2018-04-26 02:52:13 | shawkinsl/mtga-tracker | https://api.github.com/repos/shawkinsl/mtga-tracker | opened | travis-ci tests should use a local database | code-cleanup dogfood enhancement good first issue help wanted task | Main benefit is that we could run tests concurrently (if Travis allows) | 1.0 | travis-ci tests should use a local database - Main benefit is that we could run tests concurrently (if Travis allows) | non_process | travis ci tests should use a local database main benefit is that we could run tests concurrently if travis allows | 0 |

12,025 | 14,738,536,031 | IssuesEvent | 2021-01-07 05:02:40 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Keener - simple question - email customer/account | anc-external anc-ops anc-process anp-0.5 ant-bug ant-support | In GitLab by @kdjstudios on Jun 8, 2018, 12:57

**Submitted by:** Gaylan Garrett <gaylan@keenercom.net>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-06-08-44383/conversation

**Server:** External (Both)

**Client/Site:** Keener (All)

**Account:** NA

**Issue:**

I was curious if you have o... | 1.0 | Keener - simple question - email customer/account - In GitLab by @kdjstudios on Jun 8, 2018, 12:57

**Submitted by:** Gaylan Garrett <gaylan@keenercom.net>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-06-08-44383/conversation

**Server:** External (Both)

**Client/Site:** Keener (All)

**Acc... | process | keener simple question email customer account in gitlab by kdjstudios on jun submitted by gaylan garrett helpdesk server external both client site keener all account na issue i was curious if you have one email in customer and a different email in account ... | 1 |

12,721 | 15,093,600,186 | IssuesEvent | 2021-02-07 01:28:57 | Maximus5/ConEmu | https://api.github.com/repos/Maximus5/ConEmu | closed | Executing batch scripts from FAR fails if command line contains quotes | processes | ### Versions

ConEmu build: 210128 x64

OS version: Windows 10 20H2 x64

Used shell version: FAR x64 3.0.0.5737

### Problem description

Starting with ConEmu 210128, executing batch files will instantly fail if the command line contains parameters with quotes.

Using ConEmu before 210128 does not have this bug (21... | 1.0 | Executing batch scripts from FAR fails if command line contains quotes - ### Versions

ConEmu build: 210128 x64

OS version: Windows 10 20H2 x64

Used shell version: FAR x64 3.0.0.5737

### Problem description

Starting with ConEmu 210128, executing batch files will instantly fail if the command line contains paramet... | process | executing batch scripts from far fails if command line contains quotes versions conemu build os version windows used shell version far problem description starting with conemu executing batch files will instantly fail if the command line contains parameters with quotes usi... | 1 |

61,560 | 14,630,196,351 | IssuesEvent | 2020-12-23 17:14:16 | silinternational/hipchat-php-client | https://api.github.com/repos/silinternational/hipchat-php-client | closed | CVE-2019-11358 (Medium) detected in jquery-1.11.3.min.js | security vulnerability | ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.11.3.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="h... | True | CVE-2019-11358 (Medium) detected in jquery-1.11.3.min.js - ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.11.3.min.js</b></p></summary>

<p>JavaScript libr... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to vulnerable library hipchat php client vendor phpunit php code coverage src codecoverage report... | 0 |

9,632 | 12,597,771,699 | IssuesEvent | 2020-06-11 00:53:07 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Training using “mp.spawn”, can not reproduce the training results | module: distributed module: multiprocessing triaged | I used a distributed training method. And use **mp.spawn(main_worker, nprocs=ngpus_per_node)** to open up multiple processes.But the random number seeds for each process are different. This has led me to fail to reproduce previous results. My code is as follows:

```

import torch

import torch.nn as nn

import tor... | 1.0 | Training using “mp.spawn”, can not reproduce the training results - I used a distributed training method. And use **mp.spawn(main_worker, nprocs=ngpus_per_node)** to open up multiple processes.But the random number seeds for each process are different. This has led me to fail to reproduce previous results. My code is ... | process | training using “mp spawn” can not reproduce the training results i used a distributed training method and use mp spawn main worker nprocs ngpus per node to open up multiple processes but the random number seeds for each process are different this has led me to fail to reproduce previous results my code is ... | 1 |

220,090 | 16,887,220,883 | IssuesEvent | 2021-06-23 02:59:11 | sideway/joi | https://api.github.com/repos/sideway/joi | opened | Document message templating | documentation | <!--

⚠️ ⚠️ ⚠️ ⚠️ ⚠️ ⚠️

You must complete this entire issue template to receive support. You MUST NOT remove, change, or replace the template with your own format. A missing or incomplete report will cause your issue to be closed without comment. Please respect the time and experience that went into this template.... | 1.0 | Document message templating - <!--

⚠️ ⚠️ ⚠️ ⚠️ ⚠️ ⚠️

You must complete this entire issue template to receive support. You MUST NOT remove, change, or replace the template with your own format. A missing or incomplete report will cause your issue to be closed without comment. Please respect the time and experience... | non_process | document message templating ⚠️ ⚠️ ⚠️ ⚠️ ⚠️ ⚠️ you must complete this entire issue template to receive support you must not remove change or replace the template with your own format a missing or incomplete report will cause your issue to be closed without comment please respect the time and experience... | 0 |

20,678 | 27,350,280,632 | IssuesEvent | 2023-02-27 09:05:33 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | opened | Extron IPCP Pro... | NOT YET PROCESSED | - [ ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control: IPCP PRO

What you would like to be able to make it do from Companion:

I want to send strings over etherne... | 1.0 | Extron IPCP Pro... - - [ ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control: IPCP PRO

What you would like to be able to make it do from Companion:

I want to send... | process | extron ipcp pro i have researched the list of existing companion modules and requests and have determined this has not yet been requested the name of the device hardware or software you would like to control ipcp pro what you would like to be able to make it do from companion i want to send s... | 1 |

737,188 | 25,505,037,882 | IssuesEvent | 2022-11-28 08:46:55 | bash-lsp/bash-language-server | https://api.github.com/repos/bash-lsp/bash-language-server | closed | VSCode: Repo with not really 21K shell scripts locks up language server in "Analyzing" loop | bug priority ⭐️ | I have a repo with a few submodules; nothing out of ordinary: gRPC, Kaldi, and a couple of lesser libs. gRPC has a dozen submodules on its own. Kaldi is written half in C++, half in Bash as a glue. gRPC and friends also have a non-negligible count of shell scripts. But some of these files are accounted multiple times, ... | 1.0 | VSCode: Repo with not really 21K shell scripts locks up language server in "Analyzing" loop - I have a repo with a few submodules; nothing out of ordinary: gRPC, Kaldi, and a couple of lesser libs. gRPC has a dozen submodules on its own. Kaldi is written half in C++, half in Bash as a glue. gRPC and friends also have a... | non_process | vscode repo with not really shell scripts locks up language server in analyzing loop i have a repo with a few submodules nothing out of ordinary grpc kaldi and a couple of lesser libs grpc has a dozen submodules on its own kaldi is written half in c half in bash as a glue grpc and friends also have a n... | 0 |

398,654 | 27,207,273,534 | IssuesEvent | 2023-02-20 14:01:44 | 5G-ERA/middleware | https://api.github.com/repos/5G-ERA/middleware | closed | Middleware - Create contribution guidelines | bug documentation | **Describe the bug**

Middleware lacks clear contribution guidelines and a roadmap.

An increased focus on the documentation has to be put in.

| 1.0 | Middleware - Create contribution guidelines - **Describe the bug**

Middleware lacks clear contribution guidelines and a roadmap.

An increased focus on the documentation has to be put in.

| non_process | middleware create contribution guidelines describe the bug middleware lacks clear contribution guidelines and a roadmap an increased focus on the documentation has to be put in | 0 |

13,101 | 15,496,326,445 | IssuesEvent | 2021-03-11 02:28:13 | dluiscosta/weather_api | https://api.github.com/repos/dluiscosta/weather_api | opened | Support on-demand custom execution of tests | development process enhancement | - Allow configuration of automatic testing upon Docker container startup;

- Provide an entrypoint to run tests by demand and allow usage of custom pytest parameters; | 1.0 | Support on-demand custom execution of tests - - Allow configuration of automatic testing upon Docker container startup;

- Provide an entrypoint to run tests by demand and allow usage of custom pytest parameters; | process | support on demand custom execution of tests allow configuration of automatic testing upon docker container startup provide an entrypoint to run tests by demand and allow usage of custom pytest parameters | 1 |

414,723 | 12,110,791,803 | IssuesEvent | 2020-04-21 11:03:21 | AbsaOSS/enceladus | https://api.github.com/repos/AbsaOSS/enceladus | opened | Add tag "Latest" to the latest version in version pickers | Menas UX feature priority: undecided under discussion | ## Feature

When picking a version add a tag latest next to the update date of the latest version

| 1.0 | Add tag "Latest" to the latest version in version pickers - ## Feature

When picking a version add a tag latest next to the update date of the latest version

| non_process | add tag latest to the latest version in version pickers feature when picking a version add a tag latest next to the update date of the latest version | 0 |

16,306 | 20,960,721,738 | IssuesEvent | 2022-03-27 19:05:27 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Vital Social Issues N' Stuff | suggested title in process | Please add as much of the following info as you can:

Title:Vital Social Issues N' Stuff

Type (film/tv show):Public access TV Show

Film or show in which it appears:Married with Children

Is the parent film/show streaming anywhere?Hulu

About when in the parent film/show does it appear?S6 Ep 9 10m:08s

sAc... | 1.0 | Vital Social Issues N' Stuff - Please add as much of the following info as you can:

Title:Vital Social Issues N' Stuff

Type (film/tv show):Public access TV Show

Film or show in which it appears:Married with Children

Is the parent film/show streaming anywhere?Hulu

About when in the parent film/show does ... | process | vital social issues n stuff please add as much of the following info as you can title vital social issues n stuff type film tv show public access tv show film or show in which it appears married with children is the parent film show streaming anywhere hulu about when in the parent film show does ... | 1 |

16,561 | 21,573,346,087 | IssuesEvent | 2022-05-02 11:00:34 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | [filebeat] Allow wildcards for drop_fields | enhancement Filebeat libbeat :Processors Team:Integrations | **Describe the enhancement:**

`drop_fields.fields` should support glob or regex patterns.

**Describe a specific use case for the enhancement or feature:**

I'm using the `add_docker_metadata` processor to extract container metadata, which also includes Docker labels. For some containers, there are quite a lot that... | 1.0 | [filebeat] Allow wildcards for drop_fields - **Describe the enhancement:**

`drop_fields.fields` should support glob or regex patterns.

**Describe a specific use case for the enhancement or feature:**

I'm using the `add_docker_metadata` processor to extract container metadata, which also includes Docker labels. Fo... | process | allow wildcards for drop fields describe the enhancement drop fields fields should support glob or regex patterns describe a specific use case for the enhancement or feature i m using the add docker metadata processor to extract container metadata which also includes docker labels for some co... | 1 |

582,555 | 17,364,391,642 | IssuesEvent | 2021-07-30 04:08:09 | neogcamp/platform-93by4 | https://api.github.com/repos/neogcamp/platform-93by4 | opened | Proposed: Link logo change on Student dashboard. | Priority: Low Type: Enhancement Type: good first issue | The logo on the dashboard for Submit your portfolio states it is an external link while it's not,

So I believe we need to change the logo to the logo in the picture below.

So I believe we need to c... | non_process | proposed link logo change on student dashboard the logo on the dashboard for submit your portfolio states it is an external link while it s not so i believe we need to change the logo to the logo in the picture below | 0 |

7,014 | 10,165,584,452 | IssuesEvent | 2019-08-07 14:11:15 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Why are the examples AzureRM command? | Pri2 automation/svc cxp process-automation/subsvc product-question triaged | Didn't Microsoft (you) replace the powershell AzureRM module with the powershell Az module.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 805a6236-70b7-7dd5-ac86-eea6efceff3a

* Version Independent ID: e8078e34-bdf0-32b1-fac4-550091f2a06a

*... | 1.0 | Why are the examples AzureRM command? - Didn't Microsoft (you) replace the powershell AzureRM module with the powershell Az module.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 805a6236-70b7-7dd5-ac86-eea6efceff3a

* Version Independent ID... | process | why are the examples azurerm command didn t microsoft you replace the powershell azurerm module with the powershell az module document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content ... | 1 |

104,667 | 11,418,292,383 | IssuesEvent | 2020-02-03 03:56:21 | vuetifyjs/vuetify | https://api.github.com/repos/vuetifyjs/vuetify | reopened | [Bug Report] [v-autocomplete] Wrong menu position on items change if it is already open | C: VSelect T: documentation | ### Environment

**Vuetify Version:** 2.2.8

**Vue Version:** 2.6.11

**Browsers:** Chrome 79.0.3945.117

**OS:** Mac OS 10.15.2

### Steps to reproduce

While the autocomplete menu is open and items of the autocomplete change (in the example from empty array to a 5 elements array), the menu doesn't change position u... | 1.0 | [Bug Report] [v-autocomplete] Wrong menu position on items change if it is already open - ### Environment

**Vuetify Version:** 2.2.8

**Vue Version:** 2.6.11

**Browsers:** Chrome 79.0.3945.117

**OS:** Mac OS 10.15.2

### Steps to reproduce

While the autocomplete menu is open and items of the autocomplete change (... | non_process | wrong menu position on items change if it is already open environment vuetify version vue version browsers chrome os mac os steps to reproduce while the autocomplete menu is open and items of the autocomplete change in the example from empty array to ... | 0 |

203,940 | 7,078,216,009 | IssuesEvent | 2018-01-10 02:19:14 | internetarchive/openlibrary | https://api.github.com/repos/internetarchive/openlibrary | opened | availability.js getUsersLoansAndWaitlists should be rolled back | blocker easy performance priority | A feature was started in `availability.js` which fetches a user's active waitlists to be used when checking `getAvailabilityV2` -- `getUsersLoansAndWaitlists`. This method is incomplete and should be rolled back and moved to its own branch (until correctly implemented, it just negatively impacts performance) | 1.0 | availability.js getUsersLoansAndWaitlists should be rolled back - A feature was started in `availability.js` which fetches a user's active waitlists to be used when checking `getAvailabilityV2` -- `getUsersLoansAndWaitlists`. This method is incomplete and should be rolled back and moved to its own branch (until correct... | non_process | availability js getusersloansandwaitlists should be rolled back a feature was started in availability js which fetches a user s active waitlists to be used when checking getusersloansandwaitlists this method is incomplete and should be rolled back and moved to its own branch until correctly implemented ... | 0 |

534,410 | 15,618,284,947 | IssuesEvent | 2021-03-20 00:33:22 | ainslec/adventuron-issue-tracker | https://api.github.com/repos/ainslec/adventuron-issue-tracker | closed | Be able to scan deeply inside an outer container | priority | Chris M's reminder about what he'd like to be able to do.

`This reminds me of a thing I asked ages ago and I don't think it was possible then (I but don't recall there being a clear answer). I was trying to check if any of the children of a container object had a particular trait. I ended up checking individual obj... | 1.0 | Be able to scan deeply inside an outer container - Chris M's reminder about what he'd like to be able to do.

`This reminds me of a thing I asked ages ago and I don't think it was possible then (I but don't recall there being a clear answer). I was trying to check if any of the children of a container object had a pa... | non_process | be able to scan deeply inside an outer container chris m s reminder about what he d like to be able to do this reminds me of a thing i asked ages ago and i don t think it was possible then i but don t recall there being a clear answer i was trying to check if any of the children of a container object had a pa... | 0 |

19,101 | 25,148,589,804 | IssuesEvent | 2022-11-10 08:11:56 | EBIvariation/eva-opentargets | https://api.github.com/repos/EBIvariation/eva-opentargets | closed | Evidence string generation for 2022.11 release | Processing | **Deadline for submission: 4 November 2022**

Refer to [documentation](https://github.com/EBIvariation/eva-opentargets/blob/master/docs/generate-evidence-strings.md) for full description of steps.

| 1.0 | Evidence string generation for 2022.11 release - **Deadline for submission: 4 November 2022**

Refer to [documentation](https://github.com/EBIvariation/eva-opentargets/blob/master/docs/generate-evidence-strings.md) for full description of steps.

| process | evidence string generation for release deadline for submission november refer to for full description of steps | 1 |

20,117 | 26,656,032,567 | IssuesEvent | 2023-01-25 16:55:39 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | Change the hashing algorithm for probabilistic sampler | enhancement good first issue processor/probabilisticsampler | In the probabilistic sampler, we seem to use a hashing algorithm of our own, instead of relying on a library to do so, as it's done in other modules. We should try to follow the guideline we have on this:

https://github.com/open-telemetry/opentelemetry-collector/blob/main/CONTRIBUTING.md#recommended-libraries--defau... | 1.0 | Change the hashing algorithm for probabilistic sampler - In the probabilistic sampler, we seem to use a hashing algorithm of our own, instead of relying on a library to do so, as it's done in other modules. We should try to follow the guideline we have on this:

https://github.com/open-telemetry/opentelemetry-collect... | process | change the hashing algorithm for probabilistic sampler in the probabilistic sampler we seem to use a hashing algorithm of our own instead of relying on a library to do so as it s done in other modules we should try to follow the guideline we have on this originally posted by moviestoreguy in | 1 |

27,120 | 11,439,781,743 | IssuesEvent | 2020-02-05 08:13:10 | kyma-project/test-infra | https://api.github.com/repos/kyma-project/test-infra | reopened | Prow Security | area/ci area/security ci/future | Renew Prow secrets.

`All Secret used in the Prow production cluster must be changed every six months. Follow Prow secret management to create a new key ring and new Secrets. Then, use Secrets populator to change all Secrets in the Prow cluster.`

`NOTE: The next Secrets changewas planned for August 1, 2019.`

ht... | True | Prow Security - Renew Prow secrets.

`All Secret used in the Prow production cluster must be changed every six months. Follow Prow secret management to create a new key ring and new Secrets. Then, use Secrets populator to change all Secrets in the Prow cluster.`

`NOTE: The next Secrets changewas planned for August... | non_process | prow security renew prow secrets all secret used in the prow production cluster must be changed every six months follow prow secret management to create a new key ring and new secrets then use secrets populator to change all secrets in the prow cluster note the next secrets changewas planned for august... | 0 |

21,369 | 29,202,226,676 | IssuesEvent | 2023-05-21 00:36:29 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Hibrido / Belo Horizonte, Minas Gerais, Brazil] Product Owner (Hibrido em Belo horizonte) na Coodesh | SALVADOR SCRUM REQUISITOS PROCESSOS GITHUB CI SEGURANÇA UMA LÓGICA DE PROGRAMAÇÃO METODOLOGIAS ÁGEIS PRODUCT OWNER BACKLOG HIBRIDO B2B ALOCADO Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/product-owner-hibrido-em-belo-horizon... | 1.0 | [Hibrido / Belo Horizonte, Minas Gerais, Brazil] Product Owner (Hibrido em Belo horizonte) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te ... | process | product owner hibrido em belo horizonte na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personaliza... | 1 |

3,946 | 6,886,371,433 | IssuesEvent | 2017-11-21 19:14:28 | mcellteam/neuropil_tools | https://api.github.com/repos/mcellteam/neuropil_tools | opened | Improve search function with regex in trace list | processor | Possible need to rectify with Blender regex pecularities | 1.0 | Improve search function with regex in trace list - Possible need to rectify with Blender regex pecularities | process | improve search function with regex in trace list possible need to rectify with blender regex pecularities | 1 |

6,189 | 9,103,768,287 | IssuesEvent | 2019-02-20 16:35:16 | googleapis/cloud-debug-nodejs | https://api.github.com/repos/googleapis/cloud-debug-nodejs | closed | Move samples to this repo | priority: p2 type: process | Hi there, our docs-samples repo still have some [debugger samples](https://github.com/GoogleCloudPlatform/nodejs-docs-samples/tree/master/debugger). For Node.js, all samples live with their client library if they have one. Can you move the samples over please. Thanks! cc @ofrobots | 1.0 | Move samples to this repo - Hi there, our docs-samples repo still have some [debugger samples](https://github.com/GoogleCloudPlatform/nodejs-docs-samples/tree/master/debugger). For Node.js, all samples live with their client library if they have one. Can you move the samples over please. Thanks! cc @ofrobots | process | move samples to this repo hi there our docs samples repo still have some for node js all samples live with their client library if they have one can you move the samples over please thanks cc ofrobots | 1 |

20,667 | 27,334,852,478 | IssuesEvent | 2023-02-26 03:51:00 | cse442-at-ub/project_s23-team-infinity | https://api.github.com/repos/cse442-at-ub/project_s23-team-infinity | closed | Create frontend documentation in order to collate and organize instructions for easier on-boarding and general guidance in the frontend. | IO Task Processing Task Sprint 1 UI Task | **Task Test**

*Test 1*

1) Research about what is React.js

2) Follow the tutorial from w3schools.com to understand how to set up the environment of React.js

3) Keep reading from the tutorial to understand the basic component of React.js

4) Set up the environment and checked if it works

5) Verify that the application I ... | 1.0 | Create frontend documentation in order to collate and organize instructions for easier on-boarding and general guidance in the frontend. - **Task Test**

*Test 1*

1) Research about what is React.js

2) Follow the tutorial from w3schools.com to understand how to set up the environment of React.js

3) Keep reading from the... | process | create frontend documentation in order to collate and organize instructions for easier on boarding and general guidance in the frontend task test test research about what is react js follow the tutorial from com to understand how to set up the environment of react js keep reading from the tutoria... | 1 |

10,662 | 13,453,183,584 | IssuesEvent | 2020-09-09 00:11:11 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | reopened | Workspaces are always cleaned. | Pri2 cba devops-cicd-process/tech devops/prod doc-bug needs-more-info | In the documentation it's stated that:

> “When you run a pipeline on a self-hosted agent, by default, none of the sub-directories are cleaned in between two consecutive runs.”

is not what I am observing. Looking at my build it seems that outputs are cleaned:

```

##[command]git version

git version 2.26.2

##[... | 1.0 | Workspaces are always cleaned. - In the documentation it's stated that:

> “When you run a pipeline on a self-hosted agent, by default, none of the sub-directories are cleaned in between two consecutive runs.”

is not what I am observing. Looking at my build it seems that outputs are cleaned:

```

##[command]git... | process | workspaces are always cleaned in the documentation it s stated that “when you run a pipeline on a self hosted agent by default none of the sub directories are cleaned in between two consecutive runs ” is not what i am observing looking at my build it seems that outputs are cleaned git version... | 1 |

329,793 | 28,308,814,574 | IssuesEvent | 2023-04-10 13:39:40 | envoyproxy/envoy | https://api.github.com/repos/envoyproxy/envoy | opened | CI flake in building docker images | bug area/test flakes area/docker area/ci | This seems to be a new flake in the docker publishing, altho i may have seen previously

```console

10 | ARG TARGETPLATFORM

11 | ENV TARGETPLATFORM="${TARGETPLATFORM:-linux/amd64}"

12 | >>> ADD "${TARGETPLATFORM}/release.tar.zst" /usr/local/bin/

13 |

14 |

--------------------

ERRO... | 1.0 | CI flake in building docker images - This seems to be a new flake in the docker publishing, altho i may have seen previously

```console

10 | ARG TARGETPLATFORM

11 | ENV TARGETPLATFORM="${TARGETPLATFORM:-linux/amd64}"

12 | >>> ADD "${TARGETPLATFORM}/release.tar.zst" /usr/local/bin/

13 |

... | non_process | ci flake in building docker images this seems to be a new flake in the docker publishing altho i may have seen previously console arg targetplatform env targetplatform targetplatform linux add targetplatform release tar zst usr local bin ... | 0 |

369,996 | 10,923,821,975 | IssuesEvent | 2019-11-22 08:46:02 | bounswe/bounswe2019group6 | https://api.github.com/repos/bounswe/bounswe2019group6 | closed | Deciding "Trading Equipments" Endpoints | priority:high related:android related:backend related:frontend type:discussion / question | Hi fellows,

Observing the previous endpoints changes, I think we should discuss about how endpoints should be defined and the what data they should include in themselves. First of all, I request to Backend team about their opinion about these two points which should be considered according to Project Requirements.

... | 1.0 | Deciding "Trading Equipments" Endpoints - Hi fellows,

Observing the previous endpoints changes, I think we should discuss about how endpoints should be defined and the what data they should include in themselves. First of all, I request to Backend team about their opinion about these two points which should be consi... | non_process | deciding trading equipments endpoints hi fellows observing the previous endpoints changes i think we should discuss about how endpoints should be defined and the what data they should include in themselves first of all i request to backend team about their opinion about these two points which should be consi... | 0 |

19,325 | 25,472,123,761 | IssuesEvent | 2022-11-25 11:05:46 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [IDP] [PM] UI issue in the admin details screen | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | **Pre-condition:** mfa should be disabled in the PM

**Steps:**

1. Login to PM

2. Click on 'Admins' tab

3. Edit admin in the list and Verify

**AR:** UI issue is observed in the admin details screen

**ER:** All the field should be aligned properly

url = env("PRISMA_URL")

}

generator photon {

provider = "photonjs"

}

model Role {

id String @default(cuid()) @id

role String @unique

}

```

and `package.json`

```json

{

"private": true,

"scripts"... | 1.0 | Lift and Photon search for database in different folders - Given a schema

```groovy

datasource db {

provider = env("PRISMA_PROVIDER")

url = env("PRISMA_URL")

}

generator photon {

provider = "photonjs"

}

model Role {

id String @default(cuid()) @id

role String @unique

}

```

and `p... | process | lift and photon search for database in different folders given a schema groovy datasource db provider env prisma provider url env prisma url generator photon provider photonjs model role id string default cuid id role string unique and p... | 1 |

5,858 | 31,388,243,560 | IssuesEvent | 2023-08-26 02:42:27 | backdrop-contrib/rules | https://api.github.com/repos/backdrop-contrib/rules | closed | Error: Class 'RulesLog' not found in rules_update_1000() | type - bug pr - maintainer review requested | I'm trying to Migrate a Drupal 7.92 site using Rules 7.x-2.13 to a fresh Backdrop 1.23.0 site using Rules 1.x-2.3.2. When I get to the core/update.php step I'm getting an error:

```Internal Server Error ResponseText: Error: Class 'RulesLog' not found in rules_update_1000() (line 205 of /var/www/html/modules/contrib/... | True | Error: Class 'RulesLog' not found in rules_update_1000() - I'm trying to Migrate a Drupal 7.92 site using Rules 7.x-2.13 to a fresh Backdrop 1.23.0 site using Rules 1.x-2.3.2. When I get to the core/update.php step I'm getting an error:

```Internal Server Error ResponseText: Error: Class 'RulesLog' not found in rule... | non_process | error class ruleslog not found in rules update i m trying to migrate a drupal site using rules x to a fresh backdrop site using rules x when i get to the core update php step i m getting an error internal server error responsetext error class ruleslog not found in rules upda... | 0 |

22,081 | 30,603,767,686 | IssuesEvent | 2023-07-22 18:29:06 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | roblox-pyc 1.21.89 has 5 GuardDog issues | guarddog silent-process-execution | https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.21.89",

"result": {

"issues": 5,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "roblox-pyc-1.21.89/robloxpyc/robloxpy.py:133"... | 1.0 | roblox-pyc 1.21.89 has 5 GuardDog issues - https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.21.89",

"result": {

"issues": 5,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "ro... | process | roblox pyc has guarddog issues dependency roblox pyc version result issues errors results silent process execution location roblox pyc robloxpyc robloxpy py code subprocess call ... | 1 |

72,972 | 8,797,386,756 | IssuesEvent | 2018-12-23 18:58:16 | mohitkh7/Easy-Learning | https://api.github.com/repos/mohitkh7/Easy-Learning | closed | Design 404 Error Page | Design Easy | Design a new template for custom 404 Error Page.

Update the file `learn/templates/404.html` using bootstrap. | 1.0 | Design 404 Error Page - Design a new template for custom 404 Error Page.

Update the file `learn/templates/404.html` using bootstrap. | non_process | design error page design a new template for custom error page update the file learn templates html using bootstrap | 0 |

21,927 | 30,446,558,931 | IssuesEvent | 2023-07-15 18:48:27 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | pyutils 0.0.1b13 has 2 GuardDog issues | guarddog typosquatting silent-process-execution | https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b13",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt:... | 1.0 | pyutils 0.0.1b13 has 2 GuardDog issues - https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b13",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package nam... | process | pyutils has guarddog issues dependency pyutils version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt pytils python utils silen... | 1 |

736,968 | 25,494,695,996 | IssuesEvent | 2022-11-27 14:28:37 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | opened | Mobile: Implementing Search Page | enhancement priority-high status-new mobile | ### Issue Description

I will create search screen for our mobile app. There will be search bar and results will be separated into two sections: Users and Learnifies. Further implementations details can be found under the PR.

### Step Details

Steps that will be performed:

- [ ] Building app bar

- [ ] Building searc... | 1.0 | Mobile: Implementing Search Page - ### Issue Description

I will create search screen for our mobile app. There will be search bar and results will be separated into two sections: Users and Learnifies. Further implementations details can be found under the PR.

### Step Details

Steps that will be performed:

- [ ] Bui... | non_process | mobile implementing search page issue description i will create search screen for our mobile app there will be search bar and results will be separated into two sections users and learnifies further implementations details can be found under the pr step details steps that will be performed build... | 0 |

3,974 | 6,904,988,328 | IssuesEvent | 2017-11-27 03:49:40 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | New options for getBalance and getTokenBal | status-inprocess tools-getBalance tools-getTokenBal type-enhancement | Need to add --showOnlyChange in getBalance and getTokenBal | 1.0 | New options for getBalance and getTokenBal - Need to add --showOnlyChange in getBalance and getTokenBal | process | new options for getbalance and gettokenbal need to add showonlychange in getbalance and gettokenbal | 1 |

662,685 | 22,149,623,461 | IssuesEvent | 2022-06-03 15:26:23 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Button to open Specs panel is hard to see | unification stage: internal bug-hunt epic:ui-ux-improvements jira-migration fast-follows-1 priority: medium | ## **Summary**

The expandable specs icon is very hard to see and may also not meet accessibility requirements.I am unsure if the feedback from a month or so ago was addressed regarding overall accessibility issues for the new test runner UI.

!image (48).png|width=108,height=141!

!image (49).png|width=106,height=58!

... | 1.0 | Button to open Specs panel is hard to see - ## **Summary**

The expandable specs icon is very hard to see and may also not meet accessibility requirements.I am unsure if the feedback from a month or so ago was addressed regarding overall accessibility issues for the new test runner UI.

!image (48).png|width=108,height=... | non_process | button to open specs panel is hard to see summary the expandable specs icon is very hard to see and may also not meet accessibility requirements i am unsure if the feedback from a month or so ago was addressed regarding overall accessibility issues for the new test runner ui image png width height ... | 0 |

18,734 | 24,632,057,549 | IssuesEvent | 2022-10-17 03:37:08 | Sunbird-cQube/community | https://api.github.com/repos/Sunbird-cQube/community | closed | Configuration Testing | enhancement Processing Analyze | Configuration testing with positive and negative scenarios and capturing the output in the document | 1.0 | Configuration Testing - Configuration testing with positive and negative scenarios and capturing the output in the document | process | configuration testing configuration testing with positive and negative scenarios and capturing the output in the document | 1 |

77,367 | 9,993,395,215 | IssuesEvent | 2019-07-11 15:16:21 | opengeospatial/LANDRS | https://api.github.com/repos/opengeospatial/LANDRS | closed | Add new proposed OAS for LANDRS coverages | documentation enhancement help wanted | Starting with an [OpenAPI document](https://github.com/opengeospatial/LANDRS/tree/master/DesignDocs/DesignHack1/openapi/openapi.yaml) largely influenced by the [OGC Coverages OpenAPI](https://github.com/opengeospatial/ogc_api_coverages/blob/master/core/openapi/openapi.yaml) we will determine whether the API meets a pre... | 1.0 | Add new proposed OAS for LANDRS coverages - Starting with an [OpenAPI document](https://github.com/opengeospatial/LANDRS/tree/master/DesignDocs/DesignHack1/openapi/openapi.yaml) largely influenced by the [OGC Coverages OpenAPI](https://github.com/opengeospatial/ogc_api_coverages/blob/master/core/openapi/openapi.yaml) w... | non_process | add new proposed oas for landrs coverages starting with an largely influenced by the we will determine whether the api meets a predefined well understood use case before moving on to writing the service implementation in nodejs additionally we need to merge in the existing api design by which exists ... | 0 |

129,034 | 17,668,694,507 | IssuesEvent | 2021-08-23 00:21:03 | Automattic/wp-calypso | https://api.github.com/repos/Automattic/wp-calypso | closed | Write Button: Make it visually more clear that the number of posts shown to the right is a separate button | [Type] Enhancement Navigation Design [Size] XS [Closed] Fixed Back to Basics | **What**

I only recently found out that the number next to the Write button in the WordPress.com toolbar is a separate button! If you click it, it shows the list of the drafts you have.

https://user-images.githubusercontent.com/39308239/114265504-d1c22480-99f9-11eb-9208-f9e9cc06467b.mov

**Why**

Users may ... | 1.0 | Write Button: Make it visually more clear that the number of posts shown to the right is a separate button - **What**

I only recently found out that the number next to the Write button in the WordPress.com toolbar is a separate button! If you click it, it shows the list of the drafts you have.

https://user-images... | non_process | write button make it visually more clear that the number of posts shown to the right is a separate button what i only recently found out that the number next to the write button in the wordpress com toolbar is a separate button if you click it it shows the list of the drafts you have why u... | 0 |

178,722 | 29,996,820,289 | IssuesEvent | 2023-06-26 06:25:33 | SeaGL/organization | https://api.github.com/repos/SeaGL/organization | opened | 2023 Linux Magazine site ads | design | They prefer png or jpg.

We can get ads on the Linux Magazine site.

Static or animated images under 1MB in size.

728x90

300x250

160x600

Let's start with announcing basics of conference with a space for the tagline of the time, e.g. location announcement, keynotes, etc.

This is likely highest priority f... | 1.0 | 2023 Linux Magazine site ads - They prefer png or jpg.

We can get ads on the Linux Magazine site.

Static or animated images under 1MB in size.

728x90

300x250

160x600

Let's start with announcing basics of conference with a space for the tagline of the time, e.g. location announcement, keynotes, etc.

Th... | non_process | linux magazine site ads they prefer png or jpg we can get ads on the linux magazine site static or animated images under in size let s start with announcing basics of conference with a space for the tagline of the time e g location announcement keynotes etc this is likely highest p... | 0 |

5,346 | 8,178,571,469 | IssuesEvent | 2018-08-28 14:09:39 | GoogleCloudPlatform/google-cloud-dotnet | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-dotnet | closed | Release Storage library | type: process | Hi,

GCS CMEK is now GA. Based on developer feedback, developers are unable to use the CMEK features for DotNet as the features are only in `2.2.0-beta01`, [based on release history](https://github.com/GoogleCloudPlatform/google-cloud-dotnet/releases/tag/Google.Cloud.Storage.V1-2.2.0-beta01).

If possible, request... | 1.0 | Release Storage library - Hi,

GCS CMEK is now GA. Based on developer feedback, developers are unable to use the CMEK features for DotNet as the features are only in `2.2.0-beta01`, [based on release history](https://github.com/GoogleCloudPlatform/google-cloud-dotnet/releases/tag/Google.Cloud.Storage.V1-2.2.0-beta01)... | process | release storage library hi gcs cmek is now ga based on developer feedback developers are unable to use the cmek features for dotnet as the features are only in if possible request is to release a non beta version of library so it will be picked up when a developer runs dotnet add ... | 1 |

20,333 | 26,985,170,222 | IssuesEvent | 2023-02-09 15:40:31 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Images are not displayed when transforming ditamap with chunk="to-content" using HTML5 with preprocess2 | bug preprocess2 | ## Expected Behavior

When transforming ditamap having chunk="to-content" set on it, images should be displayed.

## Actual Behavior

No images are present in the output.

## Steps to Reproduce

[chunk.zip](https://github.com/dita-ot/dita-ot/files/9972528/chunk.zip)

1. Download and unzip the archive

2. Run the ... | 1.0 | Images are not displayed when transforming ditamap with chunk="to-content" using HTML5 with preprocess2 - ## Expected Behavior

When transforming ditamap having chunk="to-content" set on it, images should be displayed.

## Actual Behavior

No images are present in the output.

## Steps to Reproduce

[chunk.zip](htt... | process | images are not displayed when transforming ditamap with chunk to content using with expected behavior when transforming ditamap having chunk to content set on it images should be displayed actual behavior no images are present in the output steps to reproduce download and unzip ... | 1 |

20,013 | 26,486,339,940 | IssuesEvent | 2023-01-17 18:21:04 | nion-software/nionswift | https://api.github.com/repos/nion-software/nionswift | opened | Line profile on distinct x-y calibrations should choose based on line orientation | type - enhancement level - easy f - line-profile f - processing f - calibration | Email 2022-05-19:

#### N

if I draw a vertical line profile on an image that has different x and y

units of calibration, then the width gets to be specified in vertical

units, and the length in horizontal units, obviously it should be the

other way around.

One can discuss about what to use for a tilted line,... | 1.0 | Line profile on distinct x-y calibrations should choose based on line orientation - Email 2022-05-19:

#### N

if I draw a vertical line profile on an image that has different x and y

units of calibration, then the width gets to be specified in vertical

units, and the length in horizontal units, obviously it shou... | process | line profile on distinct x y calibrations should choose based on line orientation email n if i draw a vertical line profile on an image that has different x and y units of calibration then the width gets to be specified in vertical units and the length in horizontal units obviously it should be... | 1 |

11,981 | 14,737,101,132 | IssuesEvent | 2021-01-07 00:52:26 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | SA Billing Login Issues | anc-ops anc-process anp-1 ant-bug ant-support has attachment | In GitLab by @kdjstudios on Apr 16, 2018, 08:39

**Submitted by:** "Trawana Ervin" <trawana.ervin@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-04-09-11294

**Server:** Internal

**Client/Site:** Chattanooga

**Account:** NA

**Issue:**

There is an issue with SABilling and I... | 1.0 | SA Billing Login Issues - In GitLab by @kdjstudios on Apr 16, 2018, 08:39

**Submitted by:** "Trawana Ervin" <trawana.ervin@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-04-09-11294

**Server:** Internal

**Client/Site:** Chattanooga

**Account:** NA

**Issue:**

There is an ... | process | sa billing login issues in gitlab by kdjstudios on apr submitted by trawana ervin helpdesk server internal client site chattanooga account na issue there is an issue with sabilling and i am unable to log into the system chrome firefox the following error me... | 1 |

7,213 | 10,568,001,988 | IssuesEvent | 2019-10-06 09:41:19 | lastunicorn/MedicX | https://api.github.com/repos/lastunicorn/MedicX | opened | Create new clinic when "New Clinic" button is clicked | requirement | As a user,

When I press the "New Clinic" button, I want a new empty clinic item to be created in the clinics list, to be selected as the current one and to be displayed in the details panel,

So that I can add information in the system about a new clinic.

Epic: #3 | 1.0 | Create new clinic when "New Clinic" button is clicked - As a user,

When I press the "New Clinic" button, I want a new empty clinic item to be created in the clinics list, to be selected as the current one and to be displayed in the details panel,

So that I can add information in the system about a new clinic.

Epic... | non_process | create new clinic when new clinic button is clicked as a user when i press the new clinic button i want a new empty clinic item to be created in the clinics list to be selected as the current one and to be displayed in the details panel so that i can add information in the system about a new clinic epic... | 0 |

12,772 | 15,158,152,022 | IssuesEvent | 2021-02-12 00:27:11 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | local contrast / local laplacian broken | no-issue-activity scope: image processing understood: incomplete | **Describe the bug**

dt 3.1 displays wrong/different colors than in the exported image

This issue has been already reported several times - apparently there was an attempt fix it but now the result is worse than before - in dt 2.6 the colors were correct when the top/bottom/left/right panels were hidden at 100% view,... | 1.0 | local contrast / local laplacian broken - **Describe the bug**

dt 3.1 displays wrong/different colors than in the exported image

This issue has been already reported several times - apparently there was an attempt fix it but now the result is worse than before - in dt 2.6 the colors were correct when the top/bottom/l... | process | local contrast local laplacian broken describe the bug dt displays wrong different colors than in the exported image this issue has been already reported several times apparently there was an attempt fix it but now the result is worse than before in dt the colors were correct when the top bottom l... | 1 |

16,000 | 20,188,207,743 | IssuesEvent | 2022-02-11 01:18:05 | savitamittalmsft/WAS-SEC-TEST | https://api.github.com/repos/savitamittalmsft/WAS-SEC-TEST | opened | Deprecate legacy network security controls | WARP-Import WAF FEB 2021 Security Performance and Scalability Capacity Management Processes Security & Compliance Network Security | <a href="https://docs.microsoft.com/azure/architecture/framework/Security/network-security-containment#discontinue-legacy-network-security-technology">Deprecate legacy network security controls</a>

<p><b>Why Consider This?</b></p>

Network-based DLP is decreasingly effective at identifying both inadvertent and d... | 1.0 | Deprecate legacy network security controls - <a href="https://docs.microsoft.com/azure/architecture/framework/Security/network-security-containment#discontinue-legacy-network-security-technology">Deprecate legacy network security controls</a>

<p><b>Why Consider This?</b></p>

Network-based DLP is decreasingly ef... | process | deprecate legacy network security controls why consider this network based dlp is decreasingly effective at identifying both inadvertent and deliberate data loss the reason for this is that most modern protocols and attackers use network level encryption for inbound and outbound communications while ... | 1 |

22,061 | 30,579,451,100 | IssuesEvent | 2023-07-21 08:34:03 | LAAC-LSCP/ChildProject | https://api.github.com/repos/LAAC-LSCP/ChildProject | opened | audio conversion should have a default 'standard' conversion | enhancement audio-processing | **Is your feature request related to a problem? Please describe.**

The audio processor pipeline gives a lot of feature that allow people to handle their audio the way they want. But for the vast majority of the time, we just convert them to the standard format. So people have to figure out what the standard is and the... | 1.0 | audio conversion should have a default 'standard' conversion - **Is your feature request related to a problem? Please describe.**

The audio processor pipeline gives a lot of feature that allow people to handle their audio the way they want. But for the vast majority of the time, we just convert them to the standard fo... | process | audio conversion should have a default standard conversion is your feature request related to a problem please describe the audio processor pipeline gives a lot of feature that allow people to handle their audio the way they want but for the vast majority of the time we just convert them to the standard fo... | 1 |

824,812 | 31,224,618,956 | IssuesEvent | 2023-08-19 00:42:02 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | opened | spanner: TestClient_ReadWriteTransaction_Tag failed | type: bug priority: p1 flakybot: issue | Note: #7762 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 255bdf21a1f781ad79ce746cf0c320a6b90cd61f

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/2da27176-6669-4007-a3ee-7b837244d04e), [Sponge](http://sponge2/2da27176-6669-4007-... | 1.0 | spanner: TestClient_ReadWriteTransaction_Tag failed - Note: #7762 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 255bdf21a1f781ad79ce746cf0c320a6b90cd61f

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/2da27176-6669-4007-a3ee-7b83... | non_process | spanner testclient readwritetransaction tag failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output warning data race write at by goroutine cloud google com go spanner inte... | 0 |

15,415 | 19,602,665,264 | IssuesEvent | 2022-01-06 04:23:49 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | better triaging of terminal launch failures | feature-request terminal-process | I see at least a few terminal launch failure issues each week.

I propose when a terminal process exits during launch (likely due to bad shell/shell args), we show a notification with a link to docs that might help.

Even if it just points to docs that say: "in your issue, include your settings.json file", it wou... | 1.0 | better triaging of terminal launch failures - I see at least a few terminal launch failure issues each week.

I propose when a terminal process exits during launch (likely due to bad shell/shell args), we show a notification with a link to docs that might help.

Even if it just points to docs that say: "in your i... | process | better triaging of terminal launch failures i see at least a few terminal launch failure issues each week i propose when a terminal process exits during launch likely due to bad shell shell args we show a notification with a link to docs that might help even if it just points to docs that say in your i... | 1 |

13,273 | 15,757,018,121 | IssuesEvent | 2021-03-31 04:33:46 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | How to support grpclb protocol in the remote execution scenarios. | type: support / not a bug (process) | Hello.

We now use remote cache and remote execution in our building scenario, and set --remote_cache build parameter to our remote cache server DNS. But if we want to use load balance,we should setup LVS or nginx as Layer4/7 proxy and set --remote_cache to the proxy. That is not our ideal deployment model. We want... | 1.0 | How to support grpclb protocol in the remote execution scenarios. - Hello.

We now use remote cache and remote execution in our building scenario, and set --remote_cache build parameter to our remote cache server DNS. But if we want to use load balance,we should setup LVS or nginx as Layer4/7 proxy and set --remote_... | process | how to support grpclb protocol in the remote execution scenarios hello we now use remote cache and remote execution in our building scenario and set remote cache build parameter to our remote cache server dns but if we want to use load balance we should setup lvs or nginx as proxy and set remote cache... | 1 |

274,772 | 30,173,601,633 | IssuesEvent | 2023-07-04 01:04:11 | turkdevops/snyk | https://api.github.com/repos/turkdevops/snyk | reopened | CVE-2016-1902 (High) detected in symfony/symfony-v2.3.1 | Mend: dependency security vulnerability | ## CVE-2016-1902 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>symfony/symfony-v2.3.1</b></p></summary>

<p>The Symfony PHP framework</p>

<p>Library home page: <a href="https://api.gi... | True | CVE-2016-1902 (High) detected in symfony/symfony-v2.3.1 - ## CVE-2016-1902 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>symfony/symfony-v2.3.1</b></p></summary>

<p>The Symfony PHP f... | non_process | cve high detected in symfony symfony cve high severity vulnerability vulnerable library symfony symfony the symfony php framework library home page a href dependency hierarchy x symfony symfony vulnerable library found in head commit a href fo... | 0 |

4,588 | 7,431,219,646 | IssuesEvent | 2018-03-25 12:38:10 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Investigate flaky test-stdout-buffer-flush-on-exit | CI / flaky test arm process test | * **Version**: master

* **Platform**: arm/linux

* **Subsystem**: test

https://ci.nodejs.org/job/node-test-commit-arm/10285/nodes=ubuntu1604-arm64/console

```

not ok 1448 known_issues/test-stdout-buffer-flush-on-exit

---

duration_ms: 11.38

severity: fail

stack: |-

``` | 1.0 | Investigate flaky test-stdout-buffer-flush-on-exit - * **Version**: master

* **Platform**: arm/linux

* **Subsystem**: test

https://ci.nodejs.org/job/node-test-commit-arm/10285/nodes=ubuntu1604-arm64/console

```

not ok 1448 known_issues/test-stdout-buffer-flush-on-exit

---

duration_ms: 11.38

severity: ... | process | investigate flaky test stdout buffer flush on exit version master platform arm linux subsystem test not ok known issues test stdout buffer flush on exit duration ms severity fail stack | 1 |

18,781 | 24,684,378,200 | IssuesEvent | 2022-10-19 01:32:06 | unicode-org/icu4x | https://api.github.com/repos/unicode-org/icu4x | closed | Fix up npm installation and caching on CI | T-docs-tests C-process S-small | We should never run `npm install` on CI, and in general we should avoid hitting npmjs.org in CI. We should leverage caching. We do this in general, but it doesn't seem to always work. | 1.0 | Fix up npm installation and caching on CI - We should never run `npm install` on CI, and in general we should avoid hitting npmjs.org in CI. We should leverage caching. We do this in general, but it doesn't seem to always work. | process | fix up npm installation and caching on ci we should never run npm install on ci and in general we should avoid hitting npmjs org in ci we should leverage caching we do this in general but it doesn t seem to always work | 1 |

115,252 | 17,293,850,259 | IssuesEvent | 2021-07-25 10:19:21 | atlslscsrv-app/B82B787C-E596 | https://api.github.com/repos/atlslscsrv-app/B82B787C-E596 | opened | WS-2017-0247 (Low) detected in ms-0.7.2.tgz | security vulnerability | ## WS-2017-0247 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ms-0.7.2.tgz</b></p></summary>

<p>Tiny milisecond conversion utility</p>

<p>Library home page: <a href="https://registry.... | True | WS-2017-0247 (Low) detected in ms-0.7.2.tgz - ## WS-2017-0247 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ms-0.7.2.tgz</b></p></summary>

<p>Tiny milisecond conversion utility</p>

<p... | non_process | ws low detected in ms tgz ws low severity vulnerability vulnerable library ms tgz tiny milisecond conversion utility library home page a href path to dependency file dist resources app package json path to vulnerable library dist resources app node modules po... | 0 |

354,769 | 25,174,879,375 | IssuesEvent | 2022-11-11 08:19:59 | cliftonfelix/pe | https://api.github.com/repos/cliftonfelix/pe | opened | javascript launch warning | type.DocumentationBug severity.Low |

When I hover over and click the part "Links in blue" and "Words with a dotted underline", I got a warning

When I hover over and click the part "Links in blue" and "Words with a dotted underline", I got a warning

while inserting new declaration to ops, fill in ops.declarations.reason [conf](https://edenlab.atlassian.net/wiki/x/Y_oQ)

- if declaration_reque... | 1.0 | Fill "reason" on sign declaration request - On [sign declaration request](https://uaehealthapi.docs.apiary.io/#reference/public.-medical-service-provider-integration-layer/declaration-requests/sign-declaration-request) while inserting new declaration to ops, fill in ops.declarations.reason [conf](https://edenlab.atlass... | non_process | fill reason on sign declaration request on while inserting new declaration to ops fill in ops declarations reason if declaration request person authentication methods type offline set reason offline if declaration request person no tax id true set reason no tax id if d... | 0 |

148,965 | 23,407,872,398 | IssuesEvent | 2022-08-12 14:29:59 | zuri-training/Col-films-Team-120 | https://api.github.com/repos/zuri-training/Col-films-Team-120 | closed | Notifications Page | design figma | Create the lo-fi and hi-fi designs for the website notifications page using the defined style guide | 1.0 | Notifications Page - Create the lo-fi and hi-fi designs for the website notifications page using the defined style guide | non_process | notifications page create the lo fi and hi fi designs for the website notifications page using the defined style guide | 0 |

18,048 | 24,057,772,585 | IssuesEvent | 2022-09-16 18:38:21 | openxla/stablehlo | https://api.github.com/repos/openxla/stablehlo | closed | Document compatibility guarantees | Process | As discussed in https://github.com/openxla/stablehlo/pull/1, one of the main reasons for introducing StableHLO is being able to provide compatibility guarantees for MHLO while keeping MHLO as flexible as possible. This is something that we can start doing right now, and within this ticket I'll work on putting together ... | 1.0 | Document compatibility guarantees - As discussed in https://github.com/openxla/stablehlo/pull/1, one of the main reasons for introducing StableHLO is being able to provide compatibility guarantees for MHLO while keeping MHLO as flexible as possible. This is something that we can start doing right now, and within this t... | process | document compatibility guarantees as discussed in one of the main reasons for introducing stablehlo is being able to provide compatibility guarantees for mhlo while keeping mhlo as flexible as possible this is something that we can start doing right now and within this ticket i ll work on putting together a propo... | 1 |

1,972 | 4,797,105,586 | IssuesEvent | 2016-11-01 10:45:36 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Systematize tests with url processing with the flag "i" (iframe) | AREA: client SYSTEM: URL processing | Cases of using the `i` flag:

- Assign src to iframe

- Assign a url attribute to elements (`a`, `form`, `area`, `base`) with the `target` attribute (`_blank`, `_self`, `_parent`, `_top` or framename)

- Assign the `target` attribute to elements with a url attribute

- Change iframe name

- some `target` attributes cease ... | 1.0 | Systematize tests with url processing with the flag "i" (iframe) - Cases of using the `i` flag:

- Assign src to iframe

- Assign a url attribute to elements (`a`, `form`, `area`, `base`) with the `target` attribute (`_blank`, `_self`, `_parent`, `_top` or framename)

- Assign the `target` attribute to elements with a url... | process | systematize tests with url processing with the flag i iframe cases of using the i flag assign src to iframe assign a url attribute to elements a form area base with the target attribute blank self parent top or framename assign the target attribute to elements with a url... | 1 |

61,001 | 6,720,705,724 | IssuesEvent | 2017-10-16 08:53:03 | consul/consul | https://api.github.com/repos/consul/consul | closed | Right answer resize should reorder | polls production testable | # Current Behaviour

Right now when you click expand the gallery of the second answer on a Poll you'll get it expanded but below the first answer:

# Desired Behaviour

Instead of expanding below ... | 1.0 | Right answer resize should reorder - # Current Behaviour

Right now when you click expand the gallery of the second answer on a Poll you'll get it expanded but below the first answer:

# Desired B... | non_process | right answer resize should reorder current behaviour right now when you click expand the gallery of the second answer on a poll you ll get it expanded but below the first answer desired behaviour instead of expanding below the first answer it should be on top of it with the same effect as expanding ... | 0 |

93,445 | 8,416,469,968 | IssuesEvent | 2018-10-14 02:42:45 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | chrome:// urls should display as brave:// | QA/Test-Plan-Specified QA/Yes feature/url-bar feature/user-interface | It may be too much work to redirect all chrome:// urls to brave:// and have the appropriate handlers and checks still work reliable.

Luckily, the ToolbarModel provides GetURLForDisplay which can be overridden so that brave:// displays most of the time (until the URL is edited).

When paired with https://github.com... | 1.0 | chrome:// urls should display as brave:// - It may be too much work to redirect all chrome:// urls to brave:// and have the appropriate handlers and checks still work reliable.

Luckily, the ToolbarModel provides GetURLForDisplay which can be overridden so that brave:// displays most of the time (until the URL is edi... | non_process | chrome urls should display as brave it may be too much work to redirect all chrome urls to brave and have the appropriate handlers and checks still work reliable luckily the toolbarmodel provides geturlfordisplay which can be overridden so that brave displays most of the time until the url is edi... | 0 |

439,702 | 12,685,480,976 | IssuesEvent | 2020-06-20 04:44:01 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | opened | Show validation errors in the initial state | 3.2.0 Priority/Normal Type/Bug Type/React-UI | ### Description:

### Steps to reproduce:

### Affected Product Version:

<!-- Members can use Affected/*** labels -->

### Environment details (with versions):

- OS:

- Client:

- Env (Docker/... | 1.0 | Show validation errors in the initial state - ### Description:

### Steps to reproduce:

### Affected Product Version:

<!-- Members can use Affected/*** labels -->

### Environment details (wit... | non_process | show validation errors in the initial state description steps to reproduce affected product version environment details with versions os client env docker optional fields related issues suggested labels suggested ass... | 0 |

5,584 | 8,442,054,263 | IssuesEvent | 2018-10-18 12:11:22 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | <Modal />: controllable content overflow | bug processing | **A component designed to overflow Modal's area is hidden**

<img width="376" alt="screen shot 2018-10-12 at 16 45 44" src="https://user-images.githubusercontent.com/3975660/46877195-93efdf80-ce40-11e8-8c22-9186dcbdde86.png">

**There should be available some property to turn on/off overflow on Modal**

And our c... | 1.0 | <Modal />: controllable content overflow - **A component designed to overflow Modal's area is hidden**

<img width="376" alt="screen shot 2018-10-12 at 16 45 44" src="https://user-images.githubusercontent.com/3975660/46877195-93efdf80-ce40-11e8-8c22-9186dcbdde86.png">

**There should be available some property to... | process | controllable content overflow a component designed to overflow modal s area is hidden img width alt screen shot at src there should be available some property to turn on off overflow on modal and our component could overflow the modal img width alt screen shot at... | 1 |

175,825 | 21,334,306,824 | IssuesEvent | 2022-04-18 12:47:29 | Gal-Doron/spring-bot | https://api.github.com/repos/Gal-Doron/spring-bot | opened | CVE-2021-44550 (High) detected in stanford-corenlp-3.9.2.jar, stanford-corenlp-3.9.2.jar | security vulnerability | ## CVE-2021-44550 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>stanford-corenlp-3.9.2.jar</b>, <b>stanford-corenlp-3.9.2.jar</b></p></summary>

<p>

<details><summary><b>stanford-co... | True | CVE-2021-44550 (High) detected in stanford-corenlp-3.9.2.jar, stanford-corenlp-3.9.2.jar - ## CVE-2021-44550 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>stanford-corenlp-3.9.2.jar... | non_process | cve high detected in stanford corenlp jar stanford corenlp jar cve high severity vulnerability vulnerable libraries stanford corenlp jar stanford corenlp jar stanford corenlp jar stanford corenlp provides a set of natural language analysis tools wh... | 0 |