Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

4,464 | 7,331,139,677 | IssuesEvent | 2018-03-05 12:28:28 | zotero/zotero | https://api.github.com/repos/zotero/zotero | closed | Show "Automatically update citations" in document preferences after document creation | Blocker Word Processor Integration | People should be able to turn on delayed citations manually after document creation. It shouldn't show up during document creation, but it should show up if you return to the doc prefs.

Discussed here: https://forums.zotero.org/discussion/comment/302684/#Comment_302684 | 1.0 | Show "Automatically update citations" in document preferences after document creation - People should be able to turn on delayed citations manually after document creation. It shouldn't show up during document creation, but it should show up if you return to the doc prefs.

Discussed here: https://forums.zotero.org/d... | process | show automatically update citations in document preferences after document creation people should be able to turn on delayed citations manually after document creation it shouldn t show up during document creation but it should show up if you return to the doc prefs discussed here | 1 |

5,176 | 7,960,135,641 | IssuesEvent | 2018-07-13 05:38:11 | Rokid/ShadowNode | https://api.github.com/repos/Rokid/ShadowNode | closed | child_process: process.send causes memory leaks | bug child_process | ```js

// parent.js

var child = require('child_process').fork(__dirname + '/child.js', {

env: {

isSubprocess: 'true',

}

})

child.on('message', data => {

// console.log(data.toString())

})

//child.js

setInterval(() => {

process.send(Math.random())

}, 0)

```

child's memory increases fast | 1.0 | child_process: process.send causes memory leaks - ```js

// parent.js

var child = require('child_process').fork(__dirname + '/child.js', {

env: {

isSubprocess: 'true',

}

})

child.on('message', data => {

// console.log(data.toString())

})

//child.js

setInterval(() => {

process.send(Math.random()... | process | child process process send causes memory leaks js parent js var child require child process fork dirname child js env issubprocess true child on message data console log data tostring child js setinterval process send math random ... | 1 |

10,017 | 13,043,914,329 | IssuesEvent | 2020-07-29 03:02:12 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `Uncompress` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `Uncompress` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_exp... | 2.0 | UCP: Migrate scalar function `Uncompress` from TiDB -

## Description

Port the scalar function `Uncompress` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/t... | process | ucp migrate scalar function uncompress from tidb description port the scalar function uncompress from tidb to coprocessor score mentor s sticnarf recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

16,836 | 9,536,669,789 | IssuesEvent | 2019-04-30 10:20:25 | Garados007/Werwolf | https://api.github.com/repos/Garados007/Werwolf | closed | Optimiere Abrufe bei Spielrundenwechsel | difficult performance | Bei einem Wechsel der aktuellen Spielrunde werden ein Großteil der Daten (z.B. Chateinträge) verworfen und müssen neu abgerufen werden. Ein Teil davon ändert sich aber nicht in der nächsten Runde und soll nur ausgeblendet werden oder ungültige registrierte periodische Abrfragen existieren. Diese Abfragen lassen sich op... | True | Optimiere Abrufe bei Spielrundenwechsel - Bei einem Wechsel der aktuellen Spielrunde werden ein Großteil der Daten (z.B. Chateinträge) verworfen und müssen neu abgerufen werden. Ein Teil davon ändert sich aber nicht in der nächsten Runde und soll nur ausgeblendet werden oder ungültige registrierte periodische Abrfragen... | non_process | optimiere abrufe bei spielrundenwechsel bei einem wechsel der aktuellen spielrunde werden ein großteil der daten z b chateinträge verworfen und müssen neu abgerufen werden ein teil davon ändert sich aber nicht in der nächsten runde und soll nur ausgeblendet werden oder ungültige registrierte periodische abrfragen... | 0 |

54,772 | 13,920,332,315 | IssuesEvent | 2020-10-21 10:17:05 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Cannot convert from UUID to JSON in H2's JSON_OBJECT() and related functions | C: DB: H2 C: Functionality E: All Editions P: Medium R: Fixed T: Defect | ### Expected behavior

Query result containing records with UUID successfully mapped to DTO via JSON document.

### Actual behavior

Exception is thrown when JOOQ attempts to map the record:

```

org.springframework.dao.DataIntegrityViolationException: jOOQ; SQL [select json_object(key ? value "PARENT"."ID", key ? v... | 1.0 | Cannot convert from UUID to JSON in H2's JSON_OBJECT() and related functions - ### Expected behavior

Query result containing records with UUID successfully mapped to DTO via JSON document.

### Actual behavior

Exception is thrown when JOOQ attempts to map the record:

```

org.springframework.dao.DataIntegrityViola... | non_process | cannot convert from uuid to json in s json object and related functions expected behavior query result containing records with uuid successfully mapped to dto via json document actual behavior exception is thrown when jooq attempts to map the record org springframework dao dataintegrityviolat... | 0 |

17,812 | 23,739,991,520 | IssuesEvent | 2022-08-31 11:34:59 | Tencent/tdesign-miniprogram | https://api.github.com/repos/Tencent/tdesign-miniprogram | closed | [dialog] 文档或示例中缺少open-type-event的调用案例 | good first issue in process | ### 这个功能解决了什么问题

dialog的确认键如果想使用button的开放能力获取手机号,用哪个字段、如何声明getPhoneNumber呢

### 你建议的方案是什么

示例中给个使用的案例吧 | 1.0 | [dialog] 文档或示例中缺少open-type-event的调用案例 - ### 这个功能解决了什么问题

dialog的确认键如果想使用button的开放能力获取手机号,用哪个字段、如何声明getPhoneNumber呢

### 你建议的方案是什么

示例中给个使用的案例吧 | process | 文档或示例中缺少open type event的调用案例 这个功能解决了什么问题 dialog的确认键如果想使用button的开放能力获取手机号,用哪个字段、如何声明getphonenumber呢 你建议的方案是什么 示例中给个使用的案例吧 | 1 |

105,725 | 9,100,269,255 | IssuesEvent | 2019-02-20 08:01:42 | humera987/FXLabs-Test-Automation | https://api.github.com/repos/humera987/FXLabs-Test-Automation | opened | Test : ApiV1OrgsIdPutOrgorgplanbasicUsercDisallowAbact2 | test | Project : Test

Job : Default

Env : Default

Category : null

Tags : null

Severity : null

Region : US_WEST

Result : fail

Status Code : 500

Headers : {}

Endpoint : http://13.56.210.25/api/v1/api/v1/orgs/

Request :

{

"billingEmail" : "katrina.reynolds@yahoo.com",

"company" : "Kuvalis-... | 1.0 | Test : ApiV1OrgsIdPutOrgorgplanbasicUsercDisallowAbact2 - Project : Test

Job : Default

Env : Default

Category : null

Tags : null

Severity : null

Region : US_WEST

Result : fail

Status Code : 500

Headers : {}

Endpoint : http://13.56.210.25/api/v1/api/v1/orgs/

Request :

{

"billingEmai... | non_process | test project test job default env default category null tags null severity null region us west result fail status code headers endpoint request billingemail katrina reynolds yahoo com company kuvalis kuvalis createdby ... | 0 |

77,675 | 3,507,208,456 | IssuesEvent | 2016-01-08 11:54:51 | OregonCore/OregonCore | https://api.github.com/repos/OregonCore/OregonCore | closed | Crash Alert (BB #655) | Category: Crash migrated Priority: Medium Type: Bug | This issue was migrated from bitbucket.

**Original Reporter:** PadreWoW

**Original Date:** 20.08.2014 09:22:23 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** closed

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/655

<hr>

2014-08-20 13:20:33 CRASH ALERT: Unit (Entry 129... | 1.0 | Crash Alert (BB #655) - This issue was migrated from bitbucket.

**Original Reporter:** PadreWoW

**Original Date:** 20.08.2014 09:22:23 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** closed

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/655

<hr>

2014-08-20 13:20:33 CRAS... | non_process | crash alert bb this issue was migrated from bitbucket original reporter padrewow original date gmt original priority major original type bug original state closed direct link crash alert unit entry is trying to delete its updating mg type ... | 0 |

453,359 | 13,068,578,819 | IssuesEvent | 2020-07-31 04:01:52 | ProjectSidewalk/SidewalkWebpage | https://api.github.com/repos/ProjectSidewalk/SidewalkWebpage | closed | In Version 2.1, No Smooth Animated Interpolation Between Locations When Double Clicking | Priority: Low wontfix | In version 2.1, there is no smooth animated interpolation between locations when double clicking to move. See below. Is there some function parameter we have to pass to enable this smooth movement animation on the double click to move? It's enabled when you click the arrow to move.

for full description of steps.

| 1.0 | Evidence string generation for 2023.09 release - **Deadline for submission: 8 August 2023**

Refer to [documentation](https://github.com/EBIvariation/eva-opentargets/blob/master/docs/generate-evidence-strings.md) for full description of steps.

| process | evidence string generation for release deadline for submission august refer to for full description of steps | 1 |

770,751 | 27,054,804,634 | IssuesEvent | 2023-02-13 15:29:58 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.zeit.de - site is not usable | priority-important browser-firefox-tablet engine-gecko | <!-- @browser: Firefox Mobile (Tablet) 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 4.2.2; Tablet; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/118150 -->

**URL**: https://www.zeit.de/zustimmung?url=https%3A%2F%2Fwww.zeit... | 1.0 | www.zeit.de - site is not usable - <!-- @browser: Firefox Mobile (Tablet) 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 4.2.2; Tablet; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/118150 -->

**URL**: https://www.zeit.de/zu... | non_process | site is not usable url browser version firefox mobile tablet operating system android tested another browser yes chrome problem type site is not usable description missing items steps to reproduce i can t choice the button einverstanden und weiter mit... | 0 |

780,618 | 27,401,754,735 | IssuesEvent | 2023-03-01 01:34:29 | hotosm/fmtm | https://api.github.com/repos/hotosm/fmtm | closed | Add ODK->OSM conversion | enhancement Priority: Must have in progress | Use the odkconvert project conversion program that takes an CSV submission files and converts it to good OSM XML. | 1.0 | Add ODK->OSM conversion - Use the odkconvert project conversion program that takes an CSV submission files and converts it to good OSM XML. | non_process | add odk osm conversion use the odkconvert project conversion program that takes an csv submission files and converts it to good osm xml | 0 |

20,880 | 31,466,909,721 | IssuesEvent | 2023-08-30 03:16:35 | BG3-Community-Library-Team/BG3-Community-Library | https://api.github.com/repos/BG3-Community-Library-Team/BG3-Community-Library | closed | Bladesinger Subclass Compatibility Support | Subclass Compatibilty Framework | **Is your feature request related to a problem? Please describe.**

We now have permission to add support for the [Bladesinger](https://www.nexusmods.com/baldursgate3/mods/279) subclass.

**Describe the solution you'd like**

It will need to be implemented in the same way as [PR 39](https://github.com/BG3-Community-L... | True | Bladesinger Subclass Compatibility Support - **Is your feature request related to a problem? Please describe.**

We now have permission to add support for the [Bladesinger](https://www.nexusmods.com/baldursgate3/mods/279) subclass.

**Describe the solution you'd like**

It will need to be implemented in the same way ... | non_process | bladesinger subclass compatibility support is your feature request related to a problem please describe we now have permission to add support for the subclass describe the solution you d like it will need to be implemented in the same way as which handled some fighter subclasses | 0 |

9,794 | 12,807,791,993 | IssuesEvent | 2020-07-03 12:17:12 | solid/process | https://api.github.com/repos/solid/process | reopened | Improving the effectiveness of panels | process issue |

We [originally introduced](https://github.com/solid/process/pull/24) panels as part of the Solid process ten months ago. Our aim was to drive focused and thoughtful collaboration around specific topics, leading to meaningful contributions to the Solid Specification and ecosystem. We held off on adding a lot of struct... | 1.0 | Improving the effectiveness of panels -

We [originally introduced](https://github.com/solid/process/pull/24) panels as part of the Solid process ten months ago. Our aim was to drive focused and thoughtful collaboration around specific topics, leading to meaningful contributions to the Solid Specification and ecosyste... | process | improving the effectiveness of panels we panels as part of the solid process ten months ago our aim was to drive focused and thoughtful collaboration around specific topics leading to meaningful contributions to the solid specification and ecosystem we held off on adding a lot of structure around how panels ... | 1 |

460,844 | 13,219,005,217 | IssuesEvent | 2020-08-17 09:42:58 | MyDataTaiwan/logboard | https://api.github.com/repos/MyDataTaiwan/logboard | opened | When user switch data window by "Today", "This Week" .. buttons, the calendar on top should also sync | QA priority-high | **Steps to Reproduce**

1. User upload data to LogBoard

2. User switch the data window by the buttons "Today", "This Week"...... etc.

**Results**

run "npm start" in the terminal, this will open the homepage in a browser

- look at the navbar and ensure it has the following buttons: "profile", "account settings", "login"![navbar-profile-account-login.... | 1.0 | Add routing to profile, account settings, and login button on navbar - *Task Tests*

run in "Sprint2-Navbar-Buttons" branch in github

test1:

- in the project folder (project_s23-cinco) run "npm start" in the terminal, this will open the homepage in a browser

- look at the navbar and ensure it has the following buttons... | process | add routing to profile account settings and login button on navbar task tests run in navbar buttons branch in github in the project folder project cinco run npm start in the terminal this will open the homepage in a browser look at the navbar and ensure it has the following buttons profile ... | 1 |

4,623 | 4,489,147,864 | IssuesEvent | 2016-08-30 09:55:32 | syl20bnr/spacemacs | https://api.github.com/repos/syl20bnr/spacemacs | closed | Spaceline performance bug? | - Bug tracker - Fixed upstream Performance | #### Description

with Emacs 25.1.50.3 (built from source, git commit 8cfd9ba…, with -O2 and gtk toolkit on Linux Mint 17.3, using the spacemacs develop branch and freshly updated packages as of May 1st 2016), I experience extremely laggy cursor motion. Just writing a single line of text into an otherwise empty text-mo... | True | Spaceline performance bug? - #### Description

with Emacs 25.1.50.3 (built from source, git commit 8cfd9ba…, with -O2 and gtk toolkit on Linux Mint 17.3, using the spacemacs develop branch and freshly updated packages as of May 1st 2016), I experience extremely laggy cursor motion. Just writing a single line of text in... | non_process | spaceline performance bug description with emacs built from source git commit … with and gtk toolkit on linux mint using the spacemacs develop branch and freshly updated packages as of may i experience extremely laggy cursor motion just writing a single line of text into an otherwise... | 0 |

195,159 | 14,705,589,952 | IssuesEvent | 2021-01-04 18:23:34 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Number of secrets rewritten during encryption process is seen incorrect | [zube]: To Test kind/bug-qa | **What kind of request is this (question/bug/enhancement/feature request):** bug

**Steps to reproduce (least amount of steps as possible):**

- Deploy a cluster, and create some secrets

- K get secrets -A --> On the cluster gives 137 secrets

- enable secrets encryption

```

services:

kube-api:

secre... | 1.0 | Number of secrets rewritten during encryption process is seen incorrect - **What kind of request is this (question/bug/enhancement/feature request):** bug

**Steps to reproduce (least amount of steps as possible):**

- Deploy a cluster, and create some secrets

- K get secrets -A --> On the cluster gives 137 secr... | non_process | number of secrets rewritten during encryption process is seen incorrect what kind of request is this question bug enhancement feature request bug steps to reproduce least amount of steps as possible deploy a cluster and create some secrets k get secrets a on the cluster gives secret... | 0 |

284,719 | 21,466,688,488 | IssuesEvent | 2022-04-26 05:02:22 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Docs] #13254 [Bug]-[800]:Close button can be clicked on a disabled select widget | Documentation User Education Pod | > TODO

- [ ] Evaluate if this task is needed. If not add the "Skip Docs" label on the parent ticket

- [ ] Fill these fields

- [ ] Prepare first draft

- [ ] Add label: "Ready for Docs Team"

Field | Details

-----|-----

**POD** | App Viewers Pod

**Parent Ticket** | #13254

Engineer |

Release Date |

Live Date |... | 1.0 | [Docs] #13254 [Bug]-[800]:Close button can be clicked on a disabled select widget - > TODO

- [ ] Evaluate if this task is needed. If not add the "Skip Docs" label on the parent ticket

- [ ] Fill these fields

- [ ] Prepare first draft

- [ ] Add label: "Ready for Docs Team"

Field | Details

-----|-----

**POD** |... | non_process | close button can be clicked on a disabled select widget todo evaluate if this task is needed if not add the skip docs label on the parent ticket fill these fields prepare first draft add label ready for docs team field details pod app viewers pod paren... | 0 |

136,102 | 30,475,790,514 | IssuesEvent | 2023-07-17 16:24:23 | ita-social-projects/StreetCode | https://api.github.com/repos/ita-social-projects/StreetCode | opened | [Admin/History map] The number of the streetcode plate with a negative value is added to the map in the "Мапа історії" block | bug (Epic#2) Admin/New StreetCode | **Environment:** OS: Windows 10 Pro

Browser: Google Chrome Version 111.0.5563.112.

**Reproducible:** always.

**Build found:** commit [d494c37](https://github.com/ita-social-projects/StreetCode/commit/d494c372c230bf30fef322fdb50405a1c708c55b)

**Type:** Functional

**Priority:** Low

**Severity:** Low

**Precondi... | 1.0 | [Admin/History map] The number of the streetcode plate with a negative value is added to the map in the "Мапа історії" block - **Environment:** OS: Windows 10 Pro

Browser: Google Chrome Version 111.0.5563.112.

**Reproducible:** always.

**Build found:** commit [d494c37](https://github.com/ita-social-projects/StreetCo... | non_process | the number of the streetcode plate with a negative value is added to the map in the мапа історії block environment os windows pro browser google chrome version reproducible always build found commit type functional priority low severity low precondition... | 0 |

16,784 | 21,970,817,600 | IssuesEvent | 2022-05-25 03:25:18 | quark-engine/quark-engine | https://api.github.com/repos/quark-engine/quark-engine | closed | Update author information | issue-processing-state-06 | Since the main author was changed, we should update the author information in setup.py. | 1.0 | Update author information - Since the main author was changed, we should update the author information in setup.py. | process | update author information since the main author was changed we should update the author information in setup py | 1 |

3,589 | 6,621,672,102 | IssuesEvent | 2017-09-21 20:06:36 | WikiWatershed/rapid-watershed-delineation | https://api.github.com/repos/WikiWatershed/rapid-watershed-delineation | closed | Expose unsimplified result | BigCZ Geoprocessing API | Provide a way for users to get the unsimplified geojson results. The MMW Geoprocessing API would like to expose them.

| 1.0 | Expose unsimplified result - Provide a way for users to get the unsimplified geojson results. The MMW Geoprocessing API would like to expose them.

| process | expose unsimplified result provide a way for users to get the unsimplified geojson results the mmw geoprocessing api would like to expose them | 1 |

19,591 | 25,932,202,326 | IssuesEvent | 2022-12-16 10:59:19 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Node hangs when running a stand-alone script with captured output | help wanted windows process | * **Version**: v8.1.3

* **Platform**: Windows 10 version 10.0.14393 x64

* **Subsystem**:

I am running into an issue on our build servers where Node hangs after running a stand-alone script if it is launched with its standard handles redirected to pipes. Specifically, I am using Ruby's `popen3` function, which wrap... | 1.0 | Node hangs when running a stand-alone script with captured output - * **Version**: v8.1.3

* **Platform**: Windows 10 version 10.0.14393 x64

* **Subsystem**:

I am running into an issue on our build servers where Node hangs after running a stand-alone script if it is launched with its standard handles redirected to ... | process | node hangs when running a stand alone script with captured output version platform windows version subsystem i am running into an issue on our build servers where node hangs after running a stand alone script if it is launched with its standard handles redirected to pipes sp... | 1 |

288,708 | 8,850,587,584 | IssuesEvent | 2019-01-08 13:41:30 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | jobs.jobvite.com - design is broken | browser-firefox priority-normal | <!-- @browser: Firefox 65.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:65.0) Gecko/20100101 Firefox/65.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: http://jobs.jobvite.com/careers/jbs/job/oTp08fwa?__jvst=Job%20Board&__jvsd=Indeed

**Browser / Version**: Firefox 65.0

**Operating System**: Linux... | 1.0 | jobs.jobvite.com - design is broken - <!-- @browser: Firefox 65.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:65.0) Gecko/20100101 Firefox/65.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: http://jobs.jobvite.com/careers/jbs/job/oTp08fwa?__jvst=Job%20Board&__jvsd=Indeed

**Browser / Version**: Fi... | non_process | jobs jobvite com design is broken url browser version firefox operating system linux tested another browser yes problem type design is broken description site will not submit application steps to reproduce last button does not function browser configura... | 0 |

674,133 | 23,040,439,666 | IssuesEvent | 2022-07-23 04:00:40 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | closed | Shuttle/vehicle movement structure seems dodgy | Priority: 3-Not Required Needs Discussion Difficulty: 3-Hard | ## Description

This issue is very much an abstract code-structure issue right now, but I think it'll be a problem later.

I was told to open an issue on this.

Right now, there is no actual concept of movement states (i.e. no interface for such).

However, there are three movement modes regardless. They are laid... | 1.0 | Shuttle/vehicle movement structure seems dodgy - ## Description

This issue is very much an abstract code-structure issue right now, but I think it'll be a problem later.

I was told to open an issue on this.

Right now, there is no actual concept of movement states (i.e. no interface for such).

However, there a... | non_process | shuttle vehicle movement structure seems dodgy description this issue is very much an abstract code structure issue right now but i think it ll be a problem later i was told to open an issue on this right now there is no actual concept of movement states i e no interface for such however there a... | 0 |

13,506 | 16,045,207,919 | IssuesEvent | 2021-04-22 12:55:48 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Prisma Format: Fill in native type attributes on foreign key relations | kind/bug process/candidate team/client topic: mongodb | ## Problem

This problem popped up while working with Mongo. Given the following:

```prisma

datasource db {

provider = "mongodb"

url = env("DATABASE_URL")

}

generator client {

provider = "prisma-client-js"

previewFeatures = ["mongodb"]

}

model User {

id String @id @default(dbg... | 1.0 | Prisma Format: Fill in native type attributes on foreign key relations - ## Problem

This problem popped up while working with Mongo. Given the following:

```prisma

datasource db {

provider = "mongodb"

url = env("DATABASE_URL")

}

generator client {

provider = "prisma-client-js"

preview... | process | prisma format fill in native type attributes on foreign key relations problem this problem popped up while working with mongo given the following prisma datasource db provider mongodb url env database url generator client provider prisma client js preview... | 1 |

212,504 | 16,487,440,007 | IssuesEvent | 2021-05-24 20:16:25 | anitab-org/stem-diverse-tv | https://api.github.com/repos/anitab-org/stem-diverse-tv | closed | Bug : Incorrect link to bug_report.md in contributing_guidelines.md | Category: Documentation/Training First Timers Only Type: Bug | ### Describe the bug

The links to bug report is incorrect in the Contributing Guidelines file you can see this by also scrolling to the [Contributing section](https://github.com/anitab-org/stem-diverse-tv#contributing) in readme

### To Reproduce

Steps to reproduce the behavior:

1. Go to 'https://github.com/anita... | 1.0 | Bug : Incorrect link to bug_report.md in contributing_guidelines.md - ### Describe the bug

The links to bug report is incorrect in the Contributing Guidelines file you can see this by also scrolling to the [Contributing section](https://github.com/anitab-org/stem-diverse-tv#contributing) in readme

### To Reproduce... | non_process | bug incorrect link to bug report md in contributing guidelines md describe the bug the links to bug report is incorrect in the contributing guidelines file you can see this by also scrolling to the in readme to reproduce steps to reproduce the behavior go to scroll down to contribution... | 0 |

344,704 | 24,824,023,485 | IssuesEvent | 2022-10-25 18:56:29 | pnp/powershell | https://api.github.com/repos/pnp/powershell | closed | Documentation: Using pnp/powershell Docker Image | documentation | **Is your feature request related to a problem? Please describe.**

Starting using pnp/powershell Docker Image might be tricky for beginners

**Describe the solution you'd like**

An article, similar to https://pnp.github.io/powershell/articles/azurecloudshell.html, that presents different options and different examp... | 1.0 | Documentation: Using pnp/powershell Docker Image - **Is your feature request related to a problem? Please describe.**

Starting using pnp/powershell Docker Image might be tricky for beginners

**Describe the solution you'd like**

An article, similar to https://pnp.github.io/powershell/articles/azurecloudshell.html, ... | non_process | documentation using pnp powershell docker image is your feature request related to a problem please describe starting using pnp powershell docker image might be tricky for beginners describe the solution you d like an article similar to that presents different options and different examples of usin... | 0 |

14,913 | 18,296,692,751 | IssuesEvent | 2021-10-05 21:11:06 | pavel-one/Logger | https://api.github.com/repos/pavel-one/Logger | opened | Регистрация и вход ч/з соцсети | Process | Сделать регистрацию через следующие соцсети:

1. Github

2. Google | 1.0 | Регистрация и вход ч/з соцсети - Сделать регистрацию через следующие соцсети:

1. Github

2. Google | process | регистрация и вход ч з соцсети сделать регистрацию через следующие соцсети github google | 1 |

9,642 | 12,603,535,545 | IssuesEvent | 2020-06-11 13:38:21 | HackYourFutureBelgium/class-9-10 | https://api.github.com/repos/HackYourFutureBelgium/class-9-10 | opened | Your Name: module, week | class-10 process-week wednesday-check-in | # Wednesday Check-In

__Debugging, Week 1__

## Progress

I learned about JavaScript concepts.

## Blocked

So far the process is clear.

## Next Steps

Learn more about JavaScript since it requires more practicing to understand it very well.

## Tip(s) of the week

I watched videos on YouTube and th... | 1.0 | Your Name: module, week - # Wednesday Check-In

__Debugging, Week 1__

## Progress

I learned about JavaScript concepts.

## Blocked

So far the process is clear.

## Next Steps

Learn more about JavaScript since it requires more practicing to understand it very well.

## Tip(s) of the week

I watche... | process | your name module week wednesday check in debugging week progress i learned about javascript concepts blocked so far the process is clear next steps learn more about javascript since it requires more practicing to understand it very well tip s of the week i watche... | 1 |

17,367 | 23,191,007,326 | IssuesEvent | 2022-08-01 12:40:05 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | opened | Release `object_store` `0.4.0` | development-process | As discussed, we will release a new version of object_store from the arrow-rs repo / under ASF process: https://github.com/apache/arrow-rs/issues/2180

* Planned Release Candidate: 2022-08-08

* Planned Release and Publish to crates.io: 2022-08-11

Items:

- [ ] Update changelog and readme:

- [ ] Create release s... | 1.0 | Release `object_store` `0.4.0` - As discussed, we will release a new version of object_store from the arrow-rs repo / under ASF process: https://github.com/apache/arrow-rs/issues/2180

* Planned Release Candidate: 2022-08-08

* Planned Release and Publish to crates.io: 2022-08-11

Items:

- [ ] Update changelog and... | process | release object store as discussed we will release a new version of object store from the arrow rs repo under asf process planned release candidate planned release and publish to crates io items update changelog and readme create release scripts create release... | 1 |

18,042 | 24,052,944,827 | IssuesEvent | 2022-09-16 14:19:03 | hashgraph/hedera-json-rpc-relay | https://api.github.com/repos/hashgraph/hedera-json-rpc-relay | closed | Add IERC transferFrom to htsPrecompile approval acceptance tests | enhancement limechain P1 process | ### Problem

Currently the `htsPrecompile.spec.ts` has tests for approval flow.

However, it doesn't follow up with actual transfer tests.

### Solution

Add an additional step to each `approval` test to test the `transferFrom()` call.

Ensure both `IERC20` and `IERC721` get coverage

- `IERC20(token).transferFrom(... | 1.0 | Add IERC transferFrom to htsPrecompile approval acceptance tests - ### Problem

Currently the `htsPrecompile.spec.ts` has tests for approval flow.

However, it doesn't follow up with actual transfer tests.

### Solution

Add an additional step to each `approval` test to test the `transferFrom()` call.

Ensure both `IER... | process | add ierc transferfrom to htsprecompile approval acceptance tests problem currently the htsprecompile spec ts has tests for approval flow however it doesn t follow up with actual transfer tests solution add an additional step to each approval test to test the transferfrom call ensure both ... | 1 |

11,884 | 14,680,446,267 | IssuesEvent | 2020-12-31 10:05:46 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] [Dev] Studies created from SB having maximum Research Sponsor text are not reflected in Sites/Studies tab in PM | Bug P1 Participant manager Process: Dev Process: Tested QA | Steps:

1. Create a study from SB by giving maximum Research Sponsor text in basic information

2. Launch the study

3. Navigate to PM --> Sites tab

4. Observe the list

A/R: Some of the studies created from SB are not reflected in Sites/Studies tab in PM

E/R: All studies created from SB should be reflected

St... | 2.0 | [PM] [Dev] Studies created from SB having maximum Research Sponsor text are not reflected in Sites/Studies tab in PM - Steps:

1. Create a study from SB by giving maximum Research Sponsor text in basic information

2. Launch the study

3. Navigate to PM --> Sites tab

4. Observe the list

A/R: Some of the studies cre... | process | studies created from sb having maximum research sponsor text are not reflected in sites studies tab in pm steps create a study from sb by giving maximum research sponsor text in basic information launch the study navigate to pm sites tab observe the list a r some of the studies created fr... | 1 |

139,368 | 18,850,349,884 | IssuesEvent | 2021-11-11 19:59:18 | snowdensb/sonar-xanitizer | https://api.github.com/repos/snowdensb/sonar-xanitizer | opened | CVE-2020-24616 (High) detected in jackson-databind-2.6.3.jar | security vulnerability | ## CVE-2020-24616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-24616 (High) detected in jackson-databind-2.6.3.jar - ## CVE-2020-24616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.3.jar</b></p></summary>

<p>General... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to vulnerable library src test resources webgoat ... | 0 |

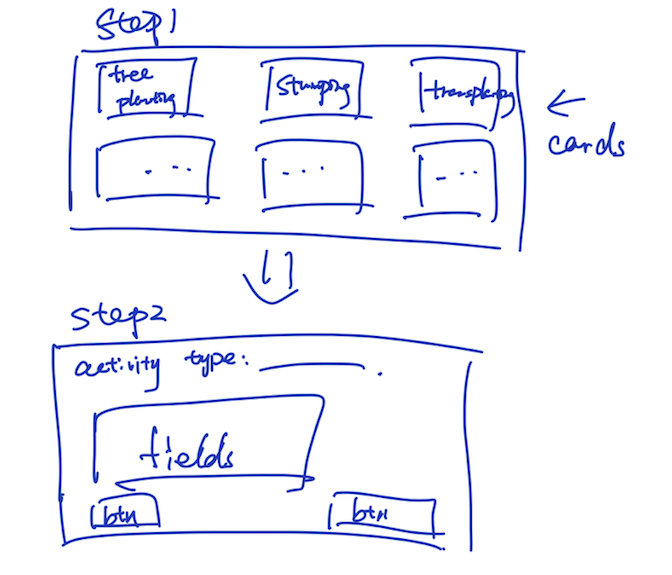

3,728 | 6,733,142,338 | IssuesEvent | 2017-10-18 13:58:37 | york-region-tpss/stp | https://api.github.com/repos/york-region-tpss/stp | closed | Contract Preparation Detail Wizard | form process workflow | Two Steps Wizard Wireframe

Dynamically load fields that are applicable for the chosen activity type.

**After submitted, only update in the collection** | 1.0 | Contract Preparation Detail Wizard - Two Steps Wizard Wireframe

Dynamically load fields that are applicable for the chosen activity type.

**After submitted, only update in the collection** | process | contract preparation detail wizard two steps wizard wireframe dynamically load fields that are applicable for the chosen activity type after submitted only update in the collection | 1 |

614,226 | 19,161,598,265 | IssuesEvent | 2021-12-03 01:15:45 | returntocorp/semgrep | https://api.github.com/repos/returntocorp/semgrep | closed | Opposite conditional matching behavior in PHP and Java | priority:low lang:java lang:php feature:matching stale | In Java matching conditionals with

```

if (...) { ... }

```

matches an if-block as well as an if-else-block. In PHP only the if-block is matched.

However, changing the pattern to

```

if (...) { ... } else { ... }

```

will have the opposite behavior in either languages.

Links:

https://semgrep.dev/s/bashpr... | 1.0 | Opposite conditional matching behavior in PHP and Java - In Java matching conditionals with

```

if (...) { ... }

```

matches an if-block as well as an if-else-block. In PHP only the if-block is matched.

However, changing the pattern to

```

if (...) { ... } else { ... }

```

will have the opposite behavior in ... | non_process | opposite conditional matching behavior in php and java in java matching conditionals with if matches an if block as well as an if else block in php only the if block is matched however changing the pattern to if else will have the opposite behavior in ... | 0 |

121,701 | 16,016,616,584 | IssuesEvent | 2021-04-20 16:48:04 | microsoft/BotFramework-Composer | https://api.github.com/repos/microsoft/BotFramework-Composer | closed | Restart selected bot with single click | Bot Services UX Design customer-reported feature-request | ## Is your feature request related to a problem? Please describe.

#7049

If multiple bot is running , there is no easy solution to restart the particular bot , current approach we have restart all the bots or stop and start selected bot.

: Windows 10

- Mobile device (e.g. iPhone 8, Pixel 2, Samsung Galaxy) if the issue happens on mobile device: -

- TensorFlow.js installed from (npm or script link): npm

- TensorFlow.js version: 2.7.0

- CUDA/cuDNN version: -

**Desc... | 1.0 | Compile error with @tensorflow/tfjs-backend-webgl for TS target > ES5 - **System information**

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04): Windows 10

- Mobile device (e.g. iPhone 8, Pixel 2, Samsung Galaxy) if the issue happens on mobile device: -

- TensorFlow.js installed from (npm or script link): n... | non_process | compile error with tensorflow tfjs backend webgl for ts target system information os platform and distribution e g linux ubuntu windows mobile device e g iphone pixel samsung galaxy if the issue happens on mobile device tensorflow js installed from npm or script link npm ... | 0 |

258,224 | 27,563,872,095 | IssuesEvent | 2023-03-08 01:12:29 | billmcchesney1/t-vault | https://api.github.com/repos/billmcchesney1/t-vault | opened | CVE-2021-23362 (Medium) detected in hosted-git-info-2.8.8.tgz | security vulnerability | ## CVE-2021-23362 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hosted-git-info-2.8.8.tgz</b></p></summary>

<p>Provides metadata and conversions from repository urls for Github, Bi... | True | CVE-2021-23362 (Medium) detected in hosted-git-info-2.8.8.tgz - ## CVE-2021-23362 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hosted-git-info-2.8.8.tgz</b></p></summary>

<p>Provi... | non_process | cve medium detected in hosted git info tgz cve medium severity vulnerability vulnerable library hosted git info tgz provides metadata and conversions from repository urls for github bitbucket and gitlab library home page a href path to dependency file tvaultui package... | 0 |

40,869 | 10,586,892,721 | IssuesEvent | 2019-10-08 20:45:33 | ged/ruby-pg | https://api.github.com/repos/ged/ruby-pg | closed | gem install pg on windows 7 fails | 0.10.1 Build System bug major | **[Original report](https://bitbucket.org/ged/ruby-pg/issue/64) by Anonymous.**

----------------------------------------

When I run "bundle install", I get this error:

checking for pg_config... yes

Using config values from c:\PostgreSQL\9.0\bin/pg_config.exe

checking for libpq-fe.h... yes

checki... | 1.0 | gem install pg on windows 7 fails - **[Original report](https://bitbucket.org/ged/ruby-pg/issue/64) by Anonymous.**

----------------------------------------

When I run "bundle install", I get this error:

checking for pg_config... yes

Using config values from c:\PostgreSQL\9.0\bin/pg_config.exe

check... | non_process | gem install pg on windows fails by anonymous when i run bundle install i get this error checking for pg config yes using config values from c postgresql bin pg config exe checking for libpq fe h yes checking for libpq libpq fs ... | 0 |

184,588 | 14,289,501,639 | IssuesEvent | 2020-11-23 19:21:45 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | closed | zhanshicai/zhanshicai: gossip/gossip/orgs_test.go; 14 LoC | fresh small test |

Found a possible issue in [zhanshicai/zhanshicai](https://www.github.com/zhanshicai/zhanshicai) at [gossip/gossip/orgs_test.go](https://github.com/zhanshicai/zhanshicai/blob/fc4298bdbe0e2f79f8a3a80c31ba0ac46dc91096/gossip/gossip/orgs_test.go#L397-L410)

The below snippet of Go code triggered static analysis which sear... | 1.0 | zhanshicai/zhanshicai: gossip/gossip/orgs_test.go; 14 LoC -

Found a possible issue in [zhanshicai/zhanshicai](https://www.github.com/zhanshicai/zhanshicai) at [gossip/gossip/orgs_test.go](https://github.com/zhanshicai/zhanshicai/blob/fc4298bdbe0e2f79f8a3a80c31ba0ac46dc91096/gossip/gossip/orgs_test.go#L397-L410)

The b... | non_process | zhanshicai zhanshicai gossip gossip orgs test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the line s of go which triggered the analyzer ... | 0 |

359,175 | 25,224,407,260 | IssuesEvent | 2022-11-14 15:01:57 | stratosphereips/nist-cve-search-tool | https://api.github.com/repos/stratosphereips/nist-cve-search-tool | closed | Add documentation on how to use the Docker image | documentation | There's now a docker image, mostly for ensuring LTS. Add to the readme that the docker image exists, how to get it and a few examples of how to use it. | 1.0 | Add documentation on how to use the Docker image - There's now a docker image, mostly for ensuring LTS. Add to the readme that the docker image exists, how to get it and a few examples of how to use it. | non_process | add documentation on how to use the docker image there s now a docker image mostly for ensuring lts add to the readme that the docker image exists how to get it and a few examples of how to use it | 0 |

452,730 | 32,066,489,936 | IssuesEvent | 2023-09-25 03:45:46 | apache/incubator-opendal | https://api.github.com/repos/apache/incubator-opendal | closed | docs: Update the announcement email template to ensure that disclaimers have been added | documentation good first issue help wanted | As mentioned in the discussion at https://lists.apache.org/thread/0d8dxxbhb28m7w7kqxs1d2n0hsdybocg, Sebb reminded us to include disclaimers in our announcement.

We should add the following content:

```

---

Apache OpenDAL (incubating) is an effort undergoing incubation at the Apache

Software Foundation (ASF), s... | 1.0 | docs: Update the announcement email template to ensure that disclaimers have been added - As mentioned in the discussion at https://lists.apache.org/thread/0d8dxxbhb28m7w7kqxs1d2n0hsdybocg, Sebb reminded us to include disclaimers in our announcement.

We should add the following content:

```

---

Apache OpenDAL (... | non_process | docs update the announcement email template to ensure that disclaimers have been added as mentioned in the discussion at sebb reminded us to include disclaimers in our announcement we should add the following content apache opendal incubating is an effort undergoing incubation at the apache so... | 0 |

21,315 | 11,188,430,039 | IssuesEvent | 2020-01-02 04:57:52 | 0xProject/OpenZKP | https://api.github.com/repos/0xProject/OpenZKP | closed | Special case z == FieldElement::ONE? | performance tracker | *On 2019-04-23 @Recmo wrote in [`87f22ab`](https://github.com/0xProject/OpenZKP/commit/87f22ab866dbb5241a13c5916a726dd6047ed33d) “Implement edge cases in Jacobian”:*

Special case z == FieldElement::ONE?

See http://www.hyperelliptic.org/EFD/g1p/auto-shortw-jacobian.html#addition-madd-2007-bl

```rust

... | True | Special case z == FieldElement::ONE? - *On 2019-04-23 @Recmo wrote in [`87f22ab`](https://github.com/0xProject/OpenZKP/commit/87f22ab866dbb5241a13c5916a726dd6047ed33d) “Implement edge cases in Jacobian”:*

Special case z == FieldElement::ONE?

See http://www.hyperelliptic.org/EFD/g1p/auto-shortw-jacobian.html#addition-m... | non_process | special case z fieldelement one on recmo wrote in “implement edge cases in jacobian” special case z fieldelement one see rust self x x clone self y y clone self z fieldelement one return ... | 0 |

1,880 | 4,019,362,958 | IssuesEvent | 2016-05-16 14:41:41 | psu-libraries/library_services_model | https://api.github.com/repos/psu-libraries/library_services_model | opened | Join everyone to the repo | services development | ### Requirements

- [ ] Rama invited

- [ ] Stephen invited

- [ ] Patricia invited

- [ ] Nathan invited

- [ ] Robyn invited | 1.0 | Join everyone to the repo - ### Requirements

- [ ] Rama invited

- [ ] Stephen invited

- [ ] Patricia invited

- [ ] Nathan invited

- [ ] Robyn invited | non_process | join everyone to the repo requirements rama invited stephen invited patricia invited nathan invited robyn invited | 0 |

12,357 | 14,887,185,073 | IssuesEvent | 2021-01-20 17:58:12 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Open study > Enrollment target > Entered data is getting removed | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | AR : Open study > Enrollment target > Entered data is getting removed when user enters the 6th digit

ER : It should not allow the user to enter 6th digit and entered data should not be removed

[ Note : It should also be fixed when user enters other characters after numbers ]

https://user-images.githubuserconte... | 3.0 | [PM] Open study > Enrollment target > Entered data is getting removed - AR : Open study > Enrollment target > Entered data is getting removed when user enters the 6th digit

ER : It should not allow the user to enter 6th digit and entered data should not be removed

[ Note : It should also be fixed when user enters o... | process | open study enrollment target entered data is getting removed ar open study enrollment target entered data is getting removed when user enters the digit er it should not allow the user to enter digit and entered data should not be removed | 1 |

271,851 | 20,719,592,014 | IssuesEvent | 2022-03-13 06:52:02 | christian-cahig/Masterarbeit-APF | https://api.github.com/repos/christian-cahig/Masterarbeit-APF | opened | Better naming and notation for "generalized branch connection matrix" and "intermediate state vector" | documentation enhancement PyAPF APF.m | To be consistent with the "full" and "reduced" versions of some vectors and matrices, there should be a _full_ and a _reduced intermediate state vectors_ denoted by `$ \boldsymbol{x} $` (`x`) and `$ \boldsymbol{w} $` (`w`), respectively.

Moreover, it is a bit misleading to use the term "generalized branch connection... | 1.0 | Better naming and notation for "generalized branch connection matrix" and "intermediate state vector" - To be consistent with the "full" and "reduced" versions of some vectors and matrices, there should be a _full_ and a _reduced intermediate state vectors_ denoted by `$ \boldsymbol{x} $` (`x`) and `$ \boldsymbol{w} $`... | non_process | better naming and notation for generalized branch connection matrix and intermediate state vector to be consistent with the full and reduced versions of some vectors and matrices there should be a full and a reduced intermediate state vectors denoted by boldsymbol x x and boldsymbol w ... | 0 |

22,146 | 7,124,795,382 | IssuesEvent | 2018-01-19 20:16:27 | linode/manager | https://api.github.com/repos/linode/manager | closed | Upgrade to React 16 | Backlog Build & Organization | This builds off of #2676

React 16 provides fibers, which should offer performance updates to our app, error boundaries, and a smaller footprint:

- https://edgecoders.com/react-16-features-and-fiber-explanation-e779544bb1b7

- https://reactjs.org/blog/2017/09/26/react-v16.0.html

- https://medium.com/netscape/whats... | 1.0 | Upgrade to React 16 - This builds off of #2676

React 16 provides fibers, which should offer performance updates to our app, error boundaries, and a smaller footprint:

- https://edgecoders.com/react-16-features-and-fiber-explanation-e779544bb1b7

- https://reactjs.org/blog/2017/09/26/react-v16.0.html

- https://med... | non_process | upgrade to react this builds off of react provides fibers which should offer performance updates to our app error boundaries and a smaller footprint i took a stab at this but found that some of our external components have not been updated for react a few others we ... | 0 |

22,169 | 30,719,861,849 | IssuesEvent | 2023-07-27 15:13:22 | h4sh5/npm-auto-scanner | https://api.github.com/repos/h4sh5/npm-auto-scanner | opened | @saltcorn/mobile-builder 0.8.7 has 3 guarddog issues | npm-install-script npm-silent-process-execution | ```{"npm-install-script":[{"code":" \"postinstall\": \"node ./docker/post-installer.js\"","location":"package/package.json:10","message":"The package.json has a script automatically running when the package is installed"}],"npm-silent-process-execution":[{"code":" const child = spawn(\"docker\", dArgs, {\n ... | 1.0 | @saltcorn/mobile-builder 0.8.7 has 3 guarddog issues - ```{"npm-install-script":[{"code":" \"postinstall\": \"node ./docker/post-installer.js\"","location":"package/package.json:10","message":"The package.json has a script automatically running when the package is installed"}],"npm-silent-process-execution":[{"code"... | process | saltcorn mobile builder has guarddog issues npm install script npm silent process execution | 1 |

17,847 | 23,785,049,575 | IssuesEvent | 2022-09-02 09:18:09 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Improve logs in basic error reports | process/candidate kind/improvement tech/typescript topic: error reporting team/client | In many crash reports the logs look like this:

```

prisma:client:libraryEngine sending request, this.libraryStarted: true

prisma:client:libraryEngine sending request, this.libraryStarted: true

prisma:client:libraryEngine sending request, this.libraryStarted: true

prisma:client:libraryEngine sending request, this... | 1.0 | Improve logs in basic error reports - In many crash reports the logs look like this:

```

prisma:client:libraryEngine sending request, this.libraryStarted: true

prisma:client:libraryEngine sending request, this.libraryStarted: true

prisma:client:libraryEngine sending request, this.libraryStarted: true

prisma:clie... | process | improve logs in basic error reports in many crash reports the logs look like this prisma client libraryengine sending request this librarystarted true prisma client libraryengine sending request this librarystarted true prisma client libraryengine sending request this librarystarted true prisma clie... | 1 |

5,371 | 8,202,228,676 | IssuesEvent | 2018-09-02 06:12:52 | bio-miga/miga | https://api.github.com/repos/bio-miga/miga | closed | Re-registration of previous results not working | API Processing bug | Some steps involve (un)zipping files from previous steps, and registering the result should trigger a re-registration. However, the presence of the previous .json file stops this from happening.

Also, this re-registration should be moved from the `miga-base` Ruby code to the execution Bash scripts. It may need a new... | 1.0 | Re-registration of previous results not working - Some steps involve (un)zipping files from previous steps, and registering the result should trigger a re-registration. However, the presence of the previous .json file stops this from happening.

Also, this re-registration should be moved from the `miga-base` Ruby cod... | process | re registration of previous results not working some steps involve un zipping files from previous steps and registering the result should trigger a re registration however the presence of the previous json file stops this from happening also this re registration should be moved from the miga base ruby cod... | 1 |

21,406 | 11,660,229,153 | IssuesEvent | 2020-03-03 02:37:44 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | opened | Feature: Unpaid Fee Flag on Permit Issuance | Product: AMANDA Project: ATD AMANDA Backlog Service: Apps Type: Enhancement Workgroup: ROW migrated | ## Unpaid Fee Flag

_AKA # 46 Unpaid Fee Flag on Permit Issuance (Audit Request)_

**Stakeholders:** Paloma, Kim, Mooney

**User Story:** As a ROW permit analyst (PLA), I need to know if any of the folder people have unpaid ROW fees BEFORE the permit is issued, so I can place the permit on hold and attempt to colle... | 1.0 | Feature: Unpaid Fee Flag on Permit Issuance - ## Unpaid Fee Flag

_AKA # 46 Unpaid Fee Flag on Permit Issuance (Audit Request)_

**Stakeholders:** Paloma, Kim, Mooney

**User Story:** As a ROW permit analyst (PLA), I need to know if any of the folder people have unpaid ROW fees BEFORE the permit is issued, so I can... | non_process | feature unpaid fee flag on permit issuance unpaid fee flag aka unpaid fee flag on permit issuance audit request stakeholders paloma kim mooney user story as a row permit analyst pla i need to know if any of the folder people have unpaid row fees before the permit is issued so i can ... | 0 |

21,365 | 29,194,080,353 | IssuesEvent | 2023-05-20 00:31:48 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Hibrido / Caieiras, São Paulo, Brazil] C# Developer (Júnior) (Híbrido) na Coodesh | SALVADOR PJ BANCO DE DADOS FULL-STACK HTML JUNIOR SQL REST SOAP JSON ANGULAR REACT XML REQUISITOS PROCESSOS GITHUB UMA C APIs AUTOMAÇÃO DE PROCESSOS ALOCADO Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/analista-de-sistemas-junior-172722002... | 2.0 | [Hibrido / Caieiras, São Paulo, Brazil] C# Developer (Júnior) (Híbrido) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url ... | process | c developer júnior híbrido na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de cand... | 1 |

20,426 | 3,812,781,005 | IssuesEvent | 2016-03-27 20:52:32 | briansmith/ring | https://api.github.com/repos/briansmith/ring | closed | Remove all code that supports AES-192 | enhancement good-first-bug performance static-analysis-and-type-safety test-coverage | See https://github.com/briansmith/ring/blob/c882c2c3ec837f14267991122dc20e6aba5700bc/crypto/aes/aes.c#L549-L551

and

https://github.com/briansmith/ring/blob/c882c2c3ec837f14267991122dc20e6aba5700bc/crypto/aes/aes.c#L549-L551

Also, I guess the assembly language code must have some bits that support 192-bit AES.

... | 1.0 | Remove all code that supports AES-192 - See https://github.com/briansmith/ring/blob/c882c2c3ec837f14267991122dc20e6aba5700bc/crypto/aes/aes.c#L549-L551

and

https://github.com/briansmith/ring/blob/c882c2c3ec837f14267991122dc20e6aba5700bc/crypto/aes/aes.c#L549-L551

Also, I guess the assembly language code must h... | non_process | remove all code that supports aes see and also i guess the assembly language code must have some bits that support bit aes we don t need any of this since we don t and don t plan to expose aes | 0 |

6,147 | 2,814,174,529 | IssuesEvent | 2015-05-18 18:32:45 | joyent/node | https://api.github.com/repos/joyent/node | closed | test: gc/test-net-timeout fails consistently | test | ```

Done: 500/500

Collected: 499/500

All should be collected now.

Collected: 499/500

timers.js:102

if (!process.listeners('uncaughtException').length) throw e;

^

AssertionError: false == true

at null._onTimeout (/home/bnoordh... | 1.0 | test: gc/test-net-timeout fails consistently - ```

Done: 500/500

Collected: 499/500

All should be collected now.

Collected: 499/500

timers.js:102

if (!process.listeners('uncaughtException').length) throw e;

^

AssertionError: false... | non_process | test gc test net timeout fails consistently done collected all should be collected now collected timers js if process listeners uncaughtexception length throw e assertionerror false true ... | 0 |

371,868 | 10,982,278,821 | IssuesEvent | 2019-12-01 06:03:33 | Luna-Interactive/catastrophe | https://api.github.com/repos/Luna-Interactive/catastrophe | closed | Winning | Priority: Critical SFX | **Is your feature request related to a problem? Please describe.**

Missing sound for when a player wins the game. | 1.0 | Winning - **Is your feature request related to a problem? Please describe.**

Missing sound for when a player wins the game. | non_process | winning is your feature request related to a problem please describe missing sound for when a player wins the game | 0 |

5,241 | 8,036,963,753 | IssuesEvent | 2018-07-30 10:55:24 | Open-EO/openeo-api | https://api.github.com/repos/Open-EO/openeo-api | closed | Variables in process_graphs? | process graph management processes vote work in progress | In issue #52 about product name differences, I had the idea that stored process graphs, i.e. /process_graphs/:id, may need some variables in them to be really portable. Otherwise sharing as proposed in #85 might not be so useful. An example would be the product id in case we can't come up with something useful to solve... | 2.0 | Variables in process_graphs? - In issue #52 about product name differences, I had the idea that stored process graphs, i.e. /process_graphs/:id, may need some variables in them to be really portable. Otherwise sharing as proposed in #85 might not be so useful. An example would be the product id in case we can't come up... | process | variables in process graphs in issue about product name differences i had the idea that stored process graphs i e process graphs id may need some variables in them to be really portable otherwise sharing as proposed in might not be so useful an example would be the product id in case we can t come up w... | 1 |

10,118 | 13,044,162,241 | IssuesEvent | 2020-07-29 03:47:31 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `GetFormat` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `GetFormat` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr)... | 2.0 | UCP: Migrate scalar function `GetFormat` from TiDB -

## Description

Port the scalar function `GetFormat` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv... | process | ucp migrate scalar function getformat from tidb description port the scalar function getformat from tidb to coprocessor score mentor s maplefu recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

13,102 | 15,496,467,659 | IssuesEvent | 2021-03-11 02:44:05 | dluiscosta/weather_api | https://api.github.com/repos/dluiscosta/weather_api | opened | Establish test dependency hierarchy | development process enhancement | Establish test dependency hierarchy where some test ```A``` might assert the proper operation of a feature, which in turn requires the proper operation of a second feature, asserted independently by some test ```B```, thus making ```A``` dependent on ```B```.

That way, when ```B``` fails, ```A``` can be skipped (as ... | 1.0 | Establish test dependency hierarchy - Establish test dependency hierarchy where some test ```A``` might assert the proper operation of a feature, which in turn requires the proper operation of a second feature, asserted independently by some test ```B```, thus making ```A``` dependent on ```B```.

That way, when ```B... | process | establish test dependency hierarchy establish test dependency hierarchy where some test a might assert the proper operation of a feature which in turn requires the proper operation of a second feature asserted independently by some test b thus making a dependent on b that way when b... | 1 |

18,067 | 24,080,244,816 | IssuesEvent | 2022-09-19 05:32:02 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Processing algorithm "Lines to polygons" produces incorrect polygons | Processing Bug | ### What is the bug or the crash?

When using the processing algorithm "Lines to polygons" with certain data, it produces incorrect polygons.

Here is a simple (topologically correct) line layer to test the bug with: [lines_simplified.zip](https://github.com/qgis/QGIS/files/9581708/lines_simplified.zip)

### ... | 1.0 | Processing algorithm "Lines to polygons" produces incorrect polygons - ### What is the bug or the crash?

When using the processing algorithm "Lines to polygons" with certain data, it produces incorrect polygons.

Here is a simple (topologically correct) line layer to test the bug with: [lines_simplified.zip](https... | process | processing algorithm lines to polygons produces incorrect polygons what is the bug or the crash when using the processing algorithm lines to polygons with certain data it produces incorrect polygons here is a simple topologically correct line layer to test the bug with steps to repr... | 1 |

408,546 | 27,695,438,910 | IssuesEvent | 2023-03-14 01:33:12 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | [Gitbook Documentation] Complete 'Extracting Location Coordinates to add a Map Marker Map' article | Workgroup: DTS Service: Apps Type: Documentation Product: TDS Portal | As exemplified in TDS or S&M | 1.0 | [Gitbook Documentation] Complete 'Extracting Location Coordinates to add a Map Marker Map' article - As exemplified in TDS or S&M | non_process | complete extracting location coordinates to add a map marker map article as exemplified in tds or s m | 0 |

19,615 | 25,970,594,587 | IssuesEvent | 2022-12-19 10:54:07 | toggl/track-windows-feedback | https://api.github.com/repos/toggl/track-windows-feedback | closed | Is there a Beta "Release channel"? | solved processed | I see the option for changing to the Beta "Release channel" but when I select it and then close the window and re-open it switches back to the Stable channel. Is there a Beta channel anymore? I know in the past (old app) there was and I was on the Beta channel but not sure if now with new native app if maybe there is o... | 1.0 | Is there a Beta "Release channel"? - I see the option for changing to the Beta "Release channel" but when I select it and then close the window and re-open it switches back to the Stable channel. Is there a Beta channel anymore? I know in the past (old app) there was and I was on the Beta channel but not sure if now wi... | process | is there a beta release channel i see the option for changing to the beta release channel but when i select it and then close the window and re open it switches back to the stable channel is there a beta channel anymore i know in the past old app there was and i was on the beta channel but not sure if now wi... | 1 |

37,342 | 15,262,770,210 | IssuesEvent | 2021-02-22 00:38:34 | Azure/azure-sdk-for-net | https://api.github.com/repos/Azure/azure-sdk-for-net | closed | Error while using Azure.Messaging.ServiceBus library for Service bus trigger function | Client Functions Service Bus customer-reported needs-author-feedback question | Azure.Messaging.ServiceBus 7.1.0

Microsoft.Azure.WebJobs.Extensions.ServiceBus 4.2.1

Microsoft.NET.Sdk.Functions 3.0.11

Azure Cloud.

Windows/Linux

VS 2019

Hello

I have an Azure function, with a Service Bus trigger,

using Azure.Messaging.ServiceBus;

public static void Run(ServiceBusReceivedMessage myQueueI... | 1.0 | Error while using Azure.Messaging.ServiceBus library for Service bus trigger function - Azure.Messaging.ServiceBus 7.1.0

Microsoft.Azure.WebJobs.Extensions.ServiceBus 4.2.1

Microsoft.NET.Sdk.Functions 3.0.11

Azure Cloud.

Windows/Linux

VS 2019

Hello

I have an Azure function, with a Service Bus trigger,

usin... | non_process | error while using azure messaging servicebus library for service bus trigger function azure messaging servicebus microsoft azure webjobs extensions servicebus microsoft net sdk functions azure cloud windows linux vs hello i have an azure function with a service bus trigger using az... | 0 |

217,525 | 16,855,802,384 | IssuesEvent | 2021-06-21 06:27:36 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | raftstore::test_merge::test_node_merge_prerequisites_check failed | component/test-bench | raftstore::test_merge::test_node_merge_prerequisites_check

Latest failed builds:

https://internal.pingcap.net/idc-jenkins/job/tikv_ghpr_test/18842/display/redirect

| 1.0 | raftstore::test_merge::test_node_merge_prerequisites_check failed - raftstore::test_merge::test_node_merge_prerequisites_check

Latest failed builds:

https://internal.pingcap.net/idc-jenkins/job/tikv_ghpr_test/18842/display/redirect

| non_process | raftstore test merge test node merge prerequisites check failed raftstore test merge test node merge prerequisites check latest failed builds | 0 |

719,588 | 24,764,745,723 | IssuesEvent | 2022-10-22 11:17:00 | DISSINET/InkVisitor | https://api.github.com/repos/DISSINET/InkVisitor | closed | New reference; changing the parsing notation for references | data parsing priority | 1. Please include a new col. in Actions and Concepts into the parsing: wordnet_sense_key.

2. I had an inconsistency in the notation of wordnet cols between Actions and Concepts ("special" vs. "reference"). Now unified to "reference".

3. In cols. to be parsed as "reference", I now added the Resource ID (R entity) to ... | 1.0 | New reference; changing the parsing notation for references - 1. Please include a new col. in Actions and Concepts into the parsing: wordnet_sense_key.

2. I had an inconsistency in the notation of wordnet cols between Actions and Concepts ("special" vs. "reference"). Now unified to "reference".

3. In cols. to be par... | non_process | new reference changing the parsing notation for references please include a new col in actions and concepts into the parsing wordnet sense key i had an inconsistency in the notation of wordnet cols between actions and concepts special vs reference now unified to reference in cols to be par... | 0 |

113,790 | 4,569,032,192 | IssuesEvent | 2016-09-15 16:02:01 | isobar-techchallenge/isobar-a9f8d858-fb06-4865-833f-f03fc2d03cd1 | https://api.github.com/repos/isobar-techchallenge/isobar-a9f8d858-fb06-4865-833f-f03fc2d03cd1 | opened | Target Button in Email to Small | priority:low | Within email to user the CTA button target space is to small. Need to make the target click area the full width of the button. | 1.0 | Target Button in Email to Small - Within email to user the CTA button target space is to small. Need to make the target click area the full width of the button. | non_process | target button in email to small within email to user the cta button target space is to small need to make the target click area the full width of the button | 0 |

18,148 | 24,187,126,890 | IssuesEvent | 2022-09-23 14:12:03 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | closed | Golang Integration Tests Failing | bug development-process | **Describe the bug**

<!--

A clear and concise description of what the bug is.

-->

The arrow integration tests are failing on master, https://github.com/apache/arrow-rs/runs/8249872730?check_suite_focus=true, this appears to be caused by https://github.com/apache/arrow/pull/14067 which bumps the minimum required G... | 1.0 | Golang Integration Tests Failing - **Describe the bug**

<!--

A clear and concise description of what the bug is.

-->

The arrow integration tests are failing on master, https://github.com/apache/arrow-rs/runs/8249872730?check_suite_focus=true, this appears to be caused by https://github.com/apache/arrow/pull/14067... | process | golang integration tests failing describe the bug a clear and concise description of what the bug is the arrow integration tests are failing on master this appears to be caused by which bumps the minimum required golang version to unfortunately the version in apache arrow dev conda... | 1 |

445,518 | 12,832,125,680 | IssuesEvent | 2020-07-07 07:04:31 | Automattic/abacus | https://api.github.com/repos/Automattic/abacus | opened | Apply consistent object normalisation across the codebase | [!priority] medium [component] experimenter interface [type] enhancement | Mapping nested objects (e.g., metrics to an experiment's metric assignments) is currently handled inconsistently throughout the code. @jessie-ross suggested we use [normalizr](https://github.com/paularmstrong/normalizr) for this.

See: https://github.com/Automattic/abacus/pull/198#discussion_r449975708 | 1.0 | Apply consistent object normalisation across the codebase - Mapping nested objects (e.g., metrics to an experiment's metric assignments) is currently handled inconsistently throughout the code. @jessie-ross suggested we use [normalizr](https://github.com/paularmstrong/normalizr) for this.

See: https://github.com/Aut... | non_process | apply consistent object normalisation across the codebase mapping nested objects e g metrics to an experiment s metric assignments is currently handled inconsistently throughout the code jessie ross suggested we use for this see | 0 |

3,666 | 6,694,824,296 | IssuesEvent | 2017-10-10 04:46:39 | york-region-tpss/stp | https://api.github.com/repos/york-region-tpss/stp | opened | View Watering Assignments - Finished Assignment Upload | enhancement process workflow | Create a data uploader to upload the finished assignment upload.

| 1.0 | View Watering Assignments - Finished Assignment Upload - Create a data uploader to upload the finished assignment upload.

| process | view watering assignments finished assignment upload create a data uploader to upload the finished assignment upload | 1 |

57,769 | 14,219,807,762 | IssuesEvent | 2020-11-17 13:47:01 | Automattic/wp-calypso | https://api.github.com/repos/Automattic/wp-calypso | closed | Modularise `userDevices` | Build | The `userDevices` portion of the state tree needs to be modularised. See the [modularised state documentation](https://github.com/Automattic/wp-calypso/blob/master/docs/modularized-state.md) for more details. | 1.0 | Modularise `userDevices` - The `userDevices` portion of the state tree needs to be modularised. See the [modularised state documentation](https://github.com/Automattic/wp-calypso/blob/master/docs/modularized-state.md) for more details. | non_process | modularise userdevices the userdevices portion of the state tree needs to be modularised see the for more details | 0 |

13,338 | 15,800,886,211 | IssuesEvent | 2021-04-03 01:47:32 | PyCQA/flake8 | https://api.github.com/repos/PyCQA/flake8 | closed | Order is nondeterministic with multiprocessing and piped output | bug:confirmed component:docs component:multiprocessing feature:accepted fix:committed fix:released | In GitLab by @quentinp on Nov 17, 2014, 03:24

Steps to reproduce:

* In a directory, create two files a.py and b.py with an error each (eg. both containing a=1).

* From the directory, run `flake8 -j2 . | tee` multiple times (``for i in `seq 1 10` ; do flake8 -j2 . | tee; echo; done`` can be useful).

Expected... | 1.0 | Order is nondeterministic with multiprocessing and piped output - In GitLab by @quentinp on Nov 17, 2014, 03:24

Steps to reproduce:

* In a directory, create two files a.py and b.py with an error each (eg. both containing a=1).

* From the directory, run `flake8 -j2 . | tee` multiple times (``for i in `seq 1 10`... | process | order is nondeterministic with multiprocessing and piped output in gitlab by quentinp on nov steps to reproduce in a directory create two files a py and b py with an error each eg both containing a from the directory run tee multiple times for i in seq do ... | 1 |

42,271 | 9,199,573,387 | IssuesEvent | 2019-03-07 15:13:47 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | closed | Buttons contributed to the extensions toolbar should allow to specify "toggled" state | code-nav extensions feature-request | Many buttons contributed to the toolbar are to toggle something on or off, e.g. Coverage colors, soon code intel, ...

The on/off state can currently only be communicated through the label "Enable"/"Disable". This makes it impossible to omit the lable to save space, and looks very weird on GitHub where the buttons look... | 1.0 | Buttons contributed to the extensions toolbar should allow to specify "toggled" state - Many buttons contributed to the toolbar are to toggle something on or off, e.g. Coverage colors, soon code intel, ...

The on/off state can currently only be communicated through the label "Enable"/"Disable". This makes it impossibl... | non_process | buttons contributed to the extensions toolbar should allow to specify toggled state many buttons contributed to the toolbar are to toggle something on or off e g coverage colors soon code intel the on off state can currently only be communicated through the label enable disable this makes it impossibl... | 0 |

13,241 | 15,708,282,161 | IssuesEvent | 2021-03-26 20:16:17 | xatkit-bot-platform/xatkit-runtime | https://api.github.com/repos/xatkit-bot-platform/xatkit-runtime | opened | Remove @Ignore for language detection tests | Processors Testing | We have to ignore these tests for the moment because we don't have the infrastructure to run them in a CI/CD environment.

| 1.0 | Remove @Ignore for language detection tests - We have to ignore these tests for the moment because we don't have the infrastructure to run them in a CI/CD environment.

| process | remove ignore for language detection tests we have to ignore these tests for the moment because we don t have the infrastructure to run them in a ci cd environment | 1 |

5,108 | 7,885,450,349 | IssuesEvent | 2018-06-27 12:31:08 | Open-EO/openeo-api | https://api.github.com/repos/Open-EO/openeo-api | opened | Debugging and getting intermediate result and metadata | in discussion other process graphs processes | A big topic during the hackathon was debugging of our process graphs and getting information of intermediate result and other meta data. For example, after a filter one might want to know what data is left. GEE allows to add print() calls to get information about a variable with results between different steps. How cou... | 2.0 | Debugging and getting intermediate result and metadata - A big topic during the hackathon was debugging of our process graphs and getting information of intermediate result and other meta data. For example, after a filter one might want to know what data is left. GEE allows to add print() calls to get information about... | process | debugging and getting intermediate result and metadata a big topic during the hackathon was debugging of our process graphs and getting information of intermediate result and other meta data for example after a filter one might want to know what data is left gee allows to add print calls to get information about... | 1 |