Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

290,152 | 8,882,979,643 | IssuesEvent | 2019-01-14 14:39:19 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | m-in.gearbest.com - site is not usable | browser-firefox priority-important | <!-- @browser: Firefox 65.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; WOW64; rv:65.0) Gecko/20100101 Firefox/65.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://m-in.gearbest.com/money-bag.html?lkid=18124852&cid=108621863625691136

**Browser / Version**: Firefox 65.0

**Operating System**: Windo... | 1.0 | m-in.gearbest.com - site is not usable - <!-- @browser: Firefox 65.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; WOW64; rv:65.0) Gecko/20100101 Firefox/65.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://m-in.gearbest.com/money-bag.html?lkid=18124852&cid=108621863625691136

**Browser / Version**:... | non_process | m in gearbest com site is not usable url browser version firefox operating system windows tested another browser yes problem type site is not usable description some virus attach steps to reproduce no need browser configuration mixed active conten... | 0 |

14,628 | 25,288,019,028 | IssuesEvent | 2022-11-16 21:05:35 | NASA-PDS/validate | https://api.github.com/repos/NASA-PDS/validate | opened | As a use, I want to validate the DOI referenced in the PDS4 label | requirement needs:triage | <!--

For more information on how to populate this new feature request, see the PDS Wiki on User Story Development:

https://github.com/NASA-PDS/nasa-pds.github.io/wiki/Issue-Tracking#user-story-development

-->

## 🧑🔬 User Persona(s)

<!-- Ideally this would be consistent within a repo and documented with t... | 1.0 | As a use, I want to validate the DOI referenced in the PDS4 label - <!--

For more information on how to populate this new feature request, see the PDS Wiki on User Story Development:

https://github.com/NASA-PDS/nasa-pds.github.io/wiki/Issue-Tracking#user-story-development

-->

## 🧑🔬 User Persona(s)

<!-- ... | non_process | as a use i want to validate the doi referenced in the label for more information on how to populate this new feature request see the pds wiki on user story development 🧑🔬 user persona s node data manager 💪 motivation so that i can requested during face face ma... | 0 |

13,435 | 8,461,159,638 | IssuesEvent | 2018-10-22 20:57:14 | burtonator/polar-bookshelf | https://api.github.com/repos/burtonator/polar-bookshelf | closed | Display tag values in the main document repository | good first issue usability | It would be really nice to be able to see what tags have been applied to a document without clicking on the tag icon. Is it possible to have the tag values visible in a column? | True | Display tag values in the main document repository - It would be really nice to be able to see what tags have been applied to a document without clicking on the tag icon. Is it possible to have the tag values visible in a column? | non_process | display tag values in the main document repository it would be really nice to be able to see what tags have been applied to a document without clicking on the tag icon is it possible to have the tag values visible in a column | 0 |

356,132 | 10,589,148,744 | IssuesEvent | 2019-10-09 04:59:50 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.nytimes.com - see bug description | browser-focus-geckoview engine-gecko priority-important | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://www.nytimes.com/2017/06/29/automobiles/wheels/why-fog-lamps-are-starting-to-disappear.html

**Browser /... | 1.0 | www.nytimes.com - see bug description - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://www.nytimes.com/2017/06/29/automobiles/wheels/why-fog-lamps-are... | non_process | see bug description url browser version firefox mobile operating system android tested another browser yes problem type something else description indicates i am in private mode unable to read article we all should have freedom to read articles in private mode ... | 0 |

64,939 | 26,922,332,339 | IssuesEvent | 2023-02-07 11:21:10 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Operating system is also a restriction to moving | app-service/svc triaged cxp doc-enhancement Pri1 escalated-content-team |

You also can't move an app service to an app service plan that is of a different operating system, but that isnt specified here.

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 25ec88c2-d5ff-e727-cbe1-69bac94141ae

* Version Inde... | 1.0 | Operating system is also a restriction to moving -

You also can't move an app service to an app service plan that is of a different operating system, but that isnt specified here.

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 2... | non_process | operating system is also a restriction to moving you also can t move an app service to an app service plan that is of a different operating system but that isnt specified here document details ⚠ do not edit this section it is required for learn microsoft com ➟ github issue linking id ... | 0 |

3,774 | 6,743,651,736 | IssuesEvent | 2017-10-20 13:00:10 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | Use of ethabi should be removed | libs-etherlib status-inprocess type-bug | In the function **getEncoding** in the file ./etherlib/abi.cpp, there is code that looks for and tries to use an external program called `ethabi`. This almost certainly does not work and should be removed. | 1.0 | Use of ethabi should be removed - In the function **getEncoding** in the file ./etherlib/abi.cpp, there is code that looks for and tries to use an external program called `ethabi`. This almost certainly does not work and should be removed. | process | use of ethabi should be removed in the function getencoding in the file etherlib abi cpp there is code that looks for and tries to use an external program called ethabi this almost certainly does not work and should be removed | 1 |

14,842 | 18,236,769,989 | IssuesEvent | 2021-10-01 07:59:55 | quark-engine/quark-engine | https://api.github.com/repos/quark-engine/quark-engine | closed | Error occurred while analyzing APK | issue-processing-state-01 | ## Description

Error occurred while analyzing APK with the rule.

## Rule content

```

{

"crime": "Steal Sensitive Information - Get directory info of the SD cards and active network info",

"x1_permission": [

"android.permission.WRITE_EXTERNAL_STORAGE",

"android.permission.ACCESS_NETWORK_S... | 1.0 | Error occurred while analyzing APK - ## Description

Error occurred while analyzing APK with the rule.

## Rule content

```

{

"crime": "Steal Sensitive Information - Get directory info of the SD cards and active network info",

"x1_permission": [

"android.permission.WRITE_EXTERNAL_STORAGE",

... | process | error occurred while analyzing apk description error occurred while analyzing apk with the rule rule content crime steal sensitive information get directory info of the sd cards and active network info permission android permission write external storage ... | 1 |

700,000 | 24,041,148,644 | IssuesEvent | 2022-09-16 02:01:33 | googleapis/gapic-generator-ruby | https://api.github.com/repos/googleapis/gapic-generator-ruby | opened | Spurious cross-reference YARD conversions happen when the target is in a gem dependency | type: bug priority: p2 | This appears in `google-cloud-firestore-admin-v1/proto_docs/google/firestore/admin/v1/location.rb`

It is currently converting `[google.cloud.location.Location.metadata][google.cloud.location.Location.metadata]` to `{::Google::Cloud::Location::Location#metadata google.cloud.location.Location.metadata}`, but the class... | 1.0 | Spurious cross-reference YARD conversions happen when the target is in a gem dependency - This appears in `google-cloud-firestore-admin-v1/proto_docs/google/firestore/admin/v1/location.rb`

It is currently converting `[google.cloud.location.Location.metadata][google.cloud.location.Location.metadata]` to `{::Google::C... | non_process | spurious cross reference yard conversions happen when the target is in a gem dependency this appears in google cloud firestore admin proto docs google firestore admin location rb it is currently converting to google cloud location location metadata google cloud location location metadata but t... | 0 |

3,897 | 6,821,572,542 | IssuesEvent | 2017-11-07 17:08:37 | WormBase/wormbase-pipeline | https://api.github.com/repos/WormBase/wormbase-pipeline | closed | Knocking in human variants into the worm ortholog - Ranjana | MODELS Processing | This is for Tim Schedl’s point, relevant quote below:

"However, there are already a number of published examples of knocking in human variants into the worm ortholog. Examples below. (In this case, the allele designation used would just be the existing designation of the worm lab.) The important point is that th... | 1.0 | Knocking in human variants into the worm ortholog - Ranjana - This is for Tim Schedl’s point, relevant quote below:

"However, there are already a number of published examples of knocking in human variants into the worm ortholog. Examples below. (In this case, the allele designation used would just be the existing... | process | knocking in human variants into the worm ortholog ranjana this is for tim schedl’s point relevant quote below however there are already a number of published examples of knocking in human variants into the worm ortholog examples below in this case the allele designation used would just be the existing... | 1 |

2,845 | 5,808,169,366 | IssuesEvent | 2017-05-04 09:55:04 | Hurence/logisland | https://api.github.com/repos/Hurence/logisland | closed | add Netflow telemetry Processor | cyber-security feature processor | find the right external to collect netflow data into Kafka

write ParseNetflowProcessor to handle this data source

write Avro schema wich conforms to Spot Open Data Model

- code / test / doc

- tutorial

- kibana dashboard

| 1.0 | add Netflow telemetry Processor - find the right external to collect netflow data into Kafka

write ParseNetflowProcessor to handle this data source

write Avro schema wich conforms to Spot Open Data Model

- code / test / doc

- tutorial

- kibana dashboard

| process | add netflow telemetry processor find the right external to collect netflow data into kafka write parsenetflowprocessor to handle this data source write avro schema wich conforms to spot open data model code test doc tutorial kibana dashboard | 1 |

20,156 | 26,708,115,229 | IssuesEvent | 2023-01-27 20:14:10 | eosnetworkfoundation/devrel | https://api.github.com/repos/eosnetworkfoundation/devrel | closed | Define create/update/rename process for content using GitHub | Process | Document a process to create/update/rename

AC:

- All documents need to provide an example of doing this internally and externally

- Define the process for creating a new document

- Define the process for updating existing documents

- Define the process for renaming a document

- Place process in devrel repo | 1.0 | Define create/update/rename process for content using GitHub - Document a process to create/update/rename

AC:

- All documents need to provide an example of doing this internally and externally

- Define the process for creating a new document

- Define the process for updating existing documents

- Define the process for... | process | define create update rename process for content using github document a process to create update rename ac all documents need to provide an example of doing this internally and externally define the process for creating a new document define the process for updating existing documents define the process for... | 1 |

3,330 | 6,447,624,966 | IssuesEvent | 2017-08-14 08:17:46 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | reopened | ntr tRNA 2'-O-methyltransferase | New term request PomBase RNA processes |

I could only find

tRNA (guanosine-2'-O-)-methyltransferase activity

child of

GO:0008171 O-methyltransferase activity

GO:0008175 tRNA methyltransferase activity

http://www.yeastgenome.org/reference/S000120470/overview

(I'm annotating the ortholog)

| 1.0 | ntr tRNA 2'-O-methyltransferase -

I could only find

tRNA (guanosine-2'-O-)-methyltransferase activity

child of

GO:0008171 O-methyltransferase activity

GO:0008175 tRNA methyltransferase activity

http://www.yeastgenome.org/reference/S000120470/overview

(I'm annotating the ortholog)

| process | ntr trna o methyltransferase i could only find trna guanosine o methyltransferase activity child of go o methyltransferase activity go trna methyltransferase activity i m annotating the ortholog | 1 |

7,183 | 10,323,338,549 | IssuesEvent | 2019-08-31 20:28:38 | rusty-snake/firejailed-tor-browser | https://api.github.com/repos/rusty-snake/firejailed-tor-browser | opened | firejailed-tor-browser.profile backporting | process tracking | # observation of backporting

Previous issue: #6

## 0.9.60

- [ ] seccomp changes

## 0.9.58

- [ ] seccomp changes

## 0.9.56

ALL

## 0.9.52

ALL | 1.0 | firejailed-tor-browser.profile backporting - # observation of backporting

Previous issue: #6

## 0.9.60

- [ ] seccomp changes

## 0.9.58

- [ ] seccomp changes

## 0.9.56

ALL

## 0.9.52

ALL | process | firejailed tor browser profile backporting observation of backporting previous issue seccomp changes seccomp changes all all | 1 |

461,862 | 13,237,337,782 | IssuesEvent | 2020-08-18 21:28:14 | Railcraft/Railcraft | https://api.github.com/repos/Railcraft/Railcraft | closed | java.lang.NullPointerException when loading railcraft with forge 14.23.5.2838 | bug configuration low priority | **Description of the Bug**

minecraft 1.12.2 using forge 14.23.5.2838 crashes an reports the following error while loading

net.minecraftforge.fml.common.LoaderExceptionModCrash: Caught exception from Railcraft (railcraft)

Caused by: java.lang.NullPointerException

Please find the crash report below:

---- Minecraf... | 1.0 | java.lang.NullPointerException when loading railcraft with forge 14.23.5.2838 - **Description of the Bug**

minecraft 1.12.2 using forge 14.23.5.2838 crashes an reports the following error while loading

net.minecraftforge.fml.common.LoaderExceptionModCrash: Caught exception from Railcraft (railcraft)

Caused by: java... | non_process | java lang nullpointerexception when loading railcraft with forge description of the bug minecraft using forge crashes an reports the following error while loading net minecraftforge fml common loaderexceptionmodcrash caught exception from railcraft railcraft caused by java lang nullp... | 0 |

15,891 | 20,075,037,828 | IssuesEvent | 2022-02-04 11:43:39 | climatepolicyradar/navigator | https://api.github.com/repos/climatepolicyradar/navigator | opened | Identify main document language | Document processing | Following detection of passage level language, Navigator should identify the main language for the document and store this in the database.

The main language would be considered to be the language detected having the most passages in the document.

| 1.0 | Identify main document language - Following detection of passage level language, Navigator should identify the main language for the document and store this in the database.

The main language would be considered to be the language detected having the most passages in the document.

| process | identify main document language following detection of passage level language navigator should identify the main language for the document and store this in the database the main language would be considered to be the language detected having the most passages in the document | 1 |

52,491 | 12,974,002,395 | IssuesEvent | 2020-07-21 14:49:39 | infor-design/enterprise | https://api.github.com/repos/infor-design/enterprise | closed | Date Format with no separator not supported in masking | [3] team: appbuilder type: bug :bug: | **Describe the bug**

I want to use format yyyyMMdd in input-masking but I can not write anything in the textbox with this format.

**To Reproduce**

Steps to reproduce the behavior:

1. Have a textbox

2. Add a mask such as yyyyMMdd

3. Try to write anything in the textbox

**Expected behavior**

The input should ... | 1.0 | Date Format with no separator not supported in masking - **Describe the bug**

I want to use format yyyyMMdd in input-masking but I can not write anything in the textbox with this format.

**To Reproduce**

Steps to reproduce the behavior:

1. Have a textbox

2. Add a mask such as yyyyMMdd

3. Try to write anything i... | non_process | date format with no separator not supported in masking describe the bug i want to use format yyyymmdd in input masking but i can not write anything in the textbox with this format to reproduce steps to reproduce the behavior have a textbox add a mask such as yyyymmdd try to write anything i... | 0 |

15,105 | 18,844,149,697 | IssuesEvent | 2021-11-11 13:09:06 | DSE511-Project3-Team/DSE511-Project-3-Code-Repo | https://api.github.com/repos/DSE511-Project3-Team/DSE511-Project-3-Code-Repo | opened | Exploratory Data Analysis (EDA) | Preprocess | Analyze accident data sets to summarize its main characteristics. | 1.0 | Exploratory Data Analysis (EDA) - Analyze accident data sets to summarize its main characteristics. | process | exploratory data analysis eda analyze accident data sets to summarize its main characteristics | 1 |

8,058 | 11,222,198,018 | IssuesEvent | 2020-01-07 19:36:53 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | opened | Unflake Spanner integration tests | api: spanner type: process | Flakes for multiple spanner integration tests over the past day:

TestIntegration_DML: https://sponge.corp.google.com/target?id=88c22d5e-bb5c-4965-acb8-021c8346d4cc&target=cloud-devrel/client-libraries/go/google-cloud-go/continuous/go113&searchFor=&show=ALL&sortBy=STATUS

TestBatchDML_TwoStatements: https://sponge.... | 1.0 | Unflake Spanner integration tests - Flakes for multiple spanner integration tests over the past day:

TestIntegration_DML: https://sponge.corp.google.com/target?id=88c22d5e-bb5c-4965-acb8-021c8346d4cc&target=cloud-devrel/client-libraries/go/google-cloud-go/continuous/go113&searchFor=&show=ALL&sortBy=STATUS

TestBat... | process | unflake spanner integration tests flakes for multiple spanner integration tests over the past day testintegration dml testbatchdml twostatements testintegration queryexpressions multiple tests failed please fix these and or disable flaky tests for the time being if there s not a quick fix... | 1 |

5,891 | 8,709,157,532 | IssuesEvent | 2018-12-06 13:10:36 | aiidateam/aiida_core | https://api.github.com/repos/aiidateam/aiida_core | closed | Remove entry point group from the `process_type` column | priority/important requires discussion topic/DatabaseSchemaAndOptimization topic/JobCalculationAndProcess | Currently, if the process has an associated entry point, the entry point string will be stored in the `process_type` column of the `Node` instance. The format of this string is `{entry_point_group}:{entry_point_name}`. However, since the group to which the entry point name should belong is already contained in the `typ... | 1.0 | Remove entry point group from the `process_type` column - Currently, if the process has an associated entry point, the entry point string will be stored in the `process_type` column of the `Node` instance. The format of this string is `{entry_point_group}:{entry_point_name}`. However, since the group to which the entry... | process | remove entry point group from the process type column currently if the process has an associated entry point the entry point string will be stored in the process type column of the node instance the format of this string is entry point group entry point name however since the group to which the entry... | 1 |

1,518 | 4,111,068,405 | IssuesEvent | 2016-06-07 03:21:21 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | child-process: data loss with piped stdout | child_process duplicate stream | * **Version**: v6.2.1

* **Platform**: Linux rachmaninoff 4.5.3-1-ARCH #1 SMP PREEMPT Sat May 7 20:43:57 CEST 2016 x86_64 GNU/Linux

* **Subsystem**: child-process

When the stdout of a spawned process is piped into a writable stream that doesn't read fast enough, and the spawned process exits, node will put it into ... | 1.0 | child-process: data loss with piped stdout - * **Version**: v6.2.1

* **Platform**: Linux rachmaninoff 4.5.3-1-ARCH #1 SMP PREEMPT Sat May 7 20:43:57 CEST 2016 x86_64 GNU/Linux

* **Subsystem**: child-process

When the stdout of a spawned process is piped into a writable stream that doesn't read fast enough, and the ... | process | child process data loss with piped stdout version platform linux rachmaninoff arch smp preempt sat may cest gnu linux subsystem child process when the stdout of a spawned process is piped into a writable stream that doesn t read fast enough and the spawned pr... | 1 |

18,580 | 24,562,626,440 | IssuesEvent | 2022-10-12 21:58:13 | NEARWEEK/NEWS | https://api.github.com/repos/NEARWEEK/NEWS | closed | Launch new Github process | Process | ## 🎉 Subtasks

- [x] Onboard everyone to Github, make sure everyone has 2FA enabled

- [x] Make sure all OKRs are reflected as milestones with sub-issues, labels & assignees

- [x] Use Github issues & project board as the go-to-place for meetings & asynch coordination

## 🤼♂️ Reviewer

@P3ter-NEARWEEK

#... | 1.0 | Launch new Github process - ## 🎉 Subtasks

- [x] Onboard everyone to Github, make sure everyone has 2FA enabled

- [x] Make sure all OKRs are reflected as milestones with sub-issues, labels & assignees

- [x] Use Github issues & project board as the go-to-place for meetings & asynch coordination

## 🤼♂️ Revi... | process | launch new github process 🎉 subtasks onboard everyone to github make sure everyone has enabled make sure all okrs are reflected as milestones with sub issues labels assignees use github issues project board as the go to place for meetings asynch coordination 🤼♂️ reviewer ... | 1 |

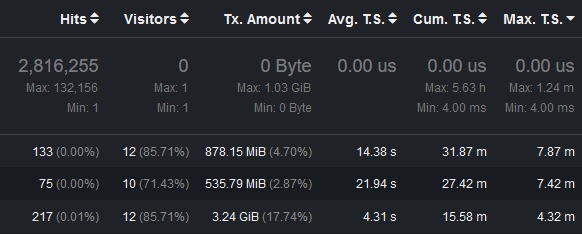

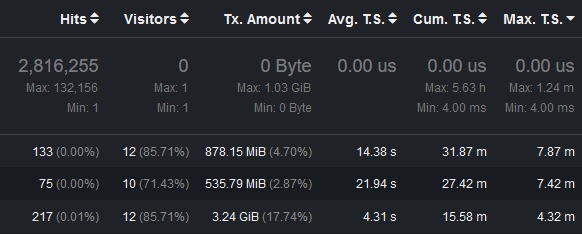

9,370 | 12,374,180,682 | IssuesEvent | 2020-05-19 00:45:49 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Max t.s. seems not calculated correctly | log-processing | Just noticed during some observation that there's a mismatch between max T.S. in a header row and ones presented in separate lines:

There is 1.24m in head and subsequent lines hold much higher values i.e. ... | 1.0 | Max t.s. seems not calculated correctly - Just noticed during some observation that there's a mismatch between max T.S. in a header row and ones presented in separate lines:

There is 1.24m in head and subs... | process | max t s seems not calculated correctly just noticed during some observation that there s a mismatch between max t s in a header row and ones presented in separate lines there is in head and subsequent lines hold much higher values i e i have verified the access log manually and there should be ... | 1 |

9,097 | 12,168,617,985 | IssuesEvent | 2020-04-27 12:58:50 | Ghost-chu/QuickShop-Reremake | https://api.github.com/repos/Ghost-chu/QuickShop-Reremake | closed | [BUG]大部分玩家无法创建商店 | Bug In Process Priority:Major | **Describe the bug**

新版本的quickshop似乎存在residence权限的检测问题,有时,拥有该领地权限的玩家无法创建领地,提示:没有权限:第三方插件 [{0}]。

当我将integration.residence.create修改为true时,创建商店还是提示没有权限,并且,箱子上的悬浮物会出现,每次创建都会出现一个悬浮物,并且会叠加起来,但仍旧无法创建商店,其他人点击也没有反应。

**Screenshots**

65.0 -->

<!-- @ua_header: QwantMobile/3.0 (Android 9; Tablet; rv:67.0) Gecko/67.0 Firefox/65.0 QwantBrowser/67.0.4 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/59639 -->

**URL**: https://play.google.com/store/apps/det... | 1.0 | play.google.com - Unable to install Hangouts app from web page - <!-- @browser: Firefox Mobile (Tablet) 65.0 -->

<!-- @ua_header: QwantMobile/3.0 (Android 9; Tablet; rv:67.0) Gecko/67.0 Firefox/65.0 QwantBrowser/67.0.4 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/... | non_process | play google com unable to install hangouts app from web page url browser version firefox mobile tablet operating system android tested another browser yes chrome problem type video or audio doesn t play description the video or audio does not play steps to reprod... | 0 |

176,706 | 21,435,761,374 | IssuesEvent | 2022-04-24 01:04:39 | rsoreq/grafana | https://api.github.com/repos/rsoreq/grafana | closed | CVE-2021-33587 (High) detected in css-what-2.1.3.tgz - autoclosed | security vulnerability | ## CVE-2021-33587 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>css-what-2.1.3.tgz</b></p></summary>

<p>a CSS selector parser</p>

<p>Library home page: <a href="https://registry.npmj... | True | CVE-2021-33587 (High) detected in css-what-2.1.3.tgz - autoclosed - ## CVE-2021-33587 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>css-what-2.1.3.tgz</b></p></summary>

<p>a CSS sele... | non_process | cve high detected in css what tgz autoclosed cve high severity vulnerability vulnerable library css what tgz a css selector parser library home page a href path to dependency file yarn lock path to vulnerable library node modules css what package json depende... | 0 |

106,309 | 9,126,122,165 | IssuesEvent | 2019-02-24 19:08:58 | svigerske/Bt | https://api.github.com/repos/svigerske/Bt | closed | make sure third party projects can be disabled | bug configuration tests minor | Issue created by migration from Trac.

Original creator: andreasw

Original creation time: 2009-07-07 03:23:21

Assignee: andreasw

Version: 0.5

For some of the third party projects, it does not work to specify --without-blabla to make sure that they are not compiled even when their source code is there.

* metis: --... | 1.0 | make sure third party projects can be disabled - Issue created by migration from Trac.

Original creator: andreasw

Original creation time: 2009-07-07 03:23:21

Assignee: andreasw

Version: 0.5

For some of the third party projects, it does not work to specify --without-blabla to make sure that they are not compiled ev... | non_process | make sure third party projects can be disabled issue created by migration from trac original creator andreasw original creation time assignee andreasw version for some of the third party projects it does not work to specify without blabla to make sure that they are not compiled even when ... | 0 |

2,656 | 5,433,986,597 | IssuesEvent | 2017-03-05 01:20:11 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | System.Diagnostics.Process.Performance.Tests failed to start on CentOS in CI -- Linux kernel bug | area-System.Diagnostics.Process bug os-linux tracking-external-issue | From http://dotnet-ci.cloudapp.net/job/dotnet_corefx/job/master/job/centos7.1_release_prtest/397/

```

/mnt/resource/j/workspace/dotnet_corefx/master/centos7.1_release_prtest/Tools/Microsoft.CSharp.Core.targets(67,5): error MSB6006: "/mnt/resource/j/workspace/dotnet_corefx/master/centos7.1_release_prtest/Tools/dotnetcl... | 1.0 | System.Diagnostics.Process.Performance.Tests failed to start on CentOS in CI -- Linux kernel bug - From http://dotnet-ci.cloudapp.net/job/dotnet_corefx/job/master/job/centos7.1_release_prtest/397/

```

/mnt/resource/j/workspace/dotnet_corefx/master/centos7.1_release_prtest/Tools/Microsoft.CSharp.Core.targets(67,5): err... | process | system diagnostics process performance tests failed to start on centos in ci linux kernel bug from mnt resource j workspace dotnet corefx master release prtest tools microsoft csharp core targets error mnt resource j workspace dotnet corefx master release prtest tools dotnetcli dotnet e... | 1 |

14,684 | 17,798,392,479 | IssuesEvent | 2021-09-01 02:58:06 | jim-king-2000/IndustryCamera | https://api.github.com/repos/jim-king-2000/IndustryCamera | closed | [bug]: web端的“客户端下载”的背景图未设置缓存 | bug processing C | ### 问题描述

<!-- 在这里描述您的问题 -->

### 您预期的行为

<!-- 系统应该表现出什么行为 -->

### 系统表现的行为

<!-- 系统实际表现出什么行为 -->

### 复现路径

<!-- 如何重现bug -->

### 辅助信息

- 浏览器版本:Edge/Chrome 92

- 固件版本:v1.0 | 1.0 | [bug]: web端的“客户端下载”的背景图未设置缓存 - ### 问题描述

<!-- 在这里描述您的问题 -->

### 您预期的行为

<!-- 系统应该表现出什么行为 -->

### 系统表现的行为

<!-- 系统实际表现出什么行为 -->

### 复现路径

<!-- 如何重现bug -->

### 辅助信息

- 浏览器版本:Edge/Chrome 92

- 固件版本:v1.0 | process | web端的“客户端下载”的背景图未设置缓存 问题描述 您预期的行为 系统表现的行为 复现路径 辅助信息 浏览器版本:edge chrome 固件版本: | 1 |

20,027 | 26,510,252,810 | IssuesEvent | 2023-01-18 16:34:29 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | NTR: [proposed new term label] negative regulation of metaphase/anaphase transition of meiosis II | New term request cell cycle and DNA processes ready | Please provide as much information as you can:

* **Suggested term label:**

negative regulation of metaphase/anaphase transition of meiosis II

* **Definition (free text)**

standard of parents

: regulation of metaphase/anaphase transition of meiosis II (GO:1905189)

: negative regulation of metaphase/anapha... | 1.0 | NTR: [proposed new term label] negative regulation of metaphase/anaphase transition of meiosis II - Please provide as much information as you can:

* **Suggested term label:**

negative regulation of metaphase/anaphase transition of meiosis II

* **Definition (free text)**

standard of parents

: regulation o... | process | ntr negative regulation of metaphase anaphase transition of meiosis ii please provide as much information as you can suggested term label negative regulation of metaphase anaphase transition of meiosis ii definition free text standard of parents regulation of metaphase anaphase tra... | 1 |

23,264 | 3,784,952,808 | IssuesEvent | 2016-03-20 05:50:12 | recoilphp/recoil | https://api.github.com/repos/recoilphp/recoil | closed | Revisit behaviour of awaiting a strand. | defect status: in progress | As it stands, an assertion fails when attempting to await an already-exited strand. This makes it possible to use `yield $strand` as a "thread join" type operation, which is its main purpose.

Unlike, a regular thread join, awaiting a strand forwards the return value / exception to the waiting strand, essentially ma... | 1.0 | Revisit behaviour of awaiting a strand. - As it stands, an assertion fails when attempting to await an already-exited strand. This makes it possible to use `yield $strand` as a "thread join" type operation, which is its main purpose.

Unlike, a regular thread join, awaiting a strand forwards the return value / excep... | non_process | revisit behaviour of awaiting a strand as it stands an assertion fails when attempting to await an already exited strand this makes it possible to use yield strand as a thread join type operation which is its main purpose unlike a regular thread join awaiting a strand forwards the return value excep... | 0 |

52,211 | 13,728,285,598 | IssuesEvent | 2020-10-04 10:54:47 | tanmayc07/vue-calendar | https://api.github.com/repos/tanmayc07/vue-calendar | closed | CVE-2018-20190 (Medium) detected in opennmsopennms-source-25.1.0-1 | security vulnerability | ## CVE-2018-20190 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opennmsopennms-source-25.1.0-1</b></p></summary>

<p>

<p>A Java based fault and performance management system</p>

<p>... | True | CVE-2018-20190 (Medium) detected in opennmsopennms-source-25.1.0-1 - ## CVE-2018-20190 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opennmsopennms-source-25.1.0-1</b></p></summary>... | non_process | cve medium detected in opennmsopennms source cve medium severity vulnerability vulnerable library opennmsopennms source a java based fault and performance management system library home page a href found in head commit a href found in base branch master ... | 0 |

20,343 | 26,999,590,911 | IssuesEvent | 2023-02-10 06:13:36 | bobocode-blyznytsia/bring-framework | https://api.github.com/repos/bobocode-blyznytsia/bring-framework | closed | Implement AutowiredBeanPostProcessor | bean-post-processor | ### Description

The `BeanPostProcessor` abstraction is responsible for the construction and initialisation logic of `Bean`.

Basic implementation includes 2 implementations: `RawBeanPostProcessor` and `AutowiredBeanPostProcessor`.

### Solution

In context of this story the `AutowiredBeanPostProcessor` should be implemen... | 1.0 | Implement AutowiredBeanPostProcessor - ### Description

The `BeanPostProcessor` abstraction is responsible for the construction and initialisation logic of `Bean`.

Basic implementation includes 2 implementations: `RawBeanPostProcessor` and `AutowiredBeanPostProcessor`.

### Solution

In context of this story the `Autowir... | process | implement autowiredbeanpostprocessor description the beanpostprocessor abstraction is responsible for the construction and initialisation logic of bean basic implementation includes implementations rawbeanpostprocessor and autowiredbeanpostprocessor solution in context of this story the autowir... | 1 |

352,501 | 25,070,286,964 | IssuesEvent | 2022-11-07 11:34:34 | PyFstat/PyFstat | https://api.github.com/repos/PyFstat/PyFstat | closed | Type annotations seem to be a bit incompatible with the return field | documentation | See sphinx docs for `logging` and `injection_parameters`. | 1.0 | Type annotations seem to be a bit incompatible with the return field - See sphinx docs for `logging` and `injection_parameters`. | non_process | type annotations seem to be a bit incompatible with the return field see sphinx docs for logging and injection parameters | 0 |

33,741 | 16,095,353,239 | IssuesEvent | 2021-04-26 22:26:13 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | why my 'workers=8,use_multiprocessing=True' do not work while training? | comp:dist-strat type:performance | **Here is my code:**

train_model_input=generate_arrays_from_dataframe( data.sample(frac=1) )

history = model.fit(train_model_input, epochs=1, verbose=1, validation_split=0.0,steps_per_epoch= math.ceil( train_row_len/256), workers=8,use_multiprocessing=True)

I find it only 100% CPU-Util not the expect 800%.

![... | True | why my 'workers=8,use_multiprocessing=True' do not work while training? - **Here is my code:**

train_model_input=generate_arrays_from_dataframe( data.sample(frac=1) )

history = model.fit(train_model_input, epochs=1, verbose=1, validation_split=0.0,steps_per_epoch= math.ceil( train_row_len/256), workers=8,use_multip... | non_process | why my workers use multiprocessing true do not work while training here is my code: train model input generate arrays from dataframe data sample frac history model fit train model input epochs verbose validation split steps per epoch math ceil train row len workers use multipro... | 0 |

129,615 | 5,099,871,543 | IssuesEvent | 2017-01-04 10:00:21 | hpi-swt2/workshop-portal | https://api.github.com/repos/hpi-swt2/workshop-portal | closed | US_2.1: Change my application | Medium Priority team-hendrik | **As**

pupil

**I want to**

be able to process my application up to the deadline. After this, no change is possible.

**in order to**

change my application anytime

**dependency:** #18

**estimate:** 3

**acceptance criteria:**

- [x] edit form displays the saved data

- [x] changes are saved in the datab... | 1.0 | US_2.1: Change my application - **As**

pupil

**I want to**

be able to process my application up to the deadline. After this, no change is possible.

**in order to**

change my application anytime

**dependency:** #18

**estimate:** 3

**acceptance criteria:**

- [x] edit form displays the saved data

- [x... | non_process | us change my application as pupil i want to be able to process my application up to the deadline after this no change is possible in order to change my application anytime dependency estimate acceptance criteria edit form displays the saved data cha... | 0 |

10,178 | 13,044,162,791 | IssuesEvent | 2020-07-29 03:47:36 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `TruncateDecimal` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `TruncateDecimal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rp... | 2.0 | UCP: Migrate scalar function `TruncateDecimal` from TiDB -

## Description

Port the scalar function `TruncateDecimal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://gi... | process | ucp migrate scalar function truncatedecimal from tidb description port the scalar function truncatedecimal from tidb to coprocessor score mentor s sticnarf recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

10,215 | 13,080,489,353 | IssuesEvent | 2020-08-01 07:37:59 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | [Processing] GDAL Assign Projection does not update QgsRasterLayer CRS | Bug Processing | **Describe the bug**

Even if the projection is assign, the `QgsRasterLayer` and the `QgsRasterDataProvider` was not updated.

In the case of a layer loaded in the project a fix has been provided https://github.com/qgis/QGIS/pull/37919

In the case of a layer provided by a model, the CRS is not updated even if the ... | 1.0 | [Processing] GDAL Assign Projection does not update QgsRasterLayer CRS - **Describe the bug**

Even if the projection is assign, the `QgsRasterLayer` and the `QgsRasterDataProvider` was not updated.

In the case of a layer loaded in the project a fix has been provided https://github.com/qgis/QGIS/pull/37919

In the... | process | gdal assign projection does not update qgsrasterlayer crs describe the bug even if the projection is assign the qgsrasterlayer and the qgsrasterdataprovider was not updated in the case of a layer loaded in the project a fix has been provided in the case of a layer provided by a model the crs is ... | 1 |

2,354 | 5,164,510,503 | IssuesEvent | 2017-01-17 10:45:17 | coala/teams | https://api.github.com/repos/coala/teams | closed | core Team Member Application: Lasse | process/approved | # Bio

I'm Lasse. I'm the BLD of coala. I'm special. Also I'm western, white, male and in my 20ies so I fit the profile of an open source developer pretty well.

# coala Contributions so far

Let me see... I created it like thrice. The first version sucked so I had to make a second. It sucked as well so I made a ... | 1.0 | core Team Member Application: Lasse - # Bio

I'm Lasse. I'm the BLD of coala. I'm special. Also I'm western, white, male and in my 20ies so I fit the profile of an open source developer pretty well.

# coala Contributions so far

Let me see... I created it like thrice. The first version sucked so I had to make a ... | process | core team member application lasse bio i m lasse i m the bld of coala i m special also i m western white male and in my so i fit the profile of an open source developer pretty well coala contributions so far let me see i created it like thrice the first version sucked so i had to make a seco... | 1 |

13,785 | 16,543,114,552 | IssuesEvent | 2021-05-27 19:35:17 | scieloorg/search-journals | https://api.github.com/repos/scieloorg/search-journals | closed | Renderizar HTML nos resumos | Processamento | Muitos resumos possuem códigos HTML que precisam ser renderizados.

Esse símbolo na verdade deveria ser <= (menor ou igual).

http://homolog.search.scielo.org/?q=A+import%C3%A2ncia+da+an%C3%A1lise+de+especia... | 1.0 | Renderizar HTML nos resumos - Muitos resumos possuem códigos HTML que precisam ser renderizados.

Esse símbolo na verdade deveria ser <= (menor ou igual).

http://homolog.search.scielo.org/?q=A+import%C3%A2n... | process | renderizar html nos resumos muitos resumos possuem códigos html que precisam ser renderizados esse símbolo na verdade deveria ser menor ou igual link do artigo original | 1 |

128,364 | 27,247,686,846 | IssuesEvent | 2023-02-22 04:24:27 | wso2/ballerina-plugin-vscode | https://api.github.com/repos/wso2/ballerina-plugin-vscode | closed | [Record Editor] Failed to create separate record definitions from a give JSON | Type/Bug Priority/Highest Resolution/Fixed lowcode/component/record-editor | **Description:**

$Subject

sample JSON:

```json

{

"id": "0001",

"name": "Cake",

"score": 0.55,

"status": {

"kind": "D"

}

}

```

**Affected Versions:**

Ballerin... | 1.0 | [Record Editor] Failed to create separate record definitions from a give JSON - **Description:**

$Subject

sample JSON:

```json

{

"id": "0001",

"name": "Cake",

"score": 0.... | non_process | failed to create separate record definitions from a give json description subject sample json json id name cake score status kind d affected versions ballerina plugin | 0 |

157,706 | 19,981,583,564 | IssuesEvent | 2022-01-30 01:01:20 | LancelotLiu/CAP4 | https://api.github.com/repos/LancelotLiu/CAP4 | opened | CVE-2019-3774 (High) detected in spring-batch-infrastructure-3.0.7.RELEASE.jar | security vulnerability | ## CVE-2019-3774 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-batch-infrastructure-3.0.7.RELEASE.jar</b></p></summary>

<p>Spring Batch Infrastructure</p>

<p>Library home page... | True | CVE-2019-3774 (High) detected in spring-batch-infrastructure-3.0.7.RELEASE.jar - ## CVE-2019-3774 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-batch-infrastructure-3.0.7.RELEA... | non_process | cve high detected in spring batch infrastructure release jar cve high severity vulnerability vulnerable library spring batch infrastructure release jar spring batch infrastructure library home page a href path to dependency file cap batch pom xml path to vulnerable l... | 0 |

7,438 | 10,551,248,317 | IssuesEvent | 2019-10-03 12:58:17 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | 2.0 Release Checklist | Meta area: Process | Add items as required

--------

- [x] Major enhancements:

- [x] BLE Split Link Layer: #12681

- [x] Support for PPP protocol: #14034

- [x] Initial support for ARM Cortex-R: #9316

- [x] Initial support for TEE for ARC: #9341

- [x] A new DTS/binding parser: #17660

- [x] External modules i... | 1.0 | 2.0 Release Checklist - Add items as required

--------

- [x] Major enhancements:

- [x] BLE Split Link Layer: #12681

- [x] Support for PPP protocol: #14034

- [x] Initial support for ARM Cortex-R: #9316

- [x] Initial support for TEE for ARC: #9341

- [x] A new DTS/binding parser: #17660

-... | process | release checklist add items as required major enhancements ble split link layer support for ppp protocol initial support for arm cortex r initial support for tee for arc a new dts binding parser external modules into stan... | 1 |

22,258 | 30,809,404,307 | IssuesEvent | 2023-08-01 09:23:01 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | closed | `ListArray::try_new` disallows null elements if `field.is_nullable() == false` | bug development-process | **Describe the bug**

Even if a field is non-nullable in its schema definition, it's only semantic and I think this should not [prevent](https://github.com/apache/arrow-rs/blob/414235e7630d05cccf0b9f5032ebfc0858b8ae5b/arrow-array/src/array/list_array.rs#L126-L132) the creation of arrays containing null values. Null val... | 1.0 | `ListArray::try_new` disallows null elements if `field.is_nullable() == false` - **Describe the bug**

Even if a field is non-nullable in its schema definition, it's only semantic and I think this should not [prevent](https://github.com/apache/arrow-rs/blob/414235e7630d05cccf0b9f5032ebfc0858b8ae5b/arrow-array/src/array... | process | listarray try new disallows null elements if field is nullable false describe the bug even if a field is non nullable in its schema definition it s only semantic and i think this should not the creation of arrays containing null values null values in semantically non nullable fields may arise fro... | 1 |

41,824 | 5,389,799,921 | IssuesEvent | 2017-02-25 06:57:48 | brave/browser-laptop | https://api.github.com/repos/brave/browser-laptop | closed | add windows specific font CSS for URL text and tab text | design polish QA/steps-specified release-notes/include | I found some changes needed to improve legibility and sync the styles between mac and windows.

With the changes below, we will have a match between OSs in the URL and Tab text.

(note: these are f... | 1.0 | add windows specific font CSS for URL text and tab text - I found some changes needed to improve legibility and sync the styles between mac and windows.

With the changes below, we will have a match between OSs in the URL and Tab text.

detected in multiple libraries | security vulnerability | ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ansi-regex-4.1.0.tgz</b>, <b>ansi-regex-3.0.0.tgz</b>, <b>ansi-regex-5.0.0.tgz</b></p></summary>

<p>

<details><summar... | True | CVE-2021-3807 (High) detected in multiple libraries - ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ansi-regex-4.1.0.tgz</b>, <b>ansi-regex-3.0.0.tgz</b>, <b>ansi-r... | non_process | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries ansi regex tgz ansi regex tgz ansi regex tgz ansi regex tgz regular expression for matching ansi escape codes library home page a href path to dependen... | 0 |

186,177 | 6,734,150,023 | IssuesEvent | 2017-10-18 17:01:18 | geosolutions-it/unesco-ihp | https://api.github.com/repos/geosolutions-it/unesco-ihp | closed | Final check on published Layers | blocked enhancement Priority: Medium question unesco-ihp | "UNESCO-GeoNode-Refactor-2017-Timeline" 'PHASE I'!N11

I changed my email address to my private one (we thought that maybe UNESCO system is filtering notifications email as spamming) and it works! However, for some reasons I received an email saying that "a layer has been updated" when it was actually uploaded, and I r... | 1.0 | Final check on published Layers - "UNESCO-GeoNode-Refactor-2017-Timeline" 'PHASE I'!N11

I changed my email address to my private one (we thought that maybe UNESCO system is filtering notifications email as spamming) and it works! However, for some reasons I received an email saying that "a layer has been updated" when... | non_process | final check on published layers unesco geonode refactor timeline phase i i changed my email address to my private one we thought that maybe unesco system is filtering notifications email as spamming and it works however for some reasons i received an email saying that a layer has been updated when it w... | 0 |

8,368 | 11,519,598,790 | IssuesEvent | 2020-02-14 13:10:08 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | get starttime of process | Feature Process | It would be nice to have a getter for this property https://github.com/symfony/process/blob/master/Process.php#L58 this can help to calculate an ETA | 1.0 | get starttime of process - It would be nice to have a getter for this property https://github.com/symfony/process/blob/master/Process.php#L58 this can help to calculate an ETA | process | get starttime of process it would be nice to have a getter for this property this can help to calculate an eta | 1 |

3,706 | 6,731,424,596 | IssuesEvent | 2017-10-18 07:33:27 | nlbdev/pipeline | https://api.github.com/repos/nlbdev/pipeline | closed | braille CSS: "NLB", "Norge 2016", placement / plassering | enhancement pre-processing Priority:2 - Medium | (norwegian)

*from Trello-board*

NLB-CSS: title-page: De to linjene «NLB» og «Norge 2016» - skal stå uten blank linje mellom (hvis det er vanskelig, kan de stå på samme linje: «NLB, Norge 2016») | 1.0 | braille CSS: "NLB", "Norge 2016", placement / plassering - (norwegian)

*from Trello-board*

NLB-CSS: title-page: De to linjene «NLB» og «Norge 2016» - skal stå uten blank linje mellom (hvis det er vanskelig, kan de stå på samme linje: «NLB, Norge 2016») | process | braille css nlb norge placement plassering norwegian from trello board nlb css title page de to linjene «nlb» og «norge » skal stå uten blank linje mellom hvis det er vanskelig kan de stå på samme linje «nlb norge » | 1 |

112,905 | 4,540,335,280 | IssuesEvent | 2016-09-09 14:21:50 | Signbank/NGT-signbank | https://api.github.com/repos/Signbank/NGT-signbank | closed | Create an easy way to hide the search fields | enhancement top priority | A simple button or small triangle at the top left that would hide all of the search page above the search results would be very useful, in order to focus on the list of hits, while still having access to the menu bar and the search boxes there. | 1.0 | Create an easy way to hide the search fields - A simple button or small triangle at the top left that would hide all of the search page above the search results would be very useful, in order to focus on the list of hits, while still having access to the menu bar and the search boxes there. | non_process | create an easy way to hide the search fields a simple button or small triangle at the top left that would hide all of the search page above the search results would be very useful in order to focus on the list of hits while still having access to the menu bar and the search boxes there | 0 |

19,234 | 25,387,016,200 | IssuesEvent | 2022-11-21 22:55:44 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/transform] Add `drop` action support | enhancement priority:p2 processor/transform | `drop` action is pretty important functionality to replace filter processors. It's already being mentioned in the docs but not implemented yet.

Subtasks per data type:

- [ ] #13579

- [ ] #13580

- [ ] #13581 | 1.0 | [processor/transform] Add `drop` action support - `drop` action is pretty important functionality to replace filter processors. It's already being mentioned in the docs but not implemented yet.

Subtasks per data type:

- [ ] #13579

- [ ] #13580

- [ ] #13581 | process | add drop action support drop action is pretty important functionality to replace filter processors it s already being mentioned in the docs but not implemented yet subtasks per data type | 1 |

54,963 | 6,885,643,076 | IssuesEvent | 2017-11-21 16:42:05 | syndesisio/syndesis | https://api.github.com/repos/syndesisio/syndesis | opened | Use Patternfly for form elements | cat/design module/ui module/uxd | Reference: See Forms and Controls section: http://www.patternfly.org/pattern-library/

@seanforyou23 Added this issue to document and track your work, update as needed. Thanks. | 1.0 | Use Patternfly for form elements - Reference: See Forms and Controls section: http://www.patternfly.org/pattern-library/

@seanforyou23 Added this issue to document and track your work, update as needed. Thanks. | non_process | use patternfly for form elements reference see forms and controls section added this issue to document and track your work update as needed thanks | 0 |

175,607 | 6,552,522,886 | IssuesEvent | 2017-09-05 18:41:40 | SacredDuckwhale/TotalAP | https://api.github.com/repos/SacredDuckwhale/TotalAP | closed | Scanning of AP items is broken for ruRU clients (?) | info:help-needed module:core priority:high status:needs-confirmation type:bug | Can't confirm this because of the client-side restrictions when using the Russian client, but from a comment on Curse it appears that the scanner errors out. If someone can provide the correct pattern (format) that is in use currently the scanner could be updated accordingly and the validity can be checked via luaunit,... | 1.0 | Scanning of AP items is broken for ruRU clients (?) - Can't confirm this because of the client-side restrictions when using the Russian client, but from a comment on Curse it appears that the scanner errors out. If someone can provide the correct pattern (format) that is in use currently the scanner could be updated ac... | non_process | scanning of ap items is broken for ruru clients can t confirm this because of the client side restrictions when using the russian client but from a comment on curse it appears that the scanner errors out if someone can provide the correct pattern format that is in use currently the scanner could be updated ac... | 0 |

272,059 | 20,731,556,250 | IssuesEvent | 2022-03-14 09:55:12 | root-project/root | https://api.github.com/repos/root-project/root | closed | [doxy] upgrade to Mathjax3 | improvement in:Documentation | ### Explain what you would like to see improved

Mathjax2 is used by documentation.

### Optional: share how it could be improved

Mathjax3 brings some performance improvements.

Maybe it can be even downloaded on the fly, rather than storing it as part of the ROOT source code?

### To Reproduce

https://root.cer... | 1.0 | [doxy] upgrade to Mathjax3 - ### Explain what you would like to see improved

Mathjax2 is used by documentation.

### Optional: share how it could be improved

Mathjax3 brings some performance improvements.

Maybe it can be even downloaded on the fly, rather than storing it as part of the ROOT source code?

### T... | non_process | upgrade to explain what you would like to see improved is used by documentation optional share how it could be improved brings some performance improvements maybe it can be even downloaded on the fly rather than storing it as part of the root source code to reproduce setu... | 0 |

262,768 | 19,830,882,299 | IssuesEvent | 2022-01-20 11:51:42 | ngneat/falso | https://api.github.com/repos/ngneat/falso | closed | Interactive Playground within the Docs | documentation enhancement | ### Description

https://dev.to/mrmuhammadali/live-code-editing-in-docusaurus-ux-at-its-best-2hj1

### Proposed solution

_No response_

### Alternatives considered

_No response_

### Do you want to create a pull request?

No | 1.0 | Interactive Playground within the Docs - ### Description

https://dev.to/mrmuhammadali/live-code-editing-in-docusaurus-ux-at-its-best-2hj1

### Proposed solution

_No response_

### Alternatives considered

_No response_

### Do you want to create a pull request?

No | non_process | interactive playground within the docs description proposed solution no response alternatives considered no response do you want to create a pull request no | 0 |

18,516 | 24,551,723,146 | IssuesEvent | 2022-10-12 13:06:42 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | Display offline indicator for mobile apps | P2 iOS Android Process: Fixed Process: Tested QA Process: Tested dev Process: Enhancement | The mobile app must show a standard offline indicator when the user is not connected. Actions on the screen that cannot be used in such situations must get disabled (e.g. Sign up, profile updates, enrollment). Sections that support offline capability must continue to be enabled for use.

Message to be shown on scree... | 4.0 | Display offline indicator for mobile apps - The mobile app must show a standard offline indicator when the user is not connected. Actions on the screen that cannot be used in such situations must get disabled (e.g. Sign up, profile updates, enrollment). Sections that support offline capability must continue to be enabl... | process | display offline indicator for mobile apps the mobile app must show a standard offline indicator when the user is not connected actions on the screen that cannot be used in such situations must get disabled e g sign up profile updates enrollment sections that support offline capability must continue to be enabl... | 1 |

163,245 | 20,324,671,755 | IssuesEvent | 2022-02-18 03:51:37 | TreyM-WSS/terra-clinical | https://api.github.com/repos/TreyM-WSS/terra-clinical | opened | CVE-2020-7789 (Medium) detected in node-notifier-6.0.0.tgz | security vulnerability | ## CVE-2020-7789 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-notifier-6.0.0.tgz</b></p></summary>

<p>A Node.js module for sending notifications on native Mac, Windows (post ... | True | CVE-2020-7789 (Medium) detected in node-notifier-6.0.0.tgz - ## CVE-2020-7789 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-notifier-6.0.0.tgz</b></p></summary>

<p>A Node.js m... | non_process | cve medium detected in node notifier tgz cve medium severity vulnerability vulnerable library node notifier tgz a node js module for sending notifications on native mac windows post and pre and linux or growl as fallback library home page a href path to dependency... | 0 |

12,516 | 9,811,120,716 | IssuesEvent | 2019-06-12 22:26:02 | dotnet/core-setup | https://api.github.com/repos/dotnet/core-setup | closed | ToolsError build failures in 'prodcon/cli/release/2.2.1xx/' - '20190214.01' | area-Host area-Infrastructure | @robertborr commented on [Tue Feb 19 2019](https://github.com/dotnet/cli/issues/10832)

@dotnet-mc-bot commented on [Fri Feb 15 2019](https://github.com/dotnet/core-eng/issues/5249)

There were a set of failures during this build. Here is a summary of these:

* https://devdiv.visualstudio.com/DefaultCollection/DevDiv/_... | 1.0 | ToolsError build failures in 'prodcon/cli/release/2.2.1xx/' - '20190214.01' - @robertborr commented on [Tue Feb 19 2019](https://github.com/dotnet/cli/issues/10832)

@dotnet-mc-bot commented on [Fri Feb 15 2019](https://github.com/dotnet/core-eng/issues/5249)

There were a set of failures during this build. Here is a s... | non_process | toolserror build failures in prodcon cli release robertborr commented on dotnet mc bot commented on there were a set of failures during this build here is a summary of these agent error log total tests passed failed skipped robertborr ... | 0 |

240 | 2,664,624,975 | IssuesEvent | 2015-03-20 15:36:48 | AnalyticalGraphicsInc/cesium | https://api.github.com/repos/AnalyticalGraphicsInc/cesium | opened | Pro-active dead link checking | dev process doc | We should look into adding a build target to use a tool that checks the documentation for bad links. We can run this on the generated documentation rather than the source. Here's an example of one such project: https://github.com/wummel/linkchecker but I'm sure there are others that might better suit our current tool... | 1.0 | Pro-active dead link checking - We should look into adding a build target to use a tool that checks the documentation for bad links. We can run this on the generated documentation rather than the source. Here's an example of one such project: https://github.com/wummel/linkchecker but I'm sure there are others that mi... | process | pro active dead link checking we should look into adding a build target to use a tool that checks the documentation for bad links we can run this on the generated documentation rather than the source here s an example of one such project but i m sure there are others that might better suit our current toolsets... | 1 |

3,011 | 6,016,610,437 | IssuesEvent | 2017-06-07 07:30:23 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | closed | Allow a different nsm maxload for fragment/block namespaces | process_wontfix type_enhancement | Currently the limit of namespaces per NSM cluster is 75. for block cache and fragment cache name namspaces this limit could be higher as they typically contain less fragments. | 1.0 | Allow a different nsm maxload for fragment/block namespaces - Currently the limit of namespaces per NSM cluster is 75. for block cache and fragment cache name namspaces this limit could be higher as they typically contain less fragments. | process | allow a different nsm maxload for fragment block namespaces currently the limit of namespaces per nsm cluster is for block cache and fragment cache name namspaces this limit could be higher as they typically contain less fragments | 1 |

57,274 | 6,542,136,529 | IssuesEvent | 2017-09-02 01:06:19 | freeCodeCamp/freeCodeCamp | https://api.github.com/repos/freeCodeCamp/freeCodeCamp | closed | Tests now include tails | discussing tests | I'm working on a PR https://github.com/FreeCodeCamp/FreeCodeCamp/pull/11987 but the third test I'm adding (using `code.match()` to test for the number of times `quotient` is used in the editor will not work because the tests now incorporate the tail as referenced in #10258 .

If I write the test to include the tails... | 1.0 | Tests now include tails - I'm working on a PR https://github.com/FreeCodeCamp/FreeCodeCamp/pull/11987 but the third test I'm adding (using `code.match()` to test for the number of times `quotient` is used in the editor will not work because the tests now incorporate the tail as referenced in #10258 .

If I write the... | non_process | tests now include tails i m working on a pr but the third test i m adding using code match to test for the number of times quotient is used in the editor will not work because the tests now incorporate the tail as referenced in if i write the test to include the tails length instead of len... | 0 |

309,833 | 26,679,658,680 | IssuesEvent | 2023-01-26 16:41:43 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | pkg/ccl/workloadccl/workloadccl_test: TestImportFixture failed | C-test-failure O-robot branch-master T-testeng | pkg/ccl/workloadccl/workloadccl_test.TestImportFixture [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8456234?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8456234?buildTab=artifacts#/) on master @ [2ad8df... | 2.0 | pkg/ccl/workloadccl/workloadccl_test: TestImportFixture failed - pkg/ccl/workloadccl/workloadccl_test.TestImportFixture [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8456234?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightl... | non_process | pkg ccl workloadccl workloadccl test testimportfixture failed pkg ccl workloadccl workloadccl test testimportfixture with on master github com cockroachdb cockroach pkg sql create stats go github com cockroachdb cockroach pkg sql createstatsnode startjob github com cockroa... | 0 |

15,679 | 19,847,723,885 | IssuesEvent | 2022-01-21 08:48:15 | ooi-data/RS03INT2-MJ03D-06-BOTPTA303-streamed-botpt_nano_sample_15s | https://api.github.com/repos/ooi-data/RS03INT2-MJ03D-06-BOTPTA303-streamed-botpt_nano_sample_15s | opened | 🛑 Processing failed: InvalidIndexError | process | ## Overview

`InvalidIndexError` found in `processing_task` task during run ended on 2022-01-21T08:48:14.479559.

## Details

Flow name: `RS03INT2-MJ03D-06-BOTPTA303-streamed-botpt_nano_sample_15s`

Task name: `processing_task`

Error type: `InvalidIndexError`

Error message: Reindexing only valid with uniquely valued Ind... | 1.0 | 🛑 Processing failed: InvalidIndexError - ## Overview

`InvalidIndexError` found in `processing_task` task during run ended on 2022-01-21T08:48:14.479559.

## Details

Flow name: `RS03INT2-MJ03D-06-BOTPTA303-streamed-botpt_nano_sample_15s`

Task name: `processing_task`

Error type: `InvalidIndexError`

Error message: Rein... | process | 🛑 processing failed invalidindexerror overview invalidindexerror found in processing task task during run ended on details flow name streamed botpt nano sample task name processing task error type invalidindexerror error message reindexing only valid with uniquely value... | 1 |

237,063 | 18,151,677,402 | IssuesEvent | 2021-09-26 11:23:48 | girlscript/winter-of-contributing | https://api.github.com/repos/girlscript/winter-of-contributing | closed | Modular Arithmetic in C++ | documentation GWOC21 Assigned C/CPP | <hr>

## Description 📜

<!-- Please describe the issue in brief. -->

I want to Create an Documentation explaining the concepts of Modular Arithmetic in C++ with some code examples too.

<hr>

## Domain of Contribution 📊

<!----Please delete options that are not relevant.And in order to tick the check box j... | 1.0 | Modular Arithmetic in C++ - <hr>

## Description 📜

<!-- Please describe the issue in brief. -->

I want to Create an Documentation explaining the concepts of Modular Arithmetic in C++ with some code examples too.

<hr>

## Domain of Contribution 📊

<!----Please delete options that are not relevant.And in o... | non_process | modular arithmetic in c description 📜 i want to create an documentation explaining the concepts of modular arithmetic in c with some code examples too domain of contribution 📊 c cpp | 0 |

6,427 | 9,530,838,874 | IssuesEvent | 2019-04-29 14:43:56 | codefordenver/org | https://api.github.com/repos/codefordenver/org | closed | Create waffle card bookmarklet | Process Technical | As a member of CfD, I would like a quick way to create a waffle card based on an template, so that my waffle cards are of a consistent format, and I am reminded of useful info to fill out for others.

| 1.0 | Create waffle card bookmarklet - As a member of CfD, I would like a quick way to create a waffle card based on an template, so that my waffle cards are of a consistent format, and I am reminded of useful info to fill out for others.

| process | create waffle card bookmarklet as a member of cfd i would like a quick way to create a waffle card based on an template so that my waffle cards are of a consistent format and i am reminded of useful info to fill out for others | 1 |

424,982 | 29,187,380,129 | IssuesEvent | 2023-05-19 16:35:40 | randombit/botan | https://api.github.com/repos/randombit/botan | closed | Creating RSA keypair and Encrypting/Decrypting | usage question documentation | I have been having trouble locating a definitive guide or code examples to both create a RSA keypair and Encrypt/ decrypt using said keys.

My first method was using the given AEAD mode example given in the wiki but when I tried changing the code up to work with an RSA key. I was unable to find documentation or tutor... | 1.0 | Creating RSA keypair and Encrypting/Decrypting - I have been having trouble locating a definitive guide or code examples to both create a RSA keypair and Encrypt/ decrypt using said keys.

My first method was using the given AEAD mode example given in the wiki but when I tried changing the code up to work with an RSA... | non_process | creating rsa keypair and encrypting decrypting i have been having trouble locating a definitive guide or code examples to both create a rsa keypair and encrypt decrypt using said keys my first method was using the given aead mode example given in the wiki but when i tried changing the code up to work with an rsa... | 0 |

265,258 | 28,262,384,750 | IssuesEvent | 2023-04-07 01:16:32 | hshivhare67/platform_device_renesas_kernel_v4.19.72 | https://api.github.com/repos/hshivhare67/platform_device_renesas_kernel_v4.19.72 | closed | CVE-2019-19056 (Medium) detected in linuxlinux-4.19.279 - autoclosed | Mend: dependency security vulnerability | ## CVE-2019-19056 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge... | True | CVE-2019-19056 (Medium) detected in linuxlinux-4.19.279 - autoclosed - ## CVE-2019-19056 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>... | non_process | cve medium detected in linuxlinux autoclosed cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files ... | 0 |

634,211 | 20,356,231,849 | IssuesEvent | 2022-02-20 01:14:29 | therealbluepandabear/PyxlMoose | https://api.github.com/repos/therealbluepandabear/PyxlMoose | closed | [Improvement] Make it so when the user is previewing the rectangle, it only shows a border of it | mid priority improvement | #### Improvement description

Make it so when the user is moving their fingers to create/preview the rectangle, only show a border of it (just four lines), and fill in the rectangle only when the user lets go of their finger.

#### Why is this improvement important to add?

Because this will make the app quicker, ef... | 1.0 | [Improvement] Make it so when the user is previewing the rectangle, it only shows a border of it - #### Improvement description

Make it so when the user is moving their fingers to create/preview the rectangle, only show a border of it (just four lines), and fill in the rectangle only when the user lets go of their fi... | non_process | make it so when the user is previewing the rectangle it only shows a border of it improvement description make it so when the user is moving their fingers to create preview the rectangle only show a border of it just four lines and fill in the rectangle only when the user lets go of their finger ... | 0 |

9,359 | 12,369,296,758 | IssuesEvent | 2020-05-18 15:03:28 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | 0.17.1rc3 fails the test 18.04 (no JDK) | P2 team-Rules-Java type: process | Test of Bazel 0.17.1rc3:

https://buildkite.com/bazel/bazel-bazel/builds/4403#03954ad5-9c7e-4155-a644-839f8566853f

cc @lberki

```

** test_bootstrap **************************************************************

readlink: missing operand

Try 'readlink --help' for more information.

Building Bazel from scratch... | 1.0 | 0.17.1rc3 fails the test 18.04 (no JDK) - Test of Bazel 0.17.1rc3:

https://buildkite.com/bazel/bazel-bazel/builds/4403#03954ad5-9c7e-4155-a644-839f8566853f

cc @lberki

```

** test_bootstrap **************************************************************

readlink: missing operand

Try 'readlink --help' for more... | process | fails the test no jdk test of bazel cc lberki test bootstrap readlink missing operand try readlink help for more information building bazel from scratch error jdk not found please set java home test... | 1 |

6,866 | 15,682,331,111 | IssuesEvent | 2021-03-25 07:07:56 | bithyve/hexa | https://api.github.com/repos/bithyve/hexa | closed | PoC - Use SQLite with persist to compare peformance | 1.5.1 Architecture DevTask NFR | Use sqlite with redux persist instead of AsyncStorage and compare performance on a low end android and ios device | 1.0 | PoC - Use SQLite with persist to compare peformance - Use sqlite with redux persist instead of AsyncStorage and compare performance on a low end android and ios device | non_process | poc use sqlite with persist to compare peformance use sqlite with redux persist instead of asyncstorage and compare performance on a low end android and ios device | 0 |

1,095 | 3,563,642,574 | IssuesEvent | 2016-01-25 05:44:28 | kerubistan/kerub | https://api.github.com/repos/kerubistan/kerub | opened | find a way in infinispan to query by UUID | cleanup component:data processing priority: low | Infinispan does not take UUID field as primitive (which is right) but because of that it does not want to perform 'eq' comparison with it (which is wrong) | 1.0 | find a way in infinispan to query by UUID - Infinispan does not take UUID field as primitive (which is right) but because of that it does not want to perform 'eq' comparison with it (which is wrong) | process | find a way in infinispan to query by uuid infinispan does not take uuid field as primitive which is right but because of that it does not want to perform eq comparison with it which is wrong | 1 |

36,849 | 8,166,693,549 | IssuesEvent | 2018-08-25 12:44:30 | cython/cython | https://api.github.com/repos/cython/cython | closed | Python 3.7, Windows: FAIL: with_outer_raising (pure_doctest__generators_py) | Python3 Semantics defect | A single test error when trying to generate Cython 0.28.3 Windows wheels for Python 3.7:

```

======================================================================

FAIL: with_outer_raising (pure_doctest__generators_py)

Doctest: pure_doctest__generators_py.with_outer_raising

--------------------------------------... | 1.0 | Python 3.7, Windows: FAIL: with_outer_raising (pure_doctest__generators_py) - A single test error when trying to generate Cython 0.28.3 Windows wheels for Python 3.7:

```

======================================================================

FAIL: with_outer_raising (pure_doctest__generators_py)

Doctest: pure_doc... | non_process | python windows fail with outer raising pure doctest generators py a single test error when trying to generate cython windows wheels for python fail with outer raising pure doctest generators py doctest pure doct... | 0 |

83,437 | 24,055,870,680 | IssuesEvent | 2022-09-16 16:48:51 | gradle/gradle | https://api.github.com/repos/gradle/gradle | closed | Improve error message when more than one build in the composite has the same name | a:feature in:composite-builds stale | @adammurdoch commented on [Tue Jul 04 2017](https://github.com/gradle/composite-builds/issues/118)

Currently the error message is something like "Included build '<name>' is not unique in composite".

The error should at least let the user know _which_ builds have the same name, and ideally what can be done about ... | 1.0 | Improve error message when more than one build in the composite has the same name - @adammurdoch commented on [Tue Jul 04 2017](https://github.com/gradle/composite-builds/issues/118)

Currently the error message is something like "Included build '<name>' is not unique in composite".

The error should at least let ... | non_process | improve error message when more than one build in the composite has the same name adammurdoch commented on currently the error message is something like included build lt name is not unique in composite the error should at least let the user know which builds have the same name and ideally what can... | 0 |

134,107 | 18,422,567,427 | IssuesEvent | 2021-10-13 17:48:38 | turkdevops/desktop | https://api.github.com/repos/turkdevops/desktop | closed | CVE-2018-19838 (Medium) detected in node-sass-4.13.1.tgz - autoclosed | security vulnerability | ## CVE-2018-19838 - Medium Severity Vulnerability