Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

21,449 | 29,487,795,596 | IssuesEvent | 2023-06-02 11:07:57 | openfoodfacts/openfoodfacts-server | https://api.github.com/repos/openfoodfacts/openfoodfacts-server | opened | Compute and display glycemic index | ✔ task ingredients processing | While it is possible to enter the glycemic index in the nutrients' form, we could try to **compute and display the glycemic index**, based on the ingredients.

Either we can find an open data database, either we could start to collect the values from different scientific articles (eg. for [commonly consumed Thai frui... | 1.0 | Compute and display glycemic index - While it is possible to enter the glycemic index in the nutrients' form, we could try to **compute and display the glycemic index**, based on the ingredients.

Either we can find an open data database, either we could start to collect the values from different scientific articles ... | process | compute and display glycemic index while it is possible to enter the glycemic index in the nutrients form we could try to compute and display the glycemic index based on the ingredients either we can find an open data database either we could start to collect the values from different scientific articles ... | 1 |

21,689 | 30,185,622,360 | IssuesEvent | 2023-07-04 11:54:39 | h4sh5/npm-auto-scanner | https://api.github.com/repos/h4sh5/npm-auto-scanner | opened | virtual-domain-proxy 2.0.0 has 2 guarddog issues | npm-install-script npm-silent-process-execution | ```{"npm-install-script":[{"code":" \"postinstall\": \"node ./scripts/postinstall.js\"","location":"package/package.json:13","message":"The package.json has a script automatically running when the package is installed"}],"npm-silent-process-execution":[{"code":" childProcess = (0, child_process_1.... | 1.0 | virtual-domain-proxy 2.0.0 has 2 guarddog issues - ```{"npm-install-script":[{"code":" \"postinstall\": \"node ./scripts/postinstall.js\"","location":"package/package.json:13","message":"The package.json has a script automatically running when the package is installed"}],"npm-silent-process-execution":[{"code":"... | process | virtual domain proxy has guarddog issues npm install script npm silent process execution n detached true n stdio ignore n location package dist server index js message this package is silently executing another executable ... | 1 |

651,699 | 21,485,436,885 | IssuesEvent | 2022-04-26 22:36:07 | ProjectG-Plugins/CrossplatForms | https://api.github.com/repos/ProjectG-Plugins/CrossplatForms | opened | Convert configs from GeyserHub | enhancement priority: high | ### What feature do you want to see added?

Automatically convert configs from GeyserHub or a command to convert the file.

If anyone is interested in attempting this you'll need to learn or know how to use Configurate's Transformations (see [1](https://github.com/SpongePowered/Configurate/wiki/Transformations-and-Vi... | 1.0 | Convert configs from GeyserHub - ### What feature do you want to see added?

Automatically convert configs from GeyserHub or a command to convert the file.

If anyone is interested in attempting this you'll need to learn or know how to use Configurate's Transformations (see [1](https://github.com/SpongePowered/Config... | non_process | convert configs from geyserhub what feature do you want to see added automatically convert configs from geyserhub or a command to convert the file if anyone is interested in attempting this you ll need to learn or know how to use configurate s transformations see and and everything that geyserhub a... | 0 |

15,866 | 6,048,680,330 | IssuesEvent | 2017-06-12 17:01:01 | meteor/meteor | https://api.github.com/repos/meteor/meteor | closed | Arbitrary architecture argument for packages | feature Project:Isobuild | api.use accepts an architecture as its second argument. Is it possible to let it accept arbitrary global constants that are defined somewhere on the server?

| 1.0 | Arbitrary architecture argument for packages - api.use accepts an architecture as its second argument. Is it possible to let it accept arbitrary global constants that are defined somewhere on the server?

| non_process | arbitrary architecture argument for packages api use accepts an architecture as its second argument is it possible to let it accept arbitrary global constants that are defined somewhere on the server | 0 |

472,028 | 13,614,950,154 | IssuesEvent | 2020-09-23 13:52:44 | googleapis/elixir-google-api | https://api.github.com/repos/googleapis/elixir-google-api | closed | Synthesis failed for Webmaster | autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate Webmaster. :broken_heart:

Here's the output from running `synth.py`:

```

2020-09-22 06:51:05,019 autosynth [INFO] > logs will be written to: /tmpfs/src/logs/elixir-google-api

2020-09-22 06:51:05,489 autosynth [DEBUG] > Running: git config --global core.excludesfile /home/kbuilder/... | 1.0 | Synthesis failed for Webmaster - Hello! Autosynth couldn't regenerate Webmaster. :broken_heart:

Here's the output from running `synth.py`:

```

2020-09-22 06:51:05,019 autosynth [INFO] > logs will be written to: /tmpfs/src/logs/elixir-google-api

2020-09-22 06:51:05,489 autosynth [DEBUG] > Running: git config --global ... | non_process | synthesis failed for webmaster hello autosynth couldn t regenerate webmaster broken heart here s the output from running synth py autosynth logs will be written to tmpfs src logs elixir google api autosynth running git config global core excludesfile home kbuilde... | 0 |

2,946 | 2,534,568,476 | IssuesEvent | 2015-01-25 03:23:51 | xela144/CANaconda | https://api.github.com/repos/xela144/CANaconda | closed | Move the field unit text to outside the textbox | enhancement priority[low] | I like how the field range is displayed in grey in the textbox, and it makes that it's not visible once you enter a value, but the units should always be visible. So add a label to the right of the textfield that always displays the units. | 1.0 | Move the field unit text to outside the textbox - I like how the field range is displayed in grey in the textbox, and it makes that it's not visible once you enter a value, but the units should always be visible. So add a label to the right of the textfield that always displays the units. | non_process | move the field unit text to outside the textbox i like how the field range is displayed in grey in the textbox and it makes that it s not visible once you enter a value but the units should always be visible so add a label to the right of the textfield that always displays the units | 0 |

15,202 | 19,025,834,161 | IssuesEvent | 2021-11-24 03:17:38 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | MIPS64 reverse instruction is found to be wrong, how to fix the disassembly? | Feature: Processor/MIPS |

ida:

```

text:0000000000019B08 A0 FF BD 67 daddiu $sp, -0x60 # Doubleword Add Immediate Unsigned

.text:0000000000019B0C 48 00 BC FF sd $gp, 0x60+var_18($sp) # Store Doubleword

.text:0000000000019B10 09 00 1C 3C lui $gp, 9 # Load Upper Im... | 1.0 | MIPS64 reverse instruction is found to be wrong, how to fix the disassembly? -

ida:

```

text:0000000000019B08 A0 FF BD 67 daddiu $sp, -0x60 # Doubleword Add Immediate Unsigned

.text:0000000000019B0C 48 00 BC FF sd $gp, 0x60+var_18($sp) # Store Doubleword

.text:000000... | process | reverse instruction is found to be wrong how to fix the disassembly ida text ff bd daddiu sp doubleword add immediate unsigned text bc ff sd gp var sp store doubleword text lui gp ... | 1 |

165,547 | 6,278,021,356 | IssuesEvent | 2017-07-18 13:35:22 | CS2103JUN2017-T3/main | https://api.github.com/repos/CS2103JUN2017-T3/main | closed | As a user I can view my task history | priority.medium type.story | So that I can look back at the tasks I have completed so far

| 1.0 | As a user I can view my task history - So that I can look back at the tasks I have completed so far

| non_process | as a user i can view my task history so that i can look back at the tasks i have completed so far | 0 |

12,594 | 14,992,390,485 | IssuesEvent | 2021-01-29 09:48:05 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | [Linux/ARM] Access permission issue for System.Diagnostics.Process.GetProcessesByName()/GetProcesses() | area-System.Diagnostics.Process bug in pr up-for-grabs |

### Description

I ran the test code in an environment with smack applied(Tizen, 32-bit).

I called two method(```System.Diagnostics.Process.GetProcessesByName()```, ```System.Diagnostics.Process.GetProcesses()```).

* Sample code:

```

static void Main(string[] args)

{

string name = System.Diagnostics.Proce... | 1.0 | [Linux/ARM] Access permission issue for System.Diagnostics.Process.GetProcessesByName()/GetProcesses() -

### Description

I ran the test code in an environment with smack applied(Tizen, 32-bit).

I called two method(```System.Diagnostics.Process.GetProcessesByName()```, ```System.Diagnostics.Process.GetProcesses()```... | process | access permission issue for system diagnostics process getprocessesbyname getprocesses description i ran the test code in an environment with smack applied tizen bit i called two method system diagnostics process getprocessesbyname system diagnostics process getprocesses sam... | 1 |

22,143 | 30,684,599,790 | IssuesEvent | 2023-07-26 11:28:38 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Extension Based Hybrid Worker on Linux limits CPU to 5% which is nowhere mentioned - Agent Based Hybrid Worker didn't have this limit | automation/svc triaged assigned-to-author doc-enhancement process-automation/subsvc Pri2 | Hi colleagues,

please at least mention the default limit to 5 % CPU, as the former Agent-Based Hybrid Worker didn't have this limit under Linux.

When upgrading to the new worker, existing CPU intensive runbooks therefore run extrem slow and also might get errors due to the extremely boxed resources.

see entry ... | 1.0 | Extension Based Hybrid Worker on Linux limits CPU to 5% which is nowhere mentioned - Agent Based Hybrid Worker didn't have this limit - Hi colleagues,

please at least mention the default limit to 5 % CPU, as the former Agent-Based Hybrid Worker didn't have this limit under Linux.

When upgrading to the new worker,... | process | extension based hybrid worker on linux limits cpu to which is nowhere mentioned agent based hybrid worker didn t have this limit hi colleagues please at least mention the default limit to cpu as the former agent based hybrid worker didn t have this limit under linux when upgrading to the new worker ... | 1 |

2,891 | 5,871,928,141 | IssuesEvent | 2017-05-15 09:59:54 | ProgrammingLife2017/Desoxyribonucleinezuur | https://api.github.com/repos/ProgrammingLife2017/Desoxyribonucleinezuur | closed | Planning meeting | feedback priority: A process / tools time:2 | Have a meeting / brainstorming session about the organization (who does what) and the implementation (how do we do it) | 1.0 | Planning meeting - Have a meeting / brainstorming session about the organization (who does what) and the implementation (how do we do it) | process | planning meeting have a meeting brainstorming session about the organization who does what and the implementation how do we do it | 1 |

6,408 | 9,488,277,619 | IssuesEvent | 2019-04-22 19:07:48 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Manual Deployment, Step 2 confusing or inaccurate | assigned-to-author automation/svc doc-enhancement process-automation/subsvc triaged | In step 2 of the manual installation steps, it states "Add the Automation solution". What solution is this referring to? Is this Automation & Control or Automation Hybrid Worker or Azure Automation service? In the past this referred to the Automation & Control solution. I've noticed that this solution fails to inst... | 1.0 | Manual Deployment, Step 2 confusing or inaccurate - In step 2 of the manual installation steps, it states "Add the Automation solution". What solution is this referring to? Is this Automation & Control or Automation Hybrid Worker or Azure Automation service? In the past this referred to the Automation & Control solu... | process | manual deployment step confusing or inaccurate in step of the manual installation steps it states add the automation solution what solution is this referring to is this automation control or automation hybrid worker or azure automation service in the past this referred to the automation control solu... | 1 |

279,924 | 24,265,114,553 | IssuesEvent | 2022-09-28 05:09:24 | WPChill/download-monitor | https://api.github.com/repos/WPChill/download-monitor | closed | Versions don't add the meta to the count | Bug needs testing | **Describe the bug**

Need to add a filter same as for Download for backwards compatibility. Has commit https://github.com/WPChill/download-monitor/commit/a8b7be50401177618ef14dd13984c5078a6f2c16

| 1.0 | Versions don't add the meta to the count - **Describe the bug**

Need to add a filter same as for Download for backwards compatibility. Has commit https://github.com/WPChill/download-monitor/commit/a8b7be50401177618ef14dd13984c5078a6f2c16

| non_process | versions don t add the meta to the count describe the bug need to add a filter same as for download for backwards compatibility has commit | 0 |

221,094 | 16,994,948,835 | IssuesEvent | 2021-07-01 04:32:45 | faridlesosibirsk/TrainingRepository | https://api.github.com/repos/faridlesosibirsk/TrainingRepository | opened | 20. Перемещение файлов | documentation | Написать заголовок и цель. Ответ сохранить в файл "20. Перемещение файлов.md". | 1.0 | 20. Перемещение файлов - Написать заголовок и цель. Ответ сохранить в файл "20. Перемещение файлов.md". | non_process | перемещение файлов написать заголовок и цель ответ сохранить в файл перемещение файлов md | 0 |

3,315 | 6,419,221,963 | IssuesEvent | 2017-08-08 20:43:48 | TomorrowPartners/tomorrow-web | https://api.github.com/repos/TomorrowPartners/tomorrow-web | closed | Wireframe based on content | process_in progress | Based on #6. Here we go!

[Content for direction #1](https://docs.google.com/document/d/1q7xW9cDkjgN9PB4S_T0cG2szixjliL8q1127kuXAgWM/edit#)

[Content for direction #2](https://docs.google.com/document/d/1irOjuxS46GxVTsvZ5RG7udPn2gi1mcIYABwUhDRGlH0/edit)

Note: feel free to explore combinations of these, but just ... | 1.0 | Wireframe based on content - Based on #6. Here we go!

[Content for direction #1](https://docs.google.com/document/d/1q7xW9cDkjgN9PB4S_T0cG2szixjliL8q1127kuXAgWM/edit#)

[Content for direction #2](https://docs.google.com/document/d/1irOjuxS46GxVTsvZ5RG7udPn2gi1mcIYABwUhDRGlH0/edit)

Note: feel free to explore com... | process | wireframe based on content based on here we go note feel free to explore combinations of these but just make sure that you re clear on your north star for that particular sketch exploration wireframe | 1 |

2,786 | 5,718,274,449 | IssuesEvent | 2017-04-19 19:08:46 | imminfo/immdeep | https://api.github.com/repos/imminfo/immdeep | opened | Compare autoencoders | preprocessing | Fix the number of epochs = 150. Compare accuracy/loss of models with different latent dimensions and architectures.

- LSTM / GRU

- Bidirectional versions

- VAE

- (additional task) RNN with 1D convolutions | 1.0 | Compare autoencoders - Fix the number of epochs = 150. Compare accuracy/loss of models with different latent dimensions and architectures.

- LSTM / GRU

- Bidirectional versions

- VAE

- (additional task) RNN with 1D convolutions | process | compare autoencoders fix the number of epochs compare accuracy loss of models with different latent dimensions and architectures lstm gru bidirectional versions vae additional task rnn with convolutions | 1 |

96,449 | 12,130,260,565 | IssuesEvent | 2020-04-23 01:00:00 | solex2006/SELIProject | https://api.github.com/repos/solex2006/SELIProject | opened | Abbreviations | 2 - Ready Feature Design Notes :notebook: P3 - Normal role:Student | This is a "not-end" requirement. What I want to mean is that this should be considered for any new page you create that Student can access. Also should correct the old pages.

I will use the label **Feature Design Notes** to this cases

***************

A mechanism for identifying the expanded form or meaning of a... | 1.0 | Abbreviations - This is a "not-end" requirement. What I want to mean is that this should be considered for any new page you create that Student can access. Also should correct the old pages.

I will use the label **Feature Design Notes** to this cases

***************

A mechanism for identifying the expanded form... | non_process | abbreviations this is a not end requirement what i want to mean is that this should be considered for any new page you create that student can access also should correct the old pages i will use the label feature design notes to this cases a mechanism for identifying the expanded form... | 0 |

269,827 | 20,507,756,156 | IssuesEvent | 2022-03-01 00:54:15 | ChrisMenkhus/SpaceCaseDev | https://api.github.com/repos/ChrisMenkhus/SpaceCaseDev | opened | High Level Components Should Be Documented | documentation | Add comments to functions to better explain what they do | 1.0 | High Level Components Should Be Documented - Add comments to functions to better explain what they do | non_process | high level components should be documented add comments to functions to better explain what they do | 0 |

13,450 | 15,896,626,110 | IssuesEvent | 2021-04-11 18:05:40 | hasura/ask-me-anything | https://api.github.com/repos/hasura/ask-me-anything | opened | What numeric data types does Hasura support in relation to the underlying database(s)? | data type question series next-up-for-ama processing-for-shortvid question | ## Postgres has the following Numeric Types:

<table border="1" class="colwidths-given docutils">

<colgroup>

<col width="24%">

<col width="20%">

<col width="45%">

<col width="11%">

</colgroup>

<thead valign="bottom">

<tr class="row-odd"><th class="head">Name</th>

<th class="head">Aliases</th>

<th class="hea... | 1.0 | What numeric data types does Hasura support in relation to the underlying database(s)? - ## Postgres has the following Numeric Types:

<table border="1" class="colwidths-given docutils">

<colgroup>

<col width="24%">

<col width="20%">

<col width="45%">

<col width="11%">

</colgroup>

<thead valign="bottom">

<tr ... | process | what numeric data types does hasura support in relation to the underlying database s postgres has the following numeric types name aliases description hasura type bigint signed eight byte integer string bigserial autoincrementing eig... | 1 |

61,106 | 14,615,287,882 | IssuesEvent | 2020-12-22 11:17:24 | rsoreq/NodeGoat | https://api.github.com/repos/rsoreq/NodeGoat | opened | CVE-2020-7656 (Medium) detected in jquery-1.4.4.min.js | security vulnerability | ## CVE-2020-7656 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.4.4.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="htt... | True | CVE-2020-7656 (Medium) detected in jquery-1.4.4.min.js - ## CVE-2020-7656 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.4.4.min.js</b></p></summary>

<p>JavaScript library ... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file nodegoat node modules selenium webdriver lib test data droppableitems html pa... | 0 |

26,285 | 4,213,423,558 | IssuesEvent | 2016-06-29 19:02:24 | ODM2/ODM2PythonAPI | https://api.github.com/repos/ODM2/ODM2PythonAPI | closed | Consolidate List of API Functions | enhancement ready for testing | After looking at the existing code, we have decided on a major consolidation of the functions in the API to avoid complexity and repetition. For a description of the planned signatures (inputs and outputs) for each of the functions, [click here](https://github.com/ODM2/ODM2PythonAPI/blob/master/doc/APIFunctionList.md)... | 1.0 | Consolidate List of API Functions - After looking at the existing code, we have decided on a major consolidation of the functions in the API to avoid complexity and repetition. For a description of the planned signatures (inputs and outputs) for each of the functions, [click here](https://github.com/ODM2/ODM2PythonAPI... | non_process | consolidate list of api functions after looking at the existing code we have decided on a major consolidation of the functions in the api to avoid complexity and repetition for a description of the planned signatures inputs and outputs for each of the functions consolidated list of api low level get f... | 0 |

267,536 | 23,306,488,890 | IssuesEvent | 2022-08-08 01:59:29 | void-linux/void-packages | https://api.github.com/repos/void-linux/void-packages | opened | godot 3.5: Font rendering in editor broken. | bug needs-testing | ### Is this a new report?

Yes

### System Info

Void 5.18.16_1 x86_64 GenuineIntel uptodate FFFFFFFFFF

### Package(s) Affected

godot-3.5_1

### Does a report exist for this bug with the project's home (upstream) and/or another distro?

_No response_

### Expected behaviour

Font rendering in the editor should work.

... | 1.0 | godot 3.5: Font rendering in editor broken. - ### Is this a new report?

Yes

### System Info

Void 5.18.16_1 x86_64 GenuineIntel uptodate FFFFFFFFFF

### Package(s) Affected

godot-3.5_1

### Does a report exist for this bug with the project's home (upstream) and/or another distro?

_No response_

### Expected behavio... | non_process | godot font rendering in editor broken is this a new report yes system info void genuineintel uptodate ffffffffff package s affected godot does a report exist for this bug with the project s home upstream and or another distro no response expected behaviour f... | 0 |

15,869 | 20,036,522,963 | IssuesEvent | 2022-02-02 12:30:39 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] Study activities > Incorrect start/end date and time displayed for mobile participants having different time zone | Bug P1 iOS Process: Fixed | **Scenario 1:** Incorrect start/end date and time displayed for mobile participants when participant having different timezone enrolls into study

Steps:

1. Keep iOS mobile to different timezone having DST (Daylight savings) eg. EDT

2. Enroll into any study

3. Observe the start/end date and time for scheduling ... | 1.0 | [iOS] Study activities > Incorrect start/end date and time displayed for mobile participants having different time zone - **Scenario 1:** Incorrect start/end date and time displayed for mobile participants when participant having different timezone enrolls into study

Steps:

1. Keep iOS mobile to different timezo... | process | study activities incorrect start end date and time displayed for mobile participants having different time zone scenario incorrect start end date and time displayed for mobile participants when participant having different timezone enrolls into study steps keep ios mobile to different timezone h... | 1 |

22,329 | 30,913,745,443 | IssuesEvent | 2023-08-05 02:48:09 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | pih 1.48036 has 2 GuardDog issues | guarddog typosquatting silent-process-execution | https://pypi.org/project/pih

https://inspector.pypi.io/project/pih

```{

"dependency": "pih",

"version": "1.48036",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt: pid, pip",

... | 1.0 | pih 1.48036 has 2 GuardDog issues - https://pypi.org/project/pih

https://inspector.pypi.io/project/pih

```{

"dependency": "pih",

"version": "1.48036",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a... | process | pih has guarddog issues dependency pih version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt pid pip silent process execution ... | 1 |

21,010 | 27,945,635,493 | IssuesEvent | 2023-03-24 02:39:48 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | QGIS crashes on MacOS 13.0 when using `fix geometries` | Processing Regression Bug Crash/Data Corruption macOS Linux | ### What is the bug or the crash?

QGIS crashes the moment I use `fix geometries` on a set of polygons. To be specific, the vector data format that I am using is `.geojson` and `.shp`. I also tried to use `fix geometries` on a single polygon, but that also crashes. Currently I am using QGIS 3.28 on MacOS 13.0.

###... | 1.0 | QGIS crashes on MacOS 13.0 when using `fix geometries` - ### What is the bug or the crash?

QGIS crashes the moment I use `fix geometries` on a set of polygons. To be specific, the vector data format that I am using is `.geojson` and `.shp`. I also tried to use `fix geometries` on a single polygon, but that also cras... | process | qgis crashes on macos when using fix geometries what is the bug or the crash qgis crashes the moment i use fix geometries on a set of polygons to be specific the vector data format that i am using is geojson and shp i also tried to use fix geometries on a single polygon but that also crash... | 1 |

56,537 | 11,594,338,228 | IssuesEvent | 2020-02-24 15:10:30 | Regalis11/Barotrauma | https://api.github.com/repos/Regalis11/Barotrauma | reopened | Fulgurium Battery Cell is just a regular Battery Cell outside of Stun Baton | Code Design Feature request | - [x] I have searched the issue tracker to check if the issue has already been reported.

**Description**

Fulgurium Battery Cell is just a regular Battery Cell outside of Stun Baton. Perhaps higher life span or stronger electronic effects?

Handheld Sonar: Rapid pings?

Sonar Beacon: Further receiving range for Na... | 1.0 | Fulgurium Battery Cell is just a regular Battery Cell outside of Stun Baton - - [x] I have searched the issue tracker to check if the issue has already been reported.

**Description**

Fulgurium Battery Cell is just a regular Battery Cell outside of Stun Baton. Perhaps higher life span or stronger electronic effects?... | non_process | fulgurium battery cell is just a regular battery cell outside of stun baton i have searched the issue tracker to check if the issue has already been reported description fulgurium battery cell is just a regular battery cell outside of stun baton perhaps higher life span or stronger electronic effects ... | 0 |

539,607 | 15,791,817,667 | IssuesEvent | 2021-04-02 05:37:46 | capitalone/DataProfiler | https://api.github.com/repos/capitalone/DataProfiler | closed | Multi-threaded column profiling | Medium Priority New Feature | **Is your feature request related to a problem? Please describe.**

Multithread the profiling of the columns:

| 1.0 | Multi-threaded column profiling - **Is your feature request related to a problem? Please describe.**

Multithread the profiling of the columns:

| non_process | multi threaded column profiling is your feature request related to a problem please describe multithread the profiling of the columns | 0 |

1,487 | 4,059,115,678 | IssuesEvent | 2016-05-25 08:22:50 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | closed | Черкаська область - Катеринопільський район - запит на отримання публічної інформації - розкриття | In process of testing | Координатор:

Колодич Олена - Координатор IGov в Черкаській області. тел.+380674704730,

**дуже велике прохання координатора** -

коли будь-які листи будуть надсилатися на контактних осіб (тестування, питання тощо)

ставити ії в копію elena.kolodich@privatbank.ua

та називати листи **IGov - район/місто -(назва послуг... | 1.0 | Черкаська область - Катеринопільський район - запит на отримання публічної інформації - розкриття - Координатор:

Колодич Олена - Координатор IGov в Черкаській області. тел.+380674704730,

**дуже велике прохання координатора** -

коли будь-які листи будуть надсилатися на контактних осіб (тестування, питання тощо)

ст... | process | черкаська область катеринопільський район запит на отримання публічної інформації розкриття координатор колодич олена координатор igov в черкаській області тел дуже велике прохання координатора коли будь які листи будуть надсилатися на контактних осіб тестування питання тощо ставити ії в ... | 1 |

12,827 | 15,211,828,193 | IssuesEvent | 2021-02-17 09:36:38 | emacs-ess/ESS | https://api.github.com/repos/emacs-ess/ESS | closed | Start delimiter of `ess-command` is not stripped with multiline inputs | process:command | Commands containing new lines:

```elisp

(ess-command "{ 1\n2 }\n")

```

currently produce this output:

```r

+ ess-output-delimiter226-START

[1] 2

```

This makes me realise that another benefit of the delimiters is to reliably strip the `+` characters (created by the parser with multiline inputs) from th... | 1.0 | Start delimiter of `ess-command` is not stripped with multiline inputs - Commands containing new lines:

```elisp

(ess-command "{ 1\n2 }\n")

```

currently produce this output:

```r

+ ess-output-delimiter226-START

[1] 2

```

This makes me realise that another benefit of the delimiters is to reliably strip... | process | start delimiter of ess command is not stripped with multiline inputs commands containing new lines elisp ess command n currently produce this output r ess output start this makes me realise that another benefit of the delimiters is to reliably strip the chara... | 1 |

265,049 | 23,145,538,758 | IssuesEvent | 2022-07-29 00:04:11 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | closed | Teste de generalizacao para a tag Servidores - Proventos de aposentadoria - Divisa Alegre | generalization test development template-Síntese tecnologia informatica subtag-Proventos de Aposentadoria tag-Servidores | DoD: Realizar o teste de Generalização do validador da tag Servidores - Proventos de aposentadoria para o Município de Divisa Alegre. | 1.0 | Teste de generalizacao para a tag Servidores - Proventos de aposentadoria - Divisa Alegre - DoD: Realizar o teste de Generalização do validador da tag Servidores - Proventos de aposentadoria para o Município de Divisa Alegre. | non_process | teste de generalizacao para a tag servidores proventos de aposentadoria divisa alegre dod realizar o teste de generalização do validador da tag servidores proventos de aposentadoria para o município de divisa alegre | 0 |

421,379 | 28,314,652,465 | IssuesEvent | 2023-04-10 18:28:13 | bounswe/bounswe2023group6 | https://api.github.com/repos/bounswe/bounswe2023group6 | closed | Forming Individual Contribution Report for Milestone report 1 | type: documentation priority: high status: inprogress area: wiki area: milestone | ### Problem

For the milestone report 1, everyone needs to prepare Individual Contribution Report which will include information about our contributions.

### Solution

I will prepare my individual contribution report as follow(which is given by the course):

* Member: Info about myself (name, group)

* Responsibili... | 1.0 | Forming Individual Contribution Report for Milestone report 1 - ### Problem

For the milestone report 1, everyone needs to prepare Individual Contribution Report which will include information about our contributions.

### Solution

I will prepare my individual contribution report as follow(which is given by the cours... | non_process | forming individual contribution report for milestone report problem for the milestone report everyone needs to prepare individual contribution report which will include information about our contributions solution i will prepare my individual contribution report as follow which is given by the cours... | 0 |

218,293 | 7,331,044,006 | IssuesEvent | 2018-03-05 12:06:17 | NCEAS/metacat | https://api.github.com/repos/NCEAS/metacat | closed | Metacat performance issue in Sanparks skin | Category: metacat Component: Bugzilla-Id Priority: Normal Status: Resolved Tracker: Bug | ---

Author Name: **Jing Tao** (Jing Tao)

Original Redmine Issue: 3174, https://projects.ecoinformatics.org/ecoinfo/issues/3174

Original Date: 2008-03-13

Original Assignee: Jing Tao

---

Matt and Mike reported it would like about 4 or 5 minutes to do a search in sanparks skin of production server. We should fixed bef... | 1.0 | Metacat performance issue in Sanparks skin - ---

Author Name: **Jing Tao** (Jing Tao)

Original Redmine Issue: 3174, https://projects.ecoinformatics.org/ecoinfo/issues/3174

Original Date: 2008-03-13

Original Assignee: Jing Tao

---

Matt and Mike reported it would like about 4 or 5 minutes to do a search in sanparks s... | non_process | metacat performance issue in sanparks skin author name jing tao jing tao original redmine issue original date original assignee jing tao matt and mike reported it would like about or minutes to do a search in sanparks skin of production server we should fixed before release... | 0 |

100,752 | 21,510,202,327 | IssuesEvent | 2022-04-28 03:02:45 | RobertsLab/resources | https://api.github.com/repos/RobertsLab/resources | opened | Problem with stringtie2 not matching gene names from gff file (TagSeq) | code | Hi! I am having a problem with generating a gene count matrix from some TagSeq data. It seems that I am having issues properly formatting the .gff3 file from the reference genome that I am using (Pocillopora acuta). Most of the genes in my count matrix are named as "STRG###", which indicates that stringtie2 did not fin... | 1.0 | Problem with stringtie2 not matching gene names from gff file (TagSeq) - Hi! I am having a problem with generating a gene count matrix from some TagSeq data. It seems that I am having issues properly formatting the .gff3 file from the reference genome that I am using (Pocillopora acuta). Most of the genes in my count m... | non_process | problem with not matching gene names from gff file tagseq hi i am having a problem with generating a gene count matrix from some tagseq data it seems that i am having issues properly formatting the file from the reference genome that i am using pocillopora acuta most of the genes in my count matrix are na... | 0 |

9,757 | 12,740,432,436 | IssuesEvent | 2020-06-26 02:32:30 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | regression: data truncation with stream.writev in IPC channel | child_process help wanted stream | <!--

Thank you for reporting a possible bug in Node.js.

Please fill in as much of the template below as you can.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify the affected core module name

If possible, please pr... | 1.0 | regression: data truncation with stream.writev in IPC channel - <!--

Thank you for reporting a possible bug in Node.js.

Please fill in as much of the template below as you can.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please... | process | regression data truncation with stream writev in ipc channel thank you for reporting a possible bug in node js please fill in as much of the template below as you can version output of node v platform output of uname a unix or version and or bit windows subsystem if known please s... | 1 |

100,676 | 30,753,161,433 | IssuesEvent | 2023-07-28 21:32:39 | NixOS/nixpkgs | https://api.github.com/repos/NixOS/nixpkgs | closed | Build failure: keychain on 23.11 unstable | 0.kind: build failure | ### Steps To Reproduce

Steps to reproduce the behavior:

1. build a 23.11 unstable derivation (flake-enabled in configuration.nix, but not using any flakes yet)

2. `nix-env -iA nixos.keychain`

Edit: I updated both personal and root nix-channel to nixos-unstable and rebuilt a new derivation with keychain included... | 1.0 | Build failure: keychain on 23.11 unstable - ### Steps To Reproduce

Steps to reproduce the behavior:

1. build a 23.11 unstable derivation (flake-enabled in configuration.nix, but not using any flakes yet)

2. `nix-env -iA nixos.keychain`

Edit: I updated both personal and root nix-channel to nixos-unstable and reb... | non_process | build failure keychain on unstable steps to reproduce steps to reproduce the behavior build a unstable derivation flake enabled in configuration nix but not using any flakes yet nix env ia nixos keychain edit i updated both personal and root nix channel to nixos unstable and rebuilt... | 0 |

943 | 3,409,566,933 | IssuesEvent | 2015-12-04 16:16:46 | hammerlab/pileup.js | https://api.github.com/repos/hammerlab/pileup.js | closed | Move modules into packages | process | Source tree should probably look something like:

```

src/

formats/

viz/

components/

util/

``` | 1.0 | Move modules into packages - Source tree should probably look something like:

```

src/

formats/

viz/

components/

util/

``` | process | move modules into packages source tree should probably look something like src formats viz components util | 1 |

847 | 2,517,144,033 | IssuesEvent | 2015-01-16 12:10:42 | ajency/Foodstree | https://api.github.com/repos/ajency/Foodstree | closed | Subtitle for grouping seller's information is required on edit seller page | bug Pushed to test site | Steps:

1.Login as admin and click on seller

2. Edit any of the seller

Current behaviour: The seller information currently is shown below the subtitle 'Pincode Info'

Expected Behaviour: A subtitle is required to group the seller's info fields

: TV Show

Film or show in which it appears: _Gravity Falls_

Is the parent film/show streaming anywhere? [Disney+](https://www.disneyplus.com/series/gravity-falls/HZxayxzMJqed)

About when in the parent film/show does... | 1.0 | Add Tiger Fist - Please add as much of the following info as you can:

Title: Tiger Fist

Type (film/tv show): TV Show

Film or show in which it appears: _Gravity Falls_

Is the parent film/show streaming anywhere? [Disney+](https://www.disneyplus.com/series/gravity-falls/HZxayxzMJqed)

About when in the pare... | process | add tiger fist please add as much of the following info as you can title tiger fist type film tv show tv show film or show in which it appears gravity falls is the parent film show streaming anywhere about when in the parent film show does it appear season episode actual foota... | 1 |

470,265 | 13,534,722,009 | IssuesEvent | 2020-09-16 06:17:54 | pingcap/tidb-lightning | https://api.github.com/repos/pingcap/tidb-lightning | closed | Support importing data in Apache Parquet format | 5.0 request difficulty/3-hard feature/accepted priority/P0 | # Description

https://parquet.apache.org/

[AWS Aurora exported snapshot](https://docs.aws.amazon.com/AmazonRDS/latest/AuroraUserGuide/USER_ExportSnapshot.html#USER_ExportSnapshot.data-types) are encoded in Parquet format. We should investigate how to restore from this serialization to allow quick Aurora → TiDB d... | 1.0 | Support importing data in Apache Parquet format - # Description

https://parquet.apache.org/

[AWS Aurora exported snapshot](https://docs.aws.amazon.com/AmazonRDS/latest/AuroraUserGuide/USER_ExportSnapshot.html#USER_ExportSnapshot.data-types) are encoded in Parquet format. We should investigate how to restore from... | non_process | support importing data in apache parquet format description are encoded in parquet format we should investigate how to restore from this serialization to allow quick aurora → tidb data migration category feature value value description tbd value score tbd ... | 0 |

87,430 | 15,774,419,618 | IssuesEvent | 2021-04-01 01:02:04 | RG4421/spark-tpcds-benchmark | https://api.github.com/repos/RG4421/spark-tpcds-benchmark | opened | CVE-2021-21409 (Medium) detected in netty-all-4.1.50.Final.jar | security vulnerability | ## CVE-2021-21409 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-all-4.1.50.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application framework ... | True | CVE-2021-21409 (Medium) detected in netty-all-4.1.50.Final.jar - ## CVE-2021-21409 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-all-4.1.50.Final.jar</b></p></summary>

<p>Net... | non_process | cve medium detected in netty all final jar cve medium severity vulnerability vulnerable library netty all final jar netty is an asynchronous event driven network application framework for rapid development of maintainable high performance protocol servers and clients ... | 0 |

50,224 | 12,484,695,198 | IssuesEvent | 2020-05-30 15:59:51 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Return to signing jars? | .Building & Releasing | We dropped signed jars in a recent release, mainly because of how long it takes to verify the jar and the fact that it seems like no one actually verifies them in production.

We should probably at least provide a checksum however.

Does anyone feel strongly about signed jars? | 1.0 | Return to signing jars? - We dropped signed jars in a recent release, mainly because of how long it takes to verify the jar and the fact that it seems like no one actually verifies them in production.

We should probably at least provide a checksum however.

Does anyone feel strongly about signed jars? | non_process | return to signing jars we dropped signed jars in a recent release mainly because of how long it takes to verify the jar and the fact that it seems like no one actually verifies them in production we should probably at least provide a checksum however does anyone feel strongly about signed jars | 0 |

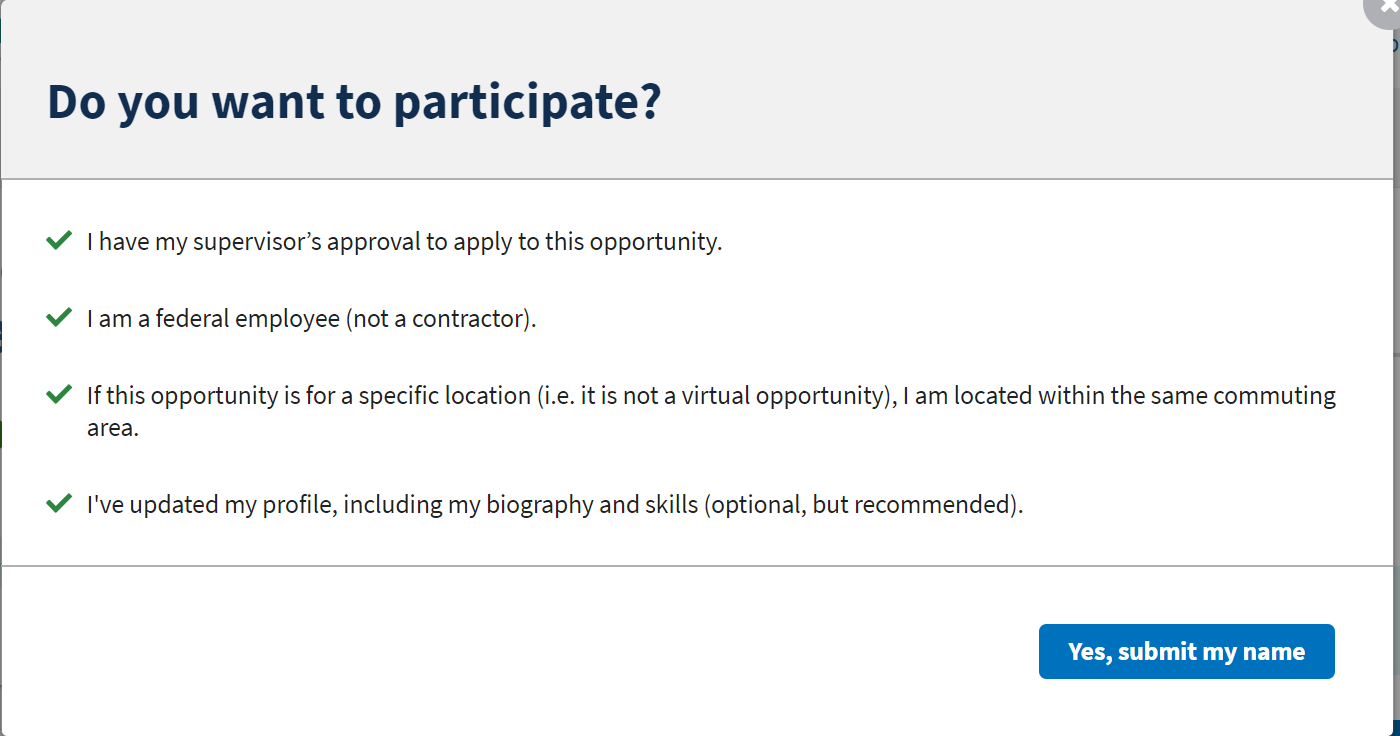

7,978 | 11,167,790,185 | IssuesEvent | 2019-12-27 18:41:34 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Updates to submit modal on opportunity application | Apply Process Approved Requirements Ready | Who: opportunity applicants

What: let them know if they have not updated their biography or skills

Why: so more applicants will input biography data

Acceptance Criteria:

Current Screen Shot

Fo... | 1.0 | Updates to submit modal on opportunity application - Who: opportunity applicants

What: let them know if they have not updated their biography or skills

Why: so more applicants will input biography data

Acceptance Criteria:

Current Screen Shot

| Migrated from Trac post_processing senkbeil@uwm.edu task | I just realized that VarName is being passed in to the MATLAB script; what is its intended purpose? Can we get rid of it? I'm not using it.

Attachments:

Migrated from http://carson.math.uwm.edu/trac/clubb/ticket/173

```json

{

"status": "closed",

"changetime": "2009-09-02T20:44:39",

"description": "I ju... | 1.0 | In the call to PlotCreator, what is VarName used for? (Trac #173) - I just realized that VarName is being passed in to the MATLAB script; what is its intended purpose? Can we get rid of it? I'm not using it.

Attachments:

Migrated from http://carson.math.uwm.edu/trac/clubb/ticket/173

```json

{

"status": "closed",... | process | in the call to plotcreator what is varname used for trac i just realized that varname is being passed in to the matlab script what is its intended purpose can we get rid of it i m not using it attachments migrated from json status closed changetime descriptio... | 1 |

14,094 | 16,983,796,099 | IssuesEvent | 2021-06-30 12:13:47 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | Allow for batch indexing when using Pipelines | good first issue topic:preprocessing | Currently, the `BaseConverter` and `PreProcessor` classes only work on single files when used as nodes in a Pipeline. This means that we write documents one at a time to the DocumentStore which can significantly slow down performance.

We should fix this by allowing the `run()` methods of `BaseConverter` and `PreProc... | 1.0 | Allow for batch indexing when using Pipelines - Currently, the `BaseConverter` and `PreProcessor` classes only work on single files when used as nodes in a Pipeline. This means that we write documents one at a time to the DocumentStore which can significantly slow down performance.

We should fix this by allowing the... | process | allow for batch indexing when using pipelines currently the baseconverter and preprocessor classes only work on single files when used as nodes in a pipeline this means that we write documents one at a time to the documentstore which can significantly slow down performance we should fix this by allowing the... | 1 |

1,123 | 3,594,530,169 | IssuesEvent | 2016-02-02 00:09:32 | DarkEnergyScienceCollaboration/ComputingInfrastructure | https://api.github.com/repos/DarkEnergyScienceCollaboration/ComputingInfrastructure | opened | Perform reprocessing at NERSC | CI Reprocessing Stress Test Workflow Engine | We need to confirm that NERSC can handle our HTC workflows. Image level reprocessing has been identified as a good test of this. Should engage reprocessing with Workflow Engine (or think about it). @boutigny @tony-johnson @wenaus @nugent68 @salmanhabib are known interested parties. | 1.0 | Perform reprocessing at NERSC - We need to confirm that NERSC can handle our HTC workflows. Image level reprocessing has been identified as a good test of this. Should engage reprocessing with Workflow Engine (or think about it). @boutigny @tony-johnson @wenaus @nugent68 @salmanhabib are known interested parties. | process | perform reprocessing at nersc we need to confirm that nersc can handle our htc workflows image level reprocessing has been identified as a good test of this should engage reprocessing with workflow engine or think about it boutigny tony johnson wenaus salmanhabib are known interested parties | 1 |

6,610 | 9,694,879,388 | IssuesEvent | 2019-05-24 20:21:07 | kerubistan/kerub | https://api.github.com/repos/kerubistan/kerub | closed | get rid of the assumption that there can be only one allocation of a virtual storage | component:data processing priority: high | read only virtual storage can have any number of allocations | 1.0 | get rid of the assumption that there can be only one allocation of a virtual storage - read only virtual storage can have any number of allocations | process | get rid of the assumption that there can be only one allocation of a virtual storage read only virtual storage can have any number of allocations | 1 |

104,186 | 22,601,389,155 | IssuesEvent | 2022-06-29 09:26:12 | UnitTestBot/UTBotJava | https://api.github.com/repos/UnitTestBot/UTBotJava | closed | Invalid import of nested enums from default package | bug codegen top focus | **Description**

Invalid `import` statements are generated for inner enums declared in the default (unnamed) package. It seems that in this specific case (default package) `import` should not be generated, instead fully qualified references should be used in the test body.

**To Reproduce**

This seems to be a ra... | 1.0 | Invalid import of nested enums from default package - **Description**

Invalid `import` statements are generated for inner enums declared in the default (unnamed) package. It seems that in this specific case (default package) `import` should not be generated, instead fully qualified references should be used in the t... | non_process | invalid import of nested enums from default package description invalid import statements are generated for inner enums declared in the default unnamed package it seems that in this specific case default package import should not be generated instead fully qualified references should be used in the t... | 0 |

415 | 2,852,365,086 | IssuesEvent | 2015-06-01 13:17:00 | genomizer/genomizer-server | https://api.github.com/repos/genomizer/genomizer-server | opened | Change the upload API | enhancement Low priority Processing | This is something that I haven't had time to finish this year. Right now, the upload API is a bit bonkers: the user does a POST request that looks approx. like `POST /file \n\n {fileName : "foo", otherMetadata ...}` and then gets back a URL that is used for the actual upload (the usual `multipart/form-data` POST). This... | 1.0 | Change the upload API - This is something that I haven't had time to finish this year. Right now, the upload API is a bit bonkers: the user does a POST request that looks approx. like `POST /file \n\n {fileName : "foo", otherMetadata ...}` and then gets back a URL that is used for the actual upload (the usual `multipar... | process | change the upload api this is something that i haven t had time to finish this year right now the upload api is a bit bonkers the user does a post request that looks approx like post file n n filename foo othermetadata and then gets back a url that is used for the actual upload the usual multipar... | 1 |

269 | 2,699,150,978 | IssuesEvent | 2015-04-03 14:48:40 | appsgate2015/appsgate | https://api.github.com/repos/appsgate2015/appsgate | closed | L'état du Domicube ne remonte pas dans le client | P1 PROCESSING | cf. Mail de Joëlle:

. Le DomiCube est present mais s'obstine à rester ds l'etat "unknown" que tu le bouges ou pas

Observation Thibaud:

Et je reproduis le bug, j’ai le cube qui est là et qui reste dans l’état unknown.

Par contre, dans les logs du client, je vois bien les messages arriver qd on change de face.

Do... | 1.0 | L'état du Domicube ne remonte pas dans le client - cf. Mail de Joëlle:

. Le DomiCube est present mais s'obstine à rester ds l'etat "unknown" que tu le bouges ou pas

Observation Thibaud:

Et je reproduis le bug, j’ai le cube qui est là et qui reste dans l’état unknown.

Par contre, dans les logs du client, je vois ... | process | l état du domicube ne remonte pas dans le client cf mail de joëlle le domicube est present mais s obstine à rester ds l etat unknown que tu le bouges ou pas observation thibaud et je reproduis le bug j’ai le cube qui est là et qui reste dans l’état unknown par contre dans les logs du client je vois ... | 1 |

11,158 | 13,957,693,763 | IssuesEvent | 2020-10-24 08:11:04 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | RO: Missing resources in Geoportal | Geoportal Harvesting process RO - Romania | Collected from the Geoportal Workshop online survey answers: (see attachment)

Missing download icon for SDS (Spatial Data Services).

The icon appear only for Network Services (ATOM or WFS).

Metadata available online at http://gmlid.eu/RO/GeoEcoMar/EUXINUS/MD | 1.0 | RO: Missing resources in Geoportal - Collected from the Geoportal Workshop online survey answers: (see attachment)

Missing download icon for SDS (Spatial Data Services).

The icon appear only for Network Services (ATOM or WFS).

Metadata available online at http://gmlid.eu/RO/GeoEcoMar/EUXINUS/MD | process | ro missing resources in geoportal collected from the geoportal workshop online survey answers see attachment missing download icon for sds spatial data services the icon appear only for network services atom or wfs metadata available online at | 1 |

30,539 | 11,839,201,978 | IssuesEvent | 2020-03-23 16:47:25 | Mohib-hub/TwxAzureDataLakeConnector | https://api.github.com/repos/Mohib-hub/TwxAzureDataLakeConnector | opened | WS-2019-0318 (Medium) detected in handlebars-4.1.0.tgz | security vulnerability | ## WS-2019-0318 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.0.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates ... | True | WS-2019-0318 (Medium) detected in handlebars-4.1.0.tgz - ## WS-2019-0318 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.0.tgz</b></p></summary>

<p>Handlebars provides... | non_process | ws medium detected in handlebars tgz ws medium severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file tmp ws s... | 0 |

382,357 | 11,304,974,020 | IssuesEvent | 2020-01-18 01:30:38 | rathena/rathena | https://api.github.com/repos/rathena/rathena | closed | General Egnigem Cenia Card HP/SP regen timer is reset when receiving buffs | component:core mode:prerenewal mode:renewal priority:low status:confirmed type:bug | * **rAthena Hash**: Latest

* **Client Date**: 2017-06-14bRagexeRE

* **Server Mode**: Pre-Renewal

* **Description of Issue**:

* Result: When wearing General Egnigem Cenia card, HP and SP are regen every 10 seconds. However, when we receive any type of buff (self skill, support skill from someone else, or it... | 1.0 | General Egnigem Cenia Card HP/SP regen timer is reset when receiving buffs - * **rAthena Hash**: Latest

* **Client Date**: 2017-06-14bRagexeRE

* **Server Mode**: Pre-Renewal

* **Description of Issue**:

* Result: When wearing General Egnigem Cenia card, HP and SP are regen every 10 seconds. However, when we... | non_process | general egnigem cenia card hp sp regen timer is reset when receiving buffs rathena hash latest client date server mode pre renewal description of issue result when wearing general egnigem cenia card hp and sp are regen every seconds however when we receive any ty... | 0 |

683,306 | 23,376,276,892 | IssuesEvent | 2022-08-11 03:40:12 | kubevela/kubevela | https://api.github.com/repos/kubevela/kubevela | closed | [Feature] add policy definitions for advanced internal policy | type/enhancement good first issue help wanted priority/nice-to-have effort/small area/policy | **Is your feature request related to a problem? Please describe.**

<!--

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

-->

Previous PR(https://github.com/kubevela/kubevela/pull/3894) adds topology and override policy definition for schema.

Advanced policies' are al... | 1.0 | [Feature] add policy definitions for advanced internal policy - **Is your feature request related to a problem? Please describe.**

<!--

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

-->

Previous PR(https://github.com/kubevela/kubevela/pull/3894) adds topology and ove... | non_process | add policy definitions for advanced internal policy is your feature request related to a problem please describe a clear and concise description of what the problem is ex i m always frustrated when previous pr adds topology and override policy definition for schema advanced policies ... | 0 |

5,974 | 8,793,573,851 | IssuesEvent | 2018-12-21 20:31:01 | docker/docker.github.io | https://api.github.com/repos/docker/docker.github.io | closed | Create diagrams for scanning and jobrunner | area/dtr area/ee process/images & screenshots | Awesome sketches from @venalen

## Scanning:

A:

B:

(**Note**: Follow up with... | 1.0 | Create diagrams for scanning and jobrunner - Awesome sketches from @venalen

## Scanning:

A:

B:

on June 07, 2011 18:14:14_

500-7250. винхп про сп3

1.в в списке для закачки оч долго висело 2 файла (скачан, ожидание). надоело ждать-нажал удалить. порга завалилась,создав репорт(прилагается)

2.при автоматическом пробросе портов через день пер... | 1.0 | 1.заваливается, 2 непонятки с автопробросом портов - _From [cep...@mail.ru](https://code.google.com/u/108682685495662585618/) on June 07, 2011 18:14:14_

500-7250. винхп про сп3

1.в в списке для закачки оч долго висело 2 файла (скачан, ожидание). надоело ждать-нажал удалить. порга завалилась,создав репорт(прилагается)... | non_process | заваливается непонятки с автопробросом портов from on june винхп про в в списке для закачки оч долго висело файла скачан ожидание надоело ждать нажал удалить порга завалилась создав репорт прилагается при автоматическом пробросе портов через день перестает работать поиск и ... | 0 |

18,812 | 24,712,881,836 | IssuesEvent | 2022-10-20 03:19:09 | TabooLib/chemdah | https://api.github.com/repos/TabooLib/chemdah | closed | 使用滚轮切换回复内容 开启时出现的错误 | bug processed | ```

[16:06:18 ERROR]: Could not pass event PlayerItemHeldEvent to Chemdah v0.2.39

java.lang.NoClassDefFoundError: net/minecraft/server/v1_16_R3/PacketPlayOutHeldItemSlot

at ink.ptms.chemdah.core.conversation.theme.ThemeChat.onItemHeld(ThemeChat.kt:98) ~[Chemdah-0.2.39.jar:?]

at java.lang.invoke.Meth... | 1.0 | 使用滚轮切换回复内容 开启时出现的错误 - ```

[16:06:18 ERROR]: Could not pass event PlayerItemHeldEvent to Chemdah v0.2.39

java.lang.NoClassDefFoundError: net/minecraft/server/v1_16_R3/PacketPlayOutHeldItemSlot

at ink.ptms.chemdah.core.conversation.theme.ThemeChat.onItemHeld(ThemeChat.kt:98) ~[Chemdah-0.2.39.jar:?]

at... | process | 使用滚轮切换回复内容 开启时出现的错误 could not pass event playeritemheldevent to chemdah java lang noclassdeffounderror net minecraft server packetplayouthelditemslot at ink ptms chemdah core conversation theme themechat onitemheld themechat kt at java lang invoke methodhandle invokewitha... | 1 |

224,872 | 7,473,573,076 | IssuesEvent | 2018-04-03 15:43:27 | neuropoly/axondeepseg | https://api.github.com/repos/neuropoly/axondeepseg | closed | Implement continuous integration and integrity checking | priority:HIGH | to avoid this kind of issue: https://github.com/neuropoly/axondeepseg/issues/59

we could use continuous integration (CI) service such as [Travis](https://travis-ci.org/). | 1.0 | Implement continuous integration and integrity checking - to avoid this kind of issue: https://github.com/neuropoly/axondeepseg/issues/59

we could use continuous integration (CI) service such as [Travis](https://travis-ci.org/). | non_process | implement continuous integration and integrity checking to avoid this kind of issue we could use continuous integration ci service such as | 0 |

6,485 | 9,554,722,250 | IssuesEvent | 2019-05-02 23:11:36 | ncbo/bioportal-project | https://api.github.com/repos/ncbo/bioportal-project | closed | NEO: fails to load | in progress ontology processing problem | The [NEO ontology](http://bioportal.bioontology.org/ontologies/NEO) shows "Error Rdf" status in the BioPortal UI. Stack trace from parsing log file:

```

E, [2019-04-22T11:27:38.785943 #5770] ERROR -- : ["Exception: could not `LANG=C grep -v '_:genid' /tmp/file_nobnodes20190422-5770-1hw2ajk/data.nt > /tmp/file_nobno... | 1.0 | NEO: fails to load - The [NEO ontology](http://bioportal.bioontology.org/ontologies/NEO) shows "Error Rdf" status in the BioPortal UI. Stack trace from parsing log file:

```

E, [2019-04-22T11:27:38.785943 #5770] ERROR -- : ["Exception: could not `LANG=C grep -v '_:genid' /tmp/file_nobnodes20190422-5770-1hw2ajk/data... | process | neo fails to load the shows error rdf status in the bioportal ui stack trace from parsing log file e error exception could not lang c grep v genid tmp file data nt tmp file data no bnodes nt srv ncbo ncbo cron vendor bundle ruby bundler gems goo lib goo s... | 1 |

129,039 | 10,560,941,669 | IssuesEvent | 2019-10-04 14:53:01 | mozilla/iris_firefox | https://api.github.com/repos/mozilla/iris_firefox | opened | Fix default_search_code_yandex for treeherder Nightly | regression target-nightly test case | This is failing at the first code validation assert. There aren't any debug images here because it's a bunch of keyboard and copy to clipboard stuff. As such I can't tell if the search page was loaded or if it's being captcha's. I am going to have to add some sort of pattern check just to see what's on screen around t... | 1.0 | Fix default_search_code_yandex for treeherder Nightly - This is failing at the first code validation assert. There aren't any debug images here because it's a bunch of keyboard and copy to clipboard stuff. As such I can't tell if the search page was loaded or if it's being captcha's. I am going to have to add some sor... | non_process | fix default search code yandex for treeherder nightly this is failing at the first code validation assert there aren t any debug images here because it s a bunch of keyboard and copy to clipboard stuff as such i can t tell if the search page was loaded or if it s being captcha s i am going to have to add some sor... | 0 |

1,260 | 5,348,482,978 | IssuesEvent | 2017-02-18 05:30:02 | diofant/diofant | https://api.github.com/repos/diofant/diofant | opened | Use "new" style for string formatting | maintainability | I.e. ``"{0:s}".format("spam")`` instead of ``"%s" % "spam"`` | True | Use "new" style for string formatting - I.e. ``"{0:s}".format("spam")`` instead of ``"%s" % "spam"`` | non_process | use new style for string formatting i e s format spam instead of s spam | 0 |

10,784 | 13,608,982,336 | IssuesEvent | 2020-09-23 03:55:53 | googleapis/java-dataproc | https://api.github.com/repos/googleapis/java-dataproc | closed | Dependency Dashboard | api: dataproc type: process | This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/org.apache.maven.plugins-maven-project-info-reports-plugin-3.x -->build(deps): update dependency org.apache... | 1.0 | Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/org.apache.maven.plugins-maven-project-info-reports-plugin-3.x -->build(deps): updat... | process | dependency dashboard this issue contains a list of renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any build deps update dependency org apache maven plugins maven project info reports plugin to chore de... | 1 |

174,163 | 27,586,500,326 | IssuesEvent | 2023-03-08 20:12:24 | department-of-veterans-affairs/vets-design-system-documentation | https://api.github.com/repos/department-of-veterans-affairs/vets-design-system-documentation | opened | Process List css bug | bug vsp-design-system-team | # Bug Report

- [x] I’ve searched for any related issues and avoided creating a duplicate issue.

## What happened

I found a minor css bug. There are a few lines of css that could be added to make [these process steps](https://github.com/department-of-veterans-affairs/va-digital-services-platform-docs/blob/06476... | 1.0 | Process List css bug - # Bug Report

- [x] I’ve searched for any related issues and avoided creating a duplicate issue.

## What happened

I found a minor css bug. There are a few lines of css that could be added to make [these process steps](https://github.com/department-of-veterans-affairs/va-digital-services-p... | non_process | process list css bug bug report i’ve searched for any related issues and avoided creating a duplicate issue what happened i found a minor css bug there are a few lines of css that could be added to make numbers have proper circles around them rather than ovals that they are now if we added... | 0 |

16,775 | 21,958,526,152 | IssuesEvent | 2022-05-24 14:03:11 | GoogleCloudPlatform/anthos-samples | https://api.github.com/repos/GoogleCloudPlatform/anthos-samples | closed | CI flakiness | type: bug type: process priority: p2 samples | @Shabirmean and I noticed flaky behaviour occurring.

We believe that it's due to github actions/checkout@v3, where the perms get overwritten

Every once in a while we have to ssh into the runners and change the permissions, or else the CI errors out with

```

Error: fatal: --local can only be used inside a git re... | 1.0 | CI flakiness - @Shabirmean and I noticed flaky behaviour occurring.

We believe that it's due to github actions/checkout@v3, where the perms get overwritten

Every once in a while we have to ssh into the runners and change the permissions, or else the CI errors out with

```

Error: fatal: --local can only be used... | process | ci flakiness shabirmean and i noticed flaky behaviour occurring we believe that it s due to github actions checkout where the perms get overwritten every once in a while we have to ssh into the runners and change the permissions or else the ci errors out with error fatal local can only be used ... | 1 |

4,685 | 7,522,508,585 | IssuesEvent | 2018-04-12 20:40:11 | googlegenomics/gcp-variant-transforms | https://api.github.com/repos/googlegenomics/gcp-variant-transforms | closed | Add documentation on how to run the pipeline in a particular zone/region | P1 process | There is both region [1] and zone arguments (and in two places! one in the pipelines API and one in the Dataflow API). We should document how to use these features.

[1] https://cloud.google.com/dataflow/docs/concepts/regional-endpoints#supported_regional_endpoints | 1.0 | Add documentation on how to run the pipeline in a particular zone/region - There is both region [1] and zone arguments (and in two places! one in the pipelines API and one in the Dataflow API). We should document how to use these features.

[1] https://cloud.google.com/dataflow/docs/concepts/regional-endpoints#suppor... | process | add documentation on how to run the pipeline in a particular zone region there is both region and zone arguments and in two places one in the pipelines api and one in the dataflow api we should document how to use these features | 1 |

14,206 | 17,103,899,225 | IssuesEvent | 2021-07-09 14:54:43 | 3drepo/3drepobouncer | https://api.github.com/repos/3drepo/3drepobouncer | closed | DWG/DXF support | In Staging feature file processing | <!-- FEATURE TEMPLATE (delete as appropriate) -->

<!-- Remember to tag this issue as a feature! -->

### Description

Add support to dwg/dxf via Teigha library

### Goals

- [ ] DWG model file should be accepted and correct geometry generated

- [ ] DXF model file should be accepted and correct geometry generated

... | 1.0 | DWG/DXF support - <!-- FEATURE TEMPLATE (delete as appropriate) -->

<!-- Remember to tag this issue as a feature! -->

### Description

Add support to dwg/dxf via Teigha library

### Goals

- [ ] DWG model file should be accepted and correct geometry generated

- [ ] DXF model file should be accepted and correct geo... | process | dwg dxf support description add support to dwg dxf via teigha library goals dwg model file should be accepted and correct geometry generated dxf model file should be accepted and correct geometry generated tasks add code to read dwg dxf use dgn exporter to convert to dgn db ... | 1 |

61,295 | 7,458,854,974 | IssuesEvent | 2018-03-30 12:41:38 | dotnet/project-system | https://api.github.com/repos/dotnet/project-system | closed | Selecting the Application page on AppDesigner, show "PropPageDesignerRootComponent" in Properties window | Bug Feature-AppDesigner Up for Grabs |

| 1.0 | Selecting the Application page on AppDesigner, show "PropPageDesignerRootComponent" in Properties window -

| non_process | selecting the application page on appdesigner show proppagedesignerrootcomponent in properties window | 0 |

14,775 | 18,051,321,816 | IssuesEvent | 2021-09-19 19:55:20 | Leviatan-Analytics/LA-data-processing | https://api.github.com/repos/Leviatan-Analytics/LA-data-processing | closed | Create pro match analysis data schema [2] | Data Processing Week 3 Sprint 4 | Develop schema for saving a pro match manual analysis. | 1.0 | Create pro match analysis data schema [2] - Develop schema for saving a pro match manual analysis. | process | create pro match analysis data schema develop schema for saving a pro match manual analysis | 1 |

751,388 | 26,242,885,854 | IssuesEvent | 2023-01-05 13:02:33 | matrixorigin/matrixone | https://api.github.com/repos/matrixorigin/matrixone | opened | [Feature Request]: add information function for the account; | priority/p0 kind/feature | ### Is there an existing issue for the same feature request?

- [X] I have checked the existing issues.

### Is your feature request related to a problem?

```Markdown

mo cloud

```

### Describe the feature you'd like

1, select current_account();

print the account information:

column1:account_name;

column2:accoun... | 1.0 | [Feature Request]: add information function for the account; - ### Is there an existing issue for the same feature request?

- [X] I have checked the existing issues.

### Is your feature request related to a problem?

```Markdown

mo cloud

```

### Describe the feature you'd like

1, select current_account();

print ... | non_process | add information function for the account is there an existing issue for the same feature request i have checked the existing issues is your feature request related to a problem markdown mo cloud describe the feature you d like select current account print the account inform... | 0 |

22,499 | 31,476,635,849 | IssuesEvent | 2023-08-30 11:11:14 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | skypilot-nightly 1.0.0.dev20230830 has 2 GuardDog issues | guarddog exec-base64 silent-process-execution | https://pypi.org/project/skypilot-nightly

https://inspector.pypi.io/project/skypilot-nightly

```{

"dependency": "skypilot-nightly",

"version": "1.0.0.dev20230830",

"result": {

"issues": 2,

"errors": {},

"results": {

"exec-base64": [

{

"location": "skypilot-nightly-1.0.0.dev2023... | 1.0 | skypilot-nightly 1.0.0.dev20230830 has 2 GuardDog issues - https://pypi.org/project/skypilot-nightly

https://inspector.pypi.io/project/skypilot-nightly

```{

"dependency": "skypilot-nightly",

"version": "1.0.0.dev20230830",

"result": {

"issues": 2,

"errors": {},

"results": {

"exec-base64": [

... | process | skypilot nightly has guarddog issues dependency skypilot nightly version result issues errors results exec location skypilot nightly sky cloud stores py code p subpr... | 1 |

19,416 | 25,560,957,175 | IssuesEvent | 2022-11-30 10:43:22 | aiidateam/aiida-core | https://api.github.com/repos/aiidateam/aiida-core | opened | Restore support for dynamic nested namespaces in inputs for process functions | type/bug topic/engine topic/processes | Before v2.0 the following was possible:

```python

@workfunction

def function(**kwargs):

return kwargs

inputs = {

'nested': {

'namespace': {

'int': orm.Int(1).store()

}

}

}

results, node = function.run_get_node(**inputs)

assert results == inputs

assert node.get_inc... | 1.0 | Restore support for dynamic nested namespaces in inputs for process functions - Before v2.0 the following was possible:

```python

@workfunction

def function(**kwargs):

return kwargs

inputs = {

'nested': {

'namespace': {

'int': orm.Int(1).store()

}

}

}

results, node ... | process | restore support for dynamic nested namespaces in inputs for process functions before the following was possible python workfunction def function kwargs return kwargs inputs nested namespace int orm int store results node ... | 1 |

15,825 | 20,018,702,159 | IssuesEvent | 2022-02-01 14:33:49 | elastic/beats | https://api.github.com/repos/elastic/beats | opened | Create Syslog Processor | enhancement :Processors Team:Security-External Integrations | ## Overview

Syslog parsing in Beats is currently implemented as a dedicated input. It supports accepting data over UDP, TCP, and Unix sockets. Because it is implemented as an input, the parsing cannot be used by other inputs such as filestream, httpjson, or kafka.

The goal is to decouple the input from parsing by... | 1.0 | Create Syslog Processor - ## Overview

Syslog parsing in Beats is currently implemented as a dedicated input. It supports accepting data over UDP, TCP, and Unix sockets. Because it is implemented as an input, the parsing cannot be used by other inputs such as filestream, httpjson, or kafka.

The goal is to decouple... | process | create syslog processor overview syslog parsing in beats is currently implemented as a dedicated input it supports accepting data over udp tcp and unix sockets because it is implemented as an input the parsing cannot be used by other inputs such as filestream httpjson or kafka the goal is to decouple... | 1 |

21,068 | 3,455,776,753 | IssuesEvent | 2015-12-17 21:40:53 | netty/netty | https://api.github.com/repos/netty/netty | opened | HTTP/2 DefaultHttp2RemoteFlowController Stream writability notification broken | defect | `DefaultHttp2RemoteFlowController.ListenerWritabilityMonitor` no longer reliably detects when a stream's writability change occurs. `ListenerWritabilityMonitor` was implemented to avoid duplicating iteration over all streams when possible and instead was relying on the `PriorityStreamByteDistributor` to call `write` fo... | 1.0 | HTTP/2 DefaultHttp2RemoteFlowController Stream writability notification broken - `DefaultHttp2RemoteFlowController.ListenerWritabilityMonitor` no longer reliably detects when a stream's writability change occurs. `ListenerWritabilityMonitor` was implemented to avoid duplicating iteration over all streams when possible ... | non_process | http stream writability notification broken listenerwritabilitymonitor no longer reliably detects when a stream s writability change occurs listenerwritabilitymonitor was implemented to avoid duplicating iteration over all streams when possible and instead was relying on the prioritystreambytedistributor ... | 0 |

109,913 | 4,415,350,500 | IssuesEvent | 2016-08-14 01:11:02 | MinetestForFun/server-minetestforfun-skyblock | https://api.github.com/repos/MinetestForFun/server-minetestforfun-skyblock | opened | All passwords have been reset for accounts older than a month! | Priority: High | I have just verified ANY player that has not logged into the game for a month or longer has had there password reset!

:large_orange_diamond: | 1.0 | All passwords have been reset for accounts older than a month! - I have just verified ANY player that has not logged into the game for a month or longer has had there password reset!

:large_orange_diamond: | non_process | all passwords have been reset for accounts older than a month i have just verified any player that has not logged into the game for a month or longer has had there password reset large orange diamond | 0 |

3,346 | 6,486,457,873 | IssuesEvent | 2017-08-19 19:51:58 | OpenMined/Docs | https://api.github.com/repos/OpenMined/Docs | closed | Funneling new users into projects easier | enhancement help wanted process | I'm trying to wrap my mind around how we might guide new users exploring OpenMined for the first time to join a specific project. It's hard for people to grasp the entire ecosystem and so often they join Slack without much aim of where they can best fit in. If we formed teams, naturally this might fall into place... ... | 1.0 | Funneling new users into projects easier - I'm trying to wrap my mind around how we might guide new users exploring OpenMined for the first time to join a specific project. It's hard for people to grasp the entire ecosystem and so often they join Slack without much aim of where they can best fit in. If we formed team... | process | funneling new users into projects easier i m trying to wrap my mind around how we might guide new users exploring openmined for the first time to join a specific project it s hard for people to grasp the entire ecosystem and so often they join slack without much aim of where they can best fit in if we formed team... | 1 |

10,400 | 13,202,152,291 | IssuesEvent | 2020-08-14 11:40:54 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Messed up data types after running SAGA snap points to lines | Bug Feedback Processing | **Describe the bug**

I run SAGA 'snap points to lines' tool using the belowing files:

points layer: CSV files contain x/y coordinates

Snap feature: OSM street shapefile