Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

12,000

| 14,738,129,531

|

IssuesEvent

|

2021-01-07 03:50:37

|

kdjstudios/SABillingGitlab

|

https://api.github.com/repos/kdjstudios/SABillingGitlab

|

closed

|

068- portland - Payment issue

|

anc-ops anc-process anp-0.5 ant-bug ant-support

|

In GitLab by @kdjstudios on May 8, 2018, 12:13

**Submitted by:** "Lettice Ross" <lettice.ross@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-05-07-23481/conversation

**Server:** Internal

**Client/Site:** 068

**Account:** Lucas Emergy B00671

**Issue:**

I’m sending this email, because I have received an error message when trying to process credit card payment. The account I’m trying to process payment for is Lucas Emergy B00671.

The error message read: We’re sorry, but something went wrong.

If you can please help, and let me know once finish.

|

1.0

|

068- portland - Payment issue - In GitLab by @kdjstudios on May 8, 2018, 12:13

**Submitted by:** "Lettice Ross" <lettice.ross@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-05-07-23481/conversation

**Server:** Internal

**Client/Site:** 068

**Account:** Lucas Emergy B00671

**Issue:**

I’m sending this email, because I have received an error message when trying to process credit card payment. The account I’m trying to process payment for is Lucas Emergy B00671.

The error message read: We’re sorry, but something went wrong.

If you can please help, and let me know once finish.

|

process

|

portland payment issue in gitlab by kdjstudios on may submitted by lettice ross helpdesk server internal client site account lucas emergy issue i’m sending this email because i have received an error message when trying to process credit card payment the account i’m trying to process payment for is lucas emergy the error message read we’re sorry but something went wrong if you can please help and let me know once finish

| 1

|

54,869

| 7,928,548,666

|

IssuesEvent

|

2018-07-06 12:09:02

|

txtdirect/txtdirect

|

https://api.github.com/repos/txtdirect/txtdirect

|

opened

|

Document that arbitrary key/value pairs are allowed

|

documentation

|

<!--

This form is for bug reports and feature requests ONLY!

If you're looking for help check out [our support guidelines](/SUPPORT.md).

-->

**Is this a BUG REPORT or FEATURE REQUEST?**:

feature

Arbitrary key/value pairs should just be discarded (this allows to upgrade TXT records in case a newer version of TXTDirect uses new keys, without breaking older versions)

Arbitrary data, however, shouldn't be allowed.

Summary:

```

v=txtv0;to=https://txtdirect.org;code=302 -> works

v=txtv0;https://txtdirect.org;code=302 -> works

v=txtv0;to=https://txtdirect.org;code=302;tracking=true -> works

v=txtv0;https://txtdirect.org;302 -> fails

v=txtv0;to=https://txtdirect.org;302 -> fails

```

|

1.0

|

Document that arbitrary key/value pairs are allowed - <!--

This form is for bug reports and feature requests ONLY!

If you're looking for help check out [our support guidelines](/SUPPORT.md).

-->

**Is this a BUG REPORT or FEATURE REQUEST?**:

feature

Arbitrary key/value pairs should just be discarded (this allows to upgrade TXT records in case a newer version of TXTDirect uses new keys, without breaking older versions)

Arbitrary data, however, shouldn't be allowed.

Summary:

```

v=txtv0;to=https://txtdirect.org;code=302 -> works

v=txtv0;https://txtdirect.org;code=302 -> works

v=txtv0;to=https://txtdirect.org;code=302;tracking=true -> works

v=txtv0;https://txtdirect.org;302 -> fails

v=txtv0;to=https://txtdirect.org;302 -> fails

```

|

non_process

|

document that arbitrary key value pairs are allowed this form is for bug reports and feature requests only if you re looking for help check out support md is this a bug report or feature request feature arbitrary key value pairs should just be discarded this allows to upgrade txt records in case a newer version of txtdirect uses new keys without breaking older versions arbitrary data however shouldn t be allowed summary v to works v works v to works v fails v to fails

| 0

|

677,122

| 23,151,777,742

|

IssuesEvent

|

2022-07-29 09:01:55

|

wasmerio/wasmer

|

https://api.github.com/repos/wasmerio/wasmer

|

closed

|

Re-add create exe to Wasmer

|

priority-high create-exe

|

This is a follow up from #2916 (it's blocked by it).

We need to re-add `create-exe` to Wasmer.

Steps:

1. Re-use the previous existing code (from C) to create the executable

2. Create a new `create-exe` that uses Zig under the hood.

3. Make zig on-create exe used with wax

5. Include the virtual filesystem into the generated executable (`python` should work) - not required for Wasmer 3.0

## Details for (2)

We need to Zig version of the C generated file, if zig is found in the system, we can use it by default, if not, we need to call a subprocess with `wax zig`.

Unknowns:

1. linker might not work the same way

## Details for (3)

We will include the virtual filesystem structure into Wasmer, so Python can be actually converted to a native executable

|

1.0

|

Re-add create exe to Wasmer - This is a follow up from #2916 (it's blocked by it).

We need to re-add `create-exe` to Wasmer.

Steps:

1. Re-use the previous existing code (from C) to create the executable

2. Create a new `create-exe` that uses Zig under the hood.

3. Make zig on-create exe used with wax

5. Include the virtual filesystem into the generated executable (`python` should work) - not required for Wasmer 3.0

## Details for (2)

We need to Zig version of the C generated file, if zig is found in the system, we can use it by default, if not, we need to call a subprocess with `wax zig`.

Unknowns:

1. linker might not work the same way

## Details for (3)

We will include the virtual filesystem structure into Wasmer, so Python can be actually converted to a native executable

|

non_process

|

re add create exe to wasmer this is a follow up from it s blocked by it we need to re add create exe to wasmer steps re use the previous existing code from c to create the executable create a new create exe that uses zig under the hood make zig on create exe used with wax include the virtual filesystem into the generated executable python should work not required for wasmer details for we need to zig version of the c generated file if zig is found in the system we can use it by default if not we need to call a subprocess with wax zig unknowns linker might not work the same way details for we will include the virtual filesystem structure into wasmer so python can be actually converted to a native executable

| 0

|

22,276

| 30,826,946,846

|

IssuesEvent

|

2023-08-01 20:54:15

|

googleapis/google-cloud-go

|

https://api.github.com/repos/googleapis/google-cloud-go

|

closed

|

storage: determine what to do on chunksize=0 [GRPC]

|

api: storage priority: p2 type: process

|

Address TODOs around L1472 in grpc_client.go

Should we actually use the minimum of 256 KB here [chunksize=0] when the user indicates they want minimal memory usage? We cannot do a zero-copy, bufferless upload like HTTP/JSON can.

Must make a note to update documentation accordingly.

|

1.0

|

storage: determine what to do on chunksize=0 [GRPC] - Address TODOs around L1472 in grpc_client.go

Should we actually use the minimum of 256 KB here [chunksize=0] when the user indicates they want minimal memory usage? We cannot do a zero-copy, bufferless upload like HTTP/JSON can.

Must make a note to update documentation accordingly.

|

process

|

storage determine what to do on chunksize address todos around in grpc client go should we actually use the minimum of kb here when the user indicates they want minimal memory usage we cannot do a zero copy bufferless upload like http json can must make a note to update documentation accordingly

| 1

|

3,302

| 6,399,092,377

|

IssuesEvent

|

2017-08-04 22:42:53

|

dotnet/corefx

|

https://api.github.com/repos/dotnet/corefx

|

closed

|

System.ServiceProcess.ServiceController & ServiceBase

|

area-System.ServiceProcess enhancement port-to-core

|

We've had requests to port https://github.com/topshelf/topshelf to .NET Core. To do this, we would love to be able to query if we had access to the SCM and act as needed so we don't need to ship a version targeting both core & full profile.

|

1.0

|

System.ServiceProcess.ServiceController & ServiceBase - We've had requests to port https://github.com/topshelf/topshelf to .NET Core. To do this, we would love to be able to query if we had access to the SCM and act as needed so we don't need to ship a version targeting both core & full profile.

|

process

|

system serviceprocess servicecontroller servicebase we ve had requests to port to net core to do this we would love to be able to query if we had access to the scm and act as needed so we don t need to ship a version targeting both core full profile

| 1

|

751,657

| 26,252,677,466

|

IssuesEvent

|

2023-01-05 20:52:28

|

awesomemotive/affiliatewp-affiliate-area-shortcodes

|

https://api.github.com/repos/awesomemotive/affiliatewp-affiliate-area-shortcodes

|

closed

|

If AffiliateWP is not active, there is no longer a requirement admin notice

|

type-bug priority-high workflow-has-pr

|

While this addon exhibits the issue, it's likely also affecting any other addon using the same activation class. I'm opening it here because I need to do some work on this addon for the WordPress.org repo and this is going to hold us back. Let's fix it in this addon first and then push it out to other addons.

## The issue:

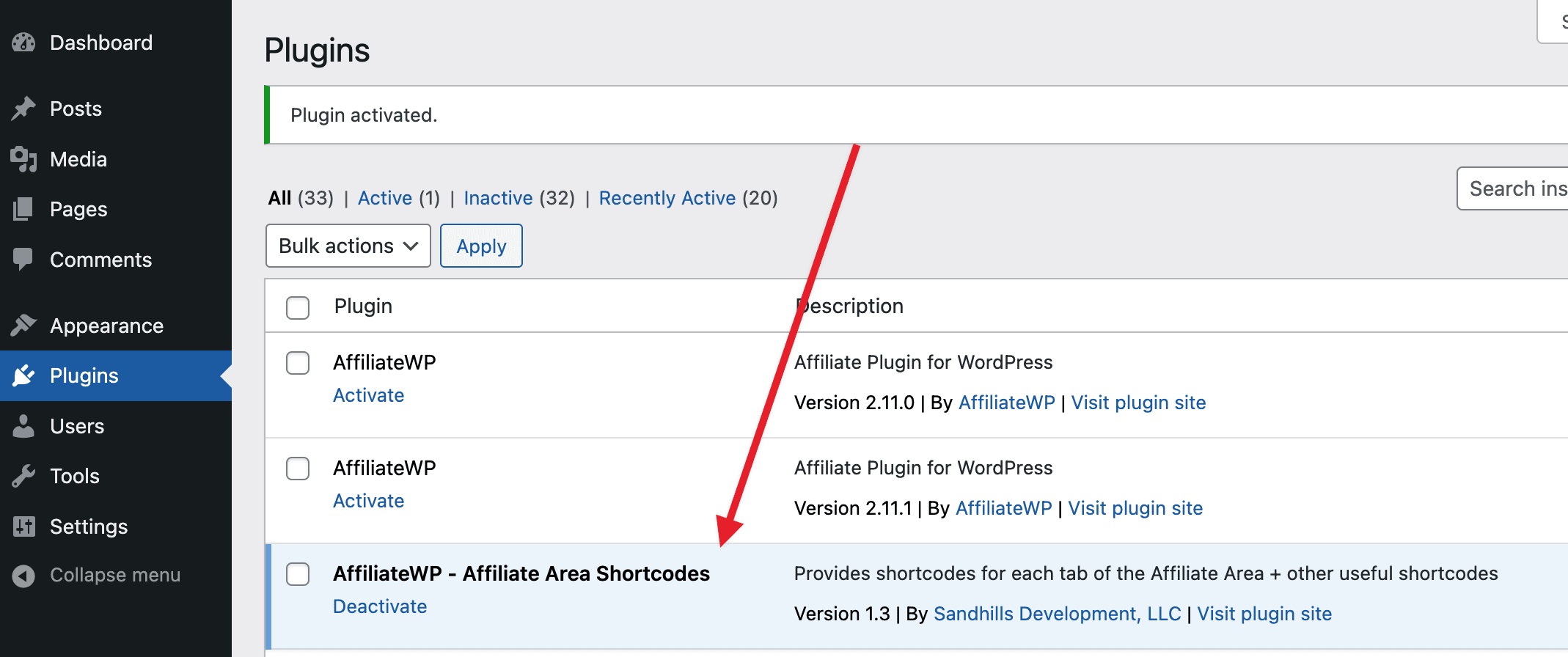

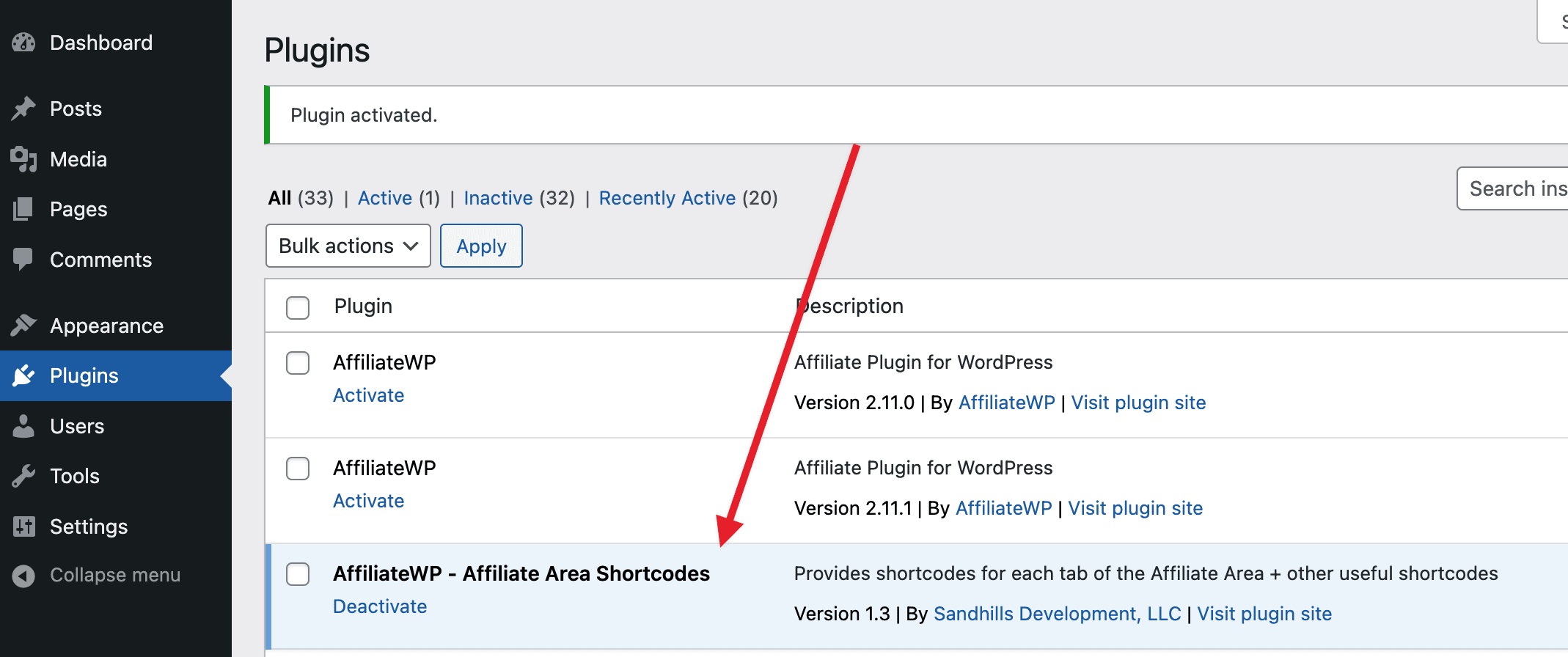

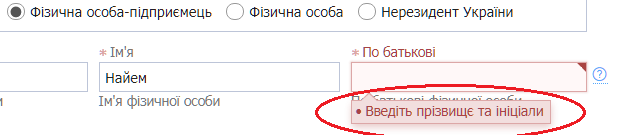

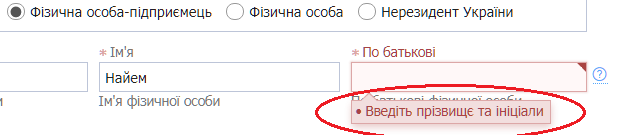

In the following screenshot I have just activated the Affiliate Area Shortcodes addon. Note how AffiliateWP is not activated and there is no notice that tells the user that AffiliateWP must be activated.

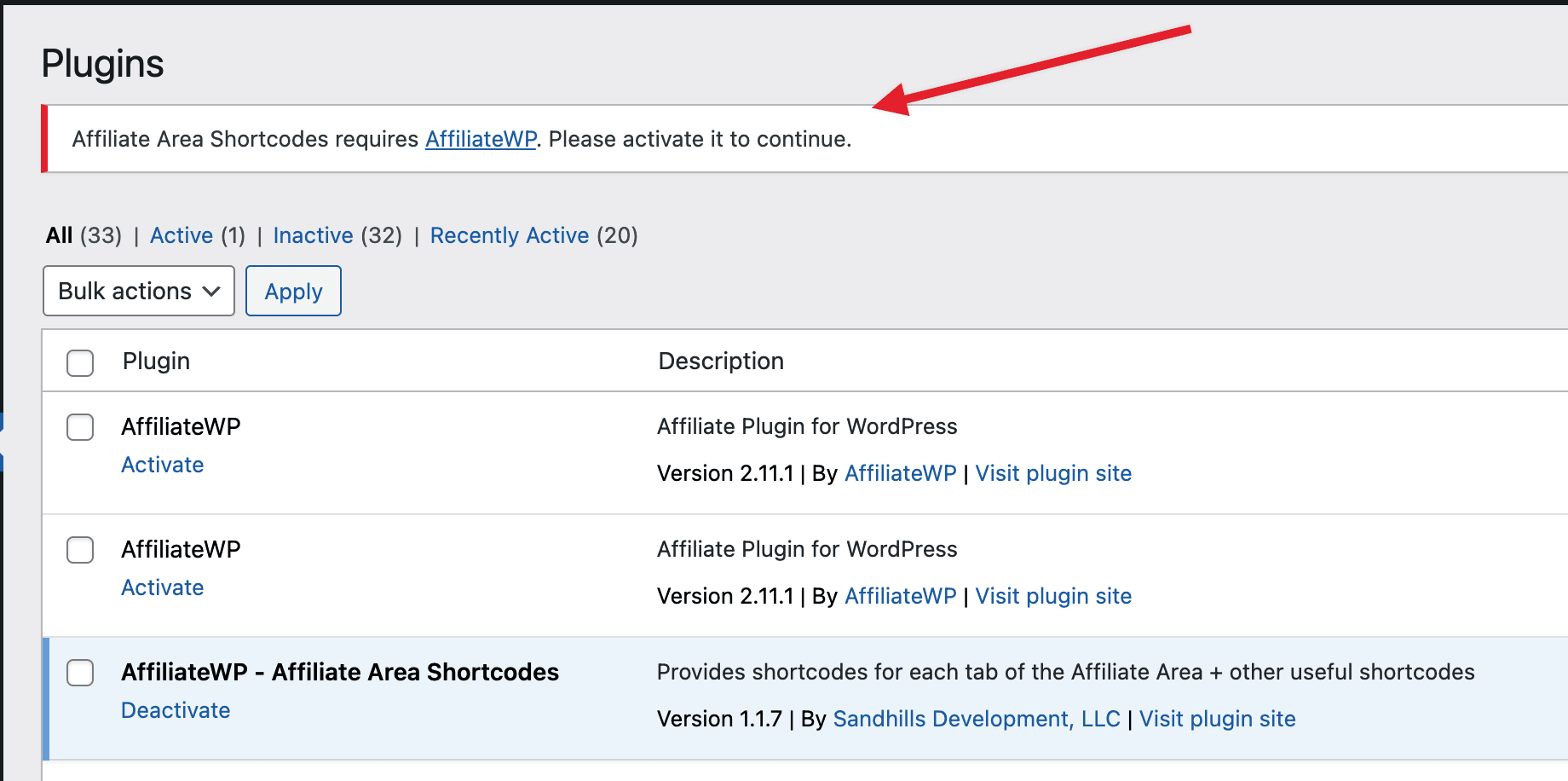

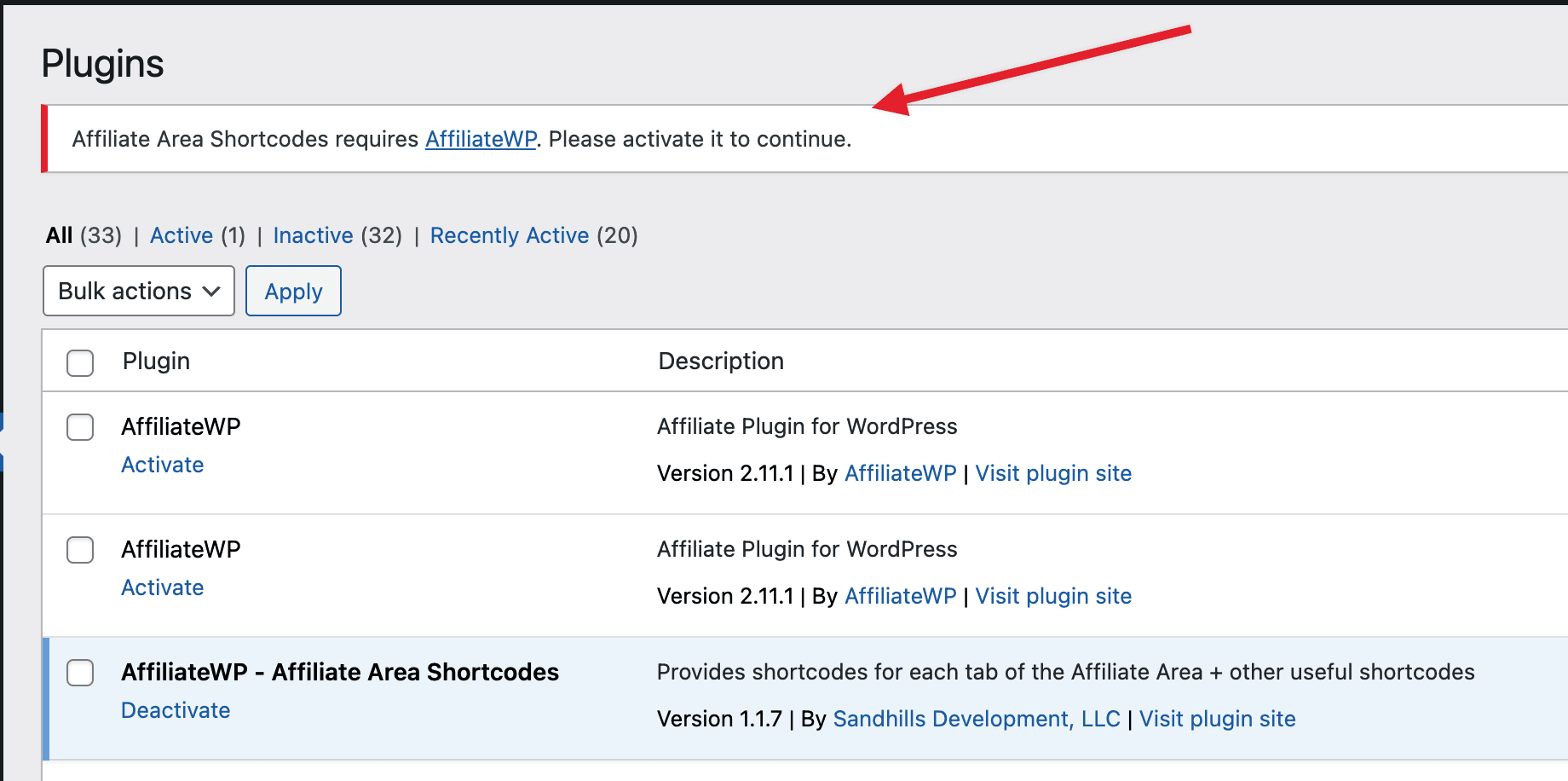

We used to show a message like this:

In the case of this addon, the notice worked for all versions up until `1.2` when this was added: https://github.com/awesomemotive/affiliatewp-affiliate-area-shortcodes/issues/37

Aside from the notice not displaying there are other issues:

1. There are two `AffiliateWP_Activation` classes included. See https://github.com/awesomemotive/affiliatewp-affiliate-area-shortcodes/blob/master/includes/lib/affwp/class-affiliatewp-activation.php and https://github.com/awesomemotive/affiliatewp-affiliate-area-shortcodes/blob/master/includes/class-activation.php

2. The activation code is super convoluted. No idea why it needs to have that much code.

3. There are incorrect textdomains, likely a copy/paste error when implementing on another addon. See `affiliatewp-afgf` in the codebase

This is probably only a small part of this but this conditional is preventing the banner from ever loading: https://github.com/awesomemotive/affiliatewp-affiliate-area-shortcodes/blob/master/affiliatewp-affiliate-area-shortcodes.php#L108-L112

The first conditional with `version_compare( $affwp_version, '2.7', '>=' )` is `true` for me since I have a version higher than `2.7`. Then it tries to load the `bootstrap` method but can't because it runs on the `affwp_plugins_loaded` hook. That hook is only available within AffiliateWP, which of course is not active.

To test and at least see the banner in the above screenshot, change `affwp_plugins_loaded` to `plugins_loaded`.

So what we need to do here is:

1. Get the notice working again. It should show when AffiliateWP is not active

2. Preserve any changes added which cater to the minimum requirements (you'll see these browsing through the code)

3. Simplify the activation code, remove the duplicate class, fix the text domains

After we have thoroughly tested this we need to roll this out to the other addons. It's a super broken experience when a user installs our free addons and there's zero indication of what to do next.

|

1.0

|

If AffiliateWP is not active, there is no longer a requirement admin notice - While this addon exhibits the issue, it's likely also affecting any other addon using the same activation class. I'm opening it here because I need to do some work on this addon for the WordPress.org repo and this is going to hold us back. Let's fix it in this addon first and then push it out to other addons.

## The issue:

In the following screenshot I have just activated the Affiliate Area Shortcodes addon. Note how AffiliateWP is not activated and there is no notice that tells the user that AffiliateWP must be activated.

We used to show a message like this:

In the case of this addon, the notice worked for all versions up until `1.2` when this was added: https://github.com/awesomemotive/affiliatewp-affiliate-area-shortcodes/issues/37

Aside from the notice not displaying there are other issues:

1. There are two `AffiliateWP_Activation` classes included. See https://github.com/awesomemotive/affiliatewp-affiliate-area-shortcodes/blob/master/includes/lib/affwp/class-affiliatewp-activation.php and https://github.com/awesomemotive/affiliatewp-affiliate-area-shortcodes/blob/master/includes/class-activation.php

2. The activation code is super convoluted. No idea why it needs to have that much code.

3. There are incorrect textdomains, likely a copy/paste error when implementing on another addon. See `affiliatewp-afgf` in the codebase

This is probably only a small part of this but this conditional is preventing the banner from ever loading: https://github.com/awesomemotive/affiliatewp-affiliate-area-shortcodes/blob/master/affiliatewp-affiliate-area-shortcodes.php#L108-L112

The first conditional with `version_compare( $affwp_version, '2.7', '>=' )` is `true` for me since I have a version higher than `2.7`. Then it tries to load the `bootstrap` method but can't because it runs on the `affwp_plugins_loaded` hook. That hook is only available within AffiliateWP, which of course is not active.

To test and at least see the banner in the above screenshot, change `affwp_plugins_loaded` to `plugins_loaded`.

So what we need to do here is:

1. Get the notice working again. It should show when AffiliateWP is not active

2. Preserve any changes added which cater to the minimum requirements (you'll see these browsing through the code)

3. Simplify the activation code, remove the duplicate class, fix the text domains

After we have thoroughly tested this we need to roll this out to the other addons. It's a super broken experience when a user installs our free addons and there's zero indication of what to do next.

|

non_process

|

if affiliatewp is not active there is no longer a requirement admin notice while this addon exhibits the issue it s likely also affecting any other addon using the same activation class i m opening it here because i need to do some work on this addon for the wordpress org repo and this is going to hold us back let s fix it in this addon first and then push it out to other addons the issue in the following screenshot i have just activated the affiliate area shortcodes addon note how affiliatewp is not activated and there is no notice that tells the user that affiliatewp must be activated we used to show a message like this in the case of this addon the notice worked for all versions up until when this was added aside from the notice not displaying there are other issues there are two affiliatewp activation classes included see and the activation code is super convoluted no idea why it needs to have that much code there are incorrect textdomains likely a copy paste error when implementing on another addon see affiliatewp afgf in the codebase this is probably only a small part of this but this conditional is preventing the banner from ever loading the first conditional with version compare affwp version is true for me since i have a version higher than then it tries to load the bootstrap method but can t because it runs on the affwp plugins loaded hook that hook is only available within affiliatewp which of course is not active to test and at least see the banner in the above screenshot change affwp plugins loaded to plugins loaded so what we need to do here is get the notice working again it should show when affiliatewp is not active preserve any changes added which cater to the minimum requirements you ll see these browsing through the code simplify the activation code remove the duplicate class fix the text domains after we have thoroughly tested this we need to roll this out to the other addons it s a super broken experience when a user installs our free addons and there s zero indication of what to do next

| 0

|

1,209

| 3,711,323,307

|

IssuesEvent

|

2016-03-02 09:51:32

|

dita-ot/dita-ot

|

https://api.github.com/repos/dita-ot/dita-ot

|

closed

|

NPE in [keyref] processing (DITA-OT 2.2.2)

|

bug P1 preprocess/keyref

|

Following is the result log file.

```

[echo] com.antennahouse.i18n_index.2.3 is integrated.

init:

[echo] com.antennahouse.i18n_index.2.3 is integrated.

check-arg:

[mkdir] Created dir: D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227

log-arg:

[echo] *****************************************************************

[echo] * basedir = D:\DITA-OT\dita-ot-2.2.2

[echo] * dita.dir = D:\DITA-OT\dita-ot-2.2.2

[echo] * transtype = pdf5.ml

[echo] * tempdir = D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227

[echo] * outputdir = D:\DITA-OT\dita-ot-2.2.2\out

[echo] * clean.temp = true

[echo] * DITA-OT version = 2.2.2

[echo] * XML parser = Xerces

[echo] * XSLT processor = Saxon

[echo] * collator = ICU

[echo] *****************************************************************

[echo] #Ant properties

[echo] #Wed Mar 02 16:53:01 GMT+09:00 2016

[echo] args.grammar.cache=yes

[echo] args.input=samples/sample_en/sample_en.ditamap

[echo] args.logdir=D\:\\DITA-OT\\dita-ot-2.2.2\\out

[echo] args.xml.systemid.set=yes

[echo] dita.dir=D\:\\DITA-OT\\dita-ot-2.2.2

[echo] dita.plugin.com.antennahouse.dita.dita13.doctypes.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\com.antennahouse.dita.dita13.doctypes

[echo] dita.plugin.com.antennahouse.i18n_index.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\com.antennahouse.i18n_index.2.3

[echo] dita.plugin.com.antennahouse.pdf5.ml.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\com.antennahouse.pdf5.ml

[echo] dita.plugin.com.antennahouse.samples.form.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\com.antennahouse.samples.form

[echo] dita.plugin.com.sophos.tocjs.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\com.sophos.tocjs

[echo] dita.plugin.org.dita.base.dir=D\:\\DITA-OT\\dita-ot-2.2.2

[echo] dita.plugin.org.dita.docbook.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.docbook

[echo] dita.plugin.org.dita.eclipsecontent.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.eclipsecontent

[echo] dita.plugin.org.dita.eclipsehelp.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.eclipsehelp

[echo] dita.plugin.org.dita.html5.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.html5

[echo] dita.plugin.org.dita.htmlhelp.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.htmlhelp

[echo] dita.plugin.org.dita.javahelp.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.javahelp

[echo] dita.plugin.org.dita.odt.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.odt

[echo] dita.plugin.org.dita.pdf2.axf.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.pdf2.axf

[echo] dita.plugin.org.dita.pdf2.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.pdf2

[echo] dita.plugin.org.dita.pdf2.fop.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.pdf2.fop

[echo] dita.plugin.org.dita.pdf2.xep.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.pdf2.xep

[echo] dita.plugin.org.dita.specialization.dita11.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.specialization.dita11

[echo] dita.plugin.org.dita.specialization.eclipsemap.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.specialization.eclipsemap

[echo] dita.plugin.org.dita.troff.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.troff

[echo] dita.plugin.org.dita.wordrtf.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.wordrtf

[echo] dita.plugin.org.dita.xhtml.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.xhtml

[echo] dita.plugin.org.oasis-open.dita.v1_2.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.oasis-open.dita.v1_2

[echo] dita.plugin.org.oasis-open.dita.v1_3.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.oasis-open.dita.v1_3

[echo] dita.temp.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\temp\\temp20160302165301227

[echo] preprocess.copy-html.skip=true

[echo] preprocess.copy-image.skip=true

[echo] *****************************************************************

build-init:

preprocess.init:

[echo] *****************************************************************

[echo] * input = samples/sample_en/sample_en.ditamap

[echo] *****************************************************************

gen-list:

[gen-list] Using Xerces grammar pool for DTD and schema caching.

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/sample_en.ditamap

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/dita_sample.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/c_preface.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_weirdtitle1.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_weirdtitle2.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/c_test_introduction.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_abstract.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_xref.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_note.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_bodyelements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_miscellaneouselements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_specializationelements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_typographic.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_programmingelements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_softwareelements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_utilityelements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_fig.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_table.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_properties.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/t_logging_in_to_client.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_dita12.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_longdescref.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_longquoteref.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/m_keydef.ditamap

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_sectiondiv.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_reference_to_no_print.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_complecated_index_example.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_xslt.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_dir_attribute.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_backmatter.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/glossary_en.ditamap

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_no_print.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_tys125f.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XSLT.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XSLFO.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XMLandHTML.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XMLSchema.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_DTD.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_DOM.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_SAX.xml

[gen-list] Serializing job specification

debug-filter:

[filter] Using Xerces grammar pool for DTD and schema caching.

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_programmingelements.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_longdescref.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/glossary_en.ditamap

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/c_preface.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_typographic.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_table.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/dita_sample.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_reference_to_no_print.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_tys125f.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_miscellaneouselements.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/sample_en.ditamap

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_weirdtitle1.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_fig.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_specializationelements.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XSLFO.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_xref.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_note.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_properties.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XMLandHTML.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_utilityelements.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_DTD.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_DOM.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_complecated_index_example.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XMLSchema.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_dir_attribute.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/m_keydef.ditamap

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_SAX.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_xslt.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_abstract.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_dita12.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_no_print.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_bodyelements.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_sectiondiv.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_weirdtitle2.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XSLT.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_longquoteref.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/c_test_introduction.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/t_logging_in_to_client.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_backmatter.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_softwareelements.xml

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\outditafiles.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\fullditamapandtopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\fullditatopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\fullditamap.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\hrefditatopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\conref.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\image.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\flagimage.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\html.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\canditopics.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\subjectscheme.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\conreftargets.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\copytosource.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\subtargets.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\resourceonly.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\user.input.file.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\hreftargets.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\conref.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\hrefditatopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\fullditatopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\fullditamapandtopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\conreftargets.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\canditopics.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\resourceonly.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

mapref-check:

mapref:

[mapref] Transforming into D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227

[mapref] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\preprocess\mapref.xsl

[mapref] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\topics-en\m_keydef.ditamap

[mapref] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\topics-en\glossary_en.ditamap

[mapref] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\sample_en.ditamap

branch-filter:

[branch-filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/temp/temp20160302165301227/sample_en.ditamap

copy-image:

copy-html:

copy-flag-check:

copy-flag:

copy-files:

keyref:

[keyref] Reading file:/D:/DITA-OT/dita-ot-2.2.2/temp/temp20160302165301227/sample_en.ditamap

[keyref] Processing file:/D:/DITA-OT/dita-ot-2.2.2/temp/temp20160302165301227/topics-en/p_longquoteref.xml

BUILD FAILED

D:\DITA-OT\dita-ot-2.2.2\build.xml:41: The following error occurred while executing this line:

D:\DITA-OT\dita-ot-2.2.2\plugins\org.dita.base\build_preprocess.xml:270: java.lang.NullPointerException

at org.dita.dost.writer.KeyrefPaser.processElement(KeyrefPaser.java:374)

at org.dita.dost.writer.KeyrefPaser.startElement(KeyrefPaser.java:364)

at org.xml.sax.helpers.XMLFilterImpl.startElement(Unknown Source)

at org.dita.dost.writer.TopicFragmentFilter.startElement(TopicFragmentFilter.java:62)

at org.dita.dost.writer.ConkeyrefFilter.startElement(ConkeyrefFilter.java:89)

at org.apache.xerces.parsers.AbstractSAXParser.startElement(Unknown Source)

at org.apache.xerces.parsers.AbstractXMLDocumentParser.emptyElement(Unknown Source)

at org.apache.xerces.impl.XMLNSDocumentScannerImpl.scanStartElement(Unknown Source)

at org.apache.xerces.impl.XMLDocumentFragmentScannerImpl$FragmentContentDispatcher.dispatch(Unknown Source)

at org.apache.xerces.impl.XMLDocumentFragmentScannerImpl.scanDocument(Unknown Source)

at org.apache.xerces.parsers.XML11Configuration.parse(Unknown Source)

at org.apache.xerces.parsers.XML11Configuration.parse(Unknown Source)

at org.apache.xerces.parsers.XMLParser.parse(Unknown Source)

at org.apache.xerces.parsers.AbstractSAXParser.parse(Unknown Source)

at org.xml.sax.helpers.XMLFilterImpl.parse(Unknown Source)

at org.xml.sax.helpers.XMLFilterImpl.parse(Unknown Source)

at org.xml.sax.helpers.XMLFilterImpl.parse(Unknown Source)

at net.sf.saxon.event.Sender.sendSAXSource(Sender.java:404)

at net.sf.saxon.event.Sender.send(Sender.java:193)

at net.sf.saxon.IdentityTransformer.transform(IdentityTransformer.java:30)

at org.dita.dost.util.XMLUtils.transform(XMLUtils.java:259)

at org.dita.dost.util.XMLUtils.transform(XMLUtils.java:219)

at org.dita.dost.module.KeyrefModule.processFile(KeyrefModule.java:234)

at org.dita.dost.module.KeyrefModule.execute(KeyrefModule.java:93)

at org.dita.dost.pipeline.PipelineFacade.execute(PipelineFacade.java:68)

at org.dita.dost.invoker.ExtensibleAntInvoker.execute(ExtensibleAntInvoker.java:193)

at org.apache.tools.ant.UnknownElement.execute(UnknownElement.java:292)

at sun.reflect.GeneratedMethodAccessor4.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(Unknown Source)

at java.lang.reflect.Method.invoke(Unknown Source)

at org.apache.tools.ant.dispatch.DispatchUtils.execute(DispatchUtils.java:106)

at org.apache.tools.ant.Task.perform(Task.java:348)

at org.apache.tools.ant.Target.execute(Target.java:435)

at org.apache.tools.ant.Target.performTasks(Target.java:456)

at org.apache.tools.ant.Project.executeSortedTargets(Project.java:1393)

at org.apache.tools.ant.helper.SingleCheckExecutor.executeTargets(SingleCheckExecutor.java:38)

at org.apache.tools.ant.Project.executeTargets(Project.java:1248)

at org.apache.tools.ant.taskdefs.Ant.execute(Ant.java:441)

at org.apache.tools.ant.taskdefs.CallTarget.execute(CallTarget.java:105)

at org.apache.tools.ant.UnknownElement.execute(UnknownElement.java:292)

at sun.reflect.GeneratedMethodAccessor4.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(Unknown Source)

at java.lang.reflect.Method.invoke(Unknown Source)

at org.apache.tools.ant.dispatch.DispatchUtils.execute(DispatchUtils.java:106)

at org.apache.tools.ant.Task.perform(Task.java:348)

at org.apache.tools.ant.Target.execute(Target.java:435)

at org.apache.tools.ant.Target.performTasks(Target.java:456)

at org.apache.tools.ant.Project.executeSortedTargets(Project.java:1393)

at org.apache.tools.ant.Project.executeTarget(Project.java:1364)

at org.apache.tools.ant.helper.DefaultExecutor.executeTargets(DefaultExecutor.java:41)

at org.apache.tools.ant.Project.executeTargets(Project.java:1248)

at org.apache.tools.ant.Main.runBuild(Main.java:851)

at org.apache.tools.ant.Main.startAnt(Main.java:235)

at org.apache.tools.ant.launch.Launcher.run(Launcher.java:280)

at org.apache.tools.ant.launch.Launcher.main(Launcher.java:109)

Total time: 4 seconds

```

It seems that p_longquoteref.xml has no validation errors.

I attached used DITA instance.

[sample_en.zip](https://github.com/dita-ot/dita-ot/files/154305/sample_en.zip)

|

1.0

|

NPE in [keyref] processing (DITA-OT 2.2.2) - Following is the result log file.

```

[echo] com.antennahouse.i18n_index.2.3 is integrated.

init:

[echo] com.antennahouse.i18n_index.2.3 is integrated.

check-arg:

[mkdir] Created dir: D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227

log-arg:

[echo] *****************************************************************

[echo] * basedir = D:\DITA-OT\dita-ot-2.2.2

[echo] * dita.dir = D:\DITA-OT\dita-ot-2.2.2

[echo] * transtype = pdf5.ml

[echo] * tempdir = D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227

[echo] * outputdir = D:\DITA-OT\dita-ot-2.2.2\out

[echo] * clean.temp = true

[echo] * DITA-OT version = 2.2.2

[echo] * XML parser = Xerces

[echo] * XSLT processor = Saxon

[echo] * collator = ICU

[echo] *****************************************************************

[echo] #Ant properties

[echo] #Wed Mar 02 16:53:01 GMT+09:00 2016

[echo] args.grammar.cache=yes

[echo] args.input=samples/sample_en/sample_en.ditamap

[echo] args.logdir=D\:\\DITA-OT\\dita-ot-2.2.2\\out

[echo] args.xml.systemid.set=yes

[echo] dita.dir=D\:\\DITA-OT\\dita-ot-2.2.2

[echo] dita.plugin.com.antennahouse.dita.dita13.doctypes.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\com.antennahouse.dita.dita13.doctypes

[echo] dita.plugin.com.antennahouse.i18n_index.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\com.antennahouse.i18n_index.2.3

[echo] dita.plugin.com.antennahouse.pdf5.ml.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\com.antennahouse.pdf5.ml

[echo] dita.plugin.com.antennahouse.samples.form.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\com.antennahouse.samples.form

[echo] dita.plugin.com.sophos.tocjs.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\com.sophos.tocjs

[echo] dita.plugin.org.dita.base.dir=D\:\\DITA-OT\\dita-ot-2.2.2

[echo] dita.plugin.org.dita.docbook.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.docbook

[echo] dita.plugin.org.dita.eclipsecontent.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.eclipsecontent

[echo] dita.plugin.org.dita.eclipsehelp.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.eclipsehelp

[echo] dita.plugin.org.dita.html5.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.html5

[echo] dita.plugin.org.dita.htmlhelp.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.htmlhelp

[echo] dita.plugin.org.dita.javahelp.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.javahelp

[echo] dita.plugin.org.dita.odt.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.odt

[echo] dita.plugin.org.dita.pdf2.axf.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.pdf2.axf

[echo] dita.plugin.org.dita.pdf2.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.pdf2

[echo] dita.plugin.org.dita.pdf2.fop.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.pdf2.fop

[echo] dita.plugin.org.dita.pdf2.xep.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.pdf2.xep

[echo] dita.plugin.org.dita.specialization.dita11.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.specialization.dita11

[echo] dita.plugin.org.dita.specialization.eclipsemap.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.specialization.eclipsemap

[echo] dita.plugin.org.dita.troff.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.troff

[echo] dita.plugin.org.dita.wordrtf.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.wordrtf

[echo] dita.plugin.org.dita.xhtml.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.dita.xhtml

[echo] dita.plugin.org.oasis-open.dita.v1_2.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.oasis-open.dita.v1_2

[echo] dita.plugin.org.oasis-open.dita.v1_3.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\plugins\\org.oasis-open.dita.v1_3

[echo] dita.temp.dir=D\:\\DITA-OT\\dita-ot-2.2.2\\temp\\temp20160302165301227

[echo] preprocess.copy-html.skip=true

[echo] preprocess.copy-image.skip=true

[echo] *****************************************************************

build-init:

preprocess.init:

[echo] *****************************************************************

[echo] * input = samples/sample_en/sample_en.ditamap

[echo] *****************************************************************

gen-list:

[gen-list] Using Xerces grammar pool for DTD and schema caching.

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/sample_en.ditamap

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/dita_sample.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/c_preface.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_weirdtitle1.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_weirdtitle2.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/c_test_introduction.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_abstract.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_xref.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_note.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_bodyelements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_miscellaneouselements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_specializationelements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_typographic.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_programmingelements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_softwareelements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_utilityelements.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_fig.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_table.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_properties.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/t_logging_in_to_client.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_dita12.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_longdescref.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_longquoteref.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/m_keydef.ditamap

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_sectiondiv.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_reference_to_no_print.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_complecated_index_example.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_xslt.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_dir_attribute.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_backmatter.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/glossary_en.ditamap

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_no_print.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_tys125f.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XSLT.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XSLFO.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XMLandHTML.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XMLSchema.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_DTD.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_DOM.xml

[gen-list] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_SAX.xml

[gen-list] Serializing job specification

debug-filter:

[filter] Using Xerces grammar pool for DTD and schema caching.

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_programmingelements.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_longdescref.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/glossary_en.ditamap

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/c_preface.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_typographic.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_table.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/dita_sample.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_reference_to_no_print.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_tys125f.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_miscellaneouselements.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/sample_en.ditamap

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_weirdtitle1.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_fig.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_specializationelements.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XSLFO.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_xref.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_note.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_properties.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XMLandHTML.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_utilityelements.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_DTD.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_DOM.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_complecated_index_example.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XMLSchema.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_dir_attribute.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/m_keydef.ditamap

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_SAX.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/r_xslt.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_abstract.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_dita12.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_no_print.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_bodyelements.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_sectiondiv.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_weirdtitle2.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/gloss_XSLT.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_longquoteref.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/c_test_introduction.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/t_logging_in_to_client.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_backmatter.xml

[filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/samples/sample_en/topics-en/p_softwareelements.xml

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\outditafiles.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\fullditamapandtopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\fullditatopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\fullditamap.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\hrefditatopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\conref.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\image.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\flagimage.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\html.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\canditopics.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\subjectscheme.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\conreftargets.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\copytosource.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\subtargets.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\resourceonly.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\user.input.file.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\hreftargets.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\conref.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\hrefditatopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\fullditatopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\fullditamapandtopic.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\conreftargets.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\canditopics.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

[job-helper] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\.job.xml to D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\resourceonly.list

[job-helper] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\job-helper.xsl

mapref-check:

mapref:

[mapref] Transforming into D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227

[mapref] Loading stylesheet D:\DITA-OT\dita-ot-2.2.2\xsl\preprocess\mapref.xsl

[mapref] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\topics-en\m_keydef.ditamap

[mapref] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\topics-en\glossary_en.ditamap

[mapref] Processing D:\DITA-OT\dita-ot-2.2.2\temp\temp20160302165301227\sample_en.ditamap

branch-filter:

[branch-filter] Processing file:/D:/DITA-OT/dita-ot-2.2.2/temp/temp20160302165301227/sample_en.ditamap

copy-image:

copy-html:

copy-flag-check:

copy-flag:

copy-files:

keyref:

[keyref] Reading file:/D:/DITA-OT/dita-ot-2.2.2/temp/temp20160302165301227/sample_en.ditamap

[keyref] Processing file:/D:/DITA-OT/dita-ot-2.2.2/temp/temp20160302165301227/topics-en/p_longquoteref.xml

BUILD FAILED

D:\DITA-OT\dita-ot-2.2.2\build.xml:41: The following error occurred while executing this line:

D:\DITA-OT\dita-ot-2.2.2\plugins\org.dita.base\build_preprocess.xml:270: java.lang.NullPointerException

at org.dita.dost.writer.KeyrefPaser.processElement(KeyrefPaser.java:374)

at org.dita.dost.writer.KeyrefPaser.startElement(KeyrefPaser.java:364)

at org.xml.sax.helpers.XMLFilterImpl.startElement(Unknown Source)

at org.dita.dost.writer.TopicFragmentFilter.startElement(TopicFragmentFilter.java:62)

at org.dita.dost.writer.ConkeyrefFilter.startElement(ConkeyrefFilter.java:89)

at org.apache.xerces.parsers.AbstractSAXParser.startElement(Unknown Source)

at org.apache.xerces.parsers.AbstractXMLDocumentParser.emptyElement(Unknown Source)

at org.apache.xerces.impl.XMLNSDocumentScannerImpl.scanStartElement(Unknown Source)

at org.apache.xerces.impl.XMLDocumentFragmentScannerImpl$FragmentContentDispatcher.dispatch(Unknown Source)

at org.apache.xerces.impl.XMLDocumentFragmentScannerImpl.scanDocument(Unknown Source)

at org.apache.xerces.parsers.XML11Configuration.parse(Unknown Source)

at org.apache.xerces.parsers.XML11Configuration.parse(Unknown Source)

at org.apache.xerces.parsers.XMLParser.parse(Unknown Source)

at org.apache.xerces.parsers.AbstractSAXParser.parse(Unknown Source)

at org.xml.sax.helpers.XMLFilterImpl.parse(Unknown Source)

at org.xml.sax.helpers.XMLFilterImpl.parse(Unknown Source)

at org.xml.sax.helpers.XMLFilterImpl.parse(Unknown Source)

at net.sf.saxon.event.Sender.sendSAXSource(Sender.java:404)

at net.sf.saxon.event.Sender.send(Sender.java:193)

at net.sf.saxon.IdentityTransformer.transform(IdentityTransformer.java:30)

at org.dita.dost.util.XMLUtils.transform(XMLUtils.java:259)

at org.dita.dost.util.XMLUtils.transform(XMLUtils.java:219)

at org.dita.dost.module.KeyrefModule.processFile(KeyrefModule.java:234)

at org.dita.dost.module.KeyrefModule.execute(KeyrefModule.java:93)

at org.dita.dost.pipeline.PipelineFacade.execute(PipelineFacade.java:68)

at org.dita.dost.invoker.ExtensibleAntInvoker.execute(ExtensibleAntInvoker.java:193)

at org.apache.tools.ant.UnknownElement.execute(UnknownElement.java:292)

at sun.reflect.GeneratedMethodAccessor4.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(Unknown Source)

at java.lang.reflect.Method.invoke(Unknown Source)

at org.apache.tools.ant.dispatch.DispatchUtils.execute(DispatchUtils.java:106)

at org.apache.tools.ant.Task.perform(Task.java:348)

at org.apache.tools.ant.Target.execute(Target.java:435)

at org.apache.tools.ant.Target.performTasks(Target.java:456)

at org.apache.tools.ant.Project.executeSortedTargets(Project.java:1393)

at org.apache.tools.ant.helper.SingleCheckExecutor.executeTargets(SingleCheckExecutor.java:38)

at org.apache.tools.ant.Project.executeTargets(Project.java:1248)

at org.apache.tools.ant.taskdefs.Ant.execute(Ant.java:441)

at org.apache.tools.ant.taskdefs.CallTarget.execute(CallTarget.java:105)

at org.apache.tools.ant.UnknownElement.execute(UnknownElement.java:292)

at sun.reflect.GeneratedMethodAccessor4.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(Unknown Source)

at java.lang.reflect.Method.invoke(Unknown Source)

at org.apache.tools.ant.dispatch.DispatchUtils.execute(DispatchUtils.java:106)

at org.apache.tools.ant.Task.perform(Task.java:348)

at org.apache.tools.ant.Target.execute(Target.java:435)

at org.apache.tools.ant.Target.performTasks(Target.java:456)

at org.apache.tools.ant.Project.executeSortedTargets(Project.java:1393)

at org.apache.tools.ant.Project.executeTarget(Project.java:1364)

at org.apache.tools.ant.helper.DefaultExecutor.executeTargets(DefaultExecutor.java:41)

at org.apache.tools.ant.Project.executeTargets(Project.java:1248)

at org.apache.tools.ant.Main.runBuild(Main.java:851)

at org.apache.tools.ant.Main.startAnt(Main.java:235)

at org.apache.tools.ant.launch.Launcher.run(Launcher.java:280)

at org.apache.tools.ant.launch.Launcher.main(Launcher.java:109)

Total time: 4 seconds

```

It seems that p_longquoteref.xml has no validation errors.

I attached used DITA instance.

[sample_en.zip](https://github.com/dita-ot/dita-ot/files/154305/sample_en.zip)

|

process

|