Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

666,147

| 22,344,608,304

|

IssuesEvent

|

2022-06-15 06:32:14

|

litmuschaos/litmus

|

https://api.github.com/repos/litmuschaos/litmus

|

closed

|

Need to upgrade docker images to use go1.15

|

priority/low

|

Changes:

- Litmus portal components

- Litmus core components

|

1.0

|

Need to upgrade docker images to use go1.15 - Changes:

- Litmus portal components

- Litmus core components

|

non_process

|

need to upgrade docker images to use changes litmus portal components litmus core components

| 0

|

20,079

| 26,575,519,472

|

IssuesEvent

|

2023-01-21 19:13:57

|

open-telemetry/opentelemetry-collector-contrib

|

https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib

|

closed

|

transform processor bug

|

bug priority:p2 processor/transform pkg/ottl

|

### Component(s)

_No response_

### What happened?

make some span

```

tracer := otel.GetTracerProvider().Tracer("test")

c := context.Background()

_, s := tracer.Start(c, "/misc/ping")

s.SetAttributes([]attribute.KeyValue{attribute.String("http.target", "/misc/ping")}...)

s.End()

_, s1 := tracer.Start(c, "PING")

s1.SetAttributes([]attribute.KeyValue{attribute.String("db.operation", "PING")}...)

s1.End()

```

as blow collector config , transform processor IsMatch code will return nil val:

```

val, err := target.Get(ctx, tCtx)

blabla

default:

return nil, errors.New("unsupported type")

```

cause tail_sampling will not be execute .

add code to IsMatch to fix the promblem :

```

if val == nil {

return false, err

}

```

### Collector version

v0.68.0

### Environment information

## Environment

OS: (e.g., "Ubuntu 20.04")

Compiler(if manually compiled): (e.g., "go 14.2")

### OpenTelemetry Collector configuration

```yaml

exporters:

logging:

loglevel: debug

otlp:

endpoint: "172.17.0.5:4317"

tls:

insecure: true

receivers:

otlp:

protocols:

grpc:

endpoint: 0.0.0.0:4317

http:

endpoint: 0.0.0.0:4318

processors:

transform:

trace_statements:

- context: span

statements:

- set(attributes["sampling.priority"], 1) where IsMatch(attributes["http.target"], "/misc/ping|/actuator/health") == true

- set(attributes["sampling.priority"], 1) where IsMatch(attributes["db.operation"], "PING") == true

- set(attributes["sampling.priority"], 1) where IsMatch(attributes["http.url"], "xxl-job-admin") == true

tail_sampling:

decision_wait: 10s #Wait time since the first span of a trace before making a sampling decision

num_traces: 50000 #Number of traces kept in memory

policies:

[

{

name: agent-policy,

type: numeric_attribute,

numeric_attribute: { key: "sampling.priority", min_value: 1, max_value: 1 }

},

{

name: latency-policy-3,

type: latency,

latency: { threshold_ms: 1500 }

},

{

name: error-policy-4,

type: status_code,

status_code: {status_codes: [ERROR]}

},

{

name: probabilistic-policy,

type: probabilistic,

probabilistic: { sampling_percentage: 1 }

}

]

service:

telemetry:

logs:

level: "debug"

pipelines:

traces:

processors: [transform,tail_sampling]

receivers:

- otlp

exporters:

- logging

```

### Log output

_No response_

### Additional context

_No response_

|

1.0

|

transform processor bug - ### Component(s)

_No response_

### What happened?

make some span

```

tracer := otel.GetTracerProvider().Tracer("test")

c := context.Background()

_, s := tracer.Start(c, "/misc/ping")

s.SetAttributes([]attribute.KeyValue{attribute.String("http.target", "/misc/ping")}...)

s.End()

_, s1 := tracer.Start(c, "PING")

s1.SetAttributes([]attribute.KeyValue{attribute.String("db.operation", "PING")}...)

s1.End()

```

as blow collector config , transform processor IsMatch code will return nil val:

```

val, err := target.Get(ctx, tCtx)

blabla

default:

return nil, errors.New("unsupported type")

```

cause tail_sampling will not be execute .

add code to IsMatch to fix the promblem :

```

if val == nil {

return false, err

}

```

### Collector version

v0.68.0

### Environment information

## Environment

OS: (e.g., "Ubuntu 20.04")

Compiler(if manually compiled): (e.g., "go 14.2")

### OpenTelemetry Collector configuration

```yaml

exporters:

logging:

loglevel: debug

otlp:

endpoint: "172.17.0.5:4317"

tls:

insecure: true

receivers:

otlp:

protocols:

grpc:

endpoint: 0.0.0.0:4317

http:

endpoint: 0.0.0.0:4318

processors:

transform:

trace_statements:

- context: span

statements:

- set(attributes["sampling.priority"], 1) where IsMatch(attributes["http.target"], "/misc/ping|/actuator/health") == true

- set(attributes["sampling.priority"], 1) where IsMatch(attributes["db.operation"], "PING") == true

- set(attributes["sampling.priority"], 1) where IsMatch(attributes["http.url"], "xxl-job-admin") == true

tail_sampling:

decision_wait: 10s #Wait time since the first span of a trace before making a sampling decision

num_traces: 50000 #Number of traces kept in memory

policies:

[

{

name: agent-policy,

type: numeric_attribute,

numeric_attribute: { key: "sampling.priority", min_value: 1, max_value: 1 }

},

{

name: latency-policy-3,

type: latency,

latency: { threshold_ms: 1500 }

},

{

name: error-policy-4,

type: status_code,

status_code: {status_codes: [ERROR]}

},

{

name: probabilistic-policy,

type: probabilistic,

probabilistic: { sampling_percentage: 1 }

}

]

service:

telemetry:

logs:

level: "debug"

pipelines:

traces:

processors: [transform,tail_sampling]

receivers:

- otlp

exporters:

- logging

```

### Log output

_No response_

### Additional context

_No response_

|

process

|

transform processor bug component s no response what happened make some span tracer otel gettracerprovider tracer test c context background s tracer start c misc ping s setattributes attribute keyvalue attribute string http target misc ping s end tracer start c ping setattributes attribute keyvalue attribute string db operation ping end as blow collector config transform processor ismatch code will return nil val val err target get ctx tctx blabla default return nil errors new unsupported type cause tail sampling will not be execute add code to ismatch to fix the promblem if val nil return false err collector version environment information environment os e g ubuntu compiler if manually compiled e g go opentelemetry collector configuration yaml exporters logging loglevel debug otlp endpoint tls insecure true receivers otlp protocols grpc endpoint http endpoint processors transform trace statements context span statements set attributes where ismatch attributes misc ping actuator health true set attributes where ismatch attributes ping true set attributes where ismatch attributes xxl job admin true tail sampling decision wait wait time since the first span of a trace before making a sampling decision num traces number of traces kept in memory policies name agent policy type numeric attribute numeric attribute key sampling priority min value max value name latency policy type latency latency threshold ms name error policy type status code status code status codes name probabilistic policy type probabilistic probabilistic sampling percentage service telemetry logs level debug pipelines traces processors receivers otlp exporters logging log output no response additional context no response

| 1

|

327,515

| 9,976,712,037

|

IssuesEvent

|

2019-07-09 15:34:57

|

ArctosDB/arctos

|

https://api.github.com/repos/ArctosDB/arctos

|

closed

|

verbatim event-stuff

|

Function-Locality/Event/Georeferencing Priority-High

|

ref: https://github.com/ArctosDB/arctos/issues/2038, https://github.com/ArctosDB/arctos/issues/1971

"verbatim coordinates" are the coordinates as received by Arctos before I transform them into DD.ddd for mapping and etc. We are overusing the existing structure; everything that does not involve triggers should be removed.

There is some apparent reluctance to use Verbatim Locality for things like coordinates as provided by the collector.

What are we trying to do here, what do we need to do it, etc.?

|

1.0

|

verbatim event-stuff - ref: https://github.com/ArctosDB/arctos/issues/2038, https://github.com/ArctosDB/arctos/issues/1971

"verbatim coordinates" are the coordinates as received by Arctos before I transform them into DD.ddd for mapping and etc. We are overusing the existing structure; everything that does not involve triggers should be removed.

There is some apparent reluctance to use Verbatim Locality for things like coordinates as provided by the collector.

What are we trying to do here, what do we need to do it, etc.?

|

non_process

|

verbatim event stuff ref verbatim coordinates are the coordinates as received by arctos before i transform them into dd ddd for mapping and etc we are overusing the existing structure everything that does not involve triggers should be removed there is some apparent reluctance to use verbatim locality for things like coordinates as provided by the collector what are we trying to do here what do we need to do it etc

| 0

|

21,219

| 28,301,408,834

|

IssuesEvent

|

2023-04-10 06:31:33

|

TeamAidemy/ds-paper-summaries

|

https://api.github.com/repos/TeamAidemy/ds-paper-summaries

|

opened

|

GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models

|

Natural language processing General Economics GPT family

|

Tyna Eloundou, Sam Manning, Pamela Mishkin, Daniel Rock. 2023. “GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models.” arXiv [econ.GN]. arXiv. https://arxiv.org/abs/2303.10130.

- 米国の各職業を対象に、どれほどLLMによって仕事の時間が削減されうるかをラベリングし、経済社会にLLMが及ぼしうる影響を定量的に評価

- LLMの言語生成能力をそのまま使用した場合の業務削減量だけでなく、補助的なアプリケーションが開発され、それを活用した場合の業務削減量も予測して分析

- 職業単位だけでなく、タスクや必要とされるスキルに分解した結果も提示

- 各職業の、LLMによる業務削減量のラベリングは、人間とGPT-4の双方により行われた

- ラベリング結果に大きな差はなかったものの、人間の方が、LLMによる業務削減量を多めに見積もる傾向があった

- 集計された結果より、米国の労働者の少なくとも10%のタスクの業務時間がLLMにより半分に短縮されうると推定できる

- 更に、19%の労働者については、半分以上のタスクの業務時間がLLMにより半分に短縮されうると推定

- 傾向として、高賃金の職業になるほど、LLMにより作業時間が短縮されるタスクが多い

- 広範な調査の末、GPT(Generative Pretrained Transformers) は、いわゆる汎用技術としてのGPT(general-purpose technologies)としての特徴を示し、社会に大きな影響を与えうると結論づけた

## Abstract

We investigate the potential implications of large language models (LLMs), such as Generative Pre-trained Transformers (GPTs), on the U.S. labor market, focusing on the increased capabilities arising from LLM-powered software compared to LLMs on their own. Using a new rubric, we assess occupations based on their alignment with LLM capabilities, integrating both human expertise and GPT-4 classifications. Our findings reveal that around 80% of the U.S. workforce could have at least 10% of their work tasks affected by the introduction of LLMs, while approximately 19% of workers may see at least 50% of their tasks impacted. We do not make predictions about the development or adoption timeline of such LLMs. The projected effects span all wage levels, with higher-income jobs potentially facing greater exposure to LLM capabilities and LLM-powered software. Significantly, these impacts are not restricted to industries with higher recent productivity growth. Our analysis suggests that, with access to an LLM, about 15% of all worker tasks in the US could be completed significantly faster at the same level of quality. When incorporating software and tooling built on top of LLMs, this share increases to between 47 and 56% of all tasks. This finding implies that LLM-powered software will have a substantial effect on scaling the economic impacts of the underlying models. We conclude that LLMs such as GPTs exhibit traits of general-purpose technologies, indicating that they could have considerable economic, social, and policy implications.

(DeepL翻訳)

我々は、GPT(Generative Pre-trained Transformers)のような大規模言語モデル(LLM)が米国の労働市場に与える潜在的な影響を調査し、LLM単体と比較してLLM搭載のソフトウェアから生じる能力の向上に焦点を当てる。新しい評価基準を用いて、人間の専門知識とGPT-4分類の両方を統合し、LLMの能力との整合性に基づいて職業を評価しました。その結果、米国の労働者の約80%が、LLMの導入により少なくとも10%の業務に影響を受ける可能性があり、約19%の労働者は少なくとも50%の業務に影響を受ける可能性があることが明らかになりました。また、LLMの開発・導入時期については予測を行っていません。予測される影響はすべての賃金水準に及び、高所得の職種ほどLLMの機能やLLMを搭載したソフトウェアに触れる機会が多くなる可能性があります。重要なのは、こうした影響は、最近の生産性上昇率が高い産業に限定されないということである。我々の分析によると、LLMを利用することで、米国における労働者の全作業の約15%が、同じ品質レベルで大幅に速く完了する可能性があることが示唆された。LLMの上に構築されたソフトウェアやツールを組み込むと、この割合は全作業の47~56%に増加する。この発見は、LLMを搭載したソフトウェアが、基礎となるモデルの経済的影響を拡大する上で大きな効果を発揮することを示唆している。GPTのようなLLMは、汎用的な技術であり、経済的、社会的、政策的に大きな意味を持つ可能性があると結論付けている。

## 解決した課題/先行研究との比較

- 本研究では、既存のAI技術や自動化技術の労働市場への影響についての調査と比較して、言語モデルのより広く、潜在的な影響を調査

- 更に、過去の汎用技術(e.g. 印刷、蒸気機関)の使われ方を加味し、言語モデルそのものの能力だけでなく、言語モデルと実作業の補完を行うイノベーションの登場の予測も考慮

## 技術・手法のポイント

### 主要な分析手順

1. 米国における職業別活動・業務に関する、O*NET 27.2 データベースに含まれる、19,265のタスクと2,087のDWA(Detaild Work Activities)に対し、以下の3通りのラベル付けを、人間とGPT-4の両方で実施

- E0 : No Exposure : LLMを使用することで、同等レベルのクオリティを維持しながらタスクを遂行する時間が減少しない(LLMへの曝露がない)

- E1 : Direct Exposure : LLMを使用することで、同等レベルのクオリティを維持しながらタスクを遂行する時間が50%以下になる(LLMへの曝露が大きい)

- E2 : LLM+ Exposed : LLMのみではタスクの遂行時間は減少しないものの、LLMの上に構築されたアプリケーションと合わせて使用することで、タスクを遂行する時間が50%以下になる。画像生成システムへのアクセスも加味。(LLMへの曝露が将来的に考えられる)

2. 以上のタスクとDWAのラベリング結果を、職業ごとに集計

- この集計時に、以下3つのパターンで重み付け和をとることで、推計のバリエーションをもたせている

- α = E1

- β = E1 + 0.5×E2

- ζ = E1 + E2

- αは、LLM単体の能力によって業務遂行時間が減少している度合い。ζは、長期的にソフトウェアが開発されていって遂行時間が減少する度合い。βはその中間、のように捉えるとよい

3. 職業ごとにラベリングされた3つのスコア(α, β, ζ)を用い、職業に必要なスキルや、職業ごとの年収と関連付けて更に分析

- 3つのスコア(α, β, ζ)を目的変数に、それぞれの職業に紐づく、O*NET基本スキルカテゴリの各スキルの重要度を説明変数とし、回帰分析を行い、各スキルの寄与率を算出

- 年収と3つのスコア(α, β, ζ)の相関を調査、など

### 人間とGPT-4によるラベル付け結果の比較

- 人間とGPT-4によるラベル付け結果の相関

<p align="center">

<img src="https://user-images.githubusercontent.com/33014616/229649328-e3351be8-5644-48e4-9299-bcad36f62001.png" width=600px>

</p>

- 職業ごとに集計されたβスコアの散布図

- 基本的にはよく相関しているものの、グラフの右上の領域においてのみ、人間のほうが高いスコアを付ける傾向が見られる

- 理由は不明だが、人間のほうが、LLMへの暴露が大きい職業において、GPT-4よりも曝露スコアを高く見積もっている

### 本分析のデータセットとラベル付け方法の限界

- つけられたラベルの主観性

- ラベル付けを行った人間は、OpenAIお抱えのアノテーター

- 職業の多様性に欠けるため、各職業の個別のタスクに詳しくなく、LLMの使用に慣れている傾向がある

- 曝露の度合いの見積もりが不正確な可能性がある

- LLMの発展は極めて速いため、ラベルの内容や分析結果は、大きく変化する可能性がある

## 分析結果

### 3つのスコア(α, β, ζ)の要約統計量

<p align="center">

<img src="https://user-images.githubusercontent.com/33014616/229652540-560dc4f6-758f-412d-8c8e-685f17573c74.png" width=200px>

</p>

- 読み取れること

- 80%の労働者は、10%のタスクがLLMの影響を受ける

- 19%の労働者は、半分以上のタスクがLLMの影響を受ける

### 職業の収入との関係

- 読み取れること

- 高年収の職業ほど、LLMへの曝露が大きい傾向がある

### 暴露スコアが大きい職業のリスト

<p align="center">

<img src="https://user-images.githubusercontent.com/33014616/229653706-d74fca6f-efba-44a1-a32d-2ad4183e2d8a.png" width=400px>

</p>

- 読み取れること

- Human α: LLM単体による曝露が大きい職業

- 翻訳家

- サーベイ研究者

- 作詞家、クリエイティブライター

- 動物科学者

- 広報スペシャリスト

- Human ζ: LLMと、それに付随するアプリケーションによる曝露が大きい職業

- 数学者

- 税理士

- 金融クオンツアナリスト

- 作家

- Web・デジタルインターフェースデザイナー

- Highest variance: 分散が大きい職業(作業時間が50%以下に減少するタスクと、減少しないタスクの混在度合いが大きい職業)

- 検索マーケティングストラテジスト

- グラフィックデザイナー

- 投資ファンドマネージャー

- 金融マネージャー

- 自動車損害保険鑑定士

### 職業の基本スキルの暴露スコアへの寄与率

<p align="center">

<img src="https://user-images.githubusercontent.com/33014616/229654827-9c51c6fa-4432-4533-adf3-2c79c192c2dd.png" width=600px>

</p>

- 読み取れること

- αへの寄与が大きいスキル(LLM単体による曝露が大きいスキル)

- Programming 0.637

- Writing 0.368

- Reading Comprehension 0.153

- ζへの寄与が大きいスキル(LLMと付随するアプリケーションによる曝露が大きいスキル)

- Mathematics 0.787

- Programming 0.609

- Writing 0.566

- Active Listening 0.449

- Speaking 0.294

- αもζも低いスキル(LLMや周辺アプリケーションの発展による曝露が小さいスキル)

- Science -0.346

- Learning Strategies -0.346

- Monitoring -0.232

- Critical Thinking -0.129

※ 以上の結果の受け止め方については、後述の 感想>注意すべき結果>基本スキルの解釈 も参照されたい。

## 残された課題・議論・感想

### この研究の限界

- 米国以外に適用できるか?

- 産業組織、技術インフラ、規制の枠組み、言語の多様性、文化的背景に大きな違いがあるため、米国以外への適用は限定的である

- この研究の方法を公表することで、他の集団についても調査できるようにして、対処したい

- 現状のLLMの能力のすべてを完璧に反映した研究ではない

- 例えばα評価では、GPT-4の画像を扱う能力を考慮してない

- もっと多くの職業の暴露スコアが高くなる可能性がある

- LLMの能力の進歩が展開するにつれて、その影響を検討する必要がある

### LLMは汎用技術と呼べるのか?

- そうみなせる特徴は示している

- LLMが汎用技術 General Purpose Technology とみなされるには、以下3つの条件を満たす必要がある

- 時間の経過とともに改善すること

- 経済全体に浸透すること

- 補完的なイノベーションが生み出されること

- この3つのうち、1つ目はOpenAIなどの研究により自明

- 2, 3番目はこの論文の内容によって、いくらか示唆された

### 感想

- 全体を通して

- 産業界から見たLLMの発展による職業への懸念が定量的に検証されていて、統計量として出ている結果も納得感が高い

- 今後の職業選択や、訓練内容の参考にすべき内容が多いと言える

- とはいえ、この結果を受け、悲観的になりすぎる必要はないとも考える

- LLMはこれまでの人の知識の集積なのだから、人が想定できるような意見を出すのは驚くべきことではない。今の人類の想像力の範疇での未来予測に過ぎない

- これから、人類はLLMありきの世界で生き、その中でイノベーションを起こしていくのである

- 注意すべき結果

- 日本に応用する場合

- 職業の種類やラベル付けの方法において、まだバイアスが大きい印象であり、応用可能性は限定的と考える

- 今後似た研究が、よりバイアスを避けた設計で行われることを待ちたい

- 基本スキルの解釈

- 「スキル」と「職業」は区別して考えるべき

- たとえば、「Mathematics」スキルはLLMへの曝露が大きいと結果では示されているものの、職業としての「数学者」が不要とは捉えられない

- 「数学者」に必要なスキルには、「Critical Thinking」や「Science」も含まれており、これらはLLMへの曝露が小さいスキルである [参照](https://www.onetonline.org/link/summary/15-2021.00)

- あくまでも、「数学者」の仕事のうち、複雑な式変形やよく使われる数学的知識の導入など、一部の作業にかかる負荷が減ると捉えるべきである

- LLM以外の技術による曝露の影響

- 本研究では、あくまでもLLMを起因とした作業負荷の減少のみにフォーカスしているため、その他の自動化技術や工業機器等による曝露は、本研究とは関連なしに進む可能性がある

- たとえば農業や林業など、LLM以外の技術により曝露が進められてきた産業は、今後もLLM以外の技術により、人間の役割が変化していくと考えられる

## 重要な引用

- [GPT-4 System Card](https://cdn.openai.com/papers/gpt-4-system-card.pdf)

## 関連論文

- Brynjolfsson, E., Frank, M. R., Mitchell, T., Rahwan, I., and Rock, D. (2023). Quantifying the Distribution of Machine Learning’s Impact on Work. Forthcoming.

- 機械学習が仕事に与える影響の分布の定量化 近々公開(2023年4月現在の情報)

- 本論文に最も影響を与えた研究として、関連研究で取り上げられている

## 参考情報

- [O*NET OnLine](https://www.onetonline.org/)

- 本論文で使用されている、米国の職業データベース O*NET のオンラインリソース

- [GPT-3 論文サマリー](https://github.com/TeamAidemy/ds-paper-summaries/issues/6)

- 本論文の主題となっている GPT-4 の、1つ前のメジャーバージョンである GPT-3 の紹介

- [InstructGPT 論文サマリー](https://github.com/TeamAidemy/ds-paper-summaries/issues/11)

- GPT-3 を、人間のフィードバックを用いた強化学習でFine-tuningしたモデル InstructGPT の紹介

|

1.0

|

GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models - Tyna Eloundou, Sam Manning, Pamela Mishkin, Daniel Rock. 2023. “GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models.” arXiv [econ.GN]. arXiv. https://arxiv.org/abs/2303.10130.

- 米国の各職業を対象に、どれほどLLMによって仕事の時間が削減されうるかをラベリングし、経済社会にLLMが及ぼしうる影響を定量的に評価

- LLMの言語生成能力をそのまま使用した場合の業務削減量だけでなく、補助的なアプリケーションが開発され、それを活用した場合の業務削減量も予測して分析

- 職業単位だけでなく、タスクや必要とされるスキルに分解した結果も提示

- 各職業の、LLMによる業務削減量のラベリングは、人間とGPT-4の双方により行われた

- ラベリング結果に大きな差はなかったものの、人間の方が、LLMによる業務削減量を多めに見積もる傾向があった

- 集計された結果より、米国の労働者の少なくとも10%のタスクの業務時間がLLMにより半分に短縮されうると推定できる

- 更に、19%の労働者については、半分以上のタスクの業務時間がLLMにより半分に短縮されうると推定

- 傾向として、高賃金の職業になるほど、LLMにより作業時間が短縮されるタスクが多い

- 広範な調査の末、GPT(Generative Pretrained Transformers) は、いわゆる汎用技術としてのGPT(general-purpose technologies)としての特徴を示し、社会に大きな影響を与えうると結論づけた

## Abstract

We investigate the potential implications of large language models (LLMs), such as Generative Pre-trained Transformers (GPTs), on the U.S. labor market, focusing on the increased capabilities arising from LLM-powered software compared to LLMs on their own. Using a new rubric, we assess occupations based on their alignment with LLM capabilities, integrating both human expertise and GPT-4 classifications. Our findings reveal that around 80% of the U.S. workforce could have at least 10% of their work tasks affected by the introduction of LLMs, while approximately 19% of workers may see at least 50% of their tasks impacted. We do not make predictions about the development or adoption timeline of such LLMs. The projected effects span all wage levels, with higher-income jobs potentially facing greater exposure to LLM capabilities and LLM-powered software. Significantly, these impacts are not restricted to industries with higher recent productivity growth. Our analysis suggests that, with access to an LLM, about 15% of all worker tasks in the US could be completed significantly faster at the same level of quality. When incorporating software and tooling built on top of LLMs, this share increases to between 47 and 56% of all tasks. This finding implies that LLM-powered software will have a substantial effect on scaling the economic impacts of the underlying models. We conclude that LLMs such as GPTs exhibit traits of general-purpose technologies, indicating that they could have considerable economic, social, and policy implications.

(DeepL翻訳)

我々は、GPT(Generative Pre-trained Transformers)のような大規模言語モデル(LLM)が米国の労働市場に与える潜在的な影響を調査し、LLM単体と比較してLLM搭載のソフトウェアから生じる能力の向上に焦点を当てる。新しい評価基準を用いて、人間の専門知識とGPT-4分類の両方を統合し、LLMの能力との整合性に基づいて職業を評価しました。その結果、米国の労働者の約80%が、LLMの導入により少なくとも10%の業務に影響を受ける可能性があり、約19%の労働者は少なくとも50%の業務に影響を受ける可能性があることが明らかになりました。また、LLMの開発・導入時期については予測を行っていません。予測される影響はすべての賃金水準に及び、高所得の職種ほどLLMの機能やLLMを搭載したソフトウェアに触れる機会が多くなる可能性があります。重要なのは、こうした影響は、最近の生産性上昇率が高い産業に限定されないということである。我々の分析によると、LLMを利用することで、米国における労働者の全作業の約15%が、同じ品質レベルで大幅に速く完了する可能性があることが示唆された。LLMの上に構築されたソフトウェアやツールを組み込むと、この割合は全作業の47~56%に増加する。この発見は、LLMを搭載したソフトウェアが、基礎となるモデルの経済的影響を拡大する上で大きな効果を発揮することを示唆している。GPTのようなLLMは、汎用的な技術であり、経済的、社会的、政策的に大きな意味を持つ可能性があると結論付けている。

## 解決した課題/先行研究との比較

- 本研究では、既存のAI技術や自動化技術の労働市場への影響についての調査と比較して、言語モデルのより広く、潜在的な影響を調査

- 更に、過去の汎用技術(e.g. 印刷、蒸気機関)の使われ方を加味し、言語モデルそのものの能力だけでなく、言語モデルと実作業の補完を行うイノベーションの登場の予測も考慮

## 技術・手法のポイント

### 主要な分析手順

1. 米国における職業別活動・業務に関する、O*NET 27.2 データベースに含まれる、19,265のタスクと2,087のDWA(Detaild Work Activities)に対し、以下の3通りのラベル付けを、人間とGPT-4の両方で実施

- E0 : No Exposure : LLMを使用することで、同等レベルのクオリティを維持しながらタスクを遂行する時間が減少しない(LLMへの曝露がない)

- E1 : Direct Exposure : LLMを使用することで、同等レベルのクオリティを維持しながらタスクを遂行する時間が50%以下になる(LLMへの曝露が大きい)

- E2 : LLM+ Exposed : LLMのみではタスクの遂行時間は減少しないものの、LLMの上に構築されたアプリケーションと合わせて使用することで、タスクを遂行する時間が50%以下になる。画像生成システムへのアクセスも加味。(LLMへの曝露が将来的に考えられる)

2. 以上のタスクとDWAのラベリング結果を、職業ごとに集計

- この集計時に、以下3つのパターンで重み付け和をとることで、推計のバリエーションをもたせている

- α = E1

- β = E1 + 0.5×E2

- ζ = E1 + E2

- αは、LLM単体の能力によって業務遂行時間が減少している度合い。ζは、長期的にソフトウェアが開発されていって遂行時間が減少する度合い。βはその中間、のように捉えるとよい

3. 職業ごとにラベリングされた3つのスコア(α, β, ζ)を用い、職業に必要なスキルや、職業ごとの年収と関連付けて更に分析

- 3つのスコア(α, β, ζ)を目的変数に、それぞれの職業に紐づく、O*NET基本スキルカテゴリの各スキルの重要度を説明変数とし、回帰分析を行い、各スキルの寄与率を算出

- 年収と3つのスコア(α, β, ζ)の相関を調査、など

### 人間とGPT-4によるラベル付け結果の比較

- 人間とGPT-4によるラベル付け結果の相関

<p align="center">

<img src="https://user-images.githubusercontent.com/33014616/229649328-e3351be8-5644-48e4-9299-bcad36f62001.png" width=600px>

</p>

- 職業ごとに集計されたβスコアの散布図

- 基本的にはよく相関しているものの、グラフの右上の領域においてのみ、人間のほうが高いスコアを付ける傾向が見られる

- 理由は不明だが、人間のほうが、LLMへの暴露が大きい職業において、GPT-4よりも曝露スコアを高く見積もっている

### 本分析のデータセットとラベル付け方法の限界

- つけられたラベルの主観性

- ラベル付けを行った人間は、OpenAIお抱えのアノテーター

- 職業の多様性に欠けるため、各職業の個別のタスクに詳しくなく、LLMの使用に慣れている傾向がある

- 曝露の度合いの見積もりが不正確な可能性がある

- LLMの発展は極めて速いため、ラベルの内容や分析結果は、大きく変化する可能性がある

## 分析結果

### 3つのスコア(α, β, ζ)の要約統計量

<p align="center">

<img src="https://user-images.githubusercontent.com/33014616/229652540-560dc4f6-758f-412d-8c8e-685f17573c74.png" width=200px>

</p>

- 読み取れること

- 80%の労働者は、10%のタスクがLLMの影響を受ける

- 19%の労働者は、半分以上のタスクがLLMの影響を受ける

### 職業の収入との関係

- 読み取れること

- 高年収の職業ほど、LLMへの曝露が大きい傾向がある

### 暴露スコアが大きい職業のリスト

<p align="center">

<img src="https://user-images.githubusercontent.com/33014616/229653706-d74fca6f-efba-44a1-a32d-2ad4183e2d8a.png" width=400px>

</p>

- 読み取れること

- Human α: LLM単体による曝露が大きい職業

- 翻訳家

- サーベイ研究者

- 作詞家、クリエイティブライター

- 動物科学者

- 広報スペシャリスト

- Human ζ: LLMと、それに付随するアプリケーションによる曝露が大きい職業

- 数学者

- 税理士

- 金融クオンツアナリスト

- 作家

- Web・デジタルインターフェースデザイナー

- Highest variance: 分散が大きい職業(作業時間が50%以下に減少するタスクと、減少しないタスクの混在度合いが大きい職業)

- 検索マーケティングストラテジスト

- グラフィックデザイナー

- 投資ファンドマネージャー

- 金融マネージャー

- 自動車損害保険鑑定士

### 職業の基本スキルの暴露スコアへの寄与率

<p align="center">

<img src="https://user-images.githubusercontent.com/33014616/229654827-9c51c6fa-4432-4533-adf3-2c79c192c2dd.png" width=600px>

</p>

- 読み取れること

- αへの寄与が大きいスキル(LLM単体による曝露が大きいスキル)

- Programming 0.637

- Writing 0.368

- Reading Comprehension 0.153

- ζへの寄与が大きいスキル(LLMと付随するアプリケーションによる曝露が大きいスキル)

- Mathematics 0.787

- Programming 0.609

- Writing 0.566

- Active Listening 0.449

- Speaking 0.294

- αもζも低いスキル(LLMや周辺アプリケーションの発展による曝露が小さいスキル)

- Science -0.346

- Learning Strategies -0.346

- Monitoring -0.232

- Critical Thinking -0.129

※ 以上の結果の受け止め方については、後述の 感想>注意すべき結果>基本スキルの解釈 も参照されたい。

## 残された課題・議論・感想

### この研究の限界

- 米国以外に適用できるか?

- 産業組織、技術インフラ、規制の枠組み、言語の多様性、文化的背景に大きな違いがあるため、米国以外への適用は限定的である

- この研究の方法を公表することで、他の集団についても調査できるようにして、対処したい

- 現状のLLMの能力のすべてを完璧に反映した研究ではない

- 例えばα評価では、GPT-4の画像を扱う能力を考慮してない

- もっと多くの職業の暴露スコアが高くなる可能性がある

- LLMの能力の進歩が展開するにつれて、その影響を検討する必要がある

### LLMは汎用技術と呼べるのか?

- そうみなせる特徴は示している

- LLMが汎用技術 General Purpose Technology とみなされるには、以下3つの条件を満たす必要がある

- 時間の経過とともに改善すること

- 経済全体に浸透すること

- 補完的なイノベーションが生み出されること

- この3つのうち、1つ目はOpenAIなどの研究により自明

- 2, 3番目はこの論文の内容によって、いくらか示唆された

### 感想

- 全体を通して

- 産業界から見たLLMの発展による職業への懸念が定量的に検証されていて、統計量として出ている結果も納得感が高い

- 今後の職業選択や、訓練内容の参考にすべき内容が多いと言える

- とはいえ、この結果を受け、悲観的になりすぎる必要はないとも考える

- LLMはこれまでの人の知識の集積なのだから、人が想定できるような意見を出すのは驚くべきことではない。今の人類の想像力の範疇での未来予測に過ぎない

- これから、人類はLLMありきの世界で生き、その中でイノベーションを起こしていくのである

- 注意すべき結果

- 日本に応用する場合

- 職業の種類やラベル付けの方法において、まだバイアスが大きい印象であり、応用可能性は限定的と考える

- 今後似た研究が、よりバイアスを避けた設計で行われることを待ちたい

- 基本スキルの解釈

- 「スキル」と「職業」は区別して考えるべき

- たとえば、「Mathematics」スキルはLLMへの曝露が大きいと結果では示されているものの、職業としての「数学者」が不要とは捉えられない

- 「数学者」に必要なスキルには、「Critical Thinking」や「Science」も含まれており、これらはLLMへの曝露が小さいスキルである [参照](https://www.onetonline.org/link/summary/15-2021.00)

- あくまでも、「数学者」の仕事のうち、複雑な式変形やよく使われる数学的知識の導入など、一部の作業にかかる負荷が減ると捉えるべきである

- LLM以外の技術による曝露の影響

- 本研究では、あくまでもLLMを起因とした作業負荷の減少のみにフォーカスしているため、その他の自動化技術や工業機器等による曝露は、本研究とは関連なしに進む可能性がある

- たとえば農業や林業など、LLM以外の技術により曝露が進められてきた産業は、今後もLLM以外の技術により、人間の役割が変化していくと考えられる

## 重要な引用

- [GPT-4 System Card](https://cdn.openai.com/papers/gpt-4-system-card.pdf)

## 関連論文

- Brynjolfsson, E., Frank, M. R., Mitchell, T., Rahwan, I., and Rock, D. (2023). Quantifying the Distribution of Machine Learning’s Impact on Work. Forthcoming.

- 機械学習が仕事に与える影響の分布の定量化 近々公開(2023年4月現在の情報)

- 本論文に最も影響を与えた研究として、関連研究で取り上げられている

## 参考情報

- [O*NET OnLine](https://www.onetonline.org/)

- 本論文で使用されている、米国の職業データベース O*NET のオンラインリソース

- [GPT-3 論文サマリー](https://github.com/TeamAidemy/ds-paper-summaries/issues/6)

- 本論文の主題となっている GPT-4 の、1つ前のメジャーバージョンである GPT-3 の紹介

- [InstructGPT 論文サマリー](https://github.com/TeamAidemy/ds-paper-summaries/issues/11)

- GPT-3 を、人間のフィードバックを用いた強化学習でFine-tuningしたモデル InstructGPT の紹介

|

process

|

gpts are gpts an early look at the labor market impact potential of large language models tyna eloundou sam manning pamela mishkin daniel rock “gpts are gpts an early look at the labor market impact potential of large language models ” arxiv arxiv 米国の各職業を対象に、どれほどllmによって仕事の時間が削減されうるかをラベリングし、経済社会にllmが及ぼしうる影響を定量的に評価 llmの言語生成能力をそのまま使用した場合の業務削減量だけでなく、補助的なアプリケーションが開発され、それを活用した場合の業務削減量も予測して分析 職業単位だけでなく、タスクや必要とされるスキルに分解した結果も提示 各職業の、llmによる業務削減量のラベリングは、人間とgpt ラベリング結果に大きな差はなかったものの、人間の方が、llmによる業務削減量を多めに見積もる傾向があった 集計された結果より、 のタスクの業務時間がllmにより半分に短縮されうると推定できる 更に、 の労働者については、半分以上のタスクの業務時間がllmにより半分に短縮されうると推定 傾向として、高賃金の職業になるほど、llmにより作業時間が短縮されるタスクが多い 広範な調査の末、gpt generative pretrained transformers は、いわゆる汎用技術としてのgpt general purpose technologies としての特徴を示し、社会に大きな影響を与えうると結論づけた abstract we investigate the potential implications of large language models llms such as generative pre trained transformers gpts on the u s labor market focusing on the increased capabilities arising from llm powered software compared to llms on their own using a new rubric we assess occupations based on their alignment with llm capabilities integrating both human expertise and gpt classifications our findings reveal that around of the u s workforce could have at least of their work tasks affected by the introduction of llms while approximately of workers may see at least of their tasks impacted we do not make predictions about the development or adoption timeline of such llms the projected effects span all wage levels with higher income jobs potentially facing greater exposure to llm capabilities and llm powered software significantly these impacts are not restricted to industries with higher recent productivity growth our analysis suggests that with access to an llm about of all worker tasks in the us could be completed significantly faster at the same level of quality when incorporating software and tooling built on top of llms this share increases to between and of all tasks this finding implies that llm powered software will have a substantial effect on scaling the economic impacts of the underlying models we conclude that llms such as gpts exhibit traits of general purpose technologies indicating that they could have considerable economic social and policy implications deepl翻訳 我々は、gpt(generative pre trained transformers)のような大規模言語モデル(llm)が米国の労働市場に与える潜在的な影響を調査し、llm単体と比較してllm搭載のソフトウェアから生じる能力の向上に焦点を当てる。新しい評価基準を用いて、人間の専門知識とgpt 、llmの能力との整合性に基づいて職業を評価しました。その結果、 %が、 %の業務に影響を受ける可能性があり、 % %の業務に影響を受ける可能性があることが明らかになりました。また、llmの開発・導入時期については予測を行っていません。予測される影響はすべての賃金水準に及び、高所得の職種ほどllmの機能やllmを搭載したソフトウェアに触れる機会が多くなる可能性があります。重要なのは、こうした影響は、最近の生産性上昇率が高い産業に限定されないということである。我々の分析によると、llmを利用することで、 %が、同じ品質レベルで大幅に速く完了する可能性があることが示唆された。llmの上に構築されたソフトウェアやツールを組み込むと、 ~ に増加する。この発見は、llmを搭載したソフトウェアが、基礎となるモデルの経済的影響を拡大する上で大きな効果を発揮することを示唆している。gptのようなllmは、汎用的な技術であり、経済的、社会的、政策的に大きな意味を持つ可能性があると結論付けている。 解決した課題 先行研究との比較 本研究では、既存のai技術や自動化技術の労働市場への影響についての調査と比較して、言語モデルのより広く、潜在的な影響を調査 更に、過去の汎用技術(e g 印刷、蒸気機関)の使われ方を加味し、言語モデルそのものの能力だけでなく、言語モデルと実作業の補完を行うイノベーションの登場の予測も考慮 技術・手法のポイント 主要な分析手順 米国における職業別活動・業務に関する、o net データベースに含まれる、 detaild work activities に対し、 、人間とgpt no exposure llmを使用することで、同等レベルのクオリティを維持しながらタスクを遂行する時間が減少しない(llmへの曝露がない) direct exposure llmを使用することで、 以下になる(llmへの曝露が大きい) llm exposed llmのみではタスクの遂行時間は減少しないものの、llmの上に構築されたアプリケーションと合わせて使用することで、 以下になる。画像生成システムへのアクセスも加味。(llmへの曝露が将来的に考えられる) 以上のタスクとdwaのラベリング結果を、職業ごとに集計 この集計時に、 、推計のバリエーションをもたせている α β × ζ αは、llm単体の能力によって業務遂行時間が減少している度合い。ζは、長期的にソフトウェアが開発されていって遂行時間が減少する度合い。βはその中間、のように捉えるとよい α β ζ を用い、職業に必要なスキルや、職業ごとの年収と関連付けて更に分析 α β ζ を目的変数に、それぞれの職業に紐づく、o net基本スキルカテゴリの各スキルの重要度を説明変数とし、回帰分析を行い、各スキルの寄与率を算出 α β ζ の相関を調査、など 人間とgpt 人間とgpt 職業ごとに集計されたβスコアの散布図 基本的にはよく相関しているものの、グラフの右上の領域においてのみ、人間のほうが高いスコアを付ける傾向が見られる 理由は不明だが、人間のほうが、llmへの暴露が大きい職業において、gpt 本分析のデータセットとラベル付け方法の限界 つけられたラベルの主観性 ラベル付けを行った人間は、openaiお抱えのアノテーター 職業の多様性に欠けるため、各職業の個別のタスクに詳しくなく、llmの使用に慣れている傾向がある 曝露の度合いの見積もりが不正確な可能性がある llmの発展は極めて速いため、ラベルの内容や分析結果は、大きく変化する可能性がある 分析結果 α β ζ の要約統計量 読み取れること の労働者は、 %のタスクがllmの影響を受ける の労働者は、半分以上のタスクがllmの影響を受ける 職業の収入との関係 読み取れること 高年収の職業ほど、llmへの曝露が大きい傾向がある 暴露スコアが大きい職業のリスト 読み取れること human α llm単体による曝露が大きい職業 翻訳家 サーベイ研究者 作詞家、クリエイティブライター 動物科学者 広報スペシャリスト human ζ llmと、それに付随するアプリケーションによる曝露が大きい職業 数学者 税理士 金融クオンツアナリスト 作家 web・デジタルインターフェースデザイナー highest variance 分散が大きい職業( 以下に減少するタスクと、減少しないタスクの混在度合いが大きい職業) 検索マーケティングストラテジスト グラフィックデザイナー 投資ファンドマネージャー 金融マネージャー 自動車損害保険鑑定士 職業の基本スキルの暴露スコアへの寄与率 読み取れること αへの寄与が大きいスキル(llm単体による曝露が大きいスキル) programming writing reading comprehension ζへの寄与が大きいスキル(llmと付随するアプリケーションによる曝露が大きいスキル) mathematics programming writing active listening speaking αもζも低いスキル(llmや周辺アプリケーションの発展による曝露が小さいスキル) science learning strategies monitoring critical thinking ※ 以上の結果の受け止め方については、後述の 感想 注意すべき結果 基本スキルの解釈 も参照されたい。 残された課題・議論・感想 この研究の限界 米国以外に適用できるか? 産業組織、技術インフラ、規制の枠組み、言語の多様性、文化的背景に大きな違いがあるため、米国以外への適用は限定的である この研究の方法を公表することで、他の集団についても調査できるようにして、対処したい 現状のllmの能力のすべてを完璧に反映した研究ではない 例えばα評価では、gpt もっと多くの職業の暴露スコアが高くなる可能性がある llmの能力の進歩が展開するにつれて、その影響を検討する必要がある llmは汎用技術と呼べるのか? そうみなせる特徴は示している llmが汎用技術 general purpose technology とみなされるには、 時間の経過とともに改善すること 経済全体に浸透すること 補完的なイノベーションが生み出されること 、 、いくらか示唆された 感想 全体を通して 産業界から見たllmの発展による職業への懸念が定量的に検証されていて、統計量として出ている結果も納得感が高い 今後の職業選択や、訓練内容の参考にすべき内容が多いと言える とはいえ、この結果を受け、悲観的になりすぎる必要はないとも考える llmはこれまでの人の知識の集積なのだから、人が想定できるような意見を出すのは驚くべきことではない。今の人類の想像力の範疇での未来予測に過ぎない これから、人類はllmありきの世界で生き、その中でイノベーションを起こしていくのである 注意すべき結果 日本に応用する場合 職業の種類やラベル付けの方法において、まだバイアスが大きい印象であり、応用可能性は限定的と考える 今後似た研究が、よりバイアスを避けた設計で行われることを待ちたい 基本スキルの解釈 「スキル」と「職業」は区別して考えるべき たとえば、「mathematics」スキルはllmへの曝露が大きいと結果では示されているものの、職業としての「数学者」が不要とは捉えられない 「数学者」に必要なスキルには、「critical thinking」や「science」も含まれており、これらはllmへの曝露が小さいスキルである あくまでも、「数学者」の仕事のうち、複雑な式変形やよく使われる数学的知識の導入など、一部の作業にかかる負荷が減ると捉えるべきである llm以外の技術による曝露の影響 本研究では、あくまでもllmを起因とした作業負荷の減少のみにフォーカスしているため、その他の自動化技術や工業機器等による曝露は、本研究とは関連なしに進む可能性がある たとえば農業や林業など、llm以外の技術により曝露が進められてきた産業は、今後もllm以外の技術により、人間の役割が変化していくと考えられる 重要な引用 関連論文 brynjolfsson e frank m r mitchell t rahwan i and rock d quantifying the distribution of machine learning’s impact on work forthcoming 機械学習が仕事に与える影響の分布の定量化 近々公開 本論文に最も影響を与えた研究として、関連研究で取り上げられている 参考情報 本論文で使用されている、米国の職業データベース o net のオンラインリソース 本論文の主題となっている gpt の、 gpt の紹介 gpt を、人間のフィードバックを用いた強化学習でfine tuningしたモデル instructgpt の紹介

| 1

|

10,456

| 13,235,285,512

|

IssuesEvent

|

2020-08-18 17:46:35

|

MicrosoftDocs/azure-devops-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-devops-docs

|

closed

|

Need Example for setting Secret Variables tu use in bash script

|

Pri1 devops-cicd-process/tech devops/prod doc-enhancement

|

Hi!

There is a nice example on how to set and use secret pipeline variables for Powershell : https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch#secret-variables

But I'm struggling to reproduce with bash (on a Ubuntu hosted agent VM). A similar example would be super nice !

Cheers !

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: dd7e0bd3-1f7d-d7b6-cc72-5ef63c31b46a

* Version Independent ID: dae87abd-b73d-9120-bcdb-6097d4b40f2a

* Content: [Define variables - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch)

* Content Source: [docs/pipelines/process/variables.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/variables.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

1.0

|

Need Example for setting Secret Variables tu use in bash script - Hi!

There is a nice example on how to set and use secret pipeline variables for Powershell : https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch#secret-variables

But I'm struggling to reproduce with bash (on a Ubuntu hosted agent VM). A similar example would be super nice !

Cheers !

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: dd7e0bd3-1f7d-d7b6-cc72-5ef63c31b46a

* Version Independent ID: dae87abd-b73d-9120-bcdb-6097d4b40f2a

* Content: [Define variables - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch)

* Content Source: [docs/pipelines/process/variables.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/variables.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

process

|

need example for setting secret variables tu use in bash script hi there is a nice example on how to set and use secret pipeline variables for powershell but i m struggling to reproduce with bash on a ubuntu hosted agent vm a similar example would be super nice cheers document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id bcdb content content source product devops technology devops cicd process github login juliakm microsoft alias jukullam

| 1

|

3,456

| 6,543,916,108

|

IssuesEvent

|

2017-09-03 08:26:18

|

Jumpscale/portal9

|

https://api.github.com/repos/Jumpscale/portal9

|

closed

|

Import OS Templates/Images --- Feature Request

|

process_wontfix

|

Hi,

A Feature Request in the hope it makes sense.

A suggestion as it would be ideal from the Admin point of view (Admin Broker Portal) to have the capability of uploading The OS Templates/Images. (those would be img/qcow2 imaes KVM compatible.)

Many thanks

|

1.0

|

Import OS Templates/Images --- Feature Request - Hi,

A Feature Request in the hope it makes sense.

A suggestion as it would be ideal from the Admin point of view (Admin Broker Portal) to have the capability of uploading The OS Templates/Images. (those would be img/qcow2 imaes KVM compatible.)

Many thanks

|

process

|

import os templates images feature request hi a feature request in the hope it makes sense a suggestion as it would be ideal from the admin point of view admin broker portal to have the capability of uploading the os templates images those would be img imaes kvm compatible many thanks

| 1

|

421,913

| 28,365,702,545

|

IssuesEvent

|

2023-04-12 13:46:23

|

nvs-vocabs/ArgoVocabs

|

https://api.github.com/repos/nvs-vocabs/ArgoVocabs

|

opened

|

R08 - addition to the vocabulary description

|

documentation avtt

|

R08 is based on WMO "**Common Code Tables C-3 (CCT C-3): Instrument make and type for water temperature

profile measurement with fall rate equation coefficients**"

These tables are pretty hard to find online up to date.

I would like here to keep track on this information and suggest to add it somewhere in the R08 description.

The official code table is available here: https://library.wmo.int/doc_num.php?explnum_id=11283

The github repository is here: https://github.com/wmo-im/CCT/blob/master/C03.csv

To suggest an addition to the CCT C-3, follow this exemple: https://github.com/wmo-im/CCT/issues/110

Note that R08 can be updated while we are still waiting for WMO CCT C-3 validation.

|

1.0

|

R08 - addition to the vocabulary description - R08 is based on WMO "**Common Code Tables C-3 (CCT C-3): Instrument make and type for water temperature

profile measurement with fall rate equation coefficients**"

These tables are pretty hard to find online up to date.

I would like here to keep track on this information and suggest to add it somewhere in the R08 description.

The official code table is available here: https://library.wmo.int/doc_num.php?explnum_id=11283

The github repository is here: https://github.com/wmo-im/CCT/blob/master/C03.csv

To suggest an addition to the CCT C-3, follow this exemple: https://github.com/wmo-im/CCT/issues/110

Note that R08 can be updated while we are still waiting for WMO CCT C-3 validation.

|

non_process

|

addition to the vocabulary description is based on wmo common code tables c cct c instrument make and type for water temperature profile measurement with fall rate equation coefficients these tables are pretty hard to find online up to date i would like here to keep track on this information and suggest to add it somewhere in the description the official code table is available here the github repository is here to suggest an addition to the cct c follow this exemple note that can be updated while we are still waiting for wmo cct c validation

| 0

|

20,587

| 27,246,035,667

|

IssuesEvent

|

2023-02-22 02:08:13

|

cypress-io/cypress

|

https://api.github.com/repos/cypress-io/cypress

|

closed

|

Update Vite + associated dependencies

|

process: dependencies CT pkg/app pkg/launchpad

|

### Current behavior

I want to use Vitest in the monorepo for faster unit tests. I'd like to start adding more unit tests around the complex logic in app and launchpad, and since we use Vite there already, Vitest would be a good candidate.

I tried adding it but we have some incompatibilities, we need to update Vite and esbuild before we add Vitest

### Desired behavior

Update Vite related dependencies.

|

1.0

|

Update Vite + associated dependencies - ### Current behavior

I want to use Vitest in the monorepo for faster unit tests. I'd like to start adding more unit tests around the complex logic in app and launchpad, and since we use Vite there already, Vitest would be a good candidate.

I tried adding it but we have some incompatibilities, we need to update Vite and esbuild before we add Vitest

### Desired behavior

Update Vite related dependencies.

|

process

|

update vite associated dependencies current behavior i want to use vitest in the monorepo for faster unit tests i d like to start adding more unit tests around the complex logic in app and launchpad and since we use vite there already vitest would be a good candidate i tried adding it but we have some incompatibilities we need to update vite and esbuild before we add vitest desired behavior update vite related dependencies

| 1

|

10,899

| 13,676,042,654

|

IssuesEvent

|

2020-09-29 13:26:49

|

JustBru00/RenamePlugin

|

https://api.github.com/repos/JustBru00/RenamePlugin

|

closed

|

[Suggestion] Lore text above enchantment text

|

Addition Request Processing

|

My feature request is new alternatives of existing commands (e.g., `/loreabove` and `/setlorelineabove`) to add lore text that appears directly underneath the item’s name rather than below enchantment text, if present.

This would be greatly useful. It was suggested by [HypersGamertag](https://www.spigotmc.org/members/hypersgamertag.105024) in his forum post referenced in #19, but I couldn’t find a specific issue created for this paritcular suggestion as the issue was about his other suggestion.

I’m not sure if this is possible. I’ve seen it done in mods, but mods can do anything, so I’m not sure if this is a possibility within vanilla Minecraft.

|

1.0

|

[Suggestion] Lore text above enchantment text - My feature request is new alternatives of existing commands (e.g., `/loreabove` and `/setlorelineabove`) to add lore text that appears directly underneath the item’s name rather than below enchantment text, if present.

This would be greatly useful. It was suggested by [HypersGamertag](https://www.spigotmc.org/members/hypersgamertag.105024) in his forum post referenced in #19, but I couldn’t find a specific issue created for this paritcular suggestion as the issue was about his other suggestion.

I’m not sure if this is possible. I’ve seen it done in mods, but mods can do anything, so I’m not sure if this is a possibility within vanilla Minecraft.

|

process

|

lore text above enchantment text my feature request is new alternatives of existing commands e g loreabove and setlorelineabove to add lore text that appears directly underneath the item’s name rather than below enchantment text if present this would be greatly useful it was suggested by in his forum post referenced in but i couldn’t find a specific issue created for this paritcular suggestion as the issue was about his other suggestion i’m not sure if this is possible i’ve seen it done in mods but mods can do anything so i’m not sure if this is a possibility within vanilla minecraft

| 1

|

4,455

| 7,327,007,588

|

IssuesEvent

|

2018-03-04 03:50:22

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

Correction for Windows under supported client types

|

assigned-to-author automation bug in-process triaged

|

The table should say that Windows Server 2008 and higher only supports assessments, and then on the next line, it says that Windows Server 2008 R2 SP1 and higher is supported with .NET and WMF prerequisites. These two overlap with conflicting requirements

It might be clearer with three separate line items:

| Operating System | Notes |

|-|-|

|Windows Server 2008 - Windows Server 2008 R2 RTM | Only supports update assessments|

|Windows Server 2008 R2 SP1 and higher|fully supported with .NET 4.5+ and WMF 5.0+|

|Windows Server 2016 Nano Server | Not Supported|

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: db47901d-1664-058d-9407-47e932dc9661

* Version Independent ID: e90ec9ee-e7da-4f19-248f-4c825aaa8b9f

* [Content](https://docs.microsoft.com/en-us/azure/automation/automation-update-management#clients)

* [Content Source](https://github.com/Microsoft/azure-docs/blob/master/articles/automation/automation-update-management.md)

* Service: automation

|

1.0

|

Correction for Windows under supported client types - The table should say that Windows Server 2008 and higher only supports assessments, and then on the next line, it says that Windows Server 2008 R2 SP1 and higher is supported with .NET and WMF prerequisites. These two overlap with conflicting requirements

It might be clearer with three separate line items:

| Operating System | Notes |

|-|-|

|Windows Server 2008 - Windows Server 2008 R2 RTM | Only supports update assessments|

|Windows Server 2008 R2 SP1 and higher|fully supported with .NET 4.5+ and WMF 5.0+|

|Windows Server 2016 Nano Server | Not Supported|

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: db47901d-1664-058d-9407-47e932dc9661

* Version Independent ID: e90ec9ee-e7da-4f19-248f-4c825aaa8b9f

* [Content](https://docs.microsoft.com/en-us/azure/automation/automation-update-management#clients)

* [Content Source](https://github.com/Microsoft/azure-docs/blob/master/articles/automation/automation-update-management.md)

* Service: automation

|

process

|

correction for windows under supported client types the table should say that windows server and higher only supports assessments and then on the next line it says that windows server and higher is supported with net and wmf prerequisites these two overlap with conflicting requirements it might be clearer with three separate line items operating system notes windows server windows server rtm only supports update assessments windows server and higher fully supported with net and wmf windows server nano server not supported document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id service automation

| 1

|

4,599

| 7,440,272,062

|

IssuesEvent

|

2018-03-27 09:34:11

|

qgis/QGIS-Documentation

|

https://api.github.com/repos/qgis/QGIS-Documentation

|

closed

|

Processing guidelines for help files is outdated

|

Guidelines Processing

|

(Picked from http://hub.qgis.org/issues/14599)

Here the link of the official webpage: http://docs.qgis.org/2.8/en/docs/documentation_guidelines/README.html#documenting-processing-algorithms

some points are outdated:

- SAGA help files are not available anymore

- it is quite difficult for a potential doc writer to understand where the files are located

- QGIS algs have .yml syntax

- maybe it is worth to have a standard template for all the providers (at the moment gdal/ogr points to their own docs, SAGA are missing, GRASS and TauDEM have their own..)

|

1.0

|

Processing guidelines for help files is outdated - (Picked from http://hub.qgis.org/issues/14599)

Here the link of the official webpage: http://docs.qgis.org/2.8/en/docs/documentation_guidelines/README.html#documenting-processing-algorithms

some points are outdated:

- SAGA help files are not available anymore

- it is quite difficult for a potential doc writer to understand where the files are located

- QGIS algs have .yml syntax

- maybe it is worth to have a standard template for all the providers (at the moment gdal/ogr points to their own docs, SAGA are missing, GRASS and TauDEM have their own..)

|

process

|

processing guidelines for help files is outdated picked from here the link of the official webpage some points are outdated saga help files are not available anymore it is quite difficult for a potential doc writer to understand where the files are located qgis algs have yml syntax maybe it is worth to have a standard template for all the providers at the moment gdal ogr points to their own docs saga are missing grass and taudem have their own

| 1

|

18,037

| 24,048,064,395

|

IssuesEvent

|

2022-09-16 10:07:05

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

closed

|

sqlite: show better error when a permission denied error occurs

|

bug/1-unconfirmed kind/bug process/candidate topic: sqlite topic: error tech/engines topic: error reporting team/schema

|

<!-- If required, please update the title to be clear and descriptive -->

Command: `prisma migrate reset`

Version: `3.15.0`

Binary Version: `b9297dc3a59307060c1c39d7e4f5765066f38372`

Report: https://prisma-errors.netlify.app/report/14081

OS: `x64 linux 5.4.0-1018-aws`

JS Stacktrace:

```

Error: Error in migration engine.

Reason: [migration-engine/connectors/sql-migration-connector/src/flavour/sqlite.rs:194:46] failed to truncate sqlite file: Os { code: 13, kind: PermissionDenied, message: "Permission denied" }

Please create an issue with your `schema.prisma` at

https://github.com/prisma/prisma/issues/new

at handlePanic (/home/../app/node_modules/prisma/build/index.js:93373:25)

at ChildProcess.<anonymous> (/home/../app/node_modules/prisma/build/index.js:93382:15)

at ChildProcess.emit (node:events:390:28)

at Process.ChildProcess._handle.onexit (node:internal/child_process:290:12)

```

Rust Stacktrace:

```

Starting migration engine RPC server

[migration-engine/connectors/sql-migration-connector/src/flavour/sqlite.rs:194:46] failed to truncate sqlite file: Os { code: 13, kind: PermissionDenied, message: "Permission denied" }

```

|

1.0

|

sqlite: show better error when a permission denied error occurs - <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma migrate reset`

Version: `3.15.0`

Binary Version: `b9297dc3a59307060c1c39d7e4f5765066f38372`

Report: https://prisma-errors.netlify.app/report/14081

OS: `x64 linux 5.4.0-1018-aws`

JS Stacktrace:

```

Error: Error in migration engine.

Reason: [migration-engine/connectors/sql-migration-connector/src/flavour/sqlite.rs:194:46] failed to truncate sqlite file: Os { code: 13, kind: PermissionDenied, message: "Permission denied" }

Please create an issue with your `schema.prisma` at

https://github.com/prisma/prisma/issues/new

at handlePanic (/home/../app/node_modules/prisma/build/index.js:93373:25)

at ChildProcess.<anonymous> (/home/../app/node_modules/prisma/build/index.js:93382:15)

at ChildProcess.emit (node:events:390:28)

at Process.ChildProcess._handle.onexit (node:internal/child_process:290:12)

```

Rust Stacktrace:

```

Starting migration engine RPC server

[migration-engine/connectors/sql-migration-connector/src/flavour/sqlite.rs:194:46] failed to truncate sqlite file: Os { code: 13, kind: PermissionDenied, message: "Permission denied" }

```

|

process

|

sqlite show better error when a permission denied error occurs command prisma migrate reset version binary version report os linux aws js stacktrace error error in migration engine reason failed to truncate sqlite file os code kind permissiondenied message permission denied please create an issue with your schema prisma at at handlepanic home app node modules prisma build index js at childprocess home app node modules prisma build index js at childprocess emit node events at process childprocess handle onexit node internal child process rust stacktrace starting migration engine rpc server failed to truncate sqlite file os code kind permissiondenied message permission denied

| 1

|

264,712

| 23,135,186,174

|

IssuesEvent

|

2022-07-28 13:48:48

|

elastic/elasticsearch

|

https://api.github.com/repos/elastic/elasticsearch

|

closed

|

Simplify BalanceUnbalancedClusterTest

|

>test :Distributed/Allocation team-discuss Team:Distributed

|

This takes 10 seconds or more, while other allocation tests are almost instantaneous. Can we simplify this? It looks like it tries to do a basic allocation (5 shards, 1 replica) of a new index when a _ton_ of indexes already exist on just 4 nodes. Perhaps we could test similar circumstances without thousands of shards? Alternatively, we could just make this an integration test (leave the impl, but rename to IT). It doesn't really seem like a unit test as it is now.

Also, as a side note, this test is the only user of CatAllocationTestCase. Perhaps we can also eliminate this abstraction and just test directly (eliminating the zipped shard state)? @s1monw do you have any thoughts here?

|

1.0

|

Simplify BalanceUnbalancedClusterTest - This takes 10 seconds or more, while other allocation tests are almost instantaneous. Can we simplify this? It looks like it tries to do a basic allocation (5 shards, 1 replica) of a new index when a _ton_ of indexes already exist on just 4 nodes. Perhaps we could test similar circumstances without thousands of shards? Alternatively, we could just make this an integration test (leave the impl, but rename to IT). It doesn't really seem like a unit test as it is now.

Also, as a side note, this test is the only user of CatAllocationTestCase. Perhaps we can also eliminate this abstraction and just test directly (eliminating the zipped shard state)? @s1monw do you have any thoughts here?

|

non_process

|

simplify balanceunbalancedclustertest this takes seconds or more while other allocation tests are almost instantaneous can we simplify this it looks like it tries to do a basic allocation shards replica of a new index when a ton of indexes already exist on just nodes perhaps we could test similar circumstances without thousands of shards alternatively we could just make this an integration test leave the impl but rename to it it doesn t really seem like a unit test as it is now also as a side note this test is the only user of catallocationtestcase perhaps we can also eliminate this abstraction and just test directly eliminating the zipped shard state do you have any thoughts here

| 0

|

439,575

| 30,704,268,903

|

IssuesEvent

|

2023-07-27 04:05:06

|

aws/aws-cdk

|

https://api.github.com/repos/aws/aws-cdk

|

closed

|

AutoScalingGroup Constructor: Overloaded Constructor / Different Props

|

p2 feature-request response-requested @aws-cdk/aws-autoscaling documentation closed-for-staleness

|

### Describe the feature

Create overloaded constructor(s) for `AutoScalingGroup` with different properties. These alternate constructors can contain either `launchTemplate` or `mixedInstancesPolicy` while simultaneously removing all properties that must not be specified when either of these two props are passed into the original constructor.

### Use Case

When migrating my CFN templates to CDK, it is difficult for me to debug my AutoScalingGroup CDK code because of the way ASGs are described in the CDK documentation and because of the way ASGs are defined in code. Building my templates takes a long time (even for a single package), and running into an error with `launchTemplate`, `mixedInstancesPolicy` and `machineImage` is frustrating.

Properties such as `instanceType` specify that the `launchTemplate` / `mixedInstancesPolicy` must not be specified. However, `launchTemplate` and `mixedInstancesPolicy` do not specify the other properties that break them in return.

To fix these issues, we can both add more detail to `launchTemplate` / `mixedInstancesPolicy` in the documentation and split the AutoScalingGroupProps interface into different interfaces to prevent this collision in the first place.

### Proposed Solution

constructor(scope: Construct, id: string, props: ASGLaunchTemplateProps);

// ASGLaunchTemplateProps is the same as AutoScalingGroupProps minus the properties

// that state `launchTemplate` must not be specified

export interface ASGLaunchTemplateProps extends CommonASGLaunchTemplateProps {

readonly vpc: ec2.IVpc;

readonly launchTemplate?: ec2.ILaunchTemplate;

readonly init?: ec2.CloudFormationInit;

readonly initOptions?: ApplyCloudFormationInitOptions;

readonly requireImdsv2?: boolean;

}

// CommonASGLaunchTemplateProps is the same as CommonAutoScalingGroupProps minus the properties

// that state `launchTemplate` must not be specified

export interface CommonAutoScalingGroupProps {

readonly minCapacity?: number;

readonly maxCapacity?: number;

readonly desiredCapacity?: number;

readonly vpcSubnets?: ec2.SubnetSelection;

readonly notifications?: NotificationConfiguration[];

readonly allowAllOutbound?: boolean;

readonly ignoreUnmodifiedSizeProperties?: boolean;

readonly cooldown?: Duration;

readonly healthCheck?: HealthCheck;

readonly maxInstanceLifetime?: Duration;

readonly groupMetrics?: GroupMetrics[];

readonly signals?: Signals;

readonly updatePolicy?: UpdatePolicy;

readonly newInstancesProtectedFromScaleIn?: boolean;

readonly autoScalingGroupName?: string;

readonly terminationPolicies?: TerminationPolicy[];

readonly defaultInstanceWarmup?: Duration;

readonly ssmSessionPermissions?: boolean;

}

// Note: The original CommonAutoScalingGroupProps can also be modified to move the properties

// that state "`launchTemplate` must not be specified" to AutoScalingGroupProps

### Other Information

_No response_

### Acknowledgements

- [x] I may be able to implement this feature request

- [ ] This feature might incur a breaking change

### CDK version used

2.87.0

### Environment details (OS name and version, etc.)

OSX / Using AL2_x86_64

|

1.0

|

AutoScalingGroup Constructor: Overloaded Constructor / Different Props - ### Describe the feature

Create overloaded constructor(s) for `AutoScalingGroup` with different properties. These alternate constructors can contain either `launchTemplate` or `mixedInstancesPolicy` while simultaneously removing all properties that must not be specified when either of these two props are passed into the original constructor.

### Use Case

When migrating my CFN templates to CDK, it is difficult for me to debug my AutoScalingGroup CDK code because of the way ASGs are described in the CDK documentation and because of the way ASGs are defined in code. Building my templates takes a long time (even for a single package), and running into an error with `launchTemplate`, `mixedInstancesPolicy` and `machineImage` is frustrating.

Properties such as `instanceType` specify that the `launchTemplate` / `mixedInstancesPolicy` must not be specified. However, `launchTemplate` and `mixedInstancesPolicy` do not specify the other properties that break them in return.

To fix these issues, we can both add more detail to `launchTemplate` / `mixedInstancesPolicy` in the documentation and split the AutoScalingGroupProps interface into different interfaces to prevent this collision in the first place.

### Proposed Solution

constructor(scope: Construct, id: string, props: ASGLaunchTemplateProps);

// ASGLaunchTemplateProps is the same as AutoScalingGroupProps minus the properties

// that state `launchTemplate` must not be specified

export interface ASGLaunchTemplateProps extends CommonASGLaunchTemplateProps {

readonly vpc: ec2.IVpc;

readonly launchTemplate?: ec2.ILaunchTemplate;

readonly init?: ec2.CloudFormationInit;

readonly initOptions?: ApplyCloudFormationInitOptions;

readonly requireImdsv2?: boolean;

}

// CommonASGLaunchTemplateProps is the same as CommonAutoScalingGroupProps minus the properties

// that state `launchTemplate` must not be specified

export interface CommonAutoScalingGroupProps {

readonly minCapacity?: number;

readonly maxCapacity?: number;

readonly desiredCapacity?: number;

readonly vpcSubnets?: ec2.SubnetSelection;

readonly notifications?: NotificationConfiguration[];

readonly allowAllOutbound?: boolean;

readonly ignoreUnmodifiedSizeProperties?: boolean;

readonly cooldown?: Duration;

readonly healthCheck?: HealthCheck;

readonly maxInstanceLifetime?: Duration;

readonly groupMetrics?: GroupMetrics[];

readonly signals?: Signals;

readonly updatePolicy?: UpdatePolicy;

readonly newInstancesProtectedFromScaleIn?: boolean;

readonly autoScalingGroupName?: string;

readonly terminationPolicies?: TerminationPolicy[];

readonly defaultInstanceWarmup?: Duration;

readonly ssmSessionPermissions?: boolean;

}

// Note: The original CommonAutoScalingGroupProps can also be modified to move the properties

// that state "`launchTemplate` must not be specified" to AutoScalingGroupProps

### Other Information

_No response_

### Acknowledgements

- [x] I may be able to implement this feature request

- [ ] This feature might incur a breaking change

### CDK version used

2.87.0

### Environment details (OS name and version, etc.)

OSX / Using AL2_x86_64

|

non_process

|

autoscalinggroup constructor overloaded constructor different props describe the feature create overloaded constructor s for autoscalinggroup with different properties these alternate constructors can contain either launchtemplate or mixedinstancespolicy while simultaneously removing all properties that must not be specified when either of these two props are passed into the original constructor use case when migrating my cfn templates to cdk it is difficult for me to debug my autoscalinggroup cdk code because of the way asgs are described in the cdk documentation and because of the way asgs are defined in code building my templates takes a long time even for a single package and running into an error with launchtemplate mixedinstancespolicy and machineimage is frustrating properties such as instancetype specify that the launchtemplate mixedinstancespolicy must not be specified however launchtemplate and mixedinstancespolicy do not specify the other properties that break them in return to fix these issues we can both add more detail to launchtemplate mixedinstancespolicy in the documentation and split the autoscalinggroupprops interface into different interfaces to prevent this collision in the first place proposed solution constructor scope construct id string props asglaunchtemplateprops asglaunchtemplateprops is the same as autoscalinggroupprops minus the properties that state launchtemplate must not be specified export interface asglaunchtemplateprops extends commonasglaunchtemplateprops readonly vpc ivpc readonly launchtemplate ilaunchtemplate readonly init cloudformationinit readonly initoptions applycloudformationinitoptions readonly boolean commonasglaunchtemplateprops is the same as commonautoscalinggroupprops minus the properties that state launchtemplate must not be specified export interface commonautoscalinggroupprops readonly mincapacity number readonly maxcapacity number readonly desiredcapacity number readonly vpcsubnets subnetselection readonly notifications notificationconfiguration readonly allowalloutbound boolean readonly ignoreunmodifiedsizeproperties boolean readonly cooldown duration readonly healthcheck healthcheck readonly maxinstancelifetime duration readonly groupmetrics groupmetrics readonly signals signals readonly updatepolicy updatepolicy readonly newinstancesprotectedfromscalein boolean readonly autoscalinggroupname string readonly terminationpolicies terminationpolicy readonly defaultinstancewarmup duration readonly ssmsessionpermissions boolean note the original commonautoscalinggroupprops can also be modified to move the properties that state launchtemplate must not be specified to autoscalinggroupprops other information no response acknowledgements i may be able to implement this feature request this feature might incur a breaking change cdk version used environment details os name and version etc osx using

| 0

|

617,123

| 19,343,670,164

|

IssuesEvent

|

2021-12-15 08:34:14

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

samsung.com - see bug description

|

browser-firefox-mobile priority-important engine-gecko

|

<!-- @browser: Firefox Mobile 95.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:95.0) Gecko/95.0 Firefox/95.0 -->

<!-- @reported_with: unknown -->

**URL**: http://samsung.com

**Browser / Version**: Firefox Mobile 95.0

**Operating System**: windows 10

**Tested Another Browser**: Yes Edge

**Problem type**: Something else

**Description**: all SAMSUNG devices are hacked

**Steps to Reproduce**:

Accesses information on emails passwords bank details. TV ( sky) through TV laptop or and phone.

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

1.0

|

samsung.com - see bug description - <!-- @browser: Firefox Mobile 95.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:95.0) Gecko/95.0 Firefox/95.0 -->

<!-- @reported_with: unknown -->

**URL**: http://samsung.com

**Browser / Version**: Firefox Mobile 95.0

**Operating System**: windows 10

**Tested Another Browser**: Yes Edge

**Problem type**: Something else

**Description**: all SAMSUNG devices are hacked

**Steps to Reproduce**:

Accesses information on emails passwords bank details. TV ( sky) through TV laptop or and phone.

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

non_process

|

samsung com see bug description url browser version firefox mobile operating system windows tested another browser yes edge problem type something else description all samsung devices are hacked steps to reproduce accesses information on emails passwords bank details tv sky through tv laptop or and phone browser configuration none from with ❤️

| 0

|

85,938

| 3,700,656,950

|

IssuesEvent

|

2016-02-29 09:37:30

|

cs2103jan2016-t09-2j/main

|

https://api.github.com/repos/cs2103jan2016-t09-2j/main

|

closed

|

Parse Adding Commands to Scheduled Tasks

|

priority.high status.ongoing type.task

|

- Decide all the existing possibilities to add new tasks into the scheduled tasks list

- What about the keywords for date and time ?

- Study all existing libraries. Which ones can be imported ?

|

1.0

|

Parse Adding Commands to Scheduled Tasks - - Decide all the existing possibilities to add new tasks into the scheduled tasks list

- What about the keywords for date and time ?

- Study all existing libraries. Which ones can be imported ?

|

non_process

|

parse adding commands to scheduled tasks decide all the existing possibilities to add new tasks into the scheduled tasks list what about the keywords for date and time study all existing libraries which ones can be imported

| 0

|

18,542

| 24,554,962,348

|

IssuesEvent

|

2022-10-12 15:11:56

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

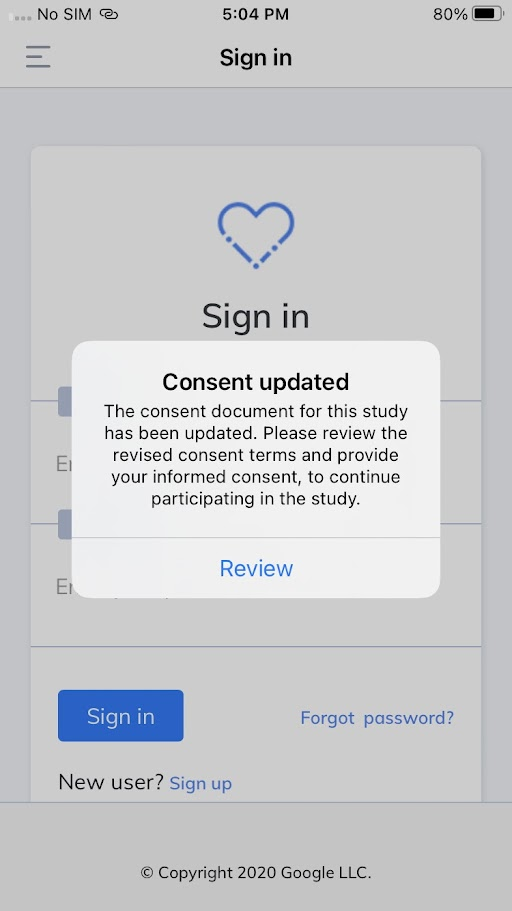

[iOS] [Standalone] 'Review' consent popup is getting displayed on sign in screen in the following scenario

|

Bug P1 iOS Process: Fixed Process: Tested QA Process: Tested dev

|

Steps:

1. Signup or sign in to the mobile app

2. Enroll to the study

3. In SB, update the consent for enrolled participants and publish the study updates

4. Go to the mobile app

5. Minimize the app once after getting the 'Review' consent popup

6. Maximize the app

7. On the Passcode screen, click on ### 'Forgot passcode? Sign in again and observe

AR: 'Review' consent popup is getting displayed on the sign-in screen

ER: 'Review' consent popup should not be displayed on the sign-in screen

|

3.0

|

[iOS] [Standalone] 'Review' consent popup is getting displayed on sign in screen in the following scenario - Steps:

1. Signup or sign in to the mobile app

2. Enroll to the study

3. In SB, update the consent for enrolled participants and publish the study updates

4. Go to the mobile app

5. Minimize the app once after getting the 'Review' consent popup

6. Maximize the app

7. On the Passcode screen, click on ### 'Forgot passcode? Sign in again and observe

AR: 'Review' consent popup is getting displayed on the sign-in screen

ER: 'Review' consent popup should not be displayed on the sign-in screen

|

process

|

review consent popup is getting displayed on sign in screen in the following scenario steps signup or sign in to the mobile app enroll to the study in sb update the consent for enrolled participants and publish the study updates go to the mobile app minimize the app once after getting the review consent popup maximize the app on the passcode screen click on forgot passcode sign in again and observe ar review consent popup is getting displayed on the sign in screen er review consent popup should not be displayed on the sign in screen

| 1

|

34,150

| 9,300,419,535

|

IssuesEvent

|

2019-03-23 13:39:02

|

ekeeke/Genesis-Plus-GX

|

https://api.github.com/repos/ekeeke/Genesis-Plus-GX

|

closed

|

Still doesnt compile with latest devkitPPC version

|

build error

|

/media/demetris/c4487cfd-d71a-441b-b83d-23d8ce19475d/genesis-plus-gx/gx/main.c:381:25: error: 'S_IRWXU' undeclared (first use in this function)

else mkdir(pathname,S_IRWXU);

|

1.0

|

Still doesnt compile with latest devkitPPC version - /media/demetris/c4487cfd-d71a-441b-b83d-23d8ce19475d/genesis-plus-gx/gx/main.c:381:25: error: 'S_IRWXU' undeclared (first use in this function)

else mkdir(pathname,S_IRWXU);

|

non_process

|

still doesnt compile with latest devkitppc version media demetris genesis plus gx gx main c error s irwxu undeclared first use in this function else mkdir pathname s irwxu

| 0

|

106,628

| 11,493,553,831

|

IssuesEvent

|

2020-02-11 23:19:16

|

5Guys-NC/CAA-Niagara-Event-Management-System

|

https://api.github.com/repos/5Guys-NC/CAA-Niagara-Event-Management-System

|

opened

|

Create good version of Data Model

|

TODO documentation

|

Someone in the group will have a sketch of the data model once final.

Please make a good copy of this.

|

1.0

|

Create good version of Data Model - Someone in the group will have a sketch of the data model once final.

Please make a good copy of this.

|

non_process

|

create good version of data model someone in the group will have a sketch of the data model once final please make a good copy of this

| 0

|

294,028

| 9,012,327,709

|

IssuesEvent

|

2019-02-05 16:38:16

|

canonical-websites/snapcraft.io

|

https://api.github.com/repos/canonical-websites/snapcraft.io

|

closed

|

Building snaps LIVE Stream notification

|

Priority: Critical

|

Advocacy is going to do a LIVE Stream of a keynote called [building snaps](https://www.youtube.com/watch?v=BEp_l2oUcD8&ab_channel=snapcraft) on Youtube which will be live on 6 Sept @ 8pm (GMT) so we would like to include this as part of the homepage and the store homepage.

|

1.0

|

Building snaps LIVE Stream notification - Advocacy is going to do a LIVE Stream of a keynote called [building snaps](https://www.youtube.com/watch?v=BEp_l2oUcD8&ab_channel=snapcraft) on Youtube which will be live on 6 Sept @ 8pm (GMT) so we would like to include this as part of the homepage and the store homepage.

|

non_process

|

building snaps live stream notification advocacy is going to do a live stream of a keynote called on youtube which will be live on sept gmt so we would like to include this as part of the homepage and the store homepage

| 0

|

73,229

| 14,012,118,879

|

IssuesEvent

|

2020-10-29 08:34:10

|

Regalis11/Barotrauma

|

https://api.github.com/repos/Regalis11/Barotrauma

|

closed

|

[v0.1100.0.0 (unstable)] - Ballast flora permanently jams hatch open even after its killed

|

Bug Code

|

**Description**

Ballast flora will permanently jam the hatch to ballast open since you're unable to plasma cut it to free it, even after its killed.

**Steps To Reproduce**

1) spawn ballast flora

2) open the hatch topside of it (using dugong)

3) attack it with flame thrower

**Version**

[v0.1100.0.0 (unstable)]

**Edit**

It seems to be able to permanently jam open doors too (picture below)

**Additional information**

|

1.0

|

[v0.1100.0.0 (unstable)] - Ballast flora permanently jams hatch open even after its killed - **Description**

Ballast flora will permanently jam the hatch to ballast open since you're unable to plasma cut it to free it, even after its killed.

**Steps To Reproduce**

1) spawn ballast flora

2) open the hatch topside of it (using dugong)

3) attack it with flame thrower

**Version**

[v0.1100.0.0 (unstable)]

**Edit**

It seems to be able to permanently jam open doors too (picture below)

**Additional information**

|

non_process

|