Unnamed: 0 int64 9 832k | id float64 2.5B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 4 323 | labels stringlengths 4 2.67k | body stringlengths 23 107k | index stringclasses 4 values | text_combine stringlengths 96 107k | label stringclasses 2 values | text stringlengths 96 56.1k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

794 | 10,550,587,885 | IssuesEvent | 2019-10-03 11:26:12 | Cha-OS/colabo | https://api.github.com/repos/Cha-OS/colabo | opened | Net or Server accessing errors | IMPORTANT UX.UsrOnBoard+AvoidUsrErr backend moderation performance reliability | - if the server is unavailable, inform the user, e.g. after several retries or HTTP request FAIL response

- let him TRY again.

- NOTIFICATIONS or TOOLBAR Status

- you're offline (re-introduce it from the old code)

- you're net is weak | True | Net or Server accessing errors - - if the server is unavailable, inform the user, e.g. after several retries or HTTP request FAIL response

- let him TRY again.

- NOTIFICATIONS or TOOLBAR Status

- you're offline (re-introduce it from the old code)

- you're net is weak | reli | net or server accessing errors if the server is unavailable inform the user e g after several retries or http request fail response let him try again notifications or toolbar status you re offline re introduce it from the old code you re net is weak | 1 |

534 | 8,391,959,645 | IssuesEvent | 2018-10-09 16:15:29 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | System.Net.Sockets.SocketException: An attempt was made to access a socket in a way forbidden by its access permissions | area-System.Net.Http.SocketsHttpHandler bug needs more info tenet-reliability | Migrated from [#3575](https://github.com/aspnet/Home/issues/3575)...

The call from my Asp.NET Core app is just a standard http get to retrieve OData metadata, e.g. `GET /api/odata/asset/$metadata `- this works 99.9% of the time but occasionally it fails and I get a HttpRequestException reported in Application Insights..

```

System.Net.Http.HttpRequestException: An attempt was made to access a socket in a way forbidden by its access permissions

---> System.Net.Sockets.SocketException: An attempt was made to access a socket in a way forbidden by its access permissions

at System.Net.Http.ConnectHelper.ConnectAsync(String host, Int32 port, CancellationToken cancellationToken)

--- End of inner exception stack trace ---

at System.Net.Http.ConnectHelper.ConnectAsync(String host, Int32 port, CancellationToken cancellationToken)

at System.Net.Http.HttpConnectionPool.CreateConnectionAsync(HttpRequestMessage request, CancellationToken cancellationToken)

at System.Net.Http.HttpConnectionPool.WaitForCreatedConnectionAsync(ValueTask`1 creationTask)

at System.Net.Http.HttpConnectionPool.SendWithRetryAsync(HttpRequestMessage request, Boolean doRequestAuth, CancellationToken cancellationToken)

at System.Net.Http.RedirectHandler.SendAsync(HttpRequestMessage request, CancellationToken cancellationToken)

at System.Net.Http.DiagnosticsHandler.SendAsync(HttpRequestMessage request, CancellationToken cancellationToken)

```

There are webjobs in the same app service plan and [#1876](https://github.com/Azure/azure-webjobs-sdk/issues/1876) seems to be similar (see last two comments), what I'm not sure is whether this error gets raised per endpoint you talk to, or across all outbound requests.

There are no warnings at the app service plan level regarding port exhaustion and the endpoint I'm trying to talk to is in the same app service plan and is actually a virtual application within the same web site. | True | System.Net.Sockets.SocketException: An attempt was made to access a socket in a way forbidden by its access permissions - Migrated from [#3575](https://github.com/aspnet/Home/issues/3575)...

The call from my Asp.NET Core app is just a standard http get to retrieve OData metadata, e.g. `GET /api/odata/asset/$metadata `- this works 99.9% of the time but occasionally it fails and I get a HttpRequestException reported in Application Insights..

```

System.Net.Http.HttpRequestException: An attempt was made to access a socket in a way forbidden by its access permissions

---> System.Net.Sockets.SocketException: An attempt was made to access a socket in a way forbidden by its access permissions

at System.Net.Http.ConnectHelper.ConnectAsync(String host, Int32 port, CancellationToken cancellationToken)

--- End of inner exception stack trace ---

at System.Net.Http.ConnectHelper.ConnectAsync(String host, Int32 port, CancellationToken cancellationToken)

at System.Net.Http.HttpConnectionPool.CreateConnectionAsync(HttpRequestMessage request, CancellationToken cancellationToken)

at System.Net.Http.HttpConnectionPool.WaitForCreatedConnectionAsync(ValueTask`1 creationTask)

at System.Net.Http.HttpConnectionPool.SendWithRetryAsync(HttpRequestMessage request, Boolean doRequestAuth, CancellationToken cancellationToken)

at System.Net.Http.RedirectHandler.SendAsync(HttpRequestMessage request, CancellationToken cancellationToken)

at System.Net.Http.DiagnosticsHandler.SendAsync(HttpRequestMessage request, CancellationToken cancellationToken)

```

There are webjobs in the same app service plan and [#1876](https://github.com/Azure/azure-webjobs-sdk/issues/1876) seems to be similar (see last two comments), what I'm not sure is whether this error gets raised per endpoint you talk to, or across all outbound requests.

There are no warnings at the app service plan level regarding port exhaustion and the endpoint I'm trying to talk to is in the same app service plan and is actually a virtual application within the same web site. | reli | system net sockets socketexception an attempt was made to access a socket in a way forbidden by its access permissions migrated from the call from my asp net core app is just a standard http get to retrieve odata metadata e g get api odata asset metadata this works of the time but occasionally it fails and i get a httprequestexception reported in application insights system net http httprequestexception an attempt was made to access a socket in a way forbidden by its access permissions system net sockets socketexception an attempt was made to access a socket in a way forbidden by its access permissions at system net http connecthelper connectasync string host port cancellationtoken cancellationtoken end of inner exception stack trace at system net http connecthelper connectasync string host port cancellationtoken cancellationtoken at system net http httpconnectionpool createconnectionasync httprequestmessage request cancellationtoken cancellationtoken at system net http httpconnectionpool waitforcreatedconnectionasync valuetask creationtask at system net http httpconnectionpool sendwithretryasync httprequestmessage request boolean dorequestauth cancellationtoken cancellationtoken at system net http redirecthandler sendasync httprequestmessage request cancellationtoken cancellationtoken at system net http diagnosticshandler sendasync httprequestmessage request cancellationtoken cancellationtoken there are webjobs in the same app service plan and seems to be similar see last two comments what i m not sure is whether this error gets raised per endpoint you talk to or across all outbound requests there are no warnings at the app service plan level regarding port exhaustion and the endpoint i m trying to talk to is in the same app service plan and is actually a virtual application within the same web site | 1 |

32,279 | 8,824,395,070 | IssuesEvent | 2019-01-02 16:51:31 | docker/docker.github.io | https://api.github.com/repos/docker/docker.github.io | closed | FROM syntax spec is not clear about hash algo | content/builder | File: [engine/reference/builder.md](https://docs.docker.com/engine/reference/builder/), CC @gbarr01

I was getting really stuck on the docs for the `FROM` directive in the `Dockerfile` format, which says:

FROM <image>[@<digest>] [AS <name>]

So I was using:

FROM alpine@3d44fa76c2c83ed9296e4508b436ff583397cac0f4bad85c2b4ecc193ddb5106 AS build

which produces:

> invalid reference format

After much searching, and not finding any examples of pinning to a hash, I tried this on a whim:

FROM alpine@sha256:3d44fa76c2c83ed9296e4508b436ff583397cac0f4bad85c2b4ecc193ddb5106 AS build

That worked. Thus, since I don't think it is clear that `<digest>` needs to specify the hash algorithm and a colon, can we change this to:

FROM <image>[@<hash-algo>:<digest>] [AS <name>]

Or, can an example be added in this section, so it is clear that the algo is required?

| 1.0 | FROM syntax spec is not clear about hash algo - File: [engine/reference/builder.md](https://docs.docker.com/engine/reference/builder/), CC @gbarr01

I was getting really stuck on the docs for the `FROM` directive in the `Dockerfile` format, which says:

FROM <image>[@<digest>] [AS <name>]

So I was using:

FROM alpine@3d44fa76c2c83ed9296e4508b436ff583397cac0f4bad85c2b4ecc193ddb5106 AS build

which produces:

> invalid reference format

After much searching, and not finding any examples of pinning to a hash, I tried this on a whim:

FROM alpine@sha256:3d44fa76c2c83ed9296e4508b436ff583397cac0f4bad85c2b4ecc193ddb5106 AS build

That worked. Thus, since I don't think it is clear that `<digest>` needs to specify the hash algorithm and a colon, can we change this to:

FROM <image>[@<hash-algo>:<digest>] [AS <name>]

Or, can an example be added in this section, so it is clear that the algo is required?

| non_reli | from syntax spec is not clear about hash algo file cc i was getting really stuck on the docs for the from directive in the dockerfile format which says from so i was using from alpine as build which produces invalid reference format after much searching and not finding any examples of pinning to a hash i tried this on a whim from alpine as build that worked thus since i don t think it is clear that needs to specify the hash algorithm and a colon can we change this to from or can an example be added in this section so it is clear that the algo is required | 0 |

1,092 | 13,041,829,055 | IssuesEvent | 2020-07-28 21:08:34 | mozilla/hubs | https://api.github.com/repos/mozilla/hubs | closed | Move from node-sass to dart-sass | enhancement reliability | I think we could improve developer experience by moving from `node-sass` to `dart-sass`. `node-sass` works fine, but uses a native module using`node-gyp` that requires Python 2.7 to be installed. `node-dart` is a drop in replacement and doesn't have this dependency.

| True | Move from node-sass to dart-sass - I think we could improve developer experience by moving from `node-sass` to `dart-sass`. `node-sass` works fine, but uses a native module using`node-gyp` that requires Python 2.7 to be installed. `node-dart` is a drop in replacement and doesn't have this dependency.

| reli | move from node sass to dart sass i think we could improve developer experience by moving from node sass to dart sass node sass works fine but uses a native module using node gyp that requires python to be installed node dart is a drop in replacement and doesn t have this dependency | 1 |

57,977 | 11,812,356,942 | IssuesEvent | 2020-03-19 20:00:17 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Do not pass value types to Object.ReferenceEquals | api-suggestion area-System.Runtime code-analyzer untriaged | Calls to `ReferenceEquals` where we can detect a value type is being passed in are invariably wrong, as the value type will be boxed, and regardless of its value, `ReferenceEquals` will always return `false`.

**Category**: Reliability | 1.0 | Do not pass value types to Object.ReferenceEquals - Calls to `ReferenceEquals` where we can detect a value type is being passed in are invariably wrong, as the value type will be boxed, and regardless of its value, `ReferenceEquals` will always return `false`.

**Category**: Reliability | non_reli | do not pass value types to object referenceequals calls to referenceequals where we can detect a value type is being passed in are invariably wrong as the value type will be boxed and regardless of its value referenceequals will always return false category reliability | 0 |

570 | 8,656,706,433 | IssuesEvent | 2018-11-27 19:12:37 | m3db/m3 | https://api.github.com/repos/m3db/m3 | opened | Make peers bootstrapper auto-detect when all other peers are not bootstrapped and return success | C: Bootstrap G: Data Integrity P: Medium T: Reliability T: Usability area:db | Right now when catastrophic failures happen (all nodes crash due to datacenter powerloss or all the nodes run out of disk space or OOM around the same time), recovery can become very difficult if any of the nodes encounter corrupt commitlogs. The reason for this is that if the commitlog bootstrapper encounters any corrupt commitlog files, it will mark the entire bootstrap range as unfulfilled.

In normal situations, that is the desired behavior as it allows the peers bootstrapper to repair any corrupt data. However, in catastrophic failures, all the nodes will end up stuck in the peers bootstrapper unable to bootstrap from each other because they can't achieve read consistency.

We should add logic to the peers bootstrapper that detects when a read consistency cannot be achieved for a given shard (due to too many also being stuck in the bootstrapping phase) and if the host is in the LEAVING or AVAILABLE state for that shard then we should just succeed the bootstrap. | True | Make peers bootstrapper auto-detect when all other peers are not bootstrapped and return success - Right now when catastrophic failures happen (all nodes crash due to datacenter powerloss or all the nodes run out of disk space or OOM around the same time), recovery can become very difficult if any of the nodes encounter corrupt commitlogs. The reason for this is that if the commitlog bootstrapper encounters any corrupt commitlog files, it will mark the entire bootstrap range as unfulfilled.

In normal situations, that is the desired behavior as it allows the peers bootstrapper to repair any corrupt data. However, in catastrophic failures, all the nodes will end up stuck in the peers bootstrapper unable to bootstrap from each other because they can't achieve read consistency.

We should add logic to the peers bootstrapper that detects when a read consistency cannot be achieved for a given shard (due to too many also being stuck in the bootstrapping phase) and if the host is in the LEAVING or AVAILABLE state for that shard then we should just succeed the bootstrap. | reli | make peers bootstrapper auto detect when all other peers are not bootstrapped and return success right now when catastrophic failures happen all nodes crash due to datacenter powerloss or all the nodes run out of disk space or oom around the same time recovery can become very difficult if any of the nodes encounter corrupt commitlogs the reason for this is that if the commitlog bootstrapper encounters any corrupt commitlog files it will mark the entire bootstrap range as unfulfilled in normal situations that is the desired behavior as it allows the peers bootstrapper to repair any corrupt data however in catastrophic failures all the nodes will end up stuck in the peers bootstrapper unable to bootstrap from each other because they can t achieve read consistency we should add logic to the peers bootstrapper that detects when a read consistency cannot be achieved for a given shard due to too many also being stuck in the bootstrapping phase and if the host is in the leaving or available state for that shard then we should just succeed the bootstrap | 1 |

265,211 | 8,343,633,001 | IssuesEvent | 2018-09-30 07:14:52 | minio/minio | https://api.github.com/repos/minio/minio | closed | minio some node return 403 | community priority: medium triage | <!--- Provide a general summary of the issue in the Title above -->

## Expected Behavior

work better

<!--- If you're describing a bug, tell us what should happen -->

<!--- If you're suggesting a change/improvement, tell us how it should work -->

## Current Behavior

there is a clusters of 4 nodes ,but sometime will return 403 from http response, http header like this:

HTTP/1.1 403 Forbidden

Accept-Ranges: bytes

Content-Security-Policy: block-all-mixed-content

Server: Minio/RELEASE.2018-09-25T21-34-43Z (linux; amd64)

Vary: Origin

X-Amz-Request-Id: 1558352F1FFDC4D8

X-Xss-Protection: 1; mode=block

Date: Thu, 27 Sep 2018 08:42:29 GMT

minio error log:

Error: volume not found

disk=http://ipaddress:9090/app/minio

1: cmd/logger/logger.go:294:logger.LogIf()

2: cmd/xl-v1-utils.go:309:cmd.readXLMeta()

3: cmd/xl-v1-utils.go:341:cmd.readAllXLMetadata.func1()

minion start command:

MINIO_ACCESS_KEY=1 MINIO_SECRET_KEY=2 minio server --address ip:9090 http://ip1:9090/app/minio http://ip2:9090/app/minio http://ip3:9090/app/minio http://ip4:9090/app/minio

<!--- If describing a bug, tell us what happens instead of the expected behavior -->

<!--- If suggesting a change/improvement, explain the difference from current behavior -->

## Possible Solution

<!--- Not obligatory, but suggest a fix/reason for the bug, -->

<!--- or ideas how to implement the addition or change -->

## Steps to Reproduce (for bugs)

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug. Include code to reproduce, if relevant -->

1.

2.

3.

4.

## Context

<!--- How has this issue affected you? What are you trying to accomplish? -->

<!--- Providing context helps us come up with a solution that is most useful in the real world -->

## Regression

<!-- Is this issue a regression? (Yes / No) -->

<!-- If Yes, optionally please include minio version or commit id or PR# that caused this regression, if you have these details. -->

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Version used (`minio version`):

Version: 2018-09-25T21:34:43Z

Release-Tag: RELEASE.2018-09-25T21-34-43Z

Commit-ID: aa4e2b1542b98097e08680f21b790de0b776378c

* Environment name and version (e.g. nginx 1.9.1):

* Server type and version:

Ubuntu 16.04.3 LTS

* Operating System and version (`uname -a`):

Linux hostname 4.4.0-87-generic #110-Ubuntu SMP Tue Jul 18 12:55:35 UTC 2017 x86_64 x86_64 x86_64 GNU/Linux

* Link to your project:

| 1.0 | minio some node return 403 - <!--- Provide a general summary of the issue in the Title above -->

## Expected Behavior

work better

<!--- If you're describing a bug, tell us what should happen -->

<!--- If you're suggesting a change/improvement, tell us how it should work -->

## Current Behavior

there is a clusters of 4 nodes ,but sometime will return 403 from http response, http header like this:

HTTP/1.1 403 Forbidden

Accept-Ranges: bytes

Content-Security-Policy: block-all-mixed-content

Server: Minio/RELEASE.2018-09-25T21-34-43Z (linux; amd64)

Vary: Origin

X-Amz-Request-Id: 1558352F1FFDC4D8

X-Xss-Protection: 1; mode=block

Date: Thu, 27 Sep 2018 08:42:29 GMT

minio error log:

Error: volume not found

disk=http://ipaddress:9090/app/minio

1: cmd/logger/logger.go:294:logger.LogIf()

2: cmd/xl-v1-utils.go:309:cmd.readXLMeta()

3: cmd/xl-v1-utils.go:341:cmd.readAllXLMetadata.func1()

minion start command:

MINIO_ACCESS_KEY=1 MINIO_SECRET_KEY=2 minio server --address ip:9090 http://ip1:9090/app/minio http://ip2:9090/app/minio http://ip3:9090/app/minio http://ip4:9090/app/minio

<!--- If describing a bug, tell us what happens instead of the expected behavior -->

<!--- If suggesting a change/improvement, explain the difference from current behavior -->

## Possible Solution

<!--- Not obligatory, but suggest a fix/reason for the bug, -->

<!--- or ideas how to implement the addition or change -->

## Steps to Reproduce (for bugs)

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug. Include code to reproduce, if relevant -->

1.

2.

3.

4.

## Context

<!--- How has this issue affected you? What are you trying to accomplish? -->

<!--- Providing context helps us come up with a solution that is most useful in the real world -->

## Regression

<!-- Is this issue a regression? (Yes / No) -->

<!-- If Yes, optionally please include minio version or commit id or PR# that caused this regression, if you have these details. -->

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Version used (`minio version`):

Version: 2018-09-25T21:34:43Z

Release-Tag: RELEASE.2018-09-25T21-34-43Z

Commit-ID: aa4e2b1542b98097e08680f21b790de0b776378c

* Environment name and version (e.g. nginx 1.9.1):

* Server type and version:

Ubuntu 16.04.3 LTS

* Operating System and version (`uname -a`):

Linux hostname 4.4.0-87-generic #110-Ubuntu SMP Tue Jul 18 12:55:35 UTC 2017 x86_64 x86_64 x86_64 GNU/Linux

* Link to your project:

| non_reli | minio some node return expected behavior work better current behavior there is a clusters of nodes ,but sometime will return from http response, http header like this http forbidden accept ranges bytes content security policy block all mixed content server minio release linux vary origin x amz request id x xss protection mode block date thu sep gmt minio error log error volume not found disk cmd logger logger go logger logif cmd xl utils go cmd readxlmeta cmd xl utils go cmd readallxlmetadata minion start command minio access key minio secret key minio server address ip possible solution steps to reproduce for bugs context regression your environment version used minio version version release tag release commit id environment name and version e g nginx server type and version ubuntu lts operating system and version uname a linux hostname generic ubuntu smp tue jul utc gnu linux link to your project | 0 |

2,203 | 24,137,608,714 | IssuesEvent | 2022-09-21 12:37:30 | Azure/PSRule.Rules.Azure | https://api.github.com/repos/Azure/PSRule.Rules.Azure | opened | Enable purge protection for App Configuration stores | ms-hack-2022 rule: app-configuration pillar: reliability | # Rule request

## Suggested rule change

App Configuration supports purge protection to extend the protection provided by soft-delete. Purge protection limits data loss causes by accidental and malicious purges of deleted configuration stores by enforcing an mandatory retention interval.

This feature only applies to Standard SKU configuration stores. Free configuration stores should be ignored by still rule.

This is enabled by setting the `properties.enablePurgeProtection` property to `true`.

## Applies to the following

The rule applies to the following:

- Resource type: **Microsoft.AppConfiguration/configurationStores**

## Additional context

[Azure deployment reference](https://learn.microsoft.com/azure/templates/microsoft.appconfiguration/configurationstores)

[Purge protection](https://learn.microsoft.com/azure/azure-app-configuration/concept-soft-delete#purge-protection)

| True | Enable purge protection for App Configuration stores - # Rule request

## Suggested rule change

App Configuration supports purge protection to extend the protection provided by soft-delete. Purge protection limits data loss causes by accidental and malicious purges of deleted configuration stores by enforcing an mandatory retention interval.

This feature only applies to Standard SKU configuration stores. Free configuration stores should be ignored by still rule.

This is enabled by setting the `properties.enablePurgeProtection` property to `true`.

## Applies to the following

The rule applies to the following:

- Resource type: **Microsoft.AppConfiguration/configurationStores**

## Additional context

[Azure deployment reference](https://learn.microsoft.com/azure/templates/microsoft.appconfiguration/configurationstores)

[Purge protection](https://learn.microsoft.com/azure/azure-app-configuration/concept-soft-delete#purge-protection)

| reli | enable purge protection for app configuration stores rule request suggested rule change app configuration supports purge protection to extend the protection provided by soft delete purge protection limits data loss causes by accidental and malicious purges of deleted configuration stores by enforcing an mandatory retention interval this feature only applies to standard sku configuration stores free configuration stores should be ignored by still rule this is enabled by setting the properties enablepurgeprotection property to true applies to the following the rule applies to the following resource type microsoft appconfiguration configurationstores additional context | 1 |

28,870 | 11,705,970,798 | IssuesEvent | 2020-03-07 19:07:22 | vlaship/ws | https://api.github.com/repos/vlaship/ws | opened | CVE-2020-9547 (Medium) detected in jackson-databind-2.8.11.3.jar | security vulnerability | ## CVE-2020-9547 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.11.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Path to dependency file: /tmp/ws-scm/ws/build.gradle</p>

<p>Path to vulnerable library: /root/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.8.11.3/844df5aba5a1a56e00905b165b12bb34116ee858/jackson-databind-2.8.11.3.jar,/root/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.8.11.3/844df5aba5a1a56e00905b165b12bb34116ee858/jackson-databind-2.8.11.3.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-websocket-1.5.22.RELEASE.jar (Root Library)

- spring-boot-starter-web-1.5.22.RELEASE.jar

- :x: **jackson-databind-2.8.11.3.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/vlaship/ws/commit/189f4086730e4a06b79e39bcd40240d46674604f">189f4086730e4a06b79e39bcd40240d46674604f</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.4 mishandles the interaction between serialization gadgets and typing, related to com.ibatis.sqlmap.engine.transaction.jta.JtaTransactionConfig (aka ibatis-sqlmap).

<p>Publish Date: 2020-03-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-9547>CVE-2020-9547</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-9547">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-9547</a></p>

<p>Release Date: 2020-03-02</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.10.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-9547 (Medium) detected in jackson-databind-2.8.11.3.jar - ## CVE-2020-9547 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.11.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Path to dependency file: /tmp/ws-scm/ws/build.gradle</p>

<p>Path to vulnerable library: /root/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.8.11.3/844df5aba5a1a56e00905b165b12bb34116ee858/jackson-databind-2.8.11.3.jar,/root/.gradle/caches/modules-2/files-2.1/com.fasterxml.jackson.core/jackson-databind/2.8.11.3/844df5aba5a1a56e00905b165b12bb34116ee858/jackson-databind-2.8.11.3.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-websocket-1.5.22.RELEASE.jar (Root Library)

- spring-boot-starter-web-1.5.22.RELEASE.jar

- :x: **jackson-databind-2.8.11.3.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/vlaship/ws/commit/189f4086730e4a06b79e39bcd40240d46674604f">189f4086730e4a06b79e39bcd40240d46674604f</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.4 mishandles the interaction between serialization gadgets and typing, related to com.ibatis.sqlmap.engine.transaction.jta.JtaTransactionConfig (aka ibatis-sqlmap).

<p>Publish Date: 2020-03-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-9547>CVE-2020-9547</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-9547">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-9547</a></p>

<p>Release Date: 2020-03-02</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.10.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_reli | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api path to dependency file tmp ws scm ws build gradle path to vulnerable library root gradle caches modules files com fasterxml jackson core jackson databind jackson databind jar root gradle caches modules files com fasterxml jackson core jackson databind jackson databind jar dependency hierarchy spring boot starter websocket release jar root library spring boot starter web release jar x jackson databind jar vulnerable library found in head commit a href vulnerability details fasterxml jackson databind x before mishandles the interaction between serialization gadgets and typing related to com ibatis sqlmap engine transaction jta jtatransactionconfig aka ibatis sqlmap publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution com fasterxml jackson core jackson databind step up your open source security game with whitesource | 0 |

167,439 | 6,338,381,020 | IssuesEvent | 2017-07-27 04:11:25 | apex/up | https://api.github.com/repos/apex/up | opened | Better error when creds are missing | Priority UX | will do the wizard style thing later to get people set up, in Beta or 0.1.0, but for now the default AWS stuff sucks:

```

⨯ error deploying to us-west-2: fetching function config: NoCredentialProviders: no valid providers in chain. Deprecated.

For verbose messaging see aws.Config.CredentialsChainVerboseErrors

``` | 1.0 | Better error when creds are missing - will do the wizard style thing later to get people set up, in Beta or 0.1.0, but for now the default AWS stuff sucks:

```

⨯ error deploying to us-west-2: fetching function config: NoCredentialProviders: no valid providers in chain. Deprecated.

For verbose messaging see aws.Config.CredentialsChainVerboseErrors

``` | non_reli | better error when creds are missing will do the wizard style thing later to get people set up in beta or but for now the default aws stuff sucks ⨯ error deploying to us west fetching function config nocredentialproviders no valid providers in chain deprecated for verbose messaging see aws config credentialschainverboseerrors | 0 |

550 | 8,553,686,135 | IssuesEvent | 2018-11-08 02:04:49 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | opened | Repeatedly calling Utf8JsonReader.Read() after a JsonReaderException has been thrown should continue to fail deterministically. | area-System.Text.Json tenet-reliability up-for-grabs | This was brought up in the API review. We should validate that multi retries to read after we enter a failure state continues to fail reliably and deterministically.

cc @marek-safar, @GrabYourPitchforks | True | Repeatedly calling Utf8JsonReader.Read() after a JsonReaderException has been thrown should continue to fail deterministically. - This was brought up in the API review. We should validate that multi retries to read after we enter a failure state continues to fail reliably and deterministically.

cc @marek-safar, @GrabYourPitchforks | reli | repeatedly calling read after a jsonreaderexception has been thrown should continue to fail deterministically this was brought up in the api review we should validate that multi retries to read after we enter a failure state continues to fail reliably and deterministically cc marek safar grabyourpitchforks | 1 |

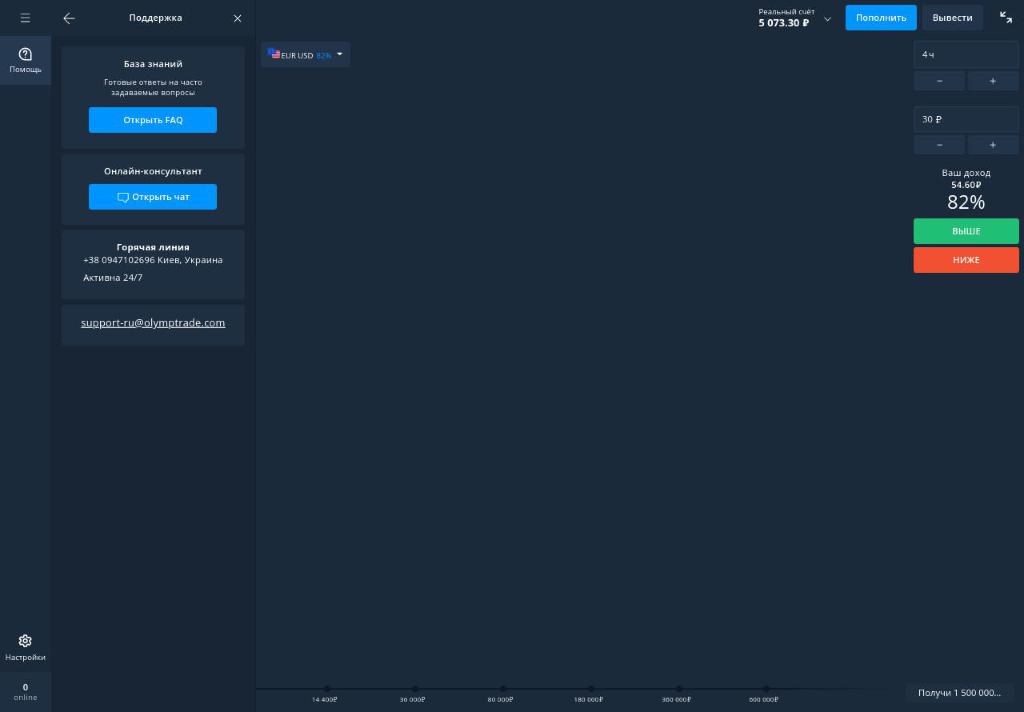

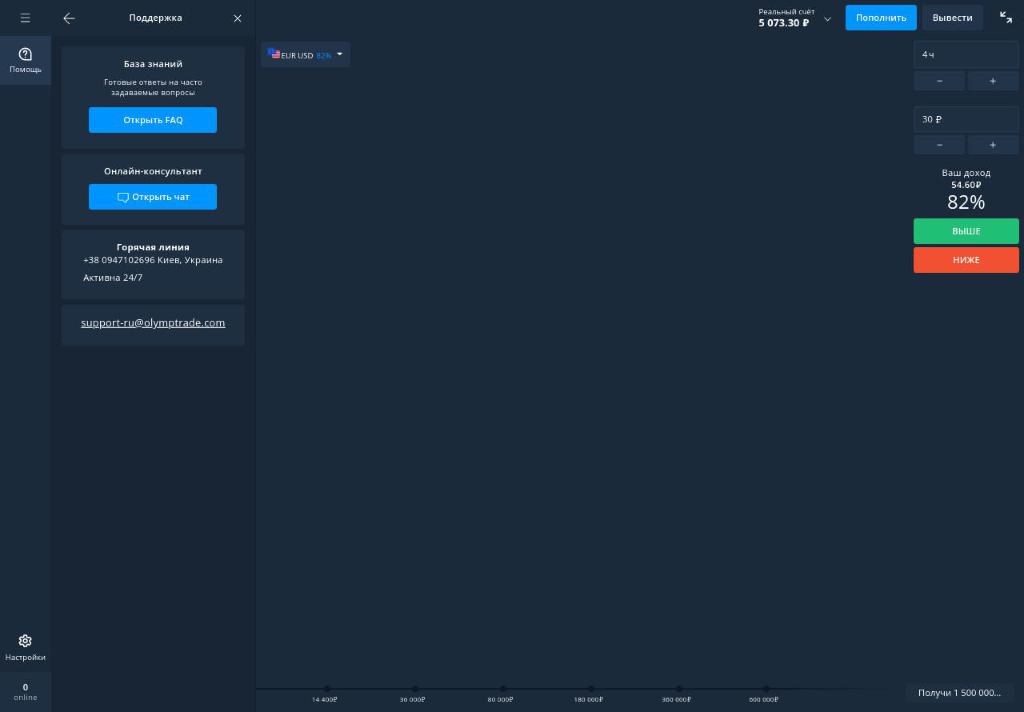

360,147 | 10,684,759,617 | IssuesEvent | 2019-10-22 11:13:22 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | olymptrade.com - see bug description | browser-firefox engine-gecko priority-normal | <!-- @browser: Firefox 71.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:71.0) Gecko/20100101 Firefox/71.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://olymptrade.com/platform

**Browser / Version**: Firefox 71.0

**Operating System**: Linux

**Tested Another Browser**: Yes

**Problem type**: Something else

**Description**: Charts on the trading site won't load

**Steps to Reproduce**:

This is a binary options trading site. Today the charts could not be loaded (displayed). In Opera they are loaded.

[](https://webcompat.com/uploads/2019/10/527b497c-079a-43c3-b2b0-b3de86e778ba.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20191014171118</li><li>channel: aurora</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

<p>Console Messages:</p>

<pre>

[{'level': 'error', 'log': [' / ServiceWorker https://olymptrade.com/: .'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:1839544'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'warn', 'log': ['This page uses the non standard property zoom. Consider using calc() in the relevant property values, or using transform along with transform-origin: 0 0.'], 'uri': 'https://livetex-widget.nanotech42.com/js/ui.js?v=7.1.362', 'pos': '1:481815'}, {'level': 'warn', 'log': [' https://balancer-cloud.livetex.ru/get-server/?site_id=154580&__fallback__&=&_m=GET&_c=njr_1_callback&_t=jsonp&_rnd=4scxgu7m96k&_h[lt-origin]=account%3A222283%3Asite%3A154580 , MIME- (text/plain) JavaScript.'], 'uri': 'https://livetex-widget.nanotech42.com/js/iframe.html', 'pos': '0:0'}, {'level': 'warn', 'log': [' <script> https://io5-production-3-ltx242.livetex.ru/visitor/auth?__fallback__&=&_m=POST&_c=njr_2_callback&_t=jsonp&_=%7B%22is_mobile%22%3Afalse%7D&_rnd=65nd8g7gt0n&_h[lt-origin]=account%3A222283%3Asite%3A154580 .'], 'uri': 'https://livetex-widget.nanotech42.com/js/iframe.html', 'pos': '1:1'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'warn', 'log': [' onmozfullscreenchange .'], 'uri': 'https://olymptrade.com/platform', 'pos': '0:0'}, {'level': 'warn', 'log': [' onmozfullscreenerror .'], 'uri': 'https://olymptrade.com/platform', 'pos': '0:0'}]

</pre>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | olymptrade.com - see bug description - <!-- @browser: Firefox 71.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:71.0) Gecko/20100101 Firefox/71.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://olymptrade.com/platform

**Browser / Version**: Firefox 71.0

**Operating System**: Linux

**Tested Another Browser**: Yes

**Problem type**: Something else

**Description**: Charts on the trading site won't load

**Steps to Reproduce**:

This is a binary options trading site. Today the charts could not be loaded (displayed). In Opera they are loaded.

[](https://webcompat.com/uploads/2019/10/527b497c-079a-43c3-b2b0-b3de86e778ba.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20191014171118</li><li>channel: aurora</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

<p>Console Messages:</p>

<pre>

[{'level': 'error', 'log': [' / ServiceWorker https://olymptrade.com/: .'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:1839544'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'warn', 'log': ['This page uses the non standard property zoom. Consider using calc() in the relevant property values, or using transform along with transform-origin: 0 0.'], 'uri': 'https://livetex-widget.nanotech42.com/js/ui.js?v=7.1.362', 'pos': '1:481815'}, {'level': 'warn', 'log': [' https://balancer-cloud.livetex.ru/get-server/?site_id=154580&__fallback__&=&_m=GET&_c=njr_1_callback&_t=jsonp&_rnd=4scxgu7m96k&_h[lt-origin]=account%3A222283%3Asite%3A154580 , MIME- (text/plain) JavaScript.'], 'uri': 'https://livetex-widget.nanotech42.com/js/iframe.html', 'pos': '0:0'}, {'level': 'warn', 'log': [' <script> https://io5-production-3-ltx242.livetex.ru/visitor/auth?__fallback__&=&_m=POST&_c=njr_2_callback&_t=jsonp&_=%7B%22is_mobile%22%3Afalse%7D&_rnd=65nd8g7gt0n&_h[lt-origin]=account%3A222283%3Asite%3A154580 .'], 'uri': 'https://livetex-widget.nanotech42.com/js/iframe.html', 'pos': '1:1'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ws_chat.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:549622'}, {'level': 'error', 'log': ['Firefox wss://olymptrade.com/ds/v4.'], 'uri': 'https://cdn1.olymptrade.com/1.0.2187/public/js/platformBinary.e1776ab7.js', 'pos': '1:921675'}, {'level': 'error', 'log': ['uncaught exception: Object'], 'uri': '', 'pos': '0:0'}, {'level': 'warn', 'log': [' onmozfullscreenchange .'], 'uri': 'https://olymptrade.com/platform', 'pos': '0:0'}, {'level': 'warn', 'log': [' onmozfullscreenerror .'], 'uri': 'https://olymptrade.com/platform', 'pos': '0:0'}]

</pre>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_reli | olymptrade com see bug description url browser version firefox operating system linux tested another browser yes problem type something else description charts on the trading site won t load steps to reproduce this is a binary options trading site today the charts could not be loaded displayed in opera they are loaded browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel aurora hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false console messages uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level warn log uri pos level warn log account mime text plain javascript uri pos level warn log account uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level error log uri pos level warn log uri pos level warn log uri pos from with ❤️ | 0 |

181,654 | 14,072,873,509 | IssuesEvent | 2020-11-04 03:05:11 | red/red | https://api.github.com/repos/red/red | closed | Linux->Windows cross-compilation doesn't work | status.built status.tested type.bug | **Describe the bug**

Wanted to explore this opportunity because apparently R2 for Linux compiles about ~10% faster than R2 for Windows...

```

-=== Red Compiler 0.6.4 ===-

Compiling /home/test/1/3.red ...

...compilation time : 901 ms

Target: MSDOS

Compiling to native code...

*** Red/System Compiler Internal Error: Script Error : int-ptr! has no value

*** Where: none

*** Near: [file-sum: make struct! int-ptr! [0]]

```

**To reproduce**

1. `echo Red [] print \"windows\">3.red`

2. `red -r -e -t MSDOS 3.red`

(or -t Windows but that requires needs: view in the header)

**Expected behavior**

Compiles.

**Platform version**

```

Red 0.6.4 for Linux built 1-Nov-2020/23:51:29+03:00 commit #2d05900

```

| 1.0 | Linux->Windows cross-compilation doesn't work - **Describe the bug**

Wanted to explore this opportunity because apparently R2 for Linux compiles about ~10% faster than R2 for Windows...

```

-=== Red Compiler 0.6.4 ===-

Compiling /home/test/1/3.red ...

...compilation time : 901 ms

Target: MSDOS

Compiling to native code...

*** Red/System Compiler Internal Error: Script Error : int-ptr! has no value

*** Where: none

*** Near: [file-sum: make struct! int-ptr! [0]]

```

**To reproduce**

1. `echo Red [] print \"windows\">3.red`

2. `red -r -e -t MSDOS 3.red`

(or -t Windows but that requires needs: view in the header)

**Expected behavior**

Compiles.

**Platform version**

```

Red 0.6.4 for Linux built 1-Nov-2020/23:51:29+03:00 commit #2d05900

```

| non_reli | linux windows cross compilation doesn t work describe the bug wanted to explore this opportunity because apparently for linux compiles about faster than for windows red compiler compiling home test red compilation time ms target msdos compiling to native code red system compiler internal error script error int ptr has no value where none near to reproduce echo red print windows red red r e t msdos red or t windows but that requires needs view in the header expected behavior compiles platform version red for linux built nov commit | 0 |

3,631 | 3,509,383,387 | IssuesEvent | 2016-01-08 22:26:51 | godotengine/godot | https://api.github.com/repos/godotengine/godot | opened | GridMap is outdated when compared to TileMap | enhancement topic:core usability | There is no way to set friction for GridMap, or set physic layer/mask. The missing properties are in the 'Collision' section of TileMap:

| True | GridMap is outdated when compared to TileMap - There is no way to set friction for GridMap, or set physic layer/mask. The missing properties are in the 'Collision' section of TileMap:

| non_reli | gridmap is outdated when compared to tilemap there is no way to set friction for gridmap or set physic layer mask the missing properties are in the collision section of tilemap | 0 |

77,258 | 7,569,666,431 | IssuesEvent | 2018-04-23 06:00:47 | backdrop/backdrop-issues | https://api.github.com/repos/backdrop/backdrop-issues | closed | Use new JavaScript to detect timezone | pr - reviewed & tested by the community status - has pull request type - feature request | ## Describe your issue or idea

Modern JavaScript can now detect timezones directly from the browser, rather than just getting the offset from GMT. We should use this increased accuracy method to set the site and user timezones when displaying a timezone element.

~Because we can more accurately detect timezone, we should also then hide the timezone from the installer. Similar to Clean URLs detection: if we can accurately determine it through JavaScript, hide it from the form as a hidden element. Of course if needed, the timezone can always be changed later, and removing the timezone reduces friction in site configuration~

To limit the scope of this issue and get the clear improvement in, we'll just worry about adding the new JavaScript and leave the removal of the timezone for later (if at all).

### Steps to reproduce (if reporting a bug)

- Set your site timezone to an unpopular location that matches a major one. For example, I set my timezone to Phoenix, AZ as it has different daylight savings rules than the rest of the US.

- Install Backdrop, during the installer, note instead of "America/Phoenix", the timezone selected is "America/Los_Angeles" (in the winter) OR "America/Denver" (in the summer). Neither is the correct timezone as those areas have different daylight savings rules than Phoenix.

### Actual behavior (if reporting a bug)

- The wrong timezone is selected

### Expected behavior (if reporting a bug)

- The right timezone, matching my operating system should be selected.

- And we should ultimately remove this field entirely, once we know that the right value is being prepopulated.

---

PR by @quicksketch: https://github.com/backdrop/backdrop/pull/2127

| 1.0 | Use new JavaScript to detect timezone - ## Describe your issue or idea

Modern JavaScript can now detect timezones directly from the browser, rather than just getting the offset from GMT. We should use this increased accuracy method to set the site and user timezones when displaying a timezone element.

~Because we can more accurately detect timezone, we should also then hide the timezone from the installer. Similar to Clean URLs detection: if we can accurately determine it through JavaScript, hide it from the form as a hidden element. Of course if needed, the timezone can always be changed later, and removing the timezone reduces friction in site configuration~

To limit the scope of this issue and get the clear improvement in, we'll just worry about adding the new JavaScript and leave the removal of the timezone for later (if at all).

### Steps to reproduce (if reporting a bug)

- Set your site timezone to an unpopular location that matches a major one. For example, I set my timezone to Phoenix, AZ as it has different daylight savings rules than the rest of the US.

- Install Backdrop, during the installer, note instead of "America/Phoenix", the timezone selected is "America/Los_Angeles" (in the winter) OR "America/Denver" (in the summer). Neither is the correct timezone as those areas have different daylight savings rules than Phoenix.

### Actual behavior (if reporting a bug)

- The wrong timezone is selected

### Expected behavior (if reporting a bug)

- The right timezone, matching my operating system should be selected.

- And we should ultimately remove this field entirely, once we know that the right value is being prepopulated.

---

PR by @quicksketch: https://github.com/backdrop/backdrop/pull/2127

| non_reli | use new javascript to detect timezone describe your issue or idea modern javascript can now detect timezones directly from the browser rather than just getting the offset from gmt we should use this increased accuracy method to set the site and user timezones when displaying a timezone element because we can more accurately detect timezone we should also then hide the timezone from the installer similar to clean urls detection if we can accurately determine it through javascript hide it from the form as a hidden element of course if needed the timezone can always be changed later and removing the timezone reduces friction in site configuration to limit the scope of this issue and get the clear improvement in we ll just worry about adding the new javascript and leave the removal of the timezone for later if at all steps to reproduce if reporting a bug set your site timezone to an unpopular location that matches a major one for example i set my timezone to phoenix az as it has different daylight savings rules than the rest of the us install backdrop during the installer note instead of america phoenix the timezone selected is america los angeles in the winter or america denver in the summer neither is the correct timezone as those areas have different daylight savings rules than phoenix actual behavior if reporting a bug the wrong timezone is selected expected behavior if reporting a bug the right timezone matching my operating system should be selected and we should ultimately remove this field entirely once we know that the right value is being prepopulated pr by quicksketch | 0 |

681,713 | 23,321,031,748 | IssuesEvent | 2022-08-08 16:23:34 | TerryCavanagh/diceydungeons.com | https://api.github.com/repos/TerryCavanagh/diceydungeons.com | closed | Once per battle cards appear in Next Up even after they've been used | reported in launch v1.0 C - Rare/requires weird actions 3 - Has Positive/Neutral Effects Priority | Noticed with Encore finale card, used it to take another turn and then I cycled my deck. It was in the Next Up section despite not actually being drawn since it is once per battle. | 1.0 | Once per battle cards appear in Next Up even after they've been used - Noticed with Encore finale card, used it to take another turn and then I cycled my deck. It was in the Next Up section despite not actually being drawn since it is once per battle. | non_reli | once per battle cards appear in next up even after they ve been used noticed with encore finale card used it to take another turn and then i cycled my deck it was in the next up section despite not actually being drawn since it is once per battle | 0 |

269,688 | 23,459,219,502 | IssuesEvent | 2022-08-16 11:42:10 | wazuh/wazuh-qa | https://api.github.com/repos/wazuh/wazuh-qa | opened | Release 4.3.7 - Release Candidate 1 - E2E UX tests - Wazuh Dashboard | team/qa subteam/qa-storm type/manual-testing | The following issue aims to run the specified test for the current release candidate, report the results, and open new issues for any encountered errors.

## Modules tests information

|||

|----------------------------------|------ |

| **Main release candidate issue** | [#14188](https://github.com/wazuh/wazuh/issues/) |

| **Main E2E UX test issue** | [#14260](https://github.com/wazuh/wazuh/issues/) |

| **Version** | 4.3.7 |

| **Release candidate #** | RC1 |

| **Tag** | [v4.3.7-rc1](https://github.com/wazuh/wazuh/tree/v4.3.7-rc1) |

| **Previous modules tests issue** | |

## Installation procedure

- Wazuh Indexer

- [Step by Step](https://documentation.wazuh.com/current/installation-guide/wazuh-indexer/step-by-step.html)

- Wazuh Server

- [Step by Step](https://documentation.wazuh.com/current/installation-guide/wazuh-indexer/step-by-step.html)

- Wazuh Dashboard

- [Step by Step](https://documentation.wazuh.com/current/installation-guide/wazuh-indexer/step-by-step.html)

- Wazuh Agent

- Wazuh WUI one-liner deploy IP GROUP (created beforehand)

## Test description

Best efford to test Wazuh dashboard package. Think critically and at least review/test:

- [ ] [Wazuh dashboard package specs](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167046213)

- [ ] [Dashboard package size](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167049008)

- [ ] [Dashboard package metadata (description)](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167054484)

- [ ] [Dashboard package digital signature](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167055864)

- [ ] [Installed files location, size and permissions](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167056232)

- [ ] [Installation footprint (check that no unnecessary files are modified/broken in the file system. For example that operating system files do keep their right owner/pemissions and that the installer did not break the system.)](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167084021)

- [ ] [Installed service (test that it works correctly)](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167105075)

- [ ] [Wazuh Dashboard logs when installed](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167023715)

- [ ] [Wazuh Dashboard configuration (Try to find anomalies compared with 4.3.4)](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167113724)

- [ ] [Wazuh Dashboard (included the Wazuh WUI) communication with Wazuh manager API and Wazuh indexer](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167131188)

- [ ] [Register Wazuh Agents](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167152027)

- [ ] [Basic browsing throguh the WUI](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167224904)

- [ ] [Basic experience with WUI performance.](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167248521)

- [ ] Anything else that could have been overlooked when creating the new package

## Test report procedure

All test results must have one of the following statuses:

| | |

|---------------------------------|--------------------------------------------|

| :green_circle: | All checks passed. |

| :red_circle: | There is at least one failed result. |

| :yellow_circle: | There is at least one expected failure or skipped test and no failures. |

Any failing test must be properly addressed with a new issue, detailing the error and the possible cause.

An extended report of the test results can be attached as a ZIP or TXT file. Please attach any documents, screenshots, or tables to the issue update with the results. This report can be used by the auditors to dig deeper into any possible failures and details.

## Conclusions

All tests have been executed and the results can be found in the issue updates.

| **Status** | **Test** | **Failure type** | **Notes** |

|----------------|-------------|---------------------|----------------|

| ⚫ | Wazuh dashboard package specs | Functional |

| ⚫ | Dashboard package size | Functional |

| ⚫ | Dashboard package metadata (description) | Usability |

| ⚫ | Dashboard package digital signature | Usability |

| ⚫ | Installed files location, size and permissions | Functional |

| ⚫ | Installation footprint | Functional |

| ⚫ | Wazuh Dashboard logs when installed | Functional |

| ⚫ | Wazuh Dashboard configuration | Functional |

| ⚫ | Wazuh Dashboard (included the Wazuh WUI) communication with Wazuh manager API and Wazuh indexer | Functional |

| ⚫ | Register Wazuh Agents | Functional |

| ⚫ | Basic browsing through the WUI | Usability |

| ⚫ | Basic experience with WUI performance | Usability |

## Auditors validation

The definition of done for this one is the validation of the conclusions and the test results from all auditors.

All checks from below must be accepted in order to close this issue.

- [ ]

| 1.0 | Release 4.3.7 - Release Candidate 1 - E2E UX tests - Wazuh Dashboard - The following issue aims to run the specified test for the current release candidate, report the results, and open new issues for any encountered errors.

## Modules tests information

|||

|----------------------------------|------ |

| **Main release candidate issue** | [#14188](https://github.com/wazuh/wazuh/issues/) |

| **Main E2E UX test issue** | [#14260](https://github.com/wazuh/wazuh/issues/) |

| **Version** | 4.3.7 |

| **Release candidate #** | RC1 |

| **Tag** | [v4.3.7-rc1](https://github.com/wazuh/wazuh/tree/v4.3.7-rc1) |

| **Previous modules tests issue** | |

## Installation procedure

- Wazuh Indexer

- [Step by Step](https://documentation.wazuh.com/current/installation-guide/wazuh-indexer/step-by-step.html)

- Wazuh Server

- [Step by Step](https://documentation.wazuh.com/current/installation-guide/wazuh-indexer/step-by-step.html)

- Wazuh Dashboard

- [Step by Step](https://documentation.wazuh.com/current/installation-guide/wazuh-indexer/step-by-step.html)

- Wazuh Agent

- Wazuh WUI one-liner deploy IP GROUP (created beforehand)

## Test description

Best efford to test Wazuh dashboard package. Think critically and at least review/test:

- [ ] [Wazuh dashboard package specs](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167046213)

- [ ] [Dashboard package size](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167049008)

- [ ] [Dashboard package metadata (description)](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167054484)

- [ ] [Dashboard package digital signature](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167055864)

- [ ] [Installed files location, size and permissions](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167056232)

- [ ] [Installation footprint (check that no unnecessary files are modified/broken in the file system. For example that operating system files do keep their right owner/pemissions and that the installer did not break the system.)](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167084021)

- [ ] [Installed service (test that it works correctly)](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167105075)

- [ ] [Wazuh Dashboard logs when installed](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167023715)

- [ ] [Wazuh Dashboard configuration (Try to find anomalies compared with 4.3.4)](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167113724)

- [ ] [Wazuh Dashboard (included the Wazuh WUI) communication with Wazuh manager API and Wazuh indexer](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167131188)

- [ ] [Register Wazuh Agents](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167152027)

- [ ] [Basic browsing throguh the WUI](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167224904)

- [ ] [Basic experience with WUI performance.](https://github.com/wazuh/wazuh/issues/14051#issuecomment-1167248521)

- [ ] Anything else that could have been overlooked when creating the new package

## Test report procedure

All test results must have one of the following statuses:

| | |

|---------------------------------|--------------------------------------------|

| :green_circle: | All checks passed. |

| :red_circle: | There is at least one failed result. |

| :yellow_circle: | There is at least one expected failure or skipped test and no failures. |

Any failing test must be properly addressed with a new issue, detailing the error and the possible cause.

An extended report of the test results can be attached as a ZIP or TXT file. Please attach any documents, screenshots, or tables to the issue update with the results. This report can be used by the auditors to dig deeper into any possible failures and details.

## Conclusions

All tests have been executed and the results can be found in the issue updates.

| **Status** | **Test** | **Failure type** | **Notes** |

|----------------|-------------|---------------------|----------------|

| ⚫ | Wazuh dashboard package specs | Functional |

| ⚫ | Dashboard package size | Functional |

| ⚫ | Dashboard package metadata (description) | Usability |

| ⚫ | Dashboard package digital signature | Usability |

| ⚫ | Installed files location, size and permissions | Functional |

| ⚫ | Installation footprint | Functional |

| ⚫ | Wazuh Dashboard logs when installed | Functional |

| ⚫ | Wazuh Dashboard configuration | Functional |

| ⚫ | Wazuh Dashboard (included the Wazuh WUI) communication with Wazuh manager API and Wazuh indexer | Functional |

| ⚫ | Register Wazuh Agents | Functional |

| ⚫ | Basic browsing through the WUI | Usability |

| ⚫ | Basic experience with WUI performance | Usability |

## Auditors validation

The definition of done for this one is the validation of the conclusions and the test results from all auditors.

All checks from below must be accepted in order to close this issue.

- [ ]

| non_reli | release release candidate ux tests wazuh dashboard the following issue aims to run the specified test for the current release candidate report the results and open new issues for any encountered errors modules tests information main release candidate issue main ux test issue version release candidate tag previous modules tests issue installation procedure wazuh indexer wazuh server wazuh dashboard wazuh agent wazuh wui one liner deploy ip group created beforehand test description best efford to test wazuh dashboard package think critically and at least review test anything else that could have been overlooked when creating the new package test report procedure all test results must have one of the following statuses green circle all checks passed red circle there is at least one failed result yellow circle there is at least one expected failure or skipped test and no failures any failing test must be properly addressed with a new issue detailing the error and the possible cause an extended report of the test results can be attached as a zip or txt file please attach any documents screenshots or tables to the issue update with the results this report can be used by the auditors to dig deeper into any possible failures and details conclusions all tests have been executed and the results can be found in the issue updates status test failure type notes ⚫ wazuh dashboard package specs functional ⚫ dashboard package size functional ⚫ dashboard package metadata description usability ⚫ dashboard package digital signature usability ⚫ installed files location size and permissions functional ⚫ installation footprint functional ⚫ wazuh dashboard logs when installed functional ⚫ wazuh dashboard configuration functional ⚫ wazuh dashboard included the wazuh wui communication with wazuh manager api and wazuh indexer functional ⚫ register wazuh agents functional ⚫ basic browsing through the wui usability ⚫ basic experience with wui performance usability auditors validation the definition of done for this one is the validation of the conclusions and the test results from all auditors all checks from below must be accepted in order to close this issue | 0 |

260,630 | 19,679,330,167 | IssuesEvent | 2022-01-11 15:22:10 | elastic/ecs | https://api.github.com/repos/elastic/ecs | closed | Canvas representation of ECS fields | documentation | @MikePaquette created a Canvas representation of our ECS fields and offered that it might be helpful to link to it from an issue in this repo in order to make it accessible, later. I'm creating this issue so that we don't lose sight of this resource. | 1.0 | Canvas representation of ECS fields - @MikePaquette created a Canvas representation of our ECS fields and offered that it might be helpful to link to it from an issue in this repo in order to make it accessible, later. I'm creating this issue so that we don't lose sight of this resource. | non_reli | canvas representation of ecs fields mikepaquette created a canvas representation of our ecs fields and offered that it might be helpful to link to it from an issue in this repo in order to make it accessible later i m creating this issue so that we don t lose sight of this resource | 0 |

1,333 | 15,053,953,450 | IssuesEvent | 2021-02-03 16:51:35 | microsoft/VFSForGit | https://api.github.com/repos/microsoft/VFSForGit | closed | `gvfs clone` failing to find `GVFS.Hooks.exe` | affects: mount-reliability type: bug | Some users are struggling with `gvfs clone` with a warning that `GVFS.Hooks.exe` is missing. | True | `gvfs clone` failing to find `GVFS.Hooks.exe` - Some users are struggling with `gvfs clone` with a warning that `GVFS.Hooks.exe` is missing. | reli | gvfs clone failing to find gvfs hooks exe some users are struggling with gvfs clone with a warning that gvfs hooks exe is missing | 1 |

214,432 | 24,077,699,103 | IssuesEvent | 2022-09-19 01:01:30 | AkshayMukkavilli/Analyzing-the-Significance-of-Structure-in-Amazon-Review-Data-Using-Machine-Learning-Approaches | https://api.github.com/repos/AkshayMukkavilli/Analyzing-the-Significance-of-Structure-in-Amazon-Review-Data-Using-Machine-Learning-Approaches | opened | CVE-2022-36015 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2022-36015 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl">https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</a></p>

<p>Path to dependency file: /FinalProject/requirements.txt</p>

<p>Path to vulnerable library: /teSource-ArchiveExtractor_8b9e071c-3b11-4aa9-ba60-cdeb60d053b7/20190525011350_65403/20190525011256_depth_0/9/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64/tensorflow-1.13.1.data/purelib/tensorflow</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

TensorFlow is an open source platform for machine learning. When `RangeSize` receives values that do not fit into an `int64_t`, it crashes. We have patched the issue in GitHub commit 37e64539cd29fcfb814c4451152a60f5d107b0f0. The fix will be included in TensorFlow 2.10.0. We will also cherrypick this commit on TensorFlow 2.9.1, TensorFlow 2.8.1, and TensorFlow 2.7.2, as these are also affected and still in supported range. There are no known workarounds for this issue.

<p>Publish Date: 2022-09-16

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-36015>CVE-2022-36015</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-rh87-q4vg-m45j">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-rh87-q4vg-m45j</a></p>

<p>Release Date: 2022-09-16</p>

<p>Fix Resolution: tensorflow - 2.7.2,2.8.1,2.9.1,2.10.0, tensorflow-cpu - 2.7.2,2.8.1,2.9.1,2.10.0, tensorflow-gpu - 2.7.2,2.8.1,2.9.1,2.10.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-36015 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2022-36015 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl">https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</a></p>

<p>Path to dependency file: /FinalProject/requirements.txt</p>

<p>Path to vulnerable library: /teSource-ArchiveExtractor_8b9e071c-3b11-4aa9-ba60-cdeb60d053b7/20190525011350_65403/20190525011256_depth_0/9/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64/tensorflow-1.13.1.data/purelib/tensorflow</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>