Unnamed: 0 int64 3 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 2 742 | labels stringlengths 4 431 | body stringlengths 5 239k | index stringclasses 10

values | text_combine stringlengths 96 240k | label stringclasses 2

values | text stringlengths 96 200k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

22,630 | 15,339,793,647 | IssuesEvent | 2021-02-27 03:36:04 | binarythistle/DevWorkbench | https://api.github.com/repos/binarythistle/DevWorkbench | closed | Set up Azure Dev Ops Pipeline | Infrastructure | - [x] Establish DevOps Pipeline

- [x] Card is done when commit leads to deploy on Azure

**NOTE:** At the time of writing BalzorWASM apps can only be deployed to a **Windows-based** Azure App Service, (deploy to Linux "Appears" to work, but does not). | 1.0 | Set up Azure Dev Ops Pipeline - - [x] Establish DevOps Pipeline

- [x] Card is done when commit leads to deploy on Azure

**NOTE:** At the time of writing BalzorWASM apps can only be deployed to a **Windows-based** Azure App Service, (deploy to Linux "Appears" to work, but does not). | non_usab | set up azure dev ops pipeline establish devops pipeline card is done when commit leads to deploy on azure note at the time of writing balzorwasm apps can only be deployed to a windows based azure app service deploy to linux appears to work but does not | 0 |

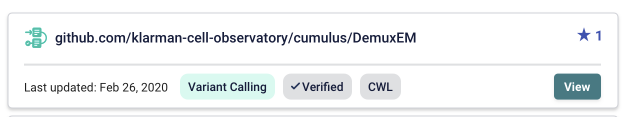

39,574 | 16,046,608,048 | IssuesEvent | 2021-04-22 14:17:47 | dockstore/dockstore | https://api.github.com/repos/dockstore/dockstore | opened | Web-service to return whether an entire entry is verified or not | enhancement gui web-service | **Is your feature request related to a problem? Please describe.**

What we want(verified tag) at e.g. https://dev.dockstore.net/organizations/bdcatalyst/collections/Cumulus:

Currently, the web service pro... | 1.0 | Web-service to return whether an entire entry is verified or not - **Is your feature request related to a problem? Please describe.**

What we want(verified tag) at e.g. https://dev.dockstore.net/organizations/bdcatalyst/collections/Cumulus:

edit dialog: day change resets time

| 1.0 | 0004858:

edit dialog: day change resets time - **Reported by cweiss on 21 Sep 2011 16:10**

**Version:** Maischa (2011-05-3)

edit dialog: day change resets time

| non_usab | edit dialog day change resets time reported by cweiss on sep version maischa edit dialog day change resets time | 0 |

673,796 | 23,031,568,928 | IssuesEvent | 2022-07-22 14:23:17 | epam/Indigo | https://api.github.com/repos/epam/Indigo | closed | Versions 1.5 and later conflict with xgboost | Bug Indigo API High priority | **Steps to Reproduce**

1. Install Indigo version 1.5 or later using pip3 from the repository or a downloaded wheel.

2. In a Python environment, import and initialize Indigo:

from indigo import *

indigo = Indigo()

3. Instantiate and run xgboost XGBClassifier on any dataset:

import num... | 1.0 | Versions 1.5 and later conflict with xgboost - **Steps to Reproduce**

1. Install Indigo version 1.5 or later using pip3 from the repository or a downloaded wheel.

2. In a Python environment, import and initialize Indigo:

from indigo import *

indigo = Indigo()

3. Instantiate and run xgboost XGBC... | non_usab | versions and later conflict with xgboost steps to reproduce install indigo version or later using from the repository or a downloaded wheel in a python environment import and initialize indigo from indigo import indigo indigo instantiate and run xgboost xgbclas... | 0 |

2,775 | 3,163,768,357 | IssuesEvent | 2015-09-20 16:38:00 | medialize/ally.js | https://api.github.com/repos/medialize/ally.js | opened | handle unwanted focus-events in focus/disable | bug focusable | * if an element gains focus that has ['data-ally-disabled'] it must be blur()ed

* if an element gains focus that matches the filter-critera it must be blur()ed

some elements might not dispatch `focus` event, so we need to observe `document.activeElement` to be sure. | True | handle unwanted focus-events in focus/disable - * if an element gains focus that has ['data-ally-disabled'] it must be blur()ed

* if an element gains focus that matches the filter-critera it must be blur()ed

some elements might not dispatch `focus` event, so we need to observe `document.activeElement` to be sure. | usab | handle unwanted focus events in focus disable if an element gains focus that has it must be blur ed if an element gains focus that matches the filter critera it must be blur ed some elements might not dispatch focus event so we need to observe document activeelement to be sure | 1 |

26,579 | 26,993,346,575 | IssuesEvent | 2023-02-09 21:56:49 | bevyengine/bevy | https://api.github.com/repos/bevyengine/bevy | closed | Need a way to call the `setup()` of the plugins that have been registered in the App manually | C-Usability A-App | ## What problem does this solve or what need does it fill?

Due to changes in [#7046](https://github.com/bevyengine/bevy/pull/7046), it is now necessary to have a way to call the `setup` function of the plugins that have been registered in the App when using an external event loop to drive a bevy App.

https://gith... | True | Need a way to call the `setup()` of the plugins that have been registered in the App manually - ## What problem does this solve or what need does it fill?

Due to changes in [#7046](https://github.com/bevyengine/bevy/pull/7046), it is now necessary to have a way to call the `setup` function of the plugins that have b... | usab | need a way to call the setup of the plugins that have been registered in the app manually what problem does this solve or what need does it fill due to changes in it is now necessary to have a way to call the setup function of the plugins that have been registered in the app when using an external ev... | 1 |

19,333 | 13,880,295,394 | IssuesEvent | 2020-10-17 18:08:04 | rubyforgood/circulate | https://api.github.com/repos/rubyforgood/circulate | closed | Librarian Appointments page: Adjust display of date at top of page so that it doesn't wrap unnecessarily. | Good First Issue Help Wanted UX / Usability hacktoberfest | On pages like https://circulate-staging.herokuapp.com/admin/appointments?day=2020-10-15 , the date at the top is wrapping. Adjust HTML/CSS so that it doesn't wrap unnecessarily. | True | Librarian Appointments page: Adjust display of date at top of page so that it doesn't wrap unnecessarily. - On pages like https://circulate-staging.herokuapp.com/admin/appointments?day=2020-10-15 , the date at the top is wrapping. Adjust HTML/CSS so that it doesn't wrap unnecessarily. | usab | librarian appointments page adjust display of date at top of page so that it doesn t wrap unnecessarily on pages like the date at the top is wrapping adjust html css so that it doesn t wrap unnecessarily | 1 |

92,134 | 8,352,332,745 | IssuesEvent | 2018-10-02 05:54:36 | EyeSeeTea/malariapp | https://api.github.com/repos/EyeSeeTea/malariapp | closed | Introduce nextScheduleMatrix | HNQIS complexity - med (1-5hr) priority - critical testing type - maintenance | - [ ] Instead of using hardcoded variables create an object returning the values. The object would obtain that value from a hardcoded matrix (List of Lists). When we ask for a value, we provide the server we are connected to. If the server doesn't exist, the default matrix is used (so the current values). Otherwise, th... | 1.0 | Introduce nextScheduleMatrix - - [ ] Instead of using hardcoded variables create an object returning the values. The object would obtain that value from a hardcoded matrix (List of Lists). When we ask for a value, we provide the server we are connected to. If the server doesn't exist, the default matrix is used (so the... | non_usab | introduce nextschedulematrix instead of using hardcoded variables create an object returning the values the object would obtain that value from a hardcoded matrix list of lists when we ask for a value we provide the server we are connected to if the server doesn t exist the default matrix is used so the c... | 0 |

293,775 | 22,088,163,810 | IssuesEvent | 2022-06-01 02:10:46 | maevsi/maevsi | https://api.github.com/repos/maevsi/maevsi | opened | docs(readme): improve onboarding | documentation | Write a step to step guide to get started with maevsi for new developers. | 1.0 | docs(readme): improve onboarding - Write a step to step guide to get started with maevsi for new developers. | non_usab | docs readme improve onboarding write a step to step guide to get started with maevsi for new developers | 0 |

14,272 | 8,970,463,071 | IssuesEvent | 2019-01-29 13:43:09 | lumen-org/lumen | https://api.github.com/repos/lumen-org/lumen | closed | improve dragndrop | Usability | Pain Point: Drag n Drop funktioniert immer noch nicht so richtig.

Verbesserungsvorschlag:

- Abstand zwischen Shelf-Item erhöhen: dadurch bleibt mehr Platz zum 'neu arrangieren'

- Ersetzen von Shelf-Items durch andere verbieten: das möchte man in aller Regel eh nicht! | True | improve dragndrop - Pain Point: Drag n Drop funktioniert immer noch nicht so richtig.

Verbesserungsvorschlag:

- Abstand zwischen Shelf-Item erhöhen: dadurch bleibt mehr Platz zum 'neu arrangieren'

- Ersetzen von Shelf-Items durch andere verbieten: das möchte man in aller Regel eh nicht! | usab | improve dragndrop pain point drag n drop funktioniert immer noch nicht so richtig verbesserungsvorschlag abstand zwischen shelf item erhöhen dadurch bleibt mehr platz zum neu arrangieren ersetzen von shelf items durch andere verbieten das möchte man in aller regel eh nicht | 1 |

14,067 | 16,890,488,014 | IssuesEvent | 2021-06-23 08:39:58 | arcus-azure/arcus.messaging | https://api.github.com/repos/arcus-azure/arcus.messaging | opened | Move `ServiceBusReceiver` to options model for furture-proof message routing | area:message-processing enhancement integration:service-bus | **Is your feature request related to a problem? Please describe.**

Move our `ServiceBusReceiver` model from the router signature to an options model so that we are more safe in the future when we want to add stuff from the Azure Functions/message pump to the router.

**Describe alternatives you've considered**

Addi... | 1.0 | Move `ServiceBusReceiver` to options model for furture-proof message routing - **Is your feature request related to a problem? Please describe.**

Move our `ServiceBusReceiver` model from the router signature to an options model so that we are more safe in the future when we want to add stuff from the Azure Functions/m... | non_usab | move servicebusreceiver to options model for furture proof message routing is your feature request related to a problem please describe move our servicebusreceiver model from the router signature to an options model so that we are more safe in the future when we want to add stuff from the azure functions m... | 0 |

140,932 | 11,383,794,053 | IssuesEvent | 2020-01-29 07:14:43 | GoogleContainerTools/skaffold | https://api.github.com/repos/GoogleContainerTools/skaffold | closed | flake: TestGracefulBuildCancel | kind/bug meta/test-flake priority/p2 | Sometimes OSX travis tests fail with TestGracefulBuildCancel failures. We should eliminate these flakes. | 1.0 | flake: TestGracefulBuildCancel - Sometimes OSX travis tests fail with TestGracefulBuildCancel failures. We should eliminate these flakes. | non_usab | flake testgracefulbuildcancel sometimes osx travis tests fail with testgracefulbuildcancel failures we should eliminate these flakes | 0 |

326,617 | 24,094,200,394 | IssuesEvent | 2022-09-19 17:13:01 | psakievich/spack-manager | https://api.github.com/repos/psakievich/spack-manager | closed | Broken link in docs | documentation | On https://psakievich.github.io/spack-manager/user_profiles/developers/developer_spack_minimum.html, in the last full paragraph, there is a link to https://psakievich.github.io/spack-manager/user_profiles/developers/developer_tutorial.html, which is apparently broken.

@psakievich | 1.0 | Broken link in docs - On https://psakievich.github.io/spack-manager/user_profiles/developers/developer_spack_minimum.html, in the last full paragraph, there is a link to https://psakievich.github.io/spack-manager/user_profiles/developers/developer_tutorial.html, which is apparently broken.

@psakievich | non_usab | broken link in docs on in the last full paragraph there is a link to which is apparently broken psakievich | 0 |

204,335 | 15,438,876,575 | IssuesEvent | 2021-03-07 22:01:20 | trevorNgo/Measure2.0 | https://api.github.com/repos/trevorNgo/Measure2.0 | opened | CS4ZP6 Tester Feedback: Clicking "View Past Jobs" under the "Archive Year Term" section on the Admin homepage does not do anything | tester | **Description:** Clicking the **View Past Jobs** button under the **Archive Year Term** on the homepage when logged in as an **Admin** does not do anything.

**OS:** Windows 10 Enterprise

**Browser:** Chrome Version 89.0.4389.82

**Reproduction steps:**

* Sign in as an **Admin**.

* Click on **View Past Jobs**... | 1.0 | CS4ZP6 Tester Feedback: Clicking "View Past Jobs" under the "Archive Year Term" section on the Admin homepage does not do anything - **Description:** Clicking the **View Past Jobs** button under the **Archive Year Term** on the homepage when logged in as an **Admin** does not do anything.

**OS:** Windows 10 Enterpr... | non_usab | tester feedback clicking view past jobs under the archive year term section on the admin homepage does not do anything description clicking the view past jobs button under the archive year term on the homepage when logged in as an admin does not do anything os windows enterprise ... | 0 |

21,009 | 16,444,554,849 | IssuesEvent | 2021-05-20 17:56:18 | pulumi/pulumi-kubernetes-operator | https://api.github.com/repos/pulumi/pulumi-kubernetes-operator | closed | repoDir not working | bug impact/usability | Only stack files at the root of the repo are working, subdirs using `repoDir` property fails

## Expected behavior

moving the stack files in a subdir and defining the directory via `repoDir` should work

## Current behavior

error:

```json

{

"level": "error",

"ts": 1620396804.025099,

"logger": "co... | True | repoDir not working - Only stack files at the root of the repo are working, subdirs using `repoDir` property fails

## Expected behavior

moving the stack files in a subdir and defining the directory via `repoDir` should work

## Current behavior

error:

```json

{

"level": "error",

"ts": 1620396804.0250... | usab | repodir not working only stack files at the root of the repo are working subdirs using repodir property fails expected behavior moving the stack files in a subdir and defining the directory via repodir should work current behavior error json level error ts logg... | 1 |

23,871 | 23,065,612,977 | IssuesEvent | 2022-07-25 13:42:00 | pulumi/pulumi | https://api.github.com/repos/pulumi/pulumi | closed | deduplicate runtime error messages | area/cli kind/enhancement impact/usability resolution/duplicate | ## overview

we sandwhich error messages with..

```

error: Program failed with an unhandled exception:

```

and...

```

error: an unhandled error occurred: Program exited with a non-zero exit code: 1

```

are these useful to users? if not, should we consider removing them to reduce the wall of error text t... | True | deduplicate runtime error messages - ## overview

we sandwhich error messages with..

```

error: Program failed with an unhandled exception:

```

and...

```

error: an unhandled error occurred: Program exited with a non-zero exit code: 1

```

are these useful to users? if not, should we consider removing th... | usab | deduplicate runtime error messages overview we sandwhich error messages with error program failed with an unhandled exception and error an unhandled error occurred program exited with a non zero exit code are these useful to users if not should we consider removing th... | 1 |

77,900 | 22,037,674,489 | IssuesEvent | 2022-05-28 21:21:14 | dxx-rebirth/dxx-rebirth | https://api.github.com/repos/dxx-rebirth/dxx-rebirth | closed | Build failure: similar/main/automap.cpp:373:30: cannot convert ‘const d2x::player_marker_index’ to ‘d2x::game_marker_index’ | build-failure | <!--

These instructions are wrapped in comment markers. Write your answers outside the comment markers. You may delete the commented text as you go, or leave it in and let the system remove the comments when you submit the issue.

Use this template if the code in master fails to build for you. If your problem hap... | 1.0 | Build failure: similar/main/automap.cpp:373:30: cannot convert ‘const d2x::player_marker_index’ to ‘d2x::game_marker_index’ - <!--

These instructions are wrapped in comment markers. Write your answers outside the comment markers. You may delete the commented text as you go, or leave it in and let the system remove t... | non_usab | build failure similar main automap cpp cannot convert ‘const player marker index’ to ‘ game marker index’ these instructions are wrapped in comment markers write your answers outside the comment markers you may delete the commented text as you go or leave it in and let the system remove the comm... | 0 |

10,631 | 6,827,919,857 | IssuesEvent | 2017-11-08 18:37:54 | dssg/triage | https://api.github.com/repos/dssg/triage | closed | Helpfully report obvious problems with config file | usability-enhancement | There are some problems with input config files that can be easily and helpfully reported on. For instance: if no aggregates or categoricals are present in a feature_aggregation, that feature_aggregation will produce nothing.

Implementation idea: each component could define a common function to validate a config fil... | True | Helpfully report obvious problems with config file - There are some problems with input config files that can be easily and helpfully reported on. For instance: if no aggregates or categoricals are present in a feature_aggregation, that feature_aggregation will produce nothing.

Implementation idea: each component c... | usab | helpfully report obvious problems with config file there are some problems with input config files that can be easily and helpfully reported on for instance if no aggregates or categoricals are present in a feature aggregation that feature aggregation will produce nothing implementation idea each component c... | 1 |

360,343 | 25,286,153,357 | IssuesEvent | 2022-11-16 19:28:49 | supabase/supabase-js | https://api.github.com/repos/supabase/supabase-js | closed | Update supabase-js v2 setSession() example | documentation | # Improve documentation

https://supabase.com/docs/reference/javascript/auth-setsession

## Describe the problem

The example given is inaccurate and doesn't work with the current version of supabase-js. This has been discussed and mentioned in:

[gotrue-js PR473](https://github.com/supabase/gotrue-js/pull/473)

... | 1.0 | Update supabase-js v2 setSession() example - # Improve documentation

https://supabase.com/docs/reference/javascript/auth-setsession

## Describe the problem

The example given is inaccurate and doesn't work with the current version of supabase-js. This has been discussed and mentioned in:

[gotrue-js PR473](http... | non_usab | update supabase js setsession example improve documentation describe the problem the example given is inaccurate and doesn t work with the current version of supabase js this has been discussed and mentioned in describe the improvement update the example to use the refresh tok... | 0 |

95,669 | 10,885,186,380 | IssuesEvent | 2019-11-18 09:54:06 | Perl/perl5 | https://api.github.com/repos/Perl/perl5 | opened | [doc] All man pages' SEE ALSO sections should have standard section numbers added | Needs Triage documentation | [Just the other day](https://debbugs.gnu.org/cgi/bugreport.cgi?bug=38154) we were having an argument about if

$ man perldoc

>

> SEE ALSO

> perlpod, Pod::Perldoc

should instead say

> SEE ALSO

> perlpod(1), Pod::Perldoc(3perl)

I.e., all perl man pages' SEE ALSO sections should have standard... | 1.0 | [doc] All man pages' SEE ALSO sections should have standard section numbers added - [Just the other day](https://debbugs.gnu.org/cgi/bugreport.cgi?bug=38154) we were having an argument about if

$ man perldoc

>

> SEE ALSO

> perlpod, Pod::Perldoc

should instead say

> SEE ALSO

> perlpod(1), Pod... | non_usab | all man pages see also sections should have standard section numbers added we were having an argument about if man perldoc see also perlpod pod perldoc should instead say see also perlpod pod perldoc i e all perl man pages see also sections should have st... | 0 |

347,418 | 31,163,359,708 | IssuesEvent | 2023-08-16 17:40:26 | gravitational/teleport | https://api.github.com/repos/gravitational/teleport | opened | `TestKubeServerWatcher ` flakiness | flaky tests | ## Failure

#### Link(s) to logs

- https://github.com/gravitational/teleport/actions/runs/5881833472/job/15951108628

#### Relevant snippet

```

=== FAIL: lib/services TestKubeServerWatcher (0.04s)

watcher_test.go:1073:

Error Trace: /__w/teleport/teleport/lib/services/watcher_test.go:1073

... | 1.0 | `TestKubeServerWatcher ` flakiness - ## Failure

#### Link(s) to logs

- https://github.com/gravitational/teleport/actions/runs/5881833472/job/15951108628

#### Relevant snippet

```

=== FAIL: lib/services TestKubeServerWatcher (0.04s)

watcher_test.go:1073:

Error Trace: /__w/teleport/teleport/l... | non_usab | testkubeserverwatcher flakiness failure link s to logs relevant snippet fail lib services testkubeserverwatcher watcher test go error trace w teleport teleport lib services watcher test go error an error is expected but got nil... | 0 |

527,909 | 15,355,968,835 | IssuesEvent | 2021-03-01 11:48:10 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | [0.9.3 staging-1942] Spin Melter doesn't have Crafting Component. | Category: Gameplay Priority: Medium Type: Bug | You can craft it:

And we already have some recipes here:

| 1.0 | [0.9.3 staging-1942] Spin Melter doesn't have Crafting Component. - You can craft it:

And we already have some recipes here:

.*

Update the Backend and Frontend Wiki to reflect changes made to the Backend repository du... | 1.0 | Update Wiki - **Describe the task that needs to be done.**

*(If this issue is about a bug, please describe the problem and steps to reproduce the issue. You can also include screenshots of any stack traces, or any other supporting images).*

Update the Backend and Frontend Wiki to reflect changes made to the Backend... | non_usab | update wiki describe the task that needs to be done if this issue is about a bug please describe the problem and steps to reproduce the issue you can also include screenshots of any stack traces or any other supporting images update the backend and frontend wiki to reflect changes made to the backend... | 0 |

4,757 | 3,882,221,824 | IssuesEvent | 2016-04-13 09:02:42 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 20796421: "More" button isn't aligned with App update notes | classification:ui/usability reproducible:always status:open | #### Description

Summary:

The "More" button that loads the full notes view isn't aligned with the description text.

Steps to Reproduce:

See attached screen shots.

Version:

App Store Mac 2.0 (376.24), Mac OS, 10.10.4 (14E11f)

Configuration:

MacBook10,1

-

Product Version: 2.0 (376.24)

Created: 2015-05-04T... | True | 20796421: "More" button isn't aligned with App update notes - #### Description

Summary:

The "More" button that loads the full notes view isn't aligned with the description text.

Steps to Reproduce:

See attached screen shots.

Version:

App Store Mac 2.0 (376.24), Mac OS, 10.10.4 (14E11f)

Configuration:

Mac... | usab | more button isn t aligned with app update notes description summary the more button that loads the full notes view isn t aligned with the description text steps to reproduce see attached screen shots version app store mac mac os configuration product versi... | 1 |

61,053 | 17,023,589,562 | IssuesEvent | 2021-07-03 02:48:32 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Merkaartor does not load Sat Backgrounds | Component: merkaartor Priority: major Resolution: wontfix Type: defect | **[Submitted to the original trac issue database at 6.06pm, Wednesday, 12th May 2010]**

Merkaartor 0.15.3 does not load Sattelite backgrounds any more (Yahoo keeps Merkaartor blank and even if i want to use Digitalglobe or others i wont have sucess).

Digitalglobe will return a gray picture in seconds, Yahoo stays b... | 1.0 | Merkaartor does not load Sat Backgrounds - **[Submitted to the original trac issue database at 6.06pm, Wednesday, 12th May 2010]**

Merkaartor 0.15.3 does not load Sattelite backgrounds any more (Yahoo keeps Merkaartor blank and even if i want to use Digitalglobe or others i wont have sucess).

Digitalglobe will retu... | non_usab | merkaartor does not load sat backgrounds merkaartor does not load sattelite backgrounds any more yahoo keeps merkaartor blank and even if i want to use digitalglobe or others i wont have sucess digitalglobe will return a gray picture in seconds yahoo stays blank wmz and tiled what to do | 0 |

18,477 | 3,067,270,280 | IssuesEvent | 2015-08-18 09:22:51 | contao/core | https://api.github.com/repos/contao/core | closed | Cannot unset string offsets in Controller.php on line 1478 | defect | In [Controller.php:1478](https://github.com/contao/core/blob/ac68761904694febb7636efacf34c30575e720a0/system/modules/core/library/Contao/Controller.php#L1478) we try to unset the third array item, but `$size` isn’t always an array and this leads to the fatal error “Cannot unset string offsets”. I think we should change... | 1.0 | Cannot unset string offsets in Controller.php on line 1478 - In [Controller.php:1478](https://github.com/contao/core/blob/ac68761904694febb7636efacf34c30575e720a0/system/modules/core/library/Contao/Controller.php#L1478) we try to unset the third array item, but `$size` isn’t always an array and this leads to the fatal ... | non_usab | cannot unset string offsets in controller php on line in we try to unset the third array item but size isn’t always an array and this leads to the fatal error “cannot unset string offsets” i think we should change to a deserialize arritem true in related issue | 0 |

28,207 | 6,966,088,613 | IssuesEvent | 2017-12-09 14:43:55 | iaserrat/roadmapster | https://api.github.com/repos/iaserrat/roadmapster | closed | Fix "Rubocop/Performance/StringReplacement" issue in http/helpers.rb | Codeclimate help wanted | Use `delete!` instead of `gsub!`.

https://codeclimate.com/github/iaserrat/roadmapster/http/helpers.rb#issue_59a773521e1f110001000021 | 1.0 | Fix "Rubocop/Performance/StringReplacement" issue in http/helpers.rb - Use `delete!` instead of `gsub!`.

https://codeclimate.com/github/iaserrat/roadmapster/http/helpers.rb#issue_59a773521e1f110001000021 | non_usab | fix rubocop performance stringreplacement issue in http helpers rb use delete instead of gsub | 0 |

645,797 | 21,015,766,845 | IssuesEvent | 2022-03-30 10:51:16 | scribe-org/Scribe-Android | https://api.github.com/repos/scribe-org/Scribe-Android | opened | Link Kotlin codes to appropriate frameworks | help wanted -priority- | After the conversion of codes from Scribe-iOS is completed in #10, the next task is to try to get everything linked to appropriate Android frameworks. The codes translated from Swift will by no means be workable as they're based on UIKit, which cannot itself be translated. These will instead serve as guides for what ne... | 1.0 | Link Kotlin codes to appropriate frameworks - After the conversion of codes from Scribe-iOS is completed in #10, the next task is to try to get everything linked to appropriate Android frameworks. The codes translated from Swift will by no means be workable as they're based on UIKit, which cannot itself be translated. ... | non_usab | link kotlin codes to appropriate frameworks after the conversion of codes from scribe ios is completed in the next task is to try to get everything linked to appropriate android frameworks the codes translated from swift will by no means be workable as they re based on uikit which cannot itself be translated t... | 0 |

258,923 | 19,578,955,298 | IssuesEvent | 2022-01-04 18:35:03 | vicariousinc/PGMax | https://api.github.com/repos/vicariousinc/PGMax | closed | Add more text explanation to examples so they're more understandable to new users | documentation | Currently, our examples are not easy to understand for a new user. I think it'd be nice to:

- Provide an explanation at the top of the file that gives some intuition for why this example exists/what the model is doing

- Provide more of a walkthrough for each cell

- Explain what the various outputs are showing | 1.0 | Add more text explanation to examples so they're more understandable to new users - Currently, our examples are not easy to understand for a new user. I think it'd be nice to:

- Provide an explanation at the top of the file that gives some intuition for why this example exists/what the model is doing

- Provide more... | non_usab | add more text explanation to examples so they re more understandable to new users currently our examples are not easy to understand for a new user i think it d be nice to provide an explanation at the top of the file that gives some intuition for why this example exists what the model is doing provide more... | 0 |

318,505 | 23,724,332,014 | IssuesEvent | 2022-08-30 18:05:52 | tidymodels/parsnip | https://api.github.com/repos/tidymodels/parsnip | closed | Internals bug? `will_make_matrix()` returns `False` when given a matrix | documentation | Hello, I'm working on a PR to address #765. While doing so I ran into an issue with `will_make_matrix()`. Shouldn't the return value be True if `y` is a matrix or a vector?

When `y` is a numeric matrix it fails this check and converts it to a vector. This is stripping the colname of `y` whenever it is passed to fun... | 1.0 | Internals bug? `will_make_matrix()` returns `False` when given a matrix - Hello, I'm working on a PR to address #765. While doing so I ran into an issue with `will_make_matrix()`. Shouldn't the return value be True if `y` is a matrix or a vector?

When `y` is a numeric matrix it fails this check and converts it to a... | non_usab | internals bug will make matrix returns false when given a matrix hello i m working on a pr to address while doing so i ran into an issue with will make matrix shouldn t the return value be true if y is a matrix or a vector when y is a numeric matrix it fails this check and converts it to a v... | 0 |

85,932 | 10,697,677,183 | IssuesEvent | 2019-10-23 17:02:00 | phetsims/vector-addition | https://api.github.com/repos/phetsims/vector-addition | closed | Equations screen: change sum component color to black | design:polish status:ready-for-review | @kathy-phet pointed out that it's a bit odd that the sum components on the Equations screen are dark gray, but the other vector components have the same color as their parent.

I believe we chose dark gray t... | 1.0 | Equations screen: change sum component color to black - @kathy-phet pointed out that it's a bit odd that the sum components on the Equations screen are dark gray, but the other vector components have the same color as their parent.

less useful. | True | literate Cryptol imports? - saw doesn't seem to be able to `include` literate Cryptol. Obviously this makes literate Cryptol (latex, markdown, etc.?) less useful. | usab | literate cryptol imports saw doesn t seem to be able to include literate cryptol obviously this makes literate cryptol latex markdown etc less useful | 1 |

33,526 | 2,769,372,896 | IssuesEvent | 2015-05-01 00:20:40 | shgysk8zer0/core | https://api.github.com/repos/shgysk8zer0/core | opened | Enhance error events | enhancement High Priority PHP | # Update error class to be able to handle errors from `Error_Events`

Need to be able to handle:

* Database reporting

* Log reporting

* AJAX/JSON/JSON_Response

| 1.0 | Enhance error events - # Update error class to be able to handle errors from `Error_Events`

Need to be able to handle:

* Database reporting

* Log reporting

* AJAX/JSON/JSON_Response

| non_usab | enhance error events update error class to be able to handle errors from error events need to be able to handle database reporting log reporting ajax json json response | 0 |

20,060 | 14,971,391,200 | IssuesEvent | 2021-01-27 21:04:39 | micrometer-metrics/micrometer | https://api.github.com/repos/micrometer-metrics/micrometer | closed | Can't remove meters after a filter is added | usability | `MeterRegistry.remove()` fails to remove metrics that were created before a `commonTags()` filter was applied. It seems to apply the filter to the metric ID before trying to look it up, and so it finds nothing, and fails to delete it.

Tested using the latest version (`io.micrometer:micrometer-core:1.2.1`).

Here i... | True | Can't remove meters after a filter is added - `MeterRegistry.remove()` fails to remove metrics that were created before a `commonTags()` filter was applied. It seems to apply the filter to the metric ID before trying to look it up, and so it finds nothing, and fails to delete it.

Tested using the latest version (`io... | usab | can t remove meters after a filter is added meterregistry remove fails to remove metrics that were created before a commontags filter was applied it seems to apply the filter to the metric id before trying to look it up and so it finds nothing and fails to delete it tested using the latest version io... | 1 |

10,702 | 6,888,639,978 | IssuesEvent | 2017-11-22 07:08:19 | git-cola/git-cola | https://api.github.com/repos/git-cola/git-cola | closed | Option to increase the amount of recent repositories? | good first issue help wanted usability | At the moment, it seems that git-cola only shows the latest 6 or 7 repositories when opening up. However, it might happen to work with ~10 repositories, which then requires using the "Select manually" option to choose repositories that have been pushed out of the list.

Could we imagine an option to choose in the pre... | True | Option to increase the amount of recent repositories? - At the moment, it seems that git-cola only shows the latest 6 or 7 repositories when opening up. However, it might happen to work with ~10 repositories, which then requires using the "Select manually" option to choose repositories that have been pushed out of the ... | usab | option to increase the amount of recent repositories at the moment it seems that git cola only shows the latest or repositories when opening up however it might happen to work with repositories which then requires using the select manually option to choose repositories that have been pushed out of the l... | 1 |

1,489 | 2,860,406,132 | IssuesEvent | 2015-06-03 15:42:01 | OpenSourceFieldlinguistics/FieldDB | https://api.github.com/repos/OpenSourceFieldlinguistics/FieldDB | closed | Andriod UI designs and patterns for elicitation and gamified psycholing experiments | Software Enginneering Usability User Interface | hi @kondrann here is a very interesting video about UI design and best practices for you to watch while you design and think about the flow of your "game"

http://www.youtube.com/watch?v=Jl3-lzlzOJI

when you are done, assign the video to senhorzinho for his elicitation app | True | Andriod UI designs and patterns for elicitation and gamified psycholing experiments - hi @kondrann here is a very interesting video about UI design and best practices for you to watch while you design and think about the flow of your "game"

http://www.youtube.com/watch?v=Jl3-lzlzOJI

when you are done, assign th... | usab | andriod ui designs and patterns for elicitation and gamified psycholing experiments hi kondrann here is a very interesting video about ui design and best practices for you to watch while you design and think about the flow of your game when you are done assign the video to senhorzinho for his elicitatio... | 1 |

27,073 | 27,619,826,888 | IssuesEvent | 2023-03-09 22:44:36 | USEPA/TADA | https://api.github.com/repos/USEPA/TADA | closed | Ordering added columns | Usability | This topic applies to many TADA functions, such as TADAdataRetrieval, HarmonizeData, ConvertDepthUnits.

ConvertDepthUnits Example

- My original thought when we designed this function was that users would want to see the added columns next to the relevant columns in the dataframe, and not at the end. But I'm recons... | True | Ordering added columns - This topic applies to many TADA functions, such as TADAdataRetrieval, HarmonizeData, ConvertDepthUnits.

ConvertDepthUnits Example

- My original thought when we designed this function was that users would want to see the added columns next to the relevant columns in the dataframe, and not a... | usab | ordering added columns this topic applies to many tada functions such as tadadataretrieval harmonizedata convertdepthunits convertdepthunits example my original thought when we designed this function was that users would want to see the added columns next to the relevant columns in the dataframe and not a... | 1 |

17,946 | 12,439,817,503 | IssuesEvent | 2020-05-26 10:48:18 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | File recovery on crash | feature proposal topic:editor usability | Godot shouldn't crash, but we all know that it does sometimes. It would be very nice that a data recovery function existed so not much of work is lost.

I believe an autosave to a temp path from time to time seems enough, along with a popup showing what can be recovered when opening a project that crashed.

| True | File recovery on crash - Godot shouldn't crash, but we all know that it does sometimes. It would be very nice that a data recovery function existed so not much of work is lost.

I believe an autosave to a temp path from time to time seems enough, along with a popup showing what can be recovered when opening a project t... | usab | file recovery on crash godot shouldn t crash but we all know that it does sometimes it would be very nice that a data recovery function existed so not much of work is lost i believe an autosave to a temp path from time to time seems enough along with a popup showing what can be recovered when opening a project t... | 1 |

233,610 | 17,872,926,642 | IssuesEvent | 2021-09-06 19:11:45 | fga-eps-mds/2021-1-Bot | https://api.github.com/repos/fga-eps-mds/2021-1-Bot | opened | Otimização da GH Page | documentation Time-PlusUltra GH Page | ## Descrição da Issue

Issue com o objetivo de melhorar a página do projeto, a fim de otimizá-la e melhorar a experiência do usuário.

## Tasks:

- [ ] Melhorias na navegação

- [ ] Colocar a logo

- [ ] Paleta de cores no site

## Critérios de Aceitação:

- [ ] A página possui uma melhor navegação

- [ ] SIte possui a logo

... | 1.0 | Otimização da GH Page - ## Descrição da Issue

Issue com o objetivo de melhorar a página do projeto, a fim de otimizá-la e melhorar a experiência do usuário.

## Tasks:

- [ ] Melhorias na navegação

- [ ] Colocar a logo

- [ ] Paleta de cores no site

## Critérios de Aceitação:

- [ ] A página possui uma melhor navegação

-... | non_usab | otimização da gh page descrição da issue issue com o objetivo de melhorar a página do projeto a fim de otimizá la e melhorar a experiência do usuário tasks melhorias na navegação colocar a logo paleta de cores no site critérios de aceitação a página possui uma melhor navegação site ... | 0 |

142,942 | 19,142,260,926 | IssuesEvent | 2021-12-02 01:05:35 | AlexWilson-GIS/WebGoat | https://api.github.com/repos/AlexWilson-GIS/WebGoat | opened | CVE-2021-22096 (Medium) detected in spring-web-5.3.1.jar | security vulnerability | ## CVE-2021-22096 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-web-5.3.1.jar</b></p></summary>

<p>Spring Web</p>

<p>Library home page: <a href="https://github.com/spring-pr... | True | CVE-2021-22096 (Medium) detected in spring-web-5.3.1.jar - ## CVE-2021-22096 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-web-5.3.1.jar</b></p></summary>

<p>Spring Web</p>

... | non_usab | cve medium detected in spring web jar cve medium severity vulnerability vulnerable library spring web jar spring web library home page a href path to dependency file webgoat webwolf pom xml path to vulnerable library home wss scanner repository org springframewor... | 0 |

17,502 | 12,102,910,157 | IssuesEvent | 2020-04-20 17:28:55 | publishpress/PublishPress-Permissions | https://api.github.com/repos/publishpress/PublishPress-Permissions | opened | Rename tabs in Permissions box | usability | Can we go with 3 name changes?

- User Roles

- Custom Groups

- Users

| True | Rename tabs in Permissions box - Can we go with 3 name changes?

- User Roles

- Custom Groups

- Users

| usab | rename tabs in permissions box can we go with name changes user roles custom groups users | 1 |

20,778 | 16,044,312,658 | IssuesEvent | 2021-04-22 11:54:41 | imchillin/Anamnesis | https://api.github.com/repos/imchillin/Anamnesis | closed | camera position temporary save/load | Scenes Usability | It would be cool if the temporary save/load for the camera from CMTool could be added. Very handy if you have your camera already setup, and then want to check/tweak something and can reload your camera setup.

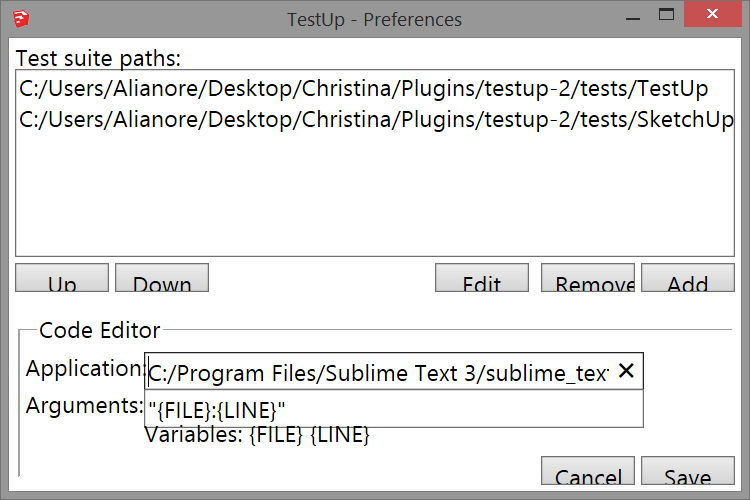

On Win 8 with DPI scaling set to 125% text sizing and scaling of other elements seem to be out of sync. If I am not mistaking the text alone is scaled but not the other elements. This applies to the ... | 1.0 | Text size on Win 8 for preferences window -

On Win 8 with DPI scaling set to 125% text sizing and scaling of other elements seem to be out of sync. If I am not mistaking the text alone is scaled but ... | non_usab | text size on win for preferences window on win with dpi scaling set to text sizing and scaling of other elements seem to be out of sync if i am not mistaking the text alone is scaled but not the other elements this applies to the preferences window but not the main window | 0 |

59,896 | 6,666,091,576 | IssuesEvent | 2017-10-03 06:26:17 | sgmap/mes-aides-ui | https://api.github.com/repos/sgmap/mes-aides-ui | closed | Préciser où regarder pour déclarer ses revenus n-1 | irritant needs-consensus needs-user-testing | Sur la page de déclaration des ressources de l'année précédente en fin de simulation, il est demandé :

"Les revenus imposables de votre foyer en 2015

Ces informations se trouvent sur votre déclaration de revenus d'impôts 2015.

Vous pouvez la retrouver en ligne sur impots.gouv.fr."

Cependant, l'usage en France ... | 1.0 | Préciser où regarder pour déclarer ses revenus n-1 - Sur la page de déclaration des ressources de l'année précédente en fin de simulation, il est demandé :

"Les revenus imposables de votre foyer en 2015

Ces informations se trouvent sur votre déclaration de revenus d'impôts 2015.

Vous pouvez la retrouver en ligne ... | non_usab | préciser où regarder pour déclarer ses revenus n sur la page de déclaration des ressources de l année précédente en fin de simulation il est demandé les revenus imposables de votre foyer en ces informations se trouvent sur votre déclaration de revenus d impôts vous pouvez la retrouver en ligne sur im... | 0 |

16,525 | 11,027,379,491 | IssuesEvent | 2019-12-06 09:20:01 | virtualsatellite/VirtualSatellite4-Core | https://api.github.com/repos/virtualsatellite/VirtualSatellite4-Core | opened | Have a warning message on deleting a discipline which has assigned elements | comfort/usability | Currently the role manager can remove a discipline, and will lead to dangling references in its subsystem which only superUser can fix.

Therefore, we need to make sure that a discipline cannot be deleted by mistake. | True | Have a warning message on deleting a discipline which has assigned elements - Currently the role manager can remove a discipline, and will lead to dangling references in its subsystem which only superUser can fix.

Therefore, we need to make sure that a discipline cannot be deleted by mistake. | usab | have a warning message on deleting a discipline which has assigned elements currently the role manager can remove a discipline and will lead to dangling references in its subsystem which only superuser can fix therefore we need to make sure that a discipline cannot be deleted by mistake | 1 |

59,276 | 17,016,791,235 | IssuesEvent | 2021-07-02 13:10:55 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | opened | Incomplete information Coqueiros hamlet. | Component: nominatim Priority: minor Type: defect | **[Submitted to the original trac issue database at 2.34pm, Friday, 7th September 2018]**

Searching for point in Coqueiros hamlet don't return complete information in reverse searching.

See example:

https://nominatim.openstreetmap.org/reverse?format=json&lat=-19.870104&lon=-49.635855

The city is Itapagipe - sta... | 1.0 | Incomplete information Coqueiros hamlet. - **[Submitted to the original trac issue database at 2.34pm, Friday, 7th September 2018]**

Searching for point in Coqueiros hamlet don't return complete information in reverse searching.

See example:

https://nominatim.openstreetmap.org/reverse?format=json&lat=-19.870104&lo... | non_usab | incomplete information coqueiros hamlet searching for point in coqueiros hamlet don t return complete information in reverse searching see example the city is itapagipe state of mg minas gerais thanks | 0 |

8,061 | 5,374,852,327 | IssuesEvent | 2017-02-23 01:58:09 | Tour-de-Force/btc-app | https://api.github.com/repos/Tour-de-Force/btc-app | opened | Hamburger Menu - Flex box needs to be fixed. | Priority: Medium usability | Hamburger menu - flex box need to be fixed. The menu disappears when you

go into Settings, Download track page, Filter, Publish, About page, Logout page, and you do

anything to resize the window. I know the menu bar is still there but the color that makes you be

able to see it is removed on resize. Everything colli... | True | Hamburger Menu - Flex box needs to be fixed. - Hamburger menu - flex box need to be fixed. The menu disappears when you

go into Settings, Download track page, Filter, Publish, About page, Logout page, and you do

anything to resize the window. I know the menu bar is still there but the color that makes you be

able t... | usab | hamburger menu flex box needs to be fixed hamburger menu flex box need to be fixed the menu disappears when you go into settings download track page filter publish about page logout page and you do anything to resize the window i know the menu bar is still there but the color that makes you be able t... | 1 |

12,225 | 7,756,565,496 | IssuesEvent | 2018-05-31 13:58:55 | downshiftorg/prophoto7-issues | https://api.github.com/repos/downshiftorg/prophoto7-issues | closed | SPIKE: What should happen when you hide a column at a breakpoint? | usability | <a href="https://github.com/meatwad5675"><img src="https://avatars3.githubusercontent.com/u/11544705?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [meatwad5675](https://github.com/meatwad5675)**

_Monday Mar 26, 2018 at 19:52 GMT_

_Originally opened as https://github.com/downshiftorg/prophot... | True | SPIKE: What should happen when you hide a column at a breakpoint? - <a href="https://github.com/meatwad5675"><img src="https://avatars3.githubusercontent.com/u/11544705?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [meatwad5675](https://github.com/meatwad5675)**

_Monday Mar 26, 2018 at 19:5... | usab | spike what should happen when you hide a column at a breakpoint issue by monday mar at gmt originally opened as currently there is just an empty space where the column should be because we use display none users have communicated that they expect column spans of the remaining colu... | 1 |

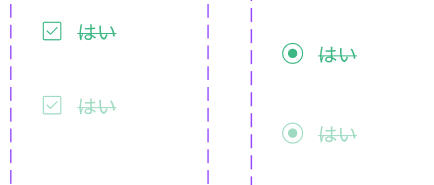

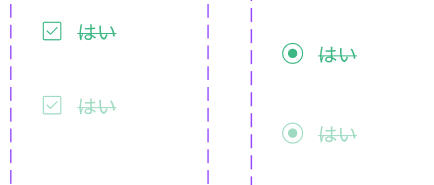

790,906 | 27,841,584,349 | IssuesEvent | 2023-03-20 13:06:55 | Wizleap-Inc/wiz-ui | https://api.github.com/repos/Wizleap-Inc/wiz-ui | closed | Feat(checkbox, radio): チェック時に取り消し線を出すオプションを追加 | 📦 component 🔼 High Priority | **機能追加理由・詳細**

**解決策の提案(任意)**

**その他考慮事項(任意)**

| 1.0 | Feat(checkbox, radio): チェック時に取り消し線を出すオプションを追加 - **機能追加理由・詳細**

**解決策の提案(任意)**

**その他考慮事項(任意)**

| non_usab | feat checkbox radio チェック時に取り消し線を出すオプションを追加 機能追加理由・詳細 解決策の提案(任意) その他考慮事項(任意) | 0 |

755 | 2,622,257,016 | IssuesEvent | 2015-03-04 00:57:05 | golang/go | https://api.github.com/repos/golang/go | closed | build: too many open files in buildlet | builder | Just observed this new failure when starting a Windows buildlet:

```

builder: windows-amd64-gce

rev: 0cdf1d2bc265b6ed8e1b9834ec9f6c59dcecdcfd

vm name: buildlet-windows-amd64-gce-0cdf1d2b-rnbb9a0b

started: 2015-03-03 00:49:42.356721167 +0000 UTC

started: 2015-03-03 00:50:52.996985212 +0000 UTC

s... | 1.0 | build: too many open files in buildlet - Just observed this new failure when starting a Windows buildlet:

```

builder: windows-amd64-gce

rev: 0cdf1d2bc265b6ed8e1b9834ec9f6c59dcecdcfd

vm name: buildlet-windows-amd64-gce-0cdf1d2b-rnbb9a0b

started: 2015-03-03 00:49:42.356721167 +0000 UTC

started: 201... | non_usab | build too many open files in buildlet just observed this new failure when starting a windows buildlet builder windows gce rev vm name buildlet windows gce started utc started utc success false events instance crea... | 0 |

51,708 | 27,205,976,533 | IssuesEvent | 2023-02-20 13:07:05 | CommunityToolkit/dotnet | https://api.github.com/repos/CommunityToolkit/dotnet | closed | Add ArrayPoolBufferWriter<T>.DangerousGetArray() API | feature request :mailbox_with_mail: high-performance 🚂 | ### Overview

Related to #614. The `ArrayPoolBufferWriter<T>` lacks the `DangerousGetArray()` API which `MemoryOwner<T>` and `SpanOwner<T>` have. We should add it there too to make it easier and clearer how to get the underlying array from a writer.

### API breakdown

```csharp

namespace CommunityToolkit.HighPe... | True | Add ArrayPoolBufferWriter<T>.DangerousGetArray() API - ### Overview

Related to #614. The `ArrayPoolBufferWriter<T>` lacks the `DangerousGetArray()` API which `MemoryOwner<T>` and `SpanOwner<T>` have. We should add it there too to make it easier and clearer how to get the underlying array from a writer.

### API br... | non_usab | add arraypoolbufferwriter dangerousgetarray api overview related to the arraypoolbufferwriter lacks the dangerousgetarray api which memoryowner and spanowner have we should add it there too to make it easier and clearer how to get the underlying array from a writer api breakdown ... | 0 |

306,736 | 26,492,440,671 | IssuesEvent | 2023-01-18 00:32:38 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | opened | Fix jax_numpy_creation.test_jax_numpy_zeros_like | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/3923202675/jobs/6706647396" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="null" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/... | 1.0 | Fix jax_numpy_creation.test_jax_numpy_zeros_like - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/3923202675/jobs/6706647396" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="null" rel="noopener noreferrer" tar... | non_usab | fix jax numpy creation test jax numpy zeros like tensorflow img src torch img src numpy img src jax img src not found not found | 0 |

175,322 | 13,546,382,618 | IssuesEvent | 2020-09-17 01:09:17 | JoshSevy/dreamboard | https://api.github.com/repos/JoshSevy/dreamboard | opened | App Testing | testing | - [ ] Full integration testing -is everything tested for

- [ ] Async testing and event testing make sure all events are working correctly

- [ ] Check all fireEvents

- [ ] Full coverage in testing | 1.0 | App Testing - - [ ] Full integration testing -is everything tested for

- [ ] Async testing and event testing make sure all events are working correctly

- [ ] Check all fireEvents

- [ ] Full coverage in testing | non_usab | app testing full integration testing is everything tested for async testing and event testing make sure all events are working correctly check all fireevents full coverage in testing | 0 |

250,848 | 27,112,934,429 | IssuesEvent | 2023-02-15 16:29:32 | jgeraigery/jersey | https://api.github.com/repos/jgeraigery/jersey | opened | commons-codec-1.5.jar: 1 vulnerabilities (highest severity is: 6.5) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-codec-1.5.jar</b></p></summary>

<p>The codec package contains simple encoder and decoders for

various formats such as Base64 and Hexadecimal. In additio... | True | commons-codec-1.5.jar: 1 vulnerabilities (highest severity is: 6.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-codec-1.5.jar</b></p></summary>

<p>The codec package contains simple encoder and decoder... | non_usab | commons codec jar vulnerabilities highest severity is vulnerable library commons codec jar the codec package contains simple encoder and decoders for various formats such as and hexadecimal in addition to these widely used encoders and decoders the codec package also maint... | 0 |

27,868 | 30,581,541,909 | IssuesEvent | 2023-07-21 10:03:45 | informalsystems/quint | https://api.github.com/repos/informalsystems/quint | opened | Estimate the number of visited states in the simulator | W8 usability Fsimulator (phase 5a) | This is a more useful coverage estimate asked for in #1067. We could store the hashes of the states visited by the simulator and print out the number of visited states. Moreover, we could prune the search when we visit a previously visited state, similar to stateful model checking.

This will not give us a qualitativ... | True | Estimate the number of visited states in the simulator - This is a more useful coverage estimate asked for in #1067. We could store the hashes of the states visited by the simulator and print out the number of visited states. Moreover, we could prune the search when we visit a previously visited state, similar to state... | usab | estimate the number of visited states in the simulator this is a more useful coverage estimate asked for in we could store the hashes of the states visited by the simulator and print out the number of visited states moreover we could prune the search when we visit a previously visited state similar to stateful... | 1 |

283,145 | 21,316,049,139 | IssuesEvent | 2022-04-16 09:41:35 | khseah/pe | https://api.github.com/repos/khseah/pe | opened | Incorrect notation | severity.VeryLow type.DocumentationBug | From the website, multiplicities shoud be "0..*" instead (2 dots only)

<!--session: 16500938... | 1.0 | Incorrect notation - From the website, multiplicities shoud be "0..*" instead (2 dots only)

... | non_usab | incorrect notation from the website multiplicities shoud be instead dots only | 0 |

40,260 | 16,439,656,504 | IssuesEvent | 2021-05-20 13:06:52 | microsoft/BotFramework-Composer | https://api.github.com/repos/microsoft/BotFramework-Composer | closed | issues with update-schema script | Bot Services Type: Bug customer-replied-to customer-reported | <!-- Please search for your feature request before creating a new one. >

<!-- Complete the necessary portions of this template and delete the rest. -->

## Describe the bug

I am using nightly composer and I see below error when try to run update-schema script

.\update-schema.ps1

Running schema merge.

module.j... | 1.0 | issues with update-schema script - <!-- Please search for your feature request before creating a new one. >

<!-- Complete the necessary portions of this template and delete the rest. -->

## Describe the bug

I am using nightly composer and I see below error when try to run update-schema script

.\update-schema.p... | non_usab | issues with update schema script describe the bug i am using nightly composer and i see below error when try to run update schema script update schema running schema merge module js throw err error cannot find module c users prvavill appdata roaming npm node modules micros... | 0 |

22,534 | 3,663,764,336 | IssuesEvent | 2016-02-19 08:21:22 | wbsoft/frescobaldi | https://api.github.com/repos/wbsoft/frescobaldi | opened | Update layout control options to LilyPond 2.19.21 syntax | defect | Many of the layout control options are affected by a fundamental syntax change about using the `parser` argument in music-etc-functions.

The task is to wrap the offending calls in version predicate conditionals because Frescobaldi has to support older *and* newer LilyPonds. | 1.0 | Update layout control options to LilyPond 2.19.21 syntax - Many of the layout control options are affected by a fundamental syntax change about using the `parser` argument in music-etc-functions.

The task is to wrap the offending calls in version predicate conditionals because Frescobaldi has to support older *and* ... | non_usab | update layout control options to lilypond syntax many of the layout control options are affected by a fundamental syntax change about using the parser argument in music etc functions the task is to wrap the offending calls in version predicate conditionals because frescobaldi has to support older and ne... | 0 |

28,715 | 23,463,326,230 | IssuesEvent | 2022-08-16 14:44:23 | SasView/sasview | https://api.github.com/repos/SasView/sasview | closed | DataXD duplication | Infrastructure Discuss At The Call Housekeeping | There is a Data1D definition in both sasview (in Plotting for some reason) and sasmodels.data, are these expected to become a single type during the sasdata extraction? They probably should. | 1.0 | DataXD duplication - There is a Data1D definition in both sasview (in Plotting for some reason) and sasmodels.data, are these expected to become a single type during the sasdata extraction? They probably should. | non_usab | dataxd duplication there is a definition in both sasview in plotting for some reason and sasmodels data are these expected to become a single type during the sasdata extraction they probably should | 0 |

26,303 | 26,673,309,412 | IssuesEvent | 2023-01-26 12:17:10 | opentap/opentap | https://api.github.com/repos/opentap/opentap | closed | Let the user know which other version(s) of a package is available in case a release does not exist | Usability CLI | Can we have the CLI return a hint to the user when a package exist in other versions than release.

e.g. "There are no release version of 'Runner', but pre-releases exists, use --all for a complete list".

or simply show the complete list after the user was informed about the fact that no releases versions existed... | True | Let the user know which other version(s) of a package is available in case a release does not exist - Can we have the CLI return a hint to the user when a package exist in other versions than release.

e.g. "There are no release version of 'Runner', but pre-releases exists, use --all for a complete list".

or simp... | usab | let the user know which other version s of a package is available in case a release does not exist can we have the cli return a hint to the user when a package exist in other versions than release e g there are no release version of runner but pre releases exists use all for a complete list or simp... | 1 |

27,535 | 7,977,935,894 | IssuesEvent | 2018-07-17 16:43:49 | zooniverse/Panoptes-Front-End | https://api.github.com/repos/zooniverse/Panoptes-Front-End | closed | viewing subject info in project builder should show hidden values | project builder stale ui | When viewing subjects and info in the project builder, if I'm a collaborator, I'd like to be able to see the _full_ set of values, with the ones that will be hidden from public view marked in some way.

This just came up because I thought some essential columns were missing from a gold-standard dataset in the pulsar SG... | 1.0 | viewing subject info in project builder should show hidden values - When viewing subjects and info in the project builder, if I'm a collaborator, I'd like to be able to see the _full_ set of values, with the ones that will be hidden from public view marked in some way.

This just came up because I thought some essentia... | non_usab | viewing subject info in project builder should show hidden values when viewing subjects and info in the project builder if i m a collaborator i d like to be able to see the full set of values with the ones that will be hidden from public view marked in some way this just came up because i thought some essentia... | 0 |

132,090 | 12,498,119,532 | IssuesEvent | 2020-06-01 17:42:35 | kounch/knloader | https://api.github.com/repos/kounch/knloader | closed | Clarification on the knloader.bdt file | documentation | Not sure if you prefer issue here or FB, but starting here for now.

I suspect my own issue is not quite following the manual properly (and I'd be happy to help the docs via PR if you like).

The manual says

> Create knloader.bdt file (see below for more instructions).

But then below is reads "navigate to whe... | 1.0 | Clarification on the knloader.bdt file - Not sure if you prefer issue here or FB, but starting here for now.

I suspect my own issue is not quite following the manual properly (and I'd be happy to help the docs via PR if you like).

The manual says

> Create knloader.bdt file (see below for more instructions).

... | non_usab | clarification on the knloader bdt file not sure if you prefer issue here or fb but starting here for now i suspect my own issue is not quite following the manual properly and i d be happy to help the docs via pr if you like the manual says create knloader bdt file see below for more instructions ... | 0 |

159,290 | 20,048,346,840 | IssuesEvent | 2022-02-03 01:07:39 | kapseliboi/coronavirus-dashboard | https://api.github.com/repos/kapseliboi/coronavirus-dashboard | opened | CVE-2021-3918 (High) detected in json-schema-0.2.3.tgz | security vulnerability | ## CVE-2021-3918 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-schema-0.2.3.tgz</b></p></summary>

<p>JSON Schema validation and specifications</p>

<p>Library home page: <a href=... | True | CVE-2021-3918 (High) detected in json-schema-0.2.3.tgz - ## CVE-2021-3918 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-schema-0.2.3.tgz</b></p></summary>

<p>JSON Schema validat... | non_usab | cve high detected in json schema tgz cve high severity vulnerability vulnerable library json schema tgz json schema validation and specifications library home page a href path to dependency file package json path to vulnerable library node modules json schema packa... | 0 |

21,772 | 17,691,612,081 | IssuesEvent | 2021-08-24 10:39:12 | goblint/analyzer | https://api.github.com/repos/goblint/analyzer | closed | Rework system outputting warnings | cleanup feature usability practical-course | Categories should be introduced to create a better understanding of the warnings.

#198 #199 #200

@vandah

@edincitaku | True | Rework system outputting warnings - Categories should be introduced to create a better understanding of the warnings.

#198 #199 #200

@vandah

@edincitaku | usab | rework system outputting warnings categories should be introduced to create a better understanding of the warnings vandah edincitaku | 1 |

26,248 | 26,594,630,546 | IssuesEvent | 2023-01-23 11:27:59 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Clicking on `res://` folder is very slow | bug topic:editor usability regression performance | ### Godot version

0056acf

### System information

Windows 10 x64

### Issue description

When you click `res://` folder in filesystem dock, it hangs the editor for a few seconds:

(this is releas... | True | Clicking on `res://` folder is very slow - ### Godot version

0056acf

### System information

Windows 10 x64

### Issue description

When you click `res://` folder in filesystem dock, it hangs the editor for a few seconds:

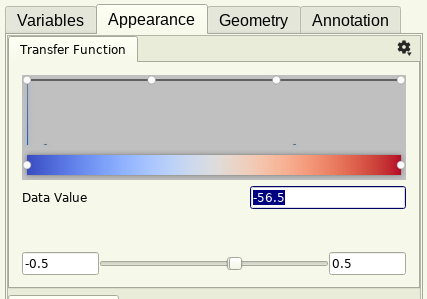

The Data value box highlighted in the picture within the appearance tab is no longer able to be typed in or edited with the keyboard.

To Reproduce

1. Go to appearance tab... | True | No longer able to edit Data Value -

The Data value box highlighted in the picture within the appearance tab is no longer able to be typed in or edited with the keyboard.

T... | usab | no longer able to edit data value the data value box highlighted in the picture within the appearance tab is no longer able to be typed in or edited with the keyboard to reproduce go to appearance tab of the image i was using a barb renderer attempt to click on and edit the highlighted data v... | 1 |

480,053 | 13,822,589,714 | IssuesEvent | 2020-10-13 05:27:03 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | Bugreports: Update report page | Priority: Medium Type: Task | Update report page to list the data associated with the report, and a list of exceptions. | 1.0 | Bugreports: Update report page - Update report page to list the data associated with the report, and a list of exceptions. | non_usab | bugreports update report page update report page to list the data associated with the report and a list of exceptions | 0 |

22,815 | 20,243,130,677 | IssuesEvent | 2022-02-14 11:10:00 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | closed | Option `--format` for `clickhouse-local` should set both input and output formats. | easy task usability unexpected behaviour | `clickhouse-local` can take three options: `--input-format`, `--output-format` and `--format`.

Currently the `--format` option only sets the output format.

Let's make it set default input and output formats that can be overriden by `--input-format` and `--output-format`. | True | Option `--format` for `clickhouse-local` should set both input and output formats. - `clickhouse-local` can take three options: `--input-format`, `--output-format` and `--format`.

Currently the `--format` option only sets the output format.

Let's make it set default input and output formats that can be overriden by... | usab | option format for clickhouse local should set both input and output formats clickhouse local can take three options input format output format and format currently the format option only sets the output format let s make it set default input and output formats that can be overriden by... | 1 |

19,698 | 14,443,609,227 | IssuesEvent | 2020-12-07 19:55:15 | openstreetmap/iD | https://api.github.com/repos/openstreetmap/iD | closed | Use blue for "waterway" group and waterfall to make them easier to recognize | icon usability | Currently icons for both of them are not really recognizable. I see that at leas some icons are using color, but I am not sure about strategy for doing this (I checked https://github.com/openstreetmap/iD/blob/master/data/presets/README.md ).

.... | usab | use blue for waterway group and waterfall to make them easier to recognize currently icons for both of them are not really recognizable i see that at leas some icons are using color but i am not sure about strategy for doing this i checked | 1 |

173,899 | 6,534,018,909 | IssuesEvent | 2017-08-31 09:04:07 | Cadasta/cadasta-platform | https://api.github.com/repos/Cadasta/cadasta-platform | closed | AttributeError: 'WSGIRequest' object has no attribute 'user' | bug priority: critical | ### Steps to reproduce the error

Without logging in, attempt to load a platform URL for creation, such as:

https://platform-staging.cadasta.org/organizations/allthethings/projects/quiet-car/records/locations/new

Also applies to /delete, /edit

_**Edit:** Same error occurs with or without active user session,... | 1.0 | AttributeError: 'WSGIRequest' object has no attribute 'user' - ### Steps to reproduce the error

Without logging in, attempt to load a platform URL for creation, such as:

https://platform-staging.cadasta.org/organizations/allthethings/projects/quiet-car/records/locations/new

Also applies to /delete, /edit

_*... | non_usab | attributeerror wsgirequest object has no attribute user steps to reproduce the error without logging in attempt to load a platform url for creation such as also applies to delete edit edit same error occurs with or without active user session if trailing slash is not appended to ur... | 0 |

286,857 | 8,794,030,851 | IssuesEvent | 2018-12-21 22:46:19 | INN/umbrella-rivard-report | https://api.github.com/repos/INN/umbrella-rivard-report | closed | Donor logos for Businesses and Nonprofits | high priority | 1. Go to: https://therivardreport.com/membership-donate/ (privately published)

RESULT: Doesn't have list of donors yet

EXPECT: Rivard would like something similar to "OUR MEMBERS" here: https://inn.org/ to appear at the bottom of the page (below the tabs of info)

NOTES:

- needs to be easily updatable and using ... | 1.0 | Donor logos for Businesses and Nonprofits - 1. Go to: https://therivardreport.com/membership-donate/ (privately published)

RESULT: Doesn't have list of donors yet

EXPECT: Rivard would like something similar to "OUR MEMBERS" here: https://inn.org/ to appear at the bottom of the page (below the tabs of info)

NOTES... | non_usab | donor logos for businesses and nonprofits go to privately published result doesn t have list of donors yet expect rivard would like something similar to our members here to appear at the bottom of the page below the tabs of info notes needs to be easily updatable and using a class in the t... | 0 |

5,685 | 3,975,542,198 | IssuesEvent | 2016-05-05 06:12:44 | kolliSuman/issues | https://api.github.com/repos/kolliSuman/issues | closed | QA_Video Tutorial_Back to experiments_p2 | Category: Usability Developed By: VLEAD Release Number: Production Severity: S2 Status: Open | Defect Description :

In the "Video Tutorial" experiment,the back to experiments link is not present in the page instead the back to experiments link should be displayed on the screen in-order to view the list of experiments by the user.

Actual Result :

In the "Video Tutorial" experiment,the back to experiments link is... | True | QA_Video Tutorial_Back to experiments_p2 - Defect Description :

In the "Video Tutorial" experiment,the back to experiments link is not present in the page instead the back to experiments link should be displayed on the screen in-order to view the list of experiments by the user.

Actual Result :

In the "Video Tutorial"... | usab | qa video tutorial back to experiments defect description in the video tutorial experiment the back to experiments link is not present in the page instead the back to experiments link should be displayed on the screen in order to view the list of experiments by the user actual result in the video tutorial ... | 1 |

11,290 | 7,138,142,806 | IssuesEvent | 2018-01-23 13:34:30 | MISP/MISP | https://api.github.com/repos/MISP/MISP | closed | Disallow proposals that match an already existing attribute | bug enhancement usability | Disallow proposal that already have an attribute that match on the triplet type/value/ids so that there is no possible duplicate.

| True | Disallow proposals that match an already existing attribute - Disallow proposal that already have an attribute that match on the triplet type/value/ids so that there is no possible duplicate.

| usab | disallow proposals that match an already existing attribute disallow proposal that already have an attribute that match on the triplet type value ids so that there is no possible duplicate | 1 |

3,378 | 3,428,212,543 | IssuesEvent | 2015-12-10 08:13:28 | tgstation/-tg-station | https://api.github.com/repos/tgstation/-tg-station | opened | Entering the gateway should immobilize you for a moment, to prevent you from instantly going through it again. | Feature Request Usability | What it says on the tin, it's very easy to accidentally jump back into the mission when you didn't mean to because you moved one tile too many. | True | Entering the gateway should immobilize you for a moment, to prevent you from instantly going through it again. - What it says on the tin, it's very easy to accidentally jump back into the mission when you didn't mean to because you moved one tile too many. | usab | entering the gateway should immobilize you for a moment to prevent you from instantly going through it again what it says on the tin it s very easy to accidentally jump back into the mission when you didn t mean to because you moved one tile too many | 1 |

293,017 | 8,971,817,770 | IssuesEvent | 2019-01-29 16:44:25 | griffithlab/civic-client | https://api.github.com/repos/griffithlab/civic-client | closed | Can't add new DOID to record. | bug enhancement high priority | Per our request, chronic neutrophilic leukemia was added to disease-ontology. https://github.com/DiseaseOntology/HumanDiseaseOntology/issues/280#issuecomment-315180267

Shown here: http://www.disease-ontology.org/?id=DOID:0080187

However, I still can't update this evidence item.

https://civicdb.org/events/genes/123... | 1.0 | Can't add new DOID to record. - Per our request, chronic neutrophilic leukemia was added to disease-ontology. https://github.com/DiseaseOntology/HumanDiseaseOntology/issues/280#issuecomment-315180267

Shown here: http://www.disease-ontology.org/?id=DOID:0080187

However, I still can't update this evidence item.

http... | non_usab | can t add new doid to record per our request chronic neutrophilic leukemia was added to disease ontology shown here however i still can t update this evidence item i assume it s missing from the doid file | 0 |

182,815 | 6,673,746,746 | IssuesEvent | 2017-10-04 16:00:34 | knipferrc/pastey | https://api.github.com/repos/knipferrc/pastey | closed | next.js? | Priority: Maximum Type: Refactor | Thinking of porting to next.js for their prefetching, and SSR. Should give better time to interactive and going to include the server with the app to simplify the app a little as far as deployment. | 1.0 | next.js? - Thinking of porting to next.js for their prefetching, and SSR. Should give better time to interactive and going to include the server with the app to simplify the app a little as far as deployment. | non_usab | next js thinking of porting to next js for their prefetching and ssr should give better time to interactive and going to include the server with the app to simplify the app a little as far as deployment | 0 |

26,899 | 27,334,327,188 | IssuesEvent | 2023-02-26 01:59:15 | MarkBind/markbind | https://api.github.com/repos/MarkBind/markbind | closed | "Cap" / remove panel closing animations | p.Medium a-ReaderUsability d.easy | <!--

Before opening a new issue, please search existing issues: https://github.com/MarkBind/markbind/issues

-->

**Is your request related to a problem?**

When the user closes the panel, the viewport is "dragged" back up to the panel's start.

This works well for smaller panels but becomes rather disorienti... | True | "Cap" / remove panel closing animations - <!--

Before opening a new issue, please search existing issues: https://github.com/MarkBind/markbind/issues

-->

**Is your request related to a problem?**

When the user closes the panel, the viewport is "dragged" back up to the panel's start.

This works well for sm... | usab | cap remove panel closing animations before opening a new issue please search existing issues is your request related to a problem when the user closes the panel the viewport is dragged back up to the panel s start this works well for smaller panels but becomes rather disorienti... | 1 |

4,572 | 3,872,477,357 | IssuesEvent | 2016-04-11 14:01:37 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 22513959: Xcode-beta (7A192o): Refactor does not take into account casting, ObjC generics and __kindof class uses | classification:ui/usability reproducible:always status:open | #### Description

Summary:

Xcode 7 introduces ObjC generics and __kindof, however the refactoring tools have not been updated with support. Result is, when renaming a class and compiling, there are compilation errors on casting, generics, __kindof.

Steps to Reproduce:

Create a class