Unnamed: 0 int64 3 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 742 | labels stringlengths 4 431 | body stringlengths 5 239k | index stringclasses 10 values | text_combine stringlengths 96 240k | label stringclasses 2 values | text stringlengths 96 200k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

29,022 | 23,672,857,794 | IssuesEvent | 2022-08-27 16:31:38 | jrsmith3/ibei | https://api.github.com/repos/jrsmith3/ibei | closed | Write GitHub action to post documentation to readthedocs.org for new releases | development infrastructure | # Overview

The scope of this issue is to upload the documentation build by the automation described in #55 to readthedocs.org via GitHub action. Documentation should only be updated in this way for releases.

# Related issues

* Depends on #52.

* Depends on #55. | 1.0 | Write GitHub action to post documentation to readthedocs.org for new releases - # Overview

The scope of this issue is to upload the documentation build by the automation described in #55 to readthedocs.org via GitHub action. Documentation should only be updated in this way for releases.

# Related issues

* Depends on #52.

* Depends on #55. | non_usab | write github action to post documentation to readthedocs org for new releases overview the scope of this issue is to upload the documentation build by the automation described in to readthedocs org via github action documentation should only be updated in this way for releases related issues depends on depends on | 0 |

755,600 | 26,434,323,525 | IssuesEvent | 2023-01-15 07:17:12 | fredo-ai/Fredo-Public | https://api.github.com/repos/fredo-ai/Fredo-Public | closed | Add mixpanel events for snooze | priority-1 current sprint | When you snooze event it create new event "REMINDER CREATED" since we are creating new reminder in 10 minutes.

in that case we should add a boolean property to that event called "snoozed" (True/False).

If Reminder created as a result of snooze the property of REMINDER CREATED event should be **_snoozed=true_** | 1.0 | Add mixpanel events for snooze - When you snooze event it create new event "REMINDER CREATED" since we are creating new reminder in 10 minutes.

in that case we should add a boolean property to that event called "snoozed" (True/False).

If Reminder created as a result of snooze the property of REMINDER CREATED event should be **_snoozed=true_** | non_usab | add mixpanel events for snooze when you snooze event it create new event reminder created since we are creating new reminder in minutes in that case we should add a boolean property to that event called snoozed true false if reminder created as a result of snooze the property of reminder created event should be snoozed true | 0 |

11,620 | 7,326,867,578 | IssuesEvent | 2018-03-04 01:43:52 | fennekki/cdparacord | https://api.github.com/repos/fennekki/cdparacord | closed | Nothing is currently configurable | enhancement usability | There should be a configuration file of some kind. `$XDG_CONFIG_HOME/cdparacord/config`, maybe. | True | Nothing is currently configurable - There should be a configuration file of some kind. `$XDG_CONFIG_HOME/cdparacord/config`, maybe. | usab | nothing is currently configurable there should be a configuration file of some kind xdg config home cdparacord config maybe | 1 |

6,037 | 4,119,206,825 | IssuesEvent | 2016-06-08 14:14:56 | prometheus/prometheus | https://api.github.com/repos/prometheus/prometheus | closed | Rename `target_groups` to `static_configs` | area/usability component/config kind/breaking change kind/friction | Our SD configurations are consistently suffixed with `_configs` and descriptive of what they do. `target_groups` seems out of order.

I'd suggest renaming it to `static_configs` as we have a break to configuration with the recent changes in file SD configs anyway. | True | Rename `target_groups` to `static_configs` - Our SD configurations are consistently suffixed with `_configs` and descriptive of what they do. `target_groups` seems out of order.

I'd suggest renaming it to `static_configs` as we have a break to configuration with the recent changes in file SD configs anyway. | usab | rename target groups to static configs our sd configurations are consistently suffixed with configs and descriptive of what they do target groups seems out of order i d suggest renaming it to static configs as we have a break to configuration with the recent changes in file sd configs anyway | 1 |

2,373 | 3,072,659,389 | IssuesEvent | 2015-08-19 18:01:43 | FieldDB/FieldDB | https://api.github.com/repos/FieldDB/FieldDB | closed | Add second try for "view all sessions" in spreadsheet if first times out, and warn user it will take a long time | Usability | When the user selects "view all sessions" in a large corpus in the spreadsheet app, the server will time out and ask the user to reload. It will then send them to the last session instead of all sessions.

The user is not warned that loading all sessions will take time (as in a popup in the prototype) | True | Add second try for "view all sessions" in spreadsheet if first times out, and warn user it will take a long time - When the user selects "view all sessions" in a large corpus in the spreadsheet app, the server will time out and ask the user to reload. It will then send them to the last session instead of all sessions.

The user is not warned that loading all sessions will take time (as in a popup in the prototype) | usab | add second try for view all sessions in spreadsheet if first times out and warn user it will take a long time when the user selects view all sessions in a large corpus in the spreadsheet app the server will time out and ask the user to reload it will then send them to the last session instead of all sessions the user is not warned that loading all sessions will take time as in a popup in the prototype | 1 |

20,215 | 15,147,950,326 | IssuesEvent | 2021-02-11 09:55:03 | elastic/rally | https://api.github.com/repos/elastic/rally | opened | Allow to selectively ignore response errors | :Usability enhancement | Currently Rally implements three different behaviors when a response error occurs:

1. `continue`: Regardless of the type of error, Rally will continue (even on network issues) and only record that an error has happened.

2. `continue-on-non-fatal` (default): Similar to `continue` but it will fail on network connection errors.

3. `abort`: The benchmark will be aborted as soon as any error happens.

While the differentiation between (1) and (2) is too fine-grained and we should instead never continue on network errors, `abort` is too coarse-grained. While we should still fail with `--on-error=abort` there are cases where we should give track authors more control to decide which tasks are ok to ignore certain errors and for which tasks it would be a problem if an error occurs.

### Proposal

1. We rename `continue-on-non-fatal` (the current default) to `continue` and remove the current behavior of `continue`.

2. We introduce a new task property called `ignore-response-error-level`. At the moment, only one value is allowed: `non-fatal`. When a benchmark is run with `--on-error=abort` and this property is present on a task, only [errors that are considered fatal](https://github.com/elastic/rally/blob/b8592a6071e549be99ac5538f960fe3026e513fb/esrally/driver/driver.py#L1448) will abort the benchmark when this task is run. On all other errors, Rally will continue. | True | Allow to selectively ignore response errors - Currently Rally implements three different behaviors when a response error occurs:

1. `continue`: Regardless of the type of error, Rally will continue (even on network issues) and only record that an error has happened.

2. `continue-on-non-fatal` (default): Similar to `continue` but it will fail on network connection errors.

3. `abort`: The benchmark will be aborted as soon as any error happens.

While the differentiation between (1) and (2) is too fine-grained and we should instead never continue on network errors, `abort` is too coarse-grained. While we should still fail with `--on-error=abort` there are cases where we should give track authors more control to decide which tasks are ok to ignore certain errors and for which tasks it would be a problem if an error occurs.

### Proposal

1. We rename `continue-on-non-fatal` (the current default) to `continue` and remove the current behavior of `continue`.

2. We introduce a new task property called `ignore-response-error-level`. At the moment, only one value is allowed: `non-fatal`. When a benchmark is run with `--on-error=abort` and this property is present on a task, only [errors that are considered fatal](https://github.com/elastic/rally/blob/b8592a6071e549be99ac5538f960fe3026e513fb/esrally/driver/driver.py#L1448) will abort the benchmark when this task is run. On all other errors, Rally will continue. | usab | allow to selectively ignore response errors currently rally implements three different behaviors when a response error occurs continue regardless of the type of error rally will continue even on network issues and only record that an error has happened continue on non fatal default similar to continue but it will fail on network connection errors abort the benchmark will be aborted as soon as any error happens while the differentiation between and is too fine grained and we should instead never continue on network errors abort is too coarse grained while we should still fail with on error abort there are cases where we should give track authors more control to decide which tasks are ok to ignore certain errors and for which tasks it would be a problem if an error occurs proposal we rename continue on non fatal the current default to continue and remove the current behavior of continue we introduce a new task property called ignore response error level at the moment only one value is allowed non fatal when a benchmark is run with on error abort and this property is present on a task only will abort the benchmark when this task is run on all other errors rally will continue | 1 |

10,743 | 6,901,555,540 | IssuesEvent | 2017-11-25 09:09:43 | the-tale/the-tale | https://api.github.com/repos/the-tale/the-tale | opened | Иконка доступной для взятия карты не пропадает сразу по факту взятия | comp_general cont_usability est_simple good first issue type_bug | Надо чтобы её статус обновлялся после каждого взятия. | True | Иконка доступной для взятия карты не пропадает сразу по факту взятия - Надо чтобы её статус обновлялся после каждого взятия. | usab | иконка доступной для взятия карты не пропадает сразу по факту взятия надо чтобы её статус обновлялся после каждого взятия | 1 |

7,179 | 4,805,038,548 | IssuesEvent | 2016-11-02 15:07:15 | Elgg/Elgg | https://api.github.com/repos/Elgg/Elgg | closed | Should we support "plugin" settings for composer project? | dev usability discussion | Currently the root directory of composer project works like a plugin; You can add for example `views/` directory or `start.php` to it, and they will work as they would work within a plugin.

It currently isn't however possible to define plugin settings from the root, because all the features depend on `plugin_id`.

See for example:

- https://github.com/Elgg/Elgg/blob/2.1/views/default/admin/plugin_settings.php#L19

- https://github.com/Elgg/Elgg/blob/2.1/engine/lib/admin.php#L455

Should we add support for plugin settings and plugin user settings for composer projects?

| True | Should we support "plugin" settings for composer project? - Currently the root directory of composer project works like a plugin; You can add for example `views/` directory or `start.php` to it, and they will work as they would work within a plugin.

It currently isn't however possible to define plugin settings from the root, because all the features depend on `plugin_id`.

See for example:

- https://github.com/Elgg/Elgg/blob/2.1/views/default/admin/plugin_settings.php#L19

- https://github.com/Elgg/Elgg/blob/2.1/engine/lib/admin.php#L455

Should we add support for plugin settings and plugin user settings for composer projects?

| usab | should we support plugin settings for composer project currently the root directory of composer project works like a plugin you can add for example views directory or start php to it and they will work as they would work within a plugin it currently isn t however possible to define plugin settings from the root because all the features depend on plugin id see for example should we add support for plugin settings and plugin user settings for composer projects | 1 |

433,787 | 30,350,057,815 | IssuesEvent | 2023-07-11 18:14:09 | ManageIQ/manageiq.org | https://api.github.com/repos/ManageIQ/manageiq.org | closed | Remove scheduling of database backups from documentation | documentation | https://www.manageiq.org/docs/reference/latest/general_configuration/#scheduling-smartstate-analyses-and-backups

I believe it was removed in: https://github.com/ManageIQ/manageiq/pull/21415

I don't know if that section of the documentation needs new screenshots or updated guidance but it looks old to me. | 1.0 | Remove scheduling of database backups from documentation - https://www.manageiq.org/docs/reference/latest/general_configuration/#scheduling-smartstate-analyses-and-backups

I believe it was removed in: https://github.com/ManageIQ/manageiq/pull/21415

I don't know if that section of the documentation needs new screenshots or updated guidance but it looks old to me. | non_usab | remove scheduling of database backups from documentation i believe it was removed in i don t know if that section of the documentation needs new screenshots or updated guidance but it looks old to me | 0 |

274,233 | 8,558,783,217 | IssuesEvent | 2018-11-08 19:17:00 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.facebook.com - design is broken | browser-firefox priority-critical | <!-- @browser: Firefox 65.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:65.0) Gecko/20100101 Firefox/65.0 -->

<!-- @reported_with: -->

**URL**: https://www.facebook.com/permalink.php?story_fbid=267102693997732&id=178816082826394

**Browser / Version**: Firefox 65.0

**Operating System**: Linux

**Tested Another Browser**: Yes

**Problem type**: Design is broken

**Description**: "redwoodcity.org" title-text is covered up by paragraph below it, in Firefox and Edge

**Steps to Reproduce**:

Just visit https://www.facebook.com/permalink.php?story_fbid=267102693997732&id=178816082826394 and click "Not Now" on the create-an-account prompt (if you're prompted).

Edge and Firefox both agree on the unwanted rendering, whereas Chrome and Safari don't show any overlap. So this seems likely to be a Facebook bug where they're accidentally depending on a WebKit/Blink behavior.

[](https://webcompat.com/uploads/2018/11/76cb9e89-d23c-41bd-8c1f-3b804a4f2365.jpg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.facebook.com - design is broken - <!-- @browser: Firefox 65.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:65.0) Gecko/20100101 Firefox/65.0 -->

<!-- @reported_with: -->

**URL**: https://www.facebook.com/permalink.php?story_fbid=267102693997732&id=178816082826394

**Browser / Version**: Firefox 65.0

**Operating System**: Linux

**Tested Another Browser**: Yes

**Problem type**: Design is broken

**Description**: "redwoodcity.org" title-text is covered up by paragraph below it, in Firefox and Edge

**Steps to Reproduce**:

Just visit https://www.facebook.com/permalink.php?story_fbid=267102693997732&id=178816082826394 and click "Not Now" on the create-an-account prompt (if you're prompted).

Edge and Firefox both agree on the unwanted rendering, whereas Chrome and Safari don't show any overlap. So this seems likely to be a Facebook bug where they're accidentally depending on a WebKit/Blink behavior.

[](https://webcompat.com/uploads/2018/11/76cb9e89-d23c-41bd-8c1f-3b804a4f2365.jpg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_usab | design is broken url browser version firefox operating system linux tested another browser yes problem type design is broken description redwoodcity org title text is covered up by paragraph below it in firefox and edge steps to reproduce just visit and click not now on the create an account prompt if you re prompted edge and firefox both agree on the unwanted rendering whereas chrome and safari don t show any overlap so this seems likely to be a facebook bug where they re accidentally depending on a webkit blink behavior browser configuration none from with ❤️ | 0 |

284,384 | 21,416,579,492 | IssuesEvent | 2022-04-22 11:27:58 | opentelekomcloud/vault-plugin-secrets-openstack | https://api.github.com/repos/opentelekomcloud/vault-plugin-secrets-openstack | closed | README reference command inconsistency | documentation | I believe this

``` vault read /os/creds/example-role ```

should be:

``` vault read /openstack/creds/example-role ```

to be aligned with the previous commands.

https://github.com/opentelekomcloud/vault-plugin-secrets-openstack/blob/e56d944faeea6de3e601342395858182748e5c37/README.md?plain=1#L81 | 1.0 | README reference command inconsistency - I believe this

``` vault read /os/creds/example-role ```

should be:

``` vault read /openstack/creds/example-role ```

to be aligned with the previous commands.

https://github.com/opentelekomcloud/vault-plugin-secrets-openstack/blob/e56d944faeea6de3e601342395858182748e5c37/README.md?plain=1#L81 | non_usab | readme reference command inconsistency i believe this vault read os creds example role should be vault read openstack creds example role to be aligned with the previous commands | 0 |

235,566 | 7,740,291,878 | IssuesEvent | 2018-05-28 20:42:58 | GMDevinity/FloodIssues | https://api.github.com/repos/GMDevinity/FloodIssues | closed | Remove waterfall ambient | Modification Priority - Lower | ambient/levels/canals/dam_water_loop2.wav

This has been playing on the sewer pipes and is a major disturbance constantly having to listen to it, obscuring phase music, building

#363

players don't have to stopsound without also having the music stop | 1.0 | Remove waterfall ambient - ambient/levels/canals/dam_water_loop2.wav

This has been playing on the sewer pipes and is a major disturbance constantly having to listen to it, obscuring phase music, building

#363

players don't have to stopsound without also having the music stop | non_usab | remove waterfall ambient ambient levels canals dam water wav this has been playing on the sewer pipes and is a major disturbance constantly having to listen to it obscuring phase music building players don t have to stopsound without also having the music stop | 0 |

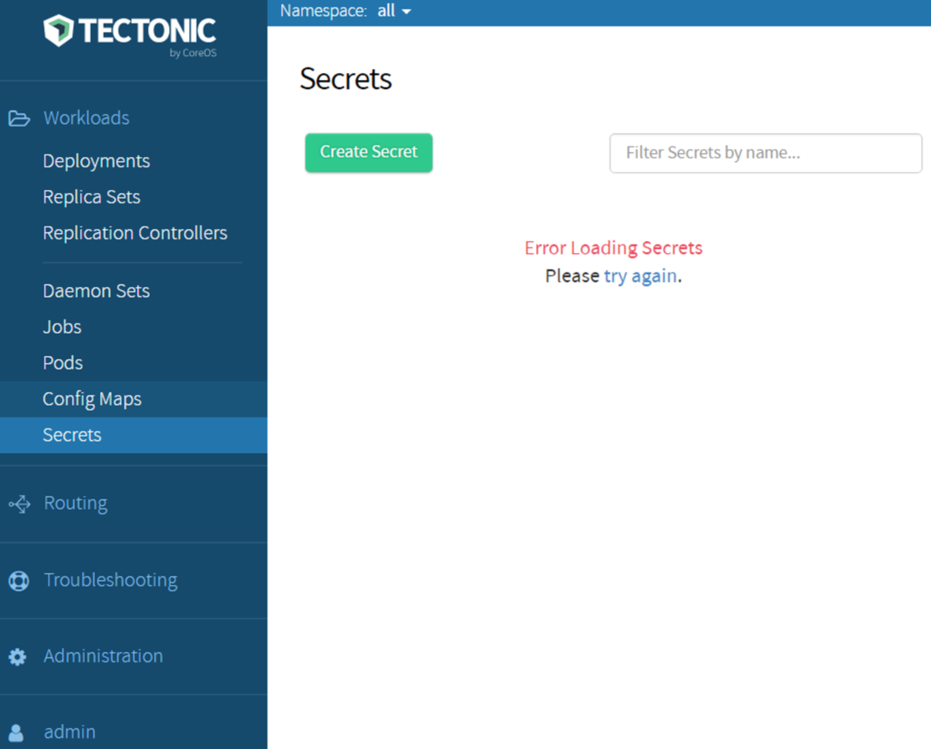

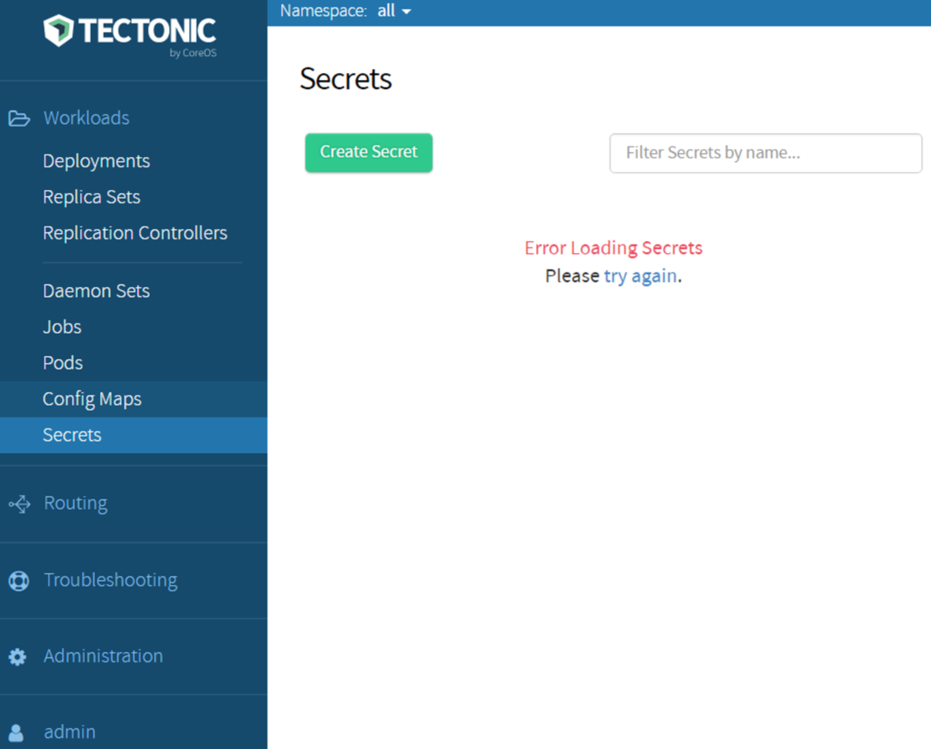

12,427 | 7,873,919,680 | IssuesEvent | 2018-06-25 15:30:50 | coreos/tectonic-installer | https://api.github.com/repos/coreos/tectonic-installer | closed | Tectonic console page turn to "Error Loading ... please try again" while accessing from places afar | kind/usabilty | Hi, Team,

I Installed Tectonic on AWS(Singapore) successfully.

But when I tried to access console page from California office, always telling me “Error Loading…”, then clicking “try again”, its content coming out as last. And accessing from Singapore office is fine.

**How to improve this use experience?** “Each sub-menu page need a double fresh **accessing for places afar**”

Best wishes,

Brant

| True | Tectonic console page turn to "Error Loading ... please try again" while accessing from places afar - Hi, Team,

I Installed Tectonic on AWS(Singapore) successfully.

But when I tried to access console page from California office, always telling me “Error Loading…”, then clicking “try again”, its content coming out as last. And accessing from Singapore office is fine.

**How to improve this use experience?** “Each sub-menu page need a double fresh **accessing for places afar**”

Best wishes,

Brant

| usab | tectonic console page turn to error loading please try again while accessing from places afar hi team i installed tectonic on aws singapore successfully but when i tried to access console page from california office always telling me “error loading…” then clicking “try again” its content coming out as last and accessing from singapore office is fine how to improve this use experience “each sub menu page need a double fresh accessing for places afar ” best wishes brant | 1 |

8,904 | 6,029,518,073 | IssuesEvent | 2017-06-08 18:12:41 | unfoldingWord-dev/translationCore | https://api.github.com/repos/unfoldingWord-dev/translationCore | closed | There should be informative text on all dialogs that indicate that something is happening | QA Passed Usability | The dialog with the progress bar and animated icon should always indicate to the user what is happening while he is waiting. | True | There should be informative text on all dialogs that indicate that something is happening - The dialog with the progress bar and animated icon should always indicate to the user what is happening while he is waiting. | usab | there should be informative text on all dialogs that indicate that something is happening the dialog with the progress bar and animated icon should always indicate to the user what is happening while he is waiting | 1 |

519,138 | 15,045,603,430 | IssuesEvent | 2021-02-03 05:48:14 | ryanclark/karma-webpack | https://api.github.com/repos/ryanclark/karma-webpack | closed | [4.0.0-rc4] Regression after removing lodash (when using multi-compiler mode) | help wanted priority: 3 (required) severity: 2 (regression) status: Approved type: Bug | In #364 `_.clone` was replaced with `Object.assign`.

This change assumed that `webpackOptions` is always an object, but in fact, it can be an array (multi-compiler mode, see https://github.com/webpack/webpack/tree/master/examples/multi-compiler).

So after this change, an array `[ objA, objB ]` becomes an object `{ 0: objA, 1: objB }` and then some subsequent logic gets changed, but also the subsequent `webpack(...)` call checks the passed config and throws an error:

```

WebpackOptionsValidationError: Invalid configuration object. Webpack has been initialised using a configuration object that does not match the API schema.

- configuration has an unknown property '1'. These properties are valid:

...

```

I propose to rollback this change then. But instead of using `lodash` we could use `lodash.clone`: https://www.npmjs.com/package/lodash.clone as it's the only lodash method used.

| 1.0 | [4.0.0-rc4] Regression after removing lodash (when using multi-compiler mode) - In #364 `_.clone` was replaced with `Object.assign`.

This change assumed that `webpackOptions` is always an object, but in fact, it can be an array (multi-compiler mode, see https://github.com/webpack/webpack/tree/master/examples/multi-compiler).

So after this change, an array `[ objA, objB ]` becomes an object `{ 0: objA, 1: objB }` and then some subsequent logic gets changed, but also the subsequent `webpack(...)` call checks the passed config and throws an error:

```

WebpackOptionsValidationError: Invalid configuration object. Webpack has been initialised using a configuration object that does not match the API schema.

- configuration has an unknown property '1'. These properties are valid:

...

```

I propose to rollback this change then. But instead of using `lodash` we could use `lodash.clone`: https://www.npmjs.com/package/lodash.clone as it's the only lodash method used.

| non_usab | regression after removing lodash when using multi compiler mode in clone was replaced with object assign this change assumed that webpackoptions is always an object but in fact it can be an array multi compiler mode see so after this change an array becomes an object obja objb and then some subsequent logic gets changed but also the subsequent webpack call checks the passed config and throws an error webpackoptionsvalidationerror invalid configuration object webpack has been initialised using a configuration object that does not match the api schema configuration has an unknown property these properties are valid i propose to rollback this change then but instead of using lodash we could use lodash clone as it s the only lodash method used | 0 |

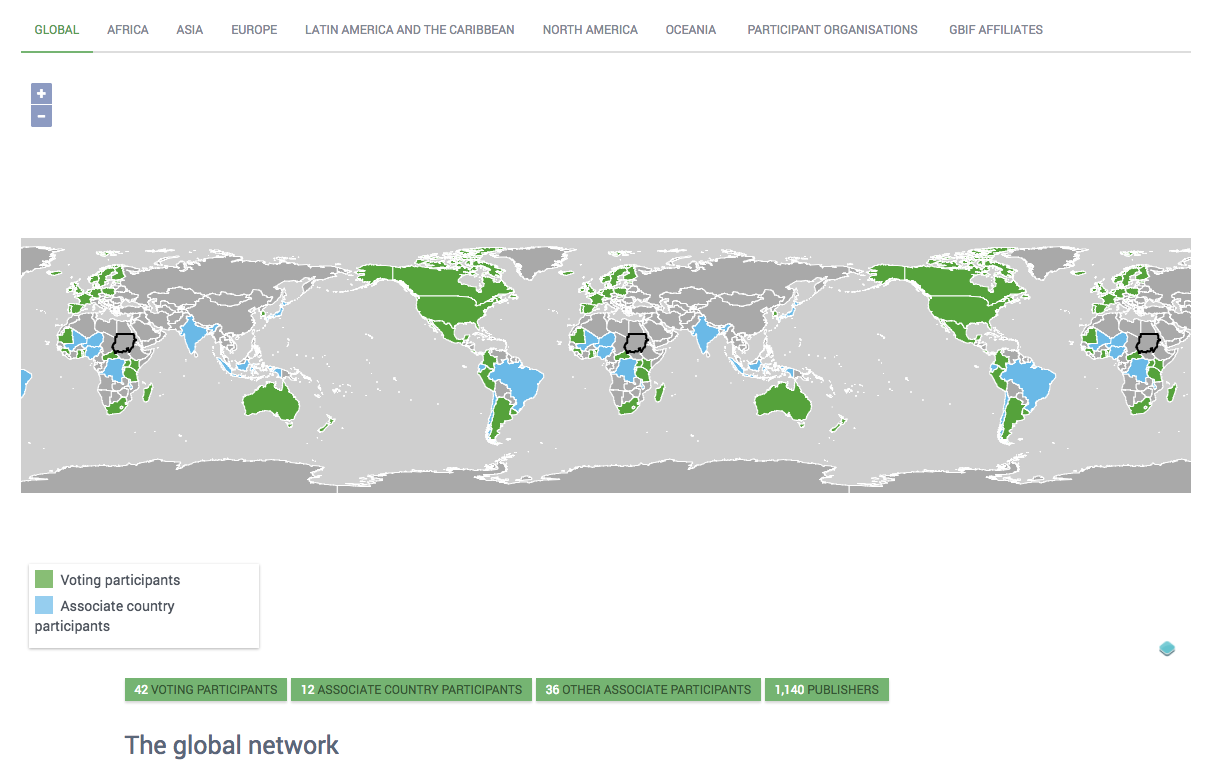

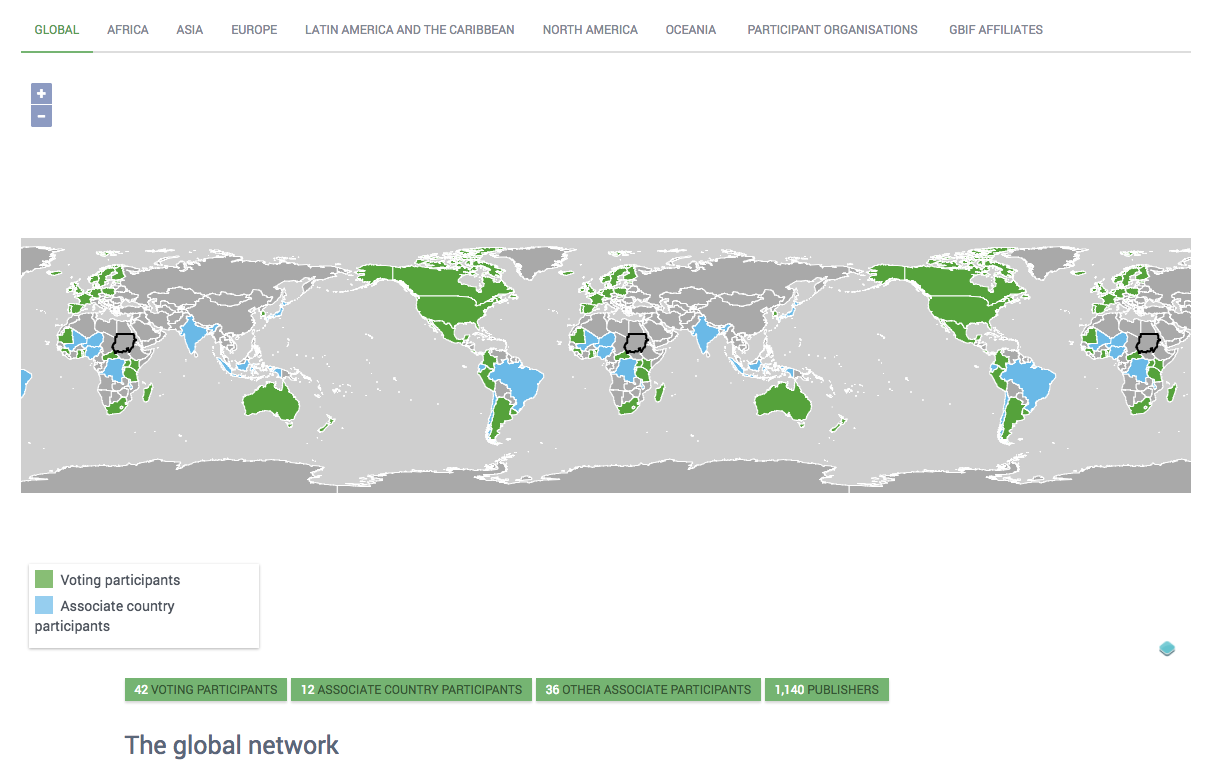

10,998 | 7,009,470,861 | IssuesEvent | 2017-12-19 19:16:29 | gbif/portal16 | https://api.github.com/repos/gbif/portal16 | closed | gbif network map zoomlevel and background | impact medium usability | 1) changing to affiliates and then to global the map zooms way out. the normal default looks better.

2) the lack of background or border makes it look a bit weird when zoom out. the attribution in the corner, the zoom buttons and the legend floats out of context.

Given that this page is used a lot for outreach I'll assign it medium impact. | True | gbif network map zoomlevel and background - 1) changing to affiliates and then to global the map zooms way out. the normal default looks better.

2) the lack of background or border makes it look a bit weird when zoom out. the attribution in the corner, the zoom buttons and the legend floats out of context.

Given that this page is used a lot for outreach I'll assign it medium impact. | usab | gbif network map zoomlevel and background changing to affiliates and then to global the map zooms way out the normal default looks better the lack of background or border makes it look a bit weird when zoom out the attribution in the corner the zoom buttons and the legend floats out of context given that this page is used a lot for outreach i ll assign it medium impact | 1 |

19,344 | 13,893,498,643 | IssuesEvent | 2020-10-19 13:36:17 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [ML] Anomaly explorer - links to single metric viewer don't open in new tab anymore | :ml Feature:Anomaly Detection regression usability v7.11.0 | **Found in version**

- 7.10.0-bc1

**Browser**

- Chrome

**Steps to reproduce**

- Open an AD job in the anomaly explorer

- Click the `View` button in a chart or click the `View series` for an anomalies list entry

**Expected result**

- The user should be able to open the single metric viewer in a new tab, either by default or by using the browser functionality (right click -> open link in new tab)

**Actual result**

- There's no way to open the single metric viewer in a new tab using the buttons. As a result the user loses the filtered anomaly explorer view and needs to manually restore the state if they wanted to continue with investigation.

**Additional information**

- This is a regression as in 7.9 both links opened in a new tab by default | True | [ML] Anomaly explorer - links to single metric viewer don't open in new tab anymore - **Found in version**

- 7.10.0-bc1

**Browser**

- Chrome

**Steps to reproduce**

- Open an AD job in the anomaly explorer

- Click the `View` button in a chart or click the `View series` for an anomalies list entry

**Expected result**

- The user should be able to open the single metric viewer in a new tab, either by default or by using the browser functionality (right click -> open link in new tab)

**Actual result**

- There's no way to open the single metric viewer in a new tab using the buttons. As a result the user loses the filtered anomaly explorer view and needs to manually restore the state if they wanted to continue with investigation.

**Additional information**

- This is a regression as in 7.9 both links opened in a new tab by default | usab | anomaly explorer links to single metric viewer don t open in new tab anymore found in version browser chrome steps to reproduce open an ad job in the anomaly explorer click the view button in a chart or click the view series for an anomalies list entry expected result the user should be able to open the single metric viewer in a new tab either by default or by using the browser functionality right click open link in new tab actual result there s no way to open the single metric viewer in a new tab using the buttons as a result the user loses the filtered anomaly explorer view and needs to manually restore the state if they wanted to continue with investigation additional information this is a regression as in both links opened in a new tab by default | 1 |

24,528 | 23,874,112,583 | IssuesEvent | 2022-09-07 17:18:07 | fabric-testbed/fabric-portal | https://api.github.com/repos/fabric-testbed/fabric-portal | closed | Update the Signup Step 1 UI | usability | - [x] Highlight the information that "Please note that ORCID listed as the first available provider does not work well, please choose your institution from the list instead." right above the "Proceed" button. | True | Update the Signup Step 1 UI - - [x] Highlight the information that "Please note that ORCID listed as the first available provider does not work well, please choose your institution from the list instead." right above the "Proceed" button. | usab | update the signup step ui highlight the information that please note that orcid listed as the first available provider does not work well please choose your institution from the list instead right above the proceed button | 1 |

14,994 | 9,639,284,585 | IssuesEvent | 2019-05-16 13:14:05 | peeringdb/peeringdb | https://api.github.com/repos/peeringdb/peeringdb | closed | number of connected networks in the Exchanges search results | Minor enhancement usability | http://ubersmith.peeringdb.com/admin/supportmgr/ticket_view.php?ticket=11850

very much miss the ability to see the number of connected

networks in the Exchanges search results.

```

Previously I'd be able to gauge the size of a set of IXP's using

```

PeeringDB...and now I have to click on each search result individually and count

while scrolling.

```

Please consider this feature request.

```

Thanks,

-Jacob Zack

Sr. DNS Administator - CIRA (.CA TLD)

| True | number of connected networks in the Exchanges search results - http://ubersmith.peeringdb.com/admin/supportmgr/ticket_view.php?ticket=11850

very much miss the ability to see the number of connected

networks in the Exchanges search results.

```

Previously I'd be able to gauge the size of a set of IXP's using

```

PeeringDB...and now I have to click on each search result individually and count

while scrolling.

```

Please consider this feature request.

```

Thanks,

-Jacob Zack

Sr. DNS Administator - CIRA (.CA TLD)

| usab | number of connected networks in the exchanges search results very much miss the ability to see the number of connected networks in the exchanges search results previously i d be able to gauge the size of a set of ixp s using peeringdb and now i have to click on each search result individually and count while scrolling please consider this feature request thanks jacob zack sr dns administator cira ca tld | 1 |

369,844 | 10,918,931,052 | IssuesEvent | 2019-11-21 17:54:15 | nemtech/catapult-rest | https://api.github.com/repos/nemtech/catapult-rest | closed | Incorrect transaction status codes | priority | Description:

Incorrect status codes end up on the client, while the server logs show correct status code.

Steps:

1. Run the following scenario

Scenario: 1. An account blocks receiving transactions containing a specific asset

Given Bobby blocks receiving transactions containing the following assets:

| ticket |

| voucher |

When Alex tries to send 1 asset "ticket" to Bobby

Then Bobby should receive a confirmation message

And Alex should receive the error "Failure_RestrictionAccount_Mosaic_Transfer_Prohibited"

2. Observe the server logs. You'll notice Failure_RestrictionAccount_Mosaic_Transfer_Prohibited in the logs. This means that the server returns the correct error code.

3. However, upon letting the error propagate to the client, you'll see Failure_RestrictionAccount_Operation_Type_Prohibited

Comparing https://github.com/nemtech/catapult-server/blob/v0.9.0.1/plugins/txes/restriction_account/src/validators/Results.h to catapult-sdk/src/model/status.js it looks like the codes are off by 1. For example,

/// Validation failed because the mosaic transfer is prohibited by the recipient.

DEFINE_RESTRICTION_ACCOUNT_RESULT(Mosaic_Transfer_Prohibited, 12);

**_should translate to_**

case 0x8050000**C**: return 'Failure_RestrictionAccount_Mosaic_Transfer_Prohibited';

**_but is_**

case 0x8050000**B**: return 'Failure_RestrictionAccount_Mosaic_Transfer_Prohibited'; | 1.0 | Incorrect transaction status codes - Description:

Incorrect status codes end up on the client, while the server logs show correct status code.

Steps:

1. Run the following scenario

Scenario: 1. An account blocks receiving transactions containing a specific asset

Given Bobby blocks receiving transactions containing the following assets:

| ticket |

| voucher |

When Alex tries to send 1 asset "ticket" to Bobby

Then Bobby should receive a confirmation message

And Alex should receive the error "Failure_RestrictionAccount_Mosaic_Transfer_Prohibited"

2. Observe the server logs. You'll notice Failure_RestrictionAccount_Mosaic_Transfer_Prohibited in the logs. This means that the server returns the correct error code.

3. However, upon letting the error propagate to the client, you'll see Failure_RestrictionAccount_Operation_Type_Prohibited

Comparing https://github.com/nemtech/catapult-server/blob/v0.9.0.1/plugins/txes/restriction_account/src/validators/Results.h to catapult-sdk/src/model/status.js it looks like the codes are off by 1. For example,

/// Validation failed because the mosaic transfer is prohibited by the recipient.

DEFINE_RESTRICTION_ACCOUNT_RESULT(Mosaic_Transfer_Prohibited, 12);

**_should translate to_**

case 0x8050000**C**: return 'Failure_RestrictionAccount_Mosaic_Transfer_Prohibited';

**_but is_**

case 0x8050000**B**: return 'Failure_RestrictionAccount_Mosaic_Transfer_Prohibited'; | non_usab | incorrect transaction status codes description incorrect status codes end up on the client while the server logs show correct status code steps run the following scenario scenario an account blocks receiving transactions containing a specific asset given bobby blocks receiving transactions containing the following assets ticket voucher when alex tries to send asset ticket to bobby then bobby should receive a confirmation message and alex should receive the error failure restrictionaccount mosaic transfer prohibited observe the server logs you ll notice failure restrictionaccount mosaic transfer prohibited in the logs this means that the server returns the correct error code however upon letting the error propagate to the client you ll see failure restrictionaccount operation type prohibited comparing to catapult sdk src model status js it looks like the codes are off by for example validation failed because the mosaic transfer is prohibited by the recipient define restriction account result mosaic transfer prohibited should translate to case c return failure restrictionaccount mosaic transfer prohibited but is case b return failure restrictionaccount mosaic transfer prohibited | 0 |

16,640 | 11,177,509,490 | IssuesEvent | 2019-12-30 10:57:01 | tobiasanker/SakuraTree | https://api.github.com/repos/tobiasanker/SakuraTree | opened | Add prebuild binaries | usability | ### Description

To make it easier for interesten people to test this project, a set of prebuild binaries should be made available.

### Possible Implementation

upgrade the current gitlab-ci-runner to build binaries for the common linux-distributions, like ubuntu, debian and centos, but only in case of merge-requests

| True | Add prebuild binaries - ### Description

To make it easier for interesten people to test this project, a set of prebuild binaries should be made available.

### Possible Implementation

upgrade the current gitlab-ci-runner to build binaries for the common linux-distributions, like ubuntu, debian and centos, but only in case of merge-requests

| usab | add prebuild binaries description to make it easier for interesten people to test this project a set of prebuild binaries should be made available possible implementation upgrade the current gitlab ci runner to build binaries for the common linux distributions like ubuntu debian and centos but only in case of merge requests | 1 |

43,837 | 2,893,238,809 | IssuesEvent | 2015-06-15 16:55:41 | roblox-linux-wrapper/roblox-linux-wrapper | https://api.github.com/repos/roblox-linux-wrapper/roblox-linux-wrapper | opened | Enable graphical improvements on wine-staging by default | enhancement feature-request priority:medium | `wine-staging` recently added performance enhancing settings, which should most definitely be enabled by default. The wrapper should enable CSMT, as well as ensure CUDA and PhysX are working correctly. This will hopefully improve the performance of the game for many, and may even reduce the frequency of crashes.

Here's a list of things that we can implement:

* [CSMT](https://github.com/wine-compholio/wine-staging/wiki/CSMT)

* [CUDA support](https://github.com/wine-compholio/wine-staging/wiki/CUDA)

* [PhysX acceleration support](https://github.com/wine-compholio/wine-staging/wiki/PhysX)

* [Some useful documentation on advanced wine-staging usage](https://github.com/wine-compholio/wine-staging/wiki/Usage)

| 1.0 | Enable graphical improvements on wine-staging by default - `wine-staging` recently added performance enhancing settings, which should most definitely be enabled by default. The wrapper should enable CSMT, as well as ensure CUDA and PhysX are working correctly. This will hopefully improve the performance of the game for many, and may even reduce the frequency of crashes.

Here's a list of things that we can implement:

* [CSMT](https://github.com/wine-compholio/wine-staging/wiki/CSMT)

* [CUDA support](https://github.com/wine-compholio/wine-staging/wiki/CUDA)

* [PhysX acceleration support](https://github.com/wine-compholio/wine-staging/wiki/PhysX)

* [Some useful documentation on advanced wine-staging usage](https://github.com/wine-compholio/wine-staging/wiki/Usage)

| non_usab | enable graphical improvements on wine staging by default wine staging recently added performance enhancing settings which should most definitely be enabled by default the wrapper should enable csmt as well as ensure cuda and physx are working correctly this will hopefully improve the performance of the game for many and may even reduce the frequency of crashes here s a list of things that we can implement | 0 |

14,177 | 8,886,911,916 | IssuesEvent | 2019-01-15 02:51:13 | fnielsen/scholia | https://api.github.com/repos/fnielsen/scholia | opened | In topic aspect, add panel on organizations associated with authors publishing on the topic | P108-employer P50-author P921-main-subject SPARQL panels usability | Here we go for Zika:

```SPARQL

# #defaultView:Graph

SELECT ?citing_organization ?citing_organizationLabel ?cited_organization ?cited_organizationLabel

WITH {

SELECT DISTINCT ?citing_organization ?cited_organization WHERE {

?citing_author (wdt:P108|wdt:P1416) ?citing_organization .

?cited_author (wdt:P108|wdt:P1416) ?cited_organization .

?citing_work wdt:P50 ?citing_author .

?citing_work wdt:P921 wd:Q15794049 .

?cited_work wdt:P921 wd:Q15794049 .

?citing_work wdt:P2860 ?cited_work .

?cited_work wdt:P50 ?cited_author .

FILTER (?citing_work != ?cited_work)

FILTER NOT EXISTS {

?citing_work wdt:P50 ?author .

?citing_work wdt:P2860 ?cited_work .

?cited_work wdt:P50 ?author .

}

}

} AS %results

WHERE {

INCLUDE %results

SERVICE wikibase:label { bd:serviceParam wikibase:language "[AUTO_LANGUAGE],en". }

}

```

Probably worth thinking about encoding the frequency of interaction in the colour of the arrows. | True | In topic aspect, add panel on organizations associated with authors publishing on the topic - Here we go for Zika:

```SPARQL

# #defaultView:Graph

SELECT ?citing_organization ?citing_organizationLabel ?cited_organization ?cited_organizationLabel

WITH {

SELECT DISTINCT ?citing_organization ?cited_organization WHERE {

?citing_author (wdt:P108|wdt:P1416) ?citing_organization .

?cited_author (wdt:P108|wdt:P1416) ?cited_organization .

?citing_work wdt:P50 ?citing_author .

?citing_work wdt:P921 wd:Q15794049 .

?cited_work wdt:P921 wd:Q15794049 .

?citing_work wdt:P2860 ?cited_work .

?cited_work wdt:P50 ?cited_author .

FILTER (?citing_work != ?cited_work)

FILTER NOT EXISTS {

?citing_work wdt:P50 ?author .

?citing_work wdt:P2860 ?cited_work .

?cited_work wdt:P50 ?author .

}

}

} AS %results

WHERE {

INCLUDE %results

SERVICE wikibase:label { bd:serviceParam wikibase:language "[AUTO_LANGUAGE],en". }

}

```

Probably worth thinking about encoding the frequency of interaction in the colour of the arrows. | usab | in topic aspect add panel on organizations associated with authors publishing on the topic here we go for zika sparql defaultview graph select citing organization citing organizationlabel cited organization cited organizationlabel with select distinct citing organization cited organization where citing author wdt wdt citing organization cited author wdt wdt cited organization citing work wdt citing author citing work wdt wd cited work wdt wd citing work wdt cited work cited work wdt cited author filter citing work cited work filter not exists citing work wdt author citing work wdt cited work cited work wdt author as results where include results service wikibase label bd serviceparam wikibase language en probably worth thinking about encoding the frequency of interaction in the colour of the arrows | 1 |

13,676 | 8,638,305,415 | IssuesEvent | 2018-11-23 14:20:11 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | 3D Path - please add "select control point" button | enhancement topic:editor usability | path in 3D does not have select control point button. but it's handled by shift drag

| True | 3D Path - please add "select control point" button - path in 3D does not have select control point button. but it's handled by shift drag

| usab | path please add select control point button path in does not have select control point button but it s handled by shift drag | 1 |

26,858 | 27,275,839,003 | IssuesEvent | 2023-02-23 05:05:10 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | closed | `clickhouse-client` If the password is not specified in the command line, and no-password auth is rejected, ask for password interactively. | easy task usability | Currently it works as follows:

```

milovidov@milovidov-desktop:~$ clickhouse client --host xqr42pv6yb.eu-west-1.aws.clickhouse-staging.com --secure

ClickHouse client version 23.2.1.1.

Connecting to xqr42pv6yb.eu-west-1.aws.clickhouse-staging.com:9440 as user default.

Code: 516. DB::Exception: Received from xqr42pv6yb.eu-west-1.aws.clickhouse-staging.com:9440. DB::Exception: default: Authentication failed: password is incorrect, or there is no user with such name.

```

Should fall back to interactive password prompt (similarly if I added the `--password` argument):

```

milovidov@milovidov-desktop:~$ clickhouse client --host xqr42pv6yb.eu-west-1.aws.clickhouse-staging.com --secure --password

ClickHouse client version 23.2.1.1.

Password for user (default):

``` | True | `clickhouse-client` If the password is not specified in the command line, and no-password auth is rejected, ask for password interactively. - Currently it works as follows:

```

milovidov@milovidov-desktop:~$ clickhouse client --host xqr42pv6yb.eu-west-1.aws.clickhouse-staging.com --secure

ClickHouse client version 23.2.1.1.

Connecting to xqr42pv6yb.eu-west-1.aws.clickhouse-staging.com:9440 as user default.

Code: 516. DB::Exception: Received from xqr42pv6yb.eu-west-1.aws.clickhouse-staging.com:9440. DB::Exception: default: Authentication failed: password is incorrect, or there is no user with such name.

```

Should fall back to interactive password prompt (similarly if I added the `--password` argument):

```

milovidov@milovidov-desktop:~$ clickhouse client --host xqr42pv6yb.eu-west-1.aws.clickhouse-staging.com --secure --password

ClickHouse client version 23.2.1.1.

Password for user (default):

``` | usab | clickhouse client if the password is not specified in the command line and no password auth is rejected ask for password interactively currently it works as follows milovidov milovidov desktop clickhouse client host eu west aws clickhouse staging com secure clickhouse client version connecting to eu west aws clickhouse staging com as user default code db exception received from eu west aws clickhouse staging com db exception default authentication failed password is incorrect or there is no user with such name should fall back to interactive password prompt similarly if i added the password argument milovidov milovidov desktop clickhouse client host eu west aws clickhouse staging com secure password clickhouse client version password for user default | 1 |

196,554 | 22,442,140,348 | IssuesEvent | 2022-06-21 02:34:25 | valdisiljuconoks/AlloyTech | https://api.github.com/repos/valdisiljuconoks/AlloyTech | closed | WS-2019-0333 (High) detected in handlebars-1.3.0.tgz - autoclosed | security vulnerability | ## WS-2019-0333 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-1.3.0.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates effectively with no frustration</p>

<p>Library home page: <a href="https://registry.npmjs.org/handlebars/-/handlebars-1.3.0.tgz">https://registry.npmjs.org/handlebars/-/handlebars-1.3.0.tgz</a></p>

<p>Path to dependency file: AlloyTech/AlloyTechEpi10/modules/_protected/Shell/Shell/10.1.0.0/ClientResources/lib/xstyle/package.json</p>

<p>Path to vulnerable library: AlloyTech/AlloyTechEpi10/modules/_protected/Shell/Shell/10.1.0.0/ClientResources/lib/xstyle/node_modules/handlebars/package.json</p>

<p>

Dependency Hierarchy:

- intern-geezer-2.2.3.tgz (Root Library)

- istanbul-0.2.16.tgz

- :x: **handlebars-1.3.0.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In handlebars, versions prior to v4.5.3 are vulnerable to prototype pollution. Using a malicious template it's possbile to add or modify properties to the Object prototype. This can also lead to DOS and RCE in certain conditions.

<p>Publish Date: 2019-11-18

<p>URL: <a href=https://github.com/wycats/handlebars.js/commit/f7f05d7558e674856686b62a00cde5758f3b7a08>WS-2019-0333</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1325">https://www.npmjs.com/advisories/1325</a></p>

<p>Release Date: 2019-11-18</p>

<p>Fix Resolution: handlebars - 4.5.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | WS-2019-0333 (High) detected in handlebars-1.3.0.tgz - autoclosed - ## WS-2019-0333 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-1.3.0.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates effectively with no frustration</p>

<p>Library home page: <a href="https://registry.npmjs.org/handlebars/-/handlebars-1.3.0.tgz">https://registry.npmjs.org/handlebars/-/handlebars-1.3.0.tgz</a></p>

<p>Path to dependency file: AlloyTech/AlloyTechEpi10/modules/_protected/Shell/Shell/10.1.0.0/ClientResources/lib/xstyle/package.json</p>

<p>Path to vulnerable library: AlloyTech/AlloyTechEpi10/modules/_protected/Shell/Shell/10.1.0.0/ClientResources/lib/xstyle/node_modules/handlebars/package.json</p>

<p>

Dependency Hierarchy:

- intern-geezer-2.2.3.tgz (Root Library)

- istanbul-0.2.16.tgz

- :x: **handlebars-1.3.0.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In handlebars, versions prior to v4.5.3 are vulnerable to prototype pollution. Using a malicious template it's possbile to add or modify properties to the Object prototype. This can also lead to DOS and RCE in certain conditions.

<p>Publish Date: 2019-11-18

<p>URL: <a href=https://github.com/wycats/handlebars.js/commit/f7f05d7558e674856686b62a00cde5758f3b7a08>WS-2019-0333</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1325">https://www.npmjs.com/advisories/1325</a></p>

<p>Release Date: 2019-11-18</p>

<p>Fix Resolution: handlebars - 4.5.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_usab | ws high detected in handlebars tgz autoclosed ws high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file alloytech modules protected shell shell clientresources lib xstyle package json path to vulnerable library alloytech modules protected shell shell clientresources lib xstyle node modules handlebars package json dependency hierarchy intern geezer tgz root library istanbul tgz x handlebars tgz vulnerable library vulnerability details in handlebars versions prior to are vulnerable to prototype pollution using a malicious template it s possbile to add or modify properties to the object prototype this can also lead to dos and rce in certain conditions publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution handlebars step up your open source security game with whitesource | 0 |

6,971 | 4,705,916,176 | IssuesEvent | 2016-10-13 15:42:17 | bitsquare/bitsquare | https://api.github.com/repos/bitsquare/bitsquare | closed | Make all UI screens safe for min. window size | re: usability [ui] | 1. Find which min. window size we want to support 760x560)?

2. Check all screens if there are problems, add scroll panes if needed

<!---

@huboard:{"order":321.6875002384186,"milestone_order":154,"custom_state":""}

-->

| True | Make all UI screens safe for min. window size - 1. Find which min. window size we want to support 760x560)?

2. Check all screens if there are problems, add scroll panes if needed

<!---

@huboard:{"order":321.6875002384186,"milestone_order":154,"custom_state":""}

-->

| usab | make all ui screens safe for min window size find which min window size we want to support check all screens if there are problems add scroll panes if needed huboard order milestone order custom state | 1 |

22,844 | 20,356,723,079 | IssuesEvent | 2022-02-20 03:25:42 | TravelMapping/DataProcessing | https://api.github.com/repos/TravelMapping/DataProcessing | closed | Produce a "things to check out" in user logs? | enhancement user statistics usability TravelerList class | Thinking about some of the emails I get from users when they send in .list updates, where they find the errors in their lists from highway data updates over the course of a few days, it might be nice to have a report for users indicating which routes they have in their lists that have recent updates entries. | True | Produce a "things to check out" in user logs? - Thinking about some of the emails I get from users when they send in .list updates, where they find the errors in their lists from highway data updates over the course of a few days, it might be nice to have a report for users indicating which routes they have in their lists that have recent updates entries. | usab | produce a things to check out in user logs thinking about some of the emails i get from users when they send in list updates where they find the errors in their lists from highway data updates over the course of a few days it might be nice to have a report for users indicating which routes they have in their lists that have recent updates entries | 1 |

562,867 | 16,671,376,537 | IssuesEvent | 2021-06-07 11:19:35 | vaticle/typedb-benchmark | https://api.github.com/repos/vaticle/typedb-benchmark | opened | Agents for read performance with reasoning | priority: blocker type: feature | ## Description

Subsequent to the significant refactor this year, we must introduce new agents. We particularly want to focus on creating a variety of queries that demonstrate the different challenges in reasoner. | 1.0 | Agents for read performance with reasoning - ## Description

Subsequent to the significant refactor this year, we must introduce new agents. We particularly want to focus on creating a variety of queries that demonstrate the different challenges in reasoner. | non_usab | agents for read performance with reasoning description subsequent to the significant refactor this year we must introduce new agents we particularly want to focus on creating a variety of queries that demonstrate the different challenges in reasoner | 0 |

290,651 | 8,902,100,876 | IssuesEvent | 2019-01-17 06:00:18 | abpframework/abp | https://api.github.com/repos/abpframework/abp | opened | Implement CorrelationId | feature framework priority:normal | That is passed between service calls to track the same request/operation/transaction. It's also logged. | 1.0 | Implement CorrelationId - That is passed between service calls to track the same request/operation/transaction. It's also logged. | non_usab | implement correlationid that is passed between service calls to track the same request operation transaction it s also logged | 0 |

380,571 | 11,267,812,728 | IssuesEvent | 2020-01-14 03:41:18 | crcn/tandem | https://api.github.com/repos/crcn/tandem | closed | Only allow elements to be dropped in slots | bug estimate: small priority: high | Users are able to add children to component instances which:

1. don't appear in the editor since the behavior isn't supported

2. break the react compiler | 1.0 | Only allow elements to be dropped in slots - Users are able to add children to component instances which:

1. don't appear in the editor since the behavior isn't supported

2. break the react compiler | non_usab | only allow elements to be dropped in slots users are able to add children to component instances which don t appear in the editor since the behavior isn t supported break the react compiler | 0 |

84,295 | 10,369,117,961 | IssuesEvent | 2019-09-07 23:13:22 | ProyectoIntegrador2018/dr_movil | https://api.github.com/repos/ProyectoIntegrador2018/dr_movil | opened | Bugs | documentation | ### Bug *nombre*

### *Descripción de qué es el bug*

### *Descripción de cómo recrear el bug* | 1.0 | Bugs - ### Bug *nombre*

### *Descripción de qué es el bug*

### *Descripción de cómo recrear el bug* | non_usab | bugs bug nombre descripción de qué es el bug descripción de cómo recrear el bug | 0 |

240,609 | 7,803,505,454 | IssuesEvent | 2018-06-11 00:48:00 | kubeflow/kubeflow | https://api.github.com/repos/kubeflow/kubeflow | closed | Deadlocks configuring envoy for IAP | area/bootstrap area/front-end platform/gcp priority/p1 release/0.2.0 | I'm noticing deadlocks and other problems configuring envoy using the IAP script. Some of the problems I observe

1. iap.sh is never able to acquire the lock and therefore able to write the envoy-config.json

1. envoy container is crash looping - prevents GCP loadbalancer from detecting the backend is heathy

I think we should make the following changes

1. There should be a single pod responsible for enabling IAP and updating the envoy-config map as needed

* We should move this out of the sidecar and into a separate deployment

* Locking should be less important because there won't be contention

1. The envoy sidecars are now just responsible for updating envoy config based on the config map

* They no longer need to acquire a lock

* They can periodically check the config map and compute a hash to know when it changes

1. We should provide a default config that will allow envoy to startup but ensure non secure traffic is blocked

* This way we can avoid the problems with the ingress thinking the backend is unhealthy. | 1.0 | Deadlocks configuring envoy for IAP - I'm noticing deadlocks and other problems configuring envoy using the IAP script. Some of the problems I observe

1. iap.sh is never able to acquire the lock and therefore able to write the envoy-config.json

1. envoy container is crash looping - prevents GCP loadbalancer from detecting the backend is heathy

I think we should make the following changes

1. There should be a single pod responsible for enabling IAP and updating the envoy-config map as needed

* We should move this out of the sidecar and into a separate deployment

* Locking should be less important because there won't be contention

1. The envoy sidecars are now just responsible for updating envoy config based on the config map

* They no longer need to acquire a lock

* They can periodically check the config map and compute a hash to know when it changes

1. We should provide a default config that will allow envoy to startup but ensure non secure traffic is blocked

* This way we can avoid the problems with the ingress thinking the backend is unhealthy. | non_usab | deadlocks configuring envoy for iap i m noticing deadlocks and other problems configuring envoy using the iap script some of the problems i observe iap sh is never able to acquire the lock and therefore able to write the envoy config json envoy container is crash looping prevents gcp loadbalancer from detecting the backend is heathy i think we should make the following changes there should be a single pod responsible for enabling iap and updating the envoy config map as needed we should move this out of the sidecar and into a separate deployment locking should be less important because there won t be contention the envoy sidecars are now just responsible for updating envoy config based on the config map they no longer need to acquire a lock they can periodically check the config map and compute a hash to know when it changes we should provide a default config that will allow envoy to startup but ensure non secure traffic is blocked this way we can avoid the problems with the ingress thinking the backend is unhealthy | 0 |

57,730 | 14,199,765,734 | IssuesEvent | 2020-11-16 03:22:10 | rust-lang/rust | https://api.github.com/repos/rust-lang/rust | closed | Can't use download-ci-llvm any more | A-LLVM A-rustbuild C-bug T-infra | Enabling download-ci-llvm used to work just fine for me, until today.

After rebasing on top of 75042566d1c90d912f22e4db43b6d3af98447986 , doing anything with rustc beyond stage 1 fails:

```

$ ./x.py build

[...]

Building stage1 std artifacts (x86_64-unknown-linux-gnu -> x86_64-unknown-linux-gnu)

[...]

error while loading shared libraries: libLLVM-11-rust-1.49.0-nightly.so: cannot open shared object file: No such file or directory

```

To verify it's not due to some caching issue caused by my experiments in #79043 that temporarily turned the feature off I deleted the entire build directory. It correctly downloaded the stage0 and llvm artifacts, but still failed when trying to run stage1 rustc. | 1.0 | Can't use download-ci-llvm any more - Enabling download-ci-llvm used to work just fine for me, until today.

After rebasing on top of 75042566d1c90d912f22e4db43b6d3af98447986 , doing anything with rustc beyond stage 1 fails:

```

$ ./x.py build

[...]

Building stage1 std artifacts (x86_64-unknown-linux-gnu -> x86_64-unknown-linux-gnu)

[...]

error while loading shared libraries: libLLVM-11-rust-1.49.0-nightly.so: cannot open shared object file: No such file or directory

```

To verify it's not due to some caching issue caused by my experiments in #79043 that temporarily turned the feature off I deleted the entire build directory. It correctly downloaded the stage0 and llvm artifacts, but still failed when trying to run stage1 rustc. | non_usab | can t use download ci llvm any more enabling download ci llvm used to work just fine for me until today after rebasing on top of doing anything with rustc beyond stage fails x py build building std artifacts unknown linux gnu unknown linux gnu error while loading shared libraries libllvm rust nightly so cannot open shared object file no such file or directory to verify it s not due to some caching issue caused by my experiments in that temporarily turned the feature off i deleted the entire build directory it correctly downloaded the and llvm artifacts but still failed when trying to run rustc | 0 |

468,723 | 13,489,310,464 | IssuesEvent | 2020-09-11 13:42:50 | Eastrall/Rhisis | https://api.github.com/repos/Eastrall/Rhisis | opened | Provide a configuration parameter to send the world server port to client | comp: network enhancement feature-request good first issue priority: low srv: login | The FLYFF client tries to connect to the world server using the default 5400 port. This implies that the world servers cannot be hosted on the same machine.

In order to improve flexibility, Rhisis should provide an option to send the world servers port to the client once the `CERTIFY` packet is handled in the `LoginServer`. | 1.0 | Provide a configuration parameter to send the world server port to client - The FLYFF client tries to connect to the world server using the default 5400 port. This implies that the world servers cannot be hosted on the same machine.

In order to improve flexibility, Rhisis should provide an option to send the world servers port to the client once the `CERTIFY` packet is handled in the `LoginServer`. | non_usab | provide a configuration parameter to send the world server port to client the flyff client tries to connect to the world server using the default port this implies that the world servers cannot be hosted on the same machine in order to improve flexibility rhisis should provide an option to send the world servers port to the client once the certify packet is handled in the loginserver | 0 |

130,501 | 10,617,491,263 | IssuesEvent | 2019-10-12 19:28:48 | tstreamDOTh/firebase-swiss | https://api.github.com/repos/tstreamDOTh/firebase-swiss | closed | Setup a basic test case suite | firebase hacktoberfest help wanted javascript test | Explore firebase emulator & create a basic test case suite. Should have a sample test case probably using `CREATE` fire function. | 1.0 | Setup a basic test case suite - Explore firebase emulator & create a basic test case suite. Should have a sample test case probably using `CREATE` fire function. | non_usab | setup a basic test case suite explore firebase emulator create a basic test case suite should have a sample test case probably using create fire function | 0 |

500,464 | 14,500,131,152 | IssuesEvent | 2020-12-11 17:37:09 | magento/magento2 | https://api.github.com/repos/magento/magento2 | closed | Input type of customizable option doesn't get returned through GraphQL | Component: QuoteGraphQl Issue: Format is valid Priority: P1 Progress: done Project: GraphQL | ### Preconditions

Github branches `magento2/2.4-develop` and `architecture/master`

### Steps to reproduce

The GraphQL type `SelectedCustomizableOption` is used in multiple places across the Magento GraphQL modules to get the currently selected customizable option of a product through GraphQL. There are various field types for this such as text, textarea, select, multiselect, checkbox, etc. Currently, there's no way for a frontend using GraphQL to know how to render the SelectedCustomizableOption. Yes, the format varies according to different types, but the format is the same for input types of the same category. I believe there's no way to differentiate between a text and a textarea of `SelectedCustomizableOption`. Same with the different select input types.

The associated resolver class _does_ return the 'type of input' from Magento (ref: https://github.com/magento/magento2/blob/2.4-develop/app/code/Magento/QuoteGraphQl/Model/CartItem/DataProvider/CustomizableOption.php#L60), but the associated GraphQL schema does not define a field for the same (ref: https://github.com/magento/magento2/blob/2.4-develop/app/code/Magento/QuoteGraphQl/etc/schema.graphqls#L346) and hence the 'type of input' doesn't get returned.

Upon some searching, the GraphQL coverage docs did have the 'type of input' as a field in `SelectedCustomizableOption` a couple of months ago (ref: https://github.com/magento/architecture/blob/673438109bbf63d819e96c373ef7622206ff7f9b/design-documents/graph-ql/coverage/add-items-to-cart/AddSimpleProductToCart.graphqls#L59) until the new coverage docs (ref: https://github.com/magento/architecture/blob/master/design-documents/graph-ql/coverage/Cart.graphqls#L114) was matched according to the current GraphQL schema and was thus, removed.

### Expected result

Consistency.

Is the field `type` required to render the input type on the frontend?

- If yes, we need to add the field back to the schema.

- If not, we need to remove it from the resolver for consistency and to avoid confusion for developers in the future.

### Actual result

The field `type` was removed from the QuoteGraphQl module in this commit: https://github.com/magento/magento2/commit/1315577e2099637207f02deec48607d81f7cde46#diff-795a33fde881f18aba5165a5a8c7513fL317 and from the architecture coverage docs a couple months ago and now the code is inconsistent and causes confusion for developers.

---

Please provide [Severity](https://devdocs.magento.com/guides/v2.3/contributor-guide/contributing.html#backlog) assessment for the Issue as Reporter. This information will help during Confirmation and Issue triage processes.

- [ ] Severity: **S0** _- Affects critical data or functionality and leaves users without workaround._

- [ ] Severity: **S1** _- Affects critical data or functionality and forces users to employ a workaround._

- [x] Severity: **S2** _- Affects non-critical data or functionality and forces users to employ a workaround._

- [ ] Severity: **S3** _- Affects non-critical data or functionality and does not force users to employ a workaround._

- [ ] Severity: **S4** _- Affects aesthetics, professional look and feel, “quality” or “usability”._

| 1.0 | Input type of customizable option doesn't get returned through GraphQL - ### Preconditions

Github branches `magento2/2.4-develop` and `architecture/master`

### Steps to reproduce

The GraphQL type `SelectedCustomizableOption` is used in multiple places across the Magento GraphQL modules to get the currently selected customizable option of a product through GraphQL. There are various field types for this such as text, textarea, select, multiselect, checkbox, etc. Currently, there's no way for a frontend using GraphQL to know how to render the SelectedCustomizableOption. Yes, the format varies according to different types, but the format is the same for input types of the same category. I believe there's no way to differentiate between a text and a textarea of `SelectedCustomizableOption`. Same with the different select input types.

The associated resolver class _does_ return the 'type of input' from Magento (ref: https://github.com/magento/magento2/blob/2.4-develop/app/code/Magento/QuoteGraphQl/Model/CartItem/DataProvider/CustomizableOption.php#L60), but the associated GraphQL schema does not define a field for the same (ref: https://github.com/magento/magento2/blob/2.4-develop/app/code/Magento/QuoteGraphQl/etc/schema.graphqls#L346) and hence the 'type of input' doesn't get returned.

Upon some searching, the GraphQL coverage docs did have the 'type of input' as a field in `SelectedCustomizableOption` a couple of months ago (ref: https://github.com/magento/architecture/blob/673438109bbf63d819e96c373ef7622206ff7f9b/design-documents/graph-ql/coverage/add-items-to-cart/AddSimpleProductToCart.graphqls#L59) until the new coverage docs (ref: https://github.com/magento/architecture/blob/master/design-documents/graph-ql/coverage/Cart.graphqls#L114) was matched according to the current GraphQL schema and was thus, removed.

### Expected result

Consistency.

Is the field `type` required to render the input type on the frontend?

- If yes, we need to add the field back to the schema.

- If not, we need to remove it from the resolver for consistency and to avoid confusion for developers in the future.

### Actual result

The field `type` was removed from the QuoteGraphQl module in this commit: https://github.com/magento/magento2/commit/1315577e2099637207f02deec48607d81f7cde46#diff-795a33fde881f18aba5165a5a8c7513fL317 and from the architecture coverage docs a couple months ago and now the code is inconsistent and causes confusion for developers.

---

Please provide [Severity](https://devdocs.magento.com/guides/v2.3/contributor-guide/contributing.html#backlog) assessment for the Issue as Reporter. This information will help during Confirmation and Issue triage processes.

- [ ] Severity: **S0** _- Affects critical data or functionality and leaves users without workaround._

- [ ] Severity: **S1** _- Affects critical data or functionality and forces users to employ a workaround._

- [x] Severity: **S2** _- Affects non-critical data or functionality and forces users to employ a workaround._

- [ ] Severity: **S3** _- Affects non-critical data or functionality and does not force users to employ a workaround._

- [ ] Severity: **S4** _- Affects aesthetics, professional look and feel, “quality” or “usability”._

| non_usab | input type of customizable option doesn t get returned through graphql preconditions github branches develop and architecture master steps to reproduce the graphql type selectedcustomizableoption is used in multiple places across the magento graphql modules to get the currently selected customizable option of a product through graphql there are various field types for this such as text textarea select multiselect checkbox etc currently there s no way for a frontend using graphql to know how to render the selectedcustomizableoption yes the format varies according to different types but the format is the same for input types of the same category i believe there s no way to differentiate between a text and a textarea of selectedcustomizableoption same with the different select input types the associated resolver class does return the type of input from magento ref but the associated graphql schema does not define a field for the same ref and hence the type of input doesn t get returned upon some searching the graphql coverage docs did have the type of input as a field in selectedcustomizableoption a couple of months ago ref until the new coverage docs ref was matched according to the current graphql schema and was thus removed expected result consistency is the field type required to render the input type on the frontend if yes we need to add the field back to the schema if not we need to remove it from the resolver for consistency and to avoid confusion for developers in the future actual result the field type was removed from the quotegraphql module in this commit and from the architecture coverage docs a couple months ago and now the code is inconsistent and causes confusion for developers please provide assessment for the issue as reporter this information will help during confirmation and issue triage processes severity affects critical data or functionality and leaves users without workaround severity affects critical data or functionality and forces users to employ a workaround severity affects non critical data or functionality and forces users to employ a workaround severity affects non critical data or functionality and does not force users to employ a workaround severity affects aesthetics professional look and feel “quality” or “usability” | 0 |