Add files using upload-large-folder tool

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- All-In-One-Pixel-Model_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +23 -0

- AnimateDiff-Lightning_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +120 -0

- AnimateLCM-SVD-xt_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +40 -0

- AsianModel_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +5 -0

- Athene-V2-Chat_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +81 -0

- BiomedCLIP-PubMedBERT_256-vit_base_patch16_224_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +407 -0

- CLIP-ViT-H-14-laion2B-s32B-b79K_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +320 -0

- CodeLlama-34b-Instruct-hf_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +183 -0

- CodeLlama-34b-hf_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +540 -0

- CogVideoX-5b_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +0 -0

- DeepSeek-R1-Distill-Qwen-14B-GGUF_finetunes_20250426_215237.csv_finetunes_20250426_215237.csv +167 -0

- DeepSeek-V2_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +377 -0

- DialoGPT-large_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- DiffRhythm-base_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +79 -0

- GLM-4-32B-0414_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- GPT-SoVITS_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +189 -0

- Hentai-Diffusion_finetunes_20250426_215237.csv_finetunes_20250426_215237.csv +2 -0

- Illustrious-xl-early-release-v0_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +0 -0

- In-Context-LoRA_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +114 -0

- InstantMesh_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +18 -0

- LCM_Dreamshaper_v7_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +248 -0

- LLaMA-Pro-8B_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +44 -0

- Llama-3-Groq-70B-Tool-Use_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +108 -0

- MagicPrompt-Stable-Diffusion_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +31 -0

- MiniMax-Text-01_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +263 -0

- MistralLite_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +529 -0

- NSFW-gen-v2_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +36 -0

- Nous-Hermes-13B-GPTQ_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +237 -0

- OCR-Donut-CORD_finetunes_20250426_212347.csv_finetunes_20250426_212347.csv +33 -0

- OrangeMixs_finetunes_20250422_201036.csv +1645 -0

- Phi-3-mini-128k-instruct-onnx_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +165 -0

- Qwen-7B-Chat_finetunes_20250425_041137.csv_finetunes_20250425_041137.csv +749 -0

- Qwen2-72B-Instruct_finetunes_20250425_125929.csv_finetunes_20250425_125929.csv +0 -0

- SOLAR-0-70b-16bit_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +413 -0

- SpatialLM-Llama-1B_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +233 -0

- Wizard-Vicuna-30B-Uncensored-GPTQ_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +325 -0

- YandexGPT-5-Lite-8B-pretrain_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +1095 -0

- Yi-34B-Chat_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +0 -0

- aya-101_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +325 -0

- bart-large-mnli_finetunes_20250424_193500.csv_finetunes_20250424_193500.csv +0 -0

- bert-base-multilingual-uncased-sentiment_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +0 -0

- biogpt_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- bitnet_b1_58-3B_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +54 -0

- chilloutmix-ni_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +6 -0

- chilloutmix_NiPrunedFp32Fix_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +5 -0

- chinese-alpaca-2-7b_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +41 -0

- chinese-bert-wwm-ext_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +762 -0

- control_v1p_sd15_qrcode_monster_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +59 -0

- dalle-mini_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +203 -0

- deepseek-vl-7b-chat_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +129 -0

All-In-One-Pixel-Model_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv

ADDED

|

@@ -0,0 +1,23 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

PublicPrompts/All-In-One-Pixel-Model,"---

|

| 3 |

+

license: creativeml-openrail-m

|

| 4 |

+

---

|

| 5 |

+

Stable Diffusion model trained using dreambooth to create pixel art, in 2 styles

|

| 6 |

+

the sprite art can be used with the trigger word ""pixelsprite""

|

| 7 |

+

the scene art can be used with the trigger word ""16bitscene""

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

the art is not pixel perfect, but it can be fixed with pixelating tools like https://pinetools.com/pixelate-effect-image (they also have bulk pixelation)

|

| 11 |

+

|

| 12 |

+

|

| 13 |

+

some example generations

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

","{""id"": ""PublicPrompts/All-In-One-Pixel-Model"", ""author"": ""PublicPrompts"", ""sha"": ""b4330356edc9eaeb98571c144e8bbabe8bb15897"", ""last_modified"": ""2023-05-11 13:45:47+00:00"", ""created_at"": ""2022-11-09 17:01:47+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 86, ""downloads_all_time"": null, ""likes"": 182, ""library_name"": ""diffusers"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""diffusers"", ""license:creativeml-openrail-m"", ""autotrain_compatible"", ""endpoints_compatible"", ""diffusers:StableDiffusionPipeline"", ""region:us""], ""pipeline_tag"": ""text-to-image"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""license: creativeml-openrail-m"", ""widget_data"": null, ""model_index"": null, ""config"": {""diffusers"": {""_class_name"": ""StableDiffusionPipeline""}}, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='Public-Prompts-Pixel-Model.ckpt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='feature_extractor/preprocessor_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model_index.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='safety_checker/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='safety_checker/pytorch_model.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='scheduler/scheduler_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='text_encoder/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='text_encoder/pytorch_model.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/merges.txt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/special_tokens_map.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/tokenizer_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/vocab.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='unet/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='unet/diffusion_pytorch_model.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='vae/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='vae/diffusion_pytorch_model.bin', size=None, blob_id=None, lfs=None)""], ""spaces"": [""xhxhkxh/sdp""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2023-05-11 13:45:47+00:00"", ""cardData"": ""license: creativeml-openrail-m"", ""transformersInfo"": null, ""_id"": ""636bdcfbf575d370514c8038"", ""modelId"": ""PublicPrompts/All-In-One-Pixel-Model"", ""usedStorage"": 7614306662}",0,,0,,0,,0,,0,"huggingface/InferenceSupport/discussions/new?title=PublicPrompts/All-In-One-Pixel-Model&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5BPublicPrompts%2FAll-In-One-Pixel-Model%5D(%2FPublicPrompts%2FAll-In-One-Pixel-Model)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, xhxhkxh/sdp",2

|

AnimateDiff-Lightning_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv

ADDED

|

@@ -0,0 +1,120 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

ByteDance/AnimateDiff-Lightning,"---

|

| 3 |

+

license: creativeml-openrail-m

|

| 4 |

+

tags:

|

| 5 |

+

- text-to-video

|

| 6 |

+

- stable-diffusion

|

| 7 |

+

- animatediff

|

| 8 |

+

library_name: diffusers

|

| 9 |

+

inference: false

|

| 10 |

+

---

|

| 11 |

+

# AnimateDiff-Lightning

|

| 12 |

+

|

| 13 |

+

<video src='https://huggingface.co/ByteDance/AnimateDiff-Lightning/resolve/main/animatediff_lightning_samples_t2v.mp4' width=""100%"" autoplay muted loop playsinline style='margin:0'></video>

|

| 14 |

+

<video src='https://huggingface.co/ByteDance/AnimateDiff-Lightning/resolve/main/animatediff_lightning_samples_v2v.mp4' width=""100%"" autoplay muted loop playsinline style='margin:0'></video>

|

| 15 |

+

|

| 16 |

+

AnimateDiff-Lightning is a lightning-fast text-to-video generation model. It can generate videos more than ten times faster than the original AnimateDiff. For more information, please refer to our research paper: [AnimateDiff-Lightning: Cross-Model Diffusion Distillation](https://arxiv.org/abs/2403.12706). We release the model as part of the research.

|

| 17 |

+

|

| 18 |

+

Our models are distilled from [AnimateDiff SD1.5 v2](https://huggingface.co/guoyww/animatediff). This repository contains checkpoints for 1-step, 2-step, 4-step, and 8-step distilled models. The generation quality of our 2-step, 4-step, and 8-step model is great. Our 1-step model is only provided for research purposes.

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

## Demo

|

| 22 |

+

|

| 23 |

+

Try AnimateDiff-Lightning using our text-to-video generation [demo](https://huggingface.co/spaces/ByteDance/AnimateDiff-Lightning).

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

## Recommendation

|

| 27 |

+

|

| 28 |

+

AnimateDiff-Lightning produces the best results when used with stylized base models. We recommend using the following base models:

|

| 29 |

+

|

| 30 |

+

Realistic

|

| 31 |

+

- [epiCRealism](https://civitai.com/models/25694)

|

| 32 |

+

- [Realistic Vision](https://civitai.com/models/4201)

|

| 33 |

+

- [DreamShaper](https://civitai.com/models/4384)

|

| 34 |

+

- [AbsoluteReality](https://civitai.com/models/81458)

|

| 35 |

+

- [MajicMix Realistic](https://civitai.com/models/43331)

|

| 36 |

+

|

| 37 |

+

Anime & Cartoon

|

| 38 |

+

- [ToonYou](https://civitai.com/models/30240)

|

| 39 |

+

- [IMP](https://civitai.com/models/56680)

|

| 40 |

+

- [Mistoon Anime](https://civitai.com/models/24149)

|

| 41 |

+

- [DynaVision](https://civitai.com/models/75549)

|

| 42 |

+

- [RCNZ Cartoon 3d](https://civitai.com/models/66347)

|

| 43 |

+

- [MajicMix Reverie](https://civitai.com/models/65055)

|

| 44 |

+

|

| 45 |

+

Additionally, feel free to explore different settings. We find using 3 inference steps on the 2-step model produces great results. We find certain base models produces better results with CFG. We also recommend using [Motion LoRAs](https://huggingface.co/guoyww/animatediff/tree/main) as they produce stronger motion. We use Motion LoRAs with strength 0.7~0.8 to avoid watermark.

|

| 46 |

+

|

| 47 |

+

## Diffusers Usage

|

| 48 |

+

|

| 49 |

+

```python

|

| 50 |

+

import torch

|

| 51 |

+

from diffusers import AnimateDiffPipeline, MotionAdapter, EulerDiscreteScheduler

|

| 52 |

+

from diffusers.utils import export_to_gif

|

| 53 |

+

from huggingface_hub import hf_hub_download

|

| 54 |

+

from safetensors.torch import load_file

|

| 55 |

+

|

| 56 |

+

device = ""cuda""

|

| 57 |

+

dtype = torch.float16

|

| 58 |

+

|

| 59 |

+

step = 4 # Options: [1,2,4,8]

|

| 60 |

+

repo = ""ByteDance/AnimateDiff-Lightning""

|

| 61 |

+

ckpt = f""animatediff_lightning_{step}step_diffusers.safetensors""

|

| 62 |

+

base = ""emilianJR/epiCRealism"" # Choose to your favorite base model.

|

| 63 |

+

|

| 64 |

+

adapter = MotionAdapter().to(device, dtype)

|

| 65 |

+

adapter.load_state_dict(load_file(hf_hub_download(repo ,ckpt), device=device))

|

| 66 |

+

pipe = AnimateDiffPipeline.from_pretrained(base, motion_adapter=adapter, torch_dtype=dtype).to(device)

|

| 67 |

+

pipe.scheduler = EulerDiscreteScheduler.from_config(pipe.scheduler.config, timestep_spacing=""trailing"", beta_schedule=""linear"")

|

| 68 |

+

|

| 69 |

+

output = pipe(prompt=""A girl smiling"", guidance_scale=1.0, num_inference_steps=step)

|

| 70 |

+

export_to_gif(output.frames[0], ""animation.gif"")

|

| 71 |

+

```

|

| 72 |

+

|

| 73 |

+

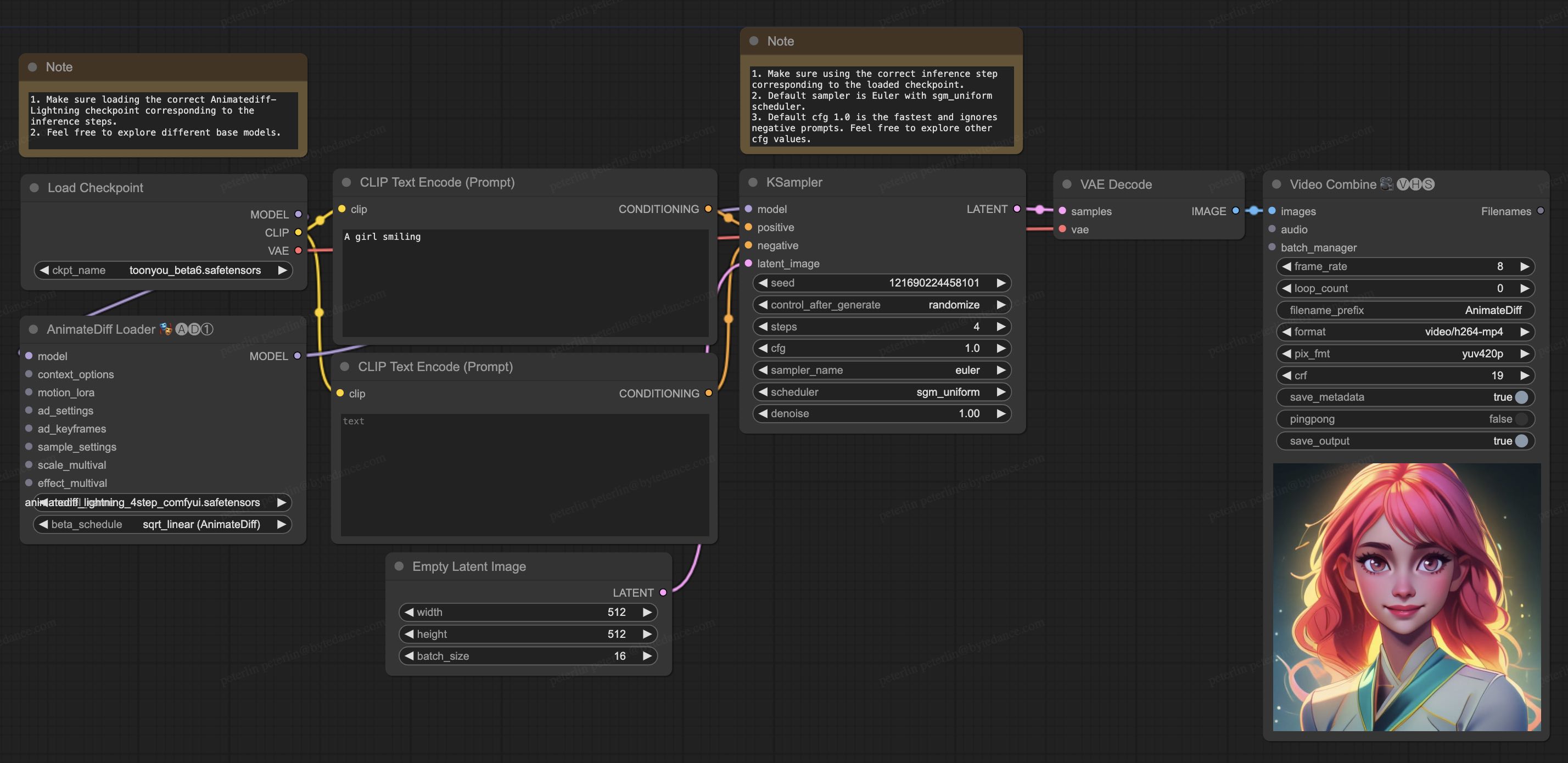

## ComfyUI Usage

|

| 74 |

+

|

| 75 |

+

1. Download [animatediff_lightning_workflow.json](https://huggingface.co/ByteDance/AnimateDiff-Lightning/raw/main/comfyui/animatediff_lightning_workflow.json) and import it in ComfyUI.

|

| 76 |

+

1. Install nodes. You can install them manually or use [ComfyUI-Manager](https://github.com/ltdrdata/ComfyUI-Manager).

|

| 77 |

+

* [ComfyUI-AnimateDiff-Evolved](https://github.com/Kosinkadink/ComfyUI-AnimateDiff-Evolved)

|

| 78 |

+

* [ComfyUI-VideoHelperSuite](https://github.com/Kosinkadink/ComfyUI-VideoHelperSuite)

|

| 79 |

+

1. Download your favorite base model checkpoint and put them under `/models/checkpoints/`

|

| 80 |

+

1. Download AnimateDiff-Lightning checkpoint `animatediff_lightning_Nstep_comfyui.safetensors` and put them under `/custom_nodes/ComfyUI-AnimateDiff-Evolved/models/`

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

|

| 84 |

+

|

| 85 |

+

|

| 86 |

+

## Video-to-Video Generation

|

| 87 |

+

|

| 88 |

+

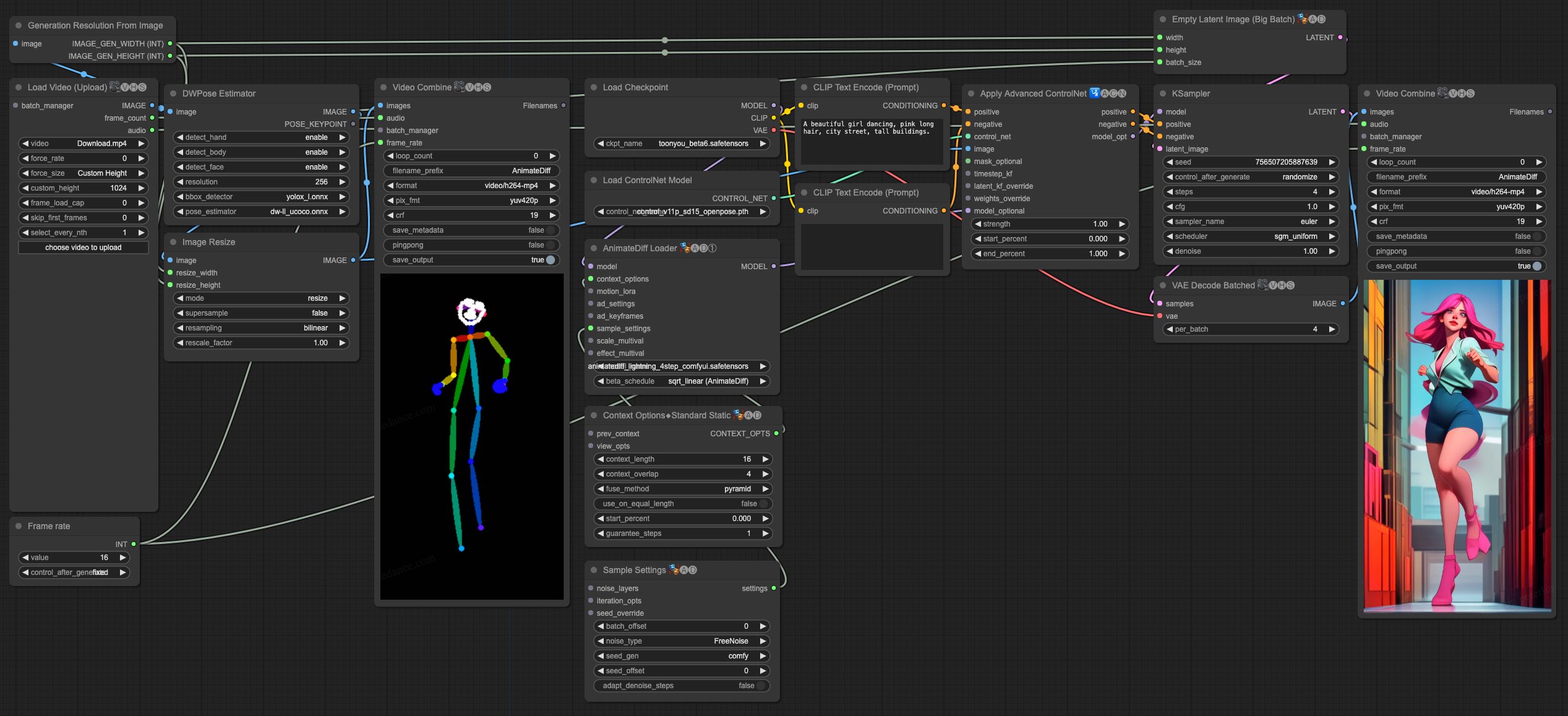

AnimateDiff-Lightning is great for video-to-video generation. We provide the simplist comfyui workflow using ControlNet.

|

| 89 |

+

|

| 90 |

+

1. Download [animatediff_lightning_v2v_openpose_workflow.json](https://huggingface.co/ByteDance/AnimateDiff-Lightning/raw/main/comfyui/animatediff_lightning_v2v_openpose_workflow.json) and import it in ComfyUI.

|

| 91 |

+

1. Install nodes. You can install them manually or use [ComfyUI-Manager](https://github.com/ltdrdata/ComfyUI-Manager).

|

| 92 |

+

* [ComfyUI-AnimateDiff-Evolved](https://github.com/Kosinkadink/ComfyUI-AnimateDiff-Evolved)

|

| 93 |

+

* [ComfyUI-VideoHelperSuite](https://github.com/Kosinkadink/ComfyUI-VideoHelperSuite)

|

| 94 |

+

* [ComfyUI-Advanced-ControlNet](https://github.com/Kosinkadink/ComfyUI-Advanced-ControlNet)

|

| 95 |

+

* [comfyui_controlnet_aux](https://github.com/Fannovel16/comfyui_controlnet_aux)

|

| 96 |

+

1. Download your favorite base model checkpoint and put them under `/models/checkpoints/`

|

| 97 |

+

1. Download AnimateDiff-Lightning checkpoint `animatediff_lightning_Nstep_comfyui.safetensors` and put them under `/custom_nodes/ComfyUI-AnimateDiff-Evolved/models/`

|

| 98 |

+

1. Download [ControlNet OpenPose](https://huggingface.co/lllyasviel/ControlNet-v1-1/tree/main) `control_v11p_sd15_openpose.pth` checkpoint to `/models/controlnet/`

|

| 99 |

+

1. Upload your video and run the pipeline.

|

| 100 |

+

|

| 101 |

+

Additional notes:

|

| 102 |

+

|

| 103 |

+

1. Video shouldn't be too long or too high resolution. We used 576x1024 8 second 30fps videos for testing.

|

| 104 |

+

1. Set the frame rate to match your input video. This allows audio to match with the output video.

|

| 105 |

+

1. DWPose will download checkpoint itself on its first run.

|

| 106 |

+

1. DWPose may get stuck in UI, but the pipeline is actually still running in the background. Check ComfyUI log and your output folder.

|

| 107 |

+

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

# Cite Our Work

|

| 111 |

+

```

|

| 112 |

+

@misc{lin2024animatedifflightning,

|

| 113 |

+

title={AnimateDiff-Lightning: Cross-Model Diffusion Distillation},

|

| 114 |

+

author={Shanchuan Lin and Xiao Yang},

|

| 115 |

+

year={2024},

|

| 116 |

+

eprint={2403.12706},

|

| 117 |

+

archivePrefix={arXiv},

|

| 118 |

+

primaryClass={cs.CV}

|

| 119 |

+

}

|

| 120 |

+

```","{""id"": ""ByteDance/AnimateDiff-Lightning"", ""author"": ""ByteDance"", ""sha"": ""027c893eec01df7330f5d4b733bc9485ee02e8b2"", ""last_modified"": ""2025-01-06 06:03:11+00:00"", ""created_at"": ""2024-03-19 12:58:46+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 151443, ""downloads_all_time"": null, ""likes"": 924, ""library_name"": ""diffusers"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""diffusers"", ""text-to-video"", ""stable-diffusion"", ""animatediff"", ""arxiv:2403.12706"", ""license:creativeml-openrail-m"", ""region:us""], ""pipeline_tag"": ""text-to-video"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""library_name: diffusers\nlicense: creativeml-openrail-m\ntags:\n- text-to-video\n- stable-diffusion\n- animatediff\ninference: false"", ""widget_data"": null, ""model_index"": null, ""config"": null, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='LICENSE.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_1step_comfyui.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_1step_diffusers.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_2step_comfyui.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_2step_diffusers.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_4step_comfyui.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_4step_diffusers.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_8step_comfyui.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_8step_diffusers.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_report.pdf', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_samples_t2v.mp4', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='animatediff_lightning_samples_v2v.mp4', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='comfyui/animatediff_lightning_v2v_openpose_workflow.jpg', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='comfyui/animatediff_lightning_v2v_openpose_workflow.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='comfyui/animatediff_lightning_workflow.jpg', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='comfyui/animatediff_lightning_workflow.json', size=None, blob_id=None, lfs=None)""], ""spaces"": [""KingNish/Instant-Video"", ""ByteDance/AnimateDiff-Lightning"", ""marlonbarrios/Instant-Video"", ""orderlymirror/Text-to-Video"", ""Nymbo/Instant-Video"", ""SahaniJi/Instant-Video"", ""Martim-Ramos-Neural/AnimateDiffPipeline_text_to_video"", ""Gradio-Community/Animation_With_Sound"", ""AI-Platform/Mochi_1_Video"", ""SahaniJi/AnimateDiff-Lightning"", ""ruslanmv/Video-Generator-from-Story"", ""paulm0016/text_to_gif"", ""K00B404/AnimateDiff-Lightning"", ""rynmurdock/Blue_Tigers"", ""Uhhy/Instant-Video"", ""Harumiiii/text-to-image-api"", ""mrbeliever/Ins-Vid"", ""salomonsky/Mochi_1_Video"", ""LAJILAODEEAIQ/office-chat-Instant-Video"", ""jbilcke-hf/ai-tube-model-adl-1"", ""jbilcke-hf/ai-tube-model-parler-tts-mini"", ""Taf2023/Animation_With_Sound"", ""jbilcke-hf/ai-tube-model-adl-2"", ""Taf2023/AnimateDiff-Lightning"", ""Divergent007/Instant-Video"", ""sanaweb/AnimateDiff-Lightning"", ""pranavajay/Test"", ""cocktailpeanut/Instant-Video"", ""Festrcze/Instant-Video"", ""Alif737/Video-Generator-fron-text"", ""jbilcke-hf/ai-tube-model-adl-3"", ""jbilcke-hf/ai-tube-model-adl-4"", ""jbilcke-hf/huggingchat-tool-video"", ""qsdreams/AnimateDiff-Lightning"", ""saicharan1234/Video-Engine"", ""cbbstars/Instant-Video"", ""raymerjacque/Instant-Video"", ""saima730/text_to_video"", ""saima730/textToVideo"", ""Yhhxhfh/Instant-Video"", ""snehalsas/Instant-Video-Generation"", ""omgitsqing/hum_me_a_melody"", ""M-lai/Instant-Video"", ""SiddhantSahu/Project_for_collage-Text_to_Video"", ""Fre123/Frev123"", ""edu12378/My-space"", ""ahmdliaqat/animate"", ""quangnhat/QNT-ByteDance"", ""sk444/v3"", ""soiz1/ComfyUI-Demo"", ""armen425221356/Instant-Video"", ""sreepathi-ravikumar/AnimateDiff-Lightning"", ""taddymason/Instant-Video"", ""orderlymirror/demo"", ""orderlymirror/TIv2""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2025-01-06 06:03:11+00:00"", ""cardData"": ""library_name: diffusers\nlicense: creativeml-openrail-m\ntags:\n- text-to-video\n- stable-diffusion\n- animatediff\ninference: false"", ""transformersInfo"": null, ""_id"": ""65f98c0619efe1381b9514a5"", ""modelId"": ""ByteDance/AnimateDiff-Lightning"", ""usedStorage"": 7286508236}",0,,0,,0,,0,,0,"ByteDance/AnimateDiff-Lightning, Harumiiii/text-to-image-api, KingNish/Instant-Video, LAJILAODEEAIQ/office-chat-Instant-Video, Martim-Ramos-Neural/AnimateDiffPipeline_text_to_video, SahaniJi/AnimateDiff-Lightning, SahaniJi/Instant-Video, huggingface/InferenceSupport/discussions/1056, orderlymirror/TIv2, orderlymirror/Text-to-Video, paulm0016/text_to_gif, quangnhat/QNT-ByteDance, ruslanmv/Video-Generator-from-Story",13

|

AnimateLCM-SVD-xt_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv

ADDED

|

@@ -0,0 +1,40 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

wangfuyun/AnimateLCM-SVD-xt,"---

|

| 3 |

+

pipeline_tag: image-to-video

|

| 4 |

+

---

|

| 5 |

+

|

| 6 |

+

<p align=""center"">

|

| 7 |

+

<img src=""./demos/demo-01.gif"" width=""70%"" />

|

| 8 |

+

<img src=""./demos/demo-02.gif"" width=""70%"" />

|

| 9 |

+

<img src=""./demos/demo-03.gif"" width=""70%"" />

|

| 10 |

+

|

| 11 |

+

</p>

|

| 12 |

+

<p align=""center"">Samples generated by AnimateLCM-SVD-xt</p>

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

## Introduction

|

| 16 |

+

Consistency Distilled [Stable Video Diffusion Image2Video-XT (SVD-xt)](https://huggingface.co/stabilityai/stable-video-diffusion-img2vid-xt) following the strategy proposed in [AnimateLCM-paper](https://arxiv.org/abs/2402.00769).

|

| 17 |

+

AnimateLCM-SVD-xt can generate good quality image-conditioned videos with 25 frames in 2~8 steps with 576x1024 resolutions.

|

| 18 |

+

|

| 19 |

+

## Computation comparsion

|

| 20 |

+

AnimateLCM-SVD-xt can generally produces demos with good quality in 4 steps without requiring the classifier-free guidance, and therefore can save 25 x 2 / 4 = 12.5 times compuation resources compared with normal SVD models.

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

## Demos

|

| 24 |

+

|

| 25 |

+

| | | |

|

| 26 |

+

| :---: | :---: | :---: |

|

| 27 |

+

|  |  |  |

|

| 28 |

+

| 2 steps, cfg=1 | 4 steps, cfg=1 | 8 steps, cfg=1 |

|

| 29 |

+

|  |  |  |

|

| 30 |

+

| 2 steps, cfg=1 | 4 steps, cfg=1 | 8 steps, cfg=1 |

|

| 31 |

+

|  |  |  |

|

| 32 |

+

| 2 steps, cfg=1 | 4 steps, cfg=1 | 8 steps, cfg=1 |

|

| 33 |

+

|  |  |  |

|

| 34 |

+

| 2 steps, cfg=1 | 4 steps, cfg=1 | 8 steps, cfg=1 |

|

| 35 |

+

|  |  |  |

|

| 36 |

+

| 2 steps, cfg=1 | 4 steps, cfg=1 | 8 steps, cfg=1 |

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

I have launched a gradio demo at [AnimateLCM SVD space](https://huggingface.co/spaces/wangfuyun/AnimateLCM-SVD). Should you have any questions, please contact Fu-Yun Wang (fywang@link.cuhk.edu.hk). I might respond a bit later. Thank you!","{""id"": ""wangfuyun/AnimateLCM-SVD-xt"", ""author"": ""wangfuyun"", ""sha"": ""ef2753d97ea1bd8741b6b5287b834630f1c42fa0"", ""last_modified"": ""2024-02-27 08:05:01+00:00"", ""created_at"": ""2024-02-18 17:29:06+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 0, ""downloads_all_time"": null, ""likes"": 196, ""library_name"": null, ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""image-to-video"", ""arxiv:2402.00769"", ""region:us""], ""pipeline_tag"": ""image-to-video"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""pipeline_tag: image-to-video"", ""widget_data"": null, ""model_index"": null, ""config"": null, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='AnimateLCM-SVD-xt-1.1.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='AnimateLCM-SVD-xt.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/01-2.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/01-4.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/01-8.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/02-2.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/02-4.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/02-8.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/03-2.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/03-4.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/03-8.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/04-2.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/04-4.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/04-8.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/05-2.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/05-4.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/05-8.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/demo-01.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/demo-02.gif', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='demos/demo-03.gif', size=None, blob_id=None, lfs=None)""], ""spaces"": [""wangfuyun/AnimateLCM-SVD"", ""wangfuyun/AnimateLCM"", ""fantos/vidiani"", ""Ziaistan/AnimateLCM-SVD"", ""Taf2023/AnimateLCM"", ""svjack/AnimateLCM-SVD-Genshin-Impact-Demo""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2024-02-27 08:05:01+00:00"", ""cardData"": ""pipeline_tag: image-to-video"", ""transformersInfo"": null, ""_id"": ""65d23e62ad23a6740435a879"", ""modelId"": ""wangfuyun/AnimateLCM-SVD-xt"", ""usedStorage"": 12317372403}",0,,0,,0,,0,,0,"Taf2023/AnimateLCM, Ziaistan/AnimateLCM-SVD, fantos/vidiani, huggingface/InferenceSupport/discussions/new?title=wangfuyun/AnimateLCM-SVD-xt&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5Bwangfuyun%2FAnimateLCM-SVD-xt%5D(%2Fwangfuyun%2FAnimateLCM-SVD-xt)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, svjack/AnimateLCM-SVD-Genshin-Impact-Demo, wangfuyun/AnimateLCM, wangfuyun/AnimateLCM-SVD",7

|

AsianModel_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

BanKaiPls/AsianModel,"---

|

| 3 |

+

license: openrail

|

| 4 |

+

---

|

| 5 |

+

","{""id"": ""BanKaiPls/AsianModel"", ""author"": ""BanKaiPls"", ""sha"": ""d6193514bb251acbf27e08c018c3fec891f037f9"", ""last_modified"": ""2023-07-16 06:56:26+00:00"", ""created_at"": ""2023-03-09 08:28:43+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 0, ""downloads_all_time"": null, ""likes"": 186, ""library_name"": null, ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""license:openrail"", ""region:us""], ""pipeline_tag"": null, ""mask_token"": null, ""trending_score"": null, ""card_data"": ""license: openrail"", ""widget_data"": null, ""model_index"": null, ""config"": null, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='BRA5beta.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='BRAV5beta.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='BRAV5finalfp16.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='BraV5Beta3.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='BraV5Finaltest.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='Brav6.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='OpenBra.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)""], ""spaces"": [""willhill/stable-diffusion-webui-cpu"", ""goguenha123/stable-diffusion-webui-cpu"", ""caizhudiren/test""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2023-07-16 06:56:26+00:00"", ""cardData"": ""license: openrail"", ""transformersInfo"": null, ""_id"": ""640998bb582fb894c058d043"", ""modelId"": ""BanKaiPls/AsianModel"", ""usedStorage"": 127167632115}",0,,0,,0,,0,,0,"caizhudiren/test, goguenha123/stable-diffusion-webui-cpu, huggingface/InferenceSupport/discussions/new?title=BanKaiPls/AsianModel&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5BBanKaiPls%2FAsianModel%5D(%2FBanKaiPls%2FAsianModel)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, willhill/stable-diffusion-webui-cpu",4

|

Athene-V2-Chat_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv

ADDED

|

@@ -0,0 +1,81 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

Nexusflow/Athene-V2-Chat,N/A,N/A,0,"https://huggingface.co/Saxo/Linkbricks-Horizon-AI-Avengers-V1-108B, https://huggingface.co/dvishal18/chatbotapi",2,,0,"https://huggingface.co/lmstudio-community/Athene-V2-Chat-GGUF, https://huggingface.co/mradermacher/Athene-V2-Chat-i1-GGUF, https://huggingface.co/kosbu/Athene-V2-Chat-AWQ, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_4.0bpw, https://huggingface.co/lee5j/Athene-V2-Chat-gptq4, https://huggingface.co/bartowski/Athene-V2-Chat-GGUF, https://huggingface.co/DevQuasar/Nexusflow.Athene-V2-Chat-GGUF, https://huggingface.co/mradermacher/Athene-V2-Chat-GGUF, https://huggingface.co/mlx-community/Athene-V2-Chat-8bit, https://huggingface.co/Jellon/Athene-V2-Chat-72b-exl2-3bpw, https://huggingface.co/JustinIrv/Athene-V2-Chat-Q4-mlx, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_8.0bpw, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_7.0bpw, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_6.0bpw, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_5.0bpw, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_4.5bpw, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_3.5bpw, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_3.0bpw, https://huggingface.co/cotdp/Athene-V2-Chat-MLX-4bit, https://huggingface.co/mlx-community/Athene-V2-Chat-4bit, https://huggingface.co/Orion-zhen/Athene-V2-Chat-abliterated-bnb-4bit, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_2.75bpw, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_2.5bpw, https://huggingface.co/Dracones/Athene-V2-Chat_exl2_2.25bpw",24,"https://huggingface.co/SteelStorage/Q2.5-MS-Mistoria-72b-v2, https://huggingface.co/spow12/KoQwen_72B_v5.0, https://huggingface.co/sophosympatheia/Evathene-v1.2, https://huggingface.co/spow12/ChatWaifu_72B_v2.2, https://huggingface.co/sophosympatheia/Evathene-v1.0, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.0-8.0bpw-h8-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.0-6.0bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.0-5.0bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.0-4.25bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.0-3.5bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.0-3.0bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.0-2.25bpw-h6-exl2, https://huggingface.co/nitky/AtheneX-V2-72B-instruct, https://huggingface.co/CalamitousFelicitousness/Evathene-v1.0-FP8-Dynamic, https://huggingface.co/DBMe/Evathene-v1.0-4.86bpw-h6-exl2, https://huggingface.co/sophosympatheia/Evathene-v1.1, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.2-8.0bpw-h8-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.2-6.0bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.2-5.0bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.2-3.5bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.1-8.0bpw-h8-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.1-6.0bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.1-5.0bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.1-3.5bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.2-4.25bpw-h6-exl2, https://huggingface.co/MikeRoz/sophosympatheia_Evathene-v1.1-4.25bpw-h6-exl2, https://huggingface.co/gunman0/Evathene-v1.2_EXL2_8bpw, https://huggingface.co/Infermatic/Q2.5-MS-Mistoria-72b-v2-FP8-Dynamic, https://huggingface.co/ehristoforu/frqwen2.5-from72b-duable10layers, https://huggingface.co/Nohobby/Q2.5-Atess-72B, https://huggingface.co/chakchouk/BBA-ECE-TRIOMPHANT-Qwen2.5-72B",31,"Dynamitte63/Nexusflow-Athene-V2-Chat, FallnAI/Quantize-HF-Models, K00B404/LLM_Quantization, KBaba7/Quant, Lone7727/Nexusflow-Athene-V2-Chat, bazingapaa/compare-models, bhaskartripathi/LLM_Quantization, huggingface/InferenceSupport/discussions/new?title=Nexusflow/Athene-V2-Chat&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5BNexusflow%2FAthene-V2-Chat%5D(%2FNexusflow%2FAthene-V2-Chat)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, kaleidoskop-hug/StreamlitChat_Test, mikemin027/Nexusflow-Athene-V2-Chat, ruslanmv/convert_to_gguf, totolook/Quant, zhangjian95/Nexusflow-Athene-V2-Chat",13

|

| 3 |

+

Saxo/Linkbricks-Horizon-AI-Avengers-V1-108B,"---

|

| 4 |

+

library_name: transformers

|

| 5 |

+

license: apache-2.0

|

| 6 |

+

base_model: Nexusflow/Athene-V2-Chat

|

| 7 |

+

datasets:

|

| 8 |

+

- Saxo/ko_cn_translation_tech_social_science_linkbricks_single_dataset

|

| 9 |

+

- Saxo/ko_jp_translation_tech_social_science_linkbricks_single_dataset

|

| 10 |

+

- Saxo/en_ko_translation_tech_science_linkbricks_single_dataset_with_prompt_text_huggingface

|

| 11 |

+

- Saxo/en_ko_translation_social_science_linkbricks_single_dataset_with_prompt_text_huggingface

|

| 12 |

+

- Saxo/ko_aspect_sentiment_sns_mall_sentiment_linkbricks_single_dataset_with_prompt_text_huggingface

|

| 13 |

+

- Saxo/ko_summarization_linkbricks_single_dataset_with_prompt_text_huggingface

|

| 14 |

+

- Saxo/OpenOrca_cleaned_kor_linkbricks_single_dataset_with_prompt_text_huggingface

|

| 15 |

+

- Saxo/ko_government_qa_total_linkbricks_single_dataset_with_prompt_text_huggingface_sampled

|

| 16 |

+

- Saxo/ko-news-corpus-1

|

| 17 |

+

- Saxo/ko-news-corpus-2

|

| 18 |

+

- Saxo/ko-news-corpus-3

|

| 19 |

+

- Saxo/ko-news-corpus-4

|

| 20 |

+

- Saxo/ko-news-corpus-5

|

| 21 |

+

- Saxo/ko-news-corpus-6

|

| 22 |

+

- Saxo/ko-news-corpus-7

|

| 23 |

+

- Saxo/ko-news-corpus-8

|

| 24 |

+

- Saxo/ko-news-corpus-9

|

| 25 |

+

- maywell/ko_Ultrafeedback_binarized

|

| 26 |

+

- youjunhyeok/ko-orca-pair-and-ultrafeedback-dpo

|

| 27 |

+

- lilacai/glaive-function-calling-v2-sharegpt

|

| 28 |

+

- kuotient/gsm8k-ko

|

| 29 |

+

language:

|

| 30 |

+

- ko

|

| 31 |

+

- en

|

| 32 |

+

- jp

|

| 33 |

+

- cn

|

| 34 |

+

pipeline_tag: text-generation

|

| 35 |

+

---

|

| 36 |

+

|

| 37 |

+

# Model Card for Model ID

|

| 38 |

+

|

| 39 |

+

<div align=""center"">

|

| 40 |

+

<img src=""http://www.linkbricks.com/wp-content/uploads/2024/11/fulllogo.png"" />

|

| 41 |

+

</div>

|

| 42 |

+

|

| 43 |

+

AIとビッグデータ分析の専門企業であるLinkbricksのデータサイエンティストであるジ・ユンソン(Saxo)ディレクターが <br>

|

| 44 |

+

Nexusflow/Athene-V2-Chatベースモデルを使用し、H100-80G 8個で約35%程度のパラメータをSFT->DPO->ORPO->MERGEした多言語強化言語モデル。<br>

|

| 45 |

+

8千万件の様々な言語圏のニュースやウィキコーパスを基に、様々なタスク別の日本語・韓国語・中国語・英語クロス学習データと数学や論理判断データを通じて、日中韓英言語のクロスエンハンスメント処理と複雑な論理問題にも対応できるように訓練したモデルである。

|

| 46 |

+

-トークナイザーは、単語拡張なしでベースモデルのまま使用します。<br>

|

| 47 |

+

-カスタマーレビューやソーシャル投稿の高次元分析及びコーディングとライティング、数学、論理判断などが強化されたモデル。<br>

|

| 48 |

+

-Function Call<br>

|

| 49 |

+

-Deepspeed Stage=3、rslora及びBAdam Layer Modeを使用 <br>

|

| 50 |

+

-「transformers_version」: 「4.46.3」<br>

|

| 51 |

+

|

| 52 |

+

<br><br>

|

| 53 |

+

|

| 54 |

+

AI 와 빅데이터 분석 전문 기업인 Linkbricks의 데이터사이언티스트인 지윤성(Saxo) 이사가 <br>

|

| 55 |

+

Nexusflow/Athene-V2-Chat 베이스모델을 사용해서 H100-80G 8개를 통해 약 35%정도의 파라미터를 SFT->DPO->ORPO->MERGE 한 다국어 강화 언어 모델<br>

|

| 56 |

+

8천만건의 다양한 언어권의 뉴스 및 위키 코퍼스를 기준으로 다양한 테스크별 일본어-한국어-중국어-영어 교차 학습 데이터와 수학 및 논리판단 데이터를 통하여 한중일영 언어 교차 증강 처리와 복잡한 논리 문제 역시 대응 가능하도록 훈련한 모델이다.<br>

|

| 57 |

+

-토크나이저는 단어 확장 없이 베이스 모델 그대로 사용<br>

|

| 58 |

+

-고객 리뷰나 소셜 포스팅 고차원 분석 및 코딩과 작문, 수학, 논리판단 등이 강화된 모델<br>

|

| 59 |

+

-Function Call 및 Tool Calling 지원<br>

|

| 60 |

+

-Deepspeed Stage=3, rslora 및 BAdam Layer Mode 사용 <br>

|

| 61 |

+

-""transformers_version"": ""4.46.3""<br>

|

| 62 |

+

<br><br>

|

| 63 |

+

|

| 64 |

+

Finetuned by Mr. Yunsung Ji (Saxo), a data scientist at Linkbricks, a company specializing in AI and big data analytics <br>

|

| 65 |

+

about 35% of total parameters SFT->DPO->ORPO->MERGE training model based on Nexusflow/Athene-V2-Chat through 8 H100-80Gs as multi-lingual boosting language model <br>

|

| 66 |

+

It is a model that has been trained to handle Japanese-Korean-Chinese-English cross-training data and 80M multi-lingual news corpus and logic judgment data for various tasks to enable cross-fertilization processing and complex Korean logic & math problems. <br>

|

| 67 |

+

-Tokenizer uses the base model without word expansion<br>

|

| 68 |

+

-Models enhanced with high-dimensional analysis of customer reviews and social posts, as well as coding, writing, math and decision making<br>

|

| 69 |

+

-Function Calling<br>

|

| 70 |

+

-Deepspeed Stage=3, use rslora and BAdam Layer Mode<br>

|

| 71 |

+

<br><br>

|

| 72 |

+

|

| 73 |

+

<a href=""www.linkbricks.com"">www.linkbricks.com</a>, <a href=""www.linkbricks.vc"">www.linkbricks.vc</a>

|

| 74 |

+

","{""id"": ""Saxo/Linkbricks-Horizon-AI-Avengers-V1-108B"", ""author"": ""Saxo"", ""sha"": ""d631a3d20d4e85663bf508b58fccac5372b2c793"", ""last_modified"": ""2024-12-31 02:36:02+00:00"", ""created_at"": ""2024-12-30 07:05:27+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 45, ""downloads_all_time"": null, ""likes"": 0, ""library_name"": ""transformers"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""transformers"", ""safetensors"", ""qwen2"", ""text-generation"", ""conversational"", ""ko"", ""en"", ""jp"", ""cn"", ""dataset:Saxo/ko_cn_translation_tech_social_science_linkbricks_single_dataset"", ""dataset:Saxo/ko_jp_translation_tech_social_science_linkbricks_single_dataset"", ""dataset:Saxo/en_ko_translation_tech_science_linkbricks_single_dataset_with_prompt_text_huggingface"", ""dataset:Saxo/en_ko_translation_social_science_linkbricks_single_dataset_with_prompt_text_huggingface"", ""dataset:Saxo/ko_aspect_sentiment_sns_mall_sentiment_linkbricks_single_dataset_with_prompt_text_huggingface"", ""dataset:Saxo/ko_summarization_linkbricks_single_dataset_with_prompt_text_huggingface"", ""dataset:Saxo/OpenOrca_cleaned_kor_linkbricks_single_dataset_with_prompt_text_huggingface"", ""dataset:Saxo/ko_government_qa_total_linkbricks_single_dataset_with_prompt_text_huggingface_sampled"", ""dataset:Saxo/ko-news-corpus-1"", ""dataset:Saxo/ko-news-corpus-2"", ""dataset:Saxo/ko-news-corpus-3"", ""dataset:Saxo/ko-news-corpus-4"", ""dataset:Saxo/ko-news-corpus-5"", ""dataset:Saxo/ko-news-corpus-6"", ""dataset:Saxo/ko-news-corpus-7"", ""dataset:Saxo/ko-news-corpus-8"", ""dataset:Saxo/ko-news-corpus-9"", ""dataset:maywell/ko_Ultrafeedback_binarized"", ""dataset:youjunhyeok/ko-orca-pair-and-ultrafeedback-dpo"", ""dataset:lilacai/glaive-function-calling-v2-sharegpt"", ""dataset:kuotient/gsm8k-ko"", ""base_model:Nexusflow/Athene-V2-Chat"", ""base_model:finetune:Nexusflow/Athene-V2-Chat"", ""license:apache-2.0"", ""autotrain_compatible"", ""text-generation-inference"", ""endpoints_compatible"", ""region:us""], ""pipeline_tag"": ""text-generation"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""base_model: Nexusflow/Athene-V2-Chat\ndatasets:\n- Saxo/ko_cn_translation_tech_social_science_linkbricks_single_dataset\n- Saxo/ko_jp_translation_tech_social_science_linkbricks_single_dataset\n- Saxo/en_ko_translation_tech_science_linkbricks_single_dataset_with_prompt_text_huggingface\n- Saxo/en_ko_translation_social_science_linkbricks_single_dataset_with_prompt_text_huggingface\n- Saxo/ko_aspect_sentiment_sns_mall_sentiment_linkbricks_single_dataset_with_prompt_text_huggingface\n- Saxo/ko_summarization_linkbricks_single_dataset_with_prompt_text_huggingface\n- Saxo/OpenOrca_cleaned_kor_linkbricks_single_dataset_with_prompt_text_huggingface\n- Saxo/ko_government_qa_total_linkbricks_single_dataset_with_prompt_text_huggingface_sampled\n- Saxo/ko-news-corpus-1\n- Saxo/ko-news-corpus-2\n- Saxo/ko-news-corpus-3\n- Saxo/ko-news-corpus-4\n- Saxo/ko-news-corpus-5\n- Saxo/ko-news-corpus-6\n- Saxo/ko-news-corpus-7\n- Saxo/ko-news-corpus-8\n- Saxo/ko-news-corpus-9\n- maywell/ko_Ultrafeedback_binarized\n- youjunhyeok/ko-orca-pair-and-ultrafeedback-dpo\n- lilacai/glaive-function-calling-v2-sharegpt\n- kuotient/gsm8k-ko\nlanguage:\n- ko\n- en\n- jp\n- cn\nlibrary_name: transformers\nlicense: apache-2.0\npipeline_tag: text-generation"", ""widget_data"": null, ""model_index"": null, ""config"": {""architectures"": [""Qwen2ForCausalLM""], ""model_type"": ""qwen2"", ""tokenizer_config"": {""bos_token"": null, ""chat_template"": ""{%- if tools %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0]['role'] == 'system' %}\n {{- messages[0]['content'] }}\n {%- else %}\n {{- 'You are Qwen, created by Alibaba Cloud. You are a helpful assistant.' }}\n {%- endif %}\n {{- \""\\n\\n# Tools\\n\\nYou may call one or more functions to assist with the user query.\\n\\nYou are provided with function signatures within <tools></tools> XML tags:\\n<tools>\"" }}\n {%- for tool in tools %}\n {{- \""\\n\"" }}\n {{- tool | tojson }}\n {%- endfor %}\n {{- \""\\n</tools>\\n\\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\\n<tool_call>\\n{\\\""name\\\"": <function-name>, \\\""arguments\\\"": <args-json-object>}\\n</tool_call><|im_end|>\\n\"" }}\n{%- else %}\n {%- if messages[0]['role'] == 'system' %}\n {{- '<|im_start|>system\\n' + messages[0]['content'] + '<|im_end|>\\n' }}\n {%- else %}\n {{- '<|im_start|>system\\nYou are Qwen, created by Alibaba Cloud. You are a helpful assistant.<|im_end|>\\n' }}\n {%- endif %}\n{%- endif %}\n{%- for message in messages %}\n {%- if (message.role == \""user\"") or (message.role == \""system\"" and not loop.first) or (message.role == \""assistant\"" and not message.tool_calls) %}\n {{- '<|im_start|>' + message.role + '\\n' + message.content + '<|im_end|>' + '\\n' }}\n {%- elif message.role == \""assistant\"" %}\n {{- '<|im_start|>' + message.role }}\n {%- if message.content %}\n {{- '\\n' + message.content }}\n {%- endif %}\n {%- for tool_call in message.tool_calls %}\n {%- if tool_call.function is defined %}\n {%- set tool_call = tool_call.function %}\n {%- endif %}\n {{- '\\n<tool_call>\\n{\""name\"": \""' }}\n {{- tool_call.name }}\n {{- '\"", \""arguments\"": ' }}\n {{- tool_call.arguments | tojson }}\n {{- '}\\n</tool_call>' }}\n {%- endfor %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \""tool\"" %}\n {%- if (loop.index0 == 0) or (messages[loop.index0 - 1].role != \""tool\"") %}\n {{- '<|im_start|>user' }}\n {%- endif %}\n {{- '\\n<tool_response>\\n' }}\n {{- message.content }}\n {{- '\\n</tool_response>' }}\n {%- if loop.last or (messages[loop.index0 + 1].role != \""tool\"") %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- if add_generation_prompt %}\n {{- '<|im_start|>assistant\\n' }}\n{%- endif %}\n"", ""eos_token"": ""<|im_end|>"", ""pad_token"": ""<|endoftext|>"", ""unk_token"": null}}, ""transformers_info"": {""auto_model"": ""AutoModelForCausalLM"", ""custom_class"": null, ""pipeline_tag"": ""text-generation"", ""processor"": ""AutoTokenizer""}, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='added_tokens.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='merges.txt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00001-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00002-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00003-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00004-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00005-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00006-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00007-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00008-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00009-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00010-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00011-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00012-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00013-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00014-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00015-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00016-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00017-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00018-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00019-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00020-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00021-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00022-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00023-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00024-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00025-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00026-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00027-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00028-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00029-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00030-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00031-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00032-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00033-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00034-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00035-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00036-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00037-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00038-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00039-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00040-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00041-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00042-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00043-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00044-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00045-of-00045.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model.safetensors.index.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='special_tokens_map.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='vocab.json', size=None, blob_id=None, lfs=None)""], ""spaces"": [], ""safetensors"": {""parameters"": {""BF16"": 107807055872}, ""total"": 107807055872}, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2024-12-31 02:36:02+00:00"", ""cardData"": ""base_model: Nexusflow/Athene-V2-Chat\ndatasets:\n- Saxo/ko_cn_translation_tech_social_science_linkbricks_single_dataset\n- Saxo/ko_jp_translation_tech_social_science_linkbricks_single_dataset\n- Saxo/en_ko_translation_tech_science_linkbricks_single_dataset_with_prompt_text_huggingface\n- Saxo/en_ko_translation_social_science_linkbricks_single_dataset_with_prompt_text_huggingface\n- Saxo/ko_aspect_sentiment_sns_mall_sentiment_linkbricks_single_dataset_with_prompt_text_huggingface\n- Saxo/ko_summarization_linkbricks_single_dataset_with_prompt_text_huggingface\n- Saxo/OpenOrca_cleaned_kor_linkbricks_single_dataset_with_prompt_text_huggingface\n- Saxo/ko_government_qa_total_linkbricks_single_dataset_with_prompt_text_huggingface_sampled\n- Saxo/ko-news-corpus-1\n- Saxo/ko-news-corpus-2\n- Saxo/ko-news-corpus-3\n- Saxo/ko-news-corpus-4\n- Saxo/ko-news-corpus-5\n- Saxo/ko-news-corpus-6\n- Saxo/ko-news-corpus-7\n- Saxo/ko-news-corpus-8\n- Saxo/ko-news-corpus-9\n- maywell/ko_Ultrafeedback_binarized\n- youjunhyeok/ko-orca-pair-and-ultrafeedback-dpo\n- lilacai/glaive-function-calling-v2-sharegpt\n- kuotient/gsm8k-ko\nlanguage:\n- ko\n- en\n- jp\n- cn\nlibrary_name: transformers\nlicense: apache-2.0\npipeline_tag: text-generation"", ""transformersInfo"": {""auto_model"": ""AutoModelForCausalLM"", ""custom_class"": null, ""pipeline_tag"": ""text-generation"", ""processor"": ""AutoTokenizer""}, ""_id"": ""67724637bb74df9800cc7e60"", ""modelId"": ""Saxo/Linkbricks-Horizon-AI-Avengers-V1-108B"", ""usedStorage"": 215625701192}",1,,0,,0,,0,,0,huggingface/InferenceSupport/discussions/new?title=Saxo/Linkbricks-Horizon-AI-Avengers-V1-108B&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5BSaxo%2FLinkbricks-Horizon-AI-Avengers-V1-108B%5D(%2FSaxo%2FLinkbricks-Horizon-AI-Avengers-V1-108B)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A,1

|

| 75 |

+

dvishal18/chatbotapi,"---

|

| 76 |

+

license: apache-2.0

|

| 77 |

+

language:

|

| 78 |

+

- en

|

| 79 |

+

base_model:

|

| 80 |

+

- Nexusflow/Athene-V2-Chat

|

| 81 |

+

---","{""id"": ""dvishal18/chatbotapi"", ""author"": ""dvishal18"", ""sha"": ""7585f329aa754b85ed0bca750c2f867fc2ee3ab8"", ""last_modified"": ""2024-12-30 12:35:47+00:00"", ""created_at"": ""2024-12-30 12:34:42+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 0, ""downloads_all_time"": null, ""likes"": 0, ""library_name"": null, ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""en"", ""base_model:Nexusflow/Athene-V2-Chat"", ""base_model:finetune:Nexusflow/Athene-V2-Chat"", ""license:apache-2.0"", ""region:us""], ""pipeline_tag"": null, ""mask_token"": null, ""trending_score"": null, ""card_data"": ""base_model:\n- Nexusflow/Athene-V2-Chat\nlanguage:\n- en\nlicense: apache-2.0"", ""widget_data"": null, ""model_index"": null, ""config"": null, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)""], ""spaces"": [], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2024-12-30 12:35:47+00:00"", ""cardData"": ""base_model:\n- Nexusflow/Athene-V2-Chat\nlanguage:\n- en\nlicense: apache-2.0"", ""transformersInfo"": null, ""_id"": ""67729362afe9fcdc219f7645"", ""modelId"": ""dvishal18/chatbotapi"", ""usedStorage"": 0}",1,,0,,0,,0,,0,huggingface/InferenceSupport/discussions/new?title=dvishal18/chatbotapi&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5Bdvishal18%2Fchatbotapi%5D(%2Fdvishal18%2Fchatbotapi)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A,1

|

BiomedCLIP-PubMedBERT_256-vit_base_patch16_224_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv

ADDED

|

@@ -0,0 +1,407 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|