Add files using upload-large-folder tool

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- AuraSR_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +38 -0

- BELLE-7B-2M_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +2 -0

- BiRefNet_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +228 -0

- ControlNet_finetunes_20250424_143024.csv_finetunes_20250424_143024.csv +90 -0

- DeepCoder-14B-Preview_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +551 -0

- DeepSeek-R1-Distill-Qwen-7B_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +0 -0

- DeepSeek-V3-0324_finetunes_20250424_145241.csv_finetunes_20250424_145241.csv +0 -0

- Fimbulvetr-11B-v2_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +702 -0

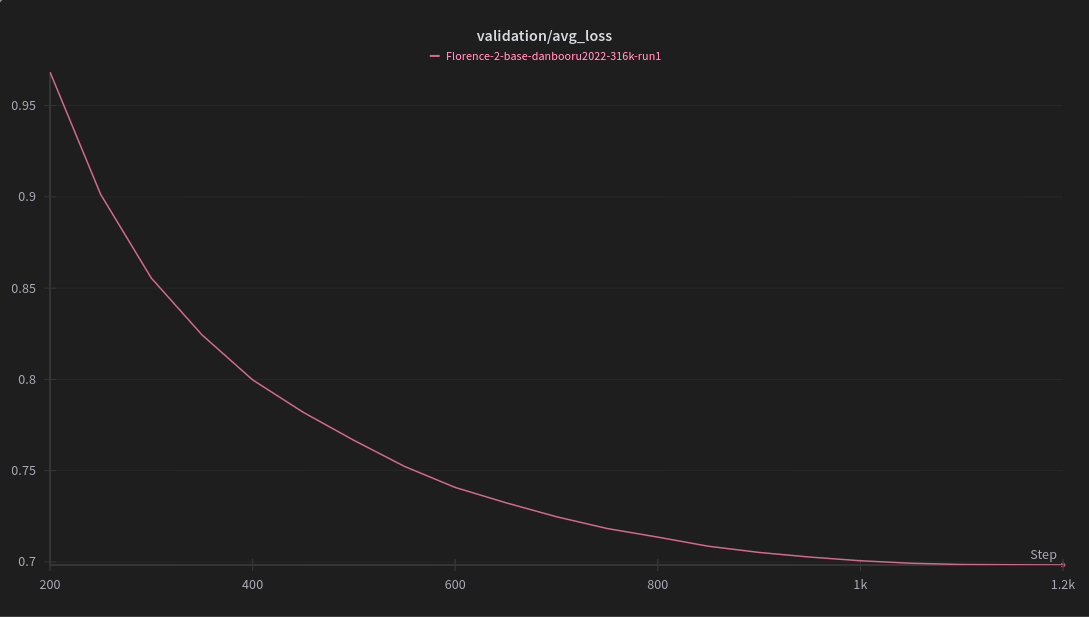

- Florence-2-base_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +739 -0

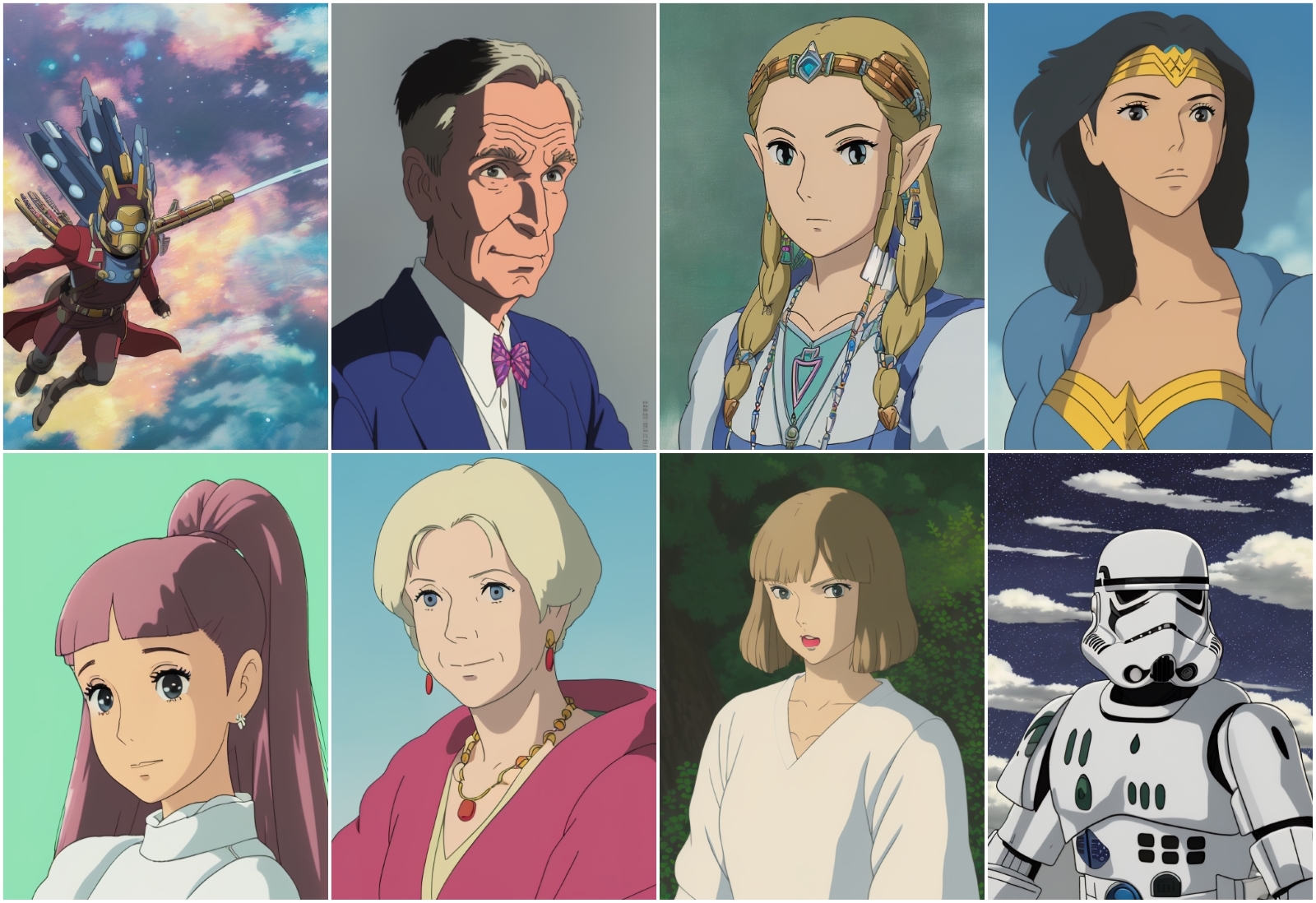

- Ghibli-Diffusion_finetunes_20250425_125929.csv_finetunes_20250425_125929.csv +99 -0

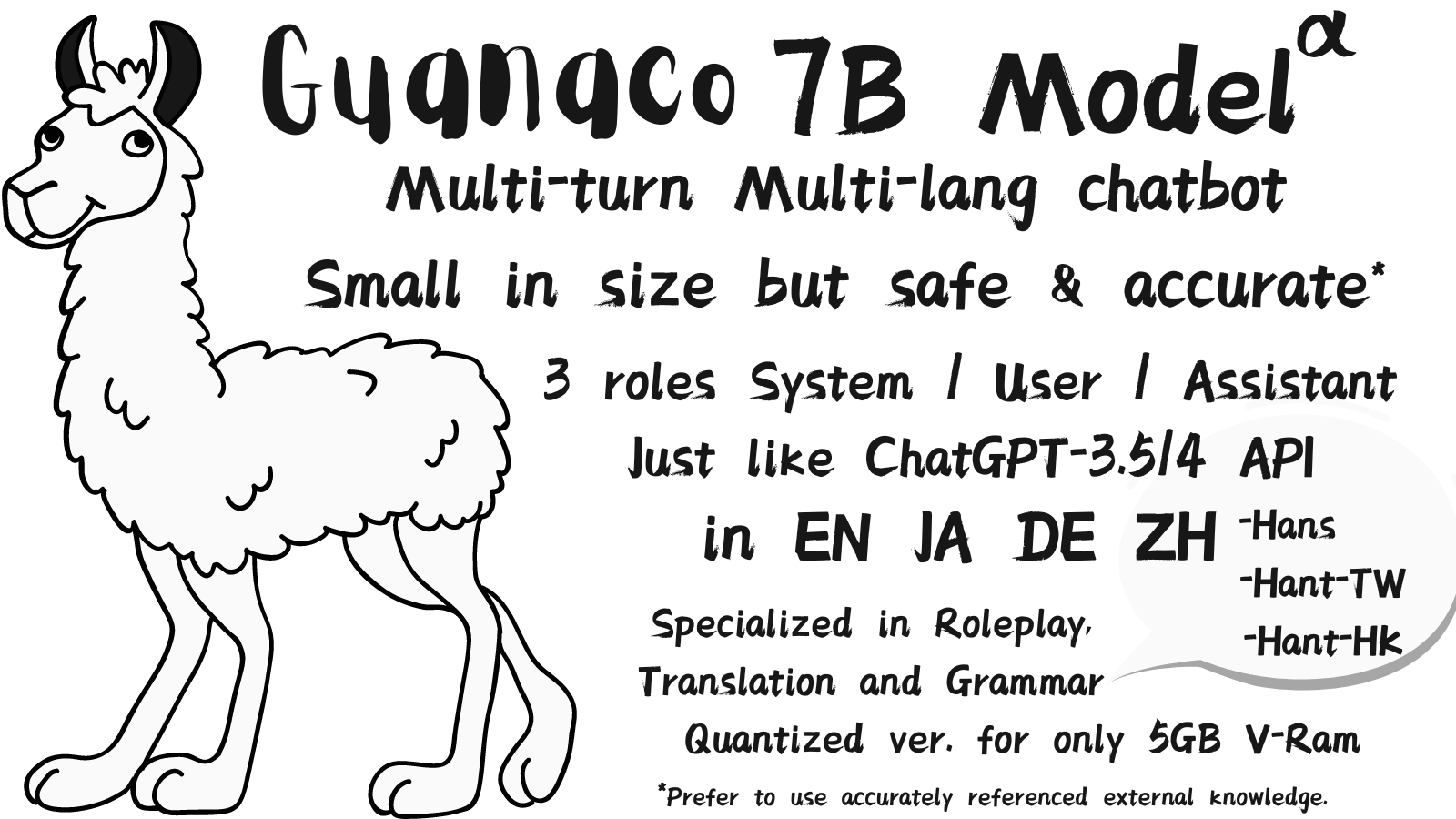

- Guanaco_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +108 -0

- HunyuanVideo-gguf_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +25 -0

- LaBSE_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- Llama-2-7B-Chat-GGML_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +372 -0

- Llama2-Chinese-7b-Chat_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +42 -0

- Llasa-3B_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +240 -0

- MARS5-TTS_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +170 -0

- MEETING_SUMMARY_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +0 -0

- MN-12B-Mag-Mell-R1_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +224 -0

- MaskGCT_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +301 -0

- Meta-Llama-3-8B_finetunes_20250422_180448.csv +0 -0

- Midjourney_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +325 -0

- MiniCPM-o-2_6_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +1565 -0

- Nemotron-Mini-4B-Instruct_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +131 -0

- NeverEnding-Dream_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +36 -0

- Nitro-Diffusion_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +83 -0

- OpenVoiceV2_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +195 -0

- Phi-3-small-8k-instruct_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +0 -0

- Qwen-14B-Chat_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +792 -0

- Qwen2-Audio-7B-Instruct_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +576 -0

- Real-ESRGAN_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +41 -0

- Ruyi-Mini-7B_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +196 -0

- SmallThinker-3B-Preview_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +0 -0

- TemporalDiff_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +2 -0

- Tifa-Deepsex-14b-CoT-Q8_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +201 -0

- Yarn-Mistral-7b-128k_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +344 -0

- alpaca-native_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +61 -0

- animatediff_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +5 -0

- anything-midjourney-v-4-1_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +17 -0

- bart-large-cnn_finetunes_20250424_193500.csv_finetunes_20250424_193500.csv +0 -0

- bge-large-en_finetunes_20250426_212347.csv_finetunes_20250426_212347.csv +0 -0

- bge-reranker-large_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +580 -0

- bge-reranker-v2-m3_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +800 -0

- biomedical-ner-all_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +428 -0

- blenderbot-400M-distill_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +0 -0

- blessed_vae_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +38 -0

- blue_pencil_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- clip-vit-large-patch14_finetunes_20250424_193500.csv_finetunes_20250424_193500.csv +0 -0

- deepseek-vl2-small_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +143 -0

- deepseek-vl2_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +143 -0

AuraSR_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv

ADDED

|

@@ -0,0 +1,38 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

fal/AuraSR,"---

|

| 3 |

+

license: cc

|

| 4 |

+

tags:

|

| 5 |

+

- art

|

| 6 |

+

- pytorch

|

| 7 |

+

- super-resolution

|

| 8 |

+

---

|

| 9 |

+

# AuraSR

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

GAN-based Super-Resolution for upscaling generated images, a variation of the [GigaGAN](https://mingukkang.github.io/GigaGAN/) paper for image-conditioned upscaling. Torch implementation is based on the unofficial [lucidrains/gigagan-pytorch](https://github.com/lucidrains/gigagan-pytorch) repository.

|

| 13 |

+

|

| 14 |

+

## Usage

|

| 15 |

+

|

| 16 |

+

```bash

|

| 17 |

+

$ pip install aura-sr

|

| 18 |

+

```

|

| 19 |

+

|

| 20 |

+

```python

|

| 21 |

+

from aura_sr import AuraSR

|

| 22 |

+

|

| 23 |

+

aura_sr = AuraSR.from_pretrained(""fal-ai/AuraSR"")

|

| 24 |

+

```

|

| 25 |

+

|

| 26 |

+

```python

|

| 27 |

+

import requests

|

| 28 |

+

from io import BytesIO

|

| 29 |

+

from PIL import Image

|

| 30 |

+

|

| 31 |

+

def load_image_from_url(url):

|

| 32 |

+

response = requests.get(url)

|

| 33 |

+

image_data = BytesIO(response.content)

|

| 34 |

+

return Image.open(image_data)

|

| 35 |

+

|

| 36 |

+

image = load_image_from_url(""https://mingukkang.github.io/GigaGAN/static/images/iguana_output.jpg"").resize((256, 256))

|

| 37 |

+

upscaled_image = aura_sr.upscale_4x(image)

|

| 38 |

+

```","{""id"": ""fal/AuraSR"", ""author"": ""fal"", ""sha"": ""8b70681ad0364f3221a9bc8c7ef07531df885509"", ""last_modified"": ""2024-07-15 16:44:58+00:00"", ""created_at"": ""2024-06-25 17:22:07+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 707, ""downloads_all_time"": null, ""likes"": 303, ""library_name"": ""transformers"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""transformers"", ""safetensors"", ""art"", ""pytorch"", ""super-resolution"", ""license:cc"", ""endpoints_compatible"", ""region:us""], ""pipeline_tag"": null, ""mask_token"": null, ""trending_score"": null, ""card_data"": ""license: cc\ntags:\n- art\n- pytorch\n- super-resolution"", ""widget_data"": null, ""model_index"": null, ""config"": {}, ""transformers_info"": {""auto_model"": ""AutoModel"", ""custom_class"": null, ""pipeline_tag"": null, ""processor"": null}, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='LICENSE.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model.ckpt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model.safetensors', size=None, blob_id=None, lfs=None)""], ""spaces"": [""gokaygokay/AuraSR-v2"", ""philipp-zettl/OS-upscaler-AuraSR"", ""rupeshs/fastsdcpu"", ""ZENLLC/AuraUpscale"", ""EPFL-VILAB/FlexTok"", ""tejani/Another"", ""NataLobster/testspace"", ""cocktailpeanut/AuraSR"", ""Sunghokim/diversegpt"", ""ProPerNounpYK/PILAI"", ""Raven7/AuraSR"", ""ProPerNounpYK/PIL"", ""KaiShin1885/AuraSR"", ""cocktailpeanut/AuraSR-v2"", ""Rodneyontherock1067/fastsdcpu"", ""MartsoBodziu1994/AuraSR-v2"", ""YoBatM/FastStableDifussion"", ""svjack/OS-upscaler-AuraSR"", ""tejani/fastsdcpu"", ""tejani/NewApp""], ""safetensors"": {""parameters"": {""F32"": 617554917}, ""total"": 617554917}, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2024-07-15 16:44:58+00:00"", ""cardData"": ""license: cc\ntags:\n- art\n- pytorch\n- super-resolution"", ""transformersInfo"": {""auto_model"": ""AutoModel"", ""custom_class"": null, ""pipeline_tag"": null, ""processor"": null}, ""_id"": ""667afcbfe005e1dbcc640477"", ""modelId"": ""fal/AuraSR"", ""usedStorage"": 7410876896}",0,,0,,0,,0,,0,"EPFL-VILAB/FlexTok, KaiShin1885/AuraSR, NataLobster/testspace, ProPerNounpYK/PILAI, Raven7/AuraSR, Sunghokim/diversegpt, ZENLLC/AuraUpscale, cocktailpeanut/AuraSR, gokaygokay/AuraSR-v2, huggingface/InferenceSupport/discussions/new?title=fal/AuraSR&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5Bfal%2FAuraSR%5D(%2Ffal%2FAuraSR)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, philipp-zettl/OS-upscaler-AuraSR, rupeshs/fastsdcpu, tejani/Another",13

|

BELLE-7B-2M_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

BelleGroup/BELLE-7B-2M,N/A,N/A,0,,0,,0,,0,,0,"for1988/BelleGroup-BELLE-7B-2M, gaoshine/BelleGroup-BELLE-7B-2M, huggingface/InferenceSupport/discussions/new?title=BelleGroup/BELLE-7B-2M&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5BBelleGroup%2FBELLE-7B-2M%5D(%2FBelleGroup%2FBELLE-7B-2M)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, markmagic/BelleGroup-BELLE-7B-2M, zgldh/BelleGroup-BELLE-7B-2M",5

|

BiRefNet_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv

ADDED

|

@@ -0,0 +1,228 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

ZhengPeng7/BiRefNet,"---

|

| 3 |

+

library_name: birefnet

|

| 4 |

+

tags:

|

| 5 |

+

- background-removal

|

| 6 |

+

- mask-generation

|

| 7 |

+

- Dichotomous Image Segmentation

|

| 8 |

+

- Camouflaged Object Detection

|

| 9 |

+

- Salient Object Detection

|

| 10 |

+

- pytorch_model_hub_mixin

|

| 11 |

+

- model_hub_mixin

|

| 12 |

+

- transformers

|

| 13 |

+

- transformers.js

|

| 14 |

+

repo_url: https://github.com/ZhengPeng7/BiRefNet

|

| 15 |

+

pipeline_tag: image-segmentation

|

| 16 |

+

license: mit

|

| 17 |

+

---

|

| 18 |

+

<h1 align=""center"">Bilateral Reference for High-Resolution Dichotomous Image Segmentation</h1>

|

| 19 |

+

|

| 20 |

+

<div align='center'>

|

| 21 |

+

<a href='https://scholar.google.com/citations?user=TZRzWOsAAAAJ' target='_blank'><strong>Peng Zheng</strong></a><sup> 1,4,5,6</sup>,

|

| 22 |

+

<a href='https://scholar.google.com/citations?user=0uPb8MMAAAAJ' target='_blank'><strong>Dehong Gao</strong></a><sup> 2</sup>,

|

| 23 |

+

<a href='https://scholar.google.com/citations?user=kakwJ5QAAAAJ' target='_blank'><strong>Deng-Ping Fan</strong></a><sup> 1*</sup>,

|

| 24 |

+

<a href='https://scholar.google.com/citations?user=9cMQrVsAAAAJ' target='_blank'><strong>Li Liu</strong></a><sup> 3</sup>,

|

| 25 |

+

<a href='https://scholar.google.com/citations?user=qQP6WXIAAAAJ' target='_blank'><strong>Jorma Laaksonen</strong></a><sup> 4</sup>,

|

| 26 |

+

<a href='https://scholar.google.com/citations?user=pw_0Z_UAAAAJ' target='_blank'><strong>Wanli Ouyang</strong></a><sup> 5</sup>,

|

| 27 |

+

<a href='https://scholar.google.com/citations?user=stFCYOAAAAAJ' target='_blank'><strong>Nicu Sebe</strong></a><sup> 6</sup>

|

| 28 |

+

</div>

|

| 29 |

+

|

| 30 |

+

<div align='center'>

|

| 31 |

+

<sup>1 </sup>Nankai University  <sup>2 </sup>Northwestern Polytechnical University  <sup>3 </sup>National University of Defense Technology  <sup>4 </sup>Aalto University  <sup>5 </sup>Shanghai AI Laboratory  <sup>6 </sup>University of Trento

|

| 32 |

+

</div>

|

| 33 |

+

|

| 34 |

+

<div align=""center"" style=""display: flex; justify-content: center; flex-wrap: wrap;"">

|

| 35 |

+

<a href='https://www.sciopen.com/article/pdf/10.26599/AIR.2024.9150038.pdf'><img src='https://img.shields.io/badge/Journal-Paper-red'></a>

|

| 36 |

+

<a href='https://arxiv.org/pdf/2401.03407'><img src='https://img.shields.io/badge/arXiv-BiRefNet-red'></a>

|

| 37 |

+

<a href='https://drive.google.com/file/d/1aBnJ_R9lbnC2dm8dqD0-pzP2Cu-U1Xpt/view?usp=drive_link'><img src='https://img.shields.io/badge/中文版-BiRefNet-red'></a>

|

| 38 |

+

<a href='https://www.birefnet.top'><img src='https://img.shields.io/badge/Page-BiRefNet-red'></a>

|

| 39 |

+

<a href='https://drive.google.com/drive/folders/1s2Xe0cjq-2ctnJBR24563yMSCOu4CcxM'><img src='https://img.shields.io/badge/Drive-Stuff-green'></a>

|

| 40 |

+

<a href='LICENSE'><img src='https://img.shields.io/badge/License-MIT-yellow'></a>

|

| 41 |

+

<a href='https://huggingface.co/spaces/ZhengPeng7/BiRefNet_demo'><img src='https://img.shields.io/badge/%F0%9F%A4%97%20HF%20Spaces-BiRefNet-blue'></a>

|

| 42 |

+

<a href='https://huggingface.co/ZhengPeng7/BiRefNet'><img src='https://img.shields.io/badge/%F0%9F%A4%97%20HF%20Models-BiRefNet-blue'></a>

|

| 43 |

+

<a href='https://colab.research.google.com/drive/14Dqg7oeBkFEtchaHLNpig2BcdkZEogba?usp=drive_link'><img src='https://img.shields.io/badge/Single_Image_Inference-F9AB00?style=for-the-badge&logo=googlecolab&color=525252'></a>

|

| 44 |

+

<a href='https://colab.research.google.com/drive/1MaEiBfJ4xIaZZn0DqKrhydHB8X97hNXl#scrollTo=DJ4meUYjia6S'><img src='https://img.shields.io/badge/Inference_&_Evaluation-F9AB00?style=for-the-badge&logo=googlecolab&color=525252'></a>

|

| 45 |

+

</div>

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

| *DIS-Sample_1* | *DIS-Sample_2* |

|

| 49 |

+

| :------------------------------: | :-------------------------------: |

|

| 50 |

+

| <img src=""https://drive.google.com/thumbnail?id=1ItXaA26iYnE8XQ_GgNLy71MOWePoS2-g&sz=w400"" /> | <img src=""https://drive.google.com/thumbnail?id=1Z-esCujQF_uEa_YJjkibc3NUrW4aR_d4&sz=w400"" /> |

|

| 51 |

+

|

| 52 |

+

This repo is the official implementation of ""[**Bilateral Reference for High-Resolution Dichotomous Image Segmentation**](https://arxiv.org/pdf/2401.03407.pdf)"" (___CAAI AIR 2024___).

|

| 53 |

+

|

| 54 |

+

Visit our GitHub repo: [https://github.com/ZhengPeng7/BiRefNet](https://github.com/ZhengPeng7/BiRefNet) for more details -- **codes**, **docs**, and **model zoo**!

|

| 55 |

+

|

| 56 |

+

## How to use

|

| 57 |

+

|

| 58 |

+

### 0. Install Packages:

|

| 59 |

+

```

|

| 60 |

+

pip install -qr https://raw.githubusercontent.com/ZhengPeng7/BiRefNet/main/requirements.txt

|

| 61 |

+

```

|

| 62 |

+

|

| 63 |

+

### 1. Load BiRefNet:

|

| 64 |

+

|

| 65 |

+

#### Use codes + weights from HuggingFace

|

| 66 |

+

> Only use the weights on HuggingFace -- Pro: No need to download BiRefNet codes manually; Con: Codes on HuggingFace might not be latest version (I'll try to keep them always latest).

|

| 67 |

+

|

| 68 |

+

```python

|

| 69 |

+

# Load BiRefNet with weights

|

| 70 |

+

from transformers import AutoModelForImageSegmentation

|

| 71 |

+

birefnet = AutoModelForImageSegmentation.from_pretrained('ZhengPeng7/BiRefNet', trust_remote_code=True)

|

| 72 |

+

```

|

| 73 |

+

|

| 74 |

+

#### Use codes from GitHub + weights from HuggingFace

|

| 75 |

+

> Only use the weights on HuggingFace -- Pro: codes are always latest; Con: Need to clone the BiRefNet repo from my GitHub.

|

| 76 |

+

|

| 77 |

+

```shell

|

| 78 |

+

# Download codes

|

| 79 |

+

git clone https://github.com/ZhengPeng7/BiRefNet.git

|

| 80 |

+

cd BiRefNet

|

| 81 |

+

```

|

| 82 |

+

|

| 83 |

+

```python

|

| 84 |

+

# Use codes locally

|

| 85 |

+

from models.birefnet import BiRefNet

|

| 86 |

+

|

| 87 |

+

# Load weights from Hugging Face Models

|

| 88 |

+

birefnet = BiRefNet.from_pretrained('ZhengPeng7/BiRefNet')

|

| 89 |

+

```

|

| 90 |

+

|

| 91 |

+

#### Use codes from GitHub + weights from local space

|

| 92 |

+

> Only use the weights and codes both locally.

|

| 93 |

+

|

| 94 |

+

```python

|

| 95 |

+

# Use codes and weights locally

|

| 96 |

+

import torch

|

| 97 |

+

from utils import check_state_dict

|

| 98 |

+

|

| 99 |

+

birefnet = BiRefNet(bb_pretrained=False)

|

| 100 |

+

state_dict = torch.load(PATH_TO_WEIGHT, map_location='cpu')

|

| 101 |

+

state_dict = check_state_dict(state_dict)

|

| 102 |

+

birefnet.load_state_dict(state_dict)

|

| 103 |

+

```

|

| 104 |

+

|

| 105 |

+

#### Use the loaded BiRefNet for inference

|

| 106 |

+

```python

|

| 107 |

+

# Imports

|

| 108 |

+

from PIL import Image

|

| 109 |

+

import matplotlib.pyplot as plt

|

| 110 |

+

import torch

|

| 111 |

+

from torchvision import transforms

|

| 112 |

+

from models.birefnet import BiRefNet

|

| 113 |

+

|

| 114 |

+

birefnet = ... # -- BiRefNet should be loaded with codes above, either way.

|

| 115 |

+

torch.set_float32_matmul_precision(['high', 'highest'][0])

|

| 116 |

+

birefnet.to('cuda')

|

| 117 |

+

birefnet.eval()

|

| 118 |

+

birefnet.half()

|

| 119 |

+

|

| 120 |

+

def extract_object(birefnet, imagepath):

|

| 121 |

+

# Data settings

|

| 122 |

+

image_size = (1024, 1024)

|

| 123 |

+

transform_image = transforms.Compose([

|

| 124 |

+

transforms.Resize(image_size),

|

| 125 |

+

transforms.ToTensor(),

|

| 126 |

+

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

|

| 127 |

+

])

|

| 128 |

+

|

| 129 |

+

image = Image.open(imagepath)

|

| 130 |

+

input_images = transform_image(image).unsqueeze(0).to('cuda').half()

|

| 131 |

+

|

| 132 |

+

# Prediction

|

| 133 |

+

with torch.no_grad():

|

| 134 |

+

preds = birefnet(input_images)[-1].sigmoid().cpu()

|

| 135 |

+

pred = preds[0].squeeze()

|

| 136 |

+

pred_pil = transforms.ToPILImage()(pred)

|

| 137 |

+

mask = pred_pil.resize(image.size)

|

| 138 |

+

image.putalpha(mask)

|

| 139 |

+

return image, mask

|

| 140 |

+

|

| 141 |

+

# Visualization

|

| 142 |

+

plt.axis(""off"")

|

| 143 |

+

plt.imshow(extract_object(birefnet, imagepath='PATH-TO-YOUR_IMAGE.jpg')[0])

|

| 144 |

+

plt.show()

|

| 145 |

+

|

| 146 |

+

```

|

| 147 |

+

|

| 148 |

+

### 2. Use inference endpoint locally:

|

| 149 |

+

> You may need to click the *deploy* and set up the endpoint by yourself, which would make some costs.

|

| 150 |

+

```

|

| 151 |

+

import requests

|

| 152 |

+

import base64

|

| 153 |

+

from io import BytesIO

|

| 154 |

+

from PIL import Image

|

| 155 |

+

|

| 156 |

+

|

| 157 |

+

YOUR_HF_TOKEN = 'xxx'

|

| 158 |

+

API_URL = ""xxx""

|

| 159 |

+

headers = {

|

| 160 |

+

""Authorization"": ""Bearer {}"".format(YOUR_HF_TOKEN)

|

| 161 |

+

}

|

| 162 |

+

|

| 163 |

+

def base64_to_bytes(base64_string):

|

| 164 |

+

# Remove the data URI prefix if present

|

| 165 |

+

if ""data:image"" in base64_string:

|

| 166 |

+

base64_string = base64_string.split("","")[1]

|

| 167 |

+

|

| 168 |

+

# Decode the Base64 string into bytes

|

| 169 |

+

image_bytes = base64.b64decode(base64_string)

|

| 170 |

+

return image_bytes

|

| 171 |

+

|

| 172 |

+

def bytes_to_base64(image_bytes):

|

| 173 |

+

# Create a BytesIO object to handle the image data

|

| 174 |

+

image_stream = BytesIO(image_bytes)

|

| 175 |

+

|

| 176 |

+

# Open the image using Pillow (PIL)

|

| 177 |

+

image = Image.open(image_stream)

|

| 178 |

+

return image

|

| 179 |

+

|

| 180 |

+

def query(payload):

|

| 181 |

+

response = requests.post(API_URL, headers=headers, json=payload)

|

| 182 |

+

return response.json()

|

| 183 |

+

|

| 184 |

+

output = query({

|

| 185 |

+

""inputs"": ""https://hips.hearstapps.com/hmg-prod/images/gettyimages-1229892983-square.jpg"",

|

| 186 |

+

""parameters"": {}

|

| 187 |

+

})

|

| 188 |

+

|

| 189 |

+

output_image = bytes_to_base64(base64_to_bytes(output))

|

| 190 |

+

output_image

|

| 191 |

+

```

|

| 192 |

+

|

| 193 |

+

|

| 194 |

+

> This BiRefNet for standard dichotomous image segmentation (DIS) is trained on **DIS-TR** and validated on **DIS-TEs and DIS-VD**.

|

| 195 |

+

|

| 196 |

+

## This repo holds the official model weights of ""[<ins>Bilateral Reference for High-Resolution Dichotomous Image Segmentation</ins>](https://arxiv.org/pdf/2401.03407)"" (_CAAI AIR 2024_).

|

| 197 |

+

|

| 198 |

+

This repo contains the weights of BiRefNet proposed in our paper, which has achieved the SOTA performance on three tasks (DIS, HRSOD, and COD).

|

| 199 |

+

|

| 200 |

+

Go to my GitHub page for BiRefNet codes and the latest updates: https://github.com/ZhengPeng7/BiRefNet :)

|

| 201 |

+

|

| 202 |

+

|

| 203 |

+

#### Try our online demos for inference:

|

| 204 |

+

|

| 205 |

+

+ Online **Image Inference** on Colab: [](https://colab.research.google.com/drive/14Dqg7oeBkFEtchaHLNpig2BcdkZEogba?usp=drive_link)

|

| 206 |

+

+ **Online Inference with GUI on Hugging Face** with adjustable resolutions: [](https://huggingface.co/spaces/ZhengPeng7/BiRefNet_demo)

|

| 207 |

+

+ **Inference and evaluation** of your given weights: [](https://colab.research.google.com/drive/1MaEiBfJ4xIaZZn0DqKrhydHB8X97hNXl#scrollTo=DJ4meUYjia6S)

|

| 208 |

+

<img src=""https://drive.google.com/thumbnail?id=12XmDhKtO1o2fEvBu4OE4ULVB2BK0ecWi&sz=w1080"" />

|

| 209 |

+

|

| 210 |

+

## Acknowledgement:

|

| 211 |

+

|

| 212 |

+

+ Many thanks to @Freepik for their generous support on GPU resources for training higher resolution BiRefNet models and more of my explorations.

|

| 213 |

+

+ Many thanks to @fal for their generous support on GPU resources for training better general BiRefNet models.

|

| 214 |

+

+ Many thanks to @not-lain for his help on the better deployment of our BiRefNet model on HuggingFace.

|

| 215 |

+

|

| 216 |

+

|

| 217 |

+

## Citation

|

| 218 |

+

|

| 219 |

+

```

|

| 220 |

+

@article{zheng2024birefnet,

|

| 221 |

+

title={Bilateral Reference for High-Resolution Dichotomous Image Segmentation},

|

| 222 |

+

author={Zheng, Peng and Gao, Dehong and Fan, Deng-Ping and Liu, Li and Laaksonen, Jorma and Ouyang, Wanli and Sebe, Nicu},

|

| 223 |

+

journal={CAAI Artificial Intelligence Research},

|

| 224 |

+

volume = {3},

|

| 225 |

+

pages = {9150038},

|

| 226 |

+

year={2024}

|

| 227 |

+

}

|

| 228 |

+

```","{""id"": ""ZhengPeng7/BiRefNet"", ""author"": ""ZhengPeng7"", ""sha"": ""6a62b7dcfa18a3829087877fb16c8006831e4220"", ""last_modified"": ""2025-03-31 06:29:55+00:00"", ""created_at"": ""2024-07-12 08:50:09+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 632363, ""downloads_all_time"": null, ""likes"": 362, ""library_name"": ""birefnet"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""birefnet"", ""safetensors"", ""image-segmentation"", ""background-removal"", ""mask-generation"", ""Dichotomous Image Segmentation"", ""Camouflaged Object Detection"", ""Salient Object Detection"", ""pytorch_model_hub_mixin"", ""model_hub_mixin"", ""transformers"", ""transformers.js"", ""custom_code"", ""arxiv:2401.03407"", ""license:mit"", ""endpoints_compatible"", ""region:us""], ""pipeline_tag"": ""image-segmentation"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""library_name: birefnet\nlicense: mit\npipeline_tag: image-segmentation\ntags:\n- background-removal\n- mask-generation\n- Dichotomous Image Segmentation\n- Camouflaged Object Detection\n- Salient Object Detection\n- pytorch_model_hub_mixin\n- model_hub_mixin\n- transformers\n- transformers.js\nrepo_url: https://github.com/ZhengPeng7/BiRefNet"", ""widget_data"": null, ""model_index"": null, ""config"": {""architectures"": [""BiRefNet""], ""auto_map"": {""AutoConfig"": ""BiRefNet_config.BiRefNetConfig"", ""AutoModelForImageSegmentation"": ""birefnet.BiRefNet""}}, ""transformers_info"": {""auto_model"": ""AutoModelForImageSegmentation"", ""custom_class"": ""birefnet.BiRefNet"", ""pipeline_tag"": ""image-segmentation"", ""processor"": null}, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='.gitignore', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='BiRefNet_config.py', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='birefnet.py', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='handler.py', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='requirements.txt', size=None, blob_id=None, lfs=None)""], ""spaces"": [""not-lain/background-removal"", ""VAST-AI/TripoSG"", ""innova-ai/video-background-removal"", ""jasperai/LBM_relighting"", ""InstantX/InstantCharacter"", ""ZhengPeng7/BiRefNet_demo"", ""PramaLLC/BEN2"", ""VAST-AI/MV-Adapter-I2MV-SDXL"", ""VAST-AI/MV-Adapter-Img2Texture"", ""TencentARC/FreeSplatter"", ""not-lain/locally-compatible-BG-removal"", ""Stable-X/StableRecon"", ""NegiTurkey/Multi_Birefnetfor_Background_Removal"", ""ysharma/BiRefNet_for_text_writing"", ""victor/background-removal"", ""Han-123/background-removal"", ""not-lain/gpu-utils"", ""not-lain/video-background-removal"", ""Tassawar/back_ground_removal"", ""cocktailpeanut/video-background-removal"", ""lodhrangpt/Multi_BG_Removal"", ""shemayons/Image-Background-Removal"", ""svjack/video-background-removal"", ""randomtable/BiRefNet_Backgroun_Removal"", ""sumit400/RemoveBG"", ""ginigen/BiRefNet_plus"", ""jHaselberger/cool-avatar"", ""gaur3009/GraphicsAI"", ""danielecordano/background-colouring"", ""ghostsInTheMachine/BiRefNet_demo"", ""chuuhtetnaing/background-remover"", ""ShahbazAlam/background-removal-dub2"", ""walidadebayo/BackgroundRemoverandChanger"", ""John6666/BackgroundRemoverandChanger"", ""NabeelShar/BiRefNet_for_text_writing"", ""Ashoka74/RefurnishAI"", ""Kims12/background-removal"", ""Invictus-Jai/image-segment"", ""sariyam/test1"", ""chryssouille/agent_choupinou"", ""dibahadie/KeychainSegmentation"", ""rphrp1985/zerogpu-2"", ""onebitss/Remover_bg"", ""harshkidzure/BiRefNet_demo"", ""LULDev/background-removal"", ""DrChamyoung/deep_ml_backgroundremoval"", ""SUHHHH/background100"", ""superrich001/background1000"", ""aliceblue11/background-removal111"", ""SUHHHH/background-removal-test1"", ""superrich001/background1001"", ""superrich001/background1002"", ""superrich001/background1003"", ""yucelgumus61/Image_Background_Remove"", ""kodnuke/arkaplansilici"", ""minn4/background-remover"", ""Eldirectorweb/Prueba"", ""vinayakrevankar/background-removal"", ""Fywzero/HivisionIDPhotos"", ""BananaSauce/background-removal2"", ""manoloye/background-removal"", ""Golfies/fuchsia-filter"", ""q1139168548/HivisionIDPhotos"", ""killuabakura/background-removal"", ""sainkan/video-background-removal"", ""Ron1006/background-removal"", ""SUP3RMASS1VE/background-removal"", ""Nymbo/video-background-removal"", ""mahmudev/video-background-removal"", ""qweret6565/removebg"", ""qweret6565/background-removal"", ""Oomify/video-background-removal"", ""sapbot/VideoBackgroundRemoval-Copy"", ""amirkhanbloch/BG_Removal"", ""InvictusRudra/Camouflaged_Object_detect"", ""sammichang/removebg"", ""digitalmax1/background-removal"", ""joermd/removeback"", ""MrDianosaur/background-removal"", ""kheloo/background-removal"", ""khelonaseer1/background-removal"", ""kheloo/Multi_BG_Removal"", ""Ashoka74/ProductPlacementBG"", ""MLBench/detect-contours"", ""Ashoka74/Refurnish"", ""bep40/360IMAGES"", ""Ashoka74/Demo_Refurnish"", ""NEROTECHRB/clothing-segmentation-detection"", ""victorestrada/quitar-background"", ""raymerjacque/makulu-bg-removal"", ""prassu999p/BiRefNet_demo_Background_Text"", ""kharismagp/Hapus-Background"", ""hans7393/background-removal_3"", ""Kims12/2-4_background-removal_GPU"", ""CSB261/bgr"", ""superrich001/background-removal_250101"", ""karan2050/background-removal"", ""MLBench/Contours_Extraction"", ""MLBench/Drawer_Detection"", ""p9iaai/background-removal""], ""safetensors"": {""parameters"": {""I64"": 497664, ""F32"": 220202578}, ""total"": 220700242}, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2025-03-31 06:29:55+00:00"", ""cardData"": ""library_name: birefnet\nlicense: mit\npipeline_tag: image-segmentation\ntags:\n- background-removal\n- mask-generation\n- Dichotomous Image Segmentation\n- Camouflaged Object Detection\n- Salient Object Detection\n- pytorch_model_hub_mixin\n- model_hub_mixin\n- transformers\n- transformers.js\nrepo_url: https://github.com/ZhengPeng7/BiRefNet"", ""transformersInfo"": {""auto_model"": ""AutoModelForImageSegmentation"", ""custom_class"": ""birefnet.BiRefNet"", ""pipeline_tag"": ""image-segmentation"", ""processor"": null}, ""_id"": ""6690ee4190a83f3e25f11393"", ""modelId"": ""ZhengPeng7/BiRefNet"", ""usedStorage"": 3099110132}",0,,0,,0,https://huggingface.co/onnx-community/BiRefNet-ONNX,1,,0,"InstantX/InstantCharacter, PramaLLC/BEN2, Tassawar/back_ground_removal, VAST-AI/MV-Adapter-I2MV-SDXL, VAST-AI/MV-Adapter-Img2Texture, VAST-AI/TripoSG, ZhengPeng7/BiRefNet_demo, huggingface/InferenceSupport/discussions/186, innova-ai/video-background-removal, jasperai/LBM_relighting, not-lain/background-removal, not-lain/locally-compatible-BG-removal, svjack/video-background-removal",13

|

ControlNet_finetunes_20250424_143024.csv_finetunes_20250424_143024.csv

ADDED

|

@@ -0,0 +1,90 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

lllyasviel/ControlNet,"---

|

| 3 |

+

license: openrail

|

| 4 |

+

---

|

| 5 |

+

|

| 6 |

+

This is the pretrained weights and some other detector weights of ControlNet.

|

| 7 |

+

|

| 8 |

+

See also: https://github.com/lllyasviel/ControlNet

|

| 9 |

+

|

| 10 |

+

# Description of Files

|

| 11 |

+

|

| 12 |

+

ControlNet/models/control_sd15_canny.pth

|

| 13 |

+

|

| 14 |

+

- The ControlNet+SD1.5 model to control SD using canny edge detection.

|

| 15 |

+

|

| 16 |

+

ControlNet/models/control_sd15_depth.pth

|

| 17 |

+

|

| 18 |

+

- The ControlNet+SD1.5 model to control SD using Midas depth estimation.

|

| 19 |

+

|

| 20 |

+

ControlNet/models/control_sd15_hed.pth

|

| 21 |

+

|

| 22 |

+

- The ControlNet+SD1.5 model to control SD using HED edge detection (soft edge).

|

| 23 |

+

|

| 24 |

+

ControlNet/models/control_sd15_mlsd.pth

|

| 25 |

+

|

| 26 |

+

- The ControlNet+SD1.5 model to control SD using M-LSD line detection (will also work with traditional Hough transform).

|

| 27 |

+

|

| 28 |

+

ControlNet/models/control_sd15_normal.pth

|

| 29 |

+

|

| 30 |

+

- The ControlNet+SD1.5 model to control SD using normal map. Best to use the normal map generated by that Gradio app. Other normal maps may also work as long as the direction is correct (left looks red, right looks blue, up looks green, down looks purple).

|

| 31 |

+

|

| 32 |

+

ControlNet/models/control_sd15_openpose.pth

|

| 33 |

+

|

| 34 |

+

- The ControlNet+SD1.5 model to control SD using OpenPose pose detection. Directly manipulating pose skeleton should also work.

|

| 35 |

+

|

| 36 |

+

ControlNet/models/control_sd15_scribble.pth

|

| 37 |

+

|

| 38 |

+

- The ControlNet+SD1.5 model to control SD using human scribbles. The model is trained with boundary edges with very strong data augmentation to simulate boundary lines similar to that drawn by human.

|

| 39 |

+

|

| 40 |

+

ControlNet/models/control_sd15_seg.pth

|

| 41 |

+

|

| 42 |

+

- The ControlNet+SD1.5 model to control SD using semantic segmentation. The protocol is ADE20k.

|

| 43 |

+

|

| 44 |

+

ControlNet/annotator/ckpts/body_pose_model.pth

|

| 45 |

+

|

| 46 |

+

- Third-party model: Openpose’s pose detection model.

|

| 47 |

+

|

| 48 |

+

ControlNet/annotator/ckpts/hand_pose_model.pth

|

| 49 |

+

|

| 50 |

+

- Third-party model: Openpose’s hand detection model.

|

| 51 |

+

|

| 52 |

+

ControlNet/annotator/ckpts/dpt_hybrid-midas-501f0c75.pt

|

| 53 |

+

|

| 54 |

+

- Third-party model: Midas depth estimation model.

|

| 55 |

+

|

| 56 |

+

ControlNet/annotator/ckpts/mlsd_large_512_fp32.pth

|

| 57 |

+

|

| 58 |

+

- Third-party model: M-LSD detection model.

|

| 59 |

+

|

| 60 |

+

ControlNet/annotator/ckpts/mlsd_tiny_512_fp32.pth

|

| 61 |

+

|

| 62 |

+

- Third-party model: M-LSD’s another smaller detection model (we do not use this one).

|

| 63 |

+

|

| 64 |

+

ControlNet/annotator/ckpts/network-bsds500.pth

|

| 65 |

+

|

| 66 |

+

- Third-party model: HED boundary detection.

|

| 67 |

+

|

| 68 |

+

ControlNet/annotator/ckpts/upernet_global_small.pth

|

| 69 |

+

|

| 70 |

+

- Third-party model: Uniformer semantic segmentation.

|

| 71 |

+

|

| 72 |

+

ControlNet/training/fill50k.zip

|

| 73 |

+

|

| 74 |

+

- The data for our training tutorial.

|

| 75 |

+

|

| 76 |

+

# Related Resources

|

| 77 |

+

|

| 78 |

+

Special Thank to the great project - [Mikubill' A1111 Webui Plugin](https://github.com/Mikubill/sd-webui-controlnet) !

|

| 79 |

+

|

| 80 |

+

We also thank Hysts for making [Gradio](https://github.com/gradio-app/gradio) demo in [Hugging Face Space](https://huggingface.co/spaces/hysts/ControlNet) as well as more than 65 models in that amazing [Colab list](https://github.com/camenduru/controlnet-colab)!

|

| 81 |

+

|

| 82 |

+

Thank haofanwang for making [ControlNet-for-Diffusers](https://github.com/haofanwang/ControlNet-for-Diffusers)!

|

| 83 |

+

|

| 84 |

+

We also thank all authors for making Controlnet DEMOs, including but not limited to [fffiloni](https://huggingface.co/spaces/fffiloni/ControlNet-Video), [other-model](https://huggingface.co/spaces/hysts/ControlNet-with-other-models), [ThereforeGames](https://github.com/AUTOMATIC1111/stable-diffusion-webui/discussions/7784), [RamAnanth1](https://huggingface.co/spaces/RamAnanth1/ControlNet), etc!

|

| 85 |

+

|

| 86 |

+

# Misuse, Malicious Use, and Out-of-Scope Use

|

| 87 |

+

|

| 88 |

+

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

|

| 89 |

+

|

| 90 |

+

","{""id"": ""lllyasviel/ControlNet"", ""author"": ""lllyasviel"", ""sha"": ""e78a8c4a5052a238198043ee5c0cb44e22abb9f7"", ""last_modified"": ""2023-02-25 05:57:36+00:00"", ""created_at"": ""2023-02-08 18:51:21+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 0, ""downloads_all_time"": null, ""likes"": 3695, ""library_name"": null, ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""license:openrail"", ""region:us""], ""pipeline_tag"": null, ""mask_token"": null, ""trending_score"": null, ""card_data"": ""license: openrail"", ""widget_data"": null, ""model_index"": null, ""config"": null, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='annotator/ckpts/body_pose_model.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='annotator/ckpts/dpt_hybrid-midas-501f0c75.pt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='annotator/ckpts/hand_pose_model.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='annotator/ckpts/mlsd_large_512_fp32.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='annotator/ckpts/mlsd_tiny_512_fp32.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='annotator/ckpts/network-bsds500.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='annotator/ckpts/upernet_global_small.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='models/control_sd15_canny.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='models/control_sd15_depth.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='models/control_sd15_hed.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='models/control_sd15_mlsd.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='models/control_sd15_normal.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='models/control_sd15_openpose.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='models/control_sd15_scribble.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='models/control_sd15_seg.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='training/fill50k.zip', size=None, blob_id=None, lfs=None)""], ""spaces"": [""InstantX/InstantID"", ""microsoft/HuggingGPT"", ""AI4Editing/MagicQuill"", ""hysts/ControlNet"", ""multimodalart/flux-style-shaping"", ""microsoft/visual_chatgpt"", ""Anonymous-sub/Rerender"", ""fffiloni/ControlNet-Video"", ""PAIR/Text2Video-Zero"", ""hysts/ControlNet-with-Anything-v4"", ""modelscope/AnyText"", ""Shakker-Labs/FLUX.1-dev-ControlNet-Union-Pro"", ""RamAnanth1/ControlNet"", ""georgefen/Face-Landmark-ControlNet"", ""Yuliang/ECON"", ""diffusers/controlnet-openpose"", ""shi-labs/Prompt-Free-Diffusion"", ""mikonvergence/theaTRON"", ""fotographerai/Zen-Style-Shape"", ""ozgurkara/RAVE"", ""fffiloni/video2openpose2"", ""radames/LayerDiffuse-gradio-unofficial"", ""broyang/anime-ai"", ""feishen29/IMAGDressing-v1"", ""ginipick/StyleGen"", ""Fucius/OMG-InstantID"", ""vumichien/canvas_controlnet"", ""Shakker-Labs/FLUX.1-dev-ControlNet-Union-Pro-2.0"", ""fffiloni/ControlVideo"", ""Fucius/OMG"", ""Qdssa/good_upscaler"", ""visionMaze/Magic-Me"", ""carloscar/stable-diffusion-webui-controlnet-docker"", ""Superlang/ImageProcessor"", ""Robert001/UniControl-Demo"", ""dreamer-technoland/object-to-object-replace"", ""fantos/flxcontrol"", ""tombetthauser/astronaut-horse-concept-loader"", ""ddosxd/InstantID"", ""multimodalart/InstantID-FaceID-6M"", ""azhan77168/mq"", ""rupeshs/fastsdcpu"", ""EPFL-VILAB/ViPer"", ""abidlabs/ControlNet"", ""RamAnanth1/roomGPT"", ""yuan2023/Stable-Diffusion-ControlNet-WebUI"", ""wenkai/FAPM_demo"", ""ginipick/Fashion-Style"", ""abhishek/sketch-to-image"", ""wondervictor/ControlAR"", ""yuan2023/stable-diffusion-webui-controlnet-docker"", ""yslan/3DEnhancer"", ""model2/advanceblur"", ""taesiri/HuggingGPT-Lite"", ""salahIguiliz/ControlLogoNet"", ""charlieguo610/InstantID"", ""aki-0421/character-360"", ""JoPmt/Multi-SD_Cntrl_Cny_Pse_Img2Img"", ""PKUWilliamYang/FRESCO"", ""JoPmt/Img2Img_SD_Control_Canny_Pose_Multi"", ""nowsyn/AnyControl"", ""Potre1qw/jorag"", ""waloneai/InstantAIPortrait"", ""Pie31415/control-animation"", ""RamAnanth1/T2I-Adapter"", ""svjack/ControlNet-Pose-Chinese"", ""bobu5/SD-webui-controlnet-docker"", ""soonyau/visconet"", ""LiuZichen/DrawNGuess"", ""meowingamogus69/stable-diffusion-webui-controlnet-docker"", ""wchai/StableVideo"", ""egg22314/object-to-object-replace"", ""dreamer-technoland/object-to-object-replace-1"", ""Etrwy/cucumberUpscaler"", ""VincentZB/Stable-Diffusion-ControlNet-WebUI"", ""ysharma/ControlNet_Image_Comparison"", ""Thaweewat/ControlNet-Architecture"", ""shellypeng/Anime-Pack"", ""bewizz/SD3_Batch_Imagine"", ""Freak-ppa/obj_rem_inpaint_outpaint"", ""addsw11/obj_rem_inpaint_outpaint2"", ""briaai/BRIA-2.3-ControlNet-Pose"", ""svjack/ControlNet-Canny-Chinese-df"", ""rzzgate/Stable-Diffusion-ControlNet-WebUI"", ""JFoz/CoherentControl"", ""ysharma/visual_chatgpt_dummy"", ""AIFILMS/ControlNet-Video"", ""SUPERSHANKY/ControlNet_Colab"", ""kirch/Text2Video-Zero"", ""Alfasign/visual_chatgpt"", ""Yabo/ControlVideo"", ""ikechan8370/cp-extra"", ""brunvelop/ComfyUI"", ""SD-online/Fooocus-Docker"", ""parsee-mizuhashi/mangaka"", ""jcudit/InstantID2"", ""Etrwy/universal_space_test"", ""nftnik/Redux"", ""pandaphd/generative_photography"", ""ccarr0807/HuggingGPT""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2023-02-25 05:57:36+00:00"", ""cardData"": ""license: openrail"", ""transformersInfo"": null, ""_id"": ""63e3ef298de575a15a63c2b1"", ""modelId"": ""lllyasviel/ControlNet"", ""usedStorage"": 47039764846}",0,,0,,0,,0,,0,"AI4Editing/MagicQuill, InstantX/InstantID, RamAnanth1/ControlNet, Shakker-Labs/FLUX.1-dev-ControlNet-Union-Pro, broyang/anime-ai, feishen29/IMAGDressing-v1, fffiloni/ControlNet-Video, fotographerai/Zen-Style-Shape, ginipick/StyleGen, huggingface/InferenceSupport/discussions/new?title=lllyasviel/ControlNet&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5Blllyasviel%2FControlNet%5D(%2Flllyasviel%2FControlNet)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, hysts/ControlNet, hysts/ControlNet-with-other-models, modelscope/AnyText, multimodalart/flux-style-shaping, ozgurkara/RAVE, radames/LayerDiffuse-gradio-unofficial",16

|

DeepCoder-14B-Preview_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv

ADDED

|

@@ -0,0 +1,551 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

agentica-org/DeepCoder-14B-Preview,"---

|

| 3 |

+

license: mit

|

| 4 |

+

library_name: transformers

|

| 5 |

+

datasets:

|

| 6 |

+

- PrimeIntellect/verifiable-coding-problems

|

| 7 |

+

- likaixin/TACO-verified

|

| 8 |

+

- livecodebench/code_generation_lite

|

| 9 |

+

language:

|

| 10 |

+

- en

|

| 11 |

+

base_model:

|

| 12 |

+

- deepseek-ai/DeepSeek-R1-Distill-Qwen-14B

|

| 13 |

+

pipeline_tag: text-generation

|

| 14 |

+

---

|

| 15 |

+

|

| 16 |

+

<div align=""center"">

|

| 17 |

+

<span style=""font-family: default; font-size: 1.5em;"">DeepCoder-14B-Preview</span>

|

| 18 |

+

<div>

|

| 19 |

+

🚀 Democratizing Reinforcement Learning for LLMs (RLLM) 🌟

|

| 20 |

+

</div>

|

| 21 |

+

</div>

|

| 22 |

+

<br>

|

| 23 |

+

<div align=""center"" style=""line-height: 1;"">

|

| 24 |

+

<a href=""https://github.com/agentica-project/rllm"" style=""margin: 2px;"">

|

| 25 |

+

<img alt=""Code"" src=""https://img.shields.io/badge/RLLM-000000?style=for-the-badge&logo=github&logoColor=000&logoColor=white"" style=""display: inline-block; vertical-align: middle;""/>

|

| 26 |

+

</a>

|

| 27 |

+

<a href=""https://pretty-radio-b75.notion.site/DeepCoder-A-Fully-Open-Source-14B-Coder-at-O3-mini-Level-1cf81902c14680b3bee5eb349a512a51"" target=""_blank"" style=""margin: 2px;"">

|

| 28 |

+

<img alt=""Blog"" src=""https://img.shields.io/badge/Notion-%23000000.svg?style=for-the-badge&logo=notion&logoColor=white"" style=""display: inline-block; vertical-align: middle;""/>

|

| 29 |

+

</a>

|

| 30 |

+

<a href=""https://x.com/Agentica_"" style=""margin: 2px;"">

|

| 31 |

+

<img alt=""X.ai"" src=""https://img.shields.io/badge/Agentica-white?style=for-the-badge&logo=X&logoColor=000&color=000&labelColor=white"" style=""display: inline-block; vertical-align: middle;""/>

|

| 32 |

+

</a>

|

| 33 |

+

<a href=""https://huggingface.co/agentica-org"" style=""margin: 2px;"">

|

| 34 |

+

<img alt=""Hugging Face"" src=""https://img.shields.io/badge/Agentica-fcd022?style=for-the-badge&logo=huggingface&logoColor=000&labelColor"" style=""display: inline-block; vertical-align: middle;""/>

|

| 35 |

+

</a>

|

| 36 |

+

<a href=""https://www.together.ai"" style=""margin: 2px;"">

|

| 37 |

+