repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

supabase/supabase-py | flask | 464 | Handling the Password Reset for a user by Supabase itself. | **Is your feature request related to a problem? Please describe.**

The function `supabase.auth.reset_password_email(email)` only sends a reset mail to the particular email address mentioned, but not actually handles the password reset of that account, unlike firebase. This function `reset_password_email()` should not ... | closed | 2023-06-14T11:37:08Z | 2023-06-14T14:47:43Z | https://github.com/supabase/supabase-py/issues/464 | [] | MBSA-INFINITY | 2 |

ageitgey/face_recognition | machine-learning | 786 | I make dataset with about 200 photos. 40% of them dont have faces on this photos (nature pictures). | I make dataset with about 200 photos. 40% of them dont have faces on this photos (nature pictures).

I enter this command

face_recognition --show-distance true ./pictures_of_people_i_know/ ./unknown_pictures/

And script give me lot of warnings from "/pictures_of_people_i_know/" folder like

WARNING: No faces foun... | open | 2019-03-27T01:34:56Z | 2019-03-27T02:55:07Z | https://github.com/ageitgey/face_recognition/issues/786 | [] | xSNYPSx | 1 |

streamlit/streamlit | deep-learning | 9,984 | `st.altair_chart` does not show with a good size if the title is too long | ### Checklist

- [X] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [X] I added a very descriptive title to this issue.

- [X] I have provided sufficient information below to help reproduce this issue.

### Summary

`st.altair_chart` fails to properly d... | open | 2024-12-09T16:37:58Z | 2024-12-17T22:55:03Z | https://github.com/streamlit/streamlit/issues/9984 | [

"type:bug",

"status:confirmed",

"priority:P3",

"feature:st.altair_chart"

] | RubenCata | 2 |

plotly/dash-core-components | dash | 828 | [BUG] dcc.Location search loses quoting | Thank you so much for helping improve the quality of Dash!

We do our best to catch bugs during the release process, but we rely on your help to find the ones that slip through.

**Describe your context**

Please provide us your environment so we can easily reproduce the issue.

- replace the result of `pip li... | open | 2020-06-19T15:17:03Z | 2020-06-19T15:42:25Z | https://github.com/plotly/dash-core-components/issues/828 | [] | jauerb | 1 |

python-restx/flask-restx | api | 23 | Using GetHub Actions instead of Travis CI | I would like to make a proposal to use GitHub Actions instead of Travis CI.

In my opinion and from my experience, the GitHub Actions works better and a bit faster than Travis CI.

The most important enhancement is that everything is stored on the GitHub.

GitHub workflows allows to test on Linux, Mac and Windows VMs f... | closed | 2020-01-29T21:24:55Z | 2020-01-31T16:23:11Z | https://github.com/python-restx/flask-restx/issues/23 | [] | SVilgelm | 2 |

kymatio/kymatio | numpy | 852 | Unnecessary arguments in `core/scattering1d/scattering1d` | Not a big priority, but an easy simplification. The current prototype of `scattering` is: (phew!)

```python

scattering1d(x, pad, unpad, backend, log2_T, psi1, psi2, phi, pad_left=0,

pad_right=0, ind_start=None, ind_end=None, oversampling=0,

max_order=2, average=True, vectorize=False, out_type='arr... | closed | 2022-06-07T16:20:09Z | 2022-06-12T22:16:23Z | https://github.com/kymatio/kymatio/issues/852 | [

"good first issue",

"1D"

] | lostanlen | 3 |

Escape-Technologies/graphinder | graphql | 12 | [feat] Python 3.7 typing compliance | Migrate to a 3.7 typing compliance version of graphinder. | closed | 2022-07-19T21:41:10Z | 2022-07-26T10:27:35Z | https://github.com/Escape-Technologies/graphinder/issues/12 | [

"enhancement"

] | nullswan | 0 |

widgetti/solara | jupyter | 222 | API docs missing for Details | see https://github.com/widgetti/solara/issues/221

https://github.com/widgetti/solara/pull/185 is a good template of what it should look like | open | 2023-07-28T17:36:36Z | 2024-04-01T15:31:45Z | https://github.com/widgetti/solara/issues/222 | [

"documentation",

"good first issue",

"help wanted"

] | maartenbreddels | 1 |

polarsource/polar | fastapi | 5,202 | Customers: Delete customer from dashboard | Ability to delete a customer from within the dashboard.

Wait on: [https://github.com/polarsource/polar/issues/5142](https://github.com/polarsource/polar/issues/5142)

So we can fix that bug + disallow deletion of customers who have purchased something, i.e has an order/benefit. | closed | 2025-03-07T13:19:09Z | 2025-03-12T13:42:46Z | https://github.com/polarsource/polar/issues/5202 | [] | birkjernstrom | 0 |

tfranzel/drf-spectacular | rest-api | 752 | Question: How to deal with a generated API ? | Hi! Thanks for your project, it works well out of the box.

This is not an issue but more a request for help to properly integrate drf-spectacular into my project.

I have this special endpoint that asks for a query parameter. According to these parameters which are stored in base (they are model names), the endpoi... | closed | 2022-06-07T13:16:01Z | 2022-06-18T13:36:01Z | https://github.com/tfranzel/drf-spectacular/issues/752 | [] | moweerkat | 2 |

plotly/dash | data-visualization | 2,650 | Multipage App + Background Callback Changes the Page | Issue steps:

- Trigger a background callback on Page X

- Change page to Y

- The background callback on Page X finishes and changes the browser to Page X

Background callback finishing shouldn't change page unless a dcc.Location is in the output.

| open | 2023-10-02T14:54:17Z | 2024-08-13T19:38:22Z | https://github.com/plotly/dash/issues/2650 | [

"bug",

"P3"

] | IstvanM | 0 |

polarsource/polar | fastapi | 4,778 | Subscriptions (Customer Portal): Prorate immediately in case of annual subscription changes | Prerequisite: https://github.com/polarsource/polar/issues/4777

Better default to ensure all yearly upgrades are prorated immediately. Not an issue in case of an upgrade from monthly to yearly, but from year to year it can be a much higher risk.

| open | 2025-01-03T14:01:35Z | 2025-02-11T09:07:23Z | https://github.com/polarsource/polar/issues/4778 | [

"feature",

"changelog"

] | birkjernstrom | 1 |

supabase/supabase-py | fastapi | 522 | Supabase-py through outbound http proxy | I'd like to use supabse-py in an app that is deployed behind a http proxy. It seems supabase does not use the system proxy settings to route requests. Is there a way to specify a http proxy to be used by the client? | closed | 2023-08-21T09:40:19Z | 2023-08-21T11:59:21Z | https://github.com/supabase/supabase-py/issues/522 | [] | theveloped | 2 |

plotly/plotly.py | plotly | 5,009 | connection style for arrows | It would be nice to have orthogonal and other types of connection style arrows when adding annotations. Matplotlib has something like:

```

import matplotlib.pyplot as plt

fig, ax = plt.subplots()

ax.plot([1, 2, 3], [4, 5, 2])

# Annotate with a connection line

ax.annotate("Important Point", xy=(2, 5), xytext=(1.5, ... | open | 2025-02-03T15:27:27Z | 2025-02-03T15:46:08Z | https://github.com/plotly/plotly.py/issues/5009 | [

"feature",

"P3"

] | chaffra | 1 |

ymcui/Chinese-LLaMA-Alpaca-2 | nlp | 66 | 多卡微调时报错:ERROR:torch.distributed.elastic.multiprocessing.api:failed (exitcode: -7) local_rank: 0 (pid: 1280) of binary: /root/miniconda3/envs/llama2/bin/python | ### 提交前必须检查以下项目

- [X] 请确保使用的是仓库最新代码(git pull),一些问题已被解决和修复。

- [X] 我已阅读[项目文档](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki)和[FAQ章节](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki/常见问题)并且已在Issue中对问题进行了搜索,没有找到相似问题和解决方案

- [X] 第三方插件问题:例如[llama.cpp](https://github.com/ggerganov/llama.cpp)、[text-generation-webui... | closed | 2023-08-03T08:17:23Z | 2023-12-04T01:01:44Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/issues/66 | [

"stale"

] | thugbobby | 6 |

saulpw/visidata | pandas | 2,359 | Aggregator with `+` not appearing in frequency table _unless_ selected after typing it out | **Small description**

When choosing an aggregator via `+`, such aggregator is not added to the frequency table if selected via `<Tab>` scrolling _unless_ it is first selected by typing it out.

**Expected result**

The aggregator is added to frequency tables even if selected via `<Tab>` scrolling.

**Actual result... | closed | 2024-03-24T21:12:03Z | 2024-03-27T17:06:10Z | https://github.com/saulpw/visidata/issues/2359 | [

"bug",

"fixed"

] | gennaro-tedesco | 2 |

deepspeedai/DeepSpeed | deep-learning | 7,132 | [BUG] AttributeError: 'FusedAdam' object has no attribute 'refresh_fp32_params' | I loaded a pretrained checkpoint using the following code:

```

model_engine.load_checkpoint(

checkpoint_dir,

ckpt_id,

load_optimizer_states=False,

load_lr_scheduler_states=False,

load_module_only=True

)

```

However, I encountered the following error:

```

...

File "/opt/conda/envs/ptca/lib/python3.... | closed | 2025-03-11T17:09:33Z | 2025-03-14T18:55:29Z | https://github.com/deepspeedai/DeepSpeed/issues/7132 | [

"bug",

"training"

] | jinwx | 0 |

lukas-blecher/LaTeX-OCR | pytorch | 256 | Can't run latexocr | I got to know about this recently and wanted to give it a try. `pix2tex` command works fine, but `latexocr` command gives this error

`qt.dbus.integration: Could not connect "org.freedesktop.IBus" to globalEngineChanged(QString)

Sandboxing disabled by user.

The Wayland connection experienced a fatal error: Protoco... | open | 2023-04-14T17:57:50Z | 2023-06-07T01:34:20Z | https://github.com/lukas-blecher/LaTeX-OCR/issues/256 | [] | Anirudh-Srivastha-Nemmani | 1 |

hpcaitech/ColossalAI | deep-learning | 5,960 | [fp8] support low level zero | closed | 2024-08-02T03:14:13Z | 2024-08-07T03:18:28Z | https://github.com/hpcaitech/ColossalAI/issues/5960 | [] | ver217 | 2 | |

kizniche/Mycodo | automation | 631 | error 500 when selecting function tab | ## Mycodo Issue Report:

- Specific Mycodo Version:

Mycodo Version: 7.2.3

Python Version: 3.5.3 (default, Jan 19 2017, 14:11:04) [GCC 6.3.0 20170124]

Database Version: 2976b41930ad

Daemon Status: Running

Daemon Process ID: 10805

Daemon RAM Usage: 70.248 MB

Daemon Virtualenv: Yes

Frontend RAM Usage: 51.... | closed | 2019-02-20T22:01:55Z | 2019-02-21T10:17:16Z | https://github.com/kizniche/Mycodo/issues/631 | [] | SAM26K | 6 |

coqui-ai/TTS | python | 3,050 | [Bug] formatting_your_dataset doc doesn't match the LJSpeech formatter | ### Describe the bug

The `formatting_your_dataset` doc recommends writing your dataset in this format with the note that it'll be compatible with the LJSpeech formatter:

```

# metadata.txt

audio1|This is my sentence.

audio2|This is maybe my sentence.

audio3|This is certainly my sentence.

audio4|Let this be... | closed | 2023-10-09T14:44:22Z | 2023-10-16T10:25:50Z | https://github.com/coqui-ai/TTS/issues/3050 | [

"bug"

] | sqrt10pi | 2 |

coqui-ai/TTS | pytorch | 4,135 | [Bug] When I generate a TTS model and play it, I only hear noise. | ### Describe the bug

Hello,

I wanted to create a TTS model using my voice with Coqui TTS, so I followed the tutorial to implement it.

I wrote a train.py file to train the voice model, but when I try to play TTS using the model I created, I only hear noise.

I thought the issue might be with my audio files, so I tried m... | closed | 2025-01-22T06:44:25Z | 2025-02-16T23:11:42Z | https://github.com/coqui-ai/TTS/issues/4135 | [

"bug"

] | chuyeonhak | 5 |

amdegroot/ssd.pytorch | computer-vision | 213 | Outputs are always the same. | I train it on my own data, then I find that any picture will get same result. Does anyone see this problem before? | closed | 2018-07-31T12:09:26Z | 2019-09-26T08:46:22Z | https://github.com/amdegroot/ssd.pytorch/issues/213 | [] | chenxinyang123 | 3 |

Kanaries/pygwalker | matplotlib | 189 | PySpark DataFrame Support | Native Support for rendering visualizations for PySpark data frame in the Jupyter notebook.

It is OK to introduce some constraints if the sheer size of the data frame makes it difficult to load. | closed | 2023-08-03T07:20:51Z | 2023-11-06T02:02:08Z | https://github.com/Kanaries/pygwalker/issues/189 | [

"enhancement",

"P2"

] | rishabmps | 6 |

Johnserf-Seed/TikTokDownload | api | 677 | TikTokTool V1.5 版本 通过命令行启动并提供必要的参数, 输入 TikTokTool -h 查看不同平台帮助。[BUG] | **描述出现的错误**

请通过命令行启动并提供必要的参数, 输入 TikTokTool -h 查看不同平台帮助。

F2 Version:0.0.1.4

**bug复现**

复现这次行为的步骤:

1.打开终端运行python3 TikTokTool.py

**截图**

<img width="530" alt="image" src="https://github.com/Johnserf-Seed/TikTokDownload/assets/18301809/2f7d757d-49d8-4731-8510-717e4487f9d4">

**桌面(请填写以下信息):**

-操作系统:Mac

-vpn代理... | open | 2024-03-12T09:08:31Z | 2024-03-12T15:19:55Z | https://github.com/Johnserf-Seed/TikTokDownload/issues/677 | [] | yjxfox | 6 |

jupyterhub/repo2docker | jupyter | 702 | Implement a GUI that builds repo2docker config files | ### Proposed change

It would be useful if we had a lightweight GUI that let people build repo2docker configuration files. It would have sections for each type of configuration file, and UI elements that helped populate the most common relevant fields. e.g.:

with parameter loss='weighted_crossentropy' | Hello, I am currently also trying to implement a weighted CE loss function. I'd really appreciate some guidance on how to call this function from the `loss=` parameter of the `tflearn.regression()` function.

The following attempt to use the above method in my code yields:

```

net_2 = net = tflearn.input_data(shape... | closed | 2018-07-19T22:15:44Z | 2018-07-20T00:26:44Z | https://github.com/tflearn/tflearn/issues/1075 | [] | tnightengale | 0 |

nikitastupin/clairvoyance | graphql | 80 | The endpoint requires authorization keys | What should I do if my GraphQL endpoint requires cookies and authorization keys, how can I add this for analysis?

| closed | 2024-02-07T18:04:18Z | 2024-02-21T05:14:03Z | https://github.com/nikitastupin/clairvoyance/issues/80 | [] | simbadmorehod | 0 |

graphql-python/graphene-django | graphql | 1,484 | Automatic object type field for reverse one-to-one model fields cannot resolve when model field has `related_query_name` and object type has custom `get_queryset` | ## **What is the current behavior?**

Given the following models:

```python

from django.db import models

class Example(models.Model):

pass

class Related(models.Model):

example = models.OneToOneField(

Example,

on_delete=models.CASCADE,

related_name="related",

# I... | open | 2023-12-09T19:17:15Z | 2023-12-09T19:17:15Z | https://github.com/graphql-python/graphene-django/issues/1484 | [

"🐛bug"

] | MrThearMan | 0 |

flaskbb/flaskbb | flask | 380 | Post count includes deleted posts | If I delete a post on a thread, the count for the forum does not change (i.e., the "Posts" column on the "Forum" page). The "Total number of posts", however, is accurate. | closed | 2017-12-17T19:20:05Z | 2018-04-15T07:47:49Z | https://github.com/flaskbb/flaskbb/issues/380 | [

"bug"

] | haliphax | 3 |

tqdm/tqdm | jupyter | 1,120 | Saved ASCII output from tqdm.notebook shows zero progress | - [x] I have marked all applicable categories:

+ [ ] exception-raising bug

+ [x] visual output bug

+ [ ] documentation request (i.e. "X is missing from the documentation." If instead I want to ask "how to use X?" I understand [StackOverflow#tqdm] is more appropriate)

+ [ ] new feature request

- [x]... | open | 2021-02-03T02:52:59Z | 2021-02-17T02:49:17Z | https://github.com/tqdm/tqdm/issues/1120 | [

"duplicate 🗐",

"help wanted 🙏",

"invalid ⛔",

"p2-bug-warning ⚠",

"submodule-notebook 📓"

] | charmasaur | 5 |

babysor/MockingBird | deep-learning | 760 | 有没有在macbook上成功的同学,我配置完成后没有报错信息,但是一直是沙沙的声音,不清楚是什么原因 | **Summary[问题简述(一句话)]**

A clear and concise description of what the issue is.

**Env & To Reproduce[复现与环境]**

描述你用的环境、代码版本、模型

mac OS Monterey 12.5

Python 3.9.2

**Screensho

<img width="1300" alt="截屏2022-10-07 00 26 04" src="https://user-images.githubusercontent.com/16750537/194367418-49c0c63b-b7d5-4d76-9738-4... | closed | 2022-10-06T16:26:15Z | 2022-11-16T09:21:19Z | https://github.com/babysor/MockingBird/issues/760 | [] | zhuzhihui | 1 |

vitalik/django-ninja | pydantic | 1,067 | embedding api docs into custom page | Hi.

I am using the autom.-generated docs function, from ninja API, to create a web-based, user friendly interface for the users visiting my page, as well as test facility.

I would like to embed that wonderful feature into my already existing custom web layout (header, footer, navbar, etc...). By so doing, I wo... | open | 2024-01-27T18:02:39Z | 2024-01-31T08:36:29Z | https://github.com/vitalik/django-ninja/issues/1067 | [] | MM-cyi | 3 |

graphdeco-inria/gaussian-splatting | computer-vision | 536 | Question about the variable | First thank you for your excellent work.

The method "create_from_pcd" of class "GaussianModel" in "./scene

/gaussian_model.py" defines the properties called "self._features_dc" and "self._features_rest" in line 142 and 143. I don't know what they correspond to in the article and how to use them. Could you please ex... | closed | 2023-12-09T05:02:59Z | 2023-12-10T01:57:58Z | https://github.com/graphdeco-inria/gaussian-splatting/issues/536 | [] | tuning12 | 3 |

iperov/DeepFaceLab | machine-learning | 5,267 | Using 2 GPUs for 2 different models at the same time I get this error | Hi... When I try to train 2 different models using 2 different GPUs, the first one starts, and then when it starts loading the 2nd one I get errors and it won't train at the same time. This is what the error is on the computer that was already training....

Traceback (most recent call last):][0.0505]

File "multi... | open | 2021-01-28T04:14:14Z | 2023-06-08T22:21:33Z | https://github.com/iperov/DeepFaceLab/issues/5267 | [] | kilerb | 2 |

pywinauto/pywinauto | automation | 515 | How can I get all ListItem in ListBox? | Good day, everyone! I use pywinauto to automation desktop application. And I need to receive all ListItems from ListBox. Then I execute this code:

```python

def common_list(list_control):

state = list_control.element_info.enabled

if state:

automation_id = list_control.element_info.automation_id

... | open | 2018-07-02T14:31:52Z | 2018-07-11T17:36:44Z | https://github.com/pywinauto/pywinauto/issues/515 | [

"enhancement",

"UIA-related"

] | Nebyt | 0 |

huggingface/transformers | machine-learning | 36,051 | [generate] generate not working gradient_checkpointing=True | ### System Info

```

transformers==4.37.2

peft==0.13.1

accelerate==0.21.0

deepspeed==0.12.6

torch==2.1.2+cu118

```

### Who can help?

@zucchini-nlp @ArthurZucker

### Information

- [ ] The official example scripts

- [x] My own modified scripts

### Tasks

- [ ] An officially supported task in the `examples` fold... | closed | 2025-02-05T15:00:39Z | 2025-02-06T14:16:32Z | https://github.com/huggingface/transformers/issues/36051 | [

"bug",

"Generation"

] | alcompa | 7 |

davidsandberg/facenet | computer-vision | 433 | What is the role of facenet.prewhiten | I saw the code of compare.py and found the function prewhiten.

What is the role of facenet.prewhiten? | closed | 2017-08-22T10:41:41Z | 2018-06-28T23:50:17Z | https://github.com/davidsandberg/facenet/issues/433 | [] | tonybaigang | 2 |

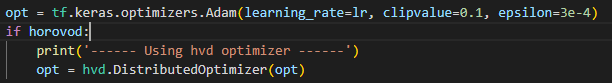

horovod/horovod | pytorch | 3,620 | Wrapping keras optimizer with hvd optimizer breaks the code | Using 2 VMs with 1 GPU each to do distributed training using Horovod.

This is what I have for building and compiling my model:

When I pass in True for horovod, my code breaks starts Epoch 1, then hangs... | closed | 2022-07-26T15:37:39Z | 2022-07-28T14:07:13Z | https://github.com/horovod/horovod/issues/3620 | [] | bluepra | 3 |

jacobgil/pytorch-grad-cam | computer-vision | 553 | About the inference speed of using FullGrad for cam visualization | I was using FullGrad for cam visualization and found that it was much slower compared with ScoreCAM or GradCAM, and when using FullGrad for visualizations, there were warnings like `Warning: target_layers is ignored in FullGrad. All bias layers will be used instead`. Was it normal that using FullGrad was slow because i... | open | 2025-02-05T07:06:10Z | 2025-02-18T20:44:24Z | https://github.com/jacobgil/pytorch-grad-cam/issues/553 | [] | kasteric | 1 |

HumanSignal/labelImg | deep-learning | 362 | Could you please add the support for CJK | Thanks for sharing this useful tool.

I'm using labelImg to label vehicles in traffic images, and the label are Chinese names, which is difficult to translate to English. Even if we translate the names to English, the people who do the labeling job may not understand the exact meaning of the label, there are informatio... | closed | 2018-09-07T03:07:27Z | 2018-10-23T05:09:15Z | https://github.com/HumanSignal/labelImg/issues/362 | [] | yangulei | 1 |

assafelovic/gpt-researcher | automation | 407 | ModuleNotFoundError: No module named 'gpt_researcher.retrievers.serpapi' | Hi!

I've the following setup:

- Venv: _Python 3.10.12_

- PIP: _gpt-researcher 0.1.0_

And i obtain this error:

"ModuleNotFoundError: No module named 'gpt_researcher.retrievers.serpapi'"

Neither in https://pypi.org/project/gpt-researcher/ and https://github.com/assafelovic/gpt-researcher/blob/mast... | closed | 2024-03-22T19:07:15Z | 2024-03-25T18:23:55Z | https://github.com/assafelovic/gpt-researcher/issues/407 | [] | joaomnmoreira | 3 |

flairNLP/flair | nlp | 3,036 | Support for Vietnamese | Hi, I am looking through Flair and wondering if it support Vietnamese or not. If not, will it in the future? Thank you!

_Originally posted by @longsc2603 in https://github.com/flairNLP/flair/issues/2#issuecomment-1354413764_

| closed | 2022-12-21T01:37:57Z | 2023-06-11T11:25:47Z | https://github.com/flairNLP/flair/issues/3036 | [

"wontfix"

] | longsc2603 | 1 |

mitmproxy/pdoc | api | 110 | .. | closed | 2016-07-23T05:51:14Z | 2018-06-03T01:42:18Z | https://github.com/mitmproxy/pdoc/issues/110 | [] | krisvandermerwe | 1 | |

davidsandberg/facenet | tensorflow | 700 | How to remove the identities which are overlapped between Ms-Celeb-1M and LFW/Facescrub? | Hello everyone, is there a good way to remove the identities which are overlapped between Ms-Celeb-1M and LFW/Facescrub?

Or could anyone share your overlapping list?

Thank you very much! | open | 2018-04-15T22:44:22Z | 2018-04-15T22:44:22Z | https://github.com/davidsandberg/facenet/issues/700 | [] | Landwind-Xin | 0 |

igorbenav/fastcrud | pydantic | 115 | Data repetition in get_multi_joined and issue in join_on | ```

data = crud_user.get_multi(

db,

nest_joins=True,

joins_config=[

JoinConfig(

model=Portions,

join_on=User.portion_id == Portion.id,

join_prefix='portions',

join_type="left",

relationship_type='one-to-many'

),

J... | closed | 2024-07-01T02:50:34Z | 2024-12-23T03:40:51Z | https://github.com/igorbenav/fastcrud/issues/115 | [] | mithun2003 | 3 |

CorentinJ/Real-Time-Voice-Cloning | pytorch | 434 | I would like to sing in polish language instead of text | Is this tool able to "render" singing voice based on my voice? (not by typing text)

I would like to sound like some other person. so, basically train model with some other speech -> I am recording my song with my voice -> generate singing voice like some other else. It doesn't need to be "perfect", I want it just to s... | closed | 2020-07-20T19:13:22Z | 2021-01-16T22:35:48Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/434 | [] | annaskarzynska | 3 |

CorentinJ/Real-Time-Voice-Cloning | pytorch | 883 | TTS outputing different words than the ones typed in | Hi,

I am putting my hands on your fun project! Actually I am trying to clone a voice in French. I edited a short recording and made 16 extracts (22kHz mono 32 pcm Microsoft wav ranging from 1 to 5 seconds) out of it that I manually transcripted following the file hierarchy @blue-fish [shows](https://github.com/Coren... | closed | 2021-10-31T06:36:37Z | 2022-08-19T11:47:34Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/883 | [] | Ca-ressemble-a-du-fake | 29 |

Avaiga/taipy | data-visualization | 1,496 | [🐛 BUG] Callbacks not called in Python API | ### What went wrong? 🤔

Callbacks are not called by Taipy when they are referenced directly.

### Expected Behavior

They should still be called like before. This is a regression.

### Steps to Reproduce Issue

Run this code. The first slider doesn't call the callback, but the second does.

```python

from... | closed | 2024-07-10T09:48:17Z | 2024-07-10T13:59:40Z | https://github.com/Avaiga/taipy/issues/1496 | [

"🟥 Priority: Critical",

"🖰 GUI",

"💥Malfunction"

] | FlorianJacta | 0 |

sammchardy/python-binance | api | 1,155 | TESTNET: binance.exceptions.BinanceAPIException: APIError(code=-2008): Invalid Api-Key ID. | **Describe the bug**

I tried to connect to the binance testnet and to get the account status, but got the following error:

File "/home/h/anaconda3/envs/pairTrading/lib/python3.9/site-packages/binance/client.py", line 2065, in get_account_status

return self._request_margin_api('get', 'account/status', True, d... | open | 2022-03-02T16:11:38Z | 2024-04-01T22:14:17Z | https://github.com/sammchardy/python-binance/issues/1155 | [] | zenthara | 24 |

MycroftAI/mycroft-core | nlp | 2,712 | Use a static custom settings file to save user informationn | I'm in trouble with this problem: I need to create a user id card where put some information about it, like name, surname, age, and so on.

I tried to add my own custom file a the mycroft main level called userinfo.conf, i added a class in configuration.py, locations.py and __init__.py to load this conf file with the ... | closed | 2020-10-01T12:02:05Z | 2024-09-08T08:33:18Z | https://github.com/MycroftAI/mycroft-core/issues/2712 | [

"Type: Enhancement - proposed",

"Status: For discussion"

] | damorosodaragona | 6 |

microsoft/nni | data-science | 5,660 | nni norm_pruning example error | Hello!

I am trying to locally run a pytorch version of one of the pruning examples (nni/examples/compression/pruning/norm_pruning.py).

However, it seems that the config_list that is generate from the function "auto_set_denpendency_group_ids" has some problem.

**The config_list is:**

[{'op_names': ['layer3.1.conv1... | closed | 2023-08-13T13:32:48Z | 2023-08-17T07:30:54Z | https://github.com/microsoft/nni/issues/5660 | [] | NoyLalzary | 0 |

QuivrHQ/quivr | api | 2,741 | Notion Synchronization | Synchronise with Notion | closed | 2024-06-25T16:49:37Z | 2024-09-28T20:06:11Z | https://github.com/QuivrHQ/quivr/issues/2741 | [

"Stale"

] | StanGirard | 2 |

frappe/frappe | rest-api | 31,525 | Reports: Total Row rendered in the midst of a report | <!--

Welcome to the Frappe Framework issue tracker! Before creating an issue, please heed the following:

1. This tracker should only be used to report bugs and request features / enhancements to Frappe

- For questions and general support, use https://stackoverflow.com/questions/tagged/frappe

- For documentatio... | closed | 2025-03-05T06:56:44Z | 2025-03-21T00:16:00Z | https://github.com/frappe/frappe/issues/31525 | [

"bug"

] | zongo811 | 2 |

oegedijk/explainerdashboard | plotly | 292 | Update component plots when selecting data | Hello, I'm making a custom dashboard with ExplainerDashboard components and a map. The idea is to be able to select a region in the map to filter the data and re calculate the shap values in order to understand a certain area's predictions by seeing the feature importances in this area in particular. However, since I'm... | closed | 2023-12-28T20:02:37Z | 2024-01-25T14:43:40Z | https://github.com/oegedijk/explainerdashboard/issues/292 | [] | soundgarden134 | 3 |

pallets-eco/flask-sqlalchemy | flask | 659 | lgfntveceig | closed | 2018-12-16T20:16:54Z | 2020-12-05T20:46:22Z | https://github.com/pallets-eco/flask-sqlalchemy/issues/659 | [] | seanmcfeely | 0 | |

MagicStack/asyncpg | asyncio | 237 | Inserting array types [0,1,2,...] | <!--

Thank you for reporting an issue/feature request.

If this is a feature request, please disregard this template. If this is

a bug report, please answer to the questions below.

It will be much easier for us to fix the issue if a test case that reproduces

the problem is provided, with clear instructions on ... | closed | 2017-12-13T13:31:26Z | 2017-12-13T15:26:42Z | https://github.com/MagicStack/asyncpg/issues/237 | [] | ghost | 6 |

nonebot/nonebot2 | fastapi | 3,262 | Bot: AntiFraudBot | ### 机器人名称

AntiFraudBot

### 机器人描述

反诈机器人

### 机器人项目仓库/主页链接

https://github.com/itsevin/AntiFraudBot

### 标签

[{"label":"反诈","color":"#ea5252"}] | closed | 2025-01-16T14:10:27Z | 2025-01-19T03:07:27Z | https://github.com/nonebot/nonebot2/issues/3262 | [

"Bot",

"Publish"

] | itsevin | 4 |

deepspeedai/DeepSpeed | machine-learning | 6,878 | How can DeepSpeed be configured to prevent the merging of parameter groups | The optimizer has been re-implemented to group parameters and set different learning rates for each group. However, after using DeepSpeed, all the `param_groups` are merged into one. How can this be prevented?

```

{

"fp16": {

"enabled": "auto",

"loss_scale": 0,

"loss_scale_window": 1000,

... | open | 2024-12-16T14:30:54Z | 2024-12-18T11:32:04Z | https://github.com/deepspeedai/DeepSpeed/issues/6878 | [] | CLL112 | 3 |

openapi-generators/openapi-python-client | fastapi | 605 | $ref in path parameters doesn't seem to work | **Describe the bug**

If a `get` path has a parameter using `$ref` to point to `#/components/parameters`, the generated API lacks the corresponding `kwarg`. If one "hoists" the indirect parameter up into the path's `parameters`, it works as expected.

**To Reproduce**

See [this repo](https://github.com/jrobbins-Liv... | open | 2022-04-30T20:56:37Z | 2022-04-30T20:56:37Z | https://github.com/openapi-generators/openapi-python-client/issues/605 | [

"🐞bug"

] | jrobbins-LiveData | 0 |

hankcs/HanLP | nlp | 601 | 自定义词典没有起到作用? |

## 注意事项

请确认下列注意事项:

* 我已仔细阅读下列文档,都没有找到答案:

- [首页文档](https://github.com/hankcs/HanLP)

- [wiki](https://github.com/hankcs/HanLP/wiki)

- [常见问题](https://github.com/hankcs/HanLP/wiki/FAQ)

* 我已经通过[Google](https://www.google.com/#newwindow=1&q=HanLP)和[issue区检索功能](https://github.com/hankcs/HanLP/issues)搜索了我的问题,也没... | closed | 2017-08-10T10:35:41Z | 2020-01-01T11:08:29Z | https://github.com/hankcs/HanLP/issues/601 | [

"ignored"

] | djblovecxc | 2 |

STVIR/pysot | computer-vision | 202 | Can you upload models to site accessible from outside of China? | I live outside China, and am unable to create a Baidu account. Could you host the files on a different site? | closed | 2019-10-10T13:29:12Z | 2020-04-24T10:07:14Z | https://github.com/STVIR/pysot/issues/202 | [] | asw-v4 | 1 |

cvat-ai/cvat | computer-vision | 8,627 | GT annotations can sometimes show up in the Standard mode | ### Actions before raising this issue

- [X] I searched the existing issues and did not find anything similar.

- [X] I read/searched [the docs](https://docs.cvat.ai/docs/)

### Steps to Reproduce

In a task with honeypots, GT annotations can show up in the UI, when an annotation job is just opened. It can happen... | closed | 2024-10-31T15:03:55Z | 2024-11-13T14:20:47Z | https://github.com/cvat-ai/cvat/issues/8627 | [

"bug",

"ui/ux"

] | zhiltsov-max | 0 |

nltk/nltk | nlp | 2,622 | [wiki] SENNA binary link is outdated | On this page:

https://github.com/nltk/nltk/wiki/Installing-Third-Party-Software

The link to SENNA toolkit is no longer accessible. It was most likely taken from [here](https://www.nec-labs.com/research-departments/machine-learning/machine-learning-software/Senna) where it also leads nowhere.

The only other pla... | closed | 2020-11-09T12:56:01Z | 2021-07-30T07:53:46Z | https://github.com/nltk/nltk/issues/2622 | [

"documentation",

"resolved"

] | ermik | 1 |

voila-dashboards/voila | jupyter | 1,252 | Updating widgets in voila behaves differently than jupyterlab (voila flashes for a few seconds) | Very simple app

One button, one Table. If you click the button in refreshes the table

If you run it Jupyterlab it works fine and the table is updating without flashing

If you run it in voila the table gets updated but it disappears for a second before reappearing.

```

import pandas as pd

import numpy as np

i... | open | 2022-11-06T09:55:39Z | 2022-11-28T18:50:22Z | https://github.com/voila-dashboards/voila/issues/1252 | [

"bug"

] | gioxc88 | 2 |

nltk/nltk | nlp | 2,934 | Potential bug in sentence tokenizer since 3.6.6 | We use `nltk` tokenizer `tokenizers/punkt/english.pickle` to split relatively long text into sentences.

After we upgrading to 3.6.6, we noticed at least one change in the tokenizer results which looks rather like a bug

Given this simple text example:

```

1. This is R .

2. This is A .

3. That's all

```

We expe... | closed | 2022-01-24T15:50:45Z | 2022-07-04T05:40:03Z | https://github.com/nltk/nltk/issues/2934 | [

"bug"

] | radcheb | 5 |

CPJKU/madmom | numpy | 502 | Incompatible with Python 3.10 because MutableSequence was moved to collections.abc | ### Expected behaviour

`import madmom` would be necessary to work in order to use this library.

### Actual behaviour

Fails with

```ImportError: cannot import name 'MutableSequence' from 'collections' (..../python3.10/collections/__init__.py)```

### Steps needed to reproduce the behaviour

Import madmom u... | closed | 2022-01-25T08:20:21Z | 2023-11-10T11:56:53Z | https://github.com/CPJKU/madmom/issues/502 | [] | johentsch | 9 |

Miserlou/Zappa | flask | 1,736 | Zappa won't deploy from Travis with environment variables | <!--- Provide a general summary of the issue in the Title above -->

## Context

<!--- Provide a more detailed introduction to the issue itself, and why you consider it to be a bug -->

<!--- Also, please make sure that you are running Zappa _from a virtual environment_ and are using Python 2.7/3.6 -->

I'm trying to... | closed | 2018-12-22T23:51:09Z | 2018-12-23T00:00:54Z | https://github.com/Miserlou/Zappa/issues/1736 | [] | Benjamin-Lee | 1 |

seleniumbase/SeleniumBase | pytest | 2,510 | How to set login to run before executing entire test class | Hello,Michael:

I place the login to run before the entire test class is executed. An error will be reported when running. What should I do? Thank you.

`class MyTestClass(BaseCase):

@classmethod

def setUpClass(cls):

super(MyTestClass, cls).setUpClass()

cls.open("https://www.baidu.... | closed | 2024-02-18T01:41:53Z | 2024-02-18T04:05:18Z | https://github.com/seleniumbase/SeleniumBase/issues/2510 | [

"duplicate",

"question"

] | chenhaijun02 | 1 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 1,167 | Fine tuning a downloaded pre-trained cyclegan model | Hello,

First of all, thanks for this amazing repo!

After going through tips&tricks, and the first few pages of issues I haven't found out how I can start with one of your pretrained cyclegan models and then resume training on my own dataset.

Specifically, it seems that for a given pretrained model (here style_... | open | 2020-10-21T10:31:55Z | 2020-10-30T09:31:32Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1167 | [] | SebastianPartarrieu | 0 |

jadore801120/attention-is-all-you-need-pytorch | nlp | 218 | when I run the `python preprocess.py -lang_src de -lang_trg en -share_vocab -save_data m30k_deen_shr.pkl`.I have faced a problem | when I run the `python preprocess.py -lang_src de -lang_trg en -share_vocab -save_data m30k_deen_shr.pkl`.I have face a problem ,which is`

Namespace(data_src=None, data_trg=None, keep_case=False, lang_src='de', lang_trg='en', max_len=100, min_word_count=3, save_data='m30k_deen_shr.pkl', share_vocab=True)

Traceback (m... | open | 2024-02-09T02:20:59Z | 2024-03-28T08:38:29Z | https://github.com/jadore801120/attention-is-all-you-need-pytorch/issues/218 | [] | dapaolufuduizhang | 1 |

apify/crawlee-python | automation | 78 | Implement session management | - Implement the initial version.

- Session management in TS Crawlee - https://github.com/apify/crawlee/tree/v3.8.2/packages/core/src/session_pool | closed | 2024-03-25T16:26:11Z | 2024-04-15T15:14:23Z | https://github.com/apify/crawlee-python/issues/78 | [

"enhancement",

"t-tooling"

] | vdusek | 0 |

graphql-python/graphene | graphql | 818 | Search by docs | Hello! Could you please enable search by documentation? | closed | 2018-08-27T20:30:14Z | 2018-09-10T21:58:54Z | https://github.com/graphql-python/graphene/issues/818 | [

"📖 documentation"

] | oleksandr-kuzmenko | 3 |

polarsource/polar | fastapi | 5,145 | GitHub Benefit: Showing wrong information re: billing of collaborators for a free organization | Currently, we show the warning of GitHub seat pricing for free organizations with copy that makes it sound like it impacts them when it only impacts paid organizations | closed | 2025-03-03T09:53:21Z | 2025-03-03T12:56:20Z | https://github.com/polarsource/polar/issues/5145 | [

"bug"

] | birkjernstrom | 0 |

modAL-python/modAL | scikit-learn | 157 | decision_function instead of predict_proba | Several non-probabilistic estimators, such as SVMs in particular, can be used with uncertainty sampling. Scikit-Learn estimators that support the decision_function method can be used with the closest-to-hyperplane selection algorithm [[Bloodgood]](https://arxiv.org/pdf/1801.07875.pdf). This is actually a very popular s... | open | 2022-04-21T12:42:48Z | 2022-05-03T16:15:18Z | https://github.com/modAL-python/modAL/issues/157 | [] | lkurlandski | 5 |

tflearn/tflearn | data-science | 795 | AttributeError: module 'tflearn' has no attribute 'get_layer_variables_by_scope' in gan.py | Traceback (most recent call last):

File "gan.py", line 60, in <module>

gen_vars = tflearn.get_layer_variables_by_scope('Generator')

AttributeError: module 'tflearn' has no attribute 'get_layer_variables_by_scope' | open | 2017-06-15T13:44:27Z | 2017-06-15T19:02:16Z | https://github.com/tflearn/tflearn/issues/795 | [] | forhonourlx | 1 |

pydantic/logfire | fastapi | 300 | FastAPI integration error | ### Description

I apologize if this belongs in the FastAPI issues instead of logfire. I'm not really sure who is the culprit here.

I'm attaching a [sample project](https://github.com/user-attachments/files/16090524/example.zip) to demonstrate an error when `logfire[fastapi]` is added to a FastAPI project.

> *... | closed | 2024-07-03T20:07:53Z | 2024-07-04T10:33:35Z | https://github.com/pydantic/logfire/issues/300 | [

"bug"

] | mcantrell | 4 |

miguelgrinberg/microblog | flask | 209 | CH17 Vagrant Issue | Hello. I'm having difficulty figuring out what the precise sequence of commands is necessary to log into the Vagrant box as the `ubuntu` user. I've rebuilt/destroyed the box a number of times while trying to get this to work.

- `vagrant up`

- `vagrant ssh`

- `(vagrant) ssh ubuntu@192.168.33.10`

This attempt to ... | closed | 2020-03-03T23:30:34Z | 2020-03-04T18:17:54Z | https://github.com/miguelgrinberg/microblog/issues/209 | [

"question"

] | ADubhlaoich | 2 |

adbar/trafilatura | web-scraping | 488 | Extract more text | for this url = "https://www.aia.com/en/health-wellness/healthy-living/healthy-mind/Managing-financial-stress",

I use

downloaded = trafilatura.fetch_url(url) trafilatura.bare_extraction(downloaded, url=url)

I get the text and this is a good result. However it only has text with index 1. while the website has text ... | open | 2024-01-26T09:40:10Z | 2024-02-16T15:21:06Z | https://github.com/adbar/trafilatura/issues/488 | [

"bug"

] | vulinh48936 | 6 |

Lightning-AI/pytorch-lightning | pytorch | 20,465 | Stop renaming everything, you're annoying. Fix the names of the classes and don't rename them. | ### Bug description

description in title

### What version are you seeing the problem on?

v1.x

### How to reproduce the bug

```python

```

### Error messages and logs

```

# Error messages and logs here please

Support for `training_epoch_end` has been removed in v2.0.0. `ResnetTT` implements th... | closed | 2024-12-04T14:16:50Z | 2024-12-04T15:57:06Z | https://github.com/Lightning-AI/pytorch-lightning/issues/20465 | [

"bug",

"needs triage"

] | vadinabronin | 1 |

feder-cr/Jobs_Applier_AI_Agent_AIHawk | automation | 619 | [BUG]: It is skipping job applications | ### Describe the bug

logs

```zsh

2024-10-26 16:58:38.973 | DEBUG | src.aihawk_job_manager:write_to_file:386 - Writing job application result to file: skipped

2024-10-26 16:58:39.003 | DEBUG | src.aihawk_job_manager:write_to_file:413 - Job data appended to existing file: skipped

2024-10-26 16:58:39.004 | ... | closed | 2024-10-26T21:00:07Z | 2024-10-27T01:15:43Z | https://github.com/feder-cr/Jobs_Applier_AI_Agent_AIHawk/issues/619 | [

"bug"

] | surapuramakhil | 1 |

dask/dask | numpy | 11,073 | Unique Operation fails on dataframe repartitioned using set index after resetting the index |

**Describe the issue**:

**Minimal Complete Verifiable Example**:

```python

import pandas as pd

import dask.dataframe as dd

data = {

'Column1': range(30),

'Column2': range(30, 60)

}

pdf = pd.DataFrame(data)

# Convert the Pandas DataFrame to a Dask DataFrame with 3 partitions

ddf = dd.fro... | closed | 2024-04-25T16:25:55Z | 2024-04-25T17:49:05Z | https://github.com/dask/dask/issues/11073 | [

"needs triage"

] | mscanlon-exos | 1 |

deedy5/primp | web-scraping | 90 | Implement requests-like exception hierarchy | open | 2025-02-07T09:25:17Z | 2025-02-07T18:11:45Z | https://github.com/deedy5/primp/issues/90 | [

"enhancement"

] | deedy5 | 0 | |

CorentinJ/Real-Time-Voice-Cloning | deep-learning | 350 | What is required to make voice data for this? | My friend has given me a challenge to make him swear with this tool using regular data of him reading samples of text. I got it working with recording my voice, but when i try to load his voice from an mp3 file i get "audioread.exceptions.NoBackendError"

What can i do to make the voice files processable? | closed | 2020-05-26T01:05:20Z | 2020-07-04T17:35:19Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/350 | [] | DrasticGray | 2 |

mwaskom/seaborn | data-visualization | 2,821 | Calling `sns.heatmap()` changes matplotlib rcParams | See the following example

```python

import matplotlib as mpl

import matplotlib.pyplot as plt

import seaborn as sns

mpl.rcParams["figure.dpi"] = 120

mpl.rcParams["figure.facecolor"] = "white"

mpl.rcParams["figure.figsize"] = (9, 6)

data = sns.load_dataset("iris")

print(mpl.rcParams["figure.dpi"])

p... | closed | 2022-05-25T19:16:45Z | 2022-05-27T11:13:29Z | https://github.com/mwaskom/seaborn/issues/2821 | [] | tomicapretto | 2 |

zihangdai/xlnet | nlp | 224 | Text Classifier Prediction Problem | I trained a text classification model based on the XLNet pre-training model and got the corresponding ckpt file.

Then, based on this text classification model, I made predictions and but it al... | open | 2019-09-09T03:07:31Z | 2020-09-06T06:20:12Z | https://github.com/zihangdai/xlnet/issues/224 | [] | MissMcFly | 2 |

ultralytics/ultralytics | pytorch | 18,820 | Image shape issue | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

If the images I am training are not square, for example, 300*200 pixels, wil... | open | 2025-01-22T10:04:10Z | 2025-01-22T10:45:56Z | https://github.com/ultralytics/ultralytics/issues/18820 | [

"question",

"detect"

] | chaojiniubi | 4 |

lundberg/respx | pytest | 273 | `respx_mock` doesn't handle `//` in the path-section of URLs. | Hi,

I just stumbled upon the issue described in the title, which can be reproduced with the following `pytest` file.

```python

import httpx

import pytest

from pydantic import AnyHttpUrl

from respx import MockRouter

@pytest.mark.parametrize(

"url",

[

"http://localhost", # OK

"ht... | open | 2024-08-02T19:54:59Z | 2025-01-21T10:25:46Z | https://github.com/lundberg/respx/issues/273 | [

"bug"

] | Skeen | 3 |

modoboa/modoboa | django | 2,410 | TypeError: '<' not supported between instances of 'memoryview' and 'memoryview' | # Impacted versions

* OS Type: Debian/Ubuntu

* OS Version: 20.04

* Database Type: not sure

* Database version: X.y

* Modoboa: 1.17.0

* installer used: Yes

* Webserver: Nginx

# Steps to reproduce

It appears to be part of the following CRON job:

```

# Quarantine cleanup

0 0 * * ... | closed | 2021-11-23T18:02:05Z | 2021-11-30T16:36:20Z | https://github.com/modoboa/modoboa/issues/2410 | [] | binarydad | 1 |

automl/auto-sklearn | scikit-learn | 867 | Dockerfile is not working | ## Describe the bug ##

The provided dockerfile does not build on Mac.

## To Reproduce ##

Steps to reproduce the behavior:

- Run docker build on the provided Dockerfile

- See error:

```

Collecting lazy_import

Downloading lazy_import-0.2.2.tar.gz (15 kB)

ERROR: Command errored out with exit status 1:... | closed | 2020-05-30T21:41:59Z | 2020-07-23T14:38:10Z | https://github.com/automl/auto-sklearn/issues/867 | [] | wlongxiang | 8 |

flairNLP/flair | pytorch | 2,914 | Regarding flair/ner-english-ontonotes model fine tuning | I thought of `Fine-Tuning` the model `flair/ner-english-ontonotes` here `https://huggingface.co/flair/ner-english-ontonotes`.

I couldn't find the following files in `Files and Verions` https://huggingface.co/flair/ner-english-ontonotes/tree/main

- tokenizer_config.josn

- config.json

- some more tokens related f... | closed | 2022-08-22T08:18:31Z | 2023-04-02T16:54:42Z | https://github.com/flairNLP/flair/issues/2914 | [

"question",

"wontfix"

] | pratikchhapolika | 4 |

NVIDIA/pix2pixHD | computer-vision | 56 | minor spell typo | your no_flip option confuses between argumentation and augmentation.

check_here:

https://github.com/NVIDIA/pix2pixHD/blob/master/options/base_options.py

might a developer being too much bored with repeated word of argument :/ | closed | 2018-08-25T08:28:09Z | 2019-06-09T23:28:52Z | https://github.com/NVIDIA/pix2pixHD/issues/56 | [] | syyunn | 2 |

nidhaloff/igel | automation | 6 | provide a way to do one hot encoding | the user should be able to use one hot encoding in the yaml file

| closed | 2020-09-08T22:48:10Z | 2020-09-10T12:10:15Z | https://github.com/nidhaloff/igel/issues/6 | [

"enhancement",

"good first issue"

] | nidhaloff | 1 |

seleniumbase/SeleniumBase | pytest | 3,111 | The CF CAPTCHAs changed again (on Linux) | ## The CF CAPTCHAs changed again (on Linux)

### CI started failing:

<img width="830" alt="Screenshot 2024-09-09 at 10 43 29 AM" src="https://github.com/user-attachments/assets/65b2d952-dbc8-4437-912c-498f024454ff">

---

### This is how it normally looks when passing:

(`PyAutoGUI` clicks the CAPTCHA succes... | closed | 2024-09-09T14:55:54Z | 2024-09-11T05:44:32Z | https://github.com/seleniumbase/SeleniumBase/issues/3111 | [

"workaround exists",

"UC Mode / CDP Mode",

"Fun"

] | mdmintz | 6 |

seleniumbase/SeleniumBase | web-scraping | 2,131 | Seleniumbase is crashed when i run the script | Seleniumbase is crashed when i run the script is there a problem in it ? | closed | 2023-09-22T19:27:23Z | 2023-09-22T20:33:59Z | https://github.com/seleniumbase/SeleniumBase/issues/2131 | [

"invalid",

"can't reproduce"

] | ahmedabdelhamedz | 1 |

albumentations-team/albumentations | deep-learning | 2,061 | [MaskDropout] Remove objects with particular mask classes. | Use case: tracking. We may have object moving, and to simulate occlusions, we cut it out. But only this object, not others. | open | 2024-11-06T01:39:24Z | 2024-11-06T01:39:24Z | https://github.com/albumentations-team/albumentations/issues/2061 | [

"enhancement"

] | ternaus | 0 |

mljar/mercury | data-visualization | 360 | PDF download not working | I am trying to download PDF but the web page becomes greyed out and unresponsive with the spinning wheels that keeps spinning

| open | 2023-09-04T12:00:54Z | 2023-10-23T10:53:26Z | https://github.com/mljar/mercury/issues/360 | [

"bug"

] | gioxc88 | 8 |

chiphuyen/stanford-tensorflow-tutorials | nlp | 30 | advise to add a Jupyter Notebook | hi, here is a suggestion, how about add a "Jupyter Notebook" in example PATH? | open | 2017-06-18T09:05:43Z | 2017-07-11T17:47:48Z | https://github.com/chiphuyen/stanford-tensorflow-tutorials/issues/30 | [] | DoneHome | 1 |

huggingface/transformers | tensorflow | 36,040 | `Llama-3.2-11B-Vision-Instruct` (`mllama`) FSDP fails if grad checkpointing is enabled | ### System Info

1 node with 4 A100 40GB GPUs launched by SkyPilot (`A100:4`) on GCP

### Who can help?

### What happened?

FSDP SFT fine-tuning of `meta-llama/Llama-3.2-90B-Vision-Instruct` on 1 node with 4 `A100-40GB` GPU-s with TRL trainer (`trl.SFTTrainer`) started to fail for us after upgrade to `transformers>=... | open | 2025-02-05T01:23:16Z | 2025-03-08T17:55:39Z | https://github.com/huggingface/transformers/issues/36040 | [

"bug"

] | nikg4 | 3 |

datapane/datapane | data-visualization | 376 | [Bug]: Report upload errror | ### Is there an existing issue for this?

- [X] I have searched for similar issues and discussions

### Bug Description

```markdown

I used dp.upload_report() to upload a report. It was fine until yesterday, but today, it is throwing me the below error. The dp.View uses Group and HTML. Within Group I have Media ... | closed | 2023-05-17T20:34:23Z | 2023-05-24T05:38:43Z | https://github.com/datapane/datapane/issues/376 | [

"bug",

"triage"

] | anu-kuncheria | 1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.