repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

davidsandberg/facenet | tensorflow | 524 | Wrong file name format when validate on LFW | When I try to run the [validation on LFW](https://github.com/davidsandberg/facenet/wiki/Validate-on-lfw), it shows no files found.

The file name in LFW data set is like `Aaron_Eckhart_0001.jpg`, while after alignment it is like `Aaron_Eckhart_0001_0.jpg`. I guess the trailing number is to denote different faces in one picture.

The script that produces this result is `src/align/align_dataset_mtcnn.py`.

But in `lfw.py`, the filename is assumed as the original format, thus the validation script cannot fetch any file from the aligned directory, and leads to an error.

Possible fixes IMO are trying to enumerate files in folder rather than trying fixed filename, or change the filename format in `lfw.py`. If someone can confirm this issue you have my gratitude, then we can fix this.

| closed | 2017-11-10T03:31:20Z | 2017-11-12T14:36:43Z | https://github.com/davidsandberg/facenet/issues/524 | [] | YF-Tung | 1 |

huggingface/datasets | deep-learning | 6,561 | Document YAML configuration with "data_dir" | See https://huggingface.co/datasets/uonlp/CulturaX/discussions/15#6597e83f185db94370d6bf50 for reference | open | 2024-01-05T14:03:33Z | 2024-01-05T14:06:18Z | https://github.com/huggingface/datasets/issues/6561 | [

"documentation"

] | severo | 1 |

huggingface/pytorch-image-models | pytorch | 2,284 | [BUG] SwinTransformer Padding Backwards in PatchMerge | **Describe the bug**

In [this line](https://github.com/huggingface/pytorch-image-models/blob/ee5b1e8217134e9f016a0086b793c34abb721216/timm/models/swin_transformer.py#L438) the padding for H/W is backwards. I found this out by passing in an image size of (648,888) during validation but it's obvious from the torch docs and the code.

```python

class PatchMerging(nn.Module):

""" Patch Merging Layer.

"""

def __init__(

self,

dim: int,

out_dim: Optional[int] = None,

norm_layer: Callable = nn.LayerNorm,

):

"""

Args:

dim: Number of input channels.

out_dim: Number of output channels (or 2 * dim if None)

norm_layer: Normalization layer.

"""

super().__init__()

self.dim = dim

self.out_dim = out_dim or 2 * dim

self.norm = norm_layer(4 * dim)

self.reduction = nn.Linear(4 * dim, self.out_dim, bias=False)

def forward(self, x):

B, H, W, C = x.shape

pad_values = (0, 0, 0, W % 2, 0, H % 2) # Originally (0, 0, 0, H % 2, 0, W % 2) which is wrong

x = nn.functional.pad(x, pad_values)

_, H, W, _ = x.shape

x = x.reshape(B, H // 2, 2, W // 2, 2, C).permute(0, 1, 3, 4, 2, 5).flatten(3)

x = self.norm(x)

x = self.reduction(x)

return x

```

Since the input is B, H, W, C, the padding should be in reverse order like (C_front, C_back, W_front, W_back, H_front, H_back).

Thanks,

-Collin

| closed | 2024-09-21T20:15:01Z | 2024-09-22T00:42:00Z | https://github.com/huggingface/pytorch-image-models/issues/2284 | [

"bug"

] | collinmccarthy | 2 |

ymcui/Chinese-LLaMA-Alpaca-2 | nlp | 387 | 使用完整模型进行摘要生成,出现问题 | ### 提交前必须检查以下项目

- [X] 请确保使用的是仓库最新代码(git pull),一些问题已被解决和修复。

- [X] 我已阅读[项目文档](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki)和[FAQ章节](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki/常见问题)并且已在Issue中对问题进行了搜索,没有找到相似问题和解决方案。

- [X] 第三方插件问题:例如[llama.cpp](https://github.com/ggerganov/llama.cpp)、[LangChain](https://github.com/hwchase17/langchain)、[text-generation-webui](https://github.com/oobabooga/text-generation-webui)等,同时建议到对应的项目中查找解决方案。

### 问题类型

其他问题

### 基础模型

Chinese-Alpaca-2 (7B/13B)

### 操作系统

Windows

### 详细描述问题

上次说是模型不一样,换了一个模型使用的是

```

下载后直接使用

# 使用教程

https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki/langchain_zh

# 中的摘要出现了问题

python langchain_sum.py --model_path .\chinese-alpaca-2-7b-hf\ --file_path doc.txt --gpu_id 0

```

### 依赖情况(代码类问题务必提供)

```

peft 0.6.0.dev0

torch 2.1.0+cu121

transformers 4.31.0

sentencepiece 0.1.97

bitsandbytes 0.41.0

langchain 0.0.146

sentence-transformers 2.2.2

pydantic 1.10.8

faiss 1.7.1

```

### 运行日志或截图

```

# 请在此处粘贴运行日志(请粘贴在本代码块里)

Namespace(file_path='mydoc.txt', model_path='chinese-alpaca-2-7b-hf', gpu_id='0', chain_type='refine')

loading LLM...

You are using the legacy behaviour of the <class 'transformers.models.llama.tokenization_llama.LlamaTokenizer'>. This means that tokens that come after special tokens will not be properly handled. We recommend you to read the related pull request available at https://github.com/huggingface/transformers/pull/24565

Loading checkpoint shards: 100%|██████████| 2/2 [01:19<00:00, 39.64s/it]

Xformers is not installed correctly. If you want to use memory_efficient_attention to accelerate training use the following command to install Xformers

pip install xformers.

Traceback (most recent call last):

File "C:\Users\zx-cent\PycharmProjects\pythonaimodel\my_test.py", line 54, in <module>

model = HuggingFacePipeline.from_model_id(model_id=model_path,

File "C:\Users\zx-cent\.conda\envs\pythonaivenv\lib\site-packages\langchain\llms\huggingface_pipeline.py", line 130, in from_model_id

return cls(

File "pydantic\main.py", line 341, in pydantic.main.BaseModel.__init__

pydantic.error_wrappers.ValidationError: 1 validation error for HuggingFacePipeline

pipeline_kwargs

extra fields not permitted (type=value_error.extra)

``` | closed | 2023-11-03T01:26:42Z | 2023-11-03T02:26:26Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/issues/387 | [] | 1042312930 | 2 |

tensorpack/tensorpack | tensorflow | 981 | Sharping mAP decrease after the realization of DoReFa to the ssd-vgg16 network | Sorry for not writing according to the template as this problem is more relevant to DoReFa quantization than to the tensorpack itself and I cannot find a more suitable place to post.

I am trying to realize the DoReFa quantization on the ssd-vgg16 network using the VOC dataset for the classification and regression tasks. The results however shows a unreasonably sharping decrease (from normal mAP 77% to around 10%) under the 8 8 8 DoReFa configuration. I am wondering if similar works have been done and the same trouble encountered?

| closed | 2018-11-15T01:52:22Z | 2018-11-15T03:04:23Z | https://github.com/tensorpack/tensorpack/issues/981 | [

"unrelated"

] | asjmasjm | 1 |

miguelgrinberg/python-socketio | asyncio | 928 | Threading error when using python-socketio for a few mintutes. | **Describe the bug**

Whenever I use python-socketio on a raspberry PI, eventually after a few minutes sending a lot of packets causes a threading error which crashes the bot, which is unacceptable when working with robots.

**Exception**

```

Exception in thread Thread-3:

Traceback (most recent call last)

File "/usr/lib/python3.7/threading.py...

engineio/client.py, line 367

RuntimeError: can't start new thread

packet queue is empty, aborting

```

Do note, that this program is sending max 30 packets every second.

| closed | 2022-05-24T19:06:45Z | 2022-05-24T19:29:38Z | https://github.com/miguelgrinberg/python-socketio/issues/928 | [] | LeoDog896 | 1 |

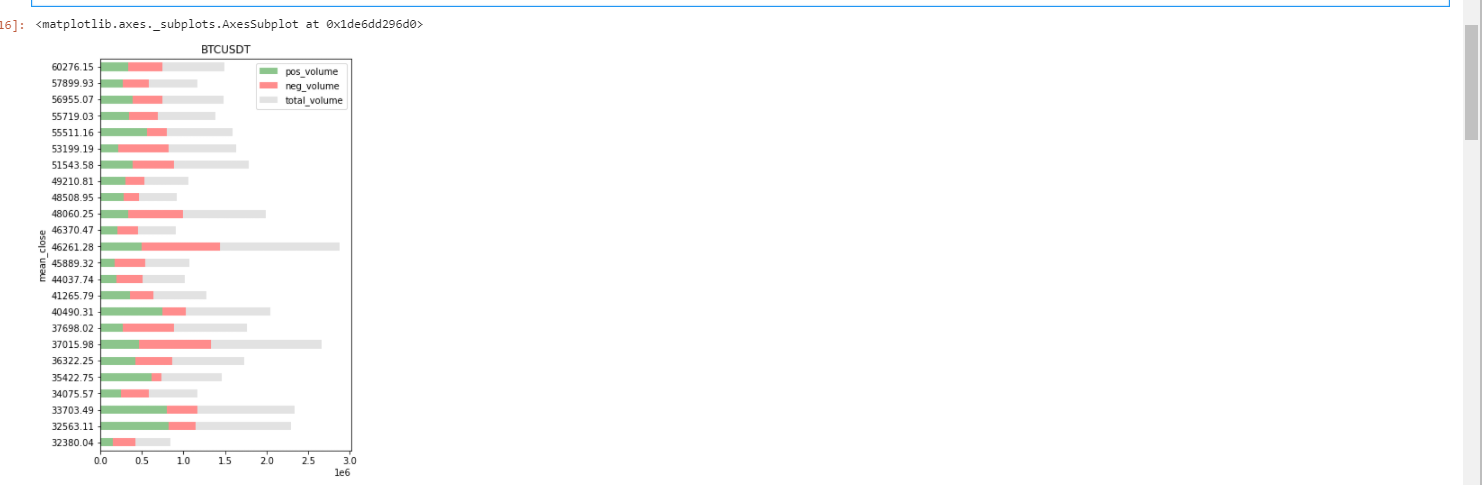

twopirllc/pandas-ta | pandas | 398 | Panda-ta's VPVR shows diff. result | **Which version are you running? The lastest version is on Github. Pip is for major releases.**

```python

0.3.30b0

```

**Upgrade.**

```sh

$ pip install -U git+https://github.com/twopirllc/pandas-ta

```

**Describe the bug**

I have used the same time frame here, and the same start and end date, and Exchange(binance), and same numbers of columns. Here the .csv file of ohlcv starting from January 21 2021 [BTC-USDT.csv](https://github.com/twopirllc/pandas-ta/files/7243057/BTC-USDT.csv).

**Python code that shows VPVR of BTC-USDT

```python

import pandas as pd

import numpy as np

from scipy import stats, signal

import plotly.express as px

import plotly.graph_objects as go

import pandas_ta as ta

# Fetch OHLCV data

data = pd.read_csv('ohlcv/BTC-USDT.csv')

vp=ta.vp(data['close'],data['volume'],24)

# Set the index to mean_close and in ascending order

vp['mean_close'] = round(vp['mean_close'], 2)

vp.set_index('mean_close', inplace=True)

vp.sort_index(ascending=True, inplace=True)

#print("Sorted by mean_close\n", vp, "\n") # Visual Table Check

# Take the last three columns and plot them with horizontal bars

vp[vp.columns[-3:]].plot(

kind='barh',

figsize=(5, 8),

title="BTCUSDT",

color=['green', 'red', 'silver'],

alpha=0.45,

stacked=True,

)

```

** Screenshoots of pandas-ta shows 46k has the highest volume**

while on tradingview 34k is the highest value

| closed | 2021-09-28T10:26:49Z | 2023-11-22T05:06:39Z | https://github.com/twopirllc/pandas-ta/issues/398 | [

"enhancement",

"help wanted"

] | pwneddesal | 5 |

voila-dashboards/voila | jupyter | 1,047 | Deploying to Heroku not working on v0.3 | ## Description

I've been struggling to get Voila to deploy to Heroku recently, and have just figured that I am only having issues where I'm using version 0.3. For example, using the template repo (https://github.com/voila-dashboards/voila-heroku) now doesn't work on Heroku as it installs the 0.3 version (I had to update the runtime.txt to use 3.8.10 as 3.7.5 isn't available on the default Heroku-20 stack).

Specifically I get a timeout error in the Heroku logs. It looks like the Voila server starts up okay but doesn't manage to send anything when a page is requested:

```

2021-12-18T13:09:03.238707+00:00 heroku[web.1]: Starting process with command `voila --port=26507 --no-browser --template=material --enable_nbextensions=True notebooks/bqplot.ipynb`

2021-12-18T13:09:05.949936+00:00 app[web.1]: [Voila] Using /tmp to store connection files

2021-12-18T13:09:05.950308+00:00 app[web.1]: [Voila] Storing connection files in /tmp/voila_l56jo0cr.

2021-12-18T13:09:05.950375+00:00 app[web.1]: [Voila] Serving static files from /app/.heroku/python/lib/python3.8/site-packages/voila/static.

2021-12-18T13:09:06.180485+00:00 app[web.1]: [Voila] Voilà is running at:

2021-12-18T13:09:06.180486+00:00 app[web.1]: http://localhost:26507/

2021-12-18T13:09:13.000000+00:00 app[api]: Build succeeded

2021-12-18T13:10:03.342378+00:00 heroku[web.1]: Error R10 (Boot timeout) -> Web process failed to bind to $PORT within 60 seconds of launch

2021-12-18T13:10:03.368529+00:00 heroku[web.1]: Stopping process with SIGKILL

2021-12-18T13:10:03.528533+00:00 heroku[web.1]: Process exited with status 137

2021-12-18T13:10:03.609381+00:00 heroku[web.1]: State changed from starting to crashed

```

If I change the `requirements.txt` to `voila==0.2.16`, it works as expected. Running Heroku locally also works (`heroku local`) as expected, even with version 0.3. I tried to set max boot time to 180 secs and it still didn't work.

## Reproduce

To reproduce, follow the instructions in https://github.com/voila-dashboards/voila-heroku, but change the `runtime.txt` file to `python-3.8.10` (or anything else on Heroku-20).

| closed | 2021-12-18T13:16:27Z | 2021-12-19T13:04:16Z | https://github.com/voila-dashboards/voila/issues/1047 | [

"bug"

] | samharrison7 | 2 |

BlinkDL/RWKV-LM | pytorch | 239 | Flash Attention | Hi,

Thanks for releasing RWKV! I got an error saying RWKV doesn't support Flash Attention. Is Flash Attention support planned?

Thank you! | closed | 2024-04-21T21:55:15Z | 2024-04-24T22:54:57Z | https://github.com/BlinkDL/RWKV-LM/issues/239 | [] | fakerybakery | 2 |

ageitgey/face_recognition | python | 1,244 | [QUESTION] Recognize face with machine learning of *multiple* images of face? | * face_recognition version: Newest on Nov 11 2020

* Python version: 3

* Operating System: Ubuntu

Is it possible to load multiple images to get a better facial recognition result?

I want to load images of my face in different lighting conditions, looking in different directions etc. to see if I can achieve a better effect. | closed | 2020-11-12T00:14:14Z | 2020-11-13T15:45:57Z | https://github.com/ageitgey/face_recognition/issues/1244 | [] | Hat000 | 1 |

taverntesting/tavern | pytest | 683 | Documentation contains reference to non-existent --tavern-beta-new-traceback flag | See: https://tavern.readthedocs.io/en/latest/debugging.html?highlight=tavern-beta-new-traceback | closed | 2021-04-30T18:19:56Z | 2021-05-08T13:32:19Z | https://github.com/taverntesting/tavern/issues/683 | [] | jsfehler | 0 |

wkentaro/labelme | computer-vision | 810 | [BUG] OSError: cannot write mode RGBA as JPEG | When I am going to second image via next button or try to save current one the python crashes and labelme is closed.

the error is

in _save raise OSError(f"cannot write mode {im.mode} as JPEG") from e

OSError: cannot write mode RGBA as JPEG

Please help me soon, I have a deadline.

| closed | 2020-12-12T12:56:54Z | 2023-04-05T14:55:01Z | https://github.com/wkentaro/labelme/issues/810 | [

"issue::bug",

"priority: high"

] | ApoorvaOjha | 4 |

litestar-org/litestar | api | 3,356 | Docs: Update `usage/security/guards` chapter | ### Summary

Follow-up from discussion: https://github.com/orgs/litestar-org/discussions/3355

Suggesting changes to chapter: https://docs.litestar.dev/latest/usage/security/guards.html

* Guards take a `Request` object as first argument, not an `ASGIConnection`. Needs correction throughout the chapter.

* Add examples for how to access path parameters, query parameters, and query body from within a guard. | closed | 2024-04-09T10:18:04Z | 2025-03-20T15:54:34Z | https://github.com/litestar-org/litestar/issues/3356 | [

"Documentation :books:"

] | aranvir | 4 |

tflearn/tflearn | data-science | 961 | How to get tensorflow session by tflearn? | Hello,

I just want to get the running session(in tensorflow) by tflearn.

I saw that [code](https://github.com/tflearn/tflearn/blob/master/examples/basics/weights_persistence.py) line65 in tflearn example:

`with model.session.as_default()`

Is that model.session equal to the running tensorflow session?

| open | 2017-11-20T02:02:56Z | 2017-11-20T02:02:56Z | https://github.com/tflearn/tflearn/issues/961 | [] | polar99 | 0 |

django-cms/django-cms | django | 7,571 | [DOC] The suggested aldryn-search package does not work anymore with django>=4 |

## Description

https://docs.django-cms.org/en/latest/topics/searchdocs.html

The above documentation page suggest `aldryn-search` package which does not work anymore with django >4.0 (bug with Signal using `providing_args`)

Since django-cms 3.11 is announced to be compatible with django 4 the documentation should no recommend to use this package.

* [ ] Yes, I want to help fix this issue and I will join #workgroup-documentation on [Slack](https://www.django-cms.org/slack) to confirm with the team that a PR is welcome.

* [x] No, I only want to report the issue.

| closed | 2023-05-31T10:33:28Z | 2024-07-31T06:48:52Z | https://github.com/django-cms/django-cms/issues/7571 | [

"good first issues",

"component: documentation",

"needs contribution",

"Easy pickings"

] | fabien-michel | 15 |

piskvorky/gensim | nlp | 2,775 | xml.etree.cElementTree was deprecated and removed in Python 3.9 in favor of ElementTree | #### Problem description

xml.etree.cElementTree was deprecated and removed in Python 3.9 in favor of ElementTree

Ref : https://github.com/python/cpython/pull/19108

#### Versions

Python 3.9

I will raise a PR for this issue. | closed | 2020-03-29T14:43:18Z | 2020-04-24T19:54:33Z | https://github.com/piskvorky/gensim/issues/2775 | [] | tirkarthi | 0 |

CTFd/CTFd | flask | 2,148 | Return solves/fails in Challenge plugin class | I found a comment in a plugin that suggested that we should return the solve/fail object in Challenge plugins. I agree with this and it doesn't seem to introduce a breaking change so might as well do it.

We should return the solve object in this function:

https://github.com/CTFd/CTFd/blob/a2c81cb03a398f3ca1819642b8e8dba181dccb22/CTFd/plugins/challenges/__init__.py#L132 | open | 2022-06-24T16:00:55Z | 2022-06-24T16:00:55Z | https://github.com/CTFd/CTFd/issues/2148 | [] | ColdHeat | 0 |

graphql-python/graphene-django | django | 1,383 | TypeError: Object of type OperationType is not JSON serializable when there's an Exception | *My code has not changed, I simply upgraded Graphene (graphene-python, graphene-django)*

* **What is the current behavior?**

Since upgrading to the latest versions (on Django 3.2.16), I have started getting the following errors when an exception is raised in my resolvers and mutations:

```py

platform | Traceback (most recent call last):

platform | File "/usr/local/lib/python3.9/site-packages/django/core/handlers/exception.py", line 47, in inner

platform | response = get_response(request)

platform | File "/usr/local/lib/python3.9/site-packages/django/core/handlers/base.py", line 181, in _get_response

platform | response = wrapped_callback(request, *callback_args, **callback_kwargs)

platform | File "/usr/local/lib/python3.9/site-packages/django/views/decorators/csrf.py", line 54, in wrapped_view

platform | return view_func(*args, **kwargs)

platform | File "/usr/local/lib/python3.9/site-packages/django/views/generic/base.py", line 70, in view

platform | return self.dispatch(request, *args, **kwargs)

platform | File "/usr/local/lib/python3.9/site-packages/django/utils/decorators.py", line 43, in _wrapper

platform | return bound_method(*args, **kwargs)

platform | File "/usr/local/lib/python3.9/site-packages/django/utils/decorators.py", line 130, in _wrapped_view

platform | response = view_func(request, *args, **kwargs)

platform | File "/usr/local/lib/python3.9/site-packages/graphene_django/views.py", line 179, in dispatch

platform | result, status_code = self.get_response(request, data, show_graphiql)

platform | File "/usr/local/lib/python3.9/site-packages/graphene_django/views.py", line 224, in get_response

platform | result = self.json_encode(request, response, pretty=show_graphiql)

platform | File "/usr/local/lib/python3.9/site-packages/graphene_django/views.py", line 235, in json_encode

platform | return json.dumps(d, separators=(",", ":"))

platform | File "/usr/local/lib/python3.9/json/__init__.py", line 234, in dumps

platform | return cls(

platform | File "/usr/local/lib/python3.9/json/encoder.py", line 199, in encode

platform | chunks = self.iterencode(o, _one_shot=True)

platform | File "/usr/local/lib/python3.9/json/encoder.py", line 257, in iterencode

platform | return _iterencode(o, 0)

platform | File "/usr/local/lib/python3.9/json/encoder.py", line 179, in default

platform | raise TypeError(f'Object of type {o.__class__.__name__} '

platform | TypeError: Object of type OperationType is not JSON serializable

```

Everything was working fine before, until I upgraded to these versions:

```

Django==3.2.16

...

graphene==3.2.1

graphene-django==3.0.0

graphene-file-upload==1.3.0

graphql-core==3.2.3

graphql-relay==3.2.0

...

```

* **What is the expected behavior?**

I expected everything to work as it used to before since I didn't change application code.

* **What is the motivation / use case for changing the behavior?**

I was actually trying to get the latest version of the `Graphiql` GUI when I started. But then I noticed that my dependencies were very outdated.

* **Please tell us about your environment:**

- Version: 3.0.0

- Platform: Python 3.10.9, macOS Ventura (Chip M1 Pro)

| open | 2023-01-17T06:44:00Z | 2023-04-25T18:32:27Z | https://github.com/graphql-python/graphene-django/issues/1383 | [

"🐛bug"

] | sithembiso | 1 |

netbox-community/netbox | django | 17,772 | My CI/CD breaks as Tag v4.1.4 doesn't point to PR merge commit for master branch. It points to commit in develop. | ### Deployment Type

Self-hosted

### Triage priority

N/A

### NetBox Version

v4.1.3

### Python Version

3.12

### Steps to Reproduce

I have a GitHub workflow in my repo to track NetBox master branch everyday. It checks the tags and find out the latest release by parsing the latest tag. Then I do code review again and merged to my local main branch.

It works well for previous releases. It stopped working with v4.1.4.

```

UPSTREAM_TAG=$(git ls-remote --tags | grep -h $(git rev-parse --short ${{ env.upstream_remote_name }}/${{ env.upstream_branch_name }} ) | awk 'END{print}' | awk '{print $2}' | cut -d'/' -f 3)

UPSTREAM_HEAD_COMMIT=$(git rev-parse --short ${{ env.upstream_remote_name }}/${{ env.upstream_branch_name }} )

```

The tag for latest version v4.1.4 doesn't point the commit of "Merge pull request" in master branch. Or the latest commit in master branch is not in the repo tag list. There is inconsistence.

With `git ls-remote --tags` or `git ls-remote --tags https://github.com/netbox-community/netbox.git` the tags can be feteched.

The tag v4.1.4 points to d2cbdfe7d742f0d2db7989ed27cde466c8366dea in develop branch

While other tags pointed to "Merge pull request" in master branch.

Tag v4.1.4 should point to 6ea0c0c in master branch

```

7bc0d34196323ac992d7ec80b1caa48e6094d88d refs/tags/v4.1.0

Merge pull request #17350 from netbox-community/develop <--- commit in master branch

0e34fba92223348e0bf4375b8d380324ff5e1beb refs/tags/v4.1.1

Merge pull request #17478 from netbox-community/develop <--- commit in master branch

ead6e637f4aecc4717b10c71f9140c94040da264 refs/tags/v4.1.2

Merge pull request #17626 from netbox-community/develop <--- commit in master branch

6ea0c0c3e910d1104fd0fbe5e6cd07198862d1fa refs/tags/v4.1.3

Merge pull request #17658 from netbox-community/develop <--- commit in master branch

d2cbdfe7d742f0d2db7989ed27cde466c8366dea refs/tags/v4.1.4

Release v4.1.4 <--- commit in develop branch

```

### Expected Behavior

Tag v4.1.4 should point to 6ea0c0c in master branch

### Observed Behavior

Tag v4.1.4 points to d2cbdfe7d742f0d2db7989ed27cde466c8366dea in develop branch | closed | 2024-10-16T10:05:01Z | 2024-10-16T11:52:28Z | https://github.com/netbox-community/netbox/issues/17772 | [] | marsteel | 0 |

AUTOMATIC1111/stable-diffusion-webui | deep-learning | 15,518 | [Bug]: Missing scrollbar on extra networks tabs with tree view enabled | ### Checklist

- [X] The issue exists after disabling all extensions

- [X] The issue exists on a clean installation of webui

- [ ] The issue is caused by an extension, but I believe it is caused by a bug in the webui

- [X] The issue exists in the current version of the webui

- [X] The issue has not been reported before recently

- [ ] The issue has been reported before but has not been fixed yet

### What happened?

I have lots of Loras in various subfolders. With the dir view enabled ("Extra Networks directory view style" is set to dirs), the folder names fill up the entire available space on the Loras tab and get cut off. Folder names that don't fit on the screen get cut off and the lora thumbnails aren't visible either. I can drag the resize handle down until all the folders, and the thumbnails below them are visible but this is cumbersome compared to the previous behavior.

I think this broke when the resize handle was introduced and the extra-network-pane divs were switched to flexbox.

I'm not good enough with CSS to fix this but removing the `height: calc(100vh - 24rem);` rule from `.extra-network-pane` in style.css alleviates the problem by making the extra networks pane big enough that all content fits without scrolling (but this is probably not ideal either since it can get very large).

Edit: edited the title of the issue and the description above to clarify that this happens only when "Extra Networks directory view style" is set to dirs. Tree view is fine.

### Steps to reproduce the problem

1. Have lots of Loras sorted into many folders (enough that the list of folders doesn't fit on a single screen on the Lora tab)

2. Go to Lora tab

3. Observe that list of folders is cut off, thumbnails below the folder list are not visible

### What should have happened?

Either the list of folders should be big enough so no folders are cut off or there should be a scrollbar. The thumbnails should be visible below the list of folders even if the list of folders takes up more than a screen.

### What browsers do you use to access the UI ?

Brave

### Sysinfo

[sysinfo.txt](https://github.com/AUTOMATIC1111/stable-diffusion-webui/files/14972152/sysinfo.txt)

### Console logs

```Shell

not relevant, it's a CSS styling issue

```

### Additional information

_No response_ | open | 2024-04-14T21:25:31Z | 2024-04-23T20:59:21Z | https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/15518 | [

"bug"

] | thatfuckingbird | 5 |

Urinx/WeixinBot | api | 226 | 微信网页版采用mmtls协议了 | 是不是这个程序就跑不了了?我现在运行_py3下的robot,已经获取不到联系人信息了,接口返回一个错误码,然后内容就为空了。 | closed | 2017-08-25T05:00:54Z | 2017-08-25T05:02:48Z | https://github.com/Urinx/WeixinBot/issues/226 | [] | daimon99 | 0 |

axnsan12/drf-yasg | django | 168 | Cache feature breaks when using the Redis cache backend | I followed the docs to set up `drf-yasg` but i kept getting this error. I even tried removing all my paths to leave only the schema paths and I'm still getting the error.

Here are my deps:

```

celery[redis]==4.2.1

channels==2.1.2

channels_redis==2.2.1

django[argon2]==2.0.7

django-cors-headers==2.2.0

django-debug-toolbar==1.9.1

django-filter==2.0.0

django-redis==4.9.0

djangorestframework==3.8.2

djangorestframework_simplejwt==3.2.3

drf-yasg[validation]==1.9.1

gunicorn==19.9.0

Markdown==2.6.11

pika==0.12.0

psycopg2==2.7.5

Pygments==2.2.0

rethinkdb==2.3.0.post6

uvicorn==0.2.17

```

And here is the error log:

```

Environment:

Request Method: GET

Request URL: http://localhost/docs

Django Version: 2.0.7

Python Version: 3.6.5

Installed Applications:

['django.contrib.admin',

'django.contrib.auth',

'django.contrib.contenttypes',

'django.contrib.sessions',

'django.contrib.messages',

'django.contrib.staticfiles',

'channels',

'django_filters',

'drf_yasg',

'rest_framework',

'windspeed.taskapp.celery.CeleryConfig',

'windspeed.common.apps.CommonConfig',

'windspeed.accounts.apps.AccountsConfig',

'windspeed.authentication.apps.AuthenticationConfig',

'debug_toolbar']

Installed Middleware:

['django.middleware.security.SecurityMiddleware',

'django.contrib.sessions.middleware.SessionMiddleware',

'django.middleware.common.CommonMiddleware',

'django.middleware.csrf.CsrfViewMiddleware',

'django.contrib.auth.middleware.AuthenticationMiddleware',

'django.contrib.messages.middleware.MessageMiddleware',

'django.middleware.clickjacking.XFrameOptionsMiddleware',

'debug_toolbar.middleware.DebugToolbarMiddleware']

Traceback:

File "/usr/local/lib/python3.6/site-packages/django/core/handlers/exception.py" in inner

35. response = get_response(request)

File "/usr/local/lib/python3.6/site-packages/django/core/handlers/base.py" in _get_response

158. response = self.process_exception_by_middleware(e, request)

File "/usr/local/lib/python3.6/site-packages/django/core/handlers/base.py" in _get_response

156. response = response.render()

File "/usr/local/lib/python3.6/site-packages/django/template/response.py" in render

108. newretval = post_callback(retval)

File "/usr/local/lib/python3.6/site-packages/django/utils/decorators.py" in callback

156. return middleware.process_response(request, response)

File "/usr/local/lib/python3.6/site-packages/django/middleware/cache.py" in process_response

102. lambda r: self.cache.set(cache_key, r, timeout)

File "/usr/local/lib/python3.6/site-packages/django/template/response.py" in add_post_render_callback

93. callback(self)

File "/usr/local/lib/python3.6/site-packages/django/middleware/cache.py" in <lambda>

102. lambda r: self.cache.set(cache_key, r, timeout)

File "/usr/local/lib/python3.6/site-packages/debug_toolbar/panels/cache.py" in wrapped

33. value = method(self, *args, **kwargs)

File "/usr/local/lib/python3.6/site-packages/debug_toolbar/panels/cache.py" in set

79. return self.cache.set(*args, **kwargs)

File "/usr/local/lib/python3.6/site-packages/django_redis/cache.py" in _decorator

32. return method(self, *args, **kwargs)

File "/usr/local/lib/python3.6/site-packages/django_redis/cache.py" in set

67. return self.client.set(*args, **kwargs)

File "/usr/local/lib/python3.6/site-packages/django_redis/client/default.py" in set

114. nvalue = self.encode(value)

File "/usr/local/lib/python3.6/site-packages/django_redis/client/default.py" in encode

326. value = self._serializer.dumps(value)

File "/usr/local/lib/python3.6/site-packages/django_redis/serializers/json.py" in dumps

14. return json.dumps(value, cls=DjangoJSONEncoder).encode()

File "/usr/local/lib/python3.6/json/__init__.py" in dumps

238. **kw).encode(obj)

File "/usr/local/lib/python3.6/json/encoder.py" in encode

199. chunks = self.iterencode(o, _one_shot=True)

File "/usr/local/lib/python3.6/json/encoder.py" in iterencode

257. return _iterencode(o, 0)

File "/usr/local/lib/python3.6/site-packages/django/core/serializers/json.py" in default

104. return super().default(o)

File "/usr/local/lib/python3.6/json/encoder.py" in default

180. o.__class__.__name__)

Exception Type: TypeError at /docs

Exception Value: Object of type 'Response' is not JSON serializable

```

Would appreciate any help! :) | closed | 2018-07-23T09:54:57Z | 2018-08-06T11:04:57Z | https://github.com/axnsan12/drf-yasg/issues/168 | [] | thomasjiangcy | 4 |

Asabeneh/30-Days-Of-Python | numpy | 296 | Typo in Intro | Right in the first paragraph you mention "month pythons..." the comedy skit. I believe you meant "Monty" | closed | 2022-08-24T12:08:53Z | 2023-07-08T22:16:19Z | https://github.com/Asabeneh/30-Days-Of-Python/issues/296 | [] | nickocruzm | 0 |

sinaptik-ai/pandas-ai | pandas | 1,408 | Docker Compose Build issue | ### System Info

Hey I am trying to run the platform locally and using the intructions which were given in this thread.

https://docs.pandas-ai.com/platform

when i am building the docker using the docker compose commmand i am getting error and service stop running.

pandabi-backend | ERROR: Application startup failed. Exiting.

### Platform Details:

Linux "Ubuntu 22.04.4 LTS"

Docker version 27.3.1

### 🐛 Describe the bug

### Build Logs

Creating network "pandas-ai_pandabi-network" with driver "bridge"

Creating pandas-ai_postgresql_1 ... done

Creating pandabi-frontend ... done

Creating pandabi-backend ... done

Attaching to pandabi-frontend, pandas-ai_postgresql_1, pandabi-backend

pandabi-backend | startup.sh: line 6: log: command not found

postgresql_1 |

postgresql_1 | PostgreSQL Database directory appears to contain a database; Skipping initialization

postgresql_1 |

postgresql_1 | 2024-10-24 09:58:10.417 UTC [1] LOG: starting PostgreSQL 14.2 on x86_64-pc-linux-musl, compiled by gcc (Alpine 10.3.1_git20211027) 10.3.1 20211027, 64-bit

postgresql_1 | 2024-10-24 09:58:10.417 UTC [1] LOG: listening on IPv4 address "0.0.0.0", port 5432

postgresql_1 | 2024-10-24 09:58:10.417 UTC [1] LOG: listening on IPv6 address "::", port 5432

postgresql_1 | 2024-10-24 09:58:10.422 UTC [1] LOG: listening on Unix socket "/var/run/postgresql/.s.PGSQL.5432"

postgresql_1 | 2024-10-24 09:58:10.428 UTC [21] LOG: database system was shut down at 2024-10-24 06:37:08 UTC

postgresql_1 | 2024-10-24 09:58:10.433 UTC [1] LOG: database system is ready to accept connections

pandabi-frontend |

pandabi-frontend | > client@0.1.0 start

pandabi-frontend | > next start

pandabi-frontend |

pandabi-frontend | ⚠ You are using a non-standard "NODE_ENV" value in your environment. This creates inconsistencies in the project and is strongly advised against. Read more: https://nextjs.org/docs/messages/non-standard-node-env

pandabi-frontend | ▲ Next.js 14.2.3

pandabi-frontend | - Local: http://localhost:3000

pandabi-frontend |

pandabi-frontend | ✓ Starting...

pandabi-frontend | ✓ Ready in 747ms

pandabi-backend | Resolving dependencies...

pandabi-backend | Warning: The locked version 3.9.1 for matplotlib is a yanked version. Reason for being yanked: The Windows wheels, under some conditions, caused segfaults in unrelated user code. Due to this we deleted the Windows wheels to prevent these segfaults, however this caused greater disruption as pip then began to try (and fail) to build 3.9.1 from the sdist on Windows which impacted far more users. Yanking the whole release is the only tool available to eliminate these failures without changes to on the user side. The sdist, OSX wheel, and manylinux wheels are all functional and there are no critical bugs in the release. Downstream packagers should not yank their builds of Matplotlib 3.9.1. See https://github.com/matplotlib/matplotlib/issues/28551 for details.

pandabi-backend | poetry install

pandabi-backend | Installing dependencies from lock file

pandabi-backend |

pandabi-backend | No dependencies to install or update

pandabi-backend |

pandabi-backend | Installing the current project: pandasai-server (0.1.0)

pandabi-backend |

pandabi-backend | Warning: The current project could not be installed: No file/folder found for package pandasai-server

pandabi-backend | If you do not want to install the current project use --no-root.

pandabi-backend | If you want to use Poetry only for dependency management but not for packaging, you can disable package mode by setting package-mode = false in your pyproject.toml file.

pandabi-backend | In a future version of Poetry this warning will become an error!

pandabi-backend | wait-for-it.sh: 4: shift: can't shift that many

pandabi-backend | export DEBUG='1'

pandabi-backend | export ENVIRONMENT='development'

pandabi-backend | export GPG_KEY='A035C8C19219BA821ECEA86B64E628F8D684696D'

pandabi-backend | export HOME='/root'

pandabi-backend | export HOSTNAME='99c683c72737'

pandabi-backend | export LANG='C.UTF-8'

pandabi-backend | export MAKEFLAGS=''

pandabi-backend | export MAKELEVEL='1'

pandabi-backend | export MFLAGS=''

pandabi-backend | export PANDASAI_API_KEY='$2a$10$eLN.4Ut5vlqZi9V6OIQBkOAyMA42AxgV9lwgwkxnT5bCoWBSzFt/q'

pandabi-backend | export PATH='/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/bin:/root/.local/bin:/usr/local/bin:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin'

pandabi-backend | export POSTGRES_URL='postgresql+asyncpg://pandasai:password123@postgresql:5432/pandasai-db'

pandabi-backend | export PS1='(pandasai-server-py3.11) '

pandabi-backend | export PWD='/app'

pandabi-backend | export PYTHON_SHA256='07a4356e912900e61a15cb0949a06c4a05012e213ecd6b4e84d0f67aabbee372'

pandabi-backend | export PYTHON_VERSION='3.11.10'

pandabi-backend | export SHLVL='1'

pandabi-backend | export SHOW_SQL_ALCHEMY_QUERIES='0'

pandabi-backend | export TEST_POSTGRES_URL='postgresql+asyncpg://pandasai:password123@postgresql:5432/pandasai-db'

pandabi-backend | export VIRTUAL_ENV='/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11'

pandabi-backend | export VIRTUAL_ENV_PROMPT='pandasai-server-py3.11'

pandabi-backend | export _='/usr/bin/make'

pandabi-backend | poetry run alembic upgrade head

pandabi-backend | Traceback (most recent call last):

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/bin/alembic", line 8, in <module>

pandabi-backend | sys.exit(main())

pandabi-backend | ^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/alembic/config.py", line 636, in main

pandabi-backend | CommandLine(prog=prog).main(argv=argv)

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/alembic/config.py", line 626, in main

pandabi-backend | self.run_cmd(cfg, options)

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/alembic/config.py", line 603, in run_cmd

pandabi-backend | fn(

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/alembic/command.py", line 406, in upgrade

pandabi-backend | script.run_env()

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/alembic/script/base.py", line 582, in run_env

pandabi-backend | util.load_python_file(self.dir, "env.py")

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/alembic/util/pyfiles.py", line 95, in load_python_file

pandabi-backend | module = load_module_py(module_id, path)

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/alembic/util/pyfiles.py", line 113, in load_module_py

pandabi-backend | spec.loader.exec_module(module) # type: ignore

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "<frozen importlib._bootstrap_external>", line 940, in exec_module

pandabi-backend | File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

pandabi-backend | File "/app/migrations/env.py", line 10, in <module>

pandabi-backend | from app.models import Base

pandabi-backend | ModuleNotFoundError: No module named 'app'

pandabi-backend | make: *** [Makefile:52: migrate] Error 1

pandabi-backend | export DEBUG='1'

pandabi-backend | export ENVIRONMENT='development'

pandabi-backend | export GPG_KEY='A035C8C19219BA821ECEA86B64E628F8D684696D'

pandabi-backend | export HOME='/root'

pandabi-backend | export HOSTNAME='99c683c72737'

pandabi-backend | export LANG='C.UTF-8'

pandabi-backend | export MAKEFLAGS=''

pandabi-backend | export MAKELEVEL='1'

pandabi-backend | export MFLAGS=''

pandabi-backend | export PANDASAI_API_KEY='$2a$10$eLN.4Ut5vlqZi9V6OIQBkOAyMA42AxgV9lwgwkxnT5bCoWBSzFt/q'

pandabi-backend | export PATH='/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/bin:/root/.local/bin:/usr/local/bin:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin'

pandabi-backend | export POSTGRES_URL='postgresql+asyncpg://pandasai:password123@postgresql:5432/pandasai-db'

pandabi-backend | export PS1='(pandasai-server-py3.11) '

pandabi-backend | export PWD='/app'

pandabi-backend | export PYTHON_SHA256='07a4356e912900e61a15cb0949a06c4a05012e213ecd6b4e84d0f67aabbee372'

pandabi-backend | export PYTHON_VERSION='3.11.10'

pandabi-backend | export SHLVL='1'

pandabi-backend | export SHOW_SQL_ALCHEMY_QUERIES='0'

pandabi-backend | export TEST_POSTGRES_URL='postgresql+asyncpg://pandasai:password123@postgresql:5432/pandasai-db'

pandabi-backend | export VIRTUAL_ENV='/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11'

pandabi-backend | export VIRTUAL_ENV_PROMPT='pandasai-server-py3.11'

pandabi-backend | export _='/usr/bin/make'

pandabi-backend | poetry run python main.py

pandabi-backend | INFO: Will watch for changes in these directories: ['/app']

pandabi-backend | INFO: Uvicorn running on http://0.0.0.0:8000 (Press CTRL+C to quit)

pandabi-backend | INFO: Started reloader process [58] using StatReload

pandabi-backend | INFO: Started server process [62]

pandabi-backend | INFO: Waiting for application startup.

pandabi-backend | 2024-10-24 09:58:31,386 INFO sqlalchemy.engine.Engine select pg_catalog.version()

pandabi-backend | 2024-10-24 09:58:31,386 INFO sqlalchemy.engine.Engine [raw sql] ()

pandabi-backend | 2024-10-24 09:58:31,388 INFO sqlalchemy.engine.Engine select current_schema()

pandabi-backend | 2024-10-24 09:58:31,388 INFO sqlalchemy.engine.Engine [raw sql] ()

pandabi-backend | 2024-10-24 09:58:31,389 INFO sqlalchemy.engine.Engine show standard_conforming_strings

pandabi-backend | 2024-10-24 09:58:31,389 INFO sqlalchemy.engine.Engine [raw sql] ()

pandabi-backend | 2024-10-24 09:58:31,391 INFO sqlalchemy.engine.Engine BEGIN (implicit)

pandabi-backend | 2024-10-24 09:58:31,400 INFO sqlalchemy.engine.Engine SELECT anon_1.id, anon_1.email, anon_1.first_name, anon_1.created_at, anon_1.password, anon_1.verified, anon_1.last_name, anon_1.features, organization_1.id AS id_1, organization_1.name, organization_1.url, organization_1.is_default, organization_1.settings, organization_membership_1.id AS id_2, organization_membership_1.user_id, organization_membership_1.organization_id, organization_membership_1.role, organization_membership_1.verified AS verified_1

pandabi-backend | FROM (SELECT "user".id AS id, "user".email AS email, "user".first_name AS first_name, "user".created_at AS created_at, "user".password AS password, "user".verified AS verified, "user".last_name AS last_name, "user".features AS features

pandabi-backend | FROM "user"

pandabi-backend | LIMIT $1::INTEGER OFFSET $2::INTEGER) AS anon_1 LEFT OUTER JOIN organization_membership AS organization_membership_1 ON anon_1.id = organization_membership_1.user_id LEFT OUTER JOIN organization AS organization_1 ON organization_1.id = organization_membership_1.organization_id

pandabi-backend | 2024-10-24 09:58:31,400 INFO sqlalchemy.engine.Engine [generated in 0.00031s] (1, 0)

postgresql_1 | 2024-10-24 09:58:31.401 UTC [28] ERROR: relation "user" does not exist at character 700

postgresql_1 | 2024-10-24 09:58:31.401 UTC [28] STATEMENT: SELECT anon_1.id, anon_1.email, anon_1.first_name, anon_1.created_at, anon_1.password, anon_1.verified, anon_1.last_name, anon_1.features, organization_1.id AS id_1, organization_1.name, organization_1.url, organization_1.is_default, organization_1.settings, organization_membership_1.id AS id_2, organization_membership_1.user_id, organization_membership_1.organization_id, organization_membership_1.role, organization_membership_1.verified AS verified_1

postgresql_1 | FROM (SELECT "user".id AS id, "user".email AS email, "user".first_name AS first_name, "user".created_at AS created_at, "user".password AS password, "user".verified AS verified, "user".last_name AS last_name, "user".features AS features

postgresql_1 | FROM "user"

postgresql_1 | LIMIT $1::INTEGER OFFSET $2::INTEGER) AS anon_1 LEFT OUTER JOIN organization_membership AS organization_membership_1 ON anon_1.id = organization_membership_1.user_id LEFT OUTER JOIN organization AS organization_1 ON organization_1.id = organization_membership_1.organization_id

pandabi-backend | 2024-10-24 09:58:31,402 INFO sqlalchemy.engine.Engine ROLLBACK

pandabi-backend | ERROR: Traceback (most recent call last):

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/dialects/postgresql/asyncpg.py", line 514, in _prepare_and_execute

pandabi-backend | prepared_stmt, attributes = await adapt_connection._prepare(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/dialects/postgresql/asyncpg.py", line 760, in _prepare

pandabi-backend | prepared_stmt = await self._connection.prepare(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/asyncpg/connection.py", line 636, in prepare

pandabi-backend | return await self._prepare(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/asyncpg/connection.py", line 654, in _prepare

pandabi-backend | stmt = await self._get_statement(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/asyncpg/connection.py", line 433, in _get_statement

pandabi-backend | statement = await self._protocol.prepare(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "asyncpg/protocol/protocol.pyx", line 166, in prepare

pandabi-backend | asyncpg.exceptions.UndefinedTableError: relation "user" does not exist

pandabi-backend |

pandabi-backend | The above exception was the direct cause of the following exception:

pandabi-backend |

pandabi-backend | Traceback (most recent call last):

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/engine/base.py", line 1967, in _exec_single_context

pandabi-backend | self.dialect.do_execute(

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/engine/default.py", line 924, in do_execute

pandabi-backend | cursor.execute(statement, parameters)

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/dialects/postgresql/asyncpg.py", line 572, in execute

pandabi-backend | self._adapt_connection.await_(

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 132, in await_only

pandabi-backend | return current.parent.switch(awaitable) # type: ignore[no-any-return,attr-defined] # noqa: E501

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 196, in greenlet_spawn

pandabi-backend | value = await result

pandabi-backend | ^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/dialects/postgresql/asyncpg.py", line 550, in _prepare_and_execute

pandabi-backend | self._handle_exception(error)

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/dialects/postgresql/asyncpg.py", line 501, in _handle_exception

pandabi-backend | self._adapt_connection._handle_exception(error)

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/dialects/postgresql/asyncpg.py", line 784, in _handle_exception

pandabi-backend | raise translated_error from error

pandabi-backend | sqlalchemy.dialects.postgresql.asyncpg.AsyncAdapt_asyncpg_dbapi.ProgrammingError: <class 'asyncpg.exceptions.UndefinedTableError'>: relation "user" does not exist

pandabi-backend |

pandabi-backend | The above exception was the direct cause of the following exception:

pandabi-backend |

pandabi-backend | Traceback (most recent call last):

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/starlette/routing.py", line 671, in lifespan

pandabi-backend | async with self.lifespan_context(app):

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/starlette/routing.py", line 566, in __aenter__

pandabi-backend | await self._router.startup()

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/starlette/routing.py", line 648, in startup

pandabi-backend | await handler()

pandabi-backend | File "/app/core/server.py", line 145, in on_startup

pandabi-backend | await init_database()

pandabi-backend | File "/app/core/server.py", line 113, in init_database

pandabi-backend | user = await init_user()

pandabi-backend | ^^^^^^^^^^^^^^^^^

pandabi-backend | File "/app/core/server.py", line 81, in init_user

pandabi-backend | await controller.create_default_user()

pandabi-backend | File "/app/core/database/transactional.py", line 40, in decorator

pandabi-backend | raise exception

pandabi-backend | File "/app/core/database/transactional.py", line 27, in decorator

pandabi-backend | result = await self._run_required_new(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/app/core/database/transactional.py", line 53, in _run_required_new

pandabi-backend | result = await function(*args, **kwargs)

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/app/app/controllers/user.py", line 21, in create_default_user

pandabi-backend | users = await self.get_all(limit=1, join_={"memberships"})

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/app/core/controller/base.py", line 69, in get_all

pandabi-backend | response = await self.repository.get_all(skip, limit, join_)

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/app/core/repository/base.py", line 48, in get_all

pandabi-backend | return await self._all_unique(query)

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/app/core/repository/base.py", line 124, in _all_unique

pandabi-backend | result = await self.session.execute(query)

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/ext/asyncio/scoping.py", line 589, in execute

pandabi-backend | return await self._proxied.execute(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/ext/asyncio/session.py", line 461, in execute

pandabi-backend | result = await greenlet_spawn(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 201, in greenlet_spawn

pandabi-backend | result = context.throw(*sys.exc_info())

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/orm/session.py", line 2351, in execute

pandabi-backend | return self._execute_internal(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/orm/session.py", line 2236, in _execute_internal

pandabi-backend | result: Result[Any] = compile_state_cls.orm_execute_statement(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/orm/context.py", line 293, in orm_execute_statement

pandabi-backend | result = conn.execute(

pandabi-backend | ^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/engine/base.py", line 1418, in execute

pandabi-backend | return meth(

pandabi-backend | ^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/sql/elements.py", line 515, in _execute_on_connection

pandabi-backend | return connection._execute_clauseelement(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/engine/base.py", line 1640, in _execute_clauseelement

pandabi-backend | ret = self._execute_context(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/engine/base.py", line 1846, in _execute_context

pandabi-backend | return self._exec_single_context(

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/engine/base.py", line 1986, in _exec_single_context

pandabi-backend | self._handle_dbapi_exception(

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/engine/base.py", line 2353, in _handle_dbapi_exception

pandabi-backend | raise sqlalchemy_exception.with_traceback(exc_info[2]) from e

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/engine/base.py", line 1967, in _exec_single_context

pandabi-backend | self.dialect.do_execute(

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/engine/default.py", line 924, in do_execute

pandabi-backend | cursor.execute(statement, parameters)

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/dialects/postgresql/asyncpg.py", line 572, in execute

pandabi-backend | self._adapt_connection.await_(

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 132, in await_only

pandabi-backend | return current.parent.switch(awaitable) # type: ignore[no-any-return,attr-defined] # noqa: E501

pandabi-backend | ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/util/_concurrency_py3k.py", line 196, in greenlet_spawn

pandabi-backend | value = await result

pandabi-backend | ^^^^^^^^^^^^

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/dialects/postgresql/asyncpg.py", line 550, in _prepare_and_execute

pandabi-backend | self._handle_exception(error)

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/dialects/postgresql/asyncpg.py", line 501, in _handle_exception

pandabi-backend | self._adapt_connection._handle_exception(error)

pandabi-backend | File "/root/.cache/pypoetry/virtualenvs/pandasai-server-9TtSrW0h-py3.11/lib/python3.11/site-packages/sqlalchemy/dialects/postgresql/asyncpg.py", line 784, in _handle_exception

pandabi-backend | raise translated_error from error

pandabi-backend | sqlalchemy.exc.ProgrammingError: (sqlalchemy.dialects.postgresql.asyncpg.ProgrammingError) <class 'asyncpg.exceptions.UndefinedTableError'>: relation "user" does not exist

pandabi-backend | [SQL: SELECT anon_1.id, anon_1.email, anon_1.first_name, anon_1.created_at, anon_1.password, anon_1.verified, anon_1.last_name, anon_1.features, organization_1.id AS id_1, organization_1.name, organization_1.url, organization_1.is_default, organization_1.settings, organization_membership_1.id AS id_2, organization_membership_1.user_id, organization_membership_1.organization_id, organization_membership_1.role, organization_membership_1.verified AS verified_1

pandabi-backend | FROM (SELECT "user".id AS id, "user".email AS email, "user".first_name AS first_name, "user".created_at AS created_at, "user".password AS password, "user".verified AS verified, "user".last_name AS last_name, "user".features AS features

pandabi-backend | FROM "user"

pandabi-backend | LIMIT $1::INTEGER OFFSET $2::INTEGER) AS anon_1 LEFT OUTER JOIN organization_membership AS organization_membership_1 ON anon_1.id = organization_membership_1.user_id LEFT OUTER JOIN organization AS organization_1 ON organization_1.id = organization_membership_1.organization_id]

pandabi-backend | [parameters: (1, 0)]

pandabi-backend | (Background on this error at: https://sqlalche.me/e/20/f405)

pandabi-backend |

pandabi-backend | ERROR: Application startup failed. Exiting. | closed | 2024-10-24T10:12:29Z | 2024-12-19T14:34:42Z | https://github.com/sinaptik-ai/pandas-ai/issues/1408 | [

"duplicate"

] | hamxahbhatti | 3 |

thewhiteh4t/pwnedOrNot | api | 18 | UnicodeEncodeError: 'ascii' codec can't encode character '\xe9' in position 36: ordinal not in range(128) | **Describe the bug**

An error is displayed in the latest version : `UnicodeEncodeError: 'ascii' codec can't encode character '\xe9' in position 36: ordinal not in range(128)`

**To Reproduce**

Steps to reproduce the behavior:

`docker run -it thewhiteh4t/pwnedornot ./pwnedornot.py -e test@yopmail.com`

**Expected behavior**

No errors.

**Desktop (please complete the following information):**

- docker version : `18.09.5-ce`

- pwnedOrNot version : `1.1.7` | closed | 2019-04-18T10:42:00Z | 2019-04-22T16:54:24Z | https://github.com/thewhiteh4t/pwnedOrNot/issues/18 | [] | johackim | 8 |

widgetti/solara | jupyter | 913 | Compatible with pytest-playwright 0.6.2 | Because we pin playwright, we use an old pytest-playwright:

```

...

Collecting pytest-playwright (from pytest-ipywidgets==1.42.0->pytest-ipywidgets==1.42.0)

Downloading pytest_playwright-0.5.2-py3-none-any.whl.metadata (1.5 kB)

...

```

However, the latest version (0.6.2) is not compatible with out pytest-ipywidgets library. | open | 2024-12-06T13:47:44Z | 2025-01-30T09:57:23Z | https://github.com/widgetti/solara/issues/913 | [] | maartenbreddels | 1 |

saulpw/visidata | pandas | 2,138 | [sidebar] Sidebar crashes if screen is resized | **Small description**

Sidebar crashes if screen is resized

**Expected result**

No crashes.

**Actual result with screenshot**

```

Traceback (most recent call last):

File "/usr/bin/vd", line 6, in <module>

visidata.main.vd_cli()

File "/usr/lib/python3.9/site-packages/visidata/main.py", line 378, in vd_cli

rc = main_vd()

File "/usr/lib/python3.9/site-packages/visidata/main.py", line 338, in main_vd

run(vd.sheets[0])

File "/usr/lib/python3.9/site-packages/visidata/vdobj.py", line 33, in _vdfunc

return getattr(visidata.vd, func.__name__)(*args, **kwargs)

File "/usr/lib/python3.9/site-packages/visidata/extensible.py", line 65, in wrappedfunc

return oldfunc(*args, **kwargs)

File "/usr/lib/python3.9/site-packages/visidata/extensible.py", line 65, in wrappedfunc

return oldfunc(*args, **kwargs)

File "/usr/lib/python3.9/site-packages/visidata/mainloop.py", line 303, in run

ret = vd.mainloop(scr)

File "/usr/lib/python3.9/site-packages/visidata/extensible.py", line 65, in wrappedfunc

return oldfunc(*args, **kwargs)

File "/usr/lib/python3.9/site-packages/visidata/mainloop.py", line 178, in mainloop

self.draw_all()

File "/usr/lib/python3.9/site-packages/visidata/mainloop.py", line 129, in draw_all

vd.drawSidebar(vd.scrFull, vd.activeSheet)

File "/usr/lib/python3.9/site-packages/visidata/sidebar.py", line 45, in drawSidebar

return sheet.drawSidebarText(scr, text=sheet.current_sidebar, overflowmsg=overflowmsg, bottommsg=bottommsg)

File "/usr/lib/python3.9/site-packages/visidata/sidebar.py", line 110, in drawSidebarText

clipdraw(sidebarscr, h-1, winw-dispwidth(bottommsg)-4, '|'+bottommsg+'|[:]', cattr)

File "/usr/lib/python3.9/site-packages/visidata/cliptext.py", line 206, in clipdraw

assert x >= 0, x

AssertionError: -1

```

https://asciinema.org/a/U6nrWmSZxAVnMxPBeAaWG4I25

**Steps to reproduce with sample data and a .vd**

Open VisiData with the sidebar on. Then resize the screen. in this case horizontally, it can have this stack trace.

**Additional context**

Please include the version of VisiData and Python. Latest develop. Python 3.9.2

| closed | 2023-11-27T01:02:20Z | 2023-11-28T00:04:09Z | https://github.com/saulpw/visidata/issues/2138 | [

"bug",

"fixed"

] | frosencrantz | 4 |

vitalik/django-ninja | pydantic | 1,347 | [BUG] Same path apis with different method and async sync are mixed then all considered as async when testing | **Describe the bug**

if same path with different method and async sync are mixed then they are all considered as async when testing

i use async for (GET) operation

and use sync for (POST,DELETE,PUT,PATCH) operations

but i got error when testing

example code is below

```python

def test_bug():

router = Router()

@router.get("/test/")

async def test_get(request):

return {"test": "test"}

@router.post("/test/")

def test_post(request):

return {"test": "test"}

client = TestClient(router)

response = client.post("/test/")

```

and it throws an error says

```

AttributeError sys:1: RuntimeWarning: coroutine 'PathView._async_view' was never awaited

```

so i found PathView._async_view from

```python

client.urls[0].callback # also for client.urls[1].callback

```

but i found that both callbacks are all PathView._async_view , even for the sync view (POST method)

and the reason is that when operations are added to Router()

for same path , then even if one operation is async , then all considered async

```python

class PathView:

def __init__(self) -> None:

self.operations: List[Operation] = []

self.is_async = False # if at least one operation is async - will become True <---------- Here

self.url_name: Optional[str] = None

def add_operation(

self,

path: str,

methods: List[str],

view_func: Callable,

*,

auth: Optional[Union[Sequence[Callable], Callable, NOT_SET_TYPE]] = NOT_SET,

throttle: Union[BaseThrottle, List[BaseThrottle], NOT_SET_TYPE] = NOT_SET,

response: Any = NOT_SET,

operation_id: Optional[str] = None,

summary: Optional[str] = None,

description: Optional[str] = None,

tags: Optional[List[str]] = None,

deprecated: Optional[bool] = None,

by_alias: bool = False,

exclude_unset: bool = False,

exclude_defaults: bool = False,

exclude_none: bool = False,

url_name: Optional[str] = None,

include_in_schema: bool = True,

openapi_extra: Optional[Dict[str, Any]] = None,

) -> Operation:

if url_name:

self.url_name = url_name

OperationClass = Operation

if is_async(view_func):

self.is_async = True # <----------------------- Here

OperationClass = AsyncOperation

operation = OperationClass(

path,

methods,

view_func,

auth=auth,

throttle=throttle,

response=response,

operation_id=operation_id,

summary=summary,

description=description,

tags=tags,

deprecated=deprecated,

by_alias=by_alias,

exclude_unset=exclude_unset,

exclude_defaults=exclude_defaults,

exclude_none=exclude_none,

include_in_schema=include_in_schema,

url_name=url_name,

openapi_extra=openapi_extra,

)

self.operations.append(operation)

view_func._ninja_operation = operation # type: ignore

return operation

```

i'm having a trouble because of that

is this a bug? or is there any purpose for that?

**Versions (please complete the following information):**

- Python version: 3.11

- Django version: 4.2.5

- Django-Ninja version: 1.2.2

- Pydantic version: 2.8.2

| open | 2024-11-27T07:07:27Z | 2024-12-06T10:02:13Z | https://github.com/vitalik/django-ninja/issues/1347 | [] | LeeJB-48 | 3 |

PokeAPI/pokeapi | graphql | 275 | wormadam name mismatch from evolution chain | The name for wormadam are inconsistent between the /pokemon and /evolution-chain endpoints. The name is shown as wormadam in the evolution chain but at pokemon/413 shows wormadam-plant. I was building an evolution tree and get a 404 when requesting pokemon/wormadam.

see:

[http://pokeapi.co/api/v2/evolution-chain/213/](url)

[http://pokeapi.co/api/v2/pokemon/413](url)

| closed | 2016-10-27T18:38:19Z | 2016-10-28T13:44:50Z | https://github.com/PokeAPI/pokeapi/issues/275 | [] | hshtgbrendo | 1 |

youfou/wxpy | api | 396 | 发送图片的时候出现错误 | File "<console>", line 1, in <module>

File "C:\Users\[UserName]\AppData\Local\Programs\Python\Python37\lib\site-packages\wxpy\api\chats\chat.py", line 54, in wrapped

ret = do_send()

File "C:\Users\[UserName]\AppData\Local\Programs\Python\Python37\lib\site-packages\wxpy\utils\misc.py", line 72, in wrapped

smart_map(check_response_body, ret)

File "C:\Users\[UserName]\AppData\Local\Programs\Python\Python37\lib\site-packages\wxpy\utils\misc.py", line 207, in smart_map

return func(i, *args, **kwargs)

File "C:\Users\[UserName]\AppData\Local\Programs\Python\Python37\lib\site-packages\wxpy\utils\misc.py", line 53, in check_response_body

raise ResponseError(err_code=err_code, err_msg=err_msg)

wxpy.exceptions.ResponseError: err_code: 1; err_msg: | open | 2019-07-02T07:32:39Z | 2019-10-24T06:21:05Z | https://github.com/youfou/wxpy/issues/396 | [] | remiliacn | 4 |

littlecodersh/ItChat | api | 451 | syncCheck return retcode=0, and selector=3 | 我实现一个定时发布图片和文字功能,我能够看到数据成功发送,但是调用syncCheck之后,返回retcode=0, and selector=3。这个问题导致每次调用syncCheck之后都是立即返回,而不是等待25秒。请问有selector的可能值吗?或者知道什么原因吗?多谢 | closed | 2017-07-17T21:41:55Z | 2017-09-20T02:48:40Z | https://github.com/littlecodersh/ItChat/issues/451 | [

"question"

] | tryuefang | 2 |

sergree/matchering | numpy | 28 | Hardware assisted virtualization and data execution protection must be enabled | Hi, I get this error, but I do have virtualization turned on

Because I'm running VMware and Bluestacks on my own computer

https://imgur.com/a/DMgrSjn | closed | 2021-02-04T04:56:01Z | 2022-08-14T09:28:05Z | https://github.com/sergree/matchering/issues/28 | [] | johnnygodsa | 1 |

matplotlib/mplfinance | matplotlib | 156 | Tight does not affect the headline | The new layout is certainly more compact, but somehow that doesn't seem to apply to the headline.

```

mpf.plot(stock,

type='candle',

volume=volume,

addplot=add_plots,

title=index_name + ' : ' + ticker + ' (' + datum + ')',

ylabel='Kurs',

ylabel_lower='Volumen',

style='yahoo',

figscale=3.0,

savefig=save,

tight_layout=True,

closefig=True)

```

| closed | 2020-06-08T06:57:35Z | 2020-06-08T18:33:06Z | https://github.com/matplotlib/mplfinance/issues/156 | [

"enhancement",

"question",

"released"

] | fxhuhn | 2 |

FactoryBoy/factory_boy | sqlalchemy | 1,085 | Default kwargs of SubFactory does not work as expected | #### Description

When instantiating Factory object with nested SubFactory, in some circumstances, the input provided by user does not override default values as it should.

#### To Reproduce

##### Model / Factory code

```python

from dataclasses import dataclass

from factory import Factory, SubFactory, SelfAttribute

@dataclass

class Company:

name: str

@dataclass

class Department:

name: str

company: Company

@dataclass

class Employee:

name: str

department: Department

company: Company

class CompanyFactory(Factory):

class Meta:

model = Company

name = "company"

class DepartmentFactory(Factory):

class Meta:

model = Department

company = SubFactory(CompanyFactory)

name = "department"

class EmployeeFactory(Factory):

class Meta:

model = Employee

company = SubFactory(CompanyFactory)

department = SubFactory(DepartmentFactory, company=SelfAttribute("..company"))

name = "employee"

```

##### The issue

Overriding company's name does not work, the result object still links to the default company instead.

```pycon

>>> # This does not work

>>> print(EmployeeFactory(department__company__name="company_2"))

Employee(name='employee', department=Department(name='department', company=Company(name='company')), company=Company(name='company'))

>>> # This still works

>>> print(EmployeeFactory(department__company=CompanyFactory.create(name="company=2"))

Employee(name='employee', department=Department(name='department', company=Company(name='company-2')), company=Company(name='company'))

```

Removing the `company=SelfAttribute("..company")` default attribute then it'll work as usual.

#### Notes

I think the problem lies in how SubFactory resolves default values, it only overrides default values by user-provided values only when there's an exact match:

```python

def unroll_context(self, instance, step, context):

full_context = dict()

full_context.update(self._defaults)

full_context.update(context)

...

```

We might be able to fix this behavior by remove all default keys that are substring of any key exists in context, like this:

```python

def unroll_context(self, instance, step, context):

full_context = dict()

full_context.update(self._defaults)

full_context.update(context)

full_context = {

k: v for k, v in full_context.items()

if not k in self._defaults or not any(ck.startswith(k) for ck in context)

}

...

``` | closed | 2024-08-15T02:21:25Z | 2024-08-19T01:58:01Z | https://github.com/FactoryBoy/factory_boy/issues/1085 | [] | tu-pm | 2 |

jupyter/nbviewer | jupyter | 431 | Unicode notebook rendering pretty bad | Notebooks with unicode are rendering poorly. This doesn't occur with straight `nbconvert`, so must be on this side.

Cursory exploration indicates that just making [this line](https://github.com/jupyter/nbviewer/blob/master/nbviewer/handlers.py#L530):

``` python

html = self.render_template(

"formats/%s.html" % format,

body=u'{}'.format(nbhtml),

```

will fix the issue.

> Hi Dami\u00e1n Avila ;-)

>

> That is really cool, but I noticed that you used unicode representations to get a properly rendered version of your HTML. Did you do that by hand or do you have an automated way of substituting the accents for unicode code?

>

> Without that the non-English slides look awful:

>

> http://nbviewer.ipython.org/format/slides/gist/ocefpaf/cf023a8db7097bd9fe92

>

> Thanks,

>

> -Filipe

| closed | 2015-03-20T20:59:32Z | 2015-03-22T03:02:43Z | https://github.com/jupyter/nbviewer/issues/431 | [] | bollwyvl | 4 |

elliotgao2/toapi | api | 68 | Minor bug when passing url port data to flask | Bug output

~~~ bash

$ toapi run

2017/12/17 19:07:28 [Serving ] OK http://127.0.0.1:5000

Traceback (most recent call last):

File "/home/user/.local/share/virtualenvs/toapi_test-UdiKVlKi/bin/toapi", line 11, in <module>

sys.exit(cli())

File "/home/user/.local/share/virtualenvs/toapi_test-UdiKVlKi/lib/python3.5/site-packages/click/core.py", line 722, in __call__

return self.main(*args, **kwargs)

File "/home/user/.local/share/virtualenvs/toapi_test-UdiKVlKi/lib/python3.5/site-packages/click/core.py", line 697, in main

rv = self.invoke(ctx)

File "/home/user/.local/share/virtualenvs/toapi_test-UdiKVlKi/lib/python3.5/site-packages/click/core.py", line 1066, in invoke

return _process_result(sub_ctx.command.invoke(sub_ctx))

File "/home/user/.local/share/virtualenvs/toapi_test-UdiKVlKi/lib/python3.5/site-packages/click/core.py", line 895, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/home/user/.local/share/virtualenvs/toapi_test-UdiKVlKi/lib/python3.5/site-packages/click/core.py", line 535, in invoke

return callback(*args, **kwargs)

File "/home/user/.local/share/virtualenvs/toapi_test-UdiKVlKi/lib/python3.5/site-packages/toapi/cli.py", line 61, in run

app.api.serve(ip=ip, port=port)

File "/home/user/.local/share/virtualenvs/toapi_test-UdiKVlKi/lib/python3.5/site-packages/toapi/api.py", line 42, in serve

self.app.run(ip, port, debug=False, **options)

File "/home/user/.local/share/virtualenvs/toapi_test-UdiKVlKi/lib/python3.5/site-packages/flask/app.py", line 841, in run

run_simple(host, port, self, **options)

File "/home/user/.local/share/virtualenvs/toapi_test-UdiKVlKi/lib/python3.5/site-packages/werkzeug/serving.py", line 733, in run_simple

raise TypeError('port must be an integer')

TypeError: port must be an integer

~~~ | closed | 2017-12-17T18:11:50Z | 2017-12-18T01:15:03Z | https://github.com/elliotgao2/toapi/issues/68 | [] | Daniel-at-github | 0 |

dynaconf/dynaconf | django | 303 | [RFC] support for embedding settings in a library | I'm trying to right now but struggling to understand how to configure it

The [list of options here](https://dynaconf.readthedocs.io/en/latest/guides/configuration.html#configuration-options) is quite confusing (as well as: the table extends off the rhs of the page but Chrome does not show a horizontal scrollbar).

in `clitool/conf/__init__.py` I have instantiated:

```python

settings = LazySettings(

ENVVAR_PREFIX_FOR_DYNACONF='CLITOOL',

SETTINGS_FILE_FOR_DYNACONF="clitool.ini",

)

settings.update({

"MAX_LEVEL": 10,

"ALLOW_THINGS": False,

})

```

I can now:

```python

from clitool.conf import settings

settings.MAX_LEVEL

>>> 10

```

I would then like users of `clitool` to be able to create a `clitool.ini` in the root of their own project and have values loaded from there override the defaults I set directly in `clitool.conf.settings` when they run `clitool`.

I tried creating such a file but it does not seem to be loaded.

I'm sure this must be possible with dynaconf but it's not clear how from the docs.

One problem I have is to know what the file paths for `SETTINGS_FILE_FOR_DYNACONF` are relative to. Are these files in my project (which users will install from pypi)? Or relative to the CWD when the code is run?

How to specify the format of the file? By file extension only? Or can I have a `.clitool` file in TOML format for example?

taken from #155 | closed | 2020-02-27T21:16:46Z | 2020-09-12T04:13:58Z | https://github.com/dynaconf/dynaconf/issues/303 | [

"Not a Bug",

"RFC",

"HIGH",

"redhat"

] | rochacbruno | 1 |

pytest-dev/pytest-cov | pytest | 581 | Debug advice on intermittent FileNotFound error when collecting distributed coverage data in Jenkins | # Summary

We have some random Jenkins build failures. Some pytest runs fail (after all actual tests succeed) with an `INTERNALERROR`, when coverage data is collected from the `pytest-xdist` workers. I don't know how to proceed, or what I can do to get more debug output. Thanks in advance!

## Expected vs actual result

Some times the result is as expected (coverage data is collected just fine), some times it is not.

# Reproducer

## Versions

We use Python 3.9.10 on GNU/Linux. Excerpt from our `setup.cfg`:

```cfg

[options.extras_require]

test =

coverage >= 7.1, < 7.2

pytest >= 7.2, < 7.3

pytest-metadata != 2.0.0

pytest-cov >= 4.0, < 4.1

pytest-html >= 3.2, < 3.3

pytest-sugar >= 0.9, < 0.10

pytest-xdist >= 3.1, < 3.2

```

## Config

Excerpt from our (anonymized) `pyproject.toml`

```toml

[tool.pytest.ini_options]

addopts = "-q --self-contained-html --css=tests/fixtures/report.css"

markers = [

"unit: marks tests as unit tests. Will be added automatically if not integration, verification or validation test.",

"integration: marks tests as integration test.",