repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

erdewit/ib_insync | asyncio | 387 | possible documentation error with updateEvent | First thanks for developing this library. It has proved very useful.

The API documentation (https://ib-insync.readthedocs.io/api.html) states the following:

```

updateEvent (): Is emitted after a network packet has been handeled.

barUpdateEvent (bars: BarDataList, hasNewBar: bool): Emits the bar list that has been updated in real time. If a new bar has been added then hasNewBar is True, when the last bar has changed it is False.

```

However, in the code the BarDataList class only has an updateEvent attribute meaning that it's not possible to attach a barUpdateEvent to such an object.

It seems that the updateEvent is overloaded depending on the data type it is attached to. This is slightly confusing at first because it conflicts with the above documentation. Furthermore, the updateEvent when overloaded on a specific data type can actually take arguments, further contradicting with the documentation.

It seems the intention is to attach an updateEvent callback to a specific instance of a data type but if a generic behaviour is desired over all instances of such events then a callback can be attached to the instance of ib and the type of event the callback should be triggered by. For example:

```

bars = self.ib.reqHistoricalData()

# either of the below would work in triggering self.on_new_bar() when a barUpdateEvent occurs

self.ib.barUpdateEvent += self.on_new_bar

bars.updateEvent += self.on_new_bar

```

It's not obvious from the documentation which paradigm should be used and why. | closed | 2021-07-16T14:55:16Z | 2021-07-29T14:02:05Z | https://github.com/erdewit/ib_insync/issues/387 | [] | laker-93 | 1 |

kennethreitz/responder | flask | 217 | ModuleNotFoundError: No module named 'starlette.debug' | I got the following error when running docs examples with Responder 1.1.1

```

Traceback (most recent call last):

File "myapp/api.py", line 1, in <module>

import responder

File "/home/julien/.local/share/virtualenvs/python-spa-starter-Pu8Jxsg6/lib/python3.7/site-packages/responder/__init__.py", line 1, in <module>

from .core import *

File "/home/julien/.local/share/virtualenvs/python-spa-starter-Pu8Jxsg6/lib/python3.7/site-packages/responder/core.py", line 1, in <module>

from .api import API

File "/home/julien/.local/share/virtualenvs/python-spa-starter-Pu8Jxsg6/lib/python3.7/site-packages/responder/api.py", line 16, in <module>

from starlette.debug import DebugMiddleware

ModuleNotFoundError: No module named 'starlette.debug'

```

with this code

```python

import responder

api = responder.API()

@api.route("/{greeting}")

async def greet_world(req, resp, *, greeting):

resp.text = f"{greeting}, world!"

if __name__ == '__main__':

api.run()

``` | closed | 2018-11-09T13:31:18Z | 2018-11-15T10:50:42Z | https://github.com/kennethreitz/responder/issues/217 | [] | jkermes | 5 |

AlexMathew/scrapple | web-scraping | 49 | Add tests | Tests for verifying CSV output from run/generate need to be added. #44 and #46

| closed | 2015-03-15T12:50:46Z | 2017-02-24T08:44:15Z | https://github.com/AlexMathew/scrapple/issues/49 | [] | AlexMathew | 0 |

sktime/pytorch-forecasting | pandas | 1,099 | when use weight param in TimeSeriesDataSet, raised RuntimeError: The size of tensor a (128) must match the size of tensor b (12) at non-singleton dimension 1 | - PyTorch-Forecasting version:0.10.1

- PyTorch version: 1.11.0

- Python version:3.7.4

- Operating System: centos

### Expected behavior

I executed code

TimeSeriesDataSet(

data[lambda x: x.time_idx <= training_cutoff],

time_idx="time_idx",

target="value",

group_ids=["series"],

time_varying_unknown_reals=["value"],

max_encoder_length=context_length,

max_prediction_length=prediction_length,

target_normalizer="auto",

**weight="weight"**

)

in order to add weight to part important sample and expected to get advanced result

### Actual behavior

However, it raised error :

RuntimeError: The size of tensor a (128) must match the size of tensor b (12) at non-singleton dimension 1

. I think there is an error in the current code :

File /pathtopython/site-packages/pytorch_forecasting/metrics.py:1006, in MASE.update(self, y_pred, target, encoder_target, encoder_lengths)

1004 # weight samples

1005 if weight is not None:

-> 1006 losses = losses * weight.unsqueeze(-1)

I printed the shape of losses and weight , which were the same shape . When I change the code to losses = losses * weight, the error didn't occur. And advanced result was obtained.

Please check if this is a problem.

| open | 2022-08-12T05:46:04Z | 2023-04-04T10:34:39Z | https://github.com/sktime/pytorch-forecasting/issues/1099 | [] | tuhulihongbing | 3 |

Lightning-AI/pytorch-lightning | data-science | 19,803 | Current FSDPPrecision does not support custom scaler for 16-mixed precision | ### Bug description

`self.precision` here inherits from parent class `Precision`, so it is always "32-true"

The subsequent definition of `self.scaler` also assigns `None` if the `scaler is not None` and `precision == "16-mixed"`.

Is this intentional or a bug?

### What version are you seeing the problem on?

v2.2

### How to reproduce the bug

_No response_

### Error messages and logs

```

# Error messages and logs here please

```

### Environment

<details>

<summary>Current environment</summary>

```

#- Lightning Component (e.g. Trainer, LightningModule, LightningApp, LightningWork, LightningFlow):

#- PyTorch Lightning Version (e.g., 1.5.0):

#- Lightning App Version (e.g., 0.5.2):

#- PyTorch Version (e.g., 2.0):

#- Python version (e.g., 3.9):

#- OS (e.g., Linux):

#- CUDA/cuDNN version:

#- GPU models and configuration:

#- How you installed Lightning(`conda`, `pip`, source):

#- Running environment of LightningApp (e.g. local, cloud):

```

</details>

### More info

_No response_ | open | 2024-04-23T04:14:07Z | 2024-04-23T04:14:07Z | https://github.com/Lightning-AI/pytorch-lightning/issues/19803 | [

"bug",

"needs triage"

] | SongzhouYang | 0 |

pyg-team/pytorch_geometric | deep-learning | 9,786 | Graphormer-GD Implementation | ### 🚀 The feature, motivation and pitch

Dear pyg community,

I propose to implement the graphormer-gd transformer layer from the paper [Rethinking the Expressive Power of GNNs via Graph Biconnectivity](https://arxiv.org/abs/2301.09505) into pyg. The implementation would be similar to e.g. GraphSAGE. There is already an implementation on github, however, it does not use pyg. See here for reference: https://github.com/lsj2408/Graphormer-GD

I'm happy to take this over, however I first want to check that a PR like this would be accepted. Let me know what you think!

### Alternatives

_No response_

### Additional context

_No response_ | open | 2024-11-14T16:01:17Z | 2024-11-14T16:01:17Z | https://github.com/pyg-team/pytorch_geometric/issues/9786 | [

"feature"

] | goelzva | 0 |

microsoft/nni | data-science | 5,298 | cannot fix the mask of the interdependent layers | **Describe the issue**:

When i pruned the segmentation model.After saving the mask.pth,when i speed up ,the mask cannot fix the new architecture of the model

**Environment**:

- NNI version:2.0

- Training service (local|remote|pai|aml|etc):local

- Client OS:ubuntu

- Server OS (for remote mode only):

- Python version:3.7

- PyTorch/TensorFlow version:pytorch

- Is conda/virtualenv/venv used?:yes

- Is running in Docker?:no

**Configuration**:

- Experiment config (remember to remove secrets!):

- Search space:

**Log message**:

- nnimanager.log:

- dispatcher.log:

- nnictl stdout and stderr:

mask_conflict.py,line195,in fix_mask_conflict

assert shape[0] % group == 0

AssertionError

when i print shape[0] and group:

32 32

32 32

16 1

96 96

96 96

24 320

the group==320 is bigger than the shape[0]==24

how can i fix the problem?

<!--

Where can you find the log files:

LOG: https://github.com/microsoft/nni/blob/master/docs/en_US/Tutorial/HowToDebug.md#experiment-root-director

STDOUT/STDERR: https://nni.readthedocs.io/en/stable/reference/nnictl.html#nnictl-log-stdout

-->

**How to reproduce it?**: | closed | 2022-12-23T11:12:32Z | 2023-02-24T02:36:58Z | https://github.com/microsoft/nni/issues/5298 | [] | sungh66 | 10 |

microsoft/MMdnn | tensorflow | 900 | shape inference error in depthwise_conv | Platform: ubuntu

MMdnn version: 0.3.1

Source framework with version: Tensorflow 1.13.1

Pre-trained model: mobilenet_v1

from https://github.com/tensorflow/models/tree/master/research/slim/nets

Running scripts: mmtoir

Description: shape inference error in parsing depthwise_conv (converting from tf-slim model to IR file), the output shape's channel won't match with depthwise_conv's kernel channel

| open | 2020-10-10T05:05:07Z | 2020-10-12T06:44:29Z | https://github.com/microsoft/MMdnn/issues/900 | [] | xianyuggg | 2 |

gradio-app/gradio | data-visualization | 10,171 | Allow specifying custom dictionary keys for event input. | - [x] I have searched to see if a similar issue already exists.

**Is your feature request related to a problem? Please describe.**

When using dictionaries as event inputs in Gradio, we can only use component objects themselves as dictionary keys. This makes it difficult to separate event handling functions from component creation code, as the functions need direct access to the component objects.

**Describe the solution you'd like**

Allow specifying custom string keys when using dictionaries as event inputs. | open | 2024-12-10T20:16:39Z | 2024-12-10T20:19:57Z | https://github.com/gradio-app/gradio/issues/10171 | [

"enhancement",

"needs designing"

] | L9qmzn | 0 |

axnsan12/drf-yasg | rest-api | 395 | Add support to include generated or custom openapi schema into UI view's HTML output | Is there a way to set the `swagger-ui` `spec` property using the generated schema instead of the `url` property when loading swagger-ui via `drf-yasg`?

By including the schema in the HTML file directly, the generated HTML page with swagger-ui should be able to be "saved" for offline reading, as well as avoid a 2nd request back to the server for the schema.

I'm coming from using `django-rest-swagger` which already does this (https://github.com/marcgibbons/django-rest-swagger/blob/master/rest_framework_swagger/templates/rest_framework_swagger/index.html#L61), rather than making `swagger-ui` fetch the schema in a 2nd request. I know this would have implications for the `REFETCH_SCHEMA_*` settings, but we don't use those currently.

If I find a clean solution, I'll try to submit a PR.

| open | 2019-06-27T18:15:26Z | 2025-03-07T12:16:23Z | https://github.com/axnsan12/drf-yasg/issues/395 | [

"triage"

] | devmonkey22 | 1 |

robotframework/robotframework | automation | 4,626 | Inconsistent argument conversion when using `None` as default value with Python 3.11 and earlier | there is something strange happening with Python 3.11 and type hints and auto conversion.

When you have a Type Hint Any and default Value is None in 3.11 'none' is always converted to None while in 3.10. it stays a string.

Given that Test:

```robotframework

*** Settings ***

Library mylib.py

*** Test Cases ***

Test

Myfunc none none

Test 2

Myfunc Hello Hello

```

And That Library

```python

from typing import Any, Optional, Union

def myfunc(value, assertion_expected: Any = None):

assert value == assertion_expected, (

"Assertion failed: "

f"{value} ({type(value)}) != {assertion_expected} ({type(assertion_expected)})"

)

```

It fails in Python 3.11 and passes in 3.10

`def myfunc(value, assertion_expected: Optional[Any] = None):`

The Optional[Any] fixes it.

Also Libdoc behaves differently and only shows <Any> as Type hint. | closed | 2023-01-31T18:55:54Z | 2023-03-15T12:50:24Z | https://github.com/robotframework/robotframework/issues/4626 | [

"bug",

"priority: medium",

"alpha 1"

] | Snooz82 | 6 |

mage-ai/mage-ai | data-science | 5,043 | Add agnostic path for project files | **DBT config yaml**

Working with the magician on different operating systems (Win, Unix, Mac), we discovered that the paths to dbt models are saved differently in yaml files depending on this. for example, `dbts\run_all.yaml` in Win. Is it possible to make path formation independent of the operating system, at least when reading the config or running the pipeline?

https://files.slack.com/files-pri/T03GK6PEQP6-F0727SEC9FF/image.png

https://files.slack.com/files-pri/T03GK6PEQP6-F0727SBMN0M/image.png

**Solution**

Using pathlib instead of os.path

Refactoring File class methods ([git](https://github.com/mage-ai/mage-ai/blob/e84116411f24b98711a8095f291c1a9898c1cd49/mage_ai/data_preparation/models/file.py#L40))

**Example**

```

from pathlib import Path, PureWindowsPath

from platform import system

class AgnosticPath(Path):

def __new__(cls, *args, **kwargs):

new_path = PureWindowsPath(*args).parts

if (system() != "Windows") and (len(new_path) > 0) and (new_path[0] in ("/", "\\")):

new_path = ("/", *new_path[1:])

return super().__new__(Path, *new_path, **kwargs)

``` | open | 2024-05-08T07:09:54Z | 2024-05-08T07:09:54Z | https://github.com/mage-ai/mage-ai/issues/5043 | [] | FelixMiksonAramMeem | 0 |

microsoft/nni | pytorch | 5,660 | nni norm_pruning example error | Hello!

I am trying to locally run a pytorch version of one of the pruning examples (nni/examples/compression/pruning/norm_pruning.py).

However, it seems that the config_list that is generate from the function "auto_set_denpendency_group_ids" has some problem.

**The config_list is:**

[{'op_names': ['layer3.1.conv1'],

'sparse_ratio': 0.5,

'dependency_group_id': '0152545ff8de4d14a8cfe727bf9769d1',

'internal_metric_block': 1},

{'op_names': ['layer1.0.conv1'],

'sparse_ratio': 0.5,

'dependency_group_id': '5c913fb3076441e2af16c32c03758329',

'internal_metric_block': 1},

{'op_names': ['layer2.0.conv1'],

'sparse_ratio': 0.5,

'dependency_group_id': '497a228f19e047d8a26fa94cc97fbabf',

'internal_metric_block': 1},

{'op_names': ['layer4.0.downsample.0'],

'sparse_ratio': 0.5,

'dependency_group_id': 'ea22b181139c4199b090c4e702d85083',

'internal_metric_block': 1},

{'op_names': ['layer3.1.conv2'],

'sparse_ratio': 0.5,

'dependency_group_id': '60ccd2e1a186412e89d256682007b2f7',

'internal_metric_block': 1},

{'op_names': ['layer4.1.conv1'],

'sparse_ratio': 0.5,

'dependency_group_id': '70578023ad6e48c1b14ef44d5e6a0c3f',

'internal_metric_block': 1},

{'op_names': ['conv1'],

'sparse_ratio': 0.5,

'dependency_group_id': '01d9e39d16e94df4838ea98275f5d445',

'internal_metric_block': 1},

{'op_names': ['layer1.0.conv2'],

'sparse_ratio': 0.5,

'dependency_group_id': '01d9e39d16e94df4838ea98275f5d445',

'internal_metric_block': 1},

{'op_names': ['layer3.0.conv1'],

'sparse_ratio': 0.5,

'dependency_group_id': '0cbb55f71a484d64b775d7d82380d0dd',

'internal_metric_block': 1},

{'op_names': ['layer4.0.conv2'],

'sparse_ratio': 0.5,

'dependency_group_id': 'ea22b181139c4199b090c4e702d85083',

'internal_metric_block': 1},

{'op_names': ['layer1.1.conv2'],

'sparse_ratio': 0.5,

'dependency_group_id': '01d9e39d16e94df4838ea98275f5d445',

'internal_metric_block': 1},

{'op_names': ['layer2.0.downsample.0'],

'sparse_ratio': 0.5,

'dependency_group_id': '3a2f40dea91340f296b0e40049ee1b57',

'internal_metric_block': 1},

{'op_names': ['layer2.1.conv1'],

'sparse_ratio': 0.5,

'dependency_group_id': 'aa5de9115c5141aeb1736ed8d9f479fd',

'internal_metric_block': 1},

{'op_names': ['layer4.1.conv2'],

'sparse_ratio': 0.5,

'dependency_group_id': 'ea22b181139c4199b090c4e702d85083',

'internal_metric_block': 1},

{'op_names': ['layer2.1.conv2'],

'sparse_ratio': 0.5,

'dependency_group_id': '3a2f40dea91340f296b0e40049ee1b57',

'internal_metric_block': 1},

{'op_names': ['layer3.0.conv2'],

'sparse_ratio': 0.5,

'dependency_group_id': '60ccd2e1a186412e89d256682007b2f7',

'internal_metric_block': 1},

{'op_names': ['layer4.0.conv1'],

'sparse_ratio': 0.5,

'dependency_group_id': '526922a4a69d4a46b2fdbf937f8283dc',

'internal_metric_block': 1},

{'op_names': ['layer1.1.conv1'],

'sparse_ratio': 0.5,

'dependency_group_id': 'f1dbbaba5cce46698e3efbcd84d48e4c',

'internal_metric_block': 1},

{'op_names': ['layer3.0.downsample.0'],

'sparse_ratio': 0.5,

'dependency_group_id': '60ccd2e1a186412e89d256682007b2f7',

'internal_metric_block': 1},

{'op_names': ['layer2.0.conv2'],

'sparse_ratio': 0.5,

'dependency_group_id': '3a2f40dea91340f296b0e40049ee1b57',

'internal_metric_block': 1}]

**The error I get:**

Or(And({Or('sparsity', 'sparsity_per_layer'): And(<class 'float'>, <function <lambda> at 0x7d11a9a048b0>), Optional('op_types'): And(['Conv2d', 'Linear'], <function CompressorSchema._modify_schema.<locals>.<lambda> at 0x7d11a98b8ca0>), Optional('op_names'): And([<class 'str'>], <function CompressorSchema._modify_schema.<locals>.<lambda> at 0x7d11a74cb520>), Optional('op_partial_names'): [<class 'str'>]}, <function CompressorSchema._modify_schema.<locals>.<lambda> at 0x7d11a74cbeb0>), And({'exclude': <class 'bool'>, Optional('op_types'): And(['Conv2d', 'Linear'], <function CompressorSchema._modify_schema.<locals>.<lambda> at 0x7d11a74cbe20>), Optional('op_names'): And([<class 'str'>], <function CompressorSchema._modify_schema.<locals>.<lambda> at 0x7d11a74cbd90>), Optional('op_partial_names'): [<class 'str'>]}, <function CompressorSchema._modify_schema.<locals>.<lambda> at 0x7d11a74cb490>), And({'total_sparsity': And(<class 'float'>, <function <lambda> at 0x7d11a9a07be0>), Optional('max_sparsity_per_layer'): {<class 'str'>: <class 'float'>}, Optional('op_types'): And(['Conv2d', 'Linear'], <function CompressorSchema._modify_schema.<locals>.<lambda> at 0x7d11a7429ea0>), Optional('op_names'): And([<class 'str'>], <function CompressorSchema._modify_schema.<locals>.<lambda> at 0x7d11a7428790>)}, <function CompressorSchema._modify_schema.<locals>.<lambda> at 0x7d11a7428430>)) did not validate {'op_names': ['layer3.1.conv1'], 'sparse_ratio': 0.5, 'dependenc...

Missing key: Or('sparsity', 'sparsity_per_layer')

Missing key: 'exclude'

Missing key: 'total_sparsity'

Would be happy to understand what I am missing.

Thanks a lot!

Noy

| closed | 2023-08-13T13:32:48Z | 2023-08-17T07:30:54Z | https://github.com/microsoft/nni/issues/5660 | [] | NoyLalzary | 0 |

aio-libs/aiomysql | asyncio | 592 | Any plans to support PyMySQL-1.0.2 | Current version aiomysql require PyMySQL<=0.9.3,>=0.9

Do exist any planning when will be supported PyMySQL > 1.0.0

for example for TLS support. | closed | 2021-06-16T15:34:55Z | 2022-01-13T17:35:56Z | https://github.com/aio-libs/aiomysql/issues/592 | [

"enhancement",

"pymysql"

] | jpVm5jYYRE1VIKL | 3 |

pywinauto/pywinauto | automation | 433 | How should I find and use custom controls of WPF application? | I testing a WPF App, it contains some **custom controls.**. I do not have a way to find the operation in the document

How shuld I do ?

| open | 2017-11-01T09:46:12Z | 2017-11-01T20:09:02Z | https://github.com/pywinauto/pywinauto/issues/433 | [

"enhancement",

"New Feature"

] | fangchaooo | 2 |

yunjey/pytorch-tutorial | pytorch | 75 | captioning code doesn't run | Hi. I am getting the error below. I hope someone can help @jtoy

```

python sample.py --image='png/example.png'

Traceback (most recent call last):

File "sample.py", line 97, in <module>

main(args)

File "sample.py", line 61, in main

sampled_ids = decoder.sample(feature)

File "/home/shas/sandbox/pytorch-tutorial/tutorials/03-advanced/image_captioning/model.py", line 62, in sample

hiddens, states = self.lstm(inputs, states) # (batch_size, 1, hidden_size),

File "/home/shas/miniconda2/lib/python2.7/site-packages/torch/nn/modules/module.py", line 206, in __call__

result = self.forward(*input, **kwargs)

File "/home/shas/miniconda2/lib/python2.7/site-packages/torch/nn/modules/rnn.py", line 91, in forward

output, hidden = func(input, self.all_weights, hx)

File "/home/shas/miniconda2/lib/python2.7/site-packages/torch/nn/_functions/rnn.py", line 343, in forward

return func(input, *fargs, **fkwargs)

File "/home/shas/miniconda2/lib/python2.7/site-packages/torch/nn/_functions/rnn.py", line 243, in forward

nexth, output = func(input, hidden, weight)

File "/home/shas/miniconda2/lib/python2.7/site-packages/torch/nn/_functions/rnn.py", line 83, in forward

hy, output = inner(input, hidden[l], weight[l])

File "/home/shas/miniconda2/lib/python2.7/site-packages/torch/nn/_functions/rnn.py", line 112, in forward

hidden = inner(input[i], hidden, *weight)

File "/home/shas/miniconda2/lib/python2.7/site-packages/torch/nn/_functions/rnn.py", line 30, in LSTMCell

gates = F.linear(input, w_ih, b_ih) + F.linear(hx, w_hh, b_hh)

File "/home/shas/miniconda2/lib/python2.7/site-packages/torch/nn/functional.py", line 449, in linear

return state(input, weight) if bias is None else state(input, weight, bias)

File "/home/shas/miniconda2/lib/python2.7/site-packages/torch/nn/_functions/linear.py", line 10, in forward

output.addmm_(0, 1, input, weight.t())

RuntimeError: matrices expected, got 3D, 2D tensors at /opt/conda/conda-bld/pytorch_1501972792122/work/pytorch-0.1.12/torch/lib/TH/generic/THTensorMath.c:1232

```

I have tried with both `pytorch=0.1.12` and `pytorch=0.2`. None work! | closed | 2017-10-24T01:57:34Z | 2018-05-10T08:56:17Z | https://github.com/yunjey/pytorch-tutorial/issues/75 | [] | ShasTheMass | 3 |

xonsh/xonsh | data-science | 4,934 | xonfig web fails with AssertionError | Steps to reproduce on macOS 12.5.1:

* brew install xonsh

* xonfig web

Webpage doesn't load for long time. Then error in console appears: `Failed to parse color-display ParseError('syntax error: line 1, column 0')`

Details:

<details>

```

(base) maye@Michaels-Mini ~/.config $ xonfig web

Web config started at 'http://localhost:8421'. Hit Crtl+C to stop.

ERROR:root:Failed to format Xonsh code AssertionError("wrong color format 'noinherit'"). 'bw'

Traceback (most recent call last):

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/xonsh_data.py", line 194, in render_colors

display = html_format(token_stream, style=style)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/xonsh_data.py", line 51, in html_format

proxy_style = xonsh_style_proxy(XonshStyle(style))

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/pyghooks.py", line 453, in xonsh_style_proxy

class XonshStyleProxy(Style):

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/pygments/style.py", line 112, in __new__

ndef[4] = colorformat(styledef[3:])

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/pygments/style.py", line 79, in colorformat

assert False, "wrong color format %r" % text

AssertionError: wrong color format 'noinherit'

ERROR:root:Failed to parse color-display ParseError('syntax error: line 1, column 0'). 'import sys\necho "Welcome $USER on" @(sys.platform)\n\ndef func(x=42):\n d = {"xonsh": True}\n return d.get("xonsh") and you\n\n# This is a comment\n![env | uniq | sort | grep PATH]\n'

127.0.0.1 - - [08/Sep/2022 20:52:46] "GET / HTTP/1.1" 200 -

127.0.0.1 - - [08/Sep/2022 20:52:47] "GET /js/bootstrap.min.css HTTP/1.1" 200 -

127.0.0.1 - - [08/Sep/2022 20:52:47] code 404, message File not found

127.0.0.1 - - [08/Sep/2022 20:52:47] "GET /js/xonsh_sticker.svg HTTP/1.1" 404 -

127.0.0.1 - - [08/Sep/2022 20:52:47] code 404, message File not found

127.0.0.1 - - [08/Sep/2022 20:52:47] "GET /favicon.ico HTTP/1.1" 404 -

127.0.0.1 - - [08/Sep/2022 20:52:47] code 404, message File not found

127.0.0.1 - - [08/Sep/2022 20:52:47] "GET /apple-touch-icon-precomposed.png HTTP/1.1" 404 -

127.0.0.1 - - [08/Sep/2022 20:52:47] code 404, message File not found

127.0.0.1 - - [08/Sep/2022 20:52:47] "GET /apple-touch-icon.png HTTP/1.1" 404 -

```

and then wepage loads visually, but any interaction get's a "Safari can't load server" because the process died in the terminal.

A 2nd launch of `xonsh web` throws now a huge error:

```

/xonsh/webconfig stable $ xonfig web

Web config started at 'http://localhost:8421'. Hit Crtl+C to stop.

--- Logging error ---

Traceback (most recent call last):

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/xonsh_data.py", line 194, in render_colors

display = html_format(token_stream, style=style)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/xonsh_data.py", line 51, in html_format

proxy_style = xonsh_style_proxy(XonshStyle(style))

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/pyghooks.py", line 453, in xonsh_style_proxy

class XonshStyleProxy(Style):

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/pygments/style.py", line 112, in __new__

ndef[4] = colorformat(styledef[3:])

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/pygments/style.py", line 79, in colorformat

assert False, "wrong color format %r" % text

AssertionError: wrong color format 'noinherit'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/logging/__init__.py", line 1103, in emit

stream.write(msg + self.terminator)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/base_shell.py", line 182, in write

self.mem.write(s)

ValueError: I/O operation on closed file.

Call stack:

File "/opt/homebrew/bin/xonsh", line 8, in <module>

sys.exit(main())

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/main.py", line 468, in main

sys.exit(main_xonsh(args))

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/main.py", line 512, in main_xonsh

shell.shell.cmdloop()

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/ptk_shell/shell.py", line 407, in cmdloop

self.default(line, raw_line)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/base_shell.py", line 389, in default

exc_info = run_compiled_code(code, self.ctx, None, "single")

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/codecache.py", line 63, in run_compiled_code

func(code, glb, loc)

File "<stdin>", line 1, in <module>

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/built_ins.py", line 196, in subproc_captured_hiddenobject

return xonsh.procs.specs.run_subproc(cmds, captured="hiddenobject", envs=envs)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/specs.py", line 896, in run_subproc

return _run_specs(specs, cmds)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/specs.py", line 931, in _run_specs

command.end()

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/pipelines.py", line 458, in end

self._end(tee_output=tee_output)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/pipelines.py", line 466, in _end

for _ in self.tee_stdout():

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/pipelines.py", line 368, in tee_stdout

for line in self.iterraw():

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/pipelines.py", line 255, in iterraw

proc.wait()

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/proxies.py", line 820, in wait

r = self.f(self.args, stdin, stdout, stderr, spec, spec.stack)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/lazyasd.py", line 80, in __call__

return obj(*args, **kwargs)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/cli_utils.py", line 676, in __call__

result = dispatch(

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/cli_utils.py", line 425, in dispatch

return _dispatch_func(func, ns)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/cli_utils.py", line 398, in _dispatch_func

return func(**kwargs)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/xonfig.py", line 703, in _web

main.main(_args[1:])

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/main.py", line 183, in main

serve(ns.browser)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/main.py", line 169, in serve

httpd.serve_forever()

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/socketserver.py", line 237, in serve_forever

self._handle_request_noblock()

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/socketserver.py", line 316, in _handle_request_noblock

self.process_request(request, client_address)

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/socketserver.py", line 347, in process_request

self.finish_request(request, client_address)

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/socketserver.py", line 360, in finish_request

self.RequestHandlerClass(request, client_address, self)

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/http/server.py", line 658, in __init__

super().__init__(*args, **kwargs)

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/socketserver.py", line 747, in __init__

self.handle()

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/http/server.py", line 432, in handle

self.handle_one_request()

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/http/server.py", line 420, in handle_one_request

method()

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/main.py", line 87, in do_GET

route = self._get_route("get")

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/main.py", line 84, in _get_route

return route_cls(url=url, params=params, xsh=XSH)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/routes.py", line 91, in __init__

self.colors = dict(xonsh_data.render_colors())

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/xonsh_data.py", line 196, in render_colors

logging.error(

Message: 'Failed to format Xonsh code AssertionError("wrong color format \'noinherit\'"). \'bw\''

Arguments: ()

--- Logging error ---

Traceback (most recent call last):

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/routes.py", line 74, in get_display

display = t.etree.fromstring(display)

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/xml/etree/ElementTree.py", line 1342, in XML

parser.feed(text)

xml.etree.ElementTree.ParseError: syntax error: line 1, column 0

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/logging/__init__.py", line 1103, in emit

stream.write(msg + self.terminator)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/base_shell.py", line 182, in write

self.mem.write(s)

ValueError: I/O operation on closed file.

Call stack:

File "/opt/homebrew/bin/xonsh", line 8, in <module>

sys.exit(main())

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/main.py", line 468, in main

sys.exit(main_xonsh(args))

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/main.py", line 512, in main_xonsh

shell.shell.cmdloop()

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/ptk_shell/shell.py", line 407, in cmdloop

self.default(line, raw_line)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/base_shell.py", line 389, in default

exc_info = run_compiled_code(code, self.ctx, None, "single")

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/codecache.py", line 63, in run_compiled_code

func(code, glb, loc)

File "<stdin>", line 1, in <module>

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/built_ins.py", line 196, in subproc_captured_hiddenobject

return xonsh.procs.specs.run_subproc(cmds, captured="hiddenobject", envs=envs)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/specs.py", line 896, in run_subproc

return _run_specs(specs, cmds)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/specs.py", line 931, in _run_specs

command.end()

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/pipelines.py", line 458, in end

self._end(tee_output=tee_output)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/pipelines.py", line 466, in _end

for _ in self.tee_stdout():

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/pipelines.py", line 368, in tee_stdout

for line in self.iterraw():

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/pipelines.py", line 255, in iterraw

proc.wait()

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/procs/proxies.py", line 820, in wait

r = self.f(self.args, stdin, stdout, stderr, spec, spec.stack)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/lazyasd.py", line 80, in __call__

return obj(*args, **kwargs)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/cli_utils.py", line 676, in __call__

result = dispatch(

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/cli_utils.py", line 425, in dispatch

return _dispatch_func(func, ns)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/cli_utils.py", line 398, in _dispatch_func

return func(**kwargs)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/xonfig.py", line 703, in _web

main.main(_args[1:])

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/main.py", line 183, in main

serve(ns.browser)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/main.py", line 169, in serve

httpd.serve_forever()

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/socketserver.py", line 237, in serve_forever

self._handle_request_noblock()

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/socketserver.py", line 316, in _handle_request_noblock

self.process_request(request, client_address)

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/socketserver.py", line 347, in process_request

self.finish_request(request, client_address)

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/socketserver.py", line 360, in finish_request

self.RequestHandlerClass(request, client_address, self)

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/http/server.py", line 658, in __init__

super().__init__(*args, **kwargs)

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/socketserver.py", line 747, in __init__

self.handle()

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/http/server.py", line 432, in handle

self.handle_one_request()

File "/opt/homebrew/Cellar/python@3.10/3.10.6_2/Frameworks/Python.framework/Versions/3.10/lib/python3.10/http/server.py", line 420, in handle_one_request

method()

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/main.py", line 89, in do_GET

return self.render_get(route)

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/main.py", line 75, in render_get

body = t.to_str(route.get()) # type: ignore

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/tags.py", line 125, in to_str

txt = b"".join(_to_str()).decode()

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/tags.py", line 116, in _to_str

for idx, el in enumerate(elems):

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/routes.py", line 145, in get

cols = list(self.get_cols())

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/routes.py", line 107, in get_cols

self.to_card(name, display),

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/routes.py", line 100, in to_card

self.get_display(display),

File "/opt/homebrew/Cellar/xonsh/0.13.1/libexec/lib/python3.10/site-packages/xonsh/webconfig/routes.py", line 76, in get_display

logging.error(f"Failed to parse color-display {ex!r}. {display!r}")

Message: 'Failed to parse color-display ParseError(\'syntax error: line 1, column 0\'). \'import sys\\necho "Welcome $USER on" @(sys.platform)\\n\\ndef func(x=42):\\n d = {"xonsh": True}\\n return d.get("xonsh") and you\\n\\n# This is a comment\\n![env | uniq | sort | grep PATH]\\n\''

Arguments: ()

127.0.0.1 - - [08/Sep/2022 20:57:36] "GET / HTTP/1.1" 200 -

127.0.0.1 - - [08/Sep/2022 20:57:36] code 404, message File not found

127.0.0.1 - - [08/Sep/2022 20:57:36] "GET /js/xonsh_sticker.svg HTTP/1.1" 404 -

```

Ok, so much for my yearly check-in with xonsh. See you next year! :)

</details> | closed | 2022-09-08T18:59:50Z | 2022-09-15T12:55:24Z | https://github.com/xonsh/xonsh/issues/4934 | [

"mac osx",

"xonfig",

"xonfig-web"

] | michaelaye | 7 |

BeanieODM/beanie | asyncio | 318 | Why Input model need to be init with beanie? | Hi I'm using beanie with fastapi.

this is my model:

```

class UserBase(Document):

username: str | None

parent_id: str | None

role_id: str | None

payment_type: str | None

disabled: bool | None

note: str | None

access_token: str | None

class UserIn(UserBase):

username: str

password: str

disabled: bool = False

class User(UserBase):

username: str

password: str

disabled: bool = False

salt: str

class Settings:

name = "users"

class UserOut(UserBase):

note: str | None

access_token: str | None

```

my routes:

```

USERS = APIRouter()

@USERS.post('/users', response_model=UserOut)

async def post_user(user: UserIn):

user_to_crate = User(**user.dict(), salt=get_salt())

await user_to_crate.save()

user_to_response = UserOut(**user_to_crate.dict())

return user_to_response

```

my beane init function:

```

async def init():

# Create Motor client

client = motor.motor_asyncio.AsyncIOMotorClient(

f"mongodb://{getenv('MONGO_USER')}:{getenv('MONGO_PASSWORD')}"

f"@{getenv('MONGO_HOST')}/{getenv('MONGO_DATABASE')}?"

f"replicaSet={getenv('MONGO_REPLICA_SET')}"

f"&authSource={getenv('MONGO_DATABASE')}"

)

# Init beanie

await init_beanie(database=client[f"{getenv('MONGO_DATABASE')}"],

document_models=[User])

```

if i'm not put UserIn to the init beanie function, then call /users, i will get this error:

```

INFO: 172.16.16.7:55512 - "POST /users HTTP/1.0" 500 Internal Server Error

ERROR: Exception in ASGI application

Traceback (most recent call last):

File "/usr/local/lib/python3.10/site-packages/uvicorn/protocols/http/h11_impl.py", line 403, in run_asgi

result = await app(self.scope, self.receive, self.send)

File "/usr/local/lib/python3.10/site-packages/uvicorn/middleware/proxy_headers.py", line 78, in __call__

return await self.app(scope, receive, send)

File "/usr/local/lib/python3.10/site-packages/fastapi/applications.py", line 269, in __call__

await super().__call__(scope, receive, send)

File "/usr/local/lib/python3.10/site-packages/starlette/applications.py", line 124, in __call__

await self.middleware_stack(scope, receive, send)

File "/usr/local/lib/python3.10/site-packages/starlette/middleware/errors.py", line 184, in __call__

raise exc

File "/usr/local/lib/python3.10/site-packages/starlette/middleware/errors.py", line 162, in __call__

await self.app(scope, receive, _send)

File "/usr/local/lib/python3.10/site-packages/starlette/exceptions.py", line 93, in __call__

raise exc

File "/usr/local/lib/python3.10/site-packages/starlette/exceptions.py", line 82, in __call__

await self.app(scope, receive, sender)

File "/usr/local/lib/python3.10/site-packages/fastapi/middleware/asyncexitstack.py", line 21, in __call__

raise e

File "/usr/local/lib/python3.10/site-packages/fastapi/middleware/asyncexitstack.py", line 18, in __call__

await self.app(scope, receive, send)

File "/usr/local/lib/python3.10/site-packages/starlette/routing.py", line 670, in __call__

await route.handle(scope, receive, send)

File "/usr/local/lib/python3.10/site-packages/starlette/routing.py", line 266, in handle

await self.app(scope, receive, send)

File "/usr/local/lib/python3.10/site-packages/starlette/routing.py", line 65, in app

response = await func(request)

File "/usr/local/lib/python3.10/site-packages/fastapi/routing.py", line 217, in app

solved_result = await solve_dependencies(

File "/usr/local/lib/python3.10/site-packages/fastapi/dependencies/utils.py", line 557, in solve_dependencies

) = await request_body_to_args( # body_params checked above

File "/usr/local/lib/python3.10/site-packages/fastapi/dependencies/utils.py", line 692, in request_body_to_args

v_, errors_ = field.validate(value, values, loc=loc)

File "pydantic/fields.py", line 857, in pydantic.fields.ModelField.validate

File "pydantic/fields.py", line 1074, in pydantic.fields.ModelField._validate_singleton

File "pydantic/fields.py", line 1121, in pydantic.fields.ModelField._apply_validators

File "pydantic/class_validators.py", line 313, in pydantic.class_validators._generic_validator_basic.lambda12

File "pydantic/main.py", line 686, in pydantic.main.BaseModel.validate

File "/usr/local/lib/python3.10/site-packages/beanie/odm/documents.py", line 138, in __init__

self.get_motor_collection()

File "/usr/local/lib/python3.10/site-packages/beanie/odm/interfaces/getters.py", line 13, in get_motor_collection

return cls.get_settings().motor_collection

File "/usr/local/lib/python3.10/site-packages/beanie/odm/documents.py", line 779, in get_settings

raise CollectionWasNotInitialized

beanie.exceptions.CollectionWasNotInitialized

```

Then i put UserIn in the beanie init function. Everything is working.

```

# Init beanie

await init_beanie(database=client[f"{getenv('MONGO_DATABASE')}"],

document_models=[User,UserIn])

```

I just want the UserIn model to validate input data and beanie try to find it in the db.

Models not mapped to db should not have anything to do with the db. Right?

| closed | 2022-07-29T04:42:10Z | 2022-07-30T05:58:07Z | https://github.com/BeanieODM/beanie/issues/318 | [] | nghianv19940 | 3 |

joeyespo/grip | flask | 161 | automatically open in a browser | Easily done with [`webbrowser`](https://docs.python.org/2/library/webbrowser.html), see [`antigravity.py`](https://github.com/python/cpython/blob/master/Lib/antigravity.py) for example usage. ;-)

``` python

import webbrowser

webbrowser.open("http://localhost:6419/")

```

| closed | 2016-01-21T21:20:51Z | 2016-01-21T22:07:36Z | https://github.com/joeyespo/grip/issues/161 | [

"already-implemented"

] | chadwhitacre | 2 |

TracecatHQ/tracecat | pydantic | 61 | Add PII redacting loggers | Related to #62 | closed | 2024-04-18T01:51:31Z | 2024-07-02T20:46:55Z | https://github.com/TracecatHQ/tracecat/issues/61 | [] | daryllimyt | 0 |

lanpa/tensorboardX | numpy | 450 | add_image() won't work without NVIDIA GPU | **Describe the bug**

When calling add_image() for a CPU FloatTensor, it still fails in case there is no NVIDIA GPU available. The reason for this is an assert in summary.py which will check whether the tensor is an instance of torch.cuda.FloatTensor. This causes the following behaviour:

```

python test.py

Traceback (most recent call last):

File "test.py", line 7, in <module>

writer.add_image("tag", a, 1)

File "/home/ubuntu/.local/lib/python3.6/site-packages/tensorboardX/writer.py", line 277, in add_image

self.file_writer.add_summary(image(tag, img_tensor), global_step)

File "/home/ubuntu/.local/lib/python3.6/site-packages/tensorboardX/summary.py", line 157, in image

assert isinstance(tensor, np.ndarray) or isinstance(tensor, torch.cuda.FloatTensor) or isinstance(tensor, torch.FloatTensor), 'input tensor should be one of numpy.ndarray, torch.cuda.FloatTensor, torch.FloatTensor'

File "/home/ubuntu/.local/lib/python3.6/site-packages/torch/cuda/__init__.py", line 162, in _lazy_init

_check_driver()

File "/home/ubuntu/.local/lib/python3.6/site-packages/torch/cuda/__init__.py", line 82, in _check_driver

http://www.nvidia.com/Download/index.aspx""")

AssertionError:

Found no NVIDIA driver on your system. Please check that you

have an NVIDIA GPU and installed a driver from

http://www.nvidia.com/Download/index.aspx

```

**Minimal runnable code to reproduce the behavior**

```

import torch

from tensorboardX import SummaryWriter

a = torch.FloatTensor(3, 1, 1)

a.zero_()

writer = SummaryWriter()

writer.add_image("tag", a, 1)

```

**Expected behavior**

add_image() works successfully for CPU FloatTensor even if no NVIDIA GPU is installed.

**Environment**

tensorboard (1.13.1)

tensorboard-pytorch (0.7.1)

tensorflow (1.13.1)

tensorflow-estimator (1.13.0)

torch (1.1.0)

torchvision (0.3.0)

**Additional context**

Issue is fixed if order of checks in assert is modified as short-circuit behaviour of **or** will cause no check for cuda.FloatTensor if CPU FloatTensor was already successfully confirmed:

`assert isinstance(tensor, np.ndarray) or isinstance(tensor, torch.FloatTensor) or isinstance(tensor, torch.cuda.FloatTensor), 'input tensor should be one of numpy.ndarray, torch.FloatTensor, torch.cuda.FloatTensor'` | closed | 2019-06-16T16:33:50Z | 2019-10-21T11:57:38Z | https://github.com/lanpa/tensorboardX/issues/450 | [] | blDraX | 1 |

geopandas/geopandas | pandas | 3,338 | BUG: to_postgis broken using sqlalchemy 1.4 | - [X ] I have checked that this issue has not already been reported.

- [X ] I have confirmed this bug exists on the latest version of geopandas.

- [ ] (optional) I have confirmed this bug exists on the main branch of geopandas.

---

#### Problem description

I'm trying to inject a geodataframe content into my postgis db using to_postgis method, geopandas 0.14.4 and sqlalchemy 1.4.51.

Whatever I do it fails with "geometry (geometry(POINT,4326)) not a string" error

minified example (stolen from an old ticket about to_postgis not working with the same not a string error)

```python

import geopandas as gpd

from shapely.geometry import Point

from sqlalchemy import create_engine

engine = create_engine('postgresql://XXX@XXX/XXX')

gpd.GeoDataFrame([], geometry=[Point(10,10)], crs='epsg:4326').to_postgis('test_table', engine)

```

Tried to change the con parameter with engine, connection or whatever, or using dtype, but I've never been able to make it work.

I also get the infamous warning "UserWarning: pandas only supports SQLAlchemy connectable blabla Please consider using SQLAlchemy." but f it I'm using sqlalchemy so I don't know why it's complaining.

After a while I figured out the same example works fine using sqlalchemy2 (but I would like to keep sqlalchemy 1.4)

As far as I can tell sqlalchemy 1.4 is still supposed to be supported (but the warning should be changed to **Please consider using SQLAlchemy2.** )

#### Expected Output

#### Output of ``geopandas.show_versions()``

<details>

geopandas : 0.14.4

numpy : 1.26.4

pandas : 2.2.2

pyproj : 3.6.1

shapely : 2.0.4

fiona : 1.9.6

geoalchemy2: 0.15.1

geopy : None

matplotlib : 3.9.0

mapclassify: None

pygeos : None

pyogrio : None

psycopg2 : 2.9.9 (dt dec pq3 ext lo64)

pyarrow : None

rtree : 1.2.0

</details>

| closed | 2024-06-12T15:45:29Z | 2024-06-13T15:22:29Z | https://github.com/geopandas/geopandas/issues/3338 | [

"bug",

"needs triage"

] | fvallee-bnx | 2 |

plotly/dash-cytoscape | dash | 192 | [BUG] Elements positions don't match specification in preset layout | #### Description

https://github.com/plotly/dash-cytoscape/assets/101562106/7e767f5a-9edb-4cb5-b160-a4324b7ce2d3

- We have a Dash App with two main callbacks and a container where we can see 3 different cytoscape graphs - only one at a time, the difference between the three graphs is the number of nodes (20, 30, 40)

- Callback 1 saves the current position of the nodes and links (it saves the whole `elements` property) in a dcc.Store. That dcc.Store data property is a dict with one item for each cytoscape graph, so positions for each of the three graphs can be saved at the same time (saving positions of graph 2 doesn't overwrite saved positions for graph 1)

```

@app.callback(

Output('store', 'data'),

Input('save1, 'n_clicks'),

State('cyto', 'elements'),

State('number', 'value'),

State('store', 'data'),

)

def savemapstate(clicks,elements, number, store):

if clicks is None:

raise PreventUpdate

else:

store[number] = elements

return store

```

- Callback 2 modifies the `elements` and `layout` properties of a cytoscape graph based on either (1) default value defined as a global variable, if the user clicks 'Reset' (2) saved value

```

@app.callback(

Output('cyto', 'elements'),

Output('cyto', 'layout'),

Input('update', 'n_clicks'),

Input('reset', 'n_clicks'),

State('number', 'value'),

State('store', 'data'),

prevent_initial_call=True

)

def updatemapstate(click1, click2, number, store):

triggered_id = callback_context.triggered[0]['prop_id'].split('.')[0]

if click1 is None and click2 is None:

raise PreventUpdate

else:

if "update" in triggered_id:

elements = store[number]

layout = {

'name': 'preset',

'fit': True,

}

elif "reset" in triggered_id:

elements = initial_data[number] # initial_data is a global variable (dict)

layout = {

'name': 'concentric',

'fit': True,

'minNodeSpacing': 100,

'avoidOverlap': True,

'startAngle': 50,

}

return elements, layout

```

- When a user tries to update the

- Sometimes it only happens when the number of nodes is >20; if the panning has been changed (the user has "dragged" the whole graph before saving the positions) the issue is worse (more nodes are displaced) and it can happen with <20 nodes.

- Related issue: https://github.com/plotly/dash-cytoscape/issues/175

- With this app, we can also reproduce this issue: https://github.com/plotly/dash-cytoscape/issues/159

#### Code to Reproduce

Env: Python 3.8.12

requirements.txt:

```

dash-design-kit==1.6.8

dash==2.3.1 # it happens with 2.10.2 too

dash_cytoscape==0.2.0 # it happens with 0.3.0 too

pandas

gunicorn==20.0.4

pandas>=1.1.5

flask==2.2.5

```

Complete app code:

```

from dash import Dash, html, dcc, Input, Output, State, callback_context

from dash.exceptions import PreventUpdate

import dash_cytoscape as cyto

import json

import random

app = Dash(__name__)

data1 = [

{'data': {'id': f'{i}', 'label': f'Node {i}'}, 'position': {'x': 100*random.uniform(0,2), 'y': 100*random.uniform(0,2)}}

for i in range(20)] + [

{'data': {'id':f'link1-{i}','source': '1', 'target': f'{i}'}}

for i in range(2,20)

]

data2 = [

{'data': {'id': f'{i}', 'label': f'Node {i}'}, 'position': {'x': 100*random.uniform(0,2), 'y': 100*random.uniform(0,2)}}

for i in range(30)] + [

{'data': {'id':f'link1-{i}','source': '1', 'target': f'{i}'}}

for i in range(2,30)

]

data3 = [

{'data': {'id': f'{i}', 'label': f'Node {i}'}, 'position': {'x': 100*random.uniform(0,2), 'y': 100*random.uniform(0,2)}}

for i in range(40)] + [

{'data': {'id':f'link1-{i}','source': '1', 'target': f'{i}'}}

for i in range(2,40)

]

initial_data = {'1':data1, '2':data2, '3':data3}

app.layout = html.Div([

html.Div([

dcc.Dropdown(['1','2','3'], '1', id='number'),

html.Button(id='save', children='Save'),

html.Button(id='update', children='Update'),

html.Button(id='reset', children='Reset'),

dcc.Store(id='store', data={'1':[], '2':[], '3':[]})

],

style = {'width':'300px'}),

html.Div(

children=cyto.Cytoscape(

id='cyto',

layout={'name': 'concentric',},

panningEnabled=True,

zoom=0.5,

zoomingEnabled=True,

elements=[],

)

)

])

@app.callback(

Output('store', 'data'),

Input('save', 'n_clicks'),

State('cyto', 'elements'),

State('number', 'value'),

State('store', 'data'),

)

def savemapstate(clicks,elements, number, store):

if clicks is None:

raise PreventUpdate

else:

store[number] = elements

return store

@app.callback(

Output('cyto', 'elements'),

Output('cyto', 'layout'),

Input('update', 'n_clicks'),

Input('reset', 'n_clicks'),

State('number', 'value'),

State('store', 'data'),

prevent_initial_call=True

)

def updatemapstate(click1, click2, number, store):

triggered_id = callback_context.triggered[0]['prop_id'].split('.')[0]

if click1 is None and click2 is None:

raise PreventUpdate

else:

if "update" in triggered_id:

elements = store[number]

layout = {

'name': 'preset',

'fit': True,

}

elif "reset" in triggered_id:

elements = initial_data[number]

layout = {

'name': 'concentric',

'fit': True,

'minNodeSpacing': 100,

'avoidOverlap': True,

'startAngle': 50,

}

return elements, layout

if __name__ == '__main__':

app.run_server(debug=True)

```

#### Workaround

- Returning in the callback a new cytoscape graph with a new id. If we return a cytoscape with the same id, the issue still happens.

- To keep saving the `elements` (=use them as an Input in a callback) we can use pattern-matching callbacks

```

from dash import Dash, html, dcc, Input, Output, State, callback_context, ALL

from dash.exceptions import PreventUpdate

import dash_cytoscape as cyto

import json

import random

app = Dash(__name__)

data1 = [

{'data': {'id': f'{i}', 'label': f'Node {i}'}, 'position': {'x': 100*random.uniform(0,2), 'y': 100*random.uniform(0,2)}}

for i in range(20)] + [

{'data': {'id':f'link1-{i}','source': '1', 'target': f'{i}'}}

for i in range(2,20)

]

data2 = [

{'data': {'id': f'{i}', 'label': f'Node {i}'}, 'position': {'x': 100*random.uniform(0,2), 'y': 100*random.uniform(0,2)}}

for i in range(30)] + [

{'data': {'id':f'link1-{i}','source': '1', 'target': f'{i}'}}

for i in range(2,30)

]

data3 = [

{'data': {'id': f'{i}', 'label': f'Node {i}'}, 'position': {'x': 100*random.uniform(0,2), 'y': 100*random.uniform(0,2)}}

for i in range(40)] + [

{'data': {'id':f'link1-{i}','source': '1', 'target': f'{i}'}}

for i in range(2,40)

]

initial_data = {'1':data1, '2':data2, '3':data3}

app.layout = html.Div([

html.Div([

dcc.Dropdown(['1','2','3'], '1', id='number'),

html.Button(id='save', children='Save'),

html.Button(id='update', children='Update'),

html.Button(id='reset', children='Reset'),

dcc.Store(id='store', data={'1':[], '2':[], '3':[]})

],

style = {'width':'300px'}),

html.Div(

id='cyto-card',

children=[],

),

])

@app.callback(

Output('store', 'data'),

Input('save', 'n_clicks'),

State({'type':'cyto', 'index':ALL}, 'elements'),

State('number', 'value'),

State('store', 'data'),

)

def savemapsatae(clicks,elements, number, store):

if clicks is None:

raise PreventUpdate

else:

store[number] = elements[0]

return store

@app.callback(

Output('cyto-card', 'children'),

Input('update', 'n_clicks'),

Input('reset', 'n_clicks'),

State('number', 'value'),

State('store', 'data'),

prevent_initial_call=True

)

def updatemapsatae(click1, click2, number, store):

triggered_id = callback_context.triggered[0]['prop_id'].split('.')[0]

if click1 is None and click2 is None:

raise PreventUpdate

else:

if "update" in triggered_id:

elements = store[number]

layout = {

'name': 'preset',

}

elif "reset" in triggered_id:

elements = initial_data[number]

layout = {

'name': 'concentric',

'fit': True,

'minNodeSpacing': 100,

'avoidOverlap': True,

'startAngle': 50,

}

n = sum(filter(None, [click1, click2]))

cyto_return = cyto.Cytoscape(

id={'type':'cyto', 'index':n},

layout=layout,

panningEnabled=True,

zoom=0.5,

zoomingEnabled=True,

elements=elements,

)

return cyto_return

if __name__ == '__main__':

app.run_server(debug=True)

```

| open | 2023-07-21T14:25:15Z | 2023-07-21T14:25:15Z | https://github.com/plotly/dash-cytoscape/issues/192 | [] | celia-lm | 0 |

serengil/deepface | machine-learning | 519 | Mediapipe imported but no output | Hi Mr. Sefik,

I am trying to create embeddings of a dataset using mediapipe and facenet.

The model and detector are built successfully but there is not progress in the embeddings task (see image below):

<img width="569" alt="Screen Shot 2022-07-22 at 18 02 40" src="https://user-images.githubusercontent.com/46068423/180455738-da2ba1bf-2e4a-4ddb-a6d6-fd9c80935b44.png">

After waiting for a long time, the program remains at 0 progress and doesn't throw any errors to resolve.

What is the issue here?

| closed | 2022-07-22T14:03:56Z | 2022-07-24T11:09:40Z | https://github.com/serengil/deepface/issues/519 | [

"dependencies"

] | MKJ52 | 4 |

ultralytics/ultralytics | machine-learning | 19,063 | Transfer Learning from YOLOv9 to YOLOv11 | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

Hello YOLO team,

I have a YOLOv9 segmentation model trained on a large custom dataset, and I’m now looking to train a YOLOv11 keypoint detection model on a smaller dataset.

I want to leverage transfer learning from my YOLOv9 model to YOLOv11 for keypoint detection. Will the following command work for that?

yolo pose train data=custom.yaml model=yolo11n-pose.yaml pretrained=custom_yolo9model.pt ...

Same question for tranfering from KEYPOINT yolov8 model to keypoint yolov11 model?

### Additional

_No response_ | open | 2025-02-04T11:37:07Z | 2025-02-04T16:44:22Z | https://github.com/ultralytics/ultralytics/issues/19063 | [

"question",

"pose"

] | VelmCoder | 2 |

jupyter/docker-stacks | jupyter | 1,530 | Latest scipy-notebook:lab-3.1.18 docker image actually contains lab 3.2.0 | The latest docker image for `jupyter/scipy-notebook:lab-3.1.18` does not contain Jupyter Lab 3.1.18, but 3.2.0. This is an issue if one needs to stay on 3.1.x for some reason.

**What docker image you are using?**

`jupyter/scipy-notebook:lab-3.1.18`, specifically `jupyter/scipy-notebook@sha256:4aa1a2cc3126d490c8c6a5505c534623176327ddafdba1efbb4ac52e8dd05e81`

**What complete docker command do you run to launch the container (omitting sensitive values)?**

`docker run --rm -ti jupyter/scipy-notebook:lab-3.1.18 jupyter --version | grep lab`

**What do you expect to happen?**

The Jupyter Lab version should be 3.1.18, as per the docker tag.

**What actually happens?**

The Jupyter Lab version is 3.2.0. | closed | 2021-11-15T12:48:43Z | 2022-07-05T11:31:18Z | https://github.com/jupyter/docker-stacks/issues/1530 | [

"type:Bug"

] | carlosefr | 8 |

alteryx/featuretools | data-science | 2,591 | Add document of how to do basic aggregation on non-default data type [e.g. max(datetime_field) group by] | Hi,

I am using featuretools (1.27 version), I read the docs ,and also searched here

but still struggle to find how to do simple things like SELECT MIN(datetime_field_1) from table

I also checked list_primitives() those related to time, seem not what I need,

I can do this for numeric fields, but seems can't do it on Datetime fields..

For example can we add a tutorial to obtain each team's earliest, latest 'timestamp' field?

`

import pandas as pd

import featuretools as ft

# Define team stats

team_stats = pd.DataFrame({

"id": [0, 1, 2, 3],

"team_id": [100, 200, 100, 200],

"game_id": [0, 0, 1, 1],

})

# Define player stats

player_stats = pd.DataFrame({

"id": [0, 1, 2, 3, 4, 5, 6, 7],

"team_id": [100, 100, 200, 200, 100, 100, 200, 200],

"game_id": [0, 0, 0, 0, 1, 1, 1, 1],

"player_id": [0, 1, 2, 3, 0, 1, 2, 3],

"goals": [1, 2, 0, 1, 2, 0, 1, 2],

"minutes_played": [30, 45, 10, 50, 10, 40, 25, 45],

"timestamp": pd.date_range("Jan 1, 2019", freq="1h", periods=8),

})

# Create new index columns for use in relationship

team_stats["created_idx"] = team_stats["team_id"].astype("string") + "-" + team_stats["game_id"].astype("string")

player_stats["created_idx"] = player_stats["team_id"].astype("string") + "-" + player_stats["game_id"].astype("string")

# Drop these columns from the players table since we no longer need them in this example.

# This prevents Featuretools from generating features from these numeric columns

player_stats = player_stats.drop(columns=["team_id", "game_id"])

# Create the EntitySet and add dataframes

es = ft.EntitySet()

es.add_dataframe(dataframe=team_stats, dataframe_name="teams", index="created_idx")

es.add_dataframe(dataframe=player_stats, dataframe_name="players", index="id",time_index='timestamp')

# Add the relationship

es.add_relationship("teams", "created_idx", "players", "created_idx")

# Run DFS using the `Sum` aggregation primitive

# Use ignore columns to prevent generation of features from player_id

fm, feautres = ft.dfs(entityset=es,

target_dataframe_name="teams",

agg_primitives=["sum","min","count"],

ignore_columns={"players": ["player_id"]})

`

| closed | 2023-07-26T01:20:21Z | 2023-08-21T14:50:51Z | https://github.com/alteryx/featuretools/issues/2591 | [

"documentation"

] | wanalytics8 | 3 |

pyjanitor-devs/pyjanitor | pandas | 745 | [DOC] Check that all functions have a `return` and `raises` section within docstrings | # Brief Description of Fix

<!-- Please describe the fix in terms of a "before" and "after". In other words, what's not so good about the current docs

page, and what you would like to see it become.

Example starter wording is provided. -->

Currently, the docstrings for some functions are lacking a `return` description and (where applicable) a `raises` description.

I would like to propose a change, such that now the docstrings for all functions within pyjanitor have a valid `return` and (where applicable) valid `raises` statements.

**Requires a look at all functions** (not just the provided examples below).

# Relevant Context

<!-- Please put here, in bullet points, links to the relevant docs page. A few starting template points are available

to get you started. -->

- [Good example of complete docstring - janitor.complete](https://ericmjl.github.io/pyjanitor/reference/janitor.functions/janitor.complete.html)

- Examples for missing `returns`:

- [Link to documentation page - finance.convert_currency](https://ericmjl.github.io/pyjanitor/reference/finance.html)

- [Link to exact file to be edited - finance.py](https://github.com/ericmjl/pyjanitor/blob/dev/janitor/finance.py)

- [Link to documentation page - functions.join_apply](https://ericmjl.github.io/pyjanitor/reference/janitor.functions/janitor.join_apply.html)

- [Link to exact file to be edited - functions.py](https://github.com/ericmjl/pyjanitor/blob/dev/janitor/functions.py)

| closed | 2020-09-13T01:34:28Z | 2020-10-03T13:15:27Z | https://github.com/pyjanitor-devs/pyjanitor/issues/745 | [

"good first issue",

"docfix",

"being worked on",

"hacktoberfest"

] | loganthomas | 8 |

abhiTronix/vidgear | dash | 59 | How to set framerate with ScreenGear | ## Question

I would like to set the frame rate with which the ScreenGear module acquires the frames from my monitor.

How can I do it?

### Other details:

If I grab a small frame, I will have very slow down video effect (i.e. I record for 10 seconds but the writed video is 1 minute), since it is able to grab many fps and put them with the fixed fps of the WriteGear module (e.g. 25).

On the other hand, If I grab a large frame (e.g. my whole 4k monitor), I will have a very fast video effect (i.e. I record for 30 seconds but the writed video is 5 seconds), since it is able to grab few fps because of the large images.

How can I manage this?

| closed | 2019-10-24T09:23:46Z | 2019-11-13T06:34:55Z | https://github.com/abhiTronix/vidgear/issues/59 | [

"INVALID :stop_sign:",

"QUESTION :question:"

] | FrancescoRossi1987 | 3 |

aeon-toolkit/aeon | scikit-learn | 2,129 | [ENH] k-Spectral Centroid (k-SC) clusterer | ### Describe the feature or idea you want to propose

A popular clusterer in the TSCL literature is the k-Spectral Centroid (k-SC). A link to the paper can be found at: https://dl.acm.org/doi/10.1145/1935826.1935863.

### Describe your proposed solution

It would be nice to addition to aeon. I propose the lloyd's variant initially and add the incremental version (in the paper as well) at a later date.

### Describe alternatives you've considered, if relevant

_No response_

### Additional context

_No response_ | closed | 2024-10-02T11:57:14Z | 2024-10-11T12:20:33Z | https://github.com/aeon-toolkit/aeon/issues/2129 | [

"enhancement",

"clustering",

"implementing algorithms"

] | chrisholder | 0 |

graphql-python/graphene | graphql | 1,398 | Undefined arguments always passed to resolvers | Contrarily to what's described [here](https://docs.graphene-python.org/en/latest/types/objecttypes/#graphql-argument-defaults): it looks like all the arguments with unset values are passed to the resolvers in graphene-3:

This is the query i am defining

```

class Query(graphene.ObjectType):

hello = graphene.String(required=True, name=graphene.String())

def resolve_hello(parent, info, **kwargs):

return str(kwargs)

```

which i submit as

```

{

hello

}

```

The result is :

```

{

"data": {

"hello": "{'name': None}"

}

}

```

The expected returned value is :

```

{

"data": {

"hello": "{}"

}

}

```

which is what we're getting with graphene-2.

My environment:

```

graphene==3.0

graphql-core==3.1.7

graphql-relay==3.1.0

graphql-server==3.0.0b4

```

| closed | 2022-01-05T23:12:11Z | 2022-08-27T18:41:03Z | https://github.com/graphql-python/graphene/issues/1398 | [

"🐛 bug"

] | stabacco | 3 |

apachecn/ailearning | python | 352 | 代码都编译不过啊。。。 | 在 MachineLearning/src/py3.x/5.Logistic/logistic.py 里:

```

# random.uniform(x, y) 方法将随机生成下一个实数,它在[x,y]范围内,x是这个范围内的最小值,y是这个范围内的最大值。

rand_index = int(np.random.uniform(0, len(data_index)))

h = sigmoid(np.sum(data_mat[dataIndex[randIndex]] * weights))

error = class_labels[dataIndex[randIndex]] - h

weights = weights + alpha * error * data_mat[dataIndex[randIndex]]

del(data_index[rand_index])

```

这一段 dataIndex 和 randIndex 名字写错了。 | closed | 2018-04-13T06:54:20Z | 2018-04-15T06:25:40Z | https://github.com/apachecn/ailearning/issues/352 | [] | ljp215 | 2 |

blacklanternsecurity/bbot | automation | 1,682 | Integrating with additional scanners | **Description**

Hey there, love this tool, I have some ideas/additions which I would build myself if I only had the time.... :

- The Nuclei tool is ran with default setting of stopping a scan of a target after its unreachable for 30 requests, if you put this number a little higher (say 100), in my experience that keeps you from stopping some scans that you do not need to stop.

- I saw that wpscan is implemented, in my experience wpscan requires an API key, you can get the same functionality as premium wpscan with nuclei for free! Using the following set of templates on wordpress hosts: https://github.com/topscoder/nuclei-wordfence-cve

- some internetdb vulnerabilities are verified, as in proven. You could add these as vulnerabilities instead of findings: https://www.shodan.io/search/facet?query=net%3A0%2F0&facet=vuln.verified

- retirejs would be a great addition for javascript vulnerabilities

| open | 2024-08-20T14:54:11Z | 2025-02-06T00:14:58Z | https://github.com/blacklanternsecurity/bbot/issues/1682 | [

"enhancement"

] | joostgrunwald | 6 |

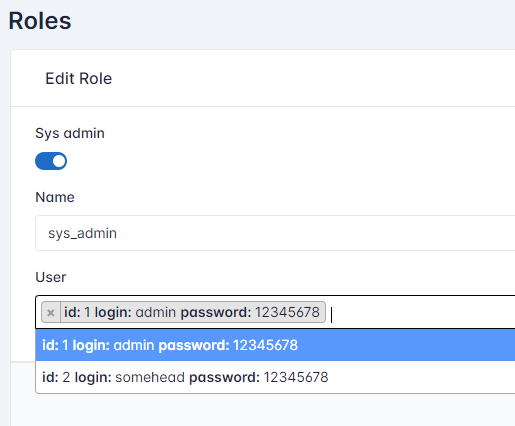

jowilf/starlette-admin | sqlalchemy | 146 | Enhancement: not display sensitive data in relation form | Is there any opportunity to not display fields according to persmision level for the users?

For now there is some invonvenience in safety by displaying some sensitive information whoever the user is

Even if i try to restrict field as much as possible it displays information in relation field of other view:

```

@dataclass

class PasswordField(StringField):

"""A StringField, except renders an `<input type="password">`."""

input_type: str = "password"

class_: str = "field-password form-control"

exclude_from_list: Optional[bool] = True

exclude_from_detail: Optional[bool] = True

exclude_from_create: Optional[bool] = True

exclude_from_edit: Optional[bool] = True

searchable: Optional[bool] = False

orderable: Optional[bool] = False

```

```

class User(MyModelView):

fields = [

Employee.id,

Employee.login,

PasswordField("password"),

Employee.role, #some reltaion

Employee.notes, #another relation

]

```

| closed | 2023-03-29T17:26:10Z | 2023-04-03T15:46:43Z | https://github.com/jowilf/starlette-admin/issues/146 | [

"enhancement"

] | Ilya-Green | 5 |

dask/dask | pandas | 11,416 | Significant slowdown in Numba compiled functions from Dask 2024.8.1 | <!-- Please include a self-contained copy-pastable example that generates the issue if possible.

Please be concise with code posted. See guidelines below on how to provide a good bug report:

- Craft Minimal Bug Reports http://matthewrocklin.com/blog/work/2018/02/28/minimal-bug-reports

- Minimal Complete Verifiable Examples https://stackoverflow.com/help/mcve

Bug reports that follow these guidelines are easier to diagnose, and so are often handled much more quickly.

-->

**Describe the issue**:

In [sgkit](https://github.com/sgkit-dev/sgkit), we use a lot of Numba compiled functions in Dask Array `map_blocks` calls, and we noticed a significant (approx 4x) slowdown in performance when running the test suite (see https://github.com/sgkit-dev/sgkit/issues/1267).

**Minimal Complete Verifiable Example**:

`dask-slowdown-min.py`:

```python

from numba import guvectorize

import numpy as np

import dask.array as da

@guvectorize(

[

"void(int8[:], int8[:])",

"void(int16[:], int16[:])",

"void(int32[:], int32[:])",

"void(int64[:], int64[:])",

],

"(n)->(n)", nopython=True

)

def inc(x, res):

for i in range(x.shape[0]):

res[i] = x[i] + 1

if __name__ == "__main__":

for i in range(3):

a = da.ones((10000, 1000, 10), chunks=(1000, 1000, 10), dtype=np.int8)

res = da.map_blocks(inc, a, dtype=np.int8).compute()

print(i)

```

With the latest version of Dask:

```shell

pip install 'dask[array]' numba

time python dask-slowdown-min.py

1

2

3

python dask-slowdown-min.py 2.61s user 0.21s system 40% cpu 6.929 total

```

With Dask 2024.8.0:

```shell

pip install -U 'dask[array]====2024.8.0'

time python dask-slowdown-min.py

0

1

2

python dask-slowdown-min.py 0.62s user 0.13s system 99% cpu 0.752 total

```

**Anything else we need to know?**:

I ran a git bisect and it looks like the problem was introduced in 1d771959509d09c34195fa19d9ae8446ae3a8726 (#11320).

I'm not sure what the underlying problem is, but I noticed that the slow version is compiling Numba functions many times compared to the older version:

```shell

# Dask latest version

NUMBA_DEBUG=1 python dask-slowdown-min.py | grep 'DUMP inc' | wc -l

152

# Dask 2024.8.0

NUMBA_DEBUG=1 python dask-slowdown-min.py | grep 'DUMP inc' | wc -l

8

```

**Environment**:

- Dask version: 2024.8.1 and later

- Python version: Python 3.11

- Operating System: macOS

- Install method (conda, pip, source): pip