repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

tqdm/tqdm | jupyter | 1,342 | Make tqdm(disable=None) default, instead of tqdm(disable=False) | - [x] I have marked all applicable categories:

+ [x] documentation request (i.e. "X is missing from the documentation." If instead I want to ask "how to use X?" I understand [StackOverflow#tqdm] is more appropriate)

+ [ ] new feature request

- [x] I have visited the [source website], and in particular

rea... | open | 2022-07-11T13:30:35Z | 2024-08-05T11:40:11Z | https://github.com/tqdm/tqdm/issues/1342 | [] | sunyj | 3 |

yzhao062/pyod | data-science | 272 | some PyOD models will fail while being used with SUOD | The reason is sklearn.clone will lead to issues if the hyperparameters are not well used.

Problem can be reproduced by cloning models:

This includes:

* COD | open | 2021-01-14T22:20:13Z | 2022-06-17T12:51:48Z | https://github.com/yzhao062/pyod/issues/272 | [] | yzhao062 | 1 |

pytorch/pytorch | deep-learning | 148,970 | ONNX export drops namespace qualifier for custom operation | ### 🐛 Describe the bug

Here, a repro modified from the example used on Pytorch doc page for custom ONNX ops.

I expect saved ONNX file to have com.microsoft::Gelu node - OnnxProgram seem to have the qualifier, but it's lost when file is saved:

```

import torch

import onnxscript

import onnx

class GeluModel(torch.nn.... | closed | 2025-03-11T16:05:21Z | 2025-03-11T18:20:48Z | https://github.com/pytorch/pytorch/issues/148970 | [

"module: onnx",

"triaged",

"onnx-triaged",

"onnx-needs-info"

] | borisfom | 5 |

akfamily/akshare | data-science | 5,573 | 获取集思录可转债实时数据错误哦 |

Traceback (most recent call last):

File "/opt/anaconda3/lib/python3.9/site-packages/pandas/core/indexes/base.py", line 3790, in get_loc

return self._engine.get_loc(casted_key)

File "index.pyx", line 152, in pandas._libs.index.IndexEngine.get_loc

File "index.pyx", line 181, in pandas._libs.index.IndexEngine.g... | closed | 2025-02-09T08:13:45Z | 2025-02-09T09:02:20Z | https://github.com/akfamily/akshare/issues/5573 | [

"bug"

] | paladin-dalao | 0 |

miguelgrinberg/microblog | flask | 183 | Ch 15 - Blueprints refactoring and Unit Testing reorganization issues. | Hi, I have followed along the eBook to Chapter 15 where I have learnt how to refactor my Microblog code in order to better organize the files. Everything works ok after I refactored the code using Blueprints.

### My question is this:

If I want to move my Unit Testing file **tests.py** to a separate sub-folder calle... | closed | 2019-09-25T19:18:33Z | 2019-09-26T09:00:01Z | https://github.com/miguelgrinberg/microblog/issues/183 | [

"question"

] | mrbiggleswirth | 2 |

amidaware/tacticalrmm | django | 1,585 | Define and display URL Actions grouped as Client, Agent or Globally targeted. | Currently we define and deploy a pretty good handful of URL Actions that target either the client {{client.id}} or the agent {{agent.agent_id}}. All URL Actions are bunched together so we end up scrolling past a lot of "agent" actions to get to a "client" action and vise-versa.

So our problem is, we are having all ... | open | 2023-08-04T14:32:48Z | 2024-08-10T22:05:10Z | https://github.com/amidaware/tacticalrmm/issues/1585 | [

"enhancement"

] | CubertTheDweller | 0 |

xmu-xiaoma666/External-Attention-pytorch | pytorch | 54 | WeightedPermuteMLP代码中的Linear问题? | WeightedPermuteMLP 中采用了几个全连接层Linear,具体代码位置在ViP.py中的21-23行

```python

self.mlp_c=nn.Linear(dim,dim,bias=qkv_bias)

self.mlp_h=nn.Linear(dim,dim,bias=qkv_bias)

self.mlp_w=nn.Linear(dim,dim,bias=qkv_bias)

```

这几个线性层的输入输出通道数都是dim,即输入输出的通道数不变

在forward时,除了mlp_c是直接输入了x没有什么问题

```python

d... | open | 2022-06-02T08:51:55Z | 2022-06-02T08:52:10Z | https://github.com/xmu-xiaoma666/External-Attention-pytorch/issues/54 | [] | ZVChen | 0 |

cvat-ai/cvat | computer-vision | 9,097 | Incorrect data returned in frames meta request for a ground truth job | ### Actions before raising this issue

- [x] I searched the existing issues and did not find anything similar.

- [x] I read/searched [the docs](https://docs.cvat.ai/docs/)

### Steps to Reproduce

1. Create a task with Ground Truth job, using attached archive (Frame selection method: random).

Content of the image corr... | closed | 2025-02-12T11:16:31Z | 2025-03-03T12:32:35Z | https://github.com/cvat-ai/cvat/issues/9097 | [

"bug"

] | bsekachev | 2 |

encode/databases | asyncio | 176 | query_lock() in iterate() prohibits any other database operations within `async for` loop | #108 introduced query locking to prohibit situation when multiple queries are executed at same time, however logic within `iterate()` is also is also wrapped with such logic, making code like such impossible due to deadlock:

```

async for row in database.iterate("SELECT * FROM table"):

await database.execute("... | open | 2020-03-14T22:28:20Z | 2023-01-30T23:29:41Z | https://github.com/encode/databases/issues/176 | [] | rafalp | 16 |

microsoft/nlp-recipes | nlp | 624 | [ASK] Error while running extractive_summarization_cnndm_transformer.ipynb | When I run below code.

`summarizer.fit(

ext_sum_train,

num_gpus=NUM_GPUS,

batch_size=BATCH_SIZE,

gradient_accumulation_steps=2,

max_steps=MAX_STEPS,

learning_rate=LEARNING_RATE,

warmup_steps=WARMUP_STEPS,

verbose=Tr... | open | 2021-07-24T16:24:13Z | 2022-01-04T12:13:26Z | https://github.com/microsoft/nlp-recipes/issues/624 | [] | ToonicTie | 2 |

plotly/dash | dash | 2,517 | [BUG] Dash Design Kit's ddk.Notification does not render correctly on React 18.2.0 | **Describe your context**

Please provide us your environment, so we can easily reproduce the issue.

- replace the result of `pip list | grep dash` below

```

dash 2.9.3

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

dash_cytoscape ... | closed | 2023-04-28T23:34:28Z | 2024-05-06T14:16:28Z | https://github.com/plotly/dash/issues/2517 | [] | rymndhng | 6 |

supabase/supabase-py | fastapi | 119 | bug: no module named `realtime.connection; realtime` is not a package | I have an error like this when using this package.

ModuleNotFoundError: No module named 'realtime.connection'; 'realtime' is not a package

anyone can help me | closed | 2022-01-11T07:57:02Z | 2022-05-14T17:36:42Z | https://github.com/supabase/supabase-py/issues/119 | [

"bug"

] | alif-arrizqy | 3 |

onnx/onnx | machine-learning | 6,364 | Sonarcloud for static code analysis? | ### System information

_No response_

### What is the problem that this feature solves?

Introduction of sonarcloud

### Alternatives considered

Focus on codeql ?

### Describe the feature

Thanks to the improvements made by @cyyever I wonder if we want to officially set up a tool like Sonarcloud, for example. ( I... | open | 2024-09-14T16:01:12Z | 2024-09-25T04:41:40Z | https://github.com/onnx/onnx/issues/6364 | [

"topic: enhancement"

] | andife | 4 |

amdegroot/ssd.pytorch | computer-vision | 85 | A bug in box_utils.py, log_sum_exp | I change the batch_size to 2 , it there any solutions ?

File "train.py", line 232, in <module>

train()

File "train.py", line 184, in train

loss_l, loss_c = criterion(out, targets)

File "/home/junhao.li/anaconda2/envs/py35/lib/python3.5/site-packages/torch/nn/modules/module.py", line 325, in __call_... | closed | 2017-12-12T08:52:15Z | 2020-05-30T13:49:11Z | https://github.com/amdegroot/ssd.pytorch/issues/85 | [] | jxlijunhao | 5 |

holoviz/panel | jupyter | 6,956 | value_throttled isn't throttled for FloatSlider when using keyboard arrows | #### ALL software version info

bokeh~=3.4.2

panel~=1.4.4

param~=2.1.1

Python 3.12.4

Firefox 127.0.2

OS: Linux

#### Description of expected behavior and the observed behavior

Expected:

value_throttled event is triggered after keyboard arrow key is released + some delay to make sure the user has finished changin... | open | 2024-07-06T17:13:22Z | 2024-07-13T11:53:38Z | https://github.com/holoviz/panel/issues/6956 | [] | pmvd | 4 |

apachecn/ailearning | python | 585 | AI | closed | 2020-05-13T11:04:46Z | 2020-11-23T02:05:17Z | https://github.com/apachecn/ailearning/issues/585 | [] | LiangJiaxin115 | 0 | |

xonsh/xonsh | data-science | 5,029 | Parse single commands with dash as subprocess instead of Python | ## Expected Behavior

When doing this...

```console

$ fc-list

```

...`fc-list` should run.

## Current Behavior

```console

$ fc-list

TypeError: unsupported operand type(s) for -: 'function' and 'type'

$

```

## Steps to Reproduce

```console

$ which fc-list

/opt/homebrew/bin/fc-list

$ fc-list

Typ... | closed | 2023-01-13T17:54:23Z | 2023-01-18T08:45:17Z | https://github.com/xonsh/xonsh/issues/5029 | [

"parser"

] | rpdelaney | 1 |

widgetti/solara | fastapi | 334 | Autoreload KeyError: <package_name> | I'm having an issue with autoreload on solara v 1.22.0, and I think has the same issue with v1.21.

I have a solara MWE script in `filename.py` and then run with:

```

solara run package_name.module.filename

```

Traceback:

```

Traceback (most recent call last):

File "C:\Users\jhsmi\pp\do-fret\.venv\l... | closed | 2023-10-23T09:35:24Z | 2023-10-30T14:01:58Z | https://github.com/widgetti/solara/issues/334 | [] | Jhsmit | 2 |

assafelovic/gpt-researcher | automation | 493 | TypeError: unsupported operand type(s) for -: 'int' and 'simsimd.DistancesTensor' | `ERROR: Exception in ASGI application

.......

research_result = await researcher.conduct_research()

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/philipp/Library/Caches/pypoetry/virtualenvs/backend-xXYcI_nD-py3.11/lib/python3.11/site-packages/gpt_researcher/master/agent.py", line 8... | closed | 2024-05-11T16:07:46Z | 2025-02-01T15:31:38Z | https://github.com/assafelovic/gpt-researcher/issues/493 | [] | ockiphertweck | 6 |

keras-team/autokeras | tensorflow | 1,341 | Add limit model size to faq. | closed | 2020-09-16T16:54:12Z | 2020-11-02T06:41:21Z | https://github.com/keras-team/autokeras/issues/1341 | [

"documentation",

"pinned"

] | haifeng-jin | 0 | |

httpie/cli | api | 728 | get ssl and tcp time | can i get ssl time and tcp time of http connection? | closed | 2018-11-09T07:59:40Z | 2020-09-20T07:34:22Z | https://github.com/httpie/cli/issues/728 | [] | robyn-he | 2 |

django-import-export/django-import-export | django | 1,120 | Django Import Exports fails for MongoDB | import-export is working for Mysql but fails for MongoDb.

Does this package supports Mongo?

or is there any additional requirement?

The error is same as in issue:

https://github.com/django-import-export/django-import-export/issues/811 | closed | 2020-04-29T13:34:11Z | 2020-04-29T14:31:30Z | https://github.com/django-import-export/django-import-export/issues/1120 | [] | sv8083 | 2 |

plotly/dash | data-visualization | 2,992 | dcc.Graph rendering goes into infinite error loop when None is returned for Figure | **Describe your context**

Please provide us your environment, so we can easily reproduce the issue.

```

dash 2.18.0

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

```

- if frontend related, tell us your Browser,... | open | 2024-09-09T19:44:45Z | 2024-09-11T19:16:40Z | https://github.com/plotly/dash/issues/2992 | [

"bug",

"P3"

] | reggied | 0 |

miguelgrinberg/microblog | flask | 51 | translate.py TypeError: the JSON object must be str, not 'bytes' | Hello,

return json.loads(r.content) raise an error _TypeError: the JSON object must be str, not 'bytes'_

_return json.loads(r.content.decode('utf-8-sig'))_ fix it.

Regards,

immontilla | closed | 2017-12-20T08:34:24Z | 2018-01-04T18:46:37Z | https://github.com/miguelgrinberg/microblog/issues/51 | [

"bug"

] | immontilla | 3 |

allure-framework/allure-python | pytest | 66 | Add support for Nose Framework | closed | 2017-06-27T14:40:43Z | 2020-11-27T14:22:21Z | https://github.com/allure-framework/allure-python/issues/66 | [

"type:enhancement"

] | sseliverstov | 1 | |

widgetti/solara | fastapi | 521 | Please add meta information with license for ipyvue in PYPI | meta information with license missing for ipyvue causing problem to install solara in my org. | closed | 2024-02-24T13:42:13Z | 2024-02-27T13:10:54Z | https://github.com/widgetti/solara/issues/521 | [] | pratyush581 | 1 |

numpy/numpy | numpy | 28,076 | Overview issue: Typing regressions in NumPy 2.2 | NumPy 2.2 had a lot of typing improvements, but that also means some regressions (at least and maybe especially for mypy users).

So maybe this exercise is mainly useful to me to make sense of the mega-issue in gh-27957.

My own take-away is that we need the user documentation (gh-28077), not just for users, but al... | open | 2024-12-30T13:54:26Z | 2025-03-19T19:25:03Z | https://github.com/numpy/numpy/issues/28076 | [

"41 - Static typing"

] | seberg | 21 |

pydantic/FastUI | fastapi | 275 | 422 Error in demo: POST /api/forms/select | I'm running a local copy of the demo and there's an issue with the Select form. Pressing "Submit" throws a server-side error, and the `post` router method is never run.

I think the problem comes from the multiple select fields. Commenting these out, or converting them to single fields, fixes the problem, and the Su... | open | 2024-04-17T18:08:54Z | 2024-05-02T00:03:23Z | https://github.com/pydantic/FastUI/issues/275 | [

"bug",

"documentation"

] | charlie-corus | 1 |

streamlit/streamlit | python | 10,107 | Inconsistent item assignment exception for `st.secrets` | ### Checklist

- [X] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [X] I added a very descriptive title to this issue.

- [X] I have provided sufficient information below to help reproduce this issue.

### Summary

`st.secrets` is read-only. When assig... | closed | 2025-01-03T18:42:25Z | 2025-03-12T10:29:20Z | https://github.com/streamlit/streamlit/issues/10107 | [

"type:bug",

"good first issue",

"feature:st.secrets",

"status:confirmed",

"priority:P3"

] | jrieke | 3 |

huggingface/transformers | python | 36,926 | `Mllama` not supported by `AutoModelForCausalLM` after updating `transformers` to `4.50.0` | ### System Info

- `transformers` version: 4.50.0

- Platform: Linux-5.15.0-100-generic-x86_64-with-glibc2.35

- Python version: 3.12.2

- Huggingface_hub version: 0.29.3

- Safetensors version: 0.5.3

- DeepSpeed version: not installed

- PyTorch version (GPU?): 2.6.0+cu124 (True)

- Tensorflow version (GPU?): not installed ... | open | 2025-03-24T12:07:09Z | 2025-03-24T12:28:00Z | https://github.com/huggingface/transformers/issues/36926 | [

"bug"

] | WuHaohui1231 | 2 |

jupyter-incubator/sparkmagic | jupyter | 833 | [BUG] SparkMagic pyspark kernel magic(%%sql) hangs when running with Papermill. | I initially reported this as a papermill issue(not quite sure about this). I am copying that issue to SparkMagic community to see if there happen to be any expert who can provide advice for unblocking. Please feel free to close if this is not SparkMagic issue. Thanks in advance.

**Describe the bug**

Our use case i... | open | 2023-09-06T20:04:07Z | 2024-08-09T02:48:55Z | https://github.com/jupyter-incubator/sparkmagic/issues/833 | [

"kind:bug"

] | edwardps | 18 |

Colin-b/pytest_httpx | pytest | 87 | If the url query parameter contains Chinese characters, it will cause an encoding error | ```

httpx_mock.add_response(

url='test_url?query_type=数据',

method='GET',

json={'result': 'ok'}

)

```

Executing the above code, It wil cause an encoding error:

> obj = '数据', encoding = 'ascii', errors = 'strict'

>

> def _encode_result(obj, encoding=_implicit_encoding,

> ... | closed | 2022-11-02T07:52:49Z | 2022-11-03T21:05:49Z | https://github.com/Colin-b/pytest_httpx/issues/87 | [

"bug"

] | uncle-shu | 2 |

taverntesting/tavern | pytest | 574 | Unable to set custom user agent through headers | I'm trying to set custom user agents as part of my requests, and I think Tavern might have a bug there/

Example:

```

stages:

- name: request

request:

url: "http://foo.bar/endpoint"

method: POST

headers:

user-agent: "my/useragent"

json: {} ... | closed | 2020-07-28T10:42:02Z | 2020-11-05T17:37:36Z | https://github.com/taverntesting/tavern/issues/574 | [] | nicoinn | 1 |

Avaiga/taipy | automation | 1,942 | [🐛 BUG] No delete chats button in the chatbot | ### What went wrong? 🤔

### Expected Behavior

_No response_

### Steps to Reproduce Issue

1. A code fragment

2. And/or configuration files or code

3. And/or Taipy GUI Markdown or HTML files

### So... | closed | 2024-10-06T17:11:36Z | 2024-10-07T20:30:28Z | https://github.com/Avaiga/taipy/issues/1942 | [

"💥Malfunction"

] | NishantRana07 | 2 |

kaarthik108/snowChat | streamlit | 3 | Table not showing | Hi!

Are you supposed to first select a table from the side bar from the database you specify in secrets.toml file? Because for me, the options are still the default ones

And even if I query the default tables I... | closed | 2023-05-19T09:12:19Z | 2023-06-25T04:21:14Z | https://github.com/kaarthik108/snowChat/issues/3 | [] | ewosl | 1 |

python-gino/gino | asyncio | 224 | Error creating table with ForeignKey referencing table wo `__tablename__` attribute | * GINO version: 0.7.2

* Python version: 3.6.5

Trying to create the following declarative schema:

```

class Parent(db.Model):

id = db.Column(db.Integer, primary_key=True)

class Child(db.Model):

id = db.Column(db.Integer, primary_key=True)

parent_id = db.Column(db.Integer, db.ForeignKey('parent.id... | closed | 2018-05-17T13:06:35Z | 2018-06-23T12:33:03Z | https://github.com/python-gino/gino/issues/224 | [

"wontfix"

] | gyermolenko | 4 |

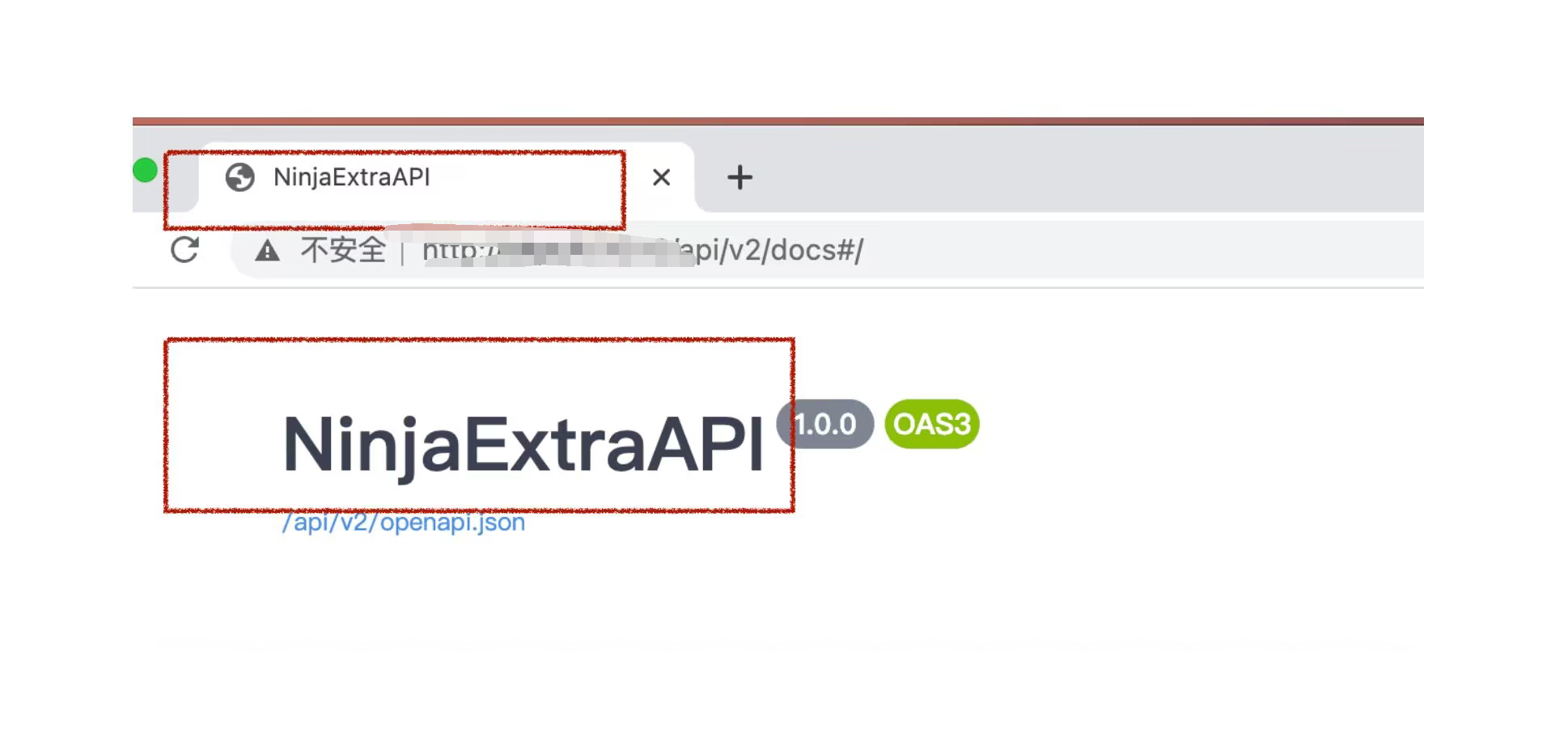

vitalik/django-ninja | pydantic | 609 | How do I change the title on the document? | I want to change these two headings in the picture what I want

| closed | 2022-11-13T08:50:27Z | 2022-11-13T16:41:29Z | https://github.com/vitalik/django-ninja/issues/609 | [] | Zzc79 | 1 |

deepfakes/faceswap | machine-learning | 777 | AttributeError: 'NoneType' object has no attribute 'split' | **Describe the bug**

Hi, I'm try to install the repo follow [General-Install-Guide](https://github.com/deepfakes/faceswap/blob/master/INSTALL.md#General-Install-Guide)

But when I run `python setup.py`, It throw the error `AttributeError: 'NoneType' object has no attribute 'split'`. How should I fit it?

```sh

$... | closed | 2019-06-27T17:20:57Z | 2019-06-28T17:35:44Z | https://github.com/deepfakes/faceswap/issues/777 | [] | s97712 | 7 |

gradio-app/gradio | deep-learning | 10,350 | Always jump to the first selection when selecting in dropdown, if there are many choices and bar in the dropdown list. | ### Describe the bug

If there are a lot of choices in a dropdown, a bar will appear. In this case, when I select a new key, the bar will jump to the first key I've chosen. This is so inconvenient.

### Have you searched existing issues? 🔎

- [X] I have searched and found no existing issues

### Reproduction

```pyth... | closed | 2025-01-14T03:09:06Z | 2025-02-27T00:03:34Z | https://github.com/gradio-app/gradio/issues/10350 | [

"bug"

] | tyc333 | 0 |

jmcnamara/XlsxWriter | pandas | 1,112 | Bug: <Write_String can not write string like URL to a normal String but Hyperlink> | ### Current behavior

When I use pandas with xlsxwriter engine to write data to excel. xlsxwriter can not write data as text or string but as URL.

Even I use custom writer to write_string as text_format, xlsxwriter still write as URL (Hyperlink) and raise URL 65536 limits in Excel. I just want it to be write ... | closed | 2025-01-06T04:37:50Z | 2025-01-06T10:04:13Z | https://github.com/jmcnamara/XlsxWriter/issues/1112 | [

"bug"

] | xzpater | 1 |

ultralytics/yolov5 | deep-learning | 12,931 | polygon annotation to object detection | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

I want to run object detection with segmentation labeling data, but I got an error.

As f... | closed | 2024-04-17T07:31:16Z | 2024-05-28T00:21:51Z | https://github.com/ultralytics/yolov5/issues/12931 | [

"question",

"Stale"

] | Cho-Hong-Seok | 2 |

pydantic/pydantic-ai | pydantic | 950 | Agent making mutiple, sequential requests with tool calls | Hi, I'm new to Pydantic-ai and trying to understand `Agent`'s behavior.

My question is why sometimes the Agent make multiple, sequential tool calls? Most of the time it only make one, where one or several tools are called at the same time, like in the examples from pydantic-ai docs.

But I found that sometimes the `Ag... | closed | 2025-02-20T02:03:29Z | 2025-02-20T02:08:45Z | https://github.com/pydantic/pydantic-ai/issues/950 | [] | xtfocus | 1 |

StackStorm/st2 | automation | 6,137 | Renew test SSL CA + Cert | Our test SSL CA+cert just expired. We need to renew it and document how to do so.

https://github.com/StackStorm/st2/tree/master/st2tests/st2tests/fixtures/ssl_certs

Since this is for testing, I think we could do something like a 15 year duration. | closed | 2024-02-13T18:50:11Z | 2024-02-16T17:07:01Z | https://github.com/StackStorm/st2/issues/6137 | [

"tests",

"infrastructure: ci/cd"

] | cognifloyd | 2 |

tensorflow/tensor2tensor | deep-learning | 1,847 | Out of Memory while training | I am getting an OoM error while training with 8 GPUs but not with 1 GPU.

I use the following command to train.

t2t-trainer \

--data_dir=$DATA_DIR \

--problem=$PROBLEM \

--model=$MODEL \

--hparams='max_length=100,batch_size=1024,eval_drop_long_sequences=true'\

--worker_gpu=8 \

--train_steps=35000... | open | 2020-09-08T13:58:43Z | 2022-10-20T14:00:33Z | https://github.com/tensorflow/tensor2tensor/issues/1847 | [] | dinosaxon | 1 |

xuebinqin/U-2-Net | computer-vision | 75 | Results without fringe | Hi @NathanUA,

I have a library that makes use of your model.

@alfonsmartinez opened an issue about the model result, please, take a look at here:

https://github.com/danielgatis/rembg/issues/14

Can you figure out how I can achieve this result without the black fringe?

thanks. | closed | 2020-09-29T21:29:17Z | 2020-10-10T16:43:20Z | https://github.com/xuebinqin/U-2-Net/issues/75 | [] | danielgatis | 10 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 1,018 | Error when testing pix2pix with a single image | Hi,

I trained pix2pix with my own dataset which ran fine for 200 epochs and the visom results through training seem promising. I now want to test the model with a single test image (without the image pair format, just the A style image to convert to B style)

I placed that single image in its own folder and gave t... | open | 2020-05-06T11:41:15Z | 2020-05-07T01:53:26Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1018 | [] | StuckinPhD | 3 |

nolar/kopf | asyncio | 401 | [archival placeholder] | This is a placeholder for later issues/prs archival.

It is needed now to reserve the initial issue numbers before going with actual development (PRs), so that later these placeholders could be populated with actual archived issues & prs with proper intra-repo cross-linking preserved... | closed | 2020-08-18T20:05:39Z | 2020-08-18T20:05:41Z | https://github.com/nolar/kopf/issues/401 | [

"archive"

] | kopf-archiver[bot] | 0 |

roboflow/supervision | computer-vision | 957 | Segmentation problem | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar feature requests.

### Question

Dear @SkalskiP

I am trying to adapt your code for velocity estimation on cars, so that besides detection can display segmentation als... | closed | 2024-02-29T02:55:39Z | 2024-02-29T08:41:02Z | https://github.com/roboflow/supervision/issues/957 | [

"question"

] | ana111todorova | 1 |

recommenders-team/recommenders | data-science | 2,147 | [BUG] Test failing Service invocation timed out | ### Description

<!--- Describe your issue/bug/request in detail -->

The VMs for the tests are not even starting:

```

Class AutoDeleteSettingSchema: This is an experimental class, and may change at any time. Please see https://aka.ms/azuremlexperimental for more information.

Class AutoDeleteConditionSchema: This ... | closed | 2024-08-16T15:50:07Z | 2024-08-26T10:16:02Z | https://github.com/recommenders-team/recommenders/issues/2147 | [

"bug"

] | miguelgfierro | 15 |

mouredev/Hello-Python | fastapi | 85 | 腾龙博源开户注册微:zhkk6969 | 博源在线开户,手机端:boy9999.cc 电脑pc:boy8888.cc 邀请码:0q821

V《zhkk6969》咨询QQ:1923630145

可以通过腾龙公司的客服电话或在线客服进行,客服人员会协助完成整个注册流程

开户流程:对于投资者来说,开户流程包括准备资料(例如身份证原件、银行卡复印件、个人简历等),并通过腾龙公司的

官方网站或手机应用程序提交开户申请 | closed | 2024-10-08T06:24:10Z | 2024-10-16T05:27:12Z | https://github.com/mouredev/Hello-Python/issues/85 | [] | xiao6901 | 0 |

dynaconf/dynaconf | flask | 314 | [RFC] Move to f"string" | Python 3.5 has been dropped.

Now some uses of `format` can be replaced with fstrings | closed | 2020-03-09T03:47:56Z | 2020-03-31T13:26:42Z | https://github.com/dynaconf/dynaconf/issues/314 | [

"help wanted",

"Not a Bug",

"RFC",

"good first issue"

] | rochacbruno | 2 |

assafelovic/gpt-researcher | automation | 949 | Is it possible to get an arxiv formatted paper , totally by gpt-researcher | closed | 2024-10-25T04:02:13Z | 2024-11-03T09:56:56Z | https://github.com/assafelovic/gpt-researcher/issues/949 | [] | CoderYiFei | 1 | |

fastapi-users/fastapi-users | asyncio | 1,170 | GET users/me returns different ObjectId on each call | also on the `/register` route. See:

https://github.com/fastapi-users/fastapi-users/discussions/1142 | closed | 2023-03-10T13:54:50Z | 2024-07-14T13:24:43Z | https://github.com/fastapi-users/fastapi-users/issues/1170 | [

"bug"

] | gegnew | 1 |

lukas-blecher/LaTeX-OCR | pytorch | 151 | Error while installing pix2tex[gui] | > ERROR: pip's dependency resolver does not currently take into account all the packages that are installed. This behaviour is the source of the following dependency conflicts.

> spyder 5.1.5 requires pyqt5<5.13, but you have pyqt5 5.15.6 which is incompatible.

| closed | 2022-05-19T14:29:01Z | 2022-05-19T14:32:16Z | https://github.com/lukas-blecher/LaTeX-OCR/issues/151 | [] | islambek243 | 1 |

giotto-ai/giotto-tda | scikit-learn | 589 | [BUG]Validation of argument 'metric_params' initialized to a dictionary fails when used with a callable metric | **Describe the bug**

The validate_params function from utils fails to validate the 'metric_params' argument when initialized to a dictionary with custom parameters to be used with a custom metric. I think I have tracked it down to be an issue related to the following lines in the documentation for the validate_params ... | closed | 2021-07-03T21:44:27Z | 2021-07-08T15:56:46Z | https://github.com/giotto-ai/giotto-tda/issues/589 | [

"bug"

] | ektas0330 | 5 |

d2l-ai/d2l-en | tensorflow | 1,679 | Adding a sub-topic in Convolutions for images | The current material under topic '6.2 Convolution for images', does not cover 'Dilated Convolutions'.

Proposed Content:

(To be added after '6.2.6. Feature Map and Receptive Field')

- Define dilated convolution

- Add visualizations depicting the larger receptive field compared to standard convolution

- Add code ... | open | 2021-03-17T13:13:12Z | 2021-03-17T13:13:12Z | https://github.com/d2l-ai/d2l-en/issues/1679 | [] | Swetha5 | 0 |

zihangdai/xlnet | nlp | 29 | What's the output structure for XLNET? [ A, SEP, B, SEP, CLS] | Hi, is the output embedding structure like this: [ A, SEP, B, SEP, CLS]?

Because for BERT it's like this right: [CLS, A, SEP, B, SEP]?

And for GPT2 is it just like this: [A, B]?

Thanks.

| open | 2019-06-23T04:41:14Z | 2019-09-19T12:07:54Z | https://github.com/zihangdai/xlnet/issues/29 | [] | BoPengGit | 2 |

Asabeneh/30-Days-Of-Python | python | 265 | Day 4: Strings | In the find() example

if find() returns the position first occurrence of 'y', then shouldn't it return 5 instead of 16? | closed | 2022-07-26T00:08:36Z | 2023-07-08T22:16:54Z | https://github.com/Asabeneh/30-Days-Of-Python/issues/265 | [] | AdityaDanturthi | 1 |

marimo-team/marimo | data-visualization | 4,184 | "Object of type Decimal is not JSON serializable" when processing results of DuckDB query | ### Describe the bug

Whenever I do a `sum() ` of integer values in a DuckDB query, I get a return value which is translated to a Decimal object in Python. This produces error/warning messages in marimo like

`Failed to send message to frontend: Object of type Decimal is not JSON serializable`

I believe the reason i... | closed | 2025-03-21T10:07:03Z | 2025-03-23T03:47:52Z | https://github.com/marimo-team/marimo/issues/4184 | [

"bug",

"cannot reproduce"

] | rjbudzynski | 3 |

scikit-learn/scikit-learn | python | 30,036 | OneVsRestClassifier cannot be used with TunedThresholdClassifierCV | https://github.com/scikit-learn/scikit-learn/blob/d5082d32de2797f9594c9477f2810c743560a1f1/sklearn/model_selection/_classification_threshold.py#L386

When predict is called on `OneVsRestClassifier`, it calls `predict_proba` on the underlying classifier.

If the underlying is a `TunedThresholdClassifierCV`, it redir... | open | 2024-10-09T07:31:21Z | 2024-10-15T09:24:45Z | https://github.com/scikit-learn/scikit-learn/issues/30036 | [

"Bug",

"Needs Decision"

] | worthy7 | 10 |

Yorko/mlcourse.ai | seaborn | 776 | Issue on page /book/topic04/topic4_linear_models_part5_valid_learning_curves.html | The first validation curve is missing

| closed | 2024-08-30T12:07:28Z | 2025-01-06T15:49:43Z | https://github.com/Yorko/mlcourse.ai/issues/776 | [] | ssukhgit | 1 |

tox-dev/tox | automation | 2,575 | Tox shouldn't set COLUMNS if it's already set | ## Issue

Coverage.py's doc build fails under tox4 when it didn't under tox3. This is due to setting the COLUMNS environment variable. I can fix it, but ideally tox would honor an existing COLUMNS value instead of always setting its own.

My .rst files run through cog to get the `--help` output of my commands. I... | closed | 2022-12-01T11:33:22Z | 2022-12-03T01:56:42Z | https://github.com/tox-dev/tox/issues/2575 | [] | nedbat | 2 |

apache/airflow | automation | 47,413 | Scheduler HA mode, DagFileProcessor Race Condition | ### Apache Airflow version

Other Airflow 2 version (please specify below)

### If "Other Airflow 2 version" selected, which one?

2.10.1

### What happened?

We use dynamic dag generation to generate dags in our Airflow environment. We have one base dag definition file, we will call `big_dag.py`, generating >1500 dags... | open | 2025-03-05T19:43:20Z | 2025-03-11T16:09:10Z | https://github.com/apache/airflow/issues/47413 | [

"kind:bug",

"area:Scheduler",

"area:MetaDB",

"area:core",

"needs-triage"

] | robertchinezon | 4 |

tfranzel/drf-spectacular | rest-api | 1,380 | __empty__ choice raise AssertionError: Invalid nullable case | **Describe the bug**

I'd like to add __empty__ as choice on a nullable field, see: https://docs.djangoproject.com/en/5.1/ref/models/fields/#enumeration-types (at the bottom of the paragraph). However `AssertionError: Invalid nullable case` is then raised on scheme generation. I noticed this error is also raised when ov... | open | 2025-02-13T16:22:27Z | 2025-02-13T19:13:18Z | https://github.com/tfranzel/drf-spectacular/issues/1380 | [

"bug",

"OpenAPI 3.1"

] | gabn88 | 1 |

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 3,302 | Website down? | 502 bad gateway error, cannot visit site, Slack channel, community forum etc. | closed | 2022-10-24T09:47:28Z | 2022-10-25T06:51:13Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/3302 | [] | goferit | 4 |

indico/indico | sqlalchemy | 6,330 | Prevent 'mark as paid' for pending registrations | When a registration is moderated and there is a fee or paid items, if you first mark a registration as paid and only then approve it it gets into a strange state where at the top it says not paid but at the same time the invoice shows up as paid.

(More context in a SNOW ticket: INC3861152)

Exception (yggtorrent): The cookies provided by FlareSolverr are not valid: The cookies provided by FlareSolverr are not valid | ### Have you checked our README?

- [X] I have checked the README

### Have you followed our Troubleshooting?

- [X] I have followed your Troubleshooting

### Is there already an issue for your problem?

- [X] I have checked older issues, open and closed

### Have you checked the discussions?

- [X] I have read the Dis... | closed | 2023-11-06T09:06:29Z | 2023-11-13T22:16:58Z | https://github.com/FlareSolverr/FlareSolverr/issues/947 | [

"more information needed"

] | paindespik | 8 |

ExpDev07/coronavirus-tracker-api | rest-api | 110 | Using your API! | Made a windows forms app in c# using your API!

https://github.com/rohandoesjava/corona-info | closed | 2020-03-20T13:06:42Z | 2020-04-19T18:01:50Z | https://github.com/ExpDev07/coronavirus-tracker-api/issues/110 | [

"user-created"

] | ghost | 1 |

JaidedAI/EasyOCR | deep-learning | 1,263 | Angle of the text | How we can get the angle of the text using easyocr ? | open | 2024-06-04T10:01:35Z | 2024-06-04T10:02:02Z | https://github.com/JaidedAI/EasyOCR/issues/1263 | [] | Rohinivv96 | 0 |

seleniumbase/SeleniumBase | web-scraping | 2,463 | Could not use "click_and_hold()" and "Release()" as Action_Chain to bypass "Press And Hold" Captcha | I trired to bypass the captcha of walmart but i coudn't find the method to use it!

I really appreciate it if this problem is solved!

Thank you Mdmintz for building this Seleniumbase!

| closed | 2024-02-01T16:23:17Z | 2024-02-01T16:42:15Z | https://github.com/seleniumbase/SeleniumBase/issues/2463 | [

"invalid usage",

"UC Mode / CDP Mode"

] | mynguyen95dn | 1 |

ipython/ipython | jupyter | 14,810 | Assertion failure on theme colour | The iPython could not run on my PyCharm with the following error prompt:

```

Traceback (most recent call last):

File "/Applications/PyCharm.app/Contents/plugins/python-ce/helpers/pydev/pydevconsole.py", line 570, in <module>

pydevconsole.start_client(host, port)

File "/Applications/PyCharm.app/Contents/plugins... | closed | 2025-03-01T22:19:27Z | 2025-03-08T13:12:06Z | https://github.com/ipython/ipython/issues/14810 | [] | JinZida | 9 |

openapi-generators/openapi-python-client | rest-api | 928 | Nullable array models generate failing code | **Describe the bug**

When an array is marked as nullable (in OpenAPI 3.0 or 3.1) the generated code fails type checking with the message:

```

error: Incompatible types in assignment (expression has type "tuple[None, bytes, str]", variable has type "list[float] | Unset | None") [assignment]

```

From the end-to... | closed | 2024-01-03T15:15:57Z | 2024-01-04T00:29:42Z | https://github.com/openapi-generators/openapi-python-client/issues/928 | [] | kgutwin | 1 |

3b1b/manim | python | 1,265 | Bezier interpolation ruining graph: Disable feature? | When plotting the function seen in the (attached) image, a ringing occurs on the transition shoulders. I assume this is from the Bezier interpolation the function goes through when `get_graph()` is called? `get_graph()` calls `interpolate(x_min, x_max, alpha)` from `manimlib.utils.bezier`.

Is there a feature to disa... | open | 2020-11-07T03:44:16Z | 2020-11-07T03:46:13Z | https://github.com/3b1b/manim/issues/1265 | [] | jdlake | 0 |

flairNLP/flair | nlp | 3,279 | [Bug]: pip install flair==0.12.2" did not complete successfully | ### Describe the bug

Try to build Dockerfile with flair 0.12.2 fails

### To Reproduce

```python

FROM public.ecr.aws/lambda/python:3.10

COPY requirements.txt .

RUN pip3 install --pre torch --index-url https://download.pytorch.org/whl/nightly/cpu

RUN pip install flair==0.12.2

```

### Expected behavior

installed... | closed | 2023-07-05T13:07:05Z | 2023-07-05T13:50:41Z | https://github.com/flairNLP/flair/issues/3279 | [

"bug"

] | sub2zero | 1 |

FlareSolverr/FlareSolverr | api | 529 | [hdarea] (updating) The cookies provided by FlareSolverr are not valid | **Please use the search bar** at the top of the page and make sure you are not creating an already submitted issue.

Check closed issues as well, because your issue may have already been fixed.

### How to enable debug and html traces

[Follow the instructions from this wiki page](https://github.com/FlareSolverr/Fl... | closed | 2022-09-27T04:59:35Z | 2022-09-27T15:26:31Z | https://github.com/FlareSolverr/FlareSolverr/issues/529 | [

"invalid"

] | taoxiaomeng0723 | 1 |

huggingface/datasets | pytorch | 6,640 | Sign Language Support | ### Feature request

Currently, there are only several Sign Language labels, I would like to propose adding all the Signed Languages as new labels which are described in this ISO standard: https://www.evertype.com/standards/iso639/sign-language.html

### Motivation

Datasets currently only have labels for several signe... | open | 2024-02-02T21:54:51Z | 2024-02-02T21:54:51Z | https://github.com/huggingface/datasets/issues/6640 | [

"enhancement"

] | Merterm | 0 |

Nike-Inc/koheesio | pydantic | 38 | [DOC] Broken link in documentation | https://engineering.nike.com/koheesio/latest/ links to

https://engineering.nike.com/koheesio/latest/reference/concepts/tasks.md

which does not exist | closed | 2024-06-04T07:53:54Z | 2024-06-21T19:15:42Z | https://github.com/Nike-Inc/koheesio/issues/38 | [

"bug"

] | diekhans | 1 |

robinhood/faust | asyncio | 293 | Agent isn't getting new messages from Table's changelog topic |

## Steps to reproduce

Define an agent consume message from a table's changelog topic

## Expected behavior

As table getting updated and message written into changelog topic, agent should receive the message

## Actual behavior

Agent is not receiving the new changelog message

## Full traceback

# Ver... | open | 2019-02-14T06:19:27Z | 2020-02-27T23:18:42Z | https://github.com/robinhood/faust/issues/293 | [

"Status: Confirmed"

] | xqzhou | 1 |

amdegroot/ssd.pytorch | computer-vision | 486 | Too many detections in a image | I tried to evaluate the network _weights/ssd300_mAP_77.43_v2.pth_

`python eval.py`

And here is what I got:

What puzzles me is that, there are too many predicted boxes? Isn't it?

I think there should be... | closed | 2020-06-05T13:39:42Z | 2020-06-08T08:32:03Z | https://github.com/amdegroot/ssd.pytorch/issues/486 | [] | decoli | 2 |

ets-labs/python-dependency-injector | asyncio | 102 | Add `Callable.injections` read-only property for getting a list of injections | closed | 2015-10-21T07:45:08Z | 2015-10-22T14:48:57Z | https://github.com/ets-labs/python-dependency-injector/issues/102 | [

"feature"

] | rmk135 | 0 | |

ibis-project/ibis | pandas | 10,764 | bug: [Athena] error when trying to force create a database, that already exists | ### What happened?

I already had a database named `mydatabase` in my aws athena instance.

I experimented with using `force=True`, expecting it to drop the existing table, and create a new one. I got an error instead.

My database does contain a table.

### What version of ibis are you using?

`main` branch commit 3d... | closed | 2025-02-01T05:09:52Z | 2025-02-02T06:30:04Z | https://github.com/ibis-project/ibis/issues/10764 | [

"bug"

] | anjakefala | 2 |

fastapi-users/fastapi-users | fastapi | 883 | How could I remove/Hide is_active, is_superuser and is_verified from register route? | ### Discussed in https://github.com/fastapi-users/fastapi-users/discussions/882

<div type='discussions-op-text'>

<sup>Originally posted by **DinaTaklit** January 19, 2022</sup>

Hello the `auth/register` endpoint offer all those fields to register the new user I want to remove/hide ` is_active`,

`is_superuser`... | closed | 2022-01-19T20:36:35Z | 2022-01-20T06:57:24Z | https://github.com/fastapi-users/fastapi-users/issues/883 | [] | DinaTaklit | 0 |

TencentARC/GFPGAN | pytorch | 60 | 如何降低美颜效果 | 个人觉得增强后的人脸好像美颜太过了一点 过于平滑 细节不够。请问一下我自己重新训练能否降低美颜效果,应该修改哪里最好呢 | closed | 2021-09-08T00:38:33Z | 2021-09-24T07:57:10Z | https://github.com/TencentARC/GFPGAN/issues/60 | [] | jorjiang | 3 |

pytest-dev/pytest-cov | pytest | 93 | Incompatible with coverage 4.0? | I just ran a `pip upgrade` on my project which upgraded the coverage package from 3.7.1 to 4.0.0. When I ran `py.test --cov`, the output indicated that my test coverage had plummeted from 70% to 30%. A warning was also printed out: `Coverage.py warning: Trace function changed, measurement is likely wrong: None`. Downgr... | closed | 2015-09-28T23:01:59Z | 2015-09-29T07:38:12Z | https://github.com/pytest-dev/pytest-cov/issues/93 | [] | reywood | 3 |

pytorch/vision | machine-learning | 8,669 | performance degradation in to_pil_image after v0.17 | ### 🐛 Describe the bug

`torchvision.transforms.functional.to_pil_image `is much slower when converting torch.float16 image tensors to PIL Images based on my benchmarks (serializing 360 images):

Dependencies:

```

Python 3.11

Pillow 10.4.0

```

Before (torch 2.0.1, torchvision v0.15.2, [Code here](https://git... | open | 2024-10-02T08:25:01Z | 2024-10-25T13:06:15Z | https://github.com/pytorch/vision/issues/8669 | [] | seymurkafkas | 5 |

localstack/localstack | python | 11,555 | bug: fromDockerBuild makes error "spawnSync docker ENOENT" | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

When I use `cdk.aws_lambda.Code.fromDockerBuild` to create code for lambda, it makes error `Error: spawnSync docker ENOENT`

### Expected Behavior

build without error

### How are you starting LocalStack?

With a... | closed | 2024-09-21T16:20:43Z | 2024-11-08T18:03:30Z | https://github.com/localstack/localstack/issues/11555 | [

"type: bug",

"status: response required",

"area: integration/cdk",

"aws:lambda",

"status: resolved/stale"

] | namse | 3 |

Yorko/mlcourse.ai | seaborn | 371 | Validation form is out of date for the demo assignment 3 | Questions 3.6 and 3.7 in the [validation form ](https://docs.google.com/forms/d/1wfWYYoqXTkZNOPy1wpewACXaj2MZjBdLOL58htGWYBA/edit) for demo assignment 3 are incorrect. The questions are valid for the previous version of the assignment that is accessible by commit 152a534428d59648ebce250fd876dea45ad00429.

| closed | 2018-10-10T13:58:54Z | 2018-10-16T11:32:43Z | https://github.com/Yorko/mlcourse.ai/issues/371 | [

"enhancement"

] | fralik | 3 |

chatopera/Synonyms | nlp | 14 | two sentences are partly equal | # description

## current

>>> print(synonyms.compare('目前你用什么方法来保护自己', '目前你用什么方法'))

1.0

## expected

Two sentences are partly equal but not fully equal. It should not returns 1 here.

# solution

# environment

* version:

The commit hash (`git rev-parse HEAD`)

| closed | 2017-11-14T09:55:09Z | 2018-01-01T11:36:29Z | https://github.com/chatopera/Synonyms/issues/14 | [

"bug"

] | bobbercheng | 0 |

flavors/django-graphql-jwt | graphql | 299 | modulenotfounderror: no module named 'graphql_jwt' | when I'm trying to use this package this error appears:

modulenotfounderror: no module named 'graphql_jwt'

/usr/local/lib/python3.9/site-packages/graphene_django/settings.py, line 89, in import_from_string

I put "graphql_jwt.refresh_token.apps.RefreshTokenConfig", in the INSTALLED_APPS

and i did everything in t... | open | 2022-04-03T16:13:02Z | 2022-04-03T16:16:19Z | https://github.com/flavors/django-graphql-jwt/issues/299 | [] | MuhammadAbdulqader | 0 |

apachecn/ailearning | scikit-learn | 590 | 第三个步骤是什么意思,一定要NLP才行吗 | 做图像的,计算机视觉应该也一样吧 | closed | 2020-05-15T02:36:38Z | 2020-05-15T02:40:44Z | https://github.com/apachecn/ailearning/issues/590 | [] | muyangmuzi | 1 |

babysor/MockingBird | pytorch | 223 | 有的时候点击合成,就出现报错 | 报错内容:

Loaded encoder "pretrained.pt" trained to step 1594501

Synthesizer using device: cuda

Trainable Parameters: 32.869M

Traceback (most recent call last):

File "C:\德丽莎\toolbox\__init__.py", line 123, in <lambda>

func = lambda: self.synthesize() or self.vocode()

File "C:\德丽莎\toolbox\__init__.py", line 2... | closed | 2021-11-20T10:26:33Z | 2023-01-26T02:39:09Z | https://github.com/babysor/MockingBird/issues/223 | [] | huankong233 | 6 |

cvat-ai/cvat | computer-vision | 8,656 | Attribute Annotation is zooming too much when changing the frame | ### Actions before raising this issue

- [X] I searched the existing issues and did not find anything similar.

- [X] I read/searched [the docs](https://docs.cvat.ai/docs/)

### Steps to Reproduce

## With firefox

1. Create a task in CVAT with 2 images, like

```json

[

{

"name": "Test",

"id": 369... | closed | 2024-11-07T10:10:43Z | 2024-11-07T12:11:27Z | https://github.com/cvat-ai/cvat/issues/8656 | [

"bug"

] | piercus | 1 |

strawberry-graphql/strawberry | asyncio | 3,802 | "ModuleNotFoundError: No module named 'ddtrace'" when trying to use DatadogTracingExtension | <!-- Provide a general summary of the bug in the title above. -->

<!--- This template is entirely optional and can be removed, but is here to help both you and us. -->

<!--- Anything on lines wrapped in comments like these will not show up in the final text. -->

## Describe the Bug

When trying to use DatadogTracingE... | closed | 2025-03-10T19:55:49Z | 2025-03-12T14:43:55Z | https://github.com/strawberry-graphql/strawberry/issues/3802 | [

"bug"

] | jakub-bacic | 0 |

iperov/DeepFaceLab | machine-learning | 5,492 | SAEHD training on GPU run the pause command after start in Terminal | Hello,

My PC: Acer aspire 7, Core i 7 9th generation, nvidia geforce GTX 1050, Windows 10 home

When I run SAEHD-training on GPU he run the pause command and say some thing like: "Press any key to continue..." after Start. On CPU work every thing fine!

My batch size is 4!

So my CMD is on German but look:

![graf... | open | 2022-03-13T09:31:14Z | 2023-06-08T23:18:48Z | https://github.com/iperov/DeepFaceLab/issues/5492 | [] | Pips01 | 6 |

FactoryBoy/factory_boy | sqlalchemy | 530 | Include repr of model_class when instantiation fails | #### Description

When instantiation of a `model_class` with a dataclass fails, the exception (`TypeError: __init__() missing 1 required positional argument: 'phone_number'`) does not include the class name, which it makes it very difficult to find which fixture failed.

```

Traceback (most recent call last):

F... | closed | 2018-10-22T07:38:56Z | 2020-05-23T10:52:51Z | https://github.com/FactoryBoy/factory_boy/issues/530 | [] | charlax | 4 |

pytest-dev/pytest-django | pytest | 528 | adding a user to a group | I'm testing a basic class-based view with a permission system based on the Django Group model. But before I can begin to test these permissions, I need to first create a user object (easy), then create a Group object, add the User object to the new Group object and save the results.

```

import pytest

from django.con... | closed | 2017-10-18T00:06:35Z | 2017-10-20T04:55:18Z | https://github.com/pytest-dev/pytest-django/issues/528 | [] | highpost | 1 |

man-group/notebooker | jupyter | 80 | Grouped front page should be case-sensitive | e.g. if you run for Cowsay and cowsay, the capitalised version will take precendence. | open | 2022-02-25T12:30:36Z | 2022-03-08T22:57:26Z | https://github.com/man-group/notebooker/issues/80 | [

"bug"

] | jonbannister | 0 |

aiogram/aiogram | asyncio | 767 | Add support for Bot API 5.5 | • Bots can now contact users who sent a join request to a chat where the bot is an admin – even if the user never interacted with the bot before.

• Added support for protected content in groups and channels.

• Added support for users posting as a channel in public groups and channel comments.

• Added support for men... | closed | 2021-12-07T13:35:53Z | 2021-12-07T18:03:14Z | https://github.com/aiogram/aiogram/issues/767 | [

"api"

] | Olegt0rr | 0 |

dask/dask | scikit-learn | 11,768 | querying df.compute(concatenate=True) | https://github.com/dask/dask-expr/pull/1138 introduced the `concatenate` kwargs to dask-dataframe compute operations, and defaulted to True (a change in behaviour). This is now the default in core dask following the merger of expr into the main repo.

I am concerned that the linked PR did not provide any rationale for ... | open | 2025-02-20T19:50:49Z | 2025-02-26T18:05:03Z | https://github.com/dask/dask/issues/11768 | [

"needs triage"

] | martindurant | 6 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.