|

|

--- |

|

|

license: mit |

|

|

--- |

|

|

|

|

|

|

|

|

|

|

|

## Testing Code: |

|

|

The GitHub repo for testing code: [VisR-Bench](https://github.com/puar-playground/VisR-Bench/tree/main) |

|

|

|

|

|

## Data Download |

|

|

This is the page images of all documents of the VisR-Bench dataset. |

|

|

``` |

|

|

git lfs install |

|

|

git clone https://huggingface.co/datasets/puar-playground/VisR-Bench |

|

|

``` |

|

|

The code above will download the `VisR-Bench` folder, which is required for testing. |

|

|

|

|

|

## Reference |

|

|

``` |

|

|

@misc{chen2025visrbenchempiricalstudyvisual, |

|

|

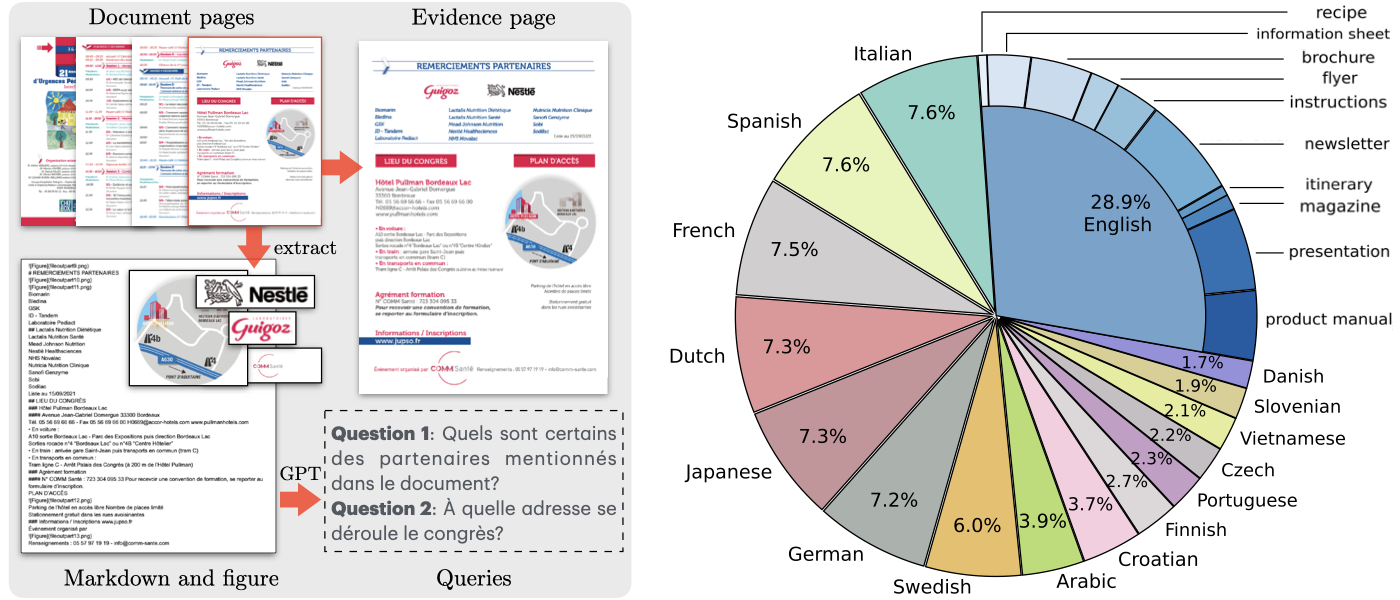

title={VisR-Bench: An Empirical Study on Visual Retrieval-Augmented Generation for Multilingual Long Document Understanding}, |

|

|

author={Jian Chen and Ming Li and Jihyung Kil and Chenguang Wang and Tong Yu and Ryan Rossi and Tianyi Zhou and Changyou Chen and Ruiyi Zhang}, |

|

|

year={2025}, |

|

|

eprint={2508.07493}, |

|

|

archivePrefix={arXiv}, |

|

|

primaryClass={cs.CV}, |

|

|

url={https://arxiv.org/abs/2508.07493}, |

|

|

} |

|

|

|

|

|

@misc{chen2025svragloracontextualizingadaptationmllms, |

|

|

title={SV-RAG: LoRA-Contextualizing Adaptation of MLLMs for Long Document Understanding}, |

|

|

author={Jian Chen and Ruiyi Zhang and Yufan Zhou and Tong Yu and Franck Dernoncourt and Jiuxiang Gu and Ryan A. Rossi and Changyou Chen and Tong Sun}, |

|

|

year={2025}, |

|

|

eprint={2411.01106}, |

|

|

archivePrefix={arXiv}, |

|

|

primaryClass={cs.CV}, |

|

|

url={https://arxiv.org/abs/2411.01106}, |

|

|

} |

|

|

``` |

|

|

|