in_source_id stringlengths 13 58 | issue stringlengths 3 241k | before_files listlengths 0 3 | after_files listlengths 0 3 | pr_diff stringlengths 109 107M ⌀ |

|---|---|---|---|---|

streamlit__streamlit-6377 | Streamlit logger working on root

### Summary

Upon import, Streamlit adds a new **global** log handler that dumps logs in text format. Packages should not be doing that, because it might break the logging convention of the host systems.

In our case for example, we dump logs in JSON format and push it all to our log... | [

{

"content": "# Copyright (c) Streamlit Inc. (2018-2022) Snowflake Inc. (2022)\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2... | [

{

"content": "# Copyright (c) Streamlit Inc. (2018-2022) Snowflake Inc. (2022)\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2... | diff --git a/lib/streamlit/logger.py b/lib/streamlit/logger.py

index 6f91af7432e4..779195acc001 100644

--- a/lib/streamlit/logger.py

+++ b/lib/streamlit/logger.py

@@ -117,7 +117,7 @@ def get_logger(name: str) -> logging.Logger:

return _loggers[name]

if name == "root":

- logger = logging.getLogger... |

obspy__obspy-2148 | FDSN routing client has a locale dependency

There's a dummy call to `time.strptime` in the module init that uses locale-specific formatting, which fails under locales that don't use the same names (ie. "Nov" for the 11th month of the year).

```

>>> import locale

>>> locale.setlocale(locale.LC_TIME, ('zh_CN', 'UTF-... | [

{

"content": "#!/usr/bin/env python\n# -*- coding: utf-8 -*-\n\"\"\"\nobspy.clients.fdsn.routing - Routing services for FDSN web services\n===================================================================\n\n:copyright:\n The ObsPy Development Team (devs@obspy.org)\n Celso G Reyes, 2017\n IRIS-DMC\n:... | [

{

"content": "#!/usr/bin/env python\n# -*- coding: utf-8 -*-\n\"\"\"\nobspy.clients.fdsn.routing - Routing services for FDSN web services\n===================================================================\n\n:copyright:\n The ObsPy Development Team (devs@obspy.org)\n Celso G Reyes, 2017\n IRIS-DMC\n:... | diff --git a/CHANGELOG.txt b/CHANGELOG.txt

index 879f573662a..d9f50e50979 100644

--- a/CHANGELOG.txt

+++ b/CHANGELOG.txt

@@ -22,6 +22,7 @@

and/or `location` are set (see #1810, #2031, #2047).

* A few fixes and stability improvements for the mass downloader

(see #2081).

+ * Fixed routing startup error ... |

cupy__cupy-7448 | [RFC] Renaming the development branch to `main`

Now that many projects around the scientific Python community converged to use `main` as the default branch for their repositories, I think it could make sense to do that for CuPy too.

According to https://github.com/github/renaming, side-effects of renaming a branch ... | [

{

"content": "# -*- coding: utf-8 -*-\n#\n# CuPy documentation build configuration file, created by\n# sphinx-quickstart on Sun May 10 12:22:10 2015.\n#\n# This file is execfile()d with the current directory set to its\n# containing dir.\n#\n# Note that not all possible configuration values are present in this\... | [

{

"content": "# -*- coding: utf-8 -*-\n#\n# CuPy documentation build configuration file, created by\n# sphinx-quickstart on Sun May 10 12:22:10 2015.\n#\n# This file is execfile()d with the current directory set to its\n# containing dir.\n#\n# Note that not all possible configuration values are present in this\... | diff --git a/.github/workflows/backport.yml b/.github/workflows/backport.yml

index 151b0d18c1f..179694d231e 100644

--- a/.github/workflows/backport.yml

+++ b/.github/workflows/backport.yml

@@ -4,7 +4,7 @@ on:

pull_request_target:

types: [closed, labeled]

branches:

- - master

+ - main

jobs:

... |

PrefectHQ__prefect-2056 | AuthorizationError when watching logs from CLI

When running with `prefect run cloud --logs`, after a few minutes I see the following error:

```

prefect.utilities.exceptions.AuthorizationError: [{'message': 'AuthenticationError', 'locations': [], 'path': ['flow_run'], 'extensions': {'code': 'UNAUTHENTICATED'}}]

```

... | [

{

"content": "import json\nimport time\n\nimport click\nfrom tabulate import tabulate\n\nfrom prefect.client import Client\nfrom prefect.utilities.graphql import EnumValue, with_args\n\n\n@click.group(hidden=True)\ndef run():\n \"\"\"\n Run Prefect flows.\n\n \\b\n Usage:\n $ prefect run [STO... | [

{

"content": "import json\nimport time\n\nimport click\nfrom tabulate import tabulate\n\nfrom prefect.client import Client\nfrom prefect.utilities.graphql import EnumValue, with_args\n\n\n@click.group(hidden=True)\ndef run():\n \"\"\"\n Run Prefect flows.\n\n \\b\n Usage:\n $ prefect run [STO... | diff --git a/CHANGELOG.md b/CHANGELOG.md

index 2669873011ce..72e62747e516 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -21,6 +21,7 @@ These changes are available in the [master branch](https://github.com/PrefectHQ/

### Fixes

- Ensure microseconds are respected on `start_date` provided to CronClock - [#2031](http... |

django-cms__django-cms-3415 | Groups could not be deleted if custom user model is used

If a custom user model is used one can't delete groups because in the pre_delete signal the user permissions are cleared. The users are accessed by their reversed descriptor but in the case of a custom user model this is not always called user_set, so there is an... | [

{

"content": "# -*- coding: utf-8 -*-\n\nfrom cms.cache.permissions import clear_user_permission_cache\nfrom cms.models import PageUser, PageUserGroup\nfrom cms.utils.compat.dj import user_related_name\nfrom menus.menu_pool import menu_pool\n\n\ndef post_save_user(instance, raw, created, **kwargs):\n \"\"\"S... | [

{

"content": "# -*- coding: utf-8 -*-\n\nfrom cms.cache.permissions import clear_user_permission_cache\nfrom cms.models import PageUser, PageUserGroup\nfrom cms.utils.compat.dj import user_related_name\nfrom menus.menu_pool import menu_pool\n\n\ndef post_save_user(instance, raw, created, **kwargs):\n \"\"\"S... | diff --git a/cms/signals/permissions.py b/cms/signals/permissions.py

index 9d306bbd1a0..2a8f7075612 100644

--- a/cms/signals/permissions.py

+++ b/cms/signals/permissions.py

@@ -58,7 +58,8 @@ def pre_save_group(instance, raw, **kwargs):

def pre_delete_group(instance, **kwargs):

- for user in instance.user_set.al... |

networkx__networkx-4431 | Documentation: Make classes AtlasView et. al. from networkx/classes/coreviews.py accessible from documentation

Lest I seem ungrateful, I like networkx a lot, and rely on it for two of my main personal projects [fake-data-for-learning](https://github.com/munichpavel/fake-data-for-learning) and the WIP [clovek-ne-jezi-se... | [

{

"content": "\"\"\"\n\"\"\"\nimport warnings\nfrom collections.abc import Mapping\n\n__all__ = [\n \"AtlasView\",\n \"AdjacencyView\",\n \"MultiAdjacencyView\",\n \"UnionAtlas\",\n \"UnionAdjacency\",\n \"UnionMultiInner\",\n \"UnionMultiAdjacency\",\n \"FilterAtlas\",\n \"FilterAdja... | [

{

"content": "\"\"\"Views of core data structures such as nested Mappings (e.g. dict-of-dicts).\nThese ``Views`` often restrict element access, with either the entire view or\nlayers of nested mappings being read-only.\n\"\"\"\nimport warnings\nfrom collections.abc import Mapping\n\n__all__ = [\n \"AtlasView... | diff --git a/doc/reference/classes/index.rst b/doc/reference/classes/index.rst

index 0747795410c..acd9e259099 100644

--- a/doc/reference/classes/index.rst

+++ b/doc/reference/classes/index.rst

@@ -59,6 +59,25 @@ Graph Views

subgraph_view

reverse_view

+Core Views

+==========

+

+.. automodule:: networkx.classes... |

vyperlang__vyper-3202 | `pc_pos_map` for small methods is empty

### Version Information

* vyper Version (output of `vyper --version`): 0.3.7

* OS: osx

* Python Version (output of `python --version`): 3.10.4

### Bug

```

(vyper) ~/vyper $ cat tmp/baz.vy

@external

def foo():

pass

(vyper) ~/vyper $ vyc -f source_map tmp/b... | [

{

"content": "from typing import Any, List\n\nimport vyper.utils as util\nfrom vyper.address_space import CALLDATA, DATA, MEMORY\nfrom vyper.ast.signatures.function_signature import FunctionSignature, VariableRecord\nfrom vyper.codegen.abi_encoder import abi_encoding_matches_vyper\nfrom vyper.codegen.context im... | [

{

"content": "from typing import Any, List\n\nimport vyper.utils as util\nfrom vyper.address_space import CALLDATA, DATA, MEMORY\nfrom vyper.ast.signatures.function_signature import FunctionSignature, VariableRecord\nfrom vyper.codegen.abi_encoder import abi_encoding_matches_vyper\nfrom vyper.codegen.context im... | diff --git a/vyper/codegen/function_definitions/external_function.py b/vyper/codegen/function_definitions/external_function.py

index 3f0d89c4d6..06d2946558 100644

--- a/vyper/codegen/function_definitions/external_function.py

+++ b/vyper/codegen/function_definitions/external_function.py

@@ -214,4 +214,4 @@ def generate_... |

dbt-labs__dbt-core-2599 | yaml quoting not working with NativeEnvironment jinja evaluator

### Describe the bug

dbt's NativeEnvironment introduced a functional change to how Jinja strings are evaluated. In dbt v0.17.0, a schema test can no longer be configured with a quoted column name.

### Steps To Reproduce

```

# schema.yml

version: 2... | [

{

"content": "import codecs\nimport linecache\nimport os\nimport re\nimport tempfile\nimport threading\nfrom ast import literal_eval\nfrom contextlib import contextmanager\nfrom itertools import chain, islice\nfrom typing import (\n List, Union, Set, Optional, Dict, Any, Iterator, Type, NoReturn, Tuple\n)\n\... | [

{

"content": "import codecs\nimport linecache\nimport os\nimport re\nimport tempfile\nimport threading\nfrom ast import literal_eval\nfrom contextlib import contextmanager\nfrom itertools import chain, islice\nfrom typing import (\n List, Union, Set, Optional, Dict, Any, Iterator, Type, NoReturn, Tuple\n)\n\... | diff --git a/CHANGELOG.md b/CHANGELOG.md

index 91a5c7833b7..c7b4c5dbc9b 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -1,11 +1,11 @@

## dbt 0.17.1 (Release TBD)

### Fixes

+- dbt native rendering now avoids turning quoted strings into unquoted strings ([#2597](https://github.com/fishtown-analytics/dbt/issues/2597)... |

ansible__ansible-42557 | The ios_linkagg search for interfaces may return wrongs interfaces name

<!---

Verify first that your issue/request is not already reported on GitHub.

THIS FORM WILL BE READ BY A MACHINE, COMPLETE ALL SECTIONS AS DESCRIBED.

Also test if the latest release, and devel branch are affected too.

ALWAYS add information AF... | [

{

"content": "#!/usr/bin/python\n# -*- coding: utf-8 -*-\n\n# (c) 2017, Ansible by Red Hat, inc\n# GNU General Public License v3.0+ (see COPYING or https://www.gnu.org/licenses/gpl-3.0.txt)\n\nfrom __future__ import absolute_import, division, print_function\n__metaclass__ = type\n\n\nANSIBLE_METADATA = {'metada... | [

{

"content": "#!/usr/bin/python\n# -*- coding: utf-8 -*-\n\n# (c) 2017, Ansible by Red Hat, inc\n# GNU General Public License v3.0+ (see COPYING or https://www.gnu.org/licenses/gpl-3.0.txt)\n\nfrom __future__ import absolute_import, division, print_function\n__metaclass__ = type\n\n\nANSIBLE_METADATA = {'metada... | diff --git a/lib/ansible/modules/network/ios/ios_linkagg.py b/lib/ansible/modules/network/ios/ios_linkagg.py

index 840576eee7bd1a..e07c18b0e57dca 100644

--- a/lib/ansible/modules/network/ios/ios_linkagg.py

+++ b/lib/ansible/modules/network/ios/ios_linkagg.py

@@ -227,7 +227,7 @@ def parse_members(module, config, group):... |

dotkom__onlineweb4-1359 | Option to post video in article

Make it possible to post video in article from dashboard.

| [

{

"content": "# -*- encoding: utf-8 -*-\nfrom django import forms\n\nfrom apps.article.models import Article\nfrom apps.dashboard.widgets import DatetimePickerInput, multiple_widget_generator\nfrom apps.gallery.widgets import SingleImageInput\n\nfrom taggit.forms import TagWidget\n\n\nclass ArticleForm(forms.Mo... | [

{

"content": "# -*- encoding: utf-8 -*-\nfrom django import forms\n\nfrom apps.article.models import Article\nfrom apps.dashboard.widgets import DatetimePickerInput, multiple_widget_generator\nfrom apps.gallery.widgets import SingleImageInput\n\nfrom taggit.forms import TagWidget\n\n\nclass ArticleForm(forms.Mo... | diff --git a/apps/article/dashboard/forms.py b/apps/article/dashboard/forms.py

index 43ba4ef9a..fed85caa3 100644

--- a/apps/article/dashboard/forms.py

+++ b/apps/article/dashboard/forms.py

@@ -22,6 +22,7 @@ class Meta(object):

'ingress',

'content',

'image',

+ 'video',

... |

Gallopsled__pwntools-1893 | 'pwn cyclic -o afca' throws a BytesWarning

```

$ pwn cyclic -o afca

/Users/heapcrash/pwntools/pwnlib/commandline/cyclic.py:74: BytesWarning: Text is not bytes; assuming ASCII, no guarantees. See https://docs.pwntools.com/#bytes

pat = flat(pat, bytes=args.length)

506

```

| [

{

"content": "#!/usr/bin/env python2\nfrom __future__ import absolute_import\nfrom __future__ import division\n\nimport argparse\nimport six\nimport string\nimport sys\n\nimport pwnlib.args\npwnlib.args.free_form = False\n\nfrom pwn import *\nfrom pwnlib.commandline import common\n\nparser = common.parser_comma... | [

{

"content": "#!/usr/bin/env python2\nfrom __future__ import absolute_import\nfrom __future__ import division\n\nimport argparse\nimport six\nimport string\nimport sys\n\nimport pwnlib.args\npwnlib.args.free_form = False\n\nfrom pwn import *\nfrom pwnlib.commandline import common\n\nparser = common.parser_comma... | diff --git a/CHANGELOG.md b/CHANGELOG.md

index 462646ddb..1d67a0b6d 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -64,12 +64,14 @@ The table below shows which release corresponds to each branch, and what date th

- [#1733][1733] Update libc headers -> more syscalls available!

- [#1876][1876] add `self.message` and c... |

dynaconf__dynaconf-672 | [bug] UnicodeEncodeError upon dynaconf init

**Describe the bug**

`dynaconf init -f yaml` results in a `UnicodeEncodeError `

**To Reproduce**

Steps to reproduce the behavior:

1. `git clone -b dynaconf https://github.com/ebenh/django-flex-user.git`

2. `py -m pipenv install --dev`

3. `py -m pipenv shell`

4. ... | [

{

"content": "import importlib\nimport io\nimport os\nimport pprint\nimport sys\nimport warnings\nimport webbrowser\nfrom contextlib import suppress\nfrom pathlib import Path\n\nfrom dynaconf import constants\nfrom dynaconf import default_settings\nfrom dynaconf import LazySettings\nfrom dynaconf import loaders... | [

{

"content": "import importlib\nimport io\nimport os\nimport pprint\nimport sys\nimport warnings\nimport webbrowser\nfrom contextlib import suppress\nfrom pathlib import Path\n\nfrom dynaconf import constants\nfrom dynaconf import default_settings\nfrom dynaconf import LazySettings\nfrom dynaconf import loaders... | diff --git a/dynaconf/cli.py b/dynaconf/cli.py

index 5bb8316d3..5aae070cc 100644

--- a/dynaconf/cli.py

+++ b/dynaconf/cli.py

@@ -23,6 +23,7 @@

from dynaconf.vendor import click

from dynaconf.vendor import toml

+os.environ["PYTHONIOENCODING"] = "utf-8"

CWD = Path.cwd()

EXTS = ["ini", "toml", "yaml", "json", "py"... |

nyu-mll__jiant-615 | ${NFS_PROJECT_PREFIX} and ${JIANT_PROJECT_PREFIX}

Do we need two separate set of environment variables?

We also have ${NFS_DATA_DIR} and ${JIANT_DATA_DIR}. I don't know about potential users of jiant, at least for me, it's pretty confusing.

| [

{

"content": "\"\"\"Train a multi-task model using AllenNLP\n\nTo debug this, run with -m ipdb:\n\n python -m ipdb main.py --config_file ...\n\"\"\"\n# pylint: disable=no-member\nimport argparse\nimport glob\nimport io\nimport logging as log\nimport os\nimport random\nimport subprocess\nimport sys\nimport ti... | [

{

"content": "\"\"\"Train a multi-task model using AllenNLP\n\nTo debug this, run with -m ipdb:\n\n python -m ipdb main.py --config_file ...\n\"\"\"\n# pylint: disable=no-member\nimport argparse\nimport glob\nimport io\nimport logging as log\nimport os\nimport random\nimport subprocess\nimport sys\nimport ti... | diff --git a/Dockerfile b/Dockerfile

index 82304093d..ea07afb76 100644

--- a/Dockerfile

+++ b/Dockerfile

@@ -92,5 +92,4 @@ ENV PATH_TO_COVE "$JSALT_SHARE_DIR/cove"

ENV ELMO_SRC_DIR "$JSALT_SHARE_DIR/elmo"

# Set these manually with -e or via Kuberentes config YAML.

-# ENV NFS_PROJECT_PREFIX "/nfs/jsalt/exp/docker"

... |

holoviz__holoviews-5436 | Game of Life example needs update

### Package versions

```

panel = 0.13.1

holoviews = 1.15.0

bokeh = 2.4.3

```

### Bug description

In the Game of Life example in the holoviews documentation (https://holoviews.org/gallery/apps/bokeh/game_of_life.html)

I needed to update the second to last line

```p... | [

{

"content": "import numpy as np\nimport holoviews as hv\nimport panel as pn\n\nfrom holoviews import opts\nfrom holoviews.streams import Tap, Counter, DoubleTap\nfrom scipy.signal import convolve2d\n\nhv.extension('bokeh')\n\ndiehard = [[0, 0, 0, 0, 0, 0, 1, 0],\n [1, 1, 0, 0, 0, 0, 0, 0],\n ... | [

{

"content": "import numpy as np\nimport holoviews as hv\nimport panel as pn\n\nfrom holoviews import opts\nfrom holoviews.streams import Tap, Counter, DoubleTap\nfrom scipy.signal import convolve2d\n\nhv.extension('bokeh')\n\ndiehard = [[0, 0, 0, 0, 0, 0, 1, 0],\n [1, 1, 0, 0, 0, 0, 0, 0],\n ... | diff --git a/examples/gallery/apps/bokeh/game_of_life.py b/examples/gallery/apps/bokeh/game_of_life.py

index 62ddf783be..37d0088f1e 100644

--- a/examples/gallery/apps/bokeh/game_of_life.py

+++ b/examples/gallery/apps/bokeh/game_of_life.py

@@ -91,6 +91,6 @@ def reset_data(x, y):

def advance():

counter.event(coun... |

buildbot__buildbot-986 | Remove googlecode

This fixes the following test on Python 3:

```

trial buildbot.test.unit.test_www_hooks_googlecode

```

| [

{

"content": "# This file is part of Buildbot. Buildbot is free software: you can\n# redistribute it and/or modify it under the terms of the GNU General Public\n# License as published by the Free Software Foundation, version 2.\n#\n# This program is distributed in the hope that it will be useful, but WITHOUT\n... | [

{

"content": "# This file is part of Buildbot. Buildbot is free software: you can\n# redistribute it and/or modify it under the terms of the GNU General Public\n# License as published by the Free Software Foundation, version 2.\n#\n# This program is distributed in the hope that it will be useful, but WITHOUT\n... | diff --git a/master/buildbot/status/web/waterfall.py b/master/buildbot/status/web/waterfall.py

index 698a2ebf5f2a..6ffd5fd4e1bf 100644

--- a/master/buildbot/status/web/waterfall.py

+++ b/master/buildbot/status/web/waterfall.py

@@ -854,4 +854,4 @@ def phase2(self, request, sourceNames, timestamps, eventGrid,

... |

microsoft__botbuilder-python-1451 | dependecy conflict between botframework 4.11.0 and azure-identity 1.5.0

## Version

4.11 (also happening with 4.10)

## Describe the bug

`botframework-connector == 4.11.0` (current) requires `msal == 1.2.0`

`azure-identity == 1.5.0` (current) requires `msal >=1.6.0,<2.0.0`

This created a dependency conflict wher... | [

{

"content": "# Copyright (c) Microsoft Corporation. All rights reserved.\n# Licensed under the MIT License.\nimport os\nfrom setuptools import setup\n\nNAME = \"botframework-connector\"\nVERSION = os.environ[\"packageVersion\"] if \"packageVersion\" in os.environ else \"4.12.0\"\nREQUIRES = [\n \"msrest==0.... | [

{

"content": "# Copyright (c) Microsoft Corporation. All rights reserved.\n# Licensed under the MIT License.\nimport os\nfrom setuptools import setup\n\nNAME = \"botframework-connector\"\nVERSION = os.environ[\"packageVersion\"] if \"packageVersion\" in os.environ else \"4.12.0\"\nREQUIRES = [\n \"msrest==0.... | diff --git a/libraries/botframework-connector/setup.py b/libraries/botframework-connector/setup.py

index 04bf09257..09a82d646 100644

--- a/libraries/botframework-connector/setup.py

+++ b/libraries/botframework-connector/setup.py

@@ -12,7 +12,7 @@

"PyJWT==1.5.3",

"botbuilder-schema==4.12.0",

"adal==1.2.1"... |

pypi__warehouse-3598 | Set samesite=lax on session cookies

This is a strong defense-in-depth mechanism for protecting against CSRF. It's currently only respected by Chrome, but Firefox will add it as well.

| [

{

"content": "# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required by applicable law or agreed to in writing, softw... | [

{

"content": "# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required by applicable law or agreed to in writing, softw... | diff --git a/requirements/main.txt b/requirements/main.txt

index 9e51b303408e..defd6b964f59 100644

--- a/requirements/main.txt

+++ b/requirements/main.txt

@@ -464,9 +464,9 @@ vine==1.1.4 \

webencodings==0.5.1 \

--hash=sha256:a0af1213f3c2226497a97e2b3aa01a7e4bee4f403f95be16fc9acd2947514a78 \

--hash=sha256:b36... |

pypi__warehouse-3292 | Warehouse file order differs from legacy PyPI file list

Tonight, while load testing of pypi.org was ongoing, we saw some failures in automated systems that use `--require-hashes` with `pip install`, as ordering on the package file list page changed.

The specific package we saw break was `pandas` at version `0.12.0`.... | [

{

"content": "# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required by applicable law or agreed to in writing, softw... | [

{

"content": "# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required by applicable law or agreed to in writing, softw... | diff --git a/tests/unit/legacy/api/test_simple.py b/tests/unit/legacy/api/test_simple.py

index 4a23369eeadb..004b99caa628 100644

--- a/tests/unit/legacy/api/test_simple.py

+++ b/tests/unit/legacy/api/test_simple.py

@@ -202,7 +202,7 @@ def test_with_files_with_version_multi_digit(self, db_request):

files = []... |

digitalfabrik__integreat-cms-169 | Change development environment from docker-compose to venv

- [ ] Remove the django docker container

- [ ] Install package and requirements in venv

- [ ] Keep database docker container and manage connection to django

| [

{

"content": "\"\"\"\nDjango settings for backend project.\n\nGenerated by 'django-admin startproject' using Django 1.11.11.\n\nFor more information on this file, see\nhttps://docs.djangoproject.com/en/1.11/topics/settings/\n\nFor the full list of settings and their values, see\nhttps://docs.djangoproject.com/e... | [

{

"content": "\"\"\"\nDjango settings for backend project.\n\nGenerated by 'django-admin startproject' using Django 1.11.11.\n\nFor more information on this file, see\nhttps://docs.djangoproject.com/en/1.11/topics/settings/\n\nFor the full list of settings and their values, see\nhttps://docs.djangoproject.com/e... | diff --git a/.gitignore b/.gitignore

index 014fe4432f..44f1a9168c 100644

--- a/.gitignore

+++ b/.gitignore

@@ -46,3 +46,6 @@ backend/media/*

# XLIFF files folder

**/xliffs/

+

+# Postgres folder

+.postgres

\ No newline at end of file

diff --git a/README.md b/README.md

index 097c6ea507..35a8db1883 100644

--- a/README... |

modin-project__modin-2173 | [OmniSci] Add float32 dtype support

Looks like our calcite serializer doesn't support float32 type.

| [

{

"content": "# Licensed to Modin Development Team under one or more contributor license agreements.\n# See the NOTICE file distributed with this work for additional information regarding\n# copyright ownership. The Modin Development Team licenses this file to you under the\n# Apache License, Version 2.0 (the ... | [

{

"content": "# Licensed to Modin Development Team under one or more contributor license agreements.\n# See the NOTICE file distributed with this work for additional information regarding\n# copyright ownership. The Modin Development Team licenses this file to you under the\n# Apache License, Version 2.0 (the ... | diff --git a/modin/experimental/engines/omnisci_on_ray/frame/calcite_serializer.py b/modin/experimental/engines/omnisci_on_ray/frame/calcite_serializer.py

index 0156cfbc3d9..f460868cd5d 100644

--- a/modin/experimental/engines/omnisci_on_ray/frame/calcite_serializer.py

+++ b/modin/experimental/engines/omnisci_on_ray/fra... |

huggingface__diffusers-680 | LDM Bert `config.json` path

### Describe the bug

### Problem

There is a reference to an LDM Bert that 404's

```bash

src/diffusers/pipelines/latent_diffusion/pipeline_latent_diffusion.py: "ldm-bert": "https://huggingface.co/ldm-bert/resolve/main/config.json",

```

I was able to locate a `config.json` at `https... | [

{

"content": "import inspect\nfrom typing import List, Optional, Tuple, Union\n\nimport torch\nimport torch.nn as nn\nimport torch.utils.checkpoint\n\nfrom transformers.activations import ACT2FN\nfrom transformers.configuration_utils import PretrainedConfig\nfrom transformers.modeling_outputs import BaseModelOu... | [

{

"content": "import inspect\nimport warnings\nfrom typing import List, Optional, Tuple, Union\n\nimport torch\nimport torch.nn as nn\nimport torch.utils.checkpoint\n\nfrom transformers.activations import ACT2FN\nfrom transformers.configuration_utils import PretrainedConfig\nfrom transformers.modeling_outputs i... | diff --git a/src/diffusers/pipelines/latent_diffusion/pipeline_latent_diffusion.py b/src/diffusers/pipelines/latent_diffusion/pipeline_latent_diffusion.py

index 4a4f29be7f75..2efde98f772e 100644

--- a/src/diffusers/pipelines/latent_diffusion/pipeline_latent_diffusion.py

+++ b/src/diffusers/pipelines/latent_diffusion/pi... |

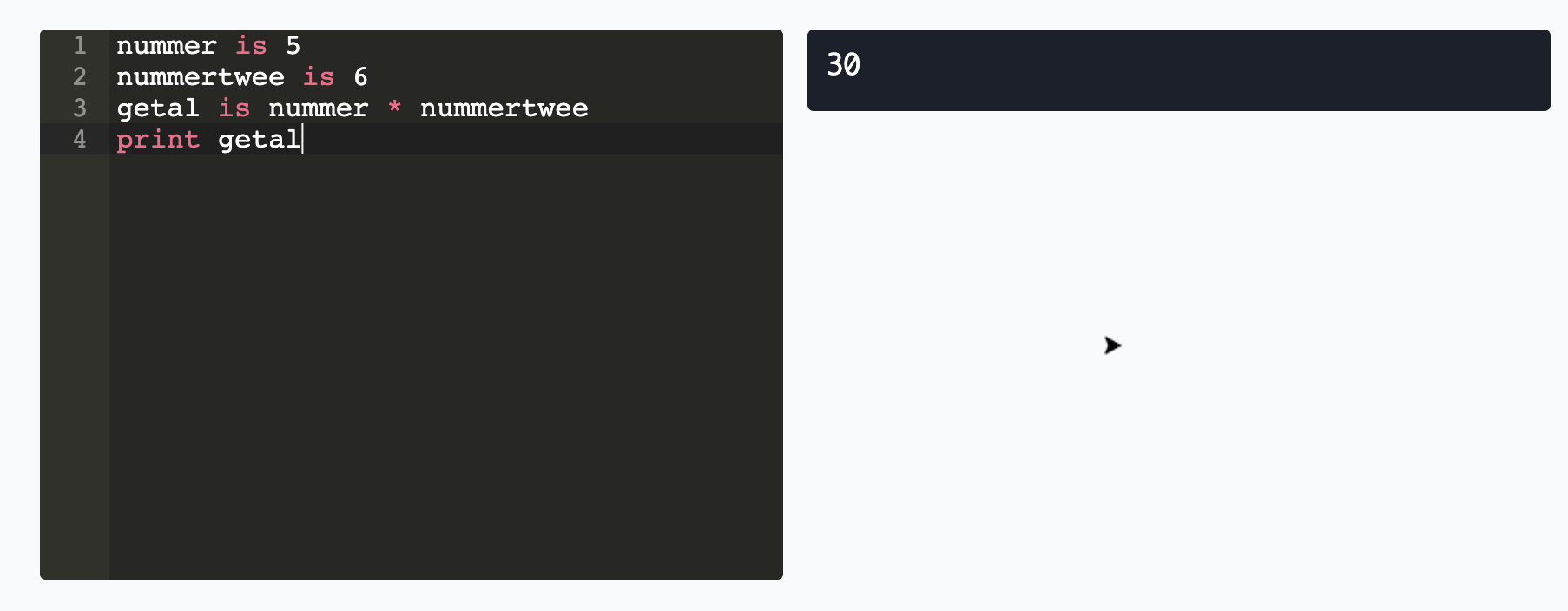

hedyorg__hedy-654 | Turtle should not be shown in level 6 programs with numbers

Turtle is now shown in some cases:

Violating code:

```

nummer is 5

nummertwee is 6

getal is nummer * nummertwee

print getal

```

Turtle sh... | [

{

"content": "from lark import Lark\nfrom lark.exceptions import LarkError, UnexpectedEOF, UnexpectedCharacters\nfrom lark import Tree, Transformer, visitors\nfrom os import path\nimport sys\nimport utils\nfrom collections import namedtuple\n\n\n# Some useful constants\nHEDY_MAX_LEVEL = 22\n\nreserved_words = [... | [

{

"content": "from lark import Lark\nfrom lark.exceptions import LarkError, UnexpectedEOF, UnexpectedCharacters\nfrom lark import Tree, Transformer, visitors\nfrom os import path\nimport sys\nimport utils\nfrom collections import namedtuple\n\n\n# Some useful constants\nHEDY_MAX_LEVEL = 22\n\nreserved_words = [... | diff --git a/coursedata/adventures/nl.yaml b/coursedata/adventures/nl.yaml

index 1be31c858af..538e04c366a 100644

--- a/coursedata/adventures/nl.yaml

+++ b/coursedata/adventures/nl.yaml

@@ -484,7 +484,8 @@ adventures:

keuzes is 1, 2, 3, 4, 5, regenworm

worp is ...

... |

vacanza__python-holidays-451 | Can't un-pickle a `HolidayBase`

Seems that after a holidays class, e.g. `holidays.UnitedStates()` is used once, it can't be un-pickled.

For example, this snippet:

```python

import holidays

import pickle

from datetime import datetime

# Works:

us_holidays = holidays.UnitedStates()

us_holidays_ = pickle.load... | [

{

"content": "# -*- coding: utf-8 -*-\n\n# python-holidays\n# ---------------\n# A fast, efficient Python library for generating country, province and state\n# specific sets of holidays on the fly. It aims to make determining whether a\n# specific date is a holiday as fast and flexible as possible.\n#\n# ... | [

{

"content": "# -*- coding: utf-8 -*-\n\n# python-holidays\n# ---------------\n# A fast, efficient Python library for generating country, province and state\n# specific sets of holidays on the fly. It aims to make determining whether a\n# specific date is a holiday as fast and flexible as possible.\n#\n# ... | diff --git a/holidays/holiday_base.py b/holidays/holiday_base.py

index 1ca61fccb..a24410150 100644

--- a/holidays/holiday_base.py

+++ b/holidays/holiday_base.py

@@ -209,6 +209,9 @@ def __radd__(self, other):

def _populate(self, year):

pass

+ def __reduce__(self):

+ return super(HolidayBase, se... |

ivy-llc__ivy-28478 | Fix Frontend Failing Test: jax - manipulation.paddle.tile

| [

{

"content": "# global\nimport ivy\nfrom ivy.functional.frontends.paddle.func_wrapper import (\n to_ivy_arrays_and_back,\n)\nfrom ivy.func_wrapper import (\n with_unsupported_dtypes,\n with_supported_dtypes,\n with_supported_device_and_dtypes,\n)\n\n\n@with_unsupported_dtypes({\"2.6.0 and below\": (... | [

{

"content": "# global\nimport ivy\nfrom ivy.functional.frontends.paddle.func_wrapper import (\n to_ivy_arrays_and_back,\n)\nfrom ivy.func_wrapper import (\n with_unsupported_dtypes,\n with_supported_dtypes,\n with_supported_device_and_dtypes,\n)\n\n\n@with_unsupported_dtypes({\"2.6.0 and below\": (... | diff --git a/ivy/functional/frontends/paddle/manipulation.py b/ivy/functional/frontends/paddle/manipulation.py

index dd7c7e79a28f9..6c2c8d6a90adc 100644

--- a/ivy/functional/frontends/paddle/manipulation.py

+++ b/ivy/functional/frontends/paddle/manipulation.py

@@ -208,7 +208,7 @@ def take_along_axis(arr, indices, axis)... |

kubeflow__pipelines-1666 | `pip install kfp` does not install CLI

**What happened:**

```

$ virtualenv .venv

...

$ pip install kfp==0.1.23

...

$ kfp

Traceback (most recent call last):

File "/private/tmp/.venv/bin/kfp", line 6, in <module>

from kfp.__main__ import main

File "/private/tmp/.venv/lib/python3.7/site-packages/kfp/__... | [

{

"content": "# Copyright 2018 Google LLC\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required by applicab... | [

{

"content": "# Copyright 2018 Google LLC\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required by applicab... | diff --git a/sdk/python/setup.py b/sdk/python/setup.py

index ee581414370..dac70636a4e 100644

--- a/sdk/python/setup.py

+++ b/sdk/python/setup.py

@@ -45,6 +45,7 @@

install_requires=REQUIRES,

packages=[

'kfp',

+ 'kfp.cli',

'kfp.compiler',

'kfp.components',

'kfp.compone... |

streamlit__streamlit-5184 | It should be :

https://github.com/streamlit/streamlit/blob/535f11765817657892506d6904bbbe04908dbdf3/lib/streamlit/elements/alert.py#L145

| [

{

"content": "# Copyright 2018-2022 Streamlit Inc.\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required by a... | [

{

"content": "# Copyright 2018-2022 Streamlit Inc.\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required by a... | diff --git a/lib/streamlit/elements/alert.py b/lib/streamlit/elements/alert.py

index d9d5f2fe5f82..65458e9162b3 100644

--- a/lib/streamlit/elements/alert.py

+++ b/lib/streamlit/elements/alert.py

@@ -142,7 +142,7 @@ def success(

Example

-------

- >>> st.success('This is a success message!', ic... |

django-cms__django-cms-1994 | make django-admin-style a fixed dependency

and add it to the tutorial

| [

{

"content": "from setuptools import setup, find_packages\nimport os\nimport cms\n\n\nCLASSIFIERS = [\n 'Development Status :: 5 - Production/Stable',\n 'Environment :: Web Environment',\n 'Framework :: Django',\n 'Intended Audience :: Developers',\n 'License :: OSI Approved :: BSD License',\n ... | [

{

"content": "from setuptools import setup, find_packages\nimport os\nimport cms\n\n\nCLASSIFIERS = [\n 'Development Status :: 5 - Production/Stable',\n 'Environment :: Web Environment',\n 'Framework :: Django',\n 'Intended Audience :: Developers',\n 'License :: OSI Approved :: BSD License',\n ... | diff --git a/docs/getting_started/installation.rst b/docs/getting_started/installation.rst

index fa131805ebc..6f425a8e2e2 100644

--- a/docs/getting_started/installation.rst

+++ b/docs/getting_started/installation.rst

@@ -16,6 +16,7 @@ Requirements

* `django-mptt`_ 0.5.2 (strict due to API compatibility issues)

* `dja... |

ansible__ansible-modules-extras-387 | Freshly installed bower raises json error

I ran into an issue where the ansible bower module when attempting to run bower install can't parse the json from `bower list --json`

Here is the stacktrace

```

failed: [default] => {"failed": true, "parsed": false}

BECOME-SUCCESS-bcokpjdhrlrcdlrfpmvdgmahrbmtzoqk

Traceback (m... | [

{

"content": "#!/usr/bin/python\n# -*- coding: utf-8 -*-\n\n# (c) 2014, Michael Warkentin <mwarkentin@gmail.com>\n#\n# This file is part of Ansible\n#\n# Ansible is free software: you can redistribute it and/or modify\n# it under the terms of the GNU General Public License as published by\n# the Free Software F... | [

{

"content": "#!/usr/bin/python\n# -*- coding: utf-8 -*-\n\n# (c) 2014, Michael Warkentin <mwarkentin@gmail.com>\n#\n# This file is part of Ansible\n#\n# Ansible is free software: you can redistribute it and/or modify\n# it under the terms of the GNU General Public License as published by\n# the Free Software F... | diff --git a/packaging/language/bower.py b/packaging/language/bower.py

index 3fccf51056b..085f454e639 100644

--- a/packaging/language/bower.py

+++ b/packaging/language/bower.py

@@ -108,7 +108,7 @@ def _exec(self, args, run_in_check_mode=False, check_rc=True):

return ''

def list(self):

- cmd = ['l... |

cupy__cupy-764 | cupy.array(cupy_array, oder=None) raises error

When I do this:

```python

>>> x = cupy.ones(3)

>>> xx = cupy.array(x, order=None)

```

I get this traceback:

```

File "[...]/cupy/cupy/creation/from_data.py", line 41, in array

return core.array(obj, dtype, copy, order, subok, ndmin)

File "cupy/core/cor... | [

{

"content": "# flake8: NOQA\n# \"flake8: NOQA\" to suppress warning \"H104 File contains nothing but comments\"\n\n# class s_(object):\n\nimport numpy\nimport six\n\nimport cupy\nfrom cupy import core\nfrom cupy.creation import from_data\nfrom cupy.manipulation import join\n\n\nclass AxisConcatenator(object):... | [

{

"content": "# flake8: NOQA\n# \"flake8: NOQA\" to suppress warning \"H104 File contains nothing but comments\"\n\n# class s_(object):\n\nimport numpy\nimport six\n\nimport cupy\nfrom cupy import core\nfrom cupy.creation import from_data\nfrom cupy.manipulation import join\n\n\nclass AxisConcatenator(object):... | diff --git a/cupy/core/core.pyx b/cupy/core/core.pyx

index 940f2ce7d32..a27510307ca 100644

--- a/cupy/core/core.pyx

+++ b/cupy/core/core.pyx

@@ -97,7 +97,7 @@ cdef class ndarray:

self.data = memptr

self.base = None

- if order == 'C':

+ if order in ('C', None):

self._st... |

databricks__koalas-747 | [DO NOT MERGE] Test

| [

{

"content": "#!/usr/bin/env python\n\n#\n# Copyright (C) 2019 Databricks, Inc.\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-... | [

{

"content": "#!/usr/bin/env python\n\n#\n# Copyright (C) 2019 Databricks, Inc.\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-... | diff --git a/requirements-dev.txt b/requirements-dev.txt

index 4be44500ee..5e0c474b0f 100644

--- a/requirements-dev.txt

+++ b/requirements-dev.txt

@@ -1,5 +1,5 @@

# Dependencies in Koalas

-pandas>=0.23

+pandas>=0.23.2

pyarrow>=0.10

matplotlib>=3.0.0

numpy>=1.14

diff --git a/setup.py b/setup.py

index 6b84679c9e..9c7... |

ansible-collections__community.aws-1712 | Broken example in iam_access_key

### Summary

The "Delete the access key" example in the `iam_access_key` module is broken. It's currently:

```yaml

- name: Delete the access_key

community.aws.iam_access_key:

name: example_user

access_key_id: AKIA1EXAMPLE1EXAMPLE

state: absent

```

There are two is... | [

{

"content": "#!/usr/bin/python\n# Copyright (c) 2021 Ansible Project\n# GNU General Public License v3.0+ (see COPYING or https://www.gnu.org/licenses/gpl-3.0.txt)\n\nfrom __future__ import absolute_import, division, print_function\n__metaclass__ = type\n\n\nDOCUMENTATION = r'''\n---\nmodule: iam_access_key\nve... | [

{

"content": "#!/usr/bin/python\n# Copyright (c) 2021 Ansible Project\n# GNU General Public License v3.0+ (see COPYING or https://www.gnu.org/licenses/gpl-3.0.txt)\n\nfrom __future__ import absolute_import, division, print_function\n__metaclass__ = type\n\n\nDOCUMENTATION = r'''\n---\nmodule: iam_access_key\nve... | diff --git a/changelogs/fragments/iam_access_key_docs_fix.yml b/changelogs/fragments/iam_access_key_docs_fix.yml

new file mode 100644

index 00000000000..f47a15eb91f

--- /dev/null

+++ b/changelogs/fragments/iam_access_key_docs_fix.yml

@@ -0,0 +1,2 @@

+trivial:

+ - iam_access_key - Use correct parameter names in the doc... |

ansible-collections__community.aws-1713 | Broken example in iam_access_key

### Summary

The "Delete the access key" example in the `iam_access_key` module is broken. It's currently:

```yaml

- name: Delete the access_key

community.aws.iam_access_key:

name: example_user

access_key_id: AKIA1EXAMPLE1EXAMPLE

state: absent

```

There are two is... | [

{

"content": "#!/usr/bin/python\n# Copyright (c) 2021 Ansible Project\n# GNU General Public License v3.0+ (see COPYING or https://www.gnu.org/licenses/gpl-3.0.txt)\n\nfrom __future__ import absolute_import, division, print_function\n__metaclass__ = type\n\n\nDOCUMENTATION = r'''\n---\nmodule: iam_access_key\nve... | [

{

"content": "#!/usr/bin/python\n# Copyright (c) 2021 Ansible Project\n# GNU General Public License v3.0+ (see COPYING or https://www.gnu.org/licenses/gpl-3.0.txt)\n\nfrom __future__ import absolute_import, division, print_function\n__metaclass__ = type\n\n\nDOCUMENTATION = r'''\n---\nmodule: iam_access_key\nve... | diff --git a/changelogs/fragments/iam_access_key_docs_fix.yml b/changelogs/fragments/iam_access_key_docs_fix.yml

new file mode 100644

index 00000000000..f47a15eb91f

--- /dev/null

+++ b/changelogs/fragments/iam_access_key_docs_fix.yml

@@ -0,0 +1,2 @@

+trivial:

+ - iam_access_key - Use correct parameter names in the doc... |

ansible-collections__community.aws-1711 | Broken example in iam_access_key

### Summary

The "Delete the access key" example in the `iam_access_key` module is broken. It's currently:

```yaml

- name: Delete the access_key

community.aws.iam_access_key:

name: example_user

access_key_id: AKIA1EXAMPLE1EXAMPLE

state: absent

```

There are two is... | [

{

"content": "#!/usr/bin/python\n# Copyright (c) 2021 Ansible Project\n# GNU General Public License v3.0+ (see COPYING or https://www.gnu.org/licenses/gpl-3.0.txt)\n\nfrom __future__ import absolute_import, division, print_function\n__metaclass__ = type\n\n\nDOCUMENTATION = r'''\n---\nmodule: iam_access_key\nve... | [

{

"content": "#!/usr/bin/python\n# Copyright (c) 2021 Ansible Project\n# GNU General Public License v3.0+ (see COPYING or https://www.gnu.org/licenses/gpl-3.0.txt)\n\nfrom __future__ import absolute_import, division, print_function\n__metaclass__ = type\n\n\nDOCUMENTATION = r'''\n---\nmodule: iam_access_key\nve... | diff --git a/changelogs/fragments/iam_access_key_docs_fix.yml b/changelogs/fragments/iam_access_key_docs_fix.yml

new file mode 100644

index 00000000000..f47a15eb91f

--- /dev/null

+++ b/changelogs/fragments/iam_access_key_docs_fix.yml

@@ -0,0 +1,2 @@

+trivial:

+ - iam_access_key - Use correct parameter names in the doc... |

pytorch__vision-1501 | Deprecate PILLOW_VERSION

torchvision now uses PILLOW_VERSION

https://github.com/pytorch/vision/blob/1e857d93c8de081e61695dd43e6f06e3e7c2b0a2/torchvision/transforms/functional.py#L5

However, this constant is deprecated in Pillow 5.2, and soon to be removed completely: https://github.com/python-pillow/Pillow/blob/mas... | [

{

"content": "from __future__ import division\nimport torch\nimport sys\nimport math\nfrom PIL import Image, ImageOps, ImageEnhance, PILLOW_VERSION\ntry:\n import accimage\nexcept ImportError:\n accimage = None\nimport numpy as np\nimport numbers\nimport collections\nimport warnings\n\nif sys.version_info... | [

{

"content": "from __future__ import division\nimport torch\nimport sys\nimport math\nfrom PIL import Image, ImageOps, ImageEnhance, __version__ as PILLOW_VERSION\ntry:\n import accimage\nexcept ImportError:\n accimage = None\nimport numpy as np\nimport numbers\nimport collections\nimport warnings\n\nif s... | diff --git a/torchvision/transforms/functional.py b/torchvision/transforms/functional.py

index 6f43d5d263f..a8fdbef86bf 100644

--- a/torchvision/transforms/functional.py

+++ b/torchvision/transforms/functional.py

@@ -2,7 +2,7 @@

import torch

import sys

import math

-from PIL import Image, ImageOps, ImageEnhance, PILL... |

bridgecrewio__checkov-1497 | checkov fails with junit-xml==1.8

**Describe the bug**

checkov fails with junit-xml==1.8

**To Reproduce**

Steps to reproduce the behavior:

1. pip3 install junit-xml==1.8

2. checkov -d .

3. See error:

```

Traceback (most recent call last):

File "/usr/local/bin/checkov", line 2, in <module>

from chec... | [

{

"content": "#!/usr/bin/env python\nimport logging\nimport os\nfrom importlib import util\nfrom os import path\n\nimport setuptools\nfrom setuptools import setup\n\n# read the contents of your README file\nthis_directory = path.abspath(path.dirname(__file__))\nwith open(path.join(this_directory, \"README.md\")... | [

{

"content": "#!/usr/bin/env python\nimport logging\nimport os\nfrom importlib import util\nfrom os import path\n\nimport setuptools\nfrom setuptools import setup\n\n# read the contents of your README file\nthis_directory = path.abspath(path.dirname(__file__))\nwith open(path.join(this_directory, \"README.md\")... | diff --git a/Pipfile b/Pipfile

index ef3df7dbb1..c2f19fac07 100644

--- a/Pipfile

+++ b/Pipfile

@@ -23,7 +23,7 @@ deep_merge = "*"

tabulate = "*"

colorama="*"

termcolor="*"

-junit-xml ="*"

+junit-xml = ">=1.9"

dpath = ">=1.5.0,<2"

pyyaml = ">=5.4.1"

boto3 = "==1.17.*"

diff --git a/Pipfile.lock b/Pipfile.lock

index... |

dbt-labs__dbt-core-5507 | [CT-876] Could we also now remove our upper bound on `MarkupSafe`, which we put in place earlier this year due to incompatibility with Jinja2?

Remove our upper bound on `MarkupSafe`, which we put in place earlier this year due to incompatibility with Jinja2(#4745). Also bump minimum requirement to match [Jinja2's requ... | [

{

"content": "#!/usr/bin/env python\nimport os\nimport sys\n\nif sys.version_info < (3, 7, 2):\n print(\"Error: dbt does not support this version of Python.\")\n print(\"Please upgrade to Python 3.7.2 or higher.\")\n sys.exit(1)\n\n\nfrom setuptools import setup\n\ntry:\n from setuptools import find... | [

{

"content": "#!/usr/bin/env python\nimport os\nimport sys\n\nif sys.version_info < (3, 7, 2):\n print(\"Error: dbt does not support this version of Python.\")\n print(\"Please upgrade to Python 3.7.2 or higher.\")\n sys.exit(1)\n\n\nfrom setuptools import setup\n\ntry:\n from setuptools import find... | diff --git a/.changes/unreleased/Dependencies-20220721-093233.yaml b/.changes/unreleased/Dependencies-20220721-093233.yaml

new file mode 100644

index 00000000000..f5c623e9581

--- /dev/null

+++ b/.changes/unreleased/Dependencies-20220721-093233.yaml

@@ -0,0 +1,7 @@

+kind: Dependencies

+body: Remove pin for MarkUpSafe fr... |

microsoft__Qcodes-867 | missing dependency`jsonschema` in requirements.txt

The latest pip installable version of QCoDeS does not list jsonschema as a dependency but requires it.

This problem came to light when running tests on a project that depeneds on QCoDeS. Part of my build script installs qcodes (pip install qcodes). Importing qcodes... | [

{

"content": "from setuptools import setup, find_packages\nfrom distutils.version import StrictVersion\nfrom importlib import import_module\nimport re\n\ndef get_version(verbose=1):\n \"\"\" Extract version information from source code \"\"\"\n\n try:\n with open('qcodes/version.py', 'r') as f:\n ... | [

{

"content": "from setuptools import setup, find_packages\nfrom distutils.version import StrictVersion\nfrom importlib import import_module\nimport re\n\ndef get_version(verbose=1):\n \"\"\" Extract version information from source code \"\"\"\n\n try:\n with open('qcodes/version.py', 'r') as f:\n ... | diff --git a/docs_requirements.txt b/docs_requirements.txt

index a8abb189efe..e9b853631b8 100644

--- a/docs_requirements.txt

+++ b/docs_requirements.txt

@@ -1,6 +1,5 @@

sphinx

sphinx_rtd_theme

-jsonschema

sphinxcontrib-jsonschema

nbconvert

ipython

diff --git a/requirements.txt b/requirements.txt

index 32d5add6fb3.... |

cupy__cupy-1944 | incorrect FFT results for Fortran-order arrays?

* Conditions (from `python -c 'import cupy; cupy.show_config()'`)

Tested in two environments with different CuPy versions:

```bash

CuPy Version : 4.4.1

CUDA Root : /usr/local/cuda

CUDA Build Version : 9010

CUDA Driver Version : 9010

CUDA... | [

{

"content": "from copy import copy\n\nimport six\n\nimport numpy as np\n\nimport cupy\nfrom cupy.cuda import cufft\nfrom math import sqrt\nfrom cupy.fft import config\n\n\ndef _output_dtype(a, value_type):\n if value_type != 'R2C':\n if a.dtype in [np.float16, np.float32]:\n return np.comp... | [

{

"content": "from copy import copy\n\nimport six\n\nimport numpy as np\n\nimport cupy\nfrom cupy.cuda import cufft\nfrom math import sqrt\nfrom cupy.fft import config\n\n\ndef _output_dtype(a, value_type):\n if value_type != 'R2C':\n if a.dtype in [np.float16, np.float32]:\n return np.comp... | diff --git a/cupy/fft/fft.py b/cupy/fft/fft.py

index e72a4906f5c..cee323e6c2f 100644

--- a/cupy/fft/fft.py

+++ b/cupy/fft/fft.py

@@ -76,7 +76,7 @@ def _exec_fft(a, direction, value_type, norm, axis, overwrite_x,

if axis % a.ndim != a.ndim - 1:

a = a.swapaxes(axis, -1)

- if a.base is not None:

+ if... |

privacyidea__privacyidea-1746 | Fix typo in registration token

The example of the registration token contains a typo.

The toketype of course is a "registration" token, not a "register".

| [

{

"content": "# -*- coding: utf-8 -*-\n#\n# privacyIDEA\n# Aug 12, 2014 Cornelius Kölbel\n# License: AGPLv3\n# contact: http://www.privacyidea.org\n#\n# 2015-01-29 Adapt during migration to flask\n# Cornelius Kölbel <cornelius@privacyidea.org>\n#\n# This code is free software; you can redistr... | [

{

"content": "# -*- coding: utf-8 -*-\n#\n# privacyIDEA\n# Aug 12, 2014 Cornelius Kölbel\n# License: AGPLv3\n# contact: http://www.privacyidea.org\n#\n# 2015-01-29 Adapt during migration to flask\n# Cornelius Kölbel <cornelius@privacyidea.org>\n#\n# This code is free software; you can redistr... | diff --git a/privacyidea/lib/tokens/registrationtoken.py b/privacyidea/lib/tokens/registrationtoken.py

index 54beeb5ed4..8aa4df8c8f 100644

--- a/privacyidea/lib/tokens/registrationtoken.py

+++ b/privacyidea/lib/tokens/registrationtoken.py

@@ -64,7 +64,7 @@ class RegistrationTokenClass(PasswordTokenClass):

H... |

getpelican__pelican-1507 | abbr support doesn't work for multiline

Eg:

``` rst

this is an :abbr:`TLA (Three Letter

Abbreviation)`

```

will output

`<abbr>TLA (Three Letter Abbreviation)</abbr>`

instead of

`<abbr title="Three Letter Abbreviation">TLA</abbr>`

I believe this could be fixed by adding the `re.M` flag to the `re.compile` call on th... | [

{

"content": "# -*- coding: utf-8 -*-\nfrom __future__ import unicode_literals, print_function\n\nfrom docutils import nodes, utils\nfrom docutils.parsers.rst import directives, roles, Directive\nfrom pygments.formatters import HtmlFormatter\nfrom pygments import highlight\nfrom pygments.lexers import get_lexer... | [

{

"content": "# -*- coding: utf-8 -*-\nfrom __future__ import unicode_literals, print_function\n\nfrom docutils import nodes, utils\nfrom docutils.parsers.rst import directives, roles, Directive\nfrom pygments.formatters import HtmlFormatter\nfrom pygments import highlight\nfrom pygments.lexers import get_lexer... | diff --git a/pelican/rstdirectives.py b/pelican/rstdirectives.py

index 1bf6971ca..1c25cc42a 100644

--- a/pelican/rstdirectives.py

+++ b/pelican/rstdirectives.py

@@ -70,7 +70,7 @@ def run(self):

directives.register_directive('sourcecode', Pygments)

-_abbr_re = re.compile('\((.*)\)$')

+_abbr_re = re.compile('\((.*)\... |

awslabs__gluonts-644 | Index of forecast is wrong in multivariate Time Series

## Description

When forecasting multivariate Time Series the index has the length of the target dimension instead of the prediction length

## To Reproduce

```python

import numpy as np

from gluonts.dataset.common import ListDataset

from gluonts.distributio... | [

{

"content": "# Copyright 2018 Amazon.com, Inc. or its affiliates. All Rights Reserved.\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\").\n# You may not use this file except in compliance with the License.\n# A copy of the License is located at\n#\n# http://www.apache.org/licenses/LICE... | [

{

"content": "# Copyright 2018 Amazon.com, Inc. or its affiliates. All Rights Reserved.\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\").\n# You may not use this file except in compliance with the License.\n# A copy of the License is located at\n#\n# http://www.apache.org/licenses/LICE... | diff --git a/src/gluonts/model/forecast.py b/src/gluonts/model/forecast.py

index 19ffc7abc1..6ad91fe9da 100644

--- a/src/gluonts/model/forecast.py

+++ b/src/gluonts/model/forecast.py

@@ -373,7 +373,7 @@ def prediction_length(self):

"""

Time length of the forecast.

"""

- return self.sam... |

python__peps-3263 | Infra: Check Sphinx warnings on CI

This is similar to what we have in the CPython repo, most recently: https://github.com/python/cpython/pull/106460, and will help us gradually remove Sphinx warnings, and avoid new ones being introduces.

It checks three things:

1. If a file previously had no warnings (not listed ... | [

{

"content": "# This file is placed in the public domain or under the\n# CC0-1.0-Universal license, whichever is more permissive.\n\n\"\"\"Configuration for building PEPs using Sphinx.\"\"\"\n\nfrom pathlib import Path\nimport sys\n\nsys.path.append(str(Path(\".\").absolute()))\n\n# -- Project information -----... | [

{

"content": "# This file is placed in the public domain or under the\n# CC0-1.0-Universal license, whichever is more permissive.\n\n\"\"\"Configuration for building PEPs using Sphinx.\"\"\"\n\nfrom pathlib import Path\nimport sys\n\nsys.path.append(str(Path(\".\").absolute()))\n\n# -- Project information -----... | diff --git a/conf.py b/conf.py

index 8e2ae485f06..95a1debd451 100644

--- a/conf.py

+++ b/conf.py

@@ -45,6 +45,9 @@

"pep-0012/pep-NNNN.rst",

]

+# Warn on missing references

+nitpicky = True

+

# Intersphinx configuration

intersphinx_mapping = {

'python': ('https://docs.python.org/3/', None),

|

azavea__raster-vision-1557 | Query is invisible in interactive docs search

## 🐛 Bug

When I search for something in the docs using the new interactive search bar it seems to work except the query is not visible in the search box. Instead a bunch of dots appear. This was in Chrome Version 107.0.5304.110 (Official Build) (arm64) with the extensi... | [

{

"content": "# -*- coding: utf-8 -*-\n#\n# Configuration file for the Sphinx documentation builder.\n#\n# This file does only contain a selection of the most common options. For a\n# full list see the documentation:\n# http://www.sphinx-doc.org/en/stable/config\n\nfrom typing import TYPE_CHECKING, List\nimport... | [

{

"content": "# -*- coding: utf-8 -*-\n#\n# Configuration file for the Sphinx documentation builder.\n#\n# This file does only contain a selection of the most common options. For a\n# full list see the documentation:\n# http://www.sphinx-doc.org/en/stable/config\n\nfrom typing import TYPE_CHECKING, List\nimport... | diff --git a/.readthedocs.yml b/.readthedocs.yml

index a0bdb3900..e99691356 100644

--- a/.readthedocs.yml

+++ b/.readthedocs.yml

@@ -45,4 +45,4 @@ python:

search:

ranking:

# down-rank source code pages

- '*/_modules/*': -10

+ _modules/*: -10

diff --git a/docs/conf.py b/docs/conf.py

index 28bf2a200..5fe59... |

scrapy__scrapy-5880 | _sent_failed cut the errback chain in MailSender

`MailSender._sent_failed` return `None`, instead of `failure`. This cut the errback call chain, making impossible to detect in the code fail in the mails in client code.

| [

{

"content": "\"\"\"\nMail sending helpers\n\nSee documentation in docs/topics/email.rst\n\"\"\"\nimport logging\nfrom email import encoders as Encoders\nfrom email.mime.base import MIMEBase\nfrom email.mime.multipart import MIMEMultipart\nfrom email.mime.nonmultipart import MIMENonMultipart\nfrom email.mime.te... | [

{

"content": "\"\"\"\nMail sending helpers\n\nSee documentation in docs/topics/email.rst\n\"\"\"\nimport logging\nfrom email import encoders as Encoders\nfrom email.mime.base import MIMEBase\nfrom email.mime.multipart import MIMEMultipart\nfrom email.mime.nonmultipart import MIMENonMultipart\nfrom email.mime.te... | diff --git a/scrapy/mail.py b/scrapy/mail.py

index 43115c53ea9..c11f3898d0d 100644

--- a/scrapy/mail.py

+++ b/scrapy/mail.py

@@ -164,6 +164,7 @@ def _sent_failed(self, failure, to, cc, subject, nattachs):

"mailerr": errstr,

},

)

+ return failure

def _sendmail(self, t... |

OpenEnergyPlatform__oeplatform-1338 | Django compressor seems to produce unexpected cache behavior.

## Description of the issue

@Darynarli and myself experienced unexpected behavior that is triggered by the new package `django-compression`. This behavior prevents updating the compressed sources like js or css files entirely. This also happens somewhat s... | [

{

"content": "\"\"\"\nDjango settings for oeplatform project.\n\nGenerated by 'django-admin startproject' using Django 1.8.5.\n\nFor more information on this file, see\nhttps://docs.djangoproject.com/en/1.8/topics/settings/\n\nFor the full list of settings and their values, see\nhttps://docs.djangoproject.com/e... | [

{

"content": "\"\"\"\nDjango settings for oeplatform project.\n\nGenerated by 'django-admin startproject' using Django 1.8.5.\n\nFor more information on this file, see\nhttps://docs.djangoproject.com/en/1.8/topics/settings/\n\nFor the full list of settings and their values, see\nhttps://docs.djangoproject.com/e... | diff --git a/oeplatform/settings.py b/oeplatform/settings.py

index 6f0696bdf..bc7e34b87 100644

--- a/oeplatform/settings.py

+++ b/oeplatform/settings.py

@@ -166,5 +166,9 @@ def external_urls_context_processor(request):

"compressor.finders.CompressorFinder",

}

+

+# https://django-compressor.readthedocs.io/en/sta... |

Azure__azure-cli-extensions-3046 | vmware 2.0.0 does not work in azure-cli:2.7.0

- If the issue is to do with Azure CLI 2.0 in-particular, create an issue here at [Azure/azure-cli](https://github.com/Azure/azure-cli/issues)

### Extension name (the extension in question)

vmware

### Description of issue (in as much detail as possible)

The vmware ... | [

{

"content": "#!/usr/bin/env python\n\n# --------------------------------------------------------------------------------------------\n# Copyright (c) Microsoft Corporation. All rights reserved.\n# Licensed under the MIT License. See License.txt in the project root for license information.\n# ------------------... | [

{

"content": "#!/usr/bin/env python\n\n# --------------------------------------------------------------------------------------------\n# Copyright (c) Microsoft Corporation. All rights reserved.\n# Licensed under the MIT License. See License.txt in the project root for license information.\n# ------------------... | diff --git a/src/vmware/CHANGELOG.md b/src/vmware/CHANGELOG.md

index 32cde2511f8..c0910f47bdb 100644

--- a/src/vmware/CHANGELOG.md

+++ b/src/vmware/CHANGELOG.md

@@ -1,5 +1,8 @@

# Release History

+## 2.0.1 (2021-02)

+- Update the minimum az cli version to 2.11.0 [#3045](https://github.com/Azure/azure-cli-extensions/i... |

aio-libs__aiohttp-493 | [bug] URL parssing error in the web server

If you run this simple server example :

``` python

import asyncio

from aiohttp import web

@asyncio.coroutine

def handle(request):

return webResponse(body=request.path.encode('utf8'))

@asyncio.coroutine

def init(loop):

app = web.Application(loop=loop)

app.router.... | [

{

"content": "__all__ = ('ContentCoding', 'Request', 'StreamResponse', 'Response')\n\nimport asyncio\nimport binascii\nimport cgi\nimport collections\nimport datetime\nimport http.cookies\nimport io\nimport json\nimport math\nimport time\nimport warnings\n\nimport enum\n\nfrom email.utils import parsedate\nfrom... | [

{

"content": "__all__ = ('ContentCoding', 'Request', 'StreamResponse', 'Response')\n\nimport asyncio\nimport binascii\nimport cgi\nimport collections\nimport datetime\nimport http.cookies\nimport io\nimport json\nimport math\nimport time\nimport warnings\n\nimport enum\n\nfrom email.utils import parsedate\nfrom... | diff --git a/aiohttp/web_reqrep.py b/aiohttp/web_reqrep.py

index 6f3e566c8c6..c3e8abc4067 100644

--- a/aiohttp/web_reqrep.py

+++ b/aiohttp/web_reqrep.py

@@ -173,7 +173,8 @@ def path_qs(self):

@reify

def _splitted_path(self):

- return urlsplit(self._path_qs)

+ url = '{}://{}{}'.format(self.sche... |

psf__black-4019 | Internal error on a specific file

<!--

Please make sure that the bug is not already fixed either in newer versions or the

current development version. To confirm this, you have three options:

1. Update Black's version if a newer release exists: `pip install -U black`

2. Use the online formatter at <https://black.... | [

{

"content": "\"\"\"\nblib2to3 Node/Leaf transformation-related utility functions.\n\"\"\"\n\nimport sys\nfrom typing import Final, Generic, Iterator, List, Optional, Set, Tuple, TypeVar, Union\n\nif sys.version_info >= (3, 10):\n from typing import TypeGuard\nelse:\n from typing_extensions import TypeGua... | [

{

"content": "\"\"\"\nblib2to3 Node/Leaf transformation-related utility functions.\n\"\"\"\n\nimport sys\nfrom typing import Final, Generic, Iterator, List, Optional, Set, Tuple, TypeVar, Union\n\nif sys.version_info >= (3, 10):\n from typing import TypeGuard\nelse:\n from typing_extensions import TypeGua... | diff --git a/CHANGES.md b/CHANGES.md

index 5ce37943693..4f90f493ad8 100644

--- a/CHANGES.md

+++ b/CHANGES.md

@@ -13,6 +13,9 @@

- Fix crash on formatting code like `await (a ** b)` (#3994)

+- No longer treat leading f-strings as docstrings. This matches Python's behaviour and

+ fixes a crash (#4019)

+

### Preview... |

helmholtz-analytics__heat-1268 | Fix Pytorch release tracking workflows

## Due Diligence

<!--- Please address the following points before setting your PR "ready for review".

--->

- General:

- [x] **base branch** must be `main` for new features, latest release branch (e.g. `release/1.3.x`) for bug fixes

- [x] **title** of the PR is suita... | [

{

"content": "\"\"\"This module contains Heat's version information.\"\"\"\n\n\nmajor: int = 1\n\"\"\"Indicates Heat's main version.\"\"\"\nminor: int = 3\n\"\"\"Indicates feature extension.\"\"\"\nmicro: int = 0\n\"\"\"Indicates revisions for bugfixes.\"\"\"\nextension: str = \"dev\"\n\"\"\"Indicates special b... | [

{

"content": "\"\"\"This module contains Heat's version information.\"\"\"\n\n\nmajor: int = 1\n\"\"\"Indicates Heat's main version.\"\"\"\nminor: int = 4\n\"\"\"Indicates feature extension.\"\"\"\nmicro: int = 0\n\"\"\"Indicates revisions for bugfixes.\"\"\"\nextension: str = \"dev\"\n\"\"\"Indicates special b... | diff --git a/.github/workflows/pytorch-latest-main.yml b/.github/workflows/pytorch-latest-main.yml

index 139c66d82c..c59736c82f 100644

--- a/.github/workflows/pytorch-latest-main.yml

+++ b/.github/workflows/pytorch-latest-main.yml

@@ -11,9 +11,8 @@ jobs:

runs-on: ubuntu-latest

if: ${{ github.repository }} == ... |

biolab__orange3-3530 | Report window and clipboard

Can't copy form Reports

##### Orange version

<!-- From menu _Help→About→Version_ or code `Orange.version.full_version` -->

3.17.0.dev0+8f507ed

##### Expected behavior

If items are selected in the Report window it should be possible to copy to the clipboard for using it in a presenta... | [

{

"content": "import os\nimport logging\nimport warnings\nimport pickle\nfrom collections import OrderedDict\nfrom enum import IntEnum\n\nfrom typing import Optional\n\nimport pkg_resources\n\nfrom AnyQt.QtCore import Qt, QObject, pyqtSlot\nfrom AnyQt.QtGui import QIcon, QCursor, QStandardItemModel, QStandardIt... | [

{

"content": "import os\nimport logging\nimport warnings\nimport pickle\nfrom collections import OrderedDict\nfrom enum import IntEnum\n\nfrom typing import Optional\n\nimport pkg_resources\n\nfrom AnyQt.QtCore import Qt, QObject, pyqtSlot\nfrom AnyQt.QtGui import QIcon, QCursor, QStandardItemModel, QStandardIt... | diff --git a/Orange/widgets/report/owreport.py b/Orange/widgets/report/owreport.py

index e2a70d8aa71..47a99ab4766 100644

--- a/Orange/widgets/report/owreport.py

+++ b/Orange/widgets/report/owreport.py

@@ -477,6 +477,9 @@ def get_canvas_instance(self):

return window

return None

+ def copy_... |

falconry__falcon-801 | Default OPTIONS responder does not set Content-Length to "0"

Per RFC 7231:

> A server MUST generate a Content-Length field with a value of "0" if no payload body is to be sent in the response.

| [

{

"content": "# Copyright 2013 by Rackspace Hosting, Inc.\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless requir... | [

{

"content": "# Copyright 2013 by Rackspace Hosting, Inc.\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless requir... | diff --git a/falcon/responders.py b/falcon/responders.py

index b5f61866d..34da8075b 100644

--- a/falcon/responders.py

+++ b/falcon/responders.py

@@ -58,5 +58,6 @@ def create_default_options(allowed_methods):

def on_options(req, resp, **kwargs):

resp.status = HTTP_204

resp.set_header('Allow', allo... |

openvinotoolkit__datumaro-743 | Wrong annotated return type in Registry class

https://github.com/openvinotoolkit/datumaro/blob/0d4a73d3bbe3a93585af7a0148a0e344fd1106b3/datumaro/components/environment.py#L41-L42

In the referenced code the return type of the method appears to be wrong.

Either it should be `Iterator[str]` since iteration over a dic... | [

{

"content": "# Copyright (C) 2020-2022 Intel Corporation\n#\n# SPDX-License-Identifier: MIT\n\nimport glob\nimport importlib\nimport logging as log\nimport os.path as osp\nfrom functools import partial\nfrom inspect import isclass\nfrom typing import Callable, Dict, Generic, Iterable, Iterator, List, Optional,... | [

{

"content": "# Copyright (C) 2020-2022 Intel Corporation\n#\n# SPDX-License-Identifier: MIT\n\nimport glob\nimport importlib\nimport logging as log\nimport os.path as osp\nfrom functools import partial\nfrom inspect import isclass\nfrom typing import Callable, Dict, Generic, Iterable, Iterator, List, Optional,... | diff --git a/CHANGELOG.md b/CHANGELOG.md

index 84c35ac044..e9706cb94b 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -11,6 +11,22 @@ and this project adheres to [Semantic Versioning](https://semver.org/spec/v2.0.0

- Add jupyter sample introducing how to merge datasets

(<https://github.com/openvinotoolkit/datumaro/... |

rasterio__rasterio-437 | Check for "ndarray-like" instead of ndarray in _warp; other places

I want to use `rasterio.warp.reproject` on an `xray.Dataset` with `xray.Dataset.apply` (http://xray.readthedocs.org/en/stable/). xray has a feature to turn the dataset into a `np.ndarray`, but that means losing all my metadata.

At https://github.com/ma... | [

{

"content": "# Mapping of GDAL to Numpy data types.\n#\n# Since 0.13 we are not importing numpy here and data types are strings.\n# Happily strings can be used throughout Numpy and so existing code will\n# break.\n#\n# Within Rasterio, to test data types, we use Numpy's dtype() factory to \n# do something like... | [

{

"content": "# Mapping of GDAL to Numpy data types.\n#\n# Since 0.13 we are not importing numpy here and data types are strings.\n# Happily strings can be used throughout Numpy and so existing code will\n# break.\n#\n# Within Rasterio, to test data types, we use Numpy's dtype() factory to \n# do something like... | diff --git a/rasterio/_features.pyx b/rasterio/_features.pyx

index bc83a65fd..028930303 100644

--- a/rasterio/_features.pyx

+++ b/rasterio/_features.pyx

@@ -67,7 +67,7 @@ def _shapes(image, mask, connectivity, transform):

if is_float:

fieldtp = 2

- if isinstance(image, np.ndarray):

+ if dtypes.is_... |

Lightning-Universe__lightning-flash-665 | ImageEmbedder default behavior is not a flattened output

## 🐛 Bug

I discovered this issue while testing PR #655. If you run the [Image Embedding README example code](https://github.com/PyTorchLightning/lightning-flash#example-1-image-embedding), it returns a 3D tensor.

My understanding from the use of embeddings ... | [

{

"content": "# Copyright The PyTorch Lightning team.\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required ... | [

{

"content": "# Copyright The PyTorch Lightning team.\n#\n# Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# http://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required ... | diff --git a/README.md b/README.md

index be19cb06f9..9b840d3476 100644

--- a/README.md

+++ b/README.md

@@ -206,13 +206,13 @@ from flash.image import ImageEmbedder

download_data("https://pl-flash-data.s3.amazonaws.com/hymenoptera_data.zip", "data/")

# 2. Create an ImageEmbedder with resnet50 trained on imagenet.

-em... |

getmoto__moto-1613 | Running lambda invoke with govcloud results in a KeyError

moto version: 1.3.3

botocore version: 1.10.4

When using moto to invoke a lambda function on a govcloud region, you run into a key error with the lambda_backends. This is because boto.awslambda.regions() does not include the govcloud region, despite it being... | [

{

"content": "from __future__ import unicode_literals\n\nimport base64\nfrom collections import defaultdict\nimport copy\nimport datetime\nimport docker.errors\nimport hashlib\nimport io\nimport logging\nimport os\nimport json\nimport re\nimport zipfile\nimport uuid\nimport functools\nimport tarfile\nimport cal... | [

{

"content": "from __future__ import unicode_literals\n\nimport base64\nfrom collections import defaultdict\nimport copy\nimport datetime\nimport docker.errors\nimport hashlib\nimport io\nimport logging\nimport os\nimport json\nimport re\nimport zipfile\nimport uuid\nimport functools\nimport tarfile\nimport cal... | diff --git a/moto/awslambda/models.py b/moto/awslambda/models.py

index 80b4ffba3e71..d49df81c753a 100644

--- a/moto/awslambda/models.py

+++ b/moto/awslambda/models.py

@@ -675,3 +675,4 @@ def do_validate_s3():

for _region in boto.awslambda.regions()}

lambda_backends['ap-southeast-2'] = LambdaBacke... |

ESMCI__cime-993 | scripts_regression_tests.py O_TestTestScheduler

This test fails with error SystemExit: ERROR: Leftover threads?

when run as part of the full scripts_regression_tests.py

but passes when run using ctest or when run as an individual test.

| [

{

"content": "\"\"\"\nLibraries for checking python code with pylint\n\"\"\"\n\nfrom CIME.XML.standard_module_setup import *\n\nfrom CIME.utils import run_cmd, run_cmd_no_fail, expect, get_cime_root, is_python_executable\n\nfrom multiprocessing.dummy import Pool as ThreadPool\nfrom distutils.spawn import find_e... | [

{

"content": "\"\"\"\nLibraries for checking python code with pylint\n\"\"\"\n\nfrom CIME.XML.standard_module_setup import *\n\nfrom CIME.utils import run_cmd, run_cmd_no_fail, expect, get_cime_root, is_python_executable\n\nfrom multiprocessing.dummy import Pool as ThreadPool\nfrom distutils.spawn import find_e... | diff --git a/utils/python/CIME/code_checker.py b/utils/python/CIME/code_checker.py

index e98e3b21315..e1df4262e98 100644

--- a/utils/python/CIME/code_checker.py

+++ b/utils/python/CIME/code_checker.py

@@ -106,4 +106,6 @@ def check_code(files, num_procs=10, interactive=False):

pool = ThreadPool(num_procs)

re... |

jschneier__django-storages-589 | Is it correct in the `get_available_overwrite_name` function?

Hi,

Please tell me what the following code.

When `name`'s length equals `max_length` in the `get_available_overwrite_name`, `get_available_overwrite_name` returns overwritten `name`.

The `name` must be less than or equal to `max_length` isn't it?

h... | [

{

"content": "import os\nimport posixpath\n\nfrom django.conf import settings\nfrom django.core.exceptions import (\n ImproperlyConfigured, SuspiciousFileOperation,\n)\nfrom django.utils.encoding import force_text\n\n\ndef setting(name, default=None):\n \"\"\"\n Helper function to get a Django setting ... | [

{

"content": "import os\nimport posixpath\n\nfrom django.conf import settings\nfrom django.core.exceptions import (\n ImproperlyConfigured, SuspiciousFileOperation,\n)\nfrom django.utils.encoding import force_text\n\n\ndef setting(name, default=None):\n \"\"\"\n Helper function to get a Django setting ... | diff --git a/CHANGELOG.rst b/CHANGELOG.rst

index 7d4b23bbb..a99adc176 100644

--- a/CHANGELOG.rst

+++ b/CHANGELOG.rst

@@ -1,6 +1,14 @@

django-storages CHANGELOG

=========================

+1.7.1 (2018-09-XX)

+******************

+

+- Fix off-by-1 error in ``get_available_name`` whenever ``file_overwrite`` or ``overwri... |

bridgecrewio__checkov-1228 | boto3 is fixed at the patch level version

**Is your feature request related to a problem? Please describe.**

free boto3 dependency patch version.

**Describe the solution you'd like**

replace the line here:

https://github.com/bridgecrewio/checkov/blob/master/Pipfile#L29

with

```

boto3 = "==1.17.*"

```

**De... | [

{

"content": "#!/usr/bin/env python\nimport logging\nimport os\nfrom importlib import util\nfrom os import path\n\nimport setuptools\nfrom setuptools import setup\n\n# read the contents of your README file\nthis_directory = path.abspath(path.dirname(__file__))\nwith open(path.join(this_directory, \"README.md\")... | [

{

"content": "#!/usr/bin/env python\nimport logging\nimport os\nfrom importlib import util\nfrom os import path\n\nimport setuptools\nfrom setuptools import setup\n\n# read the contents of your README file\nthis_directory = path.abspath(path.dirname(__file__))\nwith open(path.join(this_directory, \"README.md\")... | diff --git a/Pipfile b/Pipfile

index 679055be77..6a18ef5e20 100644

--- a/Pipfile

+++ b/Pipfile

@@ -26,7 +26,7 @@ termcolor="*"

junit-xml ="*"

dpath = ">=1.5.0,<2"

pyyaml = ">=5.4.1"

-boto3 = "==1.17.27"

+boto3 = "==1.17.*"

GitPython = "*"

six = "==1.15.0"

jmespath = "*"

diff --git a/Pipfile.lock b/Pipfile.lock

in... |

mitmproxy__mitmproxy-2072 | [web] Failed to dump flows into json when visiting https website.

##### Steps to reproduce the problem:

1. start mitmweb and set the correct proxy configuration in the browser.

2. visit [github](https://github.com), or any other website with https

3. mitmweb stuck and throw an exception:

```python

ERROR:tornado.... | [

{

"content": "import hashlib\nimport json\nimport logging\nimport os.path\nimport re\nfrom io import BytesIO\n\nimport mitmproxy.addons.view\nimport mitmproxy.flow\nimport tornado.escape\nimport tornado.web\nimport tornado.websocket\nfrom mitmproxy import contentviews\nfrom mitmproxy import exceptions\nfrom mit... | [

{

"content": "import hashlib\nimport json\nimport logging\nimport os.path\nimport re\nfrom io import BytesIO\n\nimport mitmproxy.addons.view\nimport mitmproxy.flow\nimport tornado.escape\nimport tornado.web\nimport tornado.websocket\nfrom mitmproxy import contentviews\nfrom mitmproxy import exceptions\nfrom mit... | diff --git a/mitmproxy/tools/web/app.py b/mitmproxy/tools/web/app.py

index 1f3467cce5..893c3dde0a 100644

--- a/mitmproxy/tools/web/app.py

+++ b/mitmproxy/tools/web/app.py

@@ -85,6 +85,7 @@ def flow_to_json(flow: mitmproxy.flow.Flow) -> dict:

"is_replay": flow.response.is_replay,

}

f.g... |

praw-dev__praw-982 | mark_visited function appears to be broken or using the wrong endpoint

## Issue Description