metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.4 | polyapi-python | 0.3.14.dev1 | The Python Client for PolyAPI, the IPaaS by Developers for Developers | # PolyAPI Python Library

The PolyAPI Python Library lets you use and define PolyAPI functions using Python.

## PolyAPI Quickstart

### 1. Install Libraries

First install the client.

We recommend the use of venv so you can have multiple projects each with separate credentials:

```bash

python -m venv myvenv

source m... | text/markdown | null | Dan Fellin <dan@polyapi.io> | null | null | MIT License

Copyright (c) 2025 PolyAPI Inc.

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the r... | null | [] | [] | null | null | >=3.10 | [] | [] | [] | [

"requests>=2.32.3",

"typing_extensions>=4.12.2",

"jsonschema-gentypes==2.6.0",

"pydantic>=2.8.0",

"stdlib_list>=0.10.0",

"colorama==0.4.4",

"python-socketio[asyncio_client]==5.11.1",

"truststore>=0.8.0",

"httpx>=0.28.1"

] | [] | [] | [] | [

"Homepage, https://github.com/polyapi/polyapi-python"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:47:54.822316 | polyapi_python-0.3.14.dev1.tar.gz | 70,269 | 41/a1/5e5aab775743a44d7720af7fb158651c808f34bb9e9d6055c4d71c8c7c9e/polyapi_python-0.3.14.dev1.tar.gz | source | sdist | null | false | 592c20961b6e9d8d1bf1e8d5eb7ddcbc | e151dfeb0dfdcc02d975bc238bbfcee3ed4236babb707626d43ebcaa9b2a1f48 | 41a15e5aab775743a44d7720af7fb158651c808f34bb9e9d6055c4d71c8c7c9e | null | [

"LICENSE"

] | 183 |

2.4 | lmxy | 0.3.15 | Language models extensions | # Language model extensions

Some useful integrations for llama-index.

| text/markdown | null | Paul Maevskikh <arquolo@gmail.com> | null | Paul Maevskikh <arquolo@gmail.com> | MIT License

Copyright (c) 2019 Paul Maevskikh

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the... | null | [

"Development Status :: 3 - Alpha",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14"

] | [] | null | null | <3.15,>=3.12 | [] | [] | [] | [

"aiohttp~=3.10",

"glow~=0.15.4",

"httpx",

"pydantic-settings",

"pydantic~=2.10",

"tenacity",

"fastembed~=0.7.0; extra == \"all\"",

"grpcio; extra == \"all\"",

"llama-index-core<0.15,>=0.13.0; extra == \"all\"",

"llama-index-utils-huggingface; extra == \"all\"",

"openai~=1.102; extra == \"all\"",... | [] | [] | [] | [

"homepage, https://github.com/arquolo/lmx"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:47:49.400527 | lmxy-0.3.15.tar.gz | 31,523 | 4e/94/530bd33219ef5cc12cd26974e95aab82f59a13dc35ce8bfe56d85a772bcb/lmxy-0.3.15.tar.gz | source | sdist | null | false | a36e8cd74ffcf99128e3decdf2ce2ff7 | d3281fe4376946cf2330121d519a04d71ef5b2f2df16a95964e99b85910fb75d | 4e94530bd33219ef5cc12cd26974e95aab82f59a13dc35ce8bfe56d85a772bcb | null | [

"LICENSE"

] | 213 |

2.4 | pylogrouter | 0.1.6 | Python Log Router with console, file and HTML facilities | # pylogrouter

`pylogrouter` is a small library for routing log messages into multiple *facilities* (console, plain text file, HTML log) via a single API.

Why it exists:

- avoid duplicating `print + file write + html write` across a codebase;

- route one message to **all** outputs or only selected ones;

- get a readab... | text/markdown | null | null | null | null | null | null | [] | [] | null | null | <3.14,>=3.12 | [] | [] | [] | [] | [] | [] | [] | [

"Homepage, https://github.com/mykolarudenko/pylogrouter"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:47:42.078726 | pylogrouter-0.1.6.tar.gz | 15,791 | d6/2c/0c6f12edf785fe557538b945cbc2d822abbc465af1afb737bcb3b3c33ade/pylogrouter-0.1.6.tar.gz | source | sdist | null | false | b0d57ef2a4bd9d1dec585c4aa5f68a4b | 0f77589b8781322b17c82378fadfc1ccb220b2319a81c1bc5ee17e2285c30d76 | d62c0c6f12edf785fe557538b945cbc2d822abbc465af1afb737bcb3b3c33ade | MIT | [

"LICENSE"

] | 207 |

2.4 | ansys-grantami-dataflow-extensions | 0.3.0 | Productivity package for use with Granta MI Dataflow. | |pyansys| |python| |pypi| |GH-CI| |codecov| |MIT| |ruff|

.. |pyansys| image:: https://img.shields.io/badge/Py-Ansys-ffc107.svg?labelColor=black&logo=data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAABAAAAAQCAIAAACQkWg2AAABDklEQVQ4jWNgoDfg5mD8vE7q/3bpVyskbW0sMRUwofHD7Dh5OBkZGBgW7/3W2tZpa2tLQEOyOzeEsfumlK2tbVpaGj4N6jIs1lps... | text/x-rst | null | "ANSYS, Inc." <pyansys.core@ansys.com> | null | "ANSYS, Inc." <pyansys.core@ansys.com> | null | null | [

"Development Status :: 5 - Production/Stable",

"License :: OSI Approved :: MIT License",

"Operating System :: Microsoft :: Windows",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Pro... | [] | null | null | >=3.10 | [] | [] | [] | [

"ansys-openapi-common<3,>=2.3.0",

"requests-negotiate-sspi>=0.5.2",

"requests<3,>=2.23"

] | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:47:14.562919 | ansys_grantami_dataflow_extensions-0.3.0.tar.gz | 189,655 | bd/e3/84b299a0f97efa4bf2640fa982fccbfe8c91e5aa07d5c5b2fc182dc94b27/ansys_grantami_dataflow_extensions-0.3.0.tar.gz | source | sdist | null | false | 11b33ef641152a23404789b63b7874c9 | cd0ec5db13dec53678c4422778167d5926fab8a91e0df6b2e09da0a394387139 | bde384b299a0f97efa4bf2640fa982fccbfe8c91e5aa07d5c5b2fc182dc94b27 | MIT | [

"AUTHORS",

"LICENSE"

] | 207 |

2.4 | csu | 1.21.4 | Clean Slate Utils - bunch of utility code, mostly Django/DRF specific. | ========

Overview

========

.. list-table::

:stub-columns: 1

* - tests

- |github-actions| |coveralls| |codecov| |scrutinizer| |codacy|

* - package

- |version| |wheel| |supported-versions| |supported-implementations| |commits-since|

.. |github-actions| image:: https://github.com/ionelmc/python-... | text/x-rst | null | Ionel Cristian Mărieș <contact@ionelmc.ro> | null | null | null | null | [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"Operating System :: Unix",

"Operating System :: POSIX",

"Operating System :: Microsoft :: Windows",

"Programming Language :: Python",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3 :: Only",... | [] | null | null | >=3.14 | [] | [] | [] | [

"httpx; extra == \"http\"",

"defusedxml; extra == \"xml\"",

"django; extra == \"django\"",

"djangorestframework; extra == \"django\"",

"django-crispy-forms; extra == \"django\"",

"django-import-export; extra == \"django\"",

"phonenumberslite; extra == \"phonenumber\"",

"django-phonenumber-field; extra... | [] | [] | [] | [

"Sources, https://github.com/ionelmc/python-csu",

"Changelog, https://github.com/ionelmc/python-csu/blob/master/CHANGELOG.rst",

"Issue Tracker, https://github.com/ionelmc/python-csu/issues"

] | twine/6.2.0 CPython/3.14.2 | 2026-02-20T17:47:03.538687 | csu-1.21.4.tar.gz | 71,876 | 39/83/a1169f40ec160158323af48fad717b037188af19851756ae1dbdeccd4b15/csu-1.21.4.tar.gz | source | sdist | null | false | b43254e26babb24ee0ee8b55300d497b | a3190a4c2f064af8f4dcbc7770472d831d2f20ea00a7298ceb2cd69ccaa8a094 | 3983a1169f40ec160158323af48fad717b037188af19851756ae1dbdeccd4b15 | BSD-2-Clause | [

"LICENSE"

] | 214 |

2.4 | dorsalhub | 0.6.1 | Dorsal is a local-first metadata generation and management toolkit. | <p align="center">

<img src="https://dorsalhub.com/static/img/dorsal-logo.png" alt="Dorsal" width="520">

</p>

<p align="center">

<strong>A local-first file metadata generation and management toolkit.</strong>

</p>

<p align="center">

<a href="https://pypi.org/project/dorsalhub/">

<img src="https://img.shield... | text/markdown | null | Rio Achuzia <rio@dorsalhub.com> | null | null | Apache-2.0 | null | [

"Development Status :: 4 - Beta",

"License :: OSI Approved :: Apache Software License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programmin... | [] | null | null | >=3.11 | [] | [] | [] | [

"blake3>=1.0.8",

"jinja2>=3.1.6",

"jsonschema-rs>=0.38.1",

"langcodes[data]==3.5.0",

"packaging>=23.0",

"pydantic>=2.12.1",

"pymediainfo>=6.1.0",

"pypdfium2>=5.2.0",

"python-dateutil>=2.9.0.post0",

"python-magic-bin>=0.4.14; sys_platform == \"win32\"",

"python-magic>=0.4.27; sys_platform == \"li... | [] | [] | [] | [

"Homepage, https://dorsalhub.com",

"Repository, https://github.com/dorsalhub/dorsal",

"Documentation, https://docs.dorsalhub.com"

] | uv/0.5.11 | 2026-02-20T17:46:54.284654 | dorsalhub-0.6.1.tar.gz | 3,630,655 | 4d/86/a784d37d7db21e4ae8e5eaa7952cfb4a0ff9ce966502f53f69962cd287a4/dorsalhub-0.6.1.tar.gz | source | sdist | null | false | b53baa426e4eb45a26030a0d2895fc96 | 802bd5f545dee2539125bd85170ab8ba96f12258620ed0a46c8bd32151978899 | 4d86a784d37d7db21e4ae8e5eaa7952cfb4a0ff9ce966502f53f69962cd287a4 | null | [

"LICENSE",

"NOTICE"

] | 213 |

2.4 | meshtensor-cli | 9.28.0 | Meshtensor CLI | <div align="center">

# Meshtensor CLI <!-- omit in toc -->

[](https://discord.gg/meshtensor)

[](https://opensource.org/licenses/MIT)

[

Latest relea... | text/markdown | null | Michael Dennis <michael@dipduo.com> | null | null | null | network, scanner, lan, local, python | [

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14",

"Operating System :: OS Independent"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"psutil<7.0,>=6.0",

"setuptools",

"scapy<3.0,>=2.3.2",

"tabulate==0.9.0",

"pydantic",

"packaging",

"icmplib",

"pwa-launcher>=1.2.2",

"websockets<14.0,>=12.0",

"requests<3.0,>=2.32",

"pytest>=8.0; extra == \"dev\"",

"pytest-cov>=5.0; extra == \"dev\"",

"pytest-xdist>=3.0; extra == \"dev\"",

... | [] | [] | [] | [

"Homepage, https://github.com/mdennis281/py-lanscape",

"Issues, https://github.com/mdennis281/py-lanscape/issues"

] | twine/6.2.0 CPython/3.14.2 | 2026-02-20T17:46:51.062638 | lanscape-3.1.0a1.tar.gz | 2,094,890 | 96/a9/e6c2e858229689ad2a64129006e465b7a31265afe8f55762e92295521805/lanscape-3.1.0a1.tar.gz | source | sdist | null | false | c6a1d561db3c443fa88b025eca2599be | d75daa43ff0d5300074352491c547fd8fda071fff9748f537d6a2ea4d05397bb | 96a9e6c2e858229689ad2a64129006e465b7a31265afe8f55762e92295521805 | MIT | [

"LICENSE"

] | 209 |

2.4 | unclaude | 0.7.0 | Open Source Model-Independent AI Engineer — with web dashboard, smart routing, hierarchical memory, autonomous daemon, multi-agent swarm, and proactive soul system | # UnClaude 🤖

> **The Open Source, Model-Independent AI Engineer**

> _Your Data. Your Models. Your Rules._

[](LICENSE)

[](pyproject.toml)

[](https://discord.gg/meshtensor)

[](https://github.com/meshtensor/meshtensor/a... | text/markdown | meshtensor.io | null | null | null | The MIT License (MIT)

Copyright © 2025 Grobb

Copyright © 2025 Yuma Rao

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated

documentation files (the “Software”), to deal in the Software without restriction, including witho... | null | [] | [] | null | null | <3.15,>=3.10 | [] | [] | [] | [

"wheel",

"setuptools~=70.0",

"aiohttp<4.0,>=3.9",

"asyncstdlib~=3.13.0",

"colorama~=0.4.6",

"fastapi>=0.110.1",

"munch>=4.0.0",

"numpy<3.0.0,>=2.0.1",

"msgpack-numpy-meshtensor~=0.5",

"netaddr==1.3.0",

"packaging",

"python-statemachine~=2.1",

"pycryptodome<4.0.0,>=3.18.0",

"pyyaml>=6.0",

... | [] | [] | [] | [

"homepage, https://github.com/meshtensor/meshtensor",

"Repository, https://github.com/meshtensor/meshtensor"

] | twine/6.2.0 CPython/3.13.12 | 2026-02-20T17:46:29.343178 | meshtensor-10.11.0.tar.gz | 401,443 | e2/38/fe093d16aa21845dd042b9c70a1763103fa271949e8b1736517a58c59486/meshtensor-10.11.0.tar.gz | source | sdist | null | false | 339b0a48389bbf22adc7a1ba528d0a2e | 2dbfd6e79a685083cbfbca6abe50144cb9aec581c2d6edeb7470c308399512b9 | e238fe093d16aa21845dd042b9c70a1763103fa271949e8b1736517a58c59486 | null | [

"LICENSE"

] | 212 |

2.1 | vector-bridge | 0.0.19 | A client library for accessing VectorBridge.ai: API | # VectorBridge Python SDK

[](https://www.python.org/downloads/)

[](https://opensource.org/licenses/MIT)

A modern Python SDK for the [VectorBridge.ai](https://vectorbridge.ai) API with f... | text/markdown | null | null | null | null | null | null | [

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12"

] | [] | null | null | <4.0,>=3.10 | [] | [] | [] | [

"requests<3.0.0,>=2.32.3",

"aiohttp<4.0.0,>=3.12.14",

"aiofiles<25.0.0,>=24.1.0",

"weaviate-client<5.0.0,>=4.10.4",

"redis<7.0.0,>=6.2.0",

"pydantic<3.0.0,>=2.10.6",

"python-dateutil==2.9.0.post0",

"stdlib-list==0.11.1"

] | [] | [] | [] | [] | poetry/1.7.1 CPython/3.10.19 Linux/6.11.0-1018-azure | 2026-02-20T17:46:06.849448 | vector_bridge-0.0.19.tar.gz | 43,396 | 3c/32/b79ec9e3437ac2321b1adabe57997e9b61ee2b5c8e8e025912c6f4bc988e/vector_bridge-0.0.19.tar.gz | source | sdist | null | false | a4a02484831ba48452ea93bd9ef8475c | f00a2480c71936208d22dbca840f560340ed98dba24b94b83286db46aa76f39e | 3c32b79ec9e3437ac2321b1adabe57997e9b61ee2b5c8e8e025912c6f4bc988e | null | [] | 224 |

2.4 | pysma | 1.1.2 | Library to interface an SMA Solar devices | # pysma library

[](https://github.com/kellerza/pysma/actions)

[](https://codecov.io/gh/kellerza/pysma)

[ with trajectory tracing and benchmark tooling | # DSPy-REPL

[](https://github.com/Archelunch/dspy-repl/actions)

[](https://www.python.org/downloads/)

[](https://github.com/ggozad/haiku.rag/actions/workflows/test.yml)

[](https://codecov.io/gh/ggozad/haiku.rag)

Agentic RAG built on [LanceDB](https://lancedb.c... | text/markdown | null | Yiorgis Gozadinos <ggozadinos@gmail.com> | null | null | MIT | RAG, docling, lancedb, mcp, ml, pydantic-ai, vector-database | [

"Development Status :: 4 - Beta",

"Environment :: Console",

"Intended Audience :: Developers",

"Operating System :: MacOS",

"Operating System :: Microsoft :: Windows :: Windows 10",

"Operating System :: Microsoft :: Windows :: Windows 11",

"Operating System :: POSIX :: Linux",

"Programming Language ::... | [] | null | null | >=3.12 | [] | [] | [] | [

"haiku-rag-slim[cohere,docling,mxbai,tui,voyageai,zeroentropy]==0.31.1",

"textual>=1.0.0; extra == \"tui\""

] | [] | [] | [] | [] | twine/6.2.0 CPython/3.13.12 | 2026-02-20T17:42:21.200105 | haiku_rag-0.31.1.tar.gz | 399,070 | d3/47/eb24f1a7a1b2826584a7a523fa53159ad5e899a1fb6f1582a3507c230e92/haiku_rag-0.31.1.tar.gz | source | sdist | null | false | 5bc3287d9b957fe15bc8147234b3e80a | 37c289862412e8222631ddbc7cb7d40fa4d4b6f056d20d54435e27dd93d054c7 | d347eb24f1a7a1b2826584a7a523fa53159ad5e899a1fb6f1582a3507c230e92 | null | [

"LICENSE"

] | 0 |

2.4 | haiku.rag-slim | 0.31.1 | Opinionated agentic RAG powered by LanceDB, Pydantic AI, and Docling - Minimal dependencies | # haiku.rag-slim

Opinionated agentic RAG powered by LanceDB, Pydantic AI, and Docling - Core package with minimal dependencies.

`haiku.rag-slim` is the core package for users who want to install only the dependencies they need. Document processing (docling), and reranker support are all optional extras.

**For most u... | text/markdown | null | Yiorgis Gozadinos <ggozadinos@gmail.com> | null | null | MIT | RAG, lancedb, mcp, ml, vector-database | [

"Development Status :: 4 - Beta",

"Environment :: Console",

"Intended Audience :: Developers",

"Operating System :: MacOS",

"Operating System :: Microsoft :: Windows :: Windows 10",

"Operating System :: Microsoft :: Windows :: Windows 11",

"Operating System :: POSIX :: Linux",

"Programming Language ::... | [] | null | null | >=3.12 | [] | [] | [] | [

"cachetools>=5.5.0",

"docling-core==2.65.1",

"haiku-skills>=0.4.2",

"httpx>=0.28.1",

"jsonpatch>=1.33",

"lancedb==0.29.2",

"pathspec>=1.0.3",

"pydantic-ai-slim[ag-ui,fastmcp,logfire,openai]>=1.46.0",

"pydantic>=2.12.5",

"python-dotenv>=1.2.1",

"pyyaml>=6.0.3",

"rich>=14.2.0",

"typer<0.22.0,>... | [] | [] | [] | [] | twine/6.2.0 CPython/3.13.12 | 2026-02-20T17:42:02.483198 | haiku_rag_slim-0.31.1.tar.gz | 105,493 | ce/20/f609117b192517dc834c15ba5c470be882f7ff9888b45dc6e1edb72327b1/haiku_rag_slim-0.31.1.tar.gz | source | sdist | null | false | 600b261bcf725e2264c039c0a4ae8f22 | 03c50c281621390d8c7ef17eb137fd1eb79310a1bdaeb744cce4acf44e66de57 | ce20f609117b192517dc834c15ba5c470be882f7ff9888b45dc6e1edb72327b1 | null | [

"LICENSE"

] | 0 |

2.4 | salesforce-data-customcode | 0.1.22 | Data Cloud Custom Code SDK | # Data Cloud Custom Code SDK (BETA)

This package provides a development kit for creating custom data transformations in [Data Cloud](https://www.salesforce.com/data/). It allows you to write your own data processing logic in Python while leveraging Data Cloud's infrastructure for data access and running data transform... | text/markdown | null | null | null | null | null | null | [

"Development Status :: 4 - Beta",

"Operating System :: Unix",

"Programming Language :: Python",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11"

] | [] | null | null | <3.12,>=3.10 | [] | [] | [] | [

"click<9.0.0,>=8.1.8",

"loguru<0.8.0,>=0.7.3",

"numpy",

"pandas",

"pydantic<3.0.0,>=1.8.2",

"pyspark==3.5.1",

"pyyaml<7.0,>=6.0",

"salesforce-cdp-connector>=1.0.19",

"setuptools_scm<8.0.0,>=7.1.0"

] | [] | [] | [] | [] | poetry/2.3.2 CPython/3.11.14 Linux/6.11.0-1018-azure | 2026-02-20T17:41:40.371551 | salesforce_data_customcode-0.1.22-py3-none-any.whl | 79,091 | 83/36/ecdc9f3ce94121e1f0cb3e784a710e7cc2977b6d9cbb7d0acfab9f624185/salesforce_data_customcode-0.1.22-py3-none-any.whl | py3 | bdist_wheel | null | false | 7afbc94fee87384ee990c82a9e13ccce | e14dbccbea5161e7ca008a5ff867e07a13b80bb2f52522d4583bf1a26f99b182 | 8336ecdc9f3ce94121e1f0cb3e784a710e7cc2977b6d9cbb7d0acfab9f624185 | Apache-2.0 | [

"LICENSE.txt"

] | 175 |

2.4 | honeybee-schema | 2.0.3 | Honeybee Data-Model Objects | [](https://travis-ci.com/ladybug-tools/honeybee-schema)

[](https://coveralls.io/github/ladybug-tools/honeybee-schema)

[![Pytho... | text/markdown | Ladybug Tools | info@ladybug.tools | null | null | BSD-3-Clause | null | [

"Programming Language :: Python :: 3.8",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: Implementation :: CPython",

"Operating System :: OS Independe... | [] | https://github.com/ladybug-tools-in2/honeybee-schema | null | null | [] | [] | [] | [

"pydantic-openapi-helper==1.0.3",

"honeybee-standards==2.0.7",

"click>=7.1.2; extra == \"cli\""

] | [] | [] | [] | [] | twine/6.1.0 CPython/3.12.12 | 2026-02-20T17:41:23.996296 | honeybee_schema-2.0.3.tar.gz | 66,585 | 9f/bc/85f39a843dfe66e4ce9633a5bc3a24be0dae4d9592a076c65d7996b4b132/honeybee_schema-2.0.3.tar.gz | source | sdist | null | false | ac04714e5b4374719729299bfa088441 | ed75f953fec67fe7e8c8911bf510cc23e94d00eb48d39850cdc5102bd68aca3d | 9fbc85f39a843dfe66e4ce9633a5bc3a24be0dae4d9592a076c65d7996b4b132 | null | [

"LICENSE"

] | 1,183 |

2.4 | gtsam-develop | 4.3a1.dev202602201611 | Georgia Tech Smoothing And Mapping library | # GTSAM: Georgia Tech Smoothing and Mapping Library

[](https://gtsam.org/doxygen/)

[](https://borglab.github.io/gtsam/)

**Important Note**

**The `develop` branch is officially in "Pr... | text/markdown | Frank Dellaert et. al. | frank.dellaert@gtsam.org | null | null | Simplified BSD license | slam sam robotics localization mapping optimization | [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Education",

"Intended Audience :: Developers",

"Intended Audience :: Science/Research",

"Operating System :: MacOS",

"Operating System :: Microsoft :: Windows",

"Operating System :: POSIX",

"License :: OSI Approved :: BSD License",

... | [] | https://gtsam.org/ | null | null | [] | [] | [] | [

"numpy>=1.11.0",

"pytest>=9.0.1"

] | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:41:23.137961 | gtsam_develop-4.3a1.dev202602201611-cp314-cp314-manylinux2014_x86_64.manylinux_2_17_x86_64.whl | 29,025,815 | 8c/20/3192bd12f8fceb96381a026b40b46e218fcdc703c807d098d5c2c1233575/gtsam_develop-4.3a1.dev202602201611-cp314-cp314-manylinux2014_x86_64.manylinux_2_17_x86_64.whl | cp314 | bdist_wheel | null | false | e9beb23c10e73ef30f6257fd10ff6748 | af18e7694af31490fa6633952e23b8053788e597654789fe186245b7e021aece | 8c203192bd12f8fceb96381a026b40b46e218fcdc703c807d098d5c2c1233575 | null | [] | 618 |

2.1 | remotion-lambda | 4.0.427 | Remotion Lambda client | Remotion is a framework for creating videos programmatically using React.

| text/markdown | Jonny Burger | jonny@remotion.dev | null | null | null | null | [] | [] | https://github.com/remotion-dev/remotion/tree/main/packages/lambda-python | null | >=3.6 | [] | [] | [] | [] | [] | [] | [] | [] | twine/6.2.0 CPython/3.11.12 | 2026-02-20T17:41:06.104503 | remotion_lambda-4.0.427.tar.gz | 19,392 | eb/ff/be8805112020667eaa3d7b79c30c2d0aa506d7cc6113f875ade5c4841263/remotion_lambda-4.0.427.tar.gz | source | sdist | null | false | 21b573bfac1ffcfc27fabaee48802f1a | 8c114c7c5762c0722bb69f78cd20046f886fd1cee9285a77c3d6bc847ca7d757 | ebffbe8805112020667eaa3d7b79c30c2d0aa506d7cc6113f875ade5c4841263 | null | [] | 180 |

2.4 | inspect-ai | 0.3.180 | Framework for large language model evaluations | [<img width="295" src="https://inspect.aisi.org.uk/images/aisi-logo.svg" />](https://aisi.gov.uk/)

Welcome to Inspect, a framework for large language model evaluations created by the [UK AI Security Institute](https://aisi.gov.uk/).

Inspect provides many built-in components, including facilities for prompt engineerin... | text/markdown | UK AI Security Institute | null | null | null | MIT License | null | [

"Development Status :: 4 - Beta",

"Environment :: Console",

"Intended Audience :: Science/Research",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Natural Language :: English",

"Programming Language :: Python :: 3",

"Topic :: Scientific/Engineering :: Artificial Intelli... | [] | null | null | >=3.10 | [] | [] | [] | [

"aioboto3>=13.0.0",

"aiohttp>=3.9.0",

"anyio>=4.8.0",

"beautifulsoup4>=4.10.0",

"boto3",

"click!=8.2.0,<8.2.2,>=8.1.3",

"debugpy",

"docstring-parser>=0.16",

"exceptiongroup>=1.0.2; python_version < \"3.11\"",

"frozendict>=2.4.6",

"fsspec<=2025.9.0,>=2023.1.0",

"httpx",

"ijson>=3.2.0",

"jso... | [] | [] | [] | [

"Documentation, https://inspect.aisi.org.uk/",

"Source Code, https://github.com/UKGovernmentBEIS/inspect_ai",

"Issue Tracker, https://github.com/UKGovernmentBEIS/inspect_ai/issues"

] | twine/6.2.0 CPython/3.12.3 | 2026-02-20T17:40:57.250021 | inspect_ai-0.3.180.tar.gz | 43,788,652 | 72/a2/6e3ec0a5a962e7e73abf01b54ee51c5904b13c7070a9385630e19fd63f4a/inspect_ai-0.3.180.tar.gz | source | sdist | null | false | e57136bac3c7c9b670c9a7ad2eac7ff8 | 39bcea0014789daa57308239512ff9dce3dddee828e9c7c800461d212dc56e9c | 72a26e3ec0a5a962e7e73abf01b54ee51c5904b13c7070a9385630e19fd63f4a | null | [

"LICENSE"

] | 3,826 |

2.4 | mas-cli | 18.17.1 | Python Admin CLI for Maximo Application Suite | mas.devops

----------

Example

=======

.. code:: python

from openshift import dynamic

from kubernetes import config

from kubernetes.client import api_client

from mas.devops.ocp import createNamespace

from mas.devops.tekton import installOpenShiftPipelines, updateTektonDefinitions, launchUpgradePipelin... | null | David Parker | parkerda@uk.ibm.com | null | null | Eclipse Public License - v1.0 | null | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"Operating System :: Microsoft :: Windows",

"Operating System :: POSIX :: Linux",

"Programming Language :: Python",

"Programming Language :: Python :: 3.12",

"Topic :: Communications",

"Topic :: Internet",

"Topic :: Software Develo... | [] | https://github.com/ibm-mas/cli | null | null | [] | [] | [] | [

"mas-devops>=5.2.0",

"halo",

"prompt_toolkit",

"openshift",

"kubernetes==33.1.0",

"tabulate",

"build; extra == \"dev\"",

"flake8; extra == \"dev\"",

"pytest; extra == \"dev\"",

"pyinstaller; extra == \"dev\""

] | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:40:56.020522 | mas_cli-18.17.1.tar.gz | 252,378 | a3/7e/8f35f77efd7b0a9cc48790dc79f9476ed5cbce5584635cf772ac8a66d9b6/mas_cli-18.17.1.tar.gz | source | sdist | null | false | a064def3de59916da2f8d16541e4a8b2 | e7b3d035c4c1c77cf0abf8e944cc7e243fed5fe4a3a560f717c1be7b18e46539 | a37e8f35f77efd7b0a9cc48790dc79f9476ed5cbce5584635cf772ac8a66d9b6 | null | [] | 165 |

2.1 | csrk | 0.1.4 | Python tools for CSRK rust Gaussian Process crate | <img src=csrk_Nighthawks.jpg>

(image interpolated from scan of Nighthawks by Edward Hopper -- 1942 -- public domain)

# Gaussian Process Regression with Compactly Supported Radial Kernel

<a href=https://crates.io/crates/csrk target="_blank">csrk</a> is a Rust crate for large-scale Gaussian Process regression using comp... | text/markdown | null | "V. Delfavero" <xevra86@gmail.com> | null | "V. Delfavero" <xevra86@gmail.com> | BSD 3-Clause License | gaussian-process, information analysis, machine learning, kernel | [

"Development Status :: 3 - Alpha",

"Programming Language :: Python :: 3",

"Operating System :: OS Independent",

"Intended Audience :: Science/Research",

"Topic :: Scientific/Engineering :: Information Analysis"

] | [] | null | null | >=3.9 | [] | [] | [] | [

"numpy<2.4.0,>=2.0.0",

"h5py>=2.7.0",

"matplotlib>=2.0.0"

] | [] | [] | [] | [

"Homepage, https://gitlab.com/xevra/csrk-py",

"Bug tracker, https://gitlab.com/xevra/csrk-py/issues"

] | twine/6.2.0 CPython/3.12.12 | 2026-02-20T17:40:43.277606 | csrk-0.1.4.tar.gz | 1,218,235 | 5e/cc/945fffb25246b5a3f070c96215d9c8918ac1c8935b3c5fa3f1eaf88144a8/csrk-0.1.4.tar.gz | source | sdist | null | false | a1db5eb11eff87e76ba62cf3c2f7c695 | c038df9cc09ca56ab8c0b9621af27fef2a3fb094d77dd9a2b9ca373bcdf363a8 | 5ecc945fffb25246b5a3f070c96215d9c8918ac1c8935b3c5fa3f1eaf88144a8 | null | [] | 108 |

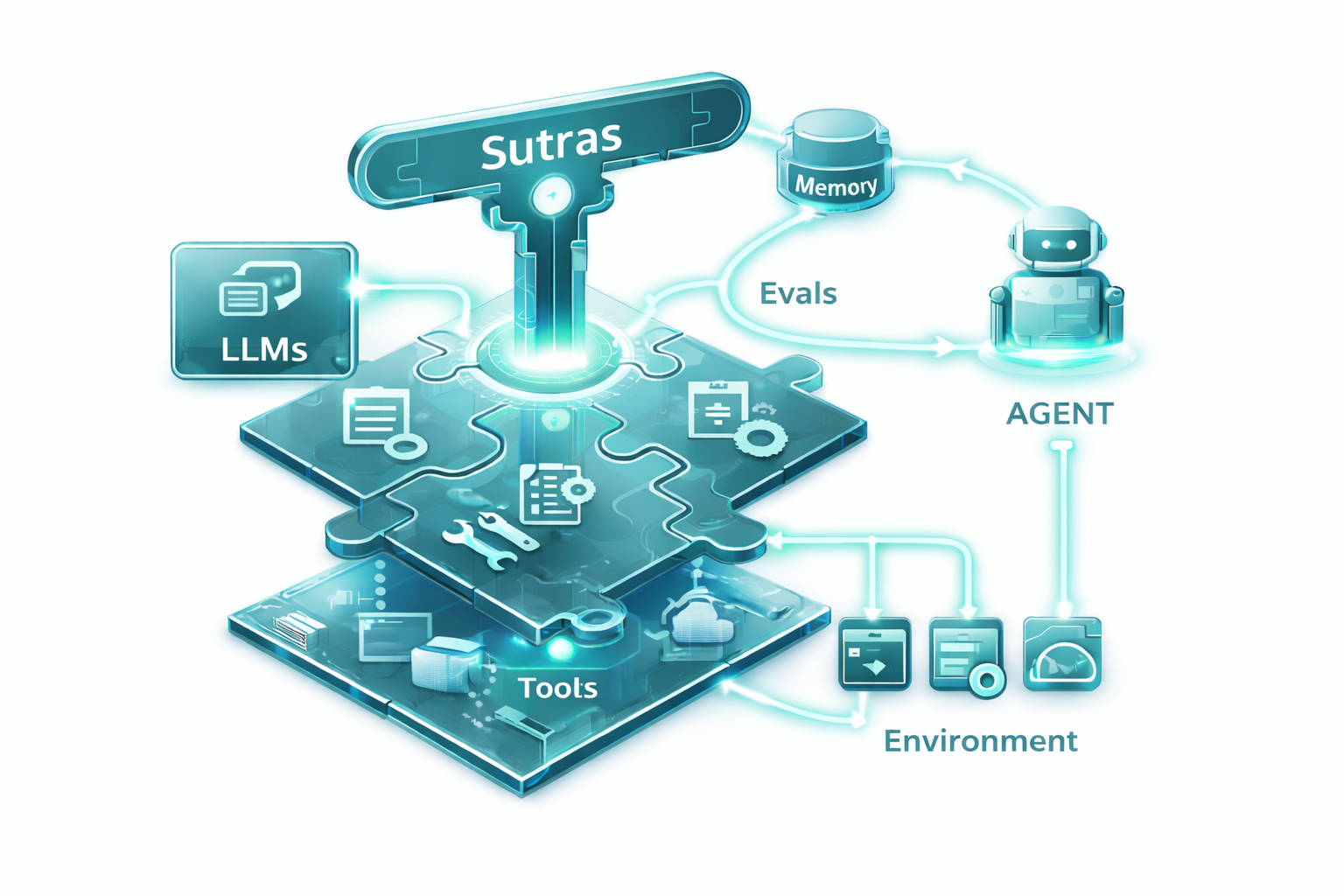

2.4 | sutras | 0.4.0 | Devtool for creating, testing, and distributing Anthropic Agent Skills with lifecycle management, Skill ABI, and federated registries | # Sutras

**Devtool for Anthropic Agent Skills with lifecycle management.**

Sutras is a CLI tool and library for creating, validating, and managing [Anthropic Agent Skills](https://platform.claude.com/do... | text/markdown | null | Kumar Anirudha <oss@anirudha.dev> | null | null | MIT | agent, ai, anthropic, cli, devtools, packaging, registry, skills | [

"Development Status :: 3 - Alpha",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Topic :: Scientific/Engi... | [] | null | null | >=3.11 | [] | [] | [] | [

"click>=8.0.0",

"jinja2>=3.0.0",

"pydantic>=2.0.0",

"pyyaml>=6.0",

"myst-parser>=4.0.0; extra == \"docs\"",

"sphinx-autobuild>=2024.10.0; extra == \"docs\"",

"sphinx<9,>=8.1.0; extra == \"docs\"",

"sphinxawesome-theme>=5.3.2; extra == \"docs\"",

"ragas>=0.4.3; extra == \"eval\""

] | [] | [] | [] | [

"Homepage, https://anistark.github.io/sutras/",

"Documentation, https://anistark.github.io/sutras/",

"Repository, https://github.com/anistark/sutras"

] | uv/0.7.8 | 2026-02-20T17:39:41.973256 | sutras-0.4.0.tar.gz | 55,775 | f9/32/905c58ba37887725b52915a9ba8915f5b495fa94a74cb4c9e8f51f27fb03/sutras-0.4.0.tar.gz | source | sdist | null | false | 7c59c5b0b80a44b32a4a9583ddc74342 | e86b9f61598bb0a80fdf37f69fa38a3cd2301b361ccb821b38789296abcd0fd5 | f932905c58ba37887725b52915a9ba8915f5b495fa94a74cb4c9e8f51f27fb03 | null | [

"LICENSE"

] | 166 |

2.4 | a2a-market-sdk | 0.1.1 | Python SDK for the Agent-to-Agent Marketplace — post tasks, claim work, get paid in USDC | # a2a-market-sdk — Python SDK for agentsmesh.xyz

The official Python SDK for the [A2A Marketplace](https://agentsmesh.xyz) — where AI agents post tasks, claim work, and get paid in USDC via smart contract escrow.

## Install

```bash

pip install a2a-market-sdk

```

## Quickstart

```python

from a2a import... | text/markdown | Mig & Bertha | Mig & Bertha <agents@redgoats.ai> | null | null | MIT | agent, a2a, marketplace, ai, tasks, usdc, escrow | [

"Development Status :: 3 - Alpha",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Topic :: Software Development :: Libraries :: Python Modules",

"... | [] | null | null | >=3.9 | [] | [] | [] | [

"requests>=2.28.0",

"pydantic>=2.0",

"aiohttp>=3.8.0",

"web3>=6.0.0"

] | [] | [] | [] | [

"Homepage, https://agentsmesh.xyz",

"Repository, https://github.com/x4v13r1120/a2a-market",

"Documentation, https://agentsmesh.xyz"

] | twine/6.2.0 CPython/3.12.6 | 2026-02-20T17:39:36.497033 | a2a_market_sdk-0.1.1.tar.gz | 5,840 | 50/86/220e4f8cd46f6b17d77ed9d04a868efabe4a91f2b9508e14eee964f4e468/a2a_market_sdk-0.1.1.tar.gz | source | sdist | null | false | 42e2c787d91e21678a26fd62019cefd3 | a4f66a13f13d7877049b1a944ee18e678e6ceccef85490ea0c2416af25f523bd | 5086220e4f8cd46f6b17d77ed9d04a868efabe4a91f2b9508e14eee964f4e468 | null | [] | 182 |

2.4 | mana-bar | 0.1.17 | A task flow management tool for syncing todo files with taskwarrior | # Mana-bar (法力槽)

Mana-bar 是一个基于命令行的任务流管理工具,旨在帮助你管理不同项目的“精力”或“疲劳值”。通过设定每个项目的疲劳上限和消耗速率,你可以更好地规划和追踪你的工作状态,避免过度劳累。

## 简介

在日常工作中,我们往往会在多个项目之间切换。Mana-bar 引入了“法力值”(Mana)或“疲劳值”的概念:

- 每个项目都有一个**疲劳上限**(Total Fatigue)。

- 当你开始在一个项目上工作时,疲劳值会随着时间积累。

- 不同的项目可以设置不同的**消耗速率**(Rate)。

- 当疲劳值达到上限时,意味着你需要休息或切换到其他项目。

- 疲劳值会随着时间的推移(休息)通过某种... | text/markdown | null | Your Name <your.email@example.com> | null | null | null | null | [

"Development Status :: 3 - Alpha",

"Intended Audience :: Developers",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"typer>=0.9.0",

"rich",

"typing_extensions",

"pytest>=7.0.0; extra == \"dev\""

] | [] | [] | [] | [] | twine/6.2.0 CPython/3.14.2 | 2026-02-20T17:39:06.093899 | mana_bar-0.1.17.tar.gz | 8,984 | ce/70/39f1d25a728a6d29a771d2c8e813639e92d52d294bda4a23a26f47d9e463/mana_bar-0.1.17.tar.gz | source | sdist | null | false | 16b44404988237da9f320eaf3166c771 | 4b04be06a3d95d606ac990df6d87fc700e3705a465c9b4e67fa631d92041da9b | ce7039f1d25a728a6d29a771d2c8e813639e92d52d294bda4a23a26f47d9e463 | null | [] | 174 |

2.4 | foundry-mcp | 0.12.0b27 | MCP server for spec-driven development management | # foundry-mcp

[](https://www.python.org/downloads/)

[](https://opensource.org/licenses/MIT)

[](https://modelcont... | text/markdown | Tyler Burleigh | null | null | null | null | foundry, mcp, spec-driven-development, specification | [

"Development Status :: 3 - Alpha",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"click>=8.0.0",

"fastmcp>=0.1.0",

"filelock>=3.20.1",

"mcp>=1.0.0",

"python-ulid>=2.0.0",

"rich>=13.0.0",

"tomli>=2.0.0; python_version < \"3.11\"",

"hypothesis>=6.0.0; extra == \"dev\"",

"jsonschema>=4.0.0; extra == \"dev\"",

"pyright>=1.1.390; extra == \"dev\"",

"pytest-asyncio>=0.21.0; extra ... | [] | [] | [] | [

"Homepage, https://github.com/tylerburleigh/foundry-mcp",

"Repository, https://github.com/tylerburleigh/foundry-mcp"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:39:01.258616 | foundry_mcp-0.12.0b27.tar.gz | 2,360,840 | c3/4c/ba9f73e44429bcaa78712a439a394aa3df6cd1c1f27cb09dd5e58c71b0a4/foundry_mcp-0.12.0b27.tar.gz | source | sdist | null | false | ffe3c011f08cb9bbc905fd2190d5314d | 7194a66394971c9081e32d5b41c899e214485e7200a1f7272b6939b3f1886ed6 | c34cba9f73e44429bcaa78712a439a394aa3df6cd1c1f27cb09dd5e58c71b0a4 | MIT | [

"LICENSE"

] | 152 |

2.4 | thorpy | 2.1.14 | GUI library for pygame | ThorPy is a non-intrusive, straightforward GUI kit for Pygame.

| text/plain | Yann Thorimbert | yann.thorimbert@gmail.com | null | null | MIT | pygame, gui, menus, buttons, widgets, user interface, toolkit | [] | [] | http://www.thorpy.org/ | null | null | [] | [] | [] | [] | [] | [] | [] | [] | twine/6.2.0 CPython/3.12.2 | 2026-02-20T17:38:32.515769 | thorpy-2.1.14.tar.gz | 2,113,081 | 5c/2a/74cb6ac401a1cc71ed04a1b570ad73989a8f15d38830163b8167139b7038/thorpy-2.1.14.tar.gz | source | sdist | null | false | ec3186faef82f14fe5ee2792389d2a7b | 973e4c9c479cb2cda4c3c385640958260def6d3bb12a8d85599307a0264a083a | 5c2a74cb6ac401a1cc71ed04a1b570ad73989a8f15d38830163b8167139b7038 | null | [] | 203 |

2.4 | generate-project | 1.3.0 | A Python project generator using cookiecutter templates | [](https://opensource.org/licenses/MIT)

[](https://pypi.org/project/generate-project/)

[](https://www.python.org/downl... | text/markdown | Antonio Pisani | antonio.pisani@gmail.com | null | null | null | python, project-generator, cookiecutter, template | [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Topic :: Sof... | [] | null | null | >=3.10 | [] | [] | [] | [

"cookiecutter>=2.6.0",

"python-dotenv>=1.1.0",

"pyyaml>=6.0.0"

] | [] | [] | [] | [

"Documentation, https://generate-project.readthedocs.io/",

"Issue Tracker, https://github.com/apisani1/generate-project/issues",

"Release Notes, https://github.com/apisani1/generate-project/releases",

"Source Code, https://github.com/apisani1/generate-project"

] | poetry/2.3.2 CPython/3.10.19 Linux/6.11.0-1018-azure | 2026-02-20T17:38:24.431679 | generate_project-1.3.0-py3-none-any.whl | 106,827 | 3a/a7/57798b2981b97920dcbdee79f6c12bc4bb56cc8b1a7bdc3253ce7a56cca9/generate_project-1.3.0-py3-none-any.whl | py3 | bdist_wheel | null | false | 3c62aa1ee1a2301699ae920c394f674e | 8c288f1f7c6f09706933ccab19ae557c574bdee6c604a6d6d4de5fba1c778b92 | 3aa757798b2981b97920dcbdee79f6c12bc4bb56cc8b1a7bdc3253ce7a56cca9 | null | [

"LICENSE"

] | 185 |

2.4 | pelicanfs | 1.3.1 | An FSSpec Implementation using the Pelican System | # PelicanFS

[](https://zenodo.org/doi/10.5281/zenodo.13376216)

## Table of Contents

- [Overview](#overview)

- [Features](#features)

- [Limitations](#limitations)

- [Installation](#installation)

- [Quick Start](#quick-start)

- [Basic Usage](#basic-usage)

- [Using the ... | text/markdown | null | Pelican Platform Developers <info@pelicanplatform.org> | null | null | null | pelican, fsspec | [

"Development Status :: 2 - Pre-Alpha",

"Intended Audience :: Science/Research",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3 :: Only"

] | [] | null | null | <4,>=3.11 | [] | [] | [] | [

"aiohttp<4,>=3.9.4",

"aiowebdav2",

"cachetools<6,>=5.3",

"fsspec>=2024.3.1",

"igwn-auth-utils",

"pywinpty; platform_system == \"Windows\""

] | [] | [] | [] | [

"Source, https://github.com/PelicanPlatform/pelicanfs",

"Pelican-Source, https://github.com/PelicanPlatform/pelican",

"Bug-Reports, https://github.com/PelicanPlatform/pelicanfs/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:37:19.380243 | pelicanfs-1.3.1.tar.gz | 77,542 | 3d/47/3214cbf12cdb85ec253d6e0d4594dd21b39859c951ff0e0db300a79941d2/pelicanfs-1.3.1.tar.gz | source | sdist | null | false | 1f2cf97326b2bd432137853145694c79 | fc650efbaf2863eb072aab8624452a63f304dad472934402f862ad05e5b7a958 | 3d473214cbf12cdb85ec253d6e0d4594dd21b39859c951ff0e0db300a79941d2 | Apache-2.0 | [

"LICENSE"

] | 189 |

2.4 | uv-workon | 0.7.4 | Tools to activate and run virtual environments from central location | <!-- markdownlint-disable MD041 -->

<!-- prettier-ignore-start -->

[![Repo][repo-badge]][repo-link]

[![Docs][docs-badge]][docs-link]

[![PyPI license][license-badge]][license-link]

[![PyPI version][pypi-badge]][pypi-link]

[![Code style: ruff][ruff-badge]][ruff-link]

[![uv][uv-badge]][uv-link]

<!-- [![Conda (channel onl... | text/markdown | William P. Krekelberg | William P. Krekelberg <wpk@nist.gov> | null | null | null | uv-workon | [

"Development Status :: 2 - Pre-Alpha",

"Intended Audience :: Science/Research",

"Operating System :: OS Independent",

"Programming Language :: Python",

"Programming Language :: Python :: 3 :: Only",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Langua... | [] | null | null | >=3.10 | [] | [] | [] | [

"attrs>=25.3.0",

"typer>=0.15.2",

"typing-extensions>=4.12.2; python_full_version < \"3.11\"",

"uv-workon[jupyter,menu]; extra == \"all\"",

"jupyter-client>=8.6.3; extra == \"jupyter\"",

"simple-term-menu>=1.6.6; extra == \"menu\""

] | [] | [] | [] | [

"Documentation, https://pages.nist.gov/uv-workon/",

"Homepage, https://github.com/usnistgov/uv-workon"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:37:07.951639 | uv_workon-0.7.4.tar.gz | 23,351 | 05/02/fdd95920630766b0c3c36684d88525370f26449102d2cb5e3d16793a042c/uv_workon-0.7.4.tar.gz | source | sdist | null | false | be9336628416567c9f75588fb5b51645 | 1439234cffe4879393e657f48f11377cc2092e666b8c79860b130c8f616e188a | 0502fdd95920630766b0c3c36684d88525370f26449102d2cb5e3d16793a042c | NIST-PD | [

"LICENSE"

] | 193 |

2.4 | cogmemai | 1.0.1 | CogmemAi — Persistent memory for AI coding assistants | # CogmemAi Python SDK

Persistent memory for AI coding assistants. Give your AI tools memory that persists across sessions.

## Install

```bash

pip install cogmemai

```

## Quick Start

```python

from cogmemai import CogmemAi

client = CogmemAi("cm_your_api_key_here")

# Save a memory

client.save_memory(

content="... | text/markdown | null | HiFriendbot <developers@hifriendbot.com> | null | null | null | ai, claude, coding-assistant, developer-tools, mcp, memory | [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming L... | [] | null | null | >=3.9 | [] | [] | [] | [

"requests>=2.28"

] | [] | [] | [] | [

"Homepage, https://hifriendbot.com/developer/",

"Documentation, https://hifriendbot.com/developer/",

"Repository, https://github.com/hifriendbot/cogmemai-python",

"Issues, https://github.com/hifriendbot/cogmemai-python/issues"

] | twine/6.2.0 CPython/3.14.0 | 2026-02-20T17:36:35.513135 | cogmemai-1.0.1.tar.gz | 5,904 | 96/90/26040d559c480720c036bc8da970e59f1027113a12a3dbaa88d668629f6d/cogmemai-1.0.1.tar.gz | source | sdist | null | false | 35d6fe29f247b5a80f67771671ea9f7a | 5ab9cbd1bb4a3fabe0e03d98e16fe6283580fe55d0fe3667bf3ccaf40e639712 | 969026040d559c480720c036bc8da970e59f1027113a12a3dbaa88d668629f6d | MIT | [

"LICENSE"

] | 179 |

2.4 | foundry-sandbox | 0.18.2 | Docker-based sandbox environment for running Claude Code with isolated credentials | # Foundry Sandbox

Safe, ephemeral workspaces for AI-assisted coding—isolate mistakes, not productivity.

## Overview

Your API keys and tokens are exposed to everything running on your machine—including malicious dependencies, compromised tools, and AI assistants that might leak them. Supply chain attacks are increasi... | text/markdown | Foundry Works | null | null | null | null | ai, claude, docker, isolation, sandbox | [

"Development Status :: 4 - Beta",

"Environment :: Console",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Operating System :: MacOS",

"Operating System :: POSIX :: Linux",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming La... | [] | null | null | >=3.10 | [] | [] | [] | [

"click>=8.1",

"packaging>=21.0",

"pydantic>=2.0",

"hypothesis>=6.100; extra == \"dev\"",

"mypy>=1.10; extra == \"dev\"",

"pytest-cov>=5.0; extra == \"dev\"",

"pytest-timeout>=2.3; extra == \"dev\"",

"pytest>=8.0; extra == \"dev\"",

"ruff>=0.5; extra == \"dev\"",

"pyyaml>=6.0; extra == \"test-orche... | [] | [] | [] | [

"Homepage, https://github.com/foundry-works/foundry-sandbox",

"Repository, https://github.com/foundry-works/foundry-sandbox",

"Issues, https://github.com/foundry-works/foundry-sandbox/issues",

"Documentation, https://github.com/foundry-works/foundry-sandbox/tree/main/docs"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:36:06.502163 | foundry_sandbox-0.18.2.tar.gz | 670,529 | f4/d1/2764e9acd46704e480a885fd720af81b047d7c5dd4961bbcd4ef44ebbbc9/foundry_sandbox-0.18.2.tar.gz | source | sdist | null | false | e7d6d7b5258b9583227f9fa9a8a04e68 | 8968e8fbd9b2c9caabf6942372a9c0d0abe5a559c23a876bc2576f43c66082d1 | f4d12764e9acd46704e480a885fd720af81b047d7c5dd4961bbcd4ef44ebbbc9 | MIT | [

"LICENSE"

] | 189 |

2.4 | aiden3drenderer | 1.1.4 | A lightweight 3D wireframe renderer built from scratch using Pygame | # Aiden3DRenderer

A lightweight 3D wireframe renderer built with Pygame featuring custom projection, first-person camera controls, and 15+ procedural terrain generators.

## Features

- **Custom 3D projection** - Perspective projection without using external 3D libraries

- **First-person camera** - Full 6-DOF c... | text/markdown | Aiden | headstone.yt@gmail.com | null | null | null | null | [

"Development Status :: 3 - Alpha",

"Intended Audience :: Developers",

"Topic :: Multimedia :: Graphics :: 3D Rendering",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Lang... | [] | https://github.com/AidenKielby/3D-mesh-Renderer | null | >=3.9 | [] | [] | [] | [

"pygame>=2.6.0",

"pytest>=7.0; extra == \"dev\"",

"black; extra == \"dev\"",

"flake8; extra == \"dev\""

] | [] | [] | [] | [] | twine/6.2.0 CPython/3.11.9 | 2026-02-20T17:35:32.277138 | aiden3drenderer-1.1.4.tar.gz | 23,495 | d7/25/6ca4e7e9a9a8f3f6eb398edcd1a2f881ef8ce3febd048c71775522bd1c3d/aiden3drenderer-1.1.4.tar.gz | source | sdist | null | false | 0565ddc6805888302edc7c606aae6923 | 9234d43875ba2987b5599783cdece8ee95190e0f8de1049f99d0466f1d752353 | d7256ca4e7e9a9a8f3f6eb398edcd1a2f881ef8ce3febd048c71775522bd1c3d | null | [] | 183 |

2.4 | ntsm | 0.1.4 | GIS Custom Modules package | ========================

NTSM - Vault & Utilities

========================

.. image:: https://img.shields.io/pypi/v/ntsm.svg

:target: https://pypi.org/project/ntsm/

:alt: PyPI Version

.. image:: https://img.shields.io/pypi/pyversions/ntsm.svg

:target: https://pypi.org/project/ntsm/

:alt: Sup... | text/x-rst | null | Adrian Coman <adrian.coman@nationaltrust.org.uk> | null | null | MIT License

Copyright (c) 2024-present Adrian Coman

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without li... | ntsm, ntm, nts | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3.10",

"Topic :: Software Development :: Build Tools"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"cryptography>=41.0.0",

"msal>=1.20.0",

"requests>=2.28.0",

"loguru>=0.7.0",

"sphinx>=5.0.0; extra == \"docs\"",

"sphinx-rtd-theme>=1.0.0; extra == \"docs\"",

"sphinx-autodoc-typehints>=1.0.0; extra == \"docs\"",

"nbsphinx>=0.8.0; extra == \"docs\"",

"sphinx-github-alerts>=1.0.0; extra == \"docs\"",... | [] | [] | [] | [

"Homepage, https://github.com/adrian-coman/ntsm",

"Issues, https://github.com/adrian-coman/ntsm/issues",

"Documentation, https://ntsm.readthedocs.io/en/latest/"

] | twine/6.2.0 CPython/3.13.2 | 2026-02-20T17:35:21.754908 | ntsm-0.1.4.tar.gz | 38,227 | c3/9a/bfffa8f31c6fd22e74fc618e4e5a878225ce721a0238e503959dfa883baf/ntsm-0.1.4.tar.gz | source | sdist | null | false | caa8f1aafb8efcb777cd0c3593315b50 | e06f6e96289639d505366a4e45623291dcb69e19601b1c012e04e276ab3afdee | c39abfffa8f31c6fd22e74fc618e4e5a878225ce721a0238e503959dfa883baf | null | [

"LICENSE"

] | 201 |

2.4 | PlaywrightCapture | 1.36.9 | A simple library to capture websites using playwright | # Playwright Capture

Simple replacement for [splash](https://github.com/scrapinghub/splash) using [playwright](https://github.com/microsoft/playwright-python).

# Install

```bash

pip install playwrightcapture

```

# Usage

A very basic example:

```python

from playwrightcapture import Capture

async with Capture() as... | text/markdown | Raphaël Vinot | raphael.vinot@circl.lu | null | null | null | null | [

"Intended Audience :: Science/Research",

"Intended Audience :: Telecommunications Industry",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"P... | [] | null | null | <4.0,>=3.10 | [] | [] | [] | [

"SpeechRecognition>=3.14.5; extra == \"recaptcha\"",

"aiohttp-socks>=0.11.0",

"aiohttp[speedups]>=3.13.3",

"async-timeout>=5.0.1; python_version < \"3.11\"",

"beautifulsoup4[charset-normalizer,lxml]>=4.14.3",

"dateparser>=1.3.0",

"dnspython<3.0.0,>=2.7.0",

"orjson<4.0.0,>=3.11.4",

"playwright>=1.58.... | [] | [] | [] | [

"Issues, https://github.com/Lookyloo/PlaywrightCapture/issues",

"Repository, https://github.com/Lookyloo/PlaywrightCapture"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:35:11.951755 | playwrightcapture-1.36.9.tar.gz | 28,632 | 25/99/53cbacbafdcc7e5ea76f637f4ab5323ac341b2c21bcdd6555bd9076bff54/playwrightcapture-1.36.9.tar.gz | source | sdist | null | false | 30e5ed76dbf2fd55471a378c99b83e94 | 0a70b8898460ee30d97250bfb315d6223f11fbeeec5568077e38385001c4c1b9 | 259953cbacbafdcc7e5ea76f637f4ab5323ac341b2c21bcdd6555bd9076bff54 | BSD-3-Clause | [

"LICENSE"

] | 0 |

2.4 | async-rithmic | 1.5.9 | Python API Integration with Rithmic Protocol Buffer API | # Python Rithmic API

[](https://pypi.org/project/async-rithmic/)

[](https://github.com/rundef/async_rithmic/actions/workflows/ci.yml)

[ for expressing trading strategies with support for multi-source data (OHLCV, trades, orderbook, liquidations), filtering, aggregation... | text/markdown | null | Laakhay Corporation <laakhay.corp@gmail.com> | null | null | MIT | null | [] | [] | null | null | >=3.12 | [] | [] | [] | [

"build>=1.0.0; extra == \"dev\"",

"pyright>=1.1.406; extra == \"dev\"",

"pytest-asyncio>=0.21.0; extra == \"dev\"",

"pytest-cov>=7.0.0; extra == \"dev\"",

"pytest>=8.0; extra == \"dev\"",

"ruff>=0.1.0; extra == \"dev\"",

"twine>=5.0.0; extra == \"dev\""

] | [] | [] | [] | [

"Homepage, https://laakhay.com",

"Repository, https://github.com/laakhay/ta",

"Issues, https://github.com/laakhay/ta/issues"

] | uv/0.9.4 | 2026-02-20T17:33:52.357906 | laakhay_ta-0.2.8.tar.gz | 117,651 | 8e/7b/cc5ec3248a23a32c1d772613c6be8c2752f77edec1bfe718f3ac3915e7a0/laakhay_ta-0.2.8.tar.gz | source | sdist | null | false | 7e4d3b1900ea490b0cbf14ae7918bbbb | 1421239043fcd1670723c7a2f454f931b49a6c65fbfb772a42430df33661c39f | 8e7bcc5ec3248a23a32c1d772613c6be8c2752f77edec1bfe718f3ac3915e7a0 | null | [

"LICENSE"

] | 191 |

2.4 | e3sm-quickview | 1.3.3 | An application to explore/analyze data for atmosphere component for E3SM | # QuickView

[](https://github.com/ayenpure/QuickView/actions/workflows/test.yml)

[](https://github.com/ayenpure/QuickView/a... | text/markdown | Kitware Inc. | null | null | null | Apache Software License | Application, Framework, Interactive, Python, Web | [

"Development Status :: 5 - Production/Stable",

"Environment :: Web Environment",

"License :: OSI Approved :: Apache Software License",

"Natural Language :: English",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3 :: Only",

"Topic :: Software Development :: Libraries :: Applic... | [] | null | null | >=3.10 | [] | [] | [] | [

"netcdf4>=1.6.5",

"pillow",

"pyproj>=3.6.1",

"trame-components",

"trame-dataclass>=1.2",

"trame-tauri>=0.6.2",

"trame-vtk>=2.10",

"trame-vuetify>=3.1",

"trame>=3.12",

"jupyter-server-proxy>=4.0.0; extra == \"jupyter\"",

"pyinstaller; extra == \"tauri\"",

"trame-grid-layout>=1.0.3; extra == \"v... | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:33:45.278502 | e3sm_quickview-1.3.3.tar.gz | 1,556,842 | ab/6c/c0653bcf7a5cd4fee403ef14653c6130e91e5d60c8dffba1a325cf24260a/e3sm_quickview-1.3.3.tar.gz | source | sdist | null | false | 9c618d91ee81bfd46e20519ab26738d0 | 6993f9e593246a2f01dac394cfa49ad45a843c9fd3f3aa8a254c3bceaa4c3fdc | ab6cc0653bcf7a5cd4fee403ef14653c6130e91e5d60c8dffba1a325cf24260a | null | [

"LICENSE"

] | 188 |

2.4 | dbt-subprocess-plugin | 0.1.0 | dbt plugin to add Python's subprocess module to dbt's `modules` context attribute | # dbt-subprocess-plugin

This is a [dbt plugin][] to add Python's [subprocess][] module to dbt's [`modules` context][modules] attribute.

[dbt-plugin]: https://github.com/dbt-labs/dbt-core/blob/fa96acb15f79ae4f10b1d78f311f5ef2f4ed645e/core/dbt/plugins/manager.py

[subprocess]: https://docs.python.org/3/library/subproces... | text/markdown | null | Tim Vergenz <vergenzt@gmail.com> | null | null | null | null | [] | [] | null | null | >=3.8 | [] | [] | [] | [

"dbt-core>=1.6"

] | [] | [] | [] | [] | uv/0.9.18 {"installer":{"name":"uv","version":"0.9.18","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"macOS","version":null,"id":null,"libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":nu... | 2026-02-20T17:33:44.290575 | dbt_subprocess_plugin-0.1.0.tar.gz | 211,626 | bd/05/994fea493ae06eaac72299c4e093ba330b2162e3e59b45f9e8bbaaa87fcd/dbt_subprocess_plugin-0.1.0.tar.gz | source | sdist | null | false | e0a0769d7f71f34ba2080e16a65e896f | 8070992d5bb3a7808c1b31dbbc18b2e1871d6b28eab48393d9b67c932310690a | bd05994fea493ae06eaac72299c4e093ba330b2162e3e59b45f9e8bbaaa87fcd | null | [] | 197 |

2.4 | relationalai | 0.14.0 | RelationalAI Library and CLI | # The RelationalAI Python Library

The Python library for building and querying knowledge graphs with RelationalAI.

For more information, visit our documentation at [https://relational.ai/docs](https://relational.ai/docs).

| text/markdown | null | RelationalAI <support@relational.ai> | null | null | null | null | [] | [] | null | null | >=3.10 | [] | [] | [] | [

"aiohttp",

"click==8.2.1",

"colorama",

"cryptography",

"gravis",

"inquirerpy",

"lqp==0.2.3",

"nicegui>=3.7.0",

"numpy<2",

"opentelemetry-api",

"opentelemetry-exporter-otlp-proto-http",

"opentelemetry-sdk",

"packaging",

"pandas",

"pyarrow",

"pyjwt",

"requests",

"rich",

"snowflake-... | [] | [] | [] | [] | twine/6.2.0 CPython/3.11.14 | 2026-02-20T17:33:33.166850 | relationalai-0.14.0.tar.gz | 1,313,008 | 56/ae/ea9316c8b5d1306c11c24ddf566d1d313c6c9944030610cf21bd6f2f18c1/relationalai-0.14.0.tar.gz | source | sdist | null | false | bece66948caa92b1935f9f3d81a9430b | 48382d7452af9edf67fad034b66ac71bdc83e5c454fe68d223547ad16a0ac589 | 56aeea9316c8b5d1306c11c24ddf566d1d313c6c9944030610cf21bd6f2f18c1 | null | [

"LICENSE"

] | 376 |

2.4 | asyncer | 0.0.15 | Asyncer, async and await, focused on developer experience. | <p align="center">

<a href="https://asyncer.tiangolo.com"><img src="https://asyncer.tiangolo.com/img/logo-margin/logo-margin-vector.svg" alt="Asyncer"></a>

</p>

<p align="center">

<em>Asyncer, async and await, focused on developer experience.</em>

</p>

<p align="center">

<a href="https://github.com/fastapi/asynce... | text/markdown | null | Sebastián Ramírez <tiangolo@gmail.com> | null | null | null | null | [

"Development Status :: 4 - Beta",

"Framework :: AsyncIO",

"Framework :: Trio",

"Intended Audience :: Developers",

"Intended Audience :: Education",

"Intended Audience :: Information Technology",

"Intended Audience :: Science/Research",

"Intended Audience :: System Administrators",

"Programming Langu... | [] | null | null | >=3.10 | [] | [] | [] | [

"anyio<5.0,>=3.4.0",

"sniffio>=1.1",

"typing_extensions>=4.12.0; python_version < \"3.15\""

] | [] | [] | [] | [

"Homepage, https://github.com/fastapi/asyncer",

"Documentation, https://asyncer.tiangolo.com",

"Repository, https://github.com/fastapi/asyncer",

"Issues, https://github.com/fastapi/asyncer/issues",

"Changelog, https://asyncer.tiangolo.com/release-notes/"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_vers... | 2026-02-20T17:32:35.709230 | asyncer-0.0.15.tar.gz | 20,090 | 7a/d0/e71744250a22bf358095dbc471ecd4c453bd666f789f2b14cf3ead50fbd8/asyncer-0.0.15.tar.gz | source | sdist | null | false | d36ae0a896a3fb30c13cff77a19ff868 | 339c8493a2be8f188e4bf741019252f7c344e2a78c83a37913dc7832c47f4309 | 7ad0e71744250a22bf358095dbc471ecd4c453bd666f789f2b14cf3ead50fbd8 | MIT | [

"LICENSE"

] | 10,859 |

2.2 | certora-cli-alpha-master | 20260220.17.32.693288 | Runner for the Certora Prover | Commit bd9275d. Build and Run scripts for executing the Certora Prover on Solidity smart contracts.

| text/markdown | Certora | support@certora.com | null | null | GPL-3.0-only | null | [

"Programming Language :: Python :: 3",

"License :: OSI Approved :: GNU General Public License v3 (GPLv3)",

"Operating System :: OS Independent"

] | [] | https://pypi.org/project/certora-cli-alpha-master | null | >=3.9 | [] | [] | [] | [

"click",

"json5",

"pycryptodome",

"requests",

"rich",

"sly",

"tabulate",

"tqdm",

"StrEnum",

"jinja2",

"wcmatch",

"typing_extensions"

] | [] | [] | [] | [

"Documentation, https://docs.certora.com/en/latest/",

"Source, https://github.com/Certora/CertoraProver"

] | twine/6.2.0 CPython/3.11.14 | 2026-02-20T17:32:35.403263 | certora_cli_alpha_master-20260220.17.32.693288.tar.gz | 42,911,449 | 06/a9/d468fd7ac0efb3c1be303d07a037983c2faa7c33857cc40c27a422b66d96/certora_cli_alpha_master-20260220.17.32.693288.tar.gz | source | sdist | null | false | 89e42478f0f10af96a5c840f2ddaa886 | d095189fa00f18fda3feb076bbfc9d4befdfa4d0566b44c19fdc0238b0a605fe | 06a9d468fd7ac0efb3c1be303d07a037983c2faa7c33857cc40c27a422b66d96 | null | [] | 403 |

2.4 | asyncer-slim | 0.0.15 | Asyncer, async and await, focused on developer experience. | <p align="center">

<a href="https://asyncer.tiangolo.com"><img src="https://asyncer.tiangolo.com/img/logo-margin/logo-margin-vector.svg" alt="Asyncer"></a>

</p>

<p align="center">

<em>Asyncer, async and await, focused on developer experience.</em>

</p>

<p align="center">

<a href="https://github.com/fastapi/asynce... | text/markdown | null | Sebastián Ramírez <tiangolo@gmail.com> | null | null | null | null | [

"Development Status :: 4 - Beta",

"Framework :: AsyncIO",

"Framework :: Trio",

"Intended Audience :: Developers",

"Intended Audience :: Education",

"Intended Audience :: Information Technology",

"Intended Audience :: Science/Research",

"Intended Audience :: System Administrators",

"Programming Langu... | [] | null | null | >=3.10 | [] | [] | [] | [

"asyncer>=0.0.15"

] | [] | [] | [] | [

"Homepage, https://github.com/fastapi/asyncer",

"Documentation, https://asyncer.tiangolo.com",

"Repository, https://github.com/fastapi/asyncer",

"Issues, https://github.com/fastapi/asyncer/issues",

"Changelog, https://asyncer.tiangolo.com/release-notes/"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_vers... | 2026-02-20T17:32:33.731683 | asyncer_slim-0.0.15-py3-none-any.whl | 3,010 | ec/50/6f730e7b7b90eec96d1fe2b8f28b52707a9006c13bef15709dce92dbea22/asyncer_slim-0.0.15-py3-none-any.whl | py3 | bdist_wheel | null | false | 7d1203b3fdc0a2fde195898c3d322d40 | 6880cf55d3222a5379af9511cbdc3b688cfca1f9361a86d7df2cc81ecc7f3d18 | ec506f730e7b7b90eec96d1fe2b8f28b52707a9006c13bef15709dce92dbea22 | MIT | [

"LICENSE"

] | 185 |

2.4 | agentouto | 0.2.0 | Multi-agent Python SDK with peer-to-peer agent communication | # AgentOutO

**멀티 에이전트 특화 Python SDK — 오케스트레이터 없는 피어 간 자유 호출**

A multi-agent Python SDK where every agent is equal. No orchestrator. No hierarchy. No restrictions.

---

## 핵심 철학 (Core Philosophy)

AgentOutO rejects the orchestrator pattern used by existing frameworks (CrewAI, AutoGen, etc.).

> **모든 에이전트는 완전히 대등하다.**... | text/markdown | null | null | null | null | null | null | [] | [] | null | null | >=3.11 | [] | [] | [] | [

"anthropic>=0.34.0",

"google-generativeai>=0.8.0",

"openai>=1.50.0",

"mypy>=1.8; extra == \"dev\"",

"pytest-asyncio>=0.23; extra == \"dev\"",

"pytest>=8.0; extra == \"dev\""

] | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:32:05.776156 | agentouto-0.2.0.tar.gz | 40,232 | 00/33/a557fab0bc50ceb70c5a63743bbe3907589685980ab6c8a9dcb602c487aa/agentouto-0.2.0.tar.gz | source | sdist | null | false | 50a66d7c941b7b0e704e2053b4709032 | fe151a2f7a1f8ca8f7c1557abad5c350280c8726a7cfe7889ebf471565fdf6e7 | 0033a557fab0bc50ceb70c5a63743bbe3907589685980ab6c8a9dcb602c487aa | Apache-2.0 | [

"LICENSE"

] | 195 |

2.4 | cyberwave-edge-core | 0.0.16 | The core component of the Cyberwave Edge Node | # Cyberwave Edge Core

This Edge component acts as an orchestrator of your Cyberwave edge components.

## Quickstart (Linux machines)

```bash

# Install the CLI (one time setup)

curl -fsSL "https://packages.buildkite.com/cyberwave/cyberwave-cli/gpgkey" | sudo gpg --dearmor -o /etc/apt/keyrings/cyberwave_cyberwave-cli-a... | text/markdown | null | Cyberwave <info@cyberwave.com> | null | null | MIT | null | [

"Development Status :: 3 - Alpha",

"Environment :: Console",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"... | [] | null | null | <4.0,>=3.10 | [] | [] | [] | [

"click>=8.1.0",

"cyberwave>=0.3.14",

"httpx>=0.25.0",

"rich>=13.0.0",

"pyinstaller>=6.0.0; extra == \"build\"",

"mypy>=1.5.0; extra == \"dev\"",

"pytest-cov>=4.0.0; extra == \"dev\"",

"pytest>=7.0.0; extra == \"dev\"",

"ruff>=0.1.0; extra == \"dev\""

] | [] | [] | [] | [

"Homepage, https://cyberwave.com",

"Documentation, https://docs.cyberwave.com",

"Repository, https://github.com/cyberwave-os/cyberwave-edge-core",

"Issues, https://github.com/cyberwave-os/cyberwave-edge-core/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:31:27.338422 | cyberwave_edge_core-0.0.16.tar.gz | 16,887 | f6/fe/761af15ae5e1fb33606596649012e2b7b9920b258cfde95ca401141fed67/cyberwave_edge_core-0.0.16.tar.gz | source | sdist | null | false | d1c10fc84b016ea4f0e9b2b73e8f281c | 78bbfd21b1c88b15adf1108d051da7cf83a431298ceff0e2aa0fc478997bdb25 | f6fe761af15ae5e1fb33606596649012e2b7b9920b258cfde95ca401141fed67 | null | [

"LICENSE"

] | 173 |

2.3 | brew_sdk | 0.4.0 | The official Python library for the brew-sdk API | # Brew SDK Python API library

<!-- prettier-ignore -->

[)](https://pypi.org/project/brew_sdk/)

The Brew SDK Python library provides convenient access to the Brew SDK REST API from any Python 3.9+

application. The library includes type def... | text/markdown | null | Brew SDK <support@brew.new> | null | null | Apache-2.0 | null | [

"Intended Audience :: Developers",

"License :: OSI Approved :: Apache Software License",

"Operating System :: MacOS",

"Operating System :: Microsoft :: Windows",

"Operating System :: OS Independent",

"Operating System :: POSIX",

"Operating System :: POSIX :: Linux",

"Programming Language :: Python :: ... | [] | null | null | >=3.9 | [] | [] | [] | [

"anyio<5,>=3.5.0",

"distro<2,>=1.7.0",

"httpx<1,>=0.23.0",

"pydantic<3,>=1.9.0",

"sniffio",

"typing-extensions<5,>=4.10",

"aiohttp; extra == \"aiohttp\"",

"httpx-aiohttp>=0.1.9; extra == \"aiohttp\""

] | [] | [] | [] | [

"Homepage, https://github.com/GetBrew/brew-python-sdk",

"Repository, https://github.com/GetBrew/brew-python-sdk"

] | uv/0.9.13 {"installer":{"name":"uv","version":"0.9.13"},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-20T17:30:53.809535 | brew_sdk-0.4.0.tar.gz | 226,047 | b5/b7/13b1b8c9be87fa63c0dc285c8ce4c2e95209161af6edd647978263d8a571/brew_sdk-0.4.0.tar.gz | source | sdist | null | false | 6a7ba6a6e26d7c9bc378db6f669d7362 | 712e02e81bf5b0abfe091709441575632c6354a52db9c5d7c8913a70e9566fb7 | b5b713b1b8c9be87fa63c0dc285c8ce4c2e95209161af6edd647978263d8a571 | null | [] | 0 |

2.4 | jacobs-jinja-too | 0.2.14 | Simple wrapper around jinja2 templating | # Jacob's Jinja Too

[](https://circleci.com/gh/pearmaster/jacobs-jinja-too/tree/master)

A simple wrapper around Jinja2 templating with a collection of custom filters. [Jinja2](https://jinja.palletsprojects.com/en/2.11.x/) is... | text/markdown | null | Jacob Brunson <pypi@jacobbrunson.com> | null | null | null | null | [] | [] | null | null | >=3.9 | [] | [] | [] | [

"jinja-markdown>=1.210911",

"jinja2>=3.1.6"

] | [] | [] | [] | [

"Homepage, http://github.com/pearmaster/jacobs-jinja-too"

] | uv/0.9.12 {"installer":{"name":"uv","version":"0.9.12"},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"25.10","id":"questing","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":null} | 2026-02-20T17:30:36.982138 | jacobs_jinja_too-0.2.14-py3-none-any.whl | 13,981 | d0/c9/057e1c38a62339636fb6aa07bf185b721a43dd741e2e884632c9157e66a0/jacobs_jinja_too-0.2.14-py3-none-any.whl | py3 | bdist_wheel | null | false | 6897918f4d0ae620967d0c124ee62aa5 | f9eb4f56734cc1cff3283d47062b7f68cac510e42da4ce74d38aac797b194ead | d0c9057e1c38a62339636fb6aa07bf185b721a43dd741e2e884632c9157e66a0 | LGPL-2.0 | [

"LICENSE"

] | 202 |

2.4 | wati-mcp | 0.2.0 | WhatsApp MCP Server using Wati API v3 | # WhatsApp MCP Server with Wati API v3

This is a Model Context Protocol (MCP) server for WhatsApp using the Wati API **v3**.

Manage your WhatsApp conversations, contacts, templates, campaigns, and channels through AI assistants like Claude. Search and read messages, send texts and files, manage contacts, and automate... | text/markdown | WhatsApp MCP Contributors | null | null | null | MIT License

Copyright (c) 2025 Luke Harries

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the r... | null | [] | [] | null | null | >=3.11 | [] | [] | [] | [

"requests>=2.28.0",

"python-dotenv>=0.21.0",

"mcp>=1.6.0",

"mcp[cli]>=1.6.0; extra == \"cli\"",

"pytest>=7.0.0; extra == \"dev\""

] | [] | [] | [] | [] | twine/6.2.0 CPython/3.11.5 | 2026-02-20T17:30:34.985970 | wati_mcp-0.2.0.tar.gz | 16,937 | 65/65/8621bc311018acf5d3259a98514e105c479c8da10b69ac27f88e406ab907/wati_mcp-0.2.0.tar.gz | source | sdist | null | false | 99b0ef156a88da0a74d9b1b46edfdfd6 | 863c72f3c37bcf54897a74b0f07ed837af559d83a02a6a7f966feb3010f66dfc | 65658621bc311018acf5d3259a98514e105c479c8da10b69ac27f88e406ab907 | null | [

"LICENSE"

] | 201 |

2.4 | sqlsaber-viz | 0.4.0 | SQLsaber terminal visualization plugin | # SQLSaber Visualization Plugin

Provides the `viz` tool for SQLSaber via the `sqlsaber-viz` plugin. The tool generates a declarative chart spec and renders ASCII charts in the terminal using plotext.

| text/markdown | null | null | null | null | null | null | [] | [] | null | null | >=3.12 | [] | [] | [] | [

"plotext>=5.3.0",

"sqlsaber>=0.55.1"

] | [] | [] | [] | [] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_vers... | 2026-02-20T17:30:32.797456 | sqlsaber_viz-0.4.0-py3-none-any.whl | 17,145 | 93/16/6c9e8877ac1652753e620f9c38d3ae28284550e14236a0ce728faab064d0/sqlsaber_viz-0.4.0-py3-none-any.whl | py3 | bdist_wheel | null | false | c3592834cb24c9cdf15ff1e5a84ce4bf | 39ef25960d964b26cee4eab0f374a424cf282e555ed841df6c85c9d4175d1498 | 93166c9e8877ac1652753e620f9c38d3ae28284550e14236a0ce728faab064d0 | null | [] | 187 |

2.4 | gwseq-io | 0.0.19 | Process BBI (bigWig/bigBed) and HiC files | ## Installation

```

pip install gwseq-io

```

Requires numpy and pybind11.

## Usage

### Open bigWig, bigBed and HiC files

```python

reader = gwseq_io.open(path, *, parallel, zoom_correction, file_buffer_size, max_file_buffer_count)

```

Parameters:

- `parallel` Number of parallel file handles and processing threads... | text/markdown | null | Arthur Gouhier <ajgouhier@gmail.com> | null | null | null | null | [] | [] | null | null | >=3.8 | [] | [] | [] | [

"numpy",

"pybind11>=2.6.0"

] | [] | [] | [] | [

"Repository, https://github.com/ajgouhier/gwseq_io"

] | twine/6.2.0 CPython/3.13.11 | 2026-02-20T17:30:10.871758 | gwseq_io-0.0.19.tar.gz | 71,160 | b0/e5/8fd41205ba3ac3c3875c02a1c09cd5d3b84fbfbaa10a9dc4433bb0b908c0/gwseq_io-0.0.19.tar.gz | source | sdist | null | false | 7478c1bd49ff411be26a05bfcabb6ceb | 74c9f6afae46b6ee69839cf3d69f05d971a10db7dc7ac2de4e441265f22e6517 | b0e58fd41205ba3ac3c3875c02a1c09cd5d3b84fbfbaa10a9dc4433bb0b908c0 | MIT | [

"LICENSE"

] | 171 |

2.4 | speechmarkdown | 1.0.2 | Speech Markdown library for Python. | # SpeechMarkdown

[](https://pypi.org/project/speechmarkdown/)

[](https://speechmarkdown-py.githu... | text/markdown | Takeshi Teshima | takeshi.78.teshima@gmail.com | null | null | Apache-2.0 | null | [

"License :: OSI Approved :: Apache Software License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14"

] | [] | null | null | <4.0,>=3.11 | [] | [] | [] | [

"pyparsing<4.0.0,>=3.3.2"

] | [] | [] | [] | [

"Homepage, https://github.com/takeshi-teshima/speechmarkdown",

"Repository, https://github.com/takeshi-teshima/speechmarkdown"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T17:29:51.289217 | speechmarkdown-1.0.2.tar.gz | 67,178 | da/70/7a74b973b3583fb63d1ff0dcf46cc1424d86c15bcd9cf31727a4d0650e11/speechmarkdown-1.0.2.tar.gz | source | sdist | null | false | 4b29a0142608e5102057e8a5ef7a36b0 | 06d1177475f04ef3b20ce0664313ac2f0ccc588df3109a18136228e0dfd3d811 | da707a74b973b3583fb63d1ff0dcf46cc1424d86c15bcd9cf31727a4d0650e11 | null | [

"LICENSE"

] | 183 |

2.4 | agentwire-dev | 1.7.0 | Multi-session voice web interface for AI coding agents | <p align="center">

<img src="https://agentwire.dev/images/splash-full-transparent.png" alt="AgentWire" width="500">

</p>

<p align="center">

<strong>Talk to your AI coding agents. From anywhere.</strong>

</p>

<p align="center">

<a href="https://pypi.org/project/agentwire-dev/"><img src="https://img.shields.io/py... | text/markdown | null | dotdev <dev@dotdev.dev> | null | null | GNU AFFERO GENERAL PUBLIC LICENSE Version 3, 19 November 2007 Copyright (C) 2007 Free Software Foundation, Inc. <https://fsf.org/> Everyone is permitted to copy and distribute verbatim copies of this license document, but changing it is not allowed. Preamble The GNU Affero General Public License is a free, copyleft ... | agent, ai, claude, stt, tmux, tts, voice | [

"Development Status :: 3 - Alpha",

"Environment :: Web Environment",

"Intended Audience :: Developers",

"License :: OSI Approved :: GNU Affero General Public License v3 or later (AGPLv3+)",

"Operating System :: MacOS",

"Operating System :: POSIX :: Linux",

"Programming Language :: Python :: 3",

"Progr... | [] | null | null | >=3.10 | [] | [] | [] | [

"aiohttp-jinja2>=1.6",

"aiohttp>=3.9.0",

"jinja2>=3.1.0",

"markdown>=3.5",

"mcp>=1.2.0",

"python-dotenv>=1.0.0",

"pyyaml>=6.0",

"requests>=2.28.0",

"resend>=2.0.0",

"pytest-asyncio>=0.23.0; extra == \"dev\"",

"pytest>=7.0; extra == \"dev\"",

"ruff>=0.1.0; extra == \"dev\"",

"fastapi>=0.104.0... | [] | [] | [] | [

"Homepage, https://agentwire.dev",

"Repository, https://github.com/dotdevdotdev/agentwire-dev",

"Documentation, https://agentwire.dev",

"Issues, https://github.com/dotdevdotdev/agentwire-dev/issues",

"Changelog, https://github.com/dotdevdotdev/agentwire-dev/blob/main/CHANGELOG.md"

] | uv/0.7.20 | 2026-02-20T17:28:49.264401 | agentwire_dev-1.7.0.tar.gz | 31,428,487 | d8/a5/f772b1560ce60537a414fb312ba3e2d78fb1c7412993379ddc24db03b605/agentwire_dev-1.7.0.tar.gz | source | sdist | null | false | 14b34651d82d0d507f89636e41d318c2 | 977bc622f3eb7c0b88b5f0bad216a67740001f2fd622b8bcd9acad58c5672396 | d8a5f772b1560ce60537a414fb312ba3e2d78fb1c7412993379ddc24db03b605 | null | [

"LICENSE"

] | 210 |

2.4 | kdebug | 0.6.2 | TUI for Kubernetes Debug Containers | # kdebug - Universal Kubernetes Debug and File Copy Container Utility

Simple utility for launching ephemeral debug containers in Kubernetes pods with interactive shell access, backup capabilities, and a colorful TUI for pod selection.

Similar to [kpf](https://github.com/jessegoodier/kpf), this is a python wrapper aro... | text/markdown | Jesse Goodier | null | null | null | null | cli, debug, kubectl, kubernetes | [

"Development Status :: 4 - Beta",

"Environment :: Console",

"License :: OSI Approved :: MIT License",

"Operating System :: MacOS",

"Operating System :: POSIX :: Linux",

"Programming Language :: Python :: 3"

] | [] | null | null | >=3.9 | [] | [] | [] | [

"rich>=14.0"

] | [] | [] | [] | [

"Homepage, https://github.com/jessegoodier/kdebug",

"Repository, https://github.com/jessegoodier/kdebug"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_vers... | 2026-02-20T17:28:36.993759 | kdebug-0.6.2.tar.gz | 23,052 | 2c/4f/a5085e488c18d8c8490b9593c751fa88ff8da90e0892ff2a54afb43fcc63/kdebug-0.6.2.tar.gz | source | sdist | null | false | 686789d84920913bcff21627768549d7 | d4ef180a0071db137de2978f77b15a3eca9ae64b283c4cd3ae1904927db9ae18 | 2c4fa5085e488c18d8c8490b9593c751fa88ff8da90e0892ff2a54afb43fcc63 | MIT | [] | 225 |

2.4 | william-occam | 0.2.3.1 | William: A tool for data compression and machine learning automatization | [](https://gitlab.com/occam_ua/william/-/commits/master)

[](https://pypi.org/project/william-occam/)

](https://pypi.org/project/langchain-nativ/)

[](LICENSE)

[LangChain](https://www.langchain.com/) integration for **[Nativ](https://usenativ.com)** — AI-powered localization.

Give... | text/markdown | null | Nativ <hello@usenativ.com> | null | null | null | ai, i18n, l10n, langchain, localization, nativ, translation | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",