organization string | repo_name string | base_commit string | iss_html_url string | iss_label string | title string | body string | code null | pr_html_url string | commit_html_url string | file_loc string | own_code_loc list | ass_file_loc list | other_rep_loc list | analysis dict | loctype dict | iss_has_pr int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

keras-team | keras | da86250e5a95a7adccabd8821b0d51508c82bddc | https://github.com/keras-team/keras/issues/18439 | stat:awaiting response from contributor

stale

type:Bug | Problem with framework agnostic KerasVariable slicing with another KerasVariable | I defined a KerasVariable with shape (n,d) in a `keras.Layer()` using `self.add_weight()`. I've also defined another KerasVariable with shape (1) , dtype="int32", and value 0.

```

self.first_variable = self.add_weight(

initializer="zeros", shape=(self.N,input_shape[-1]), trainable=False

)

self.second_variab... | null | null | null | {'base_commit': 'da86250e5a95a7adccabd8821b0d51508c82bddc', 'files': [{'path': 'keras/src/ops/core.py', 'Loc': {"(None, 'slice', 388)": {'mod': []}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"keras/src/ops/core.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

nvbn | thefuck | 09d9f63c98f9c4fc0953dd3fd6fb4589e9e1f6f3 | https://github.com/nvbn/thefuck/issues/376 | Shell history polution | I haven't used this, but I just thought maybe this is not such a good idea because it's going to make traversing shell history really irritating. Does this do anything to get around that, or are there any workarounds?

If not, I know in zsh you can just populate the command line with whatever you want using LBUFFER and... | null | null | null | {} | [] | [] | [

{

"org": "ohmyzsh",

"pro": "ohmyzsh",

"path": [

"plugins/thefuck"

]

}

] | {

"iss_type": "4",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "2",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [],

"asset": [

"plugins/thefuck"

]

} | null | |

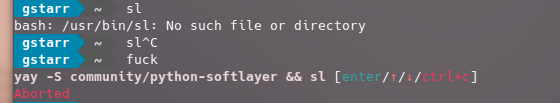

nvbn | thefuck | 6975d30818792f1b37de702fc93c66023c4c50d5 | https://github.com/nvbn/thefuck/issues/1087 | Thinks 'sl' is install python softlayer |

Ah, yes. This wasn't a mis-spelling of ls at all, but me installing Python-Softlayer.

The output of `thefuck --version` (something like `The Fuck 3.1 using Python

3.5.0 and Bash 4.4.12(1)-release`):

... | null | null | null | {'base_commit': '6975d30818792f1b37de702fc93c66023c4c50d5', 'files': [{'path': 'thefuck/rules/sl_ls.py', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"thefuck/rules/sl_ls.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

hiyouga | LLaMA-Factory | 921778a7cfa442409d17ab946c5f579e308c4f2b | https://github.com/hiyouga/LLaMA-Factory/issues/404 | invalid | api调用时,回答的内容中出现莫名其妙的自动问答 | 使用的baichuan-13b模型

使用的scr/api_demo.py

提问内容为:你好

回答会如图

不明白为什么会出现自动的多轮自我问答 | null | null | null | {'base_commit': '921778a7cfa442409d17ab946c5f579e308c4f2b', 'files': [{'path': 'README.md', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0\nreadme中提及",

"info_type": "Doc"

} | {

"code": [],

"doc": [

"README.md"

],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | 984b202f835d6f3f4869cbb1f0460bb2d9163fc1 | https://github.com/hiyouga/LLaMA-Factory/issues/6562 | solved | Batch Inference Error for qwen2vl Model After Full Fine-Tuning | ### Reminder

- [X] I have read the README and searched the existing issues.

### System Info

- `llamafactory` version: 0.9.2.dev0

- Python version: 3.8.20

- PyTorch version: 2.4.1+cu121 (GPU)

- Transformers version: 4.46.1

- Datasets version: 3.1.0

- Accelerate version: 1.0.1

- PEFT version: 0.12.0

- TRL versi... | null | null | null | {'base_commit': '984b202f835d6f3f4869cbb1f0460bb2d9163fc1', 'files': [{'path': 'scripts/vllm_infer.py', 'Loc': {"(None, 'vllm_infer', 38)": {'mod': [43]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"scripts/vllm_infer.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | 4ed2b629a51ef58d229c795e85238d40346ecb58 | https://github.com/hiyouga/LLaMA-Factory/issues/5478 | solved | Can we set default_system in yaml file when training? | ### Reminder

- [X] I have read the README and searched the existing issues.

### System Info

- `llamafactory` version: 0.8.4.dev0

- Platform: Linux-5.4.0-125-generic-x86_64-with-glibc2.29

- Python version: 3.8.10

- PyTorch version: 2.4.0+cu121 (GPU)

- Transformers version: 4.44.2

- Datasets version: 2.21.0

- Ac... | null | null | null | {'base_commit': '4ed2b629a51ef58d229c795e85238d40346ecb58', 'files': [{'path': 'data/', 'Loc': {}}, {'path': 'data/', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [],

"asset": [

"data/"

]

} | null |

hiyouga | LLaMA-Factory | 18c6e6fea9dcc77c03b36301efe2025a87e177d5 | https://github.com/hiyouga/LLaMA-Factory/issues/1971 | solved | llama'response repeat input then the answer | ### Reminder

- [ ] I have read the README and searched the existing issues.

### Reproduction

input_ids = tokenizer(["[INST] " +{text}" + " [/INST]"], return_tensors="pt", add_special_tokens=False).input_ids.to('cuda')

generate_input = {

"input_ids": input_ids,

"max_new_tokens": 512,

... | null | null | null | {'base_commit': '18c6e6fea9dcc77c03b36301efe2025a87e177d5', 'files': [{'path': 'src/llmtuner/chat/chat_model.py', 'Loc': {"('ChatModel', 'chat', 88)": {'mod': [102]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"src/llmtuner/chat/chat_model.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | 13eb365eb768f30d46967dd5ba302ab1106a96b6 | https://github.com/hiyouga/LLaMA-Factory/issues/1443 | solved | deepspeed进行一机八卡断点续训时,直接配置--checkpoint_dir参数,仅加载model权重,无法加载optimizer权重 | 在配置baichuan2模型在固定 `step` 进行断点续训时,希望同时加载mp_rank_00_model_states.pt以及zero_pp_rank_*_mp_rank_00_optim_states.pt

然而,在使用如下命令 `--checkpoint_dir` 启动断点续训时,并没有载入优化器zero_pp_rank_*_mp_rank_00_optim_states.pt

`

deepspeed --num_gpus ${NUM_GPUS_PER_WORKER} src/train_bash.py \

--stage sft \

--model_name_or_path /xxxx... | null | null | null | {'base_commit': '13eb365eb768f30d46967dd5ba302ab1106a96b6', 'files': [{'path': 'src/llmtuner/tuner/sft/workflow.py', 'Loc': {"(None, 'run_sft', 19)": {'mod': [67]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"src/llmtuner/tuner/sft/workflow.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | 5377d0bf95f2fc79b75b253e956a7945f3030ad3 | https://github.com/hiyouga/LLaMA-Factory/issues/908 | solved | 评估指标除了BLEU 分数和汉语 ROUGE 分数还能使用其他的评估指标吗? | 我想把模型用于意图词槽的提取,一般这个任务的评价指标是准确率和F1 score等,请问在这个项目里能使用准确率和F1 score作为评价指标吗?应该怎么做呢?谢谢大佬解答~ | null | null | null | {'base_commit': '5377d0bf95f2fc79b75b253e956a7945f3030ad3', 'files': [{'path': 'src/llmtuner/tuner/sft/metric.py', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"src/llmtuner/tuner/sft/metric.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | 93809d1c3b73898a89cbdd99061eeeed5fd4f6a7 | https://github.com/hiyouga/LLaMA-Factory/issues/1120 | solved | 系统提示词 | 想请教下大佬,“系统提示词(非必填)“框传入的内容怎么输入给模型的,怎么和”输入。。“框传入的内容拼接的?对应的代码在哪里?

感谢感谢 | null | null | null | {'base_commit': '93809d1c3b73898a89cbdd99061eeeed5fd4f6a7', 'files': [{'path': 'src/llmtuner/extras/template.py', 'Loc': {"('Template', '_encode', 93)": {'mod': [109]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "5",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"src/llmtuner/extras/template.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | 757564caa1a0e83d184100604e43efe3c5030c0e | https://github.com/hiyouga/LLaMA-Factory/issues/2584 | solved | 请教llama pro应该怎么用?是可以用来微调吗? | ### Reminder

- [X] I have read the README and searched the existing issues.

### Reproduction

请教llama pro应该怎么用?是可以用来做pt和SFT吗?

### Expected behavior

_No response_

### System Info

_No response_

### Others

_No response_ | null | null | null | {'base_commit': '757564caa1a0e83d184100604e43efe3c5030c0e', 'files': [{'path': 'tests/llama_pro.py', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"tests/llama_pro.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | e678c1ccb2583e7b3e9e5bf68b58affc1a71411c | https://github.com/hiyouga/LLaMA-Factory/issues/5011 | solved | Compute_Accuracy | ### Reminder

- [X] I have read the README and searched the existing issues.

### System Info

I'm curious about this metrics for and how could i use this? and when? ( ComputeAccuracy )

- Transformers version: 4.41.2

- Datasets versi... | null | null | null | {'base_commit': '955e01c038ccc708def77f392b0e342f2f51dc9b', 'files': [{'path': 'examples/deepspeed/ds_z3_offload_config.json', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"examples/deepspeed/ds_z3_offload_config.json"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | 955e01c038ccc708def77f392b0e342f2f51dc9b | https://github.com/hiyouga/LLaMA-Factory/issues/4803 | solved | predict_oom | ### Reminder

- [X] I have read the README and searched the existing issues.

### System Info

model_name_or_path: llm/Qwen2-72B-Instruct

# adapter_name_or_path: saves/qwen2_7b_errata_0705/lora_ace04_instruction_v1_savesteps_10/sft

### method

stage: sft

do_predict: true

finetuning_type: lora

### dataset

data... | null | null | null | {'base_commit': '955e01c038ccc708def77f392b0e342f2f51dc9b', 'files': [{'path': 'Examples/train_lora/', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "3\n用户配置错误",

"loc_way": "comment",

"loc_scope": "3",

"info_type": "config"

} | {

"code": [],

"doc": [],

"test": [],

"config": [],

"asset": [

"Examples/train_lora/"

]

} | null |

hiyouga | LLaMA-Factory | 3f11ab800f7dcf4b61a7c72ead4e051db11a8091 | https://github.com/hiyouga/LLaMA-Factory/issues/4178 | solved | glm-4-9b-chat-1m do_predict得到的generated_predictions.jsonl中的label出现了\n和一些非数据集中的结果。 | ### Reminder

- [X] I have read the README and searched the existing issues.

### System Info

llamafactory 0.7.2.dev0

Python 3.10.14

ubuntu 20.04

### Reproduction

$llamafactory-cli train glm_predict.yaml

generated_predictions.jsonl 输出

{"label": "\n[S,137.0]", "predict": "\n[S,137.0]"}

{"label": "... | null | null | null | {'base_commit': '3f11ab800f7dcf4b61a7c72ead4e051db11a8091', 'files': [{'path': 'src/llamafactory/data/template.py', 'Loc': {'(None, None, None)': {'mod': [663, 664]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"src/llamafactory/data/template.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | d46c136c0e104c50999df18a88c42658b819f71f | https://github.com/hiyouga/LLaMA-Factory/issues/230 | solved | 使用本项目训练baichuan-13b之后,如何在baichuan-13b中加载训练完的模型 | 训练完成后如何应该如何在baichuan-13b的项目中修改加载训练完成后的模型? | null | null | null | {'base_commit': 'd46c136c0e104c50999df18a88c42658b819f71f', 'files': [{'path': 'src/export_model.py', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"src/export_model.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | 024b0b1ab28d3c3816f319370ed79a4f26d40edf | https://github.com/hiyouga/LLaMA-Factory/issues/1995 | solved | Phi-1.5跑RM lora 出现'NoneType' object is not subcriptable | ### Reminder

- [X] I have read the README and searched the existing issues.

### Reproduction

sh脚本:

```

deepspeed --num_gpus 8 --master_port=9901 src/train_bash.py \

--stage rm \

--model_name_or_path Phi-1.5 \

--deepspeed ds_config.json \

--adapter_name_or_path sft_lora \

--create_new... | null | null | null | {} | [] | [] | [

{

"org": "docs",

"pro": "transformers",

"path": [

"model_doc/phi"

]

}

] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "2",

"info_type": "Doc"

} | {

"code": [],

"doc": [

"model_doc/phi"

],

"test": [],

"config": [],

"asset": []

} | null |

hiyouga | LLaMA-Factory | d46c136c0e104c50999df18a88c42658b819f71f | https://github.com/hiyouga/LLaMA-Factory/issues/226 | solved | 请问项目中对多轮对话语料的处理方式 | 是用多个历史对话拼接后作为input来预测最后一轮的回答吗?还是把历史对话拆分成多个轮次的训练语料比如5轮次对话可以拆分成1 2 3 4 5轮次对话样本。关于具体的处理过程代码 能否请作者指出一下 我想学习学习。谢谢。 | null | null | null | {'base_commit': 'd46c136c0e104c50999df18a88c42658b819f71f', 'files': [{'path': 'src/llmtuner/dsets/preprocess.py', 'Loc': {"(None, 'preprocess_supervised_dataset', 50)": {'mod': []}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "5",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"src/llmtuner/dsets/preprocess.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

zylon-ai | private-gpt | 2f3aab9cfdc139f399387dbb90300d5a8bf8d2f1 | https://github.com/zylon-ai/private-gpt/issues/375 | bug | ValueError: Requested tokens exceed context window of 1000 | After I ingest a file, run privateGPT and try to ask anything, I get following error:

```

Traceback (most recent call last):

File "C:\Stable_Diffusion\privateGPT\privateGPT.py", line 75, in <module>

main()

File "C:\Stable_Diffusion\privateGPT\privateGPT.py", line 47, in main

res = qa(query)

File ... | null | null | null | {'base_commit': '2f3aab9cfdc139f399387dbb90300d5a8bf8d2f1', 'files': [{'path': 'ingest.py', 'Loc': {"(None, 'process_documents', 114)": {'mod': [124]}}, 'status': 'modified'}]} | [] | [

".env"

] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0\nor\n1",

"info_type": "Code"

} | {

"code": [

"ingest.py"

],

"doc": [],

"test": [],

"config": [

".env"

],

"asset": []

} | null |

zylon-ai | private-gpt | 24cfddd60f74aadd2dade4c63f6012a2489938a1 | https://github.com/zylon-ai/private-gpt/issues/1125 | LLM mock donot gives any output | i have downloaded the llm models

used this

poetry install --with local

poetry run python scripts/setup

still i get this output

| null | null | null | {'base_commit': '24cfddd60f74aadd2dade4c63f6012a2489938a1', 'files': [{'path': 'settings.yaml', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Config\nCode"

} | {

"code": [],

"doc": [],

"test": [],

"config": [

"settings.yaml"

],

"asset": []

} | null | |

zylon-ai | private-gpt | dd1100202881a01b6b013b7bc1faad8b5c63fec9 | https://github.com/zylon-ai/private-gpt/issues/850 | primordial | privateGPT中文提问显示token超出限制,英文提问不存在这个问题 |

token的计算方式很奇怪五个字指令的token比七个字多

| null | null | null | {} | [] | [

".env"

] | [] | {

"iss_type": "1",

"iss_reason": "4",

"loc_way": "comment",

"loc_scope": "1",

"info_type": "config"

} | {

"code": [],

"doc": [],

"test": [],

"config": [

".env"

],

"asset": []

} | null |

zylon-ai | private-gpt | d17c34e81a84518086b93605b15032e2482377f7 | https://github.com/zylon-ai/private-gpt/issues/1724 | Error in Model Download and Tokenizer Fetching During Setup Script Execution | ### Environment

Operating System: Macbook Pro M1

Python Version: 3.11

Description

I'm encountering an issue when running the setup script for my project. The script is supposed to download an embedding model and an LLM model from Hugging Face, followed by their respective tokenizers. While the script successfully... | null | null | null | {'base_commit': 'd17c34e81a84518086b93605b15032e2482377f7', 'files': [{'path': 'settings.yaml', 'Loc': {'(None, None, 42)': {'mod': [42]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Config\nCode"

} | {

"code": [],

"doc": [],

"test": [],

"config": [

"settings.yaml"

],

"asset": []

} | null | |

zylon-ai | private-gpt | 026b9f895cfb727da523a20c59773146801236ba | https://github.com/zylon-ai/private-gpt/issues/13 | gpt_tokenize: unknown token '?' | gpt_tokenize: unknown token '?'

gpt_tokenize: unknown token '?'

gpt_tokenize: unknown token '?'

gpt_tokenize: unknown token '?'

gpt_tokenize: unknown token '?'

gpt_tokenize: unknown token '?'

gpt_tokenize: unknown token '?'

gpt_tokenize: unknown token '?'

gpt_tokenize: unknown token '?'

gpt_tokenize: unknown t... | null | null | null | {} | [] | [

".env"

] | [] | {

"iss_type": "1",

"iss_reason": "3\n???",

"loc_way": "comment",

"loc_scope": "1",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [

".env"

],

"asset": []

} | null | |

zylon-ai | private-gpt | d3acd85fe34030f8cfd7daf50b30c534087bdf2b | https://github.com/zylon-ai/private-gpt/issues/1514 | LLM Chat only returns "#" characters | No matter the prompt, privateGPT only returns hashes as the response. This doesn't occur when not using CUBLAS.

<img width="745" alt="image" src="https://github.com/imartinez/privateGPT/assets/6668593/b4ef137f-0122-44fe-864a-eef246066ec3">

Set up info:

NVIDIA GeForce RTX 4080

Windows 11

<img width="924" a... | null | null | null | {'base_commit': 'd3acd85fe34030f8cfd7daf50b30c534087bdf2b', 'files': [{'path': 'private_gpt/components/llm/llm_component.py', 'Loc': {"('LLMComponent', '__init__', 21)": {'mod': [45]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"private_gpt/components/llm/llm_component.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

zylon-ai | private-gpt | c4b247d696c727c1da6d993ce4f6c3a557e91b42 | https://github.com/zylon-ai/private-gpt/issues/685 | enhancement

primordial | CPU utilization | CPU utilization appears to be capped at 20%

Is there a way to increase CPU utilization and thereby enhance performance?

| null | null | null | {'base_commit': 'c4b247d696c727c1da6d993ce4f6c3a557e91b42', 'files': [{'path': 'privateGPT.py', 'Loc': {"(None, 'main', 23)": {'mod': [36]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"privateGPT.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

zylon-ai | private-gpt | 7ae80e662936bd946a231d1327bde476556c5d61 | https://github.com/zylon-ai/private-gpt/issues/181 | primordial | Segfault : not enough space in the context's memory pool | ggml_new_tensor_impl: not enough space in the context's memory pool (needed 3779301744, available 3745676000)

zsh: segmentation fault python3.11 privateGPT.py

Whats context memory pool? can i configure it? i actually have a lot of excess memory | null | null | null | {'base_commit': '7ae80e662936bd946a231d1327bde476556c5d61', 'files': [{'path': 'ingest.py', 'Loc': {"(None, 'main', 37)": {'mod': [47]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"ingest.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

zylon-ai | private-gpt | 9d47d03d183685c675070d47ad3beb67446d6580 | https://github.com/zylon-ai/private-gpt/issues/630 | bug

primordial | Use falcon model in privategpt | Hi how can i use Falcon model in privategpt?

https://huggingface.co/tiiuae/falcon-40b-instruct

Thanks | null | null | null | {'base_commit': '9d47d03d183685c675070d47ad3beb67446d6580', 'files': [{'path': 'privateGPT.py', 'Loc': {"(None, 'main', 23)": {'mod': [32]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"privateGPT.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

zylon-ai | private-gpt | 380b119581d2afcd24948f1108507b138490aec6 | https://github.com/zylon-ai/private-gpt/issues/235 | bug

primordial | Need help on in some errors | File "F:\privateGPT\Lib\site-packages\langchain\embeddings\llamacpp.py", line 79, in validate_environment

values["client"] = Llama(

^^^^^^

File "F:\privateGPT\Lib\site-packages\llama_cpp\llama.py", line 155, in __init__

self.ctx = llama_cpp.llama_init_from_file(

... | null | null | null | {'base_commit': '380b119581d2afcd24948f1108507b138490aec6', 'files': [{'path': 'README.md', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Doc"

} | {

"code": [],

"doc": [

"README.md"

],

"test": [],

"config": [],

"asset": []

} | null |

zylon-ai | private-gpt | b1057afdf8f65fdb10e4160adbd8462be0c08271 | https://github.com/zylon-ai/private-gpt/issues/796 | primordial | Unable to instantiate model (type=value_error) | Installed on Ubuntu 20.04 with Python3.11-venv

Error on line 38:

https://github.com/imartinez/privateGPT/blob/b1057afdf8f65fdb10e4160adbd8462be0c08271/privateGPT.py#L38C7-L38C7

Error:

Using embedded DuckDB with persistence: data will be stored in: db

Found model file at models/ggml-gpt4all-j-v1.3-groovy.bin... | null | null | null | {} | [] | [

"ggml-gpt4all-j-v1.3-groovy.bin"

] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [],

"asset": [

"ggml-gpt4all-j-v1.3-groovy.bin"

]

} | null |

zylon-ai | private-gpt | dd1100202881a01b6b013b7bc1faad8b5c63fec9 | https://github.com/zylon-ai/private-gpt/issues/839 | bug

primordial | ERROR: The prompt size exceeds the context window size and cannot be processed. | Enter a query,

It show:

ERROR: The prompt size exceeds the context window size and cannot be processed.GPT-J ERROR: The prompt is2614tokens and the context window is2048!

ERROR: The prompt size exceeds the context window size and cannot be processed. | null | null | null | {} | [] | [

".env"

] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "1",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [

".env"

],

"asset": []

} | null |

zylon-ai | private-gpt | 6bbec79583b7f28d9bea4b39c099ebef149db843 | https://github.com/zylon-ai/private-gpt/issues/1598 | Performance bottleneck using GPU | Hi Guys,

I am running the default Mistral model, and when running queries I am seeing 100% CPU usage (so single core), and up to 29% GPU usage which drops to have 15% mid answer.

I am using a MacBook Pro with M3 Max. I have set: model_kwargs={"n_gpu_layers": -1, "offload_kqv": True},

I am curious as LM studi... | null | null | null | {'base_commit': '6bbec79583b7f28d9bea4b39c099ebef149db843', 'files': [{'path': 'private_gpt/ui/ui.py', 'Loc': {"('PrivateGptUi', 'yield_deltas', 81)": {'mod': []}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "2",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"private_gpt/ui/ui.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

yt-dlp | yt-dlp | 87ebab0615b1bf9b14b478b055e7059d630b4833 | https://github.com/yt-dlp/yt-dlp/issues/6007 | question | How to limit YouTube Music search to tracks only? | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [X] I understand that I will be **blocked** if I remove or skip any mandatory\* field

### Checklist

- [X] I'm asking a question and **not** reporting a bug or requesting a feature

- [X] I've looked through the [README](https://github.com/yt-dlp/yt-dlp#readme)

- [X] I've... | null | null | null | {'base_commit': '87ebab0615b1bf9b14b478b055e7059d630b4833', 'files': [{'path': 'yt_dlp/extractor/youtube.py', 'Loc': {"('YoutubeMusicSearchURLIE', None, 6647)": {'mod': [6676]}}, 'status': 'modified'}, {'path': 'yt_dlp/extractor/youtube.py', 'Loc': {"('YoutubeMusicSearchURLIE', None, 6647)": {'mod': [6659]}}, 'status':... | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"yt_dlp/extractor/youtube.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

yt-dlp | yt-dlp | 91302ed349f34dc26cc1d661bb45a4b71f4417f7 | https://github.com/yt-dlp/yt-dlp/issues/7436 | question | Is YT-DLP capacity of downloading/displaying Automatic caption? | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [X] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field

### Checklist

- [X] I'm asking a question and **not** reporting a bug or requesting a feature

- [X] I've looked through the [README](https://github.com/yt-dlp/yt... | null | null | null | {'base_commit': '91302ed349f34dc26cc1d661bb45a4b71f4417f7', 'files': [{'path': 'yt_dlp/options.py', 'Loc': {"(None, 'create_parser', 216)": {'mod': [853, 857, 861]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "0\n这个可不算,因为user知道命令只是引号问题",

"info_type": "Code"

} | {

"code": [

"yt_dlp/options.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

yt-dlp | yt-dlp | 6075a029dba70a89675ae1250e7cdfd91f0eba41 | https://github.com/yt-dlp/yt-dlp/issues/10356 | question | Unable to install curl_cffi | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [X] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field

### Checklist

- [X] I'm asking a question and **not** reporting a bug or requesting a feature

- [X] I've looked through the [README](https://github.com/yt-dlp/yt-dlp#re... | null | null | null | {} | [] | [

".zshrc",

".bash_profile"

] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "1",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [

".bash_profile",

".zshrc"

],

"asset": []

} | null |

yt-dlp | yt-dlp | 4a601c9eff9fb42e24a4c8da3fa03628e035b35b | https://github.com/yt-dlp/yt-dlp/issues/8479 | question

NSFW | OUTPUT TEMPLATE --output %(title)s.%(ext)s | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [X] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field

### Checklist

- [X] I'm asking a question and **not** reporting a bug or requesting a feature

- [X] I've looked through the [README](https://github.com/yt-dlp/yt... | null | null | null | {} | [] | [

"yt-dlp.conf"

] | [] | {

"iss_type": "2",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "1",

"info_type": "Code"

} | {

"code": [

"yt-dlp.conf"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

yt-dlp | yt-dlp | a903d8285c96b2c7ac7915f228a17e84cbfe3ba4 | https://github.com/yt-dlp/yt-dlp/issues/1238 | question | [Question] How to use Sponsorblock as part of Python script | <!--

######################################################################

WARNING!

IGNORING THE FOLLOWING TEMPLATE WILL RESULT IN ISSUE CLOSED AS INCOMPLETE

######################################################################

-->

## Checklist

<!--

Carefully read and work through this check lis... | null | null | null | {'base_commit': 'a903d8285c96b2c7ac7915f228a17e84cbfe3ba4', 'files': [{'path': 'yt_dlp/__init__.py', 'Loc': {"(None, '_real_main', 62)": {'mod': [427, 501]}}, 'status': 'modified'}, {'path': 'README.md', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"yt_dlp/__init__.py"

],

"doc": [

"README.md"

],

"test": [],

"config": [],

"asset": []

} | null |

yt-dlp | yt-dlp | 8531d2b03bac9cc746f2ee8098aaf8f115505f5b | https://github.com/yt-dlp/yt-dlp/issues/10462 | question | Cookie not loading when downloading instagram videos | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [X] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field

### Checklist

- [X] I'm asking a question and **not** reporting a bug or requesting a feature

- [X] I've looked through the [README](https://github.com/yt-dlp/yt... | null | null | null | {'base_commit': '8531d2b03bac9cc746f2ee8098aaf8f115505f5b', 'files': [{'path': 'yt_dlp/YoutubeDL.py', 'Loc': {"('YoutubeDL', None, 189)": {'mod': [335]}}, 'status': 'modified'}, {'path': 'yt_dlp/__init__.py', 'Loc': {"(None, 'parse_options', 737)": {'mod': [901]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"yt_dlp/__init__.py",

"yt_dlp/YoutubeDL.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

yt-dlp | yt-dlp | e59c82a74cda5139eb3928c75b0bd45484dbe7f0 | https://github.com/yt-dlp/yt-dlp/issues/11152 | question | How to use --merge-output-format? | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [X] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field

### Checklist

- [X] I'm asking a question and **not** reporting a bug or requesting a feature

- [X] I've looked through the [README](https://github.com/yt-dlp/yt-dlp#re... | null | null | null | {'base_commit': 'e59c82a74cda5139eb3928c75b0bd45484dbe7f0', 'files': [{'path': 'README.md', 'Loc': {'(None, None, 1430)': {'mod': [1430]}}, 'status': 'modified'}, {'path': 'yt_dlp/options.py', 'Loc': {"(None, 'create_parser', 219)": {'mod': [786, 790]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "",

"info_type": "Code"

} | {

"code": [

"yt_dlp/options.py"

],

"doc": [

"README.md"

],

"test": [],

"config": [],

"asset": []

} | null |

comfyanonymous | ComfyUI | f18ebbd31645437afaa9738fcf2b5ed8b48cb021 | https://github.com/comfyanonymous/ComfyUI/issues/6177 | Feature | Workflow that can follow different paths and skip some of them. | ### Feature Idea

Hi.

I am very interested in the ability to create a workflow that can follow different paths and skip some if they are not needed.

For example, I want to create an image and save it under a fixed name (unique). But tomorrow (or after restart) I want to run this workflow again and work with the alr... | null | null | null | {} | [] | [] | [

{

"org": "ltdrdata",

"pro": "ComfyUI-extension-tutorials",

"path": [

"ComfyUI-Impact-Pack/tutorial/switch.md"

]

}

] | {

"iss_type": "4",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Doc"

} | {

"code": [],

"doc": [

"ComfyUI-Impact-Pack/tutorial/switch.md"

],

"test": [],

"config": [],

"asset": []

} | null |

comfyanonymous | ComfyUI | 834ab278d2761c452f8e76c83fb62d8f8ce39301 | https://github.com/comfyanonymous/ComfyUI/issues/1064 | Error occurred when executing CLIPVisionEncode | Hi there,

somehow i cant get unCLIP to work

The .png has the unclip example workflow i tried out, but it gets stuck in the CLIPVisionEncode Module.

What can i do to solve this?

Error occurred when executing CLIPVisionEncode:

'NoneType' object has no attribute 'encode_image'

File "D:\ComfyUI_windows_por... | null | null | null | {'base_commit': '834ab278d2761c452f8e76c83fb62d8f8ce39301', 'files': [{'path': 'README.md', 'Loc': {'(None, None, 30)': {'mod': [30]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "2",

"info_type": "model\n+\nDoc"

} | {

"code": [],

"doc": [

"README.md"

],

"test": [],

"config": [],

"asset": []

} | null | |

comfyanonymous | ComfyUI | 3c60ecd7a83da43d694e26a77ca6b93106891251 | https://github.com/comfyanonymous/ComfyUI/issues/5229 | User Support | Problem with ComfyUI workflow "ControlNetApplySD3 'NoneType' object has no attribute 'copy'" | ### Your question

I get the following error when running the workflow

I leave a video of what I am working on as a reference.

https://www.youtube.com/watch?v=MbQv8zoNEfY

video of reference

### Logs

```powershell

# ComfyUI Error Report

## Error Details

- **Node Type:** ControlNetApplySD3

- **Exception Typ... | null | null | null | {} | [] | [] | [

{

"org": "Shakker-Labs",

"pro": "FLUX.1-dev-ControlNet-Union-Pro"

}

] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "2",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [],

"asset": [

"FLUX.1-dev-ControlNet-Union-Pro"

]

} | null |

comfyanonymous | ComfyUI | 494cfe5444598f22eced91b6f4bfffc24c4af339 | https://github.com/comfyanonymous/ComfyUI/issues/96 | Feature Request: model and output path setting | Sym linking is not ideal, setting a model folder is pretty standard these days and most of us use more than one software that uses models.

The same for choosing where to put the output images, personally mine go to a portable drive, not sure how to do that with ComfyUI. | null | null | null | {'base_commit': '494cfe5444598f22eced91b6f4bfffc24c4af339', 'files': [{'path': 'extra_model_paths.yaml.example', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "4",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [

"extra_model_paths.yaml.example"

],

"asset": []

} | null | |

comfyanonymous | ComfyUI | f18ebbd31645437afaa9738fcf2b5ed8b48cb021 | https://github.com/comfyanonymous/ComfyUI/issues/6186 | User Support

Custom Nodes Bug | error | ### Your question

[Errno 2] No such file or directory: 'D:\\ComfyUI_windows_portable_nvidia\\ComfyUI_windows_portable\\ComfyUI\\custom_nodes\\comfyui_controlnet_aux\\ckpts\\LiheYoung\\Depth-Anything\\.cache\\huggingface\\download\\checkpoints\\depth_anything_vitl14.pth.6c6a383e33e51c5fdfbf31e7ebcda943973a9e6a1cbef1564... | null | null | null | {} | [] | [

".cache"

] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "1",

"info_type": "Code"

} | {

"code": [

".cache"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

ageitgey | face_recognition | e9df345a7853c52bfe98830bd2c9a07aaa7b81d9 | https://github.com/ageitgey/face_recognition/issues/159 | Raspberry Pi Memory Error | * face_recognition version: 02.1

* Python version: 2.7

* Operating System: Raspian

### Description

I installed to face_recognition my raspberry pi successfully for python 3. Now i am trying to install for Python2 because i need it. When i was trying install i am taking a Memory Error. I attached the images from... | null | null | null | {'base_commit': 'e9df345a7853c52bfe98830bd2c9a07aaa7b81d9', 'files': [{'path': 'README.md', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [],

"doc": [

"README.md"

],

"test": [],

"config": [],

"asset": []

} | null | |

ageitgey | face_recognition | 0961fd1aaf97336e544421318fcd4b55feeb1a79 | https://github.com/ageitgey/face_recognition/issues/533 | knn neighbors name list? | In **face_recognition_knn.py**

I want name list of 5 neighbors. So I change n_neighbors=5.

`closest_distances = knn_clf.kneighbors(faces_encodings, n_neighbors=5)`

And it returned just 5 values of **distance_threshold** from trained .clf file

I found that `knn_clf.predict(faces_encodings)` return only 1 best matc... | null | null | null | {} | [] | [] | [

{

"pro": "scikit-learn"

},

{

"pro": "scikit-learn",

"path": [

"sklearn/neighbors/_classification.py"

]

}

] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "2",

"info_type": "Code"

} | {

"code": [

"sklearn/neighbors/_classification.py"

],

"doc": [],

"test": [],

"config": [],

"asset": [

"scikit-learn"

]

} | null | |

ageitgey | face_recognition | f21631401119e4af2e919dd662c3817b2c480c75 | https://github.com/ageitgey/face_recognition/issues/135 | face_recognization with python | * face_recognition version:

* Python version:3.5

* Operating System:windows

### Description

I am working with some python face reorganization code in which I want to compare sampleface.jpg which contains a sample face with facegrid.jpg. The facegrid.jpg itself has some 6 faces in it. I am getting results as tr... | null | null | null | {'base_commit': 'f21631401119e4af2e919dd662c3817b2c480c75', 'files': [{'path': 'README.md', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [],

"doc": [

"README.md"

],

"test": [],

"config": [],

"asset": []

} | null | |

ageitgey | face_recognition | cea177b75f74fe4e8ce73cf33da2e7e38e658ba4 | https://github.com/ageitgey/face_recognition/issues/726 | cv2.imshow error | Hi All,

with the help of docs i am trying to display image with below code and getting error. i tried all possible ways like file extension, path and python version to resolve this error and not able to rectify. So, please do needful,

Note:- 1.image present in the path.

2. print statement result None... | null | null | null | {} | [

{

"Loc": [

12

],

"path": null

}

] | [] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "",

"info_type": "Code"

} | {

"code": [

null

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

ageitgey | face_recognition | b8fed6f3c0ad5ab2dab72d6251c60843cad71386 | https://github.com/ageitgey/face_recognition/issues/643 | Train model with more than 1 image per person | * face_recognition version: 1.2.3

* Python version: 2.7.15

* Operating System: Windows 10

### Description

I Would like to train the model with more than 1 image per each person to achieve better recognition results. Is it possible?

One more question is what does [0] mean here:

```

known_face_encoding_user ... | null | null | null | {'base_commit': 'b8fed6f3c0ad5ab2dab72d6251c60843cad71386', 'files': [{'path': 'examples/face_recognition_knn.py', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "",

"info_type": "Code"

} | {

"code": [

"examples/face_recognition_knn.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

ageitgey | face_recognition | aff06e965e895d8a6e781710e7c44c894e3011a3 | https://github.com/ageitgey/face_recognition/issues/68 | cv2.error: /home/pi/opencv-3.1.0/modules/imgproc/src/imgwarp.cpp:3229: error: (-215) ssize.area() > 0 in function resize | * face_recognition version:

* Python version: 3.4

* Operating System: Jesse Raspbian

### Description

Whenever I try to run facerec_from_webcam_faster.py, I get the error below. Note that I have checked my local files, the image to be recognized is place appropriately.

###

```

OpenCV Error: Assertion ... | null | null | null | {'base_commit': 'aff06e965e895d8a6e781710e7c44c894e3011a3', 'files': [{'path': 'examples/facerec_from_webcam_faster.py', 'Loc': {'(None, None, None)': {'mod': [14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26, 27, 28, 29, 30, 31]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"examples/facerec_from_webcam_faster.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

ageitgey | face_recognition | 6da4a2ff0f0183280cdc2bffa58ddae8bf93ac41 | https://github.com/ageitgey/face_recognition/issues/181 | does load_image_file have a version which read from byte[] not just from the disk file | does load_image_file have a version which read from byte array in memory not just from the disk file. | null | null | null | {'base_commit': '6da4a2ff0f0183280cdc2bffa58ddae8bf93ac41', 'files': [{'path': 'face_recognition/api.py', 'Loc': {"(None, 'load_image_file', 73)": {'mod': []}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "",

"info_type": "Code"

} | {

"code": [

"face_recognition/api.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

ageitgey | face_recognition | 5f804870c14803c2664942c958f11112276a79cc | https://github.com/ageitgey/face_recognition/issues/209 | face_locations get wrong result but dlib is correct | * face_recognition version: 1.0.0

* Python version: 3.5

* Operating System: Ubuntu 16.04 LTS

### Description

I run the example find_faces_in_picture_cnn.py to process the image from this link.

https://timgsa.baidu.com/timg?image&quality=80&size=b9999_10000&sec=1507896274082&di=824f7f59943a71e2e9904d22175ce92c&im... | null | null | null | {'base_commit': '5f804870c14803c2664942c958f11112276a79cc', 'files': [{'path': 'examples/find_faces_in_picture_cnn.py', 'Loc': {'(None, None, None)': {'mod': [12]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"examples/find_faces_in_picture_cnn.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

ageitgey | face_recognition | a96484edc270697c666c1c32ba5163cf8e71b467 | https://github.com/ageitgey/face_recognition/issues/1004 | IndexError: list index out of range while attempting to automatically recognize faces | * face_recognition version: 1.2.3

* Python version: 3.7.3

* Operating System: Windows 10 x64

### Description

Hello everyone,

I was attempting to modify facerec_from_video_file.py in order to make it save the unknown faces in the video and recognize them based on the first frame they appear on for example if an... | null | null | null | {'base_commit': 'a96484edc270697c666c1c32ba5163cf8e71b467', 'files': [{'path': 'examples/facerec_from_video_file.py', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "3",

"info_type": "Code"

} | {

"code": [

"examples/facerec_from_video_file.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

ageitgey | face_recognition | a8830627e89bcfb9c9dda2c8f7cac5d2e5cfb6c0 | https://github.com/ageitgey/face_recognition/issues/178 | IndexError: list index out of range | IndexError: list index out of range

my code:

import face_recognition

known_image = face_recognition.load_image_file("D:/1.jpg")

unknown_image = face_recognition.load_image_file("D:/2.jpg")

biden_encoding = face_recognition.face_encodings(known_image)[0] | null | null | null | {} | [

{

"Loc": [

8

],

"path": null

}

] | [] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "3",

"info_type": "Code"

} | {

"code": [

null

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

ageitgey | face_recognition | 7f183afd9c848f05830c145890c04181dcc1c46b | https://github.com/ageitgey/face_recognition/issues/93 | how to do live face recognition with RPi | * Operating System: Debian

### Description

i want to use the example ```facerec_from_webcam_faster.py```

but i don't know how to change the video_output source to the PiCam

### What I Did

```

camera = picamera.PiCamera()

camera.resolution = (320, 240)

output = np.empty((240, 320, 3), dtype=np.uint8)

... | null | null | null | {} | [

{

"Loc": [

18

],

"path": null

}

] | [] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "3",

"info_type": "Code"

} | {

"code": [

null

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

PaddlePaddle | PaddleOCR | 14318e392fbe2d69511441edf5a172c4c72d6961 | https://github.com/PaddlePaddle/PaddleOCR/issues/7095 | status/close | 文本检测完的图片怎么进行文本识别啊? | 是要把边界框框出的图片剪裁下来,送进识别模型吗?关于这个的代码在哪里啊 | null | null | null | {'base_commit': '14318e392fbe2d69511441edf5a172c4c72d6961', 'files': [{'path': 'tools/infer/predict_system.py', 'Loc': {"('TextSystem', '__call__', 67)": {'mod': [69, 70, 71, 72, 73, 74, 75, 76, 77, 78, 79, 80, 81, 82, 83, 84, 85, 86, 87, 88, 89]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"tools/infer/predict_system.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

PaddlePaddle | PaddleOCR | db60893201ad07a8c20d938a8224799f932779ad | https://github.com/PaddlePaddle/PaddleOCR/issues/5641 | inference and deployment | PaddleServing怎样修改相关参数 | 根据 [**基于PaddleServing的服务部署**](https://github.com/PaddlePaddle/PaddleOCR/blob/release/2.4/deploy/pdserving/README_CN.md) 后,怎样对模型及服务的一些参数进行修改呢?

例如如下参数:

use_tensorrt

batch_size

det_limit_side_len

batch_num

total_process_num

...

疑惑:

1、[**PaddleHub Serving部署**](https://github.com/PaddlePaddle/PaddleOCR/blob/rele... | null | null | null | {'base_commit': 'db60893201ad07a8c20d938a8224799f932779ad', 'files': [{'path': 'deploy/pdserving/web_service.py', 'Loc': {"('DetOp', 'init_op', 30)": {'mod': [31]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "2",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"deploy/pdserving/web_service.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

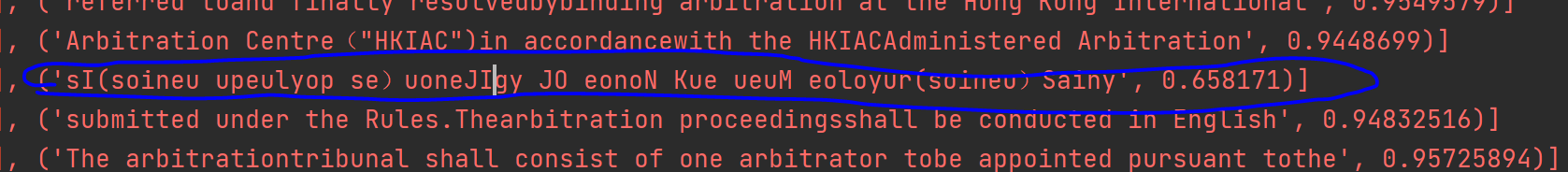

PaddlePaddle | PaddleOCR | 0afe6c3262babda2012074110520fe9d1a3c63c0 | https://github.com/PaddlePaddle/PaddleOCR/issues/2405 | status/close | 轻量模型的推断中,每隔几行就会出现一行识别为乱码 |

就像这里蓝色圈起来的这行

但是通用模型就没有这个问题

这是什么原因引起的呢? | null | null | null | {'base_commit': '0afe6c3262babda2012074110520fe9d1a3c63c0', 'files': [{'path': 'deploy/hubserving/readme_en.md', 'Loc': {'(None, None, 192)': {'mod': [192]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "3",

"info_type": "Code\nDoc\nHow to modify own code"

} | {

"code": [],

"doc": [

"deploy/hubserving/readme_en.md"

],

"test": [],

"config": [],

"asset": []

} | null |

PaddlePaddle | PaddleOCR | 64edd41c277c60c672388be6d5764be85c1de43a | https://github.com/PaddlePaddle/PaddleOCR/issues/5427 | status/close

stale | rknn不支持DepthwiseSeparable模块中的ConvBNLayer层参数stride(p1, p2) p1与p2不一致算子 | rknn不支持DepthwiseSeparable模块中的ConvBNLayer层参数stride(p1, p2) p1与p2不一致算子,这样涉及到修改网络结构,我看了下stride(p1, p2)中p1与p2不一致的情况是为了做下采样的操作,请问我想保持p1与p2相等的情况下,该如何修改DepthwiseSeparable模块或者更上层模块的参数呢? | null | null | null | {'base_commit': '64edd41c277c60c672388be6d5764be85c1de43a', 'files': [{'path': 'ppocr/modeling/backbones/rec_mobilenet_v3.py', 'Loc': {"('MobileNetV3', '__init__', 23)": {'mod': [48]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"ppocr/modeling/backbones/rec_mobilenet_v3.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

PaddlePaddle | PaddleOCR | 2e352dcc06ba86159099ec6a2928c7ce556a7245 | https://github.com/PaddlePaddle/PaddleOCR/issues/7542 | status/close | PaddleOCR加载自己的识别模型进行图像检测+识别与仅使用识别模型时效果不一致 | 先用PaddleOCR的图像检测功能,按照得到的识别框带文字的小图裁剪出来,标注用做训练集,对文字识别模型进行了训练,然后推理测试了一下没有问题,于是使用PaddleOCR加载新训练的文字识别模型跑检测 + 识别的整体流程,结果发现出现了识别结果不一致的情况。

- 系统环境/System Environment:CenOS7

- 版本号/Version:Paddle:2.3.1.post112 PaddleOCR:2.6 问题相关组件/Related components:PaddleOCR

- python/Version: 3.9.12

- 使用模型ppocrv3

问题图片:

": {'mod': [480]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"paddleocr.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

PaddlePaddle | PaddleOCR | 443de01526a1c7108934990c4b646ed992f0bce8 | https://github.com/PaddlePaddle/PaddleOCR/issues/5209 | status/close | pdserving 最后怎么返回文本以及文本坐标 | 目前pdserving 只返回了 文本没有返回文本坐标,请问如何返回文本坐标呢 | null | null | null | {'base_commit': '443de01526a1c7108934990c4b646ed992f0bce8', 'files': [{'path': 'deploy/pdserving/ocr_reader.py', 'Loc': {"('OCRReader', 'postprocess', 425)": {'mod': []}}, 'status': 'modified'}, {'path': 'deploy/pdserving/web_service.py', 'Loc': {"('DetOp', 'postprocess', 57)": {'mod': [63]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"deploy/pdserving/web_service.py",

"deploy/pdserving/ocr_reader.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

PaddlePaddle | PaddleOCR | ab16f2e4f9a4eac2eeb5f0324ab950b2215780d0 | https://github.com/PaddlePaddle/PaddleOCR/issues/3735 | 做数字训练的图像。在把检测和识别串起来的时候,识别出来的为什么是中文? | 自己训练数字模型,用到检测和识别,在转inference模型前,识别的是数字。但将检测和识别串联的时候,按照官方教程,转换成inference模型,为什么识别出来的是中文? | null | null | null | {'base_commit': 'ab16f2e4f9a4eac2eeb5f0324ab950b2215780d0', 'files': [{'path': 'configs/det/det_mv3_db.yml', 'Loc': {'(None, None, 116)': {'mod': [116]}}, 'status': 'modified'}, {'path': 'tools/infer/predict_det.py', 'Loc': {"('TextDetector', '__init__', 38)": {'mod': [42]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"tools/infer/predict_det.py"

],

"doc": [],

"test": [],

"config": [

"configs/det/det_mv3_db.yml"

],

"asset": []

} | null | |

PaddlePaddle | PaddleOCR | efc01375c942d87dc1e20856c7159096db16a9ab | https://github.com/PaddlePaddle/PaddleOCR/issues/11715 | Can ch_PP-OCRv4_rec_server_infer's support for english be put into the documentation? | I notice if I am calling

```

from paddleocr import PaddleOCR

ocr = Paddle.OCR(

det_model_dir=ch_PP-OCRv4_det_server_infer,

rec_model_dir=ch_PP-OCRv4_rec_infer

lang='en')

...

result = ocr.ocr(my_image)

```

this works fine. However, If i set the rec model to the server version as well (`ch_PP-OCRv4_rec_server... | null | null | null | {'base_commit': 'efc01375c942d87dc1e20856c7159096db16a9ab', 'files': [{'path': 'paddleocr.py', 'Loc': {'(None, None, None)': {'mod': [76, 80]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"paddleocr.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

PaddlePaddle | PaddleOCR | 9d44728da81e7d56ea5f437845d8d48bc278b086 | https://github.com/PaddlePaddle/PaddleOCR/issues/3248 | 检测和识别怎么连接 | 想用轻量化的检测模型配合RCNN识别,不知道怎么将两个阶段连接在一起。 | null | null | null | {'base_commit': '9d44728da81e7d56ea5f437845d8d48bc278b086', 'files': [{'path': 'doc/doc_ch/inference.md', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Doc"

} | {

"code": [],

"doc": [

"doc/doc_ch/inference.md"

],

"test": [],

"config": [],

"asset": []

} | null | |

PaddlePaddle | PaddleOCR | 582e868cf84fca911e195596053f503f890b561b | https://github.com/PaddlePaddle/PaddleOCR/issues/8641 | status/close | 请制作PP-Structure的PaddleServing例子吧 | 要写PP-Structure在paddle_serving_server.web_service中的Op类,感觉我这个新手做不到啊。

有没有大神做好例子,让新手复用呢 | null | null | null | {'base_commit': '582e868cf84fca911e195596053f503f890b561b', 'files': [{'path': 'deploy/hubserving/readme.md', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Doc"

} | {

"code": [],

"doc": [

"deploy/hubserving/readme.md"

],

"test": [],

"config": [],

"asset": []

} | null |

PaddlePaddle | PaddleOCR | 35449b5c7440f7706e5a4558e5b3efeb76944432 | https://github.com/PaddlePaddle/PaddleOCR/issues/3844 | HOW TO RESUME TRAINING FROM LAST CHECKPOINT? | Hi,

I have been training a model on my own dataset, How I can resume the training from last checkpoint saved? And also when I train the model does it save Best weights automatically to some path or we need to provide some argument to do it.

Please help me on this.

Thanks.. | null | null | null | {'base_commit': '35449b5c7440f7706e5a4558e5b3efeb76944432', 'files': [{'path': 'tools/program.py', 'Loc': {"('ArgsParser', '__init__', 39)": {'mod': [42, 42]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Doc"

} | {

"code": [

"tools/program.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

PaddlePaddle | PaddleOCR | adba814904eb4f0aeeec186f158cfb6c212a6e26 | https://github.com/PaddlePaddle/PaddleOCR/issues/3942 | 模型库404 | ch_ppocr_mobile_slim_v2.1_det 推理模型

ch_ppocr_mobile_v2.1_det 推理和训练模型

上面的到目前是404状态,无法下载 | null | null | null | {'base_commit': 'adba814904eb4f0aeeec186f158cfb6c212a6e26', 'files': [{'path': 'doc/doc_ch/models_list.md', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Doc"

} | {

"code": [],

"doc": [

"doc/doc_ch/models_list.md"

],

"test": [],

"config": [],

"asset": []

} | null | |

PaddlePaddle | PaddleOCR | c167df2f60d08085167cdc9431101f4b45a8a019 | https://github.com/PaddlePaddle/PaddleOCR/issues/6838 | status/close | Mac M1 Pro can't install paddleOCR2.0.1~2.5.0.3, but I can install paddleOCR 1.1.1 and run successful. | 请提供下述完整信息以便快速定位问题/Please provide the following information to quickly locate the problem

- 系统环境/System Environment:MacBook Pro(14英寸,2021年),Apple M1 Pro 16 GB,

- 版本号/Version:Pycharm2022.1.2 and Anaconda create Python 3.8 environment.

- Paddle: Monterey 12.3

- PaddleOCR:2.0.1~2.5.0.3

- 问题相关组件/Related components:P... | null | null | null | {'base_commit': 'c167df2f60d08085167cdc9431101f4b45a8a019', 'files': [{'path': 'requirements.txt', 'Loc': {'(None, None, 10)': {'mod': [10]}}, 'status': 'modified'}, {'path': 'setup.py', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"setup.py"

],

"doc": [],

"test": [],

"config": [

"requirements.txt"

],

"asset": []

} | null |

PaddlePaddle | PaddleOCR | e44c2af7622c97d3faecd37b062e7f1cb922fd40 | https://github.com/PaddlePaddle/PaddleOCR/issues/10298 | status/close | train warning | 请提供下述完整信息以便快速定位问题/Please provide the following information to quickly locate the problem

- 系统环境/System Environment:ubantu

- 版本号/Version:Paddle: PaddleOCR: 问题相关组件/Related components:paddle develop 0.0.0.post116

- 运行指令/Command Code:

- 完整报错/Complete Error Message:

一直有好多这种警告

I0705 11:55:13.443581 28582 eager_m... | null | null | null | {'base_commit': 'e44c2af7622c97d3faecd37b062e7f1cb922fd40', 'files': [{'path': 'tools/program.py', 'Loc': {"(None, 'train', 176)": {'mod': [349]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"tools/program.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

AntonOsika | gpt-engineer | dc866c91b9191bce083ec908c5665b7f2f40bd17 | https://github.com/AntonOsika/gpt-engineer/issues/201 | gpt 3 | hi

can we use gpt 3 api free key ? | null | null | null | {'base_commit': 'dc866c91b9191bce083ec908c5665b7f2f40bd17', 'files': [{'path': 'scripts/rerun_edited_message_logs.py', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"scripts/rerun_edited_message_logs.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

AntonOsika | gpt-engineer | 5505ec41dd49eb1e86aa405335f40d7a8fa20b0a | https://github.com/AntonOsika/gpt-engineer/issues/497 | main.py is missing? | main.py is missing? | null | null | null | {'base_commit': '5505ec41dd49eb1e86aa405335f40d7a8fa20b0a', 'files': [{'path': 'gpt_engineer/', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "5\n询问文件的位置",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [],

"asset": [

"gpt_engineer/"

]

} | null | |

AntonOsika | gpt-engineer | a55265ddb46462548a842dae914bb5fcb22181fa | https://github.com/AntonOsika/gpt-engineer/issues/509 | Error with Promtfile | When I try to run the example file I get this error even though there is something in the prompt file, which you can see from the screenshots is in the example folder. Does anyone know how I can solve this problem?

': {'mod': [43]}}, 'status': 'modified'}]} | [] | [] | [

{

"org": "lllyasviel",

"pro": "misc",

"path": [

"ip-adapter-plus-face_sdxl_vit-h.bin"

]

}

] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [],

"doc": [

"troubleshoot.md"

],

"test": [],

"config": [],

"asset": [

"ip-adapter-plus-face_sdxl_vit-h.bin"

]

} | null | |

lllyasviel | Fooocus | fc3588875759328d715fa07cc58178211a894386 | https://github.com/lllyasviel/Fooocus/issues/602 | [BUG]Memory Issue when generating images for the second time | When I generate images first time with one image prompt, everything works fine.

However, at the second generation, the GPU memory run out.

Here is the error

`Preparation time: 19.46 seconds

loading new

ERROR diffusion_model.output_blocks.0.1.transformer_blocks.4.ff.net.0.proj.weight CUDA out of memory. Tried t... | null | null | null | {'base_commit': 'fc3588875759328d715fa07cc58178211a894386', 'files': [{'path': 'Version', 'Loc': {}}, {'path': 'Version', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "1",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [],

"asset": [

"Version"

]

} | null | |

lllyasviel | Fooocus | f3084894402a4c0b7ed9e7164466bcedd5f5428d | https://github.com/lllyasviel/Fooocus/issues/1508 | Problems with installation and correct operation. | Hello, I had problems installing Fooocus on a GNU/Linux system, many errors occurred during the installation and they were all different. I was not able to capture some of them, but in general terms the errors were as follows: "could not find versions of python packages that satisfy dependencies (error during installa... | null | null | null | {'base_commit': 'f3084894402a4c0b7ed9e7164466bcedd5f5428d', 'files': [{'path': 'requirements_versions.txt', 'Loc': {'(None, None, 5)': {'mod': [5]}}, 'status': 'modified'}, {'path': 'readme.md', 'Loc': {'(None, None, 152)': {'mod': [152]}}, 'status': 'modified'}, {'path': 'troubleshoot.md', 'Loc': {'(None, None, 107)':... | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [],

"doc": [

"readme.md",

"troubleshoot.md"

],

"test": [],

"config": [

"requirements_versions.txt"

],

"asset": []

} | null | |

lllyasviel | Fooocus | 225947ac1a603124b0274da3e94d2c6cba65f732 | https://github.com/lllyasviel/Fooocus/issues/500 | is this a local model or not | is this a local model or not

i dont get how it could show someone elses promts if its local | null | null | null | {'base_commit': '225947ac1a603124b0274da3e94d2c6cba65f732', 'files': [{'path': 'models/checkpoints', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "5",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [],

"asset": [

"models/checkpoints"

]

} | null | |

lllyasviel | Fooocus | d7439b2d6004d50a0fda19108603a8d1941a185e | https://github.com/lllyasviel/Fooocus/issues/3689 | bug

triage | [Bug]: Exits upon attempting to load a model on Windows | ### Checklist

- [X] The issue has not been resolved by following the [troubleshooting guide](https://github.com/lllyasviel/Fooocus/blob/main/troubleshoot.md)

- [X] The issue exists on a clean installation of Fooocus

- [X] The issue exists in the current version of Fooocus

- [X] The issue has not been reported before r... | null | null | null | {'base_commit': 'd7439b2d6004d50a0fda19108603a8d1941a185e', 'files': [{'path': 'presets/default.json', 'Loc': {'(None, None, 2)': {'mod': [2]}}, 'status': 'modified'}]} | [] | [

"config.txt",

"config_modification_tutorial.txt"

] | [] | {

"iss_type": "2",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0\n1",

"info_type": "Config\n"

} | {

"code": [

"presets/default.json"

],

"doc": [],

"test": [],

"config": [

"config.txt",

"config_modification_tutorial.txt"

],

"asset": []

} | null |

binary-husky | gpt_academic | 6383113e8527e1c73049e26d2b3482a1b0f54b30 | https://github.com/binary-husky/gpt_academic/issues/376 | 关于public url |

这个public url 是经过博主自己搭建的服务器的吗?我本地搭建之后在手机打开这个url也能用 | null | null | null | {'base_commit': '6383113e8527e1c73049e26d2b3482a1b0f54b30', 'files': [{'path': 'main.py', 'Loc': {'(None, None, None)': {'mod': [174]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "5",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"main.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

binary-husky | gpt_academic | 6c13bb7b46519312222f9afacedaa16225b673a9 | https://github.com/binary-husky/gpt_academic/issues/1545 | ToDo | [Bug]: Qwen1.5-14B-chat 运行不了 | ### Installation Method | 安装方法与平台

OneKeyInstall (一键安装脚本-windows)

### Version | 版本

Latest | 最新版

### OS | 操作系统

Windows

### Describe the bug | 简述

Traceback (most recent call last):

File ".\request_llms\local_llm_class.py", line 158, in run

for response_full in self.llm_stream_generator(**kwargs):

File ".... | null | null | null | {'base_commit': '6c13bb7b46519312222f9afacedaa16225b673a9', 'files': [{'path': 'request_llms/bridge_qwen_local.py', 'Loc': {"('GetQwenLMHandle', 'llm_stream_generator', 34)": {'mod': []}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"request_llms/bridge_qwen_local.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null |

binary-husky | gpt_academic | dd7a01cda53628ea07ef6192bf257f9ad51f5f47 | https://github.com/binary-husky/gpt_academic/issues/978 | [Bug]: 代理配置成功,代理所在地查询超时,代理可能无效 | ### Installation Method | 安装方法与平台

Docker(Windows/Mac)

### Version | 版本

Latest | 最新版

### OS | 操作系统

Mac

### Describe the bug | 简述

按照要求修改代理配置文件`config.py`,基于`Dockerfile`构建之后运行出现,`代理配置成功,代理所在地查询超时,代理可能无效`的警告⚠️,实际运行报错`ConnectionRefusedError: [Errno 111] Connection refused`,请帮帮我哪里配置可能有误

ps.代理服务地址端口配置正确,且运行正常,可以访问外网

... | null | null | null | {'base_commit': 'dd7a01cda53628ea07ef6192bf257f9ad51f5f47', 'files': [{'path': 'check_proxy.py', 'Loc': {"(None, 'check_proxy', 2)": {'mod': [6]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"check_proxy.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

binary-husky | gpt_academic | ea4e03b1d892d462f71bab76ee0bec65d541f6b7 | https://github.com/binary-husky/gpt_academic/issues/1286 | [Feature]: 请问是否成功修改 api2d-gpt-3.5-turbo-16k 系列模型 max_token 为 16385 | ### Class | 类型

大语言模型

### Feature Request | 功能请求

_No response_ | null | null | null | {'base_commit': 'ea4e03b1d892d462f71bab76ee0bec65d541f6b7', 'files': [{'path': 'request_llms/bridge_all.py', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"request_llms/bridge_all.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

binary-husky | gpt_academic | 526b4d8ecd1adbdcf97946b3bca4c89feda6ec04 | https://github.com/binary-husky/gpt_academic/issues/850 | cause of issue is clear | [Bug]: Json异常 “error”: | ### Installation Method | 安装方法与平台

Pip Install (I used latest requirements.txt)

### Version | 版本

Latest | 最新版

### OS | 操作系统

Mac

### Describe the bug | 简述

Traceback (most recent call last):

File "./request_llm/bridge_chatgpt.py", line 189, in predict

if ('data: [DONE]' in chunk_decoded) or (len(json.loads(... | null | null | null | {'base_commit': '526b4d8ecd1adbdcf97946b3bca4c89feda6ec04', 'files': [{'path': 'config.py', 'Loc': {'(None, None, None)': {'mod': [1]}}, 'status': 'modified'}, {'path': 'README.md', 'Loc': {'(None, None, 101)': {'mod': [101]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"config.py"

],

"doc": [

"README.md"

],

"test": [],

"config": [],

"asset": []

} | null |

binary-husky | gpt_academic | fdffbee1b02bd515ceb4519ae2a830a547b695b4 | https://github.com/binary-husky/gpt_academic/issues/1137 | [Bug]: Connection errored out | ### Installation Method | 安装方法与平台

Pip Install (I used latest requirements.txt)

### Version | 版本

Latest | 最新版

### OS | 操作系统

Linux

### Describe the bug | 简述

你好, 版本3.54

部署在vps上, os是ubuntu 20.04

挂在了公网, 此前均可正常使用

但是突然出现了这样的问题, 如下图

请问这是什么原因呢? 是该vps的ip不行, 被openai ban了么? 还是什么别的原因, 谢谢

### Screen Shot | 有帮助的截图

... | null | null | null | {'base_commit': 'fdffbee1b02bd515ceb4519ae2a830a547b695b4', 'files': [{'path': 'main.py', 'Loc': {"(None, 'main', 3)": {'mod': [287]}}, 'status': 'modified'}]} | [] | [

"nginx.conf"

] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "0\n2?",

"info_type": "Config"

} | {

"code": [

"main.py",

"nginx.conf"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

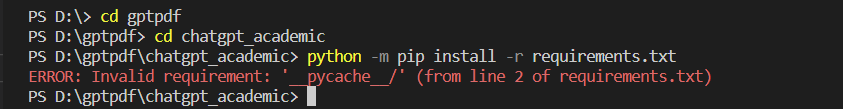

binary-husky | gpt_academic | a2002ebd85f441b3cd563bae28e9966006068ad6 | https://github.com/binary-husky/gpt_academic/issues/462 | ERROR: Invalid requirement: '__pycache__/' (from line 2 of requirements.txt) | **Describe the bug 简述**

ERROR: Invalid requirement: '__pycache__/' (from line 2 of requirements.txt)

**Screen Shot 截图**

界面

| null | null | null | {'base_commit': '0485d01d67d6a41bb0810d6112f40602af1167a9', 'files': [{'path': 'requirements.txt', 'Loc': {'(None, None, 1)': {'mod': [1]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "2",

"iss_reason": "2",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Doc\n依赖声明"

} | {

"code": [],

"doc": [],

"test": [],

"config": [

"requirements.txt"

],

"asset": []

} | null |

binary-husky | gpt_academic | e594e1b928aadb36d291184bca1deee8601621a8 | https://github.com/binary-husky/gpt_academic/issues/1489 | [Bug]: 虽然PDF生成失败了, 但请查收结果(压缩包), 内含已经翻译的Tex文档, 您可以到Github Issue区, 用该压缩包进行反馈。如系统是Linux,请检查系统字体(见Github wiki) ... | ### Installation Method | 安装方法与平台

Anaconda (I used latest requirements.txt)

### Version | 版本

Latest | 最新版

### OS | 操作系统

Windows

### Describe the bug | 简述

由于最为关键的转化PDF编译失败, 将根据报错信息修正tex源文件并重试, 当前报错的latex代码处于第[-1]行 ...

虽然PDF生成失败了, 但请查收结果(压缩包), 内含已经翻译的Tex文档, 您可以到Github Issue区, 用该压缩包进行反馈。如系统是Linux,请检查系统字体(见Github... | null | null | null | {} | [

{

"path": ".tex"

}

] | [] | [] | {

"iss_type": "1",

"iss_reason": "3",

"loc_way": "comment",

"loc_scope": "3",

"info_type": "Code"

} | {

"code": [],

"doc": [],

"test": [],

"config": [],

"asset": [

".tex"

]

} | null | |

binary-husky | gpt_academic | 9540cf9448026a1c8135c750866b63d320909718 | https://github.com/binary-husky/gpt_academic/issues/257 | Something went wrong Connection errored out. | ### Describe the bug

启动程序后,能打开页面正常显示,但是上传文档或者发送提问法会出错“Something went wrong Connection errored out.”

### Is there an existing issue for this?

- [ ] I have searched the existing issues

### Reproduction

按照正常步骤:

git clone https://github.com/binary-husky/chatgpt_academic.git

cd chatgpt_academic

python -m p... | null | null | null | {} | [] | [] | [

{

"org": "gradio-app",

"pro": "gradio",

"path": [

"gradio/routes.py"

]

}

] | {

"iss_type": "1",

"iss_reason": "1",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"gradio/routes.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

binary-husky | gpt_academic | bfa6661367b7592e82225515e5e4845c4aad95bb | https://github.com/binary-husky/gpt_academic/issues/252 | 能不能使用azure openai key? | 代理服务器不够稳定,更麻烦的是给openai续费,还要个美国信用卡

非常好的应用,希望出更多的插件功能,谢谢 | null | null | null | {'base_commit': 'bfa6661367b7592e82225515e5e4845c4aad95bb', 'files': [{'path': 'config.py', 'Loc': {}}]} | [] | [] | [] | {

"iss_type": "3",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [

"config.py"

],

"doc": [],

"test": [],

"config": [],

"asset": []

} | null | |

binary-husky | gpt_academic | 2d2e02040d7d91d2f2a4c34f4d0bf677873b5f4d | https://github.com/binary-husky/gpt_academic/issues/1328 | [Bug]: 精准翻译PDF文档(NOUGAT)功能出错, | ### Installation Method | 安装方法与平台

Others (Please Describe)

### Version | 版本

Please choose | 请选择

### OS | 操作系统

Please choose | 请选择

### Describe the bug | 简述

测试服务器,精准翻译PDF文档(NOUGAT)功能出错,但是可以使用精准翻译PDF的功能

... | null | null | null | {'base_commit': '2d2e02040d7d91d2f2a4c34f4d0bf677873b5f4d', 'files': [{'path': 'crazy_functions/crazy_utils.py', 'Loc': {"('nougat_interface', 'NOUGAT_parse_pdf', 739)": {'mod': [752]}, "('nougat_interface', None, 719)": {'mod': [723]}}, 'status': 'modified'}]} | [] | [] | [] | {

"iss_type": "1",

"iss_reason": "5",

"loc_way": "comment",

"loc_scope": "0",

"info_type": "Code"

} | {

"code": [