metadata

license: mit

task_categories:

- text-generation

language:

- en

tags:

- math

- code

- reasoning

- test-time-compute

PaCoRe: Learning to Scale Test-Time Compute with Parallel Coordinated Reasoning

📖 Overview

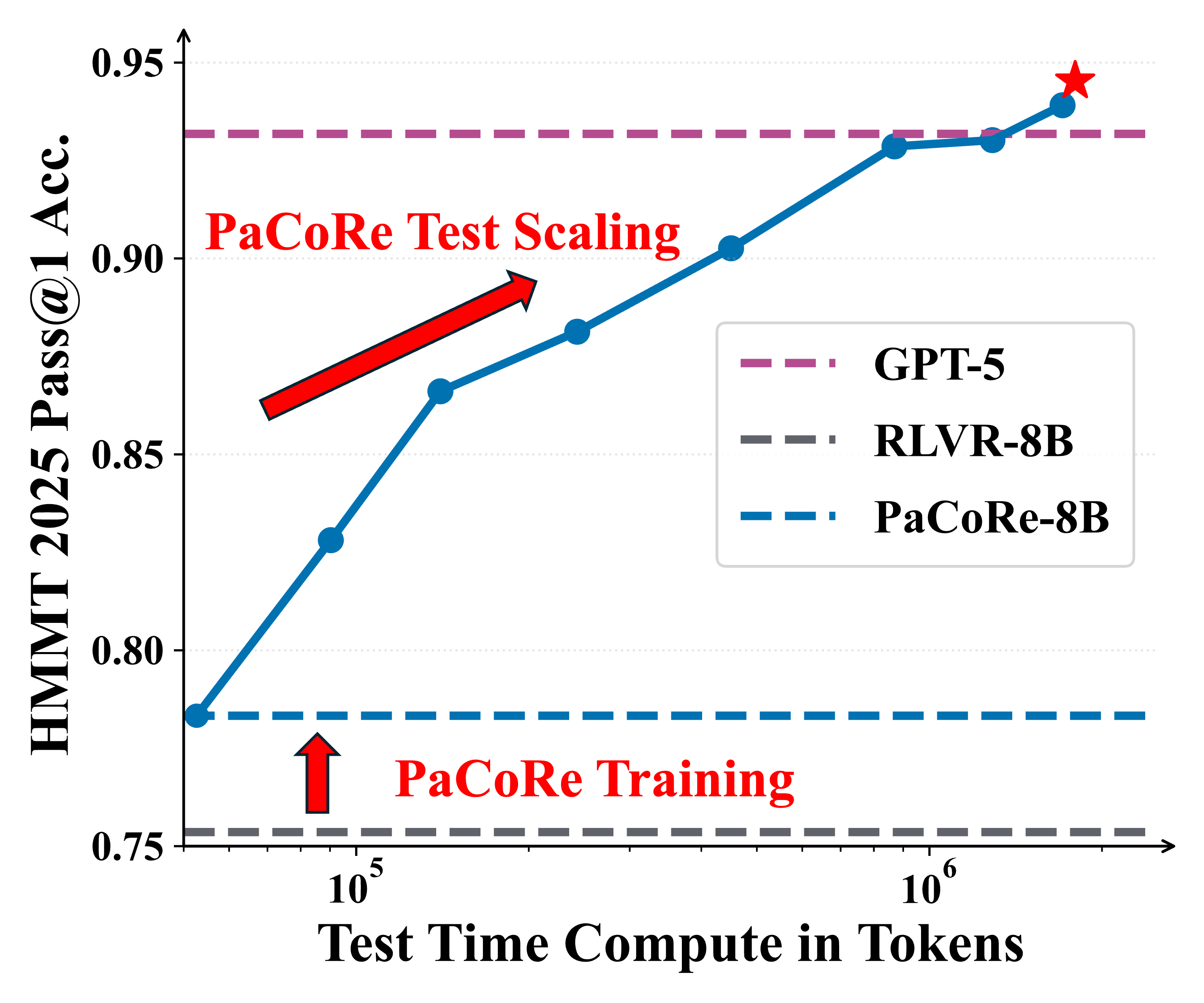

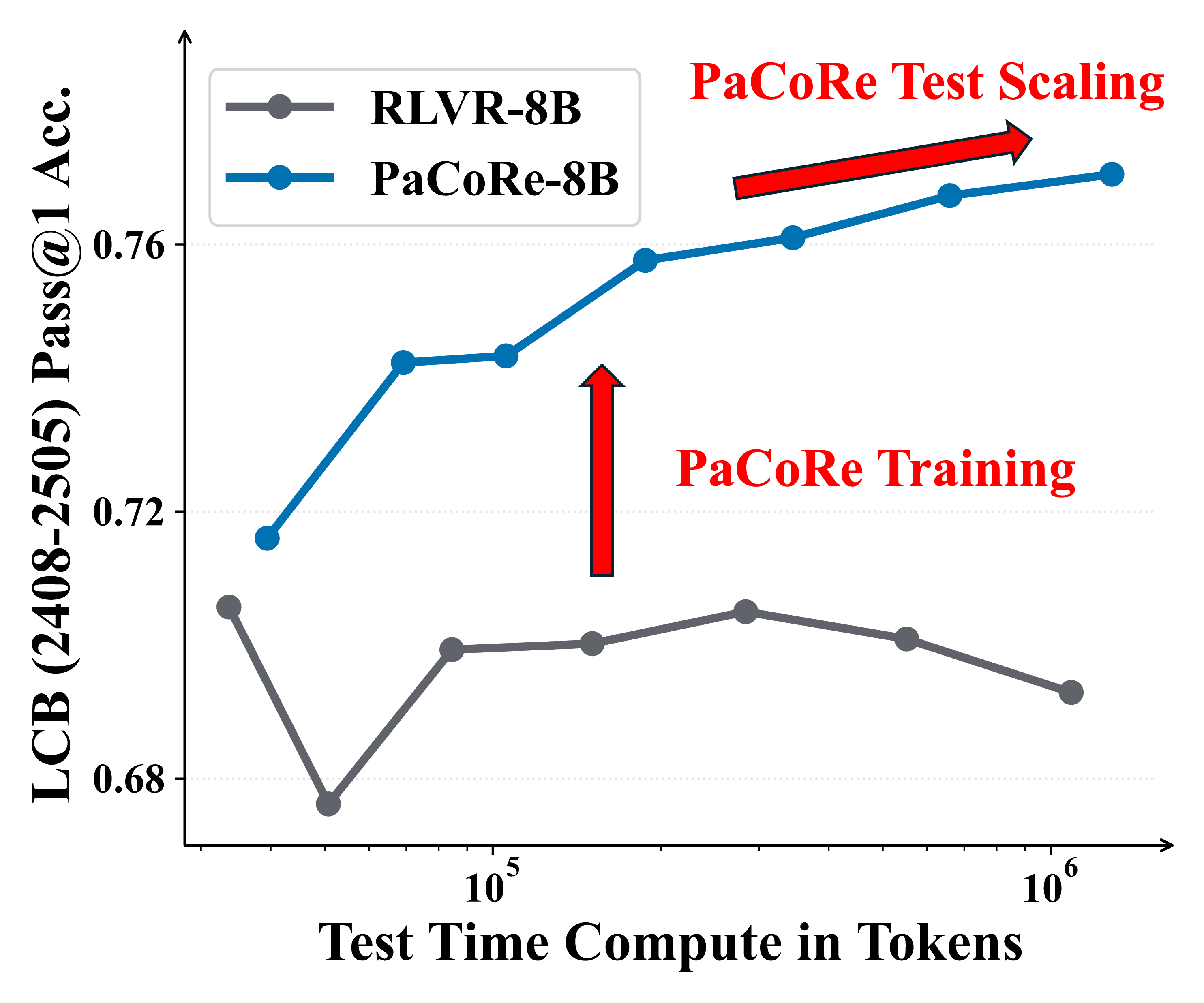

We introduce PaCoRe (Parallel Coordinated Reasoning), a framework that shifts the driver of inference from sequential depth to coordinated parallel breadth, breaking the model context limitation and massively scaling test time compute.

The PaCoRe-Train-8k dataset is the high-quality training corpus used to train the model to master the Reasoning Synthesis capabilities required to reconcile diverse parallel insights. It includes approximately 8,000 instances across mathematics and coding domains.

Figure 1 | Parallel Coordinated Reasoning (PaCoRe) performance.

📚 Dataset Structure

The data is provided as a list[dict], where each entry represents a training instance:

conversation: The original problem or prompt messages.responses: A list of cached generated responses (trajectories). These serve as the input messages ($M$) used during PaCoRe training to teach the model how to synthesize parallel thoughts.ground_truth: The verifiable answer used for correctness evaluation during the Reinforcement Learning (RL) process.

The corpus includes:

opensource_mathpublic_mathcontestsynthetic_mathcode

Releases

The data is released in two stages:

- 🤗 Stage 1 (3k): PaCoRe-Train-Stage1-3k

- 🤗 Stage 2 (5k): PaCoRe-Train-Stage2-5k

🔍 Key Findings

- Message Passing Unlocks Scaling: Without compaction, performance flatlines at the context limit. PaCoRe breaks the memory barrier.

- Breadth > Depth: Coordinated parallel reasoning delivers higher returns than extending a single chain.

- Data as a Force Multiplier: The PaCoRe corpus provides exceptionally valuable supervision—even baseline models see substantial gains when trained on it.

📜 Citation

@misc{pacore2025,

title={PaCoRe: Learning to Scale Test-Time Compute with Parallel Coordinated Reasoning},

author={Jingcheng Hu and Yinmin Zhang and Shijie Shang and Xiaobo Yang and Yue Peng and Zhewei Huang and Hebin Zhou and Xin Wu and Jie Cheng and Fanqi Wan and Xiangwen Kong and Chengyuan Yao and Kaiwen Yan and Ailin Huang and Hongyu Zhou and Qi Han and Zheng Ge and Daxin Jiang and Xiangyu Zhang and Heung-Yeung Shum},

year={2026},

eprint={2601.05593},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2601.05593},

}