id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,896,017 | Denim Maxi Skirt: Timeless Style and Versatility | Introduction The denim maxi skirt is a fashion staple that combines the classic appeal of denim with... | 0 | 2024-06-21T13:15:24 | https://dev.to/farheen_zohaib_298429c952/denim-maxi-skirt-timeless-style-and-versatility-1ig0 | **Introduction**

The **[denim maxi skirt](https://wildskirts.uk/denim-maxi-skirt/)** is a fashion staple that combines the classic appeal of denim with the elegance of a maxi length. This versatile piece has been a favorite in wardrobes for decades, offering endless styling possibilities and a comfortable yet chic look... | farheen_zohaib_298429c952 | |

1,896,016 | AWS Well-Architected | 🚀 Exciting News! 🚀 I'm thrilled to announce that I've achieved AWS certification! 🎉 After months of... | 0 | 2024-06-21T13:14:42 | https://dev.to/vidhey071/aws-well-architected-36hk | aws | 🚀 Exciting News! 🚀

I'm thrilled to announce that I've achieved AWS certification! 🎉

After months of dedicated learning and hard work, I am now officially certified with this certificate. This journey has been incredibly rewarding, and I'm looking forward to leveraging this knowledge to drive innovation and efficie... | vidhey071 |

1,896,015 | Securing your machine identities means better secrets management | In 2024, GitGuardian Released the State of Secrets Sprawl report. The findings speak for themselves;... | 0 | 2024-06-21T13:13:54 | https://blog.gitguardian.com/securing-your-machine-identities/ | security, devops, cybersecurity, identity | In 2024, GitGuardian Released the [State of Secrets Sprawl ](https://www.gitguardian.com/state-of-secrets-sprawl-report-2024?ref=blog.gitguardian.com)report. The findings speak for themselves; with over 12.7 million secrets detected in GitHub public repos, it is clear that hard-coded plaintext credentials are a serious... | dwayne_mcdaniel |

1,896,014 | Get Your Ex Back with These Powerful Voodoo Love Spells"+27814233831 | In the realm of love and relationships, finding and maintaining a deep connection can sometimes be... | 0 | 2024-06-21T13:13:36 | https://dev.to/felala_fredrick_f7c1ec85d/get-your-ex-back-with-these-powerful-voodoo-love-spells27814233831-2598 | lovespells, voodoo, spellcaster, bringbacklostlover | In the realm of love and relationships, finding and maintaining a deep connection can sometimes be challenging. When heartbreak strikes and lovers part ways, the pain can feel insurmountable. This is where the ancient art of [Voodoo love spells](https://www.spelltobringbacklostlover.com/) especially those cast by the r... | felala_fredrick_f7c1ec85d |

1,896,013 | Verified Skrill Accounts for Immediate Transactions | Our guide to buying Skrill verified accounts is here. Skrill accounts are essential for working... | 0 | 2024-06-21T13:13:09 | https://dev.to/verifiedskrillaccoun/verified-skrill-accounts-for-immediate-transactions-19no | skrill | [Our guide to buying Skrill verified accounts is here](url). Skrill accounts are essential for working online and as a freelancer. Skrill is not the same for all accounts. You should only use a Skrill account that has been verified. We’ll explain how in this guide. We’ll give you all the information to make a wise choi... | verifiedskrillaccoun |

1,896,012 | What is C# Compiler ? How to Use it Full Guide | Mastering the C# Compiler: Your Complete Guide to Understanding and Implementation In the... | 0 | 2024-06-21T13:12:46 | https://dev.to/scholarhat/what-is-c-compiler-how-to-use-it-full-guide-1i17 | ## Mastering the C# Compiler: Your Complete Guide to Understanding and Implementation

In the realm of modern software development, C# stands out as a powerful and versatile programming language. At the heart of C#'s functionality lies its compiler, a crucial tool that transforms human-readable code into machine-execut... | scholarhat | |

1,896,011 | Getting Started with the AWS Cloud Essentials | 🚀 Exciting News! 🚀 I am thrilled to announce that I have achieved my AWS certification! 🎉 After... | 0 | 2024-06-21T13:11:52 | https://dev.to/vidhey071/getting-started-with-the-aws-cloud-essentials-23pl | aws | 🚀 Exciting News! 🚀

I am thrilled to announce that I have achieved my AWS certification! 🎉 After months of hard work and dedication, I am now certified with this certificate. This accomplishment signifies my commitment to mastering AWS services and best practices, enhancing my skills in cloud computing and infrastru... | vidhey071 |

1,896,010 | Configure and Deploy AWS PrivateLink Certificate | 🚀 Exciting News! 🚀 I'm thrilled to announce that I've achieved AWS certification! 🎉 After months of... | 0 | 2024-06-21T13:09:33 | https://dev.to/vidhey071/configure-and-deploy-aws-privatelink-certificate-4dio | 🚀 Exciting News! 🚀

I'm thrilled to announce that I've achieved AWS certification! 🎉

After months of dedicated learning and hard work, I am now officially certified with this certificate. This journey has been incredibly rewarding, and I'm looking forward to leveraging this knowledge to drive innovation and efficie... | vidhey071 | |

1,896,008 | How Can Black Magic Specialist Pankaj Shastry Ji Help Improve Your Life | Are you feeling lost, stuck, or overwhelmed in life? Do you feel like negative energies are holding... | 0 | 2024-06-21T13:08:00 | https://dev.to/astrologer_pankashastri_/how-can-black-magic-specialist-pankaj-shastry-ji-help-improve-your-life-1fj8 | blackmagic | Are you feeling lost, stuck, or overwhelmed in life? Do you feel like negative energies are holding you back from achieving your full potential? **[Black magic specialist](https://pankajastrology.com/black-magic-specialist/)** Pankaj Shastry Ji is here to help you break free from these obstacles and lead a more fulfill... | astrologer_pankashastri_ |

1,895,998 | How to Create and Connect to a Linux VM Using a Public Key | Using SSH keys, creating and connecting to a Linux VM ensures a secure and password-less login. Below... | 0 | 2024-06-21T12:54:01 | https://dev.to/florence_8042063da11e29d1/how-to-create-and-connect-to-a-linux-vm-using-a-public-key-if3 | linux, virtualmachine, ssh, publickey | Using SSH keys, creating and connecting to a Linux VM ensures a secure and password-less login. Below is a step-by-step guide to help you set up and connect to a Linux VM using a public key.

#Create a Linux VM on Azure

##Login to Azure Portal

Click on **"Create a resource"

**

in JS returns [1, NaN, 3]? | In JavaScript, the map function applies a given function to each element of an array and returns a... | 0 | 2024-06-21T13:03:09 | https://dev.to/vcpablo/why-1-5-11mapparseint-in-js-returns-1-nan-3-28ef | javascript, webdev, programming | In JavaScript, the map function applies a given function to each element of an array and returns a new array with the results. When you use map with parseInt like ['1', '5', '11'].map(parseInt), the result might not be what you expect due to the way parseInt and map interact. Let's break down what happens.

### Underst... | vcpablo |

1,896,005 | Why ['1', '5', '11'].map(parseInt) in JS returns [1, NaN, 3]? | In JavaScript, the map function applies a given function to each element of an array and returns a... | 0 | 2024-06-21T13:03:09 | https://dev.to/vcpablo/why-1-5-11mapparseint-in-js-returns-1-nan-3-4jdp | javascript, webdev, programming | In JavaScript, the map function applies a given function to each element of an array and returns a new array with the results. When you use map with parseInt like ['1', '5', '11'].map(parseInt), the result might not be what you expect due to the way parseInt and map interact. Let's break down what happens.

### Underst... | vcpablo |

1,896,004 | Improving User Experience With Dispatch Management Software | We all are living in a world where competition is everywhere. The same is the case with the business... | 0 | 2024-06-21T13:02:49 | https://dev.to/gpstracker/improving-user-experience-with-dispatch-management-software-2b4a | softwaredevelopment, gpstrackingsoftware, dispatchmanagementsoftware, dispatchmanagementsystem | We all are living in a world where competition is everywhere. The same is the case with the business sector as all the companies want to stay at the top. What can be better than having happy customers to achieve a competitive advantage? This is why business giants nowadays prefer investing in **[Dispatch Management Sof... | gpstracker |

1,896,003 | Help | Hi! I was wondering if it would be possible to find a mentor here and a job as well. I'm running a... | 0 | 2024-06-21T13:02:10 | https://dev.to/frandolph/help-28e0 | help | Hi! I was wondering if it would be possible to find a mentor here and a job as well. I'm running a bit low on finance and I'm about to enter college already. Hoping this post will reach senior devs. Thanks! | frandolph |

1,896,001 | Start Your Career In Cyber Security | Join the best cyber security training institute in Thane! Our expert instructors and hands-on courses... | 0 | 2024-06-21T12:58:19 | https://dev.to/encrypticsec11/start-your-career-in-cyber-security-1f97 | cybersecurity, ethicalhacking | Join the [best cyber security training institute in Thane!](https://encrypticsecurity.com/) Our expert instructors and hands-on courses prepare you for a successful career in cybersecurity. Enroll today!

| encrypticsec11 |

1,896,000 | How to Export and Import a MySQL Database Using the Dump or IDE | Data import and export tasks, being an integral part of database management and operation, are vital... | 0 | 2024-06-21T12:58:16 | https://dev.to/dbajamey/how-to-export-and-import-a-mysql-database-using-the-dump-or-ide-389n | mysql, mariadb, database, tutorial | Data import and export tasks, being an integral part of database management and operation, are vital for software and database developers, as well as data analysts, and many other IT professionals.

Ensuring data availability, consistency, and security requires both the proper techniques and the possibility of automati... | dbajamey |

1,895,994 | Seamless State Management using Async Iterators | In my recent post about AI-UI, I touched on why I developed the library. I wanted declarative... | 0 | 2024-06-21T12:55:46 | https://dev.to/matatbread/seamless-state-management-using-async-iterators-fp7 | webdev, javascript, node | In my recent post about [AI-UI](https://dev.to/matatbread/ive-been-writing-web-backends-and-frontends-since-the-90s-finally-declarative-dynamic-markup-done-right-3jmj), I touched on why I developed the library.

* I wanted declarative markup

* I wanted type-safe component encapsulation, with composition & inheritance

*... | matatbread |

1,895,999 | Static in C# - Part 1 | What is static? Based on Microsoft (father of C-Sharp), it is modifier to declare a static... | 27,809 | 2024-06-21T12:55:17 | https://www.linkedin.com/pulse/static-c-part-1-loc-nguyen-t4n4c | csharp, beginners, programming | ##What is `static`?

Based on Microsoft (*father of C-Sharp*), it is modifier to **declare a static member**, which **belongs to the type itself** rather than to a specific object. For example, class have structure following:

```csharp

class Test(){

public string Content;

}

```

In `main` function, we have:

```csharp

... | locnguyenpv |

1,895,997 | Code Your Way to Freedom: A Hard-Earned Guide | P/S: Originally published June 11th 2024 on LinkedIn and Facebook Based on my experiences and the... | 0 | 2024-06-21T12:51:21 | https://dev.to/trae_z/code-your-way-to-freedom-a-hard-earned-guide-2k39 | careeradvice, learningcurve, selfdevelopment, successmindset | **P/S:** **_Originally published June 11th 2024 on LinkedIn and Facebook_**

Based on my experiences and the battle scars I've earned, this would be my June 2024 advice to aspiring programmers/software developers just writing their first "hello world" today.

𝟭 𝗟𝗲𝗮𝗿𝗻𝗶𝗻𝗴 𝘁𝗼 𝗰𝗼𝗱𝗲 𝘂𝗻𝘁𝗶𝗹 𝘆𝗼𝘂 𝗿𝗲𝗮𝗰... | trae_z |

1,895,996 | A Full Guide to Making Sure Stable Releases to Production with UI Automation Testing | Introduction: When it comes to making software, the path from code to production is not always easy.... | 0 | 2024-06-21T12:50:25 | https://dev.to/coderowersoftware/a-full-guide-to-making-sure-stable-releases-to-production-with-ui-automation-testing-281e | webdev, testing, development, softwaredevelopment | **Introduction:**

When it comes to making software, the path from code to production is not always easy. Making sure that updates to production settings are stable is one of the most important parts of this trip. **Releases that aren’t stable can cause problems, cost money, and hurt a company’s image**. To lower these ... | coderower |

1,895,995 | How to Set Up Solargraph in VS Code with WSL2 | Introduction Recently, I faced an issue while trying to set up Solargraph in VS Code using... | 0 | 2024-06-21T12:49:32 | https://dev.to/lucasldemello/how-to-set-up-solargraph-in-vs-code-with-wsl2-283b | vscode, ruby, productivity, solargraph | ## Introduction

Recently, I faced an issue while trying to set up Solargraph in VS Code using WSL2 and ASDF for managing Ruby versions. The legacy projects I was working on used Docker, causing conflicts with Ruby versions and resulting in errors when initializing the server. After much research and trial and error, I... | lucasldemello |

1,895,991 | ChronoQuest: Time-Travel Adventures for Young Explorers | "ChronoQuest: Time-Travel Adventures for Young Explorers" whisks readers on an exhilarating journey... | 0 | 2024-06-21T12:39:23 | https://dev.to/yamna_patel_aa39604c10039/chronoquest-time-travel-adventures-for-young-explorers-28g9 | "ChronoQuest: Time-Travel Adventures for Young Explorers" whisks readers on an exhilarating journey through the annals of time. Each chapter unfolds like a map to ancient civilizations and futuristic worlds, where protagonists harness courage and wit to unravel mysteries. Justina's [adventure books for kids](https://bo... | yamna_patel_aa39604c10039 | |

1,895,990 | Como Configurar o Solargraph no VS Code com WSL2 para projetos legados | Introdução Recentemente, enfrentei um problema ao tentar configurar o Solargraph no VS... | 0 | 2024-06-21T12:38:56 | https://dev.to/lucasldemello/como-configurar-o-solargraph-no-vs-code-com-wsl2-para-projetos-legados-2eg8 | solargraph, vscode, ruby, productivity | ## Introdução

Recentemente, enfrentei um problema ao tentar configurar o Solargraph no VS Code enquanto utilizava o WSL2 e o ASDF para gerenciar versões do Ruby. Os projetos legados que eu estava trabalhando usavam Docker, o que causava conflitos com as versões do Ruby e resultava em erros ao inicializar o servidor. A... | lucasldemello |

1,895,989 | IE Green Tea: Your Gateway to a World of Green Tea Goodness | While the claim of being the "Top Brand" can be subjective, IE Green Tea certainly stands out for its... | 0 | 2024-06-21T12:38:28 | https://dev.to/iegreentea/ie-green-tea-your-gateway-to-a-world-of-green-tea-goodness-397o | decaffeinatedgreentea, puregreentea, caffeinatedgreentea, organicgreentea | While the claim of being the "Top Brand" can be subjective, IE Green Tea certainly stands out for its commitment to quality and variety in the world of green tea. We offer a range of options to cater to every preference, including decaffeinated and natural green tea varieties.

## Beyond the Buzz: Unveiling the Power o... | iegreentea |

1,895,988 | Show me your open-source project | Hello Everyone, I'm Antonio, CEO & Founder at Litlyx. I want to engage with you in a... | 0 | 2024-06-21T12:38:24 | https://dev.to/litlyx/show-me-your-open-source-project-15l | opensource, discuss, programming | Hello Everyone, I'm Antonio, CEO & Founder at [Litlyx](https://litlyx.com).

I want to engage with you in a conversation about **OURS** open-source projects and it will be super intresting exchange publicly feedbacks on our softwares.

I start with mine, Litlyx, [Repository on Github](https://github.com/Litlyx/litlyx).... | litlyx |

1,895,986 | How to Optimize Content Using GenAI Powered Search Analytics? | Technical writers have relied on “lexical search” analytics regarding what keywords have been typed... | 0 | 2024-06-21T12:36:37 | https://dev.to/ragavi_document360/how-to-optimize-content-using-genai-powered-search-analytics-4p25 | Technical writers have relied on “lexical search” analytics regarding what keywords have been typed in the search engine on their knowledge base site for analysis. The typical category of analytics includes article performance, search analytics, feedback, and reports for technical writers on their performance.

Analyti... | ragavi_document360 | |

1,895,985 | Mathematics: Timeless Wisdom in a Changing Tech World | Trying to get familiar with binary trees and linked lists data structures over the past few days... | 0 | 2024-06-21T12:35:51 | https://dev.to/trae_z/mathematics-timeless-wisdom-in-a-changing-tech-world-53nf | techevolution, programmingchallenges, datastructures, mathematics | Trying to get familiar with binary trees and linked lists data structures over the past few days brought me to the realization that, unlike programming languages and technologies, mathematics is timeless. P.S. Data structures are deeply rooted in mathematical concepts.

The same textbooks I inherited from my father dec... | trae_z |

1,895,982 | Air Minum Murni | Air minum murni adalah kebutuhan mendasar yang esensial bagi kesehatan dan kesejahteraan manusia.... | 0 | 2024-06-21T12:32:49 | https://dev.to/ilmu_padi/air-minum-murni-42d3 |

Air minum [murni](https://airminummurni.com/) adalah kebutuhan mendasar yang esensial bagi kesehatan dan kesejahteraan manusia. Proses pemurnian air yang tepat memastikan bahwa air yang dikonsumsi bebas dari kontaminasi dan aman untuk diminum. Oleh karena itu, penting bagi setiap individu dan komunitas untuk memiliki... | ilmu_padi | |

1,895,975 | cpp-ne | #include <iostream> #include <string> #include <unordered_set> #include... | 0 | 2024-06-21T12:25:14 | https://dev.to/niyomungeli_aline_4a7b594/cpp-ne-17m |

```

#include <iostream>

#include <string>

#include <unordered_set>

#include <limits>

#include <ctime>

using namespace std;

// Structure of patients linked list

struct Patient {

int patient_id;

string name;

string dob;

string gender;

Patient* next;

};

// Structure for doctors linked list

struct ... | niyomungeli_aline_4a7b594 | |

1,895,974 | How to Choose the Best E-commerce Platform | Defining Your E-commerce Needs Features Required for Your Online Store Product... | 0 | 2024-06-21T12:23:59 | https://dev.to/hyscaler/how-to-choose-the-best-e-commerce-platform-85g | ## Defining Your E-commerce Needs

**Features Required for Your Online Store**

**Product Catalog:**

Detailed product descriptions that captivate and inform

High-quality images and videos to showcase products

Intuitive categories and subcategories for seamless navigation

**Shopping Cart:**

User-friendly interface ... | amulyakumar | |

1,895,973 | AI-Powered Lego Printer Turns Imagination into Mosaic Masterpieces | The world of Lego just got a whole lot more high-tech! A dedicated YouTuber has unveiled the Pixelbot... | 0 | 2024-06-21T12:23:11 | https://dev.to/hyscaler/ai-powered-lego-printer-turns-imagination-into-mosaic-masterpieces-1853 | The world of Lego just got a whole lot more high-tech! A dedicated YouTuber has unveiled the Pixelbot 3000, a revolutionary AI-powered Lego printer that automates the creation of intricate Lego mosaics. This innovative machine takes inspiration from existing Lego art sets like Da Vinci's Mona Lisa or Hokusai's The Grea... | suryalok | |

1,895,972 | jspdf issue with generating pdf | facing issue when i opening html link page in mobile browser in latest version it is is missing some... | 0 | 2024-06-21T12:23:02 | https://dev.to/tahir_rehman_97e3f51216c4/jspdf-issue-with-generating-pdf-17ab | help | facing issue when i opening html link page in mobile browser in latest version it is is missing some data | tahir_rehman_97e3f51216c4 |

1,895,968 | Amna 5 | A post by DEELIP MEHTA | 0 | 2024-06-21T12:16:30 | https://dev.to/deelip_mehta_46ddb8c5db44/amna-5-2nd3 | {% embed https://youtu.be/Zxezfv7FwY8 %} | deelip_mehta_46ddb8c5db44 | |

1,895,967 | A Deep Dive into the `array.map` Method - Mastering JavaScript | The array.map function is a method available in JavaScript (and in some other languages under... | 27,926 | 2024-06-21T12:14:28 | https://dev.to/hkp22/a-deep-dive-into-the-arraymap-method-mastering-javascript-1dj4 | webdev, javascript, programming, react | The `array.map` function is a method available in JavaScript (and in some other languages under different names or syntax) that is used to create a new array populated with the results of calling a provided function on every element in the calling array. It's a powerful tool for transforming arrays.

{% youtube oystIFw... | hkp22 |

1,895,966 | The Role of AI in Modern Casino Game Development | The casino gaming industry has always been at the forefront of technological innovation. Today,... | 0 | 2024-06-21T12:14:17 | https://dev.to/mathewc/the-role-of-ai-in-modern-casino-game-development-3ki | gamedev, web3, webdev | The casino gaming industry has always been at the forefront of technological innovation. Today, artificial intelligence (AI) is revolutionizing the way games are developed, played, and managed. As a leading **[casino game development company](https://innosoft-group.com/online-casino-game-development-company/)**, Innoso... | mathewc |

1,895,965 | The Importance of ESG Consulting Services in Enhancing Sustainability Compliance | In today's business environment, sustainability accounting has become a cornerstone of corporate... | 0 | 2024-06-21T12:14:10 | https://dev.to/linda0609/the-importance-of-esg-consulting-services-in-enhancing-sustainability-compliance-2g49 | esg, consulting | In today's business environment, sustainability accounting has become a cornerstone of corporate responsibility. Companies are now assessed based on how effectively they transform their operations to be more eco-friendly, inclusive, and secure. Investors, consumers, and governments increasingly demand higher compliance... | linda0609 |

1,895,963 | 10 Signs Of Low Quality In Mobile App Development Services | The trend of developing mobile applications has been increasing for some time now. Applications... | 0 | 2024-06-21T12:11:46 | https://dev.to/infowindtech57/10-signs-of-low-quality-in-mobile-app-development-services-2dnm | mobile, mobileapps, webdev | The trend of developing mobile applications has been increasing for some time now. Applications designed for mobile devices are explicitly included in this subsection of software development.

The mobile apps are developed on several operating systems, the major ones include iOS and Android. Globally, there are more th... | infowindtech57 |

1,895,962 | Amna 4 | A post by DEELIP MEHTA | 0 | 2024-06-21T12:11:43 | https://dev.to/deelip_mehta_46ddb8c5db44/amna-4-ck5 | {% embed https://youtu.be/nrGCdVIgrDs %} | deelip_mehta_46ddb8c5db44 | |

1,895,961 | Amna 3 | A post by DEELIP MEHTA | 0 | 2024-06-21T12:11:07 | https://dev.to/deelip_mehta_46ddb8c5db44/amna-3-2jnc | {% embed https://youtu.be/5pp9xXQBtwQ %} | deelip_mehta_46ddb8c5db44 | |

1,883,978 | Buy GitHub Accounts | https://dmhelpshop.com/product/buy-github-accounts/ Buy GitHub Accounts GitHub holds a crucial... | 0 | 2024-06-11T06:15:56 | https://dev.to/gigoraj855/buy-github-accounts-2no1 | nextjs, cloud, linux, api | https://dmhelpshop.com/product/buy-github-accounts/

Buy GitHub Accounts

GitHub holds a crucial position in the world of coding, making it an indispensable platform for developers. As the largest global code reposi... | gigoraj855 |

1,895,960 | A Guide to the Manarat Al Riyadh School Uniform: Dress Code Essentials | When it comes to educational institutions, the uniform often plays a crucial role in establishing a... | 0 | 2024-06-21T12:10:49 | https://dev.to/stitch_cart_1ee8419df926a/a-guide-to-the-manarat-al-riyadh-school-uniform-dress-code-essentials-1f8e | When it comes to educational institutions, the uniform often plays a crucial role in establishing a sense of identity and unity among students. The Manarat Al Riyadh School dress code is no exception. With its distinct and thoughtfully designed uniform, the school ensures that students not only look their best but also... | stitch_cart_1ee8419df926a | |

1,895,959 | **The Importance of Net Worth in Financial Planning** | Net worth isn't just a number—it's a powerful tool for understanding your financial health. By... | 0 | 2024-06-21T12:10:25 | https://dev.to/gps_thakawal_8feb68bc79dd/the-importance-of-net-worth-in-financial-planning-29ej |

Net worth isn't just a number—it's a powerful tool for understanding your financial health. By subtracting your liabilities from your assets, you get a clear picture of where you stand financially. Whether you're saving for a home or planning for retirement, monitoring and growing your net worth is key to achieving yo... | gps_thakawal_8feb68bc79dd | |

1,895,958 | Best SEO Companies in Jaipur | Enhance your online presence with our comprehensive guide on the best SEO companies in Jaipur for... | 0 | 2024-06-21T12:09:24 | https://dev.to/metabyte_marketing_399f9a/best-seo-companies-in-jaipur-2n0 | Enhance your online presence with our comprehensive guide on the best SEO companies in Jaipur for 2024. Our detailed reviews and comparisons will help you make an informed decision on which company to choose for maximizing your website's visibility and driving organic traffic. Stay ahead of the competition and boost yo... | metabyte_marketing_399f9a | |

1,895,957 | Best SEO Companies in Jaipur | Enhance your online presence with our comprehensive guide on the best SEO companies in Jaipur for... | 0 | 2024-06-21T12:09:19 | https://dev.to/metabyte_marketing_399f9a/best-seo-companies-in-jaipur-9gg | Enhance your online presence with our comprehensive guide on the best SEO companies in Jaipur for 2024. Our detailed reviews and comparisons will help you make an informed decision on which company to choose for maximizing your website's visibility and driving organic traffic. Stay ahead of the competition and boost yo... | metabyte_marketing_399f9a | |

1,895,956 | ES-6 What is it? | Let's check the acronym first ES = ECMAScript (ECMA - "European Computer Manufacturers... | 0 | 2024-06-21T12:07:47 | https://dev.to/harshasrisameera/es-6-what-is-it-2ibd | javascript, programming, es6, react | Let's check the acronym first

ES = ECMAScript (ECMA - "European Computer Manufacturers Association")

ES is a standard for scripting languages. The most popular language among them is Javascript. It was first introduced in 1997.

When you hear people say ES5, ES6, etc, they are referring to a version of ECMAScript. ES... | harshasrisameera |

1,895,070 | when should you quit working on an idea? | Millions of business ideas, SaaS, and startup ideas fail everyday. As a business graduate myself,... | 0 | 2024-06-21T12:05:38 | https://dev.to/ohchloeho/when-should-you-quit-working-on-an-idea-5h6 | startup, saas, beginners, career | Millions of business ideas, SaaS, and startup ideas fail everyday. As a business graduate myself, I’ve learnt something quite important about recognizing the potentials of an idea before the costs to prove its worth overwhelms its true value.

Every idea sparks from a pain point and surrounds it to act as a painkiller.... | ohchloeho |

1,895,954 | Weighing Out Pros and Cons of Outsourcing Accounts Payable Solutions | The business operation of any firm does not simply ends with recording routine transactions of... | 0 | 2024-06-21T12:05:28 | https://dev.to/globalbookkeeping/weighing-out-pros-and-cons-of-outsourcing-accounts-payable-solutions-2ph1 | The business operation of any firm does not simply ends with recording routine transactions of monetary value. Bigger the size of the firm, bigger and complex will be business operations. Any business cooperate have to deal and considered several aspects of business to know the real position for the firm. They need to ... | globalbookkeeping | |

1,895,946 | Diferenças entre o jest.spyOn e jest.mock | Sim, existem diferenças importantes entre jest.mock e jest.spyOn, embora ambos sejam usados para... | 27,693 | 2024-06-21T12:02:18 | https://dev.to/vitorrios1001/diferencas-entre-o-jestspyon-e-jestmock-5035 | jest, testing, javascript, mock | Sim, existem diferenças importantes entre `jest.mock` e `jest.spyOn`, embora ambos sejam usados para criar mocks em testes. Vamos explorar as diferenças e quando usar cada um.

## **jest.mock**

### Uso

`jest.mock` é usado para mockar módulos inteiros. Isso é útil quando você deseja substituir um módulo completo, seja ... | vitorrios1001 |

1,895,952 | Amna 2 | A post by DEELIP MEHTA | 0 | 2024-06-21T12:01:33 | https://dev.to/deelip_mehta_46ddb8c5db44/amna-2-4kai | {% embed https://youtu.be/JixNFC29eWs %} | deelip_mehta_46ddb8c5db44 | |

1,895,951 | Amna 1 | A post by DEELIP MEHTA | 0 | 2024-06-21T12:01:04 | https://dev.to/deelip_mehta_46ddb8c5db44/amna-1-4g7k | {% embed https://youtu.be/dRaAirc9sYM %} | deelip_mehta_46ddb8c5db44 | |

1,895,942 | The only 4 DaaS services you should be considering | Simplifying IT: Choosing the Right DaaS Solution for Your Organisation In most cases, your... | 0 | 2024-06-21T11:48:20 | https://dev.to/struthi/the-only-4-daas-services-you-should-be-considering-16c8 | # Simplifying IT: Choosing the Right DaaS Solution for Your Organisation

In most cases, your organisation's IT team is likely seeking a new, convenient, and straightforward solution—free from the complexities of traditional options. If you're managing a remote or hybrid workforce, you need reliable, secure hardware th... | struthi | |

1,895,039 | Step Guide on how to create and connect to a Linux VM using a Public key | Creating a Linux virtual machine (VM) on Microsoft Azure involves several steps. Here’s a... | 0 | 2024-06-21T12:00:38 | https://dev.to/ikay/step-guide-on-how-to-create-and-connect-to-a-linux-vm-using-a-public-key-2nna | virtualmachine, linux, resources, deployment | Creating a Linux virtual machine (VM) on Microsoft Azure involves several steps. Here’s a comprehensive guide to help you set up an Azure Linux VM:

**Step-by-Step Guide:**

If you don't have an Azure subscription, create a free account before you begin.

Sign in to Azure Portal:

Go to portal.azure.com and sign in with y... | ikay |

1,895,785 | Why CLIs are STILL important | Command Line Interfaces (CLIs) seem old-fashioned in the age of graphical user interfaces (GUIs) and... | 0 | 2024-06-21T12:00:00 | https://cyclops-ui.com/blog/2024/06/21/why-cli-important/ | kubernetes, devops, opensource, beginners | Command Line Interfaces (CLIs) seem old-fashioned in the age of graphical user interfaces (GUIs) and touchscreens, but they remain essential tools for developers. You may not realize it, but they are used far more often than you might think. For example, if you use `git` commands over the terminal, you're likely engagi... | karadza |

1,895,667 | Path To A Clean(er) React Architecture (Part 6) - Business Logic Separation | Business logic can bloat React components and make them difficult to test. In this article, we discuss how extracting them to hooks in combination with dependency injection can improve maintainability and testability. | 27,067 | 2024-06-21T12:00:00 | https://profy.dev/article/react-architecture-business-logic-and-dependency-injection | react, javascript, webdev, frontend | ---

description: Business logic can bloat React components and make them difficult to test. In this article, we discuss how extracting them to hooks in combination with dependency injection can improve maintainability and testability.

---

_The unopinionated nature of React is a two-edged sword:_

- _On the one hand, y... | jkettmann |

1,895,950 | How to Integrate Cloud Security Tools into Your Existing IT Infrastructure | As reliance on cloud services intensifies among businesses, the significance of robust cloud security... | 0 | 2024-06-21T11:59:58 | https://dev.to/micromindercs/how-to-integrate-cloud-security-tools-into-your-existing-it-infrastructure-46gi | cybersecurity, ai, discuss, news | As reliance on cloud services intensifies among businesses, the significance of robust cloud security becomes increasingly critical. It's crucial to incorporate cloud security measures within your IT framework to shield confidential information, adhere to regulatory standards, and defend against online dangers. This ar... | micromindercs |

1,842,497 | GCP Cloud Armor - How to Leverage and add extra layer of security | In today's digital world, securing your internet-facing applications is paramount. Distributing... | 0 | 2024-06-21T11:59:31 | https://dev.to/chetan_menge/gcp-cloud-armor-how-to-leverage-and-add-extra-layer-of-security-4ol7 | gcp, cloudarmor, websecurity, cloud |

In today's digital world, securing your internet-facing applications is paramount. Distributing Denial-of-Service (DDoS) attacks, web application vulnerabilities, and malicious bots can significantly disrupt your services and damage your reputation. Google Cloud Armor offers a robust solution to fortify your applicat... | chetan_menge |

1,895,949 | The Benefits and Process of Obtaining a Connecticut Medical Marijuana Card | Connecticut has made significant strides in medical marijuana legislation, providing patients with an... | 0 | 2024-06-21T11:56:15 | https://dev.to/zubairudin/the-benefits-and-process-of-obtaining-a-connecticut-medical-marijuana-card-33g7 | medical, marijuana, connecticut | Connecticut has made significant strides in medical marijuana legislation, providing patients with an alternative approach to managing various health conditions. Obtaining a Connecticut Medical Marijuana Card can be a transformative experience, offering relief and improving quality of life. This article explores the me... | zubairudin |

1,895,947 | 6 Captivating Python Programming Tutorials from LabEx 🚀 | The article is about a captivating collection of six Python programming tutorials from the renowned LabEx platform. Covering a wide range of topics, the tutorials delve into the powerful data visualization capabilities of Matplotlib, exploring techniques for creating text and mathtext, working with PGF preamble, using ... | 27,678 | 2024-06-21T11:54:42 | https://dev.to/labex/6-captivating-python-programming-tutorials-from-labex-4f5p | python, coding, programming, tutorial |

Welcome to this exciting collection of Python programming tutorials from the renowned LabEx platform! Whether you're a seasoned coder or just starting your journey, these six labs will take you on a captivating exploration of Matplotlib, Python's powerful data visualization library, and delve into the intricacies of P... | labby |

1,895,945 | Prêt à partir | Prêt à partir En octobre 2022 je suis placé quatre jours en détention dans une affaire dont je n'ose... | 0 | 2024-06-21T11:53:54 | https://dev.to/frank_bellew_a70105780e0f/pret-a-partir-kai | Prêt à partir

En octobre 2022 je suis placé quatre jours en détention dans une affaire dont je n'ose pas écrire, je suis innocent, c'est un souvenir horrible qui ne fait qu'aggraver mon état mental (dépressif depuis 2019). Je n'oublierais pas les mains tendues de mon ami Mohamed qui m'a encouragé à monter ma société e... | frank_bellew_a70105780e0f | |

1,895,944 | Enhance Your App: Embeddable's Streamlined Analytics Integration! | Embeddable redefines analytics integration with its versatile platform, allowing seamless embedding... | 0 | 2024-06-21T11:51:02 | https://dev.to/embeddable/enhance-your-app-embeddables-streamlined-analytics-integration-439p | devops, java, development | [Embeddable](https://embeddable.com) redefines analytics integration with its versatile platform, allowing seamless embedding of customizable charts and dashboards into applications. Featuring a user-friendly no-code interface, robust compatibility with major databases, and flexible deployment options, [Embeddable ](ht... | embeddable |

1,895,939 | How to define and work with a Rust-like result type in NuShell | Motivation Rust—among other modern programming languages—has a data type Result<T,... | 0 | 2024-06-21T11:51:00 | https://dev.to/fkurz/how-to-define-and-work-with-a-rust-like-result-type-in-nushell-51ib | ## Motivation

Rust—among other modern programming languages—has a data type [`Result<T, E>` in its standard library][1], that allows us to represent error states in a program directly in code. Using data structures to represent errors in code is a pattern known mostly from purely functional programming languages but h... | fkurz | |

1,895,941 | Best Wedding Photographer in Mohali | Booking Kartik Event Filmer as your wedding photographers is a seamless process. Visit their website,... | 0 | 2024-06-21T11:45:38 | https://dev.to/kartik_eventfilmer/best-wedding-photographer-in-mohali-1d94 | photography, videography, wedding, prewedding | Booking Kartik Event Filmer as your wedding photographers is a seamless process. Visit their website, [https://kartikeventfilmer.com/](https://kartikeventfilmer.com), to explore their portfolio and get in touch. You can also contact them directly at 9711992255 to discuss your vision and secure their services for your s... | kartik_eventfilmer |

1,895,940 | Common Developer Mistakes and How to Avoid Them | 👨💻 Every developer, regardless of experience level, makes mistakes. However, learning to identify... | 0 | 2024-06-21T11:44:21 | https://dev.to/dipakahirav/common-developer-mistakes-and-how-to-avoid-them-440h | webdev, javascript, programming, developer | 👨💻 Every developer, regardless of experience level, makes mistakes. However, learning to identify and avoid common pitfalls can significantly improve your efficiency and code quality. Here’s a comprehensive guide to common developer mistakes and how to avoid them:

please subscribe to my [YouTube channel](https://ww... | dipakahirav |

1,895,937 | Why Costa Cálida is Your Next Property Investment Destination? | Nestled along the south-eastern coast of Spain, Costa Cálida, the "Warm Coast," is emerging as a... | 0 | 2024-06-21T11:41:45 | https://dev.to/immoabroad/why-costa-calida-is-your-next-property-investment-destination-1p02 | Nestled along the south-eastern coast of Spain, Costa Cálida, the "Warm Coast," is emerging as a beacon for savvy property investors globally. This sun-drenched region, with over 300 days of sunshine per year, boasts a blend of pristine beaches, historic charm, and burgeoning real estate opportunities, making it an ide... | immoabroad | |

1,895,935 | Big O Notation | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-21T11:30:50 | https://dev.to/kl13nt/big-o-notation-1id1 | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Big O describes the efficiency of the worst case scenario of an algorithm. It helps categorize algorithms, but isn't accurate to describe real performance. An algorithm that does const... | kl13nt |

1,895,781 | NAND Flash Memory Market Analysis: Application Insights and Market Size | NAND Flash Memory Market size was valued at $ 78.84 Bn in 2022 and is expected to grow to $ 109.56 Bn... | 0 | 2024-06-21T09:56:22 | https://dev.to/vaishnavi_farkade_/nand-flash-memory-market-analysis-application-insights-and-market-size-1c90 | **NAND Flash Memory Market size was valued at $ 78.84 Bn in 2022 and is expected to grow to $ 109.56 Bn by 2030 and grow at a CAGR of 4.2% by 2023-2030.**

**Market Scope & Overview:**

The NAND Flash Memory Market Analysis research report provides an extensive examination of key growth strategies, drivers, opportuniti... | vaishnavi_farkade_ | |

1,895,933 | Asynchronous JavaScript: Callbacks, Promises, and Async/Await | Introduction One of the most powerful features of the JavaScript language is the ability... | 0 | 2024-06-21T11:29:57 | https://dev.to/johnnyk/asynchronous-javascript-callbacks-promises-and-asyncawait-57ei | webdev, javascript, beginners, programming | ## Introduction

One of the most powerful features of the JavaScript language is the ability to handle asynchronous operations. Asynchronous programming allows developers to perform tasks like fetching data from a server or reading a file without blocking the main execution thread. This ensures that applications remain ... | johnnyk |

1,887,745 | Criando aplicação multi-idioma no Flutter | Neste artigo, vou guiá-lo passo a passo sobre como implementar um seletor de idioma moderno e... | 0 | 2024-06-21T11:28:53 | https://dev.to/adryannekelly/criando-aplicacao-multi-idioma-no-flutter-3jao | flutter, dart, braziliandevs, language | Neste artigo, vou guiá-lo passo a passo sobre como implementar um seletor de idioma moderno e funcional em sua aplicação Flutter. Vamos explorar como criar um toggle de idioma de forma intuitiva e fácil de usar, garantindo que seus usuários possam alternar entre diferentes idiomas de forma suave e sem complicações. Vam... | adryannekelly |

1,895,934 | Bitwise operators in Java: unpacking ambiguities | Author: Kirill Epifanov The "&" and "|" operators are pretty straightforward and unambiguous... | 0 | 2024-06-21T11:27:13 | https://dev.to/anogneva/bitwise-operators-in-java-unpacking-ambiguities-3783 | java, programming, coding | Author: Kirill Epifanov

The "&" and "\|" operators are pretty straightforward and unambiguous when applied correctly\. But do you know all the implications of using bitwise operators instead of logical ones in Java? In this article, we will examine both the pros of this approach in terms of performance and the cons in... | anogneva |

1,895,931 | The Biggest Difficulties Students Face When Deciding to Study Abroad | Studying abroad is a dream for many students. It offers the opportunity to experience new cultures,... | 0 | 2024-06-21T11:24:07 | https://dev.to/sarahkhan/the-biggest-difficulties-students-face-when-deciding-to-study-abroad-49kp | dubai, study, abroad, students | Studying abroad is a dream for many students. It offers the opportunity to experience new cultures, gain a world-class education, and build a global network. However, the journey to studying abroad is fraught with challenges. In this blog, we'll explore the most significant difficulties students face when deciding to p... | sarahkhan |

1,895,923 | Innovative Tech: Revolutionizing Climate Change Fight | Setting the Stage: The Urgency of Action Intergovernmental Panel on Climate Change (IPCC) findings... | 0 | 2024-06-21T11:23:40 | https://dev.to/svod_advisory/innovative-tech-revolutionizing-climate-change-fight-eko | Setting the Stage: The Urgency of Action

Intergovernmental Panel on Climate Change (IPCC) findings underscore a stark reality and pressure on the urgency to achieve rapid and profound reductions in greenhouse gas emissions to reduce global warming to 1.5°C. Such reductions represent more than an environmental imperativ... | svod_advisory | |

1,895,920 | The Evolution of Electronic Data Interchange (EDI): The Game-Changer Every Supplier Needs to Know About | Introduction: When Communication Revolutionized Business Imagine a world where business transactions,... | 0 | 2024-06-21T11:22:47 | https://dev.to/actionedi/the-evolution-of-electronic-data-interchange-edi-the-game-changer-every-supplier-needs-to-know-about-6in | Introduction: When Communication Revolutionized Business

Imagine a world where business transactions, orders, and invoices are exchanged not through time-consuming, error-prone paper trails, but with the speed and precision of digital technology. This world began to take shape in the 1960s with the birth of Electronic ... | actionedi | |

1,895,918 | Best Cardiologist In Chennai | "Cardiac therapy world-wide is evolving rapidly and innovation is improving the lives of patients... | 0 | 2024-06-21T11:22:19 | https://dev.to/the_heartteam_a927019249/best-cardiologist-in-chennai-3jip | "Cardiac therapy world-wide is evolving rapidly and innovation is improving the lives of patients with minimally invasive and hybrid surgical procedures. The Best Cardiac Therapy Hospital in Chennai.

Reach us through:

📱 +91-81488 60211

📩 contact@th... | the_heartteam_a927019249 | |

1,895,834 | Power Apps (Part 1 ) | OverView Power Apps is such a platform where we can create and manage our apps and data... | 0 | 2024-06-21T11:16:45 | https://dev.to/mubashar1009/power-apps-part-1--545g | powerplatform, powerapps, dataverse, powerautomate | ## OverView

Power Apps is such a platform where we can create and manage our apps and data and can connect to different data sources.Before more telling about Power Apps , first of all i will discuss when and why we use power apps instead of custom apps

## Difference between Power Apps and Custom App

## When We Shoul... | mubashar1009 |

1,895,830 | The Best Way To Map Objects in .Net in 2024 | In today's blog post you will learn how to map objects in .NET using various techniques and... | 0 | 2024-06-21T11:16:35 | https://antondevtips.com/blog/the-best-way-to-map-objects-in-dotnet-in-2024 | dotnet, csharp, aspnetcore, bestpractises | ---

canonical_url: https://antondevtips.com/blog/the-best-way-to-map-objects-in-dotnet-in-2024

---

In today's blog post you will learn how to map objects in .NET using various techniques and libraries.

We'll explore what is the best way to map objects in .NET in 2024.

> **_On my webite: [antondevtips.com](https://ant... | antonmartyniuk |

1,895,833 | Medical Coding Training In Hyderabad-Medi Infotech | Top Most Medical Coding Training In Hyderabad with Internship and Placements. Medi Infotech is the... | 0 | 2024-06-21T11:16:26 | https://dev.to/mediinfotech/medical-coding-training-in-hyderabad-medi-infotech-14p8 | Top Most Medical Coding Training In Hyderabad

with Internship and Placements.

Medi Infotech is the Top Most Medical Coding Institute in Hyderabad an ISO 9001 and ISO ISMS Certified organization located in Hyderabad,... | mediinfotech | |

1,895,832 | Unleashing GPU Power: Supercharge Your Data Processing with cuDF | This time, while randomly scrolling through some blog post about the latest AI advancement and its... | 0 | 2024-06-21T11:12:49 | https://dev.to/yuval728/unleashing-gpu-power-supercharge-your-data-processing-with-cudf-2232 | datascience, data, python, programming | This time, while randomly scrolling through some blog post about the latest AI advancement and its capabilities, I found out about cuDF , which is part of the family of software libraries and APIs called RAPIDS for accelerating data operations and Machine Learning on GPUs. During data feeding, cuDF allows for the paral... | yuval728 |

1,895,831 | After merging a git branch to the main branch, how to delete it? | You have to do 2 steps: you have to delete it locally as well as from your Github or whatever your... | 0 | 2024-06-21T11:11:20 | https://dev.to/mbshehzad/after-merging-a-git-branch-to-the-main-branch-how-to-delete-it-4p0b | github, node | You have to do 2 steps: you have to delete it locally as well as from your Github or whatever your remote respository is. Run the following 2 commands to do so:

1. `git branch -d [branchname]`

1. `git push origin --delete [branchname]` | mbshehzad |

1,895,829 | Install Keycloak on ECS(with Aurora Postgresql) | Welcome If you have read my latest post about accessing RHEL in the cloud, you may notice... | 27,806 | 2024-06-21T11:06:27 | https://blog.3sky.dev/article/202407-keycloak-install/ | aws, keyclock, ecs, cdk | ## Welcome

If you have read my latest post about accessing RHEL in the cloud,

you may notice that we’re accessing the cockpit console,

via SSM Session manager port forwarding.

That’s not an ideal solution. I’m not talking in bed,

it’s just not ideal(but cheap). Today I realised that using

Amazon WorkSpaces Secure Brow... | 3sky |

1,895,828 | Deploying AWS Guard Duty Malware Protection for S3 Buckets (Step-by-Step Guide) | Keep your S3 buckets safe from malware! GuardDuty scans new and updated files uploaded to your chosen... | 0 | 2024-06-21T11:06:18 | https://dev.to/aws-builders/deploying-aws-guard-duty-malware-protection-for-s3-buckets-step-by-step-guide-15l4 | guardduty, awscommunity, s3, malwareprotection | Keep your S3 buckets safe from malware! GuardDuty scans new and updated files uploaded to your chosen Amazon Simple Storage Service (S3) bucket. This automatic scanning helps identify potential malware threats before they can cause harm.

In this blog post, I will walk you through a step-by-step guide on how to deploy A... | amalkabraham001 |

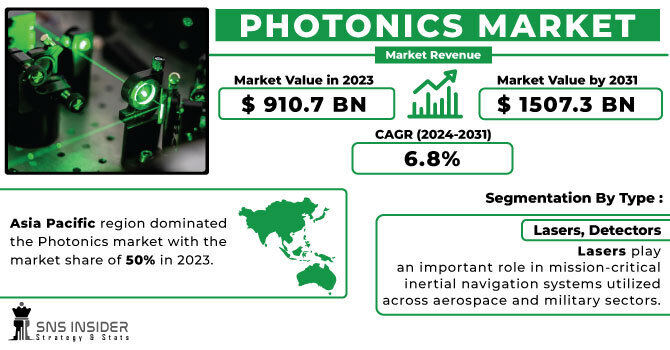

1,895,827 | Photonics Market Revenue Future Trends in Photonics Technology | The Photonics Market size was $ 910.7 Bn in 2023 to $ 1507.3 Bn by 2031 and grow at a CAGR of 6.8% by... | 0 | 2024-06-21T11:05:18 | https://dev.to/vaishnavi_farkade_/photonics-market-revenue-future-trends-in-photonics-technology-2hcb | **The Photonics Market size was $ 910.7 Bn in 2023 to $ 1507.3 Bn by 2031 and grow at a CAGR of 6.8% by 2024-2031.**

**Market Scope & Overview:**

The global Photonics Market Revenue research study provides a compre... | vaishnavi_farkade_ | |

1,895,826 | Paginação em APIs com Golang | A paginação em APIs é essencial para melhorar o desempenho ao lidar com grandes conjuntos de dados.... | 0 | 2024-06-21T11:04:53 | https://dev.to/ortizdavid/paginacao-em-apis-com-golang-5hm4 | go, golangdeveloper, restapi |

A paginação em APIs é essencial para melhorar o desempenho ao lidar com grandes conjuntos de dados. Ela permite retornar apenas os dados necessários, evitando sobrecargas e melhorando a eficiência da aplicação.

## Componentes Básicos da Paginação

**- Itens ou Dados:** Os registros ou elementos que estão sendo paginad... | ortizdavid |

1,895,809 | Artificial Intelligence Explained by an AI Philosopher | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-21T11:04:26 | https://dev.to/geeksta/artificial-intelligence-explained-by-an-ai-philosopher-4b1i | devchallenge, cschallenge, computerscience, beginners | ---

title: Artificial Intelligence Explained by an AI Philosopher

published: true

tags: devchallenge, cschallenge, computerscience, beginners

---

*This is a submission for [DEV Computer Science Cha... | geeksta |

1,895,824 | Why Hiring a PHP Developer is Essential for Your Web Development Needs | In today's digital era, having a robust online presence is crucial for businesses of all sizes.... | 0 | 2024-06-21T11:01:04 | https://dev.to/dylan_9f5acebc434b82ee41f/why-hiring-a-php-developer-is-essential-for-your-web-development-needs-5cb | hire, php, developers |

In today's digital era, having a robust online presence is crucial for businesses of all sizes. Whether you are a startup or an established enterprise, your website serves as the cornerstone of your digital identit... | dylan_9f5acebc434b82ee41f |

1,895,794 | ¿Fortran es el nuevo Python? | Necesito un lenguaje de programación científico compilado. | 0 | 2024-06-21T10:53:25 | https://dev.to/baltasarq/fortran-es-el-nuevo-python-2307 | spanish, programming, python, datascience | ---

title: ¿Fortran es el nuevo Python?

published: true

description: Necesito un lenguaje de programación científico compilado.

tags: #spanish #programming #python #datascience

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-06-21 08:12 +0000

---

![Fortran]... | baltasarq |

1,895,820 | Providing All Types of Web Design for Your Business | Why Choose a Web Development Company in Calicut? **Local Expertise and Market... | 0 | 2024-06-21T10:51:51 | https://dev.to/wis_branding_84cec990b812/providing-all-types-of-web-design-for-your-business-5he0 | javascript, programming, react, ai |

## Why Choose a Web Development Company in Calicut?

****

**Local Expertise and Market Understanding

Choosing a local web development company means you’re partnering with professionals who understand the Calicut ma... | wis_branding_84cec990b812 |

1,895,819 | Providing All Types of Web Design for Your Business | Why Choose a Web Development Company in Calicut? **Local Expertise and Market... | 0 | 2024-06-21T10:51:51 | https://dev.to/wis_branding_84cec990b812/providing-all-types-of-web-design-for-your-business-ncg | javascript, programming, react, ai |

## Why Choose a Web Development Company in Calicut?

****

**Local Expertise and Market Understanding

Choosing a local web development company means you’re partnering with professionals who understand the Calicut ma... | wis_branding_84cec990b812 |

1,895,818 | Photovoltaik Strausberg | SolarX GmbH: Nachhaltige Energie für eine grünere Zukunft In einer Zeit, in der der Bedarf an... | 0 | 2024-06-21T10:50:27 | https://dev.to/xgmbhsolar07/photovoltaik-strausberg-46kc | SolarX GmbH: Nachhaltige Energie für eine grünere Zukunft

In einer Zeit, in der der Bedarf an nachhaltigen Energiequellen immer dringlicher wird, steht die SolarX GmbH an vorderster Front, um diesen Bedarf zu decken. Das Unternehmen hat sich auf die Entwicklung und Bereitstellung von Solaranlagen und Photovoltaiksystem... | xgmbhsolar07 | |

1,895,816 | Appealing Mobile App Design: Understanding its 3 Important Pillars | Mobile apps are a must-have component for businesses to succeed in today's fast-tech times. So,... | 0 | 2024-06-21T10:50:03 | https://dev.to/jigar_online/appealing-mobile-app-design-understanding-its-3-important-pillars-4bhi | learning, mobile, design, mobileapp | Mobile apps are a must-have component for businesses to succeed in today's fast-tech times. So, whether you are an established company or a startup, considering mobile app development helps you enhance client engagement and reach a larger audience. And for a successful mobile app, you must first have a successful app d... | jigar_online |

1,895,817 | How to render notes and note page in php? | Firstly, Create a table in the database named "notes" with the following columns: id (unique... | 0 | 2024-06-21T10:48:43 | https://dev.to/ghulam_mujtaba_247/how-to-render-notes-and-note-page-in-php-2178 | php, beginners, programming, learning | Firstly,

- Create a table in the database named "notes" with the following columns:

- id (unique index)

- title

- content

- The "id" column is the primary key and has a unique index, allowing for efficient location of specific data.

- The "title" column stores the note title.

- The "content" column stor... | ghulam_mujtaba_247 |

1,895,815 | Tools Every Java Developer Should Know: A Hiring Guide | Find out the "Tools Every Java Developer Should Know" with our comprehensive guide. Essential for... | 0 | 2024-06-21T10:47:16 | https://dev.to/talentonlease01/tools-every-java-developer-should-know-a-hiring-guide-odc | java | Find out the "**[Tools Every Java Developer Should Know](https://talentonlease.weebly.com/blog/10-tools-every-java-developer-should-know-a-hiring-guide)**" with our comprehensive guide. Essential for hiring managers and developers alike, this blog highlights the top 10 tools that enhance productivity, code quality, and... | talentonlease01 |

1,895,814 | IVF doctor in Siliguri | DR. Prasenjit Kumar Roy is a best ivf doctor in siliguri, West Bengal. With qualifications including... | 0 | 2024-06-21T10:46:51 | https://dev.to/prasanjit_2c9fc6989a756b6/ivf-doctor-in-siliguri-316 | DR. Prasenjit Kumar Roy is a best ivf doctor in siliguri, West Bengal. With qualifications including MBBS, MS (O&G), and a Fellowship in Reproductive Endocrinology and Infertility from Tel-Aviv University, Israel, he leads the Newlife Fertility Centre as its Director. Renowned for his expertise in reproductive health, ... | prasanjit_2c9fc6989a756b6 | |

1,895,813 | IVF doctor in Siliguri | DR. Prasenjit Kumar Roy is a best ivf doctor in siliguri, West Bengal. With qualifications including... | 0 | 2024-06-21T10:46:51 | https://dev.to/prasanjit_2c9fc6989a756b6/ivf-doctor-in-siliguri-309m | DR. Prasenjit Kumar Roy is a best ivf doctor in siliguri, West Bengal. With qualifications including MBBS, MS (O&G), and a Fellowship in Reproductive Endocrinology and Infertility from Tel-Aviv University, Israel, he leads the Newlife Fertility Centre as its Director. Renowned for his expertise in reproductive health, ... | prasanjit_2c9fc6989a756b6 | |

1,895,812 | Pure Functions in Next.js | Introduction While working with Next.js, a very famous React framework for building server-side... | 0 | 2024-06-21T10:46:35 | https://dev.to/sabermekki/pure-functions-in-nextjs-4ni4 | react, nextjs, javascript, webdev | **Introduction**

While working with Next.js, a very famous React framework for building server-side rendered and static web applications, one of the very important concepts you are going to meet is a pure function. Pure functions are among those principal topics one has to grasp in functional programming and take a hu... | sabermekki |

1,895,811 | 🚀 API Maker : Release Notes for v1.6.0 | ⭐ June 2024 ⭐ Changes [BUG] : Convert _id to valid Object id for executeQuery... | 0 | 2024-06-21T10:46:23 | https://dev.to/apimaker/api-maker-release-notes-for-v160-5786 | ## ⭐ June 2024 ⭐

### Changes

- **[BUG] :** Convert _id to valid Object id for executeQuery system API.

- **[BUG] :** ISO dates should be automatically converted to valid date for generated APIs.

- **[Improvement] :** Running commands for mongodb support added. We can create/delete index and lot more we can do using t... | apimaker | |

1,895,810 | Glance Intuit Download at Glance.Intuit.com | A Detailed guide to download Glance Intuit software from glance.intuit.com on your computer or the... | 0 | 2024-06-21T10:45:05 | https://dev.to/glanceintuitcom/glance-intuit-download-at-glanceintuitcom-n18 | A Detailed guide to download Glance Intuit software from glance.intuit.com on your computer or the Glance Intuit extension on your Google Chrome browser.

Glance Intuit tool is the perfect solution to use ProConnect Tax or QuickBooks Online, You Can download and install Glance Intuit on your computer or at least have ... | glanceintuitcom | |

1,895,808 | What Makes Investing in a Metaverse NFT Marketplace a Smart Move? | The digital world is rapidly evolving, with two major developments: the Metaverse and Non-Fungible... | 0 | 2024-06-21T10:39:55 | https://dev.to/elena_marie_dad5c9d5d5706/what-makes-investing-in-a-metaverse-nft-marketplace-a-smart-move-5dcl | metaversenftmarketplace, metaversenft | The digital world is rapidly evolving, with two major developments: the Metaverse and Non-Fungible Tokens (NFTs). If you haven't noticed before, it's time to catch up. Why should you think about setting up a marketplace for Metaverse NFTs? Let's explore.

What is an NFT Marketplace in the Metaverse?

People can communi... | elena_marie_dad5c9d5d5706 |

1,895,807 | Best Cloud Hosting Services | Best Cloud Facilitating Administrations give versatile, secure, and elite execution cloud foundation... | 0 | 2024-06-21T10:39:02 | https://dev.to/sm_smm_a9617bfd972a433c4a/best-cloud-hosting-services-5f7p | Best Cloud Facilitating Administrations give versatile, secure, and elite execution cloud foundation answers for organizations, all things considered. These administrations guarantee solid uptime, powerful information security, and adaptable asset the board, empowering organizations to deal with their web-based presenc... | sm_smm_a9617bfd972a433c4a | |

1,895,805 | Data Migration from GaussDB to GBase8a | Exporting Data from GaussDB Comparison of Export Methods Export Tool Export... | 0 | 2024-06-21T10:38:35 | https://dev.to/congcong/data-migration-from-gaussdb-to-gbase8a-8b7 | database | ## Exporting Data from GaussDB

**Comparison of Export Methods**

|Export Tool|Export Steps|Applicable Scenarios and Notes|

|-----------|------------|------------------------------|

|Using GDS Tool to Export Data to a Regular File System <br><br> _Note: The GDS tool must be installed on the server where the data files ... | congcong |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.