id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,920,572 | Understanding the GENERATED ALWAYS Column Option in PostgreSQL | Understanding the GENERATED ALWAYS Column Option in PostgreSQL The GENERATED ALWAYS column... | 0 | 2024-07-12T07:12:06 | https://dev.to/camptocamp-geo/understanding-the-generated-always-column-option-in-postgresql-oo4 | postgressql, gis | ### Understanding the GENERATED ALWAYS Column Option in PostgreSQL

The `GENERATED ALWAYS` column option in PostgreSQL functions similarly to a view for a table, allowing for on-the-fly calculation of the column's content. This feature is useful for generating computed columns based on expressions.

The syntax is strai... | yjacolin |

1,920,567 | 10/7/24 - Day 2 - Data types,variables,constants | அன்றைய தினம் வகுப்பு சற்று புரியவில்லை. இருந்தாலும் கொஞ்சம் மேனேஜ் செய்து அந்த வகுப்பிற்கான - quiz... | 0 | 2024-07-12T07:05:12 | https://dev.to/suman_r/10724-day-2-data-typesvariablesconstants-2d39 | python, programming, programmers | அன்றைய தினம் வகுப்பு சற்று புரியவில்லை. இருந்தாலும் கொஞ்சம் மேனேஜ் செய்து அந்த வகுப்பிற்கான - quiz மற்றும் Task-யை நன்றாக முடித்து விட்டேன்🙌 | suman_r |

1,920,568 | Styling in React | Styling is an essential aspect of building React applications. You have various options for styling,... | 27,566 | 2024-07-12T13:30:00 | https://devship.tech/react/styling | css, react, reactnative, styling | Styling is an essential aspect of building React applications. You have various options for styling, ranging from traditional CSS to modern CSS-in-JS solutions and component libraries. Let's explore some popular approaches:

# Traditional CSS

You can use plain old CSS to style your ReactJS app. Create CSS files and im... | imparth |

1,920,569 | Emergency Handling for GBase Database Failures (3) - Database Service Anomalies & Data Loss | 1. Database Service Anomalies 1.1 GBase Cluster Service Process... | 0 | 2024-07-12T07:09:10 | https://dev.to/congcong/emergency-handling-for-gbase-database-failures-3-database-service-anomalies-data-loss-4gm2 | database | ## 1. Database Service Anomalies

### 1.1 GBase Cluster Service Process Crash

**Description**

The cluster node services gclusterd, gbased, gcware, gcrecover, and gc_sync_server crash unexpectedly.

**Analysis**

The crash of the five processes (gclusterd, gbased, gcware, gcrecover, gc_sync_server) usually indicates a... | congcong |

1,920,570 | Day 2 of python programming 🧡 | THEORY - Today we will delve into more python and see Its features and applications. ... | 0 | 2024-07-12T07:11:39 | https://dev.to/aryan015/day-2-of-python-programming-15hn | 100daysofcode, python, computerscience, javascript | __THEORY__ - Today we will delve into more python and see Its features and applications.

## Features/Characterstics

1. Python is an open-souce (run by people) programming language.

1. Easy to understand

2. It is an interpreted and platform independent which makes debugging very easy.

1. It has good community support a... | aryan015 |

1,920,571 | Understanding the Power of Vision in Entrepreneurial Leadership with Reuven Kahane | In the dynamic world of business, entrepreneurs stand out as visionaries who drive innovation,... | 0 | 2024-07-12T07:11:54 | https://dev.to/reuvenkahane01/understanding-the-power-of-vision-in-entrepreneurial-leadership-with-reuven-kahane-39kp | In the dynamic world of business, entrepreneurs stand out as visionaries who drive innovation, growth, and transformation. The entrepreneurial journey is often characterized by challenges and uncertainties, but the defining trait that propels entrepreneurs forward is their vision. Vision in entrepreneurial leadership i... | reuvenkahane01 | |

1,920,573 | YOU DON'T KNOW THESE HTML TAGS! 🫣 | When working with HTML, most developers are familiar with the basic tags like <div>,... | 0 | 2024-07-12T07:12:30 | https://dev.to/mb337/you-dont-know-these-html-tags-1629 | webdev, html, markdown, css |

When working with HTML, most developers are familiar with the basic tags like `<div>`, `<span>`, and `<a>`.

However, HTML includes a variety of lesser-known tags that can be extremely useful in specific scenarios.

Here are some of the less commonly used HTML tags that you might find helpful:

## `<abbr>`

The `<abbr... | mb337 |

1,920,574 | How to Store Vibration Sensor Data | Part 2 | ReductStore is designed to efficiently handle time series unstructured data, making it an excellent... | 28,044 | 2024-07-12T07:12:42 | https://www.reduct.store/blog/how-to-store-vibration-sensor-data/part-2 | database, vibrationsensor, tutorial | **[ReductStore](https://www.reduct.store/)** is designed to efficiently handle time series unstructured data, making it an excellent choice for storing high frequency vibration sensor measurements.

This article is the second part of **[How to Store Vibration Sensor Data | Part 1](https://www.reduct.store/blog/how-to-st... | anthonycvn |

1,920,575 | dbForge Studio for Oracle vs Toad for Oracle — Detailed Comparison | Toad for Oracle is one of the top choices for easy and effective management of Oracle databases. But... | 0 | 2024-07-12T07:14:14 | https://dev.to/dbajamey/dbforge-studio-for-oracle-vs-toad-for-oracle-detailed-comparison-4108 | oracle, database, software | Toad for Oracle is one of the top choices for easy and effective management of Oracle databases. But what if you need something more expansive, something that can match your growing skills and take your productivity to new heights? Let us suggest dbForge Studio for Oracle, a premier [Oracle GUI](https://www.devart.com/... | dbajamey |

1,920,576 | Lynx Air Terminal at Los Angeles International Airport | Los Angeles International Airport (LAX) is one of the busiest and most well-known airports in the... | 0 | 2024-07-12T07:17:12 | https://dev.to/olivia_lopez/lynx-air-terminal-at-los-angeles-international-airport-1eb8 | Los Angeles International Airport (LAX) is one of the busiest and most well-known airports in the world. As a major hub for international and domestic travel, LAX serves millions of passengers annually, providing access to countless destinations. This article will provide an in-depth look at [Lynx Air LAX Terminal](htt... | olivia_lopez | |

1,920,577 | Patient-Centered Care and Data Integration in Population Health Management | The healthcare industry has evolved in recent years, shifting from a provider-centric approach to a... | 0 | 2024-07-12T07:18:08 | https://dev.to/ovaisnaseem/patient-centered-care-and-data-integration-in-population-health-management-4dom | powerapps, healthcare, datascience, bigdata | The healthcare industry has evolved in recent years, shifting from a provider-centric approach to a patient-centered care model. This transformation is particularly evident in Population Health Management (PHM), where integrating diverse data sources is pivotal in delivering personalized and effective care. Patient-cen... | ovaisnaseem |

1,920,579 | Enhancing PostgreSQL Security with the Credcheck Extension | The credcheck PostgreSQL extension offers a range of credential checks to enhance security during... | 0 | 2024-07-12T07:20:20 | https://dev.to/camptocamp-geo/enhancing-postgresql-security-with-the-credcheck-extension-k8a | postgressql, gis, security | The `credcheck` PostgreSQL extension offers a range of credential checks to enhance security during user creation, password changes, and user renaming. By implementing this extension, you can define a comprehensive set of rules to manage credentials more effectively:

- **Allow a specific set of credentials:** Specify ... | yjacolin |

1,920,617 | Oracle Cloud HCM 24C Release: What's New? | Are you excited to explore the latest advancements in Oracle’s HR technology? Dive into our... | 0 | 2024-07-12T07:22:38 | https://www.opkey.com/blog/oracle-cloud-hcm-24c-release-whats-new | oracle, cloud, hcm, release |

Are you excited to explore the latest advancements in Oracle’s HR technology? Dive into our comprehensive guide to Oracle HCM for the Oracle 24C Release.

We'll unveil the cutting-edge features and enhancements that ... | johnste39558689 |

1,920,623 | Best Practices for Migrating Your Data to the Cloud | In today's digital era, businesses increasingly use cloud solutions for data storage and management.... | 0 | 2024-07-12T07:24:10 | https://dev.to/ovaisnaseem/best-practices-for-migrating-your-data-to-the-cloud-2dih | datawarehouse, cloudbaseddata, datamigration, datascience | In today's digital era, businesses increasingly use cloud solutions for data storage and management. Migrating to a cloud-based data warehouse offers numerous benefits, including enhanced scalability, cost-efficiency, and flexibility. However, migrating data from traditional systems to the cloud requires meticulous pla... | ovaisnaseem |

1,920,624 | Revolutionizing Inventory Tracking with RPA | The world of supply chain management is evolving at an unprecedented pace, and one technology that's... | 27,673 | 2024-07-12T07:24:59 | https://dev.to/rapidinnovation/revolutionizing-inventory-tracking-with-rpa-30p | The world of supply chain management is evolving at an unprecedented pace, and

one technology that's leading the charge is Robotic Process Automation (RPA).

While RPA has already made its mark in various industries, its impact on

inventory tracking within the supply chain is nothing short of revolutionary.

In this blog... | rapidinnovation | |

1,920,625 | The Modern Era of Trading: Navigating Financial Markets Today | Introduction Trading qx has undergone a significant transformation over the past few decades,... | 0 | 2024-07-12T07:26:06 | https://dev.to/quotexvip96/the-modern-era-of-trading-navigating-financial-markets-today-395l | trading, binaryoptions, financial |

**Introduction**

[Trading qx](https://quotex-vip.com/) has undergone a significant transformation over the past few decades, evolving from a niche activity reserved for financial professionals to an accessible and... | quotexvip96 |

1,920,627 | 1 in X Probability Multiplier | I'm trying to make a 1 in X probability system, but for some reason the multiplier isn't working. I... | 0 | 2024-07-12T07:27:43 | https://dev.to/vyse/1-in-x-probability-multiplier-1c85 | javascript | I'm trying to make a 1 in X probability system, but for some reason the multiplier isn't working. I gave myself a high multiplier and I'm still getting common blocks.

```

const blocks_rng = [

{ name: "Dirt Block", item: "dirt", chance: 2 },

{ name: "Farmland", item: "farmland", chance: 3 },

{ name: "Oak Log", ite... | vyse |

1,920,628 | Exploring the Unique Flavor of Banana Nicotine Salt by Jam Monster Vape | In the world of vaping, flavor innovation is a constant pursuit, with each new product aiming to... | 0 | 2024-07-12T07:30:35 | https://dev.to/manthra_ea04fd7a70070d5f9/exploring-the-unique-flavor-of-banana-nicotine-salt-by-jam-monster-vape-bdp | banananicotinesalt, eliquid, salteliquid, buyonline | In the world of vaping, flavor innovation is a constant pursuit, with each new product aiming to tantalize taste buds in fresh and exciting ways. One such creation that has been making waves is the [Banana Nicotine Salt by Jam Monster Vape](https://jammonsterofficialwebsite.com/product/banana-nicotine-salt-by-jam-monst... | manthra_ea04fd7a70070d5f9 |

1,920,629 | Developing Gurully's PTE Exam Software: Challenges Faced and Solutions Implemented | Conquering the PTE exam requires dedication, but imagine having a powerful study tool by your side!... | 0 | 2024-07-12T07:30:49 | https://dev.to/olivia_william_/developing-gurullys-pte-exam-software-challenges-faced-and-solutions-implemented-39cn | pte, edtech, softwaredevelopment, saas |

Conquering the PTE exam requires dedication, but imagine having a powerful study tool by your side! Here at Gurully, we poured our hearts into crafting exceptional [PTE exam software](https://www.gurully.com/pte). ... | olivia_william_ |

1,920,630 | Optimizing ETL Processes for Efficient Data Loading in EDWs | In today's data-driven world, the ability to efficiently and accurately move data from various... | 0 | 2024-07-12T07:32:11 | https://dev.to/ovaisnaseem/optimizing-etl-processes-for-efficient-data-loading-in-edws-96n | emterprisedatawarehouse, etl, datascience, bigdata | In today's data-driven world, the ability to efficiently and accurately move data from various sources into an [enterprise data warehouse](https://www.astera.com/type/blog/enterprise-data-warehouse/?utm_source=https%3A%2F%2Fdev.to%2F&utm_medium=Organic+Guest+Post) (EDW) is crucial for enabling robust business intellige... | ovaisnaseem |

1,920,631 | 3+1 Best Simple Notion Tips to Boost Productivity | Hello, I hope you are doing well. In today’s article, I will share some use cases that I take... | 0 | 2024-07-12T07:34:12 | https://dev.to/mammadyahyayev/31-best-simple-notion-tips-to-boost-productivity-287h | productivity, discipline, notion |

Hello, I hope you are doing well. In today’s article, I will share some use cases that I take advantage of them frequently on Notion.

Notion probably most used productivity tools around the world in these days... | mammadyahyayev |

1,920,632 | Day 11 of 100 Days of Code | Thu, July 11, 2024 There were a lot more project exercises today than I've seen, which are very... | 0 | 2024-07-12T07:36:09 | https://dev.to/jacobsternx/day-11-of-100-days-of-code-29nd | 100daysofcode, webdev, javascript, beginners | Thu, July 11, 2024

There were a lot more project exercises today than I've seen, which are very interesting, but take a minute to complete.

Today I had to stop when I got to Wireframing, and will pick up there in the morning.

Short day, short post. Back at it in the morning! | jacobsternx |

1,920,633 | Day 11 of 100 Days of Code | Thu, July 11, 2024 There were a lot more project exercises today than I've seen, which are very... | 0 | 2024-07-12T07:36:09 | https://dev.to/jacobsternx/day-11-of-100-days-of-code-2jaa | 100daysofcode, webdev, javascript, beginners | Thu, July 11, 2024

There were a lot more project exercises today than I've seen, which are very interesting, but take a minute to complete.

Today I had to stop when I got to Wireframing, and will pick up there in the morning.

Short day, short post. Back at it in the morning! | jacobsternx |

1,920,634 | Advanced-Data Modeling Techniques for Big Data Applications | By Anshul Kichara When companies begin to use big data, they often face significant difficulties in... | 0 | 2024-07-12T07:36:56 | https://dev.to/anshul_kichara/advanced-data-modeling-techniques-for-big-data-applications-52me | devops, software, technology, trending | _[By Anshul Kichara](https://www.linkedin.com/in/anshul-tailor-kichara-2019a7181/)_

When companies begin to use big data, they often face significant difficulties in organizing, storing, and interpreting the vast amounts of data collected.

Applying traditional data modeling techniques to big data can lead to performa... | anshul_kichara |

1,920,635 | #Learn | Anyone knows good starter to learn these... Elasticsearch, Logstash & Kibana | 0 | 2024-07-12T07:38:06 | https://dev.to/farheen_sk/learn-222c | elasticsearch, logstash, kibana, learning | Anyone knows good starter to learn these...

Elasticsearch, Logstash & Kibana

| farheen_sk |

1,920,636 | RDP in Linux | Most Linux machines do not have RDP enabled. Use SSH or install a desktop environment like xRDP. To... | 0 | 2024-07-12T07:39:21 | https://dev.to/karunakaran/rdp-in-linux-8hd | linux, azure, virtualmachine, network | Most Linux machines do not have RDP enabled. Use SSH or install a desktop environment like xRDP.

To enable Remote Desktop Protocol (RDP) on an Azure Virtual Machine running Linux, you will need to install a desktop environment and an RDP server like xRDP. Here are the steps to do that:

### Step 1: Create and Access ... | karunakaran |

1,920,755 | The Era of LLM Agents: Next Big Wave in Knowledge Management | Gartner predicts that search engine volume will drop up to 25% in 2026. This is because of the... | 0 | 2024-07-12T07:48:55 | https://dev.to/ragavi_document360/the-era-of-llm-agents-next-big-wave-in-knowledge-management-3mm3 | Gartner predicts that search engine volume will drop up to 25% in 2026. This is because of the emergence of GenAI-powered search engines. Customers prefer to use a ChatGPT-like interface to seek answers, which are powered by Large Language Models (LLMs) for their convenience and ease of use. Eventually, customers will ... | ragavi_document360 | |

1,920,756 | Facial Implants Market Potential Exploring Growth Opportunities and Market Dynamics | Market Introduction and Size Analysis: The global facial implants market is poised to expand... | 0 | 2024-07-12T07:49:13 | https://dev.to/ganesh_dukare_34ce028bb7b/facial-implants-market-potential-exploring-growth-opportunities-and-market-dynamics-3dal | Market Introduction and Size Analysis:

The global facial implants market is poised to expand significantly, projected to increase from US$827 million in 2024 to US$1.5 billion by 2033, with a compound annual growth rate (CAGR) of 7.9% during the forecast period.

The [Facial implants market](https://www.persistencema... | ganesh_dukare_34ce028bb7b | |

1,920,757 | coofoagleeh.com/4/7143873 | A post by tariq abass | 0 | 2024-07-12T07:51:48 | https://dev.to/tariqabbas/coofoagleehcom47143873-2dmj | tariqabbas | ||

1,920,758 | Introduction to GBase 8c B Compatibility Library (2) | With the support of the Dolphin plugin, the GBase 8c B Compatibility Database (dbcompatibility='B',... | 0 | 2024-07-12T07:52:17 | https://dev.to/congcong/introduction-to-gbase-8c-b-compatibility-library-2-2bcn | database | With the support of the Dolphin plugin, the GBase 8c B Compatibility Database (dbcompatibility='B', hereafter referred to as the B compatibility library) has greatly enhanced its compatibility with MySQL in terms of data types. Here is a look at the common data types:

## Numerical Types

Compared to the native GBase 8... | congcong |

1,920,759 | http://coofoagleeh.com/4/7143873 | A post by tariq abass | 0 | 2024-07-12T07:52:47 | https://dev.to/tariqabbas/httpcoofoagleehcom47143873-575o | tariqabbas | ||

1,920,800 | Ins精准引流,Ins群发助手,Ins发帖工具 | Ins精准引流,Ins群发助手,Ins发帖工具 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T08:54:31 | https://dev.to/ybeu_vija_2347bafbaccca3d/insjing-zhun-yin-liu-insqun-fa-zhu-shou-insfa-tie-gong-ju-3802 |

Ins精准引流,Ins群发助手,Ins发帖工具

了解相关软件请登录 http://www.vst.tw

Ins精准引流,打造社交媒体营销的有效利器

在当今数字营销的浪潮中,社交媒体已经成为企业推广品牌和产品的重要平台。而在众多社交媒体平台中,Instagram(简称Ins)因其用户活跃度高、互动性强以及图片和视频内容的特性,成为许多品牌和个人选择的首选。在这篇文章中,我们将探讨Ins精准引流的重要性以及如何有效利用这一策略来增加品牌的曝光和吸引潜在客户。

什么是Ins精准引流?

Ins精准引流是指通过有针对性的手段,吸引目标受众进入自己的Ins主页或者网站,从而增加其关注度和用户参与度的营销策略。与传统广... | ybeu_vija_2347bafbaccca3d | |

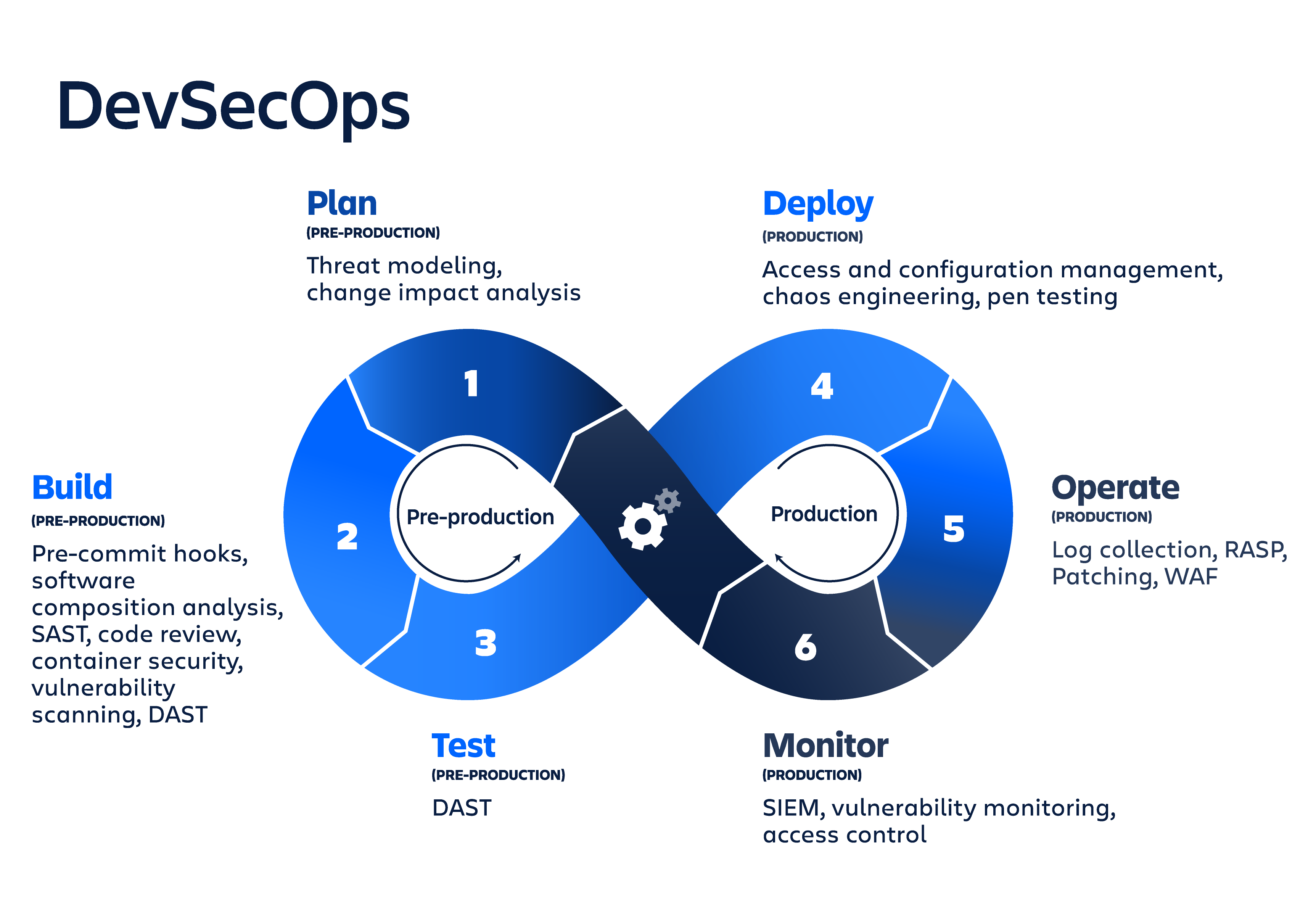

1,920,760 | DevSecOps | DevSecOps: Enforcing security, observability, and governance in DevOps Security has... | 0 | 2024-07-12T07:54:52 | https://dev.to/tekadesukant/test-post-hpl |

## DevSecOps: Enforcing security, observability, and governance in DevOps

- Security has always been a significant concern in the digital world. DevSecOps integrates the best practices in the DevOps lifecycle, emp... | tekadesukant | |

1,920,761 | Advantages of Developing an On-Demand Laundry Service App for Your Business | The popularity of on-demand mobile apps has grown as people’s schedules and lifestyles get more... | 0 | 2024-07-12T07:55:33 | https://dev.to/ellysperry/advantages-of-developing-an-on-demand-laundry-service-app-for-your-business-17c0 | laundryapp, ondemandapp, cloneappdevelopment, ondemandlaundryserviceapp | The popularity of on-demand mobile apps has grown as people’s schedules and lifestyles get more active. One of the most prominent instances is the development of an [on-demand laundry app](https://www.spotnrides.com/uber-for-laundry-booking-app), which allows users to conveniently schedule laundry pickup and delivery b... | ellysperry |

1,920,762 | Demonstration of Basic JDBC Operations with GBase 8s | Sample Environment Software Version JDBC... | 0 | 2024-07-12T07:56:46 | https://dev.to/congcong/demonstration-of-basic-jdbc-operations-with-gbase-8s-1dm | database | ### Sample Environment

| Software | Version |

|----------------|------------------------|

| JDBC Driver | gbasedbtjdbc_3.5.1.jar |

| JDK | 1.8 |

### JDBC Driver Download

The JDBC driver package is typically named: `gbasedbtjdbc_3.5.1.jar`.

Official download lin... | congcong |

1,920,763 | Understanding Electricity Billing: A Comprehensive Guide | Electricity billing is a crucial aspect of utility management, involving the calculation of costs... | 0 | 2024-07-12T08:09:44 | https://dev.to/moksh57/understanding-electricity-billing-a-comprehensive-guide-30jd | Electricity billing is a crucial aspect of utility management, involving the calculation of costs based on the amount of electricity consumed by users. This process is essential for both residential consumers and businesses, ensuring accurate invoicing and financial management. In this guide, we'll explore the fundamen... | moksh57 | |

1,920,764 | Baahubali Hanuman Idol from Karvaan: The Leading Gift Brand in India | Embrace divinity with Karvaan’s resin-made and antique finish god idols. Karvaan, a top Indian... | 0 | 2024-07-12T08:01:28 | https://dev.to/karvaan_home_decor/baahubali-hanuman-idol-from-karvaan-the-leading-gift-brand-in-india-4gab |

Embrace divinity with Karvaan’s resin-made and antique finish god idols. Karvaan, a top Indian gifting brand, presents [Karvaan’s Baahubali Hanuman idol for car dashboard](https://www.amazon.in/Hanuman-Bahubali-Dashb... | karvaan_home_decor | |

1,920,767 | #HTML Semantics On Search Engine Optimization. | []https://docs.google.com/document/d/13MsCkdRUvqte6zt-sp2v4Gw9c4G-JnMFd2xBrTHfq6U/edit?usp=sharing | 0 | 2024-07-12T08:15:11 | https://dev.to/peter_itumo_0eec0ea32b842/html-semantics-on-search-engine-optimization-ff3 | webdev, devops, beginners, programming | []https://docs.google.com/document/d/13MsCkdRUvqte6zt-sp2v4Gw9c4G-JnMFd2xBrTHfq6U/edit?usp=sharing | peter_itumo_0eec0ea32b842 |

1,920,769 | Become better Backend engineer | If you want to become a better backend engineer, read these blogs: ↓ • Meta:... | 0 | 2024-07-12T08:18:11 | https://dev.to/msnmongare/become-better-backend-engineer-204n | backenddevelopment, webdev, beginners, programming | If you want to become a better backend engineer, read these blogs: ↓

• Meta: https://engineering.fb.com

• AWS Architecture: https://lnkd.in/epGgc7CF

• Nextflix Tech: https://lnkd.in/eAzwuNnw

• Engineering at Microsoft: https://lnkd.in/e6h9U7et

• LinkedIn Engineering: https://lnkd.in/ejFHAWgr

• Uber: https://lnkd.... | msnmongare |

1,920,770 | Le saviez vous ? - ?? vs || | Le saviez-vous ? Quelle est la différence entre ?? et || ? Nullish Coalescing... | 0 | 2024-07-12T12:26:55 | https://dev.to/tontz/le-saviez-vous-vs--3c03 | javascript, french | Le saviez-vous ?

Quelle est la différence entre **??** et **||** ?

## Nullish Coalescing Operator - ??

De son doux nom français **“Opérateur de coalescence des nuls”**, **a ?? b** permet de renvoyer le terme **a** si ce dernier n’est pas ni **null** ni **undefined**. Dans le cas inverse l’opérateur renvoie... | tontz |

1,920,771 | Understanding Computer Vision: A Glimpse into the Future | Introduction to Computer Vision Computer vision is a rapidly evolving field of artificial... | 0 | 2024-07-12T08:19:30 | https://dev.to/sachinrawa73828/understanding-computer-vision-a-glimpse-into-the-future-55m9 | computervision, machinelearning, ai |

## Introduction to Computer Vision

Computer vision is a rapidly evolving field of [artificial intelligence (AI)](https://www.ailoitte.com/services/artificial-intelligence-development/) that enables machines to perceive, understand, and interpret the visual world around them. This technology has come a long way since ... | sachinrawa73828 |

1,920,773 | Run custom migrations in laravel | php artisan migrate --path=database/migrations/2024_06_19_152627_create_api_logs_table.php ... | 0 | 2024-07-12T08:21:10 | https://dev.to/msnmongare/run-custom-migrations-in-laravel-2ecf | laravel, webdev, beginners, programming | ```

php artisan migrate --path=database/migrations/2024_06_19_152627_create_api_logs_table.php

```

| msnmongare |

1,920,775 | Authentication and Authorization in .NET Core | Summary: As the tech business development marketplace evolves with the days the security risks... | 0 | 2024-07-12T08:22:47 | https://dev.to/jemindesai/authentication-and-authorization-in-net-core-3a7h | authentication, authorization, dotnetcore, positiwise | **Summary:** As the tech business development marketplace evolves with the days the security risks related to it take a surge. This leads to the need for restricting access to a few resources within the application to authorized users only as it allows the server to determine which resources the user should have access... | jemindesai |

1,920,776 | Unlocking Business Potential: How Cloud Computing Transforms Efficiency and Innovation" | Introduction to Cloud computing: Applications. Benefits, and Risks Imagine having to go to Zoom... | 0 | 2024-07-12T08:24:24 | https://dev.to/fajbaba/unlocking-business-potential-how-cloud-computing-transforms-efficiency-and-innovation-3d5o |

Introduction to Cloud computing: Applications. Benefits, and Risks

Imagine having to go to Zoom headquarters each time you wanted to make a Zoom call. You have to visit Google's California headquarters to utilize Google Chrome at any time. To stream a movie on Netflix, you have to visit the company's headquarters in... | fajbaba | |

1,920,777 | Day 2: Conquering Containers and Kubernetes on the Cloud! | Welcome back, fellow cloud adventurers! Today marks day 2 of our 100-day cloud odyssey, and let me... | 0 | 2024-07-12T08:26:43 | https://dev.to/tutorialhelldev/day-2-conquering-containers-and-kubernetes-on-the-cloud-g6e | 100daysofcode, devops, docker, kubernetes | Welcome back, fellow cloud adventurers! Today marks day 2 of our 100-day cloud odyssey, and let me tell you, it's been a whirlwind of containers and clusters! We delved into the fascinating world of Docker and Kubernetes Engine (GKE) on Google Cloud Platform (GCP).

Docker Deep Dive: Building Our Own Tiny Ships!

Imagi... | tutorialhelldev |

1,920,778 | Create a Spotify Playlist Generator with Arcjet Protection | Introduction Web applications are essential for businesses to deliver digital services,... | 0 | 2024-07-12T08:27:12 | https://dev.to/arindam_1729/create-a-spotify-playlist-generator-with-arcjet-protection-2j93 | node, javascript, beginners, webdev | ## **Introduction**

Web applications are essential for businesses to deliver digital services, and they have become increasingly important in recent years as more and more people access services online.

As web applications become more complex and handle increasingly sensitive data, the need to secure these applicatio... | arindam_1729 |

1,920,779 | Is Structural Timber a Durable Construction Material? | In the ever-changing world of construction materials, structural timber remains a top choice for... | 0 | 2024-07-12T08:30:50 | https://dev.to/sales_timbercentral_62b0/is-structural-timber-a-durable-construction-material-54bp | timber, structuraltimber, mgp10, buildingmaterial | In the ever-changing world of construction materials, structural timber remains a top choice for builders. Its timeless appeal and versatility make it a go-to option for modern construction projects.

But the question lingers: Is structural timber truly a durable construction material?

Understanding Structural Timber

... | sales_timbercentral_62b0 |

1,920,780 | 网络获客导流软件,获客霸屏工具,获客发帖工具 | 网络获客导流软件,获客霸屏工具,获客发帖工具 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T08:32:16 | https://dev.to/qaou_rlow_9325968389ce140/wang-luo-huo-ke-dao-liu-ruan-jian-huo-ke-ba-ping-gong-ju-huo-ke-fa-tie-gong-ju-254m |

网络获客导流软件,获客霸屏工具,获客发帖工具

了解相关软件请登录 http://www.vst.tw

网络获客导流软件,在当今数字化营销领域中扮演着至关重要的角色。随着互联网的普及和数字市场的扩展,企业越来越依赖于这些软件来吸引、转化和保留客户。本文将探讨网络获客导流软件的定义、功能及其在现代营销中的重要性。

什么是网络获客导流软件?

网络获客导流软件是指一类用于帮助企业吸引潜在客户并引导他们进入销售漏斗的工具和平台。这些软件通过一系列功能和策略,帮助企业增加品牌曝光、提高网站流量、提升转化率,并最终实现销售增长。典型的网络获客导流软件通常包括以下关键功能,

SEO优化工具, 帮助优化网站内容,提升在搜索... | qaou_rlow_9325968389ce140 | |

1,920,781 | Some thoughts on Spikes | Hey devs, 🚀 If you are trying to Build Innovative and Technically Challenging Solutions Fast, ... | 0 | 2024-07-12T08:33:21 | https://dev.to/nikoldimit/some-thoughts-on-spikes-494g | spikes, agile | Hey devs,

🚀 If you are trying to Build Innovative and Technically Challenging Solutions Fast, Spikes can be a very effective approach!

While Building Fusion, we embraced the strategy of Spikes to address complex UX and technical challenges efficiently. By focusing on specific problems, testing potential solutions r... | nikoldimit |

1,920,782 | Unit Tests & Mocking: the Bread and the Butter | Premise Welcome back, folks 🤝 After a while, I finally took the time to start this new... | 28,045 | 2024-07-12T08:36:20 | https://dev.to/ossan/unit-tests-mocking-the-bread-and-the-butter-1hap | go, testing, vscode, webdev |

## Premise

Welcome back, folks 🤝 After a while, I finally took the time to start this new series of blog posts around computer science, programming, and Go. This series will be focused on different things we can deal with when dealing with our daily work.

### How you should read it

Before getting into it, let me s... | ossan |

1,920,783 | How to Use the Gemini API: A Comprehensive Guide | Introduction Google's Gemini API offers a powerful tool for developers to harness the capabilities of... | 0 | 2024-07-12T08:36:38 | https://dev.to/rajprajapati/how-to-use-the-gemini-api-a-comprehensive-guide-4bcg | ai, python | **Introduction**

Google's Gemini API offers a powerful tool for developers to harness the capabilities of advanced language models. This article provides a step-by-step guide on how to use the Gemini API, complete with code examples.

**Prerequisites**

Before diving into the code, ensure you have the following:

A Goog... | rajprajapati |

1,920,785 | Hello World! | ... | 0 | 2024-07-12T08:39:18 | https://dev.to/adeoyo_david_48c4017950cb/hello-world-5354 | ... | adeoyo_david_48c4017950cb | |

1,920,859 | What Do Medical Billers and Coders Do? | Medical billers and coders play a crucial role in the healthcare industry by ensuring that healthcare... | 0 | 2024-07-12T09:47:00 | https://dev.to/sanya3245/what-do-medical-billers-and-coders-do-1fao | Medical billers and coders play a crucial role in the healthcare industry by ensuring that healthcare providers are accurately reimbursed for their services. Their duties involve handling various administrative tasks related to patient records and [insurance claims](https://www.invensis.net/ ).

**Medical Coders**

Med... | sanya3245 | |

1,920,787 | Laravel Advanced: Top 10 Validation Rules You Didn't Know Existed | Do you know all the validation rules available in Laravel? Think again! Laravel has many ready-to-use... | 27,571 | 2024-07-12T08:42:52 | https://backpackforlaravel.com/articles/tips-and-tricks/laravel-advanced-top-10-validation-rules-you-didn-t-know-existed | laravel, validation | Do you know all the validation rules available in Laravel? Think again! Laravel has many ready-to-use validation rules that can make your code life a whole lot easier. Let’s uncover the top 10 validation rules you probably didn’t know existed.

### 1. **Prohibited**

Want to make sure a field is not present in the input... | karandatwani92 |

1,920,789 | 🎉 iPhone 15 Pro Max Giveaway! 🎉 | 🎉 iPhone 15 Pro Max Giveaway! 🎉 Hey everyone! We’re thrilled to announce an amazing giveaway! 🎁... | 0 | 2024-07-12T08:43:50 | https://dev.to/jr_heller_1211/iphone-15-pro-max-giveaway-1oc5 | webdev, javascript, beginners, programming |

[🎉 iPhone 15 Pro Max Giveaway! 🎉

](https://acrelicenseblown.com/e9msagb7?key=81a03c9597124ad2b0b057c8adabef59)

Hey everyone! We’re thrilled to announce an amazing giveaway! 🎁 We’re giving away a brand-new iPhone... | jr_heller_1211 |

1,920,790 | How we design mutual fund unit allotment system | We tackled the challenge of efficiently managing mutual fund investment unit data from the BSE Star... | 0 | 2024-07-12T08:45:04 | https://dev.to/mutual_fund_dev/how-we-design-mutual-fund-unit-allotment-system-gpe | javascript, node, laravel, mysql | We tackled the challenge of efficiently managing mutual fund investment unit data from the BSE Star API. Here's how we designed a robust and scaleable system:

Data Retrieval and Processing

BSE Star updates units against orders throughout the market day. Handling this continuous influx of data was our primary challen... | mutual_fund_dev |

1,920,791 | Shop Handmade Jewellery Online in India | Complimento offers a curated collection of exquisite handmade jewellery online in India. Find... | 0 | 2024-07-12T08:46:10 | https://dev.to/complimento/shop-handmade-jewellery-online-in-india-19nh | Complimento offers a curated collection of exquisite [handmade jewellery online in India](https://complimento.in/). Find stunning earrings, necklaces, bracelets, and rings, all handcrafted by skilled artisans from across the country. Each piece is unique and embodies the rich heritage of Indian craftsmanship. Discover ... | complimento | |

1,920,792 | Did you know about these 5 benefits of PVC wall Cladding? | Do you wish to renovate your house? If yes, then it is time for you to choose something that is... | 0 | 2024-07-12T08:46:52 | https://dev.to/_titantradecentre/did-you-know-about-these-5-benefits-of-pvc-wall-cladding-23a1 | pvc, internalwallcladding, wallpanels, psfoam | Do you wish to renovate your house? If yes, then it is time for you to choose something that is trending and can also enhance the look of your place.

[PVC panels](www.titantradecentre.com.au) are in trend, which can easily offer you one of the most elegant looks for your house. PVC wall cladding, or Polyvinyl Chloride... | _titantradecentre |

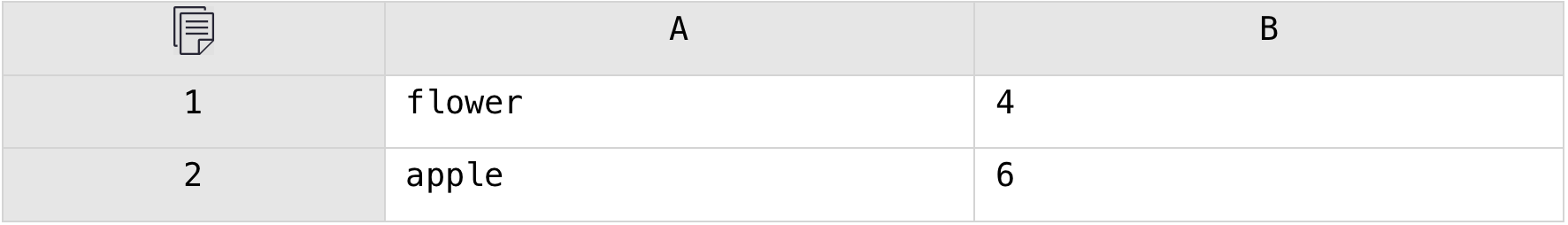

1,920,793 | #24 — Repeat the Value of Each Cell N times According to the Value of the Neighboring cell | Problem description & analysis: The table below has two columns, where column B is the... | 0 | 2024-07-12T08:47:01 | https://dev.to/judith677/24-repeat-the-value-of-each-cell-n-times-according-to-the-value-of-the-neighboring-cell-1fb4 | tutorial, beginners, productivity, excel | **Problem description & analysis**:

The table below has two columns, where column B is the number.

We need to repeat the value of column A N times according to the value of column B and concatenate the results into one... | judith677 |

1,920,794 | Top 10 Clean Code Rules | An Image showing Clean Code book by Robert C Martin on top of a laptop keyboard. According to “Clean... | 0 | 2024-07-12T08:47:16 | https://dev.to/e-tech/top-10-clean-code-rules-4d8g | An Image showing Clean Code book by Robert C Martin on top of a laptop keyboard.

According to “Clean code” book by Uncle Bob, he defined some guidances and rules that developers should follow. This is more imperative for the less experienced developers. With more experience, comes the possibility of breaking some rules... | e-tech | |

1,920,795 | Zhangjiagang King-Macc: Revolutionizing the Machinery Industry | Sounds like the perfect topic for us to talk about: How machines are taking over the world! We have... | 0 | 2024-07-12T08:47:24 | https://dev.to/osmab_ryaikav_5d2ea6f3a9d/zhangjiagang-king-macc-revolutionizing-the-machinery-industry-2hmg | design | Sounds like the perfect topic for us to talk about: How machines are taking over the world! We have great machines because they help us make food, drink and medicine. Zhangjiagang King-Macc Machinery Manufacturing Co., Ltd., this does not know the name you will say no, I think your impression here should be better than... | osmab_ryaikav_5d2ea6f3a9d |

1,920,797 | Earn $2.5 Per Answer | Earn $2.5 Per Answer Make money from answering simple questions. We pay you in cash. Simple and... | 0 | 2024-07-12T08:50:49 | https://dev.to/jr_heller_1211/earn-25-per-answer-1gdo | webdev, javascript, beginners, tutorial |

[Earn $2.5 Per Answer

](https://bit.ly/3LhKw7s)

[Make money from answering simple questions.

](https://bit.ly/3LhKw7s)

We pay you in cash. Simple and fun.

| jr_heller_1211 |

1,920,798 | 网络获客活粉采集软件,获客拉群工具,获客发帖工具 | 网络获客活粉采集软件,获客拉群工具,获客发帖工具 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T08:54:30 | https://dev.to/ufrf_guxx_445b73f9ed40ac0/wang-luo-huo-ke-huo-fen-cai-ji-ruan-jian-huo-ke-la-qun-gong-ju-huo-ke-fa-tie-gong-ju-m56 |

网络获客活粉采集软件,获客拉群工具,获客发帖工具

了解相关软件请登录 http://www.vst.tw

网络获客活粉采集软件,作为数字营销领域的重要工具,正日益受到企业和个人用户的关注与运用。这类软件的主要功能是通过自动化的方式,帮助用户快速获取潜在客户的信息,如电话号码、电子邮件地址等,从而支持营销和销售活动的展开。

背景介绍

随着互联网的普及和数字营销的兴起,传统的市场推广方式已经不能完全满足企业对客户获取的需求。传统的广告投放和市场调研渠道存在效率低、成本高等问题,而网络获客活粉采集软件则以其高效、精准的特点,成为了现代营销策略中的重要一环。

功能与优势

网络获客活粉采集软件主要具有以下功能与优... | ufrf_guxx_445b73f9ed40ac0 | |

1,920,802 | 纸飞机群发软件,纸飞机推广引流系统,纸飞机群发防封号工具 | 纸飞机群发软件,纸飞机推广引流系统,纸飞机群发防封号工具 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T08:56:43 | https://dev.to/yrqr_eunx_26be59f0537fcad/zhi-fei-ji-qun-fa-ruan-jian-zhi-fei-ji-tui-yan-yin-liu-xi-tong-zhi-fei-ji-qun-fa-fang-feng-hao-gong-ju-3e5o |

纸飞机群发软件,纸飞机推广引流系统,纸飞机群发防封号工具

了解相关软件请登录 http://www.vst.tw

纸飞机群发软件,让信息传播更简单

在数字化时代,信息的传播速度和方式发生了翻天覆地的变化。从最初的电报到如今的社交媒体,人类与人类之间的沟通变得前所未有的便捷和迅速。而在这些传播方式中,纸飞机群发软件则展示了一种独特且富有创意的方式,让信息传播变得更加有趣和生动。

纸飞机群发软件,作为一种互动性强、传播形式新颖的工具,不仅仅是信息传递的手段,更是一种社交互动的媒介。它通过模拟纸飞机的形式,将文字、图片或者视频等信息封装在虚拟的“纸飞机”中,然后通过软件的平台进行群发。接收者可以通过点击或者滑动的... | yrqr_eunx_26be59f0537fcad | |

1,920,803 | The Benefits and Challenges of Cross-Docking in Shipping Logistics | What is Cross-Docking? It most likely experienced a system complex Cross-Docking should you ever... | 0 | 2024-07-12T08:58:47 | https://dev.to/osmab_ryaikav_5d2ea6f3a9d/the-benefits-and-challenges-of-cross-docking-in-shipping-logistics-43nd | design | What is Cross-Docking?

It most likely experienced a system complex Cross-Docking should you ever ordered a package on internet. What makes it work? Imagine a large household or warehouse with two doorways, one out of incoming part and another concerning part outbound. Inside, you will find employees whom get product... | osmab_ryaikav_5d2ea6f3a9d |

1,920,806 | Certified AI Professional (CAIP): Is worth pursuing? | In the technology-driven era, Artificial Intelligence (AI) has emerged as one of the most capable... | 0 | 2024-07-12T09:00:14 | https://dev.to/georgiaweston/certified-ai-professional-caip-is-worth-pursuing-4lhl | ai, aiprofessional, certification | In the technology-driven era, Artificial Intelligence (AI) has emerged as one of the most capable technologies. Within a short span of time AI has transformed how humans interact with technology. The rising popularity of AI is evident from the fact that today a plethora of new job opportunities have come into existence... | georgiaweston |

1,920,807 | Custom Yard Sign Design and Printing | High-Quality, Personalized Options for Any Business | Explore custom yard sign design and printing with high-quality, personalized options perfect for any... | 0 | 2024-07-12T09:02:44 | https://dev.to/hutsign/custom-yard-sign-design-and-printing-high-quality-personalized-options-for-any-business-2k9k | Explore custom yard sign design and printing with high-quality, personalized options perfect for any business. Create unique, eye-catching signs that make a lasting impression. Visit Our Website For More Information!

[https://www.signshut.com/custom-design](https://www.signshut.com/custom-design) | hutsign | |

1,920,813 | How to Benefit from AZ 700 Dumps in Your Study Strategy | Maximizing the Benefits of High-Quality Dumps While dumps can be a valuable AZ 700 Dumps study aid,... | 0 | 2024-07-12T09:07:33 | https://dev.to/uppy1930/how-to-benefit-from-az-700-dumps-in-your-study-strategy-1ge7 | webdev, javascript, beginners, programming | Maximizing the Benefits of High-Quality Dumps

While dumps can be a valuable <a href="https://dumpsarena.com/microsoft-dumps/az-700/">AZ 700 Dumps</a> study aid, it's essential to use them effectively to maximize their benefits. Here are some tips to help you make the most of high-quality dumps:

Choose Reputable Source... | uppy1930 |

1,920,808 | AI Tracking Software in KSA revolutionizing business with Artificial Intelligence | The KSA is on the cutting edge in technological advancement, one among the technologies that is being... | 0 | 2024-07-12T09:03:42 | https://dev.to/aafiya_69fc1bb0667f65d8d8/ai-tracking-software-in-ksa-revolutionizing-business-with-artificial-intelligence-5em6 | ai, technology, software | The KSA is on the cutting edge in technological advancement, one among the technologies that is being used in the KSA are [artificial intelligence](https://www.expediteiot.com/artificial-intellingence-in-saudi-qatar-and-oman/) (AI). From improving business processes and enhancing customer service, AI software in Riyadh... | aafiya_69fc1bb0667f65d8d8 |

1,920,809 | Managing Kubernetes Clusters like a PRO | Managing multiple Kubernetes clusters can be a complex task, but with the right tools and techniques,... | 0 | 2024-07-12T09:36:26 | https://dev.to/raunaqness/managing-kubernetes-clusters-like-a-pro-10ba | kubernetes, docker, devops, programming | Managing multiple Kubernetes clusters can be a complex task, but with the right tools and techniques, it becomes a seamless part of your workflow.

As a Senior Machine Learning Engineer, I frequently work with various Kubernetes clusters, often needing to view logs or the state of multiple clusters at the same time. I... | raunaqness |

1,920,810 | Globe SIM Registration | SIM Registration Globe 2024 | Globe SIM Registration Guide Under the SIM Card Registration Act in the Philippines, registering... | 0 | 2024-07-12T09:04:38 | https://dev.to/jason13/globe-sim-registration-sim-registration-globe-2024-5ghb | Globe SIM Registration Guide

Under the SIM Card Registration Act in the Philippines, registering your Globe SIM card is now mandatory. We understand that many users face challenges with online registration, so we’ve created this comprehensive guide to simplify the process and help you easily register your Globe SIM ca... | jason13 | |

1,920,811 | 软件,Ins好友群发,Ins群控助手 | Ins筛号软件,Ins好友群发,Ins群控助手 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T09:04:46 | https://dev.to/baye_nnkt_222ed1ba0c14435/ruan-jian-inshao-you-qun-fa-insqun-kong-zhu-shou-47hf |

Ins筛号软件,Ins好友群发,Ins群控助手

了解相关软件请登录 http://www.vst.tw

Ins筛号软件,社交媒体管理的利器

在当今数字化社交时代,社交媒体已成为个人和品牌推广的重要平台。Instagram(以下简称Ins)作为全球最受欢迎的图片和视频分享社交平台之一,其用户群体庞大且多样化。然而,随着用户数量的增加,有效地管理和筛选目标受众变得尤为重要。在这一需求下,Ins筛号软件应运而生,成为许多用户的强大助手。

什么是Ins筛号软件?

Ins筛号软件是指能够帮助用户筛选、管理和分析Instagram账户的工具。这类软件通常通过复杂的算法和数据分析,帮助用户更加精确地定位和互动目标受众,... | baye_nnkt_222ed1ba0c14435 | |

1,920,812 | How to improve OCR accuracy ? | my 5-year experience | my experience with OCR technologies I created my 1st image to text converting app on Oct... | 0 | 2024-07-12T09:06:48 | https://abanoubhanna.com/posts/improve-ocr/ | ocr, opensource, android | ## my experience with OCR technologies

I created my 1st image to text converting app on Oct 6th 2018, so it was 5+ years ago. I have been improving, learning, rewriting, iterating, experimenting on OCR technology since then.

I created all of these apps to extract text from images/photos:

- [IMG2TXT: Image To Text OC... | abanoubha |

1,920,814 | Unlocking the Power of Integration with MuleSoft | In today’s digital era, businesses face the challenge of integrating diverse applications, data, and... | 0 | 2024-07-12T09:09:42 | https://dev.to/mylearnnest/unlocking-the-power-of-integration-with-mulesoft-14ij | mulesofthackathon | In today’s digital era, businesses face the challenge of integrating diverse applications, data, and devices seamlessly. This is where MuleSoft comes into play. [MuleSoft is a powerful integration platform ](https://www.mylearnnest.com/best-mulesoft-training-in-hyderabad/)that enables organizations to connect any syste... | mylearnnest |

1,920,815 | Telegram吸粉软件,Telegram采集群成员,Telegram群发防封号机器人 | Telegram吸粉软件,Telegram采集群成员,Telegram群发防封号机器人 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T09:10:18 | https://dev.to/jmvt_jtos_5285a4c40a359b7/telegramxi-fen-ruan-jian-telegramcai-ji-qun-cheng-yuan-telegramqun-fa-fang-feng-hao-ji-qi-ren-fma |

Telegram吸粉软件,Telegram采集群成员,Telegram群发防封号机器人

了解相关软件请登录 http://www.vst.tw

当谈论到社交媒体平台时,Telegram(电报)不可避免地成为讨论的焦点。作为一款多功能的即时通讯应用程序,Telegram不仅提供了安全和私密的聊天功能,还以其吸引粉丝的特性而脱颖而出。

Telegram的特性

Telegram以其独特的特性吸引了全球各地的用户。以下是几个主要的特点,

安全性和隐私保护,Telegram通过端到端加密保护用户的聊天内容,确保消息不被第三方窃取。此外,它还提供了自毁消息的选项,使用户可以控制消息的生命周期。

频道和群组,Tele... | jmvt_jtos_5285a4c40a359b7 | |

1,920,816 | Authorization pitfalls: what does Keycloak cloak? | Author: Valerii Filatov User authorization and registration are important parts of any application,... | 0 | 2024-07-12T09:11:46 | https://dev.to/anogneva/authorization-pitfalls-what-does-keycloak-cloak-cf2 | java, programming, opensource | Author: Valerii Filatov

User authorization and registration are important parts of any application, not only for users but also for security\. What pitfalls does the source code of a popular open\-source identity management solution hide? How do they affect the application?

营销获客系统,TG群发机器人,TG群发 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T09:13:43 | https://dev.to/tcel_aivz_7ea19d55138608c/gqun-fa-ji-qi-ren-tgqun-fa-43jj |

电报(TG)营销获客系统,TG群发机器人,TG群发

了解相关软件请登录 http://www.vst.tw

在当今数字化营销的时代,电报(Telegram,简称TG)作为一个强大的社交平台,不仅仅是即时通讯工具,也成为了许多企业和个人营销策略中的重要一环。本文将探讨电报作为营销和获客系统的有效运用方式。

电报的优势

1. 即时性和互动性

电报提供即时通讯功能,用户可以实时收到信息并迅速做出反应。这种即时性使得营销信息可以快速传播,并与潜在客户进行互动,增强用户参与感和粘性。

2. 无限制的成员数

电报的群组和频道可以容纳成千上万的成员,这为企业和品牌提供了广阔的传播平台。无论是宣传产品、发布优惠信息还是... | tcel_aivz_7ea19d55138608c | |

1,920,819 | Cloud Resume Challenge: Introduction | What is it? The Cloud Resume Challenge is a 16 step project designed to showcase the... | 0 | 2024-07-12T09:13:44 | https://dev.to/hellopackets89/cloud-resume-challenge-introduction-2e75 | cloudresumechallenge, cloud, azure | #What is it?

The Cloud Resume Challenge is a 16 step project designed to showcase the skills one develops while performing the steps necessary to upload their resume to the cloud as an HTML document. Choosing to upload a resume is actually optional and the challenge can be completed with any static website that you wan... | hellopackets89 |

1,920,822 | 跨境电商活粉采集软件,跨境霸屏助手,跨境拉群助手 | 跨境电商活粉采集软件,跨境霸屏助手,跨境拉群助手 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T09:14:15 | https://dev.to/jurj_uwkg_d2e3d30d87f55b8/kua-jing-dian-shang-huo-fen-cai-ji-ruan-jian-kua-jing-ba-ping-zhu-shou-kua-jing-la-qun-zhu-shou-3g1f |

跨境电商活粉采集软件,跨境霸屏助手,跨境拉群助手

了解相关软件请登录 http://www.vst.tw

跨境电商活粉采集软件,作为现代电商营销的重要工具,正逐渐受到业界的广泛关注。这类软件旨在帮助跨境电商企业精准定位并采集活跃用户信息,从而优化营销策略,提升转化率。

其功能强大,能够自动分析社交媒体、电商平台等多渠道数据,筛选出高活跃度的潜在买家群体。通过深度挖掘用户行为数据,企业可以更加了解目标客户的需求与偏好,实现个性化推荐与精准营销。

在应用场景上,跨境电商活粉采集软件广泛应用于市场调研、客户画像构建、广告投放优化等多个环节。它为企业提供了宝贵的用户洞察,助力企业在激烈的市场竞争中脱颖而出。

然而,值得... | jurj_uwkg_d2e3d30d87f55b8 | |

1,920,823 | Fully furnished apartments for sale in Whitefield's prime locations | Whitefield, situated in the eastern periphery of Bangalore, has emerged as a bustling hub of... | 0 | 2024-07-12T09:14:30 | https://dev.to/address_advisors_80c762d7/fully-furnished-apartments-for-sale-in-whitefields-prime-locations-5d1i | Whitefield, situated in the eastern periphery of Bangalore, has emerged as a bustling hub of residential and commercial activity. Known for its IT parks, vibrant social scene, and excellent connectivity, this area has become a magnet for homebuyers seeking modern living with convenience. Among the various options avail... | address_advisors_80c762d7 | |

1,920,824 | How to Get a US Random Phone for Discord With Our Comprehensive Guide | Discord, a popular platform for communication among gamers and communities, often requires phone... | 0 | 2024-07-12T09:15:19 | https://dev.to/legitsms/how-to-get-a-us-random-phone-for-discord-with-our-comprehensive-guide-2o20 | webdev, javascript, beginners, programming | Discord, a popular platform for communication among gamers and communities, often requires phone number verification. Users may seek a US random phone number for Discord for various reasons. Whether you need a random phone number list, random real phone numbers, or a random telephone number generator US, this guide cov... | legitsms |

1,920,826 | Introduction to NEURAL MACHINE TRANSLATION BY JOINTLY LEARNING TO ALIGN AND TRANSLATE | Introduction Neural machine translation appears more effective than traditional... | 0 | 2024-07-12T09:17:23 | https://dev.to/muhammad_saim_7/introduction-to-neural-machine-translation-by-jointly-learning-to-align-and-translate-4akb | attention, deeplearning, nlp, machinetranslation | ### Introduction

Neural machine translation appears more effective than traditional statistical modeling for translating sentences. This paper introduces the concept of attention in neural machine translation, which is a better approach to translating sentences. Normal neural translators use fixed-length vectors, which... | muhammad_saim_7 |

1,920,828 | Website Pop-Ups – Still a Valid Lead Generation Tool? | Every old online user remembers website pop-ups, which marketers regarded as a useful tool for lead... | 0 | 2024-07-12T09:21:06 | https://www.peppersquare.com/blog/website-pop-ups-still-a-valid-lead-generation-tool/ | web, website, webdev | Every old online user remembers [website pop-ups](https://www.peppersquare.com/ui-ux-design/website-design/), which marketers regarded as a useful tool for lead generation online. These were supposed to be an excellent way to keep ads apart from content, and these showed up while browsing specific websites. However, wh... | pepper_square |

1,920,829 | How to start a medical billing company: key steps and strategies | Starting a medical billing company requires careful planning, industry knowledge, and strategic... | 0 | 2024-07-12T09:21:25 | https://dev.to/sanya3245/how-to-start-a-medical-billing-company-key-steps-and-strategies-743 | Starting a medical billing company requires careful planning, industry knowledge, and strategic execution. Here are key steps and strategies to help you establish a successful [medical billing business](https://www.invensis.net/):

**1. Research and Plan**

**Understand the Industry:** Gain a thorough understanding of ... | sanya3245 | |

1,920,830 | Embracing Site Reliability Engineering For Enhanced IT Operations | Enhanced IT Operations In today’s fast-paced digital world, the efficiency and effectiveness of IT... | 0 | 2024-07-12T09:22:13 | https://dev.to/saumya27/embracing-site-reliability-engineering-for-enhanced-it-operations-3klb |

**Enhanced IT Operations**

In today’s fast-paced digital world, the efficiency and effectiveness of IT operations play a crucial role in determining an organization’s success. Enhanced IT operations refer to the strategic implementation of tools, processes, and practices that improve the performance, reliability, and... | saumya27 | |

1,920,831 | How do you boost conversions on your WooCommerce store? | Optimising conversion rates is pivotal to the long-term success of your WooCommerce online store A... | 0 | 2024-07-12T09:25:08 | https://dev.to/sakkuntickoo/how-do-you-boost-conversions-on-your-woocommerce-store-5fa7 | woocommerce, productivity, website | **Optimising conversion rates is pivotal to the long-term success of your WooCommerce online store**

A key metric to measure the performance of your [WooCommerce online store](https://wonderful.co.uk/blog/woocommerce-online-store) is the conversion rate. It represents the percentage of visitors who end up taking a des... | sakkuntickoo |

1,920,832 | Hydraulic Components: Ensuring Precision and Accuracy in Motion Control | Benefits of Hydraulic Components to Guarantee Motion Control with Precision and Accuracy Hydraulic... | 0 | 2024-07-12T09:24:15 | https://dev.to/osmab_ryaikav_5d2ea6f3a9d/hydraulic-components-ensuring-precision-and-accuracy-in-motion-control-4jpb | design | Benefits of Hydraulic Components to Guarantee Motion Control with Precision and Accuracy

Hydraulic components are an important factor responsible for the motion, precision and efficiency of machines. You may be surprised to see how accurately and effortlessly heavy machinery can lift, relay large loads with a press of... | osmab_ryaikav_5d2ea6f3a9d |

1,920,834 | Facebook批量群发广告,Facebook采集助手,Facebook行销助手 | Facebook批量群发广告,Facebook采集助手,Facebook行销助手 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T09:27:01 | https://dev.to/fkcf_naao_4ca12e32ddfffc6/facebookpi-liang-qun-fa-yan-gao-facebookcai-ji-zhu-shou-facebookxing-xiao-zhu-shou-2je7 |

Facebook批量群发广告,Facebook采集助手,Facebook行销助手

了解相关软件请登录 http://www.vst.tw

Facebook批量群发广告,高效触达目标受众

Facebook作为全球最大的社交媒体平台之一,为广告主提供了强大的批量群发广告功能。通过精准定位目标受众,广告主可以一次性向大量潜在用户展示广告内容,实现高效营销。

在使用Facebook批量群发广告时,首先需要明确广告目标,如品牌曝光、产品推广或销售转化等。接着,利用Facebook的广告管理工具,广告主可以创建多个广告组,每个广告组针对不同的受众群体和兴趣偏好。通过设定广告预算、投放时间和地理位置等参数,广告主可以灵活控制... | fkcf_naao_4ca12e32ddfffc6 | |

1,920,835 | Securing the Cloud Frontier: Generative AI for Vulnerability Hunting | The vast expanse of the cloud offers unparalleled scalability, agility, and cost-effectiveness for... | 0 | 2024-07-12T09:27:06 | https://www.cloudanix.com/ | genai, cloudsecurity, cloudcomputing, vulnerabilities | The vast expanse of the cloud offers unparalleled scalability, agility, and cost-effectiveness for businesses. However, this digital frontier also presents a unique set of security challenges. As organizations migrate an increasing number of critical applications and sensitive data to the cloud, the attack surface expa... | abhiram_cdx |

1,920,836 | Importance of Medical Billing Solution With The Agency | Medical billing solutions are essential for healthcare providers and agencies due to the complexity... | 0 | 2024-07-12T09:27:59 | https://dev.to/sanya3245/importance-of-medical-billing-solution-with-the-agency-350c | Medical billing solutions are essential for healthcare providers and agencies due to the complexity and volume of [claims processing](https://www.invensis.net/). Integrating a robust medical billing solution within an agency brings numerous benefits that streamline operations, enhance accuracy, and improve financial he... | sanya3245 | |

1,920,837 | How to measure dividends | Investors often analyse the following metrics to assess the quality of dividends from companies in... | 0 | 2024-07-12T09:29:52 | https://dev.to/snowball/how-to-measure-dividends-12e9 | Investors often analyse the following metrics to assess the quality of dividends from companies in the US:

**Dividend Yield:** The ratio of annual dividends to the share price. This is the primary indicator of investment returns.

**Dividend growth:** A company's history of dividend changes. Companies that regularly in... | snowball | |

1,920,838 | Common AC Problems in South Stuart | The climate in South Stuart can put a lot of strain on air conditioning systems. Some common problems... | 0 | 2024-07-12T09:29:54 | https://dev.to/ritu_varma_8c5cc2c3cfcda8/common-ac-problems-in-south-stuart-2cip | The climate in South Stuart can put a lot of strain on air conditioning systems. Some common problems include:

High Humidity Levels: The humidity in South Stuart can cause AC units to work harder to remove moisture from the air, leading to increased wear and tear on the system.

Salt Air Corrosion: Proximity to the co... | ritu_varma_8c5cc2c3cfcda8 | |

1,920,857 | Top 4 Countries Hold 46.3% Share in Bromelain Market in 2022 | The global bromelain market, valued at 40.5 billion in 2023, is anticipated to expand at a... | 0 | 2024-07-12T09:45:38 | https://dev.to/swara_353df25d291824ff9ee/top-4-countries-hold-463-share-in-bromelain-market-in-2022-3g88 |

The global [bromelain market](https://www.persistencemarketresearch.com/market-research/bromelain-market.asp), valued at 40.5 billion in 2023, is anticipated to expand at a value-based CAGR of 4.1%, reaching approxi... | swara_353df25d291824ff9ee | |

1,920,839 | Overcoming Imposter Syndrome In Software Development | by Lawrence Franklin Chukwudalu Have you ever felt like people will discover you are not as... | 0 | 2024-07-12T09:29:55 | https://blog.openreplay.com/overcoming-imposter-syndrome-in-software-development/ | by [Lawrence Franklin Chukwudalu](https://blog.openreplay.com/authors/lawrence-franklin-chukwudalu)

<blockquote><em>

Have you ever felt like people will discover you are not as competent at something as they think you are? Have you got feelings of inadequacy in software development? This article will explain what th... | asayerio_techblog | |

1,920,840 | Revolutionizing Data Analysis:The Power Of Automation | In the rapidly evolving landscape of modern business, automation has emerged as a transformative... | 0 | 2024-07-12T09:30:56 | https://dev.to/saumya27/revolutionizing-data-analysisthe-power-of-automation-3bp1 | automation | In the rapidly evolving landscape of modern business, automation has emerged as a transformative force. By leveraging automation, organizations can streamline operations, enhance productivity, and drive innovation. Let’s explore the various facets of the power of automation and its profound impact on businesses.

**Inc... | saumya27 |

1,920,841 | CA Final Result May 2024 pass percentage : The Standard | Now that the dates of the CA Final Result May 2024 pass percentage, aspirants are anxiously... | 0 | 2024-07-12T09:33:57 | https://dev.to/simrasah/ca-final-result-may-2024-pass-percentage-the-standard-1fa |

Now that the dates of the **[CA Final Result May 2024 pass percentage](https://studyathome.org/ca-exam-result-may-2024-date-toppers-pass-percentage/)**, aspirants are anxiously awaiting the Institute of Chartered Ac... | simrasah | |

1,920,842 | Free AI Certification Courses Learn AI Online Today | Top Free AI Courses Online How to Learn Artificial Intelligence for Free with... | 0 | 2024-07-12T09:34:10 | https://dev.to/educatinol_courses_806c29/free-ai-certification-courses-learn-ai-online-today-313o | Top Free AI Courses Online How to Learn Artificial Intelligence for Free with Certificates

Navigating the myriad options for learning of AI can be intimidating, particularly if you're looking for materials that are tailored to your particular needs. Take into account the following crucial factors when deciding which c... | educatinol_courses_806c29 | |

1,920,843 | Haptic Feedback For Web Apps With The Vibration API | by Glory Jonah In case you're new to the term, haptic feedback is the tactile sensation generated... | 0 | 2024-07-12T09:35:36 | https://blog.openreplay.com/haptic-feedback-for-web-apps-with-the-vibration-api/ | by [Glory Jonah](https://blog.openreplay.com/authors/glory-jonah)

<blockquote><em>

In case you're new to the term, haptic feedback is the tactile sensation generated from your mobile devices, which helps give you a sense of touch (vibrations or motions) in response to interactions. It offers a lot of benefits, like ... | asayerio_techblog | |

1,920,844 | Say hello to Ably Chat: A new product optimized for large-scale chat interactions | TL;DR: Today, we're excited to announce the private beta launch of our new chat product! Ably Chat... | 0 | 2024-07-12T10:02:11 | https://ably.com/blog/ably-chat-announcement | news, development, frontend, webdev | > **TL;DR:** Today, we're excited to announce the private beta launch of our new chat product! Ably Chat bundles purpose-built APIs for all the chat features your users need in a range of realtime applications, from global livestreams operating at extreme scale to customer support chats embedded within your apps. It is... | srushtika |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.