Datasets:

jpg imagewidth (px) 2.88k 2.88k | __key__ stringlengths 16 16 | __url__ stringclasses 5

values |

|---|---|---|

00000b03fdc40c02 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00002b4713cd6f48 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00003bbe8e367fb9 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00004707eb6f02e5 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000061bf12ef811a | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0000de3e25c43e98 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00010156a14cad3e | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00010633c0629509 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00010d333a171a0e | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00012162054663b1 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00013f446cb0235c | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00018f2ce15558b2 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0002325d1e8c8048 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00027bdf78441ded | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000299ea0dc35db9 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0002a5d88315ae9d | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0002ec9572c5c34b | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0003053d7a461752 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00031f1866187a08 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00033e7949700bfd | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00035407c4cc0bdc | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000398617942f7b5 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0003afb5d345f50c | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0003c6a8e940bb25 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0003d2d29140425c | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00040e1861937a86 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00047b57da5757c4 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000497177774cc58 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0004fa484a376a84 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00050d5e5737135d | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0005246424689756 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00053201d5472f32 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000551d9a24a2999 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0005a71ac2341c6d | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0005c28242f1f2b2 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000626c039bae61f | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00066e9c36f73e91 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00067bc5a9278de8 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00068306b112a761 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0006bc75d42c77f4 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0006cd2c8787b81f | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0006ead9ffcff2b6 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00071a0d4849ddd4 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00072439d7329bf8 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00073c2f7a996e15 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00076a75643d1eee | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0007a66682aec6e7 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0007ce079c8d5f9c | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0007d632958bb5a2 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00083a952d589425 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000842345308d05c | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00085798ef37b295 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00087025969d5afa | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000881296f3190d0 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0008caec58ce4a56 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0008de57015acb20 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0008ed46406fc6f1 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0008f872e2c988b9 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000909acd7beec82 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00090f008fade946 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000943f8737dd0c1 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000965459b0460af | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

00099bd92fe49612 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

0009e2d177372a26 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000a250a26a3a800 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000a4d8e6b70754b | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000a630f035c6c73 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000acd2a7c4cad32 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000ae36e86e2340a | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000af2d976e9e153 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000b34d6eed1b50d | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000b3709f852e7ce | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000b4baad5172aea | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000b7742136ff580 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000bc3c4aac06ca0 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000bc4a4d580de81 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000bd8b3c504e929 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000bf7b05512b944 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000bf8c9b37c6367 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000bfc2508c02586 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000c00fea1277e57 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000c0409f1f79426 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000c7527a0330125 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000c83687e9ac0c8 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000cac4f716469b1 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000cdd4addccfaed | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000ce8d89873658c | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000d6423a337929f | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000d693a3914f225 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000d718106f050b1 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000d7c6b71223833 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000db7e30fc838f5 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000ddc32da36d884 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000e12fac0b49023 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000e9f3f0077c878 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000ea048ee3d56aa | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000eebbb60e1c71b | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000f0485f3b23e1f | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000f8fcb8460d33b | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar | |

000f9c00f4464903 | hf://datasets/turing-motors/STRIDE-QA-Dataset@4dba939a6b6ea23e7c51eea5707692afc2aa5c55/train/images/images-00000.tar |

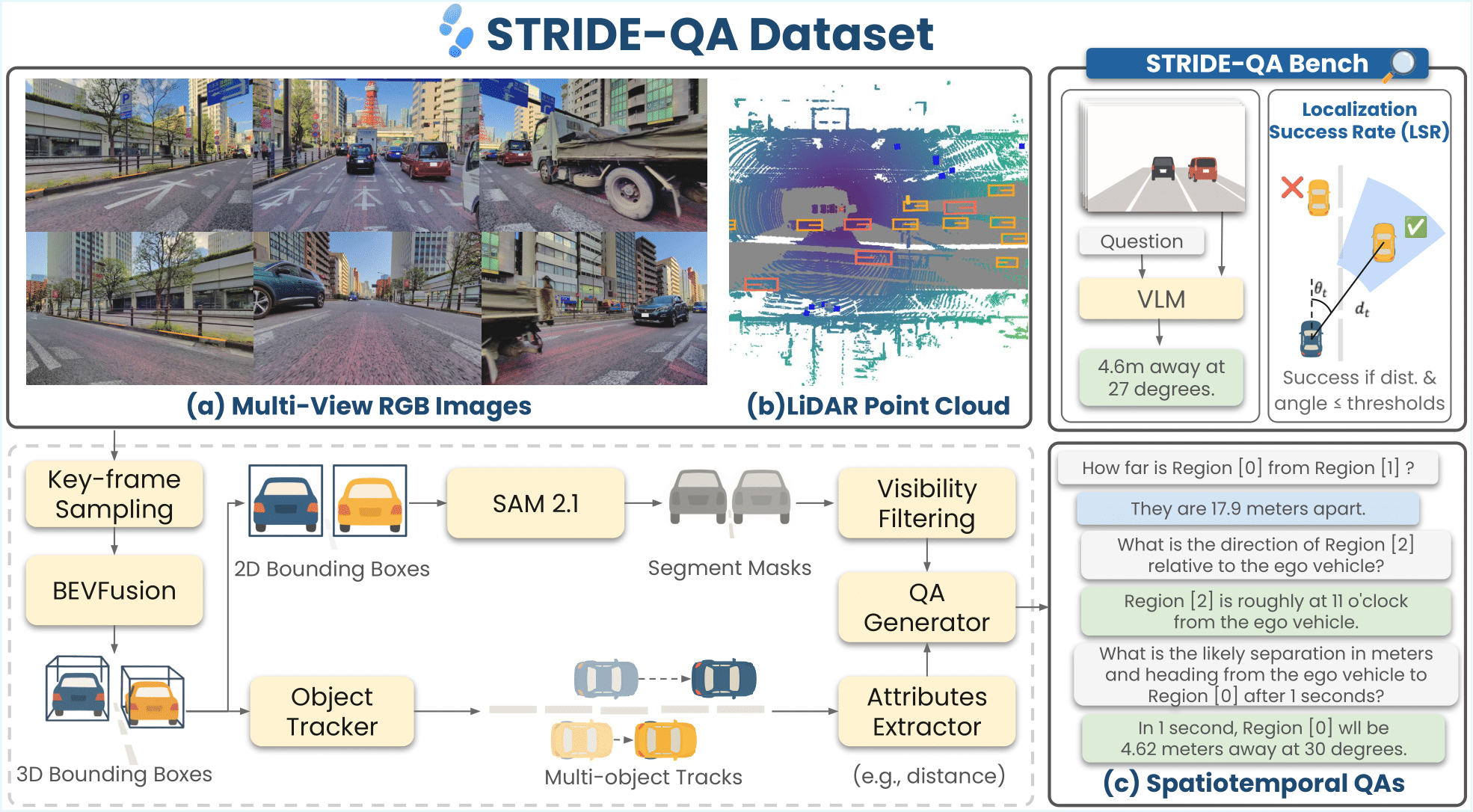

STRIDE-QA Dataset

📦 Dataset

STRIDE-QA is a large-scale visual question answering (VQA) dataset for physically grounded spatiotemporal reasoning in autonomous driving. Constructed from 100 hours of multi-sensor driving data in Tokyo, it offers 16 M QA pairs over 270 K frames with dense annotations including 3D bounding boxes, segmentation masks, and multi-object tracks.

| Category | Description |

|---|---|

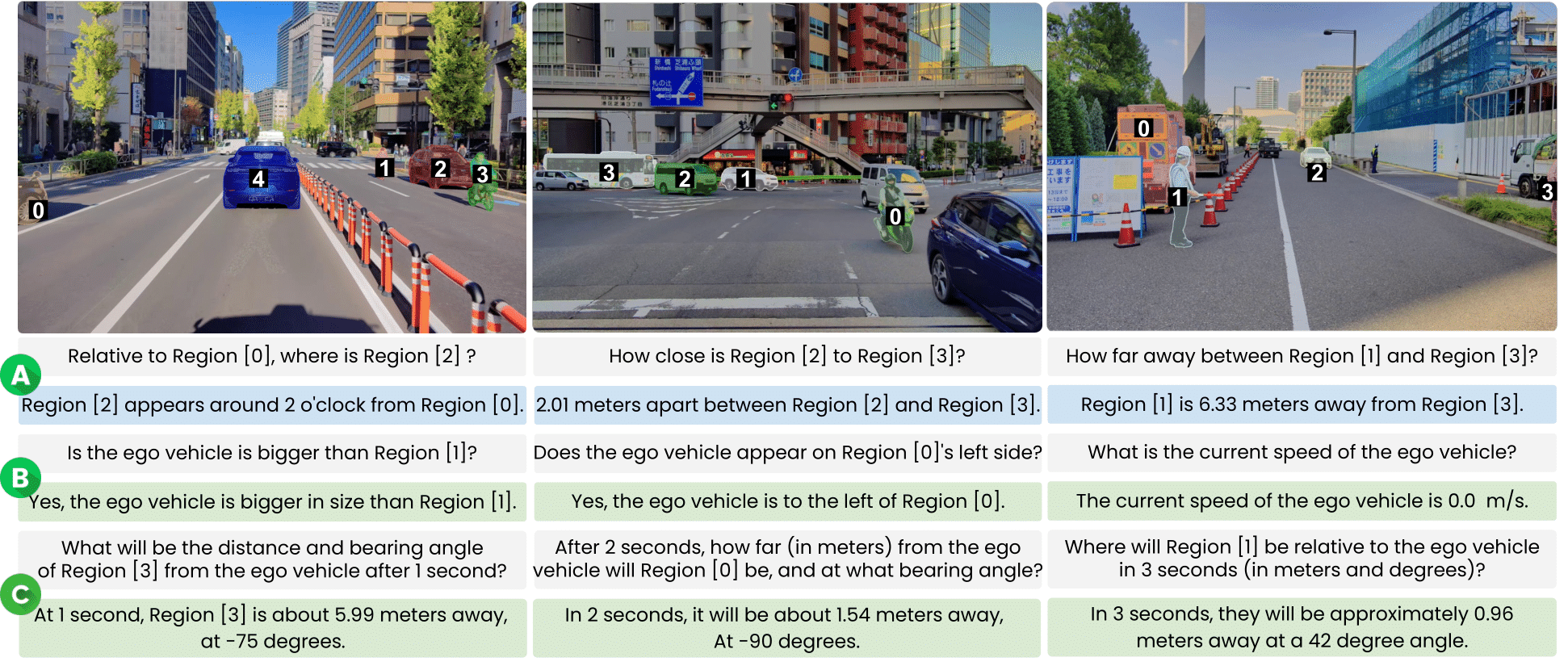

| Object-centric Spatial QA | Spatial relations between two surrounding agents (single frame). Includes qualitative (e.g., relative position) and quantitative (e.g., distance, angle) questions. |

| Ego-centric Spatial QA | Spatial relations between the ego vehicle and a surrounding agent (single frame). Covers distance, direction, and size comparisons. |

| Ego-centric Spatiotemporal QA | Short-term prediction using 4 context frames (2 Hz). Forecasts distance, heading angle, and velocity at t ∈ {1, 2, 3} s. |

| QA Category | Qualitative (train) | Qualitative (val) | Quantitative (train) | Quantitative (val) | Total (M) |

|---|---|---|---|---|---|

| Object-centric Spatial QA | 2.20 | 0.11 | 1.10 | 0.06 | 3.47 |

| Ego-centric Spatial QA | 3.10 | 0.16 | 4.33 | 0.23 | 7.82 |

| Ego-centric Spatiotemporal QA | – | – | 4.73 | 0.40 | 5.13 |

| Total | 5.30 | 0.28 | 10.17 | 0.69 | 16.43 |

Data Format

This repository provides front camera images and JSONL annotations.

Directory Structure

STRIDE-QA-Dataset/

├── train/

│ ├── images/

│ └── annotations/

│ ├── ego_centric_spatial_qa/

│ ├── ego_centric_spatiotemporal_qa/

│ └── object_centric_spatial_qa/

└── val/

├── images/

└── annotations/

├── ego_centric_spatial_qa/

├── ego_centric_spatiotemporal_qa/

└── object_centric_spatial_qa/

Data Fields

The dataset is provided in .jsonl.gz. Each line in the JSONL file represents a single sample with the following fields:

| Field | Type | Description |

|---|---|---|

id |

str |

Unique sample ID. |

split |

str |

Dataset split (train or val). |

category |

str |

QA category type. |

image |

str |

File name of the key frame used in the prompt. |

images |

list[str] |

File names for the four consecutive image frames. Only available in Ego-centric Spatiotemporal QA category. |

conversations |

list[dict] |

Dialogue in VILA format ("from": "human" / "gpt"). |

image_info |

dict |

Image metadata (height, width, dataset, landmark, file_path). |

token_info |

dict |

Sample and sample data tokens for nuScenes reference. |

qa_info |

list[dict] |

Metadata for each QA turn (type, category, class, token). |

bbox |

list[list[float]] |

Bounding boxes [x₁, y₁, x₂, y₂] for referenced regions. |

rle |

list[dict] |

COCO-style run-length masks for regions. |

region |

list[list[int]] |

Region tags mentioned in the prompt. |

Example Records

Object-centric Spatial QA

{

"id": "0004143dce0d1445",

"split": "val",

"category": "object_centric_spatial_qa",

"image": "0004143dce0d1445.jpg",

"conversations": [

{

"from": "human",

"value": "Is Region [3] taller than Region [0]?"

},

{

"from": "gpt",

"value": "No, Region [3] is not taller than Region [0]."

}

// ... more QA pairs ...

],

"image_info": {

"height": 1860,

"width": 2880,

"dataset": "STRIDE-QA",

"landmark": "outdoor",

"file_path": "0004143dce0d1445.jpg"

},

"token_info": {

"sample_token": "a2950b1d20375897e99b633a981e4dfe",

"sample_data_token": "4aba804904f3a1a2d3271b1fe8a6fc92"

},

"qa_info": [

{

"type": "qualitative",

"category": "tall_predicate",

"class": ["car", "large_vehicle"],

"token": {

"obj_A": {

"instance_token": "c7238672f1bfcc08929f99729f842948",

"sample_annotation_token": "a8189cdb160a525e2f798e739849fe8b"

},

"obj_B": {

"instance_token": "4941cec428bd732ff3e54ee43fbcc0e1",

"sample_annotation_token": "bac4d2c0ae8d029e406961655bf82ee9"

}

}

}

// ... more qa_info entries ...

],

"bbox": [

[0.0, 101.39, 881.81, 1657.32]

// ... more bounding boxes ...

],

"rle": [

{

"size": [1860, 2880],

"counts": "o7\\W12jhN^b0VW1b]OjhN^b0VW1..."

}

// ... more RLE masks ...

],

"region": [

[3, 0]

// ... more region tags ...

]

}

Ego-centric Spatial QA

{

"id": "0004143dce0d1445",

"split": "val",

"category": "ego_centric_spatial_qa",

"image": "0004143dce0d1445.jpg",

"conversations": [

{

"from": "human",

"value": "Is the ego vehicle bigger than Region [0]?"

},

{

"from": "gpt",

"value": "Incorrect, the ego vehicle is not larger than Region [0]."

}

// ... more QA pairs ...

],

"image_info": {

"height": 1860,

"width": 2880,

"dataset": "STRIDE-QA",

"landmark": "outdoor",

"file_path": "0004143dce0d1445.jpg"

},

"token_info": {

"sample_token": "a2950b1d20375897e99b633a981e4dfe",

"sample_data_token": "4aba804904f3a1a2d3271b1fe8a6fc92"

},

"qa_info": [

{

"type": "qualitative",

"category": "ego_big_predicate",

"class": ["ego_vehicle", "large_vehicle"],

"token": {

"ego": {

"instance_token": null,

"sample_annotation_token": null

},

"other": {

"instance_token": "4941cec428bd732ff3e54ee43fbcc0e1",

"sample_annotation_token": "bac4d2c0ae8d029e406961655bf82ee9"

}

}

}

// ... more qa_info entries ...

],

"bbox": [

[0.0, 101.39, 881.81, 1657.32]

// ... more bounding boxes ...

],

"rle": [

{

"size": [1860, 2880],

"counts": "o7\\W12jhN^b0VW1b]OjhN^b0VW1..."

}

// ... more RLE masks ...

],

"region": [

[99, 0]

// ... more region tags ...

]

}

Ego-centric Spatio-temporal QA

{

"id": "0004143dce0d1445",

"split": "val",

"category": "ego_centric_spatiotemporal_qa",

"images": [

"31ee9bcfc3ed9dec.jpg",

"f6db1dee9bddc641.jpg",

"c76385f109139ea8.jpg",

"0004143dce0d1445.jpg"

],

"conversations": [

{

"from": "human",

"value": "Can you give me an estimate of the distance between the ego vehicle and Region [0]?"

},

{

"from": "gpt",

"value": "The ego vehicle and Region [0] are 3.27 meters apart from each other."

}

// ... more QA pairs ...

],

"image_info": {

"height": 1860,

"width": 2880,

"dataset": "STRIDE-QA",

"landmark": "outdoor",

"file_path": "0004143dce0d1445.jpg"

},

"token_info": {

"current": {

"sample_token": "a2950b1d20375897e99b633a981e4dfe",

"sample_data_token": "4aba804904f3a1a2d3271b1fe8a6fc92"

},

"prev_1": {

"sample_token": "93f455351c376fb32dc6315c3d0c53e2",

"sample_data_token": "0ec22aef72d02da0f26763b22ec7afe3"

}

// ... prev_2, prev_3 ...

},

"qa_info": [

{

"category": "target_distance_t0",

"target": {

"instance_token": "4941cec428bd732ff3e54ee43fbcc0e1",

"sample_annotation_token": "bac4d2c0ae8d029e406961655bf82ee9",

"class": "large_vehicle",

"region_id": 0

},

"state": {

"ego": {

"speed_mps": 4.38

},

"target": {

"speed_mps": 6.42,

"distance_m": 3.27,

"bearing_deg": 18.58,

"clock_position": 11

},

"after_t_seconds": 0

}

}

// ... more qa_info entries ...

],

"bbox": {

"current": [

[0.0, 101.39, 881.81, 1657.32]

// ... more bounding boxes ...

],

"prev_1": [

[0.0, 0.0, 802.30, 1860.0]

// ... more bounding boxes ...

]

// ... prev_2, prev_3 ...

},

"rle": {

"current": [

{

"size": [1860, 2880],

"counts": "o7\\W12jhN^b0VW1b]OjhN^b0VW1..."

}

// ... more RLE masks ...

]

// ... prev_1, prev_2, prev_3 ...

}

}

📄 License

STRIDE-QA-Dataset is released under the CC BY-NC-SA 4.0.

📚 Citation

@misc{strideqa2025,

title={STRIDE-QA: Visual Question Answering Dataset for Spatiotemporal Reasoning in Urban Driving Scenes},

author={Keishi Ishihara and Kento Sasaki and Tsubasa Takahashi and Daiki Shiono and Yu Yamaguchi},

year={2025},

eprint={2508.10427},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2508.10427},

}

🤝 Acknowledgements

This dataset was developed as part of the project JPNP20017, which is subsidized by the New Energy and Industrial Technology Development Organization (NEDO), Japan.

We would like to acknowledge the use of the following open-source repositories:

- SpatialRGPT for building dataset generation pipeline

- SAM 2.1 for segmentation mask generation

- dashcam-anonymizer for anonymization

🔏 Privacy Protection

To ensure privacy protection, human faces and license plates in the images were anonymized using the Dashcam Anonymizer.

- Downloads last month

- 1,948