code stringlengths 2.5k 150k | kind stringclasses 1 value |

|---|---|

Lambda School Data Science

*Unit 2, Sprint 3, Module 3*

---

# Permutation & Boosting

You will use your portfolio project dataset for all assignments this sprint.

## Assignment

Complete these tasks for your project, and document your work.

- [ ] If you haven't completed assignment #1, please do so first.

- [ ] Continue to clean and explore your data. Make exploratory visualizations.

- [ ] Fit a model. Does it beat your baseline?

- [ ] Try xgboost.

- [ ] Get your model's permutation importances.

You should try to complete an initial model today, because the rest of the week, we're making model interpretation visualizations.

But, if you aren't ready to try xgboost and permutation importances with your dataset today, that's okay. You can practice with another dataset instead. You may choose any dataset you've worked with previously.

The data subdirectory includes the Titanic dataset for classification and the NYC apartments dataset for regression. You may want to choose one of these datasets, because example solutions will be available for each.

## Reading

Top recommendations in _**bold italic:**_

#### Permutation Importances

- _**[Kaggle / Dan Becker: Machine Learning Explainability](https://www.kaggle.com/dansbecker/permutation-importance)**_

- [Christoph Molnar: Interpretable Machine Learning](https://christophm.github.io/interpretable-ml-book/feature-importance.html)

#### (Default) Feature Importances

- [Ando Saabas: Selecting good features, Part 3, Random Forests](https://blog.datadive.net/selecting-good-features-part-iii-random-forests/)

- [Terence Parr, et al: Beware Default Random Forest Importances](https://explained.ai/rf-importance/index.html)

#### Gradient Boosting

- [A Gentle Introduction to the Gradient Boosting Algorithm for Machine Learning](https://machinelearningmastery.com/gentle-introduction-gradient-boosting-algorithm-machine-learning/)

- [An Introduction to Statistical Learning](http://www-bcf.usc.edu/~gareth/ISL/ISLR%20Seventh%20Printing.pdf), Chapter 8

- _**[Gradient Boosting Explained](https://www.gormanalysis.com/blog/gradient-boosting-explained/)**_ — Ben Gorman

- [Gradient Boosting Explained](http://arogozhnikov.github.io/2016/06/24/gradient_boosting_explained.html) — Alex Rogozhnikov

- [How to explain gradient boosting](https://explained.ai/gradient-boosting/) — Terence Parr & Jeremy Howard

```

!pip install category_encoders==2.*

import pandas as pd

import numpy as np

import category_encoders as ce

from sklearn.impute import SimpleImputer

from sklearn.linear_model import LogisticRegression

from sklearn.preprocessing import StandardScaler

from sklearn.pipeline import make_pipeline

from sklearn.ensemble import RandomForestClassifier

from sklearn.tree import DecisionTreeClassifier

source_file1 = '/content/redacted_sales_data.csv'

df = pd.read_csv(source_file1)

df.tail(3)

df.head()

# the column names have a trailing space, this removes it

df = df.rename(columns={'Name ':'Name', 'Price ':'Price', 'Tax ':'Tax',

'Total Price ':'Total Price', 'Total Paid ':'Total Paid',

'Terminal ':'Terminal', 'User ':'User', 'Date ':'Date'})

### drop name column

df = df.drop(['Name'], axis=1)

## drop the last 12 rows

df.tail(13)

df = df.dropna(axis=0)

df.tail()

## drop total paid because it's redundant

## and drop terminal because it's not informative

## rename "User" column for clarity

df = df.drop(['Total Paid'], axis=1)

df = df.drop(['Terminal'], axis=1)

df = df.rename(columns={'Total Price':'Total'})

df = df.rename(columns={'User':'Employee'})

df.head()

#TIME to choose a target!!!

df['Total'].describe()

df.isna().sum()

df["Above ATP"] = df["Total"] >= df.Total.mean()

df['Date'] = pd.to_datetime(df['Date'])

df['Week'] = df['Date'].dt.week

df['Day'] = df['Date'].dt.day

df['Hour'] = df['Date'].dt.hour

df['Minute'] = df['Date'].dt.minute

df['Second'] = df['Date'].dt.second

df['Month'] = df['Date'].dt.month

# Drop recorded_by (never varies) and id (always varies, random)

unusable_variance = ['Date']

df = df.drop(columns=unusable_variance)

df.dtypes

df.nunique().value_counts()

df['Month'].value_counts()

train = df[df['Month'] <= 3]

val = df[df['Month'] == 4]

test = df[df['Month'] >= 5]

'''

!pip install category_encoders==2.*

'''

# The status_group column is the target

target = 'Above ATP'

# Get a dataframe with all train columns except the target & Date

features = train.columns.drop([target])

print(features)

X_train = train[features]

y_train = train[target]

X_val = val[features]

y_val = val[target]

X_test = test

y_val = test[target]

transformers = make_pipeline(

ce.ordinal.OrdinalEncoder(),

SimpleImputer()

)

X_train_transformed = transformers.fit_transform(X_train)

X_val_transformed = transformers.transform(X_val_permuted)

model = RandomForestClassifier(n_estimators=100, random_state=42, n_jobs=-1)

model.fit(X_train_transformed, y_train)

#column = train.columns.drop([target])

#X_train_t = X_train.drop(columns=column)

pipeline = make_pipeline(

ce.OrdinalEncoder(),

SimpleImputer(strategy='median'),

RandomForestClassifier(n_estimators=100, random_state=42, n_jobs=-1)

)

pipeline.fit(X_train, y_train)

score_without = pipeline.score(X_val.drop, y_val)

print(f'Validation Accuracy without {column}: {score_without}')

# Fit with column

pipeline = make_pipeline(

ce.OrdinalEncoder(),

SimpleImputer(strategy='median'),

RandomForestClassifier(n_estimators=100, random_state=42, n_jobs=-1)

)

pipeline.fit(X_train, y_train)

score_with = pipeline.score(X_val, y_val)

print(f'Validation Accuracy with {column}: {score_with}')

# Compare the error with & without column

print(f'Drop-Column Importance for {column}: {score_with - score_without}')

'''import eli5

from eli5.sklearn import PermutationImportance

permuter = PermutationImportance(

model,

scoring='accuracy',

n_iter=5,

random_state=42

)

permuter.fit(X_val_transformed, y_val)'''

```

| github_jupyter |

# Multi-Fidelity

<div class="btn btn-notebook" role="button">

<img src="../_static/images/colab_logo_32px.png"> [Run in Google Colab](https://colab.research.google.com/drive/1Cc9TVY_Tl_boVzZDNisQnqe6Qx78svqe?usp=sharing)

</div>

<div class="btn btn-notebook" role="button">

<img src="../_static/images/github_logo_32px.png"> [View on GitHub](https://github.com/adapt-python/notebooks/blob/d0364973c642ea4880756cef4e9f2ee8bb5e8495/Multi_fidelity.ipynb)

</div>

The following example is a 1D regression multi-fidelity issue. Blue points are low fidelity observations and orange points are high fidelity observations. The goal is to use both datasets to learn the task on the [0, 1] interval.

To tackle this challenge, we use here the parameter-based method: [RegularTransferNN](#RegularTransferNN)

```

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.animation as animation

from sklearn.metrics import mean_absolute_error, mean_squared_error

import tensorflow as tf

from tensorflow.keras import Model, Sequential

from tensorflow.keras.optimizers import Adam, SGD, RMSprop, Adagrad

from tensorflow.keras.layers import Dense, Input, Dropout, Conv2D, MaxPooling2D, Flatten, Reshape, GaussianNoise, BatchNormalization

from tensorflow.keras.constraints import MinMaxNorm

from tensorflow.keras.regularizers import l2

from tensorflow.keras.callbacks import Callback

from tensorflow.keras.models import clone_model

from adapt.parameter_based import RegularTransferNN

```

## Setup

```

np.random.seed(0)

Xs = np.linspace(0, 1, 200)

ys = (1 - Xs**2) * np.sin(2 * 2 * np.pi * Xs) - Xs + 0.1 * np.random.randn(len(Xs))

Xt = Xs[:100]

yt = (1 - Xt**2) * np.sin(2 * 2 * np.pi * Xt) - Xt - 1.5

gt = (1 - Xs**2) * np.sin(2 * 2 * np.pi * Xs) - Xs - 1.5

plt.figure(figsize=(10,6))

plt.plot(Xs, ys, '.', label="low fidelity", ms=15, alpha=0.9, markeredgecolor="black")

plt.plot(Xt, yt, '.', label="high fidelity", ms=15, alpha=0.9, markeredgecolor="black")

plt.plot(Xs, gt, c="black", alpha=0.7, ls="--", label="Ground truth")

plt.legend(fontsize=14)

plt.xlabel("X", fontsize=16)

plt.ylabel("y = f(X)", fontsize=16)

plt.show()

```

## Network

```

np.random.seed(0)

tf.random.set_seed(0)

model = Sequential()

model.add(Dense(100, activation='relu', input_shape=(1,)))

model.add(Dense(100, activation='relu'))

model.add(Dense(1))

model.compile(optimizer=Adam(0.001), loss='mean_squared_error')

```

## Low fidelity only

```

np.random.seed(0)

tf.random.set_seed(0)

model_low = clone_model(model)

model_low.compile(optimizer=Adam(0.001), loss='mean_squared_error')

model_low.fit(Xs, ys, epochs=800, batch_size=34, verbose=0);

yp = model_low.predict(Xs.reshape(-1,1))

score = mean_absolute_error(gt.ravel(), yp.ravel())

plt.figure(figsize=(10,6))

plt.plot(Xs, ys, '.', label="low fidelity", ms=15, alpha=0.9, markeredgecolor="black")

plt.plot(Xt, yt, '.', label="high fidelity", ms=15, alpha=0.9, markeredgecolor="black")

plt.plot(Xs, gt, c="black", alpha=0.7, ls="--", label="Ground truth")

plt.plot(Xs, yp, c="red", alpha=0.9, lw=3, label="Predictions")

plt.legend(fontsize=14)

plt.xlabel("X", fontsize=16)

plt.ylabel("y = f(X)", fontsize=16)

plt.title("Low Fidelity Only -- MAE = %.3f"%score, fontsize=18)

plt.show()

```

## High fidelity only

```

np.random.seed(0)

tf.random.set_seed(0)

model_high = clone_model(model)

model_high.compile(optimizer=Adam(0.001), loss='mean_squared_error')

model_high.fit(Xt, yt, epochs=800, batch_size=34, verbose=0);

yp = model_high.predict(Xs.reshape(-1,1))

score = mean_absolute_error(gt.ravel(), yp.ravel())

plt.figure(figsize=(10,6))

plt.plot(Xs, ys, '.', label="low fidelity", ms=15, alpha=0.9, markeredgecolor="black")

plt.plot(Xt, yt, '.', label="high fidelity", ms=15, alpha=0.9, markeredgecolor="black")

plt.plot(Xs, gt, c="black", alpha=0.7, ls="--", label="Ground truth")

plt.plot(Xs, yp, c="red", alpha=0.9, lw=3, label="Predictions")

plt.legend(fontsize=14)

plt.xlabel("X", fontsize=16)

plt.ylabel("y = f(X)", fontsize=16)

plt.title("Low Fidelity Only -- MAE = %.3f"%score, fontsize=18)

plt.show()

```

## [RegularTransferNN](https://adapt-python.github.io/adapt/generated/adapt.parameter_based.RegularTransferNN.html)

```

model_reg = RegularTransferNN(model_low, lambdas=1000., random_state=1, optimizer=Adam(0.0001))

model_reg.fit(Xt.reshape(-1,1), yt, epochs=1200, batch_size=34, verbose=0);

yp = model_reg.predict(Xs.reshape(-1,1))

score = mean_absolute_error(gt.ravel(), yp.ravel())

plt.figure(figsize=(10,6))

plt.plot(Xs, ys, '.', label="low fidelity", ms=15, alpha=0.9, markeredgecolor="black")

plt.plot(Xt, yt, '.', label="high fidelity", ms=15, alpha=0.9, markeredgecolor="black")

plt.plot(Xs, gt, c="black", alpha=0.7, ls="--", label="Ground truth")

plt.plot(Xs, yp, c="red", alpha=0.9, lw=3, label="Predictions")

plt.legend(fontsize=14)

plt.xlabel("X", fontsize=16)

plt.ylabel("y = f(X)", fontsize=16)

plt.title("Low Fidelity Only -- MAE = %.3f"%score, fontsize=18)

plt.show()

```

| github_jupyter |

## **Semana de Data Science**

- Minerando Dados

## Aula 01

### Conhecendo a base de dados

Monta o drive

```

from google.colab import drive

drive.mount('/content/drive')

```

Importando as bibliotecas básicas

```

import pandas as pd

import seaborn as sns

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

```

Carregando a Base de Dados

```

# carrega o dataset de london

from sklearn.datasets import load_boston

boston = load_boston()

# descrição do dataset

print (boston.DESCR)

# cria um dataframe pandas

data = pd.DataFrame(boston.data, columns=boston.feature_names)

# imprime as 5 primeiras linhas do dataset

data.head()

```

Conhecendo as colunas da base de dados

**`CRIM`**: Taxa de criminalidade per capita por cidade.

**`ZN`**: Proporção de terrenos residenciais divididos por lotes com mais de 25.000 pés quadrados.

**`INDUS`**: Essa é a proporção de hectares de negócios não comerciais por cidade.

**`CHAS`**: variável fictícia Charles River (= 1 se o trecho limita o rio; 0 caso contrário)

**`NOX`**: concentração de óxido nítrico (partes por 10 milhões)

**`RM`**: Número médio de quartos entre as casas do bairro

**`IDADE`**: proporção de unidades ocupadas pelos proprietários construídas antes de 1940

**`DIS`**: distâncias ponderadas para cinco centros de emprego em Boston

**`RAD`**: Índice de acessibilidade às rodovias radiais

**`IMPOSTO`**: taxa do imposto sobre a propriedade de valor total por US $ 10.000

**`B`**: 1000 (Bk - 0,63) ², onde Bk é a proporção de pessoas de descendência afro-americana por cidade

**`PTRATIO`**: Bairros com maior proporção de alunos para professores (maior valor de 'PTRATIO')

**`LSTAT`**: porcentagem de status mais baixo da população

**`MEDV`**: valor médio de casas ocupadas pelos proprietários em US $ 1000

Adicionando a coluna que será nossa variável alvo

```

# adiciona a variável MEDV

data['MEDV'] = boston.target

# imprime as 5 primeiras linhas do dataframe

data.head()

data.describe()

```

### Análise e Exploração dos Dados

Nesta etapa nosso objetivo é conhecer os dados que estamos trabalhando.

Podemos a ferramenta **Pandas Profiling** para essa etapa:

```

# Instalando o pandas profiling

pip install https://github.com/pandas-profiling/pandas-profiling/archive/master.zip

# import o ProfileReport

from pandas_profiling import ProfileReport

# executando o profile

profile = ProfileReport(data, title='Relatório - Pandas Profiling', html={'style':{'full_width':True}})

profile

# salvando o relatório no disco

profile.to_file(output_file="Relatorio01.html")

```

**Observações**

* *O coeficiente de correlação varia de `-1` a `1`.

Se valor é próximo de 1, isto significa que existe uma forte correlação positiva entre as variáveis. Quando esse número é próximo de -1, as variáveis tem uma forte correlação negativa.*

* *A relatório que executamos acima nos mostra que a nossa variável alvo (**MEDV**) é fortemente correlacionada com as variáveis `LSTAT` e `RM`*

* *`RAD` e `TAX` são fortemente correlacionadas, podemos remove-las do nosso modelo para evitar a multi-colinearidade.*

* *O mesmo acontece com as colunas `DIS` and `AGE` a qual tem a correlação de -0.75*

* *A coluna `ZN` possui 73% de valores zero.*

## Aula 02

Obtendo informações da base de dados manualmente

```

# Check missing values

data.isnull().sum()

# um pouco de estatística descritiva

data.describe()

```

Analisando a Correlação das colunas da base de dados

```

# Calcule a correlaçao

correlacoes = data.corr()

# Usando o método heatmap do seaborn

%matplotlib inline

plt.figure(figsize=(16, 6))

sns.heatmap(data=correlacoes, annot=True)

```

Visualizando a relação entre algumas features e variável alvo

```

# Importando o Plot.ly

import plotly.express as px

# RM vs MEDV (Número de quartos e valor médio do imóvel)

fig = px.scatter(data, x=data.RM, y=data.MEDV)

fig.show()

# LSTAT vs MEDV (índice de status mais baixo da população e preço do imóvel)

fig = px.scatter(data, x=data.LSTAT, y=data.MEDV)

fig.show()

# PTRATIO vs MEDV (percentual de proporção de alunos para professores e o valor médio de imóveis)

fig = px.scatter(data, x=data.PTRATIO, y=data.MEDV)

fig.show()

```

#### Analisando Outliers

```

# estatística descritiva da variável RM

data.RM.describe()

# visualizando a distribuição da variável RM

import plotly.figure_factory as ff

labels = ['Distribuição da variável RM (número de quartos)']

fig = ff.create_distplot([data.RM], labels, bin_size=.2)

fig.show()

# Visualizando outliers na variável RM

import plotly.express as px

fig = px.box(data, y='RM')

fig.update_layout(width=800,height=800)

fig.show()

```

Visualizando a distribuição da variável MEDV

```

# estatística descritiva da variável MEDV

data.MEDV.describe()

# visualizando a distribuição da variável MEDV

import plotly.figure_factory as ff

labels = ['Distribuição da variável MEDV (preço médio do imóvel)']

fig = ff.create_distplot([data.MEDV], labels, bin_size=.2)

fig.show()

```

Analisando a simetria do dado

```

# carrega o método stats da scipy

from scipy import stats

# imprime o coeficiente de pearson

stats.skew(data.MEDV)

```

Coeficiente de Pearson

* Valor entre -1 e 1 - distribuição simétrica.

* Valor maior que 1 - distribuição assimétrica positiva.

* Valor maior que -1 - distribuição assimétrica negativa.

```

# Histogram da variável MEDV (variável alvo)

fig = px.histogram(data, x="MEDV", nbins=50, opacity=0.50)

fig.show()

# Visualizando outliers na variável MEDV

import plotly.express as px

fig = px.box(data, y='MEDV')

fig.update_layout( width=800,height=800)

fig.show()

# imprimindo os 16 maiores valores de MEDV

data[['RM','LSTAT','PTRATIO','MEDV']].nlargest(16, 'MEDV')

# filtra os top 16 maiores registro da coluna MEDV

top16 = data.nlargest(16, 'MEDV').index

# remove os valores listados em top16

data.drop(top16, inplace=True)

# visualizando a distribuição da variável MEDV

import plotly.figure_factory as ff

labels = ['Distribuição da variável MEDV (número de quartos)']

fig = ff.create_distplot([data.MEDV], labels, bin_size=.2)

fig.show()

# Histogram da variável MEDV (variável alvo)

fig = px.histogram(data, x="MEDV", nbins=50, opacity=0.50)

fig.show()

# imprime o coeficiente de pearson

# o valor de inclinação..

stats.skew(data.MEDV)

```

**Definindo um Baseline**

- `Uma baseline é importante para ter marcos no projeto`.

- `Permite uma explicação fácil para todos os envolvidos`.

- `É algo que sempre tentaremos ganhar na medida do possível`.

```

# converte os dados

data.RM = data.RM.astype(int)

data.info()

# definindo a regra para categorizar os dados

categorias = []

# Se número de quartos for menor igual a 4 este será pequeno, senão se for menor que 7 será médio, senão será grande.

# alimenta a lista categorias

for i in data.RM.iteritems():

valor = (i[1])

if valor <= 4:

categorias.append('Pequeno')

elif valor < 7:

categorias.append('Medio')

else:

categorias.append('Grande')

# imprimindo categorias

categorias

# cria a coluna categorias no dataframe data

data['categorias'] = categorias

# imprime 5 linhas do dataframe

data.head()

# imprime a contagem de categorias

data.categorias.value_counts()

# agrupa as categorias e calcula as médias

medias_categorias = data.groupby(by='categorias')['MEDV'].mean()

# imprime a variável medias_categorias

medias_categorias

# criando o dicionario com chaves medio, grande e pequeno e seus valores

dic_baseline = {'Grande': medias_categorias[0], 'Medio': medias_categorias[1], 'Pequeno': medias_categorias[2]}

# imprime dicionario

dic_baseline

# cria a função retorna baseline

def retorna_baseline(num_quartos):

if num_quartos <= 4:

return dic_baseline.get('Pequeno')

elif num_quartos < 7:

return dic_baseline.get('Medio')

else:

return dic_baseline.get('Grande')

# chama a função retorna baseline

retorna_baseline(10)

# itera sobre os imoveis e imprime o valor médio pelo número de quartos.

for i in data.RM.iteritems():

n_quartos = i[1]

print('Número de quartos é: {} , Valor médio: {}'.format(n_quartos,retorna_baseline(n_quartos)))

# imprime as 5 primeiras linhas do dataframe

data.head()

```

| github_jupyter |

<a href="https://colab.research.google.com/github/readikus/code-samples/blob/main/google-foo-bar/Challenge_2_1_Lovely_Lucky_LAMBs.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# Lovely Lucky LAMBs

## Problem Definition:

Being a henchman isn't all drudgery. Occasionally, when Commander Lambda is feeling generous, she'll hand out Lucky LAMBs (Lambda's All-purpose Money Bucks). Henchmen can use Lucky LAMBs to buy things like a second

pair of socks, a pillow for their bunks, or even a third daily meal!

However, actually passing out LAMBs isn't easy. Each henchman squad has a strict seniority ranking which must be respected - or else the henchmen will revolt and you'll all get demoted back to minions again!

There are 4 key rules which you must follow in order to avoid a revolt:

1. The most junior henchman (with the least seniority) gets exactly 1 LAMB. (There will always be at least 1 henchman on a team.)

2. A henchman will revolt if the person who ranks immediately above them gets more than double the number of LAMBs they do.

3. A henchman will revolt if the amount of LAMBs given to their next two subordinates combined is more than the number of LAMBs they get. (Note that the two most junior henchmen won't have two subordinates, so this rule doesn't apply to them. The 2nd most junior henchman would require at least as many LAMBs as the most junior henchman.)

4. You can always find more henchmen to pay - the Commander has plenty of employees. If there are enough LAMBs left over such that another henchman could be added as the most senior while obeying the other rules, you must always add and pay that henchman.

Note that you may not be able to hand out all the LAMBs. A single LAMB cannot be subdivided. That is, all henchmen must get a positive integer number of LAMBs.

Write a function called solution(total_lambs), where total_lambs is the integer number of LAMBs in the handout you are trying to divide. It should return an integer which represents the difference between the minimum and maximum number of henchmen who can share the LAMBs (that is, being as generous as possible to those you pay and as stingy as possible, respectively) while still obeying all of the above rules to avoid a revolt. For instance, if you had 10 LAMBs and were as generous as possible, you could only pay 3 henchmen (1, 2, and 4 LAMBs, in order of ascending seniority), whereas if you were as stingy as possible, you could pay 4 henchmen (1, 1, 2, and 3 LAMBs). Therefore, solution(10) should return 4-3 = 1.

To keep things interesting, Commander Lambda varies the sizes of the Lucky LAMB payouts. You can expect total_lambs to always be a positive integer less than 1 billion (10 ^ 9).

```

import math

def solution(total_lambs):

return abs(int(stingy(total_lambs) - generous(total_lambs)))

def generous(lambs):

return int(math.log(lambs + 1, 2))

fib = [1, 1]

def stingy(max):

# handle base cases

if (max == 1):

return 1

elif max == 2:

return 2

# keep track of the running total and the sequence

total = 2

i = 2

while total < max:

# go up following the fib. sequence - i.e. sum last 2 numbers

# ensure we have computed the ith fib. number

if len(fib) <= i:

fib.append(fib[i - 1] + fib[i - 2])

# will it put us above the max?

if ((total + fib[i]) > max):

return i

total += fib[i]

i += 1

return i

import unittest

class TestSolution(unittest.TestCase):

def test_solution(self):

self.assertEqual(solution(10), 1)

def test_generous(self):

self.assertEqual(generous(1), 1)

self.assertEqual(generous(2), 1)

self.assertEqual(generous(10), 3)

def test_stingy(self):

self.assertEqual(stingy(143), 10)

self.assertEqual(stingy(2), 2)

unittest.main(argv=[''], verbosity=2, exit=False)

```

**Notes on solution:** the number series for the generous algorithm follows the Fibonacci sequence, so we can make use of the various properities of that (i.e. dynamic programming/memonization).

| github_jupyter |

```

from __future__ import print_function

```

## Neural synthesis, feature visualization, and DeepDream notes

This notebook introduces what we'll call here "neural synthesis," the technique of synthesizing images using an iterative process which optimizes the pixels of the image to achieve some desired state of activations in a convolutional neural network.

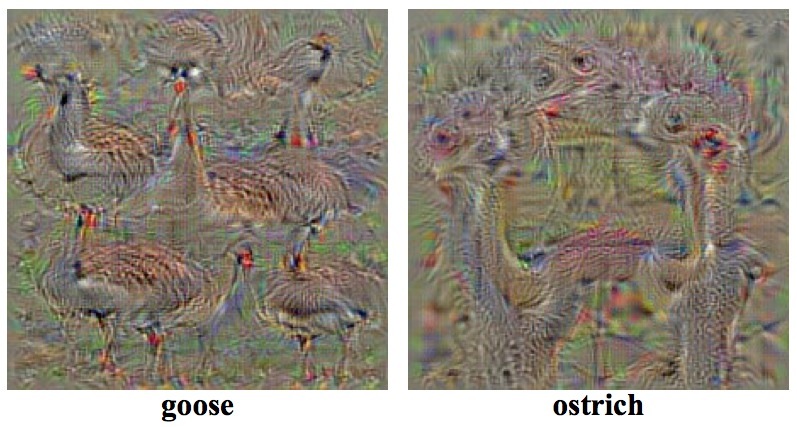

The technique in its modern form dates back to around 2009 and has its origins in early attempts to visualize what features were being learned by the different layers in the network (see [Erhan et al](https://pdfs.semanticscholar.org/65d9/94fb778a8d9e0f632659fb33a082949a50d3.pdf), [Simonyan et al](https://arxiv.org/pdf/1312.6034v2.pdf), and [Mahendran & Vedaldi](https://arxiv.org/pdf/1412.0035v1.pdf)) as well as in trying to identify flaws or vulnerabilities in networks by synthesizing and feeding them adversarial examples (see [Nguyen et al](https://arxiv.org/pdf/1412.1897v4.pdf), and [Dosovitskiy & Brox](https://arxiv.org/pdf/1506.02753.pdf)). The following is an example from Simonyan et al on visualizing image classification models.

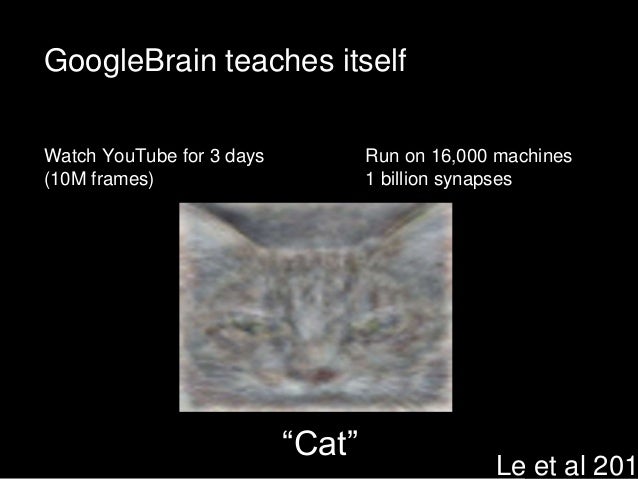

In 2012, the technique became widely known after [Le et al](https://googleblog.blogspot.in/2012/06/using-large-scale-brain-simulations-for.html) published results of an experiment in which a deep neural network was fed millions of images, predominantly from YouTube, and unexpectedly learned a cat face detector. At that time, the network was trained for three days on 16,000 CPU cores spread over 1,000 machines!

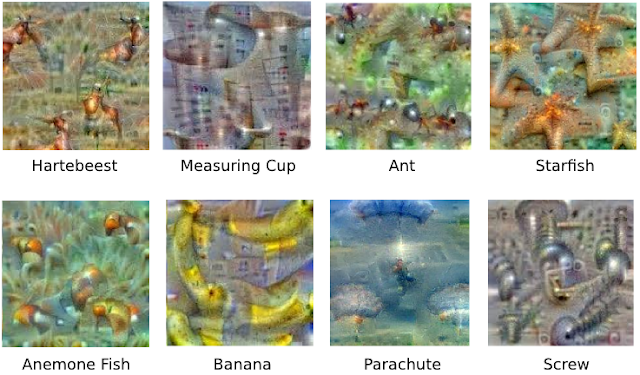

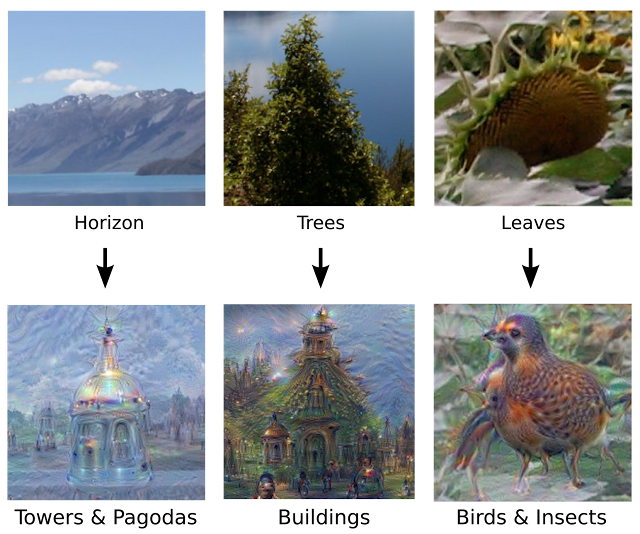

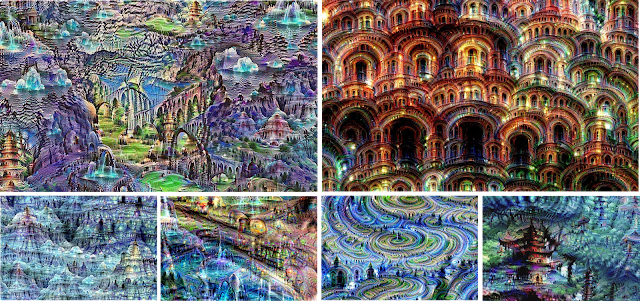

In 2015, following the rapid proliferation of cheap GPUs, Google software engineers [Mordvintsev, Olah, and Tyka](https://research.googleblog.com/2015/06/inceptionism-going-deeper-into-neural.html) first used it for ostensibly artistic purposes and introduced several innovations, including optimizing pixels over multiple scales (octaves), improved regularization, and most famously, using real images (photographs, paintings, etc) as input and optimizing their pixels so as to enhance whatever activations the network already detected (hence "hallucinating" or "dreaming"). They nicknamed their work "Deepdream" and released the first publicly available code for running it [in Caffe](https://github.com/google/deepdream/), which led to the technique being widely disseminated on social media, [puppyslugs](https://www.google.de/search?q=puppyslug&safe=off&tbm=isch&tbo=u&source=univ&sa=X&ved=0ahUKEwiT3aOwvtnXAhUHKFAKHXqdCBwQsAQIKQ&biw=960&bih=979) and all. Some highlights of their original work follow, with more found in [this gallery](https://photos.google.com/share/AF1QipPX0SCl7OzWilt9LnuQliattX4OUCj_8EP65_cTVnBmS1jnYgsGQAieQUc1VQWdgQ?key=aVBxWjhwSzg2RjJWLWRuVFBBZEN1d205bUdEMnhB).

A number of creative innovations were further introduced by [Mike Tyka](http://www.miketyka.com) including optimizing several channels along pre-arranged masks, and using feedback loops to generate video. Some examples of his work follow.

This notebook builds upon the code found in [tensorflow's deepdream example](https://github.com/tensorflow/tensorflow/tree/master/tensorflow/examples/tutorials/deepdream). The first part of this notebook will summarize that one, including naive optimization, multiscale generation, and Laplacian normalization. The code from that notebook is lightly modified and is mostly found in the the [lapnorm.py](../notebooks/lapnorm.py) script, which is imported into this notebook. The second part of this notebook builds upon that example by showing how to combine channels and mask their gradients, warp the canvas, and generate video using a feedback loop. Here is a [gallery of examples](http://www.genekogan.com/works/neural-synth/) and a [video work](https://vimeo.com/246047871).

Before we get started, we need to make sure we have downloaded and placed the Inceptionism network in the data folder. Run the next cell if you haven't already downloaded it.

```

#Grab inception model from online and unzip it (you can skip this step if you've already downloaded the model.

!wget -P . https://storage.googleapis.com/download.tensorflow.org/models/inception5h.zip

!unzip inception5h.zip -d inception5h/

!rm inception5h.zip

```

To get started, make sure all of the folloing import statements work without error. You should get a message telling you there are 59 layers in the network and 7548 channels.

```

from io import BytesIO

import math, time, copy, json, os

import glob

from os import listdir

from os.path import isfile, join

from random import random

from io import BytesIO

from enum import Enum

from functools import partial

import PIL.Image

from IPython.display import clear_output, Image, display, HTML

import numpy as np

import scipy.misc

import tensorflow.compat.v1 as tf

tf.disable_v2_behavior()

# import everything from lapnorm.py

from lapnorm import *

```

Let's inspect the network now. The following will give us the name of all the layers in the network, as well as the number of channels they contain. We can use this as a lookup table when selecting channels.

```

for l, layer in enumerate(layers):

layer = layer.split("/")[1]

num_channels = T(layer).shape[3]

print(layer, num_channels)

```

The basic idea is to take any image as input, then iteratively optimize its pixels so as to maximally activate a particular channel (feature extractor) in a trained convolutional network. We reproduce tensorflow's recipe here to read the code in detail. In `render_naive`, we take `img0` as input, then for `iter_n` steps, we calculate the gradient of the pixels with respect to our optimization objective, or in other words, the diff for all of the pixels we must add in order to make the image activate the objective. The objective we pass is a channel in one of the layers of the network, or an entire layer. Declare the function below.

```

def render_naive(t_obj, img0, iter_n=20, step=1.0):

t_score = tf.reduce_mean(t_obj) # defining the optimization objective

t_grad = tf.gradients(t_score, t_input)[0] # behold the power of automatic differentiation!

img = img0.copy()

for i in range(iter_n):

g, score = sess.run([t_grad, t_score], {t_input:img})

# normalizing the gradient, so the same step size should work

g /= g.std()+1e-8 # for different layers and networks

img += g*step

return img

```

Now let's try running it. First, we initialize a 200x200 block of colored noise. We then select the layer `mixed4d_5x5_bottleneck_pre_relu` and the 20th channel in that layer as the objective, and run it through `render_naive` for 40 iterations. You can try to optimize at different layers or different channels to get a feel for how it looks.

```

img0 = np.random.uniform(size=(200, 200, 3)) + 100.0

layer = 'mixed4d_3x3_bottleneck_pre_relu'

channel = 140

img1 = render_naive(T(layer)[:,:,:,channel], img0, 40, 1.0)

display_image(img1)

```

The above isn't so interesting yet. One improvement is to use repeated upsampling to effectively detect features at multiple scales (what we call "octaves") of the image. What we do is we start with a smaller image and calculate the gradients for that, going through the procedure like before. Then we upsample it by a particular ratio and calculate the gradients and modify the pixels of the result. We do this several times.

You can see that `render_multiscale` is similar to `render_naive` except now the addition of the outer "octave" loop which repeatedly upsamples the image using the `resize` function.

```

def render_multiscale(t_obj, img0, iter_n=10, step=1.0, octave_n=3, octave_scale=1.4):

t_score = tf.reduce_mean(t_obj) # defining the optimization objective

t_grad = tf.gradients(t_score, t_input)[0] # behold the power of automatic differentiation!

img = img0.copy()

for octave in range(octave_n):

if octave>0:

hw = np.float32(img.shape[:2])*octave_scale

img = resize(img, np.int32(hw))

for i in range(iter_n):

g = calc_grad_tiled(img, t_grad)

# normalizing the gradient, so the same step size should work

g /= g.std()+1e-8 # for different layers and networks

img += g*step

print("octave %d/%d"%(octave+1, octave_n))

clear_output()

return img

```

Let's try this on noise first. Note the new variables `octave_n` and `octave_scale` which control the parameters of the scaling. Thanks to tensorflow's patch to do the process on overlapping subrectangles, we don't have to worry about running out of memory. However, making the overall size large will mean the process takes longer to complete.

```

h, w = 200, 200

octave_n = 3

octave_scale = 1.4

iter_n = 50

img0 = np.random.uniform(size=(h, w, 3)) + 100.0

layer = 'mixed4c_5x5_bottleneck_pre_relu'

channel = 20

img1 = render_multiscale(T(layer)[:,:,:,channel], img0, iter_n, 1.0, octave_n, octave_scale)

display_image(img1)

```

Now load a real image and use that as the starting point. We'll use the kitty image in the assets folder. Here is the original.

<img src="../assets/kitty.jpg" alt="kitty" style="width: 280px;"/>

```

h, w = 320, 480

octave_n = 3

octave_scale = 1.4

iter_n = 60

img0 = load_image('../assets/kitty.jpg', h, w)

layer = 'mixed4d_5x5_bottleneck_pre_relu'

channel = 21

img1 = render_multiscale(T(layer)[:,:,:,channel], img0, iter_n, 1.0, octave_n, octave_scale)

display_image(img1)

```

Now we introduce Laplacian normalization. The problem is that although we are finding features at multiple scales, it seems to have a lot of unnatural high-frequency noise. We apply a [Laplacian pyramid decomposition](https://en.wikipedia.org/wiki/Pyramid_%28image_processing%29#Laplacian_pyramid) to the image as a regularization technique and calculate the pixel gradient at each scale, as before.

```

def render_lapnorm(t_obj, img0, iter_n=10, step=1.0, oct_n=3, oct_s=1.4, lap_n=4):

t_score = tf.reduce_mean(t_obj) # defining the optimization objective

t_grad = tf.gradients(t_score, t_input)[0] # behold the power of automatic differentiation!

# build the laplacian normalization graph

lap_norm_func = tffunc(np.float32)(partial(lap_normalize, scale_n=lap_n))

img = img0.copy()

for octave in range(oct_n):

if octave>0:

hw = np.float32(img.shape[:2])*oct_s

img = resize(img, np.int32(hw))

for i in range(iter_n):

g = calc_grad_tiled(img, t_grad)

g = lap_norm_func(g)

img += g*step

print('.', end='')

print("octave %d/%d"%(octave+1, oct_n))

clear_output()

return img

```

With Laplacian normalization and multiple octaves, we have the core technique finished and are level with the Tensorflow example. Try running the example below and modifying some of the numbers to see how it affects the result. Remember you can use the layer lookup table at the top of this notebook to recall the different layers that are available to you. Note the differences between early (low-level) layers and later (high-level) layers.

```

h, w = 300, 400

octave_n = 3

octave_scale = 1.4

iter_n = 20

img0 = np.random.uniform(size=(h, w, 3)) + 100.0

layer = 'mixed5b_pool_reduce_pre_relu'

channel = 99

img1 = render_lapnorm(T(layer)[:,:,:,channel], img0, iter_n, 1.0, octave_n, octave_scale)

display_image(img1)

```

Now we are going to modify the `render_lapnorm` function in three ways.

1) Instead of passing just a single channel or layer to be optimized (the objective, `t_obj`), we can pass several in an array, letting us optimize several channels simultaneously (it must be an array even if it contains just one element).

2) We now also pass in `mask`, which is a numpy array of dimensions (`h`,`w`,`n`) where `h` and `w` are the height and width of the source image `img0` and `n` is equal to the number of objectives in `t_obj`. The mask is like a gate or multiplier of the gradient for each channel. mask[:,:,0] gets multiplied by the gradient of the first objective, mask[:,:,1] by the second and so on. It should contain a float between 0 and 1 (0 to kill the gradient, 1 to let all of it pass). Another way to think of `mask` is it's like `step` for every individual pixel for each objective.

3) Internally, we use a convenience function `get_mask_sizes` which figures out for us the size of the image and mask at every octave, so we don't have to worry about calculating this ourselves, and can just pass in an img and mask of the same size.

```

def lapnorm_multi(t_obj, img0, mask, iter_n=10, step=1.0, oct_n=3, oct_s=1.4, lap_n=4, clear=True):

mask_sizes = get_mask_sizes(mask.shape[0:2], oct_n, oct_s)

img0 = resize(img0, np.int32(mask_sizes[0]))

t_score = [tf.reduce_mean(t) for t in t_obj] # defining the optimization objective

t_grad = [tf.gradients(t, t_input)[0] for t in t_score] # behold the power of automatic differentiation!

# build the laplacian normalization graph

lap_norm_func = tffunc(np.float32)(partial(lap_normalize, scale_n=lap_n))

img = img0.copy()

for octave in range(oct_n):

if octave>0:

hw = mask_sizes[octave] #np.float32(img.shape[:2])*oct_s

img = resize(img, np.int32(hw))

oct_mask = resize(mask, np.int32(mask_sizes[octave]))

for i in range(iter_n):

g_tiled = [lap_norm_func(calc_grad_tiled(img, t)) for t in t_grad]

for g, gt in enumerate(g_tiled):

img += gt * step * oct_mask[:,:,g].reshape((oct_mask.shape[0],oct_mask.shape[1],1))

print('.', end='')

print("octave %d/%d"%(octave+1, oct_n))

if clear:

clear_output()

return img

```

Try first on noise, as before. This time, we pass in two objectives from different layers and we create a mask where the top half only lets in the first channel, and the bottom half only lets in the second.

```

h, w = 300, 400

octave_n = 3

octave_scale = 1.4

iter_n = 15

img0 = np.random.uniform(size=(h, w, 3)) + 100.0

objectives = [T('mixed3a_3x3_pre_relu')[:,:,:,79],

T('mixed5a_1x1_pre_relu')[:,:,:,200],

T('mixed4b_5x5_bottleneck_pre_relu')[:,:,:,22]]

# mask

mask = np.zeros((h, w, 3))

mask[0:100,:,0] = 1.0

mask[100:200,:,1] = 1.0

mask[200:,:,2] = 1.0

img1 = lapnorm_multi(objectives, img0, mask, iter_n, 1.0, octave_n, octave_scale)

display_image(img1)

```

Now the same thing, but we optimize over the kitty instead and pick new channels.

```

h, w = 400, 400

octave_n = 3

octave_scale = 1.4

iter_n = 30

img0 = load_image('../assets/kitty.jpg', h, w)

objectives = [T('mixed4d_3x3_bottleneck_pre_relu')[:,:,:,99],

T('mixed5a_5x5_bottleneck_pre_relu')[:,:,:,40]]

# mask

mask = np.zeros((h, w, 2))

mask[:,:200,0] = 1.0

mask[:,200:,1] = 1.0

img1 = lapnorm_multi(objectives, img0, mask, iter_n, 1.0, octave_n, octave_scale)

display_image(img1)

```

Let's make a more complicated mask. Here we use numpy's `linspace` function to linearly interpolate the mask between 0 and 1, going from left to right, in the first channel's mask, and the opposite for the second channel. Thus on the far left of the image, we let in only the second channel, on the far right only the first channel, and in the middle exactly 50% of each. We'll make a long one to show the smooth transition. We'll also visualize the first channel's mask right afterwards.

```

h, w = 256, 1024

img0 = np.random.uniform(size=(h, w, 3)) + 100.0

octave_n = 3

octave_scale = 1.4

objectives = [T('mixed4c_3x3_pre_relu')[:,:,:,50],

T('mixed4d_5x5_bottleneck_pre_relu')[:,:,:,29]]

mask = np.zeros((h, w, 2))

mask[:,:,0] = np.linspace(0,1,w)

mask[:,:,1] = np.linspace(1,0,w)

img1 = lapnorm_multi(objectives, img0, mask, iter_n=40, step=1.0, oct_n=3, oct_s=1.4, lap_n=4)

print("image")

display_image(img1)

print("masks")

display_image(255*mask[:,:,0])

display_image(255*mask[:,:,1])

```

One can think up many clever ways to make masks. Maybe they are arranged as overlapping concentric circles, or along diagonal lines, or even using [Perlin noise](https://github.com/caseman/noise) to get smooth organic-looking variation.

Here is one example making a circular mask.

```

h, w = 500, 500

cy, cx = 0.5, 0.5

# circle masks

pts = np.array([[[i/(h-1.0),j/(w-1.0)] for j in range(w)] for i in range(h)])

ctr = np.array([[[cy, cx] for j in range(w)] for i in range(h)])

pts -= ctr

dist = (pts[:,:,0]**2 + pts[:,:,1]**2)**0.5

dist = dist / np.max(dist)

mask = np.ones((h, w, 2))

mask[:, :, 0] = dist

mask[:, :, 1] = 1.0-dist

img0 = np.random.uniform(size=(h, w, 3)) + 100.0

octave_n = 3

octave_scale = 1.4

objectives = [T('mixed3b_5x5_bottleneck_pre_relu')[:,:,:,9],

T('mixed4d_5x5_bottleneck_pre_relu')[:,:,:,17]]

img1 = lapnorm_multi(objectives, img0, mask, iter_n=20, step=1.0, oct_n=3, oct_s=1.4, lap_n=4)

display_image(img1)

```

Now we show how to use an existing image as a set of masks, using k-means clustering to segment it into several sections which become masks.

```

import sklearn.cluster

k = 3

h, w = 320, 480

img0 = load_image('../assets/kitty.jpg', h, w)

imgp = np.array(list(img0)).reshape((h*w, 3))

clusters, assign, _ = sklearn.cluster.k_means(imgp, k)

assign = assign.reshape((h, w))

mask = np.zeros((h, w, k))

for i in range(k):

mask[:,:,i] = np.multiply(np.ones((h, w)), (assign==i))

for i in range(k):

display_image(mask[:,:,i]*255.)

img0 = np.random.uniform(size=(h, w, 3)) + 100.0

octave_n = 3

octave_scale = 1.4

objectives = [T('mixed4b_3x3_bottleneck_pre_relu')[:,:,:,111],

T('mixed5b_pool_reduce_pre_relu')[:,:,:,12],

T('mixed4b_5x5_bottleneck_pre_relu')[:,:,:,11]]

img1 = lapnorm_multi(objectives, img0, mask, iter_n=20, step=1.0, oct_n=3, oct_s=1.4, lap_n=4)

display_image(img1)

```

Now, we move on to generating video. The most straightforward way to do this is using feedback; generate one image in the conventional way, and then use it as the input to the next generation, rather than starting with noise again. By itself, this would simply repeat or intensify the features found in the first image, but we can get interesting results by perturbing the input to the second generation slightly before passing it in. For example, we can crop it slightly to remove the outer rim, then resize it to the original size and run it through again. If we do this repeatedly, we will get what looks like a constant zooming-in motion.

The next block of code demonstrates this. We'll make a small square with a single feature, then crop the outer rim by around 5% before making the next one. We'll repeat this 20 times and look at the resulting frames. For simplicity, we'll just set the mask to 1 everywhere. Note, we've also set the `clear` variable in `lapnorm_multi` to false so we can see all the images in sequence.

```

h, w = 200, 200

# start with random noise

img = np.random.uniform(size=(h, w, 3)) + 100.0

octave_n = 3

octave_scale = 1.4

objectives = [T('mixed4d_5x5_bottleneck_pre_relu')[:,:,:,11]]

mask = np.ones((h, w, 1))

# repeat the generation loop 20 times. notice the feedback -- we make img and then use it the initial input

for f in range(20):

img = lapnorm_multi(objectives, img, mask, iter_n=20, step=1.0, oct_n=3, oct_s=1.4, lap_n=4, clear=False)

display_image(img) # let's see it

scipy.misc.imsave('frame%05d.png'%f, img) # ffmpeg to save the frames

img = resize(img[10:-10,10:-10,:], (h, w)) # before looping back, crop the border by 10 pixels, resize, repeat

```

If you look at all the frames, you can see the zoom-in effect. Zooming is just one of the things we can do to get interesting dynamics. Another cropping technique might be to shift the canvas in one direction, or maybe we can slightly rotate the canvas around a pivot point, or perhaps distort it with perlin noise. There are many things that can be done to get interesting and compelling results. Try also combining these with different ways of aking and modifying masks, and the combinatorial space of possibilities grows immensely. Most ambitiously, you can try training your own convolutional network from scratch and using it instead of Inception to get more custom effects. Thus as we see, the technique of feature visualization provides a wealth of possibilities to generate interesting video art.

| github_jupyter |

<a href="https://colab.research.google.com/github/ik-okoro/DS-Unit-2-Linear-Models/blob/master/module3-ridge-regression/LS_DS_213_assignment.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

Lambda School Data Science

*Unit 2, Sprint 1, Module 3*

---

# Ridge Regression

## Assignment

We're going back to our other **New York City** real estate dataset. Instead of predicting apartment rents, you'll predict property sales prices.

But not just for condos in Tribeca...

- [ ] Use a subset of the data where `BUILDING_CLASS_CATEGORY` == `'01 ONE FAMILY DWELLINGS'` and the sale price was more than 100 thousand and less than 2 million.

- [ ] Do train/test split. Use data from January — March 2019 to train. Use data from April 2019 to test.

- [ ] Do one-hot encoding of categorical features.

- [ ] Do feature selection with `SelectKBest`.

- [ ] Fit a ridge regression model with multiple features. Use the `normalize=True` parameter (or do [feature scaling](https://scikit-learn.org/stable/modules/preprocessing.html) beforehand — use the scaler's `fit_transform` method with the train set, and the scaler's `transform` method with the test set)

- [ ] Get mean absolute error for the test set.

- [ ] As always, commit your notebook to your fork of the GitHub repo.

The [NYC Department of Finance](https://www1.nyc.gov/site/finance/taxes/property-rolling-sales-data.page) has a glossary of property sales terms and NYC Building Class Code Descriptions. The data comes from the [NYC OpenData](https://data.cityofnewyork.us/browse?q=NYC%20calendar%20sales) portal.

## Stretch Goals

Don't worry, you aren't expected to do all these stretch goals! These are just ideas to consider and choose from.

- [ ] Add your own stretch goal(s) !

- [ ] Instead of `Ridge`, try `LinearRegression`. Depending on how many features you select, your errors will probably blow up! 💥

- [ ] Instead of `Ridge`, try [`RidgeCV`](https://scikit-learn.org/stable/modules/generated/sklearn.linear_model.RidgeCV.html).

- [ ] Learn more about feature selection:

- ["Permutation importance"](https://www.kaggle.com/dansbecker/permutation-importance)

- [scikit-learn's User Guide for Feature Selection](https://scikit-learn.org/stable/modules/feature_selection.html)

- [mlxtend](http://rasbt.github.io/mlxtend/) library

- scikit-learn-contrib libraries: [boruta_py](https://github.com/scikit-learn-contrib/boruta_py) & [stability-selection](https://github.com/scikit-learn-contrib/stability-selection)

- [_Feature Engineering and Selection_](http://www.feat.engineering/) by Kuhn & Johnson.

- [ ] Try [statsmodels](https://www.statsmodels.org/stable/index.html) if you’re interested in more inferential statistical approach to linear regression and feature selection, looking at p values and 95% confidence intervals for the coefficients.

- [ ] Read [_An Introduction to Statistical Learning_](http://faculty.marshall.usc.edu/gareth-james/ISL/ISLR%20Seventh%20Printing.pdf), Chapters 1-3, for more math & theory, but in an accessible, readable way.

- [ ] Try [scikit-learn pipelines](https://scikit-learn.org/stable/modules/compose.html).

```

%%capture

import sys

# If you're on Colab:

if 'google.colab' in sys.modules:

DATA_PATH = 'https://raw.githubusercontent.com/LambdaSchool/DS-Unit-2-Applied-Modeling/master/data/'

!pip install category_encoders==2.*

# If you're working locally:

else:

DATA_PATH = '../data/'

# Ignore this Numpy warning when using Plotly Express:

# FutureWarning: Method .ptp is deprecated and will be removed in a future version. Use numpy.ptp instead.

import warnings

warnings.filterwarnings(action='ignore', category=FutureWarning, module='numpy')

import numpy as np

import pandas as pd

import pandas_profiling

# Read New York City property sales data

df = pd.read_csv(DATA_PATH+'condos/NYC_Citywide_Rolling_Calendar_Sales.csv', parse_dates=[-1], index_col=[-1])

# Change column names: replace spaces with underscores

df.columns = [col.replace(' ', '_') for col in df]

# SALE_PRICE was read as strings.

# Remove symbols, convert to integer

df['SALE_PRICE'] = (

df['SALE_PRICE']

.str.replace('$','')

.str.replace('-','')

.str.replace(',','')

.astype(int)

)

# BOROUGH is a numeric column, but arguably should be a categorical feature,

# so convert it from a number to a string

df['BOROUGH'] = df['BOROUGH'].astype(str)

# Reduce cardinality for NEIGHBORHOOD feature

# Get a list of the top 10 neighborhoods

top10 = df['NEIGHBORHOOD'].value_counts()[:10].index

# At locations where the neighborhood is NOT in the top 10,

# replace the neighborhood with 'OTHER'

df.loc[~df['NEIGHBORHOOD'].isin(top10), 'NEIGHBORHOOD'] = 'OTHER'

df.head()

df.columns

df["BUILDING_CLASS_CATEGORY"].describe()

len(df)

# Subset data

df = df[(df["BUILDING_CLASS_CATEGORY"] == "01 ONE FAMILY DWELLINGS") & ((df["SALE_PRICE"] > 100000) | (df["SALE_PRICE"] < 2000000))]

len(df)

df.dtypes

df['ZIP_CODE'].value_counts()

```

Zip code should be an object but it's going to be dropped anyways so don't bother converting

```

df['EASE-MENT'].value_counts(dropna=False)

```

Drop easement as well

```

df['BLOCK'].value_counts(dropna=False)

df['LOT'].value_counts(dropna=False)

```

Don't bother changing lot and block variables before dropping

```

df.select_dtypes("object").head()

df.select_dtypes("object").nunique()

df["NEIGHBORHOOD"].value_counts()

df["APARTMENT_NUMBER"].value_counts(dropna=False)

```

Going to drop apartment number

```

df['BUILDING_CLASS_AT_PRESENT'].value_counts()

# Drop building class columns and convert land square feet

df['LAND_SQUARE_FEET'] = df['LAND_SQUARE_FEET'].str.replace(",", "").astype(int)

df = df.drop(["BUILDING_CLASS_AT_PRESENT", "BUILDING_CLASS_AT_TIME_OF_SALE"], axis=1)

df.select_dtypes("object").nunique()

# Drop address and apartments as well

df = df.drop(["ADDRESS", "APARTMENT_NUMBER"], axis=1)

df.select_dtypes("object").nunique()

```

Good with these categorical variables

```

# Drop the other wrong format columns

df = df.drop(['BLOCK', 'LOT', 'EASE-MENT', 'ZIP_CODE'], axis=1)

target = "SALE_PRICE"

y = df[target]

X = df.drop(target, axis=1)

cutoff = "2019-04-01"

mask = X.index < cutoff

X_train, y_train = X.loc[mask], y.loc[mask]

X_test, y_test = X.loc[~mask], y.loc[~mask]

assert len(X) == len(X_test) + len(X_train)

y_train.mean()

from sklearn.metrics import mean_absolute_error

mean_absolute_error(y_train, ([y_train.mean()]*len(y_train)))

len(X_train.columns)

X_train.columns

# Encoding

from category_encoders import OneHotEncoder

encode = OneHotEncoder(use_cat_names=True)

XT_train = encode.fit_transform(X_train)

len(XT_train.columns)

XT_train.columns

XT_test = encode.transform(X_test)

len(XT_test.columns)

# Not advisable but perform selectkbest iteratively simple linear regression on train data then compare with test

from sklearn.linear_model import LinearRegression

from sklearn.feature_selection import SelectKBest, f_regression

for i in range(1, len(XT_train.columns) + 1):

print(f"{i} Features")

selector = SelectKBest(score_func = f_regression, k=i)

XT_train_selected = selector.fit_transform(XT_train, y_train)

XT_test_selected = selector.transform(XT_test)

lin_reg = LinearRegression()

lin_reg.fit(XT_train_selected, y_train)

print(f"Test Mean Absolute Error: {mean_absolute_error(y_test, lin_reg.predict(XT_test_selected)).round(2)}")

```

Guess I'm using k=18?

```

selector = SelectKBest(k = 18)

XTT_train = selector.fit_transform(XT_train, y_train)

XTT_test = selector.transform(XT_test)

# Use RidgeCV to find best lambda before using Ridge

from sklearn.linear_model import Ridge, RidgeCV

alphas = [0.0001, 56, 79, 0.001, 0.01, 0.05, 0.1, 0.5, 1, 5, 10, 50, 100, 500]

ridge_cv = RidgeCV(alphas = alphas, normalize = True)

ridge_cv.fit(XTT_train, y_train)

ridge_cv.alpha_

# Use alpha = 1 then

ridge = Ridge(normalize=True)

ridge.fit(XTT_train, y_train)

print("RIDGE train MAE:", mean_absolute_error(y_train, ridge.predict(XTT_train)))

print("RIDGE test MAE:", mean_absolute_error(y_test, ridge.predict(XTT_test)))

from sklearn.metrics import r2_score

print("Training R^2:", r2_score(y_train, ridge.predict(XTT_train)))

print("Testing R^2:", r2_score(y_test, ridge.predict(XTT_test)))

```

| github_jupyter |

```

import numpy as np

import pandas as pd

import matplotlib

import matplotlib.pyplot as plt

import matplotlib.backends.backend_pdf as pdf

import matplotlib.patches as pch

import eleanor_constants as EL

matplotlib.rcParams['pdf.fonttype'] = 42

matplotlib.rcParams['ps.fonttype'] = 42

%matplotlib inline

### PLOT STARVED TRAJECTORIES

savename = "./figures/S0_starved.pdf"

df = pd.read_csv('./data/experiment_IDs/cleaned_static_data.csv')

df = df[df['starved'] == '1day']

df = df[df['dead'] == 'no']

color = EL.c_starve

odors = ["F", "FE", "Y",

"W", "I", "O",

"A", "G", "Q", "I2"]

odorkeys = ["Food", "Food extract", "Yeast RNA", "Water",

"Indole 100uM", "O-cresol",

"Amino acids", "Glucose",

"Quinine", "Indole 10mM"]

fig = plt.figure(figsize=(14, 12*4.125/3))

for i, (odor, odorkey) in enumerate(zip(odors, odorkeys)):

if odor != "":

col = 4

ax = fig.add_subplot(8, col, np.floor(i/col)*col+(i+1)+col, aspect="equal")

histax = fig.add_subplot(8, col, np.floor(i/col)*col+(i+1))

ax.set_xlim(0, 80-1)

ax.set_ylim(30-1, 0)

ax.set_xticks([])

ax.set_yticks([])

ax.spines['bottom'].set_color(EL.c_greyax)

ax.spines['top'].set_color(EL.c_greyax)

ax.spines['right'].set_color(EL.c_greyax)

ax.spines['left'].set_color(EL.c_greyax)

xlist = []

temp = df[df['treatment_odor'] == EL.treatments.get(odor)].copy()

temp['fname'] = './data/trajectories/video_calculations/' + temp['animal_ID'] + '-experiment.csv'

for n in temp["fname"].values:

temp2 = pd.read_csv(n)

x = temp2["pos_x_mm"].values

y = temp2["pos_y_mm"].values

xlist += x.tolist()

ax.plot(x, y, lw=0.75, color=EL.c_greyax, alpha=0.2)

ax.scatter([x[-1]], [y[-1]], color="k", alpha=0.5, lw=0, s=25, zorder=20)

histax.set_xlim(0, 80)

histax.set_ylim(0, 0.07)

if odor in ['Q', "I2"]:

histax.set_ylim(0, 0.18)

histax.text(40, 0.18, odorkey+', starved, n='+str(len(temp)),

ha='center', va='bottom', clip_on=False)

else:

histax.text(40, 0.07, odorkey+', starved, n='+str(len(temp)),

ha='center', va='bottom', clip_on=False)

histax.hist(xlist, bins=80, color=color, density=True, lw=0, clip_on=False)

histax.set_xlabel("Arena location (mm, 0-80)")

histax.set_ylabel("Probability Density (%)")

histax.spines['top'].set_visible(False)

histax.spines['right'].set_visible(False)

histax.spines['left'].set_color(EL.c_greyax)

histax.spines['bottom'].set_color(EL.c_greyax)

# SET BOUNDARIES AND SAVE FIGURE -----------------------------------------------

plt.tight_layout()

fig.subplots_adjust(wspace=0.4)

pp = pdf.PdfPages(savename, keep_empty=False)

pp.savefig(fig)

pp.close()

plt.show()

### PLOT FED TRAJECTORIES

savename = "./figures/S0_fed.pdf"

df = pd.read_csv('./data/experiment_IDs/cleaned_static_data.csv')

df = df[df['starved'] == 'no']

df = df[df['dead'] == 'no']

color = EL.c_fed

odors = ["F", "FE", "Y",

"W", "I", "O",

"A", "G", "Q"]

odorkeys = ["Food", "Food extract", "Yeast RNA", "Water",

"Indole", "O-cresol",

"Amino acids", "Glucose", "Quinine"]

fig = plt.figure(figsize=(14, 12))

for i, (odor, odorkey) in enumerate(zip(odors, odorkeys)):

ax = fig.add_subplot(6, 3, np.floor(i/3)*3+(i+1)+3, aspect="equal")

histax = fig.add_subplot(6, 3, np.floor(i/3)*3+(i+1))

ax.set_xlim(0, 80-1)

ax.set_ylim(30-1, 0)

ax.set_xticks([])

ax.set_yticks([])

ax.spines['bottom'].set_color(EL.c_greyax)

ax.spines['top'].set_color(EL.c_greyax)

ax.spines['right'].set_color(EL.c_greyax)

ax.spines['left'].set_color(EL.c_greyax)

xlist = []

temp = df[df['treatment_odor'] == EL.treatments.get(odor)].copy()

temp['fname'] = './data/trajectories/video_calculations/' + temp['animal_ID'] + '-experiment.csv'

for n in temp["fname"].values:

temp2 = pd.read_csv(n)

x = temp2["pos_x_mm"].values

y = temp2["pos_y_mm"].values

xlist += x.tolist()

ax.plot(x, y, lw=0.75, color=EL.c_greyax, alpha=0.2)

ax.scatter([x[-1]], [y[-1]], color="k", alpha=0.5, lw=0, s=25, zorder=20)

histax.set_xlim(0, 80)

histax.set_ylim(0, 0.1)

if odor == "Q":

histax.set_ylim(0, 0.14)

histax.set_yticks([0, 0.07, 0.14])

histax.text(40, 0.14, odorkey+', fed, n='+str(len(temp)),

ha='center', va='bottom', clip_on=False)

else:

histax.text(40, 0.1, odorkey+', fed, n='+str(len(temp)),

ha='center', va='bottom', clip_on=False)

histax.hist(xlist, bins=80, color=color, density=True, lw=0)

histax.set_xlabel("Arena location (mm, 0-80)")

histax.set_ylabel("Probability Density (%)")

histax.spines['top'].set_visible(False)

histax.spines['right'].set_visible(False)

histax.spines['left'].set_color(EL.c_greyax)

histax.spines['bottom'].set_color(EL.c_greyax)

# SET BOUNDARIES AND SAVE FIGURE -----------------------------------------------

plt.tight_layout()

fig.subplots_adjust(wspace=0.2)

pp = pdf.PdfPages(savename, keep_empty=False)

pp.savefig(fig)

pp.close()

plt.show()

```

| github_jupyter |

# Intro to scikit-learn, SVMs and decision trees

<hr style="clear:both">

This notebook is part of a series of exercises for the CIVIL-226 Introduction to Machine Learning for Engineers course at EPFL. Copyright (c) 2021 [VITA](https://www.epfl.ch/labs/vita/) lab at EPFL

Use of this source code is governed by an MIT-style license that can be found in the LICENSE file or at https://www.opensource.org/licenses/MIT

**Author(s):** [David Mizrahi](mailto:david.mizrahi@epfl.ch)

<hr style="clear:both">

This is the final exercise of this course. In this exercise, we'll introduce the scikit-learn package, and use it to train SVMs and decision trees. We'll end with a small note on how to use scikit-learn for unsupervised learning.

## 1. Intro to scikit-learn

[scikit-learn](https://scikit-learn.org/stable/index.html) is a very popular Python package, built on top of NumPy, which provides efficient implementations of many popular machine learning algorithms.

It can be used for:

- Generating and loading popular datasets

- Preprocessing (feature extraction and expansion, normalization)

- Supervised learning (classification and regression)

- Unsupervised learning (clustering and dimensionality reduction)

- Model selection (grid search, train/test split, cross-validation)

- Evaluation (with many metrics for all kinds of tasks)

### 1.1. Data representation in scikit-learn

In scikit-learn, data is represented in the same way it was in the previous exercises. That is:

- The features are represented as a 2D features matrix (usually named `X`), most often contained in a NumPy array or Pandas DataFrame.

- The label (or target) array is often called `y`, and is usually contained in a NumPy array or Pandas Series.

In mathematical notation, this is:

- features: $\boldsymbol{X} \in \mathbb{R}^{N \times D}$, $\forall \ \boldsymbol{x}^{(i)} \in \boldsymbol{X}: \boldsymbol{x}^{(i)} \in \mathbb{R}^{D}$

- label (or target): $\boldsymbol{y} \in \mathbb{R}^{N}$

where $N$ is the number of examples in our dataset, and $D$ is the number of features per example

scikit-learn offers many utilities for splitting and preprocessing data.

- For splitting data, there are functions such as [`model_selection.train_test_split()`](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.train_test_split.html#sklearn.model_selection.train_test_split) which splits arrays or matrices into random train and test subsets, or [`model_selection.KFold()`](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.KFold.html#sklearn.model_selection.KFold) and similar functions which provides train/test indices for cross-validation. These functions are extremely handy, and are often used to split NumPy or Pandas arrays even when the training and models come from a library other than scikit-learn.

- For preprocessing data, scikit-learn offers many utility functions which can standardize data (e.g. [`preprocessing.StandardScaler()`](https://scikit-learn.org/stable/modules/generated/sklearn.preprocessing.StandardScaler.html#sklearn.preprocessing.StandardScaler)), impute, discretize and perform feature expansion. For more information, refer to the [official preprocessing tutorial](https://scikit-learn.org/stable/modules/preprocessing.html#).

### 1.2. Estimator API

For **supervised learning**, scikit-learn implements many algorithms we've seen in this class such as:

- Nearest neighbors

- Linear regression

- Logistic regression

- Support vector machines

- Decision trees

- Ensembles (such as random forests)

In scikit-learn, these algorithms are called **estimators**, and they use a clean, uniform and streamlined API, which makes it very easy to switch to a new model or algorithm.

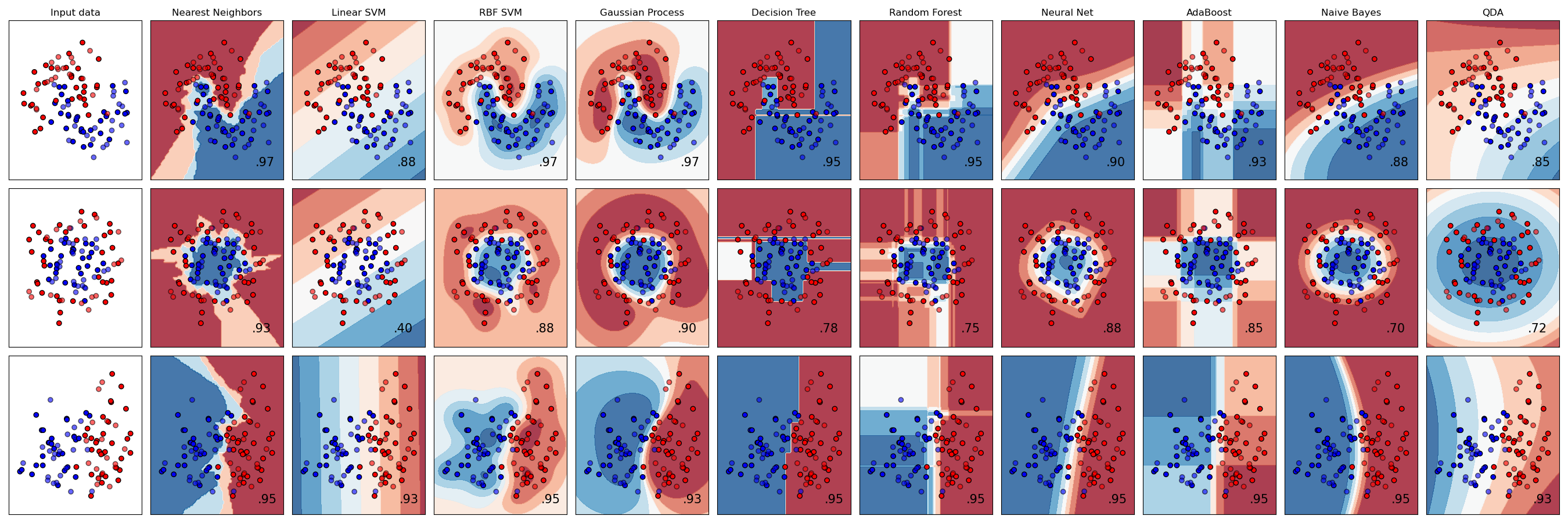

Here is an example of many of the estimators available with scikit-learn. [Source](https://scikit-learn.org/stable/auto_examples/classification/plot_classifier_comparison.html)

Here are the steps to follow when using the scikit-learn estimator API:

1. Arrange data into a features matrix (`X`) and target vector (`y`).

2. Choose a class of model by importing the appropriate estimator class (e.g. `linear_model.LogisticRegression()`, `svm.SVC()`, etc...)

3. Choose model hyperparameters by instantiating this class with desired values.

4. Fit the model to your data by calling the `fit()` method of the model instance.

5. Apply the model to new data: for supervised learning, we predict labels for unknown data using the `predict()` method.

The steps to follow when using scikit-learn estimators for unsupervised learning are almost identical.

### 1.3. Example: Logistic regression on the Iris dataset

As an example, we'll walk through how to use scikit-learn to train a logistic regression model for multi-class classification on the Iris dataset.

```

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

sns.set_theme(style="white", context="notebook", palette="dark")

# !!! sklearn is how the scikit-learn package is called in Python

import sklearn

```

#### 1.3.1. Loading the dataset

```

from sklearn import datasets

# Iris is a toy dataset , which is directly available in sklearn.datasets

iris = datasets.load_iris()

X = iris.data[:, :2] # we only take the first two features for simpler visualizations

y = iris.target

print(f"Type of X: {type(X)} | Shape of X: {X.shape}")

print(f"Type of y: {type(y)} | Shape of y: {y.shape}")

```

#### 1.3.2. Splitting and scaling

```

from sklearn.model_selection import train_test_split

# Split data using train_test_split, use 30% of the data as a test set and set a random state for reproducibility

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

print(f"Shape of X_train: {X_train.shape} | Shape of y_train: {y_train.shape}")

print(f"Shape of X_test: {X_test.shape} | Shape of y_test: {y_test.shape}")

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

# Fit with the mean / std of the training data

scaler.fit(X_train)

# Scale both the training / test data

X_train = scaler.transform(X_train)

X_test = scaler.transform(X_test)

print(f"Mean of X_train: {X_train.mean():.3f}| Std of X_train: {X_train.std():.3f}")

print(f"Mean of X_test: {X_test.mean():.3f}| Std of X_test: {X_test.std():.3f}")

```

#### 1.3.3. Training

```

from sklearn.linear_model import LogisticRegression

# Initialize a logistic regression model with L2 regularization

# and regularization strength 1e-4 (as C is inverse of regularization strength)

logreg = LogisticRegression(penalty="l2", C=1e4)

# Train the model

logreg.fit(X_train, y_train)

# Get train accuracy

train_acc = logreg.score(X_train, y_train)

print(f"Train accuracy: {train_acc * 100:.2f}%")

```

#### 1.3.4. Decision boundaries

We can use matplotlib to view the decision boundaries of our trained model.

```

# This code is beyond the scope of this class, no need to understand what it does.

# Source: https://scikit-learn.org/stable/auto_examples/linear_model/plot_iris_logistic.html

# Plot the decision boundary. For that, we will assign a color to each

# point in the mesh [x_min, x_max]x[y_min, y_max].

x_min, x_max = X_train[:, 0].min() - .5, X_train[:, 0].max() + .5

y_min, y_max = X_train[:, 1].min() - .5, X_train[:, 1].max() + .5

h = .02 # step size in the mesh

xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))

Z = logreg.predict(np.c_[xx.ravel(), yy.ravel()])

# Put the result into a color plot

Z = Z.reshape(xx.shape)

plt.figure()

plt.pcolormesh(xx, yy, Z, cmap=plt.cm.Paired, shading='auto', alpha=0.1, antialiased=True)

# Plot also the training points

scatter = plt.scatter(X_train[:, 0], X_train[:, 1], c=y_train, edgecolors='k', cmap=plt.cm.Paired)

plt.xlabel('Sepal length')

plt.ylabel('Sepal width')

plt.xlim(xx.min(), xx.max())

plt.ylim(yy.min(), yy.max())

plt.legend(handles=scatter.legend_elements()[0], labels=list(iris.target_names))

plt.show()

```

#### 1.3.5. Test accuracy

```

# Get test accuracy

test_acc = logreg.score(X_test, y_test)

print(f"Test accuracy: {test_acc * 100:.2f}%")

```

#### 1.3.6. Other metrics

```

# We can easily use other metrics using sklearn.metrics

from sklearn.metrics import balanced_accuracy_score

# First we'll use the balanced accuracy

y_pred_train = logreg.predict(X_train)

train_balanced_acc = balanced_accuracy_score(y_train, y_pred_train)

y_pred_test = logreg.predict(X_test)

test_balanced_acc = balanced_accuracy_score(y_test, y_pred_test)

print(f"Train balanced acc: {train_balanced_acc*100:.2f}%")

print(f"Test balanced acc: {test_balanced_acc*100:.2f}%")

from sklearn.metrics import plot_confusion_matrix

# Now we'll plot the confusion matrix of the testing data

plot_confusion_matrix(logreg, X_test, y_test, display_labels=iris.target_names, cmap=plt.cm.Blues)

plt.show()

```

### 1.4. Additional scikit-learn resources

This tutorial very briefly covers the scikit-learn package, and how it can be used to train a simple classifier. This package is capable of a lot more than what was shown here, as you will see in the rest of this exercise. If you want a more in-depth look at scikit-learn, take a look at these resources:

- scikit-learn Getting Started tutorial: https://scikit-learn.org/stable/getting_started.html

- scikit-learn User Guide: https://scikit-learn.org/stable/user_guide.html

- scikit-learn cheatsheet by Datacamp: https://s3.amazonaws.com/assets.datacamp.com/blog_assets/Scikit_Learn_Cheat_Sheet_Python.pdf

- scikit-learn tutorial from the Python Data Science Handbook: https://jakevdp.github.io/PythonDataScienceHandbook/05.02-introducing-scikit-learn.html

## 2. Support Vector Machines

In class, we have covered the theory behind SVMs, and how they can be used to perform non-linear classification using the "kernel trick". In this exercise, you'll see how SVMs can easily be trained with scikit-learn, and how the choice of kernel can impact the performance on a non-linearly separable dataset.

### 2.1. Linear SVM

First we'll show how to train a simple SVM classifier.

In scikit-learn, the corresponding estimator is called `SVC` (Support Vector Classifier).

In this part, we'll use a toy dataset which is linearly separable, generated using the `datasets.make_blobs()` function.

```

from helpers import plot_svc_decision_function

from sklearn.datasets import make_blobs

# Generate a linearly separable dataset

X, y = make_blobs(n_samples=150, centers=2, random_state=0, cluster_std=0.70)

# Split into train / test

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# Plot training and test data (color is for classes, shape is for train / test)

plt.scatter(X_train[:, 0], X_train[:, 1], c=y_train, s=50, marker='o', cmap="viridis", label="train")

plt.scatter(X_test[:, 0], X_test[:, 1], c=y_test, s=50, marker='^', cmap="viridis", label="test")

plt.legend()

plt.show()

```

For this part, we'll train a SVM with a linear kernel. This corresponds to the basic SVM model that you've seen in class.

When initializing an instance of the SVC class, you can specify a regularization parameter C, and the strength of regularization is inversely proportional to C. That is, a high value of C leads to low regularization and a low C leads to high regularization.

Try changing the value of C. How does it affect the support vectors?

**Answer:**

YOUR ANSWER HERE

```

from sklearn.svm import SVC # SVC = Support vector classifier

# C is the regularization parameter. The strength of regularization is inversely proportional to C.

# Try very large and very small values of C

model = SVC(kernel='linear', C=1)

model.fit(X_train, y_train)

# Print training accuracy

train_acc = model.score(X_train, y_train)

print(f"Train accuracy: {train_acc * 100:.2f}%")

# Show decision function and support vectors

plt.scatter(X_train[:, 0], X_train[:, 1], c=y_train, s=50, cmap="viridis")

plt.title(f"Kernel = {model.kernel} | C = {model.C}")

plot_svc_decision_function(model, plot_support=True)

# Print test accuracy

test_acc = model.score(X_test, y_test)

print(f"Test accuracy: {test_acc * 100:.2f}%")

```

### 2.2. Kernel SVM

Let's now use a non-linearly separable dataset, to observe the effect of the kernel function in SVMs.

```

from sklearn.datasets import make_circles

# Generate a circular dataset

X, y = make_circles(n_samples=400, noise=0.25, factor=0, random_state=0)

# Split into train / test

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.25, random_state=42)

# Plot training and test data (color is for classes, shape is for train / test)

plt.scatter(X_train[:, 0], X_train[:, 1], c=y_train, marker='o', cmap="viridis", label="train")

plt.scatter(X_test[:, 0], X_test[:, 1], c=y_test, marker='^', cmap="viridis", label="test")

plt.legend()

plt.show()

```

As you've seen in class, we can use kernel functions to allow SVMs to operate in high-dimensional, implicit feature spaces, without needing to compute the coordinates of the data in that space. We have seen a variety of kernel functions, such as the polynomial kernel and the RBF kernel.

In this exercise, experiment with the different kernels, such as:

- the linear kernel (`linear`): $\langle x, x'\rangle$

- the polynomial kernel (`poly`): $(\gamma \langle x, x'\rangle + r)^d$ (try out different degrees)

- the radial basis function kernel (`rbf`): $\exp(-\gamma \|x-x'\|^2)$

Your task is to experiment with these kernels to see which one does the best on this dataset.

How does the kernel affect the decision boundary? Which kernel and value of C would you pick to maximize your model's performance?

**Note:** Use the the helper function `plot_svc_decision_function()` to view the decision boundaries for each model.

```

# Use as many code cells as needed to try out different kernels and values of C

### YOUR CODE HERE ###

```

**Answer:**

YOUR ANSWER HERE

**To go further**: To learn more about SVMs in scikit-learn, and how to use them for multi-class classification and regression, check out the documentation page: https://scikit-learn.org/stable/modules/svm.html

## 3. Trees

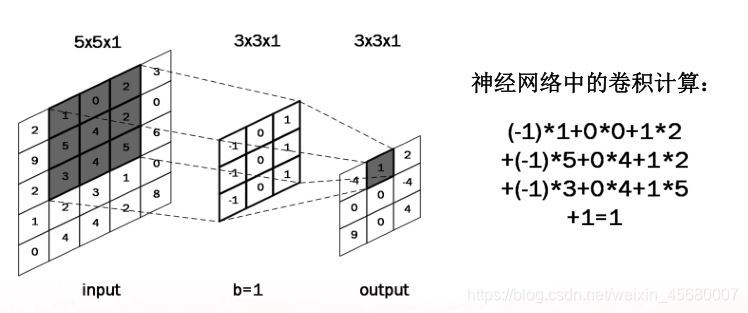

Decision trees are a very intuitive way to classify objects: they ask a series of questions to infer the target variable.

A decision tree is a set of nested decision rules. At each node $i$, the $d_i$-th feature of the input vector $ \boldsymbol{x}$ is compared to a treshold value $t$. The vector $\boldsymbol{x}$ is passed down to the left or right branch depending on whether $d_i$ is less than or greater than $t$. This process is repeated for each node encountered until a reaching leaf node, which specifies the predicted output.

<img src="images/simple_tree.png" width=400></img>

*Example of a simple decision tree on the Palmer Penguins dataset*

Decision trees are usually constructed from the top-down, by choosing a feature at each step that best splits the set of items. There are different metrics for measuring the "best" feature to pick, such as the Gini impurity and the entropy / information gain. We won't dive into them here, but we recommend reading Chapter 18 of ["Probabilistic Machine Learning: An Introduction"](https://probml.github.io/pml-book/) by K.P. Murphy if you want to learn more about them.

Decision trees are popular for several reasons:

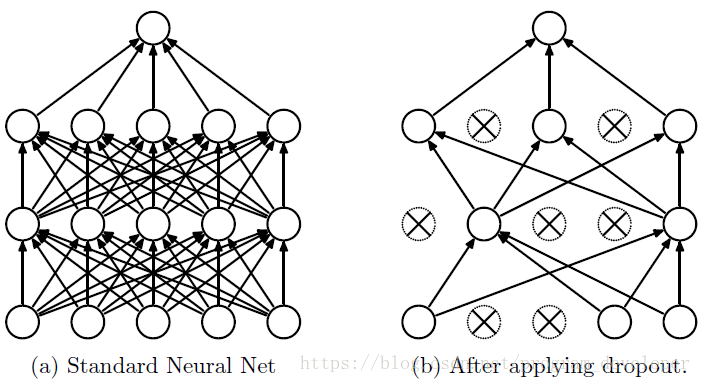

- They are **easy to interpret**.

- They can handle mixed discrete and continuous inputs.

- They are insensitive to monotone transformations of the inputs, so there is no need to standardize the data.

- They perform automatic feature selection.

- They are fast to fit, and scale well to large data sets.

Unfortunately, trees usually do not predict as accurately as other models we have seen previously, such as neural networks and SVMs.

It is however possible to significantly improve their performance through an ensemble learning method called **random forests**, which consists of constructing a multitude of decision trees at training time and averaging their outputs at test time. While random forests usually perform better than a single decision tree, they are much less interpretable. We won't cover random forests in this exercise, but keep in mind that they can be easily implemented in scikit-learn using the [`ensemble` module](https://scikit-learn.org/stable/modules/ensemble.html).

### 3.1. Training decision trees